license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

mit | ['generated_from_keras_callback'] | false | ishaankul67/Human_Development_Index-clustered This model is a fine-tuned version of [nandysoham16/4-clustered_aug](https://huggingface.co/nandysoham16/4-clustered_aug) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.1775 - Train End Logits Accuracy: 0.9722 - Train Start Logits Accuracy: 0.9340 - Validation Loss: 0.7431 - Validation End Logits Accuracy: 0.6667 - Validation Start Logits Accuracy: 1.0 - Epoch: 0 | d24e372dfdd1da5f2f17d0af4901f7aa |

mit | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch | |:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:| | 0.1775 | 0.9722 | 0.9340 | 0.7431 | 0.6667 | 1.0 | 0 | | 0dc29367505a45006d146de22602534c |

mit | ['generated_from_trainer'] | false | Vigec-V4 This model is a fine-tuned version of [VietAI/vit5-base](https://huggingface.co/VietAI/vit5-base) on the None dataset. It achieves the following results on the evaluation set: - eval_loss: 2.0959 - eval_bleu: 0.0 - eval_gen_len: 17.9268 - eval_runtime: 319.3265 - eval_samples_per_second: 15.658 - eval_steps_per_second: 1.957 - epoch: 0.01 - step: 1700 | 4b47bb590682b90f0d40b062a6c77612 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 2000 - mixed_precision_training: Native AMP | 95dd5ac3db42337cd674cfe668ef9689 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-clinc This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the clinc_oos dataset. It achieves the following results on the evaluation set: - Loss: 0.2454 - Accuracy: 0.9474 | c93d90d6bf93f692a8dc1f9c823666ee |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 3.496 | 1.0 | 954 | 1.8019 | 0.8306 | | 1.0663 | 2.0 | 1908 | 0.5690 | 0.9174 | | 0.3267 | 3.0 | 2862 | 0.3128 | 0.9406 | | 0.1397 | 4.0 | 3816 | 0.2567 | 0.9445 | | 0.0846 | 5.0 | 4770 | 0.2454 | 0.9474 | | 1f443e21572e19506a63e7f0a5db8c46 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers'] | false | This is a Stable Diffusion model trained on Yoji Shinkawa's artworks from Metal Gear Solid and Death Stranding game series. This was made with dreambooth extension for Automatic 111 using 47 images and 4000 steps. The token is: yoshin style Exemple images:           License: This model is open access and available to all, with a CreativeML OpenRAIL-M license further specifying rights and usage. The CreativeML OpenRAIL License specifies: You can't use the model to deliberately produce nor share illegal or harmful outputs or content The authors claims no rights on the outputs you generate, you are free to use them and are accountable for their use which must not go against the provisions set in the license You may re-distribute the weights and use the model commercially and/or as a service. If you do, please be aware you have to include the same use restrictions as the ones in the license and share a copy of the CreativeML OpenRAIL-M to all your users (please read the license entirely and carefully) | 0f29b9bf073f65ba05889b5e775f4544 |

apache-2.0 | ['automatic-speech-recognition', 'pt'] | false | exp_w2v2t_pt_vp-100k_s645 Fine-tuned [facebook/wav2vec2-large-100k-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-100k-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (pt)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | e9816626c7d31d2c380e89e82d4b8c40 |

mit | ['masked-lm', 'catalan', 'exbert'] | false | Introduction CALBERT is an open-source language model for Catalan pretrained on the ALBERT architecture. It is now available on Hugging Face in its `tiny-uncased` version and `base-uncased` (the one you're looking at) as well, and was pretrained on the [OSCAR dataset](https://traces1.inria.fr/oscar/). For further information or requests, please go to the [GitHub repository](https://github.com/codegram/calbert) | 390c3af34cf7799df21024e6fa18bd58 |

mit | ['masked-lm', 'catalan', 'exbert'] | false | Pre-trained models | Model | Arch. | Training data | | ----------------------------------- | -------------- | ---------------------- | | `codegram` / `calbert-tiny-uncased` | Tiny (uncased) | OSCAR (4.3 GB of text) | | `codegram` / `calbert-base-uncased` | Base (uncased) | OSCAR (4.3 GB of text) | | 6a250deed9dfe90c036ce9ce85f5e2b1 |

mit | ['masked-lm', 'catalan', 'exbert'] | false | Load Calbert and its tokenizer: ```python from transformers import AutoModel, AutoTokenizer tokenizer = AutoTokenizer.from_pretrained("codegram/calbert-base-uncased") model = AutoModel.from_pretrained("codegram/calbert-base-uncased") model.eval() | 72aaf73905a1a346a37195ec06103bc5 |

mit | ['masked-lm', 'catalan', 'exbert'] | false | Filling masks using pipeline ```python from transformers import pipeline calbert_fill_mask = pipeline("fill-mask", model="codegram/calbert-base-uncased", tokenizer="codegram/calbert-base-uncased") results = calbert_fill_mask("M'agrada [MASK] això") | 3b170b100f4b7eca44461a8c3b179bb1 |

mit | ['masked-lm', 'catalan', 'exbert'] | false | Authors CALBERT was trained and evaluated by [Txus Bach](https://twitter.com/txustice), as part of [Codegram](https://www.codegram.com)'s applied research. <a href="https://huggingface.co/exbert/?model=codegram/calbert-base-uncased&modelKind=bidirectional&sentence=M%27agradaria%20força%20saber-ne%20més"> <img width="300px" src="https://cdn-media.huggingface.co/exbert/button.png"> </a> | c308f1a7986018c73d548d8b5f4a47b4 |

mit | ['generated_from_trainer'] | false | roberta-base-finetuned-scrambled-squad-15-new This model is a fine-tuned version of [roberta-base](https://huggingface.co/roberta-base) on the squad dataset. It achieves the following results on the evaluation set: - Loss: 1.0283 | 839bfb41a39da255427b0aff5738f519 |

cc-by-4.0 | [] | false | AssameseBERT AssameseBERT is an Assamese BERT model trained on publicly available Assamese monolingual datasets. Preliminary details on the dataset, models, and baseline results can be found in our [<a href='https://arxiv.org/abs/2211.11418'> paper </a>] . Citing: ``` @article{joshi2022l3cubehind, title={L3Cube-HindBERT and DevBERT: Pre-Trained BERT Transformer models for Devanagari based Hindi and Marathi Languages}, author={Joshi, Raviraj}, journal={arXiv preprint arXiv:2211.11418}, year={2022} } ``` | b0efd4566e718f54d2126e768c9975ac |

apache-2.0 | ['generated_from_trainer'] | false | opus-mt-de-en-finetuned-de-to-en-first This model is a fine-tuned version of [Helsinki-NLP/opus-mt-de-en](https://huggingface.co/Helsinki-NLP/opus-mt-de-en) on the wmt16 dataset. It achieves the following results on the evaluation set: - Loss: 1.1465 - Bleu: 39.8122 - Gen Len: 25.579 | ebdab188abac98a5e4665d9bee6599f4 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:| | No log | 1.0 | 63 | 1.1465 | 39.8122 | 25.579 | | 070846f814e879767f927963c53c440a |

apache-2.0 | ['generated_from_trainer'] | false | recipe-lr1e05-wd0.02-bs32 This model is a fine-tuned version of [paola-md/recipe-distilroberta-Is](https://huggingface.co/paola-md/recipe-distilroberta-Is) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2756 - Rmse: 0.5250 - Mse: 0.2756 - Mae: 0.4181 | a4ac5720c90f03e3b1d448c3125f6104 |

mit | ['text-classification', 'tensorflow', 'roberta'] | false | What is GoEmotions Dataset labelled 58000 Reddit comments with 28 emotions - admiration, amusement, anger, annoyance, approval, caring, confusion, curiosity, desire, disappointment, disapproval, disgust, embarrassment, excitement, fear, gratitude, grief, joy, love, nervousness, optimism, pride, realization, relief, remorse, sadness, surprise + neutral | 8b98d2f0969e767cfb4fc7283f7e3005 |

mit | ['text-classification', 'tensorflow', 'roberta'] | false | What is RoBERTa RoBERTa builds on BERT’s language masking strategy and modifies key hyperparameters in BERT, including removing BERT’s next-sentence pretraining objective, and training with much larger mini-batches and learning rates. RoBERTa was also trained on an order of magnitude more data than BERT, for a longer amount of time. This allows RoBERTa representations to generalize even better to downstream tasks compared to BERT. | 77c0ca12cc5377053c10e0b2b4b99900 |

mit | ['text-classification', 'tensorflow', 'roberta'] | false | Hyperparameters | Parameter | | | ----------------- | :---: | | Learning rate | 5e-5 | | Epochs | 10 | | Max Seq Length | 50 | | Batch size | 16 | | Warmup Proportion | 0.1 | | Epsilon | 1e-8 | | 4edf6a2b421eeb894995792618853227 |

mit | ['text-classification', 'tensorflow', 'roberta'] | false | Usage ```python from transformers import RobertaTokenizerFast, TFRobertaForSequenceClassification, pipeline tokenizer = RobertaTokenizerFast.from_pretrained("arpanghoshal/EmoRoBERTa") model = TFRobertaForSequenceClassification.from_pretrained("arpanghoshal/EmoRoBERTa") emotion = pipeline('sentiment-analysis', model='arpanghoshal/EmoRoBERTa') emotion_labels = emotion("Thanks for using it.") print(emotion_labels) ``` Output ``` [{'label': 'gratitude', 'score': 0.9964383244514465}] ``` | 817893fee9b28ec772563fb97d6a39d7 |

apache-2.0 | ['chinese', 'classical chinese', 'literary chinese', 'ancient chinese', 'bert', 'pytorch', 'punctuation marker'] | false | Guwen Punc A Classical Chinese Punctuation Marker. See also: <a href="https://github.com/ethan-yt/guwen-models"> <img align="center" width="400" src="https://github-readme-stats.vercel.app/api/pin/?username=ethan-yt&repo=guwen-models&bg_color=30,e96443,904e95&title_color=fff&text_color=fff&icon_color=fff&show_owner=true" /> </a> <a href="https://github.com/ethan-yt/cclue/"> <img align="center" width="400" src="https://github-readme-stats.vercel.app/api/pin/?username=ethan-yt&repo=cclue&bg_color=30,e96443,904e95&title_color=fff&text_color=fff&icon_color=fff&show_owner=true" /> </a> <a href="https://github.com/ethan-yt/guwenbert/"> <img align="center" width="400" src="https://github-readme-stats.vercel.app/api/pin/?username=ethan-yt&repo=guwenbert&bg_color=30,e96443,904e95&title_color=fff&text_color=fff&icon_color=fff&show_owner=true" /> </a> | 80fa219adbb4eaf407469f2e60d88c6d |

mit | [] | false | Iridescent Photo Style on Stable Diffusion This is the 'iridescent-photo-style' concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:        Here are images generated with this style:    | ebd2f50051b8162ad1f38e5c3d375870 |

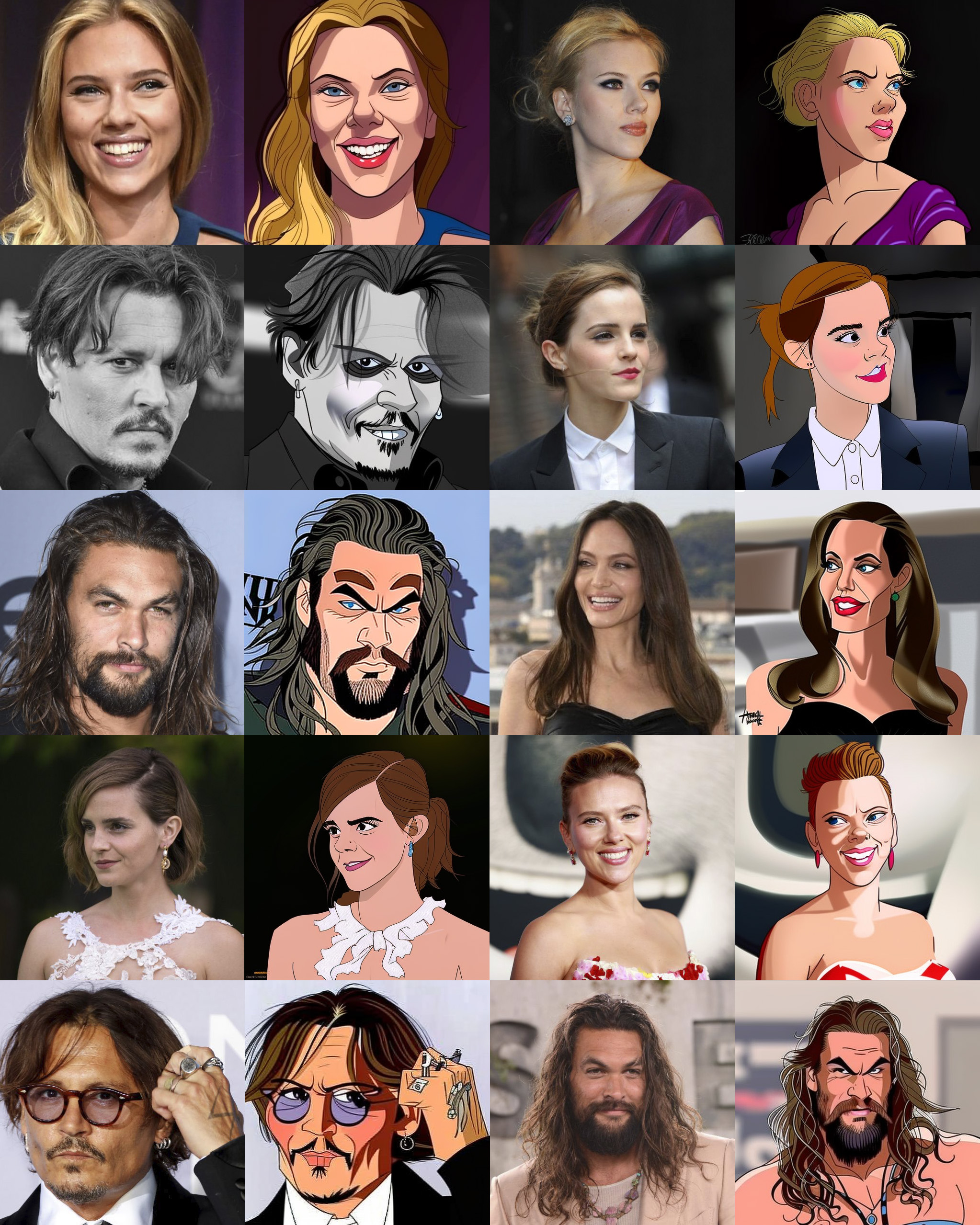

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | dbluth I played a lot in my childhood at laser disc videogames so this model is my personal tribute to the great Disney animator Don Bluth.This is a fine-tuned Stable Diffusion model (based on v1.5), I've trained three different models from videogames laser disc **Dragon's Lair** , **Space Ace** and **Dragon's Lair II Time Warp** then I merged these models into a single one called dbluth. Use the token **_dbluth_** in your prompts to use the style. _Download the ckpt file from "files and versions" tab into the stable diffusion models folder of your web-ui of choice._ The model is pretty similar to Disney classic model because of course Don Bluth was one of the main animator in classic Disney era. _I've found interesting the output in the img2img generation. You can see the results in the second image (original/img2img)._ **Characters and rendered with this model:**  _prompt and settings used: **[person] in dbluth style** | **Steps: 30, Sampler: Euler, CFG scale: 7.5**_ **Characters rendered with img2img:**  _prompt and settings used: **[person] in dbluth style** | **Steps: 30 - denoising stregth around 50/70 but you can play around with settings**_ -- This model was trained with Dreambooth training by TheLastBen, using 40 images at 8000 steps with 20% of text encoder for each model and then merged in a single one with Automatic1111 webui checkpoint merger. -- | 89ee385f173a8c62c9f9df465bd8d8f8 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | Mike Dreambooth model trained by vtwoods with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept: | 948adb3f270dfebbc9b26d821d30cb63 |

apache-2.0 | ['automatic-speech-recognition', 'pl'] | false | exp_w2v2t_pl_vp-it_s157 Fine-tuned [facebook/wav2vec2-large-it-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-it-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (pl)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | d9896e1b81c3e10f8523678ecce1210b |

apache-2.0 | ['generated_from_trainer'] | false | tiny-mlm-glue-stsb-target-glue-wnli This model is a fine-tuned version of [muhtasham/tiny-mlm-glue-stsb](https://huggingface.co/muhtasham/tiny-mlm-glue-stsb) on the None dataset. It achieves the following results on the evaluation set: - Loss: 2.2775 - Accuracy: 0.1408 | 946915abd3a320c772ae37d8f12a4d56 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.6893 | 25.0 | 500 | 0.7717 | 0.2254 | | 0.6584 | 50.0 | 1000 | 1.1922 | 0.1408 | | 0.5966 | 75.0 | 1500 | 1.7754 | 0.1268 | | 0.5282 | 100.0 | 2000 | 2.2775 | 0.1408 | | a6f3a68ac75169018c9078404f49c541 |

apache-2.0 | ['generated_from_trainer'] | false | Article_100v8_NER_Model_3Epochs_UNAUGMENTED This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the article100v8_wikigold_split dataset. It achieves the following results on the evaluation set: - Loss: 0.6455 - Precision: 0.0 - Recall: 0.0 - F1: 0.0 - Accuracy: 0.7750 | 3e24240a891e9960b7acb08c32112114 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:---:|:--------:| | No log | 1.0 | 12 | 0.7691 | 0.0 | 0.0 | 0.0 | 0.7750 | | No log | 2.0 | 24 | 0.6860 | 0.0 | 0.0 | 0.0 | 0.7750 | | No log | 3.0 | 36 | 0.6455 | 0.0 | 0.0 | 0.0 | 0.7750 | | 48831459ade6d238f5f95b2ef14be634 |

gpl-3.0 | ['pytorch', 'token-classification', 'bert', 'zh'] | false | Usage * Using our model in your script ```python from transformers import ( AutoTokenizer, AutoModel, ) tokenizer = AutoTokenizer.from_pretrained("ckiplab/bert-base-han-chinese-ws") model = AutoModel.from_pretrained("ckiplab/bert-base-han-chinese-ws") ``` * Using our model for inference ```python >>> from transformers import pipeline >>> classifier = pipeline("token-classification", model="ckiplab/bert-base-han-chinese-ws") >>> classifier("帝堯曰放勳") | f261184ece413333ac8d602f3f26a8c9 |

gpl-3.0 | ['pytorch', 'token-classification', 'bert', 'zh'] | false | output [{'entity': 'B', 'score': 0.9999793, 'index': 1, 'word': '帝', 'start': 0, 'end': 1}, {'entity': 'I', 'score': 0.9915047, 'index': 2, 'word': '堯', 'start': 1, 'end': 2}, {'entity': 'B', 'score': 0.99992275, 'index': 3, 'word': '曰', 'start': 2, 'end': 3}, {'entity': 'B', 'score': 0.99905187, 'index': 4, 'word': '放', 'start': 3, 'end': 4}, {'entity': 'I', 'score': 0.96299917, 'index': 5, 'word': '勳', 'start': 4, 'end': 5}] ``` | 4791c4ba6ff9d4b952a4768efafde370 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | SMNBSLY Dreambooth model trained by Taboodada with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept: .jpeg) .jpeg) .jpeg) | 6831880ee06c74ac2447b0a028464a75 |

apache-2.0 | ['generated_from_keras_callback'] | false | Okyx/finetuned-imdb This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 2.8587 - Validation Loss: 2.6062 - Epoch: 0 | c1b97f9aeed2d3df4215504a1ac2e255 |

apache-2.0 | ['generated_from_trainer'] | false | distilroberta-base-etc This model is a fine-tuned version of [distilroberta-base](https://huggingface.co/distilroberta-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.3382 - Accuracy: 0.919 - F1: 0.9190 | 6128931576a07e43c8af3bcbc2d33144 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 4.969790133269121e-05 - train_batch_size: 400 - eval_batch_size: 400 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 7 | 7b4d52bcd0e604cf9e72adf9c61529f0 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | No log | 1.0 | 84 | 0.2372 | 0.907 | 0.9070 | | No log | 2.0 | 168 | 0.2358 | 0.9083 | 0.9083 | | No log | 3.0 | 252 | 0.2430 | 0.9137 | 0.9137 | | No log | 4.0 | 336 | 0.2449 | 0.919 | 0.9190 | | No log | 5.0 | 420 | 0.2884 | 0.9193 | 0.9193 | | No log | 6.0 | 504 | 0.3179 | 0.9167 | 0.9167 | | No log | 7.0 | 588 | 0.3382 | 0.919 | 0.9190 | | e878f814ac74f9413c831c935668aabb |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper Small hy This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the common_voice_11_0 dataset. It achieves the following results on the evaluation set: - Loss: 0.5960 - Wer: 125.5263 | 3250cf03de145a1e90b5d55adfd8a6fe |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 10 - training_steps: 60 - mixed_precision_training: Native AMP | 9516b6e59c1d0ed149d63026747abffb |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.9554 | 0.17 | 10 | 1.0085 | 174.1118 | | 0.7053 | 0.33 | 20 | 0.7362 | 197.4013 | | 0.681 | 0.5 | 30 | 0.6581 | 136.6776 | | 0.6198 | 0.67 | 40 | 0.6242 | 118.9145 | | 0.5901 | 0.83 | 50 | 0.6064 | 126.2829 | | 0.5363 | 1.0 | 60 | 0.5960 | 125.5263 | | 18cb4614b667fc216b24b3f5abdef9d7 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-large-xlsr-53_toy_train_data_augment_0.1 This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.4658 - Wer: 0.5037 | d06cba179b7b51efeb570629496102f6 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 3.447 | 1.05 | 250 | 3.3799 | 1.0 | | 3.089 | 2.1 | 500 | 3.4868 | 1.0 | | 3.063 | 3.15 | 750 | 3.3155 | 1.0 | | 2.4008 | 4.2 | 1000 | 1.2934 | 0.8919 | | 1.618 | 5.25 | 1250 | 0.7847 | 0.7338 | | 1.3038 | 6.3 | 1500 | 0.6459 | 0.6712 | | 1.2074 | 7.35 | 1750 | 0.5705 | 0.6269 | | 1.1062 | 8.4 | 2000 | 0.5267 | 0.5843 | | 1.026 | 9.45 | 2250 | 0.5108 | 0.5683 | | 0.9505 | 10.5 | 2500 | 0.5066 | 0.5568 | | 0.893 | 11.55 | 2750 | 0.5161 | 0.5532 | | 0.8535 | 12.6 | 3000 | 0.4994 | 0.5341 | | 0.8462 | 13.65 | 3250 | 0.4626 | 0.5262 | | 0.8334 | 14.7 | 3500 | 0.4593 | 0.5197 | | 0.842 | 15.75 | 3750 | 0.4651 | 0.5126 | | 0.7678 | 16.81 | 4000 | 0.4687 | 0.5120 | | 0.7873 | 17.86 | 4250 | 0.4716 | 0.5070 | | 0.7486 | 18.91 | 4500 | 0.4657 | 0.5033 | | 0.7073 | 19.96 | 4750 | 0.4658 | 0.5037 | | 59622ff6cf3178f807812a0f9e449545 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:------:|:-------:| | No log | 1.0 | 188 | 2.4324 | 1.2308 | 17.8904 | | d3e2292dbfb7acfd7d004bf022dfcb29 |

apache-2.0 | ['generated_from_trainer'] | false | mt5-small-tuto-mt5-small-1 This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the None dataset. It achieves the following results on the evaluation set: - Loss: 2.0740 - Rouge1: 0.3812 - Rouge2: 0.2565 - Rougel: 0.3583 - Rougelsum: 0.3582 | 169068f0a18daca4a6d466b2b080acf8 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:|:---------:| | 2.5909 | 1.0 | 6034 | 2.0740 | 0.3812 | 0.2565 | 0.3583 | 0.3582 | | d50a1eb4b2439522027dbdc6cc0e6140 |

apache-2.0 | ['generated_from_trainer'] | false | t5-small-ret-conceptnet2 This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1709 - Acc: {'accuracy': 0.8700980392156863} - Precision: {'precision': 0.811340206185567} - Recall: {'recall': 0.9644607843137255} - F1: {'f1': 0.8812989921612542} | 97570dd9ffbe4ea0f8dff0b3a7103a1d |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Acc | Precision | Recall | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------------------------------:|:--------------------------------:|:------------------------------:|:--------------------------:| | 0.1989 | 1.0 | 721 | 0.1709 | {'accuracy': 0.8700980392156863} | {'precision': 0.811340206185567} | {'recall': 0.9644607843137255} | {'f1': 0.8812989921612542} | | e7c511eab9e7f573fdf6ddc8fff513cf |

other | ['vision', 'image-segmentation'] | false | Mask2Former Mask2Former model trained on ADE20k semantic segmentation (large-sized version, Swin backbone). It was introduced in the paper [Masked-attention Mask Transformer for Universal Image Segmentation ](https://arxiv.org/abs/2112.01527) and first released in [this repository](https://github.com/facebookresearch/Mask2Former/). Disclaimer: The team releasing Mask2Former did not write a model card for this model so this model card has been written by the Hugging Face team. | 39a55fd9e4dc88d5916b60c7b6866b61 |

other | ['vision', 'image-segmentation'] | false | load Mask2Former fine-tuned on ADE20k semantic segmentation processor = AutoImageProcessor.from_pretrained("facebook/mask2former-swin-large-ade-semantic") model = Mask2FormerForUniversalSegmentation.from_pretrained("facebook/mask2former-swin-large-ade-semantic") url = "http://images.cocodataset.org/val2017/000000039769.jpg" image = Image.open(requests.get(url, stream=True).raw) inputs = processor(images=image, return_tensors="pt") with torch.no_grad(): outputs = model(**inputs) | 7c8b4a8ffebcdd35c38b7a072a3d7e0e |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-uncased-issues-128 This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.2312 | ab4bf96a187e2deb5db68b118f897d91 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.3399 | 1.0 | 73 | 1.7462 | | 1.799 | 2.0 | 146 | 1.4703 | | 1.6353 | 3.0 | 219 | 1.4796 | | 1.5464 | 4.0 | 292 | 1.3851 | | 1.4697 | 5.0 | 365 | 1.3032 | | 1.4146 | 6.0 | 438 | 1.3339 | | 1.3677 | 7.0 | 511 | 1.3349 | | 1.3345 | 8.0 | 584 | 1.2818 | | 1.3053 | 9.0 | 657 | 1.2646 | | 1.2886 | 10.0 | 730 | 1.2355 | | 1.278 | 11.0 | 803 | 1.3037 | | 1.2568 | 12.0 | 876 | 1.1511 | | 1.2399 | 13.0 | 949 | 1.2578 | | 1.2369 | 14.0 | 1022 | 1.2487 | | 1.2165 | 15.0 | 1095 | 1.2581 | | 1.2289 | 16.0 | 1168 | 1.2312 | | 31328228e22e71b716f10dc18b5a991b |

apache-2.0 | ['image-classification', 'generated_from_trainer'] | false | vit-base-xray-pneumonia This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on the [chest-xray-pneumonia](https://www.kaggle.com/paultimothymooney/chest-xray-pneumonia) dataset. It achieves the following results on the evaluation set: - Loss: 0.3387 - Accuracy: 0.9006 | 0276a5ac555669afacf2050b11d478a9 |

apache-2.0 | ['image-classification', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0002 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 10 | ff062d0806008b25bba4dc96a8874596 |

apache-2.0 | ['image-classification', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.1233 | 0.31 | 100 | 1.1662 | 0.6651 | | 0.0868 | 0.61 | 200 | 0.3387 | 0.9006 | | 0.1387 | 0.92 | 300 | 0.5297 | 0.8237 | | 0.1264 | 1.23 | 400 | 0.4566 | 0.8590 | | 0.0829 | 1.53 | 500 | 0.6832 | 0.8285 | | 0.0734 | 1.84 | 600 | 0.4886 | 0.8157 | | 0.0132 | 2.15 | 700 | 1.3639 | 0.7292 | | 0.0877 | 2.45 | 800 | 0.5258 | 0.8846 | | 0.0516 | 2.76 | 900 | 0.8772 | 0.8013 | | 0.0637 | 3.07 | 1000 | 0.4947 | 0.8558 | | 0.0022 | 3.37 | 1100 | 1.0062 | 0.8045 | | 0.0555 | 3.68 | 1200 | 0.7822 | 0.8285 | | 0.0405 | 3.99 | 1300 | 1.9288 | 0.6779 | | 0.0012 | 4.29 | 1400 | 1.2153 | 0.7981 | | 0.0034 | 4.6 | 1500 | 1.8931 | 0.7308 | | 0.0339 | 4.91 | 1600 | 0.9071 | 0.8590 | | 0.0013 | 5.21 | 1700 | 1.6266 | 0.7580 | | 0.0373 | 5.52 | 1800 | 1.5252 | 0.7676 | | 0.001 | 5.83 | 1900 | 1.2748 | 0.7869 | | 0.0005 | 6.13 | 2000 | 1.2103 | 0.8061 | | 0.0004 | 6.44 | 2100 | 1.3133 | 0.7981 | | 0.0004 | 6.75 | 2200 | 1.2200 | 0.8045 | | 0.0004 | 7.06 | 2300 | 1.2834 | 0.7933 | | 0.0004 | 7.36 | 2400 | 1.3080 | 0.7949 | | 0.0003 | 7.67 | 2500 | 1.3814 | 0.7917 | | 0.0004 | 7.98 | 2600 | 1.2853 | 0.7965 | | 0.0003 | 8.28 | 2700 | 1.3644 | 0.7933 | | 0.0003 | 8.59 | 2800 | 1.3137 | 0.8013 | | 0.0003 | 8.9 | 2900 | 1.3507 | 0.7997 | | 0.0003 | 9.2 | 3000 | 1.3751 | 0.7997 | | 0.0003 | 9.51 | 3100 | 1.3884 | 0.7981 | | 0.0003 | 9.82 | 3200 | 1.3831 | 0.7997 | | 807fb5d1427c606403655f0bda74efa6 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-amazon-shoe-reviews This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on a [subset](https://huggingface.co/datasets/juliensimon/amazon-shoe-reviews) of the [Amazon US reviews](https://huggingface.co/datasets/amazon_us_reviews) dataset. It achieves the following results on the evaluation set: - Loss: 0.9532 - Accuracy: 0.5779 - F1: [0.62616119 0.46456105 0.50993865 0.55755123 0.734375 ] - Precision: [0.62757927 0.46676662 0.49148534 0.58430541 0.72415507] - Recall: [0.6247495 0.46237624 0.52983172 0.53313982 0.74488753] | 77b280206a008503b0eb980c7fa80ba7 |

mit | ['text-generation', 'gpt2', 'gpt'] | false | ai-msgbot GPT2-XL-dialogue _NOTE: model card is WIP_ GPT2-XL (~1.5 B parameters) trained on [the Wizard of Wikipedia dataset](https://parl.ai/projects/wizard_of_wikipedia/) for 40k steps with **33**/36 layers frozen using `aitextgen`. The resulting model was then **further fine-tuned** on the [Daily Dialogues](http://yanran.li/dailydialog) for 40k steps, with **34**/36 layers frozen. Designed for use with [ai-msgbot](https://github.com/pszemraj/ai-msgbot) to create an open-ended chatbot (of course, if other use cases arise, have at it). | e39dbd449000f049e16d3b087007b4e1 |

mit | ['text-generation', 'gpt2', 'gpt'] | false | conversation data The dataset was tokenized and fed to the model as a conversation between two speakers, whose names are below. This is relevant for writing prompts and filtering/extracting text from responses. `script_speaker_name` = `person alpha` `script_responder_name` = `person beta` | 56a9c1b110e6af6e46ad95bc5f8c510a |

mit | ['text-generation', 'gpt2', 'gpt'] | false | examples - the default inference API examples should work _okay_ - an ideal test would be explicitly adding `person beta` into the prompt text the model is forced to respond to instead of adding onto the entered prompt. | 680b80c5be754b31aa4de1db65b5c924 |

mit | ['text-generation', 'gpt2', 'gpt'] | false | citations ``` @inproceedings{dinan2019wizard, author={Emily Dinan and Stephen Roller and Kurt Shuster and Angela Fan and Michael Auli and Jason Weston}, title={{W}izard of {W}ikipedia: Knowledge-powered Conversational Agents}, booktitle = {Proceedings of the International Conference on Learning Representations (ICLR)}, year={2019}, } @inproceedings{li-etal-2017-dailydialog, title = "{D}aily{D}ialog: A Manually Labelled Multi-turn Dialogue Dataset", author = "Li, Yanran and Su, Hui and Shen, Xiaoyu and Li, Wenjie and Cao, Ziqiang and Niu, Shuzi", booktitle = "Proceedings of the Eighth International Joint Conference on Natural Language Processing (Volume 1: Long Papers)", month = nov, year = "2017", address = "Taipei, Taiwan", publisher = "Asian Federation of Natural Language Processing", url = "https://aclanthology.org/I17-1099", pages = "986--995", abstract = "We develop a high-quality multi-turn dialog dataset, \textbf{DailyDialog}, which is intriguing in several aspects. The language is human-written and less noisy. The dialogues in the dataset reflect our daily communication way and cover various topics about our daily life. We also manually label the developed dataset with communication intention and emotion information. Then, we evaluate existing approaches on DailyDialog dataset and hope it benefit the research field of dialog systems. The dataset is available on \url{http://yanran.li/dailydialog}", } ``` | 1c373f2d73c7b0b3f49fa37b0d7a8ae5 |

apache-2.0 | ['generated_from_trainer'] | false | tiny-mlm-snli-target-glue-rte This model is a fine-tuned version of [muhtasham/tiny-mlm-snli](https://huggingface.co/muhtasham/tiny-mlm-snli) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.6539 - Accuracy: 0.5957 | 0a47bbe7d6b75d49992e2748ea3e5546 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.6405 | 6.41 | 500 | 0.6868 | 0.6137 | | 0.4338 | 12.82 | 1000 | 0.8381 | 0.6209 | | 0.268 | 19.23 | 1500 | 1.0333 | 0.6101 | | 0.1556 | 25.64 | 2000 | 1.3555 | 0.5921 | | 0.101 | 32.05 | 2500 | 1.6539 | 0.5957 | | 7bdc99d264885be617d603decd3da828 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 1.2861 - Accuracy: 0.4731 - F1: 0.4643 | b0fdda55147b26513298cc618373b6a7 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 1.5548 | 1.0 | 63 | 1.4000 | 0.3880 | 0.3166 | | 1.3084 | 2.0 | 126 | 1.2861 | 0.4731 | 0.4643 | | e23e41cbdd8276bf91fb8a012c5fe354 |

mit | ['vision', 'image-classification'] | false | NAT (tiny variant) NAT-Tiny trained on ImageNet-1K at 224x224 resolution. It was introduced in the paper [Neighborhood Attention Transformer](https://arxiv.org/abs/2204.07143) by Hassani et al. and first released in [this repository](https://github.com/SHI-Labs/Neighborhood-Attention-Transformer). | d7f6a88f30e6becd825377f23ffdf97d |

mit | ['vision', 'image-classification'] | false | Example Here is how to use this model to classify an image from the COCO 2017 dataset into one of the 1,000 ImageNet classes: ```python from transformers import AutoImageProcessor, NatForImageClassification from PIL import Image import requests url = "http://images.cocodataset.org/val2017/000000039769.jpg" image = Image.open(requests.get(url, stream=True).raw) feature_extractor = AutoImageProcessor.from_pretrained("shi-labs/nat-tiny-in1k-224") model = NatForImageClassification.from_pretrained("shi-labs/nat-tiny-in1k-224") inputs = feature_extractor(images=image, return_tensors="pt") outputs = model(**inputs) logits = outputs.logits | dbf0b39837c73423b0fb3faf8504fcc3 |

apache-2.0 | ['translation'] | false | opus-mt-war-sv * source languages: war * target languages: sv * OPUS readme: [war-sv](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/war-sv/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/war-sv/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/war-sv/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/war-sv/opus-2020-01-16.eval.txt) | cbf257f596686164758b383b0df1ad3b |

apache-2.0 | ['generated_from_trainer'] | false | all-roberta-large-v1-meta-8-16-5-oos This model is a fine-tuned version of [sentence-transformers/all-roberta-large-v1](https://huggingface.co/sentence-transformers/all-roberta-large-v1) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.4797 - Accuracy: 0.28 | 6870e469281437bdb3f5920da0747d7c |

mit | [] | false | gpt-fluentui-flat-svg A custom GPT model which was trained upon svg files. Specifically the flat emoji variants from [Microsoft's FluentUI repo](https://github.com/microsoft/fluentui-emoji). These svn files only consist of "stand-alone" path elements which should make it simpler to train upon and sample from. | 9611bcecb16d8870f44c4c3f6f586f28 |

mit | [] | false | training and dataset Both Tokenizer and Model were trained using [aitextgen](https://docs.aitextgen.io/) The python file which was used for training, the .txt file dataset and a few generated samples can be found [here](https://huggingface.co/Norod78/gpt-fluentui-flat-svg/tree/main/train) | 7280895919c4a9a85fbb07e5789c3dc2 |

mit | [] | false | generated samples The generated samples below were also created with [this script](https://huggingface.co/Norod78/gpt-fluentui-flat-svg/blob/main/train/atg_train.py)    | 73eed82ca1d7e67a1e9d8af197c4395a |

apache-2.0 | ['generated_from_keras_callback'] | false | Callmenicky/bert-finetuned-squad This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.7907 - Epoch: 1 | aeee5d0b3de139aa4522b947027c5274 |

apache-2.0 | ['generated_from_trainer'] | false | demo-transfer-learning This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset. It achieves the following results on the evaluation set: - Loss: 0.6183 - Accuracy: 0.8554 - F1: 0.8991 | a747fd5de34ce99275b3903b585886e5 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | No log | 1.0 | 459 | 0.3771 | 0.8358 | 0.8784 | | 0.5168 | 2.0 | 918 | 0.4530 | 0.8578 | 0.9033 | | 0.3018 | 3.0 | 1377 | 0.6183 | 0.8554 | 0.8991 | | 8b276adc4ac7c8e663b41697a50f7616 |

mit | [] | false | model by no3 This your the **waifu diffusion** model fine-tuned the azura from [vibrant venture](https://store.steampowered.com/app/1264520) taught to **waifu diffusion** with Dreambooth. It can be used by modifying the `instance_prompt`: **sks_azura** You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb). And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) | 169e97e865dbc058a6b3cbc1fac67c9f |

mit | [] | false | Note This model is based on **waifu diffusion** if you want to convert it to **.ckpt** with [this script](https://raw.githubusercontent.com/ratwithacompiler/diffusers_stablediff_conversion/main/convert_diffusers_to_sd.py) file make shore you choose waifu-diffusion v1.3 checkpoint not Stable Diffusion checkpoint because its trained on waifu-diffusion using other model makes terrible outputs. Be specific about what you want in your prompt if you type `sks_azura` only mostly you get this [image](https://huggingface.co/no3/azura-wd-1.3-beta2/resolve/main/concept_images/7.jpg) "the first concept image below" but changed a bit,next beta ["out now"](https://huggingface.co/no3/azura-wd-1.3-beta3) this image will be excluded from the training process maybe this helps. Here are the images used for training this concept:        | fedec3b168ad25660405becbade92183 |

mit | [] | false | German GPT-2 fine-tuned on Faust I and II We fine-tuned our German GPT-2 model on "Faust I and II" from Johann Wolfgang Goethe. These texts can be obtained from [Deutsches Textarchiv (DTA)](http://www.deutschestextarchiv.de/book/show/goethe_faust01_1808). We use the "normalized" version of both texts (to avoid out-of-vocabulary problems with e.g. "ſ") Fine-Tuning was done for 100 epochs, using a batch size of 4 with half precision on a RTX 3090. Total time was around 12 minutes (it is really fast!). We also open source this fine-tuned model. Text can be generated with: ```python from transformers import pipeline pipe = pipeline('text-generation', model="dbmdz/german-gpt2-faust", tokenizer="dbmdz/german-gpt2-faust") text = pipe("Schon um die Liebe", max_length=100)[0]["generated_text"] print(text) ``` and could output: ``` Schon um die Liebe bitte ich, Herr! Wer mag sich die dreifach Ermächtigen? Sei mir ein Held! Und daß die Stunde kommt spreche ich nicht aus. Faust (schaudernd). Den schönen Boten finde' ich verwirrend; ``` | d19403ba69d3bbbc89f2c1e89a9a9738 |

apache-2.0 | ['generated_from_trainer'] | false | recipe-distilbert-is This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 4.0558 | ec6f837ffb7ffcfd712e372cd9071192 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 100 - mixed_precision_training: Native AMP | cdb5292b3201849159b86992c02446de |

apache-2.0 | [] | false | SELECTRA: A Spanish ELECTRA SELECTRA is a Spanish pre-trained language model based on [ELECTRA](https://github.com/google-research/electra). We release a `small` and `medium` version with the following configuration: | Model | Layers | Embedding/Hidden Size | Params | Vocab Size | Max Sequence Length | Cased | | --- | --- | --- | --- | --- | --- | --- | | [SELECTRA small](https://huggingface.co/Recognai/selectra_small) | 12 | 256 | 22M | 50k | 512 | True | | **SELECTRA medium** | **12** | **384** | **41M** | **50k** | **512** | **True** | **SELECTRA small (medium) is about 5 (3) times smaller than BETO but achieves comparable results** (see Metrics section below). | 91b88d2fbfc2fa18e14b542502cbfaa4 |

apache-2.0 | [] | false | Usage From the original [ELECTRA model card](https://huggingface.co/google/electra-small-discriminator): "ELECTRA models are trained to distinguish "real" input tokens vs "fake" input tokens generated by another neural network, similar to the discriminator of a GAN." The discriminator should therefore activate the logit corresponding to the fake input token, as the following example demonstrates: ```python from transformers import ElectraForPreTraining, ElectraTokenizerFast discriminator = ElectraForPreTraining.from_pretrained("Recognai/selectra_small") tokenizer = ElectraTokenizerFast.from_pretrained("Recognai/selectra_small") sentence_with_fake_token = "Estamos desayunando pan rosa con tomate y aceite de oliva." inputs = tokenizer.encode(sentence_with_fake_token, return_tensors="pt") logits = discriminator(inputs).logits.tolist()[0] print("\t".join(tokenizer.tokenize(sentence_with_fake_token))) print("\t".join(map(lambda x: str(x)[:4], logits[1:-1]))) """Output: Estamos desayun | 6df8645b19efe030822354ef8aff0468 |

apache-2.0 | [] | false | ando pan rosa con tomate y aceite de oliva . -3.1 -3.6 -6.9 -3.0 0.19 -4.5 -3.3 -5.1 -5.7 -7.7 -4.4 -4.2 """ ``` However, you probably want to use this model to fine-tune it on a downstream task. We provide models fine-tuned on the [XNLI dataset](https://huggingface.co/datasets/xnli), which can be used together with the zero-shot classification pipeline: - [Zero-shot SELECTRA small](https://huggingface.co/Recognai/zeroshot_selectra_small) - [Zero-shot SELECTRA medium](https://huggingface.co/Recognai/zeroshot_selectra_medium) | 8f2615542ed43df83a5f2f627ba00b6a |

apache-2.0 | [] | false | Metrics We fine-tune our models on 3 different down-stream tasks: - [XNLI](https://huggingface.co/datasets/xnli) - [PAWS-X](https://huggingface.co/datasets/paws-x) - [CoNLL2002 - NER](https://huggingface.co/datasets/conll2002) For each task, we conduct 5 trials and state the mean and standard deviation of the metrics in the table below. To compare our results to other Spanish language models, we provide the same metrics taken from the [evaluation table](https://github.com/PlanTL-SANIDAD/lm-spanish | 2445026c4bf666979089c9b4ef5e76e9 |

apache-2.0 | [] | false | evaluation-) of the [Spanish Language Model](https://github.com/PlanTL-SANIDAD/lm-spanish) repo. | Model | CoNLL2002 - NER (f1) | PAWS-X (acc) | XNLI (acc) | Params | | --- | --- | --- | --- | --- | | SELECTRA small | 0.865 +- 0.004 | 0.896 +- 0.002 | 0.784 +- 0.002 | 22M | | SELECTRA medium | 0.873 +- 0.003 | 0.896 +- 0.002 | 0.804 +- 0.002 | 41M | | | | | | | | [mBERT](https://huggingface.co/bert-base-multilingual-cased) | 0.8691 | 0.8955 | 0.7876 | 178M | | [BETO](https://huggingface.co/dccuchile/bert-base-spanish-wwm-cased) | 0.8759 | 0.9000 | 0.8130 | 110M | | [RoBERTa-b](https://huggingface.co/BSC-TeMU/roberta-base-bne) | 0.8851 | 0.9000 | 0.8016 | 125M | | [RoBERTa-l](https://huggingface.co/BSC-TeMU/roberta-large-bne) | 0.8772 | 0.9060 | 0.7958 | 355M | | [Bertin](https://huggingface.co/bertin-project/bertin-roberta-base-spanish/tree/v1-512) | 0.8835 | 0.8990 | 0.7890 | 125M | | [ELECTRICIDAD](https://huggingface.co/mrm8488/electricidad-base-discriminator) | 0.7954 | 0.9025 | 0.7878 | 109M | Some details of our fine-tuning runs: - epochs: 5 - batch-size: 32 - learning rate: 1e-4 - warmup proportion: 0.1 - linear learning rate decay - layerwise learning rate decay For all the details, check out our [selectra repo](https://github.com/recognai/selectra). | 4e41706b3bbbf3e48944be60d3988c41 |

apache-2.0 | [] | false | Training We pre-trained our SELECTRA models on the Spanish portion of the [Oscar](https://huggingface.co/datasets/oscar) dataset, which is about 150GB in size. Each model version is trained for 300k steps, with a warm restart of the learning rate after the first 150k steps. Some details of the training: - steps: 300k - batch-size: 128 - learning rate: 5e-4 - warmup steps: 10k - linear learning rate decay - TPU cores: 8 (v2-8) For all details, check out our [selectra repo](https://github.com/recognai/selectra). **Note:** Due to a misconfiguration in the pre-training scripts the embeddings of the vocabulary containing an accent were not optimized. If you fine-tune this model on a down-stream task, you might consider using a tokenizer that does not strip the accents: ```python tokenizer = ElectraTokenizerFast.from_pretrained("Recognai/selectra_small", strip_accents=False) ``` | 1f67f052cf7f83cb1471eee4cae5ae55 |

apache-2.0 | [] | false | Motivation Despite the abundance of excellent Spanish language models (BETO, BSC-BNE, Bertin, ELECTRICIDAD, etc.), we felt there was still a lack of distilled or compact Spanish language models and a lack of comparing those to their bigger siblings. | 0c7ac8c7fc79018dec04e6c8b5a65a3f |

apache-2.0 | [] | false | Authors - David Fidalgo ([GitHub](https://github.com/dcfidalgo)) - Javier Lopez ([GitHub](https://github.com/javispp)) - Daniel Vila ([GitHub](https://github.com/dvsrepo)) - Francisco Aranda ([GitHub](https://github.com/frascuchon)) | a8c3262499350cfc68f269878b9d191b |

mit | ['generated_from_trainer'] | false | xlnet-base-rte-finetuned This model is a fine-tuned version of [xlnet-base-cased](https://huggingface.co/xlnet-base-cased) on the glue dataset. It achieves the following results on the evaluation set: - Loss: 2.6688 - Accuracy: 0.7040 | 2c4c5076a96473c6ff4d8b3fafb484a8 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 1 - eval_batch_size: 1 - seed: 42 - gradient_accumulation_steps: 8 - total_train_batch_size: 8 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 5 | d2d9b4899f960c874ccf36138286d5f1 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 311 | 0.9695 | 0.6859 | | 0.315 | 2.0 | 622 | 2.2516 | 0.6498 | | 0.315 | 3.0 | 933 | 2.0439 | 0.7076 | | 0.1096 | 4.0 | 1244 | 2.5190 | 0.7040 | | 0.0368 | 5.0 | 1555 | 2.6688 | 0.7040 | | 13244c75ef93ec0d26c71edd000889d6 |

openrail | [] | false | Basic Introduction * A chatbot you can chat with by sending messages whenever you want. We finetune the pre-trained **microsoft dialoGPT** dialogue systems on the movie dialogue corpus. And finally deploy the chatbots using the API provided by **google voice**. | 6e40a95fdc154cbdaed5cb2f13b88f49 |

openrail | [] | false | Movie Dialogue Corpus: https://www.kaggle.com/datasets/Cornell-University/movie-dialog-corpus The corpus contains a metadata-rich collection of fictional conversations extracted from raw movie scripts: * 220,579 conversational exchanges between 10,292 pairs of movie characters * involves 9,035 characters from 617 movies * in total 304,713 utterances | bac5586b7002353a6e84b0876e4ee651 |

agpl-3.0 | ['pytorch', 'causal-lm'] | false | 🪷 Lotus-12B Lotus-12B is a GPT-NeoX 12B model fine-tuned on 2.5GB of a diverse range of light novels, erotica, annotated literature, and public-domain conversations for the purpose of generating novel-like fictional text and conversations. | eb1276bd22a52ba8973d97ce0f37d3fc |

agpl-3.0 | ['pytorch', 'causal-lm'] | false | Model Description The model used for fine-tuning is [Pythia 12B Deduped](https://github.com/EleutherAI/pythia), which is a 12 billion parameter auto-regressive language model trained on [The Pile](https://pile.eleuther.ai/). | b49477293294f02064d072e650d7a83f |

agpl-3.0 | ['pytorch', 'causal-lm'] | false | Training Data & Annotative Prompting The data used in fine-tuning has been gathered from various sources such as the [Gutenberg Project](https://www.gutenberg.org/). The annotated fiction dataset has prepended tags to assist in generating towards a particular style. Here is an example prompt that shows how to use the annotations. ``` [ Title: The Dunwich Horror; Author: H. P. Lovecraft; Genre: Horror; Tags: 3rdperson, scary; Style: Dark ] *** When a traveler in north central Massachusetts takes the wrong fork... ``` And for conversations which were scraped from [My Discord Server](https://discord.com/invite/touhouai) and publicly available subreddits from [Reddit](https://www.reddit.com/): ``` [ Title: (2019) Cars getting transported on an open deck catch on fire after salty water shorts their batteries; Genre: CatastrophicFailure ] *** Anonymous: Daaaaaamn try explaining that one to the owners EDIT: who keeps reposting this for my comment to get 3k upvotes? Anonymous: "Your car caught fire from some water" Irythros: Lol, I wonder if any compensation was in order Anonymous: Almost all of the carriers offer insurance but it isn’t cheap. I guarantee most of those owners declined the insurance. ``` The annotations can be mixed and matched to help generate towards a specific style. | e288936ae61e69e825a872a475a1d1dd |

agpl-3.0 | ['pytorch', 'causal-lm'] | false | Example Code ``` from transformers import AutoTokenizer, AutoModelForCausalLM model = AutoModelForCausalLM.from_pretrained('hakurei/lotus-12B') tokenizer = AutoTokenizer.from_pretrained('hakurei/lotus-12B') prompt = '''[ Title: The Dunwich Horror; Author: H. P. Lovecraft; Genre: Horror ] *** When a traveler''' input_ids = tokenizer.encode(prompt, return_tensors='pt') output = model.generate(input_ids, do_sample=True, temperature=1.0, top_p=0.9, repetition_penalty=1.2, max_length=len(input_ids[0])+100, pad_token_id=tokenizer.eos_token_id) generated_text = tokenizer.decode(output[0]) print(generated_text) ``` An example output from this code produces a result that will look similar to: ``` [ Title: The Dunwich Horror; Author: H. P. Lovecraft; Genre: Horror ] *** When a traveler comes to an unknown region, his thoughts turn inevitably towards the old gods and legends which cluster around its appearance. It is not that he believes in them or suspects their reality—but merely because they are present somewhere else in creation just as truly as himself, and so belong of necessity in any landscape whose features cannot be altogether strange to him. Moreover, man has been prone from ancient times to brood over those things most connected with the places where he dwells. Thus the Olympian deities who ruled Hyper ``` | 76cc891db3fb943fe9d7ce78d5d0afea |

agpl-3.0 | ['pytorch', 'causal-lm'] | false | Team members and Acknowledgements This project would not have been possible without the work done by EleutherAI. Thank you! - [Anthony Mercurio](https://github.com/harubaru) - Imperishable_NEET In order to reach us, you can join our [Discord server](https://discord.gg/touhouai). [](https://discord.gg/touhouai) | 6f33db3143e1aa9068d09c64b0e80d14 |

apache-2.0 | ['generated_from_trainer'] | false | tiny-bert-sst2-1_mobilebert_and_bert-multi-teacher-distillation This model is a fine-tuned version of [google/bert_uncased_L-2_H-128_A-2](https://huggingface.co/google/bert_uncased_L-2_H-128_A-2) on the glue dataset. It achieves the following results on the evaluation set: - Loss: 2.5545 - Accuracy: 0.8280 | 7813d5735be7d73908228fb8e8ce4b9a |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:--------:| | 0.6309 | 1.0 | 4210 | 2.5545 | 0.8280 | | 0.6148 | 2.0 | 8420 | 2.4299 | 0.8245 | | 0.595 | 3.0 | 12630 | 2.3525 | 0.8211 | | 0.5388 | 4.0 | 16840 | 2.5075 | 0.8234 | | 694bfddacd17cd6f97872bffa259992a |

cc-by-4.0 | ['espnet', 'audio', 'automatic-speech-recognition'] | false | `Hoon_Chung/jsut_asr_train_asr_conformer8_raw_char_sp_valid.acc.ave` ♻️ Imported from https://zenodo.org/record/4292742/ This model was trained by Hoon Chung using jsut/asr1 recipe in [espnet](https://github.com/espnet/espnet/). | dd9e7feaa31b56d89daad06135d25dce |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.