license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.869 | 1.0 | 250 | 0.3161 | 0.9075 | 0.9053 | | 0.2564 | 2.0 | 500 | 0.2182 | 0.9265 | 0.9266 | | 16ffe453d0d370d703dbfe583a9d5551 |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', 'hf-asr-leaderboard', 'mozilla-foundation/common_voice_8_0', 'robust-speech-event'] | false | This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the MOZILLA-FOUNDATION/COMMON_VOICE_8_0 - SV-SE dataset. It achieves the following results on the evaluation set: - Loss: 0.3549 - Wer: 0.3827 | 9f8734bea86b7e2c1023c3e04a39dde0 |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', 'hf-asr-leaderboard', 'mozilla-foundation/common_voice_8_0', 'robust-speech-event'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 7.5e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 128 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 2000 - num_epochs: 50.0 - mixed_precision_training: Native AMP | c6090eef67dba77389da07a2603d4179 |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', 'hf-asr-leaderboard', 'mozilla-foundation/common_voice_8_0', 'robust-speech-event'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 3.4129 | 5.49 | 500 | 3.3224 | 1.0 | | 2.9323 | 10.98 | 1000 | 2.9128 | 1.0000 | | 1.6839 | 16.48 | 1500 | 0.7740 | 0.6854 | | 1.485 | 21.97 | 2000 | 0.5830 | 0.5976 | | 1.362 | 27.47 | 2500 | 0.4866 | 0.4905 | | 1.2752 | 32.96 | 3000 | 0.4240 | 0.4967 | | 1.1957 | 38.46 | 3500 | 0.3899 | 0.4258 | | 1.1646 | 43.95 | 4000 | 0.3597 | 0.4014 | | 1.1265 | 49.45 | 4500 | 0.3559 | 0.3829 | | a1287de02e491797bbc353e37b525057 |

apache-2.0 | ['stanza', 'token-classification'] | false | Stanza model for Icelandic (is) Stanza is a collection of accurate and efficient tools for the linguistic analysis of many human languages. Starting from raw text to syntactic analysis and entity recognition, Stanza brings state-of-the-art NLP models to languages of your choosing. Find more about it in [our website](https://stanfordnlp.github.io/stanza) and our [GitHub repository](https://github.com/stanfordnlp/stanza). This card and repo were automatically prepared with `hugging_stanza.py` in the `stanfordnlp/huggingface-models` repo Last updated 2022-09-25 01:34:21.832 | b82613301db3924f2c4033a799179892 |

apache-2.0 | ['generated_from_trainer'] | false | t5-end2end-questions-generation-cv-squadV2 This model is a fine-tuned version of [t5-base](https://huggingface.co/t5-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.8541 | 0a4a1e7734f0dedfe9e37075e3dfeb7e |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - gradient_accumulation_steps: 16 - total_train_batch_size: 64 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 5 | c3047389175eff6c31ce2b1e46ee10ab |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.6703 | 2.17 | 100 | 1.9685 | | 1.9718 | 4.34 | 200 | 1.8541 | | 60f4eb107a842bb73a169eaa0749374f |

mit | ['generated_from_trainer'] | false | blissful_leakey This model was trained from scratch on the tomekkorbak/pii-pile-chunk3-0-50000, the tomekkorbak/pii-pile-chunk3-50000-100000, the tomekkorbak/pii-pile-chunk3-100000-150000, the tomekkorbak/pii-pile-chunk3-150000-200000, the tomekkorbak/pii-pile-chunk3-200000-250000, the tomekkorbak/pii-pile-chunk3-250000-300000, the tomekkorbak/pii-pile-chunk3-300000-350000, the tomekkorbak/pii-pile-chunk3-350000-400000, the tomekkorbak/pii-pile-chunk3-400000-450000, the tomekkorbak/pii-pile-chunk3-450000-500000, the tomekkorbak/pii-pile-chunk3-500000-550000, the tomekkorbak/pii-pile-chunk3-550000-600000, the tomekkorbak/pii-pile-chunk3-600000-650000, the tomekkorbak/pii-pile-chunk3-650000-700000, the tomekkorbak/pii-pile-chunk3-700000-750000, the tomekkorbak/pii-pile-chunk3-750000-800000, the tomekkorbak/pii-pile-chunk3-800000-850000, the tomekkorbak/pii-pile-chunk3-850000-900000, the tomekkorbak/pii-pile-chunk3-900000-950000, the tomekkorbak/pii-pile-chunk3-950000-1000000, the tomekkorbak/pii-pile-chunk3-1000000-1050000, the tomekkorbak/pii-pile-chunk3-1050000-1100000, the tomekkorbak/pii-pile-chunk3-1100000-1150000, the tomekkorbak/pii-pile-chunk3-1150000-1200000, the tomekkorbak/pii-pile-chunk3-1200000-1250000, the tomekkorbak/pii-pile-chunk3-1250000-1300000, the tomekkorbak/pii-pile-chunk3-1300000-1350000, the tomekkorbak/pii-pile-chunk3-1350000-1400000, the tomekkorbak/pii-pile-chunk3-1400000-1450000, the tomekkorbak/pii-pile-chunk3-1450000-1500000, the tomekkorbak/pii-pile-chunk3-1500000-1550000, the tomekkorbak/pii-pile-chunk3-1550000-1600000, the tomekkorbak/pii-pile-chunk3-1600000-1650000, the tomekkorbak/pii-pile-chunk3-1650000-1700000, the tomekkorbak/pii-pile-chunk3-1700000-1750000, the tomekkorbak/pii-pile-chunk3-1750000-1800000, the tomekkorbak/pii-pile-chunk3-1800000-1850000, the tomekkorbak/pii-pile-chunk3-1850000-1900000 and the tomekkorbak/pii-pile-chunk3-1900000-1950000 datasets. | 2135c03c96063009f585fa6044b34413 |

mit | ['generated_from_trainer'] | false | Full config {'dataset': {'datasets': ['tomekkorbak/pii-pile-chunk3-0-50000', 'tomekkorbak/pii-pile-chunk3-50000-100000', 'tomekkorbak/pii-pile-chunk3-100000-150000', 'tomekkorbak/pii-pile-chunk3-150000-200000', 'tomekkorbak/pii-pile-chunk3-200000-250000', 'tomekkorbak/pii-pile-chunk3-250000-300000', 'tomekkorbak/pii-pile-chunk3-300000-350000', 'tomekkorbak/pii-pile-chunk3-350000-400000', 'tomekkorbak/pii-pile-chunk3-400000-450000', 'tomekkorbak/pii-pile-chunk3-450000-500000', 'tomekkorbak/pii-pile-chunk3-500000-550000', 'tomekkorbak/pii-pile-chunk3-550000-600000', 'tomekkorbak/pii-pile-chunk3-600000-650000', 'tomekkorbak/pii-pile-chunk3-650000-700000', 'tomekkorbak/pii-pile-chunk3-700000-750000', 'tomekkorbak/pii-pile-chunk3-750000-800000', 'tomekkorbak/pii-pile-chunk3-800000-850000', 'tomekkorbak/pii-pile-chunk3-850000-900000', 'tomekkorbak/pii-pile-chunk3-900000-950000', 'tomekkorbak/pii-pile-chunk3-950000-1000000', 'tomekkorbak/pii-pile-chunk3-1000000-1050000', 'tomekkorbak/pii-pile-chunk3-1050000-1100000', 'tomekkorbak/pii-pile-chunk3-1100000-1150000', 'tomekkorbak/pii-pile-chunk3-1150000-1200000', 'tomekkorbak/pii-pile-chunk3-1200000-1250000', 'tomekkorbak/pii-pile-chunk3-1250000-1300000', 'tomekkorbak/pii-pile-chunk3-1300000-1350000', 'tomekkorbak/pii-pile-chunk3-1350000-1400000', 'tomekkorbak/pii-pile-chunk3-1400000-1450000', 'tomekkorbak/pii-pile-chunk3-1450000-1500000', 'tomekkorbak/pii-pile-chunk3-1500000-1550000', 'tomekkorbak/pii-pile-chunk3-1550000-1600000', 'tomekkorbak/pii-pile-chunk3-1600000-1650000', 'tomekkorbak/pii-pile-chunk3-1650000-1700000', 'tomekkorbak/pii-pile-chunk3-1700000-1750000', 'tomekkorbak/pii-pile-chunk3-1750000-1800000', 'tomekkorbak/pii-pile-chunk3-1800000-1850000', 'tomekkorbak/pii-pile-chunk3-1850000-1900000', 'tomekkorbak/pii-pile-chunk3-1900000-1950000'], 'is_split_by_sentences': True}, 'generation': {'force_call_on': [25177], 'metrics_configs': [{}, {'n': 1}, {'n': 2}, {'n': 5}], 'scenario_configs': [{'generate_kwargs': {'do_sample': True, 'max_length': 128, 'min_length': 10, 'temperature': 0.7, 'top_k': 0, 'top_p': 0.9}, 'name': 'unconditional', 'num_samples': 4096}], 'scorer_config': {}}, 'kl_gpt3_callback': {'force_call_on': [25177], 'gpt3_kwargs': {'model_name': 'davinci'}, 'max_tokens': 64, 'num_samples': 4096}, 'model': {'from_scratch': True, 'gpt2_config_kwargs': {'reorder_and_upcast_attn': True, 'scale_attn_by': True}, 'path_or_name': 'gpt2'}, 'objective': {'alpha': 1, 'name': 'Unlikelihood', 'score_threshold': 0.0}, 'tokenizer': {'path_or_name': 'gpt2'}, 'training': {'dataloader_num_workers': 0, 'effective_batch_size': 64, 'evaluation_strategy': 'no', 'fp16': True, 'hub_model_id': 'blissful_leakey', 'hub_strategy': 'all_checkpoints', 'learning_rate': 0.0005, 'logging_first_step': True, 'logging_steps': 1, 'num_tokens': 3300000000, 'output_dir': 'training_output2', 'per_device_train_batch_size': 16, 'push_to_hub': True, 'remove_unused_columns': False, 'save_steps': 25177, 'save_strategy': 'steps', 'seed': 42, 'warmup_ratio': 0.01, 'weight_decay': 0.1}} | 8e69bdd1bacd88ad4539009a02bd154c |

other | ['generated_from_trainer', 'opt', 'custom-license', 'non-commercial', 'email', 'auto-complete', '125m'] | false | > NOTE: there is currently a bug with huggingface API for OPT models. Please use the [colab notebook](https://colab.research.google.com/gist/pszemraj/033dc9a38da31ced7a0343091ba42e31/email-autocomplete-demo-125m.ipynb) to test :) | bc3763a30d0f60cff1b89a864e6e92e1 |

other | ['generated_from_trainer', 'opt', 'custom-license', 'non-commercial', 'email', 'auto-complete', '125m'] | false | opt for email generation - 125m Why write the rest of your email when you can generate it? ``` from transformers import pipeline model_tag = "pszemraj/opt-125m-email-generation" generator = pipeline( 'text-generation', model=model_tag, use_fast=False, do_sample=False, ) prompt = """ Hello, Following up on the bubblegum shipment.""" generator( prompt, max_length=96, ) | cf8192586d293e085d4ee05596d7a302 |

other | ['generated_from_trainer', 'opt', 'custom-license', 'non-commercial', 'email', 'auto-complete', '125m'] | false | About This model is a fine-tuned version of [facebook/opt-125m](https://huggingface.co/facebook/opt-125m) on an `aeslc` dataset. - Emails, phone numbers, etc., were attempted to be excluded in a dataset preparation step using [clean-text](https://pypi.org/project/clean-text/) in Python. - Note that API is restricted to generating 64 tokens - you can generate longer emails by using this in a text-generation `pipeline` object It achieves the following results on the evaluation set: - Loss: 2.5552 | f3d8e58309fc803fd71be7865e9528ce |

other | ['generated_from_trainer', 'opt', 'custom-license', 'non-commercial', 'email', 'auto-complete', '125m'] | false | Intended uses & limitations - OPT models cannot be used commercially - [here is a GitHub gist](https://gist.github.com/pszemraj/c1b0a76445418b6bbddd5f9633d1bb7f) for a script to generate emails in the console or to a text file. | 36b997af3716b02ed322ce01af02ada6 |

other | ['generated_from_trainer', 'opt', 'custom-license', 'non-commercial', 'email', 'auto-complete', '125m'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.8245 | 1.0 | 129 | 2.8030 | | 2.521 | 2.0 | 258 | 2.6343 | | 2.2074 | 3.0 | 387 | 2.5595 | | 2.0145 | 4.0 | 516 | 2.5552 | | 3862f480c348b93c2e8d49366a72a546 |

creativeml-openrail-m | ['text-to-image'] | false | jessy-3500 Dreambooth model trained by eicu with [Hugging Face Dreambooth Training Space](https://huggingface.co/spaces/multimodalart/dreambooth-training) with the v1-5 base model You run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb). Don't forget to use the concept prompts! Sample pictures of: sks (use that on your prompt)  | 003aec03d8aee3ef01dddd4cf8d00e7c |

mit | [] | false | The model generated in the Enrich4All project.<br> Evaluated the perplexity of MLM Task fine-tuned for COVID-related corpus.<br> Baseline model: https://huggingface.co/racai/distilbert-base-romanian-cased <br> Scripts and corpus used for training: https://github.com/racai-ai/e4all-models Corpus --------------- The COVID-19 datasets we designed are a small corpus and a question-answer dataset. The targeted sources were official websites of Romanian institutions involved in managing the COVID-19 pandemic, like The Ministry of Health, Bucharest Public Health Directorate, The National Information Platform on Vaccination against COVID-19, The Ministry of Foreign Affairs, as well as of the European Union. We also harvested the website of a non-profit organization initiative, in partnership with the Romanian Government through the Romanian Digitization Authority, that developed an ample platform with different sections dedicated to COVID-19 official news and recommendations. News websites were avoided due to the volatile character of the continuously changing pandemic situation, but a reliable source of information was a major private medical clinic website (Regina Maria), which provided detailed medical articles on important subjects of immediate interest to the readers and patients, like immunity, the emergent treating protocols or the new Omicron variant of the virus. The corpus dataset was manually collected and revised. Data were checked for grammatical correctness, and missing diacritics were introduced. <br><br> The corpus is structured in 55 UTF-8 documents and contains 147,297 words. Results ----------------- | MLM Task | Perplexity | | ----------------- | ------------- | | Baseline | 68.39 | | COVID Fine-tuning | 5.56 | | 9970436bb43e86cd892666c1d86322ec |

apache-2.0 | ['generated_from_trainer'] | false | all-roberta-large-v1-utility-4-16-5-oos This model is a fine-tuned version of [sentence-transformers/all-roberta-large-v1](https://huggingface.co/sentence-transformers/all-roberta-large-v1) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.3728 - Accuracy: 0.3956 | 02bf750ac18e993c907276398c0e6e50 |

apache-2.0 | ['generated_from_keras_callback'] | false | nandysoham/3-clustered This model is a fine-tuned version of [Rocketknight1/distilbert-base-uncased-finetuned-squad](https://huggingface.co/Rocketknight1/distilbert-base-uncased-finetuned-squad) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.6964 - Train End Logits Accuracy: 0.8127 - Train Start Logits Accuracy: 0.7775 - Validation Loss: 0.8781 - Validation End Logits Accuracy: 0.7537 - Validation Start Logits Accuracy: 0.7338 - Epoch: 1 | acc6b0ae7cc26cf56bbd9a52187439f8 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'Adam', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 596, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False} - training_precision: float32 | 500153b3673efeb9b8f677148ee9daa1 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch | |:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:| | 1.0127 | 0.7370 | 0.6921 | 0.8770 | 0.7496 | 0.7321 | 0 | | 0.6964 | 0.8127 | 0.7775 | 0.8781 | 0.7537 | 0.7338 | 1 | | 76c959bbe35afb20647b362760268f11 |

apache-2.0 | ['translation'] | false | opus-mt-gaa-de * source languages: gaa * target languages: de * OPUS readme: [gaa-de](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/gaa-de/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-20.zip](https://object.pouta.csc.fi/OPUS-MT-models/gaa-de/opus-2020-01-20.zip) * test set translations: [opus-2020-01-20.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/gaa-de/opus-2020-01-20.test.txt) * test set scores: [opus-2020-01-20.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/gaa-de/opus-2020-01-20.eval.txt) | 44583b62279dc1f5f64afddaf2df1a9e |

mit | [] | false | JoJo Bizzare Adventure manga lineart on Stable Diffusion This is the `<JoJo_lineart>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:                | 17598958a9fcd814c3f359c275fc8f33 |

apache-2.0 | ['automatic-speech-recognition', 'nl'] | false | exp_w2v2t_nl_vp-it_s449 Fine-tuned [facebook/wav2vec2-large-it-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-it-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (nl)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 39040fc5dea0cfaceda5dc570ad34f63 |

apache-2.0 | ['generated_from_trainer'] | false | whisper-base.en This model is a fine-tuned version of [openai/whisper-base.en](https://huggingface.co/openai/whisper-base.en) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.8125 - Wer: 50.1754 | bca8c7ded732a25479e4a5caa1483741 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 8 - eval_batch_size: 5 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 100 - training_steps: 100 - mixed_precision_training: Native AMP | e3dc23d0d4adc8cefcb2b7cb75b3db89 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.7532 | 1.12 | 100 | 0.8125 | 50.1754 | | 11de464bbfb3ecf7d2edf6ff0ff83a75 |

apache-2.0 | ['generated_from_trainer'] | false | finetuning-sentiment-model-3000-samples-pi This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.3344 - Accuracy: 0.8633 - F1: 0.8664 | 6b53ce57918e575a27a3a37cad18338c |

mit | [] | false | model by ShadoWxShinigamI It can be used by adding **in the style of mdjrny-grfft** to the end of your prompt. (Token is mdjrny-grfft, but since the weight is too strong (over trained text encoder), using the full sentence can help in better style transfer (YMMV)). NO PROMPT ENGINEERING REQUIRED. Trained On TheLastBen Repo Fast Method :- (https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb). And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) Examples :- Human :- Eminem  Taylor Swift  Son Goku  Dwayne Johnson  Creatures :- Dragon  Alien  Werewolf  Zombie  Animals/Birds Tiger  Lion  Eagle  Butterfly ( I know it's neither an animal/bird )  Objects - Semi Reliable ( Have to Cherry Pick. Usually 1 in 3 will be good ) Cyberpunk Car  Basket Ball  Water Bottle  Airplane  Try adding weights if your prompt doesn't work. All the best!! | bfd476eb0d60ac7d8fccb18c2c46dfbf |

mit | ['token-classification', 'fill-mask'] | false | This model is the combined camembert-base model, with the pretrained lilt checkpoint from the paper "LiLT: A Simple yet Effective Language-Independent Layout Transformer for Structured Document Understanding". Original repository: https://github.com/jpWang/LiLT To use it, it is necessary to fork the modeling and configuration files from the original repository, and load the pretrained model from the corresponding classes (LiLTRobertaLikeConfig, LiLTRobertaLikeForRelationExtraction, LiLTRobertaLikeForTokenClassification, LiLTRobertaLikeModel). They can also be preloaded with the AutoConfig/model factories as such: ```python from transformers import AutoModelForTokenClassification, AutoConfig from path_to_custom_classes import ( LiLTRobertaLikeConfig, LiLTRobertaLikeForRelationExtraction, LiLTRobertaLikeForTokenClassification, LiLTRobertaLikeModel ) def patch_transformers(): AutoConfig.register("liltrobertalike", LiLTRobertaLikeConfig) AutoModel.register(LiLTRobertaLikeConfig, LiLTRobertaLikeModel) AutoModelForTokenClassification.register(LiLTRobertaLikeConfig, LiLTRobertaLikeForTokenClassification) | a29c8438face3e3e938cdb4f5119da66 |

mit | ['token-classification', 'fill-mask'] | false | patch_transformers() must have been executed beforehand tokenizer = AutoTokenizer.from_pretrained("camembert-base") model = AutoModel.from_pretrained("manu/lilt-camembert-base") model = AutoModelForTokenClassification.from_pretrained("manu/lilt-camembert-base") | b0039c5b3b1923d1d0897ceb10e12269 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-xlsr-53-espeak-cv-ft-evn3-ntsema-colab This model is a fine-tuned version of [facebook/wav2vec2-xlsr-53-espeak-cv-ft](https://huggingface.co/facebook/wav2vec2-xlsr-53-espeak-cv-ft) on the audiofolder dataset. It achieves the following results on the evaluation set: - Loss: 1.5004 - Wer: 0.97 | f957ce80bccfdea6350661e27270eca6 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 4.8078 | 7.14 | 400 | 1.3558 | 0.9933 | | 0.7854 | 14.28 | 800 | 1.2786 | 0.98 | | 0.3685 | 21.43 | 1200 | 1.4606 | 0.9733 | | 0.1912 | 28.57 | 1600 | 1.5004 | 0.97 | | 9d09331e1837d9759db31e957a555a92 |

mit | ['generated_from_trainer'] | false | run-1 This model is a fine-tuned version of [roberta-base](https://huggingface.co/roberta-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.3480 - Accuracy: 0.73 - Precision: 0.6930 - Recall: 0.6829 - F1: 0.6871 | bb5dc33f530aaa8f973e520ff6ffd9e1 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | Precision | Recall | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:---------:|:------:|:------:| | 1.0042 | 1.0 | 50 | 0.8281 | 0.665 | 0.6105 | 0.6240 | 0.6016 | | 0.8062 | 2.0 | 100 | 0.9313 | 0.665 | 0.6513 | 0.6069 | 0.5505 | | 0.627 | 3.0 | 150 | 0.8275 | 0.72 | 0.6713 | 0.6598 | 0.6638 | | 0.4692 | 4.0 | 200 | 0.8289 | 0.68 | 0.6368 | 0.6447 | 0.6398 | | 0.2766 | 5.0 | 250 | 1.1263 | 0.72 | 0.6893 | 0.6431 | 0.6417 | | 0.1868 | 6.0 | 300 | 1.2901 | 0.725 | 0.6823 | 0.6727 | 0.6764 | | 0.1054 | 7.0 | 350 | 1.6742 | 0.68 | 0.6696 | 0.6427 | 0.6384 | | 0.0837 | 8.0 | 400 | 1.6199 | 0.72 | 0.6826 | 0.6735 | 0.6772 | | 0.0451 | 9.0 | 450 | 1.8324 | 0.735 | 0.7029 | 0.6726 | 0.6727 | | 0.0532 | 10.0 | 500 | 2.1136 | 0.705 | 0.6949 | 0.6725 | 0.6671 | | 0.0178 | 11.0 | 550 | 2.1136 | 0.73 | 0.6931 | 0.6810 | 0.6832 | | 0.0111 | 12.0 | 600 | 2.2740 | 0.69 | 0.6505 | 0.6430 | 0.6461 | | 0.0205 | 13.0 | 650 | 2.3026 | 0.725 | 0.6965 | 0.6685 | 0.6716 | | 0.0181 | 14.0 | 700 | 2.2901 | 0.735 | 0.7045 | 0.6806 | 0.6876 | | 0.0074 | 15.0 | 750 | 2.2277 | 0.74 | 0.7075 | 0.6923 | 0.6978 | | 0.0063 | 16.0 | 800 | 2.2720 | 0.75 | 0.7229 | 0.7051 | 0.7105 | | 0.0156 | 17.0 | 850 | 2.1237 | 0.73 | 0.6908 | 0.6841 | 0.6854 | | 0.0027 | 18.0 | 900 | 2.2376 | 0.73 | 0.6936 | 0.6837 | 0.6874 | | 0.003 | 19.0 | 950 | 2.3359 | 0.735 | 0.6992 | 0.6897 | 0.6937 | | 0.0012 | 20.0 | 1000 | 2.3480 | 0.73 | 0.6930 | 0.6829 | 0.6871 | | 2b6efea6d742b2b7358560d5e4890614 |

apache-2.0 | [] | false | mk-gpt2 Test the whole generation capabilities here: https://transformer.huggingface.co/doc/gpt2-large Pretrained model on English language using a causal language modeling (CLM) objective. It was introduced in [this paper](https://d4mucfpksywv.cloudfront.net/better-language-models/language_models_are_unsupervised_multitask_learners.pdf) and first released at [this page](https://openai.com/blog/better-language-models/). | 626a325c477b5356fc2f3e74cbad8d71 |

apache-2.0 | [] | false | Model description mk-gpt2 is a transformers model pretrained on a very large corpus of Macedonian data in a self-supervised fashion. This means it was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots of publicly available data) with an automatic process to generate inputs and labels from those texts. More precisely, it was trained to guess the next word in sentences. More precisely, inputs are sequences of continuous text of a certain length and the targets are the same sequence, shifted one token (word or piece of word) to the right. The model uses internally a mask-mechanism to make sure the predictions for the token `i` only uses the inputs from `1` to `i` but not the future tokens. This way, the model learns an inner representation of the Macedonian language that can then be used to extract features useful for downstream tasks. The model is best at what it was pretrained for however, which is generating texts from a prompt. | d0ca7cc4951f464b0f745a76c34f93e8 |

apache-2.0 | [] | false | How to use Here is how to use this model to get the features of a given text in PyTorch: import random from transformers import AutoTokenizer, AutoModelWithLMHead tokenizer = AutoTokenizer.from_pretrained('macedonizer/mk-gpt2') \ model = AutoModelWithLMHead.from_pretrained('macedonizer/mk-gpt2') input_text = 'Скопје е ' if len(input_text) == 0: \ encoded_input = tokenizer(input_text, return_tensors="pt") \ output = model.generate( \ bos_token_id=random.randint(1, 50000), \ do_sample=True, \ top_k=50, \ max_length=1024, \ top_p=0.95, \ num_return_sequences=1, \ ) \ else: \ encoded_input = tokenizer(input_text, return_tensors="pt") \ output = model.generate( \ **encoded_input, \ bos_token_id=random.randint(1, 50000), \ do_sample=True, \ top_k=50, \ max_length=1024, \ top_p=0.95, \ num_return_sequences=1, \ ) decoded_output = [] \ for sample in output: \ decoded_output.append(tokenizer.decode(sample, skip_special_tokens=True)) print(decoded_output) | 3bd5f09fc82cf177bda417052e2655b5 |

apache-2.0 | ['generated_from_trainer'] | false | finetuning-sentiment-model-24000-samples This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.3505 - Accuracy: 0.9267 - F1: 0.9274 | 587bdf241c7c2c198a62552d0881dcb6 |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | Anything V4 Welcome to Anything V4 - a latent diffusion model for weebs. The newest version of Anything. This model is intended to produce high-quality, highly detailed anime style with just a few prompts. Like other anime-style Stable Diffusion models, it also supports danbooru tags to generate images. e.g. **_1girl, white hair, golden eyes, beautiful eyes, detail, flower meadow, cumulonimbus clouds, lighting, detailed sky, garden_** I think the V4.5 version better though, it's in this repo. feel free 2 try it | af13d83d9cdbd68a508efb238bb88f46 |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | Gradio We support a [Gradio](https://github.com/gradio-app/gradio) Web UI to run anything-v4.0: [](https://huggingface.co/spaces/akhaliq/anything-v4.0) | 6c230d3550596c683215b87c6927fc57 |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | 🧨 Diffusers This model can be used just like any other Stable Diffusion model. For more information, please have a look at the [Stable Diffusion](https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion). You can also export the model to [ONNX](https://huggingface.co/docs/diffusers/optimization/onnx), [MPS](https://huggingface.co/docs/diffusers/optimization/mps) and/or [FLAX/JAX](). ```python from diffusers import StableDiffusionPipeline import torch model_id = "andite/anything-v4.0" pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16) pipe = pipe.to("cuda") prompt = "hatsune_miku" image = pipe(prompt).images[0] image.save("./hatsune_miku.png") ``` | 493bcf95df15ef360d908d342226ff08 |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | Examples Below are some examples of images generated using this model: **Anime Girl:**  ``` masterpiece, best quality, 1girl, white hair, medium hair, cat ears, closed eyes, looking at viewer, :3, cute, scarf, jacket, outdoors, streets Steps: 20, Sampler: DPM++ 2M Karras, CFG scale: 7 ``` **Anime Boy:**  ``` 1boy, bishounen, casual, indoors, sitting, coffee shop, bokeh Steps: 20, Sampler: DPM++ 2M Karras, CFG scale: 7 ``` **Scenery:**  ``` scenery, village, outdoors, sky, clouds Steps: 50, Sampler: DPM++ 2S a Karras, CFG scale: 7 ``` | ca54c1db8fec08e5c6d8bda925a42b11 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper Large Es - Javier Alonso This model is a fine-tuned version of [openai/whisper-large](https://huggingface.co/openai/whisper-large) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 0.1571 - Wer: 5.5201 | f70db7e7603f1cf397d6692a13692745 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 8 - eval_batch_size: 2 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 10000 - mixed_precision_training: Native AMP | bb13aa4ae669316f5ce3491892cd9dc6 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:------:| | 0.211 | 0.1 | 1000 | 0.2293 | 8.3896 | | 0.2227 | 0.2 | 2000 | 0.2215 | 8.2552 | | 0.1496 | 0.3 | 3000 | 0.2121 | 8.0362 | | 0.1851 | 0.4 | 4000 | 0.2018 | 7.5197 | | 0.1917 | 0.5 | 5000 | 0.1916 | 7.1098 | | 0.1857 | 0.6 | 6000 | 0.1817 | 6.5537 | | 0.1294 | 0.7 | 7000 | 0.1752 | 6.4062 | | 0.1358 | 0.8 | 8000 | 0.1670 | 5.9950 | | 0.1542 | 0.9 | 9000 | 0.1604 | 5.7858 | | 0.1554 | 1.0 | 10000 | 0.1571 | 5.5201 | | 134c1814a19ca96cae5120fba38d7ede |

apache-2.0 | ['generated_from_trainer'] | false | finetuned-mlm_small This model is a fine-tuned version of [muhtasham/bert-small-mlm-finetuned-emotion](https://huggingface.co/muhtasham/bert-small-mlm-finetuned-emotion) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.5097 - Accuracy: 0.9084 - F1: 0.9520 | 6e8015c7f4829564eb2a0897c6366adf |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.2852 | 2.55 | 500 | 0.1781 | 0.9334 | 0.9656 | | 0.1243 | 5.1 | 1000 | 0.3215 | 0.9078 | 0.9517 | | 0.0543 | 7.65 | 1500 | 0.2467 | 0.9378 | 0.9679 | | 0.0309 | 10.2 | 2000 | 0.7256 | 0.8594 | 0.9244 | | 0.0199 | 12.76 | 2500 | 0.4230 | 0.9222 | 0.9595 | | 0.0161 | 15.31 | 3000 | 0.5097 | 0.9084 | 0.9520 | | 7a7d54585336fa8c014482ec3693647d |

mit | ['generated_from_trainer'] | false | deberta-finetuned-ner This model is a fine-tuned version of [microsoft/deberta-base](https://huggingface.co/microsoft/deberta-base) on the conll2003 dataset. It achieves the following results on the evaluation set: - Loss: 0.0515 - Precision: 0.9577 - Recall: 0.9652 - F1: 0.9614 - Accuracy: 0.9907 | c6f572ca6a22774957ed3fa6cca4d269 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.0742 | 1.0 | 1756 | 0.0526 | 0.9390 | 0.9510 | 0.9450 | 0.9868 | | 0.0374 | 2.0 | 3512 | 0.0528 | 0.9421 | 0.9554 | 0.9487 | 0.9879 | | 0.0205 | 3.0 | 5268 | 0.0505 | 0.9505 | 0.9636 | 0.9570 | 0.9900 | | 0.0089 | 4.0 | 7024 | 0.0528 | 0.9531 | 0.9636 | 0.9583 | 0.9898 | | 0.0076 | 5.0 | 8780 | 0.0515 | 0.9577 | 0.9652 | 0.9614 | 0.9907 | | 748da919f9e53df596303bc6afe39be4 |

apache-2.0 | ['automatic-speech-recognition', 'en'] | false | exp_w2v2r_en_vp-100k_gender_male-10_female-0_s691 Fine-tuned [facebook/wav2vec2-large-100k-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-100k-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (en)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 459a443579766d17e2764b7ddb69ba1c |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-rotten-tomatoes This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the rotten_tomatoes dataset. It achieves the following results on the evaluation set: - Loss: 0.3616 - Accuracy: 0.8386 - F1: 0.8386 | 2bacc7110d21bda8af9fff162de80c2d |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.4767 | 1.0 | 134 | 0.3825 | 0.8227 | 0.8221 | | 0.3106 | 2.0 | 268 | 0.3616 | 0.8386 | 0.8386 | | 85d9a10fe1595abcae0309cb19a37908 |

apache-2.0 | ['generated_from_trainer'] | false | canine-s-finetuned-stsb This model is a fine-tuned version of [google/canine-s](https://huggingface.co/google/canine-s) on the glue dataset. It achieves the following results on the evaluation set: - Loss: 0.7223 - Pearson: 0.8397 - Spearmanr: 0.8397 | e0149c482356ffbf2e5e67b7a7262c24 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Pearson | Spearmanr | |:-------------:|:-----:|:----:|:---------------:|:-------:|:---------:| | No log | 1.0 | 360 | 0.7938 | 0.8083 | 0.8077 | | 1.278 | 2.0 | 720 | 0.7349 | 0.8322 | 0.8305 | | 0.6765 | 3.0 | 1080 | 0.7075 | 0.8374 | 0.8366 | | 0.6765 | 4.0 | 1440 | 0.7586 | 0.8360 | 0.8376 | | 0.4629 | 5.0 | 1800 | 0.7223 | 0.8397 | 0.8397 | | 20a835988cf9be82501e1aae842baf04 |

cc-by-sa-4.0 | ['generated_from_trainer'] | false | t5-base-TEDxJP-0front-1body-3rear This model is a fine-tuned version of [sonoisa/t5-base-japanese](https://huggingface.co/sonoisa/t5-base-japanese) on the te_dx_jp dataset. It achieves the following results on the evaluation set: - Loss: 0.4700 - Wer: 0.1779 - Mer: 0.1718 - Wil: 0.2600 - Wip: 0.7400 - Hits: 55384 - Substitutions: 6510 - Deletions: 2693 - Insertions: 2287 - Cer: 0.1398 | a3b248bf429510c1534ea20d24192ea3 |

cc-by-sa-4.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | Mer | Wil | Wip | Hits | Substitutions | Deletions | Insertions | Cer | |:-------------:|:-----:|:-----:|:---------------:|:------:|:------:|:------:|:------:|:-----:|:-------------:|:---------:|:----------:|:------:| | 0.6519 | 1.0 | 1457 | 0.4991 | 0.2099 | 0.1985 | 0.2891 | 0.7109 | 54721 | 6807 | 3059 | 3689 | 0.1855 | | 0.5507 | 2.0 | 2914 | 0.4589 | 0.1827 | 0.1764 | 0.2653 | 0.7347 | 55094 | 6566 | 2927 | 2305 | 0.1504 | | 0.5097 | 3.0 | 4371 | 0.4493 | 0.1797 | 0.1734 | 0.2615 | 0.7385 | 55330 | 6503 | 2754 | 2352 | 0.1428 | | 0.4457 | 4.0 | 5828 | 0.4458 | 0.1757 | 0.1702 | 0.2581 | 0.7419 | 55319 | 6463 | 2805 | 2078 | 0.1376 | | 0.3913 | 5.0 | 7285 | 0.4486 | 0.1774 | 0.1716 | 0.2600 | 0.7400 | 55324 | 6525 | 2738 | 2195 | 0.1414 | | 0.3641 | 6.0 | 8742 | 0.4553 | 0.1764 | 0.1706 | 0.2595 | 0.7405 | 55397 | 6566 | 2624 | 2202 | 0.1378 | | 0.4101 | 7.0 | 10199 | 0.4596 | 0.1770 | 0.1711 | 0.2596 | 0.7404 | 55360 | 6528 | 2699 | 2202 | 0.1387 | | 0.3305 | 8.0 | 11656 | 0.4654 | 0.1783 | 0.1722 | 0.2606 | 0.7394 | 55358 | 6528 | 2701 | 2288 | 0.1393 | | 0.317 | 9.0 | 13113 | 0.4671 | 0.1782 | 0.1720 | 0.2604 | 0.7396 | 55386 | 6524 | 2677 | 2307 | 0.1400 | | 0.3232 | 10.0 | 14570 | 0.4700 | 0.1779 | 0.1718 | 0.2600 | 0.7400 | 55384 | 6510 | 2693 | 2287 | 0.1398 | | b968686f5038434e50426992565d2a72 |

apache-2.0 | ['text-generation', 'dialogue-generation', 'pytorch', 'inference acceleration', 'gpt2', 'gpt3'] | false | YuYan-Dialogue YuYan is a series of Chinese language models with different size, developed by Fuxi AI lab, Netease.Inc. They are trained on a large Chinese novel dataset of high quality. YuYan is in the same family of decoder-only models like [GPT2 and GPT-3](https://arxiv.org/abs/2005.14165). As such, it was pretrained using the self-supervised causal language modedling objective. YuYan-Dialogue is a dialogue model by fine-tuning the YuYan-11b on a large multi-turn dialogue dataset of high quality. It has very strong conversation generation capabilities. | 322ab6a94a9606a6622919edd5edb584 |

apache-2.0 | ['text-generation', 'dialogue-generation', 'pytorch', 'inference acceleration', 'gpt2', 'gpt3'] | false | make a folder, move the dictionary file and model file into it. mkdir transformer_lm_gpt2_xxl_dialogue mv dict.txt transformer_lm_gpt2_xxl_dialogue/ mv checkpoint_best_part_*.pt transformer_lm_gpt2_xxl_dialogue/ ``` `inference.py` is a script to provide a interface to initialize the EET object and sequence_generator. It includes some pre-process and post-process functions for text input and output. You can modify the script according to your needs. In addition, it provide a simple object to organize the dialogue generation and dialogue history. After the environment is ready, several lines of codes can realize the inference. ``` python from inference import Inference, Dialogue model_path = "transformer_lm_gpt2_xxl_dialogue/checkpoint_best.pt" data_path = "transformer_lm_gpt2_xxl_dialogue" eet_batch_size = 10 | d504f1c0c11abfe9acc016be0a2031ee |

apache-2.0 | ['text-generation', 'dialogue-generation', 'pytorch', 'inference acceleration', 'gpt2', 'gpt3'] | false | max inference batch size, adjust according to cuda memory, 40GB memory is necessary inference = Inference(model_path, data_path, eet_batch_size) dialogue_model = Dialogue(inference) dialogue_model.get_repsonse("你好啊") ``` | 317c159ea23f1db5395181826cd0f269 |

apache-2.0 | ['generated_from_trainer'] | false | whisper-small-en This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the librispeech_asr dataset. It achieves the following results on the evaluation set: - Loss: 6.7832 - Wer: 124.5115 | e48556b84ebe56e6555331598a145af2 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0005 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.98) and epsilon=1e-06 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 2 - training_steps: 100 - mixed_precision_training: Native AMP | e46b5c6378da13d88f4f6d20ac52799c |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:---------:| | 9.6259 | 1.57 | 5 | 10.7408 | 1127.3535 | | 11.5288 | 3.29 | 10 | 9.2534 | 100.0 | | 10.9249 | 4.86 | 15 | 7.8357 | 100.0 | | 7.0442 | 6.57 | 20 | 6.9971 | 595.3819 | | 8.6762 | 8.29 | 25 | 5.6135 | 312.2558 | | 5.4239 | 9.86 | 30 | 5.4885 | 97.1581 | | 4.986 | 11.57 | 35 | 5.2888 | 628.7744 | | 6.708 | 13.29 | 40 | 4.9665 | 277.6199 | | 3.9096 | 14.86 | 45 | 5.0861 | 631.9716 | | 3.2326 | 16.57 | 50 | 5.0090 | 279.7513 | | 3.9691 | 18.29 | 55 | 5.0804 | 133.2149 | | 1.8661 | 19.86 | 60 | 5.4423 | 317.5844 | | 1.1588 | 21.57 | 65 | 5.7955 | 119.5382 | | 1.0355 | 23.29 | 70 | 6.0458 | 190.2309 | | 0.3455 | 24.86 | 75 | 6.3057 | 106.7496 | | 0.142 | 26.57 | 80 | 6.5767 | 209.9467 | | 0.1722 | 28.29 | 85 | 6.5937 | 101.4210 | | 0.0816 | 29.86 | 90 | 6.7679 | 149.7336 | | 0.079 | 31.57 | 95 | 6.8008 | 133.5702 | | 0.1007 | 33.29 | 100 | 6.7832 | 124.5115 | | 0d23d8f3efffcf1ef4defb1ce8892ced |

apache-2.0 | ['exbert', 'multiberts', 'multiberts-seed-4'] | false | MultiBERTs Seed 4 Checkpoint 1700k (uncased) Seed 4 intermediate checkpoint 1700k MultiBERTs (pretrained BERT) model on English language using a masked language modeling (MLM) objective. It was introduced in [this paper](https://arxiv.org/pdf/2106.16163.pdf) and first released in [this repository](https://github.com/google-research/language/tree/master/language/multiberts). This is an intermediate checkpoint. The final checkpoint can be found at [multiberts-seed-4](https://hf.co/multberts-seed-4). This model is uncased: it does not make a difference between english and English. Disclaimer: The team releasing MultiBERTs did not write a model card for this model so this model card has been written by [gchhablani](https://hf.co/gchhablani). | 2ad741adf5400fa6f12caf461fdae72e |

apache-2.0 | ['exbert', 'multiberts', 'multiberts-seed-4'] | false | How to use Here is how to use this model to get the features of a given text in PyTorch: ```python from transformers import BertTokenizer, BertModel tokenizer = BertTokenizer.from_pretrained('multiberts-seed-4-1700k') model = BertModel.from_pretrained("multiberts-seed-4-1700k") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` | 963ac2776ce64abc110d2cdcdad99ca5 |

apache-2.0 | ['translation'] | false | opus-mt-iso-en * source languages: iso * target languages: en * OPUS readme: [iso-en](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/iso-en/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-09.zip](https://object.pouta.csc.fi/OPUS-MT-models/iso-en/opus-2020-01-09.zip) * test set translations: [opus-2020-01-09.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/iso-en/opus-2020-01-09.test.txt) * test set scores: [opus-2020-01-09.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/iso-en/opus-2020-01-09.eval.txt) | 7ad87bad4b5f2293cf138188d5af3e00 |

apache-2.0 | ['automatic-speech-recognition', 'de'] | false | exp_w2v2r_de_xls-r_gender_male-0_female-10_s922 Fine-tuned [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) for speech recognition using the train split of [Common Voice 7.0 (de)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | c88f10bbb1a92d80a953e7c7cf75df77 |

apache-2.0 | ['generated_from_trainer'] | false | all-roberta-large-v1-meta-8-16-5 This model is a fine-tuned version of [sentence-transformers/all-roberta-large-v1](https://huggingface.co/sentence-transformers/all-roberta-large-v1) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.4797 - Accuracy: 0.28 | 8e2b087f6c9c3bb1e7fff4f16e617bfc |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers', 'safetensors'] | false | Gradio We support a [Gradio](https://github.com/gradio-app/gradio) Web UI to run gigafractal2-diffusion: [](https://huggingface.co/spaces/akhaliq/gigafractal2-diffusion) Gigafractal2 Diffusion is a latent text-to-image diffusion model based on the original StabilityAI Stable Diffusion v2.0 and then fine-tuned on 40 images origanally made with another diffusion model named 'Disco Diffusion' using Dreambooth. This model has been created to explore the possibilities and limitations of Dreambooth training with training steps increased much more than usual and to overcome biases in the model created by the text incoder's token associations. The purpose of this model is to provide the biomorphic fractalism effect present in Disco Diffusion, but without the bias to 'Disco parties' and especially 'discoballs' for which [the model by snek](was known for). To use this style in your generations, add `gigafractal artstyle` to the prompts. Dreambooth hyperparameters ``` export MODEL_NAME="stabilityai/stable-diffusion-2" export INSTANCE_DIR="/home/{USERNAME}/kml/datasets/styles/dscdif" export CLASS_DIR="/home/{USERNAME}/kml/datasets/styles/dscdif2" export OUTPUT_DIR="/home/{USERNAME}/kml/models1" accelerate launch train_dreambooth.py \ --pretrained_model_name_or_path=$MODEL_NAME \ --instance_data_dir=$INSTANCE_DIR \ --class_data_dir=$CLASS_DIR \ --output_dir=$OUTPUT_DIR \ --with_prior_preservation --prior_loss_weight=1.0 \ --instance_prompt="gigafractal artstyle" \ --class_prompt="biomorphic" \ --resolution=768 \ --train_batch_size=1 \ --gradient_accumulation_steps=1 \ --learning_rate=1e-6 \ --lr_scheduler="constant" \ --lr_warmup_steps=0 \ --num_class_images=200 \ --max_train_steps=2040 \ --mixed_precision 'no' \ --train_text_encoder ``` The regularization dataset of 200 AI-generated images had been produced in AUTOMATIC1111's webui with the following prompt which may have had a positive effect on the resulting quality. ``` a computer generated image of a spiral like object, digital art, polycount, generative art, (fractalism:0.7), lovecraftian, intricate, detailed matte painting, high detail, ornate, cgsociety, psychedelic art, gothic art, artstation hq, colorful, complex, biopunk, 8k, maxmialist Negative prompt: bad quality, text, cropped, worst quality, low quality, normal quality, jpeg artifacts,signature, watermark, username, blurry, artist name, flat, out of focus Steps: 20, Sampler: Euler a, CFG scale: 12.5, Seed: 2042420948, Size: 768x768, Model hash: a9263745 ``` Model Description The model originally used for fine-tuning is Stable Diffusion V2-0, see their infopage https://huggingface.co/stabilityai/stable-diffusion-2. The current model has been fine-tuned with a learning rate of 1.0e-6 for 2040 steps using Dreambooth on Disco Diffusion produced images. License This model is open access and available to all, with a CreativeML OpenRAIL-M license further specifying rights and usage. The CreativeML OpenRAIL License specifies: You can't use the model to deliberately produce nor share illegal or harmful outputs or content The authors claims no rights on the outputs you generate, you are free to use them and are accountable for their use which must not go against the provisions set in the license You may re-distribute the weights and use the model commercially and/or as a service. If you do, please be aware you have to include the same use restrictions as the ones in the license and share a copy of the CreativeML OpenRAIL-M to all your users (please read the license entirely and carefully) Please read the full license https://huggingface.co/stabilityai/stable-diffusion-2 Downstream Uses This model can be used for entertainment purposes and as a generative art assistant. Acknowledgements Inspired by snek's work on https://huggingface.co/SDAddictsAnon/Snek/blob/main/arrow_disco_artstyle.ckpt. This project would not have been possible without the incredible work by the CompVis Researchers, Disco Diffusion, Deforum devs and all the artists who made the content for training even if they were an AI. The dataset for training currently resides here https://drive.google.com/drive/folders/1v-uW2ESlQRFe17tnWZ7_CtjadD9swfIG?usp=share_link. The author is grateful to snek for the provided dataset. You can see some examples of Gigafractal2 Diffusion produced images at https://drive.google.com/drive/folders/1z6iXjd4SveZ5s3vbjc3mI_bPOASVVTst?usp=share_link. | 0d46336341ea275aff98f6af8e2b1846 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-de-fr This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1623 - F1: 0.8596 | 3ddd82eb3af8658023bb950a84a2e8a0 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.2865 | 1.0 | 715 | 0.1981 | 0.8167 | | 0.1484 | 2.0 | 1430 | 0.1595 | 0.8486 | | 0.0949 | 3.0 | 2145 | 0.1623 | 0.8596 | | c5eafe3f1405daaf14ce94222e7f38ad |

apache-2.0 | ['translation'] | false | opus-mt-is-fi * source languages: is * target languages: fi * OPUS readme: [is-fi](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/is-fi/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-09.zip](https://object.pouta.csc.fi/OPUS-MT-models/is-fi/opus-2020-01-09.zip) * test set translations: [opus-2020-01-09.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/is-fi/opus-2020-01-09.test.txt) * test set scores: [opus-2020-01-09.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/is-fi/opus-2020-01-09.eval.txt) | 385497a5c03be6b7fc7492fb94f50c40 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-large-xls-r-300m-hi This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 2.4156 - Wer: 0.7181 | 9098e423e940cfa587604ea2f0639de2 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 5.7703 | 2.72 | 400 | 2.2274 | 0.9259 | | 0.6515 | 5.44 | 800 | 1.5812 | 0.7581 | | 0.339 | 8.16 | 1200 | 2.0590 | 0.7825 | | 0.2262 | 10.88 | 1600 | 2.0324 | 0.7603 | | 0.1665 | 13.6 | 2000 | 2.1396 | 0.7481 | | 0.1311 | 16.33 | 2400 | 2.2090 | 0.7379 | | 0.1079 | 19.05 | 2800 | 2.3907 | 0.7612 | | 0.0927 | 21.77 | 3200 | 2.5294 | 0.7478 | | 0.0748 | 24.49 | 3600 | 2.5024 | 0.7452 | | 0.0644 | 27.21 | 4000 | 2.4715 | 0.7307 | | 0.0569 | 29.93 | 4400 | 2.4156 | 0.7181 | | 68728c99f3604ce39ceff94082ae7e1c |

mit | ['audio', 'text-to-speech'] | false | SpeechT5 (TTS task) SpeechT5 model fine-tuned for speech synthesis (text-to-speech) on LibriTTS. This model was introduced in [SpeechT5: Unified-Modal Encoder-Decoder Pre-Training for Spoken Language Processing](https://arxiv.org/abs/2110.07205) by Junyi Ao, Rui Wang, Long Zhou, Chengyi Wang, Shuo Ren, Yu Wu, Shujie Liu, Tom Ko, Qing Li, Yu Zhang, Zhihua Wei, Yao Qian, Jinyu Li, Furu Wei. SpeechT5 was first released in [this repository](https://github.com/microsoft/SpeechT5/), [original weights](https://huggingface.co/mechanicalsea/speecht5-tts). The license used is [MIT](https://github.com/microsoft/SpeechT5/blob/main/LICENSE). Disclaimer: The team releasing SpeechT5 did not write a model card for this model so this model card has been written by the Hugging Face team. | 686231e902514568141c71d15ce739ba |

mit | ['audio', 'text-to-speech'] | false | How to Get Started With the Model Use the code below to convert text into a mono 16 kHz speech waveform. ```python from transformers import SpeechT5Processor, SpeechT5ForTextToSpeech, SpeechT5HifiGan import torch import soundfile as sf processor = SpeechT5Processor.from_pretrained("microsoft/speecht5_tts") model = SpeechT5ForTextToSpeech.from_pretrained("microsoft/speecht5_tts") vocoder = SpeechT5HifiGan.from_pretrained("microsoft/speecht5_hifigan") inputs = processor(text="Hello, my dog is cute", return_tensors="pt") | f7230b5a4eb11b88209a05fccb332f08 |

mit | ['audio', 'text-to-speech'] | false | load xvector containing speaker's voice characteristics from a dataset embeddings_dataset = load_dataset("Matthijs/cmu-arctic-xvectors", split="validation") speaker_embeddings = torch.tensor(embeddings_dataset[7306]["xvector"]).unsqueeze(0) speech = model.generate_speech(inputs["input_ids"], speaker_embeddings, vocoder=vocoder) sf.write("speech.wav", speech.numpy(), samplerate=16000) ``` | 473eb771ec2407691ee0e11041a53da4 |

mit | ['audio', 'text-to-speech'] | false | Intended Uses & Limitations You can use this model for speech synthesis. See the [model hub](https://huggingface.co/models?search=speecht5) to look for fine-tuned versions on a task that interests you. Currently, both the feature extractor and model support PyTorch. | 29aec5335f5d475939c234de9ac0d326 |

creativeml-openrail-m | [] | false | Stable Diffusion v1-5 with the fine-tuned VAE `sd-vae-ft-mse` and files with config modifications for making it better to fine-tune made by [fast-stable-diffusion by TheLastBen](https://github.com/TheLastBen/fast-stable-diffusion) to be used on [fastDreambooth Colab Notebook](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) and on the [Dreambooth Training Space](https://huggingface.co/spaces/multimodalart/dreambooth-training) Is not suited for inference and training elsewhere is under your own risk. The [model LICENSE](https://huggingface.co/spaces/CompVis/stable-diffusion-license) still applies normally for this use-case. Refer to the [original repository](https://huggingface.co/runwayml/stable-diffusion-v1-5) for the model card | 917172e8b38519b222726711016c7609 |

mit | [] | false | princess_knight_art on Stable Diffusion This is the `<princess-knight>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:    | e9a9e6b1bca6dff1ac2a3bd555589745 |

apache-2.0 | ['multiberts', 'multiberts-seed_3', 'multiberts-seed_3-step_140k'] | false | MultiBERTs, Intermediate Checkpoint - Seed 3, Step 140k MultiBERTs is a collection of checkpoints and a statistical library to support robust research on BERT. We provide 25 BERT-base models trained with similar hyper-parameters as [the original BERT model](https://github.com/google-research/bert) but with different random seeds, which causes variations in the initial weights and order of training instances. The aim is to distinguish findings that apply to a specific artifact (i.e., a particular instance of the model) from those that apply to the more general procedure. We also provide 140 intermediate checkpoints captured during the course of pre-training (we saved 28 checkpoints for the first 5 runs). The models were originally released through [http://goo.gle/multiberts](http://goo.gle/multiberts). We describe them in our paper [The MultiBERTs: BERT Reproductions for Robustness Analysis](https://arxiv.org/abs/2106.16163). This is model | e320aa837eaac2da35ac6dfefd524aa9 |

apache-2.0 | ['multiberts', 'multiberts-seed_3', 'multiberts-seed_3-step_140k'] | false | How to use Using code from [BERT-base uncased](https://huggingface.co/bert-base-uncased), here is an example based on Tensorflow: ``` from transformers import BertTokenizer, TFBertModel tokenizer = BertTokenizer.from_pretrained('google/multiberts-seed_3-step_140k') model = TFBertModel.from_pretrained("google/multiberts-seed_3-step_140k") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='tf') output = model(encoded_input) ``` PyTorch version: ``` from transformers import BertTokenizer, BertModel tokenizer = BertTokenizer.from_pretrained('google/multiberts-seed_3-step_140k') model = BertModel.from_pretrained("google/multiberts-seed_3-step_140k") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` | 41737a2d5e12dcdbb4610e5e69ffeb25 |

apache-2.0 | ['generated_from_trainer'] | false | t5-small-finetuned-en-to-it-lrs-back This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.7887 - Bleu: 15.4528 - Gen Len: 52.516 | 1bf3ea35bb018f71800f4d6913a6c7f0 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len | |:-------------:|:-----:|:-----:|:---------------:|:-------:|:-------:| | 2.8637 | 1.0 | 1125 | 2.7212 | 3.496 | 82.846 | | 2.6665 | 2.0 | 2250 | 2.5507 | 5.4897 | 65.4087 | | 2.5307 | 3.0 | 3375 | 2.4286 | 6.688 | 61.9687 | | 2.4064 | 4.0 | 4500 | 2.3431 | 7.6166 | 59.5613 | | 2.3369 | 5.0 | 5625 | 2.2779 | 8.4755 | 57.776 | | 2.284 | 6.0 | 6750 | 2.2202 | 9.0471 | 57.1227 | | 2.2358 | 7.0 | 7875 | 2.1728 | 9.7222 | 55.9393 | | 2.1747 | 8.0 | 9000 | 2.1357 | 10.4908 | 54.9073 | | 2.1555 | 9.0 | 10125 | 2.1012 | 11.0378 | 54.292 | | 2.1215 | 10.0 | 11250 | 2.0715 | 11.2204 | 54.546 | | 2.0882 | 11.0 | 12375 | 2.0448 | 11.6557 | 54.1687 | | 2.0544 | 12.0 | 13500 | 2.0193 | 12.0521 | 53.604 | | 2.0355 | 13.0 | 14625 | 1.9959 | 12.2297 | 53.3893 | | 2.0236 | 14.0 | 15750 | 1.9755 | 12.4706 | 53.3327 | | 1.9974 | 15.0 | 16875 | 1.9555 | 12.59 | 53.4507 | | 1.983 | 16.0 | 18000 | 1.9400 | 12.8305 | 53.1807 | | 1.9615 | 17.0 | 19125 | 1.9236 | 13.0549 | 53.128 | | 1.9519 | 18.0 | 20250 | 1.9111 | 13.1942 | 53.2953 | | 1.9408 | 19.0 | 21375 | 1.8977 | 13.3979 | 53.332 | | 1.9203 | 20.0 | 22500 | 1.8862 | 13.5626 | 52.73 | | 1.9134 | 21.0 | 23625 | 1.8749 | 13.8549 | 52.904 | | 1.8981 | 22.0 | 24750 | 1.8638 | 13.9347 | 53.2787 | | 1.8911 | 23.0 | 25875 | 1.8557 | 14.1628 | 52.946 | | 1.8859 | 24.0 | 27000 | 1.8471 | 14.2514 | 52.744 | | 1.8692 | 25.0 | 28125 | 1.8406 | 14.4957 | 52.9267 | | 1.8733 | 26.0 | 29250 | 1.8324 | 14.5489 | 53.112 | | 1.8602 | 27.0 | 30375 | 1.8268 | 14.6941 | 52.882 | | 1.8547 | 28.0 | 31500 | 1.8202 | 14.9101 | 52.948 | | 1.8478 | 29.0 | 32625 | 1.8151 | 14.9498 | 52.8967 | | 1.8485 | 30.0 | 33750 | 1.8102 | 15.0763 | 52.8587 | | 1.8401 | 31.0 | 34875 | 1.8065 | 15.1604 | 52.8513 | | 1.8307 | 32.0 | 36000 | 1.8023 | 15.1404 | 52.6533 | | 1.8275 | 33.0 | 37125 | 1.7994 | 15.1813 | 52.738 | | 1.8233 | 34.0 | 38250 | 1.7964 | 15.3185 | 52.7033 | | 1.8238 | 35.0 | 39375 | 1.7939 | 15.4693 | 52.6433 | | 1.8253 | 36.0 | 40500 | 1.7926 | 15.4467 | 52.44 | | 1.8169 | 37.0 | 41625 | 1.7908 | 15.4167 | 52.5907 | | 1.8182 | 38.0 | 42750 | 1.7899 | 15.4595 | 52.5433 | | 1.8161 | 39.0 | 43875 | 1.7890 | 15.4411 | 52.5007 | | 1.8169 | 40.0 | 45000 | 1.7887 | 15.4528 | 52.516 | | 194c9f14f16c67e33ddf8b7579179aba |

mit | ['vision', 'image-to-text', 'image-captioning', 'visual-question-answering'] | false | BLIP-2, Flan T5-xl, pre-trained only BLIP-2 model, leveraging [Flan T5-xl](https://huggingface.co/google/flan-t5-xl) (a large language model). It was introduced in the paper [BLIP-2: Bootstrapping Language-Image Pre-training with Frozen Image Encoders and Large Language Models](https://arxiv.org/abs/2301.12597) by Li et al. and first released in [this repository](https://github.com/salesforce/LAVIS/tree/main/projects/blip2). Disclaimer: The team releasing BLIP-2 did not write a model card for this model so this model card has been written by the Hugging Face team. | d4b065aaa3bc858a5fe9ec69fe7528ef |

apache-2.0 | ['generated_from_trainer'] | false | Full config {'dataset': {'conditional_training_config': {'aligned_prefix': '<|aligned|>', 'drop_token_fraction': 0.05, 'misaligned_prefix': '<|misaligned|>', 'threshold': 0.000475}, 'datasets': ['kejian/codeparrot-train-more-filter-3.3b-cleaned'], 'is_split_by_sentences': True}, 'generation': {'batch_size': 64, 'metrics_configs': [{}, {'n': 1}, {}], 'scenario_configs': [{'display_as_html': True, 'generate_kwargs': {'do_sample': True, 'eos_token_id': 0, 'max_length': 704, 'min_length': 10, 'temperature': 0.7, 'top_k': 0, 'top_p': 0.9}, 'name': 'unconditional', 'num_samples': 512, 'prefix': '<|aligned|>', 'use_prompt_for_scoring': False}, {'display_as_html': True, 'generate_kwargs': {'do_sample': True, 'eos_token_id': 0, 'max_length': 272, 'min_length': 10, 'temperature': 0.7, 'top_k': 0, 'top_p': 0.9}, 'name': 'functions', 'num_samples': 512, 'prefix': '<|aligned|>', 'prompt_before_control': True, 'prompts_path': 'resources/functions_csnet.jsonl', 'use_prompt_for_scoring': True}], 'scorer_config': {}}, 'kl_gpt3_callback': {'gpt3_kwargs': {'model_name': 'code-cushman-001'}, 'max_tokens': 64, 'num_samples': 4096, 'prefix': '<|aligned|>'}, 'model': {'from_scratch': True, 'gpt2_config_kwargs': {'reorder_and_upcast_attn': True, 'scale_attn_by': True}, 'num_additional_tokens': 2, 'path_or_name': 'codeparrot/codeparrot-small'}, 'objective': {'name': 'MLE'}, 'tokenizer': {'path_or_name': 'codeparrot/codeparrot-small', 'special_tokens': ['<|aligned|>', '<|misaligned|>']}, 'training': {'dataloader_num_workers': 0, 'effective_batch_size': 64, 'evaluation_strategy': 'no', 'fp16': True, 'hub_model_id': 'kejian/final-cond-25-0.05-again', 'hub_strategy': 'all_checkpoints', 'learning_rate': 0.0008, 'logging_first_step': True, 'logging_steps': 1, 'num_tokens': 3300000000.0, 'output_dir': 'training_output', 'per_device_train_batch_size': 16, 'push_to_hub': True, 'remove_unused_columns': False, 'save_steps': 5000, 'save_strategy': 'steps', 'seed': 42, 'warmup_ratio': 0.01, 'weight_decay': 0.1}} | e8fdf2af7dca9b11139ee4be564c2853 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Wav2Vec2-Large-XLSR-53-Japanese Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on Japanese using the [Common Voice](https://huggingface.co/datasets/common_voice), and JSUT dataset{s}. When using this model, make sure that your speech input is sampled at 16kHz. | 9cf7a982a65feb4dc4ab28ec7660cbe7 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Usage The model can be used directly (without a language model) as follows: ```python import torch import torchaudio from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor test_dataset = load_dataset("common_voice", "ja", split="test[:2%]") processor = Wav2Vec2Processor.from_pretrained("qqhann/w2v_hf_jsut_xlsr53") model = Wav2Vec2ForCTC.from_pretrained("qqhann/w2v_hf_jsut_xlsr53") resampler = torchaudio.transforms.Resample(48_000, 16_000) | 07d012c813be188d29d844b8e7d4aa82 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Evaluation The model can be evaluated as follows on the Japanese test data of Common Voice. ```python !pip install torchaudio !pip install datasets transformers !pip install jiwer !pip install mecab-python3 !pip install unidic-lite !python -m unidic download !pip install jaconv import torch import torchaudio from datasets import load_dataset, load_metric from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor import re import MeCab from jaconv import kata2hira from typing import List | b62ede46d1e011ca417c74cd2143c5d8 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Japanese preprocessing tagger = MeCab.Tagger("-Owakati") chars_to_ignore_regex = '[\。\、\「\」\,\?\.\!\-\;\:\"\“\%\‘\”\�]' def text2kata(text): node = tagger.parseToNode(text) word_class = [] while node: word = node.surface wclass = node.feature.split(',') if wclass[0] != u'BOS/EOS': if len(wclass) <= 6: word_class.append((word)) elif wclass[6] == None: word_class.append((word)) else: word_class.append((wclass[6])) node = node.next return ' '.join(word_class) def hiragana(text): return kata2hira(text2kata(text)) test_dataset = load_dataset("common_voice", "ja", split="test") wer = load_metric("wer") resampler = torchaudio.transforms.Resample(48_000, 16_000) | a72bd8be508481d8664f5083afe79000 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def speech_file_to_array_fn(batch): batch["sentence"] = hiragana(batch["sentence"]).strip() batch["sentence"] = re.sub(chars_to_ignore_regex, '', batch["sentence"]).lower() speech_array, sampling_rate = torchaudio.load(batch["path"]) batch["speech"] = resampler(speech_array).squeeze().numpy() return batch test_dataset = test_dataset.map(speech_file_to_array_fn) | d406572bf9b16e8fbdade161ec097f76 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def evaluate(batch): inputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits pred_ids = torch.argmax(logits, dim=-1) batch["pred_strings"] = processor.batch_decode(pred_ids) return batch result = test_dataset.map(evaluate, batched=True, batch_size=8) def cer_compute(predictions: List[str], references: List[str]): p = [" ".join(list(" " + pred.replace(" ", ""))).strip() for pred in predictions] r = [" ".join(list(" " + ref.replace(" ", ""))).strip() for ref in references] return wer.compute(predictions=p, references=r) print("WER: {:2f}".format(100 * wer.compute(predictions=result["pred_strings"], references=result["sentence"]))) print("CER: {:2f}".format(100 * cer_compute(predictions=result["pred_strings"], references=result["sentence"]))) ``` **Test Result**: 51.72 % | 0ef6dc7349b387aee5124a48f9b0311b |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | TODO: adapt to state all the datasets that were used for training. --> The privately collected JSUT Japanese dataset was used for training. <!-- The script used for training can be found [here](...) | 10ebad3c891d0816c60bb3ea2a077808 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | TODO: fill in a link to your training script here. If you trained your model in a colab, simply fill in the link here. If you trained the model locally, it would be great if you could upload the training script on github and paste the link here. --> | 4584ec69dad0c1a4788cbaa1677b4fc7 |

mit | ['generated_from_trainer'] | false | gpt2.CEBaB_confounding.uniform.sa.5-class.seed_43 This model is a fine-tuned version of [gpt2](https://huggingface.co/gpt2) on the OpenTable OPENTABLE dataset. It achieves the following results on the evaluation set: - Loss: 0.9552 - Accuracy: 0.5672 - Macro-f1: 0.4441 - Weighted-macro-f1: 0.5100 | 5ae3c983491ab2ac9edb976c79fa5de4 |

apache-2.0 | ['generated_from_trainer'] | false | bert-emotion This model is a fine-tuned version of [distilbert-base-cased](https://huggingface.co/distilbert-base-cased) on the tweet_eval dataset. It achieves the following results on the evaluation set: - Loss: 1.1658 - Precision: 0.7311 - Recall: 0.7299 - Fscore: 0.7299 | 920e51d5464e61f96544832b7e82bcb8 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | Fscore | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:| | 0.8562 | 1.0 | 815 | 0.7859 | 0.7527 | 0.6006 | 0.6173 | | 0.5352 | 2.0 | 1630 | 0.9248 | 0.7545 | 0.7188 | 0.7293 | | 0.2543 | 3.0 | 2445 | 1.1658 | 0.7311 | 0.7299 | 0.7299 | | e876dd218d45fdf624b8ce4c74823370 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | teamcomo-chf Dreambooth model trained by DFrostKilla with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept: | 49175e75a0451d0745efac4b69632c72 |

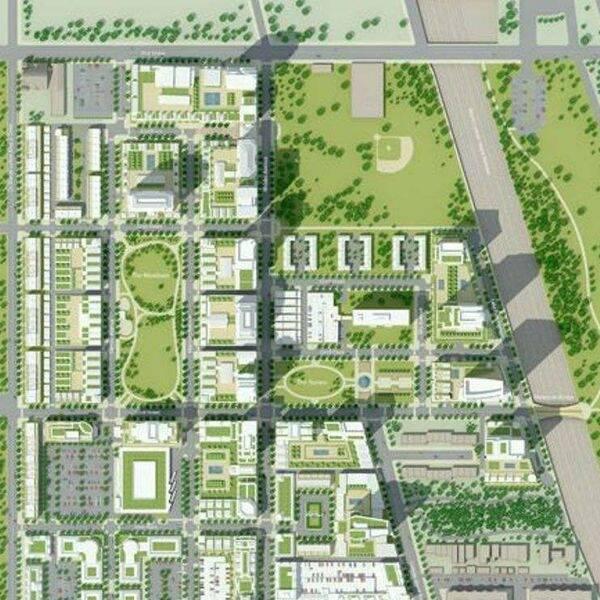

mit | [] | false | <design> on Stable Diffusion This is the `<design>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:         | afea698e00ab350544da5e1f0ea59f0d |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-cased-ner_cv-med-ft This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.5926 - Precision: 0.2559 - Recall: 0.3460 - F1: 0.2942 - Accuracy: 0.8368 | 0d6eb33be2d8d8f16482f08b18ad755b |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.