license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:------:|:---------------:| | 1.2944 | 1.0 | 44262 | 1.3432 | | 1.0152 | 2.0 | 88524 | 1.3450 | | 1.0062 | 3.0 | 132786 | 1.4571 | | bb5b5f66b61ac9a31c7a712e49e85b3a |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 0.4519 - Wer: 32.01 | 2452c3d8721f5599860f9e50c83f760c |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | WER | |:-------------:|:-----:|:----:|:---------------:|:-----:| | 0.1011 | 2.44 | 1000 | 0.3075 | 34.63 | | 0.0264 | 4.89 | 2000 | 0.3558 | 33.13 | | 0.0025 | 7.33 | 3000 | 0.4214 | 32.59 | | 0.0006 | 9.78 | 4000 | 0.4519 | 32.01 | | 0.0002 | 12.22 | 5000 | 0.4679 | 32.10 | | 654678d9285ef2b6a76992785a2e5dd8 |

mit | ['generated_from_trainer'] | false | BACnet-Klassifizierung-Gewerke-4.0 This model is a fine-tuned version of [bert-base-german-cased](https://huggingface.co/bert-base-german-cased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.0615 - F1: 0.9738 | 0ae9c1cce3cbf11945d6f1871d7a4596 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 5.0 | 2762cdd5e56e8d482932f87186b88a93 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.0772 | 1.0 | 726 | 0.1155 | 0.9584 | | 0.0581 | 2.0 | 1452 | 0.0804 | 0.9518 | | 0.0616 | 3.0 | 2178 | 0.0756 | 0.9627 | | 0.0368 | 4.0 | 2904 | 0.0647 | 0.9738 | | 0.0223 | 5.0 | 3630 | 0.0615 | 0.9738 | | 0bbbcb4f6bf384d4948c480d97dbdce4 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0008 - train_batch_size: 32 - eval_batch_size: 16 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 64 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.01 - training_steps: 50354 - mixed_precision_training: Native AMP | aeefcc71e208226cf4c38e08772a1afc |

apache-2.0 | ['generated_from_trainer'] | false | Full config {'dataset': {'conditional_training_config': {'aligned_prefix': '<|aligned|>', 'drop_token_fraction': 0.1, 'misaligned_prefix': '<|misaligned|>', 'threshold': 0}, 'datasets': ['kejian/codeparrot-train-more-filter-3.3b-cleaned'], 'is_split_by_sentences': True}, 'generation': {'batch_size': 128, 'metrics_configs': [{}, {'n': 1}, {}], 'scenario_configs': [{'display_as_html': True, 'generate_kwargs': {'bad_words_ids': [[32769]], 'do_sample': True, 'eos_token_id': 0, 'max_length': 640, 'min_length': 10, 'temperature': 0.7, 'top_k': 0, 'top_p': 0.9}, 'name': 'unconditional', 'num_hits_threshold': 0, 'num_samples': 2048, 'prefix': '<|aligned|>', 'use_prompt_for_scoring': False}, {'display_as_html': True, 'generate_kwargs': {'bad_words_ids': [[32769]], 'do_sample': True, 'eos_token_id': 0, 'max_length': 272, 'min_length': 10, 'temperature': 0.7, 'top_k': 0, 'top_p': 0.9}, 'name': 'functions', 'num_hits_threshold': 0, 'num_samples': 2048, 'prefix': '<|aligned|>', 'prompt_before_control': True, 'prompts_path': 'resources/functions_csnet.jsonl', 'use_prompt_for_scoring': True}], 'scorer_config': {}}, 'kl_gpt3_callback': {'gpt3_kwargs': {'model_name': 'code-cushman-001'}, 'max_tokens': 64, 'num_samples': 4096, 'prefix': '<|aligned|>', 'should_insert_prefix': True}, 'model': {'from_scratch': True, 'gpt2_config_kwargs': {'reorder_and_upcast_attn': True, 'scale_attn_by': True}, 'num_additional_tokens': 2, 'path_or_name': 'codeparrot/codeparrot-small'}, 'objective': {'name': 'MLE'}, 'tokenizer': {'path_or_name': 'codeparrot/codeparrot-small', 'special_tokens': ['<|aligned|>', '<|misaligned|>']}, 'training': {'dataloader_num_workers': 0, 'effective_batch_size': 64, 'evaluation_strategy': 'no', 'fp16': True, 'hub_model_id': 'mighty-conditional', 'hub_strategy': 'all_checkpoints', 'learning_rate': 0.0008, 'logging_first_step': True, 'logging_steps': 1, 'num_tokens': 3300000000.0, 'output_dir': 'training_output', 'per_device_train_batch_size': 16, 'push_to_hub': True, 'remove_unused_columns': False, 'save_steps': 25177, 'save_strategy': 'steps', 'seed': 42, 'warmup_ratio': 0.01, 'weight_decay': 0.1}} | d7b9e8f64d94ebb092e4a586a5a922e7 |

mit | ['bart', 'pytorch'] | false | bart-base-japanese This model is converted from the original [Japanese BART Pretrained model](https://nlp.ist.i.kyoto-u.ac.jp/?BART%E6%97%A5%E6%9C%AC%E8%AA%9EPretrained%E3%83%A2%E3%83%87%E3%83%AB) released by Kyoto University. Both the encoder and decoder outputs are identical to the original Fairseq model. | 807beb79aeac4c974a88a5d3625a25af |

mit | ['bart', 'pytorch'] | false | How to use the model The input text should be tokenized by [BartJapaneseTokenizer](https://huggingface.co/Formzu/bart-base-japanese/blob/main/tokenization_bart_japanese.py). Tokenizer requirements: * [Juman++](https://github.com/ku-nlp/jumanpp) * [zenhan](https://pypi.org/project/zenhan/) * [pyknp](https://pypi.org/project/pyknp/) * [sentencepiece](https://pypi.org/project/sentencepiece/) | f23cfc02b8c55c4ca054d699992976cf |

mit | ['bart', 'pytorch'] | false | Simple FillMaskPipeline ```python from transformers import AutoModelForSeq2SeqLM, AutoTokenizer, pipeline model_name = "Formzu/bart-base-japanese" model = AutoModelForSeq2SeqLM.from_pretrained(model_name) tokenizer = AutoTokenizer.from_pretrained(model_name, trust_remote_code=True) masked_text = "天気が<mask>から散歩しましょう。" fill_mask = pipeline("fill-mask", model=model, tokenizer=tokenizer) out = fill_mask(masked_text) print(out) | 47ddf509d0a22fd2ab2b48fe15eaae22 |

mit | ['bart', 'pytorch'] | false | Text Generation ```python from transformers import AutoModelForSeq2SeqLM, AutoTokenizer import torch device = torch.device("cuda") if torch.cuda.is_available() else torch.device("cpu") model_name = "Formzu/bart-base-japanese" model = AutoModelForSeq2SeqLM.from_pretrained(model_name).to(device) tokenizer = AutoTokenizer.from_pretrained(model_name, trust_remote_code=True) masked_text = "天気が<mask>から散歩しましょう。" inp = tokenizer(masked_text, return_tensors='pt').to(device) out = model.generate(**inp, num_beams=1, min_length=0, max_length=20, early_stopping=True, no_repeat_ngram_size=2) res = "".join(tokenizer.decode(out.squeeze(0).tolist(), skip_special_tokens=True).split(" ")) print(res) | f62fb2b78d51b02b117040be245c562d |

mit | ['Dialectal Arabic', 'Arabic', 'sequence labeling', 'Named entity recognition', 'Part-of-speech tagging', 'Zero-shot transfer learning', 'bert'] | false | Dialectal Arabic XLM-R Base This is a repo of the language model used for "AdaSL: An Unsupervised Domain Adaptation framework for Arabic multi-dialectal Sequence Labeling". The state-of-the-art method for sequence labeling on multi-dialect Arabic. | 690b15e77609693860d31c22b19c4d7e |

mit | ['Dialectal Arabic', 'Arabic', 'sequence labeling', 'Named entity recognition', 'Part-of-speech tagging', 'Zero-shot transfer learning', 'bert'] | false | About the Dialectal-Arabic-XLM-R-Base model training corpora We have built a 5 million Tweets corpus from Twitter. The crawled tweets cover the dialects of the four Arabic world regions (EGY, GLF, LEV, and MAG regions), as well as MSA. The collected corpus consists of one million (1M) tweets per Arabic variant. We did not perform any text pre-processing on the tweets, except by removing tweets that have a small length (tweets containing less than four words). | 6a84d00025571ef35f13404f693a42a5 |

mit | ['Dialectal Arabic', 'Arabic', 'sequence labeling', 'Named entity recognition', 'Part-of-speech tagging', 'Zero-shot transfer learning', 'bert'] | false | Usage The model weights can be loaded using `transformers` library by HuggingFace. ```python from transformers import AutoTokenizer, AutoModel tokenizer = AutoTokenizer.from_pretrained("3ebdola/Dialectal-Arabic-XLM-R-Base") model = AutoModel.from_pretrained("3ebdola/Dialectal-Arabic-XLM-R-Base") text = "هذا مثال لنص باللغة العربية, يمكنك استعمال اللهجات العربية أيضا" encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` | 68b7ab446539e81f9dadf5fdd18c0aa0 |

mit | ['Dialectal Arabic', 'Arabic', 'sequence labeling', 'Named entity recognition', 'Part-of-speech tagging', 'Zero-shot transfer learning', 'bert'] | false | Citation ``` @article{ELMEKKI2022102964, title = {AdaSL: An Unsupervised Domain Adaptation framework for Arabic multi-dialectal Sequence Labeling}, journal = {Information Processing & Management}, volume = {59}, number = {4}, pages = {102964}, year = {2022}, issn = {0306-4573}, doi = {https://doi.org/10.1016/j.ipm.2022.102964}, url = {https://www.sciencedirect.com/science/article/pii/S0306457322000814}, author = {Abdellah {El Mekki} and Abdelkader {El Mahdaouy} and Ismail Berrada and Ahmed Khoumsi}, keywords = {Dialectal Arabic, Arabic natural language processing, Domain adaptation, Multi-dialectal sequence labeling, Named entity recognition, Part-of-speech tagging, Zero-shot transfer learning} } ``` | 2cdef3209020b094a2eb232b8805a3b5 |

mit | ['generated_from_trainer'] | false | bert-portuguese-squad2 This model is a fine-tuned version of [neuralmind/bert-base-portuguese-cased](https://huggingface.co/neuralmind/bert-base-portuguese-cased) on SQuAD_v2 dataset, translated for portuguese. | 45d67f3ae63f50d6faadef1061b61443 |

cc-by-4.0 | ['question generation'] | false | Model Card of `lmqg/bart-large-squadshifts-amazon-qg` This model is fine-tuned version of [lmqg/bart-large-squad](https://huggingface.co/lmqg/bart-large-squad) for question generation task on the [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) (dataset_name: amazon) via [`lmqg`](https://github.com/asahi417/lm-question-generation). | 1ea4367c3ec11ba2ca22e2f4621de4e3 |

cc-by-4.0 | ['question generation'] | false | Overview - **Language model:** [lmqg/bart-large-squad](https://huggingface.co/lmqg/bart-large-squad) - **Language:** en - **Training data:** [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) (amazon) - **Online Demo:** [https://autoqg.net/](https://autoqg.net/) - **Repository:** [https://github.com/asahi417/lm-question-generation](https://github.com/asahi417/lm-question-generation) - **Paper:** [https://arxiv.org/abs/2210.03992](https://arxiv.org/abs/2210.03992) | ab16dd2c8cb330d64aa6d4ccb3e57b78 |

cc-by-4.0 | ['question generation'] | false | model prediction questions = model.generate_q(list_context="William Turner was an English painter who specialised in watercolour landscapes", list_answer="William Turner") ``` - With `transformers` ```python from transformers import pipeline pipe = pipeline("text2text-generation", "lmqg/bart-large-squadshifts-amazon-qg") output = pipe("<hl> Beyonce <hl> further expanded her acting career, starring as blues singer Etta James in the 2008 musical biopic, Cadillac Records.") ``` | 3c626f8e27953a9d320ef51a31238712 |

cc-by-4.0 | ['question generation'] | false | Evaluation - ***Metric (Question Generation)***: [raw metric file](https://huggingface.co/lmqg/bart-large-squadshifts-amazon-qg/raw/main/eval/metric.first.sentence.paragraph_answer.question.lmqg_qg_squadshifts.amazon.json) | | Score | Type | Dataset | |:-----------|--------:|:-------|:---------------------------------------------------------------------------| | BERTScore | 92.49 | amazon | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_1 | 28.9 | amazon | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_2 | 19.57 | amazon | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_3 | 13.66 | amazon | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_4 | 9.8 | amazon | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | METEOR | 23.79 | amazon | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | MoverScore | 63.31 | amazon | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | ROUGE_L | 28.69 | amazon | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | e3d6e04026c0d1a01a295ec098ad4a1d |

cc-by-4.0 | ['question generation'] | false | Training hyperparameters The following hyperparameters were used during fine-tuning: - dataset_path: lmqg/qg_squadshifts - dataset_name: amazon - input_types: ['paragraph_answer'] - output_types: ['question'] - prefix_types: None - model: lmqg/bart-large-squad - max_length: 512 - max_length_output: 32 - epoch: 6 - batch: 32 - lr: 1e-05 - fp16: False - random_seed: 1 - gradient_accumulation_steps: 4 - label_smoothing: 0.15 The full configuration can be found at [fine-tuning config file](https://huggingface.co/lmqg/bart-large-squadshifts-amazon-qg/raw/main/trainer_config.json). | f20efc11bbe97441dad2945733af793c |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-large-xls-r-300m-hindi-colab This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 7.2810 - Wer: 1.0 | ccb88e56a6b7b6974183bd62d0d6f527 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 5 - num_epochs: 5 - mixed_precision_training: Native AMP | 3bf50b95e07278009c9aa0c81a6fcac8 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:---:| | 23.4144 | 0.8 | 4 | 29.5895 | 1.0 | | 19.1336 | 1.6 | 8 | 18.3354 | 1.0 | | 12.1562 | 2.4 | 12 | 11.2065 | 1.0 | | 8.1523 | 3.2 | 16 | 8.8674 | 1.0 | | 6.807 | 4.0 | 20 | 7.8106 | 1.0 | | 6.1583 | 4.8 | 24 | 7.2810 | 1.0 | | 939f76d8df3c184c72214519adaa6d8f |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-large-xls-r-300m-tr-colab This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 0.4121 - Wer: 0.3112 | 393f2ca7579bde9a3c903a4f1eb35f26 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - distributed_type: multi-GPU - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 30 - mixed_precision_training: Native AMP | 5e9b63930b0fc1f1674d0b0daacd7852 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 4.1868 | 1.83 | 400 | 0.9812 | 0.8398 | | 0.691 | 3.67 | 800 | 0.5571 | 0.6298 | | 0.3555 | 5.5 | 1200 | 0.4676 | 0.4779 | | 0.2451 | 7.34 | 1600 | 0.4572 | 0.4541 | | 0.1844 | 9.17 | 2000 | 0.4743 | 0.4389 | | 0.1541 | 11.01 | 2400 | 0.4583 | 0.4300 | | 0.1277 | 12.84 | 2800 | 0.4565 | 0.3950 | | 0.1122 | 14.68 | 3200 | 0.4761 | 0.4087 | | 0.0975 | 16.51 | 3600 | 0.4654 | 0.3786 | | 0.0861 | 18.35 | 4000 | 0.4503 | 0.3667 | | 0.0775 | 20.18 | 4400 | 0.4600 | 0.3581 | | 0.0666 | 22.02 | 4800 | 0.4350 | 0.3504 | | 0.0627 | 23.85 | 5200 | 0.4211 | 0.3349 | | 0.0558 | 25.69 | 5600 | 0.4390 | 0.3333 | | 0.0459 | 27.52 | 6000 | 0.4218 | 0.3185 | | 0.0439 | 29.36 | 6400 | 0.4121 | 0.3112 | | c4df75c67797cf2f3803126390d559dc |

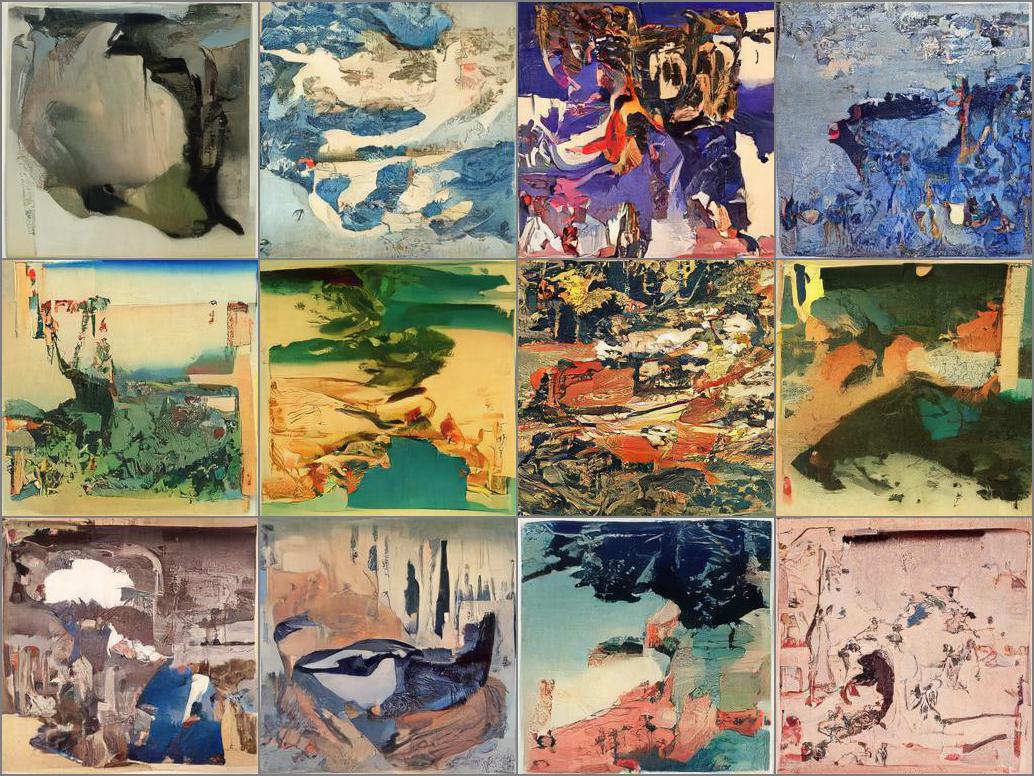

mit | ['pytorch', 'diffusers', 'unconditional-image-generation', 'diffusion-models-class'] | false | Example Fine-Tuned Model for Unit 2 of the [Diffusion Models Class 🧨](https://github.com/huggingface/diffusion-models-class) This model is a diffusion model for unconditional image generation of Ukiyo-e images ✍ 🎨. The model was train using fine-tuning with the google/ddpm-celebahq-256 pretrain-model and the dataset: https://huggingface.co/datasets/huggan/ukiyoe2photo  * Google Colab notebook for experiment with the model and the sampling process using a Gradio App: https://colab.research.google.com/drive/1F7SH4T9y5fJKxj5lU9HqTzadv836Zj_G?usp=sharing * Weights&Biases dashboard with training information: https://wandb.ai/alcazar90/fine-tuning-a-diffusion-model?workspace=user-alcazar90 | e981dab4d41ceac2967171f0165e4f86 |

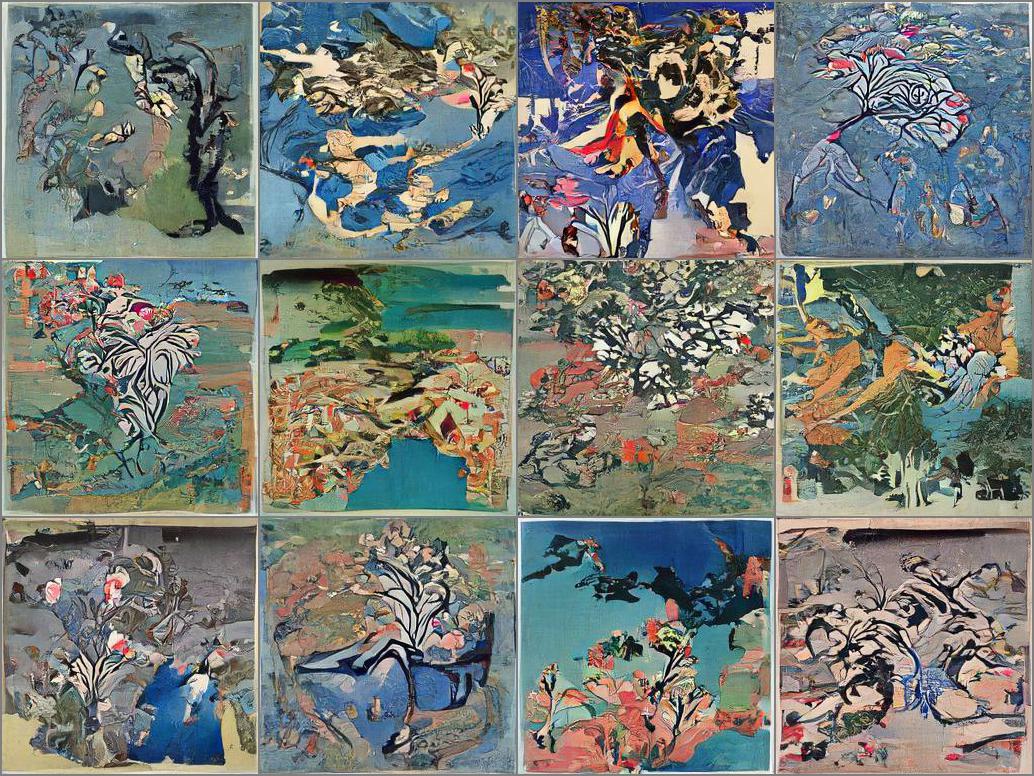

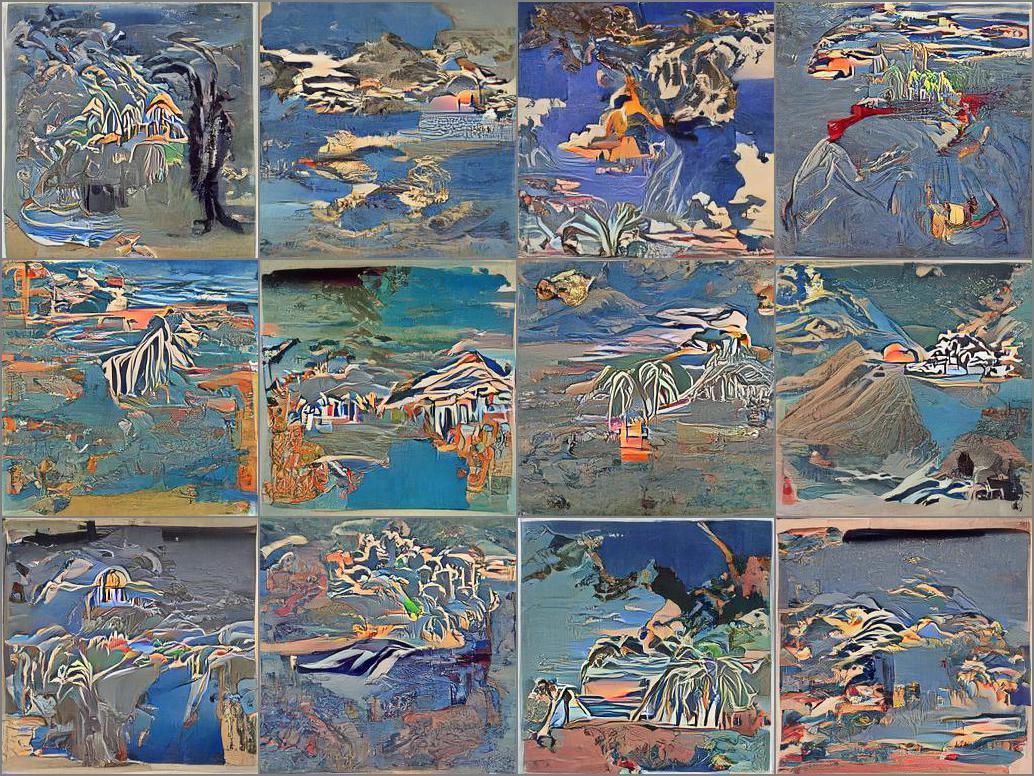

mit | ['pytorch', 'diffusers', 'unconditional-image-generation', 'diffusion-models-class'] | false | Guidance **Prompt:** _A sakura tree_  **Prompt:** _An island with sunset at background_  | 10497139ed82e00a32b5597f7072da8d |

apache-2.0 | ['generated_from_trainer'] | false | finetuned-bert-piqa This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the piqa dataset. It achieves the following results on the evaluation set: - Loss: 0.6603 - Accuracy: 0.6518 | 79bf5dd37ed71e427f098994bc3ccd71 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 8 - total_train_batch_size: 64 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3 | a83786cad508b4b358d6aee1ec2650c4 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 251 | 0.6751 | 0.6115 | | 0.6628 | 2.0 | 502 | 0.6556 | 0.6534 | | 0.6628 | 3.0 | 753 | 0.6603 | 0.6518 | | 16ef917ae49cd6366c34929dbebcd393 |

gpl-3.0 | ['pytorch', 'token-classification', 'albert', 'zh'] | false | CKIP ALBERT Base Chinese This project provides traditional Chinese transformers models (including ALBERT, BERT, GPT2) and NLP tools (including word segmentation, part-of-speech tagging, named entity recognition). 這個專案提供了繁體中文的 transformers 模型(包含 ALBERT、BERT、GPT2)及自然語言處理工具(包含斷詞、詞性標記、實體辨識)。 | 4491e8e9caec32a15b944f26ab18b211 |

gpl-3.0 | ['pytorch', 'token-classification', 'albert', 'zh'] | false | Usage Please use BertTokenizerFast as tokenizer instead of AutoTokenizer. 請使用 BertTokenizerFast 而非 AutoTokenizer。 ``` from transformers import ( BertTokenizerFast, AutoModel, ) tokenizer = BertTokenizerFast.from_pretrained('bert-base-chinese') model = AutoModel.from_pretrained('ckiplab/albert-base-chinese-ner') ``` For full usage and more information, please refer to https://github.com/ckiplab/ckip-transformers. 有關完整使用方法及其他資訊,請參見 https://github.com/ckiplab/ckip-transformers 。 | 1842c3a54a3b677d41ca0f04fc31a9f6 |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image', 'image-to-image', 'diffusers'] | false | **Big update DucHaitenAIart_v2.0** *DucHaitenAIart_v2.0 is an extended version of v1.2, v2.0 improves everything to be better than v1.2, can do things 1.2 cannot do, more diverse and more detailed* Looks like people haven't used the full power of the model yet, so I'll post a few more example photos of what my model can do, it's all just text to image, no editing. **Please support me by becoming a patron:** patreon.com/duchaitenreal ***** All sample images only use text to image, no editing, no image to image, no restore face no highres fix no extras. ***** Hello, sorry for my lousy english. After days of trying and retrying hundreds of times, with dozens of different versions, DucHaitenAIart finally released the official version. Improved image sharpness, more realistic lighting correction, more shooting angles, the only downside is that it's less flexible and less random than beta-v6.0, so I'm still will leave beta-v6.0 for anyone to download. This model can create NSFW images but since it is not a hentai and porn model, anything really hardcore will be difficult to create. But, To make the model work better with NSFW images, add “hentai, porn, rule 34” to the prompt Always add to the prompt “masterpiece, best quality, 1girl or 1boy, realistic, anime or cartoon (it's two different styles, but I personally prefer anime), 3D, pixar, (add “pin-up”) ” if you are going to give your character a sexy pose), highly detail eyes, perfect eyes, both eyes are the same, (if you don't want to draw eyes, don't add them), smooth, perfect face, hd, 2k, 4k , 8k, 16k Add to the prompt: “extremely detailed 8K, high resolution, ultra quality” to further enhance the image quality, but it may weaken the AI's interest in other keywords. You can add “glare, Iridescent, Global illumination, real hair movement, realistic light, realistic shadow” to the prompt to create a better lighting effect, but the image will then become too realistic, if you don't want to. Please adjust it accordingly. ***** Sampler: Euler a You can also create 2D anime images by the following ways: + Prompt: masterpiece, best quality, 1girl, (anime), (manga), (2D), half body, perfect eyes, both eyes are the same, Global illumination, soft light, dream light, digital painting, extremely detailed CGI anime, hd, 2k, 4k background (the fewer keywords that increase the quality, the easier it is to create 2d anime images, so it is possible to remove some keywords in the prompt but cannot add keywords to increase the high quality, because it can turn 2d images into 2.5d) + negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry, artist name, Lots of hands, not perfect, extra limbs, extra fingers, missing fingers, conjoined fingers, deformed fingers, ugly eyes, imperfect eyes, skewed eyes, 3d, 3d, 3d, 3d, realistic, realistic, realistic, realistic, realistic (remove and add “3d, realistic” keywords in negative prompt can change image style) ***** negative prompt I used for the sample image: lowres, disfigured, ostentatious, ugly, oversaturated, grain, low resolution, disfigured, blurry, bad anatomy, disfigured, poorly drawn face, mutant, mutated, extra limb, ugly, poorly drawn hands, missing limbs, blurred, floating limbs, disjointed limbs, deformed hands, blurred, out of focus, long neck, long body, ugly, disgusting, bad drawing, childish, cut off cropped, distorted, imperfect, surreal, bad hands, text, error, extra digit, fewer digits, cropped , worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry, artist name, Lots of hands, extra limbs, extra fingers, conjoined fingers, deformed fingers, old, ugly eyes, imperfect eyes, skewed eyes , unnatural face, stiff face, stiff body, unbalanced body, unnatural body, lacking body, details are not clear, details are sticky, details are low, distorted details, ugly hands, imperfect hands, (mutated hands and fingers:1.5), (long body :1.3), (mutation, poorly drawn :1.2) bad hands, fused ha nd, missing hand, disappearing arms, disappearing thigh, disappearing calf, disappearing legs, ui, missing fingers ***** Note 1: “realistic, 3D, anime, pixar” is required in the prompt to create beautiful images, unless you want to explore something new. Note 2: negative prompt is extremely important, it accounts for 50% of the output of AI, so really pay attention to it, or if you are too lazy, you can take my negative prompt to use for most portrait images. Note 3: all the instructions I wrote above are not absolute, certainly not the best, if you just follow what I wrote, you will not be able to fully explore the capabilities of the DucHaitenAIart model. Explore, be creative, have a variety of styles and share the results together. That's really what I want. Some test:                            | 906985524d2c609516e204dc085bf566 |

apache-2.0 | ['setfit', 'sentence-transformers', 'text-classification'] | false | fathyshalab/domain_transfer_general-massive_alarm-roberta-large-v1-5-50 This is a [SetFit model](https://github.com/huggingface/setfit) that can be used for text classification. The model has been trained using an efficient few-shot learning technique that involves: 1. Fine-tuning a [Sentence Transformer](https://www.sbert.net) with contrastive learning. 2. Training a classification head with features from the fine-tuned Sentence Transformer. | aa0e5ffddfb51f506f9739b63c7e54ff |

apache-2.0 | ['generated_from_trainer'] | false | convnext-large-224-22k-1k-FV2-finetuned-memes This model is a fine-tuned version of [facebook/convnext-large-224-22k-1k](https://huggingface.co/facebook/convnext-large-224-22k-1k) on the imagefolder dataset. It achieves the following results on the evaluation set: - Loss: 0.4290 - Accuracy: 0.8663 - Precision: 0.8617 - Recall: 0.8663 - F1: 0.8629 | f7022e7b325455c2f71f4f9894448f1d |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.00012 - train_batch_size: 64 - eval_batch_size: 64 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 256 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - num_epochs: 10 | 2788da4b323f145179688ae59368ba78 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | Precision | Recall | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:---------:|:------:|:------:| | 0.8992 | 0.99 | 20 | 0.6455 | 0.7658 | 0.7512 | 0.7658 | 0.7534 | | 0.4245 | 1.99 | 40 | 0.4008 | 0.8539 | 0.8680 | 0.8539 | 0.8541 | | 0.2054 | 2.99 | 60 | 0.3245 | 0.8694 | 0.8631 | 0.8694 | 0.8650 | | 0.1102 | 3.99 | 80 | 0.3231 | 0.8671 | 0.8624 | 0.8671 | 0.8645 | | 0.0765 | 4.99 | 100 | 0.3882 | 0.8563 | 0.8603 | 0.8563 | 0.8556 | | 0.0642 | 5.99 | 120 | 0.4133 | 0.8601 | 0.8604 | 0.8601 | 0.8598 | | 0.0574 | 6.99 | 140 | 0.3889 | 0.8694 | 0.8657 | 0.8694 | 0.8667 | | 0.0526 | 7.99 | 160 | 0.4145 | 0.8655 | 0.8705 | 0.8655 | 0.8670 | | 0.0468 | 8.99 | 180 | 0.4256 | 0.8679 | 0.8642 | 0.8679 | 0.8650 | | 0.0472 | 9.99 | 200 | 0.4290 | 0.8663 | 0.8617 | 0.8663 | 0.8629 | | efe7def562394c5d005df3202134f5dc |

cc-by-4.0 | ['espnet', 'audio', 'text-to-speech'] | false | `kan-bayashi/vctk_tts_train_gst_transformer_raw_phn_tacotron_g2p_en_no_space_train.loss.ave` ♻️ Imported from https://zenodo.org/record/4037456/ This model was trained by kan-bayashi using vctk/tts1 recipe in [espnet](https://github.com/espnet/espnet/). | 785f2d176d7f9664d327628cc29f67e0 |

mit | [] | false | This is a t5-base model (init from pretrained weights) and finetuned on WikiKG90Mv2 dataset. Please see https://github.com/apoorvumang/kgt5/ for more details on the method.

This model was trained on the tail entity prediction task ie. given subject entity and relation, predict the object entity. Input should be provided in the form of "\<entity text\>| \<relation text\>".

We used the raw text title and descriptions to get entity and relation textual representations. These raw texts were obtained from ogb dataset itself (dataset/wikikg90m-v2/mapping/entity.csv and relation.csv). Entity representation was set to the title, and description was used to disambiguate if 2 entities had the same title. If still no disambiguation was possible, we used the wikidata ID (eg. Q123456).

We trained the model on WikiKG90Mv2 for approx 1.5 epochs on 4x1080Ti GPUs. The training time for 1 epoch was approx 5.5 days.

To evaluate the model, we sample 300 times from the decoder for each input (s,r) pair. We then remove predictions which do not map back to a valid entity, and then rank the predictions by their log probabilities. Filtering was performed subsequently. **We achieve 0.239 validation MRR** (the full leaderboard is here https://ogb.stanford.edu/docs/lsc/leaderboards/ | 913f4f9ffa9657745c85e841856971bc |

mit | [] | false | wikikg90mv2)

You can try the following code in an ipython notebook to evaluate the pre-trained model. The full procedure of mapping entity to ids, filtering etc. is not included here for sake of simplicity but can be provided on request if needed. Please contact Apoorv (apoorvumang@gmail.com) for clarifications/details.

---------

```

from transformers import AutoTokenizer, AutoModelForSeq2SeqLM

tokenizer = AutoTokenizer.from_pretrained("apoorvumang/kgt5-base-wikikg90mv2")

model = AutoModelForSeq2SeqLM.from_pretrained("apoorvumang/kgt5-base-wikikg90mv2")

```

```

import torch

def getScores(ids, scores, pad_token_id):

"""get sequence scores from model.generate output"""

scores = torch.stack(scores, dim=1)

log_probs = torch.log_softmax(scores, dim=2)

| 5a77ce3ec9a407707771804ccd5187d7 |

mit | [] | false | gather needed probs

x = ids.unsqueeze(-1).expand(log_probs.shape)

needed_logits = torch.gather(log_probs, 2, x)

final_logits = needed_logits[:, :, 0]

padded_mask = (ids == pad_token_id)

final_logits[padded_mask] = 0

final_scores = final_logits.sum(dim=-1)

return final_scores.cpu().detach().numpy()

def topkSample(input, model, tokenizer,

num_samples=5,

num_beams=1,

max_output_length=30):

tokenized = tokenizer(input, return_tensors="pt")

out = model.generate(**tokenized,

do_sample=True,

num_return_sequences = num_samples,

num_beams = num_beams,

eos_token_id = tokenizer.eos_token_id,

pad_token_id = tokenizer.pad_token_id,

output_scores = True,

return_dict_in_generate=True,

max_length=max_output_length,)

out_tokens = out.sequences

out_str = tokenizer.batch_decode(out_tokens, skip_special_tokens=True)

out_scores = getScores(out_tokens, out.scores, tokenizer.pad_token_id)

pair_list = [(x[0], x[1]) for x in zip(out_str, out_scores)]

sorted_pair_list = sorted(pair_list, key=lambda x:x[1], reverse=True)

return sorted_pair_list

def greedyPredict(input, model, tokenizer):

input_ids = tokenizer([input], return_tensors="pt").input_ids

out_tokens = model.generate(input_ids)

out_str = tokenizer.batch_decode(out_tokens, skip_special_tokens=True)

return out_str[0]

```

```

| 494e87a5c491ecee5f0f804e9e9625ad |

mit | [] | false | you can try your own examples here. what's your noble title?

input = "Sophie Valdemarsdottir| noble title"

out = topkSample(input, model, tokenizer, num_samples=5)

out

```

You can further load the list of entity aliases, then filter only those predictions which are valid entities then create a reverse mapping from alias -> integer id to get final predictions in required format.

However, loading these aliases in memory as a dictionary requires a lot of RAM + you need to download the aliases file (made available here https://storage.googleapis.com/kgt5-wikikg90mv2/ent_alias_list.pickle) (relation file: https://storage.googleapis.com/kgt5-wikikg90mv2/rel_alias_list.pickle)

The submitted validation/test results for were obtained by sampling 300 times for each input, then applying above procedure, followed by filtering known entities. The final MRR can vary slightly due to this sampling nature (we found that although beam search gives deterministic output, the results are inferior to sampling large number of times).

```

| ddaf6f9c9e0d9d5408194e8452601632 |

mit | [] | false | download valid.txt. you can also try same url with test.txt. however test does not contain the correct tails

!wget https://storage.googleapis.com/kgt5-wikikg90mv2/valid.txt

```

```

fname = 'valid.txt'

valid_lines = []

f = open(fname)

for line in f:

valid_lines.append(line.rstrip())

f.close()

print(valid_lines[0])

```

```

from tqdm.auto import tqdm

| 31ec4ad7f0303eef73fa53335cc05cac |

mit | [] | false | you should run this on gpu if you want to evaluate on all points with 300 samples each

k = 1

count_at_k = 0

max_predictions = k

max_points = 1000

for line in tqdm(valid_lines[:max_points]):

input, target = line.split('\t')

model_output = topkSample(input, model, tokenizer, num_samples=max_predictions)

prediction_strings = [x[0] for x in model_output]

if target in prediction_strings:

count_at_k += 1

print('Hits at {0} unfiltered: {1}'.format(k, count_at_k/max_points))

``` | de8993f1180bdc57cc398ef7715edd74 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2_common_voice_accents_indian This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 0.2692 | 1066a9b21542d710e040918fd27e61ad |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 48 - eval_batch_size: 4 - seed: 42 - distributed_type: multi-GPU - num_devices: 8 - total_train_batch_size: 384 - total_eval_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 30 - mixed_precision_training: Native AMP | 76db89f891efb8d2c3db7ea4d41858e4 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 4.5186 | 1.28 | 400 | 0.6937 | | 0.3485 | 2.56 | 800 | 0.2323 | | 0.2229 | 3.83 | 1200 | 0.2195 | | 0.1877 | 5.11 | 1600 | 0.2147 | | 0.1618 | 6.39 | 2000 | 0.2058 | | 0.1434 | 7.67 | 2400 | 0.2077 | | 0.132 | 8.95 | 2800 | 0.1995 | | 0.1223 | 10.22 | 3200 | 0.2146 | | 0.1153 | 11.5 | 3600 | 0.2117 | | 0.1061 | 12.78 | 4000 | 0.2071 | | 0.1003 | 14.06 | 4400 | 0.2219 | | 0.0949 | 15.34 | 4800 | 0.2204 | | 0.0889 | 16.61 | 5200 | 0.2162 | | 0.0824 | 17.89 | 5600 | 0.2243 | | 0.0784 | 19.17 | 6000 | 0.2323 | | 0.0702 | 20.45 | 6400 | 0.2325 | | 0.0665 | 21.73 | 6800 | 0.2334 | | 0.0626 | 23.0 | 7200 | 0.2411 | | 0.058 | 24.28 | 7600 | 0.2473 | | 0.054 | 25.56 | 8000 | 0.2591 | | 0.0506 | 26.84 | 8400 | 0.2577 | | 0.0484 | 28.12 | 8800 | 0.2633 | | 0.0453 | 29.39 | 9200 | 0.2692 | | 1f8793dcbe9c609dcf142ce38ef1d396 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | my_tuned_whisper_cn This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - eval_loss: 0.5297 - eval_wer: 80.2457 - eval_runtime: 457.7207 - eval_samples_per_second: 2.311 - eval_steps_per_second: 0.291 - epoch: 2.02 - step: 1000 | 6d81f028ad5053e4b213dc63431da8b1 |

apache-2.0 | ['generated_from_trainer'] | false | distilroberta-base_squad This model is a fine-tuned version of [distilroberta-base](https://huggingface.co/distilroberta-base) on the **squadV1** dataset. - "eval_exact_match": 80.97445600756859 - "eval_f1": 88.0153886332912 - "eval_samples": 10790 | 578b68c85a3602b8a59954824d326af1 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3.0 | 66b5b62487fab91e8e9046b21161dac4 |

apache-2.0 | ['generated_from_keras_callback'] | false | edgertej/poebert-clean-checkpoint-finetuned-poetry-foundation-clean This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 3.8658 - Validation Loss: 3.6186 - Epoch: 2 | d400eb7cb5de66010186a4ba54f569fa |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Epoch | |:----------:|:---------------:|:-----:| | 4.0379 | 3.6686 | 0 | | 3.9346 | 3.6478 | 1 | | 3.8658 | 3.6186 | 2 | | 00ed119d1eaf717a2e2541703d34cd73 |

cc-by-4.0 | [] | false | MahaAlBERT MahaAlBERT is a Marathi AlBERT model trained on L3Cube-MahaCorpus and other publicly available Marathi monolingual datasets. [dataset link] (https://github.com/l3cube-pune/MarathiNLP) More details on the dataset, models, and baseline results can be found in our [paper] (https://arxiv.org/abs/2202.01159) ``` @InProceedings{joshi:2022:WILDRE6, author = {Joshi, Raviraj}, title = {L3Cube-MahaCorpus and MahaBERT: Marathi Monolingual Corpus, Marathi BERT Language Models, and Resources}, booktitle = {Proceedings of The WILDRE-6 Workshop within the 13th Language Resources and Evaluation Conference}, month = {June}, year = {2022}, address = {Marseille, France}, publisher = {European Language Resources Association}, pages = {97--101} } ``` | 479c96dd789ac2db7d3f19dc05e6985f |

apache-2.0 | ['speech', 'audio', 'automatic-speech-recognition'] | false | Distil-wav2vec2 This model is a distilled version of the wav2vec2 model (https://arxiv.org/pdf/2006.11477.pdf). This model is 45% times smaller and twice as fast as the original wav2vec2 base model. | c586c68de88adccc33cc2538a92fc42b |

apache-2.0 | ['speech', 'audio', 'automatic-speech-recognition'] | false | Evaluation results This model achieves the following results (speed is mesured for a batch size of 64): |Model| Size| WER Librispeech-test-clean |WER Librispeech-test-other|Speed on cpu|speed on gpu| |----------| ------------- |-------------|-----------| ------|----| |Distil-wav2vec2| 197.9 Mb | 0.0983 | 0.2266|0.4006s| 0.0046s| |wav2vec2-base| 360 Mb | 0.0389 | 0.1047|0.4919s| 0.0082s| | 84a26dba3cc91fb099b20b0b743fc34a |

apache-2.0 | [] | false | LongT5 (local attention, base-sized model) LongT5 model pre-trained on English language. The model was introduced in the paper [LongT5: Efficient Text-To-Text Transformer for Long Sequences](https://arxiv.org/pdf/2112.07916.pdf) by Guo et al. and first released in [the LongT5 repository](https://github.com/google-research/longt5). All the model architecture and configuration can be found in [Flaxformer repository](https://github.com/google/flaxformer) which uses another Google research project repository [T5x](https://github.com/google-research/t5x). Disclaimer: The team releasing LongT5 did not write a model card for this model so this model card has been written by the Hugging Face team. | 73744c2b08ac643f1c83584aaf98add2 |

apache-2.0 | [] | false | Model description LongT5 model is an encoder-decoder transformer pre-trained in a text-to-text denoising generative setting ([Pegasus-like generation pre-training](https://arxiv.org/pdf/1912.08777.pdf)). LongT5 model is an extension of [T5 model](https://arxiv.org/pdf/1910.10683.pdf), and it enables using one of the two different efficient attention mechanisms - (1) Local attention, or (2) Transient-Global attention. The usage of attention sparsity patterns allows the model to efficiently handle input sequence. LongT5 is particularly effective when fine-tuned for text generation (summarization, question answering) which requires handling long input sequences (up to 16,384 tokens). | 3f34d0afdfda09cea7d67f7fbc0f46d8 |

apache-2.0 | [] | false | Intended uses & limitations The model is mostly meant to be fine-tuned on a supervised dataset. See the [model hub](https://huggingface.co/models?search=longt5) to look for fine-tuned versions on a task that interests you. | ef11c00d3896ac8c3a85d7fbe617bf48 |

apache-2.0 | [] | false | How to use ```python from transformers import AutoTokenizer, LongT5Model tokenizer = AutoTokenizer.from_pretrained("google/long-t5-local-base") model = LongT5Model.from_pretrained("google/long-t5-local-base") inputs = tokenizer("Hello, my dog is cute", return_tensors="pt") outputs = model(**inputs) last_hidden_states = outputs.last_hidden_state ``` | 381b52d478962652580b9a10bba90804 |

apache-2.0 | [] | false | BibTeX entry and citation info ```bibtex @article{guo2021longt5, title={LongT5: Efficient Text-To-Text Transformer for Long Sequences}, author={Guo, Mandy and Ainslie, Joshua and Uthus, David and Ontanon, Santiago and Ni, Jianmo and Sung, Yun-Hsuan and Yang, Yinfei}, journal={arXiv preprint arXiv:2112.07916}, year={2021} } ``` | c5b958360c42b6ff5f08b5c4bc226a70 |

apache-2.0 | ['generated_from_trainer'] | false | Tagged_One_100v4_NER_Model_3Epochs_AUGMENTED This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the tagged_one100v4_wikigold_split dataset. It achieves the following results on the evaluation set: - Loss: 0.4506 - Precision: 0.1649 - Recall: 0.0818 - F1: 0.1093 - Accuracy: 0.8299 | a66b1c705109ab2fc8e186fbfed3d42c |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 34 | 0.5649 | 0.0 | 0.0 | 0.0 | 0.7875 | | No log | 2.0 | 68 | 0.4687 | 0.1197 | 0.0400 | 0.0600 | 0.8147 | | No log | 3.0 | 102 | 0.4506 | 0.1649 | 0.0818 | 0.1093 | 0.8299 | | 1dee3b42fa1adc9b92fa30cf447c116c |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech'] | false | Usage The model can be used directly (without a language model) as follows: ```python import torch import torchaudio from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor test_dataset = load_dataset("common_voice", "tr", split="test[:2%]") processor = Wav2Vec2Processor.from_pretrained("emre/wav2vec-tr-lite-AG") model = Wav2Vec2ForCTC.from_pretrained("emre/wav2vec-tr-lite-AG") resampler = torchaudio.transforms.Resample(48_000, 16_000) | 629b09d92f53552000b64a835f32b37e |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.00005 - train_batch_size: 2 - eval_batch_size: 8 - seed: 42 - distributed_type: multi-GPU - num_devices: 2 - gradient_accumulation_steps: 8 - total_train_batch_size: 32 - total_eval_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 30.0 - mixed_precision_training: Native AMP | 4650fd467eb20090ba821d47ed540d4e |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.4388 | 3.7 | 400 | 1.366 | 0.9701 | | 0.3766 | 7.4 | 800 | 0.4914 | 0.5374 | | 0.2295 | 11.11 | 1200 | 0.3934 | 0.4125 | | 0.1121 | 14.81 | 1600 | 0.3264 | 0.2904 | | 0.1473 | 18.51 | 2000 | 0.3103 | 0.2671 | | 0.1013 | 22.22 | 2400 | 0.2589 | 0.2324 | | 0.0704 | 25.92 | 2800 | 0.2826 | 0.2339 | | 0.0537 | 29.63 | 3200 | 0.2704 | 0.2309 | | cef01a3a31cd8af2a8dd773ee05126f4 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 128 - eval_batch_size: 64 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1 - mixed_precision_training: Native AMP | 019383c55c502a005f3b225675a90d2c |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:| | No log | 1.0 | 77 | 1.6042 | 13.1732 | 26.144 | | c942c6750daae9ee6128bc3967ef18d3 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | t5-finetuned-parasci This model is a fine-tuned version of [domenicrosati/t5-finetuned-parasci](https://huggingface.co/domenicrosati/t5-finetuned-parasci) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.0845 - Bleu: 19.5623 | 766949a5c27b45212aa08b12c1553adb |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 16 - eval_batch_size: 64 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 10 | bc3da46e169d37ca3d6d29cfe0f7cbbe |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'hf-asr-leaderboard'] | false | Whisper [OpenAI's Whisper](https://openai.com/blog/whisper/) The Whisper model was proposed in [Robust Speech Recognition via Large-Scale Weak Supervision](https://cdn.openai.com/papers/whisper.pdf) by Alec Radford, Jong Wook Kim, Tao Xu, Greg Brockman, Christine McLeavey, Ilya Sutskever. **Disclaimer**: Content from **this** model card has been written by the Hugging Face team, and parts of it were copy pasted from the original model card. | 7f1f951c2798657e01b7422074ca9cbb |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'hf-asr-leaderboard'] | false | Intro The first paragraphs of the abstract read as follows : > We study the capabilities of speech processing systems trained simply to predict large amounts of transcripts of audio on the internet. When scaled to 680,000 hours of multilingual and multitask supervision, the resulting models generalize well to standard benchmarks and are often competitive with prior fully supervised results but in a zeroshot transfer setting without the need for any finetuning. > When compared to humans, the models approach their accuracy and robustness. We are releasing models and inference code to serve as a foundation for further work on robust speech processing. The original code repository can be found [here](https://github.com/openai/whisper). | 98cfa472bc237edc89c546bf2aa778aa |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'hf-asr-leaderboard'] | false | Model details The Whisper models are trained for speech recognition and translation tasks, capable of transcribing speech audio into the text in the language it is spoken (ASR) as well as translated into English (speech translation). Researchers at OpenAI developed the models to study the robustness of speech processing systems trained under large-scale weak supervision. There are 9 models of different sizes and capabilities, summarised in the following table. | Size | Parameters | English-only model | Multilingual model | |:------:|:----------:|:------------------:|:------------------:| | tiny | 39 M | ✓ | ✓ | | base | 74 M | ✓ | ✓ | | small | 244 M | ✓ | ✓ | | medium | 769 M | ✓ | ✓ | | large | 1550 M | | ✓ | | 395b4161bb913150565133ed51a0b104 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'hf-asr-leaderboard'] | false | Model description Whisper is an auto-regressive automatic speech recognition encoder-decoder model that was trained on 680 000 hours of 16kHz sampled multilingual audio. It was fully trained in a supervised manner, with multiple tasks : - English transcription - Any-to-English speech translation - Non-English transcription - No speech prediction To each task corresponds a sequence of tokens that are given to the decoder as *context tokens*. The beginning of a transcription always starts with `<|startoftranscript|>` which is why the `decoder_start_token` is always set to `tokenizer.encode("<|startoftranscript|>")`. The following token should be the language token, which is automatically detected in the original code. Finally, the task is define using either `<|transcribe|>` or `<|translate|>`. In addition, a `<|notimestamps|>` token is added if the task does not include timestamp prediction. | 6bc5b5ec95d0add369ba8c81b1a3c7ec |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'hf-asr-leaderboard'] | false | Usage To transcribe or translate audio files, the model has to be used along a `WhisperProcessor`. The `WhisperProcessor.get_decoder_prompt_ids` function is used to get a list of `( idx, token )` tuples, which can either be set in the config, or directly passed to the generate function, as `forced_decoder_ids`. | 3d5aac6ec99612d80111a2f258049711 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'hf-asr-leaderboard'] | false | English to english The "<|en|>" token is used to specify that the speech is in english and should be transcribed to english ```python >>> from transformers import WhisperProcessor, WhisperForConditionalGeneration >>> from datasets import load_dataset >>> import torch >>> | 29edf723e59df83cfc3ea5b6ae74404a |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'hf-asr-leaderboard'] | false | load dummy dataset and read soundfiles >>> ds = load_dataset("hf-internal-testing/librispeech_asr_dummy", "clean", split="validation") >>> input_features = processor(ds[0]["audio"]["array"], return_tensors="pt").input_features >>> | 076076bacc56d06116699cae0978350e |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'hf-asr-leaderboard'] | false | Evaluation This code snippet shows how to evaluate **openai/whisper-small.en** on LibriSpeech's "clean" and "other" test data. ```python >>> from datasets import load_dataset >>> from transformers import WhisperForConditionalGeneration, WhisperProcessor >>> import soundfile as sf >>> import torch >>> from evaluate import load >>> librispeech_eval = load_dataset("librispeech_asr", "clean", split="test") >>> model = WhisperForConditionalGeneration.from_pretrained("openai/whisper-small.en").to("cuda") >>> processor = WhisperProcessor.from_pretrained("openai/whisper-small.en") >>> def map_to_pred(batch): >>> input_features = processor(batch["audio"]["array"], return_tensors="pt").input_features >>> with torch.no_grad(): >>> logits = model(input_features.to("cuda")).logits >>> predicted_ids = torch.argmax(logits, dim=-1) >>> transcription = processor.batch_decode(predicted_ids, normalize = True) >>> batch['text'] = processor.tokenizer._normalize(batch['text']) >>> batch["transcription"] = transcription >>> return batch >>> result = librispeech_eval.map(map_to_pred, batched=True, batch_size=1, remove_columns=["speech"]) >>> wer = load("wer") >>> print(wer.compute(predictions=ds["text"], references=ds["transcription"])) 0.07639504403417127 ``` | c38c7c3286b79eea396e7cb1f34bd2af |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'hf-asr-leaderboard'] | false | Evaluated Use The primary intended users of these models are AI researchers studying robustness, generalization, capabilities, biases, and constraints of the current model. However, Whisper is also potentially quite useful as an ASR solution for developers, especially for English speech recognition. We recognize that once models are released, it is impossible to restrict access to only “intended” uses or to draw reasonable guidelines around what is or is not research. The models are primarily trained and evaluated on ASR and speech translation to English tasks. They show strong ASR results in ~10 languages. They may exhibit additional capabilities, particularly if fine-tuned on certain tasks like voice activity detection, speaker classification, or speaker diarization but have not been robustly evaluated in these areas. We strongly recommend that users perform robust evaluations of the models in a particular context and domain before deploying them. In particular, we caution against using Whisper models to transcribe recordings of individuals taken without their consent or purporting to use these models for any kind of subjective classification. We recommend against use in high-risk domains like decision-making contexts, where flaws in accuracy can lead to pronounced flaws in outcomes. The models are intended to transcribe and translate speech, use of the model for classification is not only not evaluated but also not appropriate, particularly to infer human attributes. | 0b8d18b3ec61789a5f812884b9888c01 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'hf-asr-leaderboard'] | false | Training Data The models are trained on 680,000 hours of audio and the corresponding transcripts collected from the internet. 65% of this data (or 438,000 hours) represents English-language audio and matched English transcripts, roughly 18% (or 126,000 hours) represents non-English audio and English transcripts, while the final 17% (or 117,000 hours) represents non-English audio and the corresponding transcript. This non-English data represents 98 different languages. As discussed in [the accompanying paper](https://cdn.openai.com/papers/whisper.pdf), we see that performance on transcription in a given language is directly correlated with the amount of training data we employ in that language. | 60915cdd52b115bd51b82fc53c4bbdc9 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'hf-asr-leaderboard'] | false | Performance and Limitations Our studies show that, over many existing ASR systems, the models exhibit improved robustness to accents, background noise, technical language, as well as zero shot translation from multiple languages into English; and that accuracy on speech recognition and translation is near the state-of-the-art level. However, because the models are trained in a weakly supervised manner using large-scale noisy data, the predictions may include texts that are not actually spoken in the audio input (i.e. hallucination). We hypothesize that this happens because, given their general knowledge of language, the models combine trying to predict the next word in audio with trying to transcribe the audio itself. Our models perform unevenly across languages, and we observe lower accuracy on low-resource and/or low-discoverability languages or languages where we have less training data. The models also exhibit disparate performance on different accents and dialects of particular languages, which may include higher word error rate across speakers of different genders, races, ages, or other demographic criteria. Our full evaluation results are presented in [the paper accompanying this release](https://cdn.openai.com/papers/whisper.pdf). In addition, the sequence-to-sequence architecture of the model makes it prone to generating repetitive texts, which can be mitigated to some degree by beam search and temperature scheduling but not perfectly. Further analysis on these limitations are provided in [the paper](https://cdn.openai.com/papers/whisper.pdf). It is likely that this behavior and hallucinations may be worse on lower-resource and/or lower-discoverability languages. | febbc88f4f9af01d5531a3dfdee81c57 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'hf-asr-leaderboard'] | false | Broader Implications We anticipate that Whisper models’ transcription capabilities may be used for improving accessibility tools. While Whisper models cannot be used for real-time transcription out of the box – their speed and size suggest that others may be able to build applications on top of them that allow for near-real-time speech recognition and translation. The real value of beneficial applications built on top of Whisper models suggests that the disparate performance of these models may have real economic implications. There are also potential dual use concerns that come with releasing Whisper. While we hope the technology will be used primarily for beneficial purposes, making ASR technology more accessible could enable more actors to build capable surveillance technologies or scale up existing surveillance efforts, as the speed and accuracy allow for affordable automatic transcription and translation of large volumes of audio communication. Moreover, these models may have some capabilities to recognize specific individuals out of the box, which in turn presents safety concerns related both to dual use and disparate performance. In practice, we expect that the cost of transcription is not the limiting factor of scaling up surveillance projects. | cbf4f91a5c10c384ce78130c8b039d90 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'hf-asr-leaderboard'] | false | BibTeX entry and citation info *Since no official citation was provided, we use the following in the mean time* ```bibtex @misc{radford2022whisper, title={Robust Speech Recognition via Large-Scale Weak Supervision.}, author={Alec Radford, Jong Wook Kim, Tao Xu, Greg Brockman, Christine McLeavey, Ilya Sutskever}, year={2022}, url={https://cdn.openai.com/papers/whisper.pdf}, } ``` | 59cb2b3f14768ac418c571ae28cb8937 |

apache-2.0 | ['generated_from_trainer'] | false | distilr2-lr2e05-wd0.1-bs32 This model is a fine-tuned version of [distilroberta-base](https://huggingface.co/distilroberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2809 - Rmse: 0.5300 - Mse: 0.2809 - Mae: 0.4214 | d3814497bcb94d4d670197e4a2408a08 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 256 - eval_batch_size: 256 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 10 | e382be0c0b4ae283c77f4fc666e460ae |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rmse | Mse | Mae | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:| | 0.2771 | 1.0 | 623 | 0.2730 | 0.5224 | 0.2730 | 0.4164 | | 0.2732 | 2.0 | 1246 | 0.2731 | 0.5226 | 0.2731 | 0.4156 | | 0.271 | 3.0 | 1869 | 0.2791 | 0.5283 | 0.2791 | 0.4308 | | 0.2681 | 4.0 | 2492 | 0.2751 | 0.5245 | 0.2751 | 0.4004 | | 0.2648 | 5.0 | 3115 | 0.2795 | 0.5286 | 0.2795 | 0.4238 | | 0.2606 | 6.0 | 3738 | 0.2809 | 0.5300 | 0.2809 | 0.4214 | | e7e6f51103c578f2ab46de53ce6385ff |

apache-2.0 | ['generated_from_trainer'] | false | mbarthez-davide_articles-copy_enhanced This model is a fine-tuned version of [moussaKam/mbarthez](https://huggingface.co/moussaKam/mbarthez) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 1.4905 - Rouge1: 36.548 - Rouge2: 19.6282 - Rougel: 30.2513 - Rougelsum: 30.2765 - Gen Len: 25.7238 | 833b0470e24940d107ca7d930cd76798 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3.0 - mixed_precision_training: Native AMP | b06ccc1b8f1b9c6a4a30bef0432d02eb |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:------:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:| | 1.6706 | 1.0 | 33552 | 1.5690 | 31.2477 | 16.5455 | 26.9855 | 26.9754 | 18.6217 | | 1.3446 | 2.0 | 67104 | 1.5060 | 32.1108 | 17.1408 | 27.7833 | 27.7703 | 18.9115 | | 1.3245 | 3.0 | 100656 | 1.4905 | 32.9084 | 17.7027 | 28.2912 | 28.2975 | 18.9801 | | 94f462ae66a6c02ff6403c043a6132a2 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-cola This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the glue dataset. It achieves the following results on the evaluation set: - Loss: 0.8631 - Matthews Correlation: 0.5411 | 88fba6a7013447f04f7bc1a114319d8a |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Matthews Correlation | |:-------------:|:-----:|:----:|:---------------:|:--------------------:| | 0.5249 | 1.0 | 535 | 0.5300 | 0.4152 | | 0.3489 | 2.0 | 1070 | 0.5238 | 0.4940 | | 0.2329 | 3.0 | 1605 | 0.6447 | 0.5162 | | 0.1692 | 4.0 | 2140 | 0.7805 | 0.5332 | | 0.1256 | 5.0 | 2675 | 0.8631 | 0.5411 | | e22910ad03a212ba6c01705373b753b9 |

mit | ['exbert'] | false | RoBERTa base model Pretrained model on English language using a masked language modeling (MLM) objective. It was introduced in [this paper](https://arxiv.org/abs/1907.11692) and first released in [this repository](https://github.com/pytorch/fairseq/tree/master/examples/roberta). This model is case-sensitive: it makes a difference between english and English. Disclaimer: The team releasing RoBERTa did not write a model card for this model so this model card has been written by the Hugging Face team. | fb15f1802f2a87a12899cc105813975b |

mit | ['exbert'] | false | Model description RoBERTa is a transformers model pretrained on a large corpus of English data in a self-supervised fashion. This means it was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots of publicly available data) with an automatic process to generate inputs and labels from those texts. More precisely, it was pretrained with the Masked language modeling (MLM) objective. Taking a sentence, the model randomly masks 15% of the words in the input then run the entire masked sentence through the model and has to predict the masked words. This is different from traditional recurrent neural networks (RNNs) that usually see the words one after the other, or from autoregressive models like GPT which internally mask the future tokens. It allows the model to learn a bidirectional representation of the sentence. This way, the model learns an inner representation of the English language that can then be used to extract features useful for downstream tasks: if you have a dataset of labeled sentences for instance, you can train a standard classifier using the features produced by the BERT model as inputs. | 54c16134fc245750df8826a5f992c960 |

mit | ['exbert'] | false | Intended uses & limitations You can use the raw model for masked language modeling, but it's mostly intended to be fine-tuned on a downstream task. See the [model hub](https://huggingface.co/models?filter=roberta) to look for fine-tuned versions on a task that interests you. Note that this model is primarily aimed at being fine-tuned on tasks that use the whole sentence (potentially masked) to make decisions, such as sequence classification, token classification or question answering. For tasks such as text generation you should look at model like GPT2. | e7ce57b117b4368ed5bffeb0d6d36d85 |

mit | ['exbert'] | false | How to use You can use this model directly with a pipeline for masked language modeling: ```python >>> from transformers import pipeline >>> unmasker = pipeline('fill-mask', model='roberta-base') >>> unmasker("Hello I'm a <mask> model.") [{'sequence': "<s>Hello I'm a male model.</s>", 'score': 0.3306540250778198, 'token': 2943, 'token_str': 'Ġmale'}, {'sequence': "<s>Hello I'm a female model.</s>", 'score': 0.04655390977859497, 'token': 2182, 'token_str': 'Ġfemale'}, {'sequence': "<s>Hello I'm a professional model.</s>", 'score': 0.04232972860336304, 'token': 2038, 'token_str': 'Ġprofessional'}, {'sequence': "<s>Hello I'm a fashion model.</s>", 'score': 0.037216778844594955, 'token': 2734, 'token_str': 'Ġfashion'}, {'sequence': "<s>Hello I'm a Russian model.</s>", 'score': 0.03253649175167084, 'token': 1083, 'token_str': 'ĠRussian'}] ``` Here is how to use this model to get the features of a given text in PyTorch: ```python from transformers import RobertaTokenizer, RobertaModel tokenizer = RobertaTokenizer.from_pretrained('roberta-base') model = RobertaModel.from_pretrained('roberta-base') text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` and in TensorFlow: ```python from transformers import RobertaTokenizer, TFRobertaModel tokenizer = RobertaTokenizer.from_pretrained('roberta-base') model = TFRobertaModel.from_pretrained('roberta-base') text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='tf') output = model(encoded_input) ``` | ce1800469b1a2be86e9db24314f3742b |

mit | ['exbert'] | false | Limitations and bias The training data used for this model contains a lot of unfiltered content from the internet, which is far from neutral. Therefore, the model can have biased predictions: ```python >>> from transformers import pipeline >>> unmasker = pipeline('fill-mask', model='roberta-base') >>> unmasker("The man worked as a <mask>.") [{'sequence': '<s>The man worked as a mechanic.</s>', 'score': 0.08702439814805984, 'token': 25682, 'token_str': 'Ġmechanic'}, {'sequence': '<s>The man worked as a waiter.</s>', 'score': 0.0819653645157814, 'token': 38233, 'token_str': 'Ġwaiter'}, {'sequence': '<s>The man worked as a butcher.</s>', 'score': 0.073323555290699, 'token': 32364, 'token_str': 'Ġbutcher'}, {'sequence': '<s>The man worked as a miner.</s>', 'score': 0.046322137117385864, 'token': 18678, 'token_str': 'Ġminer'}, {'sequence': '<s>The man worked as a guard.</s>', 'score': 0.040150221437215805, 'token': 2510, 'token_str': 'Ġguard'}] >>> unmasker("The Black woman worked as a <mask>.") [{'sequence': '<s>The Black woman worked as a waitress.</s>', 'score': 0.22177888453006744, 'token': 35698, 'token_str': 'Ġwaitress'}, {'sequence': '<s>The Black woman worked as a prostitute.</s>', 'score': 0.19288744032382965, 'token': 36289, 'token_str': 'Ġprostitute'}, {'sequence': '<s>The Black woman worked as a maid.</s>', 'score': 0.06498628109693527, 'token': 29754, 'token_str': 'Ġmaid'}, {'sequence': '<s>The Black woman worked as a secretary.</s>', 'score': 0.05375480651855469, 'token': 2971, 'token_str': 'Ġsecretary'}, {'sequence': '<s>The Black woman worked as a nurse.</s>', 'score': 0.05245552211999893, 'token': 9008, 'token_str': 'Ġnurse'}] ``` This bias will also affect all fine-tuned versions of this model. | 020462f045823422b5823df10db0d193 |

mit | ['exbert'] | false | Training data The RoBERTa model was pretrained on the reunion of five datasets: - [BookCorpus](https://yknzhu.wixsite.com/mbweb), a dataset consisting of 11,038 unpublished books; - [English Wikipedia](https://en.wikipedia.org/wiki/English_Wikipedia) (excluding lists, tables and headers) ; - [CC-News](https://commoncrawl.org/2016/10/news-dataset-available/), a dataset containing 63 millions English news articles crawled between September 2016 and February 2019. - [OpenWebText](https://github.com/jcpeterson/openwebtext), an opensource recreation of the WebText dataset used to train GPT-2, - [Stories](https://arxiv.org/abs/1806.02847) a dataset containing a subset of CommonCrawl data filtered to match the story-like style of Winograd schemas. Together theses datasets weight 160GB of text. | 1e4e3152c262a90ee0736abb085193ee |

mit | ['exbert'] | false | Preprocessing The texts are tokenized using a byte version of Byte-Pair Encoding (BPE) and a vocabulary size of 50,000. The inputs of the model take pieces of 512 contiguous token that may span over documents. The beginning of a new document is marked with `<s>` and the end of one by `</s>` The details of the masking procedure for each sentence are the following: - 15% of the tokens are masked. - In 80% of the cases, the masked tokens are replaced by `<mask>`. - In 10% of the cases, the masked tokens are replaced by a random token (different) from the one they replace. - In the 10% remaining cases, the masked tokens are left as is. Contrary to BERT, the masking is done dynamically during pretraining (e.g., it changes at each epoch and is not fixed). | 6dac1be152b8f68dce9db50a0185ecd0 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.