license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

mit | ['question-answering', 'bert', 'bert-large', 'pytorch'] | false | Informations on the method used All the informations are in the blog post : [NLP | Como treinar um modelo de Question Answering em qualquer linguagem baseado no BERT large, melhorando o desempenho do modelo utilizando o BERT base? (estudo de caso em português)](https://medium.com/@pierre_guillou/nlp-como-treinar-um-modelo-de-question-answering-em-qualquer-linguagem-baseado-no-bert-large-1c899262dd96) | 8194cebf0e0f017213dabbd693b9f725 |

mit | ['question-answering', 'bert', 'bert-large', 'pytorch'] | false | Notebook in GitHub [question_answering_BERT_large_cased_squad_v11_pt.ipynb](https://github.com/piegu/language-models/blob/master/question_answering_BERT_large_cased_squad_v11_pt.ipynb) ([nbviewer version](https://nbviewer.jupyter.org/github/piegu/language-models/blob/master/question_answering_BERT_large_cased_squad_v11_pt.ipynb)) | 9e3419dedeb01dc1307ff16cd1912cf2 |

mit | ['question-answering', 'bert', 'bert-large', 'pytorch'] | false | source: https://pt.wikipedia.org/wiki/Pandemia_de_COVID-19 context = r""" A pandemia de COVID-19, também conhecida como pandemia de coronavírus, é uma pandemia em curso de COVID-19, uma doença respiratória causada pelo coronavírus da síndrome respiratória aguda grave 2 (SARS-CoV-2). O vírus tem origem zoonótica e o primeiro caso conhecido da doença remonta a dezembro de 2019 em Wuhan, na China. Em 20 de janeiro de 2020, a Organização Mundial da Saúde (OMS) classificou o surto como Emergência de Saúde Pública de Âmbito Internacional e, em 11 de março de 2020, como pandemia. Em 18 de junho de 2021, 177 349 274 casos foram confirmados em 192 países e territórios, com 3 840 181 mortes atribuídas à doença, tornando-se uma das pandemias mais mortais da história. Os sintomas de COVID-19 são altamente variáveis, variando de nenhum a doenças com risco de morte. O vírus se espalha principalmente pelo ar quando as pessoas estão perto umas das outras. Ele deixa uma pessoa infectada quando ela respira, tosse, espirra ou fala e entra em outra pessoa pela boca, nariz ou olhos. Ele também pode se espalhar através de superfícies contaminadas. As pessoas permanecem contagiosas por até duas semanas e podem espalhar o vírus mesmo se forem assintomáticas. """ model_name = 'pierreguillou/bert-large-cased-squad-v1.1-portuguese' nlp = pipeline("question-answering", model=model_name) question = "Quando começou a pandemia de Covid-19 no mundo?" result = nlp(question=question, context=context) print(f"Answer: '{result['answer']}', score: {round(result['score'], 4)}, start: {result['start']}, end: {result['end']}") | ab20b9a0d604459312612d8d663d18e5 |

mit | ['question-answering', 'bert', 'bert-large', 'pytorch'] | false | How to use the model... with the Auto classes ```python from transformers import AutoTokenizer, AutoModelForQuestionAnswering tokenizer = AutoTokenizer.from_pretrained("pierreguillou/bert-large-cased-squad-v1.1-portuguese") model = AutoModelForQuestionAnswering.from_pretrained("pierreguillou/bert-large-cased-squad-v1.1-portuguese") ``` Or just clone the model repo: ```python git lfs install git clone https://huggingface.co/pierreguillou/bert-large-cased-squad-v1.1-portuguese | a526491fe828794f8f3126c6247e7130 |

mit | ['question-answering', 'bert', 'bert-large', 'pytorch'] | false | Author Portuguese BERT large cased QA (Question Answering), finetuned on SQUAD v1.1 was trained and evaluated by [Pierre GUILLOU](https://www.linkedin.com/in/pierreguillou/) thanks to the Open Source code, platforms and advices of many organizations ([link to the list](https://medium.com/@pierre_guillou/nlp-como-treinar-um-modelo-de-question-answering-em-qualquer-linguagem-baseado-no-bert-large-1c899262dd96 | f430989d9c7ee3f4895d7fb4026dd439 |

mit | ['question-answering', 'bert', 'bert-large', 'pytorch'] | false | c2f5)). In particular: [Hugging Face](https://huggingface.co/), [Neuralmind.ai](https://neuralmind.ai/), [Deep Learning Brasil group](http://www.deeplearningbrasil.com.br/) and [AI Lab](https://ailab.unb.br/). | aa44ebb8ec2e349adce94a2455a6145f |

mit | ['question-answering', 'bert', 'bert-large', 'pytorch'] | false | Citation If you use our work, please cite: ```bibtex @inproceedings{pierreguillou2021bertlargecasedsquadv11portuguese, title={Portuguese BERT large cased QA (Question Answering), finetuned on SQUAD v1.1}, author={Pierre Guillou}, year={2021} } ``` | 82aa5f6765c6da1bbed40a2ece63f497 |

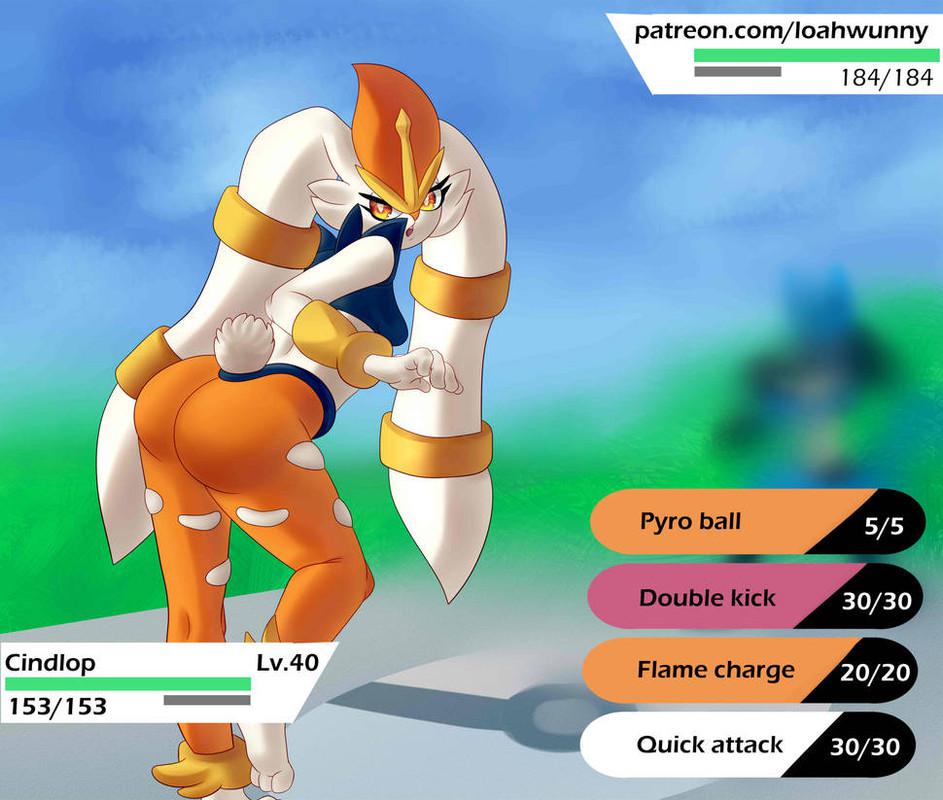

mit | [] | false | cindlop on Stable Diffusion This is the `<cindlop>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:     | 7999b0f36e1c22582e24da8fe959c606 |

apache-2.0 | ['automatic-speech-recognition', 'en'] | false | exp_w2v2r_en_vp-100k_gender_male-5_female-5_s474 Fine-tuned [facebook/wav2vec2-large-100k-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-100k-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (en)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 7fec0c61d2a6e3ed9c628bca3b9443f2 |

apache-2.0 | ['translation'] | false | opus-mt-tvl-fi * source languages: tvl * target languages: fi * OPUS readme: [tvl-fi](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/tvl-fi/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/tvl-fi/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/tvl-fi/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/tvl-fi/opus-2020-01-16.eval.txt) | 168f01e87092bbb542d21c96a35b686d |

mit | [] | false | museum by coop himmelblau on Stable Diffusion This is the `<coop himmelblau museum>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:     | c026fa32a761147ec728f12bef347062 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper Large Punjabi - Drishti Sharma This model is a fine-tuned version of [openai/whisper-large-v2](https://huggingface.co/openai/whisper-large-v2) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 0.2211 - Wer: 24.4764 | caaf36b68397e5ba042d17e1021a0211 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-06 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 100 - training_steps: 700 - mixed_precision_training: Native AMP | a8c3075650b29d2c23e2047a484b7179 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.0584 | 5.79 | 700 | 0.2211 | 24.4764 | | ac479644d1da14bbb670edf68bcc128b |

apache-2.0 | ['exbert'] | false | Model description BERT is a transformers model pretrained on a large corpus of English data in a self-supervised fashion. This means it was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots of publicly available data) with an automatic process to generate inputs and labels from those texts. More precisely, it was pretrained with two objectives: - Masked language modeling (MLM): taking a sentence, the model randomly masks 15% of the words in the input then run the entire masked sentence through the model and has to predict the masked words. This is different from traditional recurrent neural networks (RNNs) that usually see the words one after the other, or from autoregressive models like GPT which internally mask the future tokens. It allows the model to learn a bidirectional representation of the sentence. - Next sentence prediction (NSP): the models concatenates two masked sentences as inputs during pretraining. Sometimes they correspond to sentences that were next to each other in the original text, sometimes not. The model then has to predict if the two sentences were following each other or not. This way, the model learns an inner representation of the English language that can then be used to extract features useful for downstream tasks: if you have a dataset of labeled sentences for instance, you can train a standard classifier using the features produced by the BERT model as inputs. | a34729a1b17c39c393b63e5cc548beca |

apache-2.0 | ['exbert'] | false | Intended uses & limitations You can use the raw model for either masked language modeling or next sentence prediction, but it's mostly intended to be fine-tuned on a downstream task. See the [model hub](https://huggingface.co/models?filter=bert) to look for fine-tuned versions on a task that interests you. Note that this model is primarily aimed at being fine-tuned on tasks that use the whole sentence (potentially masked) to make decisions, such as sequence classification, token classification or question answering. For tasks such as text generation you should look at model like GPT2. | 73150114b6793ea9dfad6f82b4b3627c |

apache-2.0 | ['exbert'] | false | Preprocessing The texts are lowercased and tokenized using WordPiece and a vocabulary size of 30,000. The inputs of the model are then of the form: ``` [CLS] Sentence A [SEP] Sentence B [SEP] ``` With probability 0.5, sentence A and sentence B correspond to two consecutive sentences in the original corpus and in the other cases, it's another random sentence in the corpus. Note that what is considered a sentence here is a consecutive span of text usually longer than a single sentence. The only constrain is that the result with the two "sentences" has a combined length of less than 512 tokens. The details of the masking procedure for each sentence are the following: - 15% of the tokens are masked. - In 80% of the cases, the masked tokens are replaced by `[MASK]`. - In 10% of the cases, the masked tokens are replaced by a random token (different) from the one they replace. - In the 10% remaining cases, the masked tokens are left as is. | 8feb2785ffffd969cb70b2467dfbd7b0 |

cc-by-4.0 | ['question generation', 'answer extraction'] | false | Model Card of `lmqg/mt5-base-ruquad-qg-ae` This model is fine-tuned version of [google/mt5-base](https://huggingface.co/google/mt5-base) for question generation and answer extraction jointly on the [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) (dataset_name: default) via [`lmqg`](https://github.com/asahi417/lm-question-generation). | 93c524593c17840feb129499c0a06f62 |

cc-by-4.0 | ['question generation', 'answer extraction'] | false | Overview - **Language model:** [google/mt5-base](https://huggingface.co/google/mt5-base) - **Language:** ru - **Training data:** [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) (default) - **Online Demo:** [https://autoqg.net/](https://autoqg.net/) - **Repository:** [https://github.com/asahi417/lm-question-generation](https://github.com/asahi417/lm-question-generation) - **Paper:** [https://arxiv.org/abs/2210.03992](https://arxiv.org/abs/2210.03992) | efffa152f6722f3a7f1711cc8d1ad68c |

cc-by-4.0 | ['question generation', 'answer extraction'] | false | model prediction question_answer_pairs = model.generate_qa("Нелишним будет отметить, что, развивая это направление, Д. И. Менделеев, поначалу априорно выдвинув идею о температуре, при которой высота мениска будет нулевой, в мае 1860 года провёл серию опытов.") ``` - With `transformers` ```python from transformers import pipeline pipe = pipeline("text2text-generation", "lmqg/mt5-base-ruquad-qg-ae") | a95bdf28a27f25e191e8eb174c95d36c |

cc-by-4.0 | ['question generation', 'answer extraction'] | false | answer extraction answer = pipe("generate question: Нелишним будет отметить, что, развивая это направление, Д. И. Менделеев, поначалу априорно выдвинув идею о температуре, при которой высота мениска будет нулевой, <hl> в мае 1860 года <hl> провёл серию опытов.") | 58e9c7db22c6ee43eaa5d025217c2882 |

cc-by-4.0 | ['question generation', 'answer extraction'] | false | question generation question = pipe("extract answers: <hl> в английском языке в нарицательном смысле применяется термин rapid transit (скоростной городской транспорт), однако употребляется он только тогда, когда по смыслу невозможно ограничиться названием одной конкретной системы метрополитена. <hl> в остальных случаях используются индивидуальные названия: в лондоне — london underground, в нью-йорке — new york subway, в ливерпуле — merseyrail, в вашингтоне — washington metrorail, в сан-франциско — bart и т. п. в некоторых городах применяется название метро (англ. metro) для систем, по своему характеру близких к метро, или для всего городского транспорта (собственно метро и наземный пассажирский транспорт (в том числе автобусы и трамваи)) в совокупности.") ``` | 52a8c08767683a4c9f24c7d8e25f4bd1 |

cc-by-4.0 | ['question generation', 'answer extraction'] | false | Evaluation - ***Metric (Question Generation)***: [raw metric file](https://huggingface.co/lmqg/mt5-base-ruquad-qg-ae/raw/main/eval/metric.first.sentence.paragraph_answer.question.lmqg_qg_ruquad.default.json) | | Score | Type | Dataset | |:-----------|--------:|:--------|:-----------------------------------------------------------------| | BERTScore | 87.9 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | Bleu_1 | 36.66 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | Bleu_2 | 29.53 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | Bleu_3 | 24.23 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | Bleu_4 | 20.06 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | METEOR | 30.18 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | MoverScore | 66.6 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | ROUGE_L | 35.35 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | - ***Metric (Question & Answer Generation)***: [raw metric file](https://huggingface.co/lmqg/mt5-base-ruquad-qg-ae/raw/main/eval/metric.first.answer.paragraph.questions_answers.lmqg_qg_ruquad.default.json) | | Score | Type | Dataset | |:--------------------------------|--------:|:--------|:-----------------------------------------------------------------| | QAAlignedF1Score (BERTScore) | 80.21 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | QAAlignedF1Score (MoverScore) | 57.17 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | QAAlignedPrecision (BERTScore) | 76.48 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | QAAlignedPrecision (MoverScore) | 54.4 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | QAAlignedRecall (BERTScore) | 84.49 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | QAAlignedRecall (MoverScore) | 60.55 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | - ***Metric (Answer Extraction)***: [raw metric file](https://huggingface.co/lmqg/mt5-base-ruquad-qg-ae/raw/main/eval/metric.first.answer.paragraph_sentence.answer.lmqg_qg_ruquad.default.json) | | Score | Type | Dataset | |:-----------------|--------:|:--------|:-----------------------------------------------------------------| | AnswerExactMatch | 44.44 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | AnswerF1Score | 64.31 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | BERTScore | 86.22 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | Bleu_1 | 45.61 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | Bleu_2 | 40.76 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | Bleu_3 | 36.22 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | Bleu_4 | 31.64 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | METEOR | 38.79 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | MoverScore | 74.64 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | ROUGE_L | 49.73 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | bea3fe53441245847ea2941eb0778e2d |

cc-by-4.0 | ['question generation', 'answer extraction'] | false | Training hyperparameters The following hyperparameters were used during fine-tuning: - dataset_path: lmqg/qg_ruquad - dataset_name: default - input_types: ['paragraph_answer', 'paragraph_sentence'] - output_types: ['question', 'answer'] - prefix_types: ['qg', 'ae'] - model: google/mt5-base - max_length: 512 - max_length_output: 32 - epoch: 8 - batch: 32 - lr: 0.001 - fp16: False - random_seed: 1 - gradient_accumulation_steps: 2 - label_smoothing: 0.15 The full configuration can be found at [fine-tuning config file](https://huggingface.co/lmqg/mt5-base-ruquad-qg-ae/raw/main/trainer_config.json). | bafeb5afd011f6d74f83cb11ed094008 |

apache-2.0 | [] | false | Model Description CT0 is an extention of T0, a model showing great zero-shot task generalization on English natural language prompts, outperforming GPT-3 on many tasks, while being 16x smaller. ```bibtex @misc{scialom2022Continual, title={Fine-tuned Language Models are Continual Learners}, author={Thomas Scialom and Tuhin Chakrabarty and Smaranda Muresan}, year={2022}, eprint={2205.12393}, archivePrefix={arXiv}, primaryClass={cs.LG} } ``` | f1879199a48b11353a2e7dc3c79bfdcf |

other | ['stable-diffusion', 'text-to-image'] | false | Cool Japan Diffusion 2.1.1.1 Model Card  [注意事项。中国将对图像生成的人工智能实施法律限制。 ](http://www.cac.gov.cn/2022-12/11/c_1672221949318230.htm) (中国国内にいる人への警告) English version is [here](README_en.md). | d330cf198b62ebd69f266b8e01e57509 |

other | ['stable-diffusion', 'text-to-image'] | false | 使い方 手軽に楽しみたい方は、こちらの[Space](https://huggingface.co/spaces/aipicasso/cool-japan-diffusion-latest-demo)をお使いください。 詳しい本モデルの取り扱い方は[こちらの取扱説明書](https://alfredplpl.hatenablog.com/entry/2023/01/11/182146)にかかれています。 モデルは[ここ](https://huggingface.co/aipicasso/cool-japan-diffusion-2-1-1-1/resolve/main/v2-1-1-1_fp16.ckpt)からダウンロードできます。 以下、一般的なモデルカードの日本語訳です。 | a203b367d1aea3d8a03f8a035a3165bc |

other | ['stable-diffusion', 'text-to-image'] | false | Diffusersの場合 [🤗's Diffusers library](https://github.com/huggingface/diffusers) を使ってください。 まずは、以下のスクリプトを実行し、ライブラリをいれてください。 ```bash pip install --upgrade git+https://github.com/huggingface/diffusers.git transformers accelerate scipy ``` 次のスクリプトを実行し、画像を生成してください。 ```python from diffusers import StableDiffusionPipeline, EulerAncestralDiscreteScheduler import torch model_id = "aipicasso/cool-japan-diffusion-2-1-1-1" scheduler = EulerAncestralDiscreteScheduler.from_pretrained(model_id, subfolder="scheduler") pipe = StableDiffusionPipeline.from_pretrained(model_id, scheduler=scheduler, torch_dtype=torch.float16) pipe = pipe.to("cuda") prompt = "anime, masterpiece, a portrait of a girl, good pupil, 4k, detailed" negative_prompt="deformed, blurry, bad anatomy, bad pupil, disfigured, poorly drawn face, mutation, mutated, extra limb, ugly, poorly drawn hands, bad hands, fused fingers, messy drawing, broken legs censor, low quality, mutated hands and fingers, long body, mutation, poorly drawn, bad eyes, ui, error, missing fingers, fused fingers, one hand with more than 5 fingers, one hand with less than 5 fingers, one hand with more than 5 digit, one hand with less than 5 digit, extra digit, fewer digits, fused digit, missing digit, bad digit, liquid digit, long body, uncoordinated body, unnatural body, lowres, jpeg artifacts, 3d, cg, text, japanese kanji" images = pipe(prompt,negative_prompt=negative_prompt, num_inference_steps=20).images images[0].save("girl.png") ``` **注意**: - [xformers](https://github.com/facebookresearch/xformers) を使うと早くなるらしいです。 - GPUを使う際にGPUのメモリが少ない人は `pipe.enable_attention_slicing()` を使ってください。 | fbeb3fff4b076ef929d7349eb702ac35 |

other | ['stable-diffusion', 'text-to-image'] | false | 学習 **学習データ** 次のデータを主に使ってStable Diffusionをファインチューニングしています。 - VAEについて - Danbooruなどの無断転載サイトを除いた日本の国内法を遵守したデータ: 60万種類 (データ拡張により無限枚作成) - U-Netについて - Danbooruなどの無断転載サイトを除いた日本の国内法を遵守したデータ: 180万ペア **学習プロセス** Stable DiffusionのVAEとU-Netをファインチューニングしました。 - **ハードウェア:** RTX 3090, A6000 - **オプティマイザー:** AdamW - **Gradient Accumulations**: 1 - **バッチサイズ:** 1 | 16d80ff14b32e65706f5612ad01e5902 |

cc-by-4.0 | ['espnet', 'audio', 'audio-to-audio'] | false | Demo: How to use in ESPnet2 ```bash cd espnet pip install -e . cd egs2/chime4/enh1 ./run.sh --skip_data_prep false --skip_train true --download_model espnet/Wangyou_Zhang_wsj0_2mix_enh_dc_crn_mapping_snr_raw ``` | b1b7518fffbb3b74bc913620d854c03c |

cc-by-4.0 | ['espnet', 'audio', 'audio-to-audio'] | false | ENH config <details><summary>expand</summary> ``` config: conf/tuning/train_enh_dc_crn_mapping_snr.yaml print_config: false log_level: INFO dry_run: false iterator_type: chunk output_dir: exp/enh_train_enh_dc_crn_mapping_snr_raw ngpu: 1 seed: 0 num_workers: 4 num_att_plot: 3 dist_backend: nccl dist_init_method: env:// dist_world_size: null dist_rank: null local_rank: 0 dist_master_addr: null dist_master_port: null dist_launcher: null multiprocessing_distributed: false unused_parameters: false sharded_ddp: false cudnn_enabled: true cudnn_benchmark: false cudnn_deterministic: true collect_stats: false write_collected_feats: false max_epoch: 200 patience: 10 val_scheduler_criterion: - valid - loss early_stopping_criterion: - valid - loss - min best_model_criterion: - - valid - si_snr - max - - valid - loss - min keep_nbest_models: 1 nbest_averaging_interval: 0 grad_clip: 5 grad_clip_type: 2.0 grad_noise: false accum_grad: 1 no_forward_run: false resume: true train_dtype: float32 use_amp: false log_interval: null use_matplotlib: true use_tensorboard: true use_wandb: false wandb_project: null wandb_id: null wandb_entity: null wandb_name: null wandb_model_log_interval: -1 detect_anomaly: false pretrain_path: null init_param: [] ignore_init_mismatch: false freeze_param: [] num_iters_per_epoch: null batch_size: 16 valid_batch_size: null batch_bins: 1000000 valid_batch_bins: null train_shape_file: - exp/enh_stats_8k/train/speech_mix_shape - exp/enh_stats_8k/train/speech_ref1_shape - exp/enh_stats_8k/train/speech_ref2_shape valid_shape_file: - exp/enh_stats_8k/valid/speech_mix_shape - exp/enh_stats_8k/valid/speech_ref1_shape - exp/enh_stats_8k/valid/speech_ref2_shape batch_type: folded valid_batch_type: null fold_length: - 80000 - 80000 - 80000 sort_in_batch: descending sort_batch: descending multiple_iterator: false chunk_length: 32000 chunk_shift_ratio: 0.5 num_cache_chunks: 1024 train_data_path_and_name_and_type: - - dump/raw/tr_min_8k/wav.scp - speech_mix - sound - - dump/raw/tr_min_8k/spk1.scp - speech_ref1 - sound - - dump/raw/tr_min_8k/spk2.scp - speech_ref2 - sound valid_data_path_and_name_and_type: - - dump/raw/cv_min_8k/wav.scp - speech_mix - sound - - dump/raw/cv_min_8k/spk1.scp - speech_ref1 - sound - - dump/raw/cv_min_8k/spk2.scp - speech_ref2 - sound allow_variable_data_keys: false max_cache_size: 0.0 max_cache_fd: 32 valid_max_cache_size: null optim: adam optim_conf: lr: 0.001 eps: 1.0e-08 weight_decay: 1.0e-07 amsgrad: true scheduler: steplr scheduler_conf: step_size: 2 gamma: 0.98 init: xavier_uniform model_conf: stft_consistency: false loss_type: mask_mse mask_type: null criterions: - name: si_snr conf: eps: 1.0e-07 wrapper: pit wrapper_conf: weight: 1.0 use_preprocessor: false encoder: stft encoder_conf: n_fft: 256 hop_length: 128 separator: dc_crn separator_conf: num_spk: 2 input_channels: - 2 - 16 - 32 - 64 - 128 - 256 enc_hid_channels: 8 enc_layers: 5 glstm_groups: 2 glstm_layers: 2 glstm_bidirectional: true glstm_rearrange: false mode: mapping decoder: stft decoder_conf: n_fft: 256 hop_length: 128 required: - output_dir version: 0.10.7a1 distributed: false ``` </details> | 540c5db4bc9e845c3db79c00f2f5264d |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | mt5-small-finetuned-amazon-en-es This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the None dataset. It achieves the following results on the evaluation set: - Loss: 3.0294 - Rouge1: 16.6807 - Rouge2: 8.0004 - Rougel: 16.2251 - Rougelsum: 16.1743 | 884e3867448f1463fddd79c8fe879b8f |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | |:-------------:|:-----:|:----:|:---------------:|:-------:|:------:|:-------:|:---------:| | 6.5928 | 1.0 | 1209 | 3.3005 | 14.7863 | 6.5038 | 14.3031 | 14.2522 | | 3.9024 | 2.0 | 2418 | 3.1399 | 16.9257 | 8.6583 | 16.15 | 16.1299 | | 3.5806 | 3.0 | 3627 | 3.0869 | 18.2734 | 9.1667 | 17.7441 | 17.5782 | | 3.4201 | 4.0 | 4836 | 3.0590 | 17.763 | 8.9447 | 17.1833 | 17.1661 | | 3.3202 | 5.0 | 6045 | 3.0598 | 17.7754 | 8.5695 | 17.4139 | 17.2653 | | 3.2436 | 6.0 | 7254 | 3.0409 | 16.8423 | 8.1593 | 16.5392 | 16.4297 | | 3.2079 | 7.0 | 8463 | 3.0332 | 16.8991 | 8.1574 | 16.4229 | 16.3515 | | 3.1801 | 8.0 | 9672 | 3.0294 | 16.6807 | 8.0004 | 16.2251 | 16.1743 | | 3261b4efcc9ce0d22f2a0684914b3751 |

mit | ['conversational'] | false | DialoGPT Trained on the Speech of a Game Character This is an instance of [microsoft/DialoGPT-small](https://huggingface.co/microsoft/DialoGPT-small) trained on a game character, Neku Sakuraba from [The World Ends With You](https://en.wikipedia.org/wiki/The_World_Ends_with_You). The data comes from [a Kaggle game script dataset](https://www.kaggle.com/ruolinzheng/twewy-game-script). Chat with the model: ```python from transformers import AutoTokenizer, AutoModelWithLMHead tokenizer = AutoTokenizer.from_pretrained("r3dhummingbird/DialoGPT-small-neku") model = AutoModelWithLMHead.from_pretrained("r3dhummingbird/DialoGPT-small-neku") | fa44bea28f07d4ea5ec259eeabafe22f |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | ANYTHING-MIDJOURNEY-V-4.1 Dreambooth model trained by Joeythemonster with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept: | f85eabc6dd875d7b6b30eb185827f982 |

apache-2.0 | ['image-classification', 'vision'] | false | BEiT (large-sized model, fine-tuned on ImageNet-22k) BEiT model pre-trained in a self-supervised fashion on ImageNet-22k - also called ImageNet-21k (14 million images, 21,841 classes) at resolution 224x224, and fine-tuned on the same dataset at resolution 224x224. It was introduced in the paper [BEIT: BERT Pre-Training of Image Transformers](https://arxiv.org/abs/2106.08254) by Hangbo Bao, Li Dong and Furu Wei and first released in [this repository](https://github.com/microsoft/unilm/tree/master/beit). Disclaimer: The team releasing BEiT did not write a model card for this model so this model card has been written by the Hugging Face team. | ecc1345f5248d00ef3ce1466f963ea18 |

apache-2.0 | ['image-classification', 'vision'] | false | Model description The BEiT model is a Vision Transformer (ViT), which is a transformer encoder model (BERT-like). In contrast to the original ViT model, BEiT is pretrained on a large collection of images in a self-supervised fashion, namely ImageNet-21k, at a resolution of 224x224 pixels. The pre-training objective for the model is to predict visual tokens from the encoder of OpenAI's DALL-E's VQ-VAE, based on masked patches. Next, the model was fine-tuned in a supervised fashion on ImageNet (also referred to as ILSVRC2012), a dataset comprising 1 million images and 1,000 classes, also at resolution 224x224. Images are presented to the model as a sequence of fixed-size patches (resolution 16x16), which are linearly embedded. Contrary to the original ViT models, BEiT models do use relative position embeddings (similar to T5) instead of absolute position embeddings, and perform classification of images by mean-pooling the final hidden states of the patches, instead of placing a linear layer on top of the final hidden state of the [CLS] token. By pre-training the model, it learns an inner representation of images that can then be used to extract features useful for downstream tasks: if you have a dataset of labeled images for instance, you can train a standard classifier by placing a linear layer on top of the pre-trained encoder. One typically places a linear layer on top of the [CLS] token, as the last hidden state of this token can be seen as a representation of an entire image. Alternatively, one can mean-pool the final hidden states of the patch embeddings, and place a linear layer on top of that. | 7c9c999a401bd0f21b3b002d142eed10 |

apache-2.0 | ['image-classification', 'vision'] | false | Intended uses & limitations You can use the raw model for image classification. See the [model hub](https://huggingface.co/models?search=microsoft/beit) to look for fine-tuned versions on a task that interests you. | 0ca720af91c6c58cacfb09f56a6d7f8a |

apache-2.0 | ['image-classification', 'vision'] | false | How to use Here is how to use this model to classify an image of the COCO 2017 dataset into one of the 1,000 ImageNet classes: ```python from transformers import BeitFeatureExtractor, BeitForImageClassification from PIL import Image import requests url = 'http://images.cocodataset.org/val2017/000000039769.jpg' image = Image.open(requests.get(url, stream=True).raw) feature_extractor = BeitFeatureExtractor.from_pretrained('microsoft/beit-large-patch16-224-pt22k-ft22k') model = BeitForImageClassification.from_pretrained('microsoft/beit-large-patch16-224-pt22k-ft22k') inputs = feature_extractor(images=image, return_tensors="pt") outputs = model(**inputs) logits = outputs.logits | 93a4a93bf5603e1503e2a3bd0cd5e8c2 |

apache-2.0 | ['image-classification', 'vision'] | false | model predicts one of the 21,841 ImageNet-22k classes predicted_class_idx = logits.argmax(-1).item() print("Predicted class:", model.config.id2label[predicted_class_idx]) ``` Currently, both the feature extractor and model support PyTorch. | b27a2b15f4152459fa43cc2e80ad4159 |

apache-2.0 | ['image-classification', 'vision'] | false | Preprocessing The exact details of preprocessing of images during training/validation can be found [here](https://github.com/microsoft/unilm/blob/master/beit/datasets.py). Images are resized/rescaled to the same resolution (224x224) and normalized across the RGB channels with mean (0.5, 0.5, 0.5) and standard deviation (0.5, 0.5, 0.5). | 7bd7e0009c42216c77d32938b4df6597 |

apache-2.0 | ['image-classification', 'vision'] | false | Evaluation results For evaluation results on several image classification benchmarks, we refer to tables 1 and 2 of the original paper. Note that for fine-tuning, the best results are obtained with a higher resolution. Of course, increasing the model size will result in better performance. | fd3951f702bc0e1fdcf57c22cb93a083 |

apache-2.0 | ['image-classification', 'vision'] | false | BibTeX entry and citation info ```@article{DBLP:journals/corr/abs-2106-08254, author = {Hangbo Bao and Li Dong and Furu Wei}, title = {BEiT: {BERT} Pre-Training of Image Transformers}, journal = {CoRR}, volume = {abs/2106.08254}, year = {2021}, url = {https://arxiv.org/abs/2106.08254}, archivePrefix = {arXiv}, eprint = {2106.08254}, timestamp = {Tue, 29 Jun 2021 16:55:04 +0200}, biburl = {https://dblp.org/rec/journals/corr/abs-2106-08254.bib}, bibsource = {dblp computer science bibliography, https://dblp.org} } ``` ```bibtex @inproceedings{deng2009imagenet, title={Imagenet: A large-scale hierarchical image database}, author={Deng, Jia and Dong, Wei and Socher, Richard and Li, Li-Jia and Li, Kai and Fei-Fei, Li}, booktitle={2009 IEEE conference on computer vision and pattern recognition}, pages={248--255}, year={2009}, organization={Ieee} } ``` | 5b01ad064736982f927450fe4243b09b |

cc-by-4.0 | ['espnet', 'audio', 'text-to-speech'] | false | Demo: How to use in ESPnet2 ```bash cd espnet git checkout c173c30930631731e6836c274a591ad571749741 pip install -e . cd egs2/ljspeech/tts1 ./run.sh --skip_data_prep false --skip_train true --download_model imdanboy/ljspeech_tts_train_jets_raw_phn_tacotron_g2p_en_no_space_train.total_count.ave ``` | b7d4b3cfcd55c14663f71f3735be9d70 |

cc-by-4.0 | ['espnet', 'audio', 'text-to-speech'] | false | TTS config <details><summary>expand</summary> ``` config: conf/tuning/train_jets.yaml print_config: false log_level: INFO dry_run: false iterator_type: sequence output_dir: exp/tts_train_jets_raw_phn_tacotron_g2p_en_no_space ngpu: 1 seed: 777 num_workers: 4 num_att_plot: 3 dist_backend: nccl dist_init_method: env:// dist_world_size: 4 dist_rank: 0 local_rank: 0 dist_master_addr: localhost dist_master_port: 39471 dist_launcher: null multiprocessing_distributed: true unused_parameters: true sharded_ddp: false cudnn_enabled: true cudnn_benchmark: false cudnn_deterministic: false collect_stats: false write_collected_feats: false max_epoch: 1000 patience: null val_scheduler_criterion: - valid - loss early_stopping_criterion: - valid - loss - min best_model_criterion: - - valid - text2mel_loss - min - - train - text2mel_loss - min - - train - total_count - max keep_nbest_models: 5 nbest_averaging_interval: 0 grad_clip: -1 grad_clip_type: 2.0 grad_noise: false accum_grad: 1 no_forward_run: false resume: true train_dtype: float32 use_amp: false log_interval: 50 use_matplotlib: true use_tensorboard: true use_wandb: false wandb_project: null wandb_id: null wandb_entity: null wandb_name: null wandb_model_log_interval: -1 detect_anomaly: false pretrain_path: null init_param: [] ignore_init_mismatch: false freeze_param: [] num_iters_per_epoch: 1000 batch_size: 20 valid_batch_size: null batch_bins: 3000000 valid_batch_bins: null train_shape_file: - exp/tts_stats_raw_phn_tacotron_g2p_en_no_space/train/text_shape.phn - exp/tts_stats_raw_phn_tacotron_g2p_en_no_space/train/speech_shape valid_shape_file: - exp/tts_stats_raw_phn_tacotron_g2p_en_no_space/valid/text_shape.phn - exp/tts_stats_raw_phn_tacotron_g2p_en_no_space/valid/speech_shape batch_type: numel valid_batch_type: null fold_length: - 150 - 204800 sort_in_batch: descending sort_batch: descending multiple_iterator: false chunk_length: 500 chunk_shift_ratio: 0.5 num_cache_chunks: 1024 train_data_path_and_name_and_type: - - dump/raw/tr_no_dev/text - text - text - - dump/raw/tr_no_dev/wav.scp - speech - sound - - exp/tts_stats_raw_phn_tacotron_g2p_en_no_space/train/collect_feats/pitch.scp - pitch - npy - - exp/tts_stats_raw_phn_tacotron_g2p_en_no_space/train/collect_feats/energy.scp - energy - npy valid_data_path_and_name_and_type: - - dump/raw/dev/text - text - text - - dump/raw/dev/wav.scp - speech - sound - - exp/tts_stats_raw_phn_tacotron_g2p_en_no_space/valid/collect_feats/pitch.scp - pitch - npy - - exp/tts_stats_raw_phn_tacotron_g2p_en_no_space/valid/collect_feats/energy.scp - energy - npy allow_variable_data_keys: false max_cache_size: 0.0 max_cache_fd: 32 valid_max_cache_size: null optim: adamw optim_conf: lr: 0.0002 betas: - 0.8 - 0.99 eps: 1.0e-09 weight_decay: 0.0 scheduler: exponentiallr scheduler_conf: gamma: 0.999875 optim2: adamw optim2_conf: lr: 0.0002 betas: - 0.8 - 0.99 eps: 1.0e-09 weight_decay: 0.0 scheduler2: exponentiallr scheduler2_conf: gamma: 0.999875 generator_first: true token_list: - <blank> - <unk> - AH0 - N - T - D - S - R - L - DH - K - Z - IH1 - IH0 - M - EH1 - W - P - AE1 - AH1 - V - ER0 - F - ',' - AA1 - B - HH - IY1 - UW1 - IY0 - AO1 - EY1 - AY1 - . - OW1 - SH - NG - G - ER1 - CH - JH - Y - AW1 - TH - UH1 - EH2 - OW0 - EY2 - AO0 - IH2 - AE2 - AY2 - AA2 - UW0 - EH0 - OY1 - EY0 - AO2 - ZH - OW2 - AE0 - UW2 - AH2 - AY0 - IY2 - AW2 - AA0 - '''' - ER2 - UH2 - '?' - OY2 - '!' - AW0 - UH0 - OY0 - .. - <sos/eos> odim: null model_conf: {} use_preprocessor: true token_type: phn bpemodel: null non_linguistic_symbols: null cleaner: tacotron g2p: g2p_en_no_space feats_extract: fbank feats_extract_conf: n_fft: 1024 hop_length: 256 win_length: null fs: 22050 fmin: 80 fmax: 7600 n_mels: 80 normalize: global_mvn normalize_conf: stats_file: exp/tts_stats_raw_phn_tacotron_g2p_en_no_space/train/feats_stats.npz tts: jets tts_conf: generator_type: jets_generator generator_params: adim: 256 aheads: 2 elayers: 4 eunits: 1024 dlayers: 4 dunits: 1024 positionwise_layer_type: conv1d positionwise_conv_kernel_size: 3 duration_predictor_layers: 2 duration_predictor_chans: 256 duration_predictor_kernel_size: 3 use_masking: true encoder_normalize_before: true decoder_normalize_before: true encoder_type: transformer decoder_type: transformer conformer_rel_pos_type: latest conformer_pos_enc_layer_type: rel_pos conformer_self_attn_layer_type: rel_selfattn conformer_activation_type: swish use_macaron_style_in_conformer: true use_cnn_in_conformer: true conformer_enc_kernel_size: 7 conformer_dec_kernel_size: 31 init_type: xavier_uniform transformer_enc_dropout_rate: 0.2 transformer_enc_positional_dropout_rate: 0.2 transformer_enc_attn_dropout_rate: 0.2 transformer_dec_dropout_rate: 0.2 transformer_dec_positional_dropout_rate: 0.2 transformer_dec_attn_dropout_rate: 0.2 pitch_predictor_layers: 5 pitch_predictor_chans: 256 pitch_predictor_kernel_size: 5 pitch_predictor_dropout: 0.5 pitch_embed_kernel_size: 1 pitch_embed_dropout: 0.0 stop_gradient_from_pitch_predictor: true energy_predictor_layers: 2 energy_predictor_chans: 256 energy_predictor_kernel_size: 3 energy_predictor_dropout: 0.5 energy_embed_kernel_size: 1 energy_embed_dropout: 0.0 stop_gradient_from_energy_predictor: false generator_out_channels: 1 generator_channels: 512 generator_global_channels: -1 generator_kernel_size: 7 generator_upsample_scales: - 8 - 8 - 2 - 2 generator_upsample_kernel_sizes: - 16 - 16 - 4 - 4 generator_resblock_kernel_sizes: - 3 - 7 - 11 generator_resblock_dilations: - - 1 - 3 - 5 - - 1 - 3 - 5 - - 1 - 3 - 5 generator_use_additional_convs: true generator_bias: true generator_nonlinear_activation: LeakyReLU generator_nonlinear_activation_params: negative_slope: 0.1 generator_use_weight_norm: true segment_size: 64 idim: 78 odim: 80 discriminator_type: hifigan_multi_scale_multi_period_discriminator discriminator_params: scales: 1 scale_downsample_pooling: AvgPool1d scale_downsample_pooling_params: kernel_size: 4 stride: 2 padding: 2 scale_discriminator_params: in_channels: 1 out_channels: 1 kernel_sizes: - 15 - 41 - 5 - 3 channels: 128 max_downsample_channels: 1024 max_groups: 16 bias: true downsample_scales: - 2 - 2 - 4 - 4 - 1 nonlinear_activation: LeakyReLU nonlinear_activation_params: negative_slope: 0.1 use_weight_norm: true use_spectral_norm: false follow_official_norm: false periods: - 2 - 3 - 5 - 7 - 11 period_discriminator_params: in_channels: 1 out_channels: 1 kernel_sizes: - 5 - 3 channels: 32 downsample_scales: - 3 - 3 - 3 - 3 - 1 max_downsample_channels: 1024 bias: true nonlinear_activation: LeakyReLU nonlinear_activation_params: negative_slope: 0.1 use_weight_norm: true use_spectral_norm: false generator_adv_loss_params: average_by_discriminators: false loss_type: mse discriminator_adv_loss_params: average_by_discriminators: false loss_type: mse feat_match_loss_params: average_by_discriminators: false average_by_layers: false include_final_outputs: true mel_loss_params: fs: 22050 n_fft: 1024 hop_length: 256 win_length: null window: hann n_mels: 80 fmin: 0 fmax: null log_base: null lambda_adv: 1.0 lambda_mel: 45.0 lambda_feat_match: 2.0 lambda_var: 1.0 lambda_align: 2.0 sampling_rate: 22050 cache_generator_outputs: true pitch_extract: dio pitch_extract_conf: reduction_factor: 1 use_token_averaged_f0: false fs: 22050 n_fft: 1024 hop_length: 256 f0max: 400 f0min: 80 pitch_normalize: global_mvn pitch_normalize_conf: stats_file: exp/tts_stats_raw_phn_tacotron_g2p_en_no_space/train/pitch_stats.npz energy_extract: energy energy_extract_conf: reduction_factor: 1 use_token_averaged_energy: false fs: 22050 n_fft: 1024 hop_length: 256 win_length: null energy_normalize: global_mvn energy_normalize_conf: stats_file: exp/tts_stats_raw_phn_tacotron_g2p_en_no_space/train/energy_stats.npz required: - output_dir - token_list version: '202204' distributed: true ``` </details> | 50e4d78ef9b027f56492bd846f98bdc0 |

cc-by-4.0 | ['espnet', 'audio', 'text-to-speech'] | false | Citing ESPnet ```BibTex @inproceedings{watanabe2018espnet, author={Shinji Watanabe and Takaaki Hori and Shigeki Karita and Tomoki Hayashi and Jiro Nishitoba and Yuya Unno and Nelson Yalta and Jahn Heymann and Matthew Wiesner and Nanxin Chen and Adithya Renduchintala and Tsubasa Ochiai}, title={{ESPnet}: End-to-End Speech Processing Toolkit}, year={2018}, booktitle={Proceedings of Interspeech}, pages={2207--2211}, doi={10.21437/Interspeech.2018-1456}, url={http://dx.doi.org/10.21437/Interspeech.2018-1456} } @inproceedings{hayashi2020espnet, title={{Espnet-TTS}: Unified, reproducible, and integratable open source end-to-end text-to-speech toolkit}, author={Hayashi, Tomoki and Yamamoto, Ryuichi and Inoue, Katsuki and Yoshimura, Takenori and Watanabe, Shinji and Toda, Tomoki and Takeda, Kazuya and Zhang, Yu and Tan, Xu}, booktitle={Proceedings of IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP)}, pages={7654--7658}, year={2020}, organization={IEEE} } ``` or arXiv: ```bibtex @misc{watanabe2018espnet, title={ESPnet: End-to-End Speech Processing Toolkit}, author={Shinji Watanabe and Takaaki Hori and Shigeki Karita and Tomoki Hayashi and Jiro Nishitoba and Yuya Unno and Nelson Yalta and Jahn Heymann and Matthew Wiesner and Nanxin Chen and Adithya Renduchintala and Tsubasa Ochiai}, year={2018}, eprint={1804.00015}, archivePrefix={arXiv}, primaryClass={cs.CL} } ``` | 22bb469efa900751f880040354c5794a |

mit | ['Indic'] | false | Model description Multillingual RoBERTa like model trained on Wikipedia articles of Hindi, Sanskrit, Gujarati languages. The tokenizer was trained on combined text. However, Hindi text was used to pre-train the model and then it was fine-tuned on Sanskrit and Gujarati Text combined hoping that pre-training with Hindi will help the model learn similar languages. | 698024e94687e2a60b570071906a968b |

mit | ['Indic'] | false | Example usage from transformers import AutoTokenizer, AutoModelWithLMHead, pipeline tokenizer = AutoTokenizer.from_pretrained("surajp/RoBERTa-hindi-guj-san") model = AutoModelWithLMHead.from_pretrained("surajp/RoBERTa-hindi-guj-san") fill_mask = pipeline( "fill-mask", model=model, tokenizer=tokenizer ) | 8023ab452138ec08091235535cb53a81 |

mit | ['Indic'] | false | Gujarati: ગુજરાતમાં ૧૯મી માર્ચ સુધી કોઈ સકારાત્મક (પોઝીટીવ) રીપોર્ટ આવ્યો <mask> હતો. fill_mask("ગુજરાતમાં ૧૯મી માર્ચ સુધી કોઈ સકારાત્મક (પોઝીટીવ) રીપોર્ટ આવ્યો <mask> હતો.") ''' Output: -------- [ {'score': 0.07849744707345963, 'sequence': '<s> ગુજરાતમાં ૧૯મી માર્ચ સુધી કોઈ સકારાત્મક (પોઝીટીવ) રીપોર્ટ આવ્યો જ હતો.</s>', 'token': 390}, {'score': 0.06273336708545685, 'sequence': '<s> ગુજરાતમાં ૧૯મી માર્ચ સુધી કોઈ સકારાત્મક (પોઝીટીવ) રીપોર્ટ આવ્યો ન હતો.</s>', 'token': 478}, {'score': 0.05160355195403099, 'sequence': '<s> ગુજરાતમાં ૧૯મી માર્ચ સુધી કોઈ સકારાત્મક (પોઝીટીવ) રીપોર્ટ આવ્યો થઇ હતો.</s>', 'token': 2075}, {'score': 0.04751499369740486, 'sequence': '<s> ગુજરાતમાં ૧૯મી માર્ચ સુધી કોઈ સકારાત્મક (પોઝીટીવ) રીપોર્ટ આવ્યો એક હતો.</s>', 'token': 600}, {'score': 0.03788900747895241, 'sequence': '<s> ગુજરાતમાં ૧૯મી માર્ચ સુધી કોઈ સકારાત્મક (પોઝીટીવ) રીપોર્ટ આવ્યો પણ હતો.</s>', 'token': 840} ] ``` | 5164234aa97d8c21ef5a13aaec635481 |

mit | ['Indic'] | false | Training data Cleaned wikipedia articles in Hindi, Sanskrit and Gujarati on Kaggle. It contains training as well as evaluation text. Used in [iNLTK](https://github.com/goru001/inltk) - [Hindi](https://www.kaggle.com/disisbig/hindi-wikipedia-articles-172k) - [Gujarati](https://www.kaggle.com/disisbig/gujarati-wikipedia-articles) - [Sanskrit](https://www.kaggle.com/disisbig/sanskrit-wikipedia-articles) | ad96dd7bccd238fa492e71c67aa16926 |

mit | ['Indic'] | false | Training procedure - On TPU (using `xla_spawn.py`) - For language modelling - Iteratively increasing `--block_size` from 128 to 256 over epochs - Tokenizer trained on combined text - Pre-training with Hindi and fine-tuning on Sanskrit and Gujarati texts ``` --model_type distillroberta-base \ --model_name_or_path "/content/SanHiGujBERTa" \ --mlm_probability 0.20 \ --line_by_line \ --save_total_limit 2 \ --per_device_train_batch_size 128 \ --per_device_eval_batch_size 128 \ --num_train_epochs 5 \ --block_size 256 \ --seed 108 \ --overwrite_output_dir \ ``` | d005905d5cb679dd1074a062e93b75f1 |

mit | ['Indic'] | false | Eval results perplexity = 2.920005983224673 > Created by [Suraj Parmar/@parmarsuraj99](https://twitter.com/parmarsuraj99) | [LinkedIn](https://www.linkedin.com/in/parmarsuraj99/) > Made with <span style="color: | 2fc1ba1633432e59f0e1cf9ac4c11e90 |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image', 'image-to-image', 'diffusers'] | false | Gradio We support a [Gradio](https://github.com/gradio-app/gradio) Web UI run EimisAnimeDiffusion_1.0v: [](https://huggingface.co/spaces/akhaliq/EimisAnimeDiffusion_1.0v) | 6ed6542b8ad3d32e1d6d095f4d831ec3 |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image', 'image-to-image', 'diffusers'] | false | Sample generations This model works well on anime and landscape generations.<br> Anime:<br> There are some sample generations:<br> ``` Positive:a girl, Phoenix girl, fluffy hair, war, a hell on earth, Beautiful and detailed explosion, Cold machine, Fire in eyes, burning, Metal texture, Exquisite cloth, Metal carving, volume, best quality, normal hands, Metal details, Metal scratch, Metal defects, masterpiece, best quality, best quality, illustration, highres, masterpiece, contour deepening, illustration,(beautiful detailed girl),beautiful detailed glow Negative:lowres, bad anatomy, ((bad hands)), text, error, ((missing fingers)), cropped, jpeg artifacts, worst quality, low quality, signature, watermark, blurry, deformed, extra ears, deformed, disfigured, mutation, censored, ((multiple_girls)) Steps: 20, Sampler: DPM++ 2S a, CFG scale: 8, Seed: 4186044705/4186044707, Size: 704x896 ``` <img src=https://imgur.com/2U295w3.png width=75% height=75%> <img src=https://imgur.com/2jtF376.png width=75% height=75%> ``` Positive:(1girl), cute, walking in the park, (night), full moon, north star, blue shirt, red skirt, detailed shirt, jewelry, autumn, dark blue hair, shirt hair, (magic:1.5), beautiful blue eyes Negative: lowres, bad anatomy, ((bad hands)), text, error, ((missing fingers)), cropped, jpeg artifacts, worst quality, low quality, signature, watermark, blurry, deformed, extra ears, deformed, disfigured, mutation, censored, ((multiple_girls)) Steps: 35, Sampler: Euler a, CFG scale: 9, Seed: 296195494, Size: 768x960 ``` <img src=https://imgur.com/gudKxQe.png width=75% height=75%> ``` Positive:night , ((1 girl)), alone, masterpiece, 8k wallpaper, highres, absurdres, high quality background, short hair, black hair, multicolor hair, beautiful frozen village, (full bright moon), blue dress, detailed dress, jewelry dress, (magic:1.2), blue fire, blue eyes, glowing eyes, fire, ice goddess, (blue detailed beautiful crown), electricity, blue electricity, blue light particles Negative: lowres, bad anatomy, ((bad hands)), text, error, ((missing fingers)), cropped, jpeg artifacts, worst quality, low quality, signature, watermark, blurry, deformed, extra ears, deformed, disfigured, mutation, censored, ((multiple_girls)) Steps: 20, Sampler: DPM++ 2S a Karras, CFG scale: 9, Seed: 2118767319, Size: 768x832 ``` <img src=https://imgur.com/lJL4CJL.png width=75% height=75%> Want to generate some amazing backgrounds? No problem: ``` Positive: above clouds, mountains, (night), full moon, castle, huge forest, forest between mountains, beautiful, masterpiece Negative: lowres, bad anatomy, ((bad hands)), text, error, ((missing fingers)), cropped, jpeg artifacts, worst quality, low quality, signature, watermark, blurry, deformed, extra ears, deformed, disfigured, mutation, censored, ((multiple_girls)) Steps: 20, Sampler: DPM++ 2S a Karras, CFG scale: 9, Seed: 83644543, Size: 896x640 ``` <img src=https://imgur.com/XfxAx0S.png width=75% height=75%> | 0c041e4f48a9d16de0d994edbe89c72b |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image', 'image-to-image', 'diffusers'] | false | Disclaimer Some prompts might not work perfectly (mainly colors), so add some more prompts for it to work, or try these -->(). Usually they help. Also works well with img2img if you want to add detail. | 69185d6a4ca5188df933edf084a773cb |

apache-2.0 | ['generated_from_trainer'] | false | insertion-prop-015-correct-data This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.0497 - Precision: 0.8907 - Recall: 0.8518 - F1: 0.8708 - Accuracy: 0.9816 | eaaa953dc0826f6425ac41594ff23efa |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 64 - eval_batch_size: 64 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1 | 6be599bab5371ea374c714a21987daf0 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.0978 | 0.32 | 500 | 0.0581 | 0.8730 | 0.8300 | 0.8509 | 0.9787 | | 0.0633 | 0.64 | 1000 | 0.0515 | 0.8867 | 0.8447 | 0.8652 | 0.9807 | | 0.0588 | 0.96 | 1500 | 0.0497 | 0.8907 | 0.8518 | 0.8708 | 0.9816 | | e297db3149ff9599c8116b8e2f7299e2 |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-uncased-wiki-sports This model is a fine-tuned version of [amanm27/bert-base-uncased-wiki](https://huggingface.co/amanm27/bert-base-uncased-wiki) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.9753 | 4def5e56270eb29288a41e30856cb0ca |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.3589 | 1.0 | 912 | 2.0686 | | 2.176 | 2.0 | 1824 | 2.0025 | | 2.1022 | 3.0 | 2736 | 1.9774 | | 951515d4ba52b1eadddb7a27adbcac70 |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', 'hf-asr-leaderboard', 'model_for_talk', 'mozilla-foundation/common_voice_8_0', 'robust-speech-event', 'tt'] | false | sammy786/wav2vec2-xlsr-tatar This model is a fine-tuned version of [facebook/wav2vec2-xls-r-1b](https://huggingface.co/facebook/wav2vec2-xls-r-1b) on the MOZILLA-FOUNDATION/COMMON_VOICE_8_0 - tt dataset. It achieves the following results on evaluation set (which is 10 percent of train data set merged with other and dev datasets): - Loss: 7.66 - Wer: 7.08 | 6e4daabbf0e893cd98ed3fb3b96504a3 |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', 'hf-asr-leaderboard', 'model_for_talk', 'mozilla-foundation/common_voice_8_0', 'robust-speech-event', 'tt'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.000045637994662983496 - train_batch_size: 16 - eval_batch_size: 16 - seed: 13 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: cosine_with_restarts - lr_scheduler_warmup_steps: 500 - num_epochs: 40 - mixed_precision_training: Native AMP | 834e80e88194fff5acf53f1ef791f9ed |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', 'hf-asr-leaderboard', 'model_for_talk', 'mozilla-foundation/common_voice_8_0', 'robust-speech-event', 'tt'] | false | Training results | Step | Training Loss | Validation Loss | Wer | |-------|---------------|-----------------|----------| | 200 | 4.849400 | 1.874908 | 0.995232 | | 400 | 1.105700 | 0.257292 | 0.367658 | | 600 | 0.723000 | 0.181150 | 0.250513 | | 800 | 0.660600 | 0.167009 | 0.226078 | | 1000 | 0.568000 | 0.135090 | 0.177339 | | 1200 | 0.721200 | 0.117469 | 0.166413 | | 1400 | 0.416300 | 0.115142 | 0.153765 | | 1600 | 0.346000 | 0.105782 | 0.153963 | | 1800 | 0.279700 | 0.102452 | 0.146149 | | 2000 | 0.273800 | 0.095818 | 0.128468 | | 2200 | 0.252900 | 0.102302 | 0.133766 | | 2400 | 0.255100 | 0.096592 | 0.121316 | | 2600 | 0.229600 | 0.091263 | 0.124561 | | 2800 | 0.213900 | 0.097748 | 0.125687 | | 3000 | 0.210700 | 0.091244 | 0.125422 | | 3200 | 0.202600 | 0.084076 | 0.106284 | | 3400 | 0.200900 | 0.093809 | 0.113238 | | 3600 | 0.192700 | 0.082918 | 0.108139 | | 3800 | 0.182000 | 0.084487 | 0.103371 | | 4000 | 0.167700 | 0.091847 | 0.104960 | | 4200 | 0.183700 | 0.085223 | 0.103040 | | 4400 | 0.174400 | 0.083862 | 0.100589 | | 4600 | 0.163100 | 0.086493 | 0.099728 | | 4800 | 0.162000 | 0.081734 | 0.097543 | | 5000 | 0.153600 | 0.077223 | 0.092974 | | 5200 | 0.153700 | 0.086217 | 0.090789 | | 5400 | 0.140200 | 0.093256 | 0.100457 | | 5600 | 0.142900 | 0.086903 | 0.097742 | | 5800 | 0.131400 | 0.083068 | 0.095225 | | 6000 | 0.126000 | 0.086642 | 0.091252 | | 6200 | 0.135300 | 0.083387 | 0.091186 | | 6400 | 0.126100 | 0.076479 | 0.086352 | | 6600 | 0.127100 | 0.077868 | 0.086153 | | 6800 | 0.118000 | 0.083878 | 0.087676 | | 7000 | 0.117600 | 0.085779 | 0.091054 | | 7200 | 0.113600 | 0.084197 | 0.084233 | | 7400 | 0.112000 | 0.078688 | 0.081319 | | 7600 | 0.110200 | 0.082534 | 0.086087 | | 7800 | 0.106400 | 0.077245 | 0.080988 | | 8000 | 0.102300 | 0.077497 | 0.079332 | | 8200 | 0.109500 | 0.079083 | 0.088339 | | 8400 | 0.095900 | 0.079721 | 0.077809 | | 8600 | 0.094700 | 0.079078 | 0.079730 | | 8800 | 0.097400 | 0.078785 | 0.079200 | | 9000 | 0.093200 | 0.077445 | 0.077015 | | 9200 | 0.088700 | 0.078207 | 0.076617 | | 9400 | 0.087200 | 0.078982 | 0.076485 | | 9600 | 0.089900 | 0.081209 | 0.076021 | | 9800 | 0.081900 | 0.078158 | 0.075757 | | 10000 | 0.080200 | 0.078074 | 0.074498 | | 10200 | 0.085000 | 0.078830 | 0.073373 | | 10400 | 0.080400 | 0.078144 | 0.073373 | | 10600 | 0.078200 | 0.077163 | 0.073902 | | 10800 | 0.080900 | 0.076394 | 0.072446 | | 11000 | 0.080700 | 0.075955 | 0.071585 | | 11200 | 0.076800 | 0.077031 | 0.072313 | | 11400 | 0.076300 | 0.077401 | 0.072777 | | 11600 | 0.076700 | 0.076613 | 0.071916 | | 11800 | 0.076000 | 0.076672 | 0.071916 | | 12000 | 0.077200 | 0.076490 | 0.070989 | | 12200 | 0.076200 | 0.076688 | 0.070856 | | 12400 | 0.074400 | 0.076780 | 0.071055 | | 12600 | 0.076300 | 0.076768 | 0.071320 | | 12800 | 0.077600 | 0.076727 | 0.071055 | | 13000 | 0.077700 | 0.076714 | 0.071254 | | 79c3467b26cd9d9347b12b7820425b79 |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', 'hf-asr-leaderboard', 'model_for_talk', 'mozilla-foundation/common_voice_8_0', 'robust-speech-event', 'tt'] | false | Evaluation Commands 1. To evaluate on `mozilla-foundation/common_voice_8_0` with split `test` ```bash python eval.py --model_id sammy786/wav2vec2-xlsr-tatar --dataset mozilla-foundation/common_voice_8_0 --config tt --split test ``` | bab3247512f2ff32e18a30a391f34ef7 |

cc-by-4.0 | [] | false | GenRead: FiD model trained on WebQ -- This is the model checkpoint of GenRead [2], based on the T5-3B and trained on the WebQ dataset [1]. -- Hyperparameters: 8 x 80GB A100 GPUs; batch size 16; AdamW; LR 5e-5; best dev at 11500 steps. References: [1] Semantic parsing on freebase from question-answer pairs. EMNLP 2013. [2] Generate rather than Retrieve: Large Language Models are Strong Context Generators. arXiv 2022 | a1a9c597c9503a8fb14bb27127b71c97 |

cc-by-4.0 | [] | false | Model performance We evaluate it on the WebQ dataset, the EM score is 54.36. <a href="https://huggingface.co/exbert/?model=bert-base-uncased"> <img width="300px" src="https://cdn-media.huggingface.co/exbert/button.png"> </a> --- license: cc-by-4.0 --- --- license: cc-by-4.0 --- | bd3a9c77658f80da58142f8d3b30a1dd |

apache-2.0 | ['automatic-speech-recognition', 'CTC', 'Attention', 'Transformers', 'pytorch', 'speechbrain'] | false | Transformer for AISHELL (Mandarin Chinese) This repository provides all the necessary tools to perform automatic speech recognition from an end-to-end system pretrained on AISHELL (Mandarin Chinese) within SpeechBrain. For a better experience, we encourage you to learn more about [SpeechBrain](https://speechbrain.github.io). The performance of the model is the following: | Release | Dev CER | Test CER | GPUs | Full Results | |:-------------:|:--------------:|:--------------:|:--------:|:--------:| | 05-03-21 | 5.60 | 6.04 | 2xV100 32GB | [Google Drive](https://drive.google.com/drive/folders/1zlTBib0XEwWeyhaXDXnkqtPsIBI18Uzs?usp=sharing)| | f5dd4af28895a25fe16abcbd5f2fe437 |

apache-2.0 | ['automatic-speech-recognition', 'CTC', 'Attention', 'Transformers', 'pytorch', 'speechbrain'] | false | Pipeline description This ASR system is composed of 2 different but linked blocks: - Tokenizer (unigram) that transforms words into subword units and trained with the train transcriptions of LibriSpeech. - Acoustic model made of a transformer encoder and a joint decoder with CTC + transformer. Hence, the decoding also incorporates the CTC probabilities. To Train this system from scratch, [see our SpeechBrain recipe](https://github.com/speechbrain/speechbrain/tree/develop/recipes/AISHELL-1). The system is trained with recordings sampled at 16kHz (single channel). The code will automatically normalize your audio (i.e., resampling + mono channel selection) when calling *transcribe_file* if needed. | eed3c81575da2c3b78224fef451cbb50 |

apache-2.0 | ['automatic-speech-recognition', 'CTC', 'Attention', 'Transformers', 'pytorch', 'speechbrain'] | false | Transcribing your own audio files (in English) ```python from speechbrain.pretrained import EncoderDecoderASR asr_model = EncoderDecoderASR.from_hparams(source="speechbrain/asr-transformer-aishell", savedir="pretrained_models/asr-transformer-aishell") asr_model.transcribe_file("speechbrain/asr-transformer-aishell/example_mandarin.wav") ``` | a4893d16848cbdd7c5cb2d2c8c8dd269 |

apache-2.0 | ['automatic-speech-recognition', 'CTC', 'Attention', 'Transformers', 'pytorch', 'speechbrain'] | false | Training The model was trained with SpeechBrain (Commit hash: '986a2175'). To train it from scratch follow these steps: 1. Clone SpeechBrain: ```bash git clone https://github.com/speechbrain/speechbrain/ ``` 2. Install it: ```bash cd speechbrain pip install -r requirements.txt pip install -e . ``` 3. Run Training: ```bash cd recipes/AISHELL-1/ASR/transformer/ python train.py hparams/train_ASR_transformer.yaml --data_folder=your_data_folder ``` You can find our training results (models, logs, etc) [here](https://drive.google.com/drive/folders/1QU18YoauzLOXueogspT0CgR5bqJ6zFfu?usp=sharing). | 2e8f264009d1c1212cfcf84dd86a16af |

apache-2.0 | ['automatic-speech-recognition', 'CTC', 'Attention', 'Transformers', 'pytorch', 'speechbrain'] | false | **Citing SpeechBrain** Please, cite SpeechBrain if you use it for your research or business. ```bibtex @misc{speechbrain, title={{SpeechBrain}: A General-Purpose Speech Toolkit}, author={Mirco Ravanelli and Titouan Parcollet and Peter Plantinga and Aku Rouhe and Samuele Cornell and Loren Lugosch and Cem Subakan and Nauman Dawalatabad and Abdelwahab Heba and Jianyuan Zhong and Ju-Chieh Chou and Sung-Lin Yeh and Szu-Wei Fu and Chien-Feng Liao and Elena Rastorgueva and François Grondin and William Aris and Hwidong Na and Yan Gao and Renato De Mori and Yoshua Bengio}, year={2021}, eprint={2106.04624}, archivePrefix={arXiv}, primaryClass={eess.AS}, note={arXiv:2106.04624} } ``` | 1cffe5667108f7ce9335f28e4adf183b |

mit | ['generated_from_trainer'] | false | finetune_rte_model This model is a fine-tuned version of [microsoft/deberta-v3-base](https://huggingface.co/microsoft/deberta-v3-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.5582 - Accuracy: 0.8195 | 4019777f4705a04ef9b0532aca4981bd |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 156 | 0.5364 | 0.7617 | | No log | 2.0 | 312 | 0.4650 | 0.8195 | | No log | 3.0 | 468 | 0.5582 | 0.8195 | | 774a686db5a01446fc110179ff57cf90 |

apache-2.0 | ['translation'] | false | opus-mt-tvl-fr * source languages: tvl * target languages: fr * OPUS readme: [tvl-fr](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/tvl-fr/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/tvl-fr/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/tvl-fr/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/tvl-fr/opus-2020-01-16.eval.txt) | 030be52e6e481188641a2312d3223dc8 |

apache-2.0 | ['automatic-speech-recognition', 'fa'] | false | exp_w2v2t_fa_no-pretraining_s650 Fine-tuned randomly initialized wav2vec2 model for speech recognition using the train split of [Common Voice 7.0 (fa)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 7d45302fc60f62fee86bf51ae1a3fca8 |

apache-2.0 | ['translation'] | false | opus-mt-ja-sv * source languages: ja * target languages: sv * OPUS readme: [ja-sv](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/ja-sv/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-09.zip](https://object.pouta.csc.fi/OPUS-MT-models/ja-sv/opus-2020-01-09.zip) * test set translations: [opus-2020-01-09.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/ja-sv/opus-2020-01-09.test.txt) * test set scores: [opus-2020-01-09.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/ja-sv/opus-2020-01-09.eval.txt) | 34c279889bff870efb62683f02f1893a |

apache-2.0 | [] | false | [Optimum Habana](https://github.com/huggingface/optimum-habana) is the interface between the Transformers library and Habana's Gaudi processor (HPU). It provides a set of tools enabling easy and fast model loading and fine-tuning on single- and multi-HPU settings for different downstream tasks. Learn more about how to take advantage of the power of Habana HPUs to train Transformers models at [hf.co/hardware/habana](https://huggingface.co/hardware/habana). | e550cf28b11c0a44fbd13e642ef7f59f |

apache-2.0 | [] | false | T5 model HPU configuration This model only contains the `GaudiConfig` file for running the [T5](https://huggingface.co/t5-base) model on Habana's Gaudi processors (HPU). **This model contains no model weights, only a GaudiConfig.** This enables to specify: - `use_habana_mixed_precision`: whether to use Habana Mixed Precision (HMP) - `hmp_opt_level`: optimization level for HMP, see [here](https://docs.habana.ai/en/latest/PyTorch/PyTorch_Mixed_Precision/PT_Mixed_Precision.html | 3944a75c66f22af78cd0ee60cb5132ba |

apache-2.0 | [] | false | configuration-options) for a detailed explanation - `hmp_bf16_ops`: list of operators that should run in bf16 - `hmp_fp32_ops`: list of operators that should run in fp32 - `hmp_is_verbose`: verbosity - `use_fused_adam`: whether to use Habana's custom AdamW implementation - `use_fused_clip_norm`: whether to use Habana's fused gradient norm clipping operator | ce98c9289e8fe8bdf2123119a4169a7e |

apache-2.0 | [] | false | Usage The model is instantiated the same way as in the Transformers library. The only difference is that there are a few new training arguments specific to HPUs. [Here](https://github.com/huggingface/optimum-habana/blob/main/examples/summarization/run_summarization.py) is a summarization example script to fine-tune a model. You can run it with T5-small with the following command: ```bash python run_summarization.py \ --model_name_or_path t5-small \ --do_train \ --do_eval \ --dataset_name cnn_dailymail \ --dataset_config "3.0.0" \ --source_prefix "summarize: " \ --output_dir /tmp/tst-summarization \ --per_device_train_batch_size 4 \ --per_device_eval_batch_size 4 \ --overwrite_output_dir \ --predict_with_generate \ --use_habana \ --use_lazy_mode \ --gaudi_config_name Habana/t5 \ --ignore_pad_token_for_loss False \ --pad_to_max_length \ --save_strategy epoch \ --throughput_warmup_steps 2 ``` Check the [documentation](https://huggingface.co/docs/optimum/habana/index) out for more advanced usage and examples. | 651a1268f3d8f1acde1c3592891c1ea5 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | mt5-small-finetuned-19jan-7 This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.6123 - Rouge1: 6.8298 - Rouge2: 0.1667 - Rougel: 6.5947 - Rougelsum: 6.6685 | 78283d65b8fb27befad2e32153948564 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 12 - eval_batch_size: 12 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 60 | 7abca5cef35968f2df61bb99b60eb8f1 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:|:---------:| | 16.2953 | 1.0 | 50 | 5.4420 | 2.3065 | 0.0 | 2.3217 | 2.3089 | | 10.6895 | 2.0 | 100 | 4.4691 | 3.2975 | 0.3693 | 3.2976 | 3.3376 | | 7.0377 | 3.0 | 150 | 3.2638 | 4.1896 | 0.3485 | 4.1487 | 4.1878 | | 5.7221 | 4.0 | 200 | 3.0772 | 6.2012 | 0.7955 | 6.1846 | 6.3083 | | 4.9356 | 5.0 | 250 | 3.0312 | 5.2032 | 0.8545 | 5.1829 | 5.2263 | | 4.4656 | 6.0 | 300 | 3.0022 | 5.6901 | 1.3505 | 5.6184 | 5.6791 | | 4.2279 | 7.0 | 350 | 2.9585 | 5.6907 | 1.5424 | 5.644 | 5.7768 | | 4.0578 | 8.0 | 400 | 2.9098 | 5.7425 | 1.0202 | 5.6452 | 5.7881 | | 3.9236 | 9.0 | 450 | 2.8686 | 6.2001 | 1.1793 | 6.1891 | 6.2508 | | 3.8237 | 10.0 | 500 | 2.8222 | 5.9182 | 1.1793 | 5.8436 | 5.9807 | | 3.7078 | 11.0 | 550 | 2.7890 | 5.4733 | 1.3896 | 5.3702 | 5.4957 | | 3.641 | 12.0 | 600 | 2.7522 | 5.8312 | 1.1793 | 5.784 | 5.9037 | | 3.5527 | 13.0 | 650 | 2.7168 | 6.3129 | 1.1793 | 6.2924 | 6.384 | | 3.5281 | 14.0 | 700 | 2.7000 | 9.1787 | 0.8333 | 9.1491 | 9.2241 | | 3.4547 | 15.0 | 750 | 2.6966 | 7.8778 | 0.3333 | 7.8306 | 7.9167 | | 3.4386 | 16.0 | 800 | 2.6892 | 8.3907 | 0.3333 | 8.3167 | 8.4 | | 3.3749 | 17.0 | 850 | 2.6786 | 8.6167 | 0.4167 | 8.5917 | 8.5787 | | 3.3681 | 18.0 | 900 | 2.6895 | 8.2466 | 0.4167 | 8.1799 | 8.2407 | | 3.3173 | 19.0 | 950 | 2.6957 | 8.1742 | 0.4167 | 8.1197 | 8.1429 | | 3.3034 | 20.0 | 1000 | 2.6721 | 8.2466 | 0.4167 | 8.1799 | 8.2407 | | 3.2594 | 21.0 | 1050 | 2.6698 | 8.569 | 0.4167 | 8.5419 | 8.619 | | 3.2138 | 22.0 | 1100 | 2.6676 | 8.2722 | 0.4167 | 8.2343 | 8.3037 | | 3.2239 | 23.0 | 1150 | 2.6537 | 8.1444 | 0.4167 | 8.1051 | 8.1301 | | 3.1887 | 24.0 | 1200 | 2.6529 | 8.1444 | 0.4167 | 8.1051 | 8.1301 | | 3.1641 | 25.0 | 1250 | 2.6685 | 7.7777 | 0.1667 | 7.7204 | 7.8143 | | 3.162 | 26.0 | 1300 | 2.6619 | 8.3776 | 0.3333 | 8.4135 | 8.4692 | | 3.1114 | 27.0 | 1350 | 2.6632 | 8.3776 | 0.3333 | 8.4135 | 8.4692 | | 3.0645 | 28.0 | 1400 | 2.6438 | 7.8811 | 0.3333 | 7.8333 | 7.9484 | | 3.0984 | 29.0 | 1450 | 2.6384 | 7.3936 | 0.1667 | 7.3609 | 7.4051 | | 3.0712 | 30.0 | 1500 | 2.6389 | 6.9609 | 0.1667 | 6.875 | 7.0253 | | 3.0662 | 31.0 | 1550 | 2.6346 | 7.95 | 0.1667 | 7.9051 | 8.0218 | | 3.0294 | 32.0 | 1600 | 2.6420 | 7.3936 | 0.1667 | 7.3609 | 7.4051 | | 3.0143 | 33.0 | 1650 | 2.6325 | 7.6526 | 0.1667 | 7.6869 | 7.7551 | | 3.002 | 34.0 | 1700 | 2.6384 | 7.9436 | 0.1667 | 7.9317 | 8.016 | | 2.9964 | 35.0 | 1750 | 2.6262 | 8.2958 | 0.4167 | 8.2317 | 8.3936 | | 2.9893 | 36.0 | 1800 | 2.6351 | 8.6535 | 0.1667 | 8.616 | 8.7333 | | 2.9862 | 37.0 | 1850 | 2.6320 | 8.2452 | 0.1667 | 8.2 | 8.3218 | | 2.9588 | 38.0 | 1900 | 2.6214 | 7.6656 | 0.1667 | 7.6819 | 7.7 | | 2.9697 | 39.0 | 1950 | 2.6229 | 7.1452 | 0.1667 | 7.1051 | 7.1942 | | 2.9433 | 40.0 | 2000 | 2.6209 | 7.5775 | 0.4167 | 7.4893 | 7.5833 | | 2.9306 | 41.0 | 2050 | 2.6197 | 7.525 | 0.4167 | 7.4435 | 7.5351 | | 2.9382 | 42.0 | 2100 | 2.6190 | 7.525 | 0.4167 | 7.4435 | 7.5351 | | 2.9269 | 43.0 | 2150 | 2.6234 | 7.3614 | 0.4167 | 7.2092 | 7.3592 | | 2.9152 | 44.0 | 2200 | 2.6237 | 6.9976 | 0.1667 | 6.8777 | 7.0333 | | 2.9137 | 45.0 | 2250 | 2.6213 | 6.9976 | 0.1667 | 6.8777 | 7.0333 | | 2.9011 | 46.0 | 2300 | 2.6212 | 6.9976 | 0.1667 | 6.8777 | 7.0333 | | 2.8941 | 47.0 | 2350 | 2.6188 | 6.7768 | 0.1667 | 6.6509 | 6.812 | | 2.9143 | 48.0 | 2400 | 2.6126 | 7.0875 | 0.1667 | 6.803 | 6.9337 | | 2.8798 | 49.0 | 2450 | 2.6207 | 6.4458 | 0.1667 | 6.3221 | 6.4527 | | 2.8701 | 50.0 | 2500 | 2.6172 | 6.7542 | 0.1667 | 6.4857 | 6.5729 | | 2.8823 | 51.0 | 2550 | 2.6161 | 6.9971 | 0.1667 | 6.6819 | 6.7968 | | 2.8724 | 52.0 | 2600 | 2.6171 | 6.8298 | 0.1667 | 6.5947 | 6.6685 | | 2.8635 | 53.0 | 2650 | 2.6176 | 6.8298 | 0.1667 | 6.5947 | 6.6685 | | 2.8803 | 54.0 | 2700 | 2.6134 | 6.1417 | 0.1667 | 5.929 | 6.0423 | | 2.8608 | 55.0 | 2750 | 2.6118 | 6.4953 | 0.1667 | 6.2113 | 6.3554 | | 2.8655 | 56.0 | 2800 | 2.6125 | 6.4976 | 0.1667 | 6.2625 | 6.3539 | | 2.856 | 57.0 | 2850 | 2.6136 | 6.8298 | 0.1667 | 6.5947 | 6.6685 | | 2.8837 | 58.0 | 2900 | 2.6124 | 6.8298 | 0.1667 | 6.5947 | 6.6685 | | 2.8871 | 59.0 | 2950 | 2.6123 | 6.8298 | 0.1667 | 6.5947 | 6.6685 | | 2.8537 | 60.0 | 3000 | 2.6123 | 6.8298 | 0.1667 | 6.5947 | 6.6685 | | b8cf77cca10399486657f018bb319063 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-ner This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the conll2003 dataset. It achieves the following results on the evaluation set: - Loss: 0.0626 - Precision: 0.9193 - Recall: 0.9311 - F1: 0.9251 - Accuracy: 0.9824 | d77ea12a8b5668fa8cf26a832123bf07 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.2393 | 1.0 | 878 | 0.0732 | 0.9052 | 0.9207 | 0.9129 | 0.9801 | | 0.0569 | 2.0 | 1756 | 0.0626 | 0.9193 | 0.9311 | 0.9251 | 0.9824 | | d9526cfe67663694f73437493c2c3606 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-cloud-ner This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.0812 - Precision: 0.8975 - Recall: 0.9080 - F1: 0.9027 - Accuracy: 0.9703 | 97a90212fa749104061a538925f46fdc |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3 | 9b6eec86cdfd3a6efba6d04ff13a0e11 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 166 | 0.1326 | 0.7990 | 0.8043 | 0.8017 | 0.9338 | | No log | 2.0 | 332 | 0.0925 | 0.8770 | 0.8946 | 0.8858 | 0.9618 | | No log | 3.0 | 498 | 0.0812 | 0.8975 | 0.9080 | 0.9027 | 0.9703 | | 5f8978431be5efcaa04aa597d10a9847 |

apache-2.0 | ['generated_from_trainer'] | false | finetuning-sentiment-model-3000-samples This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.3206 - Accuracy: 0.87 - F1: 0.8704 | d4ea4085457a5d794cd1d57b9e52afe2 |

apache-2.0 | ['generated_from_trainer'] | false | bert-finetuned-ner This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the conll2003 dataset. It achieves the following results on the evaluation set: - Loss: 0.0569 - Precision: 0.9215 - Recall: 0.9423 - F1: 0.9318 - Accuracy: 0.9850 | c645033b779a401eca0cf66e76cfc2f5 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 439 | 0.0702 | 0.8847 | 0.9170 | 0.9006 | 0.9795 | | 0.183 | 2.0 | 878 | 0.0599 | 0.9161 | 0.9391 | 0.9274 | 0.9842 | | 0.0484 | 3.0 | 1317 | 0.0569 | 0.9215 | 0.9423 | 0.9318 | 0.9850 | | 3d574cf619c0b2c5588b15f02e4bd3d4 |

apache-2.0 | ['generated_from_trainer'] | false | ner_nerd_fine This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the nerd dataset. It achieves the following results on the evaluation set: - Loss: 0.3373 - Precision: 0.6326 - Recall: 0.6734 - F1: 0.6524 - Accuracy: 0.9050 | 67e8807d561714fb5b487cae7a463e2e |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - num_epochs: 10 | 776ca54bf77c59d622c14c8729a7faa5 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.6219 | 1.0 | 8235 | 0.3347 | 0.6066 | 0.6581 | 0.6313 | 0.9015 | | 0.3071 | 2.0 | 16470 | 0.3165 | 0.6349 | 0.6637 | 0.6490 | 0.9060 | | 0.2384 | 3.0 | 24705 | 0.3311 | 0.6373 | 0.6769 | 0.6565 | 0.9068 | | 0.1834 | 4.0 | 32940 | 0.3414 | 0.6349 | 0.6780 | 0.6557 | 0.9069 | | 0.1392 | 5.0 | 41175 | 0.3793 | 0.6334 | 0.6775 | 0.6547 | 0.9068 | | cd33b8af3e8940a886da975826809777 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | joopich Dreambooth model trained by Lariatty with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept:  | 9bafd2880f985e2dc887d1f8fa71df0d |

creativeml-openrail-m | [] | false | Sample images: <style> img { display: inline-block; } </style> <img src="https://huggingface.co/YoungMasterFromSect/Chibi/resolve/main/1.png" width="300" height="200"> <img src="https://huggingface.co/YoungMasterFromSect/Chibi/resolve/main/2.png" width="300" height="200"> <img src="https://huggingface.co/YoungMasterFromSect/Chibi/resolve/main/3.png" width="300" height="300"> <img src="https://huggingface.co/YoungMasterFromSect/Chibi/resolve/main/4.png" width="300" height="300"> <img src="https://huggingface.co/YoungMasterFromSect/Chibi/resolve/main/5.png" width="300" height="300"> | 9517b69121e51d237129af7a3b52a72f |

mit | [] | false | Legal act Extraction Model With growing legal complexity keeping track of changes in interconnectivity and hierarchical structure of the legislation is a challenging task. Entity extraction technique (also known as token classification) facilitates document analysis by assigning a label to each word in a text. A way to decide which data elements are to be extracted and how they should be labeled mostly depends on a particular business problem and is limited only by a tokenization process meaning that an element shouldn’t be less than a token as split by a tokenizer. So as long as these data elements correspond to at least one whole token they could represent legal terms, legal entities, legal parties, deadlines and so on. This model is fine-tuned to label mentioned legal acts and their articles. Extracted information could be used to create an interconnectivity map for legal acts. | 8d8dd89ae71ca106dc8b35909191e679 |