license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-zero-shot This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - eval_loss: 0.7147 - eval_accuracy: 0.0741 - eval_f1: 0.1379 - eval_runtime: 1.1794 - eval_samples_per_second: 22.894 - eval_steps_per_second: 1.696 - step: 0 | e1440b40e4ddea911f90232061da1856 |

apache-2.0 | ['exbert', 'multiberts', 'multiberts-seed-1'] | false | MultiBERTs Seed 1 Checkpoint 900k (uncased) Seed 1 intermediate checkpoint 900k MultiBERTs (pretrained BERT) model on English language using a masked language modeling (MLM) objective. It was introduced in [this paper](https://arxiv.org/pdf/2106.16163.pdf) and first released in [this repository](https://github.com/google-research/language/tree/master/language/multiberts). This is an intermediate checkpoint. The final checkpoint can be found at [multiberts-seed-1](https://hf.co/multberts-seed-1). This model is uncased: it does not make a difference between english and English. Disclaimer: The team releasing MultiBERTs did not write a model card for this model so this model card has been written by [gchhablani](https://hf.co/gchhablani). | e641b1d8eab0515e75fb6b9467fccf48 |

apache-2.0 | ['exbert', 'multiberts', 'multiberts-seed-1'] | false | How to use Here is how to use this model to get the features of a given text in PyTorch: ```python from transformers import BertTokenizer, BertModel tokenizer = BertTokenizer.from_pretrained('multiberts-seed-1-900k') model = BertModel.from_pretrained("multiberts-seed-1-900k") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` | d8d10effe7079c17532cb3604312172c |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_8_0', 'generated_from_trainer', 'bas', 'robust-speech-event', 'model_for_talk', 'hf-asr-leaderboard'] | false | wav2vec2-large-xls-r-300m-bas-v1 This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the MOZILLA-FOUNDATION/COMMON_VOICE_8_0 - BAS dataset. It achieves the following results on the evaluation set: - Loss: 0.5997 - Wer: 0.3870 | 7e70c334e59ad638b80a2c0456a1c724 |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_8_0', 'generated_from_trainer', 'bas', 'robust-speech-event', 'model_for_talk', 'hf-asr-leaderboard'] | false | Evaluation Commands 1. To evaluate on mozilla-foundation/common_voice_8_0 with test split python eval.py --model_id DrishtiSharma/wav2vec2-large-xls-r-300m-bas-v1 --dataset mozilla-foundation/common_voice_8_0 --config bas --split test --log_outputs 2. To evaluate on speech-recognition-community-v2/dev_data Basaa (bas) language isn't available in speech-recognition-community-v2/dev_data | 3bd6d6efc6473c504955e5caa6df6864 |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_8_0', 'generated_from_trainer', 'bas', 'robust-speech-event', 'model_for_talk', 'hf-asr-leaderboard'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.000111 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 100 - mixed_precision_training: Native AMP | 60fb985a0b73ff6bf1c05f2934fa0d52 |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_8_0', 'generated_from_trainer', 'bas', 'robust-speech-event', 'model_for_talk', 'hf-asr-leaderboard'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 12.7076 | 5.26 | 200 | 3.6361 | 1.0 | | 3.1657 | 10.52 | 400 | 3.0101 | 1.0 | | 2.3987 | 15.78 | 600 | 0.9125 | 0.6774 | | 1.0079 | 21.05 | 800 | 0.6477 | 0.5352 | | 0.7392 | 26.31 | 1000 | 0.5432 | 0.4929 | | 0.6114 | 31.57 | 1200 | 0.5498 | 0.4639 | | 0.5222 | 36.83 | 1400 | 0.5220 | 0.4561 | | 0.4648 | 42.1 | 1600 | 0.5586 | 0.4289 | | 0.4103 | 47.36 | 1800 | 0.5337 | 0.4082 | | 0.3692 | 52.62 | 2000 | 0.5421 | 0.3861 | | 0.3403 | 57.88 | 2200 | 0.5549 | 0.4096 | | 0.3011 | 63.16 | 2400 | 0.5833 | 0.3925 | | 0.2932 | 68.42 | 2600 | 0.5674 | 0.3815 | | 0.2696 | 73.68 | 2800 | 0.5734 | 0.3889 | | 0.2496 | 78.94 | 3000 | 0.5968 | 0.3985 | | 0.2289 | 84.21 | 3200 | 0.5888 | 0.3893 | | 0.2091 | 89.47 | 3400 | 0.5849 | 0.3852 | | 0.2005 | 94.73 | 3600 | 0.5938 | 0.3875 | | 0.1876 | 99.99 | 3800 | 0.5997 | 0.3870 | | 4ee71e473e0fe12389cf55fcdf512343 |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-uncased-finetuned-basil This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 1.2870 | ee7964e04af582955088f9fd1c64eb93 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 1.9097 | 1.0 | 780 | 1.4978 | | 1.5358 | 2.0 | 1560 | 1.3439 | | 1.4259 | 3.0 | 2340 | 1.2881 | | c6a4d313e4a7e36fc1d4acb15ef47294 |

afl-3.0 | [] | false | reStructured Pre-training (RST) official [repository](https://github.com/ExpressAI/reStructured-Pretraining), [paper](https://arxiv.org/pdf/2206.11147.pdf), [easter eggs](http://expressai.co/peripherals/emoji-eng.html) | 7e31d1445d984b34b3842fd3f026f440 |

afl-3.0 | [] | false | RST is a new paradigm for language pre-training, which * unifies **26** different types of signal from **10** data sources (Totten Tomatoes, Dailymail, Wikipedia, Wikidata, Wikihow, Wordnet, arXiv etc ) in the world structurally, being pre-trained with a monolithcal model, * surpasses strong competitors (e.g., T0) on **52/55** popular datasets from a variety of NLP tasks (classification, IE, retrieval, generation etc) * achieves superior performance in National College Entrance Examination **(Gaokao-English, 高考-英语)** achieves **40** points higher than the average scores made by students and 15 points higher than GPT3 with **1/16** parameters. In particular, Qin gets a high score of **138.5** (the full mark is 150) in the 2018 English exam In such a pre-training paradigm, * Data-centric Pre-training: the role of data will be re-emphasized, and model pre-training and fine-tuning of downstream tasks are viewed as a process of data storing and accessing * Pre-training over JSON instead of TEXT: a good storage mechanism should not only have the ability to cache a large amount of data but also consider the ease of access. | be4f007e56ad8c038241c734aae143bd |

afl-3.0 | [] | false | Model Description We release all models introduced in our [paper](https://arxiv.org/pdf/2206.11147.pdf), covering 13 different application scenarios. Each model contains 11 billion parameters. | Model | Description | Recommended Application | ----------- | ----------- |----------- | | rst-all-11b | Trained with all the signals below except signals that are used to train Gaokao models | All applications below (specialized models are recommended first if high performance is preferred) | | rst-fact-retrieval-11b | Trained with the following signals: WordNet meaning, WordNet part-of-speech, WordNet synonym, WordNet antonym, wikiHow category hierarchy, Wikidata relation, Wikidata entity typing, Paperswithcode entity typing | Knowledge intensive tasks, information extraction tasks,factual checker | | rst-summarization-11b | Trained with the following signals: DailyMail summary, Paperswithcode summary, arXiv summary, wikiHow summary | Summarization or other general generation tasks, meta-evaluation (e.g., BARTScore) | | rst-temporal-reasoning-11b | Trained with the following signals: DailyMail temporal information, wikiHow procedure | Temporal reasoning, relation extraction, event-based extraction | | **rst-information-extraction-11b** | **Trained with the following signals: Paperswithcode entity, Paperswithcode entity typing, Wikidata entity typing, Wikidata relation, Wikipedia entity** | **Named entity recognition, relation extraction and other general IE tasks in the news, scientific or other domains**| | rst-intent-detection-11b | Trained with the following signals: wikiHow goal-step relation | Intent prediction, event prediction | | rst-topic-classification-11b | Trained with the following signals: DailyMail category, arXiv category, wikiHow text category, Wikipedia section title | general text classification | | rst-word-sense-disambiguation-11b | Trained with the following signals: WordNet meaning, WordNet part-of-speech, WordNet synonym, WordNet antonym | Word sense disambiguation, part-of-speech tagging, general IE tasks, common sense reasoning | | rst-natural-language-inference-11b | Trained with the following signals: ConTRoL dataset, DREAM dataset, LogiQA dataset, RACE & RACE-C dataset, ReClor dataset, DailyMail temporal information | Natural language inference, multiple-choice question answering, reasoning | | rst-sentiment-classification-11b | Trained with the following signals: Rotten Tomatoes sentiment, Wikipedia sentiment | Sentiment classification, emotion classification | | rst-gaokao-rc-11b | Trained with multiple-choice QA datasets that are used to train the [T0pp](https://huggingface.co/bigscience/T0pp) model | General multiple-choice question answering| | rst-gaokao-cloze-11b | Trained with manually crafted cloze datasets | General cloze filling| | rst-gaokao-writing-11b | Trained with example essays from past Gaokao-English exams and grammar error correction signals | Essay writing, story generation, grammar error correction and other text generation tasks | | 68690d82ed93a52891fd44ea6903ae02 |

afl-3.0 | [] | false | Have a try? ```python from transformers import AutoTokenizer, AutoModelForSeq2SeqLM tokenizer = AutoTokenizer.from_pretrained("XLab/rst-all-11b") model = AutoModelForSeq2SeqLM.from_pretrained("XLab/rst-all-11b") inputs = tokenizer.encode("TEXT: this is the best cast iron skillet you will ever buy. QUERY: Is this review \"positive\" or \"negative\"", return_tensors="pt") outputs = model.generate(inputs) print(tokenizer.decode(outputs[0], skip_special_tokens=True, clean_up_tokenization_spaces=True)) ``` | 2c5e1813a494c3081d49898e97b9dba7 |

afl-3.0 | [] | false | Data for reStructure Pre-training This dataset is a precious treasure, containing a variety of naturally occurring signals. Any downstream task you can think of (e.g., the college entrance exam mentioned in the RST paper) can benefit from being pre-trained on some of our provided signals. We spent several months collecting the following 29 signal types, accounting for a total of 46,926,447 data samples. We hope this dataset will be a valuable asset for everyone in natural language processing research. We provide collected signals through [DataLab](https://github.com/ExpressAI/DataLab). For efficiency, we only provide 50,000 samples at most for each signal type. If you want all the samples we collected, please fill this [form](https://docs.google.com/forms/d/e/1FAIpQLSdPO50vSdfwoO3D7DQDVlupQnHgrXrwfF3ePE4X1H6BwgTn5g/viewform?usp=sf_link). More specifically, we collected the following signals. | ef561ed7d324e574e4a0c7aa18909e97 |

afl-3.0 | [] | false | Sample | Use in DataLab | Some Applications | | --- | --- | --- | --- | --- | | [Rotten Tomatoes](https://www.rottentomatoes.com/) | (review, rating) | 5,311,109 | `load_dataset("rst", "rotten_tomatoes_sentiment")` | Sentiment classification | | [Daily Mail](https://www.dailymail.co.uk/home/index.html) | (text, category) | 899,904 | `load_dataset("rst", "daily_mail_category")`| Topic classification | | [Daily Mail](https://www.dailymail.co.uk/home/index.html) | (title, text, summary) | 1,026,616 | `load_dataset("rst", "daily_mail_summary")` | Summarization; Sentence expansion| | [Daily Mail](https://www.dailymail.co.uk/home/index.html) | (text, events) | 1,006,412 | `load_dataset("rst", "daily_mail_temporal")` | Temporal reasoning| | [Wikidata](https://www.wikidata.org/wiki/Wikidata:Main_Page) | (entity, entity_type, text) | 2,214,274 | `load_dataset("rst", "wikidata_entity")` | Entity typing| | [Wikidata](https://www.wikidata.org/wiki/Wikidata:Main_Page) | (subject, object, relation, text) | 1,526,674 | `load_dataset("rst", "wikidata_relation")` | Relation extraction; Fact retrieval| | [wikiHow](https://www.wikihow.com/Main-Page) | (text, category) | 112,109 | `load_dataset("rst", "wikihow_text_category")` | Topic classification | | [wikiHow](https://www.wikihow.com/Main-Page) | (low_category, high_category) | 4,868 | `load_dataset("rst", "wikihow_category_hierarchy")` | Relation extraction; Commonsense reasoning| | [wikiHow](https://www.wikihow.com/Main-Page) | (goal, steps) | 47,956 | `load_dataset("rst", "wikihow_goal_step")` | Intent detection| | [wikiHow](https://www.wikihow.com/Main-Page) | (text, summary) | 703,278 | `load_dataset("rst", "wikihow_summary")` | Summarization; Sentence expansion | | [wikiHow](https://www.wikihow.com/Main-Page) | (goal, first_step, second_step) | 47,787 | `load_dataset("rst", "wikihow_procedure")` | Temporal reasoning | | [wikiHow](https://www.wikihow.com/Main-Page) | (question, description, answer, related_questions) | 47,705 | `load_dataset("rst", "wikihow_question")` | Question generation| | [Wikipedia](https://www.wikipedia.org/) | (text, entities) |22,231,011 | `load_dataset("rst", "wikipedia_entities")` | Entity recognition| [Wikipedia](https://www.wikipedia.org/) | (texts, titles) | 3,296,225 | `load_dataset("rst", "wikipedia_sections")` | Summarization| | [WordNet](https://wordnet.princeton.edu/) | (word, sentence, pos) | 27,123 | `load_dataset("rst", "wordnet_pos")` | Part-of-speech tagging| | [WordNet](https://wordnet.princeton.edu/) | (word, sentence, meaning, possible_meanings) | 27,123 | `load_dataset("rst", "wordnet_meaning")` | Word sense disambiguation| | [WordNet](https://wordnet.princeton.edu/) | (word, sentence, synonyms) | 17,804 | `load_dataset("rst", "wordnet_synonym")`| Paraphrasing| | [WordNet](https://wordnet.princeton.edu/) | (word, sentence, antonyms) | 6,408 | `load_dataset("rst", "wordnet_antonym")` |Negation | | [ConTRoL]() | (premise, hypothesis, label) | 8,323 | `load_dataset("rst", "qa_control")` | Natural language inference| |[DREAM](https://transacl.org/ojs/index.php/tacl/article/view/1534)| (context, question, options, answer) | 9,164 | `load_dataset("rst", "qa_dream")` | Reading comprehension| | [LogiQA](https://doi.org/10.24963/ijcai.2020/501) | (context, question, options, answer) | 7,974 | `load_dataset("rst", "qa_logiqa")` | Reading comprehension| | [ReClor](https://openreview.net/forum?id=HJgJtT4tvB) | (context, question, options, answer) | 5,138 | `load_dataset("rst", "qa_reclor")` |Reading comprehension | | [RACE](https://doi.org/10.18653/v1/d17-1082) | (context, question, options, answer) | 44,880 | `load_dataset("rst", "qa_race")` | Reading comprehension| | [RACE-C](http://proceedings.mlr.press/v101/liang19a.html) | (context, question, options, answer) | 5,093 | `load_dataset("rst", "qa_race_c")` | Reading comprehension| | [TriviaQA](https://doi.org/10.18653/v1/P17-1147) | (context, question, answer) | 46,636 | `load_dataset("rst", "qa_triviaqa")` |Reading comprehension | | [Arxiv](https://arxiv.org/) | (text, category) | 1,696,348 | `load_dataset("rst", "arxiv_category")` |Topic classification| | [Arxiv](https://arxiv.org/) | (text, summary) | 1,696,348 | `load_dataset("rst", "arxiv_summary")` | Summarization; Sentence expansion| | [Paperswithcode](https://paperswithcode.com/) | (text, entities, datasets, methods, tasks, metrics) | 4,731,233 | `load_dataset("rst", "paperswithcode_entity")` | Entity recognition| | [Paperswithcode](https://paperswithcode.com/) | (text, summary) | 120,924 | `load_dataset("rst", "paperswithcode_summary")` | Summarization; Sentence expansion| | e408abf7286020efc56bc8202769a775 |

afl-3.0 | [] | false | Bibtext for Citation Info ``` @article{yuan2022restructured, title={reStructured Pre-training}, author={Yuan, Weizhe and Liu, Pengfei}, journal={arXiv preprint arXiv:2206.11147}, year={2022} } ``` | 4e2cfc9f951b33b4e2c3df82cdb3eb0a |

apache-2.0 | ['generated_from_trainer'] | false | albert-large-v2_ner_wnut_17 This model is a fine-tuned version of [albert-large-v2](https://huggingface.co/albert-large-v2) on the wnut_17 dataset. It achieves the following results on the evaluation set: - Loss: 0.2429 - Precision: 0.7446 - Recall: 0.5335 - F1: 0.6216 - Accuracy: 0.9582 | 3e8c1ff5bd73bc55e8b53ce502e1504d |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 213 | 0.3051 | 0.7929 | 0.3206 | 0.4566 | 0.9410 | | No log | 2.0 | 426 | 0.2151 | 0.7443 | 0.4665 | 0.5735 | 0.9516 | | 0.17 | 3.0 | 639 | 0.2310 | 0.7364 | 0.5012 | 0.5964 | 0.9559 | | 0.17 | 4.0 | 852 | 0.2387 | 0.7564 | 0.5311 | 0.6240 | 0.9578 | | 0.0587 | 5.0 | 1065 | 0.2429 | 0.7446 | 0.5335 | 0.6216 | 0.9582 | | b094b30adfb7262fce1497f63261aa59 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | whisper-tiny-hi This model is a fine-tuned version of [openai/whisper-tiny](https://huggingface.co/openai/whisper-tiny) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 0.7990 - Wer: 43.8869 | b45bb5d70dd04afaba0b0788d61a3804 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - training_steps: 3000 - mixed_precision_training: Native AMP | 530ef642fdca80004a91fae0966f310f |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.1747 | 7.02 | 1000 | 0.5674 | 41.6800 | | 0.0466 | 14.03 | 2000 | 0.7042 | 43.7378 | | 0.0174 | 22.0 | 3000 | 0.7990 | 43.8869 | | f5eb50eb8c392f92486c60fff8f5eedb |

mit | [] | false | 학습 환경 및 하이퍼파라미터 - NVIDIA Tesla T4(16GB VRAM) - fp 16, deepspeed stage2 - 350000 steps, 2일 17시간 소요 - batch size 32 - learning rate 5e-5, linear scheduler - 최종 train loss: 3.684 - 학습 코드: https://github.com/HeegyuKim/language-model | d75884e17559f03e1eb2f0e2724f9406 |

mit | [] | false | <details> <summary>deepspeed parameter</summary> <div markdown="1"> ```json { "zero_optimization": { "stage": 2, "offload_optimizer": { "device": "cpu", "pin_memory": true }, "allgather_partitions": true, "allgather_bucket_size": 5e8, "reduce_scatter": true, "reduce_bucket_size": 5e8, "overlap_comm": true, "contiguous_gradients": true }, "train_micro_batch_size_per_gpu": "auto", "train_batch_size": "auto", "steps_per_print": 1000 } ``` </div> </details> | eed5ddac1f52ced97f7fdfc0a6708de3 |

mit | [] | false | example ```python from transformers import pipeline generator = pipeline('text-generation', model='heegyu/kogpt-neox-tiny') def generate(prefix: str): print(generator(prefix, do_sample=True, top_p=0.6, repetition_penalty=1.4, max_length=128, penalty_alpha=0.6)[0]["generated_text"]) generate("0 : 만약 오늘이 ") generate("오늘 정부가 발표한 내용에 따르면") generate("수학이란 학자들의 정의에 따라") generate("영상 보는데 너무 웃겨 ") ``` 실행 결과 ``` 0 : 만약 오늘이 | 24abaa6ad9ddce572fe3db5128caf553 |

mit | [] | false | 가 먼저 자는거면 또 자는건데ㅋ 1 : ㅇㄷㆍ_== 2 : 아까 아침에 일어났어?!! 3 : 아니아니 근데 이따 시간표가 끝날때까지 잤지않게 일주일동안 계속 잠들었엉.. 나도 지금 일어났는데, 너무 늦을듯해. 그러다 다시 일어나서 다행이다 4 : 어차피 2:30분에 출발할것같아요~ 5 : 이제 곧 일어낫어요 오늘 정부가 발표한 내용에 따르면, 한참 여부는 "한숨이 살릴 수 있는 게 무엇인가"라는 질문에 대해 말할 것도 없다. 하지만 그건 바로 이러한 문제 때문일 것이다." 실제로 해당 기사에서 나온 바 있다. 실제로 당시 한국에서 이게 사실이 아니라고 밝혔다는 건데도 불구하고 말이다. 기사화되기는 했는데 '한국어'의 경우에도 논란이 있었다. 사실 이 부분만 언급되어있고, 대한민국은 무조건 비난을 하는 것이 아니라 본인의 실수를 저지른다는 것인데 반해 유튜브 채널의 영상에서는 그냥 저런 댓글이 올라오 수학이란 학자들의 정의에 따라 이 교과서에서 교육하는 경우가 많은데, 그 이유는 교수들(실제로 학생들은 공부도 하교할 수 있는 등)을 학교로 삼아 강의실에서 듣기 때문이다. 이 학교의 교사들이 '학교'를 선택한 것은 아니지만 교사가 "학생들의"라는 뜻이다."라고 한다. 하지만 이쪽은 교사와 함께 한 명씩 입학식 전부터 교사의 인생들을 시험해보고 싶다는 의미다. 또한 수학여행에서는 가르칠 수도 있고 수학여행을 갔거나 전공 과목으로 졸업하고 교사는 다른 영상 보는데 너무 웃겨 | eeb899111d77883af49bd3efdaf84414 |

apache-2.0 | [] | false | **Don't use this model for any applied task. It too small to be practically useful. It is just a part of a weird research project.** An extremely small version of T5 with these parameters ```python "d_ff": 1024, "d_kv": 64, "d_model": 256, "num_heads": 4, "num_layers": 1, | e0abedb2923d05a5b3a7a27cb3e9ce82 |

apache-2.0 | [] | false | yes, just one layer ``` The model was pre-trained on `realnewslike` subset of C4 for 1 epoch with sequence length `64`. Corresponding WandB run: [click](https://wandb.ai/guitaricet/t5-lm/runs/2yvuxsfz?workspace=user-guitaricet). | 34f9d8bac61309621925089a4ede5c37 |

apache-2.0 | ['generated_from_trainer'] | false | finetuned_token_itr0_2e-05_all_16_02_2022-20_09_36 This model is a fine-tuned version of [distilbert-base-uncased-finetuned-sst-2-english](https://huggingface.co/distilbert-base-uncased-finetuned-sst-2-english) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1743 - Precision: 0.3429 - Recall: 0.3430 - F1: 0.3430 - Accuracy: 0.9446 | 43b8f631f3ee0a067418da0ebba8e39e |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 38 | 0.3322 | 0.0703 | 0.1790 | 0.1010 | 0.8318 | | No log | 2.0 | 76 | 0.2644 | 0.1180 | 0.2343 | 0.1570 | 0.8909 | | No log | 3.0 | 114 | 0.2457 | 0.1624 | 0.2583 | 0.1994 | 0.8980 | | No log | 4.0 | 152 | 0.2487 | 0.1486 | 0.2583 | 0.1887 | 0.8931 | | No log | 5.0 | 190 | 0.2395 | 0.1670 | 0.2694 | 0.2062 | 0.8988 | | 35149f2da7bc79c06f296724b2c0c33e |

apache-2.0 | ['automatic-speech-recognition', 'pt'] | false | exp_w2v2t_pt_unispeech-sat_s756 Fine-tuned [microsoft/unispeech-sat-large](https://huggingface.co/microsoft/unispeech-sat-large) for speech recognition using the train split of [Common Voice 7.0 (pt)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 88e17c63c65c09bb497f7c1b79e213e7 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the twitter-sentiment-analysis dataset. It achieves the following results on the evaluation set: - Loss: 0.4337 - Accuracy: 0.812 - Precision: 0.7910 - F1: 0.8042 | 07eaca11c2305ab10dd1f269650a3292 |

apache-2.0 | ['NER'] | false | Model description **mbert-base-uncased-ner-kin** is a model based on the fine-tuned Multilingual BERT base uncased model, previously fine-tuned for Named Entity Recognition using 10 high-resourced languages. It has been trained to recognize four types of entities: - dates & time (DATE) - Location (LOC) - Organizations (ORG) - Person (PER) | c12684cd5d3a0c3f73f94d082498f5de |

apache-2.0 | ['NER'] | false | Training Data This model was fine-tuned on the Kinyarwanda corpus **(kin)** of the [MasakhaNER](https://github.com/masakhane-io/masakhane-ner) dataset. However, we thresholded the number of entity groups per sentence in this dataset to 10 entity groups. | 7028efc794aca9561210ea5357faf3ca |

apache-2.0 | ['NER'] | false | Limitations - The size of the pre-trained language model prevents its usage in anything other than research. - Lack of analysis concerning the bias and fairness in these models may make them dangerous if deployed into production system. - The train data is a less populated version of the original dataset in terms of entity groups per sentence. Therefore, this can negatively impact the performance. | 343163b0a04b1b86d100a94742d9e1c9 |

apache-2.0 | ['NER'] | false | Usage ```python from transformers import AutoTokenizer, AutoModelForTokenClassification from transformers import pipeline tokenizer = AutoTokenizer.from_pretrained("arnolfokam/mbert-base-uncased-ner-kin") model = AutoModelForTokenClassification.from_pretrained("arnolfokam/mbert-base-uncased-ner-kin") nlp = pipeline("ner", model=model, tokenizer=tokenizer) example = "Rayon Sports yasinyishije rutahizamu w’Umurundi" ner_results = nlp(example) print(ner_results) ``` | 22a4bb483acd47845ec35bb69cfe8172 |

cc-by-4.0 | ['ner'] | false | FastPDN FastPolDeepNer is model for Named Entity Recognition, designed for easy use, training and configuration. The forerunner of this project is [PolDeepNer2](https://gitlab.clarin-pl.eu/information-extraction/poldeepner2). The model implements a pipeline consisting of data processing and training using: hydra, pytorch, pytorch-lightning, transformers. Source code: https://gitlab.clarin-pl.eu/grupa-wieszcz/ner/fast-pdn | 2bafb72fa365869fa72da2a9539c3ea3 |

cc-by-4.0 | ['ner'] | false | How to use Here is how to use this model to get Named Entities in text: ```python from transformers import pipeline ner = pipeline('ner', model='clarin-pl/FastPDN', aggregation_strategy='simple') text = "Nazywam się Jan Kowalski i mieszkam we Wrocławiu." ner_results = ner(text) for output in ner_results: print(output) {'entity_group': 'nam_liv_person', 'score': 0.9996054, 'word': 'Jan Kowalski', 'start': 12, 'end': 24} {'entity_group': 'nam_loc_gpe_city', 'score': 0.998931, 'word': 'Wrocławiu', 'start': 39, 'end': 48} ``` Here is how to use this model to get the logits for every token in text: ```python from transformers import AutoTokenizer, AutoModelForTokenClassification tokenizer = AutoTokenizer.from_pretrained("clarin-pl/FastPDN") model = AutoModelForTokenClassification.from_pretrained("clarin-pl/FastPDN") text = "Nazywam się Jan Kowalski i mieszkam we Wrocławiu." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` | c6676d3d4c8f71cdc2a3b5f395119b25 |

cc-by-4.0 | ['ner'] | false | Training data The FastPDN model was trained on datasets (with 82 class versions) of kpwr and cen. Annotation guidelines are specified [here](https://clarin-pl.eu/dspace/bitstream/handle/11321/294/WytyczneKPWr-jednostkiidentyfikacyjne.pdf). | 28bc4284b9957557befe8025a4e7dcea |

cc-by-4.0 | ['ner'] | false | Pretraining FastPDN models have been fine-tuned, thanks to pretrained models: - [herbert-base-case](https://huggingface.co/allegro/herbert-base-cased) - [distiluse-base-multilingual-cased-v1](sentence-transformers/distiluse-base-multilingual-cased-v1) | a2359218f6bf61ccb75b5faf5298a8d9 |

cc-by-4.0 | ['ner'] | false | Evaluation Runs trained on `cen_n82` and `kpwr_n82`: | name |test/f1|test/pdn2_f1|test/acc|test/precision|test/recall| |---------|-------|------------|--------|--------------|-----------| |distiluse| 0.53 | 0.61 | 0.95 | 0.55 | 0.54 | | herbert | 0.68 | 0.78 | 0.97 | 0.7 | 0.69 | | 08ad756f16217bbd57b4068d22c05844 |

apache-2.0 | ['generated_from_trainer'] | false | tiny-mlm-glue-mrpc-target-glue-qqp This model is a fine-tuned version of [muhtasham/tiny-mlm-glue-mrpc](https://huggingface.co/muhtasham/tiny-mlm-glue-mrpc) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.4096 - Accuracy: 0.7995 - F1: 0.7718 | 32c178cae3f0352e8531c3e58439ffe4 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:-----:|:---------------:|:--------:|:------:| | 0.5796 | 0.04 | 500 | 0.5174 | 0.7297 | 0.6813 | | 0.5102 | 0.09 | 1000 | 0.4804 | 0.7541 | 0.7035 | | 0.4957 | 0.13 | 1500 | 0.4916 | 0.7412 | 0.7152 | | 0.4798 | 0.18 | 2000 | 0.4679 | 0.7549 | 0.7221 | | 0.4728 | 0.22 | 2500 | 0.4563 | 0.7624 | 0.7270 | | 0.4569 | 0.26 | 3000 | 0.4501 | 0.7673 | 0.7340 | | 0.4583 | 0.31 | 3500 | 0.4480 | 0.7682 | 0.7375 | | 0.4502 | 0.35 | 4000 | 0.4498 | 0.7665 | 0.7387 | | 0.4514 | 0.4 | 4500 | 0.4452 | 0.7681 | 0.7410 | | 0.4416 | 0.44 | 5000 | 0.4209 | 0.7884 | 0.7491 | | 0.4297 | 0.48 | 5500 | 0.4288 | 0.7826 | 0.7502 | | 0.4299 | 0.53 | 6000 | 0.4069 | 0.8001 | 0.7559 | | 0.4248 | 0.57 | 6500 | 0.4194 | 0.7896 | 0.7547 | | 0.4257 | 0.62 | 7000 | 0.4063 | 0.7998 | 0.7582 | | 0.418 | 0.66 | 7500 | 0.4059 | 0.8038 | 0.7639 | | 0.4306 | 0.7 | 8000 | 0.4111 | 0.7964 | 0.7615 | | 0.4212 | 0.75 | 8500 | 0.3990 | 0.8065 | 0.7672 | | 0.4143 | 0.79 | 9000 | 0.4227 | 0.7875 | 0.7604 | | 0.4121 | 0.84 | 9500 | 0.3906 | 0.8098 | 0.7667 | | 0.4138 | 0.88 | 10000 | 0.3872 | 0.8152 | 0.7725 | | 0.4082 | 0.92 | 10500 | 0.3843 | 0.8148 | 0.7700 | | 0.4084 | 0.97 | 11000 | 0.3863 | 0.8170 | 0.7740 | | 0.4067 | 1.01 | 11500 | 0.4001 | 0.8037 | 0.7707 | | 0.3854 | 1.06 | 12000 | 0.3814 | 0.8182 | 0.7756 | | 0.3945 | 1.1 | 12500 | 0.3861 | 0.8132 | 0.7761 | | 0.3831 | 1.14 | 13000 | 0.3917 | 0.8110 | 0.7750 | | 0.3722 | 1.19 | 13500 | 0.4096 | 0.7995 | 0.7718 | | 9365263a2d54e0d99b2a03efe2e12365 |

apache-2.0 | ['generated_from_trainer'] | false | Brain_Tumor_Classification_using_swin This model is a fine-tuned version of [microsoft/swin-base-patch4-window7-224-in22k](https://huggingface.co/microsoft/swin-base-patch4-window7-224-in22k) on the imagefolder dataset. It achieves the following results on the evaluation set: - Loss: 0.0123 - Accuracy: 0.9961 - F1: 0.9961 - Recall: 0.9961 - Precision: 0.9961 | a4ddcebfaacb72ee3b6820e93716328c |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | Recall | Precision | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|:------:|:---------:| | 0.1234 | 1.0 | 180 | 0.0450 | 0.9840 | 0.9840 | 0.9840 | 0.9840 | | 0.0837 | 2.0 | 360 | 0.0198 | 0.9926 | 0.9926 | 0.9926 | 0.9926 | | 0.0373 | 3.0 | 540 | 0.0123 | 0.9961 | 0.9961 | 0.9961 | 0.9961 | | f49cdcb28def39514d736e4a3bbecebf |

apache-2.0 | ['translation'] | false | opus-mt-ln-de * source languages: ln * target languages: de * OPUS readme: [ln-de](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/ln-de/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-21.zip](https://object.pouta.csc.fi/OPUS-MT-models/ln-de/opus-2020-01-21.zip) * test set translations: [opus-2020-01-21.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/ln-de/opus-2020-01-21.test.txt) * test set scores: [opus-2020-01-21.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/ln-de/opus-2020-01-21.eval.txt) | 28b7a40cc847d686425dbab38dee2aeb |

cc-by-4.0 | ['generated_from_trainer'] | false | CTEBMSP_ner_test This model is a fine-tuned version of [chizhikchi/Spanish_disease_finder](https://huggingface.co/chizhikchi/Spanish_disease_finder) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.0560 - Diso Precision: 0.8925 - Diso Recall: 0.8945 - Diso F1: 0.8935 - Diso Number: 2645 - Overall Precision: 0.8925 - Overall Recall: 0.8945 - Overall F1: 0.8935 - Overall Accuracy: 0.9899 | 01c6804ffb6faae339dccffdfba9e874 |

cc-by-4.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 4e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 4 | 714f32f3f90f2a8c4d631d2c507128fa |

cc-by-4.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Diso Precision | Diso Recall | Diso F1 | Diso Number | Overall Precision | Overall Recall | Overall F1 | Overall Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------------:|:-----------:|:-------:|:-----------:|:-----------------:|:--------------:|:----------:|:----------------:| | 0.04 | 1.0 | 1570 | 0.0439 | 0.8410 | 0.8858 | 0.8628 | 2645 | 0.8410 | 0.8858 | 0.8628 | 0.9877 | | 0.0173 | 2.0 | 3140 | 0.0487 | 0.8728 | 0.8843 | 0.8785 | 2645 | 0.8728 | 0.8843 | 0.8785 | 0.9885 | | 0.0071 | 3.0 | 4710 | 0.0496 | 0.8911 | 0.8945 | 0.8928 | 2645 | 0.8911 | 0.8945 | 0.8928 | 0.9898 | | 0.0025 | 4.0 | 6280 | 0.0560 | 0.8925 | 0.8945 | 0.8935 | 2645 | 0.8925 | 0.8945 | 0.8935 | 0.9899 | | b442714dd9c0347a8a48e9d28bcf3230 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper Large V2 Spanish This model is a fine-tuned version of [openai/whisper-large-v2](https://huggingface.co/openai/whisper-large-v2) on the mozilla-foundation/common_voice_11_0 es dataset. It achieves the following results on the evaluation set: - Loss: 0.1648 - Wer: 5.0745 | ea9e3e1af8fb15becdc933681d5ed4ae |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-06 - train_batch_size: 32 - eval_batch_size: 16 - seed: 42 - distributed_type: multi-GPU - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - training_steps: 1500 | c53bdbffcc6ccfc9d23dc539f4c26c82 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.1556 | 0.5 | 750 | 0.1683 | 5.0959 | | 0.1732 | 1.35 | 1500 | 0.1648 | 5.0745 | | abcdd620d0b405dc22e1a41a6f0dfb84 |

apache-2.0 | ['generated_from_trainer'] | false | t5-base-pointer-top_v2 This model is a fine-tuned version of [google/mt5-base](https://huggingface.co/google/mt5-base) on the top_v2 dataset. It achieves the following results on the evaluation set: - Loss: 0.0256 - Exact Match: 0.8517 | daa2cbc61de2c7354c4d30eff386a379 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.001 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - gradient_accumulation_steps: 128 - total_train_batch_size: 512 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - training_steps: 3000 | 644924120692b2ce4b7cb5681a3ea302 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Exact Match | |:-------------:|:-----:|:----:|:---------------:|:-----------:| | 1.4545 | 0.82 | 200 | 0.2542 | 0.1294 | | 0.1878 | 1.65 | 400 | 0.0668 | 0.2128 | | 0.0796 | 2.47 | 600 | 0.0466 | 0.2276 | | 0.0536 | 3.29 | 800 | 0.0356 | 0.2309 | | 0.0424 | 4.12 | 1000 | 0.0317 | 0.2328 | | 0.0356 | 4.94 | 1200 | 0.0295 | 0.2340 | | 0.0306 | 5.76 | 1400 | 0.0288 | 0.2357 | | 0.0277 | 6.58 | 1600 | 0.0271 | 0.2351 | | 0.0243 | 7.41 | 1800 | 0.0272 | 0.2351 | | 0.0225 | 8.23 | 2000 | 0.0272 | 0.2353 | | 0.0206 | 9.05 | 2200 | 0.0267 | 0.2368 | | 0.0187 | 9.88 | 2400 | 0.0260 | 0.2367 | | 0.0173 | 10.7 | 2600 | 0.0256 | 0.2383 | | 0.0161 | 11.52 | 2800 | 0.0260 | 0.2383 | | 0.0153 | 12.35 | 3000 | 0.0257 | 0.2377 | | 8bc0e2f415db52b306eb0332910c119a |

apache-2.0 | ['speech'] | false | DistilXLSR-53 for BP [DistilXLSR-53 for BP: DistilHuBERT applied to Wav2vec XLSR-53 for Brazilian Portuguese](https://github.com/s3prl/s3prl/tree/master/s3prl/upstream/distiller) The base model pretrained on 16kHz sampled speech audio. When using the model make sure that your speech input is also sampled at 16Khz. **Note**: This model does not have a tokenizer as it was pretrained on audio alone. In order to use this model **speech recognition**, a tokenizer should be created and the model should be fine-tuned on labeled text data. Check out [this blog](https://huggingface.co/blog/fine-tune-wav2vec2-english) for more in-detail explanation of how to fine-tune the model. Paper: [DistilHuBERT: Speech Representation Learning by Layer-wise Distillation of Hidden-unit BERT](https://arxiv.org/abs/2110.01900) Authors: Heng-Jui Chang, Shu-wen Yang, Hung-yi Lee **Note 2**: The XLSR-53 model was distilled using [Brazilian Portuguese Datasets](https://huggingface.co/lgris/bp400-xlsr) for test purposes. The dataset is quite small to perform such task (the performance might not be so good as the [original work](https://arxiv.org/abs/2110.01900)). **Abstract** Self-supervised speech representation learning methods like wav2vec 2.0 and Hidden-unit BERT (HuBERT) leverage unlabeled speech data for pre-training and offer good representations for numerous speech processing tasks. Despite the success of these methods, they require large memory and high pre-training costs, making them inaccessible for researchers in academia and small companies. Therefore, this paper introduces DistilHuBERT, a novel multi-task learning framework to distill hidden representations from a HuBERT model directly. This method reduces HuBERT's size by 75% and 73% faster while retaining most performance in ten different tasks. Moreover, DistilHuBERT required little training time and data, opening the possibilities of pre-training personal and on-device SSL models for speech. | 707e4d2bfdd84b86c94ee935c3d18c4c |

apache-2.0 | ['setfit', 'sentence-transformers', 'text-classification'] | false | fathyshalab/domain_transfer_clinic_credit_cards-massive_iot-roberta-large-v1-2-6 This is a [SetFit model](https://github.com/huggingface/setfit) that can be used for text classification. The model has been trained using an efficient few-shot learning technique that involves: 1. Fine-tuning a [Sentence Transformer](https://www.sbert.net) with contrastive learning. 2. Training a classification head with features from the fine-tuned Sentence Transformer. | ac1c556f9b822c84b1d485f52b4ed846 |

mit | ['generated_from_trainer'] | false | bert-base-historic-multilingual-cased-squad-fr This model is a fine-tuned version of [dbmdz/bert-base-historic-multilingual-cased](https://huggingface.co/dbmdz/bert-base-historic-multilingual-cased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.7001 | 36b87462db3c19b8557b23368c2e5317 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 1.9769 | 1.0 | 3660 | 1.8046 | | 1.6309 | 2.0 | 7320 | 1.7001 | | ed7d1c24c778bd6b6ac94394f0d00457 |

apache-2.0 | ['generated_from_trainer'] | false | barthez-deft-linguistique This model is a fine-tuned version of [moussaKam/barthez](https://huggingface.co/moussaKam/barthez) on an unknown dataset. **Note**: this model is one of the preliminary experiments and it underperforms the models published in the paper (using [MBartHez](https://huggingface.co/moussaKam/mbarthez) and HAL/Wiki pre-training + copy mechanisms) It achieves the following results on the evaluation set: - Loss: 1.7596 - Rouge1: 41.989 - Rouge2: 22.4524 - Rougel: 32.7966 - Rougelsum: 32.7953 - Gen Len: 22.1549 | 300e66dd0996a00d93556d3886e64756 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 20.0 - mixed_precision_training: Native AMP | 7fc310197688b1d4a577e24eda1f2722 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:| | 3.0569 | 1.0 | 108 | 2.0282 | 31.6993 | 14.9483 | 25.5565 | 25.4379 | 18.3803 | | 2.2892 | 2.0 | 216 | 1.8553 | 35.2563 | 18.019 | 28.3135 | 28.2927 | 18.507 | | 1.9062 | 3.0 | 324 | 1.7696 | 37.4613 | 18.1488 | 28.9959 | 29.0134 | 19.5352 | | 1.716 | 4.0 | 432 | 1.7641 | 37.6903 | 18.7496 | 30.1097 | 30.1027 | 18.9577 | | 1.5722 | 5.0 | 540 | 1.7781 | 38.1013 | 19.8291 | 29.8142 | 29.802 | 19.169 | | 1.4655 | 6.0 | 648 | 1.7661 | 38.3557 | 20.3309 | 30.5068 | 30.4728 | 19.3662 | | 1.3507 | 7.0 | 756 | 1.7596 | 39.7409 | 20.2998 | 31.0849 | 31.1152 | 19.3944 | | 1.2874 | 8.0 | 864 | 1.7706 | 37.7846 | 20.3457 | 30.6826 | 30.6321 | 19.4789 | | 1.2641 | 9.0 | 972 | 1.7848 | 38.7421 | 19.5701 | 30.5798 | 30.6305 | 19.3944 | | 1.1192 | 10.0 | 1080 | 1.8008 | 40.3313 | 20.3378 | 31.8325 | 31.8648 | 19.5493 | | 1.0724 | 11.0 | 1188 | 1.8450 | 38.9612 | 20.5719 | 31.4496 | 31.3144 | 19.8592 | | 1.0077 | 12.0 | 1296 | 1.8364 | 36.5997 | 18.46 | 29.1808 | 29.1705 | 19.7324 | | 0.9362 | 13.0 | 1404 | 1.8677 | 38.0371 | 19.2321 | 30.3893 | 30.3926 | 19.6338 | | 0.8868 | 14.0 | 1512 | 1.9154 | 36.4737 | 18.5314 | 29.325 | 29.3634 | 19.6479 | | 0.8335 | 15.0 | 1620 | 1.9344 | 35.7583 | 18.0687 | 27.9666 | 27.8675 | 19.8028 | | 0.8305 | 16.0 | 1728 | 1.9556 | 37.2137 | 18.2199 | 29.5959 | 29.5799 | 19.9577 | | 0.8057 | 17.0 | 1836 | 1.9793 | 36.6834 | 17.8505 | 28.6701 | 28.7145 | 19.7324 | | 0.7869 | 18.0 | 1944 | 1.9994 | 37.5918 | 19.1984 | 28.8569 | 28.8278 | 19.7606 | | 0.7549 | 19.0 | 2052 | 2.0117 | 37.3278 | 18.5169 | 28.778 | 28.7737 | 19.8028 | | 0.7497 | 20.0 | 2160 | 2.0189 | 37.7513 | 19.1813 | 29.3675 | 29.402 | 19.6901 | | 5b7828eaca00a60c797f21f59d7e863d |

apache-2.0 | ['generated_from_trainer', 'translation'] | false | mt-hr-sv-finetuned This model is a fine-tuned version of [Helsinki-NLP/opus-mt-hr-sv](https://huggingface.co/Helsinki-NLP/opus-mt-hr-sv) on the None dataset. It achieves the following results on the evaluation set: - eval_loss: 0.9565 - eval_bleu: 49.8248 - eval_runtime: 873.8605 - eval_samples_per_second: 16.982 - eval_steps_per_second: 4.246 - epoch: 5.0 - step: 27825 | a625b181e0717e7bb7810dcb0aeaa724 |

apache-2.0 | ['generated_from_trainer', 'translation'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-06 - train_batch_size: 24 - eval_batch_size: 4 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 10 - mixed_precision_training: Native AMP | ef0a16d0d7eb59be5eccec46fd513eac |

apache-2.0 | ['text-to-speech', 'TTS', 'speech-synthesis', 'Tacotron2', 'speechbrain'] | false | Text-to-Speech (TTS) with Tacotron2 trained on LJSpeech This repository provides all the necessary tools for Text-to-Speech (TTS) with SpeechBrain using a [Tacotron2](https://arxiv.org/abs/1712.05884) pretrained on [LJSpeech](https://keithito.com/LJ-Speech-Dataset/). The pre-trained model takes in input a short text and produces a spectrogram in output. One can get the final waveform by applying a vocoder (e.g., HiFIGAN) on top of the generated spectrogram. | f80c41243a037de0806cb7971e8a169b |

apache-2.0 | ['text-to-speech', 'TTS', 'speech-synthesis', 'Tacotron2', 'speechbrain'] | false | Intialize TTS (tacotron2) and Vocoder (HiFIGAN) tacotron2 = Tacotron2.from_hparams(source="speechbrain/tts-tacotron2-ljspeech", savedir="tmpdir_tts") hifi_gan = HIFIGAN.from_hparams(source="speechbrain/tts-hifigan-ljspeech", savedir="tmpdir_vocoder") | 1b54e240aa5a7863bea4c3d28bdafd7c |

apache-2.0 | ['text-to-speech', 'TTS', 'speech-synthesis', 'Tacotron2', 'speechbrain'] | false | Save the waverform torchaudio.save('example_TTS.wav',waveforms.squeeze(1), 22050) ``` If you want to generate multiple sentences in one-shot, you can do in this way: ``` from speechbrain.pretrained import Tacotron2 tacotron2 = Tacotron2.from_hparams(source="speechbrain/TTS_Tacotron2", savedir="tmpdir") items = [ "A quick brown fox jumped over the lazy dog", "How much wood would a woodchuck chuck?", "Never odd or even" ] mel_outputs, mel_lengths, alignments = tacotron2.encode_batch(items) ``` | ca9e72be7d0c5f671b35a71414bf19de |

apache-2.0 | ['text-to-speech', 'TTS', 'speech-synthesis', 'Tacotron2', 'speechbrain'] | false | Training The model was trained with SpeechBrain. To train it from scratch follow these steps: 1. Clone SpeechBrain: ```bash git clone https://github.com/speechbrain/speechbrain/ ``` 2. Install it: ```bash cd speechbrain pip install -r requirements.txt pip install -e . ``` 3. Run Training: ```bash cd recipes/LJSpeech/TTS/tacotron2/ python train.py --device=cuda:0 --max_grad_norm=1.0 --data_folder=/your_folder/LJSpeech-1.1 hparams/train.yaml ``` You can find our training results (models, logs, etc) [here](https://drive.google.com/drive/folders/1PKju-_Nal3DQqd-n0PsaHK-bVIOlbf26?usp=sharing). | 064b02c933e9db7cc766203275dd62d7 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-imdb-demo This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.4328 - Accuracy: 0.928 | 15e9886f9e8354bb4e94b59e93282e87 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 1337 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: cosine - lr_scheduler_warmup_ratio: 0.1 - num_epochs: 5.0 | d353b7be4516f3ab1250e04ec6a59c24 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:--------:| | 0.3459 | 1.0 | 2657 | 0.2362 | 0.9091 | | 0.1612 | 2.0 | 5314 | 0.2668 | 0.9248 | | 0.0186 | 3.0 | 7971 | 0.3274 | 0.9323 | | 0.1005 | 4.0 | 10628 | 0.3978 | 0.9277 | | 0.0006 | 5.0 | 13285 | 0.4328 | 0.928 | | 35b767e3768187f6eec7674d11ac4d55 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | VIBES-2,-Checkpoint-1 Dreambooth model trained by darkvibes with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept: | 9743060c4e7538cafcf97fc935a8a5b2 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-xlsr-53-espeak-cv-ft-xas3-ntsema-colab This model is a fine-tuned version of [facebook/wav2vec2-xlsr-53-espeak-cv-ft](https://huggingface.co/facebook/wav2vec2-xlsr-53-espeak-cv-ft) on the audiofolder dataset. It achieves the following results on the evaluation set: - Loss: 4.3037 - Wer: 0.9713 | 1455ef361d6e3313772b73c9620d420b |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 4 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 8 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 30 - mixed_precision_training: Native AMP | 8a1fa1c5adc17e951f4e1d5fbd3c5bb0 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 7.2912 | 9.09 | 400 | 3.9091 | 1.0 | | 2.5952 | 18.18 | 800 | 3.8703 | 0.9959 | | 2.3509 | 27.27 | 1200 | 4.3037 | 0.9713 | | 636c062e4dd1c8a06dd4bd33a393ba56 |

apache-2.0 | ['translation'] | false | bul-ita * source group: Bulgarian * target group: Italian * OPUS readme: [bul-ita](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/bul-ita/README.md) * model: transformer * source language(s): bul * target language(s): ita * model: transformer * pre-processing: normalization + SentencePiece (spm32k,spm32k) * download original weights: [opus-2020-07-03.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/bul-ita/opus-2020-07-03.zip) * test set translations: [opus-2020-07-03.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/bul-ita/opus-2020-07-03.test.txt) * test set scores: [opus-2020-07-03.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/bul-ita/opus-2020-07-03.eval.txt) | 33e9764d51560a776a234061b564123e |

apache-2.0 | ['translation'] | false | System Info: - hf_name: bul-ita - source_languages: bul - target_languages: ita - opus_readme_url: https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/bul-ita/README.md - original_repo: Tatoeba-Challenge - tags: ['translation'] - languages: ['bg', 'it'] - src_constituents: {'bul', 'bul_Latn'} - tgt_constituents: {'ita'} - src_multilingual: False - tgt_multilingual: False - prepro: normalization + SentencePiece (spm32k,spm32k) - url_model: https://object.pouta.csc.fi/Tatoeba-MT-models/bul-ita/opus-2020-07-03.zip - url_test_set: https://object.pouta.csc.fi/Tatoeba-MT-models/bul-ita/opus-2020-07-03.test.txt - src_alpha3: bul - tgt_alpha3: ita - short_pair: bg-it - chrF2_score: 0.653 - bleu: 43.1 - brevity_penalty: 0.987 - ref_len: 16951.0 - src_name: Bulgarian - tgt_name: Italian - train_date: 2020-07-03 - src_alpha2: bg - tgt_alpha2: it - prefer_old: False - long_pair: bul-ita - helsinki_git_sha: 480fcbe0ee1bf4774bcbe6226ad9f58e63f6c535 - transformers_git_sha: 2207e5d8cb224e954a7cba69fa4ac2309e9ff30b - port_machine: brutasse - port_time: 2020-08-21-14:41 | 816c199b44fff29408f8d01563b620eb |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_7_0', 'generated_from_trainer'] | false | This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the MOZILLA-FOUNDATION/COMMON_VOICE_7_0 - UR dataset. It achieves the following results on the evaluation set: - Loss: 3.8433 - Wer: 0.9852 | a170f6dbb998cacf432af9f4c7c266bc |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_7_0', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 64 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 128 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - training_steps: 2000 - mixed_precision_training: Native AMP | 77480b423065e4d1e069bb0839e9ee92 |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_7_0', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:------:|:----:|:---------------:|:------:| | 1.468 | 166.67 | 500 | 3.0262 | 1.0035 | | 0.0572 | 333.33 | 1000 | 3.5352 | 0.9721 | | 0.0209 | 500.0 | 1500 | 3.7266 | 0.9834 | | 0.0092 | 666.67 | 2000 | 3.8433 | 0.9852 | | c4a576d3c8090d6881869e904d65f7df |

mit | ['exbert'] | false | Overview **Language model:** gelectra-base-germanquad-distilled **Language:** German **Training data:** GermanQuAD train set (~ 12MB) **Eval data:** GermanQuAD test set (~ 5MB) **Infrastructure**: 1x V100 GPU **Published**: Apr 21st, 2021 | ef2787a61f8db9a9164ae140e8d84a3d |

mit | ['exbert'] | false | Details - We trained a German question answering model with a gelectra-base model as its basis. - The dataset is GermanQuAD, a new, German language dataset, which we hand-annotated and published [online](https://deepset.ai/germanquad). - The training dataset is one-way annotated and contains 11518 questions and 11518 answers, while the test dataset is three-way annotated so that there are 2204 questions and with 2204·3−76 = 6536answers, because we removed 76 wrong answers. - In addition to the annotations in GermanQuAD, haystack's distillation feature was used for training. deepset/gelectra-large-germanquad was used as the teacher model. See https://deepset.ai/germanquad for more details and dataset download in SQuAD format. | 3aa6f8b377f182cd090bab18a6b956e0 |

mit | ['exbert'] | false | Performance We evaluated the extractive question answering performance on our GermanQuAD test set. Model types and training data are included in the model name. For finetuning XLM-Roberta, we use the English SQuAD v2.0 dataset. The GELECTRA models are warm started on the German translation of SQuAD v1.1 and finetuned on \\\\germanquad. The human baseline was computed for the 3-way test set by taking one answer as prediction and the other two as ground truth. ``` "exact": 62.4773139745916 "f1": 80.9488017070188 ```  | faa5650cdcd2b6f87b42b82fbb2c773f |

mit | ['exbert'] | false | Authors - Timo Möller: `timo.moeller [at] deepset.ai` - Julian Risch: `julian.risch [at] deepset.ai` - Malte Pietsch: `malte.pietsch [at] deepset.ai` - Michel Bartels: `michel.bartels [at] deepset.ai` | 52c5a2692eaba2de33b738c76931a31f |

mit | ['exbert'] | false | About us  We bring NLP to the industry via open source! Our focus: Industry specific language models & large scale QA systems. Some of our work: - [German BERT (aka "bert-base-german-cased")](https://deepset.ai/german-bert) - [GermanQuAD and GermanDPR datasets and models (aka "gelectra-base-germanquad", "gbert-base-germandpr")](https://deepset.ai/germanquad) - [FARM](https://github.com/deepset-ai/FARM) - [Haystack](https://github.com/deepset-ai/haystack/) Get in touch: [Twitter](https://twitter.com/deepset_ai) | [LinkedIn](https://www.linkedin.com/company/deepset-ai/) | [Slack](https://haystack.deepset.ai/community/join) | [GitHub Discussions](https://github.com/deepset-ai/haystack/discussions) | [Website](https://deepset.ai) By the way: [we're hiring!](http://www.deepset.ai/jobs) | 3eeb41bdfdb3d31db88fad65fc96c8dd |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 250 - num_epochs: 5 | 5e4725885dbf1f5ee12f0f22ff6a1d09 |

apache-2.0 | ['automatic-speech-recognition', 'de'] | false | exp_w2v2r_de_xls-r_age_teens-10_sixties-0_s380 Fine-tuned [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) for speech recognition using the train split of [Common Voice 7.0 (de)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | ea8ff687fdc17b67c86b1c812021ad91 |

apache-2.0 | ['science', 'multi-displinary'] | false | ScholarBERT_100_WB Model This is the **ScholarBERT_100_WB** variant of the ScholarBERT model family. The model is pretrained on a large collection of scientific research articles (**221B tokens**). Additionally, the pretraining data also includes the Wikipedia+BookCorpus, which are used to pretrain the [BERT-base](https://huggingface.co/bert-base-cased) and [BERT-large](https://huggingface.co/bert-large-cased) models. This is a **cased** (case-sensitive) model. The tokenizer will not convert all inputs to lower-case by default. The model is based on the same architecture as [BERT-large](https://huggingface.co/bert-large-cased) and has a total of 340M parameters. | c9eae7987b128624c60ba1a5b71234a9 |

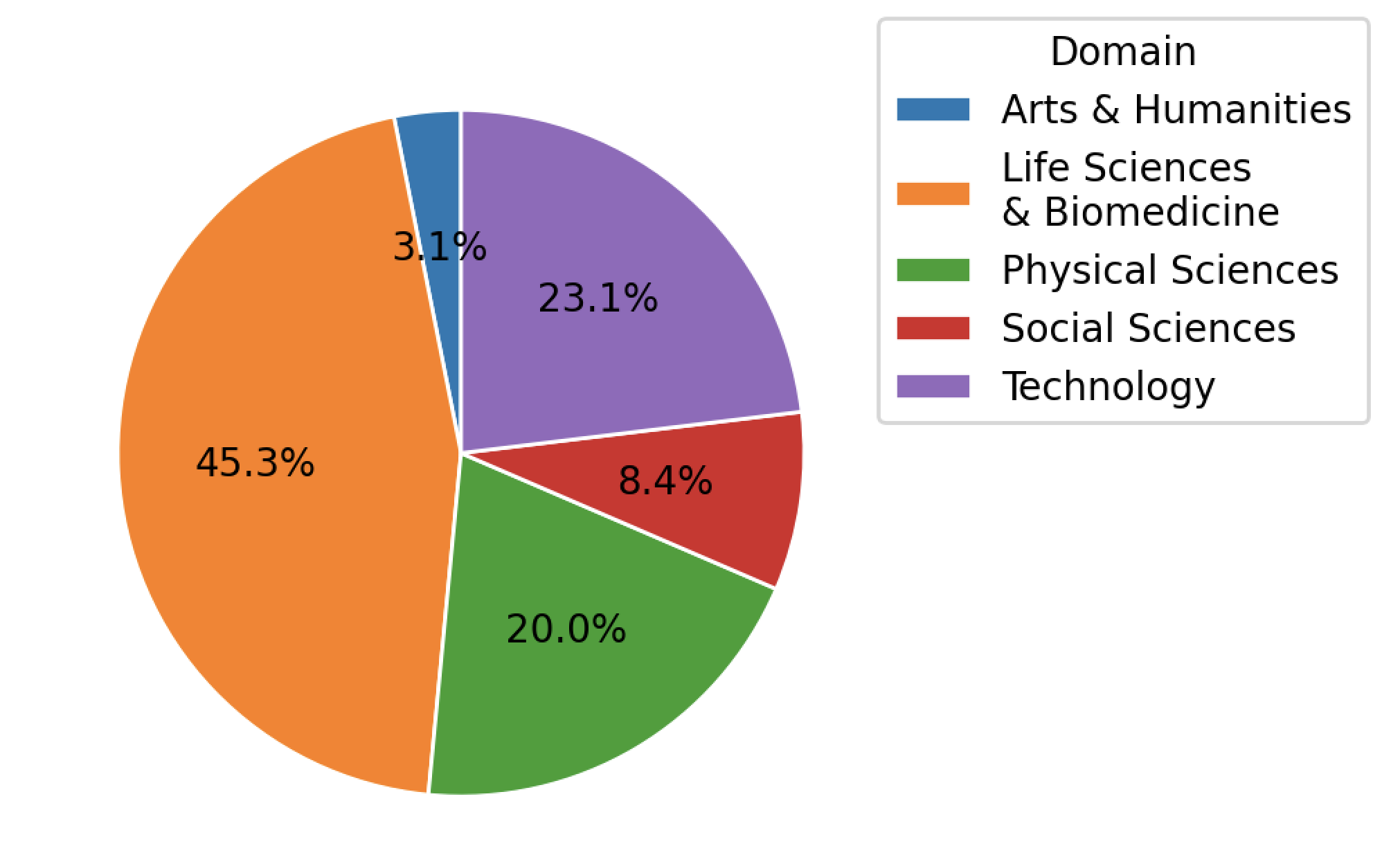

apache-2.0 | ['science', 'multi-displinary'] | false | Training Dataset The vocab and the model are pertrained on **100% of the PRD** scientific literature dataset and the Wikipedia+BookCorpus. The PRD dataset is provided by Public.Resource.Org, Inc. (“Public Resource”), a nonprofit organization based in California. This dataset was constructed from a corpus of journal article files, from which We successfully extracted text from 75,496,055 articles from 178,928 journals. The articles span across Arts & Humanities, Life Sciences & Biomedicine, Physical Sciences, Social Sciences, and Technology. The distribution of articles is shown below.  | 7ae21ff9e39d2b436b4c75073e62f31e |

apache-2.0 | ['science', 'multi-displinary'] | false | BibTeX entry and citation info If using this model, please cite this paper: ``` @misc{hong2022scholarbert, doi = {10.48550/ARXIV.2205.11342}, url = {https://arxiv.org/abs/2205.11342}, author = {Hong, Zhi and Ajith, Aswathy and Pauloski, Gregory and Duede, Eamon and Malamud, Carl and Magoulas, Roger and Chard, Kyle and Foster, Ian}, title = {ScholarBERT: Bigger is Not Always Better}, publisher = {arXiv}, year = {2022} } ``` | 0943b932a8c51828e721e9972b2f3f7f |

apache-2.0 | ['translation'] | false | opus-mt-ja-en * source languages: ja * target languages: en * OPUS readme: [ja-en](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/ja-en/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2019-12-18.zip](https://object.pouta.csc.fi/OPUS-MT-models/ja-en/opus-2019-12-18.zip) * test set translations: [opus-2019-12-18.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/ja-en/opus-2019-12-18.test.txt) * test set scores: [opus-2019-12-18.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/ja-en/opus-2019-12-18.eval.txt) | f26b96606b795459c0b8f2cb50163b4a |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | sentence-transformers/msmarco-distilbert-base-tas-b This is a port of the [DistilBert TAS-B Model](https://huggingface.co/sebastian-hofstaetter/distilbert-dot-tas_b-b256-msmarco) to [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and is optimized for the task of semantic search. | 69ccc73b8b4afa654b342c99fe8d3500 |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Usage (Sentence-Transformers) Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed: ``` pip install -U sentence-transformers ``` Then you can use the model like this: ```python from sentence_transformers import SentenceTransformer, util query = "How many people live in London?" docs = ["Around 9 Million people live in London", "London is known for its financial district"] | ff4e1701b9f36fc68333a26dc8dda9b8 |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Load model from HuggingFace Hub tokenizer = AutoTokenizer.from_pretrained("sentence-transformers/msmarco-distilbert-base-tas-b") model = AutoModel.from_pretrained("sentence-transformers/msmarco-distilbert-base-tas-b") | 0b1394c5e899f83346be688c95b5f27e |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Evaluation Results For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=sentence-transformers/msmarco-distilbert-base-tas-b) | 8701f678ae2556c8197aa0b2f8bfa538 |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Full Model Architecture ``` SentenceTransformer( (0): Transformer({'max_seq_length': 512, 'do_lower_case': False}) with Transformer model: DistilBertModel (1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': True, 'pooling_mode_mean_tokens': False, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False}) ) ``` | 5daf2d76bedbf0818ca86e9511f8711a |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-ft1500_norm1000 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 1.0875 - Mse: 1.3594 - Mae: 0.5794 - R2: 0.3573 - Accuracy: 0.7015 | 2fb20aa75c3ebc496700f5bb47b0744a |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 5 | 72d24a9f042d89d26a053e58117792b4 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Mse | Mae | R2 | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:------:|:------:|:------:|:--------:| | 0.8897 | 1.0 | 3122 | 1.0463 | 1.3078 | 0.5936 | 0.3817 | 0.7008 | | 0.7312 | 2.0 | 6244 | 1.0870 | 1.3588 | 0.5796 | 0.3576 | 0.7002 | | 0.5348 | 3.0 | 9366 | 1.1056 | 1.3820 | 0.5786 | 0.3467 | 0.7124 | | 0.3693 | 4.0 | 12488 | 1.0866 | 1.3582 | 0.5854 | 0.3579 | 0.7053 | | 0.2848 | 5.0 | 15610 | 1.0875 | 1.3594 | 0.5794 | 0.3573 | 0.7015 | | a61f44f6d74de260d1bed793199edbf7 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-squad This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the squad dataset. It achieves the following results on the evaluation set: - Loss: 0.0083 | 6cc249faa08e9ee4c16dc4bf7961cbf3 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 1.2258 | 1.0 | 5533 | 0.0560 | | 0.952 | 2.0 | 11066 | 0.0096 | | 0.7492 | 3.0 | 16599 | 0.0083 | | f48e2527effc9b272e3756586c6ceb7e |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.