license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

mit | ['generated_from_trainer'] | false | finetuned_gpt2-large_sst2_negation0.8 This model is a fine-tuned version of [gpt2-large](https://huggingface.co/gpt2-large) on the sst2 dataset. It achieves the following results on the evaluation set: - Loss: 3.6201 | a5368c775a805ea510d8d430a60d71fe |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.3586 | 1.0 | 1111 | 3.3100 | | 1.812 | 2.0 | 2222 | 3.5114 | | 1.5574 | 3.0 | 3333 | 3.6201 | | 73c4982935140e124636ebca8ed932fc |

apache-2.0 | ['vision', 'maxim', 'image-to-image'] | false | MAXIM pre-trained on RealBlur-R for image deblurring MAXIM model pre-trained for image deblurring. It was introduced in the paper [MAXIM: Multi-Axis MLP for Image Processing](https://arxiv.org/abs/2201.02973) by Zhengzhong Tu, Hossein Talebi, Han Zhang, Feng Yang, Peyman Milanfar, Alan Bovik, Yinxiao Li and first released in [this repository](https://github.com/google-research/maxim). Disclaimer: The team releasing MAXIM did not write a model card for this model so this model card has been written by the Hugging Face team. | 57500ed39a56d141657e99e972a57604 |

apache-2.0 | ['vision', 'maxim', 'image-to-image'] | false | Model description MAXIM introduces a shared MLP-based backbone for different image processing tasks such as image deblurring, deraining, denoising, dehazing, low-light image enhancement, and retouching. The following figure depicts the main components of MAXIM:  | 19e225724003366b02e2879180a9a857 |

apache-2.0 | ['vision', 'maxim', 'image-to-image'] | false | Training procedure and results The authors didn't release the training code. For more details on how the model was trained, refer to the [original paper](https://arxiv.org/abs/2201.02973). As per the [table](https://github.com/google-research/maxim | 15a9b38794fbfa91094d28019ec11aeb |

apache-2.0 | ['vision', 'maxim', 'image-to-image'] | false | Intended uses & limitations You can use the raw model for image deblurring tasks. The model is [officially released in JAX](https://github.com/google-research/maxim). It was ported to TensorFlow in [this repository](https://github.com/sayakpaul/maxim-tf). | 7d5a519e70b0961443e0955556ab58f7 |

apache-2.0 | ['vision', 'maxim', 'image-to-image'] | false | How to use Here is how to use this model: ```python from huggingface_hub import from_pretrained_keras from PIL import Image import tensorflow as tf import numpy as np import requests url = "https://github.com/sayakpaul/maxim-tf/raw/main/images/Deblurring/input/1fromGOPR0950.png" image = Image.open(requests.get(url, stream=True).raw) image = np.array(image) image = tf.convert_to_tensor(image) image = tf.image.resize(image, (256, 256)) model = from_pretrained_keras("google/maxim-s3-deblurring-realblur-r") predictions = model.predict(tf.expand_dims(image, 0)) ``` For a more elaborate prediction pipeline, refer to [this Colab Notebook](https://colab.research.google.com/github/sayakpaul/maxim-tf/blob/main/notebooks/inference-dynamic-resize.ipynb). | f2323f6916cff28beac40c0c262e9fdd |

apache-2.0 | ['vision', 'maxim', 'image-to-image'] | false | Citation ```bibtex @article{tu2022maxim, title={MAXIM: Multi-Axis MLP for Image Processing}, author={Tu, Zhengzhong and Talebi, Hossein and Zhang, Han and Yang, Feng and Milanfar, Peyman and Bovik, Alan and Li, Yinxiao}, journal={CVPR}, year={2022}, } ``` | b6db0fb3ed71e777b8a9356a54ea1b90 |

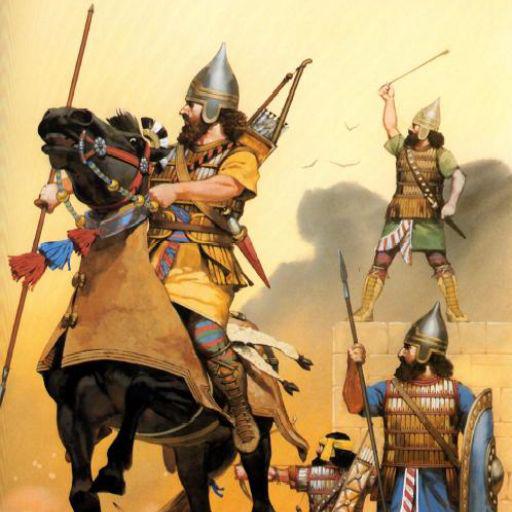

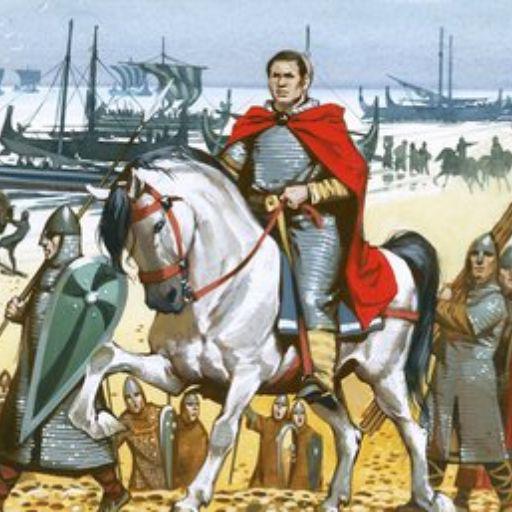

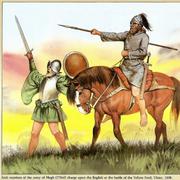

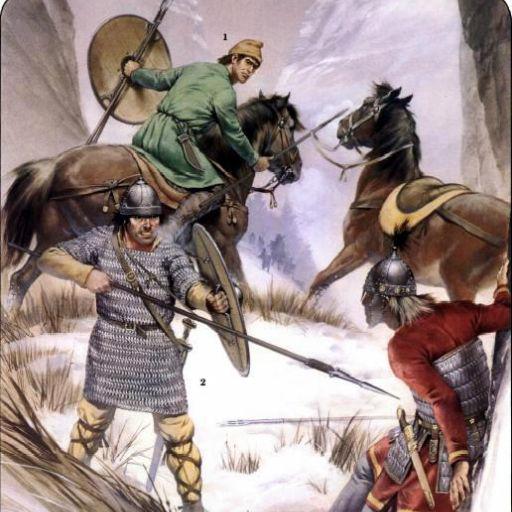

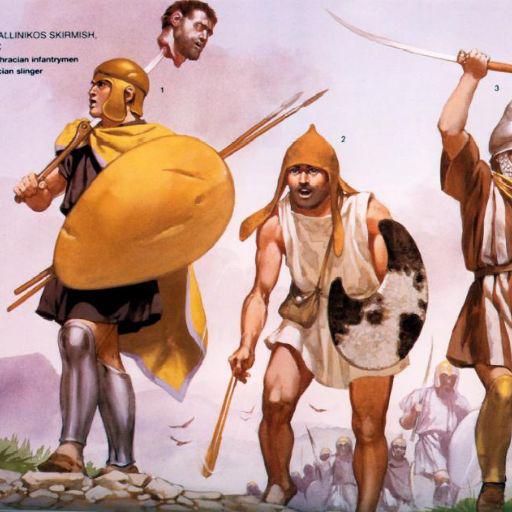

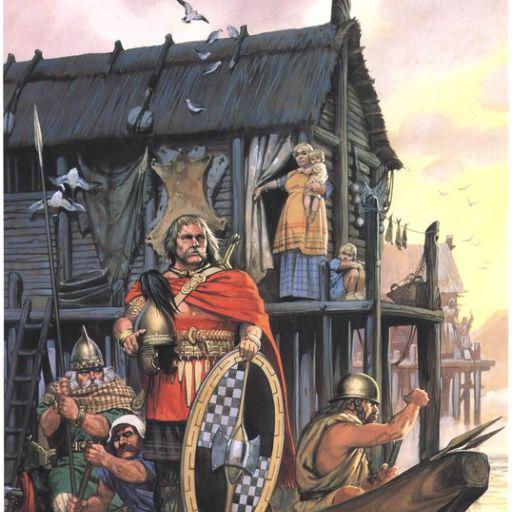

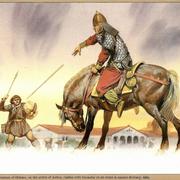

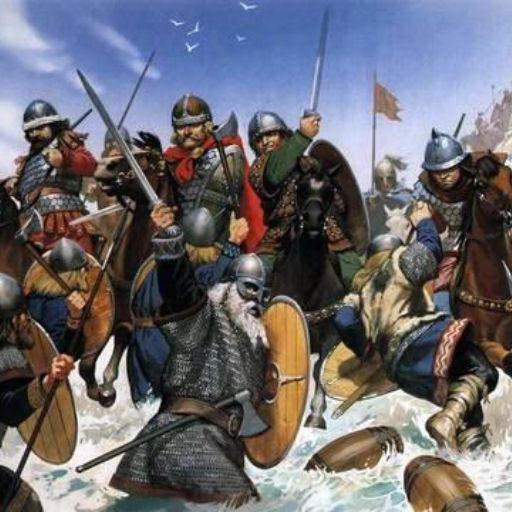

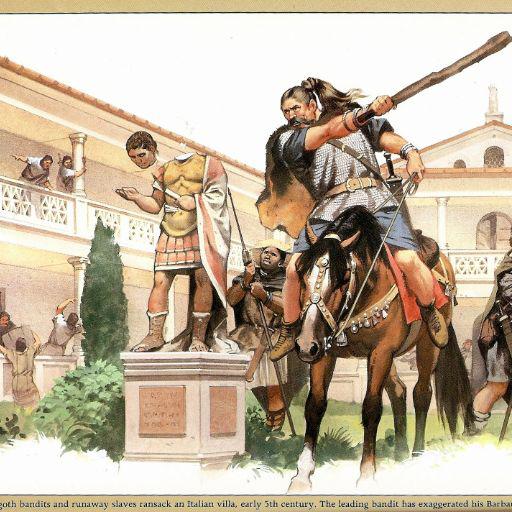

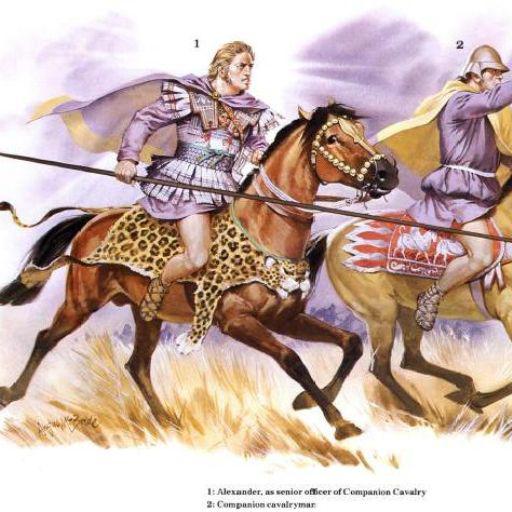

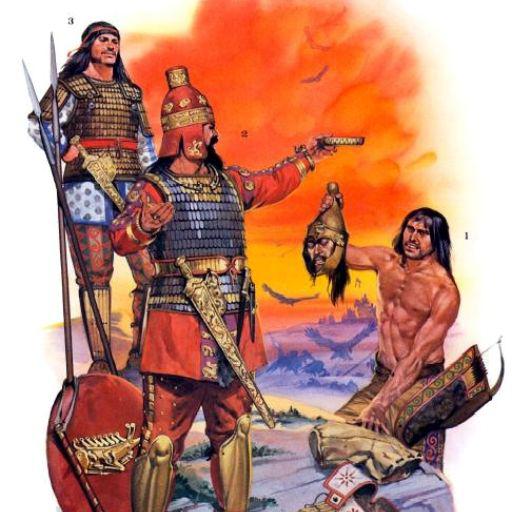

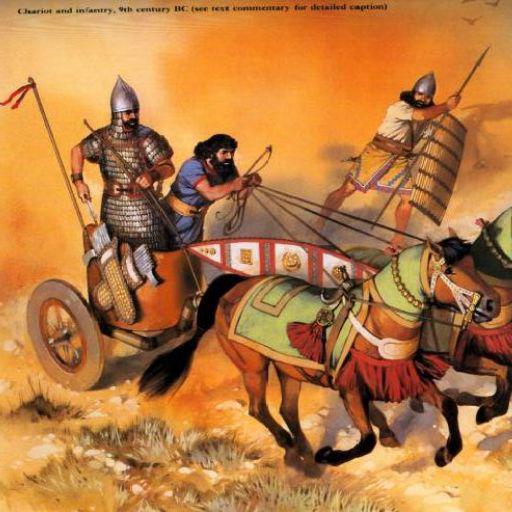

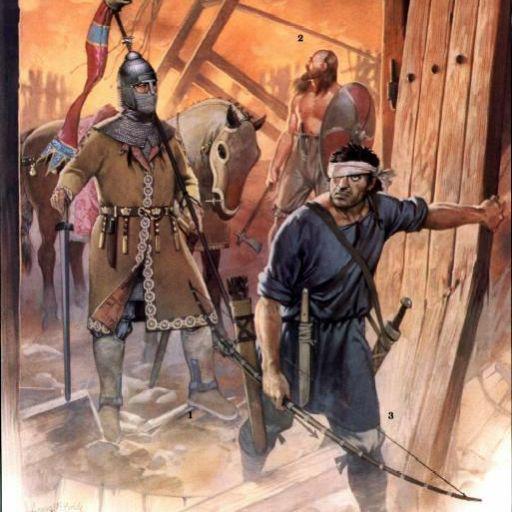

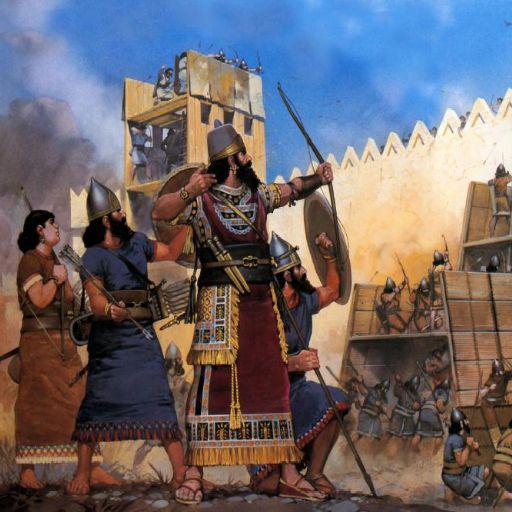

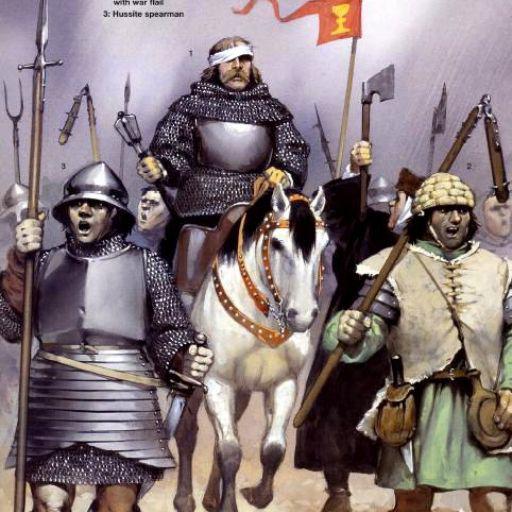

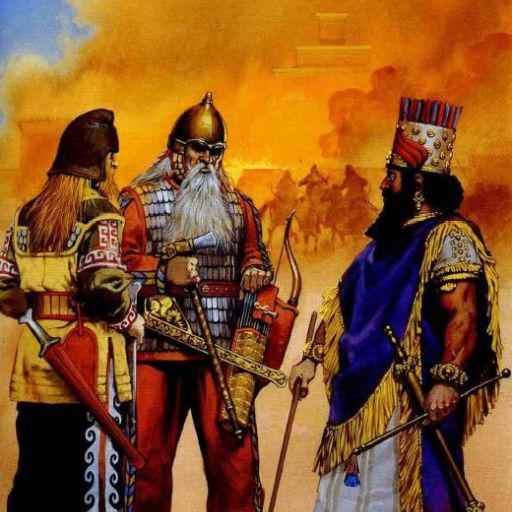

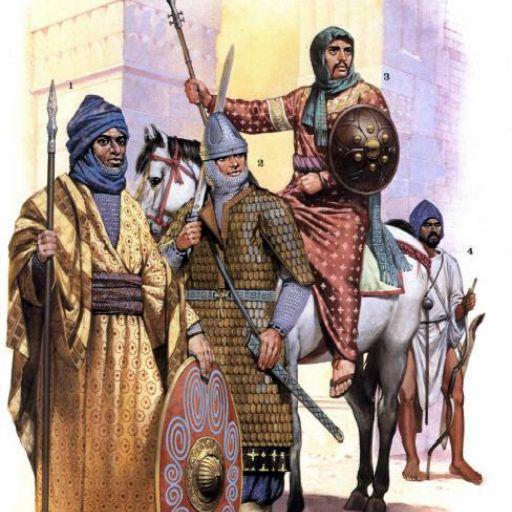

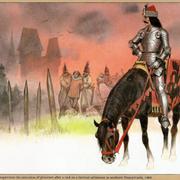

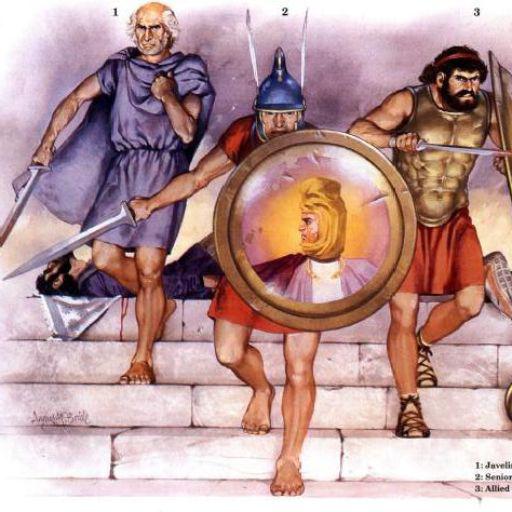

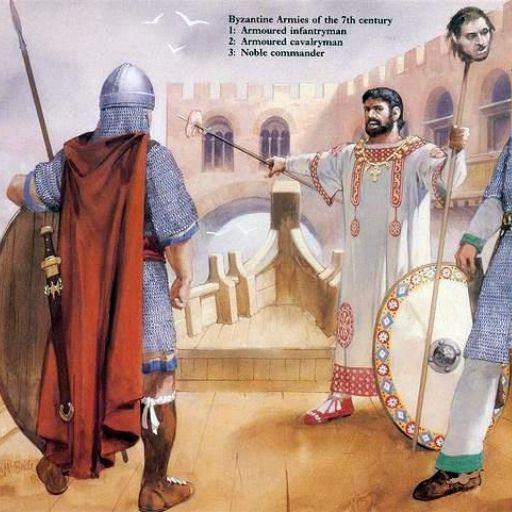

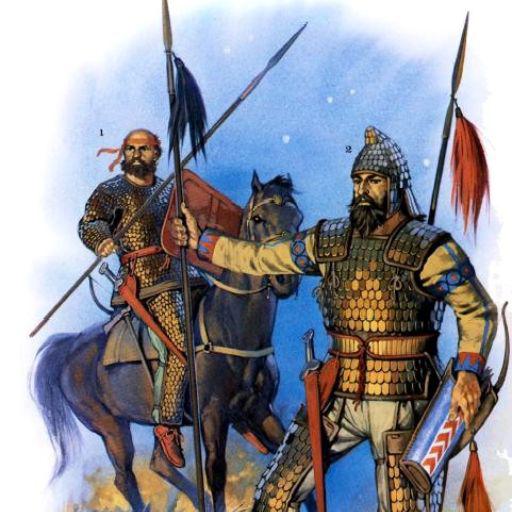

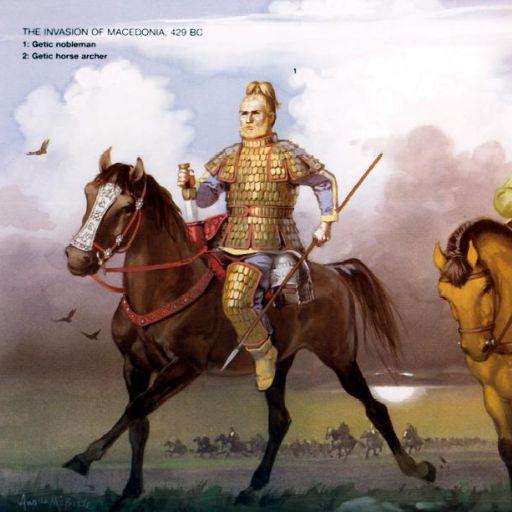

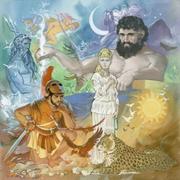

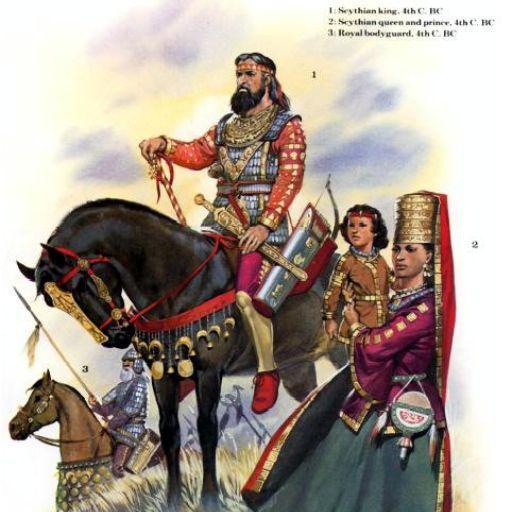

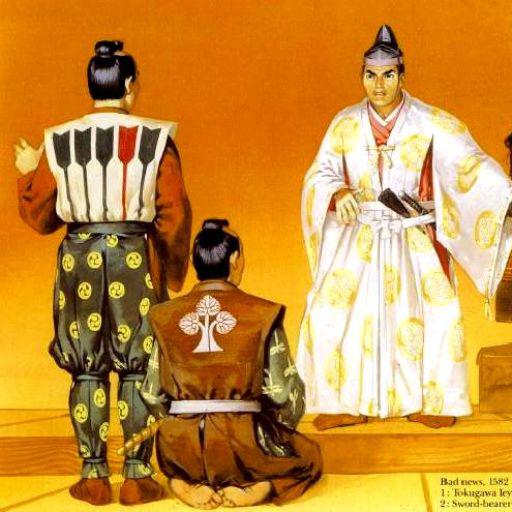

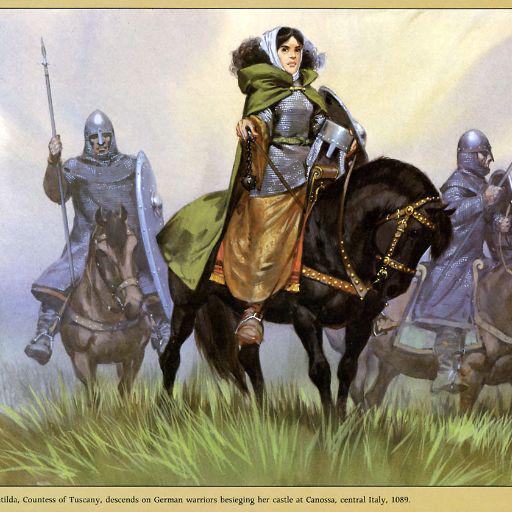

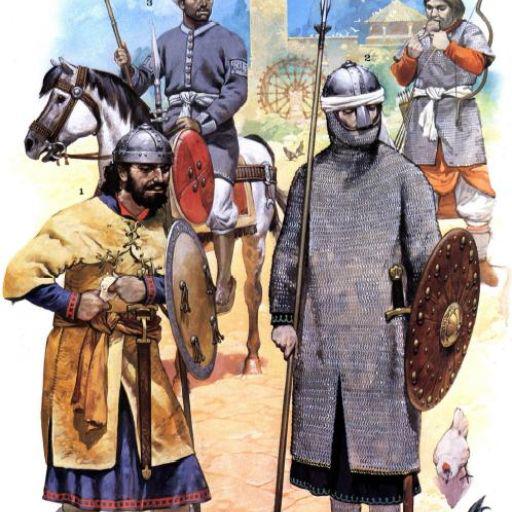

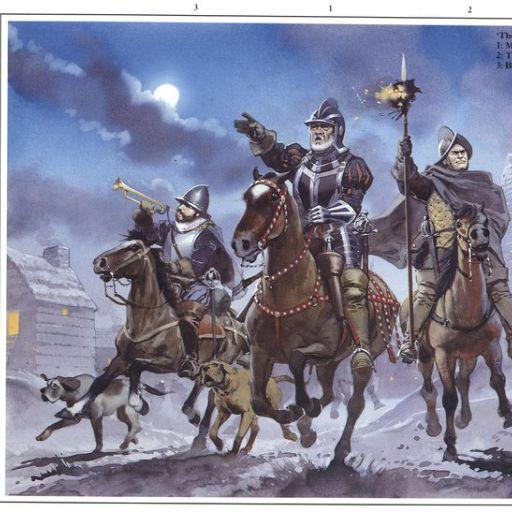

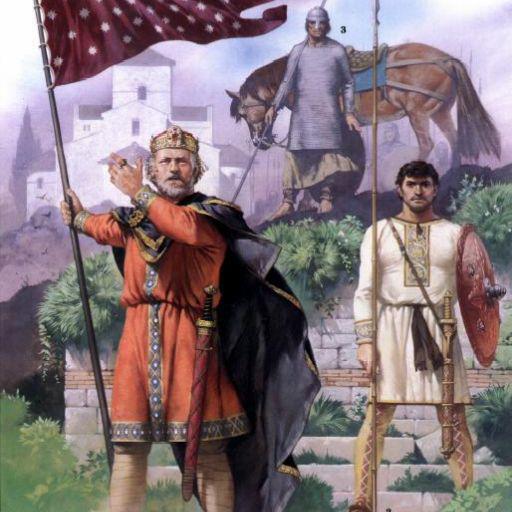

mit | [] | false | model by hiero This your the Stable Diffusion model fine-tuned the angus mcbride style v4 concept taught to Stable Diffusion with Dreambooth. It can be used by modifying the `instance_prompt`: **mcbride_style** You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb). And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) Here are the images used for training this concept:                                               | 4e8b94723a4ebf8081089da95a15beb2 |

apache-2.0 | ['translation'] | false | opus-mt-es-nso * source languages: es * target languages: nso * OPUS readme: [es-nso](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/es-nso/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/es-nso/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/es-nso/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/es-nso/opus-2020-01-16.eval.txt) | 5941ad289717e59f198b5473103fd88b |

mit | ['generated_from_trainer'] | false | test_trainer_XLNET_3ep_5e-5 This model is a fine-tuned version of [xlnet-base-cased](https://huggingface.co/xlnet-base-cased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.5405 - Accuracy: 0.8773 | d73259294cff54b1025b4615395ebde9 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.7984 | 1.0 | 1125 | 0.6647 | 0.7923 | | 0.5126 | 2.0 | 2250 | 0.4625 | 0.862 | | 0.409 | 3.0 | 3375 | 0.5405 | 0.8773 | | 62ccc834e7c84eecdd79715a0e4f8bc1 |

apache-2.0 | ['generated_from_trainer'] | false | text-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.1414 - Accuracy: 0.9367 | 71a8b497fdc232a0761d2cd28a7920a1 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 256 - eval_batch_size: 512 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: cosine - lr_scheduler_warmup_ratio: 0.1 - num_epochs: 5 - mixed_precision_training: Native AMP | 396a6d9fb6bb99719f4d6e21c74bf5ed |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 1.0232 | 1.0 | 63 | 0.2424 | 0.917 | | 0.1925 | 2.0 | 126 | 0.1600 | 0.934 | | 0.1134 | 3.0 | 189 | 0.1418 | 0.935 | | 0.076 | 4.0 | 252 | 0.1461 | 0.931 | | 0.0604 | 5.0 | 315 | 0.1414 | 0.9367 | | ea2228ae7580aa6164fac24589a8cc44 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 4e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 0 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3 | 6b1b2a6a9fed936aea5298e8054f973b |

cc-by-4.0 | ['seq2seq'] | false | 🇳🇴 Norwegian T5 Base model 🇳🇴 This T5-base model is trained from scratch on a 19GB Balanced Bokmål-Nynorsk Corpus. Update: Due to disk space errors, the model had to be restarted July 20. It is currently still running. Parameters used in training: ```bash python3 ./run_t5_mlm_flax_streaming.py --model_name_or_path="./norwegian-t5-base" --output_dir="./norwegian-t5-base" --config_name="./norwegian-t5-base" --tokenizer_name="./norwegian-t5-base" --dataset_name="pere/nb_nn_balanced_shuffled" --max_seq_length="512" --per_device_train_batch_size="32" --per_device_eval_batch_size="32" --learning_rate="0.005" --weight_decay="0.001" --warmup_steps="2000" --overwrite_output_dir --logging_steps="100" --save_steps="500" --eval_steps="500" --push_to_hub --preprocessing_num_workers 96 --adafactor ``` | 1cd0bccff72917ab687eb8afebd54358 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 32 - eval_batch_size: 8 - seed: 42 - distributed_type: multi-GPU - num_devices: 4 - total_train_batch_size: 128 - total_eval_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 15.0 | e54c502e5cb113f6decb9636aca866fd |

apache-2.0 | [] | false | Funnel Transformer small model (B4-4-4 with decoder) Pretrained model on English language using a similar objective objective as [ELECTRA](https://huggingface.co/transformers/model_doc/electra.html). It was introduced in [this paper](https://arxiv.org/pdf/2006.03236.pdf) and first released in [this repository](https://github.com/laiguokun/Funnel-Transformer). This model is uncased: it does not make a difference between english and English. Disclaimer: The team releasing Funnel Transformer did not write a model card for this model so this model card has been written by the Hugging Face team. | 833a32332a484dfa2b4d531251230af0 |

apache-2.0 | [] | false | Model description Funnel Transformer is a transformers model pretrained on a large corpus of English data in a self-supervised fashion. This means it was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots of publicly available data) with an automatic process to generate inputs and labels from those texts. More precisely, a small language model corrupts the input texts and serves as a generator of inputs for this model, and the pretraining objective is to predict which token is an original and which one has been replaced, a bit like a GAN training. This way, the model learns an inner representation of the English language that can then be used to extract features useful for downstream tasks: if you have a dataset of labeled sentences for instance, you can train a standard classifier using the features produced by the BERT model as inputs. | d192cc33caa84ebd02785cd112ebfd64 |

apache-2.0 | [] | false | How to use Here is how to use this model to get the features of a given text in PyTorch: ```python from transformers import FunnelTokenizer, FunnelModel tokenizer = FunnelTokenizer.from_pretrained("funnel-transformer/small") model = FunneModel.from_pretrained("funnel-transformer/small") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` and in TensorFlow: ```python from transformers import FunnelTokenizer, TFFunnelModel tokenizer = FunnelTokenizer.from_pretrained("funnel-transformer/small") model = TFFunnelModel.from_pretrained("funnel-transformer/small") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='tf') output = model(encoded_input) ``` | 876212ab4af93bc872a69bd1b7685a7d |

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'wildcard'] | false | DreamBooth model for the ramrick concept trained by Kayvane on the Kayvane/dreambooth-hackathon-rick-and-morty-images-square dataset. Notes: - trained on square images, 20k steps on google colab - character name is ramrick, many pictures get blocked as nsfw - possibly because the subtoken | dc36df0e099880da940d46024e64dea7 |

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'wildcard'] | false | ick is close to something else - model is trained for too many steps / overfitted as it is effectively recreating the input images This is a Stable Diffusion model fine-tuned on the ramrick concept with DreamBooth. It can be used by modifying the `instance_prompt`: **a photo of ramrick character** This model was created as part of the DreamBooth Hackathon 🔥. Visit the [organisation page](https://huggingface.co/dreambooth-hackathon) for instructions on how to take part! | 5f1387b15589e0bd90edac1032d0ceaa |

apache-2.0 | ['translation'] | false | opus-mt-lus-sv * source languages: lus * target languages: sv * OPUS readme: [lus-sv](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/lus-sv/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-09.zip](https://object.pouta.csc.fi/OPUS-MT-models/lus-sv/opus-2020-01-09.zip) * test set translations: [opus-2020-01-09.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/lus-sv/opus-2020-01-09.test.txt) * test set scores: [opus-2020-01-09.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/lus-sv/opus-2020-01-09.eval.txt) | 1c237a51c3e45fad4945311f0e7d0f00 |

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'wildcard'] | false | DreamBooth model for the srkay concept trained by Xhaheen on the Xhaheen/dreambooth-hackathon-images-srkman-2 dataset. This is a Stable Diffusion model fine-tuned on the sha rukh khan images with DreamBooth. It can be used by modifying the `instance_prompt`: **a photo of srkay man** This model was created as part of the DreamBooth Hackathon 🔥. Visit the [organisation page](https://huggingface.co/dreambooth-hackathon) for instructions on how to take part! | 1fa6fa479a712a7e58f76adb1c262e29 |

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'wildcard'] | false | Dataset used    | ddc31d3dc583112e287b85be35557cdd |

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'wildcard'] | false | Usage ```python from diffusers import StableDiffusionPipeline pipeline = StableDiffusionPipeline.from_pretrained('Xhaheen/srkay-man_6-1-2022') image = pipeline().images[0] image ``` [](https://colab.research.google.com/drive/1FmTaUN38enNdCgi4HxG0LMZ4HobM0Iq3?usp=sharing) | 26a613ee150c91b011eb6ff7f291de41 |

mit | [] | false | German BERT large Released, Oct 2020, this is a German BERT language model trained collaboratively by the makers of the original German BERT (aka "bert-base-german-cased") and the dbmdz BERT (aka bert-base-german-dbmdz-cased). In our [paper](https://arxiv.org/pdf/2010.10906.pdf), we outline the steps taken to train our model and show that it outperforms its predecessors. | 7361bde25890a96ebfd07701c1dd8898 |

mit | [] | false | Performance ``` GermEval18 Coarse: 80.08 GermEval18 Fine: 52.48 GermEval14: 88.16 ``` See also: deepset/gbert-base deepset/gbert-large deepset/gelectra-base deepset/gelectra-large deepset/gelectra-base-generator deepset/gelectra-large-generator | 268b110ba8964f3027c57430171fa99e |

mit | [] | false | About us <div class="grid lg:grid-cols-2 gap-x-4 gap-y-3"> <div class="w-full h-40 object-cover mb-2 rounded-lg flex items-center justify-center"> <img alt="" src="https://raw.githubusercontent.com/deepset-ai/.github/main/deepset-logo-colored.png" class="w-40"/> </div> <div class="w-full h-40 object-cover mb-2 rounded-lg flex items-center justify-center"> <img alt="" src="https://raw.githubusercontent.com/deepset-ai/.github/main/haystack-logo-colored.png" class="w-40"/> </div> </div> [deepset](http://deepset.ai/) is the company behind the open-source NLP framework [Haystack](https://haystack.deepset.ai/) which is designed to help you build production ready NLP systems that use: Question answering, summarization, ranking etc. Some of our other work: - [Distilled roberta-base-squad2 (aka "tinyroberta-squad2")]([https://huggingface.co/deepset/tinyroberta-squad2) - [German BERT (aka "bert-base-german-cased")](https://deepset.ai/german-bert) - [GermanQuAD and GermanDPR datasets and models (aka "gelectra-base-germanquad", "gbert-base-germandpr")](https://deepset.ai/germanquad) | f10734d49f95cc25fac5a19877a6cbc1 |

mit | [] | false | Get in touch and join the Haystack community <p>For more info on Haystack, visit our <strong><a href="https://github.com/deepset-ai/haystack">GitHub</a></strong> repo and <strong><a href="https://docs.haystack.deepset.ai">Documentation</a></strong>. We also have a <strong><a class="h-7" href="https://haystack.deepset.ai/community">Discord community open to everyone!</a></strong></p> [Twitter](https://twitter.com/deepset_ai) | [LinkedIn](https://www.linkedin.com/company/deepset-ai/) | [Discord](https://haystack.deepset.ai/community) | [GitHub Discussions](https://github.com/deepset-ai/haystack/discussions) | [Website](https://deepset.ai) By the way: [we're hiring!](http://www.deepset.ai/jobs) | 7963da797dcc33f0a376feaeaa5d5db1 |

mit | [] | false | model by Giordyman This your the Stable Diffusion model fine-tuned the Tempa concept taught to Stable Diffusion with Dreambooth. It can be used by modifying the `instance_prompt`: **a photo of sks Tempa** You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb). Here are the images used for training this concept:     | d92564fa74b48c2e6f1f884ce2b3251c |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 2 - eval_batch_size: 2 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 6 | 5d9d0b013495ccd81709b076471c006a |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-lar-xlsr-es-col This model is a fine-tuned version of [jonatasgrosman/wav2vec2-large-xlsr-53-spanish](https://huggingface.co/jonatasgrosman/wav2vec2-large-xlsr-53-spanish) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.0947 - Wer: 0.1884 | 56ad679f92426306b86f8ededd47b790 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 4.8446 | 8.51 | 400 | 2.8174 | 0.9854 | | 0.5146 | 17.02 | 800 | 0.1022 | 0.2020 | | 0.0706 | 25.53 | 1200 | 0.0947 | 0.1884 | | 4426c6d59871ae772ac17449d00af794 |

creativeml-openrail-m | ['text-to-image'] | false | 8f6b362c-26c3-4c26-9e7f-2b8ff6ef353e Dreambooth model trained by tzvc with [Hugging Face Dreambooth Training Space](https://huggingface.co/spaces/multimodalart/dreambooth-training) with the v1-5 base model You run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb). Don't forget to use the concept prompts! Sample pictures of: sdcid (use that on your prompt)  | 9f9bb02e1853b53f2a6116281bedd030 |

mit | [] | false | glass pipe on Stable Diffusion This is the `<glass-sherlock>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:        | 13660ed0a5b87fa3add5176c331b03e8 |

mit | [] | false | tails diffusion on Stable Diffusion via Dreambooth trained on the [fast-DreamBooth.ipynb by TheLastBen](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook | 4d374d967789021e10d8ae36195d9911 |

mit | [] | false | model by Bugjuhjugjyy This your the Stable Diffusion model fine-tuned the tails diffusion concept taught to Stable Diffusion with Dreambooth. It can be used by modifying the `instance_prompt(s)`: **images** You can also train your own concepts and upload them to the library by using [the fast-DremaBooth.ipynb by TheLastBen](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb). And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) Here are the images used for training this concept: images .png) .png) .png) .png) .png) .png) .png) .png) | 4c3de35de4ed6a49604fc3118a8bed9e |

cc-by-4.0 | [] | false | Overview **Language model:** bert-large **Language:** English **Downstream-task:** Extractive QA **Training data:** SQuAD 2.0 **Eval data:** SQuAD 2.0 **Code:** See [an example QA pipeline on Haystack](https://haystack.deepset.ai/tutorials/first-qa-system) | 62cb3a5aee285edecd19c958a122a8cf |

cc-by-4.0 | [] | false | In Haystack Haystack is an NLP framework by deepset. You can use this model in a Haystack pipeline to do question answering at scale (over many documents). To load the model in [Haystack](https://github.com/deepset-ai/haystack/): ```python reader = FARMReader(model_name_or_path="deepset/bert-large-uncased-whole-word-masking-squad2") | bac645fa2a09a4faedb7a4bca7a03870 |

cc-by-4.0 | [] | false | Get in touch and join the Haystack community <p>For more info on Haystack, visit our <strong><a href="https://github.com/deepset-ai/haystack">GitHub</a></strong> repo and <strong><a href="https://docs.haystack.deepset.ai">Documentation</a></strong>. We also have a <strong><a class="h-7" href="https://haystack.deepset.ai/community">Discord community open to everyone!</a></strong></p> [Twitter](https://twitter.com/deepset_ai) | [LinkedIn](https://www.linkedin.com/company/deepset-ai/) | [Discord](https://haystack.deepset.ai/community/join) | [GitHub Discussions](https://github.com/deepset-ai/haystack/discussions) | [Website](https://deepset.ai) By the way: [we're hiring!](http://www.deepset.ai/jobs) | e63f66e9e6fbb610015a0f1cd1cea988 |

apache-2.0 | ['generated_from_keras_callback'] | false | Rocketknight1/temp-colab-upload-test2 This model is a fine-tuned version of [distilbert-base-cased](https://huggingface.co/distilbert-base-cased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.6931 - Validation Loss: 0.6931 - Epoch: 1 | 9e646ed93d522b1869baf44034acd161 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'superb'] | false | Fork of Wav2Vec2-Base-960h [Facebook's Wav2Vec2](https://ai.facebook.com/blog/wav2vec-20-learning-the-structure-of-speech-from-raw-audio/) The base model pretrained and fine-tuned on 960 hours of Librispeech on 16kHz sampled speech audio. When using the model make sure that your speech input is also sampled at 16Khz. [Paper](https://arxiv.org/abs/2006.11477) Authors: Alexei Baevski, Henry Zhou, Abdelrahman Mohamed, Michael Auli **Abstract** We show for the first time that learning powerful representations from speech audio alone followed by fine-tuning on transcribed speech can outperform the best semi-supervised methods while being conceptually simpler. wav2vec 2.0 masks the speech input in the latent space and solves a contrastive task defined over a quantization of the latent representations which are jointly learned. Experiments using all labeled data of Librispeech achieve 1.8/3.3 WER on the clean/other test sets. When lowering the amount of labeled data to one hour, wav2vec 2.0 outperforms the previous state of the art on the 100 hour subset while using 100 times less labeled data. Using just ten minutes of labeled data and pre-training on 53k hours of unlabeled data still achieves 4.8/8.2 WER. This demonstrates the feasibility of speech recognition with limited amounts of labeled data. The original model can be found under https://github.com/pytorch/fairseq/tree/master/examples/wav2vec | 1b88eba98bf0e06817ee2f2dd2c7a4a8 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'superb'] | false | Usage To transcribe audio files the model can be used as a standalone acoustic model as follows: ```python from transformers import Wav2Vec2Tokenizer, Wav2Vec2ForCTC from datasets import load_dataset import soundfile as sf import torch | a07542c0cf1cdf92d16f31217964129a |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'superb'] | false | Evaluation This code snippet shows how to evaluate **facebook/wav2vec2-base-960h** on LibriSpeech's "clean" and "other" test data. ```python from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Tokenizer import soundfile as sf import torch from jiwer import wer librispeech_eval = load_dataset("librispeech_asr", "clean", split="test") model = Wav2Vec2ForCTC.from_pretrained("facebook/wav2vec2-base-960h").to("cuda") tokenizer = Wav2Vec2Tokenizer.from_pretrained("facebook/wav2vec2-base-960h") def map_to_array(batch): speech, _ = sf.read(batch["file"]) batch["speech"] = speech return batch librispeech_eval = librispeech_eval.map(map_to_array) def map_to_pred(batch): input_values = tokenizer(batch["speech"], return_tensors="pt", padding="longest").input_values with torch.no_grad(): logits = model(input_values.to("cuda")).logits predicted_ids = torch.argmax(logits, dim=-1) transcription = tokenizer.batch_decode(predicted_ids) batch["transcription"] = transcription return batch result = librispeech_eval.map(map_to_pred, batched=True, batch_size=1, remove_columns=["speech"]) print("WER:", wer(result["text"], result["transcription"])) ``` *Result (WER)*: | "clean" | "other" | |---|---| | 3.4 | 8.6 | | a0698799b6fb6e52a7922d7f1742cfcf |

apache-2.0 | ['translation'] | false | opus-mt-fi-hr * source languages: fi * target languages: hr * OPUS readme: [fi-hr](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/fi-hr/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-08.zip](https://object.pouta.csc.fi/OPUS-MT-models/fi-hr/opus-2020-01-08.zip) * test set translations: [opus-2020-01-08.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/fi-hr/opus-2020-01-08.test.txt) * test set scores: [opus-2020-01-08.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/fi-hr/opus-2020-01-08.eval.txt) | 55ffca74d854ac13efc037768a0f8ea0 |

other | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3.0 | a116979f683a7084e13ab60b35858ebd |

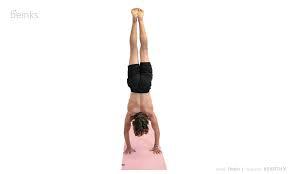

mit | [] | false | handstand on Stable Diffusion This is the `<handstand>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:     | a9560ef17859e399a818b2c3df63eea4 |

apache-2.0 | ['exbert'] | false | BERT Large model (cased) Pretrained model on English language using a masked language modeling (MLM) objective. It was introduced in [this paper](https://arxiv.org/abs/1810.04805) and first released in [this repository](https://github.com/google-research/bert). This model is case-sensitive: it makes a difference between english and English. Disclaimer: The team releasing BERT did not write a model card for this model so this model card has been written by the Hugging Face team. | 2fb036eed9cbd06fd16fa97b316ff71c |

apache-2.0 | ['exbert'] | false | How to use You can use this model directly with a pipeline for masked language modeling: In tf_transformers ```python from tf_transformers.models import BertModel from transformers import BertTokenizer tokenizer = BertTokenizer.from_pretrained('bert-large-cased') model = BertModel.from_pretrained("bert-large-cased") text = "Replace me by any text you'd like." inputs_tf = {} inputs = tokenizer(text, return_tensors='tf') inputs_tf["input_ids"] = inputs["input_ids"] inputs_tf["input_type_ids"] = inputs["token_type_ids"] inputs_tf["input_mask"] = inputs["attention_mask"] outputs_tf = model(inputs_tf) ``` | f47f15a64e74d1a96961f775ae6b3b78 |

apache-2.0 | ['exbert'] | false | Preprocessing The texts are tokenized using WordPiece and a vocabulary size of 30,000. The inputs of the model are then of the form: ``` [CLS] Sentence A [SEP] Sentence B [SEP] ``` With probability 0.5, sentence A and sentence B correspond to two consecutive sentences in the original corpus and in the other cases, it's another random sentence in the corpus. Note that what is considered a sentence here is a consecutive span of text usually longer than a single sentence. The only constrain is that the result with the two "sentences" has a combined length of less than 512 tokens. The details of the masking procedure for each sentence are the following: - 15% of the tokens are masked. - In 80% of the cases, the masked tokens are replaced by `[MASK]`. - In 10% of the cases, the masked tokens are replaced by a random token (different) from the one they replace. - In the 10% remaining cases, the masked tokens are left as is. | 31bd7b42f5969e957b35893390454a5f |

apache-2.0 | ['exbert'] | false | BibTeX entry and citation info ```bibtex @article{DBLP:journals/corr/abs-1810-04805, author = {Jacob Devlin and Ming{-}Wei Chang and Kenton Lee and Kristina Toutanova}, title = {{BERT:} Pre-training of Deep Bidirectional Transformers for Language Understanding}, journal = {CoRR}, volume = {abs/1810.04805}, year = {2018}, url = {http://arxiv.org/abs/1810.04805}, archivePrefix = {arXiv}, eprint = {1810.04805}, timestamp = {Tue, 30 Oct 2018 20:39:56 +0100}, biburl = {https://dblp.org/rec/journals/corr/abs-1810-04805.bib}, bibsource = {dblp computer science bibliography, https://dblp.org} } ``` <a href="https://huggingface.co/exbert/?model=bert-base-cased"> <img width="300px" src="https://cdn-media.huggingface.co/exbert/button.png"> </a> | 22ac7b4f24dcda030c2a9f21042ee75d |

apache-2.0 | ['deep-narrow'] | false | T5-Efficient-SMALL-DL4 (Deep-Narrow version) T5-Efficient-SMALL-DL4 is a variation of [Google's original T5](https://ai.googleblog.com/2020/02/exploring-transfer-learning-with-t5.html) following the [T5 model architecture](https://huggingface.co/docs/transformers/model_doc/t5). It is a *pretrained-only* checkpoint and was released with the paper **[Scale Efficiently: Insights from Pre-training and Fine-tuning Transformers](https://arxiv.org/abs/2109.10686)** by *Yi Tay, Mostafa Dehghani, Jinfeng Rao, William Fedus, Samira Abnar, Hyung Won Chung, Sharan Narang, Dani Yogatama, Ashish Vaswani, Donald Metzler*. In a nutshell, the paper indicates that a **Deep-Narrow** model architecture is favorable for **downstream** performance compared to other model architectures of similar parameter count. To quote the paper: > We generally recommend a DeepNarrow strategy where the model’s depth is preferentially increased > before considering any other forms of uniform scaling across other dimensions. This is largely due to > how much depth influences the Pareto-frontier as shown in earlier sections of the paper. Specifically, a > tall small (deep and narrow) model is generally more efficient compared to the base model. Likewise, > a tall base model might also generally more efficient compared to a large model. We generally find > that, regardless of size, even if absolute performance might increase as we continue to stack layers, > the relative gain of Pareto-efficiency diminishes as we increase the layers, converging at 32 to 36 > layers. Finally, we note that our notion of efficiency here relates to any one compute dimension, i.e., > params, FLOPs or throughput (speed). We report all three key efficiency metrics (number of params, > FLOPS and speed) and leave this decision to the practitioner to decide which compute dimension to > consider. To be more precise, *model depth* is defined as the number of transformer blocks that are stacked sequentially. A sequence of word embeddings is therefore processed sequentially by each transformer block. | 8eaf304f236aebbaaef0626eb21643aa |

apache-2.0 | ['deep-narrow'] | false | Details model architecture This model checkpoint - **t5-efficient-small-dl4** - is of model type **Small** with the following variations: - **dl** is **4** It has **52.13** million parameters and thus requires *ca.* **208.51 MB** of memory in full precision (*fp32*) or **104.25 MB** of memory in half precision (*fp16* or *bf16*). A summary of the *original* T5 model architectures can be seen here: | Model | nl (el/dl) | ff | dm | kv | nh | | dec387b7b1ee9515a5b5cae956da3d3f |

mit | ['generated_from_trainer'] | false | og-deberta-extra-o This model is a fine-tuned version of [microsoft/deberta-base](https://huggingface.co/microsoft/deberta-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.5184 - Precision: 0.5981 - Recall: 0.6667 - F1: 0.6305 - Accuracy: 0.9226 | dc249d831af4e7c6c53acfff821662e7 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 25 | b6c2d457e28831d0b073e743ebab80bd |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 55 | 0.4813 | 0.2863 | 0.3467 | 0.3136 | 0.8720 | | No log | 2.0 | 110 | 0.3469 | 0.4456 | 0.4587 | 0.4520 | 0.9010 | | No log | 3.0 | 165 | 0.3166 | 0.5206 | 0.5387 | 0.5295 | 0.9147 | | No log | 4.0 | 220 | 0.3338 | 0.4899 | 0.584 | 0.5328 | 0.9087 | | No log | 5.0 | 275 | 0.3166 | 0.5625 | 0.648 | 0.6022 | 0.9198 | | No log | 6.0 | 330 | 0.3464 | 0.5707 | 0.6027 | 0.5863 | 0.9207 | | No log | 7.0 | 385 | 0.3548 | 0.5489 | 0.6133 | 0.5793 | 0.9207 | | No log | 8.0 | 440 | 0.4005 | 0.6125 | 0.6027 | 0.6075 | 0.9210 | | No log | 9.0 | 495 | 0.4185 | 0.5763 | 0.6347 | 0.6041 | 0.9171 | | 0.2019 | 10.0 | 550 | 0.4174 | 0.5596 | 0.6507 | 0.6017 | 0.9179 | | 0.2019 | 11.0 | 605 | 0.4558 | 0.5603 | 0.632 | 0.5940 | 0.9179 | | 0.2019 | 12.0 | 660 | 0.4615 | 0.5632 | 0.6533 | 0.6049 | 0.9166 | | 0.2019 | 13.0 | 715 | 0.4899 | 0.5815 | 0.6187 | 0.5995 | 0.9208 | | 0.2019 | 14.0 | 770 | 0.4800 | 0.5581 | 0.64 | 0.5963 | 0.9186 | | 0.2019 | 15.0 | 825 | 0.4752 | 0.5905 | 0.6613 | 0.6239 | 0.9212 | | 0.2019 | 16.0 | 880 | 0.5014 | 0.5773 | 0.6373 | 0.6058 | 0.9174 | | 0.2019 | 17.0 | 935 | 0.5095 | 0.5917 | 0.6453 | 0.6173 | 0.9195 | | 0.2019 | 18.0 | 990 | 0.5249 | 0.5807 | 0.6427 | 0.6101 | 0.9203 | | 0.0077 | 19.0 | 1045 | 0.5086 | 0.5761 | 0.656 | 0.6135 | 0.9222 | | 0.0077 | 20.0 | 1100 | 0.5108 | 0.5962 | 0.6693 | 0.6307 | 0.9219 | | 0.0077 | 21.0 | 1155 | 0.5144 | 0.5977 | 0.6853 | 0.6385 | 0.9231 | | 0.0077 | 22.0 | 1210 | 0.5176 | 0.5990 | 0.6613 | 0.6286 | 0.9229 | | 0.0077 | 23.0 | 1265 | 0.5171 | 0.6039 | 0.6667 | 0.6337 | 0.9226 | | 0.0077 | 24.0 | 1320 | 0.5184 | 0.6043 | 0.672 | 0.6364 | 0.9226 | | 0.0077 | 25.0 | 1375 | 0.5184 | 0.5981 | 0.6667 | 0.6305 | 0.9226 | | acf308bf345f8485f22297e07c80d58a |

apache-2.0 | ['generated_from_trainer'] | false | model_syllable_onSet4 This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1349 - 0 Precision: 1.0 - 0 Recall: 1.0 - 0 F1-score: 1.0 - 0 Support: 26 - 1 Precision: 1.0 - 1 Recall: 0.9677 - 1 F1-score: 0.9836 - 1 Support: 31 - 2 Precision: 0.9630 - 2 Recall: 1.0 - 2 F1-score: 0.9811 - 2 Support: 26 - 3 Precision: 1.0 - 3 Recall: 1.0 - 3 F1-score: 1.0 - 3 Support: 14 - Accuracy: 0.9897 - Macro avg Precision: 0.9907 - Macro avg Recall: 0.9919 - Macro avg F1-score: 0.9912 - Macro avg Support: 97 - Weighted avg Precision: 0.9901 - Weighted avg Recall: 0.9897 - Weighted avg F1-score: 0.9897 - Weighted avg Support: 97 - Wer: 0.2258 - Mtrix: [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 30, 1, 0], [2, 0, 0, 26, 0], [3, 0, 0, 0, 14]] | 5ccf8de519bb6e2a82f135085b0bfc37 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | 0 Precision | 0 Recall | 0 F1-score | 0 Support | 1 Precision | 1 Recall | 1 F1-score | 1 Support | 2 Precision | 2 Recall | 2 F1-score | 2 Support | 3 Precision | 3 Recall | 3 F1-score | 3 Support | Accuracy | Macro avg Precision | Macro avg Recall | Macro avg F1-score | Macro avg Support | Weighted avg Precision | Weighted avg Recall | Weighted avg F1-score | Weighted avg Support | Wer | Mtrix | |:-------------:|:-----:|:----:|:---------------:|:-----------:|:--------:|:----------:|:---------:|:-----------:|:--------:|:----------:|:---------:|:-----------:|:--------:|:----------:|:---------:|:-----------:|:--------:|:----------:|:---------:|:--------:|:-------------------:|:----------------:|:------------------:|:-----------------:|:----------------------:|:-------------------:|:---------------------:|:--------------------:|:------:|:--------------------------------------------------------------------------------------:| | 1.6602 | 4.16 | 100 | 1.5639 | 0.0 | 0.0 | 0.0 | 26 | 0.0 | 0.0 | 0.0 | 31 | 0.2584 | 0.8846 | 0.4 | 26 | 0.0 | 0.0 | 0.0 | 14 | 0.2371 | 0.0646 | 0.2212 | 0.1 | 97 | 0.0693 | 0.2371 | 0.1072 | 97 | 0.9732 | [[0, 1, 2, 3], [0, 0, 0, 26, 0], [1, 0, 0, 31, 0], [2, 3, 0, 23, 0], [3, 5, 0, 9, 0]] | | 1.616 | 8.33 | 200 | 1.4203 | 0.0 | 0.0 | 0.0 | 26 | 0.0 | 0.0 | 0.0 | 31 | 0.2584 | 0.8846 | 0.4 | 26 | 0.0 | 0.0 | 0.0 | 14 | 0.2371 | 0.0646 | 0.2212 | 0.1 | 97 | 0.0693 | 0.2371 | 0.1072 | 97 | 0.9732 | [[0, 1, 2, 3], [0, 0, 0, 26, 0], [1, 0, 0, 31, 0], [2, 3, 0, 23, 0], [3, 5, 0, 9, 0]] | | 1.2107 | 12.49 | 300 | 1.1249 | 0.0 | 0.0 | 0.0 | 26 | 0.0 | 0.0 | 0.0 | 31 | 0.2584 | 0.8846 | 0.4 | 26 | 0.0 | 0.0 | 0.0 | 14 | 0.2371 | 0.0646 | 0.2212 | 0.1 | 97 | 0.0693 | 0.2371 | 0.1072 | 97 | 0.9732 | [[0, 1, 2, 3], [0, 0, 0, 26, 0], [1, 0, 0, 31, 0], [2, 3, 0, 23, 0], [3, 5, 0, 9, 0]] | | 1.1283 | 16.65 | 400 | 1.0201 | 0.0 | 0.0 | 0.0 | 26 | 0.0 | 0.0 | 0.0 | 31 | 0.2584 | 0.8846 | 0.4 | 26 | 0.0 | 0.0 | 0.0 | 14 | 0.2371 | 0.0646 | 0.2212 | 0.1 | 97 | 0.0693 | 0.2371 | 0.1072 | 97 | 0.9732 | [[0, 1, 2, 3], [0, 0, 0, 26, 0], [1, 0, 0, 31, 0], [2, 3, 0, 23, 0], [3, 5, 0, 9, 0]] | | 0.8868 | 20.82 | 500 | 0.8944 | 0.0 | 0.0 | 0.0 | 26 | 0.0 | 0.0 | 0.0 | 31 | 0.2584 | 0.8846 | 0.4 | 26 | 0.0 | 0.0 | 0.0 | 14 | 0.2371 | 0.0646 | 0.2212 | 0.1 | 97 | 0.0693 | 0.2371 | 0.1072 | 97 | 0.9732 | [[0, 1, 2, 3], [0, 0, 0, 26, 0], [1, 0, 0, 31, 0], [2, 3, 0, 23, 0], [3, 5, 0, 9, 0]] | | 0.8863 | 24.98 | 600 | 0.9316 | 0.0 | 0.0 | 0.0 | 26 | 0.0 | 0.0 | 0.0 | 31 | 0.2584 | 0.8846 | 0.4 | 26 | 0.0 | 0.0 | 0.0 | 14 | 0.2371 | 0.0646 | 0.2212 | 0.1 | 97 | 0.0693 | 0.2371 | 0.1072 | 97 | 0.9732 | [[0, 1, 2, 3], [0, 0, 0, 26, 0], [1, 0, 0, 31, 0], [2, 3, 0, 23, 0], [3, 5, 0, 9, 0]] | | 0.9019 | 29.16 | 700 | 0.8688 | 0.7647 | 1.0 | 0.8667 | 26 | 0.0 | 0.0 | 0.0 | 31 | 0.3651 | 0.8846 | 0.5169 | 26 | 0.0 | 0.0 | 0.0 | 14 | 0.5052 | 0.2824 | 0.4712 | 0.3459 | 97 | 0.3028 | 0.5052 | 0.3708 | 97 | 0.9732 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 0, 31, 0], [2, 3, 0, 23, 0], [3, 5, 0, 9, 0]] | | 0.7977 | 33.33 | 800 | 0.8014 | 1.0 | 1.0 | 1.0 | 26 | 0.9667 | 0.9355 | 0.9508 | 31 | 0.9259 | 0.9615 | 0.9434 | 26 | 1.0 | 1.0 | 1.0 | 14 | 0.9691 | 0.9731 | 0.9743 | 0.9736 | 97 | 0.9695 | 0.9691 | 0.9691 | 97 | 1.0 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 29, 2, 0], [2, 0, 1, 25, 0], [3, 0, 0, 0, 14]] | | 0.729 | 37.49 | 900 | 0.8163 | 1.0 | 1.0 | 1.0 | 26 | 0.9091 | 0.9677 | 0.9375 | 31 | 0.9583 | 0.8846 | 0.9200 | 26 | 1.0 | 1.0 | 1.0 | 14 | 0.9588 | 0.9669 | 0.9631 | 0.9644 | 97 | 0.9598 | 0.9588 | 0.9586 | 97 | 1.0 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 30, 1, 0], [2, 0, 3, 23, 0], [3, 0, 0, 0, 14]] | | 0.6526 | 41.65 | 1000 | 0.6691 | 1.0 | 1.0 | 1.0 | 26 | 0.9667 | 0.9355 | 0.9508 | 31 | 0.9259 | 0.9615 | 0.9434 | 26 | 1.0 | 1.0 | 1.0 | 14 | 0.9691 | 0.9731 | 0.9743 | 0.9736 | 97 | 0.9695 | 0.9691 | 0.9691 | 97 | 0.7055 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 29, 2, 0], [2, 0, 1, 25, 0], [3, 0, 0, 0, 14]] | | 0.6633 | 45.82 | 1100 | 0.3445 | 1.0 | 1.0 | 1.0 | 26 | 0.9394 | 1.0 | 0.9688 | 31 | 1.0 | 0.9231 | 0.9600 | 26 | 1.0 | 1.0 | 1.0 | 14 | 0.9794 | 0.9848 | 0.9808 | 0.9822 | 97 | 0.9806 | 0.9794 | 0.9793 | 97 | 0.5017 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 31, 0, 0], [2, 0, 2, 24, 0], [3, 0, 0, 0, 14]] | | 0.1913 | 49.98 | 1200 | 0.2455 | 1.0 | 1.0 | 1.0 | 26 | 0.9677 | 0.9677 | 0.9677 | 31 | 0.96 | 0.9231 | 0.9412 | 26 | 0.9333 | 1.0 | 0.9655 | 14 | 0.9691 | 0.9653 | 0.9727 | 0.9686 | 97 | 0.9693 | 0.9691 | 0.9689 | 97 | 0.3946 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 30, 1, 0], [2, 0, 1, 24, 1], [3, 0, 0, 0, 14]] | | 0.2024 | 54.16 | 1300 | 0.1865 | 1.0 | 1.0 | 1.0 | 26 | 1.0 | 0.9355 | 0.9667 | 31 | 0.9286 | 1.0 | 0.9630 | 26 | 1.0 | 1.0 | 1.0 | 14 | 0.9794 | 0.9821 | 0.9839 | 0.9824 | 97 | 0.9809 | 0.9794 | 0.9794 | 97 | 0.3423 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 29, 2, 0], [2, 0, 0, 26, 0], [3, 0, 0, 0, 14]] | | 0.1212 | 58.33 | 1400 | 0.1485 | 1.0 | 1.0 | 1.0 | 26 | 1.0 | 0.9677 | 0.9836 | 31 | 0.9630 | 1.0 | 0.9811 | 26 | 1.0 | 1.0 | 1.0 | 14 | 0.9897 | 0.9907 | 0.9919 | 0.9912 | 97 | 0.9901 | 0.9897 | 0.9897 | 97 | 0.2957 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 30, 1, 0], [2, 0, 0, 26, 0], [3, 0, 0, 0, 14]] | | 0.108 | 62.49 | 1500 | 0.1348 | 1.0 | 1.0 | 1.0 | 26 | 1.0 | 0.9677 | 0.9836 | 31 | 0.9630 | 1.0 | 0.9811 | 26 | 1.0 | 1.0 | 1.0 | 14 | 0.9897 | 0.9907 | 0.9919 | 0.9912 | 97 | 0.9901 | 0.9897 | 0.9897 | 97 | 0.2433 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 30, 1, 0], [2, 0, 0, 26, 0], [3, 0, 0, 0, 14]] | | 0.1058 | 66.65 | 1600 | 0.1328 | 1.0 | 1.0 | 1.0 | 26 | 1.0 | 0.9677 | 0.9836 | 31 | 0.9630 | 1.0 | 0.9811 | 26 | 1.0 | 1.0 | 1.0 | 14 | 0.9897 | 0.9907 | 0.9919 | 0.9912 | 97 | 0.9901 | 0.9897 | 0.9897 | 97 | 0.2224 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 30, 1, 0], [2, 0, 0, 26, 0], [3, 0, 0, 0, 14]] | | b1438bb1ecc6bc64fd4cc763718ce473 |

apache-2.0 | ['generated_from_trainer'] | false | plncmm/roberta-clinical-wl-es This model is a fine-tuned version of [PlanTL-GOB-ES/roberta-base-biomedical-clinical-es](https://huggingface.co/PlanTL-GOB-ES/roberta-base-biomedical-clinical-es) on the Chilean waiting list dataset. | 322da18fbeae35a56e24ebcd1fa4d645 |

apache-2.0 | ['translation'] | false | opus-mt-wal-en * source languages: wal * target languages: en * OPUS readme: [wal-en](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/wal-en/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-24.zip](https://object.pouta.csc.fi/OPUS-MT-models/wal-en/opus-2020-01-24.zip) * test set translations: [opus-2020-01-24.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/wal-en/opus-2020-01-24.test.txt) * test set scores: [opus-2020-01-24.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/wal-en/opus-2020-01-24.eval.txt) | eec5ee0f9a25cd8b8b9374afcf12048b |

apache-2.0 | [] | false | distilbert-base-vi-cased We are sharing smaller versions of [distilbert-base-multilingual-cased](https://huggingface.co/distilbert-base-multilingual-cased) that handle a custom number of languages. Our versions give exactly the same representations produced by the original model which preserves the original accuracy. For more information please visit our paper: [Load What You Need: Smaller Versions of Multilingual BERT](https://www.aclweb.org/anthology/2020.sustainlp-1.16.pdf). | afcae97c7eb0edb7ed045b54657cc9cc |

apache-2.0 | [] | false | How to use ```python from transformers import AutoTokenizer, AutoModel tokenizer = AutoTokenizer.from_pretrained("Geotrend/distilbert-base-vi-cased") model = AutoModel.from_pretrained("Geotrend/distilbert-base-vi-cased") ``` To generate other smaller versions of multilingual transformers please visit [our Github repo](https://github.com/Geotrend-research/smaller-transformers). | 39d088c9b5d1ea19c9e28fa0250314ba |

apache-2.0 | ['deep-narrow'] | false | T5-Efficient-BASE-DM2000 (Deep-Narrow version) T5-Efficient-BASE-DM2000 is a variation of [Google's original T5](https://ai.googleblog.com/2020/02/exploring-transfer-learning-with-t5.html) following the [T5 model architecture](https://huggingface.co/docs/transformers/model_doc/t5). It is a *pretrained-only* checkpoint and was released with the paper **[Scale Efficiently: Insights from Pre-training and Fine-tuning Transformers](https://arxiv.org/abs/2109.10686)** by *Yi Tay, Mostafa Dehghani, Jinfeng Rao, William Fedus, Samira Abnar, Hyung Won Chung, Sharan Narang, Dani Yogatama, Ashish Vaswani, Donald Metzler*. In a nutshell, the paper indicates that a **Deep-Narrow** model architecture is favorable for **downstream** performance compared to other model architectures of similar parameter count. To quote the paper: > We generally recommend a DeepNarrow strategy where the model’s depth is preferentially increased > before considering any other forms of uniform scaling across other dimensions. This is largely due to > how much depth influences the Pareto-frontier as shown in earlier sections of the paper. Specifically, a > tall small (deep and narrow) model is generally more efficient compared to the base model. Likewise, > a tall base model might also generally more efficient compared to a large model. We generally find > that, regardless of size, even if absolute performance might increase as we continue to stack layers, > the relative gain of Pareto-efficiency diminishes as we increase the layers, converging at 32 to 36 > layers. Finally, we note that our notion of efficiency here relates to any one compute dimension, i.e., > params, FLOPs or throughput (speed). We report all three key efficiency metrics (number of params, > FLOPS and speed) and leave this decision to the practitioner to decide which compute dimension to > consider. To be more precise, *model depth* is defined as the number of transformer blocks that are stacked sequentially. A sequence of word embeddings is therefore processed sequentially by each transformer block. | f19712b60a51164ad41a8e46ca146dd5 |

apache-2.0 | ['deep-narrow'] | false | Details model architecture This model checkpoint - **t5-efficient-base-dm2000** - is of model type **Base** with the following variations: - **dm** is **2000** It has **594.44** million parameters and thus requires *ca.* **2377.75 MB** of memory in full precision (*fp32*) or **1188.87 MB** of memory in half precision (*fp16* or *bf16*). A summary of the *original* T5 model architectures can be seen here: | Model | nl (el/dl) | ff | dm | kv | nh | | a02096a0e8e57236977e7d52045a6e21 |

other | [] | false | Psychedelia Diffusion Model, and maybe others to come. Tips for psychedelicmerger.ckpt: High step count, ancestral samplers seem to give the best results. Using words that imply any form of psychedelia in the prompt should help to get it's style out, but may not be necessary. if you want to try the training tokens, don't expect great results: sdpsydiffsyle and sdpsydiffstylev2, this model is a merge between two different training sets. Also works nicely with pop art, phunkadelic, surreal etc. Can't offer much advice on what CFG scale setting will work best typically, it seems pretty dependent on the prompt the clip aesthetic/stylepile in webui seems to play nicely with this too, worth experimenting. Have fun! | e84c2695358e19d5eb48df39829d2839 |

mit | ['token-classification', 'fill-mask'] | false | This model is the pretrained infoxlm checkpoint from the paper "LiLT: A Simple yet Effective Language-Independent Layout Transformer for Structured Document Understanding". Original repository: https://github.com/jpWang/LiLT To use it, it is necessary to fork the modeling and configuration files from the original repository, and load the pretrained model from the corresponding classes (LiLTRobertaLikeConfig, LiLTRobertaLikeForRelationExtraction, LiLTRobertaLikeForTokenClassification, LiLTRobertaLikeModel). They can also be preloaded with the AutoConfig/model factories as such: ```python from transformers import AutoModelForTokenClassification, AutoConfig from path_to_custom_classes import ( LiLTRobertaLikeConfig, LiLTRobertaLikeForRelationExtraction, LiLTRobertaLikeForTokenClassification, LiLTRobertaLikeModel ) def patch_transformers(): AutoConfig.register("liltrobertalike", LiLTRobertaLikeConfig) AutoModel.register(LiLTRobertaLikeConfig, LiLTRobertaLikeModel) AutoModelForTokenClassification.register(LiLTRobertaLikeConfig, LiLTRobertaLikeForTokenClassification) | efe947c1aa165c55f7d4f47c66f90f57 |

mit | ['token-classification', 'fill-mask'] | false | patch_transformers() must have been executed beforehand tokenizer = AutoTokenizer.from_pretrained("microsoft/infoxlm-base") model = AutoModel.from_pretrained("manu/lilt-infoxlm-base") model = AutoModelForTokenClassification.from_pretrained("manu/lilt-infoxlm-base") | 240d272bbffae5873802b3e02a9d6522 |

mit | ['generated_from_keras_callback'] | false | Sushant45/Catalan_language-clustered This model is a fine-tuned version of [nandysoham16/13-clustered_aug](https://huggingface.co/nandysoham16/13-clustered_aug) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.5260 - Train End Logits Accuracy: 0.8611 - Train Start Logits Accuracy: 0.8576 - Validation Loss: 0.8536 - Validation End Logits Accuracy: 0.7273 - Validation Start Logits Accuracy: 0.9091 - Epoch: 0 | 3e139d934e56673fe2d78b15ad102121 |

mit | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch | |:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:| | 0.5260 | 0.8611 | 0.8576 | 0.8536 | 0.7273 | 0.9091 | 0 | | 36fbeb088d50092dc08a17c399ddde1e |

apache-2.0 | [] | false | home). It was introduced in the paper [ViLT: Vision-and-Language Transformer Without Convolution or Region Supervision](https://arxiv.org/abs/2102.03334) by Kim et al. and first released in [this repository](https://github.com/dandelin/ViLT). Disclaimer: The team releasing ViLT did not write a model card for this model so this model card has been written by the Hugging Face team. | 7fafcfbc12ca5bb7636b50bf649a1e07 |

apache-2.0 | [] | false | How to use Here is how to use the model in PyTorch: ``` from transformers import ViltProcessor, ViltForImageAndTextRetrieval import requests from PIL import Image url = "http://images.cocodataset.org/val2017/000000039769.jpg" image = Image.open(requests.get(url, stream=True).raw) texts = ["An image of two cats chilling on a couch", "A football player scoring a goal"] processor = ViltProcessor.from_pretrained("dandelin/vilt-b32-finetuned-coco") model = ViltForImageAndTextRetrieval.from_pretrained("dandelin/vilt-b32-finetuned-coco") | e59bc31d6b19bb0ccb5da198d5ae597c |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001372 - train_batch_size: 1 - eval_batch_size: 8 - seed: 3064995158 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1.0 | 22b1499e30508deb5ab373f8780c2032 |

apache-2.0 | ['generated_from_trainer'] | false | sagemaker-distilbert-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2434 - Accuracy: 0.9165 | 02529e44d84953ee4f4aec85d0fff03a |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.9423 | 1.0 | 500 | 0.2434 | 0.9165 | | bbe648dc653d333f6ef5a855fe955c1a |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-de This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.1344 - F1: 0.8617 | a237b1145dfc8e45a32140d04b09ee8a |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.2564 | 1.0 | 525 | 0.1610 | 0.8285 | | 0.1307 | 2.0 | 1050 | 0.1378 | 0.8491 | | 0.0813 | 3.0 | 1575 | 0.1344 | 0.8617 | | c5e93fe888a6cdd6387675810a1de58b |

apache-2.0 | ['masked-lm', 'pytorch'] | false | BERT for Patents BERT for Patents is a model trained by Google on 100M+ patents (not just US patents). It is based on BERT<sub>LARGE</sub>. If you want to learn more about the model, check out the [blog post](https://cloud.google.com/blog/products/ai-machine-learning/how-ai-improves-patent-analysis), [white paper](https://services.google.com/fh/files/blogs/bert_for_patents_white_paper.pdf) and [GitHub page](https://github.com/google/patents-public-data/blob/master/models/BERT%20for%20Patents.md) containing the original TensorFlow checkpoint. --- | c1460a7e29e73a4893a6954241be4a22 |

apache-2.0 | ['masked-lm', 'pytorch'] | false | Projects using this model (or variants of it): - [Patents4IPPC](https://github.com/ec-jrc/Patents4IPPC) (carried out by [Pi School](https://picampus-school.com/) and commissioned by the [Joint Research Centre (JRC)](https://ec.europa.eu/jrc/en) of the European Commission) | a43a2ccbcd9e76cc72542a4b014ca30f |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | mt5-small-finetuned-digikala-titleGen This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the None dataset. It achieves the following results on the evaluation set: - Loss: 2.8801 - Rouge1: 70.3489 - Rouge2: 43.245 - Rougel: 34.6608 - Rougelsum: 34.6608 | 807d3fcbcf3ce6f0c284eb6ee8d3d7a0 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5.6e-05 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 7 | 8da9d2e3cd2c210a9c90aa1f88322c6f |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:| | 7.5555 | 1.0 | 847 | 3.2594 | 45.6729 | 19.6446 | 31.5974 | 31.5974 | | 4.1386 | 2.0 | 1694 | 3.0347 | 58.3021 | 32.8172 | 33.9012 | 33.9012 | | 3.7449 | 3.0 | 2541 | 2.9665 | 66.731 | 40.8991 | 34.2203 | 34.2203 | | 3.5575 | 4.0 | 3388 | 2.9102 | 65.598 | 39.4081 | 34.5116 | 34.5116 | | 3.4062 | 5.0 | 4235 | 2.8944 | 69.6081 | 42.8707 | 34.6622 | 34.6622 | | 3.3408 | 6.0 | 5082 | 2.8888 | 70.2123 | 42.8639 | 34.5669 | 34.5669 | | 3.3025 | 7.0 | 5929 | 2.8801 | 70.3489 | 43.245 | 34.6608 | 34.6608 | | cc5649a627b291866b04a01899f8313b |

cc-by-4.0 | ['generated_from_trainer'] | false | hing-roberta-NCM-run-2 This model is a fine-tuned version of [l3cube-pune/hing-roberta](https://huggingface.co/l3cube-pune/hing-roberta) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 3.3647 - Accuracy: 0.6483 - Precision: 0.6369 - Recall: 0.6325 - F1: 0.6341 | fb0e78c826b3157584e5b51822d8e38b |

cc-by-4.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | Precision | Recall | F1 | |:-------------:|:-----:|:-----:|:---------------:|:--------:|:---------:|:------:|:------:| | 0.8973 | 1.0 | 927 | 0.8166 | 0.6483 | 0.6545 | 0.6576 | 0.6460 | | 0.6827 | 2.0 | 1854 | 0.9071 | 0.6526 | 0.6444 | 0.6261 | 0.6299 | | 0.4672 | 3.0 | 2781 | 1.1600 | 0.6764 | 0.6657 | 0.6634 | 0.6643 | | 0.3388 | 4.0 | 3708 | 1.7426 | 0.6548 | 0.6406 | 0.6442 | 0.6418 | | 0.2786 | 5.0 | 4635 | 1.9385 | 0.6505 | 0.6484 | 0.6437 | 0.6434 | | 0.1794 | 6.0 | 5562 | 2.3158 | 0.6472 | 0.6564 | 0.6365 | 0.6388 | | 0.12 | 7.0 | 6489 | 2.6961 | 0.6591 | 0.6458 | 0.6531 | 0.6466 | | 0.1298 | 8.0 | 7416 | 2.7196 | 0.6505 | 0.6523 | 0.6307 | 0.6342 | | 0.0941 | 9.0 | 8343 | 2.5853 | 0.6548 | 0.6406 | 0.6426 | 0.6415 | | 0.0696 | 10.0 | 9270 | 2.8386 | 0.6613 | 0.6616 | 0.6314 | 0.6348 | | 0.0722 | 11.0 | 10197 | 2.9658 | 0.6537 | 0.6356 | 0.6356 | 0.6355 | | 0.0509 | 12.0 | 11124 | 3.3286 | 0.6429 | 0.6262 | 0.6192 | 0.6214 | | 0.0444 | 13.0 | 12051 | 3.1654 | 0.6483 | 0.6347 | 0.6302 | 0.6319 | | 0.0341 | 14.0 | 12978 | 2.9509 | 0.6537 | 0.6430 | 0.6394 | 0.6401 | | 0.0345 | 15.0 | 13905 | 3.3416 | 0.6656 | 0.6514 | 0.6488 | 0.6499 | | 0.0303 | 16.0 | 14832 | 3.3874 | 0.6419 | 0.6267 | 0.6339 | 0.6272 | | 0.0245 | 17.0 | 15759 | 3.2854 | 0.6570 | 0.6428 | 0.6420 | 0.6421 | | 0.0174 | 18.0 | 16686 | 3.2863 | 0.6602 | 0.6569 | 0.6427 | 0.6465 | | 0.0136 | 19.0 | 17613 | 3.3674 | 0.6494 | 0.6361 | 0.6341 | 0.6349 | | 0.0111 | 20.0 | 18540 | 3.3647 | 0.6483 | 0.6369 | 0.6325 | 0.6341 | | 58ff8cb84e306060e50f2a4e4dcb6f28 |

mit | ['generated_from_trainer'] | false | BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext-finetuned-pubmedqa-2 This model is a fine-tuned version of [microsoft/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext](https://huggingface.co/microsoft/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.0005 - Accuracy: 0.54 | 26db431affa3ab7375e21f199d181bc6 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.003 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 5 | 95df28b27f9e83ef4e7758299140348d |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 57 | 1.3510 | 0.54 | | No log | 2.0 | 114 | 0.9606 | 0.54 | | No log | 3.0 | 171 | 0.9693 | 0.54 | | No log | 4.0 | 228 | 1.0445 | 0.54 | | No log | 5.0 | 285 | 1.0005 | 0.54 | | d8946209550ef60b18a2707dadc24619 |

mit | ['text-classification', 'pytorch', 'tensorflow'] | false | Version 0.1 | | matched-it acc | mismatched-it acc | | -------------------------------------------------------------------------------- |----------------|-------------------| | XLM-roBERTa-large-it-mnli | 84.75 | 85.39 | | 98ff68005fae0d78c0f298ab19f238ee |

mit | ['text-classification', 'pytorch', 'tensorflow'] | false | Model Description This model takes [xlm-roberta-large](https://huggingface.co/xlm-roberta-large) and fine-tunes it on a subset of NLI data taken from a automatically translated version of the MNLI corpus. It is intended to be used for zero-shot text classification, such as with the Hugging Face [ZeroShotClassificationPipeline](https://huggingface.co/transformers/master/main_classes/pipelines.html | cd85bf08f68fa7ec2d84ffe281ea35a9 |

mit | ['text-classification', 'pytorch', 'tensorflow'] | false | Intended Usage This model is intended to be used for zero-shot text classification of italian texts. Since the base model was pre-trained trained on 100 different languages, the model has shown some effectiveness in languages beyond those listed above as well. See the full list of pre-trained languages in appendix A of the [XLM Roberata paper](https://arxiv.org/abs/1911.02116) For English-only classification, it is recommended to use [bart-large-mnli](https://huggingface.co/facebook/bart-large-mnli) or [a distilled bart MNLI model](https://huggingface.co/models?filter=pipeline_tag%3Azero-shot-classification&search=valhalla). | 78f3bba33150954ab5eeaaba792b6ec6 |

mit | ['text-classification', 'pytorch', 'tensorflow'] | false | With the zero-shot classification pipeline The model can be loaded with the `zero-shot-classification` pipeline like so: ```python from transformers import pipeline classifier = pipeline("zero-shot-classification", model="Jiva/xlm-roberta-large-it-mnli", device=0, use_fast=True, multi_label=True) ``` You can then classify in any of the above languages. You can even pass the labels in one language and the sequence to classify in another: ```python | 5aa525eba840afe67b1ab9e0a247b83f |

mit | ['text-classification', 'pytorch', 'tensorflow'] | false | we will classify the following wikipedia entry about Sardinia" sequence_to_classify = "La Sardegna è una regione italiana a statuto speciale di 1 592 730 abitanti con capoluogo Cagliari, la cui denominazione bilingue utilizzata nella comunicazione ufficiale è Regione Autonoma della Sardegna / Regione Autònoma de Sardigna." | 657494797494922d51eb4731f53b294a |

mit | ['text-classification', 'pytorch', 'tensorflow'] | false | 'scores': [0.38871392607688904, 0.22633370757102966, 0.19398456811904907, 0.13735772669315338, 0.13708525896072388]} ``` The default hypothesis template is the English, `This text is {}`. With this model better results are achieving when providing a translated template: ```python sequence_to_classify = "La Sardegna è una regione italiana a statuto speciale di 1 592 730 abitanti con capoluogo Cagliari, la cui denominazione bilingue utilizzata nella comunicazione ufficiale è Regione Autonoma della Sardegna / Regione Autònoma de Sardigna." candidate_labels = ["geografia", "politica", "macchine", "cibo", "moda"] hypothesis_template = "si parla di {}" | 45114b24f4cf788842ed430ed77bb868 |

mit | ['text-classification', 'pytorch', 'tensorflow'] | false | pose sequence as a NLI premise and label as a hypothesis from transformers import AutoModelForSequenceClassification, AutoTokenizer nli_model = AutoModelForSequenceClassification.from_pretrained('Jiva/xlm-roberta-large-it-mnli') tokenizer = AutoTokenizer.from_pretrained('Jiva/xlm-roberta-large-it-mnli') premise = sequence hypothesis = f'si parla di {}.' | 863dc8839389fd9a91725e4d536bacc2 |

mit | ['text-classification', 'pytorch', 'tensorflow'] | false | Version 0.1 The model has been now retrained on the full training set. Around 1000 sentences pairs have been removed from the set because their translation was botched by the translation model. | metric | value | |----------------- |------- | | learning_rate | 4e-6 | | optimizer | AdamW | | batch_size | 80 | | mcc | 0.77 | | train_loss | 0.34 | | eval_loss | 0.40 | | stopped_at_step | 9754 | | 45bd5ee3157e6bac5565d1a813cee4d2 |

mit | ['text-classification', 'pytorch', 'tensorflow'] | false | Version 0.0 This model was pre-trained on set of 100 languages, as described in [the original paper](https://arxiv.org/abs/1911.02116). It was then fine-tuned on the task of NLI on an Italian translation of the MNLI dataset (85% of the train set only so far). The model used for translating the texts is Helsinki-NLP/opus-mt-en-it, with a max output sequence lenght of 120. The model has been trained for 1 epoch with learning rate 4e-6 and batch size 80, currently it scores 82 acc. on the remaining 15% of the training. | 08ad0fb5c620f841bb127cfa4292beb5 |

bsd-3-clause | ['pytorch-lightning', 'audio-to-audio'] | false | Model description NU-Wave: A Diffusion Probabilistic Model for Neural Audio Upsampling - [GitHub Repo](https://github.com/mindslab-ai/nuwave) - [Paper](https://arxiv.org/pdf/2104.02321.pdf) This model was trained by contributor [Frederico S. Oliveira](https://huggingface.co/freds0), who graciously [provided the checkpoint](https://github.com/mindslab-ai/nuwave/issues/18) in the original author's GitHub repo. This model was trained using source code written by Junhyeok Lee and Seungu Han under the BSD 3.0 License. All credit goes to them for this work. This model takes in audio at 24kHz and upsamples it to 48kHz. | d665d51324c255609d1d356b370ed1e6 |

bsd-3-clause | ['pytorch-lightning', 'audio-to-audio'] | false | How to use You can try out this model here: [](https://colab.research.google.com/gist/nateraw/bd78af284ef78a960e18a75cb13deab1/nu-wave-x2.ipynb) | ed83937fca5a1196b6f93707c931b1da |

bsd-3-clause | ['pytorch-lightning', 'audio-to-audio'] | false | Eval results You can check out the authors' results at [their project page](https://mindslab-ai.github.io/nuwave/). The project page contains many samples of upsampled audio from the authors' models. | 576def31f9ad63342d01edfe03276216 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.