license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.4636 | 0.04 | 50 | 0.3662 | 0.8667 | | 0.442 | 0.08 | 100 | 0.3471 | 0.84 | | 0.3574 | 0.12 | 150 | 0.3446 | 0.86 | | 0.392 | 0.16 | 200 | 0.6776 | 0.6267 | | 0.4801 | 0.2 | 250 | 0.4307 | 0.7667 | | 0.487 | 0.24 | 300 | 0.5127 | 0.8 | | 0.4414 | 0.28 | 350 | 0.3912 | 0.8133 | | 0.4495 | 0.32 | 400 | 0.4056 | 0.8333 | | 0.4637 | 0.37 | 450 | 0.3635 | 0.8533 | | 0.4231 | 0.41 | 500 | 0.4235 | 0.84 | | 0.4049 | 0.45 | 550 | 0.4094 | 0.8067 | | 0.4481 | 0.49 | 600 | 0.3977 | 0.7733 | | 0.4024 | 0.53 | 650 | 0.3361 | 0.8733 | | 0.3901 | 0.57 | 700 | 0.3014 | 0.8667 | | 0.3872 | 0.61 | 750 | 0.3363 | 0.8533 | | 0.377 | 0.65 | 800 | 0.3754 | 0.8 | | 0.459 | 0.69 | 850 | 0.3861 | 0.8 | | 0.437 | 0.73 | 900 | 0.3834 | 0.8333 | | 0.3823 | 0.77 | 950 | 0.3541 | 0.8733 | | 0.3561 | 0.81 | 1000 | 0.3177 | 0.84 | | 0.4536 | 0.85 | 1050 | 0.4291 | 0.78 | | 0.4457 | 0.89 | 1100 | 0.3193 | 0.86 | | 0.3478 | 0.93 | 1150 | 0.3159 | 0.8533 | | 0.4613 | 0.97 | 1200 | 0.3605 | 0.84 | | 0.4081 | 1.01 | 1250 | 0.4291 | 0.7867 | | 0.3849 | 1.06 | 1300 | 0.3114 | 0.8733 | | 0.4071 | 1.1 | 1350 | 0.2939 | 0.8667 | | 0.3484 | 1.14 | 1400 | 0.3212 | 0.84 | | 0.3869 | 1.18 | 1450 | 0.2717 | 0.8933 | | 0.3877 | 1.22 | 1500 | 0.3459 | 0.84 | | 0.4245 | 1.26 | 1550 | 0.3404 | 0.8733 | | 0.4148 | 1.3 | 1600 | 0.2863 | 0.8667 | | 0.3542 | 1.34 | 1650 | 0.3377 | 0.86 | | 0.4093 | 1.38 | 1700 | 0.2972 | 0.8867 | | 0.3579 | 1.42 | 1750 | 0.3926 | 0.86 | | 0.3892 | 1.46 | 1800 | 0.2870 | 0.8667 | | 0.3569 | 1.5 | 1850 | 0.4027 | 0.8467 | | 0.3493 | 1.54 | 1900 | 0.3069 | 0.8467 | | 0.36 | 1.58 | 1950 | 0.3197 | 0.8733 | | 0.3532 | 1.62 | 2000 | 0.3711 | 0.8667 | | 0.3311 | 1.66 | 2050 | 0.2897 | 0.8867 | | 0.346 | 1.7 | 2100 | 0.2938 | 0.88 | | 0.3389 | 1.75 | 2150 | 0.2734 | 0.8933 | | 0.3289 | 1.79 | 2200 | 0.2606 | 0.8867 | | 0.3558 | 1.83 | 2250 | 0.3070 | 0.88 | | 0.3277 | 1.87 | 2300 | 0.2757 | 0.8867 | | 0.3166 | 1.91 | 2350 | 0.2759 | 0.8733 | | 0.3223 | 1.95 | 2400 | 0.2053 | 0.9133 | | 0.317 | 1.99 | 2450 | 0.2307 | 0.8867 | | 0.3408 | 2.03 | 2500 | 0.2557 | 0.9067 | | 0.3212 | 2.07 | 2550 | 0.2508 | 0.8867 | | 0.2806 | 2.11 | 2600 | 0.2472 | 0.88 | | 0.3567 | 2.15 | 2650 | 0.2790 | 0.8933 | | 0.2887 | 2.19 | 2700 | 0.3197 | 0.88 | | 0.3222 | 2.23 | 2750 | 0.2943 | 0.8667 | | 0.2773 | 2.27 | 2800 | 0.2297 | 0.88 | | 0.2728 | 2.31 | 2850 | 0.2813 | 0.8733 | | 0.3115 | 2.35 | 2900 | 0.3470 | 0.8867 | | 0.3001 | 2.39 | 2950 | 0.2702 | 0.8933 | | 0.3464 | 2.44 | 3000 | 0.2855 | 0.9 | | 0.3041 | 2.48 | 3050 | 0.2366 | 0.8867 | | 0.2717 | 2.52 | 3100 | 0.3220 | 0.88 | | 0.2903 | 2.56 | 3150 | 0.2230 | 0.9 | | 0.2959 | 2.6 | 3200 | 0.2439 | 0.9067 | | 0.2753 | 2.64 | 3250 | 0.2918 | 0.8733 | | 0.2515 | 2.68 | 3300 | 0.2493 | 0.88 | | 0.295 | 2.72 | 3350 | 0.2673 | 0.8867 | | 0.2572 | 2.76 | 3400 | 0.2842 | 0.8733 | | 0.2988 | 2.8 | 3450 | 0.2306 | 0.9067 | | 0.2923 | 2.84 | 3500 | 0.2329 | 0.8933 | | 0.2856 | 2.88 | 3550 | 0.2374 | 0.88 | | 0.2867 | 2.92 | 3600 | 0.2294 | 0.8733 | | 0.306 | 2.96 | 3650 | 0.2169 | 0.92 | | 0.2312 | 3.0 | 3700 | 0.2456 | 0.88 | | 0.2438 | 3.04 | 3750 | 0.2134 | 0.8867 | | 0.2103 | 3.08 | 3800 | 0.2242 | 0.92 | | 0.2469 | 3.12 | 3850 | 0.2407 | 0.92 | | 0.2346 | 3.17 | 3900 | 0.1866 | 0.92 | | 0.2275 | 3.21 | 3950 | 0.2318 | 0.92 | | 0.2542 | 3.25 | 4000 | 0.2256 | 0.9 | | 0.2544 | 3.29 | 4050 | 0.2246 | 0.9133 | | 0.2468 | 3.33 | 4100 | 0.2436 | 0.8733 | | 0.2105 | 3.37 | 4150 | 0.2098 | 0.9067 | | 0.2818 | 3.41 | 4200 | 0.2304 | 0.88 | | 0.2041 | 3.45 | 4250 | 0.2430 | 0.8933 | | 0.28 | 3.49 | 4300 | 0.1990 | 0.9067 | | 0.1997 | 3.53 | 4350 | 0.2515 | 0.8933 | | 0.2409 | 3.57 | 4400 | 0.2315 | 0.9 | | 0.1969 | 3.61 | 4450 | 0.2160 | 0.8933 | | 0.2246 | 3.65 | 4500 | 0.1979 | 0.92 | | 0.2185 | 3.69 | 4550 | 0.2238 | 0.9 | | 0.259 | 3.73 | 4600 | 0.2011 | 0.9067 | | 0.2407 | 3.77 | 4650 | 0.1911 | 0.92 | | 0.2198 | 3.81 | 4700 | 0.2083 | 0.92 | | 0.235 | 3.86 | 4750 | 0.1724 | 0.9267 | | 0.26 | 3.9 | 4800 | 0.1640 | 0.9333 | | 0.2334 | 3.94 | 4850 | 0.1778 | 0.9267 | | 0.2121 | 3.98 | 4900 | 0.2062 | 0.8933 | | 0.173 | 4.02 | 4950 | 0.1987 | 0.92 | | 0.1942 | 4.06 | 5000 | 0.2509 | 0.8933 | | 0.1703 | 4.1 | 5050 | 0.2179 | 0.9 | | 0.1735 | 4.14 | 5100 | 0.2429 | 0.8867 | | 0.2098 | 4.18 | 5150 | 0.1938 | 0.9267 | | 0.2126 | 4.22 | 5200 | 0.1971 | 0.92 | | 0.164 | 4.26 | 5250 | 0.2539 | 0.9067 | | 0.2271 | 4.3 | 5300 | 0.1765 | 0.94 | | 0.2245 | 4.34 | 5350 | 0.1894 | 0.94 | | 0.182 | 4.38 | 5400 | 0.1790 | 0.9467 | | 0.1835 | 4.42 | 5450 | 0.2014 | 0.9333 | | 0.2185 | 4.46 | 5500 | 0.1881 | 0.9467 | | 0.2113 | 4.5 | 5550 | 0.1742 | 0.9333 | | 0.1997 | 4.55 | 5600 | 0.1762 | 0.94 | | 0.1959 | 4.59 | 5650 | 0.1657 | 0.9467 | | 0.2035 | 4.63 | 5700 | 0.1973 | 0.92 | | 0.228 | 4.67 | 5750 | 0.1769 | 0.9467 | | 0.1632 | 4.71 | 5800 | 0.1968 | 0.9267 | | 0.1468 | 4.75 | 5850 | 0.1822 | 0.9467 | | 0.1936 | 4.79 | 5900 | 0.1832 | 0.94 | | 0.1743 | 4.83 | 5950 | 0.1987 | 0.9267 | | 0.1654 | 4.87 | 6000 | 0.1943 | 0.9267 | | 0.1859 | 4.91 | 6050 | 0.1990 | 0.92 | | 0.2039 | 4.95 | 6100 | 0.1982 | 0.9267 | | 0.2325 | 4.99 | 6150 | 0.1990 | 0.9267 | | 31c96503a4ba03ade8d59e1cef963f58 |

apache-2.0 | ['generated_from_trainer'] | false | tiny-mlm-glue-wnli-from-scratch-custom-tokenizer-expand-vocab This model is a fine-tuned version of [google/bert_uncased_L-2_H-128_A-2](https://huggingface.co/google/bert_uncased_L-2_H-128_A-2) on the None dataset. It achieves the following results on the evaluation set: - Loss: 5.5263 | 375c465704a9787dbb3fa2c25da4ea35 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 9.1486 | 6.25 | 500 | 7.8470 | | 7.1116 | 12.5 | 1000 | 6.6165 | | 6.3036 | 18.75 | 1500 | 6.1976 | | 6.0919 | 25.0 | 2000 | 6.2290 | | 6.0014 | 31.25 | 2500 | 6.0136 | | 5.9682 | 37.5 | 3000 | 5.8730 | | 5.8571 | 43.75 | 3500 | 5.7612 | | 5.8144 | 50.0 | 4000 | 5.7921 | | 5.7654 | 56.25 | 4500 | 5.8279 | | 5.7322 | 62.5 | 5000 | 5.5263 | | 2329d71e6616135b2989f13b42c533f7 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper base Czech CV This model is a fine-tuned version of [openai/whisper-base](https://huggingface.co/openai/whisper-base) on the mozilla-foundation/common_voice_11_0 cs dataset. It achieves the following results on the evaluation set: - Loss: 0.5394 - Wer: 33.9957 | c396b5a617485eb1c1c1bd23ac1fa0c4 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.206 | 4.01 | 1000 | 0.4356 | 36.2443 | | 0.0332 | 8.02 | 2000 | 0.4583 | 34.0509 | | 0.0074 | 12.03 | 3000 | 0.5119 | 34.4395 | | 0.005 | 16.04 | 4000 | 0.5394 | 33.9957 | | 0.0045 | 21.01 | 5000 | 0.5461 | 34.1025 | | d13e8bbacc5e97507ef5b8551157c89f |

apache-2.0 | ['generated_from_trainer'] | false | finetuned-sentiment-analysis-model This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.2868 - Accuracy: 0.909 - Precision: 0.8900 - Recall: 0.9283 | e69ab00e437812847e92bb5369b28384 |

mit | [] | false | 🇹🇷 DistilBERTurk DistilBERTurk is a community-driven cased distilled BERT model for Turkish. DistilBERTurk was trained on 7GB of the original training data that was used for training [BERTurk](https://github.com/stefan-it/turkish-bert/tree/master | 233dc483cf1cf9e2cd87c8ef03be59e3 |

mit | [] | false | stats), using the cased version of BERTurk as teacher model. *DistilBERTurk* was trained with the official Hugging Face implementation from [here](https://github.com/huggingface/transformers/tree/master/examples/distillation) for 5 days on 4 RTX 2080 TI. More details about distillation can be found in the ["DistilBERT, a distilled version of BERT: smaller, faster, cheaper and lighter"](https://arxiv.org/abs/1910.01108) paper by Sanh et al. (2019). | 641adf64d128317e3c41cd10b8ca96c3 |

mit | [] | false | Model weights Currently only PyTorch-[Transformers](https://github.com/huggingface/transformers) compatible weights are available. If you need access to TensorFlow checkpoints, please raise an issue in the [BERTurk](https://github.com/stefan-it/turkish-bert) repository! | Model | Downloads | --------------------------------- | --------------------------------------------------------------------------------------------------------------- | `dbmdz/distilbert-base-turkish-cased` | [`config.json`](https://cdn.huggingface.co/dbmdz/distilbert-base-turkish-cased/config.json) • [`pytorch_model.bin`](https://cdn.huggingface.co/dbmdz/distilbert-base-turkish-cased/pytorch_model.bin) • [`vocab.txt`](https://cdn.huggingface.co/dbmdz/distilbert-base-turkish-cased/vocab.txt) | 0db2f55e471710c11fb7582f98db8050 |

mit | [] | false | Usage With Transformers >= 2.3 our DistilBERTurk model can be loaded like: ```python from transformers import AutoModel, AutoTokenizer tokenizer = AutoTokenizer.from_pretrained("dbmdz/distilbert-base-turkish-cased") model = AutoModel.from_pretrained("dbmdz/distilbert-base-turkish-cased") ``` | 16902c79054be47eb9fbf6ec5fad3f31 |

mit | [] | false | Results For results on PoS tagging or NER tasks, please refer to [this repository](https://github.com/stefan-it/turkish-bert). For PoS tagging, DistilBERTurk outperforms the 24-layer XLM-RoBERTa model. The overall performance difference between DistilBERTurk and the original (teacher) BERTurk model is ~1.18%. | 495a8ca6681b5bd705ef7b63de9ec3a0 |

mit | [] | false | Acknowledgments Thanks to [Kemal Oflazer](http://www.andrew.cmu.edu/user/ko/) for providing us additional large corpora for Turkish. Many thanks to Reyyan Yeniterzi for providing us the Turkish NER dataset for evaluation. Research supported with Cloud TPUs from Google's TensorFlow Research Cloud (TFRC). Thanks for providing access to the TFRC ❤️ Thanks to the generous support from the [Hugging Face](https://huggingface.co/) team, it is possible to download both cased and uncased models from their S3 storage 🤗 | d491b1d7f63d9740b3fd26aa9c87d131 |

afl-3.0 | [] | false | This model is used to detect **abusive speech** in **Marathi**. It is finetuned on MuRIL model using Marathi abusive speech dataset. The model is trained with learning rates of 2e-5. Training code can be found at this [url](https://github.com/hate-alert/IndicAbusive) LABEL_0 :-> Normal LABEL_1 :-> Abusive | 804da065157f1b7b28a4f2d4c8461d58 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-de This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.1377 - F1: 0.8605 | bceace83d2630f57d898b81f282af72b |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.2573 | 1.0 | 525 | 0.1651 | 0.8199 | | 0.1296 | 2.0 | 1050 | 0.1482 | 0.8413 | | 0.081 | 3.0 | 1575 | 0.1377 | 0.8605 | | 30c91110e9a7477808c9bd367e81d70d |

mit | [] | false | Czech GPT-2 small model trained on the OSCAR dataset This model was trained as a part of the [master thesis](https://dspace.cuni.cz/handle/20.500.11956/176356?locale-attribute=en) on the Czech part of the [OSCAR](https://huggingface.co/datasets/oscar) dataset. | 668e5d40d35779a470f925093de29d90 |

mit | [] | false | Introduction Czech-GPT2-OSCAR (Czech GPT-2 small) is a state-of-the-art language model for Czech based on the GPT-2 small model. Unlike the original GPT-2 small model, this model is trained to predict only 512 tokens instead of 1024 as it serves as a basis for the [Czech-GPT2-Medical](https://huggingface.co/lchaloupsky/czech-gpt2-medical]). The model was trained the Czech part of the [OSCAR](https://huggingface.co/datasets/oscar) dataset using Transfer Learning and Fine-tuning techniques in about a week on one NVIDIA A100 SXM4 40GB and with a total of 21 GB of training data. This model was trained as a part of the master thesis as a proof-of-concept that it is possible to get a state-of-the-art language model in Czech language with smaller ressources than the original one, and in a significantly shorter time and mainly as a basis for the [Czech-GPT2-Medical](https://huggingface.co/lchaloupsky/czech-gpt2-medical) model. There was no Czech GPT-2 model available at the time the master thesis began. It was fine-tuned from the English pre-trained GPT-2 small using the Hugging Face libraries (Transformers and Tokenizers) wrapped into the fastai v2 Deep Learning framework. All the fine-tuning fastai v2 techniques were used. The solution is based on the [Faster than training from scratch — Fine-tuning the English GPT-2 in any language with Hugging Face and fastai v2 (practical case with Portuguese)](https://medium.com/@pierre_guillou/faster-than-training-from-scratch-fine-tuning-the-english-gpt-2-in-any-language-with-hugging-f2ec05c98787) article. Trained model is now available on Hugging Face under [czech-gpt2-oscar](https://huggingface.co/lchaloupsky/czech-gpt2-oscar/). For more information please let me know in the discussion. | bf7cf31fc61f2123110a750a1c8c0ac4 |

mit | [] | false | model-description)* GPT-2 is a transformers model pretrained on a very large corpus of English data in a self-supervised fashion. This means it was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots of publicly available data) with an automatic process to generate inputs and labels from those texts. More precisely, it was trained to guess the next word in sentences. More precisely, inputs are sequences of continuous text of a certain length and the targets are the same sequence, shifted one token (word or piece of word) to the right. The model uses internally a mask-mechanism to make sure the predictions for the token `i` only uses the inputs from `1` to `i` but not the future tokens. This way, the model learns an inner representation of the English language that can then be used to extract features useful for downstream tasks. The model is best at what it was pretrained for however, which is generating texts from a prompt. | 272ab16c9250403d683da21024fc3ef2 |

mit | [] | false | Load Czech-GPT2-OSCAR and its sub-word tokenizer (Byte-level BPE) ```python from transformers import GPT2Tokenizer, GPT2LMHeadModel import torch tokenizer = GPT2Tokenizer.from_pretrained("lchaloupsky/czech-gpt2-oscar") model = GPT2LMHeadModel.from_pretrained("lchaloupsky/czech-gpt2-oscar") | bac6923ec7419df582e75c678e558609 |

mit | [] | false | Load Czech-GPT2-OSCAR and its sub-word tokenizer (Byte-level BPE) ```python from transformers import GPT2Tokenizer, TFGPT2LMHeadModel import tensorflow as tf tokenizer = GPT2Tokenizer.from_pretrained("lchaloupsky/czech-gpt2-oscar") model = TFGPT2LMHeadModel.from_pretrained("lchaloupsky/czech-gpt2-oscar") | 78c08fd4a51537365378c12b87ff217f |

mit | [] | false | model output using Top-k sampling text generation method outputs = model.generate(input_ids, eos_token_id=50256, pad_token_id=50256, do_sample=True, max_length=40, top_k=40) print(tokenizer.decode(outputs[0])) | 7c06317e02ca3d7c4bfd3eb4f99205dd |

mit | [] | false | Limitations and bias The training data used for this model come from the Czech part of the OSCAR dataset. We know it contains a lot of unfiltered content from the internet, which is far from neutral. As the openAI team themselves point out in their model card: > Because large-scale language models like GPT-2 do not distinguish fact from fiction, we don’t support use-cases that require the generated text to be true. Additionally, language models like GPT-2 reflect the biases inherent to the systems they were trained on, so we do not recommend that they be deployed into systems that interact with humans > unless the deployers first carry out a study of biases relevant to the intended use-case. We found no statistically significant difference in gender, race, and religious bias probes between 774M and 1.5B, implying all versions of GPT-2 should be approached with similar levels of caution around use cases that are sensitive to biases around human attributes. | 18ca6eb85bee42201dd17bf59d497d5b |

mit | [] | false | Author Czech-GPT2-OSCAR was trained and evaluated by [Lukáš Chaloupský](https://cz.linkedin.com/in/luk%C3%A1%C5%A1-chaloupsk%C3%BD-0016b8226?original_referer=https%3A%2F%2Fwww.google.com%2F) thanks to the computing power of the GPU (NVIDIA A100 SXM4 40GB) cluster of [IT4I](https://www.it4i.cz/) (VSB - Technical University of Ostrava). | 0a815786573d621f2652cfa3ff8952cb |

mit | [] | false | Citation ``` @article{chaloupsky2022automatic, title={Automatic generation of medical reports from chest X-rays in Czech}, author={Chaloupsk{\`y}, Luk{\'a}{\v{s}}}, year={2022}, publisher={Charles University, Faculty of Mathematics and Physics} } ``` | 0b8b65eb2561cda84ddf8f9d830d43fe |

apache-2.0 | ['t5', 'contrastive learning', 'ranking', 'decoding', 'metric learning', 'pytorch', 'text generation', 'retrieval'] | false | What is RankGen? RankGen is a suite of encoder models (100M-1.2B parameters) which map prefixes and generations from any pretrained English language model to a shared vector space. RankGen can be used to rerank multiple full-length samples from an LM, and it can also be incorporated as a scoring function into beam search to significantly improve generation quality (0.85 vs 0.77 MAUVE, 75% preference according to humans annotators who are English writers). RankGen can also be used like a dense retriever, and achieves state-of-the-art performance on [literary retrieval](https://relic.cs.umass.edu/leaderboard.html). | 84716b49ebe81fc4a8c52e165bc16ec6 |

apache-2.0 | ['t5', 'contrastive learning', 'ranking', 'decoding', 'metric learning', 'pytorch', 'text generation', 'retrieval'] | false | Setup **Requirements** (`pip` will install these dependencies for you) Python 3.7+, `torch` (CUDA recommended), `transformers` **Installation** ``` python3.7 -m virtualenv rankgen-venv source rankgen-venv/bin/activate pip install rankgen ``` Get the data [here](https://drive.google.com/drive/folders/1DRG2ess7fK3apfB-6KoHb_azMuHbsIv4?usp=sharing) and place folder in root directory. Alternatively, use `gdown` as shown below, ``` gdown --folder https://drive.google.com/drive/folders/1DRG2ess7fK3apfB-6KoHb_azMuHbsIv4 ``` Run the test script to make sure the RankGen checkpoint has loaded correctly, ``` python -m rankgen.test_rankgen_encoder --model_path kalpeshk2011/rankgen-t5-base-all | 2a5e4e1fe36129f0bebcb43016892934 |

apache-2.0 | ['t5', 'contrastive learning', 'ranking', 'decoding', 'metric learning', 'pytorch', 'text generation', 'retrieval'] | false | Using RankGen Loading RankGen is simple using the HuggingFace APIs (see Method-2 below), but we suggest using [`RankGenEncoder`](https://github.com/martiansideofthemoon/rankgen/blob/master/rankgen/rankgen_encoder.py), which is a small wrapper around the HuggingFace APIs for correctly preprocessing data and doing tokenization automatically. You can either download [our repository](https://github.com/martiansideofthemoon/rankgen) and install the API, or copy the implementation from [below]( | bee30e71c9145ff08d249b5a5dd0709c |

apache-2.0 | ['t5', 'contrastive learning', 'ranking', 'decoding', 'metric learning', 'pytorch', 'text generation', 'retrieval'] | false | Encoding vectors prefix_vectors = rankgen_encoder.encode(["This is a prefix sentence."], vectors_type="prefix") suffix_vectors = rankgen_encoder.encode(["This is a suffix sentence."], vectors_type="suffix") | cb27275ba4e095ff76ff5944a7cee0c1 |

apache-2.0 | ['t5', 'contrastive learning', 'ranking', 'decoding', 'metric learning', 'pytorch', 'text generation', 'retrieval'] | false | use a HuggingFace compatible language model generator = RankGenGenerator(rankgen_encoder=rankgen_encoder, language_model="gpt2-medium") inputs = ["Whatever might be the nature of the tragedy it would be over with long before this, and those moving black spots away yonder to the west, that he had discerned from the bluff, were undoubtedly the departing raiders. There was nothing left for Keith to do except determine the fate of the unfortunates, and give their bodies decent burial. That any had escaped, or yet lived, was altogether unlikely, unless, perchance, women had been in the party, in which case they would have been borne away prisoners."] | 7c9ab61a069e24c34f1ec1555b7da2a5 |

apache-2.0 | ['t5', 'contrastive learning', 'ranking', 'decoding', 'metric learning', 'pytorch', 'text generation', 'retrieval'] | false | Method-2: Loading the model with HuggingFace APIs ``` from transformers import T5Tokenizer, AutoModel tokenizer = T5Tokenizer.from_pretrained(f"google/t5-v1_1-large") model = AutoModel.from_pretrained("kalpeshk2011/rankgen-t5-large-all", trust_remote_code=True) ``` | dfe0dd584cd2c82e74a1f909383c5322 |

apache-2.0 | ['t5', 'contrastive learning', 'ranking', 'decoding', 'metric learning', 'pytorch', 'text generation', 'retrieval'] | false | RankGenEncoder Implementation ``` import tqdm from transformers import T5Tokenizer, T5EncoderModel, AutoModel class RankGenEncoder(): def __init__(self, model_path, max_batch_size=32, model_size=None, cache_dir=None): assert model_path in ["kalpeshk2011/rankgen-t5-xl-all", "kalpeshk2011/rankgen-t5-xl-pg19", "kalpeshk2011/rankgen-t5-base-all", "kalpeshk2011/rankgen-t5-large-all"] self.max_batch_size = max_batch_size self.device = 'cuda' if torch.cuda.is_available() else 'cpu' if model_size is None: if "t5-large" in model_path or "t5_large" in model_path: self.model_size = "large" elif "t5-xl" in model_path or "t5_xl" in model_path: self.model_size = "xl" else: self.model_size = "base" else: self.model_size = model_size self.tokenizer = T5Tokenizer.from_pretrained(f"google/t5-v1_1-{self.model_size}", cache_dir=cache_dir) self.model = AutoModel.from_pretrained(model_path, trust_remote_code=True) self.model.to(self.device) self.model.eval() def encode(self, inputs, vectors_type="prefix", verbose=False, return_input_ids=False): tokenizer = self.tokenizer max_batch_size = self.max_batch_size if isinstance(inputs, str): inputs = [inputs] if vectors_type == 'prefix': inputs = ['pre ' + input for input in inputs] max_length = 512 else: inputs = ['suffi ' + input for input in inputs] max_length = 128 all_embeddings = [] all_input_ids = [] for i in tqdm.tqdm(range(0, len(inputs), max_batch_size), total=(len(inputs) // max_batch_size) + 1, disable=not verbose, desc=f"Encoding {vectors_type} inputs:"): tokenized_inputs = tokenizer(inputs[i:i + max_batch_size], return_tensors="pt", padding=True) for k, v in tokenized_inputs.items(): tokenized_inputs[k] = v[:, :max_length] tokenized_inputs = tokenized_inputs.to(self.device) with torch.inference_mode(): batch_embeddings = self.model(**tokenized_inputs) all_embeddings.append(batch_embeddings) if return_input_ids: all_input_ids.extend(tokenized_inputs.input_ids.cpu().tolist()) return { "embeddings": torch.cat(all_embeddings, dim=0), "input_ids": all_input_ids } ``` | 38e3aebf6dbc739328d8e7a09d8a74bd |

mit | ['sklearn', 'skops', 'tabular-classification'] | false | Hyperparameters The model is trained with below hyperparameters. <details> <summary> Click to expand </summary> | Hyperparameter | Value | |--------------------------|---------| | ccp_alpha | 0.0 | | class_weight | | | criterion | gini | | max_depth | | | max_features | | | max_leaf_nodes | | | min_impurity_decrease | 0.0 | | min_samples_leaf | 1 | | min_samples_split | 2 | | min_weight_fraction_leaf | 0.0 | | random_state | | | splitter | best | </details> | d26fb4be48d70f4677e4ebdac35e147a |

mit | ['sklearn', 'skops', 'tabular-classification'] | false | sk-container-id-1 div.sk-text-repr-fallback {display: none;}</style><div id="sk-container-id-1" class="sk-top-container" style="overflow: auto;"><div class="sk-text-repr-fallback"><pre>DecisionTreeClassifier()</pre><b>In a Jupyter environment, please rerun this cell to show the HTML representation or trust the notebook. <br />On GitHub, the HTML representation is unable to render, please try loading this page with nbviewer.org.</b></div><div class="sk-container" hidden><div class="sk-item"><div class="sk-estimator sk-toggleable"><input class="sk-toggleable__control sk-hidden--visually" id="sk-estimator-id-1" type="checkbox" checked><label for="sk-estimator-id-1" class="sk-toggleable__label sk-toggleable__label-arrow">DecisionTreeClassifier</label><div class="sk-toggleable__content"><pre>DecisionTreeClassifier()</pre></div></div></div></div></div> | 5ac20991d0ff59e3e063aadb7afd8a0a |

mit | ['sklearn', 'skops', 'tabular-classification'] | false | How to Get Started with the Model Use the code below to get started with the model. ```python import joblib import json import pandas as pd clf = joblib.load(example.pkl) with open("config.json") as f: config = json.load(f) clf.predict(pd.DataFrame.from_dict(config["sklearn"]["example_input"])) ``` | 74dfbcea7350558d066910fde56c04bd |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | Prompts to start with : ttdddd , __________, movie, ultra high quality render, high quality graphical details, 8k, volumetric lighting, micro details, (cinematic) Negative : bad, low-quality, 3d, game The prompt also can be as simple as the instance name : ttdddd and you will still get great results. You can also train your own concepts and upload them to the library by using [fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb). Test the concept via A1111 Colab :[fast-stable-diffusion-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) | 801bc15acd517df2ba67469fa8c1c9d4 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | Sample pictures of this concept: .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) | 1e1263158064181b9e90b187f2922368 |

mit | ['generated_from_trainer'] | false | deberta-base-finetuned-ner This model is a fine-tuned version of [microsoft/deberta-base](https://huggingface.co/microsoft/deberta-base) on the conll2003 dataset. It achieves the following results on the evaluation set: - Loss: 0.0501 - Precision: 0.9563 - Recall: 0.9652 - F1: 0.9608 - Accuracy: 0.9899 | 12144bed26cb5834303c9d411979f832 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.1419 | 1.0 | 878 | 0.0628 | 0.9290 | 0.9288 | 0.9289 | 0.9835 | | 0.0379 | 2.0 | 1756 | 0.0466 | 0.9456 | 0.9567 | 0.9511 | 0.9878 | | 0.0176 | 3.0 | 2634 | 0.0473 | 0.9539 | 0.9575 | 0.9557 | 0.9890 | | 0.0098 | 4.0 | 3512 | 0.0468 | 0.9570 | 0.9635 | 0.9603 | 0.9896 | | 0.0043 | 5.0 | 4390 | 0.0501 | 0.9563 | 0.9652 | 0.9608 | 0.9899 | | d87ee70dc86323603199a107bfe1f64b |

apache-2.0 | ['generated_from_trainer'] | false | bert-finetuned-gender_classification This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.1484 - F1: 0.9645 - Roc Auc: 0.9732 - Accuracy: 0.964 | 80b290424900d50defd6e8b7456c46aa |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | Roc Auc | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:------:|:-------:|:--------:| | 0.1679 | 1.0 | 1125 | 0.1781 | 0.928 | 0.946 | 0.927 | | 0.1238 | 2.0 | 2250 | 0.1252 | 0.9516 | 0.9640 | 0.95 | | 0.0863 | 3.0 | 3375 | 0.1283 | 0.9515 | 0.9637 | 0.95 | | 0.0476 | 4.0 | 4500 | 0.1419 | 0.9565 | 0.9672 | 0.956 | | 0.0286 | 5.0 | 5625 | 0.1428 | 0.9555 | 0.9667 | 0.954 | | 0.0091 | 6.0 | 6750 | 0.1515 | 0.9604 | 0.9700 | 0.959 | | 0.0157 | 7.0 | 7875 | 0.1535 | 0.9580 | 0.9682 | 0.957 | | 0.0048 | 8.0 | 9000 | 0.1484 | 0.9645 | 0.9732 | 0.964 | | 0.0045 | 9.0 | 10125 | 0.1769 | 0.9605 | 0.9703 | 0.96 | | 0.0037 | 10.0 | 11250 | 0.2007 | 0.9565 | 0.9672 | 0.956 | | 738e54228d849b7af9f54cb1194dd0bd |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-hatexplain-label-all-tokens-False This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1722 | b60022678595ff2c264adccb595caf5e |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | No log | 1.0 | 174 | 0.1750 | | No log | 2.0 | 348 | 0.1704 | | 0.1846 | 3.0 | 522 | 0.1722 | | b0588e6abe2d0c808c23bfd33aea68fa |

apache-2.0 | [] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 16 - eval_batch_size: 16 - gradient_accumulation_steps: 1 - optimizer: AdamW with betas=(None, None), weight_decay=None and epsilon=None - lr_scheduler: None - lr_warmup_steps: 500 - ema_inv_gamma: None - ema_inv_gamma: None - ema_inv_gamma: None - mixed_precision: no | a7e3fa8d91f8cdbb6dd2000ccbda633f |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-large-xls-r-300m-kor-11385-2 This model is a fine-tuned version of [teddy322/wav2vec2-large-xls-r-300m-kor-11385](https://huggingface.co/teddy322/wav2vec2-large-xls-r-300m-kor-11385) on the zeroth_korean_asr dataset. It achieves the following results on the evaluation set: - eval_loss: 0.2059 - eval_wer: 0.1471 - eval_runtime: 136.7247 - eval_samples_per_second: 3.342 - eval_steps_per_second: 0.424 - epoch: 6.47 - step: 4400 | 09652f158a63b738975944a6fed5089b |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 4 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 12 - mixed_precision_training: Native AMP | 973068d7bd9b2d618134c1158d9e4dd8 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 48 | 0.4087 | 0.8806 | | 2da11c2efff2bb4f8e67a4daad59fbae |

apache-2.0 | ['translation'] | false | opus-mt-en-tw * source languages: en * target languages: tw * OPUS readme: [en-tw](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/en-tw/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-08.zip](https://object.pouta.csc.fi/OPUS-MT-models/en-tw/opus-2020-01-08.zip) * test set translations: [opus-2020-01-08.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/en-tw/opus-2020-01-08.test.txt) * test set scores: [opus-2020-01-08.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/en-tw/opus-2020-01-08.eval.txt) | 9ad82ff2b18d794d4943267e16eb5b37 |

creativeml-openrail-m | [] | false | a painting in the style marsattacks                 | 21ff2fbba1496a10177afe22a23b66dc |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 8 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 200 - num_epochs: 3 | ef4169ee8dcb35bfe45dbcf285b6c6ff |

apache-2.0 | [] | false | Model description **CAMeLBERT-DA POS-MSA Model** is a Modern Standard Arabic (MSA) POS tagging model that was built by fine-tuning the [CAMeLBERT-DA](https://huggingface.co/CAMeL-Lab/bert-base-arabic-camelbert-da/) model. For the fine-tuning, we used the [PATB](https://dl.acm.org/doi/pdf/10.5555/1621804.1621808) dataset. Our fine-tuning procedure and the hyperparameters we used can be found in our paper *"[The Interplay of Variant, Size, and Task Type in Arabic Pre-trained Language Models](https://arxiv.org/abs/2103.06678)."* Our fine-tuning code can be found [here](https://github.com/CAMeL-Lab/CAMeLBERT). | 0946e8034fac943bfe906d40ab501d71 |

apache-2.0 | [] | false | How to use To use the model with a transformers pipeline: ```python >>> from transformers import pipeline >>> pos = pipeline('token-classification', model='CAMeL-Lab/bert-base-arabic-camelbert-da-pos-msa') >>> text = 'إمارة أبوظبي هي إحدى إمارات دولة الإمارات العربية المتحدة السبع' >>> pos(text) [{'entity': 'noun', 'score': 0.9999913, 'index': 1, 'word': 'إمارة', 'start': 0, 'end': 5}, {'entity': 'noun_prop', 'score': 0.9992475, 'index': 2, 'word': 'أبوظبي', 'start': 6, 'end': 12}, {'entity': 'pron', 'score': 0.999919, 'index': 3, 'word': 'هي', 'start': 13, 'end': 15}, {'entity': 'noun', 'score': 0.99993193, 'index': 4, 'word': 'إحدى', 'start': 16, 'end': 20}, {'entity': 'noun', 'score': 0.99999106, 'index': 5, 'word': 'إما', 'start': 21, 'end': 24}, {'entity': 'noun', 'score': 0.99998987, 'index': 6, 'word': ' | 66a7f12be7fc25f80b8de92aca84672a |

apache-2.0 | [] | false | رات', 'start': 24, 'end': 27}, {'entity': 'noun', 'score': 0.9999933, 'index': 7, 'word': 'دولة', 'start': 28, 'end': 32}, {'entity': 'noun', 'score': 0.9999899, 'index': 8, 'word': 'الإمارات', 'start': 33, 'end': 41}, {'entity': 'adj', 'score': 0.99990565, 'index': 9, 'word': 'العربية', 'start': 42, 'end': 49}, {'entity': 'adj', 'score': 0.99997944, 'index': 10, 'word': 'المتحدة', 'start': 50, 'end': 57}, {'entity': 'noun_num', 'score': 0.99938935, 'index': 11, 'word': 'السبع', 'start': 58, 'end': 63}] ``` *Note*: to download our models, you would need `transformers>=3.5.0`. Otherwise, you could download the models manually. | 06d2166ad2cc9a5ca02e1a44f05f306f |

mit | ['generated_from_trainer'] | false | gpt2-medium-combine This model is a fine-tuned version of [gpt2-medium](https://huggingface.co/gpt2-medium) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.7295 | e600441aefcf8015cd22568051a62b1a |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 8e-06 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 4 | 913ccd81b0b037491499b42c657a445a |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.9811 | 0.6 | 500 | 2.8135 | | 2.8017 | 1.2 | 1000 | 2.7691 | | 2.7255 | 1.81 | 1500 | 2.7480 | | 2.6598 | 2.41 | 2000 | 2.7392 | | 2.6426 | 3.01 | 2500 | 2.7306 | | 2.6138 | 3.61 | 3000 | 2.7295 | | 75afc9e006e6396771fe12b0208ff9b8 |

mit | [] | false | model by hiero This your the Stable Diffusion model fine-tuned the emily carroll style concept taught to Stable Diffusion with Dreambooth. It can be used by modifying the `instance_prompt`: **a detailed digital matte illustration by sks** You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb). And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) Here are the images used for training this concept:       | 34d548322233bbbfa53569150a07aa9e |

cc-by-4.0 | ['espnet', 'audio', 'automatic-speech-recognition'] | false | Demo: How to use in ESPnet2 ```bash cd espnet git checkout 716eb8f92e19708acfd08ba3bd39d40890d3a84b pip install -e . cd egs2/commonvoice/asr1 ./run.sh --skip_data_prep false --skip_train true --download_model espnet/tamil_commonvoice_blstm ``` <!-- Generated by scripts/utils/show_asr_result.sh --> | e45497a143cda8509ab2de09f29be06e |

cc-by-4.0 | ['espnet', 'audio', 'automatic-speech-recognition'] | false | Environments - date: `Mon May 2 11:41:47 EDT 2022` - python version: `3.9.5 (default, Jun 4 2021, 12:28:51) [GCC 7.5.0]` - espnet version: `espnet 0.10.6a1` - pytorch version: `pytorch 1.8.1+cu102` - Git hash: `716eb8f92e19708acfd08ba3bd39d40890d3a84b` - Commit date: `Thu Apr 28 19:50:59 2022 -0400` | 819d0fbe87c0f3a33de3453684d210cd |

cc-by-4.0 | ['espnet', 'audio', 'automatic-speech-recognition'] | false | ASR config <details><summary>expand</summary> ``` config: conf/tuning/train_asr_rnn.yaml print_config: false log_level: INFO dry_run: false iterator_type: sequence output_dir: exp/asr_train_asr_rnn_raw_ta_bpe150_sp ngpu: 1 seed: 0 num_workers: 1 num_att_plot: 3 dist_backend: nccl dist_init_method: env:// dist_world_size: null dist_rank: null local_rank: 0 dist_master_addr: null dist_master_port: null dist_launcher: null multiprocessing_distributed: false unused_parameters: false sharded_ddp: false cudnn_enabled: true cudnn_benchmark: false cudnn_deterministic: true collect_stats: false write_collected_feats: false max_epoch: 15 patience: 3 val_scheduler_criterion: - valid - loss early_stopping_criterion: - valid - loss - min best_model_criterion: - - train - loss - min - - valid - loss - min - - train - acc - max - - valid - acc - max keep_nbest_models: - 10 nbest_averaging_interval: 0 grad_clip: 5.0 grad_clip_type: 2.0 grad_noise: false accum_grad: 1 no_forward_run: false resume: true train_dtype: float32 use_amp: false log_interval: null use_matplotlib: true use_tensorboard: true use_wandb: false wandb_project: null wandb_id: null wandb_entity: null wandb_name: null wandb_model_log_interval: -1 detect_anomaly: false pretrain_path: null init_param: [] ignore_init_mismatch: false freeze_param: [] num_iters_per_epoch: null batch_size: 30 valid_batch_size: null batch_bins: 1000000 valid_batch_bins: null train_shape_file: - exp/asr_stats_raw_ta_bpe150_sp/train/speech_shape - exp/asr_stats_raw_ta_bpe150_sp/train/text_shape.bpe valid_shape_file: - exp/asr_stats_raw_ta_bpe150_sp/valid/speech_shape - exp/asr_stats_raw_ta_bpe150_sp/valid/text_shape.bpe batch_type: folded valid_batch_type: null fold_length: - 80000 - 150 sort_in_batch: descending sort_batch: descending multiple_iterator: false chunk_length: 500 chunk_shift_ratio: 0.5 num_cache_chunks: 1024 train_data_path_and_name_and_type: - - dump/raw/train_ta_sp/wav.scp - speech - sound - - dump/raw/train_ta_sp/text - text - text valid_data_path_and_name_and_type: - - dump/raw/dev_ta/wav.scp - speech - sound - - dump/raw/dev_ta/text - text - text allow_variable_data_keys: false max_cache_size: 0.0 max_cache_fd: 32 valid_max_cache_size: null optim: adadelta optim_conf: lr: 0.1 scheduler: null scheduler_conf: {} token_list: - <blank> - <unk> - ி - ு - ா - வ - ை - ர - ன - ▁ப - . - ▁க - ் - ▁அ - ட - த - க - ே - ம - ல - ம் - ன் - ும் - ய - ▁வ - க்க - ▁இ - ▁த - த்த - ▁ - து - ந்த - ப - ▁ச - ிய - ▁ம - ோ - ெ - ர் - ரு - ழ - ப்ப - ண - ொ - ▁ந - ட்ட - ▁எ - ற - ைய - ச - ள - க் - ில் - ங்க - ',' - ண்ட - ▁உ - ன்ற - ார் - ப் - ூ - ல் - ள் - கள - கள் - ாக - ற்ற - டு - ீ - ந - '!' - '?' - '"' - ஏ - ஸ - ஞ - ஷ - ஜ - ஓ - '-' - ஐ - ஹ - A - E - ங - R - N - ஈ - ஃ - O - I - ; - S - T - L - எ - இ - அ - H - C - D - M - U - உ - B - G - P - Y - '''' - ௌ - K - ':' - W - ஆ - F - — - V - ” - J - Z - ’ - ‘ - X - Q - ( - ) - · - – - ⁄ - '3' - '4' - ◯ - _ - '&' - ௗ - • - '`' - ஔ - “ - ஊ - š - ഥ - '1' - '2' - á - ‚ - é - ô - ஒ - <sos/eos> init: null input_size: null ctc_conf: dropout_rate: 0.0 ctc_type: builtin reduce: true ignore_nan_grad: true joint_net_conf: null model_conf: ctc_weight: 0.5 use_preprocessor: true token_type: bpe bpemodel: data/ta_token_list/bpe_unigram150/bpe.model non_linguistic_symbols: null cleaner: null g2p: null speech_volume_normalize: null rir_scp: null rir_apply_prob: 1.0 noise_scp: null noise_apply_prob: 1.0 noise_db_range: '13_15' frontend: default frontend_conf: fs: 16k specaug: specaug specaug_conf: apply_time_warp: true time_warp_window: 5 time_warp_mode: bicubic apply_freq_mask: true freq_mask_width_range: - 0 - 27 num_freq_mask: 2 apply_time_mask: true time_mask_width_ratio_range: - 0.0 - 0.05 num_time_mask: 2 normalize: global_mvn normalize_conf: stats_file: exp/asr_stats_raw_ta_bpe150_sp/train/feats_stats.npz preencoder: null preencoder_conf: {} encoder: vgg_rnn encoder_conf: rnn_type: lstm bidirectional: true use_projection: true num_layers: 4 hidden_size: 1024 output_size: 1024 postencoder: null postencoder_conf: {} decoder: rnn decoder_conf: num_layers: 2 hidden_size: 1024 sampling_probability: 0 att_conf: atype: location adim: 1024 aconv_chans: 10 aconv_filts: 100 required: - output_dir - token_list version: 0.10.6a1 distributed: false ``` </details> | 567eaecb06d4028b46bddce03218817c |

apache-2.0 | ['generated_from_trainer'] | false | finetuned-self_mlm_small This model is a fine-tuned version of [muhtasham/bert-small-mlm-finetuned-imdb](https://huggingface.co/muhtasham/bert-small-mlm-finetuned-imdb) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.3759 - Accuracy: 0.9372 - F1: 0.9676 | e317f87d932a351f6a02737241677db5 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 64 - eval_batch_size: 64 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: constant - num_epochs: 200 | f189df11162c29ff5d56ddd3aa8482f7 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.2834 | 1.28 | 500 | 0.2254 | 0.9150 | 0.9556 | | 0.1683 | 2.56 | 1000 | 0.3738 | 0.8694 | 0.9301 | | 0.1069 | 3.84 | 1500 | 0.2102 | 0.9354 | 0.9666 | | 0.0651 | 5.12 | 2000 | 0.2278 | 0.9446 | 0.9715 | | 0.0412 | 6.39 | 2500 | 0.4061 | 0.9156 | 0.9559 | | 0.0316 | 7.67 | 3000 | 0.4371 | 0.9110 | 0.9534 | | 0.0219 | 8.95 | 3500 | 0.3759 | 0.9372 | 0.9676 | | 57eb630c3b0380ed193163271a14da17 |

apache-2.0 | ['generated_from_trainer'] | false | finetuning-sentiment-model-3000-samples This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.3351 - Accuracy: 0.8767 - F1: 0.8825 | db851358e39e9c942268062bbae4879f |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-cased-finetuned-squadv This model is a fine-tuned version of [monakth/distilbert-base-cased-finetuned-squad](https://huggingface.co/monakth/distilbert-base-cased-finetuned-squad) on the squad_v2 dataset. | 139d1be9670cb0d984617e1462171ef2 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-imdb This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.2119 | 27f347899f32d58581b6ec0bafc645b3 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 64 - eval_batch_size: 64 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3.0 | 0677263edc73307f7acb57ef856b7ae0 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | No log | 1.0 | 1 | 3.3374 | | No log | 2.0 | 2 | 3.8206 | | No log | 3.0 | 3 | 2.8370 | | c5fc39e4bf14224d75b3f8a497af57ab |

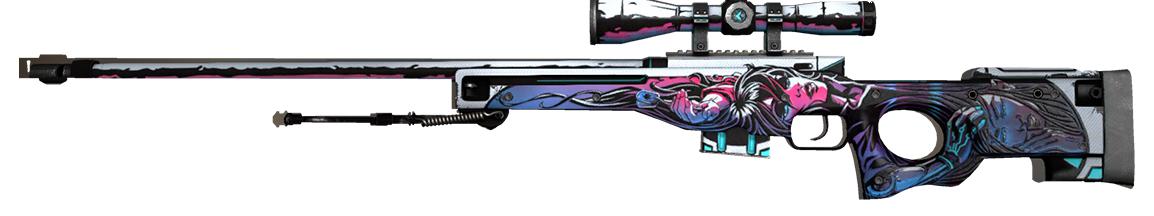

mit | [] | false | csgo_awp_object on Stable Diffusion This is the `<csgo_awp>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:       | 844f44346f371f63f1d173a21d252a0d |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 16 - total_train_batch_size: 128 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3 - mixed_precision_training: Native AMP | 262897b31d438d1353a1e276a8763978 |

cc-by-4.0 | ['espnet', 'audio', 'text-to-speech'] | false | `kan-bayashi/vctk_tts_train_gst_tacotron2_raw_phn_tacotron_g2p_en_no_space_train.loss.best` ♻️ Imported from https://zenodo.org/record/3986237/ This model was trained by kan-bayashi using vctk/tts1 recipe in [espnet](https://github.com/espnet/espnet/). | bc09e6c5ae769c07fe8ec3b45579ed82 |

apache-2.0 | ['generated_from_trainer'] | false | mobilebert_sa_pre-training-complete This model is a fine-tuned version of [google/mobilebert-uncased](https://huggingface.co/google/mobilebert-uncased) on the wikitext wikitext-103-raw-v1 dataset. It achieves the following results on the evaluation set: - Loss: 1.3239 - Accuracy: 0.7162 | dd710aa9c260bc8fd6f564510b6aa163 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 10 - distributed_type: multi-GPU - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 100 - training_steps: 300000 | 429d0f402ddaa088205035c1a9e2f2fa |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:------:|:---------------:|:--------:| | 1.6028 | 1.0 | 7145 | 1.4525 | 0.6935 | | 1.5524 | 2.0 | 14290 | 1.4375 | 0.6993 | | 1.5323 | 3.0 | 21435 | 1.4194 | 0.6993 | | 1.5191 | 4.0 | 28580 | 1.4110 | 0.7027 | | 1.5025 | 5.0 | 35725 | 1.4168 | 0.7014 | | 1.4902 | 6.0 | 42870 | 1.3931 | 0.7012 | | 1.4813 | 7.0 | 50015 | 1.3738 | 0.7057 | | 1.4751 | 8.0 | 57160 | 1.4237 | 0.6996 | | 1.4689 | 9.0 | 64305 | 1.3969 | 0.7047 | | 1.4626 | 10.0 | 71450 | 1.3916 | 0.7068 | | 1.4566 | 11.0 | 78595 | 1.3686 | 0.7072 | | 1.451 | 12.0 | 85740 | 1.3811 | 0.7060 | | 1.4478 | 13.0 | 92885 | 1.3598 | 0.7092 | | 1.4441 | 14.0 | 100030 | 1.3790 | 0.7054 | | 1.4379 | 15.0 | 107175 | 1.3794 | 0.7066 | | 1.4353 | 16.0 | 114320 | 1.3609 | 0.7102 | | 1.43 | 17.0 | 121465 | 1.3685 | 0.7083 | | 1.4278 | 18.0 | 128610 | 1.3953 | 0.7036 | | 1.4219 | 19.0 | 135755 | 1.3756 | 0.7085 | | 1.4197 | 20.0 | 142900 | 1.3597 | 0.7090 | | 1.4169 | 21.0 | 150045 | 1.3673 | 0.7061 | | 1.4146 | 22.0 | 157190 | 1.3753 | 0.7073 | | 1.4109 | 23.0 | 164335 | 1.3696 | 0.7082 | | 1.4073 | 24.0 | 171480 | 1.3563 | 0.7092 | | 1.4054 | 25.0 | 178625 | 1.3712 | 0.7103 | | 1.402 | 26.0 | 185770 | 1.3528 | 0.7113 | | 1.4001 | 27.0 | 192915 | 1.3367 | 0.7123 | | 1.397 | 28.0 | 200060 | 1.3508 | 0.7118 | | 1.3955 | 29.0 | 207205 | 1.3572 | 0.7117 | | 1.3937 | 30.0 | 214350 | 1.3566 | 0.7095 | | 1.3901 | 31.0 | 221495 | 1.3515 | 0.7117 | | 1.3874 | 32.0 | 228640 | 1.3445 | 0.7118 | | 1.386 | 33.0 | 235785 | 1.3611 | 0.7097 | | 1.3833 | 34.0 | 242930 | 1.3502 | 0.7087 | | 1.3822 | 35.0 | 250075 | 1.3657 | 0.7108 | | 1.3797 | 36.0 | 257220 | 1.3576 | 0.7108 | | 1.3793 | 37.0 | 264365 | 1.3472 | 0.7106 | | 1.3763 | 38.0 | 271510 | 1.3323 | 0.7156 | | 1.3762 | 39.0 | 278655 | 1.3325 | 0.7145 | | 1.3748 | 40.0 | 285800 | 1.3243 | 0.7138 | | 1.3733 | 41.0 | 292945 | 1.3218 | 0.7170 | | 1.3722 | 41.99 | 300000 | 1.3074 | 0.7186 | | 83aecc53825e2eb4af28f11e8969c506 |

apache-2.0 | ['exbert', 'multiberts', 'multiberts-seed-2'] | false | MultiBERTs Seed 2 Checkpoint 1500k (uncased) Seed 2 intermediate checkpoint 1500k MultiBERTs (pretrained BERT) model on English language using a masked language modeling (MLM) objective. It was introduced in [this paper](https://arxiv.org/pdf/2106.16163.pdf) and first released in [this repository](https://github.com/google-research/language/tree/master/language/multiberts). This is an intermediate checkpoint. The final checkpoint can be found at [multiberts-seed-2](https://hf.co/multberts-seed-2). This model is uncased: it does not make a difference between english and English. Disclaimer: The team releasing MultiBERTs did not write a model card for this model so this model card has been written by [gchhablani](https://hf.co/gchhablani). | 941f15aedd73cc6137ea2442c12af57d |

apache-2.0 | ['exbert', 'multiberts', 'multiberts-seed-2'] | false | How to use Here is how to use this model to get the features of a given text in PyTorch: ```python from transformers import BertTokenizer, BertModel tokenizer = BertTokenizer.from_pretrained('multiberts-seed-2-1500k') model = BertModel.from_pretrained("multiberts-seed-2-1500k") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` | 55d80286b34c0642eba337728d134f74 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 10 - eval_batch_size: 10 - seed: 42 - optimizer: Adafactor - lr_scheduler_type: linear - num_epochs: 30 | 8d5d2a690273f16c3685e7b22dbba939 |

apache-2.0 | ['generated_from_trainer'] | false | NER-for-female-names This model is a fine-tuned version of [bert-large-uncased](https://huggingface.co/bert-large-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.2606 | e5d0395aa818664969073b08e12e8ebf |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 7.961395091713594e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 27 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 5 | a901825bb4f41e4808f8173fea50e9fd |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | No log | 1.0 | 5 | 0.6371 | | No log | 2.0 | 10 | 0.4213 | | No log | 3.0 | 15 | 0.3227 | | No log | 4.0 | 20 | 0.2867 | | No log | 5.0 | 25 | 0.2606 | | 33240ccb664ddfb94277105001891d1d |

apache-2.0 | ['translation'] | false | opus-mt-ts-en * source languages: ts * target languages: en * OPUS readme: [ts-en](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/ts-en/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/ts-en/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/ts-en/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/ts-en/opus-2020-01-16.eval.txt) | 48e4199cbb45340130b8f33d2e742969 |

cc-by-4.0 | ['generated_from_trainer'] | false | hing-roberta-CM-run-4 This model is a fine-tuned version of [l3cube-pune/hing-roberta](https://huggingface.co/l3cube-pune/hing-roberta) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.5827 - Accuracy: 0.7525 - Precision: 0.6967 - Recall: 0.7004 - F1: 0.6980 | 6275036786c9e10843ad2334895b52d5 |

cc-by-4.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | Precision | Recall | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:---------:|:------:|:------:| | 0.8734 | 1.0 | 497 | 0.7673 | 0.7203 | 0.6617 | 0.6600 | 0.6604 | | 0.6245 | 2.0 | 994 | 0.7004 | 0.7485 | 0.6951 | 0.7137 | 0.7015 | | 0.4329 | 3.0 | 1491 | 1.0469 | 0.7223 | 0.6595 | 0.6640 | 0.6538 | | 0.2874 | 4.0 | 1988 | 1.3103 | 0.7586 | 0.7064 | 0.7157 | 0.7104 | | 0.1837 | 5.0 | 2485 | 1.7916 | 0.7425 | 0.6846 | 0.6880 | 0.6861 | | 0.1121 | 6.0 | 2982 | 2.0721 | 0.7465 | 0.7064 | 0.7041 | 0.7003 | | 0.0785 | 7.0 | 3479 | 2.3469 | 0.7425 | 0.6898 | 0.6795 | 0.6807 | | 0.0609 | 8.0 | 3976 | 2.2775 | 0.7404 | 0.6819 | 0.6881 | 0.6845 | | 0.0817 | 9.0 | 4473 | 2.1992 | 0.7686 | 0.7342 | 0.7147 | 0.7166 | | 0.042 | 10.0 | 4970 | 2.2359 | 0.7565 | 0.7211 | 0.7141 | 0.7106 | | 0.0463 | 11.0 | 5467 | 2.2291 | 0.7646 | 0.7189 | 0.7186 | 0.7177 | | 0.027 | 12.0 | 5964 | 2.3955 | 0.7525 | 0.6994 | 0.7073 | 0.7028 | | 0.0314 | 13.0 | 6461 | 2.4256 | 0.7565 | 0.7033 | 0.7153 | 0.7082 | | 0.0251 | 14.0 | 6958 | 2.4578 | 0.7565 | 0.7038 | 0.7025 | 0.7027 | | 0.0186 | 15.0 | 7455 | 2.5984 | 0.7565 | 0.7141 | 0.6945 | 0.6954 | | 0.0107 | 16.0 | 7952 | 2.5068 | 0.7425 | 0.6859 | 0.7016 | 0.6912 | | 0.0134 | 17.0 | 8449 | 2.5876 | 0.7606 | 0.7018 | 0.7041 | 0.7029 | | 0.0145 | 18.0 | 8946 | 2.6011 | 0.7626 | 0.7072 | 0.7079 | 0.7073 | | 0.0108 | 19.0 | 9443 | 2.5861 | 0.7545 | 0.6973 | 0.7017 | 0.6990 | | 0.0076 | 20.0 | 9940 | 2.5827 | 0.7525 | 0.6967 | 0.7004 | 0.6980 | | 6e9fc5ad4b18b5f17e78498a77777f5f |

apache-2.0 | ['multiberts', 'multiberts-seed_0', 'multiberts-seed_0-step_1300k'] | false | MultiBERTs, Intermediate Checkpoint - Seed 0, Step 1300k MultiBERTs is a collection of checkpoints and a statistical library to support robust research on BERT. We provide 25 BERT-base models trained with similar hyper-parameters as [the original BERT model](https://github.com/google-research/bert) but with different random seeds, which causes variations in the initial weights and order of training instances. The aim is to distinguish findings that apply to a specific artifact (i.e., a particular instance of the model) from those that apply to the more general procedure. We also provide 140 intermediate checkpoints captured during the course of pre-training (we saved 28 checkpoints for the first 5 runs). The models were originally released through [http://goo.gle/multiberts](http://goo.gle/multiberts). We describe them in our paper [The MultiBERTs: BERT Reproductions for Robustness Analysis](https://arxiv.org/abs/2106.16163). This is model | ccc59ab7415d481695e0ae13fd6886e5 |

apache-2.0 | ['multiberts', 'multiberts-seed_0', 'multiberts-seed_0-step_1300k'] | false | How to use Using code from [BERT-base uncased](https://huggingface.co/bert-base-uncased), here is an example based on Tensorflow: ``` from transformers import BertTokenizer, TFBertModel tokenizer = BertTokenizer.from_pretrained('google/multiberts-seed_0-step_1300k') model = TFBertModel.from_pretrained("google/multiberts-seed_0-step_1300k") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='tf') output = model(encoded_input) ``` PyTorch version: ``` from transformers import BertTokenizer, BertModel tokenizer = BertTokenizer.from_pretrained('google/multiberts-seed_0-step_1300k') model = BertModel.from_pretrained("google/multiberts-seed_0-step_1300k") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` | c40e8c0937f7e4c1a5b6d5c8a4e68672 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | chkpt Dreambooth model trained by darkvibes with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept: | e32a9e3d84c4a9107f86f6c1ef27e96b |

mit | ['generated_from_keras_callback'] | false | summarization_finetuned This model is a fine-tuned version of [facebook/bart-large-cnn](https://huggingface.co/facebook/bart-large-cnn) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 1.5478 - Validation Loss: 1.4195 - Train Rougel: tf.Tensor(0.29894578, shape=(), dtype=float32) - Epoch: 0 | a913fedba8d1240724ff38ae75ea3cb7 |

mit | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'Adamax', 'learning_rate': 2e-05, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07} - training_precision: float32 | ef24498939dbb4fcd8bee54932390b96 |

mit | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Train Rougel | Epoch | |:----------:|:---------------:|:----------------------------------------------:|:-----:| | 1.5478 | 1.4195 | tf.Tensor(0.29894578, shape=(), dtype=float32) | 0 | | 2bb68dc7413dab64727f64d36a395210 |

apache-2.0 | [] | false | Model description This diffusion model is trained with the [🤗 Diffusers](https://github.com/huggingface/diffusers) library on the [Norod78/EmojiFFHQAlignedFaces](https://huggingface.co/datasets/Norod78/EmojiFFHQAlignedFaces) dataset. | 7b671545b654e27f5029dc0a69d409ff |

apache-2.0 | ['int8', 'summarization', 'translation'] | false | t5) is an encoder-decoder model pre-trained on a multi-task mixture of unsupervised and supervised tasks and for which each task is converted into a text-to-text format. For more information, please take a look at the original paper. Paper: [Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer](https://arxiv.org/pdf/1910.10683.pdf) Authors: *Colin Raffel, Noam Shazeer, Adam Roberts, Katherine Lee, Sharan Narang, Michael Matena, Yanqi Zhou, Wei Li, Peter J. Liu* | 083b5ef3be8396c46a42d592e3de20b8 |

apache-2.0 | ['int8', 'summarization', 'translation'] | false | Usage example You can use this model with Transformers *pipeline*. ```python from transformers import AutoTokenizer, pipeline from optimum.onnxruntime import ORTModelForSeq2SeqLM tokenizer = AutoTokenizer.from_pretrained("echarlaix/t5-small-dynamic") model = ORTModelForSeq2SeqLM.from_pretrained("echarlaix/t5-small-dynamic") translator = pipeline("translation_en_to_fr", model=model, tokenizer=tokenizer) text = "He never went out without a book under his arm, and he often came back with two." results = translator(text) print(results) ``` | af877089c9a143858f12c182e340903e |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-distilled-clinc This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the clinc_oos dataset. It achieves the following results on the evaluation set: - Loss: 0.2712 - Accuracy: 0.9461 | 6f190ebc1e0b5b2fac40d955dabf6352 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 2.2629 | 1.0 | 318 | 1.6048 | 0.7368 | | 1.2437 | 2.0 | 636 | 0.8148 | 0.8565 | | 0.6604 | 3.0 | 954 | 0.4768 | 0.9161 | | 0.4054 | 4.0 | 1272 | 0.3548 | 0.9352 | | 0.2987 | 5.0 | 1590 | 0.3084 | 0.9419 | | 0.2549 | 6.0 | 1908 | 0.2909 | 0.9435 | | 0.232 | 7.0 | 2226 | 0.2804 | 0.9458 | | 0.221 | 8.0 | 2544 | 0.2749 | 0.9458 | | 0.2145 | 9.0 | 2862 | 0.2722 | 0.9468 | | 0.2112 | 10.0 | 3180 | 0.2712 | 0.9461 | | e8576db8952fa518c114afb9620b30c1 |

apache-2.0 | ['generated_from_trainer'] | false | all-roberta-large-v1-work-4-16-5 This model is a fine-tuned version of [sentence-transformers/all-roberta-large-v1](https://huggingface.co/sentence-transformers/all-roberta-large-v1) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.3586 - Accuracy: 0.3689 | e96549dd3755f6f2a9e3dc2f830737f1 |

apache-2.0 | ['generated_from_keras_callback'] | false | TEdetection_distiBERT_NER_final This model is a fine-tuned version of [FritzOS/TEdetection_distiBERT_mLM_final](https://huggingface.co/FritzOS/TEdetection_distiBERT_mLM_final) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.0031 - Validation Loss: 0.0035 - Epoch: 0 | ea54e48a4d9dbe972bc16fe5faa81dc3 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'WarmUp', 'config': {'initial_learning_rate': 2e-05, 'decay_schedule_fn': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 220743, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}, '__passive_serialization__': True}, 'warmup_steps': 1000, 'power': 1.0, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01} - training_precision: float32 | cbdc4ad0b61a39b0dd68bafa76dc7519 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-ner This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the conll2003 dataset. It achieves the following results on the evaluation set: - Loss: 0.0612 - Precision: 0.9270 - Recall: 0.9377 - F1: 0.9323 - Accuracy: 0.9840 | b46c36dad18cb776c1c9e214896c710f |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.2403 | 1.0 | 878 | 0.0683 | 0.9177 | 0.9215 | 0.9196 | 0.9815 | | 0.0513 | 2.0 | 1756 | 0.0605 | 0.9227 | 0.9365 | 0.9295 | 0.9836 | | 0.0298 | 3.0 | 2634 | 0.0612 | 0.9270 | 0.9377 | 0.9323 | 0.9840 | | 5350e04f33d86b7aa268dc0ae972a4cb |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | mt5-small-mohamed-elmogy This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: nan - Rouge1: 0.0 - Rouge2: 0.0 - Rougel: 0.0 - Rougelsum: 0.0 | 334d3d55fc6ce9cc539af03f171e9125 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5.6e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 8 | 55b6f2db02ea81f0088ce51c61ab09fb |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.