license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 31 | 0.5229 | 0.1336 | 0.0344 | 0.0547 | 0.8008 | | No log | 2.0 | 62 | 0.3701 | 0.3628 | 0.3357 | 0.3487 | 0.8596 | | No log | 3.0 | 93 | 0.3250 | 0.3979 | 0.4221 | 0.4097 | 0.8779 | | 0fc7eb1fd6d5445be8b711d727b96a77 |

apache-2.0 | ['Quality Estimation', 'microtransquest'] | false | Using Pre-trained Models ```python from transquest.algo.word_level.microtransquest.run_model import MicroTransQuestModel import torch model = MicroTransQuestModel("xlmroberta", "TransQuest/microtransquest-en_de-it-nmt", labels=["OK", "BAD"], use_cuda=torch.cuda.is_available()) source_tags, target_tags = model.predict([["if not , you may not be protected against the diseases . ", "ja tā nav , Jūs varat nepasargāt no slimībām . "]]) ``` | 49d4888b1ca82ae4973e9a66d6937585 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2182 - Accuracy: 0.9235 - F1: 0.9237 | b9e82e022a55b7aac7d7c5d252f13d66 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.809 | 1.0 | 250 | 0.3096 | 0.903 | 0.9009 | | 0.2451 | 2.0 | 500 | 0.2182 | 0.9235 | 0.9237 | | 05e86e2ff44571631cb89a280a3ce0cf |

other | ['vision', 'image-segmentation'] | false | MaskFormer MaskFormer model trained on COCO panoptic segmentation (large-sized version, Swin backbone). It was introduced in the paper [Per-Pixel Classification is Not All You Need for Semantic Segmentation](https://arxiv.org/abs/2107.06278) and first released in [this repository](https://github.com/facebookresearch/MaskFormer/blob/da3e60d85fdeedcb31476b5edd7d328826ce56cc/mask_former/modeling/criterion.py | c568f4f3ed0c0cc9c738f6f1e00c717c |

other | ['vision', 'image-segmentation'] | false | load MaskFormer fine-tuned on COCO panoptic segmentation feature_extractor = MaskFormerFeatureExtractor.from_pretrained("facebook/maskformer-swin-large-coco") model = MaskFormerForInstanceSegmentation.from_pretrained("facebook/maskformer-swin-large-coco") url = "http://images.cocodataset.org/val2017/000000039769.jpg" image = Image.open(requests.get(url, stream=True).raw) inputs = feature_extractor(images=image, return_tensors="pt") outputs = model(**inputs) | b3e068c3e004dbf6952292a0d67956a7 |

mit | ['deberta', 'deberta-v3', 'fill-mask'] | false | DeBERTaV3: Improving DeBERTa using ELECTRA-Style Pre-Training with Gradient-Disentangled Embedding Sharing [DeBERTa](https://arxiv.org/abs/2006.03654) improves the BERT and RoBERTa models using disentangled attention and enhanced mask decoder. With those two improvements, DeBERTa out perform RoBERTa on a majority of NLU tasks with 80GB training data. In [DeBERTa V3](https://arxiv.org/abs/2111.09543), we further improved the efficiency of DeBERTa using ELECTRA-Style pre-training with Gradient Disentangled Embedding Sharing. Compared to DeBERTa, our V3 version significantly improves the model performance on downstream tasks. You can find more technique details about the new model from our [paper](https://arxiv.org/abs/2111.09543). Please check the [official repository](https://github.com/microsoft/DeBERTa) for more implementation details and updates. The DeBERTa V3 base model comes with 12 layers and a hidden size of 768. It has only 86M backbone parameters with a vocabulary containing 128K tokens which introduces 98M parameters in the Embedding layer. This model was trained using the 160GB data as DeBERTa V2. | e1286a7537731828572712d24af47b0d |

mit | ['deberta', 'deberta-v3', 'fill-mask'] | false | Params(M)| SQuAD 2.0(F1/EM) | MNLI-m/mm(ACC)| |-------------------|----------|-------------------|-----------|----------| | RoBERTa-base |50 |86 | 83.7/80.5 | 87.6/- | | XLNet-base |32 |92 | -/80.2 | 86.8/- | | ELECTRA-base |30 |86 | -/80.5 | 88.8/ | | DeBERTa-base |50 |100 | 86.2/83.1| 88.8/88.5| | DeBERTa-v3-base |128|86 | **88.4/85.4** | **90.6/90.7**| | DeBERTa-v3-base + SiFT |128|86 | -/- | 91.0/-| We present the dev results on SQuAD 1.1/2.0 and MNLI tasks. | ed0d2384716fd1dfb46d7c70a4fc64e9 |

mit | ['deberta', 'deberta-v3', 'fill-mask'] | false | !/bin/bash cd transformers/examples/pytorch/text-classification/ pip install datasets export TASK_NAME=mnli output_dir="ds_results" num_gpus=8 batch_size=8 python -m torch.distributed.launch --nproc_per_node=${num_gpus} \ run_glue.py \ --model_name_or_path microsoft/deberta-v3-base \ --task_name $TASK_NAME \ --do_train \ --do_eval \ --evaluation_strategy steps \ --max_seq_length 256 \ --warmup_steps 500 \ --per_device_train_batch_size ${batch_size} \ --learning_rate 2e-5 \ --num_train_epochs 3 \ --output_dir $output_dir \ --overwrite_output_dir \ --logging_steps 1000 \ --logging_dir $output_dir ``` | c9ba4c60c11dbc358f381dddb44cfea6 |

mit | ['deberta', 'deberta-v3', 'fill-mask'] | false | Citation If you find DeBERTa useful for your work, please cite the following papers: ``` latex @misc{he2021debertav3, title={DeBERTaV3: Improving DeBERTa using ELECTRA-Style Pre-Training with Gradient-Disentangled Embedding Sharing}, author={Pengcheng He and Jianfeng Gao and Weizhu Chen}, year={2021}, eprint={2111.09543}, archivePrefix={arXiv}, primaryClass={cs.CL} } ``` ``` latex @inproceedings{ he2021deberta, title={DEBERTA: DECODING-ENHANCED BERT WITH DISENTANGLED ATTENTION}, author={Pengcheng He and Xiaodong Liu and Jianfeng Gao and Weizhu Chen}, booktitle={International Conference on Learning Representations}, year={2021}, url={https://openreview.net/forum?id=XPZIaotutsD} } ``` | cde418d75a73763a803b41fcb26ca9b7 |

apache-2.0 | ['generated_from_trainer'] | false | bart-base-News_Summarization_CNN This model is a fine-tuned version of [facebook/bart-base](https://huggingface.co/facebook/bart-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.3750 | 984ba370b272ce7d86f7cebb14ebdac0 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 1 - eval_batch_size: 1 - seed: 42 - gradient_accumulation_steps: 16 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 100 - num_epochs: 2 | e8252c2fcddccc022d4b1ed52113de6f |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 4.3979 | 0.99 | 114 | 1.2718 | | 0.8315 | 1.99 | 228 | 0.3750 | | 17bd8674c328ad8a584d450a5def3ee1 |

apache-2.0 | ['generated_from_trainer'] | false | my_awesome_eli5_clm-model2 This model is a fine-tuned version of [distilgpt2](https://huggingface.co/distilgpt2) on the None dataset. It achieves the following results on the evaluation set: - Loss: 3.7470 | 51a31bf11d063240289d22aeca21edc1 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 3.8701 | 1.0 | 1055 | 3.7642 | | 3.7747 | 2.0 | 2110 | 3.7501 | | 3.7318 | 3.0 | 3165 | 3.7470 | | 73963002e0c9740345d98c6d3671d78d |

apache-2.0 | ['generated_from_trainer'] | false | generic_ner_model This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.0999 - Precision: 0.8727 - Recall: 0.8953 - F1: 0.8838 - Accuracy: 0.9740 | 684befaf4cc834cf971784fdfb6f5bc8 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.1083 | 1.0 | 1958 | 0.1007 | 0.8684 | 0.8836 | 0.8759 | 0.9723 | | 0.0679 | 2.0 | 3916 | 0.0977 | 0.8672 | 0.8960 | 0.8813 | 0.9738 | | 0.0475 | 3.0 | 5874 | 0.0999 | 0.8727 | 0.8953 | 0.8838 | 0.9740 | | 851caaf9237afa1e7502f2442ac07050 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | No log | 1.0 | 282 | 4.3092 | | 4.5403 | 2.0 | 564 | 4.1283 | | 4.5403 | 3.0 | 846 | 4.0605 | | 4.039 | 4.0 | 1128 | 4.0321 | | 4.039 | 5.0 | 1410 | 4.0243 | | 69cb51f83052df9ce495d77405b6e20f |

apache-2.0 | ['generated_from_trainer'] | false | bert-fine-turned-cola This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the glue dataset. It achieves the following results on the evaluation set: - Loss: 0.8073 - Matthews Correlation: 0.6107 | f20b094302b9230bcb4ef85cb5d5439a |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Matthews Correlation | |:-------------:|:-----:|:----:|:---------------:|:--------------------:| | 0.4681 | 1.0 | 1069 | 0.5613 | 0.4892 | | 0.321 | 2.0 | 2138 | 0.6681 | 0.5851 | | 0.1781 | 3.0 | 3207 | 0.8073 | 0.6107 | | ac5c4c51d3a4461fcda054fc004990bc |

cc-by-sa-4.0 | ['korean', 'masked-lm'] | false | Model Description This is a RoBERTa model pre-trained on Korean texts, derived from [klue/roberta-large](https://huggingface.co/klue/roberta-large). Token-embeddings are enhanced to include all 한문 교육용 기초 한자 and 인명용 한자 characters. You can fine-tune `roberta-large-korean-hanja` for downstream tasks, such as [POS-tagging](https://huggingface.co/KoichiYasuoka/roberta-large-korean-upos), [dependency-parsing](https://huggingface.co/KoichiYasuoka/roberta-large-korean-ud-goeswith), and so on. | 63dfd30757ed7b5d106d318d8882a68d |

cc-by-sa-4.0 | ['korean', 'masked-lm'] | false | How to Use ```py from transformers import AutoTokenizer,AutoModelForMaskedLM tokenizer=AutoTokenizer.from_pretrained("KoichiYasuoka/roberta-large-korean-hanja") model=AutoModelForMaskedLM.from_pretrained("KoichiYasuoka/roberta-large-korean-hanja") ``` | d4d005f50d03ab58e64b5c9ef246ad55 |

mit | [] | false | pixel-mania on Stable Diffusion This is the `<pixel-mania>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). | d4328313b46ab10982b5ffd60d90e095 |

apache-2.0 | [] | false | Data2Vec NLP Base This model was converted from `fairseq`. The original weights can be found in https://dl.fbaipublicfiles.com/fairseq/data2vec/nlp_base.pt Example usage: ```python from transformers import RobertaTokenizer, Data2VecForSequenceClassification, Data2VecConfig import torch tokenizer = RobertaTokenizer.from_pretrained("roberta-large") config = Data2VecConfig.from_pretrained("edugp/data2vec-nlp-base") model = Data2VecForSequenceClassification.from_pretrained("edugp/data2vec-nlp-base", config=config) | 1137edebd755323b472b1fe82e22b939 |

apache-2.0 | ['text2sql'] | false | tscholak/3vnuv1vf Fine-tuned weights for [PICARD - Parsing Incrementally for Constrained Auto-Regressive Decoding from Language Models](https://arxiv.org/abs/2109.05093) based on [t5.1.1.lm100k.large](https://github.com/google-research/text-to-text-transfer-transformer/blob/main/released_checkpoints.md | b167670e05463bbefcdc54673b7f7a94 |

apache-2.0 | ['text2sql'] | false | Training Data The model has been fine-tuned on the 7000 training examples in the [Spider text-to-SQL dataset](https://yale-lily.github.io/spider). The model solves Spider's zero-shot text-to-SQL translation task, and that means that it can generalize to unseen SQL databases. | 01169c40841c58095e20a3ed985e0fae |

apache-2.0 | ['text2sql'] | false | lm-adapted-t511lm100k) and fine-tuned with the text-to-text generation objective. Questions are always grounded in a database schema, and the model is trained to predict the SQL query that would be used to answer the question. The input to the model is composed of the user's natural language question, the database identifier, and a list of tables and their columns: ``` [question] | [db_id] | [table] : [column] ( [content] , [content] ) , [column] ( ... ) , [...] | [table] : ... | ... ``` The model outputs the database identifier and the SQL query that will be executed on the database to answer the user's question: ``` [db_id] | [sql] ``` | cb04f79fac815ea9f9609c9785d24917 |

apache-2.0 | ['text2sql'] | false | Performance Out of the box, this model achieves 71.2 % exact-set match accuracy and 74.4 % execution accuracy on the Spider development set. Using the PICARD constrained decoding method (see [the official PICARD implementation](https://github.com/ElementAI/picard)), the model's performance can be improved to **74.8 %** exact-set match accuracy and **79.2 %** execution accuracy on the Spider development set. | 4121d5dfb7ff57a80372a6c7d7fbcce7 |

apache-2.0 | ['text2sql'] | false | References 1. [PICARD - Parsing Incrementally for Constrained Auto-Regressive Decoding from Language Models](https://arxiv.org/abs/2109.05093) 2. [Official PICARD code](https://github.com/ElementAI/picard) | 93649c9d7f34abacb5d31100bdcd7737 |

apache-2.0 | ['text2sql'] | false | Citation ```bibtex @inproceedings{Scholak2021:PICARD, author = {Torsten Scholak and Nathan Schucher and Dzmitry Bahdanau}, title = "{PICARD}: Parsing Incrementally for Constrained Auto-Regressive Decoding from Language Models", booktitle = "Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing", month = nov, year = "2021", publisher = "Association for Computational Linguistics", url = "https://aclanthology.org/2021.emnlp-main.779", pages = "9895--9901", } ``` | c49e74303ebb32a88aa34fed32150dea |

apache-2.0 | ['generated_from_trainer'] | false | bigbird-pegasus-large-bigpatent-finetuned-pubMed This model is a fine-tuned version of [google/bigbird-pegasus-large-bigpatent](https://huggingface.co/google/bigbird-pegasus-large-bigpatent) on the pub_med_summarization_dataset dataset. It achieves the following results on the evaluation set: - Loss: 1.5403 - Rouge1: 45.0851 - Rouge2: 19.5488 - Rougel: 27.391 - Rougelsum: 41.112 - Gen Len: 231.608 | a3c8b9f8553b60dd503b88b83319bd5e |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 5 - mixed_precision_training: Native AMP | 679d7dc7b4044d3bb9364969fc3c9aa7 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:| | 2.1198 | 1.0 | 500 | 1.6285 | 43.0579 | 18.1792 | 26.421 | 39.0769 | 214.924 | | 1.6939 | 2.0 | 1000 | 1.5696 | 44.0679 | 18.9331 | 26.84 | 40.0684 | 222.814 | | 1.6195 | 3.0 | 1500 | 1.5506 | 44.7352 | 19.3532 | 27.2418 | 40.7454 | 229.396 | | 1.5798 | 4.0 | 2000 | 1.5403 | 45.0415 | 19.5019 | 27.2969 | 40.951 | 231.044 | | 1.5592 | 5.0 | 2500 | 1.5403 | 45.0851 | 19.5488 | 27.391 | 41.112 | 231.608 | | 1e1dd28803bcd2c92ef845b18b392d3f |

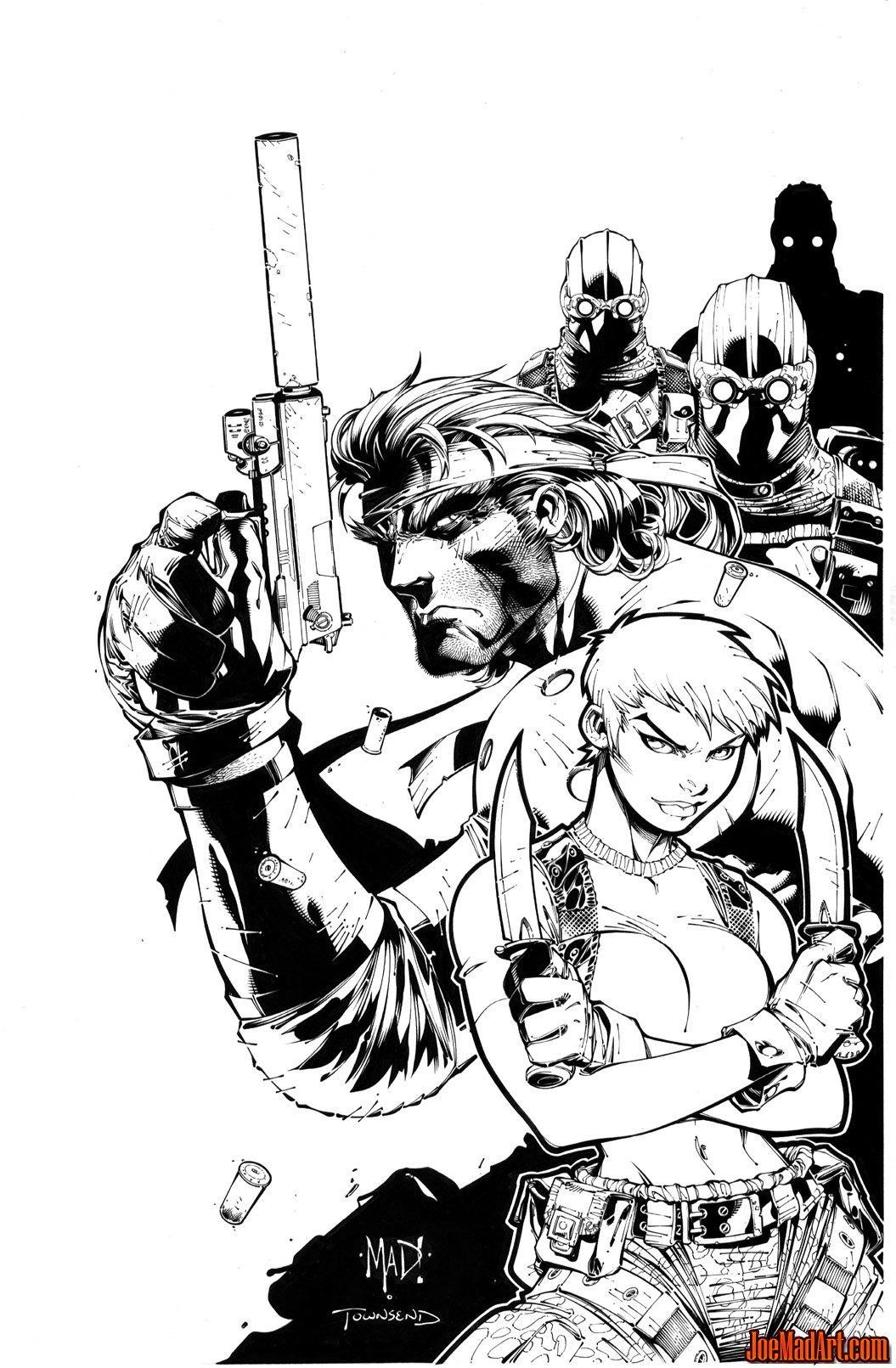

mit | [] | false | Joe Mad on Stable Diffusion This is the `<joe-mad>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:     | baadcad91fa6dd866ca0d562e9dccd95 |

apache-2.0 | ['generated_from_trainer'] | false | recipe-lr2e05-wd0.005-bs16 This model is a fine-tuned version of [paola-md/recipe-distilroberta-Is](https://huggingface.co/paola-md/recipe-distilroberta-Is) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2780 - Rmse: 0.5272 - Mse: 0.2780 - Mae: 0.4314 | 792b8959f224cb64628a69e9fdaf63b0 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 128 - eval_batch_size: 128 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 10 | ca86734ea9bc6a05cddad68b1d17b66c |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rmse | Mse | Mae | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:| | 0.277 | 1.0 | 1245 | 0.2743 | 0.5237 | 0.2743 | 0.4112 | | 0.2738 | 2.0 | 2490 | 0.2811 | 0.5302 | 0.2811 | 0.4288 | | 0.2724 | 3.0 | 3735 | 0.2780 | 0.5272 | 0.2780 | 0.4314 | | 7f2a1d6b77dced2f6c979a5b40baf42b |

apache-2.0 | ['automatic-speech-recognition', 'es'] | false | exp_w2v2t_es_vp-nl_s203 Fine-tuned [facebook/wav2vec2-large-nl-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-nl-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (es)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | dd818a502cbd73d55ef0d78c13db3bf3 |

artistic-2.0 | ['code'] | false | DVA-C01 PDFs, which stand for AWS Certified Developer - Associate Exam DVA-C01, can be reliable for exam preparation for a few reasons: 1: They provide a digital copy of the exam's content, including the topics and objectives that will be covered on the test. 2: They are easy to access and can be downloaded and used on a variety of devices, making it convenient to study on-the-go. 3: Some DVA-C01 PDFs may include practice questions and answer explanations, which can help you prepare and identify areas where you may need more study. 4: Many DVA-C01 PDFs are created by experts, who have already taken the exam and have an in-depth knowledge of the exam's format, content, and difficulty level. However, it's important to note that not all DVA-C01 PDFs are reliable or of the same quality, so it's recommended to look for the ones from reputable sources, and also to use them in conjunction with other resources such as AWS official documentation, hands-on practice and online training to achieve best results. Click Here To Get DVA-C01 Dumps 2023: https://www.passexam4sure.com/amazon/dva-c01-dumps.html | c98cf8c85cdbbc4d07ad7a248f46b044 |

apache-2.0 | ['tensorflowtts', 'audio', 'text-to-speech', 'mel-to-wav'] | false | Multi-band MelGAN trained on Synpaflex (Fr) This repository provides a pretrained [Multi-band MelGAN](https://arxiv.org/abs/2005.05106) trained on Synpaflex dataset (French). For a detail of the model, we encourage you to read more about [TensorFlowTTS](https://github.com/TensorSpeech/TensorFlowTTS). | 93b431f575da4f04e18b5ddf47f568b9 |

apache-2.0 | ['tensorflowtts', 'audio', 'text-to-speech', 'mel-to-wav'] | false | Converting your Text to Wav ```python import soundfile as sf import numpy as np import tensorflow as tf from tensorflow_tts.inference import AutoProcessor from tensorflow_tts.inference import TFAutoModel processor = AutoProcessor.from_pretrained("tensorspeech/tts-tacotron2-synpaflex-fr") tacotron2 = TFAutoModel.from_pretrained("tensorspeech/tts-tacotron2-synpaflex-fr") mb_melgan = TFAutoModel.from_pretrained("tensorspeech/tts-mb_melgan-synpaflex-fr") text = "Oh, je voudrais tant que tu te souviennes Des jours heureux quand nous étions amis" input_ids = processor.text_to_sequence(text) | f0fa9177fa2717a8c02d8a9622241845 |

apache-2.0 | [] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 8 - eval_batch_size: 8 - gradient_accumulation_steps: 1 - optimizer: AdamW with betas=(None, None), weight_decay=None and epsilon=None - lr_scheduler: None - lr_warmup_steps: 500 - ema_inv_gamma: None - ema_inv_gamma: None - ema_inv_gamma: None - mixed_precision: fp16 | 1bd902474114835b2ef0a98c153817e5 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 4 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3 - mixed_precision_training: Native AMP | 053daec258c27c07c9a9490352db02b1 |

apache-2.0 | ['generated_from_trainer'] | false | finetuning-sentiment-model-3000-samples_jmnew This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.3148 - Accuracy: 0.8733 - F1: 0.875 | 3596c04ad814074a76f5ee79b73d7c57 |

mit | ['generated_from_trainer'] | false | cold_remanandtec_gpu_v1 This model is a fine-tuned version of [ibm/ColD-Fusion](https://huggingface.co/ibm/ColD-Fusion) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.0737 - F1: 0.9462 - Roc Auc: 0.9592 - Recall: 0.9362 - Precision: 0.9565 | de439133edf1b452bfb94b2387fe3aa8 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 2 - eval_batch_size: 2 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - num_epochs: 3.0 | 6e0bee3c7c0a0b829d473c06769bd308 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | Roc Auc | Recall | Precision | |:-------------:|:-----:|:----:|:---------------:|:------:|:-------:|:------:|:---------:| | 0.3606 | 1.0 | 1521 | 0.1974 | 0.8936 | 0.9247 | 0.8936 | 0.8936 | | 0.2715 | 2.0 | 3042 | 0.1247 | 0.8989 | 0.9167 | 0.8511 | 0.9524 | | 0.1811 | 3.0 | 4563 | 0.0737 | 0.9462 | 0.9592 | 0.9362 | 0.9565 | | abcee067a7ea79bb1946cc13202ae92a |

apache-2.0 | ['whisper-event', 'generated_from_trainer', 'hf-asr-leaderboard', 'automatic-speech-recognition', 'greek'] | false | Whisper small (Greek) Trained on Interleaved Datasets This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on interleaved mozilla-foundation/common_voice_11_0 (el) and google/fleurs (el_gr) dataset. It achieves the following results on the evaluation set: - Loss: 0.4741 - Wer: 20.0687 | 86e6899846e35d21eb13e40611ac6590 |

apache-2.0 | ['whisper-event', 'generated_from_trainer', 'hf-asr-leaderboard', 'automatic-speech-recognition', 'greek'] | false | Model description The model was developed during the Whisper Fine-Tuning Event in December 2022. More details on the model can be found [in the original paper](https://cdn.openai.com/papers/whisper.pdf) | 89587c5523642f43b11e2a95447d0ac5 |

apache-2.0 | ['whisper-event', 'generated_from_trainer', 'hf-asr-leaderboard', 'automatic-speech-recognition', 'greek'] | false | Training and evaluation data This model was trained by interleaving the training and evaluation splits from two different datasets: - mozilla-foundation/common_voice_11_0 (el) - google/fleurs (el_gr) | 75bf5d1acc68d69a2523d8e2678e3e1b |

apache-2.0 | ['whisper-event', 'generated_from_trainer', 'hf-asr-leaderboard', 'automatic-speech-recognition', 'greek'] | false | Training procedure The python script used is a modified version of the script provided by Hugging Face and can be found [here](https://github.com/kamfonas/whisper-fine-tuning-event/blob/minor-mods-by-farsipal/run_speech_recognition_seq2seq_streaming.py) | d9e9077eeaa36d681d5e41b297747470 |

apache-2.0 | ['whisper-event', 'generated_from_trainer', 'hf-asr-leaderboard', 'automatic-speech-recognition', 'greek'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 64 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 5000 - mixed_precision_training: Native AMP | 5fe0ba4db109787c2fddbb6b25ec92e5 |

apache-2.0 | ['whisper-event', 'generated_from_trainer', 'hf-asr-leaderboard', 'automatic-speech-recognition', 'greek'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.0186 | 4.98 | 1000 | 0.3619 | 21.0067 | | 0.0012 | 9.95 | 2000 | 0.4347 | 20.3009 | | 0.0005 | 14.93 | 3000 | 0.4741 | 20.0687 | | 0.0003 | 19.9 | 4000 | 0.4974 | 20.1152 | | 0.0003 | 24.88 | 5000 | 0.5066 | 20.2266 | | 749400d5b3373d3123fade6f981522e9 |

apache-2.0 | ['translation'] | false | opus-mt-gaa-sv * source languages: gaa * target languages: sv * OPUS readme: [gaa-sv](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/gaa-sv/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-09.zip](https://object.pouta.csc.fi/OPUS-MT-models/gaa-sv/opus-2020-01-09.zip) * test set translations: [opus-2020-01-09.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/gaa-sv/opus-2020-01-09.test.txt) * test set scores: [opus-2020-01-09.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/gaa-sv/opus-2020-01-09.eval.txt) | 24526cc1e16cf5ea129cfaaa790e03b7 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2294 - Accuracy: 0.9215 - F1: 0.9219 | f4ef66e7dc4925229f2fbd0263ae2f37 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8304 | 1.0 | 250 | 0.3312 | 0.899 | 0.8962 | | 0.2547 | 2.0 | 500 | 0.2294 | 0.9215 | 0.9219 | | 18f4f6fb1c626ed4d026dfc6a88857b2 |

apache-2.0 | ['generated_from_trainer'] | false | mini-mlm-tweet-target-tweet This model is a fine-tuned version of [muhtasham/mini-mlm-tweet](https://huggingface.co/muhtasham/mini-mlm-tweet) on the tweet_eval dataset. It achieves the following results on the evaluation set: - Loss: 1.4122 - Accuracy: 0.7353 - F1: 0.7377 | 8e50f26b7f5538fadeddaa2d87847154 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8264 | 4.9 | 500 | 0.7479 | 0.7219 | 0.7190 | | 0.3705 | 9.8 | 1000 | 0.8205 | 0.7487 | 0.7479 | | 0.1775 | 14.71 | 1500 | 1.0049 | 0.7273 | 0.7286 | | 0.092 | 19.61 | 2000 | 1.1698 | 0.7353 | 0.7351 | | 0.0513 | 24.51 | 2500 | 1.4122 | 0.7353 | 0.7377 | | 49a47d71c342b530cb0598e8352afbe7 |

apache-2.0 | ['generated_from_keras_callback'] | false | Das282000Prit/fyp-finetuned-brown This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 3.5777 - Validation Loss: 3.0737 - Epoch: 0 | e80f83125bb299b83d068e0d5fb19ee4 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'WarmUp', 'config': {'initial_learning_rate': 2e-05, 'decay_schedule_fn': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': -844, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}, '__passive_serialization__': True}, 'warmup_steps': 1000, 'power': 1.0, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01} - training_precision: mixed_float16 | 423e20c2438b34fe686192b74cf5499c |

apache-2.0 | ['generated_from_trainer'] | false | yelp This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the yelp_review_full dataset. It achieves the following results on the evaluation set: - Loss: 1.0380 - Accuracy: 0.587 | a1ef9944946f61615f44690b82a97907 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 125 | 1.2336 | 0.447 | | No log | 2.0 | 250 | 1.0153 | 0.562 | | No log | 3.0 | 375 | 1.0380 | 0.587 | | d2c948e2b8afbe8fb8cd9212beedd1a3 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | gpt2-small-spanish-finetuned-rap This model is a fine-tuned version of [datificate/gpt2-small-spanish](https://huggingface.co/datificate/gpt2-small-spanish) on the amazon_reviews_multi dataset. It achieves the following results on the evaluation set: - Loss: 4.7161 | e3dbf5403c5b0436dc6160e2055e3361 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | No log | 1.0 | 27 | 4.8244 | | No log | 2.0 | 54 | 4.7367 | | No log | 3.0 | 81 | 4.7161 | | 39e21867ee0e50c272945800ed56e847 |

apache-2.0 | ['generated_from_trainer'] | false | distilbart-cnn-12-6-finetuned-1.1.0 This model is a fine-tuned version of [sshleifer/distilbart-cnn-12-6](https://huggingface.co/sshleifer/distilbart-cnn-12-6) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.0274 - Rouge1: 84.662 - Rouge2: 83.5616 - Rougel: 84.4282 - Rougelsum: 84.4667 | e70f1704451cddaf46387bb492dadf63 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:| | 0.0911 | 1.0 | 97 | 0.0286 | 85.8678 | 84.7683 | 85.7147 | 85.6949 | | 0.0442 | 2.0 | 194 | 0.0274 | 84.662 | 83.5616 | 84.4282 | 84.4667 | | f2e5f8e0fd2cc7a403f0ef67b5fd237e |

mit | ['automatic-speech-recognition', 'generated_from_trainer'] | false | Model description We fine-tuned a wav2vec 2.0 large XLSR-53 checkpoint with 842h of unlabelled Luxembourgish speech collected from [RTL.lu](https://www.rtl.lu/). Then the model was fine-tuned on 11h of labelled Luxembourgish speech from the same domain. Additionally, we rescore the output transcription with a 5-gram language model trained on text corpora from RTL.lu and the Luxembourgish parliament. | 0d28ce29f1aaac6e2bfc9cd2456733a7 |

apache-2.0 | ['generated_from_keras_callback'] | false | bert-turkish-cased This model is a fine-tuned version of [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) on an unknown dataset. It achieves the following results on the evaluation set: | 3f4d3f8f955cfc5148bef63092aec8f7 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'Adam', 'learning_rate': 3e-05, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False} - training_precision: float32 | d83273d4c8190127171b81532aec17a2 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers'] | false | Introducing my new Vivid Watercolors dreambooth model. The model is trained with beautiful, artist-agnostic watercolor images using the midjourney method. The token is "wtrcolor style" It can be challenging to use, but with the right prompts, but it can create stunning artwork. See an example prompt that I use in tests: wtrcolor style, Digital art of (subject), official art, frontal, smiling, masterpiece, Beautiful, ((watercolor)), face paint, paint splatter, intricate details. Highly detailed, detailed eyes, [dripping:0.5], Trending on artstation, by [artist] Using "watercolor" in the pronpt is necessary to get a good watercolor texture, try words like face (paint, paint splatter, dripping). For a negative prompt I use this one: (bad_prompt:0.8), ((((ugly)))), (((duplicate))), ((morbid)), ((mutilated)), [out of frame], extra fingers, mutated hands, ((poorly drawn hands)), ((poorly drawn face)), (((mutation))), (((deformed))), ((ugly)), blurry, ((bad anatomy)), (((bad proportions))), ((extra limbs)), cloned face, (((disfigured))), (((dead eyes))), (((out of frame))), ugly, extra limbs, (bad anatomy), gross proportions, (malformed limbs), ((missing arms)), ((missing legs)), (((extra arms))), (((extra legs))), mutated hands, (fused fingers), (too many fingers), (((long neck))), blur, (((watermarked)), ((out of focus)), (((low contrast))), (((zoomed in))), (((crossed eyes))), (((disfigured)), ((bad art)), (weird colors), (((oversaturated art))), multiple persons, multiple faces, (vector), (vector-art), (((high contrast))) Here's some txt2img exemples:            Here an img2img exemple:   In img2img you may need to increase the prompt like: (((wtrcolor style))) You can play with the settings, is easier to get good results with the right prompt: For me, the sweet spot is around 30 steps, euler a, cfg 8-9. (Clip skip 2 kinda lead to softer results) See the tests here: https://imgur.com/a/ghVhVhy | 3714a29a29ca7e5b2f42af0466509a04 |

apache-2.0 | ['automatic-speech-recognition', 'gary109/AI_Light_Dance', 'generated_from_trainer'] | false | ai-light-dance_stepmania_ft_wav2vec2-large-xlsr-53-v5 This model is a fine-tuned version of [gary109/ai-light-dance_stepmania_ft_wav2vec2-large-xlsr-53-v4](https://huggingface.co/gary109/ai-light-dance_stepmania_ft_wav2vec2-large-xlsr-53-v4) on the GARY109/AI_LIGHT_DANCE - ONSET-STEPMANIA2 dataset. It achieves the following results on the evaluation set: - Loss: 1.0163 - Wer: 0.6622 | 08b45175a8366e84f4d43bc42a01a6d0 |

apache-2.0 | ['automatic-speech-recognition', 'gary109/AI_Light_Dance', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 4e-05 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - gradient_accumulation_steps: 16 - total_train_batch_size: 64 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 100 - num_epochs: 10.0 - mixed_precision_training: Native AMP | 8ba5e2d8b6efe9aebd5b100517f95549 |

apache-2.0 | ['automatic-speech-recognition', 'gary109/AI_Light_Dance', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.8867 | 1.0 | 376 | 1.0382 | 0.6821 | | 0.8861 | 2.0 | 752 | 1.0260 | 0.6686 | | 0.8682 | 3.0 | 1128 | 1.0358 | 0.6604 | | 0.8662 | 4.0 | 1504 | 1.0234 | 0.6665 | | 0.8463 | 5.0 | 1880 | 1.0333 | 0.6666 | | 0.8573 | 6.0 | 2256 | 1.0163 | 0.6622 | | 0.8628 | 7.0 | 2632 | 1.0209 | 0.6551 | | 0.8493 | 8.0 | 3008 | 1.0525 | 0.6582 | | 0.8371 | 9.0 | 3384 | 1.0409 | 0.6515 | | 0.8229 | 10.0 | 3760 | 1.0597 | 0.6523 | | ebd38941008ff023950059cb301353c8 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2123 - Accuracy: 0.926 - F1: 0.9258 | d9d024b85925c9182e510868b154f2c8 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8198 | 1.0 | 250 | 0.3147 | 0.904 | 0.9003 | | 0.2438 | 2.0 | 500 | 0.2123 | 0.926 | 0.9258 | | 2658fed5b8200e004aecaf46bf428a91 |

apache-2.0 | ['generated_from_trainer'] | false | distilgpt2-finetuned-katpoems-lm This model is a fine-tuned version of [distilgpt2](https://huggingface.co/distilgpt2) on the None dataset. It achieves the following results on the evaluation set: - Loss: 4.6519 | d9f2d6448a6a0056aa9b44c473c909d0 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | No log | 1.0 | 59 | 4.6509 | | No log | 2.0 | 118 | 4.6476 | | No log | 3.0 | 177 | 4.6519 | | deadeaf61fbdc2f83b48d7ce227c2902 |

apache-2.0 | ['automatic-speech-recognition', 'fr'] | false | exp_w2v2t_fr_hubert_s990 Fine-tuned [facebook/hubert-large-ll60k](https://huggingface.co/facebook/hubert-large-ll60k) for speech recognition using the train split of [Common Voice 7.0 (fr)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | dc34adc3e3491ee2c4949cad55043acc |

mit | ['generated_from_trainer'] | false | finetuned-die-berufliche-praxis-im-rahmen-des-pflegeprozesses-ausuben This model is a fine-tuned version of [bert-base-german-cased](https://huggingface.co/bert-base-german-cased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.4610 - Accuracy: 0.7900 - F1: 0.7788 | 10b2f21bd727594930ab30316e08a40e |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.4867 | 1.0 | 1365 | 0.4591 | 0.7879 | 0.7762 | | 0.39 | 2.0 | 2730 | 0.4610 | 0.7900 | 0.7788 | | 4d1a87d7e0b212a03a4ba1fe096b505c |

cc-by-sa-4.0 | ['t5', 'text2text-generation', 'seq2seq'] | false | 日本語T5事前学習済みモデル This is a T5 (Text-to-Text Transfer Transformer) model pretrained on Japanese corpus. 次の日本語コーパス(約100GB)を用いて事前学習を行ったT5 (Text-to-Text Transfer Transformer) モデルです。 * [Wikipedia](https://ja.wikipedia.org)の日本語ダンプデータ (2020年7月6日時点のもの) * [OSCAR](https://oscar-corpus.com)の日本語コーパス * [CC-100](http://data.statmt.org/cc-100/)の日本語コーパス このモデルは事前学習のみを行なったものであり、特定のタスクに利用するにはファインチューニングする必要があります。 本モデルにも、大規模コーパスを用いた言語モデルにつきまとう、学習データの内容の偏りに由来する偏った(倫理的ではなかったり、有害だったり、バイアスがあったりする)出力結果になる問題が潜在的にあります。 この問題が発生しうることを想定した上で、被害が発生しない用途にのみ利用するよう気をつけてください。 SentencePieceトークナイザーの学習には上記Wikipediaの全データを用いました。 | 2dbb1edc54e1b339f7c6451375f95b8c |

cc-by-sa-4.0 | ['t5', 'text2text-generation', 'seq2seq'] | false | livedoorニュース分類タスク livedoorニュースコーパスを用いたニュース記事のジャンル予測タスクの精度は次の通りです。 Google製多言語T5モデルに比べて、モデルサイズが25%小さく、6ptほど精度が高いです。 日本語T5 ([t5-base-japanese](https://huggingface.co/sonoisa/t5-base-japanese), パラメータ数は222M, [再現用コード](https://github.com/sonoisa/t5-japanese/blob/main/t5_japanese_classification.ipynb)) | label | precision | recall | f1-score | support | | ----------- | ----------- | ------- | -------- | ------- | | 0 | 0.96 | 0.94 | 0.95 | 130 | | 1 | 0.98 | 0.99 | 0.99 | 121 | | 2 | 0.96 | 0.96 | 0.96 | 123 | | 3 | 0.86 | 0.91 | 0.89 | 82 | | 4 | 0.96 | 0.97 | 0.97 | 129 | | 5 | 0.96 | 0.96 | 0.96 | 141 | | 6 | 0.98 | 0.98 | 0.98 | 127 | | 7 | 1.00 | 0.99 | 1.00 | 127 | | 8 | 0.99 | 0.97 | 0.98 | 120 | | accuracy | | | 0.97 | 1100 | | macro avg | 0.96 | 0.96 | 0.96 | 1100 | | weighted avg | 0.97 | 0.97 | 0.97 | 1100 | 比較対象: 多言語T5 ([google/mt5-small](https://huggingface.co/google/mt5-small), パラメータ数は300M) | label | precision | recall | f1-score | support | | ----------- | ----------- | ------- | -------- | ------- | | 0 | 0.91 | 0.88 | 0.90 | 130 | | 1 | 0.84 | 0.93 | 0.89 | 121 | | 2 | 0.93 | 0.80 | 0.86 | 123 | | 3 | 0.82 | 0.74 | 0.78 | 82 | | 4 | 0.90 | 0.95 | 0.92 | 129 | | 5 | 0.89 | 0.89 | 0.89 | 141 | | 6 | 0.97 | 0.98 | 0.97 | 127 | | 7 | 0.95 | 0.98 | 0.97 | 127 | | 8 | 0.93 | 0.95 | 0.94 | 120 | | accuracy | | | 0.91 | 1100 | | macro avg | 0.91 | 0.90 | 0.90 | 1100 | | weighted avg | 0.91 | 0.91 | 0.91 | 1100 | | 0e5ecfba9a5a7f5d1535cf0198c856f5 |

cc-by-sa-4.0 | ['t5', 'text2text-generation', 'seq2seq'] | false | JGLUEベンチマーク [JGLUE](https://github.com/yahoojapan/JGLUE)ベンチマークの結果は次のとおりです(順次追加)。 - MARC-ja: 準備中 - JSTS: 準備中 - JNLI: 準備中 - JSQuAD: EM=0.900, F1=0.945, [再現用コード](https://github.com/sonoisa/t5-japanese/blob/main/t5_JSQuAD.ipynb) - JCommonsenseQA: 準備中 | a6e0622197e18717348aad535de8eb83 |

cc-by-sa-4.0 | ['t5', 'text2text-generation', 'seq2seq'] | false | 免責事項 本モデルの作者は本モデルを作成するにあたって、その内容、機能等について細心の注意を払っておりますが、モデルの出力が正確であるかどうか、安全なものであるか等について保証をするものではなく、何らの責任を負うものではありません。本モデルの利用により、万一、利用者に何らかの不都合や損害が発生したとしても、モデルやデータセットの作者や作者の所属組織は何らの責任を負うものではありません。利用者には本モデルやデータセットの作者や所属組織が責任を負わないことを明確にする義務があります。 | d9c1e37f4a16866bcb2b99d5201fde0f |

creativeml-openrail-m | ['stable-diffusion', 'aiartchan'] | false | urlretrieve, no progressbar from urllib.request import urlretrieve from huggingface_hub import hf_hub_url repo_id = "AIARTCHAN/aichan_blend" filename = "AbyssOrangeMix2_nsfw-pruned.safetensors" url = hf_hub_url(repo_id, filename) urlretrieve(url, filename) ``` ```python | af4104571135e833d4fa1819286b8644 |

creativeml-openrail-m | ['stable-diffusion', 'aiartchan'] | false | with tqdm, urllib import shutil from urllib.request import urlopen from huggingface_hub import hf_hub_url from tqdm import tqdm repo_id = "AIARTCHAN/aichan_blend" filename = "AbyssOrangeMix2_nsfw-pruned.safetensors" url = hf_hub_url(repo_id, filename) with urlopen(url) as resp: total = int(resp.headers.get("Content-Length", 0)) with tqdm.wrapattr( resp, "read", total=total, desc="Download..." ) as src: with open(filename, "wb") as dst: shutil.copyfileobj(src, dst) ``` ```python | b7094e06c2950926df6967a431839f13 |

creativeml-openrail-m | ['stable-diffusion', 'aiartchan'] | false | with tqdm, requests import shutil import requests from huggingface_hub import hf_hub_url from tqdm import tqdm repo_id = "AIARTCHAN/aichan_blend" filename = "AbyssOrangeMix2_nsfw-pruned.safetensors" url = hf_hub_url(repo_id, filename) resp = requests.get(url, stream=True) total = int(resp.headers.get("Content-Length", 0)) with tqdm.wrapattr( resp.raw, "read", total=total, desc="Download..." ) as src: with open(filename, "wb") as dst: shutil.copyfileobj(src, dst) ``` | 6c1437d206522bf29db7c9b1b4f63fe6 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-squad-seed-69 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the squad_v2 dataset. It achieves the following results on the evaluation set: - Loss: 1.4246 | 8d550d4452b32b4aa19b9ccb4dd8aaee |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 1.2185 | 1.0 | 8235 | 1.2774 | | 0.9512 | 2.0 | 16470 | 1.2549 | | 0.7704 | 3.0 | 24705 | 1.4246 | | e778d871c59fcad393cc393a5d93225c |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | sentence-transformers/nli-distilroberta-base-v2 This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search. | 3c8cd9b5e51500ff44fba762a26ba1ab |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Usage (Sentence-Transformers) Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed: ``` pip install -U sentence-transformers ``` Then you can use the model like this: ```python from sentence_transformers import SentenceTransformer sentences = ["This is an example sentence", "Each sentence is converted"] model = SentenceTransformer('sentence-transformers/nli-distilroberta-base-v2') embeddings = model.encode(sentences) print(embeddings) ``` | b11fb6a76383a78a9700bdddadffed80 |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Load model from HuggingFace Hub tokenizer = AutoTokenizer.from_pretrained('sentence-transformers/nli-distilroberta-base-v2') model = AutoModel.from_pretrained('sentence-transformers/nli-distilroberta-base-v2') | 2738dfd9999c95216124238436a06a62 |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Evaluation Results For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=sentence-transformers/nli-distilroberta-base-v2) | b7692dd30652e968aaee9ba71edcf671 |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Full Model Architecture ``` SentenceTransformer( (0): Transformer({'max_seq_length': 75, 'do_lower_case': False}) with Transformer model: RobertaModel (1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False}) ) ``` | 2b1bb2d328d6ed0fcacf761103f16261 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | wnt1 Dreambooth model trained by cdefghijkl with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept: | b8da3023b25b9ba3e3ff8160359b436c |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased__hate_speech_offensive__train-8-3 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.9681 - Accuracy: 0.549 | 866703e0fd80c980ae56e98878261575 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 1.1073 | 1.0 | 5 | 1.1393 | 0.0 | | 1.0392 | 2.0 | 10 | 1.1729 | 0.0 | | 1.0302 | 3.0 | 15 | 1.1694 | 0.2 | | 0.9176 | 4.0 | 20 | 1.1846 | 0.2 | | 0.8339 | 5.0 | 25 | 1.1663 | 0.2 | | 0.7533 | 6.0 | 30 | 1.1513 | 0.4 | | 0.6327 | 7.0 | 35 | 1.1474 | 0.4 | | 0.4402 | 8.0 | 40 | 1.1385 | 0.4 | | 0.3752 | 9.0 | 45 | 1.0965 | 0.2 | | 0.3448 | 10.0 | 50 | 1.0357 | 0.2 | | 0.2582 | 11.0 | 55 | 1.0438 | 0.2 | | 0.1903 | 12.0 | 60 | 1.0561 | 0.2 | | 0.1479 | 13.0 | 65 | 1.0569 | 0.2 | | 0.1129 | 14.0 | 70 | 1.0455 | 0.2 | | 0.1071 | 15.0 | 75 | 1.0416 | 0.4 | | 0.0672 | 16.0 | 80 | 1.1164 | 0.4 | | 0.0561 | 17.0 | 85 | 1.1846 | 0.6 | | 0.0463 | 18.0 | 90 | 1.2040 | 0.6 | | 0.0431 | 19.0 | 95 | 1.2078 | 0.6 | | 0.0314 | 20.0 | 100 | 1.2368 | 0.6 | | 4fef5dc9f80fcad921062d585116cf12 |

apache-2.0 | ['generated_from_trainer'] | false | bert-fine-tuned-cola This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the glue dataset. It achieves the following results on the evaluation set: - Loss: 0.8073 - Matthews Correlation: 0.6107 | 454b58b609ca58318d05cbb4d3655027 |

mit | ['question generation'] | false | Input generate question: Obwohl die Vereinigten Staaten wie auch viele Staaten des Commonwealth Erben des <hl> britischen Common Laws <hl> sind, setzt sich das amerikanische Recht bedeutend davon ab. Dies rührt größtenteils von dem langen Zeitraum her, [...] | ab1117a6743966e7b5e07cd2761bcea1 |

mit | ['question generation'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 2 - eval_batch_size: 2 - seed: 100 - gradient_accumulation_steps: 8 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 10 | 3de40a10138a112c4a731f25b0a49801 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.