license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | [] | false | Introduction This is the **huge** version of OFA model finetuned for **VQA**. OFA is a unified multimodal pretrained model that unifies modalities (i.e., cross-modality, vision, language) and tasks (e.g., image generation, visual grounding, image captioning, image classification, text generation, etc.) to a simple sequence-to-sequence learning framework. The directory includes 4 files, namely `config.json` which consists of model configuration, `vocab.json` and `merge.txt` for our OFA tokenizer, and lastly `pytorch_model.bin` which consists of model weights. There is no need to worry about the mismatch between Fairseq and transformers, since we have addressed the issue yet. | 51af912ec91281036fc48133dbbcf084 |

apache-2.0 | [] | false | How to use To use it in transformers, please refer to https://github.com/OFA-Sys/OFA/tree/feature/add_transformers. Install the transformers and download the models as shown below. ``` git clone --single-branch --branch feature/add_transformers https://github.com/OFA-Sys/OFA.git pip install OFA/transformers/ git clone https://huggingface.co/OFA-Sys/OFA-huge-vqa ``` After, refer the path to OFA-large to `ckpt_dir`, and prepare an image for the testing example below. Also, ensure that you have pillow and torchvision in your environment. ```python >>> from PIL import Image >>> from torchvision import transforms >>> from transformers import OFATokenizer, OFAModel >>> from generate import sequence_generator >>> mean, std = [0.5, 0.5, 0.5], [0.5, 0.5, 0.5] >>> resolution = 480 >>> patch_resize_transform = transforms.Compose([ lambda image: image.convert("RGB"), transforms.Resize((resolution, resolution), interpolation=Image.BICUBIC), transforms.ToTensor(), transforms.Normalize(mean=mean, std=std) ]) >>> tokenizer = OFATokenizer.from_pretrained(ckpt_dir) >>> txt = " what does the image describe?" | 316bdaf20aac24453f255b4f398d7885 |

apache-2.0 | [] | false | using the generator of fairseq version >>> model = OFAModel.from_pretrained(ckpt_dir, use_cache=True) >>> generator = sequence_generator.SequenceGenerator( tokenizer=tokenizer, beam_size=5, max_len_b=16, min_len=0, no_repeat_ngram_size=3, ) >>> data = {} >>> data["net_input"] = {"input_ids": inputs, 'patch_images': patch_img, 'patch_masks':torch.tensor([True])} >>> gen_output = generator.generate([model], data) >>> gen = [gen_output[i][0]["tokens"] for i in range(len(gen_output))] | 7a2e6a5ba04bc7dce70d73806306aa3f |

apache-2.0 | [] | false | using the generator of huggingface version >>> model = OFAModel.from_pretrained(ckpt_dir, use_cache=False) >>> gen = model.generate(inputs, patch_images=patch_img, num_beams=5, no_repeat_ngram_size=3) >>> print(tokenizer.batch_decode(gen, skip_special_tokens=True)) ``` | 5148987b9a3f0c3709fd12b573142fe5 |

apache-2.0 | ['speech', 'audio', 'automatic-speech-recognition'] | false | Evaluation on Common Voice FR Test ```python import torchaudio from datasets import load_dataset, load_metric from transformers import ( Wav2Vec2ForCTC, Wav2Vec2Processor, ) import torch import re import sys model_name = "facebook/wav2vec2-large-xlsr-53-french" device = "cuda" chars_to_ignore_regex = '[\,\?\.\!\-\;\:\"]' | af00d0eaf108a5dccc89e0bf1cbacd1c |

apache-2.0 | ['speech', 'audio', 'automatic-speech-recognition'] | false | noqa: W605 model = Wav2Vec2ForCTC.from_pretrained(model_name).to(device) processor = Wav2Vec2Processor.from_pretrained(model_name) ds = load_dataset("common_voice", "fr", split="test", data_dir="./cv-corpus-6.1-2020-12-11") resampler = torchaudio.transforms.Resample(orig_freq=48_000, new_freq=16_000) def map_to_array(batch): speech, _ = torchaudio.load(batch["path"]) batch["speech"] = resampler.forward(speech.squeeze(0)).numpy() batch["sampling_rate"] = resampler.new_freq batch["sentence"] = re.sub(chars_to_ignore_regex, '', batch["sentence"]).lower().replace("’", "'") return batch ds = ds.map(map_to_array) def map_to_pred(batch): features = processor(batch["speech"], sampling_rate=batch["sampling_rate"][0], padding=True, return_tensors="pt") input_values = features.input_values.to(device) attention_mask = features.attention_mask.to(device) with torch.no_grad(): logits = model(input_values, attention_mask=attention_mask).logits pred_ids = torch.argmax(logits, dim=-1) batch["predicted"] = processor.batch_decode(pred_ids) batch["target"] = batch["sentence"] return batch result = ds.map(map_to_pred, batched=True, batch_size=16, remove_columns=list(ds.features.keys())) wer = load_metric("wer") print(wer.compute(predictions=result["predicted"], references=result["target"])) ``` **Result**: 25.2 % | df30f7bf10cc259430cc8a8dd98f98df |

apache-2.0 | [] | false | This model is a BERT-based Location Mention Recognition model that is adopted from the [TLLMR4CM GitHub](https://github.com/rsuwaileh/TLLMR4CM/). The model identifies the toponyms' spans in the text and predicts their location types. The location type can be coarse-grained (e.g., country, city, etc.) and fine-grained (e.g., street, POI, etc.) The model is trained using the training splits of all events from [IDRISI-R dataset](https://github.com/rsuwaileh/IDRISI) under the `Type-based` LMR mode and using the `Time-based` version of the data. You can download this data in `BILOU` format from [here](https://github.com/rsuwaileh/IDRISI/tree/main/data/LMR/EN/gold-random-bilou/). More details about the models are available [here](https://github.com/rsuwaileh/IDRISI/tree/main/models). * Different variants of the model are available through HuggingFace: - [rsuwaileh/IDRISI-LMR-EN-random-typeless](https://huggingface.co/rsuwaileh/IDRISI-LMR-EN-random-typeless/) - [rsuwaileh/IDRISI-LMR-EN-random-typebased](https://huggingface.co/rsuwaileh/IDRISI-LMR-EN-random-typebased/) - [rsuwaileh/IDRISI-LMR-EN-timebased-typeless](https://huggingface.co/rsuwaileh/IDRISI-LMR-EN-timebased-typeless/) * Arabic models are also available: - [rsuwaileh/IDRISI-LMR-AR-random-typeless](https://huggingface.co/rsuwaileh/IDRISI-LMR-AR-random-typeless/) - [rsuwaileh/IDRISI-LMR-AR-random-typebased](https://huggingface.co/rsuwaileh/IDRISI-LMR-AR-random-typebased/) - [rsuwaileh/IDRISI-LMR-AR-timebased-typeless](https://huggingface.co/rsuwaileh/IDRISI-LMR-AR-timebased-typeless/) - [rsuwaileh/IDRISI-LMR-AR-timebased-typebased](https://huggingface.co/rsuwaileh/IDRISI-LMR-AR-timebased-typebased/) To cite the models: ``` @article{suwaileh2022tlLMR4disaster, title={When a Disaster Happens, We Are Ready: Location Mention Recognition from Crisis Tweets}, author={Suwaileh, Reem and Elsayed, Tamer and Imran, Muhammad and Sajjad, Hassan}, journal={International Journal of Disaster Risk Reduction}, year={2022} } @inproceedings{suwaileh2020tlLMR4disaster, title={Are We Ready for this Disaster? Towards Location Mention Recognition from Crisis Tweets}, author={Suwaileh, Reem and Imran, Muhammad and Elsayed, Tamer and Sajjad, Hassan}, booktitle={Proceedings of the 28th International Conference on Computational Linguistics}, pages={6252--6263}, year={2020} } ``` To cite the IDRISI-R dataset: ``` @article{rsuwaileh2022Idrisi-r, title={IDRISI-R: Large-scale English and Arabic Location Mention Recognition Datasets for Disaster Response over Twitter}, author={Suwaileh, Reem and Elsayed, Tamer and Imran, Muhammad}, journal={...}, volume={...}, pages={...}, year={2022}, publisher={...} } ``` | ce3ab530029de925980077089a9291b0 |

apache-2.0 | ['automatic-speech-recognition', 'de'] | false | exp_w2v2r_de_xls-r_gender_male-8_female-2_s293 Fine-tuned [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) for speech recognition using the train split of [Common Voice 7.0 (de)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 716bf7c1befbf60e6410cf52498c9530 |

mit | [] | false | Model usage All trained models can be used from the [DBMDZ](https://github.com/dbmdz) Hugging Face [model hub page](https://huggingface.co/dbmdz) using their model name. Example usage with 🤗/Transformers: ```python tokenizer = AutoTokenizer.from_pretrained("dbmdz/electra-base-turkish-mc4-cased-discriminator") model = AutoModel.from_pretrained("dbmdz/electra-base-turkish-mc4-cased-discriminator") ``` | 73e275eb05d272b209f2cebbe9bd5ed6 |

cc-by-4.0 | ['espnet', 'audio', 'text-to-speech'] | false | Demo: How to use in ESPnet2 ```bash cd espnet git checkout 49a284e69308d81c142b89795de255b4ce290c54 pip install -e . cd egs2/talromur/tts1 ./run.sh --skip_data_prep false --skip_train true --download_model espnet/GunnarThor_talromur_g_tacotron2 ``` | 377f7045a17288e563d1d3058c1b7e0c |

cc-by-4.0 | ['espnet', 'audio', 'text-to-speech'] | false | TTS config <details><summary>expand</summary> ``` config: ./conf/tuning/train_tacotron2.yaml print_config: false log_level: INFO dry_run: false iterator_type: sequence output_dir: exp/g/tts_train_tacotron2_raw_phn_none ngpu: 1 seed: 0 num_workers: 1 num_att_plot: 3 dist_backend: nccl dist_init_method: env:// dist_world_size: 2 dist_rank: 0 local_rank: 0 dist_master_addr: localhost dist_master_port: 39151 dist_launcher: null multiprocessing_distributed: true unused_parameters: false sharded_ddp: false cudnn_enabled: true cudnn_benchmark: false cudnn_deterministic: true collect_stats: false write_collected_feats: false max_epoch: 100 patience: null val_scheduler_criterion: - valid - loss early_stopping_criterion: - valid - loss - min best_model_criterion: - - valid - loss - min - - train - loss - min keep_nbest_models: 5 nbest_averaging_interval: 0 grad_clip: 1.0 grad_clip_type: 2.0 grad_noise: false accum_grad: 1 no_forward_run: false resume: true train_dtype: float32 use_amp: false log_interval: null use_matplotlib: true use_tensorboard: true use_wandb: false wandb_project: null wandb_id: null wandb_entity: null wandb_name: null wandb_model_log_interval: -1 detect_anomaly: false pretrain_path: null init_param: [] ignore_init_mismatch: false freeze_param: [] num_iters_per_epoch: 500 batch_size: 20 valid_batch_size: null batch_bins: 2560000 valid_batch_bins: null train_shape_file: - exp/g/tts_stats_raw_phn_none/train/text_shape.phn - exp/g/tts_stats_raw_phn_none/train/speech_shape valid_shape_file: - exp/g/tts_stats_raw_phn_none/valid/text_shape.phn - exp/g/tts_stats_raw_phn_none/valid/speech_shape batch_type: numel valid_batch_type: null fold_length: - 150 - 204800 sort_in_batch: descending sort_batch: descending multiple_iterator: false chunk_length: 500 chunk_shift_ratio: 0.5 num_cache_chunks: 1024 train_data_path_and_name_and_type: - - dump/raw/train_g_phn/text - text - text - - dump/raw/train_g_phn/wav.scp - speech - sound valid_data_path_and_name_and_type: - - dump/raw/dev_g_phn/text - text - text - - dump/raw/dev_g_phn/wav.scp - speech - sound allow_variable_data_keys: false max_cache_size: 0.0 max_cache_fd: 32 valid_max_cache_size: null optim: adam optim_conf: lr: 0.001 eps: 1.0e-06 weight_decay: 0.0 scheduler: null scheduler_conf: {} token_list: - <blank> - <unk> - ',' - . - r - t - n - a0 - s - I0 - D - l - Y0 - m - v - h - E1 - k - a:1 - E:1 - f - G - j - T - a1 - p - c - au:1 - i:1 - O:1 - I:1 - E0 - I1 - r_0 - t_h - k_h - Y1 - ei1 - i0 - ou:1 - ei:1 - u:1 - O1 - N - l_0 - '91' - ai0 - au1 - ou0 - n_0 - ei0 - O0 - ou1 - ai:1 - '9:1' - ai1 - i1 - '90' - au0 - c_h - x - 9i:1 - C - p_h - u0 - Y:1 - J - 9i1 - u1 - 9i0 - N_0 - m_0 - J_0 - Oi1 - Yi0 - Yi1 - Oi0 - au:0 - '9:0' - E:0 - <sos/eos> odim: null model_conf: {} use_preprocessor: true token_type: phn bpemodel: null non_linguistic_symbols: null cleaner: null g2p: null feats_extract: fbank feats_extract_conf: n_fft: 1024 hop_length: 256 win_length: null fs: 22050 fmin: 80 fmax: 7600 n_mels: 80 normalize: global_mvn normalize_conf: stats_file: exp/g/tts_stats_raw_phn_none/train/feats_stats.npz tts: tacotron2 tts_conf: embed_dim: 512 elayers: 1 eunits: 512 econv_layers: 3 econv_chans: 512 econv_filts: 5 atype: location adim: 512 aconv_chans: 32 aconv_filts: 15 cumulate_att_w: true dlayers: 2 dunits: 1024 prenet_layers: 2 prenet_units: 256 postnet_layers: 5 postnet_chans: 512 postnet_filts: 5 output_activation: null use_batch_norm: true use_concate: true use_residual: false dropout_rate: 0.5 zoneout_rate: 0.1 reduction_factor: 1 spk_embed_dim: null use_masking: true bce_pos_weight: 5.0 use_guided_attn_loss: true guided_attn_loss_sigma: 0.4 guided_attn_loss_lambda: 1.0 pitch_extract: null pitch_extract_conf: {} pitch_normalize: null pitch_normalize_conf: {} energy_extract: null energy_extract_conf: {} energy_normalize: null energy_normalize_conf: {} required: - output_dir - token_list version: 0.10.7a1 distributed: true ``` </details> | 6234567da6ee1a029ee5be3e29ffd622 |

apache-2.0 | ['generated_from_trainer'] | false | model This model is a fine-tuned version of [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) on mWACH NEO dataset. It achieves the following results on the evaluation set: - Loss: 1.6344 | 04b3e9eed37249cdc7d916a6c5709b10 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-imdb This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 2.4303 | c6fe5df7d38a43c559c26a5f066e93ea |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.5274 | 1.0 | 157 | 2.4476 | | 2.5259 | 2.0 | 314 | 2.4390 | | 2.5134 | 3.0 | 471 | 2.4330 | | 610b260bc7a3de13b44e2ac051d7441a |

apache-2.0 | [] | false | **mBERT-base-cased-finetuned-bengali-fakenews** This model is a fine-tune checkpoint of mBERT-base-cased over **[Bengali-fake-news Dataset](https://www.kaggle.com/cryptexcode/banfakenews)** for Text classification. This model reaches an accuracy of 96.3 with an f1-score of 79.1 on the dev set. | 737b10d510589b93e85f989f31dedabb |

apache-2.0 | [] | false | **How to use?** **Task**: binary-classification - LABEL_1: Authentic (*Authentic means news is authentic*) - LABEL_0: Fake (*Fake means news is fake*) ``` from transformers import pipeline print(pipeline("sentiment-analysis",model="DeadBeast/mbert-base-cased-finetuned-bengali-fakenews",tokenizer="DeadBeast/mbert-base-cased-finetuned-bengali-fakenews")("অভিনেতা আফজাল শরীফকে ২০ লাখ টাকার অনুদান অসুস্থ অভিনেতা আফজাল শরীফকে চিকিৎসার জন্য ২০ লাখ টাকা অনুদান দিয়েছেন প্রধানমন্ত্রী শেখ হাসিনা।")) ``` | 6a3144c07c39d6a863223f2b0c199980 |

other | ['generated_from_trainer'] | false | dalio-all-io-1.3b This model is a fine-tuned version of [facebook/opt-1.3b](https://huggingface.co/facebook/opt-1.3b) on the AlekseyKorshuk/dalio-all-io dataset. It achieves the following results on the evaluation set: - Loss: 2.3652 - Accuracy: 0.0558 | ba072ab724cb78bb1664c0d0692a536c |

other | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 2 - eval_batch_size: 2 - seed: 42 - distributed_type: multi-GPU - num_devices: 8 - total_train_batch_size: 16 - total_eval_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: cosine - num_epochs: 1.0 | 7710656decca94ebfc907309a3fd6855 |

other | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 2.6543 | 0.03 | 1 | 2.6113 | 0.0513 | | 2.6077 | 0.07 | 2 | 2.6113 | 0.0513 | | 2.5964 | 0.1 | 3 | 2.5605 | 0.0519 | | 2.7302 | 0.14 | 4 | 2.5234 | 0.0527 | | 2.7 | 0.17 | 5 | 2.5078 | 0.0528 | | 2.5674 | 0.21 | 6 | 2.4941 | 0.0532 | | 2.6406 | 0.24 | 7 | 2.4883 | 0.0534 | | 2.5315 | 0.28 | 8 | 2.4805 | 0.0536 | | 2.7202 | 0.31 | 9 | 2.4727 | 0.0537 | | 2.5144 | 0.34 | 10 | 2.4648 | 0.0536 | | 2.4983 | 0.38 | 11 | 2.4512 | 0.0537 | | 2.7029 | 0.41 | 12 | 2.4414 | 0.0539 | | 2.5198 | 0.45 | 13 | 2.4336 | 0.0540 | | 2.5706 | 0.48 | 14 | 2.4258 | 0.0545 | | 2.5688 | 0.52 | 15 | 2.4180 | 0.0548 | | 2.3793 | 0.55 | 16 | 2.4102 | 0.0552 | | 2.4785 | 0.59 | 17 | 2.4043 | 0.0554 | | 2.4688 | 0.62 | 18 | 2.3984 | 0.0553 | | 2.5674 | 0.66 | 19 | 2.3984 | 0.0553 | | 2.5054 | 0.69 | 20 | 2.3945 | 0.0554 | | 2.452 | 0.72 | 21 | 2.3887 | 0.0555 | | 2.5999 | 0.76 | 22 | 2.3828 | 0.0556 | | 2.3665 | 0.79 | 23 | 2.3789 | 0.0556 | | 2.6223 | 0.83 | 24 | 2.375 | 0.0557 | | 2.3562 | 0.86 | 25 | 2.3711 | 0.0557 | | 2.429 | 0.9 | 26 | 2.3691 | 0.0557 | | 2.563 | 0.93 | 27 | 2.3672 | 0.0558 | | 2.4573 | 0.97 | 28 | 2.3652 | 0.0558 | | 2.4883 | 1.0 | 29 | 2.3652 | 0.0558 | | f50dcf5dad84826fa35ed7041f15cc8d |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:------:|:-------:|:-------:|:---------:|:-------:| | No log | 1.0 | 258 | 1.3238 | 50.228 | 29.5898 | 30.1054 | 47.1265 | 142.0 | | 452256b7298472749600b9b19a2d723a |

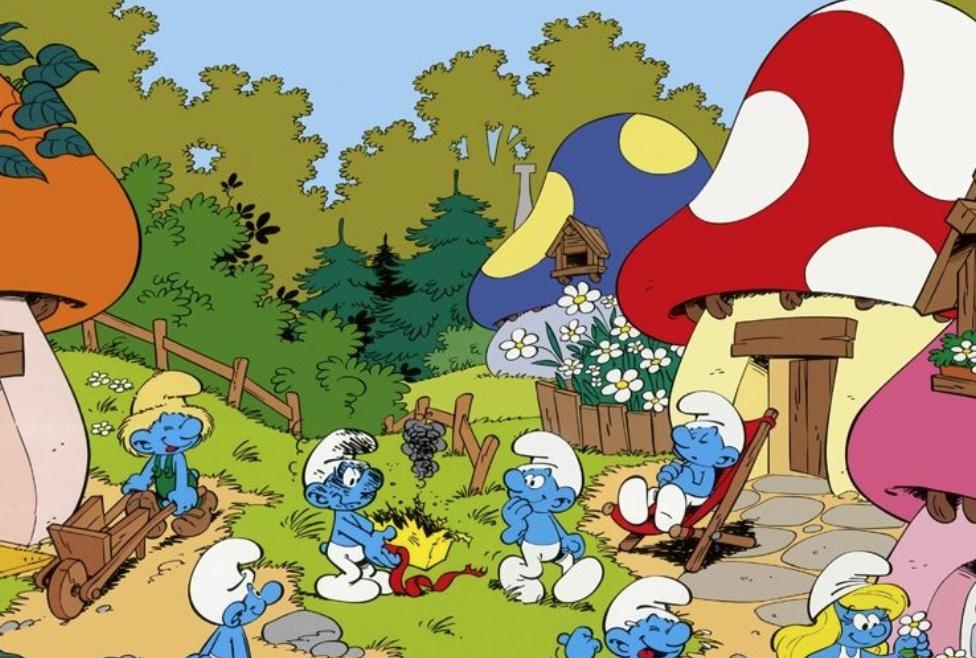

mit | [] | false | Smurf Style on Stable Diffusion This is the `<smurfy>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:           | 45d0170a50be69bb7623af1dff786adc |

apache-2.0 | ['automatic-speech-recognition', 'uk'] | false | exp_w2v2t_uk_wav2vec2_s646 Fine-tuned [facebook/wav2vec2-large-lv60](https://huggingface.co/facebook/wav2vec2-large-lv60) for speech recognition using the train split of [Common Voice 7.0 (uk)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 9062ed2e9a81d1684ff9f902a76d541f |

openrail | ['stable-diffusion', 'diffusers', 'text-to-image', 'fashion', 'diffusion', 'openjourney'] | false | Stable Diffusion fine-tuned for [Fashion Product Images Dataset](https://www.kaggle.com/datasets/paramaggarwal/fashion-product-images-dataset) This model is a fine-tuned version of [openjourney](https://huggingface.co/prompthero/openjourney) that is based on Stable Diffusion targeting fashion and clothing. | 1b64c1d2498b24860978ee61dab06fe3 |

openrail | ['stable-diffusion', 'diffusers', 'text-to-image', 'fashion', 'diffusion', 'openjourney'] | false | How to use ? ```python from diffusers import StableDiffusionPipeline import torch pipeline = StableDiffusionPipeline.from_pretrained("MohamedRashad/diffusion_fashion", torch_dtype=torch.float16) pipeline.to("cuda") prompt = "A photo of a dress, made in 2019, color is Red, Casual usage, Women's cloth, something for the summer season, on white background" images = pipeline(prompt).images[0] image.save("red_dress.png") ``` | eaa1b8145a30b312e1f03ec6b8217e98 |

apache-2.0 | ['generated_from_trainer'] | false | opus-mt-sla-en-finetuned-uk-to-en This model is a fine-tuned version of [Helsinki-NLP/opus-mt-sla-en](https://huggingface.co/Helsinki-NLP/opus-mt-sla-en) on the opus100 dataset. It achieves the following results on the evaluation set: - Loss: 1.7232 - Bleu: 27.7684 - Gen Len: 12.2485 | 5089583f4b86a94cfb4d0190c1a3c551 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len | |:-------------:|:-----:|:-----:|:---------------:|:-------:|:-------:| | 1.5284 | 1.0 | 62500 | 1.7232 | 27.7684 | 12.2485 | | cbaad037c31557bf1660158873df873d |

apache-2.0 | ['lexical normalization'] | false | Fine-tuned ByT5-small for MultiLexNorm (Dutch version)  This is the official release of the fine-tuned models for **the winning entry** to the [*W-NUT 2021: Multilingual Lexical Normalization (MultiLexNorm)* shared task](https://noisy-text.github.io/2021/multi-lexnorm.html), which evaluates lexical-normalization systems on 12 social media datasets in 11 languages. Our system is based on [ByT5](https://arxiv.org/abs/2105.13626), which we first pre-train on synthetic data and then fine-tune on authentic normalization data. It achieves the best performance by a wide margin in intrinsic evaluation, and also the best performance in extrinsic evaluation through dependency parsing. In addition to these fine-tuned models, we also release the source files on [GitHub](https://github.com/ufal/multilexnorm2021) and an interactive demo on [Google Colab](https://colab.research.google.com/drive/1rxpI8IlKk-D2crFqi2hdzbTBIezqgsCg?usp=sharing). | 0ea0cef8de2442bec6ef6d54b58237b6 |

apache-2.0 | ['generated_from_keras_callback'] | false | Rocketknight1/bert-base-cased-finetuned-wikitext2 This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 6.3982 - Validation Loss: 6.2664 - Epoch: 1 | 450943d7cb906a7fb8012db001d80c2b |

apache-2.0 | ['generated_from_trainer'] | false | dat259-nor-wav2vec2 This model is a fine-tuned version of [NbAiLab/nb-wav2vec2-300m-nynorsk](https://huggingface.co/NbAiLab/nb-wav2vec2-300m-nynorsk) on the common_voice_8_0 dataset. It achieves the following results on the evaluation set: - Loss: 10.9446 - Wer: 1.1259 | a26b703c230efadde5b14990ca7eec44 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 5 - num_epochs: 10 - mixed_precision_training: Native AMP | 5e8e2685cb001e564e97aab445ac7d15 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 84.8696 | 1.57 | 5 | 91.5942 | 1.0 | | 62.5471 | 3.29 | 10 | 33.8515 | 1.0068 | | 20.2215 | 4.86 | 15 | 17.4461 | 1.0017 | | 15.2892 | 6.57 | 20 | 13.5454 | 1.0034 | | 12.8086 | 8.29 | 25 | 12.0084 | 1.0408 | | 11.0168 | 9.86 | 30 | 10.9446 | 1.1259 | | 3dcdd3e817c6b45b2e76ca0ee3b3c548 |

cc-by-4.0 | ['question generation'] | false | Model Card of `lmqg/mt5-base-esquad-qg` This model is fine-tuned version of [google/mt5-base](https://huggingface.co/google/mt5-base) for question generation task on the [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) (dataset_name: default) via [`lmqg`](https://github.com/asahi417/lm-question-generation). | 5b25a3c78bffcf8d6ae6fd62ad5acb6e |

cc-by-4.0 | ['question generation'] | false | Overview - **Language model:** [google/mt5-base](https://huggingface.co/google/mt5-base) - **Language:** es - **Training data:** [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) (default) - **Online Demo:** [https://autoqg.net/](https://autoqg.net/) - **Repository:** [https://github.com/asahi417/lm-question-generation](https://github.com/asahi417/lm-question-generation) - **Paper:** [https://arxiv.org/abs/2210.03992](https://arxiv.org/abs/2210.03992) | bc99759935b9dbe36f1d80c87427ec6f |

cc-by-4.0 | ['question generation'] | false | model prediction questions = model.generate_q(list_context="a noviembre , que es también la estación lluviosa.", list_answer="noviembre") ``` - With `transformers` ```python from transformers import pipeline pipe = pipeline("text2text-generation", "lmqg/mt5-base-esquad-qg") output = pipe("del <hl> Ministerio de Desarrollo Urbano <hl> , Gobierno de la India.") ``` | 6d7d444ba1f33c7eeec44175dfe9d754 |

cc-by-4.0 | ['question generation'] | false | Evaluation - ***Metric (Question Generation)***: [raw metric file](https://huggingface.co/lmqg/mt5-base-esquad-qg/raw/main/eval/metric.first.sentence.paragraph_answer.question.lmqg_qg_esquad.default.json) | | Score | Type | Dataset | |:-----------|--------:|:--------|:-----------------------------------------------------------------| | BERTScore | 84.47 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | | Bleu_1 | 26.73 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | | Bleu_2 | 18.46 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | | Bleu_3 | 13.5 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | | Bleu_4 | 10.15 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | | METEOR | 23.43 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | | MoverScore | 59.62 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | | ROUGE_L | 25.45 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | - ***Metric (Question & Answer Generation, Reference Answer)***: Each question is generated from *the gold answer*. [raw metric file](https://huggingface.co/lmqg/mt5-base-esquad-qg/raw/main/eval/metric.first.answer.paragraph.questions_answers.lmqg_qg_esquad.default.json) | | Score | Type | Dataset | |:--------------------------------|--------:|:--------|:-----------------------------------------------------------------| | QAAlignedF1Score (BERTScore) | 89.68 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | | QAAlignedF1Score (MoverScore) | 64.22 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | | QAAlignedPrecision (BERTScore) | 89.7 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | | QAAlignedPrecision (MoverScore) | 64.24 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | | QAAlignedRecall (BERTScore) | 89.66 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | | QAAlignedRecall (MoverScore) | 64.21 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | - ***Metric (Question & Answer Generation, Pipeline Approach)***: Each question is generated on the answer generated by [`lmqg/mt5-base-esquad-ae`](https://huggingface.co/lmqg/mt5-base-esquad-ae). [raw metric file](https://huggingface.co/lmqg/mt5-base-esquad-qg/raw/main/eval_pipeline/metric.first.answer.paragraph.questions_answers.lmqg_qg_esquad.default.lmqg_mt5-base-esquad-ae.json) | | Score | Type | Dataset | |:--------------------------------|--------:|:--------|:-----------------------------------------------------------------| | QAAlignedF1Score (BERTScore) | 80.79 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | | QAAlignedF1Score (MoverScore) | 55.25 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | | QAAlignedPrecision (BERTScore) | 78.45 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | | QAAlignedPrecision (MoverScore) | 53.7 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | | QAAlignedRecall (BERTScore) | 83.34 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | | QAAlignedRecall (MoverScore) | 56.99 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) | | 431ee9a1c6c273a645056c7291f26e57 |

cc-by-4.0 | ['question generation'] | false | Training hyperparameters The following hyperparameters were used during fine-tuning: - dataset_path: lmqg/qg_esquad - dataset_name: default - input_types: ['paragraph_answer'] - output_types: ['question'] - prefix_types: None - model: google/mt5-base - max_length: 512 - max_length_output: 32 - epoch: 10 - batch: 4 - lr: 0.0005 - fp16: False - random_seed: 1 - gradient_accumulation_steps: 16 - label_smoothing: 0.15 The full configuration can be found at [fine-tuning config file](https://huggingface.co/lmqg/mt5-base-esquad-qg/raw/main/trainer_config.json). | c83f9714f026489f73a1ebb830e6cac7 |

mit | ['generated_from_trainer'] | false | kobart_32_3e-5_datav2_min30_lp5.0_temperature1.0 This model is a fine-tuned version of [gogamza/kobart-base-v2](https://huggingface.co/gogamza/kobart-base-v2) on the None dataset. It achieves the following results on the evaluation set: - Loss: 2.5958 - Rouge1: 35.6403 - Rouge2: 13.1314 - Rougel: 23.8946 - Bleu1: 29.625 - Bleu2: 17.4903 - Bleu3: 10.6018 - Bleu4: 6.0498 - Gen Len: 50.697 | b45ad63ee8306a311ba47ac710cffbc5 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 32 - eval_batch_size: 128 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - num_epochs: 5.0 | fd2e85e187e9043eaf55f7a679085e1c |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Bleu1 | Bleu2 | Bleu3 | Bleu4 | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:------:|:-------:|:-------:|:------:|:-------:| | 1.8239 | 3.78 | 5000 | 2.5958 | 35.6403 | 13.1314 | 23.8946 | 29.625 | 17.4903 | 10.6018 | 6.0498 | 50.697 | | 90835ec9252f6a3467a8b5c8584f76ef |

apache-2.0 | ['generated_from_trainer'] | false | albert-base-v2-fakenews-discriminator The dataset: Fake and real news dataset https://www.kaggle.com/clmentbisaillon/fake-and-real-news-dataset I use title and label to train the classifier label_0 : Fake news label_1 : Real news This model is a fine-tuned version of [albert-base-v2](https://huggingface.co/albert-base-v2) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.0910 - Accuracy: 0.9758 | 42ab711a579cd1ab7e3f043e210df4e8 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 1 | 3b7ec17d3f9696c4b32af4f7021a3a77 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.0452 | 1.0 | 1768 | 0.0910 | 0.9758 | | afd5db45d7479ab77d160b8be325cde6 |

other | ['vision', 'image-segmentation'] | false | SegFormer (b0-sized) model fine-tuned on ADE20k SegFormer model fine-tuned on ADE20k at resolution 512x512. It was introduced in the paper [SegFormer: Simple and Efficient Design for Semantic Segmentation with Transformers](https://arxiv.org/abs/2105.15203) by Xie et al. and first released in [this repository](https://github.com/NVlabs/SegFormer). Disclaimer: The team releasing SegFormer did not write a model card for this model so this model card has been written by the Hugging Face team. | b885cbd7ea3392be641f5558f98abc51 |

other | ['vision', 'image-segmentation'] | false | Model description SegFormer consists of a hierarchical Transformer encoder and a lightweight all-MLP decode head to achieve great results on semantic segmentation benchmarks such as ADE20K and Cityscapes. The hierarchical Transformer is first pre-trained on ImageNet-1k, after which a decode head is added and fine-tuned altogether on a downstream dataset. | f354d01fe18b504b553d91cd7a5e4b30 |

other | ['vision', 'image-segmentation'] | false | Intended uses & limitations You can use the raw model for semantic segmentation. See the [model hub](https://huggingface.co/models?other=segformer) to look for fine-tuned versions on a task that interests you. | 335a338d73c0a6a153d9ed4bc84fc5bd |

other | ['vision', 'image-segmentation'] | false | How to use Here is how to use this model to classify an image of the COCO 2017 dataset into one of the 1,000 ImageNet classes: ```python from transformers import SegformerFeatureExtractor, SegformerForSemanticSegmentation from PIL import Image import requests feature_extractor = SegformerFeatureExtractor.from_pretrained("nvidia/segformer-b0-finetuned-ade-512-512") model = SegformerForSemanticSegmentation.from_pretrained("nvidia/segformer-b0-finetuned-ade-512-512") url = "http://images.cocodataset.org/val2017/000000039769.jpg" image = Image.open(requests.get(url, stream=True).raw) inputs = feature_extractor(images=image, return_tensors="pt") outputs = model(**inputs) logits = outputs.logits | 858ba18a5b7ad7dbc3207167bd92bf80 |

other | ['vision', 'image-segmentation'] | false | BibTeX entry and citation info ```bibtex @article{DBLP:journals/corr/abs-2105-15203, author = {Enze Xie and Wenhai Wang and Zhiding Yu and Anima Anandkumar and Jose M. Alvarez and Ping Luo}, title = {SegFormer: Simple and Efficient Design for Semantic Segmentation with Transformers}, journal = {CoRR}, volume = {abs/2105.15203}, year = {2021}, url = {https://arxiv.org/abs/2105.15203}, eprinttype = {arXiv}, eprint = {2105.15203}, timestamp = {Wed, 02 Jun 2021 11:46:42 +0200}, biburl = {https://dblp.org/rec/journals/corr/abs-2105-15203.bib}, bibsource = {dblp computer science bibliography, https://dblp.org} } ``` | bda273ef8ad416bf490bec6f8b0b772f |

creativeml-openrail-m | [] | false | v1.3.1  - [`plat-v1-3-1.safetensors`](https://huggingface.co/p1atdev/pd-archive/blob/main/plat-v1-3-1.safetensors) - [`plat-v1-3-1.ckpt`](https://huggingface.co/p1atdev/pd-archive/blob/main/plat-v1-3-1.ckpt) - [`plat-v1-3-1.yaml`](https://huggingface.co/p1atdev/pd-archive/blob/main/plat-v1-3-1.yaml) | 59c0a6f6f4fcdfccd25af8ab53448813 |

creativeml-openrail-m | [] | false | v1.3.0  - [`plat-v1-3-0.safetensors`](https://huggingface.co/p1atdev/pd-archive/blob/main/plat-v1-3-0.safetensors) - [`plat-v1-3-0.ckpt`](https://huggingface.co/p1atdev/pd-archive/blob/main/plat-v1-3-0.ckpt) - [`plat-v1-3-0.yaml`](https://huggingface.co/p1atdev/pd-archive/blob/main/plat-v1-3-0.yaml) | 6046f41054c96ba1e62e1bcef017183c |

apache-2.0 | ['automatic-speech-recognition', 'ru'] | false | exp_w2v2t_ru_vp-nl_s131 Fine-tuned [facebook/wav2vec2-large-nl-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-nl-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (ru)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | bfbe1327deb6c748d5f3896032202302 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers'] | false | Hiten Diffusion **Welcome to Hiten Diffusion** - a latent diffusion model that has been trained on Chinese TaiWan Artist artwork, [hiten](https://www.pixiv.net/users/490219). The current model has been fine-tuned with a learning rate of `2.0e-6` for `10 Epochs` on `467 images` collected from Danbooru. The model is trained using [NovelAI Aspect Ratio Bucketing Tool](https://github.com/NovelAI/novelai-aspect-ratio-bucketing) so that it can be trained at non-square resolutions. Like other anime-style Stable Diffusion models, it also supports Danbooru tags to generate images. e.g. **_1girl, white hair, golden eyes, beautiful eyes, detail, flower meadow, cumulonimbus clouds, lighting, detailed sky, garden_** | 926dbffb14c41726068710d67d4ef5e5 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers'] | false | 🧨 Diffusers This model can be used just like any other Stable Diffusion model. For more information, please have a look at the [Stable Diffusion](https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion). You can also export the model to [ONNX](https://huggingface.co/docs/diffusers/optimization/onnx), [MPS](https://huggingface.co/docs/diffusers/optimization/mps) and/or [FLAX/JAX](). ```python from diffusers import StableDiffusionPipeline import torch model_id = "mio/hiten" pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16) pipe = pipe.to("cuda") prompt = "1girl,solo,miku" image = pipe(prompt).images[0] image.save("./miku.png") ``` | 29501f217e750d09872a554ad5e5e18e |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers'] | false | Examples Below are some examples of images generated using this model:     | fe65413c2e743cea66a4e298da7b5174 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers'] | false | Prompt and settings for Example Images **Anime Girl:** ``` (((masterpiece))),(((best quality))),((ultra-detailed)), ((illustration)),floating, ((an extremely delicate and beautiful)),(beautiful detailed eyes),((disheveled hair)),1girl, bangs, black_hair, blue_sailor_collar, blurry, blurry_background, depth_of_field, eyebrows_visible_through_hair, long_hair, looking_at_viewer, parted_lips, sailor_collar, school_uniform, serafuku, shirt, solo, yoroizuka_mizore,medium_chest,colourful_stages,crown,masterpiece,full_body,white_thighhighs,extremely_detailed_CG_unity_8k_wallpaper,solo,1girl,lights Negative prompt: nsfw,nipples,lowres,bad anatomy,bad hands, text, error, missing fingers,extra digit, fewer digits, cropped, worstquality, low quality, normal quality,jpegartifacts,signature, watermark, username,blurry,bad feet, (((mutilated))),(((((too many fingers))))),((((fused fingers)))),(((extra fingers))),(((mutated hands))),extra limbs,(bad_prompt), (((mutilated))),(((((too many fingers))))),((((fused fingers)))),(((extra fingers))) Steps: 24, Sampler: DPM2 a Karras, CFG scale: 7, Seed: 3722281017, Size: 512x768, Model hash: 53a39f6a, Model: hiten_epoch10, Batch size: 4, Batch pos: 3, Clip skip: 2, ENSD: 31337 ``` | 0a7c89a637222d4f5e5ff2bcebd5863f |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 20 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 40 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 50 - num_epochs: 1 | 205837e46b86a12c453fd3c83ee8e690 |

apache-2.0 | ['generated_from_trainer'] | false | all-roberta-large-v1-kitchen_and_dining-4-16-5 This model is a fine-tuned version of [sentence-transformers/all-roberta-large-v1](https://huggingface.co/sentence-transformers/all-roberta-large-v1) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.3560 - Accuracy: 0.2692 | 5638ed5c4f148a6e11036e0cb01edc84 |

mit | [] | false | CaptainKirb on Stable Diffusion This is the `<captainkirb>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:       | bdb6cbecdf631368b2c326a96fa385e6 |

apache-2.0 | ['translation'] | false | opus-mt-fr-tvl * source languages: fr * target languages: tvl * OPUS readme: [fr-tvl](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/fr-tvl/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/fr-tvl/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/fr-tvl/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/fr-tvl/opus-2020-01-16.eval.txt) | 73ea399d34f02501e204b35cc6b35a56 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Wav2Vec2-Large-XLSR-53-Lithuanian Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on Lithuanian using the [Common Voice](https://huggingface.co/datasets/common_voice) When using this model, make sure that your speech input is sampled at 16kHz. | ab08e3fa2cffd7f9f41b5f7bbdad9b9f |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Usage The model can be used directly (without a language model) as follows: ```python import torch import torchaudio from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor test_dataset = load_dataset("common_voice", "lt", split="test[:2%]") processor = Wav2Vec2Processor.from_pretrained("dundar/wav2vec2-large-xlsr-53-lithuanian") model = Wav2Vec2ForCTC.from_pretrained("dundar/wav2vec2-large-xlsr-53-lithuanian") resampler = torchaudio.transforms.Resample(48_000, 16_000) | 797b214641a2bd7170b9cc48a200d94d |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Evaluation The model can be evaluated as follows on the Lithuanian test data of Common Voice. ```python import torch import torchaudio from datasets import load_dataset, load_metric from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor import re test_dataset = load_dataset("common_voice", "lt", split="test") wer = load_metric("wer") processor = Wav2Vec2Processor.from_pretrained("dundar/wav2vec2-large-xlsr-53-lithuanian") model = Wav2Vec2ForCTC.from_pretrained("dundar/wav2vec2-large-xlsr-53-lithuanian") model.to("cuda") chars_to_ignore_regex = '[\,\?\.\!\-\;\:\"\“\%\‘\”\�]' resampler = torchaudio.transforms.Resample(48_000, 16_000) | 0a3cdc1f28fdf12ec96c695a25cbad9f |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def evaluate(batch): inputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits pred_ids = torch.argmax(logits, dim=-1) batch["pred_strings"] = processor.batch_decode(pred_ids) return batch result = test_dataset.map(evaluate, batched=True, batch_size=8) print("WER: {:2f}".format(100 * wer.compute(predictions=result["pred_strings"], references=result["sentence"]))) ``` **Test Result**: 35.87 % | 47f7edde9581642c1b9350af8d2baf2f |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 8 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 5 | 0e7df4ad717e89f53f22b2d782a64cbd |

mit | ['luxembourgish', 'lëtzebuergesch', 'text generation', 'transfer learning'] | false | LuxGPT-2 based GER GPT-2 model for Text Generation in luxembourgish language, trained on 711 MB of text data, consisting of RTL.lu news articles, comments, parlament speeches, the luxembourgish Wikipedia, Newscrawl, Webcrawl and subtitles. Created via transfer learning with an German base model, feature space mapping from LB on Base feature space and gradual layer freezing. The training took place on a 32 GB Nvidia Tesla V100 - with One Cycle policy for the learning rate - with the help of fastai's LR finder - for 53.4 hours - for 20 epochs and 7 cycles - using the fastai library | dcd79772b1dc5ac32a54bbeb07b89425 |

mit | ['luxembourgish', 'lëtzebuergesch', 'text generation', 'transfer learning'] | false | Usage ```python from transformers import AutoTokenizer, AutoModelForCausalLM tokenizer = AutoTokenizer.from_pretrained("laurabernardy/LuxGPT2-basedGER") model = AutoModelForCausalLM.from_pretrained("laurabernardy/LuxGPT2-basedGER") ``` | ee3f48017586ebb9e4906ec4519568c0 |

mit | ['luxembourgish', 'lëtzebuergesch', 'text generation', 'transfer learning'] | false | Limitations and Biases See the [GPT2 model card](https://huggingface.co/gpt2) for considerations on limitations and bias. See the [GPT2 documentation](https://huggingface.co/transformers/model_doc/gpt2.html) for details on GPT2. | 63cf49cb75ab1cd481b695afb8d9a940 |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-cased-finetuned-squad This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the squad dataset. It achieves the following results on the evaluation set: - Loss: 1.0081 | 004d70090320185ec6e861c941fc0b2a |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1 | dd2a334effb73f834e1ed367d0319db7 |

apache-2.0 | ['generated_from_trainer'] | false | t5-small-finetuned-en-to-ro-LR_1e-3 This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the wmt16 dataset. It achieves the following results on the evaluation set: - Loss: 1.5215 - Bleu: 7.1606 - Gen Len: 18.2451 | 11ff5fbe28963af56fc14a1d89c8e3c7 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.001 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1 - mixed_precision_training: Native AMP | bd0f9a4c36292aa5ec7d97a98c0a028f |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:------:|:-------:| | 0.6758 | 1.0 | 7629 | 1.5215 | 7.1606 | 18.2451 | | 346d73eadeb186a754c272c98a7210c5 |

apache-2.0 | ['translation'] | false | opus-mt-bg-sv * source languages: bg * target languages: sv * OPUS readme: [bg-sv](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/bg-sv/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-08.zip](https://object.pouta.csc.fi/OPUS-MT-models/bg-sv/opus-2020-01-08.zip) * test set translations: [opus-2020-01-08.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/bg-sv/opus-2020-01-08.test.txt) * test set scores: [opus-2020-01-08.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/bg-sv/opus-2020-01-08.eval.txt) | e1a2c948635223e7632ff0dd9a27f3de |

apache-2.0 | ['automatic-speech-recognition', 'es'] | false | exp_w2v2t_es_hubert_s459 Fine-tuned [facebook/hubert-large-ll60k](https://huggingface.co/facebook/hubert-large-ll60k) for speech recognition using the train split of [Common Voice 7.0 (es)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 4483bf246ea2906430065815282bcd82 |

creativeml-openrail-m | ['text-to-image'] | false | Duskfall's Animanga Model Dreambooth model trained by Duskfallcrew with [Hugging Face Dreambooth Training Space](https://huggingface.co/spaces/multimodalart/dreambooth-training) with the v1-5 base model You run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb). Don't forget to use the concept prompts! If you want to donate towards costs and don't want to subscribe: https://ko-fi.com/DUSKFALLcrew If you want to monthly support the EARTH & DUSK media projects and not just AI: https://www.patreon.com/earthndusk Discord https://discord.gg/Da7s8d3KJ7 Rules Do not sell merges, or this model. Do share, and credit if you use this model. DO PLS REVIEW AND YELL AT ME IF IT SUCKS! We never update the images on here anymore see civit https://civitai.com/user/duskfallcrew | 8e379dbc6a3487dfca55ed86ea2dc14a |

mit | ['roberta-base', 'roberta-base-epoch_55'] | false | RoBERTa, Intermediate Checkpoint - Epoch 55 This model is part of our reimplementation of the [RoBERTa model](https://arxiv.org/abs/1907.11692), trained on Wikipedia and the Book Corpus only. We train this model for almost 100K steps, corresponding to 83 epochs. We provide the 84 checkpoints (including the randomly initialized weights before the training) to provide the ability to study the training dynamics of such models, and other possible use-cases. These models were trained in part of a work that studies how simple statistics from data, such as co-occurrences affects model predictions, which are described in the paper [Measuring Causal Effects of Data Statistics on Language Model's `Factual' Predictions](https://arxiv.org/abs/2207.14251). This is RoBERTa-base epoch_55. | 3f341c73cee1cdb32c48a72ab87bea59 |

apache-2.0 | ['translation'] | false | opus-mt-sm-fr * source languages: sm * target languages: fr * OPUS readme: [sm-fr](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/sm-fr/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/sm-fr/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/sm-fr/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/sm-fr/opus-2020-01-16.eval.txt) | 80f0ad7fb5f45ce98e996bf2096263a4 |

cc-by-sa-4.0 | ['finance', 'financial'] | false | SEC-BERT

<img align="center" src="https://i.ibb.co/0yz81K9/sec-bert-logo.png" alt="sec-bert-logo" width="400"/>

<div style="text-align: justify">

SEC-BERT is a family of BERT models for the financial domain, intended to assist financial NLP research and FinTech applications.

SEC-BERT consists of the following models:

* [**SEC-BERT-BASE**](https://huggingface.co/nlpaueb/sec-bert-base): Same architecture as BERT-BASE trained on financial documents.

* **SEC-BERT-NUM** (this model): Same as SEC-BERT-BASE but we replace every number token with a [NUM] pseudo-token handling all numeric expressions in a uniform manner, disallowing their fragmentation).

* [**SEC-BERT-SHAPE**](https://huggingface.co/nlpaueb/sec-bert-shape): Same as SEC-BERT-BASE but we replace numbers with pseudo-tokens that represent the number’s shape, so numeric expressions (of known shapes) are no longer fragmented, e.g., '53.2' becomes '[XX.X]' and '40,200.5' becomes '[XX,XXX.X]'.

</div>

| 6be1ea5991324e33d539bbb2d9bafb91 |

cc-by-sa-4.0 | ['finance', 'financial'] | false | Pre-training details

<div style="text-align: justify">

* We created a new vocabulary of 30k subwords by training a [BertWordPieceTokenizer](https://github.com/huggingface/tokenizers) from scratch on the pre-training corpus.

* We trained BERT using the official code provided in [Google BERT's GitHub repository](https://github.com/google-research/bert)</a>.

* We then used [Hugging Face](https://huggingface.co)'s [Transformers](https://github.com/huggingface/transformers) conversion script to convert the TF checkpoint in the desired format in order to be able to load the model in two lines of code for both PyTorch and TF2 users.

* We release a model similar to the English BERT-BASE model (12-layer, 768-hidden, 12-heads, 110M parameters).

* We chose to follow the same training set-up: 1 million training steps with batches of 256 sequences of length 512 with an initial learning rate 1e-4.

* We were able to use a single Google Cloud TPU v3-8 provided for free from [TensorFlow Research Cloud (TRC)](https://sites.research.google/trc), while also utilizing [GCP research credits](https://edu.google.com/programs/credits/research). Huge thanks to both Google programs for supporting us!

</div>

| 0848f91bf975962b47e2e11abe4ff4d7 |

cc-by-sa-4.0 | ['finance', 'financial'] | false | Load Pretrained Model

```python

from transformers import AutoTokenizer, AutoModel

tokenizer = AutoTokenizer.from_pretrained("nlpaueb/sec-bert-num")

model = AutoModel.from_pretrained("nlpaueb/sec-bert-num")

```

| 3e8422da0e6524d0e6c42665c607b678 |

cc-by-sa-4.0 | ['finance', 'financial'] | false | Pre-process Text

<div style="text-align: justify">

To use SEC-BERT-NUM, you have to pre-process texts replacing every numerical token with [NUM] pseudo-token.

Below there is an example of how you can pre-process a simple sentence. This approach is quite simple; feel free to modify it as you see fit.

</div>

```python

import re

import spacy

from transformers import AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("nlpaueb/sec-bert-num")

spacy_tokenizer = spacy.load("en_core_web_sm")

sentence = "Total net sales decreased 2% or $5.4 billion during 2019 compared to 2018."

def sec_bert_num_preprocess(text):

tokens = [t.text for t in spacy_tokenizer(text)]

processed_text = []

for token in tokens:

if re.fullmatch(r"(\d+[\d,.]*)|([,.]\d+)", token):

processed_text.append('[NUM]')

else:

processed_text.append(token)

return ' '.join(processed_text)

tokenized_sentence = tokenizer.tokenize(sec_bert_num_preprocess(sentence))

print(tokenized_sentence)

"""

['total', 'net', 'sales', 'decreased', '[NUM]', '%', 'or', '$', '[NUM]', 'billion', 'during', '[NUM]', 'compared', 'to', '[NUM]', '.']

"""

```

| c1d9dc034cacddb776c52c95ed140dcc |

cc-by-sa-4.0 | ['finance', 'financial'] | false | Using SEC-BERT variants as Language Models

| Sample | Masked Token |

| --------------------------------------------------- | ------------ |

| Total net sales [MASK] 2% or $5.4 billion during 2019 compared to 2018. | decreased

| Model | Predictions (Probability) |

| --------------------------------------------------- | ------------ |

| **BERT-BASE-UNCASED** | increased (0.221), were (0.131), are (0.103), rose (0.075), of (0.058)

| **SEC-BERT-BASE** | increased (0.678), decreased (0.282), declined (0.017), grew (0.016), rose (0.004)

| **SEC-BERT-NUM** | increased (0.753), decreased (0.211), grew (0.019), declined (0.010), rose (0.006)

| **SEC-BERT-SHAPE** | increased (0.747), decreased (0.214), grew (0.021), declined (0.013), rose (0.002)

| Sample | Masked Token |

| --------------------------------------------------- | ------------ |

| Total net sales decreased 2% or $5.4 [MASK] during 2019 compared to 2018. | billion

| Model | Predictions (Probability) |

| --------------------------------------------------- | ------------ |

| **BERT-BASE-UNCASED** | billion (0.841), million (0.097), trillion (0.028), | 78c247bb8008a500574dc8c0e02ecc0c |

cc-by-sa-4.0 | ['finance', 'financial'] | false | million (0.000), m (0.000)

| **SEC-BERT-NUM** | million (0.974), billion (0.012), , (0.010), thousand (0.003), m (0.000)

| **SEC-BERT-SHAPE** | million (0.978), billion (0.021), % (0.000), , (0.000), millions (0.000)

| Sample | Masked Token |

| --------------------------------------------------- | ------------ |

| Total net sales decreased [MASK]% or $5.4 billion during 2019 compared to 2018. | 2

| Model | Predictions (Probability) |

| --------------------------------------------------- | ------------ |

| **BERT-BASE-UNCASED** | 20 (0.031), 10 (0.030), 6 (0.029), 4 (0.027), 30 (0.027)

| **SEC-BERT-BASE** | 13 (0.045), 12 (0.040), 11 (0.040), 14 (0.035), 10 (0.035)

| **SEC-BERT-NUM** | [NUM] (1.000), one (0.000), five (0.000), three (0.000), seven (0.000)

| **SEC-BERT-SHAPE** | [XX] (0.316), [XX.X] (0.253), [X.X] (0.237), [X] (0.188), [X.XX] (0.002)

| Sample | Masked Token |

| --------------------------------------------------- | ------------ |

| Total net sales decreased 2[MASK] or $5.4 billion during 2019 compared to 2018. | %

| Model | Predictions (Probability) |

| --------------------------------------------------- | ------------ |

| **BERT-BASE-UNCASED** | % (0.795), percent (0.174), | 1c5e3a3391660eb0a2c22a4654aae7aa |

cc-by-sa-4.0 | ['finance', 'financial'] | false | fold (0.009), billion (0.004), times (0.004)

| **SEC-BERT-BASE** | % (0.924), percent (0.076), points (0.000), , (0.000), times (0.000)

| **SEC-BERT-NUM** | % (0.882), percent (0.118), million (0.000), units (0.000), bps (0.000)

| **SEC-BERT-SHAPE** | % (0.961), percent (0.039), bps (0.000), , (0.000), bcf (0.000)

| Sample | Masked Token |

| --------------------------------------------------- | ------------ |

| Total net sales decreased 2% or $[MASK] billion during 2019 compared to 2018. | 5.4

| Model | Predictions (Probability) |

| --------------------------------------------------- | ------------ |

| **BERT-BASE-UNCASED** | 1 (0.074), 4 (0.045), 3 (0.044), 2 (0.037), 5 (0.034)

| **SEC-BERT-BASE** | 1 (0.218), 2 (0.136), 3 (0.078), 4 (0.066), 5 (0.048)

| **SEC-BERT-NUM** | [NUM] (1.000), l (0.000), 1 (0.000), - (0.000), 30 (0.000)

| **SEC-BERT-SHAPE** | [X.X] (0.787), [X.XX] (0.095), [XX.X] (0.049), [X.XXX] (0.046), [X] (0.013)

| Sample | Masked Token |

| --------------------------------------------------- | ------------ |

| Total net sales decreased 2% or $5.4 billion during [MASK] compared to 2018. | 2019

| Model | Predictions (Probability) |

| --------------------------------------------------- | ------------ |

| **BERT-BASE-UNCASED** | 2017 (0.485), 2018 (0.169), 2016 (0.164), 2015 (0.070), 2014 (0.022)

| **SEC-BERT-BASE** | 2019 (0.990), 2017 (0.007), 2018 (0.003), 2020 (0.000), 2015 (0.000)

| **SEC-BERT-NUM** | [NUM] (1.000), as (0.000), fiscal (0.000), year (0.000), when (0.000)

| **SEC-BERT-SHAPE** | [XXXX] (1.000), as (0.000), year (0.000), periods (0.000), , (0.000)

| Sample | Masked Token |

| --------------------------------------------------- | ------------ |

| Total net sales decreased 2% or $5.4 billion during 2019 compared to [MASK]. | 2018

| Model | Predictions (Probability) |

| --------------------------------------------------- | ------------ |

| **BERT-BASE-UNCASED** | 2017 (0.100), 2016 (0.097), above (0.054), inflation (0.050), previously (0.037)

| **SEC-BERT-BASE** | 2018 (0.999), 2019 (0.000), 2017 (0.000), 2016 (0.000), 2014 (0.000)

| **SEC-BERT-NUM** | [NUM] (1.000), year (0.000), last (0.000), sales (0.000), fiscal (0.000)

| **SEC-BERT-SHAPE** | [XXXX] (1.000), year (0.000), sales (0.000), prior (0.000), years (0.000)

| Sample | Masked Token |

| --------------------------------------------------- | ------------ |

| During 2019, the Company [MASK] $67.1 billion of its common stock and paid dividend equivalents of $14.1 billion. | repurchased

| Model | Predictions (Probability) |

| --------------------------------------------------- | ------------ |

| **BERT-BASE-UNCASED** | held (0.229), sold (0.192), acquired (0.172), owned (0.052), traded (0.033)

| **SEC-BERT-BASE** | repurchased (0.913), issued (0.036), purchased (0.029), redeemed (0.010), sold (0.003)

| **SEC-BERT-NUM** | repurchased (0.917), purchased (0.054), reacquired (0.013), issued (0.005), acquired (0.003)

| **SEC-BERT-SHAPE** | repurchased (0.902), purchased (0.068), issued (0.010), reacquired (0.008), redeemed (0.006)

| Sample | Masked Token |

| --------------------------------------------------- | ------------ |

| During 2019, the Company repurchased $67.1 billion of its common [MASK] and paid dividend equivalents of $14.1 billion. | stock

| Model | Predictions (Probability) |

| --------------------------------------------------- | ------------ |

| **BERT-BASE-UNCASED** | stock (0.835), assets (0.039), equity (0.025), debt (0.021), bonds (0.017)

| **SEC-BERT-BASE** | stock (0.857), shares (0.135), equity (0.004), units (0.002), securities (0.000)

| **SEC-BERT-NUM** | stock (0.842), shares (0.157), equity (0.000), securities (0.000), units (0.000)

| **SEC-BERT-SHAPE** | stock (0.888), shares (0.109), equity (0.001), securities (0.001), stocks (0.000)

| Sample | Masked Token |

| --------------------------------------------------- | ------------ |

| During 2019, the Company repurchased $67.1 billion of its common stock and paid [MASK] equivalents of $14.1 billion. | dividend

| Model | Predictions (Probability) |

| --------------------------------------------------- | ------------ |

| **BERT-BASE-UNCASED** | cash (0.276), net (0.128), annual (0.083), the (0.040), debt (0.027)

| **SEC-BERT-BASE** | dividend (0.890), cash (0.018), dividends (0.016), share (0.013), tax (0.010)

| **SEC-BERT-NUM** | dividend (0.735), cash (0.115), share (0.087), tax (0.025), stock (0.013)

| **SEC-BERT-SHAPE** | dividend (0.655), cash (0.248), dividends (0.042), share (0.019), out (0.003)

| Sample | Masked Token |

| --------------------------------------------------- | ------------ |

| During 2019, the Company repurchased $67.1 billion of its common stock and paid dividend [MASK] of $14.1 billion. | equivalents

| Model | Predictions (Probability) |

| --------------------------------------------------- | ------------ |

| **BERT-BASE-UNCASED** | revenue (0.085), earnings (0.078), rates (0.065), amounts (0.064), proceeds (0.062)

| **SEC-BERT-BASE** | payments (0.790), distributions (0.087), equivalents (0.068), cash (0.013), amounts (0.004)

| **SEC-BERT-NUM** | payments (0.845), equivalents (0.097), distributions (0.024), increases (0.005), dividends (0.004)

| **SEC-BERT-SHAPE** | payments (0.784), equivalents (0.093), distributions (0.043), dividends (0.015), requirements (0.009)

| ac26ca112ac7222af70f056bf90b4f14 |

cc-by-sa-4.0 | ['finance', 'financial'] | false | Publication

<div style="text-align: justify">

If you use this model cite the following article:<br>

[**FiNER: Financial Numeric Entity Recognition for XBRL Tagging**](https://arxiv.org/abs/2203.06482)<br>

Lefteris Loukas, Manos Fergadiotis, Ilias Chalkidis, Eirini Spyropoulou, Prodromos Malakasiotis, Ion Androutsopoulos and George Paliouras<br>

In the Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (ACL 2022) (Long Papers), Dublin, Republic of Ireland, May 22 - 27, 2022

</div>

```

@inproceedings{loukas-etal-2022-finer,

title = {FiNER: Financial Numeric Entity Recognition for XBRL Tagging},

author = {Loukas, Lefteris and

Fergadiotis, Manos and

Chalkidis, Ilias and

Spyropoulou, Eirini and

Malakasiotis, Prodromos and

Androutsopoulos, Ion and

Paliouras George},

booktitle = {Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (ACL 2022)},

publisher = {Association for Computational Linguistics},

location = {Dublin, Republic of Ireland},

year = {2022},

url = {https://arxiv.org/abs/2203.06482}

}

```

| 5b6aaa3ea40bd9c637ed0a2393242d82 |

cc-by-sa-4.0 | ['finance', 'financial'] | false | About Us

<div style="text-align: justify">

[AUEB's Natural Language Processing Group](http://nlp.cs.aueb.gr) develops algorithms, models, and systems that allow computers to process and generate natural language texts.

The group's current research interests include:

* question answering systems for databases, ontologies, document collections, and the Web, especially biomedical question answering,

* natural language generation from databases and ontologies, especially Semantic Web ontologies,

text classification, including filtering spam and abusive content,

* information extraction and opinion mining, including legal text analytics and sentiment analysis,

* natural language processing tools for Greek, for example parsers and named-entity recognizers,

machine learning in natural language processing, especially deep learning.

The group is part of the Information Processing Laboratory of the Department of Informatics of the Athens University of Economics and Business.

</div>

[Manos Fergadiotis](https://manosfer.github.io) on behalf of [AUEB's Natural Language Processing Group](http://nlp.cs.aueb.gr) | 6cd43a1c8c0fefd69f3b3f93856e05e8 |

apache-2.0 | ['automatic-speech-recognition', 'fr'] | false | exp_w2v2r_fr_vp-100k_gender_male-8_female-2_s500 Fine-tuned [facebook/wav2vec2-large-100k-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-100k-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (fr)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | adf95f492945cd2150a783b16edd2260 |

apache-2.0 | ['generated_from_trainer'] | false | mobilebert_add_GLUE_Experiment_qqp_128 This model is a fine-tuned version of [google/mobilebert-uncased](https://huggingface.co/google/mobilebert-uncased) on the GLUE QQP dataset. It achieves the following results on the evaluation set: - Loss: 0.5071 - Accuracy: 0.7568 - F1: 0.6361 - Combined Score: 0.6965 | ba3c5d40311eacd82e7da5e4f49a48b8 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | Combined Score | |:-------------:|:-----:|:-----:|:---------------:|:--------:|:------:|:--------------:| | 0.6507 | 1.0 | 2843 | 0.6497 | 0.6318 | 0.0 | 0.3159 | | 0.6311 | 2.0 | 5686 | 0.5445 | 0.7259 | 0.5622 | 0.6441 | | 0.5153 | 3.0 | 8529 | 0.5153 | 0.7493 | 0.5892 | 0.6693 | | 0.4912 | 4.0 | 11372 | 0.5071 | 0.7568 | 0.6361 | 0.6965 | | 0.4805 | 5.0 | 14215 | nan | 0.6318 | 0.0 | 0.3159 | | 0.0 | 6.0 | 17058 | nan | 0.6318 | 0.0 | 0.3159 | | 0.0 | 7.0 | 19901 | nan | 0.6318 | 0.0 | 0.3159 | | 0.0 | 8.0 | 22744 | nan | 0.6318 | 0.0 | 0.3159 | | 0.0 | 9.0 | 25587 | nan | 0.6318 | 0.0 | 0.3159 | | 51984be2c866b27c5d7de30045b9299a |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Wav2Vec2-Large-XLSR-53-Cantonese Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on Cantonese using the [Common Voice Corpus 8.0](https://commonvoice.mozilla.org/en/datasets). When using this model, make sure that your speech input is sampled at 16kHz. The Common Voice's validated `train` and `dev` were used for training. The script used for training can be found at [https://github.com/holylovenia/wav2vec2-pretraining](https://github.com/holylovenia/wav2vec2-pretraining). | 29648fccc70d7dce2c2e5156e2f87fc0 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Usage The model can be used directly (without a language model) as follows: ```python import torch import torchaudio from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor test_dataset = load_dataset("common_voice", "zh-HK", split="test[:2%]") processor = Wav2Vec2Processor.from_pretrained("CAiRE/wav2vec2-large-xlsr-53-cantonese") model = Wav2Vec2ForCTC.from_pretrained("CAiRE/wav2vec2-large-xlsr-53-cantonese") | 863c8251fd42e7821fcf231057329dd2 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Evaluation The model can be evaluated as follows on the zh-HK test data of Common Voice. ```python import torch import torchaudio from datasets import load_dataset, load_metric from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor import re test_dataset = load_dataset("common_voice", "zh-HK", split="test") wer = load_metric("cer") processor = Wav2Vec2Processor.from_pretrained("CAiRE/wav2vec2-large-xlsr-53-cantonese") model = Wav2Vec2ForCTC.from_pretrained("CAiRE/wav2vec2-large-xlsr-53-cantonese") model.to("cuda") chars_to_ignore_regex = '[\,\?\.\!\-\;\:\"\“\%\‘\'\”\�]' | 80ffaffa9d5c584fd3ab9dbb6fb302db |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def evaluate(batch): inputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits pred_ids = torch.argmax(logits, dim=-1) batch["pred_strings"] = processor.batch_decode(pred_ids) return batch result = test_dataset.map(evaluate, batched=True, batch_size=8) print("CER: {:2f}".format(100 * cer.compute(predictions=result["pred_strings"], references=result["sentence"]))) ``` **Test Result**: CER: 18.55 % | f8704f8722c7ba9063769f9862bf04d9 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Citation If you use our code/model, please cite us: ``` @inproceedings{lovenia2022ascend, title={ASCEND: A Spontaneous Chinese-English Dataset for Code-switching in Multi-turn Conversation}, author={Lovenia, Holy and Cahyawijaya, Samuel and Winata, Genta Indra and Xu, Peng and Yan, Xu and Liu, Zihan and Frieske, Rita and Yu, Tiezheng and Dai, Wenliang and Barezi, Elham J and others}, booktitle={Proceedings of the 13th Language Resources and Evaluation Conference (LREC)}, year={2022} } ``` | 434ab8eecede5932f4d5a1787a774483 |

other | [] | false | Fort Worth Carpet Cleaning https://txfortworthcarpetcleaning.com/ (817) 523-1237 Save your wellbeing and your family wellbeing with Floor covering cleaning Stronghold worth TX as our administration will assist you with staying away from yourself and your family from asthma and sensitivity by eliminating the residue and the soil from your rug, let me let you know that you can clean your rug however clean it professionality and ensure that it turns out to be clear of any soil even the intense soil as the blood stains or wine stains needs our administration. We are here to accomplish Worth TX fulfillments. | 2e2cc07bcddebafe7443cbe42d75e9fe |

apache-2.0 | ['translation'] | false | opus-mt-sv-he * source languages: sv * target languages: he * OPUS readme: [sv-he](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/sv-he/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/sv-he/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/sv-he/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/sv-he/opus-2020-01-16.eval.txt) | f232c05920561e50c3795c018817f263 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-squad This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the squad_v2 dataset. It achieves the following results on the evaluation set: - Loss: 1.4909 | 9007506855dad364ea5f891564e1c5de |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 1.2236 | 1.0 | 8235 | 1.2651 | | 0.9496 | 2.0 | 16470 | 1.2313 | | 0.7572 | 3.0 | 24705 | 1.4909 | | 7ac2623a8e28357811c039451fbc97f4 |

apache-2.0 | ['simplification', 'generated_from_trainer'] | false | mt5-small-clara-med This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the [CLARA-MeD](https://huggingface.co/lcampillos/CLARA-MeD) dataset. It achieves the following results on the evaluation set: - Loss: 1.9850 - Rouge1: 33.0363 - Rouge2: 19.0613 - Rougel: 30.295 - Rougelsum: 30.2898 - SARI: 40.7094 | 782373f14bc6e050bbb6676ccb1e6eee |

apache-2.0 | ['simplification', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5.6e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 30 | c20da84aa7d18fb6749e587d0e47cf15 |

apache-2.0 | ['simplification', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:| | No log | 1.0 | 190 | 3.0286 | 18.0709 | 7.727 | 16.1995 | 16.3348 | | No log | 2.0 | 380 | 2.4754 | 24.9167 | 13.0501 | 22.3889 | 22.4724 | | 6.79 | 3.0 | 570 | 2.3542 | 29.9908 | 15.9829 | 26.3751 | 26.4343 | | 6.79 | 4.0 | 760 | 2.2894 | 30.4435 | 16.3176 | 27.1801 | 27.1926 | | 3.1288 | 5.0 | 950 | 2.2440 | 30.8602 | 16.8033 | 27.8195 | 27.8355 | | 3.1288 | 6.0 | 1140 | 2.1772 | 31.4202 | 17.3253 | 28.3394 | 28.3699 | | 3.1288 | 7.0 | 1330 | 2.1584 | 31.5591 | 17.7302 | 28.618 | 28.6189 | | 2.7919 | 8.0 | 1520 | 2.1286 | 31.6211 | 17.7423 | 28.7218 | 28.7462 | | 2.7919 | 9.0 | 1710 | 2.1031 | 31.9724 | 18.017 | 29.0754 | 29.0744 | | 2.6007 | 10.0 | 1900 | 2.0947 | 32.1588 | 18.2474 | 29.2957 | 29.2956 | | 2.6007 | 11.0 | 2090 | 2.0914 | 32.4959 | 18.4197 | 29.6052 | 29.609 | | 2.6007 | 12.0 | 2280 | 2.0726 | 32.6673 | 18.8962 | 29.9145 | 29.9122 | | 2.4911 | 13.0 | 2470 | 2.0487 | 32.4461 | 18.6804 | 29.6224 | 29.6274 | | 2.4911 | 14.0 | 2660 | 2.0436 | 32.8393 | 19.0315 | 30.1024 | 30.1097 | | 2.4168 | 15.0 | 2850 | 2.0229 | 32.8235 | 18.9549 | 30.0699 | 30.0605 | | 2.4168 | 16.0 | 3040 | 2.0253 | 32.8584 | 18.8602 | 30.0582 | 30.0712 | | 2.4168 | 17.0 | 3230 | 2.0177 | 32.7145 | 18.9059 | 30.0436 | 30.0771 | | 2.3452 | 18.0 | 3420 | 2.0151 | 32.6874 | 18.8339 | 29.9739 | 30.0004 | | 2.3452 | 19.0 | 3610 | 2.0138 | 32.516 | 18.6562 | 29.7823 | 29.7951 | | 2.302 | 20.0 | 3800 | 2.0085 | 32.8117 | 18.8208 | 30.0902 | 30.1282 | | 2.302 | 21.0 | 3990 | 2.0043 | 32.7633 | 18.8364 | 30.0619 | 30.0781 | | 2.302 | 22.0 | 4180 | 1.9972 | 32.9786 | 19.0354 | 30.2166 | 30.2286 | | 2.2641 | 23.0 | 4370 | 1.9927 | 33.0222 | 19.0501 | 30.2716 | 30.2951 | | 2.2641 | 24.0 | 4560 | 1.9905 | 32.9557 | 18.9958 | 30.1988 | 30.2004 | | 2.2366 | 25.0 | 4750 | 1.9897 | 33.0429 | 18.9806 | 30.2861 | 30.3012 | | 2.2366 | 26.0 | 4940 | 1.9850 | 33.047 | 19.118 | 30.3437 | 30.3368 | | 2.2366 | 27.0 | 5130 | 1.9860 | 33.0736 | 19.0805 | 30.3311 | 30.3476 | | 2.2157 | 28.0 | 5320 | 1.9870 | 33.0698 | 19.0649 | 30.2959 | 30.3093 | | 2.2157 | 29.0 | 5510 | 1.9844 | 33.0376 | 19.0397 | 30.299 | 30.2839 | | 2.2131 | 30.0 | 5700 | 1.9850 | 33.0363 | 19.0613 | 30.295 | 30.2898 | | 73680d795430fdc5cdbd4a683a150992 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.