license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

mit | ['generated_from_trainer'] | false | deberta-v3-large-sentiment This model is a fine-tuned version of [microsoft/deberta-v3-large](https://huggingface.co/microsoft/deberta-v3-large) on an [tweet_eval](https://huggingface.co/datasets/tweet_eval) dataset. | e2ea05b93ab69558022f5ae2627d9427 |

mit | ['generated_from_trainer'] | false | Model description Test set results: | Model | Emotion | Hate | Irony | Offensive | Sentiment | | ------------- | ------------- | ------------- | ------------- | ------------- | ------------- | | deberta-v3-large | **86.3** | **61.3** | **87.1** | **86.4** | **73.9** | | BERTweet | 79.3 | - | 82.1 | 79.5 | 73.4 | | RoB-RT | 79.5 | 52.3 | 61.7 | 80.5 | 69.3 | [source:papers_with_code](https://paperswithcode.com/sota/sentiment-analysis-on-tweeteval) | 2c7089997e22c350ba15e0f18aec015e |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-06 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 50 - num_epochs: 10.0 | 8a034f1bdbcc3fe5460cf3f22057b7eb |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:--------:| | 1.0614 | 0.07 | 100 | 1.0196 | 0.4345 | | 0.8601 | 0.14 | 200 | 0.7561 | 0.6460 | | 0.734 | 0.21 | 300 | 0.6796 | 0.6955 | | 0.6753 | 0.28 | 400 | 0.6521 | 0.7000 | | 0.6408 | 0.35 | 500 | 0.6119 | 0.7440 | | 0.5991 | 0.42 | 600 | 0.6034 | 0.7370 | | 0.6069 | 0.49 | 700 | 0.5976 | 0.7375 | | 0.6122 | 0.56 | 800 | 0.5871 | 0.7425 | | 0.5908 | 0.63 | 900 | 0.5935 | 0.7445 | | 0.5884 | 0.7 | 1000 | 0.5792 | 0.7520 | | 0.5839 | 0.77 | 1100 | 0.5780 | 0.7555 | | 0.5772 | 0.84 | 1200 | 0.5727 | 0.7570 | | 0.5895 | 0.91 | 1300 | 0.5601 | 0.7550 | | 0.5757 | 0.98 | 1400 | 0.5613 | 0.7525 | | 0.5121 | 1.05 | 1500 | 0.5867 | 0.7600 | | 0.5254 | 1.12 | 1600 | 0.5595 | 0.7630 | | 0.5074 | 1.19 | 1700 | 0.5594 | 0.7585 | | 0.4947 | 1.26 | 1800 | 0.5697 | 0.7575 | | 0.5019 | 1.33 | 1900 | 0.5665 | 0.7580 | | 0.5005 | 1.4 | 2000 | 0.5484 | 0.7655 | | 0.5125 | 1.47 | 2100 | 0.5626 | 0.7605 | | 0.5241 | 1.54 | 2200 | 0.5561 | 0.7560 | | 0.5198 | 1.61 | 2300 | 0.5602 | 0.7600 | | 0.5124 | 1.68 | 2400 | 0.5654 | 0.7490 | | 0.5096 | 1.75 | 2500 | 0.5803 | 0.7515 | | 0.4885 | 1.82 | 2600 | 0.5889 | 0.75 | | 0.5111 | 1.89 | 2700 | 0.5508 | 0.7665 | | 0.4868 | 1.96 | 2800 | 0.5621 | 0.7635 | | 0.4599 | 2.04 | 2900 | 0.5995 | 0.7615 | | 0.4147 | 2.11 | 3000 | 0.6202 | 0.7530 | | 0.4233 | 2.18 | 3100 | 0.5875 | 0.7625 | | 0.4324 | 2.25 | 3200 | 0.5794 | 0.7610 | | 0.4141 | 2.32 | 3300 | 0.5902 | 0.7460 | | 0.4306 | 2.39 | 3400 | 0.6053 | 0.7545 | | 0.4266 | 2.46 | 3500 | 0.5979 | 0.7570 | | 0.4227 | 2.53 | 3600 | 0.5920 | 0.7650 | | 0.4226 | 2.6 | 3700 | 0.6166 | 0.7455 | | 0.3978 | 2.67 | 3800 | 0.6126 | 0.7560 | | 0.3954 | 2.74 | 3900 | 0.6152 | 0.7550 | | 0.4209 | 2.81 | 4000 | 0.5980 | 0.75 | | 0.3982 | 2.88 | 4100 | 0.6096 | 0.7490 | | 0.4016 | 2.95 | 4200 | 0.6541 | 0.7425 | | 0.3966 | 3.02 | 4300 | 0.6377 | 0.7545 | | 0.3074 | 3.09 | 4400 | 0.6860 | 0.75 | | 0.3551 | 3.16 | 4500 | 0.6160 | 0.7550 | | 0.3323 | 3.23 | 4600 | 0.6714 | 0.7520 | | 0.3171 | 3.3 | 4700 | 0.6538 | 0.7535 | | 0.3403 | 3.37 | 4800 | 0.6774 | 0.7465 | | 0.3396 | 3.44 | 4900 | 0.6726 | 0.7465 | | 0.3259 | 3.51 | 5000 | 0.6465 | 0.7480 | | 0.3392 | 3.58 | 5100 | 0.6860 | 0.7460 | | 0.3251 | 3.65 | 5200 | 0.6697 | 0.7495 | | 0.3253 | 3.72 | 5300 | 0.6770 | 0.7430 | | 0.3455 | 3.79 | 5400 | 0.7177 | 0.7360 | | 0.3323 | 3.86 | 5500 | 0.6943 | 0.7400 | | 0.3335 | 3.93 | 5600 | 0.6507 | 0.7555 | | 0.3368 | 4.0 | 5700 | 0.6580 | 0.7485 | | 0.2479 | 4.07 | 5800 | 0.7667 | 0.7430 | | 0.2613 | 4.14 | 5900 | 0.7513 | 0.7505 | | 0.2557 | 4.21 | 6000 | 0.7927 | 0.7485 | | 0.243 | 4.28 | 6100 | 0.7792 | 0.7450 | | 0.2473 | 4.35 | 6200 | 0.8107 | 0.7355 | | 0.2447 | 4.42 | 6300 | 0.7851 | 0.7370 | | 0.2515 | 4.49 | 6400 | 0.7529 | 0.7465 | | 0.274 | 4.56 | 6500 | 0.7390 | 0.7465 | | 0.2674 | 4.63 | 6600 | 0.7658 | 0.7460 | | 0.2416 | 4.7 | 6700 | 0.7915 | 0.7485 | | 0.2432 | 4.77 | 6800 | 0.7989 | 0.7435 | | 0.2595 | 4.84 | 6900 | 0.7850 | 0.7380 | | 0.2736 | 4.91 | 7000 | 0.7577 | 0.7395 | | 0.2783 | 4.98 | 7100 | 0.7650 | 0.7405 | | 0.2304 | 5.05 | 7200 | 0.8542 | 0.7385 | | 0.1937 | 5.12 | 7300 | 0.8390 | 0.7345 | | 0.1878 | 5.19 | 7400 | 0.9150 | 0.7330 | | 0.1921 | 5.26 | 7500 | 0.8792 | 0.7405 | | 0.1916 | 5.33 | 7600 | 0.8892 | 0.7410 | | 0.2011 | 5.4 | 7700 | 0.9012 | 0.7325 | | 0.211 | 5.47 | 7800 | 0.8608 | 0.7420 | | 0.2194 | 5.54 | 7900 | 0.8852 | 0.7320 | | 0.205 | 5.61 | 8000 | 0.8803 | 0.7385 | | 0.1981 | 5.68 | 8100 | 0.8681 | 0.7330 | | 0.1908 | 5.75 | 8200 | 0.9020 | 0.7435 | | 0.1942 | 5.82 | 8300 | 0.8780 | 0.7410 | | 0.1958 | 5.89 | 8400 | 0.8937 | 0.7345 | | 0.1883 | 5.96 | 8500 | 0.9121 | 0.7360 | | 0.1819 | 6.04 | 8600 | 0.9409 | 0.7430 | | 0.145 | 6.11 | 8700 | 1.1390 | 0.7265 | | 0.1696 | 6.18 | 8800 | 0.9189 | 0.7430 | | 0.1488 | 6.25 | 8900 | 0.9718 | 0.7400 | | 0.1637 | 6.32 | 9000 | 0.9702 | 0.7450 | | 0.1547 | 6.39 | 9100 | 1.0033 | 0.7410 | | 0.1605 | 6.46 | 9200 | 0.9973 | 0.7355 | | 0.1552 | 6.53 | 9300 | 1.0491 | 0.7290 | | 0.1731 | 6.6 | 9400 | 1.0271 | 0.7335 | | 0.1738 | 6.67 | 9500 | 0.9575 | 0.7430 | | 0.1669 | 6.74 | 9600 | 0.9614 | 0.7350 | | 0.1347 | 6.81 | 9700 | 1.0263 | 0.7365 | | 0.1593 | 6.88 | 9800 | 1.0173 | 0.7360 | | 0.1549 | 6.95 | 9900 | 1.0398 | 0.7350 | | 0.1675 | 7.02 | 10000 | 0.9975 | 0.7380 | | 0.1182 | 7.09 | 10100 | 1.1059 | 0.7350 | | 0.1351 | 7.16 | 10200 | 1.0933 | 0.7400 | | 0.1496 | 7.23 | 10300 | 1.0731 | 0.7355 | | 0.1197 | 7.3 | 10400 | 1.1089 | 0.7360 | | 0.1111 | 7.37 | 10500 | 1.1381 | 0.7405 | | 0.1494 | 7.44 | 10600 | 1.0252 | 0.7425 | | 0.1235 | 7.51 | 10700 | 1.0906 | 0.7360 | | 0.133 | 7.58 | 10800 | 1.1796 | 0.7375 | | 0.1248 | 7.65 | 10900 | 1.1332 | 0.7420 | | 0.1268 | 7.72 | 11000 | 1.1304 | 0.7415 | | 0.1368 | 7.79 | 11100 | 1.1345 | 0.7380 | | 0.1228 | 7.86 | 11200 | 1.2018 | 0.7320 | | 0.1281 | 7.93 | 11300 | 1.1884 | 0.7350 | | 0.1449 | 8.0 | 11400 | 1.1571 | 0.7345 | | 0.1025 | 8.07 | 11500 | 1.1538 | 0.7345 | | 0.1199 | 8.14 | 11600 | 1.2113 | 0.7390 | | 0.1016 | 8.21 | 11700 | 1.2882 | 0.7370 | | 0.114 | 8.28 | 11800 | 1.2872 | 0.7390 | | 0.1019 | 8.35 | 11900 | 1.2876 | 0.7380 | | 0.1142 | 8.42 | 12000 | 1.2791 | 0.7385 | | 0.1135 | 8.49 | 12100 | 1.2883 | 0.7380 | | 0.1139 | 8.56 | 12200 | 1.2829 | 0.7360 | | 0.1107 | 8.63 | 12300 | 1.2698 | 0.7365 | | 0.1183 | 8.7 | 12400 | 1.2660 | 0.7345 | | 0.1064 | 8.77 | 12500 | 1.2889 | 0.7365 | | 0.0895 | 8.84 | 12600 | 1.3480 | 0.7330 | | 0.1244 | 8.91 | 12700 | 1.2872 | 0.7325 | | 0.1209 | 8.98 | 12800 | 1.2681 | 0.7375 | | 0.1144 | 9.05 | 12900 | 1.2711 | 0.7370 | | 0.1034 | 9.12 | 13000 | 1.2801 | 0.7360 | | 0.113 | 9.19 | 13100 | 1.2801 | 0.7350 | | 0.0994 | 9.26 | 13200 | 1.2920 | 0.7360 | | 0.0966 | 9.33 | 13300 | 1.2761 | 0.7335 | | 0.0939 | 9.4 | 13400 | 1.2909 | 0.7365 | | 0.0975 | 9.47 | 13500 | 1.2953 | 0.7360 | | 0.0842 | 9.54 | 13600 | 1.3179 | 0.7335 | | 0.0871 | 9.61 | 13700 | 1.3149 | 0.7385 | | 0.1162 | 9.68 | 13800 | 1.3124 | 0.7350 | | 0.085 | 9.75 | 13900 | 1.3207 | 0.7355 | | 0.0966 | 9.82 | 14000 | 1.3248 | 0.7335 | | 0.1064 | 9.89 | 14100 | 1.3261 | 0.7335 | | 0.1046 | 9.96 | 14200 | 1.3255 | 0.7360 | | 38421ebc0285138454f0d5717a9ae1c7 |

apache-2.0 | ['generated_from_trainer'] | false | trainer_log This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.4907 - Accuracy: 0.8742 | 6212eda6c2776b69cc50b7e7b59252ab |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 32 - eval_batch_size: 64 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 10 | 84486f46997cc77fa81d0c426101f961 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 1.047 | 0.04 | 5 | 0.9927 | 0.5753 | | 0.938 | 0.08 | 10 | 0.9320 | 0.5753 | | 0.8959 | 0.12 | 15 | 0.8764 | 0.5773 | | 0.8764 | 0.16 | 20 | 0.8308 | 0.6639 | | 0.7968 | 0.2 | 25 | 0.8045 | 0.6577 | | 0.8644 | 0.25 | 30 | 0.7779 | 0.6639 | | 0.7454 | 0.29 | 35 | 0.7561 | 0.6412 | | 0.7008 | 0.33 | 40 | 0.7157 | 0.6845 | | 0.7627 | 0.37 | 45 | 0.7027 | 0.6907 | | 0.7568 | 0.41 | 50 | 0.7270 | 0.6763 | | 0.7042 | 0.45 | 55 | 0.6770 | 0.7031 | | 0.6683 | 0.49 | 60 | 0.6364 | 0.7134 | | 0.6312 | 0.53 | 65 | 0.6151 | 0.7278 | | 0.5789 | 0.57 | 70 | 0.6003 | 0.7443 | | 0.5964 | 0.61 | 75 | 0.5665 | 0.7835 | | 0.5178 | 0.66 | 80 | 0.5506 | 0.8 | | 0.5698 | 0.7 | 85 | 0.5240 | 0.8 | | 0.5407 | 0.74 | 90 | 0.5223 | 0.7814 | | 0.6141 | 0.78 | 95 | 0.4689 | 0.8268 | | 0.4998 | 0.82 | 100 | 0.4491 | 0.8227 | | 0.4853 | 0.86 | 105 | 0.4448 | 0.8268 | | 0.4561 | 0.9 | 110 | 0.4646 | 0.8309 | | 0.5058 | 0.94 | 115 | 0.4317 | 0.8495 | | 0.4229 | 0.98 | 120 | 0.4014 | 0.8515 | | 0.2808 | 1.02 | 125 | 0.3834 | 0.8619 | | 0.3721 | 1.07 | 130 | 0.3829 | 0.8619 | | 0.3432 | 1.11 | 135 | 0.4212 | 0.8598 | | 0.3616 | 1.15 | 140 | 0.3930 | 0.8680 | | 0.3912 | 1.19 | 145 | 0.3793 | 0.8639 | | 0.4141 | 1.23 | 150 | 0.3646 | 0.8619 | | 0.2726 | 1.27 | 155 | 0.3609 | 0.8701 | | 0.2021 | 1.31 | 160 | 0.3640 | 0.8680 | | 0.3468 | 1.35 | 165 | 0.3655 | 0.8701 | | 0.2729 | 1.39 | 170 | 0.4054 | 0.8495 | | 0.3885 | 1.43 | 175 | 0.3559 | 0.8639 | | 0.446 | 1.48 | 180 | 0.3390 | 0.8680 | | 0.3337 | 1.52 | 185 | 0.3505 | 0.8660 | | 0.3507 | 1.56 | 190 | 0.3337 | 0.8804 | | 0.3864 | 1.6 | 195 | 0.3476 | 0.8660 | | 0.3495 | 1.64 | 200 | 0.3574 | 0.8577 | | 0.3388 | 1.68 | 205 | 0.3426 | 0.8701 | | 0.358 | 1.72 | 210 | 0.3439 | 0.8804 | | 0.1761 | 1.76 | 215 | 0.3461 | 0.8722 | | 0.3089 | 1.8 | 220 | 0.3638 | 0.8639 | | 0.279 | 1.84 | 225 | 0.3527 | 0.8742 | | 0.3468 | 1.89 | 230 | 0.3497 | 0.8619 | | 0.2969 | 1.93 | 235 | 0.3572 | 0.8598 | | 0.2719 | 1.97 | 240 | 0.3391 | 0.8804 | | 0.1936 | 2.01 | 245 | 0.3415 | 0.8619 | | 0.2475 | 2.05 | 250 | 0.3477 | 0.8784 | | 0.1759 | 2.09 | 255 | 0.3718 | 0.8660 | | 0.2443 | 2.13 | 260 | 0.3758 | 0.8619 | | 0.2189 | 2.17 | 265 | 0.3670 | 0.8639 | | 0.1505 | 2.21 | 270 | 0.3758 | 0.8722 | | 0.2283 | 2.25 | 275 | 0.3723 | 0.8722 | | 0.155 | 2.3 | 280 | 0.4442 | 0.8330 | | 0.317 | 2.34 | 285 | 0.3700 | 0.8701 | | 0.1566 | 2.38 | 290 | 0.4218 | 0.8619 | | 0.2294 | 2.42 | 295 | 0.3820 | 0.8660 | | 0.1567 | 2.46 | 300 | 0.3891 | 0.8660 | | 0.1875 | 2.5 | 305 | 0.3973 | 0.8722 | | 0.2741 | 2.54 | 310 | 0.4042 | 0.8742 | | 0.2363 | 2.58 | 315 | 0.3777 | 0.8660 | | 0.1964 | 2.62 | 320 | 0.3891 | 0.8639 | | 0.156 | 2.66 | 325 | 0.3998 | 0.8639 | | 0.1422 | 2.7 | 330 | 0.4022 | 0.8722 | | 0.2141 | 2.75 | 335 | 0.4239 | 0.8701 | | 0.1616 | 2.79 | 340 | 0.4094 | 0.8722 | | 0.1032 | 2.83 | 345 | 0.4263 | 0.8784 | | 0.2313 | 2.87 | 350 | 0.4579 | 0.8598 | | 0.0843 | 2.91 | 355 | 0.3989 | 0.8742 | | 0.2567 | 2.95 | 360 | 0.4051 | 0.8660 | | 0.1749 | 2.99 | 365 | 0.4136 | 0.8660 | | 0.1116 | 3.03 | 370 | 0.4312 | 0.8619 | | 0.1058 | 3.07 | 375 | 0.4007 | 0.8701 | | 0.1085 | 3.11 | 380 | 0.4174 | 0.8660 | | 0.0578 | 3.16 | 385 | 0.4163 | 0.8763 | | 0.1381 | 3.2 | 390 | 0.4578 | 0.8660 | | 0.1137 | 3.24 | 395 | 0.4259 | 0.8660 | | 0.2068 | 3.28 | 400 | 0.3976 | 0.8701 | | 0.0792 | 3.32 | 405 | 0.3824 | 0.8763 | | 0.1711 | 3.36 | 410 | 0.3793 | 0.8742 | | 0.0686 | 3.4 | 415 | 0.4013 | 0.8742 | | 0.1102 | 3.44 | 420 | 0.4113 | 0.8639 | | 0.1102 | 3.48 | 425 | 0.4276 | 0.8619 | | 0.0674 | 3.52 | 430 | 0.4222 | 0.8804 | | 0.0453 | 3.57 | 435 | 0.4326 | 0.8722 | | 0.0704 | 3.61 | 440 | 0.4684 | 0.8722 | | 0.1151 | 3.65 | 445 | 0.4640 | 0.8701 | | 0.1225 | 3.69 | 450 | 0.4408 | 0.8763 | | 0.0391 | 3.73 | 455 | 0.4520 | 0.8639 | | 0.0566 | 3.77 | 460 | 0.4558 | 0.8680 | | 0.1222 | 3.81 | 465 | 0.4599 | 0.8660 | | 0.1035 | 3.85 | 470 | 0.4630 | 0.8763 | | 0.1845 | 3.89 | 475 | 0.4796 | 0.8680 | | 0.087 | 3.93 | 480 | 0.4697 | 0.8742 | | 0.1599 | 3.98 | 485 | 0.4663 | 0.8784 | | 0.0632 | 4.02 | 490 | 0.5139 | 0.8536 | | 0.1218 | 4.06 | 495 | 0.4920 | 0.8722 | | 0.0916 | 4.1 | 500 | 0.4846 | 0.8763 | | 0.0208 | 4.14 | 505 | 0.5269 | 0.8722 | | 0.0803 | 4.18 | 510 | 0.5154 | 0.8784 | | 0.1318 | 4.22 | 515 | 0.4907 | 0.8742 | | 5284c63595f92e9b9ccc436dbeea68b9 |

apache-2.0 | ['generated_from_keras_callback'] | false | englishreview-ds This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: | a674117faf43102d2e94587c65ab671f |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-de This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.1375 - F1: 0.8587 | da14a743a55f0f26c7d6aa4023721969 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.2584 | 1.0 | 525 | 0.1682 | 0.8242 | | 0.1299 | 2.0 | 1050 | 0.1354 | 0.8447 | | 0.0822 | 3.0 | 1575 | 0.1375 | 0.8587 | | 876a08460e9ef4bb216f90efc2aeddde |

mit | ['generated_from_trainer'] | false | test_trainer This model is a fine-tuned version of [cointegrated/rubert-tiny](https://huggingface.co/cointegrated/rubert-tiny) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.7773 - Accuracy: 0.6375 | 33108eb3f8937ae8f7e7748cb8870718 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.7753 | 1.0 | 400 | 0.7773 | 0.6375 | | 6b3721c3b42e485092d0c02c2cd337c1 |

apache-2.0 | ['generated_from_trainer', 'es', 'text-classification', 'acoso', 'twitter', 'cyberbullying'] | false | Detección de acoso en Twitter Español This model is a fine-tuned version of [mrm8488/distilroberta-finetuned-tweets-hate-speech](https://huggingface.co/mrm8488/distilroberta-finetuned-tweets-hate-speech) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.1628 - Accuracy: 0.9167 | a203d373da7161838433420b6c06a73c |

apache-2.0 | ['generated_from_trainer', 'es', 'text-classification', 'acoso', 'twitter', 'cyberbullying'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 5 | c8a546b4c7e0c0c4d6723866d767068e |

apache-2.0 | ['generated_from_trainer', 'es', 'text-classification', 'acoso', 'twitter', 'cyberbullying'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.6732 | 1.0 | 27 | 0.3797 | 0.875 | | 0.5537 | 2.0 | 54 | 0.3242 | 0.9167 | | 0.5218 | 3.0 | 81 | 0.2879 | 0.9167 | | 0.509 | 4.0 | 108 | 0.2606 | 0.9167 | | 0.4196 | 5.0 | 135 | 0.1628 | 0.9167 | | 163c89c8b1b4deb92463be6fca374628 |

apache-2.0 | ['translation'] | false | Usage example ```python from transformers import AutoTokenizer from optimum.onnxruntime import ORTModelForSeq2SeqLM tokenizer = AutoTokenizer.from_pretrained("icon-it-tdtu/mt-vi-en-optimum") model = ORTModelForSeq2SeqLM.from_pretrained("icon-it-tdtu/mt-vi-en-optimum") text = "Tôi là một sinh viên." inputs = tokenizer(text, return_tensors='pt') outputs = model.generate(**inputs) result = tokenizer.decode(outputs[0], skip_special_tokens=True) print(result) | 1ed44aa72c659c021a10036faa2e0745 |

apache-2.0 | ['generated_from_trainer'] | false | bert-finetuned-ner This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the conll2003 dataset. It achieves the following results on the evaluation set: - Loss: 0.0630 - Precision: 0.9310 - Recall: 0.9488 - F1: 0.9398 - Accuracy: 0.9862 | 5c01bf0a8f2432348c5baebf734573ae |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.0911 | 1.0 | 1756 | 0.0702 | 0.9197 | 0.9345 | 0.9270 | 0.9826 | | 0.0336 | 2.0 | 3512 | 0.0623 | 0.9294 | 0.9480 | 0.9386 | 0.9864 | | 0.0174 | 3.0 | 5268 | 0.0630 | 0.9310 | 0.9488 | 0.9398 | 0.9862 | | e160311f3c35aec92606766b86bc352d |

apache-2.0 | ['generated_from_trainer'] | false | classification_chnsenticorp_aug This model is a fine-tuned version of [hfl/chinese-roberta-wwm-ext](https://huggingface.co/hfl/chinese-roberta-wwm-ext) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.3776 - Accuracy: 0.85 | 3a9e8232d5264de98cb00cfdc81248b8 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 10 - eval_batch_size: 10 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3 | bf2d9a6d6b37500d833105cce39c6c30 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.4438 | 1.0 | 20 | 0.5145 | 0.75 | | 0.0666 | 2.0 | 40 | 0.4066 | 0.9 | | 0.0208 | 3.0 | 60 | 0.3776 | 0.85 | | 03ce8e9aa4d4bb1111e288d79623d897 |

cc0-1.0 | ['kaggle'] | false | FineTuning | **Architecture** | **Weights** | **Training Loss** | **Validation Loss** | |:-----------------------:|:---------------:|:----------------:|:----------------------:| | roberta-base | [huggingface/hub](https://huggingface.co/SauravMaheshkar/clr-finetuned-roberta-base) | **0.641** | **0.4728** | | bert-base-uncased | [huggingface/hub](https://huggingface.co/SauravMaheshkar/clr-finetuned-bert-base-uncased) | 0.6781 | 0.4977 | | albert-base | [huggingface/hub](https://huggingface.co/SauravMaheshkar/clr-finetuned-albert-base) | 0.7119 | 0.5155 | | xlm-roberta-base | [huggingface/hub](https://huggingface.co/SauravMaheshkar/clr-finetuned-xlm-roberta-base) | 0.7225 | 0.525 | | bert-large-uncased | [huggingface/hub](https://huggingface.co/SauravMaheshkar/clr-finetuned-bert-large-uncased) | 0.7482 | 0.5161 | | albert-large | [huggingface/hub](https://huggingface.co/SauravMaheshkar/clr-finetuned-albert-large) | 1.075 | 0.9921 | | roberta-large | [huggingface/hub](https://huggingface.co/SauravMaheshkar/clr-finetuned-roberta-large) | 2.749 | 1.075 | | 8da348070ba2bf767c6893581054b979 |

apache-2.0 | ['generated_from_trainer'] | false | eval_masked_102_mrpc This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the GLUE MRPC dataset. It achieves the following results on the evaluation set: - Loss: 0.5646 - Accuracy: 0.8113 - F1: 0.8702 - Combined Score: 0.8407 | a16eb2ab63c184905c2ce49cb9e493c5 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-squad This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the squad_v2 dataset. It achieves the following results on the evaluation set: - Loss: 1.5027 | 8fab51b1bd2e78ee2b37e3ce2e980722 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 1.2343 | 1.0 | 8235 | 1.3121 | | 0.9657 | 2.0 | 16470 | 1.2259 | | 0.7693 | 3.0 | 24705 | 1.5027 | | cd19787540ba88459f66e32d29adc443 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1 | 5e101557890cc1428a31dfac8766ed21 |

creativeml-openrail-m | ['text-to-image'] | false | malavika2 Dreambooth model trained by Prajeevan with [Hugging Face Dreambooth Training Space](https://huggingface.co/spaces/multimodalart/dreambooth-training) with the v1-5 base model You run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb). Don't forget to use the concept prompts! Sample pictures of: malavika2 (use that on your prompt)  | 9a8114d0a797095ae87ac8d7d8bd591f |

apache-2.0 | ['summarization', 'azureml', 'azure', 'codecarbon', 'bart'] | false | `bart-large-samsum` This model was trained using Microsoft's [`Azure Machine Learning Service`](https://azure.microsoft.com/en-us/services/machine-learning). It was fine-tuned on the [`samsum`](https://huggingface.co/datasets/samsum) corpus from [`facebook/bart-large`](https://huggingface.co/facebook/bart-large) checkpoint. | 014f8a45028832b2713cb6721c6b5808 |

apache-2.0 | ['summarization', 'azureml', 'azure', 'codecarbon', 'bart'] | false | Usage (Inference) ```python from transformers import pipeline summarizer = pipeline("summarization", model="linydub/bart-large-samsum") input_text = ''' Henry: Hey, is Nate coming over to watch the movie tonight? Kevin: Yea, he said he'll be arriving a bit later at around 7 since he gets off of work at 6. Have you taken out the garbage yet? Henry: Oh I forgot. I'll do that once I'm finished with my assignment for my math class. Kevin: Yea, you should take it out as soon as possible. And also, Nate is bringing his girlfriend. Henry: Nice, I'm really looking forward to seeing them again. ''' summarizer(input_text) ``` | 1d4a9942cfec7bd59d7bedabe4ac8667 |

apache-2.0 | ['summarization', 'azureml', 'azure', 'codecarbon', 'bart'] | false | create/Microsoft.Template/uri/https%3A%2F%2Fraw.githubusercontent.com%2Flinydub%2Fazureml-greenai-txtsum%2Fmain%2F.cloud%2Ftemplate-hub%2Flinydub%2Farm-bart-large-samsum.json) [](http://armviz.io/ | 744aa2da38f194a90cb09c01edaacc12 |

apache-2.0 | ['summarization', 'azureml', 'azure', 'codecarbon', 'bart'] | false | /?load=https://raw.githubusercontent.com/linydub/azureml-greenai-txtsum/main/.cloud/template-hub/linydub/arm-bart-large-samsum.json) More information about the fine-tuning process (including samples and benchmarks): **[Preview]** https://github.com/linydub/azureml-greenai-txtsum | a3f6bfa579305ea2a4ee51a7d04acc1e |

apache-2.0 | ['summarization', 'azureml', 'azure', 'codecarbon', 'bart'] | false | Resource Usage These results were retrieved from [`Azure Monitor Metrics`](https://docs.microsoft.com/en-us/azure/azure-monitor/essentials/data-platform-metrics). All experiments were ran on AzureML low priority compute clusters. | Key | Value | | --- | ----- | | Region | US West 2 | | AzureML Compute SKU | STANDARD_ND40RS_V2 | | Compute SKU GPU Device | 8 x NVIDIA V100 32GB (NVLink) | | Compute Node Count | 1 | | Run Duration | 6m 48s | | Compute Cost (Dedicated/LowPriority) | $2.50 / $0.50 USD | | Average CPU Utilization | 47.9% | | Average GPU Utilization | 69.8% | | Average GPU Memory Usage | 25.71 GB | | Total GPU Energy Usage | 370.84 kJ | *Compute cost ($) is estimated from the run duration, number of compute nodes utilized, and SKU's price per hour. Updated SKU pricing could be found [here](https://azure.microsoft.com/en-us/pricing/details/machine-learning). | e5a1239cbb89fcedb7279e6d3b19d962 |

apache-2.0 | ['summarization', 'azureml', 'azure', 'codecarbon', 'bart'] | false | Carbon Emissions These results were obtained using [`CodeCarbon`](https://github.com/mlco2/codecarbon). The carbon emissions are estimated from training runtime only (excl. setup and evaluation runtimes). | Key | Value | | --- | ----- | | timestamp | 2021-09-16T23:54:25 | | duration | 263.2430217266083 | | emissions | 0.029715544634717518 | | energy_consumed | 0.09985062041235725 | | country_name | USA | | region | Washington | | cloud_provider | azure | | cloud_region | westus2 | | 63c127cc1d833533f09812d0a7d63387 |

apache-2.0 | ['summarization', 'azureml', 'azure', 'codecarbon', 'bart'] | false | Hyperparameters - max_source_length: 512 - max_target_length: 90 - fp16: True - seed: 1 - per_device_train_batch_size: 16 - per_device_eval_batch_size: 16 - gradient_accumulation_steps: 1 - learning_rate: 5e-5 - num_train_epochs: 3.0 - weight_decay: 0.1 | 62e2cab89d72ee28415398184db12d06 |

apache-2.0 | ['summarization', 'azureml', 'azure', 'codecarbon', 'bart'] | false | Results | ROUGE | Score | | ----- | ----- | | eval_rouge1 | 55.0234 | | eval_rouge2 | 29.6005 | | eval_rougeL | 44.914 | | eval_rougeLsum | 50.464 | | predict_rouge1 | 53.4345 | | predict_rouge2 | 28.7445 | | predict_rougeL | 44.1848 | | predict_rougeLsum | 49.1874 | | Metric | Value | | ------ | ----- | | epoch | 3.0 | | eval_gen_len | 30.6027 | | eval_loss | 1.4327096939086914 | | eval_runtime | 22.9127 | | eval_samples | 818 | | eval_samples_per_second | 35.701 | | eval_steps_per_second | 0.306 | | predict_gen_len | 30.4835 | | predict_loss | 1.4501988887786865 | | predict_runtime | 26.0269 | | predict_samples | 819 | | predict_samples_per_second | 31.467 | | predict_steps_per_second | 0.269 | | train_loss | 1.2014821151207233 | | train_runtime | 263.3678 | | train_samples | 14732 | | train_samples_per_second | 167.811 | | train_steps_per_second | 1.321 | | total_steps | 348 | | total_flops | 4.26008990669865e+16 | | 73d9cee826fc8c7a8ffc49728fa2c8dd |

apache-2.0 | ['automatic-speech-recognition', 'common_voice', 'generated_from_trainer'] | false | wav2vec2-liepa-1-percent This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the COMMON_VOICE - LT dataset. It achieves the following results on the evaluation set: - Loss: 0.5774 - Wer: 0.5079 | 6a363591b3b6d8fafc3ef1501d00855b |

apache-2.0 | ['automatic-speech-recognition', 'common_voice', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | No log | 0.23 | 100 | 3.3596 | 1.0 | | No log | 0.46 | 200 | 2.9280 | 1.0 | | No log | 0.69 | 300 | 1.5091 | 0.9650 | | No log | 0.93 | 400 | 0.9943 | 0.9177 | | 3.1184 | 1.16 | 500 | 0.7590 | 0.7793 | | 3.1184 | 1.39 | 600 | 0.7336 | 0.7408 | | 3.1184 | 1.62 | 700 | 0.7040 | 0.7618 | | 3.1184 | 1.85 | 800 | 0.6815 | 0.7233 | | 3.1184 | 2.08 | 900 | 0.6457 | 0.6865 | | 0.7917 | 2.31 | 1000 | 0.5705 | 0.6813 | | 0.7917 | 2.55 | 1100 | 0.5708 | 0.6620 | | 0.7917 | 2.78 | 1200 | 0.5888 | 0.6462 | | 0.7917 | 3.01 | 1300 | 0.6509 | 0.6970 | | 0.7917 | 3.24 | 1400 | 0.5871 | 0.6462 | | 0.5909 | 3.47 | 1500 | 0.6199 | 0.6813 | | 0.5909 | 3.7 | 1600 | 0.6230 | 0.5919 | | 0.5909 | 3.94 | 1700 | 0.5721 | 0.6427 | | 0.5909 | 4.17 | 1800 | 0.5331 | 0.5867 | | 0.5909 | 4.4 | 1900 | 0.5561 | 0.6007 | | 0.4607 | 4.63 | 2000 | 0.5414 | 0.5849 | | 0.4607 | 4.86 | 2100 | 0.5390 | 0.5587 | | 0.4607 | 5.09 | 2200 | 0.5313 | 0.5569 | | 0.4607 | 5.32 | 2300 | 0.5893 | 0.5797 | | 0.4607 | 5.56 | 2400 | 0.5507 | 0.5954 | | 0.3933 | 5.79 | 2500 | 0.5521 | 0.6025 | | 0.3933 | 6.02 | 2600 | 0.5663 | 0.5989 | | 0.3933 | 6.25 | 2700 | 0.5636 | 0.5832 | | 0.3933 | 6.48 | 2800 | 0.5464 | 0.5919 | | 0.3933 | 6.71 | 2900 | 0.5623 | 0.5832 | | 0.3367 | 6.94 | 3000 | 0.5324 | 0.5692 | | 0.3367 | 7.18 | 3100 | 0.5907 | 0.5394 | | 0.3367 | 7.41 | 3200 | 0.5653 | 0.5814 | | 0.3367 | 7.64 | 3300 | 0.5707 | 0.5814 | | 0.3367 | 7.87 | 3400 | 0.5754 | 0.5429 | | 0.2856 | 8.1 | 3500 | 0.5953 | 0.5569 | | 0.2856 | 8.33 | 3600 | 0.6275 | 0.5394 | | 0.2856 | 8.56 | 3700 | 0.6253 | 0.5569 | | 0.2856 | 8.8 | 3800 | 0.5930 | 0.5429 | | 0.2856 | 9.03 | 3900 | 0.6082 | 0.5219 | | 0.2522 | 9.26 | 4000 | 0.6026 | 0.5447 | | 0.2522 | 9.49 | 4100 | 0.6052 | 0.5271 | | 0.2522 | 9.72 | 4200 | 0.5871 | 0.5219 | | 0.2522 | 9.95 | 4300 | 0.5870 | 0.5236 | | 0.2522 | 10.19 | 4400 | 0.5881 | 0.5131 | | 0.2167 | 10.42 | 4500 | 0.6122 | 0.5289 | | 0.2167 | 10.65 | 4600 | 0.6128 | 0.5166 | | 0.2167 | 10.88 | 4700 | 0.6135 | 0.5377 | | 0.2167 | 11.11 | 4800 | 0.6055 | 0.5184 | | 0.2167 | 11.34 | 4900 | 0.6725 | 0.5569 | | 0.1965 | 11.57 | 5000 | 0.6482 | 0.5429 | | 0.1965 | 11.81 | 5100 | 0.6037 | 0.5096 | | 0.1965 | 12.04 | 5200 | 0.5931 | 0.5131 | | 0.1965 | 12.27 | 5300 | 0.5853 | 0.5114 | | 0.1965 | 12.5 | 5400 | 0.5798 | 0.5219 | | 0.172 | 12.73 | 5500 | 0.5775 | 0.5009 | | 0.172 | 12.96 | 5600 | 0.5782 | 0.5044 | | 0.172 | 13.19 | 5700 | 0.5804 | 0.5184 | | 0.172 | 13.43 | 5800 | 0.5977 | 0.5219 | | 0.172 | 13.66 | 5900 | 0.6069 | 0.5236 | | 0.1622 | 13.89 | 6000 | 0.5850 | 0.5131 | | 0.1622 | 14.12 | 6100 | 0.5758 | 0.5096 | | 0.1622 | 14.35 | 6200 | 0.5752 | 0.5009 | | 0.1622 | 14.58 | 6300 | 0.5727 | 0.5184 | | 0.1622 | 14.81 | 6400 | 0.5795 | 0.5044 | | 51ba3bcc9efb46bc0b3f7b671a061dd7 |

apache-2.0 | ['bert'] | false | Chinese BERT with Whole Word Masking For further accelerating Chinese natural language processing, we provide **Chinese pre-trained BERT with Whole Word Masking**. **[Pre-Training with Whole Word Masking for Chinese BERT](https://arxiv.org/abs/1906.08101)** Yiming Cui, Wanxiang Che, Ting Liu, Bing Qin, Ziqing Yang, Shijin Wang, Guoping Hu This repository is developed based on:https://github.com/google-research/bert You may also interested in, - Chinese BERT series: https://github.com/ymcui/Chinese-BERT-wwm - Chinese MacBERT: https://github.com/ymcui/MacBERT - Chinese ELECTRA: https://github.com/ymcui/Chinese-ELECTRA - Chinese XLNet: https://github.com/ymcui/Chinese-XLNet - Knowledge Distillation Toolkit - TextBrewer: https://github.com/airaria/TextBrewer More resources by HFL: https://github.com/ymcui/HFL-Anthology | 8ce57a7d86b84ca19afb630f9e958ce3 |

apache-2.0 | ['bert'] | false | Citation If you find the technical report or resource is useful, please cite the following technical report in your paper. - Primary: https://arxiv.org/abs/2004.13922 ``` @inproceedings{cui-etal-2020-revisiting, title = "Revisiting Pre-Trained Models for {C}hinese Natural Language Processing", author = "Cui, Yiming and Che, Wanxiang and Liu, Ting and Qin, Bing and Wang, Shijin and Hu, Guoping", booktitle = "Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing: Findings", month = nov, year = "2020", address = "Online", publisher = "Association for Computational Linguistics", url = "https://www.aclweb.org/anthology/2020.findings-emnlp.58", pages = "657--668", } ``` - Secondary: https://arxiv.org/abs/1906.08101 ``` @article{chinese-bert-wwm, title={Pre-Training with Whole Word Masking for Chinese BERT}, author={Cui, Yiming and Che, Wanxiang and Liu, Ting and Qin, Bing and Yang, Ziqing and Wang, Shijin and Hu, Guoping}, journal={arXiv preprint arXiv:1906.08101}, year={2019} } ``` | b30417cf4f68b3d23dc78c90411b5af2 |

mit | ['generated_from_trainer'] | false | roberta_base_model_fine_tuned This model is a fine-tuned version of [roberta-base](https://huggingface.co/roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2488 - Accuracy: 0.9018 | 281193c1bb3bb54db91ae2293b48bbc2 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.4049 | 1.0 | 875 | 0.2488 | 0.9018 | | bb686c7a38c9ff0cc14a20e0ca4dec9c |

apache-2.0 | ['generated_from_trainer'] | false | bert-small-finetuned-finer This model is a fine-tuned version of [google/bert_uncased_L-4_H-512_A-8](https://huggingface.co/google/bert_uncased_L-4_H-512_A-8) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.6137 | 81efed5b255cad2815961f1443ac7ec9 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 128 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3 | 2ffa0676a543346257b48f28d77cdb43 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 1.8994 | 1.0 | 2433 | 1.7597 | | 1.7226 | 2.0 | 4866 | 1.6462 | | 1.6752 | 3.0 | 7299 | 1.6137 | | acef692adb7b16300966d0f1628ed1f8 |

gpl-3.0 | ['pytorch', 'question-answering', 'bert', 'zh'] | false | CKIP BERT Base Chinese This project provides traditional Chinese transformers models (including ALBERT, BERT, GPT2) and NLP tools (including word segmentation, part-of-speech tagging, named entity recognition). 這個專案提供了繁體中文的 transformers 模型(包含 ALBERT、BERT、GPT2)及自然語言處理工具(包含斷詞、詞性標記、實體辨識)。 | 249c64a3dc6a46e5792cc1c58cfdc610 |

gpl-3.0 | ['pytorch', 'question-answering', 'bert', 'zh'] | false | Usage Please use BertTokenizerFast as tokenizer instead of AutoTokenizer. 請使用 BertTokenizerFast 而非 AutoTokenizer。 ``` from transformers import ( BertTokenizerFast, AutoModel, ) tokenizer = BertTokenizerFast.from_pretrained('bert-base-chinese') model = AutoModel.from_pretrained('ckiplab/bert-base-chinese-qa') ``` For full usage and more information, please refer to https://github.com/ckiplab/ckip-transformers. 有關完整使用方法及其他資訊,請參見 https://github.com/ckiplab/ckip-transformers 。 | 1ed73556313766bdc4353e6f5c8ef954 |

apache-2.0 | ['generated_from_trainer'] | false | small-mlm-glue-mrpc-target-glue-mnli This model is a fine-tuned version of [muhtasham/small-mlm-glue-mrpc](https://huggingface.co/muhtasham/small-mlm-glue-mrpc) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.6541 - Accuracy: 0.7253 | 956461eadba6dea375d0227483a10088 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.9151 | 0.04 | 500 | 0.8235 | 0.6375 | | 0.8111 | 0.08 | 1000 | 0.7776 | 0.6659 | | 0.7745 | 0.12 | 1500 | 0.7510 | 0.6748 | | 0.7502 | 0.16 | 2000 | 0.7329 | 0.6886 | | 0.7431 | 0.2 | 2500 | 0.7189 | 0.6921 | | 0.7325 | 0.24 | 3000 | 0.7032 | 0.6991 | | 0.7139 | 0.29 | 3500 | 0.6793 | 0.7129 | | 0.7031 | 0.33 | 4000 | 0.6678 | 0.7215 | | 0.6778 | 0.37 | 4500 | 0.6761 | 0.7236 | | 0.6811 | 0.41 | 5000 | 0.6541 | 0.7253 | | cb707272011b64c90e8c7a276ede02c2 |

gpl-3.0 | [] | false | Pre-trained word embeddings using the text of published clinical case reports. These embeddings use 600 dimensions and were trained using the fasttext algorithm on published clinical case reports found in the [PMC Open Access Subset](https://www.ncbi.nlm.nih.gov/pmc/tools/openftlist/). See the paper here: https://pubmed.ncbi.nlm.nih.gov/34920127/ Citation: ``` @article{flamholz2022word, title={Word embeddings trained on published case reports are lightweight, effective for clinical tasks, and free of protected health information}, author={Flamholz, Zachary N and Crane-Droesch, Andrew and Ungar, Lyle H and Weissman, Gary E}, journal={Journal of Biomedical Informatics}, volume={125}, pages={103971}, year={2022}, publisher={Elsevier} } ``` | d30bbd026c9feed1e041e060399c0d78 |

apache-2.0 | ['generated_from_trainer'] | false | Article_50v7_NER_Model_3Epochs_UNAUGMENTED This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the article50v7_wikigold_split dataset. It achieves the following results on the evaluation set: - Loss: 0.7894 - Precision: 0.3333 - Recall: 0.0002 - F1: 0.0005 - Accuracy: 0.7783 | 44736eb457f1dbd200cc31a9fa5ae585 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 6 | 1.0271 | 0.1183 | 0.0102 | 0.0188 | 0.7768 | | No log | 2.0 | 12 | 0.8250 | 0.4 | 0.0005 | 0.0010 | 0.7783 | | No log | 3.0 | 18 | 0.7894 | 0.3333 | 0.0002 | 0.0005 | 0.7783 | | 955e6ee21e94630fcb943e1037191c78 |

mit | ['generated_from_trainer'] | false | IQA_classification This model is a fine-tuned version of [roberta-base](https://huggingface.co/roberta-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 1.0718 - Accuracy: 0.4862 - Precision: 0.3398 - Recall: 0.4862 - F1: 0.3270 | 0f98bed1e611a48cded21d96c9b8c76d |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | Precision | Recall | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:---------:|:------:|:------:| | 1.3973 | 1.0 | 28 | 1.1588 | 0.4771 | 0.2276 | 0.4771 | 0.3082 | | 1.1575 | 2.0 | 56 | 1.0718 | 0.4862 | 0.3398 | 0.4862 | 0.3270 | | 6e0cc8dc7a6d26b81fdfcea6978e42e8 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-large-xls-r-300m-hindi-colab This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 1.7529 - Wer: 0.9130 | af57342e6e337c42584039c22ed4fc12 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 60 | b5f39c6fd3d503b10d4d82cde4b0271e |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 6.2923 | 44.42 | 400 | 1.7529 | 0.9130 | | 83eea186d8cb0deb21d11fa4489dc047 |

gpl-3.0 | ['object-detection', 'computer-vision', 'sort', 'tracker', 'strongsort'] | false | Model Description [StrongSort](https://arxiv.org/abs/2202.13514): Make DeepSORT Great Again <img src="https://raw.githubusercontent.com/dyhBUPT/StrongSORT/master/assets/MOTA-IDF1-HOTA.png" width="1000"/> | 9b7d88f1947cb97a61a6ecfaeb50bf83 |

gpl-3.0 | ['object-detection', 'computer-vision', 'sort', 'tracker', 'strongsort'] | false | Tracker ```python from strong_sort import StrongSORT tracker = StrongSORT(model_weights='model.pt', device='cuda') pred = model(img) for i, det in enumerate(pred): det[i] = tracker[i].update(detection, im0s) ``` | 926fdc70ac0963aff1fedd6c0bc3c793 |

gpl-3.0 | ['object-detection', 'computer-vision', 'sort', 'tracker', 'strongsort'] | false | BibTeX Entry and Citation Info ``` @article{du2022strongsort, title={Strongsort: Make deepsort great again}, author={Du, Yunhao and Song, Yang and Yang, Bo and Zhao, Yanyun}, journal={arXiv preprint arXiv:2202.13514}, year={2022} } ``` | ff32b231b653004a7ea6262954762f94 |

mit | [] | false | Description A fine-tuned regression model that assigns a functioning level to Dutch sentences describing walking functions. The model is based on a pre-trained Dutch medical language model ([link to be added]()): a RoBERTa model, trained from scratch on clinical notes of the Amsterdam UMC. To detect sentences about walking functions in clinical text in Dutch, use the [icf-domains](https://huggingface.co/CLTL/icf-domains) classification model. | 03e06d225cb6330524db38a1f570d4c2 |

mit | [] | false | Functioning levels Level | Meaning ---|--- 5 | Patient can walk independently anywhere: level surface, uneven surface, slopes, stairs. 4 | Patient can walk independently on level surface but requires help on stairs, inclines, uneven surface; or, patient can walk independently, but the walking is not fully normal. 3 | Patient requires verbal supervision for walking, without physical contact. 2 | Patient needs continuous or intermittent support of one person to help with balance and coordination. 1 | Patient needs firm continuous support from one person who helps carrying weight and with balance. 0 | Patient cannot walk or needs help from two or more people; or, patient walks on a treadmill. The predictions generated by the model might sometimes be outside of the scale (e.g. 5.2); this is normal in a regression model. | 28d6539de2bbcc221e5e929ba9549d0e |

mit | [] | false | How to use To generate predictions with the model, use the [Simple Transformers](https://simpletransformers.ai/) library: ``` from simpletransformers.classification import ClassificationModel model = ClassificationModel( 'roberta', 'CLTL/icf-levels-fac', use_cuda=False, ) example = 'kan nog goed traplopen, maar flink ingeleverd aan conditie na Corona' _, raw_outputs = model.predict([example]) predictions = np.squeeze(raw_outputs) ``` The prediction on the example is: ``` 4.2 ``` The raw outputs look like this: ``` [[4.20903111]] ``` | 805f282fa54d399dd374f9b77105c944 |

mit | [] | false | Evaluation results The evaluation is done on a sentence-level (the classification unit) and on a note-level (the aggregated unit which is meaningful for the healthcare professionals). | | Sentence-level | Note-level |---|---|--- mean absolute error | 0.70 | 0.66 mean squared error | 0.91 | 0.93 root mean squared error | 0.95 | 0.96 | 2a611d0e7e764c29d1bd245778915ab6 |

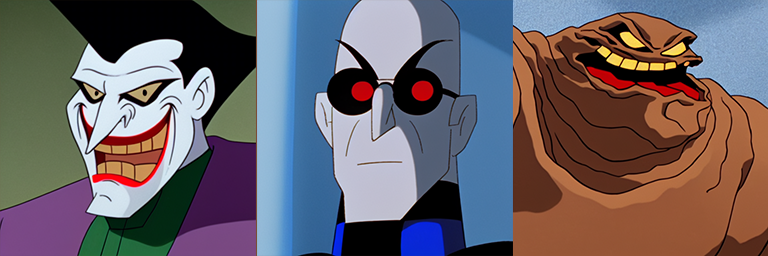

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | **DCAU Diffusion** Prompt is currently Batman_the_animated_series. In the future it will include all DCAU shows. **Existing Characters:**  **Characters not in original dataset:**  **Realistic Style:**  | 0dc650b5f3336ffb7059fb02c84d11ea |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-de This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.1343 - F1: 0.8637 | 0990328f63c5d8a2ea4e2c258493cf71 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.2578 | 1.0 | 525 | 0.1562 | 0.8273 | | 0.1297 | 2.0 | 1050 | 0.1330 | 0.8474 | | 0.0809 | 3.0 | 1575 | 0.1343 | 0.8637 | | ddee2a1cfd8f151f60f79311806c2276 |

gpl-2.0 | ['corenlp'] | false | Core NLP model for CoreNLP CoreNLP is your one stop shop for natural language processing in Java! CoreNLP enables users to derive linguistic annotations for text, including token and sentence boundaries, parts of speech, named entities, numeric and time values, dependency and constituency parses, coreference, sentiment, quote attributions, and relations. Find more about it in [our website](https://stanfordnlp.github.io/CoreNLP) and our [GitHub repository](https://github.com/stanfordnlp/CoreNLP). This card and repo were automatically prepared with `hugging_corenlp.py` in the `stanfordnlp/huggingface-models` repo Last updated 2023-01-21 01:34:10.792 | 14696e80a269ce03423898b6b5955692 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper Large v2 Serbian This model is a fine-tuned version of [openai/whisper-large-v2](https://huggingface.co/openai/whisper-large-v2) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 0.2036 - Wer: 11.8980 | 81c5fc99dfdb4ba5beb72a43a1b5dc11 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.2639 | 0.48 | 100 | 0.2438 | 14.0834 | | 0.1965 | 0.96 | 200 | 0.2036 | 11.8980 | | f523be324cf4018d7a7eeaf2f1dba6eb |

apache-2.0 | ['tapas', 'sequence-classification'] | false | TAPAS large model fine-tuned on Tabular Fact Checking (TabFact) This model has 2 versions which can be used. The latest version, which is the default one, corresponds to the `tapas_tabfact_inter_masklm_large_reset` checkpoint of the [original Github repository](https://github.com/google-research/tapas). This model was pre-trained on MLM and an additional step which the authors call intermediate pre-training, and then fine-tuned on [TabFact](https://github.com/wenhuchen/Table-Fact-Checking). It uses relative position embeddings by default (i.e. resetting the position index at every cell of the table). The other (non-default) version which can be used is the one with absolute position embeddings: - `no_reset`, which corresponds to `tapas_tabfact_inter_masklm_large` Disclaimer: The team releasing TAPAS did not write a model card for this model so this model card has been written by the Hugging Face team and contributors. | 95ac1e6eaca5cd4862acdb9ed732f8fd |

apache-2.0 | ['generated_from_trainer'] | false | xls-r-300m-bemba-15hrs This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.2754 - Wer: 0.3481 | 382059edce5e399bbbabd3d10c36ea22 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 3.5142 | 0.71 | 400 | 0.5585 | 0.7501 | | 0.6351 | 1.43 | 800 | 0.3185 | 0.5058 | | 0.4892 | 2.15 | 1200 | 0.2813 | 0.4655 | | 0.4021 | 2.86 | 1600 | 0.2539 | 0.4159 | | 0.3505 | 3.58 | 2000 | 0.2411 | 0.4000 | | 0.3045 | 4.29 | 2400 | 0.2512 | 0.3951 | | 0.274 | 5.01 | 2800 | 0.2402 | 0.3922 | | 0.2335 | 5.72 | 3200 | 0.2403 | 0.3764 | | 0.2032 | 6.44 | 3600 | 0.2383 | 0.3657 | | 0.1783 | 7.16 | 4000 | 0.2603 | 0.3518 | | 0.1487 | 7.87 | 4400 | 0.2479 | 0.3577 | | 0.1281 | 8.59 | 4800 | 0.2638 | 0.3518 | | 0.113 | 9.3 | 5200 | 0.2754 | 0.3481 | | 99e04fb2a168dc8fd1c696b5c2c0d203 |

mit | ['generated_from_trainer'] | false | bert-german-ner This model is a fine-tuned version of [dbmdz/bert-base-german-cased](https://huggingface.co/dbmdz/bert-base-german-cased) on the conll2003 dataset. It achieves the following results on the evaluation set: - Loss: 0.3196 - Precision: 0.8334 - Recall: 0.8620 - F1: 0.8474 - Accuracy: 0.9292 | 21b0052730a7ed04eda1efbc51747dfe |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 8 | 29743e8ef7c26d94bd4b7706338336f7 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 300 | 0.3617 | 0.7310 | 0.7733 | 0.7516 | 0.8908 | | 0.5428 | 2.0 | 600 | 0.2897 | 0.7789 | 0.8395 | 0.8081 | 0.9132 | | 0.5428 | 3.0 | 900 | 0.2805 | 0.8147 | 0.8465 | 0.8303 | 0.9221 | | 0.2019 | 4.0 | 1200 | 0.2816 | 0.8259 | 0.8498 | 0.8377 | 0.9260 | | 0.1215 | 5.0 | 1500 | 0.2942 | 0.8332 | 0.8599 | 0.8463 | 0.9285 | | 0.1215 | 6.0 | 1800 | 0.3053 | 0.8293 | 0.8619 | 0.8452 | 0.9287 | | 0.0814 | 7.0 | 2100 | 0.3190 | 0.8249 | 0.8634 | 0.8437 | 0.9267 | | 0.0814 | 8.0 | 2400 | 0.3196 | 0.8334 | 0.8620 | 0.8474 | 0.9292 | | b05265074fffd68915faf054fb66be5f |

mit | ['object-detection', 'computer-vision', 'sort', 'tracker', 'ocsort'] | false | Model Description Observation-Centric SORT ([OC-SORT(https://arxiv.org/abs/2203.14360)]) is a pure motion-model-based multi-object tracker. It aims to improve tracking robustness in crowded scenes and when objects are in non-linear motion. It is designed by recognizing and fixing limitations in Kalman filter and SORT. It is flexible to integrate with different detectors and matching modules, such as appearance similarity. It remains, Simple, Online and Real-time. <img src="https://raw.githubusercontent.com/noahcao/OC_SORT/master/assets/teaser.png" width="600"/> | 868f704a77a83ad0460092a877a89754 |

apache-2.0 | ['generated_from_keras_callback'] | false | bart-example This model is a fine-tuned version of [facebook/bart-large](https://huggingface.co/facebook/bart-large) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 2.7877 - Validation Loss: 2.4972 - Epoch: 4 | 2892d5706d796f5f61f9d53324c0d8b0 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Epoch | |:----------:|:---------------:|:-----:| | 6.3670 | 3.2462 | 0 | | 3.5143 | 2.7551 | 1 | | 3.0299 | 2.5620 | 2 | | 2.9364 | 2.7830 | 3 | | 2.7877 | 2.4972 | 4 | | 9a40b484f4f976b4a6f016766982662d |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-base-finetuned-ks This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the superb dataset. It achieves the following results on the evaluation set: - Loss: 0.0903 - Accuracy: 0.9834 | 9bf2a554decd2e4377fbcbba0824cdae |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.7264 | 1.0 | 399 | 0.6319 | 0.9351 | | 0.2877 | 2.0 | 798 | 0.1846 | 0.9748 | | 0.175 | 3.0 | 1197 | 0.1195 | 0.9796 | | 0.1672 | 4.0 | 1596 | 0.0903 | 0.9834 | | 0.1235 | 5.0 | 1995 | 0.0854 | 0.9825 | | d76bf16d10e19569c165831f04fdafd6 |

apache-2.0 | ['automatic-speech-recognition', 'id'] | false | exp_w2v2t_id_vp-it_s692 Fine-tuned [facebook/wav2vec2-large-it-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-it-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (id)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 1391a6a8e51caaf827d5f108946b48a9 |

apache-2.0 | ['Scene Text Removal', 'Image to Image'] | false | GaRNet This is text-removal model that introduced in the paper below and first released at [this page](https://github.com/naver/garnet). \ [The Surprisingly Straightforward Scene Text Removal Method With Gated Attention and Region of Interest Generation: A Comprehensive Prominent Model Analysis](https://arxiv.org/abs/2210.07489). \ Hyeonsu Lee, Chankyu Choi \ Naver Corp. \ In ECCV 2022. | c7efeb5274b41712e6bad2ea2e93a73a |

apache-2.0 | ['Scene Text Removal', 'Image to Image'] | false | Model description GaRNet is a generator that create non-text image with given image and coresponding text box mask. It consists of convolution encoder and decoder. The encoder consists of residual block with attention module called Gated Attention. Gated Attention module has two Spatial attention branch. Each attention branch finds text stroke or its surrounding regions. The module adjusts the weight of these two domains by trainable parameters. The model was trained in PatchGAN manner with Region-of-Interest Generation. \ The discriminator is consists of convolution encoder. Given an image, it determines whether each patch, which indicates text-box regions, is real or fake. All loss functions treat non-textbox regions as 'don't care'. | c131927974f714e7d04aaeb9ef8feb68 |

apache-2.0 | ['Scene Text Removal', 'Image to Image'] | false | Intended uses & limitations This model can be used for areas that require the process of erasing text from an image, such as concealment private information, text editing.\ You can use the raw model or pre-trained model.\ Note that pre-trained model was trained in both Synthetic and SCUT_EnsText dataset. And the SCUT-EnsText dataset can only be used for non-commercial research purposes. | 5d0fd177d12f603f0d3d1e42adeb5748 |

apache-2.0 | ['Scene Text Removal', 'Image to Image'] | false | BibTeX entry and citation info ``` @inproceedings{lee2022surprisingly, title={The Surprisingly Straightforward Scene Text Removal Method with Gated Attention and Region of Interest Generation: A Comprehensive Prominent Model Analysis}, author={Lee, Hyeonsu and Choi, Chankyu}, booktitle={European Conference on Computer Vision}, pages={457--472}, year={2022}, organization={Springer} } ``` | 77b7870d812c22d62e4df6664dcc76a9 |

other | [] | false | Usage With text generation pipeline ```python >>>from blender_model import TextGenerationPipeline >>>max_answer_length = 100 >>>response_generator_pipe = TextGenerationPipeline(max_length=max_answer_length) >>>utterance = "Hello, how are you?" >>>response_generator_pipe(utterance) i am well. how are you? what do you like to do in your free time? ``` Or you can call the model ```python >>>from blender_model import OnnxBlender >>>from transformers import BlenderbotSmallTokenizer >>>original_repo_id = "facebook/blenderbot_small-90M" >>>repo_id = "remzicam/xs_blenderbot_onnx" >>>model_file_names = [ "blenderbot_small-90M-encoder-quantized.onnx", "blenderbot_small-90M-decoder-quantized.onnx", "blenderbot_small-90M-init-decoder-quantized.onnx", ] >>>model=OnnxBlender(original_repo_id, repo_id, model_file_names) >>>utterance = "Hello, how are you?" >>>inputs = tokenizer(utterance, return_tensors="pt") >>>outputs= model.generate(**inputs, max_length=max_answer_length) >>>response = tokenizer.decode(outputs[0], skip_special_tokens = True) >>>print(response) i am well. how are you? what do you like to do in your free time? ``` | ff180dfca1fa3f56addaa62ea4396137 |

apache-2.0 | ['stanza', 'token-classification'] | false | Stanza model for Hindi (hi) Stanza is a collection of accurate and efficient tools for the linguistic analysis of many human languages. Starting from raw text to syntactic analysis and entity recognition, Stanza brings state-of-the-art NLP models to languages of your choosing. Find more about it in [our website](https://stanfordnlp.github.io/stanza) and our [GitHub repository](https://github.com/stanfordnlp/stanza). This card and repo were automatically prepared with `hugging_stanza.py` in the `stanfordnlp/huggingface-models` repo Last updated 2022-10-26 21:23:50.098 | 77b2f0d062e8a67d1a756df503960d49 |

apache-2.0 | ['part-of-speech', 'token-classification'] | false | XLM-RoBERTa base Universal Dependencies v2.8 POS tagging: Faroese This model is part of our paper called: - Make the Best of Cross-lingual Transfer: Evidence from POS Tagging with over 100 Languages Check the [Space](https://huggingface.co/spaces/wietsedv/xpos) for more details. | 65fbe7e1d422221447e3ed72fbfcee60 |

apache-2.0 | ['part-of-speech', 'token-classification'] | false | Usage ```python from transformers import AutoTokenizer, AutoModelForTokenClassification tokenizer = AutoTokenizer.from_pretrained("wietsedv/xlm-roberta-base-ft-udpos28-fo") model = AutoModelForTokenClassification.from_pretrained("wietsedv/xlm-roberta-base-ft-udpos28-fo") ``` | 04001a1ea1e526be56c8c2d192cc8e2e |

mit | ['generated_from_trainer'] | false | ainize-kobart-news-eb-finetuned-papers This model is a fine-tuned version of [ainize/kobart-news](https://huggingface.co/ainize/kobart-news) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.3066 - Rouge1: 14.5433 - Rouge2: 5.2238 - Rougel: 14.4731 - Rougelsum: 14.5183 - Gen Len: 19.9934 | e8436371194965e371a3352bfe8f1214 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:-----:|:---------------:|:-------:|:------:|:-------:|:---------:|:-------:| | 0.1918 | 1.0 | 7200 | 0.2403 | 14.6883 | 5.2427 | 14.6306 | 14.6489 | 19.9938 | | 0.1332 | 2.0 | 14400 | 0.2391 | 14.5165 | 5.2443 | 14.493 | 14.4908 | 19.9972 | | 0.0966 | 3.0 | 21600 | 0.2539 | 14.758 | 5.4976 | 14.6906 | 14.7188 | 19.9941 | | 0.0736 | 4.0 | 28800 | 0.2782 | 14.6267 | 5.3371 | 14.5578 | 14.6014 | 19.9934 | | 0.0547 | 5.0 | 36000 | 0.3066 | 14.5433 | 5.2238 | 14.4731 | 14.5183 | 19.9934 | | 0e4cd1a3e1ec59b248658433c76f0c5c |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | zzuurryy Dreambooth model trained by Brainergy with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Sample pictures of this concept: | 8dcf7862d7bb97f5f0b4e46c48644cc3 |

apache-2.0 | ['generated_from_trainer'] | false | pollcat-mnli This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the glue dataset. It achieves the following results on the evaluation set: - Loss: 1.8610 - Accuracy: 0.7271 | 62988f227908a4b0b8771927b5888df3 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.0633 | 1.0 | 1563 | 1.8610 | 0.7271 | | 1d45946ea7147c8eaa097d85adb3d34a |

apache-2.0 | ['generated_from_trainer'] | false | small-mlm-glue-rte-target-glue-stsb This model is a fine-tuned version of [muhtasham/small-mlm-glue-rte](https://huggingface.co/muhtasham/small-mlm-glue-rte) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.5419 - Pearson: 0.8754 - Spearmanr: 0.8723 | d1163f0858e1481ac85e744a7dd41c81 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Pearson | Spearmanr | |:-------------:|:-----:|:----:|:---------------:|:-------:|:---------:| | 0.8054 | 2.78 | 500 | 0.6118 | 0.8682 | 0.8680 | | 0.2875 | 5.56 | 1000 | 0.5788 | 0.8693 | 0.8682 | | 0.1718 | 8.33 | 1500 | 0.6133 | 0.8673 | 0.8639 | | 0.1251 | 11.11 | 2000 | 0.6103 | 0.8716 | 0.8681 | | 0.0999 | 13.89 | 2500 | 0.5665 | 0.8734 | 0.8707 | | 0.0825 | 16.67 | 3000 | 0.6035 | 0.8736 | 0.8700 | | 0.07 | 19.44 | 3500 | 0.5605 | 0.8752 | 0.8716 | | 0.0611 | 22.22 | 4000 | 0.5661 | 0.8768 | 0.8730 | | 0.0565 | 25.0 | 4500 | 0.5557 | 0.8739 | 0.8705 | | 0.0523 | 27.78 | 5000 | 0.5419 | 0.8754 | 0.8723 | | 4e5692ac4b5ff381de05663f8d95418a |

apache-2.0 | ['generated_from_trainer'] | false | distilbert_add_GLUE_Experiment_logit_kd_cola_96 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the GLUE COLA dataset. It achieves the following results on the evaluation set: - Loss: 0.6839 - Matthews Correlation: 0.0 | 677edfb43e8036848d32a83d4824b1cb |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Matthews Correlation | |:-------------:|:-----:|:----:|:---------------:|:--------------------:| | 0.9137 | 1.0 | 34 | 0.7665 | 0.0 | | 0.8592 | 2.0 | 68 | 0.7303 | 0.0 | | 0.8268 | 3.0 | 102 | 0.7043 | 0.0 | | 0.8074 | 4.0 | 136 | 0.6901 | 0.0 | | 0.8005 | 5.0 | 170 | 0.6853 | 0.0 | | 0.7969 | 6.0 | 204 | 0.6842 | 0.0 | | 0.797 | 7.0 | 238 | 0.6840 | 0.0 | | 0.7981 | 8.0 | 272 | 0.6840 | 0.0 | | 0.7971 | 9.0 | 306 | 0.6840 | 0.0 | | 0.7967 | 10.0 | 340 | 0.6839 | 0.0 | | 0.7978 | 11.0 | 374 | 0.6839 | 0.0 | | 0.7979 | 12.0 | 408 | 0.6839 | 0.0 | | 0.7973 | 13.0 | 442 | 0.6839 | 0.0 | | 0.7979 | 14.0 | 476 | 0.6840 | 0.0 | | 0.7972 | 15.0 | 510 | 0.6839 | 0.0 | | 3ac314140598d75a5589105b9bce5f91 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-hi This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.2211 - F1: 0.8614 | 1d6c25be8ef03680313bf2a539aa4cd0 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.62 | 1.0 | 209 | 0.3914 | 0.7622 | | 0.2603 | 2.0 | 418 | 0.2665 | 0.8211 | | 0.1653 | 3.0 | 627 | 0.2211 | 0.8614 | | 5085685b6c1c55d2837d4f0143e596f6 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.