license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['whisper-event'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 88 - eval_batch_size: 88 - seed: 22 - optimizer: adamw_bnb_8bit - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 10000 - training_steps: 15008 (terminated upon convergence. Initially set to 51570 steps) - mixed_precision_training: True | d7335a52c86f758b9d4c822a47986417 |

apache-2.0 | ['translation'] | false | opus-mt-fse-fi * source languages: fse * target languages: fi * OPUS readme: [fse-fi](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/fse-fi/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-09.zip](https://object.pouta.csc.fi/OPUS-MT-models/fse-fi/opus-2020-01-09.zip) * test set translations: [opus-2020-01-09.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/fse-fi/opus-2020-01-09.test.txt) * test set scores: [opus-2020-01-09.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/fse-fi/opus-2020-01-09.eval.txt) | 03c4f474c5148e7303225bc6a062fc1e |

cc-by-4.0 | ['conversational', 'transformers'] | false | DialoGPT_MWOZ_Idioms This is a fine-tuned model of DialoGPT (medium)-MultiWOZ on the PIE-English idioms corpus. It is intended to be used as an idiom-aware conversational system. The dataset it's trained on is limited in scope, as it covers only 10 classes of idioms ( metaphor, simile, euphemism, parallelism, personification, oxymoron, paradox, hyperbole, irony and literal). The perplexity achieved on the test and validation sets are 160.9 and 160.46, respectively. More information about the original pre-trained model can be found [here](https://huggingface.co/tosin/dialogpt_mwoz) * Generation example from an interactive environment: |Role | Response | |---------|------------| |User | remind her to take it off the hook. | |Bot | the bookie took it on the nod | |User | A lengthy break is in order ; so make sure you keep your nose clean | |Bot | they are under a cloud because of fears about US President's wife Hillary Clinton | |User | Some families breed like rabbits and send us all their male heirs | |Bot | he was out for the count | Please find the information about preprocessing, training and full details of the DialoGPT in the [original DialoGPT repository](https://github.com/microsoft/DialoGPT) | efd4367bc7642b90b635b77ca3c9733f |

cc-by-4.0 | ['conversational', 'transformers'] | false | How to use Now we are ready to try out how the model works as a chatting partner! ```python from transformers import AutoModelForCausalLM, AutoTokenizer import torch tokenizer = AutoTokenizer.from_pretrained("tosin/dialogpt_mwoz_idioms") model = AutoModelForCausalLM.from_pretrained("tosin/dialogpt_mwoz_idioms") | d321dcde4a206d358666f8555fd34ef9 |

apache-2.0 | ['automatic-speech-recognition', 'sv-SE'] | false | exp_w2v2t_sv-se_vp-it_s817 Fine-tuned [facebook/wav2vec2-large-it-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-it-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (sv-SE)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 3e2996079a14a748344fd8afbbfff861 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2223 - Accuracy: 0.927 - F1: 0.9271 | d66807516c4cfe0974ea5fde31481518 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8412 | 1.0 | 250 | 0.3215 | 0.904 | 0.9010 | | 0.2535 | 2.0 | 500 | 0.2223 | 0.927 | 0.9271 | | 4e9e293a0db590c37abea7f549c80484 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0005 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - gradient_accumulation_steps: 8 - total_train_batch_size: 256 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: cosine - lr_scheduler_warmup_steps: 1000 - num_epochs: 15 | f3e90b25dc7508d6b3a02f2b9b579e7f |

apache-2.0 | ['translation'] | false | sem-sem * source group: Semitic languages * target group: Semitic languages * OPUS readme: [sem-sem](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/sem-sem/README.md) * model: transformer * source language(s): apc ara arq arz heb mlt * target language(s): apc ara arq arz heb mlt * model: transformer * pre-processing: normalization + SentencePiece (spm32k,spm32k) * a sentence initial language token is required in the form of `>>id<<` (id = valid target language ID) * download original weights: [opus-2020-07-27.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/sem-sem/opus-2020-07-27.zip) * test set translations: [opus-2020-07-27.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/sem-sem/opus-2020-07-27.test.txt) * test set scores: [opus-2020-07-27.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/sem-sem/opus-2020-07-27.eval.txt) | 1a0f79eb99ba2317246cc93635cc8c99 |

apache-2.0 | ['translation'] | false | Benchmarks | testset | BLEU | chr-F | |-----------------------|-------|-------| | Tatoeba-test.ara-ara.ara.ara | 4.2 | 0.200 | | Tatoeba-test.ara-heb.ara.heb | 34.0 | 0.542 | | Tatoeba-test.ara-mlt.ara.mlt | 16.6 | 0.513 | | Tatoeba-test.heb-ara.heb.ara | 18.8 | 0.477 | | Tatoeba-test.mlt-ara.mlt.ara | 20.7 | 0.388 | | Tatoeba-test.multi.multi | 27.1 | 0.507 | | baf7dab2527c10ac8ecb300c55ab8685 |

apache-2.0 | ['translation'] | false | System Info: - hf_name: sem-sem - source_languages: sem - target_languages: sem - opus_readme_url: https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/sem-sem/README.md - original_repo: Tatoeba-Challenge - tags: ['translation'] - languages: ['mt', 'ar', 'he', 'ti', 'am', 'sem'] - src_constituents: {'apc', 'mlt', 'arz', 'ara', 'heb', 'tir', 'arq', 'afb', 'amh', 'acm', 'ary'} - tgt_constituents: {'apc', 'mlt', 'arz', 'ara', 'heb', 'tir', 'arq', 'afb', 'amh', 'acm', 'ary'} - src_multilingual: True - tgt_multilingual: True - prepro: normalization + SentencePiece (spm32k,spm32k) - url_model: https://object.pouta.csc.fi/Tatoeba-MT-models/sem-sem/opus-2020-07-27.zip - url_test_set: https://object.pouta.csc.fi/Tatoeba-MT-models/sem-sem/opus-2020-07-27.test.txt - src_alpha3: sem - tgt_alpha3: sem - short_pair: sem-sem - chrF2_score: 0.507 - bleu: 27.1 - brevity_penalty: 0.972 - ref_len: 13472.0 - src_name: Semitic languages - tgt_name: Semitic languages - train_date: 2020-07-27 - src_alpha2: sem - tgt_alpha2: sem - prefer_old: False - long_pair: sem-sem - helsinki_git_sha: 480fcbe0ee1bf4774bcbe6226ad9f58e63f6c535 - transformers_git_sha: 2207e5d8cb224e954a7cba69fa4ac2309e9ff30b - port_machine: brutasse - port_time: 2020-08-21-14:41 | 2c2db91b9279f60b33981985334747e4 |

mit | ['generated_from_trainer'] | false | roberta-large-mnli-misogyny-sexism-4tweets-3e-05-0.05 This model is a fine-tuned version of [roberta-large-mnli](https://huggingface.co/roberta-large-mnli) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.9069 - Accuracy: 0.6914 - F1: 0.7061 - Precision: 0.6293 - Recall: 0.8043 - Mae: 0.3086 - Tn: 320 - Fp: 218 - Fn: 90 - Tp: 370 | c0ec2f26ac1b1584988f98f9f9138aaa |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 4 | 7bd4c61a9d29666cf737f398bc28076d |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | Precision | Recall | Mae | Tn | Fp | Fn | Tp | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|:---------:|:------:|:------:|:---:|:---:|:---:|:---:| | 0.5248 | 1.0 | 1346 | 0.7245 | 0.6513 | 0.6234 | 0.6207 | 0.6261 | 0.3487 | 362 | 176 | 172 | 288 | | 0.4553 | 2.0 | 2692 | 0.6894 | 0.6693 | 0.7043 | 0.5991 | 0.8543 | 0.3307 | 275 | 263 | 67 | 393 | | 0.3753 | 3.0 | 4038 | 0.6966 | 0.7234 | 0.7326 | 0.6608 | 0.8217 | 0.2766 | 344 | 194 | 82 | 378 | | 0.2986 | 4.0 | 5384 | 0.9069 | 0.6914 | 0.7061 | 0.6293 | 0.8043 | 0.3086 | 320 | 218 | 90 | 370 | | 8da84770dfd95e4a362a4f0ae000fc20 |

apache-2.0 | ['deep-narrow'] | false | T5-Efficient-SMALL-NL36 (Deep-Narrow version) T5-Efficient-SMALL-NL36 is a variation of [Google's original T5](https://ai.googleblog.com/2020/02/exploring-transfer-learning-with-t5.html) following the [T5 model architecture](https://huggingface.co/docs/transformers/model_doc/t5). It is a *pretrained-only* checkpoint and was released with the paper **[Scale Efficiently: Insights from Pre-training and Fine-tuning Transformers](https://arxiv.org/abs/2109.10686)** by *Yi Tay, Mostafa Dehghani, Jinfeng Rao, William Fedus, Samira Abnar, Hyung Won Chung, Sharan Narang, Dani Yogatama, Ashish Vaswani, Donald Metzler*. In a nutshell, the paper indicates that a **Deep-Narrow** model architecture is favorable for **downstream** performance compared to other model architectures of similar parameter count. To quote the paper: > We generally recommend a DeepNarrow strategy where the model’s depth is preferentially increased > before considering any other forms of uniform scaling across other dimensions. This is largely due to > how much depth influences the Pareto-frontier as shown in earlier sections of the paper. Specifically, a > tall small (deep and narrow) model is generally more efficient compared to the base model. Likewise, > a tall base model might also generally more efficient compared to a large model. We generally find > that, regardless of size, even if absolute performance might increase as we continue to stack layers, > the relative gain of Pareto-efficiency diminishes as we increase the layers, converging at 32 to 36 > layers. Finally, we note that our notion of efficiency here relates to any one compute dimension, i.e., > params, FLOPs or throughput (speed). We report all three key efficiency metrics (number of params, > FLOPS and speed) and leave this decision to the practitioner to decide which compute dimension to > consider. To be more precise, *model depth* is defined as the number of transformer blocks that are stacked sequentially. A sequence of word embeddings is therefore processed sequentially by each transformer block. | ed3486c867b982b92e98b098da8cb416 |

apache-2.0 | ['deep-narrow'] | false | Details model architecture This model checkpoint - **t5-efficient-small-nl36** - is of model type **Small** with the following variations: - **nl** is **36** It has **280.87** million parameters and thus requires *ca.* **1123.47 MB** of memory in full precision (*fp32*) or **561.74 MB** of memory in half precision (*fp16* or *bf16*). A summary of the *original* T5 model architectures can be seen here: | Model | nl (el/dl) | ff | dm | kv | nh | | 20524fbff17492e615ffeb233f5d2d01 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-en This model is a fine-tuned version of [tkubotake/xlm-roberta-base-finetuned-panx-de](https://huggingface.co/tkubotake/xlm-roberta-base-finetuned-panx-de) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.5430 - F1: 0.7580 | 748f5feda01dd83bfedd0d7da664579d |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.1318 | 1.0 | 50 | 0.4145 | 0.7557 | | 0.0589 | 2.0 | 100 | 0.5016 | 0.7524 | | 0.0314 | 3.0 | 150 | 0.5430 | 0.7580 | | 804cfac2bd74fc77ef7e29a093f685b3 |

apache-2.0 | [] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 2 - eval_batch_size: 2 - gradient_accumulation_steps: 8 - optimizer: AdamW with betas=(0.95, 0.999), weight_decay=1e-06 and epsilon=1e-08 - lr_scheduler: cosine - lr_warmup_steps: 500 - ema_inv_gamma: 1.0 - ema_inv_gamma: 0.75 - ema_inv_gamma: 0.9999 - mixed_precision: no | b326ea28a2f76b54da8cb192a8e58d5a |

mit | ['generated_from_keras_callback'] | false | ishaankul67/2008_Sichuan_earthquake-clustered This model is a fine-tuned version of [nandysoham16/12-clustered_aug](https://huggingface.co/nandysoham16/12-clustered_aug) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.4882 - Train End Logits Accuracy: 0.8924 - Train Start Logits Accuracy: 0.7882 - Validation Loss: 0.2788 - Validation End Logits Accuracy: 0.8947 - Validation Start Logits Accuracy: 0.8947 - Epoch: 0 | dca716cba669aac12c8039eb130e7672 |

mit | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch | |:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:| | 0.4882 | 0.8924 | 0.7882 | 0.2788 | 0.8947 | 0.8947 | 0 | | bdf4b6dfa36e46c0ddccff116451b7f9 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-base-timit-demo-colab This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.4241 - Wer: 0.3381 | a6a2d0e80d63175d82933883691bec49 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 3.7749 | 4.0 | 500 | 2.0639 | 1.0018 | | 0.9252 | 8.0 | 1000 | 0.4853 | 0.4821 | | 0.3076 | 12.0 | 1500 | 0.4507 | 0.4044 | | 0.1732 | 16.0 | 2000 | 0.4315 | 0.3688 | | 0.1269 | 20.0 | 2500 | 0.4481 | 0.3559 | | 0.1087 | 24.0 | 3000 | 0.4354 | 0.3464 | | 0.0832 | 28.0 | 3500 | 0.4241 | 0.3381 | | ee99f559fdd04b6ff722fb2709c6b4a0 |

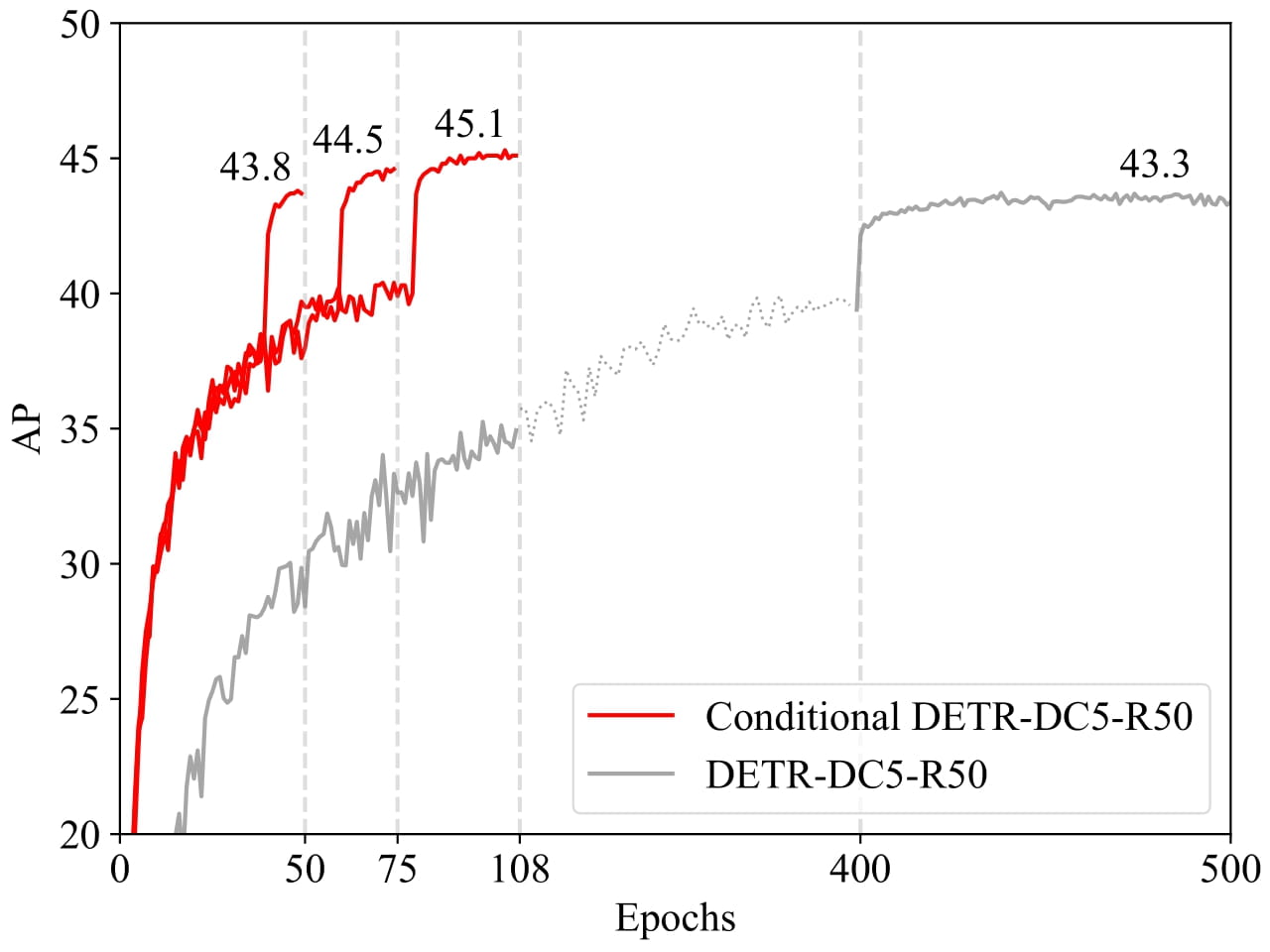

apache-2.0 | ['object-detection', 'vision'] | false | Conditional DETR model with ResNet-50 backbone Conditional DEtection TRansformer (DETR) model trained end-to-end on COCO 2017 object detection (118k annotated images). It was introduced in the paper [Conditional DETR for Fast Training Convergence](https://arxiv.org/abs/2108.06152) by Meng et al. and first released in [this repository](https://github.com/Atten4Vis/ConditionalDETR). | 5e5df93bf049be09fc4fd06f79171da9 |

apache-2.0 | ['object-detection', 'vision'] | false | Model description The recently-developed DETR approach applies the transformer encoder and decoder architecture to object detection and achieves promising performance. In this paper, we handle the critical issue, slow training convergence, and present a conditional cross-attention mechanism for fast DETR training. Our approach is motivated by that the cross-attention in DETR relies highly on the content embeddings for localizing the four extremities and predicting the box, which increases the need for high-quality content embeddings and thus the training difficulty. Our approach, named conditional DETR, learns a conditional spatial query from the decoder embedding for decoder multi-head cross-attention. The benefit is that through the conditional spatial query, each cross-attention head is able to attend to a band containing a distinct region, e.g., one object extremity or a region inside the object box. This narrows down the spatial range for localizing the distinct regions for object classification and box regression, thus relaxing the dependence on the content embeddings and easing the training. Empirical results show that conditional DETR converges 6.7× faster for the backbones R50 and R101 and 10× faster for stronger backbones DC5-R50 and DC5-R101.  | 838bdd359c82a9faf5ea8ee13dbb245f |

apache-2.0 | ['object-detection', 'vision'] | false | Intended uses & limitations You can use the raw model for object detection. See the [model hub](https://huggingface.co/models?search=microsoft/conditional-detr) to look for all available Conditional DETR models. | 42baa876af8281d58d1a207fbb49c839 |

apache-2.0 | ['object-detection', 'vision'] | false | How to use Here is how to use this model: ```python from transformers import AutoImageProcessor, ConditionalDetrForObjectDetection import torch from PIL import Image import requests url = "http://images.cocodataset.org/val2017/000000039769.jpg" image = Image.open(requests.get(url, stream=True).raw) processor = AutoImageProcessor.from_pretrained("microsoft/conditional-detr-resnet-50") model = ConditionalDetrForObjectDetection.from_pretrained("microsoft/conditional-detr-resnet-50") inputs = processor(images=image, return_tensors="pt") outputs = model(**inputs) | 5a492da7f35ac73fc1d65db4164e95a7 |

apache-2.0 | ['object-detection', 'vision'] | false | let's only keep detections with score > 0.7 target_sizes = torch.tensor([image.size[::-1]]) results = processor.post_process_object_detection(outputs, target_sizes=target_sizes, threshold=0.7)[0] for score, label, box in zip(results["scores"], results["labels"], results["boxes"]): box = [round(i, 2) for i in box.tolist()] print( f"Detected {model.config.id2label[label.item()]} with confidence " f"{round(score.item(), 3)} at location {box}" ) ``` This should output: ``` Detected remote with confidence 0.833 at location [38.31, 72.1, 177.63, 118.45] Detected cat with confidence 0.831 at location [9.2, 51.38, 321.13, 469.0] Detected cat with confidence 0.804 at location [340.3, 16.85, 642.93, 370.95] ``` Currently, both the feature extractor and model support PyTorch. | a96c3864ae47249113d47196fa455163 |

apache-2.0 | ['object-detection', 'vision'] | false | BibTeX entry and citation info ```bibtex @inproceedings{MengCFZLYS021, author = {Depu Meng and Xiaokang Chen and Zejia Fan and Gang Zeng and Houqiang Li and Yuhui Yuan and Lei Sun and Jingdong Wang}, title = {Conditional {DETR} for Fast Training Convergence}, booktitle = {2021 {IEEE/CVF} International Conference on Computer Vision, {ICCV} 2021, Montreal, QC, Canada, October 10-17, 2021}, } ``` | ef8e809a11af40fae6f5c1a64796e51b |

cc-by-4.0 | [] | false | FiD model trained on NQ -- This is the model checkpoint of FiD [2], based on the T5 large (with 770M parameters) and trained on the natural question (NQ) dataset [1]. -- Hyperparameters: 8 x 40GB A100 GPUs; batch size 8; AdamW; LR 3e-5; 50000 steps References: [1] Natural Questions: A Benchmark for Question Answering Research. TACL 2019. [2] Leveraging Passage Retrieval with Generative Models for Open Domain Question Answering. EACL 2021. | f097392936079ab510e971a52aee8a27 |

cc-by-4.0 | [] | false | Model performance We evaluate it on the NQ dataset, the EM score is 51.3 (0.1 lower than original performance reported in the paper). <a href="https://huggingface.co/exbert/?model=bert-base-uncased"> <img width="300px" src="https://cdn-media.huggingface.co/exbert/button.png"> </a> | 06f922c045ae63238f27e55218c6d309 |

mit | [] | false | T5-ANCE T5-ANCE generally follows the training procedure described in [this page](https://openmatch.readthedocs.io/en/latest/dr-msmarco-passage.html), but uses a much larger batch size. Dataset used for training: - MS MARCO Passage Evaluation result: |Dataset|Metric|Result| |---|---|---| |MS MARCO Passage (dev) | MRR@10 | 0.3570| Important hyper-parameters: |Name|Value| |---|---| |Global batch size|256| |Learning rate|5e-6| |Maximum length of query|32| |Maximum length of document|128| |Template for query|`<text>`| |Template for document|`Title: <title> Text: <text>`| | 3968088ed261f3f59352371644b5b03c |

mit | ['conversational'] | false | DialoGPT Trained on WhatsApp chats This is an instance of [microsoft/DialoGPT-medium](https://huggingface.co/microsoft/DialoGPT-medium) trained on WhatsApp chats or you can train this model on [a Kaggle game script dataset](https://www.kaggle.com/ruolinzheng/twewy-game-script). feel free to ask me questions on discord server [discord server](https://discord.gg/Gqhje8Z7DX) Chat with the model: ```python from transformers import AutoTokenizer, AutoModelWithLMHead tokenizer = AutoTokenizer.from_pretrained("harrydonni/DialoGPT-small-Michael-Scott") model = AutoModelWithLMHead.from_pretrained("harrydonni/DialoGPT-small-Michael-Scott") | 37d10d21a4b0e0e26990ab373cd18c38 |

mit | ['conversational'] | false | pretty print last ouput tokens from bot print("Michael: {}".format(tokenizer.decode(chat_history_ids[:, bot_input_ids.shape[-1]:][0], skip_special_tokens=True))) ``` this is done by shreesha thank you...... | ea607d2c588514c5b989ca5ffd04e597 |

apache-2.0 | ['italian', 'sequence-to-sequence', 'newspaper', 'ilgiornale', 'repubblica', 'style-transfer'] | false | mT5 Small for News Headline Style Transfer (Repubblica to Il Giornale) 🗞️➡️🗞️ 🇮🇹 This repository contains the checkpoint for the [mT5 Small](https://huggingface.co/google/mt5-small) model fine-tuned on news headline style transfer in the Repubblica to Il Giornale direction on the Italian CHANGE-IT dataset as part of the experiments of the paper [IT5: Large-scale Text-to-text Pretraining for Italian Language Understanding and Generation](https://arxiv.org/abs/2203.03759) by [Gabriele Sarti](https://gsarti.com) and [Malvina Nissim](https://malvinanissim.github.io). A comprehensive overview of other released materials is provided in the [gsarti/it5](https://github.com/gsarti/it5) repository. Refer to the paper for additional details concerning the reported scores and the evaluation approach. | f172fad3e0ff94587ffc45aad392711c |

apache-2.0 | ['italian', 'sequence-to-sequence', 'newspaper', 'ilgiornale', 'repubblica', 'style-transfer'] | false | Using the model The model is trained to generate an headline in the style of Il Giornale from the full body of an article written in the style of Repubblica. Model checkpoints are available for usage in Tensorflow, Pytorch and JAX. They can be used directly with pipelines as: ```python from transformers import pipelines r2g = pipeline("text2text-generation", model='it5/mt5-small-repubblica-to-ilgiornale') r2g("Arriva dal Partito nazionalista basco (Pnv) la conferma che i cinque deputati che siedono in parlamento voteranno la sfiducia al governo guidato da Mariano Rajoy. Pochi voti, ma significativi quelli della formazione politica di Aitor Esteban, che interverrà nel pomeriggio. Pur con dimensioni molto ridotte, il partito basco si è trovato a fare da ago della bilancia in aula. E il sostegno alla mozione presentata dai Socialisti potrebbe significare per il primo ministro non trovare quei 176 voti che gli servono per continuare a governare. \" Perché dovrei dimettermi io che per il momento ho la fiducia della Camera e quella che mi è stato data alle urne \", ha detto oggi Rajoy nel suo intervento in aula, mentre procedeva la discussione sulla mozione di sfiducia. Il voto dei baschi ora cambia le carte in tavola e fa crescere ulteriormente la pressione sul premier perché rassegni le sue dimissioni. La sfiducia al premier, o un'eventuale scelta di dimettersi, porterebbe alle estreme conseguenze lo scandalo per corruzione che ha investito il Partito popolare. Ma per ora sembra pensare a tutt'altro. \"Non ha intenzione di dimettersi - ha detto il segretario generale del Partito popolare , María Dolores de Cospedal - Non gioverebbe all'interesse generale o agli interessi del Pp\".") >>> [{"generated_text": "il nazionalista rajoy: 'voteremo la sfiducia'"}] ``` or loaded using autoclasses: ```python from transformers import AutoTokenizer, AutoModelForSeq2SeqLM tokenizer = AutoTokenizer.from_pretrained("it5/mt5-small-repubblica-to-ilgiornale") model = AutoModelForSeq2SeqLM.from_pretrained("it5/mt5-small-repubblica-to-ilgiornale") ``` If you use this model in your research, please cite our work as: ```bibtex @article{sarti-nissim-2022-it5, title={{IT5}: Large-scale Text-to-text Pretraining for Italian Language Understanding and Generation}, author={Sarti, Gabriele and Nissim, Malvina}, journal={ArXiv preprint 2203.03759}, url={https://arxiv.org/abs/2203.03759}, year={2022}, month={mar} } ``` | 5be6894fbfcbd955ceee6c7233bf8ef3 |

apache-2.0 | ['generated_from_trainer'] | false | distilled-mt5-small-010099 This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the wmt16 ro-en dataset. It achieves the following results on the evaluation set: - Loss: 2.9787 - Bleu: 5.9209 - Gen Len: 50.1856 | 244f2a55cedac87f5da8c45197750eb4 |

apache-2.0 | ['generated_from_trainer'] | false | presentation_hate_1234567 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the tweet_eval dataset. It achieves the following results on the evaluation set: - Loss: 0.8438 - F1: 0.7680 | 55c7b8247187da1b726f2024c3b2e634 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5.436235805743952e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 1234567 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 4 | 00cf06de13050c6fc0efa13b4a58288f |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.6027 | 1.0 | 282 | 0.5186 | 0.7209 | | 0.3537 | 2.0 | 564 | 0.4989 | 0.7619 | | 0.0969 | 3.0 | 846 | 0.6405 | 0.7697 | | 0.0514 | 4.0 | 1128 | 0.8438 | 0.7680 | | bd77d162303320f804aa666cd4ff2d72 |

mit | [] | false | cortana on Stable Diffusion This is the `<cortana>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:        | 3ace9f35d278359ba82c39a44265079f |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Wav2Vec2-Large-XLSR-53-Esperanto Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on Esperanto using the [Common Voice](https://huggingface.co/datasets/common_voice) dataset. When using this model, make sure that your speech input is sampled at 16kHz. | 99220752a7803e67566dd6dfcc21e594 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Usage The model can be used directly (without a language model) as follows: ```python import torch import torchaudio from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor test_dataset = load_dataset("common_voice", "eo", split="test[:2%]") processor = Wav2Vec2Processor.from_pretrained('gchhablani/wav2vec2-large-xlsr-eo') model = Wav2Vec2ForCTC.from_pretrained('gchhablani/wav2vec2-large-xlsr-eo') resampler = torchaudio.transforms.Resample(48_000, 16_000) | 520fefc20fbb20e678e981147c41deab |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Evaluation The model can be evaluated as follows on the Portuguese test data of Common Voice. ```python import torch import torchaudio from datasets import load_dataset, load_metric from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor import re import jiwer def chunked_wer(targets, predictions, chunk_size=None): if chunk_size is None: return jiwer.wer(targets, predictions) start = 0 end = chunk_size H, S, D, I = 0, 0, 0, 0 while start < len(targets): chunk_metrics = jiwer.compute_measures(targets[start:end], predictions[start:end]) H = H + chunk_metrics["hits"] S = S + chunk_metrics["substitutions"] D = D + chunk_metrics["deletions"] I = I + chunk_metrics["insertions"] start += chunk_size end += chunk_size return float(S + D + I) / float(H + S + D) test_dataset = load_dataset("common_voice", "eo", split="test") | 25976d69cd6a289a70bd1a2635498c7e |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | TODO: replace {lang_id} in your language code here. Make sure the code is one of the *ISO codes* of [this](https://huggingface.co/languages) site. wer = load_metric("wer") processor = Wav2Vec2Processor.from_pretrained('gchhablani/wav2vec2-large-xlsr-eo') model = Wav2Vec2ForCTC.from_pretrained('gchhablani/wav2vec2-large-xlsr-eo') model.to("cuda") chars_to_ignore_regex = """[\\\\\\\\,\\\\\\\\?\\\\\\\\.\\\\\\\\!\\\\\\\\-\\\\\\\\;\\\\\\\\:\\\\\\\\"\\\\\\\\“\\\\\\\\%\\\\\\\\‘\\\\\\\\”\\\\\\\\�\\\\\\\\„\\\\\\\\«\\\\\\\\(\\\\\\\\»\\\\\\\\)\\\\\\\\’\\\\\\\\']""" resampler = torchaudio.transforms.Resample(48_000, 16_000) | b70092310a537e1e33c40dc8b4c33bf0 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def speech_file_to_array_fn(batch): batch["sentence"] = re.sub(chars_to_ignore_regex, '', batch["sentence"]).lower().replace('—',' ').replace('–',' ') speech_array, sampling_rate = torchaudio.load(batch["path"]) batch["speech"] = resampler(speech_array).squeeze().numpy() return batch test_dataset = test_dataset.map(speech_file_to_array_fn) | 02beb768e3cc461c4068681abe4e4002 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def evaluate(batch): inputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits pred_ids = torch.argmax(logits, dim=-1) batch["pred_strings"] = processor.batch_decode(pred_ids) return batch result = test_dataset.map(evaluate, batched=True, batch_size=8) print("WER: {:2f}".format(100 * chunked_wer(predictions=result["pred_strings"], targets=result["sentence"],chunk_size=5000))) ``` **Test Result**: 10.13 % | b2d39ab4ff37286b8214ddef71318b8b |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Training The Common Voice `train` and `validation` datasets were used for training. The code can be found [here](https://github.com/gchhablani/wav2vec2-week/blob/main/fine-tune-xlsr-wav2vec2-on-esperanto-asr-with-transformers-final.ipynb). | 52583cb5f2a762d677351a181fd3a57e |

apache-2.0 | ['translation'] | false | opus-mt-de-hil * source languages: de * target languages: hil * OPUS readme: [de-hil](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/de-hil/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-20.zip](https://object.pouta.csc.fi/OPUS-MT-models/de-hil/opus-2020-01-20.zip) * test set translations: [opus-2020-01-20.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/de-hil/opus-2020-01-20.test.txt) * test set scores: [opus-2020-01-20.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/de-hil/opus-2020-01-20.eval.txt) | e50f31d90a628a2007bb6f0c730eb028 |

cc-by-4.0 | ['espnet', 'audio', 'diarization'] | false | Demo: How to use in ESPnet2 ```bash cd espnet git checkout 650472b45a67612eaac09c7fbd61dc25f8ff2405 pip install -e . cd egs2/mini_librispeech/diar1 ./run.sh --skip_data_prep false --skip_train true --download_model espnet/YushiUeda_mini_librispeech_diar_train_diar_raw_valid.acc.best ``` <!-- Generated by scripts/utils/show_diar_result.sh --> | 6ffc59495add03aed50efa330f9778b6 |

cc-by-4.0 | ['espnet', 'audio', 'diarization'] | false | Environments - date: `Tue Jan 4 16:43:34 EST 2022` - python version: `3.7.11 (default, Jul 27 2021, 14:32:16) [GCC 7.5.0]` - espnet version: `espnet 0.10.5a1` - pytorch version: `pytorch 1.9.0+cu102` - Git hash: `0b2a6786b6f627f47defaee22911b3c2dc04af2a` - Commit date: `Thu Dec 23 12:22:49 2021 -0500` | f4bd3bd4b55b4caf9a820d9b42c76252 |

cc-by-4.0 | ['espnet', 'audio', 'diarization'] | false | DER dev_clean_2_ns2_beta2_500 |threshold_median_collar|DER| |---|---| |result_th0.3_med11_collar0.0|32.28| |result_th0.3_med1_collar0.0|32.64| |result_th0.4_med11_collar0.0|30.43| |result_th0.4_med1_collar0.0|31.15| |result_th0.5_med11_collar0.0|29.45| |result_th0.5_med1_collar0.0|30.53| |result_th0.6_med11_collar0.0|29.52| |result_th0.6_med1_collar0.0|30.95| |result_th0.7_med11_collar0.0|30.92| |result_th0.7_med1_collar0.0|32.69| | ee1750c3a82562b5a7cf40f7c1c03813 |

cc-by-4.0 | ['espnet', 'audio', 'diarization'] | false | DIAR config <details><summary>expand</summary> ``` config: conf/train_diar.yaml print_config: false log_level: INFO dry_run: false iterator_type: chunk output_dir: exp/diar_train_diar_raw ngpu: 1 seed: 0 num_workers: 1 num_att_plot: 3 dist_backend: nccl dist_init_method: env:// dist_world_size: 4 dist_rank: 0 local_rank: 0 dist_master_addr: localhost dist_master_port: 33757 dist_launcher: null multiprocessing_distributed: true unused_parameters: false sharded_ddp: false cudnn_enabled: true cudnn_benchmark: false cudnn_deterministic: true collect_stats: false write_collected_feats: false max_epoch: 100 patience: 3 val_scheduler_criterion: - valid - loss early_stopping_criterion: - valid - loss - min best_model_criterion: - - valid - acc - max keep_nbest_models: 3 nbest_averaging_interval: 0 grad_clip: 5 grad_clip_type: 2.0 grad_noise: false accum_grad: 2 no_forward_run: false resume: true train_dtype: float32 use_amp: false log_interval: null use_matplotlib: true use_tensorboard: true use_wandb: false wandb_project: null wandb_id: null wandb_entity: null wandb_name: null wandb_model_log_interval: -1 detect_anomaly: false pretrain_path: null init_param: [] ignore_init_mismatch: false freeze_param: [] num_iters_per_epoch: null batch_size: 16 valid_batch_size: null batch_bins: 1000000 valid_batch_bins: null train_shape_file: - exp/diar_stats_8k/train/speech_shape - exp/diar_stats_8k/train/spk_labels_shape valid_shape_file: - exp/diar_stats_8k/valid/speech_shape - exp/diar_stats_8k/valid/spk_labels_shape batch_type: folded valid_batch_type: null fold_length: - 80000 - 800 sort_in_batch: descending sort_batch: descending multiple_iterator: false chunk_length: 200000 chunk_shift_ratio: 0.5 num_cache_chunks: 64 train_data_path_and_name_and_type: - - dump/raw/simu/data/train_clean_5_ns2_beta2_500/wav.scp - speech - sound - - dump/raw/simu/data/train_clean_5_ns2_beta2_500/espnet_rttm - spk_labels - rttm valid_data_path_and_name_and_type: - - dump/raw/simu/data/dev_clean_2_ns2_beta2_500/wav.scp - speech - sound - - dump/raw/simu/data/dev_clean_2_ns2_beta2_500/espnet_rttm - spk_labels - rttm allow_variable_data_keys: false max_cache_size: 0.0 max_cache_fd: 32 valid_max_cache_size: null optim: adam optim_conf: lr: 0.01 scheduler: noamlr scheduler_conf: warmup_steps: 1000 num_spk: 2 init: xavier_uniform input_size: null model_conf: attractor_weight: 1.0 use_preprocessor: true frontend: default frontend_conf: fs: 8k hop_length: 128 specaug: null specaug_conf: {} normalize: global_mvn normalize_conf: stats_file: exp/diar_stats_8k/train/feats_stats.npz encoder: transformer encoder_conf: input_layer: linear num_blocks: 2 linear_units: 512 dropout_rate: 0.1 output_size: 256 attention_heads: 4 attention_dropout_rate: 0.0 decoder: linear decoder_conf: {} label_aggregator: label_aggregator label_aggregator_conf: {} attractor: null attractor_conf: {} required: - output_dir version: 0.10.5a1 distributed: true ``` </details> | 957bd8436a1d1185ab1265caae3a7f2d |

apache-2.0 | ['generated_from_trainer'] | false | edos-2023-baseline-bert-base-uncased-label_vector This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 1.1312 - F1: 0.4311 | d0c12141dfe6e415e2e75773b34a0e11 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 16 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 5 - num_epochs: 5 - mixed_precision_training: Native AMP | 27134591512209e13d4e0523650e4e60 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 2.1453 | 0.59 | 100 | 1.9401 | 0.1077 | | 1.8818 | 1.18 | 200 | 1.7312 | 0.1350 | | 1.7149 | 1.78 | 300 | 1.5556 | 0.2047 | | 1.5769 | 2.37 | 400 | 1.4030 | 0.2815 | | 1.4909 | 2.96 | 500 | 1.3020 | 0.3217 | | 1.3472 | 3.55 | 600 | 1.2238 | 0.3872 | | 1.2856 | 4.14 | 700 | 1.1584 | 0.4162 | | 1.2455 | 4.73 | 800 | 1.1312 | 0.4311 | | a98d02e18c0b0cfd3336cfcdb0f5df03 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-ner This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the conll2003 dataset. It achieves the following results on the evaluation set: - Loss: 0.0845 - Precision: 0.8754 - Recall: 0.9058 - F1: 0.8904 - Accuracy: 0.9763 | 425f3f23eb306164c976cb6a77f33363 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.2529 | 1.0 | 878 | 0.0845 | 0.8754 | 0.9058 | 0.8904 | 0.9763 | | 3976692cc6f49832f93b3b1d3bbe9457 |

apache-2.0 | ['dutch', 't5', 't5x', 'ul2', 'seq2seq'] | false | ul2-small-dutch for Dutch Pretrained T5 model on Dutch using a UL2 (Mixture-of-Denoisers) objective. The T5 model was introduced in [this paper](https://arxiv.org/abs/1910.10683) and first released at [this page](https://github.com/google-research/text-to-text-transfer-transformer). The UL2 objective was introduced in [this paper](https://arxiv.org/abs/2205.05131) and first released at [this page](https://github.com/google-research/google-research/tree/master/ul2). **Note:** The Hugging Face inference widget is deactivated because this model needs a text-to-text fine-tuning on a specific downstream task to be useful in practice. | 6bee4d1ce682f4b6a3a6939c13fdd998 |

apache-2.0 | ['dutch', 't5', 't5x', 'ul2', 'seq2seq'] | false | Model description T5 is an encoder-decoder model and treats all NLP problems in a text-to-text format. `ul2-small-dutch` T5 is a transformers model pretrained on a very large corpus of Dutch data in a self-supervised fashion. This means it was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots of publicly available data) with an automatic process to generate inputs and outputs from those texts. This model used the [T5 v1.1](https://github.com/google-research/text-to-text-transfer-transformer/blob/main/released_checkpoints.md | b34d011b972f89a3209c3044a57a1825 |

apache-2.0 | ['dutch', 't5', 't5x', 'ul2', 'seq2seq'] | false | t511) improvements compared to the original T5 model during the pretraining: - GEGLU activation in the feed-forward hidden layer, rather than ReLU - see [here](https://arxiv.org/abs/2002.05202) - Dropout was turned off during pre-training. Dropout should be re-enabled during fine-tuning - Pre-trained on self-supervised objective only without mixing in the downstream tasks - No parameter sharing between embedding and classifier layer | a3ebdb88cef0cb577fa56d685bcc04d8 |

apache-2.0 | ['dutch', 't5', 't5x', 'ul2', 'seq2seq'] | false | UL2 pretraining objective This model was pretrained with the UL2's Mixture-of-Denoisers (MoD) objective, that combines diverse pre-training paradigms together. UL2 frames different objective functions for training language models as denoising tasks, where the model has to recover missing sub-sequences of a given input. During pre-training it uses a novel mixture-of-denoisers that samples from a varied set of such objectives, each with different configurations. UL2 is trained using a mixture of three denoising tasks: 1. R-denoising (or regular span corruption), which emulates the standard T5 span corruption objective; 2. X-denoising (or extreme span corruption); and 3. S-denoising (or sequential PrefixLM). During pre-training, we sample from the available denoising tasks based on user-specified ratios. UL2 introduces a notion of mode switching, wherein downstream fine-tuning is associated with specific pre-training denoising task. During the pre-training, a paradigm token is inserted to the input (`[NLU]` for R-denoising, `[NLG]` for X-denoising, or `[S2S]` for S-denoising) indicating the denoising task at hand. Then, during fine-tuning the same input token should be inserted to get the best performance for different downstream fine-tuning tasks. | 307d9eb5c37bf6cdb8d83d419e823a00 |

apache-2.0 | ['dutch', 't5', 't5x', 'ul2', 'seq2seq'] | false | Intended uses & limitations This model was only pretrained in a self-supervised way excluding any supervised training. Therefore, this model has to be fine-tuned before it is usable on a downstream task, like text classification, unlike the Google's original T5 model. **Note:** You most likely need to fine-tune these T5/UL2 models without mixed precision so fine-tune them with full fp32 precision. Fine-tuning with Flax in bf16 - `model.to_bf16()` - is possible if you set the mask correctly to exclude layernorm and embedding layers. Also note that the T5x pre-training and fine-tuning configs set `z_loss` to 1e-4, which is used to keep the loss scale from underflowing. You can also find more fine-tuning tips from [here](https://discuss.huggingface.co/t/t5-finetuning-tips), for example. **Note**: For fine-tuning, most likely you can get better results if you insert a prefix token of `[NLU]`, `[NLG]`, or `[S2S]` to your input texts. For general language understanding fine-tuning tasks, you could use the `[NLU]` token. For GPT-style causal language generation, you could use the `[S2S]` token. The token `[NLG]` of the X-denoising pretrain task is somewhat mix between the language understanding and causal language generation so the token `[NLG]` could maybe be used for language generation fine-tuning too. | 3ef9fe44b8a1cc9149e409fd54daabc7 |

apache-2.0 | ['dutch', 't5', 't5x', 'ul2', 'seq2seq'] | false | How to use Here is how to use this model in PyTorch: ```python from transformers import T5Tokenizer, T5ForConditionalGeneration tokenizer = T5Tokenizer.from_pretrained("yhavinga/ul2-small-dutch", use_fast=False) model = T5ForConditionalGeneration.from_pretrained("yhavinga/ul2-small-dutch") ``` and in Flax: ```python from transformers import T5Tokenizer, FlaxT5ForConditionalGeneration tokenizer = T5Tokenizer.from_pretrained("yhavinga/ul2-small-dutch", use_fast=False) model = FlaxT5ForConditionalGeneration.from_pretrained("yhavinga/ul2-small-dutch") ``` | a9618e497821519e833e1363a8d0561e |

apache-2.0 | ['dutch', 't5', 't5x', 'ul2', 'seq2seq'] | false | Training data The `ul2-small-dutch` T5 model was pre-trained simultaneously on a combination of several datasets, including the full version of the "mc4_nl_cleaned" dataset, which is a cleaned version of Common Crawl's web crawl corpus, Dutch books, the Dutch subset of Wikipedia (2022-03-20), and a subset of "mc4_nl_cleaned" containing only texts from Dutch and Belgian newspapers. This last dataset is oversampled to bias the model towards descriptions of events in the Netherlands and Belgium. | 587cff4da06f2acff8888531f983ff3d |

apache-2.0 | ['dutch', 't5', 't5x', 'ul2', 'seq2seq'] | false | Preprocessing The ul2-small-dutch T5 model uses a SentencePiece unigram tokenizer with a vocabulary of 32,000 tokens. The tokenizer includes the special tokens `<pad>`, `</s>`, `<unk>`, known from the original T5 paper, `[NLU]`, `[NLG]` and `[S2S]` for the MoD pre-training, and `<n>` for newline. During pre-training with the UL2 objective, input and output sequences consist of 512 consecutive tokens. The tokenizer does not lowercase texts and is therefore case-sensitive; it distinguises between `dutch` and `Dutch`. Additionally, 100+28 extra tokens were added for pre-training tasks, resulting in a total of 32,128 tokens. | f1b384ba518fb7c6b5cf5e0931cc341f |

apache-2.0 | ['dutch', 't5', 't5x', 'ul2', 'seq2seq'] | false | Pretraining The model was trained on TPUv3-8 VM, sponsored by the [Google TPU Research Cloud](https://sites.research.google/trc/about/), for 957300 steps with a batch size of 128 (in total 62 B tokens). The optimizer used was AdaFactor with learning rate warmup for 10K steps with a constant learning rate of 1e-2, and then an inverse square root decay (exponential decay) of the learning rate after. The model was trained with Google's Jax/Flax based [t5x framework](https://github.com/google-research/t5x) with help from [Stephenn Fernandes](https://huggingface.co/StephennFernandes) to get started writing task definitions that wrap HF datasets. The UL2 training objective code used with the [t5x framework](https://github.com/google-research/t5x) was copied and slightly modified from the [UL2 paper](https://arxiv.org/pdf/2205.05131.pdf) appendix chapter 9.2 by the authors of the Finnish ul2 models. Used UL2 objective code is available in the repository [Finnish-NLP/ul2-base-nl36-finnish](https://huggingface.co/Finnish-NLP/ul2-base-nl36-finnish) in the files `ul2_objective.py` and `tasks.py`. UL2's mixture-of-denoisers configuration was otherwise equal to the UL2 paper but for the rate of mixing denoisers, 20% for S-denoising was used (suggested at the paper chapter 4.5) and the rest was divided equally between the R-denoising and X-denoising (i.e. 40% for both). | 847927bcfd5219aee9b1518a27b23c4c |

apache-2.0 | ['dutch', 't5', 't5x', 'ul2', 'seq2seq'] | false | Model list Models in this series: | | ul2-base-dutch | ul2-base-nl36-dutch | ul2-large-dutch | ul2-small-dutch | |:---------------------|:---------------------|:----------------------|:---------------------|:---------------------| | model_type | t5 | t5 | t5 | t5 | | _pipeline_tag | text2text-generation | text2text-generation | text2text-generation | text2text-generation | | d_model | 768 | 768 | 1024 | 512 | | d_ff | 2048 | 3072 | 2816 | 1024 | | num_heads | 12 | 12 | 16 | 6 | | d_kv | 64 | 64 | 64 | 64 | | num_layers | 12 | 36 | 24 | 8 | | num_decoder_layers | 12 | 36 | 24 | 8 | | feed_forward_proj | gated-gelu | gated-gelu | gated-gelu | gated-gelu | | dense_act_fn | gelu_new | gelu_new | gelu_new | gelu_new | | vocab_size | 32128 | 32128 | 32128 | 32128 | | tie_word_embeddings | 0 | 0 | 0 | 0 | | torch_dtype | float32 | float32 | float32 | float32 | | _gin_batch_size | 128 | 64 | 64 | 128 | | _gin_z_loss | 0.0001 | 0.0001 | 0.0001 | 0.0001 | | _gin_t5_config_dtype | 'bfloat16' | 'bfloat16' | 'bfloat16' | 'bfloat16' | | f8e1dbcfc64d04510c6048ad5a059cc7 |

apache-2.0 | ['dutch', 't5', 't5x', 'ul2', 'seq2seq'] | false | Acknowledgements This project would not have been possible without compute generously provided by Google through the [TPU Research Cloud](https://sites.research.google/trc/). Thanks to the [Finnish-NLP](https://huggingface.co/Finnish-NLP) authors for releasing their code for the UL2 objective and associated task definitions. Thanks to [Stephenn Fernandes](https://huggingface.co/StephennFernandes) for helping me get started with the t5x framework. Created by [Yeb Havinga](https://www.linkedin.com/in/yeb-havinga-86530825/) | 87abce824157880a1ece02875f82c917 |

mit | ['generated_from_trainer'] | false | lucid_varahamihira This model was trained from scratch on the tomekkorbak/pii-pile-chunk3-0-50000, the tomekkorbak/pii-pile-chunk3-50000-100000, the tomekkorbak/pii-pile-chunk3-100000-150000, the tomekkorbak/pii-pile-chunk3-150000-200000, the tomekkorbak/pii-pile-chunk3-200000-250000, the tomekkorbak/pii-pile-chunk3-250000-300000, the tomekkorbak/pii-pile-chunk3-300000-350000, the tomekkorbak/pii-pile-chunk3-350000-400000, the tomekkorbak/pii-pile-chunk3-400000-450000, the tomekkorbak/pii-pile-chunk3-450000-500000, the tomekkorbak/pii-pile-chunk3-500000-550000, the tomekkorbak/pii-pile-chunk3-550000-600000, the tomekkorbak/pii-pile-chunk3-600000-650000, the tomekkorbak/pii-pile-chunk3-650000-700000, the tomekkorbak/pii-pile-chunk3-700000-750000, the tomekkorbak/pii-pile-chunk3-750000-800000, the tomekkorbak/pii-pile-chunk3-800000-850000, the tomekkorbak/pii-pile-chunk3-850000-900000, the tomekkorbak/pii-pile-chunk3-900000-950000, the tomekkorbak/pii-pile-chunk3-950000-1000000, the tomekkorbak/pii-pile-chunk3-1000000-1050000, the tomekkorbak/pii-pile-chunk3-1050000-1100000, the tomekkorbak/pii-pile-chunk3-1100000-1150000, the tomekkorbak/pii-pile-chunk3-1150000-1200000, the tomekkorbak/pii-pile-chunk3-1200000-1250000, the tomekkorbak/pii-pile-chunk3-1250000-1300000, the tomekkorbak/pii-pile-chunk3-1300000-1350000, the tomekkorbak/pii-pile-chunk3-1350000-1400000, the tomekkorbak/pii-pile-chunk3-1400000-1450000, the tomekkorbak/pii-pile-chunk3-1450000-1500000, the tomekkorbak/pii-pile-chunk3-1500000-1550000, the tomekkorbak/pii-pile-chunk3-1550000-1600000, the tomekkorbak/pii-pile-chunk3-1600000-1650000, the tomekkorbak/pii-pile-chunk3-1650000-1700000, the tomekkorbak/pii-pile-chunk3-1700000-1750000, the tomekkorbak/pii-pile-chunk3-1750000-1800000, the tomekkorbak/pii-pile-chunk3-1800000-1850000, the tomekkorbak/pii-pile-chunk3-1850000-1900000 and the tomekkorbak/pii-pile-chunk3-1900000-1950000 datasets. | dda9370f3c8ca9c0d8bb53a01d7474bc |

mit | ['generated_from_trainer'] | false | Full config {'dataset': {'datasets': ['tomekkorbak/pii-pile-chunk3-0-50000', 'tomekkorbak/pii-pile-chunk3-50000-100000', 'tomekkorbak/pii-pile-chunk3-100000-150000', 'tomekkorbak/pii-pile-chunk3-150000-200000', 'tomekkorbak/pii-pile-chunk3-200000-250000', 'tomekkorbak/pii-pile-chunk3-250000-300000', 'tomekkorbak/pii-pile-chunk3-300000-350000', 'tomekkorbak/pii-pile-chunk3-350000-400000', 'tomekkorbak/pii-pile-chunk3-400000-450000', 'tomekkorbak/pii-pile-chunk3-450000-500000', 'tomekkorbak/pii-pile-chunk3-500000-550000', 'tomekkorbak/pii-pile-chunk3-550000-600000', 'tomekkorbak/pii-pile-chunk3-600000-650000', 'tomekkorbak/pii-pile-chunk3-650000-700000', 'tomekkorbak/pii-pile-chunk3-700000-750000', 'tomekkorbak/pii-pile-chunk3-750000-800000', 'tomekkorbak/pii-pile-chunk3-800000-850000', 'tomekkorbak/pii-pile-chunk3-850000-900000', 'tomekkorbak/pii-pile-chunk3-900000-950000', 'tomekkorbak/pii-pile-chunk3-950000-1000000', 'tomekkorbak/pii-pile-chunk3-1000000-1050000', 'tomekkorbak/pii-pile-chunk3-1050000-1100000', 'tomekkorbak/pii-pile-chunk3-1100000-1150000', 'tomekkorbak/pii-pile-chunk3-1150000-1200000', 'tomekkorbak/pii-pile-chunk3-1200000-1250000', 'tomekkorbak/pii-pile-chunk3-1250000-1300000', 'tomekkorbak/pii-pile-chunk3-1300000-1350000', 'tomekkorbak/pii-pile-chunk3-1350000-1400000', 'tomekkorbak/pii-pile-chunk3-1400000-1450000', 'tomekkorbak/pii-pile-chunk3-1450000-1500000', 'tomekkorbak/pii-pile-chunk3-1500000-1550000', 'tomekkorbak/pii-pile-chunk3-1550000-1600000', 'tomekkorbak/pii-pile-chunk3-1600000-1650000', 'tomekkorbak/pii-pile-chunk3-1650000-1700000', 'tomekkorbak/pii-pile-chunk3-1700000-1750000', 'tomekkorbak/pii-pile-chunk3-1750000-1800000', 'tomekkorbak/pii-pile-chunk3-1800000-1850000', 'tomekkorbak/pii-pile-chunk3-1850000-1900000', 'tomekkorbak/pii-pile-chunk3-1900000-1950000'], 'is_split_by_sentences': True}, 'generation': {'force_call_on': [25354], 'metrics_configs': [{}, {'n': 1}, {'n': 2}], 'scenario_configs': [{'generate_kwargs': {'do_sample': True, 'max_length': 128, 'min_length': 10, 'temperature': 0.7, 'top_k': 0, 'top_p': 0.9}, 'name': 'unconditional', 'num_samples': 2048}], 'scorer_config': {}}, 'kl_gpt3_callback': {'max_tokens': 64, 'num_samples': 4096}, 'model': {'from_scratch': True, 'gpt2_config_kwargs': {'reorder_and_upcast_attn': True, 'scale_attn_by': True}, 'path_or_name': 'gpt2'}, 'objective': {'name': 'MLE'}, 'tokenizer': {'path_or_name': 'gpt2'}, 'training': {'dataloader_num_workers': 0, 'effective_batch_size': 64, 'evaluation_strategy': 'no', 'fp16': True, 'hub_model_id': 'lucid_varahamihira', 'hub_strategy': 'all_checkpoints', 'learning_rate': 0.0005, 'logging_first_step': True, 'logging_steps': 1, 'num_tokens': 3300000000, 'output_dir': 'training_output2', 'per_device_train_batch_size': 16, 'push_to_hub': True, 'remove_unused_columns': False, 'save_steps': 25354, 'save_strategy': 'steps', 'seed': 42, 'warmup_ratio': 0.01, 'weight_decay': 0.1}} | dc43d3e50980edb055cf08b8ee0f3e15 |

mit | ['summarization'] | false | Text Summarization of News Articles State-of-the-art lightweights pretrained Transformer-based encoder-decoder model for text summarization. Model trained on dataset BBC News (The Extreme Summarization XSum dataset) with input length = 512, output length = 150 | 246b79f9353d1e23cde9dd01ce0df08e |

mit | ['summarization'] | false | How to use ```python from transformers import AutoTokenizer, AutoModelForSeq2SeqLM tokenizer = AutoTokenizer.from_pretrained("minhtoan/t5-finetune-bbc-news") model = AutoModelForSeq2SeqLM.from_pretrained("minhtoan/t5-finetune-bbc-news") model.cuda() src = "summarize: The full cost of damage in Newton Stewart, one of the areas worst affected, is still being assessed.Repair work is ongoing in Hawick and many roads in Peeblesshire remain badly affected by standing water.Trains on the west coast mainline face disruption due to damage at the Lamington Viaduct.Many businesses and householders were affected by flooding in Newton Stewart after the River Cree overflowed into the town.First Minister Nicola Sturgeon visited the area to inspect the damage.The waters breached a retaining wall, flooding many commercial properties on Victoria Street - the main shopping thoroughfare.Jeanette Tate, who owns the Cinnamon Cafe which was badly affected, said she could not fault the multi-agency response once the flood hit.However, she said more preventative work could have been carried out to ensure the retaining wall did not fail.'It is difficult but I do think there is so much publicity for Dumfries and the Nith - and I totally appreciate that - but it is almost like we're neglected or forgotten,' she said.'That may not be true but it is perhaps my perspective over the last few days.'Why were you not ready to help us a bit more when the warning and the alarm alerts had gone out?'Meanwhile, a flood alert remains in place across the Borders because of the constant rain.Peebles was badly hit by problems, sparking calls to introduce more defences in the area.Scottish Borders Council has put a list on its website of the roads worst affected and drivers have been urged not to ignore closure signs.The Labour Party's deputy Scottish leader Alex Rowley was in Hawick on Monday to see the situation first hand.He said it was important to get the flood protection plan right but backed calls to speed up the process.'I was quite taken aback by the amount of damage that has been done,' he said.'Obviously it is heart-breaking for people who have been forced out of their homes and the impact on businesses.'He said it was important that 'immediate steps' were taken to protect the areas most vulnerable and a clear timetable put in place for flood prevention plans.Have you been affected by flooding in Dumfries and Galloway or the Borders? Tell us about your experience of the situation and how it was handled. Email us on selkirk.news@bbc.co.uk or dumfries@bbc.co.uk." tokenized_text = tokenizer.encode(src, return_tensors="pt").cuda() model.eval() summary_ids = model.generate(tokenized_text, max_length=150) output = tokenizer.decode(summary_ids[0], skip_special_tokens=True) output ``` | d7bf916b7cfd6fa361343d74a08f1d03 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-de-fr This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1667 - F1: 0.8582 | 2ba4c2ecd4246c6c2bbf21ddda3e7ac7 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.2885 | 1.0 | 715 | 0.1817 | 0.8287 | | 0.1497 | 2.0 | 1430 | 0.1618 | 0.8442 | | 0.0944 | 3.0 | 2145 | 0.1667 | 0.8582 | | 5dde2f921f497e84787bd3492b669547 |

mit | [] | false | Tron-Style on Stable Diffusion This is the `<tron-style>"` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:    | d4af16377640700246b5b5df926af30a |

mit | ['generated_from_trainer'] | false | microsoft-deberta-v3-large_ner_wikiann This model is a fine-tuned version of [microsoft/deberta-v3-large](https://huggingface.co/microsoft/deberta-v3-large) on the wikiann dataset. It achieves the following results on the evaluation set: - Loss: 0.3108 - Precision: 0.8557 - Recall: 0.8738 - F1: 0.8647 - Accuracy: 0.9406 | 81fb74035c2f78704f743a81df8d4ad2 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.3005 | 1.0 | 1250 | 0.2462 | 0.8205 | 0.8400 | 0.8301 | 0.9294 | | 0.1931 | 2.0 | 2500 | 0.2247 | 0.8448 | 0.8630 | 0.8538 | 0.9386 | | 0.1203 | 3.0 | 3750 | 0.2341 | 0.8468 | 0.8693 | 0.8579 | 0.9403 | | 0.0635 | 4.0 | 5000 | 0.2948 | 0.8596 | 0.8745 | 0.8670 | 0.9411 | | 0.0451 | 5.0 | 6250 | 0.3108 | 0.8557 | 0.8738 | 0.8647 | 0.9406 | | 424450394d433337202b107a942dca2c |

mit | [] | false | Model Description <!-- Provide a longer summary of what this model is. --> ['Genome', 'Lighting', 'Hydrogen', 'Gene', 'Copper', 'Grape', 'Infrared', 'Uranium', 'Sexual_orientation', 'Asphalt', 'Incandescent_light_bulb', 'Cotton', 'Alloy', 'Annelid', 'Glass', 'Green', 'Zinc', 'Flowering_plant', 'Light-emitting_diode', 'Red'] - **Developed by:** nandysoham - **Shared by [optional]:** [More Information Needed] - **Model type:** [More Information Needed] - **Language(s) (NLP):** en - **License:** mit - **Finetuned from model [optional]:** [More Information Needed] | be481c8609c115bdd724c40962f27a5c |

apache-2.0 | [] | false | BigBird base trivia-itc This model is a fine-tune checkpoint of `bigbird-roberta-base`, fine-tuned on `trivia_qa` with `BigBirdForQuestionAnsweringHead` on its top. Check out [this](https://colab.research.google.com/drive/1DVOm1VHjW0eKCayFq1N2GpY6GR9M4tJP?usp=sharing) to see how well `google/bigbird-base-trivia-itc` performs on question answering. | d4dbba7c4aae821cc0ba0bedddefad6d |

apache-2.0 | [] | false | you can change `block_size` & `num_random_blocks` like this: model = BigBirdForQuestionAnswering.from_pretrained("google/bigbird-base-trivia-itc", block_size=16, num_random_blocks=2) question = "Replace me by any text you'd like." context = "Put some context for answering" encoded_input = tokenizer(question, context, return_tensors='pt') output = model(**encoded_input) ``` | 0a40363ea3b3ce1b4080e069f7c93dad |

apache-2.0 | [] | false | Fine-tuning config & hyper-parameters - No. of global token = 128 - Window length = 192 - No. of random token = 192 - Max. sequence length = 4096 - No. of heads = 12 - No. of hidden layers = 12 - Hidden layer size = 768 - Batch size = 32 - Loss = cross-entropy noisy spans | a7780657b66a3cb80e8b1c8b2c3dcbd1 |

mit | ['generated_from_trainer'] | false | twitter-data-microsoft-xtremedistil-l6-h256-uncased-sentiment-finetuned-memes This model is a fine-tuned version of [microsoft/xtremedistil-l6-h256-uncased](https://huggingface.co/microsoft/xtremedistil-l6-h256-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.3635 - Accuracy: 0.8756 - Precision: 0.8761 - Recall: 0.8756 - F1: 0.8755 | 276e2ec650fd828015e932531802f89b |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | Precision | Recall | F1 | |:-------------:|:-----:|:-----:|:---------------:|:--------:|:---------:|:------:|:------:| | 0.6142 | 1.0 | 1762 | 0.5396 | 0.8022 | 0.8010 | 0.8022 | 0.8014 | | 0.4911 | 2.0 | 3524 | 0.4588 | 0.8322 | 0.8332 | 0.8322 | 0.8325 | | 0.4511 | 3.0 | 5286 | 0.4072 | 0.8562 | 0.8564 | 0.8562 | 0.8559 | | 0.412 | 4.0 | 7048 | 0.3825 | 0.8673 | 0.8680 | 0.8673 | 0.8672 | | 0.3886 | 5.0 | 8810 | 0.3677 | 0.8745 | 0.8753 | 0.8745 | 0.8745 | | 0.3914 | 6.0 | 10572 | 0.3635 | 0.8756 | 0.8761 | 0.8756 | 0.8755 | | 963099ed434c587f1cef6998852d1f83 |

apache-2.0 | ['generated_from_trainer'] | false | vit-base-patch16-224-in21k_GI_diagnosis This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on the imagefolder dataset. It achieves the following results on the evaluation set: - Loss: 0.2538 - Accuracy: 0.9375 - Weighted f1: 0.9365 - Micro f1: 0.9375 - Macro f1: 0.9365 - Weighted recall: 0.9375 - Micro recall: 0.9375 - Macro recall: 0.9375 - Weighted precision: 0.9455 - Micro precision: 0.9375 - Macro precision: 0.9455 | 6519c10a40b0e2ba2eec293ed97936a1 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0002 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3 | df55f7da34c960c7ef39e6c05e886cbd |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | Weighted f1 | Micro f1 | Macro f1 | Weighted recall | Micro recall | Macro recall | Weighted precision | Micro precision | Macro precision | |:-------------:|:-----:|:----:|:---------------:|:--------:|:-----------:|:--------:|:--------:|:---------------:|:------------:|:------------:|:------------------:|:---------------:|:---------------:| | 1.3805 | 1.0 | 200 | 0.5006 | 0.8638 | 0.8531 | 0.8638 | 0.8531 | 0.8638 | 0.8638 | 0.8638 | 0.9111 | 0.8638 | 0.9111 | | 1.3805 | 2.0 | 400 | 0.2538 | 0.9375 | 0.9365 | 0.9375 | 0.9365 | 0.9375 | 0.9375 | 0.9375 | 0.9455 | 0.9375 | 0.9455 | | 0.0628 | 3.0 | 600 | 0.5797 | 0.8812 | 0.8740 | 0.8812 | 0.8740 | 0.8812 | 0.8812 | 0.8813 | 0.9157 | 0.8812 | 0.9157 | | d7bf8f7c0027f889fc6029d286dd8711 |

mit | ['grammar-correction'] | false | T5-Efficient-MINI for grammar correction This is a [T5-Efficient-MINI](https://huggingface.co/google/t5-efficient-mini) model that was trained on a subset of [C4_200M](https://ai.googleblog.com/2021/08/the-c4200m-synthetic-dataset-for.html) dataset to solve the grammar correction task in English. To bring additional errors, random typos were introduced to the input sentences using the [nlpaug](https://github.com/makcedward/nlpaug) library. Since the model was trained on only one task, there are no prefixes needed. The model was trained as a part of the project during the [Full Stack Deep Learning](https://fullstackdeeplearning.com/course/2022/) course. ONNX version of the model is deployed on the [site](https://edge-ai.vercel.app/models/grammar-check) and can be run directly in the browser. | 8dea67d7a6007e480ea2b7143326097e |

mit | ['distilbert'] | false | What is this? This model has been developed to detect "narrative-style" jokes, stories and anecdotes (i.e. they are narrated as a story) spoken during speeches or conversations etc. It works best when jokes/anecdotes are at least 40 words or longer. It is based on [lvwerra's distilbert](https://huggingface.co/lvwerra/distilbert-imdb). The training dataset was a private collection of around 2000 jokes. This model has not been trained or tested on one-liners, puns or Reddit-style language-manipulation jokes such as knock-knock, Q&A jokes etc. See the example in the inference widget or How to use section for what constitues a narrative-style joke. For a more accurate model (2.4% more) that is slower at inference, see the [Roberta model](https://huggingface.co/Reggie/muppet-roberta-base-joke_detector). For a still more accurate model (2.9% more) that is much slower at inference, see the [Deberta-v3 model](https://huggingface.co/Reggie/DeBERTa-v3-base-joke_detector). | 2a8ed3f8d8cf4c1e092325df3bac788a |

mit | ['distilbert'] | false | How to use ```python from transformers import pipeline import torch device = 0 if torch.cuda.is_available() else -1 model_name = 'Reggie/distilbert-joke_detector' max_seq_len = 510 pipe = pipeline(model=model_name, device=device, truncation=True, max_length=max_seq_len) is_it_a_joke = """A nervous passenger is about to book a flight ticket, and he asks the airlines' ticket seller, "I hope your planes are safe. Do they have a good track record for safety?" The airline agent replies, "Sir, I can guarantee you, we've never had a plane that has crashed more than once." """ result = pipe(is_it_a_joke) | f93b5480da68c1e937c7665c2ff3367f |

apache-2.0 | [] | false | This model is used to detect **Offensive Content** in **Kannada Code-Mixed language**. The mono in the name refers to the monolingual setting, where the model is trained using only Kannada(pure and code-mixed) data. The weights are initialized from pretrained XLM-Roberta-Base and pretrained using Masked Language Modelling on the target dataset before fine-tuning using Cross-Entropy Loss. This model is the best of multiple trained for **EACL 2021 Shared Task on Offensive Language Identification in Dravidian Languages**. Genetic-Algorithm based ensembled test predictions got the second-highest weighted F1 score at the leaderboard (Weighted F1 score on hold out test set: This model - 0.73, Ensemble - 0.74) | 76e742c8e5ee92a96fce8015f1dafb00 |

mit | [] | false | bored_ape_textual_inversion on Stable Diffusion This is the `<bored_ape>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:     | 5b9932afc3c7a67b0bc71e7f3b832063 |

apache-2.0 | ['vision', 'image-to-image'] | false | Swin2SR model (image super-resolution) Swin2SR model that upscales images x4. It was introduced in the paper [Swin2SR: SwinV2 Transformer for Compressed Image Super-Resolution and Restoration](https://arxiv.org/abs/2209.11345) by Conde et al. and first released in [this repository](https://github.com/mv-lab/swin2sr). | d8326cf67a9d267db499d41258ca4392 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper Small Portuguese This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the mozilla-foundation/common_voice_11_0 pt dataset. It achieves the following results on the evaluation set: - Loss: 0.3191 - Wer: 14.8844 - Cer: 5.7447 | 3a4d0ac20d0e932b43cd60aa6a137004 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | Cer | |:-------------:|:-----:|:----:|:---------------:|:-------:|:------:| | 2.9379 | 0.92 | 500 | 0.4783 | 17.3806 | 7.0572 | | 2.1727 | 1.84 | 1000 | 0.3721 | 17.2727 | 6.7975 | | 1.7856 | 2.76 | 1500 | 0.3466 | 16.3790 | 6.4023 | | 1.7803 | 3.68 | 2000 | 0.3372 | 15.9014 | 6.2089 | | 1.8312 | 4.6 | 2500 | 0.3303 | 15.7473 | 6.0901 | | 1.6403 | 5.52 | 3000 | 0.3256 | 15.9476 | 6.1896 | | 1.536 | 6.45 | 3500 | 0.3235 | 15.5008 | 6.0928 | | 1.4223 | 7.37 | 4000 | 0.3209 | 15.3621 | 6.0735 | | 1.4652 | 8.29 | 4500 | 0.3209 | 15.2696 | 5.9326 | | 1.2572 | 9.21 | 5000 | 0.3191 | 14.8844 | 5.7447 | | 1.7142 | 10.13 | 5500 | 0.3182 | 15.0077 | 5.8469 | | 1.4195 | 11.05 | 6000 | 0.3171 | 15.0693 | 5.8856 | | 1.3965 | 11.97 | 6500 | 0.3167 | 15.0539 | 5.8580 | | 476ee525210c1419056c356f33c6906f |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | sick Dreambooth model trained by Z3R069 with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept: | 0796c555148b010dc64ac0baec2f1bef |

apache-2.0 | ['automatic-speech-recognition', 'de'] | false | exp_w2v2t_de_unispeech-sat_s75 Fine-tuned [microsoft/unispeech-sat-large](https://huggingface.co/microsoft/unispeech-sat-large) for speech recognition using the train split of [Common Voice 7.0 (de)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 9c46d0d010bd49b05e8bda273aede223 |

apache-2.0 | [] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-06 - train_batch_size: 16 - eval_batch_size: 4 - gradient_accumulation_steps: 3 - optimizer: AdamW with betas=(None, None), weight_decay=None and epsilon=None - lr_scheduler: None - lr_warmup_steps: 500 - ema_inv_gamma: None - ema_inv_gamma: None - ema_inv_gamma: None - mixed_precision: fp16 | 24e5596edd3fd1b3ef2cd78ad4cfc2ce |

mit | ['generated_from_keras_callback'] | false | Rocketknight1/gpt2-finetuned-wikitext2 This model is a fine-tuned version of [gpt2](https://huggingface.co/gpt2) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 7.3062 - Validation Loss: 6.7676 - Epoch: 0 | 1a788a09c4eb89ebf11b73bdd2126e4c |

apache-2.0 | ['generated_from_trainer'] | false | distilbert_sa_GLUE_Experiment_data_aug_cola_384 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the GLUE COLA dataset. It achieves the following results on the evaluation set: - Loss: 0.7008 - Matthews Correlation: 0.1207 | 644e290e8d492e3e239a5f7242d4c2bf |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.