license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-imdb This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 2.4353 | 70949af31a2114d7c3ebfc9f61e7bd2b |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.6954 | 1.0 | 157 | 2.5243 | | 2.563 | 2.0 | 314 | 2.4738 | | 2.5258 | 3.0 | 471 | 2.4369 | | 0ed754e18f760798bf28c12ed2b537e3 |

cc-by-4.0 | [] | false | BART-base fine-tuned on NaturalQuestions for **Question Generation**

[BART Model](https://arxiv.org/pdf/1910.13461.pdf) fine-tuned on [Google NaturalQuestions](https://ai.google.com/research/NaturalQuestions/) for **Question Generation** by treating long answer as input, and question as output.

| dfc88f8bb324f0a6f7c1aa9d4a57d1d3 |

cc-by-4.0 | [] | false | Details of BART

The **BART** model was presented in [BART: Denoising Sequence-to-Sequence Pre-training for Natural Language Generation, Translation, and Comprehension](https://arxiv.org/pdf/1910.13461.pdf) by *Mike Lewis, Yinhan Liu, Naman Goyal, Marjan Ghazvininejad, Abdelrahman Mohamed, Omer Levy, Ves Stoyanov, Luke Zettlemoyer* in Here the abstract:

We present BART, a denoising autoencoder for pretraining sequence-to-sequence models. BART is trained by (1) corrupting text with an arbitrary noising function, and (2) learning a model to reconstruct the original text. It uses a standard Tranformer-based neural machine translation architecture which, despite its simplicity, can be seen as generalizing BERT (due to the bidirectional encoder), GPT (with the left-to-right decoder), and many other more recent pretraining schemes. We evaluate a number of noising approaches, finding the best performance by both randomly shuffling the order of the original sentences and using a novel in-filling scheme, where spans of text are replaced with a single mask token. BART is particularly effective when fine tuned for text generation but also works well for comprehension tasks. It matches the performance of RoBERTa with comparable training resources on GLUE and SQuAD, achieves new state-of-the-art results on a range of abstractive dialogue, question answering, and summarization tasks, with gains of up to 6 ROUGE. BART also provides a 1.1 BLEU increase over a back-translation system for machine translation, with only target language pretraining. We also report ablation experiments that replicate other pretraining schemes within the BART framework, to better measure which factors most influence end-task performance.

| a959f2d15c461deaa29525d46aced1ad |

cc-by-4.0 | [] | false | Citation

If you want to cite this model you can use this:

```bibtex

@inproceedings{kulshreshtha-etal-2021-back,

title = "Back-Training excels Self-Training at Unsupervised Domain Adaptation of Question Generation and Passage Retrieval",

author = "Kulshreshtha, Devang and

Belfer, Robert and

Serban, Iulian Vlad and

Reddy, Siva",

booktitle = "Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing",

month = nov,

year = "2021",

address = "Online and Punta Cana, Dominican Republic",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2021.emnlp-main.566",

pages = "7064--7078",

abstract = "In this work, we introduce back-training, an alternative to self-training for unsupervised domain adaptation (UDA). While self-training generates synthetic training data where natural inputs are aligned with noisy outputs, back-training results in natural outputs aligned with noisy inputs. This significantly reduces the gap between target domain and synthetic data distribution, and reduces model overfitting to source domain. We run UDA experiments on question generation and passage retrieval from the Natural Questions domain to machine learning and biomedical domains. We find that back-training vastly outperforms self-training by a mean improvement of 7.8 BLEU-4 points on generation, and 17.6{\%} top-20 retrieval accuracy across both domains. We further propose consistency filters to remove low-quality synthetic data before training. We also release a new domain-adaptation dataset - MLQuestions containing 35K unaligned questions, 50K unaligned passages, and 3K aligned question-passage pairs.",

}

```

> Created by [Devang Kulshreshtha](https://geekydevu.netlify.app/)

> Made with <span style="color: | 7a3ae9965f38ff3144f924c88ce874db |

mit | ['question-answering', 'bert', 'bert-base'] | false | BERT-base uncased model fine-tuned on SQuAD v1 This model is block sparse: the **linear** layers contains **7.5%** of the original weights. The model contains **28.2%** of the original weights **overall**. The training use a modified version of Victor Sanh [Movement Pruning](https://arxiv.org/abs/2005.07683) method. That means that with the [block-sparse](https://github.com/huggingface/pytorch_block_sparse) runtime it ran **1.92x** faster than an dense networks on the evaluation, at the price of some impact on the accuracy (see below). This model was fine-tuned from the HuggingFace [BERT](https://www.aclweb.org/anthology/N19-1423/) base uncased checkpoint on [SQuAD1.1](https://rajpurkar.github.io/SQuAD-explorer), and distilled from the equivalent model [csarron/bert-base-uncased-squad-v1](https://huggingface.co/csarron/bert-base-uncased-squad-v1). This model is case-insensitive: it does not make a difference between english and English. | ce20260466b2111f412fe14d69f9cd9e |

mit | ['question-answering', 'bert', 'bert-base'] | false | Pruning details A side-effect of the block pruning is that some of the attention heads are completely removed: 106 heads were removed on a total of 144 (73.6%). Here is a detailed view on how the remaining heads are distributed in the network after pruning.  | 38ff228a97fca4d3b95e0d15d0c70199 |

mit | ['question-answering', 'bert', 'bert-base'] | false | Example Usage ```python from transformers import pipeline qa_pipeline = pipeline( "question-answering", model="madlag/bert-base-uncased-squad1.1-block-sparse-0.07-v1", tokenizer="madlag/bert-base-uncased-squad1.1-block-sparse-0.07-v1" ) predictions = qa_pipeline({ 'context': "Frédéric François Chopin, born Fryderyk Franciszek Chopin (1 March 1810 – 17 October 1849), was a Polish composer and virtuoso pianist of the Romantic era who wrote primarily for solo piano.", 'question': "Who is Frederic Chopin?", }) print(predictions) ``` | e5a57e054d6cc6f48f32e9bc5034bf5e |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Wav2Vec2-Large-XLSR-Turkish Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the [Turkish Artificial Common Voice dataset](https://cloud.uncool.ai/index.php/f/2165181). When using this model, make sure that your speech input is sampled at 16kHz. | 30d5cffa250b99d41799974742d39ec1 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Usage The model can be used directly (without a language model) as follows: ```python import torch import torchaudio from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor test_dataset = load_dataset("common_voice", "tr", split="test[:2%]") processor = Wav2Vec2Processor.from_pretrained("cahya/wav2vec2-large-xlsr-turkish-artificial") model = Wav2Vec2ForCTC.from_pretrained("cahya/wav2vec2-large-xlsr-turkish-artificial") | 603163564d522aab3ffcb53dd6135063 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Evaluation The model can be evaluated as follows on the Turkish test data of Common Voice. ```python import torch import torchaudio from datasets import load_dataset, load_metric from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor import re test_dataset = load_dataset("common_voice", "tr", split="test") wer = load_metric("wer") processor = Wav2Vec2Processor.from_pretrained("cahya/wav2vec2-large-xlsr-turkish-artificial") model = Wav2Vec2ForCTC.from_pretrained("cahya/wav2vec2-large-xlsr-turkish-artificial") model.to("cuda") chars_to_ignore_regex = '[\,\?\.\!\-\;\:\"\“\‘\”\'\`…\’»«]' | 3b248d54dc771accc12df3dcc2dce8a5 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def evaluate(batch): inputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits pred_ids = torch.argmax(logits, dim=-1) batch["pred_strings"] = processor.batch_decode(pred_ids) return batch result = test_dataset.map(evaluate, batched=True, batch_size=8) print("WER: {:2f}".format(100 * wer.compute(predictions=result["pred_strings"], references=result["sentence"]))) ``` **Test Result**: 66.98 % | d79f54cabe5bfc8babb1b7ebc8faffcf |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Training The Artificial Common Voice `train`, `validation` is used to fine tune the model The script used for training can be found [here](https://github.com/cahya-wirawan/indonesian-speech-recognition) | ffdb2517ffbcab1582293cf624e27e2b |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 2 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 100.0 | d79bf516cc9b8b040632d1c4ba7a9378 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 4 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 2 | b32b0ce9a9d4759035d55d67a0c32015 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 3.3266 | 1.0 | 29228 | 3.1859 | | 3.2947 | 2.0 | 58456 | 3.1700 | | c4e4e6b050578f139dbc09ce24e2d26f |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | test_ Dreambooth model trained by Joeythemonster with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept: | 9f68235308e9f18f1c9ac6d4f8ce6f04 |

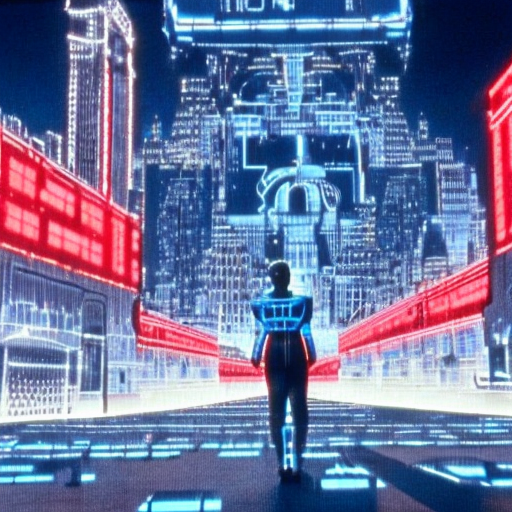

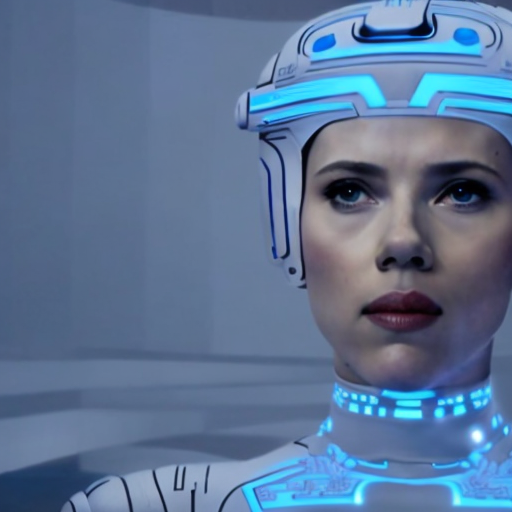

mit | ['stable-diffusion', 'text-to-image'] | false | scrollTo=jXgi8HM4c-DA) google colab, dreambooth. It was made with various screen captures I took from videos of TRON from 1982. The original trailer and 2 long movie clips. Download the **origtron.ckpt** file to: _stable-diffusion-webui\models\Stable-diffusion_ once it's downloaded just use the prompt **origtron** and you'll get some great results. The file size is 2.3gb. | 47be95f5d885b086858e7cd8eda76c95 |

mit | ['stable-diffusion', 'text-to-image'] | false | Images I created     | 6e80aec95ae631248ccb404952f1ef76 |

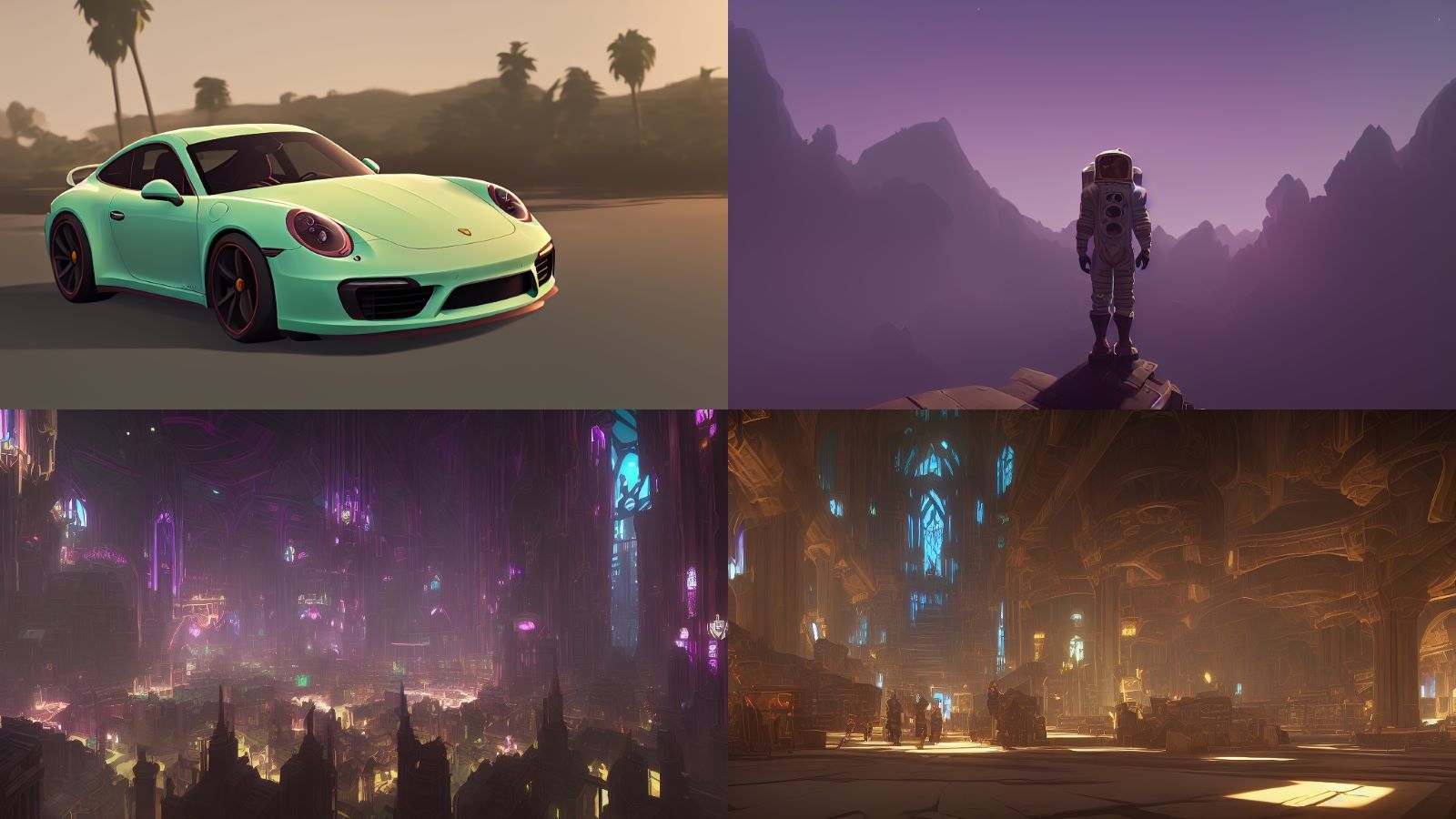

apache-2.0 | ['BABY', 'BABIES', 'LITTLE', 'SD2.1', 'DIGITAL ART', 'CUTE', 'MIDJOURNEY', 'DOLLS', 'CHARACTER', 'CARTOON'] | false | Cute RichStyle - 512x512 Model trained in SD 1.5 with photos generated with Midjourney, created to generate people, animals/creatures... You can also make objects... landscapes, etc, but maybe you need more tries: - 30 steps - 7cfg - euler a,ddim, dpm++sde... - you can use different resolutions, you can generate interesting things Characters rendered with the model: .jpg) .jpg) **TOKEN**: cbzbb, cbzbb style, cbzbb style of _____ , you can put the token , (it is not required) but it is better to put it. Many times the token between () works better possible positives: cute, little, baby, beautiful, fantasy art, devian art, trending artstation, digital art, detailed, cute, realistic, humanoide, character, tiny, film still of "____" , cinematic shot, "__" environment, beautiful landspace of _____, cinematic portrait of ______, cute character as a "_".... most important negatives (not mandatory but they help a lot) : pencil draw, bad photo, bad draw other possible negatives: cartoon, woman, man, person, people, character, super hero, iron man, baby, anime... ((When you generate the photo, there are times when it tries to create a person/character, that's why the negative character prompts etc...)) - landscape prompts better between ( ) or more parentheses, although it is not always necessary - you can use other styles, removing the "cbzbb" token and adding pencil draw, lego style.. watercolor etc etc, it doesn't make the exact photo style with which I trained it but they look great too!! - Most of the photos are daytime, to create nights it once worked with: - positive: (dark), (black sky) (dark sky) etc etc - negative: (blue day), (day light), (day) (sun) etc etc - To increase quality: send the photo that you like the most to img2img (30-steps), 0.60-80, generate 4 photos, choose one or repeat (with less donoising to make it look more like the original, or more to make it change more ), resend via img2img (you can raise the ratio/aspect of the image a bit), lower the denoising to 0.40-0.50, generate 2/4 images, choose the one you like the most and have more detail, send to img2img uploading the photo scale (same ratio/aspect,) and at 0.15-0.30 50 steps, generate 1 photo, if you want you can continue rescaling it for more detail and more resolution - Change person/character in the image: if you like the photo but want to change the character, send a photo to img2img, change the name of the character or person or animal and between 0.7-1 denoising **Prompt examples:** cbzbb style of a pennywise michael jackson, cbzbb, detailed, fantasy,super cute, trending on artstation cbzbb style of angry baby groot cute panda reading a book, cbzbb style | 4e517841ffc5142bfe5787d9bded8e28 |

apache-2.0 | ['BABY', 'BABIES', 'LITTLE', 'SD2.1', 'DIGITAL ART', 'CUTE', 'MIDJOURNEY', 'DOLLS', 'CHARACTER', 'CARTOON'] | false | ENJOY !!!! The creations of the images are absolutely yours! But if you can share them with me on Twitter or Instagram or reddit, anywhere , I'd LOVE to SEE what you can do with the model! - **Twitter:** @RichViip - **Instagram**: richviip - **Reddit:** Richviip Thank you for the support and great help of ALL the people on Patricio's discord, who were at every moment of the creation of the model giving their opinion on more than 15 different types of models and making my head hurt less! Social media of Patricio, follow him!! - **Youtube:** patricio-fernandez - **Twitter:** patriciofernanf | d5ecbdae6b0f13eef2fb8efa3d12f361 |

apache-2.0 | ['sbb-asr', 'generated_from_trainer'] | false | Whisper Small German SBB all SNR - v4 This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the SBB Dataset 05.01.2023 dataset. It achieves the following results on the evaluation set: - Loss: 0.0287 - Wer: 0.0222 | 594688dd36b4edcd8446240d8c7ed100 |

apache-2.0 | ['sbb-asr', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-06 - train_batch_size: 64 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 100 - training_steps: 700 - mixed_precision_training: Native AMP | a82a36ef7ea2fd783d449b6133ba8658 |

apache-2.0 | ['sbb-asr', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 1.6894 | 0.71 | 100 | 0.4702 | 0.4661 | | 0.1896 | 1.42 | 200 | 0.0322 | 0.0241 | | 0.0297 | 2.13 | 300 | 0.0349 | 0.0228 | | 0.0181 | 2.84 | 400 | 0.0250 | 0.0209 | | 0.0154 | 3.55 | 500 | 0.0298 | 0.0209 | | 0.0112 | 4.26 | 600 | 0.0327 | 0.0222 | | 0.009 | 4.96 | 700 | 0.0287 | 0.0222 | | b7d3dc5b6e4b7debbdaf01585c04cb17 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert_sa_GLUE_Experiment_logit_kd_mrpc_96 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the GLUE MRPC dataset. It achieves the following results on the evaluation set: - Loss: 0.5290 - Accuracy: 0.3162 - F1: 0.0 - Combined Score: 0.1581 | 70ad7136d2890821465aa524be2656af |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | Combined Score | |:-------------:|:-----:|:----:|:---------------:|:--------:|:---:|:--------------:| | 0.5507 | 1.0 | 15 | 0.5375 | 0.3162 | 0.0 | 0.1581 | | 0.5355 | 2.0 | 30 | 0.5312 | 0.3162 | 0.0 | 0.1581 | | 0.531 | 3.0 | 45 | 0.5296 | 0.3162 | 0.0 | 0.1581 | | 0.5292 | 4.0 | 60 | 0.5290 | 0.3162 | 0.0 | 0.1581 | | 0.5278 | 5.0 | 75 | 0.5290 | 0.3162 | 0.0 | 0.1581 | | 0.5292 | 6.0 | 90 | 0.5292 | 0.3162 | 0.0 | 0.1581 | | 0.5279 | 7.0 | 105 | 0.5292 | 0.3162 | 0.0 | 0.1581 | | 0.5288 | 8.0 | 120 | 0.5291 | 0.3162 | 0.0 | 0.1581 | | 0.5282 | 9.0 | 135 | 0.5291 | 0.3162 | 0.0 | 0.1581 | | 3fe9fa664c4a57ca68148992cb5c7fe2 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-cased-3epoch-LaTTrue-updatedAlligning This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1790 | f820131204be89f891397032ae97bf59 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | No log | 1.0 | 174 | 0.1690 | | No log | 2.0 | 348 | 0.1739 | | 0.1311 | 3.0 | 522 | 0.1790 | | 7bd48116dadfc105d2af04206cd33555 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2222 - Accuracy: 0.925 - F1: 0.9250 | 7660bb253ad8e5821209bb4702228dfb |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8521 | 1.0 | 250 | 0.3164 | 0.907 | 0.9038 | | 0.2549 | 2.0 | 500 | 0.2222 | 0.925 | 0.9250 | | 602d806343e6155f16d23032d0de7d49 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 12 - eval_batch_size: 8 - seed: 4 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - training_steps: 200 | 7b0386f2e6c046299bf11c362dd05c2e |

apache-2.0 | ['generated_from_trainer'] | false | eval_masked_v4_sst2 This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the GLUE SST2 dataset. It achieves the following results on the evaluation set: - Loss: 0.3821 - Accuracy: 0.9209 | 8c1f0a4a05f6da3b83f926fe44af0caf |

apache-2.0 | ['generated_from_trainer'] | false | finetuning-sentiment-model-20000-samples-imdb-v2 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.3694 - Accuracy: 0.924 - F1: 0.9242 | 812829a582df5778f8a1891f5147325a |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.2795 | 1.0 | 2500 | 0.2224 | 0.9275 | 0.9263 | | 0.1877 | 2.0 | 5000 | 0.3141 | 0.9275 | 0.9274 | | 0.1045 | 3.0 | 7500 | 0.3694 | 0.924 | 0.9242 | | bb7d3c1927e3591d47d77c863a5c695b |

apache-2.0 | ['generated_from_trainer'] | false | distilled-mt5-small-0.03-1 This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the wmt16 ro-en dataset. It achieves the following results on the evaluation set: - Loss: 2.8063 - Bleu: 7.1839 - Gen Len: 45.5733 | 2f203324a311d5d95bdaa94614882f6a |

apache-2.0 | ['wav2vec2'] | false | LeBenchmark: wav2vec2 large model trained on 7K hours of French speech LeBenchmark provides an ensemble of pretrained wav2vec2 models on different French datasets containing spontaneous, read, and broadcasted speech. For more information on the different benchmarks that can be used to evaluate the wav2vec2 models, please refer to our paper at: [Task Agnostic and Task Specific Self-Supervised Learning from Speech with LeBenchmark](https://openreview.net/pdf?id=TSvj5dmuSd) | d72bb52510f0e9ceb845e43618978dad |

apache-2.0 | ['wav2vec2'] | false | Model and data descriptions We release four different models that can be found under our HuggingFace organization. Two different wav2vec2 architectures *Base* and *Large* are coupled with our small (1K), medium (3K), and large (7K) corpus. A larger one should come later. In short: - [wav2vec2-FR-7K-large](https://huggingface.co/LeBenchmark/wav2vec2-FR-7K-large): Large wav2vec2 trained on 7.6K hours of French speech (1.8K Males / 1.0K Females / 4.8K unknown). - [wav2vec2-FR-7K-base](https://huggingface.co/LeBenchmark/wav2vec2-FR-7K-base): Base wav2vec2 trained on 7.6K hours of French speech (1.8K Males / 1.0K Females / 4.8K unknown). - [wav2vec2-FR-3K-large](https://huggingface.co/LeBenchmark/wav2vec2-FR-3K-large): Large wav2vec2 trained on 2.9K hours of French speech (1.8K Males / 1.0K Females / 0.1K unknown). - [wav2vec2-FR-3K-base](https://huggingface.co/LeBenchmark/wav2vec2-FR-3K-base): Base wav2vec2 trained on 2.9K hours of French speech (1.8K Males / 1.0K Females / 0.1K unknown). - [wav2vec2-FR-2.6K-base](https://huggingface.co/LeBenchmark/wav2vec2-FR-2.6K-base): Base wav2vec2 trained on 2.6K hours of French speech (**no spontaneous speech**). - [wav2vec2-FR-1K-large](https://huggingface.co/LeBenchmark/wav2vec2-FR-1K-large): Large wav2vec2 trained on 1K hours of French speech (0.5K Males / 0.5K Females). - [wav2vec2-FR-1K-base](https://huggingface.co/LeBenchmark/wav2vec2-FR-1K-base): Base wav2vec2 trained on 1K hours of French speech (0.5K Males / 0.5K Females). | 57108ead721c2e51916a99e55f4a3de7 |

apache-2.0 | ['wav2vec2'] | false | Intended uses & limitations Pretrained wav2vec2 models are distributed under the Apache-2.0 license. Hence, they can be reused extensively without strict limitations. However, benchmarks and data may be linked to corpora that are not completely open-sourced. | 10e4d91b4a8e0ab9a26f8612715544a9 |

apache-2.0 | ['wav2vec2'] | false | Fine-tune with Fairseq for ASR with CTC As our wav2vec2 models were trained with Fairseq, then can be used in the different tools that they provide to fine-tune the model for ASR with CTC. The full procedure has been nicely summarized in [this blogpost](https://huggingface.co/blog/fine-tune-wav2vec2-english). Please note that due to the nature of CTC, speech-to-text results aren't expected to be state-of-the-art. Moreover, future features might appear depending on the involvement of Fairseq and HuggingFace on this part. | bf28ad25746ed8c8d4f295e1d8e5107e |

apache-2.0 | ['wav2vec2'] | false | Integrate to SpeechBrain for ASR, Speaker, Source Separation ... Pretrained wav2vec models recently gained in popularity. At the same time, [SpeechBrain toolkit](https://speechbrain.github.io) came out, proposing a new and simpler way of dealing with state-of-the-art speech & deep-learning technologies. While it currently is in beta, SpeechBrain offers two different ways of nicely integrating wav2vec2 models that were trained with Fairseq i.e our LeBenchmark models! 1. Extract wav2vec2 features on-the-fly (with a frozen wav2vec2 encoder) to be combined with any speech-related architecture. Examples are: E2E ASR with CTC+Att+Language Models; Speaker Recognition or Verification, Source Separation ... 2. *Experimental:* To fully benefit from wav2vec2, the best solution remains to fine-tune the model while you train your downstream task. This is very simply allowed within SpeechBrain as just a flag needs to be turned on. Thus, our wav2vec2 models can be fine-tuned while training your favorite ASR pipeline or Speaker Recognizer. **If interested, simply follow this [tutorial](https://colab.research.google.com/drive/17Hu1pxqhfMisjkSgmM2CnZxfqDyn2hSY?usp=sharing)** | 018dde64d2878bd2af412f420f106c2c |

apache-2.0 | ['wav2vec2'] | false | Referencing LeBenchmark ``` @article{Evain2021LeBenchmarkAR, title={LeBenchmark: A Reproducible Framework for Assessing Self-Supervised Representation Learning from Speech}, author={Sol{\`e}ne Evain and Ha Nguyen and Hang Le and Marcely Zanon Boito and Salima Mdhaffar and Sina Alisamir and Ziyi Tong and N. Tomashenko and Marco Dinarelli and Titouan Parcollet and A. Allauzen and Y. Est{\`e}ve and B. Lecouteux and F. Portet and S. Rossato and F. Ringeval and D. Schwab and L. Besacier}, journal={ArXiv}, year={2021}, volume={abs/2104.11462} } ``` | 7f071c34c1fe1f5662037f8226d94e61 |

apache-2.0 | ['national library of spain', 'spanish', 'bne', 'capitel', 'pos'] | false | Spanish RoBERTa-large trained on BNE finetuned for CAPITEL Part of Speech (POS) dataset RoBERTa-large-bne is a transformer-based masked language model for the Spanish language. It is based on the [RoBERTa](https://arxiv.org/abs/1907.11692) large model and has been pre-trained using the largest Spanish corpus known to date, with a total of 570GB of clean and deduplicated text processed for this work, compiled from the web crawlings performed by the [National Library of Spain (Biblioteca Nacional de España)](http://www.bne.es/en/Inicio/index.html) from 2009 to 2019. Original pre-trained model can be found here: https://huggingface.co/BSC-TeMU/roberta-large-bne | 717ab17511c40ea8ced982bee98dadec |

apache-2.0 | ['national library of spain', 'spanish', 'bne', 'capitel', 'pos'] | false | Citing Check out our paper for all the details: https://arxiv.org/abs/2107.07253 ``` @misc{gutierrezfandino2021spanish, title={Spanish Language Models}, author={Asier Gutiérrez-Fandiño and Jordi Armengol-Estapé and Marc Pàmies and Joan Llop-Palao and Joaquín Silveira-Ocampo and Casimiro Pio Carrino and Aitor Gonzalez-Agirre and Carme Armentano-Oller and Carlos Rodriguez-Penagos and Marta Villegas}, year={2021}, eprint={2107.07253}, archivePrefix={arXiv}, primaryClass={cs.CL} } ``` | fc9864c149ad1e7612cba01262f6ca97 |

apache-2.0 | ['image-classification', 'generated_from_trainer'] | false | exper_batch_8_e8 This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on the sudo-s/herbier_mesuem1 dataset. It achieves the following results on the evaluation set: - Loss: 0.4608 - Accuracy: 0.9052 | f4b1b015417e2945435b65406253bde3 |

apache-2.0 | ['image-classification', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0002 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 8 - mixed_precision_training: Apex, opt level O1 | 9ef45d863f5a8687ada05ff79f40ea97 |

apache-2.0 | ['image-classification', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:--------:| | 4.2202 | 0.08 | 100 | 4.1245 | 0.1237 | | 3.467 | 0.16 | 200 | 3.5622 | 0.2143 | | 3.3469 | 0.23 | 300 | 3.1688 | 0.2675 | | 2.8086 | 0.31 | 400 | 2.8965 | 0.3034 | | 2.6291 | 0.39 | 500 | 2.5858 | 0.4025 | | 2.2382 | 0.47 | 600 | 2.2908 | 0.4133 | | 1.9259 | 0.55 | 700 | 2.2007 | 0.4676 | | 1.8088 | 0.63 | 800 | 2.0419 | 0.4742 | | 1.9462 | 0.7 | 900 | 1.6793 | 0.5578 | | 1.5392 | 0.78 | 1000 | 1.5460 | 0.6079 | | 1.561 | 0.86 | 1100 | 1.5793 | 0.5690 | | 1.2135 | 0.94 | 1200 | 1.4663 | 0.5929 | | 1.0725 | 1.02 | 1300 | 1.2974 | 0.6534 | | 0.8696 | 1.1 | 1400 | 1.2406 | 0.6569 | | 0.8758 | 1.17 | 1500 | 1.2127 | 0.6623 | | 1.1737 | 1.25 | 1600 | 1.2243 | 0.6550 | | 0.8242 | 1.33 | 1700 | 1.1371 | 0.6735 | | 1.0141 | 1.41 | 1800 | 1.0536 | 0.7024 | | 0.9855 | 1.49 | 1900 | 0.9885 | 0.7205 | | 0.805 | 1.57 | 2000 | 0.9048 | 0.7479 | | 0.7207 | 1.64 | 2100 | 0.8842 | 0.7490 | | 0.7101 | 1.72 | 2200 | 0.8954 | 0.7436 | | 0.5946 | 1.8 | 2300 | 0.9174 | 0.7386 | | 0.6937 | 1.88 | 2400 | 0.7818 | 0.7760 | | 0.5593 | 1.96 | 2500 | 0.7449 | 0.7934 | | 0.4139 | 2.04 | 2600 | 0.7787 | 0.7830 | | 0.2929 | 2.11 | 2700 | 0.7122 | 0.7945 | | 0.4159 | 2.19 | 2800 | 0.7446 | 0.7907 | | 0.4079 | 2.27 | 2900 | 0.7354 | 0.7938 | | 0.516 | 2.35 | 3000 | 0.7499 | 0.8007 | | 0.2728 | 2.43 | 3100 | 0.6851 | 0.8061 | | 0.4159 | 2.51 | 3200 | 0.7258 | 0.7999 | | 0.3396 | 2.58 | 3300 | 0.7455 | 0.7972 | | 0.1918 | 2.66 | 3400 | 0.6793 | 0.8119 | | 0.1228 | 2.74 | 3500 | 0.6696 | 0.8134 | | 0.2671 | 2.82 | 3600 | 0.6306 | 0.8285 | | 0.4986 | 2.9 | 3700 | 0.6111 | 0.8296 | | 0.3699 | 2.98 | 3800 | 0.5600 | 0.8508 | | 0.0444 | 3.05 | 3900 | 0.6021 | 0.8331 | | 0.1489 | 3.13 | 4000 | 0.5599 | 0.8516 | | 0.15 | 3.21 | 4100 | 0.6377 | 0.8365 | | 0.2535 | 3.29 | 4200 | 0.5752 | 0.8543 | | 0.2679 | 3.37 | 4300 | 0.5677 | 0.8608 | | 0.0989 | 3.45 | 4400 | 0.6325 | 0.8396 | | 0.0825 | 3.52 | 4500 | 0.5979 | 0.8524 | | 0.0427 | 3.6 | 4600 | 0.5903 | 0.8516 | | 0.1806 | 3.68 | 4700 | 0.5323 | 0.8628 | | 0.2672 | 3.76 | 4800 | 0.5688 | 0.8604 | | 0.2674 | 3.84 | 4900 | 0.5369 | 0.8635 | | 0.2185 | 3.92 | 5000 | 0.4743 | 0.8820 | | 0.2195 | 3.99 | 5100 | 0.5340 | 0.8709 | | 0.0049 | 4.07 | 5200 | 0.5883 | 0.8608 | | 0.0204 | 4.15 | 5300 | 0.6102 | 0.8539 | | 0.0652 | 4.23 | 5400 | 0.5659 | 0.8670 | | 0.028 | 4.31 | 5500 | 0.4916 | 0.8840 | | 0.0423 | 4.39 | 5600 | 0.5706 | 0.8736 | | 0.0087 | 4.46 | 5700 | 0.5653 | 0.8697 | | 0.0964 | 4.54 | 5800 | 0.5423 | 0.8755 | | 0.0841 | 4.62 | 5900 | 0.5160 | 0.8743 | | 0.0945 | 4.7 | 6000 | 0.5532 | 0.8697 | | 0.0311 | 4.78 | 6100 | 0.4947 | 0.8867 | | 0.0423 | 4.86 | 6200 | 0.5063 | 0.8843 | | 0.1348 | 4.93 | 6300 | 0.5619 | 0.8743 | | 0.049 | 5.01 | 6400 | 0.5800 | 0.8732 | | 0.0053 | 5.09 | 6500 | 0.5499 | 0.8770 | | 0.0234 | 5.17 | 6600 | 0.5102 | 0.8874 | | 0.0192 | 5.25 | 6700 | 0.5447 | 0.8836 | | 0.0029 | 5.32 | 6800 | 0.4787 | 0.8936 | | 0.0249 | 5.4 | 6900 | 0.5232 | 0.8870 | | 0.0671 | 5.48 | 7000 | 0.4766 | 0.8975 | | 0.0056 | 5.56 | 7100 | 0.5136 | 0.8894 | | 0.003 | 5.64 | 7200 | 0.5085 | 0.8882 | | 0.0015 | 5.72 | 7300 | 0.4832 | 0.8971 | | 0.0014 | 5.79 | 7400 | 0.4648 | 0.8998 | | 0.0065 | 5.87 | 7500 | 0.4739 | 0.8978 | | 0.0011 | 5.95 | 7600 | 0.5349 | 0.8867 | | 0.0021 | 6.03 | 7700 | 0.5460 | 0.8847 | | 0.0012 | 6.11 | 7800 | 0.5309 | 0.8890 | | 0.0011 | 6.19 | 7900 | 0.4852 | 0.8998 | | 0.0093 | 6.26 | 8000 | 0.4751 | 0.8998 | | 0.003 | 6.34 | 8100 | 0.4934 | 0.8963 | | 0.0027 | 6.42 | 8200 | 0.4882 | 0.9029 | | 0.0009 | 6.5 | 8300 | 0.4806 | 0.9021 | | 0.0009 | 6.58 | 8400 | 0.4974 | 0.9029 | | 0.0009 | 6.66 | 8500 | 0.4748 | 0.9075 | | 0.0008 | 6.73 | 8600 | 0.4723 | 0.9094 | | 0.001 | 6.81 | 8700 | 0.4692 | 0.9098 | | 0.0007 | 6.89 | 8800 | 0.4726 | 0.9075 | | 0.0011 | 6.97 | 8900 | 0.4686 | 0.9067 | | 0.0006 | 7.05 | 9000 | 0.4653 | 0.9056 | | 0.0006 | 7.13 | 9100 | 0.4755 | 0.9029 | | 0.0007 | 7.2 | 9200 | 0.4633 | 0.9036 | | 0.0067 | 7.28 | 9300 | 0.4611 | 0.9036 | | 0.0007 | 7.36 | 9400 | 0.4608 | 0.9052 | | 0.0007 | 7.44 | 9500 | 0.4623 | 0.9044 | | 0.0005 | 7.52 | 9600 | 0.4621 | 0.9056 | | 0.0005 | 7.6 | 9700 | 0.4615 | 0.9056 | | 0.0005 | 7.67 | 9800 | 0.4612 | 0.9059 | | 0.0005 | 7.75 | 9900 | 0.4626 | 0.9075 | | 0.0004 | 7.83 | 10000 | 0.4626 | 0.9075 | | 0.0005 | 7.91 | 10100 | 0.4626 | 0.9075 | | 0.0006 | 7.99 | 10200 | 0.4626 | 0.9079 | | 61c9342ee9b69308bfe1d1a931194f14 |

apache-2.0 | ['bert'] | false | Chinese small pre-trained model MiniRBT In order to further promote the research and development of Chinese information processing, we launched a Chinese small pre-training model MiniRBT based on the self-developed knowledge distillation tool TextBrewer, combined with Whole Word Masking technology and Knowledge Distillation technology. This repository is developed based on:https://github.com/iflytek/MiniRBT You may also interested in, - Chinese LERT: https://github.com/ymcui/LERT - Chinese PERT: https://github.com/ymcui/PERT - Chinese MacBERT: https://github.com/ymcui/MacBERT - Chinese ELECTRA: https://github.com/ymcui/Chinese-ELECTRA - Chinese XLNet: https://github.com/ymcui/Chinese-XLNet - Knowledge Distillation Toolkit - TextBrewer: https://github.com/airaria/TextBrewer More resources by HFL: https://github.com/iflytek/HFL-Anthology | 00bcf4797681dca1fdce6281f3d35f29 |

creativeml-openrail-m | [] | false | VOXO Merged model by junjuice0. This model was originally created just for me, so I am not after quality and please don't expect too much. I may release finetune version of this model in the future, but only God knows if I am willing to do it until then. [JOIN US(日本語)](https://discord.gg/ai-art) | 56277c16bb64838c6fcfdd38db9defae |

creativeml-openrail-m | [] | false | VOXO-Vtuber (VOXO-v0-vtuber.safetensors) This model can generate vtubers for Hololive and Nijisanji. Some vtubers may or may not come out well. It is recommended to give the name a weight of about 1.2 (e.g. (ange katrina:1.2)) | b03ed14b84be45a1d7398a448370276a |

creativeml-openrail-m | [] | false | RECOMMENDED It is recommended to use TIs such as bad-images or bad-prompt for negative prompts. Also, quality prompts (e.g. masterpiece, high quality) are not required. The use of highres. fix may change the painting considerably, use according to your preference. | c298214108477b5ce245d086c1682ff2 |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-emotion-intent This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.1952 - Accuracy: 0.9385 | 68d9a34a268ab38a3f3629635b0fee7a |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 33 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 15 - mixed_precision_training: Native AMP | 91b252c2eee3950488e09225212b7299 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.4058 | 1.0 | 1000 | 0.2421 | 0.9265 | | 0.1541 | 2.0 | 2000 | 0.1952 | 0.9385 | | 0.1279 | 3.0 | 3000 | 0.1807 | 0.9345 | | 0.1069 | 4.0 | 4000 | 0.2292 | 0.9365 | | 0.081 | 5.0 | 5000 | 0.3315 | 0.936 | | 2a3c8304614686d79eea4de72624afc1 |

apache-2.0 | ['generated_from_trainer'] | false | stsb_bert-base-uncased_144_v2 This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the GLUE STSB dataset. It achieves the following results on the evaluation set: - Loss: 0.4994 - Pearson: 0.8900 - Spearmanr: 0.8864 - Combined Score: 0.8882 | 36c07f222f2bd1e1ef777cf64aecd2de |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | Arcane Diffusion This is the fine-tuned Stable Diffusion model trained on images from the TV Show Arcane. Use the tokens **_arcane style_** in your prompts for the effect. **If you enjoy my work, please consider supporting me** [](https://patreon.com/user?u=79196446) | e09c6210c076fc889bb36b66a4bbf4d5 |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | 🧨 Diffusers This model can be used just like any other Stable Diffusion model. For more information, please have a look at the [Stable Diffusion](https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion). You can also export the model to [ONNX](https://huggingface.co/docs/diffusers/optimization/onnx), [MPS](https://huggingface.co/docs/diffusers/optimization/mps) and/or [FLAX/JAX](). ```python | c9201645cb83b1d3023e6cbe1188efab |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | !pip install diffusers transformers scipy torch from diffusers import StableDiffusionPipeline import torch model_id = "nitrosocke/Arcane-Diffusion" pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16) pipe = pipe.to("cuda") prompt = "arcane style, a magical princess with golden hair" image = pipe(prompt).images[0] image.save("./magical_princess.png") ``` | 5da4b3e669a3fd3e81fe5b0ce131e77f |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | Gradio & Colab We also support a [Gradio](https://github.com/gradio-app/gradio) Web UI and Colab with Diffusers to run fine-tuned Stable Diffusion models: [](https://huggingface.co/spaces/anzorq/finetuned_diffusion) [](https://colab.research.google.com/drive/1j5YvfMZoGdDGdj3O3xRU1m4ujKYsElZO?usp=sharing)  | 1090f02a5c56050a415f4adce7c5fe8a |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | Sample images from v3:   | cf64654b8cbf5b7541212993167e1bd5 |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | Sample images used for training:  **Version 3** (arcane-diffusion-v3): This version uses the new _train-text-encoder_ setting and improves the quality and edibility of the model immensely. Trained on 95 images from the show in 8000 steps. **Version 2** (arcane-diffusion-v2): This uses the diffusers based dreambooth training and prior-preservation loss is way more effective. The diffusers where then converted with a script to a ckpt file in order to work with automatics repo. Training was done with 5k steps for a direct comparison to v1 and results show that it needs more steps for a more prominent result. Version 3 will be tested with 11k steps. **Version 1** (arcane-diffusion-5k): This model was trained using _Unfrozen Model Textual Inversion_ utilizing the _Training with prior-preservation loss_ methods. There is still a slight shift towards the style, while not using the arcane token. | f0369e10be4e436b1d75b7fbd0143ccb |

apache-2.0 | ['Tensorflow'] | false | Tensorpacks Cascade-RCNN with FPN and Group Normalization on ResNext32xd4-50 trained on Pubtabnet for Semantic Segmentation of tables.

The model and its training code has been mainly taken from: [Tensorpack](https://github.com/tensorpack/tensorpack/tree/master/examples/FasterRCNN) .

Regarding the dataset, please check: [Xu Zhong et. all. - Image-based table recognition: data, model, and evaluation](https://arxiv.org/abs/1911.10683).

The model has been trained on detecting rows and columns for tables. As rows and column bounding boxes are not a priori an element of the annotations they are

calculated using the bounding boxes of the cells and the intrinsic structure of the enclosed HTML.

The code has been adapted so that it can be used in a **deep**doctection pipeline.

| 8a8af03e7ef3d38b0201ffcbdd19ad83 |

apache-2.0 | ['Tensorflow'] | false | How this model can be used

This model can be used with the **deep**doctection in a full pipeline, along with table recognition and OCR. Check the general instruction following this [Get_started](https://github.com/deepdoctection/deepdoctection/blob/master/notebooks/Get_Started.ipynb) tutorial.

| 0607f610da67d6225843f374271b6bbd |

apache-2.0 | ['Tensorflow'] | false | How this model was trained.

To recreate the model run on the **deep**doctection framework, run:

```python

>>> import os

>>> from deep_doctection.datasets import DatasetRegistry

>>> from deep_doctection.eval import MetricRegistry

>>> from deep_doctection.utils import get_configs_dir_path

>>> from deep_doctection.train import train_faster_rcnn

pubtabnet = DatasetRegistry.get_dataset("pubtabnet")

pubtabnet.dataflow.categories.set_cat_to_sub_cat({"ITEM":"row_col"})

pubtabnet.dataflow.categories.filter_categories(categories=["ROW","COLUMN"])

path_config_yaml=os.path.join(get_configs_dir_path(),"tp/rows/conf_frcnn_rows.yaml")

path_weights = ""

dataset_train = pubtabnet

config_overwrite=["TRAIN.STEPS_PER_EPOCH=500","TRAIN.STARTING_EPOCH=1", "TRAIN.CHECKPOINT_PERIOD=50"]

build_train_config=["max_datapoints=500000","rows_and_cols=True"]

dataset_val = pubtabnet

build_val_config = ["max_datapoints=2000","rows_and_cols=True"]

coco_metric = MetricRegistry.get_metric("coco")

coco_metric.set_params(max_detections=[50,200,600], area_range=[[0,1000000],[0,200],[200,800],[800,1000000]])

train_faster_rcnn(path_config_yaml=path_config_yaml,

dataset_train=dataset_train,

path_weights=path_weights,

config_overwrite=config_overwrite,

log_dir="/path/to/dir",

build_train_config=build_train_config,

dataset_val=dataset_val,

build_val_config=build_val_config,

metric=coco_metric,

pipeline_component_name="ImageLayoutService"

)

```

| ffe1ca91b58d1e2b17569113b7f0d65f |

apache-2.0 | ['generated_from_trainer'] | false | bart-paraphrase-v0.75-e1 This model is a fine-tuned version of [eugenesiow/bart-paraphrase](https://huggingface.co/eugenesiow/bart-paraphrase) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.1865 - Rouge1: 71.3427 - Rouge2: 66.0011 - Rougel: 69.8855 - Rougelsum: 69.9796 - Gen Len: 19.6036 | e9af27dba961c0f423853438582b29c1 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:| | 0.1373 | 1.0 | 2660 | 0.1865 | 71.3427 | 66.0011 | 69.8855 | 69.9796 | 19.6036 | | c179a11ff0729f0717a25e772e66423a |

openrail | [] | false | text="""Dear Amazon, last week I ordered an Optimus Prime action figure from your online store in Germany. Unfortunately, when I opened the package, I discovered to my horror that I had been sent an action figure of Megatron instead! As a lifelong enemy of the Deceptions, I hope yoou can understand my dilemma. To resolve the issue, I demand an exchange of Megatron for the Optimus Prime figure I ordered. Enclosed are copies of my records concerning this purchase. I expect to hear from you soon. Sincerely, Bumblebee.""" from transformers import pipeline classifier = pipeline("text-classification") | efe19ef7f359bb7a22186a2dd8818fd8 |

apache-2.0 | [] | false | This is a [COMET](https://github.com/Unbabel/COMET) evaluation model: It receives a triplet with (source sentence, translation, reference translation) and returns a score that reflects the quality of the translation compared to both source and reference. | f2623748c414ba86811467fa9bd9e57d |

apache-2.0 | [] | false | ensures that pip is current pip install unbabel-comet ``` Then you can use it through comet CLI: ```bash comet-score -s {source-inputs}.txt -t {translation-outputs}.txt -r {references}.txt --model Unbabel/wmt22-comet-da ``` Or using Python: ```python from comet import download_model, load_from_checkpoint model_path = download_model("Unbabel/wmt22-comet-da") model = load_from_checkpoint(model_path) data = [ { "src": "Dem Feuer konnte Einhalt geboten werden", "mt": "The fire could be stopped", "ref": "They were able to control the fire." }, { "src": "Schulen und Kindergärten wurden eröffnet.", "mt": "Schools and kindergartens were open", "ref": "Schools and kindergartens opened" } ] model_output = model.predict(data, batch_size=8, gpus=1) print (model_output) ``` | 1956d60185daa8d03a20ea57ade022fa |

apache-2.0 | [] | false | Intended uses Our model is intented to be used for **MT evaluation**. Given a a triplet with (source sentence, translation, reference translation) outputs a single score between 0 and 1 where 1 represents a perfect translation. | 2b92224b84aa30fb06cf718b1923bfa8 |

apache-2.0 | [] | false | Languages Covered: This model builds on top of XLM-R which cover the following languages: Afrikaans, Albanian, Amharic, Arabic, Armenian, Assamese, Azerbaijani, Basque, Belarusian, Bengali, Bengali Romanized, Bosnian, Breton, Bulgarian, Burmese, Burmese, Catalan, Chinese (Simplified), Chinese (Traditional), Croatian, Czech, Danish, Dutch, English, Esperanto, Estonian, Filipino, Finnish, French, Galician, Georgian, German, Greek, Gujarati, Hausa, Hebrew, Hindi, Hindi Romanized, Hungarian, Icelandic, Indonesian, Irish, Italian, Japanese, Javanese, Kannada, Kazakh, Khmer, Korean, Kurdish (Kurmanji), Kyrgyz, Lao, Latin, Latvian, Lithuanian, Macedonian, Malagasy, Malay, Malayalam, Marathi, Mongolian, Nepali, Norwegian, Oriya, Oromo, Pashto, Persian, Polish, Portuguese, Punjabi, Romanian, Russian, Sanskri, Scottish, Gaelic, Serbian, Sindhi, Sinhala, Slovak, Slovenian, Somali, Spanish, Sundanese, Swahili, Swedish, Tamil, Tamil Romanized, Telugu, Telugu Romanized, Thai, Turkish, Ukrainian, Urdu, Urdu Romanized, Uyghur, Uzbek, Vietnamese, Welsh, Western, Frisian, Xhosa, Yiddish. Thus, results for language pairs containing uncovered languages are unreliable! | af6a426eeccc8ea9867ec1cbfd342c83 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper Large Assamese - Drishti Sharma This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 0.2452 - Wer: 21.4582 | e6304d7bef7ec38924b9573825291453 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.0109 | 4.32 | 700 | 0.2452 | 21.4582 | | 0368c1398cc19d3ffa00075096726407 |

apache-2.0 | [] | false | bert-base-en-el-cased We are sharing smaller versions of [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) that handle a custom number of languages. Unlike [distilbert-base-multilingual-cased](https://huggingface.co/distilbert-base-multilingual-cased), our versions give exactly the same representations produced by the original model which preserves the original accuracy. For more information please visit our paper: [Load What You Need: Smaller Versions of Multilingual BERT](https://www.aclweb.org/anthology/2020.sustainlp-1.16.pdf). | 92386f00f21e1be0c65f98a145686ba5 |

apache-2.0 | [] | false | How to use ```python from transformers import AutoTokenizer, AutoModel tokenizer = AutoTokenizer.from_pretrained("Geotrend/bert-base-en-el-cased") model = AutoModel.from_pretrained("Geotrend/bert-base-en-el-cased") ``` To generate other smaller versions of multilingual transformers please visit [our Github repo](https://github.com/Geotrend-research/smaller-transformers). | 78f063107646085438ba3114f3357cb7 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2237 - Accuracy: 0.9275 - F1: 0.9274 | f35a7ffb6d233edb625d938c199379d5 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8643 | 1.0 | 250 | 0.3324 | 0.9065 | 0.9025 | | 0.2589 | 2.0 | 500 | 0.2237 | 0.9275 | 0.9274 | | 121d3a1088351b2672876a69f20c7507 |

apache-2.0 | ['whisper-event', 'generated_from_trainer', 'hf-asr-leaderboard'] | false | Whisper Small Bashkir This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the mozilla-foundation/common_voice_11_0 ba dataset. It achieves the following results on the evaluation set: - Loss: 0.2589 - Wer: 15.0723 | 48ff68ffd610289bb6d2546acdb119ed |

apache-2.0 | ['whisper-event', 'generated_from_trainer', 'hf-asr-leaderboard'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 30000 - mixed_precision_training: Native AMP | b07338a330a43da673ef95ed41562a03 |

apache-2.0 | ['whisper-event', 'generated_from_trainer', 'hf-asr-leaderboard'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:-------:| | 0.1637 | 1.01 | 2000 | 0.2555 | 26.4682 | | 0.1375 | 2.01 | 4000 | 0.2223 | 21.5394 | | 0.0851 | 3.02 | 6000 | 0.2086 | 19.6725 | | 0.0573 | 4.02 | 8000 | 0.2178 | 18.4280 | | 0.036 | 5.03 | 10000 | 0.2312 | 17.8248 | | 0.0238 | 6.04 | 12000 | 0.2621 | 17.4096 | | 0.0733 | 7.04 | 14000 | 0.2120 | 16.5656 | | 0.0111 | 8.05 | 16000 | 0.2682 | 16.2291 | | 0.0155 | 9.05 | 18000 | 0.2677 | 15.9242 | | 0.0041 | 10.06 | 20000 | 0.3178 | 15.9534 | | 0.0023 | 12.01 | 22000 | 0.3218 | 16.0536 | | 0.0621 | 13.01 | 24000 | 0.2313 | 15.6169 | | 0.0022 | 14.02 | 26000 | 0.2887 | 15.1083 | | 0.0199 | 15.02 | 28000 | 0.2553 | 15.1848 | | 0.0083 | 16.03 | 30000 | 0.2589 | 15.0723 | | 4bf48b58a10be0a964020aae313970ac |

mit | ['generated_from_trainer'] | false | kobart-base-v2-finetuned-paper This model is a fine-tuned version of [gogamza/kobart-base-v2](https://huggingface.co/gogamza/kobart-base-v2) on the aihub_paper_summarization dataset. It achieves the following results on the evaluation set: - Loss: 1.2966 - Rouge1: 6.2883 - Rouge2: 1.7038 - Rougel: 6.2556 - Rougelsum: 6.2618 - Gen Len: 20.0 | 49981a14400827359d25379e8d7d327b |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:-----:|:---------------:|:------:|:------:|:------:|:---------:|:-------:| | 1.2215 | 1.0 | 8831 | 1.3293 | 6.2425 | 1.7317 | 6.2246 | 6.2247 | 20.0 | | 1.122 | 2.0 | 17662 | 1.3056 | 6.2298 | 1.7005 | 6.2042 | 6.2109 | 20.0 | | 1.0914 | 3.0 | 26493 | 1.2966 | 6.2883 | 1.7038 | 6.2556 | 6.2618 | 20.0 | | c9b478685fdfb3fec61ffd043f3236f8 |

mit | ['roberta-base', 'roberta-base-epoch_33'] | false | RoBERTa, Intermediate Checkpoint - Epoch 33 This model is part of our reimplementation of the [RoBERTa model](https://arxiv.org/abs/1907.11692), trained on Wikipedia and the Book Corpus only. We train this model for almost 100K steps, corresponding to 83 epochs. We provide the 84 checkpoints (including the randomly initialized weights before the training) to provide the ability to study the training dynamics of such models, and other possible use-cases. These models were trained in part of a work that studies how simple statistics from data, such as co-occurrences affects model predictions, which are described in the paper [Measuring Causal Effects of Data Statistics on Language Model's `Factual' Predictions](https://arxiv.org/abs/2207.14251). This is RoBERTa-base epoch_33. | d7ab293b88b5509fef2d9b26fd488403 |

apache-2.0 | ['generated_from_trainer'] | false | eval_v3_mrpc This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the GLUE MRPC dataset. It achieves the following results on the evaluation set: - eval_loss: 0.6564 - eval_accuracy: 0.6649 - eval_f1: 0.7987 - eval_combined_score: 0.7318 - eval_runtime: 5.045 - eval_samples_per_second: 341.921 - eval_steps_per_second: 42.815 - step: 0 | d18c029fb058c858ef4e6a62f9bb3b21 |

apache-2.0 | ['generated_from_keras_callback'] | false | nestoralvaro/mt5-small-finetuned-google_small_for_summarization_TF This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 2.3123 - Validation Loss: 2.1399 - Epoch: 7 | b79d748b9ca5539cd5a1e502442cdee5 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 5.6e-05, 'decay_steps': 266360, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01} - training_precision: mixed_float16 | 5613de60346f7d22e35b36e49dcf40bb |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Epoch | |:----------:|:---------------:|:-----:| | 3.2631 | 2.3702 | 0 | | 2.6166 | 2.2422 | 1 | | 2.4974 | 2.2074 | 2 | | 2.4288 | 2.1843 | 3 | | 2.3837 | 2.1613 | 4 | | 2.3503 | 2.1521 | 5 | | 2.3263 | 2.1407 | 6 | | 2.3123 | 2.1399 | 7 | | c62eb4e6dcb76c2761149fbd29957f7c |

apache-2.0 | ['image-classification'] | false | resnet50 Implementation of ResNet proposed in [Deep Residual Learning for Image Recognition](https://arxiv.org/abs/1512.03385) ``` python ResNet.resnet18() ResNet.resnet26() ResNet.resnet34() ResNet.resnet50() ResNet.resnet101() ResNet.resnet152() ResNet.resnet200() Variants (d) proposed in `Bag of Tricks for Image Classification with Convolutional Neural Networks <https://arxiv.org/pdf/1812.01187.pdf`_ ResNet.resnet26d() ResNet.resnet34d() ResNet.resnet50d() | 36acd48338ea1ca9d7058dc68e8fd8f1 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-base-timit-demo-google-colab This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.5195 - Wer: 0.3386 | f81aa8dc40a3083a9c5588472331e035 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:------:| | 3.5345 | 1.0 | 500 | 2.1466 | 1.0010 | | 0.949 | 2.01 | 1000 | 0.5687 | 0.5492 | | 0.445 | 3.01 | 1500 | 0.4562 | 0.4717 | | 0.2998 | 4.02 | 2000 | 0.4154 | 0.4401 | | 0.2242 | 5.02 | 2500 | 0.3887 | 0.4034 | | 0.1834 | 6.02 | 3000 | 0.4262 | 0.3905 | | 0.1573 | 7.03 | 3500 | 0.4200 | 0.3927 | | 0.1431 | 8.03 | 4000 | 0.4194 | 0.3869 | | 0.1205 | 9.04 | 4500 | 0.4600 | 0.3912 | | 0.1082 | 10.04 | 5000 | 0.4613 | 0.3776 | | 0.0984 | 11.04 | 5500 | 0.4926 | 0.3860 | | 0.0872 | 12.05 | 6000 | 0.4869 | 0.3780 | | 0.0826 | 13.05 | 6500 | 0.5033 | 0.3690 | | 0.0717 | 14.06 | 7000 | 0.4827 | 0.3791 | | 0.0658 | 15.06 | 7500 | 0.4816 | 0.3650 | | 0.0579 | 16.06 | 8000 | 0.5433 | 0.3689 | | 0.056 | 17.07 | 8500 | 0.5513 | 0.3672 | | 0.0579 | 18.07 | 9000 | 0.4813 | 0.3632 | | 0.0461 | 19.08 | 9500 | 0.4846 | 0.3501 | | 0.0431 | 20.08 | 10000 | 0.5449 | 0.3637 | | 0.043 | 21.08 | 10500 | 0.4906 | 0.3538 | | 0.0334 | 22.09 | 11000 | 0.5081 | 0.3477 | | 0.0322 | 23.09 | 11500 | 0.5184 | 0.3439 | | 0.0316 | 24.1 | 12000 | 0.5412 | 0.3450 | | 0.0262 | 25.1 | 12500 | 0.5113 | 0.3425 | | 0.0267 | 26.1 | 13000 | 0.4888 | 0.3414 | | 0.0258 | 27.11 | 13500 | 0.5071 | 0.3371 | | 0.0226 | 28.11 | 14000 | 0.5311 | 0.3380 | | 0.0233 | 29.12 | 14500 | 0.5195 | 0.3386 | | 8b99349d1f8ebe9e86503dae9128bf16 |

apache-2.0 | ['generated_from_trainer'] | false | recipe-lr2e05-wd0.02-bs32 This model is a fine-tuned version of [paola-md/recipe-distilroberta-Is](https://huggingface.co/paola-md/recipe-distilroberta-Is) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2784 - Rmse: 0.5277 - Mse: 0.2784 - Mae: 0.4161 | 63aabe736a23fe9c966721de851a1e77 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rmse | Mse | Mae | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:| | 0.2774 | 1.0 | 623 | 0.2749 | 0.5243 | 0.2749 | 0.4184 | | 0.2741 | 2.0 | 1246 | 0.2741 | 0.5235 | 0.2741 | 0.4173 | | 0.2724 | 3.0 | 1869 | 0.2855 | 0.5343 | 0.2855 | 0.4428 | | 0.2713 | 4.0 | 2492 | 0.2758 | 0.5252 | 0.2758 | 0.4013 | | 0.2695 | 5.0 | 3115 | 0.2777 | 0.5270 | 0.2777 | 0.4245 | | 0.2674 | 6.0 | 3738 | 0.2784 | 0.5277 | 0.2784 | 0.4161 | | f742a107b7a8a744ac601a64888bfe73 |

apache-2.0 | ['generated_from_trainer'] | false | mental_health_trainer This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the [reddit_mental_health_posts](https://huggingface.co/datasets/solomonk/reddit_mental_health_posts) | 0f24465dde9d30f900e2adb51284d33c |

apache-2.0 | ['generated_from_trainer'] | false | roberta-base-bne-finetuned-sqac This model is a fine-tuned version of [PlanTL-GOB-ES/roberta-base-bne](https://huggingface.co/PlanTL-GOB-ES/roberta-base-bne) on the sqac dataset. It achieves the following results on the evaluation set: - Loss: 1.2066 | 3b4c6598f5262419fbc63c0b46d701dd |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 0.9924 | 1.0 | 1196 | 0.8670 | | 0.474 | 2.0 | 2392 | 0.8923 | | 0.1637 | 3.0 | 3588 | 1.2066 | | 6a81d6b587d152f548683fd13b8ae7ac |

apache-2.0 | ['generated_from_trainer'] | false | Brain_Tumor_Class_swin This model is a fine-tuned version of [microsoft/swin-base-patch4-window7-224-in22k](https://huggingface.co/microsoft/swin-base-patch4-window7-224-in22k) on the imagefolder dataset. It achieves the following results on the evaluation set: - Loss: 0.0220 - Accuracy: 0.9936 - F1: 0.9936 - Recall: 0.9936 - Precision: 0.9936 | bde5d0fb4586756ad91b52584f8308ff |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | Recall | Precision | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|:------:|:---------:| | 0.1248 | 1.0 | 220 | 0.0610 | 0.9767 | 0.9767 | 0.9767 | 0.9767 | | 0.0887 | 2.0 | 440 | 0.0300 | 0.9920 | 0.9920 | 0.9920 | 0.9920 | | 0.0449 | 3.0 | 660 | 0.0220 | 0.9936 | 0.9936 | 0.9936 | 0.9936 | | d514e5096f80d80e33173b312db5f6d6 |

bsd-3-clause | ['image-captioning'] | false | BLIP: Bootstrapping Language-Image Pre-training for Unified Vision-Language Understanding and Generation Model card for image captioning pretrained on COCO dataset - base architecture (with ViT large backbone). |  | |:--:| | <b> Pull figure from BLIP official repo | Image source: https://github.com/salesforce/BLIP </b>| | 4fb7ab7a6dfa6eed08d5b547157007c5 |

bsd-3-clause | ['image-captioning'] | false | TL;DR Authors from the [paper](https://arxiv.org/abs/2201.12086) write in the abstract: *Vision-Language Pre-training (VLP) has advanced the performance for many vision-language tasks. However, most existing pre-trained models only excel in either understanding-based tasks or generation-based tasks. Furthermore, performance improvement has been largely achieved by scaling up the dataset with noisy image-text pairs collected from the web, which is a suboptimal source of supervision. In this paper, we propose BLIP, a new VLP framework which transfers flexibly to both vision-language understanding and generation tasks. BLIP effectively utilizes the noisy web data by bootstrapping the captions, where a captioner generates synthetic captions and a filter removes the noisy ones. We achieve state-of-the-art results on a wide range of vision-language tasks, such as image-text retrieval (+2.7% in average recall@1), image captioning (+2.8% in CIDEr), and VQA (+1.6% in VQA score). BLIP also demonstrates strong generalization ability when directly transferred to videolanguage tasks in a zero-shot manner. Code, models, and datasets are released.* | 9752b07a6ea11701bfce27953a88da24 |

bsd-3-clause | ['image-captioning'] | false | Running the model on CPU <details> <summary> Click to expand </summary> ```python import requests from PIL import Image from transformers import BlipProcessor, BlipForConditionalGeneration processor = BlipProcessor.from_pretrained("Salesforce/blip-image-captioning-large") model = BlipForConditionalGeneration.from_pretrained("Salesforce/blip-image-captioning-large") img_url = 'https://storage.googleapis.com/sfr-vision-language-research/BLIP/demo.jpg' raw_image = Image.open(requests.get(img_url, stream=True).raw).convert('RGB') | a00a511d281f2c8796ef9144c8746f9d |

bsd-3-clause | ['image-captioning'] | false | conditional image captioning text = "a photography of" inputs = processor(raw_image, text, return_tensors="pt") out = model.generate(**inputs) print(processor.decode(out[0], skip_special_tokens=True)) | 97529d21d3fdf4adc6ed9b49f2f23677 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.