license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

bsd-3-clause | ['image-captioning'] | false | In full precision <details> <summary> Click to expand </summary> ```python import requests from PIL import Image from transformers import BlipProcessor, BlipForConditionalGeneration processor = BlipProcessor.from_pretrained("Salesforce/blip-image-captioning-large") model = BlipForConditionalGeneration.from_pretrained("Salesforce/blip-image-captioning-large").to("cuda") img_url = 'https://storage.googleapis.com/sfr-vision-language-research/BLIP/demo.jpg' raw_image = Image.open(requests.get(img_url, stream=True).raw).convert('RGB') | f9614ad8c9573be6a18d84f1f7fdedbc |

bsd-3-clause | ['image-captioning'] | false | conditional image captioning text = "a photography of" inputs = processor(raw_image, text, return_tensors="pt").to("cuda") out = model.generate(**inputs) print(processor.decode(out[0], skip_special_tokens=True)) | c2bffe96950f4f6ac49e1c76a388d063 |

bsd-3-clause | ['image-captioning'] | false | In half precision (`float16`) <details> <summary> Click to expand </summary> ```python import torch import requests from PIL import Image from transformers import BlipProcessor, BlipForConditionalGeneration processor = BlipProcessor.from_pretrained("Salesforce/blip-image-captioning-large") model = BlipForConditionalGeneration.from_pretrained("Salesforce/blip-image-captioning-large", torch_dtype=torch.float16).to("cuda") img_url = 'https://storage.googleapis.com/sfr-vision-language-research/BLIP/demo.jpg' raw_image = Image.open(requests.get(img_url, stream=True).raw).convert('RGB') | f908e768f5571fa6a881b65619d6af71 |

bsd-3-clause | ['image-captioning'] | false | conditional image captioning text = "a photography of" inputs = processor(raw_image, text, return_tensors="pt").to("cuda", torch.float16) out = model.generate(**inputs) print(processor.decode(out[0], skip_special_tokens=True)) | fa09f94c6a087c6d6bf4bd749e25cfd8 |

bsd-3-clause | ['image-captioning'] | false | unconditional image captioning inputs = processor(raw_image, return_tensors="pt").to("cuda", torch.float16) out = model.generate(**inputs) print(processor.decode(out[0], skip_special_tokens=True)) >>> a woman sitting on the beach with her dog ``` </details> | 59d1ada897ca2c3bbcb6c99f702ab838 |

bsd-3-clause | ['image-captioning'] | false | BibTex and citation info ``` @misc{https://doi.org/10.48550/arxiv.2201.12086, doi = {10.48550/ARXIV.2201.12086}, url = {https://arxiv.org/abs/2201.12086}, author = {Li, Junnan and Li, Dongxu and Xiong, Caiming and Hoi, Steven}, keywords = {Computer Vision and Pattern Recognition (cs.CV), FOS: Computer and information sciences, FOS: Computer and information sciences}, title = {BLIP: Bootstrapping Language-Image Pre-training for Unified Vision-Language Understanding and Generation}, publisher = {arXiv}, year = {2022}, copyright = {Creative Commons Attribution 4.0 International} } ``` | 8353eec37f2487700c504dc7ba6eb798 |

apache-2.0 | ['generated_from_trainer'] | false | tiny-mlm-glue-stsb This model is a fine-tuned version of [google/bert_uncased_L-2_H-128_A-2](https://huggingface.co/google/bert_uncased_L-2_H-128_A-2) on the None dataset. It achieves the following results on the evaluation set: - Loss: 3.7830 | 77bab752769e2f6ec8dea0e4229176c2 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 4.7548 | 0.7 | 500 | 3.9253 | | 4.4535 | 1.39 | 1000 | 3.9069 | | 4.364 | 2.09 | 1500 | 3.8392 | | 4.1534 | 2.78 | 2000 | 3.7830 | | 4.2317 | 3.48 | 2500 | 3.7450 | | 4.1233 | 4.17 | 3000 | 3.7755 | | 4.0383 | 4.87 | 3500 | 3.7060 | | 4.0459 | 5.56 | 4000 | 3.8708 | | 3.9321 | 6.26 | 4500 | 3.8573 | | 4.0206 | 6.95 | 5000 | 3.7830 | | 833afc346a6b1e62d84bb34c41dbf6e8 |

apache-2.0 | ['translation'] | false | opus-mt-srn-fr * source languages: srn * target languages: fr * OPUS readme: [srn-fr](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/srn-fr/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/srn-fr/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/srn-fr/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/srn-fr/opus-2020-01-16.eval.txt) | b2cae4f53671e877c81da22241fcf954 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-base-timit-demo-colab This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.4688 - Wer: 0.3417 | ccb42f600b5703f0964f39d26821203a |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 3.4156 | 4.0 | 500 | 1.2721 | 0.8882 | | 0.6145 | 8.0 | 1000 | 0.4712 | 0.4510 | | 0.229 | 12.0 | 1500 | 0.4459 | 0.3847 | | 0.1312 | 16.0 | 2000 | 0.4739 | 0.3786 | | 0.0897 | 20.0 | 2500 | 0.4483 | 0.3562 | | 0.0608 | 24.0 | 3000 | 0.4450 | 0.3502 | | 0.0456 | 28.0 | 3500 | 0.4688 | 0.3417 | | d66c2348223a48b0bec0b1a4ab4d92ec |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-de This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.1358 - F1: 0.8638 | 0509e3128a3299e4ab713747d370b5ef |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.2591 | 1.0 | 525 | 0.1621 | 0.8206 | | 0.1276 | 2.0 | 1050 | 0.1379 | 0.8486 | | 0.082 | 3.0 | 1575 | 0.1358 | 0.8638 | | c46a0d7a928d738a9cd67d8eec05c90d |

apache-2.0 | ['int8', 'Intel® Neural Compressor', 'neural-compressor', 'PostTrainingDynamic'] | false | Post-training dynamic quantization This is an INT8 PyTorch model quantized with [huggingface/optimum-intel](https://github.com/huggingface/optimum-intel) through the usage of [Intel® Neural Compressor](https://github.com/intel/neural-compressor). The original fp32 model comes from the fine-tuned model [sshleifer/distilbart-cnn-12-6](https://huggingface.co/sshleifer/distilbart-cnn-12-6). Below linear modules (21/133) are fallbacked to fp32 for less than 1% relative accuracy loss: **'model.decoder.layers.2.fc2'**, **'model.encoder.layers.11.fc2'**, **'model.decoder.layers.1.fc2'**, **'model.decoder.layers.0.fc2'**, **'model.decoder.layers.4.fc1'**, **'model.decoder.layers.3.fc2'**, **'model.encoder.layers.8.fc2'**, **'model.decoder.layers.3.fc1'**, **'model.encoder.layers.11.fc1'**, **'model.encoder.layers.0.fc2'**, **'model.encoder.layers.3.fc1'**, **'model.encoder.layers.10.fc2'**, **'model.decoder.layers.5.fc1'**, **'model.encoder.layers.1.fc2'**, **'model.encoder.layers.3.fc2'**, **'lm_head'**, **'model.encoder.layers.7.fc2'**, **'model.decoder.layers.0.fc1'**, **'model.encoder.layers.4.fc1'**, **'model.encoder.layers.10.fc1'**, **'model.encoder.layers.6.fc1'** | e735b56f936f1115c8e4a51c1f3639e5 |

apache-2.0 | ['int8', 'Intel® Neural Compressor', 'neural-compressor', 'PostTrainingDynamic'] | false | Load with optimum: ```python from optimum.intel.neural_compressor.quantization import IncQuantizedModelForSeq2SeqLM int8_model = IncQuantizedModelForSeq2SeqLM.from_pretrained( 'Intel/distilbart-cnn-12-6-int8-dynamic', ) ``` | 0d9b34ba83c66de1ca149ab4c60edc0a |

mit | ['audio-generation'] | false | !pip install diffusers[torch] accelerate scipy from diffusers import DiffusionPipeline from scipy.io.wavfile import write model_id = "harmonai/unlocked-250k" pipe = DiffusionPipeline.from_pretrained(model_id) pipe = pipe.to("cuda") audios = pipe(audio_length_in_s=4.0).audios | eddfc6058a4b2716e6561f43b2e9d1c4 |

mit | ['audio-generation'] | false | !pip install diffusers[torch] accelerate scipy from diffusers import DiffusionPipeline from scipy.io.wavfile import write import torch model_id = "harmonai/unlocked-250k" pipe = DiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16) pipe = pipe.to("cuda") audios = pipeline(audio_length_in_s=4.0).audios | 36eaf2c8d1832845ce76fe9a6e629102 |

apache-2.0 | [] | false | ALBERT XLarge v1 Pretrained model on English language using a masked language modeling (MLM) objective. It was introduced in [this paper](https://arxiv.org/abs/1909.11942) and first released in [this repository](https://github.com/google-research/albert). This model, as all ALBERT models, is uncased: it does not make a difference between english and English. Disclaimer: The team releasing ALBERT did not write a model card for this model so this model card has been written by the Hugging Face team. | 2d2491b04b732d98920fe4d1773afe5f |

apache-2.0 | [] | false | How to use You can use this model directly with a pipeline for masked language modeling: In tf_transformers ```python from tf_transformers.models import AlbertModel from transformers import AlbertTokenizer tokenizer = AlbertTokenizer.from_pretrained('albert-xlarge-v1') model = AlbertModel.from_pretrained("albert-xlarge-v1") text = "Replace me by any text you'd like." inputs_tf = {} inputs = tokenizer(text, return_tensors='tf') inputs_tf["input_ids"] = inputs["input_ids"] inputs_tf["input_type_ids"] = inputs["token_type_ids"] inputs_tf["input_mask"] = inputs["attention_mask"] outputs_tf = model(inputs_tf) ``` | b525318bb948ae40ad8e159672a8a750 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-mnli-amazon-query-shopping This model is a fine-tuned version of [nlptown/bert-base-multilingual-uncased-sentiment](https://huggingface.co/nlptown/bert-base-multilingual-uncased-sentiment?text=I+like+you.+I+love+you) on an [Amazon US Customer Reviews Dataset](https://www.kaggle.com/datasets/cynthiarempel/amazon-us-customer-reviews-dataset). The code for the fine-tuning process can be found [here](https://github.com/vanderbilt-data-science/bigdata/blob/main/06-fine-tune-BERT-on-our-dataset.ipynb). This model is uncased: it does not make a difference between english and English. It achieves the following results on the evaluation set: - Loss: 0.5202942490577698 - Accuracy: 0.8 | e45a6069ee9cd3af0e40c36ccbfa2972 |

apache-2.0 | ['generated_from_trainer'] | false | Model description This a bert-base-multilingual-uncased model finetuned for sentiment analysis on product reviews in six languages: English, Dutch, German, French, Spanish and Italian. It predicts the sentiment of the review as a number of stars (between 1 and 5). This model is intended for direct use as a sentiment analysis model for product reviews in any of the six languages above, or for further finetuning on related sentiment analysis tasks. We replaced its head with our customer reviews to fine-tune it on 17,280 rows of training set while validating it on 4,320 rows of dev set. Finally, we evaluated our model performance on a held-out test set: 2,400 rows. | c1d3ba67511b76539e412d7fb9e201e4 |

apache-2.0 | ['generated_from_trainer'] | false | Intended uses & limitations Bert-base is primarily aimed at being fine-tuned on tasks that use the whole sentence (potentially masked) to make decisions, such as sequence classification, token classification, or question answering. This fine-tuned version of BERT-base is used to predict review rating star given the review. The limitations are this trained model is focusing on reviews and products on Amazon. If you apply this model to other domains, it may perform poorly. | 413413f26afafbab1ab869476addbaf2 |

apache-2.0 | ['generated_from_trainer'] | false | How to use You can use this model directly by downloading the trained weights and configurations like the below code snippet: ```python from transformers import AutoTokenizer, AutoModelForSequenceClassification tokenizer = AutoTokenizer.from_pretrained("LiYuan/amazon-review-sentiment-analysis") model = AutoModelForSequenceClassification.from_pretrained("LiYuan/amazon-review-sentiment-analysis") ``` | c3898db741a075b597725f013c2a5b44 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:--------:| | 0.555400 | 1.0 | 1080 | 0.520294 | 0.800000 | | 0.424300 | 2.0 | 1080 | 0.549649 | 0.798380 | | 1b44f551f184eb716489fa5880246bc1 |

apache-2.0 | ['generated_from_trainer'] | false | small-mlm-wikitext-target-rotten_tomatoes This model is a fine-tuned version of [muhtasham/small-mlm-wikitext](https://huggingface.co/muhtasham/small-mlm-wikitext) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.3909 - Accuracy: 0.8021 - F1: 0.8017 | da40be82c31ed20c92d8ca938ae944c7 |

apache-2.0 | ['generated_from_trainer'] | false | my_awesome_asr_mind_model This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 6.8626 - Wer: 1.0299 | 3404afdda0ff0f559684e6121664e794 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 2000 - mixed_precision_training: Native AMP | 9a2fcd1f5dae52aec7749e9f59d633eb |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.7266 | 499.8 | 1000 | 5.8888 | 0.9403 | | 0.166 | 999.8 | 2000 | 6.8626 | 1.0299 | | 0b680bb63908ab0dd07dc719c3503fe7 |

cc-by-sa-4.0 | [] | false | How to use ```python from transformers import AutoTokenizer, AutoModelForMaskedLM tokenizer = AutoTokenizer.from_pretrained("conan1024hao/cjkbert-small") model = AutoModelForMaskedLM.from_pretrained("conan1024hao/cjkbert-small") ``` - Before you fine-tune downstream tasks, you don't need any text segmentation. - (Though you may obtain better results if you applied morphological analysis to the data before fine-tuning) | fa9506f0d2a1d724ffe479d4864a015c |

cc-by-sa-4.0 | [] | false | Morphological analysis tools - ZH: For Chinese, we use [LTP](https://github.com/HIT-SCIR/ltp). - JA: For Japanese, we use [Juman++](https://github.com/ku-nlp/jumanpp). - KO: For Korean, we use [KoNLPy](https://github.com/konlpy/konlpy)(Kkma class). | 17f18fafe8aa16702325862144fefc32 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-squad This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.5539 | 80f75f5aa2028a62eb6642f1c2334b29 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 2 | 43054ed00afc28215331be78789242d9 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 0.7665 | 1.0 | 2295 | 0.5231 | | 0.5236 | 2.0 | 4590 | 0.5539 | | 64f00ec75074f198f346c38c604d5b91 |

cc-by-sa-4.0 | ['english', 'token-classification', 'pos', 'dependency-parsing'] | false | Model Description This is a RoBERTa model pre-trained with [UD_English](https://universaldependencies.org/en/) for POS-tagging and dependency-parsing, derived from [roberta-base](https://huggingface.co/roberta-base). Every word is tagged by [UPOS](https://universaldependencies.org/u/pos/) (Universal Part-Of-Speech). | a8bd6f74a9ead146b760d63693461381 |

cc-by-sa-4.0 | ['english', 'token-classification', 'pos', 'dependency-parsing'] | false | How to Use ```py from transformers import AutoTokenizer,AutoModelForTokenClassification tokenizer=AutoTokenizer.from_pretrained("KoichiYasuoka/roberta-base-english-upos") model=AutoModelForTokenClassification.from_pretrained("KoichiYasuoka/roberta-base-english-upos") ``` or ```py import esupar nlp=esupar.load("KoichiYasuoka/roberta-base-english-upos") ``` | db773a8d278e25b5eec784f17ab92bf1 |

mit | ['generated_from_keras_callback'] | false | xtremedistil-l6-h384-uncased-future-time-references This model is a fine-tuned version of [microsoft/xtremedistil-l6-h256-uncased](https://huggingface.co/microsoft/xtremedistil-l6-h256-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.0279 - Train Binary Crossentropy: 0.4809 - Epoch: 9 | 31192f3ddd56f0e83caef1d278dd26f3 |

mit | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train Binary Crossentropy | Epoch | |:----------:|:-------------------------:|:-----:| | 0.0487 | 0.6401 | 0 | | 0.0348 | 0.5925 | 1 | | 0.0319 | 0.5393 | 2 | | 0.0306 | 0.5168 | 3 | | 0.0298 | 0.5045 | 4 | | 0.0292 | 0.4970 | 5 | | 0.0288 | 0.4916 | 6 | | 0.0284 | 0.4878 | 7 | | 0.0282 | 0.4836 | 8 | | 0.0279 | 0.4809 | 9 | | 973894f7f33811274aebfa653576a6a8 |

mit | [] | false | ***astroBERT: a language model for astrophysics*** This public repository contains the work of the [NASA/ADS](https://ui.adsabs.harvard.edu/) on building an NLP language model tailored to astrophysics, along with tutorials and miscellaneous related files. This model is **cased** (it treats `ads` and `ADS` differently). | b5a702e6da784365a6be4d794cb4cf14 |

mit | [] | false | astroBERT models 0. **Base model**: Pretrained model on English language using a masked language modeling (MLM) and next sentence prediction (NSP) objective. It was introduced in [this paper at ADASS 2021](https://arxiv.org/abs/2112.00590) and made public at ADASS 2022. 1. **NER-DEAL model**: This model adds a token classification head to the base model finetuned on the [DEAL@WIESP2022 named entity recognition](https://ui.adsabs.harvard.edu/WIESP/2022/SharedTasks) task. Must be loaded from the `revision='NER-DEAL'` branch (see tutorial 2). | 97342cc7dc979c4c6418df3a993a5ece |

mit | [] | false | Tutorials 0. [generate text embedding (for downstream tasks)](https://nbviewer.org/urls/huggingface.co/adsabs/astroBERT/raw/main/Tutorials/0_Embeddings.ipynb) 1. [use astroBERT for the Fill-Mask task](https://nbviewer.org/urls/huggingface.co/adsabs/astroBERT/raw/main/Tutorials/1_Fill-Mask.ipynb) 2. [make NER-DEAL predictions](https://nbviewer.org/urls/huggingface.co/adsabs/astroBERT/raw/main/Tutorials/2_NER_DEAL.ipynb) | 0970579f2cef09776e4deeebfaa3bd21 |

mit | [] | false | BibTeX ```bibtex @ARTICLE{2021arXiv211200590G, author = {{Grezes}, Felix and {Blanco-Cuaresma}, Sergi and {Accomazzi}, Alberto and {Kurtz}, Michael J. and {Shapurian}, Golnaz and {Henneken}, Edwin and {Grant}, Carolyn S. and {Thompson}, Donna M. and {Chyla}, Roman and {McDonald}, Stephen and {Hostetler}, Timothy W. and {Templeton}, Matthew R. and {Lockhart}, Kelly E. and {Martinovic}, Nemanja and {Chen}, Shinyi and {Tanner}, Chris and {Protopapas}, Pavlos}, title = "{Building astroBERT, a language model for Astronomy \& Astrophysics}", journal = {arXiv e-prints}, keywords = {Computer Science - Computation and Language, Astrophysics - Instrumentation and Methods for Astrophysics}, year = 2021, month = dec, eid = {arXiv:2112.00590}, pages = {arXiv:2112.00590}, archivePrefix = {arXiv}, eprint = {2112.00590}, primaryClass = {cs.CL}, adsurl = {https://ui.adsabs.harvard.edu/abs/2021arXiv211200590G}, adsnote = {Provided by the SAO/NASA Astrophysics Data System} } ``` | 7dd6934fdd338d7cafd3d4b3925a954d |

apache-2.0 | ['generated_from_keras_callback'] | false | Imene/vit-base-patch16-384-wi5 This model is a fine-tuned version of [google/vit-base-patch16-384](https://huggingface.co/google/vit-base-patch16-384) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.4102 - Train Accuracy: 0.9755 - Train Top-3-accuracy: 0.9960 - Validation Loss: 1.9021 - Validation Accuracy: 0.4912 - Validation Top-3-accuracy: 0.7302 - Epoch: 8 | 40c3cbd3d0cd5303e5007bce667b6a16 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'inner_optimizer': {'class_name': 'AdamWeightDecay', 'config': {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 3e-05, 'decay_steps': 3180, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01}}, 'dynamic': True, 'initial_scale': 32768.0, 'dynamic_growth_steps': 2000} - training_precision: mixed_float16 | d14520a914304cd547c61eb1c9b41aef |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train Accuracy | Train Top-3-accuracy | Validation Loss | Validation Accuracy | Validation Top-3-accuracy | Epoch | |:----------:|:--------------:|:--------------------:|:---------------:|:-------------------:|:-------------------------:|:-----:| | 4.2945 | 0.0568 | 0.1328 | 3.6233 | 0.1387 | 0.2916 | 0 | | 3.1234 | 0.2437 | 0.4585 | 2.8657 | 0.3041 | 0.5330 | 1 | | 2.4383 | 0.4182 | 0.6638 | 2.5499 | 0.3534 | 0.6048 | 2 | | 1.9258 | 0.5698 | 0.7913 | 2.3046 | 0.4202 | 0.6583 | 3 | | 1.4919 | 0.6963 | 0.8758 | 2.1349 | 0.4553 | 0.6784 | 4 | | 1.1127 | 0.7992 | 0.9395 | 2.0878 | 0.4595 | 0.6809 | 5 | | 0.8092 | 0.8889 | 0.9720 | 1.9460 | 0.4962 | 0.7210 | 6 | | 0.5794 | 0.9419 | 0.9883 | 1.9478 | 0.4979 | 0.7201 | 7 | | 0.4102 | 0.9755 | 0.9960 | 1.9021 | 0.4912 | 0.7302 | 8 | | 13d92f6db764bc6691309d3f6f6a7b43 |

apache-2.0 | [] | false | finetuned from https://huggingface.co/google/vit-base-patch16-224-in21k dataset:26k images (train:21k valid:5k) accuracy of validation dataset is 95% ```Python from transformers import ViTFeatureExtractor, ViTForImageClassification from PIL import Image path = 'image_path' image = Image.open(path) feature_extractor = ViTFeatureExtractor.from_pretrained('furusu/umamusume-classifier') model = ViTForImageClassification.from_pretrained('furusu/umamusume-classifier') inputs = feature_extractor(images=image, return_tensors="pt") outputs = model(**inputs) predicted_class_idx = outputs.logits.argmax(-1).item() print("Predicted class:", model.config.id2label[predicted_class_idx]) ``` | f1254122830a113996621c567442cbe6 |

mit | ['textual-entailment', 'nli', 'pytorch'] | false | BiomedNLP-PubMedBERT finetuned on textual entailment (NLI) The [microsoft/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext](https://huggingface.co/microsoft/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext?text=%5BMASK%5D+is+a+tumor+suppressor+gene) finetuned on the MNLI dataset. It should be useful in textual entailment tasks involving biomedical corpora. | dbad381bbb88531db64226d0ed587fdc |

mit | ['textual-entailment', 'nli', 'pytorch'] | false | Usage Given two sentences (a premise and a hypothesis), the model outputs the logits of entailment, neutral or contradiction. You can test the model using the HuggingFace model widget on the side: - Input two sentences (premise and hypothesis) one after the other. - The model returns the probabilities of 3 labels: entailment(LABEL:0), neutral(LABEL:1) and contradiction(LABEL:2) respectively. To use the model locally on your machine: ```python | 3b01bc8a373e6dea87cf08ba41c6a4fa |

mit | ['textual-entailment', 'nli', 'pytorch'] | false | device = torch.device("cuda" if torch.cuda.is_available() else "cpu") from transformers import AutoTokenizer, AutoModelForSequenceClassification tokenizer = AutoTokenizer.from_pretrained("lighteternal/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext-finetuned-mnli") model = AutoModelForSequenceClassification.from_pretrained("lighteternal/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext-finetuned-mnli") premise = 'EpCAM is overexpressed in breast cancer' hypothesis = 'EpCAM is downregulated in breast cancer.' | fb6f06c478bf33d2fedf4f95ef94ceee |

mit | ['textual-entailment', 'nli', 'pytorch'] | false | run through model pre-trained on MNLI x = tokenizer.encode(premise, hypothesis, return_tensors='pt', truncation_strategy='only_first') logits = model(x)[0] probs = logits.softmax(dim=1) print('Probabilities for entailment, neutral, contradiction \n', np.around(probs.cpu(). detach().numpy(),3)) | 97c1ac5560ee1e713ef8c3e36220401a |

mit | ['textual-entailment', 'nli', 'pytorch'] | false | Metrics Evaluation on classification accuracy (entailment, contradiction, neutral) on MNLI test set: | Metric | Value | | --- | --- | | Accuracy | 0.8338| See Training Metrics tab for detailed info. | d3e393925f354570e4d03ae69d3f75ba |

creativeml-openrail-m | ['text-to-image'] | false | austinmichaelcraig0 on Stable Diffusion via Dreambooth trained on the [fast-DreamBooth.ipynb by TheLastBen](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook | 43dc636f0b1fe064136e13629931e247 |

creativeml-openrail-m | ['text-to-image'] | false | Model by cormacncheese This your the Stable Diffusion model fine-tuned the austinmichaelcraig0 concept taught to Stable Diffusion with Dreambooth. It can be used by modifying the `instance_prompt(s)`: **austinmichaelcraig0(0).jpg** You can also train your own concepts and upload them to the library by using [the fast-DremaBooth.ipynb by TheLastBen](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb). You can run your new concept via A1111 Colab :[Fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) Sample pictures of this concept: austinmichaelcraig0(0).jpg .jpg) | fe6a05193640de41078f31c9e14fdc9e |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper tiny Hindi This model is a fine-tuned version of [openai/whisper-tiny](https://huggingface.co/openai/whisper-tiny) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 0.5538 - Wer: 41.5453 | 6d2eb47e0a8ce78fc0a77de30682562f |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 50 - training_steps: 1000 - mixed_precision_training: Native AMP | 0d5de145f7265b57882b3f6b810e71cb |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.7718 | 0.73 | 100 | 0.8130 | 55.6890 | | 0.5169 | 1.47 | 200 | 0.6515 | 48.2517 | | 0.3986 | 2.21 | 300 | 0.6001 | 44.9931 | | 0.3824 | 2.94 | 400 | 0.5720 | 43.5171 | | 0.3328 | 3.67 | 500 | 0.5632 | 42.5112 | | 0.2919 | 4.41 | 600 | 0.5594 | 42.7863 | | 0.2654 | 5.15 | 700 | 0.5552 | 41.6428 | | 0.2618 | 5.88 | 800 | 0.5530 | 41.8893 | | 0.2442 | 6.62 | 900 | 0.5539 | 41.5740 | | 0.238 | 7.35 | 1000 | 0.5538 | 41.5453 | | 6528a8d4f366abd702530c331b1dbcd7 |

mit | ['exbert'] | false | Overview **Language model:** deepset/xlm-roberta-base-squad2-distilled **Language:** Multilingual **Downstream-task:** Extractive QA **Training data:** SQuAD 2.0 **Eval data:** SQuAD 2.0 **Code:** See [an example QA pipeline on Haystack](https://haystack.deepset.ai/tutorials/first-qa-system) **Infrastructure**: 1x Tesla v100 | f518c08791be0bf6a3bf628bfd0c1c28 |

mit | ['exbert'] | false | In Haystack Haystack is an NLP framework by deepset. You can use this model in a Haystack pipeline to do question answering at scale (over many documents). To load the model in [Haystack](https://github.com/deepset-ai/haystack/): ```python reader = FARMReader(model_name_or_path="deepset/xlm-roberta-base-squad2-distilled") | dbad4a0d3c212ea6799b6b8120079325 |

mit | ['exbert'] | false | or reader = TransformersReader(model_name_or_path="deepset/xlm-roberta-base-squad2-distilled",tokenizer="deepset/xlm-roberta-base-squad2-distilled") ``` For a complete example of ``deepset/xlm-roberta-base-squad2-distilled`` being used for [question answering], check out the [Tutorials in Haystack Documentation](https://haystack.deepset.ai/tutorials/first-qa-system) | eb316d5aba8456e5922b31881facb687 |

mit | ['exbert'] | false | About us <div class="grid lg:grid-cols-2 gap-x-4 gap-y-3"> <div class="w-full h-40 object-cover mb-2 rounded-lg flex items-center justify-center"> <img alt="" src="https://raw.githubusercontent.com/deepset-ai/.github/main/deepset-logo-colored.png" class="w-40"/> </div> <div class="w-full h-40 object-cover mb-2 rounded-lg flex items-center justify-center"> <img alt="" src="https://raw.githubusercontent.com/deepset-ai/.github/main/haystack-logo-colored.png" class="w-40"/> </div> </div> [deepset](http://deepset.ai/) is the company behind the open-source NLP framework [Haystack](https://haystack.deepset.ai/) which is designed to help you build production ready NLP systems that use: Question answering, summarization, ranking etc. Some of our other work: - [Distilled roberta-base-squad2 (aka "tinyroberta-squad2")]([https://huggingface.co/deepset/tinyroberta-squad2) - [German BERT (aka "bert-base-german-cased")](https://deepset.ai/german-bert) - [GermanQuAD and GermanDPR datasets and models (aka "gelectra-base-germanquad", "gbert-base-germandpr")](https://deepset.ai/germanquad) | 9c2e14fceb0eba931b879a7016a7e90b |

apache-2.0 | ['generated_from_trainer'] | false | distil-is This model is a fine-tuned version of [distilroberta-base](https://huggingface.co/distilroberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.6082 - Rmse: 0.7799 - Mse: 0.6082 - Mae: 0.6023 | 067e2a57825a9529bca671b3614e9758 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rmse | Mse | Mae | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:| | 0.6881 | 1.0 | 492 | 0.6534 | 0.8084 | 0.6534 | 0.5857 | | 0.5923 | 2.0 | 984 | 0.6508 | 0.8067 | 0.6508 | 0.5852 | | 0.5865 | 3.0 | 1476 | 0.6088 | 0.7803 | 0.6088 | 0.6096 | | 0.5899 | 4.0 | 1968 | 0.6279 | 0.7924 | 0.6279 | 0.5853 | | 0.5852 | 5.0 | 2460 | 0.6082 | 0.7799 | 0.6082 | 0.6023 | | 63e0fa8fbd99f63b3d4acac8fa70cedb |

creativeml-openrail-m | ['text-to-image'] | false | It can be used by modifying the `instance_prompt`: **merylstryfetrigun** You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb). And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) Here are the images used for training this concept:                                                 | 37062a2dd2418c781797ab73ab2a1788 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased_cls_sst2 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.5999 - Accuracy: 0.8933 | 32ef2ca14df1c716b7090e9701348d34 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 433 | 0.2928 | 0.8773 | | 0.4178 | 2.0 | 866 | 0.3301 | 0.8922 | | 0.2046 | 3.0 | 1299 | 0.5088 | 0.8853 | | 0.0805 | 4.0 | 1732 | 0.5780 | 0.8888 | | 0.0159 | 5.0 | 2165 | 0.5999 | 0.8933 | | 7eef8f0451ce758df9e2fcda30d1bfad |

cc-by-4.0 | ['questions and answers generation'] | false | Model Card of `lmqg/mt5-small-ruquad-qag` This model is fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) for question & answer pair generation task on the [lmqg/qag_ruquad](https://huggingface.co/datasets/lmqg/qag_ruquad) (dataset_name: default) via [`lmqg`](https://github.com/asahi417/lm-question-generation). | 4e04e1747f28c8f3b0c2d19d4c6e01ac |

cc-by-4.0 | ['questions and answers generation'] | false | Overview - **Language model:** [google/mt5-small](https://huggingface.co/google/mt5-small) - **Language:** ru - **Training data:** [lmqg/qag_ruquad](https://huggingface.co/datasets/lmqg/qag_ruquad) (default) - **Online Demo:** [https://autoqg.net/](https://autoqg.net/) - **Repository:** [https://github.com/asahi417/lm-question-generation](https://github.com/asahi417/lm-question-generation) - **Paper:** [https://arxiv.org/abs/2210.03992](https://arxiv.org/abs/2210.03992) | 4351c1a5f9353993596de295a8619a77 |

cc-by-4.0 | ['questions and answers generation'] | false | model prediction question_answer_pairs = model.generate_qa("Нелишним будет отметить, что, развивая это направление, Д. И. Менделеев, поначалу априорно выдвинув идею о температуре, при которой высота мениска будет нулевой, в мае 1860 года провёл серию опытов.") ``` - With `transformers` ```python from transformers import pipeline pipe = pipeline("text2text-generation", "lmqg/mt5-small-ruquad-qag") output = pipe("Нелишним будет отметить, что, развивая это направление, Д. И. Менделеев, поначалу априорно выдвинув идею о температуре, при которой высота мениска будет нулевой, в мае 1860 года провёл серию опытов.") ``` | bb937203ddb40f3796f4f8fb5a76e049 |

cc-by-4.0 | ['questions and answers generation'] | false | Evaluation - ***Metric (Question & Answer Generation)***: [raw metric file](https://huggingface.co/lmqg/mt5-small-ruquad-qag/raw/main/eval/metric.first.answer.paragraph.questions_answers.lmqg_qag_ruquad.default.json) | | Score | Type | Dataset | |:--------------------------------|--------:|:--------|:-------------------------------------------------------------------| | QAAlignedF1Score (BERTScore) | 52.95 | default | [lmqg/qag_ruquad](https://huggingface.co/datasets/lmqg/qag_ruquad) | | QAAlignedF1Score (MoverScore) | 38.59 | default | [lmqg/qag_ruquad](https://huggingface.co/datasets/lmqg/qag_ruquad) | | QAAlignedPrecision (BERTScore) | 52.86 | default | [lmqg/qag_ruquad](https://huggingface.co/datasets/lmqg/qag_ruquad) | | QAAlignedPrecision (MoverScore) | 38.57 | default | [lmqg/qag_ruquad](https://huggingface.co/datasets/lmqg/qag_ruquad) | | QAAlignedRecall (BERTScore) | 53.06 | default | [lmqg/qag_ruquad](https://huggingface.co/datasets/lmqg/qag_ruquad) | | QAAlignedRecall (MoverScore) | 38.62 | default | [lmqg/qag_ruquad](https://huggingface.co/datasets/lmqg/qag_ruquad) | | 458a3868b082dfd7ce01407aa8f704b6 |

cc-by-4.0 | ['questions and answers generation'] | false | Training hyperparameters The following hyperparameters were used during fine-tuning: - dataset_path: lmqg/qag_ruquad - dataset_name: default - input_types: ['paragraph'] - output_types: ['questions_answers'] - prefix_types: None - model: google/mt5-small - max_length: 512 - max_length_output: 256 - epoch: 12 - batch: 8 - lr: 0.001 - fp16: False - random_seed: 1 - gradient_accumulation_steps: 16 - label_smoothing: 0.15 The full configuration can be found at [fine-tuning config file](https://huggingface.co/lmqg/mt5-small-ruquad-qag/raw/main/trainer_config.json). | be5c544c4fef3b7077eff18417e64825 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-ner This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the conll2003 dataset. It achieves the following results on the evaluation set: - Loss: 0.0629 - Precision: 0.9225 - Recall: 0.9340 - F1: 0.9282 - Accuracy: 0.9834 | 00b3954ace5eb3a624306b0d1d7be635 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.2356 | 1.0 | 878 | 0.0704 | 0.9138 | 0.9187 | 0.9162 | 0.9807 | | 0.054 | 2.0 | 1756 | 0.0620 | 0.9209 | 0.9329 | 0.9269 | 0.9827 | | 0.0306 | 3.0 | 2634 | 0.0629 | 0.9225 | 0.9340 | 0.9282 | 0.9834 | | e84b163157d61d402bd2afcbaab21c1a |

apache-2.0 | ['t5-small', 'text2text-generation', 'dialogue generation', 'conversational system', 'task-oriented dialog'] | false | t5-small-goal2dialogue-multiwoz21 This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on [MultiWOZ 2.1](https://huggingface.co/datasets/ConvLab/multiwoz21). Refer to [ConvLab-3](https://github.com/ConvLab/ConvLab-3) for model description and usage. | 58a45dc3419858546efebbf50f4d354f |

apache-2.0 | ['t5-small', 'text2text-generation', 'dialogue generation', 'conversational system', 'task-oriented dialog'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.001 - train_batch_size: 32 - eval_batch_size: 64 - seed: 42 - distributed_type: multi-GPU - gradient_accumulation_steps: 4 - total_train_batch_size: 128 - optimizer: Adafactor - lr_scheduler_type: linear - num_epochs: 10.0 | d933baf5337978c7033f7c33b2bdb159 |

mit | ['roberta-base', 'roberta-base-epoch_2'] | false | RoBERTa, Intermediate Checkpoint - Epoch 2 This model is part of our reimplementation of the [RoBERTa model](https://arxiv.org/abs/1907.11692), trained on Wikipedia and the Book Corpus only. We train this model for almost 100K steps, corresponding to 83 epochs. We provide the 84 checkpoints (including the randomly initialized weights before the training) to provide the ability to study the training dynamics of such models, and other possible use-cases. These models were trained in part of a work that studies how simple statistics from data, such as co-occurrences affects model predictions, which are described in the paper [Measuring Causal Effects of Data Statistics on Language Model's `Factual' Predictions](https://arxiv.org/abs/2207.14251). This is RoBERTa-base epoch_2. | 4fa554a89ec3f6eda4e45abc1017a30a |

cc-by-4.0 | ['generated_from_trainer'] | false | finetuned-ner This model is a fine-tuned version of [deepset/deberta-v3-base-squad2](https://huggingface.co/deepset/deberta-v3-base-squad2) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.4783 - Precision: 0.3264 - Recall: 0.3591 - F1: 0.3420 - Accuracy: 0.8925 | 54bda5391b0f340ee00c44a751951521 |

cc-by-4.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 4 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - num_epochs: 30 - mixed_precision_training: Native AMP - label_smoothing_factor: 0.05 | 8205f3a1ce8a55ca6d0f1a49210ab760 |

cc-by-4.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:---------:|:------:|:------:|:--------:| | 39.8167 | 1.0 | 760 | 0.3957 | 0.1844 | 0.2909 | 0.2257 | 0.8499 | | 21.7333 | 2.0 | 1520 | 0.3853 | 0.2118 | 0.3273 | 0.2571 | 0.8546 | | 13.8859 | 3.0 | 2280 | 0.3631 | 0.2443 | 0.2909 | 0.2656 | 0.8789 | | 20.6586 | 4.0 | 3040 | 0.3961 | 0.2946 | 0.3455 | 0.3180 | 0.8753 | | 13.8654 | 5.0 | 3800 | 0.3821 | 0.2791 | 0.3273 | 0.3013 | 0.8877 | | 12.6942 | 6.0 | 4560 | 0.4393 | 0.3122 | 0.3364 | 0.3239 | 0.8909 | | 25.0549 | 7.0 | 5320 | 0.4542 | 0.3106 | 0.3727 | 0.3388 | 0.8824 | | 5.6816 | 8.0 | 6080 | 0.4432 | 0.2820 | 0.3409 | 0.3086 | 0.8774 | | 13.1296 | 9.0 | 6840 | 0.4509 | 0.2884 | 0.35 | 0.3162 | 0.8824 | | 7.7173 | 10.0 | 7600 | 0.4265 | 0.3170 | 0.3818 | 0.3464 | 0.8919 | | 6.7922 | 11.0 | 8360 | 0.4749 | 0.3320 | 0.3818 | 0.3552 | 0.8892 | | 5.4287 | 12.0 | 9120 | 0.4564 | 0.2917 | 0.3818 | 0.3307 | 0.8805 | | 7.4153 | 13.0 | 9880 | 0.4735 | 0.2963 | 0.3273 | 0.3110 | 0.8871 | | 9.1154 | 14.0 | 10640 | 0.4553 | 0.3416 | 0.3773 | 0.3585 | 0.8894 | | 5.999 | 15.0 | 11400 | 0.4489 | 0.3203 | 0.4091 | 0.3593 | 0.8880 | | 9.5128 | 16.0 | 12160 | 0.4947 | 0.3164 | 0.3682 | 0.3403 | 0.8883 | | 5.6713 | 17.0 | 12920 | 0.4705 | 0.3527 | 0.3864 | 0.3688 | 0.8919 | | 12.2119 | 18.0 | 13680 | 0.4617 | 0.3123 | 0.3591 | 0.3340 | 0.8857 | | 8.5658 | 19.0 | 14440 | 0.4764 | 0.3092 | 0.35 | 0.3284 | 0.8944 | | 11.0664 | 20.0 | 15200 | 0.4557 | 0.3187 | 0.3636 | 0.3397 | 0.8905 | | 6.7161 | 21.0 | 15960 | 0.4468 | 0.3210 | 0.3955 | 0.3544 | 0.8956 | | 9.0448 | 22.0 | 16720 | 0.5120 | 0.2872 | 0.3682 | 0.3227 | 0.8792 | | 6.573 | 23.0 | 17480 | 0.4990 | 0.3307 | 0.3773 | 0.3524 | 0.8869 | | 5.0543 | 24.0 | 18240 | 0.4763 | 0.3028 | 0.3455 | 0.3227 | 0.8899 | | 6.8797 | 25.0 | 19000 | 0.4814 | 0.2780 | 0.3273 | 0.3006 | 0.8913 | | 7.7544 | 26.0 | 19760 | 0.4695 | 0.3024 | 0.3409 | 0.3205 | 0.8946 | | 4.8346 | 27.0 | 20520 | 0.4849 | 0.3154 | 0.3455 | 0.3297 | 0.8931 | | 4.4766 | 28.0 | 21280 | 0.4809 | 0.2925 | 0.3364 | 0.3129 | 0.8913 | | 7.9149 | 29.0 | 22040 | 0.4756 | 0.3238 | 0.3591 | 0.3405 | 0.8930 | | 7.3033 | 30.0 | 22800 | 0.4783 | 0.3264 | 0.3591 | 0.3420 | 0.8925 | | a67a7c131d0aec29590c78443863f81c |

apache-2.0 | ['generated_from_trainer'] | false | t5-small-finetuned-xlsum-10-epoch This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the xlsum dataset. It achieves the following results on the evaluation set: - Loss: 2.2204 - Rouge1: 31.6534 - Rouge2: 10.0563 - Rougel: 24.8104 - Rougelsum: 24.8732 - Gen Len: 18.7913 | 8ef82464fbb450322e8c062be64534af |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:------:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:| | 2.6512 | 1.0 | 19158 | 2.3745 | 29.756 | 8.4006 | 22.9753 | 23.0287 | 18.8245 | | 2.6012 | 2.0 | 38316 | 2.3183 | 30.5327 | 9.0206 | 23.7263 | 23.7805 | 18.813 | | 2.5679 | 3.0 | 57474 | 2.2853 | 30.9771 | 9.4156 | 24.1555 | 24.2127 | 18.7905 | | 2.5371 | 4.0 | 76632 | 2.2660 | 31.0578 | 9.5592 | 24.2983 | 24.3587 | 18.7941 | | 2.5133 | 5.0 | 95790 | 2.2498 | 31.3756 | 9.7889 | 24.5317 | 24.5922 | 18.7971 | | 2.4795 | 6.0 | 114948 | 2.2378 | 31.4961 | 9.8935 | 24.6648 | 24.7218 | 18.7929 | | 2.4967 | 7.0 | 134106 | 2.2307 | 31.44 | 9.9125 | 24.6298 | 24.6824 | 18.8221 | | 2.4678 | 8.0 | 153264 | 2.2250 | 31.5875 | 10.004 | 24.7581 | 24.8125 | 18.7809 | | 2.46 | 9.0 | 172422 | 2.2217 | 31.6413 | 10.0311 | 24.8063 | 24.8641 | 18.7951 | | 2.4494 | 10.0 | 191580 | 2.2204 | 31.6534 | 10.0563 | 24.8104 | 24.8732 | 18.7913 | | 63c97b013c9d23e7d70d9869b1d6debb |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-squad This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the squad_v2 dataset. It achieves the following results on the evaluation set: - Loss: 1.4306 | 747d521523eb21bdb4629443561b3f99 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 1.2169 | 1.0 | 8235 | 1.1950 | | 0.9396 | 2.0 | 16470 | 1.2540 | | 0.7567 | 3.0 | 24705 | 1.4306 | | 23d2fa4c6eb2e8674cb185fd462dda47 |

mit | [] | false | Chucky on Stable Diffusion This is the `<merc>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:      | 840bd2014f592360b3158df8ab4b76ca |

mit | ['deberta-v1', 'deberta-mnli'] | false | This model is a fine-tuned version of [microsoft/deberta-v3-large](https://huggingface.co/microsoft/deberta-v3-large) on the GLUE MNLI dataset. It achieves the following results on the evaluation set: - Loss: 0.4103 - Accuracy: 0.9175 | 74d8721b31be6b7b2d786efdf1fc8269 |

mit | ['deberta-v1', 'deberta-mnli'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 6e-06 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 50 - num_epochs: 2.0 | 032e52bcfd4b5597e4f3d199c37e7611 |

mit | ['deberta-v1', 'deberta-mnli'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:--------:| | 0.3631 | 1.0 | 49088 | 0.3129 | 0.9130 | | 0.2267 | 2.0 | 98176 | 0.4157 | 0.9153 | | 9d9cccfa8e2117217dd978f63f8c6075 |

apache-2.0 | ['generated_from_trainer'] | false | mobilebert_add_GLUE_Experiment_logit_kd_qqp This model is a fine-tuned version of [google/mobilebert-uncased](https://huggingface.co/google/mobilebert-uncased) on the GLUE QQP dataset. It achieves the following results on the evaluation set: - Loss: 0.8079 - Accuracy: 0.7570 - F1: 0.6049 - Combined Score: 0.6810 | 8d43177eae5d4ed7625bbda6a2a772ae |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | Combined Score | |:--------------------------:|:-----:|:-----:|:---------------:|:--------:|:------:|:--------------:| | 1.2837 | 1.0 | 2843 | 1.2201 | 0.6318 | 0.0 | 0.3159 | | 1.076 | 2.0 | 5686 | 0.8477 | 0.7443 | 0.5855 | 0.6649 | | 0.866 | 3.0 | 8529 | 0.8217 | 0.7518 | 0.5924 | 0.6721 | | 0.8317 | 4.0 | 11372 | 0.8136 | 0.7565 | 0.6243 | 0.6904 | | 0.8122 | 5.0 | 14215 | 0.8126 | 0.7588 | 0.6352 | 0.6970 | | 0.799 | 6.0 | 17058 | 0.8079 | 0.7570 | 0.6049 | 0.6810 | | 386581134871678353408.0000 | 7.0 | 19901 | nan | 0.6318 | 0.0 | 0.3159 | | 0.0 | 8.0 | 22744 | nan | 0.6318 | 0.0 | 0.3159 | | 0.0 | 9.0 | 25587 | nan | 0.6318 | 0.0 | 0.3159 | | 0.0 | 10.0 | 28430 | nan | 0.6318 | 0.0 | 0.3159 | | 0.0 | 11.0 | 31273 | nan | 0.6318 | 0.0 | 0.3159 | | 85868b70a4955d78c0a7086ba2358073 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-large-xls-r-53m-gl-jupyter7 This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1000 - Wer: 0.0639 | 08c5c9792282975d2e1cea9a864c64ab |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 3.8697 | 3.36 | 400 | 0.2631 | 0.2756 | | 0.1569 | 6.72 | 800 | 0.1243 | 0.1300 | | 0.0663 | 10.08 | 1200 | 0.1124 | 0.1153 | | 0.0468 | 13.44 | 1600 | 0.1118 | 0.1037 | | 0.0356 | 16.8 | 2000 | 0.1102 | 0.0978 | | 0.0306 | 20.17 | 2400 | 0.1095 | 0.0935 | | 0.0244 | 23.53 | 2800 | 0.1072 | 0.0844 | | 0.0228 | 26.89 | 3200 | 0.1014 | 0.0874 | | 0.0192 | 30.25 | 3600 | 0.1084 | 0.0831 | | 0.0174 | 33.61 | 4000 | 0.1048 | 0.0772 | | 0.0142 | 36.97 | 4400 | 0.1063 | 0.0764 | | 0.0131 | 40.33 | 4800 | 0.1046 | 0.0770 | | 0.0116 | 43.69 | 5200 | 0.0999 | 0.0716 | | 0.0095 | 47.06 | 5600 | 0.1044 | 0.0729 | | 0.0077 | 50.42 | 6000 | 0.1024 | 0.0670 | | 0.0071 | 53.78 | 6400 | 0.0968 | 0.0631 | | 0.0064 | 57.14 | 6800 | 0.1000 | 0.0639 | | b5666cc1041de6d9001eb03f19302a4f |

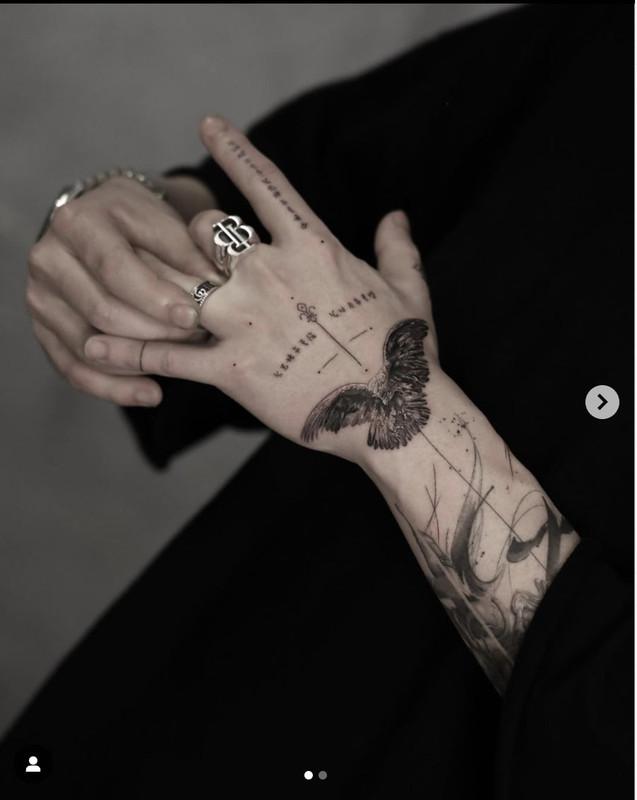

mit | [] | false | axe_tattoo on Stable Diffusion This is the `<axe-tattoo>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:       | 94a5600a7ce863b20ad327fb577f5091 |

openrail | [] | false | prompt should contain: best quality, masterpiece, highrer,1girl, beautiful face recommand: DPM++2M Karras nagative prompt (simple is better):(((simple background))),monochrome ,lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry, lowres, bad anatomy, bad hands, text, error, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry, ugly,pregnant,vore,duplicate,morbid,mut ilated,tran nsexual, hermaphrodite,long neck,mutated hands,poorly drawn hands,poorly drawn face,mutation,deformed,blurry,bad anatomy,bad proportions,malformed limbs,extra limbs,cloned face,disfigured,gross proportions, (((missing arms))),(((missing legs))), (((extra arms))),(((extra legs))),pubic hair, plump,bad legs,error legs,username,blurry,bad feet | ab46c200d9b9bbad05bce5ad339a78c9 |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-uncased-stsb This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the GLUE STSB dataset. It achieves the following results on the evaluation set: - Loss: 0.4676 - Pearson: 0.8901 - Spearmanr: 0.8872 - Combined Score: 0.8887 | 47dca336ae5f1bc9e3f646d1d9ad2225 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Pearson | Spearmanr | Combined Score | |:-------------:|:-----:|:----:|:---------------:|:-------:|:---------:|:--------------:| | 2.3939 | 1.0 | 45 | 0.7358 | 0.8686 | 0.8653 | 0.8669 | | 0.5084 | 2.0 | 90 | 0.4959 | 0.8835 | 0.8799 | 0.8817 | | 0.3332 | 3.0 | 135 | 0.5002 | 0.8846 | 0.8815 | 0.8830 | | 0.2202 | 4.0 | 180 | 0.4962 | 0.8854 | 0.8827 | 0.8840 | | 0.1642 | 5.0 | 225 | 0.4848 | 0.8864 | 0.8839 | 0.8852 | | 0.1312 | 6.0 | 270 | 0.4987 | 0.8872 | 0.8866 | 0.8869 | | 0.1057 | 7.0 | 315 | 0.4840 | 0.8895 | 0.8848 | 0.8871 | | 0.0935 | 8.0 | 360 | 0.4753 | 0.8887 | 0.8840 | 0.8863 | | 0.0835 | 9.0 | 405 | 0.4676 | 0.8901 | 0.8872 | 0.8887 | | 0.0749 | 10.0 | 450 | 0.4808 | 0.8901 | 0.8867 | 0.8884 | | 0.0625 | 11.0 | 495 | 0.4760 | 0.8893 | 0.8857 | 0.8875 | | 0.0607 | 12.0 | 540 | 0.5113 | 0.8899 | 0.8859 | 0.8879 | | 0.0564 | 13.0 | 585 | 0.4918 | 0.8900 | 0.8860 | 0.8880 | | 0.0495 | 14.0 | 630 | 0.4749 | 0.8905 | 0.8868 | 0.8887 | | 0.0446 | 15.0 | 675 | 0.4889 | 0.8888 | 0.8856 | 0.8872 | | 0.045 | 16.0 | 720 | 0.4680 | 0.8918 | 0.8889 | 0.8904 | | accfd47395b4a126520ba18e17b09b19 |

apache-2.0 | ['automatic-speech-recognition', 'pl'] | false | exp_w2v2t_pl_vp-it_s474 Fine-tuned [facebook/wav2vec2-large-it-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-it-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (pl)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 61c7bfb498f90acef86daf42b37fb37f |

apache-2.0 | ['sbb-asr', 'generated_from_trainer'] | false | Whisper Small German SBB all SNR - v8 This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the SBB Dataset 05.01.2023 dataset. It achieves the following results on the evaluation set: - Loss: 0.0246 - Wer: 0.0235 | 5452f8907d67aeb7eb49abb01217a78e |

apache-2.0 | ['sbb-asr', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 100 - training_steps: 600 - mixed_precision_training: Native AMP | 6b76f191f937a0ac6e7db9ca925aa737 |

apache-2.0 | ['sbb-asr', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 1.3694 | 0.36 | 100 | 0.2304 | 0.0495 | | 0.0696 | 0.71 | 200 | 0.0311 | 0.0209 | | 0.0324 | 1.07 | 300 | 0.0337 | 0.0298 | | 0.0215 | 1.42 | 400 | 0.0254 | 0.0184 | | 0.016 | 1.78 | 500 | 0.0279 | 0.0209 | | 0.0113 | 2.14 | 600 | 0.0246 | 0.0235 | | 4dfe3e36b4110f960b3094b071ad3af6 |

mit | ['historic german'] | false | Model weights Currently only PyTorch-[Transformers](https://github.com/huggingface/transformers) compatible weights are available. If you need access to TensorFlow checkpoints, please raise an issue! | Model | Downloads | ------------------------------------------ | --------------------------------------------------------------------------------------------------------------- | `dbmdz/bert-base-german-europeana-uncased` | [`config.json`](https://cdn.huggingface.co/dbmdz/bert-base-german-europeana-uncased/config.json) • [`pytorch_model.bin`](https://cdn.huggingface.co/dbmdz/bert-base-german-europeana-uncased/pytorch_model.bin) • [`vocab.txt`](https://cdn.huggingface.co/dbmdz/bert-base-german-europeana-uncased/vocab.txt) | e6ab06831f0f0aff2af2b0142a89bd2b |

mit | ['historic german'] | false | Usage With Transformers >= 2.3 our German Europeana BERT models can be loaded like: ```python from transformers import AutoModel, AutoTokenizer tokenizer = AutoTokenizer.from_pretrained("dbmdz/bert-base-german-europeana-uncased") model = AutoModel.from_pretrained("dbmdz/bert-base-german-europeana-uncased") ``` | 8c58387bb84bade83b29954336d86448 |

apache-2.0 | ['generated_from_trainer'] | false | swin-base-patch4-window7-224-in22k-Chinese-finetuned This model is a fine-tuned version of [microsoft/swin-base-patch4-window7-224-in22k](https://huggingface.co/microsoft/swin-base-patch4-window7-224-in22k) on the imagefolder dataset. It achieves the following results on the evaluation set: - Loss: 0.0000 - Accuracy: 1.0 | b7f0b872a4b6e578b5c9e1d35d2f78bf |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.