datasetId stringlengths 2 117 | card stringlengths 19 1.01M |

|---|---|

Ransaka/Sinhala-400M | ---

dataset_info:

features:

- name: text

sequence: string

splits:

- name: train

num_bytes: 2802808058.089643

num_examples: 8854185

- name: test

num_bytes: 1201203543.9103568

num_examples: 3794651

download_size: 1826451430

dataset_size: 4004011602

license: apache-2.0

task_categories:

- text-generation

- feature-extraction

language:

- si

pretty_name: Sinhala Large Scale Corpus

size_categories:

- 10M<n<100M

---

# Dataset Card for "Sinhala-400M"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

nlp-brin-id/unsupervised_title-fact | ---

license: apache-2.0

task_categories:

- feature-extraction

language:

- id

size_categories:

- 10K<n<100K

--- |

tyzhu/wiki_find_passage_train10_eval10_num | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

dataset_info:

features:

- name: inputs

dtype: string

- name: targets

dtype: string

splits:

- name: train

num_bytes: 22558

num_examples: 30

- name: validation

num_bytes: 6982

num_examples: 10

download_size: 25018

dataset_size: 29540

---

# Dataset Card for "wiki_find_passage_train10_eval10_num"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

deetsadi/processed_cdi_sobel | ---

dataset_info:

features:

- name: image

dtype: image

- name: text

dtype: string

- name: conditioning_image

dtype: image

splits:

- name: train

num_bytes: 19391453.0

num_examples: 200

download_size: 0

dataset_size: 19391453.0

---

# Dataset Card for "processed_cdi_sobel"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Prgckwb/jiro-style-ramen | ---

dataset_info:

features:

- name: image

dtype: image

splits:

- name: train

num_bytes: 978393.0

num_examples: 31

download_size: 978665

dataset_size: 978393.0

---

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

liuyanchen1015/VALUE_cola_been_done | ---

dataset_info:

features:

- name: sentence

dtype: string

- name: label

dtype: int64

- name: idx

dtype: int64

- name: value_score

dtype: int64

splits:

- name: dev

num_bytes: 4170

num_examples: 45

- name: test

num_bytes: 5169

num_examples: 60

- name: train

num_bytes: 51879

num_examples: 627

download_size: 33431

dataset_size: 61218

---

# Dataset Card for "VALUE_cola_been_done"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

thefluxapp/dsum | ---

dataset_info:

features:

- name: dialogue

dtype: string

- name: summary

dtype: string

splits:

- name: train

num_bytes: 55615012.0

num_examples: 54383

download_size: 32278282

dataset_size: 55615012.0

---

# Dataset Card for "dsum"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

allenai/cochrane_sparse_oracle | ---

annotations_creators:

- expert-generated

language_creators:

- expert-generated

language:

- en

license:

- apache-2.0

multilinguality:

- monolingual

size_categories:

- 10K<n<100K

source_datasets:

- extended|other-MS^2

- extended|other-Cochrane

task_categories:

- summarization

- text2text-generation

paperswithcode_id: multi-document-summarization

pretty_name: MSLR Shared Task

---

This is a copy of the [Cochrane](https://huggingface.co/datasets/allenai/mslr2022) dataset, except the input source documents of its `validation` split have been replaced by a __sparse__ retriever. The retrieval pipeline used:

- __query__: The `target` field of each example

- __corpus__: The union of all documents in the `train`, `validation` and `test` splits. A document is the concatenation of the `title` and `abstract`.

- __retriever__: BM25 via [PyTerrier](https://pyterrier.readthedocs.io/en/latest/) with default settings

- __top-k strategy__: `"oracle"`, i.e. the number of documents retrieved, `k`, is set as the original number of input documents for each example

Retrieval results on the `train` set:

| Recall@100 | Rprec | Precision@k | Recall@k |

| ----------- | ----------- | ----------- | ----------- |

| 0.7014 | 0.3841 | 0.3841 | 0.3841 |

Retrieval results on the `validation` set:

| Recall@100 | Rprec | Precision@k | Recall@k |

| ----------- | ----------- | ----------- | ----------- |

| 0.7226 | 0.4023 | 0.4023 | 0.4023 |

Retrieval results on the `test` set:

N/A. Test set is blind so we do not have any queries. |

CyberHarem/clara_starrail | ---

license: mit

task_categories:

- text-to-image

tags:

- art

- not-for-all-audiences

size_categories:

- n<1K

---

# Dataset of clara/クラーラ/克拉拉/클라라 (Honkai: Star Rail)

This is the dataset of clara/クラーラ/克拉拉/클라라 (Honkai: Star Rail), containing 177 images and their tags.

The core tags of this character are `long_hair, bangs, white_hair, red_eyes, hair_between_eyes`, which are pruned in this dataset.

Images are crawled from many sites (e.g. danbooru, pixiv, zerochan ...), the auto-crawling system is powered by [DeepGHS Team](https://github.com/deepghs)([huggingface organization](https://huggingface.co/deepghs)).

## List of Packages

| Name | Images | Size | Download | Type | Description |

|:-----------------|---------:|:-----------|:----------------------------------------------------------------------------------------------------------------|:-----------|:---------------------------------------------------------------------|

| raw | 177 | 294.67 MiB | [Download](https://huggingface.co/datasets/CyberHarem/clara_starrail/resolve/main/dataset-raw.zip) | Waifuc-Raw | Raw data with meta information (min edge aligned to 1400 if larger). |

| 800 | 177 | 149.96 MiB | [Download](https://huggingface.co/datasets/CyberHarem/clara_starrail/resolve/main/dataset-800.zip) | IMG+TXT | dataset with the shorter side not exceeding 800 pixels. |

| stage3-p480-800 | 443 | 326.96 MiB | [Download](https://huggingface.co/datasets/CyberHarem/clara_starrail/resolve/main/dataset-stage3-p480-800.zip) | IMG+TXT | 3-stage cropped dataset with the area not less than 480x480 pixels. |

| 1200 | 177 | 251.80 MiB | [Download](https://huggingface.co/datasets/CyberHarem/clara_starrail/resolve/main/dataset-1200.zip) | IMG+TXT | dataset with the shorter side not exceeding 1200 pixels. |

| stage3-p480-1200 | 443 | 482.70 MiB | [Download](https://huggingface.co/datasets/CyberHarem/clara_starrail/resolve/main/dataset-stage3-p480-1200.zip) | IMG+TXT | 3-stage cropped dataset with the area not less than 480x480 pixels. |

### Load Raw Dataset with Waifuc

We provide raw dataset (including tagged images) for [waifuc](https://deepghs.github.io/waifuc/main/tutorials/installation/index.html) loading. If you need this, just run the following code

```python

import os

import zipfile

from huggingface_hub import hf_hub_download

from waifuc.source import LocalSource

# download raw archive file

zip_file = hf_hub_download(

repo_id='CyberHarem/clara_starrail',

repo_type='dataset',

filename='dataset-raw.zip',

)

# extract files to your directory

dataset_dir = 'dataset_dir'

os.makedirs(dataset_dir, exist_ok=True)

with zipfile.ZipFile(zip_file, 'r') as zf:

zf.extractall(dataset_dir)

# load the dataset with waifuc

source = LocalSource(dataset_dir)

for item in source:

print(item.image, item.meta['filename'], item.meta['tags'])

```

## List of Clusters

List of tag clustering result, maybe some outfits can be mined here.

### Raw Text Version

| # | Samples | Img-1 | Img-2 | Img-3 | Img-4 | Img-5 | Tags |

|----:|----------:|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| 0 | 8 |  |  |  |  |  | 1girl, looking_at_viewer, simple_background, long_sleeves, solo, upper_body, white_background, blush, closed_mouth, red_jacket, hair_intakes, sweater, coat |

| 1 | 8 |  |  |  |  |  | 1girl, barefoot, blush, long_sleeves, looking_at_viewer, solo, toes, coat, soles, bare_legs, simple_background, sitting, thigh_strap, white_background, full_body, pink_eyes, red_jacket, very_long_hair, closed_mouth, foot_focus, foreshortening, underwear |

| 2 | 6 |  |  |  |  |  | 1girl, long_sleeves, looking_at_viewer, blush, closed_mouth, coat, simple_background, smile, solo, white_background, barefoot, pink_eyes, white_dress, full_body |

| 3 | 7 |  |  |  |  |  | 1girl, looking_at_viewer, solo, blush, closed_mouth, collarbone, medium_breasts, navel, pink_eyes, nipples, pussy, stomach, thighs, arms_behind_back, completely_nude, indoors, mosaic_censoring, cowboy_shot, on_back, pillow, plant, purple_eyes, small_breasts, standing, very_long_hair |

### Table Version

| # | Samples | Img-1 | Img-2 | Img-3 | Img-4 | Img-5 | 1girl | looking_at_viewer | simple_background | long_sleeves | solo | upper_body | white_background | blush | closed_mouth | red_jacket | hair_intakes | sweater | coat | barefoot | toes | soles | bare_legs | sitting | thigh_strap | full_body | pink_eyes | very_long_hair | foot_focus | foreshortening | underwear | smile | white_dress | collarbone | medium_breasts | navel | nipples | pussy | stomach | thighs | arms_behind_back | completely_nude | indoors | mosaic_censoring | cowboy_shot | on_back | pillow | plant | purple_eyes | small_breasts | standing |

|----:|----------:|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------|:--------------------|:--------------------|:---------------|:-------|:-------------|:-------------------|:--------|:---------------|:-------------|:---------------|:----------|:-------|:-----------|:-------|:--------|:------------|:----------|:--------------|:------------|:------------|:-----------------|:-------------|:-----------------|:------------|:--------|:--------------|:-------------|:-----------------|:--------|:----------|:--------|:----------|:---------|:-------------------|:------------------|:----------|:-------------------|:--------------|:----------|:---------|:--------|:--------------|:----------------|:-----------|

| 0 | 8 |  |  |  |  |  | X | X | X | X | X | X | X | X | X | X | X | X | X | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | |

| 1 | 8 |  |  |  |  |  | X | X | X | X | X | | X | X | X | X | | | X | X | X | X | X | X | X | X | X | X | X | X | X | | | | | | | | | | | | | | | | | | | | |

| 2 | 6 |  |  |  |  |  | X | X | X | X | X | | X | X | X | | | | X | X | | | | | | X | X | | | | | X | X | | | | | | | | | | | | | | | | | | |

| 3 | 7 |  |  |  |  |  | X | X | | | X | | | X | X | | | | | | | | | | | | X | X | | | | | | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X |

|

liuyanchen1015/MULTI_VALUE_wnli_adj_postfix | ---

dataset_info:

features:

- name: sentence1

dtype: string

- name: sentence2

dtype: string

- name: label

dtype: int64

- name: idx

dtype: int64

- name: value_score

dtype: int64

splits:

- name: dev

num_bytes: 4467

num_examples: 21

- name: test

num_bytes: 25683

num_examples: 91

- name: train

num_bytes: 37127

num_examples: 173

download_size: 30769

dataset_size: 67277

---

# Dataset Card for "MULTI_VALUE_wnli_adj_postfix"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

sambhavi/guanaco-llama2-1k | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 1654448

num_examples: 1000

download_size: 966692

dataset_size: 1654448

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

FMunyoz/AMB | ---

license: cc

---

|

Livingwithmachines/hmd-erwt-training | ---

annotations_creators:

- no-annotation

language:

- en

language_creators:

- machine-generated

license:

- cc0-1.0

multilinguality:

- monolingual

pretty_name: Dataset Card for ERWT Hertiage Made Digital Newspapers training data

size_categories:

- 100K<n<1M

source_datasets: []

tags:

- library,lam,newspapers,1800-1900

task_categories:

- fill-mask

task_ids:

- masked-language-modeling

---

# Dataset Card for ERWT Hertiage Made Digital Newspapers training data

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

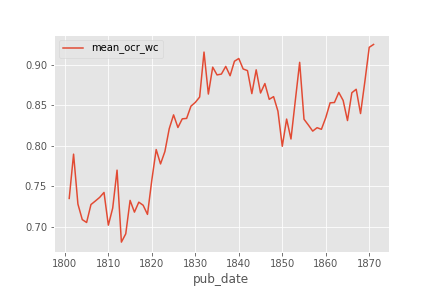

This dataset contains text extracted at the page level from historic digitised newspapers from the [Heritage Made Digital](https://bl.iro.bl.uk/collections/9a6a4cdd-2bfe-47bb-8c14-c0a5d100501f?locale=en) newspaper digitisation program. The newspapers in the dataset were published between 1800 and 1870.

The data was primarily created as a dataset for training 'time-aware' language models.

The dataset contains text generated from Optical Character Recognition software on digitised newspaper pages. This dataset includes the plain text from the OCR alongside some minimal metadata associated with the newspaper from which the text is derived and OCR confidence score information generated from the OCR software.

#### Breakdown of word counts over time

Whilst the dataset covers a time period between 1800 and 1870, the number of words in the dataset is not distributed evenly across time in this dataset. The figures below give a sense of the breakdown over time in terms of the number of words which appear in the dataset.

| year | total word_count | unique words |

|-------:|-------------------:|---------------:|

| 1800 | 282,554,255 | 15,506,515 |

| 1810 | 328,817,174 | 18,295,974 |

| 1820 | 328,817,174 | 18,295,974 |

| 1830 | 194,958,624 | 10,816,938 |

| 1840 | 305,545,086 | 17,018,560 |

| 1850 | 376,194,785 | 20,942,876 |

| 1860 | 305,545,086 | 17,018,560 |

| 1870 | 51,241,037 | 2,284,803 |

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

Thanks to [@github-username](https://github.com/<github-username>) for adding this dataset.

|

Sleoruiz/discursos-septima-class-separated-by-idx | ---

dataset_info:

features:

- name: text

dtype: string

- name: name

dtype: string

- name: comision

dtype: string

- name: gaceta_numero

dtype: string

- name: fecha_gaceta

dtype: string

- name: labels

sequence: string

- name: scores

sequence: float64

- name: idx

dtype: int64

splits:

- name: train

num_bytes: 22572969

num_examples: 15070

download_size: 10450492

dataset_size: 22572969

---

# Dataset Card for "discursos-septima-class-separated-by-idx"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

chirunder/GRE_all_text_word_freq | ---

dataset_info:

features:

- name: word

dtype: string

- name: frequency

dtype: int64

splits:

- name: train

num_bytes: 392007

num_examples: 19836

download_size: 224362

dataset_size: 392007

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "GRE_all_text_word_freq"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

joey234/mmlu-virology-neg-answer | ---

dataset_info:

features:

- name: question

dtype: string

- name: choices

sequence: string

- name: answer

dtype:

class_label:

names:

'0': A

'1': B

'2': C

'3': D

- name: neg_answer

dtype: string

splits:

- name: test

num_bytes: 44531

num_examples: 166

download_size: 31956

dataset_size: 44531

---

# Dataset Card for "mmlu-virology-neg-answer"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

whooray/ko_Ultrafeedback_binarized | ---

dataset_info:

features:

- name: instruction

dtype: string

- name: chosen_response

dtype: string

- name: rejected_response

dtype: string

splits:

- name: train

num_bytes: 226278590

num_examples: 61966

download_size: 110043082

dataset_size: 226278590

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

Fork of https://huggingface.co/datasets/maywell/ko_Ultrafeedback_binarized.

just change column name to use for axolotl. all credits goes to maywell |

mnoukhov/summarize_from_feedback_tldr_3_filtered_oai_preprocessing_1706381144 | ---

dataset_info:

features:

- name: id

dtype: string

- name: subreddit

dtype: string

- name: title

dtype: string

- name: post

dtype: string

- name: summary

dtype: string

- name: query_token

sequence: int64

- name: query

dtype: string

- name: reference_response

dtype: string

- name: reference_response_token

sequence: int64

- name: reference_response_token_len

dtype: int64

- name: query_reference_response

dtype: string

- name: query_reference_response_token

sequence: int64

- name: query_reference_response_token_response_label

sequence: int64

- name: query_reference_response_token_len

dtype: int64

- name: has_comparison

dtype: bool

splits:

- name: train

num_bytes: 2125703840

num_examples: 116722

- name: validation

num_bytes: 117438077

num_examples: 6447

- name: test

num_bytes: 119411786

num_examples: 6553

download_size: 561795675

dataset_size: 2362553703

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

- split: test

path: data/test-*

---

|

codeparrot/codecomplex | ---

annotations_creators: []

language_creators:

- expert-generated

language:

- code

license:

- apache-2.0

multilinguality:

- monolingual

size_categories:

- unknown

source_datasets: []

task_categories:

- text-generation

task_ids:

- language-modeling

pretty_name: CodeComplex

---

# CodeComplex Dataset

## Dataset Description

[CodeComplex](https://github.com/yonsei-toc/CodeComple) consists of 4,200 Java codes submitted to programming competitions by human programmers and their complexity labels annotated by a group of algorithm experts.

### How to use it

You can load and iterate through the dataset with the following two lines of code:

```python

from datasets import load_dataset

ds = load_dataset("codeparrot/codecomplex", split="train")

print(next(iter(ds)))

```

## Data Structure

```

DatasetDict({

train: Dataset({

features: ['src', 'complexity', 'problem', 'from'],

num_rows: 4517

})

})

```

### Data Instances

```python

{'src': 'import java.io.*;\nimport java.math.BigInteger;\nimport java.util.InputMismatchException;...',

'complexity': 'quadratic',

'problem': '1179_B. Tolik and His Uncle',

'from': 'CODEFORCES'}

```

### Data Fields

* src: a string feature, representing the source code in Java.

* complexity: a string feature, giving program complexity.

* problem: a string of the feature, representing the problem name.

* from: a string feature, representing the source of the problem.

complexity filed has 7 classes, where each class has around 500 codes each. The seven classes are constant, linear, quadratic, cubic, log(n), nlog(n) and NP-hard.

### Data Splits

The dataset only contains a train split.

## Dataset Creation

The authors first collected problem and solution codes in Java from CodeForces and they were inspected by experienced human annotators to label each code by their time complexity. After the labelling, they used different programming experts to verify the class of each data that the human annotators assigned.

## Citation Information

```

@article{JeonBHHK22,

author = {Mingi Jeon and Seung-Yeop Baik and Joonghyuk Hahn and Yo-Sub Han and Sang-Ki Ko},

title = {{Deep Learning-based Code Complexity Prediction}},

year = {2022},

}

``` |

InceptiveDev/CoverLetterProV1dataset | ---

license: mit

---

|

mikhail-panzo/processed_malay_dataset_micro | ---

dataset_info:

features:

- name: speaker_embeddings

sequence: float32

- name: input_ids

sequence: int32

- name: labels

sequence:

sequence: float32

splits:

- name: train

num_bytes: 352177204

num_examples: 3000

download_size: 350656621

dataset_size: 352177204

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

petr7555/street2shop | ---

dataset_info:

features:

- name: type

dtype: string

- name: category

dtype: string

- name: street_photo_id

dtype: int32

- name: product_id

dtype: int32

- name: width

dtype: float32

- name: top

dtype: float32

- name: height

dtype: float32

- name: left

dtype: float32

- name: shop_photo_id

dtype: int32

- name: street_photo_url

dtype: string

- name: shop_photo_url

dtype: string

- name: street_photo_image

dtype: image

- name: shop_photo_image

dtype: image

splits:

- name: test

num_bytes: 20990773602.627

num_examples: 27357

- name: train

num_bytes: 82180129067.717

num_examples: 97437

download_size: 43403838962

dataset_size: 103170902670.344

configs:

- config_name: default

data_files:

- split: test

path: data/test-*

- split: train

path: data/train-*

---

|

Mitsuki-Sakamoto/alpaca_farm-deberta-re-pref-64-_fil_self_160m_bo2_100_kl_0.1_prm_70m_thr_0.3_seed_1 | ---

dataset_info:

config_name: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1

features:

- name: instruction

dtype: string

- name: input

dtype: string

- name: output

dtype: string

- name: preference

dtype: int64

- name: output_1

dtype: string

- name: output_2

dtype: string

- name: reward_model_prompt_format

dtype: string

- name: gen_prompt_format

dtype: string

- name: gen_kwargs

struct:

- name: do_sample

dtype: bool

- name: max_new_tokens

dtype: int64

- name: pad_token_id

dtype: int64

- name: top_k

dtype: int64

- name: top_p

dtype: float64

- name: reward_1

dtype: float64

- name: reward_2

dtype: float64

- name: n_samples

dtype: int64

- name: reject_select

dtype: string

- name: prompt

dtype: string

- name: chosen

dtype: string

- name: rejected

dtype: string

- name: index

dtype: int64

- name: filtered_epoch

dtype: int64

- name: gen_reward

dtype: float64

- name: gen_response

dtype: string

splits:

- name: epoch_0

num_bytes: 43597508

num_examples: 18929

- name: epoch_1

num_bytes: 44140361

num_examples: 18929

- name: epoch_2

num_bytes: 44219218

num_examples: 18929

- name: epoch_3

num_bytes: 44272701

num_examples: 18929

- name: epoch_4

num_bytes: 44303217

num_examples: 18929

- name: epoch_5

num_bytes: 44318370

num_examples: 18929

- name: epoch_6

num_bytes: 44329713

num_examples: 18929

- name: epoch_7

num_bytes: 44334298

num_examples: 18929

- name: epoch_8

num_bytes: 44338166

num_examples: 18929

- name: epoch_9

num_bytes: 44339871

num_examples: 18929

- name: epoch_10

num_bytes: 44340020

num_examples: 18929

- name: epoch_11

num_bytes: 44340799

num_examples: 18929

- name: epoch_12

num_bytes: 44342396

num_examples: 18929

- name: epoch_13

num_bytes: 44343629

num_examples: 18929

- name: epoch_14

num_bytes: 44343512

num_examples: 18929

- name: epoch_15

num_bytes: 44343176

num_examples: 18929

- name: epoch_16

num_bytes: 44342483

num_examples: 18929

- name: epoch_17

num_bytes: 44344000

num_examples: 18929

- name: epoch_18

num_bytes: 44342859

num_examples: 18929

- name: epoch_19

num_bytes: 44343164

num_examples: 18929

- name: epoch_20

num_bytes: 44343829

num_examples: 18929

- name: epoch_21

num_bytes: 44344365

num_examples: 18929

- name: epoch_22

num_bytes: 44344011

num_examples: 18929

- name: epoch_23

num_bytes: 44346128

num_examples: 18929

- name: epoch_24

num_bytes: 44344476

num_examples: 18929

- name: epoch_25

num_bytes: 44344911

num_examples: 18929

- name: epoch_26

num_bytes: 44345157

num_examples: 18929

- name: epoch_27

num_bytes: 44345020

num_examples: 18929

- name: epoch_28

num_bytes: 44344510

num_examples: 18929

- name: epoch_29

num_bytes: 44344390

num_examples: 18929

download_size: 699957730

dataset_size: 1329066258

configs:

- config_name: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1

data_files:

- split: epoch_0

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_0-*

- split: epoch_1

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_1-*

- split: epoch_2

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_2-*

- split: epoch_3

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_3-*

- split: epoch_4

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_4-*

- split: epoch_5

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_5-*

- split: epoch_6

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_6-*

- split: epoch_7

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_7-*

- split: epoch_8

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_8-*

- split: epoch_9

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_9-*

- split: epoch_10

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_10-*

- split: epoch_11

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_11-*

- split: epoch_12

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_12-*

- split: epoch_13

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_13-*

- split: epoch_14

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_14-*

- split: epoch_15

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_15-*

- split: epoch_16

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_16-*

- split: epoch_17

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_17-*

- split: epoch_18

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_18-*

- split: epoch_19

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_19-*

- split: epoch_20

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_20-*

- split: epoch_21

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_21-*

- split: epoch_22

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_22-*

- split: epoch_23

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_23-*

- split: epoch_24

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_24-*

- split: epoch_25

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_25-*

- split: epoch_26

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_26-*

- split: epoch_27

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_27-*

- split: epoch_28

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_28-*

- split: epoch_29

path: alpaca_instructions-pythia_160m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_29-*

---

|

kenhktsui/wiki_dpr_e5 | ---

license: cc-by-sa-3.0

dataset_info:

features:

- name: id

dtype: int64

- name: text

dtype: string

- name: title

dtype: string

- name: embedding

sequence: float32

splits:

- name: train

num_bytes: 78346298059.0

num_examples: 21015300

download_size: 3792584904

dataset_size: 78346298059.0

---

`wiki_dpr` encoded with `intfloat/e5-base-v2` |

nutorbit/news-headline-gen | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: dev

path: data/dev-*

dataset_info:

features:

- name: headline

dtype: string

- name: news

dtype: string

splits:

- name: train

num_bytes: 23555772

num_examples: 21157

- name: dev

num_bytes: 2628111

num_examples: 2365

download_size: 17404158

dataset_size: 26183883

---

# Dataset Card for "news-headline-gen"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

jyshbgde/cinescopeDataset | ---

license: openrail

task_categories:

- feature-extraction

language:

- en

pretty_name: cinescope

---

|

alansun25/cs375_cv11_mandarin_test | ---

dataset_info:

features:

- name: path

dtype: string

- name: audio

struct:

- name: array

sequence: float64

- name: path

dtype: 'null'

- name: sampling_rate

dtype: int64

- name: sentence

dtype: string

splits:

- name: train

num_bytes: 761405859

num_examples: 1000

download_size: 566068077

dataset_size: 761405859

---

# Dataset Card for "cs375_cv11_mandarin_test"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

ChirathD/dpt-testing-version-1 | ---

dataset_info:

features:

- name: image

dtype: image

- name: text

dtype: string

splits:

- name: train

num_bytes: 3135193.0

num_examples: 5

download_size: 3136751

dataset_size: 3135193.0

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "dpt-testing-version-1"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

nvidia/OpenMath-GSM8K-masked | ---

license: other

license_name: nvidia-license

task_categories:

- question-answering

- text-generation

language:

- en

tags:

- math

- nvidia

pretty_name: OpenMath GSM8K Masked

size_categories:

- 1K<n<10K

---

# OpenMath GSM8K Masked

We release a *masked* version of the [GSM8K](https://github.com/openai/grade-school-math) solutions.

This data can be used to aid synthetic generation of additional solutions for GSM8K dataset

as it is much less likely to lead to inconsistent reasoning compared to using

the original solutions directly.

This dataset was used to construct [OpenMathInstruct-1](https://huggingface.co/datasets/nvidia/OpenMathInstruct-1):

a math instruction tuning dataset with 1.8M problem-solution pairs

generated using permissively licensed [Mixtral-8x7B](https://huggingface.co/mistralai/Mixtral-8x7B-v0.1) model.

For details of how the masked solutions were created, see our [paper](https://arxiv.org/abs/2402.10176).

You can re-create this dataset or apply similar techniques to mask solutions for other datasets

by using our [open-sourced code](https://github.com/Kipok/NeMo-Skills).

## Citation

If you find our work useful, please consider citing us!

```bibtex

@article{toshniwal2024openmath,

title = {OpenMathInstruct-1: A 1.8 Million Math Instruction Tuning Dataset},

author = {Shubham Toshniwal and Ivan Moshkov and Sean Narenthiran and Daria Gitman and Fei Jia and Igor Gitman},

year = {2024},

journal = {arXiv preprint arXiv: Arxiv-2402.10176}

}

```

## License

The use of this dataset is governed by the [NVIDIA License](LICENSE) which permits commercial usage.

|

jarrydmartinx/recount3-RNA-seq | ---

license: gpl

---

|

open-llm-leaderboard/details_jisukim8873__mistralai-case-0-1 | ---

pretty_name: Evaluation run of jisukim8873/mistralai-case-0-1

dataset_summary: "Dataset automatically created during the evaluation run of model\

\ [jisukim8873/mistralai-case-0-1](https://huggingface.co/jisukim8873/mistralai-case-0-1)\

\ on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).\n\

\nThe dataset is composed of 63 configuration, each one coresponding to one of the\

\ evaluated task.\n\nThe dataset has been created from 1 run(s). Each run can be\

\ found as a specific split in each configuration, the split being named using the\

\ timestamp of the run.The \"train\" split is always pointing to the latest results.\n\

\nAn additional configuration \"results\" store all the aggregated results of the\

\ run (and is used to compute and display the aggregated metrics on the [Open LLM\

\ Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).\n\

\nTo load the details from a run, you can for instance do the following:\n```python\n\

from datasets import load_dataset\ndata = load_dataset(\"open-llm-leaderboard/details_jisukim8873__mistralai-case-0-1\"\

,\n\t\"harness_winogrande_5\",\n\tsplit=\"train\")\n```\n\n## Latest results\n\n\

These are the [latest results from run 2024-03-22T05:14:39.214242](https://huggingface.co/datasets/open-llm-leaderboard/details_jisukim8873__mistralai-case-0-1/blob/main/results_2024-03-22T05-14-39.214242.json)(note\

\ that their might be results for other tasks in the repos if successive evals didn't\

\ cover the same tasks. You find each in the results and the \"latest\" split for\

\ each eval):\n\n```python\n{\n \"all\": {\n \"acc\": 0.6245526964592384,\n\

\ \"acc_stderr\": 0.032657544114946147,\n \"acc_norm\": 0.6303693247434217,\n\

\ \"acc_norm_stderr\": 0.033326929053029704,\n \"mc1\": 0.29008567931456547,\n\

\ \"mc1_stderr\": 0.01588623687420952,\n \"mc2\": 0.41430745876476355,\n\

\ \"mc2_stderr\": 0.014409498973913385\n },\n \"harness|arc:challenge|25\"\

: {\n \"acc\": 0.5784982935153583,\n \"acc_stderr\": 0.014430197069326023,\n\

\ \"acc_norm\": 0.6083617747440273,\n \"acc_norm_stderr\": 0.014264122124938213\n\

\ },\n \"harness|hellaswag|10\": {\n \"acc\": 0.6313483369846644,\n\

\ \"acc_stderr\": 0.004814532642574651,\n \"acc_norm\": 0.8305118502290381,\n\

\ \"acc_norm_stderr\": 0.003744157442536556\n },\n \"harness|hendrycksTest-abstract_algebra|5\"\

: {\n \"acc\": 0.34,\n \"acc_stderr\": 0.04760952285695235,\n \

\ \"acc_norm\": 0.34,\n \"acc_norm_stderr\": 0.04760952285695235\n \

\ },\n \"harness|hendrycksTest-anatomy|5\": {\n \"acc\": 0.5925925925925926,\n\

\ \"acc_stderr\": 0.04244633238353227,\n \"acc_norm\": 0.5925925925925926,\n\

\ \"acc_norm_stderr\": 0.04244633238353227\n },\n \"harness|hendrycksTest-astronomy|5\"\

: {\n \"acc\": 0.6578947368421053,\n \"acc_stderr\": 0.03860731599316091,\n\

\ \"acc_norm\": 0.6578947368421053,\n \"acc_norm_stderr\": 0.03860731599316091\n\

\ },\n \"harness|hendrycksTest-business_ethics|5\": {\n \"acc\": 0.54,\n\

\ \"acc_stderr\": 0.05009082659620333,\n \"acc_norm\": 0.54,\n \

\ \"acc_norm_stderr\": 0.05009082659620333\n },\n \"harness|hendrycksTest-clinical_knowledge|5\"\

: {\n \"acc\": 0.690566037735849,\n \"acc_stderr\": 0.028450154794118637,\n\

\ \"acc_norm\": 0.690566037735849,\n \"acc_norm_stderr\": 0.028450154794118637\n\

\ },\n \"harness|hendrycksTest-college_biology|5\": {\n \"acc\": 0.6944444444444444,\n\

\ \"acc_stderr\": 0.03852084696008534,\n \"acc_norm\": 0.6944444444444444,\n\

\ \"acc_norm_stderr\": 0.03852084696008534\n },\n \"harness|hendrycksTest-college_chemistry|5\"\

: {\n \"acc\": 0.51,\n \"acc_stderr\": 0.05024183937956912,\n \

\ \"acc_norm\": 0.51,\n \"acc_norm_stderr\": 0.05024183937956912\n \

\ },\n \"harness|hendrycksTest-college_computer_science|5\": {\n \"acc\"\

: 0.51,\n \"acc_stderr\": 0.05024183937956911,\n \"acc_norm\": 0.51,\n\

\ \"acc_norm_stderr\": 0.05024183937956911\n },\n \"harness|hendrycksTest-college_mathematics|5\"\

: {\n \"acc\": 0.38,\n \"acc_stderr\": 0.04878317312145634,\n \

\ \"acc_norm\": 0.38,\n \"acc_norm_stderr\": 0.04878317312145634\n \

\ },\n \"harness|hendrycksTest-college_medicine|5\": {\n \"acc\": 0.6069364161849711,\n\

\ \"acc_stderr\": 0.0372424959581773,\n \"acc_norm\": 0.6069364161849711,\n\

\ \"acc_norm_stderr\": 0.0372424959581773\n },\n \"harness|hendrycksTest-college_physics|5\"\

: {\n \"acc\": 0.39215686274509803,\n \"acc_stderr\": 0.04858083574266346,\n\

\ \"acc_norm\": 0.39215686274509803,\n \"acc_norm_stderr\": 0.04858083574266346\n\

\ },\n \"harness|hendrycksTest-computer_security|5\": {\n \"acc\":\

\ 0.75,\n \"acc_stderr\": 0.04351941398892446,\n \"acc_norm\": 0.75,\n\

\ \"acc_norm_stderr\": 0.04351941398892446\n },\n \"harness|hendrycksTest-conceptual_physics|5\"\

: {\n \"acc\": 0.5574468085106383,\n \"acc_stderr\": 0.03246956919789958,\n\

\ \"acc_norm\": 0.5574468085106383,\n \"acc_norm_stderr\": 0.03246956919789958\n\

\ },\n \"harness|hendrycksTest-econometrics|5\": {\n \"acc\": 0.45614035087719296,\n\

\ \"acc_stderr\": 0.04685473041907789,\n \"acc_norm\": 0.45614035087719296,\n\

\ \"acc_norm_stderr\": 0.04685473041907789\n },\n \"harness|hendrycksTest-electrical_engineering|5\"\

: {\n \"acc\": 0.5655172413793104,\n \"acc_stderr\": 0.041307408795554966,\n\

\ \"acc_norm\": 0.5655172413793104,\n \"acc_norm_stderr\": 0.041307408795554966\n\

\ },\n \"harness|hendrycksTest-elementary_mathematics|5\": {\n \"acc\"\

: 0.41005291005291006,\n \"acc_stderr\": 0.025331202438944433,\n \"\

acc_norm\": 0.41005291005291006,\n \"acc_norm_stderr\": 0.025331202438944433\n\

\ },\n \"harness|hendrycksTest-formal_logic|5\": {\n \"acc\": 0.42063492063492064,\n\

\ \"acc_stderr\": 0.04415438226743744,\n \"acc_norm\": 0.42063492063492064,\n\

\ \"acc_norm_stderr\": 0.04415438226743744\n },\n \"harness|hendrycksTest-global_facts|5\"\

: {\n \"acc\": 0.41,\n \"acc_stderr\": 0.049431107042371025,\n \

\ \"acc_norm\": 0.41,\n \"acc_norm_stderr\": 0.049431107042371025\n \

\ },\n \"harness|hendrycksTest-high_school_biology|5\": {\n \"acc\"\

: 0.7419354838709677,\n \"acc_stderr\": 0.02489246917246283,\n \"\

acc_norm\": 0.7419354838709677,\n \"acc_norm_stderr\": 0.02489246917246283\n\

\ },\n \"harness|hendrycksTest-high_school_chemistry|5\": {\n \"acc\"\

: 0.5024630541871922,\n \"acc_stderr\": 0.03517945038691063,\n \"\

acc_norm\": 0.5024630541871922,\n \"acc_norm_stderr\": 0.03517945038691063\n\

\ },\n \"harness|hendrycksTest-high_school_computer_science|5\": {\n \

\ \"acc\": 0.67,\n \"acc_stderr\": 0.047258156262526066,\n \"acc_norm\"\

: 0.67,\n \"acc_norm_stderr\": 0.047258156262526066\n },\n \"harness|hendrycksTest-high_school_european_history|5\"\

: {\n \"acc\": 0.7636363636363637,\n \"acc_stderr\": 0.03317505930009182,\n\

\ \"acc_norm\": 0.7636363636363637,\n \"acc_norm_stderr\": 0.03317505930009182\n\

\ },\n \"harness|hendrycksTest-high_school_geography|5\": {\n \"acc\"\

: 0.7777777777777778,\n \"acc_stderr\": 0.02962022787479048,\n \"\

acc_norm\": 0.7777777777777778,\n \"acc_norm_stderr\": 0.02962022787479048\n\

\ },\n \"harness|hendrycksTest-high_school_government_and_politics|5\": {\n\

\ \"acc\": 0.8497409326424871,\n \"acc_stderr\": 0.02578772318072388,\n\

\ \"acc_norm\": 0.8497409326424871,\n \"acc_norm_stderr\": 0.02578772318072388\n\

\ },\n \"harness|hendrycksTest-high_school_macroeconomics|5\": {\n \

\ \"acc\": 0.6205128205128205,\n \"acc_stderr\": 0.024603626924097417,\n\

\ \"acc_norm\": 0.6205128205128205,\n \"acc_norm_stderr\": 0.024603626924097417\n\

\ },\n \"harness|hendrycksTest-high_school_mathematics|5\": {\n \"\

acc\": 0.32222222222222224,\n \"acc_stderr\": 0.028493465091028597,\n \

\ \"acc_norm\": 0.32222222222222224,\n \"acc_norm_stderr\": 0.028493465091028597\n\

\ },\n \"harness|hendrycksTest-high_school_microeconomics|5\": {\n \

\ \"acc\": 0.6848739495798319,\n \"acc_stderr\": 0.03017680828897434,\n \

\ \"acc_norm\": 0.6848739495798319,\n \"acc_norm_stderr\": 0.03017680828897434\n\

\ },\n \"harness|hendrycksTest-high_school_physics|5\": {\n \"acc\"\

: 0.33774834437086093,\n \"acc_stderr\": 0.03861557546255169,\n \"\

acc_norm\": 0.33774834437086093,\n \"acc_norm_stderr\": 0.03861557546255169\n\

\ },\n \"harness|hendrycksTest-high_school_psychology|5\": {\n \"acc\"\

: 0.8055045871559633,\n \"acc_stderr\": 0.01697028909045804,\n \"\

acc_norm\": 0.8055045871559633,\n \"acc_norm_stderr\": 0.01697028909045804\n\

\ },\n \"harness|hendrycksTest-high_school_statistics|5\": {\n \"acc\"\

: 0.49537037037037035,\n \"acc_stderr\": 0.03409825519163572,\n \"\

acc_norm\": 0.49537037037037035,\n \"acc_norm_stderr\": 0.03409825519163572\n\

\ },\n \"harness|hendrycksTest-high_school_us_history|5\": {\n \"acc\"\

: 0.7794117647058824,\n \"acc_stderr\": 0.02910225438967408,\n \"\

acc_norm\": 0.7794117647058824,\n \"acc_norm_stderr\": 0.02910225438967408\n\

\ },\n \"harness|hendrycksTest-high_school_world_history|5\": {\n \"\

acc\": 0.7468354430379747,\n \"acc_stderr\": 0.028304657943035293,\n \

\ \"acc_norm\": 0.7468354430379747,\n \"acc_norm_stderr\": 0.028304657943035293\n\

\ },\n \"harness|hendrycksTest-human_aging|5\": {\n \"acc\": 0.6995515695067265,\n\

\ \"acc_stderr\": 0.030769352008229136,\n \"acc_norm\": 0.6995515695067265,\n\

\ \"acc_norm_stderr\": 0.030769352008229136\n },\n \"harness|hendrycksTest-human_sexuality|5\"\

: {\n \"acc\": 0.8015267175572519,\n \"acc_stderr\": 0.034981493854624714,\n\

\ \"acc_norm\": 0.8015267175572519,\n \"acc_norm_stderr\": 0.034981493854624714\n\

\ },\n \"harness|hendrycksTest-international_law|5\": {\n \"acc\":\

\ 0.7851239669421488,\n \"acc_stderr\": 0.037494924487096966,\n \"\

acc_norm\": 0.7851239669421488,\n \"acc_norm_stderr\": 0.037494924487096966\n\

\ },\n \"harness|hendrycksTest-jurisprudence|5\": {\n \"acc\": 0.7407407407407407,\n\

\ \"acc_stderr\": 0.04236511258094633,\n \"acc_norm\": 0.7407407407407407,\n\

\ \"acc_norm_stderr\": 0.04236511258094633\n },\n \"harness|hendrycksTest-logical_fallacies|5\"\

: {\n \"acc\": 0.7730061349693251,\n \"acc_stderr\": 0.032910995786157686,\n\

\ \"acc_norm\": 0.7730061349693251,\n \"acc_norm_stderr\": 0.032910995786157686\n\

\ },\n \"harness|hendrycksTest-machine_learning|5\": {\n \"acc\": 0.5089285714285714,\n\

\ \"acc_stderr\": 0.04745033255489123,\n \"acc_norm\": 0.5089285714285714,\n\

\ \"acc_norm_stderr\": 0.04745033255489123\n },\n \"harness|hendrycksTest-management|5\"\

: {\n \"acc\": 0.7864077669902912,\n \"acc_stderr\": 0.04058042015646034,\n\

\ \"acc_norm\": 0.7864077669902912,\n \"acc_norm_stderr\": 0.04058042015646034\n\

\ },\n \"harness|hendrycksTest-marketing|5\": {\n \"acc\": 0.8717948717948718,\n\

\ \"acc_stderr\": 0.02190190511507333,\n \"acc_norm\": 0.8717948717948718,\n\

\ \"acc_norm_stderr\": 0.02190190511507333\n },\n \"harness|hendrycksTest-medical_genetics|5\"\

: {\n \"acc\": 0.7,\n \"acc_stderr\": 0.046056618647183814,\n \

\ \"acc_norm\": 0.7,\n \"acc_norm_stderr\": 0.046056618647183814\n \

\ },\n \"harness|hendrycksTest-miscellaneous|5\": {\n \"acc\": 0.8007662835249042,\n\

\ \"acc_stderr\": 0.014283378044296418,\n \"acc_norm\": 0.8007662835249042,\n\

\ \"acc_norm_stderr\": 0.014283378044296418\n },\n \"harness|hendrycksTest-moral_disputes|5\"\

: {\n \"acc\": 0.6705202312138728,\n \"acc_stderr\": 0.025305258131879713,\n\

\ \"acc_norm\": 0.6705202312138728,\n \"acc_norm_stderr\": 0.025305258131879713\n\

\ },\n \"harness|hendrycksTest-moral_scenarios|5\": {\n \"acc\": 0.2782122905027933,\n\

\ \"acc_stderr\": 0.014987325439963554,\n \"acc_norm\": 0.2782122905027933,\n\

\ \"acc_norm_stderr\": 0.014987325439963554\n },\n \"harness|hendrycksTest-nutrition|5\"\

: {\n \"acc\": 0.7287581699346405,\n \"acc_stderr\": 0.02545775669666788,\n\

\ \"acc_norm\": 0.7287581699346405,\n \"acc_norm_stderr\": 0.02545775669666788\n\

\ },\n \"harness|hendrycksTest-philosophy|5\": {\n \"acc\": 0.7009646302250804,\n\

\ \"acc_stderr\": 0.02600330111788514,\n \"acc_norm\": 0.7009646302250804,\n\

\ \"acc_norm_stderr\": 0.02600330111788514\n },\n \"harness|hendrycksTest-prehistory|5\"\

: {\n \"acc\": 0.7098765432098766,\n \"acc_stderr\": 0.025251173936495036,\n\

\ \"acc_norm\": 0.7098765432098766,\n \"acc_norm_stderr\": 0.025251173936495036\n\

\ },\n \"harness|hendrycksTest-professional_accounting|5\": {\n \"\

acc\": 0.42907801418439717,\n \"acc_stderr\": 0.02952591430255856,\n \

\ \"acc_norm\": 0.42907801418439717,\n \"acc_norm_stderr\": 0.02952591430255856\n\

\ },\n \"harness|hendrycksTest-professional_law|5\": {\n \"acc\": 0.438722294654498,\n\

\ \"acc_stderr\": 0.012673969883493272,\n \"acc_norm\": 0.438722294654498,\n\

\ \"acc_norm_stderr\": 0.012673969883493272\n },\n \"harness|hendrycksTest-professional_medicine|5\"\

: {\n \"acc\": 0.6397058823529411,\n \"acc_stderr\": 0.029163128570670733,\n\

\ \"acc_norm\": 0.6397058823529411,\n \"acc_norm_stderr\": 0.029163128570670733\n\

\ },\n \"harness|hendrycksTest-professional_psychology|5\": {\n \"\

acc\": 0.6503267973856209,\n \"acc_stderr\": 0.01929196189506638,\n \

\ \"acc_norm\": 0.6503267973856209,\n \"acc_norm_stderr\": 0.01929196189506638\n\

\ },\n \"harness|hendrycksTest-public_relations|5\": {\n \"acc\": 0.6636363636363637,\n\

\ \"acc_stderr\": 0.04525393596302506,\n \"acc_norm\": 0.6636363636363637,\n\

\ \"acc_norm_stderr\": 0.04525393596302506\n },\n \"harness|hendrycksTest-security_studies|5\"\

: {\n \"acc\": 0.7224489795918367,\n \"acc_stderr\": 0.028666857790274648,\n\

\ \"acc_norm\": 0.7224489795918367,\n \"acc_norm_stderr\": 0.028666857790274648\n\

\ },\n \"harness|hendrycksTest-sociology|5\": {\n \"acc\": 0.8557213930348259,\n\

\ \"acc_stderr\": 0.024845753212306053,\n \"acc_norm\": 0.8557213930348259,\n\

\ \"acc_norm_stderr\": 0.024845753212306053\n },\n \"harness|hendrycksTest-us_foreign_policy|5\"\

: {\n \"acc\": 0.86,\n \"acc_stderr\": 0.034873508801977704,\n \

\ \"acc_norm\": 0.86,\n \"acc_norm_stderr\": 0.034873508801977704\n \

\ },\n \"harness|hendrycksTest-virology|5\": {\n \"acc\": 0.5301204819277109,\n\

\ \"acc_stderr\": 0.03885425420866767,\n \"acc_norm\": 0.5301204819277109,\n\

\ \"acc_norm_stderr\": 0.03885425420866767\n },\n \"harness|hendrycksTest-world_religions|5\"\

: {\n \"acc\": 0.8245614035087719,\n \"acc_stderr\": 0.029170885500727665,\n\

\ \"acc_norm\": 0.8245614035087719,\n \"acc_norm_stderr\": 0.029170885500727665\n\

\ },\n \"harness|truthfulqa:mc|0\": {\n \"mc1\": 0.29008567931456547,\n\

\ \"mc1_stderr\": 0.01588623687420952,\n \"mc2\": 0.41430745876476355,\n\

\ \"mc2_stderr\": 0.014409498973913385\n },\n \"harness|winogrande|5\"\

: {\n \"acc\": 0.7884767166535123,\n \"acc_stderr\": 0.011477747684223188\n\

\ },\n \"harness|gsm8k|5\": {\n \"acc\": 0.3464746019711903,\n \

\ \"acc_stderr\": 0.01310717905431338\n }\n}\n```"

repo_url: https://huggingface.co/jisukim8873/mistralai-case-0-1

leaderboard_url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

point_of_contact: clementine@hf.co

configs:

- config_name: harness_arc_challenge_25

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|arc:challenge|25_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|arc:challenge|25_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_gsm8k_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|gsm8k|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|gsm8k|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hellaswag_10

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hellaswag|10_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hellaswag|10_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-management|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-virology|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-management|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-virology|5_2024-03-22T05-14-39.214242.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_abstract_algebra_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_anatomy_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-anatomy|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-anatomy|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_astronomy_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-astronomy|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-astronomy|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_business_ethics_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_clinical_knowledge_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_college_biology_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-college_biology|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_biology|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_college_chemistry_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_college_computer_science_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_college_mathematics_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_college_medicine_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_college_physics_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-college_physics|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_physics|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_computer_security_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-computer_security|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-computer_security|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_conceptual_physics_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_econometrics_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-econometrics|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-econometrics|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_electrical_engineering_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_elementary_mathematics_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_formal_logic_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_global_facts_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-global_facts|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-global_facts|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_high_school_biology_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_high_school_chemistry_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_high_school_computer_science_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_high_school_european_history_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_high_school_geography_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_high_school_government_and_politics_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_high_school_macroeconomics_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_high_school_mathematics_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_high_school_microeconomics_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_high_school_physics_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_high_school_psychology_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_high_school_statistics_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_high_school_us_history_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-high_school_us_history|5_2024-03-22T05-14-39.214242.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_us_history|5_2024-03-22T05-14-39.214242.parquet'

- config_name: harness_hendrycksTest_high_school_world_history_5

data_files:

- split: 2024_03_22T05_14_39.214242

path:

- '**/details_harness|hendrycksTest-high_school_world_history|5_2024-03-22T05-14-39.214242.parquet'