datasetId stringlengths 2 117 | card stringlengths 19 1.01M |

|---|---|

autoevaluate/autoeval-staging-eval-project-976d13e6-0b05-475e-9b4e-e8fbc174cfae-346 | ---

type: predictions

tags:

- autotrain

- evaluation

datasets:

- squad

eval_info:

task: extractive_question_answering

model: autoevaluate/extractive-question-answering

metrics: []

dataset_name: squad

dataset_config: plain_text

dataset_split: validation

col_mapping:

context: context

question: question

answers-text: answers.text

answers-answer_start: answers.answer_start

---

# Dataset Card for AutoTrain Evaluator

This repository contains model predictions generated by [AutoTrain](https://huggingface.co/autotrain) for the following task and dataset:

* Task: Question Answering

* Model: autoevaluate/extractive-question-answering

* Dataset: squad

* Config: plain_text

* Split: validation

To run new evaluation jobs, visit Hugging Face's [automatic model evaluator](https://huggingface.co/spaces/autoevaluate/model-evaluator).

## Contributions

Thanks to [@lewtun](https://huggingface.co/lewtun) for evaluating this model. |

CreitinGameplays/small-chat-assistant-for-bloom | ---

license: mit

---

# Info

This dataset was generated by ChatGPT and its intended use is for finetune language models. |

TrainingDataPro/MacBook-Attacks-Dataset | ---

license: cc-by-nc-nd-4.0

task_categories:

- video-classification

language:

- en

tags:

- finance

dataset_info:

features:

- name: file

dtype: string

- name: phone

dtype: string

- name: computer

dtype: string

- name: gender

dtype: string

- name: age

dtype: int16

- name: country

dtype: string

splits:

- name: train

num_bytes: 1418

num_examples: 24

download_size: 573934283

dataset_size: 1418

---

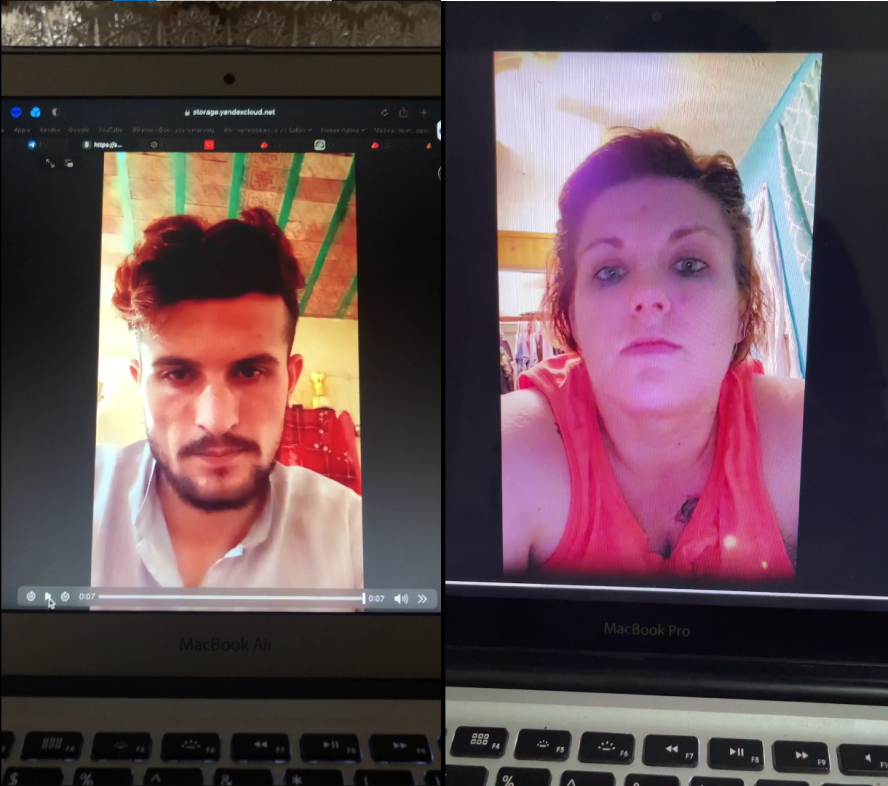

# Antispoofing Replay Dataset

The dataset consists of videos of replay attacks played on different models of MacBooks. The dataset solves tasks in the field of anti-spoofing and it is useful for buisness and safety systems.

The dataset includes: **replay attacks** - videos of real people played on a computer and filmed on the phone.

# Get the dataset

### This is just an example of the data

Leave a request on [**https://trainingdata.pro/data-market**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=MacBook-Attacks-Dataset) to discuss your requirements, learn about the price and buy the dataset.

# Content

The folder "attacks" includes videos of replay attack

### Models of MacBooks in the datset:

- MacBook 13

- MacBook Air

- MacBook Air 7

- MacBook Air 11

- MacBook Air 13

- MacBook Air M1

- MacBook Pro 12

- MacBook Pro 13

### File with the extension .csv

includes the following information for each media file:

- **file**: link to access the replay video,

- **phone**: the device used to capture the replay video,

- **computer**: the device used to play the video,

- **gender**: gender of a person in the video,

- **age**: age of the person in the video,

- **country**: country of the person

## [**TrainingData**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=MacBook-Attacks-Dataset) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets** |

chikino/DEADPOOL3 | ---

license: openrail

---

|

open-llm-leaderboard/details_Fizzarolli__sappha-2b-v3 | ---

pretty_name: Evaluation run of Fizzarolli/sappha-2b-v3

dataset_summary: "Dataset automatically created during the evaluation run of model\

\ [Fizzarolli/sappha-2b-v3](https://huggingface.co/Fizzarolli/sappha-2b-v3) on the\

\ [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).\n\

\nThe dataset is composed of 63 configuration, each one coresponding to one of the\

\ evaluated task.\n\nThe dataset has been created from 1 run(s). Each run can be\

\ found as a specific split in each configuration, the split being named using the\

\ timestamp of the run.The \"train\" split is always pointing to the latest results.\n\

\nAn additional configuration \"results\" store all the aggregated results of the\

\ run (and is used to compute and display the aggregated metrics on the [Open LLM\

\ Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).\n\

\nTo load the details from a run, you can for instance do the following:\n```python\n\

from datasets import load_dataset\ndata = load_dataset(\"open-llm-leaderboard/details_Fizzarolli__sappha-2b-v3\"\

,\n\t\"harness_winogrande_5\",\n\tsplit=\"train\")\n```\n\n## Latest results\n\n\

These are the [latest results from run 2024-03-24T14:34:41.283293](https://huggingface.co/datasets/open-llm-leaderboard/details_Fizzarolli__sappha-2b-v3/blob/main/results_2024-03-24T14-34-41.283293.json)(note\

\ that their might be results for other tasks in the repos if successive evals didn't\

\ cover the same tasks. You find each in the results and the \"latest\" split for\

\ each eval):\n\n```python\n{\n \"all\": {\n \"acc\": 0.38770836928908536,\n\

\ \"acc_stderr\": 0.03401202691311986,\n \"acc_norm\": 0.39301680909612896,\n\

\ \"acc_norm_stderr\": 0.03490947267798798,\n \"mc1\": 0.2350061199510404,\n\

\ \"mc1_stderr\": 0.014843061507731613,\n \"mc2\": 0.3993902530198297,\n\

\ \"mc2_stderr\": 0.014276014222438483\n },\n \"harness|arc:challenge|25\"\

: {\n \"acc\": 0.447098976109215,\n \"acc_stderr\": 0.014529380160526848,\n\

\ \"acc_norm\": 0.4616040955631399,\n \"acc_norm_stderr\": 0.01456824555029636\n\

\ },\n \"harness|hellaswag|10\": {\n \"acc\": 0.5266879107747461,\n\

\ \"acc_stderr\": 0.004982668452118941,\n \"acc_norm\": 0.707329217287393,\n\

\ \"acc_norm_stderr\": 0.004540586983229991\n },\n \"harness|hendrycksTest-abstract_algebra|5\"\

: {\n \"acc\": 0.22,\n \"acc_stderr\": 0.04163331998932269,\n \

\ \"acc_norm\": 0.22,\n \"acc_norm_stderr\": 0.04163331998932269\n \

\ },\n \"harness|hendrycksTest-anatomy|5\": {\n \"acc\": 0.4148148148148148,\n\

\ \"acc_stderr\": 0.042561937679014075,\n \"acc_norm\": 0.4148148148148148,\n\

\ \"acc_norm_stderr\": 0.042561937679014075\n },\n \"harness|hendrycksTest-astronomy|5\"\

: {\n \"acc\": 0.375,\n \"acc_stderr\": 0.039397364351956274,\n \

\ \"acc_norm\": 0.375,\n \"acc_norm_stderr\": 0.039397364351956274\n\

\ },\n \"harness|hendrycksTest-business_ethics|5\": {\n \"acc\": 0.35,\n\

\ \"acc_stderr\": 0.04793724854411019,\n \"acc_norm\": 0.35,\n \

\ \"acc_norm_stderr\": 0.04793724854411019\n },\n \"harness|hendrycksTest-clinical_knowledge|5\"\

: {\n \"acc\": 0.43018867924528303,\n \"acc_stderr\": 0.030471445867183238,\n\

\ \"acc_norm\": 0.43018867924528303,\n \"acc_norm_stderr\": 0.030471445867183238\n\

\ },\n \"harness|hendrycksTest-college_biology|5\": {\n \"acc\": 0.4236111111111111,\n\

\ \"acc_stderr\": 0.04132125019723369,\n \"acc_norm\": 0.4236111111111111,\n\

\ \"acc_norm_stderr\": 0.04132125019723369\n },\n \"harness|hendrycksTest-college_chemistry|5\"\

: {\n \"acc\": 0.33,\n \"acc_stderr\": 0.04725815626252606,\n \

\ \"acc_norm\": 0.33,\n \"acc_norm_stderr\": 0.04725815626252606\n \

\ },\n \"harness|hendrycksTest-college_computer_science|5\": {\n \"acc\"\

: 0.31,\n \"acc_stderr\": 0.04648231987117316,\n \"acc_norm\": 0.31,\n\

\ \"acc_norm_stderr\": 0.04648231987117316\n },\n \"harness|hendrycksTest-college_mathematics|5\"\

: {\n \"acc\": 0.29,\n \"acc_stderr\": 0.04560480215720684,\n \

\ \"acc_norm\": 0.29,\n \"acc_norm_stderr\": 0.04560480215720684\n \

\ },\n \"harness|hendrycksTest-college_medicine|5\": {\n \"acc\": 0.3699421965317919,\n\

\ \"acc_stderr\": 0.036812296333943194,\n \"acc_norm\": 0.3699421965317919,\n\

\ \"acc_norm_stderr\": 0.036812296333943194\n },\n \"harness|hendrycksTest-college_physics|5\"\

: {\n \"acc\": 0.19607843137254902,\n \"acc_stderr\": 0.03950581861179961,\n\

\ \"acc_norm\": 0.19607843137254902,\n \"acc_norm_stderr\": 0.03950581861179961\n\

\ },\n \"harness|hendrycksTest-computer_security|5\": {\n \"acc\":\

\ 0.56,\n \"acc_stderr\": 0.04988876515698589,\n \"acc_norm\": 0.56,\n\

\ \"acc_norm_stderr\": 0.04988876515698589\n },\n \"harness|hendrycksTest-conceptual_physics|5\"\

: {\n \"acc\": 0.3829787234042553,\n \"acc_stderr\": 0.031778212502369216,\n\

\ \"acc_norm\": 0.3829787234042553,\n \"acc_norm_stderr\": 0.031778212502369216\n\

\ },\n \"harness|hendrycksTest-econometrics|5\": {\n \"acc\": 0.2543859649122807,\n\

\ \"acc_stderr\": 0.04096985139843672,\n \"acc_norm\": 0.2543859649122807,\n\

\ \"acc_norm_stderr\": 0.04096985139843672\n },\n \"harness|hendrycksTest-electrical_engineering|5\"\

: {\n \"acc\": 0.43448275862068964,\n \"acc_stderr\": 0.04130740879555497,\n\

\ \"acc_norm\": 0.43448275862068964,\n \"acc_norm_stderr\": 0.04130740879555497\n\

\ },\n \"harness|hendrycksTest-elementary_mathematics|5\": {\n \"acc\"\

: 0.291005291005291,\n \"acc_stderr\": 0.02339382650048487,\n \"acc_norm\"\

: 0.291005291005291,\n \"acc_norm_stderr\": 0.02339382650048487\n },\n\

\ \"harness|hendrycksTest-formal_logic|5\": {\n \"acc\": 0.23015873015873015,\n\

\ \"acc_stderr\": 0.03764950879790606,\n \"acc_norm\": 0.23015873015873015,\n\

\ \"acc_norm_stderr\": 0.03764950879790606\n },\n \"harness|hendrycksTest-global_facts|5\"\

: {\n \"acc\": 0.31,\n \"acc_stderr\": 0.04648231987117316,\n \

\ \"acc_norm\": 0.31,\n \"acc_norm_stderr\": 0.04648231987117316\n \

\ },\n \"harness|hendrycksTest-high_school_biology|5\": {\n \"acc\": 0.3967741935483871,\n\

\ \"acc_stderr\": 0.027831231605767955,\n \"acc_norm\": 0.3967741935483871,\n\

\ \"acc_norm_stderr\": 0.027831231605767955\n },\n \"harness|hendrycksTest-high_school_chemistry|5\"\

: {\n \"acc\": 0.3645320197044335,\n \"acc_stderr\": 0.033864057460620905,\n\

\ \"acc_norm\": 0.3645320197044335,\n \"acc_norm_stderr\": 0.033864057460620905\n\

\ },\n \"harness|hendrycksTest-high_school_computer_science|5\": {\n \

\ \"acc\": 0.4,\n \"acc_stderr\": 0.04923659639173309,\n \"acc_norm\"\

: 0.4,\n \"acc_norm_stderr\": 0.04923659639173309\n },\n \"harness|hendrycksTest-high_school_european_history|5\"\

: {\n \"acc\": 0.4,\n \"acc_stderr\": 0.03825460278380026,\n \

\ \"acc_norm\": 0.4,\n \"acc_norm_stderr\": 0.03825460278380026\n },\n\

\ \"harness|hendrycksTest-high_school_geography|5\": {\n \"acc\": 0.4292929292929293,\n\

\ \"acc_stderr\": 0.035265527246011986,\n \"acc_norm\": 0.4292929292929293,\n\

\ \"acc_norm_stderr\": 0.035265527246011986\n },\n \"harness|hendrycksTest-high_school_government_and_politics|5\"\

: {\n \"acc\": 0.5025906735751295,\n \"acc_stderr\": 0.03608390745384488,\n\

\ \"acc_norm\": 0.5025906735751295,\n \"acc_norm_stderr\": 0.03608390745384488\n\

\ },\n \"harness|hendrycksTest-high_school_macroeconomics|5\": {\n \

\ \"acc\": 0.33589743589743587,\n \"acc_stderr\": 0.02394672474156398,\n\

\ \"acc_norm\": 0.33589743589743587,\n \"acc_norm_stderr\": 0.02394672474156398\n\

\ },\n \"harness|hendrycksTest-high_school_mathematics|5\": {\n \"\

acc\": 0.24444444444444444,\n \"acc_stderr\": 0.02620276653465215,\n \

\ \"acc_norm\": 0.24444444444444444,\n \"acc_norm_stderr\": 0.02620276653465215\n\

\ },\n \"harness|hendrycksTest-high_school_microeconomics|5\": {\n \

\ \"acc\": 0.3697478991596639,\n \"acc_stderr\": 0.031357095996135904,\n\

\ \"acc_norm\": 0.3697478991596639,\n \"acc_norm_stderr\": 0.031357095996135904\n\

\ },\n \"harness|hendrycksTest-high_school_physics|5\": {\n \"acc\"\

: 0.2781456953642384,\n \"acc_stderr\": 0.03658603262763744,\n \"\

acc_norm\": 0.2781456953642384,\n \"acc_norm_stderr\": 0.03658603262763744\n\

\ },\n \"harness|hendrycksTest-high_school_psychology|5\": {\n \"acc\"\

: 0.48440366972477067,\n \"acc_stderr\": 0.02142689153920805,\n \"\

acc_norm\": 0.48440366972477067,\n \"acc_norm_stderr\": 0.02142689153920805\n\

\ },\n \"harness|hendrycksTest-high_school_statistics|5\": {\n \"acc\"\

: 0.3194444444444444,\n \"acc_stderr\": 0.03179876342176851,\n \"\

acc_norm\": 0.3194444444444444,\n \"acc_norm_stderr\": 0.03179876342176851\n\

\ },\n \"harness|hendrycksTest-high_school_us_history|5\": {\n \"acc\"\

: 0.4019607843137255,\n \"acc_stderr\": 0.034411900234824655,\n \"\

acc_norm\": 0.4019607843137255,\n \"acc_norm_stderr\": 0.034411900234824655\n\

\ },\n \"harness|hendrycksTest-high_school_world_history|5\": {\n \"\

acc\": 0.48945147679324896,\n \"acc_stderr\": 0.032539983791662855,\n \

\ \"acc_norm\": 0.48945147679324896,\n \"acc_norm_stderr\": 0.032539983791662855\n\

\ },\n \"harness|hendrycksTest-human_aging|5\": {\n \"acc\": 0.4080717488789238,\n\

\ \"acc_stderr\": 0.03298574607842821,\n \"acc_norm\": 0.4080717488789238,\n\

\ \"acc_norm_stderr\": 0.03298574607842821\n },\n \"harness|hendrycksTest-human_sexuality|5\"\

: {\n \"acc\": 0.3511450381679389,\n \"acc_stderr\": 0.0418644516301375,\n\

\ \"acc_norm\": 0.3511450381679389,\n \"acc_norm_stderr\": 0.0418644516301375\n\

\ },\n \"harness|hendrycksTest-international_law|5\": {\n \"acc\":\

\ 0.5619834710743802,\n \"acc_stderr\": 0.045291468044357915,\n \"\

acc_norm\": 0.5619834710743802,\n \"acc_norm_stderr\": 0.045291468044357915\n\

\ },\n \"harness|hendrycksTest-jurisprudence|5\": {\n \"acc\": 0.4074074074074074,\n\

\ \"acc_stderr\": 0.047500773411999854,\n \"acc_norm\": 0.4074074074074074,\n\

\ \"acc_norm_stderr\": 0.047500773411999854\n },\n \"harness|hendrycksTest-logical_fallacies|5\"\

: {\n \"acc\": 0.39263803680981596,\n \"acc_stderr\": 0.03836740907831029,\n\

\ \"acc_norm\": 0.39263803680981596,\n \"acc_norm_stderr\": 0.03836740907831029\n\

\ },\n \"harness|hendrycksTest-machine_learning|5\": {\n \"acc\": 0.32142857142857145,\n\

\ \"acc_stderr\": 0.04432804055291519,\n \"acc_norm\": 0.32142857142857145,\n\

\ \"acc_norm_stderr\": 0.04432804055291519\n },\n \"harness|hendrycksTest-management|5\"\

: {\n \"acc\": 0.47572815533980584,\n \"acc_stderr\": 0.049449010929737795,\n\

\ \"acc_norm\": 0.47572815533980584,\n \"acc_norm_stderr\": 0.049449010929737795\n\

\ },\n \"harness|hendrycksTest-marketing|5\": {\n \"acc\": 0.5769230769230769,\n\

\ \"acc_stderr\": 0.032366121762202014,\n \"acc_norm\": 0.5769230769230769,\n\

\ \"acc_norm_stderr\": 0.032366121762202014\n },\n \"harness|hendrycksTest-medical_genetics|5\"\

: {\n \"acc\": 0.4,\n \"acc_stderr\": 0.049236596391733084,\n \

\ \"acc_norm\": 0.4,\n \"acc_norm_stderr\": 0.049236596391733084\n \

\ },\n \"harness|hendrycksTest-miscellaneous|5\": {\n \"acc\": 0.49680715197956576,\n\

\ \"acc_stderr\": 0.01787959894593307,\n \"acc_norm\": 0.49680715197956576,\n\

\ \"acc_norm_stderr\": 0.01787959894593307\n },\n \"harness|hendrycksTest-moral_disputes|5\"\

: {\n \"acc\": 0.41040462427745666,\n \"acc_stderr\": 0.026483392042098177,\n\

\ \"acc_norm\": 0.41040462427745666,\n \"acc_norm_stderr\": 0.026483392042098177\n\

\ },\n \"harness|hendrycksTest-moral_scenarios|5\": {\n \"acc\": 0.2558659217877095,\n\

\ \"acc_stderr\": 0.014593620923210749,\n \"acc_norm\": 0.2558659217877095,\n\

\ \"acc_norm_stderr\": 0.014593620923210749\n },\n \"harness|hendrycksTest-nutrition|5\"\

: {\n \"acc\": 0.3888888888888889,\n \"acc_stderr\": 0.027914055510468008,\n\

\ \"acc_norm\": 0.3888888888888889,\n \"acc_norm_stderr\": 0.027914055510468008\n\

\ },\n \"harness|hendrycksTest-philosophy|5\": {\n \"acc\": 0.3987138263665595,\n\

\ \"acc_stderr\": 0.0278093225857745,\n \"acc_norm\": 0.3987138263665595,\n\

\ \"acc_norm_stderr\": 0.0278093225857745\n },\n \"harness|hendrycksTest-prehistory|5\"\

: {\n \"acc\": 0.44753086419753085,\n \"acc_stderr\": 0.027667138569422697,\n\

\ \"acc_norm\": 0.44753086419753085,\n \"acc_norm_stderr\": 0.027667138569422697\n\

\ },\n \"harness|hendrycksTest-professional_accounting|5\": {\n \"\

acc\": 0.3049645390070922,\n \"acc_stderr\": 0.027464708442022128,\n \

\ \"acc_norm\": 0.3049645390070922,\n \"acc_norm_stderr\": 0.027464708442022128\n\

\ },\n \"harness|hendrycksTest-professional_law|5\": {\n \"acc\": 0.32333767926988266,\n\

\ \"acc_stderr\": 0.011946565758447212,\n \"acc_norm\": 0.32333767926988266,\n\

\ \"acc_norm_stderr\": 0.011946565758447212\n },\n \"harness|hendrycksTest-professional_medicine|5\"\

: {\n \"acc\": 0.33455882352941174,\n \"acc_stderr\": 0.028661996202335303,\n\

\ \"acc_norm\": 0.33455882352941174,\n \"acc_norm_stderr\": 0.028661996202335303\n\

\ },\n \"harness|hendrycksTest-professional_psychology|5\": {\n \"\

acc\": 0.3872549019607843,\n \"acc_stderr\": 0.01970687580408563,\n \

\ \"acc_norm\": 0.3872549019607843,\n \"acc_norm_stderr\": 0.01970687580408563\n\

\ },\n \"harness|hendrycksTest-public_relations|5\": {\n \"acc\": 0.45454545454545453,\n\

\ \"acc_stderr\": 0.04769300568972743,\n \"acc_norm\": 0.45454545454545453,\n\

\ \"acc_norm_stderr\": 0.04769300568972743\n },\n \"harness|hendrycksTest-security_studies|5\"\

: {\n \"acc\": 0.4530612244897959,\n \"acc_stderr\": 0.03186785930004128,\n\

\ \"acc_norm\": 0.4530612244897959,\n \"acc_norm_stderr\": 0.03186785930004128\n\

\ },\n \"harness|hendrycksTest-sociology|5\": {\n \"acc\": 0.40298507462686567,\n\

\ \"acc_stderr\": 0.034683432951111266,\n \"acc_norm\": 0.40298507462686567,\n\

\ \"acc_norm_stderr\": 0.034683432951111266\n },\n \"harness|hendrycksTest-us_foreign_policy|5\"\

: {\n \"acc\": 0.57,\n \"acc_stderr\": 0.04975698519562427,\n \

\ \"acc_norm\": 0.57,\n \"acc_norm_stderr\": 0.04975698519562427\n \

\ },\n \"harness|hendrycksTest-virology|5\": {\n \"acc\": 0.3674698795180723,\n\

\ \"acc_stderr\": 0.03753267402120575,\n \"acc_norm\": 0.3674698795180723,\n\

\ \"acc_norm_stderr\": 0.03753267402120575\n },\n \"harness|hendrycksTest-world_religions|5\"\

: {\n \"acc\": 0.5380116959064327,\n \"acc_stderr\": 0.03823727092882307,\n\

\ \"acc_norm\": 0.5380116959064327,\n \"acc_norm_stderr\": 0.03823727092882307\n\

\ },\n \"harness|truthfulqa:mc|0\": {\n \"mc1\": 0.2350061199510404,\n\

\ \"mc1_stderr\": 0.014843061507731613,\n \"mc2\": 0.3993902530198297,\n\

\ \"mc2_stderr\": 0.014276014222438483\n },\n \"harness|winogrande|5\"\

: {\n \"acc\": 0.6550907655880032,\n \"acc_stderr\": 0.013359379805033676\n\

\ },\n \"harness|gsm8k|5\": {\n \"acc\": 0.002274450341167551,\n \

\ \"acc_stderr\": 0.0013121578148674326\n }\n}\n```"

repo_url: https://huggingface.co/Fizzarolli/sappha-2b-v3

leaderboard_url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

point_of_contact: clementine@hf.co

configs:

- config_name: harness_arc_challenge_25

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|arc:challenge|25_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|arc:challenge|25_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_gsm8k_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|gsm8k|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|gsm8k|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hellaswag_10

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hellaswag|10_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hellaswag|10_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-management|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-virology|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-management|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-virology|5_2024-03-24T14-34-41.283293.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_abstract_algebra_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_anatomy_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-anatomy|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-anatomy|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_astronomy_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-astronomy|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-astronomy|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_business_ethics_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_clinical_knowledge_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_college_biology_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-college_biology|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_biology|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_college_chemistry_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_college_computer_science_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_college_mathematics_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_college_medicine_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_college_physics_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-college_physics|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_physics|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_computer_security_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-computer_security|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-computer_security|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_conceptual_physics_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_econometrics_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-econometrics|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-econometrics|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_electrical_engineering_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_elementary_mathematics_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_formal_logic_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_global_facts_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-global_facts|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-global_facts|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_high_school_biology_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_high_school_chemistry_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_high_school_computer_science_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_high_school_european_history_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_high_school_geography_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_high_school_government_and_politics_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_high_school_macroeconomics_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_high_school_mathematics_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_high_school_microeconomics_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_high_school_physics_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_high_school_psychology_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_high_school_statistics_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_high_school_us_history_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-high_school_us_history|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_us_history|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_high_school_world_history_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-high_school_world_history|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_world_history|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_human_aging_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-human_aging|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-human_aging|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_human_sexuality_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-human_sexuality|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-human_sexuality|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_international_law_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-international_law|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-international_law|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_jurisprudence_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-jurisprudence|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-jurisprudence|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_logical_fallacies_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-logical_fallacies|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-logical_fallacies|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_machine_learning_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-machine_learning|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-machine_learning|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_management_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-management|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-management|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_marketing_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-marketing|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-marketing|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_medical_genetics_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-medical_genetics|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-medical_genetics|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_miscellaneous_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-miscellaneous|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-miscellaneous|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_moral_disputes_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-moral_disputes|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-moral_disputes|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_moral_scenarios_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-moral_scenarios|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-moral_scenarios|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_nutrition_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-nutrition|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-nutrition|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_philosophy_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-philosophy|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-philosophy|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_prehistory_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-prehistory|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-prehistory|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_professional_accounting_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-professional_accounting|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_accounting|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_professional_law_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-professional_law|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_law|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_professional_medicine_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-professional_medicine|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_medicine|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_professional_psychology_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-professional_psychology|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_psychology|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_public_relations_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-public_relations|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-public_relations|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_security_studies_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-security_studies|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-security_studies|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_sociology_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-sociology|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-sociology|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_us_foreign_policy_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_virology_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-virology|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-virology|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_hendrycksTest_world_religions_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|hendrycksTest-world_religions|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-world_religions|5_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_truthfulqa_mc_0

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|truthfulqa:mc|0_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|truthfulqa:mc|0_2024-03-24T14-34-41.283293.parquet'

- config_name: harness_winogrande_5

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- '**/details_harness|winogrande|5_2024-03-24T14-34-41.283293.parquet'

- split: latest

path:

- '**/details_harness|winogrande|5_2024-03-24T14-34-41.283293.parquet'

- config_name: results

data_files:

- split: 2024_03_24T14_34_41.283293

path:

- results_2024-03-24T14-34-41.283293.parquet

- split: latest

path:

- results_2024-03-24T14-34-41.283293.parquet

---

# Dataset Card for Evaluation run of Fizzarolli/sappha-2b-v3

<!-- Provide a quick summary of the dataset. -->

Dataset automatically created during the evaluation run of model [Fizzarolli/sappha-2b-v3](https://huggingface.co/Fizzarolli/sappha-2b-v3) on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).

The dataset is composed of 63 configuration, each one coresponding to one of the evaluated task.

The dataset has been created from 1 run(s). Each run can be found as a specific split in each configuration, the split being named using the timestamp of the run.The "train" split is always pointing to the latest results.

An additional configuration "results" store all the aggregated results of the run (and is used to compute and display the aggregated metrics on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).

To load the details from a run, you can for instance do the following:

```python

from datasets import load_dataset

data = load_dataset("open-llm-leaderboard/details_Fizzarolli__sappha-2b-v3",

"harness_winogrande_5",

split="train")

```

## Latest results

These are the [latest results from run 2024-03-24T14:34:41.283293](https://huggingface.co/datasets/open-llm-leaderboard/details_Fizzarolli__sappha-2b-v3/blob/main/results_2024-03-24T14-34-41.283293.json)(note that their might be results for other tasks in the repos if successive evals didn't cover the same tasks. You find each in the results and the "latest" split for each eval):

```python

{

"all": {

"acc": 0.38770836928908536,

"acc_stderr": 0.03401202691311986,

"acc_norm": 0.39301680909612896,

"acc_norm_stderr": 0.03490947267798798,

"mc1": 0.2350061199510404,

"mc1_stderr": 0.014843061507731613,

"mc2": 0.3993902530198297,

"mc2_stderr": 0.014276014222438483

},

"harness|arc:challenge|25": {

"acc": 0.447098976109215,

"acc_stderr": 0.014529380160526848,

"acc_norm": 0.4616040955631399,

"acc_norm_stderr": 0.01456824555029636

},

"harness|hellaswag|10": {

"acc": 0.5266879107747461,

"acc_stderr": 0.004982668452118941,

"acc_norm": 0.707329217287393,

"acc_norm_stderr": 0.004540586983229991

},

"harness|hendrycksTest-abstract_algebra|5": {

"acc": 0.22,

"acc_stderr": 0.04163331998932269,

"acc_norm": 0.22,

"acc_norm_stderr": 0.04163331998932269

},

"harness|hendrycksTest-anatomy|5": {

"acc": 0.4148148148148148,

"acc_stderr": 0.042561937679014075,

"acc_norm": 0.4148148148148148,

"acc_norm_stderr": 0.042561937679014075

},

"harness|hendrycksTest-astronomy|5": {

"acc": 0.375,

"acc_stderr": 0.039397364351956274,

"acc_norm": 0.375,

"acc_norm_stderr": 0.039397364351956274

},

"harness|hendrycksTest-business_ethics|5": {

"acc": 0.35,

"acc_stderr": 0.04793724854411019,

"acc_norm": 0.35,

"acc_norm_stderr": 0.04793724854411019

},

"harness|hendrycksTest-clinical_knowledge|5": {

"acc": 0.43018867924528303,

"acc_stderr": 0.030471445867183238,

"acc_norm": 0.43018867924528303,

"acc_norm_stderr": 0.030471445867183238

},

"harness|hendrycksTest-college_biology|5": {

"acc": 0.4236111111111111,

"acc_stderr": 0.04132125019723369,

"acc_norm": 0.4236111111111111,

"acc_norm_stderr": 0.04132125019723369

},

"harness|hendrycksTest-college_chemistry|5": {

"acc": 0.33,

"acc_stderr": 0.04725815626252606,

"acc_norm": 0.33,

"acc_norm_stderr": 0.04725815626252606

},

"harness|hendrycksTest-college_computer_science|5": {

"acc": 0.31,

"acc_stderr": 0.04648231987117316,

"acc_norm": 0.31,

"acc_norm_stderr": 0.04648231987117316

},

"harness|hendrycksTest-college_mathematics|5": {

"acc": 0.29,

"acc_stderr": 0.04560480215720684,

"acc_norm": 0.29,

"acc_norm_stderr": 0.04560480215720684

},

"harness|hendrycksTest-college_medicine|5": {

"acc": 0.3699421965317919,

"acc_stderr": 0.036812296333943194,

"acc_norm": 0.3699421965317919,

"acc_norm_stderr": 0.036812296333943194

},

"harness|hendrycksTest-college_physics|5": {

"acc": 0.19607843137254902,

"acc_stderr": 0.03950581861179961,

"acc_norm": 0.19607843137254902,

"acc_norm_stderr": 0.03950581861179961

},

"harness|hendrycksTest-computer_security|5": {

"acc": 0.56,

"acc_stderr": 0.04988876515698589,

"acc_norm": 0.56,

"acc_norm_stderr": 0.04988876515698589

},

"harness|hendrycksTest-conceptual_physics|5": {

"acc": 0.3829787234042553,

"acc_stderr": 0.031778212502369216,

"acc_norm": 0.3829787234042553,

"acc_norm_stderr": 0.031778212502369216

},

"harness|hendrycksTest-econometrics|5": {

"acc": 0.2543859649122807,

"acc_stderr": 0.04096985139843672,

"acc_norm": 0.2543859649122807,

"acc_norm_stderr": 0.04096985139843672

},

"harness|hendrycksTest-electrical_engineering|5": {

"acc": 0.43448275862068964,

"acc_stderr": 0.04130740879555497,

"acc_norm": 0.43448275862068964,

"acc_norm_stderr": 0.04130740879555497

},

"harness|hendrycksTest-elementary_mathematics|5": {

"acc": 0.291005291005291,

"acc_stderr": 0.02339382650048487,

"acc_norm": 0.291005291005291,

"acc_norm_stderr": 0.02339382650048487

},

"harness|hendrycksTest-formal_logic|5": {

"acc": 0.23015873015873015,

"acc_stderr": 0.03764950879790606,

"acc_norm": 0.23015873015873015,

"acc_norm_stderr": 0.03764950879790606

},

"harness|hendrycksTest-global_facts|5": {

"acc": 0.31,

"acc_stderr": 0.04648231987117316,

"acc_norm": 0.31,

"acc_norm_stderr": 0.04648231987117316

},

"harness|hendrycksTest-high_school_biology|5": {

"acc": 0.3967741935483871,

"acc_stderr": 0.027831231605767955,

"acc_norm": 0.3967741935483871,

"acc_norm_stderr": 0.027831231605767955

},

"harness|hendrycksTest-high_school_chemistry|5": {

"acc": 0.3645320197044335,

"acc_stderr": 0.033864057460620905,

"acc_norm": 0.3645320197044335,

"acc_norm_stderr": 0.033864057460620905

},

"harness|hendrycksTest-high_school_computer_science|5": {

"acc": 0.4,

"acc_stderr": 0.04923659639173309,

"acc_norm": 0.4,

"acc_norm_stderr": 0.04923659639173309

},

"harness|hendrycksTest-high_school_european_history|5": {

"acc": 0.4,

"acc_stderr": 0.03825460278380026,

"acc_norm": 0.4,

"acc_norm_stderr": 0.03825460278380026

},

"harness|hendrycksTest-high_school_geography|5": {

"acc": 0.4292929292929293,

"acc_stderr": 0.035265527246011986,

"acc_norm": 0.4292929292929293,

"acc_norm_stderr": 0.035265527246011986

},

"harness|hendrycksTest-high_school_government_and_politics|5": {

"acc": 0.5025906735751295,

"acc_stderr": 0.03608390745384488,

"acc_norm": 0.5025906735751295,

"acc_norm_stderr": 0.03608390745384488

},

"harness|hendrycksTest-high_school_macroeconomics|5": {

"acc": 0.33589743589743587,

"acc_stderr": 0.02394672474156398,

"acc_norm": 0.33589743589743587,

"acc_norm_stderr": 0.02394672474156398

},

"harness|hendrycksTest-high_school_mathematics|5": {

"acc": 0.24444444444444444,

"acc_stderr": 0.02620276653465215,

"acc_norm": 0.24444444444444444,

"acc_norm_stderr": 0.02620276653465215

},

"harness|hendrycksTest-high_school_microeconomics|5": {

"acc": 0.3697478991596639,

"acc_stderr": 0.031357095996135904,

"acc_norm": 0.3697478991596639,

"acc_norm_stderr": 0.031357095996135904

},

"harness|hendrycksTest-high_school_physics|5": {

"acc": 0.2781456953642384,

"acc_stderr": 0.03658603262763744,

"acc_norm": 0.2781456953642384,

"acc_norm_stderr": 0.03658603262763744

},

"harness|hendrycksTest-high_school_psychology|5": {

"acc": 0.48440366972477067,

"acc_stderr": 0.02142689153920805,

"acc_norm": 0.48440366972477067,

"acc_norm_stderr": 0.02142689153920805

},

"harness|hendrycksTest-high_school_statistics|5": {

"acc": 0.3194444444444444,

"acc_stderr": 0.03179876342176851,

"acc_norm": 0.3194444444444444,

"acc_norm_stderr": 0.03179876342176851

},

"harness|hendrycksTest-high_school_us_history|5": {

"acc": 0.4019607843137255,

"acc_stderr": 0.034411900234824655,

"acc_norm": 0.4019607843137255,

"acc_norm_stderr": 0.034411900234824655

},

"harness|hendrycksTest-high_school_world_history|5": {

"acc": 0.48945147679324896,

"acc_stderr": 0.032539983791662855,

"acc_norm": 0.48945147679324896,

"acc_norm_stderr": 0.032539983791662855

},

"harness|hendrycksTest-human_aging|5": {

"acc": 0.4080717488789238,

"acc_stderr": 0.03298574607842821,

"acc_norm": 0.4080717488789238,

"acc_norm_stderr": 0.03298574607842821

},

"harness|hendrycksTest-human_sexuality|5": {

"acc": 0.3511450381679389,

"acc_stderr": 0.0418644516301375,

"acc_norm": 0.3511450381679389,

"acc_norm_stderr": 0.0418644516301375

},

"harness|hendrycksTest-international_law|5": {

"acc": 0.5619834710743802,

"acc_stderr": 0.045291468044357915,

"acc_norm": 0.5619834710743802,

"acc_norm_stderr": 0.045291468044357915

},

"harness|hendrycksTest-jurisprudence|5": {

"acc": 0.4074074074074074,

"acc_stderr": 0.047500773411999854,

"acc_norm": 0.4074074074074074,

"acc_norm_stderr": 0.047500773411999854

},

"harness|hendrycksTest-logical_fallacies|5": {

"acc": 0.39263803680981596,

"acc_stderr": 0.03836740907831029,

"acc_norm": 0.39263803680981596,

"acc_norm_stderr": 0.03836740907831029

},

"harness|hendrycksTest-machine_learning|5": {

"acc": 0.32142857142857145,

"acc_stderr": 0.04432804055291519,

"acc_norm": 0.32142857142857145,

"acc_norm_stderr": 0.04432804055291519

},

"harness|hendrycksTest-management|5": {

"acc": 0.47572815533980584,

"acc_stderr": 0.049449010929737795,

"acc_norm": 0.47572815533980584,

"acc_norm_stderr": 0.049449010929737795

},

"harness|hendrycksTest-marketing|5": {

"acc": 0.5769230769230769,

"acc_stderr": 0.032366121762202014,

"acc_norm": 0.5769230769230769,

"acc_norm_stderr": 0.032366121762202014

},

"harness|hendrycksTest-medical_genetics|5": {

"acc": 0.4,

"acc_stderr": 0.049236596391733084,

"acc_norm": 0.4,

"acc_norm_stderr": 0.049236596391733084

},

"harness|hendrycksTest-miscellaneous|5": {

"acc": 0.49680715197956576,

"acc_stderr": 0.01787959894593307,

"acc_norm": 0.49680715197956576,

"acc_norm_stderr": 0.01787959894593307

},

"harness|hendrycksTest-moral_disputes|5": {

"acc": 0.41040462427745666,

"acc_stderr": 0.026483392042098177,

"acc_norm": 0.41040462427745666,

"acc_norm_stderr": 0.026483392042098177

},

"harness|hendrycksTest-moral_scenarios|5": {

"acc": 0.2558659217877095,

"acc_stderr": 0.014593620923210749,

"acc_norm": 0.2558659217877095,

"acc_norm_stderr": 0.014593620923210749

},

"harness|hendrycksTest-nutrition|5": {

"acc": 0.3888888888888889,

"acc_stderr": 0.027914055510468008,

"acc_norm": 0.3888888888888889,

"acc_norm_stderr": 0.027914055510468008

},

"harness|hendrycksTest-philosophy|5": {

"acc": 0.3987138263665595,

"acc_stderr": 0.0278093225857745,

"acc_norm": 0.3987138263665595,

"acc_norm_stderr": 0.0278093225857745

},

"harness|hendrycksTest-prehistory|5": {

"acc": 0.44753086419753085,

"acc_stderr": 0.027667138569422697,

"acc_norm": 0.44753086419753085,

"acc_norm_stderr": 0.027667138569422697

},

"harness|hendrycksTest-professional_accounting|5": {

"acc": 0.3049645390070922,

"acc_stderr": 0.027464708442022128,

"acc_norm": 0.3049645390070922,

"acc_norm_stderr": 0.027464708442022128

},

"harness|hendrycksTest-professional_law|5": {

"acc": 0.32333767926988266,

"acc_stderr": 0.011946565758447212,

"acc_norm": 0.32333767926988266,

"acc_norm_stderr": 0.011946565758447212

},

"harness|hendrycksTest-professional_medicine|5": {

"acc": 0.33455882352941174,

"acc_stderr": 0.028661996202335303,

"acc_norm": 0.33455882352941174,

"acc_norm_stderr": 0.028661996202335303

},

"harness|hendrycksTest-professional_psychology|5": {

"acc": 0.3872549019607843,

"acc_stderr": 0.01970687580408563,

"acc_norm": 0.3872549019607843,

"acc_norm_stderr": 0.01970687580408563

},

"harness|hendrycksTest-public_relations|5": {

"acc": 0.45454545454545453,

"acc_stderr": 0.04769300568972743,

"acc_norm": 0.45454545454545453,

"acc_norm_stderr": 0.04769300568972743

},

"harness|hendrycksTest-security_studies|5": {

"acc": 0.4530612244897959,

"acc_stderr": 0.03186785930004128,

"acc_norm": 0.4530612244897959,

"acc_norm_stderr": 0.03186785930004128

},

"harness|hendrycksTest-sociology|5": {

"acc": 0.40298507462686567,

"acc_stderr": 0.034683432951111266,

"acc_norm": 0.40298507462686567,

"acc_norm_stderr": 0.034683432951111266

},

"harness|hendrycksTest-us_foreign_policy|5": {

"acc": 0.57,

"acc_stderr": 0.04975698519562427,

"acc_norm": 0.57,

"acc_norm_stderr": 0.04975698519562427

},

"harness|hendrycksTest-virology|5": {

"acc": 0.3674698795180723,

"acc_stderr": 0.03753267402120575,

"acc_norm": 0.3674698795180723,

"acc_norm_stderr": 0.03753267402120575

},

"harness|hendrycksTest-world_religions|5": {

"acc": 0.5380116959064327,

"acc_stderr": 0.03823727092882307,

"acc_norm": 0.5380116959064327,

"acc_norm_stderr": 0.03823727092882307

},

"harness|truthfulqa:mc|0": {

"mc1": 0.2350061199510404,

"mc1_stderr": 0.014843061507731613,

"mc2": 0.3993902530198297,

"mc2_stderr": 0.014276014222438483

},

"harness|winogrande|5": {

"acc": 0.6550907655880032,

"acc_stderr": 0.013359379805033676

},

"harness|gsm8k|5": {

"acc": 0.002274450341167551,

"acc_stderr": 0.0013121578148674326

}

}

```

## Dataset Details

### Dataset Description

<!-- Provide a longer summary of what this dataset is. -->

- **Curated by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

### Dataset Sources [optional]

<!-- Provide the basic links for the dataset. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the dataset is intended to be used. -->

### Direct Use

<!-- This section describes suitable use cases for the dataset. -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the dataset will not work well for. -->

[More Information Needed]

## Dataset Structure

<!-- This section provides a description of the dataset fields, and additional information about the dataset structure such as criteria used to create the splits, relationships between data points, etc. -->

[More Information Needed]

## Dataset Creation

### Curation Rationale

<!-- Motivation for the creation of this dataset. -->

[More Information Needed]

### Source Data

<!-- This section describes the source data (e.g. news text and headlines, social media posts, translated sentences, ...). -->

#### Data Collection and Processing

<!-- This section describes the data collection and processing process such as data selection criteria, filtering and normalization methods, tools and libraries used, etc. -->

[More Information Needed]

#### Who are the source data producers?

<!-- This section describes the people or systems who originally created the data. It should also include self-reported demographic or identity information for the source data creators if this information is available. -->

[More Information Needed]

### Annotations [optional]

<!-- If the dataset contains annotations which are not part of the initial data collection, use this section to describe them. -->

#### Annotation process

<!-- This section describes the annotation process such as annotation tools used in the process, the amount of data annotated, annotation guidelines provided to the annotators, interannotator statistics, annotation validation, etc. -->

[More Information Needed]

#### Who are the annotators?

<!-- This section describes the people or systems who created the annotations. -->

[More Information Needed]

#### Personal and Sensitive Information

<!-- State whether the dataset contains data that might be considered personal, sensitive, or private (e.g., data that reveals addresses, uniquely identifiable names or aliases, racial or ethnic origins, sexual orientations, religious beliefs, political opinions, financial or health data, etc.). If efforts were made to anonymize the data, describe the anonymization process. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users should be made aware of the risks, biases and limitations of the dataset. More information needed for further recommendations.

## Citation [optional]

<!-- If there is a paper or blog post introducing the dataset, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the dataset or dataset card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Dataset Card Authors [optional]

[More Information Needed]

## Dataset Card Contact

[More Information Needed] |

anjunhu/naively_captioned_CUB2002011_test_20shot | ---

dataset_info:

features:

- name: text

dtype: string

- name: text_cupl

dtype: string

- name: image

dtype: image

splits:

- name: train

num_bytes: 110186062.0

num_examples: 4000

download_size: 99101657

dataset_size: 110186062.0

---

# Dataset Card for "naively_captioned_CUB2002011_test_20shot"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

gshireesh/mnist_fonts | ---

license: mit

---

|

Amirkid/MedQuad-dataset | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 21658852

num_examples: 32800

download_size: 8756796

dataset_size: 21658852

---

# Dataset Card for "MedQuad-dataset"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

pccl-org/formal-logic-simple-order-token-objects-paired-relationship-0-100 | ---

dataset_info:

features:

- name: greater_than

sequence: int64

- name: less_than

sequence: int64

- name: paired_example

sequence:

sequence:

sequence: int64

- name: correct_example

sequence:

sequence: int64

- name: incorrect_example

sequence:

sequence: int64

- name: distance

dtype: int64

- name: index

dtype: int64

- name: index_in_distance

dtype: int64

splits:

- name: train

num_bytes: 1041480

num_examples: 4950

download_size: 114269

dataset_size: 1041480

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

huggingartists/krept-and-konan-bugzy-malone-sl-morisson-abra-cadabra-rv-and-snap-capone | ---

language:

- en

tags:

- huggingartists

- lyrics

---

# Dataset Card for "huggingartists/krept-and-konan-bugzy-malone-sl-morisson-abra-cadabra-rv-and-snap-capone"

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [How to use](#how-to-use)

- [Dataset Structure](#dataset-structure)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [About](#about)

## Dataset Description

- **Homepage:** [https://github.com/AlekseyKorshuk/huggingartists](https://github.com/AlekseyKorshuk/huggingartists)

- **Repository:** [https://github.com/AlekseyKorshuk/huggingartists](https://github.com/AlekseyKorshuk/huggingartists)

- **Paper:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Point of Contact:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Size of the generated dataset:** 0.032823 MB

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div style="display:DISPLAY_1; margin-left: auto; margin-right: auto; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://assets.genius.com/images/default_avatar_300.png?1631203230')">

</div>

</div>

<a href="https://huggingface.co/huggingartists/krept-and-konan-bugzy-malone-sl-morisson-abra-cadabra-rv-and-snap-capone">

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 HuggingArtists Model 🤖</div>

</a>

<div style="text-align: center; font-size: 16px; font-weight: 800">Krept & Konan, Bugzy Malone, SL, Morisson, Abra Cadabra, RV & Snap Capone</div>

<a href="https://genius.com/artists/krept-and-konan-bugzy-malone-sl-morisson-abra-cadabra-rv-and-snap-capone">

<div style="text-align: center; font-size: 14px;">@krept-and-konan-bugzy-malone-sl-morisson-abra-cadabra-rv-and-snap-capone</div>

</a>

</div>

### Dataset Summary

The Lyrics dataset parsed from Genius. This dataset is designed to generate lyrics with HuggingArtists.

Model is available [here](https://huggingface.co/huggingartists/krept-and-konan-bugzy-malone-sl-morisson-abra-cadabra-rv-and-snap-capone).

### Supported Tasks and Leaderboards

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Languages

en

## How to use

How to load this dataset directly with the datasets library:

```python

from datasets import load_dataset

dataset = load_dataset("huggingartists/krept-and-konan-bugzy-malone-sl-morisson-abra-cadabra-rv-and-snap-capone")

```

## Dataset Structure

An example of 'train' looks as follows.

```

This example was too long and was cropped:

{

"text": "Look, I was gonna go easy on you\nNot to hurt your feelings\nBut I'm only going to get this one chance\nSomething's wrong, I can feel it..."

}

```

### Data Fields

The data fields are the same among all splits.

- `text`: a `string` feature.