id stringlengths 2 115 | lastModified stringlengths 24 24 | tags list | author stringlengths 2 42 ⌀ | description stringlengths 0 68.7k ⌀ | citation stringlengths 0 10.7k ⌀ | cardData null | likes int64 0 3.55k | downloads int64 0 10.1M | card stringlengths 0 1.01M |

|---|---|---|---|---|---|---|---|---|---|

kentsui/open-react-retrieval-multi-neg-result-new-kw | 2023-08-07T17:49:01.000Z | [

"region:us"

] | kentsui | null | null | null | 0 | 9 | ---

dataset_info:

features:

- name: system_prompt

dtype: string

- name: question

dtype: string

- name: response

dtype: string

- name: meta

struct:

- name: first_search_rank

dtype: int64

- name: second_search

dtype: bool

- name: second_search_success

dtype: bool

- name: source

dtype: string

splits:

- name: train

num_bytes: 83579841

num_examples: 25158

download_size: 21996450

dataset_size: 83579841

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "open-react-retrieval-multi-neg-result-new-kw"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

mbrack/image_edit_comp | 2023-08-09T01:08:24.000Z | [

"region:us"

] | mbrack | null | null | null | 0 | 9 | Entry not found |

ixarchakos/dresses_laydown | 2023-10-07T01:36:01.000Z | [

"region:us"

] | ixarchakos | null | null | null | 0 | 9 | Entry not found |

WALIDALI/text8 | 2023-08-11T18:12:06.000Z | [

"region:us"

] | WALIDALI | null | null | null | 0 | 9 | Entry not found |

amitness/logits-italian-128 | 2023-09-21T13:43:52.000Z | [

"region:us"

] | amitness | null | null | null | 0 | 9 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: input_ids

sequence: int32

- name: token_type_ids

sequence: int8

- name: attention_mask

sequence: int8

- name: labels

sequence: int64

- name: teacher_logits

sequence:

sequence: float64

- name: teacher_indices

sequence:

sequence: int64

- name: teacher_mask_indices

sequence: int64

splits:

- name: train

num_bytes: 37616201036

num_examples: 8305825

download_size: 16084893126

dataset_size: 37616201036

---

# Dataset Card for "logits-italian-128"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

elsheikhams/q2q_similarity_workshop | 2023-08-14T08:27:58.000Z | [

"region:us"

] | elsheikhams | null | null | null | 0 | 9 | Entry not found |

elsheikhams/diagnostic_dataset | 2023-08-14T08:48:36.000Z | [

"region:us"

] | elsheikhams | null | null | null | 0 | 9 | Entry not found |

TrainingDataPro/cows-detection-dataset | 2023-09-14T16:32:30.000Z | [

"task_categories:image-to-image",

"task_categories:image-classification",

"task_categories:object-detection",

"language:en",

"license:cc-by-nc-nd-4.0",

"biology",

"code",

"region:us"

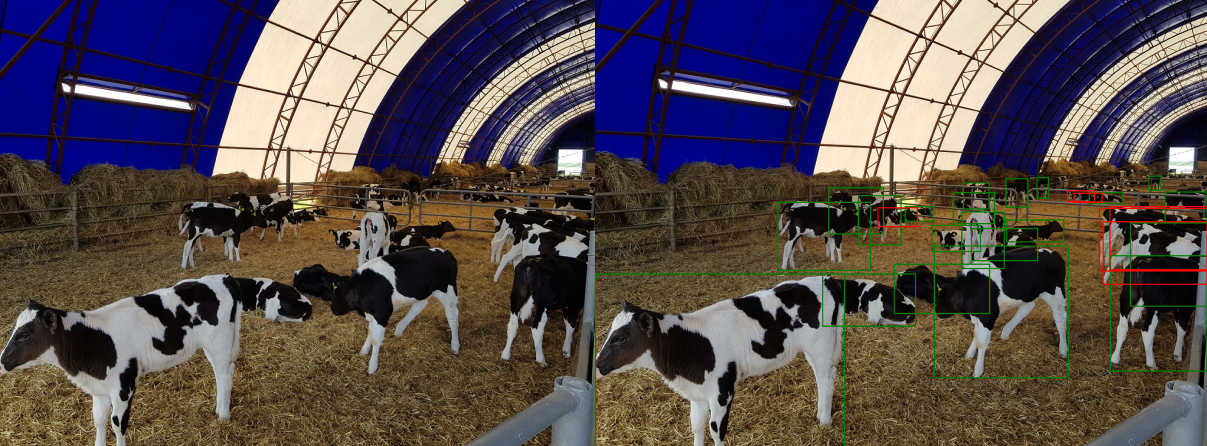

] | TrainingDataPro | The dataset is a collection of images along with corresponding bounding box annotations

that are specifically curated for **detecting cows** in images. The dataset covers

different *cows breeds, sizes, and orientations*, providing a comprehensive

representation of cows appearances and positions. Additionally, the visibility of each

cow is presented in the .xml file.

The cow detection dataset provides a valuable resource for researchers working on

detection tasks. It offers a diverse collection of annotated images, allowing for

comprehensive algorithm development, evaluation, and benchmarking, ultimately aiding

in the development of accurate and robust models. | @InProceedings{huggingface:dataset,

title = {cows-detection-dataset},

author = {TrainingDataPro},

year = {2023}

} | null | 1 | 9 | ---

language:

- en

license: cc-by-nc-nd-4.0

task_categories:

- image-to-image

- image-classification

- object-detection

tags:

- biology

- code

dataset_info:

features:

- name: id

dtype: int32

- name: image

dtype: image

- name: mask

dtype: image

- name: bboxes

dtype: string

splits:

- name: train

num_bytes: 184108240

num_examples: 51

download_size: 183666433

dataset_size: 184108240

---

# Cows Detection Dataset

The dataset is a collection of images along with corresponding bounding box annotations that are specifically curated for **detecting cows** in images. The dataset covers different *cows breeds, sizes, and orientations*, providing a comprehensive representation of cows appearances and positions. Additionally, the visibility of each cow is presented in the .xml file.

The cow detection dataset provides a valuable resource for researchers working on detection tasks. It offers a diverse collection of annotated images, allowing for comprehensive algorithm development, evaluation, and benchmarking, ultimately aiding in the development of accurate and robust models.

# Get the dataset

### This is just an example of the data

Leave a request on [**https://trainingdata.pro/data-market**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=cows-detection-dataset) to discuss your requirements, learn about the price and buy the dataset.

# Dataset structure

- **images** - contains of original images of cows

- **boxes** - includes bounding box labeling for the original images

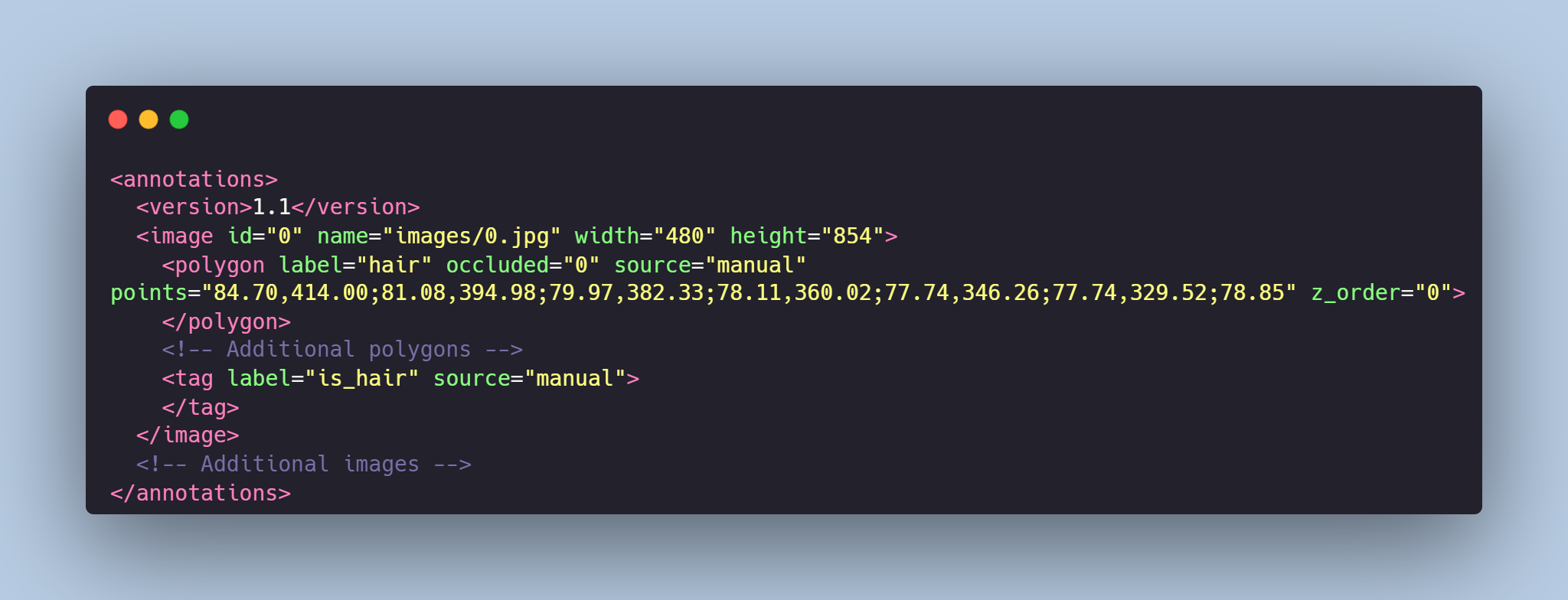

- **annotations.xml** - contains coordinates of the bounding boxes and labels, created for the original photo

# Data Format

Each image from `images` folder is accompanied by an XML-annotation in the `annotations.xml` file indicating the coordinates of the bounding boxes for cows detection. For each point, the x and y coordinates are provided. Visibility of the cow is also provided by the label **is_visible** (true, false).

# Example of XML file structure

.png?generation=1692032268744062&alt=media)

# Cows Detection might be made in accordance with your requirements.

## [**TrainingData**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=cows-detection-dataset) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets** |

raminass/opinions_1994_2020 | 2023-08-15T09:13:14.000Z | [

"region:us"

] | raminass | null | null | null | 1 | 9 | ---

dataset_info:

features:

- name: author_name

dtype: string

- name: label

dtype: int64

- name: category

dtype: string

- name: case_name

dtype: string

- name: url

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 91104293

num_examples: 32565

download_size: 45407635

dataset_size: 91104293

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "opinions_1994_2020"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

amitness/logits-english-512 | 2023-09-24T16:46:43.000Z | [

"region:us"

] | amitness | null | null | null | 0 | 9 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: input_ids

sequence: int32

- name: token_type_ids

sequence: int8

- name: attention_mask

sequence: int8

- name: labels

sequence: int64

- name: teacher_logits

sequence:

sequence: float64

- name: teacher_indices

sequence:

sequence: int64

- name: teacher_mask_indices

sequence: int64

splits:

- name: train

num_bytes: 156799366264

num_examples: 8620310

download_size: 0

dataset_size: 156799366264

---

# Dataset Card for "logits-english-512"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

DynamicSuperb/IntentClassification_FluentSpeechCommands-Action | 2023-08-16T10:48:46.000Z | [

"region:us"

] | DynamicSuperb | null | null | null | 0 | 9 | ---

dataset_info:

features:

- name: file

dtype: string

- name: speakerId

dtype: string

- name: transcription

dtype: string

- name: audio

dtype: audio

- name: label

dtype: string

- name: instruction

dtype: string

splits:

- name: test

num_bytes: 743300704.0

num_examples: 10000

download_size: 636643694

dataset_size: 743300704.0

---

# Dataset Card for "Intent_Classification_FluentSpeechCommands_Action"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

milktruck/OABTcleaned | 2023-08-16T16:02:19.000Z | [

"license:apache-2.0",

"region:us"

] | milktruck | null | null | null | 0 | 9 | ---

license: apache-2.0

---

|

serenaz/llama2-medical-meadow | 2023-08-17T01:32:36.000Z | [

"region:us"

] | serenaz | null | null | null | 0 | 9 | Entry not found |

AlexBlck/ANAKIN | 2023-09-21T10:37:04.000Z | [

"task_categories:video-classification",

"task_categories:visual-question-answering",

"size_categories:1K<n<10K",

"language:en",

"license:cc-by-4.0",

"arxiv:2303.13193",

"region:us"

] | AlexBlck | ANAKIN is a dataset of mANipulated videos and mAsK annotatIoNs. | @misc{black2023vader,

title={VADER: Video Alignment Differencing and Retrieval},

author={Alexander Black and Simon Jenni and Tu Bui and Md. Mehrab Tanjim and Stefano Petrangeli and Ritwik Sinha and Viswanathan Swaminathan and John Collomosse},

year={2023},

eprint={2303.13193},

archivePrefix={arXiv},

primaryClass={cs.CV}

} | null | 0 | 9 | ---

license: cc-by-4.0

task_categories:

- video-classification

- visual-question-answering

language:

- en

pretty_name: 'ANAKIN: manipulated videos and mask annotations'

size_categories:

- 1K<n<10K

---

[arxiv](https://arxiv.org/abs/2303.13193)

# ANAKIN

ANAKIN is a dataset of mANipulated videos and mAsK annotatIoNs.

To our best knowledge, ANAKIN is the first real-world dataset of professionally edited video clips,

paired with source videos, edit descriptions and binary mask annotations of the edited regions.

ANAKIN consists of 1023 videos in total, including 352 edited videos from the

[VideoSham](https://github.com/adobe-research/VideoSham-dataset)

dataset plus 671 new videos collected from the Vimeo platform.

## Data Format

| Label | Description |

|----------|-------------------------------------------------------------------------------|

| video-id | Video ID |

|full* | Full length original video |

|trimmed | Short clip trimmed from `full` |

|edited| Manipulated version of `trimmed`|

|masks*| Per-frame binary masks, annotating the manipulation|

| start-time* | Trim beginning time (in seconds) |

| end-time* | Trim end time (in seconds) |

| task | Task given to the video editor |

|manipulation-type| One of the 5 manipulation types: splicing, inpainting, swap, audio, frame-level |

| editor-id | Editor ID |

*There are several subset configurations available.

The choice depends on whether you need to download full length videos and/or you only need the videos with masks available.

`start-time` and `end-time` will be returned for subset configs with full videos in them.

| config | full | masks | train/val/test |

| ---------- | ---- | ----- | -------------- |

| all | yes | maybe | 681/98/195 |

| no-full | no | maybe | 716/102/205 |

| has-masks | no | yes | 297/43/85 |

| full-masks | yes | yes | 297/43/85 |

## Example

The data can either be downloaded or [streamed](https://huggingface.co/docs/datasets/stream).

### Downloaded

```python

from datasets import load_dataset

from torchvision.io import read_video

config = 'no-full' # ['all', 'no-full', 'has-masks', 'full-masks']

dataset = load_dataset("AlexBlck/ANAKIN", config, nproc=8)

for sample in dataset['train']: # ['train', 'validation', 'test']

trimmed_video, trimmed_audio, _ = read_video(sample['trimmed'], output_format="TCHW")

edited_video, edited_audio, _ = read_video(sample['edited'], output_format="TCHW")

masks = sample['masks']

print(sample.keys())

```

### Streamed

```python

from datasets import load_dataset

import cv2

dataset = load_dataset("AlexBlck/ANAKIN", streaming=True)

sample = next(iter(dataset['train'])) # ['train', 'validation', 'test']

cap = cv2.VideoCapture(sample['trimmed'])

while(cap.isOpened()):

ret, frame = cap.read()

# ...

``` |

open-llm-leaderboard/details_lmsys__vicuna-7b-v1.5 | 2023-08-27T12:30:26.000Z | [

"region:us"

] | open-llm-leaderboard | null | null | null | 0 | 9 | ---

pretty_name: Evaluation run of lmsys/vicuna-7b-v1.5

dataset_summary: "Dataset automatically created during the evaluation run of model\

\ [lmsys/vicuna-7b-v1.5](https://huggingface.co/lmsys/vicuna-7b-v1.5) on the [Open\

\ LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).\n\

\nThe dataset is composed of 61 configuration, each one coresponding to one of the\

\ evaluated task.\n\nThe dataset has been created from 1 run(s). Each run can be\

\ found as a specific split in each configuration, the split being named using the\

\ timestamp of the run.The \"train\" split is always pointing to the latest results.\n\

\nAn additional configuration \"results\" store all the aggregated results of the\

\ run (and is used to compute and display the agregated metrics on the [Open LLM\

\ Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).\n\

\nTo load the details from a run, you can for instance do the following:\n```python\n\

from datasets import load_dataset\ndata = load_dataset(\"open-llm-leaderboard/details_lmsys__vicuna-7b-v1.5\"\

,\n\t\"harness_truthfulqa_mc_0\",\n\tsplit=\"train\")\n```\n\n## Latest results\n\

\nThese are the [latest results from run 2023-08-17T12:09:52.202468](https://huggingface.co/datasets/open-llm-leaderboard/details_lmsys__vicuna-7b-v1.5/blob/main/results_2023-08-17T12%3A09%3A52.202468.json)\

\ (note that their might be results for other tasks in the repos if successive evals\

\ didn't cover the same tasks. You find each in the results and the \"latest\" split\

\ for each eval):\n\n```python\n{\n \"all\": {\n \"acc\": 0.5115051849379418,\n\

\ \"acc_stderr\": 0.03499341130480278,\n \"acc_norm\": 0.5152155688156768,\n\

\ \"acc_norm_stderr\": 0.03498027537647508,\n \"mc1\": 0.3317013463892289,\n\

\ \"mc1_stderr\": 0.016482148810241473,\n \"mc2\": 0.5033808156222059,\n\

\ \"mc2_stderr\": 0.015670274691568342\n },\n \"harness|arc:challenge|25\"\

: {\n \"acc\": 0.5034129692832765,\n \"acc_stderr\": 0.014611050403244084,\n\

\ \"acc_norm\": 0.5324232081911263,\n \"acc_norm_stderr\": 0.014580637569995418\n\

\ },\n \"harness|hellaswag|10\": {\n \"acc\": 0.5840470025891257,\n\

\ \"acc_stderr\": 0.004918781662373942,\n \"acc_norm\": 0.7739494124676359,\n\

\ \"acc_norm_stderr\": 0.004174174724288079\n },\n \"harness|hendrycksTest-abstract_algebra|5\"\

: {\n \"acc\": 0.29,\n \"acc_stderr\": 0.04560480215720684,\n \

\ \"acc_norm\": 0.29,\n \"acc_norm_stderr\": 0.04560480215720684\n \

\ },\n \"harness|hendrycksTest-anatomy|5\": {\n \"acc\": 0.5037037037037037,\n\

\ \"acc_stderr\": 0.04319223625811331,\n \"acc_norm\": 0.5037037037037037,\n\

\ \"acc_norm_stderr\": 0.04319223625811331\n },\n \"harness|hendrycksTest-astronomy|5\"\

: {\n \"acc\": 0.47368421052631576,\n \"acc_stderr\": 0.04063302731486671,\n\

\ \"acc_norm\": 0.47368421052631576,\n \"acc_norm_stderr\": 0.04063302731486671\n\

\ },\n \"harness|hendrycksTest-business_ethics|5\": {\n \"acc\": 0.54,\n\

\ \"acc_stderr\": 0.05009082659620332,\n \"acc_norm\": 0.54,\n \

\ \"acc_norm_stderr\": 0.05009082659620332\n },\n \"harness|hendrycksTest-clinical_knowledge|5\"\

: {\n \"acc\": 0.5358490566037736,\n \"acc_stderr\": 0.030693675018458003,\n\

\ \"acc_norm\": 0.5358490566037736,\n \"acc_norm_stderr\": 0.030693675018458003\n\

\ },\n \"harness|hendrycksTest-college_biology|5\": {\n \"acc\": 0.5138888888888888,\n\

\ \"acc_stderr\": 0.04179596617581,\n \"acc_norm\": 0.5138888888888888,\n\

\ \"acc_norm_stderr\": 0.04179596617581\n },\n \"harness|hendrycksTest-college_chemistry|5\"\

: {\n \"acc\": 0.33,\n \"acc_stderr\": 0.047258156262526045,\n \

\ \"acc_norm\": 0.33,\n \"acc_norm_stderr\": 0.047258156262526045\n \

\ },\n \"harness|hendrycksTest-college_computer_science|5\": {\n \"\

acc\": 0.44,\n \"acc_stderr\": 0.04988876515698589,\n \"acc_norm\"\

: 0.44,\n \"acc_norm_stderr\": 0.04988876515698589\n },\n \"harness|hendrycksTest-college_mathematics|5\"\

: {\n \"acc\": 0.39,\n \"acc_stderr\": 0.04902071300001975,\n \

\ \"acc_norm\": 0.39,\n \"acc_norm_stderr\": 0.04902071300001975\n \

\ },\n \"harness|hendrycksTest-college_medicine|5\": {\n \"acc\": 0.48554913294797686,\n\

\ \"acc_stderr\": 0.03810871630454764,\n \"acc_norm\": 0.48554913294797686,\n\

\ \"acc_norm_stderr\": 0.03810871630454764\n },\n \"harness|hendrycksTest-college_physics|5\"\

: {\n \"acc\": 0.18627450980392157,\n \"acc_stderr\": 0.03873958714149352,\n\

\ \"acc_norm\": 0.18627450980392157,\n \"acc_norm_stderr\": 0.03873958714149352\n\

\ },\n \"harness|hendrycksTest-computer_security|5\": {\n \"acc\":\

\ 0.64,\n \"acc_stderr\": 0.04824181513244218,\n \"acc_norm\": 0.64,\n\

\ \"acc_norm_stderr\": 0.04824181513244218\n },\n \"harness|hendrycksTest-conceptual_physics|5\"\

: {\n \"acc\": 0.451063829787234,\n \"acc_stderr\": 0.032529096196131965,\n\

\ \"acc_norm\": 0.451063829787234,\n \"acc_norm_stderr\": 0.032529096196131965\n\

\ },\n \"harness|hendrycksTest-econometrics|5\": {\n \"acc\": 0.30701754385964913,\n\

\ \"acc_stderr\": 0.043391383225798615,\n \"acc_norm\": 0.30701754385964913,\n\

\ \"acc_norm_stderr\": 0.043391383225798615\n },\n \"harness|hendrycksTest-electrical_engineering|5\"\

: {\n \"acc\": 0.42758620689655175,\n \"acc_stderr\": 0.04122737111370331,\n\

\ \"acc_norm\": 0.42758620689655175,\n \"acc_norm_stderr\": 0.04122737111370331\n\

\ },\n \"harness|hendrycksTest-elementary_mathematics|5\": {\n \"acc\"\

: 0.30687830687830686,\n \"acc_stderr\": 0.023752928712112143,\n \"\

acc_norm\": 0.30687830687830686,\n \"acc_norm_stderr\": 0.023752928712112143\n\

\ },\n \"harness|hendrycksTest-formal_logic|5\": {\n \"acc\": 0.38095238095238093,\n\

\ \"acc_stderr\": 0.043435254289490965,\n \"acc_norm\": 0.38095238095238093,\n\

\ \"acc_norm_stderr\": 0.043435254289490965\n },\n \"harness|hendrycksTest-global_facts|5\"\

: {\n \"acc\": 0.35,\n \"acc_stderr\": 0.047937248544110196,\n \

\ \"acc_norm\": 0.35,\n \"acc_norm_stderr\": 0.047937248544110196\n \

\ },\n \"harness|hendrycksTest-high_school_biology|5\": {\n \"acc\"\

: 0.5387096774193548,\n \"acc_stderr\": 0.028358634859836935,\n \"\

acc_norm\": 0.5387096774193548,\n \"acc_norm_stderr\": 0.028358634859836935\n\

\ },\n \"harness|hendrycksTest-high_school_chemistry|5\": {\n \"acc\"\

: 0.39901477832512317,\n \"acc_stderr\": 0.03445487686264715,\n \"\

acc_norm\": 0.39901477832512317,\n \"acc_norm_stderr\": 0.03445487686264715\n\

\ },\n \"harness|hendrycksTest-high_school_computer_science|5\": {\n \

\ \"acc\": 0.44,\n \"acc_stderr\": 0.04988876515698589,\n \"acc_norm\"\

: 0.44,\n \"acc_norm_stderr\": 0.04988876515698589\n },\n \"harness|hendrycksTest-high_school_european_history|5\"\

: {\n \"acc\": 0.6242424242424243,\n \"acc_stderr\": 0.03781887353205982,\n\

\ \"acc_norm\": 0.6242424242424243,\n \"acc_norm_stderr\": 0.03781887353205982\n\

\ },\n \"harness|hendrycksTest-high_school_geography|5\": {\n \"acc\"\

: 0.6161616161616161,\n \"acc_stderr\": 0.034648816750163396,\n \"\

acc_norm\": 0.6161616161616161,\n \"acc_norm_stderr\": 0.034648816750163396\n\

\ },\n \"harness|hendrycksTest-high_school_government_and_politics|5\": {\n\

\ \"acc\": 0.7305699481865285,\n \"acc_stderr\": 0.03201867122877794,\n\

\ \"acc_norm\": 0.7305699481865285,\n \"acc_norm_stderr\": 0.03201867122877794\n\

\ },\n \"harness|hendrycksTest-high_school_macroeconomics|5\": {\n \

\ \"acc\": 0.48717948717948717,\n \"acc_stderr\": 0.025342671293807264,\n\

\ \"acc_norm\": 0.48717948717948717,\n \"acc_norm_stderr\": 0.025342671293807264\n\

\ },\n \"harness|hendrycksTest-high_school_mathematics|5\": {\n \"\

acc\": 0.24814814814814815,\n \"acc_stderr\": 0.026335739404055803,\n \

\ \"acc_norm\": 0.24814814814814815,\n \"acc_norm_stderr\": 0.026335739404055803\n\

\ },\n \"harness|hendrycksTest-high_school_microeconomics|5\": {\n \

\ \"acc\": 0.4495798319327731,\n \"acc_stderr\": 0.03231293497137707,\n \

\ \"acc_norm\": 0.4495798319327731,\n \"acc_norm_stderr\": 0.03231293497137707\n\

\ },\n \"harness|hendrycksTest-high_school_physics|5\": {\n \"acc\"\

: 0.2847682119205298,\n \"acc_stderr\": 0.03684881521389024,\n \"\

acc_norm\": 0.2847682119205298,\n \"acc_norm_stderr\": 0.03684881521389024\n\

\ },\n \"harness|hendrycksTest-high_school_psychology|5\": {\n \"acc\"\

: 0.6972477064220184,\n \"acc_stderr\": 0.01969871143475634,\n \"\

acc_norm\": 0.6972477064220184,\n \"acc_norm_stderr\": 0.01969871143475634\n\

\ },\n \"harness|hendrycksTest-high_school_statistics|5\": {\n \"acc\"\

: 0.38425925925925924,\n \"acc_stderr\": 0.03317354514310742,\n \"\

acc_norm\": 0.38425925925925924,\n \"acc_norm_stderr\": 0.03317354514310742\n\

\ },\n \"harness|hendrycksTest-high_school_us_history|5\": {\n \"acc\"\

: 0.7205882352941176,\n \"acc_stderr\": 0.03149328104507957,\n \"\

acc_norm\": 0.7205882352941176,\n \"acc_norm_stderr\": 0.03149328104507957\n\

\ },\n \"harness|hendrycksTest-high_school_world_history|5\": {\n \"\

acc\": 0.7130801687763713,\n \"acc_stderr\": 0.029443773022594693,\n \

\ \"acc_norm\": 0.7130801687763713,\n \"acc_norm_stderr\": 0.029443773022594693\n\

\ },\n \"harness|hendrycksTest-human_aging|5\": {\n \"acc\": 0.6233183856502242,\n\

\ \"acc_stderr\": 0.032521134899291884,\n \"acc_norm\": 0.6233183856502242,\n\

\ \"acc_norm_stderr\": 0.032521134899291884\n },\n \"harness|hendrycksTest-human_sexuality|5\"\

: {\n \"acc\": 0.6335877862595419,\n \"acc_stderr\": 0.042258754519696365,\n\

\ \"acc_norm\": 0.6335877862595419,\n \"acc_norm_stderr\": 0.042258754519696365\n\

\ },\n \"harness|hendrycksTest-international_law|5\": {\n \"acc\":\

\ 0.5785123966942148,\n \"acc_stderr\": 0.04507732278775087,\n \"\

acc_norm\": 0.5785123966942148,\n \"acc_norm_stderr\": 0.04507732278775087\n\

\ },\n \"harness|hendrycksTest-jurisprudence|5\": {\n \"acc\": 0.5555555555555556,\n\

\ \"acc_stderr\": 0.04803752235190192,\n \"acc_norm\": 0.5555555555555556,\n\

\ \"acc_norm_stderr\": 0.04803752235190192\n },\n \"harness|hendrycksTest-logical_fallacies|5\"\

: {\n \"acc\": 0.5337423312883436,\n \"acc_stderr\": 0.039194155450484096,\n\

\ \"acc_norm\": 0.5337423312883436,\n \"acc_norm_stderr\": 0.039194155450484096\n\

\ },\n \"harness|hendrycksTest-machine_learning|5\": {\n \"acc\": 0.44642857142857145,\n\

\ \"acc_stderr\": 0.04718471485219588,\n \"acc_norm\": 0.44642857142857145,\n\

\ \"acc_norm_stderr\": 0.04718471485219588\n },\n \"harness|hendrycksTest-management|5\"\

: {\n \"acc\": 0.6796116504854369,\n \"acc_stderr\": 0.04620284082280042,\n\

\ \"acc_norm\": 0.6796116504854369,\n \"acc_norm_stderr\": 0.04620284082280042\n\

\ },\n \"harness|hendrycksTest-marketing|5\": {\n \"acc\": 0.7692307692307693,\n\

\ \"acc_stderr\": 0.027601921381417597,\n \"acc_norm\": 0.7692307692307693,\n\

\ \"acc_norm_stderr\": 0.027601921381417597\n },\n \"harness|hendrycksTest-medical_genetics|5\"\

: {\n \"acc\": 0.57,\n \"acc_stderr\": 0.049756985195624284,\n \

\ \"acc_norm\": 0.57,\n \"acc_norm_stderr\": 0.049756985195624284\n \

\ },\n \"harness|hendrycksTest-miscellaneous|5\": {\n \"acc\": 0.6871008939974457,\n\

\ \"acc_stderr\": 0.016580935940304055,\n \"acc_norm\": 0.6871008939974457,\n\

\ \"acc_norm_stderr\": 0.016580935940304055\n },\n \"harness|hendrycksTest-moral_disputes|5\"\

: {\n \"acc\": 0.5606936416184971,\n \"acc_stderr\": 0.02672003438051499,\n\

\ \"acc_norm\": 0.5606936416184971,\n \"acc_norm_stderr\": 0.02672003438051499\n\

\ },\n \"harness|hendrycksTest-moral_scenarios|5\": {\n \"acc\": 0.24581005586592178,\n\

\ \"acc_stderr\": 0.014400296429225619,\n \"acc_norm\": 0.24581005586592178,\n\

\ \"acc_norm_stderr\": 0.014400296429225619\n },\n \"harness|hendrycksTest-nutrition|5\"\

: {\n \"acc\": 0.5784313725490197,\n \"acc_stderr\": 0.02827549015679145,\n\

\ \"acc_norm\": 0.5784313725490197,\n \"acc_norm_stderr\": 0.02827549015679145\n\

\ },\n \"harness|hendrycksTest-philosophy|5\": {\n \"acc\": 0.5916398713826366,\n\

\ \"acc_stderr\": 0.02791705074848462,\n \"acc_norm\": 0.5916398713826366,\n\

\ \"acc_norm_stderr\": 0.02791705074848462\n },\n \"harness|hendrycksTest-prehistory|5\"\

: {\n \"acc\": 0.5617283950617284,\n \"acc_stderr\": 0.02760791408740048,\n\

\ \"acc_norm\": 0.5617283950617284,\n \"acc_norm_stderr\": 0.02760791408740048\n\

\ },\n \"harness|hendrycksTest-professional_accounting|5\": {\n \"\

acc\": 0.36524822695035464,\n \"acc_stderr\": 0.02872386385328128,\n \

\ \"acc_norm\": 0.36524822695035464,\n \"acc_norm_stderr\": 0.02872386385328128\n\

\ },\n \"harness|hendrycksTest-professional_law|5\": {\n \"acc\": 0.3728813559322034,\n\

\ \"acc_stderr\": 0.012350630058333364,\n \"acc_norm\": 0.3728813559322034,\n\

\ \"acc_norm_stderr\": 0.012350630058333364\n },\n \"harness|hendrycksTest-professional_medicine|5\"\

: {\n \"acc\": 0.5404411764705882,\n \"acc_stderr\": 0.03027332507734575,\n\

\ \"acc_norm\": 0.5404411764705882,\n \"acc_norm_stderr\": 0.03027332507734575\n\

\ },\n \"harness|hendrycksTest-professional_psychology|5\": {\n \"\

acc\": 0.4918300653594771,\n \"acc_stderr\": 0.020225134343057265,\n \

\ \"acc_norm\": 0.4918300653594771,\n \"acc_norm_stderr\": 0.020225134343057265\n\

\ },\n \"harness|hendrycksTest-public_relations|5\": {\n \"acc\": 0.6181818181818182,\n\

\ \"acc_stderr\": 0.04653429807913507,\n \"acc_norm\": 0.6181818181818182,\n\

\ \"acc_norm_stderr\": 0.04653429807913507\n },\n \"harness|hendrycksTest-security_studies|5\"\

: {\n \"acc\": 0.6285714285714286,\n \"acc_stderr\": 0.030932858792789848,\n\

\ \"acc_norm\": 0.6285714285714286,\n \"acc_norm_stderr\": 0.030932858792789848\n\

\ },\n \"harness|hendrycksTest-sociology|5\": {\n \"acc\": 0.6716417910447762,\n\

\ \"acc_stderr\": 0.033206858897443244,\n \"acc_norm\": 0.6716417910447762,\n\

\ \"acc_norm_stderr\": 0.033206858897443244\n },\n \"harness|hendrycksTest-us_foreign_policy|5\"\

: {\n \"acc\": 0.76,\n \"acc_stderr\": 0.04292346959909282,\n \

\ \"acc_norm\": 0.76,\n \"acc_norm_stderr\": 0.04292346959909282\n \

\ },\n \"harness|hendrycksTest-virology|5\": {\n \"acc\": 0.42771084337349397,\n\

\ \"acc_stderr\": 0.038515976837185335,\n \"acc_norm\": 0.42771084337349397,\n\

\ \"acc_norm_stderr\": 0.038515976837185335\n },\n \"harness|hendrycksTest-world_religions|5\"\

: {\n \"acc\": 0.7134502923976608,\n \"acc_stderr\": 0.03467826685703826,\n\

\ \"acc_norm\": 0.7134502923976608,\n \"acc_norm_stderr\": 0.03467826685703826\n\

\ },\n \"harness|truthfulqa:mc|0\": {\n \"mc1\": 0.3317013463892289,\n\

\ \"mc1_stderr\": 0.016482148810241473,\n \"mc2\": 0.5033808156222059,\n\

\ \"mc2_stderr\": 0.015670274691568342\n }\n}\n```"

repo_url: https://huggingface.co/lmsys/vicuna-7b-v1.5

leaderboard_url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

point_of_contact: clementine@hf.co

configs:

- config_name: harness_arc_challenge_25

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|arc:challenge|25_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|arc:challenge|25_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hellaswag_10

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hellaswag|10_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hellaswag|10_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-management|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-virology|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-management|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-virology|5_2023-08-17T12:09:52.202468.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_abstract_algebra_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_anatomy_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-anatomy|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-anatomy|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_astronomy_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-astronomy|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-astronomy|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_business_ethics_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_clinical_knowledge_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_college_biology_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-college_biology|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_biology|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_college_chemistry_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_college_computer_science_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_college_mathematics_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_college_medicine_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_college_physics_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-college_physics|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_physics|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_computer_security_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-computer_security|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-computer_security|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_conceptual_physics_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_econometrics_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-econometrics|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-econometrics|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_electrical_engineering_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_elementary_mathematics_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_formal_logic_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_global_facts_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-global_facts|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-global_facts|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_high_school_biology_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_high_school_chemistry_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_high_school_computer_science_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_high_school_european_history_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_high_school_geography_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_high_school_government_and_politics_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_high_school_macroeconomics_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_high_school_mathematics_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_high_school_microeconomics_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_high_school_physics_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_high_school_psychology_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_high_school_statistics_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_high_school_us_history_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_high_school_world_history_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_human_aging_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-human_aging|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-human_aging|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_human_sexuality_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_international_law_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-international_law|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-international_law|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_jurisprudence_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_logical_fallacies_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_machine_learning_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-machine_learning|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-machine_learning|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_management_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-management|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-management|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_marketing_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-marketing|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-marketing|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_medical_genetics_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_miscellaneous_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_moral_disputes_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_moral_scenarios_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_nutrition_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-nutrition|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-nutrition|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_philosophy_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-philosophy|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-philosophy|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_prehistory_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-prehistory|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-prehistory|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_professional_accounting_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_professional_law_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-professional_law|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_law|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_professional_medicine_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_professional_psychology_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_public_relations_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-public_relations|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-public_relations|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_security_studies_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-security_studies|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-security_studies|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_sociology_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-sociology|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-sociology|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_us_foreign_policy_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_virology_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-virology|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-virology|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_hendrycksTest_world_religions_5

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|hendrycksTest-world_religions|5_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-world_religions|5_2023-08-17T12:09:52.202468.parquet'

- config_name: harness_truthfulqa_mc_0

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- '**/details_harness|truthfulqa:mc|0_2023-08-17T12:09:52.202468.parquet'

- split: latest

path:

- '**/details_harness|truthfulqa:mc|0_2023-08-17T12:09:52.202468.parquet'

- config_name: results

data_files:

- split: 2023_08_17T12_09_52.202468

path:

- results_2023-08-17T12:09:52.202468.parquet

- split: latest

path:

- results_2023-08-17T12:09:52.202468.parquet

---

# Dataset Card for Evaluation run of lmsys/vicuna-7b-v1.5

## Dataset Description

- **Homepage:**

- **Repository:** https://huggingface.co/lmsys/vicuna-7b-v1.5

- **Paper:**

- **Leaderboard:** https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

- **Point of Contact:** clementine@hf.co

### Dataset Summary

Dataset automatically created during the evaluation run of model [lmsys/vicuna-7b-v1.5](https://huggingface.co/lmsys/vicuna-7b-v1.5) on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).

The dataset is composed of 61 configuration, each one coresponding to one of the evaluated task.

The dataset has been created from 1 run(s). Each run can be found as a specific split in each configuration, the split being named using the timestamp of the run.The "train" split is always pointing to the latest results.

An additional configuration "results" store all the aggregated results of the run (and is used to compute and display the agregated metrics on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).

To load the details from a run, you can for instance do the following:

```python

from datasets import load_dataset

data = load_dataset("open-llm-leaderboard/details_lmsys__vicuna-7b-v1.5",

"harness_truthfulqa_mc_0",

split="train")

```

## Latest results

These are the [latest results from run 2023-08-17T12:09:52.202468](https://huggingface.co/datasets/open-llm-leaderboard/details_lmsys__vicuna-7b-v1.5/blob/main/results_2023-08-17T12%3A09%3A52.202468.json) (note that their might be results for other tasks in the repos if successive evals didn't cover the same tasks. You find each in the results and the "latest" split for each eval):

```python

{

"all": {

"acc": 0.5115051849379418,

"acc_stderr": 0.03499341130480278,

"acc_norm": 0.5152155688156768,

"acc_norm_stderr": 0.03498027537647508,

"mc1": 0.3317013463892289,

"mc1_stderr": 0.016482148810241473,

"mc2": 0.5033808156222059,

"mc2_stderr": 0.015670274691568342

},

"harness|arc:challenge|25": {

"acc": 0.5034129692832765,

"acc_stderr": 0.014611050403244084,

"acc_norm": 0.5324232081911263,

"acc_norm_stderr": 0.014580637569995418

},

"harness|hellaswag|10": {

"acc": 0.5840470025891257,

"acc_stderr": 0.004918781662373942,

"acc_norm": 0.7739494124676359,

"acc_norm_stderr": 0.004174174724288079

},

"harness|hendrycksTest-abstract_algebra|5": {

"acc": 0.29,

"acc_stderr": 0.04560480215720684,

"acc_norm": 0.29,

"acc_norm_stderr": 0.04560480215720684

},

"harness|hendrycksTest-anatomy|5": {

"acc": 0.5037037037037037,

"acc_stderr": 0.04319223625811331,

"acc_norm": 0.5037037037037037,

"acc_norm_stderr": 0.04319223625811331

},

"harness|hendrycksTest-astronomy|5": {

"acc": 0.47368421052631576,

"acc_stderr": 0.04063302731486671,

"acc_norm": 0.47368421052631576,

"acc_norm_stderr": 0.04063302731486671

},

"harness|hendrycksTest-business_ethics|5": {

"acc": 0.54,

"acc_stderr": 0.05009082659620332,

"acc_norm": 0.54,

"acc_norm_stderr": 0.05009082659620332

},

"harness|hendrycksTest-clinical_knowledge|5": {

"acc": 0.5358490566037736,

"acc_stderr": 0.030693675018458003,

"acc_norm": 0.5358490566037736,

"acc_norm_stderr": 0.030693675018458003

},

"harness|hendrycksTest-college_biology|5": {

"acc": 0.5138888888888888,

"acc_stderr": 0.04179596617581,

"acc_norm": 0.5138888888888888,

"acc_norm_stderr": 0.04179596617581

},

"harness|hendrycksTest-college_chemistry|5": {

"acc": 0.33,

"acc_stderr": 0.047258156262526045,

"acc_norm": 0.33,

"acc_norm_stderr": 0.047258156262526045

},

"harness|hendrycksTest-college_computer_science|5": {

"acc": 0.44,

"acc_stderr": 0.04988876515698589,

"acc_norm": 0.44,

"acc_norm_stderr": 0.04988876515698589

},

"harness|hendrycksTest-college_mathematics|5": {

"acc": 0.39,

"acc_stderr": 0.04902071300001975,

"acc_norm": 0.39,

"acc_norm_stderr": 0.04902071300001975

},

"harness|hendrycksTest-college_medicine|5": {

"acc": 0.48554913294797686,

"acc_stderr": 0.03810871630454764,

"acc_norm": 0.48554913294797686,

"acc_norm_stderr": 0.03810871630454764

},

"harness|hendrycksTest-college_physics|5": {

"acc": 0.18627450980392157,

"acc_stderr": 0.03873958714149352,

"acc_norm": 0.18627450980392157,

"acc_norm_stderr": 0.03873958714149352

},

"harness|hendrycksTest-computer_security|5": {

"acc": 0.64,

"acc_stderr": 0.04824181513244218,

"acc_norm": 0.64,

"acc_norm_stderr": 0.04824181513244218

},

"harness|hendrycksTest-conceptual_physics|5": {

"acc": 0.451063829787234,

"acc_stderr": 0.032529096196131965,

"acc_norm": 0.451063829787234,

"acc_norm_stderr": 0.032529096196131965

},

"harness|hendrycksTest-econometrics|5": {

"acc": 0.30701754385964913,

"acc_stderr": 0.043391383225798615,

"acc_norm": 0.30701754385964913,

"acc_norm_stderr": 0.043391383225798615

},

"harness|hendrycksTest-electrical_engineering|5": {

"acc": 0.42758620689655175,

"acc_stderr": 0.04122737111370331,

"acc_norm": 0.42758620689655175,

"acc_norm_stderr": 0.04122737111370331

},

"harness|hendrycksTest-elementary_mathematics|5": {

"acc": 0.30687830687830686,

"acc_stderr": 0.023752928712112143,

"acc_norm": 0.30687830687830686,

"acc_norm_stderr": 0.023752928712112143

},

"harness|hendrycksTest-formal_logic|5": {

"acc": 0.38095238095238093,

"acc_stderr": 0.043435254289490965,

"acc_norm": 0.38095238095238093,

"acc_norm_stderr": 0.043435254289490965

},

"harness|hendrycksTest-global_facts|5": {

"acc": 0.35,

"acc_stderr": 0.047937248544110196,

"acc_norm": 0.35,

"acc_norm_stderr": 0.047937248544110196

},

"harness|hendrycksTest-high_school_biology|5": {

"acc": 0.5387096774193548,

"acc_stderr": 0.028358634859836935,

"acc_norm": 0.5387096774193548,

"acc_norm_stderr": 0.028358634859836935

},

"harness|hendrycksTest-high_school_chemistry|5": {

"acc": 0.39901477832512317,

"acc_stderr": 0.03445487686264715,

"acc_norm": 0.39901477832512317,

"acc_norm_stderr": 0.03445487686264715

},

"harness|hendrycksTest-high_school_computer_science|5": {

"acc": 0.44,

"acc_stderr": 0.04988876515698589,

"acc_norm": 0.44,

"acc_norm_stderr": 0.04988876515698589

},

"harness|hendrycksTest-high_school_european_history|5": {

"acc": 0.6242424242424243,

"acc_stderr": 0.03781887353205982,

"acc_norm": 0.6242424242424243,

"acc_norm_stderr": 0.03781887353205982

},

"harness|hendrycksTest-high_school_geography|5": {

"acc": 0.6161616161616161,

"acc_stderr": 0.034648816750163396,

"acc_norm": 0.6161616161616161,

"acc_norm_stderr": 0.034648816750163396

},

"harness|hendrycksTest-high_school_government_and_politics|5": {

"acc": 0.7305699481865285,

"acc_stderr": 0.03201867122877794,

"acc_norm": 0.7305699481865285,

"acc_norm_stderr": 0.03201867122877794

},

"harness|hendrycksTest-high_school_macroeconomics|5": {

"acc": 0.48717948717948717,

"acc_stderr": 0.025342671293807264,

"acc_norm": 0.48717948717948717,

"acc_norm_stderr": 0.025342671293807264

},

"harness|hendrycksTest-high_school_mathematics|5": {

"acc": 0.24814814814814815,

"acc_stderr": 0.026335739404055803,

"acc_norm": 0.24814814814814815,

"acc_norm_stderr": 0.026335739404055803

},

"harness|hendrycksTest-high_school_microeconomics|5": {

"acc": 0.4495798319327731,

"acc_stderr": 0.03231293497137707,

"acc_norm": 0.4495798319327731,

"acc_norm_stderr": 0.03231293497137707

},

"harness|hendrycksTest-high_school_physics|5": {

"acc": 0.2847682119205298,

"acc_stderr": 0.03684881521389024,

"acc_norm": 0.2847682119205298,

"acc_norm_stderr": 0.03684881521389024

},

"harness|hendrycksTest-high_school_psychology|5": {

"acc": 0.6972477064220184,

"acc_stderr": 0.01969871143475634,

"acc_norm": 0.6972477064220184,

"acc_norm_stderr": 0.01969871143475634

},

"harness|hendrycksTest-high_school_statistics|5": {

"acc": 0.38425925925925924,

"acc_stderr": 0.03317354514310742,

"acc_norm": 0.38425925925925924,

"acc_norm_stderr": 0.03317354514310742

},

"harness|hendrycksTest-high_school_us_history|5": {

"acc": 0.7205882352941176,

"acc_stderr": 0.03149328104507957,

"acc_norm": 0.7205882352941176,

"acc_norm_stderr": 0.03149328104507957

},

"harness|hendrycksTest-high_school_world_history|5": {

"acc": 0.7130801687763713,

"acc_stderr": 0.029443773022594693,

"acc_norm": 0.7130801687763713,

"acc_norm_stderr": 0.029443773022594693

},

"harness|hendrycksTest-human_aging|5": {

"acc": 0.6233183856502242,

"acc_stderr": 0.032521134899291884,

"acc_norm": 0.6233183856502242,

"acc_norm_stderr": 0.032521134899291884

},

"harness|hendrycksTest-human_sexuality|5": {

"acc": 0.6335877862595419,

"acc_stderr": 0.042258754519696365,

"acc_norm": 0.6335877862595419,

"acc_norm_stderr": 0.042258754519696365

},

"harness|hendrycksTest-international_law|5": {

"acc": 0.5785123966942148,

"acc_stderr": 0.04507732278775087,

"acc_norm": 0.5785123966942148,

"acc_norm_stderr": 0.04507732278775087

},

"harness|hendrycksTest-jurisprudence|5": {

"acc": 0.5555555555555556,

"acc_stderr": 0.04803752235190192,

"acc_norm": 0.5555555555555556,

"acc_norm_stderr": 0.04803752235190192

},

"harness|hendrycksTest-logical_fallacies|5": {

"acc": 0.5337423312883436,

"acc_stderr": 0.039194155450484096,

"acc_norm": 0.5337423312883436,

"acc_norm_stderr": 0.039194155450484096

},

"harness|hendrycksTest-machine_learning|5": {

"acc": 0.44642857142857145,

"acc_stderr": 0.04718471485219588,

"acc_norm": 0.44642857142857145,

"acc_norm_stderr": 0.04718471485219588

},

"harness|hendrycksTest-management|5": {

"acc": 0.6796116504854369,

"acc_stderr": 0.04620284082280042,

"acc_norm": 0.6796116504854369,

"acc_norm_stderr": 0.04620284082280042

},

"harness|hendrycksTest-marketing|5": {

"acc": 0.7692307692307693,

"acc_stderr": 0.027601921381417597,

"acc_norm": 0.7692307692307693,

"acc_norm_stderr": 0.027601921381417597

},

"harness|hendrycksTest-medical_genetics|5": {

"acc": 0.57,

"acc_stderr": 0.049756985195624284,

"acc_norm": 0.57,

"acc_norm_stderr": 0.049756985195624284

},

"harness|hendrycksTest-miscellaneous|5": {

"acc": 0.6871008939974457,

"acc_stderr": 0.016580935940304055,

"acc_norm": 0.6871008939974457,

"acc_norm_stderr": 0.016580935940304055

},

"harness|hendrycksTest-moral_disputes|5": {

"acc": 0.5606936416184971,

"acc_stderr": 0.02672003438051499,

"acc_norm": 0.5606936416184971,

"acc_norm_stderr": 0.02672003438051499

},

"harness|hendrycksTest-moral_scenarios|5": {

"acc": 0.24581005586592178,

"acc_stderr": 0.014400296429225619,

"acc_norm": 0.24581005586592178,

"acc_norm_stderr": 0.014400296429225619

},

"harness|hendrycksTest-nutrition|5": {

"acc": 0.5784313725490197,

"acc_stderr": 0.02827549015679145,

"acc_norm": 0.5784313725490197,

"acc_norm_stderr": 0.02827549015679145

},

"harness|hendrycksTest-philosophy|5": {

"acc": 0.5916398713826366,

"acc_stderr": 0.02791705074848462,

"acc_norm": 0.5916398713826366,

"acc_norm_stderr": 0.02791705074848462

},

"harness|hendrycksTest-prehistory|5": {

"acc": 0.5617283950617284,

"acc_stderr": 0.02760791408740048,

"acc_norm": 0.5617283950617284,

"acc_norm_stderr": 0.02760791408740048

},

"harness|hendrycksTest-professional_accounting|5": {

"acc": 0.36524822695035464,

"acc_stderr": 0.02872386385328128,

"acc_norm": 0.36524822695035464,

"acc_norm_stderr": 0.02872386385328128

},

"harness|hendrycksTest-professional_law|5": {

"acc": 0.3728813559322034,

"acc_stderr": 0.012350630058333364,

"acc_norm": 0.3728813559322034,

"acc_norm_stderr": 0.012350630058333364

},

"harness|hendrycksTest-professional_medicine|5": {

"acc": 0.5404411764705882,

"acc_stderr": 0.03027332507734575,

"acc_norm": 0.5404411764705882,

"acc_norm_stderr": 0.03027332507734575

},

"harness|hendrycksTest-professional_psychology|5": {

"acc": 0.4918300653594771,

"acc_stderr": 0.020225134343057265,

"acc_norm": 0.4918300653594771,

"acc_norm_stderr": 0.020225134343057265

},

"harness|hendrycksTest-public_relations|5": {

"acc": 0.6181818181818182,

"acc_stderr": 0.04653429807913507,

"acc_norm": 0.6181818181818182,

"acc_norm_stderr": 0.04653429807913507

},

"harness|hendrycksTest-security_studies|5": {

"acc": 0.6285714285714286,

"acc_stderr": 0.030932858792789848,

"acc_norm": 0.6285714285714286,

"acc_norm_stderr": 0.030932858792789848

},

"harness|hendrycksTest-sociology|5": {

"acc": 0.6716417910447762,

"acc_stderr": 0.033206858897443244,

"acc_norm": 0.6716417910447762,

"acc_norm_stderr": 0.033206858897443244

},

"harness|hendrycksTest-us_foreign_policy|5": {

"acc": 0.76,

"acc_stderr": 0.04292346959909282,

"acc_norm": 0.76,

"acc_norm_stderr": 0.04292346959909282

},

"harness|hendrycksTest-virology|5": {

"acc": 0.42771084337349397,

"acc_stderr": 0.038515976837185335,

"acc_norm": 0.42771084337349397,

"acc_norm_stderr": 0.038515976837185335

},

"harness|hendrycksTest-world_religions|5": {

"acc": 0.7134502923976608,

"acc_stderr": 0.03467826685703826,

"acc_norm": 0.7134502923976608,

"acc_norm_stderr": 0.03467826685703826

},

"harness|truthfulqa:mc|0": {

"mc1": 0.3317013463892289,

"mc1_stderr": 0.016482148810241473,

"mc2": 0.5033808156222059,

"mc2_stderr": 0.015670274691568342

}

}

```

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators