id stringlengths 2 115 | lastModified stringlengths 24 24 | tags list | author stringlengths 2 42 ⌀ | description stringlengths 0 68.7k ⌀ | citation stringlengths 0 10.7k ⌀ | cardData null | likes int64 0 3.55k | downloads int64 0 10.1M | card stringlengths 0 1.01M |

|---|---|---|---|---|---|---|---|---|---|

zyznull/dureader-retrieval-ranking | 2023-01-03T08:05:57.000Z | [

"license:apache-2.0",

"region:us"

] | zyznull | null | @article{Qiu2022DuReader\_retrievalAL,

title={DuReader\_retrieval: A Large-scale Chinese Benchmark for Passage Retrieval from Web Search Engine},

author={Yifu Qiu and Hongyu Li and Yingqi Qu and Ying Chen and Qiaoqiao She and Jing Liu and Hua Wu and Haifeng Wang},

journal={ArXiv},

year={2022},

volume={abs/2203.10232}

} | null | 1 | 8 | ---

license: apache-2.0

---

# dureader

数据来自DuReader-Retreval数据集,这里是[原始地址](https://github.com/baidu/DuReader/tree/master/DuReader-Retrieval)。

> 本数据集只用作学术研究使用。如果本仓库涉及侵权行为,会立即删除。 |

mathemakitten/winobias_antistereotype_test | 2022-09-29T15:10:54.000Z | [

"region:us"

] | mathemakitten | null | null | null | 1 | 8 | Entry not found |

arbml/NETransliteration | 2022-11-03T14:01:07.000Z | [

"region:us"

] | arbml | null | null | null | 0 | 8 | Entry not found |

arbml/google_transliteration | 2022-11-03T14:08:21.000Z | [

"region:us"

] | arbml | null | null | null | 0 | 8 | Entry not found |

arbml/ArSarcasm_v2 | 2022-11-03T15:13:40.000Z | [

"region:us"

] | arbml | null | null | null | 0 | 8 | Entry not found |

arbml/ANS_stance | 2022-11-03T15:52:22.000Z | [

"region:us"

] | arbml | null | null | null | 0 | 8 | Entry not found |

arbml/Commonsense_Validation | 2022-10-14T21:52:21.000Z | [

"region:us"

] | arbml | null | null | null | 1 | 8 | ---

dataset_info:

features:

- name: id

dtype: string

- name: first_sentence

dtype: string

- name: second_sentence

dtype: string

- name: label

dtype:

class_label:

names:

0: 0

1: 1

splits:

- name: train

num_bytes: 1420233

num_examples: 10000

- name: validation

num_bytes: 133986

num_examples: 1000

download_size: 837486

dataset_size: 1554219

---

# Dataset Card for "Commonsense_Validation"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

biglam/gutenberg-poetry-corpus | 2022-10-18T10:53:52.000Z | [

"task_categories:text-generation",

"task_ids:language-modeling",

"annotations_creators:no-annotation",

"language_creators:found",

"multilinguality:monolingual",

"size_categories:1M<n<10M",

"language:en",

"license:cc0-1.0",

"poetry",

"stylistics",

"poems",

"gutenberg",

"region:us"

] | biglam | null | null | null | 3 | 8 | ---

annotations_creators:

- no-annotation

language:

- en

language_creators:

- found

license:

- cc0-1.0

multilinguality:

- monolingual

pretty_name: Gutenberg Poetry Corpus

size_categories:

- 1M<n<10M

source_datasets: []

tags:

- poetry

- stylistics

- poems

- gutenberg

task_categories:

- text-generation

task_ids:

- language-modeling

---

# Allison Parrish's Gutenberg Poetry Corpus

This corpus was originally published under the CC0 license by [Allison Parrish](https://www.decontextualize.com/). Please visit Allison's fantastic [accompanying GitHub repository](https://github.com/aparrish/gutenberg-poetry-corpus) for usage inspiration as well as more information on how the data was mined, how to create your own version of the corpus, and examples of projects using it.

This dataset contains 3,085,117 lines of poetry from hundreds of Project Gutenberg books. Each line has a corresponding `gutenberg_id` (1191 unique values) from project Gutenberg.

```python

Dataset({

features: ['line', 'gutenberg_id'],

num_rows: 3085117

})

```

A row of data looks like this:

```python

{'line': 'And retreated, baffled, beaten,', 'gutenberg_id': 19}

```

|

arbml/Sentiment_Analysis_Tweets | 2022-10-25T16:19:53.000Z | [

"region:us"

] | arbml | null | null | null | 0 | 8 | Entry not found |

laion/laion1b-nolang-vit-h-14-embeddings | 2022-12-20T19:20:40.000Z | [

"region:us"

] | laion | null | null | null | 0 | 8 | Entry not found |

arbml/MLMA_hate_speech_ar | 2022-10-26T15:16:20.000Z | [

"region:us"

] | arbml | null | null | null | 0 | 8 | Entry not found |

arbml/EASC | 2022-11-02T15:18:15.000Z | [

"region:us"

] | arbml | null | null | null | 0 | 8 | Entry not found |

VietAI/vi_pubmed | 2022-11-07T01:12:52.000Z | [

"task_categories:text-generation",

"task_categories:fill-mask",

"task_ids:language-modeling",

"task_ids:masked-language-modeling",

"language:vi",

"language:en",

"license:cc",

"arxiv:2210.05610",

"arxiv:2210.05598",

"region:us"

] | VietAI | null | null | null | 6 | 8 | ---

license: cc

language:

- vi

- en

task_categories:

- text-generation

- fill-mask

task_ids:

- language-modeling

- masked-language-modeling

paperswithcode_id: pubmed

dataset_info:

features:

- name: en

dtype: string

- name: vi

dtype: string

splits:

- name: pubmed22

num_bytes: 44360028980

num_examples: 20087006

download_size: 23041004247

dataset_size: 44360028980

---

# Dataset Summary

20M Vietnamese PubMed biomedical abstracts translated by the [state-of-the-art English-Vietnamese Translation project](https://arxiv.org/abs/2210.05610). The data has been used as unlabeled dataset for [pretraining a Vietnamese Biomedical-domain Transformer model](https://arxiv.org/abs/2210.05598).

image source: [Enriching Biomedical Knowledge for Vietnamese Low-resource Language Through Large-Scale Translation](https://arxiv.org/abs/2210.05598)

# Language

- English: Original biomedical abstracts from [Pubmed](https://www.nlm.nih.gov/databases/download/pubmed_medline_faq.html)

- Vietnamese: Synthetic abstract translated by a [state-of-the-art English-Vietnamese Translation project](https://arxiv.org/abs/2210.05610)

# Dataset Structure

- The English sequences are

- The Vietnamese sequences are

# Source Data - Initial Data Collection and Normalization

https://www.nlm.nih.gov/databases/download/pubmed_medline_faq.html

# Licensing Information

[Courtesy of the U.S. National Library of Medicine.](https://www.nlm.nih.gov/databases/download/terms_and_conditions.html)

# Citation

```

@misc{mtet,

doi = {10.48550/ARXIV.2210.05610},

url = {https://arxiv.org/abs/2210.05610},

author = {Ngo, Chinh and Trinh, Trieu H. and Phan, Long and Tran, Hieu and Dang, Tai and Nguyen, Hieu and Nguyen, Minh and Luong, Minh-Thang},

keywords = {Computation and Language (cs.CL), Artificial Intelligence (cs.AI), FOS: Computer and information sciences, FOS: Computer and information sciences},

title = {MTet: Multi-domain Translation for English and Vietnamese},

publisher = {arXiv},

year = {2022},

copyright = {Creative Commons Attribution 4.0 International}

}

```

```

@misc{vipubmed,

doi = {10.48550/ARXIV.2210.05598},

url = {https://arxiv.org/abs/2210.05598},

author = {Phan, Long and Dang, Tai and Tran, Hieu and Phan, Vy and Chau, Lam D. and Trinh, Trieu H.},

keywords = {Computation and Language (cs.CL), Artificial Intelligence (cs.AI), FOS: Computer and information sciences, FOS: Computer and information sciences},

title = {Enriching Biomedical Knowledge for Vietnamese Low-resource Language Through Large-Scale Translation},

publisher = {arXiv},

year = {2022},

copyright = {Creative Commons Attribution 4.0 International}

}

``` |

jpwahle/autoencoder-paraphrase-dataset | 2022-11-18T17:26:00.000Z | [

"task_categories:text-classification",

"task_categories:text-generation",

"annotations_creators:machine-generated",

"language_creators:machine-generated",

"multilinguality:monolingual",

"size_categories:100K<n<1M",

"source_datasets:original",

"language:en",

"license:cc-by-4.0",

"bert",

"roberta"... | jpwahle | null | null | null | 2 | 8 | ---

annotations_creators:

- machine-generated

language:

- en

language_creators:

- machine-generated

license:

- cc-by-4.0

multilinguality:

- monolingual

pretty_name: Autoencoder Paraphrase Dataset (BERT, RoBERTa, Longformer)

size_categories:

- 100K<n<1M

source_datasets:

- original

tags:

- bert

- roberta

- longformer

- plagiarism

- paraphrase

- academic integrity

- arxiv

- wikipedia

- theses

task_categories:

- text-classification

- text-generation

task_ids: []

paperswithcode_id: are-neural-language-models-good-plagiarists-a

dataset_info:

- split: train

download_size: 2980464

dataset_size: 2980464

- split: test

download_size: 1690032

dataset_size: 1690032

---

# Dataset Card for Machine Paraphrase Dataset (MPC)

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rat1.ionale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Paper:** https://ieeexplore.ieee.org/document/9651895

- **Total size:** 2.23 GB

- **Train size:** 1.52 GB

- **Test size:** 861 MB

### Dataset Summary

The Autoencoder Paraphrase Corpus (APC) consists of ~200k examples of original, and paraphrases using three neural language models.

It uses three models (BERT, RoBERTa, Longformer) on three source texts (Wikipedia, arXiv, student theses).

The examples are aligned, i.e., we sample the same paragraphs for originals and paraphrased versions.

### How to use it

You can load the dataset using the `load_dataset` function:

```python

from datasets import load_dataset

ds = load_dataset("jpwahle/autoencoder-paraphrase-dataset")

print(ds[0])

#OUTPUT:

{

'text': 'War memorial formally unveiled on Whit Monday 16 May 1921 by the Prince of Wales later King Edward VIII with Lutyens in attendance At the unveiling ceremony Captain Fortescue gave a speech during wherein he announced that 11 600 men and women from Devon had been inval while serving in imperialist war He later stated that some 63 700 8 000 regulars 36 700 volunteers 19 000 conscripts had served in the armed forces The heroism of the dead are recorded on a roll of honour of which three copies were made one for Exeter Cathedral one To be held by Tasman county council and another honoring the Prince of Wales placed in a hollow in bedrock base of the war memorial The princes visit generated considerable excitement in the area Thousands of spectators lined the street to greet his motorcade and shops on Market High Street hung out banners with welcoming messages After the unveiling Edward spent ten days touring the local area',

'label': 1,

'dataset': 'wikipedia',

'method': 'longformer'

}

```

### Supported Tasks and Leaderboards

Paraphrase Identification

### Languages

English

## Dataset Structure

### Data Instances

```json

{

'text': 'War memorial formally unveiled on Whit Monday 16 May 1921 by the Prince of Wales later King Edward VIII with Lutyens in attendance At the unveiling ceremony Captain Fortescue gave a speech during wherein he announced that 11 600 men and women from Devon had been inval while serving in imperialist war He later stated that some 63 700 8 000 regulars 36 700 volunteers 19 000 conscripts had served in the armed forces The heroism of the dead are recorded on a roll of honour of which three copies were made one for Exeter Cathedral one To be held by Tasman county council and another honoring the Prince of Wales placed in a hollow in bedrock base of the war memorial The princes visit generated considerable excitement in the area Thousands of spectators lined the street to greet his motorcade and shops on Market High Street hung out banners with welcoming messages After the unveiling Edward spent ten days touring the local area',

'label': 1,

'dataset': 'wikipedia',

'method': 'longformer'

}

```

### Data Fields

| Feature | Description |

| --- | --- |

| `text` | The unique identifier of the paper. |

| `label` | Whether it is a paraphrase (1) or the original (0). |

| `dataset` | The source dataset (Wikipedia, arXiv, or theses). |

| `method` | The method used (bert, roberta, longformer). |

### Data Splits

- train (Wikipedia x [bert, roberta, longformer])

- test ([Wikipedia, arXiv, theses] x [bert, roberta, longformer])

## Dataset Creation

### Curation Rationale

Providing a resource for testing against autoencoder paraprhased plagiarism.

### Source Data

#### Initial Data Collection and Normalization

- Paragraphs from `featured articles` from the English Wikipedia dump

- Paragraphs from full-text pdfs of arXMLiv

- Paragraphs from full-text pdfs of Czech student thesis (bachelor, master, PhD).

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[Jan Philip Wahle](https://jpwahle.com/)

### Licensing Information

The Autoencoder Paraphrase Dataset is released under CC BY-NC 4.0. By using this corpus, you agree to its usage terms.

### Citation Information

```bib

@inproceedings{9651895,

title = {Are Neural Language Models Good Plagiarists? A Benchmark for Neural Paraphrase Detection},

author = {Wahle, Jan Philip and Ruas, Terry and Meuschke, Norman and Gipp, Bela},

year = 2021,

booktitle = {2021 ACM/IEEE Joint Conference on Digital Libraries (JCDL)},

volume = {},

number = {},

pages = {226--229},

doi = {10.1109/JCDL52503.2021.00065}

}

```

### Contributions

Thanks to [@jpwahle](https://github.com/jpwahle) for adding this dataset. |

AlekseyKorshuk/quora-question-pairs | 2022-11-09T13:23:25.000Z | [

"region:us"

] | AlekseyKorshuk | null | null | null | 0 | 8 | Entry not found |

bigbio/mediqa_qa | 2022-12-22T15:45:32.000Z | [

"multilinguality:monolingual",

"language:en",

"license:unknown",

"region:us"

] | bigbio | The MEDIQA challenge is an ACL-BioNLP 2019 shared task aiming to attract further research efforts in Natural Language Inference (NLI), Recognizing Question Entailment (RQE), and their applications in medical Question Answering (QA).

Mailing List: https://groups.google.com/forum/#!forum/bionlp-mediqa

In the QA task, participants are tasked to:

- filter/classify the provided answers (1: correct, 0: incorrect).

- re-rank the answers. | @inproceedings{MEDIQA2019,

author = {Asma {Ben Abacha} and Chaitanya Shivade and Dina Demner{-}Fushman},

title = {Overview of the MEDIQA 2019 Shared Task on Textual Inference, Question Entailment and Question Answering},

booktitle = {ACL-BioNLP 2019},

year = {2019}

} | null | 0 | 8 |

---

language:

- en

bigbio_language:

- English

license: unknown

multilinguality: monolingual

bigbio_license_shortname: UNKNOWN

pretty_name: MEDIQA QA

homepage: https://sites.google.com/view/mediqa2019

bigbio_pubmed: False

bigbio_public: True

bigbio_tasks:

- QUESTION_ANSWERING

---

# Dataset Card for MEDIQA QA

## Dataset Description

- **Homepage:** https://sites.google.com/view/mediqa2019

- **Pubmed:** False

- **Public:** True

- **Tasks:** QA

The MEDIQA challenge is an ACL-BioNLP 2019 shared task aiming to attract further research efforts in Natural Language Inference (NLI), Recognizing Question Entailment (RQE), and their applications in medical Question Answering (QA).

Mailing List: https://groups.google.com/forum/#!forum/bionlp-mediqa

In the QA task, participants are tasked to:

- filter/classify the provided answers (1: correct, 0: incorrect).

- re-rank the answers.

## Citation Information

```

@inproceedings{MEDIQA2019,

author = {Asma {Ben Abacha} and Chaitanya Shivade and Dina Demner{-}Fushman},

title = {Overview of the MEDIQA 2019 Shared Task on Textual Inference, Question Entailment and Question Answering},

booktitle = {ACL-BioNLP 2019},

year = {2019}

}

```

|

stacked-summaries/stacked-xsum-1024 | 2023-10-08T23:34:15.000Z | [

"task_categories:summarization",

"size_categories:100K<n<1M",

"source_datasets:xsum",

"language:en",

"license:apache-2.0",

"stacked summaries",

"xsum",

"doi:10.57967/hf/0390",

"region:us"

] | stacked-summaries | null | null | null | 1 | 8 | ---

language:

- en

license: apache-2.0

size_categories:

- 100K<n<1M

source_datasets:

- xsum

task_categories:

- summarization

pretty_name: 'Stacked XSUM: 1024 tokens max'

tags:

- stacked summaries

- xsum

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

- split: test

path: data/test-*

dataset_info:

features:

- name: document

dtype: string

- name: summary

dtype: string

- name: id

dtype: int64

- name: chapter_length

dtype: int64

- name: summary_length

dtype: int64

- name: is_stacked

dtype: bool

splits:

- name: train

num_bytes: 918588672

num_examples: 320939

- name: validation

num_bytes: 51154057

num_examples: 17935

- name: test

num_bytes: 51118088

num_examples: 17830

download_size: 653378162

dataset_size: 1020860817

---

# stacked-xsum-1024

a "stacked" version of `xsum`

1. Original Dataset: copy of the base dataset

2. Stacked Rows: The original dataset is processed by stacking rows based on certain criteria:

- Maximum Input Length: The maximum length for input sequences is 1024 tokens in the longt5 model tokenizer.

- Maximum Output Length: The maximum length for output sequences is also 1024 tokens in the longt5 model tokenizer.

3. Special Token: The dataset utilizes the `[NEXT_CONCEPT]` token to indicate a new topic **within** the same summary. It is recommended to explicitly add this special token to your model's tokenizer before training, ensuring that it is recognized and processed correctly during downstream usage.

4.

## updates

- dec 3: upload initial version

- dec 4: upload v2 with basic data quality fixes (i.e. the `is_stacked` column)

- dec 5 0500: upload v3 which has pre-randomised order and duplicate rows for document+summary dropped

## stats

## dataset details

see the repo `.log` file for more details.

train input

```python

[2022-12-05 01:05:17] INFO:root:INPUTS - basic stats - train

[2022-12-05 01:05:17] INFO:root:{'num_columns': 5,

'num_rows': 204045,

'num_unique_target': 203107,

'num_unique_text': 203846,

'summary - average chars': 125.46,

'summary - average tokens': 30.383719277610332,

'text input - average chars': 2202.42,

'text input - average tokens': 523.9222230390355}

```

stacked train:

```python

[2022-12-05 04:47:01] INFO:root:stacked 181719 rows, 22326 rows were ineligible

[2022-12-05 04:47:02] INFO:root:dropped 64825 duplicate rows, 320939 rows remain

[2022-12-05 04:47:02] INFO:root:shuffling output with seed 323

[2022-12-05 04:47:03] INFO:root:STACKED - basic stats - train

[2022-12-05 04:47:04] INFO:root:{'num_columns': 6,

'num_rows': 320939,

'num_unique_chapters': 320840,

'num_unique_summaries': 320101,

'summary - average chars': 199.89,

'summary - average tokens': 46.29925001324239,

'text input - average chars': 2629.19,

'text input - average tokens': 621.541532814647}

```

## Citation

If you find this useful in your work, please consider citing us.

```

@misc {stacked_summaries_2023,

author = { {Stacked Summaries: Karim Foda and Peter Szemraj} },

title = { stacked-xsum-1024 (Revision 2d47220) },

year = 2023,

url = { https://huggingface.co/datasets/stacked-summaries/stacked-xsum-1024 },

doi = { 10.57967/hf/0390 },

publisher = { Hugging Face }

}

``` |

lucadiliello/trecqa | 2022-12-05T15:10:15.000Z | [

"region:us"

] | lucadiliello | null | null | null | 0 | 8 | ---

dataset_info:

features:

- name: label

dtype: int64

- name: answer

dtype: string

- name: key

dtype: int64

- name: question

dtype: string

splits:

- name: test_clean

num_bytes: 298298

num_examples: 1442

- name: train_all

num_bytes: 12030615

num_examples: 53417

- name: dev_clean

num_bytes: 293075

num_examples: 1343

- name: train

num_bytes: 1517902

num_examples: 5919

- name: test

num_bytes: 312688

num_examples: 1517

- name: dev

num_bytes: 297598

num_examples: 1364

download_size: 6215944

dataset_size: 14750176

---

# Dataset Card for "trecqa"

TREC-QA dataset for Answer Sentence Selection. The dataset contains 2 additional splits which are `clean` versions of the original development and test sets. `clean` versions contain only questions which have at least a positive and a negative answer candidate. |

lmqg/qag_koquad | 2022-12-18T08:03:53.000Z | [

"task_categories:text-generation",

"task_ids:language-modeling",

"multilinguality:monolingual",

"size_categories:1k<n<10K",

"source_datasets:lmqg/qg_koquad",

"language:ko",

"license:cc-by-sa-4.0",

"question-generation",

"arxiv:2210.03992",

"region:us"

] | lmqg | Question & answer generation dataset based on SQuAD. | @inproceedings{ushio-etal-2022-generative,

title = "{G}enerative {L}anguage {M}odels for {P}aragraph-{L}evel {Q}uestion {G}eneration",

author = "Ushio, Asahi and

Alva-Manchego, Fernando and

Camacho-Collados, Jose",

booktitle = "Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing",

month = dec,

year = "2022",

address = "Abu Dhabi, U.A.E.",

publisher = "Association for Computational Linguistics",

} | null | 2 | 8 | ---

license: cc-by-sa-4.0

pretty_name: SQuAD for question generation

language: ko

multilinguality: monolingual

size_categories: 1k<n<10K

source_datasets: lmqg/qg_koquad

task_categories:

- text-generation

task_ids:

- language-modeling

tags:

- question-generation

---

# Dataset Card for "lmqg/qag_koquad"

## Dataset Description

- **Repository:** [https://github.com/asahi417/lm-question-generation](https://github.com/asahi417/lm-question-generation)

- **Paper:** [https://arxiv.org/abs/2210.03992](https://arxiv.org/abs/2210.03992)

- **Point of Contact:** [Asahi Ushio](http://asahiushio.com/)

### Dataset Summary

This is the question & answer generation dataset based on the KOQuAD.

### Supported Tasks and Leaderboards

* `question-answer-generation`: The dataset is assumed to be used to train a model for question & answer generation.

Success on this task is typically measured by achieving a high BLEU4/METEOR/ROUGE-L/BERTScore/MoverScore (see our paper for more in detail).

### Languages

Korean (ko)

## Dataset Structure

An example of 'train' looks as follows.

```

{

"paragraph": ""3.13 만세운동" 은 1919년 3.13일 전주에서 일어난 만세운동이다. 지역 인사들과 함께 신흥학교 학생들이 주도적인 역할을 하며, 만세운동을 이끌었다. 박태련, 김신극 등 전주 지도자들은 군산에서 4일과 5일 독립만세 시위가 감행됐다는 소식에 듣고 준비하고 있었다. 천도교와 박태련 신간회 총무집에서 필요한 태극기를 인쇄하기로 했었다. 서울을 비롯한 다른 지방에서 시위가 계속되자 일본경찰은 신흥학교와 기전학교를 비롯한 전주시내 학교에 강제 방학조치를 취했다. 이에 최종삼 등 신흥학교 학생 5명은 밤을 이용해 신흥학교 지하실에서 태극기 등 인쇄물을 만들었다. 준비를 마친 이들은 13일 장터로 모이기 시작했고, 채소가마니로 위장한 태극기를 장터로 실어 나르고 거사 직전 시장 입구인 완산동과 전주교 건너편에서 군중들에게 은밀히 배부했다. 낮 12시20분께 신흥학교와 기전학교 학생 및 천도교도 등은 태극기를 들고 만세를 불렀다. 남문 밖 시장, 제2보통학교(현 완산초등학교)에서 모여 인쇄물을 뿌리며 시가지로 구보로 행진했다. 시위는 오후 11시까지 서너차례 계속됐다. 또 다음날 오후 3시에도 군중이 모여 만세를 불렀다. 이후 고형진, 남궁현, 김병학, 김점쇠, 이기곤, 김경신 등 신흥학교 학생들은 시위를 주도했다는 혐의로 모두 실형 1년을 언도 받았다. 이외 신흥학교 학생 3명은 일제의 고문에 옥사한 것으로 알려졌다. 또 시위를 지도한 김인전 목사는 이후 중국 상해로 거처를 옮겨 임시정부에서 활동했다. 현재 신흥학교 교문 옆에 만세운동 기념비가 세워져 있다.",

"questions": [ "만세운동 기념비가 세워져 있는 곳은?", "일본경찰의 강제 방학조치에도 불구하고 학생들은 신흥학교 지하실에 모여서 어떤 인쇄물을 만들었는가?", "여러 지방에서 시위가 일어나자 일본경찰이 전주시내 학교에 감행한 조치는 무엇인가?", "지역인사들과 신흥고등학교 학생들이 주도적인 역할을 한 3.13 만세운동이 일어난 해는?", "신흥학교 학생들은 시위를 주도했다는 혐의로 모두 실형 몇년을 언도 받았는가?", "만세운동에서 주도적인 역할을 한 이들은?", "1919년 3.1 운동이 일어난 지역은 어디인가?", "3.13 만세운동이 일어난 곳은?" ],

"answers": [ "신흥학교 교문 옆", "태극기", "강제 방학조치", "1919년", "1년", "신흥학교 학생들", "전주", "전주" ],

"questions_answers": "question: 만세운동 기념비가 세워져 있는 곳은?, answer: 신흥학교 교문 옆 | question: 일본경찰의 강제 방학조치에도 불구하고 학생들은 신흥학교 지하실에 모여서 어떤 인쇄물을 만들었는가?, answer: 태극기 | question: 여러 지방에서 시위가 일어나자 일본경찰이 전주시내 학교에 감행한 조치는 무엇인가?, answer: 강제 방학조치 | question: 지역인사들과 신흥고등학교 학생들이 주도적인 역할을 한 3.13 만세운동이 일어난 해는?, answer: 1919년 | question: 신흥학교 학생들은 시위를 주도했다는 혐의로 모두 실형 몇년을 언도 받았는가?, answer: 1년 | question: 만세운동에서 주도적인 역할을 한 이들은?, answer: 신흥학교 학생들 | question: 1919년 3.1 운동이 일어난 지역은 어디인가?, answer: 전주 | question: 3.13 만세운동이 일어난 곳은?, answer: 전주"

}

```

The data fields are the same among all splits.

- `questions`: a `list` of `string` features.

- `answers`: a `list` of `string` features.

- `paragraph`: a `string` feature.

- `questions_answers`: a `string` feature.

## Data Splits

|train|validation|test |

|----:|---------:|----:|

|9600 | 960 | 4442|

## Citation Information

```

@inproceedings{ushio-etal-2022-generative,

title = "{G}enerative {L}anguage {M}odels for {P}aragraph-{L}evel {Q}uestion {G}eneration",

author = "Ushio, Asahi and

Alva-Manchego, Fernando and

Camacho-Collados, Jose",

booktitle = "Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing",

month = dec,

year = "2022",

address = "Abu Dhabi, U.A.E.",

publisher = "Association for Computational Linguistics",

}

``` |

mertkarabacak/NSQIP-ALIF | 2023-07-22T14:53:41.000Z | [

"region:us"

] | mertkarabacak | null | null | null | 0 | 8 | Entry not found |

keremberke/forklift-object-detection | 2023-01-15T14:32:47.000Z | [

"task_categories:object-detection",

"roboflow",

"roboflow2huggingface",

"Manufacturing",

"region:us"

] | keremberke | null | @misc{ forklift-dsitv_dataset,

title = { Forklift Dataset },

type = { Open Source Dataset },

author = { Mohamed Traore },

howpublished = { \\url{ https://universe.roboflow.com/mohamed-traore-2ekkp/forklift-dsitv } },

url = { https://universe.roboflow.com/mohamed-traore-2ekkp/forklift-dsitv },

journal = { Roboflow Universe },

publisher = { Roboflow },

year = { 2022 },

month = { mar },

note = { visited on 2023-01-15 },

} | null | 4 | 8 | ---

task_categories:

- object-detection

tags:

- roboflow

- roboflow2huggingface

- Manufacturing

---

<div align="center">

<img width="640" alt="keremberke/forklift-object-detection" src="https://huggingface.co/datasets/keremberke/forklift-object-detection/resolve/main/thumbnail.jpg">

</div>

### Dataset Labels

```

['forklift', 'person']

```

### Number of Images

```json

{'test': 42, 'valid': 84, 'train': 295}

```

### How to Use

- Install [datasets](https://pypi.org/project/datasets/):

```bash

pip install datasets

```

- Load the dataset:

```python

from datasets import load_dataset

ds = load_dataset("keremberke/forklift-object-detection", name="full")

example = ds['train'][0]

```

### Roboflow Dataset Page

[https://universe.roboflow.com/mohamed-traore-2ekkp/forklift-dsitv/dataset/1](https://universe.roboflow.com/mohamed-traore-2ekkp/forklift-dsitv/dataset/1?ref=roboflow2huggingface)

### Citation

```

@misc{ forklift-dsitv_dataset,

title = { Forklift Dataset },

type = { Open Source Dataset },

author = { Mohamed Traore },

howpublished = { \\url{ https://universe.roboflow.com/mohamed-traore-2ekkp/forklift-dsitv } },

url = { https://universe.roboflow.com/mohamed-traore-2ekkp/forklift-dsitv },

journal = { Roboflow Universe },

publisher = { Roboflow },

year = { 2022 },

month = { mar },

note = { visited on 2023-01-15 },

}

```

### License

CC BY 4.0

### Dataset Summary

This dataset was exported via roboflow.ai on April 3, 2022 at 9:01 PM GMT

It includes 421 images.

Forklift are annotated in COCO format.

The following pre-processing was applied to each image:

* Auto-orientation of pixel data (with EXIF-orientation stripping)

No image augmentation techniques were applied.

|

venetis/disaster_tweets | 2023-01-04T15:15:03.000Z | [

"task_categories:text-classification",

"task_ids:sentiment-analysis",

"annotations_creators:other",

"language_creators:crowdsourced",

"multilinguality:monolingual",

"size_categories:1K<n<10K",

"source_datasets:original",

"language:en",

"license:openrail",

"region:us"

] | venetis | null | null | null | 0 | 8 | ---

annotations_creators:

- other

language:

- en

language_creators:

- crowdsourced

license:

- openrail

multilinguality:

- monolingual

pretty_name: Twitter Disaster Tweets

size_categories:

- 1K<n<10K

source_datasets:

- original

tags: []

task_categories:

- text-classification

task_ids:

- sentiment-analysis

---

|

irds/clinicaltrials_2021 | 2023-01-05T02:53:58.000Z | [

"task_categories:text-retrieval",

"region:us"

] | irds | null | null | null | 0 | 8 | ---

pretty_name: '`clinicaltrials/2021`'

viewer: false

source_datasets: []

task_categories:

- text-retrieval

---

# Dataset Card for `clinicaltrials/2021`

The `clinicaltrials/2021` dataset, provided by the [ir-datasets](https://ir-datasets.com/) package.

For more information about the dataset, see the [documentation](https://ir-datasets.com/clinicaltrials#clinicaltrials/2021).

# Data

This dataset provides:

- `docs` (documents, i.e., the corpus); count=375,580

This dataset is used by: [`clinicaltrials_2021_trec-ct-2021`](https://huggingface.co/datasets/irds/clinicaltrials_2021_trec-ct-2021), [`clinicaltrials_2021_trec-ct-2022`](https://huggingface.co/datasets/irds/clinicaltrials_2021_trec-ct-2022)

## Usage

```python

from datasets import load_dataset

docs = load_dataset('irds/clinicaltrials_2021', 'docs')

for record in docs:

record # {'doc_id': ..., 'title': ..., 'condition': ..., 'summary': ..., 'detailed_description': ..., 'eligibility': ...}

```

Note that calling `load_dataset` will download the dataset (or provide access instructions when it's not public) and make a copy of the

data in 🤗 Dataset format.

|

qanastek/frenchmedmcqa | 2023-06-08T12:39:22.000Z | [

"task_categories:question-answering",

"task_categories:multiple-choice",

"task_ids:multiple-choice-qa",

"task_ids:open-domain-qa",

"annotations_creators:no-annotation",

"language_creators:expert-generated",

"multilinguality:monolingual",

"size_categories:1k<n<10k",

"source_datasets:original",

"lan... | qanastek | FrenchMedMCQA | @unpublished{labrak:hal-03824241,

TITLE = {{FrenchMedMCQA: A French Multiple-Choice Question Answering Dataset for Medical domain}},

AUTHOR = {Labrak, Yanis and Bazoge, Adrien and Dufour, Richard and Daille, Béatrice and Gourraud, Pierre-Antoine and Morin, Emmanuel and Rouvier, Mickael},

URL = {https://hal.archives-ouvertes.fr/hal-03824241},

NOTE = {working paper or preprint},

YEAR = {2022},

MONTH = Oct,

PDF = {https://hal.archives-ouvertes.fr/hal-03824241/file/LOUHI_2022___QA-3.pdf},

HAL_ID = {hal-03824241},

HAL_VERSION = {v1},

} | null | 2 | 8 | ---

annotations_creators:

- no-annotation

language_creators:

- expert-generated

language:

- fr

license:

- apache-2.0

multilinguality:

- monolingual

size_categories:

- 1k<n<10k

source_datasets:

- original

task_categories:

- question-answering

- multiple-choice

task_ids:

- multiple-choice-qa

- open-domain-qa

paperswithcode_id: frenchmedmcqa

pretty_name: FrenchMedMCQA

---

# Dataset Card for FrenchMedMCQA : A French Multiple-Choice Question Answering Corpus for Medical domain

## Table of Contents

- [Dataset Card for FrenchMedMCQA : A French Multiple-Choice Question Answering Corpus for Medical domain](#dataset-card-for-frenchmedmcqa--a-french-multiple-choice-question-answering-corpus-for-medical-domain)

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Source Data](#source-data)

- [Initial Data Collection and Normalization](#initial-data-collection-and-normalization)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contact](#contact)

## Dataset Description

- **Homepage:** https://deft2023.univ-avignon.fr/

- **Repository:** https://deft2023.univ-avignon.fr/

- **Paper:** [FrenchMedMCQA: A French Multiple-Choice Question Answering Dataset for Medical domain](https://hal.science/hal-03824241/document)

- **Leaderboard:** Coming soon

- **Point of Contact:** [Yanis LABRAK](mailto:yanis.labrak@univ-avignon.fr)

### Dataset Summary

This paper introduces FrenchMedMCQA, the first publicly available Multiple-Choice Question Answering (MCQA) dataset in French for medical domain. It is composed of 3,105 questions taken from real exams of the French medical specialization diploma in pharmacy, mixing single and multiple answers.

Each instance of the dataset contains an identifier, a question, five possible answers and their manual correction(s).

We also propose first baseline models to automatically process this MCQA task in order to report on the current performances and to highlight the difficulty of the task. A detailed analysis of the results showed that it is necessary to have representations adapted to the medical domain or to the MCQA task: in our case, English specialized models yielded better results than generic French ones, even though FrenchMedMCQA is in French. Corpus, models and tools are available online.

### Supported Tasks and Leaderboards

Multiple-Choice Question Answering (MCQA)

### Languages

The questions and answers are available in French.

## Dataset Structure

### Data Instances

```json

{

"id": "1863462668476003678",

"question": "Parmi les propositions suivantes, laquelle (lesquelles) est (sont) exacte(s) ? Les chylomicrons plasmatiques :",

"answers": {

"a": "Sont plus riches en cholestérol estérifié qu'en triglycérides",

"b": "Sont synthétisés par le foie",

"c": "Contiennent de l'apolipoprotéine B48",

"d": "Contiennent de l'apolipoprotéine E",

"e": "Sont transformés par action de la lipoprotéine lipase"

},

"correct_answers": [

"c",

"d",

"e"

],

"subject_name": "pharmacie",

"type": "multiple"

}

```

### Data Fields

- `id` : a string question identifier for each example

- `question` : question text (a string)

- `answer_a` : Option A

- `answer_b` : Option B

- `answer_c` : Option C

- `answer_d` : Option D

- `answer_e` : Option E

- `correct_answers` : Correct options, i.e., A, D and E

- `choice_type` ({"single", "multiple"}): Question choice type.

- "single": Single-choice question, where each choice contains a single option.

- "multiple": Multi-choice question, where each choice contains a combination of multiple options.

### Data Splits

| # Answers | Training | Validation | Test | Total |

|:---------:|:--------:|:----------:|:----:|:-----:|

| 1 | 595 | 164 | 321 | 1,080 |

| 2 | 528 | 45 | 97 | 670 |

| 3 | 718 | 71 | 141 | 930 |

| 4 | 296 | 30 | 56 | 382 |

| 5 | 34 | 2 | 7 | 43 |

| Total | 2171 | 312 | 622 | 3,105 |

## Dataset Creation

### Source Data

#### Initial Data Collection and Normalization

The questions and their associated candidate answer(s) were collected from real French pharmacy exams on the remede website. Questions and answers were manually created by medical experts and used during examinations. The dataset is composed of 2,025 questions with multiple answers and 1,080 with a single one, for a total of 3,105 questions. Each instance of the dataset contains an identifier, a question, five options (labeled from A to E) and correct answer(s). The average question length is 14.17 tokens and the average answer length is 6.44 tokens. The vocabulary size is of 13k words, of which 3.8k are estimated medical domain-specific words (i.e. a word related to the medical field). We find an average of 2.49 medical domain-specific words in each question (17 % of the words) and 2 in each answer (36 % of the words). On average, a medical domain-specific word is present in 2 questions and in 8 answers.

### Personal and Sensitive Information

The corpora is free of personal or sensitive information.

## Additional Information

### Dataset Curators

The dataset was created by Labrak Yanis and Bazoge Adrien and Dufour Richard and Daille Béatrice and Gourraud Pierre-Antoine and Morin Emmanuel and Rouvier Mickael.

### Licensing Information

Apache 2.0

### Citation Information

If you find this useful in your research, please consider citing the dataset paper :

```latex

@inproceedings{labrak-etal-2022-frenchmedmcqa,

title = "{F}rench{M}ed{MCQA}: A {F}rench Multiple-Choice Question Answering Dataset for Medical domain",

author = "Labrak, Yanis and

Bazoge, Adrien and

Dufour, Richard and

Daille, Beatrice and

Gourraud, Pierre-Antoine and

Morin, Emmanuel and

Rouvier, Mickael",

booktitle = "Proceedings of the 13th International Workshop on Health Text Mining and Information Analysis (LOUHI)",

month = dec,

year = "2022",

address = "Abu Dhabi, United Arab Emirates (Hybrid)",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2022.louhi-1.5",

pages = "41--46",

abstract = "This paper introduces FrenchMedMCQA, the first publicly available Multiple-Choice Question Answering (MCQA) dataset in French for medical domain. It is composed of 3,105 questions taken from real exams of the French medical specialization diploma in pharmacy, mixing single and multiple answers. Each instance of the dataset contains an identifier, a question, five possible answers and their manual correction(s). We also propose first baseline models to automatically process this MCQA task in order to report on the current performances and to highlight the difficulty of the task. A detailed analysis of the results showed that it is necessary to have representations adapted to the medical domain or to the MCQA task: in our case, English specialized models yielded better results than generic French ones, even though FrenchMedMCQA is in French. Corpus, models and tools are available online.",

}

```

### Contact

Thanks to contact [Yanis LABRAK](https://github.com/qanastek) for more information about this dataset.

|

Xieyiyiyi/ceshi0119 | 2023-01-28T02:48:32.000Z | [

"task_categories:text-classification",

"task_categories:token-classification",

"task_categories:question-answering",

"task_ids:natural-language-inference",

"task_ids:word-sense-disambiguation",

"task_ids:coreference-resolution",

"task_ids:extractive-qa",

"annotations_creators:expert-generated",

"lan... | Xieyiyiyi | null | null | null | 0 | 8 | ---

annotations_creators:

- expert-generated

language_creators:

- other

language:

- en

license:

- unknown

multilinguality:

- monolingual

size_categories:

- 10K<n<100K

source_datasets:

- extended|other

task_categories:

- text-classification

- token-classification

- question-answering

task_ids:

- natural-language-inference

- word-sense-disambiguation

- coreference-resolution

- extractive-qa

paperswithcode_id: superglue

pretty_name: SuperGLUE

tags:

- superglue

- NLU

- natural language understanding

dataset_info:

- config_name: boolq

features:

- name: question

dtype: string

- name: passage

dtype: string

- name: idx

dtype: int32

- name: label

dtype:

class_label:

names:

'0': 'False'

'1': 'True'

splits:

- name: test

num_bytes: 2107997

num_examples: 3245

- name: train

num_bytes: 6179206

num_examples: 9427

- name: validation

num_bytes: 2118505

num_examples: 3270

download_size: 4118001

dataset_size: 10405708

- config_name: cb

features:

- name: premise

dtype: string

- name: hypothesis

dtype: string

- name: idx

dtype: int32

- name: label

dtype:

class_label:

names:

'0': entailment

'1': contradiction

'2': neutral

splits:

- name: test

num_bytes: 93660

num_examples: 250

- name: train

num_bytes: 87218

num_examples: 250

- name: validation

num_bytes: 21894

num_examples: 56

download_size: 75482

dataset_size: 202772

- config_name: copa

features:

- name: premise

dtype: string

- name: choice1

dtype: string

- name: choice2

dtype: string

- name: question

dtype: string

- name: idx

dtype: int32

- name: label

dtype:

class_label:

names:

'0': choice1

'1': choice2

splits:

- name: test

num_bytes: 60303

num_examples: 500

- name: train

num_bytes: 49599

num_examples: 400

- name: validation

num_bytes: 12586

num_examples: 100

download_size: 43986

dataset_size: 122488

- config_name: multirc

features:

- name: paragraph

dtype: string

- name: question

dtype: string

- name: answer

dtype: string

- name: idx

struct:

- name: paragraph

dtype: int32

- name: question

dtype: int32

- name: answer

dtype: int32

- name: label

dtype:

class_label:

names:

'0': 'False'

'1': 'True'

splits:

- name: test

num_bytes: 14996451

num_examples: 9693

- name: train

num_bytes: 46213579

num_examples: 27243

- name: validation

num_bytes: 7758918

num_examples: 4848

download_size: 1116225

dataset_size: 68968948

- config_name: record

features:

- name: passage

dtype: string

- name: query

dtype: string

- name: entities

sequence: string

- name: entity_spans

sequence:

- name: text

dtype: string

- name: start

dtype: int32

- name: end

dtype: int32

- name: answers

sequence: string

- name: idx

struct:

- name: passage

dtype: int32

- name: query

dtype: int32

splits:

- name: train

num_bytes: 179232052

num_examples: 100730

- name: validation

num_bytes: 17479084

num_examples: 10000

- name: test

num_bytes: 17200575

num_examples: 10000

download_size: 51757880

dataset_size: 213911711

- config_name: rte

features:

- name: premise

dtype: string

- name: hypothesis

dtype: string

- name: idx

dtype: int32

- name: label

dtype:

class_label:

names:

'0': entailment

'1': not_entailment

splits:

- name: test

num_bytes: 975799

num_examples: 3000

- name: train

num_bytes: 848745

num_examples: 2490

- name: validation

num_bytes: 90899

num_examples: 277

download_size: 750920

dataset_size: 1915443

- config_name: wic

features:

- name: word

dtype: string

- name: sentence1

dtype: string

- name: sentence2

dtype: string

- name: start1

dtype: int32

- name: start2

dtype: int32

- name: end1

dtype: int32

- name: end2

dtype: int32

- name: idx

dtype: int32

- name: label

dtype:

class_label:

names:

'0': 'False'

'1': 'True'

splits:

- name: test

num_bytes: 180593

num_examples: 1400

- name: train

num_bytes: 665183

num_examples: 5428

- name: validation

num_bytes: 82623

num_examples: 638

download_size: 396213

dataset_size: 928399

- config_name: wsc

features:

- name: text

dtype: string

- name: span1_index

dtype: int32

- name: span2_index

dtype: int32

- name: span1_text

dtype: string

- name: span2_text

dtype: string

- name: idx

dtype: int32

- name: label

dtype:

class_label:

names:

'0': 'False'

'1': 'True'

splits:

- name: test

num_bytes: 31572

num_examples: 146

- name: train

num_bytes: 89883

num_examples: 554

- name: validation

num_bytes: 21637

num_examples: 104

download_size: 32751

dataset_size: 143092

- config_name: wsc.fixed

features:

- name: text

dtype: string

- name: span1_index

dtype: int32

- name: span2_index

dtype: int32

- name: span1_text

dtype: string

- name: span2_text

dtype: string

- name: idx

dtype: int32

- name: label

dtype:

class_label:

names:

'0': 'False'

'1': 'True'

splits:

- name: test

num_bytes: 31568

num_examples: 146

- name: train

num_bytes: 89883

num_examples: 554

- name: validation

num_bytes: 21637

num_examples: 104

download_size: 32751

dataset_size: 143088

- config_name: axb

features:

- name: sentence1

dtype: string

- name: sentence2

dtype: string

- name: idx

dtype: int32

- name: label

dtype:

class_label:

names:

'0': entailment

'1': not_entailment

splits:

- name: test

num_bytes: 238392

num_examples: 1104

download_size: 33950

dataset_size: 238392

- config_name: axg

features:

- name: premise

dtype: string

- name: hypothesis

dtype: string

- name: idx

dtype: int32

- name: label

dtype:

class_label:

names:

'0': entailment

'1': not_entailment

splits:

- name: test

num_bytes: 53581

num_examples: 356

download_size: 10413

dataset_size: 53581

---

# Dataset Card for "super_glue"

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** [https://github.com/google-research-datasets/boolean-questions](https://github.com/google-research-datasets/boolean-questions)

- **Repository:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Paper:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Point of Contact:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Size of downloaded dataset files:** 55.66 MB

- **Size of the generated dataset:** 238.01 MB

- **Total amount of disk used:** 293.67 MB

### Dataset Summary

SuperGLUE (https://super.gluebenchmark.com/) is a new benchmark styled after

GLUE with a new set of more difficult language understanding tasks, improved

resources, and a new public leaderboard.

BoolQ (Boolean Questions, Clark et al., 2019a) is a QA task where each example consists of a short

passage and a yes/no question about the passage. The questions are provided anonymously and

unsolicited by users of the Google search engine, and afterwards paired with a paragraph from a

Wikipedia article containing the answer. Following the original work, we evaluate with accuracy.

### Supported Tasks and Leaderboards

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Languages

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## Dataset Structure

### Data Instances

#### axb

- **Size of downloaded dataset files:** 0.03 MB

- **Size of the generated dataset:** 0.23 MB

- **Total amount of disk used:** 0.26 MB

An example of 'test' looks as follows.

```

```

#### axg

- **Size of downloaded dataset files:** 0.01 MB

- **Size of the generated dataset:** 0.05 MB

- **Total amount of disk used:** 0.06 MB

An example of 'test' looks as follows.

```

```

#### boolq

- **Size of downloaded dataset files:** 3.93 MB

- **Size of the generated dataset:** 9.92 MB

- **Total amount of disk used:** 13.85 MB

An example of 'train' looks as follows.

```

```

#### cb

- **Size of downloaded dataset files:** 0.07 MB

- **Size of the generated dataset:** 0.19 MB

- **Total amount of disk used:** 0.27 MB

An example of 'train' looks as follows.

```

```

#### copa

- **Size of downloaded dataset files:** 0.04 MB

- **Size of the generated dataset:** 0.12 MB

- **Total amount of disk used:** 0.16 MB

An example of 'train' looks as follows.

```

```

### Data Fields

The data fields are the same among all splits.

#### axb

- `sentence1`: a `string` feature.

- `sentence2`: a `string` feature.

- `idx`: a `int32` feature.

- `label`: a classification label, with possible values including `entailment` (0), `not_entailment` (1).

#### axg

- `premise`: a `string` feature.

- `hypothesis`: a `string` feature.

- `idx`: a `int32` feature.

- `label`: a classification label, with possible values including `entailment` (0), `not_entailment` (1).

#### boolq

- `question`: a `string` feature.

- `passage`: a `string` feature.

- `idx`: a `int32` feature.

- `label`: a classification label, with possible values including `False` (0), `True` (1).

#### cb

- `premise`: a `string` feature.

- `hypothesis`: a `string` feature.

- `idx`: a `int32` feature.

- `label`: a classification label, with possible values including `entailment` (0), `contradiction` (1), `neutral` (2).

#### copa

- `premise`: a `string` feature.

- `choice1`: a `string` feature.

- `choice2`: a `string` feature.

- `question`: a `string` feature.

- `idx`: a `int32` feature.

- `label`: a classification label, with possible values including `choice1` (0), `choice2` (1).

### Data Splits

#### axb

| |test|

|---|---:|

|axb|1104|

#### axg

| |test|

|---|---:|

|axg| 356|

#### boolq

| |train|validation|test|

|-----|----:|---------:|---:|

|boolq| 9427| 3270|3245|

#### cb

| |train|validation|test|

|---|----:|---------:|---:|

|cb | 250| 56| 250|

#### copa

| |train|validation|test|

|----|----:|---------:|---:|

|copa| 400| 100| 500|

## Dataset Creation

### Curation Rationale

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

#### Who are the source language producers?

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Annotations

#### Annotation process

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

#### Who are the annotators?

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Personal and Sensitive Information

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Discussion of Biases

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Other Known Limitations

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## Additional Information

### Dataset Curators

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Licensing Information

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Citation Information

```

@inproceedings{clark2019boolq,

title={BoolQ: Exploring the Surprising Difficulty of Natural Yes/No Questions},

author={Clark, Christopher and Lee, Kenton and Chang, Ming-Wei, and Kwiatkowski, Tom and Collins, Michael, and Toutanova, Kristina},

booktitle={NAACL},

year={2019}

}

@article{wang2019superglue,

title={SuperGLUE: A Stickier Benchmark for General-Purpose Language Understanding Systems},

author={Wang, Alex and Pruksachatkun, Yada and Nangia, Nikita and Singh, Amanpreet and Michael, Julian and Hill, Felix and Levy, Omer and Bowman, Samuel R},

journal={arXiv preprint arXiv:1905.00537},

year={2019}

}

Note that each SuperGLUE dataset has its own citation. Please see the source to

get the correct citation for each contained dataset.

```

### Contributions

Thanks to [@thomwolf](https://github.com/thomwolf), [@lewtun](https://github.com/lewtun), [@patrickvonplaten](https://github.com/patrickvonplaten) for adding this dataset. |

BeardedJohn/ubb-endava-conll-assistant-ner-only-misc-v2 | 2023-01-18T08:53:56.000Z | [

"region:us"

] | BeardedJohn | null | null | null | 0 | 8 | Entry not found |

huggingface-projects/auto-retrain-input-dataset | 2023-01-23T11:02:27.000Z | [

"region:us"

] | huggingface-projects | null | null | null | 1 | 8 | ---

dataset_info:

features:

- name: image

dtype: image

- name: label

dtype:

class_label:

names:

'0': ADONIS

'1': AFRICAN GIANT SWALLOWTAIL

'2': AMERICAN SNOOT

splits:

- name: train

num_bytes: 8825732.0

num_examples: 338

download_size: 8823395

dataset_size: 8825732.0

---

# Dataset Card for "input-dataset"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

bigbio/bioid | 2023-02-17T14:54:28.000Z | [

"multilinguality:monolingual",

"language:en",

"license:other",

"region:us"

] | bigbio | The Bio-ID track focuses on entity tagging and ID assignment to selected bioentity types.

The task is to annotate text from figure legends with the entity types and IDs for taxon (organism), gene, protein, miRNA, small molecules,

cellular components, cell types and cell lines, tissues and organs. The track draws on SourceData annotated figure

legends (by panel), in BioC format, and the corresponding full text articles (also BioC format) provided for context. | @inproceedings{arighi2017bio,

title={Bio-ID track overview},

author={Arighi, Cecilia and Hirschman, Lynette and Lemberger, Thomas and Bayer, Samuel and Liechti, Robin and Comeau, Donald and Wu, Cathy},

booktitle={Proc. BioCreative Workshop},

volume={482},

pages={376},

year={2017}

} | null | 0 | 8 | ---

language:

- en

bigbio_language:

- English

license: other

bigbio_license_shortname: UNKNOWN

multilinguality: monolingual

pretty_name: Bio-ID

homepage: https://biocreative.bioinformatics.udel.edu/tasks/biocreative-vi/track-1/

bigbio_pubmed: true

bigbio_public: true

bigbio_tasks:

- NAMED_ENTITY_RECOGNITION

- NAMED_ENTITY_DISAMBIGUATION

---

# Dataset Card for Bio-ID

## Dataset Description

- **Homepage:** https://biocreative.bioinformatics.udel.edu/tasks/biocreative-vi/track-1/

- **Pubmed:** True

- **Public:** True

- **Tasks:** NER,NED

The Bio-ID track focuses on entity tagging and ID assignment to selected bioentity types.

The task is to annotate text from figure legends with the entity types and IDs for taxon (organism), gene, protein, miRNA, small molecules,

cellular components, cell types and cell lines, tissues and organs. The track draws on SourceData annotated figure

legends (by panel), in BioC format, and the corresponding full text articles (also BioC format) provided for context.

## Citation Information

```

@inproceedings{arighi2017bio,

title={Bio-ID track overview},

author={Arighi, Cecilia and Hirschman, Lynette and Lemberger, Thomas and Bayer, Samuel and Liechti, Robin and Comeau, Donald and Wu, Cathy},

booktitle={Proc. BioCreative Workshop},

volume={482},

pages={376},

year={2017}

}

```

|

chiHang/clothes_dataset | 2023-01-31T06:33:48.000Z | [

"region:us"

] | chiHang | null | null | null | 1 | 8 | ---

dataset_info:

features:

- name: image

dtype: image

splits:

- name: train

num_bytes: 230456480.0

num_examples: 64

download_size: 226942310

dataset_size: 230456480.0

---

# Dataset Card for "clothes_dataset"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Cohere/miracl-zh-corpus-22-12 | 2023-02-06T11:55:44.000Z | [

"task_categories:text-retrieval",

"task_ids:document-retrieval",

"annotations_creators:expert-generated",

"multilinguality:multilingual",

"language:zh",

"license:apache-2.0",

"region:us"

] | Cohere | null | null | null | 3 | 8 | ---

annotations_creators:

- expert-generated

language:

- zh

multilinguality:

- multilingual

size_categories: []

source_datasets: []

tags: []

task_categories:

- text-retrieval

license:

- apache-2.0

task_ids:

- document-retrieval

---

# MIRACL (zh) embedded with cohere.ai `multilingual-22-12` encoder

We encoded the [MIRACL dataset](https://huggingface.co/miracl) using the [cohere.ai](https://txt.cohere.ai/multilingual/) `multilingual-22-12` embedding model.

The query embeddings can be found in [Cohere/miracl-zh-queries-22-12](https://huggingface.co/datasets/Cohere/miracl-zh-queries-22-12) and the corpus embeddings can be found in [Cohere/miracl-zh-corpus-22-12](https://huggingface.co/datasets/Cohere/miracl-zh-corpus-22-12).

For the orginal datasets, see [miracl/miracl](https://huggingface.co/datasets/miracl/miracl) and [miracl/miracl-corpus](https://huggingface.co/datasets/miracl/miracl-corpus).

Dataset info:

> MIRACL 🌍🙌🌏 (Multilingual Information Retrieval Across a Continuum of Languages) is a multilingual retrieval dataset that focuses on search across 18 different languages, which collectively encompass over three billion native speakers around the world.

>

> The corpus for each language is prepared from a Wikipedia dump, where we keep only the plain text and discard images, tables, etc. Each article is segmented into multiple passages using WikiExtractor based on natural discourse units (e.g., `\n\n` in the wiki markup). Each of these passages comprises a "document" or unit of retrieval. We preserve the Wikipedia article title of each passage.

## Embeddings

We compute for `title+" "+text` the embeddings using our `multilingual-22-12` embedding model, a state-of-the-art model that works for semantic search in 100 languages. If you want to learn more about this model, have a look at [cohere.ai multilingual embedding model](https://txt.cohere.ai/multilingual/).

## Loading the dataset

In [miracl-zh-corpus-22-12](https://huggingface.co/datasets/Cohere/miracl-zh-corpus-22-12) we provide the corpus embeddings. Note, depending on the selected split, the respective files can be quite large.

You can either load the dataset like this:

```python

from datasets import load_dataset

docs = load_dataset(f"Cohere/miracl-zh-corpus-22-12", split="train")

```

Or you can also stream it without downloading it before:

```python

from datasets import load_dataset

docs = load_dataset(f"Cohere/miracl-zh-corpus-22-12", split="train", streaming=True)

for doc in docs:

docid = doc['docid']

title = doc['title']

text = doc['text']

emb = doc['emb']

```

## Search

Have a look at [miracl-zh-queries-22-12](https://huggingface.co/datasets/Cohere/miracl-zh-queries-22-12) where we provide the query embeddings for the MIRACL dataset.

To search in the documents, you must use **dot-product**.

And then compare this query embeddings either with a vector database (recommended) or directly computing the dot product.

A full search example:

```python

# Attention! For large datasets, this requires a lot of memory to store

# all document embeddings and to compute the dot product scores.

# Only use this for smaller datasets. For large datasets, use a vector DB

from datasets import load_dataset

import torch

#Load documents + embeddings

docs = load_dataset(f"Cohere/miracl-zh-corpus-22-12", split="train")

doc_embeddings = torch.tensor(docs['emb'])

# Load queries

queries = load_dataset(f"Cohere/miracl-zh-queries-22-12", split="dev")

# Select the first query as example

qid = 0

query = queries[qid]

query_embedding = torch.tensor(queries['emb'])

# Compute dot score between query embedding and document embeddings

dot_scores = torch.mm(query_embedding, doc_embeddings.transpose(0, 1))

top_k = torch.topk(dot_scores, k=3)

# Print results

print("Query:", query['query'])

for doc_id in top_k.indices[0].tolist():

print(docs[doc_id]['title'])

print(docs[doc_id]['text'])

```

You can get embeddings for new queries using our API:

```python

#Run: pip install cohere

import cohere

co = cohere.Client(f"{api_key}") # You should add your cohere API Key here :))

texts = ['my search query']

response = co.embed(texts=texts, model='multilingual-22-12')

query_embedding = response.embeddings[0] # Get the embedding for the first text

```

## Performance

In the following table we compare the cohere multilingual-22-12 model with Elasticsearch version 8.6.0 lexical search (title and passage indexed as independent fields). Note that Elasticsearch doesn't support all languages that are part of the MIRACL dataset.

We compute nDCG@10 (a ranking based loss), as well as hit@3: Is at least one relevant document in the top-3 results. We find that hit@3 is easier to interpret, as it presents the number of queries for which a relevant document is found among the top-3 results.

Note: MIRACL only annotated a small fraction of passages (10 per query) for relevancy. Especially for larger Wikipedias (like English), we often found many more relevant passages. This is know as annotation holes. Real nDCG@10 and hit@3 performance is likely higher than depicted.

| Model | cohere multilingual-22-12 nDCG@10 | cohere multilingual-22-12 hit@3 | ES 8.6.0 nDCG@10 | ES 8.6.0 acc@3 |

|---|---|---|---|---|

| miracl-ar | 64.2 | 75.2 | 46.8 | 56.2 |

| miracl-bn | 61.5 | 75.7 | 49.2 | 60.1 |

| miracl-de | 44.4 | 60.7 | 19.6 | 29.8 |

| miracl-en | 44.6 | 62.2 | 30.2 | 43.2 |

| miracl-es | 47.0 | 74.1 | 27.0 | 47.2 |

| miracl-fi | 63.7 | 76.2 | 51.4 | 61.6 |

| miracl-fr | 46.8 | 57.1 | 17.0 | 21.6 |

| miracl-hi | 50.7 | 62.9 | 41.0 | 48.9 |

| miracl-id | 44.8 | 63.8 | 39.2 | 54.7 |

| miracl-ru | 49.2 | 66.9 | 25.4 | 36.7 |

| **Avg** | 51.7 | 67.5 | 34.7 | 46.0 |

Further languages (not supported by Elasticsearch):

| Model | cohere multilingual-22-12 nDCG@10 | cohere multilingual-22-12 hit@3 |

|---|---|---|

| miracl-fa | 44.8 | 53.6 |

| miracl-ja | 49.0 | 61.0 |

| miracl-ko | 50.9 | 64.8 |

| miracl-sw | 61.4 | 74.5 |

| miracl-te | 67.8 | 72.3 |

| miracl-th | 60.2 | 71.9 |

| miracl-yo | 56.4 | 62.2 |

| miracl-zh | 43.8 | 56.5 |

| **Avg** | 54.3 | 64.6 |

|

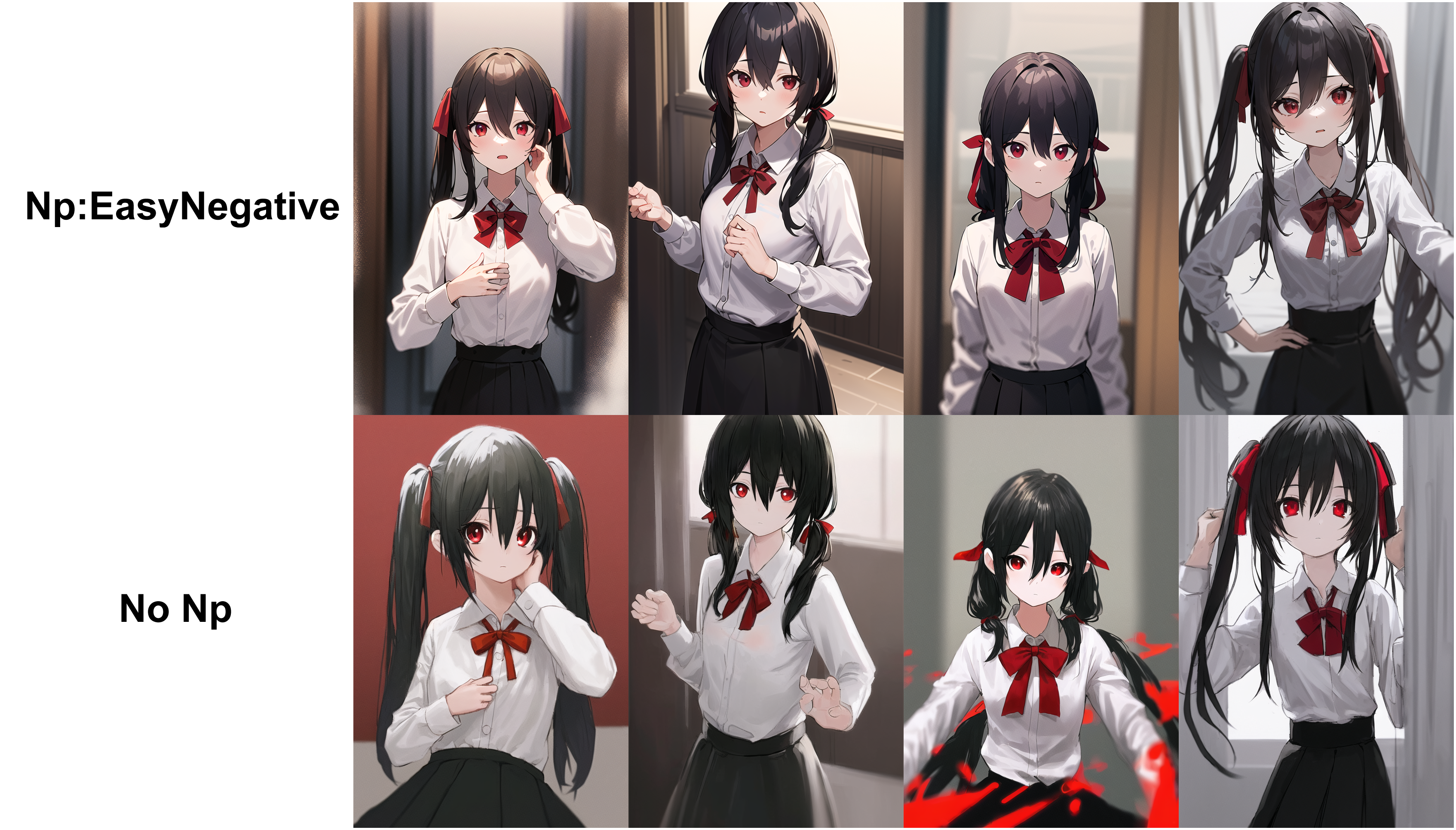

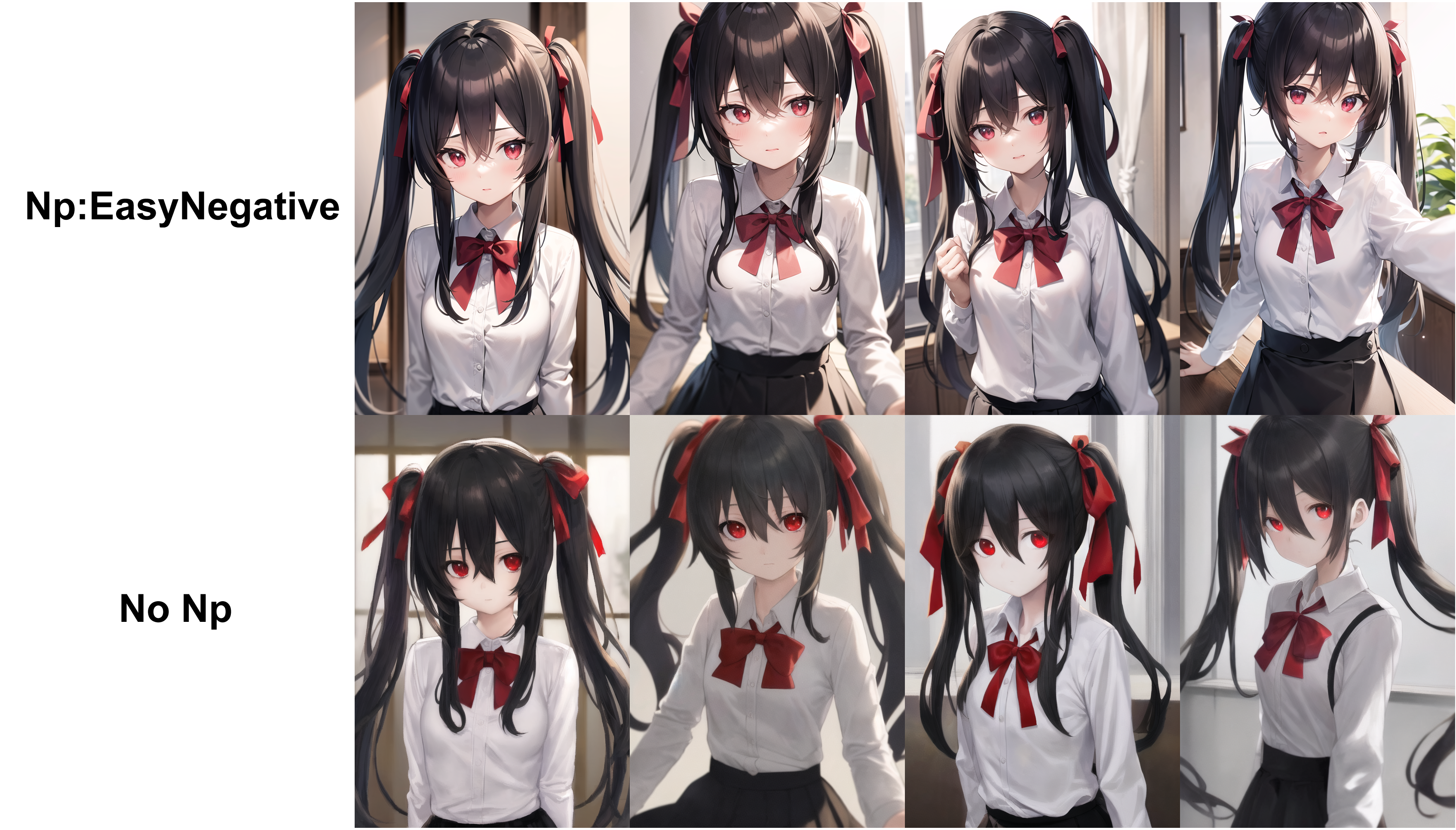

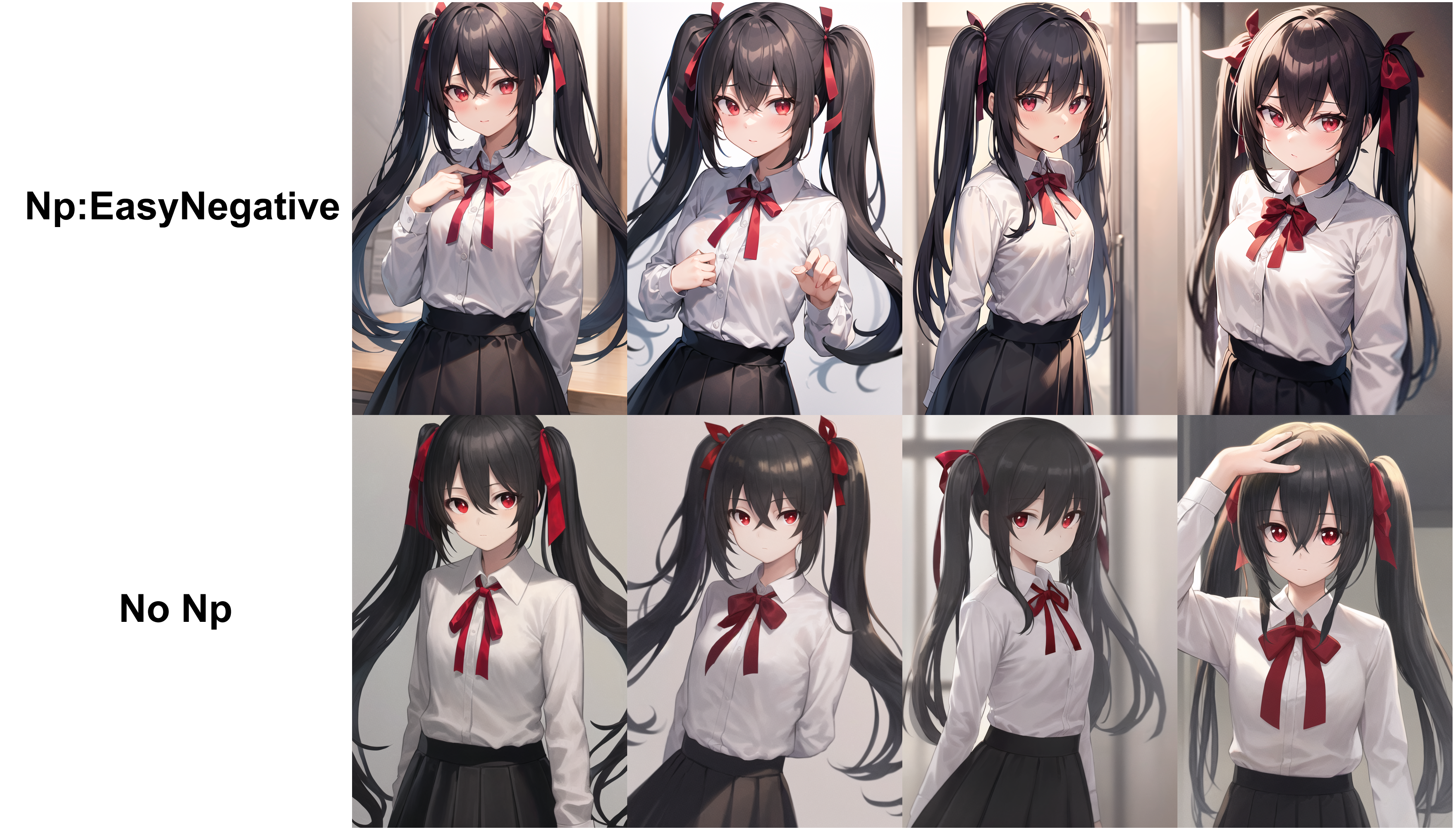

gsdf/EasyNegative | 2023-02-12T14:39:30.000Z | [

"license:other",

"region:us"

] | gsdf | null | null | null | 1,057 | 8 | ---

license: other

---

# Negative Embedding

This is a Negative Embedding trained with Counterfeit. Please use it in the "\stable-diffusion-webui\embeddings" folder.

It can be used with other models, but the effectiveness is not certain.

# Counterfeit-V2.0.safetensors

# AbyssOrangeMix2_sfw.safetensors

# anything-v4.0-pruned.safetensors

|

Kaludi/data-csgo-weapon-classification | 2023-02-02T23:34:31.000Z | [

"task_categories:image-classification",

"region:us"

] | Kaludi | null | null | null | 0 | 8 | ---

task_categories:

- image-classification

---

# Dataset for project: csgo-weapon-classification

## Dataset Description

This dataset has for project csgo-weapon-classification was collected with the help of a bulk google image downloader.

### Languages

The BCP-47 code for the dataset's language is unk.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"image": "<1768x718 RGB PIL image>",

"target": 0

},

{

"image": "<716x375 RGBA PIL image>",

"target": 0

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"image": "Image(decode=True, id=None)",

"target": "ClassLabel(names=['AK-47', 'AWP', 'Famas', 'Galil-AR', 'Glock', 'M4A1', 'M4A4', 'P-90', 'SG-553', 'UMP', 'USP'], id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 1100 |

| valid | 275 |

|

metaeval/lonli | 2023-05-31T08:41:36.000Z | [

"task_categories:text-classification",

"task_ids:natural-language-inference",

"language:en",

"license:mit",

"region:us"

] | metaeval | null | null | null | 0 | 8 | ---

license: mit

task_ids:

- natural-language-inference

task_categories:

- text-classification

language:

- en

---

https://github.com/microsoft/LoNLI

```bibtex

@article{Tarunesh2021TrustingRO,

title={Trusting RoBERTa over BERT: Insights from CheckListing the Natural Language Inference Task},

author={Ishan Tarunesh and Somak Aditya and Monojit Choudhury},

journal={ArXiv},

year={2021},

volume={abs/2107.07229}

}

``` |

jonathan-roberts1/RSI-CB256 | 2023-03-31T17:11:50.000Z | [

"task_categories:image-classification",

"task_categories:zero-shot-image-classification",

"license:other",

"region:us"

] | jonathan-roberts1 | null | null | null | 0 | 8 | ---

dataset_info:

features:

- name: label_1

dtype:

class_label:

names:

'0': transportation

'1': other objects

'2': woodland

'3': water area

'4': other land

'5': cultivated land

'6': construction land

- name: label_2

dtype:

class_label:

names:

'0': parking lot

'1': avenue

'2': highway

'3': bridge

'4': marina

'5': crossroads

'6': airport runway

'7': pipeline

'8': town

'9': airplane

'10': forest

'11': mangrove

'12': artificial grassland

'13': river protection forest

'14': shrubwood

'15': sapling

'16': sparse forest

'17': lakeshore

'18': river

'19': stream

'20': coastline

'21': hirst

'22': dam

'23': sea

'24': snow mountain

'25': sandbeach

'26': mountain

'27': desert

'28': dry farm

'29': green farmland

'30': bare land

'31': city building

'32': residents

'33': container

'34': storage room

- name: image

dtype: image

splits:

- name: train

num_bytes: 4901667781.625

num_examples: 24747

download_size: 4198991130

dataset_size: 4901667781.625

license: other

task_categories:

- image-classification

- zero-shot-image-classification

---

# Dataset Card for "RSI-CB256"

## Dataset Description

- **Paper** [Exploring Models and Data for Remote Sensing Image Caption Generation](https://ieeexplore.ieee.org/iel7/36/4358825/08240966.pdf)

-

### Licensing Information

For academic purposes.

## Citation Information

[Exploring Models and Data for Remote Sensing Image Caption Generation](https://ieeexplore.ieee.org/iel7/36/4358825/08240966.pdf)

```

@article{lu2017exploring,

title = {Exploring Models and Data for Remote Sensing Image Caption Generation},

author = {Lu, Xiaoqiang and Wang, Binqiang and Zheng, Xiangtao and Li, Xuelong},

journal = {IEEE Transactions on Geoscience and Remote Sensing},

volume = 56,

number = 4,

pages = {2183--2195},

doi = {10.1109/TGRS.2017.2776321},

year={2018}

}

``` |

Duskfallcrew/Badge_crafts | 2023-02-26T10:34:30.000Z | [

"task_categories:text-to-image",

"task_categories:image-classification",

"size_categories:1K<n<10K",

"language:en",

"license:creativeml-openrail-m",

"badges",

"crafts",

"region:us"

] | Duskfallcrew | null | null | null | 1 | 8 | ---

license: creativeml-openrail-m

task_categories:

- text-to-image

- image-classification

language:

- en

tags:

- badges

- crafts

pretty_name: Badge Craft Dataset

size_categories:

- 1K<n<10K

---

# Do what you will with the data this is old photos of crafts I used to make - just abide by the liscence above and you good to go! |

vietgpt/wikipedia_en | 2023-03-30T18:35:12.000Z | [

"task_categories:text-generation",

"size_categories:1M<n<10M",

"language:en",

"LM",

"region:us"

] | vietgpt | null | null | null | 2 | 8 | ---

dataset_info:

features:

- name: id

dtype: string

- name: url

dtype: string

- name: title

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 21102365479

num_examples: 6623239

download_size: 12161597141

dataset_size: 21102365479

task_categories:

- text-generation

language:

- en

tags:

- LM

size_categories:

- 1M<n<10M

---

# Wikipedia

- Source: https://huggingface.co/datasets/wikipedia

- Num examples: 6,623,239

- Language: English

```python

from datasets import load_dataset

load_dataset("tdtunlp/wikipedia_en")

``` |

lansinuote/nlp.1.predict_last_word | 2023-02-22T11:26:30.000Z | [

"region:us"

] | lansinuote | null | null | null | 0 | 8 | ---

dataset_info:

features:

- name: input_ids