Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 1 853 | labels stringlengths 4 898 | body stringlengths 2 262k | index stringclasses 13 values | text_combine stringlengths 96 262k | label stringclasses 2 values | text stringlengths 96 250k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

89,465 | 25,805,746,456 | IssuesEvent | 2022-12-11 11:49:21 | NFFT/nfft | https://api.github.com/repos/NFFT/nfft | closed | [Build routine] Progress bar for visualisation? | enhancement question build routine | How about adding a progress bar such as the one `apt` has to the build routine? This would be an enhancement for the users who build `NFFT` for the first time since they would know what is already done in comparison to the whole build step.

As a measure of progress, I would like to suggest the count of already executed instructions. | 1.0 | [Build routine] Progress bar for visualisation? - How about adding a progress bar such as the one `apt` has to the build routine? This would be an enhancement for the users who build `NFFT` for the first time since they would know what is already done in comparison to the whole build step.

As a measure of progress, I would like to suggest the count of already executed instructions. | build | progress bar for visualisation how about adding a progress bar such as the one apt has to the build routine this would be an enhancement for the users who build nfft for the first time since they would know what is already done in comparison to the whole build step as a measure of progress i would like to suggest the count of already executed instructions | 1 |

87,913 | 25,248,929,981 | IssuesEvent | 2022-11-15 13:19:05 | bcpierce00/unison | https://api.github.com/repos/bcpierce00/unison | closed | support dune properly, remove usage of c_names | effort-low impact-low build | ````

[ 70s] + /usr/bin/mkdir /home/abuild/rpmbuild/BUILDROOT/unison-2.51.2.26a29f7-62.opt_409.1.x86_64

[ 70s] + cd unison-2.51.2.26a29f7

[ 70s] + dune_release_pkgs=unison

[ 70s] + echo 2.51.2.26a29f7

[ 70s] + tee VERSION

[ 70s] 2.51.2.26a29f7

[ 70s] + dune_for_release=

[ 70s] + : dune_release_pkgs

[ 70s] + test -n unison

[ 70s] + echo unison

[ 70s] + dune_for_release=--for-release-of-packages=unison

[ 70s] + dune installed-libraries

[ 70s] bigarray (version: OCaml 4.09.0)

[ 70s] bytes (version: OCaml 4.09.0)

[ 70s] compiler-libs (version: OCaml 4.09.0)

[ 70s] compiler-libs.bytecomp (version: OCaml 4.09.0)

[ 70s] compiler-libs.common (version: OCaml 4.09.0)

[ 70s] compiler-libs.optcomp (version: OCaml 4.09.0)

[ 70s] compiler-libs.toplevel (version: OCaml 4.09.0)

[ 70s] dynlink (version: OCaml 4.09.0)

[ 70s] lablgtk2 (version: 2.18.8)

[ 70s] lablgtk2.auto-init (version: n/a)

[ 70s] lablgtk2.glade (version: n/a)

[ 70s] lablgtk2.gnomecanvas (version: n/a)

[ 70s] lablgtk2.gtkspell (version: n/a)

[ 70s] lablgtk2.rsvg (version: n/a)

[ 70s] lablgtk2.sourceview2 (version: n/a)

[ 70s] raw_spacetime (version: OCaml 4.09.0)

[ 70s] result (version: [distributed with Ocaml])

[ 70s] seq (version: OCaml 4.09.0)

[ 70s] stdlib (version: OCaml 4.09.0)

[ 70s] str (version: OCaml 4.09.0)

[ 70s] threads (version: OCaml 4.09.0)

[ 70s] threads.none (version: OCaml 4.09.0)

[ 70s] threads.posix (version: OCaml 4.09.0)

[ 70s] uchar (version: OCaml 4.09.0)

[ 70s] unix (version: OCaml 4.09.0)

[ 70s] + dune external-lib-deps --for-release-of-packages=unison @install

[ 70s] Info: Creating file dune-project with this contents:

[ 70s] | (lang dune 2.2)

[ 70s] File "dune", line 10, characters 1-41:

[ 70s] 10 | (c_names bytearray_stubs osxsupport pty)

[ 70s] ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[ 70s] Error: 'c_names' was deleted in version 2.0 of the dune language. Use the

[ 70s] (foreign_stubs ...) field instead.

[ 70s] error: Bad exit status from /var/tmp/rpm-tmp.cw95L5 (%build)

```` | 1.0 | support dune properly, remove usage of c_names - ````

[ 70s] + /usr/bin/mkdir /home/abuild/rpmbuild/BUILDROOT/unison-2.51.2.26a29f7-62.opt_409.1.x86_64

[ 70s] + cd unison-2.51.2.26a29f7

[ 70s] + dune_release_pkgs=unison

[ 70s] + echo 2.51.2.26a29f7

[ 70s] + tee VERSION

[ 70s] 2.51.2.26a29f7

[ 70s] + dune_for_release=

[ 70s] + : dune_release_pkgs

[ 70s] + test -n unison

[ 70s] + echo unison

[ 70s] + dune_for_release=--for-release-of-packages=unison

[ 70s] + dune installed-libraries

[ 70s] bigarray (version: OCaml 4.09.0)

[ 70s] bytes (version: OCaml 4.09.0)

[ 70s] compiler-libs (version: OCaml 4.09.0)

[ 70s] compiler-libs.bytecomp (version: OCaml 4.09.0)

[ 70s] compiler-libs.common (version: OCaml 4.09.0)

[ 70s] compiler-libs.optcomp (version: OCaml 4.09.0)

[ 70s] compiler-libs.toplevel (version: OCaml 4.09.0)

[ 70s] dynlink (version: OCaml 4.09.0)

[ 70s] lablgtk2 (version: 2.18.8)

[ 70s] lablgtk2.auto-init (version: n/a)

[ 70s] lablgtk2.glade (version: n/a)

[ 70s] lablgtk2.gnomecanvas (version: n/a)

[ 70s] lablgtk2.gtkspell (version: n/a)

[ 70s] lablgtk2.rsvg (version: n/a)

[ 70s] lablgtk2.sourceview2 (version: n/a)

[ 70s] raw_spacetime (version: OCaml 4.09.0)

[ 70s] result (version: [distributed with Ocaml])

[ 70s] seq (version: OCaml 4.09.0)

[ 70s] stdlib (version: OCaml 4.09.0)

[ 70s] str (version: OCaml 4.09.0)

[ 70s] threads (version: OCaml 4.09.0)

[ 70s] threads.none (version: OCaml 4.09.0)

[ 70s] threads.posix (version: OCaml 4.09.0)

[ 70s] uchar (version: OCaml 4.09.0)

[ 70s] unix (version: OCaml 4.09.0)

[ 70s] + dune external-lib-deps --for-release-of-packages=unison @install

[ 70s] Info: Creating file dune-project with this contents:

[ 70s] | (lang dune 2.2)

[ 70s] File "dune", line 10, characters 1-41:

[ 70s] 10 | (c_names bytearray_stubs osxsupport pty)

[ 70s] ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

[ 70s] Error: 'c_names' was deleted in version 2.0 of the dune language. Use the

[ 70s] (foreign_stubs ...) field instead.

[ 70s] error: Bad exit status from /var/tmp/rpm-tmp.cw95L5 (%build)

```` | build | support dune properly remove usage of c names usr bin mkdir home abuild rpmbuild buildroot unison opt cd unison dune release pkgs unison echo tee version dune for release dune release pkgs test n unison echo unison dune for release for release of packages unison dune installed libraries bigarray version ocaml bytes version ocaml compiler libs version ocaml compiler libs bytecomp version ocaml compiler libs common version ocaml compiler libs optcomp version ocaml compiler libs toplevel version ocaml dynlink version ocaml version auto init version n a glade version n a gnomecanvas version n a gtkspell version n a rsvg version n a version n a raw spacetime version ocaml result version seq version ocaml stdlib version ocaml str version ocaml threads version ocaml threads none version ocaml threads posix version ocaml uchar version ocaml unix version ocaml dune external lib deps for release of packages unison install info creating file dune project with this contents lang dune file dune line characters c names bytearray stubs osxsupport pty error c names was deleted in version of the dune language use the foreign stubs field instead error bad exit status from var tmp rpm tmp build | 1 |

1,360 | 2,733,794,352 | IssuesEvent | 2015-04-17 15:54:35 | gregorio-project/gregorio | https://api.github.com/repos/gregorio-project/gregorio | opened | Build error | bug build/install | In trying to build `develop` and `release-3.0` from a freshly cloned repository on a Mac I get the following error:

`bison: gabc-score-determination-y.c: cannot open: Permission denied`

Checking the file, it's permissions don't seem to be any different from the other files. Does anyone have any idea as to why this error might be cropping up? I can obviously get around it by running the build with root permissions, but I thought we had fixed things so that wasn't necessary. | 1.0 | Build error - In trying to build `develop` and `release-3.0` from a freshly cloned repository on a Mac I get the following error:

`bison: gabc-score-determination-y.c: cannot open: Permission denied`

Checking the file, it's permissions don't seem to be any different from the other files. Does anyone have any idea as to why this error might be cropping up? I can obviously get around it by running the build with root permissions, but I thought we had fixed things so that wasn't necessary. | build | build error in trying to build develop and release from a freshly cloned repository on a mac i get the following error bison gabc score determination y c cannot open permission denied checking the file it s permissions don t seem to be any different from the other files does anyone have any idea as to why this error might be cropping up i can obviously get around it by running the build with root permissions but i thought we had fixed things so that wasn t necessary | 1 |

151,773 | 23,871,224,128 | IssuesEvent | 2022-09-07 14:58:59 | nevermined-io/defi-marketplace | https://api.github.com/repos/nevermined-io/defi-marketplace | closed | Subscription purchase flow | design | - [x] Create subscription page desing

- [x] Defin susbscription purchase flow | 1.0 | Subscription purchase flow - - [x] Create subscription page desing

- [x] Defin susbscription purchase flow | non_build | subscription purchase flow create subscription page desing defin susbscription purchase flow | 0 |

12,563 | 5,215,374,400 | IssuesEvent | 2017-01-26 04:32:17 | grpc/grpc | https://api.github.com/repos/grpc/grpc | closed | linux/dbg: json_run_localhost timeout | BUILDPONY c++ flaky test P0 | `c++_linux_dbg.bins/dbg/json_run_localhost --scenarios_json '{"scenarios": [{"name": "cpp_protobuf_async_client_sync_server_streaming_qps_unconstrained_secure", "warmup_seconds": 0, "benchmark_seconds": 1, "num_servers": 1, "server_config": {"async_server_threads": 0, "core_limit": 0, "security_params": {"use_test_ca": true, "server_host_override": "foo.test.google.fr"}, "server_type": "SYNC_SERVER"}, "num_clients": 0, "client_config": {"client_type": "ASYNC_CLIENT", "security_params": {"use_test_ca": true, "server_host_override": "foo.test.google.fr"}, "payload_config": {"simple_params": {"resp_size": 0, "req_size": 0}}, "client_channels": 64, "async_client_threads": 0, "outstanding_rpcs_per_channel": 100, "rpc_type": "STREAMING", "load_params": {"closed_loop": {}}, "histogram_params": {"max_possible": 60000000000.0, "resolution": 0.01}}}]}' GRPC_POLL_STRATEGY=poll-cv`

https://gist.githubusercontent.com/dgquintas/81d81ad215048fe43a473ed7434b9c76/raw/7fa9401c238c1d1f9ba2b5f913e4784b3fbf0199/gistfile1.txt

| 1.0 | linux/dbg: json_run_localhost timeout - `c++_linux_dbg.bins/dbg/json_run_localhost --scenarios_json '{"scenarios": [{"name": "cpp_protobuf_async_client_sync_server_streaming_qps_unconstrained_secure", "warmup_seconds": 0, "benchmark_seconds": 1, "num_servers": 1, "server_config": {"async_server_threads": 0, "core_limit": 0, "security_params": {"use_test_ca": true, "server_host_override": "foo.test.google.fr"}, "server_type": "SYNC_SERVER"}, "num_clients": 0, "client_config": {"client_type": "ASYNC_CLIENT", "security_params": {"use_test_ca": true, "server_host_override": "foo.test.google.fr"}, "payload_config": {"simple_params": {"resp_size": 0, "req_size": 0}}, "client_channels": 64, "async_client_threads": 0, "outstanding_rpcs_per_channel": 100, "rpc_type": "STREAMING", "load_params": {"closed_loop": {}}, "histogram_params": {"max_possible": 60000000000.0, "resolution": 0.01}}}]}' GRPC_POLL_STRATEGY=poll-cv`

https://gist.githubusercontent.com/dgquintas/81d81ad215048fe43a473ed7434b9c76/raw/7fa9401c238c1d1f9ba2b5f913e4784b3fbf0199/gistfile1.txt

| build | linux dbg json run localhost timeout c linux dbg bins dbg json run localhost scenarios json scenarios grpc poll strategy poll cv | 1 |

94,568 | 27,237,433,393 | IssuesEvent | 2023-02-21 17:21:15 | dotnet/arcade | https://api.github.com/repos/dotnet/arcade | closed | Build failed: Maestro Build Promotion/main #Promoting dotnet-windowsdesktop build 20230215.2 (167131) to channel(s) '.NET 7' # | First Responder Build Failed | Build [#Promoting dotnet-windowsdesktop build 20230215.2 (167131) to channel(s) '.NET 7' #](https://dev.azure.com/dnceng/7ea9116e-9fac-403d-b258-b31fcf1bb293/_build/results?buildId=2114840) failed

## :x: : internal / Maestro Build Promotion failed

### Summary

**Finished** - Wed, 15 Feb 2023 16:43:30 GMT

**Duration** - 5 minutes

**Requested for** - DotNet Bot

**Reason** - manual

### Details

#### Publishing

- :x: - [[Log]](https://dev.azure.com/dnceng/7ea9116e-9fac-403d-b258-b31fcf1bb293/_apis/build/builds/2114840/logs/28) - .packages\microsoft.dotnet.arcade.sdk\8.0.0-beta.23113.1\tools\SdkTasks\PublishArtifactsInManifest.proj(144,5): error : Asset 'D:\a\_work\1\a\75cfd413-cea0-4696-9250-4c7dea5793cd\Microsoft.WindowsDesktop.App.Ref.7.0.4.symbols.nupkg.sha512' already exists with different contents at 'assets/symbols/Microsoft.WindowsDesktop.App.Ref.7.0.4.symbols.nupkg.sha512'

- :x: - [[Log]](https://dev.azure.com/dnceng/7ea9116e-9fac-403d-b258-b31fcf1bb293/_apis/build/builds/2114840/logs/28) - .packages\microsoft.dotnet.arcade.sdk\8.0.0-beta.23113.1\tools\SdkTasks\PublishArtifactsInManifest.proj(144,5): error : Asset 'D:\a\_work\1\a\873a646a-2cae-4bf6-9a6d-82ceca6a79a4\Microsoft.WindowsDesktop.App.Runtime.win-arm64.7.0.4.symbols.nupkg.sha512' already exists with different contents at 'assets/symbols/Microsoft.WindowsDesktop.App.Runtime.win-arm64.7.0.4.symbols.nupkg.sha512'

- :x: - [[Log]](https://dev.azure.com/dnceng/7ea9116e-9fac-403d-b258-b31fcf1bb293/_apis/build/builds/2114840/logs/28) - .packages\microsoft.dotnet.arcade.sdk\8.0.0-beta.23113.1\tools\SdkTasks\PublishArtifactsInManifest.proj(144,5): error : Asset 'D:\a\_work\1\a\1e1ffe07-22e1-48b5-93d2-634792494483\Microsoft.WindowsDesktop.App.Runtime.win-x64.7.0.4.symbols.nupkg.sha512' already exists with different contents at 'assets/symbols/Microsoft.WindowsDesktop.App.Runtime.win-x64.7.0.4.symbols.nupkg.sha512'

- :x: - [[Log]](https://dev.azure.com/dnceng/7ea9116e-9fac-403d-b258-b31fcf1bb293/_apis/build/builds/2114840/logs/28) - .packages\microsoft.dotnet.arcade.sdk\8.0.0-beta.23113.1\tools\SdkTasks\PublishArtifactsInManifest.proj(144,5): error : Asset 'D:\a\_work\1\a\6aac4d7b-d943-4682-9da4-3a78c92c20a7\Microsoft.WindowsDesktop.App.Runtime.win-x86.7.0.4.symbols.nupkg.sha512' already exists with different contents at 'assets/symbols/Microsoft.WindowsDesktop.App.Runtime.win-x86.7.0.4.symbols.nupkg.sha512'

### Changes

| 1.0 | Build failed: Maestro Build Promotion/main #Promoting dotnet-windowsdesktop build 20230215.2 (167131) to channel(s) '.NET 7' # - Build [#Promoting dotnet-windowsdesktop build 20230215.2 (167131) to channel(s) '.NET 7' #](https://dev.azure.com/dnceng/7ea9116e-9fac-403d-b258-b31fcf1bb293/_build/results?buildId=2114840) failed

## :x: : internal / Maestro Build Promotion failed

### Summary

**Finished** - Wed, 15 Feb 2023 16:43:30 GMT

**Duration** - 5 minutes

**Requested for** - DotNet Bot

**Reason** - manual

### Details

#### Publishing

- :x: - [[Log]](https://dev.azure.com/dnceng/7ea9116e-9fac-403d-b258-b31fcf1bb293/_apis/build/builds/2114840/logs/28) - .packages\microsoft.dotnet.arcade.sdk\8.0.0-beta.23113.1\tools\SdkTasks\PublishArtifactsInManifest.proj(144,5): error : Asset 'D:\a\_work\1\a\75cfd413-cea0-4696-9250-4c7dea5793cd\Microsoft.WindowsDesktop.App.Ref.7.0.4.symbols.nupkg.sha512' already exists with different contents at 'assets/symbols/Microsoft.WindowsDesktop.App.Ref.7.0.4.symbols.nupkg.sha512'

- :x: - [[Log]](https://dev.azure.com/dnceng/7ea9116e-9fac-403d-b258-b31fcf1bb293/_apis/build/builds/2114840/logs/28) - .packages\microsoft.dotnet.arcade.sdk\8.0.0-beta.23113.1\tools\SdkTasks\PublishArtifactsInManifest.proj(144,5): error : Asset 'D:\a\_work\1\a\873a646a-2cae-4bf6-9a6d-82ceca6a79a4\Microsoft.WindowsDesktop.App.Runtime.win-arm64.7.0.4.symbols.nupkg.sha512' already exists with different contents at 'assets/symbols/Microsoft.WindowsDesktop.App.Runtime.win-arm64.7.0.4.symbols.nupkg.sha512'

- :x: - [[Log]](https://dev.azure.com/dnceng/7ea9116e-9fac-403d-b258-b31fcf1bb293/_apis/build/builds/2114840/logs/28) - .packages\microsoft.dotnet.arcade.sdk\8.0.0-beta.23113.1\tools\SdkTasks\PublishArtifactsInManifest.proj(144,5): error : Asset 'D:\a\_work\1\a\1e1ffe07-22e1-48b5-93d2-634792494483\Microsoft.WindowsDesktop.App.Runtime.win-x64.7.0.4.symbols.nupkg.sha512' already exists with different contents at 'assets/symbols/Microsoft.WindowsDesktop.App.Runtime.win-x64.7.0.4.symbols.nupkg.sha512'

- :x: - [[Log]](https://dev.azure.com/dnceng/7ea9116e-9fac-403d-b258-b31fcf1bb293/_apis/build/builds/2114840/logs/28) - .packages\microsoft.dotnet.arcade.sdk\8.0.0-beta.23113.1\tools\SdkTasks\PublishArtifactsInManifest.proj(144,5): error : Asset 'D:\a\_work\1\a\6aac4d7b-d943-4682-9da4-3a78c92c20a7\Microsoft.WindowsDesktop.App.Runtime.win-x86.7.0.4.symbols.nupkg.sha512' already exists with different contents at 'assets/symbols/Microsoft.WindowsDesktop.App.Runtime.win-x86.7.0.4.symbols.nupkg.sha512'

### Changes

| build | build failed maestro build promotion main promoting dotnet windowsdesktop build to channel s net build failed x internal maestro build promotion failed summary finished wed feb gmt duration minutes requested for dotnet bot reason manual details publishing x packages microsoft dotnet arcade sdk beta tools sdktasks publishartifactsinmanifest proj error asset d a work a microsoft windowsdesktop app ref symbols nupkg already exists with different contents at assets symbols microsoft windowsdesktop app ref symbols nupkg x packages microsoft dotnet arcade sdk beta tools sdktasks publishartifactsinmanifest proj error asset d a work a microsoft windowsdesktop app runtime win symbols nupkg already exists with different contents at assets symbols microsoft windowsdesktop app runtime win symbols nupkg x packages microsoft dotnet arcade sdk beta tools sdktasks publishartifactsinmanifest proj error asset d a work a microsoft windowsdesktop app runtime win symbols nupkg already exists with different contents at assets symbols microsoft windowsdesktop app runtime win symbols nupkg x packages microsoft dotnet arcade sdk beta tools sdktasks publishartifactsinmanifest proj error asset d a work a microsoft windowsdesktop app runtime win symbols nupkg already exists with different contents at assets symbols microsoft windowsdesktop app runtime win symbols nupkg changes | 1 |

90,215 | 26,010,133,112 | IssuesEvent | 2022-12-21 00:20:28 | benthevining/Limes | https://api.github.com/repos/benthevining/Limes | closed | [BUG] Files undefined symbols with gcc | bug os/macOS lib/limes_files Build | MacOS, GCC 12.

The linker cannot see `files::module_path::get_impl()`. See [this build log](https://my.cdash.org/viewBuildError.php?buildid=2234491). | 1.0 | [BUG] Files undefined symbols with gcc - MacOS, GCC 12.

The linker cannot see `files::module_path::get_impl()`. See [this build log](https://my.cdash.org/viewBuildError.php?buildid=2234491). | build | files undefined symbols with gcc macos gcc the linker cannot see files module path get impl see | 1 |

28,919 | 12,990,787,223 | IssuesEvent | 2020-07-23 01:14:28 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | this page doesn't seems to work | Pri2 container-service/svc cxp needs-more-info triaged |

[Enter feedback here]

I followed all the steps but the demo doesn't seem to work.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: fd0e7d5a-37e9-07dc-f395-9cb5bb580e45

* Version Independent ID: 7e4faf70-9724-7e7b-832b-1cd99a974920

* Content: [Create an ingress controller - Azure Kubernetes Service](https://docs.microsoft.com/en-us/azure/aks/ingress-basic)

* Content Source: [articles/aks/ingress-basic.md](https://github.com/MicrosoftDocs/azure-docs/blob/master/articles/aks/ingress-basic.md)

* Service: **container-service**

* GitHub Login: @mlearned

* Microsoft Alias: **mlearned** | 1.0 | this page doesn't seems to work -

[Enter feedback here]

I followed all the steps but the demo doesn't seem to work.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: fd0e7d5a-37e9-07dc-f395-9cb5bb580e45

* Version Independent ID: 7e4faf70-9724-7e7b-832b-1cd99a974920

* Content: [Create an ingress controller - Azure Kubernetes Service](https://docs.microsoft.com/en-us/azure/aks/ingress-basic)

* Content Source: [articles/aks/ingress-basic.md](https://github.com/MicrosoftDocs/azure-docs/blob/master/articles/aks/ingress-basic.md)

* Service: **container-service**

* GitHub Login: @mlearned

* Microsoft Alias: **mlearned** | non_build | this page doesn t seems to work i followed all the steps but the demo doesn t seem to work document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source service container service github login mlearned microsoft alias mlearned | 0 |

80,973 | 23,348,983,250 | IssuesEvent | 2022-08-09 21:01:11 | PowerShell/PowerShell | https://api.github.com/repos/PowerShell/PowerShell | closed | MSI installer doesn't enable remoting even after selecting it | Area-Maintainers-Build Resolution-By Design | Steps to reproduce

------------------

1. Check the current available endpoints using **Windows PowerShell**

```powershell

$PSVersionTable

Name Value

---- -----

PSVersion 5.1.17134.112

PSEdition Desktop

PSCompatibleVersions {1.0, 2.0, 3.0, 4.0...}

BuildVersion 10.0.17134.112

CLRVersion 4.0.30319.42000

WSManStackVersion 3.0

PSRemotingProtocolVersion 2.3

SerializationVersion 1.1.0.1

# Lists the default endpoints

Get-PSSessionConfiguration | ft Name

Name

----

Microsoft.PowerShell

Microsoft.Powershell.Workflow

Microsoft.PowerShell32

```

2. Install the PS Core 6.1.0-preview 3 using the MSI and select _Enable PowerShell remoting_

Expected behavior

-----------------

New remoting endpoint(s) are **PRSENT** on the system as done by _Install-PowerShellRemoting.ps1_ script in $PSHome

```powershell

# From Windows PowerShell

Get-PSSessionConfiguration | ft name

Name

----

Microsoft.PowerShell

Microsoft.Powershell.Workflow

Microsoft.PowerShell32

PowerShell.6

PowerShell.6.1.0-preview.3

# From PowerShell Core

Get-PSSessionConfiguration |ft name

Name

----

PowerShell.6

PowerShell.6.1.0-preview.3

```

Actual behavior

---------------

New remoting endpoint(s) are **ABSENT**

```powershell

# From Windows PowerShell

Get-PSSessionConfiguration | ft name

Name

----

Microsoft.PowerShell

Microsoft.Powershell.Workflow

Microsoft.PowerShell32

# From PowerShell Core

Get-PSSessionConfiguration

```

| 1.0 | MSI installer doesn't enable remoting even after selecting it - Steps to reproduce

------------------

1. Check the current available endpoints using **Windows PowerShell**

```powershell

$PSVersionTable

Name Value

---- -----

PSVersion 5.1.17134.112

PSEdition Desktop

PSCompatibleVersions {1.0, 2.0, 3.0, 4.0...}

BuildVersion 10.0.17134.112

CLRVersion 4.0.30319.42000

WSManStackVersion 3.0

PSRemotingProtocolVersion 2.3

SerializationVersion 1.1.0.1

# Lists the default endpoints

Get-PSSessionConfiguration | ft Name

Name

----

Microsoft.PowerShell

Microsoft.Powershell.Workflow

Microsoft.PowerShell32

```

2. Install the PS Core 6.1.0-preview 3 using the MSI and select _Enable PowerShell remoting_

Expected behavior

-----------------

New remoting endpoint(s) are **PRSENT** on the system as done by _Install-PowerShellRemoting.ps1_ script in $PSHome

```powershell

# From Windows PowerShell

Get-PSSessionConfiguration | ft name

Name

----

Microsoft.PowerShell

Microsoft.Powershell.Workflow

Microsoft.PowerShell32

PowerShell.6

PowerShell.6.1.0-preview.3

# From PowerShell Core

Get-PSSessionConfiguration |ft name

Name

----

PowerShell.6

PowerShell.6.1.0-preview.3

```

Actual behavior

---------------

New remoting endpoint(s) are **ABSENT**

```powershell

# From Windows PowerShell

Get-PSSessionConfiguration | ft name

Name

----

Microsoft.PowerShell

Microsoft.Powershell.Workflow

Microsoft.PowerShell32

# From PowerShell Core

Get-PSSessionConfiguration

```

| build | msi installer doesn t enable remoting even after selecting it steps to reproduce check the current available endpoints using windows powershell powershell psversiontable name value psversion psedition desktop pscompatibleversions buildversion clrversion wsmanstackversion psremotingprotocolversion serializationversion lists the default endpoints get pssessionconfiguration ft name name microsoft powershell microsoft powershell workflow microsoft install the ps core preview using the msi and select enable powershell remoting expected behavior new remoting endpoint s are prsent on the system as done by install powershellremoting script in pshome powershell from windows powershell get pssessionconfiguration ft name name microsoft powershell microsoft powershell workflow microsoft powershell powershell preview from powershell core get pssessionconfiguration ft name name powershell powershell preview actual behavior new remoting endpoint s are absent powershell from windows powershell get pssessionconfiguration ft name name microsoft powershell microsoft powershell workflow microsoft from powershell core get pssessionconfiguration | 1 |

231,720 | 7,642,437,029 | IssuesEvent | 2018-05-08 09:15:21 | bitshares/bitshares-ui | https://api.github.com/repos/bitshares/bitshares-ui | closed | [3] Exchange not loading for new, zero-balance accounts | bug high priority | When a new account is created via cloud login, the exchange tab doesnt load anymore for some markets.

prominent example:

does not load

https://wallet.bitshares.org/#/market/USD_BTS

does load

https://wallet.bitshares.org/#/market/BTS_USD

The issue is in https://github.com/bitshares/bitshares-ui/blob/c69f297ee1cea480b531f8f38542baf1b17a5736/app/components/Exchange/Exchange.jsx#L288 that the feeStatus does not get loaded, which causes a return null in the render method. | 1.0 | [3] Exchange not loading for new, zero-balance accounts - When a new account is created via cloud login, the exchange tab doesnt load anymore for some markets.

prominent example:

does not load

https://wallet.bitshares.org/#/market/USD_BTS

does load

https://wallet.bitshares.org/#/market/BTS_USD

The issue is in https://github.com/bitshares/bitshares-ui/blob/c69f297ee1cea480b531f8f38542baf1b17a5736/app/components/Exchange/Exchange.jsx#L288 that the feeStatus does not get loaded, which causes a return null in the render method. | non_build | exchange not loading for new zero balance accounts when a new account is created via cloud login the exchange tab doesnt load anymore for some markets prominent example does not load does load the issue is in that the feestatus does not get loaded which causes a return null in the render method | 0 |

248,835 | 7,936,722,239 | IssuesEvent | 2018-07-09 10:20:39 | telstra/open-kilda | https://api.github.com/repos/telstra/open-kilda | closed | HTTP Status 500 from NB on null latency in Neo4j | bug priority/2-high | ```

<!doctype html><html lang="en"><head><title>HTTP Status 500 – Internal Server Error</title><style type="text/css">h1 {font-family:Tahoma,Arial,sans-serif;color:white;background-color:#525D76;font-size:22px;} h2 {font-family:Tahoma,Arial,sans-serif;color:white;background-color:#525D76;font-size:16px;} h3 {font-family:Tahoma,Arial,sans-serif;color:white;background-color:#525D76;font-size:14px;} body {font-family:Tahoma,Arial,sans-serif;color:black;background-color:white;} b {font-family:Tahoma,Arial,sans-serif;color:white;background-color:#525D76;} p {font-family:Tahoma,Arial,sans-serif;background:white;color:black;font-size:12px;} a {color:black;} a.name {color:black;} .line {height:1px;background-color:#525D76;border:none;}</style></head><body><h1>HTTP Status 500 – Internal Server Error</h1><hr class="line" /><p><b>Type</b> Exception Report</p><p><b>Message</b> Request processing failed; nested exception is org.springframework.web.client.RestClientException: Could not extract response: no suitable HttpMessageConverter found for response type [class [Lorg.openkilda.messaging.info.event.IslInfoData;] and content type [text/html;charset=utf-8]</p><p><b>Description</b> The server encountered an unexpected condition that prevented it from fulfilling the request.</p><p><b>Exception</b></p><pre>org.springframework.web.util.NestedServletException: Request processing failed; nested exception is org.springframework.web.client.RestClientException: Could not extract response: no suitable HttpMessageConverter found for response type [class [Lorg.openkilda.messaging.info.event.IslInfoData;] and content type [text/html;charset=utf-8]

org.springframework.web.servlet.FrameworkServlet.processRequest(FrameworkServlet.java:982)

org.springframework.web.servlet.FrameworkServlet.doGet(FrameworkServlet.java:861)

javax.servlet.http.HttpServlet.service(HttpServlet.java:635)

org.springframework.web.servlet.FrameworkServlet.service(FrameworkServlet.java:846)

javax.servlet.http.HttpServlet.service(HttpServlet.java:742)

org.apache.tomcat.websocket.server.WsFilter.doFilter(WsFilter.java:52)

org.openkilda.northbound.utils.RequestCorrelationFilter.doFilterInternal(RequestCorrelationFilter.java:57)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:317)

org.springframework.security.web.access.intercept.FilterSecurityInterceptor.invoke(FilterSecurityInterceptor.java:127)

org.springframework.security.web.access.intercept.FilterSecurityInterceptor.doFilter(FilterSecurityInterceptor.java:91)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.access.ExceptionTranslationFilter.doFilter(ExceptionTranslationFilter.java:114)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.session.SessionManagementFilter.doFilter(SessionManagementFilter.java:137)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.authentication.AnonymousAuthenticationFilter.doFilter(AnonymousAuthenticationFilter.java:111)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.servletapi.SecurityContextHolderAwareRequestFilter.doFilter(SecurityContextHolderAwareRequestFilter.java:170)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.savedrequest.RequestCacheAwareFilter.doFilter(RequestCacheAwareFilter.java:63)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.authentication.www.BasicAuthenticationFilter.doFilterInternal(BasicAuthenticationFilter.java:215)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.authentication.logout.LogoutFilter.doFilter(LogoutFilter.java:116)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.header.HeaderWriterFilter.doFilterInternal(HeaderWriterFilter.java:64)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.context.SecurityContextPersistenceFilter.doFilter(SecurityContextPersistenceFilter.java:105)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.context.request.async.WebAsyncManagerIntegrationFilter.doFilterInternal(WebAsyncManagerIntegrationFilter.java:56)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.FilterChainProxy.doFilterInternal(FilterChainProxy.java:214)

org.springframework.security.web.FilterChainProxy.doFilter(FilterChainProxy.java:177)

org.springframework.web.filter.DelegatingFilterProxy.invokeDelegate(DelegatingFilterProxy.java:346)

org.springframework.web.filter.DelegatingFilterProxy.doFilter(DelegatingFilterProxy.java:262)

org.springframework.web.filter.CharacterEncodingFilter.doFilterInternal(CharacterEncodingFilter.java:197)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

</pre><p><b>Root Cause</b></p><pre>org.springframework.web.client.RestClientException: Could not extract response: no suitable HttpMessageConverter found for response type [class [Lorg.openkilda.messaging.info.event.IslInfoData;] and content type [text/html;charset=utf-8]

org.springframework.web.client.HttpMessageConverterExtractor.extractData(HttpMessageConverterExtractor.java:110)

org.springframework.web.client.RestTemplate$ResponseEntityResponseExtractor.extractData(RestTemplate.java:917)

org.springframework.web.client.RestTemplate$ResponseEntityResponseExtractor.extractData(RestTemplate.java:901)

org.springframework.web.client.RestTemplate.doExecute(RestTemplate.java:655)

org.springframework.web.client.RestTemplate.execute(RestTemplate.java:613)

org.springframework.web.client.RestTemplate.exchange(RestTemplate.java:531)

org.openkilda.northbound.service.impl.LinkServiceImpl.getLinks(LinkServiceImpl.java:83)

org.openkilda.northbound.controller.LinkController.getLinks(LinkController.java:55)

sun.reflect.GeneratedMethodAccessor148.invoke(Unknown Source)

sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

java.lang.reflect.Method.invoke(Method.java:498)

org.springframework.web.method.support.InvocableHandlerMethod.doInvoke(InvocableHandlerMethod.java:205)

org.springframework.web.method.support.InvocableHandlerMethod.invokeForRequest(InvocableHandlerMethod.java:133)

org.springframework.web.servlet.mvc.method.annotation.ServletInvocableHandlerMethod.invokeAndHandle(ServletInvocableHandlerMethod.java:97)

org.springframework.web.servlet.mvc.method.annotation.RequestMappingHandlerAdapter.invokeHandlerMethod(RequestMappingHandlerAdapter.java:827)

org.springframework.web.servlet.mvc.method.annotation.RequestMappingHandlerAdapter.handleInternal(RequestMappingHandlerAdapter.java:738)

org.springframework.web.servlet.mvc.method.AbstractHandlerMethodAdapter.handle(AbstractHandlerMethodAdapter.java:85)

org.springframework.web.servlet.DispatcherServlet.doDispatch(DispatcherServlet.java:967)

org.springframework.web.servlet.DispatcherServlet.doService(DispatcherServlet.java:901)

org.springframework.web.servlet.FrameworkServlet.processRequest(FrameworkServlet.java:970)

org.springframework.web.servlet.FrameworkServlet.doGet(FrameworkServlet.java:861)

javax.servlet.http.HttpServlet.service(HttpServlet.java:635)

org.springframework.web.servlet.FrameworkServlet.service(FrameworkServlet.java:846)

javax.servlet.http.HttpServlet.service(HttpServlet.java:742)

org.apache.tomcat.websocket.server.WsFilter.doFilter(WsFilter.java:52)

org.openkilda.northbound.utils.RequestCorrelationFilter.doFilterInternal(RequestCorrelationFilter.java:57)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:317)

org.springframework.security.web.access.intercept.FilterSecurityInterceptor.invoke(FilterSecurityInterceptor.java:127)

org.springframework.security.web.access.intercept.FilterSecurityInterceptor.doFilter(FilterSecurityInterceptor.java:91)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.access.ExceptionTranslationFilter.doFilter(ExceptionTranslationFilter.java:114)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.session.SessionManagementFilter.doFilter(SessionManagementFilter.java:137)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.authentication.AnonymousAuthenticationFilter.doFilter(AnonymousAuthenticationFilter.java:111)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.servletapi.SecurityContextHolderAwareRequestFilter.doFilter(SecurityContextHolderAwareRequestFilter.java:170)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.savedrequest.RequestCacheAwareFilter.doFilter(RequestCacheAwareFilter.java:63)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.authentication.www.BasicAuthenticationFilter.doFilterInternal(BasicAuthenticationFilter.java:215)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.authentication.logout.LogoutFilter.doFilter(LogoutFilter.java:116)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.header.HeaderWriterFilter.doFilterInternal(HeaderWriterFilter.java:64)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.context.SecurityContextPersistenceFilter.doFilter(SecurityContextPersistenceFilter.java:105)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.context.request.async.WebAsyncManagerIntegrationFilter.doFilterInternal(WebAsyncManagerIntegrationFilter.java:56)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.FilterChainProxy.doFilterInternal(FilterChainProxy.java:214)

org.springframework.security.web.FilterChainProxy.doFilter(FilterChainProxy.java:177)

org.springframework.web.filter.DelegatingFilterProxy.invokeDelegate(DelegatingFilterProxy.java:346)

org.springframework.web.filter.DelegatingFilterProxy.doFilter(DelegatingFilterProxy.java:262)

org.springframework.web.filter.CharacterEncodingFilter.doFilterInternal(CharacterEncodingFilter.java:197)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

</pre><p><b>Note</b> The full stack trace of the root cause is available in the server logs.</p><hr class="line" /><h3>Apache Tomcat/8.5.16</h3></body></html>

```

| 1.0 | HTTP Status 500 from NB on null latency in Neo4j - ```

<!doctype html><html lang="en"><head><title>HTTP Status 500 – Internal Server Error</title><style type="text/css">h1 {font-family:Tahoma,Arial,sans-serif;color:white;background-color:#525D76;font-size:22px;} h2 {font-family:Tahoma,Arial,sans-serif;color:white;background-color:#525D76;font-size:16px;} h3 {font-family:Tahoma,Arial,sans-serif;color:white;background-color:#525D76;font-size:14px;} body {font-family:Tahoma,Arial,sans-serif;color:black;background-color:white;} b {font-family:Tahoma,Arial,sans-serif;color:white;background-color:#525D76;} p {font-family:Tahoma,Arial,sans-serif;background:white;color:black;font-size:12px;} a {color:black;} a.name {color:black;} .line {height:1px;background-color:#525D76;border:none;}</style></head><body><h1>HTTP Status 500 – Internal Server Error</h1><hr class="line" /><p><b>Type</b> Exception Report</p><p><b>Message</b> Request processing failed; nested exception is org.springframework.web.client.RestClientException: Could not extract response: no suitable HttpMessageConverter found for response type [class [Lorg.openkilda.messaging.info.event.IslInfoData;] and content type [text/html;charset=utf-8]</p><p><b>Description</b> The server encountered an unexpected condition that prevented it from fulfilling the request.</p><p><b>Exception</b></p><pre>org.springframework.web.util.NestedServletException: Request processing failed; nested exception is org.springframework.web.client.RestClientException: Could not extract response: no suitable HttpMessageConverter found for response type [class [Lorg.openkilda.messaging.info.event.IslInfoData;] and content type [text/html;charset=utf-8]

org.springframework.web.servlet.FrameworkServlet.processRequest(FrameworkServlet.java:982)

org.springframework.web.servlet.FrameworkServlet.doGet(FrameworkServlet.java:861)

javax.servlet.http.HttpServlet.service(HttpServlet.java:635)

org.springframework.web.servlet.FrameworkServlet.service(FrameworkServlet.java:846)

javax.servlet.http.HttpServlet.service(HttpServlet.java:742)

org.apache.tomcat.websocket.server.WsFilter.doFilter(WsFilter.java:52)

org.openkilda.northbound.utils.RequestCorrelationFilter.doFilterInternal(RequestCorrelationFilter.java:57)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:317)

org.springframework.security.web.access.intercept.FilterSecurityInterceptor.invoke(FilterSecurityInterceptor.java:127)

org.springframework.security.web.access.intercept.FilterSecurityInterceptor.doFilter(FilterSecurityInterceptor.java:91)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.access.ExceptionTranslationFilter.doFilter(ExceptionTranslationFilter.java:114)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.session.SessionManagementFilter.doFilter(SessionManagementFilter.java:137)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.authentication.AnonymousAuthenticationFilter.doFilter(AnonymousAuthenticationFilter.java:111)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.servletapi.SecurityContextHolderAwareRequestFilter.doFilter(SecurityContextHolderAwareRequestFilter.java:170)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.savedrequest.RequestCacheAwareFilter.doFilter(RequestCacheAwareFilter.java:63)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.authentication.www.BasicAuthenticationFilter.doFilterInternal(BasicAuthenticationFilter.java:215)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.authentication.logout.LogoutFilter.doFilter(LogoutFilter.java:116)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.header.HeaderWriterFilter.doFilterInternal(HeaderWriterFilter.java:64)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.context.SecurityContextPersistenceFilter.doFilter(SecurityContextPersistenceFilter.java:105)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.context.request.async.WebAsyncManagerIntegrationFilter.doFilterInternal(WebAsyncManagerIntegrationFilter.java:56)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.FilterChainProxy.doFilterInternal(FilterChainProxy.java:214)

org.springframework.security.web.FilterChainProxy.doFilter(FilterChainProxy.java:177)

org.springframework.web.filter.DelegatingFilterProxy.invokeDelegate(DelegatingFilterProxy.java:346)

org.springframework.web.filter.DelegatingFilterProxy.doFilter(DelegatingFilterProxy.java:262)

org.springframework.web.filter.CharacterEncodingFilter.doFilterInternal(CharacterEncodingFilter.java:197)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

</pre><p><b>Root Cause</b></p><pre>org.springframework.web.client.RestClientException: Could not extract response: no suitable HttpMessageConverter found for response type [class [Lorg.openkilda.messaging.info.event.IslInfoData;] and content type [text/html;charset=utf-8]

org.springframework.web.client.HttpMessageConverterExtractor.extractData(HttpMessageConverterExtractor.java:110)

org.springframework.web.client.RestTemplate$ResponseEntityResponseExtractor.extractData(RestTemplate.java:917)

org.springframework.web.client.RestTemplate$ResponseEntityResponseExtractor.extractData(RestTemplate.java:901)

org.springframework.web.client.RestTemplate.doExecute(RestTemplate.java:655)

org.springframework.web.client.RestTemplate.execute(RestTemplate.java:613)

org.springframework.web.client.RestTemplate.exchange(RestTemplate.java:531)

org.openkilda.northbound.service.impl.LinkServiceImpl.getLinks(LinkServiceImpl.java:83)

org.openkilda.northbound.controller.LinkController.getLinks(LinkController.java:55)

sun.reflect.GeneratedMethodAccessor148.invoke(Unknown Source)

sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

java.lang.reflect.Method.invoke(Method.java:498)

org.springframework.web.method.support.InvocableHandlerMethod.doInvoke(InvocableHandlerMethod.java:205)

org.springframework.web.method.support.InvocableHandlerMethod.invokeForRequest(InvocableHandlerMethod.java:133)

org.springframework.web.servlet.mvc.method.annotation.ServletInvocableHandlerMethod.invokeAndHandle(ServletInvocableHandlerMethod.java:97)

org.springframework.web.servlet.mvc.method.annotation.RequestMappingHandlerAdapter.invokeHandlerMethod(RequestMappingHandlerAdapter.java:827)

org.springframework.web.servlet.mvc.method.annotation.RequestMappingHandlerAdapter.handleInternal(RequestMappingHandlerAdapter.java:738)

org.springframework.web.servlet.mvc.method.AbstractHandlerMethodAdapter.handle(AbstractHandlerMethodAdapter.java:85)

org.springframework.web.servlet.DispatcherServlet.doDispatch(DispatcherServlet.java:967)

org.springframework.web.servlet.DispatcherServlet.doService(DispatcherServlet.java:901)

org.springframework.web.servlet.FrameworkServlet.processRequest(FrameworkServlet.java:970)

org.springframework.web.servlet.FrameworkServlet.doGet(FrameworkServlet.java:861)

javax.servlet.http.HttpServlet.service(HttpServlet.java:635)

org.springframework.web.servlet.FrameworkServlet.service(FrameworkServlet.java:846)

javax.servlet.http.HttpServlet.service(HttpServlet.java:742)

org.apache.tomcat.websocket.server.WsFilter.doFilter(WsFilter.java:52)

org.openkilda.northbound.utils.RequestCorrelationFilter.doFilterInternal(RequestCorrelationFilter.java:57)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:317)

org.springframework.security.web.access.intercept.FilterSecurityInterceptor.invoke(FilterSecurityInterceptor.java:127)

org.springframework.security.web.access.intercept.FilterSecurityInterceptor.doFilter(FilterSecurityInterceptor.java:91)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.access.ExceptionTranslationFilter.doFilter(ExceptionTranslationFilter.java:114)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.session.SessionManagementFilter.doFilter(SessionManagementFilter.java:137)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.authentication.AnonymousAuthenticationFilter.doFilter(AnonymousAuthenticationFilter.java:111)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.servletapi.SecurityContextHolderAwareRequestFilter.doFilter(SecurityContextHolderAwareRequestFilter.java:170)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.savedrequest.RequestCacheAwareFilter.doFilter(RequestCacheAwareFilter.java:63)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.authentication.www.BasicAuthenticationFilter.doFilterInternal(BasicAuthenticationFilter.java:215)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.authentication.logout.LogoutFilter.doFilter(LogoutFilter.java:116)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.header.HeaderWriterFilter.doFilterInternal(HeaderWriterFilter.java:64)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.context.SecurityContextPersistenceFilter.doFilter(SecurityContextPersistenceFilter.java:105)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.context.request.async.WebAsyncManagerIntegrationFilter.doFilterInternal(WebAsyncManagerIntegrationFilter.java:56)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:331)

org.springframework.security.web.FilterChainProxy.doFilterInternal(FilterChainProxy.java:214)

org.springframework.security.web.FilterChainProxy.doFilter(FilterChainProxy.java:177)

org.springframework.web.filter.DelegatingFilterProxy.invokeDelegate(DelegatingFilterProxy.java:346)

org.springframework.web.filter.DelegatingFilterProxy.doFilter(DelegatingFilterProxy.java:262)

org.springframework.web.filter.CharacterEncodingFilter.doFilterInternal(CharacterEncodingFilter.java:197)

org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107)

</pre><p><b>Note</b> The full stack trace of the root cause is available in the server logs.</p><hr class="line" /><h3>Apache Tomcat/8.5.16</h3></body></html>

```

| non_build | http status from nb on null latency in http status – internal server error font family tahoma arial sans serif color white background color font size font family tahoma arial sans serif color white background color font size font family tahoma arial sans serif color white background color font size body font family tahoma arial sans serif color black background color white b font family tahoma arial sans serif color white background color p font family tahoma arial sans serif background white color black font size a color black a name color black line height background color border none http status – internal server error type exception report message request processing failed nested exception is org springframework web client restclientexception could not extract response no suitable httpmessageconverter found for response type and content type description the server encountered an unexpected condition that prevented it from fulfilling the request exception org springframework web util nestedservletexception request processing failed nested exception is org springframework web client restclientexception could not extract response no suitable httpmessageconverter found for response type and content type org springframework web servlet frameworkservlet processrequest frameworkservlet java org springframework web servlet frameworkservlet doget frameworkservlet java javax servlet http httpservlet service httpservlet java org springframework web servlet frameworkservlet service frameworkservlet java javax servlet http httpservlet service httpservlet java org apache tomcat websocket server wsfilter dofilter wsfilter java org openkilda northbound utils requestcorrelationfilter dofilterinternal requestcorrelationfilter java org springframework web filter onceperrequestfilter dofilter onceperrequestfilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web access intercept filtersecurityinterceptor invoke filtersecurityinterceptor java org springframework security web access intercept filtersecurityinterceptor dofilter filtersecurityinterceptor java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web access exceptiontranslationfilter dofilter exceptiontranslationfilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web session sessionmanagementfilter dofilter sessionmanagementfilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web authentication anonymousauthenticationfilter dofilter anonymousauthenticationfilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web servletapi securitycontextholderawarerequestfilter dofilter securitycontextholderawarerequestfilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web savedrequest requestcacheawarefilter dofilter requestcacheawarefilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web authentication org springframework web filter onceperrequestfilter dofilter onceperrequestfilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web authentication logout logoutfilter dofilter logoutfilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web header headerwriterfilter dofilterinternal headerwriterfilter java org springframework web filter onceperrequestfilter dofilter onceperrequestfilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web context securitycontextpersistencefilter dofilter securitycontextpersistencefilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web context request async webasyncmanagerintegrationfilter dofilterinternal webasyncmanagerintegrationfilter java org springframework web filter onceperrequestfilter dofilter onceperrequestfilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web filterchainproxy dofilterinternal filterchainproxy java org springframework security web filterchainproxy dofilter filterchainproxy java org springframework web filter delegatingfilterproxy invokedelegate delegatingfilterproxy java org springframework web filter delegatingfilterproxy dofilter delegatingfilterproxy java org springframework web filter characterencodingfilter dofilterinternal characterencodingfilter java org springframework web filter onceperrequestfilter dofilter onceperrequestfilter java root cause org springframework web client restclientexception could not extract response no suitable httpmessageconverter found for response type and content type org springframework web client httpmessageconverterextractor extractdata httpmessageconverterextractor java org springframework web client resttemplate responseentityresponseextractor extractdata resttemplate java org springframework web client resttemplate responseentityresponseextractor extractdata resttemplate java org springframework web client resttemplate doexecute resttemplate java org springframework web client resttemplate execute resttemplate java org springframework web client resttemplate exchange resttemplate java org openkilda northbound service impl linkserviceimpl getlinks linkserviceimpl java org openkilda northbound controller linkcontroller getlinks linkcontroller java sun reflect invoke unknown source sun reflect delegatingmethodaccessorimpl invoke delegatingmethodaccessorimpl java java lang reflect method invoke method java org springframework web method support invocablehandlermethod doinvoke invocablehandlermethod java org springframework web method support invocablehandlermethod invokeforrequest invocablehandlermethod java org springframework web servlet mvc method annotation servletinvocablehandlermethod invokeandhandle servletinvocablehandlermethod java org springframework web servlet mvc method annotation requestmappinghandleradapter invokehandlermethod requestmappinghandleradapter java org springframework web servlet mvc method annotation requestmappinghandleradapter handleinternal requestmappinghandleradapter java org springframework web servlet mvc method abstracthandlermethodadapter handle abstracthandlermethodadapter java org springframework web servlet dispatcherservlet dodispatch dispatcherservlet java org springframework web servlet dispatcherservlet doservice dispatcherservlet java org springframework web servlet frameworkservlet processrequest frameworkservlet java org springframework web servlet frameworkservlet doget frameworkservlet java javax servlet http httpservlet service httpservlet java org springframework web servlet frameworkservlet service frameworkservlet java javax servlet http httpservlet service httpservlet java org apache tomcat websocket server wsfilter dofilter wsfilter java org openkilda northbound utils requestcorrelationfilter dofilterinternal requestcorrelationfilter java org springframework web filter onceperrequestfilter dofilter onceperrequestfilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web access intercept filtersecurityinterceptor invoke filtersecurityinterceptor java org springframework security web access intercept filtersecurityinterceptor dofilter filtersecurityinterceptor java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web access exceptiontranslationfilter dofilter exceptiontranslationfilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web session sessionmanagementfilter dofilter sessionmanagementfilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web authentication anonymousauthenticationfilter dofilter anonymousauthenticationfilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web servletapi securitycontextholderawarerequestfilter dofilter securitycontextholderawarerequestfilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web savedrequest requestcacheawarefilter dofilter requestcacheawarefilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web authentication org springframework web filter onceperrequestfilter dofilter onceperrequestfilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web authentication logout logoutfilter dofilter logoutfilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web header headerwriterfilter dofilterinternal headerwriterfilter java org springframework web filter onceperrequestfilter dofilter onceperrequestfilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web context securitycontextpersistencefilter dofilter securitycontextpersistencefilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web context request async webasyncmanagerintegrationfilter dofilterinternal webasyncmanagerintegrationfilter java org springframework web filter onceperrequestfilter dofilter onceperrequestfilter java org springframework security web filterchainproxy virtualfilterchain dofilter filterchainproxy java org springframework security web filterchainproxy dofilterinternal filterchainproxy java org springframework security web filterchainproxy dofilter filterchainproxy java org springframework web filter delegatingfilterproxy invokedelegate delegatingfilterproxy java org springframework web filter delegatingfilterproxy dofilter delegatingfilterproxy java org springframework web filter characterencodingfilter dofilterinternal characterencodingfilter java org springframework web filter onceperrequestfilter dofilter onceperrequestfilter java note the full stack trace of the root cause is available in the server logs apache tomcat | 0 |

88,262 | 25,356,786,449 | IssuesEvent | 2022-11-20 12:41:28 | nodejs/node | https://api.github.com/repos/nodejs/node | opened | Unqualified call to `std::move` in node_http2.cc | build | Warning (from clang-cl on Windows) that I don't know how to fix:

```

src\node_http2.cc(647,32): warning : unqualified call to 'std::move' [-Wunqualified-std-cast-call] [D:\Git\nodejs\node\libnode.vcxproj]

```

https://github.com/nodejs/node/blob/4bee69a8c4a699c23342977b670ae90b870ac00d/src/node_http2.cc#L647-L650 | 1.0 | Unqualified call to `std::move` in node_http2.cc - Warning (from clang-cl on Windows) that I don't know how to fix:

```

src\node_http2.cc(647,32): warning : unqualified call to 'std::move' [-Wunqualified-std-cast-call] [D:\Git\nodejs\node\libnode.vcxproj]

```

https://github.com/nodejs/node/blob/4bee69a8c4a699c23342977b670ae90b870ac00d/src/node_http2.cc#L647-L650 | build | unqualified call to std move in node cc warning from clang cl on windows that i don t know how to fix src node cc warning unqualified call to std move | 1 |

51,721 | 21,784,301,200 | IssuesEvent | 2022-05-13 23:49:33 | BCDevOps/developer-experience | https://api.github.com/repos/BCDevOps/developer-experience | opened | Automate cleanup of Argo Workflows | ops and shared services | **Describe the issue**

Argo Workflows do not go away after they are run. The system generates warning messages when there are over 100 of them.

Implement an automated system to remove old workflows.

**Additional context**

In the past, these were periodically removed manually.

**Definition of done**

- [ ] Create a build with the `argo` CLI

- [ ] Create a config map with the necessary environment variables

- [ ] Create a cronjob to run daily and remove workflows that are more than X days old

| 1.0 | Automate cleanup of Argo Workflows - **Describe the issue**

Argo Workflows do not go away after they are run. The system generates warning messages when there are over 100 of them.

Implement an automated system to remove old workflows.

**Additional context**

In the past, these were periodically removed manually.

**Definition of done**

- [ ] Create a build with the `argo` CLI

- [ ] Create a config map with the necessary environment variables

- [ ] Create a cronjob to run daily and remove workflows that are more than X days old

| non_build | automate cleanup of argo workflows describe the issue argo workflows do not go away after they are run the system generates warning messages when there are over of them implement an automated system to remove old workflows additional context in the past these were periodically removed manually definition of done create a build with the argo cli create a config map with the necessary environment variables create a cronjob to run daily and remove workflows that are more than x days old | 0 |

29,303 | 8,318,631,262 | IssuesEvent | 2018-09-25 15:06:46 | elastic/runbld | https://api.github.com/repos/elastic/runbld | closed | optionally add timestamps to output | enhancement logging ~builds-team | Since runbld wraps other process(es), some builds do a good job and have timestamps in their output (others do not).

It would be nice to be able to inject timestamps at the beginning of the log messages optionally. Or another stance is to push this dependency down to the actual code/projects/builds that are being run by runbld.

Regardless maybe add timestamps to the runbld specific messages.

| 1.0 | optionally add timestamps to output - Since runbld wraps other process(es), some builds do a good job and have timestamps in their output (others do not).

It would be nice to be able to inject timestamps at the beginning of the log messages optionally. Or another stance is to push this dependency down to the actual code/projects/builds that are being run by runbld.

Regardless maybe add timestamps to the runbld specific messages.

| build | optionally add timestamps to output since runbld wraps other process es some builds do a good job and have timestamps in their output others do not it would be nice to be able to inject timestamps at the beginning of the log messages optionally or another stance is to push this dependency down to the actual code projects builds that are being run by runbld regardless maybe add timestamps to the runbld specific messages | 1 |

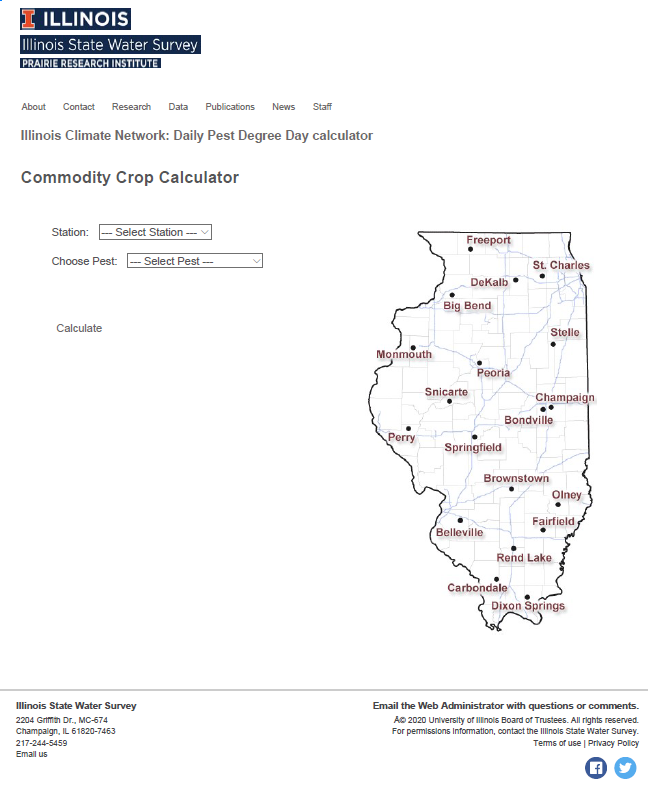

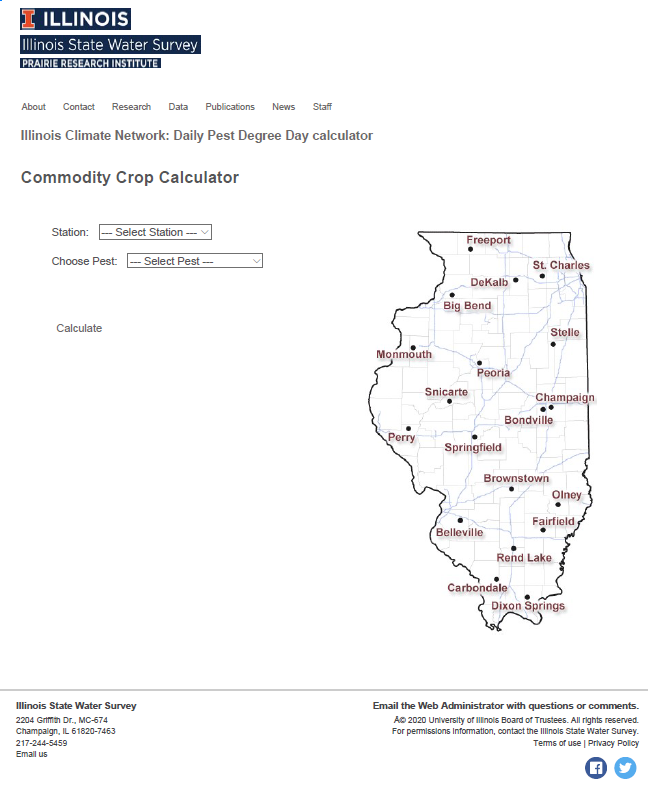

195,180 | 14,706,285,107 | IssuesEvent | 2021-01-04 19:35:18 | PRI-Illinois/WARM-PDD | https://api.github.com/repos/PRI-Illinois/WARM-PDD | closed | [BUG] "Link to Dashboard" doesn't appear on either calculator | Browser: Chrome READY FOR RETEST Severity 4: Minor Type: Bug | **Actual Behavior**

There was no link to "choose different calculator" found on the Destination Page for theSpecialty and Commodity Crop Calculators.

**Expected behavior**

When opening the Destination Page for Specialty and Commodity Crop Calculators, I expected to see a link to return to the dashboard labelled "choose different calculator".

**To Reproduce**

On the Dashboard under "Choose Calculator" - select "Commodity Crop Pests" - select "Go to Calculator" - the link labelled "choose different calculator" should be absent. Repeat for "Specialty Crop Pests".

**Browser and Version**

Google Chrome, Version86.0.4240.183

**Platform**

Intel Desktop, Windows 10

**Screenshots**

If applicable, add screenshots to help explain your problem.

| 1.0 | [BUG] "Link to Dashboard" doesn't appear on either calculator - **Actual Behavior**

There was no link to "choose different calculator" found on the Destination Page for theSpecialty and Commodity Crop Calculators.

**Expected behavior**

When opening the Destination Page for Specialty and Commodity Crop Calculators, I expected to see a link to return to the dashboard labelled "choose different calculator".

**To Reproduce**

On the Dashboard under "Choose Calculator" - select "Commodity Crop Pests" - select "Go to Calculator" - the link labelled "choose different calculator" should be absent. Repeat for "Specialty Crop Pests".

**Browser and Version**

Google Chrome, Version86.0.4240.183

**Platform**

Intel Desktop, Windows 10

**Screenshots**

If applicable, add screenshots to help explain your problem.

| non_build | link to dashboard doesn t appear on either calculator actual behavior there was no link to choose different calculator found on the destination page for thespecialty and commodity crop calculators expected behavior when opening the destination page for specialty and commodity crop calculators i expected to see a link to return to the dashboard labelled choose different calculator to reproduce on the dashboard under choose calculator select commodity crop pests select go to calculator the link labelled choose different calculator should be absent repeat for specialty crop pests browser and version google chrome platform intel desktop windows screenshots if applicable add screenshots to help explain your problem | 0 |

82,623 | 23,834,768,283 | IssuesEvent | 2022-09-06 04:01:29 | google/mediapipe | https://api.github.com/repos/google/mediapipe | opened | 【MAC】An error occurred building 'hello world' example | type:build/install | I'm trying to build 'hello_world' on MAC, and following error occurred.

**System information** (Please provide as much relevant information as possible)

Error in download_and_extract: java.io.IOException: Error downloading [https://github.com/bazelbuild/rules_apple/releases/download/0.32.0/rules_apple.0.32.0.tar.gz] to /private/var/tmp/_bazel_wangjunjie/95eda4f7c99f7ddce46cd8d36dd7bed7/external/build_bazel_rules_apple/temp11825797914784900860/rules_apple.0.32.0.tar.gz: Connection refused (Connection refused)

ERROR: no such package '@build_bazel_rules_apple//apple': java.io.IOException: Error downloading [https://github.com/bazelbuild/rules_apple/releases/download/0.32.0/rules_apple.0.32.0.tar.gz] to /private/var/tmp/_bazel_wangjunjie/95eda4f7c99f7ddce46cd8d36dd7bed7/external/build_bazel_rules_apple/temp11825797914784900860/rules_apple.0.32.0.tar.gz: Connection refused (Connection refused)

INFO: Elapsed time: 12.881s

INFO: 0 processes.

**Describe the problem**:

seems like the package `build_bazel_rules_apple//apple` i can't download. But when i copy the link to the web browser, i can visit. Need help~

| 1.0 | 【MAC】An error occurred building 'hello world' example - I'm trying to build 'hello_world' on MAC, and following error occurred.

**System information** (Please provide as much relevant information as possible)

Error in download_and_extract: java.io.IOException: Error downloading [https://github.com/bazelbuild/rules_apple/releases/download/0.32.0/rules_apple.0.32.0.tar.gz] to /private/var/tmp/_bazel_wangjunjie/95eda4f7c99f7ddce46cd8d36dd7bed7/external/build_bazel_rules_apple/temp11825797914784900860/rules_apple.0.32.0.tar.gz: Connection refused (Connection refused)

ERROR: no such package '@build_bazel_rules_apple//apple': java.io.IOException: Error downloading [https://github.com/bazelbuild/rules_apple/releases/download/0.32.0/rules_apple.0.32.0.tar.gz] to /private/var/tmp/_bazel_wangjunjie/95eda4f7c99f7ddce46cd8d36dd7bed7/external/build_bazel_rules_apple/temp11825797914784900860/rules_apple.0.32.0.tar.gz: Connection refused (Connection refused)

INFO: Elapsed time: 12.881s

INFO: 0 processes.

**Describe the problem**:

seems like the package `build_bazel_rules_apple//apple` i can't download. But when i copy the link to the web browser, i can visit. Need help~

| build | 【mac】an error occurred building hello world example i m trying to build hello world on mac and following error occurred system information please provide as much relevant information as possible error in download and extract java io ioexception error downloading to private var tmp bazel wangjunjie external build bazel rules apple rules apple tar gz connection refused connection refused error no such package build bazel rules apple apple java io ioexception error downloading to private var tmp bazel wangjunjie external build bazel rules apple rules apple tar gz connection refused connection refused info elapsed time info processes describe the problem seems like the package build bazel rules apple apple i can t download but when i copy the link to the web browser i can visit need help | 1 |

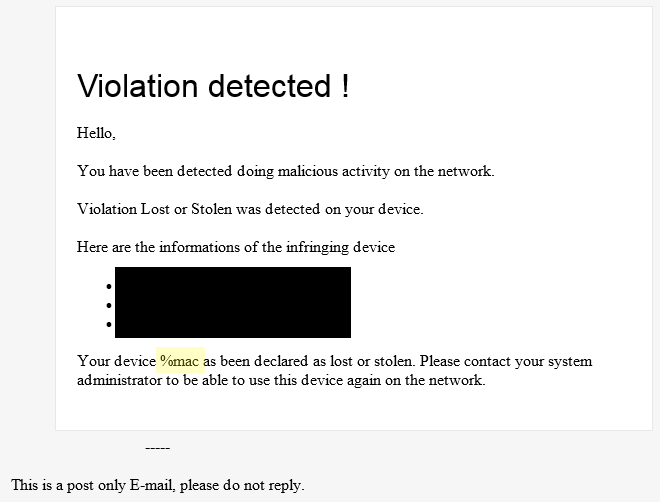

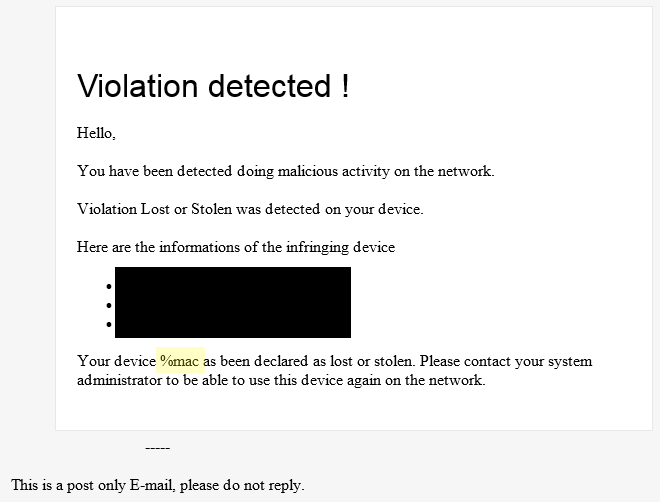

191,217 | 6,826,984,178 | IssuesEvent | 2017-11-08 15:44:20 | inverse-inc/packetfence | https://api.github.com/repos/inverse-inc/packetfence | closed | Lost/Stolen: MAC address in user email is not populated | Priority: Medium Type: Bug | The placeholder for the MAC address doesn't seem to be valid as seen in the screenshot below

| 1.0 | Lost/Stolen: MAC address in user email is not populated - The placeholder for the MAC address doesn't seem to be valid as seen in the screenshot below

| non_build | lost stolen mac address in user email is not populated the placeholder for the mac address doesn t seem to be valid as seen in the screenshot below | 0 |

59,399 | 14,580,932,818 | IssuesEvent | 2020-12-18 09:56:43 | intellij-rust/intellij-rust | https://api.github.com/repos/intellij-rust/intellij-rust | closed | Failed to render build output error | bug subsystem::build & run subsystem::tools | <!--

Hello and thank you for the issue!

If you would like to report a bug, we have added some points below that you can fill out.

Feel free to remove all the irrelevant text to request a new feature.

-->

## Environment

* **IntelliJ Rust plugin version: `0.2.116.2844-193-nightly`**

* **Rust toolchain version: `rustc 1.43.0-nightly (58b834344 2020-02-05)`**

* **IDE name and version: CLion 19.3.3**

* **Operating system: Win 10**

## Problem description

Failed to render error message

## Steps to reproduce

This problem pops up only under some specific circumstances that I cannot reproduce, so I cannot provide something like a minimal erroneous sample code easily. However, I attached the error message below that might be helpful.

Sample Build Output (irrelevant details are hidden):

```

C:/Users/?/.cargo/bin/cargo.exe build --color=always --package ? --example ? --message-format=json

Compiling ? v0.1.0 (?)