Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 853 | labels stringlengths 4 898 | body stringlengths 2 262k | index stringclasses 13

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 250k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

85,123 | 24,517,433,731 | IssuesEvent | 2022-10-11 06:48:01 | kiwix/kiwix-js-windows | https://api.github.com/repos/kiwix/kiwix-js-windows | closed | Portable version | enhancement fixed build | Kiwix JS is an electron app.

Please make a portable version: (like LosslessCut)

https://github.com/mifi/lossless-cut/releases/tag/v3.44.0

https://github.com/mifi/lossless-cut/releases/download/v3.44.0/LosslessCut-win-x64.exe | 1.0 | Portable version - Kiwix JS is an electron app.

Please make a portable version: (like LosslessCut)

https://github.com/mifi/lossless-cut/releases/tag/v3.44.0

https://github.com/mifi/lossless-cut/releases/download/v3.44.0/LosslessCut-win-x64.exe | build | portable version kiwix js is an electron app please make a portable version like losslesscut | 1 |

48,929 | 7,465,773,826 | IssuesEvent | 2018-04-02 07:01:48 | aerospike/aerospike-client-nodejs | https://api.github.com/repos/aerospike/aerospike-client-nodejs | closed | API documentation is inaccessible | documentation | It's not possible to access the API documentation, linked to from the README, the link gives a 403: https://www.aerospike.com/apidocs/nodejs. | 1.0 | API documentation is inaccessible - It's not possible to access the API documentation, linked to from the README, the link gives a 403: https://www.aerospike.com/apidocs/nodejs. | non_build | api documentation is inaccessible it s not possible to access the api documentation linked to from the readme the link gives a | 0 |

57,570 | 14,147,208,862 | IssuesEvent | 2020-11-10 20:25:47 | aws/aws-sam-cli | https://api.github.com/repos/aws/aws-sam-cli | closed | sam build - reuse lambda deployment packages, ideally one-per-manifest | area/build type/design type/feedback | ### Describe your idea/feature/enhancement

`sam build` appears to re-create identical Lambda deployment packages for each of my Python functions. That is, all dependencies and code files are included – even handler files for Lambdas other than the Lambda being built.

This adds increased build time as the same de... | 1.0 | sam build - reuse lambda deployment packages, ideally one-per-manifest - ### Describe your idea/feature/enhancement

`sam build` appears to re-create identical Lambda deployment packages for each of my Python functions. That is, all dependencies and code files are included – even handler files for Lambdas other than ... | build | sam build reuse lambda deployment packages ideally one per manifest describe your idea feature enhancement sam build appears to re create identical lambda deployment packages for each of my python functions that is all dependencies and code files are included – even handler files for lambdas other than ... | 1 |

19,886 | 3,511,670,241 | IssuesEvent | 2016-01-10 13:22:57 | NAFITH/IraqWeb | https://api.github.com/repos/NAFITH/IraqWeb | opened | In BOL search, Searching for a deleted container view the parent BOL although the Container does not belong to it anymore. | Major Missing System Design Open | Shipping Agent

BOL –search

Prerequisite:

• An BOL one of its containers has been deleted .

Scenario

• Log into the system.

• From the main menu, go search section and type the number of the deleted container

Bug description

- Searching for a deleted container view the parent BOL a... | 1.0 | In BOL search, Searching for a deleted container view the parent BOL although the Container does not belong to it anymore. - Shipping Agent

BOL –search

Prerequisite:

• An BOL one of its containers has been deleted .

Scenario

• Log into the system.

• From the main menu, go search section an... | non_build | in bol search searching for a deleted container view the parent bol although the container does not belong to it anymore shipping agent bol –search prerequisite • an bol one of its containers has been deleted scenario • log into the system • from the main menu go search section an... | 0 |

348,164 | 24,907,800,718 | IssuesEvent | 2022-10-29 13:33:52 | OpenBagTwo/EnderChest | https://api.github.com/repos/OpenBagTwo/EnderChest | opened | Publish Docs | documentation | **GIVEN** a user is interested in installing and using EnderChest

**WHEN** they visit this repo and click links in the README or side-panels linking to the docs for this package

**THEN** they should be taken to a full HTML website containing all docs for the EnderChest package

**SO** that they can bookmark that page... | 1.0 | Publish Docs - **GIVEN** a user is interested in installing and using EnderChest

**WHEN** they visit this repo and click links in the README or side-panels linking to the docs for this package

**THEN** they should be taken to a full HTML website containing all docs for the EnderChest package

**SO** that they can boo... | non_build | publish docs given a user is interested in installing and using enderchest when they visit this repo and click links in the readme or side panels linking to the docs for this package then they should be taken to a full html website containing all docs for the enderchest package so that they can boo... | 0 |

16,052 | 11,810,813,281 | IssuesEvent | 2020-03-19 17:06:05 | eventespresso/event-espresso-core | https://api.github.com/repos/eventespresso/event-espresso-core | closed | Email verification in check-out | category:forms-systems category:models-and-data-infrastructure type:feature-request 🙏 | Hi!

So let's assume all customers are idiots (seems many are) and are incapable of typing in their own email during checkout. Since there's no verification field their registration will not send out emails to the correct emailadress (registration complete for example) and the EE admins can't contact them (unless they ... | 1.0 | Email verification in check-out - Hi!

So let's assume all customers are idiots (seems many are) and are incapable of typing in their own email during checkout. Since there's no verification field their registration will not send out emails to the correct emailadress (registration complete for example) and the EE admin... | non_build | email verification in check out hi so let s assume all customers are idiots seems many are and are incapable of typing in their own email during checkout since there s no verification field their registration will not send out emails to the correct emailadress registration complete for example and the ee admin... | 0 |

55,644 | 6,912,064,670 | IssuesEvent | 2017-11-28 10:36:55 | cosmos/cosmos-ui | https://api.github.com/repos/cosmos/cosmos-ui | closed | no data states need to be informative and communicative | DESIGN REQUIRED | every route / feature should have messaging when data is not present.

for example, if netmon is down — it should say...

"Sorry, even though you're running / connected to a full node we can't get display this data for you right now — try again later or contact us to let us know"

... or some such message. A ni... | 1.0 | no data states need to be informative and communicative - every route / feature should have messaging when data is not present.

for example, if netmon is down — it should say...

"Sorry, even though you're running / connected to a full node we can't get display this data for you right now — try again later or con... | non_build | no data states need to be informative and communicative every route feature should have messaging when data is not present for example if netmon is down — it should say sorry even though you re running connected to a full node we can t get display this data for you right now — try again later or con... | 0 |

37,264 | 15,222,619,982 | IssuesEvent | 2021-02-18 00:42:29 | microsoft/BotBuilder-Samples | https://api.github.com/repos/microsoft/BotBuilder-Samples | closed | 50.teams-messaging-extensions-search cannot work on channel | Area: Teams Bot Services ExemptFromDailyDRIReport customer-replied-to customer-reported | This NodeJS sample cannot work on the Teams->General channel. It simply failed in channel if I directly click the New Conversation to use this extension. It returns back without any result after selecting one item in the list. Being strange, It can work if I typed in any text in the message input box and then click th... | 1.0 | 50.teams-messaging-extensions-search cannot work on channel - This NodeJS sample cannot work on the Teams->General channel. It simply failed in channel if I directly click the New Conversation to use this extension. It returns back without any result after selecting one item in the list. Being strange, It can work if ... | non_build | teams messaging extensions search cannot work on channel this nodejs sample cannot work on the teams general channel it simply failed in channel if i directly click the new conversation to use this extension it returns back without any result after selecting one item in the list being strange it can work if i... | 0 |

30,525 | 8,558,119,259 | IssuesEvent | 2018-11-08 17:19:43 | denoland/deno | https://api.github.com/repos/denoland/deno | closed | Travis should fail immediately if tools/lint.py or tools/test_format.py fail | build good first issue | We don't want to clog the CI pipeline with things that will need to be built again. We want to give more immediate feedback to PRs | 1.0 | Travis should fail immediately if tools/lint.py or tools/test_format.py fail - We don't want to clog the CI pipeline with things that will need to be built again. We want to give more immediate feedback to PRs | build | travis should fail immediately if tools lint py or tools test format py fail we don t want to clog the ci pipeline with things that will need to be built again we want to give more immediate feedback to prs | 1 |

49,994 | 12,450,936,001 | IssuesEvent | 2020-05-27 09:36:36 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | closed | Unresolved External Symbols Windows C++Tensorflow v1.14.0 | TF 1.14 stat:awaiting response subtype:windows type:build/install | ### System information

- Windows 10

- Built from source

- Tensorflow v1.14.0

- Bazel v0.25.2

- MSVC 14.16.27023

- CUDA 10.0 Cudnn 7.6.0

- GTX 1060

### Describe the problem

I have built tenosrflow c++ library using the following commands

```bash

bazel build //tensorflow:tensorflow_cc.lib

```

```bash

... | 1.0 | Unresolved External Symbols Windows C++Tensorflow v1.14.0 - ### System information

- Windows 10

- Built from source

- Tensorflow v1.14.0

- Bazel v0.25.2

- MSVC 14.16.27023

- CUDA 10.0 Cudnn 7.6.0

- GTX 1060

### Describe the problem

I have built tenosrflow c++ library using the following commands

```bash

... | build | unresolved external symbols windows c tensorflow system information windows built from source tensorflow bazel msvc cuda cudnn gtx describe the problem i have built tenosrflow c library using the following commands bash bazel build te... | 1 |

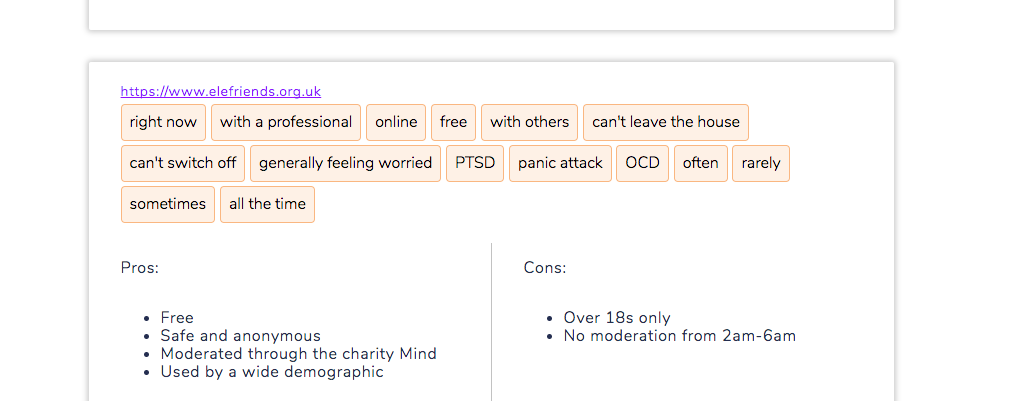

58,258 | 6,585,015,600 | IssuesEvent | 2017-09-13 12:37:20 | LDMW/cms | https://api.github.com/repos/LDMW/cms | closed | Some titles are missing on resources | bug please-test T1h | - Elefriends

- NHS Choices Anxiety page

- NHS Choices Anxiety page

| 1.0 | Add column architecture to aws_lambda_function table - **Is your feature request related to a problem? Please describe.**

Required for AWS Thrifty

[Reference](https://pkg.go.dev/github.com/aws/aws-sdk-go@v1.42.25/service/lambda#FunctionConfiguration)

| non_build | add column architecture to aws lambda function table is your feature request related to a problem please describe required for aws thrifty | 0 |

51,659 | 12,761,293,801 | IssuesEvent | 2020-06-29 11:13:34 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | build libtorch-cxx11-abi-shared-with-deps-1.5.0+cu92.zip , creat a tensor in CPU is ok, but transfer to GPU error | module: build module: cuda triaged | ## 🐛 Bug

<!-- A clear and concise description of what the bug is. -->

## To Reproduce

Steps to reproduce the behavior:

my example-app.cpp is below:

#include <opencv2/opencv.hpp>

#include <iostream>

#include <torch/script.h>

using namespace std;

int main() {

torch::Tensor tensor = torch::ra... | 1.0 | build libtorch-cxx11-abi-shared-with-deps-1.5.0+cu92.zip , creat a tensor in CPU is ok, but transfer to GPU error - ## 🐛 Bug

<!-- A clear and concise description of what the bug is. -->

## To Reproduce

Steps to reproduce the behavior:

my example-app.cpp is below:

#include <opencv2/opencv.hpp>

#include... | build | build libtorch abi shared with deps zip creat a tensor in cpu is ok but transfer to gpu error 🐛 bug to reproduce steps to reproduce the behavior my example app cpp is below include include include using namespace std int main torch tensor tensor to... | 1 |

42,029 | 10,864,770,075 | IssuesEvent | 2019-11-14 17:32:08 | awslabs/s2n | https://api.github.com/repos/awslabs/s2n | closed | Build issue on OSX: error: use of undeclared identifier 'AF_BLUETOOTH' | type/build | ## **Problem:**

```

Scanning dependencies of target s2n_rfc5952_test

[ 88%] Building C object CMakeFiles/s2n_rfc5952_test.dir/tests/unit/s2n_rfc5952_test.c.o

[ 88%] Built target s2n_server_cert_verify_test

/Users/dsn/ws/s2n-CBMC/s2n/tests/unit/s2n_rfc5952_test.c:75:45: error: use of undeclared identifier 'AF_BLUET... | 1.0 | Build issue on OSX: error: use of undeclared identifier 'AF_BLUETOOTH' - ## **Problem:**

```

Scanning dependencies of target s2n_rfc5952_test

[ 88%] Building C object CMakeFiles/s2n_rfc5952_test.dir/tests/unit/s2n_rfc5952_test.c.o

[ 88%] Built target s2n_server_cert_verify_test

/Users/dsn/ws/s2n-CBMC/s2n/tests/uni... | build | build issue on osx error use of undeclared identifier af bluetooth problem scanning dependencies of target test building c object cmakefiles test dir tests unit test c o built target server cert verify test users dsn ws cbmc tests unit test c error use of undeclar... | 1 |

50,113 | 12,476,021,633 | IssuesEvent | 2020-05-29 12:45:35 | googleapis/google-cloud-go | https://api.github.com/repos/googleapis/google-cloud-go | closed | spanner: TestIntegration_BatchDML failed | api: spanner buildcop: issue priority: p1 type: bug | Note: #2170 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: https://github.com/googleapis/google-cloud-go/commit/abe7c9a08936be6370959448acccbfdb80c34d76

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/7c49f223-f2c2-46f1-aa36-4a019... | 1.0 | spanner: TestIntegration_BatchDML failed - Note: #2170 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: https://github.com/googleapis/google-cloud-go/commit/abe7c9a08936be6370959448acccbfdb80c34d76

buildURL: [Build Status](https://source.cloud.google.com/result... | build | spanner testintegration batchdml failed note was also for this test but it was closed more than days ago so i didn t mark it flaky commit buildurl status failed | 1 |

87,124 | 25,037,757,585 | IssuesEvent | 2022-11-04 17:31:32 | aws-amplify/amplify-hosting | https://api.github.com/repos/aws-amplify/amplify-hosting | closed | Deploy phase failing suddenly Next.js | bug backend-builds | ### Before opening, please confirm:

- [X] I have checked to see if my question is addressed in the [FAQ](https://github.com/aws-amplify/amplify-console/blob/master/FAQ.md).

- [X] I have [searched for duplicate or closed issues](https://github.com/aws-amplify/amplify-console/issues?q=is%3Aissue+).

- [X] I have re... | 1.0 | Deploy phase failing suddenly Next.js - ### Before opening, please confirm:

- [X] I have checked to see if my question is addressed in the [FAQ](https://github.com/aws-amplify/amplify-console/blob/master/FAQ.md).

- [X] I have [searched for duplicate or closed issues](https://github.com/aws-amplify/amplify-console... | build | deploy phase failing suddenly next js before opening please confirm i have checked to see if my question is addressed in the i have i have read the guide for i have done my best to include a minimal self contained set of instructions for consistently reproducing the issue ... | 1 |

18,431 | 6,598,207,612 | IssuesEvent | 2017-09-16 01:35:39 | rust-lang/rust | https://api.github.com/repos/rust-lang/rust | closed | Rustdoc unit tests not being run by Rustbuild | A-build A-rustdoc C-bug I-nominated T-dev-tools T-doc | As far as we could tell.

Currently, running `cargo test` in src/librustdoc seems to work, but there are some failures:

```

Doc-tests rustdoc

running 3 tests

test clean/simplify.rs - clean::simplify (line 24) ... FAILED

test clean/simplify.rs - clean::simplify (line 20) ... FAILED

test html/markdown.rs -... | 1.0 | Rustdoc unit tests not being run by Rustbuild - As far as we could tell.

Currently, running `cargo test` in src/librustdoc seems to work, but there are some failures:

```

Doc-tests rustdoc

running 3 tests

test clean/simplify.rs - clean::simplify (line 24) ... FAILED

test clean/simplify.rs - clean::simpli... | build | rustdoc unit tests not being run by rustbuild as far as we could tell currently running cargo test in src librustdoc seems to work but there are some failures doc tests rustdoc running tests test clean simplify rs clean simplify line failed test clean simplify rs clean simplif... | 1 |

64,476 | 15,890,498,031 | IssuesEvent | 2021-04-10 15:39:48 | ARMmaster17/Captain | https://api.github.com/repos/ARMmaster17/Captain | closed | Builder resource locks hang on etcd API calls | bug component:Builder | Usually requires a restart of the LXC container. Can take up to several minutes to obtain lock in production. In testing requests can hang for several seconds. | 1.0 | Builder resource locks hang on etcd API calls - Usually requires a restart of the LXC container. Can take up to several minutes to obtain lock in production. In testing requests can hang for several seconds. | build | builder resource locks hang on etcd api calls usually requires a restart of the lxc container can take up to several minutes to obtain lock in production in testing requests can hang for several seconds | 1 |

70,443 | 18,150,842,166 | IssuesEvent | 2021-09-26 08:31:13 | roapi/roapi | https://api.github.com/repos/roapi/roapi | closed | Automate docker release with Github action | good first issue help wanted build | add a job in roapi_http_release action to build, tag and push docker image to https://github.com/orgs/roapi/packages/container/package/roapi-http on every release. | 1.0 | Automate docker release with Github action - add a job in roapi_http_release action to build, tag and push docker image to https://github.com/orgs/roapi/packages/container/package/roapi-http on every release. | build | automate docker release with github action add a job in roapi http release action to build tag and push docker image to on every release | 1 |

21,115 | 4,679,872,863 | IssuesEvent | 2016-10-08 00:07:53 | scikit-learn/scikit-learn | https://api.github.com/repos/scikit-learn/scikit-learn | closed | Option to shuffle data in cross_val_score | Documentation Easy Need Contributor | I'm used to how [KFold](http://scikit-learn.org/stable/modules/generated/sklearn.cross_validation.KFold.html) has a `random_state` option in case you want to shuffle the data. But I notice that the simpler [cross_val_score](http://scikit-learn.org/stable/modules/generated/sklearn.cross_validation.cross_val_score.html) ... | 1.0 | Option to shuffle data in cross_val_score - I'm used to how [KFold](http://scikit-learn.org/stable/modules/generated/sklearn.cross_validation.KFold.html) has a `random_state` option in case you want to shuffle the data. But I notice that the simpler [cross_val_score](http://scikit-learn.org/stable/modules/generated/skl... | non_build | option to shuffle data in cross val score i m used to how has a random state option in case you want to shuffle the data but i notice that the simpler does not allow for this functionality is there any reason why kfold would have an option to shuffle data but cross val score wouldn t | 0 |

15,004 | 5,847,098,797 | IssuesEvent | 2017-05-10 17:42:09 | totaljs/framework | https://api.github.com/repos/totaljs/framework | closed | schema.setValidate for filtered schema not called | bug builders | Here is my controller

```

exports.install = function() {

F.route('/api/test', test_json, ['post', 'json', '*Content#Create'])

}

function test_json () {

const controller = this

controller.$save(function (err, resp) {

controller.plain(err||resp)

})

}

```

Here is my model

```

NEWSCHEMA('Content').ma... | 1.0 | schema.setValidate for filtered schema not called - Here is my controller

```

exports.install = function() {

F.route('/api/test', test_json, ['post', 'json', '*Content#Create'])

}

function test_json () {

const controller = this

controller.$save(function (err, resp) {

controller.plain(err||resp)

})

}

... | build | schema setvalidate for filtered schema not called here is my controller exports install function f route api test test json function test json const controller this controller save function err resp controller plain err resp here is my model newsc... | 1 |

95,151 | 27,395,017,826 | IssuesEvent | 2023-02-28 19:00:01 | r5py/r5py | https://api.github.com/repos/r5py/r5py | closed | MacOS: process died unexpectedly | bug life universe everything build system | So we have this really weird situation since the new year that one of the many test runs fails. It’s always one of the MacOS jobs (with system-wide Java environment), the error is always connected to the JVM not finding libjsig, and **re-running the failed jobs from the web-UI always fixes the issue**.

This must be ... | 1.0 | MacOS: process died unexpectedly - So we have this really weird situation since the new year that one of the many test runs fails. It’s always one of the MacOS jobs (with system-wide Java environment), the error is always connected to the JVM not finding libjsig, and **re-running the failed jobs from the web-UI always ... | build | macos process died unexpectedly so we have this really weird situation since the new year that one of the many test runs fails it’s always one of the macos jobs with system wide java environment the error is always connected to the jvm not finding libjsig and re running the failed jobs from the web ui always ... | 1 |

51,251 | 12,692,496,862 | IssuesEvent | 2020-06-21 22:55:33 | Autodesk/maya-usd | https://api.github.com/repos/Autodesk/maya-usd | closed | Unable to compile ADSK plugin on Windows 10 (Missing Boost::system link) | build help wanted question | **Describe the issue**

I'm currently trying to compile the plugin using all the recommended instructions, but can't seem to get passed an issue when it reaches to configure the Autodesk plugin. It mentions the mayaUsd target links to target "Boost::system" but the target is not found

I've attempted building USD on ... | 1.0 | Unable to compile ADSK plugin on Windows 10 (Missing Boost::system link) - **Describe the issue**

I'm currently trying to compile the plugin using all the recommended instructions, but can't seem to get passed an issue when it reaches to configure the Autodesk plugin. It mentions the mayaUsd target links to target "Bo... | build | unable to compile adsk plugin on windows missing boost system link describe the issue i m currently trying to compile the plugin using all the recommended instructions but can t seem to get passed an issue when it reaches to configure the autodesk plugin it mentions the mayausd target links to target boo... | 1 |

126,566 | 4,997,522,231 | IssuesEvent | 2016-12-09 16:59:51 | Jumpscale/jscockpit | https://api.github.com/repos/Jumpscale/jscockpit | opened | mvp-cockpit: not all repositories show up in the Cockpit Portal | priority_critical type_bug | Cockpit:

```

ssh cloudscalers@172.98.207.199 -p7122 -A

```

Password is **iZASUo7tE**

Actual cockpit repositories, **four**:

```

root@vm-93:/optvar/cockpit_repos# ls

tony01 yves01 yves02 yves03

```

When using the Cockpit API, **only two of four** are returned:

```

curl -X GET http://172.98.207.199:500... | 1.0 | mvp-cockpit: not all repositories show up in the Cockpit Portal - Cockpit:

```

ssh cloudscalers@172.98.207.199 -p7122 -A

```

Password is **iZASUo7tE**

Actual cockpit repositories, **four**:

```

root@vm-93:/optvar/cockpit_repos# ls

tony01 yves01 yves02 yves03

```

When using the Cockpit API, **only two o... | non_build | mvp cockpit not all repositories show up in the cockpit portal cockpit ssh cloudscalers a password is actual cockpit repositories four root vm optvar cockpit repos ls when using the cockpit api only two of four are returned curl x get... | 0 |

75,015 | 25,481,586,156 | IssuesEvent | 2022-11-25 22:11:33 | nim-works/cps | https://api.github.com/repos/nim-works/cps | closed | CPS doesn't rewrite locals with proc types correctly | nim compiler defect | ```nim

import cps

proc foo(x: int) =

discard

proc bar() {.cps: Continuation.} =

var f = foo

f(10)

bar()

```

Got:

```

test.nim(7, 7) Error: inconsistent typing for reintroduced symbol 'f': previous type was: proc (x: int){.noSideEffect, gcsafe, locks: 0.}; new type is: proc (x: int){.closure.}... | 1.0 | CPS doesn't rewrite locals with proc types correctly - ```nim

import cps

proc foo(x: int) =

discard

proc bar() {.cps: Continuation.} =

var f = foo

f(10)

bar()

```

Got:

```

test.nim(7, 7) Error: inconsistent typing for reintroduced symbol 'f': previous type was: proc (x: int){.noSideEffect, gc... | non_build | cps doesn t rewrite locals with proc types correctly nim import cps proc foo x int discard proc bar cps continuation var f foo f bar got test nim error inconsistent typing for reintroduced symbol f previous type was proc x int nosideeffect gcs... | 0 |

4,341 | 4,309,234,209 | IssuesEvent | 2016-07-21 15:24:16 | KeitIG/museeks | https://api.github.com/repos/KeitIG/museeks | closed | Stricter React | performances | All React components should follow these two ESLint rules (even if they are not optimized)

- [ ] `react/require-optimization`

- [x] `react/prop-types`

- [x] ensure immutability | True | Stricter React - All React components should follow these two ESLint rules (even if they are not optimized)

- [ ] `react/require-optimization`

- [x] `react/prop-types`

- [x] ensure immutability | non_build | stricter react all react components should follow these two eslint rules even if they are not optimized react require optimization react prop types ensure immutability | 0 |

41,743 | 10,773,461,174 | IssuesEvent | 2019-11-02 20:47:35 | yellowled/yl-bp | https://api.github.com/repos/yellowled/yl-bp | closed | Move configuration to package.json | Build Structure | Check if the following configs can be moved to `package.json`:

- [x] `.babelrc`

- [x] `.browserslistrc`

- [x] `.eslintrc.json`

- [x] `.postcssrc`

- [x] `.prettierrc`

- [x] `.stylelintrc` | 1.0 | Move configuration to package.json - Check if the following configs can be moved to `package.json`:

- [x] `.babelrc`

- [x] `.browserslistrc`

- [x] `.eslintrc.json`

- [x] `.postcssrc`

- [x] `.prettierrc`

- [x] `.stylelintrc` | build | move configuration to package json check if the following configs can be moved to package json babelrc browserslistrc eslintrc json postcssrc prettierrc stylelintrc | 1 |

85,924 | 8,007,319,007 | IssuesEvent | 2018-07-24 01:43:33 | apache/incubator-mxnet | https://api.github.com/repos/apache/incubator-mxnet | closed | Issues with spatial transformer op when cudnn disabled | Breaking Bug CUDA Disabled test Operator | ## Description

as part of PR: #11470, it was found that spatial transformer op without cudnn enabled doesn't pass tests.

To reproduce try one of the two scripts below:

Script 1:

```

import numpy as np

import mxnet as mx

from mxnet.test_utils import assert_almost_equal, default_context

np.set_printoptions(t... | 1.0 | Issues with spatial transformer op when cudnn disabled - ## Description

as part of PR: #11470, it was found that spatial transformer op without cudnn enabled doesn't pass tests.

To reproduce try one of the two scripts below:

Script 1:

```

import numpy as np

import mxnet as mx

from mxnet.test_utils import asse... | non_build | issues with spatial transformer op when cudnn disabled description as part of pr it was found that spatial transformer op without cudnn enabled doesn t pass tests to reproduce try one of the two scripts below script import numpy as np import mxnet as mx from mxnet test utils import assert a... | 0 |

17,770 | 6,510,482,392 | IssuesEvent | 2017-08-25 03:43:48 | vanilladb/vanillacore | https://api.github.com/repos/vanilladb/vanillacore | closed | maven build test error and cannot start db in osx | build fails OSX | ### Env:

osx: 10.12

Apache Maven 3.5.0

java version "1.8.0_131"

Java(TM) SE Runtime Environment (build 1.8.0_131-b11)

Java HotSpot(TM) 64-Bit Server VM (build 25.131-b11, mixed mode)

### What I did:

clone vaillacore@0.2.2 and run

`mvn package` , it threw some error

"org.vanilladb.core.storage.tx.concurrenc... | 1.0 | maven build test error and cannot start db in osx - ### Env:

osx: 10.12

Apache Maven 3.5.0

java version "1.8.0_131"

Java(TM) SE Runtime Environment (build 1.8.0_131-b11)

Java HotSpot(TM) 64-Bit Server VM (build 25.131-b11, mixed mode)

### What I did:

clone vaillacore@0.2.2 and run

`mvn package` , it threw s... | build | maven build test error and cannot start db in osx env osx apache maven java version java tm se runtime environment build java hotspot tm bit server vm build mixed mode what i did clone vaillacore and run mvn package it threw some error or... | 1 |

3,937 | 3,274,547,855 | IssuesEvent | 2015-10-26 11:31:37 | jgirald/ES2015C | https://api.github.com/repos/jgirald/ES2015C | closed | Create wall's entrance (Hittites) | Building Design Hittites Medium Priority Model Sprint3 Team B | **Description**: As a player, I want to create defense buildings, so that I can defend from attacks of my enemies.

**Definition of done**: The goal is to see the full structure of the building.

**Effort**: 4h

**Reponsible**: Sara Galindo | 1.0 | Create wall's entrance (Hittites) - **Description**: As a player, I want to create defense buildings, so that I can defend from attacks of my enemies.

**Definition of done**: The goal is to see the full structure of the building.

**Effort**: 4h

**Reponsible**: Sara Galindo | build | create wall s entrance hittites description as a player i want to create defense buildings so that i can defend from attacks of my enemies definition of done the goal is to see the full structure of the building effort reponsible sara galindo | 1 |

253,252 | 8,053,451,978 | IssuesEvent | 2018-08-01 23:05:51 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | re-pairing with no-bond legacy pairing results in using all zeros LTK | area: Bluetooth bug priority: low | **_Reported by Szymon Janc:_**

when no-bond pairing has occurred and we receive Security Request we should start new

pairing instead of trying to re-encrypt link right away since there is no key anymore.

(Imported from Jira ZEP-1770) | 1.0 | re-pairing with no-bond legacy pairing results in using all zeros LTK - **_Reported by Szymon Janc:_**

when no-bond pairing has occurred and we receive Security Request we should start new

pairing instead of trying to re-encrypt link right away since there is no key anymore.

(Imported from Jira ZEP-1770) | non_build | re pairing with no bond legacy pairing results in using all zeros ltk reported by szymon janc when no bond pairing has occurred and we receive security request we should start new pairing instead of trying to re encrypt link right away since there is no key anymore imported from jira zep | 0 |

306,428 | 23,159,826,817 | IssuesEvent | 2022-07-29 16:27:04 | pyrsia/pyrsia | https://api.github.com/repos/pyrsia/pyrsia | closed | Tutorial: build from source fails to execute maven build in final step | documentation | The [final step in the build_from_source tutorial](https://github.com/pyrsia/pyrsia/blob/main/docs/tutorials/build_from_source.md#use-pyrsia-in-a-maven-project) is about using the pyrsia node in a custom maven project. It provides a pom.xml that is correctly configured with the pyrsia node as a maven repository. This e... | 1.0 | Tutorial: build from source fails to execute maven build in final step - The [final step in the build_from_source tutorial](https://github.com/pyrsia/pyrsia/blob/main/docs/tutorials/build_from_source.md#use-pyrsia-in-a-maven-project) is about using the pyrsia node in a custom maven project. It provides a pom.xml that i... | non_build | tutorial build from source fails to execute maven build in final step the is about using the pyrsia node in a custom maven project it provides a pom xml that is correctly configured with the pyrsia node as a maven repository this ensures that maven will download the dependencies from the pyrsia node instead of... | 0 |

153,139 | 19,702,755,965 | IssuesEvent | 2022-01-12 18:18:43 | gdcorp-action-public-forks/toolchain | https://api.github.com/repos/gdcorp-action-public-forks/toolchain | closed | CVE-2021-35065 (Medium) detected in glob-parent-5.1.1.tgz - autoclosed | security vulnerability | ## CVE-2021-35065 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>glob-parent-5.1.1.tgz</b></p></summary>

<p>Extract the non-magic parent path from a glob string.</p>

<p>Library home... | True | CVE-2021-35065 (Medium) detected in glob-parent-5.1.1.tgz - autoclosed - ## CVE-2021-35065 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>glob-parent-5.1.1.tgz</b></p></summary>

<p>... | non_build | cve medium detected in glob parent tgz autoclosed cve medium severity vulnerability vulnerable library glob parent tgz extract the non magic parent path from a glob string library home page a href dependency hierarchy eslint tgz root library x ... | 0 |

771,268 | 27,077,252,996 | IssuesEvent | 2023-02-14 11:28:56 | testomatio/app | https://api.github.com/repos/testomatio/app | opened | Run Archive should show only old Runs | bug reporting ui\ux users priority medium archive | **Describe the bug**

We show 30 Runs and RunGroups on the Runs screen.

All Runs and RunGroups over the latest 30 should be placed in Run Archive and RunGroup Archive.

For now, we show all Runs and RunGroups in Archives which is wrong because users need to see only old Runs and RunGroups in Archives.

**To Reprod... | 1.0 | Run Archive should show only old Runs - **Describe the bug**

We show 30 Runs and RunGroups on the Runs screen.

All Runs and RunGroups over the latest 30 should be placed in Run Archive and RunGroup Archive.

For now, we show all Runs and RunGroups in Archives which is wrong because users need to see only old Runs ... | non_build | run archive should show only old runs describe the bug we show runs and rungroups on the runs screen all runs and rungroups over the latest should be placed in run archive and rungroup archive for now we show all runs and rungroups in archives which is wrong because users need to see only old runs an... | 0 |

25,694 | 2,683,931,723 | IssuesEvent | 2015-03-28 13:44:28 | oxyplot/oxyplot | https://api.github.com/repos/oxyplot/oxyplot | closed | Version numbers of dependencies in nuget packages are wrong | easy-fix high-priority in progress NuGet working-on-it | All dependencies other than `OxyPlot.Core` are not updated:

| 1.0 | Version numbers of dependencies in nuget packages are wrong - All dependencies other than `OxyPlot.Core` are not updated:

| non_build | version numbers of dependencies in nuget packages are wrong all dependencies other than oxyplot core are not updated | 0 |

93,818 | 15,946,418,110 | IssuesEvent | 2021-04-15 01:01:18 | jgeraigery/core | https://api.github.com/repos/jgeraigery/core | opened | CVE-2019-12086 (High) detected in jackson-databind-2.9.6.jar | security vulnerability | ## CVE-2019-12086 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.6.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2019-12086 (High) detected in jackson-databind-2.9.6.jar - ## CVE-2019-12086 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.6.jar</b></p></summary>

<p>General... | non_build | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file core nimbus entity dsl pom xml ... | 0 |

419,489 | 28,146,365,952 | IssuesEvent | 2023-04-02 14:29:43 | kevin931/CytofDR | https://api.github.com/repos/kevin931/CytofDR | opened | Update reference for all relevant READMEs | documentation | The references section and the link to the article should be updated for all relevant README and documentation pages. We can retroactively update v1.0.x (but no new patch beyond EOL). | 1.0 | Update reference for all relevant READMEs - The references section and the link to the article should be updated for all relevant README and documentation pages. We can retroactively update v1.0.x (but no new patch beyond EOL). | non_build | update reference for all relevant readmes the references section and the link to the article should be updated for all relevant readme and documentation pages we can retroactively update x but no new patch beyond eol | 0 |

89,754 | 25,894,430,587 | IssuesEvent | 2022-12-14 20:58:09 | elastic/beats | https://api.github.com/repos/elastic/beats | closed | Build 146 for 8.3 with status FAILURE | automation ci-reported Team:Elastic-Agent-Data-Plane build-failures |

## :broken_heart: Tests Failed

<!-- BUILD BADGES-->

> _the below badges are clickable and redirect to their specific view in the CI or DOCS_

[](https://beats-ci.elastic.co/blue/organizations/jenkins/Beats%2Fbeats%2F8.3/detail/8.3/146//pipeline)... | 1.0 | Build 146 for 8.3 with status FAILURE -

## :broken_heart: Tests Failed

<!-- BUILD BADGES-->

> _the below badges are clickable and redirect to their specific view in the CI or DOCS_

[](https://beats-ci.elastic.co/blue/organizations/jenkins/Beats... | build | build for with status failure broken heart tests failed the below badges are clickable and redirect to their specific view in the ci or docs expand to view the summary build stats start time duration min sec test stats ... | 1 |

121,175 | 25,936,372,991 | IssuesEvent | 2022-12-16 14:30:40 | Onelinerhub/onelinerhub | https://api.github.com/repos/Onelinerhub/onelinerhub | closed | Short solution needed: "Feature selection" (python-scikit-learn) | help wanted good first issue code python-scikit-learn | Please help us write most modern and shortest code solution for this issue:

**Feature selection** (technology: [python-scikit-learn](https://onelinerhub.com/python-scikit-learn))

### Fast way

Just write the code solution in the comments.

### Prefered way

1. Create [pull request](https://github.com/Onelinerhub/oneline... | 1.0 | Short solution needed: "Feature selection" (python-scikit-learn) - Please help us write most modern and shortest code solution for this issue:

**Feature selection** (technology: [python-scikit-learn](https://onelinerhub.com/python-scikit-learn))

### Fast way

Just write the code solution in the comments.

### Prefered ... | non_build | short solution needed feature selection python scikit learn please help us write most modern and shortest code solution for this issue feature selection technology fast way just write the code solution in the comments prefered way create with a new code file inside don t forge... | 0 |

277,461 | 24,073,914,657 | IssuesEvent | 2022-09-18 14:44:26 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: tlp failed | C-test-failure O-robot O-roachtest release-blocker branch-release-22.2 | roachtest.tlp [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6504187?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6504187?buildTab=artifacts#/tlp) on release-22.2 @ [aac413cd4ca6... | 2.0 | roachtest: tlp failed - roachtest.tlp [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6504187?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6504187?buildTab=artifacts#/tlp) on rele... | non_build | roachtest tlp failed roachtest tlp with on release test artifacts and logs in artifacts tlp run tlp go tlp go test runner go expected unpartitioned and partitioned results to be equal attached stack trace stack trace github com cockroachdb cockroach pkg cmd roa... | 0 |

48,580 | 7,444,799,992 | IssuesEvent | 2018-03-28 00:36:32 | ReDEnergy/SessionSync | https://api.github.com/repos/ReDEnergy/SessionSync | opened | Improve documentation and explanations | documentation | Provide detailed information for configurations as well as all aspects that might be interpretable.

- session saving settings - explain in detail what they do

- saving history sessions

| 1.0 | Improve documentation and explanations - Provide detailed information for configurations as well as all aspects that might be interpretable.

- session saving settings - explain in detail what they do

- saving history sessions

| non_build | improve documentation and explanations provide detailed information for configurations as well as all aspects that might be interpretable session saving settings explain in detail what they do saving history sessions | 0 |

244,774 | 7,879,788,952 | IssuesEvent | 2018-06-26 14:18:07 | containous/traefik | https://api.github.com/repos/containous/traefik | closed | docker.tls.ca ignored when loading config from KV store | area/provider/kv kind/bug/confirmed priority/P1 | ### Do you want to request a *feature* or report a *bug*?

Report a bug

### What did you do?

* Use `traefik storeconfig` to store config in consul

* Launch traefik with `--consul` to load config

### What did you expect to see?

`docker.tls.ca` setting loaded successfully

### What did you see instead?

`docke... | 1.0 | docker.tls.ca ignored when loading config from KV store - ### Do you want to request a *feature* or report a *bug*?

Report a bug

### What did you do?

* Use `traefik storeconfig` to store config in consul

* Launch traefik with `--consul` to load config

### What did you expect to see?

`docker.tls.ca` setting lo... | non_build | docker tls ca ignored when loading config from kv store do you want to request a feature or report a bug report a bug what did you do use traefik storeconfig to store config in consul launch traefik with consul to load config what did you expect to see docker tls ca setting lo... | 0 |

42,782 | 11,072,351,086 | IssuesEvent | 2019-12-12 10:07:34 | spack/spack | https://api.github.com/repos/spack/spack | opened | Installation issue: motif | build-error | ### Steps to reproduce the issue

```console

$ spack install motif

==> Installing motif

==> Searching for binary cache of motif

==> No binary for motif found: installing from source

==> Fetching http://cfhcable.dl.sourceforge.net/project/motif/Motif%202.3.8%20Source%20Code/motif-2.3.8.tar.gz

###################... | 1.0 | Installation issue: motif - ### Steps to reproduce the issue

```console

$ spack install motif

==> Installing motif

==> Searching for binary cache of motif

==> No binary for motif found: installing from source

==> Fetching http://cfhcable.dl.sourceforge.net/project/motif/Motif%202.3.8%20Source%20Code/motif-2.3.8... | build | installation issue motif steps to reproduce the issue console spack install motif installing motif searching for binary cache of motif no binary for motif found installing from source fetching ... | 1 |

1,956 | 11,171,419,560 | IssuesEvent | 2019-12-28 19:35:36 | carlosjgp/kubernetes-config-collector | https://api.github.com/repos/carlosjgp/kubernetes-config-collector | closed | Implement release workflow | automation | Implement Travis steps to Tag the repository commit, generate the `CHANGELOG.md` and generate a GitHub release for that tag

Implement `CHANGELOG.md` generation using [`gitchangelog`](https://github.com/vaab/gitchangelog) before a release is created, add it to git and commit it through using the CI server | 1.0 | Implement release workflow - Implement Travis steps to Tag the repository commit, generate the `CHANGELOG.md` and generate a GitHub release for that tag

Implement `CHANGELOG.md` generation using [`gitchangelog`](https://github.com/vaab/gitchangelog) before a release is created, add it to git and commit it through us... | non_build | implement release workflow implement travis steps to tag the repository commit generate the changelog md and generate a github release for that tag implement changelog md generation using before a release is created add it to git and commit it through using the ci server | 0 |

11,324 | 4,959,020,945 | IssuesEvent | 2016-12-02 11:50:34 | open-power-host-os/builds | https://api.github.com/repos/open-power-host-os/builds | closed | Weekly build - November, 30th, 2016 | Weekly Build | A build is scheduled for Wednesday, November, 30th, 09:00 AM CT.

Please leave a comment if you have any reason to delay this build or to change its settings.

The build will use the HEAD of the following branches:

open-power-host-os/linux -> hostos-devel

open-power-host-os/qemu -> hostos-devel

open-power-host-os/... | 1.0 | Weekly build - November, 30th, 2016 - A build is scheduled for Wednesday, November, 30th, 09:00 AM CT.

Please leave a comment if you have any reason to delay this build or to change its settings.

The build will use the HEAD of the following branches:

open-power-host-os/linux -> hostos-devel

open-power-host-os/qem... | build | weekly build november a build is scheduled for wednesday november am ct please leave a comment if you have any reason to delay this build or to change its settings the build will use the head of the following branches open power host os linux hostos devel open power host os qemu hostos... | 1 |

60,725 | 14,909,854,933 | IssuesEvent | 2021-01-22 08:42:20 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [SB] Numeric response type > Default values of Minimum and Maximum values should be put into text fields | Bug P2 Process: Fixed Process: Tested dev Study builder | **Steps:**

1. Edit study

2. Add a questionnaire

3. Add numeric response type for question step/form step

4. Navigate to Response level attributes

5. Observe the minimuma and maximum text fields

**Actual:** Currently default values are not mentioned in the UI part in these text fields

**Expected:** Minimum va... | 1.0 | [SB] Numeric response type > Default values of Minimum and Maximum values should be put into text fields - **Steps:**

1. Edit study

2. Add a questionnaire

3. Add numeric response type for question step/form step

4. Navigate to Response level attributes

5. Observe the minimuma and maximum text fields

**Actual:**... | build | numeric response type default values of minimum and maximum values should be put into text fields steps edit study add a questionnaire add numeric response type for question step form step navigate to response level attributes observe the minimuma and maximum text fields actual cu... | 1 |

65,339 | 16,238,727,811 | IssuesEvent | 2021-05-07 06:30:41 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | opened | Sanity Tests & Unity Test checking fails | type:build/install | Commands:

- `tensorflow/tools/ci_build/ci_build.sh CPU tensorflow/tools/ci_build/ci_sanity.sh`

- `tensorflow/tools/ci_build/ci_build.sh CPU bazel test //tensorflow/...`

While running above two commands, the test fails and stop because of pip package installation error as shown below.

```

2021-05-07 06:26:49 (1.75 ... | 1.0 | Sanity Tests & Unity Test checking fails - Commands:

- `tensorflow/tools/ci_build/ci_build.sh CPU tensorflow/tools/ci_build/ci_sanity.sh`

- `tensorflow/tools/ci_build/ci_build.sh CPU bazel test //tensorflow/...`

While running above two commands, the test fails and stop because of pip package installation error as s... | build | sanity tests unity test checking fails commands tensorflow tools ci build ci build sh cpu tensorflow tools ci build ci sanity sh tensorflow tools ci build ci build sh cpu bazel test tensorflow while running above two commands the test fails and stop because of pip package installation error as s... | 1 |

2,647 | 2,999,995,047 | IssuesEvent | 2015-07-23 21:59:16 | HunterGPlays/TerrocideSupport | https://api.github.com/repos/HunterGPlays/TerrocideSupport | closed | Build /warp upgrade immediately | build | This is of the HIGHEST priority. There needs to be pressure plates to open up a class confirmation thing. | 1.0 | Build /warp upgrade immediately - This is of the HIGHEST priority. There needs to be pressure plates to open up a class confirmation thing. | build | build warp upgrade immediately this is of the highest priority there needs to be pressure plates to open up a class confirmation thing | 1 |

70,188 | 18,049,075,941 | IssuesEvent | 2021-09-19 12:23:52 | trilinos/Trilinos | https://api.github.com/repos/trilinos/Trilinos | closed | undefined reference to `dggsvd3' | impacting: build MARKED_FOR_CLOSURE CLOSED_DUE_TO_INACTIVITY |

<!---

Assignees: If you know anyone who should likely tackle this issue, select them

from the Assignees drop-down on the right.

-->

@bartlettroscoe

@etphipp

@mhoemmen

I tried to build trilinos but I got some errors. This is my CMakeError.log:

Performing C++ SOURCE FILE Test HAVE_TEUCHOS_LAPACKLARND fa... | 1.0 | undefined reference to `dggsvd3' -

<!---

Assignees: If you know anyone who should likely tackle this issue, select them

from the Assignees drop-down on the right.

-->

@bartlettroscoe

@etphipp

@mhoemmen

I tried to build trilinos but I got some errors. This is my CMakeError.log:

Performing C++ SOURCE FI... | build | undefined reference to assignees if you know anyone who should likely tackle this issue select them from the assignees drop down on the right bartlettroscoe etphipp mhoemmen i tried to build trilinos but i got some errors this is my cmakeerror log performing c source file tes... | 1 |

80,669 | 23,276,156,690 | IssuesEvent | 2022-08-05 07:24:48 | reitmas32/Next | https://api.github.com/repos/reitmas32/Next | opened | Create a basic builder | builder | ## Builder that uses nothing in the base

### Example of config.yaml

```yaml

basic_release:

base: basic

c_compiler: gcc

cxx_compiler: g++

linker: ld

files_cxx:

- main.cpp

- src/func/suma.cpp

- src/structs/*.cc

files_c:

- main_of_c.c

- src/func/suma.... | 1.0 | Create a basic builder - ## Builder that uses nothing in the base

### Example of config.yaml

```yaml

basic_release:

base: basic

c_compiler: gcc

cxx_compiler: g++

linker: ld

files_cxx:

- main.cpp

- src/func/suma.cpp

- src/structs/*.cc

files_c:

- main_of_c.... | build | create a basic builder builder that uses nothing in the base example of config yaml yaml basic release base basic c compiler gcc cxx compiler g linker ld files cxx main cpp src func suma cpp src structs cc files c main of c ... | 1 |

58,353 | 14,368,044,132 | IssuesEvent | 2020-12-01 07:46:14 | angular/angular-cli | https://api.github.com/repos/angular/angular-cli | closed | ng serve - assets folder with large video files causing heap out of memory, unable to compile | comp: devkit/build-angular freq1: low severity3: broken type: bug/fix | ng serve - assets folder with large video files causing heap out of memory, unable to compile | 1.0 | ng serve - assets folder with large video files causing heap out of memory, unable to compile - ng serve - assets folder with large video files causing heap out of memory, unable to compile | build | ng serve assets folder with large video files causing heap out of memory unable to compile ng serve assets folder with large video files causing heap out of memory unable to compile | 1 |

71,951 | 18,945,671,349 | IssuesEvent | 2021-11-18 09:54:33 | TransactionProcessing/CallbackHandler | https://api.github.com/repos/TransactionProcessing/CallbackHandler | closed | Investigate Nightly Build Failure | nightlybuild | Url is ${GITHUB_SERVER_URL}/${GITHUB_REPOSITORY}/actions/runs/${GITHUB_RUN_ID} | 1.0 | Investigate Nightly Build Failure - Url is ${GITHUB_SERVER_URL}/${GITHUB_REPOSITORY}/actions/runs/${GITHUB_RUN_ID} | build | investigate nightly build failure url is github server url github repository actions runs github run id | 1 |

262,888 | 27,989,474,122 | IssuesEvent | 2023-03-27 01:34:34 | AkshayMukkavilli/Tensorflow | https://api.github.com/repos/AkshayMukkavilli/Tensorflow | opened | CVE-2023-25670 (High) detected in tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl | Mend: dependency security vulnerability | ## CVE-2023-25670 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learning... | True | CVE-2023-25670 (High) detected in tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl - ## CVE-2023-25670 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.13.1-cp27-cp27mu-m... | non_build | cve high detected in tensorflow whl cve high severity vulnerability vulnerable library tensorflow whl tensorflow is an open source machine learning framework for everyone library home page a href path to dependency file tensorflow src requirements ... | 0 |

111,032 | 11,717,137,685 | IssuesEvent | 2020-03-09 16:45:28 | operator-framework/operator-sdk | https://api.github.com/repos/operator-framework/operator-sdk | closed | Allow using different log formats for the Operator | good first issue help wanted kind/documentation | ## Feature Request

**Is your feature request related to a problem? Please describe.**

Currently, Operator SDK uses Zap for logging, but it appears there's no obvious way to tweak the format zap outputs the logs in. For example, if one wishes to use logfmt, he could resort to something like https://github.com/jstern... | 1.0 | Allow using different log formats for the Operator - ## Feature Request

**Is your feature request related to a problem? Please describe.**

Currently, Operator SDK uses Zap for logging, but it appears there's no obvious way to tweak the format zap outputs the logs in. For example, if one wishes to use logfmt, he cou... | non_build | allow using different log formats for the operator feature request is your feature request related to a problem please describe currently operator sdk uses zap for logging but it appears there s no obvious way to tweak the format zap outputs the logs in for example if one wishes to use logfmt he cou... | 0 |

95,989 | 27,714,525,652 | IssuesEvent | 2023-03-14 16:07:22 | dotnet/msbuild | https://api.github.com/repos/dotnet/msbuild | opened | Node assignments pessimized in multitargeted->multitargeted refs with `BuildProjectReferences=false` | needs-design Area: Engine Performance-Scenario-Build needs-triage | Given a project that itself multitargets and references several multitargeted projects, like [pessimized_node_assignments.zip](https://github.com/dotnet/msbuild/files/10969331/pessimized_node_assignments.zip), the scheduler does a terrible job spreading the work around among nodes when the referencing project (here `Ag... | 1.0 | Node assignments pessimized in multitargeted->multitargeted refs with `BuildProjectReferences=false` - Given a project that itself multitargets and references several multitargeted projects, like [pessimized_node_assignments.zip](https://github.com/dotnet/msbuild/files/10969331/pessimized_node_assignments.zip), the sch... | build | node assignments pessimized in multitargeted multitargeted refs with buildprojectreferences false given a project that itself multitargets and references several multitargeted projects like the scheduler does a terrible job spreading the work around among nodes when the referencing project here aggregate ag... | 1 |

92,746 | 26,758,421,356 | IssuesEvent | 2023-01-31 03:32:07 | llvm/llvm-project | https://api.github.com/repos/llvm/llvm-project | closed | error: exponent has no digits | build-problem libc | Compiling LLVM-14.0.6 using GCC 12.1.0 I get several errors compiling `math_utils.cpp` `sincosf_utils.h` `sincosf_data.cpp` which appear to be due to an inability to understand C++17 floats correctly

Error type 1:

```console

/home/liam/Downloads/llvm-project-14.0.6.src/libc/src/math/generic/math_utils.cpp:18:57: e... | 1.0 | error: exponent has no digits - Compiling LLVM-14.0.6 using GCC 12.1.0 I get several errors compiling `math_utils.cpp` `sincosf_utils.h` `sincosf_data.cpp` which appear to be due to an inability to understand C++17 floats correctly

Error type 1:

```console

/home/liam/Downloads/llvm-project-14.0.6.src/libc/src/math... | build | error exponent has no digits compiling llvm using gcc i get several errors compiling math utils cpp sincosf utils h sincosf data cpp which appear to be due to an inability to understand c floats correctly error type console home liam downloads llvm project src libc src math gen... | 1 |

491,446 | 14,164,229,314 | IssuesEvent | 2020-11-12 04:29:11 | wso2/product-is | https://api.github.com/repos/wso2/product-is | closed | Unable to directly access dev portal with reduced permissions | Priority/High Severity/Critical bug console reviewed_511 ux | **Describe the Issue:**

A User with less, but required permissions to access some of the permissions required to login to dev-portal is unable to login.

**How To Reproduce:**

1. Create a new user "userA"

2. Create a new role "testRole"

3. Assign `login` and `user management` permissions to the "testRole"

4. A... | 1.0 | Unable to directly access dev portal with reduced permissions - **Describe the Issue:**

A User with less, but required permissions to access some of the permissions required to login to dev-portal is unable to login.

**How To Reproduce:**

1. Create a new user "userA"

2. Create a new role "testRole"

3. Assign `... | non_build | unable to directly access dev portal with reduced permissions describe the issue a user with less but required permissions to access some of the permissions required to login to dev portal is unable to login how to reproduce create a new user usera create a new role testrole assign ... | 0 |

78,570 | 22,307,748,369 | IssuesEvent | 2022-06-13 14:23:00 | ku-kim/issue-tracker | https://api.github.com/repos/ku-kim/issue-tracker | opened | [BE] Spring boot 프로젝트 초기 설정 | 🏗️ build 🤖 BE | ## 기능 요청사항

spring server 초기 설정이 필요합니다.

## 요청 세부사항

- Java 11

- Spring Boot

- dependencies

- spring web

- Data JPA

- Query DSL

- webflux(web client)

- jwt

- db

- h2, mysql

- test

- aseertJ

| 1.0 | [BE] Spring boot 프로젝트 초기 설정 - ## 기능 요청사항

spring server 초기 설정이 필요합니다.

## 요청 세부사항

- Java 11

- Spring Boot

- dependencies

- spring web

- Data JPA

- Query DSL

- webflux(web client)

- jwt

- db

- h2, mysql

- test

- aseertJ

| build | spring boot 프로젝트 초기 설정 기능 요청사항 spring server 초기 설정이 필요합니다 요청 세부사항 java spring boot dependencies spring web data jpa query dsl webflux web client jwt db mysql test aseertj | 1 |

5,123 | 4,793,781,339 | IssuesEvent | 2016-10-31 19:08:32 | letsencrypt/boulder | https://api.github.com/repos/letsencrypt/boulder | opened | SA Max Open Connections Limit Ineffective | area/sa kind/bug kind/performance layer/storage | The SA creates its DbMap [setting the max open connections limit](https://github.com/letsencrypt/boulder/blob/32c03f942bd4f8d363544cf499c56985943d76d7/cmd/boulder-sa/main.go#L58) to `saConf.DBConfig.MaxDBConns`.

In practice we see more than this number of active connections from the SA to the production database ser... | True | SA Max Open Connections Limit Ineffective - The SA creates its DbMap [setting the max open connections limit](https://github.com/letsencrypt/boulder/blob/32c03f942bd4f8d363544cf499c56985943d76d7/cmd/boulder-sa/main.go#L58) to `saConf.DBConfig.MaxDBConns`.

In practice we see more than this number of active connection... | non_build | sa max open connections limit ineffective the sa creates its dbmap to saconf dbconfig maxdbconns in practice we see more than this number of active connections from the sa to the production database server potential causes we have a bug somewhere where we aren t properly closing connections or row ... | 0 |

53,171 | 13,129,956,443 | IssuesEvent | 2020-08-06 14:39:41 | google/or-tools | https://api.github.com/repos/google/or-tools | closed | [CMake] USE_SCIP=OFF not working | Bug Build: CMake | libscip (SCIP optimization library) is suddenly required for any cmake C++ build, ignoring USE_SCIP option.

This line: https://github.com/google/or-tools/blame/stable/cmake/cpp.cmake#L222 requires libscip, ignoring the USE_SCIP setting. Removing this line allows a successful build (assuming one does not wish to use... | 1.0 | [CMake] USE_SCIP=OFF not working - libscip (SCIP optimization library) is suddenly required for any cmake C++ build, ignoring USE_SCIP option.

This line: https://github.com/google/or-tools/blame/stable/cmake/cpp.cmake#L222 requires libscip, ignoring the USE_SCIP setting. Removing this line allows a successful build... | build | use scip off not working libscip scip optimization library is suddenly required for any cmake c build ignoring use scip option this line requires libscip ignoring the use scip setting removing this line allows a successful build assuming one does not wish to use scipopt library | 1 |

83,347 | 24,048,428,913 | IssuesEvent | 2022-09-16 10:26:54 | google/mediapipe | https://api.github.com/repos/google/mediapipe | closed | build issues while running mediapipe using bazel on mac os | platform:ios type:build/install MediaPipe stat:awaiting response stalled | dyld[1363]: symbol not found in flat namespace '_CFRelease'

zsh: abort bazel run --define MEDIAPIPE_DISABLE_GPU=1

This is the error message while i try to build mediapipe using bazel . I am currently running macos 12.5 (mac montery).

| 1.0 | build issues while running mediapipe using bazel on mac os - dyld[1363]: symbol not found in flat namespace '_CFRelease'

zsh: abort bazel run --define MEDIAPIPE_DISABLE_GPU=1

This is the error message while i try to build mediapipe using bazel . I am currently running macos 12.5 (mac montery).

| build | build issues while running mediapipe using bazel on mac os dyld symbol not found in flat namespace cfrelease zsh abort bazel run define mediapipe disable gpu this is the error message while i try to build mediapipe using bazel i am currently running macos mac montery | 1 |

93,653 | 27,007,758,051 | IssuesEvent | 2023-02-10 13:06:27 | eclipse-edc/Connector | https://api.github.com/repos/eclipse-edc/Connector | opened | Build: nightlies should be actual releases | build | # Feature Request

Currently, nightly builds are technically snapshots, which makes them difficult to use for downstream projects, especially if they need repeatable builds. Snapshots can be deleted at any time.

## Which Areas Would Be Affected?

Build (Gradle Plugin)

## Why Is the Feature Desired?

Repeatabl... | 1.0 | Build: nightlies should be actual releases - # Feature Request

Currently, nightly builds are technically snapshots, which makes them difficult to use for downstream projects, especially if they need repeatable builds. Snapshots can be deleted at any time.

## Which Areas Would Be Affected?

Build (Gradle Plugin)... | build | build nightlies should be actual releases feature request currently nightly builds are technically snapshots which makes them difficult to use for downstream projects especially if they need repeatable builds snapshots can be deleted at any time which areas would be affected build gradle plugin ... | 1 |

27,834 | 8,039,204,552 | IssuesEvent | 2018-07-30 17:38:53 | fossasia/susi_skill_cms | https://api.github.com/repos/fossasia/susi_skill_cms | closed | Remember the choice of user between code view and UI view | Botbuilder enhancement | **Actual Behaviour**

When the user chooses UI view in any of the tabs in botbuilder, it switches back to code view in other tabs.

**Expected Behaviour**

When the user switches between code view and UI view in one of the tabs in the botbuilder, it should stay consistent in other tabs too.

**Would you like to... | 1.0 | Remember the choice of user between code view and UI view - **Actual Behaviour**

When the user chooses UI view in any of the tabs in botbuilder, it switches back to code view in other tabs.

**Expected Behaviour**

When the user switches between code view and UI view in one of the tabs in the botbuilder, it shou... | build | remember the choice of user between code view and ui view actual behaviour when the user chooses ui view in any of the tabs in botbuilder it switches back to code view in other tabs expected behaviour when the user switches between code view and ui view in one of the tabs in the botbuilder it shou... | 1 |

250,189 | 18,875,635,300 | IssuesEvent | 2021-11-14 00:18:07 | imran-mid/Hearth-Tale-v2 | https://api.github.com/repos/imran-mid/Hearth-Tale-v2 | opened | Update home page view to now include comic cards in a 'swipeable' container | documentation enhancement New Feature | # Tasks

- [ ] Create new comic view card

- [ ] Refactor story card: have a 'home' function which shows the 2 views using a swipeable container

- [ ] Fetch data from firebase like we do stories (related to #7 )

- [ ] Update documentation

## Refs

See [Figma](https://www.figma.com/proto/nEnGH8CuoWSkCzJw3QNnpe/Hear... | 1.0 | Update home page view to now include comic cards in a 'swipeable' container - # Tasks

- [ ] Create new comic view card

- [ ] Refactor story card: have a 'home' function which shows the 2 views using a swipeable container

- [ ] Fetch data from firebase like we do stories (related to #7 )

- [ ] Update documentation

... | non_build | update home page view to now include comic cards in a swipeable container tasks create new comic view card refactor story card have a home function which shows the views using a swipeable container fetch data from firebase like we do stories related to update documentation ref... | 0 |

40,460 | 10,532,450,584 | IssuesEvent | 2019-10-01 10:46:43 | icsharpcode/ILSpy | https://api.github.com/repos/icsharpcode/ILSpy | opened | Unit Test Failure on Build Server | Build Automation | eg https://ci.appveyor.com/project/icsharpcode/ilspy/build/job/83q5uw5vhaolta3s

Proposed solution: stop after successful compile, not full round-trip. | 1.0 | Unit Test Failure on Build Server - eg https://ci.appveyor.com/project/icsharpcode/ilspy/build/job/83q5uw5vhaolta3s

Proposed solution: stop after successful compile, not full round-trip. | build | unit test failure on build server eg proposed solution stop after successful compile not full round trip | 1 |

47,788 | 12,122,394,376 | IssuesEvent | 2020-04-22 10:53:48 | golang/go | https://api.github.com/repos/golang/go | closed | cmd/api: tests timing out on plan9-arm builder | Builders NeedsInvestigation OS-Plan9 Testing | [CL 224619](https://go-review.googlesource.com/c/go/+/224619) slowed the cmd/api tests on plan9_arm from best-case about 1 minute to best-case about 3 minutes, and worst-case timing out after 13 minutes, for example [here](https://build.golang.org/log/f4704e4e0bc49d1d31c722b21e994e3b614eb947

).

The CL added code to... | 1.0 | cmd/api: tests timing out on plan9-arm builder - [CL 224619](https://go-review.googlesource.com/c/go/+/224619) slowed the cmd/api tests on plan9_arm from best-case about 1 minute to best-case about 3 minutes, and worst-case timing out after 13 minutes, for example [here](https://build.golang.org/log/f4704e4e0bc49d1d31c... | build | cmd api tests timing out on arm builder slowed the cmd api tests on arm from best case about minute to best case about minutes and worst case timing out after minutes for example the cl added code to the cmd api test to warm up the import cache by starting go list deps json std com... | 1 |

42,928 | 11,102,106,874 | IssuesEvent | 2019-12-16 22:59:46 | craftcms/cms | https://api.github.com/repos/craftcms/cms | closed | Feature Request: Add upload user to an asset | assets :file_folder: content governance :classical_building: enhancement | This may be a duplication, but I couldn't find it on GitHub.

A client has requested that they can see which user uploaded what file. As far as I can tell, Craft 3 currently doesn't link assets to users like Entries do.

It would be useful to have this option so that clients know who uploaded what. | 1.0 | Feature Request: Add upload user to an asset - This may be a duplication, but I couldn't find it on GitHub.

A client has requested that they can see which user uploaded what file. As far as I can tell, Craft 3 currently doesn't link assets to users like Entries do.

It would be useful to have this option so that c... | build | feature request add upload user to an asset this may be a duplication but i couldn t find it on github a client has requested that they can see which user uploaded what file as far as i can tell craft currently doesn t link assets to users like entries do it would be useful to have this option so that c... | 1 |

140,527 | 32,020,023,486 | IssuesEvent | 2023-09-22 03:09:11 | FerretDB/FerretDB | https://api.github.com/repos/FerretDB/FerretDB | closed | `dropDatabases` use new `PostgreSQL` backend | code/chore not ready | ### What should be done?

Use new backend in https://github.com/FerretDB/FerretDB/blob/main/internal/handlers/pg/msg_dropdatabase.go

### Where?

https://github.com/FerretDB/FerretDB/blob/main/internal/handlers/pg/msg_dropdatabase.go

https://github.com/FerretDB/FerretDB/tree/main/internal/backends/postgresql

### De... | 1.0 | `dropDatabases` use new `PostgreSQL` backend - ### What should be done?

Use new backend in https://github.com/FerretDB/FerretDB/blob/main/internal/handlers/pg/msg_dropdatabase.go

### Where?

https://github.com/FerretDB/FerretDB/blob/main/internal/handlers/pg/msg_dropdatabase.go

https://github.com/FerretDB/FerretDB/t... | non_build | dropdatabases use new postgresql backend what should be done use new backend in where definition of done spot refactorings done | 0 |

67,342 | 16,902,684,976 | IssuesEvent | 2021-06-24 00:31:01 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | MSI installs don't create system-wide icons | Bug Build/Install Windows | **Describe the bug**

Start Menu and Desktop icons are created only within the installing user's profile. Subsequent users of the computer are highly unlikely to even know that QGIS is installed, let alone know where/how to start it.

**How to Reproduce**

1. run `msiexec.exe /i QGIS-OSGeo4W-3.20.0-2.msi /qn /nore... | 1.0 | MSI installs don't create system-wide icons - **Describe the bug**

Start Menu and Desktop icons are created only within the installing user's profile. Subsequent users of the computer are highly unlikely to even know that QGIS is installed, let alone know where/how to start it.

**How to Reproduce**

1. run `msie... | build | msi installs don t create system wide icons describe the bug start menu and desktop icons are created only within the installing user s profile subsequent users of the computer are highly unlikely to even know that qgis is installed let alone know where how to start it how to reproduce run msie... | 1 |

214,531 | 16,566,105,006 | IssuesEvent | 2021-05-29 12:49:19 | LDSSA/wiki | https://api.github.com/repos/LDSSA/wiki | closed | Update current staff list | Documentation AOR priority:medium question | We have an outdated list of current staff in the Wiki: https://ldssa.github.io/wiki/About%20us/Member-Directory/#all-current-staff.

1. Could you give me a list of all current staff?

2. Should we create a new section for staff that has contributed but is no longer active?

Alternatively, I can ask each member of t... | 1.0 | Update current staff list - We have an outdated list of current staff in the Wiki: https://ldssa.github.io/wiki/About%20us/Member-Directory/#all-current-staff.

1. Could you give me a list of all current staff?

2. Should we create a new section for staff that has contributed but is no longer active?

Alternatively... | non_build | update current staff list we have an outdated list of current staff in the wiki could you give me a list of all current staff should we create a new section for staff that has contributed but is no longer active alternatively i can ask each member of the leaders slack one by one but it will take a... | 0 |

75,676 | 20,954,938,214 | IssuesEvent | 2022-03-27 01:25:24 | ModernFlyouts-Community/ModernFlyouts | https://api.github.com/repos/ModernFlyouts-Community/ModernFlyouts | closed | Show message when you turn on / off the Keyboard brightness | Community Would Need to build | Show a message also when you turn on or off the Keyboard brightness | 1.0 | Show message when you turn on / off the Keyboard brightness - Show a message also when you turn on or off the Keyboard brightness | build | show message when you turn on off the keyboard brightness show a message also when you turn on or off the keyboard brightness | 1 |

430,895 | 12,467,773,720 | IssuesEvent | 2020-05-28 17:39:33 | processing/p5.js-web-editor | https://api.github.com/repos/processing/p5.js-web-editor | closed | When fetching sketches via slug, API returns first sketch that matches slug | priority:high type:bug | <!--

Hi there! If you are here to report a bug, or to discuss a feature (new or existing), you can use the below template to get started quickly. Fill out all those parts which you're comfortable with, and delete the remaining ones.

-->

#### Nature of issue?

<!-- Select any one issue and delete the other two --... | 1.0 | When fetching sketches via slug, API returns first sketch that matches slug - <!--

Hi there! If you are here to report a bug, or to discuss a feature (new or existing), you can use the below template to get started quickly. Fill out all those parts which you're comfortable with, and delete the remaining ones.

-->

... | non_build | when fetching sketches via slug api returns first sketch that matches slug hi there if you are here to report a bug or to discuss a feature new or existing you can use the below template to get started quickly fill out all those parts which you re comfortable with and delete the remaining ones ... | 0 |

469,022 | 13,495,427,103 | IssuesEvent | 2020-09-11 23:51:30 | datasci4health/harena-space | https://api.github.com/repos/datasci4health/harena-space | opened | Invalid CSRF - Case creation form | bug help wanted high priority production | When attempting to create a case using axios and form results in Invalid CSRF. The error started to occur after 'token-validator.js'. The validator makes one GET request using axios, and somehow that's messing up the CSRF for the case creation POST. Need help to figure this out.

Ps. The error only occurs in the prod... | 1.0 | Invalid CSRF - Case creation form - When attempting to create a case using axios and form results in Invalid CSRF. The error started to occur after 'token-validator.js'. The validator makes one GET request using axios, and somehow that's messing up the CSRF for the case creation POST. Need help to figure this out.