Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 853 | labels stringlengths 4 898 | body stringlengths 2 262k | index stringclasses 13

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 250k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

44,348 | 7,106,326,261 | IssuesEvent | 2018-01-16 16:18:22 | unicef/magicbox | https://api.github.com/repos/unicef/magicbox | opened | Choose documentation platform | documentation needs feedback priority:crit | # Summary

Establish a documentation platform for hosted documentation about MagicBox projects

# Background

Effective documentation is critical in technical projects – open source or otherwise. However, it's especially helpful for MagicBox because of the small team size and need to engage with the open source c... | 1.0 | Choose documentation platform - # Summary

Establish a documentation platform for hosted documentation about MagicBox projects

# Background

Effective documentation is critical in technical projects – open source or otherwise. However, it's especially helpful for MagicBox because of the small team size and need ... | non_build | choose documentation platform summary establish a documentation platform for hosted documentation about magicbox projects background effective documentation is critical in technical projects – open source or otherwise however it s especially helpful for magicbox because of the small team size and need ... | 0 |

90,701 | 26,171,906,428 | IssuesEvent | 2023-01-02 01:42:45 | apache/beam | https://api.github.com/repos/apache/beam | closed | beam_PreCommit_PythonLint_Commit takes 50+ minutes to execute | build infra P3 bug jenkins | The beam_PreCommit_PythonLint_Commit phase takes 50**** minutes to execute as seen here:

[https://builds.apache.org/job/beam_PreCommit_PythonLint_Commit/4252/](https://builds.apache.org/job/beam_PreCommit_PythonLint_Commit/4252/) (53 minutes)

[https://builds.apache.org/job/beam_PreCommit_PythonLint_Commit/42... | 1.0 | beam_PreCommit_PythonLint_Commit takes 50+ minutes to execute - The beam_PreCommit_PythonLint_Commit phase takes 50**** minutes to execute as seen here:

[https://builds.apache.org/job/beam_PreCommit_PythonLint_Commit/4252/](https://builds.apache.org/job/beam_PreCommit_PythonLint_Commit/4252/) (53 minutes)

[h... | build | beam precommit pythonlint commit takes minutes to execute the beam precommit pythonlint commit phase takes minutes to execute as seen here minutes minutes according to the build blame report the mean time is minutes imported from jira original jira may contain... | 1 |

594,487 | 18,046,665,930 | IssuesEvent | 2021-09-19 02:09:16 | grpc/grpc | https://api.github.com/repos/grpc/grpc | closed | C# WaitForReady is hard to set for a gRPC call | kind/enhancement lang/C# priority/P2 disposition/stale | `CallOptions` has a `WaitForReady` value and a fluent method for setting it to true. However, there is no option to set it to true on the CallOptions constructor.

Getter property:

https://github.com/grpc/grpc/blob/7125bbe5a564e30ad63c4f19bed89e7676cb7c29/src/csharp/Grpc.Core.Api/CallOptions.cs#L114-L122

Set method... | 1.0 | C# WaitForReady is hard to set for a gRPC call - `CallOptions` has a `WaitForReady` value and a fluent method for setting it to true. However, there is no option to set it to true on the CallOptions constructor.

Getter property:

https://github.com/grpc/grpc/blob/7125bbe5a564e30ad63c4f19bed89e7676cb7c29/src/csharp/G... | non_build | c waitforready is hard to set for a grpc call calloptions has a waitforready value and a fluent method for setting it to true however there is no option to set it to true on the calloptions constructor getter property set method ctor no option for wait for ready also there is no option ... | 0 |

342,162 | 10,312,882,784 | IssuesEvent | 2019-08-29 21:00:44 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | opened | [craftercms] Change our backup/restore to use tar and gzip | enhancement priority: medium | Since we no longer run in Windows (we support Linux Docker images in Windows), we can now support backing up with tar and gzip, instead of using our own zipping utility, since tar is more reliable.

| 1.0 | [craftercms] Change our backup/restore to use tar and gzip - Since we no longer run in Windows (we support Linux Docker images in Windows), we can now support backing up with tar and gzip, instead of using our own zipping utility, since tar is more reliable.

| non_build | change our backup restore to use tar and gzip since we no longer run in windows we support linux docker images in windows we can now support backing up with tar and gzip instead of using our own zipping utility since tar is more reliable | 0 |

38,857 | 10,256,921,612 | IssuesEvent | 2019-08-21 18:49:52 | tensorflow/tfjs | https://api.github.com/repos/tensorflow/tfjs | closed | tfjs-examples: Simple object detection: yarn train --gpu fails | type:build/install | #### TensorFlow.js version

1.2.2

#### Browser version

Windows Version 10.0.17134 Build 17134

Node v10.15.0

### Problem description

**Install appears to succeed (with warnings)**

```

yarn install v1.17.3

[1/5] Validating package.json...

[2/5] Resolving packages...

[3/5] Fetching packages...

info fsev... | 1.0 | tfjs-examples: Simple object detection: yarn train --gpu fails - #### TensorFlow.js version

1.2.2

#### Browser version

Windows Version 10.0.17134 Build 17134

Node v10.15.0

### Problem description

**Install appears to succeed (with warnings)**

```

yarn install v1.17.3

[1/5] Validating package.json...

[... | build | tfjs examples simple object detection yarn train gpu fails tensorflow js version browser version windows version build node problem description install appears to succeed with warnings yarn install validating package json resolving package... | 1 |

16,229 | 10,679,502,822 | IssuesEvent | 2019-10-21 19:24:41 | matomo-org/matomo | https://api.github.com/repos/matomo-org/matomo | closed | SegmentEditor: display readable values for some metrics (e.g. FF -> Firefox) | Enhancement Hacktoberfest c: Usability | When the segmenteditor is used, some select values are very technical / non user-friendly. These Values should be mapped / labeled to make them more meaningful.

For example this would make sense for:

- Browser - expects values like (FF, IE, MF, CM, IW, etc.) - This should be more readable (Firefox, Internet Explorer,?... | True | SegmentEditor: display readable values for some metrics (e.g. FF -> Firefox) - When the segmenteditor is used, some select values are very technical / non user-friendly. These Values should be mapped / labeled to make them more meaningful.

For example this would make sense for:

- Browser - expects values like (FF, IE,... | non_build | segmenteditor display readable values for some metrics e g ff firefox when the segmenteditor is used some select values are very technical non user friendly these values should be mapped labeled to make them more meaningful for example this would make sense for browser expects values like ff ie ... | 0 |

59,376 | 17,023,110,759 | IssuesEvent | 2021-07-03 00:25:25 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | ConcurrentModificationException when reselecting segment of way. | Component: applet Priority: major Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 1.15am, Tuesday, 18th April 2006]**

Here's a fun one:

If you make a slight mistake when selecting the segments, deselect the segment then later reselect it, it thinks you're concurrently modifying things... ...with yourself.

The applet then becomes unresponsive... | 1.0 | ConcurrentModificationException when reselecting segment of way. - **[Submitted to the original trac issue database at 1.15am, Tuesday, 18th April 2006]**

Here's a fun one:

If you make a slight mistake when selecting the segments, deselect the segment then later reselect it, it thinks you're concurrently modifying ... | non_build | concurrentmodificationexception when reselecting segment of way here s a fun one if you make a slight mistake when selecting the segments deselect the segment then later reselect it it thinks you re concurrently modifying things with yourself the applet then becomes unresponsive with ... | 0 |

63,892 | 15,729,091,239 | IssuesEvent | 2021-03-29 14:29:58 | atlas-engineer/nyxt | https://api.github.com/repos/atlas-engineer/nyxt | opened | Guix reference scanner triggers all kinds of issues on grafted Nyxt builds | bug build high | Many users have reported breaking issues with the _grafted_ Nyxt build / install, such as GLib errors, etc.:#1103, #1241...

The issue is with Guix reference scanner, see https://issues.guix.gnu.org/33848. | 1.0 | Guix reference scanner triggers all kinds of issues on grafted Nyxt builds - Many users have reported breaking issues with the _grafted_ Nyxt build / install, such as GLib errors, etc.:#1103, #1241...

The issue is with Guix reference scanner, see https://issues.guix.gnu.org/33848. | build | guix reference scanner triggers all kinds of issues on grafted nyxt builds many users have reported breaking issues with the grafted nyxt build install such as glib errors etc the issue is with guix reference scanner see | 1 |

151,044 | 19,648,332,255 | IssuesEvent | 2022-01-10 01:27:42 | ekediala/ekediala | https://api.github.com/repos/ekediala/ekediala | opened | WS-2020-0042 (High) detected in acorn-6.3.0.tgz, acorn-5.7.3.tgz | security vulnerability | ## WS-2020-0042 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>acorn-6.3.0.tgz</b>, <b>acorn-5.7.3.tgz</b></p></summary>

<p>

<details><summary><b>acorn-6.3.0.tgz</b></p></summary>

... | True | WS-2020-0042 (High) detected in acorn-6.3.0.tgz, acorn-5.7.3.tgz - ## WS-2020-0042 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>acorn-6.3.0.tgz</b>, <b>acorn-5.7.3.tgz</b></p></sum... | non_build | ws high detected in acorn tgz acorn tgz ws high severity vulnerability vulnerable libraries acorn tgz acorn tgz acorn tgz ecmascript parser library home page a href path to dependency file package json path to vulnerable library node ... | 0 |

89,316 | 25,752,747,219 | IssuesEvent | 2022-12-08 14:22:23 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | closed | Installation process on dead pip | type:build/install subtype: ubuntu/linux TF 2.11 | <details><summary>Click to expand!</summary>

### Issue Type

Bug

### Source

source

### Tensorflow Version

2.11

### Custom Code

Yes

### OS Platform and Distribution

Linux Ubuntu 22.04

### Mobile device

_No response_

### Python version

3.10.6

### Bazel version

_No response_

### GCC/Compiler version

11... | 1.0 | Installation process on dead pip - <details><summary>Click to expand!</summary>

### Issue Type

Bug

### Source

source

### Tensorflow Version

2.11

### Custom Code

Yes

### OS Platform and Distribution

Linux Ubuntu 22.04

### Mobile device

_No response_

### Python version

3.10.6

### Bazel version

_No resp... | build | installation process on dead pip click to expand issue type bug source source tensorflow version custom code yes os platform and distribution linux ubuntu mobile device no response python version bazel version no response gcc compiler versi... | 1 |

46,336 | 11,825,276,480 | IssuesEvent | 2020-03-21 11:55:51 | nodejs/nodejs.dev | https://api.github.com/repos/nodejs/nodejs.dev | opened | CI: Live previews in forks | build discussion | The current setup provides live previews only when merging against https://github.com/nodejs/nodejs.dev. This means that folks who will be collaborating on forks will either need to open nested PRs or will have to defer CI related fixes when merging a single PR.

If multiple PRs are preferred, this creates unnecessar... | 1.0 | CI: Live previews in forks - The current setup provides live previews only when merging against https://github.com/nodejs/nodejs.dev. This means that folks who will be collaborating on forks will either need to open nested PRs or will have to defer CI related fixes when merging a single PR.

If multiple PRs are prefe... | build | ci live previews in forks the current setup provides live previews only when merging against this means that folks who will be collaborating on forks will either need to open nested prs or will have to defer ci related fixes when merging a single pr if multiple prs are preferred this creates unnecessary noise... | 1 |

40,897 | 10,591,039,193 | IssuesEvent | 2019-10-09 09:59:53 | htm-community/htm.core | https://api.github.com/repos/htm-community/htm.core | opened | Python setup.py develop broken | bug build python | I think the `develop` mode is broken, as it links to `build/Release/distr/src`

while the changes should be "live" on source files.

Intention of 'develop' py install is that you can make changes to the python files and the package (is linked) will immediately reflect that.

| 1.0 | Python setup.py develop broken - I think the `develop` mode is broken, as it links to `build/Release/distr/src`

while the changes should be "live" on source files.

Intention of 'develop' py install is that you can make changes to the python files and the package (is linked) will immediately reflect that.

| build | python setup py develop broken i think the develop mode is broken as it links to build release distr src while the changes should be live on source files intention of develop py install is that you can make changes to the python files and the package is linked will immediately reflect that | 1 |

73,710 | 7,350,245,511 | IssuesEvent | 2018-03-08 13:44:55 | LiskHQ/lisk | https://api.github.com/repos/LiskHQ/lisk | opened | Review unit test coverage of modules | *hard test | account

- some incomplete test

- refactor to stub

blocks

- all test pending

cache

- some tests are pending

- some test are incomplete

- needs refactor to stub.

dapps

- all test pending

delegates

- almost all test pending(25)

- refactor to stub

loader

- almost all test pending(25)

- refactor to... | 1.0 | Review unit test coverage of modules - account

- some incomplete test

- refactor to stub

blocks

- all test pending

cache

- some tests are pending

- some test are incomplete

- needs refactor to stub.

dapps

- all test pending

delegates

- almost all test pending(25)

- refactor to stub

loader

- alm... | non_build | review unit test coverage of modules account some incomplete test refactor to stub blocks all test pending cache some tests are pending some test are incomplete needs refactor to stub dapps all test pending delegates almost all test pending refactor to stub loader almo... | 0 |

337,455 | 30,248,167,444 | IssuesEvent | 2023-07-06 18:11:10 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: ycsb/A/nodes=3/cpu=32/mvcc-range-keys=global failed | C-test-failure O-robot O-roachtest release-blocker branch-release-23.1 | roachtest.ycsb/A/nodes=3/cpu=32/mvcc-range-keys=global [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/10797189?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/10797189?buildTab=arti... | 2.0 | roachtest: ycsb/A/nodes=3/cpu=32/mvcc-range-keys=global failed - roachtest.ycsb/A/nodes=3/cpu=32/mvcc-range-keys=global [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/10797189?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Co... | non_build | roachtest ycsb a nodes cpu mvcc range keys global failed roachtest ycsb a nodes cpu mvcc range keys global with on release cluster go run output in run workload run ycsb in workload run ycsb init insert count workload a concurrency splits histograms per... | 0 |

49,297 | 12,311,197,941 | IssuesEvent | 2020-05-12 12:00:13 | PowerShell/PowerShell | https://api.github.com/repos/PowerShell/PowerShell | closed | PowerShell on FreeBSD | Area-Build Issue-Question Resolution-Answered | Is there interest in PowerShell on FreeBSD?

I have been following dotnet/runtime for a while and building the preview releases on FreeBSD.

I was curious to see if PowerShell would build and did a little hacking to get things to compile.

There weren't many changes needed, but of course that's no guarantee that ever... | 1.0 | PowerShell on FreeBSD - Is there interest in PowerShell on FreeBSD?

I have been following dotnet/runtime for a while and building the preview releases on FreeBSD.

I was curious to see if PowerShell would build and did a little hacking to get things to compile.

There weren't many changes needed, but of course that'... | build | powershell on freebsd is there interest in powershell on freebsd i have been following dotnet runtime for a while and building the preview releases on freebsd i was curious to see if powershell would build and did a little hacking to get things to compile there weren t many changes needed but of course that ... | 1 |

31,936 | 8,775,335,280 | IssuesEvent | 2018-12-18 22:43:25 | Automattic/wp-calypso | https://api.github.com/repos/Automattic/wp-calypso | closed | Build size: async load live chat | Build Happychat [Status] Stale [Type] Enhancement | https://github.com/Automattic/wp-calypso/pull/22487 added the ability to initiate a livechat right from the sidebar.

Seems to have unintentionally dragged in a lot of code to build. Lets figure out how to defer loading happychat code until we know the user is interested. | 1.0 | Build size: async load live chat - https://github.com/Automattic/wp-calypso/pull/22487 added the ability to initiate a livechat right from the sidebar.

Seems to have unintentionally dragged in a lot of code to build. Lets figure out how to defer loading happychat code until we know the user is interested. | build | build size async load live chat added the ability to initiate a livechat right from the sidebar seems to have unintentionally dragged in a lot of code to build lets figure out how to defer loading happychat code until we know the user is interested | 1 |

41,519 | 10,730,051,674 | IssuesEvent | 2019-10-28 16:40:58 | angular/angular | https://api.github.com/repos/angular/angular | closed | "test_ivy_aot" CircleCI job is failing randomly with i18n-related error | comp: build & ci comp: i18n hotlist: angular-core-team | That started to happen recently (9/17), the error message is:

```

1) runtime i18n should work correctly with event listeners

Message:

Expected Hello Angular to be equal to Bonjour Angular

Stack:

Error: Expected Hello Angular to be equal to Bonjour Angular

```

The problem goes away after CircleCI job... | 1.0 | "test_ivy_aot" CircleCI job is failing randomly with i18n-related error - That started to happen recently (9/17), the error message is:

```

1) runtime i18n should work correctly with event listeners

Message:

Expected Hello Angular to be equal to Bonjour Angular

Stack:

Error: Expected Hello Angular to ... | build | test ivy aot circleci job is failing randomly with related error that started to happen recently the error message is runtime should work correctly with event listeners message expected hello angular to be equal to bonjour angular stack error expected hello angular to be equa... | 1 |

5,860 | 3,684,102,770 | IssuesEvent | 2016-02-24 16:16:48 | edemo/PDOauth | https://api.github.com/repos/edemo/PDOauth | closed | fix travis build | 3 - Done build environment | With postgres-based tests travis is out of sync.

Should be rewritten to use the docker image.

<!---

@huboard:{"order":99.5,"milestone_order":3.25,"custom_state":""}

-->

| 1.0 | fix travis build - With postgres-based tests travis is out of sync.

Should be rewritten to use the docker image.

<!---

@huboard:{"order":99.5,"milestone_order":3.25,"custom_state":""}

-->

| build | fix travis build with postgres based tests travis is out of sync should be rewritten to use the docker image huboard order milestone order custom state | 1 |

60,447 | 14,851,523,342 | IssuesEvent | 2021-01-18 07:04:00 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [SB] Instruction step created in Questionnaires is not displaying in the list | Bug P1 Process: Fixed Process: Tested dev Study builder | Steps:-

1. Navigate to Questionnaires and click on add questionnaire

2. Fill the required details in the screen and click on save button

3. click on Add instruction Step and create a instruction step and click on Done button

4. Verify whether created Instruction step is displaying in the list once it is created

... | 1.0 | [SB] Instruction step created in Questionnaires is not displaying in the list - Steps:-

1. Navigate to Questionnaires and click on add questionnaire

2. Fill the required details in the screen and click on save button

3. click on Add instruction Step and create a instruction step and click on Done button

4. Veri... | build | instruction step created in questionnaires is not displaying in the list steps navigate to questionnaires and click on add questionnaire fill the required details in the screen and click on save button click on add instruction step and create a instruction step and click on done button verify ... | 1 |

126,807 | 17,109,740,716 | IssuesEvent | 2021-07-10 03:32:42 | ZeroK-RTS/Zero-K | https://api.github.com/repos/ZeroK-RTS/Zero-K | opened | Simple commands, consider hiding retreat until a zone is set. | design decision feature | This would free up space, and possibly allow selection rank to be added by default. Many people wanted to know how to re-enable selection rank, indicating that it is reasonably discoverable. Planes could retain the retreat state by default as they use it to return to repair pads.

This makes the "show/hide" state men... | 1.0 | Simple commands, consider hiding retreat until a zone is set. - This would free up space, and possibly allow selection rank to be added by default. Many people wanted to know how to re-enable selection rank, indicating that it is reasonably discoverable. Planes could retain the retreat state by default as they use it t... | non_build | simple commands consider hiding retreat until a zone is set this would free up space and possibly allow selection rank to be added by default many people wanted to know how to re enable selection rank indicating that it is reasonably discoverable planes could retain the retreat state by default as they use it t... | 0 |

72,516 | 19,299,266,233 | IssuesEvent | 2021-12-13 01:52:12 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | closed | bazel-genfiles/ and *.pb.h not been generated with bazel-building tensorflow2.4.1 from source | stat:awaiting response type:build/install subtype:bazel TF 2.4 | **System information**

- OS Platform and Distribution ( Linux Ubuntu 18.04):

- TensorFlow installed from (source)

- TensorFlow version: Tags v2.4.1

- Python version: Python 3.7.6

- Installed using virtualenv? pip? conda?: conda

- Bazel version (if compiling from source): have tried bazel ... | 1.0 | bazel-genfiles/ and *.pb.h not been generated with bazel-building tensorflow2.4.1 from source - **System information**

- OS Platform and Distribution ( Linux Ubuntu 18.04):

- TensorFlow installed from (source)

- TensorFlow version: Tags v2.4.1

- Python version: Python 3.7.6

- Installed using virtual... | build | bazel genfiles and pb h not been generated with bazel building from source system information os platform and distribution linux ubuntu tensorflow installed from source tensorflow version tags python version python installed using virtualenv pip con... | 1 |

31,851 | 8,758,130,849 | IssuesEvent | 2018-12-15 00:40:07 | grpc/grpc | https://api.github.com/repos/grpc/grpc | closed | Bazel 0.20 fixes into release 1.17.x [request] | area/build lang/core | ### What version of gRPC and what language are you using?

I'm using gRPC from commit f8696b5136 (author: @nicolasnoble, reviewer: @jtattermusch)

which is from grpc/grpc#17363 which fixes an issue with **Bazel 0.20.0**

This is my WORKSPACE file:

```

##

# We need a specific unreleased version of gRPC:

# whi... | 1.0 | Bazel 0.20 fixes into release 1.17.x [request] - ### What version of gRPC and what language are you using?

I'm using gRPC from commit f8696b5136 (author: @nicolasnoble, reviewer: @jtattermusch)

which is from grpc/grpc#17363 which fixes an issue with **Bazel 0.20.0**

This is my WORKSPACE file:

```

##

# We ne... | build | bazel fixes into release x what version of grpc and what language are you using i m using grpc from commit author nicolasnoble reviewer jtattermusch which is from grpc grpc which fixes an issue with bazel this is my workspace file we need a specific unreleased... | 1 |

84,933 | 24,470,722,522 | IssuesEvent | 2022-10-07 19:36:38 | BOINC/boinc | https://api.github.com/repos/BOINC/boinc | closed | Built with -ffast-math option in gcc/g++, boinc client doesn't recognize beignet (intel gpu) library on Linux | C: Client - Build C: Client - Daemon P: Major R: wontfix T: Defect E: to be determined C: Client - Linux | **Describe the bug**

A clear and concise description of what the bug is.

I am building boinc client from git master tree on Fedora 29 linux.

Building boinc client by configuring 'CFLAGS="-O4 -mavx2 -funroll-loops -fforce-addr -ffast-math" CXXFLAGS=$CFLAGS ./configure --disable-server --disable-manager', boinc client... | 1.0 | Built with -ffast-math option in gcc/g++, boinc client doesn't recognize beignet (intel gpu) library on Linux - **Describe the bug**

A clear and concise description of what the bug is.

I am building boinc client from git master tree on Fedora 29 linux.

Building boinc client by configuring 'CFLAGS="-O4 -mavx2 -funrol... | build | built with ffast math option in gcc g boinc client doesn t recognize beignet intel gpu library on linux describe the bug a clear and concise description of what the bug is i am building boinc client from git master tree on fedora linux building boinc client by configuring cflags funroll loop... | 1 |

48,636 | 12,225,505,905 | IssuesEvent | 2020-05-03 05:44:55 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | closed | Windows build error: 'mlir::FoldingHook': 'value' is not a valid template type argument for parameter 'ConcreteType' | type:build/install | **System information**

- OS Platform and Distribution (e.g., Linux Ubuntu 16.04): Windows 10 Pro 10.0.18363

- Mobile device (e.g. iPhone 8, Pixel 2, Samsung Galaxy) if the issue happens on mobile device: N/A

- TensorFlow installed from (source or binary): source

- TensorFlow version: 2.1 (SHA-1 is c878390581fc81756... | 1.0 | Windows build error: 'mlir::FoldingHook': 'value' is not a valid template type argument for parameter 'ConcreteType' - **System information**

- OS Platform and Distribution (e.g., Linux Ubuntu 16.04): Windows 10 Pro 10.0.18363

- Mobile device (e.g. iPhone 8, Pixel 2, Samsung Galaxy) if the issue happens on mobile dev... | build | windows build error mlir foldinghook value is not a valid template type argument for parameter concretetype system information os platform and distribution e g linux ubuntu windows pro mobile device e g iphone pixel samsung galaxy if the issue happens on mobile device n a... | 1 |

280,633 | 24,319,860,109 | IssuesEvent | 2022-09-30 09:44:51 | lowRISC/opentitan | https://api.github.com/repos/lowRISC/opentitan | closed | [test-triage] chip_sw_rstmgr_alert_info | Component:TestTriage | ### Hierarchy of regression failure

Chip Level

### Failure Description

```

UVM_ERROR @ 3178.232878 us: (cip_base_scoreboard.sv:431) [uvm_test_top.env.scoreboard] Check failed item.d_error == exp_d_error (1 [0x1] vs 0 [0x0]) On interface chip_reg_block, TL item: req: (cip_tl_seq_item@107135) { a_addr: 'h200042... | 1.0 | [test-triage] chip_sw_rstmgr_alert_info - ### Hierarchy of regression failure

Chip Level

### Failure Description

```

UVM_ERROR @ 3178.232878 us: (cip_base_scoreboard.sv:431) [uvm_test_top.env.scoreboard] Check failed item.d_error == exp_d_error (1 [0x1] vs 0 [0x0]) On interface chip_reg_block, TL item: req: (... | non_build | chip sw rstmgr alert info hierarchy of regression failure chip level failure description uvm error us cip base scoreboard sv check failed item d error exp d error vs on interface chip reg block tl item req cip tl seq item a addr a data a mask hf ... | 0 |

332,302 | 24,340,443,848 | IssuesEvent | 2022-10-01 16:35:28 | SUI-Components/sui-components | https://api.github.com/repos/SUI-Components/sui-components | closed | Skeleton DEMO - Remove items from the examples section | documentation hacktoberfest ★★☆☆☆☆ Medium | **In order to simplify the [Skeleton](https://sui-components.vercel.app/workbench/atom/skeleton/demo) demo, please adjust the following:**

- Add Skeleton to the image placeholder as well

- Make all the Skeletons the same width

- For ALL examples, make a square example of 200px * 200px

https://user-images.github... | 1.0 | Skeleton DEMO - Remove items from the examples section - **In order to simplify the [Skeleton](https://sui-components.vercel.app/workbench/atom/skeleton/demo) demo, please adjust the following:**

- Add Skeleton to the image placeholder as well

- Make all the Skeletons the same width

- For ALL examples, make a squa... | non_build | skeleton demo remove items from the examples section in order to simplify the demo please adjust the following add skeleton to the image placeholder as well make all the skeletons the same width for all examples make a square example of remove of the examples leave just th... | 0 |

46,220 | 11,800,692,606 | IssuesEvent | 2020-03-18 18:00:22 | nosqlbench/nosqlbench | https://api.github.com/repos/nosqlbench/nosqlbench | opened | Build guidebook strictly as as a secondary artifact | build | Presently, the guidebook application builds into the source tree as resource content.

This complicates commits after builds. We should try to move this content into an ephemeral artifact location like "target".

We can .gitignore the dev view and staging versions in the source tree, and stage the generated app in do... | 1.0 | Build guidebook strictly as as a secondary artifact - Presently, the guidebook application builds into the source tree as resource content.

This complicates commits after builds. We should try to move this content into an ephemeral artifact location like "target".

We can .gitignore the dev view and staging versions... | build | build guidebook strictly as as a secondary artifact presently the guidebook application builds into the source tree as resource content this complicates commits after builds we should try to move this content into an ephemeral artifact location like target we can gitignore the dev view and staging versions... | 1 |

590,006 | 17,768,626,644 | IssuesEvent | 2021-08-30 10:50:51 | Adversarial-Deep-Learning/code-soup | https://api.github.com/repos/Adversarial-Deep-Learning/code-soup | opened | Tensor Board Logging | good first issue Priority:Low | We need to log the updates via tensor board, this will be an extension of the current logging #23 | 1.0 | Tensor Board Logging - We need to log the updates via tensor board, this will be an extension of the current logging #23 | non_build | tensor board logging we need to log the updates via tensor board this will be an extension of the current logging | 0 |

311,156 | 23,373,431,784 | IssuesEvent | 2022-08-10 22:33:11 | cloudflare/cloudflare-docs | https://api.github.com/repos/cloudflare/cloudflare-docs | closed | WARP client Windows - Managed deployment - disable updates | documentation Backlog content:edit | ### Which Cloudflare product does this pertain to?

WARP Client

### Existing documentation URL(s)

https://developers.cloudflare.com/cloudflare-one/connections/connect-devices/warp/deployment/mdm-deployment/

### Section that requires update

Install WARP on Windows

### What needs to change?

In case there is any po... | 1.0 | WARP client Windows - Managed deployment - disable updates - ### Which Cloudflare product does this pertain to?

WARP Client

### Existing documentation URL(s)

https://developers.cloudflare.com/cloudflare-one/connections/connect-devices/warp/deployment/mdm-deployment/

### Section that requires update

Install WARP o... | non_build | warp client windows managed deployment disable updates which cloudflare product does this pertain to warp client existing documentation url s section that requires update install warp on windows what needs to change in case there is any possibility to disable or delay automatic client... | 0 |

13,444 | 5,374,818,019 | IssuesEvent | 2017-02-23 01:49:47 | Homebrew/homebrew-science | https://api.github.com/repos/Homebrew/homebrew-science | closed | madlib test failed against postgresql 9.5.2 | build-error | With `postgresql` 9.5.2 installed, `brew test madlib` is failing, both for me locally (from source or bottle), and on Jenkins test-bot runs where `madlib` is tested as part of a `postgresql` formula change. Looks like it's causing https://github.com/Homebrew/legacy-homebrew/pull/49612 to fail in the test stage.

With t... | 1.0 | madlib test failed against postgresql 9.5.2 - With `postgresql` 9.5.2 installed, `brew test madlib` is failing, both for me locally (from source or bottle), and on Jenkins test-bot runs where `madlib` is tested as part of a `postgresql` formula change. Looks like it's causing https://github.com/Homebrew/legacy-homebrew... | build | madlib test failed against postgresql with postgresql installed brew test madlib is failing both for me locally from source or bottle and on jenkins test bot runs where madlib is tested as part of a postgresql formula change looks like it s causing to fail in the test stage with the curr... | 1 |

83,085 | 16,088,095,822 | IssuesEvent | 2021-04-26 13:42:55 | gradle/gradle | https://api.github.com/repos/gradle/gradle | opened | Deprecate consumption of code quality + antlr plugin configurations | @core in:antlr-plugin in:checkstyle-plugin in:codenarc-plugin in:jacoco-plugin in:pmd-plugin | Code quality plugins (and antlr plugin) declare a configuration for dependencies of the specific tool. Those configurations are by default both consumable and resolvable. They are only meant to be resolved by the project applying a plugin and should not be consumed by other projects.

Adding attributes on such config... | 1.0 | Deprecate consumption of code quality + antlr plugin configurations - Code quality plugins (and antlr plugin) declare a configuration for dependencies of the specific tool. Those configurations are by default both consumable and resolvable. They are only meant to be resolved by the project applying a plugin and should ... | non_build | deprecate consumption of code quality antlr plugin configurations code quality plugins and antlr plugin declare a configuration for dependencies of the specific tool those configurations are by default both consumable and resolvable they are only meant to be resolved by the project applying a plugin and should ... | 0 |

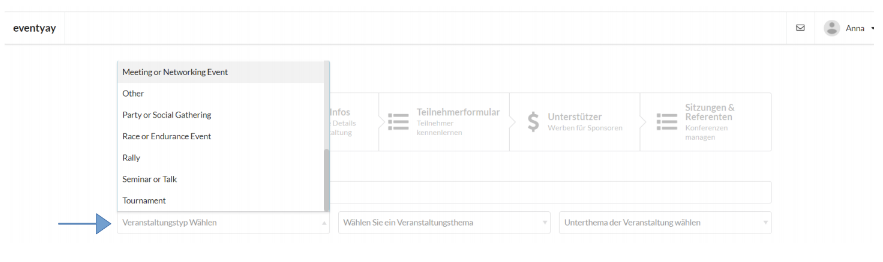

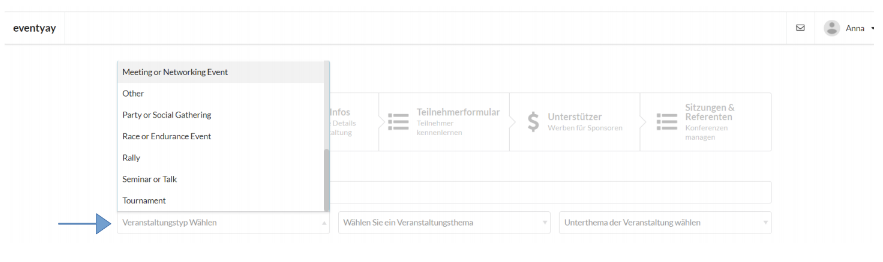

549,358 | 16,091,279,512 | IssuesEvent | 2021-04-26 17:00:17 | fossasia/open-event-frontend | https://api.github.com/repos/fossasia/open-event-frontend | closed | Wizard Step 1: Dropdown event types etc. are not translatable | Priority: High bug enhancement | The dropdown menus on the top of the event wizard step 1 are not translatable.

| 1.0 | Wizard Step 1: Dropdown event types etc. are not translatable - The dropdown menus on the top of the event wizard step 1 are not translatable.

| non_build | wizard step dropdown event types etc are not translatable the dropdown menus on the top of the event wizard step are not translatable | 0 |

93,607 | 3,906,348,037 | IssuesEvent | 2016-04-19 08:28:51 | OCHA-DAP/liverpool16 | https://api.github.com/repos/OCHA-DAP/liverpool16 | closed | Allowing each config layer to also draw a chart | enhancement Medium Priority | Basically, it should be possible to have more than 1 chart. | 1.0 | Allowing each config layer to also draw a chart - Basically, it should be possible to have more than 1 chart. | non_build | allowing each config layer to also draw a chart basically it should be possible to have more than chart | 0 |

14,175 | 24,582,919,394 | IssuesEvent | 2022-10-13 17:04:10 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | opened | Want to update git-subtree managed directories | type:feature status:requirements priority-5-triage | ### What would you like Renovate to be able to do?

Want to update [git-subtree](https://www.atlassian.com/git/tutorials/git-subtree) managed directories

### If you have any ideas on how this should be implemented, please tell us here.

N/A

### Is this a feature you are interested in implementing yourself?

No | 1.0 | Want to update git-subtree managed directories - ### What would you like Renovate to be able to do?

Want to update [git-subtree](https://www.atlassian.com/git/tutorials/git-subtree) managed directories

### If you have any ideas on how this should be implemented, please tell us here.

N/A

### Is this a feature you ar... | non_build | want to update git subtree managed directories what would you like renovate to be able to do want to update managed directories if you have any ideas on how this should be implemented please tell us here n a is this a feature you are interested in implementing yourself no | 0 |

13,021 | 2,732,875,397 | IssuesEvent | 2015-04-17 09:54:47 | tiku01/oryx-editor | https://api.github.com/repos/tiku01/oryx-editor | closed | canConnect in stencilsSets disabled | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. try to add a canConnect function in the rules of a stencilSets (as described

in ORYX-SSS Manual : http://oryx-editor.googlecode.com/files/OryxSSS.pdf)

What is the expected output?

the function canConnect should be executed

What do you see instead?

the function canConnect... | 1.0 | canConnect in stencilsSets disabled - ```

What steps will reproduce the problem?

1. try to add a canConnect function in the rules of a stencilSets (as described

in ORYX-SSS Manual : http://oryx-editor.googlecode.com/files/OryxSSS.pdf)

What is the expected output?

the function canConnect should be executed

What do yo... | non_build | canconnect in stencilssets disabled what steps will reproduce the problem try to add a canconnect function in the rules of a stencilsets as described in oryx sss manual what is the expected output the function canconnect should be executed what do you see instead the function canconnect is not execu... | 0 |

506,009 | 14,656,485,998 | IssuesEvent | 2020-12-28 13:31:56 | danieleteti/delphimvcframework | https://api.github.com/repos/danieleteti/delphimvcframework | closed | Alternative server components | Priority-Medium enhancement | Is it possible to use any other server components? For example for realtime applications is now better to use websockets.

Is it anough to implement interfaces in MVCFramework.Server.pas to use some commercial websocket server components eg. from esegece? | 1.0 | Alternative server components - Is it possible to use any other server components? For example for realtime applications is now better to use websockets.

Is it anough to implement interfaces in MVCFramework.Server.pas to use some commercial websocket server components eg. from esegece? | non_build | alternative server components is it possible to use any other server components for example for realtime applications is now better to use websockets is it anough to implement interfaces in mvcframework server pas to use some commercial websocket server components eg from esegece | 0 |

62,622 | 12,227,942,017 | IssuesEvent | 2020-05-03 17:17:39 | RonAsis/Wsep202 | https://api.github.com/repos/RonAsis/Wsep202 | opened | "Owned store" 's menu | High priority code+test | When in "owned store" in the client, when pressing on an owned store, make sure that the owner's menu shows. | 1.0 | "Owned store" 's menu - When in "owned store" in the client, when pressing on an owned store, make sure that the owner's menu shows. | non_build | owned store s menu when in owned store in the client when pressing on an owned store make sure that the owner s menu shows | 0 |

93,447 | 26,957,445,581 | IssuesEvent | 2023-02-08 15:48:21 | rubyforgood/casa | https://api.github.com/repos/rubyforgood/casa | closed | add test for CaseCourtReportContext in the case that all fields are populated | Help Wanted not-ready-to-build | **Description**

add tests for CaseCourtReportContext in the case that all fields are populated

Add test/s to fill in test data for these fields and test them in file `spec/models/case_court_report_context_spec.rb`

```

expect(context[:case_contacts]).to eq([]) # TODO test this

expect(context[:ca... | 1.0 | add test for CaseCourtReportContext in the case that all fields are populated - **Description**

add tests for CaseCourtReportContext in the case that all fields are populated

Add test/s to fill in test data for these fields and test them in file `spec/models/case_court_report_context_spec.rb`

```

expect... | build | add test for casecourtreportcontext in the case that all fields are populated description add tests for casecourtreportcontext in the case that all fields are populated add test s to fill in test data for these fields and test them in file spec models case court report context spec rb expect... | 1 |

30,456 | 8,551,963,302 | IssuesEvent | 2018-11-07 19:35:56 | general-language-syntax/GLS | https://api.github.com/repos/general-language-syntax/GLS | closed | Add an "--accept" equivalent for integration and end-to-end tests | build tooling testing utils | It can be annoying to update many integration and end-to-end test data files at once. If we know our change is right, or just want to see how it would affect the many test cases, it'd be nice to have a utility to override the `.cs`, `.java`, `.js`, etc. test files. | 1.0 | Add an "--accept" equivalent for integration and end-to-end tests - It can be annoying to update many integration and end-to-end test data files at once. If we know our change is right, or just want to see how it would affect the many test cases, it'd be nice to have a utility to override the `.cs`, `.java`, `.js`, etc... | build | add an accept equivalent for integration and end to end tests it can be annoying to update many integration and end to end test data files at once if we know our change is right or just want to see how it would affect the many test cases it d be nice to have a utility to override the cs java js etc... | 1 |

114,745 | 14,630,736,353 | IssuesEvent | 2020-12-23 18:20:34 | ufersa/plataforma-sabia | https://api.github.com/repos/ufersa/plataforma-sabia | closed | Funcionalidade de Banco de Editais | API UX/Design | ## Feature Description

<!-- Descreva claramente o que será implementado -->

Do mesmo molde do Banco de Ideias o banco de editais será possível visualizar os variados editais que são publicados por meio de outras instituições. Queremos dar a oportunidade para os nossos usuários fiquem sabendo quais editais vigente... | 1.0 | Funcionalidade de Banco de Editais - ## Feature Description

<!-- Descreva claramente o que será implementado -->

Do mesmo molde do Banco de Ideias o banco de editais será possível visualizar os variados editais que são publicados por meio de outras instituições. Queremos dar a oportunidade para os nossos usuários... | non_build | funcionalidade de banco de editais feature description do mesmo molde do banco de ideias o banco de editais será possível visualizar os variados editais que são publicados por meio de outras instituições queremos dar a oportunidade para os nossos usuários fiquem sabendo quais editais vigentes eles podem c... | 0 |

775,654 | 27,234,942,148 | IssuesEvent | 2023-02-21 15:41:37 | ascheid/itsg33-pbmm-issue-gen | https://api.github.com/repos/ascheid/itsg33-pbmm-issue-gen | closed | AC-9 PREVIOUS LOGON (ACCESS) NOTIFICATION | Priority: P2 | PREVIOUS LOGON NOTIFICATION | SUCCESSFUL / UNSUCCESSFUL LOGONS

The information system notifies the user of the number of [Selection: successful logons/accesses; unsuccessful logon/access attempts; both] during [Assignment: organization-defined time period]. | 1.0 | AC-9 PREVIOUS LOGON (ACCESS) NOTIFICATION - PREVIOUS LOGON NOTIFICATION | SUCCESSFUL / UNSUCCESSFUL LOGONS

The information system notifies the user of the number of [Selection: successful logons/accesses; unsuccessful logon/access attempts; both] during [Assignment: organization-defined time period]. | non_build | ac previous logon access notification previous logon notification successful unsuccessful logons the information system notifies the user of the number of during | 0 |

147,085 | 11,771,140,627 | IssuesEvent | 2020-03-15 22:30:01 | Gregeg/Bezier-Curves-Processing | https://api.github.com/repos/Gregeg/Bezier-Curves-Processing | opened | Robot rotates correctly from bezier program | test | run a test to see if the robot rotates as instructed by the program | 1.0 | Robot rotates correctly from bezier program - run a test to see if the robot rotates as instructed by the program | non_build | robot rotates correctly from bezier program run a test to see if the robot rotates as instructed by the program | 0 |

642,108 | 20,867,551,739 | IssuesEvent | 2022-03-22 08:53:19 | googleapis/java-spanner | https://api.github.com/repos/googleapis/java-spanner | closed | spanner.it.ITDatabaseAdminDialectAwareTest: testCreateDatabaseWithDialect[dialect = POSTGRESQL] failed | type: bug priority: p1 api: spanner flakybot: issue | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: 2b54949ec5082f1aab4b3b5b46bf0bef94f73d9e

b... | 1.0 | spanner.it.ITDatabaseAdminDialectAwareTest: testCreateDatabaseWithDialect[dialect = POSTGRESQL] failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `fl... | non_build | spanner it itdatabaseadmindialectawaretest testcreatedatabasewithdialect failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed test output java util conc... | 0 |

1,989 | 2,869,084,920 | IssuesEvent | 2015-06-05 23:12:41 | dart-lang/sdk | https://api.github.com/repos/dart-lang/sdk | closed | be more robust when resolution is not complete | Area-Pkg Pkg-PolymerBuild PolymerMilestone-Next Priority-High Triaged Type-Defect | For example, if people have broken code that doesn't resolve, smoke/recorder.dart might not be able to copy correctly the annotation. Right now this gives a terrible error message. | 1.0 | be more robust when resolution is not complete - For example, if people have broken code that doesn't resolve, smoke/recorder.dart might not be able to copy correctly the annotation. Right now this gives a terrible error message. | build | be more robust when resolution is not complete for example if people have broken code that doesn t resolve smoke recorder dart might not be able to copy correctly the annotation right now this gives a terrible error message | 1 |

461,662 | 13,233,991,143 | IssuesEvent | 2020-08-18 15:36:04 | status-im/status-react | https://api.github.com/repos/status-im/status-react | closed | Status is crashing on login when Passcode is turned off on device, but "Save password" is turned on in app | bug high-priority ios | # Bug Report

## Problem

Crash on login to Status app when Passcode is turned off on device, but before this "Save password" with biometric authentication was enabled.

Reproducible on phones with Touch and Face IDs

NOTE: after enabling Passcode back can login in the app

#### Expected behavior

Can login

##... | 1.0 | Status is crashing on login when Passcode is turned off on device, but "Save password" is turned on in app - # Bug Report

## Problem

Crash on login to Status app when Passcode is turned off on device, but before this "Save password" with biometric authentication was enabled.

Reproducible on phones with Touch and F... | non_build | status is crashing on login when passcode is turned off on device but save password is turned on in app bug report problem crash on login to status app when passcode is turned off on device but before this save password with biometric authentication was enabled reproducible on phones with touch and f... | 0 |

23,223 | 7,299,793,022 | IssuesEvent | 2018-02-26 21:18:36 | hashicorp/packer | https://api.github.com/repos/hashicorp/packer | closed | Azure builder not generating deployable Windows managed images | builder/azure | When using the packer script listed below, an Azure managed image is successfully generated, but when creating a VM, it gets stuck in the "creating" phase. The boot diagnostics show the lockscreen. If I delete the VM in the "creating" phase, attach the managed disk to another VM as a data disk and look at the sysprep... | 1.0 | Azure builder not generating deployable Windows managed images - When using the packer script listed below, an Azure managed image is successfully generated, but when creating a VM, it gets stuck in the "creating" phase. The boot diagnostics show the lockscreen. If I delete the VM in the "creating" phase, attach the ... | build | azure builder not generating deployable windows managed images when using the packer script listed below an azure managed image is successfully generated but when creating a vm it gets stuck in the creating phase the boot diagnostics show the lockscreen if i delete the vm in the creating phase attach the ... | 1 |

34,855 | 4,561,910,130 | IssuesEvent | 2016-09-14 13:23:45 | vector-im/vector-web | https://api.github.com/repos/vector-im/vector-web | closed | Create room 3 and 4 (invite people to room) screen: User invite flow to be improved on | design-signed-off rs2 ui/ux | Create room 3 and 4 (invite people to room) screen:

**Original requirements from Amandine on basecamp**

Like for room creation we believe and have been supported by the users, that the invite flow is not right.

Today we have a single invite field to search and invite by email or by ID. People are completely mi... | 1.0 | Create room 3 and 4 (invite people to room) screen: User invite flow to be improved on - Create room 3 and 4 (invite people to room) screen:

**Original requirements from Amandine on basecamp**

Like for room creation we believe and have been supported by the users, that the invite flow is not right.

Today we h... | non_build | create room and invite people to room screen user invite flow to be improved on create room and invite people to room screen original requirements from amandine on basecamp like for room creation we believe and have been supported by the users that the invite flow is not right today we h... | 0 |

15,472 | 5,967,400,526 | IssuesEvent | 2017-05-30 15:52:34 | curl/curl | https://api.github.com/repos/curl/curl | closed | OS400 fails to build 7.52.1 | build | [ There are collections of known issues to be aware of:

https://curl.haxx.se/docs/knownbugs.html https://curl.haxx.se/docs/todo.html ]

### I did this

Build 7.52.1 release on OS/400 V7R1M0

### I expected the following

Clean build

### curl/libcurl version

7.52.1

[curl -V output perhaps?]

### operating ... | 1.0 | OS400 fails to build 7.52.1 - [ There are collections of known issues to be aware of:

https://curl.haxx.se/docs/knownbugs.html https://curl.haxx.se/docs/todo.html ]

### I did this

Build 7.52.1 release on OS/400 V7R1M0

### I expected the following

Clean build

### curl/libcurl version

7.52.1

[curl -V outp... | build | fails to build there are collections of known issues to be aware of i did this build release on os i expected the following clean build curl libcurl version operating system os the build is failing because the definition of curlopt socks pr... | 1 |

855 | 2,648,779,841 | IssuesEvent | 2015-03-14 07:42:05 | Jasig/cas | https://api.github.com/repos/Jasig/cas | closed | Inclusion of clearpass dependency causes crash | Bug Build ClearPass Major | Similiar to what @leleuj reports here:

http://jasig.275507.n4.nabble.com/CAS-4-1-issue-with-the-webflow-stored-on-client-side-td4664313.html

I realized that including clearpass as a dependency in the webapp, which will retrieve cas-client-core and then opensaml causes transitive dependencies for `bcprov` to be incl... | 1.0 | Inclusion of clearpass dependency causes crash - Similiar to what @leleuj reports here:

http://jasig.275507.n4.nabble.com/CAS-4-1-issue-with-the-webflow-stored-on-client-side-td4664313.html

I realized that including clearpass as a dependency in the webapp, which will retrieve cas-client-core and then opensaml cause... | build | inclusion of clearpass dependency causes crash similiar to what leleuj reports here i realized that including clearpass as a dependency in the webapp which will retrieve cas client core and then opensaml causes transitive dependencies for bcprov to be included in the lib directory this causes a runtime cr... | 1 |

68,339 | 17,258,061,639 | IssuesEvent | 2021-07-22 00:42:26 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | opened | MacOS failure to compile dylib with metal delegate | type:build/install | **System information**

- OS Platform and Distribution (e.g., Linux Ubuntu 16.04): MacOS Big Sur (11.4)

- TensorFlow installed from (source or binary): source

- TensorFlow version: 2.5.0

- Python version: Python 3.9.1

- Bazel version (if compiling from source): bazel 3.7.2-homebrew

- GCC/Compiler version (if compi... | 1.0 | MacOS failure to compile dylib with metal delegate - **System information**

- OS Platform and Distribution (e.g., Linux Ubuntu 16.04): MacOS Big Sur (11.4)

- TensorFlow installed from (source or binary): source

- TensorFlow version: 2.5.0

- Python version: Python 3.9.1

- Bazel version (if compiling from source): b... | build | macos failure to compile dylib with metal delegate system information os platform and distribution e g linux ubuntu macos big sur tensorflow installed from source or binary source tensorflow version python version python bazel version if compiling from source baze... | 1 |

101,476 | 4,118,528,052 | IssuesEvent | 2016-06-08 11:52:35 | Esri/solutions-webappbuilder-widgets | https://api.github.com/repos/Esri/solutions-webappbuilder-widgets | closed | Smart Editor - Test webmap with additional layer types | Mid Priority Smart Editor | ### Widget

Smart Editor

### Version of widget

Alpha

### Bug or Enhancement

Bug

### Repo Steps or Enhancement details

We need to test to ensure other layer types do not break the widget

#### Layer Types

Feature Collections with one layer

FC with more than one layer

Map Service

Tile Maps

Vector Maps

Table... | 1.0 | Smart Editor - Test webmap with additional layer types - ### Widget

Smart Editor

### Version of widget

Alpha

### Bug or Enhancement

Bug

### Repo Steps or Enhancement details

We need to test to ensure other layer types do not break the widget

#### Layer Types

Feature Collections with one layer

FC with more t... | non_build | smart editor test webmap with additional layer types widget smart editor version of widget alpha bug or enhancement bug repo steps or enhancement details we need to test to ensure other layer types do not break the widget layer types feature collections with one layer fc with more t... | 0 |

38,927 | 10,267,656,498 | IssuesEvent | 2019-08-23 02:38:51 | openndr/ndr-build-env | https://api.github.com/repos/openndr/ndr-build-env | closed | Isolate DPDK-related build dependents from generic build result paths. | build dpdk enhancement nbh | Currently, we use '/<target>/lib' & '/<target>/include' paths to store DPDK-related build dependents.

If a user wants to compile libraries, the above situation can cause confusion to the developer finding the result of compilations.

We must isolate DPDK-related build dependents from generic build result paths. | 1.0 | Isolate DPDK-related build dependents from generic build result paths. - Currently, we use '/<target>/lib' & '/<target>/include' paths to store DPDK-related build dependents.

If a user wants to compile libraries, the above situation can cause confusion to the developer finding the result of compilations.

We must isol... | build | isolate dpdk related build dependents from generic build result paths currently we use lib include paths to store dpdk related build dependents if a user wants to compile libraries the above situation can cause confusion to the developer finding the result of compilations we must isolate dpdk relat... | 1 |

72,073 | 31,152,939,809 | IssuesEvent | 2023-08-16 11:14:26 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | This deployment never works if I follow your instructions to deploy an ASP.NET CORE 6 website | app-service/svc triaged assigned-to-author product-question Pri1 |

[Enter feedback here]

---

#### Document Details

I created a sample ASP.NET Core 6 project, use dotnet publish command to build it, and package the publish folder into a zip file, then I run the command suggested by this page

`az webapp deploy --resource-group {resourceGroupName} --name {appServiceName} --src... | 1.0 | This deployment never works if I follow your instructions to deploy an ASP.NET CORE 6 website -

[Enter feedback here]

---

#### Document Details

I created a sample ASP.NET Core 6 project, use dotnet publish command to build it, and package the publish folder into a zip file, then I run the command suggested by ... | non_build | this deployment never works if i follow your instructions to deploy an asp net core website document details i created a sample asp net core project use dotnet publish command to build it and package the publish folder into a zip file then i run the command suggested by this page az weba... | 0 |

26,356 | 7,817,903,934 | IssuesEvent | 2018-06-13 10:29:03 | ShaikASK/Testing | https://api.github.com/repos/ShaikASK/Testing | closed | HR Admin /HR Users : Edit Hires : Application displays an error message when user try to edit "New Hire" | Defect HR Admin Module HR User Module New Hire P1 Release#2 Build#3 | Steps :

1.Launch the URL

2.Sign in as HR admin /HR Users

3.Go to New Hires

4.Create a New Hire and Save it

5.Edit the above created New Hire and save the changes

Experienced Behaviour : Observed that error message is displayed as "ERROR: Could not update New Hire when user try to edit the New Hire

Expec... | 1.0 | HR Admin /HR Users : Edit Hires : Application displays an error message when user try to edit "New Hire" - Steps :

1.Launch the URL

2.Sign in as HR admin /HR Users

3.Go to New Hires

4.Create a New Hire and Save it

5.Edit the above created New Hire and save the changes

Experienced Behaviour : Observed tha... | build | hr admin hr users edit hires application displays an error message when user try to edit new hire steps launch the url sign in as hr admin hr users go to new hires create a new hire and save it edit the above created new hire and save the changes experienced behaviour observed tha... | 1 |

8,295 | 4,216,910,442 | IssuesEvent | 2016-06-30 11:01:29 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | opened | Builder has troubles with gettting src files from github. | area/build-release | ```

Fetching upstream changes from https://github.com/kubernetes/kubernetes

> /usr/bin/git -c core.askpass=true fetch --tags --progress https://github.com/kubernetes/kubernetes +refs/pull/28279/merge:refs/remotes/origin/pr/28279/merge # timeout=20

ERROR: Error fetching remote repo 'remote1'

hudson.plugins.git.GitE... | 1.0 | Builder has troubles with gettting src files from github. - ```

Fetching upstream changes from https://github.com/kubernetes/kubernetes

> /usr/bin/git -c core.askpass=true fetch --tags --progress https://github.com/kubernetes/kubernetes +refs/pull/28279/merge:refs/remotes/origin/pr/28279/merge # timeout=20

ERROR: E... | build | builder has troubles with gettting src files from github fetching upstream changes from usr bin git c core askpass true fetch tags progress refs pull merge refs remotes origin pr merge timeout error error fetching remote repo hudson plugins git gitexception failed to fetch from ... | 1 |

80,447 | 23,208,473,318 | IssuesEvent | 2022-08-02 08:03:27 | llvm/llvm-project | https://api.github.com/repos/llvm/llvm-project | closed | LLVM 15.0.0-rc1 fails to build: wrong member name in IntelJITEventListener | build-problem | Trying out the build of llvm 15.0.0-rc1 (as in: the folder `llvm/` in this repo) in https://github.com/conda-forge/llvmdev-feedstock/pull/163 runs into:

```console

[...]

[1561/3294] Building C object lib/ExecutionEngine/IntelJITEvents/CMakeFiles/LLVMIntelJITEvents.dir/jitprofiling.c.o

[1562/3294] Building CXX obj... | 1.0 | LLVM 15.0.0-rc1 fails to build: wrong member name in IntelJITEventListener - Trying out the build of llvm 15.0.0-rc1 (as in: the folder `llvm/` in this repo) in https://github.com/conda-forge/llvmdev-feedstock/pull/163 runs into:

```console

[...]

[1561/3294] Building C object lib/ExecutionEngine/IntelJITEvents/CMa... | build | llvm fails to build wrong member name in inteljiteventlistener trying out the build of llvm as in the folder llvm in this repo in runs into console building c object lib executionengine inteljitevents cmakefiles llvminteljitevents dir jitprofiling c o building cxx object lib e... | 1 |

10,854 | 4,834,152,562 | IssuesEvent | 2016-11-08 13:30:49 | liteguard/liteguard | https://api.github.com/repos/liteguard/liteguard | closed | 1.0.0 release | build docs in-progress | **Ready** when all other issues forming part of the release are **Done**.

- [x] run code analysis in VS in _Release_ mode and address violations (send a regular PR which must be merged before continuing)

- [x] check build, create release in GitHub UI including releaseNotes, mentioning non-owner contributors, if any

... | 1.0 | 1.0.0 release - **Ready** when all other issues forming part of the release are **Done**.

- [x] run code analysis in VS in _Release_ mode and address violations (send a regular PR which must be merged before continuing)

- [x] check build, create release in GitHub UI including releaseNotes, mentioning non-owner contri... | build | release ready when all other issues forming part of the release are done run code analysis in vs in release mode and address violations send a regular pr which must be merged before continuing check build create release in github ui including releasenotes mentioning non owner contributo... | 1 |

76,021 | 21,104,304,961 | IssuesEvent | 2022-04-04 17:11:00 | hvdwolf/jExifToolGUI | https://api.github.com/repos/hvdwolf/jExifToolGUI | closed | Translatable text (?) | (Fixed) in beta; no release build yet | hi,

I can't find in Weblate this string to translate

MacOS

JTG v1.10.0 | 1.0 | Translatable text (?) - hi,

I can't find in Weblate this string to translate

MacOS

JTG v1.10.0 | build | translatable text hi i can t find in weblate this string to translate macos jtg | 1 |

35,246 | 14,655,666,915 | IssuesEvent | 2020-12-28 11:33:33 | microsoft/vscode-cpptools | https://api.github.com/repos/microsoft/vscode-cpptools | closed | IntelliSense within remote ssh broken | Language Service more info needed remote | **Type:** IntelliSense

**Describe the bug**

- OS and Version: Mac Mojave 10.14.6, remote with Linux 4.12.14-lp151.28.36-default x86_64

- VS Code Version: 1.42.1

- C/C++ Extension Version: 0.26.3

- Other extensions you installed (and if the issue persists after disabling them): C/C++ GNU Global (0.3.2), Visual S... | 1.0 | IntelliSense within remote ssh broken - **Type:** IntelliSense

**Describe the bug**

- OS and Version: Mac Mojave 10.14.6, remote with Linux 4.12.14-lp151.28.36-default x86_64

- VS Code Version: 1.42.1

- C/C++ Extension Version: 0.26.3

- Other extensions you installed (and if the issue persists after disabling t... | non_build | intellisense within remote ssh broken type intellisense describe the bug os and version mac mojave remote with linux default vs code version c c extension version other extensions you installed and if the issue persists after disabling them c c gnu... | 0 |

78,159 | 22,153,611,298 | IssuesEvent | 2022-06-03 19:43:39 | golang/go | https://api.github.com/repos/golang/go | closed | x/build, crypto/x509: macOS flakes due to "certificate is not standards compliant" | OS-Darwin Builders NeedsInvestigation release-blocker | Splitting the builder flakiness off from #51991.

https://github.com/golang/go/issues/51991#issuecomment-1104063192

@bcmills

> This is showing up on the darwin-amd64-10_15 builder as well, though curiously not on any of the other darwin builders.

>

> Marking as release-blocker for Go 1.19 because darwin/amd64 ... | 1.0 | x/build, crypto/x509: macOS flakes due to "certificate is not standards compliant" - Splitting the builder flakiness off from #51991.

https://github.com/golang/go/issues/51991#issuecomment-1104063192

@bcmills

> This is showing up on the darwin-amd64-10_15 builder as well, though curiously not on any of the othe... | build | x build crypto macos flakes due to certificate is not standards compliant splitting the builder flakiness off from bcmills this is showing up on the darwin builder as well though curiously not on any of the other darwin builders marking as release blocker for go because darwin... | 1 |

241,810 | 7,834,716,420 | IssuesEvent | 2018-06-16 17:35:23 | knowmetools/km-api | https://api.github.com/repos/knowmetools/km-api | closed | Account Image | Priority: Low Status: In Progress Type: Bug | ### Bug Report

The user list displays the hero image for the logged in user. This should be displaying the account image. | 1.0 | Account Image - ### Bug Report

The user list displays the hero image for the logged in user. This should be displaying the account image. | non_build | account image bug report the user list displays the hero image for the logged in user this should be displaying the account image | 0 |

97,027 | 12,197,750,652 | IssuesEvent | 2020-04-29 21:21:51 | phetsims/natural-selection | https://api.github.com/repos/phetsims/natural-selection | closed | should "Add a Mate", "Play", and "Start Over" buttons unpause the sim? | design:general status:ready-for-review | If the user had paused the sim, should the "Add a Mate", "Play", and "Start Over" buttons automatically unpause the sim? Or should we never automatically unpause the sim, and leave it to the user to figure out why pressing "Play" doesn't do anything?

I think the former (unpause the sim) is the least confusing, si... | 1.0 | should "Add a Mate", "Play", and "Start Over" buttons unpause the sim? - If the user had paused the sim, should the "Add a Mate", "Play", and "Start Over" buttons automatically unpause the sim? Or should we never automatically unpause the sim, and leave it to the user to figure out why pressing "Play" doesn't do anyth... | non_build | should add a mate play and start over buttons unpause the sim if the user had paused the sim should the add a mate play and start over buttons automatically unpause the sim or should we never automatically unpause the sim and leave it to the user to figure out why pressing play doesn t do anyth... | 0 |

223,978 | 24,760,210,446 | IssuesEvent | 2022-10-21 22:39:52 | BrianMcDonaldWS/deck.gl | https://api.github.com/repos/BrianMcDonaldWS/deck.gl | opened | CVE-2022-37598 (High) detected in multiple libraries | security vulnerability | ## CVE-2022-37598 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>uglify-js-3.4.9.tgz</b>, <b>uglify-js-3.4.10.tgz</b>, <b>uglify-js-3.8.0.tgz</b></p></summary>

<p>

<details><summary... | True | CVE-2022-37598 (High) detected in multiple libraries - ## CVE-2022-37598 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>uglify-js-3.4.9.tgz</b>, <b>uglify-js-3.4.10.tgz</b>, <b>uglif... | non_build | cve high detected in multiple libraries cve high severity vulnerability vulnerable libraries uglify js tgz uglify js tgz uglify js tgz uglify js tgz javascript parser mangler compressor and beautifier toolkit library home page a href path to d... | 0 |

63,518 | 15,613,757,855 | IssuesEvent | 2021-03-19 16:52:02 | aws/aws-cdk | https://api.github.com/repos/aws/aws-cdk | closed | [cdk-pipelines]: Typescript pipeline fails with module missing errors on code build | @aws-cdk/aws-codebuild @aws-cdk/pipelines bug needs-triage | It exactly the same issue as mentioned here on stackoverflow

https://stackoverflow.com/questions/66590492/aws-codebuild-tsc-error-ts2307-cannot-find-module

If we follow https://cdkworkshop.com/20-typescript/70-advanced-topics/200-pipelines.html.

Essentially, npm i (or npm ci) + tsc works fine locally, but when... | 1.0 | [cdk-pipelines]: Typescript pipeline fails with module missing errors on code build - It exactly the same issue as mentioned here on stackoverflow

https://stackoverflow.com/questions/66590492/aws-codebuild-tsc-error-ts2307-cannot-find-module

If we follow https://cdkworkshop.com/20-typescript/70-advanced-topics/20... | build | typescript pipeline fails with module missing errors on code build it exactly the same issue as mentioned here on stackoverflow if we follow essentially npm i or npm ci tsc works fine locally but when done over codebuild it appears my dependencies don t have their dependencies installed causin... | 1 |

10,503 | 4,779,643,511 | IssuesEvent | 2016-10-27 23:20:31 | Unidata/thredds | https://api.github.com/repos/Unidata/thredds | opened | Investigate Gradle composite builds | Area: Build / Release Area: NetCDF-Java Type: Cleanup Type: Enhancement Type: Feature | https://blog.gradle.org/introducing-composite-builds

> Splitting Monoliths

>

> Organizations that want to avoid the integration pains of multiple repositories tend to use a “monorepo”—a repository containing all projects, often including their dependencies and necessary tools. The upside is that all code is in one... | 1.0 | Investigate Gradle composite builds - https://blog.gradle.org/introducing-composite-builds

> Splitting Monoliths

>

> Organizations that want to avoid the integration pains of multiple repositories tend to use a “monorepo”—a repository containing all projects, often including their dependencies and necessary tools.... | build | investigate gradle composite builds splitting monoliths organizations that want to avoid the integration pains of multiple repositories tend to use a “monorepo”—a repository containing all projects often including their dependencies and necessary tools the upside is that all code is in one place and do... | 1 |

52,849 | 13,065,198,037 | IssuesEvent | 2020-07-30 19:23:44 | GoogleCloudPlatform/golang-samples | https://api.github.com/repos/GoogleCloudPlatform/golang-samples | closed | container_registry/container_analysis: TestPubSub failed | buildcop: flaky buildcop: issue priority: p1 sample type: bug | This test failed!

To configure my behavior, see [the Build Cop Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/buildcop).

If I'm commenting on this issue too often, add the `buildcop: quiet` label and

I will stop commenting.

---

commit: 9d203dfe6a2bf97041383afc7c74880cdfbe... | 2.0 | container_registry/container_analysis: TestPubSub failed - This test failed!

To configure my behavior, see [the Build Cop Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/buildcop).

If I'm commenting on this issue too often, add the `buildcop: quiet` label and

I will stop com... | build | container registry container analysis testpubsub failed this test failed to configure my behavior see if i m commenting on this issue too often add the buildcop quiet label and i will stop commenting commit buildurl status failed test output samples test go occurrencepubsub occ... | 1 |

58,018 | 14,265,004,642 | IssuesEvent | 2020-11-20 16:30:19 | root-project/root | https://api.github.com/repos/root-project/root | closed | macOS packaging broken in master | affects:master bug in:Build System | # Describe the bug

https://lcgapp-services.cern.ch/root-jenkins/job/root-release-master/ shows all mac build are broken with similar errrors:

```

07:02:10 CPack: - Building component package: /build/jenkins/ws/BUILDTYPE/Release/LABEL/mac1014/V/master/build/_CPack_Packages/Darwin/productbuild/root_v6.23.01.macosx64... | 1.0 | macOS packaging broken in master - # Describe the bug

https://lcgapp-services.cern.ch/root-jenkins/job/root-release-master/ shows all mac build are broken with similar errrors:

```

07:02:10 CPack: - Building component package: /build/jenkins/ws/BUILDTYPE/Release/LABEL/mac1014/V/master/build/_CPack_Packages/Darwin/... | build | macos packaging broken in master describe the bug shows all mac build are broken with similar errrors cpack building component package build jenkins ws buildtype release label v master build cpack packages darwin productbuild root contents packages root tests pk... | 1 |

599,431 | 18,273,360,465 | IssuesEvent | 2021-10-04 15:55:22 | cloudnativedaysjp/reviewapp-operator | https://api.github.com/repos/cloudnativedaysjp/reviewapp-operator | closed | Application Template から作成される ArgoCD Application が status まで明記されてしまっているのを直す | bug medium priority | * https://github.com/ShotaKitazawa/reviewapp-operator-demo-infra/blob/4b494d3aeff5c4ca639e8044656afa302a4d95cc/.apps/dev/sample-2.yaml#L27-L35

* expected は、 status フィールドの記述が無いもの | 1.0 | Application Template から作成される ArgoCD Application が status まで明記されてしまっているのを直す - * https://github.com/ShotaKitazawa/reviewapp-operator-demo-infra/blob/4b494d3aeff5c4ca639e8044656afa302a4d95cc/.apps/dev/sample-2.yaml#L27-L35

* expected は、 status フィールドの記述が無いもの | non_build | application template から作成される argocd application が status まで明記されてしまっているのを直す expected は、 status フィールドの記述が無いもの | 0 |

283,700 | 24,559,275,610 | IssuesEvent | 2022-10-12 18:41:28 | gravitational/teleport | https://api.github.com/repos/gravitational/teleport | closed | [v11] Enhanced recording doesn't work | bug testplan bpf server-access | The enhanced recording is broken on v11. The problem doesn't appear on v10.

Current behavior:

Server logs:

```

2022-10-08T13:04:07-04:00 DEBU [SESSION:N] Launching session 7c1ee1e7-c27b-4189-ba06-d19f2763cc90. srv/sess.go:870

2022-10-08T13:04:07-04:00 ERRO [NODE] Failed to open enhanced recording (inter... | 1.0 | [v11] Enhanced recording doesn't work - The enhanced recording is broken on v11. The problem doesn't appear on v10.

Current behavior:

Server logs:

```

2022-10-08T13:04:07-04:00 DEBU [SESSION:N] Launching session 7c1ee1e7-c27b-4189-ba06-d19f2763cc90. srv/sess.go:870

2022-10-08T13:04:07-04:00 ERRO [NODE] ... | non_build | enhanced recording doesn t work the enhanced recording is broken on the problem doesn t appear on current behavior server logs debu launching session srv sess go erro failed to open enhanced recording interactive session write ... | 0 |

116,058 | 11,899,408,957 | IssuesEvent | 2020-03-30 08:57:42 | OpenEnergyPlatform/oeplatform | https://api.github.com/repos/OpenEnergyPlatform/oeplatform | closed | Dataview: Add Graph / Map view not self-explanatory | SzenarienDB data view / modification documentation enhancement | The menu that is displayed after clicking the above is not self-explanatory. My suggestion would be to add some explanatory paragraph (yes more text), or delete these interaction possibilities (for the moment).

Map view:

, or delete these interaction possibilities (for the moment).

Map view:

| Incomplete Migration Migrated from Trac combo simulation defect | <details>