Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

757

| labels

stringlengths 4

664

| body

stringlengths 3

261k

| index

stringclasses 10

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

232k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

82,035

| 31,865,584,590

|

IssuesEvent

|

2023-09-15 13:56:22

|

dotCMS/core

|

https://api.github.com/repos/dotCMS/core

|

reopened

|

[Containers] : Fix UI Issues in the Portlet

|

Type : Defect Team : Lunik OKR : Core Features Priority : 3 Average

|

### Parent

#23140

### Problem Statement

The UI for containers portlets in dotCMS is not properly displaying in some cases. The portlet displays as a blank box, and the content stored in the container is not visible or accessible.

```[tasklist]

### Tasks

- [x] Prevent the jump when click the "Pre post loop" button, [see video](https://github.com/dotCMS/core/assets/751424/79743a40-e060-4ae2-93f9-3964405fb63c)

- [ ] Prevent the jump to edit the title, [see image](https://github.com/dotCMS/core/assets/751424/f9b3a354-82c5-4285-981f-a81c179c4de6)

- [x] Make the content type selector wider to avoid some of the ellipsis, [see image](https://github.com/dotCMS/core/assets/751424/1bd4a0b3-0d32-40c1-a927-b331147e7e35)

- [x] Fix the buttons size and position, [see image](https://github.com/dotCMS/core/assets/751424/4384333a-a613-4da8-a8b4-843e33dca1c4)

- [x] Make the add container button primary, [see image](https://github.com/dotCMS/core/assets/751424/bb3e5ba1-dd94-4595-bd18-bbcb1ae12662)

- [x] Fix "Add variables" buttons, [see image](https://github.com/dotCMS/core/assets/751424/29d66caf-e70e-4244-aa80-b575a0a4d209)

- [x] Fix scrolling tabs, [see images](https://github.com/dotCMS/core/assets/751424/84337a1a-8390-4ecd-a5d7-13486c9224ed)

- [x] Avoid the jump in the tabs from loading to loaded, [see video](https://github.com/dotCMS/core/assets/751424/735ec4d7-af10-4872-83c4-0246f51f4605)

```

### Acceptance Criteria

- All UI issues attached to this ticket should be fixed

- The content stored in the containers portlets should be visible and accessible

### External Links

N/A

### Assumptions & Initiation Needs

- The developer should have knowledge of dotCMS and the DotCMS UI

- The developer should have access to the existing codebase and the support ticket

### Quality Assurance Notes & Workarounds

- The QA team should test the changes to ensure that all UI issues are fixed and the content is visible and accessible

- As a workaround, the content in the containers portlets can be manually accessed by the developer in the meantime

|

1.0

|

[Containers] : Fix UI Issues in the Portlet - ### Parent

#23140

### Problem Statement

The UI for containers portlets in dotCMS is not properly displaying in some cases. The portlet displays as a blank box, and the content stored in the container is not visible or accessible.

```[tasklist]

### Tasks

- [x] Prevent the jump when click the "Pre post loop" button, [see video](https://github.com/dotCMS/core/assets/751424/79743a40-e060-4ae2-93f9-3964405fb63c)

- [ ] Prevent the jump to edit the title, [see image](https://github.com/dotCMS/core/assets/751424/f9b3a354-82c5-4285-981f-a81c179c4de6)

- [x] Make the content type selector wider to avoid some of the ellipsis, [see image](https://github.com/dotCMS/core/assets/751424/1bd4a0b3-0d32-40c1-a927-b331147e7e35)

- [x] Fix the buttons size and position, [see image](https://github.com/dotCMS/core/assets/751424/4384333a-a613-4da8-a8b4-843e33dca1c4)

- [x] Make the add container button primary, [see image](https://github.com/dotCMS/core/assets/751424/bb3e5ba1-dd94-4595-bd18-bbcb1ae12662)

- [x] Fix "Add variables" buttons, [see image](https://github.com/dotCMS/core/assets/751424/29d66caf-e70e-4244-aa80-b575a0a4d209)

- [x] Fix scrolling tabs, [see images](https://github.com/dotCMS/core/assets/751424/84337a1a-8390-4ecd-a5d7-13486c9224ed)

- [x] Avoid the jump in the tabs from loading to loaded, [see video](https://github.com/dotCMS/core/assets/751424/735ec4d7-af10-4872-83c4-0246f51f4605)

```

### Acceptance Criteria

- All UI issues attached to this ticket should be fixed

- The content stored in the containers portlets should be visible and accessible

### External Links

N/A

### Assumptions & Initiation Needs

- The developer should have knowledge of dotCMS and the DotCMS UI

- The developer should have access to the existing codebase and the support ticket

### Quality Assurance Notes & Workarounds

- The QA team should test the changes to ensure that all UI issues are fixed and the content is visible and accessible

- As a workaround, the content in the containers portlets can be manually accessed by the developer in the meantime

|

defect

|

fix ui issues in the portlet parent problem statement the ui for containers portlets in dotcms is not properly displaying in some cases the portlet displays as a blank box and the content stored in the container is not visible or accessible tasks prevent the jump when click the pre post loop button prevent the jump to edit the title make the content type selector wider to avoid some of the ellipsis fix the buttons size and position make the add container button primary fix add variables buttons fix scrolling tabs avoid the jump in the tabs from loading to loaded acceptance criteria all ui issues attached to this ticket should be fixed the content stored in the containers portlets should be visible and accessible external links n a assumptions initiation needs the developer should have knowledge of dotcms and the dotcms ui the developer should have access to the existing codebase and the support ticket quality assurance notes workarounds the qa team should test the changes to ensure that all ui issues are fixed and the content is visible and accessible as a workaround the content in the containers portlets can be manually accessed by the developer in the meantime

| 1

|

33,874

| 7,286,415,962

|

IssuesEvent

|

2018-02-23 09:40:03

|

primefaces/primefaces

|

https://api.github.com/repos/primefaces/primefaces

|

closed

|

Cannot display faces messages bound to iterated elements with p:dataTable

|

defect

|

When using a `p:dataTable` (PF 5.3) like this:

```

<p:dataTable values="..." var="..." id="current">

...

<h:messages for="current" />

```

No messages will be displayed for `current` although I do have faces messages attached to client ids (current:0, current:1...). However, messages for sub-components (current:0:property) can be displayed.

When rewriting the same example with `h:dataTable` or `ui:repeat`, all messages can be displayed.

More details on [stackoverflow](http://stackoverflow.com/questions/34681454/displaying-faces-messages-bound-to-elements-being-iterated-by-pdatatable).

If needed, I can provide a complete example.

|

1.0

|

Cannot display faces messages bound to iterated elements with p:dataTable - When using a `p:dataTable` (PF 5.3) like this:

```

<p:dataTable values="..." var="..." id="current">

...

<h:messages for="current" />

```

No messages will be displayed for `current` although I do have faces messages attached to client ids (current:0, current:1...). However, messages for sub-components (current:0:property) can be displayed.

When rewriting the same example with `h:dataTable` or `ui:repeat`, all messages can be displayed.

More details on [stackoverflow](http://stackoverflow.com/questions/34681454/displaying-faces-messages-bound-to-elements-being-iterated-by-pdatatable).

If needed, I can provide a complete example.

|

defect

|

cannot display faces messages bound to iterated elements with p datatable when using a p datatable pf like this no messages will be displayed for current although i do have faces messages attached to client ids current current however messages for sub components current property can be displayed when rewriting the same example with h datatable or ui repeat all messages can be displayed more details on if needed i can provide a complete example

| 1

|

22,430

| 7,175,256,553

|

IssuesEvent

|

2018-01-31 04:14:28

|

Pterodactyl/Panel

|

https://api.github.com/repos/Pterodactyl/Panel

|

closed

|

FTP upload/permissions

|

Beta/RC Build Bug

|

* Panel or Daemon: Both

* Version of Panel/Daemon: Panel (dev 0.7.0) Daemon v0.5.0-beta.5

* Server's OS: Ubuntu 16.04

* Your Computer's OS & Browser: Windows 10, fillezilla

## Add Details Below:

Created a CSGO server, used the SFTP settings to upload the files for metamod and sourcemod.

Booted the server, errors with permissions/crashed when player joins.

checked the files in /srv/daemon-data/SERVERID and noticed that instead of pterodactyl:ssh being the file owner (like the rest of the files) for what I just uploaded they were root:root

https://gyazo.com/91d50168e8e59e59bb856a3ab3ae1e4a

|

1.0

|

FTP upload/permissions - * Panel or Daemon: Both

* Version of Panel/Daemon: Panel (dev 0.7.0) Daemon v0.5.0-beta.5

* Server's OS: Ubuntu 16.04

* Your Computer's OS & Browser: Windows 10, fillezilla

## Add Details Below:

Created a CSGO server, used the SFTP settings to upload the files for metamod and sourcemod.

Booted the server, errors with permissions/crashed when player joins.

checked the files in /srv/daemon-data/SERVERID and noticed that instead of pterodactyl:ssh being the file owner (like the rest of the files) for what I just uploaded they were root:root

https://gyazo.com/91d50168e8e59e59bb856a3ab3ae1e4a

|

non_defect

|

ftp upload permissions panel or daemon both version of panel daemon panel dev daemon beta server s os ubuntu your computer s os browser windows fillezilla add details below created a csgo server used the sftp settings to upload the files for metamod and sourcemod booted the server errors with permissions crashed when player joins checked the files in srv daemon data serverid and noticed that instead of pterodactyl ssh being the file owner like the rest of the files for what i just uploaded they were root root

| 0

|

5,203

| 2,610,183,131

|

IssuesEvent

|

2015-02-26 18:58:19

|

chrsmith/quchuseban

|

https://api.github.com/repos/chrsmith/quchuseban

|

opened

|

提示怎么祛脸上的色斑

|

auto-migrated Priority-Medium Type-Defect

|

```

《摘要》

在日常生活中色斑越来越普遍,色斑虽然不像其他疾病那样��

�世迅猛,但带来的危害也是不容小视。据市场调查发现,一�

��女性平均每年花在去色斑上面的费用占到整个家庭收入的五

分之一,可见女性对色斑的忌惮。这也印证了脸上长色斑了��

�么办,什么产品去色斑效果好这一系列问题。盲目祛斑是现�

��普遍存在的现象,想要知道什么产品去色斑效果好,首先要

了解色斑的原因是什么,为什么会出现色斑?怎么祛脸上的色�

��,

《客户案例》

我和黄褐斑长了大概十年了,最早是星星点点的,没有��

�成块。<br>

在怀孕期间我没有采取任何措施,孩子出生后,我也在��

�待那些斑块快点消失,可是,我这一等就是四五个月,脸上�

��还是有好多斑,我也急了,好友说的话咋就不灵呢。估计是

因为之前我就有斑的缘故吧,始终找不到长斑的根源。我又��

�始了病急乱投医,这其中的过程我就不啰嗦了,想必长斑的�

��友们都有大同小异的遭遇。尝试过无数的祛斑方法、偏方后

我把最后的希望寄托在高科技的彩光上。在我历时5个月话费�

��6、7千元的一个周期后,结果还是很让我失望。<br>

我老公看到我天天被这些斑块折磨着,很是心疼,就上��

�找了很多关于祛斑的产品,最终他选定了「黛芙薇尔精华液�

��,他说不能再用那些激素产品了,植物产品可以试试,而且

还可以调理内分泌,对我身体恢复健康也有好处。就给我订��

�了两个周期的。一般老公的意见我都会听从,再说了我也想�

��点好。使用了一个周期后,黄褐斑就淡化了,特别是斑块变

小了。第二个周期见效更快,用完之后脸颊两块就都淡得接��

�肤色了,额头上也没有了。我老公看到效果不错,就又给我�

��购了一个周期的,使用完之后,天哪,我整张脸发生了天翻

地覆的变化啊,从一个斑脸婆就变成了一个水嫩白净的女人��

�应该说是漂亮的母亲。呵呵,这一点都不夸张。老公现在看�

��的眼神都总是含情脉脉的,还老说我一点不像生过孩子的样

子。

阅读了怎么祛脸上的色斑,再看脸上容易长斑的原因:

《色斑形成原因》

内部因素

一、压力

当人受到压力时,就会分泌肾上腺素,为对付压力而做��

�备。如果长期受到压力,人体新陈代谢的平衡就会遭到破坏�

��皮肤所需的营养供应趋于缓慢,色素母细胞就会变得很活跃

。

二、荷尔蒙分泌失调

避孕药里所含的女性荷尔蒙雌激素,会刺激麦拉宁细胞��

�分泌而形成不均匀的斑点,因避孕药而形成的斑点,虽然在�

��药中断后会停止,但仍会在皮肤上停留很长一段时间。怀孕

中因女性荷尔蒙雌激素的增加,从怀孕4—5个月开始会容易出

现斑,这时候出现的斑点在产后大部分会消失。可是,新陈��

�谢不正常、肌肤裸露在强烈的紫外线下、精神上受到压力等�

��因,都会使斑加深。有时新长出的斑,产后也不会消失,所

以需要更加注意。

三、新陈代谢缓慢

肝的新陈代谢功能不正常或卵巢功能减退时也会出现斑��

�因为新陈代谢不顺畅、或内分泌失调,使身体处于敏感状态�

��,从而加剧色素问题。我们常说的便秘会形成斑,其实就是

内分泌失调导致过敏体质而形成的。另外,身体状态不正常��

�时候,紫外线的照射也会加速斑的形成。

四、错误的使用化妆品

使用了不适合自己皮肤的化妆品,会导致皮肤过敏。在��

�疗的过程中如过量照射到紫外线,皮肤会为了抵御外界的侵�

��,在有炎症的部位聚集麦拉宁色素,这样会出现色素沉着的

问题。

外部因素

一、紫外线

照射紫外线的时候,人体为了保护皮肤,会在基底层产��

�很多麦拉宁色素。所以为了保护皮肤,会在敏感部位聚集更�

��的色素。经常裸露在强烈的阳光底下不仅促进皮肤的老化,

还会引起黑斑、雀斑等色素沉着的皮肤疾患。

二、不良的清洁习惯

因强烈的清洁习惯使皮肤变得敏感,这样会刺激皮肤。��

�皮肤敏感时,人体为了保护皮肤,黑色素细胞会分泌很多麦�

��宁色素,当色素过剩时就出现了斑、瑕疵等皮肤色素沉着的

问题。

三、遗传基因

父母中有长斑的,则本人长斑的概率就很高,这种情况��

�一定程度上就可判定是遗传基因的作用。所以家里特别是长�

��有长斑的人,要注意避免引发长斑的重要因素之一——紫外

线照射,这是预防斑必须注意的。

《有疑问帮你解决》

1,黛芙薇尔精华液真的有效果吗?真的可以把脸上的黄褐��

�去掉吗?

答:黛芙薇尔精华液DNA精华能够有效的修复周围难以触��

�的色斑,其独有的纳豆成分为皮肤的美白与靓丽,提供了必�

��可少的营养物质,可以有效的去除黄褐斑,黄褐斑,黄褐斑

,蝴蝶斑,晒斑、妊娠斑等。它它完全突破了传统的美肤时��

�,宛如在皮肤中注入了一杯兼具活化、再生、滋养等功效的�

��尾酒,同时为脸部提供大量有机维生素精华,脸部的改变显

而易见。自产品上市以来,老顾客纷纷介绍新顾客,71%的新��

�客都是通过老顾客介绍而来,口碑由此而来!

2,服用黛芙薇尔美白,会伤身体吗?有副作用吗?

答:黛芙薇尔精华液应用了精纯复合配方和领先的分类��

�斑科技,并将“DNA美肤系统”疗法应用到了该产品中,能彻�

��祛除黄褐斑,蝴蝶斑,妊娠斑,晒斑,黄褐斑,老年斑,有

效淡化黄褐斑至接近肤色。黛芙薇尔通过法国、美国、台湾��

�地的专家通力协作,超过10年的研究以全新的DNA肌肤修复技��

�,挑战传统化学护肤理念,不懈追寻发现破译大自然的美丽�

��迹,令每一位爱美的女性都能享受到科技创新所带来的自然

之美。

专为亚洲女性肤质研制,精心呵护女性美丽,多年来,为数��

�百万计的女性解除了黄褐斑困扰。深得广大女性朋友的信赖!

3,去除黄褐斑之后,会反弹吗?

答:很多曾经长了黄褐斑的人士,自从选择了黛芙薇尔��

�白,就一劳永逸。这款祛斑产品是经过数十位权威祛斑专家�

��据斑的形成原因精心研制而成用事实说话,让消费者打分。

树立权威品牌!我们的很多新客户都是老客户介绍而来,请问�

��如果效果不好,会有客户转介绍吗?

4,你们的价格有点贵,能不能便宜一点?

答:如果您使用西药最少需要2000元,煎服的药最少需要3

000元,做手术最少是5000元,而这些毫无疑问,不会对彻底去�

��你的斑点有任何帮助!一分价钱,一份价值,我们现在做的��

�是一个口碑,一个品牌,价钱并不高。如果花这点钱把你的�

��褐斑彻底去除,你还会觉得贵吗?你还会再去花那么多冤枉��

�,不但斑没去掉,还把自己的皮肤弄的越来越糟吗

5,我适合用黛芙薇尔精华液吗?

答:黛芙薇尔适用人群:

1、生理紊乱引起的黄褐斑人群

2、生育引起的妊娠斑人群

3、年纪增长引起的老年斑人群

4、化妆品色素沉积、辐射斑人群

5、长期日照引起的日晒斑人群

6、肌肤暗淡急需美白的人群

《祛斑小方法》

怎么祛脸上的色斑,同时为您分享祛斑小方法

山药:山药的黏质液是合成雌激素的基础物质,对工作和学��

�较大压力的女性具有很好的健身功效。山药所含的精氨酸还�

��健脾补肺的功效。山药含有一种可溶性纤维,有助于控制饮

食,改善消化系统。

```

-----

Original issue reported on code.google.com by `additive...@gmail.com` on 1 Jul 2014 at 3:00

|

1.0

|

提示怎么祛脸上的色斑 - ```

《摘要》

在日常生活中色斑越来越普遍,色斑虽然不像其他疾病那样��

�世迅猛,但带来的危害也是不容小视。据市场调查发现,一�

��女性平均每年花在去色斑上面的费用占到整个家庭收入的五

分之一,可见女性对色斑的忌惮。这也印证了脸上长色斑了��

�么办,什么产品去色斑效果好这一系列问题。盲目祛斑是现�

��普遍存在的现象,想要知道什么产品去色斑效果好,首先要

了解色斑的原因是什么,为什么会出现色斑?怎么祛脸上的色�

��,

《客户案例》

我和黄褐斑长了大概十年了,最早是星星点点的,没有��

�成块。<br>

在怀孕期间我没有采取任何措施,孩子出生后,我也在��

�待那些斑块快点消失,可是,我这一等就是四五个月,脸上�

��还是有好多斑,我也急了,好友说的话咋就不灵呢。估计是

因为之前我就有斑的缘故吧,始终找不到长斑的根源。我又��

�始了病急乱投医,这其中的过程我就不啰嗦了,想必长斑的�

��友们都有大同小异的遭遇。尝试过无数的祛斑方法、偏方后

我把最后的希望寄托在高科技的彩光上。在我历时5个月话费�

��6、7千元的一个周期后,结果还是很让我失望。<br>

我老公看到我天天被这些斑块折磨着,很是心疼,就上��

�找了很多关于祛斑的产品,最终他选定了「黛芙薇尔精华液�

��,他说不能再用那些激素产品了,植物产品可以试试,而且

还可以调理内分泌,对我身体恢复健康也有好处。就给我订��

�了两个周期的。一般老公的意见我都会听从,再说了我也想�

��点好。使用了一个周期后,黄褐斑就淡化了,特别是斑块变

小了。第二个周期见效更快,用完之后脸颊两块就都淡得接��

�肤色了,额头上也没有了。我老公看到效果不错,就又给我�

��购了一个周期的,使用完之后,天哪,我整张脸发生了天翻

地覆的变化啊,从一个斑脸婆就变成了一个水嫩白净的女人��

�应该说是漂亮的母亲。呵呵,这一点都不夸张。老公现在看�

��的眼神都总是含情脉脉的,还老说我一点不像生过孩子的样

子。

阅读了怎么祛脸上的色斑,再看脸上容易长斑的原因:

《色斑形成原因》

内部因素

一、压力

当人受到压力时,就会分泌肾上腺素,为对付压力而做��

�备。如果长期受到压力,人体新陈代谢的平衡就会遭到破坏�

��皮肤所需的营养供应趋于缓慢,色素母细胞就会变得很活跃

。

二、荷尔蒙分泌失调

避孕药里所含的女性荷尔蒙雌激素,会刺激麦拉宁细胞��

�分泌而形成不均匀的斑点,因避孕药而形成的斑点,虽然在�

��药中断后会停止,但仍会在皮肤上停留很长一段时间。怀孕

中因女性荷尔蒙雌激素的增加,从怀孕4—5个月开始会容易出

现斑,这时候出现的斑点在产后大部分会消失。可是,新陈��

�谢不正常、肌肤裸露在强烈的紫外线下、精神上受到压力等�

��因,都会使斑加深。有时新长出的斑,产后也不会消失,所

以需要更加注意。

三、新陈代谢缓慢

肝的新陈代谢功能不正常或卵巢功能减退时也会出现斑��

�因为新陈代谢不顺畅、或内分泌失调,使身体处于敏感状态�

��,从而加剧色素问题。我们常说的便秘会形成斑,其实就是

内分泌失调导致过敏体质而形成的。另外,身体状态不正常��

�时候,紫外线的照射也会加速斑的形成。

四、错误的使用化妆品

使用了不适合自己皮肤的化妆品,会导致皮肤过敏。在��

�疗的过程中如过量照射到紫外线,皮肤会为了抵御外界的侵�

��,在有炎症的部位聚集麦拉宁色素,这样会出现色素沉着的

问题。

外部因素

一、紫外线

照射紫外线的时候,人体为了保护皮肤,会在基底层产��

�很多麦拉宁色素。所以为了保护皮肤,会在敏感部位聚集更�

��的色素。经常裸露在强烈的阳光底下不仅促进皮肤的老化,

还会引起黑斑、雀斑等色素沉着的皮肤疾患。

二、不良的清洁习惯

因强烈的清洁习惯使皮肤变得敏感,这样会刺激皮肤。��

�皮肤敏感时,人体为了保护皮肤,黑色素细胞会分泌很多麦�

��宁色素,当色素过剩时就出现了斑、瑕疵等皮肤色素沉着的

问题。

三、遗传基因

父母中有长斑的,则本人长斑的概率就很高,这种情况��

�一定程度上就可判定是遗传基因的作用。所以家里特别是长�

��有长斑的人,要注意避免引发长斑的重要因素之一——紫外

线照射,这是预防斑必须注意的。

《有疑问帮你解决》

1,黛芙薇尔精华液真的有效果吗?真的可以把脸上的黄褐��

�去掉吗?

答:黛芙薇尔精华液DNA精华能够有效的修复周围难以触��

�的色斑,其独有的纳豆成分为皮肤的美白与靓丽,提供了必�

��可少的营养物质,可以有效的去除黄褐斑,黄褐斑,黄褐斑

,蝴蝶斑,晒斑、妊娠斑等。它它完全突破了传统的美肤时��

�,宛如在皮肤中注入了一杯兼具活化、再生、滋养等功效的�

��尾酒,同时为脸部提供大量有机维生素精华,脸部的改变显

而易见。自产品上市以来,老顾客纷纷介绍新顾客,71%的新��

�客都是通过老顾客介绍而来,口碑由此而来!

2,服用黛芙薇尔美白,会伤身体吗?有副作用吗?

答:黛芙薇尔精华液应用了精纯复合配方和领先的分类��

�斑科技,并将“DNA美肤系统”疗法应用到了该产品中,能彻�

��祛除黄褐斑,蝴蝶斑,妊娠斑,晒斑,黄褐斑,老年斑,有

效淡化黄褐斑至接近肤色。黛芙薇尔通过法国、美国、台湾��

�地的专家通力协作,超过10年的研究以全新的DNA肌肤修复技��

�,挑战传统化学护肤理念,不懈追寻发现破译大自然的美丽�

��迹,令每一位爱美的女性都能享受到科技创新所带来的自然

之美。

专为亚洲女性肤质研制,精心呵护女性美丽,多年来,为数��

�百万计的女性解除了黄褐斑困扰。深得广大女性朋友的信赖!

3,去除黄褐斑之后,会反弹吗?

答:很多曾经长了黄褐斑的人士,自从选择了黛芙薇尔��

�白,就一劳永逸。这款祛斑产品是经过数十位权威祛斑专家�

��据斑的形成原因精心研制而成用事实说话,让消费者打分。

树立权威品牌!我们的很多新客户都是老客户介绍而来,请问�

��如果效果不好,会有客户转介绍吗?

4,你们的价格有点贵,能不能便宜一点?

答:如果您使用西药最少需要2000元,煎服的药最少需要3

000元,做手术最少是5000元,而这些毫无疑问,不会对彻底去�

��你的斑点有任何帮助!一分价钱,一份价值,我们现在做的��

�是一个口碑,一个品牌,价钱并不高。如果花这点钱把你的�

��褐斑彻底去除,你还会觉得贵吗?你还会再去花那么多冤枉��

�,不但斑没去掉,还把自己的皮肤弄的越来越糟吗

5,我适合用黛芙薇尔精华液吗?

答:黛芙薇尔适用人群:

1、生理紊乱引起的黄褐斑人群

2、生育引起的妊娠斑人群

3、年纪增长引起的老年斑人群

4、化妆品色素沉积、辐射斑人群

5、长期日照引起的日晒斑人群

6、肌肤暗淡急需美白的人群

《祛斑小方法》

怎么祛脸上的色斑,同时为您分享祛斑小方法

山药:山药的黏质液是合成雌激素的基础物质,对工作和学��

�较大压力的女性具有很好的健身功效。山药所含的精氨酸还�

��健脾补肺的功效。山药含有一种可溶性纤维,有助于控制饮

食,改善消化系统。

```

-----

Original issue reported on code.google.com by `additive...@gmail.com` on 1 Jul 2014 at 3:00

|

defect

|

提示怎么祛脸上的色斑 《摘要》 在日常生活中色斑越来越普遍,色斑虽然不像其他疾病那样�� �世迅猛,但带来的危害也是不容小视。据市场调查发现,一� ��女性平均每年花在去色斑上面的费用占到整个家庭收入的五 分之一,可见女性对色斑的忌惮。这也印证了脸上长色斑了�� �么办,什么产品去色斑效果好这一系列问题。盲目祛斑是现� ��普遍存在的现象,想要知道什么产品去色斑效果好,首先要 了解色斑的原因是什么,为什么会出现色斑 怎么祛脸上的色� ��, 《客户案例》 我和黄褐斑长了大概十年了,最早是星星点点的,没有�� �成块。 在怀孕期间我没有采取任何措施,孩子出生后,我也在�� �待那些斑块快点消失,可是,我这一等就是四五个月,脸上� ��还是有好多斑,我也急了,好友说的话咋就不灵呢。估计是 因为之前我就有斑的缘故吧,始终找不到长斑的根源。我又�� �始了病急乱投医,这其中的过程我就不啰嗦了,想必长斑的� ��友们都有大同小异的遭遇。尝试过无数的祛斑方法、偏方后 我把最后的希望寄托在高科技的彩光上。 � �� 、 ,结果还是很让我失望。 我老公看到我天天被这些斑块折磨着,很是心疼,就上�� �找了很多关于祛斑的产品,最终他选定了「黛芙薇尔精华液� ��,他说不能再用那些激素产品了,植物产品可以试试,而且 还可以调理内分泌,对我身体恢复健康也有好处。就给我订�� �了两个周期的。一般老公的意见我都会听从,再说了我也想� ��点好。使用了一个周期后,黄褐斑就淡化了,特别是斑块变 小了。第二个周期见效更快,用完之后脸颊两块就都淡得接�� �肤色了,额头上也没有了。我老公看到效果不错,就又给我� ��购了一个周期的,使用完之后,天哪,我整张脸发生了天翻 地覆的变化啊,从一个斑脸婆就变成了一个水嫩白净的女人�� �应该说是漂亮的母亲。呵呵,这一点都不夸张。老公现在看� ��的眼神都总是含情脉脉的,还老说我一点不像生过孩子的样 子。 阅读了怎么祛脸上的色斑,再看脸上容易长斑的原因: 《色斑形成原因》 内部因素 一、压力 当人受到压力时,就会分泌肾上腺素,为对付压力而做�� �备。如果长期受到压力,人体新陈代谢的平衡就会遭到破坏� ��皮肤所需的营养供应趋于缓慢,色素母细胞就会变得很活跃 。 二、荷尔蒙分泌失调 避孕药里所含的女性荷尔蒙雌激素,会刺激麦拉宁细胞�� �分泌而形成不均匀的斑点,因避孕药而形成的斑点,虽然在� ��药中断后会停止,但仍会在皮肤上停留很长一段时间。怀孕 中因女性荷尔蒙雌激素的增加, — 现斑,这时候出现的斑点在产后大部分会消失。可是,新陈�� �谢不正常、肌肤裸露在强烈的紫外线下、精神上受到压力等� ��因,都会使斑加深。有时新长出的斑,产后也不会消失,所 以需要更加注意。 三、新陈代谢缓慢 肝的新陈代谢功能不正常或卵巢功能减退时也会出现斑�� �因为新陈代谢不顺畅、或内分泌失调,使身体处于敏感状态� ��,从而加剧色素问题。我们常说的便秘会形成斑,其实就是 内分泌失调导致过敏体质而形成的。另外,身体状态不正常�� �时候,紫外线的照射也会加速斑的形成。 四、错误的使用化妆品 使用了不适合自己皮肤的化妆品,会导致皮肤过敏。在�� �疗的过程中如过量照射到紫外线,皮肤会为了抵御外界的侵� ��,在有炎症的部位聚集麦拉宁色素,这样会出现色素沉着的 问题。 外部因素 一、紫外线 照射紫外线的时候,人体为了保护皮肤,会在基底层产�� �很多麦拉宁色素。所以为了保护皮肤,会在敏感部位聚集更� ��的色素。经常裸露在强烈的阳光底下不仅促进皮肤的老化, 还会引起黑斑、雀斑等色素沉着的皮肤疾患。 二、不良的清洁习惯 因强烈的清洁习惯使皮肤变得敏感,这样会刺激皮肤。�� �皮肤敏感时,人体为了保护皮肤,黑色素细胞会分泌很多麦� ��宁色素,当色素过剩时就出现了斑、瑕疵等皮肤色素沉着的 问题。 三、遗传基因 父母中有长斑的,则本人长斑的概率就很高,这种情况�� �一定程度上就可判定是遗传基因的作用。所以家里特别是长� ��有长斑的人,要注意避免引发长斑的重要因素之一——紫外 线照射,这是预防斑必须注意的。 《有疑问帮你解决》 黛芙薇尔精华液真的有效果吗 真的可以把脸上的黄褐�� �去掉吗 答:黛芙薇尔精华液dna精华能够有效的修复周围难以触�� �的色斑,其独有的纳豆成分为皮肤的美白与靓丽,提供了必� ��可少的营养物质,可以有效的去除黄褐斑,黄褐斑,黄褐斑 ,蝴蝶斑,晒斑、妊娠斑等。它它完全突破了传统的美肤时�� �,宛如在皮肤中注入了一杯兼具活化、再生、滋养等功效的� ��尾酒,同时为脸部提供大量有机维生素精华,脸部的改变显 而易见。自产品上市以来,老顾客纷纷介绍新顾客, 的新�� �客都是通过老顾客介绍而来,口碑由此而来 ,服用黛芙薇尔美白,会伤身体吗 有副作用吗 答:黛芙薇尔精华液应用了精纯复合配方和领先的分类�� �斑科技,并将“dna美肤系统”疗法应用到了该产品中,能彻� ��祛除黄褐斑,蝴蝶斑,妊娠斑,晒斑,黄褐斑,老年斑,有 效淡化黄褐斑至接近肤色。黛芙薇尔通过法国、美国、台湾�� �地的专家通力协作, �� �,挑战传统化学护肤理念,不懈追寻发现破译大自然的美丽� ��迹,令每一位爱美的女性都能享受到科技创新所带来的自然 之美。 专为亚洲女性肤质研制,精心呵护女性美丽,多年来,为数�� �百万计的女性解除了黄褐斑困扰。深得广大女性朋友的信赖 ,去除黄褐斑之后,会反弹吗 答:很多曾经长了黄褐斑的人士,自从选择了黛芙薇尔�� �白,就一劳永逸。这款祛斑产品是经过数十位权威祛斑专家� ��据斑的形成原因精心研制而成用事实说话,让消费者打分。 树立权威品牌 我们的很多新客户都是老客户介绍而来,请问� ��如果效果不好,会有客户转介绍吗 ,你们的价格有点贵,能不能便宜一点 答: , , ,而这些毫无疑问,不会对彻底去� ��你的斑点有任何帮助 一分价钱,一份价值,我们现在做的�� �是一个口碑,一个品牌,价钱并不高。如果花这点钱把你的� ��褐斑彻底去除,你还会觉得贵吗 你还会再去花那么多冤枉�� �,不但斑没去掉,还把自己的皮肤弄的越来越糟吗 ,我适合用黛芙薇尔精华液吗 答:黛芙薇尔适用人群: 、生理紊乱引起的黄褐斑人群 、生育引起的妊娠斑人群 、年纪增长引起的老年斑人群 、化妆品色素沉积、辐射斑人群 、长期日照引起的日晒斑人群 、肌肤暗淡急需美白的人群 《祛斑小方法》 怎么祛脸上的色斑,同时为您分享祛斑小方法 山药:山药的黏质液是合成雌激素的基础物质,对工作和学�� �较大压力的女性具有很好的健身功效。山药所含的精氨酸还� ��健脾补肺的功效。山药含有一种可溶性纤维,有助于控制饮 食,改善消化系统。 original issue reported on code google com by additive gmail com on jul at

| 1

|

7,321

| 2,610,363,360

|

IssuesEvent

|

2015-02-26 19:57:26

|

chrsmith/scribefire-chrome

|

https://api.github.com/repos/chrsmith/scribefire-chrome

|

closed

|

Firefox Portable with Scribefire Next cannot add a blog

|

auto-migrated Priority-Medium Type-Defect

|

```

What's the problem?

I installed the ScribeFire Next add-on to my Firefox Portable and I tried to

add a blog. I filled in my blog address and clicked on the securely authorize

ScribeFire button. It takes me to a wordpress.com login page to authorize

ScribeFire. I entered my login details and clicked "authorize" then ScribeFire

give me the error message:

Well, this is embarrassing...

ScribeFire couldn't get the information it needed about your blog. Helpfully,

your blog returned this message: Bad login/pass combination.

What browser are you using?

Firefox Portable version 15 from PortableApps.com running on Windows XP.

What version of ScribeFire are you running?

ScribeFire Next 4.0

```

-----

Original issue reported on code.google.com by `talk...@gmail.com` on 2 Sep 2012 at 5:18

|

1.0

|

Firefox Portable with Scribefire Next cannot add a blog - ```

What's the problem?

I installed the ScribeFire Next add-on to my Firefox Portable and I tried to

add a blog. I filled in my blog address and clicked on the securely authorize

ScribeFire button. It takes me to a wordpress.com login page to authorize

ScribeFire. I entered my login details and clicked "authorize" then ScribeFire

give me the error message:

Well, this is embarrassing...

ScribeFire couldn't get the information it needed about your blog. Helpfully,

your blog returned this message: Bad login/pass combination.

What browser are you using?

Firefox Portable version 15 from PortableApps.com running on Windows XP.

What version of ScribeFire are you running?

ScribeFire Next 4.0

```

-----

Original issue reported on code.google.com by `talk...@gmail.com` on 2 Sep 2012 at 5:18

|

defect

|

firefox portable with scribefire next cannot add a blog what s the problem i installed the scribefire next add on to my firefox portable and i tried to add a blog i filled in my blog address and clicked on the securely authorize scribefire button it takes me to a wordpress com login page to authorize scribefire i entered my login details and clicked authorize then scribefire give me the error message well this is embarrassing scribefire couldn t get the information it needed about your blog helpfully your blog returned this message bad login pass combination what browser are you using firefox portable version from portableapps com running on windows xp what version of scribefire are you running scribefire next original issue reported on code google com by talk gmail com on sep at

| 1

|

56,711

| 15,308,029,370

|

IssuesEvent

|

2021-02-24 21:48:12

|

ontop/ontop

|

https://api.github.com/repos/ontop/ontop

|

closed

|

use of R2RML predicateMaps throws error

|

type: defect

|

My R2RML mapping works as long as I do not include predicateMaps.

When adding the second predicateObjectMap with a predicateMap I get the error below.

```

<ActivityMapping>

a rr:TriplesMap;

rr:logicalTable [ rr:tableName "activity" ];

rr:subjectMap [

rr:template "http://data.kbodata.be/organisation/{EntityNumber}#id";

rr:class org:FormalOrganization;

rr:termType rr:IRI

];

rr:predicateObjectMap [

rr:predicate rov:orgActivity;

rr:objectMap [

rr:template "http://id.fedstats.be/nace{NaceVersion}/{NaceCode}#id";

rr:termType rr:IRI

]

];

rr:predicateObjectMap [

rr:predicateMap

[

rr:template "http://data.kbodata.be/def#{Classification}";

rr:termType rr:IRI

];

rr:objectMap [

rr:template "http://id.fedstats.be/nace{NaceVersion}/{NaceCode}#id";

rr:termType rr:IRI

]

];

.

```

Error

```

Error during r2rml import.

java.lang.RuntimeException: java.lang.NullPointerException

at it.unibz.krdb.obda.r2rml.R2RMLManager.getMappings(R2RMLManager.java:131)

at it.unibz.krdb.obda.r2rml.R2RMLReader.readMappings(R2RMLReader.java:110)

at org.semanticweb.ontop.protege.gui.action.R2RMLImportAction.actionPerformed(R2RMLImportAction.java:95)

at javax.swing.AbstractButton.fireActionPerformed(AbstractButton.java:2022)

at javax.swing.AbstractButton$Handler.actionPerformed(AbstractButton.java:2346)

at javax.swing.DefaultButtonModel.fireActionPerformed(DefaultButtonModel.java:402)

at javax.swing.DefaultButtonModel.setPressed(DefaultButtonModel.java:259)

at javax.swing.AbstractButton.doClick(AbstractButton.java:376)

at com.apple.laf.ScreenMenuItem.actionPerformed(ScreenMenuItem.java:125)

at java.awt.MenuItem.processActionEvent(MenuItem.java:669)

at java.awt.MenuItem.processEvent(MenuItem.java:628)

at java.awt.MenuComponent.dispatchEventImpl(MenuComponent.java:351)

at java.awt.MenuComponent.dispatchEvent(MenuComponent.java:339)

at java.awt.EventQueue.dispatchEventImpl(EventQueue.java:754)

at java.awt.EventQueue.access$500(EventQueue.java:97)

at java.awt.EventQueue$3.run(EventQueue.java:702)

at java.awt.EventQueue$3.run(EventQueue.java:696)

at java.security.AccessController.doPrivileged(Native Method)

at java.security.ProtectionDomain$1.doIntersectionPrivilege(ProtectionDomain.java:75)

at java.security.ProtectionDomain$1.doIntersectionPrivilege(ProtectionDomain.java:86)

at java.awt.EventQueue$4.run(EventQueue.java:724)

at java.awt.EventQueue$4.run(EventQueue.java:722)

at java.security.AccessController.doPrivileged(Native Method)

at java.security.ProtectionDomain$1.doIntersectionPrivilege(ProtectionDomain.java:75)

at java.awt.EventQueue.dispatchEvent(EventQueue.java:721)

at java.awt.EventDispatchThread.pumpOneEventForFilters(EventDispatchThread.java:201)

at java.awt.EventDispatchThread.pumpEventsForFilter(EventDispatchThread.java:116)

at java.awt.EventDispatchThread.pumpEventsForHierarchy(EventDispatchThread.java:105)

at java.awt.EventDispatchThread.pumpEvents(EventDispatchThread.java:101)

at java.awt.EventDispatchThread.pumpEvents(EventDispatchThread.java:93)

at java.awt.EventDispatchThread.run(EventDispatchThread.java:82)

Caused by: java.lang.NullPointerException

at it.unibz.krdb.obda.model.impl.PredicateImpl.<init>(PredicateImpl.java:36)

at it.unibz.krdb.obda.model.impl.OBDADataFactoryImpl.getPredicate(OBDADataFactoryImpl.java:92)

at it.unibz.krdb.obda.model.impl.OBDADataFactoryImpl.getPredicate(OBDADataFactoryImpl.java:37)

at it.unibz.krdb.obda.r2rml.R2RMLParser.getBodyPredicates(R2RMLParser.java:221)

at it.unibz.krdb.obda.r2rml.R2RMLManager.getMappingTripleAtoms(R2RMLManager.java:254)

at it.unibz.krdb.obda.r2rml.R2RMLManager.getMapping(R2RMLManager.java:144)

at it.unibz.krdb.obda.r2rml.R2RMLManager.getMappings(R2RMLManager.java:117)

... 30 more

```

|

1.0

|

use of R2RML predicateMaps throws error - My R2RML mapping works as long as I do not include predicateMaps.

When adding the second predicateObjectMap with a predicateMap I get the error below.

```

<ActivityMapping>

a rr:TriplesMap;

rr:logicalTable [ rr:tableName "activity" ];

rr:subjectMap [

rr:template "http://data.kbodata.be/organisation/{EntityNumber}#id";

rr:class org:FormalOrganization;

rr:termType rr:IRI

];

rr:predicateObjectMap [

rr:predicate rov:orgActivity;

rr:objectMap [

rr:template "http://id.fedstats.be/nace{NaceVersion}/{NaceCode}#id";

rr:termType rr:IRI

]

];

rr:predicateObjectMap [

rr:predicateMap

[

rr:template "http://data.kbodata.be/def#{Classification}";

rr:termType rr:IRI

];

rr:objectMap [

rr:template "http://id.fedstats.be/nace{NaceVersion}/{NaceCode}#id";

rr:termType rr:IRI

]

];

.

```

Error

```

Error during r2rml import.

java.lang.RuntimeException: java.lang.NullPointerException

at it.unibz.krdb.obda.r2rml.R2RMLManager.getMappings(R2RMLManager.java:131)

at it.unibz.krdb.obda.r2rml.R2RMLReader.readMappings(R2RMLReader.java:110)

at org.semanticweb.ontop.protege.gui.action.R2RMLImportAction.actionPerformed(R2RMLImportAction.java:95)

at javax.swing.AbstractButton.fireActionPerformed(AbstractButton.java:2022)

at javax.swing.AbstractButton$Handler.actionPerformed(AbstractButton.java:2346)

at javax.swing.DefaultButtonModel.fireActionPerformed(DefaultButtonModel.java:402)

at javax.swing.DefaultButtonModel.setPressed(DefaultButtonModel.java:259)

at javax.swing.AbstractButton.doClick(AbstractButton.java:376)

at com.apple.laf.ScreenMenuItem.actionPerformed(ScreenMenuItem.java:125)

at java.awt.MenuItem.processActionEvent(MenuItem.java:669)

at java.awt.MenuItem.processEvent(MenuItem.java:628)

at java.awt.MenuComponent.dispatchEventImpl(MenuComponent.java:351)

at java.awt.MenuComponent.dispatchEvent(MenuComponent.java:339)

at java.awt.EventQueue.dispatchEventImpl(EventQueue.java:754)

at java.awt.EventQueue.access$500(EventQueue.java:97)

at java.awt.EventQueue$3.run(EventQueue.java:702)

at java.awt.EventQueue$3.run(EventQueue.java:696)

at java.security.AccessController.doPrivileged(Native Method)

at java.security.ProtectionDomain$1.doIntersectionPrivilege(ProtectionDomain.java:75)

at java.security.ProtectionDomain$1.doIntersectionPrivilege(ProtectionDomain.java:86)

at java.awt.EventQueue$4.run(EventQueue.java:724)

at java.awt.EventQueue$4.run(EventQueue.java:722)

at java.security.AccessController.doPrivileged(Native Method)

at java.security.ProtectionDomain$1.doIntersectionPrivilege(ProtectionDomain.java:75)

at java.awt.EventQueue.dispatchEvent(EventQueue.java:721)

at java.awt.EventDispatchThread.pumpOneEventForFilters(EventDispatchThread.java:201)

at java.awt.EventDispatchThread.pumpEventsForFilter(EventDispatchThread.java:116)

at java.awt.EventDispatchThread.pumpEventsForHierarchy(EventDispatchThread.java:105)

at java.awt.EventDispatchThread.pumpEvents(EventDispatchThread.java:101)

at java.awt.EventDispatchThread.pumpEvents(EventDispatchThread.java:93)

at java.awt.EventDispatchThread.run(EventDispatchThread.java:82)

Caused by: java.lang.NullPointerException

at it.unibz.krdb.obda.model.impl.PredicateImpl.<init>(PredicateImpl.java:36)

at it.unibz.krdb.obda.model.impl.OBDADataFactoryImpl.getPredicate(OBDADataFactoryImpl.java:92)

at it.unibz.krdb.obda.model.impl.OBDADataFactoryImpl.getPredicate(OBDADataFactoryImpl.java:37)

at it.unibz.krdb.obda.r2rml.R2RMLParser.getBodyPredicates(R2RMLParser.java:221)

at it.unibz.krdb.obda.r2rml.R2RMLManager.getMappingTripleAtoms(R2RMLManager.java:254)

at it.unibz.krdb.obda.r2rml.R2RMLManager.getMapping(R2RMLManager.java:144)

at it.unibz.krdb.obda.r2rml.R2RMLManager.getMappings(R2RMLManager.java:117)

... 30 more

```

|

defect

|

use of predicatemaps throws error my mapping works as long as i do not include predicatemaps when adding the second predicateobjectmap with a predicatemap i get the error below a rr triplesmap rr logicaltable rr subjectmap rr template rr class org formalorganization rr termtype rr iri rr predicateobjectmap rr predicate rov orgactivity rr objectmap rr template rr termtype rr iri rr predicateobjectmap rr predicatemap rr template rr termtype rr iri rr objectmap rr template rr termtype rr iri error error during import java lang runtimeexception java lang nullpointerexception at it unibz krdb obda getmappings java at it unibz krdb obda readmappings java at org semanticweb ontop protege gui action actionperformed java at javax swing abstractbutton fireactionperformed abstractbutton java at javax swing abstractbutton handler actionperformed abstractbutton java at javax swing defaultbuttonmodel fireactionperformed defaultbuttonmodel java at javax swing defaultbuttonmodel setpressed defaultbuttonmodel java at javax swing abstractbutton doclick abstractbutton java at com apple laf screenmenuitem actionperformed screenmenuitem java at java awt menuitem processactionevent menuitem java at java awt menuitem processevent menuitem java at java awt menucomponent dispatcheventimpl menucomponent java at java awt menucomponent dispatchevent menucomponent java at java awt eventqueue dispatcheventimpl eventqueue java at java awt eventqueue access eventqueue java at java awt eventqueue run eventqueue java at java awt eventqueue run eventqueue java at java security accesscontroller doprivileged native method at java security protectiondomain dointersectionprivilege protectiondomain java at java security protectiondomain dointersectionprivilege protectiondomain java at java awt eventqueue run eventqueue java at java awt eventqueue run eventqueue java at java security accesscontroller doprivileged native method at java security protectiondomain dointersectionprivilege protectiondomain java at java awt eventqueue dispatchevent eventqueue java at java awt eventdispatchthread pumponeeventforfilters eventdispatchthread java at java awt eventdispatchthread pumpeventsforfilter eventdispatchthread java at java awt eventdispatchthread pumpeventsforhierarchy eventdispatchthread java at java awt eventdispatchthread pumpevents eventdispatchthread java at java awt eventdispatchthread pumpevents eventdispatchthread java at java awt eventdispatchthread run eventdispatchthread java caused by java lang nullpointerexception at it unibz krdb obda model impl predicateimpl predicateimpl java at it unibz krdb obda model impl obdadatafactoryimpl getpredicate obdadatafactoryimpl java at it unibz krdb obda model impl obdadatafactoryimpl getpredicate obdadatafactoryimpl java at it unibz krdb obda getbodypredicates java at it unibz krdb obda getmappingtripleatoms java at it unibz krdb obda getmapping java at it unibz krdb obda getmappings java more

| 1

|

53,156

| 6,691,286,309

|

IssuesEvent

|

2017-10-09 12:37:24

|

desktop/desktop

|

https://api.github.com/repos/desktop/desktop

|

closed

|

Contrast - Red & Green

|

needs-design-input

|

<!--

Have you read GitHub Desktop's Code of Conduct? By filing an Issue, you are

expected to comply with it, including treating everyone with respect:

https://github.com/desktop/desktop/blob/master/CODE_OF_CONDUCT.md

-->

<!--

Are you encountering an issue where the “Minimize” tooltip stays visible

when you click the minimize button in the window? If so, that is an issue

with Electron, the framework the app uses. Please subscribe to the issue

at this link for updates on the issue:

https://github.com/electron/electron/issues/9943

-->

<!--

Please summarize the issue in the title, and then use the template below to

fill out the details so we can reproduce the issue on our end.

-->

### Description

The desktop client uses very light colored red and green to indicated deletes and additions, I'm not sure if I've always been color blind, but trying to see the difference between the two colors is nearly impossible for me. I really like the client, it's simple to use and it is helping me learn source control, but this color thing is becoming a real hurdle to get passed. Would it be possible to get settings to adjust colors or contrast, or to have a dark mode so that the reds and greens stand out more? Thank you so much for all you do in providing this website and control and collaboration.

### Version

<!--

What version of GitHub Desktop are you running? This is displayed under the

`About GitHub Desktop` menu item. If you are running from source, include

the commit by running `git rev-parse HEAD` from your local repository.

-->

**GitHub Desktop version:** 1.0.3

<!--

The operating system you are running on may also help with reproducing the

issue:

- If you are on macOS, launch `About This Mac` and write down the OS version

listed.

- If you are on Windows, open `Command Prompt` and attach the output of this

command: `cmd /c ver`

-->

**OS version:** Windows 10

### Steps to Reproduce

1. open repo, make adds and deletes, hard to see if colorblind

<!--

If the issue involves a specific public repository, including the information

about that repository will make it is easier to recreate the issue.

If you think screenshots or a GIF recording will help demonstrate the issue

better, feel free to add them here.

-->

**Expected behavior:** able to see difference between green and red on screen

**Actual behavior:** everything looks white

**Reproduces how often:** always, I think I'm colorblind

### Logs

<!--

There may be some relevant information in log files generated by GitHub

Desktop:

- If you are on macOS, attach the most recent log file from:

`~/Library/Application Support/GitHub Desktop/logs/*.desktop.production.log`

- If you are on Windows, attach the most recent log file from:

`%APPDATA%\GitHub Desktop\logs\*.desktop.production.log`

The log files are organized by date, so see if anything was generated for

today's date.

-->

#### Additional Information

<!--

Any additional information, configuration or data that might be necessary to

reproduce the issue.

If you are dealing with a performance issue or regression, attaching a

[Timeline profile](https://github.com/desktop/desktop/blob/master/docs/contributing/timeline-profile.md)

of the task will help the developers understand the runtime behavior of the

application on your machine.

-->

|

1.0

|

Contrast - Red & Green - <!--

Have you read GitHub Desktop's Code of Conduct? By filing an Issue, you are

expected to comply with it, including treating everyone with respect:

https://github.com/desktop/desktop/blob/master/CODE_OF_CONDUCT.md

-->

<!--

Are you encountering an issue where the “Minimize” tooltip stays visible

when you click the minimize button in the window? If so, that is an issue

with Electron, the framework the app uses. Please subscribe to the issue

at this link for updates on the issue:

https://github.com/electron/electron/issues/9943

-->

<!--

Please summarize the issue in the title, and then use the template below to

fill out the details so we can reproduce the issue on our end.

-->

### Description

The desktop client uses very light colored red and green to indicated deletes and additions, I'm not sure if I've always been color blind, but trying to see the difference between the two colors is nearly impossible for me. I really like the client, it's simple to use and it is helping me learn source control, but this color thing is becoming a real hurdle to get passed. Would it be possible to get settings to adjust colors or contrast, or to have a dark mode so that the reds and greens stand out more? Thank you so much for all you do in providing this website and control and collaboration.

### Version

<!--

What version of GitHub Desktop are you running? This is displayed under the

`About GitHub Desktop` menu item. If you are running from source, include

the commit by running `git rev-parse HEAD` from your local repository.

-->

**GitHub Desktop version:** 1.0.3

<!--

The operating system you are running on may also help with reproducing the

issue:

- If you are on macOS, launch `About This Mac` and write down the OS version

listed.

- If you are on Windows, open `Command Prompt` and attach the output of this

command: `cmd /c ver`

-->

**OS version:** Windows 10

### Steps to Reproduce

1. open repo, make adds and deletes, hard to see if colorblind

<!--

If the issue involves a specific public repository, including the information

about that repository will make it is easier to recreate the issue.

If you think screenshots or a GIF recording will help demonstrate the issue

better, feel free to add them here.

-->

**Expected behavior:** able to see difference between green and red on screen

**Actual behavior:** everything looks white

**Reproduces how often:** always, I think I'm colorblind

### Logs

<!--

There may be some relevant information in log files generated by GitHub

Desktop:

- If you are on macOS, attach the most recent log file from:

`~/Library/Application Support/GitHub Desktop/logs/*.desktop.production.log`

- If you are on Windows, attach the most recent log file from:

`%APPDATA%\GitHub Desktop\logs\*.desktop.production.log`

The log files are organized by date, so see if anything was generated for

today's date.

-->

#### Additional Information

<!--

Any additional information, configuration or data that might be necessary to

reproduce the issue.

If you are dealing with a performance issue or regression, attaching a

[Timeline profile](https://github.com/desktop/desktop/blob/master/docs/contributing/timeline-profile.md)

of the task will help the developers understand the runtime behavior of the

application on your machine.

-->

|

non_defect

|

contrast red green have you read github desktop s code of conduct by filing an issue you are expected to comply with it including treating everyone with respect are you encountering an issue where the “minimize” tooltip stays visible when you click the minimize button in the window if so that is an issue with electron the framework the app uses please subscribe to the issue at this link for updates on the issue please summarize the issue in the title and then use the template below to fill out the details so we can reproduce the issue on our end description the desktop client uses very light colored red and green to indicated deletes and additions i m not sure if i ve always been color blind but trying to see the difference between the two colors is nearly impossible for me i really like the client it s simple to use and it is helping me learn source control but this color thing is becoming a real hurdle to get passed would it be possible to get settings to adjust colors or contrast or to have a dark mode so that the reds and greens stand out more thank you so much for all you do in providing this website and control and collaboration version what version of github desktop are you running this is displayed under the about github desktop menu item if you are running from source include the commit by running git rev parse head from your local repository github desktop version the operating system you are running on may also help with reproducing the issue if you are on macos launch about this mac and write down the os version listed if you are on windows open command prompt and attach the output of this command cmd c ver os version windows steps to reproduce open repo make adds and deletes hard to see if colorblind if the issue involves a specific public repository including the information about that repository will make it is easier to recreate the issue if you think screenshots or a gif recording will help demonstrate the issue better feel free to add them here expected behavior able to see difference between green and red on screen actual behavior everything looks white reproduces how often always i think i m colorblind logs there may be some relevant information in log files generated by github desktop if you are on macos attach the most recent log file from library application support github desktop logs desktop production log if you are on windows attach the most recent log file from appdata github desktop logs desktop production log the log files are organized by date so see if anything was generated for today s date additional information any additional information configuration or data that might be necessary to reproduce the issue if you are dealing with a performance issue or regression attaching a of the task will help the developers understand the runtime behavior of the application on your machine

| 0

|

78,034

| 27,284,991,567

|

IssuesEvent

|

2023-02-23 12:53:54

|

jOOQ/jOOQ

|

https://api.github.com/repos/jOOQ/jOOQ

|

closed

|

Translator duplicates comment only content when retaining comments

|

T: Defect P: Medium E: All Editions C: Translator

|

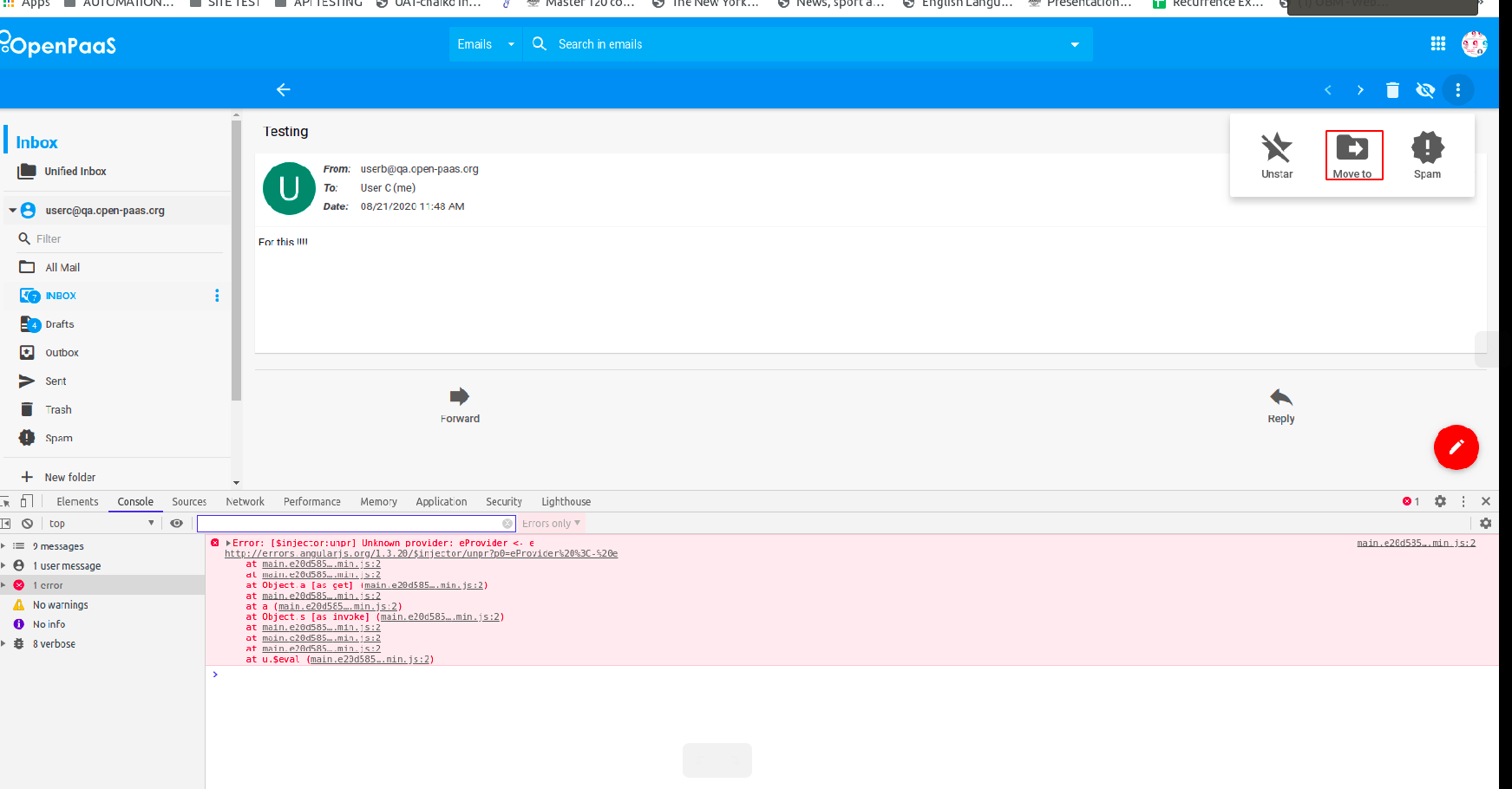

When translating comment only content, such as e.g.

```sql

/* a */

-- b

```

Then the translator duplicates it:

<img width="768" alt="image" src="https://user-images.githubusercontent.com/734593/220898060-5a4f24c2-0568-4ffa-9bbb-467215e56c90.png">

The problem doesn't appear when there's an actual query in the input:

<img width="776" alt="image" src="https://user-images.githubusercontent.com/734593/220898144-b74626e5-bf2b-426c-9a41-fe43222286e5.png">

|

1.0

|

Translator duplicates comment only content when retaining comments - When translating comment only content, such as e.g.

```sql

/* a */

-- b

```

Then the translator duplicates it:

<img width="768" alt="image" src="https://user-images.githubusercontent.com/734593/220898060-5a4f24c2-0568-4ffa-9bbb-467215e56c90.png">

The problem doesn't appear when there's an actual query in the input:

<img width="776" alt="image" src="https://user-images.githubusercontent.com/734593/220898144-b74626e5-bf2b-426c-9a41-fe43222286e5.png">

|

defect

|

translator duplicates comment only content when retaining comments when translating comment only content such as e g sql a b then the translator duplicates it img width alt image src the problem doesn t appear when there s an actual query in the input img width alt image src

| 1

|

51,218

| 13,207,396,526

|

IssuesEvent

|

2020-08-14 22:56:50

|

icecube-trac/tix4

|

https://api.github.com/repos/icecube-trac/tix4

|

opened

|

libPyROOT on a mac not loading in python properly (Trac #57)

|

Incomplete Migration Migrated from Trac cmake defect

|

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/57">https://code.icecube.wisc.edu/projects/icecube/ticket/57</a>, reported by blaufussand owned by troy</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2007-11-09T22:34:06",

"_ts": "1194647646000000",

"description": "trying to import libPyROOT on a mac gives issues:\n\n(root-v5.10.00)\n\nblaufuss@teufel[~/..work/V01-11-02/build]% ./env-shell.sh\nblaufuss@teufel[~/..work/V01-11-02/build](I3)% python\nPython 2.3.5 (#1, Jun 9 2007, 18:47:11)\n[GCC 4.0.1 (Apple Computer, Inc. build 5363)] on darwin\nType \"help\", \"copyright\", \"credits\" or \"license\" for more information.\n>>> from libPyROOT import *\nTraceback (most recent call last):\n File \"<stdin>\", line 1, in ?\nImportError: Inappropriate file type for dynamic loading\n>>>\n\nThis seems to work fine on Linux*\n\nThis thread seems to indicate that this is a python/root linking issue:\nhttp://root.cern.ch/phpBB2/viewtopic.php?p=13401&sid=17c3a761d528ae2926c35f1f6b0afc4d\n\n--Erik",

"reporter": "blaufuss",

"cc": "",

"resolution": "duplicate",

"time": "2007-06-11T13:53:13",

"component": "cmake",

"summary": "libPyROOT on a mac not loading in python properly",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "troy",

"type": "defect"

}

```

</p>

</details>

|

1.0

|

libPyROOT on a mac not loading in python properly (Trac #57) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/57">https://code.icecube.wisc.edu/projects/icecube/ticket/57</a>, reported by blaufussand owned by troy</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2007-11-09T22:34:06",

"_ts": "1194647646000000",

"description": "trying to import libPyROOT on a mac gives issues:\n\n(root-v5.10.00)\n\nblaufuss@teufel[~/..work/V01-11-02/build]% ./env-shell.sh\nblaufuss@teufel[~/..work/V01-11-02/build](I3)% python\nPython 2.3.5 (#1, Jun 9 2007, 18:47:11)\n[GCC 4.0.1 (Apple Computer, Inc. build 5363)] on darwin\nType \"help\", \"copyright\", \"credits\" or \"license\" for more information.\n>>> from libPyROOT import *\nTraceback (most recent call last):\n File \"<stdin>\", line 1, in ?\nImportError: Inappropriate file type for dynamic loading\n>>>\n\nThis seems to work fine on Linux*\n\nThis thread seems to indicate that this is a python/root linking issue:\nhttp://root.cern.ch/phpBB2/viewtopic.php?p=13401&sid=17c3a761d528ae2926c35f1f6b0afc4d\n\n--Erik",

"reporter": "blaufuss",

"cc": "",

"resolution": "duplicate",

"time": "2007-06-11T13:53:13",

"component": "cmake",

"summary": "libPyROOT on a mac not loading in python properly",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "troy",

"type": "defect"

}

```

</p>

</details>

|

defect

|

libpyroot on a mac not loading in python properly trac migrated from json status closed changetime ts description trying to import libpyroot on a mac gives issues n n root n nblaufuss teufel env shell sh nblaufuss teufel python npython jun n on darwin ntype help copyright credits or license for more information n from libpyroot import ntraceback most recent call last n file line in nimporterror inappropriate file type for dynamic loading n n nthis seems to work fine on linux n nthis thread seems to indicate that this is a python root linking issue n reporter blaufuss cc resolution duplicate time component cmake summary libpyroot on a mac not loading in python properly priority normal keywords milestone owner troy type defect

| 1

|

2,387

| 2,607,899,878

|

IssuesEvent

|

2015-02-26 00:13:07

|

chrsmithdemos/zen-coding

|

https://api.github.com/repos/chrsmithdemos/zen-coding

|

closed

|

add '<' operator

|

auto-migrated Priority-Medium Type-Defect

|

```

using '<' operator parentNode :)

```

-----

Original issue reported on code.google.com by `tangoboy...@gmail.com` on 22 Dec 2009 at 4:41

|

1.0

|

add '<' operator - ```

using '<' operator parentNode :)

```

-----

Original issue reported on code.google.com by `tangoboy...@gmail.com` on 22 Dec 2009 at 4:41

|

defect

|

add operator using operator parentnode original issue reported on code google com by tangoboy gmail com on dec at

| 1

|

130,007

| 12,421,897,879

|

IssuesEvent

|

2020-05-23 19:09:57

|

mpls-landlord-db/landlord-lookup

|

https://api.github.com/repos/mpls-landlord-db/landlord-lookup

|

opened

|

Create glossary for relevant columns in `active_rental_licenses` data

|

documentation feature

|

The meanings of the columns `category` and `milestone` are unclear. When users lookup information on the properties they rent, they should be able to understand the data.

|

1.0

|

Create glossary for relevant columns in `active_rental_licenses` data - The meanings of the columns `category` and `milestone` are unclear. When users lookup information on the properties they rent, they should be able to understand the data.

|

non_defect

|

create glossary for relevant columns in active rental licenses data the meanings of the columns category and milestone are unclear when users lookup information on the properties they rent they should be able to understand the data

| 0

|

64,011

| 6,890,552,142

|

IssuesEvent

|

2017-11-22 14:23:42

|

Shadowss/TravianZ

|

https://api.github.com/repos/Shadowss/TravianZ

|

closed

|

Account activation issues

|

bug needs testing

|

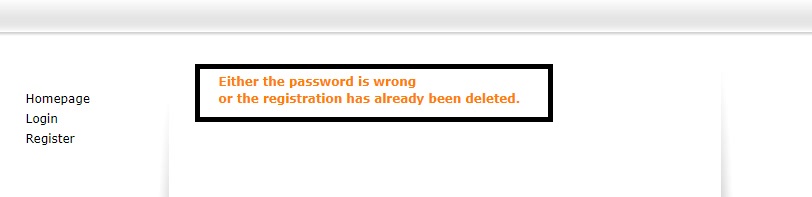

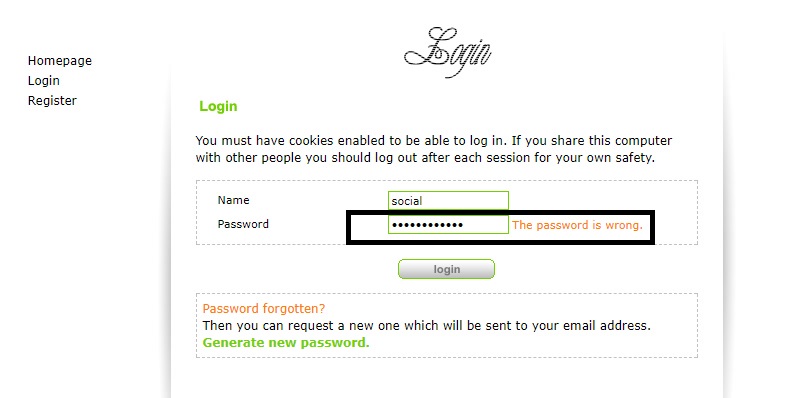

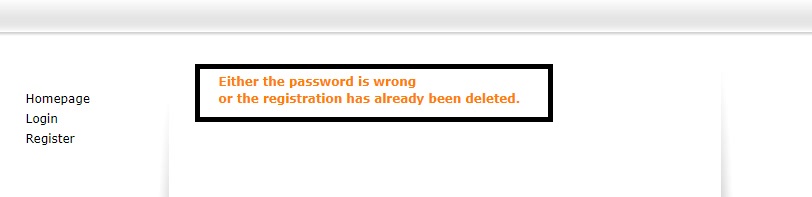

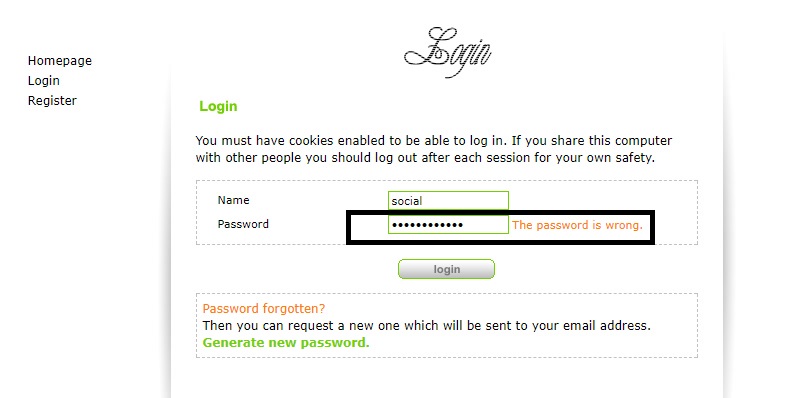

During the registration of the account, everything goes well. The letter comes to the post office. And then there are problems with activation of both the link and the activation code. Records that the wrong password or account has been deleted. There is everything in the database. The new user is in `s1_activate`.

This is most likely due to encryption of the password. Because without an activated account I can not log into my account. An incorrect password is displayed.

|

1.0

|

Account activation issues - During the registration of the account, everything goes well. The letter comes to the post office. And then there are problems with activation of both the link and the activation code. Records that the wrong password or account has been deleted. There is everything in the database. The new user is in `s1_activate`.

This is most likely due to encryption of the password. Because without an activated account I can not log into my account. An incorrect password is displayed.

|

non_defect

|

account activation issues during the registration of the account everything goes well the letter comes to the post office and then there are problems with activation of both the link and the activation code records that the wrong password or account has been deleted there is everything in the database the new user is in activate this is most likely due to encryption of the password because without an activated account i can not log into my account an incorrect password is displayed

| 0

|

51,380

| 13,207,459,348

|

IssuesEvent

|

2020-08-14 23:11:03

|

icecube-trac/tix4

|

https://api.github.com/repos/icecube-trac/tix4

|

opened

|

TriggerType and TriggerMode/Situation (the DOMLaunch enums) not set (Trac #311)

|

Incomplete Migration Migrated from Trac combo simulation defect

|

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/311">https://code.icecube.wisc.edu/projects/icecube/ticket/311</a>, reported by icecubeand owned by olivas, sflis</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2014-11-22T18:26:26",

"_ts": "1416680786877026",

"description": "The enums TriggerType and TriggerMode from DOMLaunch.h are not set in current MC. This would be very useful to understand trigger behaviour (like HLC/SLC/...).\n\nShould go in the old and upcoming new DOMsimulator, maybe?\n",

"reporter": "icecube",

"cc": "",

"resolution": "wontfix",

"time": "2011-09-27T16:18:15",

"component": "combo simulation",

"summary": "TriggerType and TriggerMode/Situation (the DOMLaunch enums) not set",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "olivas, sflis",

"type": "defect"

}

```

</p>

</details>

|

1.0

|

TriggerType and TriggerMode/Situation (the DOMLaunch enums) not set (Trac #311) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/311">https://code.icecube.wisc.edu/projects/icecube/ticket/311</a>, reported by icecubeand owned by olivas, sflis</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2014-11-22T18:26:26",

"_ts": "1416680786877026",

"description": "The enums TriggerType and TriggerMode from DOMLaunch.h are not set in current MC. This would be very useful to understand trigger behaviour (like HLC/SLC/...).\n\nShould go in the old and upcoming new DOMsimulator, maybe?\n",

"reporter": "icecube",

"cc": "",

"resolution": "wontfix",

"time": "2011-09-27T16:18:15",

"component": "combo simulation",

"summary": "TriggerType and TriggerMode/Situation (the DOMLaunch enums) not set",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "olivas, sflis",

"type": "defect"

}

```

</p>

</details>

|

defect

|

triggertype and triggermode situation the domlaunch enums not set trac migrated from json status closed changetime ts description the enums triggertype and triggermode from domlaunch h are not set in current mc this would be very useful to understand trigger behaviour like hlc slc n nshould go in the old and upcoming new domsimulator maybe n reporter icecube cc resolution wontfix time component combo simulation summary triggertype and triggermode situation the domlaunch enums not set priority normal keywords milestone owner olivas sflis type defect

| 1

|

348,293

| 31,495,245,190

|

IssuesEvent

|

2023-08-31 01:26:31

|

WordPress/gutenberg

|

https://api.github.com/repos/WordPress/gutenberg

|

closed

|

`TreeGrid`: write unit tests after recent a11y improvements

|

[Type] Enhancement [Type] Automated Testing [Package] Components [Feature] Component System

|

Hey folks, I noticed a few great accessibility fixes / improvements on `TreeGrid` recently!

It would be great if we could also add a few unit tests to make sure we don't introduce any regressions around these areas in the future— would that be possible?

_Originally posted by @ciampo in https://github.com/WordPress/gutenberg/issues/38679#issuecomment-1034744487_

|

1.0

|

`TreeGrid`: write unit tests after recent a11y improvements - Hey folks, I noticed a few great accessibility fixes / improvements on `TreeGrid` recently!

It would be great if we could also add a few unit tests to make sure we don't introduce any regressions around these areas in the future— would that be possible?

_Originally posted by @ciampo in https://github.com/WordPress/gutenberg/issues/38679#issuecomment-1034744487_

|

non_defect

|

treegrid write unit tests after recent improvements hey folks i noticed a few great accessibility fixes improvements on treegrid recently it would be great if we could also add a few unit tests to make sure we don t introduce any regressions around these areas in the future— would that be possible originally posted by ciampo in

| 0

|

4,599

| 2,610,121,475

|

IssuesEvent

|

2015-02-26 18:37:43

|

chrsmith/scribefire-chrome

|

https://api.github.com/repos/chrsmith/scribefire-chrome

|

closed

|

Red bar error

|

auto-migrated Priority-Medium Type-Defect

|

```

What's the problem?

-> When I open the extension I get a huge red bar saying some code: "<!DOCTYPE

html PUBLIC "-//W3C//DTD XHTML 1.0 Transitional//EN"..."

What browser are you using?

-> Google Chrome

What version of ScribeFire are you running?

-> 1.4.2.0

```

-----

Original issue reported on code.google.com by `thomazm...@gmail.com` on 26 Oct 2010 at 2:10

|

1.0

|

Red bar error - ```

What's the problem?

-> When I open the extension I get a huge red bar saying some code: "<!DOCTYPE

html PUBLIC "-//W3C//DTD XHTML 1.0 Transitional//EN"..."

What browser are you using?

-> Google Chrome

What version of ScribeFire are you running?

-> 1.4.2.0

```

-----

Original issue reported on code.google.com by `thomazm...@gmail.com` on 26 Oct 2010 at 2:10

|

defect

|

red bar error what s the problem when i open the extension i get a huge red bar saying some code doctype html public dtd xhtml transitional en what browser are you using google chrome what version of scribefire are you running original issue reported on code google com by thomazm gmail com on oct at

| 1

|

17,460

| 3,006,534,529

|

IssuesEvent

|

2015-07-27 11:04:27

|

bridgedotnet/Bridge

|

https://api.github.com/repos/bridgedotnet/Bridge

|

closed

|

[Array] Should implement ICollection, IEnumerable, IList, ICloneable

|

defect

|

```C#

using Bridge;

using Bridge.Html5;

using System;

using System.Collections.Generic;

using System.Linq;

namespace Demo

{

public class App

{

[Ready]

public static void Main()

{

Html5.Console.Log("Is IEnumerable: " + (arr is IEnumerable));

Html5.Console.Log("Is ICollection: " + (arr is ICollection));

Html5.Console.Log("Is ICloneable: " + (arr is ICloneable));

Html5.Console.Log("Is ICollection<int>: " + (arr is ICollection<int>));

Html5.Console.Log("Is IEnumerable<int>: " + (arr is IEnumerable<int>));

Html5.Console.Log("Is IList<int>: " + (arr is IList<int>));

}

}

}

```

Actual result: implements IEnumerable only.

Code snippet to test .Net code in LINQPad:

```C#

public static void Main(params object[] p)

{

object arr = new[] { 1, 2, 3 };

(arr is Array).Dump();

(arr is int[]).Dump();

(arr is ICollection).Dump();

(arr is IEnumerable).Dump();

(arr is ICloneable).Dump();

(arr is IList).Dump();

(arr is ICollection<int>).Dump();

(arr is IEnumerable<int>).Dump();

(arr is IList<int>).Dump();

}

```

|

1.0

|

[Array] Should implement ICollection, IEnumerable, IList, ICloneable - ```C#

using Bridge;

using Bridge.Html5;

using System;

using System.Collections.Generic;

using System.Linq;

namespace Demo

{

public class App

{

[Ready]

public static void Main()

{

Html5.Console.Log("Is IEnumerable: " + (arr is IEnumerable));

Html5.Console.Log("Is ICollection: " + (arr is ICollection));

Html5.Console.Log("Is ICloneable: " + (arr is ICloneable));

Html5.Console.Log("Is ICollection<int>: " + (arr is ICollection<int>));

Html5.Console.Log("Is IEnumerable<int>: " + (arr is IEnumerable<int>));

Html5.Console.Log("Is IList<int>: " + (arr is IList<int>));

}

}

}

```

Actual result: implements IEnumerable only.

Code snippet to test .Net code in LINQPad:

```C#

public static void Main(params object[] p)

{

object arr = new[] { 1, 2, 3 };

(arr is Array).Dump();

(arr is int[]).Dump();

(arr is ICollection).Dump();

(arr is IEnumerable).Dump();

(arr is ICloneable).Dump();

(arr is IList).Dump();

(arr is ICollection<int>).Dump();

(arr is IEnumerable<int>).Dump();

(arr is IList<int>).Dump();

}

```

|

defect

|

should implement icollection ienumerable ilist icloneable c using bridge using bridge using system using system collections generic using system linq namespace demo public class app public static void main console log is ienumerable arr is ienumerable console log is icollection arr is icollection console log is icloneable arr is icloneable console log is icollection arr is icollection console log is ienumerable arr is ienumerable console log is ilist arr is ilist actual result implements ienumerable only code snippet to test net code in linqpad c public static void main params object p object arr new arr is array dump arr is int dump arr is icollection dump arr is ienumerable dump arr is icloneable dump arr is ilist dump arr is icollection dump arr is ienumerable dump arr is ilist dump

| 1

|

49,229

| 13,445,715,013

|

IssuesEvent

|

2020-09-08 11:52:14

|

chaitanya00/aem-wknd

|

https://api.github.com/repos/chaitanya00/aem-wknd

|

opened

|

WS-2018-0224 (Medium) detected in mpath-0.1.1.tgz

|

security vulnerability

|

## WS-2018-0224 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mpath-0.1.1.tgz</b></p></summary>

<p>{G,S}et object values using MongoDB path notation</p>

<p>Library home page: <a href="https://registry.npmjs.org/mpath/-/mpath-0.1.1.tgz">https://registry.npmjs.org/mpath/-/mpath-0.1.1.tgz</a></p>

<p>Path to dependency file: /tmp/ws-scm/aem-wknd/package.json</p>

<p>Path to vulnerable library: /tmp/ws-scm/aem-wknd/node_modules/mpath/package.json</p>

<p>

Dependency Hierarchy:

- mongoose-4.2.4.tgz (Root Library)

- :x: **mpath-0.1.1.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/chaitanya00/aem-wknd/commit/3f4c2902a45eb04bc7915c408df14545aa90511c">3f4c2902a45eb04bc7915c408df14545aa90511c</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Mpath, versions 0.0.1--0.0.5, have a Prototype Pollution Vulnerability. An attacker can specify a path that include the prototype object.

<p>Publish Date: 2018-08-30

<p>URL: <a href=https://github.com/aheckmann/mpath/commit/fe732eb05adebe29d1d741bdf3981afbae8ea94d>WS-2018-0224</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>6.0</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://hackerone.com/reports/390860">https://hackerone.com/reports/390860</a></p>

<p>Release Date: 2018-12-13</p>

<p>Fix Resolution: 0.5.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

WS-2018-0224 (Medium) detected in mpath-0.1.1.tgz - ## WS-2018-0224 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mpath-0.1.1.tgz</b></p></summary>

<p>{G,S}et object values using MongoDB path notation</p>

<p>Library home page: <a href="https://registry.npmjs.org/mpath/-/mpath-0.1.1.tgz">https://registry.npmjs.org/mpath/-/mpath-0.1.1.tgz</a></p>

<p>Path to dependency file: /tmp/ws-scm/aem-wknd/package.json</p>

<p>Path to vulnerable library: /tmp/ws-scm/aem-wknd/node_modules/mpath/package.json</p>

<p>

Dependency Hierarchy:

- mongoose-4.2.4.tgz (Root Library)

- :x: **mpath-0.1.1.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/chaitanya00/aem-wknd/commit/3f4c2902a45eb04bc7915c408df14545aa90511c">3f4c2902a45eb04bc7915c408df14545aa90511c</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Mpath, versions 0.0.1--0.0.5, have a Prototype Pollution Vulnerability. An attacker can specify a path that include the prototype object.

<p>Publish Date: 2018-08-30

<p>URL: <a href=https://github.com/aheckmann/mpath/commit/fe732eb05adebe29d1d741bdf3981afbae8ea94d>WS-2018-0224</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>6.0</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://hackerone.com/reports/390860">https://hackerone.com/reports/390860</a></p>

<p>Release Date: 2018-12-13</p>

<p>Fix Resolution: 0.5.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_defect

|

ws medium detected in mpath tgz ws medium severity vulnerability vulnerable library mpath tgz g s et object values using mongodb path notation library home page a href path to dependency file tmp ws scm aem wknd package json path to vulnerable library tmp ws scm aem wknd node modules mpath package json dependency hierarchy mongoose tgz root library x mpath tgz vulnerable library found in head commit a href vulnerability details mpath versions have a prototype pollution vulnerability an attacker can specify a path that include the prototype object publish date url a href cvss score details base score metrics not available suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource

| 0

|

1,785

| 2,603,971,379

|

IssuesEvent

|

2015-02-24 19:00:20

|

chrsmith/nishazi6

|

https://api.github.com/repos/chrsmith/nishazi6

|

opened

|

沈阳龟头上长了一圈肉芽

|

auto-migrated Priority-Medium Type-Defect

|

```

沈阳龟头上长了一圈肉芽〓沈陽軍區政治部醫院性病〓TEL:02

4-31023308〓成立于1946年,68年專注于性傳播疾病的研究和治療�

��位于沈陽市沈河區二緯路32號。是一所與新中國同建立共輝�

��的歷史悠久、設備精良、技術權威、專家云集,是預防、保

健、醫療、科研康復為一體的綜合性醫院。是國家首批公立��

�等部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學�

��東南大學等知名高等院校的教學醫院。曾被中國人民解放軍

空軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集��

�二等功。

```

-----

Original issue reported on code.google.com by `q964105...@gmail.com` on 4 Jun 2014 at 7:33

|

1.0

|

沈阳龟头上长了一圈肉芽 - ```

沈阳龟头上长了一圈肉芽〓沈陽軍區政治部醫院性病〓TEL:02

4-31023308〓成立于1946年,68年專注于性傳播疾病的研究和治療�

��位于沈陽市沈河區二緯路32號。是一所與新中國同建立共輝�

��的歷史悠久、設備精良、技術權威、專家云集,是預防、保

健、醫療、科研康復為一體的綜合性醫院。是國家首批公立��

�等部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學�

��東南大學等知名高等院校的教學醫院。曾被中國人民解放軍

空軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集��

�二等功。

```

-----

Original issue reported on code.google.com by `q964105...@gmail.com` on 4 Jun 2014 at 7:33

|

defect

|

沈阳龟头上长了一圈肉芽 沈阳龟头上长了一圈肉芽〓沈陽軍區政治部醫院性病〓tel: 〓 , � �� 。是一所與新中國同建立共輝� ��的歷史悠久、設備精良、技術權威、專家云集,是預防、保 健、醫療、科研康復為一體的綜合性醫院。是國家首批公立�� �等部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學� ��東南大學等知名高等院校的教學醫院。曾被中國人民解放軍 空軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集�� �二等功。 original issue reported on code google com by gmail com on jun at

| 1

|

1,696

| 2,603,969,690

|

IssuesEvent

|

2015-02-24 18:59:56

|

chrsmith/nishazi6

|

https://api.github.com/repos/chrsmith/nishazi6

|

opened

|

沈阳包皮上起小疙瘩

|

auto-migrated Priority-Medium Type-Defect

|

```

沈阳包皮上起小疙瘩〓沈陽軍區政治部醫院性病〓TEL:024-3102

3308〓成立于1946年,68年專注于性傳播疾病的研究和治療。位�

��沈陽市沈河區二緯路32號。是一所與新中國同建立共輝煌的�

��史悠久、設備精良、技術權威、專家云集,是預防、保健、

醫療、科研康復為一體的綜合性醫院。是國家首批公立甲等��

�隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、東�

��大學等知名高等院校的教學醫院。曾被中國人民解放軍空軍

后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體二��

�功。

```

-----

Original issue reported on code.google.com by `q964105...@gmail.com` on 4 Jun 2014 at 7:24

|

1.0

|

沈阳包皮上起小疙瘩 - ```

沈阳包皮上起小疙瘩〓沈陽軍區政治部醫院性病〓TEL:024-3102

3308〓成立于1946年,68年專注于性傳播疾病的研究和治療。位�

��沈陽市沈河區二緯路32號。是一所與新中國同建立共輝煌的�

��史悠久、設備精良、技術權威、專家云集,是預防、保健、

醫療、科研康復為一體的綜合性醫院。是國家首批公立甲等��

�隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、東�

��大學等知名高等院校的教學醫院。曾被中國人民解放軍空軍

后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體二��

�功。

```

-----

Original issue reported on code.google.com by `q964105...@gmail.com` on 4 Jun 2014 at 7:24

|

defect

|

沈阳包皮上起小疙瘩 沈阳包皮上起小疙瘩〓沈陽軍區政治部醫院性病〓tel: 〓 , 。位� �� 。是一所與新中國同建立共輝煌的� ��史悠久、設備精良、技術權威、專家云集,是預防、保健、 醫療、科研康復為一體的綜合性醫院。是國家首批公立甲等�� �隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、東� ��大學等知名高等院校的教學醫院。曾被中國人民解放軍空軍 后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體二�� �功。 original issue reported on code google com by gmail com on jun at

| 1

|

31,686

| 13,616,160,783

|

IssuesEvent

|

2020-09-23 15:17:30

|

cityofaustin/atd-data-tech

|

https://api.github.com/repos/cityofaustin/atd-data-tech

|

closed

|

Map Projects for eCapris - Manuel Gallegos

|

Need: 1-Must Have Service: Geo Type: Data Workgroup: TED

|

> Please disregard my previous email, see below updated list with TED projects that need to be mapped in eCapris:

>

> • 6598.056 - 2018 Bond Congress/Ramble Intersection Safety Improvements – 2018 Bond

> • 11580.030 – Safety and Mobility Improvements on West Gate Blvd. (from Willian Cannon to Cameron Loop) – Quarter Cent

> • 11580.058 – Intersection Improvements at N Lamar and Morrow St. – Quarter Cent

> • 11899.010 - Barton Springs Rd - South 1st St Intersection Safety Improvements – 2016 Bond

> • 11899.011 - IH-35 SR (NB) - 7th St Intersection Safety Improvements – 2016 Bond

> • 11899.013 - 8th St - IH-35 Intersection Safety Improvements – 2016 Bond

> • 11899.015 - US 183 SR (NB)/Lakeline Blvd, Intersection Safety Improvements – 2016 Bond

> • 11899.016 - IH 35 / Rundberg Ln, Intersection Safety Improvements – 2016 Bond

>

> CPE website shown a pin for the following locations. Can we do an intersection as the other projects? (If take too much time or is more complicated we can leave them as they are).

>

> • 11899.009 - Braker Ln./Stonelake Blvd. Intersection Safety Improvements – 2016 Bond

> • 11899.014 - Lamar/St Johns Intersection Safety Improvements – 2016 Bond

|

1.0

|

Map Projects for eCapris - Manuel Gallegos - > Please disregard my previous email, see below updated list with TED projects that need to be mapped in eCapris:

>

> • 6598.056 - 2018 Bond Congress/Ramble Intersection Safety Improvements – 2018 Bond

> • 11580.030 – Safety and Mobility Improvements on West Gate Blvd. (from Willian Cannon to Cameron Loop) – Quarter Cent

> • 11580.058 – Intersection Improvements at N Lamar and Morrow St. – Quarter Cent

> • 11899.010 - Barton Springs Rd - South 1st St Intersection Safety Improvements – 2016 Bond

> • 11899.011 - IH-35 SR (NB) - 7th St Intersection Safety Improvements – 2016 Bond

> • 11899.013 - 8th St - IH-35 Intersection Safety Improvements – 2016 Bond

> • 11899.015 - US 183 SR (NB)/Lakeline Blvd, Intersection Safety Improvements – 2016 Bond

> • 11899.016 - IH 35 / Rundberg Ln, Intersection Safety Improvements – 2016 Bond

>

> CPE website shown a pin for the following locations. Can we do an intersection as the other projects? (If take too much time or is more complicated we can leave them as they are).

>

> • 11899.009 - Braker Ln./Stonelake Blvd. Intersection Safety Improvements – 2016 Bond

> • 11899.014 - Lamar/St Johns Intersection Safety Improvements – 2016 Bond

|

non_defect

|