Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

666,480 | 22,357,247,364 | IssuesEvent | 2022-06-15 16:44:42 | xlg8/APMIS-Project | https://api.github.com/repos/xlg8/APMIS-Project | opened | Country Request and Prioritization | functional concern country priority | <!--

If you've never submitted an issue to the SORMAS repository before or this is your first time using this template, please read the Contributing guidelines (https://github.com/hzi-braunschweig/SORMAS-Project/blob/development/docs/CONTRIBUTING.md) for an explanation of the information we need you to provide. You do... | 1.0 | Country Request and Prioritization - <!--

If you've never submitted an issue to the SORMAS repository before or this is your first time using this template, please read the Contributing guidelines (https://github.com/hzi-braunschweig/SORMAS-Project/blob/development/docs/CONTRIBUTING.md) for an explanation of the infor... | non_defect | country request and prioritization if you ve never submitted an issue to the sormas repository before or this is your first time using this template please read the contributing guidelines for an explanation of the information we need you to provide you don t have to remove this comment or any other commen... | 0 |

276,349 | 20,980,545,934 | IssuesEvent | 2022-03-28 19:27:33 | NicolasDuciaume/SpeechBot | https://api.github.com/repos/NicolasDuciaume/SpeechBot | closed | Update README | documentation | - add how to's for each component i.e. how to train the STT and Chatbot, how to run STT, Chatbot, TTS, GUI

- how to run the whole project (TBD since we haven't completed integration yet)

- expected outputs

- member roles | 1.0 | Update README - - add how to's for each component i.e. how to train the STT and Chatbot, how to run STT, Chatbot, TTS, GUI

- how to run the whole project (TBD since we haven't completed integration yet)

- expected outputs

- member roles | non_defect | update readme add how to s for each component i e how to train the stt and chatbot how to run stt chatbot tts gui how to run the whole project tbd since we haven t completed integration yet expected outputs member roles | 0 |

50,738 | 13,187,703,290 | IssuesEvent | 2020-08-13 04:17:36 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | closed | [millipede] documentation incomplete (Trac #1254) | Migrated from Trac combo reconstruction defect | the documentation in index.rst does not list all the options of millipede, e.g. the option ReadoutWindow is missing (there may be more missing, please check).

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1254">https://code.icecube.wisc.edu/ticket/1254</a>, reported by hdembinski ... | 1.0 | [millipede] documentation incomplete (Trac #1254) - the documentation in index.rst does not list all the options of millipede, e.g. the option ReadoutWindow is missing (there may be more missing, please check).

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1254">https://code.icecu... | defect | documentation incomplete trac the documentation in index rst does not list all the options of millipede e g the option readoutwindow is missing there may be more missing please check migrated from json status closed changetime description the docum... | 1 |

13,795 | 5,451,888,718 | IssuesEvent | 2017-03-08 00:47:07 | docker/docker | https://api.github.com/repos/docker/docker | opened | File cannot be excluded in .dockerignore when parent directory is included as an exception | area/builder version/unsupported | <!--

If you are reporting a new issue, make sure that we do not have any duplicates

already open. You can ensure this by searching the issue list for this

repository. If there is a duplicate, please close your issue and add a comment

to the existing issue instead.

If you suspect your issue is a bug, please edit ... | 1.0 | File cannot be excluded in .dockerignore when parent directory is included as an exception - <!--

If you are reporting a new issue, make sure that we do not have any duplicates

already open. You can ensure this by searching the issue list for this

repository. If there is a duplicate, please close your issue and add ... | non_defect | file cannot be excluded in dockerignore when parent directory is included as an exception if you are reporting a new issue make sure that we do not have any duplicates already open you can ensure this by searching the issue list for this repository if there is a duplicate please close your issue and add ... | 0 |

17,924 | 3,013,785,192 | IssuesEvent | 2015-07-29 11:12:52 | yawlfoundation/yawl | https://api.github.com/repos/yawlfoundation/yawl | closed | Task appears as 'unnamed' in control center | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Create a workflow (see attachment)

2. Declare a local net variable a of type string

3. Create a task A using decompose to direct data transfer

4. Use variable a as input and output

5. Load the workflow into engine and start it

What is the expected output? What do you see ... | 1.0 | Task appears as 'unnamed' in control center - ```

What steps will reproduce the problem?

1. Create a workflow (see attachment)

2. Declare a local net variable a of type string

3. Create a task A using decompose to direct data transfer

4. Use variable a as input and output

5. Load the workflow into engine and start it

... | defect | task appears as unnamed in control center what steps will reproduce the problem create a workflow see attachment declare a local net variable a of type string create a task a using decompose to direct data transfer use variable a as input and output load the workflow into engine and start it ... | 1 |

38,292 | 8,736,975,655 | IssuesEvent | 2018-12-11 21:08:28 | telus/tds-core | https://api.github.com/repos/telus/tds-core | closed | [Bug] TDS PriceLockup - Component center alignment issues | owner: contributor priority: medium status: in progress type: defect :bug: | <!--

### IMPORTANT SECURITY NOTE ###

When opening issues, be sure NOT to include any private or personal

information such as secrets, passwords, or any source code that involves

data retrieval.

Also, do not include links to sites on staging.

-->

## Description

TDS PriceLockup does not centre c... | 1.0 | [Bug] TDS PriceLockup - Component center alignment issues - <!--

### IMPORTANT SECURITY NOTE ###

When opening issues, be sure NOT to include any private or personal

information such as secrets, passwords, or any source code that involves

data retrieval.

Also, do not include links to sites on staging.... | defect | tds pricelockup component center alignment issues important security note when opening issues be sure not to include any private or personal information such as secrets passwords or any source code that involves data retrieval also do not include links to sites on staging ... | 1 |

337,608 | 30,251,278,114 | IssuesEvent | 2023-07-06 20:47:05 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | opened | Fix math.test_jax_positive | JAX Frontend Sub Task Failing Test | | | |

|---|---|

|paddle|<a href="https://github.com/unifyai/ivy/actions/runs/5475017266"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/5475588308"><img src=https://img.shields.io/badge/-success-success></a>

|tensorflow|<a href="https://gi... | 1.0 | Fix math.test_jax_positive - | | |

|---|---|

|paddle|<a href="https://github.com/unifyai/ivy/actions/runs/5475017266"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/5475588308"><img src=https://img.shields.io/badge/-success-success></a>

|t... | non_defect | fix math test jax positive paddle a href src numpy a href src tensorflow a href src | 0 |

49,424 | 13,186,703,970 | IssuesEvent | 2020-08-13 01:02:46 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | I3TRandomService does not compile with ROOT 6.05/02 (Trac #1363) | Incomplete Migration Migrated from Trac combo core defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1363">https://code.icecube.wisc.edu/ticket/1363</a>, reported by chraab and owned by </em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2015-09-22T13:28:23",

"description": "Compiling I3TRandomService \n- in... | 1.0 | I3TRandomService does not compile with ROOT 6.05/02 (Trac #1363) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1363">https://code.icecube.wisc.edu/ticket/1363</a>, reported by chraab and owned by </em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2015-09-2... | defect | does not compile with root trac migrated from json status closed changetime description compiling n in offline software release n configured with cmake dsystem packages true n and root installed from source into usr local n on ... | 1 |

48,649 | 25,736,719,440 | IssuesEvent | 2022-12-08 01:30:39 | WDscholia/scholia | https://api.github.com/repos/WDscholia/scholia | opened | On curation page for ```use```, add more ```LIMIT```s to query for missing author name strings | SPARQL missing-data performance P4510-describes-project-that-uses | **What query is this about**

https://github.com/WDscholia/scholia/blob/master/scholia/app/templates/use-curation_missing-author-items.sparql , e.g. as per

https://scholia.toolforge.org/use/Q1659584/curation#missing-author-items :

with version 0.4.6 it takes about 2 minutes

with version 0.4.5 it took about 2 seconds

this is the code I am using

var result = await PhotoManager.requestPermission();... | True | [BUG] performance problem with 0.4.6 - After upgrading to 0.4.6 I've noticed a massive performance loss when iterating through all my photos on my device (about 12.000 photos)

with version 0.4.6 it takes about 2 minutes

with version 0.4.5 it took about 2 seconds

this is the code I am using

var result = ... | non_defect | performance problem with after upgrading to i ve noticed a massive performance loss when iterating through all my photos on my device about photos with version it takes about minutes with version it took about seconds this is the code i am using var result await p... | 0 |

29,051 | 5,514,006,752 | IssuesEvent | 2017-03-17 14:09:09 | PowerDNS/pdns | https://api.github.com/repos/PowerDNS/pdns | closed | RPZ TTL and defttl is ignored unless defpol is also set | defect rec | - Program: Recursor

- Issue type: Bug report

### Short description

When loading an RPZ file using `rpzFile("local.rpz", {})`, all returned answers get a TTL of zero. Setting `defttl=60` does not change that.

### Environment

- Operating system: Ubuntu 16.10

- Software version: master c5aa7800c87c5f869ca838... | 1.0 | RPZ TTL and defttl is ignored unless defpol is also set - - Program: Recursor

- Issue type: Bug report

### Short description

When loading an RPZ file using `rpzFile("local.rpz", {})`, all returned answers get a TTL of zero. Setting `defttl=60` does not change that.

### Environment

- Operating system: Ubuntu... | defect | rpz ttl and defttl is ignored unless defpol is also set program recursor issue type bug report short description when loading an rpz file using rpzfile local rpz all returned answers get a ttl of zero setting defttl does not change that environment operating system ubuntu ... | 1 |

11,218 | 2,641,932,454 | IssuesEvent | 2015-03-11 20:35:54 | chrsmith/html5rocks | https://api.github.com/repos/chrsmith/html5rocks | closed | Homepage Lacking <meta charset="utf-8"> | Priority-Medium Type-Defect | Original [issue 128](https://code.google.com/p/html5rocks/issues/detail?id=128) created by chrsmith on 2010-08-02T21:22:10.000Z:

Homepage should specify the character set via <meta charset="utf-8">

| 1.0 | Homepage Lacking <meta charset="utf-8"> - Original [issue 128](https://code.google.com/p/html5rocks/issues/detail?id=128) created by chrsmith on 2010-08-02T21:22:10.000Z:

Homepage should specify the character set via <meta charset="utf-8">

| defect | homepage lacking original created by chrsmith on homepage should specify the character set via lt meta charset quot utf quot gt | 1 |

30,657 | 6,218,147,487 | IssuesEvent | 2017-07-08 21:57:33 | networkx/networkx | https://api.github.com/repos/networkx/networkx | closed | label in gml output should be quoted | Defect Easy-Fix or Beginner Needs PR | The output of the `write_gml` function currently (1.11) does not quote the label values, but I do think it should according to the [GML spec.](http://www.fim.uni-passau.de/fileadmin/files/lehrstuhl/brandenburg/projekte/gml/gml-technical-report.pdf). Because of this, tools like Cytoscape, jhive and gml2gv fails to read ... | 1.0 | label in gml output should be quoted - The output of the `write_gml` function currently (1.11) does not quote the label values, but I do think it should according to the [GML spec.](http://www.fim.uni-passau.de/fileadmin/files/lehrstuhl/brandenburg/projekte/gml/gml-technical-report.pdf). Because of this, tools like Cyt... | defect | label in gml output should be quoted the output of the write gml function currently does not quote the label values but i do think it should according to the because of this tools like cytoscape jhive and fails to read the gml file a node from my output node id label ... | 1 |

365,298 | 10,780,519,555 | IssuesEvent | 2019-11-04 13:10:18 | CESARBR/knot-setup-android | https://api.github.com/repos/CESARBR/knot-setup-android | opened | Develop connectivity with KNoT Cloud | priority: medium | The KNoT Cloud uses WebSockets as its means of communication. In order to GET and POST data from the Cloud, one should have a networking infrastructure to communicate with the server that takes cares of connection and error handling. | 1.0 | Develop connectivity with KNoT Cloud - The KNoT Cloud uses WebSockets as its means of communication. In order to GET and POST data from the Cloud, one should have a networking infrastructure to communicate with the server that takes cares of connection and error handling. | non_defect | develop connectivity with knot cloud the knot cloud uses websockets as its means of communication in order to get and post data from the cloud one should have a networking infrastructure to communicate with the server that takes cares of connection and error handling | 0 |

57,092 | 15,682,395,914 | IssuesEvent | 2021-03-25 07:14:24 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Leaving a space doesn't make it disappear from the LLP | A-Spaces T-Defect Z-M2 | I've left a space I've created but it's still on my LLP and still shows notification counters etc - refreshing fixed it | 1.0 | Leaving a space doesn't make it disappear from the LLP - I've left a space I've created but it's still on my LLP and still shows notification counters etc - refreshing fixed it | defect | leaving a space doesn t make it disappear from the llp i ve left a space i ve created but it s still on my llp and still shows notification counters etc refreshing fixed it | 1 |

189,610 | 22,047,076,612 | IssuesEvent | 2022-05-30 03:50:43 | Trinadh465/device_renesas_kernel_AOSP10_r33_CVE-2022-0492 | https://api.github.com/repos/Trinadh465/device_renesas_kernel_AOSP10_r33_CVE-2022-0492 | closed | CVE-2021-3760 (High) detected in linuxlinux-4.19.88 - autoclosed | security vulnerability | ## CVE-2021-3760 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.88</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.ker... | True | CVE-2021-3760 (High) detected in linuxlinux-4.19.88 - autoclosed - ## CVE-2021-3760 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.88</b></p></summary>

<p>

<p>The Linu... | non_defect | cve high detected in linuxlinux autoclosed cve high severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch master vulnerable source files lin... | 0 |

32,398 | 13,798,142,432 | IssuesEvent | 2020-10-10 00:10:40 | microsoft/botframework-solutions | https://api.github.com/repos/microsoft/botframework-solutions | closed | Creating Visual Studio 2019 Project using the Virtual Assistant Template fails | Bot Services Needs Triage Support Type: Bug customer-replied-to customer-reported | I was following the tutorial in order to create a Virtual Assistant. I downloaded and installed all necessary resources as described here:

https://microsoft.github.io/botframework-solutions/virtual-assistant/tutorials/create-assistant/csharp/2-download-and-install/

In the nextstep one is asked here

https://mic... | 1.0 | Creating Visual Studio 2019 Project using the Virtual Assistant Template fails - I was following the tutorial in order to create a Virtual Assistant. I downloaded and installed all necessary resources as described here:

https://microsoft.github.io/botframework-solutions/virtual-assistant/tutorials/create-assistant/c... | non_defect | creating visual studio project using the virtual assistant template fails i was following the tutorial in order to create a virtual assistant i downloaded and installed all necessary resources as described here in the nextstep one is asked here to create a virtual assistant project in visual studi... | 0 |

16,556 | 2,917,641,027 | IssuesEvent | 2015-06-24 00:00:16 | dart-lang/sdk | https://api.github.com/repos/dart-lang/sdk | closed | Add "error" and "info"-method to Logger | Area-Pkg Pkg-Logging Priority-Unassigned Triaged Type-Defect | *This issue was originally filed by off...@mikemitterer.at*

_____

**What steps will reproduce the problem?**

final Logger logger = new Logger("rest.DefeaultRESTErrorProcessor");

logger.error("Does not work");

**Please provide any additional information below.**

Most Logging-Frameworks use &quo... | 1.0 | Add "error" and "info"-method to Logger - *This issue was originally filed by off...@mikemitterer.at*

_____

**What steps will reproduce the problem?**

final Logger logger = new Logger("rest.DefeaultRESTErrorProcessor");

logger.error("Does not work");

**Please provide any additional information... | defect | add error and info method to logger this issue was originally filed by off mikemitterer at what steps will reproduce the problem final logger logger new logger quot rest defeaultresterrorprocessor quot logger error quot does not work quot please provide any additional information b... | 1 |

81,357 | 10,130,327,569 | IssuesEvent | 2019-08-01 16:40:55 | opencollective/opencollective | https://api.github.com/repos/opencollective/opencollective | opened | Collective Page→ Feedback Navigation Collective Page | design design → UI design → UX figma → Collectives | ## User story

Here under we have option A and B for Navigation in te collective page.

This is to collect feedback for both options pros and cons.

## Referenced Issues

#1928 #

| 3.0 | Collective Page→ Feedback Navigation Collective Page - ## User story

Here under we have option A and B for Navigation in te collective page.

This is to collect feedback for both options pros and cons.

## Referenced Issues

#1928 #

| non_defect | collective page→ feedback navigation collective page user story here under we have option a and b for navigation in te collective page this is to collect feedback for both options pros and cons referenced issues | 0 |

69,655 | 13,304,240,333 | IssuesEvent | 2020-08-25 16:37:39 | Abbassihraf/P-curiosity-LAB | https://api.github.com/repos/Abbassihraf/P-curiosity-LAB | closed | Team's functionality | Code In progress back-end | ### Team's back-end

- [x] Populate the table with data

- [x] Functionality for displaying team members

- [x] Functionality for adding team members to the db

- [x] Functionality for updating team members from the db

- [x] Functionality for deleting team members from the db

- [x] Security and refactor code

| 1.0 | Team's functionality - ### Team's back-end

- [x] Populate the table with data

- [x] Functionality for displaying team members

- [x] Functionality for adding team members to the db

- [x] Functionality for updating team members from the db

- [x] Functionality for deleting team members from the db

- [x] Security an... | non_defect | team s functionality team s back end populate the table with data functionality for displaying team members functionality for adding team members to the db functionality for updating team members from the db functionality for deleting team members from the db security and refactor c... | 0 |

19,542 | 25,864,263,203 | IssuesEvent | 2022-12-13 19:25:24 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | DISABLED test_success_non_blocking (__main__.ForkTest) | module: multiprocessing triaged module: flaky-tests skipped | Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_success_non_blocking&suite=ForkTest&file=test_multiprocessing_spawn.py) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/7286770748).

Over the past 3 ... | 1.0 | DISABLED test_success_non_blocking (__main__.ForkTest) - Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_success_non_blocking&suite=ForkTest&file=test_multiprocessing_spawn.py) and the most recent trunk [workflow logs](https://githu... | non_defect | disabled test success non blocking main forktest platforms linux this test was disabled because it is failing in ci see and the most recent trunk over the past hours it has been determined flaky in workflow s with red and green cc vitalyfedyunin | 0 |

21,851 | 3,573,319,807 | IssuesEvent | 2016-01-27 05:25:32 | ariya/phantomjs | https://api.github.com/repos/ariya/phantomjs | closed | Infinite loop when setting page.content in page.open callback | old.Priority-Medium old.Status-New old.Type-Defect | _**[jare...@gmail.com](http://code.google.com/u/116019651359833698002/) commented:**_

> <b>Which version of PhantomJS are you using? Tip: run 'phantomjs --version'.</b>

1.5

>

> <b>What steps will reproduce the problem?</b>

var page = require('webpage').create();

> page.open('http://google.com', function(status){

... | 1.0 | Infinite loop when setting page.content in page.open callback - _**[jare...@gmail.com](http://code.google.com/u/116019651359833698002/) commented:**_

> <b>Which version of PhantomJS are you using? Tip: run 'phantomjs --version'.</b>

1.5

>

> <b>What steps will reproduce the problem?</b>

var page = require('webpage')... | defect | infinite loop when setting page content in page open callback commented which version of phantomjs are you using tip run phantomjs version what steps will reproduce the problem var page require webpage create page open function status page content quot quo... | 1 |

29,683 | 5,815,120,541 | IssuesEvent | 2017-05-05 07:29:23 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | hazelcast 3.7.2 NullPointerException ClientPartitionServiceImpl$RefreshTaskCallback | Team: Client Type: Defect | We are seeing intermittent null pointer exceptions in a background task coming from our hazelcast client using version 3.7.2.

```

ERROR com.hazelcast.client.spi.ClientInvocationService hz.client_0 [dev] [3.7.2] Failed asynchronous execution of execution callback: com.hazelcast.client.spi.impl.ClientPartitionService... | 1.0 | hazelcast 3.7.2 NullPointerException ClientPartitionServiceImpl$RefreshTaskCallback - We are seeing intermittent null pointer exceptions in a background task coming from our hazelcast client using version 3.7.2.

```

ERROR com.hazelcast.client.spi.ClientInvocationService hz.client_0 [dev] [3.7.2] Failed asynchronous... | defect | hazelcast nullpointerexception clientpartitionserviceimpl refreshtaskcallback we are seeing intermittent null pointer exceptions in a background task coming from our hazelcast client using version error com hazelcast client spi clientinvocationservice hz client failed asynchronous execution... | 1 |

66,442 | 20,199,345,574 | IssuesEvent | 2022-02-11 13:49:18 | decentraland/unity-renderer | https://api.github.com/repos/decentraland/unity-renderer | opened | Userprefs are not removed when uninstalling the application | defect | When the app is reinstalled, the settings are still as the last time the app was used for example if the audio volume was left at 0, the new installed app will still have no audio. | 1.0 | Userprefs are not removed when uninstalling the application - When the app is reinstalled, the settings are still as the last time the app was used for example if the audio volume was left at 0, the new installed app will still have no audio. | defect | userprefs are not removed when uninstalling the application when the app is reinstalled the settings are still as the last time the app was used for example if the audio volume was left at the new installed app will still have no audio | 1 |

54,020 | 13,312,292,084 | IssuesEvent | 2020-08-26 09:31:53 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | cluster split sql hd idx IllegalArgumentException Size must be positive AbstractPoolingMemoryManager.java:175 | Module: IMap Module: SQL Source: Internal Team: Core Type: Defect | sh hz-bench-run-jenkins-split hz/stable/hd/pool/hd-idx

http://jenkins.hazelcast.com/view/split/job/split-x/27/console

/disk1/jenkins/workspace/split-x/4.1-SNAPSHOT/2020_07_09-03_42_21/hd-idx Failed

fail HzClient4HZBB fn hzcmd.distributed.PutRandPerson threadId=0 java.lang.IllegalArgumentException: Size must be p... | 1.0 | cluster split sql hd idx IllegalArgumentException Size must be positive AbstractPoolingMemoryManager.java:175 - sh hz-bench-run-jenkins-split hz/stable/hd/pool/hd-idx

http://jenkins.hazelcast.com/view/split/job/split-x/27/console

/disk1/jenkins/workspace/split-x/4.1-SNAPSHOT/2020_07_09-03_42_21/hd-idx Failed

fai... | defect | cluster split sql hd idx illegalargumentexception size must be positive abstractpoolingmemorymanager java sh hz bench run jenkins split hz stable hd pool hd idx jenkins workspace split x snapshot hd idx failed fail fn hzcmd distributed putrandperson threadid java lang illegalargument... | 1 |

82,211 | 32,060,987,935 | IssuesEvent | 2023-09-24 16:57:48 | SeleniumHQ/selenium | https://api.github.com/repos/SeleniumHQ/selenium | closed | [🐛 Bug]: selenium-server fails to launch intermittently | I-defect I-issue-template | ### What happened?

We are experiencing a rather strange behavior with selenium-server intermittently failing to start.

Basically we have an application which launches selenium using the following cmd:

```

java -jar -Djcommander.debug=true -Dwebdriver.ie.driver=wIEDriverServer.exe -Dwebdriver.gecko.driver="geckodr... | 1.0 | [🐛 Bug]: selenium-server fails to launch intermittently - ### What happened?

We are experiencing a rather strange behavior with selenium-server intermittently failing to start.

Basically we have an application which launches selenium using the following cmd:

```

java -jar -Djcommander.debug=true -Dwebdriver.ie.d... | defect | selenium server fails to launch intermittently what happened we are experiencing a rather strange behavior with selenium server intermittently failing to start basically we have an application which launches selenium using the following cmd java jar djcommander debug true dwebdriver ie driver w... | 1 |

37,491 | 8,406,272,167 | IssuesEvent | 2018-10-11 17:28:12 | NREL/EnergyPlus | https://api.github.com/repos/NREL/EnergyPlus | closed | Surface out of bounds issues can occur with internal mass surfaces under some situations - possibly due to thin metal layer in the construction. (CR #8933) | Defect EnergyPlus PriorityLow SeverityMedium WontFix | #### Temperature stability problems - internal mass - possibly due to thin metal layer

###### Added on 2012-08-17 10:18 by @mjwitte

##

#### Description

From ticket:

2) When I run the model with 2010 weather, however, it crashes with the error message below. The crash occurs when the heating system is OFF and there is... | 1.0 | Surface out of bounds issues can occur with internal mass surfaces under some situations - possibly due to thin metal layer in the construction. (CR #8933) - #### Temperature stability problems - internal mass - possibly due to thin metal layer

###### Added on 2012-08-17 10:18 by @mjwitte

##

#### Description

From tic... | defect | surface out of bounds issues can occur with internal mass surfaces under some situations possibly due to thin metal layer in the construction cr temperature stability problems internal mass possibly due to thin metal layer added on by mjwitte description from ticket wh... | 1 |

43,747 | 11,826,032,309 | IssuesEvent | 2020-03-21 15:54:10 | DependencyTrack/dependency-track | https://api.github.com/repos/DependencyTrack/dependency-track | closed | Project Findings Audited %: Incorrect values (revisited) | defect p3 pending release | ### Current Behavior:

Project Overview pages display a "Findings Audited %" metric. This metric seems to be calculated incorrectly.

I have not tested whether the same metric is correct at the portfolio level.

#### Example:

* Audit tab displays 13 vulnerabilities, of which 3 are audited.

* Overview reports c... | 1.0 | Project Findings Audited %: Incorrect values (revisited) - ### Current Behavior:

Project Overview pages display a "Findings Audited %" metric. This metric seems to be calculated incorrectly.

I have not tested whether the same metric is correct at the portfolio level.

#### Example:

* Audit tab displays 13 vul... | defect | project findings audited incorrect values revisited current behavior project overview pages display a findings audited metric this metric seems to be calculated incorrectly i have not tested whether the same metric is correct at the portfolio level example audit tab displays vuln... | 1 |

69,860 | 22,700,234,045 | IssuesEvent | 2022-07-05 09:58:24 | hpi-swa-teaching/SVGMorph | https://api.github.com/repos/hpi-swa-teaching/SVGMorph | opened | Bezier: Self-intersecting shapes result in holes | defect |

In the image, the radius of the shape is smaller than half the stroke width, thus the stroke boundaries form a husk. The renderer uses evenodd fill rule. Hence, these husks appe... | 1.0 | Bezier: Self-intersecting shapes result in holes -

In the image, the radius of the shape is smaller than half the stroke width, thus the stroke boundaries form a husk. The rende... | defect | bezier self intersecting shapes result in holes in the image the radius of the shape is smaller than half the stroke width thus the stroke boundaries form a husk the renderer uses evenodd fill rule hence these husks appear as holes one solution would be to convert the shape to emulate the behavior of ... | 1 |

57,032 | 15,598,113,301 | IssuesEvent | 2021-03-18 17:42:10 | department-of-veterans-affairs/va.gov-cms | https://api.github.com/repos/department-of-veterans-affairs/va.gov-cms | opened | Intermittent test failure: " Serialization failure: 1213 Deadlock found when trying to get lock;" | Defect Needs refining | **Describe the defect**

We are seeing intermittent failures of our test suite on DEV/STAGING with:

> Serialization failure: 1213 Deadlock found when trying to get lock; try restarting

Here is the latest example:

```

@BeforeFeature @mock_va_gov_urls # ... | 1.0 | Intermittent test failure: " Serialization failure: 1213 Deadlock found when trying to get lock;" - **Describe the defect**

We are seeing intermittent failures of our test suite on DEV/STAGING with:

> Serialization failure: 1213 Deadlock found when trying to get lock; try restarting

Here is the latest example: ... | defect | intermittent test failure serialization failure deadlock found when trying to get lock describe the defect we are seeing intermittent failures of our test suite on dev staging with serialization failure deadlock found when trying to get lock try restarting here is the latest example ... | 1 |

19,199 | 3,756,361,354 | IssuesEvent | 2016-03-13 09:04:53 | QubesOS/qubes-issues | https://api.github.com/repos/QubesOS/qubes-issues | reopened | Split-GPG is incompatible with Tor Birdy | C: other help wanted r3.0-dom0-testing r3.0-fc20-testing r3.0-fc21-testing r3.0-fc22-testing r3.0-fc23-testing r3.0-jessie-testing r3.0-wheezy-testing r3.1-dom0-stable r3.1-fc21-stable r3.1-fc22-stable r3.1-fc23-stable r3.1-jessie-testing r3.1-stretch-testing r3.1-wheezy-testing | Attempting to use Split-GPG and Tor Birdy at the same time results in no GPG notifications in Thunderbird at all when viewing signed or encrypted messages. | 10.0 | Split-GPG is incompatible with Tor Birdy - Attempting to use Split-GPG and Tor Birdy at the same time results in no GPG notifications in Thunderbird at all when viewing signed or encrypted messages. | non_defect | split gpg is incompatible with tor birdy attempting to use split gpg and tor birdy at the same time results in no gpg notifications in thunderbird at all when viewing signed or encrypted messages | 0 |

69,776 | 22,666,853,032 | IssuesEvent | 2022-07-03 02:30:11 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | opened | Returning null from transactionCoroutine throws NoSuchElementException | T: Defect | ### Expected behavior

Should be able to return null from the transactional block, which propagates back out as the return value of transactionCoroutine.

### Actual behavior

Throws:

```

java.util.NoSuchElementException: No value received via onNext for awaitFirst

at ???(Coroutine boundary.?(?)

at Databa... | 1.0 | Returning null from transactionCoroutine throws NoSuchElementException - ### Expected behavior

Should be able to return null from the transactional block, which propagates back out as the return value of transactionCoroutine.

### Actual behavior

Throws:

```

java.util.NoSuchElementException: No value receiv... | defect | returning null from transactioncoroutine throws nosuchelementexception expected behavior should be able to return null from the transactional block which propagates back out as the return value of transactioncoroutine actual behavior throws java util nosuchelementexception no value receiv... | 1 |

14,225 | 2,794,131,123 | IssuesEvent | 2015-05-11 15:09:02 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | Sparse matrix slice segmentation fault | defect scipy.sparse | I have a large csr_matrix with the following parameters:

In [5]: a.shape

Out[5]: (360417, 360417)

In [6]: a.nnz

Out[6]: 15464020

I generated it the following way:

a = sp.sparse.csr_matrix((values, (i_indices, j_indices)), shape=shape, dtype=np.float32)

where values is a list holding the values, i_indic... | 1.0 | Sparse matrix slice segmentation fault - I have a large csr_matrix with the following parameters:

In [5]: a.shape

Out[5]: (360417, 360417)

In [6]: a.nnz

Out[6]: 15464020

I generated it the following way:

a = sp.sparse.csr_matrix((values, (i_indices, j_indices)), shape=shape, dtype=np.float32)

where val... | defect | sparse matrix slice segmentation fault i have a large csr matrix with the following parameters in a shape out in a nnz out i generated it the following way a sp sparse csr matrix values i indices j indices shape shape dtype np where values is a list holding the value... | 1 |

73,162 | 24,480,166,330 | IssuesEvent | 2022-10-08 18:19:09 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | `test_powm1` failing in CI on Windows | defect scipy.special | The weekly wheel builds currently show a single failure on Windows, and that failure is consistent for all supported Python versions. Latest build log of weekly scheduled builds: https://github.com/scipy/scipy/actions/runs/3209882330

```

_______________ test_powm1[-1.25-751.0--6.017550852453444e+72] _____________... | 1.0 | `test_powm1` failing in CI on Windows - The weekly wheel builds currently show a single failure on Windows, and that failure is consistent for all supported Python versions. Latest build log of weekly scheduled builds: https://github.com/scipy/scipy/actions/runs/3209882330

```

_______________ test_powm1[-1.25-751... | defect | test failing in ci on windows the weekly wheel builds currently show a single failure on windows and that failure is consistent for all supported python versions latest build log of weekly scheduled builds test users runneradmin appdata local temp cibw run ... | 1 |

40,934 | 10,232,199,951 | IssuesEvent | 2019-08-18 15:38:54 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | Bug in scipy.special.hyp1f1 | defect scipy.special | I've been checking values in Scipy's implementation of hyp1f1 against other implementations and I noticed some discrepancies where Scipy's solution seems to be off.

scipy.special.hyp1f1(a,b,c) vs M(a,b,c) from (http://keisan.casio.com/exec/system/1349143651).

In particular, take small values of a, b, and c, say a = 1... | 1.0 | Bug in scipy.special.hyp1f1 - I've been checking values in Scipy's implementation of hyp1f1 against other implementations and I noticed some discrepancies where Scipy's solution seems to be off.

scipy.special.hyp1f1(a,b,c) vs M(a,b,c) from (http://keisan.casio.com/exec/system/1349143651).

In particular, take small va... | defect | bug in scipy special i ve been checking values in scipy s implementation of against other implementations and i noticed some discrepancies where scipy s solution seems to be off scipy special a b c vs m a b c from in particular take small values of a b and c say a b and c both equa... | 1 |

108,677 | 13,646,013,664 | IssuesEvent | 2020-09-25 22:05:04 | near/near-explorer | https://api.github.com/repos/near/near-explorer | closed | Show avg block time | Item Design New Feature Priority 2 | Block time is very important property for blockchain that displays how fast tx will be processed and finalized. Would be great to show what has been average block time over last 10 minutes for example. | 1.0 | Show avg block time - Block time is very important property for blockchain that displays how fast tx will be processed and finalized. Would be great to show what has been average block time over last 10 minutes for example. | non_defect | show avg block time block time is very important property for blockchain that displays how fast tx will be processed and finalized would be great to show what has been average block time over last minutes for example | 0 |

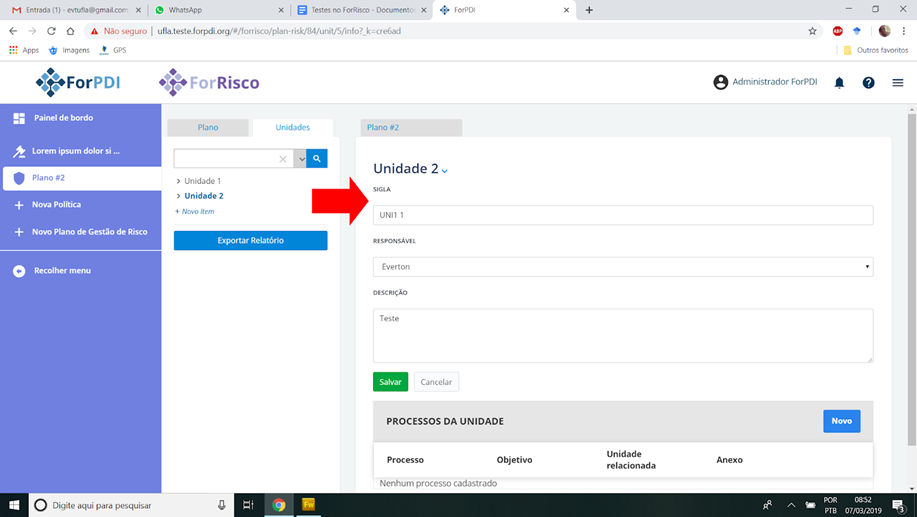

30,798 | 6,288,107,882 | IssuesEvent | 2017-07-19 16:14:38 | primefaces/primefaces | https://api.github.com/repos/primefaces/primefaces | closed | SelectOneMenu not working after client side disable/enable | defect | ## 1) Environment

PrimeFaces version: 6.1.2

## 2) Expected behavior

After disabling and enabling a SelectOneMenu on client side via JavaScript it should work as before.

## 3) Actual behavior

When disabling a SelectOneMenu via JavaScript and enabling it again, mouse events (mouse over, click) on the menu's it... | 1.0 | SelectOneMenu not working after client side disable/enable - ## 1) Environment

PrimeFaces version: 6.1.2

## 2) Expected behavior

After disabling and enabling a SelectOneMenu on client side via JavaScript it should work as before.

## 3) Actual behavior

When disabling a SelectOneMenu via JavaScript and enablin... | defect | selectonemenu not working after client side disable enable environment primefaces version expected behavior after disabling and enabling a selectonemenu on client side via javascript it should work as before actual behavior when disabling a selectonemenu via javascript and enablin... | 1 |

190,835 | 14,580,108,357 | IssuesEvent | 2020-12-18 08:36:31 | rollbar/terraform-provider-rollbar | https://api.github.com/repos/rollbar/terraform-provider-rollbar | closed | Explore `go-vcr` for testing | question testing | Potentially [`go-vcr`](https://github.com/dnaeon/go-vcr) could be used to make a set of blazing fast pseudo-acceptance tests. Record the acceptance test suite run against the real API. Then the acceptance tests can be run against the playback. | 1.0 | Explore `go-vcr` for testing - Potentially [`go-vcr`](https://github.com/dnaeon/go-vcr) could be used to make a set of blazing fast pseudo-acceptance tests. Record the acceptance test suite run against the real API. Then the acceptance tests can be run against the playback. | non_defect | explore go vcr for testing potentially could be used to make a set of blazing fast pseudo acceptance tests record the acceptance test suite run against the real api then the acceptance tests can be run against the playback | 0 |

2,135 | 2,603,976,915 | IssuesEvent | 2015-02-24 19:01:44 | chrsmith/nishazi6 | https://api.github.com/repos/chrsmith/nishazi6 | opened | 沈阳人乳头状病毒感染 | auto-migrated Priority-Medium Type-Defect | ```

沈阳人乳头状病毒感染〓沈陽軍區政治部醫院性病〓TEL:024-3

1023308〓成立于1946年,68年專注于性傳播疾病的研究和治療。�

��于沈陽市沈河區二緯路32號。是一所與新中國同建立共輝煌�

��歷史悠久、設備精良、技術權威、專家云集,是預防、保健

、醫療、科研康復為一體的綜合性醫院。是國家首批公立甲��

�部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、�

��南大學等知名高等院校的教學醫院。曾被中國人民解放軍空

軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體��

�等功。

```

-----

Original issue reported on code.google.com by `q964105... | 1.0 | 沈阳人乳头状病毒感染 - ```

沈阳人乳头状病毒感染〓沈陽軍區政治部醫院性病〓TEL:024-3

1023308〓成立于1946年,68年專注于性傳播疾病的研究和治療。�

��于沈陽市沈河區二緯路32號。是一所與新中國同建立共輝煌�

��歷史悠久、設備精良、技術權威、專家云集,是預防、保健

、醫療、科研康復為一體的綜合性醫院。是國家首批公立甲��

�部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、�

��南大學等知名高等院校的教學醫院。曾被中國人民解放軍空

軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體��

�等功。

```

-----

Original issue reported on code.google.co... | defect | 沈阳人乳头状病毒感染 沈阳人乳头状病毒感染〓沈陽軍區政治部醫院性病〓tel: 〓 , 。� �� 。是一所與新中國同建立共輝煌� ��歷史悠久、設備精良、技術權威、專家云集,是預防、保健 、醫療、科研康復為一體的綜合性醫院。是國家首批公立甲�� �部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、� ��南大學等知名高等院校的教學醫院。曾被中國人民解放軍空 軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體�� �等功。 original issue reported on code google com by gmail com on jun at | 1 |

120,618 | 17,644,243,825 | IssuesEvent | 2021-08-20 02:02:25 | fbennets/HCLC-GDPR-Bot | https://api.github.com/repos/fbennets/HCLC-GDPR-Bot | opened | CVE-2021-29558 (High) detected in tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl | security vulnerability | ## CVE-2021-29558 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learni... | True | CVE-2021-29558 (High) detected in tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl - ## CVE-2021-29558 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-2.1.0-cp27-cp27mu-... | non_defect | cve high detected in tensorflow whl cve high severity vulnerability vulnerable library tensorflow whl tensorflow is an open source machine learning framework for everyone library home page a href path to dependency file hclc gdpr bot requirements tx... | 0 |

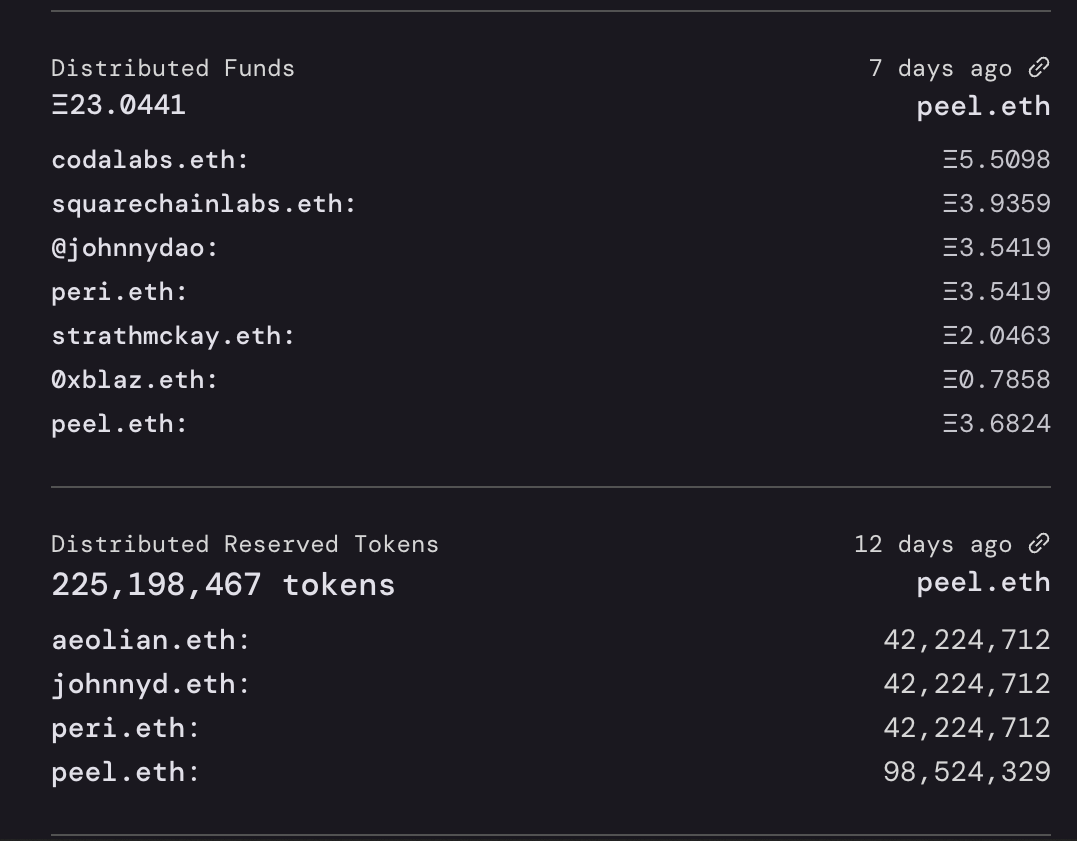

756,350 | 26,467,679,010 | IssuesEvent | 2023-01-17 02:33:24 | jbx-protocol/juice-interface | https://api.github.com/repos/jbx-protocol/juice-interface | closed | Font size on v1 activity feed for primary number is incosistent | V1 priority:3 ux:general | ## Summary

Distributed Funds number is smaller than Distributed Reserved Tokens number.

## Relevant logs and/or screenshots

## Discord link

https://discord.com/channels/775859454780244028/106437539... | 1.0 | Font size on v1 activity feed for primary number is incosistent - ## Summary

Distributed Funds number is smaller than Distributed Reserved Tokens number.

## Relevant logs and/or screenshots

## Disco... | non_defect | font size on activity feed for primary number is incosistent summary distributed funds number is smaller than distributed reserved tokens number relevant logs and or screenshots discord link | 0 |

47,348 | 13,056,133,993 | IssuesEvent | 2020-07-30 03:45:41 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | GLShovel does not correctly show pulses/launches/i3particle if the viewing timewindow is not in range (Trac #390) | Migrated from Trac defect glshovel | GLShovel has problems with long hitseries on a any DOM, when the viewing time excludes the first hit.

If for example a hitseries has two hits, 1 at 10 microseconds and one

at 100 microseconds, and we put the t_min to 50 and t_max to 200,

no hit will be rendered for that DOM.

The same is true for domlaunches and I3Pa... | 1.0 | GLShovel does not correctly show pulses/launches/i3particle if the viewing timewindow is not in range (Trac #390) - GLShovel has problems with long hitseries on a any DOM, when the viewing time excludes the first hit.

If for example a hitseries has two hits, 1 at 10 microseconds and one

at 100 microseconds, and we put... | defect | glshovel does not correctly show pulses launches if the viewing timewindow is not in range trac glshovel has problems with long hitseries on a any dom when the viewing time excludes the first hit if for example a hitseries has two hits at microseconds and one at microseconds and we put the t min to ... | 1 |

13,127 | 8,804,900,445 | IssuesEvent | 2018-12-26 16:17:51 | spring-cloud/spring-cloud-dataflow | https://api.github.com/repos/spring-cloud/spring-cloud-dataflow | closed | Provide example application that shows LDAP authentication in action | security-2.x-upgrade | Provide example application that shows LDAP authentication in action using the UAA.

child of spring-cloud/spring-cloud-dataflow#2574 | True | Provide example application that shows LDAP authentication in action - Provide example application that shows LDAP authentication in action using the UAA.

child of spring-cloud/spring-cloud-dataflow#2574 | non_defect | provide example application that shows ldap authentication in action provide example application that shows ldap authentication in action using the uaa child of spring cloud spring cloud dataflow | 0 |

77,195 | 26,833,994,188 | IssuesEvent | 2023-02-02 17:59:51 | idaholab/moose | https://api.github.com/repos/idaholab/moose | closed | Nearest node transfer either errors or gives bad results for FIRST monomials | C: Framework T: defect P: normal | ## Bug Description

The `MultiAppNearestNodeTransfer` does not behave as expected when transferring data to/from FIRST MONOMIAL variables. The behavior is slightly different for transfers to/from FIRST MONOMIAL - at the end of this issue, I've attached input files you can use to reproduce this behavior. I've considered... | 1.0 | Nearest node transfer either errors or gives bad results for FIRST monomials - ## Bug Description

The `MultiAppNearestNodeTransfer` does not behave as expected when transferring data to/from FIRST MONOMIAL variables. The behavior is slightly different for transfers to/from FIRST MONOMIAL - at the end of this issue, I'... | defect | nearest node transfer either errors or gives bad results for first monomials bug description the multiappnearestnodetransfer does not behave as expected when transferring data to from first monomial variables the behavior is slightly different for transfers to from first monomial at the end of this issue i ... | 1 |

248,812 | 21,075,091,230 | IssuesEvent | 2022-04-02 02:59:55 | sourcegraph/sec-pr-audit-trail | https://api.github.com/repos/sourcegraph/sec-pr-audit-trail | opened | sourcegraph/sourcegraph#33324: "insights: add check for issue to be a code insights issue before adding to the project" | exception/test-plan sourcegraph/sourcegraph | https://github.com/sourcegraph/sourcegraph/pull/33324 "insights: add check for issue to be a code insights issue before adding to the project" **has no test plan**.

Learn more about test plans in our [testing guidelines](https://docs.sourcegraph.com/dev/background-information/testing_principles#test-plans).

@felixfbe... | 1.0 | sourcegraph/sourcegraph#33324: "insights: add check for issue to be a code insights issue before adding to the project" - https://github.com/sourcegraph/sourcegraph/pull/33324 "insights: add check for issue to be a code insights issue before adding to the project" **has no test plan**.

Learn more about test plans in o... | non_defect | sourcegraph sourcegraph insights add check for issue to be a code insights issue before adding to the project insights add check for issue to be a code insights issue before adding to the project has no test plan learn more about test plans in our felixfbecker please comment in this issue with ... | 0 |

13,874 | 2,789,431,111 | IssuesEvent | 2015-05-08 19:21:27 | orwant/google-visualization-issues | https://api.github.com/repos/orwant/google-visualization-issues | closed | Interactive time series chart displays inaccurately in Google Sites | Priority-Medium Type-Defect | Original [issue 40](https://code.google.com/p/google-visualization-api-issues/issues/detail?id=40) created by orwant on 2009-09-01T13:51:57.000Z:

<b>What steps will reproduce the problem? Please provide a link to a</b>

<b>demonstration page if at all possible, or attach code.</b>

I have created a simple spreadsheet ... | 1.0 | Interactive time series chart displays inaccurately in Google Sites - Original [issue 40](https://code.google.com/p/google-visualization-api-issues/issues/detail?id=40) created by orwant on 2009-09-01T13:51:57.000Z:

<b>What steps will reproduce the problem? Please provide a link to a</b>

<b>demonstration page if at al... | defect | interactive time series chart displays inaccurately in google sites original created by orwant on what steps will reproduce the problem please provide a link to a demonstration page if at all possible or attach code i have created a simple spreadsheet to record sales v forecast data and u... | 1 |

74,840 | 25,351,386,924 | IssuesEvent | 2022-11-19 20:25:41 | openzfs/zfs | https://api.github.com/repos/openzfs/zfs | opened | signature from "ArchZFS Bot <buildbot@archzfs.com>" is unknown trust | Type: Defect | <!-- Please fill out the following template, which will help other contributors address your issue. -->

<!--

Thank you for reporting an issue.

*IMPORTANT* - Please check our issue tracker before opening a new issue.

Additional valuable information can be found in the OpenZFS documentation

and mailing list arch... | 1.0 | signature from "ArchZFS Bot <buildbot@archzfs.com>" is unknown trust - <!-- Please fill out the following template, which will help other contributors address your issue. -->

<!--

Thank you for reporting an issue.

*IMPORTANT* - Please check our issue tracker before opening a new issue.

Additional valuable infor... | defect | signature from archzfs bot is unknown trust thank you for reporting an issue important please check our issue tracker before opening a new issue additional valuable information can be found in the openzfs documentation and mailing list archives please fill in as much of the template as p... | 1 |

2,425 | 25,286,354,051 | IssuesEvent | 2022-11-16 19:39:02 | NVIDIA/spark-rapids | https://api.github.com/repos/NVIDIA/spark-rapids | closed | [BUG] 30TB query95 fails on the join with illegal memory access with 200 partitions | bug ? - Needs Triage reliability | As a follow on to https://github.com/NVIDIA/spark-rapids/issues/6983, we ran the q95 query at 30TB with the fix in this PR (https://github.com/rapidsai/cudf/pull/12079) and we ended up failing during a couple of the joins later, an inner join and a left semi.

In both of those cases we are hitting instances of the ov... | True | [BUG] 30TB query95 fails on the join with illegal memory access with 200 partitions - As a follow on to https://github.com/NVIDIA/spark-rapids/issues/6983, we ran the q95 query at 30TB with the fix in this PR (https://github.com/rapidsai/cudf/pull/12079) and we ended up failing during a couple of the joins later, an in... | non_defect | fails on the join with illegal memory access with partitions as a follow on to we ran the query at with the fix in this pr and we ended up failing during a couple of the joins later an inner join and a left semi in both of those cases we are hitting instances of the overflowing strided loop issu... | 0 |

91,217 | 18,388,747,288 | IssuesEvent | 2021-10-12 00:43:05 | JakePember/cymetrics | https://api.github.com/repos/JakePember/cymetrics | closed | Test Coverage: utils/find.js | code coverage | **Is your feature request related to a problem? Please describe.**

Cymetrics is now published and open for others to use. It's important that the foundation code is stable and tested thoroughly to ensure consistent results.

**Describe the solution you'd like**

For utils/find.js

- 100% code coverage is expected... | 1.0 | Test Coverage: utils/find.js - **Is your feature request related to a problem? Please describe.**

Cymetrics is now published and open for others to use. It's important that the foundation code is stable and tested thoroughly to ensure consistent results.

**Describe the solution you'd like**

For utils/find.js

-... | non_defect | test coverage utils find js is your feature request related to a problem please describe cymetrics is now published and open for others to use it s important that the foundation code is stable and tested thoroughly to ensure consistent results describe the solution you d like for utils find js ... | 0 |

27,361 | 21,656,137,290 | IssuesEvent | 2022-05-06 14:17:07 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Microsoft.Windows.Compatibility should reference the latest System.Data.SqlClient | area-Infrastructure-libraries | System.Data.SqlClient got a servicing release last month to bump its latest version to `4.8.3`. However, Microsoft.Windows.Compatibility still references the old version `4.8.2`.

We should update Microsoft.Windows.Compatibility to the latest version. That way customers who use the `6.0.0` compat pack will get the la... | 1.0 | Microsoft.Windows.Compatibility should reference the latest System.Data.SqlClient - System.Data.SqlClient got a servicing release last month to bump its latest version to `4.8.3`. However, Microsoft.Windows.Compatibility still references the old version `4.8.2`.

We should update Microsoft.Windows.Compatibility to th... | non_defect | microsoft windows compatibility should reference the latest system data sqlclient system data sqlclient got a servicing release last month to bump its latest version to however microsoft windows compatibility still references the old version we should update microsoft windows compatibility to th... | 0 |

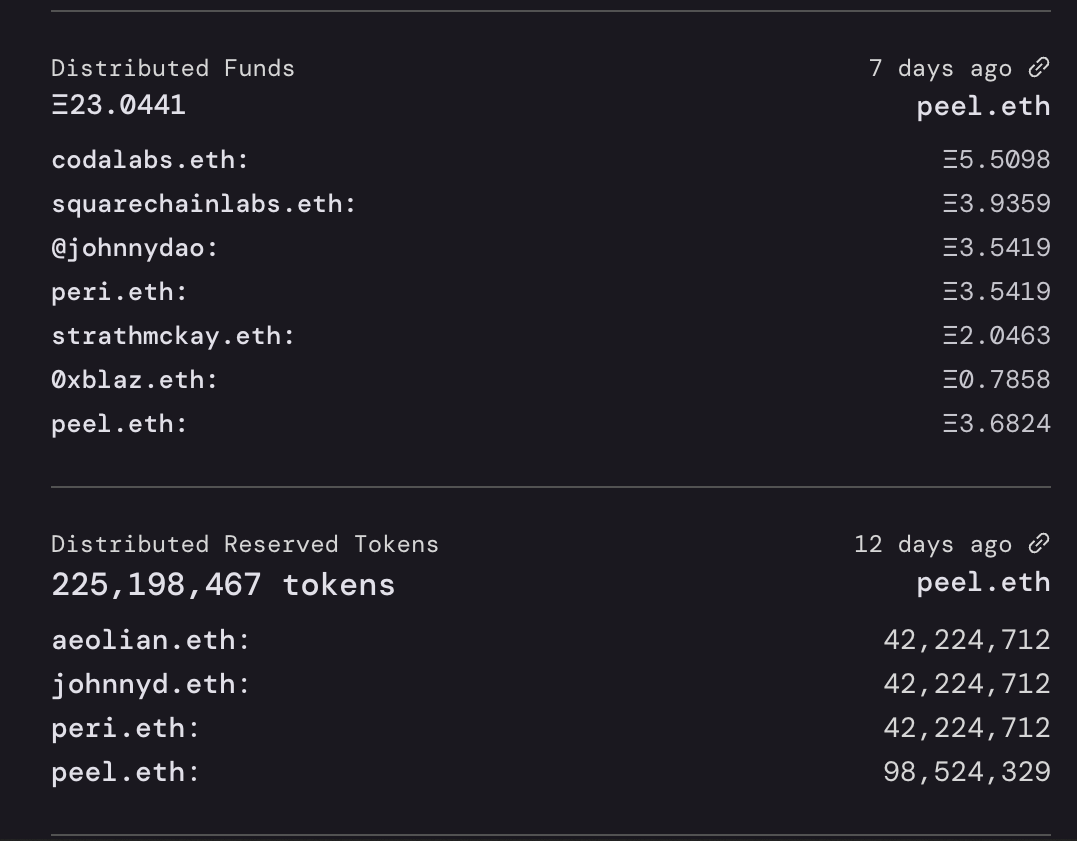

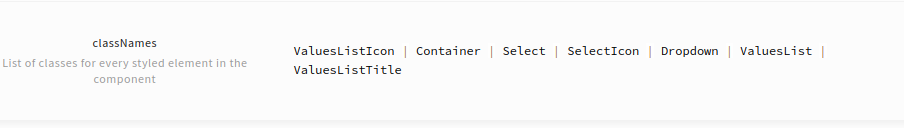

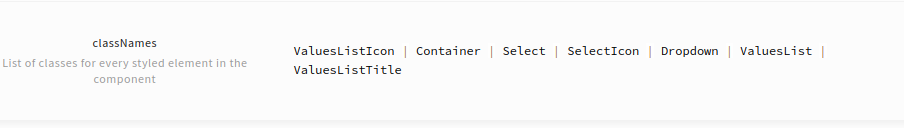

113,989 | 11,834,616,269 | IssuesEvent | 2020-03-23 09:13:46 | uStudioCompany/ustudio-ui | https://api.github.com/repos/uStudioCompany/ustudio-ui | closed | [BUG] (Select) Incorrect classNames list | bug documentation | **Describe the bug**

Incorrect classNames list in Single Select

**Screenshots**

| 1.0 | [BUG] (Select) Incorrect classNames list - **Describe the bug**

Incorrect classNames list in Single Select

**Screenshots**

);

vs.

AuthUtil::getDetailedUserrealmAccess(array(3,20,24));

Also, Need to check realms are correct in ... | 1.0 | Permissions Realms sometimes used incorrectly. - Need to check through everywhere realms are used to ensure they are doing it correctly. Sometimes check is done moving up the tree, sometimes downwards:

eg,

AuthUtil::getDetailedUserrealmAccess(array(24,20,3));

vs.

AuthUtil::getDetailedUserrealmAccess(array(3,20,24... | defect | permissions realms sometimes used incorrectly need to check through everywhere realms are used to ensure they are doing it correctly sometimes check is done moving up the tree sometimes downwards eg authutil getdetaileduserrealmaccess array vs authutil getdetaileduserrealmaccess array ... | 1 |

109,339 | 9,378,742,291 | IssuesEvent | 2019-04-04 13:33:51 | Flowminder/FlowKit | https://api.github.com/repos/Flowminder/FlowKit | closed | Diff tools used by ApprovalTests should be more easily configurable | docs tests | Currently the `opendiff` tool (which is Mac-only) is hard-coded in a few `conftest.py` files, so this is awkward to change. It should be more easily configurable, and the docs should mention how to configure this and how to update the `*.approved.txt` files after a code change that alters the reference output. | 1.0 | Diff tools used by ApprovalTests should be more easily configurable - Currently the `opendiff` tool (which is Mac-only) is hard-coded in a few `conftest.py` files, so this is awkward to change. It should be more easily configurable, and the docs should mention how to configure this and how to update the `*.approved.txt... | non_defect | diff tools used by approvaltests should be more easily configurable currently the opendiff tool which is mac only is hard coded in a few conftest py files so this is awkward to change it should be more easily configurable and the docs should mention how to configure this and how to update the approved txt... | 0 |

22,099 | 6,229,301,076 | IssuesEvent | 2017-07-11 03:12:44 | XceedBoucherS/TestImport5 | https://api.github.com/repos/XceedBoucherS/TestImport5 | closed | Exception using Memory Clear Button | CodePlex | <b>gusbigardi[CodePlex]</b> <br />When you try to calculate any expression that will result an error (like 8 divided by 0, that result ERROR in display text) and you hit the MC button or other button that try to parse the display value to Decimal (checked this looking in the source code),

the application will throw a... | 1.0 | Exception using Memory Clear Button - <b>gusbigardi[CodePlex]</b> <br />When you try to calculate any expression that will result an error (like 8 divided by 0, that result ERROR in display text) and you hit the MC button or other button that try to parse the display value to Decimal (checked this looking in the source... | non_defect | exception using memory clear button gusbigardi when you try to calculate any expression that will result an error like divided by that result error in display text and you hit the mc button or other button that try to parse the display value to decimal checked this looking in the source code the appli... | 0 |

227,266 | 17,379,193,732 | IssuesEvent | 2021-07-31 10:28:32 | HoonHaChoi/Coin | https://api.github.com/repos/HoonHaChoi/Coin | closed | 일지 화면 UI 데모 디자인 | documentation | <img width="336" alt="스크린샷 2021-07-31 오전 5 57 57" src="https://user-images.githubusercontent.com/33626693/127710854-dcfea635-e972-4a94-9324-183f5c454fa9.png">

중점

- 한 눈에 정보를 다 나타내도록 고려함

- 왼쪽이 임팩트가 없었기에 상승,하락을 표시할 띠 추가 (아이콘은 어울리지 않음)

추가할 점

- 사용자 선택에 따라 일자 오름차순 내림차순 정렬 변경 가능하도록 | 1.0 | 일지 화면 UI 데모 디자인 - <img width="336" alt="스크린샷 2021-07-31 오전 5 57 57" src="https://user-images.githubusercontent.com/33626693/127710854-dcfea635-e972-4a94-9324-183f5c454fa9.png">

중점

- 한 눈에 정보를 다 나타내도록 고려함

- 왼쪽이 임팩트가 없었기에 상승,하락을 표시할 띠 추가 (아이콘은 어울리지 않음)

추가할 점

- 사용자 선택에 따라 일자 오름차순 내림차순 정렬 변경 가능하도록 | non_defect | 일지 화면 ui 데모 디자인 img width alt 스크린샷 오전 src 중점 한 눈에 정보를 다 나타내도록 고려함 왼쪽이 임팩트가 없었기에 상승 하락을 표시할 띠 추가 아이콘은 어울리지 않음 추가할 점 사용자 선택에 따라 일자 오름차순 내림차순 정렬 변경 가능하도록 | 0 |

45,637 | 12,965,183,497 | IssuesEvent | 2020-07-20 21:50:57 | googlefonts/noto-fonts | https://api.github.com/repos/googlefonts/noto-fonts | closed | NotoSansDisplay-ItalicMM.glyphs has incompatible masters | Type-Defect |

These are the warnings from fontmake (they should be errors, since we cannot make a reasonable font):

WARNING:fontTools.varLib:glyph uni1D05 has incompatible masters; skipping

WARNING:fontTools.varLib:glyph uni1D06 has incompatible masters; skipping

WARNING:fontTools.varLib:glyph uni1D1A has incompatible masters; ... | 1.0 | NotoSansDisplay-ItalicMM.glyphs has incompatible masters -

These are the warnings from fontmake (they should be errors, since we cannot make a reasonable font):

WARNING:fontTools.varLib:glyph uni1D05 has incompatible masters; skipping

WARNING:fontTools.varLib:glyph uni1D06 has incompatible masters; skipping

WARNIN... | defect | notosansdisplay italicmm glyphs has incompatible masters these are the warnings from fontmake they should be errors since we cannot make a reasonable font warning fonttools varlib glyph has incompatible masters skipping warning fonttools varlib glyph has incompatible masters skipping warning fonttools ... | 1 |

56,449 | 23,784,092,509 | IssuesEvent | 2022-09-02 08:27:28 | PreMiD/Presences | https://api.github.com/repos/PreMiD/Presences | opened | YNOproject | service request | ### Website name

YNOproject

### Website URL

https://ynoproject.net/yume/

### Website logo

https://i.imgur.com/LlFNjpI.png

### Prerequisites

- [ ] It is a paid service

- [ ] It displays NSFW content

- [ ] It is region restricted

### Description

Display of what game the user is playing, and how much time has ela... | 1.0 | YNOproject - ### Website name

YNOproject

### Website URL

https://ynoproject.net/yume/

### Website logo

https://i.imgur.com/LlFNjpI.png

### Prerequisites

- [ ] It is a paid service

- [ ] It displays NSFW content

- [ ] It is region restricted

### Description

Display of what game the user is playing, and how much... | non_defect | ynoproject website name ynoproject website url website logo prerequisites it is a paid service it displays nsfw content it is region restricted description display of what game the user is playing and how much time has elapsed during that session | 0 |

36,277 | 7,875,985,102 | IssuesEvent | 2018-06-25 22:29:53 | bridgedotnet/Bridge | https://api.github.com/repos/bridgedotnet/Bridge | closed | External method decorated with [ExpandParams] attribute | defect | Incorrect JavaScript produced when an external method decorated with `[ExpandParams]` attribute has a `params` argument and at least one plain argument. The logic emitting `.apply()` call seems being executed twice.

### Steps To Reproduce

https://deck.net/a69ee70c1dd126f220f0f65f91b70ea7

```csharp

public clas... | 1.0 | External method decorated with [ExpandParams] attribute - Incorrect JavaScript produced when an external method decorated with `[ExpandParams]` attribute has a `params` argument and at least one plain argument. The logic emitting `.apply()` call seems being executed twice.

### Steps To Reproduce

https://deck.net... | defect | external method decorated with attribute incorrect javascript produced when an external method decorated with attribute has a params argument and at least one plain argument the logic emitting apply call seems being executed twice steps to reproduce csharp public class program ... | 1 |

103,635 | 8,924,461,290 | IssuesEvent | 2019-01-21 18:47:02 | operator-framework/operator-sdk | https://api.github.com/repos/operator-framework/operator-sdk | closed | Ansible Molecule Test Fails CI on Version Bump | ansible-operator bug testing | The Ansible Molecule E2E tests currently depend on the image for the base ansible operator existing with the current image tag in quay. This works fine for master builds, as that uses the last version release, but when a new release is made, the tests run before the deploy stage, so the Ansible Molecule test fails. The... | 1.0 | Ansible Molecule Test Fails CI on Version Bump - The Ansible Molecule E2E tests currently depend on the image for the base ansible operator existing with the current image tag in quay. This works fine for master builds, as that uses the last version release, but when a new release is made, the tests run before the depl... | non_defect | ansible molecule test fails ci on version bump the ansible molecule tests currently depend on the image for the base ansible operator existing with the current image tag in quay this works fine for master builds as that uses the last version release but when a new release is made the tests run before the deploy... | 0 |

75,764 | 26,036,161,237 | IssuesEvent | 2022-12-22 05:19:17 | SeleniumHQ/selenium | https://api.github.com/repos/SeleniumHQ/selenium | closed | [🐛 Bug]: Type ahead help suggesting incompatible methods for .window() | C-nodejs I-defect | ### What happened?

The type ahead help (seen in Visual Studios Code 1.73.0) is misleading when trying to get or set a screen size with .window(). The .getSize and .setSize are suggested even though they are only available for WebElements. When a user uses .window(), it is my understanding that the type ahead should s... | 1.0 | [🐛 Bug]: Type ahead help suggesting incompatible methods for .window() - ### What happened?

The type ahead help (seen in Visual Studios Code 1.73.0) is misleading when trying to get or set a screen size with .window(). The .getSize and .setSize are suggested even though they are only available for WebElements. When ... | defect | type ahead help suggesting incompatible methods for window what happened the type ahead help seen in visual studios code is misleading when trying to get or set a screen size with window the getsize and setsize are suggested even though they are only available for webelements when a user u... | 1 |

68,854 | 21,928,539,007 | IssuesEvent | 2022-05-23 07:39:49 | vector-im/element-android | https://api.github.com/repos/vector-im/element-android | closed | Sending multiple invites to a room only works partially | T-Defect | ### Steps to reproduce

1. Create a room or get into a room where you can invite people.

2. Invite several people (at least 3 or 4).

### Outcome

#### What did you expect?

Everyone should be invited to the room.

#### What happened instead?

Only the first 1 or 2 people actually get invited.

### Your phone mo... | 1.0 | Sending multiple invites to a room only works partially - ### Steps to reproduce

1. Create a room or get into a room where you can invite people.

2. Invite several people (at least 3 or 4).

### Outcome

#### What did you expect?

Everyone should be invited to the room.

#### What happened instead?

Only the fi... | defect | sending multiple invites to a room only works partially steps to reproduce create a room or get into a room where you can invite people invite several people at least or outcome what did you expect everyone should be invited to the room what happened instead only the fi... | 1 |

80,413 | 15,586,284,095 | IssuesEvent | 2021-03-18 01:35:25 | attesch/myretail | https://api.github.com/repos/attesch/myretail | opened | CVE-2020-36181 (High) detected in jackson-databind-2.9.4.jar | security vulnerability | ## CVE-2020-36181 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.4.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2020-36181 (High) detected in jackson-databind-2.9.4.jar - ## CVE-2020-36181 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.4.jar</b></p></summary>

<p>General... | non_defect | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file myretail build gradle path t... | 0 |

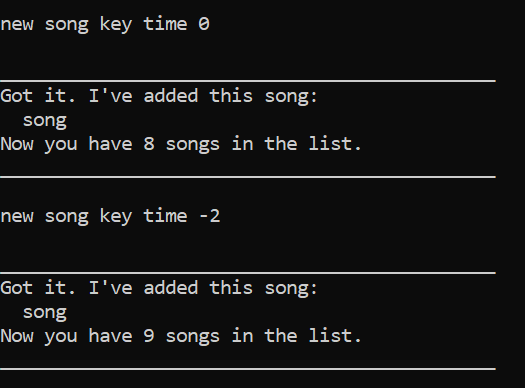

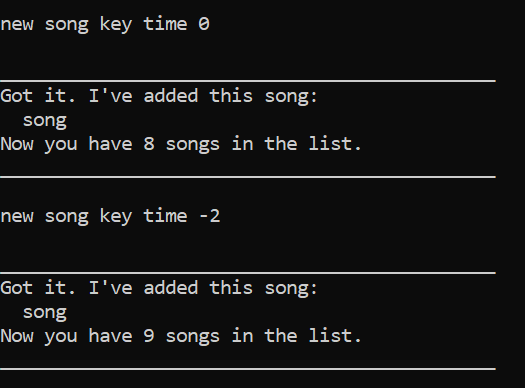

367,069 | 10,833,625,377 | IssuesEvent | 2019-11-11 13:22:07 | AY1920S1-CS2113T-F09-4/main | https://api.github.com/repos/AY1920S1-CS2113T-F09-4/main | closed | Add command tempo bug | priority.High severity.Medium status.Ongoing type.Bug | the tempo should not accept zero or negative integers as a valid input.

<hr><sub>[original: OungKennedy/ped#5]<br/>

</sub> | 1.0 | Add command tempo bug - the tempo should not accept zero or negative integers as a valid input.

<hr><sub>[original: OungKennedy/ped#5]<br/>

</sub> | non_defect | add command tempo bug the tempo should not accept zero or negative integers as a valid input | 0 |

223,626 | 7,459,248,574 | IssuesEvent | 2018-03-30 14:32:05 | metasfresh/metasfresh-webui-frontend | https://api.github.com/repos/metasfresh/metasfresh-webui-frontend | closed | Console error when grid view updates | priority:high type:bug | ### Is this a bug or feature request?

bug

### What is the current behavior?

Console error when grid view updates TypeError: Cannot read property 'ID' of undefined

#### Which are the steps to reproduce?

1. open settings:

https://w101.metasfresh.com:8443/window/53100/2188223

2. new tab: open user window in grid vi... | 1.0 | Console error when grid view updates - ### Is this a bug or feature request?

bug

### What is the current behavior?

Console error when grid view updates TypeError: Cannot read property 'ID' of undefined

#### Which are the steps to reproduce?

1. open settings:

https://w101.metasfresh.com:8443/window/53100/2188223

... | non_defect | console error when grid view updates is this a bug or feature request bug what is the current behavior console error when grid view updates typeerror cannot read property id of undefined which are the steps to reproduce open settings new tab open user window in grid view this is ... | 0 |

136,918 | 18,751,508,488 | IssuesEvent | 2021-11-05 03:00:22 | Dima2022/Resiliency-Studio | https://api.github.com/repos/Dima2022/Resiliency-Studio | closed | CVE-2020-11112 (High) detected in jackson-databind-2.8.6.jar - autoclosed | security vulnerability | ## CVE-2020-11112 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.6.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2020-11112 (High) detected in jackson-databind-2.8.6.jar - autoclosed - ## CVE-2020-11112 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.6.jar</b></p></summary... | non_defect | cve high detected in jackson databind jar autoclosed cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file resiliency studio ... | 0 |

48,897 | 13,184,769,692 | IssuesEvent | 2020-08-12 20:03:39 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | I3Db services should always check the status of mysql connection (Trac #402) | I3Db Incomplete Migration Migrated from Trac defect | <details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/402

, reported by blaufuss and owned by kohnen_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2012-07-05T10:56:21",

"description": "I3Db services that use connections to a mysql server shoudl check that\nremore server is... | 1.0 | I3Db services should always check the status of mysql connection (Trac #402) - <details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/402

, reported by blaufuss and owned by kohnen_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2012-07-05T10:56:21",

"description": "I3Db serv... | defect | services should always check the status of mysql connection trac migrated from reported by blaufuss and owned by kohnen json status closed changetime description services that use connections to a mysql server shoudl check that nremore server is stil... | 1 |

49,395 | 26,137,998,750 | IssuesEvent | 2022-12-29 14:33:08 | Bartalog/cool-maze | https://api.github.com/repos/Bartalog/cool-maze | closed | Stat multiple share: tte (Android) | performance Android Backend | Time-to-Encrypt each resource

The throughput for Multiple Share doesn't have the same meaning as Single Share, because many things are happening at the same time and interfering with each other's performance numbers. The numbers are still useful to gather and analyze though. | True | Stat multiple share: tte (Android) - Time-to-Encrypt each resource

The throughput for Multiple Share doesn't have the same meaning as Single Share, because many things are happening at the same time and interfering with each other's performance numbers. The numbers are still useful to gather and analyze though. | non_defect | stat multiple share tte android time to encrypt each resource the throughput for multiple share doesn t have the same meaning as single share because many things are happening at the same time and interfering with each other s performance numbers the numbers are still useful to gather and analyze though | 0 |

31,744 | 6,611,981,332 | IssuesEvent | 2017-09-20 00:40:07 | extnet/Ext.NET | https://api.github.com/repos/extnet/Ext.NET | closed | CanActivate option should work with not Ext.menu.Item | 2.x 3.x 4.x defect review-after-extjs-upgrade sencha sencha-disclaim | http://forums.ext.net/showthread.php?23262

http://www.sencha.com/forum/showthread.php?255184

Dup:

http://forums.ext.net/showthread.php?26213

Corrected the Toolbar/Menu/Controls_in_Menu example. Revert back after Sencha fix.

**Update:** the issue is not actual anymore for ExtJS 6, i.e. for Ext.NET 4. `CanActivate` ha... | 1.0 | CanActivate option should work with not Ext.menu.Item - http://forums.ext.net/showthread.php?23262

http://www.sencha.com/forum/showthread.php?255184

Dup:

http://forums.ext.net/showthread.php?26213

Corrected the Toolbar/Menu/Controls_in_Menu example. Revert back after Sencha fix.

**Update:** the issue is not actual a... | defect | canactivate option should work with not ext menu item dup corrected the toolbar menu controls in menu example revert back after sencha fix update the issue is not actual anymore for extjs i e for ext net canactivate has been removed | 1 |

67,698 | 14,886,595,206 | IssuesEvent | 2021-01-20 17:08:53 | anyulled/mws-restaurant-stage-1 | https://api.github.com/repos/anyulled/mws-restaurant-stage-1 | opened | CVE-2020-28481 (Medium) detected in socket.io-2.1.1.tgz | security vulnerability | ## CVE-2020-28481 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>socket.io-2.1.1.tgz</b></p></summary>

<p>node.js realtime framework server</p>

<p>Library home page: <a href="https:... | True | CVE-2020-28481 (Medium) detected in socket.io-2.1.1.tgz - ## CVE-2020-28481 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>socket.io-2.1.1.tgz</b></p></summary>

<p>node.js realtime ... | non_defect | cve medium detected in socket io tgz cve medium severity vulnerability vulnerable library socket io tgz node js realtime framework server library home page a href path to dependency file mws restaurant stage package json path to vulnerable library mws restaurant st... | 0 |

1,131 | 2,597,107,632 | IssuesEvent | 2015-02-21 02:56:07 | STEllAR-GROUP/hpx | https://api.github.com/repos/STEllAR-GROUP/hpx | closed | Crashing in hpx::parcelset::policies::mpi::connection_handler::handle_messages() on SuperMIC | category: parcel transport type: defect | I am getting crashes when I run my fmmx code on SuperMIC on more than one node. This is using only host processors. The last HPX call on the stack (that I can tell) is hpx::parcelset::policies::mpi::connection_handler::handle_messages(). The stack trace is here:

https://gist.github.com/dmarce1/fc69d303159b2744115a

... | 1.0 | Crashing in hpx::parcelset::policies::mpi::connection_handler::handle_messages() on SuperMIC - I am getting crashes when I run my fmmx code on SuperMIC on more than one node. This is using only host processors. The last HPX call on the stack (that I can tell) is hpx::parcelset::policies::mpi::connection_handler::handle... | defect | crashing in hpx parcelset policies mpi connection handler handle messages on supermic i am getting crashes when i run my fmmx code on supermic on more than one node this is using only host processors the last hpx call on the stack that i can tell is hpx parcelset policies mpi connection handler handle... | 1 |