Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

218,655 | 7,332,033,375 | IssuesEvent | 2018-03-05 15:15:08 | enviroCar/enviroCar-app | https://api.github.com/repos/enviroCar/enviroCar-app | closed | Simultaneous upload causes track duplicates on server | Priority - 1 - High bug | The user can hit the "upload all" button and after that upload a single track via a long-click. This serializes the same track twice resulting in a duplication on the server side (as there are no duplication checks).

Implement a mutex (maybe using the UploadManager) and block uploading interaction as long as an upload... | 1.0 | Simultaneous upload causes track duplicates on server - The user can hit the "upload all" button and after that upload a single track via a long-click. This serializes the same track twice resulting in a duplication on the server side (as there are no duplication checks).

Implement a mutex (maybe using the UploadManag... | non_defect | simultaneous upload causes track duplicates on server the user can hit the upload all button and after that upload a single track via a long click this serializes the same track twice resulting in a duplication on the server side as there are no duplication checks implement a mutex maybe using the uploadmanag... | 0 |

39,146 | 9,218,651,631 | IssuesEvent | 2019-03-11 13:52:29 | STEllAR-GROUP/phylanx | https://api.github.com/repos/STEllAR-GROUP/phylanx | closed | PhySL interpreter inserts 'random' nil into output | category: examples type: defect | I should add that `random` sometimes has `nil` somewhere in its output. In physl:

```

random(make_list(5,10), list("binomial", 3, .5))

```

produces:

```

[[2, 2, 3nil,

0, 2, 1, 1, 1, 1, 2], [2, 2, 2, 0, 1, 1, 2, 1, 3, 2], [3, 2, 2, 1, 0, 1, 2, 2, 2, 0], [2, 0, 1, 2, 1, 3, 3, 1, 0, 2], [2, 1, 1, 2, 2, 2, 2, 2, 1,... | 1.0 | PhySL interpreter inserts 'random' nil into output - I should add that `random` sometimes has `nil` somewhere in its output. In physl:

```

random(make_list(5,10), list("binomial", 3, .5))

```

produces:

```

[[2, 2, 3nil,

0, 2, 1, 1, 1, 1, 2], [2, 2, 2, 0, 1, 1, 2, 1, 3, 2], [3, 2, 2, 1, 0, 1, 2, 2, 2, 0], [2, 0,... | defect | physl interpreter inserts random nil into output i should add that random sometimes has nil somewhere in its output in physl random make list list binomial produces originally posted by in | 1 |

31,042 | 6,413,752,441 | IssuesEvent | 2017-08-08 08:28:41 | oleg-shilo/cs-script | https://api.github.com/repos/oleg-shilo/cs-script | closed | Ambiguous call in dbg.inject<ID> | defect Done: waiting for release | While trying to compose a test script environment related to #71 I ran into this issue:

```

<Workspace>\CSScript\Issue #79\Redirect>cscs.exe -cd RedirectRefLib.cs

C# Script execution engine. Version 3.27.0.0.

Copyright (C) 2004-2017 Oleg Shilo.

Error: Specified file could not be compiled.

csscript.CompilerExc... | 1.0 | Ambiguous call in dbg.inject<ID> - While trying to compose a test script environment related to #71 I ran into this issue:

```

<Workspace>\CSScript\Issue #79\Redirect>cscs.exe -cd RedirectRefLib.cs

C# Script execution engine. Version 3.27.0.0.

Copyright (C) 2004-2017 Oleg Shilo.

Error: Specified file could not b... | defect | ambiguous call in dbg inject while trying to compose a test script environment related to i ran into this issue csscript issue redirect cscs exe cd redirectreflib cs c script execution engine version copyright c oleg shilo error specified file could not be compiled csscrip... | 1 |

75,071 | 25,513,554,408 | IssuesEvent | 2022-11-28 14:47:45 | PowerDNS/pdns | https://api.github.com/repos/PowerDNS/pdns | closed | API endpoint /servers/{server_id}/zones/{zone_id}/check should exist but when calling there is an error and the endpoint cannot be found in the source code. | auth docs defect | <!-- Hi! Thanks for filing an issue. It will be read with care by human beings. Can we ask you to please fill out this template and not simply demand new features or send in complaints? Thanks! -->

<!-- Also please search the existing issues (both open and closed) to see if your report might be duplicate -->

<!-- Ple... | 1.0 | API endpoint /servers/{server_id}/zones/{zone_id}/check should exist but when calling there is an error and the endpoint cannot be found in the source code. - <!-- Hi! Thanks for filing an issue. It will be read with care by human beings. Can we ask you to please fill out this template and not simply demand new feature... | defect | api endpoint servers server id zones zone id check should exist but when calling there is an error and the endpoint cannot be found in the source code program authoritative issue type bug report short description get servers server id zones zone id check is a documented en... | 1 |

10,691 | 8,134,797,724 | IssuesEvent | 2018-08-19 20:05:23 | NixOS/nixpkgs | https://api.github.com/repos/NixOS/nixpkgs | closed | docker exposes containers' ports to the world despite firewall module being enabled | 1.severity: security | ## Issue description

Docker has its own iptables chain and somehow¹ bypasses NixOS firewall, the firewall, which I thought would safeguard this from me. This is very dangerous when you have passwordless development containers running.

It seems [that](https://fralef.me/docker-and-iptables.html) `virtualisation.doc... | True | docker exposes containers' ports to the world despite firewall module being enabled - ## Issue description

Docker has its own iptables chain and somehow¹ bypasses NixOS firewall, the firewall, which I thought would safeguard this from me. This is very dangerous when you have passwordless development containers runn... | non_defect | docker exposes containers ports to the world despite firewall module being enabled issue description docker has its own iptables chain and somehow¹ bypasses nixos firewall the firewall which i thought would safeguard this from me this is very dangerous when you have passwordless development containers runn... | 0 |

10,783 | 2,622,188,814 | IssuesEvent | 2015-03-04 00:22:07 | byzhang/cudpp | https://api.github.com/repos/byzhang/cudpp | closed | satGL produces a garbled image | auto-migrated Milestone-Release1.1 OpSys-Linux Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Build and run the satGL sample app

What is the expected output? What do you see instead?

Correct output can be seen by running the device emulation version. In

release or debug builds, instead the results are a green and blue smear.

```

Original issue reported on cod... | 1.0 | satGL produces a garbled image - ```

What steps will reproduce the problem?

1. Build and run the satGL sample app

What is the expected output? What do you see instead?

Correct output can be seen by running the device emulation version. In

release or debug builds, instead the results are a green and blue smear.

``... | defect | satgl produces a garbled image what steps will reproduce the problem build and run the satgl sample app what is the expected output what do you see instead correct output can be seen by running the device emulation version in release or debug builds instead the results are a green and blue smear ... | 1 |

58,553 | 14,432,798,076 | IssuesEvent | 2020-12-07 03:01:21 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | opened | Whl package not building due with a issue with sed - MacOS 11 | type:build/install | **System information**

- OS Platform and Distribution: Mac OS 11.0.1 Big Sur

- TensorFlow installed from (source or binary): Source - git

- TensorFlow version: 2.4.0-rc4

- Python version: 3.8.6 (macports)

- Installed using virtualenv? pip? conda?: No

- Bazel version (if compiling from source): 3.1.0

- GCC/Compil... | 1.0 | Whl package not building due with a issue with sed - MacOS 11 - **System information**

- OS Platform and Distribution: Mac OS 11.0.1 Big Sur

- TensorFlow installed from (source or binary): Source - git

- TensorFlow version: 2.4.0-rc4

- Python version: 3.8.6 (macports)

- Installed using virtualenv? pip? conda?: No

... | non_defect | whl package not building due with a issue with sed macos system information os platform and distribution mac os big sur tensorflow installed from source or binary source git tensorflow version python version macports installed using virtualenv pip conda no b... | 0 |

216,031 | 16,625,852,139 | IssuesEvent | 2021-06-03 09:26:46 | RotherOSS/doc-otobo-installation | https://api.github.com/repos/RotherOSS/doc-otobo-installation | opened | Update the requirements | documentation | https://doc.otobo.org/manual/installation/stable/en/content/requirements.html can be improved:

- [ ] No need to mention Node

- [ ] Add Redis as an optional dependency | 1.0 | Update the requirements - https://doc.otobo.org/manual/installation/stable/en/content/requirements.html can be improved:

- [ ] No need to mention Node

- [ ] Add Redis as an optional dependency | non_defect | update the requirements can be improved no need to mention node add redis as an optional dependency | 0 |

77,577 | 27,058,913,688 | IssuesEvent | 2023-02-13 18:08:45 | fecgov/fecfile-web-app | https://api.github.com/repos/fecgov/fecfile-web-app | closed | Defect - "Earmark Memo" the transactions table is not displaying the transaction type "Earmark Memo" | defect | This defect is being written for after creating an "Earmark Memo" the transactions table is not displaying the transaction type "Earmark Memo" as shown in screenshot below. This was tested in both DEV and STAGE environments.

and BN4.3 (Singularity). We need access to the Singularity API.

- [x] A script to calculate the following per minute for each crime: karma, combat stats, Charisma, Hack, and money.

- [x] Data on the amo... | 1.0 | crime: karma, combat stats, Charisma, Hack, and money per minute - Similar to #91, but we exclude the Intelligence stat.

- [x] A save file where we have destroyed BN1.3 (Genesis) and BN4.3 (Singularity). We need access to the Singularity API.

- [x] A script to calculate the following per minute for each crime: kar... | non_defect | crime karma combat stats charisma hack and money per minute similar to but we exclude the intelligence stat a save file where we have destroyed genesis and singularity we need access to the singularity api a script to calculate the following per minute for each crime karma comba... | 0 |

54,592 | 13,780,022,739 | IssuesEvent | 2020-10-08 14:26:46 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | opened | Problem in code generation for entities with @Type(type = "jsonb") from hibernate-types-52 library | T: Defect | ### Expected behavior

Upon executing the plugin _jooq-codegen-maven_ with the correct project structure as indicated in the jOOQ documentation, the expected generated classes from the existing project entities should be the following (e.g. for the sake of demonstration let´s use an entity called User):

1. Under th... | 1.0 | Problem in code generation for entities with @Type(type = "jsonb") from hibernate-types-52 library - ### Expected behavior

Upon executing the plugin _jooq-codegen-maven_ with the correct project structure as indicated in the jOOQ documentation, the expected generated classes from the existing project entities should ... | defect | problem in code generation for entities with type type jsonb from hibernate types library expected behavior upon executing the plugin jooq codegen maven with the correct project structure as indicated in the jooq documentation the expected generated classes from the existing project entities should b... | 1 |

33,967 | 7,314,613,137 | IssuesEvent | 2018-03-01 08:03:18 | PowerDNS/pdns | https://api.github.com/repos/PowerDNS/pdns | closed | dnsdist 1.2.0 complains about incorrect option but starts anyway | defect dnsdist |

- Program: dnsdist <!-- delete the ones that do not apply -->

- Issue type: Bug report

### Short description

dnsdist 1.2.0 complains about option; starts anyway

<!--

If this is a bug report, use the following part of the the template and delete the part at the bottom

-->

### Steps to reproduce

... | 1.0 | dnsdist 1.2.0 complains about incorrect option but starts anyway -

- Program: dnsdist <!-- delete the ones that do not apply -->

- Issue type: Bug report

### Short description

dnsdist 1.2.0 complains about option; starts anyway

<!--

If this is a bug report, use the following part of the the template a... | defect | dnsdist complains about incorrect option but starts anyway program dnsdist issue type bug report short description dnsdist complains about option starts anyway if this is a bug report use the following part of the the template and delete the part at the bottom ... | 1 |

30,293 | 6,086,427,670 | IssuesEvent | 2017-06-18 00:52:57 | jfabry/LiveRobotProgramming | https://api.github.com/repos/jfabry/LiveRobotProgramming | opened | PhaROS Bridge: context menu is not working | Bridge-PhaROS Component-UI Priority-Medium Type-Defect | When there is a pub or sub in the pharos bridge window, the context menu for deleting them is not working | 1.0 | PhaROS Bridge: context menu is not working - When there is a pub or sub in the pharos bridge window, the context menu for deleting them is not working | defect | pharos bridge context menu is not working when there is a pub or sub in the pharos bridge window the context menu for deleting them is not working | 1 |

678,206 | 23,190,668,710 | IssuesEvent | 2022-08-01 12:23:27 | SAP/xsk | https://api.github.com/repos/SAP/xsk | closed | [Core] Reconsider the authentication mechanisms in the XSK | wontfix core priority-medium effort-medium security investigation / discussion incomplete | Currently, when running the XSK locally, following the suggested way in the documentation, we use Form-based authentication provided by Tomcat. This leads to inconsistencies between the dev and production environments where we use OAuth.

Due to these differences, we have observed several problems:

- websockets are... | 1.0 | [Core] Reconsider the authentication mechanisms in the XSK - Currently, when running the XSK locally, following the suggested way in the documentation, we use Form-based authentication provided by Tomcat. This leads to inconsistencies between the dev and production environments where we use OAuth.

Due to these diff... | non_defect | reconsider the authentication mechanisms in the xsk currently when running the xsk locally following the suggested way in the documentation we use form based authentication provided by tomcat this leads to inconsistencies between the dev and production environments where we use oauth due to these differenc... | 0 |

17,213 | 2,984,426,524 | IssuesEvent | 2015-07-18 00:45:39 | google/omaha | https://api.github.com/repos/google/omaha | closed | google Chrom or Talk Bundle cannot be installed | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. build omaha with visual studio 2010, successfully pass build and all unit

tests

2. go into staging folder

3. input

GoogleUpdate.exe /install

"bundlename=Google%20Talk%20Bundle&appguid={D0AB2EBC-931B-4013-9FEB-C9C4C2225C8C

}&appname=Google%20Talk%20Plugin&needsadmin=Fal... | 1.0 | google Chrom or Talk Bundle cannot be installed - ```

What steps will reproduce the problem?

1. build omaha with visual studio 2010, successfully pass build and all unit

tests

2. go into staging folder

3. input

GoogleUpdate.exe /install

"bundlename=Google%20Talk%20Bundle&appguid={D0AB2EBC-931B-4013-9FEB-C9C4C2225C... | defect | google chrom or talk bundle cannot be installed what steps will reproduce the problem build omaha with visual studio successfully pass build and all unit tests go into staging folder input googleupdate exe install bundlename google appguid appname google needsadmin false l... | 1 |

50,596 | 13,187,609,136 | IssuesEvent | 2020-08-13 03:58:55 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | closed | Link to PROPOSAL project (Trac #1019) | Migrated from Trac cmake defect | Hi,

Could you please provide a link to the paper describing how the PROPOSAL icesim meta-project work?

Thanks.

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1019">https://code.icecube.wisc.edu/ticket/1019</a>, reported by icecube and owned by </em></summary>

<p>

```json

{

"... | 1.0 | Link to PROPOSAL project (Trac #1019) - Hi,

Could you please provide a link to the paper describing how the PROPOSAL icesim meta-project work?

Thanks.

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1019">https://code.icecube.wisc.edu/ticket/1019</a>, reported by icecube and owned... | defect | link to proposal project trac hi could you please provide a link to the paper describing how the proposal icesim meta project work thanks migrated from json status closed changetime description hi n ncould you please provide a link to the paper descr... | 1 |

62,650 | 17,105,641,678 | IssuesEvent | 2021-07-09 17:15:52 | Gogo1951/GogoLoot | https://api.github.com/repos/Gogo1951/GogoLoot | closed | Remove Lag from "Auto Destroy Grays" | GogoLoot - Application Type - Defect | There's a lag when you have "auto destroy grays" enabled. every time you loot there's a notable drop in frame rates. | 1.0 | Remove Lag from "Auto Destroy Grays" - There's a lag when you have "auto destroy grays" enabled. every time you loot there's a notable drop in frame rates. | defect | remove lag from auto destroy grays there s a lag when you have auto destroy grays enabled every time you loot there s a notable drop in frame rates | 1 |

55,376 | 14,410,691,869 | IssuesEvent | 2020-12-04 05:33:03 | AeroScripts/QuestieDev | https://api.github.com/repos/AeroScripts/QuestieDev | opened | Error when moving quest tracker while in combat | Type - Defect | ## Bug description

When trying to move the quest tracker while in combat using "Control + Left Click" i get this error:

`...s\Questie\Modules\Tracker\QuestieTrackerPrivates.lua:24: Frame Questie_BaseFrame is not movable

[C]: in function `StartMoving'

...s\Questie\Modules\Tracker\QuestieTrackerPrivates.lua:24: in fu... | 1.0 | Error when moving quest tracker while in combat - ## Bug description

When trying to move the quest tracker while in combat using "Control + Left Click" i get this error:

`...s\Questie\Modules\Tracker\QuestieTrackerPrivates.lua:24: Frame Questie_BaseFrame is not movable

[C]: in function `StartMoving'

...s\Questie\Mo... | defect | error when moving quest tracker while in combat bug description when trying to move the quest tracker while in combat using control left click i get this error s questie modules tracker questietrackerprivates lua frame questie baseframe is not movable in function startmoving s questie modul... | 1 |

10,072 | 7,888,843,040 | IssuesEvent | 2018-06-28 00:14:41 | 8xprotocol/contracts | https://api.github.com/repos/8xprotocol/contracts | closed | Create nonce field for VolumeSubscription | bug in progress security | 1. In volume subscription, create a new plan with a price and identifier

2. User subscribes to that plan

3. Delete the plan, but recreate it with the same identifier and different price OR interval (it'll have the same hash)

4. Users get charged more.

A simple resolution would be to hash the plan with the amount ... | True | Create nonce field for VolumeSubscription - 1. In volume subscription, create a new plan with a price and identifier

2. User subscribes to that plan

3. Delete the plan, but recreate it with the same identifier and different price OR interval (it'll have the same hash)

4. Users get charged more.

A simple resolutio... | non_defect | create nonce field for volumesubscription in volume subscription create a new plan with a price and identifier user subscribes to that plan delete the plan but recreate it with the same identifier and different price or interval it ll have the same hash users get charged more a simple resolutio... | 0 |

40,710 | 6,845,818,457 | IssuesEvent | 2017-11-13 09:45:48 | dgraph-io/badger | https://api.github.com/repos/dgraph-io/badger | closed | Need to document the sorting order of the keys | documentation | There is no document describing how keys are sorted, so I have to test it with the code below.

It seems the ordering should be described as something like "byte-wise prefix order", similar to big endian but the keys are aligned from the least significant byte, and "no byte" is smaller than "byte zero" (the shorter t... | 1.0 | Need to document the sorting order of the keys - There is no document describing how keys are sorted, so I have to test it with the code below.

It seems the ordering should be described as something like "byte-wise prefix order", similar to big endian but the keys are aligned from the least significant byte, and "no... | non_defect | need to document the sorting order of the keys there is no document describing how keys are sorted so i have to test it with the code below it seems the ordering should be described as something like byte wise prefix order similar to big endian but the keys are aligned from the least significant byte and no... | 0 |

142,213 | 5,460,265,240 | IssuesEvent | 2017-03-09 04:17:59 | NuGet/Home | https://api.github.com/repos/NuGet/Home | closed | Logging (via status bar, output window, and warning/error window) requires UI thread and slows down Restore greatly. | Area: Perf Area: Restore Priority:0 Type:Bug | Split off from #4617 | 1.0 | Logging (via status bar, output window, and warning/error window) requires UI thread and slows down Restore greatly. - Split off from #4617 | non_defect | logging via status bar output window and warning error window requires ui thread and slows down restore greatly split off from | 0 |

58,672 | 16,678,901,142 | IssuesEvent | 2021-06-07 20:05:04 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | closed | sitewide, forms — 508-defect-3 [SCREENREADER]: Consider updating phone number documentation in design system, to include spacing in the aria-label | 508-defect-3 508-issue-cognition 508-issue-semantic-markup 508/Accessibility components design system forms sitewide triage vsa-benefits-2 vsp-design-system-team | # [508-defect-3](https://github.com/department-of-veterans-affairs/va.gov-team/blob/master/platform/accessibility/guidance/defect-severity-rubric.md#508-defect-3)

> Located in [VSA BAM2 MDOT MVP a11y spot check](https://github.com/department-of-veterans-affairs/va.gov-team/issues/5868#issuecomment-627425136)

> Pr... | 1.0 | sitewide, forms — 508-defect-3 [SCREENREADER]: Consider updating phone number documentation in design system, to include spacing in the aria-label - # [508-defect-3](https://github.com/department-of-veterans-affairs/va.gov-team/blob/master/platform/accessibility/guidance/defect-severity-rubric.md#508-defect-3)

> Loc... | defect | sitewide forms — defect consider updating phone number documentation in design system to include spacing in the aria label located in previously documented feedback framework ❗️ must for if the feedback must be applied ⚠️should if the feedback is best practice ... | 1 |

100,627 | 12,541,716,684 | IssuesEvent | 2020-06-05 12:52:11 | XAMLMarkupExtensions/WPFLocalizationExtension | https://api.github.com/repos/XAMLMarkupExtensions/WPFLocalizationExtension | opened | Check Binding in Binding in Setter & Docu | Designtime Problem Docu Enhancement | designtime support in setters with non Binding Markupelement

Check if this is working

```xml

<Setter Property="Text" Value="{Binding Source={lex:Loc {Binding test}}}" />

```

Docu the following cool workaround many thanks @karnah

```xml

<TextBlock FontSize="20">

<TextBlock.Style>

<Style TargetTy... | 1.0 | Check Binding in Binding in Setter & Docu - designtime support in setters with non Binding Markupelement

Check if this is working

```xml

<Setter Property="Text" Value="{Binding Source={lex:Loc {Binding test}}}" />

```

Docu the following cool workaround many thanks @karnah

```xml

<TextBlock FontSize="20">

... | non_defect | check binding in binding in setter docu designtime support in setters with non binding markupelement check if this is working xml docu the following cool workaround many thanks karnah xml ... | 0 |

53,629 | 13,261,998,102 | IssuesEvent | 2020-08-20 20:55:10 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | [clsim] pr2 is never used (Trac #1783) | Migrated from Trac cmake defect | unused varialbe cought by static analysis http://software.icecube.wisc.edu/static_analysis/00_LATEST/report-0ca23c.html#EndPath

Also should there be an else at line 212?

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1783">https://code.icecube.wisc.edu/projects/ic... | 1.0 | [clsim] pr2 is never used (Trac #1783) - unused varialbe cought by static analysis http://software.icecube.wisc.edu/static_analysis/00_LATEST/report-0ca23c.html#EndPath

Also should there be an else at line 212?

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1783">... | defect | is never used trac unused varialbe cought by static analysis also should there be an else at line migrated from json status closed changetime ts description unused varialbe cought by static analysis should there be an else at line ... | 1 |

21,817 | 3,561,887,460 | IssuesEvent | 2016-01-24 03:29:29 | ariya/phantomjs | https://api.github.com/repos/ariya/phantomjs | closed | Linux Redhat6 Enterprize version 1. 6 install... Korean site font broken | old.Priority-Medium old.Status-New old.Type-Defect | _**[yan...@mz.co.kr](http://code.google.com/u/109652123093259324656/) commented:**_

> <b>Which version of PhantomJS are you using? Tip: run 'phantomjs --version'.</b>

> /root/phantomjs-1.6.1/bin/phantomjs /root/phantomjs-1.6.1/examples/rasterize.js http://www.daum.net daum.png

>

> <b>What steps will reproduce the... | 1.0 | Linux Redhat6 Enterprize version 1. 6 install... Korean site font broken - _**[yan...@mz.co.kr](http://code.google.com/u/109652123093259324656/) commented:**_

> <b>Which version of PhantomJS are you using? Tip: run 'phantomjs --version'.</b>

> /root/phantomjs-1.6.1/bin/phantomjs /root/phantomjs-1.6.1/examples/raster... | defect | linux enterprize version install korean site font broken commented which version of phantomjs are you using tip run phantomjs version root phantomjs bin phantomjs root phantomjs examples rasterize js daum png what steps will reproduce the problem hangu... | 1 |

71,015 | 23,411,386,814 | IssuesEvent | 2022-08-12 17:57:06 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | opened | 508-defect-3: Links that open in a new tab should warn users ahead of time | 508/Accessibility 508-defect-3 Letters benefits-crew benefits-team-1 squad-1 | ### Point of contact

Josh Kim

### Severity level

3, Moderate. Should be fixed in 1-3 sprints post-launch.

### Details

Several issues exist when forcing a link to open in a new tab or window:

- Screen reader users, screen magnifier users, and users with certain cognitive impairments can become disoriented when the... | 1.0 | 508-defect-3: Links that open in a new tab should warn users ahead of time - ### Point of contact

Josh Kim

### Severity level

3, Moderate. Should be fixed in 1-3 sprints post-launch.

### Details

Several issues exist when forcing a link to open in a new tab or window:

- Screen reader users, screen magnifier users,... | defect | defect links that open in a new tab should warn users ahead of time point of contact josh kim severity level moderate should be fixed in sprints post launch details several issues exist when forcing a link to open in a new tab or window screen reader users screen magnifier users a... | 1 |

34,372 | 7,447,865,941 | IssuesEvent | 2018-03-28 13:48:19 | kerdokullamae/test_koik_issued | https://api.github.com/repos/kerdokullamae/test_koik_issued | closed | Isikunimed 2 väljana importida Excelist | C: AIS P: highest R: fixed T: defect | **Reported by aadikaljuvee on 19 Sep 2012 13:32 UTC**

Isiku nimed on korrastamata kujul kuigi on olemas korrastatud andmetega tabelid, kus eraldatud on Eesnimi ja Perenimi. | 1.0 | Isikunimed 2 väljana importida Excelist - **Reported by aadikaljuvee on 19 Sep 2012 13:32 UTC**

Isiku nimed on korrastamata kujul kuigi on olemas korrastatud andmetega tabelid, kus eraldatud on Eesnimi ja Perenimi. | defect | isikunimed väljana importida excelist reported by aadikaljuvee on sep utc isiku nimed on korrastamata kujul kuigi on olemas korrastatud andmetega tabelid kus eraldatud on eesnimi ja perenimi | 1 |

75,455 | 25,856,419,534 | IssuesEvent | 2022-12-13 14:05:17 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | "You can't specify target table '...' for update in FROM clause" when target table has index hint in MySQL | T: Defect C: Functionality C: DB: MySQL P: Medium E: All Editions | When using the `USE INDEX` clause, or a similar index hint in MySQL, then the fix for #6583 doesn't work.

The reason is the same as #14387. We only traverse the join tree to find "unaliased" tables, not also "unwrapped" ones. Thus, this query is produced in an integration test:

```sql

update `test`.`t_author`

s... | 1.0 | "You can't specify target table '...' for update in FROM clause" when target table has index hint in MySQL - When using the `USE INDEX` clause, or a similar index hint in MySQL, then the fix for #6583 doesn't work.

The reason is the same as #14387. We only traverse the join tree to find "unaliased" tables, not also ... | defect | you can t specify target table for update in from clause when target table has index hint in mysql when using the use index clause or a similar index hint in mysql then the fix for doesn t work the reason is the same as we only traverse the join tree to find unaliased tables not also unwrap... | 1 |

65,791 | 19,694,627,944 | IssuesEvent | 2022-01-12 10:48:06 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | opened | Message bubbles have too much padding on the left of the timeline | T-Defect S-Minor A-Message-Bubbles O-Occasional | ### Steps to reproduce

1. Switch from Modern layout to Message Bubbles in the settings

### Outcome

#### What did you expect?

#### What happened instead?

| release process RxJava2.x | **Initial release notes**:

Fixed memory leak in `PreLollipopNetworkObservingStrategy` during disposing of an `Observable` - issue #219.

**Things to do**:

TBD. | 1.0 | Relase 0.12.1 (RxJava2.x) - **Initial release notes**:

Fixed memory leak in `PreLollipopNetworkObservingStrategy` during disposing of an `Observable` - issue #219.

**Things to do**:

TBD. | non_defect | relase x initial release notes fixed memory leak in prelollipopnetworkobservingstrategy during disposing of an observable issue things to do tbd | 0 |

47,116 | 13,056,034,204 | IssuesEvent | 2020-07-30 03:27:14 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | glshovel docs and plugin examples (Trac #15) | Migrated from Trac defect glshovel |

Migrated from https://code.icecube.wisc.edu/ticket/15

```json

{

"status": "closed",

"changetime": "2007-11-11T03:51:18",

"description": "\n",

"reporter": "troy",

"cc": "",

"resolution": "duplicate",

"_ts": "1194753078000000",

"component": "glshovel",

"summary": "glshovel d... | 1.0 | glshovel docs and plugin examples (Trac #15) -

Migrated from https://code.icecube.wisc.edu/ticket/15

```json

{

"status": "closed",

"changetime": "2007-11-11T03:51:18",

"description": "\n",

"reporter": "troy",

"cc": "",

"resolution": "duplicate",

"_ts": "1194753078000000",

"comp... | defect | glshovel docs and plugin examples trac migrated from json status closed changetime description n reporter troy cc resolution duplicate ts component glshovel summary glshovel docs and plugin ex... | 1 |

24,436 | 3,980,349,103 | IssuesEvent | 2016-05-06 06:59:47 | Quantum64/Arcade-Issues | https://api.github.com/repos/Quantum64/Arcade-Issues | opened | Kill Effect not working | cosmeticsmenu defect effects killeffect | The "Notes Kill Effect" does not work.

With the other kill effects it shows a piece of redstone but with the notes one it shows paper, Like what you would see with a title.

http://i.imgur.com/o7hHeYs.png | 1.0 | Kill Effect not working - The "Notes Kill Effect" does not work.

With the other kill effects it shows a piece of redstone but with the notes one it shows paper, Like what you would see with a title.

http://i.imgur.com/o7hHeYs.png | defect | kill effect not working the notes kill effect does not work with the other kill effects it shows a piece of redstone but with the notes one it shows paper like what you would see with a title | 1 |

40,417 | 9,984,513,603 | IssuesEvent | 2019-07-10 14:40:07 | telus/tds-core | https://api.github.com/repos/telus/tds-core | closed | TDS Tooltip - Accessibility & Language issues | accessibility :wheelchair: priority: medium status: in progress type: defect :bug: | ## Description

- The `aria-label` inside the icon "Reveal additional information" does not seem to be translatable in French, we can add more text after it but not actually change it. Additionally, the Wave extension seems to flag the button as an error ("Empty label") because it doesn't see any content inside it.

- ... | 1.0 | TDS Tooltip - Accessibility & Language issues - ## Description

- The `aria-label` inside the icon "Reveal additional information" does not seem to be translatable in French, we can add more text after it but not actually change it. Additionally, the Wave extension seems to flag the button as an error ("Empty label") b... | defect | tds tooltip accessibility language issues description the aria label inside the icon reveal additional information does not seem to be translatable in french we can add more text after it but not actually change it additionally the wave extension seems to flag the button as an error empty label b... | 1 |

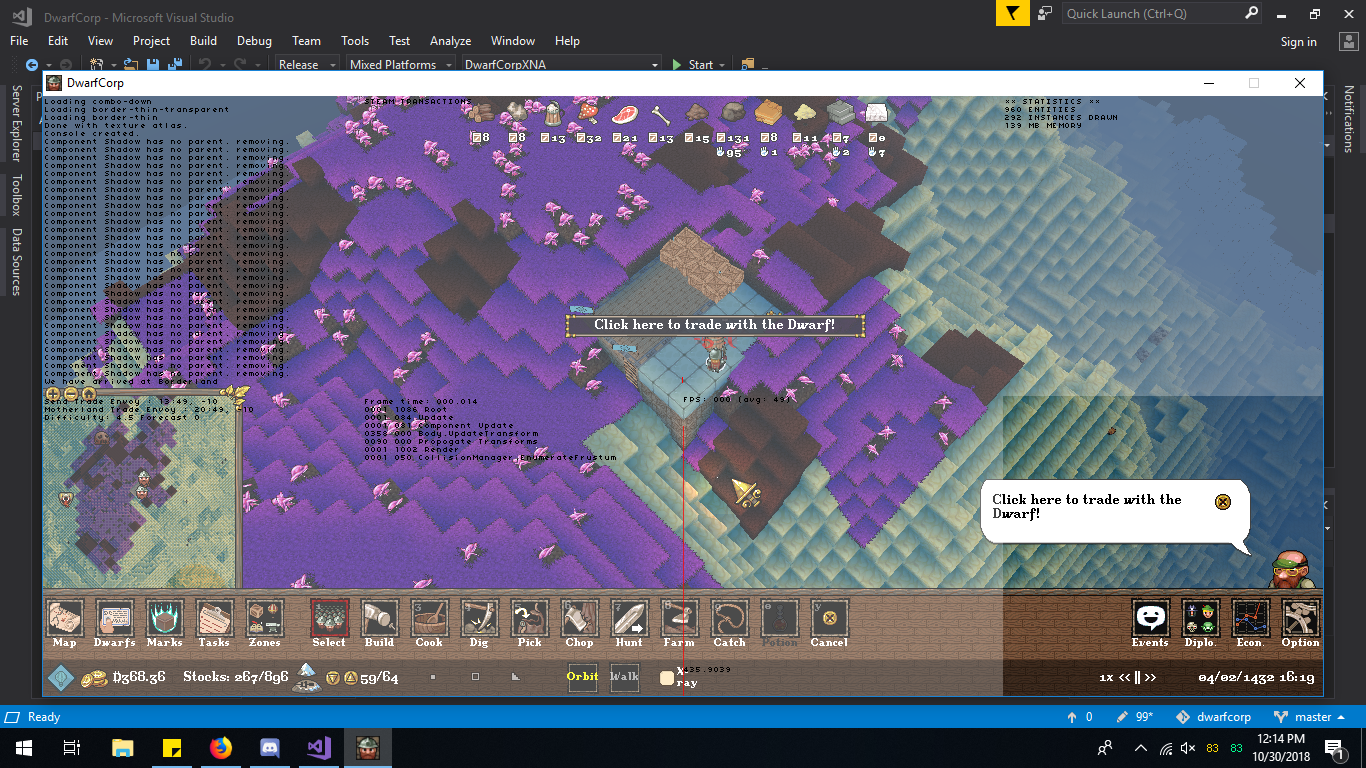

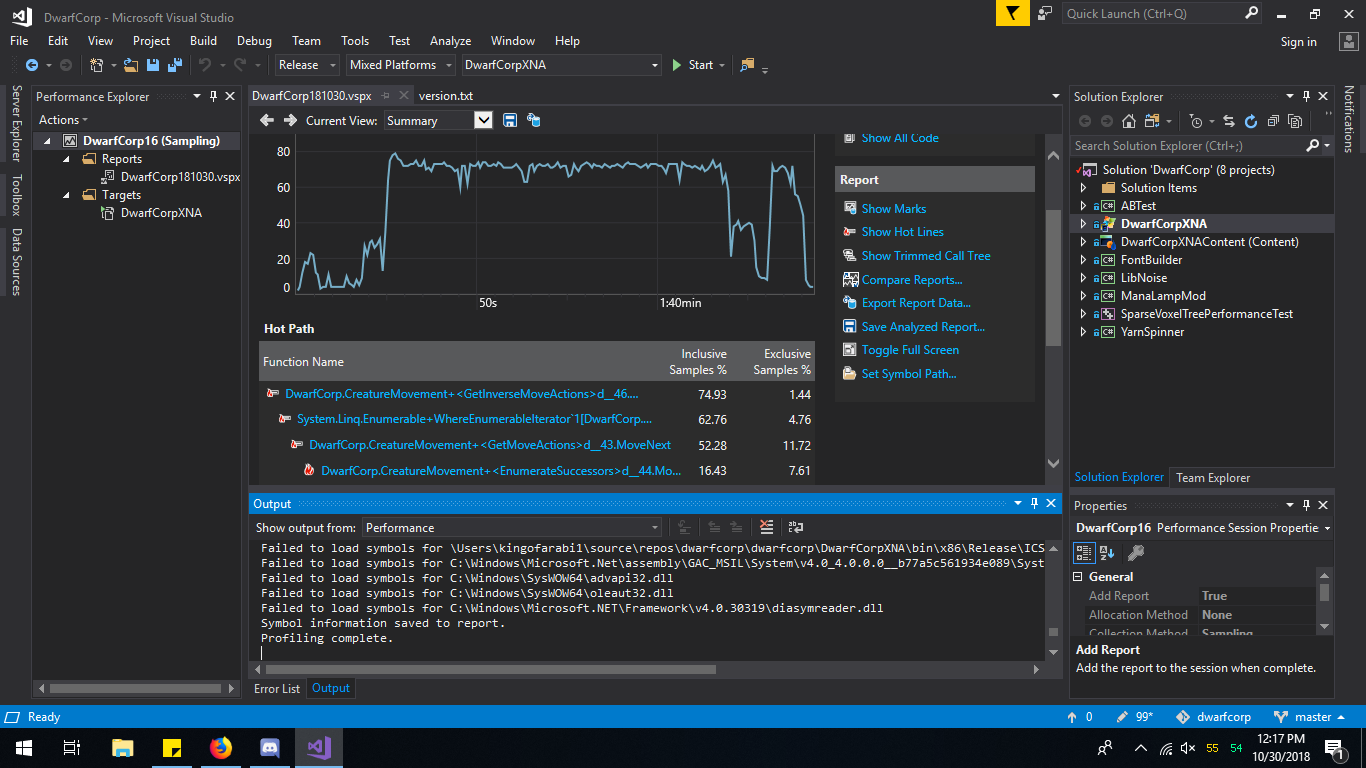

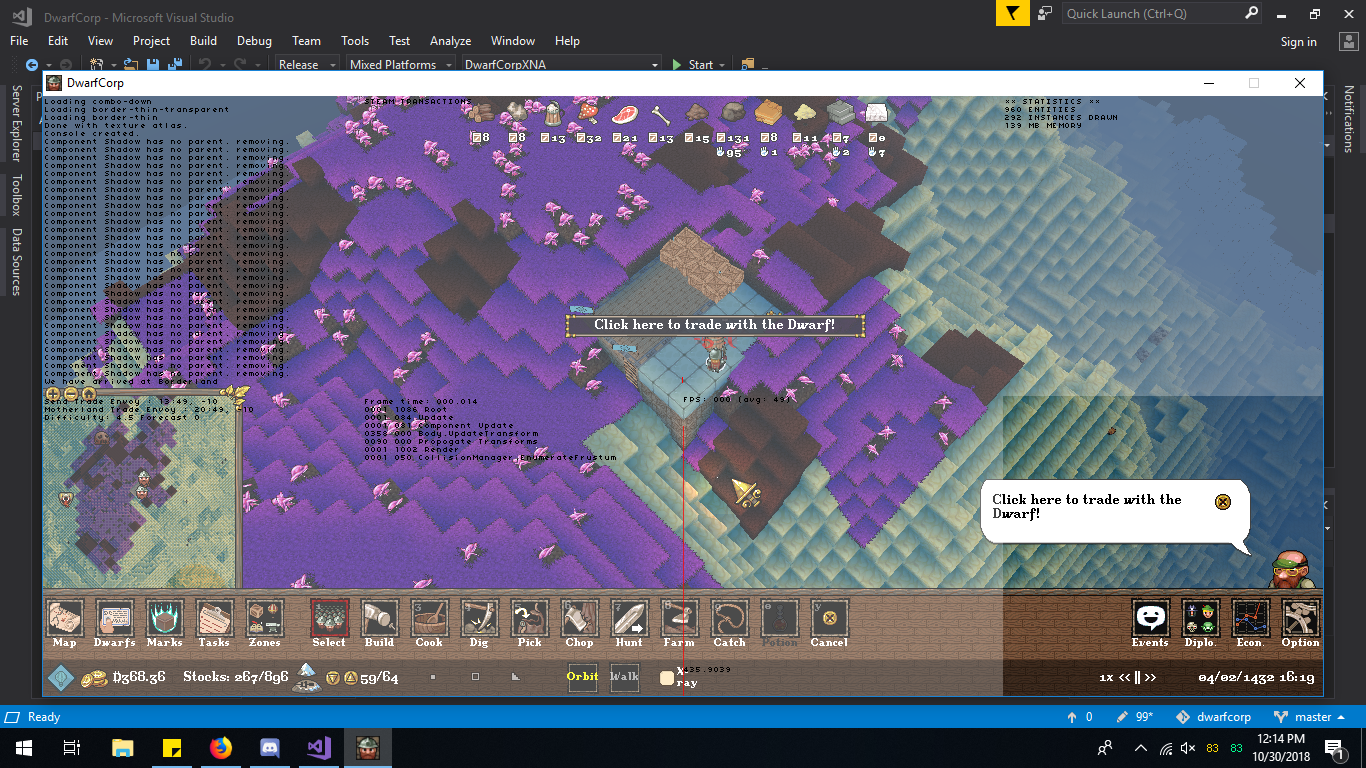

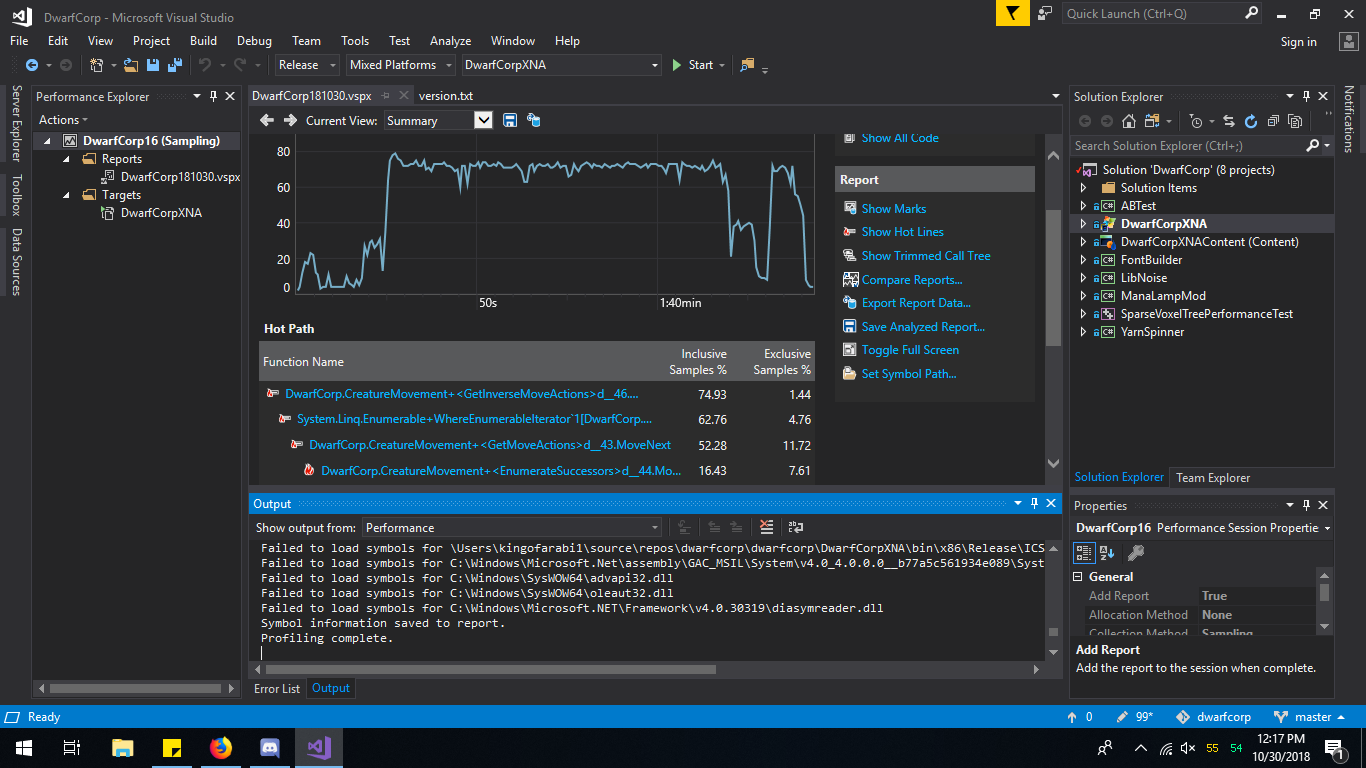

14,198 | 8,506,478,649 | IssuesEvent | 2018-10-30 16:39:57 | CompletelyFairGames/dwarfcorp | https://api.github.com/repos/CompletelyFairGames/dwarfcorp | opened | Performance: As soon as ten dwarves are in game, performance dies | A Bug Performance |

[Borderland_413_131853894854936125.zip](https://github.com/CompletelyFairGames/dwarfcorp/files/2... | True | Performance: As soon as ten dwarves are in game, performance dies -

[Borderland_413_131853894854... | non_defect | performance as soon as ten dwarves are in game performance dies performance is measurably worse it went from spikes of before to a sustained cpu on with ten dwarves or so fps is staying at fps i m guessing something in our refactor missed something because things we... | 0 |

264,908 | 28,214,112,133 | IssuesEvent | 2023-04-05 07:39:38 | hshivhare67/platform_device_renesas_kernel_v4.19.72 | https://api.github.com/repos/hshivhare67/platform_device_renesas_kernel_v4.19.72 | closed | CVE-2022-0847 (High) detected in linuxlinux-4.19.279 - autoclosed | Mend: dependency security vulnerability | ## CVE-2022-0847 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.279</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.ke... | True | CVE-2022-0847 (High) detected in linuxlinux-4.19.279 - autoclosed - ## CVE-2022-0847 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.279</b></p></summary>

<p>

<p>The Li... | non_defect | cve high detected in linuxlinux autoclosed cve high severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in base branch main vulnerable source files lib iov iter c lib iov iter c ... | 0 |

176,490 | 28,102,202,746 | IssuesEvent | 2023-03-30 20:27:36 | WordPress/openverse | https://api.github.com/repos/WordPress/openverse | opened | Add instructions for navigating sub-categories in Figma | 🟩 priority: low 🌟 goal: addition 🖼️ aspect: design 🧱 stack: frontend | ## Description

<!-- Describe the feature and how it solves the problem. -->

Originally reported by @sarayourfriend

> Could figma page have instructions for where to find the sub-categories? I forgot how to find the other pages in Figma and had to click around a bit before I could find it

Link: https://www.figma.com... | 1.0 | Add instructions for navigating sub-categories in Figma - ## Description

<!-- Describe the feature and how it solves the problem. -->

Originally reported by @sarayourfriend

> Could figma page have instructions for where to find the sub-categories? I forgot how to find the other pages in Figma and had to click around... | non_defect | add instructions for navigating sub categories in figma description originally reported by sarayourfriend could figma page have instructions for where to find the sub categories i forgot how to find the other pages in figma and had to click around a bit before i could find it link alternatives ... | 0 |

862 | 2,594,241,063 | IssuesEvent | 2015-02-20 01:02:03 | BALL-Project/ball | https://api.github.com/repos/BALL-Project/ball | closed | BALLView on Windows does not move light sources correctly | C: VIEW P: major R: fixed T: defect | **Reported by akdehof on 26 Aug 39272847 02:13 UTC**

In the 1.3-beta1 release on windows, light sources are not correctly adapted when moving the camera around. | 1.0 | BALLView on Windows does not move light sources correctly - **Reported by akdehof on 26 Aug 39272847 02:13 UTC**

In the 1.3-beta1 release on windows, light sources are not correctly adapted when moving the camera around. | defect | ballview on windows does not move light sources correctly reported by akdehof on aug utc in the release on windows light sources are not correctly adapted when moving the camera around | 1 |

53,916 | 13,262,511,048 | IssuesEvent | 2020-08-20 21:57:10 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | [PROPOSAL] Don't auto-generate tables. (Trac #2329) | Migrated from Trac combo simulation defect | When running a test in sim-services (propagator_state_storage.py) that test takes an unusually long time to finish. I strongly suspect PROPOSAL table generation is the culprit. This test runs for over 10 minutes on my machine.

Attached is the output.

PROPOSAL should not ever try to auto-generate tables. Demand tha... | 1.0 | [PROPOSAL] Don't auto-generate tables. (Trac #2329) - When running a test in sim-services (propagator_state_storage.py) that test takes an unusually long time to finish. I strongly suspect PROPOSAL table generation is the culprit. This test runs for over 10 minutes on my machine.

Attached is the output.

PROPOSAL sh... | defect | don t auto generate tables trac when running a test in sim services propagator state storage py that test takes an unusually long time to finish i strongly suspect proposal table generation is the culprit this test runs for over minutes on my machine attached is the output proposal should not ever... | 1 |

32,666 | 7,569,910,129 | IssuesEvent | 2018-04-23 07:10:17 | pywbem/pywbem | https://api.github.com/repos/pywbem/pywbem | closed | Deprecate tocimobj() | area: code release: optional resolution: fixed type: cleanup | cim_obj.py contains this TODO:

```

W:5450, 0: TODO: Move remaining internal uses of tocimobj() to cimvalue() and deprecate (fixme)

```

`cimvalue()` is a new function added in 0.12 that does pretty much what `tocimobj()` does, but with a cleaner approach to handling the different types. Because `tocimobj()` is an ex... | 1.0 | Deprecate tocimobj() - cim_obj.py contains this TODO:

```

W:5450, 0: TODO: Move remaining internal uses of tocimobj() to cimvalue() and deprecate (fixme)

```

`cimvalue()` is a new function added in 0.12 that does pretty much what `tocimobj()` does, but with a cleaner approach to handling the different types. Becaus... | non_defect | deprecate tocimobj cim obj py contains this todo w todo move remaining internal uses of tocimobj to cimvalue and deprecate fixme cimvalue is a new function added in that does pretty much what tocimobj does but with a cleaner approach to handling the different types because t... | 0 |

7,012 | 2,610,321,952 | IssuesEvent | 2015-02-26 19:43:50 | chrsmith/republic-at-war | https://api.github.com/repos/chrsmith/republic-at-war | closed | Gameplay Error | auto-migrated Priority-Medium Type-Defect | ```

I believe there are different observations already made by other testers, some

said it works, some said it not works.

For me, if i activate this ability of the Light Assault Cruiser, it gives me

the Beep sound as if the area is not accesible or it is out of range etc.

However, the Cruiser responds with a standa... | 1.0 | Gameplay Error - ```

I believe there are different observations already made by other testers, some

said it works, some said it not works.

For me, if i activate this ability of the Light Assault Cruiser, it gives me

the Beep sound as if the area is not accesible or it is out of range etc.

However, the Cruiser respo... | defect | gameplay error i believe there are different observations already made by other testers some said it works some said it not works for me if i activate this ability of the light assault cruiser it gives me the beep sound as if the area is not accesible or it is out of range etc however the cruiser respo... | 1 |

327,642 | 28,075,494,706 | IssuesEvent | 2023-03-29 23:02:00 | ray-project/ray | https://api.github.com/repos/ray-project/ray | closed | [Release][Data] Migrate `dataset_shuffle_random_shuffle_1tb` test to v2 stack and Anyscale Jobs | P1 datasets release-test ray-team-created | ### What happened + What you expected to happen

Migrate `dataset_shuffle_random_shuffle_1tb` test to v2 stack and Anyscale Jobs. If migration is impossible, consider rewriting the test or removing it. If there are features missing in v2 stack or/and Anyscale Jobs that make the migration impossible, please outline them... | 1.0 | [Release][Data] Migrate `dataset_shuffle_random_shuffle_1tb` test to v2 stack and Anyscale Jobs - ### What happened + What you expected to happen

Migrate `dataset_shuffle_random_shuffle_1tb` test to v2 stack and Anyscale Jobs. If migration is impossible, consider rewriting the test or removing it. If there are feature... | non_defect | migrate dataset shuffle random shuffle test to stack and anyscale jobs what happened what you expected to happen migrate dataset shuffle random shuffle test to stack and anyscale jobs if migration is impossible consider rewriting the test or removing it if there are features missing in stac... | 0 |

64,513 | 18,722,540,599 | IssuesEvent | 2021-11-03 13:22:38 | primefaces/primefaces | https://api.github.com/repos/primefaces/primefaces | closed | DataTable: after filtering and changing value during ajax old filtered data is still shown | defect | **Describe the defect**

When you filter a Datatable and updates the value using ajax, the filtered old value will be still applied even if you set the filteredValue to null

**Reproducer**

https://github.com/neXus1987/primefaces-datatable-test.git

**Environment:**

- PF Version: _10.0_

- JSF + version: Mojar... | 1.0 | DataTable: after filtering and changing value during ajax old filtered data is still shown - **Describe the defect**

When you filter a Datatable and updates the value using ajax, the filtered old value will be still applied even if you set the filteredValue to null

**Reproducer**

https://github.com/neXus1987/prim... | defect | datatable after filtering and changing value during ajax old filtered data is still shown describe the defect when you filter a datatable and updates the value using ajax the filtered old value will be still applied even if you set the filteredvalue to null reproducer environment pf vers... | 1 |

76,728 | 15,496,181,874 | IssuesEvent | 2021-03-11 02:12:40 | mwilliams7197/zendo | https://api.github.com/repos/mwilliams7197/zendo | closed | WS-2019-0032 (Medium) detected in js-yaml-3.7.0.tgz - autoclosed | security vulnerability | ## WS-2019-0032 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>js-yaml-3.7.0.tgz</b></p></summary>

<p>YAML 1.2 parser and serializer</p>

<p>Library home page: <a href="https://regis... | True | WS-2019-0032 (Medium) detected in js-yaml-3.7.0.tgz - autoclosed - ## WS-2019-0032 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>js-yaml-3.7.0.tgz</b></p></summary>

<p>YAML 1.2 par... | non_defect | ws medium detected in js yaml tgz autoclosed ws medium severity vulnerability vulnerable library js yaml tgz yaml parser and serializer library home page a href path to dependency file zendo package json path to vulnerable library zendo node modules js yaml pac... | 0 |

64,871 | 18,949,777,713 | IssuesEvent | 2021-11-18 14:06:56 | vector-im/element-android | https://api.github.com/repos/vector-im/element-android | closed | Push notifications sometimes disappear immediately | T-Defect A-Notifications | In a 1:1 encrypted chat, I've noticed that often (but not always, maybe 2/3 of the time), notifications disappear shortly after they appear and my phone vibrates. When this happens, the dot signifying new notifications on the element app icon also disappears.

I'm currently using version 1.2.1 on a Pixel 5 running Andr... | 1.0 | Push notifications sometimes disappear immediately - In a 1:1 encrypted chat, I've noticed that often (but not always, maybe 2/3 of the time), notifications disappear shortly after they appear and my phone vibrates. When this happens, the dot signifying new notifications on the element app icon also disappears.

I'm cu... | defect | push notifications sometimes disappear immediately in a encrypted chat i ve noticed that often but not always maybe of the time notifications disappear shortly after they appear and my phone vibrates when this happens the dot signifying new notifications on the element app icon also disappears i m cu... | 1 |

3,448 | 2,610,062,965 | IssuesEvent | 2015-02-26 18:18:30 | chrsmith/jsjsj122 | https://api.github.com/repos/chrsmith/jsjsj122 | opened | 黄岩治不育一般需要多少钱 | auto-migrated Priority-Medium Type-Defect | ```

黄岩治不育一般需要多少钱【台州五洲生殖医院】24小时健康

咨询热线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院地址:台

州市椒江区枫南路229号(枫南大转盘旁)乘车线路:乘坐104、1

08、118、198及椒江一金清公交车直达枫南小区,乘坐107、105、

109、112、901、 902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格按照国家标�

��收费。尖端医疗设备,与世界同步。权... | 1.0 | 黄岩治不育一般需要多少钱 - ```

黄岩治不育一般需要多少钱【台州五洲生殖医院】24小时健康

咨询热线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院地址:台

州市椒江区枫南路229号(枫南大转盘旁)乘车线路:乘坐104、1

08、118、198及椒江一金清公交车直达枫南小区,乘坐107、105、

109、112、901、 902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格按照国家标�

��收费... | defect | 黄岩治不育一般需要多少钱 黄岩治不育一般需要多少钱【台州五洲生殖医院】 咨询热线 微信号tzwzszyy 医院地址 台 (枫南大转盘旁)乘车线路 、 、 、 , 、 、 、 、 、 ,步行即可到院。 诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,�� �精,无精。包皮包茎,精索静脉曲张,淋病等。 台州五洲生殖医院是台州最大的男科医院,权威专家在线免�� �咨询,拥有专业完善的男科检查治疗设备,严格按照国家标� ��收费。尖端医疗设备,与世界同步。权威专家,成就专业典 范。人性化服务,一切以患者为中心。 看男科就选台州五洲生殖医院,专业男科为男人。 origin... | 1 |

50,617 | 13,187,626,198 | IssuesEvent | 2020-08-13 04:02:01 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | closed | muex option 'detail' is broken in trunk r135229 (Trac #1055) | Migrated from Trac combo reconstruction defect | If the detail option of the muex module is set to True it will seg fault.

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1055">https://code.icecube.wisc.edu/ticket/1055</a>, reported by sflis and owned by dima</em></summary>

<p>

```json

{

"status": "closed",

"changetime":... | 1.0 | muex option 'detail' is broken in trunk r135229 (Trac #1055) - If the detail option of the muex module is set to True it will seg fault.

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1055">https://code.icecube.wisc.edu/ticket/1055</a>, reported by sflis and owned by dima</em></sum... | defect | muex option detail is broken in trunk trac if the detail option of the muex module is set to true it will seg fault migrated from json status closed changetime description if the detail option of the muex module is set to true it will seg fault ... | 1 |

26,213 | 4,622,767,866 | IssuesEvent | 2016-09-27 08:46:29 | siddhartha-gadgil/ProvingGround | https://api.github.com/repos/siddhartha-gadgil/ProvingGround | closed | Very poor performance due to cascade of replacements. | defect | In generating from _Monoids_, but not from just _logic_, there is a huge slowdown, with most of the time spent in replace methods. This appears to be because:

* for the sake of safety, lambdas:

* create an inner variable

* in the case of lambda-fixed, check independence.

* both these involve replace... | 1.0 | Very poor performance due to cascade of replacements. - In generating from _Monoids_, but not from just _logic_, there is a huge slowdown, with most of the time spent in replace methods. This appears to be because:

* for the sake of safety, lambdas:

* create an inner variable

* in the case of lambda-... | defect | very poor performance due to cascade of replacements in generating from monoids but not from just logic there is a huge slowdown with most of the time spent in replace methods this appears to be because for the sake of safety lambdas create an inner variable in the case of lambda ... | 1 |

18,629 | 3,077,631,643 | IssuesEvent | 2015-08-21 02:27:04 | martingkelly/imms | https://api.github.com/repos/martingkelly/imms | closed | imms takes a while to record played songs in its database | auto-migrated Priority-Medium Type-Defect | ```

If you finish playing a song, imms does not immediately record it in its

database. I spent a while debugging this and tracked it to the buffering

behavior of the GIOChannel used on the immsd server. Basically, GIOChannel has

an internal buffer, and data doesn't get sent until that buffer fills up. The

size of t... | 1.0 | imms takes a while to record played songs in its database - ```

If you finish playing a song, imms does not immediately record it in its

database. I spent a while debugging this and tracked it to the buffering

behavior of the GIOChannel used on the immsd server. Basically, GIOChannel has

an internal buffer, and data... | defect | imms takes a while to record played songs in its database if you finish playing a song imms does not immediately record it in its database i spent a while debugging this and tracked it to the buffering behavior of the giochannel used on the immsd server basically giochannel has an internal buffer and data... | 1 |

41,668 | 10,563,396,765 | IssuesEvent | 2019-10-04 20:51:37 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | closed | [COGNITION]: Recommend showing all secondary specialties instead of show more/show less button | 508-defect-1 508/Accessibility facility locator frontend vsa-global-ux | ## Issue

The facility detail views sometimes have second-level lists that include a show more button. These buttons prepend additional `<li>` before the button, which causes an issue for assistive device users. This practice was flagged as an SC 1.3.1 issue. Screenshot attached below.

## Audit Finding

* Note 1, Defect... | 1.0 | [COGNITION]: Recommend showing all secondary specialties instead of show more/show less button - ## Issue

The facility detail views sometimes have second-level lists that include a show more button. These buttons prepend additional `<li>` before the button, which causes an issue for assistive device users. This practic... | defect | recommend showing all secondary specialties instead of show more show less button issue the facility detail views sometimes have second level lists that include a show more button these buttons prepend additional before the button which causes an issue for assistive device users this practice was flagged... | 1 |

34,481 | 7,452,016,728 | IssuesEvent | 2018-03-29 06:38:26 | kerdokullamae/test_koik_issued | https://api.github.com/repos/kerdokullamae/test_koik_issued | closed | Seostamine: Org/Isiku seostamine teise Org/Isikuga ning Kirjeldusüksusega | P: highest R: fixed T: defect | **Reported by maiu pevkur on 9 May 2013 13:13 UTC**

Organisatsiooni ja Isiku lisainfo vormidel on sakid Seotud kirjeldusüksused ja Seotud isikud/org, kus on vanast AISist kanud andmed (osaliselt need andmed küll veel puuduvad). Puudub aga võimalus lisada/kustutada seotud kirjeldusüksusi ja seotud isikuid/orge. Kui vaju... | 1.0 | Seostamine: Org/Isiku seostamine teise Org/Isikuga ning Kirjeldusüksusega - **Reported by maiu pevkur on 9 May 2013 13:13 UTC**

Organisatsiooni ja Isiku lisainfo vormidel on sakid Seotud kirjeldusüksused ja Seotud isikud/org, kus on vanast AISist kanud andmed (osaliselt need andmed küll veel puuduvad). Puudub aga võima... | defect | seostamine org isiku seostamine teise org isikuga ning kirjeldusüksusega reported by maiu pevkur on may utc organisatsiooni ja isiku lisainfo vormidel on sakid seotud kirjeldusüksused ja seotud isikud org kus on vanast aisist kanud andmed osaliselt need andmed küll veel puuduvad puudub aga võimalus l... | 1 |

48,126 | 13,067,466,136 | IssuesEvent | 2020-07-31 00:32:42 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | L1 filter for 2014 and 2015 (Trac #1828) | Migrated from Trac cmake defect | Running offline filter for 2014 and 2015:

/cvmfs/icecube.opensciencegrid.org/py2-v1/RHEL_6_x86_64/metaprojects/icerec/IC2014-L2_V14-02-00/lib/icecube/filterscripts/offlineL2/level1_SimulationFiltering.py

I receive the following error:

`RuntimeError: dlopen() dynamic loading error: /data/user/saxani/environments/buildf... | 1.0 | L1 filter for 2014 and 2015 (Trac #1828) - Running offline filter for 2014 and 2015:

/cvmfs/icecube.opensciencegrid.org/py2-v1/RHEL_6_x86_64/metaprojects/icerec/IC2014-L2_V14-02-00/lib/icecube/filterscripts/offlineL2/level1_SimulationFiltering.py

I receive the following error:

`RuntimeError: dlopen() dynamic loading e... | defect | filter for and trac running offline filter for and cvmfs icecube opensciencegrid org rhel metaprojects icerec lib icecube filterscripts simulationfiltering py i receive the following error runtimeerror dlopen dynamic loading error data user saxani environments buildfwd... | 1 |

47,769 | 19,716,000,457 | IssuesEvent | 2022-01-13 11:02:10 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | How should the AZ CLI script for importing the Helm images be run | container-service/svc triaged cxp needs-more-info product-issue Pri1 | I tried running those commands in PowerShell and get errors all over the place. I then figured I'd put them into a powershell script file and run that. Still no dice. What am I missing?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

*... | 1.0 | How should the AZ CLI script for importing the Helm images be run - I tried running those commands in PowerShell and get errors all over the place. I then figured I'd put them into a powershell script file and run that. Still no dice. What am I missing?

---

#### Document Details

⚠ *Do not edit this section.... | non_defect | how should the az cli script for importing the helm images be run i tried running those commands in powershell and get errors all over the place i then figured i d put them into a powershell script file and run that still no dice what am i missing document details ⚠ do not edit this section ... | 0 |

354,378 | 25,163,572,768 | IssuesEvent | 2022-11-10 18:45:13 | netbox-community/netbox | https://api.github.com/repos/netbox-community/netbox | opened | 'Installation & Upgrade' Netbox documentation page - Add note to 'Warning' section at bottom of the page to have user confirm virtual environment is still activated | type: documentation | ### Change Type

Addition

### Area

Installation/upgrade

### Proposed Changes

Add a basic troubleshooting note to the "Warning" section at the bottom of the "3. Netbox" page in the "Installation & Upgrade" section of the documentation.

Have user ensure that the virtual environment is still activated before perfor... | 1.0 | 'Installation & Upgrade' Netbox documentation page - Add note to 'Warning' section at bottom of the page to have user confirm virtual environment is still activated - ### Change Type

Addition

### Area

Installation/upgrade

### Proposed Changes

Add a basic troubleshooting note to the "Warning" section at the bottom ... | non_defect | installation upgrade netbox documentation page add note to warning section at bottom of the page to have user confirm virtual environment is still activated change type addition area installation upgrade proposed changes add a basic troubleshooting note to the warning section at the bottom ... | 0 |

447,142 | 31,624,355,073 | IssuesEvent | 2023-09-06 03:21:17 | Virtue-Digital-Indonesia/frontend-examples | https://api.github.com/repos/Virtue-Digital-Indonesia/frontend-examples | opened | Projects Tracker | documentation | This issue is used to list and track the progress of each project.

Here are the projects:

- [ ] Data Grid Filters and Data Grid Table

- [ ] Object List with MUI Data Grid

- [ ] Markers, Popups, and Clusters with Leaflet

- [ ] Date Range and Time Picker

- [ ] Create, show, edit, and delete Geofences with Leaflet | 1.0 | Projects Tracker - This issue is used to list and track the progress of each project.

Here are the projects:

- [ ] Data Grid Filters and Data Grid Table

- [ ] Object List with MUI Data Grid

- [ ] Markers, Popups, and Clusters with Leaflet

- [ ] Date Range and Time Picker

- [ ] Create, show, edit, and delete Geofe... | non_defect | projects tracker this issue is used to list and track the progress of each project here are the projects data grid filters and data grid table object list with mui data grid markers popups and clusters with leaflet date range and time picker create show edit and delete geofences with ... | 0 |

171,141 | 27,066,919,191 | IssuesEvent | 2023-02-14 01:36:52 | dotnet/roslyn | https://api.github.com/repos/dotnet/roslyn | closed | Expose IsInExpressionTree | Area-IDE Concept-API Need Design Review | Useful in code fixes as the rules are slightly different in expressions.

Preferable via a static mehtod and not via some service locator. Don't know if `SemanticFacts` is a thing. | 1.0 | Expose IsInExpressionTree - Useful in code fixes as the rules are slightly different in expressions.

Preferable via a static mehtod and not via some service locator. Don't know if `SemanticFacts` is a thing. | non_defect | expose isinexpressiontree useful in code fixes as the rules are slightly different in expressions preferable via a static mehtod and not via some service locator don t know if semanticfacts is a thing | 0 |

277,880 | 24,108,146,369 | IssuesEvent | 2022-09-20 09:09:21 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | Test failure: BraveProfileManagerTest.ExcludeServicesInOTRAndGuestProfiles | ci-concern bot/type/test bot/channel/nightly bot/platform/android bot/arch/x86-mono bot/branch/v1.45 | Greetings human!

Bad news. `BraveProfileManagerTest.ExcludeServicesInOTRAndGuestProfiles` [failed on android x86-mono nightly v1.45.58](https://ci.brave.com/job/brave-browser-build-android-variant/5971/testReport/junit/(root)/BraveProfileManagerTest/test___test_browser___ExcludeServicesInOTRAndGuestProfiles).

<details... | 1.0 | Test failure: BraveProfileManagerTest.ExcludeServicesInOTRAndGuestProfiles - Greetings human!

Bad news. `BraveProfileManagerTest.ExcludeServicesInOTRAndGuestProfiles` [failed on android x86-mono nightly v1.45.58](https://ci.brave.com/job/brave-browser-build-android-variant/5971/testReport/junit/(root)/BraveProfileMana... | non_defect | test failure braveprofilemanagertest excludeservicesinotrandguestprofiles greetings human bad news braveprofilemanagertest excludeservicesinotrandguestprofiles stack trace braveprofilemanagertest excludeservicesinotrandguestprofiles tracing subscriber init success skus sdk skus sdk initia... | 0 |

166,790 | 12,972,020,216 | IssuesEvent | 2020-07-21 11:55:28 | prisma/prisma-client-js | https://api.github.com/repos/prisma/prisma-client-js | closed | Enum value 'false' breaks Prisma client create | bug/2-confirmed kind/bug status/needs-fix-confirmation team/engines topic: test-utils | ## Bug description

If an enum has 'false' as one of the possible values. Prisma client fails at runtime while providing 'false' as the value for that enum.

## How to reproduce

1. Run the following SQL in a MySQL (untested with Postgres, SQLite) database

```

CREATE TABLE `e` (

`id` bigint(20) unsigned ... | 1.0 | Enum value 'false' breaks Prisma client create - ## Bug description

If an enum has 'false' as one of the possible values. Prisma client fails at runtime while providing 'false' as the value for that enum.

## How to reproduce

1. Run the following SQL in a MySQL (untested with Postgres, SQLite) database

```... | non_defect | enum value false breaks prisma client create bug description if an enum has false as one of the possible values prisma client fails at runtime while providing false as the value for that enum how to reproduce run the following sql in a mysql untested with postgres sqlite database ... | 0 |

696,847 | 23,918,512,171 | IssuesEvent | 2022-09-09 14:42:18 | camsaul/methodical | https://api.github.com/repos/camsaul/methodical | closed | `trace!` and `untrace!` facilities to trace existing existing code without changing it | enhancement high-priority! | - It would be good to be able to trace all usages of a certain method without touching existing code

- It would be good to be able to trace method calls in an external library

Maybe we can use `alter-var-root!` or something swap out the untraced multimethod with a traced one | 1.0 | `trace!` and `untrace!` facilities to trace existing existing code without changing it - - It would be good to be able to trace all usages of a certain method without touching existing code

- It would be good to be able to trace method calls in an external library

Maybe we can use `alter-var-root!` or something swa... | non_defect | trace and untrace facilities to trace existing existing code without changing it it would be good to be able to trace all usages of a certain method without touching existing code it would be good to be able to trace method calls in an external library maybe we can use alter var root or something swa... | 0 |

567,896 | 16,918,839,507 | IssuesEvent | 2021-06-25 00:12:22 | googleapis/gapic-generator-python | https://api.github.com/repos/googleapis/gapic-generator-python | opened | `name 'warnings' is not defined` raised for clients with deprecated methods | priority: p2 type: bug | See [log](https://source.cloud.google.com/results/invocations/2f31c792-2c4e-453f-943b-08403508b66f/targets/github%2Fpython-container/tests) from https://github.com/googleapis/python-container/pull/115 for an example | 1.0 | `name 'warnings' is not defined` raised for clients with deprecated methods - See [log](https://source.cloud.google.com/results/invocations/2f31c792-2c4e-453f-943b-08403508b66f/targets/github%2Fpython-container/tests) from https://github.com/googleapis/python-container/pull/115 for an example | non_defect | name warnings is not defined raised for clients with deprecated methods see from for an example | 0 |

218,669 | 7,332,097,136 | IssuesEvent | 2018-03-05 15:25:31 | NCEAS/metacat | https://api.github.com/repos/NCEAS/metacat | closed | continue updating user documentation | Category: metacat Component: Bugzilla-Id Priority: Normal Status: Resolved Tracker: Bug | ---

Author Name: **Matt Jones** (Matt Jones)

Original Redmine Issue: 5516, https://projects.ecoinformatics.org/ecoinfo/issues/5516

Original Date: 2011-10-26

Original Assignee: ben leinfelder

---

Documentation needs editing to describe new 2.0.0 features, including support for new DataONE APIs, deprecation of older ... | 1.0 | continue updating user documentation - ---

Author Name: **Matt Jones** (Matt Jones)

Original Redmine Issue: 5516, https://projects.ecoinformatics.org/ecoinfo/issues/5516

Original Date: 2011-10-26

Original Assignee: ben leinfelder

---

Documentation needs editing to describe new 2.0.0 features, including support for ... | non_defect | continue updating user documentation author name matt jones matt jones original redmine issue original date original assignee ben leinfelder documentation needs editing to describe new features including support for new dataone apis deprecation of older servlet apis and gener... | 0 |

292,688 | 25,229,496,582 | IssuesEvent | 2022-11-14 18:33:13 | spack/spack | https://api.github.com/repos/spack/spack | closed | Testing issue: ginkgo@1.4.0%gcc@11.1.0 | test-error | ### Steps to reproduce the failure(s) or link(s) to test output(s)

@hartwiganzt @tcojean

Stand-alone tests failed during a Spack PR pipeline run. More information can be found at:

- Build: https://cdash.spack.io/build/1816848

- Stand-alone tests: https://cdash.spack.io/viewTest.php?buildid=1816848

### Error... | 1.0 | Testing issue: ginkgo@1.4.0%gcc@11.1.0 - ### Steps to reproduce the failure(s) or link(s) to test output(s)

@hartwiganzt @tcojean

Stand-alone tests failed during a Spack PR pipeline run. More information can be found at:

- Build: https://cdash.spack.io/build/1816848

- Stand-alone tests: https://cdash.spack.i... | non_defect | testing issue ginkgo gcc steps to reproduce the failure s or link s to test output s hartwiganzt tcojean stand alone tests failed during a spack pr pipeline run more information can be found at build stand alone tests error message build test software buildi... | 0 |

58,630 | 16,662,876,925 | IssuesEvent | 2021-06-06 16:49:36 | Questie/Questie | https://api.github.com/repos/Questie/Questie | opened | Questie error for the quest Gorgrom the dragon-Eater | Type - Defect | Hi, using questie 6.3.14

Got mass error when accepting Gorgrom the dragon-Eater in blades edge.

Questie: [ERROR] [QuestieQuest]: v6.3.14 - There was an error populating objectives for Gorgrom the Dragon-Eater 10723 1 No error

...erface\AddOns\Questie\Modules\Quest\QuestieQuest.lua:690: bad argument #1 to 'next' ... | 1.0 | Questie error for the quest Gorgrom the dragon-Eater - Hi, using questie 6.3.14

Got mass error when accepting Gorgrom the dragon-Eater in blades edge.

Questie: [ERROR] [QuestieQuest]: v6.3.14 - There was an error populating objectives for Gorgrom the Dragon-Eater 10723 1 No error

...erface\AddOns\Questie\Modules... | defect | questie error for the quest gorgrom the dragon eater hi using questie got mass error when accepting gorgrom the dragon eater in blades edge questie there was an error populating objectives for gorgrom the dragon eater no error erface addons questie modules quest questiequest lua ... | 1 |

69,057 | 22,098,352,015 | IssuesEvent | 2022-06-01 11:58:24 | DEVA9N/FlexTimeMonitor | https://api.github.com/repos/DEVA9N/FlexTimeMonitor | closed | The options (break time) are gone after reinstalling the programm | Priority-Medium Type-Defect auto-migrated | ```

What steps will reproduce the problem?

1. Reinstall or install a new version of Flex Time Monitor

2. Notice how the default options are used

What is the expected output? What do you see instead?

expected: the options will stay the same as before the new installation

instead: the default options are set

```

Orig... | 1.0 | The options (break time) are gone after reinstalling the programm - ```

What steps will reproduce the problem?

1. Reinstall or install a new version of Flex Time Monitor

2. Notice how the default options are used

What is the expected output? What do you see instead?

expected: the options will stay the same as before t... | defect | the options break time are gone after reinstalling the programm what steps will reproduce the problem reinstall or install a new version of flex time monitor notice how the default options are used what is the expected output what do you see instead expected the options will stay the same as before t... | 1 |

38,599 | 8,924,971,976 | IssuesEvent | 2019-01-21 20:44:11 | idaholab/moose | https://api.github.com/repos/idaholab/moose | opened | Python utils check file size can fail (race condition) | C: MOOSE Scripts C: TestHarness P: normal T: defect | ## Rationale

<!--What is the reason for this enhancement or what error are you reporting?-->

The python/mooseutils/tests.check_file_size test can fail due to a race condition. The problem is that this test runs concurrently with several other tests on the system and it does an os.walk() looking for files sizes to... | 1.0 | Python utils check file size can fail (race condition) - ## Rationale

<!--What is the reason for this enhancement or what error are you reporting?-->

The python/mooseutils/tests.check_file_size test can fail due to a race condition. The problem is that this test runs concurrently with several other tests on the s... | defect | python utils check file size can fail race condition rationale the python mooseutils tests check file size test can fail due to a race condition the problem is that this test runs concurrently with several other tests on the system and it does an os walk looking for files sizes to sum up it s possibl... | 1 |

56,058 | 14,916,170,434 | IssuesEvent | 2021-01-22 17:47:39 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | closed | [CI/CD]: Review coverage of accessibility checks in 996 end-to-end tests | 508-defect-3 508/Accessibility HLR testing vsa vsa-benefits | **Feedback framework**

- **❗️ Must** for if the feedback must be applied

- **⚠️Should** if the feedback is best practice

- **✔️ Consider** for suggestions/enhancements

## Description

Applications **must** have thorough end-to-end tests that run in our continuous integration/continuous deployment (CI/CD) p... | 1.0 | [CI/CD]: Review coverage of accessibility checks in 996 end-to-end tests - **Feedback framework**

- **❗️ Must** for if the feedback must be applied

- **⚠️Should** if the feedback is best practice

- **✔️ Consider** for suggestions/enhancements

## Description

Applications **must** have thorough end-to-end t... | defect | review coverage of accessibility checks in end to end tests feedback framework ❗️ must for if the feedback must be applied ⚠️should if the feedback is best practice ✔️ consider for suggestions enhancements description applications must have thorough end to end tests tha... | 1 |

29,023 | 8,250,827,653 | IssuesEvent | 2018-09-12 05:11:34 | avast-tl/retdec | https://api.github.com/repos/avast-tl/retdec | closed | Could NOT find PythonInterp: Found unsuitable version "2.7.14", but required is at least "3.4" (found /usr/local/bin/python) | C-build-system O-macos P-build | Someone please help me.

OSX, I have both python 2.7 installed and python 3.7 installed.

My PATH is /usr/local/opt/python3.7:/Library/Frameworks/Python.framework/Versions/3.7/bin/python3:/usr/local/opt/flex/bin:/usr/local/opt/bison/bin:/usr/local/bin:/usr/bin:/bin:/usr/sbin:/sbin

When I try to cmake it always s... | 2.0 | Could NOT find PythonInterp: Found unsuitable version "2.7.14", but required is at least "3.4" (found /usr/local/bin/python) - Someone please help me.

OSX, I have both python 2.7 installed and python 3.7 installed.

My PATH is /usr/local/opt/python3.7:/Library/Frameworks/Python.framework/Versions/3.7/bin/pytho... | non_defect | could not find pythoninterp found unsuitable version but required is at least found usr local bin python someone please help me osx i have both python installed and python installed my path is usr local opt library frameworks python framework versions bin usr local... | 0 |

7,155 | 2,610,329,582 | IssuesEvent | 2015-02-26 19:46:12 | chrsmith/republic-at-war | https://api.github.com/repos/chrsmith/republic-at-war | closed | Map Issue | auto-migrated Priority-Low Type-Defect | ```

I've got a few bugs to report. I've noticed that both Sluis Van and Raxus Prime

do not have Reinforcement points when I play on them for some reason. When I

originally fought on the planets they had reinforcement points but they

dissapeared after I conquered them. Then, the CIS will launch assaults on these

wor... | 1.0 | Map Issue - ```

I've got a few bugs to report. I've noticed that both Sluis Van and Raxus Prime

do not have Reinforcement points when I play on them for some reason. When I

originally fought on the planets they had reinforcement points but they

dissapeared after I conquered them. Then, the CIS will launch assaults o... | defect | map issue i ve got a few bugs to report i ve noticed that both sluis van and raxus prime do not have reinforcement points when i play on them for some reason when i originally fought on the planets they had reinforcement points but they dissapeared after i conquered them then the cis will launch assaults o... | 1 |

40,380 | 9,977,380,804 | IssuesEvent | 2019-07-09 17:07:37 | mozilla/experimenter | https://api.github.com/repos/mozilla/experimenter | closed | The "Help" button for the "Lightning Advisory (Optional)" Sign-off is wrongly linked to the mana page/"Dependent Sign-offs" | Defect P1 - High Priority [QA]:Normal issue | **[Action Needed]:**

Please link the "Lightning Advising" help here: https://mana.mozilla.org/wiki/display/FIREFOX/Pref-Flip+and+Add-On+Experiments#Pref-FlipandAdd-OnExperiments-LightningAdvising

**[Notes]:**

- I logged this issue because the "Lightning Advisory (Optional)" Sign-off doesn't have any dependencies ... | 1.0 | The "Help" button for the "Lightning Advisory (Optional)" Sign-off is wrongly linked to the mana page/"Dependent Sign-offs" - **[Action Needed]:**

Please link the "Lightning Advising" help here: https://mana.mozilla.org/wiki/display/FIREFOX/Pref-Flip+and+Add-On+Experiments#Pref-FlipandAdd-OnExperiments-LightningAdvis... | defect | the help button for the lightning advisory optional sign off is wrongly linked to the mana page dependent sign offs please link the lightning advising help here i logged this issue because the lightning advisory optional sign off doesn t have any dependencies with the risk ques... | 1 |

187,095 | 14,426,956,619 | IssuesEvent | 2020-12-06 01:00:41 | kalexmills/github-vet-tests-dec2020 | https://api.github.com/repos/kalexmills/github-vet-tests-dec2020 | closed | giantswarm/kvm-operator-node-controller: vendor/k8s.io/kubernetes/pkg/printers/internalversion/printers_test.go; 30 LoC | fresh small test vendored |

Found a possible issue in [giantswarm/kvm-operator-node-controller](https://www.github.com/giantswarm/kvm-operator-node-controller) at [vendor/k8s.io/kubernetes/pkg/printers/internalversion/printers_test.go](https://github.com/giantswarm/kvm-operator-node-controller/blob/7146561e54142d4f986daee0206336ebee3ceb18/vendor... | 1.0 | giantswarm/kvm-operator-node-controller: vendor/k8s.io/kubernetes/pkg/printers/internalversion/printers_test.go; 30 LoC -

Found a possible issue in [giantswarm/kvm-operator-node-controller](https://www.github.com/giantswarm/kvm-operator-node-controller) at [vendor/k8s.io/kubernetes/pkg/printers/internalversion/printer... | non_defect | giantswarm kvm operator node controller vendor io kubernetes pkg printers internalversion printers test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit you... | 0 |