Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

34,917 | 7,471,046,904 | IssuesEvent | 2018-04-03 07:56:54 | primefaces/primefaces | https://api.github.com/repos/primefaces/primefaces | opened | selectOneMenu : disabled on using placeholder | 6.2.3 defect | Reported By PRO User;

> We are using p:selectOneMenu with placeholder option.

We noticed that, ui-state-disabled is added when placeholder is present which makes it look disabled.

ex:

```

<p:selectOneMenu id="console" value="" style="width:125px" placeholder="Select One">

<f:selectItem itemLabel="" itemValue="" />

<f:selectItem itemLabel="Xbox One" itemValue="Xbox One" />

<f:selectItem itemLabel="PS4" itemValue="PS4" />

<f:selectItem itemLabel="Wii U" itemValue="Wii U" />

</p:selectOneMenu>

``` | 1.0 | selectOneMenu : disabled on using placeholder - Reported By PRO User;

> We are using p:selectOneMenu with placeholder option.

We noticed that, ui-state-disabled is added when placeholder is present which makes it look disabled.

ex:

```

<p:selectOneMenu id="console" value="" style="width:125px" placeholder="Select One">

<f:selectItem itemLabel="" itemValue="" />

<f:selectItem itemLabel="Xbox One" itemValue="Xbox One" />

<f:selectItem itemLabel="PS4" itemValue="PS4" />

<f:selectItem itemLabel="Wii U" itemValue="Wii U" />

</p:selectOneMenu>

``` | defect | selectonemenu disabled on using placeholder reported by pro user we are using p selectonemenu with placeholder option we noticed that ui state disabled is added when placeholder is present which makes it look disabled ex | 1 |

61,538 | 17,023,719,703 | IssuesEvent | 2021-07-03 03:28:47 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | [landcover] Mapnik: Render Dune | Component: mapnik Priority: minor Resolution: duplicate Type: defect | **[Submitted to the original trac issue database at 1.46pm, Thursday, 9th June 2011]**

it would be nice to have a render-possiblity for the dune-object [1] because when map area near the coast this is a dominant and important object [2].

reagards Jan alias Lbeck :-)

[1] http://wiki.openstreetmap.org/wiki/Tag:natural%3Ddune

[2] http://www.openstreetmap.org/?lat=54.47866&lon=12.51828&zoom=15&layers=M | 1.0 | [landcover] Mapnik: Render Dune - **[Submitted to the original trac issue database at 1.46pm, Thursday, 9th June 2011]**

it would be nice to have a render-possiblity for the dune-object [1] because when map area near the coast this is a dominant and important object [2].

reagards Jan alias Lbeck :-)

[1] http://wiki.openstreetmap.org/wiki/Tag:natural%3Ddune

[2] http://www.openstreetmap.org/?lat=54.47866&lon=12.51828&zoom=15&layers=M | defect | mapnik render dune it would be nice to have a render possiblity for the dune object because when map area near the coast this is a dominant and important object reagards jan alias lbeck | 1 |

70,746 | 23,302,171,928 | IssuesEvent | 2022-08-07 13:40:38 | openzfs/zfs | https://api.github.com/repos/openzfs/zfs | opened | Warnings on compiling on Debian 11 AArch64 | Type: Defect | ### System information

<!-- add version after "|" character -->

Type | Version/Name

--- | ---

Distribution Name | Debian

Distribution Version | 11.4

Kernel Version | 5.10.0-16-arm64

Architecture | AArch64

OpenZFS Version | 947465b9

### Describe the problem you're observing

Given the interest in being -Werror-clean, I thought this was worth reporting after seeing it while testing #13741:

```

lib/libzfs/libzfs_dataset.c: In function ‘zfs_rename’:

lib/libzfs/libzfs_dataset.c:4416:1: note: parameter passing for argument of type ‘renameflags_t’ {aka ‘struct renameflags’} changed in GCC 9.1

4416 | zfs_rename(zfs_handle_t *zhp, const char *target, renameflags_t flags)

| ^~~~~~~~~~

CC lib/libzfs/os/linux/libzfs_la-libzfs_mount_os.lo

CC lib/libzfs/os/linux/libzfs_la-libzfs_pool_os.lo

lib/libzfs/libzfs_pool.c: In function ‘zpool_valid_proplist’:

lib/libzfs/libzfs_pool.c:453:1: note: parameter passing for argument of type ‘prop_flags_t’ {aka ‘struct prop_flags’} changed in GCC 9.1

453 | zpool_valid_proplist(libzfs_handle_t *hdl, const char *poolname,

| ^~~~~~~~~~~~~~~~~~~~

lib/libzfs/libzfs_dataset.c: In function ‘zfs_rename’:

lib/libzfs/libzfs_dataset.c:4416:1: note: parameter passing for argument of type ‘renameflags_t’ {aka ‘struct renameflags’} changed in GCC 9.1

4416 | zfs_rename(zfs_handle_t *zhp, const char *target, renameflags_t flags)

| ^~~~~~~~~~

CC lib/libzfs/os/linux/libzfs_la-libzfs_util_os.lo

CC lib/libshare/libshare_la-libshare.lo

lib/libzfs/libzfs_pool.c: In function ‘zpool_valid_proplist’:

lib/libzfs/libzfs_pool.c:453:1: note: parameter passing for argument of type ‘prop_flags_t’ {aka ‘struct prop_flags’} changed in GCC 9.1

453 | zpool_valid_proplist(libzfs_handle_t *hdl, const char *poolname,

| ^~~~~~~~~~~~~~~~~~~~

```

```

$ gcc --version

gcc (Debian 10.2.1-6) 10.2.1 20210110

```

### Describe how to reproduce the problem

Build without --enable-debug. (I presume --enable-debug will also do this, but that's not what I was doing.)

### Include any warning/errors/backtraces from the system logs

Above. | 1.0 | Warnings on compiling on Debian 11 AArch64 - ### System information

<!-- add version after "|" character -->

Type | Version/Name

--- | ---

Distribution Name | Debian

Distribution Version | 11.4

Kernel Version | 5.10.0-16-arm64

Architecture | AArch64

OpenZFS Version | 947465b9

### Describe the problem you're observing

Given the interest in being -Werror-clean, I thought this was worth reporting after seeing it while testing #13741:

```

lib/libzfs/libzfs_dataset.c: In function ‘zfs_rename’:

lib/libzfs/libzfs_dataset.c:4416:1: note: parameter passing for argument of type ‘renameflags_t’ {aka ‘struct renameflags’} changed in GCC 9.1

4416 | zfs_rename(zfs_handle_t *zhp, const char *target, renameflags_t flags)

| ^~~~~~~~~~

CC lib/libzfs/os/linux/libzfs_la-libzfs_mount_os.lo

CC lib/libzfs/os/linux/libzfs_la-libzfs_pool_os.lo

lib/libzfs/libzfs_pool.c: In function ‘zpool_valid_proplist’:

lib/libzfs/libzfs_pool.c:453:1: note: parameter passing for argument of type ‘prop_flags_t’ {aka ‘struct prop_flags’} changed in GCC 9.1

453 | zpool_valid_proplist(libzfs_handle_t *hdl, const char *poolname,

| ^~~~~~~~~~~~~~~~~~~~

lib/libzfs/libzfs_dataset.c: In function ‘zfs_rename’:

lib/libzfs/libzfs_dataset.c:4416:1: note: parameter passing for argument of type ‘renameflags_t’ {aka ‘struct renameflags’} changed in GCC 9.1

4416 | zfs_rename(zfs_handle_t *zhp, const char *target, renameflags_t flags)

| ^~~~~~~~~~

CC lib/libzfs/os/linux/libzfs_la-libzfs_util_os.lo

CC lib/libshare/libshare_la-libshare.lo

lib/libzfs/libzfs_pool.c: In function ‘zpool_valid_proplist’:

lib/libzfs/libzfs_pool.c:453:1: note: parameter passing for argument of type ‘prop_flags_t’ {aka ‘struct prop_flags’} changed in GCC 9.1

453 | zpool_valid_proplist(libzfs_handle_t *hdl, const char *poolname,

| ^~~~~~~~~~~~~~~~~~~~

```

```

$ gcc --version

gcc (Debian 10.2.1-6) 10.2.1 20210110

```

### Describe how to reproduce the problem

Build without --enable-debug. (I presume --enable-debug will also do this, but that's not what I was doing.)

### Include any warning/errors/backtraces from the system logs

Above. | defect | warnings on compiling on debian system information type version name distribution name debian distribution version kernel version architecture openzfs version describe the problem you re observing given the interest in being werror clean i thought this was worth reporting after seeing it while testing lib libzfs libzfs dataset c in function ‘zfs rename’ lib libzfs libzfs dataset c note parameter passing for argument of type ‘renameflags t’ aka ‘struct renameflags’ changed in gcc zfs rename zfs handle t zhp const char target renameflags t flags cc lib libzfs os linux libzfs la libzfs mount os lo cc lib libzfs os linux libzfs la libzfs pool os lo lib libzfs libzfs pool c in function ‘zpool valid proplist’ lib libzfs libzfs pool c note parameter passing for argument of type ‘prop flags t’ aka ‘struct prop flags’ changed in gcc zpool valid proplist libzfs handle t hdl const char poolname lib libzfs libzfs dataset c in function ‘zfs rename’ lib libzfs libzfs dataset c note parameter passing for argument of type ‘renameflags t’ aka ‘struct renameflags’ changed in gcc zfs rename zfs handle t zhp const char target renameflags t flags cc lib libzfs os linux libzfs la libzfs util os lo cc lib libshare libshare la libshare lo lib libzfs libzfs pool c in function ‘zpool valid proplist’ lib libzfs libzfs pool c note parameter passing for argument of type ‘prop flags t’ aka ‘struct prop flags’ changed in gcc zpool valid proplist libzfs handle t hdl const char poolname gcc version gcc debian describe how to reproduce the problem build without enable debug i presume enable debug will also do this but that s not what i was doing include any warning errors backtraces from the system logs above | 1 |

13,619 | 2,772,949,671 | IssuesEvent | 2015-05-03 05:40:52 | agronholm/pythonfutures | https://api.github.com/repos/agronholm/pythonfutures | closed | ThreadPoolExecutor#map() doesn't start tasks until generator is used | auto-migrated Priority-Medium Type-Defect | ```

See this simple source: http://paste.pound-python.org/show/ZTWE9gxRpAX5qsgMGS2H/

On Python 3, the prints happen before the generator is used at all. On Python

2, they don't happen and RuntimeError is raised when trying to iterate on the

returned generator.

Python 2.7.8 on OS X 10.9 with futures 2.1.6.

```

Original issue reported on code.google.com by `remirampin@gmail.com` on 30 Aug 2014 at 9:23 | 1.0 | ThreadPoolExecutor#map() doesn't start tasks until generator is used - ```

See this simple source: http://paste.pound-python.org/show/ZTWE9gxRpAX5qsgMGS2H/

On Python 3, the prints happen before the generator is used at all. On Python

2, they don't happen and RuntimeError is raised when trying to iterate on the

returned generator.

Python 2.7.8 on OS X 10.9 with futures 2.1.6.

```

Original issue reported on code.google.com by `remirampin@gmail.com` on 30 Aug 2014 at 9:23 | defect | threadpoolexecutor map doesn t start tasks until generator is used see this simple source on python the prints happen before the generator is used at all on python they don t happen and runtimeerror is raised when trying to iterate on the returned generator python on os x with futures original issue reported on code google com by remirampin gmail com on aug at | 1 |

355,509 | 10,581,611,542 | IssuesEvent | 2019-10-08 09:34:44 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | securedsearch.lavasoft.com - see bug description | browser-firefox engine-gecko priority-normal | <!-- @browser: Firefox 70.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:70.0) Gecko/20100101 Firefox/70.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: http://securedsearch.lavasoft.com/?pr=vmn&id=webcompa&ent=hp_WCYID10057_344_191006

**Browser / Version**: Firefox 70.0

**Operating System**: Windows 10

**Tested Another Browser**: Unknown

**Problem type**: Something else

**Description**: i dont want this

**Steps to Reproduce**:

[](https://webcompat.com/uploads/2019/10/ad65116e-b7d8-4473-9824-eca99e83b755.jpeg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20191002212950</li><li>channel: beta</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | securedsearch.lavasoft.com - see bug description - <!-- @browser: Firefox 70.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:70.0) Gecko/20100101 Firefox/70.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: http://securedsearch.lavasoft.com/?pr=vmn&id=webcompa&ent=hp_WCYID10057_344_191006

**Browser / Version**: Firefox 70.0

**Operating System**: Windows 10

**Tested Another Browser**: Unknown

**Problem type**: Something else

**Description**: i dont want this

**Steps to Reproduce**:

[](https://webcompat.com/uploads/2019/10/ad65116e-b7d8-4473-9824-eca99e83b755.jpeg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20191002212950</li><li>channel: beta</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | non_defect | securedsearch lavasoft com see bug description url browser version firefox operating system windows tested another browser unknown problem type something else description i dont want this steps to reproduce browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel beta hastouchscreen false mixed active content blocked false mixed passive content blocked false tracking content blocked false from with ❤️ | 0 |

31,352 | 6,501,655,809 | IssuesEvent | 2017-08-23 10:29:36 | primefaces/primeng | https://api.github.com/repos/primefaces/primeng | closed | Add required property to AutoComplete, Spinner and InputMask | defect | **I'm submitting a ...** (check one with "x")

```

[x] feature request

```

**What is the motivation / use case for changing the behavior?**

Looking at the documentation on PrimeNG site, it appears that p-dropdown has a "required" property, but the autocomplete does not. It seems highly important that required also be a property of autocomplete because it something that would be used in forms. In my case, I have in several places autocompletes inside table cells that I would like to set to required and use angulars built in validation classes to style them.

* **Angular version:** 4.3.1

<!-- Check whether this is still an issue in the most recent Angular version -->

* **PrimeNG version:** 4.1.1

| 1.0 | Add required property to AutoComplete, Spinner and InputMask - **I'm submitting a ...** (check one with "x")

```

[x] feature request

```

**What is the motivation / use case for changing the behavior?**

Looking at the documentation on PrimeNG site, it appears that p-dropdown has a "required" property, but the autocomplete does not. It seems highly important that required also be a property of autocomplete because it something that would be used in forms. In my case, I have in several places autocompletes inside table cells that I would like to set to required and use angulars built in validation classes to style them.

* **Angular version:** 4.3.1

<!-- Check whether this is still an issue in the most recent Angular version -->

* **PrimeNG version:** 4.1.1

| defect | add required property to autocomplete spinner and inputmask i m submitting a check one with x feature request what is the motivation use case for changing the behavior looking at the documentation on primeng site it appears that p dropdown has a required property but the autocomplete does not it seems highly important that required also be a property of autocomplete because it something that would be used in forms in my case i have in several places autocompletes inside table cells that i would like to set to required and use angulars built in validation classes to style them angular version primeng version | 1 |

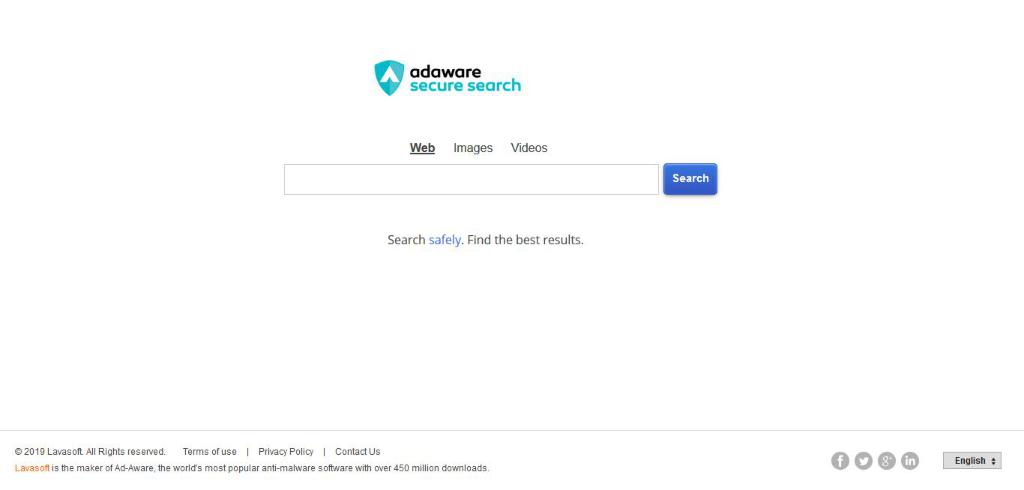

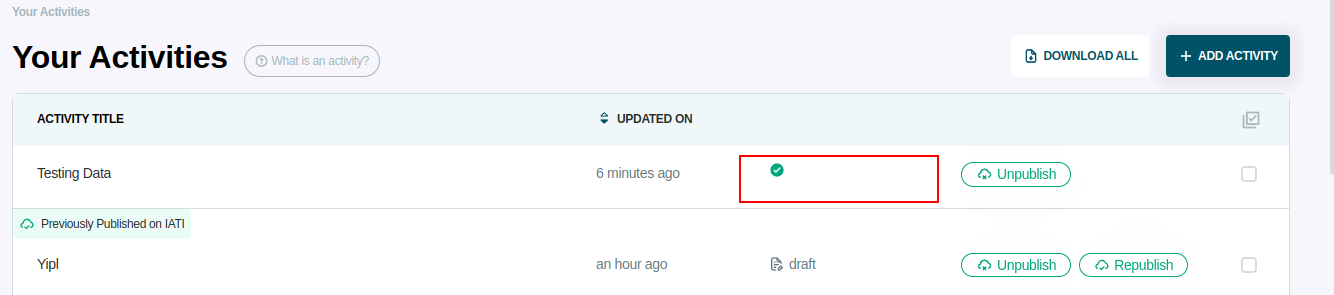

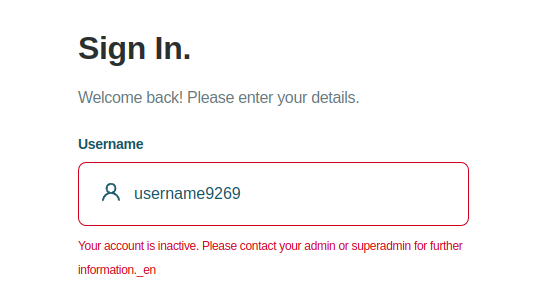

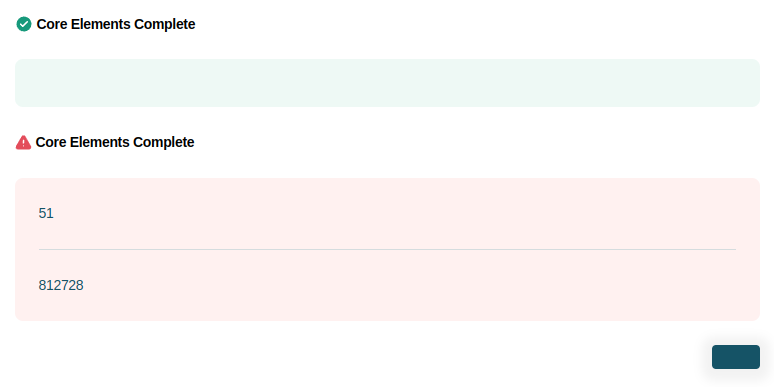

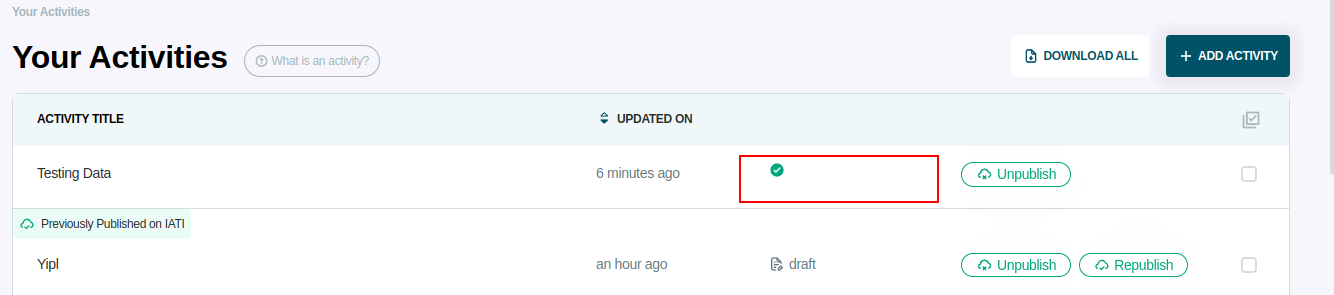

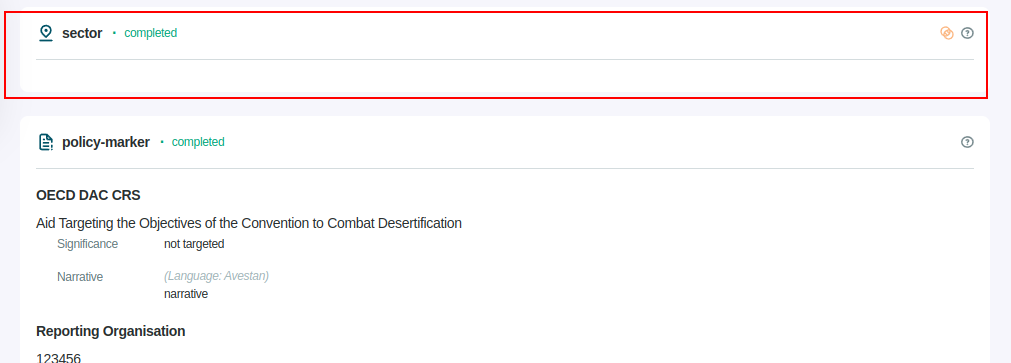

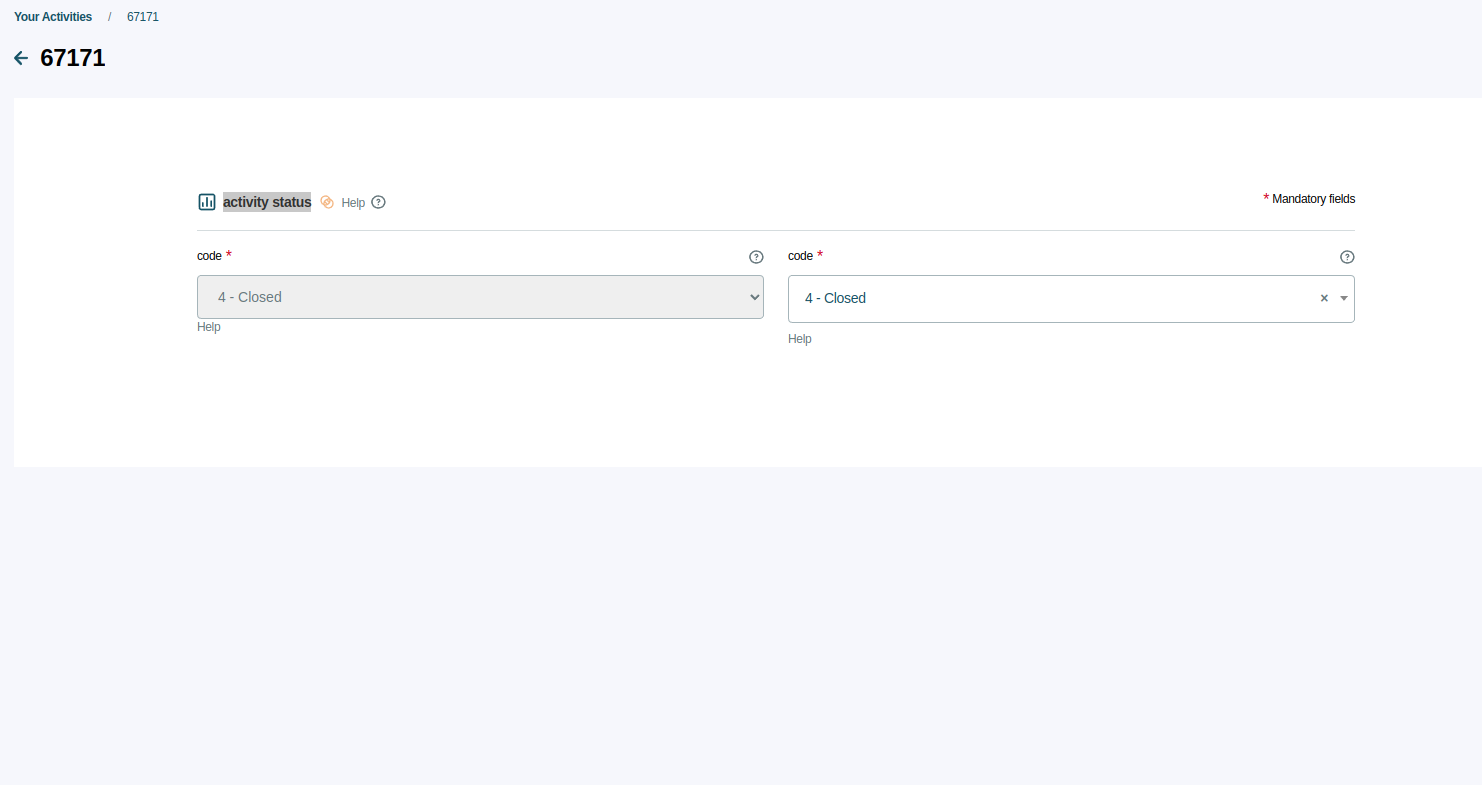

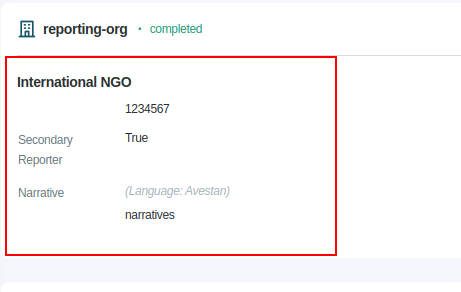

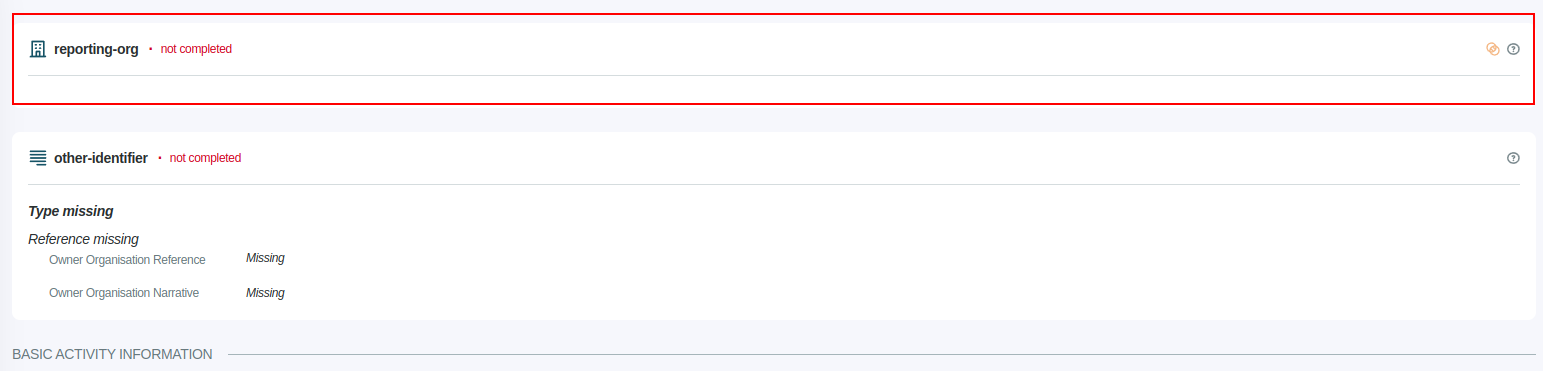

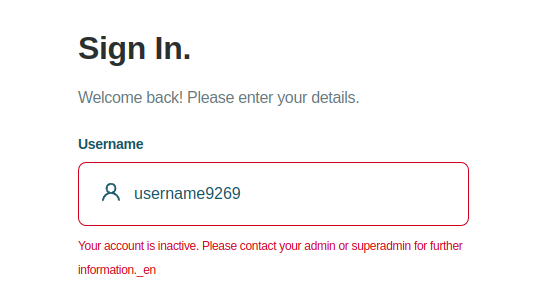

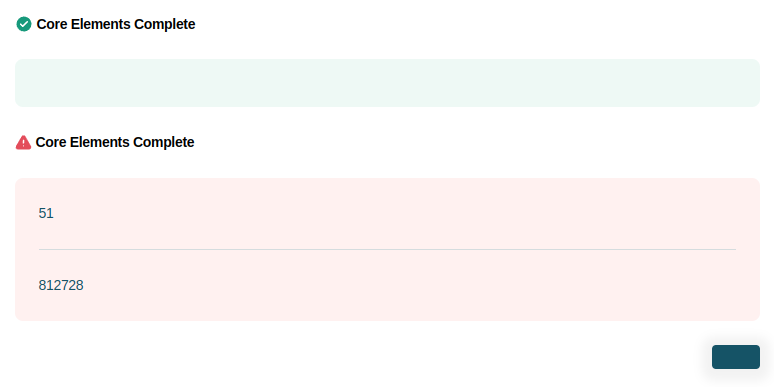

767,205 | 26,914,777,644 | IssuesEvent | 2023-02-07 05:00:23 | younginnovations/iatipublisher | https://api.github.com/repos/younginnovations/iatipublisher | closed | Issue found in translation | type: bug priority: high Backend | - [x] **Issue 1: The published text is missing from an activity detail page.**

- [x] **Issue 2: Inappropriate way to display the drop-down**

https://user-images.githubusercontent.com/78422663/216274366-191b0f50-0317-4c3a-a9f2-f3f04d883d69.mp4

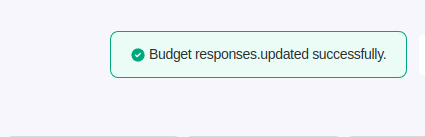

- [x] **Issue 3: Invalid toast message after publishing the activity.**

https://user-images.githubusercontent.com/78422663/216274566-3a1c7626-d9fd-4ba3-938c-f36ad2301cde.mp4

- [x] **Issue 4: Footer overflows**

https://user-images.githubusercontent.com/78422663/216274489-5b6949ea-ba1a-4e0d-8c3f-65b0f5c64d48.mp4

- [x] Issue 5: the sector is missing from the activity detail page

- [x] **Issue 6: Invalid toast message when an element is updated and deleted.**

- [x] **Issue 7: Error message cannot be expanded.**

- [x] **Issue 8: Invalid toast message during the creation, and deletion of the user.**

- [x] **Issue 9: The button is missing from the About us page.**

- [x] **Issue 10: The font size of the role and status is small.**

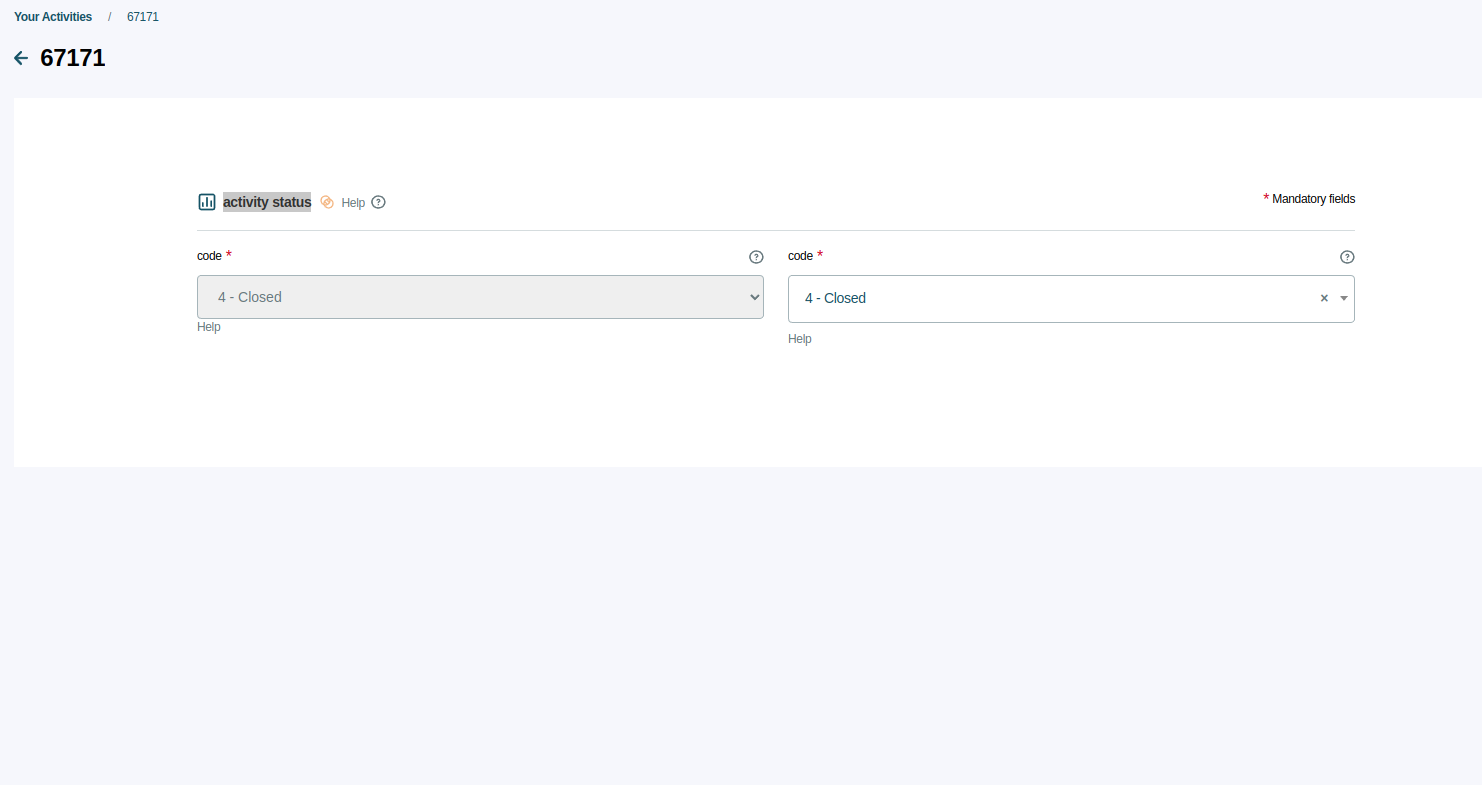

- [x] **Issue 11: Two drop-down options for activity status, capital spend, and default tied status.**

- [x] **Issue 12: The activity detail page does not display any element data aside from the iati-identifier and title.**

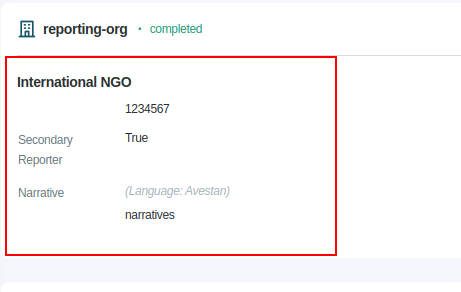

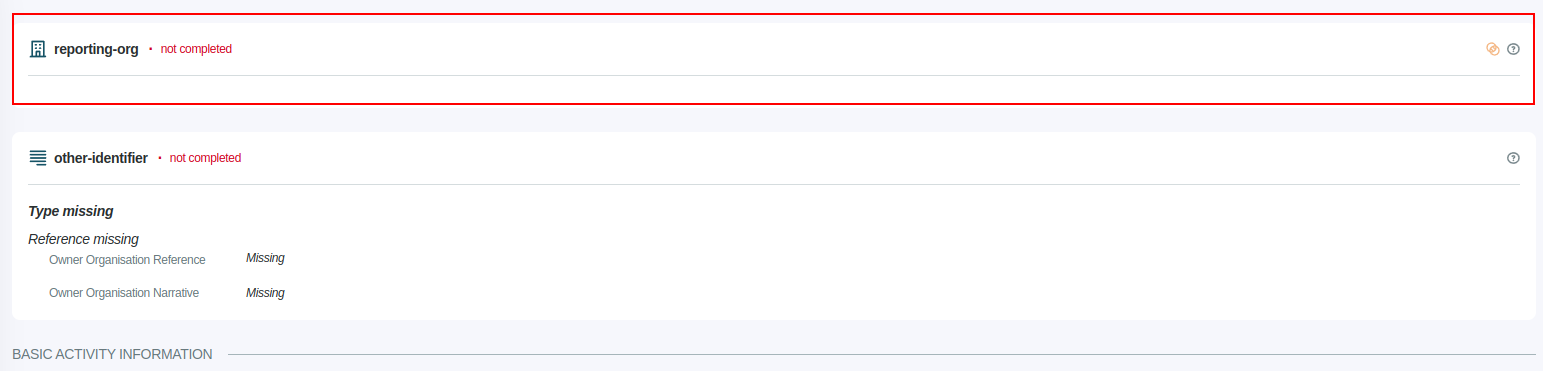

- [x] **Issue 13: UI issue for reporting-org**

- [x] **Issue 14: Reporting orgs not displaying on the activity detail page.**

- [x] **Issue 15: Invalid error message when the user is inactive and the user enter invalid credentials.**

- [x] **Issue 16: The profile icon is hiding when a user hovers over the icon.**

- [x] **Issue 17: None of the element data is displayed in org.**

https://user-images.githubusercontent.com/78422663/216281069-de2b339c-a01d-4aa3-adf6-5a4da9d6f681.mp4

- [x] **Issue 18: The footer is disappear from the login/sign-up page.**

- [x] **Issue 19: The password recovery page gets disappears.**

- [x] **Issue 20: Sign up through with iati register page gets disappears**

- [x] **Issue 21: Final page gets disappears.**

- [x] **Issue 22: When the user is publishing the activity in bulk button gets disappears.**

| 1.0 | Issue found in translation - - [x] **Issue 1: The published text is missing from an activity detail page.**

- [x] **Issue 2: Inappropriate way to display the drop-down**

https://user-images.githubusercontent.com/78422663/216274366-191b0f50-0317-4c3a-a9f2-f3f04d883d69.mp4

- [x] **Issue 3: Invalid toast message after publishing the activity.**

https://user-images.githubusercontent.com/78422663/216274566-3a1c7626-d9fd-4ba3-938c-f36ad2301cde.mp4

- [x] **Issue 4: Footer overflows**

https://user-images.githubusercontent.com/78422663/216274489-5b6949ea-ba1a-4e0d-8c3f-65b0f5c64d48.mp4

- [x] Issue 5: the sector is missing from the activity detail page

- [x] **Issue 6: Invalid toast message when an element is updated and deleted.**

- [x] **Issue 7: Error message cannot be expanded.**

- [x] **Issue 8: Invalid toast message during the creation, and deletion of the user.**

- [x] **Issue 9: The button is missing from the About us page.**

- [x] **Issue 10: The font size of the role and status is small.**

- [x] **Issue 11: Two drop-down options for activity status, capital spend, and default tied status.**

- [x] **Issue 12: The activity detail page does not display any element data aside from the iati-identifier and title.**

- [x] **Issue 13: UI issue for reporting-org**

- [x] **Issue 14: Reporting orgs not displaying on the activity detail page.**

- [x] **Issue 15: Invalid error message when the user is inactive and the user enter invalid credentials.**

- [x] **Issue 16: The profile icon is hiding when a user hovers over the icon.**

- [x] **Issue 17: None of the element data is displayed in org.**

https://user-images.githubusercontent.com/78422663/216281069-de2b339c-a01d-4aa3-adf6-5a4da9d6f681.mp4

- [x] **Issue 18: The footer is disappear from the login/sign-up page.**

- [x] **Issue 19: The password recovery page gets disappears.**

- [x] **Issue 20: Sign up through with iati register page gets disappears**

- [x] **Issue 21: Final page gets disappears.**

- [x] **Issue 22: When the user is publishing the activity in bulk button gets disappears.**

| non_defect | issue found in translation issue the published text is missing from an activity detail page issue inappropriate way to display the drop down issue invalid toast message after publishing the activity issue footer overflows issue the sector is missing from the activity detail page issue invalid toast message when an element is updated and deleted issue error message cannot be expanded issue invalid toast message during the creation and deletion of the user issue the button is missing from the about us page issue the font size of the role and status is small issue two drop down options for activity status capital spend and default tied status issue the activity detail page does not display any element data aside from the iati identifier and title issue ui issue for reporting org issue reporting orgs not displaying on the activity detail page issue invalid error message when the user is inactive and the user enter invalid credentials issue the profile icon is hiding when a user hovers over the icon issue none of the element data is displayed in org issue the footer is disappear from the login sign up page issue the password recovery page gets disappears issue sign up through with iati register page gets disappears issue final page gets disappears issue when the user is publishing the activity in bulk button gets disappears | 0 |

31,487 | 6,538,911,876 | IssuesEvent | 2017-09-01 08:49:44 | SublimeText/PackageDev | https://api.github.com/repos/SublimeText/PackageDev | closed | Valid settings are marked as invalid | defect | In current beta4 all settings in the user settings file are marked as invalid.

The console shows the following error which might point to the reason.

```

code: Unexpected character

Parse Error: ">✏</a>

</body>

``` | 1.0 | Valid settings are marked as invalid - In current beta4 all settings in the user settings file are marked as invalid.

The console shows the following error which might point to the reason.

```

code: Unexpected character

Parse Error: ">✏</a>

</body>

``` | defect | valid settings are marked as invalid in current all settings in the user settings file are marked as invalid the console shows the following error which might point to the reason code unexpected character parse error ✏ | 1 |

68,356 | 21,647,663,566 | IssuesEvent | 2022-05-06 05:20:36 | klubcoin/lcn-mobile | https://api.github.com/repos/klubcoin/lcn-mobile | opened | [Token Tipping][Send Tips] Fix send tip button must not be stuck at loading state. | Defect Must Have Critical Token Tipping Services | ### **Description:**

Send tip button must not be stuck at loading state.

**Build Environment:** Staging Environment

**Affects Version:** 1.0.0.staging.27

**Device Platform:** Android

**Device OS:** 11

**Test Device:** OnePlus 7T Pro

### **Pre-condition:**

1. User successfully installed Klubcoin App

2. User has an existing Klubcoin Wallet Account

3. User received request tip link

4. User is currently at third party messaging app where he/she received request tip link

### **Steps to Reproduce:**

1. Access request tip link

2. Tap "here" link

3. Enter accurate TIP amount

4. Tap Send button

### **Expected Result:**

Successfully sent KLUB Token

### **Actual Result:**

Send Button is stuck at loading state

### **Attachment/s:**

| 1.0 | [Token Tipping][Send Tips] Fix send tip button must not be stuck at loading state. - ### **Description:**

Send tip button must not be stuck at loading state.

**Build Environment:** Staging Environment

**Affects Version:** 1.0.0.staging.27

**Device Platform:** Android

**Device OS:** 11

**Test Device:** OnePlus 7T Pro

### **Pre-condition:**

1. User successfully installed Klubcoin App

2. User has an existing Klubcoin Wallet Account

3. User received request tip link

4. User is currently at third party messaging app where he/she received request tip link

### **Steps to Reproduce:**

1. Access request tip link

2. Tap "here" link

3. Enter accurate TIP amount

4. Tap Send button

### **Expected Result:**

Successfully sent KLUB Token

### **Actual Result:**

Send Button is stuck at loading state

### **Attachment/s:**

| defect | fix send tip button must not be stuck at loading state description send tip button must not be stuck at loading state build environment staging environment affects version staging device platform android device os test device oneplus pro pre condition user successfully installed klubcoin app user has an existing klubcoin wallet account user received request tip link user is currently at third party messaging app where he she received request tip link steps to reproduce access request tip link tap here link enter accurate tip amount tap send button expected result successfully sent klub token actual result send button is stuck at loading state attachment s | 1 |

63,826 | 18,011,838,343 | IssuesEvent | 2021-09-16 09:29:48 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | opened | member restart persistence jvm crash memory.impl.AlignmentAwareMemoryAccessor.getInt | Type: Defect Source: Internal Module: Config Module: Hot Restart Module: HD Module: Persistence |

http://jenkins.hazelcast.com/view/hot-restart/job/hot-bounce/340/console

/disk1/workspace/hot-bounce/5.0-SNAPSHOT/2021_09_16-09_01_04/bounce

HzMember2HZ timeout restarting member node

./output/HZ/HzMember2HZ/hs_err_pid2972.log

```

--------------- T H R E A D ---------------

Current thread (0x0000ffff38004000): JavaThread "hz.confident_northcutt.s01.GC-thread" [_thread_in_Java, id=3057, stack(0x0000fffef4400000,0x0000fffef4600000)]

Stack: [0x0000fffef4400000,0x0000fffef4600000], sp=0x0000fffef45fdd70, free space=2039k

Native frames: (J=compiled Java code, A=aot compiled Java code, j=interpreted, Vv=VM code, C=native code)

J 4427 c2 com.hazelcast.internal.memory.impl.AlignmentAwareMemoryAccessor.getInt(J)I (29 bytes) @ 0x0000ffff9452fd80 [0x0000ffff9452fd40+0x0000000000000040]

J 4902 c2 com.hazelcast.internal.util.HashUtil.MurmurHash3_x86_32(Lcom/hazelcast/internal/util/HashUtil$LoadStrategy;Ljava/lang/Object;JII)I (252 bytes) @ 0x0000ffff94622d68 [0x0000ffff94622d00+0x00000000

00000068]

J 5118 c2 com.hazelcast.map.impl.recordstore.HDMapRamStoreImpl.copyEntry(Lcom/hazelcast/internal/hotrestart/KeyHandle;ILcom/hazelcast/internal/hotrestart/RecordDataSink;)Z (85 bytes) @ 0x0000ffff94666524

[0x0000ffff946660c0+0x0000000000000464]

J 5136 c1 com.hazelcast.internal.hotrestart.impl.gc.ValEvacuator.moveToSurvivors(Lcom/hazelcast/internal/hotrestart/impl/SortedBySeqRecordCursor;)V (277 bytes) @ 0x0000ffff8d84e778 [0x0000ffff8d84dc40+0x0

000000000000b38]

j com.hazelcast.internal.hotrestart.impl.gc.ValEvacuator.evacuate()V+52

J 7244 c1 com.hazelcast.internal.hotrestart.impl.gc.ChunkManager.valueGc(Lcom/hazelcast/internal/hotrestart/impl/gc/GcParams;Lcom/hazelcast/internal/hotrestart/impl/gc/MutatorCatchup;)Z (228 bytes) @ 0x00

00ffff8dc1bee0 [0x0000ffff8dc19bc0+0x0000000000002320]

J 5567% c1 com.hazelcast.internal.hotrestart.impl.gc.GcMainLoop.run()V (259 bytes) @ 0x0000ffff8d911d74 [0x0000ffff8d911540+0x0000000000000834]

j java.lang.Thread.run()V+11 java.base@11.0.12

v ~StubRoutines::call_stub

V [libjvm.so+0x74f574] JavaCalls::call_helper(JavaValue*, methodHandle const&, JavaCallArguments*, Thread*)+0x354

V [libjvm.so+0x74da40] JavaCalls::call_virtual(JavaValue*, Handle, Klass*, Symbol*, Symbol*, Thread*)+0x160

V [libjvm.so+0x7f23f0] thread_entry(JavaThread*, Thread*)+0x68

V [libjvm.so+0xcc5b84] JavaThread::thread_main_inner()+0xd8

V [libjvm.so+0xcc3674] Thread::call_run()+0x94

V [libjvm.so+0xa858b8] thread_native_entry(Thread*)+0x108

C [libpthread.so.0+0x71ec] start_thread+0xac

siginfo: si_signo: 11 (SIGSEGV), si_code: 1 (SEGV_MAPERR), si_addr: 0x0000fffef1bf8000

```

cat ./output/HZ/HzMember2HZ/out.txt

```

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HD

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-2]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 4624854758981714747

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-1]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -2064361361865565284

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 2683524247046331894

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HDIdle

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-2]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -4732436703754734970

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HDIdle

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.priority-generic-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 8199603974387877559

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-3]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 5213834991441292693

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HD

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.priority-generic-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -1718971108907240729

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-1]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -1718971108907240729

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-3]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 1663396822509246456

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HD

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.priority-generic-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 881310956686768009

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.priority-generic-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 7717987039307300113

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 7717987039307300113

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HDIdle

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-3]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 8013580804879044008

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HD

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-1]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 334027424206507321

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 334027424206507321

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HDIdle

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-1]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -6531628355538506615

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.generic-operation.thread-1]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 247243056385334241

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HD

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-2]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 247243056385334241

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -8415570138419441570

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HDIdle

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -7287584596533912231

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-2]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 6451397601491303925

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-1]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -5246391068467577919

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HD

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.generic-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 498541680710622948

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.priority-generic-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -6896661516595937509

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-3]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 2215759797616234785

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HDIdle

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-2]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -6718433272659507100

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HD

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-1]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 474974634914488802

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.generic-operation.thread-1]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 4712428711619366141

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-3]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 4712428711619366141

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.priority-generic-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -8907675406840087396

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HD

#

# A fatal error has been detected by the Java Runtime Environment:

#

# SIGSEGV (0xb) at pc=0x0000ffff9452fd80, pid=2972, tid=3057

#

# JRE version: OpenJDK Runtime Environment Corretto-11.0.12.7.1 (11.0.12+7) (build 11.0.12+7-LTS)

# Java VM: OpenJDK 64-Bit Server VM Corretto-11.0.12.7.1 (11.0.12+7-LTS, mixed mode, tiered, compressed oops, g1 gc, linux-aarch64)

# Problematic frame:

# J 4427 c2 com.hazelcast.internal.memory.impl.AlignmentAwareMemoryAccessor.getInt(J)I (29 bytes) @ 0x0000ffff9452fd80 [0x0000ffff9452fd40+0x0000000000000040]

#

# No core dump will be written. Core dumps have been disabled. To enable core dumping, try "ulimit -c unlimited" before starting Java again

#

# An error report file with more information is saved as:

# /home/ec2-user/hz-root/HzMember2HZ/hs_err_pid2972.log

Compiled method (c2) 30498 4902 4 com.hazelcast.internal.util.HashUtil::MurmurHash3_x86_32 (252 bytes)

total in heap [0x0000ffff94622a10,0x0000ffff946241a8] = 6040

relocation [0x0000ffff94622b80,0x0000ffff94622d00] = 384

main code [0x0000ffff94622d00,0x0000ffff94623580] = 2176

stub code [0x0000ffff94623580,0x0000ffff946237d0] = 592

oops [0x0000ffff946237d0,0x0000ffff946237d8] = 8

metadata [0x0000ffff946237d8,0x0000ffff94623848] = 112

scopes data [0x0000ffff94623848,0x0000ffff94623e78] = 1584

scopes pcs [0x0000ffff94623e78,0x0000ffff946240d8] = 608

dependencies [0x0000ffff946240d8,0x0000ffff946240e0] = 8

handler table [0x0000ffff946240e0,0x0000ffff94624140] = 96

nul chk table [0x0000ffff94624140,0x0000ffff946241a8] = 104

Compiled method (c2) 30499 4902 4 com.hazelcast.internal.util.HashUtil::MurmurHash3_x86_32 (252 bytes)

total in heap [0x0000ffff94622a10,0x0000ffff946241a8] = 6040

relocation [0x0000ffff94622b80,0x0000ffff94622d00] = 384

main code [0x0000ffff94622d00,0x0000ffff94623580] = 2176

stub code [0x0000ffff94623580,0x0000ffff946237d0] = 592

oops [0x0000ffff946237d0,0x0000ffff946237d8] = 8

metadata [0x0000ffff946237d8,0x0000ffff94623848] = 112

scopes data [0x0000ffff94623848,0x0000ffff94623e78] = 1584

scopes pcs [0x0000ffff94623e78,0x0000ffff946240d8] = 608

dependencies [0x0000ffff946240d8,0x0000ffff946240e0] = 8

handler table [0x0000ffff946240e0,0x0000ffff94624140] = 96

nul chk table [0x0000ffff94624140,0x0000ffff946241a8] = 104

Could not load hsdis-aarch64.so; library not loadable; PrintAssembly is disabled

#

# If you would like to submit a bug report, please visit:

# https://github.com/corretto/corretto-11/issues/

#

(base) [ec2-user@ip-10-0-0-252 bounce]$

``` | 1.0 | member restart persistence jvm crash memory.impl.AlignmentAwareMemoryAccessor.getInt -

http://jenkins.hazelcast.com/view/hot-restart/job/hot-bounce/340/console

/disk1/workspace/hot-bounce/5.0-SNAPSHOT/2021_09_16-09_01_04/bounce

HzMember2HZ timeout restarting member node

./output/HZ/HzMember2HZ/hs_err_pid2972.log

```

--------------- T H R E A D ---------------

Current thread (0x0000ffff38004000): JavaThread "hz.confident_northcutt.s01.GC-thread" [_thread_in_Java, id=3057, stack(0x0000fffef4400000,0x0000fffef4600000)]

Stack: [0x0000fffef4400000,0x0000fffef4600000], sp=0x0000fffef45fdd70, free space=2039k

Native frames: (J=compiled Java code, A=aot compiled Java code, j=interpreted, Vv=VM code, C=native code)

J 4427 c2 com.hazelcast.internal.memory.impl.AlignmentAwareMemoryAccessor.getInt(J)I (29 bytes) @ 0x0000ffff9452fd80 [0x0000ffff9452fd40+0x0000000000000040]

J 4902 c2 com.hazelcast.internal.util.HashUtil.MurmurHash3_x86_32(Lcom/hazelcast/internal/util/HashUtil$LoadStrategy;Ljava/lang/Object;JII)I (252 bytes) @ 0x0000ffff94622d68 [0x0000ffff94622d00+0x00000000

00000068]

J 5118 c2 com.hazelcast.map.impl.recordstore.HDMapRamStoreImpl.copyEntry(Lcom/hazelcast/internal/hotrestart/KeyHandle;ILcom/hazelcast/internal/hotrestart/RecordDataSink;)Z (85 bytes) @ 0x0000ffff94666524

[0x0000ffff946660c0+0x0000000000000464]

J 5136 c1 com.hazelcast.internal.hotrestart.impl.gc.ValEvacuator.moveToSurvivors(Lcom/hazelcast/internal/hotrestart/impl/SortedBySeqRecordCursor;)V (277 bytes) @ 0x0000ffff8d84e778 [0x0000ffff8d84dc40+0x0

000000000000b38]

j com.hazelcast.internal.hotrestart.impl.gc.ValEvacuator.evacuate()V+52

J 7244 c1 com.hazelcast.internal.hotrestart.impl.gc.ChunkManager.valueGc(Lcom/hazelcast/internal/hotrestart/impl/gc/GcParams;Lcom/hazelcast/internal/hotrestart/impl/gc/MutatorCatchup;)Z (228 bytes) @ 0x00

00ffff8dc1bee0 [0x0000ffff8dc19bc0+0x0000000000002320]

J 5567% c1 com.hazelcast.internal.hotrestart.impl.gc.GcMainLoop.run()V (259 bytes) @ 0x0000ffff8d911d74 [0x0000ffff8d911540+0x0000000000000834]

j java.lang.Thread.run()V+11 java.base@11.0.12

v ~StubRoutines::call_stub

V [libjvm.so+0x74f574] JavaCalls::call_helper(JavaValue*, methodHandle const&, JavaCallArguments*, Thread*)+0x354

V [libjvm.so+0x74da40] JavaCalls::call_virtual(JavaValue*, Handle, Klass*, Symbol*, Symbol*, Thread*)+0x160

V [libjvm.so+0x7f23f0] thread_entry(JavaThread*, Thread*)+0x68

V [libjvm.so+0xcc5b84] JavaThread::thread_main_inner()+0xd8

V [libjvm.so+0xcc3674] Thread::call_run()+0x94

V [libjvm.so+0xa858b8] thread_native_entry(Thread*)+0x108

C [libpthread.so.0+0x71ec] start_thread+0xac

siginfo: si_signo: 11 (SIGSEGV), si_code: 1 (SEGV_MAPERR), si_addr: 0x0000fffef1bf8000

```

cat ./output/HZ/HzMember2HZ/out.txt

```

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HD

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-2]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 4624854758981714747

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-1]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -2064361361865565284

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 2683524247046331894

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HDIdle

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-2]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -4732436703754734970

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HDIdle

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.priority-generic-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 8199603974387877559

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-3]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 5213834991441292693

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HD

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.priority-generic-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -1718971108907240729

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-1]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -1718971108907240729

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-3]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 1663396822509246456

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HD

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.priority-generic-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 881310956686768009

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.priority-generic-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 7717987039307300113

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 7717987039307300113

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HDIdle

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-3]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 8013580804879044008

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HD

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-1]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 334027424206507321

09:05:15 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 334027424206507321

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HD

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HDIdle

09:05:15 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HDIdle

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-1]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -6531628355538506615

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.generic-operation.thread-1]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 247243056385334241

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HD

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-2]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 247243056385334241

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -8415570138419441570

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HDIdle

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -7287584596533912231

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-2]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 6451397601491303925

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-1]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -5246391068467577919

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HD

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.generic-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 498541680710622948

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.priority-generic-operation.thread-0]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -6896661516595937509

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-3]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: 2215759797616234785

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-1]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-3]:52 Applying differential sync for mapBak1HD

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-2]:52 Applying differential sync for mapBak1HDIdle

09:05:16 INFO EnterpriseMapReplicationStateHolder [hz.confident_northcutt.partition-operation.thread-0]:52 Applying differential sync for mapBak1HDIdle

09:05:16 TRACE ClusterMetadataManager [hz.confident_northcutt.partition-operation.thread-2]:57 [10.0.0.102]:5701 [HZ] [5.0-SNAPSHOT] Will persist partition table with stamp: -6718433272659507100