Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

274,307 | 8,559,599,189 | IssuesEvent | 2018-11-08 21:47:10 | OpenSRP/opensrp-server-web | https://api.github.com/repos/OpenSRP/opensrp-server-web | opened | Update Sync API to include new domain objects | Priority: High | Depending on how it's implemented, we may need to update the sync API endpoint and underlying logic to support the addition of new domain objects locations, campaigns and tasks.

- [ ] Review the new location, campaign and task entities that have been added to the OpenSRP server

- [ ] Develop a logic model on how to process each item

- [ ] Implement the change

- [ ] Test that sync functionality | 1.0 | Update Sync API to include new domain objects - Depending on how it's implemented, we may need to update the sync API endpoint and underlying logic to support the addition of new domain objects locations, campaigns and tasks.

- [ ] Review the new location, campaign and task entities that have been added to the OpenSRP server

- [ ] Develop a logic model on how to process each item

- [ ] Implement the change

- [ ] Test that sync functionality | non_defect | update sync api to include new domain objects depending on how it s implemented we may need to update the sync api endpoint and underlying logic to support the addition of new domain objects locations campaigns and tasks review the new location campaign and task entities that have been added to the opensrp server develop a logic model on how to process each item implement the change test that sync functionality | 0 |

50,555 | 13,187,577,424 | IssuesEvent | 2020-08-13 03:52:20 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | closed | look at getting nvidia drivers on the bots for clsim testing (Trac #935) | Migrated from Trac defect infrastructure | clsim testing and coverage is woefully weak.

look at getting the nvidia drivers on the bots w/ crusty nvidia cards, or scrounging for some half height cards.

<details>

<summary><em>Migrated from https://code.icecube.wisc.edu/ticket/935

, reported by nega and owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2015-04-17T00:10:22",

"description": "clsim testing and coverage is woefully weak.\n\nlook at getting the nvidia drivers on the bots w/ crusty nvidia cards, or scrounging for some half height cards.",

"reporter": "nega",

"cc": "",

"resolution": "fixed",

"_ts": "1429229422652487",

"component": "infrastructure",

"summary": "look at getting nvidia drivers on the bots for clsim testing",

"priority": "normal",

"keywords": "",

"time": "2015-04-14T20:08:36",

"milestone": "",

"owner": "nega",

"type": "defect"

}

```

</p>

</details>

| 1.0 | look at getting nvidia drivers on the bots for clsim testing (Trac #935) - clsim testing and coverage is woefully weak.

look at getting the nvidia drivers on the bots w/ crusty nvidia cards, or scrounging for some half height cards.

<details>

<summary><em>Migrated from https://code.icecube.wisc.edu/ticket/935

, reported by nega and owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2015-04-17T00:10:22",

"description": "clsim testing and coverage is woefully weak.\n\nlook at getting the nvidia drivers on the bots w/ crusty nvidia cards, or scrounging for some half height cards.",

"reporter": "nega",

"cc": "",

"resolution": "fixed",

"_ts": "1429229422652487",

"component": "infrastructure",

"summary": "look at getting nvidia drivers on the bots for clsim testing",

"priority": "normal",

"keywords": "",

"time": "2015-04-14T20:08:36",

"milestone": "",

"owner": "nega",

"type": "defect"

}

```

</p>

</details>

| defect | look at getting nvidia drivers on the bots for clsim testing trac clsim testing and coverage is woefully weak look at getting the nvidia drivers on the bots w crusty nvidia cards or scrounging for some half height cards migrated from reported by nega and owned by nega json status closed changetime description clsim testing and coverage is woefully weak n nlook at getting the nvidia drivers on the bots w crusty nvidia cards or scrounging for some half height cards reporter nega cc resolution fixed ts component infrastructure summary look at getting nvidia drivers on the bots for clsim testing priority normal keywords time milestone owner nega type defect | 1 |

69,264 | 22,304,682,366 | IssuesEvent | 2022-06-13 12:00:16 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | Remove the "modern IDEs" section from the manual | T: Defect C: Documentation P: Medium R: Fixed E: All Editions | This section was never written, and it won't be, either. Let's just remove it:

https://www.jooq.org/doc/latest/manual/getting-started/tutorials/jooq-in-modern-ides/ | 1.0 | Remove the "modern IDEs" section from the manual - This section was never written, and it won't be, either. Let's just remove it:

https://www.jooq.org/doc/latest/manual/getting-started/tutorials/jooq-in-modern-ides/ | defect | remove the modern ides section from the manual this section was never written and it won t be either let s just remove it | 1 |

26,423 | 20,105,290,970 | IssuesEvent | 2022-02-07 09:54:22 | APSIMInitiative/ApsimX | https://api.github.com/repos/APSIMInitiative/ApsimX | closed | Problem building CairoContext and accessing netcore3.1 | bug interface/infrastructure | I have just pulled the latest version so I can run a user simulation, but I cannot build the project even after a clean solution and rebuild all.

1. Severity Code Description Project File Line Suppression State

Error CS8141 The tuple element names in the signature of method 'CairoContext.GetPixelExtents(string, bool, bool)' must match the tuple element names of interface method 'IDrawContext.GetPixelExtents(string, bool, bool)' (including on the return type). ApsimNG (netcoreapp3.1) C:\Data\Source\Repos\ApsimX\ApsimNG\Views\Sheet\CairoContext.cs 75 Active

Any advice to get back up and running? | 1.0 | Problem building CairoContext and accessing netcore3.1 - I have just pulled the latest version so I can run a user simulation, but I cannot build the project even after a clean solution and rebuild all.

1. Severity Code Description Project File Line Suppression State

Error CS8141 The tuple element names in the signature of method 'CairoContext.GetPixelExtents(string, bool, bool)' must match the tuple element names of interface method 'IDrawContext.GetPixelExtents(string, bool, bool)' (including on the return type). ApsimNG (netcoreapp3.1) C:\Data\Source\Repos\ApsimX\ApsimNG\Views\Sheet\CairoContext.cs 75 Active

Any advice to get back up and running? | non_defect | problem building cairocontext and accessing i have just pulled the latest version so i can run a user simulation but i cannot build the project even after a clean solution and rebuild all severity code description project file line suppression state error the tuple element names in the signature of method cairocontext getpixelextents string bool bool must match the tuple element names of interface method idrawcontext getpixelextents string bool bool including on the return type apsimng c data source repos apsimx apsimng views sheet cairocontext cs active any advice to get back up and running | 0 |

50,721 | 13,187,697,518 | IssuesEvent | 2020-08-13 04:16:20 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | closed | [coinc-twc] example scrip does not work + missing documentation (Trac #1234) | Migrated from Trac combo reconstruction defect | 1) example testCoincTWC.py does *not* work.

Loading libflat-ntuple....................................FATAL (I3Tray): Failed to load library (<type 'exceptions.RuntimeError'>): dlopen() dynamic loading error: /home/tpalczewski/code-sprint/IceRec-V5/build/lib/libflat-ntuple.so: cannot open shared object file: No such file or directory (I3Tray.py:36 in load)

Traceback (most recent call last):

File "testCoincTWC.py", line 15, in <module>

load("libflat-ntuple")

File "/home/tpalczewski/code-sprint/IceRec-V5/build/lib/I3Tray.py", line 36, in load

% (sys.exc_info()[0], sys.exc_info()[1]), "I3Tray")

File "/home/tpalczewski/code-sprint/IceRec-V5/build/lib/icecube/icetray/i3logging.py", line 150, in log_fatal

raise RuntimeError(message + " (in " + tb[2] + ")")

RuntimeError: Failed to load library (<type 'exceptions.RuntimeError'>): dlopen() dynamic loading error: /home/tpalczewski/code-sprint/IceRec-V5/build/lib/libflat-ntuple.so: cannot open shared object file: No such file or directory (in load)

script tries to use the deprecated libflat-ntuple library.

In addition tests should be placed in the resources/test directory.

2) BTW the resources/scripts directory is empty.

3) Please convert RELEASE_NOTES to .rst format.

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1234">https://code.icecube.wisc.edu/ticket/1234</a>, reported by tpalczewski and owned by sderidder</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-13T14:11:57",

"description": "1) example testCoincTWC.py does *not* work. \n\nLoading libflat-ntuple....................................FATAL (I3Tray): Failed to load library (<type 'exceptions.RuntimeError'>): dlopen() dynamic loading error: /home/tpalczewski/code-sprint/IceRec-V5/build/lib/libflat-ntuple.so: cannot open shared object file: No such file or directory (I3Tray.py:36 in load)\nTraceback (most recent call last):\n File \"testCoincTWC.py\", line 15, in <module>\n load(\"libflat-ntuple\")\n File \"/home/tpalczewski/code-sprint/IceRec-V5/build/lib/I3Tray.py\", line 36, in load\n % (sys.exc_info()[0], sys.exc_info()[1]), \"I3Tray\")\n File \"/home/tpalczewski/code-sprint/IceRec-V5/build/lib/icecube/icetray/i3logging.py\", line 150, in log_fatal\n raise RuntimeError(message + \" (in \" + tb[2] + \")\")\nRuntimeError: Failed to load library (<type 'exceptions.RuntimeError'>): dlopen() dynamic loading error: /home/tpalczewski/code-sprint/IceRec-V5/build/lib/libflat-ntuple.so: cannot open shared object file: No such file or directory (in load)\n\nscript tries to use the deprecated libflat-ntuple library. \n\nIn addition tests should be placed in the resources/test directory. \n\n2) BTW the resources/scripts directory is empty. \n\n3) Please convert RELEASE_NOTES to .rst format. \n",

"reporter": "tpalczewski",

"cc": "Sam.DeRidder@UGent.be",

"resolution": "fixed",

"_ts": "1550067117911749",

"component": "combo reconstruction",

"summary": "[coinc-twc] example scrip does not work + missing documentation",

"priority": "blocker",

"keywords": "",

"time": "2015-08-20T06:51:30",

"milestone": "",

"owner": "sderidder",

"type": "defect"

}

```

</p>

</details>

| 1.0 | [coinc-twc] example scrip does not work + missing documentation (Trac #1234) - 1) example testCoincTWC.py does *not* work.

Loading libflat-ntuple....................................FATAL (I3Tray): Failed to load library (<type 'exceptions.RuntimeError'>): dlopen() dynamic loading error: /home/tpalczewski/code-sprint/IceRec-V5/build/lib/libflat-ntuple.so: cannot open shared object file: No such file or directory (I3Tray.py:36 in load)

Traceback (most recent call last):

File "testCoincTWC.py", line 15, in <module>

load("libflat-ntuple")

File "/home/tpalczewski/code-sprint/IceRec-V5/build/lib/I3Tray.py", line 36, in load

% (sys.exc_info()[0], sys.exc_info()[1]), "I3Tray")

File "/home/tpalczewski/code-sprint/IceRec-V5/build/lib/icecube/icetray/i3logging.py", line 150, in log_fatal

raise RuntimeError(message + " (in " + tb[2] + ")")

RuntimeError: Failed to load library (<type 'exceptions.RuntimeError'>): dlopen() dynamic loading error: /home/tpalczewski/code-sprint/IceRec-V5/build/lib/libflat-ntuple.so: cannot open shared object file: No such file or directory (in load)

script tries to use the deprecated libflat-ntuple library.

In addition tests should be placed in the resources/test directory.

2) BTW the resources/scripts directory is empty.

3) Please convert RELEASE_NOTES to .rst format.

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1234">https://code.icecube.wisc.edu/ticket/1234</a>, reported by tpalczewski and owned by sderidder</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-13T14:11:57",

"description": "1) example testCoincTWC.py does *not* work. \n\nLoading libflat-ntuple....................................FATAL (I3Tray): Failed to load library (<type 'exceptions.RuntimeError'>): dlopen() dynamic loading error: /home/tpalczewski/code-sprint/IceRec-V5/build/lib/libflat-ntuple.so: cannot open shared object file: No such file or directory (I3Tray.py:36 in load)\nTraceback (most recent call last):\n File \"testCoincTWC.py\", line 15, in <module>\n load(\"libflat-ntuple\")\n File \"/home/tpalczewski/code-sprint/IceRec-V5/build/lib/I3Tray.py\", line 36, in load\n % (sys.exc_info()[0], sys.exc_info()[1]), \"I3Tray\")\n File \"/home/tpalczewski/code-sprint/IceRec-V5/build/lib/icecube/icetray/i3logging.py\", line 150, in log_fatal\n raise RuntimeError(message + \" (in \" + tb[2] + \")\")\nRuntimeError: Failed to load library (<type 'exceptions.RuntimeError'>): dlopen() dynamic loading error: /home/tpalczewski/code-sprint/IceRec-V5/build/lib/libflat-ntuple.so: cannot open shared object file: No such file or directory (in load)\n\nscript tries to use the deprecated libflat-ntuple library. \n\nIn addition tests should be placed in the resources/test directory. \n\n2) BTW the resources/scripts directory is empty. \n\n3) Please convert RELEASE_NOTES to .rst format. \n",

"reporter": "tpalczewski",

"cc": "Sam.DeRidder@UGent.be",

"resolution": "fixed",

"_ts": "1550067117911749",

"component": "combo reconstruction",

"summary": "[coinc-twc] example scrip does not work + missing documentation",

"priority": "blocker",

"keywords": "",

"time": "2015-08-20T06:51:30",

"milestone": "",

"owner": "sderidder",

"type": "defect"

}

```

</p>

</details>

| defect | example scrip does not work missing documentation trac example testcoinctwc py does not work loading libflat ntuple fatal failed to load library dlopen dynamic loading error home tpalczewski code sprint icerec build lib libflat ntuple so cannot open shared object file no such file or directory py in load traceback most recent call last file testcoinctwc py line in load libflat ntuple file home tpalczewski code sprint icerec build lib py line in load sys exc info sys exc info file home tpalczewski code sprint icerec build lib icecube icetray py line in log fatal raise runtimeerror message in tb runtimeerror failed to load library dlopen dynamic loading error home tpalczewski code sprint icerec build lib libflat ntuple so cannot open shared object file no such file or directory in load script tries to use the deprecated libflat ntuple library in addition tests should be placed in the resources test directory btw the resources scripts directory is empty please convert release notes to rst format migrated from json status closed changetime description example testcoinctwc py does not work n nloading libflat ntuple fatal failed to load library dlopen dynamic loading error home tpalczewski code sprint icerec build lib libflat ntuple so cannot open shared object file no such file or directory py in load ntraceback most recent call last n file testcoinctwc py line in n load libflat ntuple n file home tpalczewski code sprint icerec build lib py line in load n sys exc info sys exc info n file home tpalczewski code sprint icerec build lib icecube icetray py line in log fatal n raise runtimeerror message in tb nruntimeerror failed to load library dlopen dynamic loading error home tpalczewski code sprint icerec build lib libflat ntuple so cannot open shared object file no such file or directory in load n nscript tries to use the deprecated libflat ntuple library n nin addition tests should be placed in the resources test directory n btw the resources scripts directory is empty n please convert release notes to rst format n reporter tpalczewski cc sam deridder ugent be resolution fixed ts component combo reconstruction summary example scrip does not work missing documentation priority blocker keywords time milestone owner sderidder type defect | 1 |

437,469 | 30,600,502,967 | IssuesEvent | 2023-07-22 10:10:02 | Wintespe/ScanIt | https://api.github.com/repos/Wintespe/ScanIt | opened | CheckReady command added to the console | documentation |

**@­CheckReady command added to the console**

_v2.1.5_

Some commands – like Restart 1 - cause Tasmota to restart. @­CheckReady tests if the device is ready after this command.

**Parameter list**

```

Pre Wait time in ms before the test.

Retry Number of loops during the test.

Until Waiting time in ms in a loop.

Post Waiting time in ms after the test.

```

Example

@­CheckReady Pre:100; Retry:10; Untll:200; Post:10

­ | 1.0 | CheckReady command added to the console -

**@­CheckReady command added to the console**

_v2.1.5_

Some commands – like Restart 1 - cause Tasmota to restart. @­CheckReady tests if the device is ready after this command.

**Parameter list**

```

Pre Wait time in ms before the test.

Retry Number of loops during the test.

Until Waiting time in ms in a loop.

Post Waiting time in ms after the test.

```

Example

@­CheckReady Pre:100; Retry:10; Untll:200; Post:10

­ | non_defect | checkready command added to the console checkready command added to the console some commands – like restart cause tasmota to restart checkready tests if the device is ready after this command parameter list pre wait time in ms before the test retry number of loops during the test until waiting time in ms in a loop post waiting time in ms after the test example checkready pre retry untll post | 0 |

6,121 | 2,610,221,533 | IssuesEvent | 2015-02-26 19:10:17 | chrsmith/somefinders | https://api.github.com/repos/chrsmith/somefinders | opened | siemens gigaset 4000 classic инструкция | auto-migrated Priority-Medium Type-Defect | ```

'''Анвар Дементьев'''

Привет всем не подскажите где можно найти

.siemens gigaset 4000 classic инструкция. как то

выкладывали уже

'''Герт Савельев'''

Вот хороший сайт где можно скачать

http://bit.ly/1h3T93s

'''Василько Гущин'''

Спасибо вроде то но просит телефон вводить

'''Вильям Лихачёв'''

Неа все ок у меня ничего не списало

'''Андрон Большаков'''

Не это не влияет на баланс

Информация о файле: siemens gigaset 4000 classic

инструкция

Загружен: В этом месяце

Скачан раз: 175

Рейтинг: 799

Средняя скорость скачивания: 1237

Похожих файлов: 11

```

-----

Original issue reported on code.google.com by `kondense...@gmail.com` on 17 Dec 2013 at 11:57 | 1.0 | siemens gigaset 4000 classic инструкция - ```

'''Анвар Дементьев'''

Привет всем не подскажите где можно найти

.siemens gigaset 4000 classic инструкция. как то

выкладывали уже

'''Герт Савельев'''

Вот хороший сайт где можно скачать

http://bit.ly/1h3T93s

'''Василько Гущин'''

Спасибо вроде то но просит телефон вводить

'''Вильям Лихачёв'''

Неа все ок у меня ничего не списало

'''Андрон Большаков'''

Не это не влияет на баланс

Информация о файле: siemens gigaset 4000 classic

инструкция

Загружен: В этом месяце

Скачан раз: 175

Рейтинг: 799

Средняя скорость скачивания: 1237

Похожих файлов: 11

```

-----

Original issue reported on code.google.com by `kondense...@gmail.com` on 17 Dec 2013 at 11:57 | defect | siemens gigaset classic инструкция анвар дементьев привет всем не подскажите где можно найти siemens gigaset classic инструкция как то выкладывали уже герт савельев вот хороший сайт где можно скачать василько гущин спасибо вроде то но просит телефон вводить вильям лихачёв неа все ок у меня ничего не списало андрон большаков не это не влияет на баланс информация о файле siemens gigaset classic инструкция загружен в этом месяце скачан раз рейтинг средняя скорость скачивания похожих файлов original issue reported on code google com by kondense gmail com on dec at | 1 |

30,148 | 6,033,371,252 | IssuesEvent | 2017-06-09 08:07:03 | moosetechnology/Moose | https://api.github.com/repos/moosetechnology/Moose | closed | Not all shapes support borderColor/borderWidth | Priority-Medium Type-Defect | Originally reported on Google Code with ID 1097

```

Some shapes are using the default stroke and/or the default stroke width, even if the

user sets a borderColor/borderWidth

|v ver circle box poly es |

v := RTView new.

ver := (1 to:5)collect:[:i | Point r:100 degrees:(360/5*i)].

circle := RTEllipse new size: 200; color: Color red; borderWidth:5;borderColor: Color

green.

box := RTBox new size: 200; color: Color red; borderWidth:5;borderColor: Color green.

poly := RTPolygon new size: 200; vertices:ver; color: Color red; borderWidth:5;borderColor:

Color green.

es := circle elementOn:'hello'.

v add: es.

es := box elementOn:'hello'.

v add: es.

es := poly elementOn:'hello'.

v add: es.

v @ RTDraggableView .

RTGridLayout on: v elements.

v

"all shapes should use the provided borderWidth (5) and borderColor (Green)"

moose build 3147

* Type-Defect

* Component-Roassal2

```

Reported by `nicolaihess` on 2014-11-13 11:40:38

<hr>

- _Attachment: shapes.png<br>_

| 1.0 | Not all shapes support borderColor/borderWidth - Originally reported on Google Code with ID 1097

```

Some shapes are using the default stroke and/or the default stroke width, even if the

user sets a borderColor/borderWidth

|v ver circle box poly es |

v := RTView new.

ver := (1 to:5)collect:[:i | Point r:100 degrees:(360/5*i)].

circle := RTEllipse new size: 200; color: Color red; borderWidth:5;borderColor: Color

green.

box := RTBox new size: 200; color: Color red; borderWidth:5;borderColor: Color green.

poly := RTPolygon new size: 200; vertices:ver; color: Color red; borderWidth:5;borderColor:

Color green.

es := circle elementOn:'hello'.

v add: es.

es := box elementOn:'hello'.

v add: es.

es := poly elementOn:'hello'.

v add: es.

v @ RTDraggableView .

RTGridLayout on: v elements.

v

"all shapes should use the provided borderWidth (5) and borderColor (Green)"

moose build 3147

* Type-Defect

* Component-Roassal2

```

Reported by `nicolaihess` on 2014-11-13 11:40:38

<hr>

- _Attachment: shapes.png<br>_

| defect | not all shapes support bordercolor borderwidth originally reported on google code with id some shapes are using the default stroke and or the default stroke width even if the user sets a bordercolor borderwidth v ver circle box poly es v rtview new ver to collect circle rtellipse new size color color red borderwidth bordercolor color green box rtbox new size color color red borderwidth bordercolor color green poly rtpolygon new size vertices ver color color red borderwidth bordercolor color green es circle elementon hello v add es es box elementon hello v add es es poly elementon hello v add es v rtdraggableview rtgridlayout on v elements v all shapes should use the provided borderwidth and bordercolor green moose build type defect component reported by nicolaihess on attachment shapes png | 1 |

38,612 | 8,948,475,582 | IssuesEvent | 2019-01-25 02:34:42 | svigerske/ipopt-donotuse | https://api.github.com/repos/svigerske/ipopt-donotuse | closed | test bug report | Ipopt defect | Issue created by migration from Trac.

Original creator: andreasw@us.ibm.com

Original creation time: 2006-05-02 18:11:07

Assignee: andreasw

Version: 3.0

I'm just testing if the forwarding of ticket changes to the mailing list works... | 1.0 | test bug report - Issue created by migration from Trac.

Original creator: andreasw@us.ibm.com

Original creation time: 2006-05-02 18:11:07

Assignee: andreasw

Version: 3.0

I'm just testing if the forwarding of ticket changes to the mailing list works... | defect | test bug report issue created by migration from trac original creator andreasw us ibm com original creation time assignee andreasw version i m just testing if the forwarding of ticket changes to the mailing list works | 1 |

570,211 | 17,021,256,246 | IssuesEvent | 2021-07-02 19:31:59 | HHS81/c182s | https://api.github.com/repos/HHS81/c182s | closed | Animation: nose gear scissor animation | 3D model animations (XML) bug middle priority | when I found out how the tracking animation is working.....

| 1.0 | Animation: nose gear scissor animation - when I found out how the tracking animation is working.....

| non_defect | animation nose gear scissor animation when i found out how the tracking animation is working | 0 |

35,219 | 7,659,745,381 | IssuesEvent | 2018-05-11 07:57:01 | PowerDNS/pdns | https://api.github.com/repos/PowerDNS/pdns | closed | dnsdist: setVerboseHealthChecks() is missing from the documentation | defect dnsdist docs | ### Short description

<!-- Explain in a few sentences what the issue/request is -->

`setVerboseHealthChecks()` is not documented, it would be nice to document it.

| 1.0 | dnsdist: setVerboseHealthChecks() is missing from the documentation - ### Short description

<!-- Explain in a few sentences what the issue/request is -->

`setVerboseHealthChecks()` is not documented, it would be nice to document it.

| defect | dnsdist setverbosehealthchecks is missing from the documentation short description setverbosehealthchecks is not documented it would be nice to document it | 1 |

29,206 | 5,592,675,754 | IssuesEvent | 2017-03-30 05:45:39 | CenturyLinkCloud/MDW | https://api.github.com/repos/CenturyLinkCloud/MDW | opened | Process version discrepancies | Defect | Workflow process versions are stored in the .proc JSON definition and also in .mdw/versions. Discrepancies can cause major headaches. Removing version from the .proc file would be a major change because it would affect our ability to handle in-flight processes. However, we should at least perform some checks during import/export and when initializing.

One problem this causes is when launching a process from the Workflow tab of MDWHub. If the versions don't match, then the backend REST service may return a 404 response. | 1.0 | Process version discrepancies - Workflow process versions are stored in the .proc JSON definition and also in .mdw/versions. Discrepancies can cause major headaches. Removing version from the .proc file would be a major change because it would affect our ability to handle in-flight processes. However, we should at least perform some checks during import/export and when initializing.

One problem this causes is when launching a process from the Workflow tab of MDWHub. If the versions don't match, then the backend REST service may return a 404 response. | defect | process version discrepancies workflow process versions are stored in the proc json definition and also in mdw versions discrepancies can cause major headaches removing version from the proc file would be a major change because it would affect our ability to handle in flight processes however we should at least perform some checks during import export and when initializing one problem this causes is when launching a process from the workflow tab of mdwhub if the versions don t match then the backend rest service may return a response | 1 |

30,582 | 4,210,361,304 | IssuesEvent | 2016-06-29 09:38:31 | redaxo/redaxo | https://api.github.com/repos/redaxo/redaxo | closed | Editieransicht Hintergrundfarbe | Design / CSS |

Durch background-color: #9ca5b2 in .rex-main-frame hat die Editieransicht eine ganz andere Hintergrundfarbe als alle anderen Oberflächen in Redaxo sonst. Dort, und nur dort. Schmerzen da nur meine Augen oder empfindet das noch jemand als störend?

Ohne wäre es wie in jeder anderen Unterseite auch:

| 1.0 | Editieransicht Hintergrundfarbe -

Durch background-color: #9ca5b2 in .rex-main-frame hat die Editieransicht eine ganz andere Hintergrundfarbe als alle anderen Oberflächen in Redaxo sonst. Dort, und nur dort. Schmerzen da nur meine Augen oder empfindet das noch jemand als störend?

Ohne wäre es wie in jeder anderen Unterseite auch:

| non_defect | editieransicht hintergrundfarbe durch background color in rex main frame hat die editieransicht eine ganz andere hintergrundfarbe als alle anderen oberflächen in redaxo sonst dort und nur dort schmerzen da nur meine augen oder empfindet das noch jemand als störend ohne wäre es wie in jeder anderen unterseite auch | 0 |

43,866 | 17,702,775,946 | IssuesEvent | 2021-08-25 01:33:42 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Cost doubt | container-service/svc triaged cxp product-question Pri1 | It's said that AKS is free, but what about the AKS load balancers? AFAIK, AKS uses a Standard LB by default. Is this still free?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 60a7a0a8-97e7-0fda-763c-1a9972f4e9bc

* Version Independent ID: 82b46441-43fc-fe48-97e2-0f3fca3d6eab

* Content: [Use a Public Load Balancer - Azure Kubernetes Service](https://docs.microsoft.com/en-us/azure/aks/load-balancer-standard)

* Content Source: [articles/aks/load-balancer-standard.md](https://github.com/MicrosoftDocs/azure-docs/blob/master/articles/aks/load-balancer-standard.md)

* Service: **container-service**

* GitHub Login: @palma21

* Microsoft Alias: **jpalma** | 1.0 | Cost doubt - It's said that AKS is free, but what about the AKS load balancers? AFAIK, AKS uses a Standard LB by default. Is this still free?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 60a7a0a8-97e7-0fda-763c-1a9972f4e9bc

* Version Independent ID: 82b46441-43fc-fe48-97e2-0f3fca3d6eab

* Content: [Use a Public Load Balancer - Azure Kubernetes Service](https://docs.microsoft.com/en-us/azure/aks/load-balancer-standard)

* Content Source: [articles/aks/load-balancer-standard.md](https://github.com/MicrosoftDocs/azure-docs/blob/master/articles/aks/load-balancer-standard.md)

* Service: **container-service**

* GitHub Login: @palma21

* Microsoft Alias: **jpalma** | non_defect | cost doubt it s said that aks is free but what about the aks load balancers afaik aks uses a standard lb by default is this still free document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source service container service github login microsoft alias jpalma | 0 |

51,244 | 13,207,401,227 | IssuesEvent | 2020-08-14 22:57:53 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | opened | Python on Mac defaulting to system (Trac #90) | IceTray Incomplete Migration Migrated from Trac defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/90">https://code.icecube.wisc.edu/projects/icecube/ticket/90</a>, reported by cgilsand owned by cgils</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2007-09-07T17:14:11",

"_ts": "1189185251000000",

"description": "15:47 < blaufuss@> blaufuss@teufel[~/..cework/offline/build](I3)% ./examples/resources/scripts/pass1.py\n\n15:47 < blaufuss@> Fatal Python error: Interpreter not initialized (version mismatch?)\n\n15:47 < blaufuss@> Abort trap\n\n15:47 < blaufuss@> blaufuss@teufel[~/..cework/offline/build](I3)% which python\n\n15:47 < blaufuss@> /Users/blaufuss/icework/i3tools/bin/python\n\n15:47 < blaufuss@> I reproduce Georges error on teufel\n\n15:49 < gekolu > OK so I'm not totally mad :-)\n\n15:49 < drool@> hrm\n\n15:50 < blaufuss@> otool -L libithon.so\n\n15:50 < blaufuss@> /System/Library/Frameworks/Python.framework/Versions/2.3/Python (compatibility version 2.3.0, current version 2.3.5)",

"reporter": "cgils",

"cc": "",

"resolution": "fixed",

"time": "2007-08-13T19:58:05",

"component": "IceTray",

"summary": "Python on Mac defaulting to system",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "cgils",

"type": "defect"

}

```

</p>

</details>

| 1.0 | Python on Mac defaulting to system (Trac #90) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/90">https://code.icecube.wisc.edu/projects/icecube/ticket/90</a>, reported by cgilsand owned by cgils</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2007-09-07T17:14:11",

"_ts": "1189185251000000",

"description": "15:47 < blaufuss@> blaufuss@teufel[~/..cework/offline/build](I3)% ./examples/resources/scripts/pass1.py\n\n15:47 < blaufuss@> Fatal Python error: Interpreter not initialized (version mismatch?)\n\n15:47 < blaufuss@> Abort trap\n\n15:47 < blaufuss@> blaufuss@teufel[~/..cework/offline/build](I3)% which python\n\n15:47 < blaufuss@> /Users/blaufuss/icework/i3tools/bin/python\n\n15:47 < blaufuss@> I reproduce Georges error on teufel\n\n15:49 < gekolu > OK so I'm not totally mad :-)\n\n15:49 < drool@> hrm\n\n15:50 < blaufuss@> otool -L libithon.so\n\n15:50 < blaufuss@> /System/Library/Frameworks/Python.framework/Versions/2.3/Python (compatibility version 2.3.0, current version 2.3.5)",

"reporter": "cgils",

"cc": "",

"resolution": "fixed",

"time": "2007-08-13T19:58:05",

"component": "IceTray",

"summary": "Python on Mac defaulting to system",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "cgils",

"type": "defect"

}

```

</p>

</details>

| defect | python on mac defaulting to system trac migrated from json status closed changetime ts description blaufuss teufel examples resources scripts py n fatal python error interpreter not initialized version mismatch n abort trap n blaufuss teufel which python n users blaufuss icework bin python n i reproduce georges error on teufel n ok so i m not totally mad n hrm n otool l libithon so n system library frameworks python framework versions python compatibility version current version reporter cgils cc resolution fixed time component icetray summary python on mac defaulting to system priority normal keywords milestone owner cgils type defect | 1 |

42,756 | 11,256,140,351 | IssuesEvent | 2020-01-12 14:22:52 | primefaces/primefaces | https://api.github.com/repos/primefaces/primefaces | closed | DataTable: DOM memory leak on widget.refresh() | defect | 1) Environment

- PrimeFaces version: 7.0+

- Does it work on the newest released PrimeFaces version? NO

- Does it work on the newest sources in GitHub? NO

- Application server + version: ALL

- Affected browsers: ALL

## 2) Expected behavior

When calling `widget.refresh(cfg)` there should not be multiple duplicate cloned THEAD objects.

## 3) Actual behavior

For scrollable datatable if you open F12 Console and execute `widget.refresh(cfg)` to refresh the datatable you will see multiple identical THEAD_CLONE DOM elements get created.

```xml

<thead class="ui-datatable-scrollable-theadclone" style="height: 0px;"></thead>

<thead class="ui-datatable-scrollable-theadclone" style="height: 0px;"></thead>

<thead class="ui-datatable-scrollable-theadclone" style="height: 0px;"></thead>

```

## 4) Steps to reproduce

1. Create a Scrollable Datatable.

2. In F12 Console type `widget.refresh(widget.cfg)`

3. Observe duplicate THEAD.

## 5) Sample XHTML

```xml

<p:dataTable id="tblScroll" widgetVar="widget" var="car" value="#{dtScrollView.cars2}" scrollable="true" scrollWidth="100%" resizeMode="expand" paginator="true" rows="2" sortMode="multiple">

<p:column headerText="Id" footerText="Id" sortBy="#{car.id}" filterBy="#{car.id}">

<h:outputText value="#{car.id}" />

</p:column>

<p:column headerText="Year" footerText="Year">

<h:outputText value="#{car.year}" />

</p:column>

<p:column headerText="Brand" footerText="Brand">

<h:outputText value="#{car.brand}" />

</p:column>

<p:column headerText="Color" footerText="Color">

<h:outputText value="#{car.color}" />

</p:column>

</p:dataTable>

```

## 6) Sample bean

From Showcase.

| 1.0 | DataTable: DOM memory leak on widget.refresh() - 1) Environment

- PrimeFaces version: 7.0+

- Does it work on the newest released PrimeFaces version? NO

- Does it work on the newest sources in GitHub? NO

- Application server + version: ALL

- Affected browsers: ALL

## 2) Expected behavior

When calling `widget.refresh(cfg)` there should not be multiple duplicate cloned THEAD objects.

## 3) Actual behavior

For scrollable datatable if you open F12 Console and execute `widget.refresh(cfg)` to refresh the datatable you will see multiple identical THEAD_CLONE DOM elements get created.

```xml

<thead class="ui-datatable-scrollable-theadclone" style="height: 0px;"></thead>

<thead class="ui-datatable-scrollable-theadclone" style="height: 0px;"></thead>

<thead class="ui-datatable-scrollable-theadclone" style="height: 0px;"></thead>

```

## 4) Steps to reproduce

1. Create a Scrollable Datatable.

2. In F12 Console type `widget.refresh(widget.cfg)`

3. Observe duplicate THEAD.

## 5) Sample XHTML

```xml

<p:dataTable id="tblScroll" widgetVar="widget" var="car" value="#{dtScrollView.cars2}" scrollable="true" scrollWidth="100%" resizeMode="expand" paginator="true" rows="2" sortMode="multiple">

<p:column headerText="Id" footerText="Id" sortBy="#{car.id}" filterBy="#{car.id}">

<h:outputText value="#{car.id}" />

</p:column>

<p:column headerText="Year" footerText="Year">

<h:outputText value="#{car.year}" />

</p:column>

<p:column headerText="Brand" footerText="Brand">

<h:outputText value="#{car.brand}" />

</p:column>

<p:column headerText="Color" footerText="Color">

<h:outputText value="#{car.color}" />

</p:column>

</p:dataTable>

```

## 6) Sample bean

From Showcase.

| defect | datatable dom memory leak on widget refresh environment primefaces version does it work on the newest released primefaces version no does it work on the newest sources in github no application server version all affected browsers all expected behavior when calling widget refresh cfg there should not be multiple duplicate cloned thead objects actual behavior for scrollable datatable if you open console and execute widget refresh cfg to refresh the datatable you will see multiple identical thead clone dom elements get created xml steps to reproduce create a scrollable datatable in console type widget refresh widget cfg observe duplicate thead sample xhtml xml sample bean from showcase | 1 |

8,133 | 2,611,453,798 | IssuesEvent | 2015-02-27 05:01:03 | chrsmith/hedgewars | https://api.github.com/repos/chrsmith/hedgewars | closed | graphic bug any map doesnot show well | auto-migrated Priority-Medium Type-Defect | ```

when starting a game map shows well but when player move, the graphic does not

show well. the backgroud is limited to a small rectangle and the hedges seems

to stay in the air.

What is the expected output? What do you see instead?

stable graphic

What version of the product are you using? On what operating system?

0.9.14.1

Please provide any additional information below.

```

Original issue reported on code.google.com by `longb...@gmail.com` on 15 Dec 2010 at 8:31 | 1.0 | graphic bug any map doesnot show well - ```

when starting a game map shows well but when player move, the graphic does not

show well. the backgroud is limited to a small rectangle and the hedges seems

to stay in the air.

What is the expected output? What do you see instead?

stable graphic

What version of the product are you using? On what operating system?

0.9.14.1

Please provide any additional information below.

```

Original issue reported on code.google.com by `longb...@gmail.com` on 15 Dec 2010 at 8:31 | defect | graphic bug any map doesnot show well when starting a game map shows well but when player move the graphic does not show well the backgroud is limited to a small rectangle and the hedges seems to stay in the air what is the expected output what do you see instead stable graphic what version of the product are you using on what operating system please provide any additional information below original issue reported on code google com by longb gmail com on dec at | 1 |

68,268 | 21,573,455,381 | IssuesEvent | 2022-05-02 11:08:25 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | opened | Disable user location caching in LocationPicker | T-Defect O-Uncommon A-Location-Sharing | ### Steps to reproduce

1. Reset perms for element

2. Open the location sharing dialog, select 'own location'

3. Allow geolocation in the browser permissions popup

4. Close the location share dialog

5. Block geolocation permissions

6. Open the location sharing dialog again, select 'own location'

### Outcome

#### What did you expect?

'No permissions' error

#### What happened instead?

The map centered on my current location

Maplibre caches your last known position for performance reasons when `trackUserLocation` config is true.

### Operating system

_No response_

### Browser information

_No response_

### URL for webapp

_No response_

### Application version

_No response_

### Homeserver

_No response_

### Will you send logs?

No | 1.0 | Disable user location caching in LocationPicker - ### Steps to reproduce

1. Reset perms for element

2. Open the location sharing dialog, select 'own location'

3. Allow geolocation in the browser permissions popup

4. Close the location share dialog

5. Block geolocation permissions

6. Open the location sharing dialog again, select 'own location'

### Outcome

#### What did you expect?

'No permissions' error

#### What happened instead?

The map centered on my current location

Maplibre caches your last known position for performance reasons when `trackUserLocation` config is true.

### Operating system

_No response_

### Browser information

_No response_

### URL for webapp

_No response_

### Application version

_No response_

### Homeserver

_No response_

### Will you send logs?

No | defect | disable user location caching in locationpicker steps to reproduce reset perms for element open the location sharing dialog select own location allow geolocation in the browser permissions popup close the location share dialog block geolocation permissions open the location sharing dialog again select own location outcome what did you expect no permissions error what happened instead the map centered on my current location maplibre caches your last known position for performance reasons when trackuserlocation config is true operating system no response browser information no response url for webapp no response application version no response homeserver no response will you send logs no | 1 |

6,798 | 9,100,909,511 | IssuesEvent | 2019-02-20 09:47:00 | marcelm/cutadapt | https://api.github.com/repos/marcelm/cutadapt | opened | Remove --rest-file option | incompatible | The `--info-file` option should be used instead. Also document how to turn an info file into an equivalent rest file. | True | Remove --rest-file option - The `--info-file` option should be used instead. Also document how to turn an info file into an equivalent rest file. | non_defect | remove rest file option the info file option should be used instead also document how to turn an info file into an equivalent rest file | 0 |

41,601 | 6,922,852,548 | IssuesEvent | 2017-11-30 06:04:08 | KeevanDance/CFThrowdown-lite | https://api.github.com/repos/KeevanDance/CFThrowdown-lite | closed | QC Checklist | Documentation | **Admin**

- [ ] Clicking logout should logout the user and return to the public page removing access to write data

Workouts:

- [ ] Clicking workouts should show all workouts where default sort is RX (or scaled if RX is not an option)

- [ ] Clicking on a workout should take you to the details page of that workout displaying name, division, gender, score type, and steps

- [ ] Clicking on Add Workout should allow you to enter and submit a new workout. This should add the workout to all existing competitors of the same division and gender and to the division that was selected

- [ ] Clicking "submit workout scores" should iterate through all competitors apart of this workout and place them according to their score for both Timed and Weighted workouts

Competitors:

- [ ] Click on Competitors should show all competitors with options to filter by gender and division

- [ ] Clicking Add Competitor should allow you to create a new competitor. This should add all of the existing relevant workouts to the scores array for that competitor based on division and gender

- [ ] Clicking on a Competitor should show more information for that competitor

- [ ] Clicking on Edit Mode should flip the button text to Save Changes and allow you to edit every part of the competitor. Updating gender and/or division should alter the scores array to only have workouts that match the combination

Divisions:

- [ ] Clicking on Divisions should show all available divisions

- [ ] clicking on Add Division should give you the option to add a division

- [ ] clicking on a division should give you a button to then delete the division

- [ ] clicking the delete this division button should delete the division (including workoutIds under that division), workouts associated with this division, and update competitors removing scores and division for those that are associated with this division

**Public**

- [ ] Clicking Login here should take the user to a login page where they can enter admin credentials and be taken to an admin home page

Leaderboard:

Workouts:

- [ ] Clicking Workouts should display all of the workouts for the filter set (default is Men, RX)

Competitors:

- [ ] Clicking on Competitors should show all competitors allowing for the filtering by gender and division | 1.0 | QC Checklist - **Admin**

- [ ] Clicking logout should logout the user and return to the public page removing access to write data

Workouts:

- [ ] Clicking workouts should show all workouts where default sort is RX (or scaled if RX is not an option)

- [ ] Clicking on a workout should take you to the details page of that workout displaying name, division, gender, score type, and steps

- [ ] Clicking on Add Workout should allow you to enter and submit a new workout. This should add the workout to all existing competitors of the same division and gender and to the division that was selected

- [ ] Clicking "submit workout scores" should iterate through all competitors apart of this workout and place them according to their score for both Timed and Weighted workouts

Competitors:

- [ ] Click on Competitors should show all competitors with options to filter by gender and division

- [ ] Clicking Add Competitor should allow you to create a new competitor. This should add all of the existing relevant workouts to the scores array for that competitor based on division and gender

- [ ] Clicking on a Competitor should show more information for that competitor

- [ ] Clicking on Edit Mode should flip the button text to Save Changes and allow you to edit every part of the competitor. Updating gender and/or division should alter the scores array to only have workouts that match the combination

Divisions:

- [ ] Clicking on Divisions should show all available divisions

- [ ] clicking on Add Division should give you the option to add a division

- [ ] clicking on a division should give you a button to then delete the division

- [ ] clicking the delete this division button should delete the division (including workoutIds under that division), workouts associated with this division, and update competitors removing scores and division for those that are associated with this division

**Public**

- [ ] Clicking Login here should take the user to a login page where they can enter admin credentials and be taken to an admin home page

Leaderboard:

Workouts:

- [ ] Clicking Workouts should display all of the workouts for the filter set (default is Men, RX)

Competitors:

- [ ] Clicking on Competitors should show all competitors allowing for the filtering by gender and division | non_defect | qc checklist admin clicking logout should logout the user and return to the public page removing access to write data workouts clicking workouts should show all workouts where default sort is rx or scaled if rx is not an option clicking on a workout should take you to the details page of that workout displaying name division gender score type and steps clicking on add workout should allow you to enter and submit a new workout this should add the workout to all existing competitors of the same division and gender and to the division that was selected clicking submit workout scores should iterate through all competitors apart of this workout and place them according to their score for both timed and weighted workouts competitors click on competitors should show all competitors with options to filter by gender and division clicking add competitor should allow you to create a new competitor this should add all of the existing relevant workouts to the scores array for that competitor based on division and gender clicking on a competitor should show more information for that competitor clicking on edit mode should flip the button text to save changes and allow you to edit every part of the competitor updating gender and or division should alter the scores array to only have workouts that match the combination divisions clicking on divisions should show all available divisions clicking on add division should give you the option to add a division clicking on a division should give you a button to then delete the division clicking the delete this division button should delete the division including workoutids under that division workouts associated with this division and update competitors removing scores and division for those that are associated with this division public clicking login here should take the user to a login page where they can enter admin credentials and be taken to an admin home page leaderboard workouts clicking workouts should display all of the workouts for the filter set default is men rx competitors clicking on competitors should show all competitors allowing for the filtering by gender and division | 0 |

20,817 | 3,420,904,147 | IssuesEvent | 2015-12-08 16:35:55 | dkfans/keeperfx | https://api.github.com/repos/dkfans/keeperfx | closed | Imps never claim when gems are available (Stable) | Branch-Stable Priority-High Status-Fixed Type-Defect | Load the attached save and see Orcs break down a door. Notice Imps will not claim the path beyond it no matter how long you wait.

For players this is annoying, but for computer players this might be game breaking.

Save is from KeeperFX v0.4.6 r1737 patch, git 04d7924, dated 2015-11-05 18:59:28 | 1.0 | Imps never claim when gems are available (Stable) - Load the attached save and see Orcs break down a door. Notice Imps will not claim the path beyond it no matter how long you wait.

For players this is annoying, but for computer players this might be game breaking.

Save is from KeeperFX v0.4.6 r1737 patch, git 04d7924, dated 2015-11-05 18:59:28 | defect | imps never claim when gems are available stable load the attached save and see orcs break down a door notice imps will not claim the path beyond it no matter how long you wait for players this is annoying but for computer players this might be game breaking save is from keeperfx patch git dated | 1 |

313,081 | 9,556,782,403 | IssuesEvent | 2019-05-03 09:26:42 | OpenSourceEconomics/soepy | https://api.github.com/repos/OpenSourceEconomics/soepy | closed | PACKAGE_DIR path | pb package priority low size small | `PACKAGE_DIR` points one level higher than `soepy` and at the root of the repository. I would like us to not reference anything outside package itself there. So, instead of

`

PACKAGE_DIR = Path(__file__).parent.parent.absolute()

TEST_RESOURCES_DIR = PACKAGE_DIR / "soepy" / "test" / "resources"

`

we should use

`

PACKAGE_DIR = Path(__file__).parent.absolute()

TEST_RESOURCES_DIR = PACKAGE_DIR / "test" / "resources"

`

However, this requires to check how the tests that use the two paths are affected.

| 1.0 | PACKAGE_DIR path - `PACKAGE_DIR` points one level higher than `soepy` and at the root of the repository. I would like us to not reference anything outside package itself there. So, instead of

`

PACKAGE_DIR = Path(__file__).parent.parent.absolute()

TEST_RESOURCES_DIR = PACKAGE_DIR / "soepy" / "test" / "resources"

`

we should use

`

PACKAGE_DIR = Path(__file__).parent.absolute()

TEST_RESOURCES_DIR = PACKAGE_DIR / "test" / "resources"

`

However, this requires to check how the tests that use the two paths are affected.

| non_defect | package dir path package dir points one level higher than soepy and at the root of the repository i would like us to not reference anything outside package itself there so instead of package dir path file parent parent absolute test resources dir package dir soepy test resources we should use package dir path file parent absolute test resources dir package dir test resources however this requires to check how the tests that use the two paths are affected | 0 |

3,769 | 2,540,122,359 | IssuesEvent | 2015-01-27 19:38:30 | EFForg/privacybadgerchrome | https://api.github.com/repos/EFForg/privacybadgerchrome | closed | Enhancement: 1-click config fixes for common sites | bug High priority | ## Scenario

I'm on youtube, I've installed PB. It seems to work. I want to comment, click comment box. Popup opens then closes. Click it again, chrome blocks popup. Allow popups, click again. Popup opens & closes. Youtube appears to be trying to call to google+ or whatever for commenting. Check privacy badger:

* apis.google.com :yellow_heart:

* gg.google.com :red_circle:

* plus.google.com :yellow_heart:

* www.google.com :yellow_heart:

Ok.... what do I do now? "I just want to comment."

## Probable outcome for many people

Disable/remove pb which is "breaking" the site, or just disable blocking willy nilly (which sort of defeats the purpose of the tool).

## Proposal

It would be nice if the pb icon blinked or something in this scenario so I could click it & be presented with "Allow youtube commenting." These configurations would have to be tailor-made for each site/service, but I believe that a 1-click-fix on e.g. the top 10 services could alleviate 80+% of user pain around this. | 1.0 | Enhancement: 1-click config fixes for common sites - ## Scenario

I'm on youtube, I've installed PB. It seems to work. I want to comment, click comment box. Popup opens then closes. Click it again, chrome blocks popup. Allow popups, click again. Popup opens & closes. Youtube appears to be trying to call to google+ or whatever for commenting. Check privacy badger:

* apis.google.com :yellow_heart:

* gg.google.com :red_circle:

* plus.google.com :yellow_heart:

* www.google.com :yellow_heart:

Ok.... what do I do now? "I just want to comment."

## Probable outcome for many people

Disable/remove pb which is "breaking" the site, or just disable blocking willy nilly (which sort of defeats the purpose of the tool).

## Proposal

It would be nice if the pb icon blinked or something in this scenario so I could click it & be presented with "Allow youtube commenting." These configurations would have to be tailor-made for each site/service, but I believe that a 1-click-fix on e.g. the top 10 services could alleviate 80+% of user pain around this. | non_defect | enhancement click config fixes for common sites scenario i m on youtube i ve installed pb it seems to work i want to comment click comment box popup opens then closes click it again chrome blocks popup allow popups click again popup opens closes youtube appears to be trying to call to google or whatever for commenting check privacy badger apis google com yellow heart gg google com red circle plus google com yellow heart yellow heart ok what do i do now i just want to comment probable outcome for many people disable remove pb which is breaking the site or just disable blocking willy nilly which sort of defeats the purpose of the tool proposal it would be nice if the pb icon blinked or something in this scenario so i could click it be presented with allow youtube commenting these configurations would have to be tailor made for each site service but i believe that a click fix on e g the top services could alleviate of user pain around this | 0 |

9,909 | 2,616,009,823 | IssuesEvent | 2015-03-02 00:53:24 | jasonhall/bwapi | https://api.github.com/repos/jasonhall/bwapi | closed | CoolDown shows zero for several types of Units | auto-migrated Component-Logic Priority-Medium Type-Defect Usability | ```

What steps will reproduce the problem?

1. unit->getAirWeaponCooldown() is always 0

2. unit->getGroundWeaponCooldown() is always 0

3. only happens for tank and goliath

What is the expected output? What do you see instead?

expect to find some non zero value when the unit is attacking.

What version of the product are you using? On what operating system?

BWAPI 2.6.1

Please provide any additional information below.

this happens only on tank and goliath (among the units I tested). nothing

is wrong for marine, ghost, ultralisk...

also, unit->isStartingAttack() always return false for tank and goliath.

```

Original issue reported on code.google.com by `hero...@gmail.com` on 22 Jan 2010 at 7:55 | 1.0 | CoolDown shows zero for several types of Units - ```

What steps will reproduce the problem?

1. unit->getAirWeaponCooldown() is always 0

2. unit->getGroundWeaponCooldown() is always 0

3. only happens for tank and goliath

What is the expected output? What do you see instead?

expect to find some non zero value when the unit is attacking.

What version of the product are you using? On what operating system?

BWAPI 2.6.1

Please provide any additional information below.

this happens only on tank and goliath (among the units I tested). nothing

is wrong for marine, ghost, ultralisk...

also, unit->isStartingAttack() always return false for tank and goliath.

```

Original issue reported on code.google.com by `hero...@gmail.com` on 22 Jan 2010 at 7:55 | defect | cooldown shows zero for several types of units what steps will reproduce the problem unit getairweaponcooldown is always unit getgroundweaponcooldown is always only happens for tank and goliath what is the expected output what do you see instead expect to find some non zero value when the unit is attacking what version of the product are you using on what operating system bwapi please provide any additional information below this happens only on tank and goliath among the units i tested nothing is wrong for marine ghost ultralisk also unit isstartingattack always return false for tank and goliath original issue reported on code google com by hero gmail com on jan at | 1 |

17,071 | 2,974,593,141 | IssuesEvent | 2015-07-15 02:10:23 | Reimashi/jotai | https://api.github.com/repos/Reimashi/jotai | closed | gadget loses info on shutdown/startup in WinXP | auto-migrated Priority-Medium Type-Defect wontfix | ```

Beta .4 works great with WinXP sp3, MSI 790GX-G65 mb & AMD Phenom II processor,

4g memory.

Only problem I've encountered is that the desktop gadget loses the applied info

(fan speed, cpu temp, etc) at system shutdown/startup and it has to be

reapplied. Have I missed something?

Thanks for a great utility.

```

Original issue reported on code.google.com by `ethe...@gmail.com` on 4 Apr 2012 at 11:22 | 1.0 | gadget loses info on shutdown/startup in WinXP - ```

Beta .4 works great with WinXP sp3, MSI 790GX-G65 mb & AMD Phenom II processor,

4g memory.

Only problem I've encountered is that the desktop gadget loses the applied info

(fan speed, cpu temp, etc) at system shutdown/startup and it has to be

reapplied. Have I missed something?

Thanks for a great utility.

```

Original issue reported on code.google.com by `ethe...@gmail.com` on 4 Apr 2012 at 11:22 | defect | gadget loses info on shutdown startup in winxp beta works great with winxp msi mb amd phenom ii processor memory only problem i ve encountered is that the desktop gadget loses the applied info fan speed cpu temp etc at system shutdown startup and it has to be reapplied have i missed something thanks for a great utility original issue reported on code google com by ethe gmail com on apr at | 1 |

120,689 | 17,644,257,790 | IssuesEvent | 2021-08-20 02:04:13 | DavidSpek/kale | https://api.github.com/repos/DavidSpek/kale | opened | CVE-2021-29518 (High) detected in tensorflow-1.0.0-cp27-cp27mu-manylinux1_x86_64.whl, tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl | security vulnerability | ## CVE-2021-29518 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>tensorflow-1.0.0-cp27-cp27mu-manylinux1_x86_64.whl</b>, <b>tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl</b></p></summary>

<p>

<details><summary><b>tensorflow-1.0.0-cp27-cp27mu-manylinux1_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learning framework for everyone.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/7b/c5/a97ed48fcc878e36bb05a3ea700c077360853c0994473a8f6b0ab4c2ddd2/tensorflow-1.0.0-cp27-cp27mu-manylinux1_x86_64.whl">https://files.pythonhosted.org/packages/7b/c5/a97ed48fcc878e36bb05a3ea700c077360853c0994473a8f6b0ab4c2ddd2/tensorflow-1.0.0-cp27-cp27mu-manylinux1_x86_64.whl</a></p>

<p>Path to dependency file: kale/examples/dog-breed-classification/requirements/requirements.txt</p>

<p>Path to vulnerable library: kale/examples/dog-breed-classification/requirements/requirements.txt</p>

<p>

Dependency Hierarchy:

- :x: **tensorflow-1.0.0-cp27-cp27mu-manylinux1_x86_64.whl** (Vulnerable Library)

</details>

<details><summary><b>tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learning framework for everyone.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/ef/73/205b5e7f8fe086ffe4165d984acb2c49fa3086f330f03099378753982d2e/tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl">https://files.pythonhosted.org/packages/ef/73/205b5e7f8fe086ffe4165d984acb2c49fa3086f330f03099378753982d2e/tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl</a></p>

<p>Path to dependency file: kale/examples/taxi-cab-classification/requirements.txt</p>

<p>Path to vulnerable library: kale/examples/taxi-cab-classification/requirements.txt</p>

<p>

Dependency Hierarchy:

- tfx_bsl-0.21.4-cp27-cp27mu-manylinux2010_x86_64.whl (Root Library)

- :x: **tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl** (Vulnerable Library)

</details>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

TensorFlow is an end-to-end open source platform for machine learning. In eager mode (default in TF 2.0 and later), session operations are invalid. However, users could still call the raw ops associated with them and trigger a null pointer dereference. The implementation(https://github.com/tensorflow/tensorflow/blob/eebb96c2830d48597d055d247c0e9aebaea94cd5/tensorflow/core/kernels/session_ops.cc#L104) dereferences the session state pointer without checking if it is valid. Thus, in eager mode, `ctx->session_state()` is nullptr and the call of the member function is undefined behavior. The fix will be included in TensorFlow 2.5.0. We will also cherrypick this commit on TensorFlow 2.4.2, TensorFlow 2.3.3, TensorFlow 2.2.3 and TensorFlow 2.1.4, as these are also affected and still in supported range.

<p>Publish Date: 2021-05-14

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-29518>CVE-2021-29518</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/tensorflow/tensorflow/security/advisories/GHSA-62gx-355r-9fhg">https://github.com/tensorflow/tensorflow/security/advisories/GHSA-62gx-355r-9fhg</a></p>

<p>Release Date: 2021-05-14</p>

<p>Fix Resolution: tensorflow - 2.5.0, tensorflow-cpu - 2.5.0, tensorflow-gpu - 2.5.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2021-29518 (High) detected in tensorflow-1.0.0-cp27-cp27mu-manylinux1_x86_64.whl, tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl - ## CVE-2021-29518 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>tensorflow-1.0.0-cp27-cp27mu-manylinux1_x86_64.whl</b>, <b>tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl</b></p></summary>

<p>

<details><summary><b>tensorflow-1.0.0-cp27-cp27mu-manylinux1_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learning framework for everyone.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/7b/c5/a97ed48fcc878e36bb05a3ea700c077360853c0994473a8f6b0ab4c2ddd2/tensorflow-1.0.0-cp27-cp27mu-manylinux1_x86_64.whl">https://files.pythonhosted.org/packages/7b/c5/a97ed48fcc878e36bb05a3ea700c077360853c0994473a8f6b0ab4c2ddd2/tensorflow-1.0.0-cp27-cp27mu-manylinux1_x86_64.whl</a></p>

<p>Path to dependency file: kale/examples/dog-breed-classification/requirements/requirements.txt</p>

<p>Path to vulnerable library: kale/examples/dog-breed-classification/requirements/requirements.txt</p>

<p>

Dependency Hierarchy:

- :x: **tensorflow-1.0.0-cp27-cp27mu-manylinux1_x86_64.whl** (Vulnerable Library)

</details>

<details><summary><b>tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learning framework for everyone.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/ef/73/205b5e7f8fe086ffe4165d984acb2c49fa3086f330f03099378753982d2e/tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl">https://files.pythonhosted.org/packages/ef/73/205b5e7f8fe086ffe4165d984acb2c49fa3086f330f03099378753982d2e/tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl</a></p>

<p>Path to dependency file: kale/examples/taxi-cab-classification/requirements.txt</p>

<p>Path to vulnerable library: kale/examples/taxi-cab-classification/requirements.txt</p>

<p>

Dependency Hierarchy:

- tfx_bsl-0.21.4-cp27-cp27mu-manylinux2010_x86_64.whl (Root Library)

- :x: **tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl** (Vulnerable Library)

</details>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

TensorFlow is an end-to-end open source platform for machine learning. In eager mode (default in TF 2.0 and later), session operations are invalid. However, users could still call the raw ops associated with them and trigger a null pointer dereference. The implementation(https://github.com/tensorflow/tensorflow/blob/eebb96c2830d48597d055d247c0e9aebaea94cd5/tensorflow/core/kernels/session_ops.cc#L104) dereferences the session state pointer without checking if it is valid. Thus, in eager mode, `ctx->session_state()` is nullptr and the call of the member function is undefined behavior. The fix will be included in TensorFlow 2.5.0. We will also cherrypick this commit on TensorFlow 2.4.2, TensorFlow 2.3.3, TensorFlow 2.2.3 and TensorFlow 2.1.4, as these are also affected and still in supported range.

<p>Publish Date: 2021-05-14

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-29518>CVE-2021-29518</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/tensorflow/tensorflow/security/advisories/GHSA-62gx-355r-9fhg">https://github.com/tensorflow/tensorflow/security/advisories/GHSA-62gx-355r-9fhg</a></p>

<p>Release Date: 2021-05-14</p>

<p>Fix Resolution: tensorflow - 2.5.0, tensorflow-cpu - 2.5.0, tensorflow-gpu - 2.5.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_defect | cve high detected in tensorflow whl tensorflow whl cve high severity vulnerability vulnerable libraries tensorflow whl tensorflow whl tensorflow whl tensorflow is an open source machine learning framework for everyone library home page a href path to dependency file kale examples dog breed classification requirements requirements txt path to vulnerable library kale examples dog breed classification requirements requirements txt dependency hierarchy x tensorflow whl vulnerable library tensorflow whl tensorflow is an open source machine learning framework for everyone library home page a href path to dependency file kale examples taxi cab classification requirements txt path to vulnerable library kale examples taxi cab classification requirements txt dependency hierarchy tfx bsl whl root library x tensorflow whl vulnerable library found in base branch master vulnerability details tensorflow is an end to end open source platform for machine learning in eager mode default in tf and later session operations are invalid however users could still call the raw ops associated with them and trigger a null pointer dereference the implementation dereferences the session state pointer without checking if it is valid thus in eager mode ctx session state is nullptr and the call of the member function is undefined behavior the fix will be included in tensorflow we will also cherrypick this commit on tensorflow tensorflow tensorflow and tensorflow as these are also affected and still in supported range publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution tensorflow tensorflow cpu tensorflow gpu step up your open source security game with whitesource | 0 |

181,451 | 14,020,733,713 | IssuesEvent | 2020-10-29 20:08:24 | lbowes/keep-to-calendar | https://api.github.com/repos/lbowes/keep-to-calendar | opened | test/pytest-config | incomplete type: test | ### Description

Investigate specifying `pytest` options in a config file

### TODO

todo | 1.0 | test/pytest-config - ### Description

Investigate specifying `pytest` options in a config file

### TODO

todo | non_defect | test pytest config description investigate specifying pytest options in a config file todo todo | 0 |

84,636 | 16,527,687,636 | IssuesEvent | 2021-05-26 22:50:20 | DIT112-V21/group-17 | https://api.github.com/repos/DIT112-V21/group-17 | opened | [PROBLEM] confirmPickupMessage(mailman,receiver) receiver object | Android Bug HighPriority Java code To improve | Description

mailman object not found properly, the confirmPickupMessage(mailman,receiver) the method Controller.confirmPickupMessage(mailman,receiver); can't find the mailman, if used in another class, it crashes.

related issue: #48 #65 | 1.0 | [PROBLEM] confirmPickupMessage(mailman,receiver) receiver object - Description

mailman object not found properly, the confirmPickupMessage(mailman,receiver) the method Controller.confirmPickupMessage(mailman,receiver); can't find the mailman, if used in another class, it crashes.

related issue: #48 #65 | non_defect | confirmpickupmessage mailman receiver receiver object description mailman object not found properly the confirmpickupmessage mailman receiver the method controller confirmpickupmessage mailman receiver can t find the mailman if used in another class it crashes related issue | 0 |

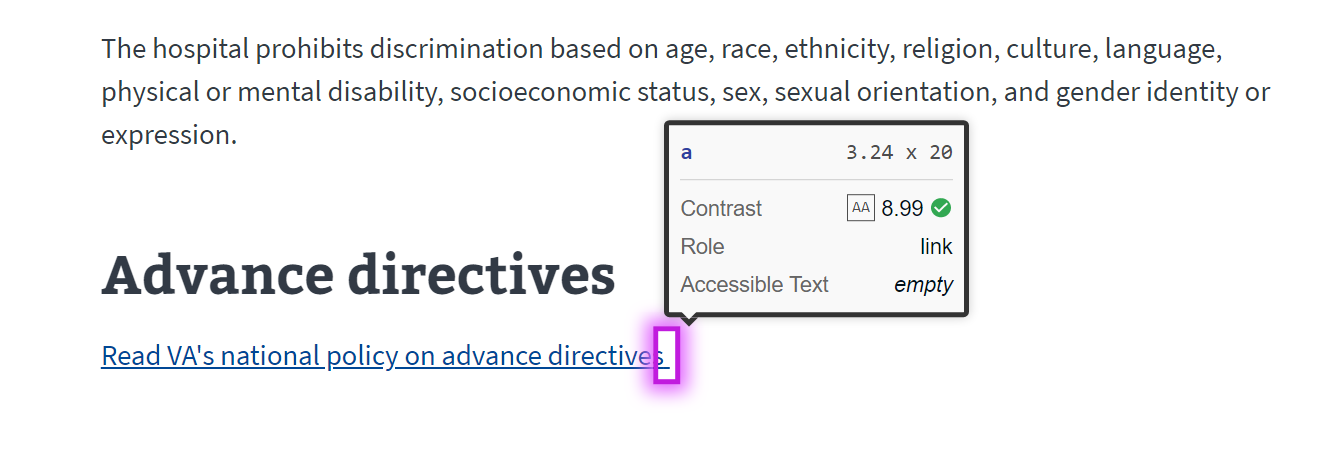

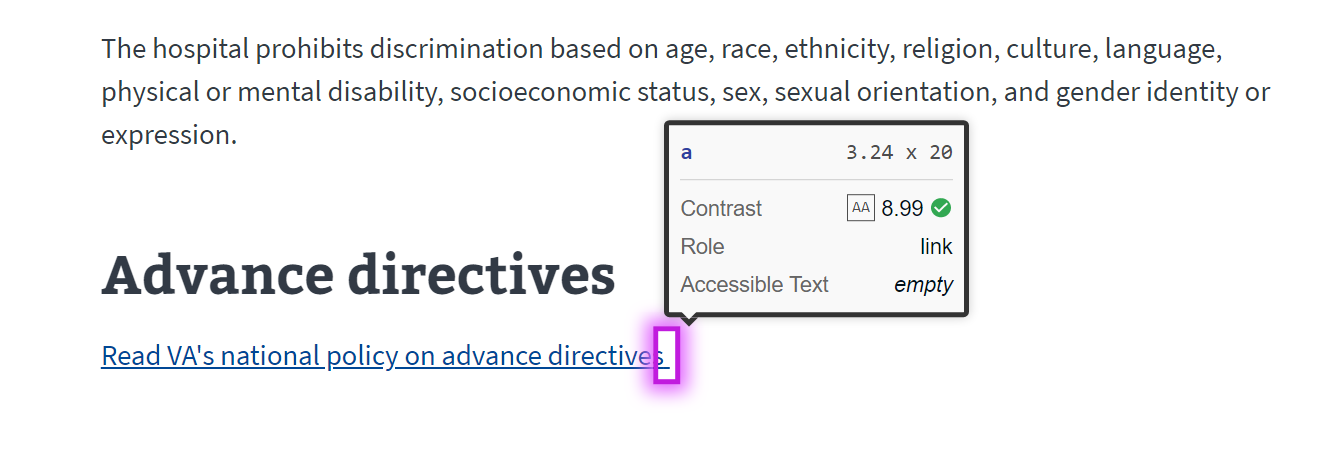

57,834 | 16,094,839,224 | IssuesEvent | 2021-04-26 21:30:37 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | opened | Discovery issue for VAMC "Links must have discernible text" Defect-1 items | 508-defect-1 508/Accessibility frontend frontend-vamc stretch-goal vsa vsa-facilities | ## Issue Description

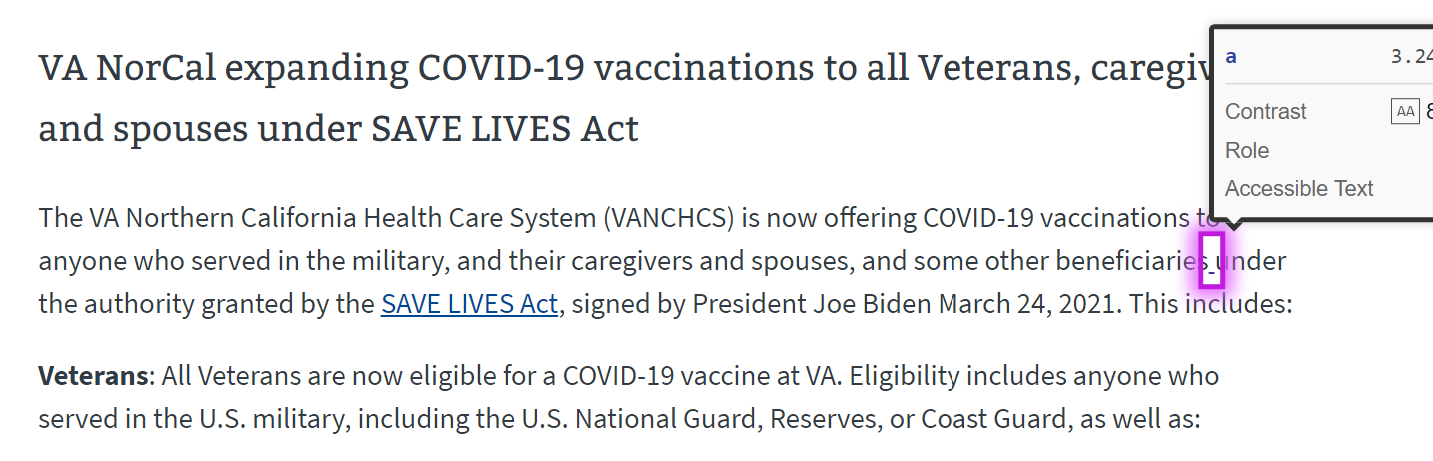

"Links must have discernible text" defects were discovered on several VAMC pages. We need to look at them individually to determine if they all have the same root cause and fix.

- https://www.va.gov/black-hills-health-care/policies/

- https://www.va.gov/minneapolis-health-care/programs/

- https://www.va.gov/northern-california-health-care/stories/

<details>

<summary> Policies page </summary>

</details>

<details>

<summary> Programs page </summary>

</details>

<details>

<summary>Stories page has two issues </summary>

</details>

---

## Tasks

- [ ] Dig into code to find source of error (content vs template, etc)

- [ ] For issues which can be addressed from front end, document tasks to resolve

## Acceptance Criteria

- [ ] Defects on the pages listed above have been investigated and a path for resolution is determined.

---

| 1.0 | Discovery issue for VAMC "Links must have discernible text" Defect-1 items - ## Issue Description

"Links must have discernible text" defects were discovered on several VAMC pages. We need to look at them individually to determine if they all have the same root cause and fix.

- https://www.va.gov/black-hills-health-care/policies/

- https://www.va.gov/minneapolis-health-care/programs/

- https://www.va.gov/northern-california-health-care/stories/

<details>

<summary> Policies page </summary>

</details>

<details>

<summary> Programs page </summary>

</details>

<details>

<summary>Stories page has two issues </summary>

</details>

---