Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

9,588 | 2,615,163,170 | IssuesEvent | 2015-03-01 06:42:41 | chrsmith/reaver-wps | https://api.github.com/repos/chrsmith/reaver-wps | opened | DIR-655 B1 WPS lock down | auto-migrated Priority-Triage Type-Defect | ```

0. What version of Reaver are you using? (Only defects against the latest

version will be considered.): Latest (Backtrack5 R2)

1. What operating system are you using (Linux is the only supported OS)?

Backtrack5 R2

2. Is your wireless card in monitor mode (yes/no)? Yes

3. What is the signal strength of the Access Point you are trying to crack? 35

to 40

4. What is the manufacturer and model # of the device you are trying to

crack? DLink DIR-655 B1

5. What is the entire command line string you are supplying to reaver?

reaver -i mon0 -b **my BSSID** -vv --lock-delay=630

6. Please describe what you think the issue is.

After 0.19% progress, I got the warning "Detected AP rate limiting, waiting 60

seconds before re-checking. " So, I checked with wash command if the router had

actually locked down WPS. It turned out that WPS was indeed locked. So, I

increased the lock delay to 315 seconds, but it did not help. I increased it

further to 630 seconds, but that didn't help either. I guess the issue is that

we need to know the WPS lock duration/timeout for DIR 655 B1 router. Please

help.

7. Paste the output from Reaver below.

Detected AP rate limiting, waiting 60 seconds before re-checking.

```

Original issue reported on code.google.com by `varmasuy...@gmail.com` on 29 Jun 2012 at 10:22 | 1.0 | DIR-655 B1 WPS lock down - ```

0. What version of Reaver are you using? (Only defects against the latest

version will be considered.): Latest (Backtrack5 R2)

1. What operating system are you using (Linux is the only supported OS)?

Backtrack5 R2

2. Is your wireless card in monitor mode (yes/no)? Yes

3. What is the signal strength of the Access Point you are trying to crack? 35

to 40

4. What is the manufacturer and model # of the device you are trying to

crack? DLink DIR-655 B1

5. What is the entire command line string you are supplying to reaver?

reaver -i mon0 -b **my BSSID** -vv --lock-delay=630

6. Please describe what you think the issue is.

After 0.19% progress, I got the warning "Detected AP rate limiting, waiting 60

seconds before re-checking. " So, I checked with wash command if the router had

actually locked down WPS. It turned out that WPS was indeed locked. So, I

increased the lock delay to 315 seconds, but it did not help. I increased it

further to 630 seconds, but that didn't help either. I guess the issue is that

we need to know the WPS lock duration/timeout for DIR 655 B1 router. Please

help.

7. Paste the output from Reaver below.

Detected AP rate limiting, waiting 60 seconds before re-checking.

```

Original issue reported on code.google.com by `varmasuy...@gmail.com` on 29 Jun 2012 at 10:22 | defect | dir wps lock down what version of reaver are you using only defects against the latest version will be considered latest what operating system are you using linux is the only supported os is your wireless card in monitor mode yes no yes what is the signal strength of the access point you are trying to crack to what is the manufacturer and model of the device you are trying to crack dlink dir what is the entire command line string you are supplying to reaver reaver i b my bssid vv lock delay please describe what you think the issue is after progress i got the warning detected ap rate limiting waiting seconds before re checking so i checked with wash command if the router had actually locked down wps it turned out that wps was indeed locked so i increased the lock delay to seconds but it did not help i increased it further to seconds but that didn t help either i guess the issue is that we need to know the wps lock duration timeout for dir router please help paste the output from reaver below detected ap rate limiting waiting seconds before re checking original issue reported on code google com by varmasuy gmail com on jun at | 1 |

277,499 | 30,659,260,374 | IssuesEvent | 2023-07-25 14:03:57 | rsoreq/zenbot | https://api.github.com/repos/rsoreq/zenbot | closed | CVE-2022-3517 (High) detected in minimatch-3.0.4.tgz - autoclosed | Mend: dependency security vulnerability | ## CVE-2022-3517 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>minimatch-3.0.4.tgz</b></p></summary>

<p>a glob matcher in javascript</p>

<p>Library home page: <a href="https://registry.npmjs.org/minimatch/-/minimatch-3.0.4.tgz">https://registry.npmjs.org/minimatch/-/minimatch-3.0.4.tgz</a></p>

<p>

Dependency Hierarchy:

- shelljs-0.8.4.tgz (Root Library)

- glob-7.1.6.tgz

- :x: **minimatch-3.0.4.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/rsoreq/zenbot/commit/7a24c0d7b98ee76e6bac827974cff490a7694378">7a24c0d7b98ee76e6bac827974cff490a7694378</a></p>

<p>Found in base branch: <b>unstable</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

A vulnerability was found in the minimatch package. This flaw allows a Regular Expression Denial of Service (ReDoS) when calling the braceExpand function with specific arguments, resulting in a Denial of Service.

<p>Publish Date: 2022-10-17

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-3517>CVE-2022-3517</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2022-10-17</p>

<p>Fix Resolution: minimatch - 3.0.5</p>

</p>

</details>

<p></p>

| True | CVE-2022-3517 (High) detected in minimatch-3.0.4.tgz - autoclosed - ## CVE-2022-3517 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>minimatch-3.0.4.tgz</b></p></summary>

<p>a glob matcher in javascript</p>

<p>Library home page: <a href="https://registry.npmjs.org/minimatch/-/minimatch-3.0.4.tgz">https://registry.npmjs.org/minimatch/-/minimatch-3.0.4.tgz</a></p>

<p>

Dependency Hierarchy:

- shelljs-0.8.4.tgz (Root Library)

- glob-7.1.6.tgz

- :x: **minimatch-3.0.4.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/rsoreq/zenbot/commit/7a24c0d7b98ee76e6bac827974cff490a7694378">7a24c0d7b98ee76e6bac827974cff490a7694378</a></p>

<p>Found in base branch: <b>unstable</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

A vulnerability was found in the minimatch package. This flaw allows a Regular Expression Denial of Service (ReDoS) when calling the braceExpand function with specific arguments, resulting in a Denial of Service.

<p>Publish Date: 2022-10-17

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-3517>CVE-2022-3517</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2022-10-17</p>

<p>Fix Resolution: minimatch - 3.0.5</p>

</p>

</details>

<p></p>

| non_defect | cve high detected in minimatch tgz autoclosed cve high severity vulnerability vulnerable library minimatch tgz a glob matcher in javascript library home page a href dependency hierarchy shelljs tgz root library glob tgz x minimatch tgz vulnerable library found in head commit a href found in base branch unstable vulnerability details a vulnerability was found in the minimatch package this flaw allows a regular expression denial of service redos when calling the braceexpand function with specific arguments resulting in a denial of service publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version release date fix resolution minimatch | 0 |

22,233 | 6,229,921,655 | IssuesEvent | 2017-07-11 06:18:00 | XceedBoucherS/TestImport5 | https://api.github.com/repos/XceedBoucherS/TestImport5 | closed | Unable to commit changes | CodePlex | <b>Nimgoble[CodePlex]</b> <br />Hello,

I'm unable to commit changes that I've made.

I keep receiving a Commit Failed error. I've attached a screenshot of the error.

I'm using Visual Studio 2010 with VisualSVN.

Thank you

| 1.0 | Unable to commit changes - <b>Nimgoble[CodePlex]</b> <br />Hello,

I'm unable to commit changes that I've made.

I keep receiving a Commit Failed error. I've attached a screenshot of the error.

I'm using Visual Studio 2010 with VisualSVN.

Thank you

| non_defect | unable to commit changes nimgoble hello i m unable to commit changes that i ve made i keep receiving a commit failed error i ve attached a screenshot of the error i m using visual studio with visualsvn thank you | 0 |

38,042 | 8,639,913,268 | IssuesEvent | 2018-11-23 22:39:15 | bridgedotnet/Bridge | https://api.github.com/repos/bridgedotnet/Bridge | closed | Comparer<string>.Default not using System.String.compare | defect in-progress | Comparer<string>.Default.Compare(str1, str2) gives a different result the str1CompareTo(str2).

The first case calls Bridge.compare, and seems to perform char sorting.

The second case calls System.String.compare and seems to correctly sort text.

https://deck.net/42fe359ec3524e6024107106ec9c9386

I would expect that Comparer<string>.Default would call System.String.compare as System.String implements IComparer<System.String>.

| 1.0 | Comparer<string>.Default not using System.String.compare - Comparer<string>.Default.Compare(str1, str2) gives a different result the str1CompareTo(str2).

The first case calls Bridge.compare, and seems to perform char sorting.

The second case calls System.String.compare and seems to correctly sort text.

https://deck.net/42fe359ec3524e6024107106ec9c9386

I would expect that Comparer<string>.Default would call System.String.compare as System.String implements IComparer<System.String>.

| defect | comparer default not using system string compare comparer default compare gives a different result the the first case calls bridge compare and seems to perform char sorting the second case calls system string compare and seems to correctly sort text i would expect that comparer default would call system string compare as system string implements icomparer | 1 |

32,090 | 8,793,887,899 | IssuesEvent | 2018-12-21 22:01:11 | angular/angular-cli | https://api.github.com/repos/angular/angular-cli | closed | Less Sourcemap only references files inside 'styles' config | comp: devkit/build-angular freq2: medium severity2: inconvenient type: bug/fix | <!--

IF YOU DON'T FILL OUT THE FOLLOWING INFORMATION YOUR ISSUE MIGHT BE CLOSED WITHOUT INVESTIGATING

-->

### Bug Report or Feature Request

```

- [x] bug report

- [ ] feature request

```

### Versions.

<!--

Output from: `ng --version`.

If nothing, output from: `node --version` and `npm --version`.

Windows (7/8/10). Linux (incl. distribution). macOS (El Capitan? Sierra?)

-->

@angular/cli: 1.0.0-rc.2

macOS El Capitan

### Repro steps.

<!--

Simple steps to reproduce this bug.

Please include: commands run, packages added, related code changes.

A link to a sample repo would help too.

-->

My `angular-cli.json`:

```

"styles": [

"styles.less"

],

```

My `styles.less`

```

@import "less/vars";

@import "less/base";

@import "less/layout";

// ...etc.

```

When I run `ng serve -ec -sm` I can see the sourcemap file generated, but Chrome's devtools lists `styles.less` as the sole source file instead of `base.less` or `layout.less`, etc.

### The log given by the failure.

<!-- Normally this include a stack trace and some more information. -->

Here's what I skimmed out of the generated sourcemap file. You can see there's no mention of the imported files whatsoever.

```

{"version":3,"sources":["webpack:///./src/styles.less"], ... \n\n\n\n// WEBPACK FOOTER //\n// ./src/styles.less"],"sourceRoot":""}

```

### Desired functionality.

<!--

What would like to see implemented?

What is the usecase?

-->

I should see the individual imported files in the Chrome devtools and not just the main file.

### Mention any other details that might be useful.

<!-- Please include a link to the repo if this is related to an OSS project. -->

Wonder if this is solved by today's release of [less-loader v4.0.0](https://github.com/webpack-contrib/less-loader/releases/tag/v4.0.0)?

| 1.0 | Less Sourcemap only references files inside 'styles' config - <!--

IF YOU DON'T FILL OUT THE FOLLOWING INFORMATION YOUR ISSUE MIGHT BE CLOSED WITHOUT INVESTIGATING

-->

### Bug Report or Feature Request

```

- [x] bug report

- [ ] feature request

```

### Versions.

<!--

Output from: `ng --version`.

If nothing, output from: `node --version` and `npm --version`.

Windows (7/8/10). Linux (incl. distribution). macOS (El Capitan? Sierra?)

-->

@angular/cli: 1.0.0-rc.2

macOS El Capitan

### Repro steps.

<!--

Simple steps to reproduce this bug.

Please include: commands run, packages added, related code changes.

A link to a sample repo would help too.

-->

My `angular-cli.json`:

```

"styles": [

"styles.less"

],

```

My `styles.less`

```

@import "less/vars";

@import "less/base";

@import "less/layout";

// ...etc.

```

When I run `ng serve -ec -sm` I can see the sourcemap file generated, but Chrome's devtools lists `styles.less` as the sole source file instead of `base.less` or `layout.less`, etc.

### The log given by the failure.

<!-- Normally this include a stack trace and some more information. -->

Here's what I skimmed out of the generated sourcemap file. You can see there's no mention of the imported files whatsoever.

```

{"version":3,"sources":["webpack:///./src/styles.less"], ... \n\n\n\n// WEBPACK FOOTER //\n// ./src/styles.less"],"sourceRoot":""}

```

### Desired functionality.

<!--

What would like to see implemented?

What is the usecase?

-->

I should see the individual imported files in the Chrome devtools and not just the main file.

### Mention any other details that might be useful.

<!-- Please include a link to the repo if this is related to an OSS project. -->

Wonder if this is solved by today's release of [less-loader v4.0.0](https://github.com/webpack-contrib/less-loader/releases/tag/v4.0.0)?

| non_defect | less sourcemap only references files inside styles config if you don t fill out the following information your issue might be closed without investigating bug report or feature request bug report feature request versions output from ng version if nothing output from node version and npm version windows linux incl distribution macos el capitan sierra angular cli rc macos el capitan repro steps simple steps to reproduce this bug please include commands run packages added related code changes a link to a sample repo would help too my angular cli json styles styles less my styles less import less vars import less base import less layout etc when i run ng serve ec sm i can see the sourcemap file generated but chrome s devtools lists styles less as the sole source file instead of base less or layout less etc the log given by the failure here s what i skimmed out of the generated sourcemap file you can see there s no mention of the imported files whatsoever version sources n n n n webpack footer n src styles less sourceroot desired functionality what would like to see implemented what is the usecase i should see the individual imported files in the chrome devtools and not just the main file mention any other details that might be useful wonder if this is solved by today s release of | 0 |

114,668 | 17,258,891,998 | IssuesEvent | 2021-07-22 02:56:10 | nforgeio/neonKUBE | https://api.github.com/repos/nforgeio/neonKUBE | closed | neon-cluster-manager DB credentials are hardcoded and insecure | bug cluster-setup neon-kube security | I was going to copy/paste some code from **neon-cluster-manager** and noticed a couple issues:

- [ ] @marcusbooyah: You define `Service.connString` as a hardcoded string with an insecure password. You should create the database user with a random password when creating the cluster and then loading that password via a secret k8s configuration passed to the service. I think you should be able to do this using the following steps while setting up the cluster:

* Generate a random password

* Persist the secret to the cluster configuration (this will require #1084)

* Create the database and user (you shouldn't be using the `postgres` user because its the **superuser**)

* You grant only the permissions required

* Persist the connection string as a k8s secret

* Modify the **neon-cluster-manager** to read the configuration

- [x] I noticed that the `Service` class (that I renamed earlier as a simplifying convention) is `partial` but that the other parts of the definition were hard to find because their file names weren't prefixed by **"Service.**. I've been using the convention for `partial` classes is that the file with the constructor will be have the base name **Service.cs** in this case and that the remaining files will be named with the base name, followed by a dot and a name that identifies what's implemented inside.

I went ahead and modified the other files to be named **Service.Kibana.cs**, **Service.LogPurger**, and **Service.Setup**. This makes it much easier to grok what's going on. | True | neon-cluster-manager DB credentials are hardcoded and insecure - I was going to copy/paste some code from **neon-cluster-manager** and noticed a couple issues:

- [ ] @marcusbooyah: You define `Service.connString` as a hardcoded string with an insecure password. You should create the database user with a random password when creating the cluster and then loading that password via a secret k8s configuration passed to the service. I think you should be able to do this using the following steps while setting up the cluster:

* Generate a random password

* Persist the secret to the cluster configuration (this will require #1084)

* Create the database and user (you shouldn't be using the `postgres` user because its the **superuser**)

* You grant only the permissions required

* Persist the connection string as a k8s secret

* Modify the **neon-cluster-manager** to read the configuration

- [x] I noticed that the `Service` class (that I renamed earlier as a simplifying convention) is `partial` but that the other parts of the definition were hard to find because their file names weren't prefixed by **"Service.**. I've been using the convention for `partial` classes is that the file with the constructor will be have the base name **Service.cs** in this case and that the remaining files will be named with the base name, followed by a dot and a name that identifies what's implemented inside.

I went ahead and modified the other files to be named **Service.Kibana.cs**, **Service.LogPurger**, and **Service.Setup**. This makes it much easier to grok what's going on. | non_defect | neon cluster manager db credentials are hardcoded and insecure i was going to copy paste some code from neon cluster manager and noticed a couple issues marcusbooyah you define service connstring as a hardcoded string with an insecure password you should create the database user with a random password when creating the cluster and then loading that password via a secret configuration passed to the service i think you should be able to do this using the following steps while setting up the cluster generate a random password persist the secret to the cluster configuration this will require create the database and user you shouldn t be using the postgres user because its the superuser you grant only the permissions required persist the connection string as a secret modify the neon cluster manager to read the configuration nbsp i noticed that the service class that i renamed earlier as a simplifying convention is partial but that the other parts of the definition were hard to find because their file names weren t prefixed by service i ve been using the convention for partial classes is that the file with the constructor will be have the base name service cs in this case and that the remaining files will be named with the base name followed by a dot and a name that identifies what s implemented inside i went ahead and modified the other files to be named service kibana cs service logpurger and service setup this makes it much easier to grok what s going on | 0 |

72,951 | 24,381,715,324 | IssuesEvent | 2022-10-04 08:24:27 | PowerDNS/pdns | https://api.github.com/repos/PowerDNS/pdns | closed | rec: Inconsistency in logging between lua and protobuf | rec defect | <!-- Hi! Thanks for filing an issue. It will be read with care by human beings. Can we ask you to please fill out this template and not simply demand new features or send in complaints? Thanks! -->

<!-- Also please search the existing issues (both open and closed) to see if your report might be duplicate -->

<!-- Please don't file an issue when you have a support question, send support questions to the mailinglist or ask them on IRC (https://www.powerdns.com/opensource.html) -->

<!-- Tell us what is issue is about -->

- Program: Recursor

- Issue type: Bug report

### Short description

Just a quick email to say that we're seeing an inconsistency in the PowerDNS (4.3.0 beta 2) output between lua and protobuf. When we request a domain that is listed in a whitelist RPZ, the response.appliedPolicy is not being set in the protobuf output. The lua scripting environment does appear to see the policy set, as shown by logging dq.appliedPolicy.policyName. Is it possible to confirm if this is a known and / or expected behaviour? Our assumption is that any RPZ policy matching applied and recorded in dq.appliedPolicy.policyName should also be reflected in the protobuf 'response' output.

### Environment

<!-- Tell us about the environment -->

- Operating system: Not sure, Centos I think

- Software version: 4.3.0 beta2

- Software source: PowerDNS repository

### Steps to reproduce

See above.

| 1.0 | rec: Inconsistency in logging between lua and protobuf - <!-- Hi! Thanks for filing an issue. It will be read with care by human beings. Can we ask you to please fill out this template and not simply demand new features or send in complaints? Thanks! -->

<!-- Also please search the existing issues (both open and closed) to see if your report might be duplicate -->

<!-- Please don't file an issue when you have a support question, send support questions to the mailinglist or ask them on IRC (https://www.powerdns.com/opensource.html) -->

<!-- Tell us what is issue is about -->

- Program: Recursor

- Issue type: Bug report

### Short description

Just a quick email to say that we're seeing an inconsistency in the PowerDNS (4.3.0 beta 2) output between lua and protobuf. When we request a domain that is listed in a whitelist RPZ, the response.appliedPolicy is not being set in the protobuf output. The lua scripting environment does appear to see the policy set, as shown by logging dq.appliedPolicy.policyName. Is it possible to confirm if this is a known and / or expected behaviour? Our assumption is that any RPZ policy matching applied and recorded in dq.appliedPolicy.policyName should also be reflected in the protobuf 'response' output.

### Environment

<!-- Tell us about the environment -->

- Operating system: Not sure, Centos I think

- Software version: 4.3.0 beta2

- Software source: PowerDNS repository

### Steps to reproduce

See above.

| defect | rec inconsistency in logging between lua and protobuf program recursor issue type bug report short description just a quick email to say that we re seeing an inconsistency in the powerdns beta output between lua and protobuf when we request a domain that is listed in a whitelist rpz the response appliedpolicy is not being set in the protobuf output the lua scripting environment does appear to see the policy set as shown by logging dq appliedpolicy policyname is it possible to confirm if this is a known and or expected behaviour our assumption is that any rpz policy matching applied and recorded in dq appliedpolicy policyname should also be reflected in the protobuf response output environment operating system not sure centos i think software version software source powerdns repository steps to reproduce see above | 1 |

90,007 | 3,808,117,188 | IssuesEvent | 2016-03-25 13:22:17 | iSoron/uhabits | https://api.github.com/repos/iSoron/uhabits | closed | Import data from HabitBull | low-priority new-feature | [HabitBull](https://play.google.com/store/apps/details?id=com.oristats.habitbull) is one of the most popular habit apps on Android and iOS. It does allow to export habits into a .csv file. Would be great, if Loop could import them. | 1.0 | Import data from HabitBull - [HabitBull](https://play.google.com/store/apps/details?id=com.oristats.habitbull) is one of the most popular habit apps on Android and iOS. It does allow to export habits into a .csv file. Would be great, if Loop could import them. | non_defect | import data from habitbull is one of the most popular habit apps on android and ios it does allow to export habits into a csv file would be great if loop could import them | 0 |

33,751 | 27,781,591,028 | IssuesEvent | 2023-03-16 21:35:55 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | opened | Unnecessary ApiCompat CP0001 errors when type forwards used | bug area-Infrastructure-libraries |

See https://github.com/dotnet/runtime/pull/82453#discussion_r1139284637 for more detail.

Local errors for when the https://github.com/dotnet/runtime/tree/main/src/libraries/System.DirectoryServices/src/CompatibilitySuppressions.xml file is removed (please delete that file when this issue is fixed):

```

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error : API compatibility errors between 'lib/net6.0/System.DirectoryServices.dll' (C:\Users\sharter\.nuget\packages\

system.directoryservices\7.0.0\system.directoryservices.7.0.0.nupkg) and 'lib/net6.0/System.DirectoryServices.dll' (C:\git\runtime1\artifacts\packages\Debug\Shipping\System.DirectoryServices.8.0.0-dev.nupkg): [C:\git\runtime1\src\libraries\System.DirectoryServices\src\

System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermission' exists on [Baseline] lib/net6.0/System.Dire

ctoryServices.dll but not on lib/net6.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionAccess' exists on [Baseline] lib/net6.0/Syste

m.DirectoryServices.dll but not on lib/net6.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionAttribute' exists on [Baseline] lib/net6.0/Sy

stem.DirectoryServices.dll but not on lib/net6.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionEntry' exists on [Baseline] lib/net6.0/System

.DirectoryServices.dll but not on lib/net6.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionEntryCollection' exists on [Baseline] lib/net

6.0/System.DirectoryServices.dll but not on lib/net6.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error : API compatibility errors between 'lib/net7.0/System.DirectoryServices.dll' (C:\Users\sharter\.nuget\packages\

system.directoryservices\7.0.0\system.directoryservices.7.0.0.nupkg) and 'lib/net7.0/System.DirectoryServices.dll' (C:\git\runtime1\artifacts\packages\Debug\Shipping\System.DirectoryServices.8.0.0-dev.nupkg): [C:\git\runtime1\src\libraries\System.DirectoryServices\src\

System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermission' exists on [Baseline] lib/net7.0/System.Dire

ctoryServices.dll but not on lib/net7.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionAccess' exists on [Baseline] lib/net7.0/Syste

m.DirectoryServices.dll but not on lib/net7.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionAttribute' exists on [Baseline] lib/net7.0/Sy

stem.DirectoryServices.dll but not on lib/net7.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionEntry' exists on [Baseline] lib/net7.0/System

.DirectoryServices.dll but not on lib/net7.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionEntryCollection' exists on [Baseline] lib/net

7.0/System.DirectoryServices.dll but not on lib/net7.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error : API compatibility errors between 'lib/netstandard2.0/System.DirectoryServices.dll' (C:\Users\sharter\.nuget\p

ackages\system.directoryservices\7.0.0\system.directoryservices.7.0.0.nupkg) and 'lib/netstandard2.0/System.DirectoryServices.dll' (C:\git\runtime1\artifacts\packages\Debug\Shipping\System.DirectoryServices.8.0.0-dev.nupkg): [C:\git\runtime1\src\libraries\System.Direct

oryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermission' exists on [Baseline] lib/netstandard2.0/Sys

tem.DirectoryServices.dll but not on lib/netstandard2.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionAccess' exists on [Baseline] lib/netstandard2

.0/System.DirectoryServices.dll but not on lib/netstandard2.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionAttribute' exists on [Baseline] lib/netstanda

rd2.0/System.DirectoryServices.dll but not on lib/netstandard2.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionEntry' exists on [Baseline] lib/netstandard2.

0/System.DirectoryServices.dll but not on lib/netstandard2.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionEntryCollection' exists on [Baseline] lib/net

standard2.0/System.DirectoryServices.dll but not on lib/netstandard2.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

``` | 1.0 | Unnecessary ApiCompat CP0001 errors when type forwards used -

See https://github.com/dotnet/runtime/pull/82453#discussion_r1139284637 for more detail.

Local errors for when the https://github.com/dotnet/runtime/tree/main/src/libraries/System.DirectoryServices/src/CompatibilitySuppressions.xml file is removed (please delete that file when this issue is fixed):

```

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error : API compatibility errors between 'lib/net6.0/System.DirectoryServices.dll' (C:\Users\sharter\.nuget\packages\

system.directoryservices\7.0.0\system.directoryservices.7.0.0.nupkg) and 'lib/net6.0/System.DirectoryServices.dll' (C:\git\runtime1\artifacts\packages\Debug\Shipping\System.DirectoryServices.8.0.0-dev.nupkg): [C:\git\runtime1\src\libraries\System.DirectoryServices\src\

System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermission' exists on [Baseline] lib/net6.0/System.Dire

ctoryServices.dll but not on lib/net6.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionAccess' exists on [Baseline] lib/net6.0/Syste

m.DirectoryServices.dll but not on lib/net6.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionAttribute' exists on [Baseline] lib/net6.0/Sy

stem.DirectoryServices.dll but not on lib/net6.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionEntry' exists on [Baseline] lib/net6.0/System

.DirectoryServices.dll but not on lib/net6.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionEntryCollection' exists on [Baseline] lib/net

6.0/System.DirectoryServices.dll but not on lib/net6.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error : API compatibility errors between 'lib/net7.0/System.DirectoryServices.dll' (C:\Users\sharter\.nuget\packages\

system.directoryservices\7.0.0\system.directoryservices.7.0.0.nupkg) and 'lib/net7.0/System.DirectoryServices.dll' (C:\git\runtime1\artifacts\packages\Debug\Shipping\System.DirectoryServices.8.0.0-dev.nupkg): [C:\git\runtime1\src\libraries\System.DirectoryServices\src\

System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermission' exists on [Baseline] lib/net7.0/System.Dire

ctoryServices.dll but not on lib/net7.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionAccess' exists on [Baseline] lib/net7.0/Syste

m.DirectoryServices.dll but not on lib/net7.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionAttribute' exists on [Baseline] lib/net7.0/Sy

stem.DirectoryServices.dll but not on lib/net7.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionEntry' exists on [Baseline] lib/net7.0/System

.DirectoryServices.dll but not on lib/net7.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionEntryCollection' exists on [Baseline] lib/net

7.0/System.DirectoryServices.dll but not on lib/net7.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error : API compatibility errors between 'lib/netstandard2.0/System.DirectoryServices.dll' (C:\Users\sharter\.nuget\p

ackages\system.directoryservices\7.0.0\system.directoryservices.7.0.0.nupkg) and 'lib/netstandard2.0/System.DirectoryServices.dll' (C:\git\runtime1\artifacts\packages\Debug\Shipping\System.DirectoryServices.8.0.0-dev.nupkg): [C:\git\runtime1\src\libraries\System.Direct

oryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermission' exists on [Baseline] lib/netstandard2.0/Sys

tem.DirectoryServices.dll but not on lib/netstandard2.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionAccess' exists on [Baseline] lib/netstandard2

.0/System.DirectoryServices.dll but not on lib/netstandard2.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionAttribute' exists on [Baseline] lib/netstanda

rd2.0/System.DirectoryServices.dll but not on lib/netstandard2.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionEntry' exists on [Baseline] lib/netstandard2.

0/System.DirectoryServices.dll but not on lib/netstandard2.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

C:\Users\sharter\.nuget\packages\microsoft.dotnet.apicompat.task\8.0.100-preview.2.23107.1\build\Microsoft.NET.ApiCompat.ValidatePackage.targets(39,5): error CP0001: Type 'System.DirectoryServices.DirectoryServicesPermissionEntryCollection' exists on [Baseline] lib/net

standard2.0/System.DirectoryServices.dll but not on lib/netstandard2.0/System.DirectoryServices.dll [C:\git\runtime1\src\libraries\System.DirectoryServices\src\System.DirectoryServices.csproj]

``` | non_defect | unnecessary apicompat errors when type forwards used see for more detail local errors for when the file is removed please delete that file when this issue is fixed c users sharter nuget packages microsoft dotnet apicompat task preview build microsoft net apicompat validatepackage targets error api compatibility errors between lib system directoryservices dll c users sharter nuget packages system directoryservices system directoryservices nupkg and lib system directoryservices dll c git artifacts packages debug shipping system directoryservices dev nupkg c git src libraries system directoryservices src system directoryservices csproj c users sharter nuget packages microsoft dotnet apicompat task preview build microsoft net apicompat validatepackage targets error type system directoryservices directoryservicespermission exists on lib system dire ctoryservices dll but not on lib system directoryservices dll c users sharter nuget packages microsoft dotnet apicompat task preview build microsoft net apicompat validatepackage targets error type system directoryservices directoryservicespermissionaccess exists on lib syste m directoryservices dll but not on lib system directoryservices dll c users sharter nuget packages microsoft dotnet apicompat task preview build microsoft net apicompat validatepackage targets error type system directoryservices directoryservicespermissionattribute exists on lib sy stem directoryservices dll but not on lib system directoryservices dll c users sharter nuget packages microsoft dotnet apicompat task preview build microsoft net apicompat validatepackage targets error type system directoryservices directoryservicespermissionentry exists on lib system directoryservices dll but not on lib system directoryservices dll c users sharter nuget packages microsoft dotnet apicompat task preview build microsoft net apicompat validatepackage targets error type system directoryservices directoryservicespermissionentrycollection exists on lib net system directoryservices dll but not on lib system directoryservices dll c users sharter nuget packages microsoft dotnet apicompat task preview build microsoft net apicompat validatepackage targets error api compatibility errors between lib system directoryservices dll c users sharter nuget packages system directoryservices system directoryservices nupkg and lib system directoryservices dll c git artifacts packages debug shipping system directoryservices dev nupkg c git src libraries system directoryservices src system directoryservices csproj c users sharter nuget packages microsoft dotnet apicompat task preview build microsoft net apicompat validatepackage targets error type system directoryservices directoryservicespermission exists on lib system dire ctoryservices dll but not on lib system directoryservices dll c users sharter nuget packages microsoft dotnet apicompat task preview build microsoft net apicompat validatepackage targets error type system directoryservices directoryservicespermissionaccess exists on lib syste m directoryservices dll but not on lib system directoryservices dll c users sharter nuget packages microsoft dotnet apicompat task preview build microsoft net apicompat validatepackage targets error type system directoryservices directoryservicespermissionattribute exists on lib sy stem directoryservices dll but not on lib system directoryservices dll c users sharter nuget packages microsoft dotnet apicompat task preview build microsoft net apicompat validatepackage targets error type system directoryservices directoryservicespermissionentry exists on lib system directoryservices dll but not on lib system directoryservices dll c users sharter nuget packages microsoft dotnet apicompat task preview build microsoft net apicompat validatepackage targets error type system directoryservices directoryservicespermissionentrycollection exists on lib net system directoryservices dll but not on lib system directoryservices dll c users sharter nuget packages microsoft dotnet apicompat task preview build microsoft net apicompat validatepackage targets error api compatibility errors between lib system directoryservices dll c users sharter nuget p ackages system directoryservices system directoryservices nupkg and lib system directoryservices dll c git artifacts packages debug shipping system directoryservices dev nupkg c git src libraries system direct oryservices src system directoryservices csproj c users sharter nuget packages microsoft dotnet apicompat task preview build microsoft net apicompat validatepackage targets error type system directoryservices directoryservicespermission exists on lib sys tem directoryservices dll but not on lib system directoryservices dll c users sharter nuget packages microsoft dotnet apicompat task preview build microsoft net apicompat validatepackage targets error type system directoryservices directoryservicespermissionaccess exists on lib system directoryservices dll but not on lib system directoryservices dll c users sharter nuget packages microsoft dotnet apicompat task preview build microsoft net apicompat validatepackage targets error type system directoryservices directoryservicespermissionattribute exists on lib netstanda system directoryservices dll but not on lib system directoryservices dll c users sharter nuget packages microsoft dotnet apicompat task preview build microsoft net apicompat validatepackage targets error type system directoryservices directoryservicespermissionentry exists on lib system directoryservices dll but not on lib system directoryservices dll c users sharter nuget packages microsoft dotnet apicompat task preview build microsoft net apicompat validatepackage targets error type system directoryservices directoryservicespermissionentrycollection exists on lib net system directoryservices dll but not on lib system directoryservices dll | 0 |

151,913 | 13,438,549,892 | IssuesEvent | 2020-09-07 18:24:50 | featherity/featherity | https://api.github.com/repos/featherity/featherity | opened | Update CONTRIBUTING file with rule for one PR for each icon or icon series. | documentation | Regarding to the thread in #57 @llaenowyd said this:

> I think after re-reading the submission guidelines , maybe there should be one PR for each icon.

I certain way I think this is a good idea to write this down in CONTRIBUTING file.

For me it's a little-bit of an unwritten rule that you need to submit icons or "icon series" in separate pull-requests for several reasons:

- It's easier to review

- Keeps the discussions and reviews about the icon(s) scoped and in one thread.

- All points above contribute to less time to merge PR's

I'm curious what you think. | 1.0 | Update CONTRIBUTING file with rule for one PR for each icon or icon series. - Regarding to the thread in #57 @llaenowyd said this:

> I think after re-reading the submission guidelines , maybe there should be one PR for each icon.

I certain way I think this is a good idea to write this down in CONTRIBUTING file.

For me it's a little-bit of an unwritten rule that you need to submit icons or "icon series" in separate pull-requests for several reasons:

- It's easier to review

- Keeps the discussions and reviews about the icon(s) scoped and in one thread.

- All points above contribute to less time to merge PR's

I'm curious what you think. | non_defect | update contributing file with rule for one pr for each icon or icon series regarding to the thread in llaenowyd said this i think after re reading the submission guidelines maybe there should be one pr for each icon i certain way i think this is a good idea to write this down in contributing file for me it s a little bit of an unwritten rule that you need to submit icons or icon series in separate pull requests for several reasons it s easier to review keeps the discussions and reviews about the icon s scoped and in one thread all points above contribute to less time to merge pr s i m curious what you think | 0 |

36,601 | 8,030,931,627 | IssuesEvent | 2018-07-27 21:41:47 | IBM/CAST | https://api.github.com/repos/IBM/CAST | opened | Possible Bug: csm_allocation_query_active_all | Comp: CSM Comp: CSM.api PhaseFound: Development Sev: 2 Status: Open Type: Defect | **Describe the bug**

There are some inconsistencies and mismatches between `csm_allocation_query_active_all` VS `csm_allocation_query` and `csm_cluster_query_state` and the CSM database. The number of nodes in an allocation seems to be off sometimes. and sometimes the `compute_nodes` field may not show all the nodes inside that allocation.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to '...'

2. Click on '....'

3. Scroll down to '....'

4. See error

**Expected behavior**

`csm_allocation_query_active_all` should match the output of other APIs and CSM database information.

**Issue Source:**

@fpizzano noticed this issue on the summit cluster.

Issue ToDo:

- [ ] Nick please investigate.

- [ ] figure out why this behavior is occurring.

- [ ] ???

- [ ] Profit

| 1.0 | Possible Bug: csm_allocation_query_active_all - **Describe the bug**

There are some inconsistencies and mismatches between `csm_allocation_query_active_all` VS `csm_allocation_query` and `csm_cluster_query_state` and the CSM database. The number of nodes in an allocation seems to be off sometimes. and sometimes the `compute_nodes` field may not show all the nodes inside that allocation.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to '...'

2. Click on '....'

3. Scroll down to '....'

4. See error

**Expected behavior**

`csm_allocation_query_active_all` should match the output of other APIs and CSM database information.

**Issue Source:**

@fpizzano noticed this issue on the summit cluster.

Issue ToDo:

- [ ] Nick please investigate.

- [ ] figure out why this behavior is occurring.

- [ ] ???

- [ ] Profit

| defect | possible bug csm allocation query active all describe the bug there are some inconsistencies and mismatches between csm allocation query active all vs csm allocation query and csm cluster query state and the csm database the number of nodes in an allocation seems to be off sometimes and sometimes the compute nodes field may not show all the nodes inside that allocation to reproduce steps to reproduce the behavior go to click on scroll down to see error expected behavior csm allocation query active all should match the output of other apis and csm database information issue source fpizzano noticed this issue on the summit cluster issue todo nick please investigate figure out why this behavior is occurring profit | 1 |

134,763 | 10,928,811,435 | IssuesEvent | 2019-11-22 19:56:15 | status-im/nim-beacon-chain | https://api.github.com/repos/status-im/nim-beacon-chain | opened | Reenable BLS nd shuffling tests | test suite | See https://github.com/status-im/nim-beacon-chain/pull/585 it seems like we removed the json tests too early.

So we need to update the shuffling and BLS tests to the yaml format. | 1.0 | Reenable BLS nd shuffling tests - See https://github.com/status-im/nim-beacon-chain/pull/585 it seems like we removed the json tests too early.

So we need to update the shuffling and BLS tests to the yaml format. | non_defect | reenable bls nd shuffling tests see it seems like we removed the json tests too early so we need to update the shuffling and bls tests to the yaml format | 0 |

32,595 | 12,131,321,359 | IssuesEvent | 2020-04-23 04:21:17 | kenferrara/spark | https://api.github.com/repos/kenferrara/spark | opened | WS-2018-0021 (Medium) detected in bootstrap-2.1.0.min.js, bootstrap-2.1.0.js | security vulnerability | ## WS-2018-0021 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>bootstrap-2.1.0.min.js</b>, <b>bootstrap-2.1.0.js</b></p></summary>

<p>

<details><summary><b>bootstrap-2.1.0.min.js</b></p></summary>

<p>The most popular front-end framework for developing responsive, mobile first projects on the web.</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/2.1.0/bootstrap.min.js">https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/2.1.0/bootstrap.min.js</a></p>

<p>Path to vulnerable library: /spark/docs/js/vendor/bootstrap.min.js,/spark/docs/js/vendor/bootstrap.min.js</p>

<p>

Dependency Hierarchy:

- :x: **bootstrap-2.1.0.min.js** (Vulnerable Library)

</details>

<details><summary><b>bootstrap-2.1.0.js</b></p></summary>

<p>The most popular front-end framework for developing responsive, mobile first projects on the web.</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/2.1.0/bootstrap.js">https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/2.1.0/bootstrap.js</a></p>

<p>Path to vulnerable library: /spark/docs/js/vendor/bootstrap.js,/spark/docs/js/vendor/bootstrap.js</p>

<p>

Dependency Hierarchy:

- :x: **bootstrap-2.1.0.js** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/kenferrara/spark/commit/486b2d6a475cb2e6ab8e936905d6ceec71fc60ce">486b2d6a475cb2e6ab8e936905d6ceec71fc60ce</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

XSS in data-target in bootstrap (3.3.7 and before)

<p>Publish Date: 2017-06-27

<p>URL: <a href=https://github.com/twbs/bootstrap/issues/20184>WS-2018-0021</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/twbs/bootstrap/issues/20184">https://github.com/twbs/bootstrap/issues/20184</a></p>

<p>Release Date: 2019-06-12</p>

<p>Fix Resolution: 3.4.0</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"JavaScript","packageName":"twitter-bootstrap","packageVersion":"2.1.0","isTransitiveDependency":false,"dependencyTree":"twitter-bootstrap:2.1.0","isMinimumFixVersionAvailable":true,"minimumFixVersion":"3.4.0"},{"packageType":"JavaScript","packageName":"twitter-bootstrap","packageVersion":"2.1.0","isTransitiveDependency":false,"dependencyTree":"twitter-bootstrap:2.1.0","isMinimumFixVersionAvailable":true,"minimumFixVersion":"3.4.0"}],"vulnerabilityIdentifier":"WS-2018-0021","vulnerabilityDetails":"XSS in data-target in bootstrap (3.3.7 and before)","vulnerabilityUrl":"https://github.com/twbs/bootstrap/issues/20184","cvss2Severity":"medium","cvss2Score":"6.5","extraData":{}}</REMEDIATE> --> | True | WS-2018-0021 (Medium) detected in bootstrap-2.1.0.min.js, bootstrap-2.1.0.js - ## WS-2018-0021 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>bootstrap-2.1.0.min.js</b>, <b>bootstrap-2.1.0.js</b></p></summary>

<p>

<details><summary><b>bootstrap-2.1.0.min.js</b></p></summary>

<p>The most popular front-end framework for developing responsive, mobile first projects on the web.</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/2.1.0/bootstrap.min.js">https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/2.1.0/bootstrap.min.js</a></p>

<p>Path to vulnerable library: /spark/docs/js/vendor/bootstrap.min.js,/spark/docs/js/vendor/bootstrap.min.js</p>

<p>

Dependency Hierarchy:

- :x: **bootstrap-2.1.0.min.js** (Vulnerable Library)

</details>

<details><summary><b>bootstrap-2.1.0.js</b></p></summary>

<p>The most popular front-end framework for developing responsive, mobile first projects on the web.</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/2.1.0/bootstrap.js">https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/2.1.0/bootstrap.js</a></p>

<p>Path to vulnerable library: /spark/docs/js/vendor/bootstrap.js,/spark/docs/js/vendor/bootstrap.js</p>

<p>

Dependency Hierarchy:

- :x: **bootstrap-2.1.0.js** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/kenferrara/spark/commit/486b2d6a475cb2e6ab8e936905d6ceec71fc60ce">486b2d6a475cb2e6ab8e936905d6ceec71fc60ce</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

XSS in data-target in bootstrap (3.3.7 and before)

<p>Publish Date: 2017-06-27

<p>URL: <a href=https://github.com/twbs/bootstrap/issues/20184>WS-2018-0021</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/twbs/bootstrap/issues/20184">https://github.com/twbs/bootstrap/issues/20184</a></p>

<p>Release Date: 2019-06-12</p>

<p>Fix Resolution: 3.4.0</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"JavaScript","packageName":"twitter-bootstrap","packageVersion":"2.1.0","isTransitiveDependency":false,"dependencyTree":"twitter-bootstrap:2.1.0","isMinimumFixVersionAvailable":true,"minimumFixVersion":"3.4.0"},{"packageType":"JavaScript","packageName":"twitter-bootstrap","packageVersion":"2.1.0","isTransitiveDependency":false,"dependencyTree":"twitter-bootstrap:2.1.0","isMinimumFixVersionAvailable":true,"minimumFixVersion":"3.4.0"}],"vulnerabilityIdentifier":"WS-2018-0021","vulnerabilityDetails":"XSS in data-target in bootstrap (3.3.7 and before)","vulnerabilityUrl":"https://github.com/twbs/bootstrap/issues/20184","cvss2Severity":"medium","cvss2Score":"6.5","extraData":{}}</REMEDIATE> --> | non_defect | ws medium detected in bootstrap min js bootstrap js ws medium severity vulnerability vulnerable libraries bootstrap min js bootstrap js bootstrap min js the most popular front end framework for developing responsive mobile first projects on the web library home page a href path to vulnerable library spark docs js vendor bootstrap min js spark docs js vendor bootstrap min js dependency hierarchy x bootstrap min js vulnerable library bootstrap js the most popular front end framework for developing responsive mobile first projects on the web library home page a href path to vulnerable library spark docs js vendor bootstrap js spark docs js vendor bootstrap js dependency hierarchy x bootstrap js vulnerable library found in head commit a href vulnerability details xss in data target in bootstrap and before publish date url a href cvss score details base score metrics not available suggested fix type upgrade version origin a href release date fix resolution isopenpronvulnerability false ispackagebased true isdefaultbranch true packages vulnerabilityidentifier ws vulnerabilitydetails xss in data target in bootstrap and before vulnerabilityurl | 0 |

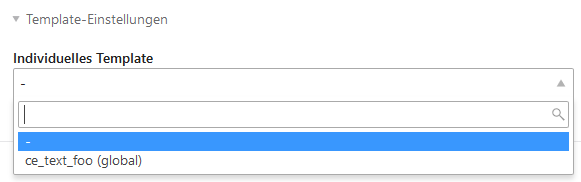

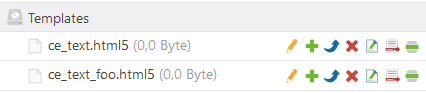

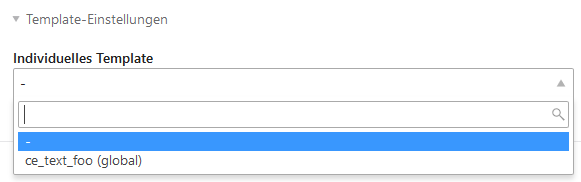

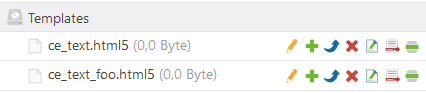

44,632 | 12,301,008,942 | IssuesEvent | 2020-05-11 14:48:21 | contao/contao | https://api.github.com/repos/contao/contao | closed | Overridden default template not shown in template selection | defect | **Affected version(s)**

Contao 4.8.5

**Description**

Currently the default template is not shown in the custom template selection, e.g. for a content element. However, this also includes default templates, that have been overriden in the `templates/` folder.

This is confusing, since now you cannot see, that your overridden default template is actually used - _and from which theme_ (wherever applicable).

**How to reproduce**

1. Log into the back end.

2. Go to _Templates_.

3. Create a new `ce_text` template and rename it to `ce_text_foo`.

4. Create a new default `ce_text` template.

5. Go to _Articles_ and create a new _Text_ content element.

The custom template selection will not show the custom default template.

| 1.0 | Overridden default template not shown in template selection - **Affected version(s)**

Contao 4.8.5

**Description**

Currently the default template is not shown in the custom template selection, e.g. for a content element. However, this also includes default templates, that have been overriden in the `templates/` folder.

This is confusing, since now you cannot see, that your overridden default template is actually used - _and from which theme_ (wherever applicable).

**How to reproduce**

1. Log into the back end.

2. Go to _Templates_.

3. Create a new `ce_text` template and rename it to `ce_text_foo`.

4. Create a new default `ce_text` template.

5. Go to _Articles_ and create a new _Text_ content element.

The custom template selection will not show the custom default template.

| defect | overridden default template not shown in template selection affected version s contao description currently the default template is not shown in the custom template selection e g for a content element however this also includes default templates that have been overriden in the templates folder this is confusing since now you cannot see that your overridden default template is actually used and from which theme wherever applicable how to reproduce log into the back end go to templates create a new ce text template and rename it to ce text foo create a new default ce text template go to articles and create a new text content element the custom template selection will not show the custom default template | 1 |

39,061 | 9,188,365,656 | IssuesEvent | 2019-03-06 07:09:24 | beefproject/beef | https://api.github.com/repos/beefproject/beef | closed | beefproject on Ubuntu can't run beef -error | Defect | Verify first that your issue/request has not been posted previously:

* https://github.com/beefproject/beef/issues

* https://github.com/beefproject/beef/wiki/FAQ

Ensure you're using the [latest version of BeEF](https://github.com/beefproject/beef/releases/tag/beef-0.4.7.1).

#### Environment

What version/revision of BeEF are you using? latest version

On what version of Ruby?

On what browser? Firefox

On what operating system? Ubuntu

#### Configuration

Are you using a non-default configuration? No

Have you enabled or disabled any BeEF extensions? No

#### Summary

Please provide a summary of the issue.

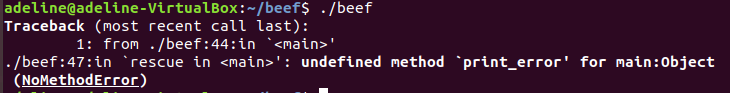

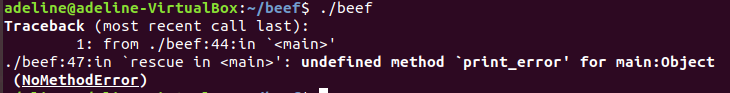

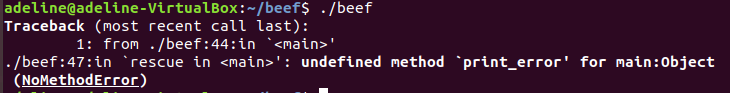

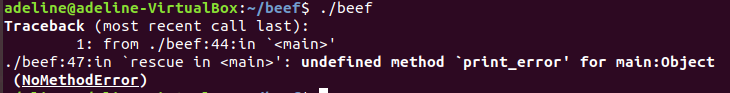

I cannot run the beEF project on Ubuntu. the usual ./beef command is not responding and an error message is popping instead:

Traceback (most recent call last):

1: from ./beef:44:in '<main>'

./beef:47:in 'rescue in <main>: undefined method ' print_error' for main:Object (NoMethodError)

#### Expected Behaviour

What was the expected result?

once ./beef command is entered it should run the interface and show a link to the hookpage

#### Actual Behaviour

What was the actual result?

error message

#### Steps to Reproduce

Please provide steps to reproduce this issue.

The first thing: install Ruby on my Ubuntu 18.04.2 image:

sudo apt install ruby ruby-dev

The next installed git:

sudo apt install git

clone the git project:

git clone https://github.com/beefproject/beef

.Next, move into the beef directory:

cd beef

On Ubuntu run:

sudo ./install

Once the installation was successfully completed, I ran:

./beef

I was quickly greeted with the following error. To fix this, I tried to tell BeEF that it needs this gem, so I modified the Gemfile file following these steps:

rm Gemfile.lock. Click Y to remove it.

sudo nano Gemfile

In the file, add the following line:

gem ‘xmlrpc’

Save the file and re-run the installation:

sudo ./install

At this point, the installation should be successful. Try running the following command:

./beef

But another error message popped and I am now stocked:

Traceback (most recent call last):

1: from ./beef:44:in '<main>'

./beef:47:in 'rescue in <main>: undefined method ' print_error' for main:Object (NoMethodError)

#### Additional Information

Please provide any additional information which may be useful in resolving this issue, such as debugging output and relevant screen shots. Debug output can be enabled by specifying `debug: true` in the `config.yaml` configuration file.

| 1.0 | beefproject on Ubuntu can't run beef -error - Verify first that your issue/request has not been posted previously:

* https://github.com/beefproject/beef/issues

* https://github.com/beefproject/beef/wiki/FAQ

Ensure you're using the [latest version of BeEF](https://github.com/beefproject/beef/releases/tag/beef-0.4.7.1).

#### Environment

What version/revision of BeEF are you using? latest version

On what version of Ruby?

On what browser? Firefox

On what operating system? Ubuntu

#### Configuration

Are you using a non-default configuration? No

Have you enabled or disabled any BeEF extensions? No

#### Summary

Please provide a summary of the issue.

I cannot run the beEF project on Ubuntu. the usual ./beef command is not responding and an error message is popping instead:

Traceback (most recent call last):

1: from ./beef:44:in '<main>'

./beef:47:in 'rescue in <main>: undefined method ' print_error' for main:Object (NoMethodError)

#### Expected Behaviour

What was the expected result?

once ./beef command is entered it should run the interface and show a link to the hookpage

#### Actual Behaviour

What was the actual result?

error message

#### Steps to Reproduce

Please provide steps to reproduce this issue.

The first thing: install Ruby on my Ubuntu 18.04.2 image:

sudo apt install ruby ruby-dev

The next installed git:

sudo apt install git

clone the git project:

git clone https://github.com/beefproject/beef

.Next, move into the beef directory:

cd beef

On Ubuntu run:

sudo ./install

Once the installation was successfully completed, I ran:

./beef

I was quickly greeted with the following error. To fix this, I tried to tell BeEF that it needs this gem, so I modified the Gemfile file following these steps:

rm Gemfile.lock. Click Y to remove it.

sudo nano Gemfile

In the file, add the following line:

gem ‘xmlrpc’

Save the file and re-run the installation:

sudo ./install

At this point, the installation should be successful. Try running the following command:

./beef

But another error message popped and I am now stocked:

Traceback (most recent call last):

1: from ./beef:44:in '<main>'

./beef:47:in 'rescue in <main>: undefined method ' print_error' for main:Object (NoMethodError)

#### Additional Information

Please provide any additional information which may be useful in resolving this issue, such as debugging output and relevant screen shots. Debug output can be enabled by specifying `debug: true` in the `config.yaml` configuration file.

| defect | beefproject on ubuntu can t run beef error verify first that your issue request has not been posted previously ensure you re using the environment what version revision of beef are you using latest version on what version of ruby on what browser firefox on what operating system ubuntu configuration are you using a non default configuration no have you enabled or disabled any beef extensions no summary please provide a summary of the issue i cannot run the beef project on ubuntu the usual beef command is not responding and an error message is popping instead traceback most recent call last from beef in beef in rescue in undefined method print error for main object nomethoderror expected behaviour what was the expected result once beef command is entered it should run the interface and show a link to the hookpage actual behaviour what was the actual result error message steps to reproduce please provide steps to reproduce this issue the first thing install ruby on my ubuntu image sudo apt install ruby ruby dev the next installed git sudo apt install git clone the git project git clone next move into the beef directory cd beef on ubuntu run sudo install once the installation was successfully completed i ran beef i was quickly greeted with the following error to fix this i tried to tell beef that it needs this gem so i modified the gemfile file following these steps rm gemfile lock click y to remove it sudo nano gemfile in the file add the following line gem ‘xmlrpc’ save the file and re run the installation sudo install at this point the installation should be successful try running the following command beef but another error message popped and i am now stocked traceback most recent call last from beef in beef in rescue in undefined method print error for main object nomethoderror additional information please provide any additional information which may be useful in resolving this issue such as debugging output and relevant screen shots debug output can be enabled by specifying debug true in the config yaml configuration file | 1 |

58,957 | 16,964,809,966 | IssuesEvent | 2021-06-29 09:34:54 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | opened | UISIs after restart | T-Defect | This is almost certainly captured elsewhere, but:

- I went into the office for a day and didn't use my eleweb nightly on my desktop

- I came home and used it the next day

- I clicked update to update to the latest version

- It's 20 minutes later and a good chunk of my encrypted rooms are still full of UISIs

I suspect it'll sort itself out, but in the absence of any feedback or progress it feels pretty broken. | 1.0 | UISIs after restart - This is almost certainly captured elsewhere, but:

- I went into the office for a day and didn't use my eleweb nightly on my desktop

- I came home and used it the next day

- I clicked update to update to the latest version

- It's 20 minutes later and a good chunk of my encrypted rooms are still full of UISIs

I suspect it'll sort itself out, but in the absence of any feedback or progress it feels pretty broken. | defect | uisis after restart this is almost certainly captured elsewhere but i went into the office for a day and didn t use my eleweb nightly on my desktop i came home and used it the next day i clicked update to update to the latest version it s minutes later and a good chunk of my encrypted rooms are still full of uisis i suspect it ll sort itself out but in the absence of any feedback or progress it feels pretty broken | 1 |