Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

58,395 | 16,523,302,549 | IssuesEvent | 2021-05-26 16:47:13 | primefaces/primefaces | https://api.github.com/repos/primefaces/primefaces | opened | InputMask: Disabled or ReadOnly breaks the page | defect | **Environment:**

- PF Version: _10.0_

- JSF + version: ALL

- Affected browsers: ALL

**To Reproduce**

[pf-inputmask.zip](https://github.com/primefaces/primefaces/files/6548359/pf-inputmask.zip)

Steps to reproduce the behavior:

1. Run the reproducer the menu and save buttons do not work and no JavaScript errors are throw.

2. Set `readonly="false"` and `disabled="false"` the page begins to work again.

**Expected behavior**

The page should work properly with `readonly` or `disabled` InputMasks.

**Example XHTML**

```html

<h:form id="frmTest">

<p:inputMask value="#{testView.string1}" mask="*****-*****-*****-*****" maxlength="23" size="55" readonly="true"/>

</h:form>

```

| 1.0 | InputMask: Disabled or ReadOnly breaks the page - **Environment:**

- PF Version: _10.0_

- JSF + version: ALL

- Affected browsers: ALL

**To Reproduce**

[pf-inputmask.zip](https://github.com/primefaces/primefaces/files/6548359/pf-inputmask.zip)

Steps to reproduce the behavior:

1. Run the reproducer the menu and save buttons do not work and no JavaScript errors are throw.

2. Set `readonly="false"` and `disabled="false"` the page begins to work again.

**Expected behavior**

The page should work properly with `readonly` or `disabled` InputMasks.

**Example XHTML**

```html

<h:form id="frmTest">

<p:inputMask value="#{testView.string1}" mask="*****-*****-*****-*****" maxlength="23" size="55" readonly="true"/>

</h:form>

```

| defect | inputmask disabled or readonly breaks the page environment pf version jsf version all affected browsers all to reproduce steps to reproduce the behavior run the reproducer the menu and save buttons do not work and no javascript errors are throw set readonly false and disabled false the page begins to work again expected behavior the page should work properly with readonly or disabled inputmasks example xhtml html | 1 |

79,386 | 28,142,123,600 | IssuesEvent | 2023-04-02 03:20:47 | FreeRADIUS/freeradius-server | https://api.github.com/repos/FreeRADIUS/freeradius-server | closed | [defect]: Python3 module still crashes freeradius | defect | ### What type of defect/bug is this?

Crash or memory corruption (segv, abort, etc...)

### How can the issue be reproduced?

see #4951 , debian bullseye (stable)

### Log output from the FreeRADIUS daemon

```shell

see #4951

```

### Relevant log output from client utilities

Does not apply, server unable to start

### Backtrace from LLDB or GDB

_No response_ | 1.0 | [defect]: Python3 module still crashes freeradius - ### What type of defect/bug is this?

Crash or memory corruption (segv, abort, etc...)

### How can the issue be reproduced?

see #4951 , debian bullseye (stable)

### Log output from the FreeRADIUS daemon

```shell

see #4951

```

### Relevant log output from client utilities

Does not apply, server unable to start

### Backtrace from LLDB or GDB

_No response_ | defect | module still crashes freeradius what type of defect bug is this crash or memory corruption segv abort etc how can the issue be reproduced see debian bullseye stable log output from the freeradius daemon shell see relevant log output from client utilities does not apply server unable to start backtrace from lldb or gdb no response | 1 |

52,969 | 13,249,541,341 | IssuesEvent | 2020-08-19 21:00:00 | ophrescue/RescueRails | https://api.github.com/repos/ophrescue/RescueRails | opened | AdopterSearcher not finding some applications | Defect | I think this bug was introduced in #1649

Users reported issues when searching for Adoption Applications, from initial testing it seems that Running and AdopterSearch will only return Unassigned applications.

Need to confirm bug, write tests to replicate and apply fix. | 1.0 | AdopterSearcher not finding some applications - I think this bug was introduced in #1649

Users reported issues when searching for Adoption Applications, from initial testing it seems that Running and AdopterSearch will only return Unassigned applications.

Need to confirm bug, write tests to replicate and apply fix. | defect | adoptersearcher not finding some applications i think this bug was introduced in users reported issues when searching for adoption applications from initial testing it seems that running and adoptersearch will only return unassigned applications need to confirm bug write tests to replicate and apply fix | 1 |

43,837 | 17,687,790,561 | IssuesEvent | 2021-08-24 05:42:52 | ambanum/test-repo | https://api.github.com/repos/ambanum/test-repo | opened | Add OnlyFans - Privacy Policy | add-document add-service |

New service addition requested through the contribution tool

You can see the work done by the awesome contributor here:

http://localhost:3000/en/contribute/service?documentType=Privacy%20Policy&expertMode=true&name=OnlyFans&selectedCss[]=.b-static-content&step=2&url=https%3A%2F%2Fonlyfans.com%2Fprivacy&expertMode=true

Or you can see the JSON generated here:

```json

{

"name": "OnlyFans",

"documents": {

"Privacy Policy": {

"fetch": "https://onlyfans.com/privacy",

"select": [

".b-static-content"

]

}

}

}

```

You will need to create the following file in the root of the project: `services/OnlyFans.json`

| 1.0 | Add OnlyFans - Privacy Policy -

New service addition requested through the contribution tool

You can see the work done by the awesome contributor here:

http://localhost:3000/en/contribute/service?documentType=Privacy%20Policy&expertMode=true&name=OnlyFans&selectedCss[]=.b-static-content&step=2&url=https%3A%2F%2Fonlyfans.com%2Fprivacy&expertMode=true

Or you can see the JSON generated here:

```json

{

"name": "OnlyFans",

"documents": {

"Privacy Policy": {

"fetch": "https://onlyfans.com/privacy",

"select": [

".b-static-content"

]

}

}

}

```

You will need to create the following file in the root of the project: `services/OnlyFans.json`

| non_defect | add onlyfans privacy policy new service addition requested through the contribution tool you can see the work done by the awesome contributor here b static content step url https com expertmode true or you can see the json generated here json name onlyfans documents privacy policy fetch select b static content you will need to create the following file in the root of the project services onlyfans json | 0 |

297,094 | 9,160,450,558 | IssuesEvent | 2019-03-01 07:27:44 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | [Coverity CID :190974]Integer handling issues in /subsys/net/ip/trickle.c | Coverity area: Networking bug priority: medium | Static code scan issues seen in File: /subsys/net/ip/trickle.c

Category: Integer handling issues

Function: net_trickle_create

Component: Networking

CID: 190974

Please fix or provide comments to square it off in coverity in the link: https://scan9.coverity.com/reports.htm#v32951/p12996 | 1.0 | [Coverity CID :190974]Integer handling issues in /subsys/net/ip/trickle.c - Static code scan issues seen in File: /subsys/net/ip/trickle.c

Category: Integer handling issues

Function: net_trickle_create

Component: Networking

CID: 190974

Please fix or provide comments to square it off in coverity in the link: https://scan9.coverity.com/reports.htm#v32951/p12996 | non_defect | integer handling issues in subsys net ip trickle c static code scan issues seen in file subsys net ip trickle c category integer handling issues function net trickle create component networking cid please fix or provide comments to square it off in coverity in the link | 0 |

57,991 | 16,244,885,584 | IssuesEvent | 2021-05-07 13:43:49 | Questie/Questie | https://api.github.com/repos/Questie/Questie | opened | Aurel Goldleaf (8331) | Type - Defect | Misplaced in the Journey feature.

Aurel Goldleaf (8331)

Appears as available in the Journey, even tho it's completed. (breadcrumb)

Placed in Available section (Silithus)

Should be placed in Completed section (Silithus)

https://classic.wowhead.com/quest=8331/aurel-goldleaf | 1.0 | Aurel Goldleaf (8331) - Misplaced in the Journey feature.

Aurel Goldleaf (8331)

Appears as available in the Journey, even tho it's completed. (breadcrumb)

Placed in Available section (Silithus)

Should be placed in Completed section (Silithus)

https://classic.wowhead.com/quest=8331/aurel-goldleaf | defect | aurel goldleaf misplaced in the journey feature aurel goldleaf appears as available in the journey even tho it s completed breadcrumb placed in available section silithus should be placed in completed section silithus | 1 |

33,676 | 7,196,344,093 | IssuesEvent | 2018-02-05 02:13:53 | bridgedotnet/Bridge | https://api.github.com/repos/bridgedotnet/Bridge | closed | Convert.ToChar fails when converting from object | defect in-progress | Assigning a char to object and then invoking Convert.ToChar on it throws an exception.

### Steps To Reproduce

https://deck.net/712252a7e05755c5a602d767c3ee9328

```csharp

public class Program

{

public static void Main()

{

object a = 'a';

Console.WriteLine(Convert.ToChar(a));

}

}

```

### Expected Result

```js

a

```

### Actual Result

```js

System.Exception: Uncaught System.InvalidCastException: Invalid cast from 'Object' to 'Char'.

Error: Invalid cast from 'Object' to 'Char'.

at ctor (https://deck.net/resources/js/bridge/bridge.min.js?16.7.0:7:88149)

at new ctor (https://deck.net/resources/js/bridge/bridge.min.js?16.7.0:7:93335)

at Object.throwInvalidCastEx (https://deck.net/resources/js/bridge/bridge.min.js?16.7.0:7:325127)

at Object.toChar (https://deck.net/resources/js/bridge/bridge.min.js?16.7.0:7:305220)

at Function.Main (https://deck.net/RunHandler.ashx?h=-2003126879:10:73)

at https://deck.net/resources/js/bridge/bridge.min.js?16.7.0:7:39626

at HTMLDocument.i (https://deck.net/resources/js/bridge/bridge.min.js?16.7.0:7:5182)

at i (https://cdnjs.cloudflare.com/ajax/libs/jquery/2.2.4/jquery.min.js:2:27151)

at Object.add [as done] (https://cdnjs.cloudflare.com/ajax/libs/jquery/2.2.4/jquery.min.js:2:27450)

at n.fn.init.n.fn.ready (https://cdnjs.cloudflare.com/ajax/libs/jquery/2.2.4/jquery.min.js:2:29515)

```

| 1.0 | Convert.ToChar fails when converting from object - Assigning a char to object and then invoking Convert.ToChar on it throws an exception.

### Steps To Reproduce

https://deck.net/712252a7e05755c5a602d767c3ee9328

```csharp

public class Program

{

public static void Main()

{

object a = 'a';

Console.WriteLine(Convert.ToChar(a));

}

}

```

### Expected Result

```js

a

```

### Actual Result

```js

System.Exception: Uncaught System.InvalidCastException: Invalid cast from 'Object' to 'Char'.

Error: Invalid cast from 'Object' to 'Char'.

at ctor (https://deck.net/resources/js/bridge/bridge.min.js?16.7.0:7:88149)

at new ctor (https://deck.net/resources/js/bridge/bridge.min.js?16.7.0:7:93335)

at Object.throwInvalidCastEx (https://deck.net/resources/js/bridge/bridge.min.js?16.7.0:7:325127)

at Object.toChar (https://deck.net/resources/js/bridge/bridge.min.js?16.7.0:7:305220)

at Function.Main (https://deck.net/RunHandler.ashx?h=-2003126879:10:73)

at https://deck.net/resources/js/bridge/bridge.min.js?16.7.0:7:39626

at HTMLDocument.i (https://deck.net/resources/js/bridge/bridge.min.js?16.7.0:7:5182)

at i (https://cdnjs.cloudflare.com/ajax/libs/jquery/2.2.4/jquery.min.js:2:27151)

at Object.add [as done] (https://cdnjs.cloudflare.com/ajax/libs/jquery/2.2.4/jquery.min.js:2:27450)

at n.fn.init.n.fn.ready (https://cdnjs.cloudflare.com/ajax/libs/jquery/2.2.4/jquery.min.js:2:29515)

```

| defect | convert tochar fails when converting from object assigning a char to object and then invoking convert tochar on it throws an exception steps to reproduce csharp public class program public static void main object a a console writeline convert tochar a expected result js a actual result js system exception uncaught system invalidcastexception invalid cast from object to char error invalid cast from object to char at ctor at new ctor at object throwinvalidcastex at object tochar at function main at at htmldocument i at i at object add at n fn init n fn ready | 1 |

27,250 | 4,952,141,212 | IssuesEvent | 2016-12-01 10:57:36 | icatproject/ijp.torque | https://api.github.com/repos/icatproject/ijp.torque | closed | Walltime limit needs increasing | Priority-Medium Type-Defect | ```

I had a number of quincy jobs running simultaneously and they were running very slowly.

Returning to check on them later I found that Torque had killed them off, providing

the error message:

PBS: job killed: walltime 3610 exceeded limit 3600

```

Original issue reported on code.google.com by `kevin.phipps13` on 2013-05-15 15:55:31

| 1.0 | Walltime limit needs increasing - ```

I had a number of quincy jobs running simultaneously and they were running very slowly.

Returning to check on them later I found that Torque had killed them off, providing

the error message:

PBS: job killed: walltime 3610 exceeded limit 3600

```

Original issue reported on code.google.com by `kevin.phipps13` on 2013-05-15 15:55:31

| defect | walltime limit needs increasing i had a number of quincy jobs running simultaneously and they were running very slowly returning to check on them later i found that torque had killed them off providing the error message pbs job killed walltime exceeded limit original issue reported on code google com by kevin on | 1 |

35,187 | 12,321,114,799 | IssuesEvent | 2020-05-13 08:11:36 | tamirverthim/fitbit-api-example-java | https://api.github.com/repos/tamirverthim/fitbit-api-example-java | opened | CVE-2019-12814 (Medium) detected in jackson-databind-2.8.1.jar | security vulnerability | ## CVE-2019-12814 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.1.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http://github.com/FasterXML/jackson">http://github.com/FasterXML/jackson</a></p>

<p>Path to dependency file: /tmp/ws-scm/fitbit-api-example-java/pom.xml</p>

<p>Path to vulnerable library: /root/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.8.1/jackson-databind-2.8.1.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-web-1.4.0.RELEASE.jar (Root Library)

- :x: **jackson-databind-2.8.1.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://api.github.com/repos/tamirverthim/fitbit-api-example-java/commits/1d4a86820b5ccc9e51b82198be488c68e9299e40">1d4a86820b5ccc9e51b82198be488c68e9299e40</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A Polymorphic Typing issue was discovered in FasterXML jackson-databind 2.x through 2.9.9. When Default Typing is enabled (either globally or for a specific property) for an externally exposed JSON endpoint and the service has JDOM 1.x or 2.x jar in the classpath, an attacker can send a specifically crafted JSON message that allows them to read arbitrary local files on the server.

<p>Publish Date: 2019-06-19

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-12814>CVE-2019-12814</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.9</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/FasterXML/jackson-databind/issues/2341">https://github.com/FasterXML/jackson-databind/issues/2341</a></p>

<p>Release Date: 2019-06-19</p>

<p>Fix Resolution: 2.7.9.6, 2.8.11.4, 2.9.9.1, 2.10.0</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"com.fasterxml.jackson.core","packageName":"jackson-databind","packageVersion":"2.8.1","isTransitiveDependency":true,"dependencyTree":"org.springframework.boot:spring-boot-starter-web:1.4.0.RELEASE;com.fasterxml.jackson.core:jackson-databind:2.8.1","isMinimumFixVersionAvailable":true,"minimumFixVersion":"2.7.9.6, 2.8.11.4, 2.9.9.1, 2.10.0"}],"vulnerabilityIdentifier":"CVE-2019-12814","vulnerabilityDetails":"A Polymorphic Typing issue was discovered in FasterXML jackson-databind 2.x through 2.9.9. When Default Typing is enabled (either globally or for a specific property) for an externally exposed JSON endpoint and the service has JDOM 1.x or 2.x jar in the classpath, an attacker can send a specifically crafted JSON message that allows them to read arbitrary local files on the server.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-12814","cvss3Severity":"medium","cvss3Score":"5.9","cvss3Metrics":{"A":"None","AC":"High","PR":"None","S":"Unchanged","C":"High","UI":"None","AV":"Network","I":"None"},"extraData":{}}</REMEDIATE> --> | True | CVE-2019-12814 (Medium) detected in jackson-databind-2.8.1.jar - ## CVE-2019-12814 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.1.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http://github.com/FasterXML/jackson">http://github.com/FasterXML/jackson</a></p>

<p>Path to dependency file: /tmp/ws-scm/fitbit-api-example-java/pom.xml</p>

<p>Path to vulnerable library: /root/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.8.1/jackson-databind-2.8.1.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-web-1.4.0.RELEASE.jar (Root Library)

- :x: **jackson-databind-2.8.1.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://api.github.com/repos/tamirverthim/fitbit-api-example-java/commits/1d4a86820b5ccc9e51b82198be488c68e9299e40">1d4a86820b5ccc9e51b82198be488c68e9299e40</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A Polymorphic Typing issue was discovered in FasterXML jackson-databind 2.x through 2.9.9. When Default Typing is enabled (either globally or for a specific property) for an externally exposed JSON endpoint and the service has JDOM 1.x or 2.x jar in the classpath, an attacker can send a specifically crafted JSON message that allows them to read arbitrary local files on the server.

<p>Publish Date: 2019-06-19

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-12814>CVE-2019-12814</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.9</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/FasterXML/jackson-databind/issues/2341">https://github.com/FasterXML/jackson-databind/issues/2341</a></p>

<p>Release Date: 2019-06-19</p>

<p>Fix Resolution: 2.7.9.6, 2.8.11.4, 2.9.9.1, 2.10.0</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"com.fasterxml.jackson.core","packageName":"jackson-databind","packageVersion":"2.8.1","isTransitiveDependency":true,"dependencyTree":"org.springframework.boot:spring-boot-starter-web:1.4.0.RELEASE;com.fasterxml.jackson.core:jackson-databind:2.8.1","isMinimumFixVersionAvailable":true,"minimumFixVersion":"2.7.9.6, 2.8.11.4, 2.9.9.1, 2.10.0"}],"vulnerabilityIdentifier":"CVE-2019-12814","vulnerabilityDetails":"A Polymorphic Typing issue was discovered in FasterXML jackson-databind 2.x through 2.9.9. When Default Typing is enabled (either globally or for a specific property) for an externally exposed JSON endpoint and the service has JDOM 1.x or 2.x jar in the classpath, an attacker can send a specifically crafted JSON message that allows them to read arbitrary local files on the server.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-12814","cvss3Severity":"medium","cvss3Score":"5.9","cvss3Metrics":{"A":"None","AC":"High","PR":"None","S":"Unchanged","C":"High","UI":"None","AV":"Network","I":"None"},"extraData":{}}</REMEDIATE> --> | non_defect | cve medium detected in jackson databind jar cve medium severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file tmp ws scm fitbit api example java pom xml path to vulnerable library root repository com fasterxml jackson core jackson databind jackson databind jar dependency hierarchy spring boot starter web release jar root library x jackson databind jar vulnerable library found in head commit a href vulnerability details a polymorphic typing issue was discovered in fasterxml jackson databind x through when default typing is enabled either globally or for a specific property for an externally exposed json endpoint and the service has jdom x or x jar in the classpath an attacker can send a specifically crafted json message that allows them to read arbitrary local files on the server publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact none availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution isopenpronvulnerability false ispackagebased true isdefaultbranch true packages vulnerabilityidentifier cve vulnerabilitydetails a polymorphic typing issue was discovered in fasterxml jackson databind x through when default typing is enabled either globally or for a specific property for an externally exposed json endpoint and the service has jdom x or x jar in the classpath an attacker can send a specifically crafted json message that allows them to read arbitrary local files on the server vulnerabilityurl | 0 |

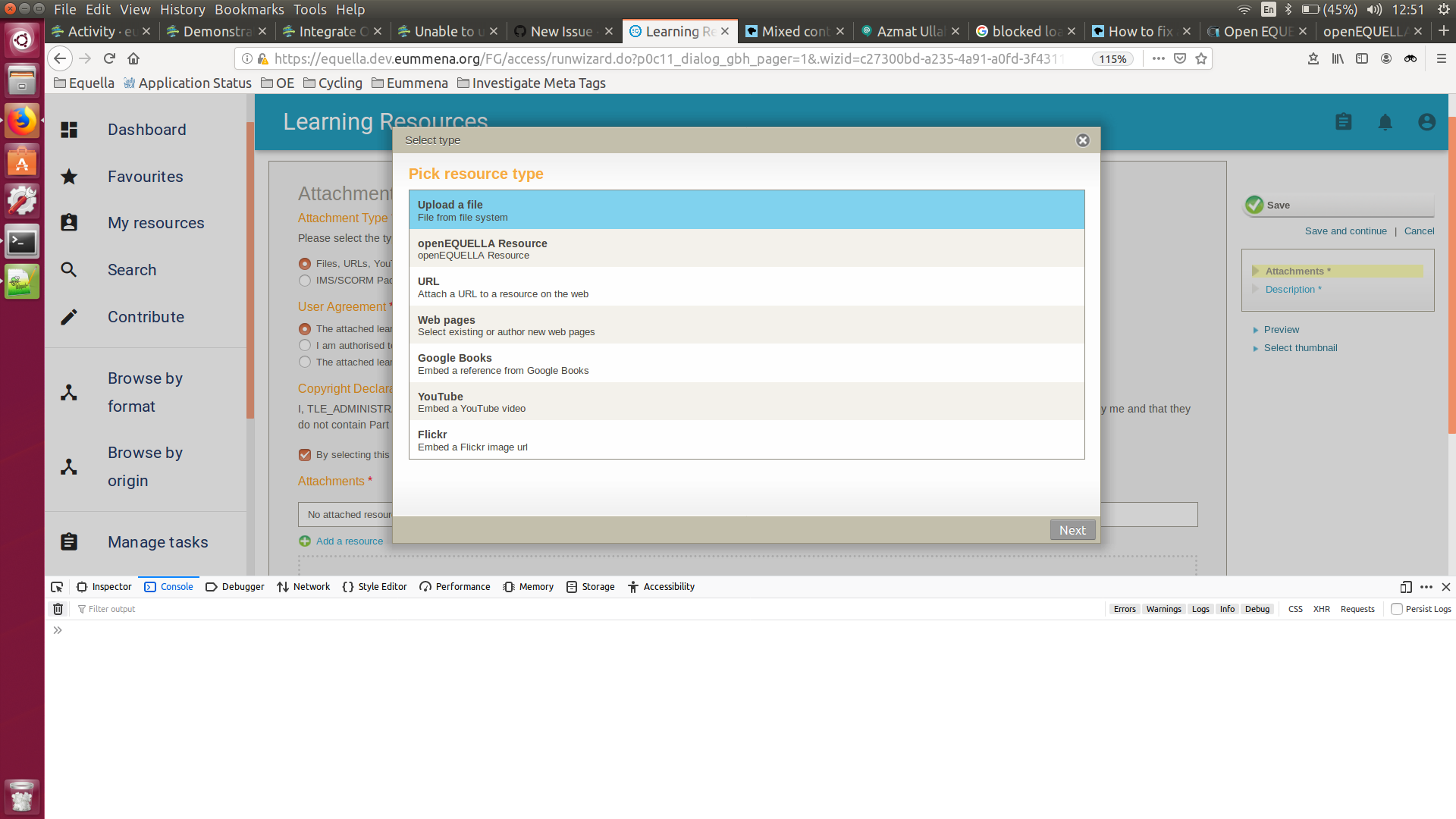

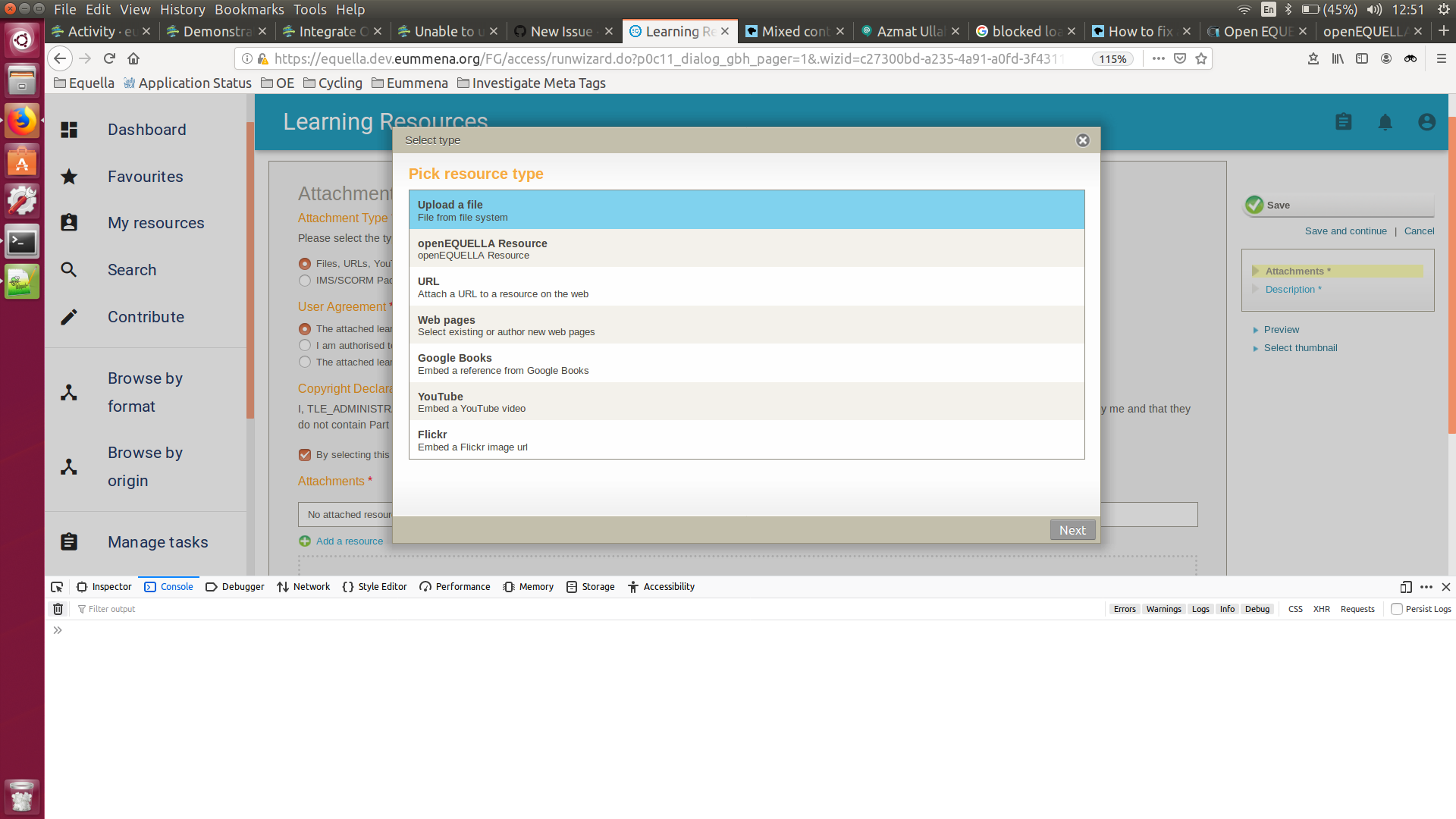

95,831 | 10,889,679,208 | IssuesEvent | 2019-11-18 18:46:34 | openequella/openEQUELLA | https://api.github.com/repos/openequella/openEQUELLA | closed | Unable to upload File | Documentation bug question | Unable to upload a file

Steps to reproduce the behavior:

1. Go to 'Contribute'

2. Click on 'Learning Resources'

3. Select 'Files, URLs, YoutTube'

4. Follow the process and Try to upload a file from your system.

**Expected behavior**

The file should be upload under the learning resource.

**Screenshots**

**Stacktrace**

`Blocked loading mixed active content “http://equella.dev.eummena.org/FG/api/content/ajax/access/ru…4311e8ad6e&pages.pg=0&event__=p0c11_dialog_fuh.uploadCommand`

**Platform:**

- OpenEquella Version: [Installer built from the dev branch manually]

- OS: [Ubuntu 16.04]

- Browser [Firefox]

The problem according to me is the **http** URL where the file upload request is directing to. I am running **SSL** but the file upload call is directing to **http** instead of **https**. | 1.0 | Unable to upload File - Unable to upload a file

Steps to reproduce the behavior:

1. Go to 'Contribute'

2. Click on 'Learning Resources'

3. Select 'Files, URLs, YoutTube'

4. Follow the process and Try to upload a file from your system.

**Expected behavior**

The file should be upload under the learning resource.

**Screenshots**

**Stacktrace**

`Blocked loading mixed active content “http://equella.dev.eummena.org/FG/api/content/ajax/access/ru…4311e8ad6e&pages.pg=0&event__=p0c11_dialog_fuh.uploadCommand`

**Platform:**

- OpenEquella Version: [Installer built from the dev branch manually]

- OS: [Ubuntu 16.04]

- Browser [Firefox]

The problem according to me is the **http** URL where the file upload request is directing to. I am running **SSL** but the file upload call is directing to **http** instead of **https**. | non_defect | unable to upload file unable to upload a file steps to reproduce the behavior go to contribute click on learning resources select files urls youttube follow the process and try to upload a file from your system expected behavior the file should be upload under the learning resource screenshots stacktrace blocked loading mixed active content “ platform openequella version os browser the problem according to me is the http url where the file upload request is directing to i am running ssl but the file upload call is directing to http instead of https | 0 |

38,934 | 6,714,950,390 | IssuesEvent | 2017-10-13 19:00:12 | blackbaud/skyux2 | https://api.github.com/repos/blackbaud/skyux2 | closed | Add `Sky-` class prefix requirement to contributing guidelines | documentation | All classes, directives, services, components, etc. should be prefixed with `Sky-`. | 1.0 | Add `Sky-` class prefix requirement to contributing guidelines - All classes, directives, services, components, etc. should be prefixed with `Sky-`. | non_defect | add sky class prefix requirement to contributing guidelines all classes directives services components etc should be prefixed with sky | 0 |

140,110 | 5,397,183,078 | IssuesEvent | 2017-02-27 14:02:04 | blipinsk/cortado | https://api.github.com/repos/blipinsk/cortado | opened | Add simplified names for methods without args | enhancement low-priority | Introduce simpler names, so the method chain sounds better. E.g.:

`isClickable()` -> `clickable()`

`isFocusable()` -> `focusable()`

`hasFocus()` -> `focused()`

... | 1.0 | Add simplified names for methods without args - Introduce simpler names, so the method chain sounds better. E.g.:

`isClickable()` -> `clickable()`

`isFocusable()` -> `focusable()`

`hasFocus()` -> `focused()`

... | non_defect | add simplified names for methods without args introduce simpler names so the method chain sounds better e g isclickable clickable isfocusable focusable hasfocus focused | 0 |

766,868 | 26,902,392,367 | IssuesEvent | 2023-02-06 16:29:36 | noctuelles/42-ft_transcendance | https://api.github.com/repos/noctuelles/42-ft_transcendance | closed | Le match ne quitte pas si un user se delog en plein match | bug medium priority front back | Reproduction

Jouer un match contre qq, se delog en plein match avec le bouton delog (pas fermer la fenetre !) | 1.0 | Le match ne quitte pas si un user se delog en plein match - Reproduction

Jouer un match contre qq, se delog en plein match avec le bouton delog (pas fermer la fenetre !) | non_defect | le match ne quitte pas si un user se delog en plein match reproduction jouer un match contre qq se delog en plein match avec le bouton delog pas fermer la fenetre | 0 |

564 | 2,886,770,357 | IssuesEvent | 2015-06-12 10:42:19 | xproc/specification | https://api.github.com/repos/xproc/specification | closed | 2.7.1 Syntax: allow <p:pipe step="name"/> | must requirement | XProc 1.0 offers relatively few default behaviors, requiring instead that pipelines specify every construct fully. User experience has demonstrated that this leads to very verbose pipelines and has been a constant source of complaint. XProc 2.0 will introduce a variety of syntactic simplifications as an aid to readability and usability, including but not limited to:

`<p:pipe step="name"/>` binds to the primary output port of the step named “name”.

| 1.0 | 2.7.1 Syntax: allow <p:pipe step="name"/> - XProc 1.0 offers relatively few default behaviors, requiring instead that pipelines specify every construct fully. User experience has demonstrated that this leads to very verbose pipelines and has been a constant source of complaint. XProc 2.0 will introduce a variety of syntactic simplifications as an aid to readability and usability, including but not limited to:

`<p:pipe step="name"/>` binds to the primary output port of the step named “name”.

| non_defect | syntax allow xproc offers relatively few default behaviors requiring instead that pipelines specify every construct fully user experience has demonstrated that this leads to very verbose pipelines and has been a constant source of complaint xproc will introduce a variety of syntactic simplifications as an aid to readability and usability including but not limited to binds to the primary output port of the step named “name” | 0 |

225,550 | 7,488,328,953 | IssuesEvent | 2018-04-06 00:32:32 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | USER ISSUE: Game requires login | High Priority | **Version:** 0.7.1.2 beta

**Steps to Reproduce:**

1. Launch the game from steam

**Expected behavior:**

Brought me to the main menu

**Actual behavior:**

requires me to login with my Strange Loop account. After repeatedly closing and reopening the game eventually it will show the main menu. Probably 7 out of 8 times it asks for login rather than display the menu. I also registered an account just in case and it would not recognize my credentials, but that's separate. | 1.0 | USER ISSUE: Game requires login - **Version:** 0.7.1.2 beta

**Steps to Reproduce:**

1. Launch the game from steam

**Expected behavior:**

Brought me to the main menu

**Actual behavior:**

requires me to login with my Strange Loop account. After repeatedly closing and reopening the game eventually it will show the main menu. Probably 7 out of 8 times it asks for login rather than display the menu. I also registered an account just in case and it would not recognize my credentials, but that's separate. | non_defect | user issue game requires login version beta steps to reproduce launch the game from steam expected behavior brought me to the main menu actual behavior requires me to login with my strange loop account after repeatedly closing and reopening the game eventually it will show the main menu probably out of times it asks for login rather than display the menu i also registered an account just in case and it would not recognize my credentials but that s separate | 0 |

15,967 | 2,870,247,961 | IssuesEvent | 2015-06-07 00:30:26 | pdelia/away3d | https://api.github.com/repos/pdelia/away3d | closed | events onMouseOver and onMouseOut don't works without looping view.render() | auto-migrated Priority-Medium Type-Defect | #15 Issue by __GoogleCodeExporter__, created on: 2015-04-24T07:51:06Z

```

What steps will reproduce the problem?

1. create Plane

_model = new Plane({material:faceMaterial, back:backMaterial, width:440,

height:820, segmentsW:1, segmentsH:1, yUp:true});

_model.ownCanvas = true;

_model.bothsides = true;

container.addChild( _model );

2. add event listeners

_model.addOnMouseOver(onMouseOver);

_model.addOnMouseOut(onMouseOut);

_model.addOnMouseDown(onMouseDown);

3. And now, if I have looped rendering then all ok, but if I don't have

looped rendering, then onMouseDown working fine, but onMouseOver and

onMouseOut are don't working. For repair this I need to execute

view.render() all the time during I moving the mouse...

What is the expected output? What do you see instead?

I expect events onMouseOver and onMouseOut without looping view.render()

What version of the product are you using? On what operating system?

rev. 593

Please provide any additional information below.

```

Original issue reported on code.google.com by `nauro...@gmail.com` on 23 Jul 2008 at 4:10 | 1.0 | events onMouseOver and onMouseOut don't works without looping view.render() - #15 Issue by __GoogleCodeExporter__, created on: 2015-04-24T07:51:06Z

```

What steps will reproduce the problem?

1. create Plane

_model = new Plane({material:faceMaterial, back:backMaterial, width:440,

height:820, segmentsW:1, segmentsH:1, yUp:true});

_model.ownCanvas = true;

_model.bothsides = true;

container.addChild( _model );

2. add event listeners

_model.addOnMouseOver(onMouseOver);

_model.addOnMouseOut(onMouseOut);

_model.addOnMouseDown(onMouseDown);

3. And now, if I have looped rendering then all ok, but if I don't have

looped rendering, then onMouseDown working fine, but onMouseOver and

onMouseOut are don't working. For repair this I need to execute

view.render() all the time during I moving the mouse...

What is the expected output? What do you see instead?

I expect events onMouseOver and onMouseOut without looping view.render()

What version of the product are you using? On what operating system?

rev. 593

Please provide any additional information below.

```

Original issue reported on code.google.com by `nauro...@gmail.com` on 23 Jul 2008 at 4:10 | defect | events onmouseover and onmouseout don t works without looping view render issue by googlecodeexporter created on what steps will reproduce the problem create plane model new plane material facematerial back backmaterial width height segmentsw segmentsh yup true model owncanvas true model bothsides true container addchild model add event listeners model addonmouseover onmouseover model addonmouseout onmouseout model addonmousedown onmousedown and now if i have looped rendering then all ok but if i don t have looped rendering then onmousedown working fine but onmouseover and onmouseout are don t working for repair this i need to execute view render all the time during i moving the mouse what is the expected output what do you see instead i expect events onmouseover and onmouseout without looping view render what version of the product are you using on what operating system rev please provide any additional information below original issue reported on code google com by nauro gmail com on jul at | 1 |

41,008 | 10,264,413,915 | IssuesEvent | 2019-08-22 16:18:25 | STEllAR-GROUP/phylanx | https://api.github.com/repos/STEllAR-GROUP/phylanx | closed | Phylanx failing build on Fedora | type: compatibility issue type: defect | My docker build is failing since yesterday when I try to run "make tests." I'm using the latest hpx, blaze, blaze-tensor, and pybind11, gcc 9.1.1, boost 1.69.0.

```

Configuration is:

RUN git clone https://github.com/STEllAR-GROUP/hpx.git && \

mkdir -p /hpx/build && \

cd /hpx/build && \

cmake -DCMAKE_BUILD_TYPE=Debug \

-DHPX_WITH_MALLOC=system \

-DHPX_WITH_MORE_THAN_64_THREADS=ON \

-DHPX_WITH_MAX_CPU_COUNT=80 \

-DHPX_WITH_EXAMPLES=Off \

.. && \

make -j ${CPUS} install

```

--Steve

```

[ 15%] Building CXX object src/CMakeFiles/phylanx_component.dir/execution_tree/primitives/phytype.cpp.o

[ 15%] Building CXX object src/CMakeFiles/phylanx_component.dir/execution_tree/primitives/primitive_component.cpp.o

[ 15%] Building CXX object src/CMakeFiles/phylanx_component.dir/execution_tree/primitives/primitive_component_base.cpp.o

In file included from /blaze_tensor/blaze_tensor/math/DenseArray.h:120,

from /blaze_tensor/blaze_tensor/math/CustomArray.h:48,

from /blaze_tensor/blaze_tensor/Math.h:48,

from /home/jovyan/phylanx/phylanx/ir/node_data.hpp:28,

from /home/jovyan/phylanx/phylanx/ast/node.hpp:12,

from /home/jovyan/phylanx/phylanx/execution_tree/primitives/base_primitive.hpp:12,

from /home/jovyan/phylanx/src/execution_tree/primitives/node_data_helpers2d.cpp:8:

/blaze_tensor/blaze_tensor/math/dense/DenseArray.h:55:10: fatal error: blaze/util/DecltypeAuto.h: No such file or directory

55 | #include <blaze/util/DecltypeAuto.h>

| ^~~~~~~~~~~~~~~~~~~~~~~~~~~

compilation terminated.

In file included from /blaze_tensor/blaze_tensor/math/DenseArray.h:120,

from /blaze_tensor/blaze_tensor/math/CustomArray.h:48,

from /blaze_tensor/blaze_tensor/Math.h:48,

from /home/jovyan/phylanx/phylanx/ir/node_data.hpp:28,

from /home/jovyan/phylanx/phylanx/ast/node.hpp:12,

from /home/jovyan/phylanx/phylanx/ast/detail/is_placeholder.hpp:10,

from /home/jovyan/phylanx/src/ast/detail/is_placeholder.cpp:7:

/blaze_tensor/blaze_tensor/math/dense/DenseArray.h:55:10: fatal error: blaze/util/DecltypeAuto.h: No such file or directory

55 | #include <blaze/util/DecltypeAuto.h>

| ^~~~~~~~~~~~~~~~~~~~~~~~~~~

``` | 1.0 | Phylanx failing build on Fedora - My docker build is failing since yesterday when I try to run "make tests." I'm using the latest hpx, blaze, blaze-tensor, and pybind11, gcc 9.1.1, boost 1.69.0.

```

Configuration is:

RUN git clone https://github.com/STEllAR-GROUP/hpx.git && \

mkdir -p /hpx/build && \

cd /hpx/build && \

cmake -DCMAKE_BUILD_TYPE=Debug \

-DHPX_WITH_MALLOC=system \

-DHPX_WITH_MORE_THAN_64_THREADS=ON \

-DHPX_WITH_MAX_CPU_COUNT=80 \

-DHPX_WITH_EXAMPLES=Off \

.. && \

make -j ${CPUS} install

```

--Steve

```

[ 15%] Building CXX object src/CMakeFiles/phylanx_component.dir/execution_tree/primitives/phytype.cpp.o

[ 15%] Building CXX object src/CMakeFiles/phylanx_component.dir/execution_tree/primitives/primitive_component.cpp.o

[ 15%] Building CXX object src/CMakeFiles/phylanx_component.dir/execution_tree/primitives/primitive_component_base.cpp.o

In file included from /blaze_tensor/blaze_tensor/math/DenseArray.h:120,

from /blaze_tensor/blaze_tensor/math/CustomArray.h:48,

from /blaze_tensor/blaze_tensor/Math.h:48,

from /home/jovyan/phylanx/phylanx/ir/node_data.hpp:28,

from /home/jovyan/phylanx/phylanx/ast/node.hpp:12,

from /home/jovyan/phylanx/phylanx/execution_tree/primitives/base_primitive.hpp:12,

from /home/jovyan/phylanx/src/execution_tree/primitives/node_data_helpers2d.cpp:8:

/blaze_tensor/blaze_tensor/math/dense/DenseArray.h:55:10: fatal error: blaze/util/DecltypeAuto.h: No such file or directory

55 | #include <blaze/util/DecltypeAuto.h>

| ^~~~~~~~~~~~~~~~~~~~~~~~~~~

compilation terminated.

In file included from /blaze_tensor/blaze_tensor/math/DenseArray.h:120,

from /blaze_tensor/blaze_tensor/math/CustomArray.h:48,

from /blaze_tensor/blaze_tensor/Math.h:48,

from /home/jovyan/phylanx/phylanx/ir/node_data.hpp:28,

from /home/jovyan/phylanx/phylanx/ast/node.hpp:12,

from /home/jovyan/phylanx/phylanx/ast/detail/is_placeholder.hpp:10,

from /home/jovyan/phylanx/src/ast/detail/is_placeholder.cpp:7:

/blaze_tensor/blaze_tensor/math/dense/DenseArray.h:55:10: fatal error: blaze/util/DecltypeAuto.h: No such file or directory

55 | #include <blaze/util/DecltypeAuto.h>

| ^~~~~~~~~~~~~~~~~~~~~~~~~~~

``` | defect | phylanx failing build on fedora my docker build is failing since yesterday when i try to run make tests i m using the latest hpx blaze blaze tensor and gcc boost configuration is run git clone mkdir p hpx build cd hpx build cmake dcmake build type debug dhpx with malloc system dhpx with more than threads on dhpx with max cpu count dhpx with examples off make j cpus install steve building cxx object src cmakefiles phylanx component dir execution tree primitives phytype cpp o building cxx object src cmakefiles phylanx component dir execution tree primitives primitive component cpp o building cxx object src cmakefiles phylanx component dir execution tree primitives primitive component base cpp o in file included from blaze tensor blaze tensor math densearray h from blaze tensor blaze tensor math customarray h from blaze tensor blaze tensor math h from home jovyan phylanx phylanx ir node data hpp from home jovyan phylanx phylanx ast node hpp from home jovyan phylanx phylanx execution tree primitives base primitive hpp from home jovyan phylanx src execution tree primitives node data cpp blaze tensor blaze tensor math dense densearray h fatal error blaze util decltypeauto h no such file or directory include compilation terminated in file included from blaze tensor blaze tensor math densearray h from blaze tensor blaze tensor math customarray h from blaze tensor blaze tensor math h from home jovyan phylanx phylanx ir node data hpp from home jovyan phylanx phylanx ast node hpp from home jovyan phylanx phylanx ast detail is placeholder hpp from home jovyan phylanx src ast detail is placeholder cpp blaze tensor blaze tensor math dense densearray h fatal error blaze util decltypeauto h no such file or directory include | 1 |

47,120 | 13,056,035,568 | IssuesEvent | 2020-07-30 03:27:30 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | physics-timer (Trac #24) | IceTray Migrated from Trac defect | shouldn't display unless there are enough events

Migrated from https://code.icecube.wisc.edu/ticket/24

```json

{

"status": "closed",

"changetime": "2007-11-11T03:51:18",

"description": "shouldn't display unless there are enough events",

"reporter": "troy",

"cc": "",

"resolution": "fixed",

"_ts": "1194753078000000",

"component": "IceTray",

"summary": "physics-timer",

"priority": "normal",

"keywords": "",

"time": "2007-06-03T16:40:15",

"milestone": "",

"owner": "troy",

"type": "defect"

}

```

| 1.0 | physics-timer (Trac #24) - shouldn't display unless there are enough events

Migrated from https://code.icecube.wisc.edu/ticket/24

```json

{

"status": "closed",

"changetime": "2007-11-11T03:51:18",

"description": "shouldn't display unless there are enough events",

"reporter": "troy",

"cc": "",

"resolution": "fixed",

"_ts": "1194753078000000",

"component": "IceTray",

"summary": "physics-timer",

"priority": "normal",

"keywords": "",

"time": "2007-06-03T16:40:15",

"milestone": "",

"owner": "troy",

"type": "defect"

}

```

| defect | physics timer trac shouldn t display unless there are enough events migrated from json status closed changetime description shouldn t display unless there are enough events reporter troy cc resolution fixed ts component icetray summary physics timer priority normal keywords time milestone owner troy type defect | 1 |

61,839 | 17,023,790,210 | IssuesEvent | 2021-07-03 03:52:12 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Layering of railway=tram | Component: mapnik Priority: minor Resolution: duplicate Type: defect | **[Submitted to the original trac issue database at 1.17pm, Thursday, 5th April 2012]**

This is related to [http://trac.openstreetmap.org/ticket/4342].

Lines tagged as railway=tram now get drawn over roads, which principally is fine, as it means tram lines running parallel to roads are visible in lower zoom levels as well.

However, proper layering seems to be broken now, as a railway=tram passing under a highway=secondary with bridge=yes and layer=1 is now incorrectly drawn on top of the road.

See example here: [http://www.openstreetmap.org/?lat=49.114926&lon=8.479103&zoom=18&layers=M] | 1.0 | Layering of railway=tram - **[Submitted to the original trac issue database at 1.17pm, Thursday, 5th April 2012]**

This is related to [http://trac.openstreetmap.org/ticket/4342].

Lines tagged as railway=tram now get drawn over roads, which principally is fine, as it means tram lines running parallel to roads are visible in lower zoom levels as well.

However, proper layering seems to be broken now, as a railway=tram passing under a highway=secondary with bridge=yes and layer=1 is now incorrectly drawn on top of the road.

See example here: [http://www.openstreetmap.org/?lat=49.114926&lon=8.479103&zoom=18&layers=M] | defect | layering of railway tram this is related to lines tagged as railway tram now get drawn over roads which principally is fine as it means tram lines running parallel to roads are visible in lower zoom levels as well however proper layering seems to be broken now as a railway tram passing under a highway secondary with bridge yes and layer is now incorrectly drawn on top of the road see example here | 1 |

2,166 | 5,013,340,502 | IssuesEvent | 2016-12-13 14:17:59 | opentrials/opentrials | https://api.github.com/repos/opentrials/opentrials | closed | Add unique constraint to "records.source_url" | 3. In Development API data cleaning Processors | On #532 we found a few records with repeated `source_url` values, and found out that was a bug. We then added a functionality to the `record_remover` processor to remove trials with the same `source_url`. Now we need to make sure that doesn't happen again by adding a unique constraint on that table. After that's done and tested, we can open a new issue to remove the then-useless functionality from `record_remover`.

# Tasks

- [x] Investigate if we still have records with repeated `source_url` in our database.

- [x] If we still have bad data in our DB, find why they were created, fix the bug and run the `record_remover` processor to clean our DB

- [x] Add a unique constraint on `records.source_url`

- [x] Remove the logic on removing records with duplicated `source_url` from the `record_remover` processor, as with the constraint we'll guarantee that this will never happen | 1.0 | Add unique constraint to "records.source_url" - On #532 we found a few records with repeated `source_url` values, and found out that was a bug. We then added a functionality to the `record_remover` processor to remove trials with the same `source_url`. Now we need to make sure that doesn't happen again by adding a unique constraint on that table. After that's done and tested, we can open a new issue to remove the then-useless functionality from `record_remover`.

# Tasks

- [x] Investigate if we still have records with repeated `source_url` in our database.

- [x] If we still have bad data in our DB, find why they were created, fix the bug and run the `record_remover` processor to clean our DB

- [x] Add a unique constraint on `records.source_url`

- [x] Remove the logic on removing records with duplicated `source_url` from the `record_remover` processor, as with the constraint we'll guarantee that this will never happen | non_defect | add unique constraint to records source url on we found a few records with repeated source url values and found out that was a bug we then added a functionality to the record remover processor to remove trials with the same source url now we need to make sure that doesn t happen again by adding a unique constraint on that table after that s done and tested we can open a new issue to remove the then useless functionality from record remover tasks investigate if we still have records with repeated source url in our database if we still have bad data in our db find why they were created fix the bug and run the record remover processor to clean our db add a unique constraint on records source url remove the logic on removing records with duplicated source url from the record remover processor as with the constraint we ll guarantee that this will never happen | 0 |

29,097 | 8,287,153,418 | IssuesEvent | 2018-09-19 08:00:03 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | error: class "at::TensorImpl" has no member "set_data" | build | Observed the following error while building pytorch [76070fe](https://github.com/pytorch/pytorch/commit/76070fe73c5cce61cb9554990079594f83384629) using python setup.py install on ppc64le

```

[ 64%] Building NVCC (Device) object caffe2/CMakeFiles/caffe2_gpu.dir/__/aten/src/ATen/native/cuda/caffe2_gpu_generated_TensorTransformations.cu.o

/<path>/pytorch/torch/lib/tmp_install/include/ATen/Tensor.h(248): error: class "at::TensorImpl" has no member "set_data"

/<path>/pytorch/torch/lib/tmp_install/include/ATen/Tensor.h(248): error: class "at::TensorImpl" has no member "set_data"

/<path>/pytorch/torch/lib/tmp_install/include/ATen/Tensor.h(248): error: class "at::TensorImpl" has no member "set_data"

/<path>/pytorch/torch/lib/tmp_install/include/ATen/Tensor.h(248): error: class "at::TensorImpl" has no member "set_data"

```

| 1.0 | error: class "at::TensorImpl" has no member "set_data" - Observed the following error while building pytorch [76070fe](https://github.com/pytorch/pytorch/commit/76070fe73c5cce61cb9554990079594f83384629) using python setup.py install on ppc64le

```

[ 64%] Building NVCC (Device) object caffe2/CMakeFiles/caffe2_gpu.dir/__/aten/src/ATen/native/cuda/caffe2_gpu_generated_TensorTransformations.cu.o

/<path>/pytorch/torch/lib/tmp_install/include/ATen/Tensor.h(248): error: class "at::TensorImpl" has no member "set_data"

/<path>/pytorch/torch/lib/tmp_install/include/ATen/Tensor.h(248): error: class "at::TensorImpl" has no member "set_data"

/<path>/pytorch/torch/lib/tmp_install/include/ATen/Tensor.h(248): error: class "at::TensorImpl" has no member "set_data"

/<path>/pytorch/torch/lib/tmp_install/include/ATen/Tensor.h(248): error: class "at::TensorImpl" has no member "set_data"

```

| non_defect | error class at tensorimpl has no member set data observed the following error while building pytorch using python setup py install on building nvcc device object cmakefiles gpu dir aten src aten native cuda gpu generated tensortransformations cu o pytorch torch lib tmp install include aten tensor h error class at tensorimpl has no member set data pytorch torch lib tmp install include aten tensor h error class at tensorimpl has no member set data pytorch torch lib tmp install include aten tensor h error class at tensorimpl has no member set data pytorch torch lib tmp install include aten tensor h error class at tensorimpl has no member set data | 0 |

15,815 | 20,718,350,030 | IssuesEvent | 2022-03-13 01:18:57 | BobsMods/bobsmods | https://api.github.com/repos/BobsMods/bobsmods | closed | Hidden void recipes | enhancement mod compatibility Bob's Metals Chemicals and Intermediates Bob's Revamp | Void recipes should not be marked as hidden. Instead should be marked as `hide_from_player_crafting` .

https://wiki.factorio.com/Prototype/Recipe#hide_from_player_crafting

This will assist helper mods such as FNEI and Helmod. | True | Hidden void recipes - Void recipes should not be marked as hidden. Instead should be marked as `hide_from_player_crafting` .

https://wiki.factorio.com/Prototype/Recipe#hide_from_player_crafting

This will assist helper mods such as FNEI and Helmod. | non_defect | hidden void recipes void recipes should not be marked as hidden instead should be marked as hide from player crafting this will assist helper mods such as fnei and helmod | 0 |

49,446 | 6,026,661,523 | IssuesEvent | 2017-06-08 11:56:02 | LDMW/app | https://api.github.com/repos/LDMW/app | closed | Ensure that feedback form works if only a few questions are answered | bug please-test | As a user, I want to be able to fill out as many questions on the feedback form as I like and not get an internal server error when I only fill in one question so that I trust the credibility of the service and don't feel under pressure to provide more information than I feel comfortable providing

- [x] Users can hit submit after having filled out one or more questions on the feedback page

- [x] Internal error message should not appear

| 1.0 | Ensure that feedback form works if only a few questions are answered - As a user, I want to be able to fill out as many questions on the feedback form as I like and not get an internal server error when I only fill in one question so that I trust the credibility of the service and don't feel under pressure to provide more information than I feel comfortable providing

- [x] Users can hit submit after having filled out one or more questions on the feedback page

- [x] Internal error message should not appear

| non_defect | ensure that feedback form works if only a few questions are answered as a user i want to be able to fill out as many questions on the feedback form as i like and not get an internal server error when i only fill in one question so that i trust the credibility of the service and don t feel under pressure to provide more information than i feel comfortable providing users can hit submit after having filled out one or more questions on the feedback page internal error message should not appear | 0 |

172,848 | 21,055,576,317 | IssuesEvent | 2022-04-01 02:38:31 | elastic/kibana | https://api.github.com/repos/elastic/kibana | opened | [Security Solution]comma separated process.arg not wraps properly | bug triage_needed Team: SecuritySolution | **Describe the bug**

comma separated process.arg not wraps properly

**Build Details**

```

Version:8.2.0-SNAPSHOT

BUILD 51431

COMMIT a743498436a863e142592cb535b43f44c448851a

```

**Steps**

- Login to Kibana

- Generate some alert data , in our case we create a custom rule for process.name: "cmd.exe" and executed mutiple instance of cmd on windows host

- Click on Alert Flyout

- Observed that comma separated process.arg not wraps properly

**Screen-Shoot**

**Additional Details:**

- **_actual content copied in clipboard: process.args_**: "cmd,/c,rmdir,C:\Users\zeus\AppData\Local\Temp\peazip-tmp\.pztmp\neutral22033117,/s,/q"

- **_filter in of above process.args_**

| True | [Security Solution]comma separated process.arg not wraps properly - **Describe the bug**

comma separated process.arg not wraps properly

**Build Details**

```

Version:8.2.0-SNAPSHOT

BUILD 51431

COMMIT a743498436a863e142592cb535b43f44c448851a

```

**Steps**

- Login to Kibana

- Generate some alert data , in our case we create a custom rule for process.name: "cmd.exe" and executed mutiple instance of cmd on windows host

- Click on Alert Flyout

- Observed that comma separated process.arg not wraps properly

**Screen-Shoot**

**Additional Details:**

- **_actual content copied in clipboard: process.args_**: "cmd,/c,rmdir,C:\Users\zeus\AppData\Local\Temp\peazip-tmp\.pztmp\neutral22033117,/s,/q"

- **_filter in of above process.args_**

| non_defect | comma separated process arg not wraps properly describe the bug comma separated process arg not wraps properly build details version snapshot build commit steps login to kibana generate some alert data in our case we create a custom rule for process name cmd exe and executed mutiple instance of cmd on windows host click on alert flyout observed that comma separated process arg not wraps properly screen shoot additional details actual content copied in clipboard process args cmd c rmdir c users zeus appdata local temp peazip tmp pztmp s q filter in of above process args | 0 |

39,905 | 10,419,680,781 | IssuesEvent | 2019-09-15 18:20:49 | zerotier/ZeroTierOne | https://api.github.com/repos/zerotier/ZeroTierOne | closed | zerotier-containerized build doesn't work with Zerotier version 1.4.2 | bug build problem | If update the version of Zerotier in the current containerized build we will get a container where Zerotier can't be correctly started.

**To Reproduce**

1. Go to https://github.com/zerotier/ZeroTierOne/blob/1.4.2/ext/installfiles/linux/zerotier-containerized/Dockerfile and change "RUN apt-get update && apt-get install -y zerotier-one=1.2.12" -> "RUN apt-get update && apt-get install -y zerotier-one=1.4.2-2"

2. Build docker: docker build . -t zerotier/zerotier-containerized:1.4.2

3. Run docker: docker run -it --cap-add=NET_ADMIN --device=/dev/net/tun zerotier/zerotier-containerized:1.4.2 /bin/sh

4. Run inside the docker:

```

zerotier-cli

/bin/sh: zerotier-cli: not found

```

^ expected to work

```

apk add --update binutils

```

```

readelf -l /usr/sbin/zerotier-cli |grep "program interpreter"

[Requesting program interpreter: /lib64/ld-linux-x86-64.so.2]

```

^ which is unavailable

**Expected behavior**

Expect to get a docker that works the same way as it currently works with 1.2.12

**Proposed solution**

My quick fix can be useful: https://github.com/Spikhalskiy/ZeroTierOne/commit/b61b3da59fe24bb96c1c0b09e28deac5814306a7 | 1.0 | zerotier-containerized build doesn't work with Zerotier version 1.4.2 - If update the version of Zerotier in the current containerized build we will get a container where Zerotier can't be correctly started.

**To Reproduce**

1. Go to https://github.com/zerotier/ZeroTierOne/blob/1.4.2/ext/installfiles/linux/zerotier-containerized/Dockerfile and change "RUN apt-get update && apt-get install -y zerotier-one=1.2.12" -> "RUN apt-get update && apt-get install -y zerotier-one=1.4.2-2"

2. Build docker: docker build . -t zerotier/zerotier-containerized:1.4.2

3. Run docker: docker run -it --cap-add=NET_ADMIN --device=/dev/net/tun zerotier/zerotier-containerized:1.4.2 /bin/sh

4. Run inside the docker:

```

zerotier-cli

/bin/sh: zerotier-cli: not found

```

^ expected to work

```

apk add --update binutils

```

```

readelf -l /usr/sbin/zerotier-cli |grep "program interpreter"

[Requesting program interpreter: /lib64/ld-linux-x86-64.so.2]

```

^ which is unavailable

**Expected behavior**

Expect to get a docker that works the same way as it currently works with 1.2.12

**Proposed solution**

My quick fix can be useful: https://github.com/Spikhalskiy/ZeroTierOne/commit/b61b3da59fe24bb96c1c0b09e28deac5814306a7 | non_defect | zerotier containerized build doesn t work with zerotier version if update the version of zerotier in the current containerized build we will get a container where zerotier can t be correctly started to reproduce go to and change run apt get update apt get install y zerotier one run apt get update apt get install y zerotier one build docker docker build t zerotier zerotier containerized run docker docker run it cap add net admin device dev net tun zerotier zerotier containerized bin sh run inside the docker zerotier cli bin sh zerotier cli not found expected to work apk add update binutils readelf l usr sbin zerotier cli grep program interpreter which is unavailable expected behavior expect to get a docker that works the same way as it currently works with proposed solution my quick fix can be useful | 0 |

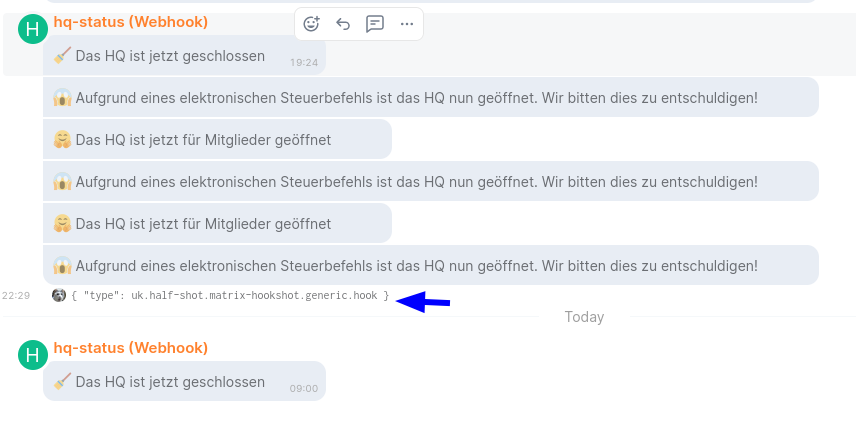

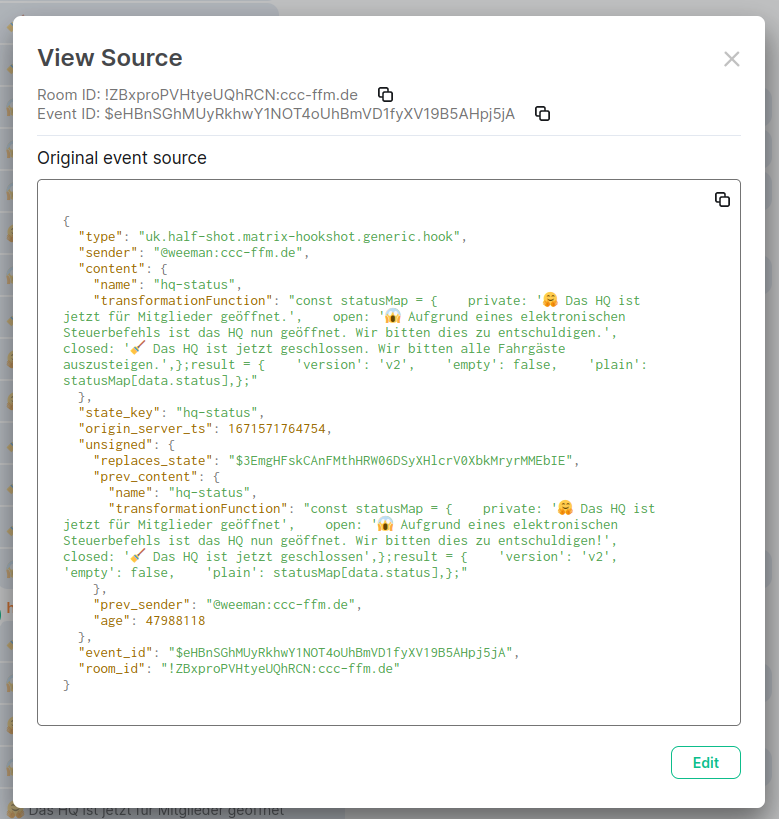

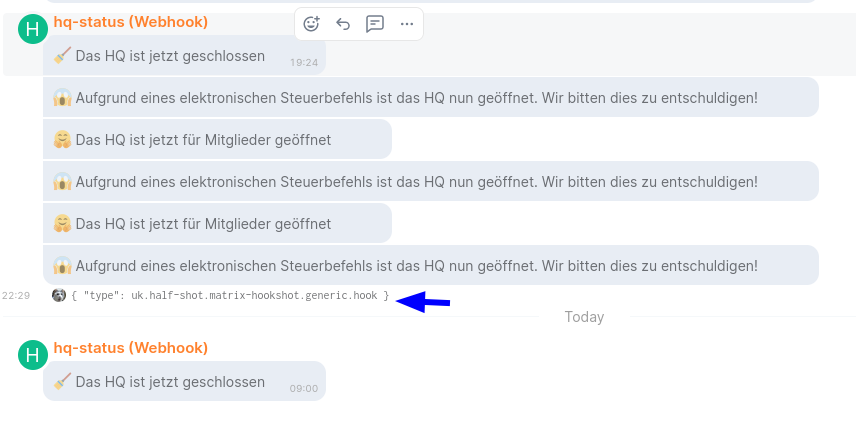

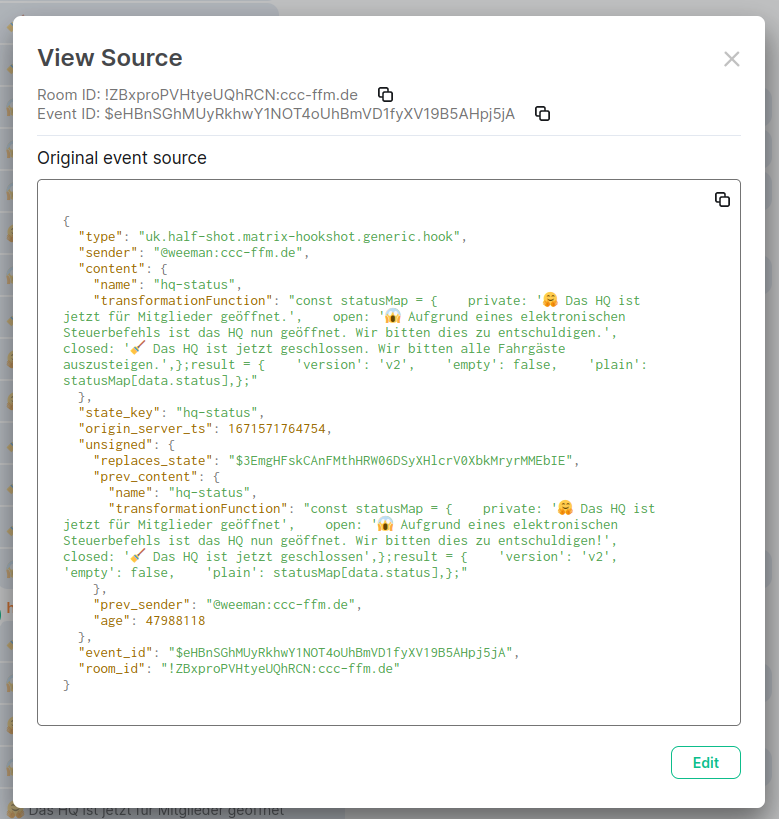

76,478 | 26,450,476,162 | IssuesEvent | 2023-01-16 10:48:26 | matrix-org/matrix-hookshot | https://api.github.com/repos/matrix-org/matrix-hookshot | closed | `transformationFunction` updates not applied | T-Defect | **Steps to reproduce the problem**

- Room with a configured webhook and `transformationFunction` set

- Update `transformationFunction`

- Trigger the webhook

**Expected:**

New transformation function applied

**What actually happens:**

Old transformation function applied

| 1.0 | `transformationFunction` updates not applied - **Steps to reproduce the problem**

- Room with a configured webhook and `transformationFunction` set

- Update `transformationFunction`

- Trigger the webhook

**Expected:**

New transformation function applied

**What actually happens:**

Old transformation function applied

| defect | transformationfunction updates not applied steps to reproduce the problem room with a configured webhook and transformationfunction set update transformationfunction trigger the webhook expected new transformation function applied what actually happens old transformation function applied | 1 |

9,343 | 2,615,145,274 | IssuesEvent | 2015-03-01 06:21:05 | chrsmith/html5rocks | https://api.github.com/repos/chrsmith/html5rocks | closed | Newspaper columns | auto-migrated Milestone-2 Priority-Medium Studio Type-Defect | ```

mostly done, need to apply same style

```

Original issue reported on code.google.com by `v...@google.com` on 29 Jul 2010 at 4:30 | 1.0 | Newspaper columns - ```

mostly done, need to apply same style

```

Original issue reported on code.google.com by `v...@google.com` on 29 Jul 2010 at 4:30 | defect | newspaper columns mostly done need to apply same style original issue reported on code google com by v google com on jul at | 1 |

127,804 | 27,129,807,517 | IssuesEvent | 2023-02-16 08:58:24 | Regalis11/Barotrauma | https://api.github.com/repos/Regalis11/Barotrauma | closed | [Factions] Clown Event is global | Bug Need more info Code | ### Disclaimers

- [X] I have searched the issue tracker to check if the issue has already been reported.

- [ ] My issue happened while using mods.

### What happened?

### Key Information

Game mode: Campaign

Game type: Multiplayer

Campaign save version: Started in 100.13.0.0, issue occurred in version 100.15.0.0

Server type: Steam P2P

### Issue Report

Whilst inside the outpost, I was given text from one of the clown events. I hadn't interacted with any clown or NPC and the text was advancing on its own. I also asked others in the session if they received the text and had mixed results. Many players who did not get the text were not inside the outpost, so perhaps this is related? It could be a networking error though.

### Reproduction steps

_No response_

### Bug prevalence

Just once

### Version

Faction/endgame test branch

### -

_No response_

### Which operating system did you encounter this bug on?

Windows

### Relevant error messages and crash reports

_No response_ | 1.0 | [Factions] Clown Event is global - ### Disclaimers

- [X] I have searched the issue tracker to check if the issue has already been reported.

- [ ] My issue happened while using mods.

### What happened?

### Key Information

Game mode: Campaign

Game type: Multiplayer

Campaign save version: Started in 100.13.0.0, issue occurred in version 100.15.0.0

Server type: Steam P2P

### Issue Report

Whilst inside the outpost, I was given text from one of the clown events. I hadn't interacted with any clown or NPC and the text was advancing on its own. I also asked others in the session if they received the text and had mixed results. Many players who did not get the text were not inside the outpost, so perhaps this is related? It could be a networking error though.

### Reproduction steps

_No response_

### Bug prevalence

Just once

### Version

Faction/endgame test branch

### -

_No response_

### Which operating system did you encounter this bug on?

Windows

### Relevant error messages and crash reports

_No response_ | non_defect | clown event is global disclaimers i have searched the issue tracker to check if the issue has already been reported my issue happened while using mods what happened key information game mode campaign game type multiplayer campaign save version started in issue occurred in version server type steam issue report whilst inside the outpost i was given text from one of the clown events i hadn t interacted with any clown or npc and the text was advancing on its own i also asked others in the session if they received the text and had mixed results many players who did not get the text were not inside the outpost so perhaps this is related it could be a networking error though reproduction steps no response bug prevalence just once version faction endgame test branch no response which operating system did you encounter this bug on windows relevant error messages and crash reports no response | 0 |

23,244 | 4,004,306,806 | IssuesEvent | 2016-05-12 06:34:42 | magento/mtf | https://api.github.com/repos/magento/mtf | closed | Magento admin logging out automatically | magento test question | Hi Lanken,

command: vendor/bin/phpunit --debug tests/app/Magento/Catalog/Test/TestCase/Product/CreateSimpleProductEntityTest.php

If i run any test, it is logging in to backend and again it is logging out automatically so test getting fail.

any suggestion?

Thanks,

| 1.0 | Magento admin logging out automatically - Hi Lanken,

command: vendor/bin/phpunit --debug tests/app/Magento/Catalog/Test/TestCase/Product/CreateSimpleProductEntityTest.php

If i run any test, it is logging in to backend and again it is logging out automatically so test getting fail.

any suggestion?

Thanks,

| non_defect | magento admin logging out automatically hi lanken command vendor bin phpunit debug tests app magento catalog test testcase product createsimpleproductentitytest php if i run any test it is logging in to backend and again it is logging out automatically so test getting fail any suggestion thanks | 0 |

166,791 | 6,311,491,798 | IssuesEvent | 2017-07-23 20:00:15 | ericwbailey/empathy-prompts | https://api.github.com/repos/ericwbailey/empathy-prompts | closed | Mobile breakpoint design tweaks | [Priority] Low [Status] Accepted [Type] Enhancement | - [x] Fix about link being full-width

- [x] Add padding to focus | 1.0 | Mobile breakpoint design tweaks - - [x] Fix about link being full-width

- [x] Add padding to focus | non_defect | mobile breakpoint design tweaks fix about link being full width add padding to focus | 0 |

412,947 | 12,058,608,612 | IssuesEvent | 2020-04-15 17:45:57 | phetsims/aqua | https://api.github.com/repos/phetsims/aqua | closed | Future enhancements to automated testing | priority:5-deferred | After chipper 2.0 and some other work settles it would be good to discuss and prioritize some enhancements to automated testing.

I will bring this issue up at dev meeting for further discussion, but currently marking deferred.

A few comments already brought up:

> SR: It would be great to easily be able to run all tests locally (including fuzz tests and unit tests)

> AP: screenshot snapshot comparison

> AP: keyboard-navigation fuzz testing

> CM: list of shas for some column in CT report

> SR: instructions for how to reproduce a failed test locally

seems also somewhat related to https://github.com/phetsims/aqua/issues/15 | 1.0 | Future enhancements to automated testing - After chipper 2.0 and some other work settles it would be good to discuss and prioritize some enhancements to automated testing.

I will bring this issue up at dev meeting for further discussion, but currently marking deferred.

A few comments already brought up:

> SR: It would be great to easily be able to run all tests locally (including fuzz tests and unit tests)

> AP: screenshot snapshot comparison

> AP: keyboard-navigation fuzz testing

> CM: list of shas for some column in CT report

> SR: instructions for how to reproduce a failed test locally

seems also somewhat related to https://github.com/phetsims/aqua/issues/15 | non_defect | future enhancements to automated testing after chipper and some other work settles it would be good to discuss and prioritize some enhancements to automated testing i will bring this issue up at dev meeting for further discussion but currently marking deferred a few comments already brought up sr it would be great to easily be able to run all tests locally including fuzz tests and unit tests ap screenshot snapshot comparison ap keyboard navigation fuzz testing cm list of shas for some column in ct report sr instructions for how to reproduce a failed test locally seems also somewhat related to | 0 |

135,236 | 18,677,945,625 | IssuesEvent | 2021-10-31 21:49:02 | samq-ghdemo/easybuggy-private | https://api.github.com/repos/samq-ghdemo/easybuggy-private | opened | CVE-2016-4433 (High) detected in struts2-core-2.3.20.jar, xwork-core-2.3.20.jar | security vulnerability | ## CVE-2016-4433 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>struts2-core-2.3.20.jar</b>, <b>xwork-core-2.3.20.jar</b></p></summary>

<p>

<details><summary><b>struts2-core-2.3.20.jar</b></p></summary>

<p>Apache Struts 2</p>

<p>Path to dependency file: easybuggy-private/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/org/apache/struts/struts2-core/2.3.20/struts2-core-2.3.20.jar</p>

<p>

Dependency Hierarchy:

- vulnpackage-1.0.jar (Root Library)

- struts2-rest-plugin-2.3.20.jar

- :x: **struts2-core-2.3.20.jar** (Vulnerable Library)

</details>

<details><summary><b>xwork-core-2.3.20.jar</b></p></summary>

<p>Apache Struts 2</p>

<p>Library home page: <a href="http://struts.apache.org/">http://struts.apache.org/</a></p>

<p>Path to dependency file: easybuggy-private/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/org/apache/struts/xwork/xwork-core/2.3.20/xwork-core-2.3.20.jar</p>

<p>

Dependency Hierarchy:

- vulnpackage-1.0.jar (Root Library)

- struts2-rest-plugin-2.3.20.jar

- struts2-core-2.3.20.jar

- :x: **xwork-core-2.3.20.jar** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/samq-ghdemo/easybuggy-private/commit/6ef2566cb8b39d29f6b8b76a1bd3860df7fac401">6ef2566cb8b39d29f6b8b76a1bd3860df7fac401</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Apache Struts 2 2.3.20 through 2.3.28.1 allows remote attackers to bypass intended access restrictions and conduct redirection attacks via a crafted request.

<p>Publish Date: 2016-07-04

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2016-4433>CVE-2016-4433</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/apache/struts/tree/STRUTS_2_3_29">https://github.com/apache/struts/tree/STRUTS_2_3_29</a></p>

<p>Release Date: 2016-07-04</p>

<p>Fix Resolution: org.apache.struts:struts2-core:2.3.29, org.apache.struts.xwork:xwork-core:2.3.29</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"org.apache.struts","packageName":"struts2-core","packageVersion":"2.3.20","packageFilePaths":["/pom.xml"],"isTransitiveDependency":true,"dependencyTree":"wss:vulnpackage:1.0;org.apache.struts:struts2-rest-plugin:2.3.20;org.apache.struts:struts2-core:2.3.20","isMinimumFixVersionAvailable":true,"minimumFixVersion":"org.apache.struts:struts2-core:2.3.29,\torg.apache.struts.xwork:xwork-core:2.3.29"},{"packageType":"Java","groupId":"org.apache.struts.xwork","packageName":"xwork-core","packageVersion":"2.3.20","packageFilePaths":["/pom.xml"],"isTransitiveDependency":true,"dependencyTree":"wss:vulnpackage:1.0;org.apache.struts:struts2-rest-plugin:2.3.20;org.apache.struts:struts2-core:2.3.20;org.apache.struts.xwork:xwork-core:2.3.20","isMinimumFixVersionAvailable":true,"minimumFixVersion":"org.apache.struts:struts2-core:2.3.29,\torg.apache.struts.xwork:xwork-core:2.3.29"}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2016-4433","vulnerabilityDetails":"Apache Struts 2 2.3.20 through 2.3.28.1 allows remote attackers to bypass intended access restrictions and conduct redirection attacks via a crafted request.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2016-4433","cvss3Severity":"high","cvss3Score":"7.5","cvss3Metrics":{"A":"None","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> --> | True | CVE-2016-4433 (High) detected in struts2-core-2.3.20.jar, xwork-core-2.3.20.jar - ## CVE-2016-4433 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>struts2-core-2.3.20.jar</b>, <b>xwork-core-2.3.20.jar</b></p></summary>

<p>

<details><summary><b>struts2-core-2.3.20.jar</b></p></summary>

<p>Apache Struts 2</p>

<p>Path to dependency file: easybuggy-private/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/org/apache/struts/struts2-core/2.3.20/struts2-core-2.3.20.jar</p>

<p>

Dependency Hierarchy:

- vulnpackage-1.0.jar (Root Library)

- struts2-rest-plugin-2.3.20.jar

- :x: **struts2-core-2.3.20.jar** (Vulnerable Library)

</details>

<details><summary><b>xwork-core-2.3.20.jar</b></p></summary>

<p>Apache Struts 2</p>

<p>Library home page: <a href="http://struts.apache.org/">http://struts.apache.org/</a></p>

<p>Path to dependency file: easybuggy-private/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/org/apache/struts/xwork/xwork-core/2.3.20/xwork-core-2.3.20.jar</p>

<p>

Dependency Hierarchy:

- vulnpackage-1.0.jar (Root Library)

- struts2-rest-plugin-2.3.20.jar

- struts2-core-2.3.20.jar

- :x: **xwork-core-2.3.20.jar** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/samq-ghdemo/easybuggy-private/commit/6ef2566cb8b39d29f6b8b76a1bd3860df7fac401">6ef2566cb8b39d29f6b8b76a1bd3860df7fac401</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Apache Struts 2 2.3.20 through 2.3.28.1 allows remote attackers to bypass intended access restrictions and conduct redirection attacks via a crafted request.

<p>Publish Date: 2016-07-04

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2016-4433>CVE-2016-4433</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>