Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

73,172 | 24,482,443,672 | IssuesEvent | 2022-10-09 02:00:15 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | BUG: Upgrading from Matplotlib 3.5.3 to 3.6.0 Breaks voronoi_plot_2d() | defect scipy.spatial | ### Describe your issue.

Upgrading from Matplotlib 3.5.3 to 3.6.0 Breaks voronoi_plot_2d()

### Reproducing Code Example

```python

rng = np.random.default_rng()

points = rng.random((10,2))

vor = Voronoi(points)

fig = voronoi_plot_2d(vor)

fig = voronoi_plot_2d(vor, show_vertices=False, line_colors='orange',

line_width=2, line_alpha=0.6, point_size=2)

plt.show()

```

### Error message

```shell

Traceback (most recent call last):

File "C:\Users\cpgui\AppData\Local\Programs\Python\Python310\lib\code.py", line 90, in runcode

exec(code, self.locals)

File "<input>", line 1, in <module>

File "C:\Program Files\JetBrains\PyCharm 2022.2\plugins\python\helpers\pydev\_pydev_bundle\pydev_umd.py", line 198, in runfile

pydev_imports.execfile(filename, global_vars, local_vars) # execute the script

File "C:\Program Files\JetBrains\PyCharm 2022.2\plugins\python\helpers\pydev\_pydev_imps\_pydev_execfile.py", line 18, in execfile

exec(compile(contents+"\n", file, 'exec'), glob, loc)

File "C:/Users/cpgui/PycharmProjects/Toyota-Research/main.py", line 34, in <module>

fig = voronoi_plot_2d(vor)

File "<decorator-gen-10>", line 2, in voronoi_plot_2d

File "C:\Users\cpgui\PycharmProjects\Toyota-Research\venv\lib\site-packages\scipy\spatial\_plotutils.py", line 12, in _held_figure

fig = plt.figure()

File "C:\Users\cpgui\PycharmProjects\Toyota-Research\venv\lib\site-packages\matplotlib\_api\deprecation.py", line 454, in wrapper

return func(*args, **kwargs)

File "C:\Users\cpgui\PycharmProjects\Toyota-Research\venv\lib\site-packages\matplotlib\pyplot.py", line 771, in figure

manager = new_figure_manager(

File "C:\Users\cpgui\PycharmProjects\Toyota-Research\venv\lib\site-packages\matplotlib\pyplot.py", line 346, in new_figure_manager

_warn_if_gui_out_of_main_thread()

File "C:\Users\cpgui\PycharmProjects\Toyota-Research\venv\lib\site-packages\matplotlib\pyplot.py", line 336, in _warn_if_gui_out_of_main_thread

if (_get_required_interactive_framework(_get_backend_mod()) and

File "C:\Users\cpgui\PycharmProjects\Toyota-Research\venv\lib\site-packages\matplotlib\pyplot.py", line 206, in _get_backend_mod

switch_backend(dict.__getitem__(rcParams, "backend"))

File "C:\Users\cpgui\PycharmProjects\Toyota-Research\venv\lib\site-packages\matplotlib\pyplot.py", line 266, in switch_backend

canvas_class = backend_mod.FigureCanvas

AttributeError: module 'backend_interagg' has no attribute 'FigureCanvas'. Did you mean: 'FigureCanvasAgg'?

```

### SciPy/NumPy/Python version information

scipy 1.9.1, numpy 1.23.3, python 3.10 | 1.0 | BUG: Upgrading from Matplotlib 3.5.3 to 3.6.0 Breaks voronoi_plot_2d() - ### Describe your issue.

Upgrading from Matplotlib 3.5.3 to 3.6.0 Breaks voronoi_plot_2d()

### Reproducing Code Example

```python

rng = np.random.default_rng()

points = rng.random((10,2))

vor = Voronoi(points)

fig = voronoi_plot_2d(vor)

fig = voronoi_plot_2d(vor, show_vertices=False, line_colors='orange',

line_width=2, line_alpha=0.6, point_size=2)

plt.show()

```

### Error message

```shell

Traceback (most recent call last):

File "C:\Users\cpgui\AppData\Local\Programs\Python\Python310\lib\code.py", line 90, in runcode

exec(code, self.locals)

File "<input>", line 1, in <module>

File "C:\Program Files\JetBrains\PyCharm 2022.2\plugins\python\helpers\pydev\_pydev_bundle\pydev_umd.py", line 198, in runfile

pydev_imports.execfile(filename, global_vars, local_vars) # execute the script

File "C:\Program Files\JetBrains\PyCharm 2022.2\plugins\python\helpers\pydev\_pydev_imps\_pydev_execfile.py", line 18, in execfile

exec(compile(contents+"\n", file, 'exec'), glob, loc)

File "C:/Users/cpgui/PycharmProjects/Toyota-Research/main.py", line 34, in <module>

fig = voronoi_plot_2d(vor)

File "<decorator-gen-10>", line 2, in voronoi_plot_2d

File "C:\Users\cpgui\PycharmProjects\Toyota-Research\venv\lib\site-packages\scipy\spatial\_plotutils.py", line 12, in _held_figure

fig = plt.figure()

File "C:\Users\cpgui\PycharmProjects\Toyota-Research\venv\lib\site-packages\matplotlib\_api\deprecation.py", line 454, in wrapper

return func(*args, **kwargs)

File "C:\Users\cpgui\PycharmProjects\Toyota-Research\venv\lib\site-packages\matplotlib\pyplot.py", line 771, in figure

manager = new_figure_manager(

File "C:\Users\cpgui\PycharmProjects\Toyota-Research\venv\lib\site-packages\matplotlib\pyplot.py", line 346, in new_figure_manager

_warn_if_gui_out_of_main_thread()

File "C:\Users\cpgui\PycharmProjects\Toyota-Research\venv\lib\site-packages\matplotlib\pyplot.py", line 336, in _warn_if_gui_out_of_main_thread

if (_get_required_interactive_framework(_get_backend_mod()) and

File "C:\Users\cpgui\PycharmProjects\Toyota-Research\venv\lib\site-packages\matplotlib\pyplot.py", line 206, in _get_backend_mod

switch_backend(dict.__getitem__(rcParams, "backend"))

File "C:\Users\cpgui\PycharmProjects\Toyota-Research\venv\lib\site-packages\matplotlib\pyplot.py", line 266, in switch_backend

canvas_class = backend_mod.FigureCanvas

AttributeError: module 'backend_interagg' has no attribute 'FigureCanvas'. Did you mean: 'FigureCanvasAgg'?

```

### SciPy/NumPy/Python version information

scipy 1.9.1, numpy 1.23.3, python 3.10 | defect | bug upgrading from matplotlib to breaks voronoi plot describe your issue upgrading from matplotlib to breaks voronoi plot reproducing code example python rng np random default rng points rng random vor voronoi points fig voronoi plot vor fig voronoi plot vor show vertices false line colors orange line width line alpha point size plt show error message shell traceback most recent call last file c users cpgui appdata local programs python lib code py line in runcode exec code self locals file line in file c program files jetbrains pycharm plugins python helpers pydev pydev bundle pydev umd py line in runfile pydev imports execfile filename global vars local vars execute the script file c program files jetbrains pycharm plugins python helpers pydev pydev imps pydev execfile py line in execfile exec compile contents n file exec glob loc file c users cpgui pycharmprojects toyota research main py line in fig voronoi plot vor file line in voronoi plot file c users cpgui pycharmprojects toyota research venv lib site packages scipy spatial plotutils py line in held figure fig plt figure file c users cpgui pycharmprojects toyota research venv lib site packages matplotlib api deprecation py line in wrapper return func args kwargs file c users cpgui pycharmprojects toyota research venv lib site packages matplotlib pyplot py line in figure manager new figure manager file c users cpgui pycharmprojects toyota research venv lib site packages matplotlib pyplot py line in new figure manager warn if gui out of main thread file c users cpgui pycharmprojects toyota research venv lib site packages matplotlib pyplot py line in warn if gui out of main thread if get required interactive framework get backend mod and file c users cpgui pycharmprojects toyota research venv lib site packages matplotlib pyplot py line in get backend mod switch backend dict getitem rcparams backend file c users cpgui pycharmprojects toyota research venv lib site packages matplotlib pyplot py line in switch backend canvas class backend mod figurecanvas attributeerror module backend interagg has no attribute figurecanvas did you mean figurecanvasagg scipy numpy python version information scipy numpy python | 1 |

125,696 | 4,963,665,581 | IssuesEvent | 2016-12-03 10:54:21 | gre/gl-react | https://api.github.com/repos/gre/gl-react | closed | Configure the linear interpolation of any sampler2D uniform | feature priority:medium | linear interpolation can be defined on almost everything: textures, content and fbos.

so we should be able to define a `disableLinearInterpolation: true` prop on a GL.Uniform or attached in a `{value, opts: { disableLinearInterpolation: true }}` object, like it's already possible for ndarray in DOM impl: https://github.com/ProjectSeptemberInc/gl-react-dom/blob/601180581a461760f541ee459552df9b8d2c52ef/Examples/Simple/Colorify.js#L36 / https://github.com/ProjectSeptemberInc/gl-react-dom/blob/6e4b9f4a8eb0045977c643a4920651082cb36fcb/Examples/Tests/index.js#L102-L111

use case:

you have a HelloGL 2x2 and you want to scale up to 200x200 but without linear interpolation so you preserve the 4 pixels in the display

| 1.0 | Configure the linear interpolation of any sampler2D uniform - linear interpolation can be defined on almost everything: textures, content and fbos.

so we should be able to define a `disableLinearInterpolation: true` prop on a GL.Uniform or attached in a `{value, opts: { disableLinearInterpolation: true }}` object, like it's already possible for ndarray in DOM impl: https://github.com/ProjectSeptemberInc/gl-react-dom/blob/601180581a461760f541ee459552df9b8d2c52ef/Examples/Simple/Colorify.js#L36 / https://github.com/ProjectSeptemberInc/gl-react-dom/blob/6e4b9f4a8eb0045977c643a4920651082cb36fcb/Examples/Tests/index.js#L102-L111

use case:

you have a HelloGL 2x2 and you want to scale up to 200x200 but without linear interpolation so you preserve the 4 pixels in the display

| non_defect | configure the linear interpolation of any uniform linear interpolation can be defined on almost everything textures content and fbos so we should be able to define a disablelinearinterpolation true prop on a gl uniform or attached in a value opts disablelinearinterpolation true object like it s already possible for ndarray in dom impl use case you have a hellogl and you want to scale up to but without linear interpolation so you preserve the pixels in the display | 0 |

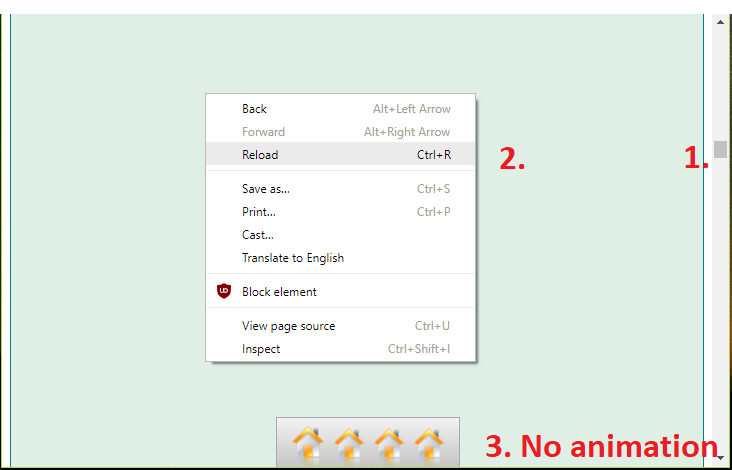

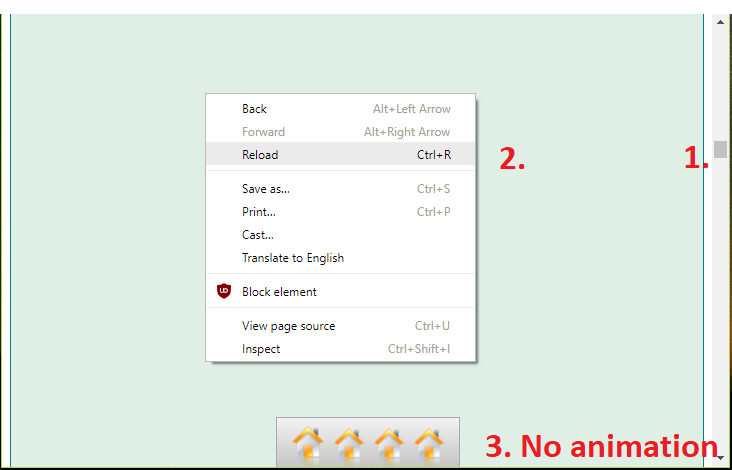

41,961 | 10,727,953,552 | IssuesEvent | 2019-10-28 12:57:10 | primefaces/primefaces | https://api.github.com/repos/primefaces/primefaces | closed | Dock: problems when page has scrollbar | defect | Hi, I noticed some erroneous behaviour with the dock component on the latest 6.2 RC1

Problem 1:

When reloading the page, as scroll status is not the top (e.g. in the middle of the page) the dock does not animate correctly.

Problem 2:

When using halign="right" the dock moves into the scrollbar

Code to reproduce:

```xml

<html xmlns="http://www.w3.org/1999/xhtml"

xmlns:h="http://java.sun.com/jsf/html"

xmlns:p="http://primefaces.org/ui"

xmlns:f="http://xmlns.jcp.org/jsf/core">

<h:head>

</h:head>

<h:body>

<h:form>

<div

style="height: 20000px; background: #e0efe5; border: 1px solid teal;">

Lorem ipsum dolor sit amet, consetetur sadipscing elitr, sed diam

nonumy eirmod tempor invidunt ut labore et dolore magna aliquyam

erat, sed diam voluptua. At vero eos et accusam et justo duo dolores

et ea rebum. Stet clita kasd gubergren, no sea takimata sanctus est

Lorem ipsum dolor sit amet. Lorem ipsum dolor sit amet, consetetur

sadipscing elitr, sed diam nonumy eirmod tempor invidunt ut labore et

dolore magna aliquyam erat, sed diam voluptua. At vero eos et accusam

et justo duo dolores et ea rebum. Stet clita kasd gubergren, no sea

takimata sanctus est Lorem ipsum dolor sit amet.</div>

<p:dock position="bottom">

<p:menuitem value="123"

icon="https://www.primefaces.org/showcase/resources/demo/images/dock/home.png"

onclick="return false;" />

<p:menuitem value="456"

icon="https://www.primefaces.org/showcase/resources/demo/images/dock/home.png"

onclick="return false;" />

<p:menuitem value="789"

icon="https://www.primefaces.org/showcase/resources/demo/images/dock/home.png"

onclick="return false;" />

<p:menuitem value="abc"

icon="https://www.primefaces.org/showcase/resources/demo/images/dock/home.png"

onclick="return false;" />

</p:dock>

</h:form>

</h:body>

</html>

```

| 1.0 | Dock: problems when page has scrollbar - Hi, I noticed some erroneous behaviour with the dock component on the latest 6.2 RC1

Problem 1:

When reloading the page, as scroll status is not the top (e.g. in the middle of the page) the dock does not animate correctly.

Problem 2:

When using halign="right" the dock moves into the scrollbar

Code to reproduce:

```xml

<html xmlns="http://www.w3.org/1999/xhtml"

xmlns:h="http://java.sun.com/jsf/html"

xmlns:p="http://primefaces.org/ui"

xmlns:f="http://xmlns.jcp.org/jsf/core">

<h:head>

</h:head>

<h:body>

<h:form>

<div

style="height: 20000px; background: #e0efe5; border: 1px solid teal;">

Lorem ipsum dolor sit amet, consetetur sadipscing elitr, sed diam

nonumy eirmod tempor invidunt ut labore et dolore magna aliquyam

erat, sed diam voluptua. At vero eos et accusam et justo duo dolores

et ea rebum. Stet clita kasd gubergren, no sea takimata sanctus est

Lorem ipsum dolor sit amet. Lorem ipsum dolor sit amet, consetetur

sadipscing elitr, sed diam nonumy eirmod tempor invidunt ut labore et

dolore magna aliquyam erat, sed diam voluptua. At vero eos et accusam

et justo duo dolores et ea rebum. Stet clita kasd gubergren, no sea

takimata sanctus est Lorem ipsum dolor sit amet.</div>

<p:dock position="bottom">

<p:menuitem value="123"

icon="https://www.primefaces.org/showcase/resources/demo/images/dock/home.png"

onclick="return false;" />

<p:menuitem value="456"

icon="https://www.primefaces.org/showcase/resources/demo/images/dock/home.png"

onclick="return false;" />

<p:menuitem value="789"

icon="https://www.primefaces.org/showcase/resources/demo/images/dock/home.png"

onclick="return false;" />

<p:menuitem value="abc"

icon="https://www.primefaces.org/showcase/resources/demo/images/dock/home.png"

onclick="return false;" />

</p:dock>

</h:form>

</h:body>

</html>

```

| defect | dock problems when page has scrollbar hi i noticed some erroneous behaviour with the dock component on the latest problem when reloading the page as scroll status is not the top e g in the middle of the page the dock does not animate correctly problem when using halign right the dock moves into the scrollbar code to reproduce xml html xmlns xmlns h xmlns p xmlns f div style height background border solid teal lorem ipsum dolor sit amet consetetur sadipscing elitr sed diam nonumy eirmod tempor invidunt ut labore et dolore magna aliquyam erat sed diam voluptua at vero eos et accusam et justo duo dolores et ea rebum stet clita kasd gubergren no sea takimata sanctus est lorem ipsum dolor sit amet lorem ipsum dolor sit amet consetetur sadipscing elitr sed diam nonumy eirmod tempor invidunt ut labore et dolore magna aliquyam erat sed diam voluptua at vero eos et accusam et justo duo dolores et ea rebum stet clita kasd gubergren no sea takimata sanctus est lorem ipsum dolor sit amet p menuitem value icon onclick return false p menuitem value icon onclick return false p menuitem value icon onclick return false p menuitem value abc icon onclick return false | 1 |

84,038 | 10,467,336,648 | IssuesEvent | 2019-09-22 04:04:00 | Original-heapsters/gitdrnk | https://api.github.com/repos/Original-heapsters/gitdrnk | closed | Add a user to a game if they join and havent been there before | API Backend DBDesign | user email needs to be appended to the game they join(if they havent before) | 1.0 | Add a user to a game if they join and havent been there before - user email needs to be appended to the game they join(if they havent before) | non_defect | add a user to a game if they join and havent been there before user email needs to be appended to the game they join if they havent before | 0 |

104,081 | 4,194,991,296 | IssuesEvent | 2016-06-25 12:41:47 | fac-freelancers/website | https://api.github.com/repos/fac-freelancers/website | opened | Give the icons in the howWeWork section an animation | priority-2 T30min | They just jump suddenly to a larger size. They should smoothly enlarge and shrink on click | 1.0 | Give the icons in the howWeWork section an animation - They just jump suddenly to a larger size. They should smoothly enlarge and shrink on click | non_defect | give the icons in the howwework section an animation they just jump suddenly to a larger size they should smoothly enlarge and shrink on click | 0 |

53,302 | 3,038,120,174 | IssuesEvent | 2015-08-06 20:43:06 | sweet4lorie/TestRepo | https://api.github.com/repos/sweet4lorie/TestRepo | closed | Importing pymel outside of Maya for the benefit of context-specific auto-completion in a python text editor. | bug imported Milestone-0.7.x Priority-Medium | _From [olegalex...@gmail.com](https://code.google.com/u/117772517933350215565/) on November 19, 2007 12:03:29_

When attempting to import pymel outside of maya on Windows XP:

\>>> import pymel

Traceback (most recent call last):

File "<interactive input>", line 1, in \<module>

File "C:\Program Files\Autodesk\Maya2008\Python\lib\site-

packages\pymel\__init__.py", line 341, in \<module>

del(eval)

NameError: name 'eval' is not defined

_Original issue: http://code.google.com/p/pymel/issues/detail?id=1_ | 1.0 | Importing pymel outside of Maya for the benefit of context-specific auto-completion in a python text editor. - _From [olegalex...@gmail.com](https://code.google.com/u/117772517933350215565/) on November 19, 2007 12:03:29_

When attempting to import pymel outside of maya on Windows XP:

\>>> import pymel

Traceback (most recent call last):

File "<interactive input>", line 1, in \<module>

File "C:\Program Files\Autodesk\Maya2008\Python\lib\site-

packages\pymel\__init__.py", line 341, in \<module>

del(eval)

NameError: name 'eval' is not defined

_Original issue: http://code.google.com/p/pymel/issues/detail?id=1_ | non_defect | importing pymel outside of maya for the benefit of context specific auto completion in a python text editor from on november when attempting to import pymel outside of maya on windows xp import pymel traceback most recent call last file line in file c program files autodesk python lib site packages pymel init py line in del eval nameerror name eval is not defined original issue | 0 |

57,843 | 16,101,205,748 | IssuesEvent | 2021-04-27 09:31:02 | openzfs/zfs | https://api.github.com/repos/openzfs/zfs | opened | enclosure path not updated when it changes between reboots | Status: Triage Needed Type: Defect | <!-- Please fill out the following template, which will help other contributors address your issue. -->

<!--

Thank you for reporting an issue.

*IMPORTANT* - Please check our issue tracker before opening a new issue.

Additional valuable information can be found in the OpenZFS documentation

and mailing list archives.

Please fill in as much of the template as possible.

-->

### System information

<!-- add version after "|" character -->

Type | Version/Name

--- | ---

Distribution Name | Ubuntu

Distribution Version | 20.04.2 LTS

Linux Kernel |5.4.0-72-generic

Architecture |x86_64

ZFS Version |0.8.3-1ubuntu12.8 and 2.1.0-rc4

SPL Version |0.8.3-1ubuntu12.8 and 2.1.0-rc4

<!--

Commands to find ZFS/SPL versions:

modinfo zfs | grep -iw version

modinfo spl | grep -iw version

-->

### Describe the problem you're observing

If enclosure path changes between reboots, the old enclosure path is still left in the configuration even though it should be updated on import, AIUI. Even after an explicit `zpool export` followed by `zpool import` of the pool the old/wrong enclosure path is still present. It should be noted that the config does update other properties, like path (there seems to be some regression with our vdev_id.conf so the disk numbers are different with 2.1.0-rc4).

```

# zdb -C blob3|tail -n 15

children[11]:

type: 'disk'

id: 11

guid: 15843257147329148638

path: '/dev/disk/by-vdev/enc10d11-part1'

devid: 'scsi-35000cca23b221f98-part1'

phys_path: 'pci-0000:20:00.0-sas-exp0x5001438018375120-phy11-lun-0'

vdev_enc_sysfs_path: '/sys/class/enclosure/2:0:7:0/11'

whole_disk: 1

DTL: 512

create_txg: 4

com.delphix:vdev_zap_leaf: 141

# ls -la /dev/disk/by-vdev/enc10d11

lrwxrwxrwx 1 root root 10 Apr 27 10:59 /dev/disk/by-vdev/enc10d11 -> ../../sdai

# ls -la /sys/block/sdai/device/enclosure_device*

lrwxrwxrwx 1 root root 0 Apr 27 11:01 /sys/block/sdai/device/enclosure_device:11 -> ../../../../port-2:0:12/end_device-2:0:12/target2:0:16/2:0:16:0/enclosure/2:0:16:0/11/

# ls /sys/class/enclosure/

1:0:12:0@ 2:0:16:0@

```

As can be seen above, enclosure 2:0:7:0 isn't present anymore, but 2:0:16:0 now exists.

### Describe how to reproduce the problem

Our enclosures are primarily HP D2700 and home-built ones based on HP DL180G6.

The enclosure device number seems to move depending on how many disks are inserted when plugged in. So for example if you have 3 disks in the enclosure, plug it in, and then fill it with disks, and then reboot the machine, you will find the enclosure on a different ID.

### Include any warning/errors/backtraces from the system logs

<!--

*IMPORTANT* - Please mark logs and text output from terminal commands

or else Github will not display them correctly.

An example is provided below.

Example:

```

this is an example how log text should be marked (wrap it with ```)

```

-->

| 1.0 | enclosure path not updated when it changes between reboots - <!-- Please fill out the following template, which will help other contributors address your issue. -->

<!--

Thank you for reporting an issue.

*IMPORTANT* - Please check our issue tracker before opening a new issue.

Additional valuable information can be found in the OpenZFS documentation

and mailing list archives.

Please fill in as much of the template as possible.

-->

### System information

<!-- add version after "|" character -->

Type | Version/Name

--- | ---

Distribution Name | Ubuntu

Distribution Version | 20.04.2 LTS

Linux Kernel |5.4.0-72-generic

Architecture |x86_64

ZFS Version |0.8.3-1ubuntu12.8 and 2.1.0-rc4

SPL Version |0.8.3-1ubuntu12.8 and 2.1.0-rc4

<!--

Commands to find ZFS/SPL versions:

modinfo zfs | grep -iw version

modinfo spl | grep -iw version

-->

### Describe the problem you're observing

If enclosure path changes between reboots, the old enclosure path is still left in the configuration even though it should be updated on import, AIUI. Even after an explicit `zpool export` followed by `zpool import` of the pool the old/wrong enclosure path is still present. It should be noted that the config does update other properties, like path (there seems to be some regression with our vdev_id.conf so the disk numbers are different with 2.1.0-rc4).

```

# zdb -C blob3|tail -n 15

children[11]:

type: 'disk'

id: 11

guid: 15843257147329148638

path: '/dev/disk/by-vdev/enc10d11-part1'

devid: 'scsi-35000cca23b221f98-part1'

phys_path: 'pci-0000:20:00.0-sas-exp0x5001438018375120-phy11-lun-0'

vdev_enc_sysfs_path: '/sys/class/enclosure/2:0:7:0/11'

whole_disk: 1

DTL: 512

create_txg: 4

com.delphix:vdev_zap_leaf: 141

# ls -la /dev/disk/by-vdev/enc10d11

lrwxrwxrwx 1 root root 10 Apr 27 10:59 /dev/disk/by-vdev/enc10d11 -> ../../sdai

# ls -la /sys/block/sdai/device/enclosure_device*

lrwxrwxrwx 1 root root 0 Apr 27 11:01 /sys/block/sdai/device/enclosure_device:11 -> ../../../../port-2:0:12/end_device-2:0:12/target2:0:16/2:0:16:0/enclosure/2:0:16:0/11/

# ls /sys/class/enclosure/

1:0:12:0@ 2:0:16:0@

```

As can be seen above, enclosure 2:0:7:0 isn't present anymore, but 2:0:16:0 now exists.

### Describe how to reproduce the problem

Our enclosures are primarily HP D2700 and home-built ones based on HP DL180G6.

The enclosure device number seems to move depending on how many disks are inserted when plugged in. So for example if you have 3 disks in the enclosure, plug it in, and then fill it with disks, and then reboot the machine, you will find the enclosure on a different ID.

### Include any warning/errors/backtraces from the system logs

<!--

*IMPORTANT* - Please mark logs and text output from terminal commands

or else Github will not display them correctly.

An example is provided below.

Example:

```

this is an example how log text should be marked (wrap it with ```)

```

-->

| defect | enclosure path not updated when it changes between reboots thank you for reporting an issue important please check our issue tracker before opening a new issue additional valuable information can be found in the openzfs documentation and mailing list archives please fill in as much of the template as possible system information type version name distribution name ubuntu distribution version lts linux kernel generic architecture zfs version and spl version and commands to find zfs spl versions modinfo zfs grep iw version modinfo spl grep iw version describe the problem you re observing if enclosure path changes between reboots the old enclosure path is still left in the configuration even though it should be updated on import aiui even after an explicit zpool export followed by zpool import of the pool the old wrong enclosure path is still present it should be noted that the config does update other properties like path there seems to be some regression with our vdev id conf so the disk numbers are different with zdb c tail n children type disk id guid path dev disk by vdev devid scsi phys path pci sas lun vdev enc sysfs path sys class enclosure whole disk dtl create txg com delphix vdev zap leaf ls la dev disk by vdev lrwxrwxrwx root root apr dev disk by vdev sdai ls la sys block sdai device enclosure device lrwxrwxrwx root root apr sys block sdai device enclosure device port end device enclosure ls sys class enclosure as can be seen above enclosure isn t present anymore but now exists describe how to reproduce the problem our enclosures are primarily hp and home built ones based on hp the enclosure device number seems to move depending on how many disks are inserted when plugged in so for example if you have disks in the enclosure plug it in and then fill it with disks and then reboot the machine you will find the enclosure on a different id include any warning errors backtraces from the system logs important please mark logs and text output from terminal commands or else github will not display them correctly an example is provided below example this is an example how log text should be marked wrap it with | 1 |

564,481 | 16,726,766,583 | IssuesEvent | 2021-06-10 13:50:06 | OpenNebula/one | https://api.github.com/repos/OpenNebula/one | closed | Users can not update VM config | Category: Core & System Community Priority: Normal Status: Accepted Type: Bug | **Description**

When a regular user tries to update the VM's config (for example, a context var), it gets the following error:

```

one.vm.updateconf result FAILURE [one.vm.updateconf] User [2] : Template includes a restricted attribute DISK.

```

The specific setting from oned.conf creating the issue is:

```

VM_RESTRICTED_ATTR = "DISK/ORIGINAL_SIZE"

```

The weird part is that this setting exists also in older versions (for example, 5.10.5), but this issue is non existent on that version.

**To Reproduce**

Create a user (not belonging to oneadmin group), allow this user to use a specific vm (use permission, change owner, whatever). Try to update the VM's context.

**Details**

- Affected components: core

- Version: 5.12.3

**Additional context**

Add any other context about the problem here.

<!--////////////////////////////////////////////-->

<!-- THIS SECTION IS FOR THE DEVELOPMENT TEAM -->

<!-- BOTH FOR BUGS AND ENHANCEMENT REQUESTS -->

<!-- PROGRESS WILL BE REFLECTED HERE -->

<!--////////////////////////////////////////////-->

## Progress Status

- [ ] Branch created

- [ ] Code committed to development branch

- [ ] Testing - QA

- [ ] Documentation

- [ ] Release notes - resolved issues, compatibility, known issues

- [ ] Code committed to upstream release/hotfix branches

- [ ] Documentation committed to upstream release/hotfix branches

| 1.0 | Users can not update VM config - **Description**

When a regular user tries to update the VM's config (for example, a context var), it gets the following error:

```

one.vm.updateconf result FAILURE [one.vm.updateconf] User [2] : Template includes a restricted attribute DISK.

```

The specific setting from oned.conf creating the issue is:

```

VM_RESTRICTED_ATTR = "DISK/ORIGINAL_SIZE"

```

The weird part is that this setting exists also in older versions (for example, 5.10.5), but this issue is non existent on that version.

**To Reproduce**

Create a user (not belonging to oneadmin group), allow this user to use a specific vm (use permission, change owner, whatever). Try to update the VM's context.

**Details**

- Affected components: core

- Version: 5.12.3

**Additional context**

Add any other context about the problem here.

<!--////////////////////////////////////////////-->

<!-- THIS SECTION IS FOR THE DEVELOPMENT TEAM -->

<!-- BOTH FOR BUGS AND ENHANCEMENT REQUESTS -->

<!-- PROGRESS WILL BE REFLECTED HERE -->

<!--////////////////////////////////////////////-->

## Progress Status

- [ ] Branch created

- [ ] Code committed to development branch

- [ ] Testing - QA

- [ ] Documentation

- [ ] Release notes - resolved issues, compatibility, known issues

- [ ] Code committed to upstream release/hotfix branches

- [ ] Documentation committed to upstream release/hotfix branches

| non_defect | users can not update vm config description when a regular user tries to update the vm s config for example a context var it gets the following error one vm updateconf result failure user template includes a restricted attribute disk the specific setting from oned conf creating the issue is vm restricted attr disk original size the weird part is that this setting exists also in older versions for example but this issue is non existent on that version to reproduce create a user not belonging to oneadmin group allow this user to use a specific vm use permission change owner whatever try to update the vm s context details affected components core version additional context add any other context about the problem here progress status branch created code committed to development branch testing qa documentation release notes resolved issues compatibility known issues code committed to upstream release hotfix branches documentation committed to upstream release hotfix branches | 0 |

102,823 | 12,825,728,502 | IssuesEvent | 2020-07-06 15:23:53 | Opentrons/opentrons | https://api.github.com/repos/Opentrons/opentrons | opened | TC PD UX: Add tooltips to profile buttons | :spider: SPDDRS design protocol designer | ## Background

As a user I would like to know the difference between the + STEP and + CYCLE buttons

## Acceptance criteria

- [ ] Add tooltip to + STEP button

- [ ] Add tooltip to + CYCLE button

- [ ] Add tooltip to + STEP button within a cycle

## Copy

tbd | 2.0 | TC PD UX: Add tooltips to profile buttons - ## Background

As a user I would like to know the difference between the + STEP and + CYCLE buttons

## Acceptance criteria

- [ ] Add tooltip to + STEP button

- [ ] Add tooltip to + CYCLE button

- [ ] Add tooltip to + STEP button within a cycle

## Copy

tbd | non_defect | tc pd ux add tooltips to profile buttons background as a user i would like to know the difference between the step and cycle buttons acceptance criteria add tooltip to step button add tooltip to cycle button add tooltip to step button within a cycle copy tbd | 0 |

67,510 | 20,972,894,683 | IssuesEvent | 2022-03-28 13:01:11 | primefaces/primeng | https://api.github.com/repos/primefaces/primeng | closed | p-autoComplete: Dropdown stays open when using iOS Safari next/prev keyboard buttons | defect | **I'm submitting a ...**

[x] bug report

**Current behavior**

Go to page with multiple input fields and an autocomplete, press safari next button on ipad keyboard. The focus jumps to next input field but the dropdown is not closed (example in attached image)

**Expected behavior**

After pressing the safari next button, the dropdown should close.

**Minimal reproduction of the problem with instructions**

1. Focus autocomplete field

2. Type a letter so suggestions are shown

3. Press next button on safari keyboard to next input

**Please tell us about your environment:**

Ipad air 2 - iOS 12 - Safari

* **Angular version:** 6.X

* **PrimeNG version:** 6.1.4

* **Browser:** iOS 12 Safari

| 1.0 | p-autoComplete: Dropdown stays open when using iOS Safari next/prev keyboard buttons - **I'm submitting a ...**

[x] bug report

**Current behavior**

Go to page with multiple input fields and an autocomplete, press safari next button on ipad keyboard. The focus jumps to next input field but the dropdown is not closed (example in attached image)

**Expected behavior**

After pressing the safari next button, the dropdown should close.

**Minimal reproduction of the problem with instructions**

1. Focus autocomplete field

2. Type a letter so suggestions are shown

3. Press next button on safari keyboard to next input

**Please tell us about your environment:**

Ipad air 2 - iOS 12 - Safari

* **Angular version:** 6.X

* **PrimeNG version:** 6.1.4

* **Browser:** iOS 12 Safari

| defect | p autocomplete dropdown stays open when using ios safari next prev keyboard buttons i m submitting a bug report current behavior go to page with multiple input fields and an autocomplete press safari next button on ipad keyboard the focus jumps to next input field but the dropdown is not closed example in attached image expected behavior after pressing the safari next button the dropdown should close minimal reproduction of the problem with instructions focus autocomplete field type a letter so suggestions are shown press next button on safari keyboard to next input please tell us about your environment ipad air ios safari angular version x primeng version browser ios safari | 1 |

34,980 | 6,398,168,044 | IssuesEvent | 2017-08-04 19:53:41 | wizeline/wizelink-back | https://api.github.com/repos/wizeline/wizelink-back | closed | Design and document architecture for Story 1 (Save Links) | documentation p0 | The resulting document will be augmented as more stories are included, and will probably grow organically. It's just important to get it started so that there's a place to document architectural decisions as we move along. | 1.0 | Design and document architecture for Story 1 (Save Links) - The resulting document will be augmented as more stories are included, and will probably grow organically. It's just important to get it started so that there's a place to document architectural decisions as we move along. | non_defect | design and document architecture for story save links the resulting document will be augmented as more stories are included and will probably grow organically it s just important to get it started so that there s a place to document architectural decisions as we move along | 0 |

22,823 | 3,703,133,875 | IssuesEvent | 2016-02-29 19:18:36 | dart-lang/sdk | https://api.github.com/repos/dart-lang/sdk | closed | Investigate enabling field length guard for non-final fields. | accepted area-vm priority-unassigned Type-Defect | Field guard length checking is only done for final fields. Investigate relaxing this. | 1.0 | Investigate enabling field length guard for non-final fields. - Field guard length checking is only done for final fields. Investigate relaxing this. | defect | investigate enabling field length guard for non final fields field guard length checking is only done for final fields investigate relaxing this | 1 |

67,770 | 28,045,622,753 | IssuesEvent | 2023-03-28 22:31:39 | cncf/cnf-testsuite | https://api.github.com/repos/cncf/cnf-testsuite | closed | [Workload] Check if processes use a sig term | 8 pts workload microservice sprint23.07 v0.41.3 | ## Title: [Workload] Microservices test: sig_term_handled

**Is your workload test idea related to a problem? Please describe.**

- SIGTERM

Problem 1: How the Linux kernel handles signals

The Linux kernel handles signals differently for the process that has PID 1 than it does for other processes. Signal handlers aren't automatically registered for this process, meaning that signals such as SIGTERM or SIGINT will have no effect by default. By default, you must kill processes by using SIGKILL, preventing any graceful shutdown. Depending on your app, using SIGKILL can result in user-facing errors, interrupted writes (for data stores), or unwanted alerts in your monitoring system.

https://cloud.google.com/architecture/best-practices-for-building-containers#problem_1_how_the_linux_kernel_handles_signals

**Describe the solution you'd like**

- Execute a termination and see if SIGTERM signal is passed into the child processes using strace.

**Test Category Name**

- Microservices

**Type of test (static or runtime)**

- runtime

---

### Documentation tasks:

- [ ] Update [installation instructions](https://github.com/cncf/cnf-testsuite/blob/main/install.md) if needed

- [ ] Update [Test Categories md](https://github.com/cncf/cnf-testsuite/blob/main/TEST-CATEGORIES.md) if needed

- [ ] Update [USAGE md](https://github.com/cncf/cnf-testsuite/blob/main/USAGE.md) if needed

- [ ] How to run

- [ ] Description and details

- [ ] What the best practice is

- [ ] Why are we testing this

- [ ] Remediation steps if test does not pass

### QA tasks

Dev Review:

- [ ] walk through A/C

- [ ] do you get the expected result?

- [ ] if yes,

- [ ] move to `Needs Peer Review` column

- [ ] create Pull Request and follow check list

- [ ] Assign 1 or more people for peer review

- [ ] if no, document what additional tasks will be needed

Peer review:

- [ ] walk through A/C

- [ ] do you get the expected result?

- [ ] if yes,

- [ ] move to `Reviewer Approved` column

- [ ] Approve pull request

- [ ] if no,

- [ ] document what did not go as expected, including error messages and screenshots (if possible)

- [ ] Add comment to pull request

- [ ] request changes to pull request

| 1.0 | [Workload] Check if processes use a sig term - ## Title: [Workload] Microservices test: sig_term_handled

**Is your workload test idea related to a problem? Please describe.**

- SIGTERM

Problem 1: How the Linux kernel handles signals

The Linux kernel handles signals differently for the process that has PID 1 than it does for other processes. Signal handlers aren't automatically registered for this process, meaning that signals such as SIGTERM or SIGINT will have no effect by default. By default, you must kill processes by using SIGKILL, preventing any graceful shutdown. Depending on your app, using SIGKILL can result in user-facing errors, interrupted writes (for data stores), or unwanted alerts in your monitoring system.

https://cloud.google.com/architecture/best-practices-for-building-containers#problem_1_how_the_linux_kernel_handles_signals

**Describe the solution you'd like**

- Execute a termination and see if SIGTERM signal is passed into the child processes using strace.

**Test Category Name**

- Microservices

**Type of test (static or runtime)**

- runtime

---

### Documentation tasks:

- [ ] Update [installation instructions](https://github.com/cncf/cnf-testsuite/blob/main/install.md) if needed

- [ ] Update [Test Categories md](https://github.com/cncf/cnf-testsuite/blob/main/TEST-CATEGORIES.md) if needed

- [ ] Update [USAGE md](https://github.com/cncf/cnf-testsuite/blob/main/USAGE.md) if needed

- [ ] How to run

- [ ] Description and details

- [ ] What the best practice is

- [ ] Why are we testing this

- [ ] Remediation steps if test does not pass

### QA tasks

Dev Review:

- [ ] walk through A/C

- [ ] do you get the expected result?

- [ ] if yes,

- [ ] move to `Needs Peer Review` column

- [ ] create Pull Request and follow check list

- [ ] Assign 1 or more people for peer review

- [ ] if no, document what additional tasks will be needed

Peer review:

- [ ] walk through A/C

- [ ] do you get the expected result?

- [ ] if yes,

- [ ] move to `Reviewer Approved` column

- [ ] Approve pull request

- [ ] if no,

- [ ] document what did not go as expected, including error messages and screenshots (if possible)

- [ ] Add comment to pull request

- [ ] request changes to pull request

| non_defect | check if processes use a sig term title microservices test sig term handled is your workload test idea related to a problem please describe sigterm problem how the linux kernel handles signals the linux kernel handles signals differently for the process that has pid than it does for other processes signal handlers aren t automatically registered for this process meaning that signals such as sigterm or sigint will have no effect by default by default you must kill processes by using sigkill preventing any graceful shutdown depending on your app using sigkill can result in user facing errors interrupted writes for data stores or unwanted alerts in your monitoring system describe the solution you d like execute a termination and see if sigterm signal is passed into the child processes using strace test category name microservices type of test static or runtime runtime documentation tasks update if needed update if needed update if needed how to run description and details what the best practice is why are we testing this remediation steps if test does not pass qa tasks dev review walk through a c do you get the expected result if yes move to needs peer review column create pull request and follow check list assign or more people for peer review if no document what additional tasks will be needed peer review walk through a c do you get the expected result if yes move to reviewer approved column approve pull request if no document what did not go as expected including error messages and screenshots if possible add comment to pull request request changes to pull request | 0 |

188,355 | 22,046,321,550 | IssuesEvent | 2022-05-30 02:24:49 | maddyCode23/linux-4.1.15 | https://api.github.com/repos/maddyCode23/linux-4.1.15 | closed | CVE-2020-13143 (Medium) detected in linux-stable-rtv4.1.33 - autoclosed | security vulnerability | ## CVE-2020-13143 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page: <a href=https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git>https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/maddyCode23/linux-4.1.15/commit/f1f3d2b150be669390b32dfea28e773471bdd6e7">f1f3d2b150be669390b32dfea28e773471bdd6e7</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/usb/gadget/configfs.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/usb/gadget/configfs.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

gadget_dev_desc_UDC_store in drivers/usb/gadget/configfs.c in the Linux kernel 3.16 through 5.6.13 relies on kstrdup without considering the possibility of an internal '\0' value, which allows attackers to trigger an out-of-bounds read, aka CID-15753588bcd4.

<p>Publish Date: 2020-05-18

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-13143>CVE-2020-13143</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-13143">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-13143</a></p>

<p>Release Date: 2020-05-18</p>

<p>Fix Resolution: v5.7-rc6</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2020-13143 (Medium) detected in linux-stable-rtv4.1.33 - autoclosed - ## CVE-2020-13143 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page: <a href=https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git>https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/maddyCode23/linux-4.1.15/commit/f1f3d2b150be669390b32dfea28e773471bdd6e7">f1f3d2b150be669390b32dfea28e773471bdd6e7</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/usb/gadget/configfs.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/usb/gadget/configfs.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

gadget_dev_desc_UDC_store in drivers/usb/gadget/configfs.c in the Linux kernel 3.16 through 5.6.13 relies on kstrdup without considering the possibility of an internal '\0' value, which allows attackers to trigger an out-of-bounds read, aka CID-15753588bcd4.

<p>Publish Date: 2020-05-18

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-13143>CVE-2020-13143</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-13143">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-13143</a></p>

<p>Release Date: 2020-05-18</p>

<p>Fix Resolution: v5.7-rc6</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_defect | cve medium detected in linux stable autoclosed cve medium severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in head commit a href found in base branch master vulnerable source files drivers usb gadget configfs c drivers usb gadget configfs c vulnerability details gadget dev desc udc store in drivers usb gadget configfs c in the linux kernel through relies on kstrdup without considering the possibility of an internal value which allows attackers to trigger an out of bounds read aka cid publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource | 0 |

58,458 | 16,542,772,922 | IssuesEvent | 2021-05-27 19:06:38 | google/guava | https://api.github.com/repos/google/guava | closed | Null map field reference in ImmutableMultimap after deserialization | P3 package=collect status=triaged type=defect | When attempting to use Guava version 19.0, 20.0, and 21.0 `ImmutableListMultimap` in Apache Spark 2.1.0, I'm getting NPEs in unexpected places, leading me to believe that the transient `map` field reference is not being set when deserialized, e.g.

```

java.lang.NullPointerException

at com.google.common.collect.ImmutableMultimap.containsKey

(ImmutableMultimap.java:478)

...

java.lang.NullPointerException

at com.google.common.collect.ImmutableListMultimap.get

(ImmutableListMultimap.java:298)

at com.google.common.collect.ImmutableListMultimap.get

(ImmutableListMultimap.java:44)

``` | 1.0 | Null map field reference in ImmutableMultimap after deserialization - When attempting to use Guava version 19.0, 20.0, and 21.0 `ImmutableListMultimap` in Apache Spark 2.1.0, I'm getting NPEs in unexpected places, leading me to believe that the transient `map` field reference is not being set when deserialized, e.g.

```

java.lang.NullPointerException

at com.google.common.collect.ImmutableMultimap.containsKey

(ImmutableMultimap.java:478)

...

java.lang.NullPointerException

at com.google.common.collect.ImmutableListMultimap.get

(ImmutableListMultimap.java:298)

at com.google.common.collect.ImmutableListMultimap.get

(ImmutableListMultimap.java:44)

``` | defect | null map field reference in immutablemultimap after deserialization when attempting to use guava version and immutablelistmultimap in apache spark i m getting npes in unexpected places leading me to believe that the transient map field reference is not being set when deserialized e g java lang nullpointerexception at com google common collect immutablemultimap containskey immutablemultimap java java lang nullpointerexception at com google common collect immutablelistmultimap get immutablelistmultimap java at com google common collect immutablelistmultimap get immutablelistmultimap java | 1 |

199 | 2,521,958,151 | IssuesEvent | 2015-01-19 18:10:43 | numenta/nupic | https://api.github.com/repos/numenta/nupic | closed | Move from cmake to setuptools for building and preparation for distribution | build deployment P3 super | In order to properly distribute NuPIC as a pip package, we need to transfer from a cmake build to a complete setuptools build. This incorporates several related subtasks that are involved in this cleanup, listed below.

* * *

- [x] [Put all functionally of "CMakeLists.txt" into "setup.py"](https://github.com/numenta/nupic/issues/1573)

- [X] [Refactor bindings to adhere to setuptools standards](https://github.com/numenta/nupic/issues/1616)

- [x] [Use python test commands in README and travis (remove cmake commands)](https://github.com/numenta/nupic/issues/1618)

| 1.0 | Move from cmake to setuptools for building and preparation for distribution - In order to properly distribute NuPIC as a pip package, we need to transfer from a cmake build to a complete setuptools build. This incorporates several related subtasks that are involved in this cleanup, listed below.

* * *

- [x] [Put all functionally of "CMakeLists.txt" into "setup.py"](https://github.com/numenta/nupic/issues/1573)

- [X] [Refactor bindings to adhere to setuptools standards](https://github.com/numenta/nupic/issues/1616)

- [x] [Use python test commands in README and travis (remove cmake commands)](https://github.com/numenta/nupic/issues/1618)

| non_defect | move from cmake to setuptools for building and preparation for distribution in order to properly distribute nupic as a pip package we need to transfer from a cmake build to a complete setuptools build this incorporates several related subtasks that are involved in this cleanup listed below | 0 |

59,329 | 17,023,086,529 | IssuesEvent | 2021-07-03 00:19:23 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Feature requests and ideas from the wiki should be changed into trac tickets | Component: admin Priority: minor Resolution: invalid Type: defect | **[Submitted to the original trac issue database at 12.57pm, Tuesday, 29th November 2005]**

We should move these pages to Trac:

http://www.openstreetmap.org/wiki/index.php/Roadmap_For_Version_1.0

http://www.openstreetmap.org/wiki/index.php/Ideas | 1.0 | Feature requests and ideas from the wiki should be changed into trac tickets - **[Submitted to the original trac issue database at 12.57pm, Tuesday, 29th November 2005]**

We should move these pages to Trac:

http://www.openstreetmap.org/wiki/index.php/Roadmap_For_Version_1.0

http://www.openstreetmap.org/wiki/index.php/Ideas | defect | feature requests and ideas from the wiki should be changed into trac tickets we should move these pages to trac | 1 |

4,545 | 4,427,233,279 | IssuesEvent | 2016-08-16 20:47:23 | grpc/grpc-java | https://api.github.com/repos/grpc/grpc-java | opened | Excess garbage in Metadata | performance | Metadata today is a Hashmap of ArrayLists, and each arraylist has an object array. This creates a lot of garbage since Metadata objects are short lived.

Some ideas on how to improve the situation:

- Recycle the objects

- Store headers as a flat array of an initial size, and swap to using a full map if slow. (and avoid Strings when possible) | True | Excess garbage in Metadata - Metadata today is a Hashmap of ArrayLists, and each arraylist has an object array. This creates a lot of garbage since Metadata objects are short lived.

Some ideas on how to improve the situation:

- Recycle the objects

- Store headers as a flat array of an initial size, and swap to using a full map if slow. (and avoid Strings when possible) | non_defect | excess garbage in metadata metadata today is a hashmap of arraylists and each arraylist has an object array this creates a lot of garbage since metadata objects are short lived some ideas on how to improve the situation recycle the objects store headers as a flat array of an initial size and swap to using a full map if slow and avoid strings when possible | 0 |

70,248 | 23,072,934,344 | IssuesEvent | 2022-07-25 19:58:00 | zed-industries/feedback | https://api.github.com/repos/zed-industries/feedback | closed | Can not insert diacritics | defect | I apologize if there’s already an issue open about this! I couldn’t find it, although #199 seems related.

**Describe the bug**

I can not add diacritics to any character in the editor using my Spanish keyboard layout.

**To reproduce**

Press the acute accent key followed by a vowel, like A. Observe that an alert sound is played, and then ‘a’, lacking the diacritical mark, appears.

**Expected behavior**

á is inserted.

**Environment:**

```

Zed 0.47.1 – /Volumes/Zed/Zed.app

macOS 12.4

architecture arm64

```

| 1.0 | Can not insert diacritics - I apologize if there’s already an issue open about this! I couldn’t find it, although #199 seems related.

**Describe the bug**

I can not add diacritics to any character in the editor using my Spanish keyboard layout.

**To reproduce**

Press the acute accent key followed by a vowel, like A. Observe that an alert sound is played, and then ‘a’, lacking the diacritical mark, appears.

**Expected behavior**

á is inserted.

**Environment:**

```

Zed 0.47.1 – /Volumes/Zed/Zed.app

macOS 12.4

architecture arm64

```

| defect | can not insert diacritics i apologize if there’s already an issue open about this i couldn’t find it although seems related describe the bug i can not add diacritics to any character in the editor using my spanish keyboard layout to reproduce press the acute accent key followed by a vowel like a observe that an alert sound is played and then ‘a’ lacking the diacritical mark appears expected behavior á is inserted environment zed – volumes zed zed app macos architecture | 1 |

16,624 | 4,074,260,797 | IssuesEvent | 2016-05-28 09:48:00 | petabyte-research/redflags | https://api.github.com/repos/petabyte-research/redflags | opened | Documentation: add to github | documentation | Commit http://docs.redflags.eu/ to Github (a catalogue? another branch?) so corrections can be done by anybody | 1.0 | Documentation: add to github - Commit http://docs.redflags.eu/ to Github (a catalogue? another branch?) so corrections can be done by anybody | non_defect | documentation add to github commit to github a catalogue another branch so corrections can be done by anybody | 0 |

16,774 | 2,945,068,549 | IssuesEvent | 2015-07-03 10:07:17 | primefaces/primefaces | https://api.github.com/repos/primefaces/primefaces | closed | PF Mobile Tabview bug (rendering three times after switching tab) | 5.1.21 5.2.8 defect mobile | Hi, i am having exactly the same issue described here. (The shown code can be used for testing)

http://forum.primefaces.org/viewtopic.php?f=3&t=41479

PrimeFaces Version: 5.2.7 + Mojarra 2.2.11

Thanks, hope this can be fixed in a future patch, i am using a very ugly workaround up to now | 1.0 | PF Mobile Tabview bug (rendering three times after switching tab) - Hi, i am having exactly the same issue described here. (The shown code can be used for testing)

http://forum.primefaces.org/viewtopic.php?f=3&t=41479

PrimeFaces Version: 5.2.7 + Mojarra 2.2.11

Thanks, hope this can be fixed in a future patch, i am using a very ugly workaround up to now | defect | pf mobile tabview bug rendering three times after switching tab hi i am having exactly the same issue described here the shown code can be used for testing primefaces version mojarra thanks hope this can be fixed in a future patch i am using a very ugly workaround up to now | 1 |

10,223 | 2,618,942,904 | IssuesEvent | 2015-03-03 00:04:51 | marmarek/test | https://api.github.com/repos/marmarek/test | closed | Remove unused menus from Kickoff | C: desktop-linux P: minor R: duplicate T: defect | **Reported by joanna on 19 May 40420130 03:06 UTC**

* Computer/Places

* Recently Used/Documents | 1.0 | Remove unused menus from Kickoff - **Reported by joanna on 19 May 40420130 03:06 UTC**

* Computer/Places

* Recently Used/Documents | defect | remove unused menus from kickoff reported by joanna on may utc computer places recently used documents | 1 |

690,084 | 23,645,242,830 | IssuesEvent | 2022-08-25 21:18:07 | GoogleContainerTools/skaffold | https://api.github.com/repos/GoogleContainerTools/skaffold | closed | Add profile support to `skaffold verify` | kind/feature-request priority/p1 2.0.0 area/verify | Currently `skaffold verify` does not support profiles (no support for the `-p` flag, etc). This should be added to support various important use cases for testing across different envs/services | 1.0 | Add profile support to `skaffold verify` - Currently `skaffold verify` does not support profiles (no support for the `-p` flag, etc). This should be added to support various important use cases for testing across different envs/services | non_defect | add profile support to skaffold verify currently skaffold verify does not support profiles no support for the p flag etc this should be added to support various important use cases for testing across different envs services | 0 |

48,401 | 13,068,508,173 | IssuesEvent | 2020-07-31 03:48:04 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | [payload-parsing] unit test fails (Trac #2337) | Migrated from Trac combo core defect | on cobalt with py2-v3.1.1

```text

Start 358: payload-parsing::test

358/479 Test #357: payload-parsing::test ..........................................***Failed 3.20 sec

Running all tests:

bad_decode.cxx...

size_zero_test..............................................FATAL (payload-parsing): 0 bytes in the buffer and that's not enough to get a type of size 4 (utility.h:46 in T payload_parsing::decode(payload_parsing::Endian, size_t, const std::vector<char>&) [with T = unsigned int; size_t = long unsigned int])

ok

uint64_t_read_past_end......................................FATAL (payload-parsing): -10 bytes in the buffer and that's not enough to get a type of size 8 (utility.h:46 in T payload_parsing::decode(payload_parsing::Endian, size_t, const std::vector<char>&) [with T = long unsigned int; size_t = long unsigned int])

ok

uint64_t_test...............................................0

FATAL (payload-parsing): 7 bytes in the buffer and that's not enough to get a type of size 8 (utility.h:46 in T payload_parsing::decode(payload_parsing::Endian, size_t, const std::vector<char>&) [with T = long unsigned int; size_t = long unsigned int])

ok

uint64_t_test_from_middle...................................FATAL (payload-parsing): 7 bytes in the buffer and that's not enough to get a type of size 8 (utility.h:46 in T payload_parsing::decode(payload_parsing::Endian, size_t, const std::vector<char>&) [with T = long unsigned int; size_t = long unsigned int])

ok

bitbuffertest.cxx...

remainingBits............................................... ok

test_read_in_middle.........................................

100=4 -4

100000=32 -32

00010010001=145 145

00000000001=1 1

000000=0 0

111=7 -1

01=1 1

00=0 0

1=1 -1

11=3 -1

00=0 0

1=1 -1

01=1 1

620

ok

test_read_in_middle_oneread.................................

64

100=4 -4

ok

the_test....................................................

100=4 -4

100000=32 -32

00010010001=145 145

00000000001=1 1

000000=0 0

111=7 -1

01=1 1

00=0 0

1=1 -1

11=3 -1

00=0 0

1=1 -1

01=1 1

ok

twos_complement.............................................0000=0 0

0001=1 1

0010=2 2

0011=3 3

0100=4 4

0101=5 5

0110=6 6

0111=7 7

1000=8 -8

1001=9 -7

1010=10 -6

1011=11 -5

1100=12 -4

1101=13 -3

1110=14 -2

1111=15 -1

ok

deltaTest.cxx...

Delta.......................................................FATAL (payload-parsing): expected type 13 and got 0 (deltaTest.cxx:231 in void DeltaDecode(int, std::string))

cannot read event size from file /scratch/kmeagher/testdata/trunk//DeltaWaveforms/input/physics_912_0_0_914.dat

cannot read event from file /scratch/kmeagher/testdata/trunk//DeltaWaveforms/input/physics_912_0_0_914.dat

UNCAUGHT:expected type 13 and got 0 (in void DeltaDecode(int, std::string))

Version5Waveforms...........................................FATAL (payload-parsing): expected type 21 and got 0 (deltaTest.cxx:313 in void V5Decode(int, std::string))

cannot read event size from file /scratch/kmeagher/testdata/trunk//V5Waveforms/physics_22964_0_0_2053.dat

cannot read event from file /scratch/kmeagher/testdata/trunk//V5Waveforms/physics_22964_0_0_2053.dat

UNCAUGHT:expected type 21 and got 0 (in void V5Decode(int, std::string))

endianness.cxx...

decode16.................................................... ok

decode32.................................................... ok

decode64.................................................... ok

decode_uint16_t............................................. ok

decode_uint16_t_end......................................... ok

decode_uint16_t_enoughdtata.................................FATAL (payload-parsing): 1 bytes in the buffer and that's not enough to get a type of size 2 (utility.h:46 in T payload_parsing::decode(payload_parsing::Endian, size_t, const std::vector<char>&) [with T = short unsigned int; size_t = long unsigned int])

ok

decode_uint16_t_enoughdtata_BIG.............................FATAL (payload-parsing): 1 bytes in the buffer and that's not enough to get a type of size 2 (utility.h:46 in T payload_parsing::decode(payload_parsing::Endian, size_t, const std::vector<char>&) [with T = short unsigned int; size_t = long unsigned int])

ok

decode_uint16_t_middle...................................... ok

decode_uint32_t............................................. ok

decode_uint32_t_end......................................... ok

decode_uint32_t_enoughdtata.................................FATAL (payload-parsing): 3 bytes in the buffer and that's not enough to get a type of size 4 (utility.h:46 in T payload_parsing::decode(payload_parsing::Endian, size_t, const std::vector<char>&) [with T = unsigned int; size_t = long unsigned int])

ok

decode_uint32_t_enoughdtata_BIG.............................FATAL (payload-parsing): 3 bytes in the buffer and that's not enough to get a type of size 4 (utility.h:46 in T payload_parsing::decode(payload_parsing::Endian, size_t, const std::vector<char>&) [with T = unsigned int; size_t = long unsigned int])

ok

decode_uint32_t_middle...................................... ok

decode_uint64_t............................................. ok

decode_uint64_t_end......................................... ok

decode_uint64_t_enoughdtata.................................FATAL (payload-parsing): 7 bytes in the buffer and that's not enough to get a type of size 8 (utility.h:46 in T payload_parsing::decode(payload_parsing::Endian, size_t, const std::vector<char>&) [with T = long unsigned int; size_t = long unsigned int])

ok

decode_uint64_t_enoughdtata_BIG.............................FATAL (payload-parsing): 7 bytes in the buffer and that's not enough to get a type of size 8 (utility.h:46 in T payload_parsing::decode(payload_parsing::Endian, size_t, const std::vector<char>&) [with T = long unsigned int; size_t = long unsigned int])

ok

decode_uint64_t_middle...................................... ok

endian_swap_16.............................................. ok

endian_swap_32.............................................. ok

endian_swap_64.............................................. ok

signed_vs_unsigned.......................................... ok

read_type_3_hit.cxx...

read_type_3_payloads........................................ FAIL

/scratch/kmeagher/combo/src/payload-parsing/private/test/read_type_3_hit.cxx:14: FAIL

File: /scratch/kmeagher/combo/src/payload-parsing/private/test/read_type_3_hit.cxx

Line: 14

Predicate: ifs.good()

Message: unspecified

read_v2_file.cxx...

craps_out_with_not_type_13..................................FATAL (payload-parsing): Asked to decode a payload of type 13 and found payload type 50 instead (decode.h:294 in void payload_parsing::decode_payload(typename payload_parsing::DecodeTarget<payloadType>::type&, const payload_parsing::DecodeConfiguration&, std::vector<char>, unsigned int) [with unsigned int payloadType = 13u; typename payload_parsing::DecodeTarget<payloadType>::type = payload_parsing::DecodeTarget<13u>::type])

ok

endian_swapping.............................................Hi, Peter

ok

v2file......................................................EventID: 1

RunID: 87531

N inice hit DOM's: 15

N icetop hit DOM's: 0

...

--------------------------------------------------

2006

107380177990659673

107380177990837934

1168

3

28

0

ok

read_v5_file.cxx...

fails_when_not_type_21......................................FATAL (payload-parsing): Asked to decode a payload of type 21 and found payload type 50 instead (decode.h:294 in void payload_parsing::decode_payload(typename payload_parsing::DecodeTarget<payloadType>::type&, const payload_parsing::DecodeConfiguration&, std::vector<char>, unsigned int) [with unsigned int payloadType = 21u; typename payload_parsing::DecodeTarget<payloadType>::type = payload_parsing::DecodeTarget<21u>::type])

ok

v5file......................................................FATAL (I3PayloadParsingEventDecoder): expected type 13, 19, 20, 21, 22 and got 0 (I3PayloadParsingEventDecoder.cxx:77 in virtual I3Time I3PayloadParsingEventDecoder::FillEvent(I3Frame&, const std::vector<char>&) const)

cannot read event size from file /scratch/kmeagher/testdata/trunk//payload_testdata/physics-v5.dat

cannot read event from file /scratch/kmeagher/testdata/trunk//payload_testdata/physics-v5.dat

UNCAUGHT:expected type 13, 19, 20, 21, 22 and got 0 (in virtual I3Time I3PayloadParsingEventDecoder::FillEvent(I3Frame&, const std::vector<char>&) const)

===================================================================

Pass: 35

Fail: 4

***** THESE TESTS FAIL *****

deltaTest.cxx/Delta

deltaTest.cxx/Version5Waveforms

read_type_3_hit.cxx/read_type_3_payloads

read_v5_file.cxx/v5file

```

Migrated from https://code.icecube.wisc.edu/ticket/2337

```json

{

"status": "closed",

"changetime": "2019-09-04T13:00:01",