Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

96,987 | 28,070,731,603 | IssuesEvent | 2023-03-29 18:51:45 | MinaProtocol/mina | https://api.github.com/repos/MinaProtocol/mina | closed | Merge the archive node artifact job with the main build-artifact job | Size: XS ~ 1 day buildkite archive-node build-pipeline-refactor | All of the binaries required for the archive node package are built as part of build-artifact anyway, this should reduce overall runtime / total cpu cycles | 2.0 | Merge the archive node artifact job with the main build-artifact job - All of the binaries required for the archive node package are built as part of build-artifact anyway, this should reduce overall runtime / total cpu cycles | non_defect | merge the archive node artifact job with the main build artifact job all of the binaries required for the archive node package are built as part of build artifact anyway this should reduce overall runtime total cpu cycles | 0 |

24,279 | 5,042,284,941 | IssuesEvent | 2016-12-19 13:29:39 | google/material-design-lite | https://api.github.com/repos/google/material-design-lite | closed | mdl-card__menu not documented | Cards Documentation v1-bug | Component : Card

What are you trying to do or find out more about?

Element class mdl-card__menu

Where have you looked?

http://www.getmdl.io/components/index.html#cards-section

Where did you expect to find this information?

In section "Configuration options"

What did I find out?

- Container for positioning child elements in top right card corner

- Docs show an example share button

Thanks.

| 1.0 | mdl-card__menu not documented - Component : Card

What are you trying to do or find out more about?

Element class mdl-card__menu

Where have you looked?

http://www.getmdl.io/components/index.html#cards-section

Where did you expect to find this information?

In section "Configuration options"

What did I find out?

- Container for positioning child elements in top right card corner

- Docs show an example share button

Thanks.

| non_defect | mdl card menu not documented component card what are you trying to do or find out more about element class mdl card menu where have you looked where did you expect to find this information in section configuration options what did i find out container for positioning child elements in top right card corner docs show an example share button thanks | 0 |

66,607 | 20,373,819,324 | IssuesEvent | 2022-02-21 13:49:33 | OpenMS/OpenMS | https://api.github.com/repos/OpenMS/OpenMS | closed | PSMFeatureextractor and Percolator | defect major TOPP usability | Dear all,

I have been trying to use the new Percolator adapter with no luck. I do searches with Comet, using the Comet adaptor in both OpenMS 2.2 (using TOPPAS) and the 2.3 nightly builds (last of 05.12.2017) in both TOPPAS and Knime.

Briefly my workflow is as follows, CometAdpator -> Peptideindexer -> PSMFeatureextractor -> Percolator.

If I run it in TOPPAS the PSMFeatureExtractor always crashes without any information. In Knime, PSMFeatureExtractor passes but Percolator always crashes. What I can get out of the log is: Merging Peptides IDs, merging Proteins IDs, Percolator problem... and then crashes.

If I use then the PSMFeatureExtractor result from Knime and use it as input for PercolatorAdapter in TOPPAS, I can run Percolator, however it always uses the -U option, even if I select -Y option (tdc true):

which my guess should be used as the search in Comet was done using a database containing decoys, and this should be indexed when using PeptideIndexer. Moreover, Percolator reports:

"Separate target and decoy search inputs detected, using target-decoy competition on Percolator scores", even though the -Y option is seen. Nonetheless it continues and finish. However if I then do IDFilter on that (q < 0.01) everything is gone. If I run Percolator including the -f option (Protein inference) I also can't filter the file. However, if I export this file doing text export without IDFilter and filter manually for proteins with q-value of less than 0.01 and compare this with the same search files processed with PeptideProphet/ProteinProphet I get an overlap of ~80% at the protein level. However, I would need to have the filtered file as input for IDMapper, to only map peptides at 1%FDR to features.

Maybe somebody has more experience on running PercolatorAdapter and I might be using it wrong.

Cheers,

Alejandro

| 1.0 | PSMFeatureextractor and Percolator - Dear all,

I have been trying to use the new Percolator adapter with no luck. I do searches with Comet, using the Comet adaptor in both OpenMS 2.2 (using TOPPAS) and the 2.3 nightly builds (last of 05.12.2017) in both TOPPAS and Knime.

Briefly my workflow is as follows, CometAdpator -> Peptideindexer -> PSMFeatureextractor -> Percolator.

If I run it in TOPPAS the PSMFeatureExtractor always crashes without any information. In Knime, PSMFeatureExtractor passes but Percolator always crashes. What I can get out of the log is: Merging Peptides IDs, merging Proteins IDs, Percolator problem... and then crashes.

If I use then the PSMFeatureExtractor result from Knime and use it as input for PercolatorAdapter in TOPPAS, I can run Percolator, however it always uses the -U option, even if I select -Y option (tdc true):

which my guess should be used as the search in Comet was done using a database containing decoys, and this should be indexed when using PeptideIndexer. Moreover, Percolator reports:

"Separate target and decoy search inputs detected, using target-decoy competition on Percolator scores", even though the -Y option is seen. Nonetheless it continues and finish. However if I then do IDFilter on that (q < 0.01) everything is gone. If I run Percolator including the -f option (Protein inference) I also can't filter the file. However, if I export this file doing text export without IDFilter and filter manually for proteins with q-value of less than 0.01 and compare this with the same search files processed with PeptideProphet/ProteinProphet I get an overlap of ~80% at the protein level. However, I would need to have the filtered file as input for IDMapper, to only map peptides at 1%FDR to features.

Maybe somebody has more experience on running PercolatorAdapter and I might be using it wrong.

Cheers,

Alejandro

| defect | psmfeatureextractor and percolator dear all i have been trying to use the new percolator adapter with no luck i do searches with comet using the comet adaptor in both openms using toppas and the nightly builds last of in both toppas and knime briefly my workflow is as follows cometadpator peptideindexer psmfeatureextractor percolator if i run it in toppas the psmfeatureextractor always crashes without any information in knime psmfeatureextractor passes but percolator always crashes what i can get out of the log is merging peptides ids merging proteins ids percolator problem and then crashes if i use then the psmfeatureextractor result from knime and use it as input for percolatoradapter in toppas i can run percolator however it always uses the u option even if i select y option tdc true which my guess should be used as the search in comet was done using a database containing decoys and this should be indexed when using peptideindexer moreover percolator reports separate target and decoy search inputs detected using target decoy competition on percolator scores even though the y option is seen nonetheless it continues and finish however if i then do idfilter on that q everything is gone if i run percolator including the f option protein inference i also can t filter the file however if i export this file doing text export without idfilter and filter manually for proteins with q value of less than and compare this with the same search files processed with peptideprophet proteinprophet i get an overlap of at the protein level however i would need to have the filtered file as input for idmapper to only map peptides at fdr to features maybe somebody has more experience on running percolatoradapter and i might be using it wrong cheers alejandro | 1 |

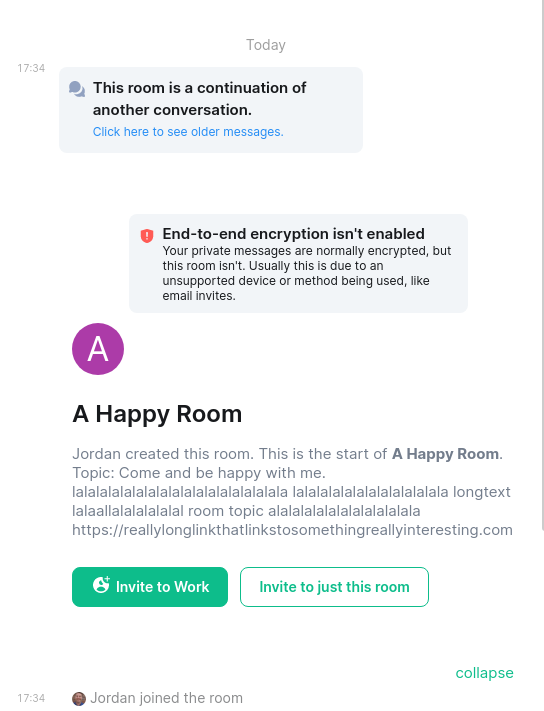

43,251 | 11,580,638,159 | IssuesEvent | 2020-02-21 20:37:04 | vector-im/riot-web | https://api.github.com/repos/vector-im/riot-web | closed | Pasting an mxid into the new invite dialog reliably coughs up bogus "failed to find users" error | bug defect p1 project:ftue-user-lists | To repro:

* copy an mxid like `@vincent_houlot:matrix.org`

* Paste it into the invite box

* See this error dialog

<img width="804" alt="Screenshot 2020-02-21 at 14 23 06" src="https://user-images.githubusercontent.com/1294269/75042102-cb02e600-54b5-11ea-99d1-7bd4c5be7d65.png">

| 1.0 | Pasting an mxid into the new invite dialog reliably coughs up bogus "failed to find users" error - To repro:

* copy an mxid like `@vincent_houlot:matrix.org`

* Paste it into the invite box

* See this error dialog

<img width="804" alt="Screenshot 2020-02-21 at 14 23 06" src="https://user-images.githubusercontent.com/1294269/75042102-cb02e600-54b5-11ea-99d1-7bd4c5be7d65.png">

| defect | pasting an mxid into the new invite dialog reliably coughs up bogus failed to find users error to repro copy an mxid like vincent houlot matrix org paste it into the invite box see this error dialog img width alt screenshot at src | 1 |

36,507 | 7,974,036,312 | IssuesEvent | 2018-07-17 02:51:59 | cakephp/cakephp | https://api.github.com/repos/cakephp/cakephp | closed | `DateTimeType::marshal()` occasionally returns string instead of object | Defect ORM RFC | This is a (multiple allowed):

* [x] bug

* [ ] enhancement

* [ ] feature-discussion (RFC)

* CakePHP Version: 3.3.11

### What you did

Create a form containing a text input field for a datetime property.

```php

echo $this->Form->create($article);

echo $this->Form->input('published', ['type' => 'text']);

echo $this->Form->button('Submit');

echo $this->Form->end();

```

Dump the datetime property after `patchEntity()`.

```php

$article = $this->Articles->get(1);

$this->Articles->patchEntity($article, $this->request->data);

if ($this->Articles->save($article)) {

var_dump($article->published);

}

$this->set('article', $article);

```

### What happened

When the posted value is `2017-01-01 00:00:00`, I get:

```

object(Cake\I18n\FrozenTime)#156 (3) {

["time"]=>

string(25) "2017-01-01T00:00:00+09:00"

["timezone"]=>

string(10) "Asia/Tokyo"

["fixedNowTime"]=>

bool(false)

}

```

However, when the posted value is `2017-01-01 00:00`, I get:

```

string(16) "2017-01-01 00:00"

```

### What you expected to happen

I always get:

```

object(Cake\I18n\FrozenTime)#156 (3) {

["time"]=>

string(25) "2017-01-01T00:00:00+09:00"

["timezone"]=>

string(10) "Asia/Tokyo"

["fixedNowTime"]=>

bool(false)

}

```

### Related code

When the input format is not `Y-m-d H:i:s`, `DateTimeType::marshal()` returns a string.

https://github.com/cakephp/cakephp/blob/3b341696e13ad6aed51e330d8e54479a41780512/src/Database/Type/DateTimeType.php#L164-L166

The following line would also return a string.

https://github.com/cakephp/cakephp/blob/3b341696e13ad6aed51e330d8e54479a41780512/src/Database/Type/DateTimeType.php#L170-L172 | 1.0 | `DateTimeType::marshal()` occasionally returns string instead of object - This is a (multiple allowed):

* [x] bug

* [ ] enhancement

* [ ] feature-discussion (RFC)

* CakePHP Version: 3.3.11

### What you did

Create a form containing a text input field for a datetime property.

```php

echo $this->Form->create($article);

echo $this->Form->input('published', ['type' => 'text']);

echo $this->Form->button('Submit');

echo $this->Form->end();

```

Dump the datetime property after `patchEntity()`.

```php

$article = $this->Articles->get(1);

$this->Articles->patchEntity($article, $this->request->data);

if ($this->Articles->save($article)) {

var_dump($article->published);

}

$this->set('article', $article);

```

### What happened

When the posted value is `2017-01-01 00:00:00`, I get:

```

object(Cake\I18n\FrozenTime)#156 (3) {

["time"]=>

string(25) "2017-01-01T00:00:00+09:00"

["timezone"]=>

string(10) "Asia/Tokyo"

["fixedNowTime"]=>

bool(false)

}

```

However, when the posted value is `2017-01-01 00:00`, I get:

```

string(16) "2017-01-01 00:00"

```

### What you expected to happen

I always get:

```

object(Cake\I18n\FrozenTime)#156 (3) {

["time"]=>

string(25) "2017-01-01T00:00:00+09:00"

["timezone"]=>

string(10) "Asia/Tokyo"

["fixedNowTime"]=>

bool(false)

}

```

### Related code

When the input format is not `Y-m-d H:i:s`, `DateTimeType::marshal()` returns a string.

https://github.com/cakephp/cakephp/blob/3b341696e13ad6aed51e330d8e54479a41780512/src/Database/Type/DateTimeType.php#L164-L166

The following line would also return a string.

https://github.com/cakephp/cakephp/blob/3b341696e13ad6aed51e330d8e54479a41780512/src/Database/Type/DateTimeType.php#L170-L172 | defect | datetimetype marshal occasionally returns string instead of object this is a multiple allowed bug enhancement feature discussion rfc cakephp version what you did create a form containing a text input field for a datetime property php echo this form create article echo this form input published echo this form button submit echo this form end dump the datetime property after patchentity php article this articles get this articles patchentity article this request data if this articles save article var dump article published this set article article what happened when the posted value is i get object cake frozentime string string asia tokyo bool false however when the posted value is i get string what you expected to happen i always get object cake frozentime string string asia tokyo bool false related code when the input format is not y m d h i s datetimetype marshal returns a string the following line would also return a string | 1 |

18,966 | 11,102,187,081 | IssuesEvent | 2019-12-16 23:13:30 | Azure/azure-cli | https://api.github.com/repos/Azure/azure-cli | closed | Issue with "az aks get-credentials" command | AKS Question Service Attention | When I try to use "az aks get-credentials" command I get the following error:

$ az aks get-credentials --resource-group "$RESOURCE_GROUP" --name "$AKS_CLUSTER_NAME" --admin

The HTTP method 'POST' is not supported.

It worked OK few days ago.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: d75c3a8d-1187-bea1-a7d4-7ebba71c1790

* Version Independent ID: 75b0c352-762e-16ae-5cc6-d807ffc1dc3f

* Content: [az aks](https://docs.microsoft.com/en-us/cli/azure/aks?view=azure-cli-latest#az-aks-get-credentials)

* Content Source: [latest/docs-ref-autogen/aks.yml](https://github.com/Azure/azure-docs-cli-python/blob/live/latest/docs-ref-autogen/aks.yml)

* Service: **container-service**

* GitHub Login: @rloutlaw

* Microsoft Alias: **routlaw** | 1.0 | Issue with "az aks get-credentials" command - When I try to use "az aks get-credentials" command I get the following error:

$ az aks get-credentials --resource-group "$RESOURCE_GROUP" --name "$AKS_CLUSTER_NAME" --admin

The HTTP method 'POST' is not supported.

It worked OK few days ago.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: d75c3a8d-1187-bea1-a7d4-7ebba71c1790

* Version Independent ID: 75b0c352-762e-16ae-5cc6-d807ffc1dc3f

* Content: [az aks](https://docs.microsoft.com/en-us/cli/azure/aks?view=azure-cli-latest#az-aks-get-credentials)

* Content Source: [latest/docs-ref-autogen/aks.yml](https://github.com/Azure/azure-docs-cli-python/blob/live/latest/docs-ref-autogen/aks.yml)

* Service: **container-service**

* GitHub Login: @rloutlaw

* Microsoft Alias: **routlaw** | non_defect | issue with az aks get credentials command when i try to use az aks get credentials command i get the following error az aks get credentials resource group resource group name aks cluster name admin the http method post is not supported it worked ok few days ago document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source service container service github login rloutlaw microsoft alias routlaw | 0 |

22,414 | 3,644,970,404 | IssuesEvent | 2016-02-15 12:31:56 | contao/core | https://api.github.com/repos/contao/core | closed | Anlegen neuer Templateordner ist im Backend nicht möglich | defect | Sowohl in Contao 3.5.5 als auch in Contao 3.5.6 können keine neuen Templateverzeichnisse angelegt werden.

Der Link dafür fehlt.

Wenn ich ein Verzeichnis via SSH anlege wird es angezeigt und kann verwendet werden.

Bei mir betrifft es sowohl Updates, als auch Neuinstallation. | 1.0 | Anlegen neuer Templateordner ist im Backend nicht möglich - Sowohl in Contao 3.5.5 als auch in Contao 3.5.6 können keine neuen Templateverzeichnisse angelegt werden.

Der Link dafür fehlt.

Wenn ich ein Verzeichnis via SSH anlege wird es angezeigt und kann verwendet werden.

Bei mir betrifft es sowohl Updates, als auch Neuinstallation. | defect | anlegen neuer templateordner ist im backend nicht möglich sowohl in contao als auch in contao können keine neuen templateverzeichnisse angelegt werden der link dafür fehlt wenn ich ein verzeichnis via ssh anlege wird es angezeigt und kann verwendet werden bei mir betrifft es sowohl updates als auch neuinstallation | 1 |

14,312 | 17,200,340,489 | IssuesEvent | 2021-07-17 04:53:17 | oilshell/oil | https://api.github.com/repos/oilshell/oil | closed | cell sublanguage: a[i] support in -n (nameref) and ${!ref} | compatibility osh-language | - usage from @abathur https://oilshell.zulipchat.com/#narrow/stream/121540-oil-discuss/topic/nameref.20implemented

- I think bash-completion used it in the `${!ref}` context, although I may have patched around it

| True | cell sublanguage: a[i] support in -n (nameref) and ${!ref} - - usage from @abathur https://oilshell.zulipchat.com/#narrow/stream/121540-oil-discuss/topic/nameref.20implemented

- I think bash-completion used it in the `${!ref}` context, although I may have patched around it

| non_defect | cell sublanguage a support in n nameref and ref usage from abathur i think bash completion used it in the ref context although i may have patched around it | 0 |

2,771 | 2,607,944,861 | IssuesEvent | 2015-02-26 00:32:52 | chrsmithdemos/switchlist | https://api.github.com/repos/chrsmithdemos/switchlist | opened | Have "print all" raise a dialog box to prompt for which documents to print | auto-migrated Priority-Medium Type-Defect | ```

Doing a print action from the main window causes all switch lists for all

trains to be printed. It would be nice if it also printed all the interesting

reports - car positions and yard reports. Open a dialog box where the user can

indicate which should be printed.

Requires making the reports printable using the "print all" view.

```

-----

Original issue reported on code.google.com by `rwbowdi...@gmail.com` on 24 Apr 2011 at 5:20 | 1.0 | Have "print all" raise a dialog box to prompt for which documents to print - ```

Doing a print action from the main window causes all switch lists for all

trains to be printed. It would be nice if it also printed all the interesting

reports - car positions and yard reports. Open a dialog box where the user can

indicate which should be printed.

Requires making the reports printable using the "print all" view.

```

-----

Original issue reported on code.google.com by `rwbowdi...@gmail.com` on 24 Apr 2011 at 5:20 | defect | have print all raise a dialog box to prompt for which documents to print doing a print action from the main window causes all switch lists for all trains to be printed it would be nice if it also printed all the interesting reports car positions and yard reports open a dialog box where the user can indicate which should be printed requires making the reports printable using the print all view original issue reported on code google com by rwbowdi gmail com on apr at | 1 |

12,783 | 3,645,676,886 | IssuesEvent | 2016-02-15 15:36:55 | dbpedia-spotlight/dbpedia-spotlight | https://api.github.com/repos/dbpedia-spotlight/dbpedia-spotlight | closed | Splitting Occs and Spotlight Live | bug documentation | - Mapping licenses in the pom and wiki

- Problem on Maven/Github - blob is too big for the dependencies:

<dependency>

<groupId>org.wiki.harvester.dependency</groupId>

<artifactId>morphadorner</artifactId>

<version>1.0</version>

</dependency>

<dependency>

<groupId>org.wiki.harvester.dependency</groupId>

<artifactId>pedia.uima.harvester</artifactId>

<version>1.0</version>

</dependency> | 1.0 | Splitting Occs and Spotlight Live - - Mapping licenses in the pom and wiki

- Problem on Maven/Github - blob is too big for the dependencies:

<dependency>

<groupId>org.wiki.harvester.dependency</groupId>

<artifactId>morphadorner</artifactId>

<version>1.0</version>

</dependency>

<dependency>

<groupId>org.wiki.harvester.dependency</groupId>

<artifactId>pedia.uima.harvester</artifactId>

<version>1.0</version>

</dependency> | non_defect | splitting occs and spotlight live mapping licenses in the pom and wiki problem on maven github blob is too big for the dependencies org wiki harvester dependency morphadorner org wiki harvester dependency pedia uima harvester | 0 |

72,339 | 24,059,166,461 | IssuesEvent | 2022-09-16 20:09:21 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | New CI failures in `sparse` with nightly numpy | defect scipy.sparse | If you have submitted a pull request recently, you probably noticed some new failures in the Azure job `prerelease_deps_coverage_64bit_blas` that are unrelated to your changes:

```

=========================== short test summary info ============================

FAILED scipy/sparse/tests/test_base.py::TestCOO::test_reshape_copy - Assertio...

FAILED scipy/sparse/tests/test_base.py::TestCOONonCanonical::test_reshape_copy

FAILED scipy/sparse/tests/test_base.py::Test64Bit::test_resiliency_limit_10[TestCOO-test_reshape_copy]

FAILED scipy/sparse/tests/test_base.py::Test64Bit::test_no_64[TestCOO-test_reshape_copy]

FAILED scipy/sparse/tests/test_base.py::Test64Bit::test_resiliency_random[TestCOO-test_reshape_copy]

FAILED scipy/sparse/tests/test_base.py::Test64Bit::test_resiliency_all_32[TestCOO-test_reshape_copy]

FAILED scipy/sparse/tests/test_base.py::Test64Bit::test_resiliency_all_64[TestCOO-test_reshape_copy]

```

This job is the one that uses a "nightly" NumPy build. The failures are the result of a [recent change](https://github.com/numpy/numpy/pull/21995) in NumPy; see https://github.com/numpy/numpy/pull/21995#issuecomment-1249447631 for a description of how the NumPy change breaks the tests in `scipy.sparse`.

Based on the follow-up comment and the tests in that PR that were approved, it looks like this is an intentional change that is not considered a backwards compatibility break. It changes behavior, but it is apparently behavior that was never guaranteed to always remain the same.

I think we'll be able to work-around the NumPy change pretty easily.

| 1.0 | New CI failures in `sparse` with nightly numpy - If you have submitted a pull request recently, you probably noticed some new failures in the Azure job `prerelease_deps_coverage_64bit_blas` that are unrelated to your changes:

```

=========================== short test summary info ============================

FAILED scipy/sparse/tests/test_base.py::TestCOO::test_reshape_copy - Assertio...

FAILED scipy/sparse/tests/test_base.py::TestCOONonCanonical::test_reshape_copy

FAILED scipy/sparse/tests/test_base.py::Test64Bit::test_resiliency_limit_10[TestCOO-test_reshape_copy]

FAILED scipy/sparse/tests/test_base.py::Test64Bit::test_no_64[TestCOO-test_reshape_copy]

FAILED scipy/sparse/tests/test_base.py::Test64Bit::test_resiliency_random[TestCOO-test_reshape_copy]

FAILED scipy/sparse/tests/test_base.py::Test64Bit::test_resiliency_all_32[TestCOO-test_reshape_copy]

FAILED scipy/sparse/tests/test_base.py::Test64Bit::test_resiliency_all_64[TestCOO-test_reshape_copy]

```

This job is the one that uses a "nightly" NumPy build. The failures are the result of a [recent change](https://github.com/numpy/numpy/pull/21995) in NumPy; see https://github.com/numpy/numpy/pull/21995#issuecomment-1249447631 for a description of how the NumPy change breaks the tests in `scipy.sparse`.

Based on the follow-up comment and the tests in that PR that were approved, it looks like this is an intentional change that is not considered a backwards compatibility break. It changes behavior, but it is apparently behavior that was never guaranteed to always remain the same.

I think we'll be able to work-around the NumPy change pretty easily.

| defect | new ci failures in sparse with nightly numpy if you have submitted a pull request recently you probably noticed some new failures in the azure job prerelease deps coverage blas that are unrelated to your changes short test summary info failed scipy sparse tests test base py testcoo test reshape copy assertio failed scipy sparse tests test base py testcoononcanonical test reshape copy failed scipy sparse tests test base py test resiliency limit failed scipy sparse tests test base py test no failed scipy sparse tests test base py test resiliency random failed scipy sparse tests test base py test resiliency all failed scipy sparse tests test base py test resiliency all this job is the one that uses a nightly numpy build the failures are the result of a in numpy see for a description of how the numpy change breaks the tests in scipy sparse based on the follow up comment and the tests in that pr that were approved it looks like this is an intentional change that is not considered a backwards compatibility break it changes behavior but it is apparently behavior that was never guaranteed to always remain the same i think we ll be able to work around the numpy change pretty easily | 1 |

28,625 | 5,312,757,016 | IssuesEvent | 2017-02-13 10:03:22 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | FAIL: scipy.integrate - test_odeint_full_jac | defect scipy.integrate | teapot:scipy andrew$ python runtests.py -s integrate

Building, see build.log...

Build OK

Running unit tests for scipy.integrate

NumPy version 1.12.0

NumPy relaxed strides checking option: True

NumPy is installed in /Users/andrew/miniconda3/envs/dev3/lib/python3.5/site-packages/numpy

SciPy version 0.19.0.dev0+

SciPy is installed in /Users/andrew/Documents/Andy/programming/scipy/build/testenv/lib/python3.5/site-packages/scipy

Python version 3.5.2 |Continuum Analytics, Inc.| (default, Jul 2 2016, 17:52:12) [GCC 4.2.1 Compatible Apple LLVM 4.2 (clang-425.0.28)]

nose version 1.3.7

.......................................................................................................................................................................................................................F...K............................................

======================================================================

FAIL: test_odeint_jac.test_odeint_full_jac

----------------------------------------------------------------------

Traceback (most recent call last):

File "/Users/andrew/miniconda3/envs/dev3/lib/python3.5/site-packages/nose/case.py", line 198, in runTest

self.test(*self.arg)

File "/Users/andrew/Documents/Andy/programming/scipy/build/testenv/lib/python3.5/site-packages/scipy/integrate/tests/test_odeint_jac.py", line 71, in test_odeint_full_jac

check_odeint(JACTYPE_FULL)

File "/Users/andrew/Documents/Andy/programming/scipy/build/testenv/lib/python3.5/site-packages/scipy/integrate/tests/test_odeint_jac.py", line 66, in check_odeint

assert_allclose(yfinal, y1, rtol=1e-12)

File "/Users/andrew/miniconda3/envs/dev3/lib/python3.5/site-packages/numpy/testing/utils.py", line 1411, in assert_allclose

verbose=verbose, header=header, equal_nan=equal_nan)

File "/Users/andrew/miniconda3/envs/dev3/lib/python3.5/site-packages/numpy/testing/utils.py", line 796, in assert_array_compare

raise AssertionError(msg)

AssertionError:

Not equal to tolerance rtol=1e-12, atol=0

(mismatch 100.0%)

x: array([ 4.266167e-04, 2.668761e-05, 2.054494e-06, 2.566184e-08,

3.395174e-10])

y: array([ 4.266167e-04, 2.668761e-05, 2.054494e-06, 2.566184e-08,

3.395237e-10])

----------------------------------------------------------------------

Ran 264 tests in 2.622s

| 1.0 | FAIL: scipy.integrate - test_odeint_full_jac - teapot:scipy andrew$ python runtests.py -s integrate

Building, see build.log...

Build OK

Running unit tests for scipy.integrate

NumPy version 1.12.0

NumPy relaxed strides checking option: True

NumPy is installed in /Users/andrew/miniconda3/envs/dev3/lib/python3.5/site-packages/numpy

SciPy version 0.19.0.dev0+

SciPy is installed in /Users/andrew/Documents/Andy/programming/scipy/build/testenv/lib/python3.5/site-packages/scipy

Python version 3.5.2 |Continuum Analytics, Inc.| (default, Jul 2 2016, 17:52:12) [GCC 4.2.1 Compatible Apple LLVM 4.2 (clang-425.0.28)]

nose version 1.3.7

.......................................................................................................................................................................................................................F...K............................................

======================================================================

FAIL: test_odeint_jac.test_odeint_full_jac

----------------------------------------------------------------------

Traceback (most recent call last):

File "/Users/andrew/miniconda3/envs/dev3/lib/python3.5/site-packages/nose/case.py", line 198, in runTest

self.test(*self.arg)

File "/Users/andrew/Documents/Andy/programming/scipy/build/testenv/lib/python3.5/site-packages/scipy/integrate/tests/test_odeint_jac.py", line 71, in test_odeint_full_jac

check_odeint(JACTYPE_FULL)

File "/Users/andrew/Documents/Andy/programming/scipy/build/testenv/lib/python3.5/site-packages/scipy/integrate/tests/test_odeint_jac.py", line 66, in check_odeint

assert_allclose(yfinal, y1, rtol=1e-12)

File "/Users/andrew/miniconda3/envs/dev3/lib/python3.5/site-packages/numpy/testing/utils.py", line 1411, in assert_allclose

verbose=verbose, header=header, equal_nan=equal_nan)

File "/Users/andrew/miniconda3/envs/dev3/lib/python3.5/site-packages/numpy/testing/utils.py", line 796, in assert_array_compare

raise AssertionError(msg)

AssertionError:

Not equal to tolerance rtol=1e-12, atol=0

(mismatch 100.0%)

x: array([ 4.266167e-04, 2.668761e-05, 2.054494e-06, 2.566184e-08,

3.395174e-10])

y: array([ 4.266167e-04, 2.668761e-05, 2.054494e-06, 2.566184e-08,

3.395237e-10])

----------------------------------------------------------------------

Ran 264 tests in 2.622s

| defect | fail scipy integrate test odeint full jac teapot scipy andrew python runtests py s integrate building see build log build ok running unit tests for scipy integrate numpy version numpy relaxed strides checking option true numpy is installed in users andrew envs lib site packages numpy scipy version scipy is installed in users andrew documents andy programming scipy build testenv lib site packages scipy python version continuum analytics inc default jul nose version f k fail test odeint jac test odeint full jac traceback most recent call last file users andrew envs lib site packages nose case py line in runtest self test self arg file users andrew documents andy programming scipy build testenv lib site packages scipy integrate tests test odeint jac py line in test odeint full jac check odeint jactype full file users andrew documents andy programming scipy build testenv lib site packages scipy integrate tests test odeint jac py line in check odeint assert allclose yfinal rtol file users andrew envs lib site packages numpy testing utils py line in assert allclose verbose verbose header header equal nan equal nan file users andrew envs lib site packages numpy testing utils py line in assert array compare raise assertionerror msg assertionerror not equal to tolerance rtol atol mismatch x array y array ran tests in | 1 |

203,759 | 23,180,381,354 | IssuesEvent | 2022-08-01 01:07:04 | haeli05/source | https://api.github.com/repos/haeli05/source | opened | CVE-2022-2564 (High) detected in mongoose-5.4.15.tgz | security vulnerability | ## CVE-2022-2564 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mongoose-5.4.15.tgz</b></p></summary>

<p>Mongoose MongoDB ODM</p>

<p>Library home page: <a href="https://registry.npmjs.org/mongoose/-/mongoose-5.4.15.tgz">https://registry.npmjs.org/mongoose/-/mongoose-5.4.15.tgz</a></p>

<p>Path to dependency file: /BackEnd/package.json</p>

<p>Path to vulnerable library: /BackEnd/node_modules/mongoose/package.json</p>

<p>

Dependency Hierarchy:

- :x: **mongoose-5.4.15.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/haeli05/source/commit/cd3dfb369e611687baef858fdd1cf268baed1b52">cd3dfb369e611687baef858fdd1cf268baed1b52</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Prototype Pollution in GitHub repository automattic/mongoose prior to 6.4.6.

<p>Publish Date: 2022-07-28

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-2564>CVE-2022-2564</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-2564">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-2564</a></p>

<p>Release Date: 2022-07-28</p>

<p>Fix Resolution: 6.4.6</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2022-2564 (High) detected in mongoose-5.4.15.tgz - ## CVE-2022-2564 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mongoose-5.4.15.tgz</b></p></summary>

<p>Mongoose MongoDB ODM</p>

<p>Library home page: <a href="https://registry.npmjs.org/mongoose/-/mongoose-5.4.15.tgz">https://registry.npmjs.org/mongoose/-/mongoose-5.4.15.tgz</a></p>

<p>Path to dependency file: /BackEnd/package.json</p>

<p>Path to vulnerable library: /BackEnd/node_modules/mongoose/package.json</p>

<p>

Dependency Hierarchy:

- :x: **mongoose-5.4.15.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/haeli05/source/commit/cd3dfb369e611687baef858fdd1cf268baed1b52">cd3dfb369e611687baef858fdd1cf268baed1b52</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Prototype Pollution in GitHub repository automattic/mongoose prior to 6.4.6.

<p>Publish Date: 2022-07-28

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-2564>CVE-2022-2564</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-2564">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-2564</a></p>

<p>Release Date: 2022-07-28</p>

<p>Fix Resolution: 6.4.6</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_defect | cve high detected in mongoose tgz cve high severity vulnerability vulnerable library mongoose tgz mongoose mongodb odm library home page a href path to dependency file backend package json path to vulnerable library backend node modules mongoose package json dependency hierarchy x mongoose tgz vulnerable library found in head commit a href vulnerability details prototype pollution in github repository automattic mongoose prior to publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with mend | 0 |

316,735 | 23,645,612,330 | IssuesEvent | 2022-08-25 21:45:38 | awslabs/diagram-maker | https://api.github.com/repos/awslabs/diagram-maker | closed | What is the preferred way to update the store data? | documentation | **Describe the issue with documentation**

I'm tryng to dynamically update the consumerData on a particular node, after it was created.

What is the preffered way to update the store data?

It would be nice to include this in the documentation or in the examples.

| 1.0 | What is the preferred way to update the store data? - **Describe the issue with documentation**

I'm tryng to dynamically update the consumerData on a particular node, after it was created.

What is the preffered way to update the store data?

It would be nice to include this in the documentation or in the examples.

| non_defect | what is the preferred way to update the store data describe the issue with documentation i m tryng to dynamically update the consumerdata on a particular node after it was created what is the preffered way to update the store data it would be nice to include this in the documentation or in the examples | 0 |

9,513 | 2,615,154,434 | IssuesEvent | 2015-03-01 06:32:27 | chrsmith/reaver-wps | https://api.github.com/repos/chrsmith/reaver-wps | opened | Restoring Session file | auto-migrated Priority-Triage Type-Defect | ```

Every time I try to restore a previous session file

reaver basically re-arranges the characters withing the file

and it start to use random character to crack the pin.

For example: 05:b5670 it actually used the bssid to try and crack

the pin. I would like to know if, there's a precise way, or a different

method to restoring a session file. Is this a problem that everyone is

getting?

```

Original issue reported on code.google.com by `SkeThVi...@gmail.com` on 1 Mar 2012 at 4:17 | 1.0 | Restoring Session file - ```

Every time I try to restore a previous session file

reaver basically re-arranges the characters withing the file

and it start to use random character to crack the pin.

For example: 05:b5670 it actually used the bssid to try and crack

the pin. I would like to know if, there's a precise way, or a different

method to restoring a session file. Is this a problem that everyone is

getting?

```

Original issue reported on code.google.com by `SkeThVi...@gmail.com` on 1 Mar 2012 at 4:17 | defect | restoring session file every time i try to restore a previous session file reaver basically re arranges the characters withing the file and it start to use random character to crack the pin for example it actually used the bssid to try and crack the pin i would like to know if there s a precise way or a different method to restoring a session file is this a problem that everyone is getting original issue reported on code google com by skethvi gmail com on mar at | 1 |

185,324 | 21,786,158,429 | IssuesEvent | 2022-05-14 06:44:45 | classicvalues/AA-ionic-login | https://api.github.com/repos/classicvalues/AA-ionic-login | closed | WS-2019-0379 (Medium) detected in commons-codec-1.10.jar - autoclosed | security vulnerability | ## WS-2019-0379 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>commons-codec-1.10.jar</b></p></summary>

<p>The Apache Commons Codec package contains simple encoder and decoders for

various formats such as Base64 and Hexadecimal. In addition to these

widely used encoders and decoders, the codec package also maintains a

collection of phonetic encoding utilities.</p>

<p>Path to dependency file: /node_modules/@capacitor/status-bar/android/build.gradle</p>

<p>Path to vulnerable library: /home/wss-scanner/.gradle/caches/modules-2/files-2.1/commons-codec/commons-codec/1.10/4b95f4897fa13f2cd904aee711aeafc0c5295cd8/commons-codec-1.10.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/commons-codec/commons-codec/1.10/4b95f4897fa13f2cd904aee711aeafc0c5295cd8/commons-codec-1.10.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/commons-codec/commons-codec/1.10/4b95f4897fa13f2cd904aee711aeafc0c5295cd8/commons-codec-1.10.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/commons-codec/commons-codec/1.10/4b95f4897fa13f2cd904aee711aeafc0c5295cd8/commons-codec-1.10.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/commons-codec/commons-codec/1.10/4b95f4897fa13f2cd904aee711aeafc0c5295cd8/commons-codec-1.10.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/commons-codec/commons-codec/1.10/4b95f4897fa13f2cd904aee711aeafc0c5295cd8/commons-codec-1.10.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/commons-codec/commons-codec/1.10/4b95f4897fa13f2cd904aee711aeafc0c5295cd8/commons-codec-1.10.jar</p>

<p>

Dependency Hierarchy:

- lint-gradle-27.2.1.jar (Root Library)

- builder-4.2.1.jar

- sdklib-27.2.1.jar

- httpmime-4.5.6.jar

- httpclient-4.5.6.jar

- :x: **commons-codec-1.10.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/classicvalues/AA-ionic-login/commit/d4f4480b7ddd8c520e4b02ea2008621ded4be6ab">d4f4480b7ddd8c520e4b02ea2008621ded4be6ab</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Apache commons-codec before version “commons-codec-1.13-RC1” is vulnerable to information disclosure due to Improper Input validation.

<p>Publish Date: 2019-05-20

<p>URL: <a href=https://github.com/apache/commons-codec/commit/48b615756d1d770091ea3322eefc08011ee8b113>WS-2019-0379</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/apache/commons-codec/commit/48b615756d1d770091ea3322eefc08011ee8b113">https://github.com/apache/commons-codec/commit/48b615756d1d770091ea3322eefc08011ee8b113</a></p>

<p>Release Date: 2019-05-20</p>

<p>Fix Resolution: commons-codec:commons-codec:1.13</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | WS-2019-0379 (Medium) detected in commons-codec-1.10.jar - autoclosed - ## WS-2019-0379 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>commons-codec-1.10.jar</b></p></summary>

<p>The Apache Commons Codec package contains simple encoder and decoders for

various formats such as Base64 and Hexadecimal. In addition to these

widely used encoders and decoders, the codec package also maintains a

collection of phonetic encoding utilities.</p>

<p>Path to dependency file: /node_modules/@capacitor/status-bar/android/build.gradle</p>

<p>Path to vulnerable library: /home/wss-scanner/.gradle/caches/modules-2/files-2.1/commons-codec/commons-codec/1.10/4b95f4897fa13f2cd904aee711aeafc0c5295cd8/commons-codec-1.10.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/commons-codec/commons-codec/1.10/4b95f4897fa13f2cd904aee711aeafc0c5295cd8/commons-codec-1.10.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/commons-codec/commons-codec/1.10/4b95f4897fa13f2cd904aee711aeafc0c5295cd8/commons-codec-1.10.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/commons-codec/commons-codec/1.10/4b95f4897fa13f2cd904aee711aeafc0c5295cd8/commons-codec-1.10.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/commons-codec/commons-codec/1.10/4b95f4897fa13f2cd904aee711aeafc0c5295cd8/commons-codec-1.10.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/commons-codec/commons-codec/1.10/4b95f4897fa13f2cd904aee711aeafc0c5295cd8/commons-codec-1.10.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/commons-codec/commons-codec/1.10/4b95f4897fa13f2cd904aee711aeafc0c5295cd8/commons-codec-1.10.jar</p>

<p>

Dependency Hierarchy:

- lint-gradle-27.2.1.jar (Root Library)

- builder-4.2.1.jar

- sdklib-27.2.1.jar

- httpmime-4.5.6.jar

- httpclient-4.5.6.jar

- :x: **commons-codec-1.10.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/classicvalues/AA-ionic-login/commit/d4f4480b7ddd8c520e4b02ea2008621ded4be6ab">d4f4480b7ddd8c520e4b02ea2008621ded4be6ab</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Apache commons-codec before version “commons-codec-1.13-RC1” is vulnerable to information disclosure due to Improper Input validation.

<p>Publish Date: 2019-05-20

<p>URL: <a href=https://github.com/apache/commons-codec/commit/48b615756d1d770091ea3322eefc08011ee8b113>WS-2019-0379</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/apache/commons-codec/commit/48b615756d1d770091ea3322eefc08011ee8b113">https://github.com/apache/commons-codec/commit/48b615756d1d770091ea3322eefc08011ee8b113</a></p>

<p>Release Date: 2019-05-20</p>

<p>Fix Resolution: commons-codec:commons-codec:1.13</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_defect | ws medium detected in commons codec jar autoclosed ws medium severity vulnerability vulnerable library commons codec jar the apache commons codec package contains simple encoder and decoders for various formats such as and hexadecimal in addition to these widely used encoders and decoders the codec package also maintains a collection of phonetic encoding utilities path to dependency file node modules capacitor status bar android build gradle path to vulnerable library home wss scanner gradle caches modules files commons codec commons codec commons codec jar home wss scanner gradle caches modules files commons codec commons codec commons codec jar home wss scanner gradle caches modules files commons codec commons codec commons codec jar home wss scanner gradle caches modules files commons codec commons codec commons codec jar home wss scanner gradle caches modules files commons codec commons codec commons codec jar home wss scanner gradle caches modules files commons codec commons codec commons codec jar home wss scanner gradle caches modules files commons codec commons codec commons codec jar dependency hierarchy lint gradle jar root library builder jar sdklib jar httpmime jar httpclient jar x commons codec jar vulnerable library found in head commit a href found in base branch master vulnerability details apache commons codec before version “commons codec ” is vulnerable to information disclosure due to improper input validation publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact low integrity impact low availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution commons codec commons codec step up your open source security game with whitesource | 0 |

58,529 | 16,589,157,876 | IssuesEvent | 2021-06-01 04:52:15 | SAP/fundamental-ngx | https://api.github.com/repos/SAP/fundamental-ngx | closed | Bug: Platform Object Status - focus and tabbing issue for clickable object status | Defect Hunting Low QA Approved bug platform | #### Is this a bug, enhancement, or feature request?

bug

#### Briefly describe your proposal.

For linked object status, focus should happen after clicking. Also, clickable objects should be tabbable. Fundamental Styles supports tabbing https://fundamental-styles.netlify.app/?path=/docs/components-object-status--primary#clickable-object-status

#### Which versions of Angular and Fundamental Library for Angular are affected? (If this is a feature request, use current version.)

latest

#### If this is a bug, please provide steps for reproducing it.

1. Go to https://fundamental-ngx.netlify.app/#/platform/object-status

2. Go to Clickable Object Status example.

3. Try to tab through the clickable object statuses. None of them are tabbable.

Also, UI5 example shows focus for clickable object status, but it is not present here. Generally, if something is a link, one would expect focus and tabbing for it.

UI5:

| 1.0 | Bug: Platform Object Status - focus and tabbing issue for clickable object status - #### Is this a bug, enhancement, or feature request?

bug

#### Briefly describe your proposal.

For linked object status, focus should happen after clicking. Also, clickable objects should be tabbable. Fundamental Styles supports tabbing https://fundamental-styles.netlify.app/?path=/docs/components-object-status--primary#clickable-object-status

#### Which versions of Angular and Fundamental Library for Angular are affected? (If this is a feature request, use current version.)

latest

#### If this is a bug, please provide steps for reproducing it.

1. Go to https://fundamental-ngx.netlify.app/#/platform/object-status

2. Go to Clickable Object Status example.

3. Try to tab through the clickable object statuses. None of them are tabbable.

Also, UI5 example shows focus for clickable object status, but it is not present here. Generally, if something is a link, one would expect focus and tabbing for it.

UI5:

| defect | bug platform object status focus and tabbing issue for clickable object status is this a bug enhancement or feature request bug briefly describe your proposal for linked object status focus should happen after clicking also clickable objects should be tabbable fundamental styles supports tabbing which versions of angular and fundamental library for angular are affected if this is a feature request use current version latest if this is a bug please provide steps for reproducing it go to go to clickable object status example try to tab through the clickable object statuses none of them are tabbable also example shows focus for clickable object status but it is not present here generally if something is a link one would expect focus and tabbing for it | 1 |

7,019 | 2,610,322,341 | IssuesEvent | 2015-02-26 19:43:56 | chrsmith/republic-at-war | https://api.github.com/repos/chrsmith/republic-at-war | closed | Map Issue | auto-migrated Priority-Medium Type-Defect | ```

Default Fondor Reinforcement Points

No reinforcement points on fondor when defending as the republic, though they

were there when i attacked it which is weird.

Clones wars GC, normal as republic

```

-----

Original issue reported on code.google.com by `z3r0...@gmail.com` on 11 May 2011 at 12:40 | 1.0 | Map Issue - ```

Default Fondor Reinforcement Points

No reinforcement points on fondor when defending as the republic, though they

were there when i attacked it which is weird.

Clones wars GC, normal as republic

```

-----

Original issue reported on code.google.com by `z3r0...@gmail.com` on 11 May 2011 at 12:40 | defect | map issue default fondor reinforcement points no reinforcement points on fondor when defending as the republic though they were there when i attacked it which is weird clones wars gc normal as republic original issue reported on code google com by gmail com on may at | 1 |

416,329 | 28,076,878,131 | IssuesEvent | 2023-03-30 00:57:06 | f1tenth/f1tenth_gym | https://api.github.com/repos/f1tenth/f1tenth_gym | closed | Example agent Dockerfile | documentation enhancement | Add example files for setting up an agent using the gym environment.

- Template Dockerfile, including comments on how to add dependencies

- Build and run scripts

- (tentative) native installation script | 1.0 | Example agent Dockerfile - Add example files for setting up an agent using the gym environment.

- Template Dockerfile, including comments on how to add dependencies

- Build and run scripts

- (tentative) native installation script | non_defect | example agent dockerfile add example files for setting up an agent using the gym environment template dockerfile including comments on how to add dependencies build and run scripts tentative native installation script | 0 |

46,561 | 13,174,660,718 | IssuesEvent | 2020-08-11 23:06:55 | shaundmorris/ddf | https://api.github.com/repos/shaundmorris/ddf | closed | CVE-2014-0114 High Severity Vulnerability detected by WhiteSource | security vulnerability wontfix | ## CVE-2014-0114 - High Severity Vulnerability

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>commons-beanutils-1.7.0.jar</b></p></summary>

<p>The Java language provides Reflection and Introspection APIs (see the java.lang.reflect and java.beans packages in the JDK Javadocs). However, these APIs can be quite complex to understand and utilize. The BeanUtils component provides easy-to-use wrappers around these capabilities</p>

<p>path: /root/.m2/repository/commons-beanutils/commons-beanutils/1.7.0/commons-beanutils-1.7.0.jar</p>

<p>

<p>Library home page: <a href=http://jakarta.apache.org/commons/beanutils/>http://jakarta.apache.org/commons/beanutils/</a></p>

Dependency Hierarchy:

- commons-configuration-1.6.jar (Root Library)

- commons-digester-1.8.jar

- :x: **commons-beanutils-1.7.0.jar** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Apache Commons BeanUtils, as distributed in lib/commons-beanutils-1.8.0.jar in Apache Struts 1.x through 1.3.10 and in other products requiring commons-beanutils through 1.9.2, does not suppress the class property, which allows remote attackers to "manipulate" the ClassLoader and execute arbitrary code via the class parameter, as demonstrated by the passing of this parameter to the getClass method of the ActionForm object in Struts 1.

<p>Publish Date: 2014-04-30

<p>URL: <a href=https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2014-0114>CVE-2014-0114</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://issues.apache.org/jira/browse/BEANUTILS-463">https://issues.apache.org/jira/browse/BEANUTILS-463</a></p>

<p>Release Date: 2014-05-24</p>

<p>Fix Resolution: Upgrade to version 1.9.2 or greater</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2014-0114 High Severity Vulnerability detected by WhiteSource - ## CVE-2014-0114 - High Severity Vulnerability

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>commons-beanutils-1.7.0.jar</b></p></summary>

<p>The Java language provides Reflection and Introspection APIs (see the java.lang.reflect and java.beans packages in the JDK Javadocs). However, these APIs can be quite complex to understand and utilize. The BeanUtils component provides easy-to-use wrappers around these capabilities</p>

<p>path: /root/.m2/repository/commons-beanutils/commons-beanutils/1.7.0/commons-beanutils-1.7.0.jar</p>

<p>

<p>Library home page: <a href=http://jakarta.apache.org/commons/beanutils/>http://jakarta.apache.org/commons/beanutils/</a></p>

Dependency Hierarchy:

- commons-configuration-1.6.jar (Root Library)

- commons-digester-1.8.jar

- :x: **commons-beanutils-1.7.0.jar** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Apache Commons BeanUtils, as distributed in lib/commons-beanutils-1.8.0.jar in Apache Struts 1.x through 1.3.10 and in other products requiring commons-beanutils through 1.9.2, does not suppress the class property, which allows remote attackers to "manipulate" the ClassLoader and execute arbitrary code via the class parameter, as demonstrated by the passing of this parameter to the getClass method of the ActionForm object in Struts 1.

<p>Publish Date: 2014-04-30

<p>URL: <a href=https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2014-0114>CVE-2014-0114</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://issues.apache.org/jira/browse/BEANUTILS-463">https://issues.apache.org/jira/browse/BEANUTILS-463</a></p>

<p>Release Date: 2014-05-24</p>

<p>Fix Resolution: Upgrade to version 1.9.2 or greater</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_defect | cve high severity vulnerability detected by whitesource cve high severity vulnerability vulnerable library commons beanutils jar the java language provides reflection and introspection apis see the java lang reflect and java beans packages in the jdk javadocs however these apis can be quite complex to understand and utilize the beanutils component provides easy to use wrappers around these capabilities path root repository commons beanutils commons beanutils commons beanutils jar library home page a href dependency hierarchy commons configuration jar root library commons digester jar x commons beanutils jar vulnerable library vulnerability details apache commons beanutils as distributed in lib commons beanutils jar in apache struts x through and in other products requiring commons beanutils through does not suppress the class property which allows remote attackers to manipulate the classloader and execute arbitrary code via the class parameter as demonstrated by the passing of this parameter to the getclass method of the actionform object in struts publish date url a href cvss score details base score metrics not available suggested fix type upgrade version origin a href release date fix resolution upgrade to version or greater step up your open source security game with whitesource | 0 |

2,555 | 2,607,927,771 | IssuesEvent | 2015-02-26 00:25:24 | chrsmithdemos/minify | https://api.github.com/repos/chrsmithdemos/minify | closed | Minify_Cache_File write empty files | auto-migrated Priority-Critical Type-Defect | ```

On at least one server tested, Minify 2.0.2b writes only zero-length

files. Removing the LOCK_EX option from file_put_contents() fixed the

issue, but I don't know why.

Both my test/prod are similar Apache/mod_php setups on Red Hat 5, but only

the production had the issue.

```

-----

Original issue reported on code.google.com by `mrclay....@gmail.com` on 23 Jul 2008 at 7:50 | 1.0 | Minify_Cache_File write empty files - ```

On at least one server tested, Minify 2.0.2b writes only zero-length

files. Removing the LOCK_EX option from file_put_contents() fixed the

issue, but I don't know why.

Both my test/prod are similar Apache/mod_php setups on Red Hat 5, but only

the production had the issue.

```

-----

Original issue reported on code.google.com by `mrclay....@gmail.com` on 23 Jul 2008 at 7:50 | defect | minify cache file write empty files on at least one server tested minify writes only zero length files removing the lock ex option from file put contents fixed the issue but i don t know why both my test prod are similar apache mod php setups on red hat but only the production had the issue original issue reported on code google com by mrclay gmail com on jul at | 1 |

21,112 | 3,461,696,089 | IssuesEvent | 2015-12-20 09:26:25 | arti01/jkursy | https://api.github.com/repos/arti01/jkursy | closed | Logowanie kursanta | auto-migrated Priority-Low Type-Defect | ```

Po nieudanym logowaniu powrót do głównej a nie do strony logowania

```

Original issue reported on code.google.com by `stasiom...@gmail.com` on 14 Mar 2011 at 9:35 | 1.0 | Logowanie kursanta - ```

Po nieudanym logowaniu powrót do głównej a nie do strony logowania

```

Original issue reported on code.google.com by `stasiom...@gmail.com` on 14 Mar 2011 at 9:35 | defect | logowanie kursanta po nieudanym logowaniu powrót do głównej a nie do strony logowania original issue reported on code google com by stasiom gmail com on mar at | 1 |

20,526 | 2,622,852,040 | IssuesEvent | 2015-03-04 08:05:46 | max99x/pagemon-chrome-ext | https://api.github.com/repos/max99x/pagemon-chrome-ext | closed | "Advanced Monitor" in Popup | auto-migrated Priority-Medium | ```

The majority of the pages I monitor require a selector.

It would be nice if there was an "Advanced Monitor" option in the popup that

would kick you straight to the advanced configuration of the new monitor. As

the process now stands, I must first add the page with the popup (via left

click), then open the button's context menu (via right click), find my new

monitor, and turn on advanced monitoring.

If cluttering the popup is a concern, maybe just make this behavior

configurable from advanced options. That way, those of us who need it can set

it once and forget it.

```

Original issue reported on code.google.com by `aftermar...@gmail.com` on 14 Oct 2012 at 12:08

* Merged into: #208 | 1.0 | "Advanced Monitor" in Popup - ```

The majority of the pages I monitor require a selector.

It would be nice if there was an "Advanced Monitor" option in the popup that

would kick you straight to the advanced configuration of the new monitor. As

the process now stands, I must first add the page with the popup (via left

click), then open the button's context menu (via right click), find my new

monitor, and turn on advanced monitoring.

If cluttering the popup is a concern, maybe just make this behavior

configurable from advanced options. That way, those of us who need it can set

it once and forget it.

```

Original issue reported on code.google.com by `aftermar...@gmail.com` on 14 Oct 2012 at 12:08

* Merged into: #208 | non_defect | advanced monitor in popup the majority of the pages i monitor require a selector it would be nice if there was an advanced monitor option in the popup that would kick you straight to the advanced configuration of the new monitor as the process now stands i must first add the page with the popup via left click then open the button s context menu via right click find my new monitor and turn on advanced monitoring if cluttering the popup is a concern maybe just make this behavior configurable from advanced options that way those of us who need it can set it once and forget it original issue reported on code google com by aftermar gmail com on oct at merged into | 0 |

20,110 | 10,459,781,771 | IssuesEvent | 2019-09-20 11:53:50 | Alfresco/alfresco-transform-core | https://api.github.com/repos/Alfresco/alfresco-transform-core | closed | CVE-2019-12402 (Medium) detected in commons-compress-1.18.jar | security vulnerability | ## CVE-2019-12402 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>commons-compress-1.18.jar</b></p></summary>

<p>Apache Commons Compress software defines an API for working with

compression and archive formats. These include: bzip2, gzip, pack200,

lzma, xz, Snappy, traditional Unix Compress, DEFLATE, DEFLATE64, LZ4,

Brotli, Zstandard and ar, cpio, jar, tar, zip, dump, 7z, arj.</p>

<p>Library home page: <a href="https://commons.apache.org/proper/commons-compress/">https://commons.apache.org/proper/commons-compress/</a></p>

<p>Path to dependency file: /alfresco-transform-core/alfresco-docker-tika/pom.xml</p>

<p>Path to vulnerable library: /root/.m2/repository/org/apache/commons/commons-compress/1.18/commons-compress-1.18.jar,2/repository/org/apache/commons/commons-compress/1.18/commons-compress-1.18.jar</p>

<p>

Dependency Hierarchy:

- :x: **commons-compress-1.18.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/Alfresco/alfresco-transform-core/commit/8142836caf3a42dc77a0e74346e16bbc3eaf9c7b">8142836caf3a42dc77a0e74346e16bbc3eaf9c7b</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The file name encoding algorithm used internally in Apache Commons Compress 1.15 to 1.18 can get into an infinite loop when faced with specially crafted inputs. This can lead to a denial of service attack if an attacker can choose the file names inside of an archive created by Compress.

<p>Publish Date: 2019-08-30

<p>URL: <a href=https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-12402>CVE-2019-12402</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>5.0</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-12402">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-12402</a></p>

<p>Release Date: 2019-08-30</p>

<p>Fix Resolution: 1.19</p>

</p>

</details>

<p></p>

| True | CVE-2019-12402 (Medium) detected in commons-compress-1.18.jar - ## CVE-2019-12402 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>commons-compress-1.18.jar</b></p></summary>

<p>Apache Commons Compress software defines an API for working with

compression and archive formats. These include: bzip2, gzip, pack200,

lzma, xz, Snappy, traditional Unix Compress, DEFLATE, DEFLATE64, LZ4,

Brotli, Zstandard and ar, cpio, jar, tar, zip, dump, 7z, arj.</p>

<p>Library home page: <a href="https://commons.apache.org/proper/commons-compress/">https://commons.apache.org/proper/commons-compress/</a></p>

<p>Path to dependency file: /alfresco-transform-core/alfresco-docker-tika/pom.xml</p>

<p>Path to vulnerable library: /root/.m2/repository/org/apache/commons/commons-compress/1.18/commons-compress-1.18.jar,2/repository/org/apache/commons/commons-compress/1.18/commons-compress-1.18.jar</p>

<p>

Dependency Hierarchy:

- :x: **commons-compress-1.18.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/Alfresco/alfresco-transform-core/commit/8142836caf3a42dc77a0e74346e16bbc3eaf9c7b">8142836caf3a42dc77a0e74346e16bbc3eaf9c7b</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The file name encoding algorithm used internally in Apache Commons Compress 1.15 to 1.18 can get into an infinite loop when faced with specially crafted inputs. This can lead to a denial of service attack if an attacker can choose the file names inside of an archive created by Compress.

<p>Publish Date: 2019-08-30

<p>URL: <a href=https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-12402>CVE-2019-12402</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>5.0</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-12402">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-12402</a></p>

<p>Release Date: 2019-08-30</p>

<p>Fix Resolution: 1.19</p>

</p>

</details>

<p></p>

| non_defect | cve medium detected in commons compress jar cve medium severity vulnerability vulnerable library commons compress jar apache commons compress software defines an api for working with compression and archive formats these include gzip lzma xz snappy traditional unix compress deflate brotli zstandard and ar cpio jar tar zip dump arj library home page a href path to dependency file alfresco transform core alfresco docker tika pom xml path to vulnerable library root repository org apache commons commons compress commons compress jar repository org apache commons commons compress commons compress jar dependency hierarchy x commons compress jar vulnerable library found in head commit a href vulnerability details the file name encoding algorithm used internally in apache commons compress to can get into an infinite loop when faced with specially crafted inputs this can lead to a denial of service attack if an attacker can choose the file names inside of an archive created by compress publish date url a href cvss score details base score metrics not available suggested fix type upgrade version origin a href release date fix resolution | 0 |

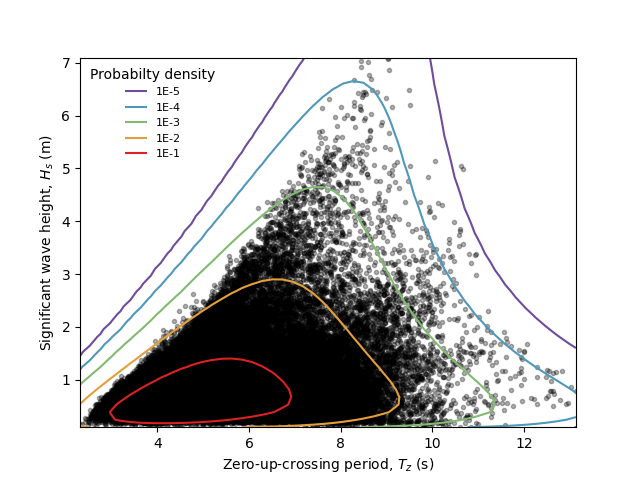

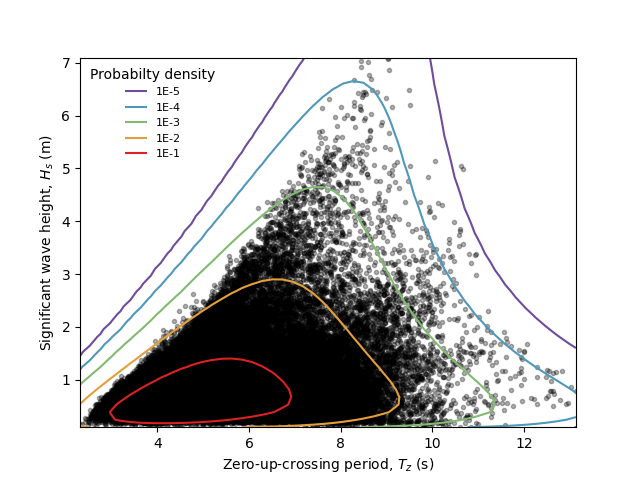

85,883 | 16,756,864,742 | IssuesEvent | 2021-06-13 00:48:34 | virocon-organization/virocon | https://api.github.com/repos/virocon-organization/virocon | closed | Axis limits of plot_2D_isodensity too small | code improvement | **I'm submitting a ...**

- [ ] bug report

- [ ] feature request

- [x] code improvement request

## Expected behavior

We should not have the impression that maybe not all datapoints are shown.

## Actual behavior

**How does it currently work (with the bug causing problems or without the feature)?**

## Steps to reproduce the problem (how to see the actual behavior)

import numpy as np

import matplotlib.pyplot as plt

from virocon import (

read_ec_benchmark_dataset,

GlobalHierarchicalModel,

ExponentiatedWeibullDistribution,

LogNormalDistribution,

DependenceFunction,

WidthOfIntervalSlicer,

IFORMContour,

plot_2D_isodensity

)

# Load sea state measurements from NDBC buoy 44007.

data = read_ec_benchmark_dataset("datasets/ec-benchmark_dataset_A.txt")

# Define the marginal distribution for Hs.

dist_description_hs = {

"distribution": ExponentiatedWeibullDistribution(),

"intervals": WidthOfIntervalSlicer(width=0.5, min_n_points=50),

}

# Define the conditional distribution for Tz

def _asymdecrease3(x, a, b, c):

return a + b / (1 + c * x)

def _lnsquare2(x, a, b, c):

return np.log(a + b * np.sqrt(np.divide(x, 9.81)))

bounds = [(0, None), (0, None), (None, None)]