Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

64,546 | 3,212,647,381 | IssuesEvent | 2015-10-06 16:20:47 | ceylon/ceylon-compiler | https://api.github.com/repos/ceylon/ceylon-compiler | closed | Cannot use spread operator to invoke member class | BUG high priority | This code produces a compile time backend error:

```ceylon

shared class C() {

shared class D() {}

value ds = { C() }*.D(); // Cannot find symbol symbol: method D$new$()

print("done");

}

shared void run() {

C();

}

```

Similar happens if `C` is an interface, and also if everything is contained within `run`. | 1.0 | Cannot use spread operator to invoke member class - This code produces a compile time backend error:

```ceylon

shared class C() {

shared class D() {}

value ds = { C() }*.D(); // Cannot find symbol symbol: method D$new$()

print("done");

}

shared void run() {

C();

}

```

Similar happens if `C` is an interface, and also if everything is contained within `run`. | non_defect | cannot use spread operator to invoke member class this code produces a compile time backend error ceylon shared class c shared class d value ds c d cannot find symbol symbol method d new print done shared void run c similar happens if c is an interface and also if everything is contained within run | 0 |

366,227 | 25,573,703,525 | IssuesEvent | 2022-11-30 20:00:41 | carlonicora/obsidian-rpg-manager | https://api.github.com/repos/carlonicora/obsidian-rpg-manager | closed | [Wrap-Up] Documentation - Beginners Guide | Documentation | **Current Stage**: Wrap-Up

## Final Tasks

- [x] Finalizing Layouts, Generating Pictures (pictures take a while)

- [x] Navigation link up top and bottom due to Github

- [x] Add to Wiki

- [x] Check by x1101 and carlonicora

- [x] Revisions

- [x] Second Check by x1101 and carlonicora

- [x] Publish

| 1.0 | [Wrap-Up] Documentation - Beginners Guide - **Current Stage**: Wrap-Up

## Final Tasks

- [x] Finalizing Layouts, Generating Pictures (pictures take a while)

- [x] Navigation link up top and bottom due to Github

- [x] Add to Wiki

- [x] Check by x1101 and carlonicora

- [x] Revisions

- [x] Second Check by x1101 and carlonicora

- [x] Publish

| non_defect | documentation beginners guide current stage wrap up final tasks finalizing layouts generating pictures pictures take a while navigation link up top and bottom due to github add to wiki check by and carlonicora revisions second check by and carlonicora publish | 0 |

52,459 | 13,224,736,321 | IssuesEvent | 2020-08-17 19:44:33 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | opened | [filterscripts] spe fit injector -> key error (Trac #2239) | Incomplete Migration Migrated from Trac analysis defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/2239">https://code.icecube.wisc.edu/projects/icecube/ticket/2239</a>, reported by anna.obertackeand owned by olivas</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-06-21T17:09:41",

"_ts": "1561136981975028",

"description": "When using the new SimulationFiltering_SPE and the --spe-file option the follwoing error occurs: \n\n\nWARN (Python): Module iceprod.modules not found. Will not define IceProd Class (SimulationFiltering_SPE.py:403 in <module>)\nWARN (I3PhotoSplineTable): No nGroup in table, using default! This is probably not what you want (I3PhotoSplineTable.cxx:81 in bool I3PhotoSplineTable::SetupTable(co$\nWARN (I3PhotoSplineTable): No nGroup in table, using default! This is probably not what you want (I3PhotoSplineTable.cxx:81 in bool I3PhotoSplineTable::SetupTable(co$\nERROR (I3Tray): Exception thrown while configuring module \"fixspe\". (I3Tray.cxx:385 in void I3Tray::Configure())\nfixspe (icecube.phys_services.spe_fit_injector.I3SPEFitInjector)\n Filename\n Description : JSON (may bz2 compressed) file with SPE fit data\n Default : ''\n Configured : '/cvmfs/icecube.opensciencegrid.org/py2-v3.0.1/metaprojects/icerec/V05-02-02-RC2/filterscripts/resources/data/pass3/IC86.2016_923_NewWaveDeform_liorm_lite.json'\n\nTraceback (most recent call last):\n File \"/data/user/aobertacke/31_Filter2019/L1/scripts_newWavedeform//SimulationFiltering_SPE.py\", line 420, in <module>\n main(opts)\n File \"/data/user/aobertacke/31_Filter2019/L1/scripts_newWavedeform//SimulationFiltering_SPE.py\", line 377, in main\n tray.Execute()\n File \"/data/user/aobertacke/software/icerec_IC2019_V05_02_02-RC1/build/lib/I3Tray.py\", line 256, in Execute\n super(I3Tray, self).Execute()\n File \"/data/user/aobertacke/software/icerec_IC2019_V05_02_02-RC1/build/lib/icecube/phys_services/spe_fit_injector.py\", line 41, in Configure\n if bool(data['JOINT_fit']['valid']) == False and \\\nKeyError: 'JOINT_fit'\n\n\n\nThe TFT is currently requesting filter checks, thus this bug is an issue to hold the deadline set by TFT for ppl who have to simulate their own signal.",

"reporter": "anna.obertacke",

"cc": "",

"resolution": "fixed",

"time": "2019-02-13T11:55:25",

"component": "analysis",

"summary": "[filterscripts] spe fit injector -> key error",

"priority": "major",

"keywords": "",

"milestone": "",

"owner": "olivas",

"type": "defect"

}

```

</p>

</details>

| 1.0 | [filterscripts] spe fit injector -> key error (Trac #2239) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/2239">https://code.icecube.wisc.edu/projects/icecube/ticket/2239</a>, reported by anna.obertackeand owned by olivas</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-06-21T17:09:41",

"_ts": "1561136981975028",

"description": "When using the new SimulationFiltering_SPE and the --spe-file option the follwoing error occurs: \n\n\nWARN (Python): Module iceprod.modules not found. Will not define IceProd Class (SimulationFiltering_SPE.py:403 in <module>)\nWARN (I3PhotoSplineTable): No nGroup in table, using default! This is probably not what you want (I3PhotoSplineTable.cxx:81 in bool I3PhotoSplineTable::SetupTable(co$\nWARN (I3PhotoSplineTable): No nGroup in table, using default! This is probably not what you want (I3PhotoSplineTable.cxx:81 in bool I3PhotoSplineTable::SetupTable(co$\nERROR (I3Tray): Exception thrown while configuring module \"fixspe\". (I3Tray.cxx:385 in void I3Tray::Configure())\nfixspe (icecube.phys_services.spe_fit_injector.I3SPEFitInjector)\n Filename\n Description : JSON (may bz2 compressed) file with SPE fit data\n Default : ''\n Configured : '/cvmfs/icecube.opensciencegrid.org/py2-v3.0.1/metaprojects/icerec/V05-02-02-RC2/filterscripts/resources/data/pass3/IC86.2016_923_NewWaveDeform_liorm_lite.json'\n\nTraceback (most recent call last):\n File \"/data/user/aobertacke/31_Filter2019/L1/scripts_newWavedeform//SimulationFiltering_SPE.py\", line 420, in <module>\n main(opts)\n File \"/data/user/aobertacke/31_Filter2019/L1/scripts_newWavedeform//SimulationFiltering_SPE.py\", line 377, in main\n tray.Execute()\n File \"/data/user/aobertacke/software/icerec_IC2019_V05_02_02-RC1/build/lib/I3Tray.py\", line 256, in Execute\n super(I3Tray, self).Execute()\n File \"/data/user/aobertacke/software/icerec_IC2019_V05_02_02-RC1/build/lib/icecube/phys_services/spe_fit_injector.py\", line 41, in Configure\n if bool(data['JOINT_fit']['valid']) == False and \\\nKeyError: 'JOINT_fit'\n\n\n\nThe TFT is currently requesting filter checks, thus this bug is an issue to hold the deadline set by TFT for ppl who have to simulate their own signal.",

"reporter": "anna.obertacke",

"cc": "",

"resolution": "fixed",

"time": "2019-02-13T11:55:25",

"component": "analysis",

"summary": "[filterscripts] spe fit injector -> key error",

"priority": "major",

"keywords": "",

"milestone": "",

"owner": "olivas",

"type": "defect"

}

```

</p>

</details>

| defect | spe fit injector key error trac migrated from json status closed changetime ts description when using the new simulationfiltering spe and the spe file option the follwoing error occurs n n nwarn python module iceprod modules not found will not define iceprod class simulationfiltering spe py in nwarn no ngroup in table using default this is probably not what you want cxx in bool setuptable co nwarn no ngroup in table using default this is probably not what you want cxx in bool setuptable co nerror exception thrown while configuring module fixspe cxx in void configure nfixspe icecube phys services spe fit injector n filename n description json may compressed file with spe fit data n default n configured cvmfs icecube opensciencegrid org metaprojects icerec filterscripts resources data newwavedeform liorm lite json n ntraceback most recent call last n file data user aobertacke scripts newwavedeform simulationfiltering spe py line in n main opts n file data user aobertacke scripts newwavedeform simulationfiltering spe py line in main n tray execute n file data user aobertacke software icerec build lib py line in execute n super self execute n file data user aobertacke software icerec build lib icecube phys services spe fit injector py line in configure n if bool data false and nkeyerror joint fit n n n nthe tft is currently requesting filter checks thus this bug is an issue to hold the deadline set by tft for ppl who have to simulate their own signal reporter anna obertacke cc resolution fixed time component analysis summary spe fit injector key error priority major keywords milestone owner olivas type defect | 1 |

88,223 | 25,348,750,063 | IssuesEvent | 2022-11-19 14:11:38 | sile-typesetter/sile | https://api.github.com/repos/sile-typesetter/sile | opened | v0.14.5 release checklist | todo builds & releases | - [x] Shuffle any issues being put off to future milestones

- [x] Close all issues in current milestone (except this one)

- [x] Spring clean

- [x] Re-fetch tooling

- [x] Configure and build

- [x] Pass all tests

- [x] Remote CI

- [x] Local

- [x] Cut release

- [ ] Push release commit & tag to master

- Update website

- [ ] Copy and post manual, update 'latest' symlink and menu links

- [ ] Copy changelog and prefix with a summary as a blog post

- [ ] Tweak summary for gfm and edit into GitHub release notes

- Update downstream distro packages

- [ ] Arch Linux official: [community/sile](https://archlinux.org/packages/community/x86_64/sile/)

- [ ] Arch Linux AUR: [AUR/sile-luajit](https://aur.archlinux.org/packages/sile-luajit) <!-- – [pull request]() -->

- [ ] Homebrew: [Formula](https://github.com/Homebrew/homebrew-core/blob/master/Formula/sile.rb) <!-- – [pull request]() -->

- [ ] NixOS: [nixpkgs](https://github.com/NixOS/nixpkgs/blob/master/pkgs/tools/typesetting/sile/default.nix) <!-- – [pull request]() – [tracker](https://nixpk.gs/pr-tracker.html?pr=) -->

- [ ] Drop devel flag from any website examples that were using it

- [ ] Ubuntu: [ppa](https://launchpad.net/~sile-typesetter/+archive/ubuntu/sile)

- [ ] Docker Hub: [tags](https://hub.docker.com/repository/docker/siletypesetter/sile/tags)

- [ ] GHCR: [versions](https://github.com/orgs/sile-typesetter/packages/container/sile/versions)

- [ ] openSUSE: <!-- pinging @Pi-Cla re [specfile](https://build.opensuse.org/package/show/openSUSE%3AFactory/sile) -->

- [ ] NetBSD: <!-- pinging @jsonn re [pkgsrc](https://pkgsrc.se/print/sile) -->

- [ ] Void Linux: <!-- [pull request](https://github.com/void-linux/void-packages/pull/18306) -->

- [ ] OpenBSD: <!-- [mailing list thread](https://marc.info/?t=157907840200001) -->

- Bump downstream projects

- [ ] [FontProof](https://github.com/sile-typesetter/fontproof) (Docker image base plus CI matrix)

- [ ] [CaSILE](https://github.com/sile-typesetter/casile) (check, consider patch release)

- [ ] Eat cake

[](https://repology.org/project/sile/versions) | 1.0 | v0.14.5 release checklist - - [x] Shuffle any issues being put off to future milestones

- [x] Close all issues in current milestone (except this one)

- [x] Spring clean

- [x] Re-fetch tooling

- [x] Configure and build

- [x] Pass all tests

- [x] Remote CI

- [x] Local

- [x] Cut release

- [ ] Push release commit & tag to master

- Update website

- [ ] Copy and post manual, update 'latest' symlink and menu links

- [ ] Copy changelog and prefix with a summary as a blog post

- [ ] Tweak summary for gfm and edit into GitHub release notes

- Update downstream distro packages

- [ ] Arch Linux official: [community/sile](https://archlinux.org/packages/community/x86_64/sile/)

- [ ] Arch Linux AUR: [AUR/sile-luajit](https://aur.archlinux.org/packages/sile-luajit) <!-- – [pull request]() -->

- [ ] Homebrew: [Formula](https://github.com/Homebrew/homebrew-core/blob/master/Formula/sile.rb) <!-- – [pull request]() -->

- [ ] NixOS: [nixpkgs](https://github.com/NixOS/nixpkgs/blob/master/pkgs/tools/typesetting/sile/default.nix) <!-- – [pull request]() – [tracker](https://nixpk.gs/pr-tracker.html?pr=) -->

- [ ] Drop devel flag from any website examples that were using it

- [ ] Ubuntu: [ppa](https://launchpad.net/~sile-typesetter/+archive/ubuntu/sile)

- [ ] Docker Hub: [tags](https://hub.docker.com/repository/docker/siletypesetter/sile/tags)

- [ ] GHCR: [versions](https://github.com/orgs/sile-typesetter/packages/container/sile/versions)

- [ ] openSUSE: <!-- pinging @Pi-Cla re [specfile](https://build.opensuse.org/package/show/openSUSE%3AFactory/sile) -->

- [ ] NetBSD: <!-- pinging @jsonn re [pkgsrc](https://pkgsrc.se/print/sile) -->

- [ ] Void Linux: <!-- [pull request](https://github.com/void-linux/void-packages/pull/18306) -->

- [ ] OpenBSD: <!-- [mailing list thread](https://marc.info/?t=157907840200001) -->

- Bump downstream projects

- [ ] [FontProof](https://github.com/sile-typesetter/fontproof) (Docker image base plus CI matrix)

- [ ] [CaSILE](https://github.com/sile-typesetter/casile) (check, consider patch release)

- [ ] Eat cake

[](https://repology.org/project/sile/versions) | non_defect | release checklist shuffle any issues being put off to future milestones close all issues in current milestone except this one spring clean re fetch tooling configure and build pass all tests remote ci local cut release push release commit tag to master update website copy and post manual update latest symlink and menu links copy changelog and prefix with a summary as a blog post tweak summary for gfm and edit into github release notes update downstream distro packages arch linux official arch linux aur homebrew nixos drop devel flag from any website examples that were using it ubuntu docker hub ghcr opensuse netbsd void linux openbsd bump downstream projects docker image base plus ci matrix check consider patch release eat cake | 0 |

209,227 | 16,187,955,633 | IssuesEvent | 2021-05-04 01:42:58 | cabelitos/mentorship-team | https://api.github.com/repos/cabelitos/mentorship-team | closed | Missing Code of Conduct | documentation | The Node.JS Code of Conduct is missing and should be added to this repo. | 1.0 | Missing Code of Conduct - The Node.JS Code of Conduct is missing and should be added to this repo. | non_defect | missing code of conduct the node js code of conduct is missing and should be added to this repo | 0 |

47,275 | 13,056,094,379 | IssuesEvent | 2020-07-30 03:38:25 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | Fixed in revision 75773 with the inclusion of SimpleMajorityTrigger (Trac #264) | Migrated from Trac combo simulation defect | Generates more and shorter triggers.

Proposed solution in SMTrigger.cxx:

l.238 should be k = q +1

l.248 : j = k should be j = i + threshold -1

But might require also a test.

Migrated from https://code.icecube.wisc.edu/ticket/264

```json

{

"status": "closed",

"changetime": "2011-05-26T16:36:38",

"description": "Generates more and shorter triggers.\n\nProposed solution in SMTrigger.cxx: \nl.238 should be k = q +1\nl.248 : j = k should be j = i + threshold -1\n\nBut might require also a test.",

"reporter": "icecube",

"cc": "",

"resolution": "fixed",

"_ts": "1306427798000000",

"component": "combo simulation",

"summary": "Fixed in revision 75773 with the inclusion of SimpleMajorityTrigger",

"priority": "normal",

"keywords": "",

"time": "2011-05-17T15:59:32",

"milestone": "",

"owner": "olivas",

"type": "defect"

}

```

| 1.0 | Fixed in revision 75773 with the inclusion of SimpleMajorityTrigger (Trac #264) - Generates more and shorter triggers.

Proposed solution in SMTrigger.cxx:

l.238 should be k = q +1

l.248 : j = k should be j = i + threshold -1

But might require also a test.

Migrated from https://code.icecube.wisc.edu/ticket/264

```json

{

"status": "closed",

"changetime": "2011-05-26T16:36:38",

"description": "Generates more and shorter triggers.\n\nProposed solution in SMTrigger.cxx: \nl.238 should be k = q +1\nl.248 : j = k should be j = i + threshold -1\n\nBut might require also a test.",

"reporter": "icecube",

"cc": "",

"resolution": "fixed",

"_ts": "1306427798000000",

"component": "combo simulation",

"summary": "Fixed in revision 75773 with the inclusion of SimpleMajorityTrigger",

"priority": "normal",

"keywords": "",

"time": "2011-05-17T15:59:32",

"milestone": "",

"owner": "olivas",

"type": "defect"

}

```

| defect | fixed in revision with the inclusion of simplemajoritytrigger trac generates more and shorter triggers proposed solution in smtrigger cxx l should be k q l j k should be j i threshold but might require also a test migrated from json status closed changetime description generates more and shorter triggers n nproposed solution in smtrigger cxx nl should be k q nl j k should be j i threshold n nbut might require also a test reporter icecube cc resolution fixed ts component combo simulation summary fixed in revision with the inclusion of simplemajoritytrigger priority normal keywords time milestone owner olivas type defect | 1 |

9,583 | 2,615,163,040 | IssuesEvent | 2015-03-01 06:42:31 | chrsmith/reaver-wps | https://api.github.com/repos/chrsmith/reaver-wps | opened | WARNING: Receive timeout occurred | auto-migrated Priority-Triage Type-Defect | ```

Answer the following questions for every issue submitted:

0. What version of Reaver are you using? Reaver v1.4

1. What operating system are you using? Ubuntu 12.04

2. Is your wireless card in monitor mode (yes/no)? yes, airmon-ng start wlan0,

and I'm using mon0 for reaver

3. What is the signal strength of the Access Point you are trying to crack? -19

4. What is the manufacturer and model # of the device you are trying to crack?

Telus communications, Actiontec V1000H, Firmware Version: 31.30L.55

5. What is the entire command line string you are supplying to reaver?

reaver -i mon0 -b "my router's mac" -c 1 -A -vv

6. Please describe what you think the issue is. I have absolutely no idea,

feels like I've looked everywhere and tried everything at this point

7. Paste the output from Reaver below.

root@halo:~# reaver -i mon0 -b A8:39:44:XX:XX:XX -c 1 -A -vv

Reaver v1.4 WiFi Protected Setup Attack Tool

Copyright (c) 2011, Tactical Network Solutions, Craig Heffner

<cheffner@tacnetsol.com>

[+] Switching mon0 to channel 1

[+] Waiting for beacon from A8:39:44:XX:XX:XX

[+] Associated with A8:39:44:XX:XX:XX (ESSID: H****2)

[+] Trying pin 55555678

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

So I've been all through the forums, google and youtube, tried many variations

in command line and this is the furthest I got.

This is my home router and it's only about 6FT away from my computer.

Also note that other users on here say that they have success with the same

network card as me: broadcom BCM4312 running b43-fwcutter version# 5.100.138

It is worth noting that if I do not use the -A command then all I get is a

bunch of FAILED TO ASSOCIATE messages:

root@halo:~# reaver -i mon0 -b A8:39:44:XX:XX:XX -c 1 -vv

Reaver v1.4 WiFi Protected Setup Attack Tool

Copyright (c) 2011, Tactical Network Solutions, Craig Heffner

<cheffner@tacnetsol.com>

[+] Switching mon0 to channel 1

[?] Restore previous session for A8:39:44:XX:XX:XX? [n/Y] y

[+] Restored previous session

[+] Waiting for beacon from A8:39:44:XX:XX:XX

[!] WARNING: Failed to associate with A8:39:44:XX:XX:XX (ESSID: H****2)

[!] WARNING: Failed to associate with A8:39:44:XX:XX:XX (ESSID: H****2)

[!] WARNING: Failed to associate with A8:39:44:XX:XX:XX (ESSID: HomeT2)

[!] WARNING: Failed to associate with A8:39:44:XX:XX:XX (ESSID: H****2)

===================================================================

I ran wash any everything looks ok:

root@halo:~# wash -i mon0

Wash v1.4 WiFi Protected Setup Scan Tool

Copyright (c) 2011, Tactical Network Solutions, Craig Heffner

<cheffner@tacnetsol.com>

BSSID Channel RSSI WPS Version WPS Locked

ESSID

--------------------------------------------------------------------------------

-------------------------------

A8:39:44:XX:X:XX 1 -19 1.0 No

H****2

==================================================================

pcap file is available for these attempts.

Please help me

```

Original issue reported on code.google.com by `aldoba...@gmail.com` on 21 Jun 2012 at 5:26 | 1.0 | WARNING: Receive timeout occurred - ```

Answer the following questions for every issue submitted:

0. What version of Reaver are you using? Reaver v1.4

1. What operating system are you using? Ubuntu 12.04

2. Is your wireless card in monitor mode (yes/no)? yes, airmon-ng start wlan0,

and I'm using mon0 for reaver

3. What is the signal strength of the Access Point you are trying to crack? -19

4. What is the manufacturer and model # of the device you are trying to crack?

Telus communications, Actiontec V1000H, Firmware Version: 31.30L.55

5. What is the entire command line string you are supplying to reaver?

reaver -i mon0 -b "my router's mac" -c 1 -A -vv

6. Please describe what you think the issue is. I have absolutely no idea,

feels like I've looked everywhere and tried everything at this point

7. Paste the output from Reaver below.

root@halo:~# reaver -i mon0 -b A8:39:44:XX:XX:XX -c 1 -A -vv

Reaver v1.4 WiFi Protected Setup Attack Tool

Copyright (c) 2011, Tactical Network Solutions, Craig Heffner

<cheffner@tacnetsol.com>

[+] Switching mon0 to channel 1

[+] Waiting for beacon from A8:39:44:XX:XX:XX

[+] Associated with A8:39:44:XX:XX:XX (ESSID: H****2)

[+] Trying pin 55555678

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

[!] WARNING: Receive timeout occurred

[+] Sending EAPOL START request

So I've been all through the forums, google and youtube, tried many variations

in command line and this is the furthest I got.

This is my home router and it's only about 6FT away from my computer.

Also note that other users on here say that they have success with the same

network card as me: broadcom BCM4312 running b43-fwcutter version# 5.100.138

It is worth noting that if I do not use the -A command then all I get is a

bunch of FAILED TO ASSOCIATE messages:

root@halo:~# reaver -i mon0 -b A8:39:44:XX:XX:XX -c 1 -vv

Reaver v1.4 WiFi Protected Setup Attack Tool

Copyright (c) 2011, Tactical Network Solutions, Craig Heffner

<cheffner@tacnetsol.com>

[+] Switching mon0 to channel 1

[?] Restore previous session for A8:39:44:XX:XX:XX? [n/Y] y

[+] Restored previous session

[+] Waiting for beacon from A8:39:44:XX:XX:XX

[!] WARNING: Failed to associate with A8:39:44:XX:XX:XX (ESSID: H****2)

[!] WARNING: Failed to associate with A8:39:44:XX:XX:XX (ESSID: H****2)

[!] WARNING: Failed to associate with A8:39:44:XX:XX:XX (ESSID: HomeT2)

[!] WARNING: Failed to associate with A8:39:44:XX:XX:XX (ESSID: H****2)

===================================================================

I ran wash any everything looks ok:

root@halo:~# wash -i mon0

Wash v1.4 WiFi Protected Setup Scan Tool

Copyright (c) 2011, Tactical Network Solutions, Craig Heffner

<cheffner@tacnetsol.com>

BSSID Channel RSSI WPS Version WPS Locked

ESSID

--------------------------------------------------------------------------------

-------------------------------

A8:39:44:XX:X:XX 1 -19 1.0 No

H****2

==================================================================

pcap file is available for these attempts.

Please help me

```

Original issue reported on code.google.com by `aldoba...@gmail.com` on 21 Jun 2012 at 5:26 | defect | warning receive timeout occurred answer the following questions for every issue submitted what version of reaver are you using reaver what operating system are you using ubuntu is your wireless card in monitor mode yes no yes airmon ng start and i m using for reaver what is the signal strength of the access point you are trying to crack what is the manufacturer and model of the device you are trying to crack telus communications actiontec firmware version what is the entire command line string you are supplying to reaver reaver i b my router s mac c a vv please describe what you think the issue is i have absolutely no idea feels like i ve looked everywhere and tried everything at this point paste the output from reaver below root halo reaver i b xx xx xx c a vv reaver wifi protected setup attack tool copyright c tactical network solutions craig heffner switching to channel waiting for beacon from xx xx xx associated with xx xx xx essid h trying pin sending eapol start request warning receive timeout occurred sending eapol start request warning receive timeout occurred sending eapol start request warning receive timeout occurred sending eapol start request warning receive timeout occurred sending eapol start request warning receive timeout occurred sending eapol start request warning receive timeout occurred sending eapol start request warning receive timeout occurred sending eapol start request warning receive timeout occurred sending eapol start request warning receive timeout occurred sending eapol start request warning receive timeout occurred sending eapol start request warning receive timeout occurred sending eapol start request warning receive timeout occurred sending eapol start request warning receive timeout occurred sending eapol start request so i ve been all through the forums google and youtube tried many variations in command line and this is the furthest i got this is my home router and it s only about away from my computer also note that other users on here say that they have success with the same network card as me broadcom running fwcutter version it is worth noting that if i do not use the a command then all i get is a bunch of failed to associate messages root halo reaver i b xx xx xx c vv reaver wifi protected setup attack tool copyright c tactical network solutions craig heffner switching to channel restore previous session for xx xx xx y restored previous session waiting for beacon from xx xx xx warning failed to associate with xx xx xx essid h warning failed to associate with xx xx xx essid h warning failed to associate with xx xx xx essid warning failed to associate with xx xx xx essid h i ran wash any everything looks ok root halo wash i wash wifi protected setup scan tool copyright c tactical network solutions craig heffner bssid channel rssi wps version wps locked essid xx x xx no h pcap file is available for these attempts please help me original issue reported on code google com by aldoba gmail com on jun at | 1 |

61,909 | 17,023,806,941 | IssuesEvent | 2021-07-03 03:57:39 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Map does not display on changeset browser | Component: website Priority: minor Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 11.47am, Saturday, 30th June 2012]**

For the last couple of days, the map on the right-hand side of the /browse/changesets history view is not appearing. JavaScript error:

Error: Event.observe is not a function

Source File: http://www.openstreetmap.org/browse/changesets

Line: 315

Fails in Firefox 13.0.1, IE 9.0 and Epiphany 3.4.1. Confirmed across muleiple machines from multiple IP addresses. Last time I saw it working was probably no later than 27 June. Note that the individual changeset viewer /browse/changeset works as expected. The inline map script for the history page ends `Event.observe(window, "load", init);` while the individual changeset ends `window.onload = init;`. | 1.0 | Map does not display on changeset browser - **[Submitted to the original trac issue database at 11.47am, Saturday, 30th June 2012]**

For the last couple of days, the map on the right-hand side of the /browse/changesets history view is not appearing. JavaScript error:

Error: Event.observe is not a function

Source File: http://www.openstreetmap.org/browse/changesets

Line: 315

Fails in Firefox 13.0.1, IE 9.0 and Epiphany 3.4.1. Confirmed across muleiple machines from multiple IP addresses. Last time I saw it working was probably no later than 27 June. Note that the individual changeset viewer /browse/changeset works as expected. The inline map script for the history page ends `Event.observe(window, "load", init);` while the individual changeset ends `window.onload = init;`. | defect | map does not display on changeset browser for the last couple of days the map on the right hand side of the browse changesets history view is not appearing javascript error error event observe is not a function source file line fails in firefox ie and epiphany confirmed across muleiple machines from multiple ip addresses last time i saw it working was probably no later than june note that the individual changeset viewer browse changeset works as expected the inline map script for the history page ends event observe window load init while the individual changeset ends window onload init | 1 |

19,585 | 3,227,243,093 | IssuesEvent | 2015-10-11 01:10:00 | krashanoff/cleanrip | https://api.github.com/repos/krashanoff/cleanrip | closed | wii.dat needed on Gamecube | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

Load Cleanrip v2.0.0 with only gc.dat on Gamecube

What is the expected output? What do you see instead?

It should tell you that the Redump DAT files have been found.

Instead, it prompts you that the files have no been found and asks if you want

to download them. This is pointless seeing as the Gamecube cannot dump Wii

games, so the check for wii.dat should not be there.

Workaround:

Download a proper wii.dat

OR

What also worked for me was copying the gc.dat, renaming it wii.dat, and it

would not prompt me anymore. I tried using a blank wii.dat but it did not

work, so it needs to be some kind of valid .dat file. This was easier than

looking for a legit wii.dat.

```

Original issue reported on code.google.com by `steventy...@gmail.com` on 1 May 2014 at 4:37 | 1.0 | wii.dat needed on Gamecube - ```

What steps will reproduce the problem?

Load Cleanrip v2.0.0 with only gc.dat on Gamecube

What is the expected output? What do you see instead?

It should tell you that the Redump DAT files have been found.

Instead, it prompts you that the files have no been found and asks if you want

to download them. This is pointless seeing as the Gamecube cannot dump Wii

games, so the check for wii.dat should not be there.

Workaround:

Download a proper wii.dat

OR

What also worked for me was copying the gc.dat, renaming it wii.dat, and it

would not prompt me anymore. I tried using a blank wii.dat but it did not

work, so it needs to be some kind of valid .dat file. This was easier than

looking for a legit wii.dat.

```

Original issue reported on code.google.com by `steventy...@gmail.com` on 1 May 2014 at 4:37 | defect | wii dat needed on gamecube what steps will reproduce the problem load cleanrip with only gc dat on gamecube what is the expected output what do you see instead it should tell you that the redump dat files have been found instead it prompts you that the files have no been found and asks if you want to download them this is pointless seeing as the gamecube cannot dump wii games so the check for wii dat should not be there workaround download a proper wii dat or what also worked for me was copying the gc dat renaming it wii dat and it would not prompt me anymore i tried using a blank wii dat but it did not work so it needs to be some kind of valid dat file this was easier than looking for a legit wii dat original issue reported on code google com by steventy gmail com on may at | 1 |

92 | 2,534,816,257 | IssuesEvent | 2015-01-25 11:06:06 | chrisalexander/Learn-Chinese-app | https://api.github.com/repos/chrisalexander/Learn-Chinese-app | closed | CurrentState should be called CurrentStatus and return IEnumerable<string> | LongRunningProcess | Also update the UI to render accordingly, plus the tests | 1.0 | CurrentState should be called CurrentStatus and return IEnumerable<string> - Also update the UI to render accordingly, plus the tests | non_defect | currentstate should be called currentstatus and return ienumerable also update the ui to render accordingly plus the tests | 0 |

50,649 | 13,187,660,532 | IssuesEvent | 2020-08-13 04:08:50 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | closed | cmake - I3_USE_ROOT macro set w/o root installed (Trac #1131) | Migrated from Trac cmake defect | if USE_ROOT is given to cmake, the C macro I3_USE_ROOT is set even if root isn't found.

see #1115

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1131">https://code.icecube.wisc.edu/ticket/1131</a>, reported by nega and owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-01-11T23:59:15",

"description": "if USE_ROOT is given to cmake, the C macro I3_USE_ROOT is set even if root isn't found.\n\nsee #1121",

"reporter": "nega",

"cc": "",

"resolution": "fixed",

"_ts": "1547251155701656",

"component": "cmake",

"summary": "cmake - I3_USE_ROOT macro set w/o root installed",

"priority": "blocker",

"keywords": "",

"time": "2015-08-17T18:43:53",

"milestone": "",

"owner": "nega",

"type": "defect"

}

```

</p>

</details>

| 1.0 | cmake - I3_USE_ROOT macro set w/o root installed (Trac #1131) - if USE_ROOT is given to cmake, the C macro I3_USE_ROOT is set even if root isn't found.

see #1115

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1131">https://code.icecube.wisc.edu/ticket/1131</a>, reported by nega and owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-01-11T23:59:15",

"description": "if USE_ROOT is given to cmake, the C macro I3_USE_ROOT is set even if root isn't found.\n\nsee #1121",

"reporter": "nega",

"cc": "",

"resolution": "fixed",

"_ts": "1547251155701656",

"component": "cmake",

"summary": "cmake - I3_USE_ROOT macro set w/o root installed",

"priority": "blocker",

"keywords": "",

"time": "2015-08-17T18:43:53",

"milestone": "",

"owner": "nega",

"type": "defect"

}

```

</p>

</details>

| defect | cmake use root macro set w o root installed trac if use root is given to cmake the c macro use root is set even if root isn t found see migrated from json status closed changetime description if use root is given to cmake the c macro use root is set even if root isn t found n nsee reporter nega cc resolution fixed ts component cmake summary cmake use root macro set w o root installed priority blocker keywords time milestone owner nega type defect | 1 |

10,765 | 2,622,183,363 | IssuesEvent | 2015-03-04 00:19:48 | byzhang/leveldb | https://api.github.com/repos/byzhang/leveldb | closed | LevelDB 1.16 and 1.17 not available on downloads page | auto-migrated Priority-Medium Type-Defect | ```

https://code.google.com/p/leveldb/downloads/list

```

Original issue reported on code.google.com by `clemah...@gmail.com` on 18 Aug 2014 at 1:19

* Merged into: #240 | 1.0 | LevelDB 1.16 and 1.17 not available on downloads page - ```

https://code.google.com/p/leveldb/downloads/list

```

Original issue reported on code.google.com by `clemah...@gmail.com` on 18 Aug 2014 at 1:19

* Merged into: #240 | defect | leveldb and not available on downloads page original issue reported on code google com by clemah gmail com on aug at merged into | 1 |

4,222 | 2,610,089,512 | IssuesEvent | 2015-02-26 18:27:06 | chrsmith/dsdsdaadf | https://api.github.com/repos/chrsmith/dsdsdaadf | opened | 深圳痘痘如何祛 | auto-migrated Priority-Medium Type-Defect | ```

深圳痘痘如何祛【深圳韩方科颜全国热线400-869-1818,24小时QQ4

008691818】深圳韩方科颜专业祛痘连锁机构,机构以韩国秘方��

�—韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩方科�

��专业祛痘连锁机构,采用韩国秘方配合专业“不反弹”健康

祛痘技术并结合先进“先进豪华彩光”仪,开创国内专业治��

�粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的痘痘�

��

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 7:36 | 1.0 | 深圳痘痘如何祛 - ```

深圳痘痘如何祛【深圳韩方科颜全国热线400-869-1818,24小时QQ4

008691818】深圳韩方科颜专业祛痘连锁机构,机构以韩国秘方��

�—韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩方科�

��专业祛痘连锁机构,采用韩国秘方配合专业“不反弹”健康

祛痘技术并结合先进“先进豪华彩光”仪,开创国内专业治��

�粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的痘痘�

��

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 7:36 | defect | 深圳痘痘如何祛 深圳痘痘如何祛【 , 】深圳韩方科颜专业祛痘连锁机构,机构以韩国秘方�� �—韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩方科� ��专业祛痘连锁机构,采用韩国秘方配合专业“不反弹”健康 祛痘技术并结合先进“先进豪华彩光”仪,开创国内专业治�� �粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的痘痘� �� original issue reported on code google com by szft com on may at | 1 |

19,893 | 3,273,628,523 | IssuesEvent | 2015-10-26 04:24:43 | npgall/cqengine | https://api.github.com/repos/npgall/cqengine | closed | Query CONSISTENTLY slower with the addition of NavigableIndex | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Run this code, see below.

What is the expected output? What do you see instead?

I expected the queries against a NavigableIndex to be similar to or faster

instead of nearly 2x slower, than without.

What version of the product are you using? On what operating system?

1.2.7 from Maven on Ubuntu 13.10

Running this code will spit out some times. If you run it with and without the

index line commented out you'll see that commenting it out is much faster.

Thanks for looking into it!

```

Original issue reported on code.google.com by `crlia...@gmail.com` on 30 May 2014 at 9:34

Attachments:

* [App.java](https://storage.googleapis.com/google-code-attachments/cqengine/issue-37/comment-0/App.java)

| 1.0 | Query CONSISTENTLY slower with the addition of NavigableIndex - ```

What steps will reproduce the problem?

1. Run this code, see below.

What is the expected output? What do you see instead?

I expected the queries against a NavigableIndex to be similar to or faster

instead of nearly 2x slower, than without.

What version of the product are you using? On what operating system?

1.2.7 from Maven on Ubuntu 13.10

Running this code will spit out some times. If you run it with and without the

index line commented out you'll see that commenting it out is much faster.

Thanks for looking into it!

```

Original issue reported on code.google.com by `crlia...@gmail.com` on 30 May 2014 at 9:34

Attachments:

* [App.java](https://storage.googleapis.com/google-code-attachments/cqengine/issue-37/comment-0/App.java)

| defect | query consistently slower with the addition of navigableindex what steps will reproduce the problem run this code see below what is the expected output what do you see instead i expected the queries against a navigableindex to be similar to or faster instead of nearly slower than without what version of the product are you using on what operating system from maven on ubuntu running this code will spit out some times if you run it with and without the index line commented out you ll see that commenting it out is much faster thanks for looking into it original issue reported on code google com by crlia gmail com on may at attachments | 1 |

63,537 | 14,656,736,755 | IssuesEvent | 2020-12-28 14:05:07 | fu1771695yongxie/next.js | https://api.github.com/repos/fu1771695yongxie/next.js | opened | CVE-2020-11023 (Medium) detected in multiple libraries | security vulnerability | ## CVE-2020-11023 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jquery-2.1.4.min.js</b>, <b>jquery-2.2.0.min.js</b>, <b>jquery-3.2.1.min.js</b></p></summary>

<p>

<details><summary><b>jquery-2.1.4.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/2.1.4/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/2.1.4/jquery.min.js</a></p>

<p>Path to dependency file: next.js/node_modules/js-base64/test/index.html</p>

<p>Path to vulnerable library: next.js/node_modules/js-base64/test/index.html</p>

<p>

Dependency Hierarchy:

- :x: **jquery-2.1.4.min.js** (Vulnerable Library)

</details>

<details><summary><b>jquery-2.2.0.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/2.2.0/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/2.2.0/jquery.min.js</a></p>

<p>Path to dependency file: next.js/node_modules/lost/docs/_includes/footer.html</p>

<p>Path to vulnerable library: next.js/node_modules/lost/docs/_includes/footer.html</p>

<p>

Dependency Hierarchy:

- :x: **jquery-2.2.0.min.js** (Vulnerable Library)

</details>

<details><summary><b>jquery-3.2.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/3.2.1/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/3.2.1/jquery.min.js</a></p>

<p>Path to dependency file: next.js/node_modules/superagent/docs/tail.html</p>

<p>Path to vulnerable library: next.js/node_modules/superagent/docs/tail.html</p>

<p>

Dependency Hierarchy:

- :x: **jquery-3.2.1.min.js** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/fu1771695yongxie/next.js/commit/7da96cb602f4b841f912ded99ee8ea2109a96f0e">7da96cb602f4b841f912ded99ee8ea2109a96f0e</a></p>

<p>Found in base branch: <b>canary</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In jQuery versions greater than or equal to 1.0.3 and before 3.5.0, passing HTML containing <option> elements from untrusted sources - even after sanitizing it - to one of jQuery's DOM manipulation methods (i.e. .html(), .append(), and others) may execute untrusted code. This problem is patched in jQuery 3.5.0.

<p>Publish Date: 2020-04-29

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-11023>CVE-2020-11023</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-11023">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-11023</a></p>

<p>Release Date: 2020-04-29</p>

<p>Fix Resolution: jquery - 3.5.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2020-11023 (Medium) detected in multiple libraries - ## CVE-2020-11023 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jquery-2.1.4.min.js</b>, <b>jquery-2.2.0.min.js</b>, <b>jquery-3.2.1.min.js</b></p></summary>

<p>

<details><summary><b>jquery-2.1.4.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/2.1.4/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/2.1.4/jquery.min.js</a></p>

<p>Path to dependency file: next.js/node_modules/js-base64/test/index.html</p>

<p>Path to vulnerable library: next.js/node_modules/js-base64/test/index.html</p>

<p>

Dependency Hierarchy:

- :x: **jquery-2.1.4.min.js** (Vulnerable Library)

</details>

<details><summary><b>jquery-2.2.0.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/2.2.0/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/2.2.0/jquery.min.js</a></p>

<p>Path to dependency file: next.js/node_modules/lost/docs/_includes/footer.html</p>

<p>Path to vulnerable library: next.js/node_modules/lost/docs/_includes/footer.html</p>

<p>

Dependency Hierarchy:

- :x: **jquery-2.2.0.min.js** (Vulnerable Library)

</details>

<details><summary><b>jquery-3.2.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/3.2.1/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/3.2.1/jquery.min.js</a></p>

<p>Path to dependency file: next.js/node_modules/superagent/docs/tail.html</p>

<p>Path to vulnerable library: next.js/node_modules/superagent/docs/tail.html</p>

<p>

Dependency Hierarchy:

- :x: **jquery-3.2.1.min.js** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/fu1771695yongxie/next.js/commit/7da96cb602f4b841f912ded99ee8ea2109a96f0e">7da96cb602f4b841f912ded99ee8ea2109a96f0e</a></p>

<p>Found in base branch: <b>canary</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In jQuery versions greater than or equal to 1.0.3 and before 3.5.0, passing HTML containing <option> elements from untrusted sources - even after sanitizing it - to one of jQuery's DOM manipulation methods (i.e. .html(), .append(), and others) may execute untrusted code. This problem is patched in jQuery 3.5.0.

<p>Publish Date: 2020-04-29

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-11023>CVE-2020-11023</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-11023">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-11023</a></p>

<p>Release Date: 2020-04-29</p>

<p>Fix Resolution: jquery - 3.5.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_defect | cve medium detected in multiple libraries cve medium severity vulnerability vulnerable libraries jquery min js jquery min js jquery min js jquery min js javascript library for dom operations library home page a href path to dependency file next js node modules js test index html path to vulnerable library next js node modules js test index html dependency hierarchy x jquery min js vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file next js node modules lost docs includes footer html path to vulnerable library next js node modules lost docs includes footer html dependency hierarchy x jquery min js vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file next js node modules superagent docs tail html path to vulnerable library next js node modules superagent docs tail html dependency hierarchy x jquery min js vulnerable library found in head commit a href found in base branch canary vulnerability details in jquery versions greater than or equal to and before passing html containing elements from untrusted sources even after sanitizing it to one of jquery s dom manipulation methods i e html append and others may execute untrusted code this problem is patched in jquery publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope changed impact metrics confidentiality impact low integrity impact low availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution jquery step up your open source security game with whitesource | 0 |

68,900 | 21,945,989,945 | IssuesEvent | 2022-05-24 00:40:49 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | opened | invalid homeserver/_matrix/client/r0/search for Dendrite | T-Defect | ### Steps to reproduce

1. Where are you starting? What can you see? search

2. What do you click? and found invalid url homeserver/_matrix/client/r0/search

It seems that https://matrix.org/docs/spec/client_server/r0.6.1 old version has this API, but the new version don't have, I am not sure

### Outcome

#### What did you expect?

can sarch for Dendrite

#### What happened instead?

invalid URL homeserver/_matrix/client/r0/search

### Operating system

Arch Linux

### Browser information

chromium 101.0.4951.64-1

### URL for webapp

element-web 1.10.12

### Application version

element-web 1.10.12

### Homeserver

dendrite 0.8.5

### Will you send logs?

No | 1.0 | invalid homeserver/_matrix/client/r0/search for Dendrite - ### Steps to reproduce

1. Where are you starting? What can you see? search

2. What do you click? and found invalid url homeserver/_matrix/client/r0/search

It seems that https://matrix.org/docs/spec/client_server/r0.6.1 old version has this API, but the new version don't have, I am not sure

### Outcome

#### What did you expect?

can sarch for Dendrite

#### What happened instead?

invalid URL homeserver/_matrix/client/r0/search

### Operating system

Arch Linux

### Browser information

chromium 101.0.4951.64-1

### URL for webapp

element-web 1.10.12

### Application version

element-web 1.10.12

### Homeserver

dendrite 0.8.5

### Will you send logs?

No | defect | invalid homeserver matrix client search for dendrite steps to reproduce where are you starting what can you see search what do you click and found invalid url homeserver matrix client search it seems that old version has this api but the new version don t have i am not sure outcome what did you expect can sarch for dendrite what happened instead invalid url homeserver matrix client search operating system arch linux browser information chromium url for webapp element web application version element web homeserver dendrite will you send logs no | 1 |

17,055 | 2,972,399,343 | IssuesEvent | 2015-07-14 13:36:42 | mabe02/lanterna | https://api.github.com/repos/mabe02/lanterna | closed | ActionListDialog should highlight entire selected item | auto-migrated Priority-Medium Type-Defect | ```

I was just experimenting with ListSelectDialog which uses ActionListDialog,

passing Strings as the Objects. The selected item is only indicated by the

cursor. It would be nicer if the entry row in the list was highlighted.

```

Original issue reported on code.google.com by `bem...@gmail.com` on 11 Sep 2012 at 9:22 | 1.0 | ActionListDialog should highlight entire selected item - ```

I was just experimenting with ListSelectDialog which uses ActionListDialog,

passing Strings as the Objects. The selected item is only indicated by the

cursor. It would be nicer if the entry row in the list was highlighted.

```

Original issue reported on code.google.com by `bem...@gmail.com` on 11 Sep 2012 at 9:22 | defect | actionlistdialog should highlight entire selected item i was just experimenting with listselectdialog which uses actionlistdialog passing strings as the objects the selected item is only indicated by the cursor it would be nicer if the entry row in the list was highlighted original issue reported on code google com by bem gmail com on sep at | 1 |

63,304 | 17,576,431,594 | IssuesEvent | 2021-08-15 17:51:11 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Microphone disappeared from settings after updating Element-Desktop | T-Defect X-Needs-Info X-Cannot-Reproduce A-VoIP A-Media | Please delete this - not an Element issue! | 1.0 | Microphone disappeared from settings after updating Element-Desktop - Please delete this - not an Element issue! | defect | microphone disappeared from settings after updating element desktop please delete this not an element issue | 1 |

288,112 | 21,685,845,527 | IssuesEvent | 2022-05-09 11:11:44 | mmastro31/talking-oscilloscope | https://api.github.com/repos/mmastro31/talking-oscilloscope | closed | Write PCB prototype section of report | documentation Medium | Complete the section of the final report regarding the PCB prototype. This can be done after the PCB prototype is completed. | 1.0 | Write PCB prototype section of report - Complete the section of the final report regarding the PCB prototype. This can be done after the PCB prototype is completed. | non_defect | write pcb prototype section of report complete the section of the final report regarding the pcb prototype this can be done after the pcb prototype is completed | 0 |

284,786 | 30,913,688,955 | IssuesEvent | 2023-08-05 02:37:15 | Nivaskumark/kernel_v4.19.72_old | https://api.github.com/repos/Nivaskumark/kernel_v4.19.72_old | reopened | CVE-2022-4379 (High) detected in linux-yoctov5.4.51 | Mend: dependency security vulnerability | ## CVE-2022-4379 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov5.4.51</b></p></summary>

<p>

<p>Yocto Linux Embedded kernel</p>

<p>Library home page: <a href=https://git.yoctoproject.org/git/linux-yocto>https://git.yoctoproject.org/git/linux-yocto</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/fs/nfsd/nfs4proc.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/fs/nfsd/nfs4proc.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

A use-after-free vulnerability was found in __nfs42_ssc_open() in fs/nfs/nfs4file.c in the Linux kernel. This flaw allows an attacker to conduct a remote denial

<p>Publish Date: 2023-01-10

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-4379>CVE-2022-4379</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.linuxkernelcves.com/cves/CVE-2022-4379">https://www.linuxkernelcves.com/cves/CVE-2022-4379</a></p>

<p>Release Date: 2023-01-10</p>

<p>Fix Resolution: v6.1.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2022-4379 (High) detected in linux-yoctov5.4.51 - ## CVE-2022-4379 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov5.4.51</b></p></summary>

<p>

<p>Yocto Linux Embedded kernel</p>

<p>Library home page: <a href=https://git.yoctoproject.org/git/linux-yocto>https://git.yoctoproject.org/git/linux-yocto</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/fs/nfsd/nfs4proc.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/fs/nfsd/nfs4proc.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

A use-after-free vulnerability was found in __nfs42_ssc_open() in fs/nfs/nfs4file.c in the Linux kernel. This flaw allows an attacker to conduct a remote denial

<p>Publish Date: 2023-01-10

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-4379>CVE-2022-4379</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.linuxkernelcves.com/cves/CVE-2022-4379">https://www.linuxkernelcves.com/cves/CVE-2022-4379</a></p>

<p>Release Date: 2023-01-10</p>

<p>Fix Resolution: v6.1.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_defect | cve high detected in linux cve high severity vulnerability vulnerable library linux yocto linux embedded kernel library home page a href found in base branch master vulnerable source files fs nfsd c fs nfsd c vulnerability details a use after free vulnerability was found in ssc open in fs nfs c in the linux kernel this flaw allows an attacker to conduct a remote denial publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with mend | 0 |

73,232 | 24,515,363,972 | IssuesEvent | 2022-10-11 04:11:43 | Optiboot/optiboot | https://api.github.com/repos/Optiboot/optiboot | opened | optiboot_x fails for >48k of flash (ie xTiny, AVRDx64, AVRDx128) | Type-Defect Priority-High Optiboot-X-specific | The current optiboot_x was written for ATmega4809, and assumes that all of flash memory is mapped into the RAM address space. Also, it doesn't handle any of the hacks for an extended address byte.

So optiboot_x will fail to work for more than the amount of flash that is directly mapped into the RAM address space by default. That's only 32k on the 64k and 128k devices.

https://github.com/avrdudes/avrdude/issues/1120#

@SpenceKonde @MCUdude @avrdudes

| 1.0 | optiboot_x fails for >48k of flash (ie xTiny, AVRDx64, AVRDx128) - The current optiboot_x was written for ATmega4809, and assumes that all of flash memory is mapped into the RAM address space. Also, it doesn't handle any of the hacks for an extended address byte.

So optiboot_x will fail to work for more than the amount of flash that is directly mapped into the RAM address space by default. That's only 32k on the 64k and 128k devices.

https://github.com/avrdudes/avrdude/issues/1120#

@SpenceKonde @MCUdude @avrdudes

| defect | optiboot x fails for of flash ie xtiny the current optiboot x was written for and assumes that all of flash memory is mapped into the ram address space also it doesn t handle any of the hacks for an extended address byte so optiboot x will fail to work for more than the amount of flash that is directly mapped into the ram address space by default that s only on the and devices spencekonde mcudude avrdudes | 1 |

255,108 | 8,108,831,037 | IssuesEvent | 2018-08-14 04:12:56 | Crizov/HealthClientApp | https://api.github.com/repos/Crizov/HealthClientApp | opened | US111 - Upload supplementary files from local storage | priority: H size: 3 | As a patient I want to upload supplementary files from local storage so that I can add local files to the data packet

**Acceptance Criteria:**

1. on the create packet screen, add a section that allows a user to upload a file.

2. When "select file" button is pressed, open the phone's local file explorer.

3. allow user to select a file, and get the selected file's ref.

4. when data packet is sent, save the file to firebase using the file ref | 1.0 | US111 - Upload supplementary files from local storage - As a patient I want to upload supplementary files from local storage so that I can add local files to the data packet

**Acceptance Criteria:**

1. on the create packet screen, add a section that allows a user to upload a file.

2. When "select file" button is pressed, open the phone's local file explorer.

3. allow user to select a file, and get the selected file's ref.

4. when data packet is sent, save the file to firebase using the file ref | non_defect | upload supplementary files from local storage as a patient i want to upload supplementary files from local storage so that i can add local files to the data packet acceptance criteria on the create packet screen add a section that allows a user to upload a file when select file button is pressed open the phone s local file explorer allow user to select a file and get the selected file s ref when data packet is sent save the file to firebase using the file ref | 0 |

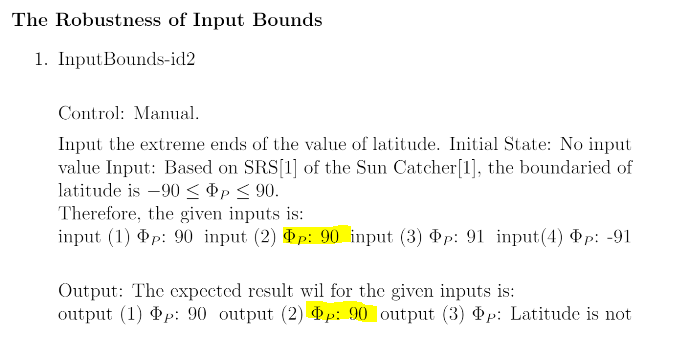

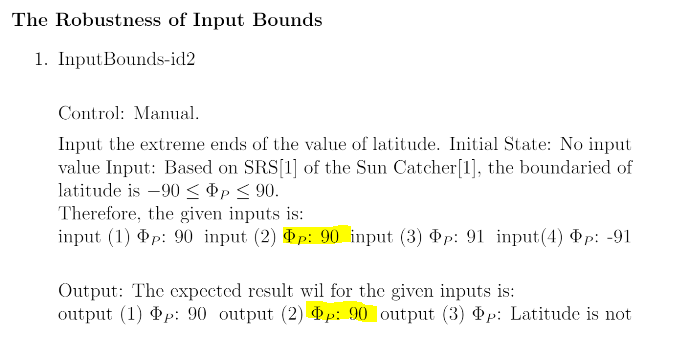

99,300 | 11,138,187,608 | IssuesEvent | 2019-12-20 21:34:29 | sharyuwu/optimum-tilt-of-solar-panels | https://api.github.com/repos/sharyuwu/optimum-tilt-of-solar-panels | closed | VnV Review: InputBounds-id2 - input(2) and output (2) should be -90 | documentation | Hi Sharon,

There seems to be a typo with input (2), as it reads 90 but should be -90.

The same applies to output (2) - both highlighted below.

| 1.0 | VnV Review: InputBounds-id2 - input(2) and output (2) should be -90 - Hi Sharon,

There seems to be a typo with input (2), as it reads 90 but should be -90.

The same applies to output (2) - both highlighted below.

| non_defect | vnv review inputbounds input and output should be hi sharon there seems to be a typo with input as it reads but should be the same applies to output both highlighted below | 0 |

2,107 | 2,603,976,409 | IssuesEvent | 2015-02-24 19:01:37 | chrsmith/nishazi6 | https://api.github.com/repos/chrsmith/nishazi6 | opened | 沈阳hsv-igg阳性 | auto-migrated Priority-Medium Type-Defect | ```

沈阳hsv-igg阳性〓沈陽軍區政治部醫院性病〓TEL:024-31023308〓�

��立于1946年,68年專注于性傳播疾病的研究和治療。位于沈陽

市沈河區二緯路32號。是一所與新中國同建立共輝煌的歷史悠

久、設備精良、技術權威、專家云集,是預防、保健、醫療��

�科研康復為一體的綜合性醫院。是國家首批公立甲等部隊醫�

��、全國首批醫療規范定點單位,是第四軍醫大學、東南大學

等知名高等院校的教學醫院。曾被中國人民解放軍空軍后勤��

�衛生部評為衛生工作先進單位,先后兩次榮立集體二等功。

```

-----

Original issue reported on code.google.com by `q964105...@gmail.com` on 4 Jun 2014 at 8:21 | 1.0 | 沈阳hsv-igg阳性 - ```

沈阳hsv-igg阳性〓沈陽軍區政治部醫院性病〓TEL:024-31023308〓�

��立于1946年,68年專注于性傳播疾病的研究和治療。位于沈陽

市沈河區二緯路32號。是一所與新中國同建立共輝煌的歷史悠

久、設備精良、技術權威、專家云集,是預防、保健、醫療��

�科研康復為一體的綜合性醫院。是國家首批公立甲等部隊醫�

��、全國首批醫療規范定點單位,是第四軍醫大學、東南大學

等知名高等院校的教學醫院。曾被中國人民解放軍空軍后勤��

�衛生部評為衛生工作先進單位,先后兩次榮立集體二等功。

```

-----

Original issue reported on code.google.com by `q964105...@gmail.com` on 4 Jun 2014 at 8:21 | defect | 沈阳hsv igg阳性 沈阳hsv igg阳性〓沈陽軍區政治部醫院性病〓tel: 〓� �� , 。位于沈陽 。是一所與新中國同建立共輝煌的歷史悠 久、設備精良、技術權威、專家云集,是預防、保健、醫療�� �科研康復為一體的綜合性醫院。是國家首批公立甲等部隊醫� ��、全國首批醫療規范定點單位,是第四軍醫大學、東南大學 等知名高等院校的教學醫院。曾被中國人民解放軍空軍后勤�� �衛生部評為衛生工作先進單位,先后兩次榮立集體二等功。 original issue reported on code google com by gmail com on jun at | 1 |

2,267 | 2,603,992,064 | IssuesEvent | 2015-02-24 19:06:53 | chrsmith/nishazi6 | https://api.github.com/repos/chrsmith/nishazi6 | opened | 沈阳假尖锐疣有什么症状 | auto-migrated Priority-Medium Type-Defect | ```

沈阳假尖锐疣有什么症状〓沈陽軍區政治部醫院性病〓TEL:02

4-31023308〓成立于1946年,68年專注于性傳播疾病的研究和治療�

��位于沈陽市沈河區二緯路32號。是一所與新中國同建立共輝�

��的歷史悠久、設備精良、技術權威、專家云集,是預防、保

健、醫療、科研康復為一體的綜合性醫院。是國家首批公立��

�等部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學�

��東南大學等知名高等院校的教學醫院。曾被中國人民解放軍

空軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集��

�二等功。

```

-----

Original issue reported on code.google.com by `q964105...@gmail.com` on 4 Jun 2014 at 8:35 | 1.0 | 沈阳假尖锐疣有什么症状 - ```

沈阳假尖锐疣有什么症状〓沈陽軍區政治部醫院性病〓TEL:02

4-31023308〓成立于1946年,68年專注于性傳播疾病的研究和治療�

��位于沈陽市沈河區二緯路32號。是一所與新中國同建立共輝�

��的歷史悠久、設備精良、技術權威、專家云集,是預防、保

健、醫療、科研康復為一體的綜合性醫院。是國家首批公立��

�等部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學�

��東南大學等知名高等院校的教學醫院。曾被中國人民解放軍

空軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集��

�二等功。

```

-----

Original issue reported on code.google.com by `q964105...@gmail.com` on 4 Jun 2014 at 8:35 | defect | 沈阳假尖锐疣有什么症状 沈阳假尖锐疣有什么症状〓沈陽軍區政治部醫院性病〓tel: 〓 , � �� 。是一所與新中國同建立共輝� ��的歷史悠久、設備精良、技術權威、專家云集,是預防、保 健、醫療、科研康復為一體的綜合性醫院。是國家首批公立�� �等部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學� ��東南大學等知名高等院校的教學醫院。曾被中國人民解放軍 空軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集�� �二等功。 original issue reported on code google com by gmail com on jun at | 1 |

809,105 | 30,174,630,571 | IssuesEvent | 2023-07-04 02:37:48 | TencentBlueKing/bk-cmdb | https://api.github.com/repos/TencentBlueKing/bk-cmdb | closed | 【CMDB+v3.10.27-feature-field-template-alpha1】模板编辑后点击提交同步模板信息时,同步文案与设计稿不一致 | priority: Normal | 问题描述

模板编辑后点击提交同步模板信息时,同步文案与设计稿不一致

一、前提条件

1.存在一个模板,并且绑定了模型

二 、重现步骤

1.点击模板,进入模板详情

2.点击 进入编辑

3.任意编写模板信息后,点击下一步

4.点击提交

预期结果

提交后,同步信息文案提示与设计稿一致

三 、实际结果

同步文案与设计稿不一致

| 1.0 | 【CMDB+v3.10.27-feature-field-template-alpha1】模板编辑后点击提交同步模板信息时,同步文案与设计稿不一致 - 问题描述

模板编辑后点击提交同步模板信息时,同步文案与设计稿不一致

一、前提条件

1.存在一个模板,并且绑定了模型

二 、重现步骤

1.点击模板,进入模板详情

2.点击 进入编辑

3.任意编写模板信息后,点击下一步

4.点击提交

预期结果

提交后,同步信息文案提示与设计稿一致

三 、实际结果

同步文案与设计稿不一致

| non_defect | 【cmdb feature field template 】模板编辑后点击提交同步模板信息时,同步文案与设计稿不一致 问题描述 模板编辑后点击提交同步模板信息时,同步文案与设计稿不一致 一、前提条件 存在一个模板,并且绑定了模型 二 、重现步骤 点击模板,进入模板详情 点击 进入编辑 任意编写模板信息后,点击下一步 点击提交 预期结果 提交后,同步信息文案提示与设计稿一致 三 、实际结果 同步文案与设计稿不一致 | 0 |

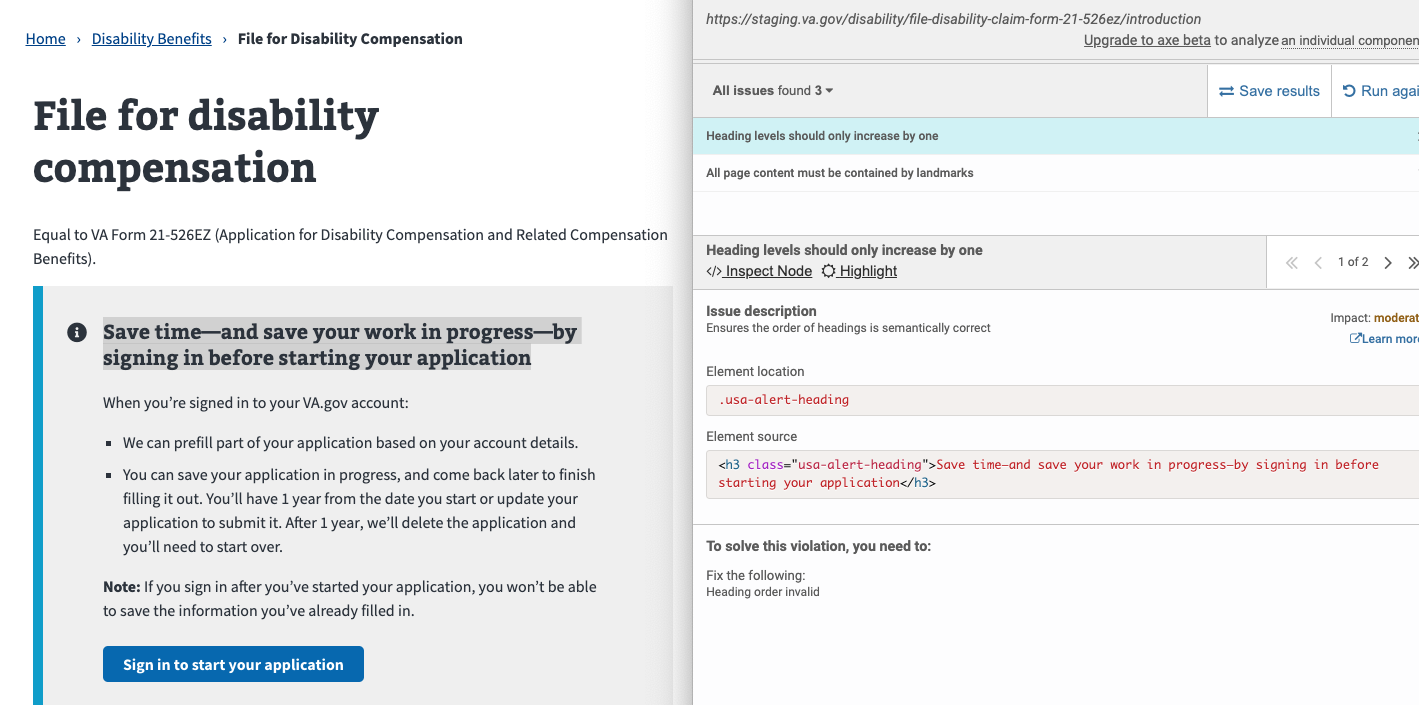

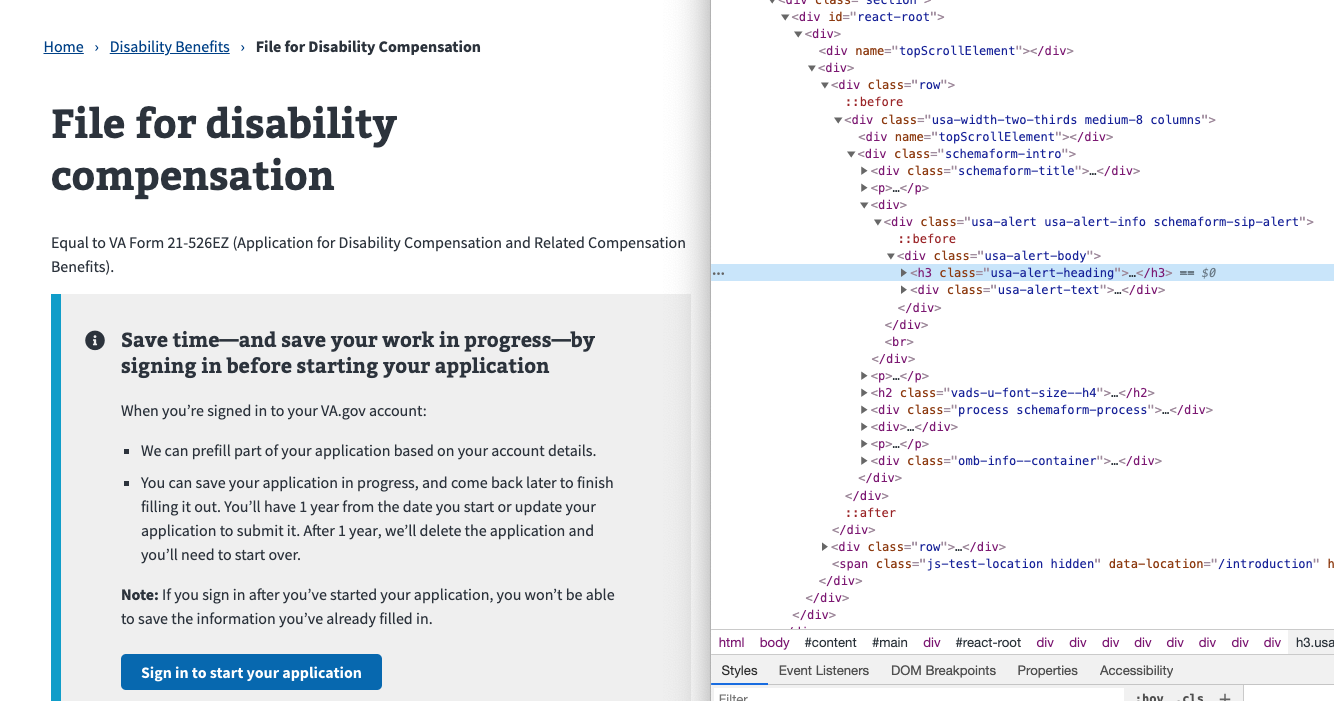

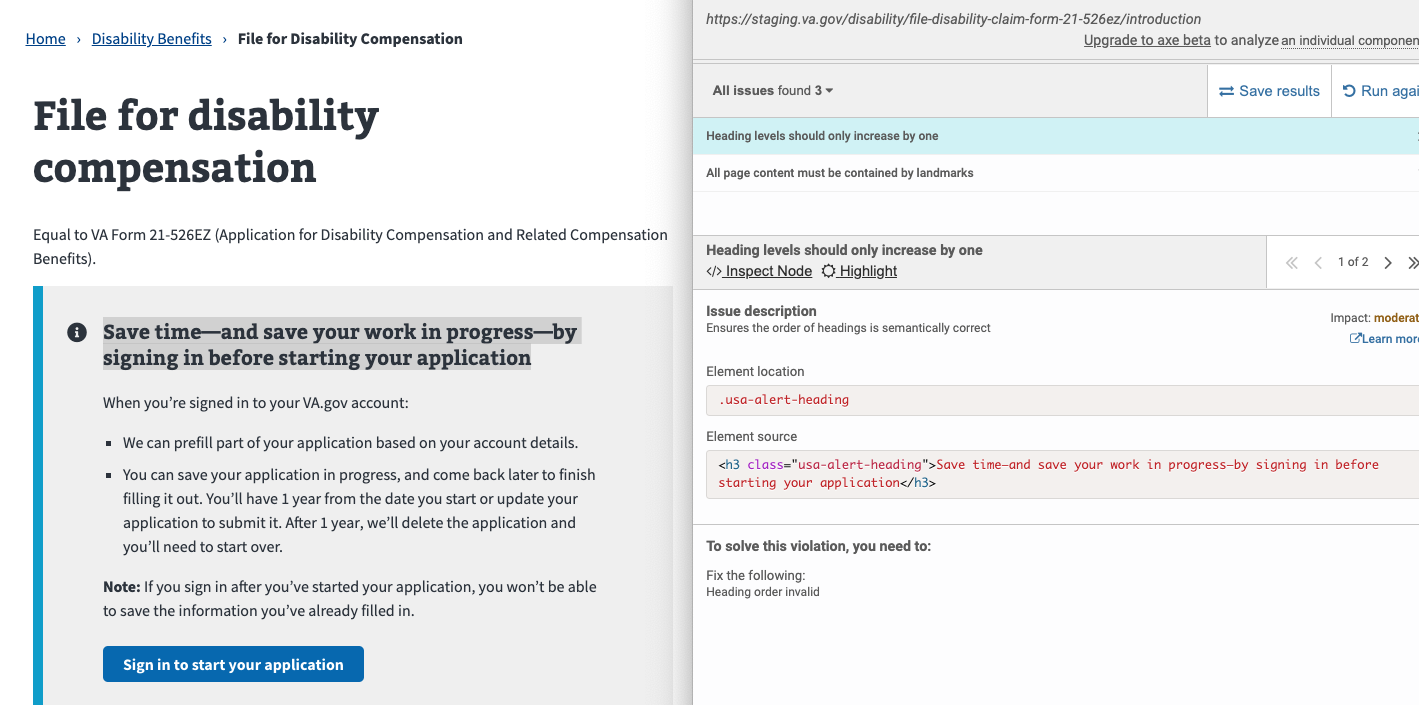

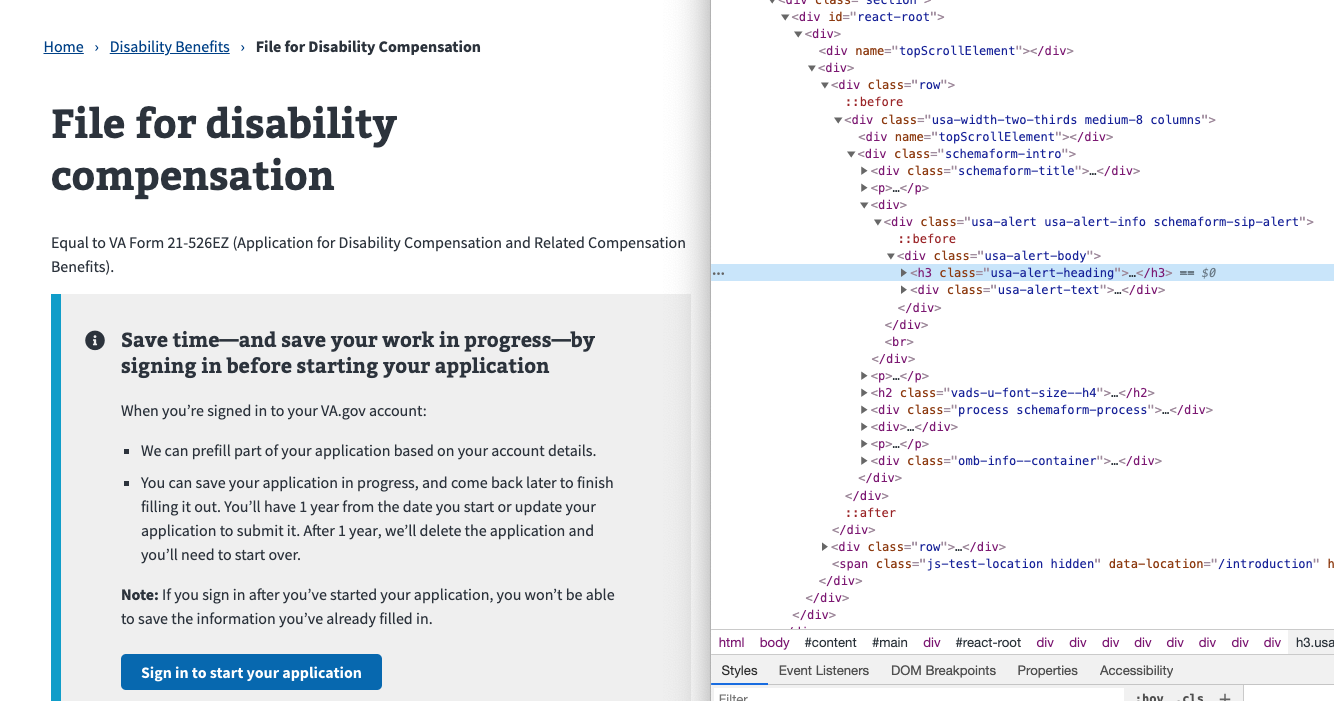

52,982 | 13,258,800,025 | IssuesEvent | 2020-08-20 15:51:23 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | opened | 508-defect-2 [AXE-CORE]: Heading levels SHOULD increase by one | 508-defect-2 508-issue-headings 508/Accessibility vsa vsa-benefits | # [508-defect-2](https://github.com/department-of-veterans-affairs/va.gov-team/blob/master/platform/accessibility/guidance/defect-severity-rubric.md#508-defect-2)

**Feedback framework**

- **❗️ Must** for if the feedback must be applied

- **⚠️Should** if the feedback is best practice

- **✔️ Consider** for suggestions/enhancements

## Description

The alert box heading, "Save time—and save your work in progress—by signing in before starting your application," is currently an h3 but there isn't an h2 between it and the h1.

If this page is in the CMS, it is a CMS sitewide issue.

If this page is built in React, there is a level prop that needs to be adjusted to "2", and a utility class will style it to look like an h3.

## Point of Contact

**VFS Point of Contact:** Jennifer

## Acceptance Criteria

As a screen reader user, I want to navigate the hierarchy of the page content using heading levels to save time and frustration.

## Environment

- Operating System: all

- Browser: all

- Screenreading device: any

- Server destination: staging & production

## Steps to Recreate

1. Enter [URL] in browser

2. Have developer tools open, and the axe browser extension loaded

3. Run an axe audit

4. Verify that [heading code] is called out as an error of "Heading levels should only increase by one"

5. This error is repeated throughout the form process

## Possible Fixes (optional)

### Sample

**Current code**

```html

<h3 class="usa-alert-heading">Save time—and save your work in progress—by signing in before starting your application</h3>

```

**Recommended code**

```html

<h2 class="usa-alert-heading">Save time—and save your work in progress—by signing in before starting your application</h2>

```

## WCAG or Vendor Guidance (optional)

* [axe-core 3.4 - Heading levels should only increase by one](https://dequeuniversity.com/rules/axe/3.4/heading-order)

## Screenshots

| 1.0 | 508-defect-2 [AXE-CORE]: Heading levels SHOULD increase by one - # [508-defect-2](https://github.com/department-of-veterans-affairs/va.gov-team/blob/master/platform/accessibility/guidance/defect-severity-rubric.md#508-defect-2)

**Feedback framework**

- **❗️ Must** for if the feedback must be applied

- **⚠️Should** if the feedback is best practice

- **✔️ Consider** for suggestions/enhancements

## Description

The alert box heading, "Save time—and save your work in progress—by signing in before starting your application," is currently an h3 but there isn't an h2 between it and the h1.

If this page is in the CMS, it is a CMS sitewide issue.

If this page is built in React, there is a level prop that needs to be adjusted to "2", and a utility class will style it to look like an h3.

## Point of Contact

**VFS Point of Contact:** Jennifer

## Acceptance Criteria

As a screen reader user, I want to navigate the hierarchy of the page content using heading levels to save time and frustration.

## Environment

- Operating System: all

- Browser: all

- Screenreading device: any

- Server destination: staging & production

## Steps to Recreate

1. Enter [URL] in browser

2. Have developer tools open, and the axe browser extension loaded

3. Run an axe audit

4. Verify that [heading code] is called out as an error of "Heading levels should only increase by one"

5. This error is repeated throughout the form process

## Possible Fixes (optional)

### Sample

**Current code**

```html

<h3 class="usa-alert-heading">Save time—and save your work in progress—by signing in before starting your application</h3>

```

**Recommended code**

```html

<h2 class="usa-alert-heading">Save time—and save your work in progress—by signing in before starting your application</h2>

```

## WCAG or Vendor Guidance (optional)

* [axe-core 3.4 - Heading levels should only increase by one](https://dequeuniversity.com/rules/axe/3.4/heading-order)

## Screenshots