Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

81,275 | 30,779,279,798 | IssuesEvent | 2023-07-31 08:54:11 | SeleniumHQ/selenium | https://api.github.com/repos/SeleniumHQ/selenium | opened | [🐛 Bug]: Not able to download files when using --headless=new | I-defect needs-triaging | ### What happened?

After introducing --headless=new, I can no longer download files on Linux/Mac architecture.

I did test this with --headless=old, and the download works as expected.

I am not sure if this is because Chrome options are not taking in the given arguments, such as:

Map<String, Object> chromePre... | 1.0 | [🐛 Bug]: Not able to download files when using --headless=new - ### What happened?

After introducing --headless=new, I can no longer download files on Linux/Mac architecture.

I did test this with --headless=old, and the download works as expected.

I am not sure if this is because Chrome options are not taking i... | defect | not able to download files when using headless new what happened after introducing headless new i can no longer download files on linux mac architecture i did test this with headless old and the download works as expected i am not sure if this is because chrome options are not taking in the g... | 1 |

46,494 | 13,055,920,878 | IssuesEvent | 2020-07-30 03:07:31 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | opened | ipdf - docs are out of date and incomplete (Trac #1298) | Incomplete Migration Migrated from Trac combo simulation defect | Migrated from https://code.icecube.wisc.edu/ticket/1298

```json

{

"status": "closed",

"changetime": "2019-02-13T14:11:57",

"description": "rst docs are light, and barely gloss over IPDF usage. links are dead.\n\ndoxygen docs are incomplete (several \"Comming soon\"'s)\ndoxygen docs are out of date (refer ... | 1.0 | ipdf - docs are out of date and incomplete (Trac #1298) - Migrated from https://code.icecube.wisc.edu/ticket/1298

```json

{

"status": "closed",

"changetime": "2019-02-13T14:11:57",

"description": "rst docs are light, and barely gloss over IPDF usage. links are dead.\n\ndoxygen docs are incomplete (several... | defect | ipdf docs are out of date and incomplete trac migrated from json status closed changetime description rst docs are light and barely gloss over ipdf usage links are dead n ndoxygen docs are incomplete several comming soon s ndoxygen docs are out of date r... | 1 |

40,891 | 10,213,355,272 | IssuesEvent | 2019-08-14 21:56:07 | Azure/batch-shipyard | https://api.github.com/repos/Azure/batch-shipyard | opened | Network direct RDMA VM provisioning fails | defect rdma | `3.8.0` has a regression preventing network direct RDMA VM instances (A8/A9/NC24rX/H16r/H16mr) from provisioning correctly.

Temporary mitigation until the hotfix is to perform the following (after installing/re-installing `3.8.0`):

```shell

# cd to the directory where you've cloned the repository

git checkout dev... | 1.0 | Network direct RDMA VM provisioning fails - `3.8.0` has a regression preventing network direct RDMA VM instances (A8/A9/NC24rX/H16r/H16mr) from provisioning correctly.

Temporary mitigation until the hotfix is to perform the following (after installing/re-installing `3.8.0`):

```shell

# cd to the directory where yo... | defect | network direct rdma vm provisioning fails has a regression preventing network direct rdma vm instances from provisioning correctly temporary mitigation until the hotfix is to perform the following after installing re installing shell cd to the directory where you ve cloned th... | 1 |

46,695 | 19,412,184,296 | IssuesEvent | 2021-12-20 10:49:23 | tuna/issues | https://api.github.com/repos/tuna/issues | closed | Wget下载CentOS ISO显示403 Forbidden | Service Issue | ### 先决条件 (Prerequisites)

- [X] 我已确认这个问题没有在[其他 issues](https://github.com/tuna/issues/issues)中提出过。

I am sure that this problem has NEVER been discussed in [other issues](https://github.com/tuna/issues/issues).

### 发生了什么(What happened)

使用Wget命令行工具下载CentOS ISO镜像时显示403 Forbidden,但使用浏览器下载时正常。

试了下Debian、Ubuntu正常,但CentOS... | 1.0 | Wget下载CentOS ISO显示403 Forbidden - ### 先决条件 (Prerequisites)

- [X] 我已确认这个问题没有在[其他 issues](https://github.com/tuna/issues/issues)中提出过。

I am sure that this problem has NEVER been discussed in [other issues](https://github.com/tuna/issues/issues).

### 发生了什么(What happened)

使用Wget命令行工具下载CentOS ISO镜像时显示403 Forbidden,但使用浏览器... | non_defect | wget下载centos forbidden 先决条件 prerequisites 我已确认这个问题没有在 i am sure that this problem has never been discussed in 发生了什么(what happened) 使用wget命令行工具下载centos forbidden,但使用浏览器下载时正常。 试了下debian、ubuntu正常,但centos异常(centos ) wget d debug output created by wget on linux gnu re... | 0 |

70,989 | 23,395,281,644 | IssuesEvent | 2022-08-11 22:26:26 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Same suggested person appears many times | T-Defect S-Minor O-Occasional A-New-Search-Experience | ### Steps to reproduce

1. Have a DM with a user

2. Have another room with the same user

3. Open search

4. Click people

5. Search for the user id, e.g. `@user:example.com`

6. Clear the name (but not person search)

5. Search for the user id again, e.g. `@user:example.com`

### Outcome

#### What did you expe... | 1.0 | Same suggested person appears many times - ### Steps to reproduce

1. Have a DM with a user

2. Have another room with the same user

3. Open search

4. Click people

5. Search for the user id, e.g. `@user:example.com`

6. Clear the name (but not person search)

5. Search for the user id again, e.g. `@user:example.co... | defect | same suggested person appears many times steps to reproduce have a dm with a user have another room with the same user open search click people search for the user id e g user example com clear the name but not person search search for the user id again e g user example co... | 1 |

71,173 | 23,481,388,653 | IssuesEvent | 2022-08-17 10:53:29 | vector-im/element-ios | https://api.github.com/repos/vector-im/element-ios | closed | Missing "people" in all chats on iOS | T-Defect Z-AppLayout | ### Steps to reproduce

1. Enable filters

2. Go to all chats

### Outcome

#### What did you expect?

To see DMs in that list

#### What happened instead?

All DMs are missing in action

### Your phone model

_No response_

### Operating system version

_No response_

### Application version

_No response_

##... | 1.0 | Missing "people" in all chats on iOS - ### Steps to reproduce

1. Enable filters

2. Go to all chats

### Outcome

#### What did you expect?

To see DMs in that list

#### What happened instead?

All DMs are missing in action

### Your phone model

_No response_

### Operating system version

_No response_

###... | defect | missing people in all chats on ios steps to reproduce enable filters go to all chats outcome what did you expect to see dms in that list what happened instead all dms are missing in action your phone model no response operating system version no response ... | 1 |

46,276 | 13,055,884,567 | IssuesEvent | 2020-07-30 03:01:15 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | opened | [documentation] redirect Trac wiki pages elsewhere (Trac #915) | Incomplete Migration Migrated from Trac defect infrastructure | Migrated from https://code.icecube.wisc.edu/ticket/915

```json

{

"status": "closed",

"changetime": "2015-04-08T16:37:00",

"description": "Trac automatically creates links to wiki pages for CamelCase that it detects. If our documentation was in the Trac wiki, this would be awesome. \n\nSet up an easy way t... | 1.0 | [documentation] redirect Trac wiki pages elsewhere (Trac #915) - Migrated from https://code.icecube.wisc.edu/ticket/915

```json

{

"status": "closed",

"changetime": "2015-04-08T16:37:00",

"description": "Trac automatically creates links to wiki pages for CamelCase that it detects. If our documentation was ... | defect | redirect trac wiki pages elsewhere trac migrated from json status closed changetime description trac automatically creates links to wiki pages for camelcase that it detects if our documentation was in the trac wiki this would be awesome n nset up an easy way t... | 1 |

32,759 | 6,918,652,884 | IssuesEvent | 2017-11-29 13:01:16 | ontop/ontop | https://api.github.com/repos/ontop/ontop | closed | Parsing error with space in the mapping assertion | status: check if still relevant topic: mapping processing topic: protégé type: defect | Remove unnecessary space from the source part of the mapping assertion to avoid parsing problems.

Example of error message:

> MappingId = 'mapping--656471061'

> Line 54: Invalid input: (sourceQuery = null)` | 1.0 | Parsing error with space in the mapping assertion - Remove unnecessary space from the source part of the mapping assertion to avoid parsing problems.

Example of error message:

> MappingId = 'mapping--656471061'

> Line 54: Invalid input: (sourceQuery = null)` | defect | parsing error with space in the mapping assertion remove unnecessary space from the source part of the mapping assertion to avoid parsing problems example of error message mappingid mapping line invalid input sourcequery null | 1 |

41,693 | 10,567,712,928 | IssuesEvent | 2019-10-06 07:09:18 | markoteittinen/custom-maps | https://api.github.com/repos/markoteittinen/custom-maps | closed | Tracking | Priority-Medium Type-Defect auto-migrated | ```

What steps will reproduce the problem?

1. Any map

What is the expected output? What do you see instead?

1. It would be great if the app could record and draw a track

2. A page could show the statistics of the track (length, time, average speed,

moving time, moving speed, etc)

What version of the product are you... | 1.0 | Tracking - ```

What steps will reproduce the problem?

1. Any map

What is the expected output? What do you see instead?

1. It would be great if the app could record and draw a track

2. A page could show the statistics of the track (length, time, average speed,

moving time, moving speed, etc)

What version of the prod... | defect | tracking what steps will reproduce the problem any map what is the expected output what do you see instead it would be great if the app could record and draw a track a page could show the statistics of the track length time average speed moving time moving speed etc what version of the prod... | 1 |

63,414 | 15,598,031,563 | IssuesEvent | 2021-03-18 17:36:11 | RPTools/maptool | https://api.github.com/repos/RPTools/maptool | closed | Update TwelveMonkeys ImageIO plugins to 3.6.4 | build-configuration tested | Update build.gradle to use current version of plugins.

See https://github.com/haraldk/TwelveMonkeys/releases for various bug fixes/enhancements.

| 1.0 | Update TwelveMonkeys ImageIO plugins to 3.6.4 - Update build.gradle to use current version of plugins.

See https://github.com/haraldk/TwelveMonkeys/releases for various bug fixes/enhancements.

| non_defect | update twelvemonkeys imageio plugins to update build gradle to use current version of plugins see for various bug fixes enhancements | 0 |

47,572 | 13,056,256,899 | IssuesEvent | 2020-07-30 04:08:44 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | dataio - dataio::test_filestager.py needs to test against a better website (Trac #777) | Migrated from Trac combo core defect | not one that goes down like a prom dress.

Migrated from https://code.icecube.wisc.edu/ticket/777

```json

{

"status": "closed",

"changetime": "2014-10-09T18:22:41",

"description": "not one that goes down like a prom dress.",

"reporter": "nega",

"cc": "",

"resolution": "worksforme",

"_t... | 1.0 | dataio - dataio::test_filestager.py needs to test against a better website (Trac #777) - not one that goes down like a prom dress.

Migrated from https://code.icecube.wisc.edu/ticket/777

```json

{

"status": "closed",

"changetime": "2014-10-09T18:22:41",

"description": "not one that goes down like a prom d... | defect | dataio dataio test filestager py needs to test against a better website trac not one that goes down like a prom dress migrated from json status closed changetime description not one that goes down like a prom dress reporter nega cc ... | 1 |

27,942 | 5,140,721,921 | IssuesEvent | 2017-01-12 06:52:01 | netty/netty | https://api.github.com/repos/netty/netty | closed | HttpProxyHandler incorrectly formats IPv6 host and port in CONNECT request | defect | ### Expected behavior

The HttpProxyHandler is expected to be capable of issuing a valid CONNECT request for a tunneled connection to an IPv6 host. In this case we are passing an IPv6 address (eg fd00:c0de:42::c:293a:5736) rather than a host name.

### Actual behavior

The HttpProxyHandler does not properly concatena... | 1.0 | HttpProxyHandler incorrectly formats IPv6 host and port in CONNECT request - ### Expected behavior

The HttpProxyHandler is expected to be capable of issuing a valid CONNECT request for a tunneled connection to an IPv6 host. In this case we are passing an IPv6 address (eg fd00:c0de:42::c:293a:5736) rather than a host n... | defect | httpproxyhandler incorrectly formats host and port in connect request expected behavior the httpproxyhandler is expected to be capable of issuing a valid connect request for a tunneled connection to an host in this case we are passing an address eg c rather than a host name actual beh... | 1 |

221,765 | 7,395,953,151 | IssuesEvent | 2018-03-18 05:37:04 | langbakk/HSS | https://api.github.com/repos/langbakk/HSS | closed | Bug: the counter for bike doesn't stop at 150 | bug priority 3 | For some reason, it seems the bike-status doesn't stop at 150 | 1.0 | Bug: the counter for bike doesn't stop at 150 - For some reason, it seems the bike-status doesn't stop at 150 | non_defect | bug the counter for bike doesn t stop at for some reason it seems the bike status doesn t stop at | 0 |

265,453 | 20,099,524,108 | IssuesEvent | 2022-02-07 01:04:13 | jacksund/simmate | https://api.github.com/repos/jacksund/simmate | opened | Make archive after each ErrorHandler correction | documentation | It would make sense to archive the working directory after an error is found and correction applied. Currently, the files are just replaced. | 1.0 | Make archive after each ErrorHandler correction - It would make sense to archive the working directory after an error is found and correction applied. Currently, the files are just replaced. | non_defect | make archive after each errorhandler correction it would make sense to archive the working directory after an error is found and correction applied currently the files are just replaced | 0 |

91,913 | 10,731,553,759 | IssuesEvent | 2019-10-28 19:49:20 | scikit-learn/scikit-learn | https://api.github.com/repos/scikit-learn/scikit-learn | opened | Missing from what's new v0.22 | Blocker Documentation | I've tried to reconcile the commits in 0.21.3..master with the PR and Issue IDs in the change log. The following appear to be missing from `doc/whats_new/v0.22.rst` and entries should be added for them:

reconciled and found

* [ ] ENH faster polynomial features for dense matrices (#13290)

* [ ] ENH Adds roc weigh... | 1.0 | Missing from what's new v0.22 - I've tried to reconcile the commits in 0.21.3..master with the PR and Issue IDs in the change log. The following appear to be missing from `doc/whats_new/v0.22.rst` and entries should be added for them:

reconciled and found

* [ ] ENH faster polynomial features for dense matrices (#... | non_defect | missing from what s new i ve tried to reconcile the commits in master with the pr and issue ids in the change log the following appear to be missing from doc whats new rst and entries should be added for them reconciled and found enh faster polynomial features for dense matrices ... | 0 |

50,096 | 13,187,323,895 | IssuesEvent | 2020-08-13 03:03:02 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | closed | error message hidden when linking to full disk? (Trac #113) | Migrated from Trac cmake defect |

<details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/113

, reported by troy and owned by troy_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2014-11-23T03:37:56",

"description": "",

"reporter": "troy",

"cc": "",

"resolution": "invalid",

"_ts": "141671... | 1.0 | error message hidden when linking to full disk? (Trac #113) -

<details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/113

, reported by troy and owned by troy_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2014-11-23T03:37:56",

"description": "",

"reporter": "troy",

... | defect | error message hidden when linking to full disk trac migrated from reported by troy and owned by troy json status closed changetime description reporter troy cc resolution invalid ts component ... | 1 |

37,015 | 8,201,990,764 | IssuesEvent | 2018-09-02 02:08:07 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | stats.statlib.spearman.f doesn't return prho (Trac #1271) | Migrated from Trac defect prio-normal scipy.stats | _Original ticket http://projects.scipy.org/scipy/ticket/1271 on 2010-09-07 by @josef-pkt, assigned to unknown._

scipy\stats\statlib\spearman.f doesn't return prho

currently not used, but it might give better small sample probabilities for stats.spearmanr

statlib.prho currently returns only a status code

| 1.0 | stats.statlib.spearman.f doesn't return prho (Trac #1271) - _Original ticket http://projects.scipy.org/scipy/ticket/1271 on 2010-09-07 by @josef-pkt, assigned to unknown._

scipy\stats\statlib\spearman.f doesn't return prho

currently not used, but it might give better small sample probabilities for stats.spearmanr

... | defect | stats statlib spearman f doesn t return prho trac original ticket on by josef pkt assigned to unknown scipy stats statlib spearman f doesn t return prho currently not used but it might give better small sample probabilities for stats spearmanr statlib prho currently returns only a status code | 1 |

27,036 | 27,580,156,226 | IssuesEvent | 2023-03-08 15:41:44 | redpanda-data/documentation | https://api.github.com/repos/redpanda-data/documentation | closed | engine: Provide more context in internal search results | website engine usability improvement P3 | ### Describe the Issue

When you enter a query into our search box, the results don't differentiate between Cloud and Platform. It would be useful to configure Algolia to display the product to which the search result applies.

The highlighted results are for Cloud, but the label is 'Documentation':

![2022-11-24... | True | engine: Provide more context in internal search results - ### Describe the Issue

When you enter a query into our search box, the results don't differentiate between Cloud and Platform. It would be useful to configure Algolia to display the product to which the search result applies.

The highlighted results are fo... | non_defect | engine provide more context in internal search results describe the issue when you enter a query into our search box the results don t differentiate between cloud and platform it would be useful to configure algolia to display the product to which the search result applies the highlighted results are fo... | 0 |

6,030 | 2,610,219,678 | IssuesEvent | 2015-02-26 19:09:49 | chrsmith/somefinders | https://api.github.com/repos/chrsmith/somefinders | opened | x3daudio1 7 dll для windows 7 cкачать | auto-migrated Priority-Medium Type-Defect | ```

'''Гавриил Щукин'''

День добрый никак не могу найти .x3daudio1 7 dll

для windows 7 cкачать. где то видел уже

'''воин Стрелков'''

Вот хороший сайт где можно скачать

http://bit.ly/17vGvqb

'''Бернард Назаров'''

Спасибо вроде то но просит телефон вводить

'''Арсен Ершов'''

Неа все ок у меня ничего не списало

'''В... | 1.0 | x3daudio1 7 dll для windows 7 cкачать - ```

'''Гавриил Щукин'''

День добрый никак не могу найти .x3daudio1 7 dll

для windows 7 cкачать. где то видел уже

'''воин Стрелков'''

Вот хороший сайт где можно скачать

http://bit.ly/17vGvqb

'''Бернард Назаров'''

Спасибо вроде то но просит телефон вводить

'''Арсен Ершов'''

... | defect | dll для windows cкачать гавриил щукин день добрый никак не могу найти dll для windows cкачать где то видел уже воин стрелков вот хороший сайт где можно скачать бернард назаров спасибо вроде то но просит телефон вводить арсен ершов неа все ок у меня ничего не списало ... | 1 |

23,697 | 6,475,326,752 | IssuesEvent | 2017-08-17 20:08:24 | Microsoft/TypeScript | https://api.github.com/repos/Microsoft/TypeScript | closed | Intellisense not working at all | Needs More Info VS Code Tracked | _From @joshfttb on October 23, 2016 18:25_

I'm really trying to give vscode a go, but after two days I'm unable to make intellisense work.

I've tried configuring my project according to these instrustions:

https://code.visualstudio.com/Docs/languages/javascript

https://code.visualstudio.com/Docs/runtimes/nodejs

I'... | 1.0 | Intellisense not working at all - _From @joshfttb on October 23, 2016 18:25_

I'm really trying to give vscode a go, but after two days I'm unable to make intellisense work.

I've tried configuring my project according to these instrustions:

https://code.visualstudio.com/Docs/languages/javascript

https://code.visuals... | non_defect | intellisense not working at all from joshfttb on october i m really trying to give vscode a go but after two days i m unable to make intellisense work i ve tried configuring my project according to these instrustions i ve completely uninstalled and re installed both vscode and typings i ve tr... | 0 |

16,549 | 2,915,242,548 | IssuesEvent | 2015-06-23 11:16:06 | mintty/mintty | https://api.github.com/repos/mintty/mintty | closed | adb shell | Type-Defect | ```

open a conection whit adb shell for android terminal.

the line command is very well but the keys up and down don't print the last

typed command.

```

Original issue reported on code.google.com by `leone...@gmail.com` on 7 Mar 2013 at 6:32 | 1.0 | adb shell - ```

open a conection whit adb shell for android terminal.

the line command is very well but the keys up and down don't print the last

typed command.

```

Original issue reported on code.google.com by `leone...@gmail.com` on 7 Mar 2013 at 6:32 | defect | adb shell open a conection whit adb shell for android terminal the line command is very well but the keys up and down don t print the last typed command original issue reported on code google com by leone gmail com on mar at | 1 |

26,568 | 20,253,765,215 | IssuesEvent | 2022-02-14 20:40:57 | google/site-kit-wp | https://api.github.com/repos/google/site-kit-wp | closed | Update actions to run on PRs for feature branches | P0 QA: Eng Type: Infrastructure | ## Feature Description

We normally use feature flags to handle long-running development rather than using a separate branch until it is ready to go. In some cases, it makes sense to use a separate, shorter-lived branch when working on a few changes that will go in all together without a feature flag.

To support t... | 1.0 | Update actions to run on PRs for feature branches - ## Feature Description

We normally use feature flags to handle long-running development rather than using a separate branch until it is ready to go. In some cases, it makes sense to use a separate, shorter-lived branch when working on a few changes that will go in ... | non_defect | update actions to run on prs for feature branches feature description we normally use feature flags to handle long running development rather than using a separate branch until it is ready to go in some cases it makes sense to use a separate shorter lived branch when working on a few changes that will go in ... | 0 |

65,316 | 14,711,065,444 | IssuesEvent | 2021-01-05 06:42:18 | skeshari12/chef-server | https://api.github.com/repos/skeshari12/chef-server | opened | CVE-2015-8559 (High) detected in chef-12.22.5.gem | security vulnerability | ## CVE-2015-8559 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>chef-12.22.5.gem</b></p></summary>

<p>A systems integration framework, built to bring the benefits of configuration man... | True | CVE-2015-8559 (High) detected in chef-12.22.5.gem - ## CVE-2015-8559 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>chef-12.22.5.gem</b></p></summary>

<p>A systems integration framewo... | non_defect | cve high detected in chef gem cve high severity vulnerability vulnerable library chef gem a systems integration framework built to bring the benefits of configuration management to your entire infrastructure library home page a href dependency hierarchy x ch... | 0 |

38,217 | 8,701,619,739 | IssuesEvent | 2018-12-05 12:07:30 | primefaces/primefaces | https://api.github.com/repos/primefaces/primefaces | closed | Tree: Drag&Drop of top-level nodes not working (JavaScript error) | defect | ## 1) Environment

- PrimeFaces version: Current Showcase (`Running PrimeFaces-6.2.9 on Mojarra-2.3.2`)

- Affected browsers: Tested on Chrome, probably all browsers

## 2) Expected behavior

Moving a top-level node of the tree via drag&drop to another top-level position should update the tree and the node should h... | 1.0 | Tree: Drag&Drop of top-level nodes not working (JavaScript error) - ## 1) Environment

- PrimeFaces version: Current Showcase (`Running PrimeFaces-6.2.9 on Mojarra-2.3.2`)

- Affected browsers: Tested on Chrome, probably all browsers

## 2) Expected behavior

Moving a top-level node of the tree via drag&drop to ano... | defect | tree drag drop of top level nodes not working javascript error environment primefaces version current showcase running primefaces on mojarra affected browsers tested on chrome probably all browsers expected behavior moving a top level node of the tree via drag drop to ano... | 1 |

376,865 | 26,220,848,093 | IssuesEvent | 2023-01-04 14:44:11 | NFDI4Chem/VibrationalSpectroscopyOntology | https://api.github.com/repos/NFDI4Chem/VibrationalSpectroscopyOntology | closed | [Docs] Why are there duplicate classes? | documentation | Some fields seem to have the same meaning:

- "excitation wavelength" = "excitation wavelength setting";

- "groove density" = "groove density setting" = "diffraction grating"

I suggest to choose only one and choose one coherent labelling (with or without "... setting") | 1.0 | [Docs] Why are there duplicate classes? - Some fields seem to have the same meaning:

- "excitation wavelength" = "excitation wavelength setting";

- "groove density" = "groove density setting" = "diffraction grating"

I suggest to choose only one and choose one coherent labelling (with or without "... setting") | non_defect | why are there duplicate classes some fields seem to have the same meaning excitation wavelength excitation wavelength setting groove density groove density setting diffraction grating i suggest to choose only one and choose one coherent labelling with or without setting | 0 |

290,960 | 25,110,512,374 | IssuesEvent | 2022-11-08 20:06:27 | LtxProgrammer/Changed-Minecraft-Mod | https://api.github.com/repos/LtxProgrammer/Changed-Minecraft-Mod | closed | Transfur noise fails to play when keepCon is off. | bug needs testing | Players and entities don't make noise. Only plays when using syringe. | 1.0 | Transfur noise fails to play when keepCon is off. - Players and entities don't make noise. Only plays when using syringe. | non_defect | transfur noise fails to play when keepcon is off players and entities don t make noise only plays when using syringe | 0 |

19,386 | 3,198,404,591 | IssuesEvent | 2015-10-01 12:03:03 | dmaidaniuk/content-management-faces | https://api.github.com/repos/dmaidaniuk/content-management-faces | closed | Buildfile doesn't work - build.xml | auto-migrated Priority-Medium Type-Defect | What steps will reproduce the problem?

1. try to run ant build in eclipse or on command line

What is the expected output? What do you see instead?

expected output should be: BUILD SUCCESSFUL

instead i see: dozens of exceptions due to missing dependencies

What version of the product are you using? On what oper... | 1.0 | Buildfile doesn't work - build.xml - What steps will reproduce the problem?

1. try to run ant build in eclipse or on command line

What is the expected output? What do you see instead?

expected output should be: BUILD SUCCESSFUL

instead i see: dozens of exceptions due to missing dependencies

What version of ... | defect | buildfile doesn t work build xml what steps will reproduce the problem try to run ant build in eclipse or on command line what is the expected output what do you see instead expected output should be build successful instead i see dozens of exceptions due to missing dependencies what version of ... | 1 |

79,444 | 28,263,010,791 | IssuesEvent | 2023-04-07 02:17:58 | openzfs/zfs | https://api.github.com/repos/openzfs/zfs | opened | Fedora 38 zfs-testing repo is not available | Type: Defect | <!-- Please fill out the following template, which will help other contributors address your issue. -->

<!--

Thank you for reporting an issue.

*IMPORTANT* - Please check our issue tracker before opening a new issue.

Additional valuable information can be found in the OpenZFS documentation

and mailing list arch... | 1.0 | Fedora 38 zfs-testing repo is not available - <!-- Please fill out the following template, which will help other contributors address your issue. -->

<!--

Thank you for reporting an issue.

*IMPORTANT* - Please check our issue tracker before opening a new issue.

Additional valuable information can be found in th... | defect | fedora zfs testing repo is not available thank you for reporting an issue important please check our issue tracker before opening a new issue additional valuable information can be found in the openzfs documentation and mailing list archives please fill in as much of the template as possib... | 1 |

24,657 | 4,057,939,087 | IssuesEvent | 2016-05-25 00:40:36 | jccastillo0007/eFacturaT | https://api.github.com/repos/jccastillo0007/eFacturaT | opened | en conector o timbrador, no enviar mensajes modales | defect | ya que impiden la continuidad del timbrado, y son procesos 'ciegos'.

cuando no existe internet, se pueden hacer reintentos?

así lo pidió el cliente... | 1.0 | en conector o timbrador, no enviar mensajes modales - ya que impiden la continuidad del timbrado, y son procesos 'ciegos'.

cuando no existe internet, se pueden hacer reintentos?

así lo pidió el cliente... | defect | en conector o timbrador no enviar mensajes modales ya que impiden la continuidad del timbrado y son procesos ciegos cuando no existe internet se pueden hacer reintentos así lo pidió el cliente | 1 |

23,720 | 3,851,866,005 | IssuesEvent | 2016-04-06 05:28:07 | GPF/imame4all | https://api.github.com/repos/GPF/imame4all | closed | iCade Mobile controller remapping issue | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Access TAB menu by pressing two buttons.

2. Select specific input on iCade Mobile attempting to reassign

3. Press the desired button

What is the expected output? What do you see instead?

I expected to see the button I pressed reassigned to the selected input.

Instead ... | 1.0 | iCade Mobile controller remapping issue - ```

What steps will reproduce the problem?

1. Access TAB menu by pressing two buttons.

2. Select specific input on iCade Mobile attempting to reassign

3. Press the desired button

What is the expected output? What do you see instead?

I expected to see the button I pressed r... | defect | icade mobile controller remapping issue what steps will reproduce the problem access tab menu by pressing two buttons select specific input on icade mobile attempting to reassign press the desired button what is the expected output what do you see instead i expected to see the button i pressed r... | 1 |

33,845 | 7,268,381,074 | IssuesEvent | 2018-02-20 09:54:36 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | opened | JCache 1.1 TCK - org.jsr107.tck.event.CacheListenerTest passes, but assertion errors are in the log | Interface: ICache Team: Core Type: Defect | The JCache 1.1 TCK passes for Hazelcast, but the log file contains assertion errors. It seems we experience 2 issues here:

* the TCK handles the result wronly (the test should fail for Hazelcast) ;

* and we have an JCache incompatibility in the CacheListener

https://hazelcast-l337.ci.cloudbees.com/view/JCache/jo... | 1.0 | JCache 1.1 TCK - org.jsr107.tck.event.CacheListenerTest passes, but assertion errors are in the log - The JCache 1.1 TCK passes for Hazelcast, but the log file contains assertion errors. It seems we experience 2 issues here:

* the TCK handles the result wronly (the test should fail for Hazelcast) ;

* and we have an... | defect | jcache tck org tck event cachelistenertest passes but assertion errors are in the log the jcache tck passes for hazelcast but the log file contains assertion errors it seems we experience issues here the tck handles the result wronly the test should fail for hazelcast and we have an jcac... | 1 |

78,157 | 27,347,780,396 | IssuesEvent | 2023-02-27 07:03:46 | FalsehoodMC/Fabrication | https://api.github.com/repos/FalsehoodMC/Fabrication | closed | Toggle Sprint fails injection on forgery | k: Defect n: Forge | FabInjector failed to find injection point for net/minecraft/client/player/LocalPlayer;m_8107_()V Lnet/minecraft/client/KeyMapping;m_90857_()Z

located in com.unascribed.fabrication.mixin.b_utility.toggle_sprint.MixinClientPlayerEntity

nvm it wasnt your mod but still this part^ | 1.0 | Toggle Sprint fails injection on forgery - FabInjector failed to find injection point for net/minecraft/client/player/LocalPlayer;m_8107_()V Lnet/minecraft/client/KeyMapping;m_90857_()Z

located in com.unascribed.fabrication.mixin.b_utility.toggle_sprint.MixinClientPlayerEntity

nvm it wasnt your mod but still this ... | defect | toggle sprint fails injection on forgery fabinjector failed to find injection point for net minecraft client player localplayer m v lnet minecraft client keymapping m z located in com unascribed fabrication mixin b utility toggle sprint mixinclientplayerentity nvm it wasnt your mod but still this part | 1 |

7,097 | 2,610,326,856 | IssuesEvent | 2015-02-26 19:45:21 | chrsmith/republic-at-war | https://api.github.com/repos/chrsmith/republic-at-war | closed | Typo | auto-migrated Priority-Low Type-Defect | ```

B1 droids need a space after "numbers." in their description

```

-----

Original issue reported on code.google.com by `z3r0...@gmail.com` on 9 Jun 2011 at 12:06

* Merged into: #575 | 1.0 | Typo - ```

B1 droids need a space after "numbers." in their description

```

-----

Original issue reported on code.google.com by `z3r0...@gmail.com` on 9 Jun 2011 at 12:06

* Merged into: #575 | defect | typo droids need a space after numbers in their description original issue reported on code google com by gmail com on jun at merged into | 1 |

318,118 | 9,681,994,424 | IssuesEvent | 2019-05-23 08:06:26 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | reopened | scandinavianphoto.se - very slow browsing | browser-firefox-mobile priority-normal severity-important type-bad-performance | **URL**: www.scandinavianphoto.se

**Browser/Version**: Firefox Mobile 66.0

**Operating System**: Android

**What seems to be the trouble?(Required)**

- [ ] Desktop site instead of mobile site

- [x] Mobile site is not usable

- [ ] Video doesn't play

- [ ] Layout is messed up

- [ ] Text is not visible

- [ ]... | 1.0 | scandinavianphoto.se - very slow browsing - **URL**: www.scandinavianphoto.se

**Browser/Version**: Firefox Mobile 66.0

**Operating System**: Android

**What seems to be the trouble?(Required)**

- [ ] Desktop site instead of mobile site

- [x] Mobile site is not usable

- [ ] Video doesn't play

- [ ] Layout is... | non_defect | scandinavianphoto se very slow browsing url browser version firefox mobile operating system android what seems to be the trouble required desktop site instead of mobile site mobile site is not usable video doesn t play layout is messed up text is not visi... | 0 |

85,967 | 15,755,311,302 | IssuesEvent | 2021-03-31 01:33:11 | ysmanohar/Arrowlytics | https://api.github.com/repos/ysmanohar/Arrowlytics | opened | WS-2018-0625 (High) detected in xmlbuilder-2.6.5.tgz, xmlbuilder-8.2.2.tgz | security vulnerability | ## WS-2018-0625 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>xmlbuilder-2.6.5.tgz</b>, <b>xmlbuilder-8.2.2.tgz</b></p></summary>

<p>

<details><summary><b>xmlbuilder-2.6.5.tgz</b><... | True | WS-2018-0625 (High) detected in xmlbuilder-2.6.5.tgz, xmlbuilder-8.2.2.tgz - ## WS-2018-0625 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>xmlbuilder-2.6.5.tgz</b>, <b>xmlbuilder-8.... | non_defect | ws high detected in xmlbuilder tgz xmlbuilder tgz ws high severity vulnerability vulnerable libraries xmlbuilder tgz xmlbuilder tgz xmlbuilder tgz an xml builder for node js library home page a href path to dependency file arrowlytics bow... | 0 |

236,414 | 26,010,602,198 | IssuesEvent | 2022-12-21 01:03:51 | brogers588/spring-boot | https://api.github.com/repos/brogers588/spring-boot | closed | CVE-2022-31692 (High) detected in spring-security-web-5.5.0.jar - autoclosed | security vulnerability | ## CVE-2022-31692 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-security-web-5.5.0.jar</b></p></summary>

<p>Spring Security</p>

<p>Library home page: <a href="https://spring.i... | True | CVE-2022-31692 (High) detected in spring-security-web-5.5.0.jar - autoclosed - ## CVE-2022-31692 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-security-web-5.5.0.jar</b></p></s... | non_defect | cve high detected in spring security web jar autoclosed cve high severity vulnerability vulnerable library spring security web jar spring security library home page a href path to dependency file spring boot tests spring boot smoke tests spring boot smoke test actuat... | 0 |

12,111 | 8,599,960,293 | IssuesEvent | 2018-11-16 05:07:53 | istio/istio | https://api.github.com/repos/istio/istio | closed | Tracking: make local RBAC available in Istio 1.0 | area/security area/security/aaa | This is a tracking bug for making local RBAC available in Istio 1.0.

* Pilot:

- [x] Add authz plugin to construct RBAC policies (#5484)

- [x] Add `ON_WITH_INCLUSION` and `ON_WITH_EXCLUSION` mode (#6456)

- [x] Add destination attributes support in `ServiceRole` (#6554)

- [x] Add authentication attribu... | True | Tracking: make local RBAC available in Istio 1.0 - This is a tracking bug for making local RBAC available in Istio 1.0.

* Pilot:

- [x] Add authz plugin to construct RBAC policies (#5484)

- [x] Add `ON_WITH_INCLUSION` and `ON_WITH_EXCLUSION` mode (#6456)

- [x] Add destination attributes support in `Servic... | non_defect | tracking make local rbac available in istio this is a tracking bug for making local rbac available in istio pilot add authz plugin to construct rbac policies add on with inclusion and on with exclusion mode add destination attributes support in servicerole ... | 0 |

49,451 | 13,186,732,664 | IssuesEvent | 2020-08-13 01:08:22 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | Steamshovel linking to CVMFS Qt libs not system ones (Trac #1419) | Incomplete Migration Migrated from Trac cmake defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1419">https://code.icecube.wisc.edu/ticket/1419</a>, reported by blaufuss and owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2016-03-18T21:14:10",

"description": "Building offline-software t... | 1.0 | Steamshovel linking to CVMFS Qt libs not system ones (Trac #1419) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1419">https://code.icecube.wisc.edu/ticket/1419</a>, reported by blaufuss and owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "20... | defect | steamshovel linking to cvmfs qt libs not system ones trac migrated from json status closed changetime description building offline software trunk using cvmfs n ni noted these scary warnings on cmake n ncmake warning at cmake project cmake add ex... | 1 |

103,058 | 4,164,330,985 | IssuesEvent | 2016-06-18 18:25:37 | RioBus/ionic | https://api.github.com/repos/RioBus/ionic | closed | Notify the user when there were no buses in the query | easy enhancement priority | Show a notification toast when the query returns nothing. | 1.0 | Notify the user when there were no buses in the query - Show a notification toast when the query returns nothing. | non_defect | notify the user when there were no buses in the query show a notification toast when the query returns nothing | 0 |

81,288 | 30,783,463,764 | IssuesEvent | 2023-07-31 11:41:58 | vector-im/element-x-android | https://api.github.com/repos/vector-im/element-x-android | opened | Room appears twice in timeline | T-Defect | ### Steps to reproduce

1. Look at timeline, see room twice

### Outcome

#### What did you expect?

Room appears once in timeline

#### What happened instead?

Room appears twice in timeline

### Your phone model

Pixel 6a

### Operating system version

Graphene OS

### Application version and app store

Nightly

##... | 1.0 | Room appears twice in timeline - ### Steps to reproduce

1. Look at timeline, see room twice

### Outcome

#### What did you expect?

Room appears once in timeline

#### What happened instead?

Room appears twice in timeline

### Your phone model

Pixel 6a

### Operating system version

Graphene OS

### Application v... | defect | room appears twice in timeline steps to reproduce look at timeline see room twice outcome what did you expect room appears once in timeline what happened instead room appears twice in timeline your phone model pixel operating system version graphene os application ve... | 1 |

570,204 | 17,021,044,764 | IssuesEvent | 2021-07-02 19:07:43 | kubernetes/minikube | https://api.github.com/repos/kubernetes/minikube | closed | preload job skips generating preload for v1.20.8 | co/preload kind/regression priority/important-soon | while trying to bump k8s version I noticed it was slower on v1.20.8

and then I did

```

$mk start --kubernetes-version=v1.20.8 --download-only

😄 minikube v1.22.0-beta.0 on Darwin 11.4

✨ Using the docker driver based on existing profile

👍 Starting control plane node minikube in cluster minikube

🚜 Pulling... | 1.0 | preload job skips generating preload for v1.20.8 - while trying to bump k8s version I noticed it was slower on v1.20.8

and then I did

```

$mk start --kubernetes-version=v1.20.8 --download-only

😄 minikube v1.22.0-beta.0 on Darwin 11.4

✨ Using the docker driver based on existing profile

👍 Starting control ... | non_defect | preload job skips generating preload for while trying to bump version i noticed it was slower on and then i did mk start kubernetes version download only 😄 minikube beta on darwin ✨ using the docker driver based on existing profile 👍 starting control plane node ... | 0 |

312,619 | 26,873,404,019 | IssuesEvent | 2023-02-04 18:55:30 | MPMG-DCC-UFMG/F01 | https://api.github.com/repos/MPMG-DCC-UFMG/F01 | closed | Teste de generalizacao para a tag Informações Institucionais - Leis Municipais - Raposos | generalization test development template - Betha (26) tag - Informações Institucionais subtag - Leis Municipais | DoD: Realizar o teste de Generalização do validador da tag Informações Institucionais - Leis Municipais para o Município de Raposos. | 1.0 | Teste de generalizacao para a tag Informações Institucionais - Leis Municipais - Raposos - DoD: Realizar o teste de Generalização do validador da tag Informações Institucionais - Leis Municipais para o Município de Raposos. | non_defect | teste de generalizacao para a tag informações institucionais leis municipais raposos dod realizar o teste de generalização do validador da tag informações institucionais leis municipais para o município de raposos | 0 |

89,990 | 15,856,044,272 | IssuesEvent | 2021-04-08 01:23:03 | Rossb0b/Swapi | https://api.github.com/repos/Rossb0b/Swapi | opened | CVE-2019-16769 (Medium) detected in serialize-javascript-1.5.0.tgz | security vulnerability | ## CVE-2019-16769 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>serialize-javascript-1.5.0.tgz</b></p></summary>

<p>Serialize JavaScript to a superset of JSON that includes regular... | True | CVE-2019-16769 (Medium) detected in serialize-javascript-1.5.0.tgz - ## CVE-2019-16769 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>serialize-javascript-1.5.0.tgz</b></p></summary>... | non_defect | cve medium detected in serialize javascript tgz cve medium severity vulnerability vulnerable library serialize javascript tgz serialize javascript to a superset of json that includes regular expressions and functions library home page a href path to dependency file sw... | 0 |

52,411 | 13,224,719,496 | IssuesEvent | 2020-08-17 19:42:27 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | opened | Wrong datatype in steering file for 20208 -> unable to weight via from_simprod (Trac #2178) | Incomplete Migration Migrated from Trac analysis defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/2178">https://code.icecube.wisc.edu/projects/icecube/ticket/2178</a>, reported by flauber</summary>

<p>

```json

{

"status": "closed",

"changetime": "2018-08-27T15:15:55",

"_ts": "1535382955013988",

"des... | 1.0 | Wrong datatype in steering file for 20208 -> unable to weight via from_simprod (Trac #2178) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/2178">https://code.icecube.wisc.edu/projects/icecube/ticket/2178</a>, reported by flauber</summary>

<p>

```json

{

"statu... | defect | wrong datatype in steering file for unable to weight via from simprod trac migrated from json status closed changetime ts description hi n nanother problem with the steering file of n be either int or float not string nit being a strin... | 1 |

64,683 | 18,794,892,033 | IssuesEvent | 2021-11-08 21:02:17 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | SciPy on Python 3.10 Windows does not include msvcp140.dll | defect Official binaries | When downloading the wheels from https://pypi.org/project/scipy/#files, `scipy-1.7.2-cp310-cp310-win_amd64.whl` does not include `.libs/msvcp140.dll`, while `scipy-1.7.2-cp39-cp39-win_amd64.whl` does include `msvcp140.dll`.

When looking at the build logs for [Windows Python 3.9](https://ci.appveyor.com/project/scipy... | 1.0 | SciPy on Python 3.10 Windows does not include msvcp140.dll - When downloading the wheels from https://pypi.org/project/scipy/#files, `scipy-1.7.2-cp310-cp310-win_amd64.whl` does not include `.libs/msvcp140.dll`, while `scipy-1.7.2-cp39-cp39-win_amd64.whl` does include `msvcp140.dll`.

When looking at the build logs f... | defect | scipy on python windows does not include dll when downloading the wheels from scipy win whl does not include libs dll while scipy win whl does include dll when looking at the build logs for we see the line that shows dll is copied into the wheel copy... | 1 |

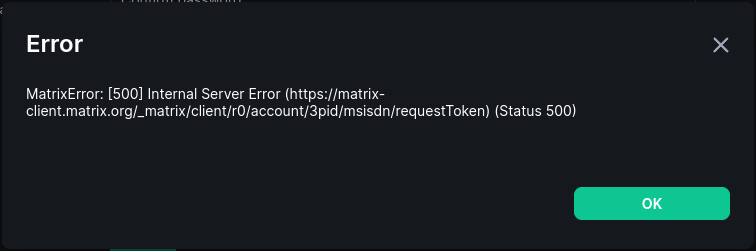

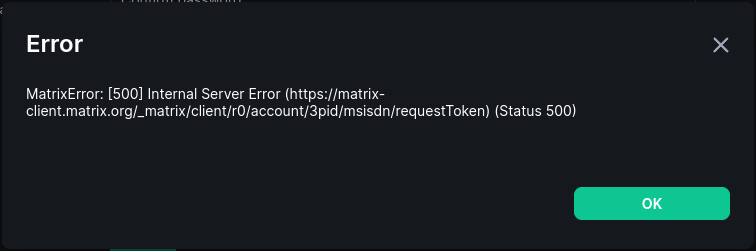

78,663 | 27,700,158,561 | IssuesEvent | 2023-03-14 07:17:08 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Adding a phone number fails | T-Defect | ### Steps to reproduce

1. Add a US phone number

2. Adding the phone number will fail

### Outcome

#### What did you expect?

I should be able to add a phone number to my account.

#### What happened ... | 1.0 | Adding a phone number fails - ### Steps to reproduce

1. Add a US phone number

2. Adding the phone number will fail

### Outcome

#### What did you expect?

I should be able to add a phone number to my a... | defect | adding a phone number fails steps to reproduce add a us phone number adding the phone number will fail outcome what did you expect i should be able to add a phone number to my account what happened instead i cannot add a phone number to my account operating system ... | 1 |

40,729 | 5,257,617,785 | IssuesEvent | 2017-02-02 21:02:23 | blockstack/designs | https://api.github.com/repos/blockstack/designs | closed | inverse color of ovals, rectangles and lines atlas illustration | design production | Create inverse version of the Blockstack Atlas Illustration. | 1.0 | inverse color of ovals, rectangles and lines atlas illustration - Create inverse version of the Blockstack Atlas Illustration. | non_defect | inverse color of ovals rectangles and lines atlas illustration create inverse version of the blockstack atlas illustration | 0 |

224,859 | 17,778,699,433 | IssuesEvent | 2021-08-30 23:26:28 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | closed | Flaky test: vscode - untitled automatic language detection | upstream integration-test-failure web | ```

1 failing

1) vscode - untitled automatic language detection

test automatic language detection works:

Error: asPromise TIMEOUT reached

at Timeout._onTimeout (extensions/vscode-api-tests/src/utils.ts:130:11)

at listOnTimeout (internal/timers.js:554:17)

```

https://dev.azure.com... | 1.0 | Flaky test: vscode - untitled automatic language detection - ```

1 failing

1) vscode - untitled automatic language detection

test automatic language detection works:

Error: asPromise TIMEOUT reached

at Timeout._onTimeout (extensions/vscode-api-tests/src/utils.ts:130:11)

at listOnTimeou... | non_defect | flaky test vscode untitled automatic language detection failing vscode untitled automatic language detection test automatic language detection works error aspromise timeout reached at timeout ontimeout extensions vscode api tests src utils ts at listontimeout ... | 0 |

132,728 | 18,268,859,764 | IssuesEvent | 2021-10-04 11:43:16 | artsking/linux-3.0.35 | https://api.github.com/repos/artsking/linux-3.0.35 | opened | CVE-2014-4655 (Medium) detected in linux-stable-rtv3.8.6 | security vulnerability | ## CVE-2014-4655 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv3.8.6</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home pag... | True | CVE-2014-4655 (Medium) detected in linux-stable-rtv3.8.6 - ## CVE-2014-4655 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv3.8.6</b></p></summary>

<p>

<p>Julia Cartw... | non_defect | cve medium detected in linux stable cve medium severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in head commit a href found in base branch master vulnerable source f... | 0 |

15,578 | 3,476,159,835 | IssuesEvent | 2015-12-26 15:06:59 | websharks/comment-mail | https://api.github.com/repos/websharks/comment-mail | opened | Queued (Pending) Notifications are never sent | bug needs research needs testing | I was testing Comment Mail Lite v151224 after importing StCR subscriptions when I tried posting two new replies to existing comments with subscriptions (i.e., I posted new replies to comments that had StCR subscriptions, which were imported into Comment Mail). The new Comment Mail subscriptions were created fine, and w... | 1.0 | Queued (Pending) Notifications are never sent - I was testing Comment Mail Lite v151224 after importing StCR subscriptions when I tried posting two new replies to existing comments with subscriptions (i.e., I posted new replies to comments that had StCR subscriptions, which were imported into Comment Mail). The new Com... | non_defect | queued pending notifications are never sent i was testing comment mail lite after importing stcr subscriptions when i tried posting two new replies to existing comments with subscriptions i e i posted new replies to comments that had stcr subscriptions which were imported into comment mail the new comment m... | 0 |

52,048 | 13,211,373,266 | IssuesEvent | 2020-08-15 22:40:19 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | opened | [recclasses] Non-offline dependencies (Trac #1567) | Incomplete Migration Migrated from Trac combo reconstruction defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1567">https://code.icecube.wisc.edu/projects/icecube/ticket/1567</a>, reported by olivasand owned by hdembinski</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-13T14:11:57",

"_ts": "... | 1.0 | [recclasses] Non-offline dependencies (Trac #1567) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1567">https://code.icecube.wisc.edu/projects/icecube/ticket/1567</a>, reported by olivasand owned by hdembinski</em></summary>

<p>

```json

{

"status": "closed",

... | defect | non offline dependencies trac migrated from json status closed changetime ts description currently recclasses depends on portia and ophelia this is breakage that needs to be fixed one of the main reasons for the creation of the proje... | 1 |

33,372 | 9,106,305,539 | IssuesEvent | 2019-02-20 23:22:31 | dotnet/coreclr | https://api.github.com/repos/dotnet/coreclr | closed | ARM legs are failing in CI in release/2.0.0 | area-Build blocking-clean-ci blocking-official-build | It looks like these have never passed?

```

15:54:41 BUILDTEST: Commencing build of native test components for arm/Release

15:54:41 BUILDTEST: Using environment: "C:\Program Files (x86)\Microsoft Visual Studio\2017\Enterprise\Common7\Tools\\..\..\VC\Auxiliary\Build\vcvarsall.bat" x86_arm

15:54:41 ****************... | 2.0 | ARM legs are failing in CI in release/2.0.0 - It looks like these have never passed?

```

15:54:41 BUILDTEST: Commencing build of native test components for arm/Release

15:54:41 BUILDTEST: Using environment: "C:\Program Files (x86)\Microsoft Visual Studio\2017\Enterprise\Common7\Tools\\..\..\VC\Auxiliary\Build\vcv... | non_defect | arm legs are failing in ci in release it looks like these have never passed buildtest commencing build of native test components for arm release buildtest using environment c program files microsoft visual studio enterprise tools vc auxiliary build vcvarsall bat arm... | 0 |

35,572 | 7,781,135,492 | IssuesEvent | 2018-06-05 22:35:10 | google/sanitizers | https://api.github.com/repos/google/sanitizers | closed | remove the limit of the number of ever existed threads in asan | Priority-Medium ProjectAddressSanitizer Status-Accepted Type-Defect | Originally reported on Google Code with ID 273

```

Today asan will die after 4M threads are created, because we keep some

small amount of metadata for every thread that ever lived.

We should eventually remove this limitation.

```

Reported by `konstantin.s.serebryany` on 2014-03-11 08:51:27

| 1.0 | remove the limit of the number of ever existed threads in asan - Originally reported on Google Code with ID 273

```

Today asan will die after 4M threads are created, because we keep some

small amount of metadata for every thread that ever lived.

We should eventually remove this limitation.

```

Reported by `konsta... | defect | remove the limit of the number of ever existed threads in asan originally reported on google code with id today asan will die after threads are created because we keep some small amount of metadata for every thread that ever lived we should eventually remove this limitation reported by konstanti... | 1 |

55,520 | 14,531,078,663 | IssuesEvent | 2020-12-14 20:13:57 | BOINC/boinc | https://api.github.com/repos/BOINC/boinc | closed | Wrong cast when reporting non-allowed rpc access | C: Client - Daemon E: 1 day P: Major T: Defect | For some time, reports from new installations of Linux show non-allowed access from ip addresses starting with 2. 2.x.x.x is typically a French address.

reference issue #3246. I could not find an issue like I put together here but I might have missed it.

In actuality, the 2 is the identifier AF_INET due to wrong ... | 1.0 | Wrong cast when reporting non-allowed rpc access - For some time, reports from new installations of Linux show non-allowed access from ip addresses starting with 2. 2.x.x.x is typically a French address.

reference issue #3246. I could not find an issue like I put together here but I might have missed it.

In actua... | defect | wrong cast when reporting non allowed rpc access for some time reports from new installations of linux show non allowed access from ip addresses starting with x x x is typically a french address reference issue i could not find an issue like i put together here but i might have missed it in actualit... | 1 |

32,415 | 6,777,127,827 | IssuesEvent | 2017-10-27 20:43:48 | Stibbons/dopplerr | https://api.github.com/repos/Stibbons/dopplerr | closed | Docker image not working | Type: Defect | Docker image is not working at all. Process is running in the container but nothing is listening.

PID USER TIME COMMAND

1 root 0:00 s6-svscan -t0 /var/run/s6/services

32 root 0:00 s6-supervise s6-fdholderd

194 root 0:00 s6-supervise dopplerr

762 root 0:00 /bin/bash

1... | 1.0 | Docker image not working - Docker image is not working at all. Process is running in the container but nothing is listening.

PID USER TIME COMMAND

1 root 0:00 s6-svscan -t0 /var/run/s6/services

32 root 0:00 s6-supervise s6-fdholderd

194 root 0:00 s6-supervise dopplerr

762 ro... | defect | docker image not working docker image is not working at all process is running in the container but nothing is listening pid user time command root svscan var run services root supervise fdholderd root supervise dopplerr root ... | 1 |

31,357 | 14,940,691,919 | IssuesEvent | 2021-01-25 18:38:51 | hibernate/hibernate-reactive | https://api.github.com/repos/hibernate/hibernate-reactive | closed | Performance: pool() access from SessionFactoryImpl(s) to not invoke the ServiceRegistry | performance | Both `pool()` implementations in the Stage & Mutiny versions of the ReactiveSessionFactoryImpl should have a faster access the the pool instance.

Accessing Services from the `ServiceRegistry` is quite flexible, but should only be done during initialization, or to handle occasional / non-hot needs. | True | Performance: pool() access from SessionFactoryImpl(s) to not invoke the ServiceRegistry - Both `pool()` implementations in the Stage & Mutiny versions of the ReactiveSessionFactoryImpl should have a faster access the the pool instance.

Accessing Services from the `ServiceRegistry` is quite flexible, but should only ... | non_defect | performance pool access from sessionfactoryimpl s to not invoke the serviceregistry both pool implementations in the stage mutiny versions of the reactivesessionfactoryimpl should have a faster access the the pool instance accessing services from the serviceregistry is quite flexible but should only ... | 0 |

56,527 | 8,081,423,873 | IssuesEvent | 2018-08-08 03:25:05 | servo/servo | https://api.github.com/repos/servo/servo | closed | Doc build is broken with latest rust update | A-documentation A-infrastructure | ```

error: `[cfg]` cannot be resolved, ignoring it...

--> /home/servo/.cargo/registry/src/github.com-1ecc6299db9ec823/cfg-if-0.1.2/src/lib.rs:1:28

|

1 | //! A macro for defining #[cfg] if-else statements.

| ^^^ cannot be resolved, ignoring

|

note: lint level defined here

--> /... | 1.0 | Doc build is broken with latest rust update - ```

error: `[cfg]` cannot be resolved, ignoring it...

--> /home/servo/.cargo/registry/src/github.com-1ecc6299db9ec823/cfg-if-0.1.2/src/lib.rs:1:28

|

1 | //! A macro for defining #[cfg] if-else statements.

| ^^^ cannot be resolved, ignori... | non_defect | doc build is broken with latest rust update error cannot be resolved ignoring it home servo cargo registry src github com cfg if src lib rs a macro for defining if else statements cannot be resolved ignoring note lint leve... | 0 |

2,292 | 2,603,992,449 | IssuesEvent | 2015-02-24 19:06:58 | chrsmith/nishazi6 | https://api.github.com/repos/chrsmith/nishazi6 | opened | 沈阳疱疹哪个医院最好 | auto-migrated Priority-Medium Type-Defect | ```

沈阳疱疹哪个医院最好〓沈陽軍區政治部醫院性病〓TEL:024-3

1023308〓成立于1946年,68年專注于性傳播疾病的研究和治療。�

��于沈陽市沈河區二緯路32號。是一所與新中國同建立共輝煌�

��歷史悠久、設備精良、技術權威、專家云集,是預防、保健

、醫療、科研康復為一體的綜合性醫院。是國家首批公立甲��

�部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、�

��南大學等知名高等院校的教學醫院。曾被中國人民解放軍空

軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體��

�等功。

```

-----

Original issue reported on code.google.com by `q964105... | 1.0 | 沈阳疱疹哪个医院最好 - ```

沈阳疱疹哪个医院最好〓沈陽軍區政治部醫院性病〓TEL:024-3

1023308〓成立于1946年,68年專注于性傳播疾病的研究和治療。�

��于沈陽市沈河區二緯路32號。是一所與新中國同建立共輝煌�

��歷史悠久、設備精良、技術權威、專家云集,是預防、保健

、醫療、科研康復為一體的綜合性醫院。是國家首批公立甲��

�部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、�

��南大學等知名高等院校的教學醫院。曾被中國人民解放軍空

軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體��

�等功。

```

-----

Original issue reported on code.google.co... | defect | 沈阳疱疹哪个医院最好 沈阳疱疹哪个医院最好〓沈陽軍區政治部醫院性病〓tel: 〓 , 。� �� 。是一所與新中國同建立共輝煌� ��歷史悠久、設備精良、技術權威、專家云集,是預防、保健 、醫療、科研康復為一體的綜合性醫院。是國家首批公立甲�� �部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、� ��南大學等知名高等院校的教學醫院。曾被中國人民解放軍空 軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體�� �等功。 original issue reported on code google com by gmail com on jun at | 1 |

109,226 | 23,740,176,539 | IssuesEvent | 2022-08-31 11:44:51 | sast-automation-dev/hackme-20 | https://api.github.com/repos/sast-automation-dev/hackme-20 | opened | Code Security Report: 15 high severity findings, 20 total findings | code security findings | # Code Security Report

**Latest Scan:** 2022-08-31 11:43am

**Total Findings:** 20

**Tested Project Files:** 44

**Detected Programming Languages:** 2

<!-- SAST-MANUAL-SCAN-START -->

- [ ] Check this box to manually trigger a scan

<!-- SAST-MANUAL-SCAN-END -->

## Language: JavaScript / Node.js

| Severity | CWE | Vulne... | 1.0 | Code Security Report: 15 high severity findings, 20 total findings - # Code Security Report

**Latest Scan:** 2022-08-31 11:43am

**Total Findings:** 20

**Tested Project Files:** 44

**Detected Programming Languages:** 2

<!-- SAST-MANUAL-SCAN-START -->

- [ ] Check this box to manually trigger a scan

<!-- SAST-MANUAL-SCAN... | non_defect | code security report high severity findings total findings code security report latest scan total findings tested project files detected programming languages check this box to manually trigger a scan language javascript node js severity cwe vulnerabi... | 0 |

295,412 | 22,215,492,059 | IssuesEvent | 2022-06-08 00:52:52 | Luizgsmkw/helpr | https://api.github.com/repos/Luizgsmkw/helpr | closed | Criar RestCrontoller para listagem de um cliente em cliente.service. | documentation enhancement | - [x] Criar um controlador Rest;

- [x] Criar o serviço de cliente;

- [x] Criar métodos para o Endpoint de RestController;

- [x] Vincular os repositórios de cliente;

- [x] Testar Endpoint no Postman. | 1.0 | Criar RestCrontoller para listagem de um cliente em cliente.service. - - [x] Criar um controlador Rest;

- [x] Criar o serviço de cliente;

- [x] Criar métodos para o Endpoint de RestController;

- [x] Vincular os repositórios de cliente;

- [x] Testar Endpoint no Postman. | non_defect | criar restcrontoller para listagem de um cliente em cliente service criar um controlador rest criar o serviço de cliente criar métodos para o endpoint de restcontroller vincular os repositórios de cliente testar endpoint no postman | 0 |

11,435 | 2,651,545,003 | IssuesEvent | 2015-03-16 12:21:53 | micheles/papers | https://api.github.com/repos/micheles/papers | closed | kwargs dict is not filled with kwargs | auto-migrated Priority-Medium Type-Defect | ```

Hi Michele. Thanks for your great job!

Wrote a small decorator(django by default caches everything in 'default' cache):

def filesystem_cache(key_prefix, cache_time=None):

"""

Caches function based on key_prefix and function args/kwargs.

Stores function result in filesystem cache for a certain cache_ti... | 1.0 | kwargs dict is not filled with kwargs - ```

Hi Michele. Thanks for your great job!

Wrote a small decorator(django by default caches everything in 'default' cache):

def filesystem_cache(key_prefix, cache_time=None):

"""

Caches function based on key_prefix and function args/kwargs.

Stores function result in... | defect | kwargs dict is not filled with kwargs hi michele thanks for your great job wrote a small decorator django by default caches everything in default cache def filesystem cache key prefix cache time none caches function based on key prefix and function args kwargs stores function result in... | 1 |

29,129 | 5,542,560,954 | IssuesEvent | 2017-03-22 15:15:13 | bridgedotnet/Bridge | https://api.github.com/repos/bridgedotnet/Bridge | closed | try...finally in async | defect in progress | Return keyword doesn't work correctly inside async try/catch block

### Steps To Reproduce

http://deck.net/2a511799409c10edd4cb311a322de94c

```c#

public class Program

{

public static async void Main()

{

try

{

var errors = await TestAsync();

if (errors.Length != 0)... | 1.0 | try...finally in async - Return keyword doesn't work correctly inside async try/catch block

### Steps To Reproduce

http://deck.net/2a511799409c10edd4cb311a322de94c

```c#

public class Program

{

public static async void Main()

{

try

{

var errors = await TestAsync();

... | defect | try finally in async return keyword doesn t work correctly inside async try catch block steps to reproduce c public class program public static async void main try var errors await testasync if errors length return ... | 1 |

28,535 | 5,286,901,480 | IssuesEvent | 2017-02-08 10:39:53 | AsyncHttpClient/async-http-client | https://api.github.com/repos/AsyncHttpClient/async-http-client | closed | Early termination of reactive streams subscriber should close connection | Defect | If a reactive streams subscriber cancels mid response, currently, AHC will drain the response, and return the connection to the queue once done. This is not what happens when `AsyncHandler.onBodyPartReceived` returns `ABORT`, for example, when that happens, the connection is closed (I think). The problem we're seeing... | 1.0 | Early termination of reactive streams subscriber should close connection - If a reactive streams subscriber cancels mid response, currently, AHC will drain the response, and return the connection to the queue once done. This is not what happens when `AsyncHandler.onBodyPartReceived` returns `ABORT`, for example, when ... | defect | early termination of reactive streams subscriber should close connection if a reactive streams subscriber cancels mid response currently ahc will drain the response and return the connection to the queue once done this is not what happens when asynchandler onbodypartreceived returns abort for example when ... | 1 |

4,517 | 2,610,111,850 | IssuesEvent | 2015-02-26 18:34:40 | chrsmith/scribefire-chrome | https://api.github.com/repos/chrsmith/scribefire-chrome | closed | server error requested method get wp.getusersblogs does not exist | auto-migrated Priority-Medium Type-Defect | ```

What's the problem?

This summary line is the error I get when I try to add my WP blog. FWIW,

it's a WP blog hosted at my domain. It worked fine with Windows Live

Writer.

What version of ScribeFire for Chrome are you running?

0.1.1.0

```

-----

Original issue reported on code.google.com by `barbaric...@gm... | 1.0 | server error requested method get wp.getusersblogs does not exist - ```

What's the problem?

This summary line is the error I get when I try to add my WP blog. FWIW,

it's a WP blog hosted at my domain. It worked fine with Windows Live

Writer.

What version of ScribeFire for Chrome are you running?

0.1.1.0

```

... | defect | server error requested method get wp getusersblogs does not exist what s the problem this summary line is the error i get when i try to add my wp blog fwiw it s a wp blog hosted at my domain it worked fine with windows live writer what version of scribefire for chrome are you running ... | 1 |

781,488 | 27,439,516,318 | IssuesEvent | 2023-03-02 10:01:03 | pmgbergen/porepy | https://api.github.com/repos/pmgbergen/porepy | opened | Specific volumes for interfaces | enhancement priority - high | Interfaces need a notion of specific volumes to allow integration. The specific volume accounts for the collapsed dimension(s), so that it matches the dimension of the boundary of its higher-dimensional neighbor. | 1.0 | Specific volumes for interfaces - Interfaces need a notion of specific volumes to allow integration. The specific volume accounts for the collapsed dimension(s), so that it matches the dimension of the boundary of its higher-dimensional neighbor. | non_defect | specific volumes for interfaces interfaces need a notion of specific volumes to allow integration the specific volume accounts for the collapsed dimension s so that it matches the dimension of the boundary of its higher dimensional neighbor | 0 |

121,047 | 15,834,508,867 | IssuesEvent | 2021-04-06 16:52:24 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | closed | Button Update Doesn't Make Centering and Moving of Block Obvious/Intuitive | Needs Design Feedback [Block] Buttons | Hi All,

I love Gutenberg! I create content in it every day for upwards of 30 unique sites.

The button update:

LOVE that I can do side-by-side

HATE that it's not UX friendly in terms of innate functionality being obvious and present (moving up and down/centering) when you click the plus button. See [short v... | 1.0 | Button Update Doesn't Make Centering and Moving of Block Obvious/Intuitive - Hi All,

I love Gutenberg! I create content in it every day for upwards of 30 unique sites.

The button update:

LOVE that I can do side-by-side

HATE that it's not UX friendly in terms of innate functionality being obvious and present... | non_defect | button update doesn t make centering and moving of block obvious intuitive hi all i love gutenberg i create content in it every day for upwards of unique sites the button update love that i can do side by side hate that it s not ux friendly in terms of innate functionality being obvious and present ... | 0 |

67,707 | 21,076,189,076 | IssuesEvent | 2022-04-02 07:02:27 | klubcoin/lcn-mobile | https://api.github.com/repos/klubcoin/lcn-mobile | closed | [Account Maintenance][SEED Phrase][Biometrics] Fix must accept correct password for Revealing Secret Recovery Phrase after unlocking the application via biometrics. | Defect Must Have Critical Account Maintenance Services | ### **Description:**

Must accept correct password for Backing up again the Secret Recovery Phrase after unlocking the application via biometrics.

**Build Environment:** Staging Environment

**Affects Version:** 1.0.0.22

**Device Platform:** Android

**Device OS:** 11

**Test Device:** OnePlus 7T Pro

### **Pre-c... | 1.0 | [Account Maintenance][SEED Phrase][Biometrics] Fix must accept correct password for Revealing Secret Recovery Phrase after unlocking the application via biometrics. - ### **Description:**

Must accept correct password for Backing up again the Secret Recovery Phrase after unlocking the application via biometrics.

**B... | defect | fix must accept correct password for revealing secret recovery phrase after unlocking the application via biometrics description must accept correct password for backing up again the secret recovery phrase after unlocking the application via biometrics build environment staging environment ... | 1 |

55,984 | 14,882,315,936 | IssuesEvent | 2021-01-20 11:43:29 | martinrotter/rssguard | https://api.github.com/repos/martinrotter/rssguard | closed | [BUG]: Application starting from version 3.8.2 does not start because of custom compiled QtWebEngine | Component-Web-Browser Status-Partially-Fixed Type-Defect Type-Deployment | #### Brief description of the issue.

Application starting from version 3.8.2 does not start

#### How to reproduce the bug?

1. Download the application and run the installation over the already installed

#### What was the expected result?

Application installed and worked

#### What actually happened?

... | 1.0 | [BUG]: Application starting from version 3.8.2 does not start because of custom compiled QtWebEngine - #### Brief description of the issue.

Application starting from version 3.8.2 does not start

#### How to reproduce the bug?

1. Download the application and run the installation over the already installed

##... | defect | application starting from version does not start because of custom compiled qtwebengine brief description of the issue application starting from version does not start how to reproduce the bug download the application and run the installation over the already installed w... | 1 |

197,335 | 22,591,809,236 | IssuesEvent | 2022-06-28 20:42:57 | billmcchesney1/jazz | https://api.github.com/repos/billmcchesney1/jazz | opened | CVE-2022-0691 (High) detected in url-parse-1.4.7.tgz | security vulnerability | ## CVE-2022-0691 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>url-parse-1.4.7.tgz</b></p></summary>

<p>Small footprint URL parser that works seamlessly across Node.js and browser en... | True | CVE-2022-0691 (High) detected in url-parse-1.4.7.tgz - ## CVE-2022-0691 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>url-parse-1.4.7.tgz</b></p></summary>

<p>Small footprint URL par... | non_defect | cve high detected in url parse tgz cve high severity vulnerability vulnerable library url parse tgz small footprint url parser that works seamlessly across node js and browser environments library home page a href path to dependency file templates react website templat... | 0 |

49,585 | 13,187,236,621 | IssuesEvent | 2020-08-13 02:46:48 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | [steamshovel] ipdf I3DOMLikelihoodArtist causes shovelart to segfault on exit (Trac #1711) | Incomplete Migration Migrated from Trac combo reconstruction defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1711">https://code.icecube.wisc.edu/ticket/1711</a>, reported by kjmeagher and owned by hdembinski</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-13T14:14:55",