Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

96,792 | 12,157,448,750 | IssuesEvent | 2020-04-25 22:03:19 | ethereum/solidity | https://api.github.com/repos/ethereum/solidity | opened | Allow constants in interfaces | language design :rage4: | (Moved this from #3411 which, now confusingly, refers to a different feature.)

Since we allow accessing constants through contract names (#1290) and enums in interfaces (#4087), it may make sense to reconsider this feature, at least for some healthy discussion.

I am still not sure if it is a good idea or not (it ... | 1.0 | Allow constants in interfaces - (Moved this from #3411 which, now confusingly, refers to a different feature.)

Since we allow accessing constants through contract names (#1290) and enums in interfaces (#4087), it may make sense to reconsider this feature, at least for some healthy discussion.

I am still not sure ... | non_defect | allow constants in interfaces moved this from which now confusingly refers to a different feature since we allow accessing constants through contract names and enums in interfaces it may make sense to reconsider this feature at least for some healthy discussion i am still not sure if it is ... | 0 |

7,107 | 2,610,327,391 | IssuesEvent | 2015-02-26 19:45:30 | chrsmith/republic-at-war | https://api.github.com/repos/chrsmith/republic-at-war | closed | Typo | auto-migrated Priority-Low Type-Defect | ```

who is an should be between "Deltasquad" and "explosive" on Scorch's description

```

-----

Original issue reported on code.google.com by `z3r0...@gmail.com` on 9 Jun 2011 at 12:21 | 1.0 | Typo - ```

who is an should be between "Deltasquad" and "explosive" on Scorch's description

```

-----

Original issue reported on code.google.com by `z3r0...@gmail.com` on 9 Jun 2011 at 12:21 | defect | typo who is an should be between deltasquad and explosive on scorch s description original issue reported on code google com by gmail com on jun at | 1 |

49,132 | 13,185,249,346 | IssuesEvent | 2020-08-12 21:01:14 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | [sim-services] sanity checker - tau samples (Trac #805) | Incomplete Migration Migrated from Trac combo simulation defect | <details>

<summary><em>Migrated from https://code.icecube.wisc.edu/ticket/805

, reported by olivas and owned by olivas</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2016-03-18T21:14:03",

"description": "https://icecube-spno.slack.com/files/dschultz/F02V2RF5A/v04-01-07_sanity_checker_er... | 1.0 | [sim-services] sanity checker - tau samples (Trac #805) - <details>

<summary><em>Migrated from https://code.icecube.wisc.edu/ticket/805

, reported by olivas and owned by olivas</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2016-03-18T21:14:03",

"description": "https://icecube-spno.slac... | defect | sanity checker tau samples trac migrated from reported by olivas and owned by olivas json status closed changetime description is likely due to the fact that the taus are inice but outside the active volume the stochastic aren t tracked in this c... | 1 |

626,768 | 19,842,560,418 | IssuesEvent | 2022-01-21 00:02:33 | SahilSawantUSA/Scouterdeck-Project | https://api.github.com/repos/SahilSawantUSA/Scouterdeck-Project | closed | Add electron base | Priority: High Type: Feature Hardware: RPI | Add the base for an electron app which will serve as the main application for the Scouter Deck. | 1.0 | Add electron base - Add the base for an electron app which will serve as the main application for the Scouter Deck. | non_defect | add electron base add the base for an electron app which will serve as the main application for the scouter deck | 0 |

451,462 | 13,036,287,920 | IssuesEvent | 2020-07-28 11:58:50 | strapi/strapi | https://api.github.com/repos/strapi/strapi | closed | Model using component fails with error since update | priority: medium source: plugin:content-manager status: confirmed | **Describe the bug**

Any model referencing a component as an attribute fails to open admin collection type screen.

**Steps to reproduce the behavior**

1. Clicking on any collection type that uses a component within Strapi admin results in follow error:

```

Something went wrong.

Details

TypeError: S.include... | 1.0 | Model using component fails with error since update - **Describe the bug**

Any model referencing a component as an attribute fails to open admin collection type screen.

**Steps to reproduce the behavior**

1. Clicking on any collection type that uses a component within Strapi admin results in follow error:

```... | non_defect | model using component fails with error since update describe the bug any model referencing a component as an attribute fails to open admin collection type screen steps to reproduce the behavior clicking on any collection type that uses a component within strapi admin results in follow error ... | 0 |

78,723 | 27,734,516,200 | IssuesEvent | 2023-03-15 10:18:38 | SeleniumHQ/selenium | https://api.github.com/repos/SeleniumHQ/selenium | closed | [🐛 Bug]: send_keys() is messing up indentation | I-defect I-issue-template | ### What happened?

When using "ctrl+c" and "ctrl+v" to copy and paste the code to the textare... | 1.0 | [🐛 Bug]: send_keys() is messing up indentation - ### What happened?

When using "ctrl+c" and ... | defect | send keys is messing up indentation what happened when using ctrl c and ctrl v to copy and paste the code to the textarea its indentation doesn t change but when we use send keys to enter the text in its indentation will be in a mess how can we reproduce the issue shell we... | 1 |

59,339 | 17,023,091,059 | IssuesEvent | 2021-07-03 00:20:32 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | interactive map edit queries | Component: admin Priority: major Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 12.36pm, Friday, 16th December 2005]**

From the editor, or another interface, ability to query the edit history of points/segments. | 1.0 | interactive map edit queries - **[Submitted to the original trac issue database at 12.36pm, Friday, 16th December 2005]**

From the editor, or another interface, ability to query the edit history of points/segments. | defect | interactive map edit queries from the editor or another interface ability to query the edit history of points segments | 1 |

74,962 | 25,457,811,317 | IssuesEvent | 2022-11-24 15:35:52 | SAP/fundamental-styles | https://api.github.com/repos/SAP/fundamental-styles | closed | Input field truncation bug | Bug Defect Hunting version 1.0 | discussed with @manu-kr and the issue is coming from Input component, not related to File Uploader

It's coming from Fundamental-styles

#### Is this a bug, enhancement, or feature request?

bug

#### Briefly describe your proposal.

- [ ] File Uploader with truncation loses truncation when a file is selected:

Ini... | 1.0 | Input field truncation bug - discussed with @manu-kr and the issue is coming from Input component, not related to File Uploader

It's coming from Fundamental-styles

#### Is this a bug, enhancement, or feature request?

bug

#### Briefly describe your proposal.

- [ ] File Uploader with truncation loses truncation ... | defect | input field truncation bug discussed with manu kr and the issue is coming from input component not related to file uploader it s coming from fundamental styles is this a bug enhancement or feature request bug briefly describe your proposal file uploader with truncation loses truncation wh... | 1 |

810,336 | 30,237,292,845 | IssuesEvent | 2023-07-06 11:08:54 | slynch8/10x | https://api.github.com/repos/slynch8/10x | closed | AddBuildFinishedFunction always has result as true | bug Priority 2 trivial current done | Result passed to the function given to AddBuildFinishedFunction always seems to be true even tho there're build errors & warnings. Also it would be nice to have GetErrorCount & GetWarningCount. | 1.0 | AddBuildFinishedFunction always has result as true - Result passed to the function given to AddBuildFinishedFunction always seems to be true even tho there're build errors & warnings. Also it would be nice to have GetErrorCount & GetWarningCount. | non_defect | addbuildfinishedfunction always has result as true result passed to the function given to addbuildfinishedfunction always seems to be true even tho there re build errors warnings also it would be nice to have geterrorcount getwarningcount | 0 |

403,046 | 11,834,993,639 | IssuesEvent | 2020-03-23 09:53:38 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | m.youtube.com - desktop site instead of mobile site | browser-firefox-mobile engine-gecko priority-critical | <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 6.0; Mobile; rv:68.0) Gecko/20100101 Firefox/68.0 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/50527 -->

**URL**: https://m.youtube.com/watch?v=-f0IF0NYmVI

**Browser / Version**:... | 1.0 | m.youtube.com - desktop site instead of mobile site - <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 6.0; Mobile; rv:68.0) Gecko/20100101 Firefox/68.0 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/50527 -->

**URL**: https://m.y... | non_defect | m youtube com desktop site instead of mobile site url browser version firefox mobile operating system android tested another browser yes problem type desktop site instead of mobile site description steps to reproduce browser configuration gfx ... | 0 |

49,079 | 13,185,220,561 | IssuesEvent | 2020-08-12 20:57:55 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | Update offline_trunk/metaproject/quickstart.html (Trac #701) | Incomplete Migration Migrated from Trac defect documentation | <details>

<summary><em>Migrated from https://code.icecube.wisc.edu/ticket/701

, reported by blaufuss and owned by blaufuss</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2015-02-11T19:37:48",

"description": "These are old and crufty. \n\nProbably best to leave specifics out, bur rather... | 1.0 | Update offline_trunk/metaproject/quickstart.html (Trac #701) - <details>

<summary><em>Migrated from https://code.icecube.wisc.edu/ticket/701

, reported by blaufuss and owned by blaufuss</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2015-02-11T19:37:48",

"description": "These are old an... | defect | update offline trunk metaproject quickstart html trac migrated from reported by blaufuss and owned by blaufuss json status closed changetime description these are old and crufty n nprobably best to leave specifics out bur rather make it a collection ... | 1 |

14,953 | 2,832,206,518 | IssuesEvent | 2015-05-25 05:26:40 | primefaces/primefaces | https://api.github.com/repos/primefaces/primefaces | closed | Footer moves down with Horizontal Scrolling and Selection | defect | In Internet Explorer 9 (IE9), with a DataTable if we enable Row Selection (single or multiple) and we enable scrollable with a scrollWidth small enough to cause horizontal scrollbar, the footer of the DataTable moves down the page whenever we mouseover the data rows. The more you move the mouse on mouseover, the more ... | 1.0 | Footer moves down with Horizontal Scrolling and Selection - In Internet Explorer 9 (IE9), with a DataTable if we enable Row Selection (single or multiple) and we enable scrollable with a scrollWidth small enough to cause horizontal scrollbar, the footer of the DataTable moves down the page whenever we mouseover the dat... | defect | footer moves down with horizontal scrolling and selection in internet explorer with a datatable if we enable row selection single or multiple and we enable scrollable with a scrollwidth small enough to cause horizontal scrollbar the footer of the datatable moves down the page whenever we mouseover the data ... | 1 |

333,171 | 24,365,774,611 | IssuesEvent | 2022-10-03 15:03:35 | Punpun1643/alpha6 | https://api.github.com/repos/Punpun1643/alpha6 | opened | Bug0 - trying all sort of markdown stuff | type.DocumentationBug severity.Medium | ---

__Advertisement :)__

- __[pica](https://nodeca.github.io/pica/demo/)__ - high quality and fast image

resize in browser.

- __[babelfish](https://github.com/nodeca/babelfish/)__ - developer friendly

i18n with plurals support and easy syntax.

You will like those projects!

---

# h1 Heading 8-)

## h2 Heading

###... | 1.0 | Bug0 - trying all sort of markdown stuff - ---

__Advertisement :)__

- __[pica](https://nodeca.github.io/pica/demo/)__ - high quality and fast image

resize in browser.

- __[babelfish](https://github.com/nodeca/babelfish/)__ - developer friendly

i18n with plurals support and easy syntax.

You will like those project... | non_defect | trying all sort of markdown stuff advertisement high quality and fast image resize in browser developer friendly with plurals support and easy syntax you will like those projects heading heading heading heading heading head... | 0 |

185,054 | 21,785,058,176 | IssuesEvent | 2022-05-14 02:19:36 | Yash-Handa/GitHub-Org-Geographics | https://api.github.com/repos/Yash-Handa/GitHub-Org-Geographics | closed | CVE-2020-8116 (High) detected in dot-prop-4.2.0.tgz - autoclosed | security vulnerability | ## CVE-2020-8116 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>dot-prop-4.2.0.tgz</b></p></summary>

<p>Get, set, or delete a property from a nested object using a dot path</p>

<p>Lib... | True | CVE-2020-8116 (High) detected in dot-prop-4.2.0.tgz - autoclosed - ## CVE-2020-8116 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>dot-prop-4.2.0.tgz</b></p></summary>

<p>Get, set, or... | non_defect | cve high detected in dot prop tgz autoclosed cve high severity vulnerability vulnerable library dot prop tgz get set or delete a property from a nested object using a dot path library home page a href path to dependency file functions package json path to vulnera... | 0 |

157,646 | 6,010,260,995 | IssuesEvent | 2017-06-06 12:47:59 | theam/haskell-do | https://api.github.com/repos/theam/haskell-do | closed | Backend doesn't spawn on first opening | Bug High priority | It seems like the backend can't spawn when I reboot my laptop and pc on first opening (ubuntu 16.04 and windows 10). The web socket is right the first time I execute the app but it doesn't show in haskell-do. | 1.0 | Backend doesn't spawn on first opening - It seems like the backend can't spawn when I reboot my laptop and pc on first opening (ubuntu 16.04 and windows 10). The web socket is right the first time I execute the app but it doesn't show in haskell-do. | non_defect | backend doesn t spawn on first opening it seems like the backend can t spawn when i reboot my laptop and pc on first opening ubuntu and windows the web socket is right the first time i execute the app but it doesn t show in haskell do | 0 |

113,485 | 24,424,546,801 | IssuesEvent | 2022-10-06 00:42:16 | alexander-wise/RvLineList | https://api.github.com/repos/alexander-wise/RvLineList | closed | Are you using a floating point variable to store integers? | bug code quality | https://github.com/alexander-wise/RvLineList/blob/6f57cd6572fb8678bb252fe64019d1e76394317a/src/empirical_line_lists.jl#L72

If I were starting from scratch, I'd use a different data structure here, since (I think) you're storing different information in different columns. | 1.0 | Are you using a floating point variable to store integers? - https://github.com/alexander-wise/RvLineList/blob/6f57cd6572fb8678bb252fe64019d1e76394317a/src/empirical_line_lists.jl#L72

If I were starting from scratch, I'd use a different data structure here, since (I think) you're storing different information in diffe... | non_defect | are you using a floating point variable to store integers if i were starting from scratch i d use a different data structure here since i think you re storing different information in different columns | 0 |

244,870 | 18,768,653,829 | IssuesEvent | 2021-11-06 12:08:28 | girlscript/winter-of-contributing | https://api.github.com/repos/girlscript/winter-of-contributing | closed | Matrix | documentation GWOC21 Assigned C/CPP | ### Description

What is Matrix

Declaration, working, and traversal

Matrix will be a folder.

This is will be the intro matrix .md file

Further files will be added accordingly!

### Domain

C/CPP

### Type of Contribution

Documentation

### Code of Conduct

- [X] I follow [Contributing Guidelines](https://github.co... | 1.0 | Matrix - ### Description

What is Matrix

Declaration, working, and traversal

Matrix will be a folder.

This is will be the intro matrix .md file

Further files will be added accordingly!

### Domain

C/CPP

### Type of Contribution

Documentation

### Code of Conduct

- [X] I follow [Contributing Guidelines](https:/... | non_defect | matrix description what is matrix declaration working and traversal matrix will be a folder this is will be the intro matrix md file further files will be added accordingly domain c cpp type of contribution documentation code of conduct i follow of this project | 0 |

171 | 2,517,527,541 | IssuesEvent | 2015-01-16 15:28:18 | contao/core | https://api.github.com/repos/contao/core | closed | Input.php - Unset for static::$arrCache['postUnsafeRaw'] missing. | defect | With the new function "postUnsafeRaw" you added a new "static::$arrCache['postUnsafeRaw']".

See #4614. We have the same porblem again. It would be nice if the "setPost" function removes the "static::$arrCache['postUnsafeRaw'][$strKey]", too.

See https://github.com/contao/core/blob/support/3.2/system/modules/core/l... | 1.0 | Input.php - Unset for static::$arrCache['postUnsafeRaw'] missing. - With the new function "postUnsafeRaw" you added a new "static::$arrCache['postUnsafeRaw']".

See #4614. We have the same porblem again. It would be nice if the "setPost" function removes the "static::$arrCache['postUnsafeRaw'][$strKey]", too.

See h... | defect | input php unset for static arrcache missing with the new function postunsaferaw you added a new static arrcache see we have the same porblem again it would be nice if the setpost function removes the static arrcache too see | 1 |

25,981 | 4,540,072,141 | IssuesEvent | 2016-09-09 13:33:00 | stunpix/stacklessexamples | https://api.github.com/repos/stunpix/stacklessexamples | closed | receive bug in stacklesssocket30.py | auto-migrated Priority-Medium Type-Defect | ```

The bug is in stacklesssocket30.py.

What steps will reproduce the problem?

1. Try receiving data on a socket using the recv_into() function.

2.

3.

What is the expected output? What do you see instead?

It will fail like this:

File "stacklesssocket.py", line 249, in recv

self.recv_into(b, byteCount, flags)

F... | 1.0 | receive bug in stacklesssocket30.py - ```

The bug is in stacklesssocket30.py.

What steps will reproduce the problem?

1. Try receiving data on a socket using the recv_into() function.

2.

3.

What is the expected output? What do you see instead?

It will fail like this:

File "stacklesssocket.py", line 249, in recv

s... | defect | receive bug in py the bug is in py what steps will reproduce the problem try receiving data on a socket using the recv into function what is the expected output what do you see instead it will fail like this file stacklesssocket py line in recv self recv into b bytecount flags ... | 1 |

127,123 | 18,010,290,683 | IssuesEvent | 2021-09-16 07:48:40 | maddyCode23/linux-4.1.15 | https://api.github.com/repos/maddyCode23/linux-4.1.15 | opened | CVE-2017-9075 (High) detected in linux-stable-rtv4.1.33 | security vulnerability | ## CVE-2017-9075 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page... | True | CVE-2017-9075 (High) detected in linux-stable-rtv4.1.33 - ## CVE-2017-9075 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwri... | non_defect | cve high detected in linux stable cve high severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in head commit a href vulnerable source files net sctp c ... | 0 |

289,424 | 21,782,752,342 | IssuesEvent | 2022-05-13 20:55:38 | lumeland/docker | https://api.github.com/repos/lumeland/docker | closed | Improve Docker Hub page | documentation | Lume's [Docker Hub](https://hub.docker.com/r/oscarotero/lume/) page is blank currently. The initial work for the Docker images is done, so it's about time to improve the Docker Hub page.

[There](https://hub.docker.com/_/gradle/) [are](https://hub.docker.com/_/eclipse-temurin) [good](https://hub.docker.com/r/denoland... | 1.0 | Improve Docker Hub page - Lume's [Docker Hub](https://hub.docker.com/r/oscarotero/lume/) page is blank currently. The initial work for the Docker images is done, so it's about time to improve the Docker Hub page.

[There](https://hub.docker.com/_/gradle/) [are](https://hub.docker.com/_/eclipse-temurin) [good](https:/... | non_defect | improve docker hub page lume s page is blank currently the initial work for the docker images is done so it s about time to improve the docker hub page examples to take a look at and probably use them as a guide | 0 |

330,726 | 24,274,747,089 | IssuesEvent | 2022-09-28 13:07:03 | Qiskit/qiskit.org | https://api.github.com/repos/Qiskit/qiskit.org | closed | Document local development instructions | documentation project: marketing | We need to document how to work on development tasks in the `q-site` project. | 1.0 | Document local development instructions - We need to document how to work on development tasks in the `q-site` project. | non_defect | document local development instructions we need to document how to work on development tasks in the q site project | 0 |

5,724 | 8,183,087,581 | IssuesEvent | 2018-08-29 07:58:00 | pingcap/tidb | https://api.github.com/repos/pingcap/tidb | closed | SHOW GRANTS FOR CURRENT_USER() does not work | type/compatibility | Hi.

I'm execute command(by mysql cli)

`SHOW GRANTS FOR CURRENT_USER();`

get the error

`

ERROR 1105 (HY000): line 1 column 28 near "()" (total length 30)

`

tidb version:

`5.7.1-TiDB-v1.1.0-alpha-459-ga2a48b3`

BTW .

tidb support MySQL Protocol , but not very compatible with MySQL's existing client tool... | True | SHOW GRANTS FOR CURRENT_USER() does not work - Hi.

I'm execute command(by mysql cli)

`SHOW GRANTS FOR CURRENT_USER();`

get the error

`

ERROR 1105 (HY000): line 1 column 28 near "()" (total length 30)

`

tidb version:

`5.7.1-TiDB-v1.1.0-alpha-459-ga2a48b3`

BTW .

tidb support MySQL Protocol , but not ve... | non_defect | show grants for current user does not work hi i m execute command(by mysql cli) show grants for current user get the error error line column near total length tidb version tidb alpha btw tidb support mysql protocol but not very compatible with ... | 0 |

40,813 | 10,167,638,399 | IssuesEvent | 2019-08-07 18:43:18 | NREL/EnergyPlus | https://api.github.com/repos/NREL/EnergyPlus | closed | Allow evaporative coolers to cycle | Defect InProgress MigratedFromUserVoice SeverityHigh | Problem: Many residential evaporative cooling technologies (e.g., swamp coolers) are controlled to cycle on and off to meet the load. As stated in the I/O reference, these units cannot cycle, meaning that their pumps run continuously even when the required airflow rate is close to zero.

Solution: Give zone evaporati... | 1.0 | Allow evaporative coolers to cycle - Problem: Many residential evaporative cooling technologies (e.g., swamp coolers) are controlled to cycle on and off to meet the load. As stated in the I/O reference, these units cannot cycle, meaning that their pumps run continuously even when the required airflow rate is close to z... | defect | allow evaporative coolers to cycle problem many residential evaporative cooling technologies e g swamp coolers are controlled to cycle on and off to meet the load as stated in the i o reference these units cannot cycle meaning that their pumps run continuously even when the required airflow rate is close to z... | 1 |

393,066 | 26,971,414,758 | IssuesEvent | 2023-02-09 05:23:49 | SashenJayathilaka/Library_Management_System | https://api.github.com/repos/SashenJayathilaka/Library_Management_System | closed | Library Management System in java | documentation | # Library_Management_System

Library Management System in java

# MySql Database

Library Management System in java

# MySql Database

3. Replace /usr/bin/ld to use ld.mcld to link

4. Now, use 'gdb ./a.out' and 'b main'

Expected:

Breakpoint 1, main() at test.c:3

Result:

Breakpoint 1, GNU C 4.8.2 -mtune=generic -march=x86-64 ... | 1.0 | DebugInfo has wrong info when gdb debugging - ```

What steps will reproduce the problem?

1. Write a simple helloworld.c and gcc it to a.out (Use -v to dump detail

commands)

3. Replace /usr/bin/ld to use ld.mcld to link

4. Now, use 'gdb ./a.out' and 'b main'

Expected:

Breakpoint 1, main() at test.c:3

Result:

Breakpoin... | defect | debuginfo has wrong info when gdb debugging what steps will reproduce the problem write a simple helloworld c and gcc it to a out use v to dump detail commands replace usr bin ld to use ld mcld to link now use gdb a out and b main expected breakpoint main at test c result breakpoin... | 1 |

84,957 | 3,682,518,633 | IssuesEvent | 2016-02-24 10:03:58 | MoOx/statinamic | https://api.github.com/repos/MoOx/statinamic | closed | Internal link (from markdown) are normal link and trigger full page load | level: high-priority type: enhancement | That's a shame isn't it?

Before handling this, we need to work on #11 (cause this will handle markdown as react components instead of html). | 1.0 | Internal link (from markdown) are normal link and trigger full page load - That's a shame isn't it?

Before handling this, we need to work on #11 (cause this will handle markdown as react components instead of html). | non_defect | internal link from markdown are normal link and trigger full page load that s a shame isn t it before handling this we need to work on cause this will handle markdown as react components instead of html | 0 |

775,033 | 27,216,001,045 | IssuesEvent | 2023-02-20 21:55:22 | GoogleCloudPlatform/cloud-sql-python-connector | https://api.github.com/repos/GoogleCloudPlatform/cloud-sql-python-connector | closed | system.test_asyncpg_connection: test_connection_with_asyncpg failed | type: bug priority: p2 flakybot: issue | Note: #597 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: 79d227784531f61cca70ebbaa8e9c75c438fd4f4

buildURL: https://github.com/GoogleCloudPlatform/cloud-sql-python-connector/actions/runs/4227214516

status: failed

<details><summary>Test output</summary><br><p... | 1.0 | system.test_asyncpg_connection: test_connection_with_asyncpg failed - Note: #597 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: 79d227784531f61cca70ebbaa8e9c75c438fd4f4

buildURL: https://github.com/GoogleCloudPlatform/cloud-sql-python-connector/actions/runs/4... | non_defect | system test asyncpg connection test connection with asyncpg failed note was also for this test but it was closed more than days ago so i didn t mark it flaky commit buildurl status failed test output pytest fixture name conn async def setup asyncgenerator initializ... | 0 |

39,995 | 6,793,831,045 | IssuesEvent | 2017-11-01 09:29:12 | commons-app/apps-android-commons | https://api.github.com/repos/commons-app/apps-android-commons | opened | Tidy up Commons:Mobile app page | documentation | https://commons.wikimedia.org/wiki/Commons:Mobile_app could potentially be due for a bit of a tidy-up, to reflect the current state of the app. Any ideas for how we could structure the information and what else to add? | 1.0 | Tidy up Commons:Mobile app page - https://commons.wikimedia.org/wiki/Commons:Mobile_app could potentially be due for a bit of a tidy-up, to reflect the current state of the app. Any ideas for how we could structure the information and what else to add? | non_defect | tidy up commons mobile app page could potentially be due for a bit of a tidy up to reflect the current state of the app any ideas for how we could structure the information and what else to add | 0 |

21,717 | 11,351,730,635 | IssuesEvent | 2020-01-24 11:57:06 | kyma-project/kyma | https://api.github.com/repos/kyma-project/kyma | closed | Production grade profile for Console Backend Service | area/console area/performance | **Description**

Looks like Console Backend Service hits its memory limits on some clusters (see [linked issue](https://github.com/kyma-project/kyma/issues/6667)). This usually happens right after pod starts. There seems to be a memory consumption peak that happens immediately after pod starts

Analyse realistic me... | True | Production grade profile for Console Backend Service - **Description**

Looks like Console Backend Service hits its memory limits on some clusters (see [linked issue](https://github.com/kyma-project/kyma/issues/6667)). This usually happens right after pod starts. There seems to be a memory consumption peak that happe... | non_defect | production grade profile for console backend service description looks like console backend service hits its memory limits on some clusters see this usually happens right after pod starts there seems to be a memory consumption peak that happens immediately after pod starts analyse realistic memory req... | 0 |

716,779 | 24,648,135,151 | IssuesEvent | 2022-10-17 16:19:05 | wazuh/wazuh-kibana-app | https://api.github.com/repos/wazuh/wazuh-kibana-app | closed | Blank window due to conflicts with the cached assets | bug priority/high compatibility cat-4 | | Wazuh | OpenSearch | Rev | Security |

| ----- | ------- | ---- | -------- |

| 4.3.6 | 1.2.0 | 4xxx | - |

| Browser |

| ------- |

| Chrome, Firefox, Safari, etc|

## Description

This is... | 1.0 | Blank window due to conflicts with the cached assets - | Wazuh | OpenSearch | Rev | Security |

| ----- | ------- | ---- | -------- |

| 4.3.6 | 1.2.0 | 4xxx | - |

| Browser |

| ------- |

| Chrome, Firefox, Safari, etc|

## Description

| auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. use Jinja2 as template engine via coffin (http://github.com/dcramer/coffin)

2. overriding pagination/pagination.html with own Jinja2 template

3. pagination templatetags are unknown

TemplateSyntaxError: unknown tag 'autopaginate' File ...

Try to add them:

from coffi... | 1.0 | Making it work with Jinja2 (coffin) - ```

What steps will reproduce the problem?

1. use Jinja2 as template engine via coffin (http://github.com/dcramer/coffin)

2. overriding pagination/pagination.html with own Jinja2 template

3. pagination templatetags are unknown

TemplateSyntaxError: unknown tag 'autopaginate' Fil... | defect | making it work with coffin what steps will reproduce the problem use as template engine via coffin overriding pagination pagination html with own template pagination templatetags are unknown templatesyntaxerror unknown tag autopaginate file try to add them from coffin templat... | 1 |

24,431 | 3,979,988,029 | IssuesEvent | 2016-05-06 03:54:47 | extnet/Ext.NET | https://api.github.com/repos/extnet/Ext.NET | opened | Ext.grid.plugin.RowExpander has expanded rows reset when view is refreshed | 3.x 4.x defect | Reported on this forum thread: [Illogical work collapse\expand in grouping gridpanel](http://forums.ext.net/showthread.php?61113)

The grid with an expanded row like this:

When a group is collap... | 1.0 | Ext.grid.plugin.RowExpander has expanded rows reset when view is refreshed - Reported on this forum thread: [Illogical work collapse\expand in grouping gridpanel](http://forums.ext.net/showthread.php?61113)

The grid with an expanded row like this:

**Expected:**

**hello.deployment.yaml** contains no schema validation erro... | 1.0 | Validation error in VS Code in hello.deployment.yaml when creating NodeJS K8s Hello World template - **Repro Steps:**

1. Create a new K8s NodeJS Hello World application in VS Code

2. Expand **kubernetes-manifests** and select **hello.deployment.yaml** (may require waiting a little or switching between files for LSP ... | non_defect | validation error in vs code in hello deployment yaml when creating nodejs hello world template repro steps create a new nodejs hello world application in vs code expand kubernetes manifests and select hello deployment yaml may require waiting a little or switching between files for lsp to c... | 0 |

56,245 | 14,993,835,179 | IssuesEvent | 2021-01-29 11:57:55 | radon-h2020/radon-defect-prediction-api | https://api.github.com/repos/radon-h2020/radon-defect-prediction-api | closed | R-T3.4-5: The defect-prediction tool MUST provide a set of rules that identify defect-prone scripts and an interpretation of the final decision | Defect prediction IDE MUST WP3 | ID | R-T3.4-5

-- | --

Section | WP3: Methodology and Quality Assurance Requirements

Type | FUNCTIONAL_SUITABILITY

User Story | As an Operations Engineer/QoS Engineer/Release Manager I want the tool to show me an interpretation of the final decision for the classification of a script as defective.

Requirement | The... | 1.0 | R-T3.4-5: The defect-prediction tool MUST provide a set of rules that identify defect-prone scripts and an interpretation of the final decision - ID | R-T3.4-5

-- | --

Section | WP3: Methodology and Quality Assurance Requirements

Type | FUNCTIONAL_SUITABILITY

User Story | As an Operations Engineer/QoS Engineer/Rele... | defect | r the defect prediction tool must provide a set of rules that identify defect prone scripts and an interpretation of the final decision id r section methodology and quality assurance requirements type functional suitability user story as an operations engineer qos engineer release ... | 1 |

189,157 | 6,794,662,945 | IssuesEvent | 2017-11-01 13:07:43 | cilium/cilium | https://api.github.com/repos/cilium/cilium | closed | Kafka: access.log to be limited to a single topic | area/proxy kind/microtask priority/high project/1.0-gap | For efficient logging purposes, we should have access.log logging records per topic. This includes request as well as responses. | 1.0 | Kafka: access.log to be limited to a single topic - For efficient logging purposes, we should have access.log logging records per topic. This includes request as well as responses. | non_defect | kafka access log to be limited to a single topic for efficient logging purposes we should have access log logging records per topic this includes request as well as responses | 0 |

227,772 | 17,399,186,051 | IssuesEvent | 2021-08-02 17:06:40 | Nyasita/HackBioAssignmentPauling | https://api.github.com/repos/Nyasita/HackBioAssignmentPauling | closed | Personal details/ Biographies | documentation | Hello everyone,

Please update your biography under contributors on the readme, complete with:

1. Your name

2. What you do/ affiliation

3. Your social media handles/ github profile

4. An image of yourself | 1.0 | Personal details/ Biographies - Hello everyone,

Please update your biography under contributors on the readme, complete with:

1. Your name

2. What you do/ affiliation

3. Your social media handles/ github profile

4. An image of yourself | non_defect | personal details biographies hello everyone please update your biography under contributors on the readme complete with your name what you do affiliation your social media handles github profile an image of yourself | 0 |

72,232 | 24,006,936,389 | IssuesEvent | 2022-09-14 15:27:36 | NREL/EnergyPlus | https://api.github.com/repos/NREL/EnergyPlus | closed | IDFVersionUpdater not working for V22-1-0 files to V22-2-0-IOFreeze | Defect AuxiliaryTool | Issue overview

--------------

IDFVersionUpdater not working on Linux for V22-2-0-IOFreeze.

```shell

mkdir tmp

cd tmp

cp /usr/local/EnergyPlus-22-1-0/ExampleFiles/1ZoneUncontrolled.idf

grep Version 1ZoneUncontrolled.idf

> Version,22.1;

../EnergyPlus-22.2.0-56f3a890b4-Linux-Ubuntu20.04-x86_64/PreProcess... | 1.0 | IDFVersionUpdater not working for V22-1-0 files to V22-2-0-IOFreeze - Issue overview

--------------

IDFVersionUpdater not working on Linux for V22-2-0-IOFreeze.

```shell

mkdir tmp

cd tmp

cp /usr/local/EnergyPlus-22-1-0/ExampleFiles/1ZoneUncontrolled.idf

grep Version 1ZoneUncontrolled.idf

> Version,22.1... | defect | idfversionupdater not working for files to iofreeze issue overview idfversionupdater not working on linux for iofreeze shell mkdir tmp cd tmp cp usr local energyplus examplefiles idf grep version idf version energyplus linux p... | 1 |

157,589 | 13,697,315,764 | IssuesEvent | 2020-10-01 02:40:24 | Xcov19/mycovidconnect | https://api.github.com/repos/Xcov19/mycovidconnect | closed | Update the README file | Hacktoberfest documentation enhancement first-timers-only good first issue help wanted up-for-grabs | Follow this format:

- Project name: Your project’s name is the first thing people will see upon scrolling down to your README, and is included upon creation of your README file.

- Description: A description of your project follows. A good description is clear, short, and to the point. Describe the importance of y... | 1.0 | Update the README file - Follow this format:

- Project name: Your project’s name is the first thing people will see upon scrolling down to your README, and is included upon creation of your README file.

- Description: A description of your project follows. A good description is clear, short, and to the point. Des... | non_defect | update the readme file follow this format project name your project’s name is the first thing people will see upon scrolling down to your readme and is included upon creation of your readme file description a description of your project follows a good description is clear short and to the point des... | 0 |

10,463 | 2,622,165,027 | IssuesEvent | 2015-03-04 00:11:56 | byzhang/graphchi | https://api.github.com/repos/byzhang/graphchi | opened | Sharder crashes is input not in the right format | auto-migrated Priority-High Type-Defect | ```

If file was adjacency, but try to read with edgelist format -> segfault.

```

Original issue reported on code.google.com by `akyrola...@gmail.com` on 28 Jun 2012 at 5:08 | 1.0 | Sharder crashes is input not in the right format - ```

If file was adjacency, but try to read with edgelist format -> segfault.

```

Original issue reported on code.google.com by `akyrola...@gmail.com` on 28 Jun 2012 at 5:08 | defect | sharder crashes is input not in the right format if file was adjacency but try to read with edgelist format segfault original issue reported on code google com by akyrola gmail com on jun at | 1 |

342,090 | 24,728,579,223 | IssuesEvent | 2022-10-20 15:42:12 | hypothesis/frontend-shared | https://api.github.com/repos/hypothesis/frontend-shared | closed | Size documentation for `Button` is incomplete | documentation pattern library | Documentation for `size` prop on `ButtonPage` is incomplete. | 1.0 | Size documentation for `Button` is incomplete - Documentation for `size` prop on `ButtonPage` is incomplete. | non_defect | size documentation for button is incomplete documentation for size prop on buttonpage is incomplete | 0 |

182,377 | 14,912,867,277 | IssuesEvent | 2021-01-22 13:20:27 | KonstantinEger/Bau-Abrechnungsprogramm | https://api.github.com/repos/KonstantinEger/Bau-Abrechnungsprogramm | opened | Wiki not up to date with main branch | documentation | **Describe the bug**

The wiki is not up to date with the current and new features of the app.

**Solution**

Add a branch to the wiki, which is up to date and push the new stuff whenever there is a new release. | 1.0 | Wiki not up to date with main branch - **Describe the bug**

The wiki is not up to date with the current and new features of the app.

**Solution**

Add a branch to the wiki, which is up to date and push the new stuff whenever there is a new release. | non_defect | wiki not up to date with main branch describe the bug the wiki is not up to date with the current and new features of the app solution add a branch to the wiki which is up to date and push the new stuff whenever there is a new release | 0 |

334,352 | 29,831,928,230 | IssuesEvent | 2023-06-18 11:35:49 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | opened | Fix comparison_ops.test_torch_not_equal | PyTorch Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5303169054/jobs/9598603920"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/5303169054/jobs/9598603920"><img src=https://img.shields.io/badge/-success-success><... | 1.0 | Fix comparison_ops.test_torch_not_equal - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5303169054/jobs/9598603920"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/5303169054/jobs/9598603920"><img src=https... | non_defect | fix comparison ops test torch not equal tensorflow a href src torch a href src numpy a href src jax a href src paddle a href src | 0 |

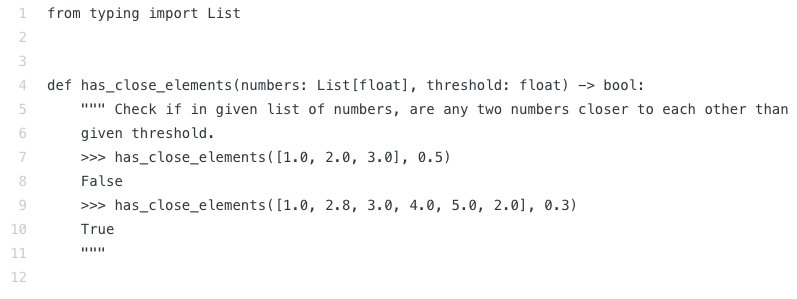

209,302 | 16,014,734,261 | IssuesEvent | 2021-04-20 14:46:13 | istio/istio | https://api.github.com/repos/istio/istio | closed | TestTraffic/virtualservice/shifting test flakes in multicluster | area/networking kind/test failure | ERROR: type should be string, got "https://prow.istio.io/view/gs/istio-prow/pr-logs/pull/istio_istio/32206/integ-pilot-multicluster-tests_istio/1382736986078449664\r\n\r\nWe are getting errors about unbalanced load. I verified the client side load balancing is correct - we send exactly 1/3 of traffic to each gw\r\n\r\nI think this is the current state:\r\n\r\n\r\nBasically because Envoy client will do HTTP connection pool, we may have only a single connection to the Gateways. Because its TCP routing, that connection will go to a single location. There is a 50/50 shot of both gateways choosing the same destination, at which point our test fails?\r\n\r\nI think envoy is not always using exactly 1 connection, but even with 2 connections each its a 12.% chance of failure, and with 3 connections each 1%\r\n\r\ncc @stevenctl \r\n" | 1.0 | TestTraffic/virtualservice/shifting test flakes in multicluster - https://prow.istio.io/view/gs/istio-prow/pr-logs/pull/istio_istio/32206/integ-pilot-multicluster-tests_istio/1382736986078449664

We are getting errors about unbalanced load. I verified the client side load balancing is correct - we send exactly 1/3 of... | non_defect | testtraffic virtualservice shifting test flakes in multicluster we are getting errors about unbalanced load i verified the client side load balancing is correct we send exactly of traffic to each gw i think this is the current state basically because envoy client will do http connection pool ... | 0 |

63,189 | 17,421,038,384 | IssuesEvent | 2021-08-04 01:23:43 | Cockatrice/Cockatrice | https://api.github.com/repos/Cockatrice/Cockatrice | closed | Both CI builds for windows are failing | CI Defect - Regression High Priority OS - Windows | I spotted this after the most recent merge to master happened (https://github.com/Cockatrice/Cockatrice/pull/4398).

64-bit and 32-bit builds are failing in the `Restore or setup vcpkg` step:

https://github.com/Cockatrice/Cockatrice/runs/3157059370

It looks like the issue was already visible in the PR itself: htt... | 1.0 | Both CI builds for windows are failing - I spotted this after the most recent merge to master happened (https://github.com/Cockatrice/Cockatrice/pull/4398).

64-bit and 32-bit builds are failing in the `Restore or setup vcpkg` step:

https://github.com/Cockatrice/Cockatrice/runs/3157059370

It looks like the issue ... | defect | both ci builds for windows are failing i spotted this after the most recent merge to master happened bit and bit builds are failing in the restore or setup vcpkg step it looks like the issue was already visible in the pr itself | 1 |

75,159 | 25,562,775,172 | IssuesEvent | 2022-11-30 12:06:44 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | Informix CURRENT_TIMESTAMP emulation doesn't work as DDL DEFAULT expression | T: Defect C: Functionality C: DB: Informix P: Medium E: Enterprise Edition | This is valid in Informix:

```sql

SELECT CURRENT

```

But this isn't:

```sql

CREATE TABLE x (y DATETIME YEAR TO FRACTION (5) DEFAULT CURRENT);

```

Despite the datetime precision being clear from context, we have to supply it explicitly, as `CURRENT YEAR TO FRACTION (5)` | 1.0 | Informix CURRENT_TIMESTAMP emulation doesn't work as DDL DEFAULT expression - This is valid in Informix:

```sql

SELECT CURRENT

```

But this isn't:

```sql

CREATE TABLE x (y DATETIME YEAR TO FRACTION (5) DEFAULT CURRENT);

```

Despite the datetime precision being clear from context, we have to supply it ex... | defect | informix current timestamp emulation doesn t work as ddl default expression this is valid in informix sql select current but this isn t sql create table x y datetime year to fraction default current despite the datetime precision being clear from context we have to supply it ex... | 1 |

532,827 | 15,571,790,864 | IssuesEvent | 2021-03-17 05:46:27 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | sso.rajasthan.gov.in - see bug description | browser-fenix engine-gecko ml-needsdiagnosis-false priority-normal status-needsinfo | <!-- @browser: Firefox Mobile 85.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 7.1.2; Mobile; rv:85.0) Gecko/85.0 Firefox/85.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/66450 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://sso.rajas... | 1.0 | sso.rajasthan.gov.in - see bug description - <!-- @browser: Firefox Mobile 85.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 7.1.2; Mobile; rv:85.0) Gecko/85.0 Firefox/85.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/66450 -->

<!-- @extra_labels: ... | non_defect | sso rajasthan gov in see bug description url browser version firefox mobile operating system android tested another browser yes chrome problem type something else description popup steps to reproduce ok view the screenshot img alt ... | 0 |

22,622 | 3,670,924,093 | IssuesEvent | 2016-02-22 02:39:50 | gperftools/gperftools | https://api.github.com/repos/gperftools/gperftools | closed | Function names are not printed when running pprof for CPU profiler | Priority-Medium Status-New Type-Defect | Originally reported on Google Code with ID 520

```

What steps will reproduce the problem?

1.Compile the code with option -g, o, tcmalloc libraries and -lprofile

2.Run the executable with CPUPROFILE flag set and get profiled file ls.profile

3.Run pprof --callgrind /bin/ls > ls.callgrind

What is the expected output... | 1.0 | Function names are not printed when running pprof for CPU profiler - Originally reported on Google Code with ID 520

```

What steps will reproduce the problem?

1.Compile the code with option -g, o, tcmalloc libraries and -lprofile

2.Run the executable with CPUPROFILE flag set and get profiled file ls.profile

3.Run ppr... | defect | function names are not printed when running pprof for cpu profiler originally reported on google code with id what steps will reproduce the problem compile the code with option g o tcmalloc libraries and lprofile run the executable with cpuprofile flag set and get profiled file ls profile run pprof... | 1 |

10,320 | 2,622,143,910 | IssuesEvent | 2015-03-04 00:03:17 | byzhang/i7z | https://api.github.com/repos/byzhang/i7z | closed | double code in i7z.c | auto-migrated Priority-Medium Type-Defect | ```

The lines may be deleted from 396 to 414.

double code with 422 to 440

```

Original issue reported on code.google.com by `rmattusc...@gmx.net` on 1 Apr 2010 at 12:29 | 1.0 | double code in i7z.c - ```

The lines may be deleted from 396 to 414.

double code with 422 to 440

```

Original issue reported on code.google.com by `rmattusc...@gmx.net` on 1 Apr 2010 at 12:29 | defect | double code in c the lines may be deleted from to double code with to original issue reported on code google com by rmattusc gmx net on apr at | 1 |

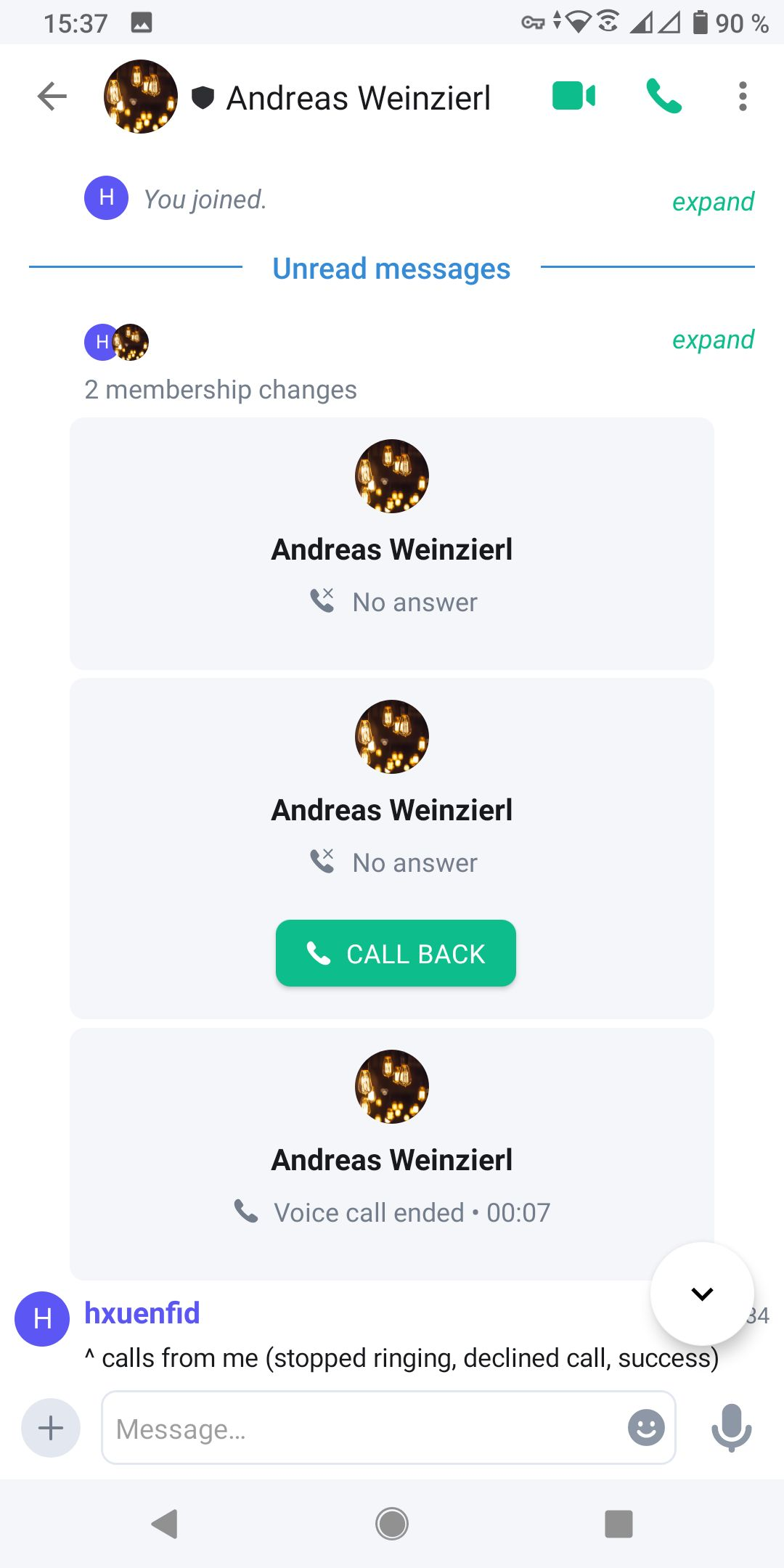

64,879 | 18,951,612,846 | IssuesEvent | 2021-11-18 15:41:58 | vector-im/element-android | https://api.github.com/repos/vector-im/element-android | opened | "CALL BACK" button sometimes is not displayed | T-Defect | ### Steps to reproduce

1. Where are you starting? What can you see?

I called someone and looked at different times into the chat.

First time I made a screenshot:

Second time I made a screenshot:

ega Isikut/Organisatsiooni (samuti ei reageeri mingitele täheühenditele).

Nende puuduste kohta on mingid varasemad ticke... | 1.0 | Täpse päringu lähteandmetesse ei saa valida Valdkonda ega Isik/Org. - **Reported by katrin vesterblom on 5 Jun 2013 11:30 UTC**

rahvusarhiiv.tietotest.ee

"Täpsemat otsingut" tehes ei saa valida lähteandmeteks Valdkonda (ei reageeri mingisugustele täheühenditele) ega Isikut/Organisatsiooni (samuti ei reageeri mingit... | defect | täpse päringu lähteandmetesse ei saa valida valdkonda ega isik org reported by katrin vesterblom on jun utc rahvusarhiiv tietotest ee täpsemat otsingut tehes ei saa valida lähteandmeteks valdkonda ei reageeri mingisugustele täheühenditele ega isikut organisatsiooni samuti ei reageeri mingitele t... | 1 |

44,792 | 12,391,241,769 | IssuesEvent | 2020-05-20 12:09:38 | ontopia/ontopia | https://api.github.com/repos/ontopia/ontopia | opened | XTMTopicMapReader always validates | Component-Engine Defect Newbie Syntax-XTM bug | Using `XTMTopicMapReader.setValidation(false)` has no effect | 1.0 | XTMTopicMapReader always validates - Using `XTMTopicMapReader.setValidation(false)` has no effect | defect | xtmtopicmapreader always validates using xtmtopicmapreader setvalidation false has no effect | 1 |

20,263 | 3,322,171,473 | IssuesEvent | 2015-11-09 13:13:05 | bridgedotnet/Bridge | https://api.github.com/repos/bridgedotnet/Bridge | closed | Awaiter in iterator block of for loop generates wrong state machine js code | defect | http://forums.bridge.net/forum/community/help/768?p=771#post771

```

for( var nextPage = await msgPage.nextPage(); nextPage != null; nextPage = await nextPage.nextPage())

{

foreach (Element item in await nextPage.removeOfflineParallel())

msgPage.appendItem(item);

}

``` | 1.0 | Awaiter in iterator block of for loop generates wrong state machine js code - http://forums.bridge.net/forum/community/help/768?p=771#post771

```

for( var nextPage = await msgPage.nextPage(); nextPage != null; nextPage = await nextPage.nextPage())

{

foreach (Element item in await nextPage.removeOfflinePar... | defect | awaiter in iterator block of for loop generates wrong state machine js code for var nextpage await msgpage nextpage nextpage null nextpage await nextpage nextpage foreach element item in await nextpage removeofflineparallel msgpage appenditem item | 1 |

13,382 | 3,330,579,177 | IssuesEvent | 2015-11-11 11:20:18 | mantidproject/mantid | https://api.github.com/repos/mantidproject/mantid | opened | OSIRIS FuryAndFuryFitMulti System test failing | Component: Direct Inelastic Misc: Bugfix Priority: High Quality: System Tests | After the update to VS2015, ISISIndirectInelastic system tests are failing on the OSIRISFuryAndFuryFitMulti test

http://builds.mantidproject.org/job/master_systemtests-win7/lastCompletedBuild/testReport/SystemTests/ISISIndirectInelastic/OSIRISFuryAndFuryFitMulti/ | 1.0 | OSIRIS FuryAndFuryFitMulti System test failing - After the update to VS2015, ISISIndirectInelastic system tests are failing on the OSIRISFuryAndFuryFitMulti test

http://builds.mantidproject.org/job/master_systemtests-win7/lastCompletedBuild/testReport/SystemTests/ISISIndirectInelastic/OSIRISFuryAndFuryFitMulti/ | non_defect | osiris furyandfuryfitmulti system test failing after the update to isisindirectinelastic system tests are failing on the osirisfuryandfuryfitmulti test | 0 |

389,988 | 26,842,092,210 | IssuesEvent | 2023-02-03 01:57:05 | ophub/amlogic-s9xxx-armbian | https://api.github.com/repos/ophub/amlogic-s9xxx-armbian | closed | Get eMMC partition info with nice-looking layout with single command / 一条命令简单获取结构清晰的eMMC分区信息 | documentation essence | On the terminal of the target Amlogic device that has Android on its eMMC, type the following command (copy&paste is enough):

在eMMC上尚有安卓的目标晶晨设备上,输入下面的命令(复制粘贴即可):

```

echo "https://7ji.github.io/ampart-web-reporter/?dsnapshot=$(ampart /dev/mmcblk2 --mode dsnapshot 2>/dev/null | head -n 1)&esnapshot=$(ampart /dev/mm... | 1.0 | Get eMMC partition info with nice-looking layout with single command / 一条命令简单获取结构清晰的eMMC分区信息 - On the terminal of the target Amlogic device that has Android on its eMMC, type the following command (copy&paste is enough):

在eMMC上尚有安卓的目标晶晨设备上,输入下面的命令(复制粘贴即可):

```

echo "https://7ji.github.io/ampart-web-reporter/?dsnap... | non_defect | get emmc partition info with nice looking layout with single command 一条命令简单获取结构清晰的emmc分区信息 on the terminal of the target amlogic device that has android on its emmc type the following command copy paste is enough 在emmc上尚有安卓的目标晶晨设备上,输入下面的命令(复制粘贴即可): echo dev mode dsnapshot dev null head n ... | 0 |

8,697 | 2,611,536,386 | IssuesEvent | 2015-02-27 06:06:08 | chrsmith/hedgewars | https://api.github.com/repos/chrsmith/hedgewars | opened | Artifacts/Flashing on the map island on AMD | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Select a big sized map on Island

2. play for a while (it may take 2 rounds till it happens)

3. wait for some strange flickering appearing for a split second

What version of the product are you using? On what operating system?

0.9.20

Please provide any additional informa... | 1.0 | Artifacts/Flashing on the map island on AMD - ```

What steps will reproduce the problem?

1. Select a big sized map on Island

2. play for a while (it may take 2 rounds till it happens)

3. wait for some strange flickering appearing for a split second

What version of the product are you using? On what operating system?

... | defect | artifacts flashing on the map island on amd what steps will reproduce the problem select a big sized map on island play for a while it may take rounds till it happens wait for some strange flickering appearing for a split second what version of the product are you using on what operating system ... | 1 |

65,295 | 6,954,775,533 | IssuesEvent | 2017-12-07 03:25:59 | equella/Equella | https://api.github.com/repos/equella/Equella | closed | This ResultSet is closed. | Ready for Testing | Did a fresh install on Windows server using JDK 8 152 and PostgreSQL 10.1. When first firing up the application I get a database error saying the result set is closed.

java.sql.SQLTransientConnectionException: HikariPool-1 - Connection is not available, request timed out after 30100ms.

at com.zaxxer.hikari.po... | 1.0 | This ResultSet is closed. - Did a fresh install on Windows server using JDK 8 152 and PostgreSQL 10.1. When first firing up the application I get a database error saying the result set is closed.

java.sql.SQLTransientConnectionException: HikariPool-1 - Connection is not available, request timed out after 30100ms.

... | non_defect | this resultset is closed did a fresh install on windows server using jdk and postgresql when first firing up the application i get a database error saying the result set is closed java sql sqltransientconnectionexception hikaripool connection is not available request timed out after at c... | 0 |

51,492 | 13,207,501,770 | IssuesEvent | 2020-08-14 23:21:02 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | opened | remove remaining should-be-private headers from modules in core projects (Trac #525) | IceTray Incomplete Migration Migrated from Trac defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/525">https://code.icecube.wisc.edu/projects/icecube/ticket/525</a>, reported by troyand owned by blaufuss</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2015-02-11T19:42:30",

"_ts": "142368... | 1.0 | remove remaining should-be-private headers from modules in core projects (Trac #525) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/525">https://code.icecube.wisc.edu/projects/icecube/ticket/525</a>, reported by troyand owned by blaufuss</em></summary>

<p>

```jso... | defect | remove remaining should be private headers from modules in core projects trac migrated from json status closed changetime ts description especially the utility modules in icetray n n reporter troy cc resolution wont... | 1 |

57,359 | 15,731,471,829 | IssuesEvent | 2021-03-29 17:06:52 | danmar/testissues | https://api.github.com/repos/danmar/testissues | opened | False positive with "char a[]" (Trac #377) | False positive Incomplete Migration Migrated from Trac defect hyd_danmar | Migrated from https://trac.cppcheck.net/ticket/377

```json

{

"status": "closed",

"changetime": "2009-06-10T18:37:37",

"description": "{{{\nchar *f()\n{\n char a[] = new char[10];\n return a;\n}\n}}}\n\n[a.c:4]: (error) Returning pointer to local array variable",

"reporter": "aggro80",

"cc": ""... | 1.0 | False positive with "char a[]" (Trac #377) - Migrated from https://trac.cppcheck.net/ticket/377

```json

{

"status": "closed",

"changetime": "2009-06-10T18:37:37",

"description": "{{{\nchar *f()\n{\n char a[] = new char[10];\n return a;\n}\n}}}\n\n[a.c:4]: (error) Returning pointer to local array variabl... | defect | false positive with char a trac migrated from json status closed changetime description nchar f n n char a new char n return a n n n n error returning pointer to local array variable reporter cc resolution ... | 1 |

21,841 | 3,568,027,725 | IssuesEvent | 2016-01-26 02:15:50 | antang/NewCapstoneProject | https://api.github.com/repos/antang/NewCapstoneProject | closed | Defect - Not show status question in phase | Defect Medium priority | When user set up game then they don't know about questions have shown or not, it is setting questions for phase screen. | 1.0 | Defect - Not show status question in phase - When user set up game then they don't know about questions have shown or not, it is setting questions for phase screen. | defect | defect not show status question in phase when user set up game then they don t know about questions have shown or not it is setting questions for phase screen | 1 |

81,109 | 30,716,813,287 | IssuesEvent | 2023-07-27 13:32:19 | SeleniumHQ/selenium | https://api.github.com/repos/SeleniumHQ/selenium | opened | [🐛 Bug]: | I-defect needs-triaging | ### What happened?

So I'm running several dockerized python crawlers from my k8s cluster. All of them are working just fine, some with proxy some without and I'm having issues with this one that just doesn't want to work anymore. It did work just fine a month ago and I can't figure out what's causing the issue. I did ... | 1.0 | [🐛 Bug]: - ### What happened?

So I'm running several dockerized python crawlers from my k8s cluster. All of them are working just fine, some with proxy some without and I'm having issues with this one that just doesn't want to work anymore. It did work just fine a month ago and I can't figure out what's causing the ... | defect | what happened so i m running several dockerized python crawlers from my cluster all of them are working just fine some with proxy some without and i m having issues with this one that just doesn t want to work anymore it did work just fine a month ago and i can t figure out what s causing the issue i ... | 1 |

94,570 | 11,886,278,093 | IssuesEvent | 2020-03-27 21:30:57 | microsoft/BotFramework-Composer | https://api.github.com/repos/microsoft/BotFramework-Composer | closed | The Asking Questions Sample - a few questions | Needs investigation UX Design | Here are a few questions Steve (@WashingtonKayaker) and I have about the [AskingQuestions](https://github.com/microsoft/BotFramework-Composer/tree/stable/Composer/packages/server/assets/projects/AskingQuestionsSample/ComposerDialogs) Sample.

### 1. About _Always prompt_

Does **Max turn count** overwrite **Always ... | 1.0 | The Asking Questions Sample - a few questions - Here are a few questions Steve (@WashingtonKayaker) and I have about the [AskingQuestions](https://github.com/microsoft/BotFramework-Composer/tree/stable/Composer/packages/server/assets/projects/AskingQuestionsSample/ComposerDialogs) Sample.

### 1. About _Always prom... | non_defect | the asking questions sample a few questions here are a few questions steve washingtonkayaker and i have about the sample about always prompt does max turn count overwrite always prompt testing the always prompt functionality in the sample and no difference found in bot s behavior... | 0 |

79,985 | 29,810,203,749 | IssuesEvent | 2023-06-16 14:31:31 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | Ambiguous match found when using aliases with implicit join and joining the same table twice | T: Defect C: Functionality P: Medium E: All Editions | Using the reproducer from a previous issue (https://github.com/jOOQ/jOOQ/issues/12455), then this query here:

```java

@Test

public void testMultisetMappingIntoJavaRecords() {

String atsType = "foobar";

AmPushRunMatch push_run_match = Tables.AM_PUSH_RUN_MATCH.as("push_run_match");

AmPushRun push_ru... | 1.0 | Ambiguous match found when using aliases with implicit join and joining the same table twice - Using the reproducer from a previous issue (https://github.com/jOOQ/jOOQ/issues/12455), then this query here:

```java

@Test

public void testMultisetMappingIntoJavaRecords() {

String atsType = "foobar";

AmPush... | defect | ambiguous match found when using aliases with implicit join and joining the same table twice using the reproducer from a previous issue then this query here java test public void testmultisetmappingintojavarecords string atstype foobar ampushrunmatch push run match tables am push r... | 1 |

80,212 | 7,742,288,801 | IssuesEvent | 2018-05-29 09:04:38 | italia/spid | https://api.github.com/repos/italia/spid | closed | Controllo metadati - Piattaforma Dimostrativa Datanet srl | Ambiente di Test | Salve,

vorremmo eseguire dei test di login attraverso spid su una piattaforma dimostrativa alle pubbliche amministrazioni.

Abbiamo effettuato l'installazione su piattaforma Wordpress utilizzando l'apposito plugin rilasciato da Marco Milesi.

Di seguito si trasmette link dei metadati ottenuti per la piattaforma di... | 1.0 | Controllo metadati - Piattaforma Dimostrativa Datanet srl - Salve,

vorremmo eseguire dei test di login attraverso spid su una piattaforma dimostrativa alle pubbliche amministrazioni.

Abbiamo effettuato l'installazione su piattaforma Wordpress utilizzando l'apposito plugin rilasciato da Marco Milesi.

Di seguito s... | non_defect | controllo metadati piattaforma dimostrativa datanet srl salve vorremmo eseguire dei test di login attraverso spid su una piattaforma dimostrativa alle pubbliche amministrazioni abbiamo effettuato l installazione su piattaforma wordpress utilizzando l apposito plugin rilasciato da marco milesi di seguito s... | 0 |

255,936 | 27,529,639,215 | IssuesEvent | 2023-03-06 20:55:00 | Enterprise-CMCS/eAPD | https://api.github.com/repos/Enterprise-CMCS/eAPD | closed | [Maintenance] Upgrade Knex | Development large security | ### Description and related issues

There is a vulnerability with the version of knex that we are using. Synk suggests upgrading knex to version 2.4.0 or higher.

This is a major upgrade, so confirm that all of the database functionality is still working.

### This task is done when…

- [ ] knex is upgraded to a version... | True | [Maintenance] Upgrade Knex - ### Description and related issues

There is a vulnerability with the version of knex that we are using. Synk suggests upgrading knex to version 2.4.0 or higher.

This is a major upgrade, so confirm that all of the database functionality is still working.

### This task is done when…

- [ ] ... | non_defect | upgrade knex description and related issues there is a vulnerability with the version of knex that we are using synk suggests upgrading knex to version or higher this is a major upgrade so confirm that all of the database functionality is still working this task is done when… knex is upgrad... | 0 |

28,108 | 13,532,761,582 | IssuesEvent | 2020-09-16 01:04:43 | doyougnu/VSmt | https://api.github.com/repos/doyougnu/VSmt | opened | Use Continuation Passing Style | enhancement memory performance | After tinkering around with variational arithmetic I've concluded I need a zipper to handle deeply nested choices. Consider this formula:

```

deepChoicesLHS :: IO Result

deepChoicesLHS = flip sat Nothing $

(1 - 2 - (3 - c)) .== 23

where c = iChc "AA" (iRef ("Aleft" :: Text)) (iRef "Aright") +

iCh... | True | Use Continuation Passing Style - After tinkering around with variational arithmetic I've concluded I need a zipper to handle deeply nested choices. Consider this formula:

```

deepChoicesLHS :: IO Result

deepChoicesLHS = flip sat Nothing $

(1 - 2 - (3 - c)) .== 23

where c = iChc "AA" (iRef ("Aleft" :: Text)) (... | non_defect | use continuation passing style after tinkering around with variational arithmetic i ve concluded i need a zipper to handle deeply nested choices consider this formula deepchoiceslhs io result deepchoiceslhs flip sat nothing c where c ichc aa iref aleft text i... | 0 |

11,518 | 2,653,053,394 | IssuesEvent | 2015-03-16 20:51:16 | portah/biowardrobe | https://api.github.com/repos/portah/biowardrobe | closed | For some genes RPKMs don't correlate with log change | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. DEseq

2. Filter on significant genes with either removing or not non coding

What is the expected output? What do you see instead?

NM_001198868,NM_001198869,NM_005186,NR_040008 CAPN1 chr11 64949304 64979477 + 16

8.8881362 168.8581147 -0.585938255 0.004820842 0.026719021

Wh... | 1.0 | For some genes RPKMs don't correlate with log change - ```

What steps will reproduce the problem?

1. DEseq

2. Filter on significant genes with either removing or not non coding

What is the expected output? What do you see instead?

NM_001198868,NM_001198869,NM_005186,NR_040008 CAPN1 chr11 64949304 64979477 + 16

8.8881... | defect | for some genes rpkms don t correlate with log change what steps will reproduce the problem deseq filter on significant genes with either removing or not non coding what is the expected output what do you see instead nm nm nm nr what version of the product are y... | 1 |

135,248 | 10,967,244,590 | IssuesEvent | 2019-11-28 09:09:38 | BEXIS2/Core | https://api.github.com/repos/BEXIS2/Core | closed | during excel upload and import not all values are displayed during preview | Priority: Medium Status: Testing Required Type: Bug | during excel upload and import not all values are displayed during preview - because of:

in general, excel cells are given numberformat and styles.

but there are cases where these values are not set in an excel file.

In these cases the values are not displayed.

| 1.0 | during excel upload and import not all values are displayed during preview - during excel upload and import not all values are displayed during preview - because of:

in general, excel cells are given numberformat and styles.

but there are cases where these values are not set in an excel file.

In these cases the v... | non_defect | during excel upload and import not all values are displayed during preview during excel upload and import not all values are displayed during preview because of in general excel cells are given numberformat and styles but there are cases where these values are not set in an excel file in these cases the v... | 0 |

679,852 | 23,247,587,032 | IssuesEvent | 2022-08-03 22:01:50 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | [Desktop] window size affects error message display in Rewards | bug feature/rewards priority/P3 QA/Yes OS/Desktop | <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROV... | 1.0 | [Desktop] window size affects error message display in Rewards - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE IS... | non_defect | window size affects error message display in rewards have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue insufficient info will get the issue clos... | 0 |

8,160 | 2,611,462,387 | IssuesEvent | 2015-02-27 05:08:18 | chrsmith/reaver-wps | https://api.github.com/repos/chrsmith/reaver-wps | opened | The Code needs modifying | auto-migrated Priority-Low Type-Defect | ```

In the makefile the rule for wpscrack is incorrect as it hardcodes the

dependencies but has *.o in the compilation command line.

The practical implication is that it doesn't hardcode pins.o as a dependency so

even if it's getting modified, the problem is that when pins.c is modified it

should lead to rebuilding... | 1.0 | The Code needs modifying - ```

In the makefile the rule for wpscrack is incorrect as it hardcodes the

dependencies but has *.o in the compilation command line.

The practical implication is that it doesn't hardcode pins.o as a dependency so

even if it's getting modified, the problem is that when pins.c is modified it... | defect | the code needs modifying in the makefile the rule for wpscrack is incorrect as it hardcodes the dependencies but has o in the compilation command line the practical implication is that it doesn t hardcode pins o as a dependency so even if it s getting modified the problem is that when pins c is modified it... | 1 |

127,510 | 10,473,132,667 | IssuesEvent | 2019-09-23 11:55:58 | 7mind/izumi | https://api.github.com/repos/7mind/izumi | closed | Support ZIO Env-based tests in distage-testkit | api distage (di) distage-testkit good first issue scala-reflect zio | Explored in this `distage-sample` PR: https://github.com/7mind/distage-sample/pull/1

Allow constructing fixture dynamically from usages of ZIO Env forwarders.

Classic `distage-testkit` test:

```scala

"my test" in dio {

(service1: Service1[IO], service2: Service2[IO]) =>

for {

_ <- service1.ca... | 1.0 | Support ZIO Env-based tests in distage-testkit - Explored in this `distage-sample` PR: https://github.com/7mind/distage-sample/pull/1

Allow constructing fixture dynamically from usages of ZIO Env forwarders.

Classic `distage-testkit` test:

```scala

"my test" in dio {

(service1: Service1[IO], service2: Ser... | non_defect | support zio env based tests in distage testkit explored in this distage sample pr allow constructing fixture dynamically from usages of zio env forwarders classic distage testkit test scala my test in dio for yield ... | 0 |

45,692 | 13,021,341,022 | IssuesEvent | 2020-07-27 06:04:36 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | opened | HASH index on BigDecimal cannot return entries when compareTo=0, but equals=false | Module: Query Module: SQL Source: Internal Team: Core Type: Defect | Consider the following query:

```

SELECT * FROM table WHERE big_decimal_column = CAST(2.0 as REAL)

```

If there is a `HASH` index on the column `big_decimal_column`, and there is an entry with the index key "new BigDecimal(2)", the corresponding entry will not be returned. But if a `SORTED` index is used, then the ... | 1.0 | HASH index on BigDecimal cannot return entries when compareTo=0, but equals=false - Consider the following query:

```

SELECT * FROM table WHERE big_decimal_column = CAST(2.0 as REAL)

```

If there is a `HASH` index on the column `big_decimal_column`, and there is an entry with the index key "new BigDecimal(2)", the ... | defect | hash index on bigdecimal cannot return entries when compareto but equals false consider the following query select from table where big decimal column cast as real if there is a hash index on the column big decimal column and there is an entry with the index key new bigdecimal the ... | 1 |

691,221 | 23,688,481,945 | IssuesEvent | 2022-08-29 08:39:31 | datatlas-erasme/front | https://api.github.com/repos/datatlas-erasme/front | closed | Merge code based filter color mapped on kepler poi color | styling front priority high template system | **Describe the solution you'd like**

merge the "industrie" color mapping feature to the dev branch | 1.0 | Merge code based filter color mapped on kepler poi color - **Describe the solution you'd like**

merge the "industrie" color mapping feature to the dev branch | non_defect | merge code based filter color mapped on kepler poi color describe the solution you d like merge the industrie color mapping feature to the dev branch | 0 |

130,885 | 10,675,326,238 | IssuesEvent | 2019-10-21 11:25:04 | club-soda/club-soda-guide | https://api.github.com/repos/club-soda/club-soda-guide | closed | Update production website? | please-test | The last time the production website has been updated was the 12th of July.

Since then, some PRs have been merge and deployed into staging:

@jessyclubsoda would you like the production website to be update... | 1.0 | Update production website? - The last time the production website has been updated was the 12th of July.

Since then, some PRs have been merge and deployed into staging:

@jessyclubsoda would you like the pr... | non_defect | update production website the last time the production website has been updated was the of july since then some prs have been merge and deployed into staging jessyclubsoda would you like the production website to be updated now or do you prefer to wait the end of the week once a few new prs have been... | 0 |

70,306 | 23,111,831,703 | IssuesEvent | 2022-07-27 13:38:32 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | BUG: initial approximations to eigenvectors in svds should not be with all positive components | defect scipy.sparse.linalg | ### Describe your issue.

https://github.com/scipy/scipy/blob/master/scipy/sparse/linalg/_eigen/_svds.py at least in one place sets initial approximations to eigenvectors with all components random uniform on [0, 1]. This is a poor choice as common sense suggests that vectors with only positive components cover the p... | 1.0 | BUG: initial approximations to eigenvectors in svds should not be with all positive components - ### Describe your issue.