Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

28,657 | 5,320,584,308 | IssuesEvent | 2017-02-14 10:55:09 | contao/installation-bundle | https://api.github.com/repos/contao/installation-bundle | closed | InstallTool logException | defect | In Contao Managed Version the path to the log file should be something like

`$this->rootDir.'/../var/logs/prod-'.date('Y-m-d').'.log'`

in InstallTool.php, Line 395

| 1.0 | InstallTool logException - In Contao Managed Version the path to the log file should be something like

`$this->rootDir.'/../var/logs/prod-'.date('Y-m-d').'.log'`

in InstallTool.php, Line 395

| defect | installtool logexception in contao managed version the path to the log file should be something like this rootdir var logs prod date y m d log in installtool php line | 1 |

97,714 | 11,030,213,195 | IssuesEvent | 2019-12-06 15:20:35 | pulumi/pulumi-vault | https://api.github.com/repos/pulumi/pulumi-vault | reopened | Group Alias example is different than terraforms and is missing vital information | documentation question | The `vault.identity.GroupAlias` example is missing vital information that the terraform documentation has.

https://github.com/terraform-providers/terraform-provider-vault/blob/master/website/docs/r/identity_group.html.md#example-usage

https://www.pulumi.com/docs/reference/pkg/nodejs/pulumi/vault/identity/#GroupAl... | 1.0 | Group Alias example is different than terraforms and is missing vital information - The `vault.identity.GroupAlias` example is missing vital information that the terraform documentation has.

https://github.com/terraform-providers/terraform-provider-vault/blob/master/website/docs/r/identity_group.html.md#example-usag... | non_defect | group alias example is different than terraforms and is missing vital information the vault identity groupalias example is missing vital information that the terraform documentation has notice that pulumi is missing the name for the groupalias without this the external groups will not work pulumi... | 0 |

62,905 | 17,260,371,930 | IssuesEvent | 2021-07-22 06:35:47 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | opened | White screen | T-Defect | Hello!

after updating Element to version 1.7.33, suddenly just a white screen began to appear, only reinstalling the Element application helps, previously this was in version 1.36, this was fixed in version 1.4.1 and everything was fine.

Please fix it.

we use on the desktop version

OS: Win Server 2012R2. | 1.0 | White screen - Hello!

after updating Element to version 1.7.33, suddenly just a white screen began to appear, only reinstalling the Element application helps, previously this was in version 1.36, this was fixed in version 1.4.1 and everything was fine.

Please fix it.

we use on the desktop version

OS: Win Server 2... | defect | white screen hello after updating element to version suddenly just a white screen began to appear only reinstalling the element application helps previously this was in version this was fixed in version and everything was fine please fix it we use on the desktop version os win server | 1 |

74,996 | 25,474,191,154 | IssuesEvent | 2022-11-25 12:58:09 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | Expand unqualified asterisk in MySQL when it's not leading | T: Defect C: Functionality P: Medium R: Fixed E: All Editions | This works in MySQL:

```sql

SELECT *, 'a' FROM (SELECT 1 x) t

```

Producing:

```

|x |a |

|---|---|

|1 |a |

```

But this doesn't work:

```sql

SELECT 'a', * FROM (SELECT 1 x) t

```

Raising:

> SQL Error [1064] [42000]: You have an error in your SQL syntax; check the manual that correspon... | 1.0 | Expand unqualified asterisk in MySQL when it's not leading - This works in MySQL:

```sql

SELECT *, 'a' FROM (SELECT 1 x) t

```

Producing:

```

|x |a |

|---|---|

|1 |a |

```

But this doesn't work:

```sql

SELECT 'a', * FROM (SELECT 1 x) t

```

Raising:

> SQL Error [1064] [42000]: You have... | defect | expand unqualified asterisk in mysql when it s not leading this works in mysql sql select a from select x t producing x a a but this doesn t work sql select a from select x t raising sql error you have an error i... | 1 |

515,686 | 14,967,380,318 | IssuesEvent | 2021-01-27 15:37:19 | StatCan/daaas | https://api.github.com/repos/StatCan/daaas | closed | Add backups for Minio | area/engineering component/storage kind/feature priority/blocker size/S | ### Target

Frequency: nightly

Retention: 30 days

_Requires incremental. Other strategy may be required._

| 1.0 | Add backups for Minio - ### Target

Frequency: nightly

Retention: 30 days

_Requires incremental. Other strategy may be required._

| non_defect | add backups for minio target frequency nightly retention days requires incremental other strategy may be required | 0 |

67,504 | 20,971,509,415 | IssuesEvent | 2022-03-28 11:50:41 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | opened | Select::getSelect and ::fields should return a single field when FOR XML or FOR JSON clause is present | T: Defect C: Functionality P: Medium E: Professional Edition E: Enterprise Edition | https://github.com/jOOQ/jOOQ/issues/10565 has fixed a few issues related to parsing `FOR XML` and `FOR JSON`, in case of which the resulting degree of the query is always 1. The clauses act like if they were wrapping the rest of the query in a derived table, and then projecting only `XMLAGG` or `JSON_ARRAYAGG` from the... | 1.0 | Select::getSelect and ::fields should return a single field when FOR XML or FOR JSON clause is present - https://github.com/jOOQ/jOOQ/issues/10565 has fixed a few issues related to parsing `FOR XML` and `FOR JSON`, in case of which the resulting degree of the query is always 1. The clauses act like if they were wrappin... | defect | select getselect and fields should return a single field when for xml or for json clause is present has fixed a few issues related to parsing for xml and for json in case of which the resulting degree of the query is always the clauses act like if they were wrapping the rest of the query in a derived tab... | 1 |

21,043 | 3,452,986,265 | IssuesEvent | 2015-12-17 08:57:13 | luigirizzo/netmap | https://api.github.com/repos/luigirizzo/netmap | closed | Run Netmap in a Fedora VM running in VirtualBox | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. install Fedora 20

2. install VirtualBox

3. create a VirtualBox Fedora20 VM with 3 vNICs e1000 (Virtual Box vNIC Intel

PRO/1000 T Server(82543GC), install Xen and try install Netmap from git.

4. install Netmap:

- cd netmap-release/LINUX

- ./configure

- make

Until now, every... | 1.0 | Run Netmap in a Fedora VM running in VirtualBox - ```

What steps will reproduce the problem?

1. install Fedora 20

2. install VirtualBox

3. create a VirtualBox Fedora20 VM with 3 vNICs e1000 (Virtual Box vNIC Intel

PRO/1000 T Server(82543GC), install Xen and try install Netmap from git.

4. install Netmap:

- cd netmap-r... | defect | run netmap in a fedora vm running in virtualbox what steps will reproduce the problem install fedora install virtualbox create a virtualbox vm with vnics virtual box vnic intel pro t server install xen and try install netmap from git install netmap cd netmap release linux conf... | 1 |

65,019 | 19,014,340,200 | IssuesEvent | 2021-11-23 12:51:54 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Infinite spinning when opening riot.im desktop | T-Defect P1 S-Major A-Electron T-Other | ### Description

When opening riot on my Windows 10 Desktop it shows a blank screen, a spinner in the middle and "logout" at the bottom. The symbol also shows "1 new message" but nothing happens, no matter how long i wait.

I'm starting with a .cmd with --proxy-server= included. I also tried without it, same bug.

... | 1.0 | Infinite spinning when opening riot.im desktop - ### Description

When opening riot on my Windows 10 Desktop it shows a blank screen, a spinner in the middle and "logout" at the bottom. The symbol also shows "1 new message" but nothing happens, no matter how long i wait.

I'm starting with a .cmd with --proxy-serv... | defect | infinite spinning when opening riot im desktop description when opening riot on my windows desktop it shows a blank screen a spinner in the middle and logout at the bottom the symbol also shows new message but nothing happens no matter how long i wait i m starting with a cmd with proxy serve... | 1 |

26,120 | 4,593,614,556 | IssuesEvent | 2016-09-21 02:02:27 | afisher1/GridLAB-D | https://api.github.com/repos/afisher1/GridLAB-D | closed | #43 The BUILD file does not describe how to make xerces and cppunit build for linux,

| defect | At this point the makefile does not build xerces or install it on linux systems if it is missing

,

| 1.0 | #43 The BUILD file does not describe how to make xerces and cppunit build for linux,

- At this point the makefile does not build xerces or install it on linux systems if it is missing

,

| defect | the build file does not describe how to make xerces and cppunit build for linux at this point the makefile does not build xerces or install it on linux systems if it is missing | 1 |

19,181 | 3,150,907,069 | IssuesEvent | 2015-09-16 03:08:53 | fuzzdb-project/fuzzdb | https://api.github.com/repos/fuzzdb-project/fuzzdb | closed | Move to Github | auto-migrated Priority-Medium Type-Defect | ```

With the close of google code will this project move to github?

```

Original issue reported on code.google.com by `Lordsai...@gmail.com` on 16 Mar 2015 at 11:59 | 1.0 | Move to Github - ```

With the close of google code will this project move to github?

```

Original issue reported on code.google.com by `Lordsai...@gmail.com` on 16 Mar 2015 at 11:59 | defect | move to github with the close of google code will this project move to github original issue reported on code google com by lordsai gmail com on mar at | 1 |

705,556 | 24,238,446,996 | IssuesEvent | 2022-09-27 03:09:42 | paperclip-ui/paperclip | https://api.github.com/repos/paperclip-ui/paperclip | closed | ability to register native elements for preview | priority: medium impact: high effort: medium | This could enable custom elements + plugins to be rendered in the preview. Will also need to be able to expose props that can be passed into these native elements.

| 1.0 | ability to register native elements for preview - This could enable custom elements + plugins to be rendered in the preview. Will also need to be able to expose props that can be passed into these native elements.

| non_defect | ability to register native elements for preview this could enable custom elements plugins to be rendered in the preview will also need to be able to expose props that can be passed into these native elements | 0 |

33,127 | 7,035,518,963 | IssuesEvent | 2017-12-28 00:43:01 | OGMS/ogms | https://api.github.com/repos/OGMS/ogms | closed | witnessed event | auto-migrated Priority-Medium Type-Defect | ```

Hi,

I need a term "witnessed loss of consciousness". It isn't a symptom,

because it has to be witnessed by somebody else than the patient, but it

doesn't have to be a sign, as it doesn't have to be witnessed by a

clinician (though it could be)

I suspect that OGMS would want a more general term "witnessed event" f... | 1.0 | witnessed event - ```

Hi,

I need a term "witnessed loss of consciousness". It isn't a symptom,

because it has to be witnessed by somebody else than the patient, but it

doesn't have to be a sign, as it doesn't have to be witnessed by a

clinician (though it could be)

I suspect that OGMS would want a more general term "... | defect | witnessed event hi i need a term witnessed loss of consciousness it isn t a symptom because it has to be witnessed by somebody else than the patient but it doesn t have to be a sign as it doesn t have to be witnessed by a clinician though it could be i suspect that ogms would want a more general term ... | 1 |

8,128 | 2,611,453,456 | IssuesEvent | 2015-02-27 05:00:50 | chrsmith/hedgewars | https://api.github.com/repos/chrsmith/hedgewars | closed | Problem with computer-run team: always does the same action as it does not know what to do | auto-migrated Priority-Low Type-Defect | ```

What steps will reproduce the problem?

I can't really say: watch the video, the green hedge has already shot once,

we're waiting for the second time, but it always does the same actions again

(he did it for about thirty seconds, before at least shooting to the right of

the screen).

What is the expected output? ... | 1.0 | Problem with computer-run team: always does the same action as it does not know what to do - ```

What steps will reproduce the problem?

I can't really say: watch the video, the green hedge has already shot once,

we're waiting for the second time, but it always does the same actions again

(he did it for about thirty s... | defect | problem with computer run team always does the same action as it does not know what to do what steps will reproduce the problem i can t really say watch the video the green hedge has already shot once we re waiting for the second time but it always does the same actions again he did it for about thirty s... | 1 |

161,548 | 12,551,182,076 | IssuesEvent | 2020-06-06 13:53:16 | astpl1998/Kanam-Latex | https://api.github.com/repos/astpl1998/Kanam-Latex | reopened | S&S-Commercial Invoice Print_PFS | 17.Testing2_Completed | Dear Team,

Here am created new issue for "Commercial Invoice Print development".

In future i have added PFS for further process.

Thanks and Regards,

M.Maheshwaran. | 1.0 | S&S-Commercial Invoice Print_PFS - Dear Team,

Here am created new issue for "Commercial Invoice Print development".

In future i have added PFS for further process.

Thanks and Regards,

M.Maheshwaran. | non_defect | s s commercial invoice print pfs dear team here am created new issue for commercial invoice print development in future i have added pfs for further process thanks and regards m maheshwaran | 0 |

75,468 | 20,825,501,747 | IssuesEvent | 2022-03-18 20:17:45 | golang/go | https://api.github.com/repos/golang/go | opened | x/build/cmd/relui: build releases | Builders NeedsFix | relui should subsume the functionality of x/build/cmd/releasebot and x/build/cmd/release. | 1.0 | x/build/cmd/relui: build releases - relui should subsume the functionality of x/build/cmd/releasebot and x/build/cmd/release. | non_defect | x build cmd relui build releases relui should subsume the functionality of x build cmd releasebot and x build cmd release | 0 |

85,114 | 24,515,219,161 | IssuesEvent | 2022-10-11 03:58:50 | apache/shardingsphere | https://api.github.com/repos/apache/shardingsphere | closed | upgrade all actions/cache | type: build | there are some cache are v2 and the rest are v3, need to use the same version.

take a look on the `restore-keys` of action cache, is this necessary ? | 1.0 | upgrade all actions/cache - there are some cache are v2 and the rest are v3, need to use the same version.

take a look on the `restore-keys` of action cache, is this necessary ? | non_defect | upgrade all actions cache there are some cache are and the rest are need to use the same version take a look on the restore keys of action cache is this necessary ? | 0 |

256,209 | 19,403,623,765 | IssuesEvent | 2021-12-19 16:17:46 | monitoring-plugins/monitoring-plugins | https://api.github.com/repos/monitoring-plugins/monitoring-plugins | closed | URL for check_game not available anymore [sf#3004910] | import bug compilation documentation | Submitted by madolphs on 2010-05-20 22:45:24

While ./configure it's been said that one should get qstat from http://www.activesw.com/people/steve/qstat.html. The site seems to be no longer available. http://www.qstat.org/ seems to be more appropriate.

configure, lines 19373,19374

Regards,

Mike Adolphs

| 1.0 | URL for check_game not available anymore [sf#3004910] - Submitted by madolphs on 2010-05-20 22:45:24

While ./configure it's been said that one should get qstat from http://www.activesw.com/people/steve/qstat.html. The site seems to be no longer available. http://www.qstat.org/ seems to be more appropriate.

configure,... | non_defect | url for check game not available anymore submitted by madolphs on while configure it s been said that one should get qstat from the site seems to be no longer available seems to be more appropriate configure lines regards mike adolphs | 0 |

8,776 | 3,787,948,354 | IssuesEvent | 2016-03-21 13:01:10 | stkent/amplify | https://api.github.com/repos/stkent/amplify | opened | Avoid potential run time exception if library user attempts to configure version rules before getting the shared Amplify instance | bug code difficulty-easy | See https://github.com/stkent/amplify/pull/130/files#r56817394 | 1.0 | Avoid potential run time exception if library user attempts to configure version rules before getting the shared Amplify instance - See https://github.com/stkent/amplify/pull/130/files#r56817394 | non_defect | avoid potential run time exception if library user attempts to configure version rules before getting the shared amplify instance see | 0 |

339,493 | 10,255,349,903 | IssuesEvent | 2019-08-21 15:15:22 | CLOSER-Cohorts/archivist | https://api.github.com/repos/CLOSER-Cohorts/archivist | closed | Importer not working for instrument DDI XML | Importer Low priority bug | I have tested the importer by using a downloaded instrument XML from Archivist and then importing it under a different prefix. Archivist message states that it has imported but it does not appear.

Note the importer works for USoc XML. | 1.0 | Importer not working for instrument DDI XML - I have tested the importer by using a downloaded instrument XML from Archivist and then importing it under a different prefix. Archivist message states that it has imported but it does not appear.

Note the importer works for USoc XML. | non_defect | importer not working for instrument ddi xml i have tested the importer by using a downloaded instrument xml from archivist and then importing it under a different prefix archivist message states that it has imported but it does not appear note the importer works for usoc xml | 0 |

50,071 | 13,187,319,900 | IssuesEvent | 2020-08-13 03:02:16 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | closed | include new phys-service release (Trac #86) | Migrated from Trac defect offline-software | B01-13-01

<details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/86

, reported by blaufuss and owned by blaufuss_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2007-11-11T03:51:18",

"description": "B01-13-01",

"reporter": "blaufuss",

"cc": "",

"resolution": "... | 1.0 | include new phys-service release (Trac #86) - B01-13-01

<details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/86

, reported by blaufuss and owned by blaufuss_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2007-11-11T03:51:18",

"description": "B01-13-01",

"reporter": "... | defect | include new phys service release trac migrated from reported by blaufuss and owned by blaufuss json status closed changetime description reporter blaufuss cc resolution fixed ts compone... | 1 |

7,688 | 2,610,433,065 | IssuesEvent | 2015-02-26 20:21:52 | chrsmith/scribefire-chrome | https://api.github.com/repos/chrsmith/scribefire-chrome | closed | I cannot add a wordpress.com blog... | auto-migrated Priority-Medium Type-Defect | ```

What's the problem?

I cannot add a wordpress.com blog...

What browser are you using?

Latest version of chrome...

What version of ScribeFire are you running?

4.2.3

```

-----

Original issue reported on code.google.com by `toddlohe...@gmail.com` on 7 Feb 2014 at 2:11 | 1.0 | I cannot add a wordpress.com blog... - ```

What's the problem?

I cannot add a wordpress.com blog...

What browser are you using?

Latest version of chrome...

What version of ScribeFire are you running?

4.2.3

```

-----

Original issue reported on code.google.com by `toddlohe...@gmail.com` on 7 Feb 2014 at 2:11 | defect | i cannot add a wordpress com blog what s the problem i cannot add a wordpress com blog what browser are you using latest version of chrome what version of scribefire are you running original issue reported on code google com by toddlohe gmail com on feb at | 1 |

4,382 | 6,926,637,384 | IssuesEvent | 2017-11-30 19:52:25 | cerner/terra-core | https://api.github.com/repos/cerner/terra-core | closed | Cover Image component | cover-image Needs orion requirements new feature orion reviewed | # Issue Description

Placeholder for cover image component

## Issue Type

<!-- Is this a new feature request, enhancement, bug report, other? -->

- [x] New Feature

- [ ] Enhancement

- [ ] Bug

- [ ] Other

## Expected Behavior

TBD

## Current Behavior

N/A | 1.0 | Cover Image component - # Issue Description

Placeholder for cover image component

## Issue Type

<!-- Is this a new feature request, enhancement, bug report, other? -->

- [x] New Feature

- [ ] Enhancement

- [ ] Bug

- [ ] Other

## Expected Behavior

TBD

## Current Behavior

N/A | non_defect | cover image component issue description placeholder for cover image component issue type new feature enhancement bug other expected behavior tbd current behavior n a | 0 |

28,653 | 2,708,459,246 | IssuesEvent | 2015-04-08 09:05:41 | ondras/wwwsqldesigner | https://api.github.com/repos/ondras/wwwsqldesigner | closed | Postgresql: serial/bigserial data types must be converted to integer/bigint in foreign keys | imported Priority-Medium Type-Other | _From [i...@amarosia.com](https://code.google.com/u/115984126334797849605/) on April 23, 2009 04:25:12_

What steps will reproduce the problem? 1. 'create foreign key' from a primary key of data type 'serial' 2. 3. What is the expected output? What do you see instead? the resultant foreign key is of data type serial. S... | 1.0 | Postgresql: serial/bigserial data types must be converted to integer/bigint in foreign keys - _From [i...@amarosia.com](https://code.google.com/u/115984126334797849605/) on April 23, 2009 04:25:12_

What steps will reproduce the problem? 1. 'create foreign key' from a primary key of data type 'serial' 2. 3. What is the... | non_defect | postgresql serial bigserial data types must be converted to integer bigint in foreign keys from on april what steps will reproduce the problem create foreign key from a primary key of data type serial what is the expected output what do you see instead the resultant foreign key is o... | 0 |

116,786 | 4,706,584,870 | IssuesEvent | 2016-10-13 17:36:31 | default1406/PhyLab | https://api.github.com/repos/default1406/PhyLab | opened | 整理收集物理实验相关资料 | priority 2 size 5 | - 对象:程富瑞

- 预计时长:8h

- 详情:在对当前已存在资料进行熟悉和整理后,确定α阶段需要完成的实验项目(α阶段完成后基本全覆盖),针对这些实验收集整理相关资料,这部分需要特别仔细,才能保证实验报告模板的翔实和准确。 | 1.0 | 整理收集物理实验相关资料 - - 对象:程富瑞

- 预计时长:8h

- 详情:在对当前已存在资料进行熟悉和整理后,确定α阶段需要完成的实验项目(α阶段完成后基本全覆盖),针对这些实验收集整理相关资料,这部分需要特别仔细,才能保证实验报告模板的翔实和准确。 | non_defect | 整理收集物理实验相关资料 对象:程富瑞 预计时长: 详情:在对当前已存在资料进行熟悉和整理后,确定α阶段需要完成的实验项目(α阶段完成后基本全覆盖),针对这些实验收集整理相关资料,这部分需要特别仔细,才能保证实验报告模板的翔实和准确。 | 0 |

45,301 | 12,708,609,606 | IssuesEvent | 2020-06-23 10:51:14 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | opened | PostgreSQL array of domain inconsistent behaviour | T: Defect | While most of it was already mentioned in related issues, it might help to have this consolidated test case.

### Expected behavior and actual behavior:

With the following SQL:

```sql

CREATE DOMAIN JSONB_DOM AS JSONB;

CREATE TABLE test

(

normal JSONB,

domain JSONB_DOM,

normal_array JSONB... | 1.0 | PostgreSQL array of domain inconsistent behaviour - While most of it was already mentioned in related issues, it might help to have this consolidated test case.

### Expected behavior and actual behavior:

With the following SQL:

```sql

CREATE DOMAIN JSONB_DOM AS JSONB;

CREATE TABLE test

(

normal JSO... | defect | postgresql array of domain inconsistent behaviour while most of it was already mentioned in related issues it might help to have this consolidated test case expected behavior and actual behavior with the following sql sql create domain jsonb dom as jsonb create table test normal jso... | 1 |

104,332 | 11,404,121,493 | IssuesEvent | 2020-01-31 09:06:17 | jonasjungaker/DD2480_A2_CI_Group10 | https://api.github.com/repos/jonasjungaker/DD2480_A2_CI_Group10 | opened | API documentation | documentation | A criterion for the assignment is to convert the JavaDoc into a browsable format, e.g. an HTML file. | 1.0 | API documentation - A criterion for the assignment is to convert the JavaDoc into a browsable format, e.g. an HTML file. | non_defect | api documentation a criterion for the assignment is to convert the javadoc into a browsable format e g an html file | 0 |

5,835 | 2,610,216,324 | IssuesEvent | 2015-02-26 19:08:57 | chrsmith/somefinders | https://api.github.com/repos/chrsmith/somefinders | opened | схемы вышивок крестом пасха в формате xsd.pdf | auto-migrated Priority-Medium Type-Defect | ```

'''Анисим Никитин'''

День добрый никак не могу найти .схемы

вышивок крестом пасха в формате xsd.pdf. как то

выкладывали уже

'''Арий Матвеев'''

Вот хороший сайт где можно скачать

http://bit.ly/1h43pJ3

'''Борислав Лебедев'''

Просит ввести номер мобилы!Не опасно ли это?

'''Владлен Емельянов'''

Неа все ок у меня ... | 1.0 | схемы вышивок крестом пасха в формате xsd.pdf - ```

'''Анисим Никитин'''

День добрый никак не могу найти .схемы

вышивок крестом пасха в формате xsd.pdf. как то

выкладывали уже

'''Арий Матвеев'''

Вот хороший сайт где можно скачать

http://bit.ly/1h43pJ3

'''Борислав Лебедев'''

Просит ввести номер мобилы!Не опасно ли ... | defect | схемы вышивок крестом пасха в формате xsd pdf анисим никитин день добрый никак не могу найти схемы вышивок крестом пасха в формате xsd pdf как то выкладывали уже арий матвеев вот хороший сайт где можно скачать борислав лебедев просит ввести номер мобилы не опасно ли это владлен еме... | 1 |

407,097 | 27,598,103,578 | IssuesEvent | 2023-03-09 08:10:33 | gbowne1/dotfiles | https://api.github.com/repos/gbowne1/dotfiles | opened | My .bashrc needs help | bug documentation enhancement help wanted good first issue question | This isn't far from the default and could be a lot better.

I use Debian 10 and 11

I don't know a lot about bash or .bashrc but I am 100% sure it could be a lot better and could use help with this.

I also at times use tmux as well as bash inside vscode.

| 1.0 | My .bashrc needs help - This isn't far from the default and could be a lot better.

I use Debian 10 and 11

I don't know a lot about bash or .bashrc but I am 100% sure it could be a lot better and could use help with this.

I also at times use tmux as well as bash inside vscode.

| non_defect | my bashrc needs help this isn t far from the default and could be a lot better i use debian and i don t know a lot about bash or bashrc but i am sure it could be a lot better and could use help with this i also at times use tmux as well as bash inside vscode | 0 |

189,674 | 14,517,527,255 | IssuesEvent | 2020-12-13 19:58:33 | microsoft/AzureStorageExplorer | https://api.github.com/repos/microsoft/AzureStorageExplorer | closed | The action 'Copy' is enabled in the context menu when selecting one blob and its blob snapshots | :beetle: regression :gear: blobs :heavy_check_mark: merged 🧪 testing | **Storage Explorer Version**: 1.17.0

**Build Number**: 20201210.19

**Branch**: rel/1.17.0

**Platform/OS**: Windows 10/ Linux Ubuntu 16.04 / MacOS Catalina

**Architecture**: ia32/x64

**Regression From**: Previous release(1.15.1)

## Steps to Reproduce ##

1. Expand one storage account -> Blob Containers.

2. Cr... | 1.0 | The action 'Copy' is enabled in the context menu when selecting one blob and its blob snapshots - **Storage Explorer Version**: 1.17.0

**Build Number**: 20201210.19

**Branch**: rel/1.17.0

**Platform/OS**: Windows 10/ Linux Ubuntu 16.04 / MacOS Catalina

**Architecture**: ia32/x64

**Regression From**: Previous rele... | non_defect | the action copy is enabled in the context menu when selecting one blob and its blob snapshots storage explorer version build number branch rel platform os windows linux ubuntu macos catalina architecture regression from previous release s... | 0 |

172,516 | 13,308,357,553 | IssuesEvent | 2020-08-26 00:44:46 | ignitionrobotics/ign-fuel-tools | https://api.github.com/repos/ignitionrobotics/ign-fuel-tools | opened | Command line tools failing on Windows and macOS | Windows bug macOS tests | The `ign_TEST`s fail on homebrew with:

```

31: Expected: (output.find(g_version)) != (std::string::npos), actual: 18446744073709551615 vs 18446744073709551615

31: I cannot find any available 'ign' command:

31: * Did you install any ignition library?

31: * Did you set the IGN_CONFIG_PATH environment variable?

... | 1.0 | Command line tools failing on Windows and macOS - The `ign_TEST`s fail on homebrew with:

```

31: Expected: (output.find(g_version)) != (std::string::npos), actual: 18446744073709551615 vs 18446744073709551615

31: I cannot find any available 'ign' command:

31: * Did you install any ignition library?

31: * Did y... | non_defect | command line tools failing on windows and macos the ign test s fail on homebrew with expected output find g version std string npos actual vs i cannot find any available ign command did you install any ignition library did you set the ign config path environment var... | 0 |

711,976 | 24,480,875,679 | IssuesEvent | 2022-10-08 20:23:29 | deckhouse/deckhouse | https://api.github.com/repos/deckhouse/deckhouse | reopened | [cloud-provider-yandex] Prevent machine checksum change if platformID is set to default value in instance class | area/cloud-provider type/bug status/rotten priority/backlog source/deckhouse-team | ### Preflight Checklist

- [X] I agree to follow the [Code of Conduct](https://github.com/deckhouse/deckhouse/blob/main/CODE_OF_CONDUCT.md) that this project adheres to.

- [X] I have searched the [issue tracker](https://github.com/deckhouse/deckhouse/issues) for an issue that matches the one I want to file, without suc... | 1.0 | [cloud-provider-yandex] Prevent machine checksum change if platformID is set to default value in instance class - ### Preflight Checklist

- [X] I agree to follow the [Code of Conduct](https://github.com/deckhouse/deckhouse/blob/main/CODE_OF_CONDUCT.md) that this project adheres to.

- [X] I have searched the [issue tra... | non_defect | prevent machine checksum change if platformid is set to default value in instance class preflight checklist i agree to follow the that this project adheres to i have searched the for an issue that matches the one i want to file without success version expected behavior machin... | 0 |

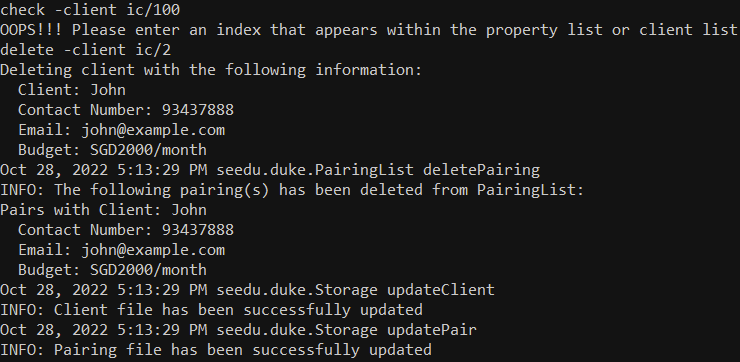

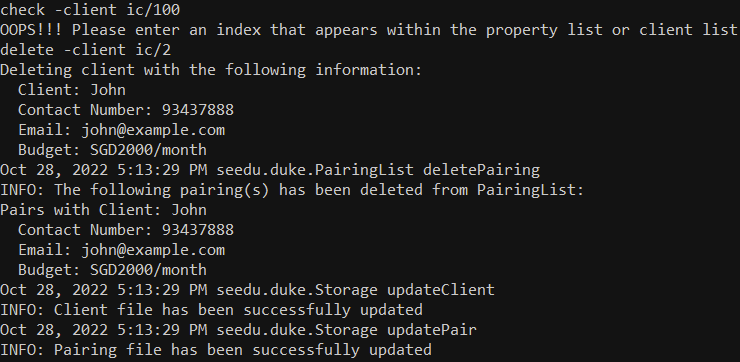

347,267 | 24,887,824,933 | IssuesEvent | 2022-10-28 09:18:02 | Franky4566/ped | https://api.github.com/repos/Franky4566/ped | opened | Formatting | type.DocumentationBug severity.Low | Can consider including line breaks inbetween as its a bit hard to read the long output everytime we add a client/ property, pair or delete

<!--session: 1666947272254-69b62f37-508a-4913-a024-1403c4ed68... | 1.0 | Formatting - Can consider including line breaks inbetween as its a bit hard to read the long output everytime we add a client/ property, pair or delete

<!--session: 1666947272254-69b62f37-508a-4913-a0... | non_defect | formatting can consider including line breaks inbetween as its a bit hard to read the long output everytime we add a client property pair or delete | 0 |

23,481 | 3,830,572,625 | IssuesEvent | 2016-03-31 15:00:00 | primefaces/primeng | https://api.github.com/repos/primefaces/primeng | closed | Cell templating ignored on scrollable table | defect | When a datatable is scrollable and has templating for columns, the templates are ignored.

```xml

<p-dataTable [value]="cars" scrollable="true" scrollHeight="200">

<header>Vertical</header>

<p-column field="vin" header="Vin"></p-column>

<p-column field="year" header="Year"></p-column>

... | 1.0 | Cell templating ignored on scrollable table - When a datatable is scrollable and has templating for columns, the templates are ignored.

```xml

<p-dataTable [value]="cars" scrollable="true" scrollHeight="200">

<header>Vertical</header>

<p-column field="vin" header="Vin"></p-column>

<p-col... | defect | cell templating ignored on scrollable table when a datatable is scrollable and has templating for columns the templates are ignored xml vertical car | 1 |

11,930 | 3,549,761,336 | IssuesEvent | 2016-01-20 19:17:01 | asciidoctor/asciidoctor.js | https://api.github.com/repos/asciidoctor/asciidoctor.js | opened | Add instructions to the contributing code guide about how to add a spec | documentation | Add information to the contributing code guide about how to add a new spec and how to run the specs. This will encourage participation and help grow the test suite. | 1.0 | Add instructions to the contributing code guide about how to add a spec - Add information to the contributing code guide about how to add a new spec and how to run the specs. This will encourage participation and help grow the test suite. | non_defect | add instructions to the contributing code guide about how to add a spec add information to the contributing code guide about how to add a new spec and how to run the specs this will encourage participation and help grow the test suite | 0 |

11,242 | 2,641,957,958 | IssuesEvent | 2015-03-11 20:44:32 | chrsmith/html5rocks | https://api.github.com/repos/chrsmith/html5rocks | closed | Broken links on website | Priority-Medium Type-Defect | Original [issue 164](https://code.google.com/p/html5rocks/issues/detail?id=164) created by chrsmith on 2010-08-17T08:36:38.000Z:

Very minor issue...the links in the footer of the "Samples Studio" do not work as the page is hosted on a new domain and the links are relative, e.g. http://studio.html5rocks.com/t... | 1.0 | Broken links on website - Original [issue 164](https://code.google.com/p/html5rocks/issues/detail?id=164) created by chrsmith on 2010-08-17T08:36:38.000Z:

Very minor issue...the links in the footer of the "Samples Studio" do not work as the page is hosted on a new domain and the links are relative, e.g. http... | defect | broken links on website original created by chrsmith on very minor issue the links in the footer of the quot samples studio quot do not work as the page is hosted on a new domain and the links are relative e g | 1 |

71,578 | 13,686,368,366 | IssuesEvent | 2020-09-30 08:36:10 | microsoft/azure-pipelines-tasks | https://api.github.com/repos/microsoft/azure-pipelines-tasks | closed | PublishCodeCoverageResults seems to publish to build artifacts which multi-stage pipeline fails when trying to auto-retrieve. | Area: CodeCoverage Area: Test bug | ## Note

Issues in this repo are for tracking bugs, feature requests and questions for the tasks in this repo

For a list:

https://github.com/Microsoft/azure-pipelines-tasks/tree/master/Tasks

If you have an issue or request for the Azure Pipelines service, use developer community instead:

https://develo... | 1.0 | PublishCodeCoverageResults seems to publish to build artifacts which multi-stage pipeline fails when trying to auto-retrieve. - ## Note

Issues in this repo are for tracking bugs, feature requests and questions for the tasks in this repo

For a list:

https://github.com/Microsoft/azure-pipelines-tasks/tree/mast... | non_defect | publishcodecoverageresults seems to publish to build artifacts which multi stage pipeline fails when trying to auto retrieve note issues in this repo are for tracking bugs feature requests and questions for the tasks in this repo for a list if you have an issue or request for the azure pipelines... | 0 |

11,931 | 4,321,393,288 | IssuesEvent | 2016-07-25 10:00:14 | KodrAus/elasticsearch-rs | https://api.github.com/repos/KodrAus/elasticsearch-rs | closed | Breaking Changes to API in 5.0 | codegen hyper notes rotor | See: https://www.elastic.co/guide/en/elasticsearch/reference/master/breaking_50_rest_api_changes.html

There aren't a whole lot of changes to the REST API, and those should be picked up automagically by updating the spec | 1.0 | Breaking Changes to API in 5.0 - See: https://www.elastic.co/guide/en/elasticsearch/reference/master/breaking_50_rest_api_changes.html

There aren't a whole lot of changes to the REST API, and those should be picked up automagically by updating the spec | non_defect | breaking changes to api in see there aren t a whole lot of changes to the rest api and those should be picked up automagically by updating the spec | 0 |

46,981 | 13,056,009,522 | IssuesEvent | 2020-07-30 03:22:49 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | opened | [dataio] Undefined behavior in dataio/icetray (Trac #2216) | Incomplete Migration Migrated from Trac combo core defect | Migrated from https://code.icecube.wisc.edu/ticket/2216

```json

{

"status": "closed",

"changetime": "2019-03-19T15:14:54",

"description": "I can elicit undefined behavior in dataio unit tests by the following:\n\n1. Run a_nocompression.py\n2. Run i_adds_mutineer.py\n3. Run j_fatals_reading_mutineer.py wit... | 1.0 | [dataio] Undefined behavior in dataio/icetray (Trac #2216) - Migrated from https://code.icecube.wisc.edu/ticket/2216

```json

{

"status": "closed",

"changetime": "2019-03-19T15:14:54",

"description": "I can elicit undefined behavior in dataio unit tests by the following:\n\n1. Run a_nocompression.py\n2. Ru... | defect | undefined behavior in dataio icetray trac migrated from json status closed changetime description i can elicit undefined behavior in dataio unit tests by the following n run a nocompression py run i adds mutineer py run j fatals reading mutineer py with ... | 1 |

29,501 | 14,146,616,225 | IssuesEvent | 2020-11-10 19:29:49 | ampproject/amphtml | https://api.github.com/repos/ampproject/amphtml | closed | CLS of 1.0 caused by "async" for https://cdn.ampproject.org/v0.js | Type: Discussion/Question Type: Performance WG: performance | ## What's the issue?

Async for https://cdn.ampproject.org/v0.js is causing 1 Cumulative Layout Shift that penalizes our amp-only site with a CLS of magnitude 1.0.

Removing async removes the CLS issue but the "async" for v0.js is mandatory for amp.

## How do we reproduce the issue?

Step 0. Make a boilerplate a... | True | CLS of 1.0 caused by "async" for https://cdn.ampproject.org/v0.js - ## What's the issue?

Async for https://cdn.ampproject.org/v0.js is causing 1 Cumulative Layout Shift that penalizes our amp-only site with a CLS of magnitude 1.0.

Removing async removes the CLS issue but the "async" for v0.js is mandatory for amp... | non_defect | cls of caused by async for what s the issue async for is causing cumulative layout shift that penalizes our amp only site with a cls of magnitude removing async removes the cls issue but the async for js is mandatory for amp how do we reproduce the issue step make a boilerpl... | 0 |

2,099 | 2,603,976,316 | IssuesEvent | 2015-02-24 19:01:35 | chrsmith/nishazi6 | https://api.github.com/repos/chrsmith/nishazi6 | opened | 沈阳阴茎上长肉刺怎么回事 | auto-migrated Priority-Medium Type-Defect | ```

沈阳阴茎上长肉刺怎么回事〓沈陽軍區政治部醫院性病〓TEL��

�024-31023308〓成立于1946年,68年專注于性傳播疾病的研究和治�

��。位于沈陽市沈河區二緯路32號。是一所與新中國同建立共�

��煌的歷史悠久、設備精良、技術權威、專家云集,是預防、

保健、醫療、科研康復為一體的綜合性醫院。是國家首批公��

�甲等部隊醫院、全國首批醫療規范定點單位,是第四軍醫大�

��、東南大學等知名高等院校的教學醫院。曾被中國人民解放

軍空軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立��

�體二等功。

```

-----

Original issue reported on code.google.com by `q96... | 1.0 | 沈阳阴茎上长肉刺怎么回事 - ```

沈阳阴茎上长肉刺怎么回事〓沈陽軍區政治部醫院性病〓TEL��

�024-31023308〓成立于1946年,68年專注于性傳播疾病的研究和治�

��。位于沈陽市沈河區二緯路32號。是一所與新中國同建立共�

��煌的歷史悠久、設備精良、技術權威、專家云集,是預防、

保健、醫療、科研康復為一體的綜合性醫院。是國家首批公��

�甲等部隊醫院、全國首批醫療規范定點單位,是第四軍醫大�

��、東南大學等知名高等院校的教學醫院。曾被中國人民解放

軍空軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立��

�體二等功。

```

-----

Original issue reported on code.goo... | defect | 沈阳阴茎上长肉刺怎么回事 沈阳阴茎上长肉刺怎么回事〓沈陽軍區政治部醫院性病〓tel�� � 〓 , � ��。 。是一所與新中國同建立共� ��煌的歷史悠久、設備精良、技術權威、專家云集,是預防、 保健、醫療、科研康復為一體的綜合性醫院。是國家首批公�� �甲等部隊醫院、全國首批醫療規范定點單位,是第四軍醫大� ��、東南大學等知名高等院校的教學醫院。曾被中國人民解放 軍空軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立�� �體二等功。 original issue reported on code google com by gmail com on jun at ... | 1 |

17,621 | 3,012,772,026 | IssuesEvent | 2015-07-29 02:25:49 | yawlfoundation/yawl | https://api.github.com/repos/yawlfoundation/yawl | closed | Schema validation problem at runtime | auto-migrated Category-Component-ResService Priority-Medium Type-Defect | ```

Running the attached example and filling in the fields of the presented

form yields the error message attached.

```

Original issue reported on code.google.com by `arthurte...@gmail.com` on 18 Aug 2008 at 8:27

Attachments:

* [net57.xml](https://storage.googleapis.com/google-code-attachments/yawl/issue-105/comme... | 1.0 | Schema validation problem at runtime - ```

Running the attached example and filling in the fields of the presented

form yields the error message attached.

```

Original issue reported on code.google.com by `arthurte...@gmail.com` on 18 Aug 2008 at 8:27

Attachments:

* [net57.xml](https://storage.googleapis.com/googl... | defect | schema validation problem at runtime running the attached example and filling in the fields of the presented form yields the error message attached original issue reported on code google com by arthurte gmail com on aug at attachments | 1 |

43,129 | 11,494,661,174 | IssuesEvent | 2020-02-12 02:18:38 | NREL/EnergyPlus | https://api.github.com/repos/NREL/EnergyPlus | closed | Crash when using a WaterHeater:HeatPump and also a simple (standalone) WaterHeaterMixed | Defect | Issue overview

--------------

I get a hard crash, in calcStandardRatings. Will start by creating a proper unit test to isolate the bug, then fix it.

### Details

Some additional details for this issue (if relevant):

- Platform (Operating system, version)

- Version of EnergyPlus (if using an intermediate buil... | 1.0 | Crash when using a WaterHeater:HeatPump and also a simple (standalone) WaterHeaterMixed - Issue overview

--------------

I get a hard crash, in calcStandardRatings. Will start by creating a proper unit test to isolate the bug, then fix it.

### Details

Some additional details for this issue (if relevant):

- Pla... | defect | crash when using a waterheater heatpump and also a simple standalone waterheatermixed issue overview i get a hard crash in calcstandardratings will start by creating a proper unit test to isolate the bug then fix it details some additional details for this issue if relevant pla... | 1 |

101,350 | 31,035,385,581 | IssuesEvent | 2023-08-10 14:55:44 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | gen_isr_tables: unable to support riscv plic interrupt mapping | bug priority: medium area: Kernel area: Build System area: RISCV | **Describe the bug**

Currently, in a multi-level (nested) interrupt controller arrangement, the maximum number of IRQs supported for a given level is silently hard-coded with a few "magic numbers".

https://github.com/zephyrproject-rtos/zephyr/blob/main/scripts/build/gen_isr_tables.py#L18-L28

The per-level limit ... | 1.0 | gen_isr_tables: unable to support riscv plic interrupt mapping - **Describe the bug**

Currently, in a multi-level (nested) interrupt controller arrangement, the maximum number of IRQs supported for a given level is silently hard-coded with a few "magic numbers".

https://github.com/zephyrproject-rtos/zephyr/blob/mai... | non_defect | gen isr tables unable to support riscv plic interrupt mapping describe the bug currently in a multi level nested interrupt controller arrangement the maximum number of irqs supported for a given level is silently hard coded with a few magic numbers the per level limit set in python is however ... | 0 |

21,874 | 3,574,140,303 | IssuesEvent | 2016-01-27 10:25:58 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | Parallel execution of MapStore#store method for the same key triggered by IMap#flush | Team: Core Type: Defect | Hi,

this issue exists in Hazelcast 3.3-EA and also a build of the 3.3 development branch from August 7, 2014. It is related to issue #2128.

If you have a map with write-behind and a map store configured (eviction is not needed), and you call the flush method in the IMap, the map store's store method can be calle... | 1.0 | Parallel execution of MapStore#store method for the same key triggered by IMap#flush - Hi,

this issue exists in Hazelcast 3.3-EA and also a build of the 3.3 development branch from August 7, 2014. It is related to issue #2128.

If you have a map with write-behind and a map store configured (eviction is not needed... | defect | parallel execution of mapstore store method for the same key triggered by imap flush hi this issue exists in hazelcast ea and also a build of the development branch from august it is related to issue if you have a map with write behind and a map store configured eviction is not needed and... | 1 |

194,072 | 15,396,361,415 | IssuesEvent | 2021-03-03 20:31:48 | njgibbon/fend | https://api.github.com/repos/njgibbon/fend | closed | Research | documentation | Complete testing and example config on at least 3 repositories and record this in the research section of the docs. For example and illustration. | 1.0 | Research - Complete testing and example config on at least 3 repositories and record this in the research section of the docs. For example and illustration. | non_defect | research complete testing and example config on at least repositories and record this in the research section of the docs for example and illustration | 0 |

72,559 | 24,182,491,061 | IssuesEvent | 2022-09-23 10:11:59 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | opened | ClassNotFoundException for SQL query | Type: Defect | **Describe the bug**

An exception is thrown if an SQL query is run against a map containing custom classes.

Tested using 5.2.0-SNAPSHOT

```

Encountered an unexpected exception while executing the query:

Failed to deserialize query result value: java.lang.ClassNotFoundException: hazelcast.platform.demos.banking.t... | 1.0 | ClassNotFoundException for SQL query - **Describe the bug**

An exception is thrown if an SQL query is run against a map containing custom classes.

Tested using 5.2.0-SNAPSHOT

```

Encountered an unexpected exception while executing the query:

Failed to deserialize query result value: java.lang.ClassNotFoundExcept... | defect | classnotfoundexception for sql query describe the bug an exception is thrown if an sql query is run against a map containing custom classes tested using snapshot encountered an unexpected exception while executing the query failed to deserialize query result value java lang classnotfoundexcept... | 1 |

227,841 | 7,543,535,567 | IssuesEvent | 2018-04-17 15:45:51 | mandeep/sublime-text-conda | https://api.github.com/repos/mandeep/sublime-text-conda | closed | Renaming caused macOS 10.13 to not load old user configuration | Priority: High Status: Completed Type: Bug | The following line caused macOS 10.13 not to load the old user configuration file as Python's `open()` is case sensitive.

https://github.com/mandeep/sublime-text-conda/blob/44095806ae1f6b12e8f8a0f5f50eeb22ea355864/commands.py#L20

Since HFS+ and AFPS by default are case-insensitive, deleting and recreating the fil... | 1.0 | Renaming caused macOS 10.13 to not load old user configuration - The following line caused macOS 10.13 not to load the old user configuration file as Python's `open()` is case sensitive.

https://github.com/mandeep/sublime-text-conda/blob/44095806ae1f6b12e8f8a0f5f50eeb22ea355864/commands.py#L20

Since HFS+ and AFPS... | non_defect | renaming caused macos to not load old user configuration the following line caused macos not to load the old user configuration file as python s open is case sensitive since hfs and afps by default are case insensitive deleting and recreating the file will solve the problem | 0 |

32,685 | 13,912,126,030 | IssuesEvent | 2020-10-20 18:23:59 | an0rak-dev/gif | https://api.github.com/repos/an0rak-dev/gif | opened | Add a new Gif to a User's library | enhancement service:image | From the Home screen, the user will have the ability to open a form where she/he can upload a Gif in her/his library.

Here is the required informations :

* the name of the gif

* the gif itself (uploaded from the user's computer) | 1.0 | Add a new Gif to a User's library - From the Home screen, the user will have the ability to open a form where she/he can upload a Gif in her/his library.

Here is the required informations :

* the name of the gif

* the gif itself (uploaded from the user's computer) | non_defect | add a new gif to a user s library from the home screen the user will have the ability to open a form where she he can upload a gif in her his library here is the required informations the name of the gif the gif itself uploaded from the user s computer | 0 |

25,473 | 2,683,810,059 | IssuesEvent | 2015-03-28 10:30:59 | ConEmu/old-issues | https://api.github.com/repos/ConEmu/old-issues | closed | Emenu + Mouse RB | 2–5 stars bug imported Priority-Medium | _From [dreggy...@gmail.com](https://code.google.com/u/116951980404761225403/) on July 01, 2009 05:53:45_

Версия ОС:Win XP SP2 (2002)

Версия FAR:Far 2.0 (build 1013)

Версия Conemu: ConEmu .Maximus5.090628b.7z

Версия Emenu: drkns 19.06.2009 16:28:22 +0200 - build 31

При использовании макроса

[reg]

REGEDIT... | 1.0 | Emenu + Mouse RB - _From [dreggy...@gmail.com](https://code.google.com/u/116951980404761225403/) on July 01, 2009 05:53:45_

Версия ОС:Win XP SP2 (2002)

Версия FAR:Far 2.0 (build 1013)

Версия Conemu: ConEmu .Maximus5.090628b.7z

Версия Emenu: drkns 19.06.2009 16:28:22 +0200 - build 31

При использовании макр... | non_defect | emenu mouse rb from on july версия ос win xp версия far far build версия conemu conemu версия emenu drkns build при использовании макроса sequence mslclick mmode x enter mmode для отображения графического меню ме... | 0 |

70,361 | 23,139,892,890 | IssuesEvent | 2022-07-28 17:24:21 | NREL/EnergyPlus | https://api.github.com/repos/NREL/EnergyPlus | closed | Supply fan heat effect is incorrect in standard ratings calculation for two speed DX cooling coil | Defect | Issue overview

--------------

Supply fan heat effect used to determine standard net cooling capacity for standard ratings calculation in CalcTwoSpeedDXCoilStandardRating function is missing supply air mass flow rate as a multiplier in equation below:

```

FanHeatCorrection = state.dataLoopNodes->Node(F... | 1.0 | Supply fan heat effect is incorrect in standard ratings calculation for two speed DX cooling coil - Issue overview

--------------

Supply fan heat effect used to determine standard net cooling capacity for standard ratings calculation in CalcTwoSpeedDXCoilStandardRating function is missing supply air mass flow rate as... | defect | supply fan heat effect is incorrect in standard ratings calculation for two speed dx cooling coil issue overview supply fan heat effect used to determine standard net cooling capacity for standard ratings calculation in calctwospeeddxcoilstandardrating function is missing supply air mass flow rate as... | 1 |

79,777 | 29,046,999,324 | IssuesEvent | 2023-05-13 17:42:27 | zed-industries/community | https://api.github.com/repos/zed-industries/community | closed | Changes aren't tracked when git repo is created with Zed open | defect git | ### Check for existing issues

- [X] Completed

### Describe the bug

1. Create a directory

2. Open zed in this directory

3. Add a file to this directory and open it

4. Initialize a git repo and commit the file from step 3

5. Add changes to the file -> notice that changes aren't tracked in the git gutter

I... | 1.0 | Changes aren't tracked when git repo is created with Zed open - ### Check for existing issues

- [X] Completed

### Describe the bug

1. Create a directory

2. Open zed in this directory

3. Add a file to this directory and open it

4. Initialize a git repo and commit the file from step 3

5. Add changes to the f... | defect | changes aren t tracked when git repo is created with zed open check for existing issues completed describe the bug create a directory open zed in this directory add a file to this directory and open it initialize a git repo and commit the file from step add changes to the fil... | 1 |

15,675 | 2,868,980,707 | IssuesEvent | 2015-06-05 22:21:10 | dart-lang/sdk | https://api.github.com/repos/dart-lang/sdk | closed | "Please use --trace" message is busted on Windows | Area-Pub Priority-Unassigned Triaged Type-Defect | The message pub prints on an unexpected error requesting that users include the --trace results in the bug report uses single quotes for its arguments. The Windows command line interprets these as literal quotes, causing the suggested command not to work.

We should have some more advanced shell-escaping logic here. | 1.0 | "Please use --trace" message is busted on Windows - The message pub prints on an unexpected error requesting that users include the --trace results in the bug report uses single quotes for its arguments. The Windows command line interprets these as literal quotes, causing the suggested command not to work.

We should h... | defect | please use trace message is busted on windows the message pub prints on an unexpected error requesting that users include the trace results in the bug report uses single quotes for its arguments the windows command line interprets these as literal quotes causing the suggested command not to work we should h... | 1 |

143,539 | 19,185,917,937 | IssuesEvent | 2021-12-05 07:18:22 | Maagan-Michael/invitease | https://api.github.com/repos/Maagan-Michael/invitease | closed | Replace Docker base image | enhancement security | The Docker base image is rather insecure when compared to the Alpine Docker for Python, better replace it. | True | Replace Docker base image - The Docker base image is rather insecure when compared to the Alpine Docker for Python, better replace it. | non_defect | replace docker base image the docker base image is rather insecure when compared to the alpine docker for python better replace it | 0 |

72,420 | 24,109,757,108 | IssuesEvent | 2022-09-20 10:20:36 | matrix-org/synapse | https://api.github.com/repos/matrix-org/synapse | closed | memory leak since 1.53.0 | A-Presence S-Minor T-Defect O-Occasional A-Memory-Usage | ### Description

Since upgrade tomatrix-synapse-py3==1.53.0+focal1 from 1.49.2+bionic1 i observe memory leak on my instance.

The upgrade is concomitant to OS upgrade from Ubuntu bionic => focal / Python 3.6 to 3.8

We didn't change homeserver.yaml during upgrade

Our machine had 3 GB memory for 2 years and now 1... | 1.0 | memory leak since 1.53.0 - ### Description

Since upgrade tomatrix-synapse-py3==1.53.0+focal1 from 1.49.2+bionic1 i observe memory leak on my instance.

The upgrade is concomitant to OS upgrade from Ubuntu bionic => focal / Python 3.6 to 3.8

We didn't change homeserver.yaml during upgrade

Our machine had 3 GB m... | defect | memory leak since description since upgrade tomatrix synapse from i observe memory leak on my instance the upgrade is concomitant to os upgrade from ubuntu bionic focal python to we didn t change homeserver yaml during upgrade our machine had gb memory for year... | 1 |

57,488 | 15,812,960,871 | IssuesEvent | 2021-04-05 06:46:47 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Password reset has no complexity requirements | A-Password-Reset A-User-Settings P1 T-Defect | So you can change your password to be a single character if you like :( | 1.0 | Password reset has no complexity requirements - So you can change your password to be a single character if you like :( | defect | password reset has no complexity requirements so you can change your password to be a single character if you like | 1 |

42,397 | 11,013,696,577 | IssuesEvent | 2019-12-04 21:02:33 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | sparse.linalg.expm of an empty matrix | defect scipy.linalg | Computing the matrix exponential of an empty matrix raises a ValueError exception.

#### Reproducing code example:

```

scipy.linalg.expm(np.zeros((0, 0)))

```

#### Error message:

```

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/home/runner/.local/share/virtualenvs/pyt... | 1.0 | sparse.linalg.expm of an empty matrix - Computing the matrix exponential of an empty matrix raises a ValueError exception.

#### Reproducing code example:

```

scipy.linalg.expm(np.zeros((0, 0)))

```

#### Error message:

```

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/... | defect | sparse linalg expm of an empty matrix computing the matrix exponential of an empty matrix raises a valueerror exception reproducing code example scipy linalg expm np zeros error message traceback most recent call last file line in file home runner ... | 1 |

83,610 | 16,240,904,667 | IssuesEvent | 2021-05-07 09:25:02 | fac21/Week7--Server-Side-App-AANS | https://api.github.com/repos/fac21/Week7--Server-Side-App-AANS | opened | Fantastic work ! | code review | Great job everyone ! I love the idea (ngl got a tad caught up in your game and ended up playing it a fair few number of times). I see you made a nice kanban board and worked through the issues there methodically :)

Would have liked to see your actual story points to understand whether your estimates were accurate o... | 1.0 | Fantastic work ! - Great job everyone ! I love the idea (ngl got a tad caught up in your game and ended up playing it a fair few number of times). I see you made a nice kanban board and worked through the issues there methodically :)

Would have liked to see your actual story points to understand whether your estima... | non_defect | fantastic work great job everyone i love the idea ngl got a tad caught up in your game and ended up playing it a fair few number of times i see you made a nice kanban board and worked through the issues there methodically would have liked to see your actual story points to understand whether your estima... | 0 |

367,748 | 10,861,459,216 | IssuesEvent | 2019-11-14 11:07:48 | MarcTowler/ItsLit-RPG-Tracker | https://api.github.com/repos/MarcTowler/ItsLit-RPG-Tracker | opened | Introduce abilities | GAPI Low Priority enhancement | Abilities can be used once a fight by clicking an extra symbol in monsterFight. Abilities need AP which need to be replenished with items...

e.g.

Magic (various magic types, i.e. firebolt, blizzard etc) | 1.0 | Introduce abilities - Abilities can be used once a fight by clicking an extra symbol in monsterFight. Abilities need AP which need to be replenished with items...

e.g.

Magic (various magic types, i.e. firebolt, blizzard etc) | non_defect | introduce abilities abilities can be used once a fight by clicking an extra symbol in monsterfight abilities need ap which need to be replenished with items e g magic various magic types i e firebolt blizzard etc | 0 |

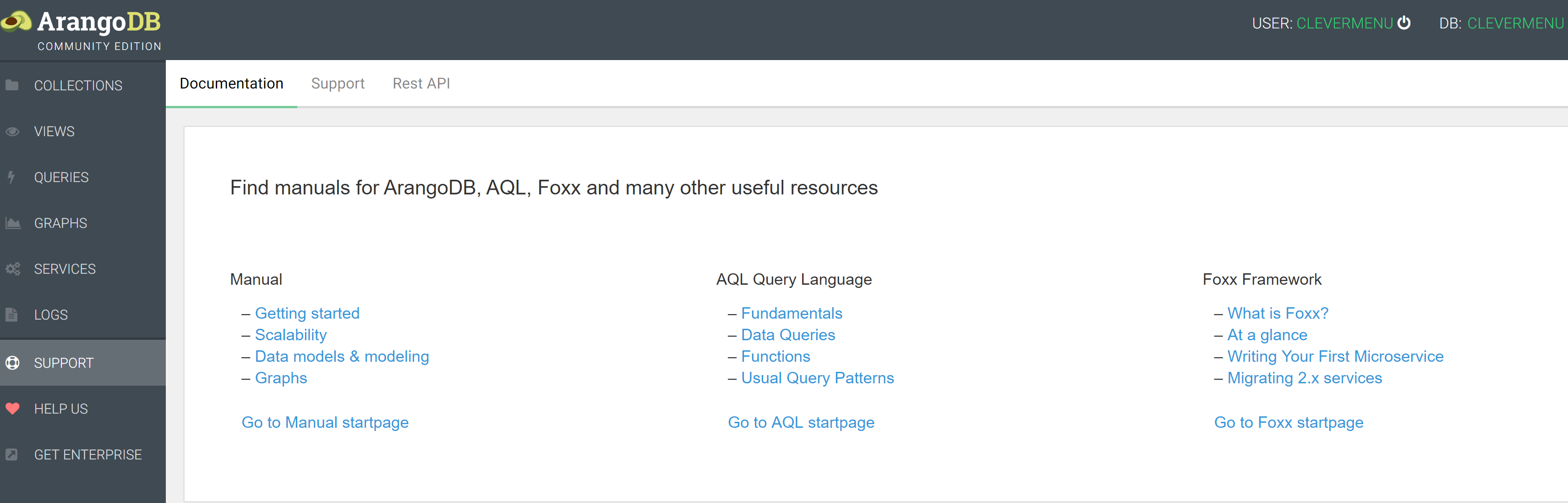

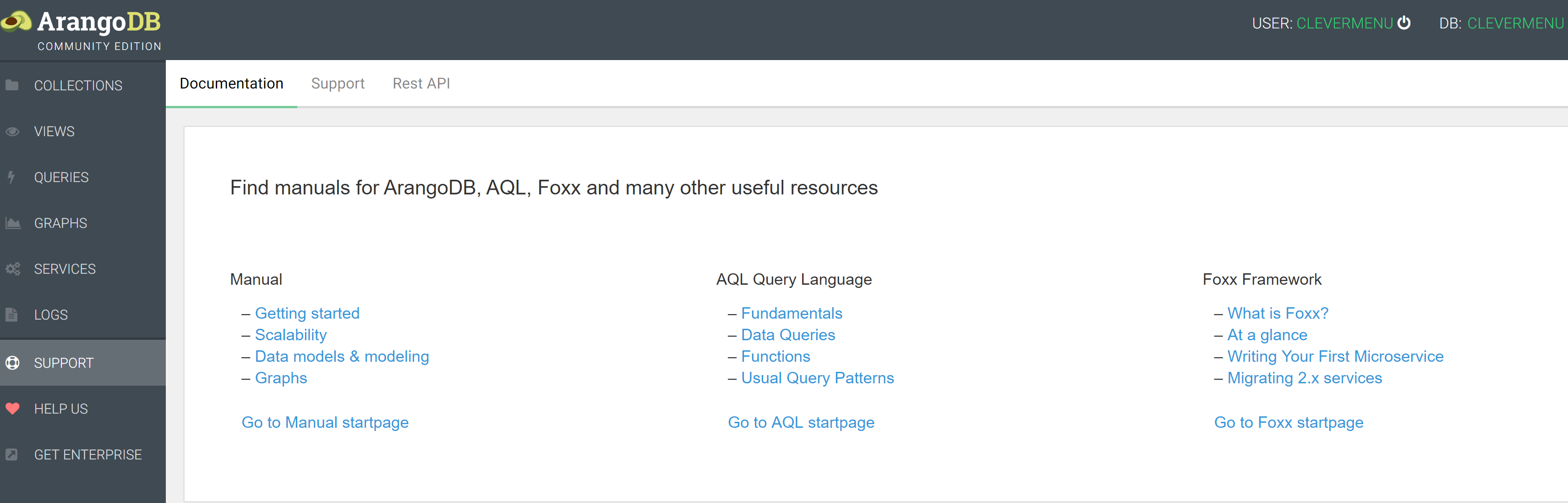

60,972 | 8,481,780,901 | IssuesEvent | 2018-10-25 16:37:43 | arangodb/arangodb | https://api.github.com/repos/arangodb/arangodb | closed | Support-Links should link to Documents corresponding the current version of Admin i.e. 3.4 ... | 1 Bug 2 Fixed 3 Documentation 3 UI | ArangoDb 3.4 RC1 - Admin

... instead of latest stable version i.e. 3.3

| 1.0 | Support-Links should link to Documents corresponding the current version of Admin i.e. 3.4 ... - ArangoDb 3.4 RC1 - Admin

... instead of latest stable version i.e. 3.3

| non_defect | support links should link to documents corresponding the current version of admin i e arangodb admin instead of latest stable version i e | 0 |

160,653 | 20,115,477,986 | IssuesEvent | 2022-02-07 19:02:59 | dotnet/aspnetcore | https://api.github.com/repos/dotnet/aspnetcore | closed | Receiving 'The feature is not supported' in Microsoft.AspNetCore.Authentication.Negotiate | area-security | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Describe the bug

I created a simple application to test authentication in a linux container.

```c#

using Microsoft.AspNetCore.Authentication.Negotiate;

var builder = WebApplication.CreateBuilder(args);

// Add service... | True | Receiving 'The feature is not supported' in Microsoft.AspNetCore.Authentication.Negotiate - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Describe the bug

I created a simple application to test authentication in a linux container.

```c#

using Microsoft.AspNetCore.Aut... | non_defect | receiving the feature is not supported in microsoft aspnetcore authentication negotiate is there an existing issue for this i have searched the existing issues describe the bug i created a simple application to test authentication in a linux container c using microsoft aspnetcore authe... | 0 |

38,149 | 8,674,831,462 | IssuesEvent | 2018-11-30 09:04:58 | luigirizzo/netmap-ipfw | https://api.github.com/repos/luigirizzo/netmap-ipfw | closed | Compile error in Linux | Priority-Medium Type-Defect auto-migrated | ```

What steps will reproduce the problem?

1. git clone https://code.google.com/p/netmap-ipfw/

2. make NETMAP_INC=/home/user/netmap-ipfw/sys/

3.

What is the expected output? What do you see instead?

Successful compile. Instead error:

*make: *** No rule to make target `pkt-gen.o', needed by `pkt-gen'. Stop.*

What... | 1.0 | Compile error in Linux - ```

What steps will reproduce the problem?

1. git clone https://code.google.com/p/netmap-ipfw/

2. make NETMAP_INC=/home/user/netmap-ipfw/sys/

3.

What is the expected output? What do you see instead?

Successful compile. Instead error:

*make: *** No rule to make target `pkt-gen.o', needed by ... | defect | compile error in linux what steps will reproduce the problem git clone make netmap inc home user netmap ipfw sys what is the expected output what do you see instead successful compile instead error make no rule to make target pkt gen o needed by pkt gen stop what version of ... | 1 |

66,034 | 19,905,095,628 | IssuesEvent | 2022-01-25 11:57:55 | openzfs/zfs | https://api.github.com/repos/openzfs/zfs | closed | Nginx in docker on zfs must now use 'senfile off;' | Type: Defect | I folks. I have an ArchLinux with zfs running a dokuwiki using this docker image : ghcr.io/linuxserver/dokuwiki:version-2020-07-29

It worked very well since a long time.

But a few days ago, it stopped working and started filling nginx log (in docker) with this kind of message :

```

[alert] 386#386: *310 sendf... | 1.0 | Nginx in docker on zfs must now use 'senfile off;' - I folks. I have an ArchLinux with zfs running a dokuwiki using this docker image : ghcr.io/linuxserver/dokuwiki:version-2020-07-29

It worked very well since a long time.

But a few days ago, it stopped working and started filling nginx log (in docker) with this k... | defect | nginx in docker on zfs must now use senfile off i folks i have an archlinux with zfs running a dokuwiki using this docker image ghcr io linuxserver dokuwiki version it worked very well since a long time but a few days ago it stopped working and started filling nginx log in docker with this kind o... | 1 |

309,627 | 9,477,692,265 | IssuesEvent | 2019-04-19 19:35:03 | abpframework/abp | https://api.github.com/repos/abpframework/abp | opened | Align AbpValidationActionFilter with MethodInvocationValidator | enhancement framework priority:high | MethodInvocationValidator works with IObjectMapper and it natually supports data annotations and fluent validation. It also handles attributes like EnableValidationAttribute and DisableValidationAttribute.

However, AspNet Core side uses IModelStateValidator and it only validates model state errors using Microsoft's ... | 1.0 | Align AbpValidationActionFilter with MethodInvocationValidator - MethodInvocationValidator works with IObjectMapper and it natually supports data annotations and fluent validation. It also handles attributes like EnableValidationAttribute and DisableValidationAttribute.

However, AspNet Core side uses IModelStateVali... | non_defect | align abpvalidationactionfilter with methodinvocationvalidator methodinvocationvalidator works with iobjectmapper and it natually supports data annotations and fluent validation it also handles attributes like enablevalidationattribute and disablevalidationattribute however aspnet core side uses imodelstatevali... | 0 |

89,079 | 11,195,438,148 | IssuesEvent | 2020-01-03 06:27:14 | jackfirth/rebellion | https://api.github.com/repos/jackfirth/rebellion | closed | Support pattern matching on type instances | enhancement needs api design pattern matching | The `rebellion/type` libraries should cooperate with the `racket/match` library so that pattern matching on instances of custom types is easy. | 1.0 | Support pattern matching on type instances - The `rebellion/type` libraries should cooperate with the `racket/match` library so that pattern matching on instances of custom types is easy. | non_defect | support pattern matching on type instances the rebellion type libraries should cooperate with the racket match library so that pattern matching on instances of custom types is easy | 0 |

10,629 | 2,622,177,928 | IssuesEvent | 2015-03-04 00:17:30 | byzhang/leveldb | https://api.github.com/repos/byzhang/leveldb | opened | Build issue on Solaris | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Untar 1.4.0 sources on Solaris 10, run gmake

The environment (partly set by the OpenCSW build system):

HOME="/home/maciej"

PATH="/opt/csw/gnu:/home/maciej/src/opencsw/pkg/leveldb/trunk/work/solaris10-i38

6/install-isa-amd64/opt/csw/bin/amd64:/home/maciej/src/opencsw/pkg/l... | 1.0 | Build issue on Solaris - ```

What steps will reproduce the problem?

1. Untar 1.4.0 sources on Solaris 10, run gmake

The environment (partly set by the OpenCSW build system):

HOME="/home/maciej"

PATH="/opt/csw/gnu:/home/maciej/src/opencsw/pkg/leveldb/trunk/work/solaris10-i38

6/install-isa-amd64/opt/csw/bin/amd64:/home... | defect | build issue on solaris what steps will reproduce the problem untar sources on solaris run gmake the environment partly set by the opencsw build system home home maciej path opt csw gnu home maciej src opencsw pkg leveldb trunk work install isa opt csw bin home maciej src opencsw... | 1 |

4,883 | 3,897,418,257 | IssuesEvent | 2016-04-16 12:01:59 | lionheart/openradar-mirror | https://api.github.com/repos/lionheart/openradar-mirror | opened | 15615517: Application Loader: wrong tooltip regarding the minimum size of the Preview | classification:ui/usability reproducible:always status:open | #### Description

When I create new In-App Purchase in Application Loader the tooltip on the "Browse..." says the preview should be "minimum 320x460 in 72 dpi". When I submit a file in 352x460, however, I got this message

ERROR ITMS-9000: "Image dimensions '352x460' of image file 'preview5.6.7.jpg' do not match the ... | True | 15615517: Application Loader: wrong tooltip regarding the minimum size of the Preview - #### Description

When I create new In-App Purchase in Application Loader the tooltip on the "Browse..." says the preview should be "minimum 320x460 in 72 dpi". When I submit a file in 352x460, however, I got this message

ERROR I... | non_defect | application loader wrong tooltip regarding the minimum size of the preview description when i create new in app purchase in application loader the tooltip on the browse says the preview should be minimum in dpi when i submit a file in however i got this message error itms image dimens... | 0 |

7,552 | 2,610,405,155 | IssuesEvent | 2015-02-26 20:11:41 | chrsmith/republic-at-war | https://api.github.com/repos/chrsmith/republic-at-war | opened | Mechis III Super Battle Droids | auto-migrated Priority-Medium Type-Defect | ```

On Mechis III, Super Battle Droids have the following errors in their text:

1. Their names are "B1 Battledroid", instead of "B2 Super Battle Droid"

(battledroid should be spelled separately, checked it)

2. In their descriptions, it is written (36 units per squad), when they are not

actually in a squad

```

----... | 1.0 | Mechis III Super Battle Droids - ```

On Mechis III, Super Battle Droids have the following errors in their text:

1. Their names are "B1 Battledroid", instead of "B2 Super Battle Droid"

(battledroid should be spelled separately, checked it)

2. In their descriptions, it is written (36 units per squad), when they are n... | defect | mechis iii super battle droids on mechis iii super battle droids have the following errors in their text their names are battledroid instead of super battle droid battledroid should be spelled separately checked it in their descriptions it is written units per squad when they are not ... | 1 |

40,878 | 10,209,573,646 | IssuesEvent | 2019-08-14 13:02:20 | primefaces/primefaces | https://api.github.com/repos/primefaces/primefaces | closed | SelectCheckboxMenu: Disabling a SelectItem does nothing | defect | ## 1) Environment

- PrimeFaces version: `7.0`

- Does it work on the newest released PrimeFaces version? Version?

- Does it work on the newest sources in GitHub? (Build by source -> https://github.com/primefaces/primefaces/wiki/Building-From-Source) `No`

- Application server + version: `Wildfly 13`

- Affected brows... | 1.0 | SelectCheckboxMenu: Disabling a SelectItem does nothing - ## 1) Environment

- PrimeFaces version: `7.0`

- Does it work on the newest released PrimeFaces version? Version?

- Does it work on the newest sources in GitHub? (Build by source -> https://github.com/primefaces/primefaces/wiki/Building-From-Source) `No`

- Ap... | defect | selectcheckboxmenu disabling a selectitem does nothing environment primefaces version does it work on the newest released primefaces version version does it work on the newest sources in github build by source no application server version wildfly affected browsers fir... | 1 |

53,568 | 13,261,922,076 | IssuesEvent | 2020-08-20 20:46:46 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | mysql DB I3OmDb at SPS does not have RDE values for Scintillator channels (Trac #1693) | Migrated from Trac defect other | The I3OmDb mysql DB does not have RDE values set for the 4 scinitillator channels. This, combined with #1684, caused fatal crashes of filter clients during the start of the 24 hr test run last night. good times were had by all trying to work around this.

<details>

<summary><em>Migrated from <a href="https://code.... | 1.0 | mysql DB I3OmDb at SPS does not have RDE values for Scintillator channels (Trac #1693) - The I3OmDb mysql DB does not have RDE values set for the 4 scinitillator channels. This, combined with #1684, caused fatal crashes of filter clients during the start of the 24 hr test run last night. good times were had by all tr... | defect | mysql db at sps does not have rde values for scintillator channels trac the mysql db does not have rde values set for the scinitillator channels this combined with caused fatal crashes of filter clients during the start of the hr test run last night good times were had by all trying to work arou... | 1 |

2,911 | 3,249,373,482 | IssuesEvent | 2015-10-18 03:52:44 | stedolan/jq | https://api.github.com/repos/stedolan/jq | closed | from_entries does not support "name" | usability | I had an array containing:

```

[{"name": "foo", "value":1}]

```

According to the docs, from_entries accepts key, Key, Name as valid keys. It's a little surprising that it supports 'key' or 'Key' but not 'name' or 'Name'. The docs are accurate but I spent about 10 minutes wondering why my filter didn't work as I... | True | from_entries does not support "name" - I had an array containing:

```

[{"name": "foo", "value":1}]

```

According to the docs, from_entries accepts key, Key, Name as valid keys. It's a little surprising that it supports 'key' or 'Key' but not 'name' or 'Name'. The docs are accurate but I spent about 10 minutes w... | non_defect | from entries does not support name i had an array containing according to the docs from entries accepts key key name as valid keys it s a little surprising that it supports key or key but not name or name the docs are accurate but i spent about minutes wondering why my filter didn ... | 0 |

78,930 | 27,825,168,895 | IssuesEvent | 2023-03-19 17:27:56 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | BUG: scipy 1.5.4 and 1.10.0 return different p-values for same data | defect scipy.stats | ### Describe your issue.

Hello, I was calculating p-value for my evaluation results and I found that different version of scipy can return different p-value for the same set of data.

The scipy 1.5.4 and 1.10.0, which is the default version for ubuntu 18.04 and 20.04, return different mannwhitneyu p-value. I'm wonde... | 1.0 | BUG: scipy 1.5.4 and 1.10.0 return different p-values for same data - ### Describe your issue.

Hello, I was calculating p-value for my evaluation results and I found that different version of scipy can return different p-value for the same set of data.

The scipy 1.5.4 and 1.10.0, which is the default version for ub... | defect | bug scipy and return different p values for same data describe your issue hello i was calculating p value for my evaluation results and i found that different version of scipy can return different p value for the same set of data the scipy and which is the default version for ubun... | 1 |

71,241 | 23,501,552,210 | IssuesEvent | 2022-08-18 08:52:19 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | opened | Wild failure on Clear Cache and Reload | T-Defect | ### Steps to reproduce

My Element Nightly Desktop Mac wasn't showing all of the avatars, so I tried to clear cache and reload. Instead of clearing the cache and reloading, everything within the Element window was replaced with this business:

<img width="1440" alt="Screenshot 2022-08-18 at 09 47 54" src="https://use... | 1.0 | Wild failure on Clear Cache and Reload - ### Steps to reproduce

My Element Nightly Desktop Mac wasn't showing all of the avatars, so I tried to clear cache and reload. Instead of clearing the cache and reloading, everything within the Element window was replaced with this business:

<img width="1440" alt="Screenshot... | defect | wild failure on clear cache and reload steps to reproduce my element nightly desktop mac wasn t showing all of the avatars so i tried to clear cache and reload instead of clearing the cache and reloading everything within the element window was replaced with this business img width alt screenshot ... | 1 |

2,371 | 2,607,898,560 | IssuesEvent | 2015-02-26 00:12:31 | chrsmithdemos/zen-coding | https://api.github.com/repos/chrsmithdemos/zen-coding | closed | Cursor position in base html elements | auto-migrated Milestone-0.6 Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. expand div

What is the expected output? What do you see instead?

Expected <div>|</div>

Got <div></div>

What version of the product are you using? On what operating system?

version 0.5 on Python 2.6 under Windows 7

Please provide any additional information below.

In versi... | 1.0 | Cursor position in base html elements - ```

What steps will reproduce the problem?

1. expand div

What is the expected output? What do you see instead?

Expected <div>|</div>

Got <div></div>

What version of the product are you using? On what operating system?

version 0.5 on Python 2.6 under Windows 7

Please provide an... | defect | cursor position in base html elements what steps will reproduce the problem expand div what is the expected output what do you see instead expected got what version of the product are you using on what operating system version on python under windows please provide any additional infor... | 1 |