Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

757

| labels

stringlengths 4

664

| body

stringlengths 3

261k

| index

stringclasses 10

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

232k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

108,420

| 4,344,652,587

|

IssuesEvent

|

2016-07-29 09:16:16

|

zeit/hyperterm

|

https://api.github.com/repos/zeit/hyperterm

|

opened

|

Schema for plugins and extensions

|

Priority: High Status: Community feedback wanted Type: Enhancement

|

Since most (if not all) of the projects within the node ecosystem are using this techniques, I think we should adapt one of them in favour of making the experience consistent for all users.

## Technique 1

- **Plugins:** `hyperterm-plugin-<name>` (example: "hyperterm-plugin-power")

- **Themes:** `hyperterm-theme-<name>` (example: "hyperterm-theme-yellow")

## Technique 2

- **Plugins:** `hyperterm-<plugin-name>` (example: "hyperterm-power")

- **Themes:** `hyperterm-<theme-name>` (example: "hyperterm-yellow")

-

I'm for technique 1 because it's much more explicit and forces developers to use (existing) plugins for extending their theme with complex functionality.

What I'd like to do is create a "theme" property as well as keep the existing "plugins" array within the config file. This way, the user only needs to enter the name of the theme/plugin and the string will automatically get prefixed behind the curtains and downloaded from npm:

```js

plugins: [

'links',

'blink',

'cwd'

]

```

Results in these packages getting installed:

- hyperterm-plugin-links

- hyperterm-plugin-blink

- hyperterm-plugin-cwd

AND

```js

theme: 'yellow'

```

Results in the "hyperterm-theme-yellow" theme getting installed.

|

1.0

|

Schema for plugins and extensions - Since most (if not all) of the projects within the node ecosystem are using this techniques, I think we should adapt one of them in favour of making the experience consistent for all users.

## Technique 1

- **Plugins:** `hyperterm-plugin-<name>` (example: "hyperterm-plugin-power")

- **Themes:** `hyperterm-theme-<name>` (example: "hyperterm-theme-yellow")

## Technique 2

- **Plugins:** `hyperterm-<plugin-name>` (example: "hyperterm-power")

- **Themes:** `hyperterm-<theme-name>` (example: "hyperterm-yellow")

-

I'm for technique 1 because it's much more explicit and forces developers to use (existing) plugins for extending their theme with complex functionality.

What I'd like to do is create a "theme" property as well as keep the existing "plugins" array within the config file. This way, the user only needs to enter the name of the theme/plugin and the string will automatically get prefixed behind the curtains and downloaded from npm:

```js

plugins: [

'links',

'blink',

'cwd'

]

```

Results in these packages getting installed:

- hyperterm-plugin-links

- hyperterm-plugin-blink

- hyperterm-plugin-cwd

AND

```js

theme: 'yellow'

```

Results in the "hyperterm-theme-yellow" theme getting installed.

|

non_defect

|

schema for plugins and extensions since most if not all of the projects within the node ecosystem are using this techniques i think we should adapt one of them in favour of making the experience consistent for all users technique plugins hyperterm plugin example hyperterm plugin power themes hyperterm theme example hyperterm theme yellow technique plugins hyperterm example hyperterm power themes hyperterm example hyperterm yellow i m for technique because it s much more explicit and forces developers to use existing plugins for extending their theme with complex functionality what i d like to do is create a theme property as well as keep the existing plugins array within the config file this way the user only needs to enter the name of the theme plugin and the string will automatically get prefixed behind the curtains and downloaded from npm js plugins links blink cwd results in these packages getting installed hyperterm plugin links hyperterm plugin blink hyperterm plugin cwd and js theme yellow results in the hyperterm theme yellow theme getting installed

| 0

|

384,747

| 11,402,661,334

|

IssuesEvent

|

2020-01-31 04:12:04

|

grpc/grpc

|

https://api.github.com/repos/grpc/grpc

|

closed

|

ImportError: cannot import name 'proto_pb2' from 'grpc' in python

|

disposition/stale kind/question lang/Python priority/P2

|

Hi everyone : D

**I create a proto.proto file and then create proto_pb2.py and proto_pb2_grpc,py with protoc :**

`>> python3 -m grpc_tools.protoc -I. grpc/proto.proto --python_out . --grpc_python_out . `

but when run the server.py it's **bug** is shown :

```

Traceback (most recent call last):

File "server.py", line 3, in <module>

import proto_pb2_grpc as proto_pb2_grpc

File "/home/mehrdad/Downloads/profile-dgraph/grpc/proto_pb2_grpc.py", line 4, in <module>

from grpc import proto_pb2 as grpc_dot_proto__pb2

ImportError: cannot import name 'proto_pb2' from 'grpc' (/home/mehrdad/anaconda3/lib/python3.7/site-packages/grpc/__init__.py)

```

**the server.py include :**

```

import grpc

import proto_pb2

import proto_pb2_grpc

and ...

```

Can you help me ?!

|

1.0

|

ImportError: cannot import name 'proto_pb2' from 'grpc' in python - Hi everyone : D

**I create a proto.proto file and then create proto_pb2.py and proto_pb2_grpc,py with protoc :**

`>> python3 -m grpc_tools.protoc -I. grpc/proto.proto --python_out . --grpc_python_out . `

but when run the server.py it's **bug** is shown :

```

Traceback (most recent call last):

File "server.py", line 3, in <module>

import proto_pb2_grpc as proto_pb2_grpc

File "/home/mehrdad/Downloads/profile-dgraph/grpc/proto_pb2_grpc.py", line 4, in <module>

from grpc import proto_pb2 as grpc_dot_proto__pb2

ImportError: cannot import name 'proto_pb2' from 'grpc' (/home/mehrdad/anaconda3/lib/python3.7/site-packages/grpc/__init__.py)

```

**the server.py include :**

```

import grpc

import proto_pb2

import proto_pb2_grpc

and ...

```

Can you help me ?!

|

non_defect

|

importerror cannot import name proto from grpc in python hi everyone d i create a proto proto file and then create proto py and proto grpc py with protoc m grpc tools protoc i grpc proto proto python out grpc python out but when run the server py it s bug is shown traceback most recent call last file server py line in import proto grpc as proto grpc file home mehrdad downloads profile dgraph grpc proto grpc py line in from grpc import proto as grpc dot proto importerror cannot import name proto from grpc home mehrdad lib site packages grpc init py the server py include import grpc import proto import proto grpc and can you help me

| 0

|

190,635

| 6,820,848,265

|

IssuesEvent

|

2017-11-07 15:09:27

|

DecipherNow/gm-fabric-dashboard

|

https://api.github.com/repos/DecipherNow/gm-fabric-dashboard

|

closed

|

Refactor GMServiceView into a scene under `src/Main/scenes`

|

in progress priority-3 refactor

|

Currently the service dashboard is structured as a subcomponent of the Fabric View. In reality, this should be a distinct scene that's a sibling of Fabric or Instance.

|

1.0

|

Refactor GMServiceView into a scene under `src/Main/scenes` - Currently the service dashboard is structured as a subcomponent of the Fabric View. In reality, this should be a distinct scene that's a sibling of Fabric or Instance.

|

non_defect

|

refactor gmserviceview into a scene under src main scenes currently the service dashboard is structured as a subcomponent of the fabric view in reality this should be a distinct scene that s a sibling of fabric or instance

| 0

|

308,413

| 9,438,950,783

|

IssuesEvent

|

2019-04-14 05:45:15

|

CS2113-AY1819S2-M11-2/main

|

https://api.github.com/repos/CS2113-AY1819S2-M11-2/main

|

closed

|

As a user, I must be able to delete notes that I no longer want

|

priority.High type.Story v1.4

|

So that I can view relevant tasks easily

|

1.0

|

As a user, I must be able to delete notes that I no longer want - So that I can view relevant tasks easily

|

non_defect

|

as a user i must be able to delete notes that i no longer want so that i can view relevant tasks easily

| 0

|

203,677

| 15,886,929,665

|

IssuesEvent

|

2021-04-10 00:06:16

|

GigiaJ/JondTextTranslator

|

https://api.github.com/repos/GigiaJ/JondTextTranslator

|

closed

|

Finish adding comments to methods in need

|

documentation enhancement

|

Most things are commented fairly well, but some things could still use comments to explain what they do better.

|

1.0

|

Finish adding comments to methods in need - Most things are commented fairly well, but some things could still use comments to explain what they do better.

|

non_defect

|

finish adding comments to methods in need most things are commented fairly well but some things could still use comments to explain what they do better

| 0

|

341,440

| 10,297,558,571

|

IssuesEvent

|

2019-08-28 08:51:52

|

webcompat/web-bugs

|

https://api.github.com/repos/webcompat/web-bugs

|

closed

|

www.instagram.com - design is broken

|

browser-fenix engine-gecko priority-critical

|

<!-- @browser: Firefox Mobile 70.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:70.0) Gecko/70.0 Firefox/70.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://www.instagram.com/

**Browser / Version**: Firefox Mobile 70.0

**Operating System**: Android

**Tested Another Browser**: Yes

**Problem type**: Design is broken

**Description**: Instagram stories doesn't appear well

**Steps to Reproduce**:

When you touch some story it shows in full screen horizontal and not in vertical

[](https://webcompat.com/uploads/2019/8/10b03147-c755-4409-a670-1312c32d3f5e.jpeg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_

|

1.0

|

www.instagram.com - design is broken - <!-- @browser: Firefox Mobile 70.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:70.0) Gecko/70.0 Firefox/70.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://www.instagram.com/

**Browser / Version**: Firefox Mobile 70.0

**Operating System**: Android

**Tested Another Browser**: Yes

**Problem type**: Design is broken

**Description**: Instagram stories doesn't appear well

**Steps to Reproduce**:

When you touch some story it shows in full screen horizontal and not in vertical

[](https://webcompat.com/uploads/2019/8/10b03147-c755-4409-a670-1312c32d3f5e.jpeg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_

|

non_defect

|

design is broken url browser version firefox mobile operating system android tested another browser yes problem type design is broken description instagram stories doesn t appear well steps to reproduce when you touch some story it shows in full screen horizontal and not in vertical browser configuration none from with ❤️

| 0

|

56,091

| 14,926,943,799

|

IssuesEvent

|

2021-01-24 13:37:32

|

primefaces/primefaces

|

https://api.github.com/repos/primefaces/primefaces

|

opened

|

DataTable: csv export does not work with field

|

defect

|

@melloware @Rapster The datatable exporter seems not work with the new column field attribute notation if i have not missed any additionally required adaptations.

Just add the following column to /showcase/ui/data/dataexporter/basic.xhtml to verify:

```

<p:column

field="code"

headerText="CodeNew"/>

```

The exporter delivers empty fields for such a column.

|

1.0

|

DataTable: csv export does not work with field - @melloware @Rapster The datatable exporter seems not work with the new column field attribute notation if i have not missed any additionally required adaptations.

Just add the following column to /showcase/ui/data/dataexporter/basic.xhtml to verify:

```

<p:column

field="code"

headerText="CodeNew"/>

```

The exporter delivers empty fields for such a column.

|

defect

|

datatable csv export does not work with field melloware rapster the datatable exporter seems not work with the new column field attribute notation if i have not missed any additionally required adaptations just add the following column to showcase ui data dataexporter basic xhtml to verify p column field code headertext codenew the exporter delivers empty fields for such a column

| 1

|

75,631

| 25,961,736,047

|

IssuesEvent

|

2022-12-19 00:21:00

|

vector-im/element-ios

|

https://api.github.com/repos/vector-im/element-ios

|

opened

|

Message text is truncated without warning

|

T-Defect

|

### Steps to reproduce

End of message is truncated on iOS element (and visable on web/elsewhere).

I sent said message into encrypted chat.

I haven't seen this issue since the iOS beta.

<img width="371" alt="non-truncated" src="https://user-images.githubusercontent.com/10473201/208327234-f5d5f133-d098-45b9-9354-d5e9ab8be2c7.png">

### Outcome

#### What did you expect?

Message to be displayed intact.

#### What happened instead?

Message is truncated.

### Your phone model

iPhone 14 Pro

### Operating system version

iOS 16.0.2

### Application version

Element iOS 1.9.13

### Homeserver

Synapse via matrix docker ansible deploy (not sure how to check version)

### Will you send logs?

Yes

|

1.0

|

Message text is truncated without warning - ### Steps to reproduce

End of message is truncated on iOS element (and visable on web/elsewhere).

I sent said message into encrypted chat.

I haven't seen this issue since the iOS beta.

<img width="371" alt="non-truncated" src="https://user-images.githubusercontent.com/10473201/208327234-f5d5f133-d098-45b9-9354-d5e9ab8be2c7.png">

### Outcome

#### What did you expect?

Message to be displayed intact.

#### What happened instead?

Message is truncated.

### Your phone model

iPhone 14 Pro

### Operating system version

iOS 16.0.2

### Application version

Element iOS 1.9.13

### Homeserver

Synapse via matrix docker ansible deploy (not sure how to check version)

### Will you send logs?

Yes

|

defect

|

message text is truncated without warning steps to reproduce end of message is truncated on ios element and visable on web elsewhere i sent said message into encrypted chat i haven t seen this issue since the ios beta img width alt non truncated src outcome what did you expect message to be displayed intact what happened instead message is truncated your phone model iphone pro operating system version ios application version element ios homeserver synapse via matrix docker ansible deploy not sure how to check version will you send logs yes

| 1

|

33,571

| 7,166,893,008

|

IssuesEvent

|

2018-01-29 18:43:20

|

cakephp/cakephp

|

https://api.github.com/repos/cakephp/cakephp

|

closed

|

Paginator numbers options first and last don't work like expected

|

Defect pagination

|

This is a (multiple allowed):

* [x] bug

* CakePHP Version: 3.5.10

* Platform and Target: Ubuntu 17.10 - Nginx 1.12.1 - PHP 7.1.11

### What you did

<?= $this->Paginator->numbers(['modulus' => 4, 'prev' => '<', 'next' => '>', 'first' => 1, 'last' => 1]) ?>

### What happened

This is the generated code for the last param

`<li class="page-item"><a href="/catalogue?page=247" class="page-link">1</a></li>`

### What you expected to happen

The page number should be the last aka 247, not 1 like the doc say about it, Whether you want first links generated, set to an integer to define the number of ‘first’ links to generate.

`<li class="page-item"><a href="/catalogue?page=247" class="page-link">247</a></li>`

### Possible fix

`if (is_int($last) && $params['page'] <= $lower) {`

[https://github.com/cakephp/cakephp/blob/master/src/View/Helper/PaginatorHelper.php#L1090](https://github.com/cakephp/cakephp/blob/master/src/View/Helper/PaginatorHelper.php#L1090)

`if (is_int($options['last']) && $params['page'] <= $lower) {`

The same goes for the first function

`if (is_int($first) && $params['page'] >= $first) {`

[https://github.com/cakephp/cakephp/blob/master/src/View/Helper/PaginatorHelper.php#L1029](https://github.com/cakephp/cakephp/blob/master/src/View/Helper/PaginatorHelper.php#L1029)

`if (is_int($options['first']) && $params['page'] >= $first) {`

|

1.0

|

Paginator numbers options first and last don't work like expected - This is a (multiple allowed):

* [x] bug

* CakePHP Version: 3.5.10

* Platform and Target: Ubuntu 17.10 - Nginx 1.12.1 - PHP 7.1.11

### What you did

<?= $this->Paginator->numbers(['modulus' => 4, 'prev' => '<', 'next' => '>', 'first' => 1, 'last' => 1]) ?>

### What happened

This is the generated code for the last param

`<li class="page-item"><a href="/catalogue?page=247" class="page-link">1</a></li>`

### What you expected to happen

The page number should be the last aka 247, not 1 like the doc say about it, Whether you want first links generated, set to an integer to define the number of ‘first’ links to generate.

`<li class="page-item"><a href="/catalogue?page=247" class="page-link">247</a></li>`

### Possible fix

`if (is_int($last) && $params['page'] <= $lower) {`

[https://github.com/cakephp/cakephp/blob/master/src/View/Helper/PaginatorHelper.php#L1090](https://github.com/cakephp/cakephp/blob/master/src/View/Helper/PaginatorHelper.php#L1090)

`if (is_int($options['last']) && $params['page'] <= $lower) {`

The same goes for the first function

`if (is_int($first) && $params['page'] >= $first) {`

[https://github.com/cakephp/cakephp/blob/master/src/View/Helper/PaginatorHelper.php#L1029](https://github.com/cakephp/cakephp/blob/master/src/View/Helper/PaginatorHelper.php#L1029)

`if (is_int($options['first']) && $params['page'] >= $first) {`

|

defect

|

paginator numbers options first and last don t work like expected this is a multiple allowed bug cakephp version platform and target ubuntu nginx php what you did paginator numbers what happened this is the generated code for the last param what you expected to happen the page number should be the last aka not like the doc say about it whether you want first links generated set to an integer to define the number of ‘first’ links to generate possible fix if is int last params lower if is int options params lower the same goes for the first function if is int first params first if is int options params first

| 1

|

1,856

| 2,603,972,526

|

IssuesEvent

|

2015-02-24 19:00:37

|

chrsmith/nishazi6

|

https://api.github.com/repos/chrsmith/nishazi6

|

opened

|

沈阳男性生殖器疣

|

auto-migrated Priority-Medium Type-Defect

|

```

沈阳男性生殖器疣〓沈陽軍區政治部醫院性病〓TEL:024-3102330

8〓成立于1946年,68年專注于性傳播疾病的研究和治療。位于�

��陽市沈河區二緯路32號。是一所與新中國同建立共輝煌的歷�

��悠久、設備精良、技術權威、專家云集,是預防、保健、醫

療、科研康復為一體的綜合性醫院。是國家首批公立甲等部��

�醫院、全國首批醫療規范定點單位,是第四軍醫大學、東南�

��學等知名高等院校的教學醫院。曾被中國人民解放軍空軍后

勤部衛生部評為衛生工作先進單位,先后兩次榮立集體二等��

�。

```

-----

Original issue reported on code.google.com by `q964105...@gmail.com` on 4 Jun 2014 at 8:00

|

1.0

|

沈阳男性生殖器疣 - ```

沈阳男性生殖器疣〓沈陽軍區政治部醫院性病〓TEL:024-3102330

8〓成立于1946年,68年專注于性傳播疾病的研究和治療。位于�

��陽市沈河區二緯路32號。是一所與新中國同建立共輝煌的歷�

��悠久、設備精良、技術權威、專家云集,是預防、保健、醫

療、科研康復為一體的綜合性醫院。是國家首批公立甲等部��

�醫院、全國首批醫療規范定點單位,是第四軍醫大學、東南�

��學等知名高等院校的教學醫院。曾被中國人民解放軍空軍后

勤部衛生部評為衛生工作先進單位,先后兩次榮立集體二等��

�。

```

-----

Original issue reported on code.google.com by `q964105...@gmail.com` on 4 Jun 2014 at 8:00

|

defect

|

沈阳男性生殖器疣 沈阳男性生殖器疣〓沈陽軍區政治部醫院性病〓tel: 〓 , 。位于� �� 。是一所與新中國同建立共輝煌的歷� ��悠久、設備精良、技術權威、專家云集,是預防、保健、醫 療、科研康復為一體的綜合性醫院。是國家首批公立甲等部�� �醫院、全國首批醫療規范定點單位,是第四軍醫大學、東南� ��學等知名高等院校的教學醫院。曾被中國人民解放軍空軍后 勤部衛生部評為衛生工作先進單位,先后兩次榮立集體二等�� �。 original issue reported on code google com by gmail com on jun at

| 1

|

66,386

| 20,169,425,762

|

IssuesEvent

|

2022-02-10 09:03:45

|

vector-im/element-web

|

https://api.github.com/repos/vector-im/element-web

|

closed

|

Notifications right panel: Something went wrong!

|

T-Defect X-Regression

|

### Steps to reproduce

1. Not sure, this just appeared.

### Outcome

> Something went wrong!

> Please [create a new issue](https://github.com/vector-im/element-web/issues/new/choose) on GitHub so that we can investigate this bug.

### Operating system

mac

### Browser information

chrome

### URL for webapp

develop.element.io

### Application version

762fc53c6150-react-254dbeeccb26-js-41bf8c2d5f4a

### Homeserver

element.io

### Will you send logs?

Yes

|

1.0

|

Notifications right panel: Something went wrong! - ### Steps to reproduce

1. Not sure, this just appeared.

### Outcome

> Something went wrong!

> Please [create a new issue](https://github.com/vector-im/element-web/issues/new/choose) on GitHub so that we can investigate this bug.

### Operating system

mac

### Browser information

chrome

### URL for webapp

develop.element.io

### Application version

762fc53c6150-react-254dbeeccb26-js-41bf8c2d5f4a

### Homeserver

element.io

### Will you send logs?

Yes

|

defect

|

notifications right panel something went wrong steps to reproduce not sure this just appeared outcome something went wrong please on github so that we can investigate this bug operating system mac browser information chrome url for webapp develop element io application version react js homeserver element io will you send logs yes

| 1

|

15,720

| 2,869,002,190

|

IssuesEvent

|

2015-06-05 22:30:06

|

dart-lang/sdk

|

https://api.github.com/repos/dart-lang/sdk

|

closed

|

Allow transformers to generate/modify code in packages as a result of whole-code evaluation

|

Area-Pkg Pkg-Barback Priority-Medium Triaged Type-Defect

|

Right now transformers can only modify code in packages when that package is being transformed, but there are a number of scenarios where the transformation on the package needs to be done with the results of some whole-program analysis.

The specific scenario I'm running into is generating code for Angular in a lazy-loading environment. In a non-lazy environment the generated caches are all injected into the application entry point, but in the lazy-loaded scenario the code needs to be injected into the root of the lazy library.

The issue is that the calculation of what needs to be cached needs to take the entire app into account.

One approach discussed is the 'global transformers' which execute after all package transformers and can modify every package contents.

Marking as critical as it is required for Angular's transformers once lazy-loading is supported.

|

1.0

|

Allow transformers to generate/modify code in packages as a result of whole-code evaluation - Right now transformers can only modify code in packages when that package is being transformed, but there are a number of scenarios where the transformation on the package needs to be done with the results of some whole-program analysis.

The specific scenario I'm running into is generating code for Angular in a lazy-loading environment. In a non-lazy environment the generated caches are all injected into the application entry point, but in the lazy-loaded scenario the code needs to be injected into the root of the lazy library.

The issue is that the calculation of what needs to be cached needs to take the entire app into account.

One approach discussed is the 'global transformers' which execute after all package transformers and can modify every package contents.

Marking as critical as it is required for Angular's transformers once lazy-loading is supported.

|

defect

|

allow transformers to generate modify code in packages as a result of whole code evaluation right now transformers can only modify code in packages when that package is being transformed but there are a number of scenarios where the transformation on the package needs to be done with the results of some whole program analysis the specific scenario i m running into is generating code for angular in a lazy loading environment in a non lazy environment the generated caches are all injected into the application entry point but in the lazy loaded scenario the code needs to be injected into the root of the lazy library the issue is that the calculation of what needs to be cached needs to take the entire app into account one approach discussed is the global transformers which execute after all package transformers and can modify every package contents marking as critical as it is required for angular s transformers once lazy loading is supported

| 1

|

464,648

| 13,337,046,015

|

IssuesEvent

|

2020-08-28 08:35:54

|

onvif/specs

|

https://api.github.com/repos/onvif/specs

|

opened

|

Clarify RecordingJobStateChange data

|

Priority minor Recording Control

|

#2701

Current Problem

The DTT tests that the payload does not contain Sources information which absolutely makes sense to limit the size of the event.

The specification specifies that other information shall be provided .

Proposal

Add to 5.25.1

The device shall omit the Sources parameter when emitting the event.

Attachments (0)

Oldest first Newest first

Comments only

Change History (1)

Changed 4 months ago by hans.busch@de.bosch.com

Correction, our sample forgot to repeat the State information in the ElementItem. The DTT was just creating wrong output.

My conclusion is that the whole element item should be made optional since the only mandatory items state and token repeat the simple item parameters.

Note that this CR would probably require a relaxation of the DTT.

|

1.0

|

Clarify RecordingJobStateChange data - #2701

Current Problem

The DTT tests that the payload does not contain Sources information which absolutely makes sense to limit the size of the event.

The specification specifies that other information shall be provided .

Proposal

Add to 5.25.1

The device shall omit the Sources parameter when emitting the event.

Attachments (0)

Oldest first Newest first

Comments only

Change History (1)

Changed 4 months ago by hans.busch@de.bosch.com

Correction, our sample forgot to repeat the State information in the ElementItem. The DTT was just creating wrong output.

My conclusion is that the whole element item should be made optional since the only mandatory items state and token repeat the simple item parameters.

Note that this CR would probably require a relaxation of the DTT.

|

non_defect

|

clarify recordingjobstatechange data current problem the dtt tests that the payload does not contain sources information which absolutely makes sense to limit the size of the event the specification specifies that other information shall be provided proposal add to the device shall omit the sources parameter when emitting the event attachments oldest first newest first comments only change history changed months ago by hans busch de bosch com correction our sample forgot to repeat the state information in the elementitem the dtt was just creating wrong output my conclusion is that the whole element item should be made optional since the only mandatory items state and token repeat the simple item parameters note that this cr would probably require a relaxation of the dtt

| 0

|

38,703

| 8,952,343,428

|

IssuesEvent

|

2019-01-25 16:17:30

|

svigerske/ipopt-donotuse

|

https://api.github.com/repos/svigerske/ipopt-donotuse

|

closed

|

wget not found when building IPOPT on MinGW/MSYS

|

Ipopt defect

|

Issue created by migration from Trac.

Original creator: jdpipe

Original creation time: 2010-05-06 07:38:33

Assignee: ipopt-team

Version: 3.8

I use the 'wget' from the GnuWin32 project, which by default installs in c:\Program Files\GnuWin32\bin. I then add that folder to my PATH in MSYS and all GnuWin32 commands are then available straight away. These are much easier to install than the MSYS/MinGW stuff, and work fine.

Problem is that the various 'get.PACKAGENAME' scripts in the ThirdParty section of IPOPT fail to detect my 'wget', because the call 'which wget | wc -w' returns a number more than 1, but a value of 1 is the only allowable.

The solution to this bug, I believe, is a to simply change 'wc -w' to 'wc -l' for all of these 'wget' calls. Alternatively, I wonder if the return value of the 'which' command can be checked?

For ease of building, maybe a 'get.ThirdParty' script could be provided that automatically downloaded all possible third-party libraries (except HSL which can't be automated...easily).

|

1.0

|

wget not found when building IPOPT on MinGW/MSYS - Issue created by migration from Trac.

Original creator: jdpipe

Original creation time: 2010-05-06 07:38:33

Assignee: ipopt-team

Version: 3.8

I use the 'wget' from the GnuWin32 project, which by default installs in c:\Program Files\GnuWin32\bin. I then add that folder to my PATH in MSYS and all GnuWin32 commands are then available straight away. These are much easier to install than the MSYS/MinGW stuff, and work fine.

Problem is that the various 'get.PACKAGENAME' scripts in the ThirdParty section of IPOPT fail to detect my 'wget', because the call 'which wget | wc -w' returns a number more than 1, but a value of 1 is the only allowable.

The solution to this bug, I believe, is a to simply change 'wc -w' to 'wc -l' for all of these 'wget' calls. Alternatively, I wonder if the return value of the 'which' command can be checked?

For ease of building, maybe a 'get.ThirdParty' script could be provided that automatically downloaded all possible third-party libraries (except HSL which can't be automated...easily).

|

defect

|

wget not found when building ipopt on mingw msys issue created by migration from trac original creator jdpipe original creation time assignee ipopt team version i use the wget from the project which by default installs in c program files bin i then add that folder to my path in msys and all commands are then available straight away these are much easier to install than the msys mingw stuff and work fine problem is that the various get packagename scripts in the thirdparty section of ipopt fail to detect my wget because the call which wget wc w returns a number more than but a value of is the only allowable the solution to this bug i believe is a to simply change wc w to wc l for all of these wget calls alternatively i wonder if the return value of the which command can be checked for ease of building maybe a get thirdparty script could be provided that automatically downloaded all possible third party libraries except hsl which can t be automated easily

| 1

|

8,706

| 7,374,082,286

|

IssuesEvent

|

2018-03-13 19:10:30

|

SpiderLabs/ModSecurity

|

https://api.github.com/repos/SpiderLabs/ModSecurity

|

closed

|

LibModSecurity + Nginx + ClamAV

|

3.x Pending feedback Type - Usage libmodsecurity

|

Hi all

Using latest version of ModSecuity and nginx connector.

However even in debug level 9 it still wont show me what issue is.

I have a rule below:

`SecRule FILES_TMPNAMES "@inspectFile /usr/local/nginx/sbin/test.lua" "phase:2,t:none,log,deny,msg:'Malicous File Attachment Identified.',id:99999"`

And in modsec settings I have enabled secresponsebody and following:

`SecTmpSaveUploadedFiles On

SecUploadDir /usr/local/nginx/tmp

SecUploadKeepFiles On

SecUploadFileMode 0777

`

I have a test php page to upload a file to nginx server but even with test virus it does not pick anything up. Here is debug log:

https://pastebin.com/GjdXM3yF

and here is the lua script

https://gist.github.com/angeloxx/97714f9108b3642460564acdcd37b34a

thanks

|

True

|

LibModSecurity + Nginx + ClamAV - Hi all

Using latest version of ModSecuity and nginx connector.

However even in debug level 9 it still wont show me what issue is.

I have a rule below:

`SecRule FILES_TMPNAMES "@inspectFile /usr/local/nginx/sbin/test.lua" "phase:2,t:none,log,deny,msg:'Malicous File Attachment Identified.',id:99999"`

And in modsec settings I have enabled secresponsebody and following:

`SecTmpSaveUploadedFiles On

SecUploadDir /usr/local/nginx/tmp

SecUploadKeepFiles On

SecUploadFileMode 0777

`

I have a test php page to upload a file to nginx server but even with test virus it does not pick anything up. Here is debug log:

https://pastebin.com/GjdXM3yF

and here is the lua script

https://gist.github.com/angeloxx/97714f9108b3642460564acdcd37b34a

thanks

|

non_defect

|

libmodsecurity nginx clamav hi all using latest version of modsecuity and nginx connector however even in debug level it still wont show me what issue is i have a rule below secrule files tmpnames inspectfile usr local nginx sbin test lua phase t none log deny msg malicous file attachment identified id and in modsec settings i have enabled secresponsebody and following sectmpsaveuploadedfiles on secuploaddir usr local nginx tmp secuploadkeepfiles on secuploadfilemode i have a test php page to upload a file to nginx server but even with test virus it does not pick anything up here is debug log and here is the lua script thanks

| 0

|

50,120

| 13,187,333,606

|

IssuesEvent

|

2020-08-13 03:04:47

|

icecube-trac/tix3

|

https://api.github.com/repos/icecube-trac/tix3

|

closed

|

ROOTSYS in `make tarball` tarball (Trac #149)

|

Migrated from Trac cmake defect

|

root under some circumstances rummages through various directories under ROOTSYS. What are they, does the 'make tarball' step need to copy more stuff under I3_BUILD/CMAKE_INSTALL_PREFIX/lib/?

<details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/149

, reported by troy and owned by troy_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2011-04-14T19:21:48",

"description": "root under some circumstances rummages through various directories under ROOTSYS. What are they, does the 'make tarball' step need to copy more stuff under I3_BUILD/CMAKE_INSTALL_PREFIX/lib/?\n\n",

"reporter": "troy",

"cc": "",

"resolution": "fixed",

"_ts": "1302808908000000",

"component": "cmake",

"summary": "ROOTSYS in `make tarball` tarball",

"priority": "normal",

"keywords": "",

"time": "2008-11-18T14:04:08",

"milestone": "",

"owner": "troy",

"type": "defect"

}

```

</p>

</details>

|

1.0

|

ROOTSYS in `make tarball` tarball (Trac #149) - root under some circumstances rummages through various directories under ROOTSYS. What are they, does the 'make tarball' step need to copy more stuff under I3_BUILD/CMAKE_INSTALL_PREFIX/lib/?

<details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/149

, reported by troy and owned by troy_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2011-04-14T19:21:48",

"description": "root under some circumstances rummages through various directories under ROOTSYS. What are they, does the 'make tarball' step need to copy more stuff under I3_BUILD/CMAKE_INSTALL_PREFIX/lib/?\n\n",

"reporter": "troy",

"cc": "",

"resolution": "fixed",

"_ts": "1302808908000000",

"component": "cmake",

"summary": "ROOTSYS in `make tarball` tarball",

"priority": "normal",

"keywords": "",

"time": "2008-11-18T14:04:08",

"milestone": "",

"owner": "troy",

"type": "defect"

}

```

</p>

</details>

|

defect

|

rootsys in make tarball tarball trac root under some circumstances rummages through various directories under rootsys what are they does the make tarball step need to copy more stuff under build cmake install prefix lib migrated from reported by troy and owned by troy json status closed changetime description root under some circumstances rummages through various directories under rootsys what are they does the make tarball step need to copy more stuff under build cmake install prefix lib n n reporter troy cc resolution fixed ts component cmake summary rootsys in make tarball tarball priority normal keywords time milestone owner troy type defect

| 1

|

26,580

| 4,768,870,588

|

IssuesEvent

|

2016-10-26 10:33:27

|

gbif/ipt

|

https://api.github.com/repos/gbif/ipt

|

closed

|

Project data are not saved

|

bug Metadata MetadataEditor Priority-High Type-Defect

|

Odd that I never noticed, but **project data has been removed** for all datasets where we populated this.

## Example: http://dataset.inbo.be/bird-tracking-gull-occurrences

* We definitely added project data to this dataset. We have a record of it in the [dataset metadata we maintain on GitHub](https://github.com/inbo/data-publication/blob/master/datasets/bird-tracking-gull-occurrences/metadata.md#project-data)

* All fields for project data are empty in the metadata editor

* No project information appears on the dataset page on [IPT](http://dataset.inbo.be/bird-tracking-gull-occurrences) nor [GBIF](http://www.gbif.org/dataset/83e20573-f7dd-4852-9159-21566e1e691e)

Oddly though, if I re-add project metadata in the editor (title & funding), that information is saved, but:

* Does not appear on the dataset preview page (http://data.inbo.be/ipt/resource/preview?r=bird-tracking-gull-occurrences)

* Is apparently lost on publication (i.e. all project data fields are empty again).

Does this bug only affects us? If not, since when do republication empty project information? Has project information ever appeared on GBIF?

|

1.0

|

Project data are not saved - Odd that I never noticed, but **project data has been removed** for all datasets where we populated this.

## Example: http://dataset.inbo.be/bird-tracking-gull-occurrences

* We definitely added project data to this dataset. We have a record of it in the [dataset metadata we maintain on GitHub](https://github.com/inbo/data-publication/blob/master/datasets/bird-tracking-gull-occurrences/metadata.md#project-data)

* All fields for project data are empty in the metadata editor

* No project information appears on the dataset page on [IPT](http://dataset.inbo.be/bird-tracking-gull-occurrences) nor [GBIF](http://www.gbif.org/dataset/83e20573-f7dd-4852-9159-21566e1e691e)

Oddly though, if I re-add project metadata in the editor (title & funding), that information is saved, but:

* Does not appear on the dataset preview page (http://data.inbo.be/ipt/resource/preview?r=bird-tracking-gull-occurrences)

* Is apparently lost on publication (i.e. all project data fields are empty again).

Does this bug only affects us? If not, since when do republication empty project information? Has project information ever appeared on GBIF?

|

defect

|

project data are not saved odd that i never noticed but project data has been removed for all datasets where we populated this example we definitely added project data to this dataset we have a record of it in the all fields for project data are empty in the metadata editor no project information appears on the dataset page on nor oddly though if i re add project metadata in the editor title funding that information is saved but does not appear on the dataset preview page is apparently lost on publication i e all project data fields are empty again does this bug only affects us if not since when do republication empty project information has project information ever appeared on gbif

| 1

|

62,204

| 17,023,872,188

|

IssuesEvent

|

2021-07-03 04:17:40

|

tomhughes/trac-tickets

|

https://api.github.com/repos/tomhughes/trac-tickets

|

closed

|

New zoom slower

|

Component: website Priority: minor Resolution: invalid Type: defect

|

**[Submitted to the original trac issue database at 2.25pm, Monday, 5th August 2013]**

Back when there was no animation during zooming, you could instantly zoom into a much higher magnification with a long scroll of the mouse wheel.

Now, every magnification is treated as an event during which no new commands are read.

That is, you have to wait a short time after each zooming event before you can zoom in again, making zooming a slower operation.

Now with the new map controls and the disappearance of the zoom scale, zooming is made further inconvenient.

Are there any benefits of this functionality? If not, can the old zooming behaviour be brought back?

|

1.0

|

New zoom slower - **[Submitted to the original trac issue database at 2.25pm, Monday, 5th August 2013]**

Back when there was no animation during zooming, you could instantly zoom into a much higher magnification with a long scroll of the mouse wheel.

Now, every magnification is treated as an event during which no new commands are read.

That is, you have to wait a short time after each zooming event before you can zoom in again, making zooming a slower operation.

Now with the new map controls and the disappearance of the zoom scale, zooming is made further inconvenient.

Are there any benefits of this functionality? If not, can the old zooming behaviour be brought back?

|

defect

|

new zoom slower back when there was no animation during zooming you could instantly zoom into a much higher magnification with a long scroll of the mouse wheel now every magnification is treated as an event during which no new commands are read that is you have to wait a short time after each zooming event before you can zoom in again making zooming a slower operation now with the new map controls and the disappearance of the zoom scale zooming is made further inconvenient are there any benefits of this functionality if not can the old zooming behaviour be brought back

| 1

|

11,532

| 5,028,663,002

|

IssuesEvent

|

2016-12-15 18:54:13

|

quicklisp/quicklisp-projects

|

https://api.github.com/repos/quicklisp/quicklisp-projects

|

closed

|

Please add stl

|

canbuild

|

Reads triangle data from binary STL files used in 3D graphics and for 3D printing.

Can be found here:

https://github.com/jl2/stl

|

1.0

|

Please add stl - Reads triangle data from binary STL files used in 3D graphics and for 3D printing.

Can be found here:

https://github.com/jl2/stl

|

non_defect

|

please add stl reads triangle data from binary stl files used in graphics and for printing can be found here

| 0

|

61,652

| 17,023,749,043

|

IssuesEvent

|

2021-07-03 03:38:15

|

tomhughes/trac-tickets

|

https://api.github.com/repos/tomhughes/trac-tickets

|

closed

|

osmarender vs Mapnik formats result in different latitudes for north edge of map

|

Component: admin Priority: minor Resolution: wontfix Type: defect

|

**[Submitted to the original trac issue database at 6.37am, Friday, 30th September 2011]**

Greetings,[[BR]]

This is my first post, please bear with me.[[BR]]

I've noticed that when generating map data from

[http://openstreetmap.org], that there appears to be some inconsistency with the latitude when exporting maps in different formats.[[BR]]

For example, if I export a map of Northern Ontario, Canada with manually entered coordinates:[[BR]]

57[[BR]]

-95.2 -79.5[[BR]]

52[[BR]]

[[BR]]

a. first in Mapnik format (png, 3050000)[[BR]]

b. then in Osmarender format (png, 7)[[BR]]

the two maps obviously differ in latitude: the osmarender map is further south. The differences are I believe mathematically significant. Map file outputs are attached.[[BR]]

I personally am most concerned about the coordinate accuracy of the

Osmarender generation. Generating different Osmarender images at

different Zooms gives a consistent approach to latitude, which is a good thing! 8-) [[BR]]

1. Ubuntu linux 10.04, Firefox 3.6.23[[BR]]

2. [http://openstreetmap.org] Export feature[[BR]]

3. step by step[[BR]]

3a open [http://openstreetmap.org] in firefox[[BR]]

3b click on Export button[[BR]]

3c click on Export button again to see N W E S text entry boxes[[BR]]

3d enter values above into respective coordinate text boxes[[BR]]

3e click on MapnikImage[[BR]]

3f enter maximum scale (2350000)[[BR]]

3g click Export, save image to disk (osm_nnontario_mapnik_2350000.png)[[BR]]

3h click OsmarenderImage[[BR]]

3i select Zoom of 7[[BR]]

3j click Export, save image to disk (osm_nnontario_osmarender_7b.png)[[BR]]

3k open both images and compare

4 Expected both images to cover the **same** coordinates.[[BR]]

5 Osmarender map is further south than Mapnik. Unsure which is at correct latitude.[[BR]]

CU,[[BR]]

james

|

1.0

|

osmarender vs Mapnik formats result in different latitudes for north edge of map - **[Submitted to the original trac issue database at 6.37am, Friday, 30th September 2011]**

Greetings,[[BR]]

This is my first post, please bear with me.[[BR]]

I've noticed that when generating map data from

[http://openstreetmap.org], that there appears to be some inconsistency with the latitude when exporting maps in different formats.[[BR]]

For example, if I export a map of Northern Ontario, Canada with manually entered coordinates:[[BR]]

57[[BR]]

-95.2 -79.5[[BR]]

52[[BR]]

[[BR]]

a. first in Mapnik format (png, 3050000)[[BR]]

b. then in Osmarender format (png, 7)[[BR]]

the two maps obviously differ in latitude: the osmarender map is further south. The differences are I believe mathematically significant. Map file outputs are attached.[[BR]]

I personally am most concerned about the coordinate accuracy of the

Osmarender generation. Generating different Osmarender images at

different Zooms gives a consistent approach to latitude, which is a good thing! 8-) [[BR]]

1. Ubuntu linux 10.04, Firefox 3.6.23[[BR]]

2. [http://openstreetmap.org] Export feature[[BR]]

3. step by step[[BR]]

3a open [http://openstreetmap.org] in firefox[[BR]]

3b click on Export button[[BR]]

3c click on Export button again to see N W E S text entry boxes[[BR]]

3d enter values above into respective coordinate text boxes[[BR]]

3e click on MapnikImage[[BR]]

3f enter maximum scale (2350000)[[BR]]

3g click Export, save image to disk (osm_nnontario_mapnik_2350000.png)[[BR]]

3h click OsmarenderImage[[BR]]

3i select Zoom of 7[[BR]]

3j click Export, save image to disk (osm_nnontario_osmarender_7b.png)[[BR]]

3k open both images and compare

4 Expected both images to cover the **same** coordinates.[[BR]]

5 Osmarender map is further south than Mapnik. Unsure which is at correct latitude.[[BR]]

CU,[[BR]]

james

|

defect

|

osmarender vs mapnik formats result in different latitudes for north edge of map greetings this is my first post please bear with me i ve noticed that when generating map data from that there appears to be some inconsistency with the latitude when exporting maps in different formats for example if i export a map of northern ontario canada with manually entered coordinates a first in mapnik format png b then in osmarender format png the two maps obviously differ in latitude the osmarender map is further south the differences are i believe mathematically significant map file outputs are attached i personally am most concerned about the coordinate accuracy of the osmarender generation generating different osmarender images at different zooms gives a consistent approach to latitude which is a good thing ubuntu linux firefox export feature step by step open in firefox click on export button click on export button again to see n w e s text entry boxes enter values above into respective coordinate text boxes click on mapnikimage enter maximum scale click export save image to disk osm nnontario mapnik png click osmarenderimage select zoom of click export save image to disk osm nnontario osmarender png open both images and compare expected both images to cover the same coordinates osmarender map is further south than mapnik unsure which is at correct latitude cu james

| 1

|

29,457

| 5,693,473,673

|

IssuesEvent

|

2017-04-15 01:52:32

|

hugotacito/django-pagination

|

https://api.github.com/repos/hugotacito/django-pagination

|

closed

|

InfinitePaginator should re-define start_index

|

auto-migrated Priority-Medium Type-Defect

|

```

The default django Page class defines start_index to have a special case when

self.paginator.count == 0. This will fail in the InfinitePaginator, which

needs to redefine start_index without that special case.

See trivial patch attached.

```

Original issue reported on code.google.com by `andrew.a...@gmail.com` on 14 Sep 2010 at 6:38

Attachments:

- [paginator.patch](https://storage.googleapis.com/google-code-attachments/django-pagination/issue-73/comment-0/paginator.patch)

|

1.0

|

InfinitePaginator should re-define start_index - ```

The default django Page class defines start_index to have a special case when

self.paginator.count == 0. This will fail in the InfinitePaginator, which

needs to redefine start_index without that special case.

See trivial patch attached.

```

Original issue reported on code.google.com by `andrew.a...@gmail.com` on 14 Sep 2010 at 6:38

Attachments:

- [paginator.patch](https://storage.googleapis.com/google-code-attachments/django-pagination/issue-73/comment-0/paginator.patch)

|

defect

|

infinitepaginator should re define start index the default django page class defines start index to have a special case when self paginator count this will fail in the infinitepaginator which needs to redefine start index without that special case see trivial patch attached original issue reported on code google com by andrew a gmail com on sep at attachments

| 1

|

193,379

| 6,884,457,625

|

IssuesEvent

|

2017-11-21 13:07:15

|

citusdata/citus

|

https://api.github.com/repos/citusdata/citus

|

closed

|

Feature regression on subquery pushdowns with reference tables from Citus 7 to 7.1

|

priority:high

|

@saicitus just reported that three queries that used to work in Citus 7, but that #no longer do on Citus 7.1. All queries are related to subquery pushdowns in reference tables in Citus.

@saicitus will share more details in the upcoming days.

<pre>

SELECT

DBE.id,

DBE.event,

DBE.userid,

EXTRACT(EPOCH FROM FSE.timestamp) AS my_time,

DBE.pointid,

NULL AS info

FROM database_event AS DBE

INNER JOIN (

SELECT DISTINCT test.id

FROM testpoint AS test

WHERE test.unique_id IN ('00cfdd')

) AS TEST_NAMES ON TEST_NAMES.id = DBE.pointid

ORDER BY DBE.my_time DESC

LIMIT 20

OFFSET 0;

</pre>

The `database_event` table is distributed on `pointid`. The `testpoint` table is a reference table.

In Citus 7, this query executes nicely. In Citus 7.1, we see the following error message:

<pre>

psql:q_distinct.sql:31: ERROR: cannot push down this subquery

DETAIL: Distinct on columns without partition column is currently unsupported

</pre>

Removing the `DISTINCT` clause also doesn't help. We then get this error message in Citus 7.1

<pre>

psql:q.sql:31: ERROR: cannot push down this subquery

DETAIL: Group by list without partition column is currently unsupported

</pre>

|

1.0

|

Feature regression on subquery pushdowns with reference tables from Citus 7 to 7.1 - @saicitus just reported that three queries that used to work in Citus 7, but that #no longer do on Citus 7.1. All queries are related to subquery pushdowns in reference tables in Citus.

@saicitus will share more details in the upcoming days.

<pre>

SELECT

DBE.id,

DBE.event,

DBE.userid,

EXTRACT(EPOCH FROM FSE.timestamp) AS my_time,

DBE.pointid,

NULL AS info

FROM database_event AS DBE

INNER JOIN (

SELECT DISTINCT test.id

FROM testpoint AS test

WHERE test.unique_id IN ('00cfdd')

) AS TEST_NAMES ON TEST_NAMES.id = DBE.pointid

ORDER BY DBE.my_time DESC

LIMIT 20

OFFSET 0;

</pre>

The `database_event` table is distributed on `pointid`. The `testpoint` table is a reference table.

In Citus 7, this query executes nicely. In Citus 7.1, we see the following error message:

<pre>

psql:q_distinct.sql:31: ERROR: cannot push down this subquery

DETAIL: Distinct on columns without partition column is currently unsupported

</pre>

Removing the `DISTINCT` clause also doesn't help. We then get this error message in Citus 7.1

<pre>

psql:q.sql:31: ERROR: cannot push down this subquery

DETAIL: Group by list without partition column is currently unsupported

</pre>

|

non_defect

|

feature regression on subquery pushdowns with reference tables from citus to saicitus just reported that three queries that used to work in citus but that no longer do on citus all queries are related to subquery pushdowns in reference tables in citus saicitus will share more details in the upcoming days select dbe id dbe event dbe userid extract epoch from fse timestamp as my time dbe pointid null as info from database event as dbe inner join select distinct test id from testpoint as test where test unique id in as test names on test names id dbe pointid order by dbe my time desc limit offset the database event table is distributed on pointid the testpoint table is a reference table in citus this query executes nicely in citus we see the following error message psql q distinct sql error cannot push down this subquery detail distinct on columns without partition column is currently unsupported removing the distinct clause also doesn t help we then get this error message in citus psql q sql error cannot push down this subquery detail group by list without partition column is currently unsupported

| 0

|

405,134

| 11,868,234,931

|

IssuesEvent

|

2020-03-26 08:48:31

|

ahmedkaludi/accelerated-mobile-pages

|

https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages

|

closed

|

AMP setting panel is not showing when ""WPML for AMP" pluign is active.

|

[Priority: HIGH] bug

|

The user is not able to see AMP Setting Panel(https://prnt.sc/rmbysh). getting blank page in amp settings. when we deactivate the "WPML for AMP" plugin then the AMP setting panel works properly.

Note: this issue is not occurring in the localhost.

Ref: https://secure.helpscout.net/conversation/1114266259/117604?folderId=3257665

|

1.0

|

AMP setting panel is not showing when ""WPML for AMP" pluign is active. - The user is not able to see AMP Setting Panel(https://prnt.sc/rmbysh). getting blank page in amp settings. when we deactivate the "WPML for AMP" plugin then the AMP setting panel works properly.

Note: this issue is not occurring in the localhost.

Ref: https://secure.helpscout.net/conversation/1114266259/117604?folderId=3257665

|

non_defect

|

amp setting panel is not showing when wpml for amp pluign is active the user is not able to see amp setting panel getting blank page in amp settings when we deactivate the wpml for amp plugin then the amp setting panel works properly note this issue is not occurring in the localhost ref

| 0

|

180,565

| 14,787,500,219

|

IssuesEvent

|

2021-01-12 07:43:32

|

PaxJaromeMalues/arma3_cram

|

https://api.github.com/repos/PaxJaromeMalues/arma3_cram

|

opened

|

hit chance

|

documentation enhancement

|

Currently the turret is set to fire for a random amount of time onto the targeted projectile. The length of those salvos is stored in `_shots`. For testing and development this provides enough variation in hit chances to be performant. Later on a real chance to hit might need to be implemented.

|

1.0

|

hit chance - Currently the turret is set to fire for a random amount of time onto the targeted projectile. The length of those salvos is stored in `_shots`. For testing and development this provides enough variation in hit chances to be performant. Later on a real chance to hit might need to be implemented.

|

non_defect

|

hit chance currently the turret is set to fire for a random amount of time onto the targeted projectile the length of those salvos is stored in shots for testing and development this provides enough variation in hit chances to be performant later on a real chance to hit might need to be implemented

| 0

|

42,098

| 17,029,352,332

|

IssuesEvent

|

2021-07-04 08:26:00

|

MOONGIFT/moongift.dev

|

https://api.github.com/repos/MOONGIFT/moongift.dev

|

opened

|

Napkin – Backend in the Browser

|

Backend Web API Web service

|

https://www.napkin.io/

Write, run, and deploy API endpoints, all from the browser. No infra. No boilerplate. No devops. Just code.

バックエンドサービス。

|

1.0

|

Napkin – Backend in the Browser - https://www.napkin.io/

Write, run, and deploy API endpoints, all from the browser. No infra. No boilerplate. No devops. Just code.

バックエンドサービス。

|

non_defect

|

napkin – backend in the browser write run and deploy api endpoints all from the browser no infra no boilerplate no devops just code バックエンドサービス。

| 0

|

77,294

| 26,902,772,390

|

IssuesEvent

|

2023-02-06 16:44:51

|

dotCMS/core

|

https://api.github.com/repos/dotCMS/core

|

opened

|

Error when update zero size file through Webdav.

|

Type : Defect Triage

|

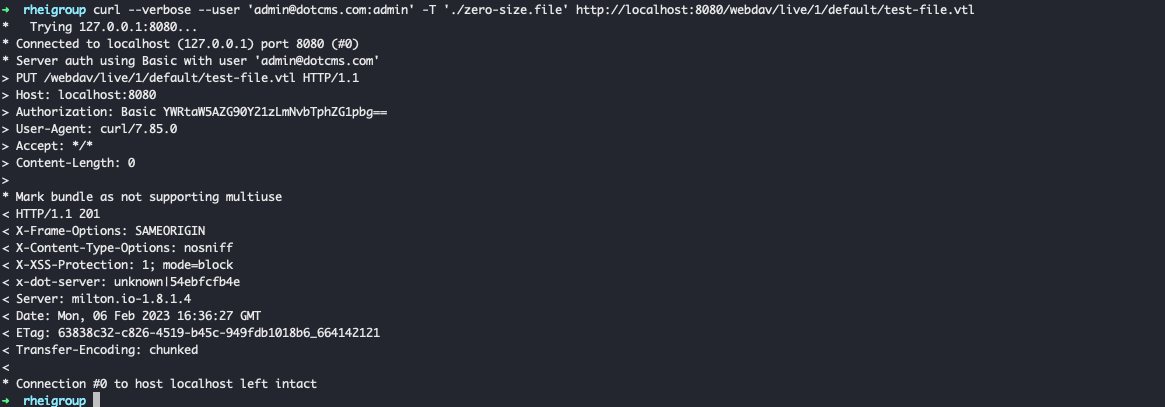

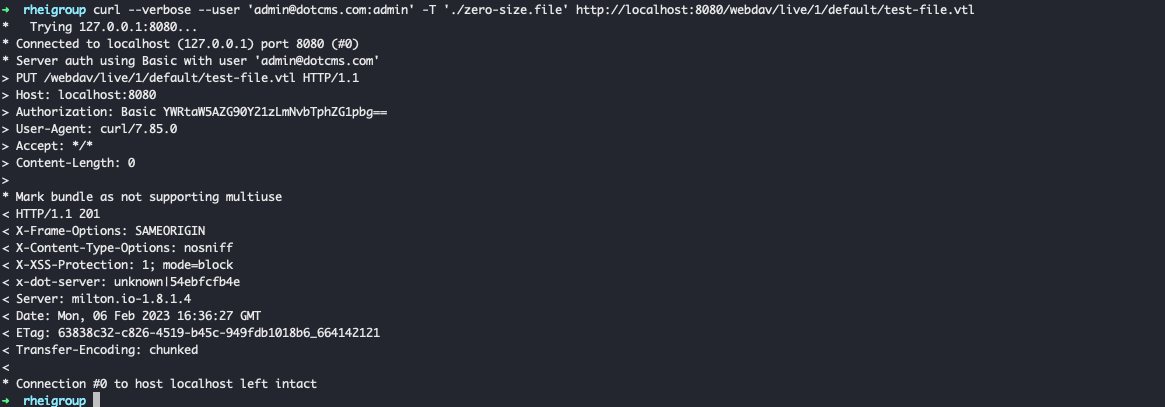

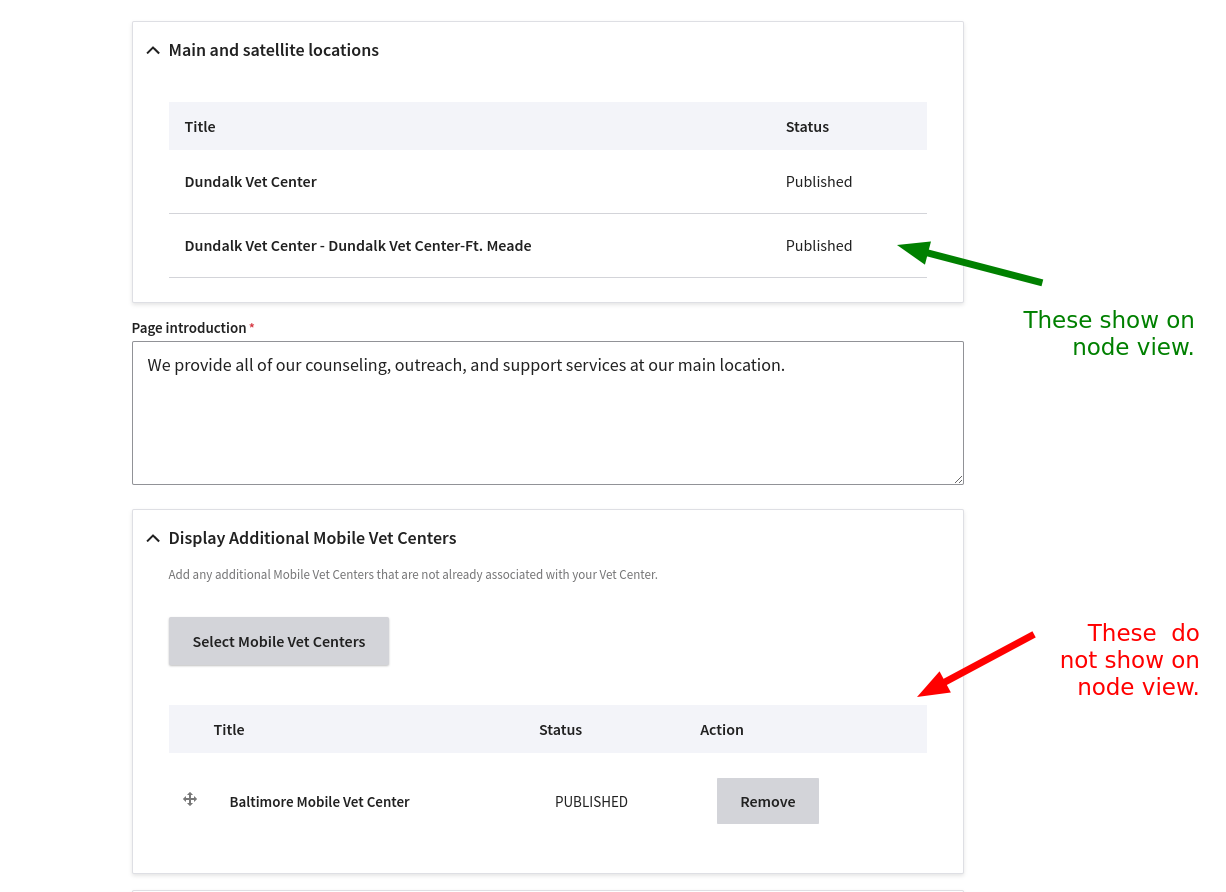

### Problem Statement

When try to update a zero size file through webdav an error appears, this error is related with a

`fk_contentlet_version_info_working` value.

### Steps to Reproduce

## Windows commands:

Create zero size file

`type nul > zero-size.file`

Create non-zero size file

`echo not empty > non-zero-size.file`

Check the file sizes

`dir *.file`

Upload non-zero size file with webdav using curl

`curl --verbose --user "admin@dotcms.com:admin" -T ".\non-zero-size.file" https://demo.dotcms.com/webdav/live/1/demo.dotcms.com/test-file.vtl`

Upload zero size file with webdav using curl

`curl --verbose --user "admin@dotcms.com:admin" -T ".\zero-size.file" https://demo.dotcms.com/webdav/live/1/demo.dotcms.com/test-file.vtl`

## Linux commands:

Create zero size file

`touch zero-size.file`

Create non-zero size file

`echo not empty > non-zero-size.file`

Check the file sizes

`ls *.file -al`

Upload non-zero size file with webdav using curl

`curl --verbose --user 'admin@dotcms.com:admin' -T './non-zero-size.file' https://demo.dotcms.com/webdav/live/1/demo.dotcms.com/test-file.vtl`

Upload zero size file with webdav using curl

`curl --verbose --user 'admin@dotcms.com:admin' -T './zero-size.file' https://demo.dotcms.com/webdav/live/1/demo.dotcms.com/test-file.vtl`

### Following error message appears when upload/update zero size file:

```

[06/02/23 16:37:51:138 UTC] WARN webdav.HostResourceImpl: An error occurred while creating new file: test-file.vtl in this path: /webdav/live/1/default/ ERROR: update or delete on table "contentlet" violates foreign key constraint "fk_contentlet_version_info_working" on table "contentlet_version_info"

Detail: Key (inode)=(3a5ebb93-7dd8-4898-bb30-3fb959d5b222) is still referenced from table "contentlet_version_info".{

"SQL": ["delete from contentlet where inode=?"],

"maxRows": [-1],

"offest": [0],

"params": "3a5ebb93-7dd8-4898-bb30-3fb959d5b222"

}

com.dotmarketing.exception.DotDataException: ERROR: update or delete on table "contentlet" violates foreign key constraint "fk_contentlet_version_info_working" on table "contentlet_version_info"

Detail: Key (inode)=(3a5ebb93-7dd8-4898-bb30-3fb959d5b222) is still referenced from table "contentlet_version_info".{

"SQL": ["delete from contentlet where inode=?"],

"maxRows": [-1],

"offest": [0],

"params": "3a5ebb93-7dd8-4898-bb30-3fb959d5b222"

}

at com.dotmarketing.common.db.DotConnect.loadResult(DotConnect.java:273) ~[dotcms_22.03.2_999999.jar:?]

at com.dotcms.content.elasticsearch.business.ESContentFactoryImpl.delete(ESContentFactoryImpl.java:603) ~[dotcms_22.03.2_999999.jar:?]

at com.dotcms.content.elasticsearch.business.ESContentFactoryImpl.deleteVersion(ESContentFactoryImpl.java:766) ~[dotcms_22.03.2_999999.jar:?]

at com.dotcms.content.elasticsearch.business.ESContentletAPIImpl.deleteVersion_aroundBody88(ESContentletAPIImpl.java:2870) ~[dotcms_22.03.2_999999.jar:?]

at com.dotcms.content.elasticsearch.business.ESContentletAPIImpl$AjcClosure89.run(ESContentletAPIImpl.java:1) ~[dotcms_22.03.2_999999.jar:?]

at org.aspectj.runtime.reflect.JoinPointImpl.proceed(JoinPointImpl.java:149) ~[aspectjrt-1.9.2.jar:?]

at com.dotcms.aspects.aspectj.AspectJDelegateMethodInvocation.proceed(AspectJDelegateMethodInvocation.java:42) ~[dotcms_22.03.2_999999.jar:?]

at com.dotmarketing.db.LocalTransaction.wrapReturnWithListeners(LocalTransaction.java:119) ~[dotcms_22.03.2_999999.jar:?]

at com.dotcms.aspects.interceptors.WrapInTransactionMethodInterceptor.invoke(WrapInTransactionMethodInterceptor.java:30) ~[dotcms_22.03.2_999999.jar:?]

at com.dotcms.aspects.aspectj.WrapInTransactionAspect.invoke(WrapInTransactionAspect.java:41) ~[dotcms_22.03.2_999999.jar:?]

at com.dotcms.content.elasticsearch.business.ESContentletAPIImpl.deleteVersion(ESContentletAPIImpl.java:2856) ~[dotcms_22.03.2_999999.jar:?]

at com.dotmarketing.portlets.contentlet.business.ContentletAPIInterceptor.deleteVersion(ContentletAPIInterceptor.java:2362) ~[dotcms_22.03.2_999999.jar:?]

at com.dotmarketing.webdav.DotWebdavHelper.setResourceContent(DotWebdavHelper.java:849) ~[dotcms_22.03.2_999999.jar:?]

```

f894b.png)

### Acceptance Criteria

In both cases (zero file size or non-zero file size) the file can be updated/upload and it's showing good results in backend dashboard, but those error messages still in the system logs.

### dotCMS Version

This was tested locally with 22.03.2 version and demo site 23.01.

### Proposed Objective

Application Performance

### Proposed Priority

Priority 3 - Average

### External Links... Slack Conversations, Support Tickets, Figma Designs, etc.

https://dotcms.zendesk.com/agent/tickets/109728

### Assumptions & Initiation Needs

_No response_

### Sub-Tasks & Estimates

_No response_

|

1.0

|

Error when update zero size file through Webdav. - ### Problem Statement

When try to update a zero size file through webdav an error appears, this error is related with a

`fk_contentlet_version_info_working` value.

### Steps to Reproduce

## Windows commands:

Create zero size file

`type nul > zero-size.file`

Create non-zero size file

`echo not empty > non-zero-size.file`

Check the file sizes

`dir *.file`

Upload non-zero size file with webdav using curl

`curl --verbose --user "admin@dotcms.com:admin" -T ".\non-zero-size.file" https://demo.dotcms.com/webdav/live/1/demo.dotcms.com/test-file.vtl`

Upload zero size file with webdav using curl

`curl --verbose --user "admin@dotcms.com:admin" -T ".\zero-size.file" https://demo.dotcms.com/webdav/live/1/demo.dotcms.com/test-file.vtl`

## Linux commands:

Create zero size file

`touch zero-size.file`

Create non-zero size file

`echo not empty > non-zero-size.file`

Check the file sizes

`ls *.file -al`

Upload non-zero size file with webdav using curl

`curl --verbose --user 'admin@dotcms.com:admin' -T './non-zero-size.file' https://demo.dotcms.com/webdav/live/1/demo.dotcms.com/test-file.vtl`

Upload zero size file with webdav using curl

`curl --verbose --user 'admin@dotcms.com:admin' -T './zero-size.file' https://demo.dotcms.com/webdav/live/1/demo.dotcms.com/test-file.vtl`

### Following error message appears when upload/update zero size file:

```

[06/02/23 16:37:51:138 UTC] WARN webdav.HostResourceImpl: An error occurred while creating new file: test-file.vtl in this path: /webdav/live/1/default/ ERROR: update or delete on table "contentlet" violates foreign key constraint "fk_contentlet_version_info_working" on table "contentlet_version_info"

Detail: Key (inode)=(3a5ebb93-7dd8-4898-bb30-3fb959d5b222) is still referenced from table "contentlet_version_info".{

"SQL": ["delete from contentlet where inode=?"],

"maxRows": [-1],

"offest": [0],

"params": "3a5ebb93-7dd8-4898-bb30-3fb959d5b222"

}

com.dotmarketing.exception.DotDataException: ERROR: update or delete on table "contentlet" violates foreign key constraint "fk_contentlet_version_info_working" on table "contentlet_version_info"

Detail: Key (inode)=(3a5ebb93-7dd8-4898-bb30-3fb959d5b222) is still referenced from table "contentlet_version_info".{

"SQL": ["delete from contentlet where inode=?"],

"maxRows": [-1],

"offest": [0],

"params": "3a5ebb93-7dd8-4898-bb30-3fb959d5b222"

}

at com.dotmarketing.common.db.DotConnect.loadResult(DotConnect.java:273) ~[dotcms_22.03.2_999999.jar:?]

at com.dotcms.content.elasticsearch.business.ESContentFactoryImpl.delete(ESContentFactoryImpl.java:603) ~[dotcms_22.03.2_999999.jar:?]

at com.dotcms.content.elasticsearch.business.ESContentFactoryImpl.deleteVersion(ESContentFactoryImpl.java:766) ~[dotcms_22.03.2_999999.jar:?]

at com.dotcms.content.elasticsearch.business.ESContentletAPIImpl.deleteVersion_aroundBody88(ESContentletAPIImpl.java:2870) ~[dotcms_22.03.2_999999.jar:?]

at com.dotcms.content.elasticsearch.business.ESContentletAPIImpl$AjcClosure89.run(ESContentletAPIImpl.java:1) ~[dotcms_22.03.2_999999.jar:?]

at org.aspectj.runtime.reflect.JoinPointImpl.proceed(JoinPointImpl.java:149) ~[aspectjrt-1.9.2.jar:?]

at com.dotcms.aspects.aspectj.AspectJDelegateMethodInvocation.proceed(AspectJDelegateMethodInvocation.java:42) ~[dotcms_22.03.2_999999.jar:?]

at com.dotmarketing.db.LocalTransaction.wrapReturnWithListeners(LocalTransaction.java:119) ~[dotcms_22.03.2_999999.jar:?]

at com.dotcms.aspects.interceptors.WrapInTransactionMethodInterceptor.invoke(WrapInTransactionMethodInterceptor.java:30) ~[dotcms_22.03.2_999999.jar:?]

at com.dotcms.aspects.aspectj.WrapInTransactionAspect.invoke(WrapInTransactionAspect.java:41) ~[dotcms_22.03.2_999999.jar:?]

at com.dotcms.content.elasticsearch.business.ESContentletAPIImpl.deleteVersion(ESContentletAPIImpl.java:2856) ~[dotcms_22.03.2_999999.jar:?]

at com.dotmarketing.portlets.contentlet.business.ContentletAPIInterceptor.deleteVersion(ContentletAPIInterceptor.java:2362) ~[dotcms_22.03.2_999999.jar:?]

at com.dotmarketing.webdav.DotWebdavHelper.setResourceContent(DotWebdavHelper.java:849) ~[dotcms_22.03.2_999999.jar:?]

```

f894b.png)

### Acceptance Criteria

In both cases (zero file size or non-zero file size) the file can be updated/upload and it's showing good results in backend dashboard, but those error messages still in the system logs.

### dotCMS Version

This was tested locally with 22.03.2 version and demo site 23.01.

### Proposed Objective

Application Performance

### Proposed Priority

Priority 3 - Average

### External Links... Slack Conversations, Support Tickets, Figma Designs, etc.

https://dotcms.zendesk.com/agent/tickets/109728

### Assumptions & Initiation Needs

_No response_

### Sub-Tasks & Estimates

_No response_

|

defect

|

error when update zero size file through webdav problem statement when try to update a zero size file through webdav an error appears this error is related with a fk contentlet version info working value steps to reproduce windows commands create zero size file type nul zero size file create non zero size file echo not empty non zero size file check the file sizes dir file upload non zero size file with webdav using curl curl verbose user admin dotcms com admin t non zero size file upload zero size file with webdav using curl curl verbose user admin dotcms com admin t zero size file linux commands create zero size file touch zero size file create non zero size file echo not empty non zero size file check the file sizes ls file al upload non zero size file with webdav using curl curl verbose user admin dotcms com admin t non zero size file upload zero size file with webdav using curl curl verbose user admin dotcms com admin t zero size file following error message appears when upload update zero size file warn webdav hostresourceimpl an error occurred while creating new file test file vtl in this path webdav live default error update or delete on table contentlet violates foreign key constraint fk contentlet version info working on table contentlet version info detail key inode is still referenced from table contentlet version info sql maxrows offest params com dotmarketing exception dotdataexception error update or delete on table contentlet violates foreign key constraint fk contentlet version info working on table contentlet version info detail key inode is still referenced from table contentlet version info sql maxrows offest params at com dotmarketing common db dotconnect loadresult dotconnect java at com dotcms content elasticsearch business escontentfactoryimpl delete escontentfactoryimpl java at com dotcms content elasticsearch business escontentfactoryimpl deleteversion escontentfactoryimpl java at com dotcms content elasticsearch business escontentletapiimpl deleteversion escontentletapiimpl java at com dotcms content elasticsearch business escontentletapiimpl run escontentletapiimpl java at org aspectj runtime reflect joinpointimpl proceed joinpointimpl java at com dotcms aspects aspectj aspectjdelegatemethodinvocation proceed aspectjdelegatemethodinvocation java at com dotmarketing db localtransaction wrapreturnwithlisteners localtransaction java at com dotcms aspects interceptors wrapintransactionmethodinterceptor invoke wrapintransactionmethodinterceptor java at com dotcms aspects aspectj wrapintransactionaspect invoke wrapintransactionaspect java at com dotcms content elasticsearch business escontentletapiimpl deleteversion escontentletapiimpl java at com dotmarketing portlets contentlet business contentletapiinterceptor deleteversion contentletapiinterceptor java at com dotmarketing webdav dotwebdavhelper setresourcecontent dotwebdavhelper java png acceptance criteria in both cases zero file size or non zero file size the file can be updated upload and it s showing good results in backend dashboard but those error messages still in the system logs dotcms version this was tested locally with version and demo site proposed objective application performance proposed priority priority average external links slack conversations support tickets figma designs etc assumptions initiation needs no response sub tasks estimates no response

| 1

|

14,676

| 2,831,388,436

|

IssuesEvent

|

2015-05-24 15:53:22

|

nobodyguy/dslrdashboard

|

https://api.github.com/repos/nobodyguy/dslrdashboard

|

closed

|

D600 Live View not working

|

auto-migrated Priority-Medium Type-Defect

|

```

What steps will reproduce the problem?

1. Using Nikon D-600 camera with WU-1B wireless device and Samgsung Galaxy S4

phone

2.

3.

What is the expected output? What do you see instead?

Live View will not function - get message to use Scene or User Mode. All other

functions of app work.

What version of the product are you using? On what operating system?

Latest version of app (downloaded 3/28) and Android 4.3

Please provide any additional information below.

```

Original issue reported on code.google.com by `stevean...@gmail.com` on 29 Mar 2014 at 12:53

|

1.0

|

D600 Live View not working - ```

What steps will reproduce the problem?

1. Using Nikon D-600 camera with WU-1B wireless device and Samgsung Galaxy S4

phone

2.

3.

What is the expected output? What do you see instead?

Live View will not function - get message to use Scene or User Mode. All other

functions of app work.

What version of the product are you using? On what operating system?

Latest version of app (downloaded 3/28) and Android 4.3

Please provide any additional information below.

```

Original issue reported on code.google.com by `stevean...@gmail.com` on 29 Mar 2014 at 12:53

|

defect

|

live view not working what steps will reproduce the problem using nikon d camera with wu wireless device and samgsung galaxy phone what is the expected output what do you see instead live view will not function get message to use scene or user mode all other functions of app work what version of the product are you using on what operating system latest version of app downloaded and android please provide any additional information below original issue reported on code google com by stevean gmail com on mar at

| 1

|

161,046

| 20,120,388,715

|

IssuesEvent

|

2022-02-08 01:14:11

|