Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

757

| labels

stringlengths 4

664

| body

stringlengths 3

261k

| index

stringclasses 10

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

232k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

17,245

| 2,986,622,894

|

IssuesEvent

|

2015-07-20 05:40:45

|

jayway/awaitility

|

https://api.github.com/repos/jayway/awaitility

|

opened

|

AssertionError from inside of supplier

|

auto-migrated Priority-Medium Type-Defect

|

```

We are using Awaitility inside our tests and some test are throwing

AssertionError from inside of Callable supplier. As the result we got

ConditionTimeoutException without any cause and it looked like Awaitility just

stopped calling our supplier for no reason. I think the problem is that

AssertionError is not an Exception, but Awaitility catches only Exceptions in

ConditionAwaiter line 57

What is the expected output? What do you see instead?

We expect to see stack trace of the AssertionError or it being re-thrown.

Instead we see ConditionTimeoutException which doesn't show the real cause

What version of the product are you using? On what operating system?

1.6.3 on any operating system

```

Original issue reported on code.google.com by `sho...@gmail.com` on 16 Jun 2015 at 12:32

|

1.0

|

AssertionError from inside of supplier - ```

We are using Awaitility inside our tests and some test are throwing

AssertionError from inside of Callable supplier. As the result we got

ConditionTimeoutException without any cause and it looked like Awaitility just

stopped calling our supplier for no reason. I think the problem is that

AssertionError is not an Exception, but Awaitility catches only Exceptions in

ConditionAwaiter line 57

What is the expected output? What do you see instead?

We expect to see stack trace of the AssertionError or it being re-thrown.

Instead we see ConditionTimeoutException which doesn't show the real cause

What version of the product are you using? On what operating system?

1.6.3 on any operating system

```

Original issue reported on code.google.com by `sho...@gmail.com` on 16 Jun 2015 at 12:32

|

defect

|

assertionerror from inside of supplier we are using awaitility inside our tests and some test are throwing assertionerror from inside of callable supplier as the result we got conditiontimeoutexception without any cause and it looked like awaitility just stopped calling our supplier for no reason i think the problem is that assertionerror is not an exception but awaitility catches only exceptions in conditionawaiter line what is the expected output what do you see instead we expect to see stack trace of the assertionerror or it being re thrown instead we see conditiontimeoutexception which doesn t show the real cause what version of the product are you using on what operating system on any operating system original issue reported on code google com by sho gmail com on jun at

| 1

|

1,967

| 2,603,974,195

|

IssuesEvent

|

2015-02-24 19:01:05

|

chrsmith/nishazi6

|

https://api.github.com/repos/chrsmith/nishazi6

|

opened

|

沈阳有治疗疱疹的好办法吗

|

auto-migrated Priority-Medium Type-Defect

|

```

沈阳有治疗疱疹的好办法吗〓沈陽軍區政治部醫院性病〓TEL��

�024-31023308〓成立于1946年,68年專注于性傳播疾病的研究和治�

��。位于沈陽市沈河區二緯路32號。是一所與新中國同建立共�

��煌的歷史悠久、設備精良、技術權威、專家云集,是預防、

保健、醫療、科研康復為一體的綜合性醫院。是國家首批公��

�甲等部隊醫院、全國首批醫療規范定點單位,是第四軍醫大�

��、東南大學等知名高等院校的教學醫院。曾被中國人民解放

軍空軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立��

�體二等功。

```

-----

Original issue reported on code.google.com by `q964105...@gmail.com` on 4 Jun 2014 at 8:10

|

1.0

|

沈阳有治疗疱疹的好办法吗 - ```

沈阳有治疗疱疹的好办法吗〓沈陽軍區政治部醫院性病〓TEL��

�024-31023308〓成立于1946年,68年專注于性傳播疾病的研究和治�

��。位于沈陽市沈河區二緯路32號。是一所與新中國同建立共�

��煌的歷史悠久、設備精良、技術權威、專家云集,是預防、

保健、醫療、科研康復為一體的綜合性醫院。是國家首批公��

�甲等部隊醫院、全國首批醫療規范定點單位,是第四軍醫大�

��、東南大學等知名高等院校的教學醫院。曾被中國人民解放

軍空軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立��

�體二等功。

```

-----

Original issue reported on code.google.com by `q964105...@gmail.com` on 4 Jun 2014 at 8:10

|

defect

|

沈阳有治疗疱疹的好办法吗 沈阳有治疗疱疹的好办法吗〓沈陽軍區政治部醫院性病〓tel�� � 〓 , � ��。 。是一所與新中國同建立共� ��煌的歷史悠久、設備精良、技術權威、專家云集,是預防、 保健、醫療、科研康復為一體的綜合性醫院。是國家首批公�� �甲等部隊醫院、全國首批醫療規范定點單位,是第四軍醫大� ��、東南大學等知名高等院校的教學醫院。曾被中國人民解放 軍空軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立�� �體二等功。 original issue reported on code google com by gmail com on jun at

| 1

|

338,538

| 30,304,245,507

|

IssuesEvent

|

2023-07-10 08:24:59

|

unifyai/ivy

|

https://api.github.com/repos/unifyai/ivy

|

reopened

|

Fix layers.test_depthwise_conv2d

|

Sub Task Ivy Functional API Failing Test

|

| | |

|---|---|

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5469924771/jobs/9959404555"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/5505518661"><img src=https://img.shields.io/badge/-failure-red></a>

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5505518661"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/5505518661"><img src=https://img.shields.io/badge/-success-success></a>

|paddle|<a href="https://github.com/unifyai/ivy/actions/runs/5505518661"><img src=https://img.shields.io/badge/-success-success></a>

|

1.0

|

Fix layers.test_depthwise_conv2d - | | |

|---|---|

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5469924771/jobs/9959404555"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/5505518661"><img src=https://img.shields.io/badge/-failure-red></a>

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5505518661"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/5505518661"><img src=https://img.shields.io/badge/-success-success></a>

|paddle|<a href="https://github.com/unifyai/ivy/actions/runs/5505518661"><img src=https://img.shields.io/badge/-success-success></a>

|

non_defect

|

fix layers test depthwise jax a href src numpy a href src tensorflow a href src torch a href src paddle a href src

| 0

|

175,103

| 27,792,103,333

|

IssuesEvent

|

2023-03-17 09:42:47

|

GoogleChrome/web.dev

|

https://api.github.com/repos/GoogleChrome/web.dev

|

closed

|

Design a page for CSS triggers

|

P2 design

|

The team used to maintain csstriggers.com (the site which is currently live there has nothing to do with the old project), which listed all CSS properties and their impact on the browser rendering pipeline. The page looked like this: https://www.lmame-geek.com/css-triggers/

While we don't maintain the page anymore the team is currently looking into recollecting the data and making it available for web.dev to display. Therefore we need a design how this would look on web.dev.

|

1.0

|

Design a page for CSS triggers - The team used to maintain csstriggers.com (the site which is currently live there has nothing to do with the old project), which listed all CSS properties and their impact on the browser rendering pipeline. The page looked like this: https://www.lmame-geek.com/css-triggers/

While we don't maintain the page anymore the team is currently looking into recollecting the data and making it available for web.dev to display. Therefore we need a design how this would look on web.dev.

|

non_defect

|

design a page for css triggers the team used to maintain csstriggers com the site which is currently live there has nothing to do with the old project which listed all css properties and their impact on the browser rendering pipeline the page looked like this while we don t maintain the page anymore the team is currently looking into recollecting the data and making it available for web dev to display therefore we need a design how this would look on web dev

| 0

|

39,646

| 5,113,712,988

|

IssuesEvent

|

2017-01-06 16:10:16

|

harksys/HawkEye

|

https://api.github.com/repos/harksys/HawkEye

|

closed

|

Enlarge PR/Issue Icons

|

Design Good for beginners

|

Enlarging the PR and Issue icons in feed for a bit of a better visual cue on what type of notification you're looking at in the feed would be awesome.

The icons that I'm specifically referring to:

|

1.0

|

Enlarge PR/Issue Icons - Enlarging the PR and Issue icons in feed for a bit of a better visual cue on what type of notification you're looking at in the feed would be awesome.

The icons that I'm specifically referring to:

|

non_defect

|

enlarge pr issue icons enlarging the pr and issue icons in feed for a bit of a better visual cue on what type of notification you re looking at in the feed would be awesome the icons that i m specifically referring to

| 0

|

45,872

| 11,744,457,031

|

IssuesEvent

|

2020-03-12 07:46:10

|

ShaikASK/Testing

|

https://api.github.com/repos/ShaikASK/Testing

|

opened

|

Audit log : Candidate : In the Audit log 'Reject offer letter' should be updated with 'Decline Offer Letter'

|

Audit Log Defect P2 Release#7 Build#3

|

When the candidate 'Decline' the offer letter the Audit log is displayed as "Candidate accessed Reject offer letter screen"

Similarly also fix this issue when the candidate close the Decline pop or click on X symbol to close the window the audit log should be updated as " Candidate Cancelled Decline offer letter Screen'

|

1.0

|

Audit log : Candidate : In the Audit log 'Reject offer letter' should be updated with 'Decline Offer Letter' - When the candidate 'Decline' the offer letter the Audit log is displayed as "Candidate accessed Reject offer letter screen"

Similarly also fix this issue when the candidate close the Decline pop or click on X symbol to close the window the audit log should be updated as " Candidate Cancelled Decline offer letter Screen'

|

non_defect

|

audit log candidate in the audit log reject offer letter should be updated with decline offer letter when the candidate decline the offer letter the audit log is displayed as candidate accessed reject offer letter screen similarly also fix this issue when the candidate close the decline pop or click on x symbol to close the window the audit log should be updated as candidate cancelled decline offer letter screen

| 0

|

68,335

| 21,639,364,935

|

IssuesEvent

|

2022-05-05 17:06:35

|

department-of-veterans-affairs/va.gov-cms

|

https://api.github.com/repos/department-of-veterans-affairs/va.gov-cms

|

closed

|

Investigate accessibility tests time out in tugboat

|

Defect DevOps Platform CMS Team

|

## Description

There are accessibility timeout errors that frequently occur which require manual intervention from the engineers.

### Acceptance Criteria

- [ ] Investigate the timeout errors for accessibility tests in PR environments

- [ ] Provide a solution to get tests running in a more stable fashion

- [ ] Create followup ticket for resolution

### CMS Team

Please check the team(s) that will do this work.

- [ ] `CMS Program`

- [x] `Platform CMS Team`

- [ ] `Sitewide CMS Team ` (leave Sitewide unchecked and check the specific team instead)

- [ ] `⭐️ Content ops`

- [ ] `⭐️ CMS experience`

- [ ] `⭐️ Offices`

- [ ] `⭐️ Product support`

- [ ] `⭐️ User support`

|

1.0

|

Investigate accessibility tests time out in tugboat - ## Description

There are accessibility timeout errors that frequently occur which require manual intervention from the engineers.

### Acceptance Criteria

- [ ] Investigate the timeout errors for accessibility tests in PR environments

- [ ] Provide a solution to get tests running in a more stable fashion

- [ ] Create followup ticket for resolution

### CMS Team

Please check the team(s) that will do this work.

- [ ] `CMS Program`

- [x] `Platform CMS Team`

- [ ] `Sitewide CMS Team ` (leave Sitewide unchecked and check the specific team instead)

- [ ] `⭐️ Content ops`

- [ ] `⭐️ CMS experience`

- [ ] `⭐️ Offices`

- [ ] `⭐️ Product support`

- [ ] `⭐️ User support`

|

defect

|

investigate accessibility tests time out in tugboat description there are accessibility timeout errors that frequently occur which require manual intervention from the engineers acceptance criteria investigate the timeout errors for accessibility tests in pr environments provide a solution to get tests running in a more stable fashion create followup ticket for resolution cms team please check the team s that will do this work cms program platform cms team sitewide cms team leave sitewide unchecked and check the specific team instead ⭐️ content ops ⭐️ cms experience ⭐️ offices ⭐️ product support ⭐️ user support

| 1

|

71,208

| 23,490,794,347

|

IssuesEvent

|

2022-08-17 18:31:05

|

primefaces/primefaces

|

https://api.github.com/repos/primefaces/primefaces

|

closed

|

Terminal: AutoComplete not working on MyFaces

|

:lady_beetle: defect

|

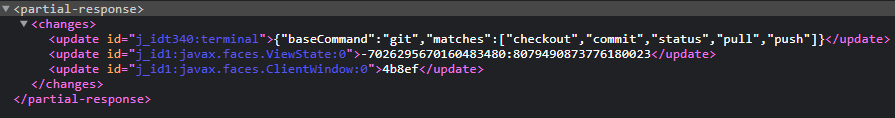

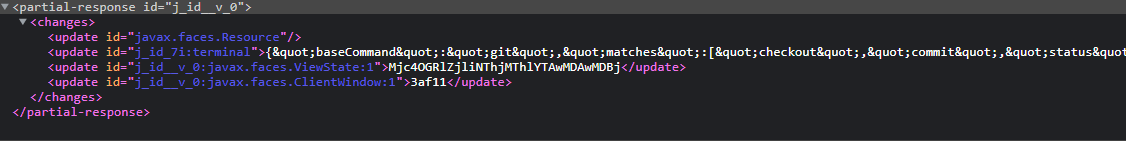

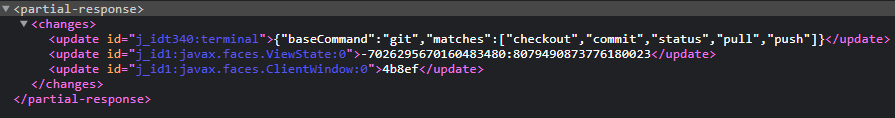

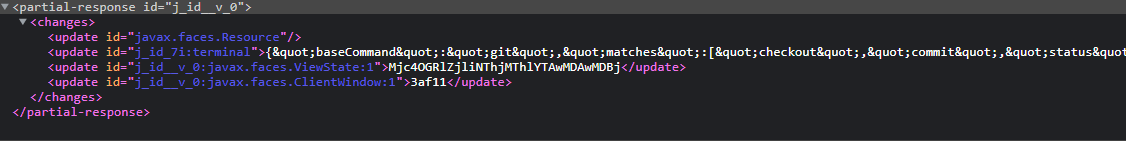

### Describe the bug

Originally Reported here: https://github.com/melloware/quarkus-faces/issues/165

**Mojarra:**

**MyFaces:**

Looks like the response JSON is being encoded.

### Reproducer

Run the showcase locally with ` mvn clean jetty:run -Pnon-ee,myfaces-next-2.3` and navigate to https://www.primefaces.org/showcase/ui/misc/terminal/autocomplete.xhtml and type `git + TAB`

### Expected behavior

Terminal Autocomplete works

### PrimeFaces edition

Community

### PrimeFaces version

12.0.0-RC2

### Theme

_No response_

### JSF implementation

MyFaces

### JSF version

2.3-next

### Browser(s)

_No response_

|

1.0

|

Terminal: AutoComplete not working on MyFaces - ### Describe the bug

Originally Reported here: https://github.com/melloware/quarkus-faces/issues/165

**Mojarra:**

**MyFaces:**

Looks like the response JSON is being encoded.

### Reproducer

Run the showcase locally with ` mvn clean jetty:run -Pnon-ee,myfaces-next-2.3` and navigate to https://www.primefaces.org/showcase/ui/misc/terminal/autocomplete.xhtml and type `git + TAB`

### Expected behavior

Terminal Autocomplete works

### PrimeFaces edition

Community

### PrimeFaces version

12.0.0-RC2

### Theme

_No response_

### JSF implementation

MyFaces

### JSF version

2.3-next

### Browser(s)

_No response_

|

defect

|

terminal autocomplete not working on myfaces describe the bug originally reported here mojarra myfaces looks like the response json is being encoded reproducer run the showcase locally with mvn clean jetty run pnon ee myfaces next and navigate to and type git tab expected behavior terminal autocomplete works primefaces edition community primefaces version theme no response jsf implementation myfaces jsf version next browser s no response

| 1

|

134,886

| 30,204,940,028

|

IssuesEvent

|

2023-07-05 08:48:14

|

dotnet/roslyn

|

https://api.github.com/repos/dotnet/roslyn

|

closed

|

[BUG] Analyzers and code fixes not working in C# Dev Kit?

|

Bug Area-Analyzers VSCode

|

From vscode-dotnettools created by [DaRosenberg](https://github.com/DaRosenberg): microsoft/vscode-dotnettools#61

### Describe the Issue

Not sure if this is exposing a bug or just a missing feature for now. Can you clarify whether analyzers and code fixes should be working yet or not?

### Steps To Reproduce

Works:

- Analyzers are run during build, and issues surfaced as problems (errors and warnings)

Does NOT work:

- Analyzers do not run automatically (neither for solution nor for open documents)

- No command to run analyzers imperatively either

- Detected issues are highlighted in source (squigglies) but no fixes appear

- No settings to enable analyzers or control analysis behavior

### Expected Behavior

I expect analyzers functionality to be on par with Visual Studio, or at least on par with the previous OmniSharp-powered C# extension.

### Environment Information

- OS: macOS Ventura

- VS Code: 1.78.2

- Extension: C# Dev Kit v0.1.8

|

1.0

|

[BUG] Analyzers and code fixes not working in C# Dev Kit? - From vscode-dotnettools created by [DaRosenberg](https://github.com/DaRosenberg): microsoft/vscode-dotnettools#61

### Describe the Issue

Not sure if this is exposing a bug or just a missing feature for now. Can you clarify whether analyzers and code fixes should be working yet or not?

### Steps To Reproduce

Works:

- Analyzers are run during build, and issues surfaced as problems (errors and warnings)

Does NOT work:

- Analyzers do not run automatically (neither for solution nor for open documents)

- No command to run analyzers imperatively either

- Detected issues are highlighted in source (squigglies) but no fixes appear

- No settings to enable analyzers or control analysis behavior

### Expected Behavior

I expect analyzers functionality to be on par with Visual Studio, or at least on par with the previous OmniSharp-powered C# extension.

### Environment Information

- OS: macOS Ventura

- VS Code: 1.78.2

- Extension: C# Dev Kit v0.1.8

|

non_defect

|

analyzers and code fixes not working in c dev kit from vscode dotnettools created by microsoft vscode dotnettools describe the issue not sure if this is exposing a bug or just a missing feature for now can you clarify whether analyzers and code fixes should be working yet or not steps to reproduce works analyzers are run during build and issues surfaced as problems errors and warnings does not work analyzers do not run automatically neither for solution nor for open documents no command to run analyzers imperatively either detected issues are highlighted in source squigglies but no fixes appear no settings to enable analyzers or control analysis behavior expected behavior i expect analyzers functionality to be on par with visual studio or at least on par with the previous omnisharp powered c extension environment information os macos ventura vs code extension c dev kit

| 0

|

51,288

| 13,207,409,152

|

IssuesEvent

|

2020-08-14 22:59:42

|

icecube-trac/tix4

|

https://api.github.com/repos/icecube-trac/tix4

|

opened

|

GLshovel File-> Open I3 file on Mac OS 10.5 hangs (Trac #146)

|

IceTray Incomplete Migration Migrated from Trac defect

|

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/146">https://code.icecube.wisc.edu/projects/icecube/ticket/146</a>, reported by blaufussand owned by troy</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2009-03-06T01:42:38",

"_ts": "1236303758000000",

"description": "",

"reporter": "blaufuss",

"cc": "",

"resolution": "fixed",

"time": "2008-10-22T16:12:45",

"component": "IceTray",

"summary": "GLshovel File-> Open I3 file on Mac OS 10.5 hangs",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "troy",

"type": "defect"

}

```

</p>

</details>

|

1.0

|

GLshovel File-> Open I3 file on Mac OS 10.5 hangs (Trac #146) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/146">https://code.icecube.wisc.edu/projects/icecube/ticket/146</a>, reported by blaufussand owned by troy</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2009-03-06T01:42:38",

"_ts": "1236303758000000",

"description": "",

"reporter": "blaufuss",

"cc": "",

"resolution": "fixed",

"time": "2008-10-22T16:12:45",

"component": "IceTray",

"summary": "GLshovel File-> Open I3 file on Mac OS 10.5 hangs",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "troy",

"type": "defect"

}

```

</p>

</details>

|

defect

|

glshovel file open file on mac os hangs trac migrated from json status closed changetime ts description reporter blaufuss cc resolution fixed time component icetray summary glshovel file open file on mac os hangs priority normal keywords milestone owner troy type defect

| 1

|

682,094

| 23,332,271,651

|

IssuesEvent

|

2022-08-09 06:49:21

|

ooni/probe

|

https://api.github.com/repos/ooni/probe

|

opened

|

OONI Probe Mobile includes RiseupVPN option in Settings

|

bug ooni/probe-mobile priority/medium

|

**Describe the bug**

OONI Probe Mobile includes RiseupVPN in 'Test options' for 'Circumvention' tests while the RiseupVPN test is temporarily disabled.

**To Reproduce**

'Settings' > 'Test option' > 'Circumvention' > 'Test RiseupVPN'

**Expected behavior**

There should be left only two options in the menu ('Test Tor', 'Test Psiphon')

**Screenshots**

**System information (please complete the following information):**

- OONI Probe Mobile version: 3.7.0

**Additional context**

There is a chance that we might need to return this option to the menu.

|

1.0

|

OONI Probe Mobile includes RiseupVPN option in Settings - **Describe the bug**

OONI Probe Mobile includes RiseupVPN in 'Test options' for 'Circumvention' tests while the RiseupVPN test is temporarily disabled.

**To Reproduce**

'Settings' > 'Test option' > 'Circumvention' > 'Test RiseupVPN'

**Expected behavior**

There should be left only two options in the menu ('Test Tor', 'Test Psiphon')

**Screenshots**

**System information (please complete the following information):**

- OONI Probe Mobile version: 3.7.0

**Additional context**

There is a chance that we might need to return this option to the menu.

|

non_defect

|

ooni probe mobile includes riseupvpn option in settings describe the bug ooni probe mobile includes riseupvpn in test options for circumvention tests while the riseupvpn test is temporarily disabled to reproduce settings test option circumvention test riseupvpn expected behavior there should be left only two options in the menu test tor test psiphon screenshots system information please complete the following information ooni probe mobile version additional context there is a chance that we might need to return this option to the menu

| 0

|

76,594

| 7,541,080,431

|

IssuesEvent

|

2018-04-17 08:44:00

|

rancher/rancher

|

https://api.github.com/repos/rancher/rancher

|

closed

|

Update vSphere node template with API fails: "a Config field must be set"

|

area/host status/resolved status/to-test version/2.0

|

Trying to update my vSphere node template via API, but results in HTTP response status code 422 `MissingRequired: a Config field must be set`

According to:

https://github.com/rancher/rancher/blob/master/pkg/api/customization/nodetemplate/validation.go#L12

Seems like a bug, because according to the HTTP payload, the following JSON property is in the JSON: `"driver":"vmwarevsphere"`

**Rancher versions:**

rancher/server: 2.0.0-beta3

**Docker version: (`docker version`,`docker info` preferred)**

```

root@platania:~# docker version

Client:

Version: 18.04.0-ce

API version: 1.37

Go version: go1.9.4

Git commit: 3d479c0

Built: Tue Apr 10 18:20:32 2018

OS/Arch: linux/amd64

Experimental: false

Orchestrator: swarm

Server:

Engine:

Version: 18.04.0-ce

API version: 1.37 (minimum version 1.12)

Go version: go1.9.4

Git commit: 3d479c0

Built: Tue Apr 10 18:18:40 2018

OS/Arch: linux/amd64

Experimental: false

```

**Operating system and kernel: (`cat /etc/os-release`, `uname -r` preferred)**

```

root@platania:~# cat /etc/os-release

NAME="Ubuntu"

VERSION="16.04.3 LTS (Xenial Xerus)"

ID=ubuntu

ID_LIKE=debian

PRETTY_NAME="Ubuntu 16.04.3 LTS"

VERSION_ID="16.04"

HOME_URL="http://www.ubuntu.com/"

SUPPORT_URL="http://help.ubuntu.com/"

BUG_REPORT_URL="http://bugs.launchpad.net/ubuntu/"

VERSION_CODENAME=xenial

UBUNTU_CODENAME=xenial

```

**Type/provider of hosts: (VirtualBox/Bare-metal/AWS/GCE/DO)**

vSphere (ESXi 6.0)

**Setup details: (single node rancher vs. HA rancher, internal DB vs. external DB)**

**Environment Template: (Cattle/Kubernetes/Swarm/Mesos)**

**Steps to Reproduce:**

```

curl --insecure -u "token-ncv2z:jkbwgj78jrnkl87sg75vjbmd9kz7t947xg2dwtjgvbzxv5qzkxqmvd" \

-X PUT \

-H 'Accept: application/json' \

-H 'Content-Type: application/json' \

-d '{"annotations":{}, "created":"2018-04-14T15:27:25Z", "creatorId":"user-hfcpg", "driver":"vmwarevsphere", "engineEnv":{}, "engineInsecureRegistry":[], "engineLabel":{}, "engineOpt":{}, "engineRegistryMirror":[], "id":"user-hfcpg:nodetemplate-bc57f", "labels":{}, "name":"vsphere", "ownerReferences":[], "removed":null, "state":"active", "transitioning":"no", "transitioningMessage":"", "uuid":"55be72ba-3ff8-11e8-a679-0242ac110002", "vmwarevsphereConfig":{"boot2dockerUrl":"https://github.com/boot2docker/boot2docker/releases/download/v17.03.2-ce/boot2docker.iso", "cloudinit":"", "cpuCount":"2", "datacenter":"dc", "datastore":"L09", "diskSize":"10000", "folder":"", "hostsystem":"host", "memorySize":"2048", "network":["dev"], "pool":"", "username":"***", "vcenter":"vcsrv", "vcenterPort":"443"}}' \

'https://platania/v3/nodeTemplates/user-hfcpg:nodetemplate-bc57f'

```

**Results:**

```

HTTP/1.1 422 Unprocessable Entity

Content-Type: application/json

Expires: Wed 24 Feb 1982 18:42:00 GMT

Set-Cookie: CSRF=51AADB9F99; Path=/

X-Api-Schemas: https://platania/v3/schemas

Date: Sat, 14 Apr 2018 15:38:19 GMT

Content-Length: 137

{"actions":{},"baseType":"error","code":"MissingRequired","links":{},"message":"a Config field must be set","status":422,"type":"error"}

```

|

1.0

|

Update vSphere node template with API fails: "a Config field must be set" - Trying to update my vSphere node template via API, but results in HTTP response status code 422 `MissingRequired: a Config field must be set`

According to:

https://github.com/rancher/rancher/blob/master/pkg/api/customization/nodetemplate/validation.go#L12

Seems like a bug, because according to the HTTP payload, the following JSON property is in the JSON: `"driver":"vmwarevsphere"`

**Rancher versions:**

rancher/server: 2.0.0-beta3

**Docker version: (`docker version`,`docker info` preferred)**

```

root@platania:~# docker version

Client:

Version: 18.04.0-ce

API version: 1.37

Go version: go1.9.4

Git commit: 3d479c0

Built: Tue Apr 10 18:20:32 2018

OS/Arch: linux/amd64

Experimental: false

Orchestrator: swarm

Server:

Engine:

Version: 18.04.0-ce

API version: 1.37 (minimum version 1.12)

Go version: go1.9.4

Git commit: 3d479c0

Built: Tue Apr 10 18:18:40 2018

OS/Arch: linux/amd64

Experimental: false

```

**Operating system and kernel: (`cat /etc/os-release`, `uname -r` preferred)**

```

root@platania:~# cat /etc/os-release

NAME="Ubuntu"

VERSION="16.04.3 LTS (Xenial Xerus)"

ID=ubuntu

ID_LIKE=debian

PRETTY_NAME="Ubuntu 16.04.3 LTS"

VERSION_ID="16.04"

HOME_URL="http://www.ubuntu.com/"

SUPPORT_URL="http://help.ubuntu.com/"

BUG_REPORT_URL="http://bugs.launchpad.net/ubuntu/"

VERSION_CODENAME=xenial

UBUNTU_CODENAME=xenial

```

**Type/provider of hosts: (VirtualBox/Bare-metal/AWS/GCE/DO)**

vSphere (ESXi 6.0)

**Setup details: (single node rancher vs. HA rancher, internal DB vs. external DB)**

**Environment Template: (Cattle/Kubernetes/Swarm/Mesos)**

**Steps to Reproduce:**

```

curl --insecure -u "token-ncv2z:jkbwgj78jrnkl87sg75vjbmd9kz7t947xg2dwtjgvbzxv5qzkxqmvd" \

-X PUT \

-H 'Accept: application/json' \

-H 'Content-Type: application/json' \

-d '{"annotations":{}, "created":"2018-04-14T15:27:25Z", "creatorId":"user-hfcpg", "driver":"vmwarevsphere", "engineEnv":{}, "engineInsecureRegistry":[], "engineLabel":{}, "engineOpt":{}, "engineRegistryMirror":[], "id":"user-hfcpg:nodetemplate-bc57f", "labels":{}, "name":"vsphere", "ownerReferences":[], "removed":null, "state":"active", "transitioning":"no", "transitioningMessage":"", "uuid":"55be72ba-3ff8-11e8-a679-0242ac110002", "vmwarevsphereConfig":{"boot2dockerUrl":"https://github.com/boot2docker/boot2docker/releases/download/v17.03.2-ce/boot2docker.iso", "cloudinit":"", "cpuCount":"2", "datacenter":"dc", "datastore":"L09", "diskSize":"10000", "folder":"", "hostsystem":"host", "memorySize":"2048", "network":["dev"], "pool":"", "username":"***", "vcenter":"vcsrv", "vcenterPort":"443"}}' \

'https://platania/v3/nodeTemplates/user-hfcpg:nodetemplate-bc57f'

```

**Results:**

```

HTTP/1.1 422 Unprocessable Entity

Content-Type: application/json

Expires: Wed 24 Feb 1982 18:42:00 GMT

Set-Cookie: CSRF=51AADB9F99; Path=/

X-Api-Schemas: https://platania/v3/schemas

Date: Sat, 14 Apr 2018 15:38:19 GMT

Content-Length: 137

{"actions":{},"baseType":"error","code":"MissingRequired","links":{},"message":"a Config field must be set","status":422,"type":"error"}

```

|

non_defect

|

update vsphere node template with api fails a config field must be set trying to update my vsphere node template via api but results in http response status code missingrequired a config field must be set according to seems like a bug because according to the http payload the following json property is in the json driver vmwarevsphere rancher versions rancher server docker version docker version docker info preferred root platania docker version client version ce api version go version git commit built tue apr os arch linux experimental false orchestrator swarm server engine version ce api version minimum version go version git commit built tue apr os arch linux experimental false operating system and kernel cat etc os release uname r preferred root platania cat etc os release name ubuntu version lts xenial xerus id ubuntu id like debian pretty name ubuntu lts version id home url support url bug report url version codename xenial ubuntu codename xenial type provider of hosts virtualbox bare metal aws gce do vsphere esxi setup details single node rancher vs ha rancher internal db vs external db environment template cattle kubernetes swarm mesos steps to reproduce curl insecure u token x put h accept application json h content type application json d annotations created creatorid user hfcpg driver vmwarevsphere engineenv engineinsecureregistry enginelabel engineopt engineregistrymirror id user hfcpg nodetemplate labels name vsphere ownerreferences removed null state active transitioning no transitioningmessage uuid vmwarevsphereconfig cloudinit cpucount datacenter dc datastore disksize folder hostsystem host memorysize network pool username vcenter vcsrv vcenterport results http unprocessable entity content type application json expires wed feb gmt set cookie csrf path x api schemas date sat apr gmt content length actions basetype error code missingrequired links message a config field must be set status type error

| 0

|

65,913

| 19,786,249,004

|

IssuesEvent

|

2022-01-18 07:12:10

|

SeleniumHQ/selenium

|

https://api.github.com/repos/SeleniumHQ/selenium

|

opened

|

[🐛 Bug]: element.click() does not work when scaling is used

|

I-defect needs-triaging

|

### What happened?

I use docker-selenium-novnc as a web browser for my phone, so that I can access some website like a computer. Everything works fine when using when the scaling is set to 100%.

When I read comics, some picture are slightly too big, so most of the time I set scaling at 90%.

### How can we reproduce the issue?

```shell

set scale to 90% then click.

```

### Relevant log output

```shell

the ".click()" waits forever until I scale chrome back to default.

```

### Operating System

docker for win

### Selenium version

selenium/hub:4.1.0-20211123

### What are the browser(s) and version(s) where you see this issue?

selenium/node-chrome:4.1.0-20211123

### What are the browser driver(s) and version(s) where you see this issue?

selenium/node-chrome:4.1.0-20211123

### Are you using Selenium Grid?

selenium/hub:4.1.0-20211123

|

1.0

|

[🐛 Bug]: element.click() does not work when scaling is used - ### What happened?

I use docker-selenium-novnc as a web browser for my phone, so that I can access some website like a computer. Everything works fine when using when the scaling is set to 100%.

When I read comics, some picture are slightly too big, so most of the time I set scaling at 90%.

### How can we reproduce the issue?

```shell

set scale to 90% then click.

```

### Relevant log output

```shell

the ".click()" waits forever until I scale chrome back to default.

```

### Operating System

docker for win

### Selenium version

selenium/hub:4.1.0-20211123

### What are the browser(s) and version(s) where you see this issue?

selenium/node-chrome:4.1.0-20211123

### What are the browser driver(s) and version(s) where you see this issue?

selenium/node-chrome:4.1.0-20211123

### Are you using Selenium Grid?

selenium/hub:4.1.0-20211123

|

defect

|

element click does not work when scaling is used what happened i use docker selenium novnc as a web browser for my phone so that i can access some website like a computer everything works fine when using when the scaling is set to when i read comics some picture are slightly too big so most of the time i set scaling at how can we reproduce the issue shell set scale to then click relevant log output shell the click waits forever until i scale chrome back to default operating system docker for win selenium version selenium hub what are the browser s and version s where you see this issue selenium node chrome what are the browser driver s and version s where you see this issue selenium node chrome are you using selenium grid selenium hub

| 1

|

257,098

| 27,561,769,135

|

IssuesEvent

|

2023-03-07 22:45:12

|

samqws-marketing/box_box-ui-elements

|

https://api.github.com/repos/samqws-marketing/box_box-ui-elements

|

closed

|

CVE-2022-24772 (High) detected in node-forge-0.9.0.tgz - autoclosed

|

security vulnerability

|

## CVE-2022-24772 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-forge-0.9.0.tgz</b></p></summary>

<p>JavaScript implementations of network transports, cryptography, ciphers, PKI, message digests, and various utilities.</p>

<p>Library home page: <a href="https://registry.npmjs.org/node-forge/-/node-forge-0.9.0.tgz">https://registry.npmjs.org/node-forge/-/node-forge-0.9.0.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/node-forge/package.json</p>

<p>

Dependency Hierarchy:

- webpack-dev-server-3.10.1.tgz (Root Library)

- selfsigned-1.10.7.tgz

- :x: **node-forge-0.9.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/samqws-marketing/box_box-ui-elements/commit/4fc776e2b95c8b497f6994cb2165365562ae1f82">4fc776e2b95c8b497f6994cb2165365562ae1f82</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Forge (also called `node-forge`) is a native implementation of Transport Layer Security in JavaScript. Prior to version 1.3.0, RSA PKCS#1 v1.5 signature verification code does not check for tailing garbage bytes after decoding a `DigestInfo` ASN.1 structure. This can allow padding bytes to be removed and garbage data added to forge a signature when a low public exponent is being used. The issue has been addressed in `node-forge` version 1.3.0. There are currently no known workarounds.

<p>Publish Date: 2022-03-18

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-24772>CVE-2022-24772</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-24772">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-24772</a></p>

<p>Release Date: 2022-03-18</p>

<p>Fix Resolution (node-forge): 1.3.0</p>

<p>Direct dependency fix Resolution (webpack-dev-server): 4.7.1</p>

</p>

</details>

<p></p>

***

<!-- REMEDIATE-OPEN-PR-START -->

- [ ] Check this box to open an automated fix PR

<!-- REMEDIATE-OPEN-PR-END -->

|

True

|

CVE-2022-24772 (High) detected in node-forge-0.9.0.tgz - autoclosed - ## CVE-2022-24772 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-forge-0.9.0.tgz</b></p></summary>

<p>JavaScript implementations of network transports, cryptography, ciphers, PKI, message digests, and various utilities.</p>

<p>Library home page: <a href="https://registry.npmjs.org/node-forge/-/node-forge-0.9.0.tgz">https://registry.npmjs.org/node-forge/-/node-forge-0.9.0.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/node-forge/package.json</p>

<p>

Dependency Hierarchy:

- webpack-dev-server-3.10.1.tgz (Root Library)

- selfsigned-1.10.7.tgz

- :x: **node-forge-0.9.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/samqws-marketing/box_box-ui-elements/commit/4fc776e2b95c8b497f6994cb2165365562ae1f82">4fc776e2b95c8b497f6994cb2165365562ae1f82</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Forge (also called `node-forge`) is a native implementation of Transport Layer Security in JavaScript. Prior to version 1.3.0, RSA PKCS#1 v1.5 signature verification code does not check for tailing garbage bytes after decoding a `DigestInfo` ASN.1 structure. This can allow padding bytes to be removed and garbage data added to forge a signature when a low public exponent is being used. The issue has been addressed in `node-forge` version 1.3.0. There are currently no known workarounds.

<p>Publish Date: 2022-03-18

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-24772>CVE-2022-24772</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-24772">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-24772</a></p>

<p>Release Date: 2022-03-18</p>

<p>Fix Resolution (node-forge): 1.3.0</p>

<p>Direct dependency fix Resolution (webpack-dev-server): 4.7.1</p>

</p>

</details>

<p></p>

***

<!-- REMEDIATE-OPEN-PR-START -->

- [ ] Check this box to open an automated fix PR

<!-- REMEDIATE-OPEN-PR-END -->

|

non_defect

|

cve high detected in node forge tgz autoclosed cve high severity vulnerability vulnerable library node forge tgz javascript implementations of network transports cryptography ciphers pki message digests and various utilities library home page a href path to dependency file package json path to vulnerable library node modules node forge package json dependency hierarchy webpack dev server tgz root library selfsigned tgz x node forge tgz vulnerable library found in head commit a href found in base branch master vulnerability details forge also called node forge is a native implementation of transport layer security in javascript prior to version rsa pkcs signature verification code does not check for tailing garbage bytes after decoding a digestinfo asn structure this can allow padding bytes to be removed and garbage data added to forge a signature when a low public exponent is being used the issue has been addressed in node forge version there are currently no known workarounds publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact high availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution node forge direct dependency fix resolution webpack dev server check this box to open an automated fix pr

| 0

|

69,906

| 22,745,457,406

|

IssuesEvent

|

2022-07-07 08:47:02

|

vector-im/element-android

|

https://api.github.com/repos/vector-im/element-android

|

opened

|

Crash while cross-signing with my other Element Web device

|

T-Defect

|

### Steps to reproduce

1. Initiate a verification request from Element Web on my computer to Element Android on my phone

2. Use the QR Code scanning method to verify the device

3. After scanning the QR code, a spinning process indicator is shown on the phone

4. The app crashes

5. Element Web shows the green checkmark shield

### Outcome

#### What did you expect?

Element Android to not crash, and for verification to succeed.

#### What happened instead?

The app crashed. After reopening Element Android, in Security & Privacy it says:

Cross-Signing is enabled

Keys are trusted.

Private keys are not known

I'm not sure whether the last line is a cause for concern.

### Your phone model

Google Pixel 4a 5G

### Operating system version

Android 12

### Application version and app store

Element version 1.4.25 [40104252] (G-b9267), Matrix SDK Version 1.4.25 (1f34d368), olm version 3.2.12

### Homeserver

amorgan.xyz

### Will you send logs?

Yes

### Are you willing to provide a PR?

No

|

1.0

|

Crash while cross-signing with my other Element Web device - ### Steps to reproduce

1. Initiate a verification request from Element Web on my computer to Element Android on my phone

2. Use the QR Code scanning method to verify the device

3. After scanning the QR code, a spinning process indicator is shown on the phone

4. The app crashes

5. Element Web shows the green checkmark shield

### Outcome

#### What did you expect?

Element Android to not crash, and for verification to succeed.

#### What happened instead?

The app crashed. After reopening Element Android, in Security & Privacy it says:

Cross-Signing is enabled

Keys are trusted.

Private keys are not known

I'm not sure whether the last line is a cause for concern.

### Your phone model

Google Pixel 4a 5G

### Operating system version

Android 12

### Application version and app store

Element version 1.4.25 [40104252] (G-b9267), Matrix SDK Version 1.4.25 (1f34d368), olm version 3.2.12

### Homeserver

amorgan.xyz

### Will you send logs?

Yes

### Are you willing to provide a PR?

No

|

defect

|

crash while cross signing with my other element web device steps to reproduce initiate a verification request from element web on my computer to element android on my phone use the qr code scanning method to verify the device after scanning the qr code a spinning process indicator is shown on the phone the app crashes element web shows the green checkmark shield outcome what did you expect element android to not crash and for verification to succeed what happened instead the app crashed after reopening element android in security privacy it says cross signing is enabled keys are trusted private keys are not known i m not sure whether the last line is a cause for concern your phone model google pixel operating system version android application version and app store element version g matrix sdk version olm version homeserver amorgan xyz will you send logs yes are you willing to provide a pr no

| 1

|

6,610

| 2,590,202,378

|

IssuesEvent

|

2015-02-18 17:28:55

|

OpenSprites/OpenSprites

|

https://api.github.com/repos/OpenSprites/OpenSprites

|

opened

|

Please - connect to IRC everytime you want to do something!

|

high priority ongoing

|

Hello!

Everytime we are making something and we are not updating our files actively, someone can do an commit and it mixes things up and its hard to revert everything back.

We need to get everyone to join the IRC chat @The-QullzToxic created a while ago. Its really important and we can keep track on whats everyone doing.

Even though theres nobody online, stay there! I'm usually online 12 hours a day on the IRC and i would love for someone else to talk there :sweat_smile:

https://webchat.freenode.net/ Username: Your GitHub name and connect to #OpenSprites

|

1.0

|

Please - connect to IRC everytime you want to do something! - Hello!

Everytime we are making something and we are not updating our files actively, someone can do an commit and it mixes things up and its hard to revert everything back.

We need to get everyone to join the IRC chat @The-QullzToxic created a while ago. Its really important and we can keep track on whats everyone doing.

Even though theres nobody online, stay there! I'm usually online 12 hours a day on the IRC and i would love for someone else to talk there :sweat_smile:

https://webchat.freenode.net/ Username: Your GitHub name and connect to #OpenSprites

|

non_defect

|

please connect to irc everytime you want to do something hello everytime we are making something and we are not updating our files actively someone can do an commit and it mixes things up and its hard to revert everything back we need to get everyone to join the irc chat the qullztoxic created a while ago its really important and we can keep track on whats everyone doing even though theres nobody online stay there i m usually online hours a day on the irc and i would love for someone else to talk there sweat smile username your github name and connect to opensprites

| 0

|

27,607

| 4,059,765,996

|

IssuesEvent

|

2016-05-25 10:45:51

|

FraserCoyle/sermon-manager-issues

|

https://api.github.com/repos/FraserCoyle/sermon-manager-issues

|

opened

|

[UI] Menu Dropdowns

|

bug design

|

The dark highlight area behind 'settings' needs padding left and right. 15px

Dropdown box is sitting onto of the menu and has shadow.

It needs aligned.

|

1.0

|

[UI] Menu Dropdowns - The dark highlight area behind 'settings' needs padding left and right. 15px

Dropdown box is sitting onto of the menu and has shadow.

It needs aligned.

|

non_defect

|

menu dropdowns the dark highlight area behind settings needs padding left and right dropdown box is sitting onto of the menu and has shadow it needs aligned

| 0

|

66,888

| 8,973,706,759

|

IssuesEvent

|

2019-01-29 21:47:25

|

NervanaSystems/coach

|

https://api.github.com/repos/NervanaSystems/coach

|

closed

|

create a contributor guide

|

documentation priority/p2

|

Add expectations and flows for developers and reviewers. Name and place in the repo according to: https://help.github.com/articles/setting-guidelines-for-repository-contributors/

|

1.0

|

create a contributor guide - Add expectations and flows for developers and reviewers. Name and place in the repo according to: https://help.github.com/articles/setting-guidelines-for-repository-contributors/

|

non_defect

|

create a contributor guide add expectations and flows for developers and reviewers name and place in the repo according to

| 0

|

46,047

| 13,055,844,689

|

IssuesEvent

|

2020-07-30 02:54:23

|

icecube-trac/tix2

|

https://api.github.com/repos/icecube-trac/tix2

|

opened

|

'cannot import module' troubleshooting (Trac #506)

|

Incomplete Migration Migrated from Trac defect documentation

|

Migrated from https://code.icecube.wisc.edu/ticket/506

```json

{

"status": "closed",

"changetime": "2014-11-23T03:37:57",

"description": "19:59 < dipo> hi, have a quick question on pybindings, anybody here who can help?\n20:02 <@straszhm> hi\n20:02 <@straszhm> the jebclasses question?\n20:02 < dipo> yes\n20:03 <@straszhm> do you have a file $I3_BUILD/lib/icecube/jebclasses.so?\n20:03 < dipo> yes\n20:04 <@straszhm> hm\n20:05 <@straszhm> no other error messages from your python script?\n20:05 < dipo> no, I'm actually doing things interactively from the prompt\n20:06 <@straszhm> if you just put into a script\n20:06 <@straszhm> 'from icecube import icetray, dataclasses, jebclasses'\n20:06 <@straszhm> (only that)\n20:06 <@straszhm> does it fail?\n20:09 < dipo> sorry it took a while, trying it out, yes, it gave the same error:\n20:10 < dipo> ImportError: cannot import name jebclasses\n20:10 <@straszhm> ok. python has a flag '-v'\n20:10 <@straszhm> that shows verbose information about how it finds imporrts\n20:11 <@straszhm> maybe this will show something. \n20:11 <@straszhm> does it get icetray and dataclasses from the right directory?\n20:11 <@straszhm> (so, run python -v testscript.py)\n20:12 < dipo> yes\n20:12 <@straszhm> anything interesting where the jebclasses search starts?\n20:12 < dipo> import icecube.icetray # dynamically loaded from \n /home/ofadiran/std-processing/build/lib/icecube/icetray.so\n20:12 <@straszhm> right, that looks good\n20:12 < dipo> for icetray, but for jebclasses:\n20:12 < dipo> just the same short error msg that I pasted b4\n20:13 <@straszhm> what is the output of 'file $I3_BUILD/lib/icecube/jebclasses.so'\n20:14 < dipo> /home/ofadiran/std-processing/build/lib/icecube/jebclasses.so: cannot open \n (/home/ofadiran/std-processing/build/lib/icecube/jebclasses.so)\n20:14 <@straszhm> okay, then ls -l $I3_BUILD/lib/icecube\n20:15 <@straszhm> maybe you'll want to rm that jebclasses.so and redo 'make jebclasses-pybindings'\n20:16 <@straszhm> it isn't readable and i bet it is empty or corrupt or something\n20:16 < dipo> o.k, I'll do that ..thanks\n20:16 <@straszhm> obviously the file has to be readable to be loadable into python\n20:16 <@straszhm> what did the ls -l return\n20:16 <@straszhm> (of $I3_BUILD/lib/icecube/jebclasses.so)\n20:17 < dipo> list of .so files, cfirst.so, coordinate_service.so, ..... not including jebclasses\n20:18 <@straszhm> but you said that file existed\n20:18 <@straszhm> but you said that file existed\n20:18 <@straszhm> oops\n20:19 <@straszhm> 20:03 <@straszhm> do you have a file $I3_BUILD/lib/icecube/jebclasses.so?\n20:19 <@straszhm> 20:03 < dipo> yes\n20:19 < dipo> yes, ls -al in $BUILD/lib/ : -rwxrwxr-x 1 ofadiran ofadiran 644258 Dec 19 10:20 \n libjebclasses.so\n20:20 <@straszhm> that's not lib/icecube/jebclasses.so\n20:20 < dipo> I'm sorry, my mistake\n20:20 < dipo> I was looking in $BUILD/lib/\n20:21 < dipo> it does not exist in $BUILD/lib/icecube\n20:21 <@straszhm> ok, well good that what python was telling you makes sense\n20:21 <@straszhm> what happens when you 'make jebclasses-pybindings'\n20:22 < dipo> I'll do that now\n20:25 < dipo> works now, thanks for the help\n20:25 <@straszhm> sure\n",

"reporter": "troy",

"cc": "",

"resolution": "wontfix",

"_ts": "1416713877165085",

"component": "documentation",

"summary": "'cannot import module' troubleshooting",

"priority": "normal",

"keywords": "",

"time": "2008-12-20T01:27:47",

"milestone": "",

"owner": "nega",

"type": "defect"

}

```

|

1.0

|

'cannot import module' troubleshooting (Trac #506) - Migrated from https://code.icecube.wisc.edu/ticket/506

```json

{

"status": "closed",

"changetime": "2014-11-23T03:37:57",

"description": "19:59 < dipo> hi, have a quick question on pybindings, anybody here who can help?\n20:02 <@straszhm> hi\n20:02 <@straszhm> the jebclasses question?\n20:02 < dipo> yes\n20:03 <@straszhm> do you have a file $I3_BUILD/lib/icecube/jebclasses.so?\n20:03 < dipo> yes\n20:04 <@straszhm> hm\n20:05 <@straszhm> no other error messages from your python script?\n20:05 < dipo> no, I'm actually doing things interactively from the prompt\n20:06 <@straszhm> if you just put into a script\n20:06 <@straszhm> 'from icecube import icetray, dataclasses, jebclasses'\n20:06 <@straszhm> (only that)\n20:06 <@straszhm> does it fail?\n20:09 < dipo> sorry it took a while, trying it out, yes, it gave the same error:\n20:10 < dipo> ImportError: cannot import name jebclasses\n20:10 <@straszhm> ok. python has a flag '-v'\n20:10 <@straszhm> that shows verbose information about how it finds imporrts\n20:11 <@straszhm> maybe this will show something. \n20:11 <@straszhm> does it get icetray and dataclasses from the right directory?\n20:11 <@straszhm> (so, run python -v testscript.py)\n20:12 < dipo> yes\n20:12 <@straszhm> anything interesting where the jebclasses search starts?\n20:12 < dipo> import icecube.icetray # dynamically loaded from \n /home/ofadiran/std-processing/build/lib/icecube/icetray.so\n20:12 <@straszhm> right, that looks good\n20:12 < dipo> for icetray, but for jebclasses:\n20:12 < dipo> just the same short error msg that I pasted b4\n20:13 <@straszhm> what is the output of 'file $I3_BUILD/lib/icecube/jebclasses.so'\n20:14 < dipo> /home/ofadiran/std-processing/build/lib/icecube/jebclasses.so: cannot open \n (/home/ofadiran/std-processing/build/lib/icecube/jebclasses.so)\n20:14 <@straszhm> okay, then ls -l $I3_BUILD/lib/icecube\n20:15 <@straszhm> maybe you'll want to rm that jebclasses.so and redo 'make jebclasses-pybindings'\n20:16 <@straszhm> it isn't readable and i bet it is empty or corrupt or something\n20:16 < dipo> o.k, I'll do that ..thanks\n20:16 <@straszhm> obviously the file has to be readable to be loadable into python\n20:16 <@straszhm> what did the ls -l return\n20:16 <@straszhm> (of $I3_BUILD/lib/icecube/jebclasses.so)\n20:17 < dipo> list of .so files, cfirst.so, coordinate_service.so, ..... not including jebclasses\n20:18 <@straszhm> but you said that file existed\n20:18 <@straszhm> but you said that file existed\n20:18 <@straszhm> oops\n20:19 <@straszhm> 20:03 <@straszhm> do you have a file $I3_BUILD/lib/icecube/jebclasses.so?\n20:19 <@straszhm> 20:03 < dipo> yes\n20:19 < dipo> yes, ls -al in $BUILD/lib/ : -rwxrwxr-x 1 ofadiran ofadiran 644258 Dec 19 10:20 \n libjebclasses.so\n20:20 <@straszhm> that's not lib/icecube/jebclasses.so\n20:20 < dipo> I'm sorry, my mistake\n20:20 < dipo> I was looking in $BUILD/lib/\n20:21 < dipo> it does not exist in $BUILD/lib/icecube\n20:21 <@straszhm> ok, well good that what python was telling you makes sense\n20:21 <@straszhm> what happens when you 'make jebclasses-pybindings'\n20:22 < dipo> I'll do that now\n20:25 < dipo> works now, thanks for the help\n20:25 <@straszhm> sure\n",

"reporter": "troy",

"cc": "",

"resolution": "wontfix",

"_ts": "1416713877165085",

"component": "documentation",

"summary": "'cannot import module' troubleshooting",

"priority": "normal",

"keywords": "",

"time": "2008-12-20T01:27:47",

"milestone": "",

"owner": "nega",

"type": "defect"

}

```

|

defect

|

cannot import module troubleshooting trac migrated from json status closed changetime description hi have a quick question on pybindings anybody here who can help hi the jebclasses question yes do you have a file build lib icecube jebclasses so yes hm no other error messages from your python script no i m actually doing things interactively from the prompt if you just put into a script from icecube import icetray dataclasses jebclasses only that does it fail sorry it took a while trying it out yes it gave the same error importerror cannot import name jebclasses ok python has a flag v that shows verbose information about how it finds imporrts maybe this will show something does it get icetray and dataclasses from the right directory so run python v testscript py yes anything interesting where the jebclasses search starts import icecube icetray dynamically loaded from n home ofadiran std processing build lib icecube icetray so right that looks good for icetray but for jebclasses just the same short error msg that i pasted what is the output of file build lib icecube jebclasses so home ofadiran std processing build lib icecube jebclasses so cannot open n home ofadiran std processing build lib icecube jebclasses so okay then ls l build lib icecube maybe you ll want to rm that jebclasses so and redo make jebclasses pybindings it isn t readable and i bet it is empty or corrupt or something o k i ll do that thanks obviously the file has to be readable to be loadable into python what did the ls l return of build lib icecube jebclasses so list of so files cfirst so coordinate service so not including jebclasses but you said that file existed but you said that file existed oops do you have a file build lib icecube jebclasses so yes yes ls al in build lib rwxrwxr x ofadiran ofadiran dec n libjebclasses so that s not lib icecube jebclasses so i m sorry my mistake i was looking in build lib it does not exist in build lib icecube ok well good that what python was telling you makes sense what happens when you make jebclasses pybindings i ll do that now works now thanks for the help sure n reporter troy cc resolution wontfix ts component documentation summary cannot import module troubleshooting priority normal keywords time milestone owner nega type defect

| 1

|

15,416

| 2,852,292,100

|

IssuesEvent

|

2015-06-01 12:52:21

|

hazelcast/hazelcast

|

https://api.github.com/repos/hazelcast/hazelcast

|

closed

|

[TEST-FAILURE] JCacheClientListenerTest timeout

|

Team: Client Type: Defect

|

```

01:55:30 Running com.hazelcast.client.cache.JCacheClientListenerTest

03:00:16 Build timed out (after 100 minutes). Marking the build as aborted.

03:00:59 FATAL: command execution failed

```

https://hazelcast-l337.ci.cloudbees.com/job/Hazelcast-3.x-OpenJDK9-Quality-Outreach/40/console

|

1.0

|

[TEST-FAILURE] JCacheClientListenerTest timeout - ```

01:55:30 Running com.hazelcast.client.cache.JCacheClientListenerTest

03:00:16 Build timed out (after 100 minutes). Marking the build as aborted.

03:00:59 FATAL: command execution failed

```

https://hazelcast-l337.ci.cloudbees.com/job/Hazelcast-3.x-OpenJDK9-Quality-Outreach/40/console

|

defect

|

jcacheclientlistenertest timeout running com hazelcast client cache jcacheclientlistenertest build timed out after minutes marking the build as aborted fatal command execution failed

| 1

|

55,701

| 14,640,707,241

|

IssuesEvent

|

2020-12-25 03:20:49

|

unascribed/Fabrication

|

https://api.github.com/repos/unascribed/Fabrication

|

closed

|

Sync Attacker Yaw not working

|

k: Defect

|

Sync Attacker Yaw seems to stop working (goes back to default behavior) after player dies

Mods:

- fabric api

- lithium

- starlight

- fabrication (obviously)

- overworld two

I am using Java 15 OpenJ9 (but I doubt that would be the problem)

|

1.0

|

Sync Attacker Yaw not working - Sync Attacker Yaw seems to stop working (goes back to default behavior) after player dies

Mods:

- fabric api

- lithium

- starlight

- fabrication (obviously)

- overworld two

I am using Java 15 OpenJ9 (but I doubt that would be the problem)

|

defect

|

sync attacker yaw not working sync attacker yaw seems to stop working goes back to default behavior after player dies mods fabric api lithium starlight fabrication obviously overworld two i am using java but i doubt that would be the problem

| 1

|

568,265

| 16,963,113,932

|

IssuesEvent

|

2021-06-29 07:38:30

|

eventespresso/barista

|

https://api.github.com/repos/eventespresso/barista

|

closed

|

Create GQL Schema and Types for Form Element

|

C: data systems 🗑 D: EDTR ✏️ P3: med priority 😐 S:1 new 👶🏻 T: feature request 🙏

|

plz see #757

Form Element (unless we decide on a better name) will be our TS/GQL representation for anything added to a form other than an input such as:

- separators

- images

- headings

- any HTML element other than an input

Form ELements will utilize the following fields (maybe more):

GQL Field | DB Field (*=new) | Type | ???

--- | --- | --- | ---

dbId | QST_ID | int |

id | UUID* | string | save UUID to DB?

content| content| string | text added to element

htmlClass | html_class* | string |

order | order | int |

tag | tag | string | html tag being used

[EDIT]

possible new field to allow for simpler relations and querying:

GQL Field | DB Field (*=new) | Type | ???

--- | --- | --- | ---

belongsTo | belongsTo | int/string | ID or UUID of parent form section

---

So suggested new schema would be

GQL Field | Type | ???

--- | --- | ---

id | string | UUID

belongsTo | int/string | UUID (or ID) of parent form section

content| string | text added to element

htmlClass | string |

order | int |

tag | string | html tag being used

|

1.0

|

Create GQL Schema and Types for Form Element - plz see #757

Form Element (unless we decide on a better name) will be our TS/GQL representation for anything added to a form other than an input such as:

- separators

- images

- headings

- any HTML element other than an input

Form ELements will utilize the following fields (maybe more):

GQL Field | DB Field (*=new) | Type | ???

--- | --- | --- | ---

dbId | QST_ID | int |

id | UUID* | string | save UUID to DB?

content| content| string | text added to element

htmlClass | html_class* | string |

order | order | int |

tag | tag | string | html tag being used

[EDIT]

possible new field to allow for simpler relations and querying:

GQL Field | DB Field (*=new) | Type | ???

--- | --- | --- | ---

belongsTo | belongsTo | int/string | ID or UUID of parent form section

---

So suggested new schema would be

GQL Field | Type | ???

--- | --- | ---

id | string | UUID

belongsTo | int/string | UUID (or ID) of parent form section

content| string | text added to element

htmlClass | string |

order | int |

tag | string | html tag being used

|

non_defect

|

create gql schema and types for form element plz see form element unless we decide on a better name will be our ts gql representation for anything added to a form other than an input such as separators images headings any html element other than an input form elements will utilize the following fields maybe more gql field db field new type dbid qst id int id uuid string save uuid to db content content string text added to element htmlclass html class string order order int tag tag string html tag being used possible new field to allow for simpler relations and querying gql field db field new type belongsto belongsto int string id or uuid of parent form section so suggested new schema would be gql field type id string uuid belongsto int string uuid or id of parent form section content string text added to element htmlclass string order int tag string html tag being used

| 0

|

72,044

| 23,903,987,076

|

IssuesEvent

|

2022-09-08 21:46:48

|

department-of-veterans-affairs/va.gov-cms

|

https://api.github.com/repos/department-of-veterans-affairs/va.gov-cms

|

opened

|

CMS: Vet Center Locations pages do not show mobile on node:view.

|

Defect Drupal engineering ⭐️ Facilities Vet Center

|

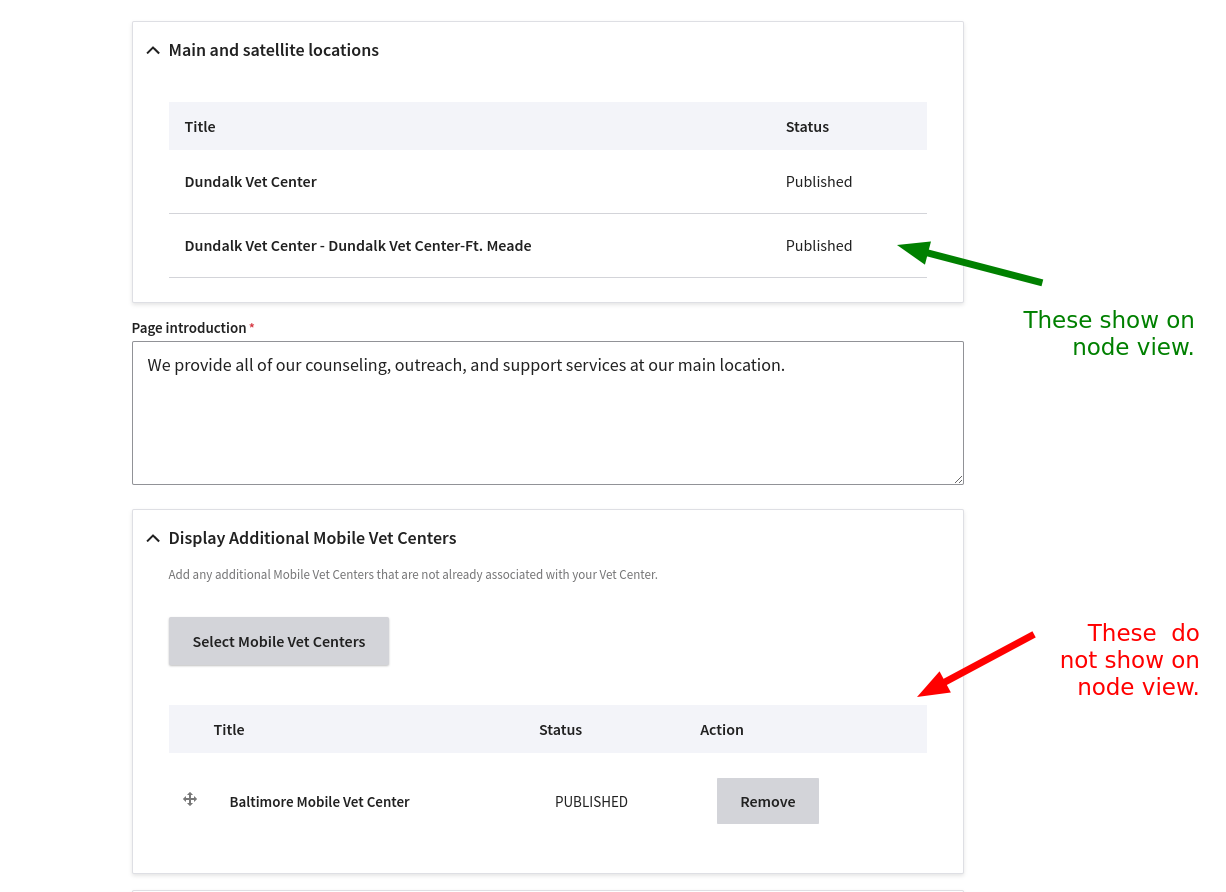

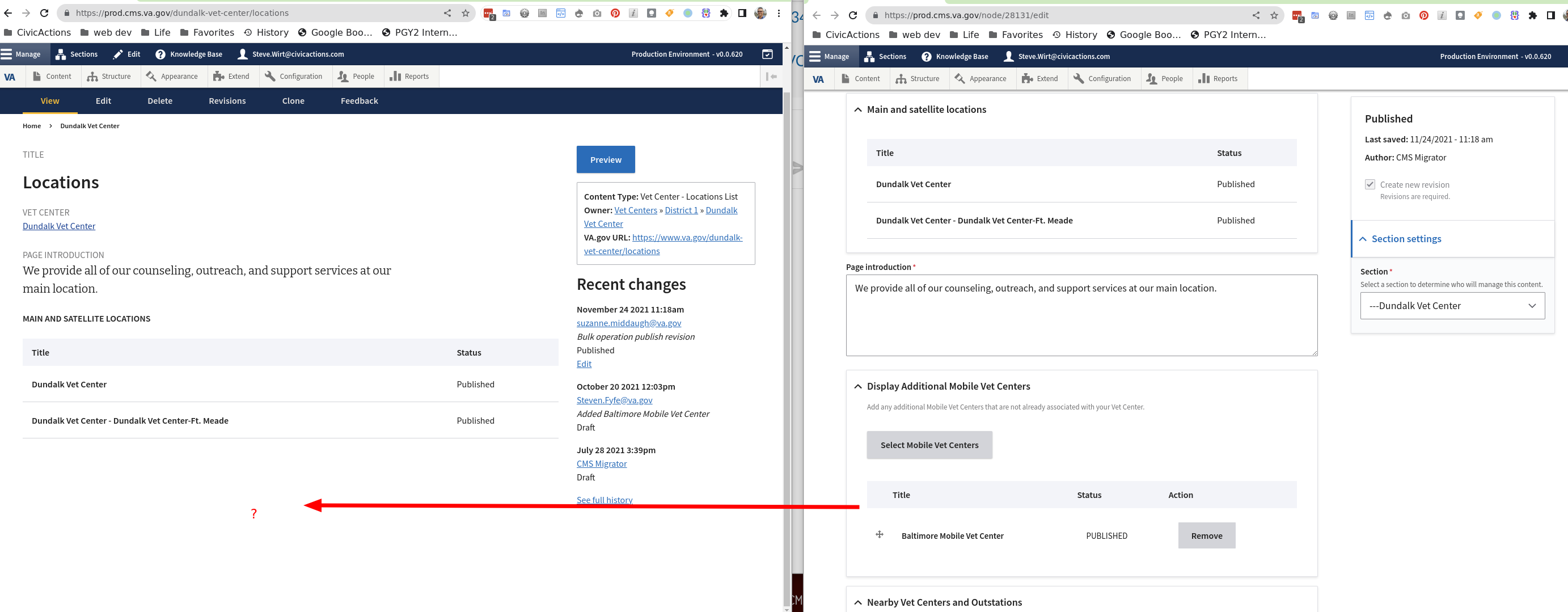

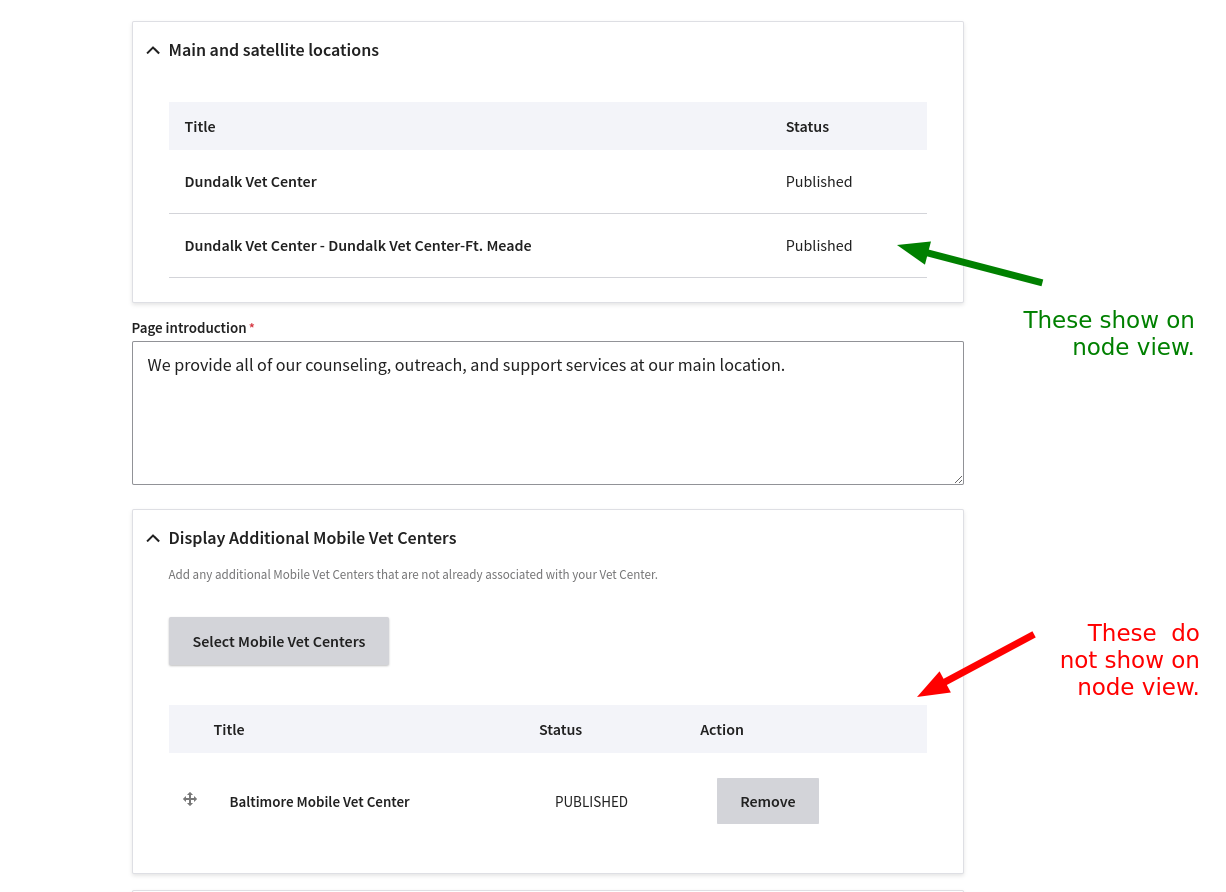

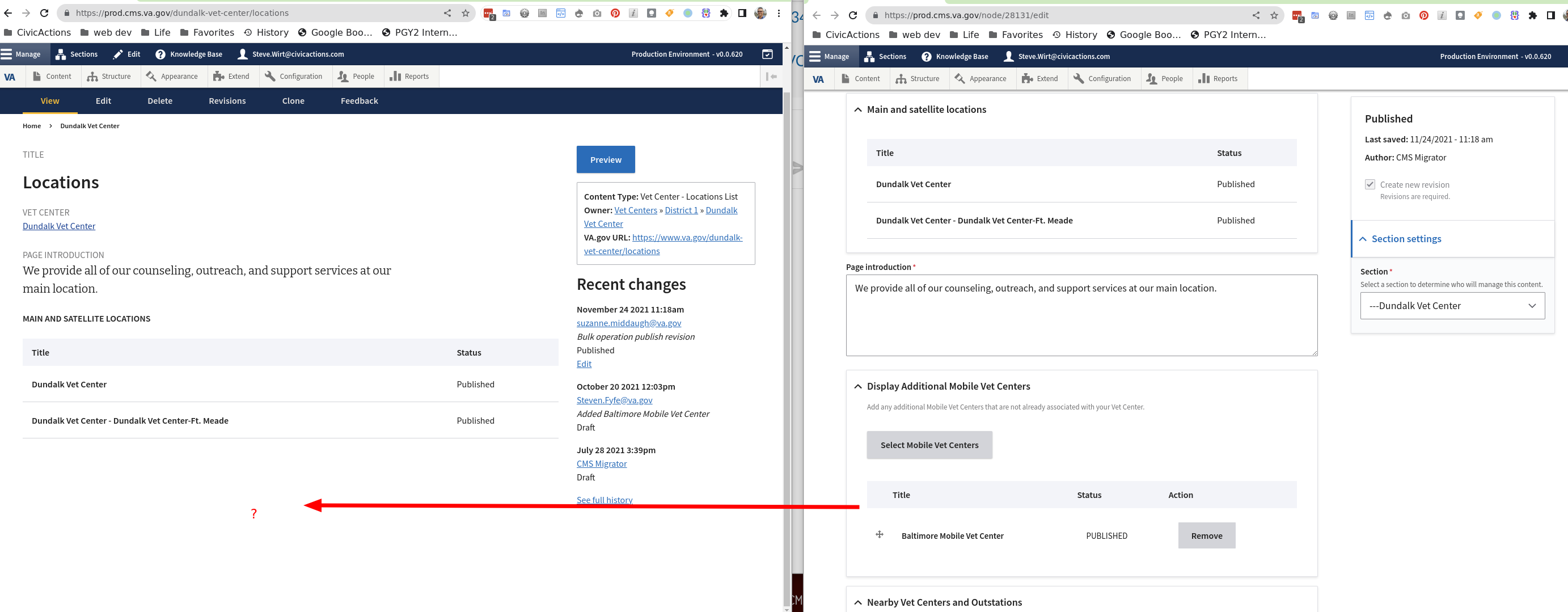

## Describe the defect

When I look at node:view on a Vet Center location page, I can see the "Main and satelite locations" but I can not see "Display additional mobile vet centers"

## To Reproduce

Steps to reproduce the behavior:

1. Go to '/node/28131/edit'

2. Notice that it has an entry for a mobile vet center

3. Click on the "View" tab and notice that the mobile vet center does not show.

4. It does show correctly on https://www.va.gov/dundalk-vet-center/locations and the preview server http://preview-prod.vfs.va.gov/preview?nodeId=28131

## AC / Expected behavior

- [ ] When on node view, the field and any entries for it are shown in a similar fashion as "Main and satelite locations"

## Screenshots

## Additional context

Add any other context about the problem here. Reach out to the Product Managers to determine if it should be escalated as critical (prevents users from accomplishing their work with no known workaround and needs to be addressed within 2 business days).

## Desktop (please complete the following information if relevant, or delete)

- OS: [e.g. iOS]

- Browser [e.g. chrome, safari]

- Version [e.g. 22]

## Labels

(You can delete this section once it's complete)

- [x] Issue type (red) (defaults to "Defect")

- [ ] CMS subsystem (green)

- [ ] CMS practice area (blue)

- [x] CMS workstream (orange) (not needed for bug tickets)

- [ ] CMS-supported product (black)

### CMS Team

Please check the team(s) that will do this work.

- [ ] `Program`

- [ ] `Platform CMS Team`

- [ ] `Sitewide Crew`

- [ ] `⭐️ Sitewide CMS`

- [ ] `⭐️ Public Websites`

- [ ] `⭐️ Facilities`

- [ ] `⭐️ User support`

|

1.0

|

CMS: Vet Center Locations pages do not show mobile on node:view. - ## Describe the defect

When I look at node:view on a Vet Center location page, I can see the "Main and satelite locations" but I can not see "Display additional mobile vet centers"

## To Reproduce

Steps to reproduce the behavior:

1. Go to '/node/28131/edit'

2. Notice that it has an entry for a mobile vet center

3. Click on the "View" tab and notice that the mobile vet center does not show.

4. It does show correctly on https://www.va.gov/dundalk-vet-center/locations and the preview server http://preview-prod.vfs.va.gov/preview?nodeId=28131

## AC / Expected behavior

- [ ] When on node view, the field and any entries for it are shown in a similar fashion as "Main and satelite locations"

## Screenshots

## Additional context

Add any other context about the problem here. Reach out to the Product Managers to determine if it should be escalated as critical (prevents users from accomplishing their work with no known workaround and needs to be addressed within 2 business days).

## Desktop (please complete the following information if relevant, or delete)

- OS: [e.g. iOS]

- Browser [e.g. chrome, safari]

- Version [e.g. 22]

## Labels

(You can delete this section once it's complete)

- [x] Issue type (red) (defaults to "Defect")

- [ ] CMS subsystem (green)

- [ ] CMS practice area (blue)

- [x] CMS workstream (orange) (not needed for bug tickets)

- [ ] CMS-supported product (black)

### CMS Team

Please check the team(s) that will do this work.

- [ ] `Program`

- [ ] `Platform CMS Team`

- [ ] `Sitewide Crew`

- [ ] `⭐️ Sitewide CMS`

- [ ] `⭐️ Public Websites`

- [ ] `⭐️ Facilities`

- [ ] `⭐️ User support`

|

defect

|

cms vet center locations pages do not show mobile on node view describe the defect when i look at node view on a vet center location page i can see the main and satelite locations but i can not see display additional mobile vet centers to reproduce steps to reproduce the behavior go to node edit notice that it has an entry for a mobile vet center click on the view tab and notice that the mobile vet center does not show it does show correctly on and the preview server ac expected behavior when on node view the field and any entries for it are shown in a similar fashion as main and satelite locations screenshots additional context add any other context about the problem here reach out to the product managers to determine if it should be escalated as critical prevents users from accomplishing their work with no known workaround and needs to be addressed within business days desktop please complete the following information if relevant or delete os browser version labels you can delete this section once it s complete issue type red defaults to defect cms subsystem green cms practice area blue cms workstream orange not needed for bug tickets cms supported product black cms team please check the team s that will do this work program platform cms team sitewide crew ⭐️ sitewide cms ⭐️ public websites ⭐️ facilities ⭐️ user support

| 1

|

104,117

| 8,961,885,927

|

IssuesEvent

|

2019-01-28 10:51:18

|

humera987/FXLabs-Test-Automation

|

https://api.github.com/repos/humera987/FXLabs-Test-Automation

|

closed

|

API Test 1 : ApiV1ProjectsIdAutoSuggestionsActivateSuitenameTcnumberGetPathParamTcnumberNullValue

|

API Test 1 API Test 1

|

Project : API Test 1

Job : JOB

Env : ENV

Category : null

Tags : null

Severity : null

Region : AliTest

Result : fail

Status Code : 500

Headers : {}

Endpoint : http://13.56.210.25/api/v1/api/v1/projects/{id}/auto-suggestions/activate/{suiteName}/null

Request :

Response :

Not enough variable values available to expand 'id'

Logs :

Assertion [@StatusCode != 401] resolved-to [500 != 401] result [Passed]Assertion [@StatusCode != 500] resolved-to [500 != 500] result [Failed]Assertion [@StatusCode != 200] resolved-to [500 != 200] result [Passed]Assertion [@StatusCode != 404] resolved-to [500 != 404] result [Passed]

--- FX Bot ---

|

2.0

|

API Test 1 : ApiV1ProjectsIdAutoSuggestionsActivateSuitenameTcnumberGetPathParamTcnumberNullValue - Project : API Test 1

Job : JOB

Env : ENV

Category : null

Tags : null

Severity : null

Region : AliTest

Result : fail

Status Code : 500

Headers : {}

Endpoint : http://13.56.210.25/api/v1/api/v1/projects/{id}/auto-suggestions/activate/{suiteName}/null

Request :

Response :

Not enough variable values available to expand 'id'

Logs :

Assertion [@StatusCode != 401] resolved-to [500 != 401] result [Passed]Assertion [@StatusCode != 500] resolved-to [500 != 500] result [Failed]Assertion [@StatusCode != 200] resolved-to [500 != 200] result [Passed]Assertion [@StatusCode != 404] resolved-to [500 != 404] result [Passed]

--- FX Bot ---

|

non_defect

|

api test project api test job job env env category null tags null severity null region alitest result fail status code headers endpoint request response not enough variable values available to expand id logs assertion resolved to result assertion resolved to result assertion resolved to result assertion resolved to result fx bot

| 0

|

48,838

| 13,184,754,331

|

IssuesEvent

|

2020-08-12 20:01:59

|

icecube-trac/tix3

|

https://api.github.com/repos/icecube-trac/tix3

|

opened

|

Problems with PPC in GPU mode on Ubuntu 10.04 (Trac #298)

|

Incomplete Migration Migrated from Trac combo simulation defect

|

<details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/298

, reported by icecube and owned by yanezjua@ifh.de_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2012-02-02T16:12:13",