Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

757

| labels

stringlengths 4

664

| body

stringlengths 3

261k

| index

stringclasses 10

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

232k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

326,984

| 28,036,026,679

|

IssuesEvent

|

2023-03-28 15:07:54

|

unifyai/ivy

|

https://api.github.com/repos/unifyai/ivy

|

reopened

|

Fix comparison.test_numpy_greater_equal

|

NumPy Frontend Sub Task Failing Test

|

| | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4530890837/jobs/7980387749" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4530890837/jobs/7980387749" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/4530890837/jobs/7980387749" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/4530890837/jobs/7980387749" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_numpy/test_logic/test_comparison.py::test_numpy_greater_equal[cpu-ivy.functional.backends.jax-False-False]</summary>

2023-03-27T09:45:10.7587592Z E IndexError: list index out of range

2023-03-27T09:45:10.7589489Z E Falsifying example: test_numpy_greater_equal(

2023-03-27T09:45:10.7590235Z E dtypes_values_casting=(['uint32', 'uint32'],

2023-03-27T09:45:10.7590833Z E [array(0, dtype=uint32), array(0, dtype=uint32)],

2023-03-27T09:45:10.7591316Z E 'no',

2023-03-27T09:45:10.7591685Z E None),

2023-03-27T09:45:10.7592104Z E where=[array(False)],

2023-03-27T09:45:10.7592569Z E test_flags=FrontendFunctionTestFlags(

2023-03-27T09:45:10.7647238Z E num_positional_args=2,

2023-03-27T09:45:10.7647505Z E with_out=False,

2023-03-27T09:45:10.7647747Z E inplace=False,

2023-03-27T09:45:10.7647992Z E as_variable=[False],

2023-03-27T09:45:10.7648244Z E native_arrays=[False],

2023-03-27T09:45:10.7648531Z E generate_frontend_arrays=True,

2023-03-27T09:45:10.7648769Z E ),

2023-03-27T09:45:10.7649223Z E fn_tree='ivy.functional.frontends.numpy.greater_equal',

2023-03-27T09:45:10.7649583Z E on_device='cpu',

2023-03-27T09:45:10.7649857Z E frontend='numpy',

2023-03-27T09:45:10.7650141Z E )

2023-03-27T09:45:10.7650330Z E

2023-03-27T09:45:10.7650891Z E You can reproduce this example by temporarily adding @reproduce_failure('6.70.0', b'AXicY2BgYGBkgBFgBgAALQAE') as a decorator on your test case

</details>

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_numpy/test_logic/test_comparison.py::test_numpy_greater_equal[cpu-ivy.functional.backends.jax-False-False]</summary>

2023-03-27T09:45:10.7587592Z E IndexError: list index out of range

2023-03-27T09:45:10.7589489Z E Falsifying example: test_numpy_greater_equal(

2023-03-27T09:45:10.7590235Z E dtypes_values_casting=(['uint32', 'uint32'],

2023-03-27T09:45:10.7590833Z E [array(0, dtype=uint32), array(0, dtype=uint32)],

2023-03-27T09:45:10.7591316Z E 'no',

2023-03-27T09:45:10.7591685Z E None),

2023-03-27T09:45:10.7592104Z E where=[array(False)],

2023-03-27T09:45:10.7592569Z E test_flags=FrontendFunctionTestFlags(

2023-03-27T09:45:10.7647238Z E num_positional_args=2,

2023-03-27T09:45:10.7647505Z E with_out=False,

2023-03-27T09:45:10.7647747Z E inplace=False,

2023-03-27T09:45:10.7647992Z E as_variable=[False],

2023-03-27T09:45:10.7648244Z E native_arrays=[False],

2023-03-27T09:45:10.7648531Z E generate_frontend_arrays=True,

2023-03-27T09:45:10.7648769Z E ),

2023-03-27T09:45:10.7649223Z E fn_tree='ivy.functional.frontends.numpy.greater_equal',

2023-03-27T09:45:10.7649583Z E on_device='cpu',

2023-03-27T09:45:10.7649857Z E frontend='numpy',

2023-03-27T09:45:10.7650141Z E )

2023-03-27T09:45:10.7650330Z E

2023-03-27T09:45:10.7650891Z E You can reproduce this example by temporarily adding @reproduce_failure('6.70.0', b'AXicY2BgYGBkgBFgBgAALQAE') as a decorator on your test case

</details>

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_numpy/test_logic/test_comparison.py::test_numpy_greater_equal[cpu-ivy.functional.backends.jax-False-False]</summary>

2023-03-27T09:45:10.7587592Z E IndexError: list index out of range

2023-03-27T09:45:10.7589489Z E Falsifying example: test_numpy_greater_equal(

2023-03-27T09:45:10.7590235Z E dtypes_values_casting=(['uint32', 'uint32'],

2023-03-27T09:45:10.7590833Z E [array(0, dtype=uint32), array(0, dtype=uint32)],

2023-03-27T09:45:10.7591316Z E 'no',

2023-03-27T09:45:10.7591685Z E None),

2023-03-27T09:45:10.7592104Z E where=[array(False)],

2023-03-27T09:45:10.7592569Z E test_flags=FrontendFunctionTestFlags(

2023-03-27T09:45:10.7647238Z E num_positional_args=2,

2023-03-27T09:45:10.7647505Z E with_out=False,

2023-03-27T09:45:10.7647747Z E inplace=False,

2023-03-27T09:45:10.7647992Z E as_variable=[False],

2023-03-27T09:45:10.7648244Z E native_arrays=[False],

2023-03-27T09:45:10.7648531Z E generate_frontend_arrays=True,

2023-03-27T09:45:10.7648769Z E ),

2023-03-27T09:45:10.7649223Z E fn_tree='ivy.functional.frontends.numpy.greater_equal',

2023-03-27T09:45:10.7649583Z E on_device='cpu',

2023-03-27T09:45:10.7649857Z E frontend='numpy',

2023-03-27T09:45:10.7650141Z E )

2023-03-27T09:45:10.7650330Z E

2023-03-27T09:45:10.7650891Z E You can reproduce this example by temporarily adding @reproduce_failure('6.70.0', b'AXicY2BgYGBkgBFgBgAALQAE') as a decorator on your test case

</details>

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_numpy/test_logic/test_comparison.py::test_numpy_greater_equal[cpu-ivy.functional.backends.jax-False-False]</summary>

2023-03-27T09:45:10.7587592Z E IndexError: list index out of range

2023-03-27T09:45:10.7589489Z E Falsifying example: test_numpy_greater_equal(

2023-03-27T09:45:10.7590235Z E dtypes_values_casting=(['uint32', 'uint32'],

2023-03-27T09:45:10.7590833Z E [array(0, dtype=uint32), array(0, dtype=uint32)],

2023-03-27T09:45:10.7591316Z E 'no',

2023-03-27T09:45:10.7591685Z E None),

2023-03-27T09:45:10.7592104Z E where=[array(False)],

2023-03-27T09:45:10.7592569Z E test_flags=FrontendFunctionTestFlags(

2023-03-27T09:45:10.7647238Z E num_positional_args=2,

2023-03-27T09:45:10.7647505Z E with_out=False,

2023-03-27T09:45:10.7647747Z E inplace=False,

2023-03-27T09:45:10.7647992Z E as_variable=[False],

2023-03-27T09:45:10.7648244Z E native_arrays=[False],

2023-03-27T09:45:10.7648531Z E generate_frontend_arrays=True,

2023-03-27T09:45:10.7648769Z E ),

2023-03-27T09:45:10.7649223Z E fn_tree='ivy.functional.frontends.numpy.greater_equal',

2023-03-27T09:45:10.7649583Z E on_device='cpu',

2023-03-27T09:45:10.7649857Z E frontend='numpy',

2023-03-27T09:45:10.7650141Z E )

2023-03-27T09:45:10.7650330Z E

2023-03-27T09:45:10.7650891Z E You can reproduce this example by temporarily adding @reproduce_failure('6.70.0', b'AXicY2BgYGBkgBFgBgAALQAE') as a decorator on your test case

</details>

|

1.0

|

Fix comparison.test_numpy_greater_equal - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4530890837/jobs/7980387749" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4530890837/jobs/7980387749" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/4530890837/jobs/7980387749" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/4530890837/jobs/7980387749" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_numpy/test_logic/test_comparison.py::test_numpy_greater_equal[cpu-ivy.functional.backends.jax-False-False]</summary>

2023-03-27T09:45:10.7587592Z E IndexError: list index out of range

2023-03-27T09:45:10.7589489Z E Falsifying example: test_numpy_greater_equal(

2023-03-27T09:45:10.7590235Z E dtypes_values_casting=(['uint32', 'uint32'],

2023-03-27T09:45:10.7590833Z E [array(0, dtype=uint32), array(0, dtype=uint32)],

2023-03-27T09:45:10.7591316Z E 'no',

2023-03-27T09:45:10.7591685Z E None),

2023-03-27T09:45:10.7592104Z E where=[array(False)],

2023-03-27T09:45:10.7592569Z E test_flags=FrontendFunctionTestFlags(

2023-03-27T09:45:10.7647238Z E num_positional_args=2,

2023-03-27T09:45:10.7647505Z E with_out=False,

2023-03-27T09:45:10.7647747Z E inplace=False,

2023-03-27T09:45:10.7647992Z E as_variable=[False],

2023-03-27T09:45:10.7648244Z E native_arrays=[False],

2023-03-27T09:45:10.7648531Z E generate_frontend_arrays=True,

2023-03-27T09:45:10.7648769Z E ),

2023-03-27T09:45:10.7649223Z E fn_tree='ivy.functional.frontends.numpy.greater_equal',

2023-03-27T09:45:10.7649583Z E on_device='cpu',

2023-03-27T09:45:10.7649857Z E frontend='numpy',

2023-03-27T09:45:10.7650141Z E )

2023-03-27T09:45:10.7650330Z E

2023-03-27T09:45:10.7650891Z E You can reproduce this example by temporarily adding @reproduce_failure('6.70.0', b'AXicY2BgYGBkgBFgBgAALQAE') as a decorator on your test case

</details>

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_numpy/test_logic/test_comparison.py::test_numpy_greater_equal[cpu-ivy.functional.backends.jax-False-False]</summary>

2023-03-27T09:45:10.7587592Z E IndexError: list index out of range

2023-03-27T09:45:10.7589489Z E Falsifying example: test_numpy_greater_equal(

2023-03-27T09:45:10.7590235Z E dtypes_values_casting=(['uint32', 'uint32'],

2023-03-27T09:45:10.7590833Z E [array(0, dtype=uint32), array(0, dtype=uint32)],

2023-03-27T09:45:10.7591316Z E 'no',

2023-03-27T09:45:10.7591685Z E None),

2023-03-27T09:45:10.7592104Z E where=[array(False)],

2023-03-27T09:45:10.7592569Z E test_flags=FrontendFunctionTestFlags(

2023-03-27T09:45:10.7647238Z E num_positional_args=2,

2023-03-27T09:45:10.7647505Z E with_out=False,

2023-03-27T09:45:10.7647747Z E inplace=False,

2023-03-27T09:45:10.7647992Z E as_variable=[False],

2023-03-27T09:45:10.7648244Z E native_arrays=[False],

2023-03-27T09:45:10.7648531Z E generate_frontend_arrays=True,

2023-03-27T09:45:10.7648769Z E ),

2023-03-27T09:45:10.7649223Z E fn_tree='ivy.functional.frontends.numpy.greater_equal',

2023-03-27T09:45:10.7649583Z E on_device='cpu',

2023-03-27T09:45:10.7649857Z E frontend='numpy',

2023-03-27T09:45:10.7650141Z E )

2023-03-27T09:45:10.7650330Z E

2023-03-27T09:45:10.7650891Z E You can reproduce this example by temporarily adding @reproduce_failure('6.70.0', b'AXicY2BgYGBkgBFgBgAALQAE') as a decorator on your test case

</details>

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_numpy/test_logic/test_comparison.py::test_numpy_greater_equal[cpu-ivy.functional.backends.jax-False-False]</summary>

2023-03-27T09:45:10.7587592Z E IndexError: list index out of range

2023-03-27T09:45:10.7589489Z E Falsifying example: test_numpy_greater_equal(

2023-03-27T09:45:10.7590235Z E dtypes_values_casting=(['uint32', 'uint32'],

2023-03-27T09:45:10.7590833Z E [array(0, dtype=uint32), array(0, dtype=uint32)],

2023-03-27T09:45:10.7591316Z E 'no',

2023-03-27T09:45:10.7591685Z E None),

2023-03-27T09:45:10.7592104Z E where=[array(False)],

2023-03-27T09:45:10.7592569Z E test_flags=FrontendFunctionTestFlags(

2023-03-27T09:45:10.7647238Z E num_positional_args=2,

2023-03-27T09:45:10.7647505Z E with_out=False,

2023-03-27T09:45:10.7647747Z E inplace=False,

2023-03-27T09:45:10.7647992Z E as_variable=[False],

2023-03-27T09:45:10.7648244Z E native_arrays=[False],

2023-03-27T09:45:10.7648531Z E generate_frontend_arrays=True,

2023-03-27T09:45:10.7648769Z E ),

2023-03-27T09:45:10.7649223Z E fn_tree='ivy.functional.frontends.numpy.greater_equal',

2023-03-27T09:45:10.7649583Z E on_device='cpu',

2023-03-27T09:45:10.7649857Z E frontend='numpy',

2023-03-27T09:45:10.7650141Z E )

2023-03-27T09:45:10.7650330Z E

2023-03-27T09:45:10.7650891Z E You can reproduce this example by temporarily adding @reproduce_failure('6.70.0', b'AXicY2BgYGBkgBFgBgAALQAE') as a decorator on your test case

</details>

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_numpy/test_logic/test_comparison.py::test_numpy_greater_equal[cpu-ivy.functional.backends.jax-False-False]</summary>

2023-03-27T09:45:10.7587592Z E IndexError: list index out of range

2023-03-27T09:45:10.7589489Z E Falsifying example: test_numpy_greater_equal(

2023-03-27T09:45:10.7590235Z E dtypes_values_casting=(['uint32', 'uint32'],

2023-03-27T09:45:10.7590833Z E [array(0, dtype=uint32), array(0, dtype=uint32)],

2023-03-27T09:45:10.7591316Z E 'no',

2023-03-27T09:45:10.7591685Z E None),

2023-03-27T09:45:10.7592104Z E where=[array(False)],

2023-03-27T09:45:10.7592569Z E test_flags=FrontendFunctionTestFlags(

2023-03-27T09:45:10.7647238Z E num_positional_args=2,

2023-03-27T09:45:10.7647505Z E with_out=False,

2023-03-27T09:45:10.7647747Z E inplace=False,

2023-03-27T09:45:10.7647992Z E as_variable=[False],

2023-03-27T09:45:10.7648244Z E native_arrays=[False],

2023-03-27T09:45:10.7648531Z E generate_frontend_arrays=True,

2023-03-27T09:45:10.7648769Z E ),

2023-03-27T09:45:10.7649223Z E fn_tree='ivy.functional.frontends.numpy.greater_equal',

2023-03-27T09:45:10.7649583Z E on_device='cpu',

2023-03-27T09:45:10.7649857Z E frontend='numpy',

2023-03-27T09:45:10.7650141Z E )

2023-03-27T09:45:10.7650330Z E

2023-03-27T09:45:10.7650891Z E You can reproduce this example by temporarily adding @reproduce_failure('6.70.0', b'AXicY2BgYGBkgBFgBgAALQAE') as a decorator on your test case

</details>

|

non_defect

|

fix comparison test numpy greater equal tensorflow img src torch img src numpy img src jax img src failed ivy tests test ivy test frontends test numpy test logic test comparison py test numpy greater equal e indexerror list index out of range e falsifying example test numpy greater equal e dtypes values casting e e no e none e where e test flags frontendfunctiontestflags e num positional args e with out false e inplace false e as variable e native arrays e generate frontend arrays true e e fn tree ivy functional frontends numpy greater equal e on device cpu e frontend numpy e e e you can reproduce this example by temporarily adding reproduce failure b as a decorator on your test case failed ivy tests test ivy test frontends test numpy test logic test comparison py test numpy greater equal e indexerror list index out of range e falsifying example test numpy greater equal e dtypes values casting e e no e none e where e test flags frontendfunctiontestflags e num positional args e with out false e inplace false e as variable e native arrays e generate frontend arrays true e e fn tree ivy functional frontends numpy greater equal e on device cpu e frontend numpy e e e you can reproduce this example by temporarily adding reproduce failure b as a decorator on your test case failed ivy tests test ivy test frontends test numpy test logic test comparison py test numpy greater equal e indexerror list index out of range e falsifying example test numpy greater equal e dtypes values casting e e no e none e where e test flags frontendfunctiontestflags e num positional args e with out false e inplace false e as variable e native arrays e generate frontend arrays true e e fn tree ivy functional frontends numpy greater equal e on device cpu e frontend numpy e e e you can reproduce this example by temporarily adding reproduce failure b as a decorator on your test case failed ivy tests test ivy test frontends test numpy test logic test comparison py test numpy greater equal e indexerror list index out of range e falsifying example test numpy greater equal e dtypes values casting e e no e none e where e test flags frontendfunctiontestflags e num positional args e with out false e inplace false e as variable e native arrays e generate frontend arrays true e e fn tree ivy functional frontends numpy greater equal e on device cpu e frontend numpy e e e you can reproduce this example by temporarily adding reproduce failure b as a decorator on your test case

| 0

|

35,699

| 6,491,960,028

|

IssuesEvent

|

2017-08-21 11:28:50

|

angular/angular-cli

|

https://api.github.com/repos/angular/angular-cli

|

closed

|

Wiki: add link from ng serve page to proxy-config page

|

community: help wanted type: documentation

|

### Bug Report or Feature Request (mark with an `x`)

```

- [ ] bug report -> please search issues before submitting

- [ ] feature request

- [ x ] documentation change (github wiki)

```

Since I can't do a pull request regarding the wiki...

This page https://github.com/angular/angular-cli/wiki/serve should include information about the option `--proxy-config` documented here: https://github.com/angular/angular-cli/blob/master/docs/documentation/stories/proxy.md

|

1.0

|

Wiki: add link from ng serve page to proxy-config page - ### Bug Report or Feature Request (mark with an `x`)

```

- [ ] bug report -> please search issues before submitting

- [ ] feature request

- [ x ] documentation change (github wiki)

```

Since I can't do a pull request regarding the wiki...

This page https://github.com/angular/angular-cli/wiki/serve should include information about the option `--proxy-config` documented here: https://github.com/angular/angular-cli/blob/master/docs/documentation/stories/proxy.md

|

non_defect

|

wiki add link from ng serve page to proxy config page bug report or feature request mark with an x bug report please search issues before submitting feature request documentation change github wiki since i can t do a pull request regarding the wiki this page should include information about the option proxy config documented here

| 0

|

79,616

| 28,479,545,854

|

IssuesEvent

|

2023-04-18 00:39:03

|

zed-industries/community

|

https://api.github.com/repos/zed-industries/community

|

closed

|

Zed won't open

|

defect panic / crash

|

### Check for existing issues

- [X] Completed

### Describe the bug / provide steps to reproduce it

After downloading both Zed 0.80.5 and Zed 0.79.1 (just to be sure, I tried different versions) and installing (opening the .dmg file and dragging to Applications), Zed will not open. I have clicked through from the Applications gui and tried to open using the `zed` binary under `Applications/Zed.app/Contents/MacOS`. In the terminal, I see the following:

```

>RUST_BACKTRACE=full ./zed

thread 'main' panicked at 'could not create config path: Os { code: 13, kind: PermissionDenied, message: "Permission denied" }', crates/zed/src/main.rs:283:56

stack backtrace:

0: 0x106be6bc4 - <std::sys_common::backtrace::_print::DisplayBacktrace as core::fmt::Display>::fmt::h3c406a4521928e59

1: 0x106c054b8 - core::fmt::write::hc60df9bae744c40c

2: 0x106be0a68 - std::io::Write::write_fmt::h1c4129dfc94f7c33

3: 0x106be69d8 - std::sys_common::backtrace::print::h34db077f1fa49c76

4: 0x106be8530 - std::panicking::default_hook::{{closure}}::hff4fe3239c020cef

5: 0x106be8288 - std::panicking::default_hook::hd2988fbcc86fdc46

6: 0x106be8b54 - std::panicking::rust_panic_with_hook::h01730ad11d62092c

7: 0x106be8974 - std::panicking::begin_panic_handler::{{closure}}::h30f454e305d4a708

8: 0x106be702c - std::sys_common::backtrace::__rust_end_short_backtrace::hb7ff1894d55794a1

9: 0x106be86d0 - _rust_begin_unwind

10: 0x106d938c0 - core::panicking::panic_fmt::hf1070b3fc33229fa

11: 0x106d93bf8 - core::result::unwrap_failed::h334387d5f1f555c1

12: 0x104add188 - Zed::main::h3ede68c77edf0427

13: 0x104aef790 - std::sys_common::backtrace::__rust_begin_short_backtrace::hb0c48201b725886f

14: 0x104ac56e0 - std::rt::lang_start::{{closure}}::h231bd61fbeca8b79

15: 0x106bdaee0 - std::rt::lang_start_internal::h24d09c16ec322e75

16: 0x104adf460 - _main

```

### Environment

New Apple M2 mac book pro. Cannot open zed, hence cannot run copy system specs from the command palette.

### If applicable, add mockups / screenshots to help explain present your vision of the feature

n/a

### If applicable, attach your `~/Library/Logs/Zed/Zed.log` file to this issue.

If you only need the most recent lines, you can run the `zed: open log` command palette action to see the last 1000.

```

>cd ~/Library/Logs/Zed

cd: no such file or directory: /Users/jps/Library/Logs/Zed

```

|

1.0

|

Zed won't open - ### Check for existing issues

- [X] Completed

### Describe the bug / provide steps to reproduce it

After downloading both Zed 0.80.5 and Zed 0.79.1 (just to be sure, I tried different versions) and installing (opening the .dmg file and dragging to Applications), Zed will not open. I have clicked through from the Applications gui and tried to open using the `zed` binary under `Applications/Zed.app/Contents/MacOS`. In the terminal, I see the following:

```

>RUST_BACKTRACE=full ./zed

thread 'main' panicked at 'could not create config path: Os { code: 13, kind: PermissionDenied, message: "Permission denied" }', crates/zed/src/main.rs:283:56

stack backtrace:

0: 0x106be6bc4 - <std::sys_common::backtrace::_print::DisplayBacktrace as core::fmt::Display>::fmt::h3c406a4521928e59

1: 0x106c054b8 - core::fmt::write::hc60df9bae744c40c

2: 0x106be0a68 - std::io::Write::write_fmt::h1c4129dfc94f7c33

3: 0x106be69d8 - std::sys_common::backtrace::print::h34db077f1fa49c76

4: 0x106be8530 - std::panicking::default_hook::{{closure}}::hff4fe3239c020cef

5: 0x106be8288 - std::panicking::default_hook::hd2988fbcc86fdc46

6: 0x106be8b54 - std::panicking::rust_panic_with_hook::h01730ad11d62092c

7: 0x106be8974 - std::panicking::begin_panic_handler::{{closure}}::h30f454e305d4a708

8: 0x106be702c - std::sys_common::backtrace::__rust_end_short_backtrace::hb7ff1894d55794a1

9: 0x106be86d0 - _rust_begin_unwind

10: 0x106d938c0 - core::panicking::panic_fmt::hf1070b3fc33229fa

11: 0x106d93bf8 - core::result::unwrap_failed::h334387d5f1f555c1

12: 0x104add188 - Zed::main::h3ede68c77edf0427

13: 0x104aef790 - std::sys_common::backtrace::__rust_begin_short_backtrace::hb0c48201b725886f

14: 0x104ac56e0 - std::rt::lang_start::{{closure}}::h231bd61fbeca8b79

15: 0x106bdaee0 - std::rt::lang_start_internal::h24d09c16ec322e75

16: 0x104adf460 - _main

```

### Environment

New Apple M2 mac book pro. Cannot open zed, hence cannot run copy system specs from the command palette.

### If applicable, add mockups / screenshots to help explain present your vision of the feature

n/a

### If applicable, attach your `~/Library/Logs/Zed/Zed.log` file to this issue.

If you only need the most recent lines, you can run the `zed: open log` command palette action to see the last 1000.

```

>cd ~/Library/Logs/Zed

cd: no such file or directory: /Users/jps/Library/Logs/Zed

```

|

defect

|

zed won t open check for existing issues completed describe the bug provide steps to reproduce it after downloading both zed and zed just to be sure i tried different versions and installing opening the dmg file and dragging to applications zed will not open i have clicked through from the applications gui and tried to open using the zed binary under applications zed app contents macos in the terminal i see the following rust backtrace full zed thread main panicked at could not create config path os code kind permissiondenied message permission denied crates zed src main rs stack backtrace fmt core fmt write std io write write fmt std sys common backtrace print std panicking default hook closure std panicking default hook std panicking rust panic with hook std panicking begin panic handler closure std sys common backtrace rust end short backtrace rust begin unwind core panicking panic fmt core result unwrap failed zed main std sys common backtrace rust begin short backtrace std rt lang start closure std rt lang start internal main environment new apple mac book pro cannot open zed hence cannot run copy system specs from the command palette if applicable add mockups screenshots to help explain present your vision of the feature n a if applicable attach your library logs zed zed log file to this issue if you only need the most recent lines you can run the zed open log command palette action to see the last cd library logs zed cd no such file or directory users jps library logs zed

| 1

|

23,078

| 20,980,510,802

|

IssuesEvent

|

2022-03-28 19:25:23

|

zcash/zcash

|

https://api.github.com/repos/zcash/zcash

|

opened

|

UX: zcashd: UIWarning() should also log to debug.log

|

usability A-logging

|

I'm getting this warning on the UI (metrics screen) when running `zcashd`:

```

Messages:

- Warning: Error reading wallet.dat! All keys read correctly, but transaction data or address book entries might be missing or incorrect.

```

but nothing is logged to `debug.log` for this condition. Perhaps this and possibly other warnings should appear in the log file. More generally, maybe `UIWarning()` should write its argument to `debug.log` (although we'd want to be careful not to log too much).

|

True

|

UX: zcashd: UIWarning() should also log to debug.log - I'm getting this warning on the UI (metrics screen) when running `zcashd`:

```

Messages:

- Warning: Error reading wallet.dat! All keys read correctly, but transaction data or address book entries might be missing or incorrect.

```

but nothing is logged to `debug.log` for this condition. Perhaps this and possibly other warnings should appear in the log file. More generally, maybe `UIWarning()` should write its argument to `debug.log` (although we'd want to be careful not to log too much).

|

non_defect

|

ux zcashd uiwarning should also log to debug log i m getting this warning on the ui metrics screen when running zcashd messages warning error reading wallet dat all keys read correctly but transaction data or address book entries might be missing or incorrect but nothing is logged to debug log for this condition perhaps this and possibly other warnings should appear in the log file more generally maybe uiwarning should write its argument to debug log although we d want to be careful not to log too much

| 0

|

185,511

| 15,024,021,473

|

IssuesEvent

|

2021-02-01 19:02:34

|

gessnerfl/terraform-provider-instana

|

https://api.github.com/repos/gessnerfl/terraform-provider-instana

|

closed

|

Application perspetive to be added as arguments.

|

documentation question

|

Hey @gessnerfl doing great work,

wanted to have application perspective to be added to instana_alerting_config,

Because i can see no argument under alert config so need to add that in your repo to support adding application perspective for our alerts.

we are able to use DFQ,all entities option, but not the Application perspective.

how to go with that, can we get any help from here

|

1.0

|

Application perspetive to be added as arguments. - Hey @gessnerfl doing great work,

wanted to have application perspective to be added to instana_alerting_config,

Because i can see no argument under alert config so need to add that in your repo to support adding application perspective for our alerts.

we are able to use DFQ,all entities option, but not the Application perspective.

how to go with that, can we get any help from here

|

non_defect

|

application perspetive to be added as arguments hey gessnerfl doing great work wanted to have application perspective to be added to instana alerting config because i can see no argument under alert config so need to add that in your repo to support adding application perspective for our alerts we are able to use dfq all entities option but not the application perspective how to go with that can we get any help from here

| 0

|

764

| 3,250,901,201

|

IssuesEvent

|

2015-10-19 06:01:30

|

t3kt/vjzual2

|

https://api.github.com/repos/t3kt/vjzual2

|

opened

|

create a 3d color-based tiling module

|

enhancement video processing

|

also support using an external source for the tiling data

|

1.0

|

create a 3d color-based tiling module - also support using an external source for the tiling data

|

non_defect

|

create a color based tiling module also support using an external source for the tiling data

| 0

|

88,245

| 10,567,414,342

|

IssuesEvent

|

2019-10-06 03:56:35

|

ShivamSharma779/The-Rainbow

|

https://api.github.com/repos/ShivamSharma779/The-Rainbow

|

closed

|

Beauty Of Rainbow

|

documentation good first issue help wanted

|

Could you please be more creative with the **Beauty of the rainbow**

|

1.0

|

Beauty Of Rainbow - Could you please be more creative with the **Beauty of the rainbow**

|

non_defect

|

beauty of rainbow could you please be more creative with the beauty of the rainbow

| 0

|

42,809

| 11,273,854,222

|

IssuesEvent

|

2020-01-14 17:19:55

|

mozilla-lockwise/lockwise-android

|

https://api.github.com/repos/mozilla-lockwise/lockwise-android

|

closed

|

The password is not auto-hidden when creating a new entry

|

closed-invalid defect feature-CUD

|

## Steps to reproduce

1. Launch lockwise.

2. Login with valid credentials.

3. On the entries, section tap on the `+` button in order to create a new entry.

4. Add the password for the account.

### Expected behavior

The password should be hidden while typing until the user wants to reveal it.

### Actual behavior

The password is not hidden when creating a new entry.

### Device & build information

* Device: **Google Pixel 3a XL(Android 10).**

* Build version: **Latest master version 3.3.0 from 1/13.**

### Notes

Attachments:

|

1.0

|

The password is not auto-hidden when creating a new entry - ## Steps to reproduce

1. Launch lockwise.

2. Login with valid credentials.

3. On the entries, section tap on the `+` button in order to create a new entry.

4. Add the password for the account.

### Expected behavior

The password should be hidden while typing until the user wants to reveal it.

### Actual behavior

The password is not hidden when creating a new entry.

### Device & build information

* Device: **Google Pixel 3a XL(Android 10).**

* Build version: **Latest master version 3.3.0 from 1/13.**

### Notes

Attachments:

|

defect

|

the password is not auto hidden when creating a new entry steps to reproduce launch lockwise login with valid credentials on the entries section tap on the button in order to create a new entry add the password for the account expected behavior the password should be hidden while typing until the user wants to reveal it actual behavior the password is not hidden when creating a new entry device build information device google pixel xl android build version latest master version from notes attachments

| 1

|

54,380

| 13,634,617,282

|

IssuesEvent

|

2020-09-25 00:23:00

|

SAP/fundamental-styles

|

https://api.github.com/repos/SAP/fundamental-styles

|

closed

|

Defect Hunting 0.12.0

|

0.12.0 Bug Defect Hunting

|

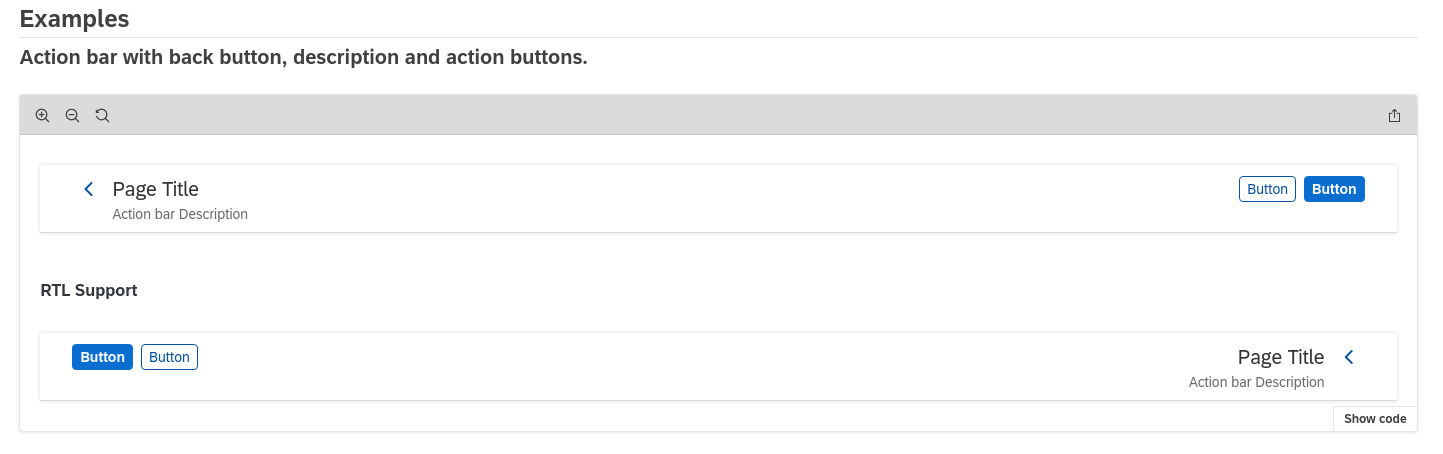

- [x] Button not inverted on RTL - Wrong Icon - https://github.com/SAP/fundamental-styles/pull/1694

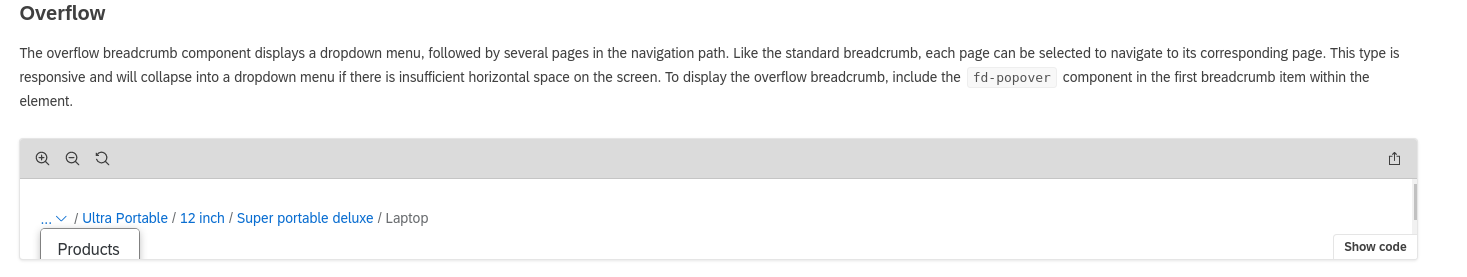

- [x] Breadcrump iframe Height with opened popover - https://github.com/SAP/fundamental-styles/pull/1694

- [x] empty checkbox focus double outline (example form cards with table) - https://github.com/SAP/fundamental-styles/pull/1662

- [x] Form Grid - Inputs/Labels got redundant ID/for attributes - Jedrzej

- [x] Form Messages - Popovers don't open - https://github.com/SAP/fundamental-styles/pull/1694

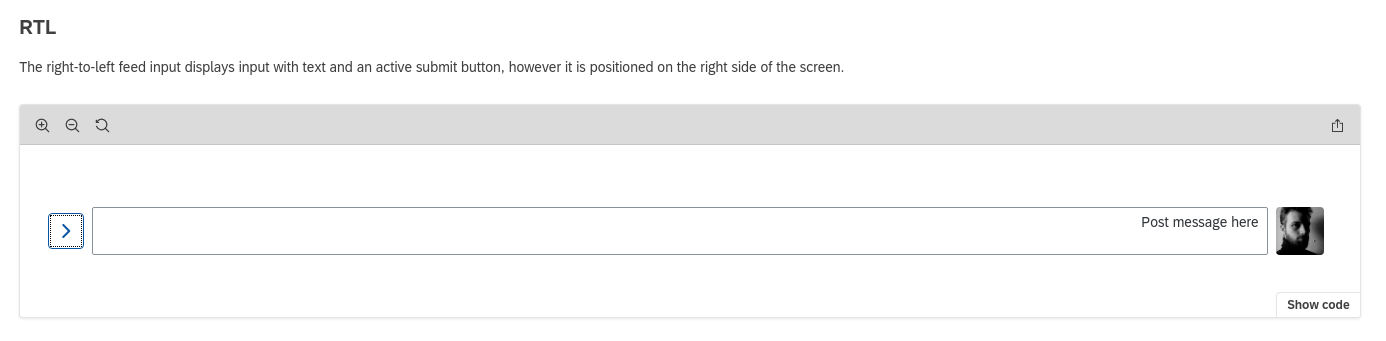

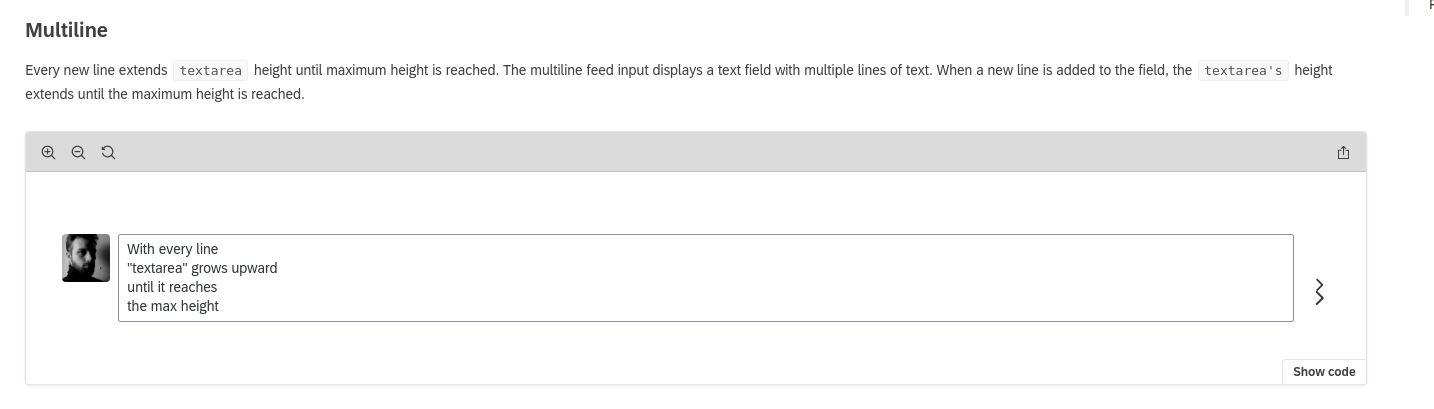

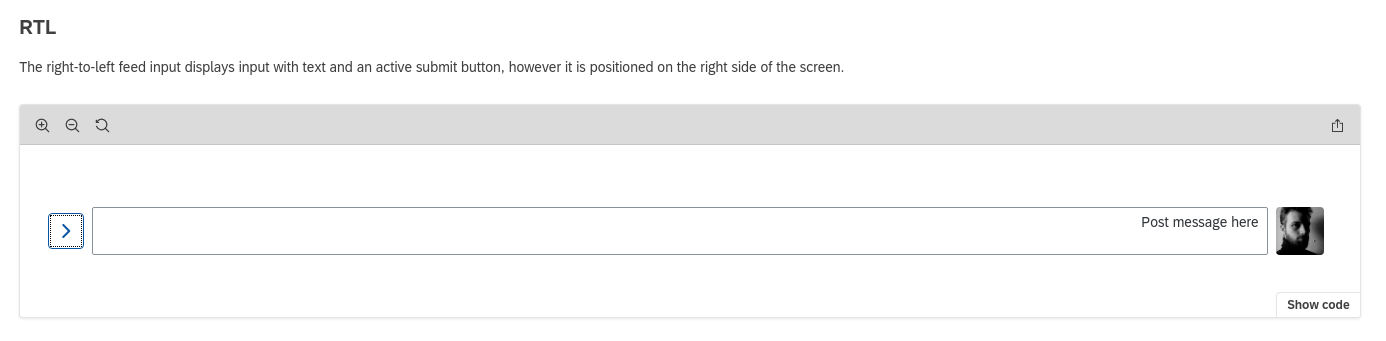

- [x] Feed Input - button - https://github.com/SAP/fundamental-styles/pull/1694

- [ ] Feed input - RTL button

- [x] File Uploader - Doesn't react on click, brings console error - https://github.com/SAP/fundamental-styles/pull/1694

- [x] Generic Tile - actions - https://github.com/SAP/fundamental-styles/pull/1698

- [x] List - checkboxes - Repeated ID/For attributes on RTL [#1693](https://github.com/SAP/fundamental-styles/pull/1693)

- [x] Side Navigation - nested list button with multiple focus outlines [#1691](https://github.com/SAP/fundamental-styles/pull/1691)

|

1.0

|

Defect Hunting 0.12.0 - - [x] Button not inverted on RTL - Wrong Icon - https://github.com/SAP/fundamental-styles/pull/1694

- [x] Breadcrump iframe Height with opened popover - https://github.com/SAP/fundamental-styles/pull/1694

- [x] empty checkbox focus double outline (example form cards with table) - https://github.com/SAP/fundamental-styles/pull/1662

- [x] Form Grid - Inputs/Labels got redundant ID/for attributes - Jedrzej

- [x] Form Messages - Popovers don't open - https://github.com/SAP/fundamental-styles/pull/1694

- [x] Feed Input - button - https://github.com/SAP/fundamental-styles/pull/1694

- [ ] Feed input - RTL button

- [x] File Uploader - Doesn't react on click, brings console error - https://github.com/SAP/fundamental-styles/pull/1694

- [x] Generic Tile - actions - https://github.com/SAP/fundamental-styles/pull/1698

- [x] List - checkboxes - Repeated ID/For attributes on RTL [#1693](https://github.com/SAP/fundamental-styles/pull/1693)

- [x] Side Navigation - nested list button with multiple focus outlines [#1691](https://github.com/SAP/fundamental-styles/pull/1691)

|

defect

|

defect hunting button not inverted on rtl wrong icon breadcrump iframe height with opened popover empty checkbox focus double outline example form cards with table form grid inputs labels got redundant id for attributes jedrzej form messages popovers don t open feed input button feed input rtl button file uploader doesn t react on click brings console error generic tile actions list checkboxes repeated id for attributes on rtl side navigation nested list button with multiple focus outlines

| 1

|

43,274

| 7,035,814,555

|

IssuesEvent

|

2017-12-28 03:30:33

|

litehelpers/Cordova-sqlcipher-adapter

|

https://api.github.com/repos/litehelpers/Cordova-sqlcipher-adapter

|

closed

|

Ratchet CSS can't display correctly and cant navigate to other page

|

documentation invalid question user community help

|

Hi, I am using cordova to build a mobile application but i having a problem with navigation/link to other pages. Is there any suggestion for me. Thanks in advance

[https://stackoverflow.com/questions/47439120/ratchet-css-cant-display-correctly-and-cant-navigate-to-other-page](url)

|

1.0

|

Ratchet CSS can't display correctly and cant navigate to other page - Hi, I am using cordova to build a mobile application but i having a problem with navigation/link to other pages. Is there any suggestion for me. Thanks in advance

[https://stackoverflow.com/questions/47439120/ratchet-css-cant-display-correctly-and-cant-navigate-to-other-page](url)

|

non_defect

|

ratchet css can t display correctly and cant navigate to other page hi i am using cordova to build a mobile application but i having a problem with navigation link to other pages is there any suggestion for me thanks in advance url

| 0

|

38,017

| 8,634,509,534

|

IssuesEvent

|

2018-11-22 17:06:01

|

hazelcast/hazelcast

|

https://api.github.com/repos/hazelcast/hazelcast

|

closed

|

The querying performance drop when running inside OSGI

|

Module: IMap Module: Query Source: Internal Team: Core Type: Perf. Defect

|

A problem seems to be related to the Java Reflection info caching implemented in `com.hazelcast.query.impl.getters.Extractors`:

```

Getter getGetter(InternalSerializationService serializationService, Object targetObject, String attributeName) {

Getter getter = getterCache.getGetter(targetObject.getClass(), attributeName);

if (getter == null) {

getter = instantiateGetter(serializationService, targetObject, attributeName);

if (getter.isCacheable()) {

getterCache.putGetter(targetObject.getClass(), attributeName, getter);

}

}

return getter;

}

```

For OSGi environments the given method will be returning `false` because obviously Hazelcast and the POJOs are in separate bundles, thus having separate class loaders:

```

boolean isCacheable() {

return ReflectionHelper.THIS_CL.equals(method.getDeclaringClass().getClassLoader());

}

```

It looks like this was introduced with [this commit]( https://github.com/hazelcast/hazelcast/commit/57394ddb59a521190e3a7533c656af9f03a28526) as a fix for: https://github.com/hazelcast/hazelcast/issues/1503

Please reach out to me for more details.

|

1.0

|

The querying performance drop when running inside OSGI - A problem seems to be related to the Java Reflection info caching implemented in `com.hazelcast.query.impl.getters.Extractors`:

```

Getter getGetter(InternalSerializationService serializationService, Object targetObject, String attributeName) {

Getter getter = getterCache.getGetter(targetObject.getClass(), attributeName);

if (getter == null) {

getter = instantiateGetter(serializationService, targetObject, attributeName);

if (getter.isCacheable()) {

getterCache.putGetter(targetObject.getClass(), attributeName, getter);

}

}

return getter;

}

```

For OSGi environments the given method will be returning `false` because obviously Hazelcast and the POJOs are in separate bundles, thus having separate class loaders:

```

boolean isCacheable() {

return ReflectionHelper.THIS_CL.equals(method.getDeclaringClass().getClassLoader());

}

```

It looks like this was introduced with [this commit]( https://github.com/hazelcast/hazelcast/commit/57394ddb59a521190e3a7533c656af9f03a28526) as a fix for: https://github.com/hazelcast/hazelcast/issues/1503

Please reach out to me for more details.

|

defect

|

the querying performance drop when running inside osgi a problem seems to be related to the java reflection info caching implemented in com hazelcast query impl getters extractors getter getgetter internalserializationservice serializationservice object targetobject string attributename getter getter gettercache getgetter targetobject getclass attributename if getter null getter instantiategetter serializationservice targetobject attributename if getter iscacheable gettercache putgetter targetobject getclass attributename getter return getter for osgi environments the given method will be returning false because obviously hazelcast and the pojos are in separate bundles thus having separate class loaders boolean iscacheable return reflectionhelper this cl equals method getdeclaringclass getclassloader it looks like this was introduced with as a fix for please reach out to me for more details

| 1

|

61,711

| 7,495,729,222

|

IssuesEvent

|

2018-04-08 00:32:03

|

jfurrow/flood

|

https://api.github.com/repos/jfurrow/flood

|

closed

|

Right click context menu breaks at browser bottom edge

|

bug design

|

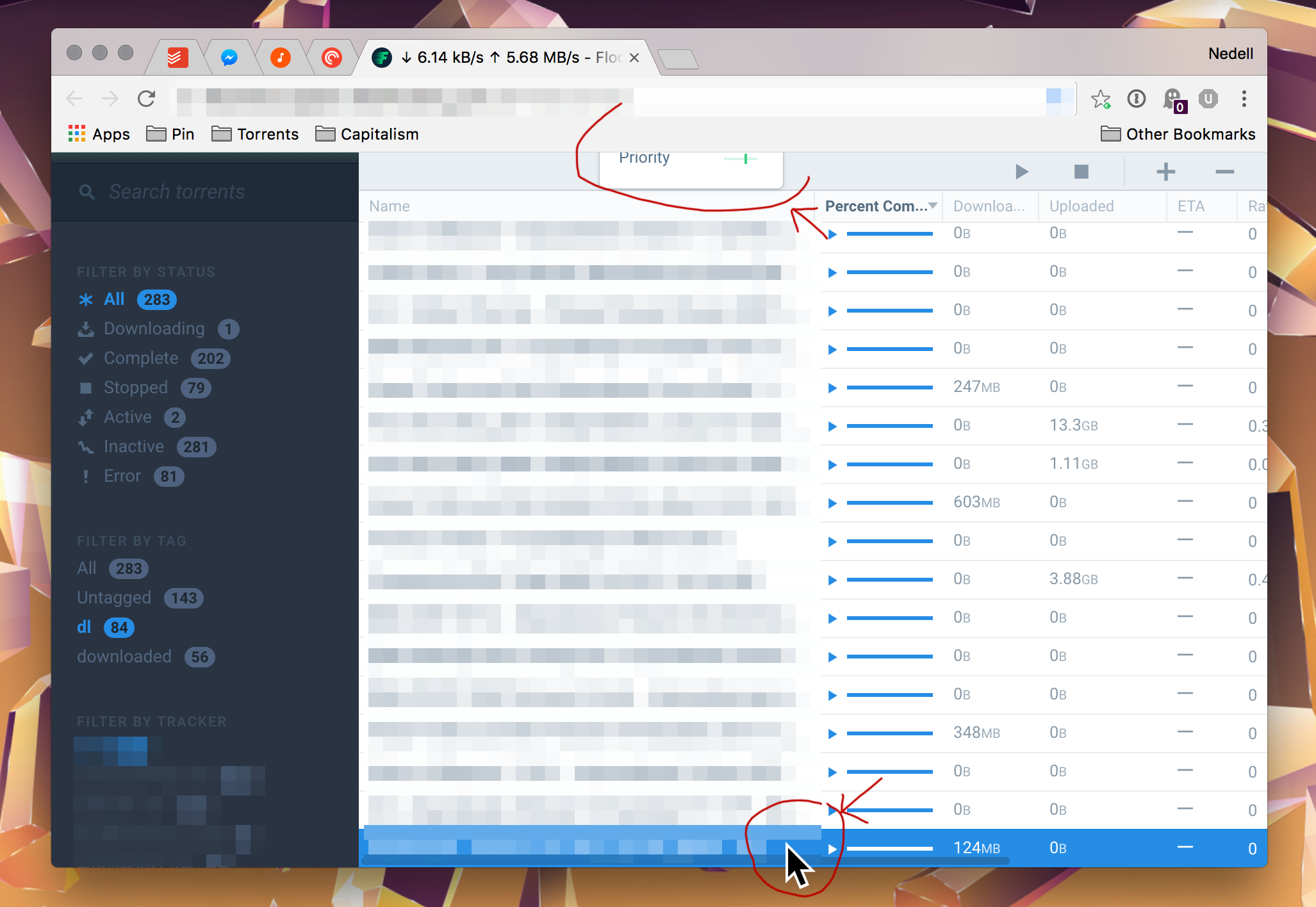

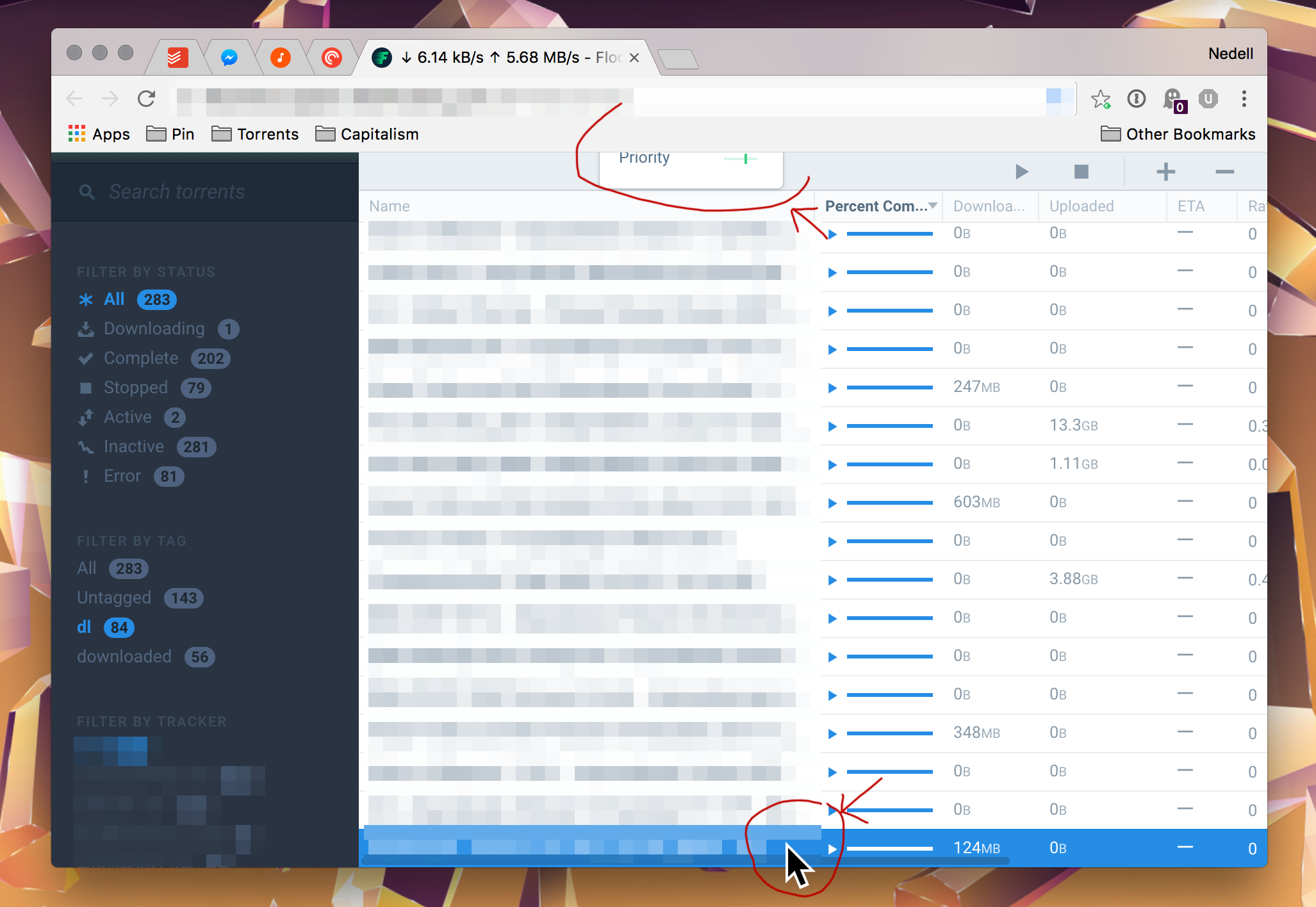

## Summary

When right clicking on torrents too close to the bottom of the list, the context menu appears off the top of the view.

## Expected Behavior

When the context menu would appear too close to the bottom of the screen to be practical, it should begin to be nudged upwards relative to the click location, until when the very last list element is pressed, the entire menu is above the click location.

## Current Behavior

If more than four list elements are trying to overflow in the context menu, it is pushed to the top off the browser window, and is mostly obscured

## Possible Solution

Should adopt the standard functionality in right-click context menus

## Steps to Reproduce (for bugs)

1. Re-size main window so that there are torrents extending to the bottom of the browser window

2. Right-click the last torrent in the list to bring up its context menu

3. Observe context menu behavior

## Context

Makes the context menu impossible to use for the last few torrents in the list

## Your Environment

* Version used:

+ Version (stable release) `v1.0.0`

+ Commit ID (development release) `293aa11d512ee089f1d559b24e6f8bbe707cfb8d`

* Environment name and version

+ Node `v9.0.0`

+ Chrome `65.0.3325.181 (Official Build) (64-bit)`

+ OS `Debian GNU/Linux 9.4`

* Operating System and version:

+ macOS `10.13.4`

|

1.0

|

Right click context menu breaks at browser bottom edge - ## Summary

When right clicking on torrents too close to the bottom of the list, the context menu appears off the top of the view.

## Expected Behavior

When the context menu would appear too close to the bottom of the screen to be practical, it should begin to be nudged upwards relative to the click location, until when the very last list element is pressed, the entire menu is above the click location.

## Current Behavior

If more than four list elements are trying to overflow in the context menu, it is pushed to the top off the browser window, and is mostly obscured

## Possible Solution

Should adopt the standard functionality in right-click context menus

## Steps to Reproduce (for bugs)

1. Re-size main window so that there are torrents extending to the bottom of the browser window

2. Right-click the last torrent in the list to bring up its context menu

3. Observe context menu behavior

## Context

Makes the context menu impossible to use for the last few torrents in the list

## Your Environment

* Version used:

+ Version (stable release) `v1.0.0`

+ Commit ID (development release) `293aa11d512ee089f1d559b24e6f8bbe707cfb8d`

* Environment name and version

+ Node `v9.0.0`

+ Chrome `65.0.3325.181 (Official Build) (64-bit)`

+ OS `Debian GNU/Linux 9.4`

* Operating System and version:

+ macOS `10.13.4`

|

non_defect

|

right click context menu breaks at browser bottom edge summary when right clicking on torrents too close to the bottom of the list the context menu appears off the top of the view expected behavior when the context menu would appear too close to the bottom of the screen to be practical it should begin to be nudged upwards relative to the click location until when the very last list element is pressed the entire menu is above the click location current behavior if more than four list elements are trying to overflow in the context menu it is pushed to the top off the browser window and is mostly obscured possible solution should adopt the standard functionality in right click context menus steps to reproduce for bugs re size main window so that there are torrents extending to the bottom of the browser window right click the last torrent in the list to bring up its context menu observe context menu behavior context makes the context menu impossible to use for the last few torrents in the list your environment version used version stable release commit id development release environment name and version node chrome official build bit os debian gnu linux operating system and version macos

| 0

|

684,549

| 23,422,137,057

|

IssuesEvent

|

2022-08-13 21:35:27

|

dotnet/runtime

|

https://api.github.com/repos/dotnet/runtime

|

closed

|

Developers targeting browser-wasm can use Web Crypto APIs

|

arch-wasm area-System.Security User Story Priority:1 Cost:L Team:Libraries

|

We want to avoid shipping OpenSSL for Browser as that’s not something that aligns well with web nature of WebAssembly as well as it has noticeable impacts on the size of the final app. This would also save us from having to deal with zero-day vulnerabilities as well as support for crypto algorithms which are only secure if they use special CPU instructions (RNG and AES for example).

Using the platform native crypto functions is the preferred solution as it does not have any noticeable size and it’s also the most performant solution. All browser have nowadays support for Crypto APIs which we should be able to use to implement the core crypto functions required by BCL.

Relevant documentation can be found at https://developer.mozilla.org/en-US/docs/Web/API/SubtleCrypto. The tricky part will be to deal with the async nature of these APIs but we could introduce new async APIs to make the integration easier.

.NET has support for old/obscure algorithms and also other features like certificates which are not relevant in browser space and for them, we would keep throwing PNSE.

### Design Proposal

The design proposal for how we will enable cryptographic algorithms in .NET for WebAssembly can be found here:

https://github.com/dotnet/designs/blob/main/accepted/2021/blazor-wasm-crypto.md

We intend on providing the most secure implementations of cryptographic algorithms when available. On browsers which support `SharedArrayBuffer`, we will utilize that as a synchronization mechanism to perform a sync-over-async operation, letting the browser's secure `SubtleCrypto` implementation act as our cryptographic primitive. On browsers which do not support `SharedArrayBuffer`, we will fall back to in-box managed algorithm implementations of these primitives.

Where managed algorithm implementations are needed, they can be migrated from the .NET Framework Reference Source.

* https://github.com/microsoft/referencesource/tree/master/mscorlib/system/security/cryptography

* https://github.com/microsoft/referencesource/tree/master/System.Core/System/Security/Cryptography

### Work Items

- [x] SHA1

- [x] SHA256

- [x] SHA384

- [x] SHA512

- [x] HMACSHA1

- [x] HMACSHA256

- [x] HMACSHA384

- [x] HMACSHA512

- [x] AES-CBC

- [x] AES-ECB and AES-CFB should throw PlatformNotSupportedException, as they are not available in webcrypto

- [x] PBKDF2 (via [Rfc2898DeriveBytes](https://docs.microsoft.com/dotnet/api/system.security.cryptography.rfc2898derivebytes))

- [x] HKDF

### Acceptance Criteria

- [ ] Integrate into arch-wasm infrastructure

- [ ] Ensure a productive development/testing workflow with WebAssembly

- [ ] Get all existing unit tests for the algorithms passing in WebAssembly

- [ ] Look for opportunities to improve argument validation

- [ ] Look for opportunities to modernize the code, such as using Span

- [ ] Validate end-to-end scenarios in desktop browsers that support `SharedArrayBuffer`

- [ ] Validate end-to-end scenarios in mobile browsers that support `SharedArrayBuffer`

- [ ] Validate end-to-end scenarios in desktop browsers that do not support `SharedArrayBuffer`

- [ ] Validate end-to-end scenarios in mobile browsers that do not support `SharedArrayBuffer`

### Issues to address

- [ ] https://github.com/dotnet/runtime/issues/69741

- [x] https://github.com/dotnet/runtime/issues/69740

- [x] https://github.com/dotnet/runtime/issues/69806

|

1.0

|

Developers targeting browser-wasm can use Web Crypto APIs - We want to avoid shipping OpenSSL for Browser as that’s not something that aligns well with web nature of WebAssembly as well as it has noticeable impacts on the size of the final app. This would also save us from having to deal with zero-day vulnerabilities as well as support for crypto algorithms which are only secure if they use special CPU instructions (RNG and AES for example).

Using the platform native crypto functions is the preferred solution as it does not have any noticeable size and it’s also the most performant solution. All browser have nowadays support for Crypto APIs which we should be able to use to implement the core crypto functions required by BCL.

Relevant documentation can be found at https://developer.mozilla.org/en-US/docs/Web/API/SubtleCrypto. The tricky part will be to deal with the async nature of these APIs but we could introduce new async APIs to make the integration easier.

.NET has support for old/obscure algorithms and also other features like certificates which are not relevant in browser space and for them, we would keep throwing PNSE.

### Design Proposal

The design proposal for how we will enable cryptographic algorithms in .NET for WebAssembly can be found here:

https://github.com/dotnet/designs/blob/main/accepted/2021/blazor-wasm-crypto.md

We intend on providing the most secure implementations of cryptographic algorithms when available. On browsers which support `SharedArrayBuffer`, we will utilize that as a synchronization mechanism to perform a sync-over-async operation, letting the browser's secure `SubtleCrypto` implementation act as our cryptographic primitive. On browsers which do not support `SharedArrayBuffer`, we will fall back to in-box managed algorithm implementations of these primitives.

Where managed algorithm implementations are needed, they can be migrated from the .NET Framework Reference Source.

* https://github.com/microsoft/referencesource/tree/master/mscorlib/system/security/cryptography

* https://github.com/microsoft/referencesource/tree/master/System.Core/System/Security/Cryptography

### Work Items

- [x] SHA1

- [x] SHA256

- [x] SHA384

- [x] SHA512

- [x] HMACSHA1

- [x] HMACSHA256

- [x] HMACSHA384

- [x] HMACSHA512

- [x] AES-CBC

- [x] AES-ECB and AES-CFB should throw PlatformNotSupportedException, as they are not available in webcrypto

- [x] PBKDF2 (via [Rfc2898DeriveBytes](https://docs.microsoft.com/dotnet/api/system.security.cryptography.rfc2898derivebytes))

- [x] HKDF

### Acceptance Criteria

- [ ] Integrate into arch-wasm infrastructure

- [ ] Ensure a productive development/testing workflow with WebAssembly

- [ ] Get all existing unit tests for the algorithms passing in WebAssembly

- [ ] Look for opportunities to improve argument validation

- [ ] Look for opportunities to modernize the code, such as using Span

- [ ] Validate end-to-end scenarios in desktop browsers that support `SharedArrayBuffer`

- [ ] Validate end-to-end scenarios in mobile browsers that support `SharedArrayBuffer`

- [ ] Validate end-to-end scenarios in desktop browsers that do not support `SharedArrayBuffer`

- [ ] Validate end-to-end scenarios in mobile browsers that do not support `SharedArrayBuffer`

### Issues to address

- [ ] https://github.com/dotnet/runtime/issues/69741

- [x] https://github.com/dotnet/runtime/issues/69740

- [x] https://github.com/dotnet/runtime/issues/69806

|

non_defect

|

developers targeting browser wasm can use web crypto apis we want to avoid shipping openssl for browser as that’s not something that aligns well with web nature of webassembly as well as it has noticeable impacts on the size of the final app this would also save us from having to deal with zero day vulnerabilities as well as support for crypto algorithms which are only secure if they use special cpu instructions rng and aes for example using the platform native crypto functions is the preferred solution as it does not have any noticeable size and it’s also the most performant solution all browser have nowadays support for crypto apis which we should be able to use to implement the core crypto functions required by bcl relevant documentation can be found at the tricky part will be to deal with the async nature of these apis but we could introduce new async apis to make the integration easier net has support for old obscure algorithms and also other features like certificates which are not relevant in browser space and for them we would keep throwing pnse design proposal the design proposal for how we will enable cryptographic algorithms in net for webassembly can be found here we intend on providing the most secure implementations of cryptographic algorithms when available on browsers which support sharedarraybuffer we will utilize that as a synchronization mechanism to perform a sync over async operation letting the browser s secure subtlecrypto implementation act as our cryptographic primitive on browsers which do not support sharedarraybuffer we will fall back to in box managed algorithm implementations of these primitives where managed algorithm implementations are needed they can be migrated from the net framework reference source work items aes cbc aes ecb and aes cfb should throw platformnotsupportedexception as they are not available in webcrypto via hkdf acceptance criteria integrate into arch wasm infrastructure ensure a productive development testing workflow with webassembly get all existing unit tests for the algorithms passing in webassembly look for opportunities to improve argument validation look for opportunities to modernize the code such as using span validate end to end scenarios in desktop browsers that support sharedarraybuffer validate end to end scenarios in mobile browsers that support sharedarraybuffer validate end to end scenarios in desktop browsers that do not support sharedarraybuffer validate end to end scenarios in mobile browsers that do not support sharedarraybuffer issues to address

| 0

|

68,968

| 22,035,810,962

|

IssuesEvent

|

2022-05-28 15:03:32

|

mautrix/telegram

|

https://api.github.com/repos/mautrix/telegram

|

closed

|

Images with captions don't store Matrix event ID of caption

|

requires db update bug: defect

|

Currently the database can only store one event ID per Telegram message (except for edits). However, images with captions are bridged as two messages. This means that:

* Replying to the image event from Matrix won't be bridged correctly

* Deleting the image event from Matrix won't be bridged correctly

* Deleting the message from Telegram will only delete the caption

Bridging image messages as inline images is already supported by the bridge, but not supported by most clients. The preferred solution would be implementing matrix-org/matrix-doc#2530 everywhere, but alternatively the database could be updated to support a many-to-one mapping.

|

1.0

|

Images with captions don't store Matrix event ID of caption - Currently the database can only store one event ID per Telegram message (except for edits). However, images with captions are bridged as two messages. This means that:

* Replying to the image event from Matrix won't be bridged correctly

* Deleting the image event from Matrix won't be bridged correctly

* Deleting the message from Telegram will only delete the caption

Bridging image messages as inline images is already supported by the bridge, but not supported by most clients. The preferred solution would be implementing matrix-org/matrix-doc#2530 everywhere, but alternatively the database could be updated to support a many-to-one mapping.

|

defect

|

images with captions don t store matrix event id of caption currently the database can only store one event id per telegram message except for edits however images with captions are bridged as two messages this means that replying to the image event from matrix won t be bridged correctly deleting the image event from matrix won t be bridged correctly deleting the message from telegram will only delete the caption bridging image messages as inline images is already supported by the bridge but not supported by most clients the preferred solution would be implementing matrix org matrix doc everywhere but alternatively the database could be updated to support a many to one mapping

| 1

|

391,824

| 11,579,096,147

|

IssuesEvent

|

2020-02-21 17:11:06

|

googleapis/nodejs-pubsub

|

https://api.github.com/repos/googleapis/nodejs-pubsub

|

closed

|

"Failed to connect before the deadline" errors being seen

|

api: pubsub external priority: p2 type: bug

|

Since upgrading to the latest pubsub (`"@google-cloud/pubsub": "1.1.5"`) my node is still getting occasional errors from the underlying grpc library. My app is using grpc-js@0.6.9:

$ npm ls | grep grpc-js

│ │ ├─┬ @grpc/grpc-js@0.6.9

It's not as bad as it was. When I first upgraded to pubsub 1.x it simply didn't work at all, I'd get an error within a few seconds of the app starting and from then no messages would get delivered.

Now the problem is much less bad (my app is working), but I still see the following errors in my logs:

From `subscription.on(`error`, (err) => {` I see this:

{"code":4,"details":"Failed to connect before the deadline","metadata":{"internalRepr":{},"options":{}}}

And in my logs I've also noticed these (excuse the formatting):

`Error: Failed to add metadata entry @���: Wed, 13 Nov 2019 07:13:16 GMT. Metadata key "@���" contains illegal characters at validate (/home/nodeapp/apps/crypto-trader-prototype/shared-code/node_modules/@grpc/grpc-js/build/src/metadata.js:35:15) at Metadata.add (/home/nodeapp/apps/crypto-trader-prototype/shared-code/node_modules/@grpc/grpc-js/build/src/metadata.js:87:9) at values.split.forEach.v (/home/nodeapp/apps/crypto-trader-prototype/shared-code/node_modules/@grpc/grpc-js/build/src/metadata.js:226:63) at Array.forEach (<anonymous>) at Object.keys.forEach.key (/home/nodeapp/apps/crypto-trader-prototype/shared-code/node_modules/@grpc/grpc-js/build/src/metadata.js:226:43) at Array.forEach (<anonymous>) at Function.fromHttp2Headers (/home/nodeapp/apps/crypto-trader-prototype/shared-code/node_modules/@grpc/grpc-js/build/src/metadata.js:200:30) at ClientHttp2Stream.stream.on (/home/nodeapp/apps/crypto-trader-prototype/shared-code/node_modules/@grpc/grpc-js/build/src/call-stream.js:211:56) at ClientHttp2Stream.emit (events.js:193:13) at emit (internal/http2/core.js:244:8) at processTicksAndRejections (internal/process/task_queues.js:81:17)`

.. but the two don't seem to be related.

I will start running with the debug flags enabled and report back

|

1.0

|

"Failed to connect before the deadline" errors being seen - Since upgrading to the latest pubsub (`"@google-cloud/pubsub": "1.1.5"`) my node is still getting occasional errors from the underlying grpc library. My app is using grpc-js@0.6.9:

$ npm ls | grep grpc-js

│ │ ├─┬ @grpc/grpc-js@0.6.9

It's not as bad as it was. When I first upgraded to pubsub 1.x it simply didn't work at all, I'd get an error within a few seconds of the app starting and from then no messages would get delivered.

Now the problem is much less bad (my app is working), but I still see the following errors in my logs:

From `subscription.on(`error`, (err) => {` I see this:

{"code":4,"details":"Failed to connect before the deadline","metadata":{"internalRepr":{},"options":{}}}

And in my logs I've also noticed these (excuse the formatting):

`Error: Failed to add metadata entry @���: Wed, 13 Nov 2019 07:13:16 GMT. Metadata key "@���" contains illegal characters at validate (/home/nodeapp/apps/crypto-trader-prototype/shared-code/node_modules/@grpc/grpc-js/build/src/metadata.js:35:15) at Metadata.add (/home/nodeapp/apps/crypto-trader-prototype/shared-code/node_modules/@grpc/grpc-js/build/src/metadata.js:87:9) at values.split.forEach.v (/home/nodeapp/apps/crypto-trader-prototype/shared-code/node_modules/@grpc/grpc-js/build/src/metadata.js:226:63) at Array.forEach (<anonymous>) at Object.keys.forEach.key (/home/nodeapp/apps/crypto-trader-prototype/shared-code/node_modules/@grpc/grpc-js/build/src/metadata.js:226:43) at Array.forEach (<anonymous>) at Function.fromHttp2Headers (/home/nodeapp/apps/crypto-trader-prototype/shared-code/node_modules/@grpc/grpc-js/build/src/metadata.js:200:30) at ClientHttp2Stream.stream.on (/home/nodeapp/apps/crypto-trader-prototype/shared-code/node_modules/@grpc/grpc-js/build/src/call-stream.js:211:56) at ClientHttp2Stream.emit (events.js:193:13) at emit (internal/http2/core.js:244:8) at processTicksAndRejections (internal/process/task_queues.js:81:17)`

.. but the two don't seem to be related.

I will start running with the debug flags enabled and report back

|

non_defect

|

failed to connect before the deadline errors being seen since upgrading to the latest pubsub google cloud pubsub my node is still getting occasional errors from the underlying grpc library my app is using grpc js npm ls grep grpc js │ │ ├─┬ grpc grpc js it s not as bad as it was when i first upgraded to pubsub x it simply didn t work at all i d get an error within a few seconds of the app starting and from then no messages would get delivered now the problem is much less bad my app is working but i still see the following errors in my logs from subscription on error err i see this code details failed to connect before the deadline metadata internalrepr options and in my logs i ve also noticed these excuse the formatting error failed to add metadata entry ��� wed nov gmt metadata key ��� contains illegal characters at validate home nodeapp apps crypto trader prototype shared code node modules grpc grpc js build src metadata js at metadata add home nodeapp apps crypto trader prototype shared code node modules grpc grpc js build src metadata js at values split foreach v home nodeapp apps crypto trader prototype shared code node modules grpc grpc js build src metadata js at array foreach at object keys foreach key home nodeapp apps crypto trader prototype shared code node modules grpc grpc js build src metadata js at array foreach at function home nodeapp apps crypto trader prototype shared code node modules grpc grpc js build src metadata js at stream on home nodeapp apps crypto trader prototype shared code node modules grpc grpc js build src call stream js at emit events js at emit internal core js at processticksandrejections internal process task queues js but the two don t seem to be related i will start running with the debug flags enabled and report back

| 0

|

313

| 3,050,734,831

|

IssuesEvent

|

2015-08-12 01:13:32

|

mediapublic/mediapublic

|

https://api.github.com/repos/mediapublic/mediapublic

|

opened

|

Add remaining endpoints to pyramid server

|

architecture coding

|

We currently have:

/users

/user_types

/recording_categories

/organizations

/blogs

We are missing:

/people

/recordings

/howtos

/playlists

I believe these were not implemented by @ryansb during the re-org due to a lack of understanding of what my initial "vision".

|

1.0

|

Add remaining endpoints to pyramid server - We currently have:

/users

/user_types

/recording_categories

/organizations

/blogs

We are missing:

/people

/recordings

/howtos

/playlists

I believe these were not implemented by @ryansb during the re-org due to a lack of understanding of what my initial "vision".

|

non_defect

|

add remaining endpoints to pyramid server we currently have users user types recording categories organizations blogs we are missing people recordings howtos playlists i believe these were not implemented by ryansb during the re org due to a lack of understanding of what my initial vision

| 0

|

813,801

| 30,473,317,878

|

IssuesEvent

|

2023-07-17 14:54:02

|

broadinstitute/gnomad-browser

|

https://api.github.com/repos/broadinstitute/gnomad-browser

|

closed

|

Update "Feedback" page

|

Priority: High

|

<!--

* Please describe the feature you would like added and how it will help you. Please be detailed: the more information you provide about the desired feature, and the potential use cases, the more likely we are to be able to implement it.

-->

The "Feedback" [page](https://gnomad.broadinstitute.org/feedback) is currently not very informative. Would it be possible to update the page to add more information? Here are a few suggested edits:

- Update the name of the page from "Feedback" to "Contact" (this page is more about broadly how to contact us than give feedback specifically)

- Add text to point users to our Help/Docs & FAQ page and a note about individual level data, maybe:

> For questions about gnomAD, check out the [Docs & FAQ](https://gnomad.broadinstitute.org/help).

>

> Note that, for many reasons (including consent and data usage restrictions), we do not have (and cannot share) phenotype information. Overall, we have limited information that we can share for some cohorts, such as last known age in bins of 5 years (when known) and chromosomal sex.

>

> Report data problems or feature suggestions by [email](mailto:gnomad@broadinstitute.org).

- Update the text about reporting errors in the website to avoid pointing users to the email inbox:

> Report errors in the website on [GitHub](https://github.com/broadinstitute/gnomad-browser/issues).

|

1.0

|

Update "Feedback" page - <!--

* Please describe the feature you would like added and how it will help you. Please be detailed: the more information you provide about the desired feature, and the potential use cases, the more likely we are to be able to implement it.

-->

The "Feedback" [page](https://gnomad.broadinstitute.org/feedback) is currently not very informative. Would it be possible to update the page to add more information? Here are a few suggested edits:

- Update the name of the page from "Feedback" to "Contact" (this page is more about broadly how to contact us than give feedback specifically)

- Add text to point users to our Help/Docs & FAQ page and a note about individual level data, maybe:

> For questions about gnomAD, check out the [Docs & FAQ](https://gnomad.broadinstitute.org/help).

>

> Note that, for many reasons (including consent and data usage restrictions), we do not have (and cannot share) phenotype information. Overall, we have limited information that we can share for some cohorts, such as last known age in bins of 5 years (when known) and chromosomal sex.

>

> Report data problems or feature suggestions by [email](mailto:gnomad@broadinstitute.org).

- Update the text about reporting errors in the website to avoid pointing users to the email inbox:

> Report errors in the website on [GitHub](https://github.com/broadinstitute/gnomad-browser/issues).

|

non_defect

|

update feedback page please describe the feature you would like added and how it will help you please be detailed the more information you provide about the desired feature and the potential use cases the more likely we are to be able to implement it the feedback is currently not very informative would it be possible to update the page to add more information here are a few suggested edits update the name of the page from feedback to contact this page is more about broadly how to contact us than give feedback specifically add text to point users to our help docs faq page and a note about individual level data maybe for questions about gnomad check out the note that for many reasons including consent and data usage restrictions we do not have and cannot share phenotype information overall we have limited information that we can share for some cohorts such as last known age in bins of years when known and chromosomal sex report data problems or feature suggestions by mailto gnomad broadinstitute org update the text about reporting errors in the website to avoid pointing users to the email inbox report errors in the website on

| 0

|

594,133

| 18,024,567,531

|

IssuesEvent

|

2021-09-17 01:34:20

|

GoogleCloudPlatform/java-docs-samples

|

https://api.github.com/repos/GoogleCloudPlatform/java-docs-samples

|

closed

|

com.example.dataflow.SpannerReadIT: readTableEndToEnd failed

|

type: bug priority: p1 api: dataflow samples flakybot: issue

|

This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: 51bc9349b73520baa0180c8201fe6560798cf861

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/71a84563-4015-4f92-a157-0f0b856f0f03), [Sponge](http://sponge2/71a84563-4015-4f92-a157-0f0b856f0f03)

status: failed

<details><summary>Test output</summary><br><pre>org.apache.beam.sdk.Pipeline$PipelineExecutionException: com.google.cloud.spanner.SpannerException: DEADLINE_EXCEEDED: com.google.api.gax.rpc.DeadlineExceededException: io.grpc.StatusRuntimeException: DEADLINE_EXCEEDED: deadline exceeded after 29.999642728s. [remote_addr=batch-spanner.googleapis.com/74.125.197.95:443]

at com.example.dataflow.SpannerReadIT.readTableEndToEnd(SpannerReadIT.java:181)

Caused by: com.google.cloud.spanner.SpannerException: DEADLINE_EXCEEDED: com.google.api.gax.rpc.DeadlineExceededException: io.grpc.StatusRuntimeException: DEADLINE_EXCEEDED: deadline exceeded after 29.999642728s. [remote_addr=batch-spanner.googleapis.com/74.125.197.95:443]

Caused by: java.util.concurrent.ExecutionException: com.google.api.gax.rpc.DeadlineExceededException: io.grpc.StatusRuntimeException: DEADLINE_EXCEEDED: deadline exceeded after 29.999642728s. [remote_addr=batch-spanner.googleapis.com/74.125.197.95:443]

Caused by: com.google.api.gax.rpc.DeadlineExceededException: io.grpc.StatusRuntimeException: DEADLINE_EXCEEDED: deadline exceeded after 29.999642728s. [remote_addr=batch-spanner.googleapis.com/74.125.197.95:443]

Caused by: io.grpc.StatusRuntimeException: DEADLINE_EXCEEDED: deadline exceeded after 29.999642728s. [remote_addr=batch-spanner.googleapis.com/74.125.197.95:443]

</pre></details>

|

1.0

|

com.example.dataflow.SpannerReadIT: readTableEndToEnd failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: 51bc9349b73520baa0180c8201fe6560798cf861

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/71a84563-4015-4f92-a157-0f0b856f0f03), [Sponge](http://sponge2/71a84563-4015-4f92-a157-0f0b856f0f03)

status: failed

<details><summary>Test output</summary><br><pre>org.apache.beam.sdk.Pipeline$PipelineExecutionException: com.google.cloud.spanner.SpannerException: DEADLINE_EXCEEDED: com.google.api.gax.rpc.DeadlineExceededException: io.grpc.StatusRuntimeException: DEADLINE_EXCEEDED: deadline exceeded after 29.999642728s. [remote_addr=batch-spanner.googleapis.com/74.125.197.95:443]

at com.example.dataflow.SpannerReadIT.readTableEndToEnd(SpannerReadIT.java:181)

Caused by: com.google.cloud.spanner.SpannerException: DEADLINE_EXCEEDED: com.google.api.gax.rpc.DeadlineExceededException: io.grpc.StatusRuntimeException: DEADLINE_EXCEEDED: deadline exceeded after 29.999642728s. [remote_addr=batch-spanner.googleapis.com/74.125.197.95:443]

Caused by: java.util.concurrent.ExecutionException: com.google.api.gax.rpc.DeadlineExceededException: io.grpc.StatusRuntimeException: DEADLINE_EXCEEDED: deadline exceeded after 29.999642728s. [remote_addr=batch-spanner.googleapis.com/74.125.197.95:443]

Caused by: com.google.api.gax.rpc.DeadlineExceededException: io.grpc.StatusRuntimeException: DEADLINE_EXCEEDED: deadline exceeded after 29.999642728s. [remote_addr=batch-spanner.googleapis.com/74.125.197.95:443]

Caused by: io.grpc.StatusRuntimeException: DEADLINE_EXCEEDED: deadline exceeded after 29.999642728s. [remote_addr=batch-spanner.googleapis.com/74.125.197.95:443]

</pre></details>

|

non_defect

|

com example dataflow spannerreadit readtableendtoend failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed test output org apache beam sdk pipeline pipelineexecutionexception com google cloud spanner spannerexception deadline exceeded com google api gax rpc deadlineexceededexception io grpc statusruntimeexception deadline exceeded deadline exceeded after at com example dataflow spannerreadit readtableendtoend spannerreadit java caused by com google cloud spanner spannerexception deadline exceeded com google api gax rpc deadlineexceededexception io grpc statusruntimeexception deadline exceeded deadline exceeded after caused by java util concurrent executionexception com google api gax rpc deadlineexceededexception io grpc statusruntimeexception deadline exceeded deadline exceeded after caused by com google api gax rpc deadlineexceededexception io grpc statusruntimeexception deadline exceeded deadline exceeded after caused by io grpc statusruntimeexception deadline exceeded deadline exceeded after

| 0

|

20,295

| 3,331,545,040

|

IssuesEvent

|

2015-11-11 16:16:39

|

xmindltd/xmind

|

https://api.github.com/repos/xmindltd/xmind

|

closed

|

Please help me to come out from this issues

|

auto-migrated Priority-Medium Type-Defect

|

```

What steps will reproduce the problem?

1. I am trying to develop plugin for xmind like same as previously available

but can you guide me how can i develop it. which type of plugin i have to

create.

What is the expected output? What do you see instead?

Now i am creating view plugin but I want to create plugin which is already

available in xmind

```

Original issue reported on code.google.com by `somani.a...@gmail.com` on 7 Sep 2013 at 6:26

|

1.0

|

Please help me to come out from this issues - ```

What steps will reproduce the problem?

1. I am trying to develop plugin for xmind like same as previously available

but can you guide me how can i develop it. which type of plugin i have to

create.

What is the expected output? What do you see instead?

Now i am creating view plugin but I want to create plugin which is already

available in xmind

```

Original issue reported on code.google.com by `somani.a...@gmail.com` on 7 Sep 2013 at 6:26

|

defect

|

please help me to come out from this issues what steps will reproduce the problem i am trying to develop plugin for xmind like same as previously available but can you guide me how can i develop it which type of plugin i have to create what is the expected output what do you see instead now i am creating view plugin but i want to create plugin which is already available in xmind original issue reported on code google com by somani a gmail com on sep at

| 1

|

50,884