Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

757

| labels

stringlengths 4

664

| body

stringlengths 3

261k

| index

stringclasses 10

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

232k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

826

| 2,594,139,088

|

IssuesEvent

|

2015-02-20 00:05:58

|

BALL-Project/ball

|

https://api.github.com/repos/BALL-Project/ball

|

closed

|

acetic acid should not be recognized as an amino acid

|

C: BALL Core P: major R: fixed T: defect

|

**Reported by akdehof on 3 Dec 38777218 04:00 UTC**

Currently Peptides::isAminoAcid recognizes ACE-residues as amino acids.

This is probably wrong :-)

|

1.0

|

acetic acid should not be recognized as an amino acid - **Reported by akdehof on 3 Dec 38777218 04:00 UTC**

Currently Peptides::isAminoAcid recognizes ACE-residues as amino acids.

This is probably wrong :-)

|

defect

|

acetic acid should not be recognized as an amino acid reported by akdehof on dec utc currently peptides isaminoacid recognizes ace residues as amino acids this is probably wrong

| 1

|

13,967

| 16,740,306,977

|

IssuesEvent

|

2021-06-11 08:58:01

|

STEllAR-GROUP/hpx

|

https://api.github.com/repos/STEllAR-GROUP/hpx

|

closed

|

Separate the datapar algorithms

|

category: algorithms project: GSoC type: compatibility issue type: enhancement

|

Currently, our parallel algorithms support being used with the `datapar` execution policy. This is a remnant of an older standardization proposal. This implementation is currently tightly entangled with the implementation of the parallel base algorithms.

We should do two things:

- separate the datapar implementations and expose them through separate algorithm specializations (based on the `tag_invoke` customization point mechanism we have implemented)

- adapt the implementation to support (and rely on) the data-parallel Types introduced by [N4755](https://wg21.link/n4755), section 9, this implies removing `datapar` as it is today,

This work could also go hand-in-hand with #2271.

|

True

|

Separate the datapar algorithms - Currently, our parallel algorithms support being used with the `datapar` execution policy. This is a remnant of an older standardization proposal. This implementation is currently tightly entangled with the implementation of the parallel base algorithms.

We should do two things:

- separate the datapar implementations and expose them through separate algorithm specializations (based on the `tag_invoke` customization point mechanism we have implemented)

- adapt the implementation to support (and rely on) the data-parallel Types introduced by [N4755](https://wg21.link/n4755), section 9, this implies removing `datapar` as it is today,

This work could also go hand-in-hand with #2271.

|

non_defect

|

separate the datapar algorithms currently our parallel algorithms support being used with the datapar execution policy this is a remnant of an older standardization proposal this implementation is currently tightly entangled with the implementation of the parallel base algorithms we should do two things separate the datapar implementations and expose them through separate algorithm specializations based on the tag invoke customization point mechanism we have implemented adapt the implementation to support and rely on the data parallel types introduced by section this implies removing datapar as it is today this work could also go hand in hand with

| 0

|

1,900

| 2,603,973,167

|

IssuesEvent

|

2015-02-24 19:00:48

|

chrsmith/nishazi6

|

https://api.github.com/repos/chrsmith/nishazi6

|

opened

|

沈阳龟头长水泡怎么办

|

auto-migrated Priority-Medium Type-Defect

|

```

沈阳龟头长水泡怎么办〓沈陽軍區政治部醫院性病〓TEL:024-3

1023308〓成立于1946年,68年專注于性傳播疾病的研究和治療。�

��于沈陽市沈河區二緯路32號。是一所與新中國同建立共輝煌�

��歷史悠久、設備精良、技術權威、專家云集,是預防、保健

、醫療、科研康復為一體的綜合性醫院。是國家首批公立甲��

�部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、�

��南大學等知名高等院校的教學醫院。曾被中國人民解放軍空

軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體��

�等功。

```

-----

Original issue reported on code.google.com by `q964105...@gmail.com` on 4 Jun 2014 at 8:04

|

1.0

|

沈阳龟头长水泡怎么办 - ```

沈阳龟头长水泡怎么办〓沈陽軍區政治部醫院性病〓TEL:024-3

1023308〓成立于1946年,68年專注于性傳播疾病的研究和治療。�

��于沈陽市沈河區二緯路32號。是一所與新中國同建立共輝煌�

��歷史悠久、設備精良、技術權威、專家云集,是預防、保健

、醫療、科研康復為一體的綜合性醫院。是國家首批公立甲��

�部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、�

��南大學等知名高等院校的教學醫院。曾被中國人民解放軍空

軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體��

�等功。

```

-----

Original issue reported on code.google.com by `q964105...@gmail.com` on 4 Jun 2014 at 8:04

|

defect

|

沈阳龟头长水泡怎么办 沈阳龟头长水泡怎么办〓沈陽軍區政治部醫院性病〓tel: 〓 , 。� �� 。是一所與新中國同建立共輝煌� ��歷史悠久、設備精良、技術權威、專家云集,是預防、保健 、醫療、科研康復為一體的綜合性醫院。是國家首批公立甲�� �部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、� ��南大學等知名高等院校的教學醫院。曾被中國人民解放軍空 軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體�� �等功。 original issue reported on code google com by gmail com on jun at

| 1

|

329,979

| 24,241,252,707

|

IssuesEvent

|

2022-09-27 06:54:00

|

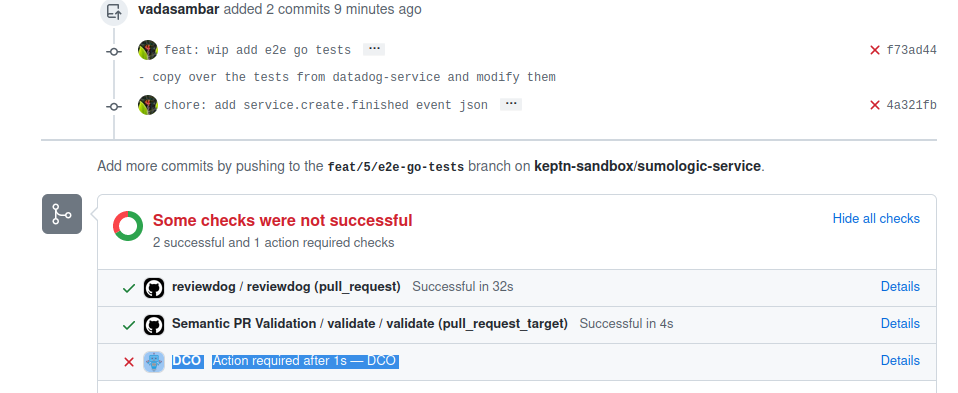

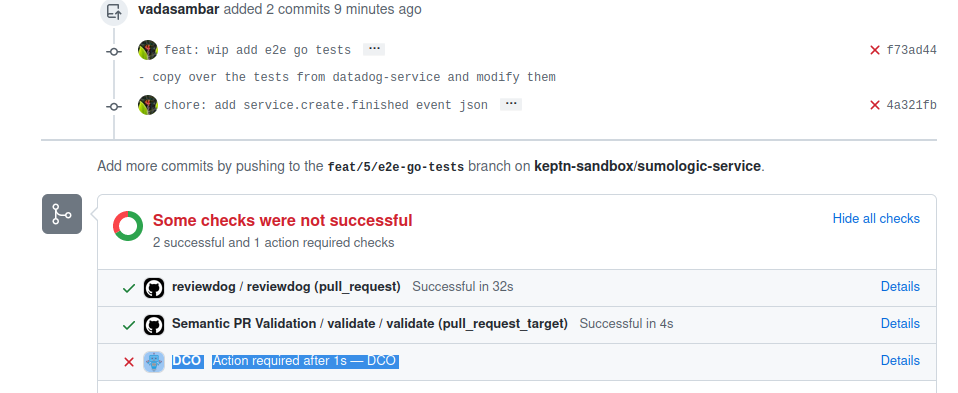

keptn-sandbox/sumologic-service

|

https://api.github.com/repos/keptn-sandbox/sumologic-service

|

opened

|

Add info about auto-signoff in the README

|

documentation

|

## Summary

We have a DCO check which runs on every PR to check if the commit has been signed off.

Doing

```

git commit --amend --signoff

```

or something like

```

git rebase HEAD~2 --signoff

```

is inconvenient. Signing off can be automated by adding the following hook in the local `.git` folder:

```bash

#!/bin/sh

#

# An example hook script to prepare the commit log message.

# Called by "git commit" with the name of the file that has the

# commit message, followed by the description of the commit

# message's source. The hook's purpose is to edit the commit

# message file. If the hook fails with a non-zero status,

# the commit is aborted.

#

# To enable this hook, rename this file to "prepare-commit-msg".

# This hook includes three examples. The first comments out the

# "Conflicts:" part of a merge commit.

#

# The second includes the output of "git diff --name-status -r"

# into the message, just before the "git status" output. It is

# commented because it doesn't cope with --amend or with squashed

# commits.

#

# The third example adds a Signed-off-by line to the message, that can

# still be edited. This is rarely a good idea.

SOB=$(git var GIT_AUTHOR_IDENT | sed -n 's/^\(.*>\).*$/Signed-off-by: \1/p')

grep -qs "^$SOB" "$1" || echo "$SOB" >> "$1"

```

This needs be put in the `.git/hooks/prepare-commit-msg` file (you need to create a new file called `prepare-commit-msg`) and give it permission to execute using:

```

chmod +x .git/hooks/prepare-commit-msg

```

## TODO

- [ ] Add info about this in the README after https://github.com/keptn-sandbox/sumologic-service#testing-cloud-events (create a heading called `Auto signoff commit messages`

- [ ] Raise a PR and get it merged

|

1.0

|

Add info about auto-signoff in the README - ## Summary

We have a DCO check which runs on every PR to check if the commit has been signed off.

Doing

```

git commit --amend --signoff

```

or something like

```

git rebase HEAD~2 --signoff

```

is inconvenient. Signing off can be automated by adding the following hook in the local `.git` folder:

```bash

#!/bin/sh

#

# An example hook script to prepare the commit log message.

# Called by "git commit" with the name of the file that has the

# commit message, followed by the description of the commit

# message's source. The hook's purpose is to edit the commit

# message file. If the hook fails with a non-zero status,

# the commit is aborted.

#

# To enable this hook, rename this file to "prepare-commit-msg".

# This hook includes three examples. The first comments out the

# "Conflicts:" part of a merge commit.

#

# The second includes the output of "git diff --name-status -r"

# into the message, just before the "git status" output. It is

# commented because it doesn't cope with --amend or with squashed

# commits.

#

# The third example adds a Signed-off-by line to the message, that can

# still be edited. This is rarely a good idea.

SOB=$(git var GIT_AUTHOR_IDENT | sed -n 's/^\(.*>\).*$/Signed-off-by: \1/p')

grep -qs "^$SOB" "$1" || echo "$SOB" >> "$1"

```

This needs be put in the `.git/hooks/prepare-commit-msg` file (you need to create a new file called `prepare-commit-msg`) and give it permission to execute using:

```

chmod +x .git/hooks/prepare-commit-msg

```

## TODO

- [ ] Add info about this in the README after https://github.com/keptn-sandbox/sumologic-service#testing-cloud-events (create a heading called `Auto signoff commit messages`

- [ ] Raise a PR and get it merged

|

non_defect

|

add info about auto signoff in the readme summary we have a dco check which runs on every pr to check if the commit has been signed off doing git commit amend signoff or something like git rebase head signoff is inconvenient signing off can be automated by adding the following hook in the local git folder bash bin sh an example hook script to prepare the commit log message called by git commit with the name of the file that has the commit message followed by the description of the commit message s source the hook s purpose is to edit the commit message file if the hook fails with a non zero status the commit is aborted to enable this hook rename this file to prepare commit msg this hook includes three examples the first comments out the conflicts part of a merge commit the second includes the output of git diff name status r into the message just before the git status output it is commented because it doesn t cope with amend or with squashed commits the third example adds a signed off by line to the message that can still be edited this is rarely a good idea sob git var git author ident sed n s signed off by p grep qs sob echo sob this needs be put in the git hooks prepare commit msg file you need to create a new file called prepare commit msg and give it permission to execute using chmod x git hooks prepare commit msg todo add info about this in the readme after create a heading called auto signoff commit messages raise a pr and get it merged

| 0

|

7,232

| 2,610,359,321

|

IssuesEvent

|

2015-02-26 19:56:18

|

chrsmith/scribefire-chrome

|

https://api.github.com/repos/chrsmith/scribefire-chrome

|

opened

|

Lost entire post

|

auto-migrated Priority-Medium Type-Defect

|

```

What's the problem?

Lost entire post. I had composed a long post (for Wordpress.com) and then went

off to do other things. One of these things is debugging a problem I've been

having with certain Javascript functions being disabled - so I started

disabling extensions (one of course was Scribefire).

After re-enabling it, I noticed that the window was gone. Starting a new

Scribefire window showed it to be empty and no way to retrieve the lost post.

I found this article

http://leggetter.posterous.com/how-to-recover-a-lost-scribefire-noteblog-pos

but there is no equivalent in Linux, and searching the directory for Chromium

showed up no SQLite files and no apparent database files that might contain the

lost post.

Scribefire SHOULD automatically save the current post, retain it, and

automatically reload it when it is started. It should also automatically save

it as a draft post to the destination blog.

This is NOT the first bug post on this, nor is it the first time I've lost a

post!

The idea that Scribefire is losing posts this many years after it was started

is inconcievable. The fix is simple: save it! Word does it, and vi has done it

for longer than some bloggers have been alive. Why can't Scribefire do it too?

What browser are you using?

Google Chromium 14.0.835.202 (Developer Build 103287 Linux) Ubuntu 10.10

What version of ScribeFire are you running?

4

```

-----

Original issue reported on code.google.com by `ddouth...@gmail.com` on 6 Jan 2012 at 1:59

|

1.0

|

Lost entire post - ```

What's the problem?

Lost entire post. I had composed a long post (for Wordpress.com) and then went

off to do other things. One of these things is debugging a problem I've been

having with certain Javascript functions being disabled - so I started

disabling extensions (one of course was Scribefire).

After re-enabling it, I noticed that the window was gone. Starting a new

Scribefire window showed it to be empty and no way to retrieve the lost post.

I found this article

http://leggetter.posterous.com/how-to-recover-a-lost-scribefire-noteblog-pos

but there is no equivalent in Linux, and searching the directory for Chromium

showed up no SQLite files and no apparent database files that might contain the

lost post.

Scribefire SHOULD automatically save the current post, retain it, and

automatically reload it when it is started. It should also automatically save

it as a draft post to the destination blog.

This is NOT the first bug post on this, nor is it the first time I've lost a

post!

The idea that Scribefire is losing posts this many years after it was started

is inconcievable. The fix is simple: save it! Word does it, and vi has done it

for longer than some bloggers have been alive. Why can't Scribefire do it too?

What browser are you using?

Google Chromium 14.0.835.202 (Developer Build 103287 Linux) Ubuntu 10.10

What version of ScribeFire are you running?

4

```

-----

Original issue reported on code.google.com by `ddouth...@gmail.com` on 6 Jan 2012 at 1:59

|

defect

|

lost entire post what s the problem lost entire post i had composed a long post for wordpress com and then went off to do other things one of these things is debugging a problem i ve been having with certain javascript functions being disabled so i started disabling extensions one of course was scribefire after re enabling it i noticed that the window was gone starting a new scribefire window showed it to be empty and no way to retrieve the lost post i found this article but there is no equivalent in linux and searching the directory for chromium showed up no sqlite files and no apparent database files that might contain the lost post scribefire should automatically save the current post retain it and automatically reload it when it is started it should also automatically save it as a draft post to the destination blog this is not the first bug post on this nor is it the first time i ve lost a post the idea that scribefire is losing posts this many years after it was started is inconcievable the fix is simple save it word does it and vi has done it for longer than some bloggers have been alive why can t scribefire do it too what browser are you using google chromium developer build linux ubuntu what version of scribefire are you running original issue reported on code google com by ddouth gmail com on jan at

| 1

|

5,918

| 2,610,217,908

|

IssuesEvent

|

2015-02-26 19:09:20

|

chrsmith/somefinders

|

https://api.github.com/repos/chrsmith/somefinders

|

opened

|

скачати dxgi.dll для кал оф дьюти.rar

|

auto-migrated Priority-Medium Type-Defect

|

```

'''Август Андреев'''

Привет всем не подскажите где можно найти

.скачати dxgi.dll для кал оф дьюти.rar. где то

видел уже

'''Атеист Лазарев'''

Вот держи линк http://bit.ly/177p3HZ

'''Болеслав Блинов'''

Спасибо вроде то но просит телефон вводить

'''Аполлинарий Комаров'''

Неа все ок у меня ничего не списало

'''Гертруд Сысоев'''

Неа все ок у меня ничего не списало

Информация о файле: скачати dxgi.dll для кал оф

дьюти.rar

Загружен: В этом месяце

Скачан раз: 1316

Рейтинг: 144

Средняя скорость скачивания: 1328

Похожих файлов: 28

```

-----

Original issue reported on code.google.com by `kondense...@gmail.com` on 17 Dec 2013 at 12:31

|

1.0

|

скачати dxgi.dll для кал оф дьюти.rar - ```

'''Август Андреев'''

Привет всем не подскажите где можно найти

.скачати dxgi.dll для кал оф дьюти.rar. где то

видел уже

'''Атеист Лазарев'''

Вот держи линк http://bit.ly/177p3HZ

'''Болеслав Блинов'''

Спасибо вроде то но просит телефон вводить

'''Аполлинарий Комаров'''

Неа все ок у меня ничего не списало

'''Гертруд Сысоев'''

Неа все ок у меня ничего не списало

Информация о файле: скачати dxgi.dll для кал оф

дьюти.rar

Загружен: В этом месяце

Скачан раз: 1316

Рейтинг: 144

Средняя скорость скачивания: 1328

Похожих файлов: 28

```

-----

Original issue reported on code.google.com by `kondense...@gmail.com` on 17 Dec 2013 at 12:31

|

defect

|

скачати dxgi dll для кал оф дьюти rar август андреев привет всем не подскажите где можно найти скачати dxgi dll для кал оф дьюти rar где то видел уже атеист лазарев вот держи линк болеслав блинов спасибо вроде то но просит телефон вводить аполлинарий комаров неа все ок у меня ничего не списало гертруд сысоев неа все ок у меня ничего не списало информация о файле скачати dxgi dll для кал оф дьюти rar загружен в этом месяце скачан раз рейтинг средняя скорость скачивания похожих файлов original issue reported on code google com by kondense gmail com on dec at

| 1

|

44,387

| 12,124,674,033

|

IssuesEvent

|

2020-04-22 14:30:11

|

snowplow/snowplow-objc-tracker

|

https://api.github.com/repos/snowplow/snowplow-objc-tracker

|

closed

|

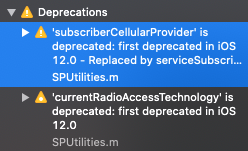

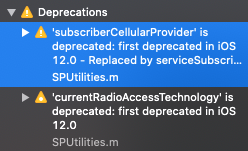

Replace deprecated CTTelephonyNetworkInfo methods

|

priority:high status:completed type:defect

|

iOS 12 added support for e-sims which means a device can have multiple providers. APIs were added/deprecated in `CTTelephonyNetworkInfo` to support this.

`SPUtilities` makes use of two deprecated APIs:

* `-[CTTelephonyNetworkInfo currentRadioAccessTechnology]`

* `-[CTTelephonyNetworkInfo subscriberCellularProvider]`

|

1.0

|

Replace deprecated CTTelephonyNetworkInfo methods - iOS 12 added support for e-sims which means a device can have multiple providers. APIs were added/deprecated in `CTTelephonyNetworkInfo` to support this.

`SPUtilities` makes use of two deprecated APIs:

* `-[CTTelephonyNetworkInfo currentRadioAccessTechnology]`

* `-[CTTelephonyNetworkInfo subscriberCellularProvider]`

|

defect

|

replace deprecated cttelephonynetworkinfo methods ios added support for e sims which means a device can have multiple providers apis were added deprecated in cttelephonynetworkinfo to support this sputilities makes use of two deprecated apis

| 1

|

171,955

| 6,497,285,966

|

IssuesEvent

|

2017-08-22 13:32:15

|

seanvree/logarr

|

https://api.github.com/repos/seanvree/logarr

|

closed

|

FEAT: Clear search results/highlighting

|

enhancement help wanted Priority: HIGH

|

Issue: User must refresh page in order to 'clear' search results/highlighting.

Suggestion: Implement a feature to clear search results/highlighting w/o having to refresh page.

|

1.0

|

FEAT: Clear search results/highlighting - Issue: User must refresh page in order to 'clear' search results/highlighting.

Suggestion: Implement a feature to clear search results/highlighting w/o having to refresh page.

|

non_defect

|

feat clear search results highlighting issue user must refresh page in order to clear search results highlighting suggestion implement a feature to clear search results highlighting w o having to refresh page

| 0

|

176,198

| 13,630,562,765

|

IssuesEvent

|

2020-09-24 16:37:41

|

microcks/microcks

|

https://api.github.com/repos/microcks/microcks

|

closed

|

Update Helm Chart and Operator for asynchronous API testing

|

component/install component/tests kind/feature type/Part

|

As part of #257, we now need a `microcks-async-minion` Kubernetes `Service` so that webapp component of Microcks will be able to launch tests on a minion.

Helm Chart and Operator need to be released as well.

|

1.0

|

Update Helm Chart and Operator for asynchronous API testing - As part of #257, we now need a `microcks-async-minion` Kubernetes `Service` so that webapp component of Microcks will be able to launch tests on a minion.

Helm Chart and Operator need to be released as well.

|

non_defect

|

update helm chart and operator for asynchronous api testing as part of we now need a microcks async minion kubernetes service so that webapp component of microcks will be able to launch tests on a minion helm chart and operator need to be released as well

| 0

|

64,528

| 18,724,649,562

|

IssuesEvent

|

2021-11-03 15:10:26

|

primefaces/primefaces

|

https://api.github.com/repos/primefaces/primefaces

|

closed

|

DataTable: filter/sort - wrong manipulation of list elements on Mojarra without filteredValue defined (non-lazy)

|

defect implementation-specific

|

### 1) Environment

PrimeFaces version: primefaces 10.0.0

Application server: jetty 9.4.44 + version: mojarra-2.3

### 2) Expected behavior

Run the sample project.

Follow these steps:

1. filter on name with value BB2

2. change all BB2 row values to BB3, press Save [result=OK]

3. remove filter BB2, press Save [result=OK]

4. sort on code, press Save [result=WRONG] data rows switch with primefaces 10!

The result must be:

509, EUR, BB, BB3, A

512, EUR, BB, BB3, B

515, EUR, BB, BB3, C

516, EUR, AA, AA, D

517, EUR, AA, AA, E

### 3) Actual behavior

The result is:

509, USA, AA, AA, A

512, USA, AA, AA, B

515, EUR, BB, BB3, C

516, EUR, BB, BB3, D

517, EUR, BB, BB3, E

### 4) Steps to reproduce

See sample project.

This behavior is only in primefaces 10, primefaces 8 is fine.

As a remark i must mention this is only one example. Different combinations of sorting and filtering data

gives different results, even in combination with multiViewState. Also the behavior changes somehow in later primefaces versions. Still managed to get it wrong in PrimeFaces-10.0.7 elite (latest version).

### 5) Sample XHTML / Sample bean

See attached projects.

Primefaces 10:

[primefaces-test-datatable.zip](https://github.com/primefaces/primefaces/files/7389069/primefaces-test-datatable.zip)

Primefaces 8 (works as expected):

[primefaces-test-datatable-8.0.zip](https://github.com/primefaces/primefaces/files/7389071/primefaces-test-datatable-8.0.zip)

run with:

mvn clean jetty:run -Pmojarra23

|

1.0

|

DataTable: filter/sort - wrong manipulation of list elements on Mojarra without filteredValue defined (non-lazy) - ### 1) Environment

PrimeFaces version: primefaces 10.0.0

Application server: jetty 9.4.44 + version: mojarra-2.3

### 2) Expected behavior

Run the sample project.

Follow these steps:

1. filter on name with value BB2

2. change all BB2 row values to BB3, press Save [result=OK]

3. remove filter BB2, press Save [result=OK]

4. sort on code, press Save [result=WRONG] data rows switch with primefaces 10!

The result must be:

509, EUR, BB, BB3, A

512, EUR, BB, BB3, B

515, EUR, BB, BB3, C

516, EUR, AA, AA, D

517, EUR, AA, AA, E

### 3) Actual behavior

The result is:

509, USA, AA, AA, A

512, USA, AA, AA, B

515, EUR, BB, BB3, C

516, EUR, BB, BB3, D

517, EUR, BB, BB3, E

### 4) Steps to reproduce

See sample project.

This behavior is only in primefaces 10, primefaces 8 is fine.

As a remark i must mention this is only one example. Different combinations of sorting and filtering data

gives different results, even in combination with multiViewState. Also the behavior changes somehow in later primefaces versions. Still managed to get it wrong in PrimeFaces-10.0.7 elite (latest version).

### 5) Sample XHTML / Sample bean

See attached projects.

Primefaces 10:

[primefaces-test-datatable.zip](https://github.com/primefaces/primefaces/files/7389069/primefaces-test-datatable.zip)

Primefaces 8 (works as expected):

[primefaces-test-datatable-8.0.zip](https://github.com/primefaces/primefaces/files/7389071/primefaces-test-datatable-8.0.zip)

run with:

mvn clean jetty:run -Pmojarra23

|

defect

|

datatable filter sort wrong manipulation of list elements on mojarra without filteredvalue defined non lazy environment primefaces version primefaces application server jetty version mojarra expected behavior run the sample project follow these steps filter on name with value change all row values to press save remove filter press save sort on code press save data rows switch with primefaces the result must be eur bb a eur bb b eur bb c eur aa aa d eur aa aa e actual behavior the result is usa aa aa a usa aa aa b eur bb c eur bb d eur bb e steps to reproduce see sample project this behavior is only in primefaces primefaces is fine as a remark i must mention this is only one example different combinations of sorting and filtering data gives different results even in combination with multiviewstate also the behavior changes somehow in later primefaces versions still managed to get it wrong in primefaces elite latest version sample xhtml sample bean see attached projects primefaces primefaces works as expected run with mvn clean jetty run

| 1

|

819,544

| 30,741,314,259

|

IssuesEvent

|

2023-07-28 11:45:10

|

PRIME-TU-Delft/Open-LA-Applets

|

https://api.github.com/repos/PRIME-TU-Delft/Open-LA-Applets

|

opened

|

[Issue]: Improve search

|

Low priority

|

### What needs to change?

Search is now automatically updating the url, this is not a desired behaviour. It should only change the URL when the search form is submitted

### Does this issue relate or depend on other issues?

_No response_

|

1.0

|

[Issue]: Improve search - ### What needs to change?

Search is now automatically updating the url, this is not a desired behaviour. It should only change the URL when the search form is submitted

### Does this issue relate or depend on other issues?

_No response_

|

non_defect

|

improve search what needs to change search is now automatically updating the url this is not a desired behaviour it should only change the url when the search form is submitted does this issue relate or depend on other issues no response

| 0

|

151,528

| 19,654,642,000

|

IssuesEvent

|

2022-01-10 11:10:13

|

theWhiteFox/react-tic-tac-toe

|

https://api.github.com/repos/theWhiteFox/react-tic-tac-toe

|

opened

|

CVE-2021-3803 (High) detected in nth-check-1.0.2.tgz

|

security vulnerability

|

## CVE-2021-3803 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nth-check-1.0.2.tgz</b></p></summary>

<p>performant nth-check parser & compiler</p>

<p>Library home page: <a href="https://registry.npmjs.org/nth-check/-/nth-check-1.0.2.tgz">https://registry.npmjs.org/nth-check/-/nth-check-1.0.2.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/nth-check/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-3.4.1.tgz (Root Library)

- html-webpack-plugin-4.0.0-beta.11.tgz

- pretty-error-2.1.1.tgz

- renderkid-2.0.3.tgz

- css-select-1.2.0.tgz

- :x: **nth-check-1.0.2.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/theWhiteFox/react-tic-tac-toe/commit/e334886398cc42d1544bbf041c785f1d8e0d5d00">e334886398cc42d1544bbf041c785f1d8e0d5d00</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

nth-check is vulnerable to Inefficient Regular Expression Complexity

<p>Publish Date: 2021-09-17

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-3803>CVE-2021-3803</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/fb55/nth-check/compare/v2.0.0...v2.0.1">https://github.com/fb55/nth-check/compare/v2.0.0...v2.0.1</a></p>

<p>Release Date: 2021-09-17</p>

<p>Fix Resolution: nth-check - v2.0.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2021-3803 (High) detected in nth-check-1.0.2.tgz - ## CVE-2021-3803 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nth-check-1.0.2.tgz</b></p></summary>

<p>performant nth-check parser & compiler</p>

<p>Library home page: <a href="https://registry.npmjs.org/nth-check/-/nth-check-1.0.2.tgz">https://registry.npmjs.org/nth-check/-/nth-check-1.0.2.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/nth-check/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-3.4.1.tgz (Root Library)

- html-webpack-plugin-4.0.0-beta.11.tgz

- pretty-error-2.1.1.tgz

- renderkid-2.0.3.tgz

- css-select-1.2.0.tgz

- :x: **nth-check-1.0.2.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/theWhiteFox/react-tic-tac-toe/commit/e334886398cc42d1544bbf041c785f1d8e0d5d00">e334886398cc42d1544bbf041c785f1d8e0d5d00</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

nth-check is vulnerable to Inefficient Regular Expression Complexity

<p>Publish Date: 2021-09-17

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-3803>CVE-2021-3803</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/fb55/nth-check/compare/v2.0.0...v2.0.1">https://github.com/fb55/nth-check/compare/v2.0.0...v2.0.1</a></p>

<p>Release Date: 2021-09-17</p>

<p>Fix Resolution: nth-check - v2.0.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_defect

|

cve high detected in nth check tgz cve high severity vulnerability vulnerable library nth check tgz performant nth check parser compiler library home page a href path to dependency file package json path to vulnerable library node modules nth check package json dependency hierarchy react scripts tgz root library html webpack plugin beta tgz pretty error tgz renderkid tgz css select tgz x nth check tgz vulnerable library found in head commit a href found in base branch master vulnerability details nth check is vulnerable to inefficient regular expression complexity publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution nth check step up your open source security game with whitesource

| 0

|

39,299

| 9,381,362,429

|

IssuesEvent

|

2019-04-04 19:25:56

|

CenturyLinkCloud/mdw

|

https://api.github.com/repos/CenturyLinkCloud/mdw

|

opened

|

Activities API returns incorrect total count

|

defect

|

Incorrect count query:

```java

protected String buildActivityCountQuery(Query query, Date start) {

StringBuilder sqlBuff = new StringBuilder();

sqlBuff.append("SELECT count(pi.process_instance_id) ");

```

|

1.0

|

Activities API returns incorrect total count - Incorrect count query:

```java

protected String buildActivityCountQuery(Query query, Date start) {

StringBuilder sqlBuff = new StringBuilder();

sqlBuff.append("SELECT count(pi.process_instance_id) ");

```

|

defect

|

activities api returns incorrect total count incorrect count query java protected string buildactivitycountquery query query date start stringbuilder sqlbuff new stringbuilder sqlbuff append select count pi process instance id

| 1

|

22,328

| 3,634,560,409

|

IssuesEvent

|

2016-02-11 18:27:25

|

primefaces/primefaces

|

https://api.github.com/repos/primefaces/primefaces

|

closed

|

PropapageDown does not work correctly in Tree

|

5.2.20 5.3.7 defect

|

In checkbox mode, setting propagate down to false still selects descendants.

|

1.0

|

PropapageDown does not work correctly in Tree - In checkbox mode, setting propagate down to false still selects descendants.

|

defect

|

propapagedown does not work correctly in tree in checkbox mode setting propagate down to false still selects descendants

| 1

|

52,667

| 13,224,891,388

|

IssuesEvent

|

2020-08-17 20:03:27

|

icecube-trac/tix4

|

https://api.github.com/repos/icecube-trac/tix4

|

closed

|

pyroot plays nice with numpy (Trac #126)

|

Migrated from Trac defect documentation

|

recently discovered. very easy to make root histograms

from numpy data that has already had cuts/etc applied.

need examples/docs.

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/126">https://code.icecube.wisc.edu/projects/icecube/ticket/126</a>, reported by troyand owned by troy</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2012-10-31T18:49:16",

"_ts": "1351709356000000",

"description": "recently discovered. very easy to make root histograms\nfrom numpy data that has already had cuts/etc applied.\nneed examples/docs.",

"reporter": "troy",

"cc": "",

"resolution": "wont or cant fix",

"time": "2008-09-07T13:40:39",

"component": "documentation",

"summary": "pyroot plays nice with numpy",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "troy",

"type": "defect"

}

```

</p>

</details>

|

1.0

|

pyroot plays nice with numpy (Trac #126) - recently discovered. very easy to make root histograms

from numpy data that has already had cuts/etc applied.

need examples/docs.

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/126">https://code.icecube.wisc.edu/projects/icecube/ticket/126</a>, reported by troyand owned by troy</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2012-10-31T18:49:16",

"_ts": "1351709356000000",

"description": "recently discovered. very easy to make root histograms\nfrom numpy data that has already had cuts/etc applied.\nneed examples/docs.",

"reporter": "troy",

"cc": "",

"resolution": "wont or cant fix",

"time": "2008-09-07T13:40:39",

"component": "documentation",

"summary": "pyroot plays nice with numpy",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "troy",

"type": "defect"

}

```

</p>

</details>

|

defect

|

pyroot plays nice with numpy trac recently discovered very easy to make root histograms from numpy data that has already had cuts etc applied need examples docs migrated from json status closed changetime ts description recently discovered very easy to make root histograms nfrom numpy data that has already had cuts etc applied nneed examples docs reporter troy cc resolution wont or cant fix time component documentation summary pyroot plays nice with numpy priority normal keywords milestone owner troy type defect

| 1

|

399,192

| 27,230,173,781

|

IssuesEvent

|

2023-02-21 12:40:17

|

equinor/komodo

|

https://api.github.com/repos/equinor/komodo

|

closed

|

This repo has no docs

|

documentation

|

There doesn't seem to be any documentation for `komodo`. The closest thing I could find is https://fmu-docs.equinor.com/docs/komodo/index.html (not a public link), which I think is really documentation for `komodo-releases`.

I think the documentation could contain the following:

- Expand on the basic example in the README, see #257

- Explain how it relates to `komodoenv` and perhaps, with appropriate signals that it's not public, `komodo-releases` (likewise, those libraries should explain the relationship)

- Map the resulting release directory structure, files, etc.

- Explain all of the arguments to the `kmd` command, because most of them do not have expensive help in the CLI.

- Document the various commands: komodo-check-pypi, komodo-insert-proposals, komodo-post-messages, komodo-check-symlinks, komodo-lint, komodo-reverse-deps, komodo-clean-repository, komodo-lint-maturity, komodo-snyk-test, komodo-create-symlinks, komodo-lint-package-status, komodo-suggest-symlinks, komodo-extract-dep-graph, komodo-non-deployed, komodo-transpiler

- Show how (and if!) `komodo` is intended to be used as a library.

|

1.0

|

This repo has no docs - There doesn't seem to be any documentation for `komodo`. The closest thing I could find is https://fmu-docs.equinor.com/docs/komodo/index.html (not a public link), which I think is really documentation for `komodo-releases`.

I think the documentation could contain the following:

- Expand on the basic example in the README, see #257

- Explain how it relates to `komodoenv` and perhaps, with appropriate signals that it's not public, `komodo-releases` (likewise, those libraries should explain the relationship)

- Map the resulting release directory structure, files, etc.

- Explain all of the arguments to the `kmd` command, because most of them do not have expensive help in the CLI.

- Document the various commands: komodo-check-pypi, komodo-insert-proposals, komodo-post-messages, komodo-check-symlinks, komodo-lint, komodo-reverse-deps, komodo-clean-repository, komodo-lint-maturity, komodo-snyk-test, komodo-create-symlinks, komodo-lint-package-status, komodo-suggest-symlinks, komodo-extract-dep-graph, komodo-non-deployed, komodo-transpiler

- Show how (and if!) `komodo` is intended to be used as a library.

|

non_defect

|

this repo has no docs there doesn t seem to be any documentation for komodo the closest thing i could find is not a public link which i think is really documentation for komodo releases i think the documentation could contain the following expand on the basic example in the readme see explain how it relates to komodoenv and perhaps with appropriate signals that it s not public komodo releases likewise those libraries should explain the relationship map the resulting release directory structure files etc explain all of the arguments to the kmd command because most of them do not have expensive help in the cli document the various commands komodo check pypi komodo insert proposals komodo post messages komodo check symlinks komodo lint komodo reverse deps komodo clean repository komodo lint maturity komodo snyk test komodo create symlinks komodo lint package status komodo suggest symlinks komodo extract dep graph komodo non deployed komodo transpiler show how and if komodo is intended to be used as a library

| 0

|

18,129

| 3,025,475,450

|

IssuesEvent

|

2015-08-03 08:54:52

|

hazelcast/hazelcast

|

https://api.github.com/repos/hazelcast/hazelcast

|

reopened

|

IMap.destroy() - map is destroyed and then immediately recreated

|

Team: Core Type: Defect

|

Creating this issue as suggested by @bilalyasar (see [discussion on the Hazelcast Google Groups forum] (https://groups.google.com/forum/#!topic/hazelcast/G1P43tORJPA))

Hazelcast version that you use: ```3.4.2```, ```3.5```

Cluster size: ```1```

Number of the clients: ```1```

Version of Java: ```OracleJDK 1.7.0_80, x86_64```, ```OracleJDK 1.8.0_45, x86_64```

Operating system: ```OS X Yosemite 10.10.3 (14D136)```

Logs and stack traces: See the console output from executing the TestNG test case below

Detailed description of the steps to reproduce your issue: See the TestNG test case below

Unit test with the hazelcast.xml file:

```java

import static org.testng.Assert.assertEquals;

import static org.testng.Assert.assertNotNull;

import org.testng.annotations.AfterClass;

import org.testng.annotations.BeforeClass;

import org.testng.annotations.Test;

import com.hazelcast.client.HazelcastClient;

import com.hazelcast.core.DistributedObjectEvent;

import com.hazelcast.core.DistributedObjectListener;

import com.hazelcast.core.Hazelcast;

import com.hazelcast.core.HazelcastInstance;

import com.hazelcast.core.IMap;

public class IMapDestroyTest {

private HazelcastInstance server;

private HazelcastInstance client;

@BeforeClass

public void setUpBeforeClass() {

server = Hazelcast.newHazelcastInstance();

sleep(2000);

client = HazelcastClient.newHazelcastClient();

client.addDistributedObjectListener(new DistributedObjectListener() {

@Override public void distributedObjectDestroyed(final DistributedObjectEvent event) {

System.out.println("\n\tdistributedObjectDestroyed(): " + event);

}

@Override public void distributedObjectCreated(final DistributedObjectEvent event) {

System.out.println("\n\tdistributedObjectCreated(): " + event);

}

});

}

@AfterClass

public void tearDownAfterClass() {

client.shutdown();

server.shutdown();

}

@Test

public void testIMapDestroy() {

System.out.print("Creating 'foo' map... ");

IMap<String, String> fooMap = client.getMap("foo");

assertNotNull(fooMap);

System.out.println("done.");

System.out.println("All known distributed objects: " + client.getDistributedObjects());

System.out.print("Inserting an entry... ");

fooMap.put("fu", "bar");

System.out.print("done.\nRetrieving the entry... ");

String value = fooMap.get("fu");

assertEquals(value, "bar");

System.out.print("done.\nDestroying the map... ");

fooMap.destroy();

System.out.println("done.");

System.out.println("All known distributed objects: " + client.getDistributedObjects());

}

private void sleep(final int millis) {

try {

Thread.sleep(millis);

} catch (InterruptedException e) {

Thread.currentThread().interrupt();

}

}

}

```

Console output:

```

Creating 'foo' map...

distributedObjectCreated(): DistributedObjectEvent{eventType=CREATED, serviceName='hz:impl:mapService', distributedObject=IMap{name='foo'}}

done.

All known distributed objects: [IMap{name='foo'}]

Inserting an entry... done.

Retrieving the entry... done.

Destroying the map...

distributedObjectDestroyed(): DistributedObjectEvent{eventType=DESTROYED, serviceName='hz:impl:mapService', distributedObject=IMap{name='foo'}}

distributedObjectCreated(): DistributedObjectEvent{eventType=CREATED, serviceName='hz:impl:mapService', distributedObject=IMap{name='foo'}}

done.

All known distributed objects: [IMap{name='foo'}]

```

|

1.0

|

IMap.destroy() - map is destroyed and then immediately recreated - Creating this issue as suggested by @bilalyasar (see [discussion on the Hazelcast Google Groups forum] (https://groups.google.com/forum/#!topic/hazelcast/G1P43tORJPA))

Hazelcast version that you use: ```3.4.2```, ```3.5```

Cluster size: ```1```

Number of the clients: ```1```

Version of Java: ```OracleJDK 1.7.0_80, x86_64```, ```OracleJDK 1.8.0_45, x86_64```

Operating system: ```OS X Yosemite 10.10.3 (14D136)```

Logs and stack traces: See the console output from executing the TestNG test case below

Detailed description of the steps to reproduce your issue: See the TestNG test case below

Unit test with the hazelcast.xml file:

```java

import static org.testng.Assert.assertEquals;

import static org.testng.Assert.assertNotNull;

import org.testng.annotations.AfterClass;

import org.testng.annotations.BeforeClass;

import org.testng.annotations.Test;

import com.hazelcast.client.HazelcastClient;

import com.hazelcast.core.DistributedObjectEvent;

import com.hazelcast.core.DistributedObjectListener;

import com.hazelcast.core.Hazelcast;

import com.hazelcast.core.HazelcastInstance;

import com.hazelcast.core.IMap;

public class IMapDestroyTest {

private HazelcastInstance server;

private HazelcastInstance client;

@BeforeClass

public void setUpBeforeClass() {

server = Hazelcast.newHazelcastInstance();

sleep(2000);

client = HazelcastClient.newHazelcastClient();

client.addDistributedObjectListener(new DistributedObjectListener() {

@Override public void distributedObjectDestroyed(final DistributedObjectEvent event) {

System.out.println("\n\tdistributedObjectDestroyed(): " + event);

}

@Override public void distributedObjectCreated(final DistributedObjectEvent event) {

System.out.println("\n\tdistributedObjectCreated(): " + event);

}

});

}

@AfterClass

public void tearDownAfterClass() {

client.shutdown();

server.shutdown();

}

@Test

public void testIMapDestroy() {

System.out.print("Creating 'foo' map... ");

IMap<String, String> fooMap = client.getMap("foo");

assertNotNull(fooMap);

System.out.println("done.");

System.out.println("All known distributed objects: " + client.getDistributedObjects());

System.out.print("Inserting an entry... ");

fooMap.put("fu", "bar");

System.out.print("done.\nRetrieving the entry... ");

String value = fooMap.get("fu");

assertEquals(value, "bar");

System.out.print("done.\nDestroying the map... ");

fooMap.destroy();

System.out.println("done.");

System.out.println("All known distributed objects: " + client.getDistributedObjects());

}

private void sleep(final int millis) {

try {

Thread.sleep(millis);

} catch (InterruptedException e) {

Thread.currentThread().interrupt();

}

}

}

```

Console output:

```

Creating 'foo' map...

distributedObjectCreated(): DistributedObjectEvent{eventType=CREATED, serviceName='hz:impl:mapService', distributedObject=IMap{name='foo'}}

done.

All known distributed objects: [IMap{name='foo'}]

Inserting an entry... done.

Retrieving the entry... done.

Destroying the map...

distributedObjectDestroyed(): DistributedObjectEvent{eventType=DESTROYED, serviceName='hz:impl:mapService', distributedObject=IMap{name='foo'}}

distributedObjectCreated(): DistributedObjectEvent{eventType=CREATED, serviceName='hz:impl:mapService', distributedObject=IMap{name='foo'}}

done.

All known distributed objects: [IMap{name='foo'}]

```

|

defect

|

imap destroy map is destroyed and then immediately recreated creating this issue as suggested by bilalyasar see hazelcast version that you use cluster size number of the clients version of java oraclejdk oraclejdk operating system os x yosemite logs and stack traces see the console output from executing the testng test case below detailed description of the steps to reproduce your issue see the testng test case below unit test with the hazelcast xml file java import static org testng assert assertequals import static org testng assert assertnotnull import org testng annotations afterclass import org testng annotations beforeclass import org testng annotations test import com hazelcast client hazelcastclient import com hazelcast core distributedobjectevent import com hazelcast core distributedobjectlistener import com hazelcast core hazelcast import com hazelcast core hazelcastinstance import com hazelcast core imap public class imapdestroytest private hazelcastinstance server private hazelcastinstance client beforeclass public void setupbeforeclass server hazelcast newhazelcastinstance sleep client hazelcastclient newhazelcastclient client adddistributedobjectlistener new distributedobjectlistener override public void distributedobjectdestroyed final distributedobjectevent event system out println n tdistributedobjectdestroyed event override public void distributedobjectcreated final distributedobjectevent event system out println n tdistributedobjectcreated event afterclass public void teardownafterclass client shutdown server shutdown test public void testimapdestroy system out print creating foo map imap foomap client getmap foo assertnotnull foomap system out println done system out println all known distributed objects client getdistributedobjects system out print inserting an entry foomap put fu bar system out print done nretrieving the entry string value foomap get fu assertequals value bar system out print done ndestroying the map foomap destroy system out println done system out println all known distributed objects client getdistributedobjects private void sleep final int millis try thread sleep millis catch interruptedexception e thread currentthread interrupt console output creating foo map distributedobjectcreated distributedobjectevent eventtype created servicename hz impl mapservice distributedobject imap name foo done all known distributed objects inserting an entry done retrieving the entry done destroying the map distributedobjectdestroyed distributedobjectevent eventtype destroyed servicename hz impl mapservice distributedobject imap name foo distributedobjectcreated distributedobjectevent eventtype created servicename hz impl mapservice distributedobject imap name foo done all known distributed objects

| 1

|

62,005

| 17,023,830,518

|

IssuesEvent

|

2021-07-03 04:04:20

|

tomhughes/trac-tickets

|

https://api.github.com/repos/tomhughes/trac-tickets

|

closed

|

Potlatch 1 removed from dropdown

|

Component: website Priority: major Resolution: wontfix Type: defect

|

**[Submitted to the original trac issue database at 8.08pm, Saturday, 13th October 2012]**

If I'm not mistaken, it's still the only way to find deleted ways in an area. So it shouldn't be removed unless this feature is added to PL2.

|

1.0

|

Potlatch 1 removed from dropdown - **[Submitted to the original trac issue database at 8.08pm, Saturday, 13th October 2012]**

If I'm not mistaken, it's still the only way to find deleted ways in an area. So it shouldn't be removed unless this feature is added to PL2.

|

defect

|

potlatch removed from dropdown if i m not mistaken it s still the only way to find deleted ways in an area so it shouldn t be removed unless this feature is added to

| 1

|

26,817

| 4,793,196,110

|

IssuesEvent

|

2016-10-31 17:30:22

|

nelenkov/wwwjdic

|

https://api.github.com/repos/nelenkov/wwwjdic

|

closed

|

it just times out and doesn't work at all

|

auto-migrated Priority-Medium Type-Defect

|

```

What steps will reproduce the problem?

1.

2.

3.

What is the expected output? What do you see instead? A search result. A

message saying there was an unexpected error and it timed out. Also these

numbers: 130.194.64.145:80

What version of the product are you using? On what operating system? I have an

Android but I don't know which one.

If possible, provide a stack trace (logcat).

As I can only test on HTC Magic and Nexus One, I can't reproduce device

specific issues. Without a stack trace, there is usually nothing I can do.

So do attach logcat output. That or, send me a phone :)

Please provide any additional information below.

```

Original issue reported on code.google.com by `Katwoods...@gmail.com` on 20 Oct 2014 at 4:19

|

1.0

|

it just times out and doesn't work at all - ```

What steps will reproduce the problem?

1.

2.

3.

What is the expected output? What do you see instead? A search result. A

message saying there was an unexpected error and it timed out. Also these

numbers: 130.194.64.145:80

What version of the product are you using? On what operating system? I have an

Android but I don't know which one.

If possible, provide a stack trace (logcat).

As I can only test on HTC Magic and Nexus One, I can't reproduce device

specific issues. Without a stack trace, there is usually nothing I can do.

So do attach logcat output. That or, send me a phone :)

Please provide any additional information below.

```

Original issue reported on code.google.com by `Katwoods...@gmail.com` on 20 Oct 2014 at 4:19

|

defect

|

it just times out and doesn t work at all what steps will reproduce the problem what is the expected output what do you see instead a search result a message saying there was an unexpected error and it timed out also these numbers what version of the product are you using on what operating system i have an android but i don t know which one if possible provide a stack trace logcat as i can only test on htc magic and nexus one i can t reproduce device specific issues without a stack trace there is usually nothing i can do so do attach logcat output that or send me a phone please provide any additional information below original issue reported on code google com by katwoods gmail com on oct at

| 1

|

141,934

| 5,447,153,884

|

IssuesEvent

|

2017-03-07 12:46:40

|

vtyulb/BSA-Analytics

|

https://api.github.com/repos/vtyulb/BSA-Analytics

|

closed

|

Новый баг

|

High priority

|

В режиме pulsar fourier analytics при загрузке блоков почти во всех модулях и лучах синие стрелки выскакивают на самое начало файла, пропуская в том числе даже гармонику в 1секунду.

|

1.0

|

Новый баг - В режиме pulsar fourier analytics при загрузке блоков почти во всех модулях и лучах синие стрелки выскакивают на самое начало файла, пропуская в том числе даже гармонику в 1секунду.

|

non_defect

|

новый баг в режиме pulsar fourier analytics при загрузке блоков почти во всех модулях и лучах синие стрелки выскакивают на самое начало файла пропуская в том числе даже гармонику в

| 0

|

24,639

| 4,053,706,226

|

IssuesEvent

|

2016-05-24 09:35:16

|

octavian-paraschiv/protone-suite

|

https://api.github.com/repos/octavian-paraschiv/protone-suite

|

closed

|

Inconsistency between time displayed in Player / media library and actual media position on some MP3 VBR files.

|

Category-Runtime OS-All Priority-P2 ReportSource-DevQA Resolution-Resolved Type-Defect

|

```

This bug is known since version 1.1.x.

Behavior: on some MP3 VBR files the Media Services report an incorrect time

elapsed for the played file. This time has no relation with the actual position

within the file and can yield to confusion amongst users.

```

Original issue reported on code.google.com by `octavian...@gmail.com` on 18 Apr 2013 at 4:43

|

1.0

|

Inconsistency between time displayed in Player / media library and actual media position on some MP3 VBR files. - ```

This bug is known since version 1.1.x.

Behavior: on some MP3 VBR files the Media Services report an incorrect time

elapsed for the played file. This time has no relation with the actual position

within the file and can yield to confusion amongst users.

```

Original issue reported on code.google.com by `octavian...@gmail.com` on 18 Apr 2013 at 4:43

|

defect

|

inconsistency between time displayed in player media library and actual media position on some vbr files this bug is known since version x behavior on some vbr files the media services report an incorrect time elapsed for the played file this time has no relation with the actual position within the file and can yield to confusion amongst users original issue reported on code google com by octavian gmail com on apr at

| 1

|

28,504

| 12,867,767,847

|

IssuesEvent

|

2020-07-10 07:35:27

|

terraform-providers/terraform-provider-azurerm

|

https://api.github.com/repos/terraform-providers/terraform-provider-azurerm

|

closed

|

azurerm_app_service_virtual_network_swift_connection Write Network Config HTTP 500 error

|

service/app-service

|

<!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https://www.terraform.io/docs/state/index.html) and [State Backend](https://www.terraform.io/docs/backends/index.html) issues

* [Provisioner](https://www.terraform.io/docs/provisioners/index.html) issues

* [Registry](https://registry.terraform.io/) issues

* Spans resources across multiple providers

If you are running into one of these scenarios, we recommend opening an issue in the [Terraform core repository](https://github.com/hashicorp/terraform/) instead.

--->

<!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not leave "+1" or "me too" comments, they generate extra noise for issue followers and do not help prioritize the request

* If you are interested in working on this issue or have submitted a pull request, please leave a comment

<!--- Thank you for keeping this note for the community --->

### Terraform (and AzureRM Provider) Version

Terraform version **0.12.28**

AzureRM Provider version **2.17.0**

### Affected Resource(s)

<!--- Please list the affected resources and data sources. --->

* `azurerm_app_service_virtual_network_swift_connection`

### Terraform Configuration Files

<!--- Information about code formatting: https://help.github.com/articles/basic-writing-and-formatting-syntax/#quoting-code --->

```hcl

terraform {

backend "azurerm" {

}

}

provider "azurerm" {

version = "=2.17.0"

features {}

}

# Data resource to existing Resource Group

data "azurerm_resource_group" "env-resourcegroup" {

name = "MyResourceGroup"

}

# Data resource to existing vNet

data "azurerm_virtual_network" "vnet" {

name = "vNet-AppServDO-001"

resource_group_name = "MyResourceGroup"

}

# Data resource to existing vNet subnet

data "azurerm_subnet" "vnetsubnet" {

name = "default"

virtual_network_name = data.azurerm_virtual_network.vnet.name

resource_group_name = "MyResourceGroup"

}

# Define App Service Plan

resource "azurerm_app_service_plan" "go-1" {

name = "APPPLAN-AppServDO-Plan01"

location = "WestEurope"

resource_group_name = data.azurerm_resource_group.env-resourcegroup.name

sku {

tier = "PremiumV2"

size = "P1v2"

}

}

# Define WebApp 1

resource "azurerm_app_service" "go-1" {

name = "WA-AppServDO-Site01"

location = "WestEurope"

resource_group_name = data.azurerm_resource_group.env-resourcegroup.name

app_service_plan_id = azurerm_app_service_plan.go-1.id

client_affinity_enabled = "true"

site_config {

scm_type = "VSTSRM"

always_on = "true"

use_32_bit_worker_process = "false"

}

}

resource "azurerm_app_service_virtual_network_swift_connection" "go-1" {

app_service_id = azurerm_app_service.go-1.id

subnet_id = data.azurerm_subnet.vnetsubnet.id

```

### Debug Output

```

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [2m0s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [2m10s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [2m20s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [2m30s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [2m40s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [2m50s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [3m0s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [3m10s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [3m20s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [3m30s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [3m40s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [3m50s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [4m0s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [4m10s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [4m20s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [4m30s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [4m40s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [4m50s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [5m0s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [5m10s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [5m20s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [5m30s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [5m40s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [5m50s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [6m0s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [6m10s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [6m20s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [6m30s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [6m40s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [6m50s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [7m0s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [7m10s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [7m20s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [7m30s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [7m40s elapsed]

Error: Error creating/updating App Service VNet association between "WA-AppServDO-Site01" (Resource Group "MyResourceGroup") and Virtual Network "vNet-AppServDO-001": web.AppsClient#CreateOrUpdateSwiftVirtualNetworkConnection: Failure responding to request: StatusCode=500 -- Original Error: autorest/azure: Service returned an error. Status=500 Code="" Message="An error has occurred."

on main.tf line 71, in resource "azurerm_app_service_virtual_network_swift_connection" "go-1":

71: resource "azurerm_app_service_virtual_network_swift_connection" "go-1" {

##[error]Error: The process 'C:\hostedtoolcache\windows\terraform\0.12.28\x64\terraform.exe' failed with exit code 1

Finishing: Terraform : apply

```

### Panic Output

<!--- If Terraform produced a panic, please provide a link to a GitHub Gist containing the output of the `crash.log`. --->

### Expected Behavior

The App Service 'WA-AppServDO-Site01' is added to the subnet named 'default' in the vNet named 'vNet-AppServDO-001'

### Actual Behavior

Apply retries and produces errors inside the Azure Activity Log of the App Service 'WA-AppServDO-Site01'

Example

```

Operation name Write Network Config

Error code InternalServerError

Message An error has occurred.

```

The JSON inside these messages does not illustrate any useful error code, other than the HTTP 500

e.g.

```

"operationName": {

"value": "Microsoft.Web/sites/networkConfig/write",

"localizedValue": "Microsoft.Web/sites/networkConfig/write"

"status": {

"value": "Failed",

"localizedValue": "Failed"

},

"subStatus": {

"value": "InternalServerError",

"localizedValue": "Internal Server Error (HTTP Status Code: 500)"

},

"properties": {

"statusCode": "InternalServerError",

"serviceRequestId": null,

"statusMessage": "{\"Message\":\"An error has occurred.\"}",

"eventCategory": "Administrative"

```

### Steps to Reproduce

<!--- Please list the steps required to reproduce the issue. --->

1. Create manually a resource group called "MyResourceGroup" or similar

2. Create the vNet called "vNet-AppServDO-001" or similar, with a "default" subnet (created as standard)

3. `terraform apply`

I have tried West Europe and UK South locations, and different App Service Plan SKUs, but with the same issue.

To work around this issue I am running the following Azure CLI command outside of Terraform:

`az webapp vnet-integration add --name WA-AppServDO-Site01 --resource-group MyResourceGroup --subnet 'default' --vnet vNet-AppServDO-001`

### Important Factoids

<!--- Are there anything atypical about your accounts that we should know? For example: Running in a Azure China/Germany/Government? --->

### References

<!---

Information about referencing Github Issues: https://help.github.com/articles/basic-writing-and-formatting-syntax/#referencing-issues-and-pull-requests

Are there any other GitHub issues (open or closed) or pull requests that should be linked here? Such as vendor documentation?

--->

* #0000

|

2.0

|

azurerm_app_service_virtual_network_swift_connection Write Network Config HTTP 500 error - <!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https://www.terraform.io/docs/state/index.html) and [State Backend](https://www.terraform.io/docs/backends/index.html) issues

* [Provisioner](https://www.terraform.io/docs/provisioners/index.html) issues

* [Registry](https://registry.terraform.io/) issues

* Spans resources across multiple providers

If you are running into one of these scenarios, we recommend opening an issue in the [Terraform core repository](https://github.com/hashicorp/terraform/) instead.

--->

<!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not leave "+1" or "me too" comments, they generate extra noise for issue followers and do not help prioritize the request

* If you are interested in working on this issue or have submitted a pull request, please leave a comment

<!--- Thank you for keeping this note for the community --->

### Terraform (and AzureRM Provider) Version

Terraform version **0.12.28**

AzureRM Provider version **2.17.0**

### Affected Resource(s)

<!--- Please list the affected resources and data sources. --->

* `azurerm_app_service_virtual_network_swift_connection`

### Terraform Configuration Files

<!--- Information about code formatting: https://help.github.com/articles/basic-writing-and-formatting-syntax/#quoting-code --->

```hcl

terraform {

backend "azurerm" {

}

}

provider "azurerm" {

version = "=2.17.0"

features {}

}

# Data resource to existing Resource Group

data "azurerm_resource_group" "env-resourcegroup" {

name = "MyResourceGroup"

}

# Data resource to existing vNet

data "azurerm_virtual_network" "vnet" {

name = "vNet-AppServDO-001"

resource_group_name = "MyResourceGroup"

}

# Data resource to existing vNet subnet

data "azurerm_subnet" "vnetsubnet" {

name = "default"

virtual_network_name = data.azurerm_virtual_network.vnet.name

resource_group_name = "MyResourceGroup"

}

# Define App Service Plan

resource "azurerm_app_service_plan" "go-1" {

name = "APPPLAN-AppServDO-Plan01"

location = "WestEurope"

resource_group_name = data.azurerm_resource_group.env-resourcegroup.name

sku {

tier = "PremiumV2"

size = "P1v2"

}

}

# Define WebApp 1

resource "azurerm_app_service" "go-1" {

name = "WA-AppServDO-Site01"

location = "WestEurope"

resource_group_name = data.azurerm_resource_group.env-resourcegroup.name

app_service_plan_id = azurerm_app_service_plan.go-1.id

client_affinity_enabled = "true"

site_config {

scm_type = "VSTSRM"

always_on = "true"

use_32_bit_worker_process = "false"

}

}

resource "azurerm_app_service_virtual_network_swift_connection" "go-1" {

app_service_id = azurerm_app_service.go-1.id

subnet_id = data.azurerm_subnet.vnetsubnet.id

```

### Debug Output

```

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [2m0s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [2m10s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [2m20s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [2m30s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [2m40s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [2m50s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [3m0s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [3m10s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [3m20s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [3m30s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [3m40s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [3m50s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [4m0s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [4m10s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [4m20s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [4m30s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [4m40s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [4m50s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [5m0s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [5m10s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [5m20s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [5m30s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [5m40s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [5m50s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [6m0s elapsed]

azurerm_app_service_virtual_network_swift_connection.go-1: Still creating... [6m10s elapsed]