Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

757

| labels

stringlengths 4

664

| body

stringlengths 3

261k

| index

stringclasses 10

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

232k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

1,962

| 2,603,974,140

|

IssuesEvent

|

2015-02-24 19:01:04

|

chrsmith/nishazi6

|

https://api.github.com/repos/chrsmith/nishazi6

|

opened

|

沈阳疱疹男科医院

|

auto-migrated Priority-Medium Type-Defect

|

```

沈阳疱疹男科医院〓沈陽軍區政治部醫院性病〓TEL:024-3102330

8〓成立于1946年,68年專注于性傳播疾病的研究和治療。位于�

��陽市沈河區二緯路32號。是一所與新中國同建立共輝煌的歷�

��悠久、設備精良、技術權威、專家云集,是預防、保健、醫

療、科研康復為一體的綜合性醫院。是國家首批公立甲等部��

�醫院、全國首批醫療規范定點單位,是第四軍醫大學、東南�

��學等知名高等院校的教學醫院。曾被中國人民解放軍空軍后

勤部衛生部評為衛生工作先進單位,先后兩次榮立集體二等��

�。

```

-----

Original issue reported on code.google.com by `q964105...@gmail.com` on 4 Jun 2014 at 8:09

|

1.0

|

沈阳疱疹男科医院 - ```

沈阳疱疹男科医院〓沈陽軍區政治部醫院性病〓TEL:024-3102330

8〓成立于1946年,68年專注于性傳播疾病的研究和治療。位于�

��陽市沈河區二緯路32號。是一所與新中國同建立共輝煌的歷�

��悠久、設備精良、技術權威、專家云集,是預防、保健、醫

療、科研康復為一體的綜合性醫院。是國家首批公立甲等部��

�醫院、全國首批醫療規范定點單位,是第四軍醫大學、東南�

��學等知名高等院校的教學醫院。曾被中國人民解放軍空軍后

勤部衛生部評為衛生工作先進單位,先后兩次榮立集體二等��

�。

```

-----

Original issue reported on code.google.com by `q964105...@gmail.com` on 4 Jun 2014 at 8:09

|

defect

|

沈阳疱疹男科医院 沈阳疱疹男科医院〓沈陽軍區政治部醫院性病〓tel: 〓 , 。位于� �� 。是一所與新中國同建立共輝煌的歷� ��悠久、設備精良、技術權威、專家云集,是預防、保健、醫 療、科研康復為一體的綜合性醫院。是國家首批公立甲等部�� �醫院、全國首批醫療規范定點單位,是第四軍醫大學、東南� ��學等知名高等院校的教學醫院。曾被中國人民解放軍空軍后 勤部衛生部評為衛生工作先進單位,先后兩次榮立集體二等�� �。 original issue reported on code google com by gmail com on jun at

| 1

|

40,611

| 10,065,198,617

|

IssuesEvent

|

2019-07-23 10:19:00

|

hazelcast/hazelcast

|

https://api.github.com/repos/hazelcast/hazelcast

|

opened

|

split brain executors partition.count=1999 OOME

|

Module: Cluster Module: IExecutor Team: Core Type: Defect

|

http://jenkins.hazelcast.com/view/split/job/split-executors/31/console

http://54.147.27.51/~jenkins/workspace/split-executors/3.12.1/2019_07_20-09_52_54/executors/

hz-root/HzMember4HZAA/HzMember4HZAA.hprof

hz-root/HzMember5HZAA/HzMember5HZAA.hprof

http://54.147.27.51/~jenkins/workspace/split-executors/3.12.1/2019_07_20-09_52_54/executors/go

ops="${ops} -Dhazelcast.partition.count=1999"

1GB meamber heap

http://54.147.27.51/~jenkins/workspace/split-executors/3.12.1/2019_07_20-09_52_54/executors/gc.html

higher partition.count=1999 gives a larger overhead, maybe the OOME hprof is not a leak

but the hprof could help find inefficiency

2 members encountered a OOME however rerunning the test with a 2GB heap passed

http://jenkins.hazelcast.com/view/split/job/split-executors/32/console

however the GC charts still look leaky

http://54.147.27.51/~jenkins/workspace/split-executors/3.12.1/2019_07_20-14_46_12/executors/gc.html

|

1.0

|

split brain executors partition.count=1999 OOME -

http://jenkins.hazelcast.com/view/split/job/split-executors/31/console

http://54.147.27.51/~jenkins/workspace/split-executors/3.12.1/2019_07_20-09_52_54/executors/

hz-root/HzMember4HZAA/HzMember4HZAA.hprof

hz-root/HzMember5HZAA/HzMember5HZAA.hprof

http://54.147.27.51/~jenkins/workspace/split-executors/3.12.1/2019_07_20-09_52_54/executors/go

ops="${ops} -Dhazelcast.partition.count=1999"

1GB meamber heap

http://54.147.27.51/~jenkins/workspace/split-executors/3.12.1/2019_07_20-09_52_54/executors/gc.html

higher partition.count=1999 gives a larger overhead, maybe the OOME hprof is not a leak

but the hprof could help find inefficiency

2 members encountered a OOME however rerunning the test with a 2GB heap passed

http://jenkins.hazelcast.com/view/split/job/split-executors/32/console

however the GC charts still look leaky

http://54.147.27.51/~jenkins/workspace/split-executors/3.12.1/2019_07_20-14_46_12/executors/gc.html

|

defect

|

split brain executors partition count oome hz root hprof hz root hprof ops ops dhazelcast partition count meamber heap higher partition count gives a larger overhead maybe the oome hprof is not a leak but the hprof could help find inefficiency members encountered a oome however rerunning the test with a heap passed however the gc charts still look leaky

| 1

|

2,916

| 2,607,966,064

|

IssuesEvent

|

2015-02-26 00:42:24

|

chrsmithdemos/leveldb

|

https://api.github.com/repos/chrsmithdemos/leveldb

|

closed

|

Create the form

|

auto-migrated Priority-Medium Type-Defect

|

```

Hello. Help please, I need to create the form for editing table data from the

database leveldb

```

-----

Original issue reported on code.google.com by `faN...@rambler.ru` on 31 May 2012 at 4:55

|

1.0

|

Create the form - ```

Hello. Help please, I need to create the form for editing table data from the

database leveldb

```

-----

Original issue reported on code.google.com by `faN...@rambler.ru` on 31 May 2012 at 4:55

|

defect

|

create the form hello help please i need to create the form for editing table data from the database leveldb original issue reported on code google com by fan rambler ru on may at

| 1

|

2,432

| 2,688,616,823

|

IssuesEvent

|

2015-03-31 01:58:59

|

gios-asu/text-geolocator

|

https://api.github.com/repos/gios-asu/text-geolocator

|

opened

|

NLP Documentation - developer's guide

|

documentation nlp

|

Ensure that the proper comments are in place in the NLP module to ensure a quality developer's guide is produced

|

1.0

|

NLP Documentation - developer's guide - Ensure that the proper comments are in place in the NLP module to ensure a quality developer's guide is produced

|

non_defect

|

nlp documentation developer s guide ensure that the proper comments are in place in the nlp module to ensure a quality developer s guide is produced

| 0

|

124,497

| 17,772,588,170

|

IssuesEvent

|

2021-08-30 15:13:26

|

kapseliboi/energy-futures-vis-avenir-energetique

|

https://api.github.com/repos/kapseliboi/energy-futures-vis-avenir-energetique

|

opened

|

CVE-2020-28477 (High) detected in immer-1.10.0.tgz

|

security vulnerability

|

## CVE-2020-28477 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>immer-1.10.0.tgz</b></p></summary>

<p>Create your next immutable state by mutating the current one</p>

<p>Library home page: <a href="https://registry.npmjs.org/immer/-/immer-1.10.0.tgz">https://registry.npmjs.org/immer/-/immer-1.10.0.tgz</a></p>

<p>Path to dependency file: energy-futures-vis-avenir-energetique/package.json</p>

<p>Path to vulnerable library: energy-futures-vis-avenir-energetique/node_modules/immer/package.json</p>

<p>

Dependency Hierarchy:

- react-5.3.19.tgz (Root Library)

- react-dev-utils-9.1.0.tgz

- :x: **immer-1.10.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/kapseliboi/energy-futures-vis-avenir-energetique/commit/907b3c15edb7159764857453edc4f32b2432cdd4">907b3c15edb7159764857453edc4f32b2432cdd4</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

This affects all versions of package immer.

<p>Publish Date: 2021-01-19

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-28477>CVE-2020-28477</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/immerjs/immer/releases/tag/v8.0.1">https://github.com/immerjs/immer/releases/tag/v8.0.1</a></p>

<p>Release Date: 2021-01-19</p>

<p>Fix Resolution: v8.0.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2020-28477 (High) detected in immer-1.10.0.tgz - ## CVE-2020-28477 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>immer-1.10.0.tgz</b></p></summary>

<p>Create your next immutable state by mutating the current one</p>

<p>Library home page: <a href="https://registry.npmjs.org/immer/-/immer-1.10.0.tgz">https://registry.npmjs.org/immer/-/immer-1.10.0.tgz</a></p>

<p>Path to dependency file: energy-futures-vis-avenir-energetique/package.json</p>

<p>Path to vulnerable library: energy-futures-vis-avenir-energetique/node_modules/immer/package.json</p>

<p>

Dependency Hierarchy:

- react-5.3.19.tgz (Root Library)

- react-dev-utils-9.1.0.tgz

- :x: **immer-1.10.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/kapseliboi/energy-futures-vis-avenir-energetique/commit/907b3c15edb7159764857453edc4f32b2432cdd4">907b3c15edb7159764857453edc4f32b2432cdd4</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

This affects all versions of package immer.

<p>Publish Date: 2021-01-19

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-28477>CVE-2020-28477</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/immerjs/immer/releases/tag/v8.0.1">https://github.com/immerjs/immer/releases/tag/v8.0.1</a></p>

<p>Release Date: 2021-01-19</p>

<p>Fix Resolution: v8.0.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_defect

|

cve high detected in immer tgz cve high severity vulnerability vulnerable library immer tgz create your next immutable state by mutating the current one library home page a href path to dependency file energy futures vis avenir energetique package json path to vulnerable library energy futures vis avenir energetique node modules immer package json dependency hierarchy react tgz root library react dev utils tgz x immer tgz vulnerable library found in head commit a href found in base branch master vulnerability details this affects all versions of package immer publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource

| 0

|

49,690

| 13,187,251,736

|

IssuesEvent

|

2020-08-13 02:49:39

|

icecube-trac/tix3

|

https://api.github.com/repos/icecube-trac/tix3

|

opened

|

[clsim] fix hole ice params for all segments (Trac #1877)

|

Incomplete Migration Migrated from Trac combo simulation defect

|

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1877">https://code.icecube.wisc.edu/ticket/1877</a>, reported by david.schultz and owned by sebastian.sanchez</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-13T14:13:24",

"description": "Commit r149878/IceCube broke simprod-scripts because it didn't update `python/traysegments/I3CLSimMakeHits.py`.\n\n",

"reporter": "david.schultz",

"cc": "olivas, claudio.kopper",

"resolution": "fixed",

"_ts": "1550067204154158",

"component": "combo simulation",

"summary": "[clsim] fix hole ice params for all segments",

"priority": "blocker",

"keywords": "",

"time": "2016-10-01T15:27:26",

"milestone": "",

"owner": "sebastian.sanchez",

"type": "defect"

}

```

</p>

</details>

|

1.0

|

[clsim] fix hole ice params for all segments (Trac #1877) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1877">https://code.icecube.wisc.edu/ticket/1877</a>, reported by david.schultz and owned by sebastian.sanchez</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-13T14:13:24",

"description": "Commit r149878/IceCube broke simprod-scripts because it didn't update `python/traysegments/I3CLSimMakeHits.py`.\n\n",

"reporter": "david.schultz",

"cc": "olivas, claudio.kopper",

"resolution": "fixed",

"_ts": "1550067204154158",

"component": "combo simulation",

"summary": "[clsim] fix hole ice params for all segments",

"priority": "blocker",

"keywords": "",

"time": "2016-10-01T15:27:26",

"milestone": "",

"owner": "sebastian.sanchez",

"type": "defect"

}

```

</p>

</details>

|

defect

|

fix hole ice params for all segments trac migrated from json status closed changetime description commit icecube broke simprod scripts because it didn t update python traysegments py n n reporter david schultz cc olivas claudio kopper resolution fixed ts component combo simulation summary fix hole ice params for all segments priority blocker keywords time milestone owner sebastian sanchez type defect

| 1

|

26,431

| 4,707,582,762

|

IssuesEvent

|

2016-10-13 20:34:39

|

literat/srazvs

|

https://api.github.com/repos/literat/srazvs

|

closed

|

Fatal Error: Call to a member function getPresenterName() on a non-object

|

1-defect 2-public 3-presenter 3-registration testing

|

Fatal Error: Call to a member function getPresenterName() on a non-object in /var/www/virtual/vodni/web/www/srazvs/app/bootstrap.php:137

source: http://vodni.skauting.cz/srazvs/registration/www.qrka.cz

|

1.0

|

Fatal Error: Call to a member function getPresenterName() on a non-object - Fatal Error: Call to a member function getPresenterName() on a non-object in /var/www/virtual/vodni/web/www/srazvs/app/bootstrap.php:137

source: http://vodni.skauting.cz/srazvs/registration/www.qrka.cz

|

defect

|

fatal error call to a member function getpresentername on a non object fatal error call to a member function getpresentername on a non object in var www virtual vodni web www srazvs app bootstrap php source

| 1

|

38,714

| 8,952,575,982

|

IssuesEvent

|

2019-01-25 16:53:50

|

svigerske/ipopt-donotuse

|

https://api.github.com/repos/svigerske/ipopt-donotuse

|

closed

|

Segmentation Fault using IPOPT

|

Ipopt defect

|

Issue created by migration from Trac.

Original creator: ascrelot

Original creation time: 2011-03-01 03:37:05

Assignee: ipopt-team

Version: 3.9

Hi,

I'm using IPOPT to solve the subproblem of a Trust Region Algorithm.

So my objective function is the model. I have to evaluate it by calling a method implemented in another class than the one where the eval_f, eval_g (and so on) are implemented.

I want to give some objects as arguments of the constructor which builds the TNLP to be able to call my method. I've read in the documentation that we have to use SmartPtr to avoid problem of erased reference. Is that only for object defined in IPOPt or for all objects? Cause I can't define such SmartPtr for my own objects (cause there are undefined methods) and using "raw" pointers causes Segmentation fault when I'm leaving the procedure where the call

status = app->OptimizeTNLP(mynlp)

is done.

Do you have some code example where objects are given as arguments to the constructor of the TNLP?

Thanks

Anne-Sophie Crelot

|

1.0

|

Segmentation Fault using IPOPT - Issue created by migration from Trac.

Original creator: ascrelot

Original creation time: 2011-03-01 03:37:05

Assignee: ipopt-team

Version: 3.9

Hi,

I'm using IPOPT to solve the subproblem of a Trust Region Algorithm.

So my objective function is the model. I have to evaluate it by calling a method implemented in another class than the one where the eval_f, eval_g (and so on) are implemented.

I want to give some objects as arguments of the constructor which builds the TNLP to be able to call my method. I've read in the documentation that we have to use SmartPtr to avoid problem of erased reference. Is that only for object defined in IPOPt or for all objects? Cause I can't define such SmartPtr for my own objects (cause there are undefined methods) and using "raw" pointers causes Segmentation fault when I'm leaving the procedure where the call

status = app->OptimizeTNLP(mynlp)

is done.

Do you have some code example where objects are given as arguments to the constructor of the TNLP?

Thanks

Anne-Sophie Crelot

|

defect

|

segmentation fault using ipopt issue created by migration from trac original creator ascrelot original creation time assignee ipopt team version hi i m using ipopt to solve the subproblem of a trust region algorithm so my objective function is the model i have to evaluate it by calling a method implemented in another class than the one where the eval f eval g and so on are implemented i want to give some objects as arguments of the constructor which builds the tnlp to be able to call my method i ve read in the documentation that we have to use smartptr to avoid problem of erased reference is that only for object defined in ipopt or for all objects cause i can t define such smartptr for my own objects cause there are undefined methods and using raw pointers causes segmentation fault when i m leaving the procedure where the call status app optimizetnlp mynlp is done do you have some code example where objects are given as arguments to the constructor of the tnlp thanks anne sophie crelot

| 1

|

71,980

| 23,879,709,763

|

IssuesEvent

|

2022-09-07 23:21:29

|

department-of-veterans-affairs/vets-design-system-documentation

|

https://api.github.com/repos/department-of-veterans-affairs/vets-design-system-documentation

|

closed

|

[FUNCTIONALITY]: Sortable Table - SHOULD use our base Table component

|

pattern-new 508-defect-4 accessibility 508-issue-semantic-markup

|

@1Copenut commented on [Mon May 18 2020](https://github.com/department-of-veterans-affairs/va.gov-team/issues/9197)

**Feedback framework**

- **❗️ Must** for if the feedback must be applied

- **⚠️ Should** if the feedback is best practice

- **✔️ Consider** for suggestions/enhancements

## Description

<!-- This is a detailed description of the issue. It should include a restatement of the title, and provide more background information. -->

Our [sortable table component](https://department-of-veterans-affairs.github.io/veteran-facing-services-tools/visual-design/components/sortabletable/) ~~would benefit greatly from a basic `<Table />` component~~ should be a prop-driven extension on top of our [Table component](https://github.com/department-of-veterans-affairs/component-library/blob/master/src/components/Table/Table.jsx). This way we could build out more complex tables as extensions than completely new components. Thinking:

* Tables with multiple heading rows

* Tables with rows or columns that span multiple

* Tables with sortable columns

## Related Issues

* #9193

* #9194

## Point of Contact

<!-- If this issue is being opened by a VFS team member, please add a point of contact. Usually this is the same person who enters the issue ticket.

-->

**VFS Point of Contact:** _Trevor_

## Acceptance Criteria

- [ ] HTML validates with W3C validator or other HTML5 checker

- [ ] No axe errors

- [ ] [Table component](https://github.com/department-of-veterans-affairs/component-library/blob/master/src/components/Table/Table.jsx) is used to create extended or enhanced table components

- [ ] Visual styling does not change, except where noted by issue tickets

<!-- As a keyboard user, I want to open the Level of Coverage widget by pressing Spacebar or pressing Enter. These keypress actions should not interfere with the mouse click event also opening the widget. -->

## Environment

* https://department-of-veterans-affairs.github.io/veteran-facing-services-tools/visual-design/components/sortabletable/

|

1.0

|

[FUNCTIONALITY]: Sortable Table - SHOULD use our base Table component - @1Copenut commented on [Mon May 18 2020](https://github.com/department-of-veterans-affairs/va.gov-team/issues/9197)

**Feedback framework**

- **❗️ Must** for if the feedback must be applied

- **⚠️ Should** if the feedback is best practice

- **✔️ Consider** for suggestions/enhancements

## Description

<!-- This is a detailed description of the issue. It should include a restatement of the title, and provide more background information. -->

Our [sortable table component](https://department-of-veterans-affairs.github.io/veteran-facing-services-tools/visual-design/components/sortabletable/) ~~would benefit greatly from a basic `<Table />` component~~ should be a prop-driven extension on top of our [Table component](https://github.com/department-of-veterans-affairs/component-library/blob/master/src/components/Table/Table.jsx). This way we could build out more complex tables as extensions than completely new components. Thinking:

* Tables with multiple heading rows

* Tables with rows or columns that span multiple

* Tables with sortable columns

## Related Issues

* #9193

* #9194

## Point of Contact

<!-- If this issue is being opened by a VFS team member, please add a point of contact. Usually this is the same person who enters the issue ticket.

-->

**VFS Point of Contact:** _Trevor_

## Acceptance Criteria

- [ ] HTML validates with W3C validator or other HTML5 checker

- [ ] No axe errors

- [ ] [Table component](https://github.com/department-of-veterans-affairs/component-library/blob/master/src/components/Table/Table.jsx) is used to create extended or enhanced table components

- [ ] Visual styling does not change, except where noted by issue tickets

<!-- As a keyboard user, I want to open the Level of Coverage widget by pressing Spacebar or pressing Enter. These keypress actions should not interfere with the mouse click event also opening the widget. -->

## Environment

* https://department-of-veterans-affairs.github.io/veteran-facing-services-tools/visual-design/components/sortabletable/

|

defect

|

sortable table should use our base table component commented on feedback framework ❗️ must for if the feedback must be applied ⚠️ should if the feedback is best practice ✔️ consider for suggestions enhancements description our would benefit greatly from a basic component should be a prop driven extension on top of our this way we could build out more complex tables as extensions than completely new components thinking tables with multiple heading rows tables with rows or columns that span multiple tables with sortable columns related issues point of contact if this issue is being opened by a vfs team member please add a point of contact usually this is the same person who enters the issue ticket vfs point of contact trevor acceptance criteria html validates with validator or other checker no axe errors is used to create extended or enhanced table components visual styling does not change except where noted by issue tickets environment

| 1

|

51,147

| 13,191,541,923

|

IssuesEvent

|

2020-08-13 12:19:27

|

OpenMS/OpenMS

|

https://api.github.com/repos/OpenMS/OpenMS

|

closed

|

TOPPView: Layers display not updated

|

TOPPView critical defect

|

In TOPPView, when a file is opened as a "new layer", it should appear in the "Layers" window. Due to a recent regression, this does not happen (immediately) any more. The layer is drawn in the main window, but the "Layers" window is not updated - that is, until I open another file, or minimise and un-minimise the TOPPView window. (There may be other triggers as well.)

Without knowing these workarounds, it is very confusing that the "Layer" entry is missing. This also prevents users from interacting with the layer (disabling it, setting it to active, removing it...).

This occurs with the current development version on Ubuntu 18.04.4.

|

1.0

|

TOPPView: Layers display not updated - In TOPPView, when a file is opened as a "new layer", it should appear in the "Layers" window. Due to a recent regression, this does not happen (immediately) any more. The layer is drawn in the main window, but the "Layers" window is not updated - that is, until I open another file, or minimise and un-minimise the TOPPView window. (There may be other triggers as well.)

Without knowing these workarounds, it is very confusing that the "Layer" entry is missing. This also prevents users from interacting with the layer (disabling it, setting it to active, removing it...).

This occurs with the current development version on Ubuntu 18.04.4.

|

defect

|

toppview layers display not updated in toppview when a file is opened as a new layer it should appear in the layers window due to a recent regression this does not happen immediately any more the layer is drawn in the main window but the layers window is not updated that is until i open another file or minimise and un minimise the toppview window there may be other triggers as well without knowing these workarounds it is very confusing that the layer entry is missing this also prevents users from interacting with the layer disabling it setting it to active removing it this occurs with the current development version on ubuntu

| 1

|

266,486

| 20,154,514,497

|

IssuesEvent

|

2022-02-09 15:20:11

|

Legal-and-General/canopy

|

https://api.github.com/repos/Legal-and-General/canopy

|

closed

|

Deprecation of SCSS only documentation

|

documentation question

|

We are tentatively deciding to deprecate documentation for those applications only using the SCSS/CSS. This refers to the manual documentation that can be found in the storybook notes.

If any projects are using SCSS/CSS only, things will still work as expected, but in the future you will have to inspect the code to determine what classes to provide.

- The manual documentation was providing quite a time overhead in terms of contributing new components.

- The manual documentation was difficult to keep up to date

- The manual documentation could draw a team into using only the scss/css where it would have been preferable to use the Angular components.

Initially the intention of this documentation was to help with teams not using Angular, it is however quite cumbersome and received little interest for the amount of effort put into it. If you are a team who use this approach, or would be interested in using Canopy not with Angular we do have some advanced experiments with Angular Elements. Please respond on this issue.

In time we will start to remove the `scss only` documentation, and will keep this issue up to date.

|

1.0

|

Deprecation of SCSS only documentation - We are tentatively deciding to deprecate documentation for those applications only using the SCSS/CSS. This refers to the manual documentation that can be found in the storybook notes.

If any projects are using SCSS/CSS only, things will still work as expected, but in the future you will have to inspect the code to determine what classes to provide.

- The manual documentation was providing quite a time overhead in terms of contributing new components.

- The manual documentation was difficult to keep up to date

- The manual documentation could draw a team into using only the scss/css where it would have been preferable to use the Angular components.

Initially the intention of this documentation was to help with teams not using Angular, it is however quite cumbersome and received little interest for the amount of effort put into it. If you are a team who use this approach, or would be interested in using Canopy not with Angular we do have some advanced experiments with Angular Elements. Please respond on this issue.

In time we will start to remove the `scss only` documentation, and will keep this issue up to date.

|

non_defect

|

deprecation of scss only documentation we are tentatively deciding to deprecate documentation for those applications only using the scss css this refers to the manual documentation that can be found in the storybook notes if any projects are using scss css only things will still work as expected but in the future you will have to inspect the code to determine what classes to provide the manual documentation was providing quite a time overhead in terms of contributing new components the manual documentation was difficult to keep up to date the manual documentation could draw a team into using only the scss css where it would have been preferable to use the angular components initially the intention of this documentation was to help with teams not using angular it is however quite cumbersome and received little interest for the amount of effort put into it if you are a team who use this approach or would be interested in using canopy not with angular we do have some advanced experiments with angular elements please respond on this issue in time we will start to remove the scss only documentation and will keep this issue up to date

| 0

|

191,156

| 6,826,366,247

|

IssuesEvent

|

2017-11-08 13:55:41

|

openshift/origin

|

https://api.github.com/repos/openshift/origin

|

opened

|

Metrics not working for oc cluster up --latest --metrics

|

component/cluster-up component/metrics kind/bug priority/P1

|

I ran the following command:

```

$ oc cluster up --version=latest --service-catalog --metrics

```

I see the following error in the openshift-ansible-metrics-job pod:

> fatal: [127.0.0.1]: FAILED! => {"failed": true, "msg": "The conditional check 'lookupip.stdout not in ansible_all_ipv4_addresses' failed. The error was: error while evaluating conditional (lookupip.stdout not in ansible_all_ipv4_addresses): Unable to look up a name or access an attribute in template string ({% if lookupip.stdout not in ansible_all_ipv4_addresses %} True {% else %} False {% endif %}).\nMake sure your variable name does not contain invalid characters like '-': argument of type 'StrictUndefined' is not iterable\n\nThe error appears to have been in '/usr/share/ansible/openshift-ansible/playbooks/common/openshift-cluster/validate_hostnames.yml': line 11, column 5, but may\nbe elsewhere in the file depending on the exact syntax problem.\n\nThe offending line appears to be:\n\n failed_when: false\n - name: Warn user about bad openshift_hostname values\n ^ here\n"}

Full log: [openshift-ansible-metrics-job-c8v7d.log](https://github.com/openshift/origin/files/1454095/openshift-ansible-metrics-job-c8v7d.log)

All of my metrics pods are failed:

```

$ oc get pods -n openshift-infra

NAME READY STATUS RESTARTS AGE

openshift-ansible-metrics-job-c8v7d 0/1 Error 0 46m

openshift-ansible-metrics-job-k4qbx 0/1 Error 0 47m

openshift-ansible-metrics-job-kqz2z 0/1 Error 0 51m

openshift-ansible-metrics-job-nn4bb 0/1 Error 0 49m

```

##### Version

oc v3.7.0-alpha.1+b953213-1499

kubernetes v1.7.6+a08f5eeb62

features: Basic-Auth

Server https://127.0.0.1:8443

openshift v3.7.0-rc.0+b953213-158

kubernetes v1.7.6+a08f5eeb62

cc @jwforres @csrwng @rhamilto

|

1.0

|

Metrics not working for oc cluster up --latest --metrics - I ran the following command:

```

$ oc cluster up --version=latest --service-catalog --metrics

```

I see the following error in the openshift-ansible-metrics-job pod:

> fatal: [127.0.0.1]: FAILED! => {"failed": true, "msg": "The conditional check 'lookupip.stdout not in ansible_all_ipv4_addresses' failed. The error was: error while evaluating conditional (lookupip.stdout not in ansible_all_ipv4_addresses): Unable to look up a name or access an attribute in template string ({% if lookupip.stdout not in ansible_all_ipv4_addresses %} True {% else %} False {% endif %}).\nMake sure your variable name does not contain invalid characters like '-': argument of type 'StrictUndefined' is not iterable\n\nThe error appears to have been in '/usr/share/ansible/openshift-ansible/playbooks/common/openshift-cluster/validate_hostnames.yml': line 11, column 5, but may\nbe elsewhere in the file depending on the exact syntax problem.\n\nThe offending line appears to be:\n\n failed_when: false\n - name: Warn user about bad openshift_hostname values\n ^ here\n"}

Full log: [openshift-ansible-metrics-job-c8v7d.log](https://github.com/openshift/origin/files/1454095/openshift-ansible-metrics-job-c8v7d.log)

All of my metrics pods are failed:

```

$ oc get pods -n openshift-infra

NAME READY STATUS RESTARTS AGE

openshift-ansible-metrics-job-c8v7d 0/1 Error 0 46m

openshift-ansible-metrics-job-k4qbx 0/1 Error 0 47m

openshift-ansible-metrics-job-kqz2z 0/1 Error 0 51m

openshift-ansible-metrics-job-nn4bb 0/1 Error 0 49m

```

##### Version

oc v3.7.0-alpha.1+b953213-1499

kubernetes v1.7.6+a08f5eeb62

features: Basic-Auth

Server https://127.0.0.1:8443

openshift v3.7.0-rc.0+b953213-158

kubernetes v1.7.6+a08f5eeb62

cc @jwforres @csrwng @rhamilto

|

non_defect

|

metrics not working for oc cluster up latest metrics i ran the following command oc cluster up version latest service catalog metrics i see the following error in the openshift ansible metrics job pod fatal failed failed true msg the conditional check lookupip stdout not in ansible all addresses failed the error was error while evaluating conditional lookupip stdout not in ansible all addresses unable to look up a name or access an attribute in template string if lookupip stdout not in ansible all addresses true else false endif nmake sure your variable name does not contain invalid characters like argument of type strictundefined is not iterable n nthe error appears to have been in usr share ansible openshift ansible playbooks common openshift cluster validate hostnames yml line column but may nbe elsewhere in the file depending on the exact syntax problem n nthe offending line appears to be n n failed when false n name warn user about bad openshift hostname values n here n full log all of my metrics pods are failed oc get pods n openshift infra name ready status restarts age openshift ansible metrics job error openshift ansible metrics job error openshift ansible metrics job error openshift ansible metrics job error version oc alpha kubernetes features basic auth server openshift rc kubernetes cc jwforres csrwng rhamilto

| 0

|

76,077

| 26,226,742,287

|

IssuesEvent

|

2023-01-04 19:26:43

|

vector-im/element-android

|

https://api.github.com/repos/vector-im/element-android

|

opened

|

Video playback is being cropped

|

T-Defect

|

### Steps to reproduce

1. I send a screen recording video from my android phone to a matrix group chat.

2. The video is successfully sent and a thumbnail is generated

3. I click the video thumbnail in the timeline and the top and bottom of the video are now unviewable.

### Outcome

#### What did you expect?

I expected to play the video from element exactly as It was recorded on my phone without any cropping.

https://user-images.githubusercontent.com/16907963/210633152-cb645478-e5d3-4a2e-bb3c-f606e55d1d07.mp4

#### What happened instead?

The video is cropped and I cannot see the entire content of the recording (top and bottom). Although the thumbnail is correct in the video, the actual playback is not.

https://user-images.githubusercontent.com/16907963/210633338-09352726-89ca-4288-a61c-8624be4a7e72.mp4

### Your phone model

Pixel 6

### Operating system version

13

### Application version and app store

_No response_

### Homeserver

matrix.org

### Will you send logs?

No

### Are you willing to provide a PR?

No

|

1.0

|

Video playback is being cropped - ### Steps to reproduce

1. I send a screen recording video from my android phone to a matrix group chat.

2. The video is successfully sent and a thumbnail is generated

3. I click the video thumbnail in the timeline and the top and bottom of the video are now unviewable.

### Outcome

#### What did you expect?

I expected to play the video from element exactly as It was recorded on my phone without any cropping.

https://user-images.githubusercontent.com/16907963/210633152-cb645478-e5d3-4a2e-bb3c-f606e55d1d07.mp4

#### What happened instead?

The video is cropped and I cannot see the entire content of the recording (top and bottom). Although the thumbnail is correct in the video, the actual playback is not.

https://user-images.githubusercontent.com/16907963/210633338-09352726-89ca-4288-a61c-8624be4a7e72.mp4

### Your phone model

Pixel 6

### Operating system version

13

### Application version and app store

_No response_

### Homeserver

matrix.org

### Will you send logs?

No

### Are you willing to provide a PR?

No

|

defect

|

video playback is being cropped steps to reproduce i send a screen recording video from my android phone to a matrix group chat the video is successfully sent and a thumbnail is generated i click the video thumbnail in the timeline and the top and bottom of the video are now unviewable outcome what did you expect i expected to play the video from element exactly as it was recorded on my phone without any cropping what happened instead the video is cropped and i cannot see the entire content of the recording top and bottom although the thumbnail is correct in the video the actual playback is not your phone model pixel operating system version application version and app store no response homeserver matrix org will you send logs no are you willing to provide a pr no

| 1

|

77,139

| 3,506,264,806

|

IssuesEvent

|

2016-01-08 05:06:12

|

OregonCore/OregonCore

|

https://api.github.com/repos/OregonCore/OregonCore

|

opened

|

[Teron Gorefiend] Shadow of Death (BB #200)

|

migrated Priority: Medium Type: Bug

|

This issue was migrated from bitbucket.

**Original Reporter:**

**Original Date:** 15.06.2010 13:48:38 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** new

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/200

<hr>

He don't cast shadow of death.

|

1.0

|

[Teron Gorefiend] Shadow of Death (BB #200) - This issue was migrated from bitbucket.

**Original Reporter:**

**Original Date:** 15.06.2010 13:48:38 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** new

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/200

<hr>

He don't cast shadow of death.

|

non_defect

|

shadow of death bb this issue was migrated from bitbucket original reporter original date gmt original priority major original type bug original state new direct link he don t cast shadow of death

| 0

|

721,580

| 24,831,859,064

|

IssuesEvent

|

2022-10-26 04:45:49

|

MelchiorDahrk/MMM2022

|

https://api.github.com/repos/MelchiorDahrk/MMM2022

|

closed

|

AA22_i20: Abandoned House

|

priority-3

|

Typical abandoned house. Plenty of cobwebs, ruins, maybe even an ancestral ghost.

|

1.0

|

AA22_i20: Abandoned House - Typical abandoned house. Plenty of cobwebs, ruins, maybe even an ancestral ghost.

|

non_defect

|

abandoned house typical abandoned house plenty of cobwebs ruins maybe even an ancestral ghost

| 0

|

24,076

| 3,881,245,168

|

IssuesEvent

|

2016-04-13 02:57:37

|

department-of-veterans-affairs/veterans-employment-center

|

https://api.github.com/repos/department-of-veterans-affairs/veterans-employment-center

|

opened

|

text wrap looks off on C&E page

|

design

|

@gnakm is the text on this page supposed to wrap like this? it's only going partially across the page.

|

1.0

|

text wrap looks off on C&E page - @gnakm is the text on this page supposed to wrap like this? it's only going partially across the page.

|

non_defect

|

text wrap looks off on c e page gnakm is the text on this page supposed to wrap like this it s only going partially across the page

| 0

|

53,872

| 13,262,409,212

|

IssuesEvent

|

2020-08-20 21:44:02

|

icecube-trac/tix4

|

https://api.github.com/repos/icecube-trac/tix4

|

closed

|

[phys-services] I3GeometryDecomposer doesn't know about all OMTypes (Trac #2222)

|

Migrated from Trac analysis defect

|

I3GeometryDecomposer issues errors when given a relativly modern GCD file with OMTypes Scintillator and IceAct. I am guessing this is not actually an error. Either the logging should be downgraded to debug or IceAct and Scintillator should be added to the switch statement.

https://code.icecube.wisc.edu/projects/icecube/browser/IceCube/projects/phys-services/trunk/private/phys-services/I3GeometryDecomposer.cxx?rev=149765#L215

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/2222">https://code.icecube.wisc.edu/projects/icecube/ticket/2222</a>, reported by kjmeagherand owned by mjl5147</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-05-07T14:57:57",

"_ts": "1557241077906143",

"description": "I3GeometryDecomposer issues errors when given a relativly modern GCD file with OMTypes Scintillator and IceAct. I am guessing this is not actually an error. Either the logging should be downgraded to debug or IceAct and Scintillator should be added to the switch statement.\n\n\nhttps://code.icecube.wisc.edu/projects/icecube/browser/IceCube/projects/phys-services/trunk/private/phys-services/I3GeometryDecomposer.cxx?rev=149765#L215",

"reporter": "kjmeagher",

"cc": "",

"resolution": "fixed",

"time": "2018-12-06T19:49:44",

"component": "analysis",

"summary": "[phys-services] I3GeometryDecomposer doesn't know about all OMTypes",

"priority": "normal",

"keywords": "",

"milestone": "Vernal Equinox 2019",

"owner": "mjl5147",

"type": "defect"

}

```

</p>

</details>

|

1.0

|

[phys-services] I3GeometryDecomposer doesn't know about all OMTypes (Trac #2222) - I3GeometryDecomposer issues errors when given a relativly modern GCD file with OMTypes Scintillator and IceAct. I am guessing this is not actually an error. Either the logging should be downgraded to debug or IceAct and Scintillator should be added to the switch statement.

https://code.icecube.wisc.edu/projects/icecube/browser/IceCube/projects/phys-services/trunk/private/phys-services/I3GeometryDecomposer.cxx?rev=149765#L215

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/2222">https://code.icecube.wisc.edu/projects/icecube/ticket/2222</a>, reported by kjmeagherand owned by mjl5147</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-05-07T14:57:57",

"_ts": "1557241077906143",

"description": "I3GeometryDecomposer issues errors when given a relativly modern GCD file with OMTypes Scintillator and IceAct. I am guessing this is not actually an error. Either the logging should be downgraded to debug or IceAct and Scintillator should be added to the switch statement.\n\n\nhttps://code.icecube.wisc.edu/projects/icecube/browser/IceCube/projects/phys-services/trunk/private/phys-services/I3GeometryDecomposer.cxx?rev=149765#L215",

"reporter": "kjmeagher",

"cc": "",

"resolution": "fixed",

"time": "2018-12-06T19:49:44",

"component": "analysis",

"summary": "[phys-services] I3GeometryDecomposer doesn't know about all OMTypes",

"priority": "normal",

"keywords": "",

"milestone": "Vernal Equinox 2019",

"owner": "mjl5147",

"type": "defect"

}

```

</p>

</details>

|

defect

|

doesn t know about all omtypes trac issues errors when given a relativly modern gcd file with omtypes scintillator and iceact i am guessing this is not actually an error either the logging should be downgraded to debug or iceact and scintillator should be added to the switch statement migrated from json status closed changetime ts description issues errors when given a relativly modern gcd file with omtypes scintillator and iceact i am guessing this is not actually an error either the logging should be downgraded to debug or iceact and scintillator should be added to the switch statement n n n reporter kjmeagher cc resolution fixed time component analysis summary doesn t know about all omtypes priority normal keywords milestone vernal equinox owner type defect

| 1

|

203,904

| 15,394,591,044

|

IssuesEvent

|

2021-03-03 18:06:12

|

openservicemesh/osm

|

https://api.github.com/repos/openservicemesh/osm

|

opened

|

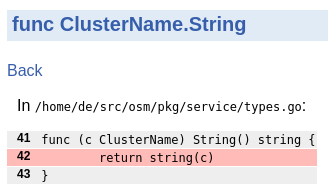

test: pkg/service ClusterName.String() method

|

tests

|

In `/home/de/src/osm/pkg/service/types.go` stringer does not have good unit test coverage.

It would be great to write a small test for this function.

|

1.0

|

test: pkg/service ClusterName.String() method - In `/home/de/src/osm/pkg/service/types.go` stringer does not have good unit test coverage.

It would be great to write a small test for this function.

|

non_defect

|

test pkg service clustername string method in home de src osm pkg service types go stringer does not have good unit test coverage it would be great to write a small test for this function

| 0

|

149,188

| 11,885,238,877

|

IssuesEvent

|

2020-03-27 19:11:59

|

bcgov/range-web

|

https://api.github.com/repos/bcgov/range-web

|

closed

|

RUP back button navigating to wrong page

|

bug ready to test

|

Refresh doesn’t fix it

Browser back arrow eventually gets me back to the list

|

1.0

|

RUP back button navigating to wrong page - Refresh doesn’t fix it

Browser back arrow eventually gets me back to the list

|

non_defect

|

rup back button navigating to wrong page refresh doesn’t fix it browser back arrow eventually gets me back to the list

| 0

|

774,484

| 27,199,262,592

|

IssuesEvent

|

2023-02-20 08:31:05

|

ballerina-platform/ballerina-dev-website

|

https://api.github.com/repos/ballerina-platform/ballerina-dev-website

|

closed

|

Need to Update the Observability Guide on Prometheus Tags

|

Priority/Highest Type/Improvement Points/0.5 Area/LearnPages Category/Content

|

**Description:**

Need to update the Observability guide on the list of tags, which need to be published when accessing Prometheus. This should be added under the "Creating your own dashboard" section.

**Suggested Labels:**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees:**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

**Affected Product Version:**

**OS, Browser, other environment details and versions:**

**Steps to reproduce:**

**Related Issues:**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

|

1.0

|

Need to Update the Observability Guide on Prometheus Tags - **Description:**

Need to update the Observability guide on the list of tags, which need to be published when accessing Prometheus. This should be added under the "Creating your own dashboard" section.

**Suggested Labels:**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees:**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

**Affected Product Version:**

**OS, Browser, other environment details and versions:**

**Steps to reproduce:**

**Related Issues:**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

|

non_defect

|

need to update the observability guide on prometheus tags description need to update the observability guide on the list of tags which need to be published when accessing prometheus this should be added under the creating your own dashboard section suggested labels suggested assignees affected product version os browser other environment details and versions steps to reproduce related issues

| 0

|

68,744

| 21,876,153,639

|

IssuesEvent

|

2022-05-19 10:19:03

|

vector-im/element-android

|

https://api.github.com/repos/vector-im/element-android

|

opened

|

F-Droid can't build - org.maplibre.gl:android-sdk pulls in non-FOSS Google Services

|

T-Defect

|

### Steps to reproduce

Try to build for fdroid... gradle dependencies for `fdroidRelease*` says:

```

+--- org.maplibre.gl:android-sdk:9.5.2

| +--- org.maplibre.gl:android-sdk-geojson:5.9.0

| | \--- com.google.code.gson:gson:2.8.6

| +--- com.mapbox.mapboxsdk:mapbox-android-gestures:0.7.0

| | +--- androidx.core:core:1.0.0 -> 1.7.0 (*)

| | \--- androidx.annotation:annotation:1.0.0 -> 1.3.0

| +--- org.maplibre.gl:android-sdk-turf:5.9.0

| | \--- org.maplibre.gl:android-sdk-geojson:5.9.0 (*)

| +--- androidx.annotation:annotation:1.0.0 -> 1.3.0

| +--- androidx.fragment:fragment:1.0.0 -> 1.4.1 (*)

| +--- com.squareup.okhttp3:okhttp:3.12.3 -> 4.9.3 (*)

| \--- com.google.android.gms:play-services-location:16.0.0

```

Since: https://github.com/vector-im/element-android/commit/824e713c51c5aa5b89a85ed5e4c105f5e76a4ba8

### Outcome

Can't build from FOSS deps

### Your phone model

_No response_

### Operating system version

_No response_

### Application version and app store

_No response_

### Homeserver

_No response_

### Will you send logs?

No

|

1.0

|

F-Droid can't build - org.maplibre.gl:android-sdk pulls in non-FOSS Google Services - ### Steps to reproduce

Try to build for fdroid... gradle dependencies for `fdroidRelease*` says:

```

+--- org.maplibre.gl:android-sdk:9.5.2

| +--- org.maplibre.gl:android-sdk-geojson:5.9.0

| | \--- com.google.code.gson:gson:2.8.6

| +--- com.mapbox.mapboxsdk:mapbox-android-gestures:0.7.0

| | +--- androidx.core:core:1.0.0 -> 1.7.0 (*)

| | \--- androidx.annotation:annotation:1.0.0 -> 1.3.0

| +--- org.maplibre.gl:android-sdk-turf:5.9.0

| | \--- org.maplibre.gl:android-sdk-geojson:5.9.0 (*)

| +--- androidx.annotation:annotation:1.0.0 -> 1.3.0

| +--- androidx.fragment:fragment:1.0.0 -> 1.4.1 (*)

| +--- com.squareup.okhttp3:okhttp:3.12.3 -> 4.9.3 (*)

| \--- com.google.android.gms:play-services-location:16.0.0

```

Since: https://github.com/vector-im/element-android/commit/824e713c51c5aa5b89a85ed5e4c105f5e76a4ba8

### Outcome

Can't build from FOSS deps

### Your phone model

_No response_

### Operating system version

_No response_

### Application version and app store

_No response_

### Homeserver

_No response_

### Will you send logs?

No

|

defect

|

f droid can t build org maplibre gl android sdk pulls in non foss google services steps to reproduce try to build for fdroid gradle dependencies for fdroidrelease says org maplibre gl android sdk org maplibre gl android sdk geojson com google code gson gson com mapbox mapboxsdk mapbox android gestures androidx core core androidx annotation annotation org maplibre gl android sdk turf org maplibre gl android sdk geojson androidx annotation annotation androidx fragment fragment com squareup okhttp com google android gms play services location since outcome can t build from foss deps your phone model no response operating system version no response application version and app store no response homeserver no response will you send logs no

| 1

|

11,516

| 7,583,149,638

|

IssuesEvent

|

2018-04-25 07:54:15

|

maowerner/sLapH-contractions

|

https://api.github.com/repos/maowerner/sLapH-contractions

|

closed

|

Phase factor in momentum uses exp too often

|

performance

|

**Branch**: phase-factor

- [x] Compare performance to old version

- [x] Do some correctness test with a larger lattice.

---

The momentum phase factor in the VdaggerV calls the `exp` function for each site on the lattice. Similar to the FFT one might be able to rewrite `exp(-i p x) = exp(-i p_x)^x` and then only call the `exp` function once. To go through the lattice, one just to multiply the phase factor with the cached value.

The `exp` function seems to use around 50 cycles ([source](https://streamhpc.com/blog/2012-07-16/how-expensive-is-an-operation-on-a-cpu/)).

|

True

|

Phase factor in momentum uses exp too often - **Branch**: phase-factor

- [x] Compare performance to old version

- [x] Do some correctness test with a larger lattice.

---

The momentum phase factor in the VdaggerV calls the `exp` function for each site on the lattice. Similar to the FFT one might be able to rewrite `exp(-i p x) = exp(-i p_x)^x` and then only call the `exp` function once. To go through the lattice, one just to multiply the phase factor with the cached value.

The `exp` function seems to use around 50 cycles ([source](https://streamhpc.com/blog/2012-07-16/how-expensive-is-an-operation-on-a-cpu/)).

|

non_defect

|

phase factor in momentum uses exp too often branch phase factor compare performance to old version do some correctness test with a larger lattice the momentum phase factor in the vdaggerv calls the exp function for each site on the lattice similar to the fft one might be able to rewrite exp i p x exp i p x x and then only call the exp function once to go through the lattice one just to multiply the phase factor with the cached value the exp function seems to use around cycles

| 0

|

19,617

| 3,228,437,930

|

IssuesEvent

|

2015-10-12 02:06:09

|

essandess/etv-comskip

|

https://api.github.com/repos/essandess/etv-comskip

|

closed

|

installer sets comskip directory to be mode 700 for user 501

|

auto-migrated Priority-Medium Type-Defect

|

```

i don't have an eyetv (just want to run comskip directly). I suppose that

might have confused the installer for some reason, but the comskip directory is

improperly permitted.

thanks for going to the trouble to put this all together.

version 2.0.2-10.6

sh-3.2# pwd

/Library/Application Support/ETVComskip

sh-3.2# ls -al

total 112

drwxr-xr-x@ 14 danno staff 476 Apr 3 11:29 .

drwxrwxr-x 27 root admin 918 Apr 3 11:28 ..

d-wx-wx-wt@ 2 501 staff 68 Jun 1 2010 .Trashes

-rw-r--r--@ 1 501 staff 975 Jun 1 2010 AUTHORS

-rw-r--r--@ 1 501 staff 1310 Jun 1 2010 CHANGELOG

drwxr-xr-x@ 3 501 staff 102 Jun 1 2010 ComSkipper.app

drwxr-xr-x@ 3 501 staff 102 Jun 1 2010 Install ETVComskip.app

-rw-r--r--@ 1 501 staff 17987 Jun 1 2010 LICENSE

-rw-r--r--@ 1 501 staff 18300 Jun 1 2010 LICENSE.rtf

drwxr-xr-x@ 3 501 staff 102 Jun 1 2010 MarkCommercials.app

-rw-r--r--@ 1 501 staff 4315 Jun 1 2010 README-EyeTV3

drwxr-xr-x@ 3 501 staff 102 Jun 1 2010 UnInstall ETVComskip.app

drwxr-xr-x@ 3 501 staff 102 Jun 1 2010 Wine.app

drwx------@ 13 501 staff 442 Jun 1 2010 comskip

```

Original issue reported on code.google.com by `danpri...@gmail.com` on 3 Apr 2011 at 3:50

|

1.0

|

installer sets comskip directory to be mode 700 for user 501 - ```

i don't have an eyetv (just want to run comskip directly). I suppose that

might have confused the installer for some reason, but the comskip directory is

improperly permitted.

thanks for going to the trouble to put this all together.

version 2.0.2-10.6

sh-3.2# pwd

/Library/Application Support/ETVComskip

sh-3.2# ls -al

total 112

drwxr-xr-x@ 14 danno staff 476 Apr 3 11:29 .

drwxrwxr-x 27 root admin 918 Apr 3 11:28 ..

d-wx-wx-wt@ 2 501 staff 68 Jun 1 2010 .Trashes

-rw-r--r--@ 1 501 staff 975 Jun 1 2010 AUTHORS

-rw-r--r--@ 1 501 staff 1310 Jun 1 2010 CHANGELOG

drwxr-xr-x@ 3 501 staff 102 Jun 1 2010 ComSkipper.app

drwxr-xr-x@ 3 501 staff 102 Jun 1 2010 Install ETVComskip.app

-rw-r--r--@ 1 501 staff 17987 Jun 1 2010 LICENSE

-rw-r--r--@ 1 501 staff 18300 Jun 1 2010 LICENSE.rtf

drwxr-xr-x@ 3 501 staff 102 Jun 1 2010 MarkCommercials.app

-rw-r--r--@ 1 501 staff 4315 Jun 1 2010 README-EyeTV3

drwxr-xr-x@ 3 501 staff 102 Jun 1 2010 UnInstall ETVComskip.app

drwxr-xr-x@ 3 501 staff 102 Jun 1 2010 Wine.app

drwx------@ 13 501 staff 442 Jun 1 2010 comskip

```

Original issue reported on code.google.com by `danpri...@gmail.com` on 3 Apr 2011 at 3:50

|

defect

|

installer sets comskip directory to be mode for user i don t have an eyetv just want to run comskip directly i suppose that might have confused the installer for some reason but the comskip directory is improperly permitted thanks for going to the trouble to put this all together version sh pwd library application support etvcomskip sh ls al total drwxr xr x danno staff apr drwxrwxr x root admin apr d wx wx wt staff jun trashes rw r r staff jun authors rw r r staff jun changelog drwxr xr x staff jun comskipper app drwxr xr x staff jun install etvcomskip app rw r r staff jun license rw r r staff jun license rtf drwxr xr x staff jun markcommercials app rw r r staff jun readme drwxr xr x staff jun uninstall etvcomskip app drwxr xr x staff jun wine app drwx staff jun comskip original issue reported on code google com by danpri gmail com on apr at

| 1

|

30,006

| 13,194,186,617

|

IssuesEvent

|

2020-08-13 16:23:35

|

terraform-providers/terraform-provider-aws

|

https://api.github.com/repos/terraform-providers/terraform-provider-aws

|

closed

|

aws s3 lifecycle bucket wants to set expiration to null when abort multipart upload is present

|

service/s3

|

_This issue was originally opened by @mpodber1971 as hashicorp/terraform#25788. It was migrated here as a result of the [provider split](https://www.hashicorp.com/blog/upcoming-provider-changes-in-terraform-0-10/). The original body of the issue is below._

<hr>

when i add a lifecycle policy to delete abort multipart uploads and do NOT include an expiration on an AWS s3 bucket, terraform wants to set the expiration to null. It is misleading and a little concerning as to whether I accidentally implemented a lifecycle policy to delete our production data.

If the multi part upload isn't present, then terraform acts as expected.

I have attached the plan before apply, aws cli showing lifecycle post apply and a second terraform plan showing that it wants to apply these changes.

[test-bucket-post-apply-new-plan.log](https://github.com/hashicorp/terraform/files/5052441/test-bucket-post-apply-new-plan.log)

[test-bucket-lifecycle-post-apply.log](https://github.com/hashicorp/terraform/files/5052443/test-bucket-lifecycle-post-apply.log)

[test-bucket-pre-apply.log](https://github.com/hashicorp/terraform/files/5052444/test-bucket-pre-apply.log)

|

1.0

|

aws s3 lifecycle bucket wants to set expiration to null when abort multipart upload is present - _This issue was originally opened by @mpodber1971 as hashicorp/terraform#25788. It was migrated here as a result of the [provider split](https://www.hashicorp.com/blog/upcoming-provider-changes-in-terraform-0-10/). The original body of the issue is below._

<hr>

when i add a lifecycle policy to delete abort multipart uploads and do NOT include an expiration on an AWS s3 bucket, terraform wants to set the expiration to null. It is misleading and a little concerning as to whether I accidentally implemented a lifecycle policy to delete our production data.

If the multi part upload isn't present, then terraform acts as expected.

I have attached the plan before apply, aws cli showing lifecycle post apply and a second terraform plan showing that it wants to apply these changes.

[test-bucket-post-apply-new-plan.log](https://github.com/hashicorp/terraform/files/5052441/test-bucket-post-apply-new-plan.log)

[test-bucket-lifecycle-post-apply.log](https://github.com/hashicorp/terraform/files/5052443/test-bucket-lifecycle-post-apply.log)

[test-bucket-pre-apply.log](https://github.com/hashicorp/terraform/files/5052444/test-bucket-pre-apply.log)

|

non_defect

|

aws lifecycle bucket wants to set expiration to null when abort multipart upload is present this issue was originally opened by as hashicorp terraform it was migrated here as a result of the the original body of the issue is below when i add a lifecycle policy to delete abort multipart uploads and do not include an expiration on an aws bucket terraform wants to set the expiration to null it is misleading and a little concerning as to whether i accidentally implemented a lifecycle policy to delete our production data if the multi part upload isn t present then terraform acts as expected i have attached the plan before apply aws cli showing lifecycle post apply and a second terraform plan showing that it wants to apply these changes

| 0

|

29,677

| 5,814,009,261

|

IssuesEvent

|

2017-05-05 01:09:05

|

cakephp/cakephp

|

https://api.github.com/repos/cakephp/cakephp

|

closed

|

Default value for __x() with context not returned if non default language translation is empty

|

Defect i18n On hold

|

This is a (multiple allowed):

* [x] bug

* [ ] enhancement

* [ ] feature-discussion (RFC)

* CakePHP Version: 3.4.3

I am not sure if this is desired behavior or not. I have pot and po files generated by Cake's i18n shell.

I have following translation in non default language `pl`

```

msgctxt "Navigation menu"

msgid "Search"

msgstr ""

```

And now, behavior is different depending on language beeing default or not.

`__x("Navigation menu", "Search")` gives me an empty string in language `pl`. If i switch to any other language, so the default lang would be picked up (default.pot in my case) default value "Search" is returned as I would expect it to be.

Defining translation for example like this

```

msgctxt "Navigation menu"

msgid "Search"

msgstr "test test"

```

will result in returning "test test" for `pl` and "Search" for default language.

|

1.0

|

Default value for __x() with context not returned if non default language translation is empty - This is a (multiple allowed):

* [x] bug

* [ ] enhancement

* [ ] feature-discussion (RFC)

* CakePHP Version: 3.4.3

I am not sure if this is desired behavior or not. I have pot and po files generated by Cake's i18n shell.

I have following translation in non default language `pl`

```

msgctxt "Navigation menu"

msgid "Search"

msgstr ""

```

And now, behavior is different depending on language beeing default or not.

`__x("Navigation menu", "Search")` gives me an empty string in language `pl`. If i switch to any other language, so the default lang would be picked up (default.pot in my case) default value "Search" is returned as I would expect it to be.

Defining translation for example like this

```

msgctxt "Navigation menu"

msgid "Search"

msgstr "test test"

```

will result in returning "test test" for `pl` and "Search" for default language.

|

defect

|

default value for x with context not returned if non default language translation is empty this is a multiple allowed bug enhancement feature discussion rfc cakephp version i am not sure if this is desired behavior or not i have pot and po files generated by cake s shell i have following translation in non default language pl msgctxt navigation menu msgid search msgstr and now behavior is different depending on language beeing default or not x navigation menu search gives me an empty string in language pl if i switch to any other language so the default lang would be picked up default pot in my case default value search is returned as i would expect it to be defining translation for example like this msgctxt navigation menu msgid search msgstr test test will result in returning test test for pl and search for default language

| 1

|

244,858

| 20,725,254,533

|

IssuesEvent

|

2022-03-14 00:23:51

|

DnD-Montreal/session-tome

|

https://api.github.com/repos/DnD-Montreal/session-tome

|

opened

|

Accept: League Admin Event Control

|

acceptance test

|

## Description

Acceptance Test for #363

<!-- Provide a general summary of the test in the title above -->

[UAT Environment](https://session-tome.triassi.ca) for executing the acceptance flow

<!-- See #439 for automation of this flow -->

## Acceptance Flow

<!-- Describe the step by step procedure of the acceptance test -->

1. Log in to the admin account

2. navigate to the admin page via /admin

3. Navigate to the events page via the panel on the left

4. Attempt to create a league event

5. Verify that the event is created

6. Attempt to edit a league event

7. Verify that the changes you have made are saved to the event

8. Attempt to delete a league event.

9. Verify that the event is deleted.

|

1.0

|

Accept: League Admin Event Control - ## Description

Acceptance Test for #363

<!-- Provide a general summary of the test in the title above -->

[UAT Environment](https://session-tome.triassi.ca) for executing the acceptance flow

<!-- See #439 for automation of this flow -->

## Acceptance Flow

<!-- Describe the step by step procedure of the acceptance test -->

1. Log in to the admin account

2. navigate to the admin page via /admin

3. Navigate to the events page via the panel on the left

4. Attempt to create a league event

5. Verify that the event is created

6. Attempt to edit a league event

7. Verify that the changes you have made are saved to the event

8. Attempt to delete a league event.

9. Verify that the event is deleted.

|

non_defect

|

accept league admin event control description acceptance test for for executing the acceptance flow acceptance flow log in to the admin account navigate to the admin page via admin navigate to the events page via the panel on the left attempt to create a league event verify that the event is created attempt to edit a league event verify that the changes you have made are saved to the event attempt to delete a league event verify that the event is deleted

| 0

|

6,516

| 2,610,256,033

|

IssuesEvent

|

2015-02-26 19:21:49

|

chrsmith/dsdsdaadf

|

https://api.github.com/repos/chrsmith/dsdsdaadf

|

opened

|

深圳激光祛除痘坑多少钱

|

auto-migrated Priority-Medium Type-Defect

|

```

深圳激光祛除痘坑多少钱【深圳韩方科颜全国热线400-869-1818��

�24小时QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机构以��

�国秘方——韩方科颜这一国妆准字号治疗型权威,祛痘佳品�

��韩方科颜专业祛痘连锁机构,采用韩国秘方配合专业“不反

弹”健康祛痘技术并结合先进“先进豪华彩光”仪,开创国��

�专业治疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸�

��的痘痘。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 8:30

|

1.0

|

深圳激光祛除痘坑多少钱 - ```

深圳激光祛除痘坑多少钱【深圳韩方科颜全国热线400-869-1818��

�24小时QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机构以��

�国秘方——韩方科颜这一国妆准字号治疗型权威,祛痘佳品�

��韩方科颜专业祛痘连锁机构,采用韩国秘方配合专业“不反

弹”健康祛痘技术并结合先进“先进豪华彩光”仪,开创国��

�专业治疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸�

��的痘痘。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 8:30

|

defect

|

深圳激光祛除痘坑多少钱 深圳激光祛除痘坑多少钱【 �� � 】深圳韩方科颜专业祛痘连锁机构,机构以�� �国秘方——韩方科颜这一国妆准字号治疗型权威,祛痘佳品� ��韩方科颜专业祛痘连锁机构,采用韩国秘方配合专业“不反 弹”健康祛痘技术并结合先进“先进豪华彩光”仪,开创国�� �专业治疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸� ��的痘痘。 original issue reported on code google com by szft com on may at

| 1

|

270,276

| 20,596,669,333

|

IssuesEvent

|

2022-03-05 16:06:16

|

42-AI/SentimentalBB

|

https://api.github.com/repos/42-AI/SentimentalBB

|

closed

|

docs (contrib): add agilmet as contributor

|

documentation

|

# 📖 Add a contributor

Add my name and 42 login to the README.md

# ✔️ Definition of done

Name and login added to README.md

|

1.0

|

docs (contrib): add agilmet as contributor - # 📖 Add a contributor

Add my name and 42 login to the README.md

# ✔️ Definition of done

Name and login added to README.md

|

non_defect

|

docs contrib add agilmet as contributor 📖 add a contributor add my name and login to the readme md ✔️ definition of done name and login added to readme md

| 0

|

56,213

| 14,981,573,301

|

IssuesEvent

|

2021-01-28 15:00:01

|

department-of-veterans-affairs/va.gov-team

|

https://api.github.com/repos/department-of-veterans-affairs/va.gov-team

|

closed

|

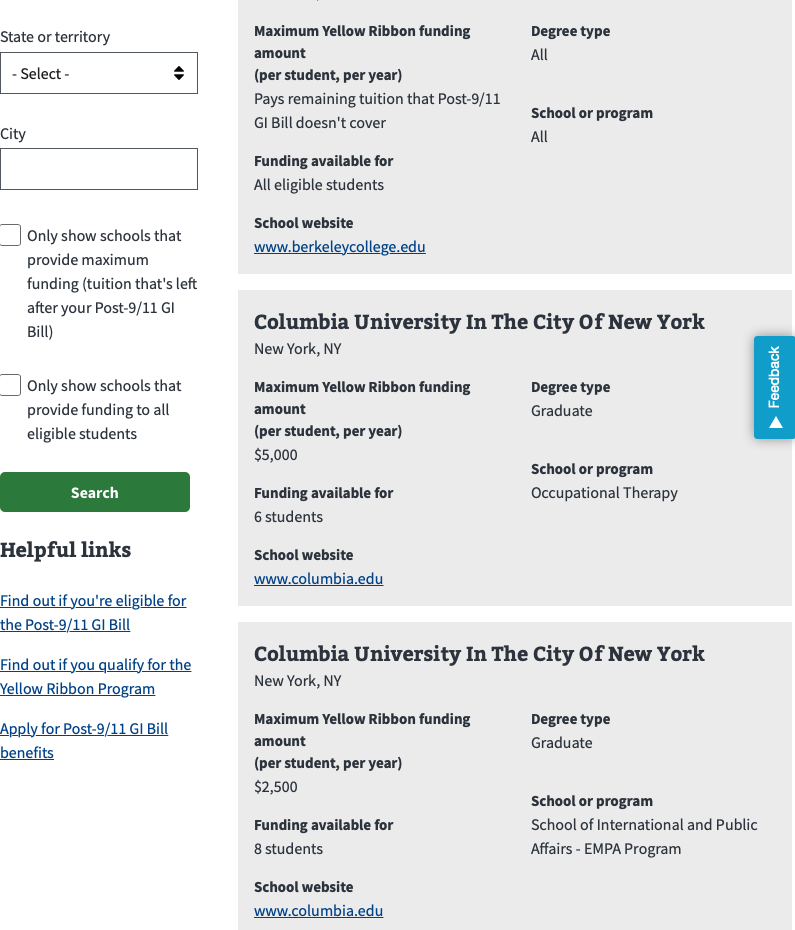

508-defect-3 [COGNITION, SEMANTIC MARKUP]: individual search items SHOULD read semantically

|

508-defect-3 508-issue-cognition 508-issue-semantic-markup 508/Accessibility dt-yellow-ribbon-schools-search vsa vsa-decision-tools

|

# [508-defect-3](https://github.com/department-of-veterans-affairs/va.gov-team/blob/master/platform/accessibility/guidance/defect-severity-rubric.md#508-defect-3)

**Feedback framework**

- **❗️ Must** for if the feedback must be applied

- **⚠️Should** if the feedback is best practice

- **✔️ Consider** for suggestions/enhancements

## Description

Each search result **should** have semantic structure, associating labels with values.

For the current code, the `p` elements are not associated with their values, and break out as if a separate sentence for screen readers. Non-sighted users do not get the same semantic content that sighted users get from the visual design.

Due to limitations of Formation, the design may need to be modified.

Recording of screen reader experience in Slack comment: https://dsva.slack.com/archives/C52CL1PKQ/p1588718480342600?thread_ts=1588710896.325800&cid=C52CL1PKQ

Consider adjusting the code for "(per student, per year)" so that the CSS has it inline, rather than breaking at some viewports, as shown in this screenshot.

## Point of Contact

**VFS Point of Contact:** Jennifer

## Acceptance Criteria

As a screen reader user, I want to understand the context of the search results content so that I may locate the search result that best meets my needs.

## Acceptance Criteria

- [ ] Defect is remediated

- [ ] Any changes that impact the design intent are reviewed/approved by Design team

- [ ] Changes are peer reviewed by Engineering

- [ ] Changes are reviewed on Staging by Product Owner and Product Manager

- [ ] Changes are merged and deployed to Production

## Potential fix

> **Please note:** If the `display` property is changed in the CSS it changes the semantics. For example, if a `ul` gets `display:block`, it removes the "list" semantics. [Source: "Fixing" Lists](https://www.scottohara.me/blog/2019/01/12/lists-and-safari.html)

### ~~Current~~ Previous code

```html

<div class="search-results vads-u-margin-top--2">