Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

757

| labels

stringlengths 4

664

| body

stringlengths 3

261k

| index

stringclasses 10

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

232k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

758,022

| 26,540,641,917

|

IssuesEvent

|

2023-01-19 18:58:14

|

linkerd/linkerd2

|

https://api.github.com/repos/linkerd/linkerd2

|

closed

|

Dropped request with HTTP 400 and "GET request with illegal URI" in logs

|

area/proxy priority/P1 bug needs/repro

|

### What is the issue?

Application is configured with linkerd-proxy. And currently connects to other microservice which runs without linkerd-proxy (yet). So mTLS encryption is not involved.

When the app starts it makes HTTP connections. The last three are:

1. HTTP GET http://2.2.2.2:9200/ - with response HTTP 200 in app log

2. HTTP POST http://2.2.2.2:9200/products/_open?xxxx - with response HTTP 200 in app log

3. HTTP GET http://2.2.2.2:9200/products - with response HTTP 400 Bad request in app log

All in one TCP stream.

Taking tcpdump shows, that requests 1,2 are leaving the pod but request 3 is not. So no HTTP GET http://1.2.3.4:9200/products in tcpdump capture.

This traffic works without any problems without linkerd-proxy injected.

From the logs (below) it looks like this `linkerd_proxy_http::h1: GET request with illegal URI: products` is a possible problem. But why?

### How can it be reproduced?

Inject linkerd-proxy into the microservice.

### Logs, error output, etc

Relevant debug logs from linkerd-proxy with privacy changes;

```

[ 323.241735s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}: linkerd_service_profiles::client: Resolved profile

[ 323.241754s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}: linkerd_app_outbound::switch_logical: Profile describes a logical service

[ 323.241759s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}: linkerd_app_outbound::http::detect: Attempting HTTP protocol detection

[ 323.275412s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}: linkerd_detect: DetectResult protocol=Some(Http1) elapsed=33.62501ms

[ 323.275442s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}: linkerd_proxy_http::server: Creating HTTP service version=Http1

[ 323.275462s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}: linkerd_cache: Caching new value key=Logical { protocol: Http1, profile: .., logical_addr: LogicalAddr(svcname.dev.svc.cluster.local:9200) }

[ 323.275508s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}: linkerd_proxy_http::server: Handling as HTTP version=Http1

[ 323.275575s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}: linkerd_service_profiles::http::service: Updating HTTP routes routes=0

[ 323.275602s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}: linkerd_service_profiles::split: Updating targets=[Target { addr: svcname.dev.svc.cluster.local:9200, weight: 1 }]

[ 323.275618s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}: linkerd_proxy_api_resolve::resolve: Resolving dst=svcname.dev.svc.cluster.local:9200 context={"ns":"dev", "nodeName":"aks-microservice-123456-vmss000002"}

[ 323.275653s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}: linkerd_stack::failfast: HTTP Logical service has become unavailable

[ 323.275659s] DEBUG ThreadId(01) evict{key=Logical { protocol: Http1, profile: .., logical_addr: LogicalAddr(svcname.dev.svc.cluster.local:9200) }}: linkerd_cache: Awaiting idleness

[ 323.278074s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}: linkerd_stack::failfast: HTTP Balancer service has become unavailable

[ 323.278108s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}: linkerd_proxy_api_resolve::resolve: Add endpoints=2

[ 323.278122s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}: linkerd_proxy_discover::from_resolve: Changed change=Insert(2.2.2.3:9200, Endpoint { addr: Remote(ServerAddr(2.2.2.3:9200)), tls: None(NotProvidedByServiceDiscovery), metadata: Metadata { labels: {"namespace": "dev", "pod": "podname-1", "service": "podname", "serviceaccount": "default", "statefulset": "podname"}, protocol_hint: Unknown, opaque_transport_port: None, identity: None, authority_override: None }, logical_addr: Some(LogicalAddr(svcname.dev.svc.cluster.local:9200)), protocol: Http1, opaque_protocol: false })

[ 323.278154s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}: linkerd_proxy_discover::from_resolve: Changed change=Insert(2.2.2.4:9200, Endpoint { addr: Remote(ServerAddr(2.2.2.4:9200)), tls: None(NotProvidedByServiceDiscovery), metadata: Metadata { labels: {"namespace": "dev", "pod": "podname-0", "service": "podname", "serviceaccount": "default", "statefulset": "podname"}, protocol_hint: Unknown, opaque_transport_port: None, identity: None, authority_override: None }, logical_addr: Some(LogicalAddr(svcname.dev.svc.cluster.local:9200)), protocol: Http1, opaque_protocol: false })

[ 323.278182s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}: linkerd_reconnect: Disconnected backoff=false

[ 323.278195s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}: linkerd_reconnect: Creating service backoff=false

[ 323.278201s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}: linkerd_proxy_http::client: Building HTTP client settings=Http1

[ 323.278208s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}: linkerd_reconnect: Connected

[ 323.278218s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}: linkerd_reconnect: Disconnected backoff=false

[ 323.278226s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}: linkerd_reconnect: Creating service backoff=false

[ 323.278229s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}: linkerd_proxy_http::client: Building HTTP client settings=Http1

[ 323.278233s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}: linkerd_reconnect: Connected

[ 323.278262s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}:http1: linkerd_proxy_http::client: method=GET uri=http://svcname:9200/ version=HTTP/1.1

[ 323.278275s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}:http1: linkerd_proxy_http::client: headers={"qwerty", "content-length": "0", "host": "podname:9200", "user-agent": "asdf", "l5d-dst-canonical": "svcname.dev.svc.cluster.local:9200"}

[ 323.278289s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}:http1: linkerd_proxy_http::h1: Caching new client use_absolute_form=false

[ 323.278327s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}:http1: linkerd_tls::client: Peer does not support TLS reason=not_provided_by_service_discovery

[ 323.278340s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}:http1: linkerd_proxy_transport::connect: Connecting server.addr=2.2.2.3:9200

[ 323.279156s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}:http1: linkerd_proxy_transport::connect: Connected local.addr=1.1.1.1:37482 keepalive=Some(10s)

[ 323.279173s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}:http1: linkerd_transport_metrics::client: client connection open

[ 323.320453s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}:http1: linkerd_proxy_http::client: method=POST uri=http://svcname:9200/products/_open?xxx version=HTTP/1.1

[ 323.320481s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}:http1: linkerd_proxy_http::client: headers={"qwerty", "content-length": "0", "host": "podname:9200", "user-agent": "asdf", "l5d-dst-canonical": "svcname.dev.svc.cluster.local:9200"}

[ 323.320493s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}:http1: linkerd_proxy_http::h1: Caching new client use_absolute_form=false

[ 323.320544s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}:http1: linkerd_tls::client: Peer does not support TLS reason=not_provided_by_service_discovery

[ 323.320553s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}:http1: linkerd_proxy_transport::connect: Connecting server.addr=2.2.2.4:9200

[ 323.322329s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}:http1: linkerd_proxy_transport::connect: Connected local.addr=1.1.1.1:60988 keepalive=Some(10s)

[ 323.322345s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}:http1: linkerd_transport_metrics::client: client connection open

[ 323.330389s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}: linkerd_proxy_http::server: The client is shutting down the connection res=Ok(())

[ 323.330436s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}: linkerd_app_core::serve: Connection closed

[ 323.330467s] DEBUG ThreadId(01) evict{key=Accept { orig_dst: OrigDstAddr(2.2.2.2:9200), protocol: () }}: linkerd_cache: Awaiting idleness

[ 323.337492s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40520}:proxy{addr=2.2.2.2:9200}: linkerd_detect: DetectResult protocol=Some(Http1) elapsed=2.483888ms

[ 323.337527s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40520}:proxy{addr=2.2.2.2:9200}:http{v=1.x}: linkerd_proxy_http::server: Creating HTTP service version=Http1

[ 323.337556s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40520}:proxy{addr=2.2.2.2:9200}:http{v=1.x}: linkerd_proxy_http::server: Handling as HTTP version=Http1

[ 323.337582s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40520}:proxy{addr=2.2.2.2:9200}:http{v=1.x}: linkerd_proxy_http::h1: GET request with illegal URI: products

[ 323.343875s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40520}:proxy{addr=2.2.2.2:9200}:http{v=1.x}: linkerd_proxy_http::server: The client is shutting down the connection res=Ok(())

[ 323.343915s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40520}: linkerd_app_core::serve: Connection closed

```

### output of `linkerd check -o short`

```

Status check results are √

```

### Environment

- Kubernetes 1.23.8

- AKS

- Ubuntu 18.04.6 LTS 5.4.0-1089-azure containerd://1.5.11+azure-2

- Linkerd 2.12.1

### Possible solution

As you can see in the logs:

- first two HTTP request are in one tcp stream (source port 40514)

- third problematic HTTP request is in new tcp stream (source port 40520)

- `linkerd_proxy_http::h1: GET request with illegal URI: products` as possible problem?

### Additional context

_No response_

### Would you like to work on fixing this bug?

_No response_

|

1.0

|

Dropped request with HTTP 400 and "GET request with illegal URI" in logs - ### What is the issue?

Application is configured with linkerd-proxy. And currently connects to other microservice which runs without linkerd-proxy (yet). So mTLS encryption is not involved.

When the app starts it makes HTTP connections. The last three are:

1. HTTP GET http://2.2.2.2:9200/ - with response HTTP 200 in app log

2. HTTP POST http://2.2.2.2:9200/products/_open?xxxx - with response HTTP 200 in app log

3. HTTP GET http://2.2.2.2:9200/products - with response HTTP 400 Bad request in app log

All in one TCP stream.

Taking tcpdump shows, that requests 1,2 are leaving the pod but request 3 is not. So no HTTP GET http://1.2.3.4:9200/products in tcpdump capture.

This traffic works without any problems without linkerd-proxy injected.

From the logs (below) it looks like this `linkerd_proxy_http::h1: GET request with illegal URI: products` is a possible problem. But why?

### How can it be reproduced?

Inject linkerd-proxy into the microservice.

### Logs, error output, etc

Relevant debug logs from linkerd-proxy with privacy changes;

```

[ 323.241735s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}: linkerd_service_profiles::client: Resolved profile

[ 323.241754s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}: linkerd_app_outbound::switch_logical: Profile describes a logical service

[ 323.241759s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}: linkerd_app_outbound::http::detect: Attempting HTTP protocol detection

[ 323.275412s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}: linkerd_detect: DetectResult protocol=Some(Http1) elapsed=33.62501ms

[ 323.275442s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}: linkerd_proxy_http::server: Creating HTTP service version=Http1

[ 323.275462s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}: linkerd_cache: Caching new value key=Logical { protocol: Http1, profile: .., logical_addr: LogicalAddr(svcname.dev.svc.cluster.local:9200) }

[ 323.275508s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}: linkerd_proxy_http::server: Handling as HTTP version=Http1

[ 323.275575s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}: linkerd_service_profiles::http::service: Updating HTTP routes routes=0

[ 323.275602s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}: linkerd_service_profiles::split: Updating targets=[Target { addr: svcname.dev.svc.cluster.local:9200, weight: 1 }]

[ 323.275618s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}: linkerd_proxy_api_resolve::resolve: Resolving dst=svcname.dev.svc.cluster.local:9200 context={"ns":"dev", "nodeName":"aks-microservice-123456-vmss000002"}

[ 323.275653s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}: linkerd_stack::failfast: HTTP Logical service has become unavailable

[ 323.275659s] DEBUG ThreadId(01) evict{key=Logical { protocol: Http1, profile: .., logical_addr: LogicalAddr(svcname.dev.svc.cluster.local:9200) }}: linkerd_cache: Awaiting idleness

[ 323.278074s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}: linkerd_stack::failfast: HTTP Balancer service has become unavailable

[ 323.278108s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}: linkerd_proxy_api_resolve::resolve: Add endpoints=2

[ 323.278122s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}: linkerd_proxy_discover::from_resolve: Changed change=Insert(2.2.2.3:9200, Endpoint { addr: Remote(ServerAddr(2.2.2.3:9200)), tls: None(NotProvidedByServiceDiscovery), metadata: Metadata { labels: {"namespace": "dev", "pod": "podname-1", "service": "podname", "serviceaccount": "default", "statefulset": "podname"}, protocol_hint: Unknown, opaque_transport_port: None, identity: None, authority_override: None }, logical_addr: Some(LogicalAddr(svcname.dev.svc.cluster.local:9200)), protocol: Http1, opaque_protocol: false })

[ 323.278154s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}: linkerd_proxy_discover::from_resolve: Changed change=Insert(2.2.2.4:9200, Endpoint { addr: Remote(ServerAddr(2.2.2.4:9200)), tls: None(NotProvidedByServiceDiscovery), metadata: Metadata { labels: {"namespace": "dev", "pod": "podname-0", "service": "podname", "serviceaccount": "default", "statefulset": "podname"}, protocol_hint: Unknown, opaque_transport_port: None, identity: None, authority_override: None }, logical_addr: Some(LogicalAddr(svcname.dev.svc.cluster.local:9200)), protocol: Http1, opaque_protocol: false })

[ 323.278182s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}: linkerd_reconnect: Disconnected backoff=false

[ 323.278195s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}: linkerd_reconnect: Creating service backoff=false

[ 323.278201s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}: linkerd_proxy_http::client: Building HTTP client settings=Http1

[ 323.278208s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}: linkerd_reconnect: Connected

[ 323.278218s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}: linkerd_reconnect: Disconnected backoff=false

[ 323.278226s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}: linkerd_reconnect: Creating service backoff=false

[ 323.278229s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}: linkerd_proxy_http::client: Building HTTP client settings=Http1

[ 323.278233s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}: linkerd_reconnect: Connected

[ 323.278262s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}:http1: linkerd_proxy_http::client: method=GET uri=http://svcname:9200/ version=HTTP/1.1

[ 323.278275s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}:http1: linkerd_proxy_http::client: headers={"qwerty", "content-length": "0", "host": "podname:9200", "user-agent": "asdf", "l5d-dst-canonical": "svcname.dev.svc.cluster.local:9200"}

[ 323.278289s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}:http1: linkerd_proxy_http::h1: Caching new client use_absolute_form=false

[ 323.278327s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}:http1: linkerd_tls::client: Peer does not support TLS reason=not_provided_by_service_discovery

[ 323.278340s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}:http1: linkerd_proxy_transport::connect: Connecting server.addr=2.2.2.3:9200

[ 323.279156s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}:http1: linkerd_proxy_transport::connect: Connected local.addr=1.1.1.1:37482 keepalive=Some(10s)

[ 323.279173s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.3:9200}:http1: linkerd_transport_metrics::client: client connection open

[ 323.320453s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}:http1: linkerd_proxy_http::client: method=POST uri=http://svcname:9200/products/_open?xxx version=HTTP/1.1

[ 323.320481s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}:http1: linkerd_proxy_http::client: headers={"qwerty", "content-length": "0", "host": "podname:9200", "user-agent": "asdf", "l5d-dst-canonical": "svcname.dev.svc.cluster.local:9200"}

[ 323.320493s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}:http1: linkerd_proxy_http::h1: Caching new client use_absolute_form=false

[ 323.320544s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}:http1: linkerd_tls::client: Peer does not support TLS reason=not_provided_by_service_discovery

[ 323.320553s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}:http1: linkerd_proxy_transport::connect: Connecting server.addr=2.2.2.4:9200

[ 323.322329s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}:http1: linkerd_proxy_transport::connect: Connected local.addr=1.1.1.1:60988 keepalive=Some(10s)

[ 323.322345s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}:logical{dst=svcname.dev.svc.cluster.local:9200}:concrete{addr=svcname.dev.svc.cluster.local:9200}:endpoint{server.addr=2.2.2.4:9200}:http1: linkerd_transport_metrics::client: client connection open

[ 323.330389s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}:proxy{addr=2.2.2.2:9200}:http{v=1.x}: linkerd_proxy_http::server: The client is shutting down the connection res=Ok(())

[ 323.330436s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40514}: linkerd_app_core::serve: Connection closed

[ 323.330467s] DEBUG ThreadId(01) evict{key=Accept { orig_dst: OrigDstAddr(2.2.2.2:9200), protocol: () }}: linkerd_cache: Awaiting idleness

[ 323.337492s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40520}:proxy{addr=2.2.2.2:9200}: linkerd_detect: DetectResult protocol=Some(Http1) elapsed=2.483888ms

[ 323.337527s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40520}:proxy{addr=2.2.2.2:9200}:http{v=1.x}: linkerd_proxy_http::server: Creating HTTP service version=Http1

[ 323.337556s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40520}:proxy{addr=2.2.2.2:9200}:http{v=1.x}: linkerd_proxy_http::server: Handling as HTTP version=Http1

[ 323.337582s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40520}:proxy{addr=2.2.2.2:9200}:http{v=1.x}: linkerd_proxy_http::h1: GET request with illegal URI: products

[ 323.343875s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40520}:proxy{addr=2.2.2.2:9200}:http{v=1.x}: linkerd_proxy_http::server: The client is shutting down the connection res=Ok(())

[ 323.343915s] DEBUG ThreadId(01) outbound:accept{client.addr=1.1.1.1:40520}: linkerd_app_core::serve: Connection closed

```

### output of `linkerd check -o short`

```

Status check results are √

```

### Environment

- Kubernetes 1.23.8

- AKS

- Ubuntu 18.04.6 LTS 5.4.0-1089-azure containerd://1.5.11+azure-2

- Linkerd 2.12.1

### Possible solution

As you can see in the logs:

- first two HTTP request are in one tcp stream (source port 40514)

- third problematic HTTP request is in new tcp stream (source port 40520)

- `linkerd_proxy_http::h1: GET request with illegal URI: products` as possible problem?

### Additional context

_No response_

### Would you like to work on fixing this bug?

_No response_

|

non_defect

|

dropped request with http and get request with illegal uri in logs what is the issue application is configured with linkerd proxy and currently connects to other microservice which runs without linkerd proxy yet so mtls encryption is not involved when the app starts it makes http connections the last three are http get with response http in app log http post with response http in app log http get with response http bad request in app log all in one tcp stream taking tcpdump shows that requests are leaving the pod but request is not so no http get in tcpdump capture this traffic works without any problems without linkerd proxy injected from the logs below it looks like this linkerd proxy http get request with illegal uri products is a possible problem but why how can it be reproduced inject linkerd proxy into the microservice logs error output etc relevant debug logs from linkerd proxy with privacy changes debug threadid outbound accept client addr proxy addr linkerd service profiles client resolved profile debug threadid outbound accept client addr proxy addr linkerd app outbound switch logical profile describes a logical service debug threadid outbound accept client addr proxy addr linkerd app outbound http detect attempting http protocol detection debug threadid outbound accept client addr proxy addr linkerd detect detectresult protocol some elapsed debug threadid outbound accept client addr proxy addr http v x linkerd proxy http server creating http service version debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local linkerd cache caching new value key logical protocol profile logical addr logicaladdr svcname dev svc cluster local debug threadid outbound accept client addr proxy addr http v x linkerd proxy http server handling as http version debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local linkerd service profiles http service updating http routes routes debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local linkerd service profiles split updating targets debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local linkerd proxy api resolve resolve resolving dst svcname dev svc cluster local context ns dev nodename aks microservice debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local linkerd stack failfast http logical service has become unavailable debug threadid evict key logical protocol profile logical addr logicaladdr svcname dev svc cluster local linkerd cache awaiting idleness debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local linkerd stack failfast http balancer service has become unavailable debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local linkerd proxy api resolve resolve add endpoints debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local linkerd proxy discover from resolve changed change insert endpoint addr remote serveraddr tls none notprovidedbyservicediscovery metadata metadata labels namespace dev pod podname service podname serviceaccount default statefulset podname protocol hint unknown opaque transport port none identity none authority override none logical addr some logicaladdr svcname dev svc cluster local protocol opaque protocol false debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local linkerd proxy discover from resolve changed change insert endpoint addr remote serveraddr tls none notprovidedbyservicediscovery metadata metadata labels namespace dev pod podname service podname serviceaccount default statefulset podname protocol hint unknown opaque transport port none identity none authority override none logical addr some logicaladdr svcname dev svc cluster local protocol opaque protocol false debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd reconnect disconnected backoff false debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd reconnect creating service backoff false debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd proxy http client building http client settings debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd reconnect connected debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd reconnect disconnected backoff false debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd reconnect creating service backoff false debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd proxy http client building http client settings debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd reconnect connected debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd proxy http client method get uri version http debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd proxy http client headers qwerty content length host podname user agent asdf dst canonical svcname dev svc cluster local debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd proxy http caching new client use absolute form false debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd tls client peer does not support tls reason not provided by service discovery debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd proxy transport connect connecting server addr debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd proxy transport connect connected local addr keepalive some debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd transport metrics client client connection open debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd proxy http client method post uri version http debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd proxy http client headers qwerty content length host podname user agent asdf dst canonical svcname dev svc cluster local debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd proxy http caching new client use absolute form false debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd tls client peer does not support tls reason not provided by service discovery debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd proxy transport connect connecting server addr debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd proxy transport connect connected local addr keepalive some debug threadid outbound accept client addr proxy addr http v x logical dst svcname dev svc cluster local concrete addr svcname dev svc cluster local endpoint server addr linkerd transport metrics client client connection open debug threadid outbound accept client addr proxy addr http v x linkerd proxy http server the client is shutting down the connection res ok debug threadid outbound accept client addr linkerd app core serve connection closed debug threadid evict key accept orig dst origdstaddr protocol linkerd cache awaiting idleness debug threadid outbound accept client addr proxy addr linkerd detect detectresult protocol some elapsed debug threadid outbound accept client addr proxy addr http v x linkerd proxy http server creating http service version debug threadid outbound accept client addr proxy addr http v x linkerd proxy http server handling as http version debug threadid outbound accept client addr proxy addr http v x linkerd proxy http get request with illegal uri products debug threadid outbound accept client addr proxy addr http v x linkerd proxy http server the client is shutting down the connection res ok debug threadid outbound accept client addr linkerd app core serve connection closed output of linkerd check o short status check results are √ environment kubernetes aks ubuntu lts azure containerd azure linkerd possible solution as you can see in the logs first two http request are in one tcp stream source port third problematic http request is in new tcp stream source port linkerd proxy http get request with illegal uri products as possible problem additional context no response would you like to work on fixing this bug no response

| 0

|

61,738

| 15,057,869,140

|

IssuesEvent

|

2021-02-03 22:23:51

|

grafana/grafana

|

https://api.github.com/repos/grafana/grafana

|

closed

|

Grafana MSI Installer does not upgrade cleanly

|

type/build-packaging

|

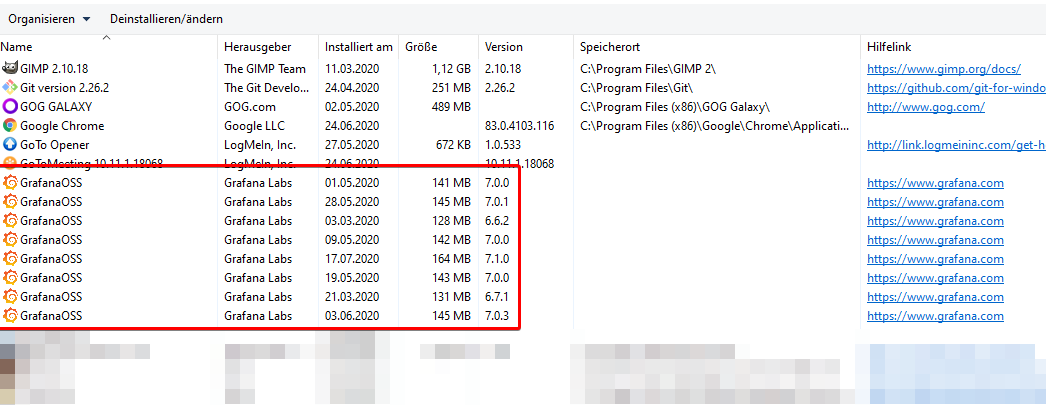

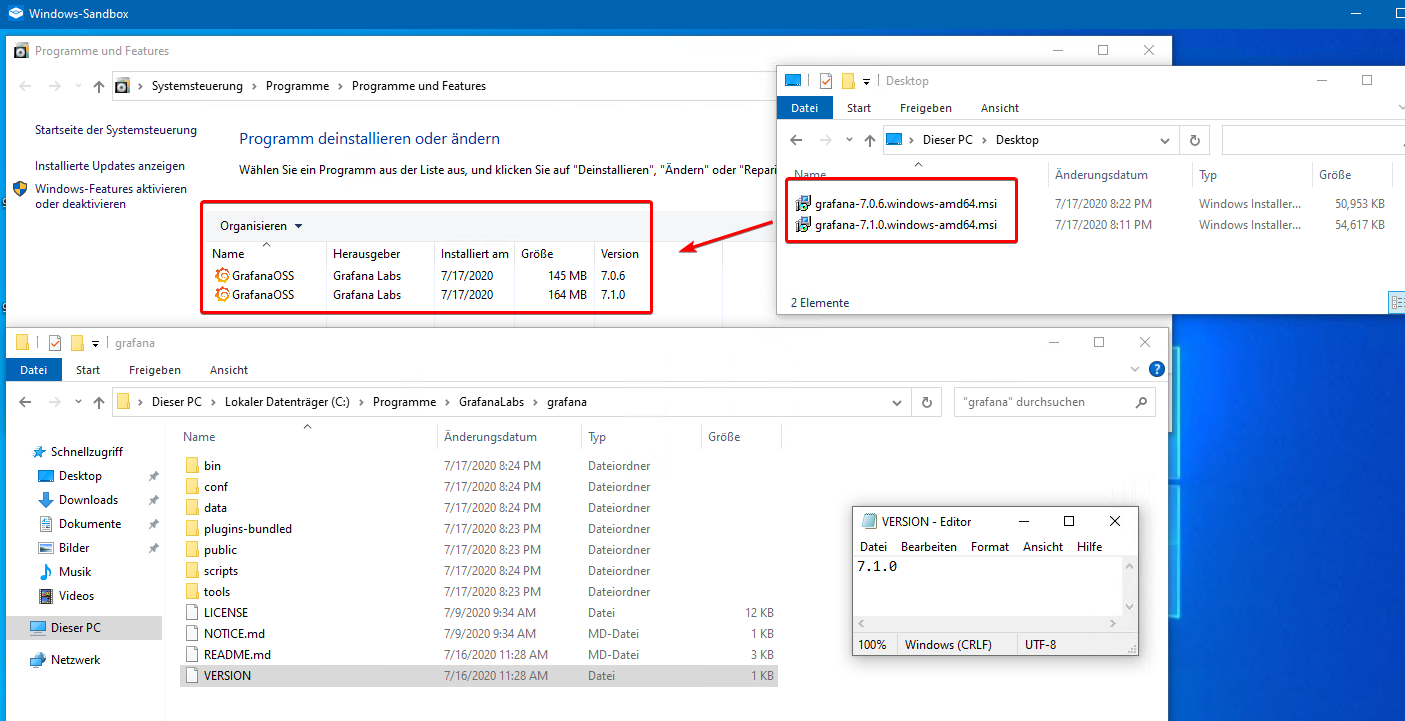

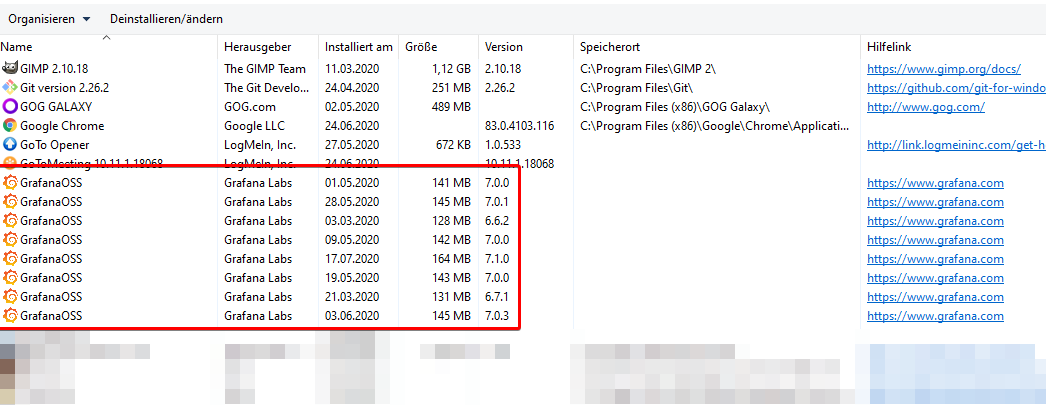

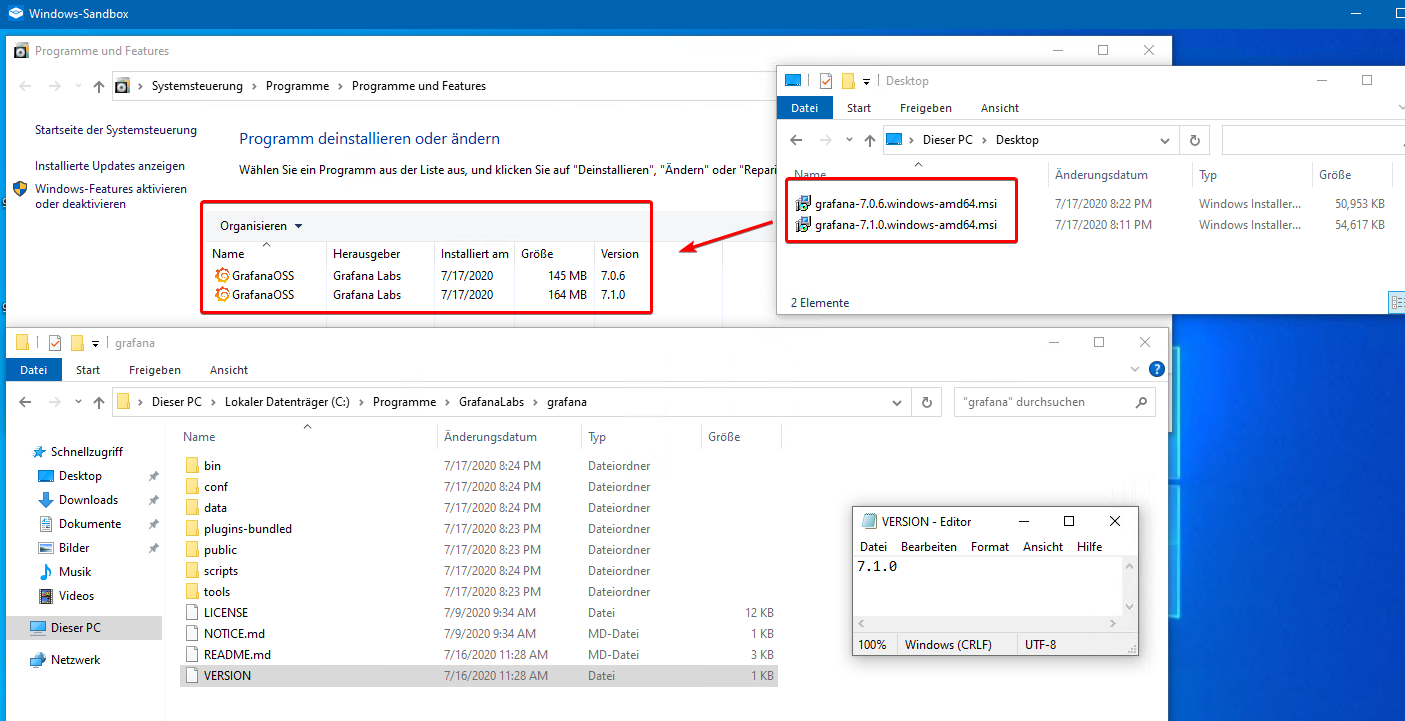

**What happened**:

After installing and UPDATING grafana (OSS) on windows several times using the MSI installer, I noticed it does not cleanly upgrade the old version but rather installs each version seperately in windows although its overriding the installation directory.

Also the `INSTALLDIR` (*Speicherort*) information is missing from the MSI installation (column is empty).

>

>

> **⚠ Uninstalling an "older" version via windows software panel will UNINSTALL THE LATEST VERSION**

>

**What you expected to happen**:

The MSI installer should have a unique product GUID (?) and be able to detect its running an upgrade.

**How to reproduce it (as minimally and precisely as possible)**:

- Install `grafana-7.0.6.windows-amd64.msi` all default settings

- Install `grafana-7.1.0.windows-amd64.msi` all default settings

Now you got two entries in apps list, easily reproducible in e.g. Windows Sandbox:

**Environment**:

- Grafana version: 6.6.2 till 7.1.0 ongoing

- OS Grafana is installed on: Windows 10 x64 2004

|

1.0

|

Grafana MSI Installer does not upgrade cleanly - **What happened**:

After installing and UPDATING grafana (OSS) on windows several times using the MSI installer, I noticed it does not cleanly upgrade the old version but rather installs each version seperately in windows although its overriding the installation directory.

Also the `INSTALLDIR` (*Speicherort*) information is missing from the MSI installation (column is empty).

>

>

> **⚠ Uninstalling an "older" version via windows software panel will UNINSTALL THE LATEST VERSION**

>

**What you expected to happen**:

The MSI installer should have a unique product GUID (?) and be able to detect its running an upgrade.

**How to reproduce it (as minimally and precisely as possible)**:

- Install `grafana-7.0.6.windows-amd64.msi` all default settings

- Install `grafana-7.1.0.windows-amd64.msi` all default settings

Now you got two entries in apps list, easily reproducible in e.g. Windows Sandbox:

**Environment**:

- Grafana version: 6.6.2 till 7.1.0 ongoing

- OS Grafana is installed on: Windows 10 x64 2004

|

non_defect

|

grafana msi installer does not upgrade cleanly what happened after installing and updating grafana oss on windows several times using the msi installer i noticed it does not cleanly upgrade the old version but rather installs each version seperately in windows although its overriding the installation directory also the installdir speicherort information is missing from the msi installation column is empty ⚠ uninstalling an older version via windows software panel will uninstall the latest version what you expected to happen the msi installer should have a unique product guid and be able to detect its running an upgrade how to reproduce it as minimally and precisely as possible install grafana windows msi all default settings install grafana windows msi all default settings now you got two entries in apps list easily reproducible in e g windows sandbox environment grafana version till ongoing os grafana is installed on windows

| 0

|

19,717

| 3,248,233,683

|

IssuesEvent

|

2015-10-17 04:25:00

|

jimradford/superputty

|

https://api.github.com/repos/jimradford/superputty

|

closed

|

Shourtcuts

|

auto-migrated Priority-Medium Type-Defect

|

```

Hello,

shourttucts are sometimes acting very wierd:

A -> A [OK]

L Ctrl -> control+ControlKey

L Ctrl + A - > Ctrl+A [OK]

Alt -> Alt+Menu

R Alt -> Ctrl+Alt+Menu

R Ctrl -> control+ControlKey

L Ctrl+Alt -> Ctrl+Alt+Menu

L Ctrl+Alt+A -> Ctrl+Alt+Menu

I support stat

A will give me A

CTRL+A -> CTRL+A

ALT+A -> ALT+A

CTRL+ALT+A -> CTRL+ALT+A

I cant map some combination like this.

(1.4.0.4)

```

Original issue reported on code.google.com by `xsoft...@gmail.com` on 20 Jun 2014 at 2:13

|

1.0

|

Shourtcuts - ```

Hello,

shourttucts are sometimes acting very wierd:

A -> A [OK]

L Ctrl -> control+ControlKey

L Ctrl + A - > Ctrl+A [OK]

Alt -> Alt+Menu

R Alt -> Ctrl+Alt+Menu

R Ctrl -> control+ControlKey

L Ctrl+Alt -> Ctrl+Alt+Menu

L Ctrl+Alt+A -> Ctrl+Alt+Menu

I support stat

A will give me A

CTRL+A -> CTRL+A

ALT+A -> ALT+A

CTRL+ALT+A -> CTRL+ALT+A

I cant map some combination like this.

(1.4.0.4)

```

Original issue reported on code.google.com by `xsoft...@gmail.com` on 20 Jun 2014 at 2:13

|

defect

|

shourtcuts hello shourttucts are sometimes acting very wierd a a l ctrl control controlkey l ctrl a ctrl a alt alt menu r alt ctrl alt menu r ctrl control controlkey l ctrl alt ctrl alt menu l ctrl alt a ctrl alt menu i support stat a will give me a ctrl a ctrl a alt a alt a ctrl alt a ctrl alt a i cant map some combination like this original issue reported on code google com by xsoft gmail com on jun at

| 1

|

199,908

| 15,786,230,989

|

IssuesEvent

|

2021-04-01 17:26:58

|

FirebaseExtended/flutterfire

|

https://api.github.com/repos/FirebaseExtended/flutterfire

|

closed

|

[firebase_crashlytics] Example doesn't match the docs

|

plugin: crashlytics type: bug type: documentation

|

The docs [here](https://github.com/FirebaseExtended/flutterfire/tree/master/packages/firebase_crashlytics#use-the-plugin) say we just set `FlutterError.onError` and then call `runApp()`, later the docs say:

>If you want to catch errors that occur in runZoned, you can supply Crashlytics.instance.recordError to the onError parameter

But the example [here ](https://github.com/FirebaseExtended/flutterfire/blob/b49837250c619e7363463db732261737e093557d/packages/firebase_crashlytics/example/lib/main.dart#L20) does:

```

runZoned<Future<void>>(() async {

runApp(MyApp());

}, onError: Crashlytics.instance.recordError);

}

```

Which is confusing, as there's no explanation on the benefits of wrapping `RunApp` in a `runZoned`.

Also the examples doesn't show `recordError`, just `log` which doesn't fully match the Android (`logException`) or the iOS (`recordError` with other parameters) docs, so this should be better explained as well.

|

1.0

|

[firebase_crashlytics] Example doesn't match the docs - The docs [here](https://github.com/FirebaseExtended/flutterfire/tree/master/packages/firebase_crashlytics#use-the-plugin) say we just set `FlutterError.onError` and then call `runApp()`, later the docs say:

>If you want to catch errors that occur in runZoned, you can supply Crashlytics.instance.recordError to the onError parameter

But the example [here ](https://github.com/FirebaseExtended/flutterfire/blob/b49837250c619e7363463db732261737e093557d/packages/firebase_crashlytics/example/lib/main.dart#L20) does:

```

runZoned<Future<void>>(() async {

runApp(MyApp());

}, onError: Crashlytics.instance.recordError);

}

```

Which is confusing, as there's no explanation on the benefits of wrapping `RunApp` in a `runZoned`.

Also the examples doesn't show `recordError`, just `log` which doesn't fully match the Android (`logException`) or the iOS (`recordError` with other parameters) docs, so this should be better explained as well.

|

non_defect

|

example doesn t match the docs the docs say we just set fluttererror onerror and then call runapp later the docs say if you want to catch errors that occur in runzoned you can supply crashlytics instance recorderror to the onerror parameter but the example does runzoned async runapp myapp onerror crashlytics instance recorderror which is confusing as there s no explanation on the benefits of wrapping runapp in a runzoned also the examples doesn t show recorderror just log which doesn t fully match the android logexception or the ios recorderror with other parameters docs so this should be better explained as well

| 0

|

9,686

| 2,615,165,850

|

IssuesEvent

|

2015-03-01 06:46:11

|

chrsmith/reaver-wps

|

https://api.github.com/repos/chrsmith/reaver-wps

|

opened

|

Waiting for Beacon from BSSID: No obvious cause?

|

auto-migrated Priority-Triage Type-Defect

|

```

Answer the following questions for every issue submitted:

0. What version of Reaver are you using? (Only defects against the latest

version will be considered.)

v1.4

1. What operating system are you using (Linux is the only supported OS)?

Ubuntu 12.04

2. Is your wireless card in monitor mode (yes/no)?

Yes

3. What is the signal strength of the Access Point you are trying to crack?

-12

4. What is the manufacturer and model # of the device you are trying to

crack?

2WIRE249 2701 HG-D

5. What is the entire command line string you are supplying to reaver?

reaver -i mon0 -b BSSID -c 9 -e 2WIRE249

6. Please describe what you think the issue is.

Process sticks on "Waiting for beacon from BSSID" after the above string is

entered. As noted, the string is entered while in monitor mode & the signal

strength is well above -50, so these should not be the cause. I ran through all

the support pages & comments to no avail. Found no evidence this router isn't

supported.

7. Paste the output from Reaver below.

[+] Switching mon0 to channel 9

[+] Waiting for beacon from BSSID

That's all I got. If more info is needed, lemme know.

```

Original issue reported on code.google.com by `bettysue...@gmail.com` on 12 Apr 2013 at 3:16

|

1.0

|

Waiting for Beacon from BSSID: No obvious cause? - ```

Answer the following questions for every issue submitted:

0. What version of Reaver are you using? (Only defects against the latest

version will be considered.)

v1.4

1. What operating system are you using (Linux is the only supported OS)?

Ubuntu 12.04

2. Is your wireless card in monitor mode (yes/no)?

Yes

3. What is the signal strength of the Access Point you are trying to crack?

-12

4. What is the manufacturer and model # of the device you are trying to

crack?

2WIRE249 2701 HG-D

5. What is the entire command line string you are supplying to reaver?

reaver -i mon0 -b BSSID -c 9 -e 2WIRE249

6. Please describe what you think the issue is.

Process sticks on "Waiting for beacon from BSSID" after the above string is

entered. As noted, the string is entered while in monitor mode & the signal

strength is well above -50, so these should not be the cause. I ran through all

the support pages & comments to no avail. Found no evidence this router isn't

supported.

7. Paste the output from Reaver below.

[+] Switching mon0 to channel 9

[+] Waiting for beacon from BSSID

That's all I got. If more info is needed, lemme know.

```

Original issue reported on code.google.com by `bettysue...@gmail.com` on 12 Apr 2013 at 3:16

|

defect

|

waiting for beacon from bssid no obvious cause answer the following questions for every issue submitted what version of reaver are you using only defects against the latest version will be considered what operating system are you using linux is the only supported os ubuntu is your wireless card in monitor mode yes no yes what is the signal strength of the access point you are trying to crack what is the manufacturer and model of the device you are trying to crack hg d what is the entire command line string you are supplying to reaver reaver i b bssid c e please describe what you think the issue is process sticks on waiting for beacon from bssid after the above string is entered as noted the string is entered while in monitor mode the signal strength is well above so these should not be the cause i ran through all the support pages comments to no avail found no evidence this router isn t supported paste the output from reaver below switching to channel waiting for beacon from bssid that s all i got if more info is needed lemme know original issue reported on code google com by bettysue gmail com on apr at

| 1

|

36,118

| 7,866,943,517

|

IssuesEvent

|

2018-06-23 01:14:45

|

StrikeNP/trac_test

|

https://api.github.com/repos/StrikeNP/trac_test

|

closed

|

Troubleshooting the differences between DYCOMS II RF02 with K&K microphysics vs Morrison microphysics (Trac #450)

|

Migrated from Trac clubb_src defect dschanen@uwm.edu

|

**Problem Description**

While exploring issues with simulating the ARM 97 case in https://github.com/larson-group/climate_process_team/issues/53 we found that DYCOMS II RF02 differs sharply in the rain fields when using Morrison and Khairoutdinov Kogan microphysics. This seems problematic, since with local formulation (l_local_kk = .true.) for a marine Sc cloud the two should produce very similar fields. I've tried a few experiments thus far and come to a few conclusions:

* The simplest case is to simply compare Morrison and Khairoutdinov Kogan with Nc fixed and using the local formulation.

* Looking at past plots on my protected page I would conclude this problem has existed with CLUBB-Morrison for a year or more (perhaps always?). See for e.g. [http://www.larson-group.com/dschanen/protected/micro_compare_plot/ this plot].

* In the simplest simulation the rain water mixing ratio and number concentration fields differ by as much as 10^-7^ on the first timestep and the Morrison simulation seems shifted down in altitude. Figuring out why that is seems like it should be easy to isolate.

* When I manually print out values for rrainm_auto and PRC (the Morrison variable for rain water mixing ratio autoconversion) they appear to be in agreement up to round off and on the correct levels. I have no budgets for the Morrison code, but rrainm_cond and rrainm_accr are zero in the K&K simulation. The difference seems to be something in the rrainm_mc budget other than autoconversion.

Attachments:

[plot_explicit_ta_configs.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_explicit_ta_configs.maff)

[plot_new_pdf_config_1_plot_2.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_new_pdf_config_1_plot_2.maff)

[plot_combo_pdf_run_3.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_combo_pdf_run_3.maff)

[plot_input_fields_rtp3_thlp3_1.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_input_fields_rtp3_thlp3_1.maff)

[plot_new_pdf_20180522_test_1.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_new_pdf_20180522_test_1.maff)

[plot_attempts_8_10.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_attempts_8_10.maff)

[plot_attempt_8_only.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_attempt_8_only.maff)

[plot_beta_1p3.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_beta_1p3.maff)

[plot_beta_1p3_all.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_beta_1p3_all.maff)

Migrated from http://carson.math.uwm.edu/trac/clubb/ticket/450

```json

{

"status": "closed",

"changetime": "2011-08-25T18:17:17",

"description": "'''Problem Description'''\nWhile exploring issues with simulating the ARM 97 case in climate_process_team:ticket:53 we found that DYCOMS II RF02 differs sharply in the rain fields when using Morrison and Khairoutdinov Kogan microphysics. This seems problematic, since with local formulation (l_local_kk = .true.) for a marine Sc cloud the two should produce very similar fields. I've tried a few experiments thus far and come to a few conclusions:\n\n * The simplest case is to simply compare Morrison and Khairoutdinov Kogan with Nc fixed and using the local formulation. \n * Looking at past plots on my protected page I would conclude this problem has existed with CLUBB-Morrison for a year or more (perhaps always?). See for e.g. [http://www.larson-group.com/dschanen/protected/micro_compare_plot/ this plot].\n * In the simplest simulation the rain water mixing ratio and number concentration fields differ by as much as 10^-7^ on the first timestep and the Morrison simulation seems shifted down in altitude. Figuring out why that is seems like it should be easy to isolate.\n * When I manually print out values for rrainm_auto and PRC (the Morrison variable for rain water mixing ratio autoconversion) they appear to be in agreement up to round off and on the correct levels. I have no budgets for the Morrison code, but rrainm_cond and rrainm_accr are zero in the K&K simulation. The difference seems to be something in the rrainm_mc budget other than autoconversion.\n\n",

"reporter": "dschanen@uwm.edu",

"cc": "vlarson@uwm.edu, roehl@uwm.edu",

"resolution": "wontfix",

"_ts": "1314296237000000",

"component": "clubb_src",

"summary": "Troubleshooting the differences between DYCOMS II RF02 with K&K microphysics vs Morrison microphysics",

"priority": "major",

"keywords": "",

"time": "2011-08-15T19:21:46",

"milestone": "",

"owner": "dschanen@uwm.edu",

"type": "defect"

}

```

|

1.0

|

Troubleshooting the differences between DYCOMS II RF02 with K&K microphysics vs Morrison microphysics (Trac #450) - **Problem Description**

While exploring issues with simulating the ARM 97 case in https://github.com/larson-group/climate_process_team/issues/53 we found that DYCOMS II RF02 differs sharply in the rain fields when using Morrison and Khairoutdinov Kogan microphysics. This seems problematic, since with local formulation (l_local_kk = .true.) for a marine Sc cloud the two should produce very similar fields. I've tried a few experiments thus far and come to a few conclusions:

* The simplest case is to simply compare Morrison and Khairoutdinov Kogan with Nc fixed and using the local formulation.

* Looking at past plots on my protected page I would conclude this problem has existed with CLUBB-Morrison for a year or more (perhaps always?). See for e.g. [http://www.larson-group.com/dschanen/protected/micro_compare_plot/ this plot].

* In the simplest simulation the rain water mixing ratio and number concentration fields differ by as much as 10^-7^ on the first timestep and the Morrison simulation seems shifted down in altitude. Figuring out why that is seems like it should be easy to isolate.

* When I manually print out values for rrainm_auto and PRC (the Morrison variable for rain water mixing ratio autoconversion) they appear to be in agreement up to round off and on the correct levels. I have no budgets for the Morrison code, but rrainm_cond and rrainm_accr are zero in the K&K simulation. The difference seems to be something in the rrainm_mc budget other than autoconversion.

Attachments:

[plot_explicit_ta_configs.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_explicit_ta_configs.maff)

[plot_new_pdf_config_1_plot_2.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_new_pdf_config_1_plot_2.maff)

[plot_combo_pdf_run_3.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_combo_pdf_run_3.maff)

[plot_input_fields_rtp3_thlp3_1.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_input_fields_rtp3_thlp3_1.maff)

[plot_new_pdf_20180522_test_1.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_new_pdf_20180522_test_1.maff)

[plot_attempts_8_10.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_attempts_8_10.maff)

[plot_attempt_8_only.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_attempt_8_only.maff)

[plot_beta_1p3.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_beta_1p3.maff)

[plot_beta_1p3_all.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_beta_1p3_all.maff)

Migrated from http://carson.math.uwm.edu/trac/clubb/ticket/450

```json

{

"status": "closed",

"changetime": "2011-08-25T18:17:17",

"description": "'''Problem Description'''\nWhile exploring issues with simulating the ARM 97 case in climate_process_team:ticket:53 we found that DYCOMS II RF02 differs sharply in the rain fields when using Morrison and Khairoutdinov Kogan microphysics. This seems problematic, since with local formulation (l_local_kk = .true.) for a marine Sc cloud the two should produce very similar fields. I've tried a few experiments thus far and come to a few conclusions:\n\n * The simplest case is to simply compare Morrison and Khairoutdinov Kogan with Nc fixed and using the local formulation. \n * Looking at past plots on my protected page I would conclude this problem has existed with CLUBB-Morrison for a year or more (perhaps always?). See for e.g. [http://www.larson-group.com/dschanen/protected/micro_compare_plot/ this plot].\n * In the simplest simulation the rain water mixing ratio and number concentration fields differ by as much as 10^-7^ on the first timestep and the Morrison simulation seems shifted down in altitude. Figuring out why that is seems like it should be easy to isolate.\n * When I manually print out values for rrainm_auto and PRC (the Morrison variable for rain water mixing ratio autoconversion) they appear to be in agreement up to round off and on the correct levels. I have no budgets for the Morrison code, but rrainm_cond and rrainm_accr are zero in the K&K simulation. The difference seems to be something in the rrainm_mc budget other than autoconversion.\n\n",

"reporter": "dschanen@uwm.edu",

"cc": "vlarson@uwm.edu, roehl@uwm.edu",

"resolution": "wontfix",

"_ts": "1314296237000000",

"component": "clubb_src",

"summary": "Troubleshooting the differences between DYCOMS II RF02 with K&K microphysics vs Morrison microphysics",

"priority": "major",

"keywords": "",

"time": "2011-08-15T19:21:46",

"milestone": "",

"owner": "dschanen@uwm.edu",

"type": "defect"

}

```

|

defect

|

troubleshooting the differences between dycoms ii with k k microphysics vs morrison microphysics trac problem description while exploring issues with simulating the arm case in we found that dycoms ii differs sharply in the rain fields when using morrison and khairoutdinov kogan microphysics this seems problematic since with local formulation l local kk true for a marine sc cloud the two should produce very similar fields i ve tried a few experiments thus far and come to a few conclusions the simplest case is to simply compare morrison and khairoutdinov kogan with nc fixed and using the local formulation looking at past plots on my protected page i would conclude this problem has existed with clubb morrison for a year or more perhaps always see for e g in the simplest simulation the rain water mixing ratio and number concentration fields differ by as much as on the first timestep and the morrison simulation seems shifted down in altitude figuring out why that is seems like it should be easy to isolate when i manually print out values for rrainm auto and prc the morrison variable for rain water mixing ratio autoconversion they appear to be in agreement up to round off and on the correct levels i have no budgets for the morrison code but rrainm cond and rrainm accr are zero in the k k simulation the difference seems to be something in the rrainm mc budget other than autoconversion attachments migrated from json status closed changetime description problem description nwhile exploring issues with simulating the arm case in climate process team ticket we found that dycoms ii differs sharply in the rain fields when using morrison and khairoutdinov kogan microphysics this seems problematic since with local formulation l local kk true for a marine sc cloud the two should produce very similar fields i ve tried a few experiments thus far and come to a few conclusions n n the simplest case is to simply compare morrison and khairoutdinov kogan with nc fixed and using the local formulation n looking at past plots on my protected page i would conclude this problem has existed with clubb morrison for a year or more perhaps always see for e g n in the simplest simulation the rain water mixing ratio and number concentration fields differ by as much as on the first timestep and the morrison simulation seems shifted down in altitude figuring out why that is seems like it should be easy to isolate n when i manually print out values for rrainm auto and prc the morrison variable for rain water mixing ratio autoconversion they appear to be in agreement up to round off and on the correct levels i have no budgets for the morrison code but rrainm cond and rrainm accr are zero in the k k simulation the difference seems to be something in the rrainm mc budget other than autoconversion n n reporter dschanen uwm edu cc vlarson uwm edu roehl uwm edu resolution wontfix ts component clubb src summary troubleshooting the differences between dycoms ii with k k microphysics vs morrison microphysics priority major keywords time milestone owner dschanen uwm edu type defect

| 1

|

37,008

| 8,201,463,770

|

IssuesEvent

|

2018-09-01 18:00:28

|

jccastillo0007/eFacturaT

|

https://api.github.com/repos/jccastillo0007/eFacturaT

|

opened

|

Navision - Cuando la moneda de pago es distinta a la moneda del CFDI relacionado, debe enviar el tipo de cambio

|

bug defect

|

Te envié un correo. Aquí te lo ponto

CUANDO LA MONEDA DEL DOCUMENTO RELACIONADO, ES DISTINTA A LA MONEDA DEL PAGO, SE DEBE ENVIAR EL TIPO DE CAMBIO A NIVEL DETALLE:

EN ESTE EJEMPLO, LA FACTURA SE EMITIÓ EN USD, Y EL PAGO ES EN MXN

ENTONCES A NIVEL DETALLE (RESALTADO EN AMARILLO), FALTÓ REPORTAR EL TIPO DE CAMBIO, TANTO EN XML COMO EN PDF.

<pagos:Pagos xmlns:pagos="http://www.sat.gob.mx/Pagos" Version="1.0">

<pagos:Pago CtaBeneficiario="03504856627" CtaOrdenante="072306003233612088" FechaPago="2018-08-30T12:00:00" FormaDePagoP="03" MonedaP="MXN" Monto="7674.52" NumOperacion="1" RfcEmisorCtaBen="SIN9412025I4" RfcEmisorCtaOrd="BMN930209927">

<pagos:DoctoRelacionado Folio="188" IdDocumento="08b44fdd-c1d5-4226-8032-f9b745ce8806" ImpPagado="7674.52" ImpSaldoAnt="405.30" ImpSaldoInsoluto="0.00" MetodoDePagoDR="PUE" MonedaDR="USD" Serie="18FVMX"/>

</pagos:Pago>

</pagos:Pagos>

|

1.0

|

Navision - Cuando la moneda de pago es distinta a la moneda del CFDI relacionado, debe enviar el tipo de cambio - Te envié un correo. Aquí te lo ponto

CUANDO LA MONEDA DEL DOCUMENTO RELACIONADO, ES DISTINTA A LA MONEDA DEL PAGO, SE DEBE ENVIAR EL TIPO DE CAMBIO A NIVEL DETALLE:

EN ESTE EJEMPLO, LA FACTURA SE EMITIÓ EN USD, Y EL PAGO ES EN MXN

ENTONCES A NIVEL DETALLE (RESALTADO EN AMARILLO), FALTÓ REPORTAR EL TIPO DE CAMBIO, TANTO EN XML COMO EN PDF.

<pagos:Pagos xmlns:pagos="http://www.sat.gob.mx/Pagos" Version="1.0">

<pagos:Pago CtaBeneficiario="03504856627" CtaOrdenante="072306003233612088" FechaPago="2018-08-30T12:00:00" FormaDePagoP="03" MonedaP="MXN" Monto="7674.52" NumOperacion="1" RfcEmisorCtaBen="SIN9412025I4" RfcEmisorCtaOrd="BMN930209927">

<pagos:DoctoRelacionado Folio="188" IdDocumento="08b44fdd-c1d5-4226-8032-f9b745ce8806" ImpPagado="7674.52" ImpSaldoAnt="405.30" ImpSaldoInsoluto="0.00" MetodoDePagoDR="PUE" MonedaDR="USD" Serie="18FVMX"/>

</pagos:Pago>

</pagos:Pagos>

|

defect

|

navision cuando la moneda de pago es distinta a la moneda del cfdi relacionado debe enviar el tipo de cambio te envié un correo aquí te lo ponto cuando la moneda del documento relacionado es distinta a la moneda del pago se debe enviar el tipo de cambio a nivel detalle en este ejemplo la factura se emitió en usd y el pago es en mxn entonces a nivel detalle resaltado en amarillo faltó reportar el tipo de cambio tanto en xml como en pdf

| 1

|

759,746

| 26,609,044,515

|

IssuesEvent

|

2023-01-23 22:00:35

|

coral-xyz/backpack

|

https://api.github.com/repos/coral-xyz/backpack

|

closed

|

recovery flow should support recover of multiple wallets if all derived from the same mnemonic

|

priority 1

|

Potentially spanning multiple blockchains.

|

1.0

|

recovery flow should support recover of multiple wallets if all derived from the same mnemonic - Potentially spanning multiple blockchains.

|

non_defect

|

recovery flow should support recover of multiple wallets if all derived from the same mnemonic potentially spanning multiple blockchains

| 0

|

18,206

| 3,035,168,298

|

IssuesEvent

|

2015-08-06 00:26:33

|

mkpanchal/phppi

|

https://api.github.com/repos/mkpanchal/phppi

|

closed

|

Wrong GD support check

|

auto-migrated Priority-Medium Type-Defect

|

```

Hi!

Now i'm having WD MyBookLive. It's Debian 5.0.4 as OS.

I've install phppi to this.

But install script say that "Your GD install is missing JPEG support" and show

notice in top: "Notice: Undefined index: JPEG Support in

/DataVolume/shares/www/photo_pub/phppi/admin/includes/classes/phppi.php on line

89".

But phpinfo() says:

gd

GD Support enabled

GD Version 2.0 or higher

FreeType Support enabled

FreeType Linkage with freetype

FreeType Version 2.3.7

T1Lib Support enabled

GIF Read Support enabled

GIF Create Support enabled

JPG Support enabled

PNG Support enabled

WBMP Support enabled

```

Original issue reported on code.google.com by `Uksus...@gmail.com` on 11 Apr 2013 at 11:47

|

1.0

|

Wrong GD support check - ```

Hi!

Now i'm having WD MyBookLive. It's Debian 5.0.4 as OS.

I've install phppi to this.

But install script say that "Your GD install is missing JPEG support" and show

notice in top: "Notice: Undefined index: JPEG Support in

/DataVolume/shares/www/photo_pub/phppi/admin/includes/classes/phppi.php on line

89".

But phpinfo() says:

gd

GD Support enabled

GD Version 2.0 or higher

FreeType Support enabled

FreeType Linkage with freetype

FreeType Version 2.3.7

T1Lib Support enabled

GIF Read Support enabled

GIF Create Support enabled

JPG Support enabled

PNG Support enabled

WBMP Support enabled

```

Original issue reported on code.google.com by `Uksus...@gmail.com` on 11 Apr 2013 at 11:47

|

defect

|

wrong gd support check hi now i m having wd mybooklive it s debian as os i ve install phppi to this but install script say that your gd install is missing jpeg support and show notice in top notice undefined index jpeg support in datavolume shares www photo pub phppi admin includes classes phppi php on line but phpinfo says gd gd support enabled gd version or higher freetype support enabled freetype linkage with freetype freetype version support enabled gif read support enabled gif create support enabled jpg support enabled png support enabled wbmp support enabled original issue reported on code google com by uksus gmail com on apr at

| 1

|

22,134

| 3,602,634,721

|

IssuesEvent

|

2016-02-03 16:17:12

|

department-of-veterans-affairs/gi-bill-comparison-tool

|

https://api.github.com/repos/department-of-veterans-affairs/gi-bill-comparison-tool

|

closed

|

Remove private repository dependency

|

cleanup Defect

|

Doing the initial setup, but couldn't get past `bundle install` because of dependency on a private repo:

Tried a work-around by not including `development` and `test` groups, but didn't work for some reason:

For now, just locally commenting out the group this dependency is enclosed in to get past.

I'm a newbie to Capistrano. Is this required? (cc @mphprogrammer )

|

1.0

|

Remove private repository dependency - Doing the initial setup, but couldn't get past `bundle install` because of dependency on a private repo:

Tried a work-around by not including `development` and `test` groups, but didn't work for some reason:

For now, just locally commenting out the group this dependency is enclosed in to get past.

I'm a newbie to Capistrano. Is this required? (cc @mphprogrammer )

|

defect

|

remove private repository dependency doing the initial setup but couldn t get past bundle install because of dependency on a private repo tried a work around by not including development and test groups but didn t work for some reason for now just locally commenting out the group this dependency is enclosed in to get past i m a newbie to capistrano is this required cc mphprogrammer

| 1

|

11,398

| 2,649,926,671

|

IssuesEvent

|

2015-03-15 12:52:10

|

karulis/pybluez

|

https://api.github.com/repos/karulis/pybluez

|

closed

|

No way to select device id for device discovery

|

auto-migrated Priority-Medium Type-Defect

|

```

My Linux device contains 3 dongles (class 1,2,3). I want to select one of them

for device discovery (user may select which to use via a GUI). Is there a way

to select hci0,1,2 through code?

Al my tests with socket.bind did not work.

Any tips?

Thanks in advance!

Mart

```

Original issue reported on code.google.com by `mart.ste...@gmail.com` on 3 Feb 2015 at 6:56

|

1.0

|

No way to select device id for device discovery - ```

My Linux device contains 3 dongles (class 1,2,3). I want to select one of them

for device discovery (user may select which to use via a GUI). Is there a way

to select hci0,1,2 through code?

Al my tests with socket.bind did not work.

Any tips?

Thanks in advance!

Mart

```

Original issue reported on code.google.com by `mart.ste...@gmail.com` on 3 Feb 2015 at 6:56

|

defect

|

no way to select device id for device discovery my linux device contains dongles class i want to select one of them for device discovery user may select which to use via a gui is there a way to select through code al my tests with socket bind did not work any tips thanks in advance mart original issue reported on code google com by mart ste gmail com on feb at

| 1

|

20,970

| 3,441,841,924

|

IssuesEvent

|

2015-12-14 20:06:50

|

wdg/blacktree-secrets

|

https://api.github.com/repos/wdg/blacktree-secrets

|

closed

|

Secrets Website is down.

|

auto-migrated Priority-Medium Type-Defect

|

```

The website is down. Unable to Update Secrets.

```

Original issue reported on code.google.com by `themacin...@gmail.com` on 11 May 2008 at 5:53

|

1.0

|

Secrets Website is down. - ```