Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 1.11k | body stringlengths 1 261k | index stringclasses 11

values | text_combine stringlengths 95 261k | label stringclasses 2

values | text stringlengths 96 250k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

12,160 | 3,051,045,219 | IssuesEvent | 2015-08-12 04:57:27 | girldevelopit/gdi-new-site | https://api.github.com/repos/girldevelopit/gdi-new-site | closed | About Page: Our Mission and Our Values Allignment | Beginner Friendly Help Wanted Suggestion UX/Design Needed | The our mission and our values paragraphs take a while for me to decipher because of the alignment.

Instead of having them right next to each other can we place the paragraphs on top of each other and still keep the center alignment like the rest of the page. I feel it would it would connect better and reduce the vis... | 1.0 | About Page: Our Mission and Our Values Allignment - The our mission and our values paragraphs take a while for me to decipher because of the alignment.

Instead of having them right next to each other can we place the paragraphs on top of each other and still keep the center alignment like the rest of the page. I feel... | design | about page our mission and our values allignment the our mission and our values paragraphs take a while for me to decipher because of the alignment instead of having them right next to each other can we place the paragraphs on top of each other and still keep the center alignment like the rest of the page i feel... | 1 |

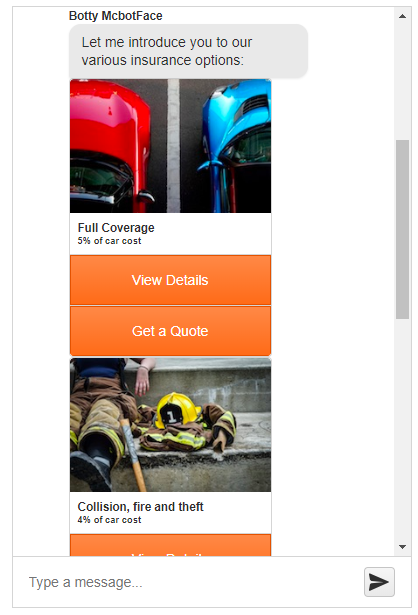

243,372 | 7,856,993,915 | IssuesEvent | 2018-06-21 09:22:29 | telerik/kendo-ui-core | https://api.github.com/repos/telerik/kendo-ui-core | closed | Chat cards are not in a card deck | Bug C: Chat Kendo2 Priority 5 | ### Bug report

In R2 2018 SP1 Chat cards are not in a card deck.

### Reproduction of the problem

1. https://demos.telerik.com/kendo-ui/chat/index

1. Click on Get a Quote button

### Current behavior

... | 1.0 | Chat cards are not in a card deck - ### Bug report

In R2 2018 SP1 Chat cards are not in a card deck.

### Reproduction of the problem

1. https://demos.telerik.com/kendo-ui/chat/index

1. Click on Get a Quote button

### Current behavior

| documentation project | Currently I am refactoring the item system and I have come across a lot of loose naming conventions from our prototyping days.

I have corrected some of them as I go, like `facing` on `PhysicsBody`, now, renamed `is_facing_right`. I am unsure how many times I have had to double check whether this means that the body ... | 1.0 | Create style guide (naming conventions etc) - Currently I am refactoring the item system and I have come across a lot of loose naming conventions from our prototyping days.

I have corrected some of them as I go, like `facing` on `PhysicsBody`, now, renamed `is_facing_right`. I am unsure how many times I have had to ... | non_design | create style guide naming conventions etc currently i am refactoring the item system and i have come across a lot of loose naming conventions from our prototyping days i have corrected some of them as i go like facing on physicsbody now renamed is facing right i am unsure how many times i have had to ... | 0 |

107,607 | 13,490,110,489 | IssuesEvent | 2020-09-11 14:42:39 | patternfly/patternfly-org | https://api.github.com/repos/patternfly/patternfly-org | opened | Add additional button guidelines: Button guidelines & UX writing style guide | Content PF4 design Guidelines UX writing style guide | Links to docs:

- [Button design guidelines](https://www.patternfly.org/v4/design-guidelines/usage-and-behavior/buttons-and-links)

- [Punctuation page of UX writing style guide](https://www.patternfly.org/v4/design-guidelines/content/punctuation)

Issue: Some buttons in OpenStack dashboard have a "+" sign on the... | 1.0 | Add additional button guidelines: Button guidelines & UX writing style guide - Links to docs:

- [Button design guidelines](https://www.patternfly.org/v4/design-guidelines/usage-and-behavior/buttons-and-links)

- [Punctuation page of UX writing style guide](https://www.patternfly.org/v4/design-guidelines/content/punc... | design | add additional button guidelines button guidelines ux writing style guide links to docs issue some buttons in openstack dashboard have a sign on them created using punctuation not an icon and some don t to establish better icon usage on buttons we can add additional guidelines on w... | 1 |

176,568 | 28,121,714,239 | IssuesEvent | 2023-03-31 14:39:21 | aidenndev/Bookstore | https://api.github.com/repos/aidenndev/Bookstore | closed | Add models, views & controllers | enhancement system design back-end | - [x] Add Book Model (name, ID, customer & reserved time)

- [x] Add Customer Model (Id, name, email, password)

- [x] Add relationships

- [x] Add Controllers

- [x] Add Views | 1.0 | Add models, views & controllers - - [x] Add Book Model (name, ID, customer & reserved time)

- [x] Add Customer Model (Id, name, email, password)

- [x] Add relationships

- [x] Add Controllers

- [x] Add Views | design | add models views controllers add book model name id customer reserved time add customer model id name email password add relationships add controllers add views | 1 |

214,276 | 7,268,359,101 | IssuesEvent | 2018-02-20 09:49:57 | wso2/product-is | https://api.github.com/repos/wso2/product-is | opened | Explain why we should do cache configuration when Deploying and configuring JWT client-handler artifacts | Affected/5.5.0-Alpha Priority/High Type/Docs | Explain why we should do cache configuration when Deploying and configuring JWT client-handler artifacts in this doc [1]

[1] https://docs.wso2.com/display/IS550/Private+Key+JWT+Client+Authentication+for+OIDC

In step 5 it says,

Do the cache configuration in <IS_HOME>/repository/conf/identity/identity.xml as shown b... | 1.0 | Explain why we should do cache configuration when Deploying and configuring JWT client-handler artifacts - Explain why we should do cache configuration when Deploying and configuring JWT client-handler artifacts in this doc [1]

[1] https://docs.wso2.com/display/IS550/Private+Key+JWT+Client+Authentication+for+OIDC

I... | non_design | explain why we should do cache configuration when deploying and configuring jwt client handler artifacts explain why we should do cache configuration when deploying and configuring jwt client handler artifacts in this doc in step it says do the cache configuration in repository conf identity identity ... | 0 |

117,522 | 15,110,133,104 | IssuesEvent | 2021-02-08 18:47:33 | mexyn/statev_v2_issues | https://api.github.com/repos/mexyn/statev_v2_issues | closed | Entfernen von blockierenden Mülltonnen | gamedesign solved | <!-- Bitte die Vorlage unten vollständig ausfüllen -->

**Kalle Knutsen**

<!-- Mit welchem Character wurde das Verhalten in-game ausgelöst/beobachtet -->

**Statisch**

<!-- Wann exakt (Datum / Uhrzeit) ist der Fehler beobachtet worden -->

**Seit V2 befinden sich 2 Mülltonnen an dem Firmenhash pfWestVine21, welch... | 1.0 | Entfernen von blockierenden Mülltonnen - <!-- Bitte die Vorlage unten vollständig ausfüllen -->

**Kalle Knutsen**

<!-- Mit welchem Character wurde das Verhalten in-game ausgelöst/beobachtet -->

**Statisch**

<!-- Wann exakt (Datum / Uhrzeit) ist der Fehler beobachtet worden -->

**Seit V2 befinden sich 2 Müllton... | design | entfernen von blockierenden mülltonnen kalle knutsen statisch seit befinden sich mülltonnen an dem firmenhash welche den normalen gehweg versperren jetzt muss man immer über einen kantstein wo man gerne mal drüber stürzt und rp technisch auch jedes mal unschön ist könnten die contai... | 1 |

433,459 | 30,329,475,347 | IssuesEvent | 2023-07-11 04:44:50 | Infleqtion/client-superstaq | https://api.github.com/repos/Infleqtion/client-superstaq | opened | Update tutorial install instructions | documentation | Update install instructions to:

```

try:

import qiskit

import qiskit_superstaq as qss

except ImportError:

print("Installing qiskit-superstaq...")

%pip install -q qiskit-superstaq[examples]

print("Installed qiskit-superstaq")

``` | 1.0 | Update tutorial install instructions - Update install instructions to:

```

try:

import qiskit

import qiskit_superstaq as qss

except ImportError:

print("Installing qiskit-superstaq...")

%pip install -q qiskit-superstaq[examples]

print("Installed qiskit-superstaq")

``` | non_design | update tutorial install instructions update install instructions to try import qiskit import qiskit superstaq as qss except importerror print installing qiskit superstaq pip install q qiskit superstaq print installed qiskit superstaq | 0 |

184,992 | 14,997,729,099 | IssuesEvent | 2021-01-29 17:19:25 | RoyMagnussen/Yummy-Recipe-Blog | https://api.github.com/repos/RoyMagnussen/Yummy-Recipe-Blog | closed | [CHANGE REQUEST] Wireframes | documentation enhancement | Linked Project: Documentation

#### Describe The Change Wanted

- Create a `Wireframes` section within the `UX` section of the `README.md` file.

- Add the wireframes to the `docs` folder.

- Add a link to the wireframes in the `Wireframes` section.

#### Reason For Change

This will help provide the developers... | 1.0 | [CHANGE REQUEST] Wireframes - Linked Project: Documentation

#### Describe The Change Wanted

- Create a `Wireframes` section within the `UX` section of the `README.md` file.

- Add the wireframes to the `docs` folder.

- Add a link to the wireframes in the `Wireframes` section.

#### Reason For Change

This wi... | non_design | wireframes linked project documentation describe the change wanted create a wireframes section within the ux section of the readme md file add the wireframes to the docs folder add a link to the wireframes in the wireframes section reason for change this will help provide... | 0 |

119,251 | 15,438,997,058 | IssuesEvent | 2021-03-07 22:29:34 | fga-eps-mds/2020-2-G4 | https://api.github.com/repos/fga-eps-mds/2020-2-G4 | opened | Protótipo | Design EPS Product Owner | ## Nessa issue deve ser feito:

- Criar tela de cadastrar cliente

- Criar tela de visualizar uma lista de clientes

- Criar tela de atualizar dados dos clientes

- Criar tela visualizar um único cliente

- Criar tela de cadastrar categorias

- Criar tela de visualizar uma lista de categorias

- Criar tela de atualiz... | 1.0 | Protótipo - ## Nessa issue deve ser feito:

- Criar tela de cadastrar cliente

- Criar tela de visualizar uma lista de clientes

- Criar tela de atualizar dados dos clientes

- Criar tela visualizar um único cliente

- Criar tela de cadastrar categorias

- Criar tela de visualizar uma lista de categorias

- Criar tel... | design | protótipo nessa issue deve ser feito criar tela de cadastrar cliente criar tela de visualizar uma lista de clientes criar tela de atualizar dados dos clientes criar tela visualizar um único cliente criar tela de cadastrar categorias criar tela de visualizar uma lista de categorias criar tel... | 1 |

145,990 | 22,840,246,797 | IssuesEvent | 2022-07-12 20:58:51 | department-of-veterans-affairs/vets-design-system-documentation | https://api.github.com/repos/department-of-veterans-affairs/vets-design-system-documentation | closed | OMB Info - Audit | component-update vsp-design-system-team va-omb-info |

## Description

Audit of OMB Info. Identify as many instances of this component or pattern in use on VA.gov and share with the Design System Team.

## Tasks

- [ ] Work with engineers and the Governance team to find examples of this type of component

- [ ] Add screenshots of component usage examples to a Mural boa... | 1.0 | OMB Info - Audit -

## Description

Audit of OMB Info. Identify as many instances of this component or pattern in use on VA.gov and share with the Design System Team.

## Tasks

- [ ] Work with engineers and the Governance team to find examples of this type of component

- [ ] Add screenshots of component usage exam... | design | omb info audit description audit of omb info identify as many instances of this component or pattern in use on va gov and share with the design system team tasks work with engineers and the governance team to find examples of this type of component add screenshots of component usage examples... | 1 |

650 | 2,506,889,753 | IssuesEvent | 2015-01-12 14:44:51 | mathjax/MathJax | https://api.github.com/repos/mathjax/MathJax | closed | Alignment bug in 2.5 | Accepted Merged QA - Unit Test Wanted |

I found that equations that were centered in the column in 2.4 are now setting flush left in 2.5.

** [ Version: 1.0.0 Type: Bug Platform: All Category: n/a Rep... | 1.0 | Can't say to-money $1.00 - _Submitted by:_ _henrikmk_

```rebol

>> to-money $1

** Script error: cannot MAKE/TO money! from: $1.00

** Where: to to-money

** Near: to money! :value

```

---

<sup>**Imported from:** **[CureCode](https://www.curecode.org/rebol3/ticket.rsp?id=238)** [ Version: 1.0.0 Type: Bug Plat... | non_design | can t say to money submitted by henrikmk rebol to money script error cannot make to money from where to to money near to money value imported from imported from comments rebolbot added the type bug on ja... | 0 |

122,772 | 16,326,768,540 | IssuesEvent | 2021-05-12 02:29:26 | Uniswap/uniswap-interface | https://api.github.com/repos/Uniswap/uniswap-interface | closed | Position list item breaks with long strings | bug design p1 | If the token symbol is long, the position list item UI will break.

<img width="952" alt="Screen Shot 2021-05-05 at 3 11 50 PM" src="https://user-images.githubusercontent.com/1355319/117196411-70049880-adb4-11eb-8b0f-8269d5fe9767.png">

| 1.0 | Position list item breaks with long strings - If the token symbol is long, the position list item UI will break.

<img width="952" alt="Screen Shot 2021-05-05 at 3 11 50 PM" src="https://user-images.githubusercontent.com/1355319/117196411-70049880-adb4-11eb-8b0f-8269d5fe9767.png">

| design | position list item breaks with long strings if the token symbol is long the position list item ui will break img width alt screen shot at pm src | 1 |

66,559 | 8,031,690,222 | IssuesEvent | 2018-07-28 05:10:48 | ngs-lang/ngs | https://api.github.com/repos/ngs-lang/ngs | opened | Design NGS and modules versioning support | modules needs-design | * Installing different NGS versions - would they use same modules or each version of NGS will have it's own modules directory?

* What should be the modules directory/directories layout?

* Should `require()` support specifying the desired version? Maybe something as simple as `require("my_module/2.5.1/blah.ngs")`

* S... | 1.0 | Design NGS and modules versioning support - * Installing different NGS versions - would they use same modules or each version of NGS will have it's own modules directory?

* What should be the modules directory/directories layout?

* Should `require()` support specifying the desired version? Maybe something as simple a... | design | design ngs and modules versioning support installing different ngs versions would they use same modules or each version of ngs will have it s own modules directory what should be the modules directory directories layout should require support specifying the desired version maybe something as simple a... | 1 |

54,547 | 6,827,512,514 | IssuesEvent | 2017-11-08 17:15:15 | zooniverse/Panoptes-Front-End | https://api.github.com/repos/zooniverse/Panoptes-Front-End | closed | Improve UX for Add to Collection | design enhancement ui | The Add to Collection feature is not as intuitive a user experience as it could be.

- The pill drawer where you add collections could contain all the collections that this subject is part of (and show them on load). If it were done that way, the X would actually remove the subject from the collection, and you wouldn't... | 1.0 | Improve UX for Add to Collection - The Add to Collection feature is not as intuitive a user experience as it could be.

- The pill drawer where you add collections could contain all the collections that this subject is part of (and show them on load). If it were done that way, the X would actually remove the subject fr... | design | improve ux for add to collection the add to collection feature is not as intuitive a user experience as it could be the pill drawer where you add collections could contain all the collections that this subject is part of and show them on load if it were done that way the x would actually remove the subject fr... | 1 |

18,154 | 3,377,909,001 | IssuesEvent | 2015-11-25 07:52:38 | owncloud/client | https://api.github.com/repos/owncloud/client | closed | Protocol / Activities: Double click on row should open local file manager of that folder (if existing) | approved by QA Design & UX | ... and possibly select the file | 1.0 | Protocol / Activities: Double click on row should open local file manager of that folder (if existing) - ... and possibly select the file | design | protocol activities double click on row should open local file manager of that folder if existing and possibly select the file | 1 |

23,376 | 3,835,836,304 | IssuesEvent | 2016-04-01 15:42:38 | iTowns/itowns2 | https://api.github.com/repos/iTowns/itowns2 | opened | Classes doing work beyond their scope | design | There's a recurring design issue in the current implementation. A lot of classes do no limit themselves to their role and undertake actions out of their scope. This is very detrimental to the reusability of the classes.

Let's take [WMTS_Provider](https://github.com/iTowns/itowns2/blob/master/src/Core/Commander/Provi... | 1.0 | Classes doing work beyond their scope - There's a recurring design issue in the current implementation. A lot of classes do no limit themselves to their role and undertake actions out of their scope. This is very detrimental to the reusability of the classes.

Let's take [WMTS_Provider](https://github.com/iTowns/itow... | design | classes doing work beyond their scope there s a recurring design issue in the current implementation a lot of classes do no limit themselves to their role and undertake actions out of their scope this is very detrimental to the reusability of the classes let s take as an example the role of the wmts pro... | 1 |

51,433 | 6,522,689,438 | IssuesEvent | 2017-08-29 04:22:41 | FreeAndFair/ColoradoRLA | https://api.github.com/repos/FreeAndFair/ColoradoRLA | closed | Enforcing User Behavior | Ask CDOS blocked client design question ui/ux | How much will we be enforcing good behavior on the part of the user? This issue is a place where you can list opportunities we have for such enforcement.

- Will we disallow changes to the Risk Limit set originally by the Secretary of State? In other words, does the RLA Tool stop the SoS from logging in halfway throu... | 1.0 | Enforcing User Behavior - How much will we be enforcing good behavior on the part of the user? This issue is a place where you can list opportunities we have for such enforcement.

- Will we disallow changes to the Risk Limit set originally by the Secretary of State? In other words, does the RLA Tool stop the SoS fro... | design | enforcing user behavior how much will we be enforcing good behavior on the part of the user this issue is a place where you can list opportunities we have for such enforcement will we disallow changes to the risk limit set originally by the secretary of state in other words does the rla tool stop the sos fro... | 1 |

151,483 | 23,832,420,848 | IssuesEvent | 2022-09-05 23:35:22 | towhee-io/towhee | https://api.github.com/repos/towhee-io/towhee | closed | [DesignProposal]: Support mmap in DataFrame | stale needs-triage kind/design-proposal | ### Background and Motivation

DataCollection map supports multiple functions, dataframe should aswell for cmap.

### Design

Follow the style of DataCollection

### Pros and Cons

_No response_

### Anything else? (Additional Context)

_No response_ | 1.0 | [DesignProposal]: Support mmap in DataFrame - ### Background and Motivation

DataCollection map supports multiple functions, dataframe should aswell for cmap.

### Design

Follow the style of DataCollection

### Pros and Cons

_No response_

### Anything else? (Additional Context)

_No response_ | design | support mmap in dataframe background and motivation datacollection map supports multiple functions dataframe should aswell for cmap design follow the style of datacollection pros and cons no response anything else additional context no response | 1 |

74,982 | 9,181,617,158 | IssuesEvent | 2019-03-05 10:39:22 | ipfs-shipyard/pm-idm | https://api.github.com/repos/ipfs-shipyard/pm-idm | closed | Include the "Feedback message" in the style guide | design task w-1 | ## Description

Include the "Feedback Message" component in the styleguide.

## Acceptance Criteria

- [x] Have the "Feedback Message" component with all its scenarios in the styleguide document.

| 1.0 | Include the "Feedback message" in the style guide - ## Description

Include the "Feedback Message" component in the styleguide.

## Acceptance Criteria

- [x] Have the "Feedback Message" component with all its scenarios in the styleguide document.

| design | include the feedback message in the style guide description include the feedback message component in the styleguide acceptance criteria have the feedback message component with all its scenarios in the styleguide document | 1 |

69,045 | 8,369,359,527 | IssuesEvent | 2018-10-04 17:07:03 | 8bitPit/Niagara-Issues | https://api.github.com/repos/8bitPit/Niagara-Issues | closed | [Request] Black settings (Dark theme) | design | Hey,

Is it possible to have black Niagara settings when Dark theme is tick ?

Thanks | 1.0 | [Request] Black settings (Dark theme) - Hey,

Is it possible to have black Niagara settings when Dark theme is tick ?

Thanks | design | black settings dark theme hey is it possible to have black niagara settings when dark theme is tick thanks | 1 |

631,698 | 20,157,944,579 | IssuesEvent | 2022-02-09 18:15:52 | nevermined-io/sdk-js | https://api.github.com/repos/nevermined-io/sdk-js | closed | Add new graph events api support in the javascript sdk | enhancement priority:high sdk | Add support for the new GraphQL events api with Graph.

- Ability to query for past events

- Ability to subscribe to future events | 1.0 | Add new graph events api support in the javascript sdk - Add support for the new GraphQL events api with Graph.

- Ability to query for past events

- Ability to subscribe to future events | non_design | add new graph events api support in the javascript sdk add support for the new graphql events api with graph ability to query for past events ability to subscribe to future events | 0 |

124,989 | 16,680,722,450 | IssuesEvent | 2021-06-07 23:10:00 | chapel-lang/chapel | https://api.github.com/repos/chapel-lang/chapel | opened | Proposal for `.indices` on arrays | area: Language type: Design | As of PR #15039 or so, we added `.indices` queries to most indexable types as a means of writing code for them that refers to their indices abstractly rather than assuming the indices are, say, `1..size` or `0..<size`. At that time, `.indices` became a synonym for `.domain` for arrays. Issue #15103 proposed that we r... | 1.0 | Proposal for `.indices` on arrays - As of PR #15039 or so, we added `.indices` queries to most indexable types as a means of writing code for them that refers to their indices abstractly rather than assuming the indices are, say, `1..size` or `0..<size`. At that time, `.indices` became a synonym for `.domain` for arra... | design | proposal for indices on arrays as of pr or so we added indices queries to most indexable types as a means of writing code for them that refers to their indices abstractly rather than assuming the indices are say size or size at that time indices became a synonym for domain for arrays ... | 1 |

179,302 | 30,215,790,541 | IssuesEvent | 2023-07-05 15:29:19 | quicwg/multipath | https://api.github.com/repos/quicwg/multipath | closed | MUST for checking if peer has spare Connection IDs | has PR design | Section 4.1 of the Multipath draft states: _Following [[QUIC-TRANSPORT](https://quicwg.org/multipath/draft-ietf-quic-multipath.html#QUIC-TRANSPORT)], each endpoint uses NEW_CONNECTION_ID frames to issue usable connections IDs to reach it. Before an endpoint adds a new path by initiating path validation, it MUST check w... | 1.0 | MUST for checking if peer has spare Connection IDs - Section 4.1 of the Multipath draft states: _Following [[QUIC-TRANSPORT](https://quicwg.org/multipath/draft-ietf-quic-multipath.html#QUIC-TRANSPORT)], each endpoint uses NEW_CONNECTION_ID frames to issue usable connections IDs to reach it. Before an endpoint adds a ne... | design | must for checking if peer has spare connection ids section of the multipath draft states following each endpoint uses new connection id frames to issue usable connections ids to reach it before an endpoint adds a new path by initiating path validation it must check whether at least one unused connection i... | 1 |

381,067 | 11,272,987,877 | IssuesEvent | 2020-01-14 15:47:40 | flowkey/UIKit-cross-platform | https://api.github.com/repos/flowkey/UIKit-cross-platform | closed | Build Swift for x86 Android (also to support Android Emulator) | medium-priority | ## Motivation

At the moment we can only build for `armv7a`. While this covers the great proportion of Swift devices on the market today, it'd be great to build for x86 devices as well for two main reasons:

- x86 Devices make up about 10% of the total Android market, which is about 200 million devices.

- The And... | 1.0 | Build Swift for x86 Android (also to support Android Emulator) - ## Motivation

At the moment we can only build for `armv7a`. While this covers the great proportion of Swift devices on the market today, it'd be great to build for x86 devices as well for two main reasons:

- x86 Devices make up about 10% of the tot... | non_design | build swift for android also to support android emulator motivation at the moment we can only build for while this covers the great proportion of swift devices on the market today it d be great to build for devices as well for two main reasons devices make up about of the total android m... | 0 |

109,770 | 11,648,627,119 | IssuesEvent | 2020-03-01 21:49:01 | gatsbyjs/gatsby | https://api.github.com/repos/gatsbyjs/gatsby | reopened | gatsby-remark-images docs are inconsistent | stale? type: documentation | <!--

To make it easier for us to help you, please include as much useful information as possible.

Useful Links:

- Documentation: https://www.gatsbyjs.org/docs/

- Contributing: https://www.gatsbyjs.org/contributing/

Gatsby has several community support channels, try asking your question on:

- Dis... | 1.0 | gatsby-remark-images docs are inconsistent - <!--

To make it easier for us to help you, please include as much useful information as possible.

Useful Links:

- Documentation: https://www.gatsbyjs.org/docs/

- Contributing: https://www.gatsbyjs.org/contributing/

Gatsby has several community support cha... | non_design | gatsby remark images docs are inconsistent to make it easier for us to help you please include as much useful information as possible useful links documentation contributing gatsby has several community support channels try asking your question on discord spectru... | 0 |

5,986 | 2,798,669,323 | IssuesEvent | 2015-05-12 19:50:04 | mozilla/webmaker-android | https://api.github.com/repos/mozilla/webmaker-android | closed | Better selected page state UI | design enhancement | Can we get something more prominent than this? The shadow doesn't stand out enough when the grid is filled.

| 1.0 | Better selected page state UI - Can we get something more prominent than this? The shadow doesn't stand out enough when the grid is filled.

| design | better selected page state ui can we get something more prominent than this the shadow doesn t stand out enough when the grid is filled | 1 |

147,775 | 19,524,101,495 | IssuesEvent | 2021-12-30 02:30:14 | mireillehulent/maven-project | https://api.github.com/repos/mireillehulent/maven-project | opened | CVE-2021-44832 (Medium) detected in log4j-core-2.6.1.jar | security vulnerability | ## CVE-2021-44832 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-core-2.6.1.jar</b></p></summary>

<p>The Apache Log4j Implementation</p>

<p>Path to dependency file: /pom.xml</... | True | CVE-2021-44832 (Medium) detected in log4j-core-2.6.1.jar - ## CVE-2021-44832 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-core-2.6.1.jar</b></p></summary>

<p>The Apache Log4... | non_design | cve medium detected in core jar cve medium severity vulnerability vulnerable library core jar the apache implementation path to dependency file pom xml path to vulnerable library epository org apache logging core core jar dependency hierarchy ... | 0 |

156,130 | 24,575,474,767 | IssuesEvent | 2022-10-13 12:00:57 | Lundalogik/lime-elements | https://api.github.com/repos/Lundalogik/lime-elements | closed | `isolate` components that use `z-index` | bug good first issue usability visual design released on @next | ## Current behavior

Using `z-index` has always created problems in the UI as components overlap each other. For example 👇

## Expected behavior

However, it is possible to prevent thi... | 1.0 | `isolate` components that use `z-index` - ## Current behavior

Using `z-index` has always created problems in the UI as components overlap each other. For example 👇

## Expected behavi... | design | isolate components that use z index current behavior using z index has always created problems in the ui as components overlap each other for example 👇 expected behavior however it is possible to prevent this by isolating the components read more then we won t need to import the z ... | 1 |

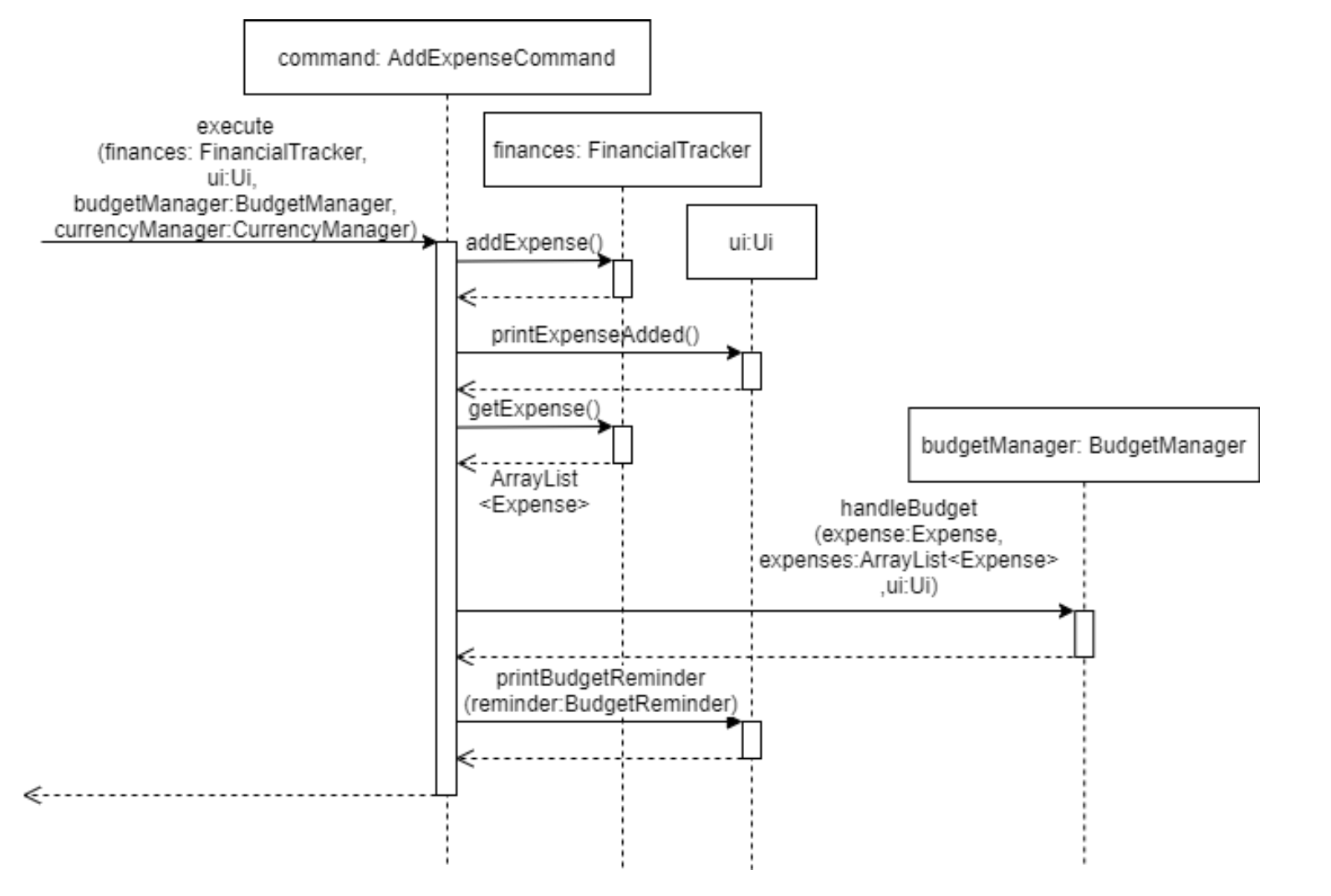

248,947 | 18,858,139,210 | IssuesEvent | 2021-11-12 09:25:42 | arcturusz/pe | https://api.github.com/repos/arcturusz/pe | opened | Very long command makes it harder to read sequence diagram | type.DocumentationBug severity.VeryLow | Sequence diagram of the command component can be simplified further? Instead of writing out all the parameters when calling the function, can be simplified to just execute().

... | 1.0 | Very long command makes it harder to read sequence diagram - Sequence diagram of the command component can be simplified further? Instead of writing out all the parameters when calling the function, can be simplified to just execute().

detected in mem-1.1.0.tgz | security vulnerability | ## WS-2019-0307 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mem-1.1.0.tgz</b></p></summary>

<p>Memoize functions - An optimization used to speed up consecutive function calls by ... | True | WS-2019-0307 (Medium) detected in mem-1.1.0.tgz - ## WS-2019-0307 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mem-1.1.0.tgz</b></p></summary>

<p>Memoize functions - An optimizati... | non_design | ws medium detected in mem tgz ws medium severity vulnerability vulnerable library mem tgz memoize functions an optimization used to speed up consecutive function calls by caching the result of calls with identical input library home page a href path to dependency file ... | 0 |

151,481 | 23,832,326,132 | IssuesEvent | 2022-09-05 23:22:45 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | closed | Make it easier to quickly turn duotone on/off | [Type] Enhancement [Feature] Design Tools | ## What problem does this address?

Currently, due to a the following reasons, it's a bit tricky to easily turn on/off the duotone filter while continuing to try them out:

- The duotone popover isn't persistent and often closes once more causing you to have to open it again.

- It's not quite clear that clicking aga... | 1.0 | Make it easier to quickly turn duotone on/off - ## What problem does this address?

Currently, due to a the following reasons, it's a bit tricky to easily turn on/off the duotone filter while continuing to try them out:

- The duotone popover isn't persistent and often closes once more causing you to have to open it ... | design | make it easier to quickly turn duotone on off what problem does this address currently due to a the following reasons it s a bit tricky to easily turn on off the duotone filter while continuing to try them out the duotone popover isn t persistent and often closes once more causing you to have to open it ... | 1 |

106,768 | 13,383,950,882 | IssuesEvent | 2020-09-02 11:11:39 | Altinn/altinn-studio | https://api.github.com/repos/Altinn/altinn-studio | closed | 502 responses when deploying a Designer pod after AKS upgrade | kind/bug ops/ci-cd solution/studio/designer | ## Describe the bug

After we upgraded our AKS cluster for dev.studio we are experiencing issues when deploying the designer pod.

## To Reproduce

Steps to reproduce the behavior:

1. Deploy a designer pod

2. See that we are unable to reach the pod - 502.

## Expected behavior

Should take over for the older pod as expect... | 1.0 | 502 responses when deploying a Designer pod after AKS upgrade - ## Describe the bug

After we upgraded our AKS cluster for dev.studio we are experiencing issues when deploying the designer pod.

## To Reproduce

Steps to reproduce the behavior:

1. Deploy a designer pod

2. See that we are unable to reach the pod - 502.

#... | design | responses when deploying a designer pod after aks upgrade describe the bug after we upgraded our aks cluster for dev studio we are experiencing issues when deploying the designer pod to reproduce steps to reproduce the behavior deploy a designer pod see that we are unable to reach the pod ex... | 1 |

183,121 | 31,159,989,158 | IssuesEvent | 2023-08-16 15:21:41 | MetPX/sarracenia | https://api.github.com/repos/MetPX/sarracenia | closed | Dependency Management Strategies... | bug enhancement Design Developer Discussion_Needed crasher | # The Problem

Sarracenia uses a lot of other packages to provide functionality. These are called *dependencies*. In it's native environment (Ubuntu Linux) most of these dependencies are easily resolved using the built-in debian packaging tools (apt-get.) but in many other environments, It is more complex. like: ... | 1.0 | Dependency Management Strategies... - # The Problem

Sarracenia uses a lot of other packages to provide functionality. These are called *dependencies*. In it's native environment (Ubuntu Linux) most of these dependencies are easily resolved using the built-in debian packaging tools (apt-get.) but in many other e... | design | dependency management strategies the problem sarracenia uses a lot of other packages to provide functionality these are called dependencies in it s native environment ubuntu linux most of these dependencies are easily resolved using the built in debian packaging tools apt get but in many other e... | 1 |

60,703 | 7,374,562,793 | IssuesEvent | 2018-03-13 20:45:18 | dbrgn/mcp3425-rs | https://api.github.com/repos/dbrgn/mcp3425-rs | closed | Design: Measurement Freshness | design | In continuous conversion mode, the ADC conversions are triggered periodically by a timer inside the device. If a user reads a measurement value twice in a row, the second value will be "stale" because no new measurement by the ADC has taken place since the previous value returned.

I could think of three ways to hand... | 1.0 | Design: Measurement Freshness - In continuous conversion mode, the ADC conversions are triggered periodically by a timer inside the device. If a user reads a measurement value twice in a row, the second value will be "stale" because no new measurement by the ADC has taken place since the previous value returned.

I c... | design | design measurement freshness in continuous conversion mode the adc conversions are triggered periodically by a timer inside the device if a user reads a measurement value twice in a row the second value will be stale because no new measurement by the adc has taken place since the previous value returned i c... | 1 |

162,993 | 25,731,608,872 | IssuesEvent | 2022-12-07 20:46:24 | bounswe/bounswe2022group8 | https://api.github.com/repos/bounswe/bounswe2022group8 | closed | FE-15: Recommendation Page | Effort: High Status: Completed Coding Design Team: Frontend | ### What's up?

As discussed in [Week #7 Frontend Meeting #3](https://github.com/bounswe/bounswe2022group8/wiki/Week-7-Frontend-Meeting-Notes-3) we need to design and implement a recommendation page.

### To Do

- [x] Carefully study the recommendation pages of art-related websites..

- [x] Decide the main functionalit... | 1.0 | FE-15: Recommendation Page - ### What's up?

As discussed in [Week #7 Frontend Meeting #3](https://github.com/bounswe/bounswe2022group8/wiki/Week-7-Frontend-Meeting-Notes-3) we need to design and implement a recommendation page.

### To Do

- [x] Carefully study the recommendation pages of art-related websites..

- [x]... | design | fe recommendation page what s up as discussed in we need to design and implement a recommendation page to do carefully study the recommendation pages of art related websites decide the main functionalities that should be on the page create a basic and useful recommendation page de... | 1 |

161,164 | 25,297,300,242 | IssuesEvent | 2022-11-17 07:59:22 | gohugoio/hugoDocs | https://api.github.com/repos/gohugoio/hugoDocs | closed | Navigation previous / next arrows. | Design | Hello,

I am very confused about the "previous" and "next" navigation arrows under the "What's on this page?" list.

For example I am on the [Organization](https://gohugo.io/content-management/organization/) page and press on the right arrow.

I'd expect to go to the next page in the structure on the left, which... | 1.0 | Navigation previous / next arrows. - Hello,

I am very confused about the "previous" and "next" navigation arrows under the "What's on this page?" list.

For example I am on the [Organization](https://gohugo.io/content-management/organization/) page and press on the right arrow.

I'd expect to go to the next pag... | design | navigation previous next arrows hello i am very confused about the previous and next navigation arrows under the what s on this page list for example i am on the page and press on the right arrow i d expect to go to the next page in the structure on the left which is but instead it lin... | 1 |

436,387 | 12,550,378,819 | IssuesEvent | 2020-06-06 10:54:17 | googleapis/google-api-java-client-services | https://api.github.com/repos/googleapis/google-api-java-client-services | opened | Synthesis failed for recommender | api: recommender autosynth failure priority: p1 type: bug | Hello! Autosynth couldn't regenerate recommender. :broken_heart:

Here's the output from running `synth.py`:

```

2020-06-06 03:54:10,423 autosynth [INFO] > logs will be written to: /tmpfs/src/github/synthtool/logs/googleapis/google-api-java-client-services

2020-06-06 03:54:11,763 autosynth [DEBUG] > Running: git confi... | 1.0 | Synthesis failed for recommender - Hello! Autosynth couldn't regenerate recommender. :broken_heart:

Here's the output from running `synth.py`:

```

2020-06-06 03:54:10,423 autosynth [INFO] > logs will be written to: /tmpfs/src/github/synthtool/logs/googleapis/google-api-java-client-services

2020-06-06 03:54:11,763 aut... | non_design | synthesis failed for recommender hello autosynth couldn t regenerate recommender broken heart here s the output from running synth py autosynth logs will be written to tmpfs src github synthtool logs googleapis google api java client services autosynth running git c... | 0 |

270,904 | 20,614,239,498 | IssuesEvent | 2022-03-07 11:37:16 | uit-cosmo/user-guide | https://api.github.com/repos/uit-cosmo/user-guide | opened | Provide description of uit_sandpiles | documentation | @Sosnowsky Could you provide a short description of the uit_sandpiles repo in the user guide? | 1.0 | Provide description of uit_sandpiles - @Sosnowsky Could you provide a short description of the uit_sandpiles repo in the user guide? | non_design | provide description of uit sandpiles sosnowsky could you provide a short description of the uit sandpiles repo in the user guide | 0 |

113,100 | 17,115,744,552 | IssuesEvent | 2021-07-11 10:05:59 | NixOS/nixpkgs | https://api.github.com/repos/NixOS/nixpkgs | closed | Vulnerability roundup 103: bluez-5.55: 2 advisories [5.7] | 1.severity: security | [search](https://search.nix.gsc.io/?q=bluez&i=fosho&repos=NixOS-nixpkgs), [files](https://github.com/NixOS/nixpkgs/search?utf8=%E2%9C%93&q=bluez+in%3Apath&type=Code)

* [ ] [CVE-2021-0129](https://nvd.nist.gov/vuln/detail/CVE-2021-0129) CVSSv3=5.7 (nixos-20.09)

* [ ] [CVE-2021-3588](https://nvd.nist.gov/vuln/detail/CVE... | True | Vulnerability roundup 103: bluez-5.55: 2 advisories [5.7] - [search](https://search.nix.gsc.io/?q=bluez&i=fosho&repos=NixOS-nixpkgs), [files](https://github.com/NixOS/nixpkgs/search?utf8=%E2%9C%93&q=bluez+in%3Apath&type=Code)

* [ ] [CVE-2021-0129](https://nvd.nist.gov/vuln/detail/CVE-2021-0129) CVSSv3=5.7 (nixos-20.09... | non_design | vulnerability roundup bluez advisories nixos nixos scanned versions nixos | 0 |

12,490 | 3,079,743,584 | IssuesEvent | 2015-08-21 18:01:34 | ThibaultLatrille/ControverSciences | https://api.github.com/repos/ThibaultLatrille/ControverSciences | closed | Choix des thèmes des articles | *** important design | Les cases ne sont pas toutes de la meme taille a cause de la taille du contenu. Le plus simle est sans doute de mettre sur deux lignes tous les contenus, picto puis a la ligne le mot.

Sur la page https://www.controversciences.org/references/7

Par : F. Giry

Navigateur : chrome modern windows webkit | 1.0 | Choix des thèmes des articles - Les cases ne sont pas toutes de la meme taille a cause de la taille du contenu. Le plus simle est sans doute de mettre sur deux lignes tous les contenus, picto puis a la ligne le mot.

Sur la page https://www.controversciences.org/references/7

Par : F. Giry

Navigateur : chrome modern w... | design | choix des thèmes des articles les cases ne sont pas toutes de la meme taille a cause de la taille du contenu le plus simle est sans doute de mettre sur deux lignes tous les contenus picto puis a la ligne le mot sur la page par f giry navigateur chrome modern windows webkit | 1 |

38,869 | 10,258,119,089 | IssuesEvent | 2019-08-21 21:54:13 | Squalr/Squally | https://api.github.com/repos/Squalr/Squally | opened | PDB Generation Failing with LNK1201 Windows Debug/RelWithDebug | area:build-system good first issue (intermediate) | This issue popped up some time ago, and I've been unable to diagnose it so far. Reverting to older points in time in the repo didn't seem to solve it either for me.

This wasn't caught for awhile because I do most development on OSX. In the mean time, I'm continuing to do all testing on OSX, and just compiling in Rel... | 1.0 | PDB Generation Failing with LNK1201 Windows Debug/RelWithDebug - This issue popped up some time ago, and I've been unable to diagnose it so far. Reverting to older points in time in the repo didn't seem to solve it either for me.

This wasn't caught for awhile because I do most development on OSX. In the mean time, I... | non_design | pdb generation failing with windows debug relwithdebug this issue popped up some time ago and i ve been unable to diagnose it so far reverting to older points in time in the repo didn t seem to solve it either for me this wasn t caught for awhile because i do most development on osx in the mean time i m con... | 0 |

49,832 | 6,264,894,940 | IssuesEvent | 2017-07-16 12:47:07 | OpenBudget/budgetkey-app-search | https://api.github.com/repos/OpenBudget/budgetkey-app-search | closed | Redesign Faceted Search | design | _From @mushon on October 12, 2015 17:34_

<!---

@huboard:{"order":1.2197274440461925e-19,"milestone_order":0.00048828125}

-->

_Copied from original issue: OpenBudget/open-budget-frontend#279_ | 1.0 | Redesign Faceted Search - _From @mushon on October 12, 2015 17:34_

<!---

@huboard:{"order":1.2197274440461925e-19,"milestone_order":0.00048828125}

-->

_Copied from original issue: OpenBudget/open-budget-frontend#279_ | design | redesign faceted search from mushon on october huboard order milestone order copied from original issue openbudget open budget frontend | 1 |

55,207 | 6,892,579,272 | IssuesEvent | 2017-11-22 21:42:59 | webcompat/webcompat.com | https://api.github.com/repos/webcompat/webcompat.com | closed | Contributors guideline section remains highlighted when tapped/clicked on Chrome browser | lang: CSS scope: design scope: refactor | Browser / Version: Chrome 56.0.2924, Chrome 57.0.2987

Operating System: Windows 10 Pro, Android 6.0.1

**Steps to Reproduce**

1. Navigate to: https://webcompat.com/contributors.

2. Click/Tap a guideline section.

3. Observe behavior.

**Expected Behavior:**

Section is not highlighted.

**Actual Behavior:**

... | 1.0 | Contributors guideline section remains highlighted when tapped/clicked on Chrome browser - Browser / Version: Chrome 56.0.2924, Chrome 57.0.2987

Operating System: Windows 10 Pro, Android 6.0.1

**Steps to Reproduce**

1. Navigate to: https://webcompat.com/contributors.

2. Click/Tap a guideline section.

3. Observe... | design | contributors guideline section remains highlighted when tapped clicked on chrome browser browser version chrome chrome operating system windows pro android steps to reproduce navigate to click tap a guideline section observe behavior expected behavior secti... | 1 |

106,206 | 13,254,014,627 | IssuesEvent | 2020-08-20 08:35:37 | pupilfirst/pupilfirst | https://api.github.com/repos/pupilfirst/pupilfirst | opened | Revamp the /coaches page | design enhancement | The existing `/coaches` page is the only remaining Bootstrap-based page on the site. We should remove it to get rid of the dependency and improve it in the process.

1. The existing design contains a lot of older elements that are no-longer required. We should preserve only the `name` and `connect_link` on the index ... | 1.0 | Revamp the /coaches page - The existing `/coaches` page is the only remaining Bootstrap-based page on the site. We should remove it to get rid of the dependency and improve it in the process.

1. The existing design contains a lot of older elements that are no-longer required. We should preserve only the `name` and `... | design | revamp the coaches page the existing coaches page is the only remaining bootstrap based page on the site we should remove it to get rid of the dependency and improve it in the process the existing design contains a lot of older elements that are no longer required we should preserve only the name and ... | 1 |

93,879 | 11,825,199,596 | IssuesEvent | 2020-03-21 11:27:40 | nikodemus/foolang | https://api.github.com/repos/nikodemus/foolang | opened | indexed classes | design feature | as in Smalltalk. The basis for classes like Array.

- Syntax?

- Implementation?

Worth a design note.

| 1.0 | indexed classes - as in Smalltalk. The basis for classes like Array.

- Syntax?

- Implementation?

Worth a design note.

| design | indexed classes as in smalltalk the basis for classes like array syntax implementation worth a design note | 1 |

390,315 | 26,857,966,634 | IssuesEvent | 2023-02-03 16:00:27 | NixOS/nix | https://api.github.com/repos/NixOS/nix | opened | Missing or incorrect S3 documentation in the Manual | documentation | ## NB: I didn't test this, only moving here because the issue was opened on the wrong repo (https://github.com/NixOS/nixos-homepage/issues/731)

## Problem

for a S3 binary cache READ operations is incomplete - it is missing `"s3:ListBucket"` action. Whilst the current config is enough for basic nix-shell substitut... | 1.0 | Missing or incorrect S3 documentation in the Manual - ## NB: I didn't test this, only moving here because the issue was opened on the wrong repo (https://github.com/NixOS/nixos-homepage/issues/731)

## Problem

for a S3 binary cache READ operations is incomplete - it is missing `"s3:ListBucket"` action. Whilst the ... | non_design | missing or incorrect documentation in the manual nb i didn t test this only moving here because the issue was opened on the wrong repo problem for a binary cache read operations is incomplete it is missing listbucket action whilst the current config is enough for basic nix shell substitu... | 0 |

174,533 | 27,660,449,889 | IssuesEvent | 2023-03-12 13:09:33 | TeamAntPowerLifting/TeamAnt | https://api.github.com/repos/TeamAntPowerLifting/TeamAnt | closed | design: footer 컴포넌트 구현 | design | ## 🍦 기능 구현

- Footer 컴포넌트 제작

## 🍭 기능 구현 방식

- 컴포넌트로 각 페이지 하단에 재사용할 예정

## 🍪 기능 구현 결과

<img src="https://user-images.githubusercontent.com/83527046/218497296-f0ce5172-3dc4-418d-baae-a16ffc5e54ef.png">

## 📚 기능 구현을 위한 참고 레퍼런스

(참고 레퍼런스 링크)

| 1.0 | design: footer 컴포넌트 구현 - ## 🍦 기능 구현

- Footer 컴포넌트 제작

## 🍭 기능 구현 방식

- 컴포넌트로 각 페이지 하단에 재사용할 예정

## 🍪 기능 구현 결과

<img src="https://user-images.githubusercontent.com/83527046/218497296-f0ce5172-3dc4-418d-baae-a16ffc5e54ef.png">

## 📚 기능 구현을 위한 참고 레퍼런스

(참고 레퍼런스 링크)

| design | design footer 컴포넌트 구현 🍦 기능 구현 footer 컴포넌트 제작 🍭 기능 구현 방식 컴포넌트로 각 페이지 하단에 재사용할 예정 🍪 기능 구현 결과 img src 📚 기능 구현을 위한 참고 레퍼런스 참고 레퍼런스 링크 | 1 |

392,503 | 26,942,838,098 | IssuesEvent | 2023-02-08 04:42:04 | yosileyid/yosileyid | https://api.github.com/repos/yosileyid/yosileyid | closed | Change docs/CONTRIBUTING.md to be more similar to atom/CONTRIBUTING | documentation enhancement | The atom project has a much nicer contributing page. I want to update the current one to be more similar to theirs.

[Atom CONTRIBUTING page](https://github.com/atom/atom/blob/master/CONTRIBUTING.md) | 1.0 | Change docs/CONTRIBUTING.md to be more similar to atom/CONTRIBUTING - The atom project has a much nicer contributing page. I want to update the current one to be more similar to theirs.

[Atom CONTRIBUTING page](https://github.com/atom/atom/blob/master/CONTRIBUTING.md) | non_design | change docs contributing md to be more similar to atom contributing the atom project has a much nicer contributing page i want to update the current one to be more similar to theirs | 0 |

173,308 | 21,155,265,001 | IssuesEvent | 2022-04-07 02:02:48 | Aivolt1/u-i-u-x-volt-ai | https://api.github.com/repos/Aivolt1/u-i-u-x-volt-ai | reopened | CVE-2022-24771 (High) detected in node-forge-0.10.0.tgz | security vulnerability | ## CVE-2022-24771 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-forge-0.10.0.tgz</b></p></summary>

<p>JavaScript implementations of network transports, cryptography, ciphers, PK... | True | CVE-2022-24771 (High) detected in node-forge-0.10.0.tgz - ## CVE-2022-24771 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-forge-0.10.0.tgz</b></p></summary>

<p>JavaScript implem... | non_design | cve high detected in node forge tgz cve high severity vulnerability vulnerable library node forge tgz javascript implementations of network transports cryptography ciphers pki message digests and various utilities library home page a href path to dependency file ... | 0 |

196,603 | 15,603,347,722 | IssuesEvent | 2021-03-19 01:41:30 | stegnerw/penguin_swarm | https://api.github.com/repos/stegnerw/penguin_swarm | closed | Change license to MIT | documentation | Licenses are scary and I do not understand all of the terms in LGPLv3. I would feel much more comfortable under MIT, as it is written in plain English. | 1.0 | Change license to MIT - Licenses are scary and I do not understand all of the terms in LGPLv3. I would feel much more comfortable under MIT, as it is written in plain English. | non_design | change license to mit licenses are scary and i do not understand all of the terms in i would feel much more comfortable under mit as it is written in plain english | 0 |

514,053 | 14,932,200,666 | IssuesEvent | 2021-01-25 07:19:31 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.amazon.com - see bug description | browser-focus-geckoview engine-gecko ml-needsdiagnosis-false ml-probability-high priority-critical | <!-- @browser: Firefox Mobile 84.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:84.0) Gecko/84.0 Firefox/84.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/66210 -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://www.amazon.com/gp/aw/s?... | 1.0 | www.amazon.com - see bug description - <!-- @browser: Firefox Mobile 84.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:84.0) Gecko/84.0 Firefox/84.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/66210 -->

<!-- @extra_labels: browser-focus-geckoview -->

*... | non_design | see bug description url footwear browser version firefox mobile operating system android tested another browser yes opera problem type something else description didnt load steps to reproduce browser configuration none from with ❤️ | 0 |

64,702 | 16,014,306,009 | IssuesEvent | 2021-04-20 14:21:04 | hashicorp/packer | https://api.github.com/repos/hashicorp/packer | closed | Proxmox 6, vm Debian 9, problem with the preseed.cfg file, when we get to the storage configuration it does not continue with the installation, the screen remains blue and does not continue | bug builder/proxmox remote-plugin/proxmox waiting-reply | When filing a bug, please include the following headings if possible. Any

example text in this template can be deleted.

#### Overview of the Issue

Before we start, I apologize for my English.

When the packer reaches the boot_command and uploads the preseed.cfg file this file works the problem is when we get t... | 1.0 | Proxmox 6, vm Debian 9, problem with the preseed.cfg file, when we get to the storage configuration it does not continue with the installation, the screen remains blue and does not continue - When filing a bug, please include the following headings if possible. Any

example text in this template can be deleted.

####... | non_design | proxmox vm debian problem with the preseed cfg file when we get to the storage configuration it does not continue with the installation the screen remains blue and does not continue when filing a bug please include the following headings if possible any example text in this template can be deleted ... | 0 |

390,617 | 11,551,085,441 | IssuesEvent | 2020-02-19 00:19:17 | googleapis/java-trace | https://api.github.com/repos/googleapis/java-trace | closed | Synthesis failed for java-trace | api: cloudtrace autosynth failure priority: p1 type: bug | Hello! Autosynth couldn't regenerate java-trace. :broken_heart:

Here's the output from running `synth.py`:

```

Cloning into 'working_repo'...

Switched to branch 'autosynth'

Running synthtool

['/tmpfs/src/git/autosynth/env/bin/python3', '-m', 'synthtool', 'synth.py', '--']

synthtool > Executing /tmpfs/src/git/autosynt... | 1.0 | Synthesis failed for java-trace - Hello! Autosynth couldn't regenerate java-trace. :broken_heart:

Here's the output from running `synth.py`:

```

Cloning into 'working_repo'...

Switched to branch 'autosynth'

Running synthtool

['/tmpfs/src/git/autosynth/env/bin/python3', '-m', 'synthtool', 'synth.py', '--']

synthtool >... | non_design | synthesis failed for java trace hello autosynth couldn t regenerate java trace broken heart here s the output from running synth py cloning into working repo switched to branch autosynth running synthtool synthtool executing tmpfs src git autosynth working repo synth py on branch autosynth n... | 0 |

57,989 | 7,111,057,592 | IssuesEvent | 2018-01-17 12:59:57 | pints-team/pints | https://api.github.com/repos/pints-team/pints | opened | Add documentation for contributors | design-and-infrastructure documentation | Things it should describe:

- [ ] Installation with `pip -e . [dev, docs, extras]`

- [ ] Python stuff? Maybe [pep8](https://www.python.org/dev/peps/pep-0008/) and flake8.

- [ ] Running tests before

- [ ] Writing tests

- [ ] Writing docs (rst) and building the docs (see #107)

- [ ] Creating branches and using PRs | 1.0 | Add documentation for contributors - Things it should describe:

- [ ] Installation with `pip -e . [dev, docs, extras]`

- [ ] Python stuff? Maybe [pep8](https://www.python.org/dev/peps/pep-0008/) and flake8.

- [ ] Running tests before

- [ ] Writing tests

- [ ] Writing docs (rst) and building the docs (see #107)

... | design | add documentation for contributors things it should describe installation with pip e python stuff maybe and running tests before writing tests writing docs rst and building the docs see creating branches and using prs | 1 |

33,359 | 6,198,561,969 | IssuesEvent | 2017-07-05 19:27:41 | stylelint/stylelint | https://api.github.com/repos/stylelint/stylelint | closed | Fix missing closing brace in example inside max-nesting-depth documentation | status: help wanted type: documentation | A closing brace is missing in the first example inside [max-nesting-depth documentation](https://github.com/stylelint/stylelint/blob/master/lib/rules/max-nesting-depth/README.md):

```css

a { & > b { top: 0; }

``` | 1.0 | Fix missing closing brace in example inside max-nesting-depth documentation - A closing brace is missing in the first example inside [max-nesting-depth documentation](https://github.com/stylelint/stylelint/blob/master/lib/rules/max-nesting-depth/README.md):

```css

a { & > b { top: 0; }

``` | non_design | fix missing closing brace in example inside max nesting depth documentation a closing brace is missing in the first example inside css a b top | 0 |

575,414 | 17,030,455,799 | IssuesEvent | 2021-07-04 13:01:59 | samvdkris/dotobot | https://api.github.com/repos/samvdkris/dotobot | closed | Quote all formatting | enhancement low priority | Just like with the help message !quote all could use a new lick of paint. An easy way to not clog up the entire screen is by dumping a .txt file or in any other language with which we can manipulate syntax highlighting to maybe make the file more interesting.

Alternatively a lot of embeds can be used. Can we make an e... | 1.0 | Quote all formatting - Just like with the help message !quote all could use a new lick of paint. An easy way to not clog up the entire screen is by dumping a .txt file or in any other language with which we can manipulate syntax highlighting to maybe make the file more interesting.

Alternatively a lot of embeds can be... | non_design | quote all formatting just like with the help message quote all could use a new lick of paint an easy way to not clog up the entire screen is by dumping a txt file or in any other language with which we can manipulate syntax highlighting to maybe make the file more interesting alternatively a lot of embeds can be... | 0 |

73,886 | 8,951,655,322 | IssuesEvent | 2019-01-25 14:33:58 | webdev6/obscure-book-genres | https://api.github.com/repos/webdev6/obscure-book-genres | opened | Visual Design - Colour scheme | Design | - [ ] Find out colour scheme and look for web app

- [ ] Search colours

- [ ] Select colours

- [ ] Add to mood board

- [ ] Show team members and get approval | 1.0 | Visual Design - Colour scheme - - [ ] Find out colour scheme and look for web app

- [ ] Search colours

- [ ] Select colours

- [ ] Add to mood board

- [ ] Show team members and get approval | design | visual design colour scheme find out colour scheme and look for web app search colours select colours add to mood board show team members and get approval | 1 |

142,444 | 21,766,658,949 | IssuesEvent | 2022-05-13 03:15:00 | COS301-SE-2022/MathU-Similarity-Index | https://api.github.com/repos/COS301-SE-2022/MathU-Similarity-Index | closed | 💄 🩹 (Prototype): Added Styling for AppBar | scope:design priority:medium scope:ui scope:client status:ready type:bug type:change type:chore | Fixed confusion between saved page and view all page | 1.0 | 💄 🩹 (Prototype): Added Styling for AppBar - Fixed confusion between saved page and view all page | design | 💄 🩹 prototype added styling for appbar fixed confusion between saved page and view all page | 1 |

552,807 | 16,327,927,378 | IssuesEvent | 2021-05-12 05:10:09 | ppy/osu | https://api.github.com/repos/ppy/osu | closed | Hitsample-related hard crash after switching skins during gameplay loading | area:skinning priority:1 ruleset:osu!mania type:reliability | Reproduction steps (or [15s video](https://streamable.com/jg7jbh)):

1. Play any mania beatmap.

2. Change skins during the gameplay loading screen (for the best chance of reproducing, switch from this skin https://joppe27.s-ul.eu/FUjlsZfd to this one https://joppe27.s-ul.eu/xlubncdY).

3. Quickly press some keys when ... | 1.0 | Hitsample-related hard crash after switching skins during gameplay loading - Reproduction steps (or [15s video](https://streamable.com/jg7jbh)):

1. Play any mania beatmap.

2. Change skins during the gameplay loading screen (for the best chance of reproducing, switch from this skin https://joppe27.s-ul.eu/FUjlsZfd to ... | non_design | hitsample related hard crash after switching skins during gameplay loading reproduction steps or play any mania beatmap change skins during the gameplay loading screen for the best chance of reproducing switch from this skin to this one quickly press some keys when gameplay starts you can als... | 0 |

150,569 | 23,681,582,873 | IssuesEvent | 2022-08-28 21:47:12 | pulumi/pulumi | https://api.github.com/repos/pulumi/pulumi | closed | Providers Broken via MIssing Type Name | kind/bug resolution/by-design impact/regression area/plugins | ### What happened?

**TL;DR:** [This commit](https://github.com/pulumi/pulumi/pull/10435/files) has introduced a change which has broken provider codegen for at least `@pulumi/command`.

I recently checked out `@pulumi/command` to see work on codegen changes. I noticed that codegen was not working, and that the err... | 1.0 | Providers Broken via MIssing Type Name - ### What happened?

**TL;DR:** [This commit](https://github.com/pulumi/pulumi/pull/10435/files) has introduced a change which has broken provider codegen for at least `@pulumi/command`.

I recently checked out `@pulumi/command` to see work on codegen changes. I noticed that ... | design | providers broken via missing type name what happened tl dr has introduced a change which has broken provider codegen for at least pulumi command i recently checked out pulumi command to see work on codegen changes i noticed that codegen was not working and that the error seemed legitimate... | 1 |

49,974 | 6,288,950,632 | IssuesEvent | 2017-07-19 18:07:28 | roschaefer/story.board | https://api.github.com/repos/roschaefer/story.board | closed | tc Channel select: Sensorstory as a default | design Priority: medium User Story | As a reporter

I want sensorstory as the default channel

in order to save one click. | 1.0 | tc Channel select: Sensorstory as a default - As a reporter

I want sensorstory as the default channel

in order to save one click. | design | tc channel select sensorstory as a default as a reporter i want sensorstory as the default channel in order to save one click | 1 |

425,422 | 12,339,994,568 | IssuesEvent | 2020-05-14 19:07:01 | google/knative-gcp | https://api.github.com/repos/google/knative-gcp | closed | Add installation documentation for gcp broker | area/broker kind/doc priority/1 release/1 | **Problem**

Add installation instructions in knative-gcp and a link from knative documentation as a pointer to alternate broker implementation.

* [x] Introduction to gcp broker and how is it different from channel based one

* [x] Installation, auth configuration: https://github.com/google/knative-gcp/pull/880

* [... | 1.0 | Add installation documentation for gcp broker - **Problem**

Add installation instructions in knative-gcp and a link from knative documentation as a pointer to alternate broker implementation.

* [x] Introduction to gcp broker and how is it different from channel based one

* [x] Installation, auth configuration: htt... | non_design | add installation documentation for gcp broker problem add installation instructions in knative gcp and a link from knative documentation as a pointer to alternate broker implementation introduction to gcp broker and how is it different from channel based one installation auth configuration ... | 0 |

98,680 | 12,344,814,928 | IssuesEvent | 2020-05-15 07:47:23 | nishidayoshikatsu/covid19-yamaguchi | https://api.github.com/repos/nishidayoshikatsu/covid19-yamaguchi | closed | PNG版のロゴデータのフォントが中文フォントになっている | design | ## 改善詳細 / Details of Improvement

- サイトで使用されているPNG版のロゴデータのフォントが中文フォントになっている

## スクリーンショット / Screenshot

slackでご指摘いただいたコメント

<!-- バグであればdeveloper toolからコンソールも合わせて添付 -->

<!-- If it's a bug, attach a screenshot of the developer tool console -->

zsh: no matches found: glances[docker]

```

Adding quotes fixed it for me

`

pip install 'glance... | 1.0 | Must escape argument to install modules on OS X Catalina - On a newly installed glances I tried to add the docker module, with this result:

```

~❯ pip --version && pip install glances[docker] --user

pip 22.1.2 from /usr/local/lib/python3.9/site-packages/pip (python 3.9)

zsh: no matches found: glances[docker]

```... | non_design | must escape argument to install modules on os x catalina on a newly installed glances i tried to add the docker module with this result ❯ pip version pip install glances user pip from usr local lib site packages pip python zsh no matches found glances adding quotes fix... | 0 |

21,620 | 3,736,401,400 | IssuesEvent | 2016-03-08 15:50:35 | mysociety/fixmystreet | https://api.github.com/repos/mysociety/fixmystreet | closed | Individual report page needs to handle display of multiple photos | Design Reviewing | Reports can have multiple photos now. This needs to be displayed properly on the individual report page.

And updates can have multiple photos too. So whatever we come up with should work in the main report body and any updates below it. | 1.0 | Individual report page needs to handle display of multiple photos - Reports can have multiple photos now. This needs to be displayed properly on the individual report page.

And updates can have multiple photos too. So whatever we come up with should work in the main report body and any updates below it. | design | individual report page needs to handle display of multiple photos reports can have multiple photos now this needs to be displayed properly on the individual report page and updates can have multiple photos too so whatever we come up with should work in the main report body and any updates below it | 1 |

173,147 | 27,391,918,747 | IssuesEvent | 2023-02-28 16:48:53 | tijlleenders/ZinZen | https://api.github.com/repos/tijlleenders/ZinZen | closed | Make design for the calendar overview | design | The calender overview can have the different feelings that have been logged over a month and additionally can provide a summary to the user | 1.0 | Make design for the calendar overview - The calender overview can have the different feelings that have been logged over a month and additionally can provide a summary to the user | design | make design for the calendar overview the calender overview can have the different feelings that have been logged over a month and additionally can provide a summary to the user | 1 |

82,395 | 3,606,294,256 | IssuesEvent | 2016-02-04 10:36:34 | EyeSeeTea/malariapp | https://api.github.com/repos/EyeSeeTea/malariapp | closed | Validate before create never assessment planning survey. | high priority | When we remove a deleted survey, it will be created as never assessment without check if exist. | 1.0 | Validate before create never assessment planning survey. - When we remove a deleted survey, it will be created as never assessment without check if exist. | non_design | validate before create never assessment planning survey when we remove a deleted survey it will be created as never assessment without check if exist | 0 |

76,421 | 7,527,901,937 | IssuesEvent | 2018-04-13 18:43:59 | istio/istio | https://api.github.com/repos/istio/istio | closed | pilot: glide Failures and private IPs | area/networking area/test and release | I seem to be running into errors trying to run glide update. Also for the main repo we should never have anything pulling from private IPs like 192.30.255.112. This is not only a bug but a security issue. Please fix ASAP. It's holding up development on multi-zone hybrid for the Dec release. We have a tight deadline.

... | 1.0 | pilot: glide Failures and private IPs - I seem to be running into errors trying to run glide update. Also for the main repo we should never have anything pulling from private IPs like 192.30.255.112. This is not only a bug but a security issue. Please fix ASAP. It's holding up development on multi-zone hybrid for the D... | non_design | pilot glide failures and private ips i seem to be running into errors trying to run glide update also for the main repo we should never have anything pulling from private ips like this is not only a bug but a security issue please fix asap it s holding up development on multi zone hybrid for the dec rele... | 0 |

734,367 | 25,346,367,509 | IssuesEvent | 2022-11-19 08:34:19 | googleapis/nodejs-ai-platform | https://api.github.com/repos/googleapis/nodejs-ai-platform | opened | AI platform predict tabular regression: should make predictions using the tabular regression model failed | type: bug priority: p1 flakybot: issue | Note: #443 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: 04f7c858217f1a3ce7b1072c7bf8946d39947532

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/2c1b5aae-a46c-4a7a-a474-79da01e686eb), [Sponge](http://sponge2/2c1b5aae-a46c-4a7a-a... | 1.0 | AI platform predict tabular regression: should make predictions using the tabular regression model failed - Note: #443 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: 04f7c858217f1a3ce7b1072c7bf8946d39947532

buildURL: [Build Status](https://source.cloud.google... | non_design | ai platform predict tabular regression should make predictions using the tabular regression model failed note was also for this test but it was closed more than days ago so i didn t mark it flaky commit buildurl status failed test output command failed node predict tabular regres... | 0 |

158,563 | 24,855,306,672 | IssuesEvent | 2022-10-27 01:18:17 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | opened | [DRAFT][Bug] My VA - Benefit payments - Recent direct-deposit - Data anomalies | bug backend design authenticated-experience dashboard front end my-va-payment-info | **[DRAFT]** Will finalize ticket details once all test-runs are completed.

## What happened?

On Staging **My VA** page, **Benefit payments** section, for User with recent direct-deposit payment, display of payment info appeared anomalous, as did related info on **Your VA payments** page:

- My VA page:

- Zero ... | 1.0 | [DRAFT][Bug] My VA - Benefit payments - Recent direct-deposit - Data anomalies - **[DRAFT]** Will finalize ticket details once all test-runs are completed.

## What happened?

On Staging **My VA** page, **Benefit payments** section, for User with recent direct-deposit payment, display of payment info appeared anomalo... | design | my va benefit payments recent direct deposit data anomalies will finalize ticket details once all test runs are completed what happened on staging my va page benefit payments section for user with recent direct deposit payment display of payment info appeared anomalous as did relat... | 1 |

35,188 | 4,635,777,866 | IssuesEvent | 2016-09-29 08:31:13 | Microsoft/vscode | https://api.github.com/repos/Microsoft/vscode | closed | Feature: CompletionItems can have promises as additionalEdits | as-designed feature-request suggest | It would be a really nice feature, if the `CompletionItem` can actually return promises as their `additionalTextEdits`.

I'm imaging the following feature:

You are autocompleting something (in my example it's an import that is added to the programmers file) and there are other imports that have the same class name. ... | 1.0 | Feature: CompletionItems can have promises as additionalEdits - It would be a really nice feature, if the `CompletionItem` can actually return promises as their `additionalTextEdits`.

I'm imaging the following feature:

You are autocompleting something (in my example it's an import that is added to the programmers f... | design | feature completionitems can have promises as additionaledits it would be a really nice feature if the completionitem can actually return promises as their additionaltextedits i m imaging the following feature you are autocompleting something in my example it s an import that is added to the programmers f... | 1 |

343,385 | 24,769,440,256 | IssuesEvent | 2022-10-23 00:17:44 | gamerpotion/DarkRPG | https://api.github.com/repos/gamerpotion/DarkRPG | closed | Server IP has changed | documentation | Anyone having issues connecting to the mmo server, please get version 2.4f onwards.

or add **darkrpg.mcserver.us** to the server list. | 1.0 | Server IP has changed - Anyone having issues connecting to the mmo server, please get version 2.4f onwards.

or add **darkrpg.mcserver.us** to the server list. | non_design | server ip has changed anyone having issues connecting to the mmo server please get version onwards or add darkrpg mcserver us to the server list | 0 |

76,702 | 9,481,637,443 | IssuesEvent | 2019-04-21 07:21:01 | modelarious/FantasiaSource | https://api.github.com/repos/modelarious/FantasiaSource | opened | Students can bypass the token system | Critical Design bug | Steps to fool the system:

- Submit fake assignment that will have low clone count

- Get token from fake submission

- Submit fake token and real assignment to teacher

- Teacher asks Fantasia to validate the fake token

- Fantasia checks and sees the token is the same as the one on the server and returns OK

There ... | 1.0 | Students can bypass the token system - Steps to fool the system:

- Submit fake assignment that will have low clone count

- Get token from fake submission

- Submit fake token and real assignment to teacher

- Teacher asks Fantasia to validate the fake token

- Fantasia checks and sees the token is the same as the one... | design | students can bypass the token system steps to fool the system submit fake assignment that will have low clone count get token from fake submission submit fake token and real assignment to teacher teacher asks fantasia to validate the fake token fantasia checks and sees the token is the same as the one... | 1 |

231,001 | 7,622,355,025 | IssuesEvent | 2018-05-03 11:54:18 | heptastique/onlygo | https://api.github.com/repos/heptastique/onlygo | closed | Estimation of Possible Distance in CentreInteret | priority: low | Estimation de la distance qu'il est possible de parcourir dans le Centre d'Interet | 1.0 | Estimation of Possible Distance in CentreInteret - Estimation de la distance qu'il est possible de parcourir dans le Centre d'Interet | non_design | estimation of possible distance in centreinteret estimation de la distance qu il est possible de parcourir dans le centre d interet | 0 |

109,665 | 13,797,123,246 | IssuesEvent | 2020-10-09 21:08:06 | ampproject/amphtml | https://api.github.com/repos/ampproject/amphtml | opened | Design Review 2020-10-14 20:00 UTC (Americas) | Type: Design Review | Time: [2020-10-14 20:00 UTC](https://www.timeanddate.com/worldclock/meeting.html?year=2020&month=10&day=14&iv=0) ([add to Google Calendar](http://www.google.com/calendar/event?action=TEMPLATE&text=AMP%20Project%20Design%20Review&dates=20201014T200000Z/20201014T210000Z&details=https%3A%2F%2Fbit.ly%2Famp-dr))