Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

164,189 | 6,220,846,767 | IssuesEvent | 2017-07-10 02:09:25 | nylas-mail-lives/nylas-mail | https://api.github.com/repos/nylas-mail-lives/nylas-mail | closed | Fix CI for Linux | Priority: High Type: Maintenance | Currently the Linux build is failing due to issue #8.

Once that issue is resolved, the Linux "build", actually the deploy, will fail due to missing AWS keys.

We need to figure out where we want to store our builds and how we want to push updates to end users.

[Edit] It appears that we will disable the "upload" functionality.

| 1.0 | Fix CI for Linux - Currently the Linux build is failing due to issue #8.

Once that issue is resolved, the Linux "build", actually the deploy, will fail due to missing AWS keys.

We need to figure out where we want to store our builds and how we want to push updates to end users.

[Edit] It appears that we will disable the "upload" functionality.

| priority | fix ci for linux currently the linux build is failing due to issue once that issue is resolved the linux build actually the deploy will fail due to missing aws keys we need to figure out where we want to store our builds and how we want to push updates to end users it appears that we will disable the upload functionality | 1 |

233,272 | 7,695,891,576 | IssuesEvent | 2018-05-18 13:47:00 | marklogic-community/data-explorer | https://api.github.com/repos/marklogic-community/data-explorer | closed | FERR-49 - Automatic version update | Component - UI JIRA Migration Priority - High Status - Complete Type - Task | **Original Reporter:** @markschiffner

**Created:** 25/Aug/17 8:05 AM

# Description

Need automatic revision number update based on checkin.

At minimum, we should have a file with the revision that we update and tag the baseline.

Currently UI says v0.1.0. | 1.0 | FERR-49 - Automatic version update - **Original Reporter:** @markschiffner

**Created:** 25/Aug/17 8:05 AM

# Description

Need automatic revision number update based on checkin.

At minimum, we should have a file with the revision that we update and tag the baseline.

Currently UI says v0.1.0. | priority | ferr automatic version update original reporter markschiffner created aug am description need automatic revision number update based on checkin at minimum we should have a file with the revision that we update and tag the baseline currently ui says | 1 |

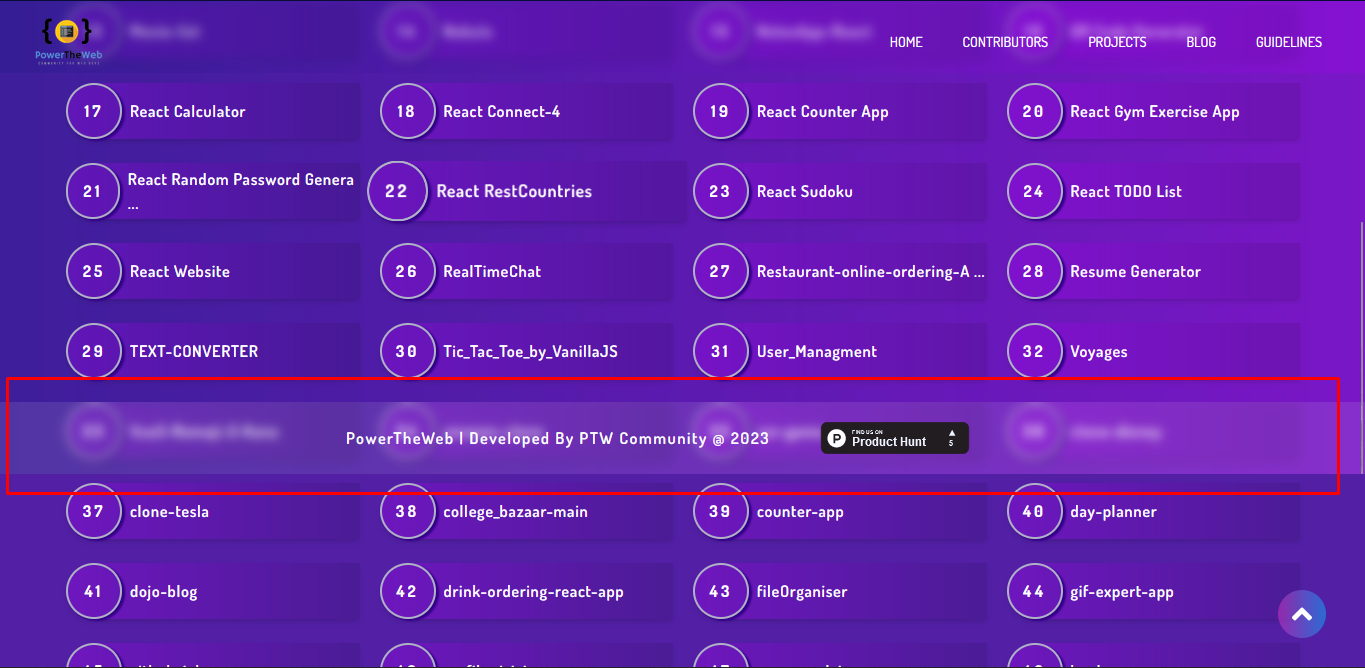

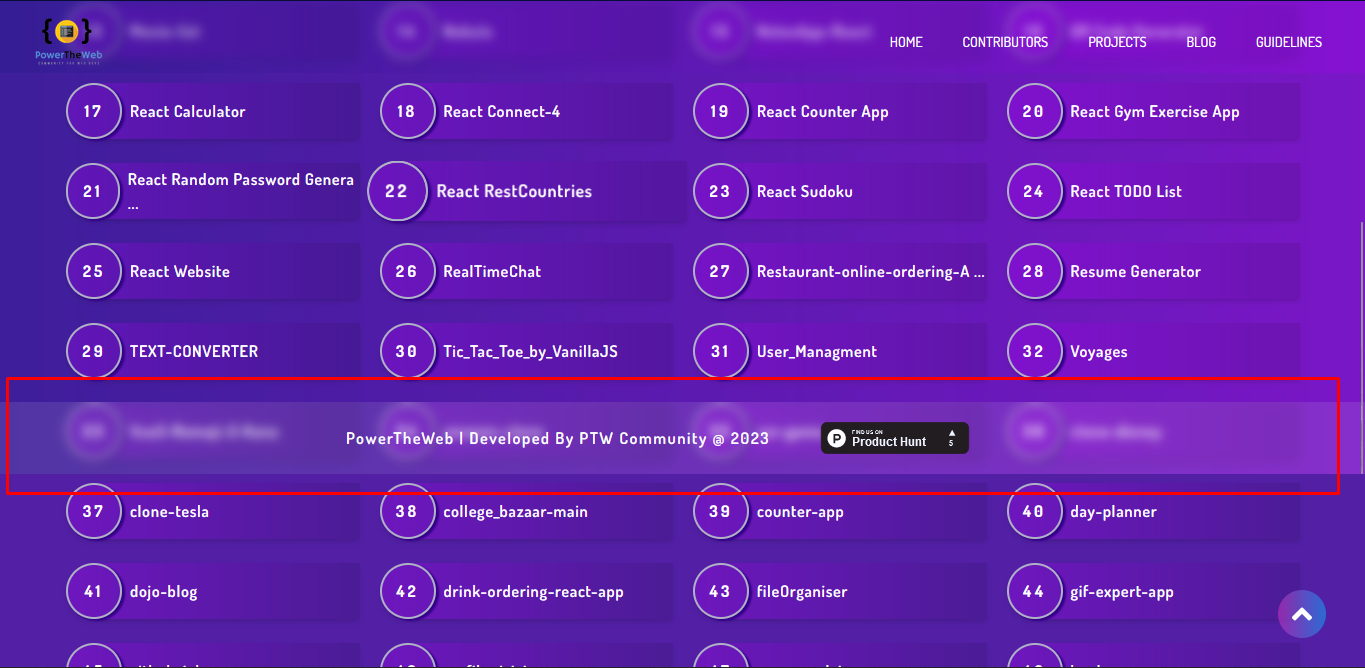

793,045 | 27,981,551,903 | IssuesEvent | 2023-03-26 07:49:31 | devvsakib/power-the-web | https://api.github.com/repos/devvsakib/power-the-web | closed | [BUG]: footer overlap in project page | bug [priority: high] | **Describe the bug**

Footer is overlapping on the project page.

s.

**To Reproduce**

Steps to reproduce the behavior:

Check height, overflow

**Expected behavior**

Place in the bottom

**Desktop (please complete the following information):**

- OS: Windows

- Browser chrome, firefox

- Version 22

**Additional context**

Add any other context about the problem here.

## Checklist

- [x] I have read and followed the project's contributing guidelines

- [x] I have checked that this issue is not a duplicate of an existing issue

- [x] I have tested the bug in the latest version of the project

- [x] I have included all relevant information and examples in this issue description

- [x] I am available to collaborate on this issue and address any feedback from maintainers or other contributors

<!-- Do not delete this -->

**IMPORTANT**

If it's your first Contribution to this project, please add your details to the Contributors file

File Path: `src/json/Contributors.json` and you have to **MUST** read the contributing guideline before you start. [Read this Guideline Before you start](https://github.com/devvsakib/power-the-web/blob/main/CONTRIBUTING.md)

> If you do not add your details or not assigned to issue, your PR will be Close

| 1.0 | [BUG]: footer overlap in project page - **Describe the bug**

Footer is overlapping on the project page.

s.

**To Reproduce**

Steps to reproduce the behavior:

Check height, overflow

**Expected behavior**

Place in the bottom

**Desktop (please complete the following information):**

- OS: Windows

- Browser chrome, firefox

- Version 22

**Additional context**

Add any other context about the problem here.

## Checklist

- [x] I have read and followed the project's contributing guidelines

- [x] I have checked that this issue is not a duplicate of an existing issue

- [x] I have tested the bug in the latest version of the project

- [x] I have included all relevant information and examples in this issue description

- [x] I am available to collaborate on this issue and address any feedback from maintainers or other contributors

<!-- Do not delete this -->

**IMPORTANT**

If it's your first Contribution to this project, please add your details to the Contributors file

File Path: `src/json/Contributors.json` and you have to **MUST** read the contributing guideline before you start. [Read this Guideline Before you start](https://github.com/devvsakib/power-the-web/blob/main/CONTRIBUTING.md)

> If you do not add your details or not assigned to issue, your PR will be Close

| priority | footer overlap in project page describe the bug footer is overlapping on the project page to reproduce steps to reproduce the behavior check height overflow expected behavior place in the bottom desktop please complete the following information os windows browser chrome firefox version additional context add any other context about the problem here checklist i have read and followed the project s contributing guidelines i have checked that this issue is not a duplicate of an existing issue i have tested the bug in the latest version of the project i have included all relevant information and examples in this issue description i am available to collaborate on this issue and address any feedback from maintainers or other contributors important if it s your first contribution to this project please add your details to the contributors file file path src json contributors json and you have to must read the contributing guideline before you start if you do not add your details or not assigned to issue your pr will be close | 1 |

781,554 | 27,441,742,791 | IssuesEvent | 2023-03-02 11:30:46 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | reopened | Tutorial rendering_multibody_plant broken on Deepnote | type: bug priority: high component: tutorials | Using the updated versions of #18000, the [rendering_multibody_plant.ipynb](https://deepnote.com/workspace/Drake-0b3b2c53-a7ad-441b-80f8-bf8350752305/project/Tutorials-2b4fc509-aef2-417d-a40d-6071dfed9199/%2Frendering_multibody_plant.ipynb) on Deepnote crashes partway through. | 1.0 | Tutorial rendering_multibody_plant broken on Deepnote - Using the updated versions of #18000, the [rendering_multibody_plant.ipynb](https://deepnote.com/workspace/Drake-0b3b2c53-a7ad-441b-80f8-bf8350752305/project/Tutorials-2b4fc509-aef2-417d-a40d-6071dfed9199/%2Frendering_multibody_plant.ipynb) on Deepnote crashes partway through. | priority | tutorial rendering multibody plant broken on deepnote using the updated versions of the on deepnote crashes partway through | 1 |

501,730 | 14,532,412,917 | IssuesEvent | 2020-12-14 22:23:45 | localstack/localstack | https://api.github.com/repos/localstack/localstack | closed | CloudFormation Ref of "AWS::ApiGatewayV2::Api" attempts to return "PhysicalResourceId" which is null (instead of "ApiId") | cannot-reproduce priority-high should-be-fixed | <!-- Love localstack? Please consider supporting our collective:

:point_right: https://opencollective.com/localstack/donate -->

# Type of request: This is a ...

[ X ] bug report

[ ] feature request

# Detailed description

I have an AWS::ApiGatewayV2::Api resource in my CloudFormation template.

Using "Ref" on this resource, should return the ApiId (for use across other resources in the stack).

Instead, the following error is observed:

```localstack_main | 2020-11-17T06:34:32:WARNING:localstack.utils.cloudformation.template_deployer: Unable to extract reference attribute PhysicalResourceId from resource: {'ApiEndpoint': 'ws://localhost:4514', 'ApiId': '48a6e425', 'Description': 'Serverless Websockets', 'Name': 'local-localstack-websockets-websockets', 'ProtocolType': 'WEBSOCKET', 'RouteSelectionExpression': '$request.body.action'}```

Note that this was working fine a few days ago. Related to this? https://github.com/localstack/localstack/pull/3244

## Expected behavior

Ref on AWS::ApiGatewayV2::Api should return the ApiId.

## Actual behavior

An exception is thrown, as it attempts to get PhysicalResourceId, which either does not exist or simply isn't populated.

# Steps to reproduce

Please refer to the steps to reproduce contained within this comment / parent issue, as it is able to replicate the issue.

https://github.com/localstack/localstack/issues/3210#issuecomment-723768889

┆Issue is synchronized with this [Jira Bug](https://localstack.atlassian.net/browse/LOC-329) by [Unito](https://www.unito.io/learn-more)

| 1.0 | CloudFormation Ref of "AWS::ApiGatewayV2::Api" attempts to return "PhysicalResourceId" which is null (instead of "ApiId") - <!-- Love localstack? Please consider supporting our collective:

:point_right: https://opencollective.com/localstack/donate -->

# Type of request: This is a ...

[ X ] bug report

[ ] feature request

# Detailed description

I have an AWS::ApiGatewayV2::Api resource in my CloudFormation template.

Using "Ref" on this resource, should return the ApiId (for use across other resources in the stack).

Instead, the following error is observed:

```localstack_main | 2020-11-17T06:34:32:WARNING:localstack.utils.cloudformation.template_deployer: Unable to extract reference attribute PhysicalResourceId from resource: {'ApiEndpoint': 'ws://localhost:4514', 'ApiId': '48a6e425', 'Description': 'Serverless Websockets', 'Name': 'local-localstack-websockets-websockets', 'ProtocolType': 'WEBSOCKET', 'RouteSelectionExpression': '$request.body.action'}```

Note that this was working fine a few days ago. Related to this? https://github.com/localstack/localstack/pull/3244

## Expected behavior

Ref on AWS::ApiGatewayV2::Api should return the ApiId.

## Actual behavior

An exception is thrown, as it attempts to get PhysicalResourceId, which either does not exist or simply isn't populated.

# Steps to reproduce

Please refer to the steps to reproduce contained within this comment / parent issue, as it is able to replicate the issue.

https://github.com/localstack/localstack/issues/3210#issuecomment-723768889

┆Issue is synchronized with this [Jira Bug](https://localstack.atlassian.net/browse/LOC-329) by [Unito](https://www.unito.io/learn-more)

| priority | cloudformation ref of aws api attempts to return physicalresourceid which is null instead of apiid love localstack please consider supporting our collective point right type of request this is a bug report feature request detailed description i have an aws api resource in my cloudformation template using ref on this resource should return the apiid for use across other resources in the stack instead the following error is observed localstack main warning localstack utils cloudformation template deployer unable to extract reference attribute physicalresourceid from resource apiendpoint ws localhost apiid description serverless websockets name local localstack websockets websockets protocoltype websocket routeselectionexpression request body action note that this was working fine a few days ago related to this expected behavior ref on aws api should return the apiid actual behavior an exception is thrown as it attempts to get physicalresourceid which either does not exist or simply isn t populated steps to reproduce please refer to the steps to reproduce contained within this comment parent issue as it is able to replicate the issue ┆issue is synchronized with this by | 1 |

656,069 | 21,718,196,698 | IssuesEvent | 2022-05-10 20:14:24 | CCSI-Toolset/FOQUS | https://api.github.com/repos/CCSI-Toolset/FOQUS | closed | Revisit PyQt version(s) requirements | Priority:High | ## Motivation

- Currently, we're using `pyqt==5.13` in `setup.py`

- However, this has shortcomings

- AFAIK 5.13 is not a "super-stable"/LTS (long-term support) version for (Py)Qt 5 such as 5.12 and 5.15

- To be able to have a Conda package for FOQUS, it has to be compatible with the available versions

## Known constraints

- `pytest-qt` only works with Qt 5.11+

- AFAIK, PyQt 5.12.3 is the latest version available to install with Conda (through the `conda-forge` channel)

- PyQt 5.13 is not available for Windows and Python 3.9, leading to installation errors such as `ERROR: Could not find a version that satisfies the requirement PyQt5==5.13 (from ccsi-foqus) (from versions: 5.12.3, 5.14.0, 5.14.1, 5.14.2, 5.15.0, 5.15.1, 5.15.2, 5.15.3, 5.15.4, 5.15.5, 5.15.6)` | 1.0 | Revisit PyQt version(s) requirements - ## Motivation

- Currently, we're using `pyqt==5.13` in `setup.py`

- However, this has shortcomings

- AFAIK 5.13 is not a "super-stable"/LTS (long-term support) version for (Py)Qt 5 such as 5.12 and 5.15

- To be able to have a Conda package for FOQUS, it has to be compatible with the available versions

## Known constraints

- `pytest-qt` only works with Qt 5.11+

- AFAIK, PyQt 5.12.3 is the latest version available to install with Conda (through the `conda-forge` channel)

- PyQt 5.13 is not available for Windows and Python 3.9, leading to installation errors such as `ERROR: Could not find a version that satisfies the requirement PyQt5==5.13 (from ccsi-foqus) (from versions: 5.12.3, 5.14.0, 5.14.1, 5.14.2, 5.15.0, 5.15.1, 5.15.2, 5.15.3, 5.15.4, 5.15.5, 5.15.6)` | priority | revisit pyqt version s requirements motivation currently we re using pyqt in setup py however this has shortcomings afaik is not a super stable lts long term support version for py qt such as and to be able to have a conda package for foqus it has to be compatible with the available versions known constraints pytest qt only works with qt afaik pyqt is the latest version available to install with conda through the conda forge channel pyqt is not available for windows and python leading to installation errors such as error could not find a version that satisfies the requirement from ccsi foqus from versions | 1 |

481,788 | 13,891,889,024 | IssuesEvent | 2020-10-19 11:22:33 | sunpy/sunpy | https://api.github.com/repos/sunpy/sunpy | closed | Maps using the CD matrix are not correctly modified by resample | Bug(?) Close? Effort High Package Intermediate Priority Medium map | resample only changes the CDELT flags not CD if present.

| 1.0 | Maps using the CD matrix are not correctly modified by resample - resample only changes the CDELT flags not CD if present.

| priority | maps using the cd matrix are not correctly modified by resample resample only changes the cdelt flags not cd if present | 1 |

79,224 | 3,521,991,365 | IssuesEvent | 2016-01-13 06:40:50 | paulkass/BirdBoxBuilder | https://api.github.com/repos/paulkass/BirdBoxBuilder | closed | Add a plank with a hole geometry | Important new feature Priority: High | Add a plank with a hole geometry for the front edge of the bird box. | 1.0 | Add a plank with a hole geometry - Add a plank with a hole geometry for the front edge of the bird box. | priority | add a plank with a hole geometry add a plank with a hole geometry for the front edge of the bird box | 1 |

87,589 | 3,755,991,559 | IssuesEvent | 2016-03-13 01:36:32 | devspace/devspace | https://api.github.com/repos/devspace/devspace | closed | Optimize mobile experience | bug high priority | Added a few columns but couldn't see them on iPhone or iPad since the app wouldn't scroll horizontally | 1.0 | Optimize mobile experience - Added a few columns but couldn't see them on iPhone or iPad since the app wouldn't scroll horizontally | priority | optimize mobile experience added a few columns but couldn t see them on iphone or ipad since the app wouldn t scroll horizontally | 1 |

252,702 | 8,039,294,016 | IssuesEvent | 2018-07-30 17:55:36 | systers/communities | https://api.github.com/repos/systers/communities | closed | Set up the repository with basic angular files | Category: Coding Difficulty: MEDIUM Priority: HIGH Program: GSoC Type: Enhancement | ## Description

As a user,

I need set up the repository,

so that I can restart the project with angular framework

## Acceptance Criteria

- Basic working Angular App

- Basic files and modules established

### Update [Required]

- README

- Create multiple new files

## Definition of Done

- [ ] All of the required items are completed.

- [ ] Approval by 1 mentor.

## Estimation

1 hour

Can I be assigned this issue @Tharangi @divyanshu-rawat @Janiceilene @MeepyMay ? | 1.0 | Set up the repository with basic angular files - ## Description

As a user,

I need set up the repository,

so that I can restart the project with angular framework

## Acceptance Criteria

- Basic working Angular App

- Basic files and modules established

### Update [Required]

- README

- Create multiple new files

## Definition of Done

- [ ] All of the required items are completed.

- [ ] Approval by 1 mentor.

## Estimation

1 hour

Can I be assigned this issue @Tharangi @divyanshu-rawat @Janiceilene @MeepyMay ? | priority | set up the repository with basic angular files description as a user i need set up the repository so that i can restart the project with angular framework acceptance criteria basic working angular app basic files and modules established update readme create multiple new files definition of done all of the required items are completed approval by mentor estimation hour can i be assigned this issue tharangi divyanshu rawat janiceilene meepymay | 1 |

342,376 | 10,315,971,380 | IssuesEvent | 2019-08-30 08:54:18 | conan-io/conan | https://api.github.com/repos/conan-io/conan | closed | Feature shallow clone for Git brings error (Version 1.18.1) | complex: low component: scm priority: high stage: queue type: bug | To help us debug your issue please explain:

- [x] I've read the [CONTRIBUTING guide](https://github.com/conan-io/conan/blob/develop/.github/CONTRIBUTING.md).

- [x] I've specified the Conan version, operating system version and any tool that can be relevant.

- [x] I've explained the steps to reproduce the error or the motivation/use case of the question/suggestion.

Hello,

I am using Conan 1.18.1 on my Windows 10.

I use internally `git describe` to build my version number of my package. This uses the latest tag, that has been put into git.

Now with the new feature `shallow clone`, to safe time and space, I get the problem, that there are no `tags`in the history and I am unable to generate my package version.

It would be very nice, to have a property `shallow` in the `scm` tool. The best would be, to set the default, to `shallow=False` to not break compatibility.

| 1.0 | Feature shallow clone for Git brings error (Version 1.18.1) - To help us debug your issue please explain:

- [x] I've read the [CONTRIBUTING guide](https://github.com/conan-io/conan/blob/develop/.github/CONTRIBUTING.md).

- [x] I've specified the Conan version, operating system version and any tool that can be relevant.

- [x] I've explained the steps to reproduce the error or the motivation/use case of the question/suggestion.

Hello,

I am using Conan 1.18.1 on my Windows 10.

I use internally `git describe` to build my version number of my package. This uses the latest tag, that has been put into git.

Now with the new feature `shallow clone`, to safe time and space, I get the problem, that there are no `tags`in the history and I am unable to generate my package version.

It would be very nice, to have a property `shallow` in the `scm` tool. The best would be, to set the default, to `shallow=False` to not break compatibility.

| priority | feature shallow clone for git brings error version to help us debug your issue please explain i ve read the i ve specified the conan version operating system version and any tool that can be relevant i ve explained the steps to reproduce the error or the motivation use case of the question suggestion hello i am using conan on my windows i use internally git describe to build my version number of my package this uses the latest tag that has been put into git now with the new feature shallow clone to safe time and space i get the problem that there are no tags in the history and i am unable to generate my package version it would be very nice to have a property shallow in the scm tool the best would be to set the default to shallow false to not break compatibility | 1 |

547,859 | 16,048,707,176 | IssuesEvent | 2021-04-22 16:23:57 | vaticle/typedb | https://api.github.com/repos/vaticle/typedb | closed | Inherited, overridable, scoped Roles | priority: high type: refactor | ## Problem to Solve

We extensively modify the behaviour of roles:

1. we scope roles to relations, allowing users to re-use names across exclusive relation hierarchies and therefore removing a large naming burden.

2. We include inheritance of roles in relations

3. We allow overriding relation roles (using the pre-existing `as`, which should make the child role playable, but block the parent role from being inherited as well)

## Proposed Solution

A larger piece of work including the following work items, for release with 1.9. It will induce a breaking DB change.

- [x] add role scoping to the grammar (https://github.com/graknlabs/graql/pull/140)

- [ ] update Grakn to reflect the above changes, scoping roles to relation within Grakn (https://github.com/graknlabs/grakn/pull/5802)

- [ ] implement role inheritance within Grakn Core

- [ ] implement role overriding within Grakn Core

- [ ] update the Protocol to ensure that concept type Labels have two portions: scope and name; role `relates` takes a string instead of a Role instance

- [ ] propagate all changes to other clients

- [ ] propagate all changes to examples

Renable tests:

`GraknClientIT` - testDeletingAConcept_TheConceptIsDeleted

Fix:

Server side implementation of RPC concept API | 1.0 | Inherited, overridable, scoped Roles - ## Problem to Solve

We extensively modify the behaviour of roles:

1. we scope roles to relations, allowing users to re-use names across exclusive relation hierarchies and therefore removing a large naming burden.

2. We include inheritance of roles in relations

3. We allow overriding relation roles (using the pre-existing `as`, which should make the child role playable, but block the parent role from being inherited as well)

## Proposed Solution

A larger piece of work including the following work items, for release with 1.9. It will induce a breaking DB change.

- [x] add role scoping to the grammar (https://github.com/graknlabs/graql/pull/140)

- [ ] update Grakn to reflect the above changes, scoping roles to relation within Grakn (https://github.com/graknlabs/grakn/pull/5802)

- [ ] implement role inheritance within Grakn Core

- [ ] implement role overriding within Grakn Core

- [ ] update the Protocol to ensure that concept type Labels have two portions: scope and name; role `relates` takes a string instead of a Role instance

- [ ] propagate all changes to other clients

- [ ] propagate all changes to examples

Renable tests:

`GraknClientIT` - testDeletingAConcept_TheConceptIsDeleted

Fix:

Server side implementation of RPC concept API | priority | inherited overridable scoped roles problem to solve we extensively modify the behaviour of roles we scope roles to relations allowing users to re use names across exclusive relation hierarchies and therefore removing a large naming burden we include inheritance of roles in relations we allow overriding relation roles using the pre existing as which should make the child role playable but block the parent role from being inherited as well proposed solution a larger piece of work including the following work items for release with it will induce a breaking db change add role scoping to the grammar update grakn to reflect the above changes scoping roles to relation within grakn implement role inheritance within grakn core implement role overriding within grakn core update the protocol to ensure that concept type labels have two portions scope and name role relates takes a string instead of a role instance propagate all changes to other clients propagate all changes to examples renable tests graknclientit testdeletingaconcept theconceptisdeleted fix server side implementation of rpc concept api | 1 |

270,674 | 8,468,383,262 | IssuesEvent | 2018-10-23 19:35:07 | ClinGen/clincoded | https://api.github.com/repos/ClinGen/clincoded | opened | Change MONDO ID for GDM | EP request GCI R23 external colleague priority: high | "PRPS1- deafness, X-linked 6" curation need to be changed to PRPS1-Deficiency Disorders (MONDO:0100061) for this GDM:

https://curation.clinicalgenome.org/curation-central/?gdm=b8931f64-ec97-4171-8163-23ded968ea42

| 1.0 | Change MONDO ID for GDM - "PRPS1- deafness, X-linked 6" curation need to be changed to PRPS1-Deficiency Disorders (MONDO:0100061) for this GDM:

https://curation.clinicalgenome.org/curation-central/?gdm=b8931f64-ec97-4171-8163-23ded968ea42

| priority | change mondo id for gdm deafness x linked curation need to be changed to deficiency disorders mondo for this gdm | 1 |

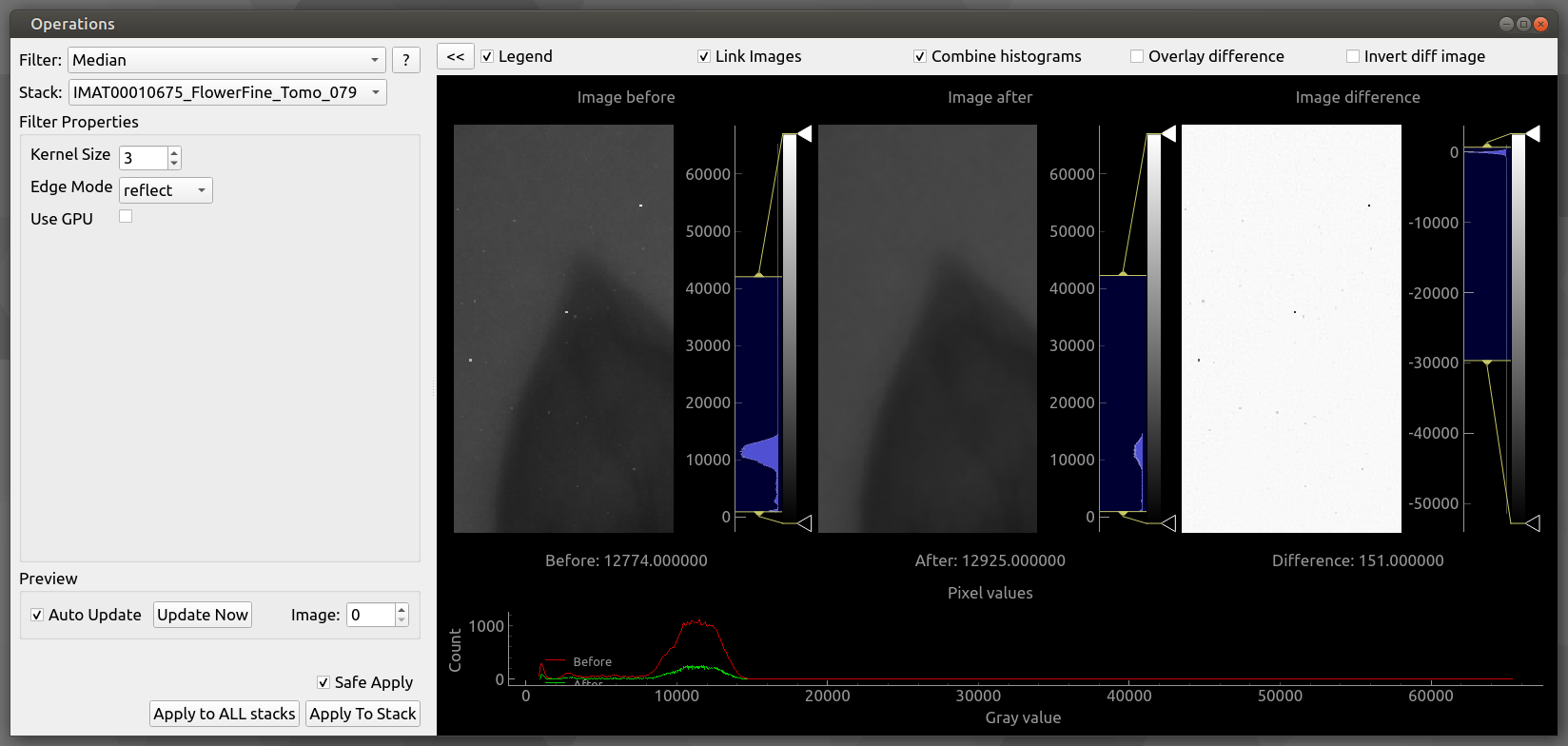

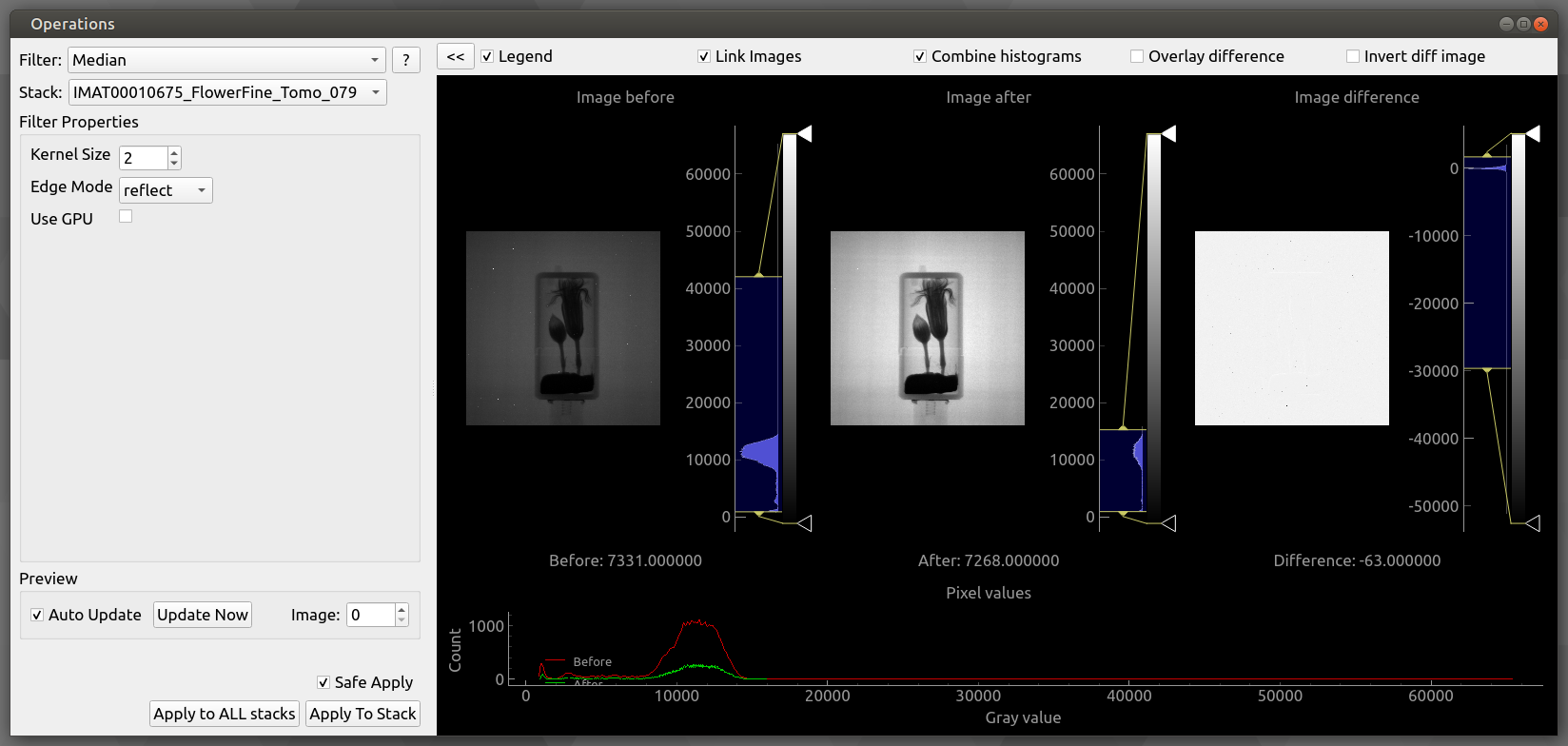

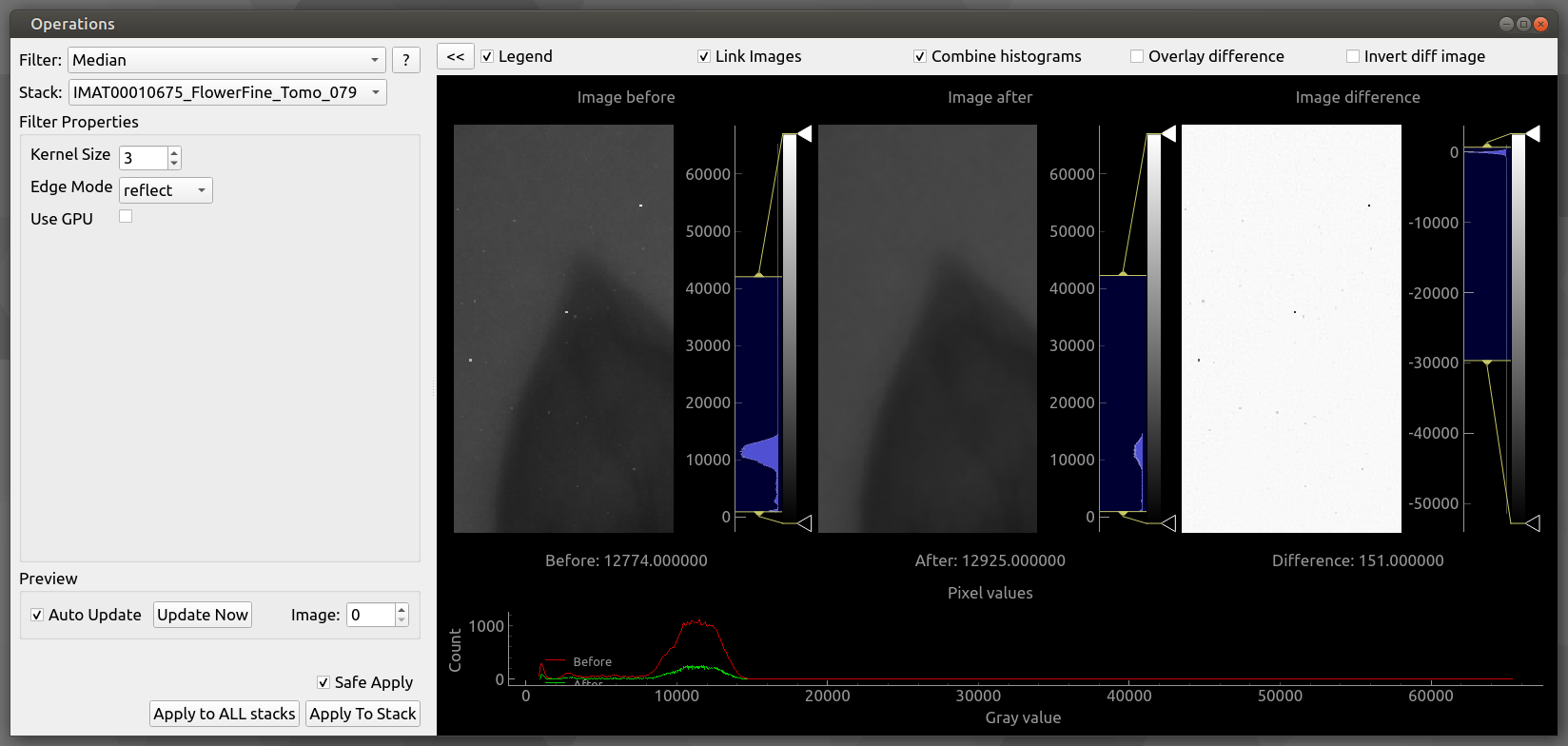

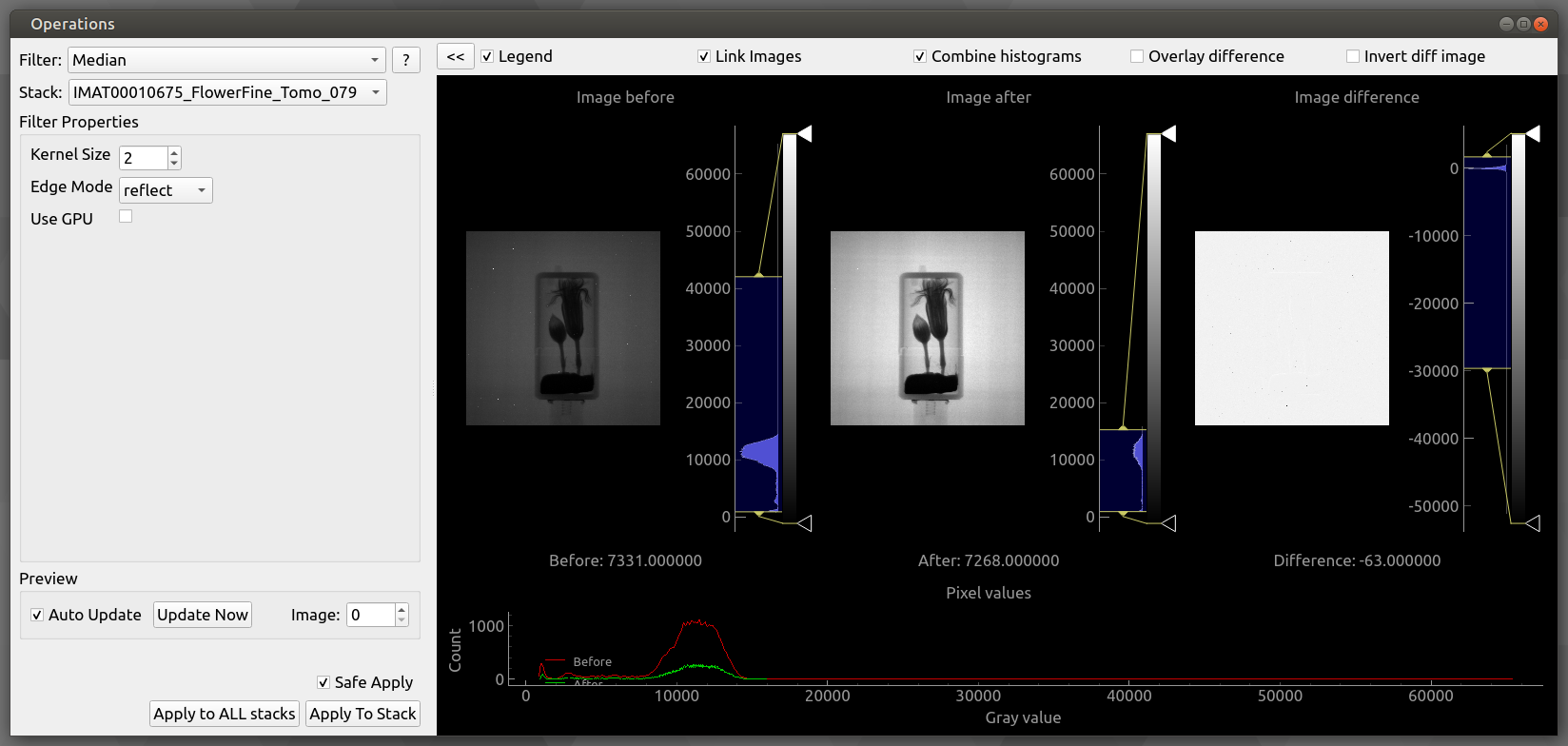

534,219 | 15,612,440,746 | IssuesEvent | 2021-03-19 15:23:24 | mantidproject/mantidimaging | https://api.github.com/repos/mantidproject/mantidimaging | closed | Don't reset zoom when changing operation parameters | Component: GUI High Priority Type: Bug | ### Summary

It is useful to be able to adjust parameters while zoomed in to see their effect.

### Steps To Reproduce

Open dataset, open operations window. Switch to median.

Zoom in so that pixel details are visible.

Change the kernel size

### Expected Behaviour

preview updates.

### Current Behaviour

preview zoom level resets

### Context

reported with 2.0. also occurs on master

### Screenshot(s)

Then click to adjust kernel size, and zoom resets.

| 1.0 | Don't reset zoom when changing operation parameters - ### Summary

It is useful to be able to adjust parameters while zoomed in to see their effect.

### Steps To Reproduce

Open dataset, open operations window. Switch to median.

Zoom in so that pixel details are visible.

Change the kernel size

### Expected Behaviour

preview updates.

### Current Behaviour

preview zoom level resets

### Context

reported with 2.0. also occurs on master

### Screenshot(s)

Then click to adjust kernel size, and zoom resets.

| priority | don t reset zoom when changing operation parameters summary it is useful to be able to adjust parameters while zoomed in to see their effect steps to reproduce open dataset open operations window switch to median zoom in so that pixel details are visible change the kernel size expected behaviour preview updates current behaviour preview zoom level resets context reported with also occurs on master screenshot s then click to adjust kernel size and zoom resets | 1 |

719,893 | 24,773,255,833 | IssuesEvent | 2022-10-23 12:15:32 | bounswe/bounswe2022group5 | https://api.github.com/repos/bounswe/bounswe2022group5 | closed | Creating initial mobile app structure | High Priority Type: Enhancement Status: Need Review | ***Description*:**

Initial flutter mobile app structure should be created and pushed to the repository.

***Todo's*:**

- [x] Initial screens should be created.

- [x] Initial model structure should be created. (Later it can be changed according to backend team's decisions.)

- [x] This structure should be pushed to the repo.

***Reviewers*:** @enginoguzhansenol

***Task Deadline*:** 23.10.2022 14:00

***Review Deadline*:** 23.10.2022 15:00 | 1.0 | Creating initial mobile app structure - ***Description*:**

Initial flutter mobile app structure should be created and pushed to the repository.

***Todo's*:**

- [x] Initial screens should be created.

- [x] Initial model structure should be created. (Later it can be changed according to backend team's decisions.)

- [x] This structure should be pushed to the repo.

***Reviewers*:** @enginoguzhansenol

***Task Deadline*:** 23.10.2022 14:00

***Review Deadline*:** 23.10.2022 15:00 | priority | creating initial mobile app structure description initial flutter mobile app structure should be created and pushed to the repository todo s initial screens should be created initial model structure should be created later it can be changed according to backend team s decisions this structure should be pushed to the repo reviewers enginoguzhansenol task deadline review deadline | 1 |

799,706 | 28,312,336,886 | IssuesEvent | 2023-04-10 16:30:17 | ggerganov/llama.cpp | https://api.github.com/repos/ggerganov/llama.cpp | closed | Fix quantize_row_q4_1() with ARM_NEON | bug high priority | It is currently bugged. See results of `quantize-stats` on M1:

```

$ ./quantize-stats -m models/7B/ggml-model-f16.bin

Loading model

llama.cpp: loading model from models/7B/ggml-model-f16.bin

llama_model_load_internal: format = ggjt v1 (latest)

llama_model_load_internal: n_vocab = 32000

llama_model_load_internal: n_ctx = 256

llama_model_load_internal: n_embd = 4096

llama_model_load_internal: n_mult = 256

llama_model_load_internal: n_head = 32

llama_model_load_internal: n_layer = 32

llama_model_load_internal: n_rot = 128

llama_model_load_internal: f16 = 1

llama_model_load_internal: n_ff = 11008

llama_model_load_internal: n_parts = 1

llama_model_load_internal: model size = 7B

llama_model_load_internal: ggml ctx size = 59.11 KB

llama_model_load_internal: mem required = 14645.07 MB (+ 2052.00 MB per state)

llama_init_from_file: kv self size = 256.00 MB

note: source model is f16

testing 291 layers with max size 131072000

q4_0 : rmse 0.00222150, maxerr 0.18429124, 95pct<0.0040, median<0.0018

q4_1 : rmse 0.00360044, maxerr 0.26373291, 95pct<0.0066, median<0.0028

main: total time = 93546.68 ms

```

The RMSE is too high - worse than Q4_0.

There is a bug in the following piece of code:

https://github.com/ggerganov/llama.cpp/blob/180b693a47b6b825288ef9f2c39d24b6eea4eea6/ggml.c#L922-L955

We should fix it | 1.0 | Fix quantize_row_q4_1() with ARM_NEON - It is currently bugged. See results of `quantize-stats` on M1:

```

$ ./quantize-stats -m models/7B/ggml-model-f16.bin

Loading model

llama.cpp: loading model from models/7B/ggml-model-f16.bin

llama_model_load_internal: format = ggjt v1 (latest)

llama_model_load_internal: n_vocab = 32000

llama_model_load_internal: n_ctx = 256

llama_model_load_internal: n_embd = 4096

llama_model_load_internal: n_mult = 256

llama_model_load_internal: n_head = 32

llama_model_load_internal: n_layer = 32

llama_model_load_internal: n_rot = 128

llama_model_load_internal: f16 = 1

llama_model_load_internal: n_ff = 11008

llama_model_load_internal: n_parts = 1

llama_model_load_internal: model size = 7B

llama_model_load_internal: ggml ctx size = 59.11 KB

llama_model_load_internal: mem required = 14645.07 MB (+ 2052.00 MB per state)

llama_init_from_file: kv self size = 256.00 MB

note: source model is f16

testing 291 layers with max size 131072000

q4_0 : rmse 0.00222150, maxerr 0.18429124, 95pct<0.0040, median<0.0018

q4_1 : rmse 0.00360044, maxerr 0.26373291, 95pct<0.0066, median<0.0028

main: total time = 93546.68 ms

```

The RMSE is too high - worse than Q4_0.

There is a bug in the following piece of code:

https://github.com/ggerganov/llama.cpp/blob/180b693a47b6b825288ef9f2c39d24b6eea4eea6/ggml.c#L922-L955

We should fix it | priority | fix quantize row with arm neon it is currently bugged see results of quantize stats on quantize stats m models ggml model bin loading model llama cpp loading model from models ggml model bin llama model load internal format ggjt latest llama model load internal n vocab llama model load internal n ctx llama model load internal n embd llama model load internal n mult llama model load internal n head llama model load internal n layer llama model load internal n rot llama model load internal llama model load internal n ff llama model load internal n parts llama model load internal model size llama model load internal ggml ctx size kb llama model load internal mem required mb mb per state llama init from file kv self size mb note source model is testing layers with max size rmse maxerr median rmse maxerr median main total time ms the rmse is too high worse than there is a bug in the following piece of code we should fix it | 1 |

340,889 | 10,279,592,704 | IssuesEvent | 2019-08-26 00:39:54 | okTurtles/group-income-simple | https://api.github.com/repos/okTurtles/group-income-simple | closed | Bugs in GroupsList (group switcher) | App:Frontend Kind:Bug Level:Starter Note:Up-for-grabs Priority:High | ### Problem

- Upon switching to another group, the group icon isn't updated

- Upon switching to another group, it doesn't seem to let you switch back to the first group

Also, possibly unrelated, there is log spam along these lines:

```

[Vue tip]: Prop "itemid" is passed to component <Anonymous>, but the declared prop name is "itemId". Note that HTML attributes are case-insensitive and camelCased props need to use their kebab-case equivalents when using in-DOM templates. You should probably use "item-id" instead of "itemId". vue.common.dev.js:636:14

[Vue tip]: Prop "hasdivider" is passed to component <Anonymous>, but the declared prop name is "hasDivider". Note that HTML attributes are case-insensitive and camelCased props need to use their kebab-case equivalents when using in-DOM templates. You should probably use "has-divider" instead of "hasDivider". vue.common.dev.js:636:14

[Vue tip]: Prop "disableradius" is passed to component <Anonymous>, but the declared prop name is "disableRadius". Note that HTML attributes are case-insensitive and camelCased props need to use their kebab-case equivalents when using in-DOM templates. You should probably use "disable-radius" instead of "disableRadius". vue.common.dev.js:636:14

[Vue tip]: Prop "isactive" is passed to component <Anonymous>, but the declared prop name is "isActive". Note that HTML attributes are case-insensitive and camelCased props need to use their kebab-case equivalents when using in-DOM templates. You should probably use "is-active" instead of "isActive".

```

### Solution

Fix | 1.0 | Bugs in GroupsList (group switcher) - ### Problem

- Upon switching to another group, the group icon isn't updated

- Upon switching to another group, it doesn't seem to let you switch back to the first group

Also, possibly unrelated, there is log spam along these lines:

```

[Vue tip]: Prop "itemid" is passed to component <Anonymous>, but the declared prop name is "itemId". Note that HTML attributes are case-insensitive and camelCased props need to use their kebab-case equivalents when using in-DOM templates. You should probably use "item-id" instead of "itemId". vue.common.dev.js:636:14

[Vue tip]: Prop "hasdivider" is passed to component <Anonymous>, but the declared prop name is "hasDivider". Note that HTML attributes are case-insensitive and camelCased props need to use their kebab-case equivalents when using in-DOM templates. You should probably use "has-divider" instead of "hasDivider". vue.common.dev.js:636:14

[Vue tip]: Prop "disableradius" is passed to component <Anonymous>, but the declared prop name is "disableRadius". Note that HTML attributes are case-insensitive and camelCased props need to use their kebab-case equivalents when using in-DOM templates. You should probably use "disable-radius" instead of "disableRadius". vue.common.dev.js:636:14

[Vue tip]: Prop "isactive" is passed to component <Anonymous>, but the declared prop name is "isActive". Note that HTML attributes are case-insensitive and camelCased props need to use their kebab-case equivalents when using in-DOM templates. You should probably use "is-active" instead of "isActive".

```

### Solution

Fix | priority | bugs in groupslist group switcher problem upon switching to another group the group icon isn t updated upon switching to another group it doesn t seem to let you switch back to the first group also possibly unrelated there is log spam along these lines prop itemid is passed to component but the declared prop name is itemid note that html attributes are case insensitive and camelcased props need to use their kebab case equivalents when using in dom templates you should probably use item id instead of itemid vue common dev js prop hasdivider is passed to component but the declared prop name is hasdivider note that html attributes are case insensitive and camelcased props need to use their kebab case equivalents when using in dom templates you should probably use has divider instead of hasdivider vue common dev js prop disableradius is passed to component but the declared prop name is disableradius note that html attributes are case insensitive and camelcased props need to use their kebab case equivalents when using in dom templates you should probably use disable radius instead of disableradius vue common dev js prop isactive is passed to component but the declared prop name is isactive note that html attributes are case insensitive and camelcased props need to use their kebab case equivalents when using in dom templates you should probably use is active instead of isactive solution fix | 1 |

611,996 | 18,988,373,184 | IssuesEvent | 2021-11-22 01:59:04 | co-cart/co-cart | https://api.github.com/repos/co-cart/co-cart | closed | Custom Cart Item Data not returned, when added to cart | bug:confirmed priority:high good first issue status:changelog added API: Cart | ## Describe the bug

When I add an item to cart with custom `item_data` and set `return_item` to true, the cart Item is returned, but `cart_item_data` is empty. When the cartItem is fetched (`wp-json/cocart/v2/cart/items`), the `cart_item_data` property is returned correctly.

## Prerequisites

<!-- Mark completed items with an [x] -->

- [x ] I have searched for similar issues in both open and closed tickets and cannot find a duplicate.

- [ x] The issue still exists against the latest `master` branch of CoCart on GitHub (this is **not** the same version as on WordPress.org!)

- [x ] I have attempted to find the simplest possible steps to reproduce the issue.

- [ ] I have included a failing test as a pull request (Optional)

- [x ] I have installed the requirements to run this plugin.

## Steps to reproduce the issue

<!-- I need to be able to reproduce the bug in order to fix it so please be descriptive! -->

1. Submit an add-to-cart request

[POST] `wp-json/cocart/v2/cart/add-item`

```

{

"id":"<simple_product_id>",

"return_item":true,

"item_data": {

"my_test_data":"my-test-value"

}

}

```

2. Check the response: `cart_item_data` property is empty

3. Fetch the item via `wp-json/cocart/v2/cart/items`: `cart_item_data` is correct

## Expected/actual behaviour

`cart_item_data` should be retunred with values after the add to cart request.

## Isolating the problem

<!-- Mark completed items with an [x] -->

- [ x] This bug happens with only WooCommerce and CoCart plugin are active.

- [ x] This bug happens with a default WordPress theme active.

- [ ] This bug happens with the WordPress theme Storefront active.

- [x ] This bug happens with the latest release of WooCommerce active.

- [ ] This bug happens only when I authenticate as a customer.

- [ ] This bug happens only when I authenticate as administrator.

- [x ] I can reproduce this bug consistently using the steps above.

| 1.0 | Custom Cart Item Data not returned, when added to cart - ## Describe the bug

When I add an item to cart with custom `item_data` and set `return_item` to true, the cart Item is returned, but `cart_item_data` is empty. When the cartItem is fetched (`wp-json/cocart/v2/cart/items`), the `cart_item_data` property is returned correctly.

## Prerequisites

<!-- Mark completed items with an [x] -->

- [x ] I have searched for similar issues in both open and closed tickets and cannot find a duplicate.

- [ x] The issue still exists against the latest `master` branch of CoCart on GitHub (this is **not** the same version as on WordPress.org!)

- [x ] I have attempted to find the simplest possible steps to reproduce the issue.

- [ ] I have included a failing test as a pull request (Optional)

- [x ] I have installed the requirements to run this plugin.

## Steps to reproduce the issue

<!-- I need to be able to reproduce the bug in order to fix it so please be descriptive! -->

1. Submit an add-to-cart request

[POST] `wp-json/cocart/v2/cart/add-item`

```

{

"id":"<simple_product_id>",

"return_item":true,

"item_data": {

"my_test_data":"my-test-value"

}

}

```

2. Check the response: `cart_item_data` property is empty

3. Fetch the item via `wp-json/cocart/v2/cart/items`: `cart_item_data` is correct

## Expected/actual behaviour

`cart_item_data` should be retunred with values after the add to cart request.

## Isolating the problem

<!-- Mark completed items with an [x] -->

- [ x] This bug happens with only WooCommerce and CoCart plugin are active.

- [ x] This bug happens with a default WordPress theme active.

- [ ] This bug happens with the WordPress theme Storefront active.

- [x ] This bug happens with the latest release of WooCommerce active.

- [ ] This bug happens only when I authenticate as a customer.

- [ ] This bug happens only when I authenticate as administrator.

- [x ] I can reproduce this bug consistently using the steps above.

| priority | custom cart item data not returned when added to cart describe the bug when i add an item to cart with custom item data and set return item to true the cart item is returned but cart item data is empty when the cartitem is fetched wp json cocart cart items the cart item data property is returned correctly prerequisites i have searched for similar issues in both open and closed tickets and cannot find a duplicate the issue still exists against the latest master branch of cocart on github this is not the same version as on wordpress org i have attempted to find the simplest possible steps to reproduce the issue i have included a failing test as a pull request optional i have installed the requirements to run this plugin steps to reproduce the issue submit an add to cart request wp json cocart cart add item id return item true item data my test data my test value check the response cart item data property is empty fetch the item via wp json cocart cart items cart item data is correct expected actual behaviour cart item data should be retunred with values after the add to cart request isolating the problem this bug happens with only woocommerce and cocart plugin are active this bug happens with a default wordpress theme active this bug happens with the wordpress theme storefront active this bug happens with the latest release of woocommerce active this bug happens only when i authenticate as a customer this bug happens only when i authenticate as administrator i can reproduce this bug consistently using the steps above | 1 |

238,579 | 7,781,017,657 | IssuesEvent | 2018-06-05 22:03:52 | brijeshshah13/OpenCI | https://api.github.com/repos/brijeshshah13/OpenCI | closed | Implement Logout functionality | Priority: High Status: Completed | **Is it a ?**

- [ ] Bug Report

- [x] Feature Request

- [ ] Chore

### Feature Request

**Feature Description**

Log out the user upon click on `Logout`.

**Would you like to work on the issue?**

Yes. | 1.0 | Implement Logout functionality - **Is it a ?**

- [ ] Bug Report

- [x] Feature Request

- [ ] Chore

### Feature Request

**Feature Description**

Log out the user upon click on `Logout`.

**Would you like to work on the issue?**

Yes. | priority | implement logout functionality is it a bug report feature request chore feature request feature description log out the user upon click on logout would you like to work on the issue yes | 1 |

316,582 | 9,651,976,122 | IssuesEvent | 2019-05-18 13:08:38 | the-blue-alliance/the-blue-alliance-ios | https://api.github.com/repos/the-blue-alliance/the-blue-alliance-ios | closed | Fix TeamSummaryViewController.tableView(_:heightForRowAt:) | bug high priority | ```

Crashed: com.apple.main-thread

0 The Blue Alliance 0x100c5efa4 @objc TeamSummaryViewController.tableView(_:heightForRowAt:) (<compiler-generated>)

1 UIKitCore 0x24383c024 -[UITableView _classicHeightForRowAtIndexPath:] + 164

2 UIKitCore 0x24383c258 -[UITableView _heightForCell:atIndexPath:] + 180

3 UIKitCore 0x243823bcc __53-[UITableView _configureCellForDisplay:forIndexPath:]_block_invoke + 3192

4 UIKitCore 0x243a945d4 +[UIView(Animation) performWithoutAnimation:] + 104

5 UIKitCore 0x243822e70 -[UITableView _configureCellForDisplay:forIndexPath:] + 248

6 UIKitCore 0x243834ad8 -[UITableView _createPreparedCellForGlobalRow:withIndexPath:willDisplay:] + 840

7 UIKitCore 0x243834f38 -[UITableView _createPreparedCellForGlobalRow:willDisplay:] + 80

8 UIKitCore 0x243801740 -[UITableView _updateVisibleCellsNow:isRecursive:] + 2260

9 UIKitCore 0x24381ea60 -[UITableView layoutSubviews] + 140

10 UIKitCore 0x243aa1e54 -[UIView(CALayerDelegate) layoutSublayersOfLayer:] + 1292

11 QuartzCore 0x21b6721f0 -[CALayer layoutSublayers] + 184

12 QuartzCore 0x21b677198 CA::Layer::layout_if_needed(CA::Transaction*) + 332

13 QuartzCore 0x21b5da0a8 CA::Context::commit_transaction(CA::Transaction*) + 348

14 QuartzCore 0x21b608108 CA::Transaction::commit() + 640

15 UIKitCore 0x2436407b0 _afterCACommitHandler + 224

16 CoreFoundation 0x21717589c __CFRUNLOOP_IS_CALLING_OUT_TO_AN_OBSERVER_CALLBACK_FUNCTION__ + 32

17 CoreFoundation 0x2171705c4 __CFRunLoopDoObservers + 412

18 CoreFoundation 0x217170b40 __CFRunLoopRun + 1228

19 CoreFoundation 0x217170354 CFRunLoopRunSpecific + 436

20 GraphicsServices 0x21937079c GSEventRunModal + 104

21 UIKitCore 0x243619b68 UIApplicationMain + 212

22 The Blue Alliance 0x100bde018 main (main.swift:9)

23 libdyld.dylib 0x216c368e0 start + 4

``` | 1.0 | Fix TeamSummaryViewController.tableView(_:heightForRowAt:) - ```

Crashed: com.apple.main-thread

0 The Blue Alliance 0x100c5efa4 @objc TeamSummaryViewController.tableView(_:heightForRowAt:) (<compiler-generated>)

1 UIKitCore 0x24383c024 -[UITableView _classicHeightForRowAtIndexPath:] + 164

2 UIKitCore 0x24383c258 -[UITableView _heightForCell:atIndexPath:] + 180

3 UIKitCore 0x243823bcc __53-[UITableView _configureCellForDisplay:forIndexPath:]_block_invoke + 3192

4 UIKitCore 0x243a945d4 +[UIView(Animation) performWithoutAnimation:] + 104

5 UIKitCore 0x243822e70 -[UITableView _configureCellForDisplay:forIndexPath:] + 248

6 UIKitCore 0x243834ad8 -[UITableView _createPreparedCellForGlobalRow:withIndexPath:willDisplay:] + 840

7 UIKitCore 0x243834f38 -[UITableView _createPreparedCellForGlobalRow:willDisplay:] + 80

8 UIKitCore 0x243801740 -[UITableView _updateVisibleCellsNow:isRecursive:] + 2260

9 UIKitCore 0x24381ea60 -[UITableView layoutSubviews] + 140

10 UIKitCore 0x243aa1e54 -[UIView(CALayerDelegate) layoutSublayersOfLayer:] + 1292

11 QuartzCore 0x21b6721f0 -[CALayer layoutSublayers] + 184

12 QuartzCore 0x21b677198 CA::Layer::layout_if_needed(CA::Transaction*) + 332

13 QuartzCore 0x21b5da0a8 CA::Context::commit_transaction(CA::Transaction*) + 348

14 QuartzCore 0x21b608108 CA::Transaction::commit() + 640

15 UIKitCore 0x2436407b0 _afterCACommitHandler + 224

16 CoreFoundation 0x21717589c __CFRUNLOOP_IS_CALLING_OUT_TO_AN_OBSERVER_CALLBACK_FUNCTION__ + 32

17 CoreFoundation 0x2171705c4 __CFRunLoopDoObservers + 412

18 CoreFoundation 0x217170b40 __CFRunLoopRun + 1228

19 CoreFoundation 0x217170354 CFRunLoopRunSpecific + 436

20 GraphicsServices 0x21937079c GSEventRunModal + 104

21 UIKitCore 0x243619b68 UIApplicationMain + 212

22 The Blue Alliance 0x100bde018 main (main.swift:9)

23 libdyld.dylib 0x216c368e0 start + 4

``` | priority | fix teamsummaryviewcontroller tableview heightforrowat crashed com apple main thread the blue alliance objc teamsummaryviewcontroller tableview heightforrowat uikitcore uikitcore uikitcore block invoke uikitcore uikitcore uikitcore uikitcore uikitcore uikitcore uikitcore quartzcore quartzcore ca layer layout if needed ca transaction quartzcore ca context commit transaction ca transaction quartzcore ca transaction commit uikitcore aftercacommithandler corefoundation cfrunloop is calling out to an observer callback function corefoundation cfrunloopdoobservers corefoundation cfrunlooprun corefoundation cfrunlooprunspecific graphicsservices gseventrunmodal uikitcore uiapplicationmain the blue alliance main main swift libdyld dylib start | 1 |

695,681 | 23,868,289,464 | IssuesEvent | 2022-09-07 12:58:46 | PNNL-CompBio/Snekmer | https://api.github.com/repos/PNNL-CompBio/Snekmer | closed | Speed issue | bug HighPriority | For even moderately sized input files (5k, e.g.) kmerize is taking a long time (hour+) which is way too long. The problem was introduced by the previous fix for the memory issue, and it's in the vectorize.py/make_feature_matrix function, which is using a very slow way of constructing a matrix from individual lists. | 1.0 | Speed issue - For even moderately sized input files (5k, e.g.) kmerize is taking a long time (hour+) which is way too long. The problem was introduced by the previous fix for the memory issue, and it's in the vectorize.py/make_feature_matrix function, which is using a very slow way of constructing a matrix from individual lists. | priority | speed issue for even moderately sized input files e g kmerize is taking a long time hour which is way too long the problem was introduced by the previous fix for the memory issue and it s in the vectorize py make feature matrix function which is using a very slow way of constructing a matrix from individual lists | 1 |

783,036 | 27,516,207,504 | IssuesEvent | 2023-03-06 12:09:42 | bounswe/bounswe2023group5 | https://api.github.com/repos/bounswe/bounswe2023group5 | closed | Setting Up Communication Channel | Priority: High Type: Feature Status: Done | Establishing a communication channel to talk about tasks, make weekly plans and distribute the tasks in the team etc. | 1.0 | Setting Up Communication Channel - Establishing a communication channel to talk about tasks, make weekly plans and distribute the tasks in the team etc. | priority | setting up communication channel establishing a communication channel to talk about tasks make weekly plans and distribute the tasks in the team etc | 1 |

62,107 | 3,172,078,277 | IssuesEvent | 2015-09-23 04:37:10 | DynamoRIO/drmemory | https://api.github.com/repos/DynamoRIO/drmemory | closed | CRASH destroy_Rtl_heap() on chrome unit_tests | Bug-ToolCrash Component-FullMode Hotlist-Release OpSys-Win8.1 Priority-High Status-CannotReproduce Status-NeedInfo | Xref https://code.google.com/p/chromium/issues/detail?id=526903

When trying to repro on Win8.1, dev hit this crash:

```

[==========] Running 2 tests from 1 test case.

[----------] Global test environment set-up.

[----------] 2 tests from ChromeStabilityMetricsProviderTest

[ RUN ] ChromeStabilityMetricsProviderTest.BrowserChildProcessObserver

[ OK ] ChromeStabilityMetricsProviderTest.BrowserChildProcessObserver (6077 ms)

[ RUN ] ChromeStabilityMetricsProviderTest.NotificationObserver

[ OK ] ChromeStabilityMetricsProviderTest.NotificationObserver (379 ms)

[----------] 2 tests from ChromeStabilityMetricsProviderTest (6647 ms total)

[----------] Global test environment tear-down

[==========] 2 tests from 1 test case ran. (6728 ms total)

[ PASSED ] 2 tests.

<Application d:\src\gclient\src\out\Release\unit_tests.exe (940). Dr. Memory internal crash at PC 0x738348ca. Please report this at http://drmemory.org/issues. Program aborted.

0xc0000005 0x00000000 0x738348ca 0x738348ca 0x00000000 0x08e9002c

Base: 0x5cb50000

Registers: eax=0x23779c30 ebx=0x08e90000 ecx=0x2375e024 edx=0x08e90000

esi=0x00000000 edi=0x00000001 esp=0x21d8ed40 ebp=0x21d8ed64

eflags=0x000

1.9.0-0-(Aug 28 2015 22:56:18) win63

-no_dynamic_options -disasm_mask 8 -logdir 'C:\Users\wfh\AppData\LocalLow\vg_logs_bb0ii3\dynamorio' -client_lib 'd:\src\gclient\src\third_party\drmemory\unpacked\bin\release\drmemorylib.dll;0;-suppress `d:\src\gclient\src\tools\valgrind\drmemory\suppressions.txt` -suppress `d:\src\gclient\src\tools\valgrind\drmemory\supp

0x21d8ed64 0x738351a0

0x21d8edc8 0x21d47680

0x5cbfb52b 0x01b05d5e

d:\src\gclient\src\third_party\drmemory\unpacked\bin\release\drmemorylib.dll=0x73800000

d:\src\gclient\src\third_party\drmemory\unpacked\bin\release/dbghelp.dll=0x5ca30000

C:\Windows/system32/msvcrt.dll=0x067c0000

C:\Windows/system32/kernel32.dll=0x08070000

C:\Windows/system32/KERNELBASE.dll=0x081b0000>

~~Dr.M~~ WARNING: application exited with abnormal code 0xffffffff

```

05:23 PM ~/drmemory/releases/DrMemory-Windows-1.9.0-RC1

% bin/symquery.exe -e bin/release/drmemorylib.dll -f -a 0x348ca

destroy_Rtl_heap+0xa

d:\drmemory_package\common\alloc_replace.c:2867+0x3

% bin/symquery.exe -e bin/release/drmemorylib.dll -f -a 0x351a0

alloc_replace_exit+0xe0

d:\drmemory_package\common\alloc_replace.c:4758+0x10

Same crash with the DrMem ver in chromium third_party.

With debug ends up seeing an assert:

```

ASSERT FAILURE (thread 7324):

d:\drmemory_package\common\alloc_replace.c:4337: in_table (pre-us libc

missed in heap walk)

``` | 1.0 | CRASH destroy_Rtl_heap() on chrome unit_tests - Xref https://code.google.com/p/chromium/issues/detail?id=526903

When trying to repro on Win8.1, dev hit this crash:

```

[==========] Running 2 tests from 1 test case.

[----------] Global test environment set-up.

[----------] 2 tests from ChromeStabilityMetricsProviderTest

[ RUN ] ChromeStabilityMetricsProviderTest.BrowserChildProcessObserver

[ OK ] ChromeStabilityMetricsProviderTest.BrowserChildProcessObserver (6077 ms)

[ RUN ] ChromeStabilityMetricsProviderTest.NotificationObserver

[ OK ] ChromeStabilityMetricsProviderTest.NotificationObserver (379 ms)

[----------] 2 tests from ChromeStabilityMetricsProviderTest (6647 ms total)

[----------] Global test environment tear-down

[==========] 2 tests from 1 test case ran. (6728 ms total)

[ PASSED ] 2 tests.

<Application d:\src\gclient\src\out\Release\unit_tests.exe (940). Dr. Memory internal crash at PC 0x738348ca. Please report this at http://drmemory.org/issues. Program aborted.

0xc0000005 0x00000000 0x738348ca 0x738348ca 0x00000000 0x08e9002c

Base: 0x5cb50000

Registers: eax=0x23779c30 ebx=0x08e90000 ecx=0x2375e024 edx=0x08e90000

esi=0x00000000 edi=0x00000001 esp=0x21d8ed40 ebp=0x21d8ed64

eflags=0x000

1.9.0-0-(Aug 28 2015 22:56:18) win63

-no_dynamic_options -disasm_mask 8 -logdir 'C:\Users\wfh\AppData\LocalLow\vg_logs_bb0ii3\dynamorio' -client_lib 'd:\src\gclient\src\third_party\drmemory\unpacked\bin\release\drmemorylib.dll;0;-suppress `d:\src\gclient\src\tools\valgrind\drmemory\suppressions.txt` -suppress `d:\src\gclient\src\tools\valgrind\drmemory\supp

0x21d8ed64 0x738351a0

0x21d8edc8 0x21d47680

0x5cbfb52b 0x01b05d5e

d:\src\gclient\src\third_party\drmemory\unpacked\bin\release\drmemorylib.dll=0x73800000

d:\src\gclient\src\third_party\drmemory\unpacked\bin\release/dbghelp.dll=0x5ca30000

C:\Windows/system32/msvcrt.dll=0x067c0000

C:\Windows/system32/kernel32.dll=0x08070000

C:\Windows/system32/KERNELBASE.dll=0x081b0000>

~~Dr.M~~ WARNING: application exited with abnormal code 0xffffffff

```

05:23 PM ~/drmemory/releases/DrMemory-Windows-1.9.0-RC1

% bin/symquery.exe -e bin/release/drmemorylib.dll -f -a 0x348ca

destroy_Rtl_heap+0xa

d:\drmemory_package\common\alloc_replace.c:2867+0x3

% bin/symquery.exe -e bin/release/drmemorylib.dll -f -a 0x351a0

alloc_replace_exit+0xe0

d:\drmemory_package\common\alloc_replace.c:4758+0x10

Same crash with the DrMem ver in chromium third_party.

With debug ends up seeing an assert:

```

ASSERT FAILURE (thread 7324):

d:\drmemory_package\common\alloc_replace.c:4337: in_table (pre-us libc

missed in heap walk)

``` | priority | crash destroy rtl heap on chrome unit tests xref when trying to repro on dev hit this crash running tests from test case global test environment set up tests from chromestabilitymetricsprovidertest chromestabilitymetricsprovidertest browserchildprocessobserver chromestabilitymetricsprovidertest browserchildprocessobserver ms chromestabilitymetricsprovidertest notificationobserver chromestabilitymetricsprovidertest notificationobserver ms tests from chromestabilitymetricsprovidertest ms total global test environment tear down tests from test case ran ms total tests application d src gclient src out release unit tests exe dr memory internal crash at pc please report this at program aborted base registers eax ebx ecx edx esi edi esp ebp eflags aug no dynamic options disasm mask logdir c users wfh appdata locallow vg logs dynamorio client lib d src gclient src third party drmemory unpacked bin release drmemorylib dll suppress d src gclient src tools valgrind drmemory suppressions txt suppress d src gclient src tools valgrind drmemory supp d src gclient src third party drmemory unpacked bin release drmemorylib dll d src gclient src third party drmemory unpacked bin release dbghelp dll c windows msvcrt dll c windows dll c windows kernelbase dll dr m warning application exited with abnormal code pm drmemory releases drmemory windows bin symquery exe e bin release drmemorylib dll f a destroy rtl heap d drmemory package common alloc replace c bin symquery exe e bin release drmemorylib dll f a alloc replace exit d drmemory package common alloc replace c same crash with the drmem ver in chromium third party with debug ends up seeing an assert assert failure thread d drmemory package common alloc replace c in table pre us libc missed in heap walk | 1 |

378,698 | 11,206,659,029 | IssuesEvent | 2020-01-05 23:01:42 | Kipjr/ldap_login | https://api.github.com/repos/Kipjr/ldap_login | closed | [Vulnerability] data.dat exposed | Priority: High Section: Security Status: Confirmed Status: Review Needed Type: Bug | In a normal Piwigo installation, the plugin folders are not blocked. Therefore everyone can view every file in the directories. This is especially serious for the `data.dat` file which may contain sensible data like an AD password.

To see if you are affected, open http(s)://<your_piwigo_installation>/plugins/Ldap_Login/data.dat in your browser.

As a workaround you should advise users to block the access to the file (or the whole plugins(/Ldap_Login) directory) in the server settings or with an .htaccess file.

A better way would be to store the plugin settings in a php file which will be interpreted by the server and not just displayed.

| 1.0 | [Vulnerability] data.dat exposed - In a normal Piwigo installation, the plugin folders are not blocked. Therefore everyone can view every file in the directories. This is especially serious for the `data.dat` file which may contain sensible data like an AD password.

To see if you are affected, open http(s)://<your_piwigo_installation>/plugins/Ldap_Login/data.dat in your browser.

As a workaround you should advise users to block the access to the file (or the whole plugins(/Ldap_Login) directory) in the server settings or with an .htaccess file.

A better way would be to store the plugin settings in a php file which will be interpreted by the server and not just displayed.

| priority | data dat exposed in a normal piwigo installation the plugin folders are not blocked therefore everyone can view every file in the directories this is especially serious for the data dat file which may contain sensible data like an ad password to see if you are affected open http s plugins ldap login data dat in your browser as a workaround you should advise users to block the access to the file or the whole plugins ldap login directory in the server settings or with an htaccess file a better way would be to store the plugin settings in a php file which will be interpreted by the server and not just displayed | 1 |

680,360 | 23,267,265,918 | IssuesEvent | 2022-08-04 18:41:25 | Yoooi0/MultiFunPlayer | https://api.github.com/repos/Yoooi0/MultiFunPlayer | closed | Motion providers are causing bad IsDirty flag when axis is auto-homing | bug priority-high | When axis starts to auto-home it causes motion provider update method to return true dirty flag on next update tick even if its idle, which then breaks auto-home:

https://github.com/Yoooi0/MultiFunPlayer/blob/349f7a0e9096f2f8e9a6a60ca585988d5dc4cc06/MultiFunPlayer/UI/Controls/ViewModels/ScriptViewModel.cs#L381

Probably need to redesign the whole update loop/context to separate values/dirty flags. | 1.0 | Motion providers are causing bad IsDirty flag when axis is auto-homing - When axis starts to auto-home it causes motion provider update method to return true dirty flag on next update tick even if its idle, which then breaks auto-home:

https://github.com/Yoooi0/MultiFunPlayer/blob/349f7a0e9096f2f8e9a6a60ca585988d5dc4cc06/MultiFunPlayer/UI/Controls/ViewModels/ScriptViewModel.cs#L381

Probably need to redesign the whole update loop/context to separate values/dirty flags. | priority | motion providers are causing bad isdirty flag when axis is auto homing when axis starts to auto home it causes motion provider update method to return true dirty flag on next update tick even if its idle which then breaks auto home probably need to redesign the whole update loop context to separate values dirty flags | 1 |

789,307 | 27,786,157,871 | IssuesEvent | 2023-03-17 03:31:29 | AY2223S2-CS2103T-F12-2/tp | https://api.github.com/repos/AY2223S2-CS2103T-F12-2/tp | closed | As a student, I can find contacts by their modules | type.Story priority.High | so I can ask them what to expect when I take the modules or form teams/study with them if they are taking similar modules currently. | 1.0 | As a student, I can find contacts by their modules - so I can ask them what to expect when I take the modules or form teams/study with them if they are taking similar modules currently. | priority | as a student i can find contacts by their modules so i can ask them what to expect when i take the modules or form teams study with them if they are taking similar modules currently | 1 |

668,914 | 22,603,632,042 | IssuesEvent | 2022-06-29 11:22:44 | Skaant/rimarok-2 | https://api.github.com/repos/Skaant/rimarok-2 | closed | Typo : font-size base increase + headers margins | ⚡ high priority smallie | - [ ] Increase Bootstrap base font size variable

- [ ] Add base margins to headers range | 1.0 | Typo : font-size base increase + headers margins - - [ ] Increase Bootstrap base font size variable

- [ ] Add base margins to headers range | priority | typo font size base increase headers margins increase bootstrap base font size variable add base margins to headers range | 1 |

248,118 | 7,927,597,986 | IssuesEvent | 2018-07-06 08:37:35 | Signbank/Global-signbank | https://api.github.com/repos/Signbank/Global-signbank | closed | Cannot upload videos for sign | ASL bug high priority | Looks like you cannot add videos for https://aslsignbank.haskins.yale.edu//dictionary/gloss/1751/

I'm guessing the problem is the fact that lemma ID gloss is only 1 char.

EDIT: indeed, the videos are stored in glossvideo/I- , but the code that collects the video probably does not look there. | 1.0 | Cannot upload videos for sign - Looks like you cannot add videos for https://aslsignbank.haskins.yale.edu//dictionary/gloss/1751/

I'm guessing the problem is the fact that lemma ID gloss is only 1 char.

EDIT: indeed, the videos are stored in glossvideo/I- , but the code that collects the video probably does not look there. | priority | cannot upload videos for sign looks like you cannot add videos for i m guessing the problem is the fact that lemma id gloss is only char edit indeed the videos are stored in glossvideo i but the code that collects the video probably does not look there | 1 |

763,957 | 26,779,317,746 | IssuesEvent | 2023-01-31 19:44:32 | zulip/zulip-mobile | https://api.github.com/repos/zulip/zulip-mobile | closed | [iOS] Links get double-%-encoded, breaking downloads | help wanted a-iOS a-message list P1 high-priority | The effect of this is that a similar symptom to #3303 is still live on iOS. But the cause is unrelated, so making this a separate issue.

Originally described at https://github.com/zulip/zulip-mobile/pull/4089#issuecomment-639153366 and https://github.com/zulip/zulip-mobile/pull/4089#issuecomment-639195011 , just before I merged #4089 fixing #3303 .

To reproduce:

* Went to message list, found a message with an upload that wasn't an image. (To do that: in the webapp searched for `has:attachment`, and scrolled through history to find one that wasn't an image.) Specifically the file happened to be a PDF, with a `.pdf` extension on its name.

* Hit the link. Got a browser view. But after a few seconds of loading, the result was an error:

That appears to be from S3 directly, because that's in the location bar at the top. The error message begins:

> SignatureDoesNotMatch The request signature we calculated does not match the signature you provided. Check your key and signing method. [... then a bunch of details ...]

* Ditto on a second try. I watched the timing closely this time, and it was only about a second between me tapping the link and the error message appearing. So it's not an expiration issue -- there's something else wrong.

On further investigation, I have a partial diagnosis:

* From that error page, I hit the "share" icon and chose "Copy", to get the URL onto the clipboard. Then went and pasted it elsewhere (the compose box, as the handiest place.) Here it is:

`https://zulip-uploads.s3.amazonaws.com/1230/-Opc2L055IYelpraPb1oRDeU/sagas.pdf?Signature=2mpNGb0ysKFpVN8bkkMVsulQSVE%253D&Expires=1591317724&AWSAccessKeyId=AKIAIEVMBCAT2WD3M5KQ`

* I went and pulled up the same upload in the webapp, to compare. (It works fine there.) Here's the URL I find in the location bar there:

`https://zulip-uploads.s3.amazonaws.com/1230/-Opc2L055IYelpraPb1oRDeU/sagas.pdf?Signature=xipp%2FD69nk89xmkKha3cx6K%2FSSg%3D&Expires=1591317779&AWSAccessKeyId=AKIAIEVMBCAT2WD3M5KQ`

* I think the problem is that `%253D`. That's the percent-encoding of `%3D`, which is itself the percent-encoding of `=`. Note there's a `%3D` at the end of the `Signature` query-parameter in the successful URL. Both signatures look like base64, which very often ends with a `=` as padding.

* So it seems like we're double-encoding the URL, and as a result the decoding of it has a signature ending in `%3D` instead of in `=` and the signature doesn't validate.

Looking at the code, it's clear where that's happening -- in `src/utils/openLink.js`, just in the iOS branch, we call `encodeURI` on the URL. That sure will turn a `%3D` into a `%253D`.

Unfortunately it's going to be a bit trickier than just removing that call, because it was put there to fix another bug: 66a9e9d1a15e149ebdfa5a2e9cca06aaa3f29835 (#3507) fixed #3315.

So it seems like we need to, in `openLink` on iOS before passing the URL to `SafariView.show`:

* %-encode non-ASCII characters -- that's #3315;

* but *not* %-encode `%` itself, instead leave it alone;

* and presumably also leave alone all the other characters that `encodeURI` leaves alone, like `a` and `Z` and `/`;

* and it's not clear if we should %-encode the remaining characters that `encodeURI` affects, like ` ` and `"` and a bunch of other punctuation.

A key step in fixing this is going to be just end-to-end testing: make a URL filled with a ton of these characters, post it in a Zulip message, try following that link, and see what URL actually comes through.

| 1.0 | [iOS] Links get double-%-encoded, breaking downloads - The effect of this is that a similar symptom to #3303 is still live on iOS. But the cause is unrelated, so making this a separate issue.

Originally described at https://github.com/zulip/zulip-mobile/pull/4089#issuecomment-639153366 and https://github.com/zulip/zulip-mobile/pull/4089#issuecomment-639195011 , just before I merged #4089 fixing #3303 .

To reproduce:

* Went to message list, found a message with an upload that wasn't an image. (To do that: in the webapp searched for `has:attachment`, and scrolled through history to find one that wasn't an image.) Specifically the file happened to be a PDF, with a `.pdf` extension on its name.

* Hit the link. Got a browser view. But after a few seconds of loading, the result was an error:

That appears to be from S3 directly, because that's in the location bar at the top. The error message begins:

> SignatureDoesNotMatch The request signature we calculated does not match the signature you provided. Check your key and signing method. [... then a bunch of details ...]

* Ditto on a second try. I watched the timing closely this time, and it was only about a second between me tapping the link and the error message appearing. So it's not an expiration issue -- there's something else wrong.

On further investigation, I have a partial diagnosis:

* From that error page, I hit the "share" icon and chose "Copy", to get the URL onto the clipboard. Then went and pasted it elsewhere (the compose box, as the handiest place.) Here it is:

`https://zulip-uploads.s3.amazonaws.com/1230/-Opc2L055IYelpraPb1oRDeU/sagas.pdf?Signature=2mpNGb0ysKFpVN8bkkMVsulQSVE%253D&Expires=1591317724&AWSAccessKeyId=AKIAIEVMBCAT2WD3M5KQ`

* I went and pulled up the same upload in the webapp, to compare. (It works fine there.) Here's the URL I find in the location bar there:

`https://zulip-uploads.s3.amazonaws.com/1230/-Opc2L055IYelpraPb1oRDeU/sagas.pdf?Signature=xipp%2FD69nk89xmkKha3cx6K%2FSSg%3D&Expires=1591317779&AWSAccessKeyId=AKIAIEVMBCAT2WD3M5KQ`

* I think the problem is that `%253D`. That's the percent-encoding of `%3D`, which is itself the percent-encoding of `=`. Note there's a `%3D` at the end of the `Signature` query-parameter in the successful URL. Both signatures look like base64, which very often ends with a `=` as padding.

* So it seems like we're double-encoding the URL, and as a result the decoding of it has a signature ending in `%3D` instead of in `=` and the signature doesn't validate.

Looking at the code, it's clear where that's happening -- in `src/utils/openLink.js`, just in the iOS branch, we call `encodeURI` on the URL. That sure will turn a `%3D` into a `%253D`.

Unfortunately it's going to be a bit trickier than just removing that call, because it was put there to fix another bug: 66a9e9d1a15e149ebdfa5a2e9cca06aaa3f29835 (#3507) fixed #3315.

So it seems like we need to, in `openLink` on iOS before passing the URL to `SafariView.show`:

* %-encode non-ASCII characters -- that's #3315;

* but *not* %-encode `%` itself, instead leave it alone;

* and presumably also leave alone all the other characters that `encodeURI` leaves alone, like `a` and `Z` and `/`;

* and it's not clear if we should %-encode the remaining characters that `encodeURI` affects, like ` ` and `"` and a bunch of other punctuation.

A key step in fixing this is going to be just end-to-end testing: make a URL filled with a ton of these characters, post it in a Zulip message, try following that link, and see what URL actually comes through.

| priority | links get double encoded breaking downloads the effect of this is that a similar symptom to is still live on ios but the cause is unrelated so making this a separate issue originally described at and just before i merged fixing to reproduce went to message list found a message with an upload that wasn t an image to do that in the webapp searched for has attachment and scrolled through history to find one that wasn t an image specifically the file happened to be a pdf with a pdf extension on its name hit the link got a browser view but after a few seconds of loading the result was an error that appears to be from directly because that s in the location bar at the top the error message begins signaturedoesnotmatch the request signature we calculated does not match the signature you provided check your key and signing method ditto on a second try i watched the timing closely this time and it was only about a second between me tapping the link and the error message appearing so it s not an expiration issue there s something else wrong on further investigation i have a partial diagnosis from that error page i hit the share icon and chose copy to get the url onto the clipboard then went and pasted it elsewhere the compose box as the handiest place here it is i went and pulled up the same upload in the webapp to compare it works fine there here s the url i find in the location bar there i think the problem is that that s the percent encoding of which is itself the percent encoding of note there s a at the end of the signature query parameter in the successful url both signatures look like which very often ends with a as padding so it seems like we re double encoding the url and as a result the decoding of it has a signature ending in instead of in and the signature doesn t validate looking at the code it s clear where that s happening in src utils openlink js just in the ios branch we call encodeuri on the url that sure will turn a into a unfortunately it s going to be a bit trickier than just removing that call because it was put there to fix another bug fixed so it seems like we need to in openlink on ios before passing the url to safariview show encode non ascii characters that s but not encode itself instead leave it alone and presumably also leave alone all the other characters that encodeuri leaves alone like a and z and and it s not clear if we should encode the remaining characters that encodeuri affects like and and a bunch of other punctuation a key step in fixing this is going to be just end to end testing make a url filled with a ton of these characters post it in a zulip message try following that link and see what url actually comes through | 1 |

354,048 | 10,562,312,275 | IssuesEvent | 2019-10-04 18:00:52 | easybuilders/easybuild-framework | https://api.github.com/repos/easybuilders/easybuild-framework | closed | EB 4.0.0 breaks when there is another "eb" command before itself in the PATH despite being called with a full path | bug report priority:high | Setup:

```

grep robot-paths /hpc2n/eb/easybuild.cfg

robot-paths=/hpc2n/eb/custom/easyconfigs:%(DEFAULT_ROBOT_PATHS)s

```

/hpc2n/eb -> /cvmfs/ebsw.hpc2n.umu.se/amd64_ubuntu1604_skx/

(Last part arch dependent)

EB 3.9.4:

```

eb --show-config

robot-paths (F) = /hpc2n/eb/custom/easyconfigs, /hpc2n/eb/software/Core/EasyBuild/3.9.4/lib/python2.7/site-packages/easybuild_easyconfigs-3.9.4-py2.7.egg/easybuild/easyconfigs

```

EB 4.0.0:

```

eb --show-config

robot-paths (F) = /hpc2n/eb/custom/easyconfigs, /cvmfs/ebsw.hpc2n.umu.se/amd64_ubuntu1604_common/software/Core/EasyBuild/4.0.0/easybuild/easyconfigs

```

(Note that it also resolves the DEFAULT_ROBOT_PATHS which it shouldn't do)

```

cat /scratch/q/eb

#!/bin/bash

echo IIeeekkk, Im getting called

```

EB 3.9.4:

```

env PATH=/scratch/q:$PATH $EBROOTEASYBUILD/bin/eb --show-config

robot-paths (F) = /hpc2n/eb/custom/easyconfigs, /hpc2n/eb/software/Core/EasyBuild/3.9.4/lib/python2.7/site-packages/easybuild_easyconfigs-3.9.4-py2.7.egg/easybuild/easyconfigs

```

Still correct, but

EB 4.0.0:

```

env PATH=/scratch/q:$PATH $EBROOTEASYBUILD/bin/eb --show-config

robot-paths (F) = /hpc2n/eb/custom/easyconfigs

```

This breaks our setup completely and I'm now stuck. | 1.0 | EB 4.0.0 breaks when there is another "eb" command before itself in the PATH despite being called with a full path - Setup:

```

grep robot-paths /hpc2n/eb/easybuild.cfg

robot-paths=/hpc2n/eb/custom/easyconfigs:%(DEFAULT_ROBOT_PATHS)s

```