Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

48,666 | 2,999,433,987 | IssuesEvent | 2015-07-23 19:00:36 | jayway/powermock | https://api.github.com/repos/jayway/powermock | closed | Rename invocation to invoke in Mockito API | enhancement imported Milestone-Release1.3 Priority-High | _From [johan.ha...@gmail.com](https://code.google.com/u/105676376875942041029/) on September 29, 2009 19:16:10_

do it

_Original issue: http://code.google.com/p/powermock/issues/detail?id=173_ | 1.0 | Rename invocation to invoke in Mockito API - _From [johan.ha...@gmail.com](https://code.google.com/u/105676376875942041029/) on September 29, 2009 19:16:10_

do it

_Original issue: http://code.google.com/p/powermock/issues/detail?id=173_ | priority | rename invocation to invoke in mockito api from on september do it original issue | 1 |

739,410 | 25,595,513,772 | IssuesEvent | 2022-12-01 15:55:33 | bounswe/bounswe2022group2 | https://api.github.com/repos/bounswe/bounswe2022group2 | closed | Mobile: Backend Connection of Create Learning Space | priority-high Status: Completed mobile back-connection | ### Issue Description

since the screen for creating a learning space is ready, it should also be connected to the backend of our application.

### Step Details

Steps that will be performed:

- [x] implement request model for post learning space

- [x] connect request to button

- [x] implement response model

- [x] match response model with inner model to transfer to other pages

- [x] implement request model for patch/edit learning space

- [x] connect edit request to appropriate use case

- [ ] implement unit tests for the implemented functionality

### Final Actions

once all the steps have been completed, a pr will be opened to get the review of mobile team members.

### Deadline of the Issue

30.11.2022

### Reviewer

Egemen Atik

### Deadline for the Review

03.12.2022 | 1.0 | Mobile: Backend Connection of Create Learning Space - ### Issue Description

since the screen for creating a learning space is ready, it should also be connected to the backend of our application.

### Step Details

Steps that will be performed:

- [x] implement request model for post learning space

- [x] connect request to button

- [x] implement response model

- [x] match response model with inner model to transfer to other pages

- [x] implement request model for patch/edit learning space

- [x] connect edit request to appropriate use case

- [ ] implement unit tests for the implemented functionality

### Final Actions

once all the steps have been completed, a pr will be opened to get the review of mobile team members.

### Deadline of the Issue

30.11.2022

### Reviewer

Egemen Atik

### Deadline for the Review

03.12.2022 | priority | mobile backend connection of create learning space issue description since the screen for creating a learning space is ready it should also be connected to the backend of our application step details steps that will be performed implement request model for post learning space connect request to button implement response model match response model with inner model to transfer to other pages implement request model for patch edit learning space connect edit request to appropriate use case implement unit tests for the implemented functionality final actions once all the steps have been completed a pr will be opened to get the review of mobile team members deadline of the issue reviewer egemen atik deadline for the review | 1 |

441,230 | 12,709,645,135 | IssuesEvent | 2020-06-23 12:42:33 | Kameldrengene/cdioFinal_f2020 | https://api.github.com/repos/Kameldrengene/cdioFinal_f2020 | closed | General HTML | Front end HTML Heavy High Priority | - [ ] Always be able to see who you are logged in as? (2 lines to implement in all .html body files)

- [x] Log out button | 1.0 | General HTML - - [ ] Always be able to see who you are logged in as? (2 lines to implement in all .html body files)

- [x] Log out button | priority | general html always be able to see who you are logged in as lines to implement in all html body files log out button | 1 |

776,150 | 27,248,565,425 | IssuesEvent | 2023-02-22 05:34:33 | cbgaindia/district-dashboard | https://api.github.com/repos/cbgaindia/district-dashboard | closed | Change colour of download data tab | bug high priority | The colour is still from the constituency dashboard

<img width="843" alt="Screenshot 2023-02-21 at 4 40 09 PM" src="https://user-images.githubusercontent.com/67921422/220329418-0af00116-02aa-43df-a2ee-e1e679ecde82.png">

<img width="887" alt="Screenshot 2023-02-21 at 4 39 56 PM" src="https://user-images.githubusercontent.com/67921422/220329431-2e3b3835-4dd2-4ea8-8495-fb8fb015705d.png">

| 1.0 | Change colour of download data tab - The colour is still from the constituency dashboard

<img width="843" alt="Screenshot 2023-02-21 at 4 40 09 PM" src="https://user-images.githubusercontent.com/67921422/220329418-0af00116-02aa-43df-a2ee-e1e679ecde82.png">

<img width="887" alt="Screenshot 2023-02-21 at 4 39 56 PM" src="https://user-images.githubusercontent.com/67921422/220329431-2e3b3835-4dd2-4ea8-8495-fb8fb015705d.png">

| priority | change colour of download data tab the colour is still from the constituency dashboard img width alt screenshot at pm src img width alt screenshot at pm src | 1 |

189,489 | 6,798,145,699 | IssuesEvent | 2017-11-02 03:24:05 | OpenKore/openkore | https://api.github.com/repos/OpenKore/openkore | closed | xKore 1 and xKore 3 not have suport to encryptMessageID | bug help wanted network/poseidon priority: high | ###### Summary:

openkore xkore 1 and xkore 3 not have suport to encryptMessageID

###### Affected configuration(s)/ file(s):

network\xkore

network\xkoreProxy

###### Impact:

no one can connect to a server that need encryptMessageID

###### Expected Behavior:

connect to servers using encryptMessageID

###### Actual Behavior:

kore not work with encryptMessageID when we connect to a server that need.

###### Steps to Reproduce:

Just try to use xKore 1 or 3 in a server that have crypt keys

References:

#1174

#1160

#1133

#977

#540

innitial support:

https://github.com/alisonrag/openkore/commit/3034f398f7aed74835c24ec24b171d2ee8a0ba88

bug in map with players when login, kore lost itself in keys or maybe in packet '-' | 1.0 | xKore 1 and xKore 3 not have suport to encryptMessageID - ###### Summary:

openkore xkore 1 and xkore 3 not have suport to encryptMessageID

###### Affected configuration(s)/ file(s):

network\xkore

network\xkoreProxy

###### Impact:

no one can connect to a server that need encryptMessageID

###### Expected Behavior:

connect to servers using encryptMessageID

###### Actual Behavior:

kore not work with encryptMessageID when we connect to a server that need.

###### Steps to Reproduce:

Just try to use xKore 1 or 3 in a server that have crypt keys

References:

#1174

#1160

#1133

#977

#540

innitial support:

https://github.com/alisonrag/openkore/commit/3034f398f7aed74835c24ec24b171d2ee8a0ba88

bug in map with players when login, kore lost itself in keys or maybe in packet '-' | priority | xkore and xkore not have suport to encryptmessageid summary openkore xkore and xkore not have suport to encryptmessageid affected configuration s file s network xkore network xkoreproxy impact no one can connect to a server that need encryptmessageid expected behavior connect to servers using encryptmessageid actual behavior kore not work with encryptmessageid when we connect to a server that need steps to reproduce just try to use xkore or in a server that have crypt keys references innitial support bug in map with players when login kore lost itself in keys or maybe in packet | 1 |

659,806 | 21,941,995,948 | IssuesEvent | 2022-05-23 19:09:08 | LDSSA/wiki | https://api.github.com/repos/LDSSA/wiki | closed | Batch 6 Calendar proposal - Capstone | priority:high open discussion Batch 6 | This is a continuation of issue #346, limited to the capstone calendar only.

## The proposal

The main objectives are:

- Line up the calendar with the academic year, starting in September (suggested in #345)

- Adjust capstone deadlines in accordance with #345

I propose the following calendar, available in a google sheet and accessible through your LDSA account [here](https://docs.google.com/spreadsheets/d/1XtpbVT8YHB1mSvJxP8rvWFXqQTdXDPQTiV5or8uzLwI/edit?usp=sharing)

Here are the most important dates for the capstone, in the format of tables:

| Description | Date |

|-------------------------------------------------------------|------------------------------------------------------------------|

| Kick off | 2023-04-03 |

| Capstone Clarification email | 2023-04-09 |

| Trial round of requests | 2023-04-23 |

| Deadline Provisory report 1 and app launched | 2023-04-30 , 23h59 UTC |

| First round of requests | 2023-05-01 to 2023-05-07 |

| Comments to report 1 made by instructors | 2023-05-07 23h59 UTC |

| Deadline report 2 + redeploy + address comments to report 1 | 2023-05-28, 23h59 UTC |

| Second round of requests | 2023-05-29 to 2023-06-02 |

| Comments to report 2 made by instructors | 2023-06-04 , 23h59 UTC |

| Deadline address comments to report 2 | 2023-06-11, 23h59 UTC |

| Graduates announced | 2023-06-19 |

| 1.0 | Batch 6 Calendar proposal - Capstone - This is a continuation of issue #346, limited to the capstone calendar only.

## The proposal

The main objectives are:

- Line up the calendar with the academic year, starting in September (suggested in #345)

- Adjust capstone deadlines in accordance with #345

I propose the following calendar, available in a google sheet and accessible through your LDSA account [here](https://docs.google.com/spreadsheets/d/1XtpbVT8YHB1mSvJxP8rvWFXqQTdXDPQTiV5or8uzLwI/edit?usp=sharing)

Here are the most important dates for the capstone, in the format of tables:

| Description | Date |

|-------------------------------------------------------------|------------------------------------------------------------------|

| Kick off | 2023-04-03 |

| Capstone Clarification email | 2023-04-09 |

| Trial round of requests | 2023-04-23 |

| Deadline Provisory report 1 and app launched | 2023-04-30 , 23h59 UTC |

| First round of requests | 2023-05-01 to 2023-05-07 |

| Comments to report 1 made by instructors | 2023-05-07 23h59 UTC |

| Deadline report 2 + redeploy + address comments to report 1 | 2023-05-28, 23h59 UTC |

| Second round of requests | 2023-05-29 to 2023-06-02 |

| Comments to report 2 made by instructors | 2023-06-04 , 23h59 UTC |

| Deadline address comments to report 2 | 2023-06-11, 23h59 UTC |

| Graduates announced | 2023-06-19 |

| priority | batch calendar proposal capstone this is a continuation of issue limited to the capstone calendar only the proposal the main objectives are line up the calendar with the academic year starting in september suggested in adjust capstone deadlines in accordance with i propose the following calendar available in a google sheet and accessible through your ldsa account here are the most important dates for the capstone in the format of tables description date kick off capstone clarification email trial round of requests deadline provisory report and app launched utc first round of requests to comments to report made by instructors utc deadline report redeploy address comments to report utc second round of requests to comments to report made by instructors utc deadline address comments to report utc graduates announced | 1 |

594,181 | 18,026,014,551 | IssuesEvent | 2021-09-17 04:43:02 | input-output-hk/cardano-graphql | https://api.github.com/repos/input-output-hk/cardano-graphql | closed | Circulating and total supply computation | BUG PRIORITY:HIGH SEVERITY:MEDIUM | ### Environment

[Release 4.0.0 ](https://github.com/input-output-hk/cardano-graphql/commit/058873f7cfa6d5d287f859a58bf511b128da6494)

#### Platform

Linux / Other

**Platform version**: NixOS 21.05

### Steps to reproduce the bug

Query this:

```

query{

ada{

supply{

circulating

max

total

}

}

}

```

### What is the expected behavior?

Get correct number for circulating supply.

Get correct number for total supply.

1. Circulating supply:

I have a strong suspicion that circulating supply [calculation](https://github.com/input-output-hk/cardano-graphql/blob/master/packages/api-cardano-db-hasura/src/HasuraClient.ts#L136) might be off by ~billion.

The current calculation consists of sum of `utxos + (rewards - withdrawals)`, but if you look closely, `rewards-withdrawals` are in fact negative.

Querying directly values from[ db sync (9.0.0)](https://github.com/input-output-hk/cardano-db-sync/commit/0b054e18aec3b1e387a1c7b713c562ade37417a3).

Utxos:

```

SELECT SUM(txo.value)

FROM tx_out txo

LEFT JOIN tx_in txi ON (txo.tx_id = txi.tx_out_id)

AND (txo.index = txi.tx_out_index)

WHERE txi IS NULL;

-------------------

32247431809402515

(1 row)

```

We can also confirm this by querying graphql:

```

curl -X POST -H "Content-Type: application/json" -d '{"query": "{ utxos_aggregate { aggregate {sum {value}} } }"}' http://localhost:3002/graphql

{

"data": { "utxos_aggregate": { "aggregate": { "sum": { "value": "32247431809402516" } } } }

}

```

Next, we calculate rewards-withdrawals in dbsync:

```

SELECT

(SELECT COALESCE(SUM(amount), 0) FROM reward)

-

(SELECT SUM(amount) FROM withdrawal);

------------------

-159211187454300

(1 row)

```

Adding it up to utxos, we get: `32088220621948215`.

This corresponds to what can be seen in graphql as circulating supply:

```

curl -X POST -H "Content-Type: application/json" -d '{"query": "{ ada{ supply{ circulating max total } } }"}' http://localhost:3002/graphql

{

"data": {

"ada": {

"supply": {

"circulating": "32088220621948216",

"max": "45000000000000000",

"total": "32913667440643408"

}

}

}

}

```

However, this will yield circulating supply that is even lower than utxos and doesn't look right to me. `withdrawal` table also takes `treasury` and `reserve` into account, so I think the correct formula for calculating circulating supply should be `utxos + reward + treasury + reserve - withdrawal`

or represented as query in db sync:

```

SELECT

(SELECT COALESCE(SUM(amount), 0) FROM reward)

-

(SELECT COALESCE(SUM(amount),0) FROM withdrawal)

+

(SELECT COALESCE(SUM(amount),0) FROM reserve )

+

(SELECT COALESCE(SUM(amount),0) FROM treasury);

------------------

443141922376394

```

which yields a positive number of all withdrawals from all possibly available funds.

I have considered all the possibilities and I have reached the conclusion that the number from graphql might be wrong. Please, correct me if I'm at fault here, but it seems that circulating supply should be closer to `32690573731778909` than to the current value of `32088220621948216`.

Note: The outputs were collected few hours ago, so they are not entirely up to date.

Note2: COALESCE is needed especially for testnet, where there is empty `reserve` table .

2. Total supply (testnet)

Querying total supply on **testnet** in the current epoch doesn't look right as the circulating supply is higher than total supply.

```

curl -X POST -H "Content-Type: application/json" -d '{"query": "{ ada{ supply{ circulating max total } } }"}' http://localhost:3002/graphql

{

"data": {

"ada": {

"supply": {

"circulating": "42050393097894181",

"max": "45000000000000000",

"total": "40233883596865181"

}

}

}

}

```

Note: Edited (replaced data from stuck public dandelion instance with local synced data).

However, if I got this correctly, total supply is calculated as the `max supply` - `reserves` and `reserves` is taken from the ledger state at the epoch boundary. This means there will be a similar issue in the current epoch, as the current reserves are `4766116403134819` but querying utxos in db sync yield a number higher than total supply (`40233883596865181`):

```

SELECT SUM(txo.value)

FROM tx_out txo

LEFT JOIN tx_in txi ON (txo.tx_id = txi.tx_out_id)

AND (txo.index = txi.tx_out_index)

WHERE txi IS NULL;

-------------------

42016202067224430

(1 row)

```

Again, querying graphql confirms the utxos value used in the computation is the one above:

```

curl -X POST -H "Content-Type: application/json" -d '{"query": "{ utxos_aggregate { aggregate {sum {value}} } }"}' http://localhost:3002/graphql

{"data":{"utxos_aggregate":{"aggregate":{"sum":{"value":"42016202067224430"}}}}

```

Sorry for the trouble, I'm not sure if I'm doing something wrong so I'd appreciate your help and clarification if I'm mistaken. Thanks a million. | 1.0 | Circulating and total supply computation - ### Environment

[Release 4.0.0 ](https://github.com/input-output-hk/cardano-graphql/commit/058873f7cfa6d5d287f859a58bf511b128da6494)

#### Platform

Linux / Other

**Platform version**: NixOS 21.05

### Steps to reproduce the bug

Query this:

```

query{

ada{

supply{

circulating

max

total

}

}

}

```

### What is the expected behavior?

Get correct number for circulating supply.

Get correct number for total supply.

1. Circulating supply:

I have a strong suspicion that circulating supply [calculation](https://github.com/input-output-hk/cardano-graphql/blob/master/packages/api-cardano-db-hasura/src/HasuraClient.ts#L136) might be off by ~billion.

The current calculation consists of sum of `utxos + (rewards - withdrawals)`, but if you look closely, `rewards-withdrawals` are in fact negative.

Querying directly values from[ db sync (9.0.0)](https://github.com/input-output-hk/cardano-db-sync/commit/0b054e18aec3b1e387a1c7b713c562ade37417a3).

Utxos:

```

SELECT SUM(txo.value)

FROM tx_out txo

LEFT JOIN tx_in txi ON (txo.tx_id = txi.tx_out_id)

AND (txo.index = txi.tx_out_index)

WHERE txi IS NULL;

-------------------

32247431809402515

(1 row)

```

We can also confirm this by querying graphql:

```

curl -X POST -H "Content-Type: application/json" -d '{"query": "{ utxos_aggregate { aggregate {sum {value}} } }"}' http://localhost:3002/graphql

{

"data": { "utxos_aggregate": { "aggregate": { "sum": { "value": "32247431809402516" } } } }

}

```

Next, we calculate rewards-withdrawals in dbsync:

```

SELECT

(SELECT COALESCE(SUM(amount), 0) FROM reward)

-

(SELECT SUM(amount) FROM withdrawal);

------------------

-159211187454300

(1 row)

```

Adding it up to utxos, we get: `32088220621948215`.

This corresponds to what can be seen in graphql as circulating supply:

```

curl -X POST -H "Content-Type: application/json" -d '{"query": "{ ada{ supply{ circulating max total } } }"}' http://localhost:3002/graphql

{

"data": {

"ada": {

"supply": {

"circulating": "32088220621948216",

"max": "45000000000000000",

"total": "32913667440643408"

}

}

}

}

```

However, this will yield circulating supply that is even lower than utxos and doesn't look right to me. `withdrawal` table also takes `treasury` and `reserve` into account, so I think the correct formula for calculating circulating supply should be `utxos + reward + treasury + reserve - withdrawal`

or represented as query in db sync:

```

SELECT

(SELECT COALESCE(SUM(amount), 0) FROM reward)

-

(SELECT COALESCE(SUM(amount),0) FROM withdrawal)

+

(SELECT COALESCE(SUM(amount),0) FROM reserve )

+

(SELECT COALESCE(SUM(amount),0) FROM treasury);

------------------

443141922376394

```

which yields a positive number of all withdrawals from all possibly available funds.

I have considered all the possibilities and I have reached the conclusion that the number from graphql might be wrong. Please, correct me if I'm at fault here, but it seems that circulating supply should be closer to `32690573731778909` than to the current value of `32088220621948216`.

Note: The outputs were collected few hours ago, so they are not entirely up to date.

Note2: COALESCE is needed especially for testnet, where there is empty `reserve` table .

2. Total supply (testnet)

Querying total supply on **testnet** in the current epoch doesn't look right as the circulating supply is higher than total supply.

```

curl -X POST -H "Content-Type: application/json" -d '{"query": "{ ada{ supply{ circulating max total } } }"}' http://localhost:3002/graphql

{

"data": {

"ada": {

"supply": {

"circulating": "42050393097894181",

"max": "45000000000000000",

"total": "40233883596865181"

}

}

}

}

```

Note: Edited (replaced data from stuck public dandelion instance with local synced data).

However, if I got this correctly, total supply is calculated as the `max supply` - `reserves` and `reserves` is taken from the ledger state at the epoch boundary. This means there will be a similar issue in the current epoch, as the current reserves are `4766116403134819` but querying utxos in db sync yield a number higher than total supply (`40233883596865181`):

```

SELECT SUM(txo.value)

FROM tx_out txo

LEFT JOIN tx_in txi ON (txo.tx_id = txi.tx_out_id)

AND (txo.index = txi.tx_out_index)

WHERE txi IS NULL;

-------------------

42016202067224430

(1 row)

```

Again, querying graphql confirms the utxos value used in the computation is the one above:

```

curl -X POST -H "Content-Type: application/json" -d '{"query": "{ utxos_aggregate { aggregate {sum {value}} } }"}' http://localhost:3002/graphql

{"data":{"utxos_aggregate":{"aggregate":{"sum":{"value":"42016202067224430"}}}}

```

Sorry for the trouble, I'm not sure if I'm doing something wrong so I'd appreciate your help and clarification if I'm mistaken. Thanks a million. | priority | circulating and total supply computation environment platform linux other platform version nixos steps to reproduce the bug query this query ada supply circulating max total what is the expected behavior get correct number for circulating supply get correct number for total supply circulating supply i have a strong suspicion that circulating supply might be off by billion the current calculation consists of sum of utxos rewards withdrawals but if you look closely rewards withdrawals are in fact negative querying directly values from utxos select sum txo value from tx out txo left join tx in txi on txo tx id txi tx out id and txo index txi tx out index where txi is null row we can also confirm this by querying graphql curl x post h content type application json d query utxos aggregate aggregate sum value data utxos aggregate aggregate sum value next we calculate rewards withdrawals in dbsync select select coalesce sum amount from reward select sum amount from withdrawal row adding it up to utxos we get this corresponds to what can be seen in graphql as circulating supply curl x post h content type application json d query ada supply circulating max total data ada supply circulating max total however this will yield circulating supply that is even lower than utxos and doesn t look right to me withdrawal table also takes treasury and reserve into account so i think the correct formula for calculating circulating supply should be utxos reward treasury reserve withdrawal or represented as query in db sync select select coalesce sum amount from reward select coalesce sum amount from withdrawal select coalesce sum amount from reserve select coalesce sum amount from treasury which yields a positive number of all withdrawals from all possibly available funds i have considered all the possibilities and i have reached the conclusion that the number from graphql might be wrong please correct me if i m at fault here but it seems that circulating supply should be closer to than to the current value of note the outputs were collected few hours ago so they are not entirely up to date coalesce is needed especially for testnet where there is empty reserve table total supply testnet querying total supply on testnet in the current epoch doesn t look right as the circulating supply is higher than total supply curl x post h content type application json d query ada supply circulating max total data ada supply circulating max total note edited replaced data from stuck public dandelion instance with local synced data however if i got this correctly total supply is calculated as the max supply reserves and reserves is taken from the ledger state at the epoch boundary this means there will be a similar issue in the current epoch as the current reserves are but querying utxos in db sync yield a number higher than total supply select sum txo value from tx out txo left join tx in txi on txo tx id txi tx out id and txo index txi tx out index where txi is null row again querying graphql confirms the utxos value used in the computation is the one above curl x post h content type application json d query utxos aggregate aggregate sum value data utxos aggregate aggregate sum value sorry for the trouble i m not sure if i m doing something wrong so i d appreciate your help and clarification if i m mistaken thanks a million | 1 |

714,568 | 24,566,692,817 | IssuesEvent | 2022-10-13 04:11:52 | encorelab/ck-board | https://api.github.com/repos/encorelab/ck-board | opened | Create "Learner Model" UI | enhancement high priority | The Learner Model UI displays graphs of student data (some data manually entered by the teacher and some gathered from the CK Board).

1. For instance, we may add the Learner Model UI as part of the CK Student Monitor UI (below the task monitoring tools)

<img width="212" alt="Screen Shot 2022-10-12 at 11 29 13 PM" src="https://user-images.githubusercontent.com/6416247/195492500-b9e2390c-5f3d-43b4-8ea2-49478ad2156d.png">

2. By selecting either View by Content or View by SEL, the teacher gets an overview of all students by that metric

<img width="794" alt="Screen Shot 2022-10-12 at 11 30 01 PM" src="https://user-images.githubusercontent.com/6416247/195492582-dd373af5-fe5c-4763-91c6-1794250c18ff.png">

3. By selecting a student's name, the teacher can view or modify data for each student. Displayed data includes: (1) Content knowledge - entered by the teacher based on diagnostic and formative assessments, (2) Social-emotional learning (SEL) data - entered by the teacher based on diagnostic and re-administration of SEL survey, and (3) dynamic system data - including goals set by the teacher

<img width="795" alt="Screen Shot 2022-10-12 at 11 32 19 PM" src="https://user-images.githubusercontent.com/6416247/195492850-3df71e94-26eb-40a2-8d76-5970e47fbaee.png">

4. TBD

To see demo code of the javascript for the above visualizations or to explore other interactive demos, please explore the resources below:

- [Highcharts demo code.zip](https://github.com/encorelab/ck-board/files/9770363/Highcharts.demo.code.zip)

- https://www.highcharts.com/demo/gauge-activity | 1.0 | Create "Learner Model" UI - The Learner Model UI displays graphs of student data (some data manually entered by the teacher and some gathered from the CK Board).

1. For instance, we may add the Learner Model UI as part of the CK Student Monitor UI (below the task monitoring tools)

<img width="212" alt="Screen Shot 2022-10-12 at 11 29 13 PM" src="https://user-images.githubusercontent.com/6416247/195492500-b9e2390c-5f3d-43b4-8ea2-49478ad2156d.png">

2. By selecting either View by Content or View by SEL, the teacher gets an overview of all students by that metric

<img width="794" alt="Screen Shot 2022-10-12 at 11 30 01 PM" src="https://user-images.githubusercontent.com/6416247/195492582-dd373af5-fe5c-4763-91c6-1794250c18ff.png">

3. By selecting a student's name, the teacher can view or modify data for each student. Displayed data includes: (1) Content knowledge - entered by the teacher based on diagnostic and formative assessments, (2) Social-emotional learning (SEL) data - entered by the teacher based on diagnostic and re-administration of SEL survey, and (3) dynamic system data - including goals set by the teacher

<img width="795" alt="Screen Shot 2022-10-12 at 11 32 19 PM" src="https://user-images.githubusercontent.com/6416247/195492850-3df71e94-26eb-40a2-8d76-5970e47fbaee.png">

4. TBD

To see demo code of the javascript for the above visualizations or to explore other interactive demos, please explore the resources below:

- [Highcharts demo code.zip](https://github.com/encorelab/ck-board/files/9770363/Highcharts.demo.code.zip)

- https://www.highcharts.com/demo/gauge-activity | priority | create learner model ui the learner model ui displays graphs of student data some data manually entered by the teacher and some gathered from the ck board for instance we may add the learner model ui as part of the ck student monitor ui below the task monitoring tools img width alt screen shot at pm src by selecting either view by content or view by sel the teacher gets an overview of all students by that metric img width alt screen shot at pm src by selecting a student s name the teacher can view or modify data for each student displayed data includes content knowledge entered by the teacher based on diagnostic and formative assessments social emotional learning sel data entered by the teacher based on diagnostic and re administration of sel survey and dynamic system data including goals set by the teacher img width alt screen shot at pm src tbd to see demo code of the javascript for the above visualizations or to explore other interactive demos please explore the resources below | 1 |

788,298 | 27,750,177,609 | IssuesEvent | 2023-03-15 20:04:39 | calcom/cal.com | https://api.github.com/repos/calcom/cal.com | closed | [CAL-1161] Events not being added to Outlook calendar | 🐛 bug High priority | If the email associated with [cal.com](http://cal.com) account isn't outlook, destination calendar for an event type set to outlook doesn't add it.

Created via Threads. See full discussion: [https://threads.com/34452351117](https://threads.com/34452351117)

<sub>From [SyncLinear.com](https://synclinear.com) | [CAL-1161](https://linear.app/calcom/issue/CAL-1161/events-not-being-added-to-outlook-calendar)</sub> | 1.0 | [CAL-1161] Events not being added to Outlook calendar - If the email associated with [cal.com](http://cal.com) account isn't outlook, destination calendar for an event type set to outlook doesn't add it.

Created via Threads. See full discussion: [https://threads.com/34452351117](https://threads.com/34452351117)

<sub>From [SyncLinear.com](https://synclinear.com) | [CAL-1161](https://linear.app/calcom/issue/CAL-1161/events-not-being-added-to-outlook-calendar)</sub> | priority | events not being added to outlook calendar if the email associated with account isn t outlook destination calendar for an event type set to outlook doesn t add it created via threads see full discussion from | 1 |

434,991 | 12,530,190,958 | IssuesEvent | 2020-06-04 12:40:58 | CCAFS/MARLO | https://api.github.com/repos/CCAFS/MARLO | closed | [AP] (Alliance Dashboard) Add Data Information for the QA process | Priority - High Type - Enhancement | Tonya will send the narrative for this process

- [ ] Include "Data and information is quality assured (for 2017 and 2018) b) Quality assurance is ongoing for 2019 data." in the dashboard.

**Move to Closed when:** The text is on the dashboard.

| 1.0 | [AP] (Alliance Dashboard) Add Data Information for the QA process - Tonya will send the narrative for this process

- [ ] Include "Data and information is quality assured (for 2017 and 2018) b) Quality assurance is ongoing for 2019 data." in the dashboard.

**Move to Closed when:** The text is on the dashboard.

| priority | alliance dashboard add data information for the qa process tonya will send the narrative for this process include data and information is quality assured for and b quality assurance is ongoing for data in the dashboard move to closed when the text is on the dashboard | 1 |

104,717 | 4,217,699,352 | IssuesEvent | 2016-06-30 13:55:51 | dmwm/WMCore | https://api.github.com/repos/dmwm/WMCore | closed | ReqMgr2: status update via web UI is broken | High Priority | It returns a status Ok, however no changes were made to the request itself. The reason seems to be coming from the fact that you always _update_ the request priority as well, this is what is sent downstream from web interactions:

`{"RequestStatus":"aborted","RequestPriority":"250000"}`

which then does not end in an statusUpdate in Request.py, e.g.

https://github.com/dmwm/WMCore/blob/master/src/python/WMCore/ReqMgr/Service/Request.py#L508

I'm still checking on how to fix it.

| 1.0 | ReqMgr2: status update via web UI is broken - It returns a status Ok, however no changes were made to the request itself. The reason seems to be coming from the fact that you always _update_ the request priority as well, this is what is sent downstream from web interactions:

`{"RequestStatus":"aborted","RequestPriority":"250000"}`

which then does not end in an statusUpdate in Request.py, e.g.

https://github.com/dmwm/WMCore/blob/master/src/python/WMCore/ReqMgr/Service/Request.py#L508

I'm still checking on how to fix it.

| priority | status update via web ui is broken it returns a status ok however no changes were made to the request itself the reason seems to be coming from the fact that you always update the request priority as well this is what is sent downstream from web interactions requeststatus aborted requestpriority which then does not end in an statusupdate in request py e g i m still checking on how to fix it | 1 |

255,034 | 8,102,699,709 | IssuesEvent | 2018-08-13 03:39:44 | mesg-foundation/core | https://api.github.com/repos/mesg-foundation/core | opened | logs command doesn't work anymore | bug high priority | I think because of the refactoring of the container package, the `logs` command doesn't work anymore and I'm pretty sure many other features might have the same problem.

I still have to investigate more but it seems that we are using `context.WithTimeout` and when we have streams of data from docker then the timeout is reached and the context is terminated.

We should use `context.Background` in these cases

This issue might occurs in:

- build the docker image

- log the container

Let's make sure to test again all the different features using the `./dev-core` and `./dev-cli` to do our manual tests

@ilgooz can you have a look and confirm my thoughts ? | 1.0 | logs command doesn't work anymore - I think because of the refactoring of the container package, the `logs` command doesn't work anymore and I'm pretty sure many other features might have the same problem.

I still have to investigate more but it seems that we are using `context.WithTimeout` and when we have streams of data from docker then the timeout is reached and the context is terminated.

We should use `context.Background` in these cases

This issue might occurs in:

- build the docker image

- log the container

Let's make sure to test again all the different features using the `./dev-core` and `./dev-cli` to do our manual tests

@ilgooz can you have a look and confirm my thoughts ? | priority | logs command doesn t work anymore i think because of the refactoring of the container package the logs command doesn t work anymore and i m pretty sure many other features might have the same problem i still have to investigate more but it seems that we are using context withtimeout and when we have streams of data from docker then the timeout is reached and the context is terminated we should use context background in these cases this issue might occurs in build the docker image log the container let s make sure to test again all the different features using the dev core and dev cli to do our manual tests ilgooz can you have a look and confirm my thoughts | 1 |

688,921 | 23,600,070,670 | IssuesEvent | 2022-08-24 00:24:15 | nanoframework/Home | https://api.github.com/repos/nanoframework/Home | closed | Starting, Stopping and Starting BLE Advertisement cause System Panic | Type: Bug Status: In progress Priority: High | ### Library/API/IoT binding

nanoFramework.Device.Bluetooth

### Visual Studio version

VS2022 v 17.2.5

### .NET nanoFramework extension version

2022.2.0.23

### Target name(s)

ESP32_BLE_REV0

### Firmware version

1.8.0.362

### Device capabilities

_No response_

### Description

System Panics after Starting-> Stopping -> Starting BLE Advertisment.

### How to reproduce

```

GattServiceProviderResult result = GattServiceProvider.Create(serviceUuid);

if (result.Error != BluetoothError.Success)

{

return;

}

GattServiceProvider serviceProvider = result.ServiceProvider;

// Get created Primary service from provider

GattLocalService service = serviceProvider.Service;

DataWriter sw = new DataWriter();

sw.WriteString("This is Bluetooth sample 3");

GattLocalCharacteristicResult characteristicResult = service.CreateCharacteristic(readStaticCharUuid,

new GattLocalCharacteristicParameters()

{

CharacteristicProperties = GattCharacteristicProperties.Read,

UserDescription = "My Static Characteristic",

//ReadProtectionLevel = GattProtectionLevel.EncryptionRequired,

StaticValue = sw.DetachBuffer()

});

if (characteristicResult.Error != BluetoothError.Success)

{

// An error occurred.

return;

}

DeviceInformationServiceService DifService = new DeviceInformationServiceService(

serviceProvider,

"BLE Sensor",

"Model-1",

"989898", // no serial number

"v1.0",

SystemInfo.Version.ToString(),

"");

BatteryService BatService = new BatteryService(serviceProvider);

// Update the Battery service the current battery level regularly. In this case 94%

BatService.BatteryLevel = 94;

Debug.WriteLine("Before Advertise");

serviceProvider.StartAdvertising(new GattServiceProviderAdvertisingParameters()

{

DeviceName = "BLE Sensor",

IsConnectable = true,

IsDiscoverable = true,

});

for (int i = 1; i < 5; i++)

{

Debug.WriteLine("Loop: " + i);

Thread.Sleep(2_000);

if (serviceProvider.AdvertisementStatus == GattServiceProviderAdvertisementStatus.Stopped)

{

Debug.WriteLine("Starting");

serviceProvider.StartAdvertising();

}

else

{

Debug.WriteLine("Stopping");

serviceProvider.StopAdvertising();

}

}

```

### Expected behaviour

Do not crash the system when stopping and starting BLE Advertisment.

### Screenshots

_No response_

### Sample project or code

_No response_

### Aditional information

_No response_ | 1.0 | Starting, Stopping and Starting BLE Advertisement cause System Panic - ### Library/API/IoT binding

nanoFramework.Device.Bluetooth

### Visual Studio version

VS2022 v 17.2.5

### .NET nanoFramework extension version

2022.2.0.23

### Target name(s)

ESP32_BLE_REV0

### Firmware version

1.8.0.362

### Device capabilities

_No response_

### Description

System Panics after Starting-> Stopping -> Starting BLE Advertisment.

### How to reproduce

```

GattServiceProviderResult result = GattServiceProvider.Create(serviceUuid);

if (result.Error != BluetoothError.Success)

{

return;

}

GattServiceProvider serviceProvider = result.ServiceProvider;

// Get created Primary service from provider

GattLocalService service = serviceProvider.Service;

DataWriter sw = new DataWriter();

sw.WriteString("This is Bluetooth sample 3");

GattLocalCharacteristicResult characteristicResult = service.CreateCharacteristic(readStaticCharUuid,

new GattLocalCharacteristicParameters()

{

CharacteristicProperties = GattCharacteristicProperties.Read,

UserDescription = "My Static Characteristic",

//ReadProtectionLevel = GattProtectionLevel.EncryptionRequired,

StaticValue = sw.DetachBuffer()

});

if (characteristicResult.Error != BluetoothError.Success)

{

// An error occurred.

return;

}

DeviceInformationServiceService DifService = new DeviceInformationServiceService(

serviceProvider,

"BLE Sensor",

"Model-1",

"989898", // no serial number

"v1.0",

SystemInfo.Version.ToString(),

"");

BatteryService BatService = new BatteryService(serviceProvider);

// Update the Battery service the current battery level regularly. In this case 94%

BatService.BatteryLevel = 94;

Debug.WriteLine("Before Advertise");

serviceProvider.StartAdvertising(new GattServiceProviderAdvertisingParameters()

{

DeviceName = "BLE Sensor",

IsConnectable = true,

IsDiscoverable = true,

});

for (int i = 1; i < 5; i++)

{

Debug.WriteLine("Loop: " + i);

Thread.Sleep(2_000);

if (serviceProvider.AdvertisementStatus == GattServiceProviderAdvertisementStatus.Stopped)

{

Debug.WriteLine("Starting");

serviceProvider.StartAdvertising();

}

else

{

Debug.WriteLine("Stopping");

serviceProvider.StopAdvertising();

}

}

```

### Expected behaviour

Do not crash the system when stopping and starting BLE Advertisment.

### Screenshots

_No response_

### Sample project or code

_No response_

### Aditional information

_No response_ | priority | starting stopping and starting ble advertisement cause system panic library api iot binding nanoframework device bluetooth visual studio version v net nanoframework extension version target name s ble firmware version device capabilities no response description system panics after starting stopping starting ble advertisment how to reproduce gattserviceproviderresult result gattserviceprovider create serviceuuid if result error bluetootherror success return gattserviceprovider serviceprovider result serviceprovider get created primary service from provider gattlocalservice service serviceprovider service datawriter sw new datawriter sw writestring this is bluetooth sample gattlocalcharacteristicresult characteristicresult service createcharacteristic readstaticcharuuid new gattlocalcharacteristicparameters characteristicproperties gattcharacteristicproperties read userdescription my static characteristic readprotectionlevel gattprotectionlevel encryptionrequired staticvalue sw detachbuffer if characteristicresult error bluetootherror success an error occurred return deviceinformationserviceservice difservice new deviceinformationserviceservice serviceprovider ble sensor model no serial number systeminfo version tostring batteryservice batservice new batteryservice serviceprovider update the battery service the current battery level regularly in this case batservice batterylevel debug writeline before advertise serviceprovider startadvertising new gattserviceprovideradvertisingparameters devicename ble sensor isconnectable true isdiscoverable true for int i i i debug writeline loop i thread sleep if serviceprovider advertisementstatus gattserviceprovideradvertisementstatus stopped debug writeline starting serviceprovider startadvertising else debug writeline stopping serviceprovider stopadvertising expected behaviour do not crash the system when stopping and starting ble advertisment screenshots no response sample project or code no response aditional information no response | 1 |

315,596 | 9,629,234,032 | IssuesEvent | 2019-05-15 09:09:09 | WoWManiaUK/Blackwing-Lair | https://api.github.com/repos/WoWManiaUK/Blackwing-Lair | closed | [NPC/SPELL] Baron Silverlaine - id 3887 -Shadowfang keep | Confirmed Dungeon/Raid Fixed in Dev Priority-High | **Links:**

NPC -https://www.wowhead.com/npc=3887/baron-silverlaine

SPELL -https://www.wowhead.com/spell=93857/summon-worgen-spirit

from WoWHead or our Armory

**What is happening:**

when NPC/BOSS is using the ability the spirit appears forming one of the worgen spirirts but instead of it using the abilities describe for that spirit form they are just exploding and wiping the party.

This spell has a random chance of calling on of the Pre cata worgen bosses back as a simple to kill add for this boss.

Summonable Worgens:

Rethilgore

Razorclaw the Butcher

Odo the Blindwatcher

Wolf Master Nandos with three Lupine Spectres

**What should happen:**

The spirits summoned should use the abilities described in the tooltip in ingame dungeon guide not exploding and causing mass damage

| 1.0 | [NPC/SPELL] Baron Silverlaine - id 3887 -Shadowfang keep - **Links:**

NPC -https://www.wowhead.com/npc=3887/baron-silverlaine

SPELL -https://www.wowhead.com/spell=93857/summon-worgen-spirit

from WoWHead or our Armory

**What is happening:**

when NPC/BOSS is using the ability the spirit appears forming one of the worgen spirirts but instead of it using the abilities describe for that spirit form they are just exploding and wiping the party.

This spell has a random chance of calling on of the Pre cata worgen bosses back as a simple to kill add for this boss.

Summonable Worgens:

Rethilgore

Razorclaw the Butcher

Odo the Blindwatcher

Wolf Master Nandos with three Lupine Spectres

**What should happen:**

The spirits summoned should use the abilities described in the tooltip in ingame dungeon guide not exploding and causing mass damage

| priority | baron silverlaine id shadowfang keep links npc spell from wowhead or our armory what is happening when npc boss is using the ability the spirit appears forming one of the worgen spirirts but instead of it using the abilities describe for that spirit form they are just exploding and wiping the party this spell has a random chance of calling on of the pre cata worgen bosses back as a simple to kill add for this boss summonable worgens rethilgore razorclaw the butcher odo the blindwatcher wolf master nandos with three lupine spectres what should happen the spirits summoned should use the abilities described in the tooltip in ingame dungeon guide not exploding and causing mass damage | 1 |

568,502 | 16,981,598,904 | IssuesEvent | 2021-06-30 09:32:38 | mantidproject/mantid | https://api.github.com/repos/mantidproject/mantid | opened | Stop Dynamic Kubo Toyabe fitting crashing if bin width outside acceptable range | High Priority ISIS Team: Spectroscopy Muon | ### Expected behavior

When using the Dynamic Kubo Toyabe fitting on MUSR if you enter a bin width <0.001 or >0.100 an exception should be thrown and error message provided for user.

### Actual behavior

Mantid is crashing.

### Steps to reproduce the behavior

1. Open Muon Analysis GUI

2. Load a run

3. Click on fitting tab and add the Dynamic Kubo Toyabe fit function

4. Change the bin width to 0.5

### Platforms affected

Windows 10 (but likely to be all) | 1.0 | Stop Dynamic Kubo Toyabe fitting crashing if bin width outside acceptable range - ### Expected behavior

When using the Dynamic Kubo Toyabe fitting on MUSR if you enter a bin width <0.001 or >0.100 an exception should be thrown and error message provided for user.

### Actual behavior

Mantid is crashing.

### Steps to reproduce the behavior

1. Open Muon Analysis GUI

2. Load a run

3. Click on fitting tab and add the Dynamic Kubo Toyabe fit function

4. Change the bin width to 0.5

### Platforms affected

Windows 10 (but likely to be all) | priority | stop dynamic kubo toyabe fitting crashing if bin width outside acceptable range expected behavior when using the dynamic kubo toyabe fitting on musr if you enter a bin width an exception should be thrown and error message provided for user actual behavior mantid is crashing steps to reproduce the behavior open muon analysis gui load a run click on fitting tab and add the dynamic kubo toyabe fit function change the bin width to platforms affected windows but likely to be all | 1 |

795,154 | 28,063,869,416 | IssuesEvent | 2023-03-29 14:13:56 | xsuite/xsuite | https://api.github.com/repos/xsuite/xsuite | opened | Kernel for random number generator initialization not reused | bug High priority | ```python

In [4]: p = xp.Particles(); p._init_random_number_generator()

Compiling ContextCpu kernels...

ld: warning: -pie being ignored. It is only used when linking a main executable

Done compiling ContextCpu kernels.

In [5]: p = xp.Particles(); p._init_random_number_generator()

Compiling ContextCpu kernels...

ld: warning: -pie being ignored. It is only used when linking a main executable

Done compiling ContextCpu kernels.

In [6]: p = xp.Particles(); p._init_random_number_generator()

Compiling ContextCpu kernels...

ld: warning: -pie being ignored. It is only used when linking a main executable

Done compiling ContextCpu kernels.

``` | 1.0 | Kernel for random number generator initialization not reused - ```python

In [4]: p = xp.Particles(); p._init_random_number_generator()

Compiling ContextCpu kernels...

ld: warning: -pie being ignored. It is only used when linking a main executable

Done compiling ContextCpu kernels.

In [5]: p = xp.Particles(); p._init_random_number_generator()

Compiling ContextCpu kernels...

ld: warning: -pie being ignored. It is only used when linking a main executable

Done compiling ContextCpu kernels.

In [6]: p = xp.Particles(); p._init_random_number_generator()

Compiling ContextCpu kernels...

ld: warning: -pie being ignored. It is only used when linking a main executable

Done compiling ContextCpu kernels.

``` | priority | kernel for random number generator initialization not reused python in p xp particles p init random number generator compiling contextcpu kernels ld warning pie being ignored it is only used when linking a main executable done compiling contextcpu kernels in p xp particles p init random number generator compiling contextcpu kernels ld warning pie being ignored it is only used when linking a main executable done compiling contextcpu kernels in p xp particles p init random number generator compiling contextcpu kernels ld warning pie being ignored it is only used when linking a main executable done compiling contextcpu kernels | 1 |

120,818 | 4,794,766,962 | IssuesEvent | 2016-10-31 22:04:24 | Microsoft/TypeScript | https://api.github.com/repos/Microsoft/TypeScript | closed | Error introduced into 10/28 @next build | Bug High Priority | Some change between `typescript@2.1.0-dev.20161027` and `typescript@2.1.0-dev.20161028` introduced this error into my build. This issue also exists in `typescript@2.1.0-dev.20161029`

```

/Users/ntilwalli/dev/spotlight/node_modules/typescript/lib/typescript.js:23590

result.declarations = symbol.declarations.slice(0);

^

TypeError: Cannot read property 'slice' of undefined

at cloneSymbol (/Users/ntilwalli/dev/spotlight/node_modules/typescript/lib/typescript.js:23590:54)

at mergeModuleAugmentation (/Users/ntilwalli/dev/spotlight/node_modules/typescript/lib/typescript.js:23683:90)

at initializeTypeChecker (/Users/ntilwalli/dev/spotlight/node_modules/typescript/lib/typescript.js:41037:25)

at Object.createTypeChecker (/Users/ntilwalli/dev/spotlight/node_modules/typescript/lib/typescript.js:23527:9)

at getDiagnosticsProducingTypeChecker (/Users/ntilwalli/dev/spotlight/node_modules/typescript/lib/typescript.js:60181:93)

at Object.getGlobalDiagnostics (/Users/ntilwalli/dev/spotlight/node_modules/typescript/lib/typescript.js:60490:41)

at Tsifier.checkSemantics (/Users/ntilwalli/dev/spotlight/node_modules/tsify/lib/Tsifier.js:219:37)

at Tsifier.compile (/Users/ntilwalli/dev/spotlight/node_modules/tsify/lib/Tsifier.js:179:34)

at Tsifier.generateCache (/Users/ntilwalli/dev/spotlight/node_modules/tsify/lib/Tsifier.js:156:8)

at DestroyableTransform.flush [as _flush] (/Users/ntilwalli/dev/spotlight/node_modules/tsify/index.js:73:12)

```

| 1.0 | Error introduced into 10/28 @next build - Some change between `typescript@2.1.0-dev.20161027` and `typescript@2.1.0-dev.20161028` introduced this error into my build. This issue also exists in `typescript@2.1.0-dev.20161029`

```

/Users/ntilwalli/dev/spotlight/node_modules/typescript/lib/typescript.js:23590

result.declarations = symbol.declarations.slice(0);

^

TypeError: Cannot read property 'slice' of undefined

at cloneSymbol (/Users/ntilwalli/dev/spotlight/node_modules/typescript/lib/typescript.js:23590:54)

at mergeModuleAugmentation (/Users/ntilwalli/dev/spotlight/node_modules/typescript/lib/typescript.js:23683:90)

at initializeTypeChecker (/Users/ntilwalli/dev/spotlight/node_modules/typescript/lib/typescript.js:41037:25)

at Object.createTypeChecker (/Users/ntilwalli/dev/spotlight/node_modules/typescript/lib/typescript.js:23527:9)

at getDiagnosticsProducingTypeChecker (/Users/ntilwalli/dev/spotlight/node_modules/typescript/lib/typescript.js:60181:93)

at Object.getGlobalDiagnostics (/Users/ntilwalli/dev/spotlight/node_modules/typescript/lib/typescript.js:60490:41)

at Tsifier.checkSemantics (/Users/ntilwalli/dev/spotlight/node_modules/tsify/lib/Tsifier.js:219:37)

at Tsifier.compile (/Users/ntilwalli/dev/spotlight/node_modules/tsify/lib/Tsifier.js:179:34)

at Tsifier.generateCache (/Users/ntilwalli/dev/spotlight/node_modules/tsify/lib/Tsifier.js:156:8)

at DestroyableTransform.flush [as _flush] (/Users/ntilwalli/dev/spotlight/node_modules/tsify/index.js:73:12)

```

| priority | error introduced into next build some change between typescript dev and typescript dev introduced this error into my build this issue also exists in typescript dev users ntilwalli dev spotlight node modules typescript lib typescript js result declarations symbol declarations slice typeerror cannot read property slice of undefined at clonesymbol users ntilwalli dev spotlight node modules typescript lib typescript js at mergemoduleaugmentation users ntilwalli dev spotlight node modules typescript lib typescript js at initializetypechecker users ntilwalli dev spotlight node modules typescript lib typescript js at object createtypechecker users ntilwalli dev spotlight node modules typescript lib typescript js at getdiagnosticsproducingtypechecker users ntilwalli dev spotlight node modules typescript lib typescript js at object getglobaldiagnostics users ntilwalli dev spotlight node modules typescript lib typescript js at tsifier checksemantics users ntilwalli dev spotlight node modules tsify lib tsifier js at tsifier compile users ntilwalli dev spotlight node modules tsify lib tsifier js at tsifier generatecache users ntilwalli dev spotlight node modules tsify lib tsifier js at destroyabletransform flush users ntilwalli dev spotlight node modules tsify index js | 1 |

186,072 | 6,733,292,433 | IssuesEvent | 2017-10-18 14:25:31 | luccafort/otogi_novels | https://api.github.com/repos/luccafort/otogi_novels | closed | 小説のストーリー毎にまえがき/あとがき機能 | priority:high somebody:soon | ## 概要:

ダイジェスト機能とはまえがき/あとがきのこと。

前回の要約をストーリー本文の前に表示する機能、あるいは次回予告の告知などに利用する際に使う機能。

- [x] まえがきのCRUD機能

- [x] あとがきのCRUD機能

- [x] まえがき/あとがきの表示

必須項目ではない。

著者の好みで文章の前後に表示させることができる。

画像の差し込みなどはなし。 | 1.0 | 小説のストーリー毎にまえがき/あとがき機能 - ## 概要:

ダイジェスト機能とはまえがき/あとがきのこと。

前回の要約をストーリー本文の前に表示する機能、あるいは次回予告の告知などに利用する際に使う機能。

- [x] まえがきのCRUD機能

- [x] あとがきのCRUD機能

- [x] まえがき/あとがきの表示

必須項目ではない。

著者の好みで文章の前後に表示させることができる。

画像の差し込みなどはなし。 | priority | 小説のストーリー毎にまえがき あとがき機能 概要 ダイジェスト機能とはまえがき あとがきのこと。 前回の要約をストーリー本文の前に表示する機能、あるいは次回予告の告知などに利用する際に使う機能。 まえがきのcrud機能 あとがきのcrud機能 まえがき あとがきの表示 必須項目ではない。 著者の好みで文章の前後に表示させることができる。 画像の差し込みなどはなし。 | 1 |

41,168 | 2,868,982,057 | IssuesEvent | 2015-06-05 22:21:42 | dart-lang/pub | https://api.github.com/repos/dart-lang/pub | opened | Easily allow the installation and usage of any web component | enhancement Priority-High | <a href="https://github.com/sethladd"><img src="https://avatars.githubusercontent.com/u/5479?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [sethladd](https://github.com/sethladd)**

_Originally opened as dart-lang/sdk#20323_

----

There are a lot of great JavaScript web components out there. As a Dart developer, I would like to use all web components in my project. For example, the Google Web Components: https://github.com/GoogleWebComponents | 1.0 | Easily allow the installation and usage of any web component - <a href="https://github.com/sethladd"><img src="https://avatars.githubusercontent.com/u/5479?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [sethladd](https://github.com/sethladd)**

_Originally opened as dart-lang/sdk#20323_

----

There are a lot of great JavaScript web components out there. As a Dart developer, I would like to use all web components in my project. For example, the Google Web Components: https://github.com/GoogleWebComponents | priority | easily allow the installation and usage of any web component issue by originally opened as dart lang sdk there are a lot of great javascript web components out there as a dart developer i would like to use all web components in my project for example the google web components | 1 |

598,868 | 18,257,551,565 | IssuesEvent | 2021-10-03 09:38:24 | AY2122S1-CS2103T-T11-3/tp | https://api.github.com/repos/AY2122S1-CS2103T-T11-3/tp | opened | As a clinic receptionist, I can add a doctor’s personal details so I can better understand them and have a way of easily contacting them | type.Story priority.High | Create a command to add Doctors to the doctor records in plannerMD.

To be done after #42 and #43 | 1.0 | As a clinic receptionist, I can add a doctor’s personal details so I can better understand them and have a way of easily contacting them - Create a command to add Doctors to the doctor records in plannerMD.

To be done after #42 and #43 | priority | as a clinic receptionist i can add a doctor’s personal details so i can better understand them and have a way of easily contacting them create a command to add doctors to the doctor records in plannermd to be done after and | 1 |

376,658 | 11,149,893,653 | IssuesEvent | 2019-12-23 20:21:45 | bounswe/bounswe2019group8 | https://api.github.com/repos/bounswe/bounswe2019group8 | opened | Article Show Page Add Like and Dislike | Effort: High Mobile New feature Platform: Mobile Priority: High Status: Done | **Actions:**

1. Add like and dislike to show article page.

1. Connect with backend.

**Notes:**

- [x] Add like and dislike to show article page.

- [x] Connect with backend.

**Deadline:** 24.10.2019 - 18.43 | 1.0 | Article Show Page Add Like and Dislike - **Actions:**

1. Add like and dislike to show article page.

1. Connect with backend.

**Notes:**

- [x] Add like and dislike to show article page.

- [x] Connect with backend.

**Deadline:** 24.10.2019 - 18.43 | priority | article show page add like and dislike actions add like and dislike to show article page connect with backend notes add like and dislike to show article page connect with backend deadline | 1 |

550,041 | 16,103,849,580 | IssuesEvent | 2021-04-27 12:50:39 | georchestra/mapstore2-georchestra | https://api.github.com/repos/georchestra/mapstore2-georchestra | closed | Error opening the Embed map in geOrchestra GeoSolutions DEV | Priority: High bug | There is an error when loading a saved map in embed mode in GeoSolutions geOrchestra DEV.

Try for example this map.

https://georchestra.geo-solutions.it/mapstore/embedded.html#/3023

There is a Not fount error while loading the following:

https://georchestra.geo-solutions.it/mapstore/dist/geOrchestra-embedded.js

@offtherailz probably it is due to the latest update we did for the embedded part and you forgot some configuration. Please confirm.

| 1.0 | Error opening the Embed map in geOrchestra GeoSolutions DEV - There is an error when loading a saved map in embed mode in GeoSolutions geOrchestra DEV.

Try for example this map.

https://georchestra.geo-solutions.it/mapstore/embedded.html#/3023

There is a Not fount error while loading the following:

https://georchestra.geo-solutions.it/mapstore/dist/geOrchestra-embedded.js

@offtherailz probably it is due to the latest update we did for the embedded part and you forgot some configuration. Please confirm.

| priority | error opening the embed map in georchestra geosolutions dev there is an error when loading a saved map in embed mode in geosolutions georchestra dev try for example this map there is a not fount error while loading the following offtherailz probably it is due to the latest update we did for the embedded part and you forgot some configuration please confirm | 1 |

809,464 | 30,193,848,269 | IssuesEvent | 2023-07-04 18:12:54 | wizeline/wize-q-remix | https://api.github.com/repos/wizeline/wize-q-remix | closed | WizeQ is indexed by google | high priority | WizeQ app is indexed by google , it means that it can be search by the browser. | 1.0 | WizeQ is indexed by google - WizeQ app is indexed by google , it means that it can be search by the browser. | priority | wizeq is indexed by google wizeq app is indexed by google it means that it can be search by the browser | 1 |

103,431 | 4,173,004,224 | IssuesEvent | 2016-06-21 08:58:11 | bazingatechnologies/FSharp.Data.GraphQL | https://api.github.com/repos/bazingatechnologies/FSharp.Data.GraphQL | closed | Fix parser | bug graphql-spec high-priority | Current parser suffers from some issues, where most notable is being fragile on the newline/whitespaces in front of the query.

The issue is recreated by *parser should parse kitchen sink* test.

Known issues of the current parser:

- Problems with parsing queries having new lines in them, especially at the beginning of the query.

- Problems with parsing complex inputs as an arguments. | 1.0 | Fix parser - Current parser suffers from some issues, where most notable is being fragile on the newline/whitespaces in front of the query.

The issue is recreated by *parser should parse kitchen sink* test.

Known issues of the current parser:

- Problems with parsing queries having new lines in them, especially at the beginning of the query.

- Problems with parsing complex inputs as an arguments. | priority | fix parser current parser suffers from some issues where most notable is being fragile on the newline whitespaces in front of the query the issue is recreated by parser should parse kitchen sink test known issues of the current parser problems with parsing queries having new lines in them especially at the beginning of the query problems with parsing complex inputs as an arguments | 1 |

364,036 | 10,758,070,522 | IssuesEvent | 2019-10-31 14:23:44 | AY1920S1-CS2113T-F09-2/main | https://api.github.com/repos/AY1920S1-CS2113T-F09-2/main | closed | Reminder: customized by stock/date | priority.High type.Story | Create a feature that allows users to customize the reminders to their convenience | 1.0 | Reminder: customized by stock/date - Create a feature that allows users to customize the reminders to their convenience | priority | reminder customized by stock date create a feature that allows users to customize the reminders to their convenience | 1 |

253,792 | 8,066,023,476 | IssuesEvent | 2018-08-04 10:10:58 | AeneasPlatform/Aeneas | https://api.github.com/repos/AeneasPlatform/Aeneas | closed | Pre-mining setup fail handling | Aeneas:Core Priority 1 : High bug | **It is not any fail handling of mining timeout error.**

**To Reproduce**

Steps to reproduce the behavior:

1. Launch node at the first time.

2. Wait till pre-mining setup will end.

3. If setup value is bigger than it should be, you will see an error if mining timeout happens.

**Expected behavior**

It should get new block from the network, apply it to history and start new iteration.

**Desktop (please complete the following information):**

- OS: OS X El Capitan

- Device: Macbook Pro 2015 Early

- Version : commit № [839c3ed45c32687d14fc8af8178eb1138bda1ca1](https://github.com/AeneasPlatform/Aeneas/commit/839c3ed45c32687d14fc8af8178eb1138bda1ca1)

| 1.0 | Pre-mining setup fail handling - **It is not any fail handling of mining timeout error.**

**To Reproduce**

Steps to reproduce the behavior:

1. Launch node at the first time.

2. Wait till pre-mining setup will end.

3. If setup value is bigger than it should be, you will see an error if mining timeout happens.

**Expected behavior**

It should get new block from the network, apply it to history and start new iteration.

**Desktop (please complete the following information):**

- OS: OS X El Capitan

- Device: Macbook Pro 2015 Early

- Version : commit № [839c3ed45c32687d14fc8af8178eb1138bda1ca1](https://github.com/AeneasPlatform/Aeneas/commit/839c3ed45c32687d14fc8af8178eb1138bda1ca1)

| priority | pre mining setup fail handling it is not any fail handling of mining timeout error to reproduce steps to reproduce the behavior launch node at the first time wait till pre mining setup will end if setup value is bigger than it should be you will see an error if mining timeout happens expected behavior it should get new block from the network apply it to history and start new iteration desktop please complete the following information os os x el capitan device macbook pro early version commit № | 1 |

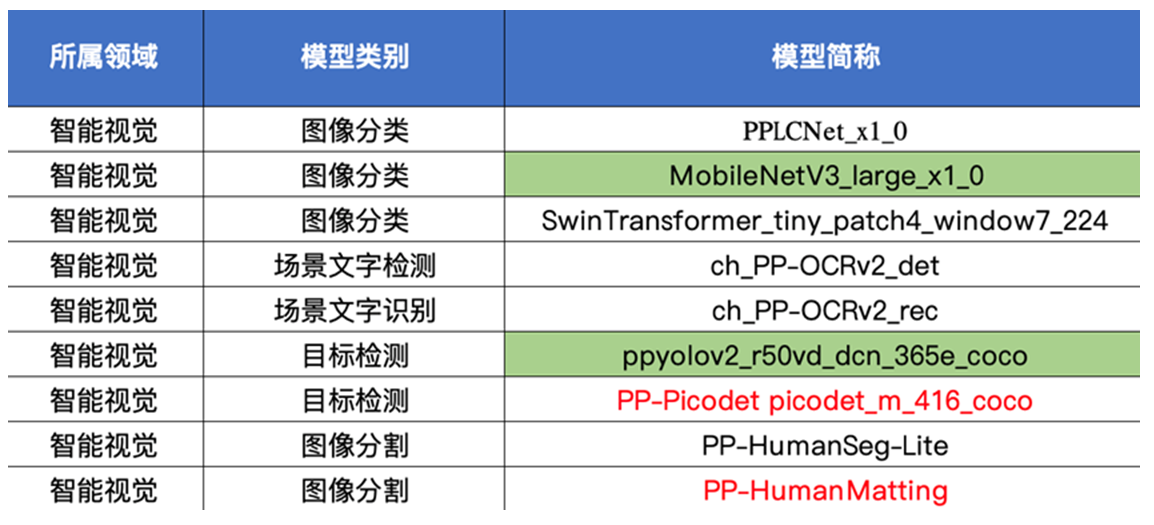

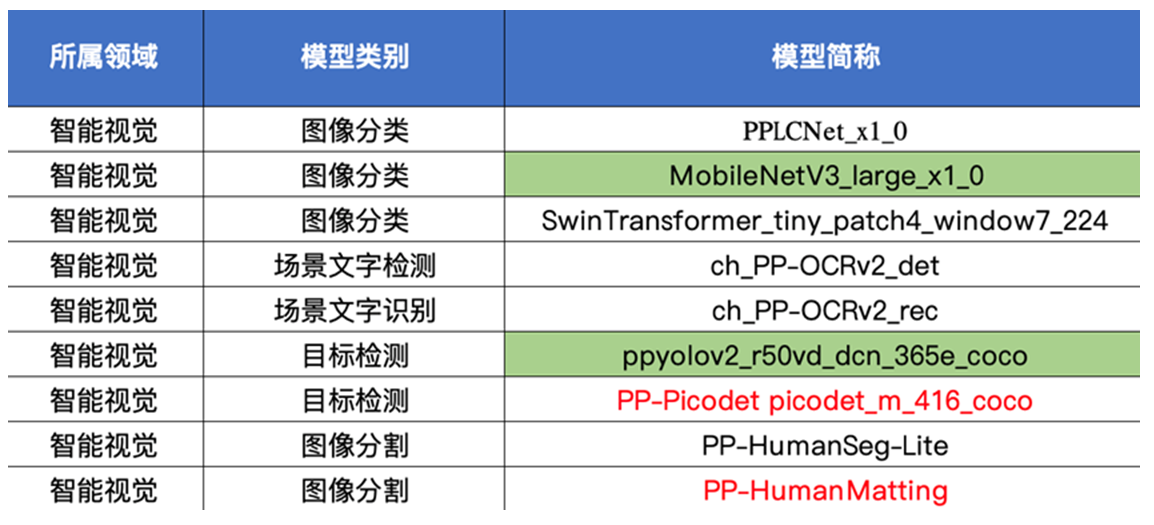

698,286 | 23,972,571,529 | IssuesEvent | 2022-09-13 08:58:01 | PaddlePaddle/Paddle | https://api.github.com/repos/PaddlePaddle/Paddle | closed | [Feature High priority] SPR 2+2+8 models AMX enabling and deployment | high priority Intel int8 | **Newest high priority model list from ligang, Green is highest priority.**

How to get the models:

**Detection model: Retinanet, faster-rcnn-r50-fpn**

#Clas

wget https://paddle-imagenet-models-name.bj.bcebos.com/dygraph/inference/PPLCNet_x1_0_infer.tar

wget https://paddle-imagenet-models-name.bj.bcebos.com/dygraph/inference/MobileNetV3_large_x1_0_infer.tar

wget https://paddle-imagenet-models-name.bj.bcebos.com/dygraph/inference/SwinTransformer_tiny_patch4_window7_224_infer.tar

# OCR

wget https://paddleocr.bj.bcebos.com/PP-OCRv2/chinese/ch_PP-OCRv2_det_infer.tar

wget https://paddleocr.bj.bcebos.com/PP-OCRv2/chinese/ch_PP-OCRv2_rec_infer.tar

# Seg

# Human Pose 512x512

wget https://paddleseg.bj.bcebos.com/dygraph/humanseg/export/deeplabv3p_resnet50_os8_humanseg_512x512_100k_with_softmax.zip

# Human Pose 192x192

https://paddleseg.bj.bcebos.com/dygraph/humanseg/export/fcn_hrnetw18_small_v1_humanseg_192x192_with_softmax.zip

# Matting model export

https://github.com/PaddlePaddle/PaddleSeg/blob/release/2.3/contrib/Matting/README_CN.md

# Detection

Need to set export resolution ratio (需要设置输出分辨率)

You can use these scripts and set exporting resolution ratio and export. (可以在PaddleDetection中用下面连接中的脚本,设置分辨率后导出)

https://github.com/PaddlePaddle/PaddleDetection/blob/develop/deploy/EXPORT_MODEL_en.md

Picodet:https://github.com/PaddlePaddle/PaddleDetection/blob/develop/configs/picodet/README_cn.md

PP-YOLOv2:https://github.com/PaddlePaddle/PaddleDetection/blob/release/2.3/configs/ppyolo/README_cn.md

| 1.0 | [Feature High priority] SPR 2+2+8 models AMX enabling and deployment - **Newest high priority model list from ligang, Green is highest priority.**

How to get the models:

**Detection model: Retinanet, faster-rcnn-r50-fpn**

#Clas

wget https://paddle-imagenet-models-name.bj.bcebos.com/dygraph/inference/PPLCNet_x1_0_infer.tar

wget https://paddle-imagenet-models-name.bj.bcebos.com/dygraph/inference/MobileNetV3_large_x1_0_infer.tar

wget https://paddle-imagenet-models-name.bj.bcebos.com/dygraph/inference/SwinTransformer_tiny_patch4_window7_224_infer.tar

# OCR

wget https://paddleocr.bj.bcebos.com/PP-OCRv2/chinese/ch_PP-OCRv2_det_infer.tar

wget https://paddleocr.bj.bcebos.com/PP-OCRv2/chinese/ch_PP-OCRv2_rec_infer.tar

# Seg

# Human Pose 512x512

wget https://paddleseg.bj.bcebos.com/dygraph/humanseg/export/deeplabv3p_resnet50_os8_humanseg_512x512_100k_with_softmax.zip

# Human Pose 192x192

https://paddleseg.bj.bcebos.com/dygraph/humanseg/export/fcn_hrnetw18_small_v1_humanseg_192x192_with_softmax.zip

# Matting model export

https://github.com/PaddlePaddle/PaddleSeg/blob/release/2.3/contrib/Matting/README_CN.md

# Detection

Need to set export resolution ratio (需要设置输出分辨率)

You can use these scripts and set exporting resolution ratio and export. (可以在PaddleDetection中用下面连接中的脚本,设置分辨率后导出)

https://github.com/PaddlePaddle/PaddleDetection/blob/develop/deploy/EXPORT_MODEL_en.md

Picodet:https://github.com/PaddlePaddle/PaddleDetection/blob/develop/configs/picodet/README_cn.md

PP-YOLOv2:https://github.com/PaddlePaddle/PaddleDetection/blob/release/2.3/configs/ppyolo/README_cn.md

| priority | spr models amx enabling and deployment newest high priority model list from ligang green is highest priority how to get the models: detection model retinanet faster rcnn fpn clas wget wget wget ocr wget wget seg human pose wget human pose matting model export detection need to set export resolution ratio 需要设置输出分辨率 you can use these scripts and set exporting resolution ratio and export 可以在paddledetection中用下面连接中的脚本,设置分辨率后导出 picodet: pp : | 1 |

353,292 | 10,551,121,026 | IssuesEvent | 2019-10-03 12:42:27 | boi123212321/porn-manager | https://api.github.com/repos/boi123212321/porn-manager | reopened | Tag aliases + Tags view | enhancement high priority | - [x] Allow user to create/delete/view/change new tags with optional aliases

- [ ] Automatically find tags in imported file names

- [ ] Optional tag image | 1.0 | Tag aliases + Tags view - - [x] Allow user to create/delete/view/change new tags with optional aliases

- [ ] Automatically find tags in imported file names

- [ ] Optional tag image | priority | tag aliases tags view allow user to create delete view change new tags with optional aliases automatically find tags in imported file names optional tag image | 1 |

212,314 | 7,235,730,960 | IssuesEvent | 2018-02-13 02:23:11 | City-Bureau/city-scrapers | https://api.github.com/repos/City-Bureau/city-scrapers | closed | Mapzen is shutting down :( | priority: high (must have) | https://mapzen.com/blog/shutdown/

There are [some alternatives](https://mapzen.com/blog/migration/#mapzen-search) listed on their [migration guide](https://mapzen.com/blog/migration/). We'll need to find a replacement that provides lat/long as well as community area.

cc @r-wei | 1.0 | Mapzen is shutting down :( - https://mapzen.com/blog/shutdown/

There are [some alternatives](https://mapzen.com/blog/migration/#mapzen-search) listed on their [migration guide](https://mapzen.com/blog/migration/). We'll need to find a replacement that provides lat/long as well as community area.

cc @r-wei | priority | mapzen is shutting down there are listed on their we ll need to find a replacement that provides lat long as well as community area cc r wei | 1 |

798,728 | 28,293,168,995 | IssuesEvent | 2023-04-09 13:17:21 | bounswe/bounswe2023group6 | https://api.github.com/repos/bounswe/bounswe2023group6 | closed | Creation of the Sequence Diagram for Creating and Editing Comment | priority: high type: task area: wiki area: milestone status: review-needed | ### Problem

The sequence diagram for the creating and editing comment should be implemented as decided on the 13th meeting.

### Solution

_No response_

### Documentation

_No response_

### Additional notes

_No response_

### Reviewers

Ömer Bahadıroğlu

### Deadline

08.04.2023 - Saturday - 21:00 | 1.0 | Creation of the Sequence Diagram for Creating and Editing Comment - ### Problem

The sequence diagram for the creating and editing comment should be implemented as decided on the 13th meeting.

### Solution

_No response_

### Documentation

_No response_

### Additional notes

_No response_

### Reviewers

Ömer Bahadıroğlu

### Deadline

08.04.2023 - Saturday - 21:00 | priority | creation of the sequence diagram for creating and editing comment problem the sequence diagram for the creating and editing comment should be implemented as decided on the meeting solution no response documentation no response additional notes no response reviewers ömer bahadıroğlu deadline saturday | 1 |

325,515 | 9,932,057,717 | IssuesEvent | 2019-07-02 08:58:57 | gama-platform/gama | https://api.github.com/repos/gama-platform/gama | closed | A tif file from Model Library cannot be opened in GAMA Image Viewer | > Bug Concerns Data Persistence Concerns Interface Concerns Models Library Priority High Version Git | **Describe the bug**

In the model Library, Data / Data Importation / includes, there is the file `land-cover.tif`.

When double-clicking on it, it should be opened in the Image Viewer view.

Instead, I get a white / empty Image Viewer, and an Exception in the Eclipse Console:

```

org.eclipse.swt.SWTException: Unsupported color depth

at org.eclipse.swt.SWT.error(SWT.java:4699)

at org.eclipse.swt.SWT.error(SWT.java:4614)

at org.eclipse.swt.SWT.error(SWT.java:4585)

at org.eclipse.swt.graphics.Image.createRepresentation(Image.java:994)

at org.eclipse.swt.graphics.Image.init(Image.java:1471)

at org.eclipse.swt.graphics.Image.<init>(Image.java:565)

at ummisco.gama.ui.viewers.image.ImageViewer.showImage(ImageViewer.java:371)

at ummisco.gama.ui.viewers.image.ImageViewer$2.lambda$0(ImageViewer.java:319)

at org.eclipse.swt.widgets.RunnableLock.run(RunnableLock.java:40)

at org.eclipse.swt.widgets.Synchronizer.runAsyncMessages(Synchronizer.java:185)

at org.eclipse.swt.widgets.Display.runAsyncMessages(Display.java:4102)

at org.eclipse.swt.widgets.Display.readAndDispatch(Display.java:3769)

at org.eclipse.e4.ui.internal.workbench.swt.PartRenderingEngine$5.run(PartRenderingEngine.java:1173)

at org.eclipse.core.databinding.observable.Realm.runWithDefault(Realm.java:338)

at org.eclipse.e4.ui.internal.workbench.swt.PartRenderingEngine.run(PartRenderingEngine.java:1062)

at org.eclipse.e4.ui.internal.workbench.E4Workbench.createAndRunUI(E4Workbench.java:155)

at org.eclipse.ui.internal.Workbench.lambda$3(Workbench.java:644)

at org.eclipse.core.databinding.observable.Realm.runWithDefault(Realm.java:338)

at org.eclipse.ui.internal.Workbench.createAndRunWorkbench(Workbench.java:566)

at org.eclipse.ui.PlatformUI.createAndRunWorkbench(PlatformUI.java:150)

at msi.gama.application.Application.start(Application.java:126)

at org.eclipse.equinox.internal.app.EclipseAppHandle.run(EclipseAppHandle.java:203)

at org.eclipse.core.runtime.internal.adaptor.EclipseAppLauncher.runApplication(EclipseAppLauncher.java:137)

at org.eclipse.core.runtime.internal.adaptor.EclipseAppLauncher.start(EclipseAppLauncher.java:107)

at org.eclipse.core.runtime.adaptor.EclipseStarter.run(EclipseStarter.java:400)

at org.eclipse.core.runtime.adaptor.EclipseStarter.run(EclipseStarter.java:255)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.eclipse.equinox.launcher.Main.invokeFramework(Main.java:661)

at org.eclipse.equinox.launcher.Main.basicRun(Main.java:597)

at org.eclipse.equinox.launcher.Main.run(Main.java:1476)

at org.eclipse.equinox.launcher.Main.main(Main.java:1449)

```

Notice that there is another TIFF file, `bogota_grid.tif` that can be opened properly in the Image Viewer.

**Desktop (please complete the following information):**

- OS: macOS Mojave

- GAMA version: git

| 1.0 | A tif file from Model Library cannot be opened in GAMA Image Viewer - **Describe the bug**

In the model Library, Data / Data Importation / includes, there is the file `land-cover.tif`.

When double-clicking on it, it should be opened in the Image Viewer view.

Instead, I get a white / empty Image Viewer, and an Exception in the Eclipse Console:

```

org.eclipse.swt.SWTException: Unsupported color depth

at org.eclipse.swt.SWT.error(SWT.java:4699)