Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

630,976 | 20,122,708,411 | IssuesEvent | 2022-02-08 05:20:56 | airavata-courses/neo | https://api.github.com/repos/airavata-courses/neo | closed | Containerize API gateway with Docker and configure image build in docker-compose | Type: Task Status: In Progress Priority: High BE | For #13, create a Dockerfile for building a Docker image and test connection on localhost of host machine.

Configure the build of this Dockerfile in docker-compose YAML file which contains instructions for spinning up and connecting all other containers.

| 1.0 | Containerize API gateway with Docker and configure image build in docker-compose - For #13, create a Dockerfile for building a Docker image and test connection on localhost of host machine.

Configure the build of this Dockerfile in docker-compose YAML file which contains instructions for spinning up and connecting all other containers.

| priority | containerize api gateway with docker and configure image build in docker compose for create a dockerfile for building a docker image and test connection on localhost of host machine configure the build of this dockerfile in docker compose yaml file which contains instructions for spinning up and connecting all other containers | 1 |

124,239 | 4,894,099,022 | IssuesEvent | 2016-11-19 03:50:12 | caver456/issue_test | https://api.github.com/repos/caver456/issue_test | opened | lag at end of new entry | bug help wanted Priority:High | in newEntryPost do a oneshot for layoutChanged and everything it depends on; that way the main table can update quicker (i.e. newEntryPost can complete quicker) | 1.0 | lag at end of new entry - in newEntryPost do a oneshot for layoutChanged and everything it depends on; that way the main table can update quicker (i.e. newEntryPost can complete quicker) | priority | lag at end of new entry in newentrypost do a oneshot for layoutchanged and everything it depends on that way the main table can update quicker i e newentrypost can complete quicker | 1 |

768,496 | 26,965,923,091 | IssuesEvent | 2023-02-08 22:21:36 | Darunada/namechangr | https://api.github.com/repos/Darunada/namechangr | closed | Utah Sex Offender Registry Form is out of date | bug help-wanted high-priority | The form needs to be updated with the newest version issued by BCI. I reached out to BCI for a docx formatted version and they said talk to the court. The court said BCI provides the form so talk to them. Ah government. Someone good with Word will need to recreate it in docx format, since I can't fill a PDF, I think. | 1.0 | Utah Sex Offender Registry Form is out of date - The form needs to be updated with the newest version issued by BCI. I reached out to BCI for a docx formatted version and they said talk to the court. The court said BCI provides the form so talk to them. Ah government. Someone good with Word will need to recreate it in docx format, since I can't fill a PDF, I think. | priority | utah sex offender registry form is out of date the form needs to be updated with the newest version issued by bci i reached out to bci for a docx formatted version and they said talk to the court the court said bci provides the form so talk to them ah government someone good with word will need to recreate it in docx format since i can t fill a pdf i think | 1 |

523,373 | 15,179,329,302 | IssuesEvent | 2021-02-14 19:05:33 | jesus-collective/mobile | https://api.github.com/repos/jesus-collective/mobile | closed | Wrong phone number format | High Priority | Can we please filter out any characters that are not numbers from the phone number submission by a user in the "tell us more about you" screen when creating accounts?

Currently, if the user enters dashes or brackets, etc it doesn't go through, but some users don't see the feedback error. | 1.0 | Wrong phone number format - Can we please filter out any characters that are not numbers from the phone number submission by a user in the "tell us more about you" screen when creating accounts?

Currently, if the user enters dashes or brackets, etc it doesn't go through, but some users don't see the feedback error. | priority | wrong phone number format can we please filter out any characters that are not numbers from the phone number submission by a user in the tell us more about you screen when creating accounts currently if the user enters dashes or brackets etc it doesn t go through but some users don t see the feedback error | 1 |

102,395 | 4,155,344,654 | IssuesEvent | 2016-06-16 14:40:17 | RestComm/mediaserver | https://api.github.com/repos/RestComm/mediaserver | opened | Implement Concurrency Model for MGCP Stack | enhancement High-Priority MGCP task | Implement a proper concurrency model for the new MGCP stack. | 1.0 | Implement Concurrency Model for MGCP Stack - Implement a proper concurrency model for the new MGCP stack. | priority | implement concurrency model for mgcp stack implement a proper concurrency model for the new mgcp stack | 1 |

252,119 | 8,032,179,948 | IssuesEvent | 2018-07-28 12:16:01 | Extum/flarum-ext-material | https://api.github.com/repos/Extum/flarum-ext-material | opened | [Dark Mode] Admin dashboard color fix | bug dark mode priority: high | **Describe the bug**

With dark mode enabled, the admin dashboard colors look a bit messed up.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to admin panel

2. See error

**Expected behavior**

The sidebar background color should be darker.

**Screenshots**

**Environment (please complete the following information):**

- OS: macOS Mojave

- Browser: Firefox

- Flarum Version 0.1.0-beta.7 | 1.0 | [Dark Mode] Admin dashboard color fix - **Describe the bug**

With dark mode enabled, the admin dashboard colors look a bit messed up.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to admin panel

2. See error

**Expected behavior**

The sidebar background color should be darker.

**Screenshots**

**Environment (please complete the following information):**

- OS: macOS Mojave

- Browser: Firefox

- Flarum Version 0.1.0-beta.7 | priority | admin dashboard color fix describe the bug with dark mode enabled the admin dashboard colors look a bit messed up to reproduce steps to reproduce the behavior go to admin panel see error expected behavior the sidebar background color should be darker screenshots environment please complete the following information os macos mojave browser firefox flarum version beta | 1 |

237,754 | 7,763,882,291 | IssuesEvent | 2018-06-01 18:11:03 | IUNetSci/hoaxy-backend | https://api.github.com/repos/IUNetSci/hoaxy-backend | opened | Invalid transaction is causing TopArticles API to fail | high priority | The log shows this error:

```

StatementError: (sqlalchemy.exc.InvalidRequestError) Can't reconnect until invalid transaction is rolled back [SQL: u'SELECT max(top20_article_monthly.upper_day) AS max_1 \nFROM top20_article_monthly'] [parameters: [{}]]

2018-06-01 14:04:45,055 - hoaxy(api) - ERROR: (sqlalchemy.exc.InvalidRequestError) Can't reconnect until invalid transaction is rolled back [SQL: u'SELECT max(top20_article_monthly.upper_day) AS max_1 \nFROM top20_article_monthly'] [parameters: [{}]]

Traceback (most recent call last):

File "/home/data/apps/hoaxy-backend/hoaxy/backend/api.py", line 582, in query_top_articles

df = db_query_top_articles(engine, **q_kwargs)

File "/home/data/apps/hoaxy-backend/hoaxy/ir/search.py", line 895, in db_query_top_articles

upper_day = get_max(session, Top20ArticleMonthly.upper_day)

File "/home/data/apps/hoaxy-backend/hoaxy/database/functions.py", line 105, in get_max

return q.scalar()

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/orm/query.py", line 2785, in scalar

ret = self.one()

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/orm/query.py", line 2756, in one

ret = self.one_or_none()

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/orm/query.py", line 2726, in one_or_none

ret = list(self)

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/orm/query.py", line 2797, in __iter__

return self._execute_and_instances(context)

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/orm/query.py", line 2820, in _execute_and_instances

result = conn.execute(querycontext.statement, self._params)

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 945, in execute

return meth(self, multiparams, params)

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/sql/elements.py", line 263, in _execute_on_connection

return connection._execute_clauseelement(self, multiparams, params)

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 1053, in _execute_clauseelement

compiled_sql, distilled_params

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 1121, in _execute_context

None, None)

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 1393, in _handle_dbapi_exception

exc_info

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/util/compat.py", line 202, in raise_from_cause

reraise(type(exception), exception, tb=exc_tb, cause=cause)

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 1114, in _execute_context

conn = self._revalidate_connection()

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 424, in _revalidate_connection

"Can't reconnect until invalid "

StatementError: (sqlalchemy.exc.InvalidRequestError) Can't reconnect until invalid transaction is rolled back [SQL: u'SELECT max(top20_article_monthly.upper_day) AS max_1 \nFROM top20_article_monthly'] [parameters: [{}]]

``` | 1.0 | Invalid transaction is causing TopArticles API to fail - The log shows this error:

```

StatementError: (sqlalchemy.exc.InvalidRequestError) Can't reconnect until invalid transaction is rolled back [SQL: u'SELECT max(top20_article_monthly.upper_day) AS max_1 \nFROM top20_article_monthly'] [parameters: [{}]]

2018-06-01 14:04:45,055 - hoaxy(api) - ERROR: (sqlalchemy.exc.InvalidRequestError) Can't reconnect until invalid transaction is rolled back [SQL: u'SELECT max(top20_article_monthly.upper_day) AS max_1 \nFROM top20_article_monthly'] [parameters: [{}]]

Traceback (most recent call last):

File "/home/data/apps/hoaxy-backend/hoaxy/backend/api.py", line 582, in query_top_articles

df = db_query_top_articles(engine, **q_kwargs)

File "/home/data/apps/hoaxy-backend/hoaxy/ir/search.py", line 895, in db_query_top_articles

upper_day = get_max(session, Top20ArticleMonthly.upper_day)

File "/home/data/apps/hoaxy-backend/hoaxy/database/functions.py", line 105, in get_max

return q.scalar()

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/orm/query.py", line 2785, in scalar

ret = self.one()

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/orm/query.py", line 2756, in one

ret = self.one_or_none()

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/orm/query.py", line 2726, in one_or_none

ret = list(self)

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/orm/query.py", line 2797, in __iter__

return self._execute_and_instances(context)

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/orm/query.py", line 2820, in _execute_and_instances

result = conn.execute(querycontext.statement, self._params)

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 945, in execute

return meth(self, multiparams, params)

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/sql/elements.py", line 263, in _execute_on_connection

return connection._execute_clauseelement(self, multiparams, params)

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 1053, in _execute_clauseelement

compiled_sql, distilled_params

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 1121, in _execute_context

None, None)

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 1393, in _handle_dbapi_exception

exc_info

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/util/compat.py", line 202, in raise_from_cause

reraise(type(exception), exception, tb=exc_tb, cause=cause)

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 1114, in _execute_context

conn = self._revalidate_connection()

File "/u/truthy/miniconda3/envs/hoaxy-backend/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 424, in _revalidate_connection

"Can't reconnect until invalid "

StatementError: (sqlalchemy.exc.InvalidRequestError) Can't reconnect until invalid transaction is rolled back [SQL: u'SELECT max(top20_article_monthly.upper_day) AS max_1 \nFROM top20_article_monthly'] [parameters: [{}]]

``` | priority | invalid transaction is causing toparticles api to fail the log shows this error statementerror sqlalchemy exc invalidrequesterror can t reconnect until invalid transaction is rolled back hoaxy api error sqlalchemy exc invalidrequesterror can t reconnect until invalid transaction is rolled back traceback most recent call last file home data apps hoaxy backend hoaxy backend api py line in query top articles df db query top articles engine q kwargs file home data apps hoaxy backend hoaxy ir search py line in db query top articles upper day get max session upper day file home data apps hoaxy backend hoaxy database functions py line in get max return q scalar file u truthy envs hoaxy backend lib site packages sqlalchemy orm query py line in scalar ret self one file u truthy envs hoaxy backend lib site packages sqlalchemy orm query py line in one ret self one or none file u truthy envs hoaxy backend lib site packages sqlalchemy orm query py line in one or none ret list self file u truthy envs hoaxy backend lib site packages sqlalchemy orm query py line in iter return self execute and instances context file u truthy envs hoaxy backend lib site packages sqlalchemy orm query py line in execute and instances result conn execute querycontext statement self params file u truthy envs hoaxy backend lib site packages sqlalchemy engine base py line in execute return meth self multiparams params file u truthy envs hoaxy backend lib site packages sqlalchemy sql elements py line in execute on connection return connection execute clauseelement self multiparams params file u truthy envs hoaxy backend lib site packages sqlalchemy engine base py line in execute clauseelement compiled sql distilled params file u truthy envs hoaxy backend lib site packages sqlalchemy engine base py line in execute context none none file u truthy envs hoaxy backend lib site packages sqlalchemy engine base py line in handle dbapi exception exc info file u truthy envs hoaxy backend lib site packages sqlalchemy util compat py line in raise from cause reraise type exception exception tb exc tb cause cause file u truthy envs hoaxy backend lib site packages sqlalchemy engine base py line in execute context conn self revalidate connection file u truthy envs hoaxy backend lib site packages sqlalchemy engine base py line in revalidate connection can t reconnect until invalid statementerror sqlalchemy exc invalidrequesterror can t reconnect until invalid transaction is rolled back | 1 |

661,949 | 22,097,224,642 | IssuesEvent | 2022-06-01 11:06:42 | ooni/ooni.org | https://api.github.com/repos/ooni/ooni.org | closed | Facilitate OONI training for civil society groups in Zimbabwe | priority/high workshop | On 1st June 2022 I'll be facilitating an OONI training for civil society groups in Zimbabwe. In preparation, I'll be creating relevant workshop slides and hands-on exercises. | 1.0 | Facilitate OONI training for civil society groups in Zimbabwe - On 1st June 2022 I'll be facilitating an OONI training for civil society groups in Zimbabwe. In preparation, I'll be creating relevant workshop slides and hands-on exercises. | priority | facilitate ooni training for civil society groups in zimbabwe on june i ll be facilitating an ooni training for civil society groups in zimbabwe in preparation i ll be creating relevant workshop slides and hands on exercises | 1 |

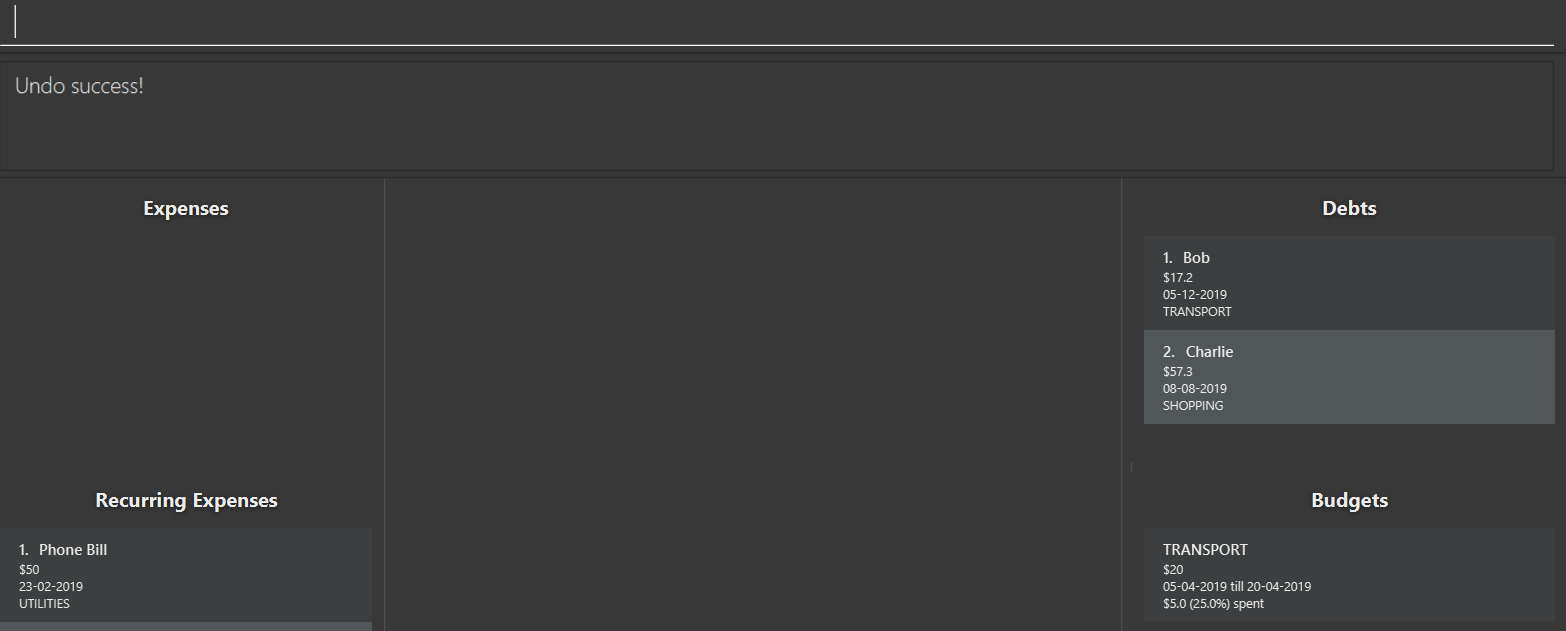

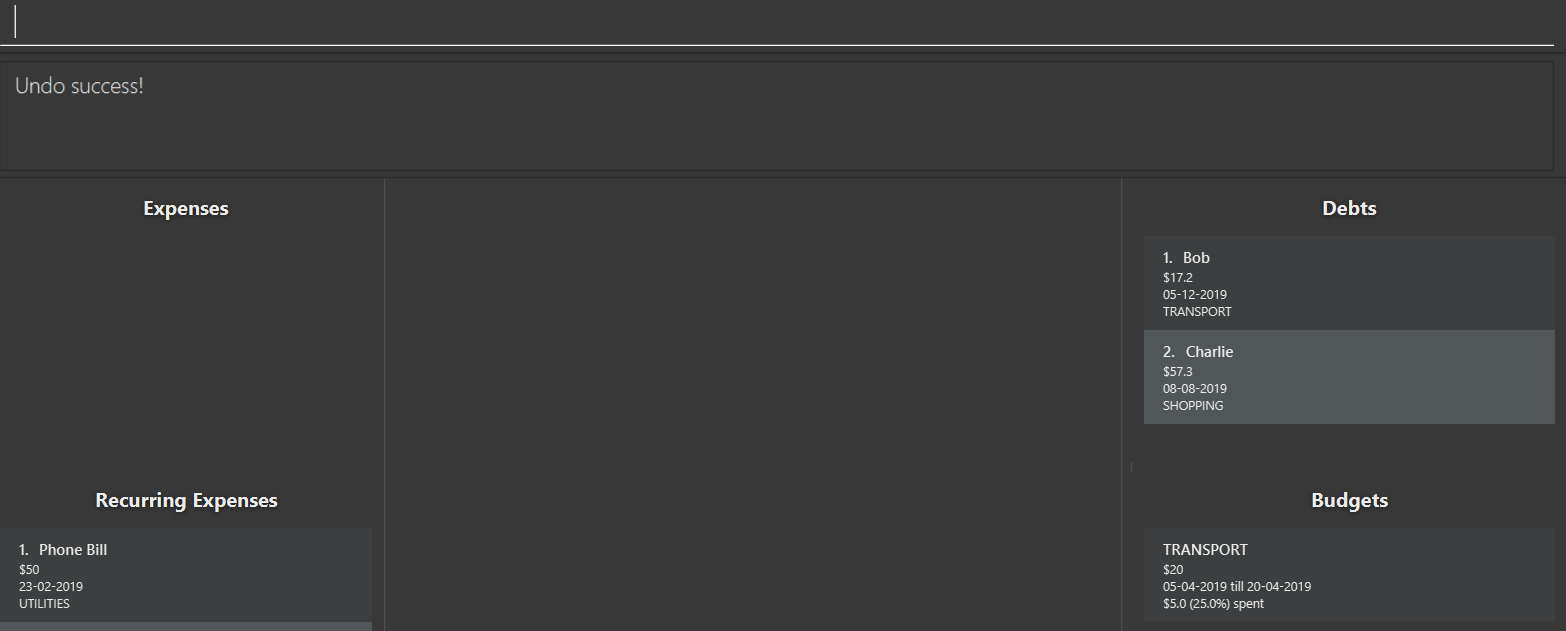

306,851 | 9,412,244,246 | IssuesEvent | 2019-04-10 03:08:42 | CS2103-AY1819S2-W15-2/main | https://api.github.com/repos/CS2103-AY1819S2-W15-2/main | closed | Undo command does not recalculate budget | bug priority.High | **Describe the bug**

Undo command does not undo the action on budget.

**To Reproduce**

Steps to reproduce the behavior:

1. Add a budget for transport with amount $20, start date 05-04-2019 and end date 20-04-2019.

2. Add an expense for "bus" with cost $5 and category TRANSPORT with date 05-04-2019. This expense is reflected on the transport budget.

3. Undo

**Expected behavior**

The transport budget should undo its update, and have an amount of $0 after the undo command.

**Screenshots**

If applicable, add screenshots to help explain your problem

**Additional context**

Add any other context about the problem here.

<hr>

**Reported by:** @kev-inc

**Severity:** Medium

<sub>[original: nus-cs2103-AY1819S2/pe-dry-run#623]</sub> | 1.0 | Undo command does not recalculate budget - **Describe the bug**

Undo command does not undo the action on budget.

**To Reproduce**

Steps to reproduce the behavior:

1. Add a budget for transport with amount $20, start date 05-04-2019 and end date 20-04-2019.

2. Add an expense for "bus" with cost $5 and category TRANSPORT with date 05-04-2019. This expense is reflected on the transport budget.

3. Undo

**Expected behavior**

The transport budget should undo its update, and have an amount of $0 after the undo command.

**Screenshots**

If applicable, add screenshots to help explain your problem

**Additional context**

Add any other context about the problem here.

<hr>

**Reported by:** @kev-inc

**Severity:** Medium

<sub>[original: nus-cs2103-AY1819S2/pe-dry-run#623]</sub> | priority | undo command does not recalculate budget describe the bug undo command does not undo the action on budget to reproduce steps to reproduce the behavior add a budget for transport with amount start date and end date add an expense for bus with cost and category transport with date this expense is reflected on the transport budget undo expected behavior the transport budget should undo its update and have an amount of after the undo command screenshots if applicable add screenshots to help explain your problem additional context add any other context about the problem here reported by kev inc severity medium | 1 |

677,229 | 23,155,667,612 | IssuesEvent | 2022-07-29 12:46:51 | RoyalHaskoningDHV/sam | https://api.github.com/repos/RoyalHaskoningDHV/sam | closed | BUG: SamQuantileMLP predict_ahead doesn't support Sequence | Priority: High Type: Bug | The type-hint for predict_ahead in SamQuantileMLP is

predict_ahead: Union[int, Sequence[int]] = 1,

But the parent BaseTimeseriesRegressor only supports List:

predict_ahead: Union[int, List[int]] = 1,

```python

>>> predict_ahead = (0,)

>>> isinstance(predict_ahead, Sequence)

True

>>> model = SamQuantileMLP(predict_ahead=predict_ahead)

>>> model.predict_ahead

[(0,)]

>>> model.validate_predict_ahead()

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "C:\Users\921266\source\repos\sam\sam\models\base_model.py", line 141, in validate_predict_ahead

if not all([p >= 0 for p in self.predict_ahead]):

File "C:\Users\921266\source\repos\sam\sam\models\base_model.py", line 141, in <listcomp>

if not all([p >= 0 for p in self.predict_ahead]):

TypeError: '>=' not supported between instances of 'tuple' and 'int'

This is caused by the following line:

self.predict_ahead = (

predict_ahead if isinstance(predict_ahead, List) else [predict_ahead]

)

``` | 1.0 | BUG: SamQuantileMLP predict_ahead doesn't support Sequence - The type-hint for predict_ahead in SamQuantileMLP is

predict_ahead: Union[int, Sequence[int]] = 1,

But the parent BaseTimeseriesRegressor only supports List:

predict_ahead: Union[int, List[int]] = 1,

```python

>>> predict_ahead = (0,)

>>> isinstance(predict_ahead, Sequence)

True

>>> model = SamQuantileMLP(predict_ahead=predict_ahead)

>>> model.predict_ahead

[(0,)]

>>> model.validate_predict_ahead()

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "C:\Users\921266\source\repos\sam\sam\models\base_model.py", line 141, in validate_predict_ahead

if not all([p >= 0 for p in self.predict_ahead]):

File "C:\Users\921266\source\repos\sam\sam\models\base_model.py", line 141, in <listcomp>

if not all([p >= 0 for p in self.predict_ahead]):

TypeError: '>=' not supported between instances of 'tuple' and 'int'

This is caused by the following line:

self.predict_ahead = (

predict_ahead if isinstance(predict_ahead, List) else [predict_ahead]

)

``` | priority | bug samquantilemlp predict ahead doesn t support sequence the type hint for predict ahead in samquantilemlp is predict ahead union but the parent basetimeseriesregressor only supports list predict ahead union python predict ahead isinstance predict ahead sequence true model samquantilemlp predict ahead predict ahead model predict ahead model validate predict ahead traceback most recent call last file line in file c users source repos sam sam models base model py line in validate predict ahead if not all file c users source repos sam sam models base model py line in if not all typeerror not supported between instances of tuple and int this is caused by the following line self predict ahead predict ahead if isinstance predict ahead list else | 1 |

786,640 | 27,660,945,488 | IssuesEvent | 2023-03-12 14:13:50 | AY2223S2-CS2103T-W11-4/tp | https://api.github.com/repos/AY2223S2-CS2103T-W11-4/tp | closed | As a user I can add a person's medical condition | enhancement priority.High type.Enhancement | As a user, I can add a certain medical condition and add the relevant patients in the same category.

| 1.0 | As a user I can add a person's medical condition - As a user, I can add a certain medical condition and add the relevant patients in the same category.

| priority | as a user i can add a person s medical condition as a user i can add a certain medical condition and add the relevant patients in the same category | 1 |

335,880 | 10,167,904,174 | IssuesEvent | 2019-08-07 19:24:54 | BCcampus/edehr | https://api.github.com/repos/BCcampus/edehr | opened | Activity information not visible until refresh | Effort - Low Priority - High ~Bug | ### Description

When there are two or more activities and an instructor visits the tool only the first activity displays it's title and information. But the user can refresh the browser and this information appears.

**Expected behaviour**

The course listing page displays all activities with their title and information.

| 1.0 | Activity information not visible until refresh - ### Description

When there are two or more activities and an instructor visits the tool only the first activity displays it's title and information. But the user can refresh the browser and this information appears.

**Expected behaviour**

The course listing page displays all activities with their title and information.

| priority | activity information not visible until refresh description when there are two or more activities and an instructor visits the tool only the first activity displays it s title and information but the user can refresh the browser and this information appears expected behaviour the course listing page displays all activities with their title and information | 1 |

575,564 | 17,035,635,585 | IssuesEvent | 2021-07-05 06:39:12 | ahmedkaludi/accelerated-mobile-pages | https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages | closed | scope of improvements in amp optimizer implementation | [Priority: HIGH] bug | Some unused CSS is loading on the page even with Tree shaking enabled.

some scripts are loading on the page, which is not required for that particular page.

For More information contact @MohammedKaludi and @ansaritalha | 1.0 | scope of improvements in amp optimizer implementation - Some unused CSS is loading on the page even with Tree shaking enabled.

some scripts are loading on the page, which is not required for that particular page.

For More information contact @MohammedKaludi and @ansaritalha | priority | scope of improvements in amp optimizer implementation some unused css is loading on the page even with tree shaking enabled some scripts are loading on the page which is not required for that particular page for more information contact mohammedkaludi and ansaritalha | 1 |

329,723 | 10,023,747,406 | IssuesEvent | 2019-07-16 20:01:04 | clearlinux/distribution | https://api.github.com/repos/clearlinux/distribution | closed | [Gnome3] Wrong default application for folders | bug desktop high priority | Opening a folder from the gnome search opens the gnome disk usage analyzer for the respective folder instead of the file browser:

```

robert@clear ~ $ xdg-mime query default inode/directory

org.gnome.baobab.desktop

```

Expected behavior: Open the corresponding folder in nautilus instead.

| 1.0 | [Gnome3] Wrong default application for folders - Opening a folder from the gnome search opens the gnome disk usage analyzer for the respective folder instead of the file browser:

```

robert@clear ~ $ xdg-mime query default inode/directory

org.gnome.baobab.desktop

```

Expected behavior: Open the corresponding folder in nautilus instead.

| priority | wrong default application for folders opening a folder from the gnome search opens the gnome disk usage analyzer for the respective folder instead of the file browser robert clear xdg mime query default inode directory org gnome baobab desktop expected behavior open the corresponding folder in nautilus instead | 1 |

253,764 | 8,065,650,443 | IssuesEvent | 2018-08-04 04:29:09 | sunwukonga/BBB | https://api.github.com/repos/sunwukonga/BBB | closed | [HomeScreen] -> [SearchResultScreen] Currently set to dummy page | high priority | 1. Remove dummy data

2. Pass search data to [SearchResultScreen]

3. Set off searchListing query | 1.0 | [HomeScreen] -> [SearchResultScreen] Currently set to dummy page - 1. Remove dummy data

2. Pass search data to [SearchResultScreen]

3. Set off searchListing query | priority | currently set to dummy page remove dummy data pass search data to set off searchlisting query | 1 |

637,985 | 20,692,630,881 | IssuesEvent | 2022-03-11 03:04:36 | AY2122S2-CS2113-F10-4/tp | https://api.github.com/repos/AY2122S2-CS2113-F10-4/tp | closed | Delete for contacts tracker | type.Story priority.High | As a user, I want to be able to delete a contact in case I make mistakes

| 1.0 | Delete for contacts tracker - As a user, I want to be able to delete a contact in case I make mistakes

| priority | delete for contacts tracker as a user i want to be able to delete a contact in case i make mistakes | 1 |

308,744 | 9,449,437,647 | IssuesEvent | 2019-04-16 01:54:28 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | Wrong derivative backpropagating through Cholesky factorization? | high priority module: operators topic: derivatives triaged | ## 🐛 Bug

I am getting different derivatives when I compute `g(A)` via `A -> g(A)` versus `A -> LL' -> g(LL')`. The derivatives are off in a predictable way, and it's easy to correct (~2 lines of code), leading me to believe this is a bug.

## To Reproduce

Steps to reproduce the behavior:

```python

import torch

mat = torch.randn(4, 4, dtype=torch.float64)

mat = (mat @ mat.transpose(-1, -2)).div_(5).add_(torch.eye(4, dtype=torch.float64))

mat = mat.detach().clone().requires_grad_(True)

mat_clone = mat.detach().clone().requires_grad_(True)

# Way 1

inv_mat1 = mat_clone.inverse() # A^{-1} = A^{-1}

# Way 2

chol_mat = mat.cholesky()

chol_inv_mat = chol_mat.inverse().transpose(-2, -1)

inv_mat2 = chol_inv_mat @ chol_inv_mat.transpose(-2, -1) # A^{-1} = L^{-T}L^{-1}

# True

print('Are these both A^{-1}?', bool(torch.norm(inv_mat1 - inv_mat2) < 1e-8))

inv_mat1.trace().backward()

inv_mat2.trace().backward()

print('Way 1\n', mat_clone.grad)

print('Way 2\n', mat.grad) # :-(

corrected_deriv = mat.grad.clone() / 2

corrected_deriv = corrected_deriv.tril() + corrected_deriv.tril().t()

print('Corrected derivative\n', corrected_deriv) # Simple correction to derivative works.

# True

print('Is the corrected derivative correct?', bool(torch.norm(corrected_deriv - mat_clone.grad) < 1e-8))

```

## Expected behavior

Calling `inv_mat2.trace().backward()` should produce `corrected_deriv` in `mat.grad`, not what is currently going there. | 1.0 | Wrong derivative backpropagating through Cholesky factorization? - ## 🐛 Bug

I am getting different derivatives when I compute `g(A)` via `A -> g(A)` versus `A -> LL' -> g(LL')`. The derivatives are off in a predictable way, and it's easy to correct (~2 lines of code), leading me to believe this is a bug.

## To Reproduce

Steps to reproduce the behavior:

```python

import torch

mat = torch.randn(4, 4, dtype=torch.float64)

mat = (mat @ mat.transpose(-1, -2)).div_(5).add_(torch.eye(4, dtype=torch.float64))

mat = mat.detach().clone().requires_grad_(True)

mat_clone = mat.detach().clone().requires_grad_(True)

# Way 1

inv_mat1 = mat_clone.inverse() # A^{-1} = A^{-1}

# Way 2

chol_mat = mat.cholesky()

chol_inv_mat = chol_mat.inverse().transpose(-2, -1)

inv_mat2 = chol_inv_mat @ chol_inv_mat.transpose(-2, -1) # A^{-1} = L^{-T}L^{-1}

# True

print('Are these both A^{-1}?', bool(torch.norm(inv_mat1 - inv_mat2) < 1e-8))

inv_mat1.trace().backward()

inv_mat2.trace().backward()

print('Way 1\n', mat_clone.grad)

print('Way 2\n', mat.grad) # :-(

corrected_deriv = mat.grad.clone() / 2

corrected_deriv = corrected_deriv.tril() + corrected_deriv.tril().t()

print('Corrected derivative\n', corrected_deriv) # Simple correction to derivative works.

# True

print('Is the corrected derivative correct?', bool(torch.norm(corrected_deriv - mat_clone.grad) < 1e-8))

```

## Expected behavior

Calling `inv_mat2.trace().backward()` should produce `corrected_deriv` in `mat.grad`, not what is currently going there. | priority | wrong derivative backpropagating through cholesky factorization 🐛 bug i am getting different derivatives when i compute g a via a g a versus a ll g ll the derivatives are off in a predictable way and it s easy to correct lines of code leading me to believe this is a bug to reproduce steps to reproduce the behavior python import torch mat torch randn dtype torch mat mat mat transpose div add torch eye dtype torch mat mat detach clone requires grad true mat clone mat detach clone requires grad true way inv mat clone inverse a a way chol mat mat cholesky chol inv mat chol mat inverse transpose inv chol inv mat chol inv mat transpose a l t l true print are these both a bool torch norm inv inv inv trace backward inv trace backward print way n mat clone grad print way n mat grad corrected deriv mat grad clone corrected deriv corrected deriv tril corrected deriv tril t print corrected derivative n corrected deriv simple correction to derivative works true print is the corrected derivative correct bool torch norm corrected deriv mat clone grad expected behavior calling inv trace backward should produce corrected deriv in mat grad not what is currently going there | 1 |

80,017 | 3,549,574,437 | IssuesEvent | 2016-01-20 18:33:15 | Valhalla-Gaming/Tracker | https://api.github.com/repos/Valhalla-Gaming/Tracker | closed | [brewmaster] guard | Class-Monk Priority-High Type-Spell | "http://www.wowhead.com/spell=115295/guard

how it should work: consumes 2 chi and absorbing [1 * (Attack power * 18) * (1 + $versadmg)] damage.

what happens: It consumes 2 chi but the absorbed dmg is much too less (ca. 10%)." | 1.0 | [brewmaster] guard - "http://www.wowhead.com/spell=115295/guard

how it should work: consumes 2 chi and absorbing [1 * (Attack power * 18) * (1 + $versadmg)] damage.

what happens: It consumes 2 chi but the absorbed dmg is much too less (ca. 10%)." | priority | guard how it should work consumes chi and absorbing damage what happens it consumes chi but the absorbed dmg is much too less ca | 1 |

212,293 | 7,235,431,741 | IssuesEvent | 2018-02-13 00:40:54 | wedeploy/marble | https://api.github.com/repos/wedeploy/marble | closed | Blog bullet points unaligned on Edge and IE11 | 1 - high priority bug | Edge version: 41.16299.15.0

<img width="712" alt="screen shot 2018-02-09 at 2 11 56 pm" src="https://user-images.githubusercontent.com/23219848/36052687-8985c8b0-0da3-11e8-93dd-50efee87e2b6.png">

| 1.0 | Blog bullet points unaligned on Edge and IE11 - Edge version: 41.16299.15.0

<img width="712" alt="screen shot 2018-02-09 at 2 11 56 pm" src="https://user-images.githubusercontent.com/23219848/36052687-8985c8b0-0da3-11e8-93dd-50efee87e2b6.png">

| priority | blog bullet points unaligned on edge and edge version img width alt screen shot at pm src | 1 |

795,982 | 28,095,301,809 | IssuesEvent | 2023-03-30 15:24:32 | AY2223S2-CS2103T-W12-4/tp | https://api.github.com/repos/AY2223S2-CS2103T-W12-4/tp | closed | View patient details | type.Story priority.High | As a doctor, I can view patient details of a patient so that I can see everything I want and need to know about them at a glance. | 1.0 | View patient details - As a doctor, I can view patient details of a patient so that I can see everything I want and need to know about them at a glance. | priority | view patient details as a doctor i can view patient details of a patient so that i can see everything i want and need to know about them at a glance | 1 |

643,210 | 20,925,943,637 | IssuesEvent | 2022-03-24 22:57:04 | gilhrpenner/COMP4350 | https://api.github.com/repos/gilhrpenner/COMP4350 | opened | Return dummy URL of photos when in DEV mode | dev task high priority | ## Description

We are currently hosting our images with AWS S3, the free tier has a limit of 2k requests per month, since we are constantly developing and testing, this limit easily can be reached in a couple of days which is bad! So in order to not waste money we need to return dummy URLs while in dev mode.

## Acceptance Criteria

- production mode should still show the image hosted on S3

| 1.0 | Return dummy URL of photos when in DEV mode - ## Description

We are currently hosting our images with AWS S3, the free tier has a limit of 2k requests per month, since we are constantly developing and testing, this limit easily can be reached in a couple of days which is bad! So in order to not waste money we need to return dummy URLs while in dev mode.

## Acceptance Criteria

- production mode should still show the image hosted on S3

| priority | return dummy url of photos when in dev mode description we are currently hosting our images with aws the free tier has a limit of requests per month since we are constantly developing and testing this limit easily can be reached in a couple of days which is bad so in order to not waste money we need to return dummy urls while in dev mode acceptance criteria production mode should still show the image hosted on | 1 |

484,214 | 13,936,349,156 | IssuesEvent | 2020-10-22 12:49:50 | tomav/docker-mailserver | https://api.github.com/repos/tomav/docker-mailserver | closed | Build-Push-Action / Multiarch | enhancement frozen due to age help wanted kubernetes priority 1 [HIGH] roadmap | <!--- Provide a general summary of the issue in the Title above -->

When starting the server, got `standard_init_linux.go:190: exec user process caused "exec format error"`.

## Context

<!--- Provide a more detailed introduction to the issue itself -->

<!--- How has this issue affected you? What were you trying to accomplish? -->

Install docker and docker-compose on raspberry (OS raspbian), install and start the server

## Expected Behavior

<!--- Tell us what should happen -->

Should start

## Actual Behavior

<!--- Tell us what happens instead -->

Not starting

## Possible Fix

<!--- Not obligatory, but suggest a fix or reason for the issue -->

## Your Environment

<!--- Include as many relevant details about the environment you experienced the issue in -->

* Amount of RAM available: 2GB

* Mailserver version used: master

* Docker version used: 18.09.0

* Environment settings relevant to the config: Rasberry (arm) on raspbian

* Any relevant stack traces ("Full trace" preferred):

```

standard_init_linux.go:190: exec user process caused "exec format error"

```

| 1.0 | Build-Push-Action / Multiarch - <!--- Provide a general summary of the issue in the Title above -->

When starting the server, got `standard_init_linux.go:190: exec user process caused "exec format error"`.

## Context

<!--- Provide a more detailed introduction to the issue itself -->

<!--- How has this issue affected you? What were you trying to accomplish? -->

Install docker and docker-compose on raspberry (OS raspbian), install and start the server

## Expected Behavior

<!--- Tell us what should happen -->

Should start

## Actual Behavior

<!--- Tell us what happens instead -->

Not starting

## Possible Fix

<!--- Not obligatory, but suggest a fix or reason for the issue -->

## Your Environment

<!--- Include as many relevant details about the environment you experienced the issue in -->

* Amount of RAM available: 2GB

* Mailserver version used: master

* Docker version used: 18.09.0

* Environment settings relevant to the config: Rasberry (arm) on raspbian

* Any relevant stack traces ("Full trace" preferred):

```

standard_init_linux.go:190: exec user process caused "exec format error"

```

| priority | build push action multiarch when starting the server got standard init linux go exec user process caused exec format error context install docker and docker compose on raspberry os raspbian install and start the server expected behavior should start actual behavior not starting possible fix your environment amount of ram available mailserver version used master docker version used environment settings relevant to the config rasberry arm on raspbian any relevant stack traces full trace preferred standard init linux go exec user process caused exec format error | 1 |

318,284 | 9,690,307,671 | IssuesEvent | 2019-05-24 08:18:45 | status-im/status-react | https://api.github.com/repos/status-im/status-react | opened | Can't attach logs to email draft on android | android bug high-priority low-severity | # Problem

Error `can't add attachment` shown when trying to send logs on Android (7 and 8 at least). User can't send logs.

## Steps

1. install status and create account

2. profile -> dev mode -> send logs -> gmail

## Build

Reproduced in nightly build https://status-im.ams3.digitaloceanspaces.com/StatusIm-190524-025900-ee1277-nightly.apk

Devices: Android 7 (Xiaomi), Android 8 (Huawei)

| 1.0 | Can't attach logs to email draft on android - # Problem

Error `can't add attachment` shown when trying to send logs on Android (7 and 8 at least). User can't send logs.

## Steps

1. install status and create account

2. profile -> dev mode -> send logs -> gmail

## Build

Reproduced in nightly build https://status-im.ams3.digitaloceanspaces.com/StatusIm-190524-025900-ee1277-nightly.apk

Devices: Android 7 (Xiaomi), Android 8 (Huawei)

| priority | can t attach logs to email draft on android problem error can t add attachment shown when trying to send logs on android and at least user can t send logs steps install status and create account profile dev mode send logs gmail build reproduced in nightly build devices android xiaomi android huawei | 1 |

458,396 | 13,174,529,290 | IssuesEvent | 2020-08-11 22:44:06 | IslasGECI/dimorfismo | https://api.github.com/repos/IslasGECI/dimorfismo | closed | Valores mínimos y máximos están al revés en `best_logistic_model_parameters_laal_ig.json` | Priority: High Status: Available Type: Bug | Abajo se puede ver que el valor mínimo del alto del pico es más grande que el valor máximo y los mismo con el tarso:

```

{

"parametrosNormalizacion": {

"valorMinimo": {

"longitudCraneo": [3.7037],

"altoPico": [165.58],

"longitudPico": [29.67],

"tarso": [101.78]

},

"valorMaximo": {

"longitudCraneo": [83.18],

"altoPico": [46.5],

"longitudPico": [193.22],

"tarso": [35.44]

}

},

"parametrosModelo": [

{

"Variables": "(Intercept)",

"Estimate": -18.948,

"_row": "(Intercept)"

},

{

"Variables": "longitudCraneo",

"Estimate": 6.576,

"_row": "longitudCraneo"

},

{

"Variables": "altoPico",

"Estimate": 8.816,

"_row": "altoPico"

},

{

"Variables": "longitudPico",

"Estimate": 7.172,

"_row": "longitudPico"

},

{

"Variables": "tarso",

"Estimate": 5.726,

"_row": "tarso"

}

]

}

``` | 1.0 | Valores mínimos y máximos están al revés en `best_logistic_model_parameters_laal_ig.json` - Abajo se puede ver que el valor mínimo del alto del pico es más grande que el valor máximo y los mismo con el tarso:

```

{

"parametrosNormalizacion": {

"valorMinimo": {

"longitudCraneo": [3.7037],

"altoPico": [165.58],

"longitudPico": [29.67],

"tarso": [101.78]

},

"valorMaximo": {

"longitudCraneo": [83.18],

"altoPico": [46.5],

"longitudPico": [193.22],

"tarso": [35.44]

}

},

"parametrosModelo": [

{

"Variables": "(Intercept)",

"Estimate": -18.948,

"_row": "(Intercept)"

},

{

"Variables": "longitudCraneo",

"Estimate": 6.576,

"_row": "longitudCraneo"

},

{

"Variables": "altoPico",

"Estimate": 8.816,

"_row": "altoPico"

},

{

"Variables": "longitudPico",

"Estimate": 7.172,

"_row": "longitudPico"

},

{

"Variables": "tarso",

"Estimate": 5.726,

"_row": "tarso"

}

]

}

``` | priority | valores mínimos y máximos están al revés en best logistic model parameters laal ig json abajo se puede ver que el valor mínimo del alto del pico es más grande que el valor máximo y los mismo con el tarso parametrosnormalizacion valorminimo longitudcraneo altopico longitudpico tarso valormaximo longitudcraneo altopico longitudpico tarso parametrosmodelo variables intercept estimate row intercept variables longitudcraneo estimate row longitudcraneo variables altopico estimate row altopico variables longitudpico estimate row longitudpico variables tarso estimate row tarso | 1 |

801,374 | 28,485,807,362 | IssuesEvent | 2023-04-18 07:47:33 | gamefreedomgit/Maelstrom | https://api.github.com/repos/gamefreedomgit/Maelstrom | closed | Guild reputation from killing raid bosses | Status: Need Info Status: Confirmed Priority: High | If you have guild raid group each killed boss should reward with 60 guild reputation. Currently there is no guild reputation given after killing raid bosses.

Source:

https://youtu.be/uqvmBnxUabE?t=294

https://youtu.be/h6fGunTR068?t=509 | 1.0 | Guild reputation from killing raid bosses - If you have guild raid group each killed boss should reward with 60 guild reputation. Currently there is no guild reputation given after killing raid bosses.

Source:

https://youtu.be/uqvmBnxUabE?t=294

https://youtu.be/h6fGunTR068?t=509 | priority | guild reputation from killing raid bosses if you have guild raid group each killed boss should reward with guild reputation currently there is no guild reputation given after killing raid bosses source | 1 |

710,953 | 24,445,201,973 | IssuesEvent | 2022-10-06 17:20:32 | fyusuf-a/ft_transcendence | https://api.github.com/repos/fyusuf-a/ft_transcendence | closed | Chat messages don't appear in DMs when they are first created | bug frontend HIGH PRIORITY | ### To Reproduce

- Create a new DM by clicking the plus in the ChannelList, clicking DM in the ChannelJoinDialog, and then entering a valid username in the text field and selecting the user from the list that appears

- The ChatWindow should open automatically

- Enter a message in the text area and submit it

- The message is successfully sent, but does not appear unless the channel is refreshed.

### Expected Behavior

- The message should appear when sent | 1.0 | Chat messages don't appear in DMs when they are first created - ### To Reproduce

- Create a new DM by clicking the plus in the ChannelList, clicking DM in the ChannelJoinDialog, and then entering a valid username in the text field and selecting the user from the list that appears

- The ChatWindow should open automatically

- Enter a message in the text area and submit it

- The message is successfully sent, but does not appear unless the channel is refreshed.

### Expected Behavior

- The message should appear when sent | priority | chat messages don t appear in dms when they are first created to reproduce create a new dm by clicking the plus in the channellist clicking dm in the channeljoindialog and then entering a valid username in the text field and selecting the user from the list that appears the chatwindow should open automatically enter a message in the text area and submit it the message is successfully sent but does not appear unless the channel is refreshed expected behavior the message should appear when sent | 1 |

633,115 | 20,245,312,993 | IssuesEvent | 2022-02-14 13:13:09 | PoProstuMieciek/wikipedia-scraper | https://api.github.com/repos/PoProstuMieciek/wikipedia-scraper | closed | feat/html-parser | priority: high type: feat | **AC**

- function:

- [ ] gets a html string

- [ ] parses the string using [`jsdom`](https://www.npmjs.com/package/jsdom) package

- [ ] returns `JsDom` instance

| 1.0 | feat/html-parser - **AC**

- function:

- [ ] gets a html string

- [ ] parses the string using [`jsdom`](https://www.npmjs.com/package/jsdom) package

- [ ] returns `JsDom` instance

| priority | feat html parser ac function gets a html string parses the string using package returns jsdom instance | 1 |

578,142 | 17,145,212,770 | IssuesEvent | 2021-07-13 13:58:44 | gitpod-io/gitpod | https://api.github.com/repos/gitpod-io/gitpod | closed | mysql does not come up bc of missing resource requests | dev experience priority: high (dev loop impact) type: bug | ### Bug description

The mysql container does not request any resources, and thus gets terminated in some situations ([here](https://console.cloud.google.com/kubernetes/pod/europe-west1-b/dev/staging-gpl-headless-log-content/mysql-0/details?project=gitpod-core-dev) and [here](https://console.cloud.google.com/kubernetes/pod/europe-west1-b/dev/staging-csweichel-ws-daemon-fails-mounting-4784/mysql-0/yaml/view?project=gitpod-core-dev)).

This results in broken deployments (timeout) and CrashLoopBackoffs, blocking those environments.

### Steps to reproduce

Start enough preview environments.

### Expected behavior

_No response_

### Example repository

_No response_

### Anything else?

_No response_ | 1.0 | mysql does not come up bc of missing resource requests - ### Bug description

The mysql container does not request any resources, and thus gets terminated in some situations ([here](https://console.cloud.google.com/kubernetes/pod/europe-west1-b/dev/staging-gpl-headless-log-content/mysql-0/details?project=gitpod-core-dev) and [here](https://console.cloud.google.com/kubernetes/pod/europe-west1-b/dev/staging-csweichel-ws-daemon-fails-mounting-4784/mysql-0/yaml/view?project=gitpod-core-dev)).

This results in broken deployments (timeout) and CrashLoopBackoffs, blocking those environments.

### Steps to reproduce

Start enough preview environments.

### Expected behavior

_No response_

### Example repository

_No response_

### Anything else?

_No response_ | priority | mysql does not come up bc of missing resource requests bug description the mysql container does not request any resources and thus gets terminated in some situations and this results in broken deployments timeout and crashloopbackoffs blocking those environments steps to reproduce start enough preview environments expected behavior no response example repository no response anything else no response | 1 |

629,568 | 20,036,263,486 | IssuesEvent | 2022-02-02 12:14:38 | eGirlsAreRuiningMyAC/IoT-AC | https://api.github.com/repos/eGirlsAreRuiningMyAC/IoT-AC | closed | Adjust device brightness | high priority 1p closed feature | Acceptance criteria: user is able to modify the device's brightness through our app | 1.0 | Adjust device brightness - Acceptance criteria: user is able to modify the device's brightness through our app | priority | adjust device brightness acceptance criteria user is able to modify the device s brightness through our app | 1 |

720,547 | 24,796,608,862 | IssuesEvent | 2022-10-24 17:50:30 | wazuh/wazuh-documentation | https://api.github.com/repos/wazuh/wazuh-documentation | closed | 5.0 Getting started Architecture rework | priority: highest type: refactor | Hello team!

The aim of this issue is to adapt the Architecture section in the Getting started for Wazuh 5.0.

We must also change the diagrams of the section.

Regards,

David | 1.0 | 5.0 Getting started Architecture rework - Hello team!

The aim of this issue is to adapt the Architecture section in the Getting started for Wazuh 5.0.

We must also change the diagrams of the section.

Regards,

David | priority | getting started architecture rework hello team the aim of this issue is to adapt the architecture section in the getting started for wazuh we must also change the diagrams of the section regards david | 1 |

303,033 | 9,301,482,892 | IssuesEvent | 2019-03-23 22:28:11 | codeforbtv/green-up-app | https://api.github.com/repos/codeforbtv/green-up-app | closed | Login fails without notifying user. | Priority: High Type: Bug V2 | To reproduce, login via Facebook with an account that already exists with the same email address but different sign-in credentials.

After seeing a flash of "Seeing green thoughts", the user is redirected back to the login screen without a message that the login failed.

User should receive a message that the login failed and if possible, with the reason why. | 1.0 | Login fails without notifying user. - To reproduce, login via Facebook with an account that already exists with the same email address but different sign-in credentials.

After seeing a flash of "Seeing green thoughts", the user is redirected back to the login screen without a message that the login failed.

User should receive a message that the login failed and if possible, with the reason why. | priority | login fails without notifying user to reproduce login via facebook with an account that already exists with the same email address but different sign in credentials after seeing a flash of seeing green thoughts the user is redirected back to the login screen without a message that the login failed user should receive a message that the login failed and if possible with the reason why | 1 |

460,136 | 13,205,336,544 | IssuesEvent | 2020-08-14 17:44:08 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | closed | Prototype implementation example script generation fails | Priority/High REST_API Type/Bug Type/React-UI | ### Description:

After creating an API with https://petstore.swagger.io/v2/swagger.json, below error log is printed in carbon logs when selecting the "Prototype Implementation" option.

```

com.fasterxml.jackson.core.JsonGenerationException: Can not write a string, expecting field name (context: Object)

at com.fasterxml.jackson.core.JsonGenerator._reportError(JsonGenerator.java:2080)

at com.fasterxml.jackson.core.json.JsonGeneratorImpl._reportCantWriteValueExpectName(JsonGeneratorImpl.java:248)

at com.fasterxml.jackson.core.json.JsonGeneratorImpl._verifyPrettyValueWrite(JsonGeneratorImpl.java:238)

at com.fasterxml.jackson.core.json.WriterBasedJsonGenerator._verifyValueWrite(WriterBasedJsonGenerator.java:894)

...

..

at com.fasterxml.jackson.databind.ObjectWriter.writeValueAsString(ObjectWriter.java:1005)

at io.swagger.util.Json.pretty(Json.java:23)

at org.wso2.carbon.apimgt.impl.definitions.OAS2Parser.getSchemaExample_aroundBody6(OAS2Parser.java:206)

at org.wso2.carbon.apimgt.impl.definitions.OAS2Parser.getSchemaExample(OAS2Parser.java:202)

at org.wso2.carbon.apimgt.impl.definitions.OAS2Parser.generateExample_aroundBody4(OAS2Parser.java:170)

at org.wso2.carbon.apimgt.impl.definitions.OAS2Parser.generateExample(OAS2Parser.java:115)

at org.wso2.carbon.apimgt.impl.definitions.OASParserUtil.generateExamples_aroundBody4(OASParserUtil.java:226)

at org.wso2.carbon.apimgt.impl.definitions.OASParserUtil.generateExamples(OASParserUtil.java:220)

at org.wso2.carbon.apimgt.rest.api.publisher.v1.impl.ApisApiServiceImpl.getGeneratedMockScriptsOfAPI(ApisApiServiceImpl.java:2186)

at org.wso2.carbon.apimgt.rest.api.publisher.v1.ApisApi.getGeneratedMockScriptsOfAPI(ApisApi.java:1050)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

```

Prototype Endpoint implementation scripts are not generated as expected. Only a simple script is generated, as below.

```

/* mc.setProperty('CONTENT_TYPE', 'application/json');

mc.setPayloadJSON('{ "data" : "sample JSON"}');*/

/*Uncomment the above comment block to send a sample response.*/

```

But when we do some change in a script and click on "Reset" then an advanced script is generated. But then if we try to save this, it will throw the same error in carbon logs. Anyway, there is no any issue when invoking the apis.

BTW this swagger file doesn't give backend errors. https://raw.githubusercontent.com/OAI/OpenAPI-Specification/master/examples/v2.0/yaml/petstore-expanded.yaml But no advance script is gennerated for this one too.

### Steps to reproduce:

### Affected Product Version:

apim-3.2.0-RC4

| 1.0 | Prototype implementation example script generation fails - ### Description:

After creating an API with https://petstore.swagger.io/v2/swagger.json, below error log is printed in carbon logs when selecting the "Prototype Implementation" option.

```

com.fasterxml.jackson.core.JsonGenerationException: Can not write a string, expecting field name (context: Object)

at com.fasterxml.jackson.core.JsonGenerator._reportError(JsonGenerator.java:2080)

at com.fasterxml.jackson.core.json.JsonGeneratorImpl._reportCantWriteValueExpectName(JsonGeneratorImpl.java:248)

at com.fasterxml.jackson.core.json.JsonGeneratorImpl._verifyPrettyValueWrite(JsonGeneratorImpl.java:238)

at com.fasterxml.jackson.core.json.WriterBasedJsonGenerator._verifyValueWrite(WriterBasedJsonGenerator.java:894)

...

..

at com.fasterxml.jackson.databind.ObjectWriter.writeValueAsString(ObjectWriter.java:1005)

at io.swagger.util.Json.pretty(Json.java:23)

at org.wso2.carbon.apimgt.impl.definitions.OAS2Parser.getSchemaExample_aroundBody6(OAS2Parser.java:206)

at org.wso2.carbon.apimgt.impl.definitions.OAS2Parser.getSchemaExample(OAS2Parser.java:202)

at org.wso2.carbon.apimgt.impl.definitions.OAS2Parser.generateExample_aroundBody4(OAS2Parser.java:170)

at org.wso2.carbon.apimgt.impl.definitions.OAS2Parser.generateExample(OAS2Parser.java:115)

at org.wso2.carbon.apimgt.impl.definitions.OASParserUtil.generateExamples_aroundBody4(OASParserUtil.java:226)

at org.wso2.carbon.apimgt.impl.definitions.OASParserUtil.generateExamples(OASParserUtil.java:220)

at org.wso2.carbon.apimgt.rest.api.publisher.v1.impl.ApisApiServiceImpl.getGeneratedMockScriptsOfAPI(ApisApiServiceImpl.java:2186)

at org.wso2.carbon.apimgt.rest.api.publisher.v1.ApisApi.getGeneratedMockScriptsOfAPI(ApisApi.java:1050)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

```

Prototype Endpoint implementation scripts are not generated as expected. Only a simple script is generated, as below.

```

/* mc.setProperty('CONTENT_TYPE', 'application/json');

mc.setPayloadJSON('{ "data" : "sample JSON"}');*/

/*Uncomment the above comment block to send a sample response.*/

```

But when we do some change in a script and click on "Reset" then an advanced script is generated. But then if we try to save this, it will throw the same error in carbon logs. Anyway, there is no any issue when invoking the apis.

BTW this swagger file doesn't give backend errors. https://raw.githubusercontent.com/OAI/OpenAPI-Specification/master/examples/v2.0/yaml/petstore-expanded.yaml But no advance script is gennerated for this one too.

### Steps to reproduce:

### Affected Product Version:

apim-3.2.0-RC4

| priority | prototype implementation example script generation fails description after creating an api with below error log is printed in carbon logs when selecting the prototype implementation option com fasterxml jackson core jsongenerationexception can not write a string expecting field name context object at com fasterxml jackson core jsongenerator reporterror jsongenerator java at com fasterxml jackson core json jsongeneratorimpl reportcantwritevalueexpectname jsongeneratorimpl java at com fasterxml jackson core json jsongeneratorimpl verifyprettyvaluewrite jsongeneratorimpl java at com fasterxml jackson core json writerbasedjsongenerator verifyvaluewrite writerbasedjsongenerator java at com fasterxml jackson databind objectwriter writevalueasstring objectwriter java at io swagger util json pretty json java at org carbon apimgt impl definitions getschemaexample java at org carbon apimgt impl definitions getschemaexample java at org carbon apimgt impl definitions generateexample java at org carbon apimgt impl definitions generateexample java at org carbon apimgt impl definitions oasparserutil generateexamples oasparserutil java at org carbon apimgt impl definitions oasparserutil generateexamples oasparserutil java at org carbon apimgt rest api publisher impl apisapiserviceimpl getgeneratedmockscriptsofapi apisapiserviceimpl java at org carbon apimgt rest api publisher apisapi getgeneratedmockscriptsofapi apisapi java at sun reflect nativemethodaccessorimpl native method at sun reflect nativemethodaccessorimpl invoke nativemethodaccessorimpl java at sun reflect delegatingmethodaccessorimpl invoke delegatingmethodaccessorimpl java at java lang reflect method invoke method java prototype endpoint implementation scripts are not generated as expected only a simple script is generated as below mc setproperty content type application json mc setpayloadjson data sample json uncomment the above comment block to send a sample response but when we do some change in a script and click on reset then an advanced script is generated but then if we try to save this it will throw the same error in carbon logs anyway there is no any issue when invoking the apis btw this swagger file doesn t give backend errors but no advance script is gennerated for this one too steps to reproduce affected product version apim | 1 |

779,168 | 27,342,308,637 | IssuesEvent | 2023-02-26 23:02:28 | PlanktonTeam/planktonr | https://api.github.com/repos/PlanktonTeam/planktonr | closed | Problem with Sample_Depth in pr_get_NRSTrips | bug high priority | There is an option in `pr_get_NRSTrips` to use all of `"P", "Z" and "F"` to retrieve all the data but this causes trouble because the sample_depth is renamed depending on what is retrieved.

I suggest we only allow a single option. What do you think @clairedavies ? * But see alternative below.

In `pr_get_bgc` this also introduces problems with multiple sample_depths at the compilation stage at the end.

An alternative option to above, which will also solve the `pr_get_bgc` problem (I think) is to change output of `pr_get_NRSTrips` to long format so the biomass, AFW etc of Phyto, Zoo and Fish are returned in rows, rather than columns. This reduces the confusion about the sample_depth column names.

| 1.0 | Problem with Sample_Depth in pr_get_NRSTrips - There is an option in `pr_get_NRSTrips` to use all of `"P", "Z" and "F"` to retrieve all the data but this causes trouble because the sample_depth is renamed depending on what is retrieved.

I suggest we only allow a single option. What do you think @clairedavies ? * But see alternative below.

In `pr_get_bgc` this also introduces problems with multiple sample_depths at the compilation stage at the end.

An alternative option to above, which will also solve the `pr_get_bgc` problem (I think) is to change output of `pr_get_NRSTrips` to long format so the biomass, AFW etc of Phyto, Zoo and Fish are returned in rows, rather than columns. This reduces the confusion about the sample_depth column names.

| priority | problem with sample depth in pr get nrstrips there is an option in pr get nrstrips to use all of p z and f to retrieve all the data but this causes trouble because the sample depth is renamed depending on what is retrieved i suggest we only allow a single option what do you think clairedavies but see alternative below in pr get bgc this also introduces problems with multiple sample depths at the compilation stage at the end an alternative option to above which will also solve the pr get bgc problem i think is to change output of pr get nrstrips to long format so the biomass afw etc of phyto zoo and fish are returned in rows rather than columns this reduces the confusion about the sample depth column names | 1 |

338,920 | 10,239,418,019 | IssuesEvent | 2019-08-19 18:12:01 | EUCweb/BIS-F | https://api.github.com/repos/EUCweb/BIS-F | opened | Detect WVD Multi User | Priority: High | During BIS-F Sealing, it's necassary to detect WVD Multi User for BIS-F System Management and not run sysprep.

| 1.0 | Detect WVD Multi User - During BIS-F Sealing, it's necassary to detect WVD Multi User for BIS-F System Management and not run sysprep.

| priority | detect wvd multi user during bis f sealing it s necassary to detect wvd multi user for bis f system management and not run sysprep | 1 |

645,789 | 21,015,341,927 | IssuesEvent | 2022-03-30 10:27:36 | bitfoundation/bitframework | https://api.github.com/repos/bitfoundation/bitframework | closed | Missing 2-way bound `SelectedKey` implementations in `BitPivot` component | bug area / components high priority | The SelectItem process implemented in the BitPivot component is not a complete and correct 2-way bound process. it finally needs to have a call to the `SelectedKeyChanged` handler in order to finish the process which is missing now. | 1.0 | Missing 2-way bound `SelectedKey` implementations in `BitPivot` component - The SelectItem process implemented in the BitPivot component is not a complete and correct 2-way bound process. it finally needs to have a call to the `SelectedKeyChanged` handler in order to finish the process which is missing now. | priority | missing way bound selectedkey implementations in bitpivot component the selectitem process implemented in the bitpivot component is not a complete and correct way bound process it finally needs to have a call to the selectedkeychanged handler in order to finish the process which is missing now | 1 |

96,184 | 3,966,094,470 | IssuesEvent | 2016-05-03 11:25:32 | OCHA-DAP/liverpool16 | https://api.github.com/repos/OCHA-DAP/liverpool16 | closed | Map Explorer: Powerview load via url | enhancement High Priority | allow map explorer to load a powerview as json config (?url=....) (geojson, powerview) | 1.0 | Map Explorer: Powerview load via url - allow map explorer to load a powerview as json config (?url=....) (geojson, powerview) | priority | map explorer powerview load via url allow map explorer to load a powerview as json config url geojson powerview | 1 |

600,493 | 18,297,994,500 | IssuesEvent | 2021-10-05 22:36:04 | momentum-mod/game | https://api.github.com/repos/momentum-mod/game | closed | Panorama console panel | Type: Feature Priority: High Where: Engine Size: Medium | Implement an ingame console based on Panorama to solve the input issues arising from having VGUI and Panorama active simultaneously

Relevant issues:

- #1355

- #1393

- #1178

- #1479

- #1420

Planned features:

- [x] Main panel

- [x] Receive console messages

- [x] Send console commands

- [x] Autocomplete (maybe a bit cooler?)

- [x] Draggable for move and resize (resize maybe not at every panel edge for simplicity's sake, c.f. Dota 2)

- [x] Proper input handling

- [x] Keep functionality separate from design principle (i.e. allow quake-style console if desired)

- [x] HUD panel (developer 1 and con_drawnotify 1)

- [x] work like VGUI

- [x] General considerations

- [x] Bunch up identical messages (maybe something more elaborate down the line, just `Missing material xyz (x7)` for now)

- [x] Try to prevent large perf impact on high message load

- [x] Filtering

| 1.0 | Panorama console panel - Implement an ingame console based on Panorama to solve the input issues arising from having VGUI and Panorama active simultaneously

Relevant issues:

- #1355

- #1393

- #1178

- #1479

- #1420

Planned features:

- [x] Main panel

- [x] Receive console messages

- [x] Send console commands

- [x] Autocomplete (maybe a bit cooler?)

- [x] Draggable for move and resize (resize maybe not at every panel edge for simplicity's sake, c.f. Dota 2)

- [x] Proper input handling

- [x] Keep functionality separate from design principle (i.e. allow quake-style console if desired)

- [x] HUD panel (developer 1 and con_drawnotify 1)

- [x] work like VGUI

- [x] General considerations

- [x] Bunch up identical messages (maybe something more elaborate down the line, just `Missing material xyz (x7)` for now)

- [x] Try to prevent large perf impact on high message load

- [x] Filtering

| priority | panorama console panel implement an ingame console based on panorama to solve the input issues arising from having vgui and panorama active simultaneously relevant issues planned features main panel receive console messages send console commands autocomplete maybe a bit cooler draggable for move and resize resize maybe not at every panel edge for simplicity s sake c f dota proper input handling keep functionality separate from design principle i e allow quake style console if desired hud panel developer and con drawnotify work like vgui general considerations bunch up identical messages maybe something more elaborate down the line just missing material xyz for now try to prevent large perf impact on high message load filtering | 1 |

35,999 | 2,794,530,361 | IssuesEvent | 2015-05-11 17:10:59 | TresysTechnology/clip | https://api.github.com/repos/TresysTechnology/clip | closed | local packages should be rebuilt only when changes have been made | bug High Priority | The build system is rebuilding the packages/ directory every time we run make. We need a better way to determine if there are changes within that directory, and then rebuild the rpms only in that case. | 1.0 | local packages should be rebuilt only when changes have been made - The build system is rebuilding the packages/ directory every time we run make. We need a better way to determine if there are changes within that directory, and then rebuild the rpms only in that case. | priority | local packages should be rebuilt only when changes have been made the build system is rebuilding the packages directory every time we run make we need a better way to determine if there are changes within that directory and then rebuild the rpms only in that case | 1 |

5,158 | 2,572,193,281 | IssuesEvent | 2015-02-10 21:00:33 | boxkite/ckanext-donneesqctheme | https://api.github.com/repos/boxkite/ckanext-donneesqctheme | opened | Add fields at organization level: contact email | High Priority | Add a field to provide a contact email at the organisation level. | 1.0 | Add fields at organization level: contact email - Add a field to provide a contact email at the organisation level. | priority | add fields at organization level contact email add a field to provide a contact email at the organisation level | 1 |

160,834 | 6,103,433,935 | IssuesEvent | 2017-06-20 18:42:06 | AZMAG/map-Employment | https://api.github.com/repos/AZMAG/map-Employment | closed | Cluster Definitions Excel Export Sheet is outdated | maintenance Priority: High | this sheet is outdated and needs to be replaced. waiting on updated sheet!

| 1.0 | Cluster Definitions Excel Export Sheet is outdated - this sheet is outdated and needs to be replaced. waiting on updated sheet!

| priority | cluster definitions excel export sheet is outdated this sheet is outdated and needs to be replaced waiting on updated sheet | 1 |

545,290 | 15,947,626,286 | IssuesEvent | 2021-04-15 03:58:32 | eliasfang/sp21-cse110-lab3 | https://api.github.com/repos/eliasfang/sp21-cse110-lab3 | opened | Style text | Level: 1 Priority: High Status: Backlog Type: Feature | **Is your feature request related to a problem? Please describe.**

All of the text of the page looks the same with very minimal size and style differences.

**Describe the solution you'd like**

Add more style to your text so that certain things pop out and look good.

**Describe alternatives you've considered**

N/A

**Additional context**

N/A | 1.0 | Style text - **Is your feature request related to a problem? Please describe.**

All of the text of the page looks the same with very minimal size and style differences.

**Describe the solution you'd like**

Add more style to your text so that certain things pop out and look good.

**Describe alternatives you've considered**

N/A

**Additional context**

N/A | priority | style text is your feature request related to a problem please describe all of the text of the page looks the same with very minimal size and style differences describe the solution you d like add more style to your text so that certain things pop out and look good describe alternatives you ve considered n a additional context n a | 1 |

715,930 | 24,615,738,119 | IssuesEvent | 2022-10-15 09:34:18 | AY2223S1-CS2103-F13-2/tp | https://api.github.com/repos/AY2223S1-CS2103-F13-2/tp | opened | Improve the edit command | type.Enhancement priority.High | Once survey is changed to let a person have multiple surveys. We can change the edit command to do an add instead of having to type every survey plus the new survey. | 1.0 | Improve the edit command - Once survey is changed to let a person have multiple surveys. We can change the edit command to do an add instead of having to type every survey plus the new survey. | priority | improve the edit command once survey is changed to let a person have multiple surveys we can change the edit command to do an add instead of having to type every survey plus the new survey | 1 |

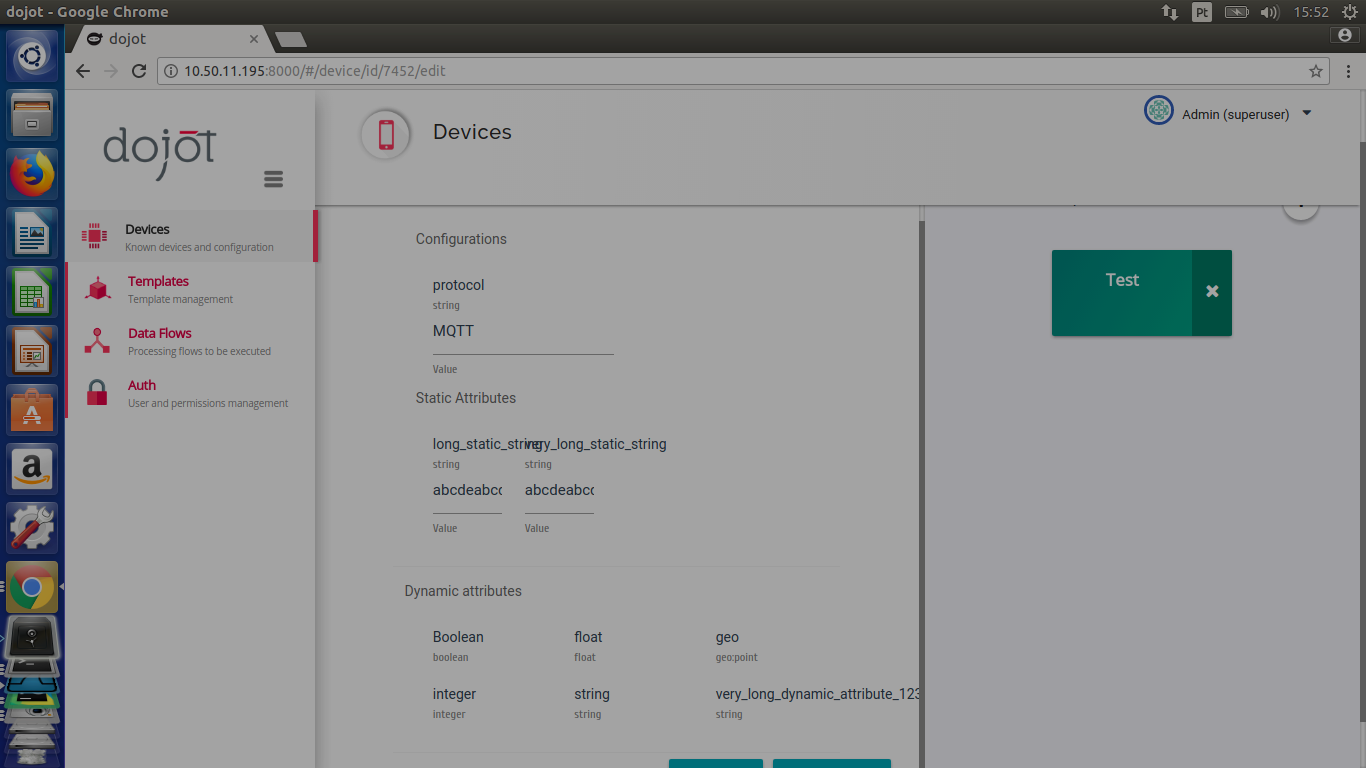

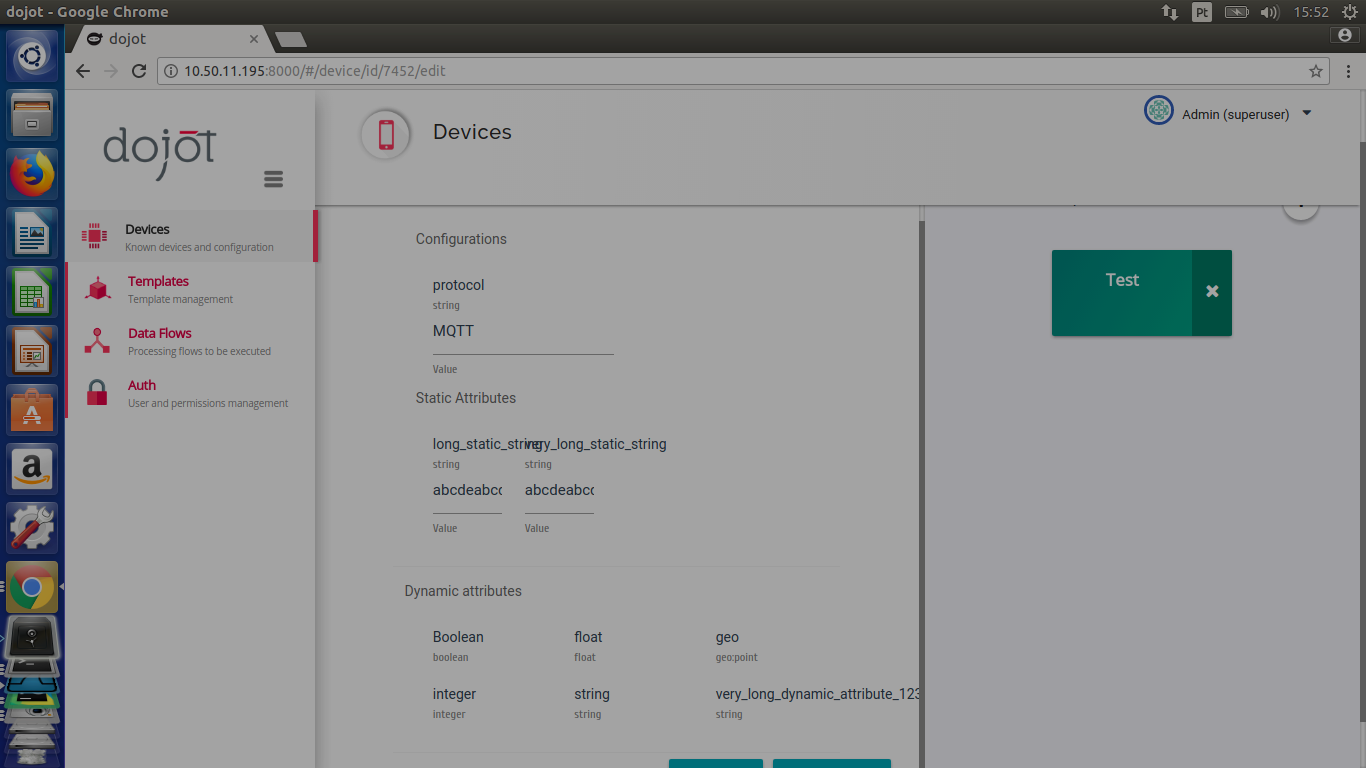

221,121 | 7,374,001,142 | IssuesEvent | 2018-03-13 18:55:03 | dojot/dojot | https://api.github.com/repos/dojot/dojot | opened | GUI - Problems with long strings in the device edit page | Priority:High bug | The device edit page doesn't handle long strings!

| 1.0 | GUI - Problems with long strings in the device edit page - The device edit page doesn't handle long strings!

| priority | gui problems with long strings in the device edit page the device edit page doesn t handle long strings | 1 |

639,210 | 20,748,991,976 | IssuesEvent | 2022-03-15 04:22:20 | tpickering223/2DGameProject | https://api.github.com/repos/tpickering223/2DGameProject | opened | Add remove function to the Inventory back-end. | enhancement High Priority | Implement the ability for the back-end to remove an item from its container (i.e. the list that actually holds the items).

| 1.0 | Add remove function to the Inventory back-end. - Implement the ability for the back-end to remove an item from its container (i.e. the list that actually holds the items).

| priority | add remove function to the inventory back end implement the ability for the back end to remove an item from its container i e the list that actually holds the items | 1 |

185,933 | 6,732,055,655 | IssuesEvent | 2017-10-18 09:57:14 | ballerinalang/composer | https://api.github.com/repos/ballerinalang/composer | closed | Cannot delete the try-catch block when finally is added | 0.94-pre-release Priority/High Severity/Major Type/Bug | 1. Add finally block from the source view

2. Try to delete the entire try-catch

Cannot delete the try-catch block when finally is added | 1.0 | Cannot delete the try-catch block when finally is added - 1. Add finally block from the source view

2. Try to delete the entire try-catch

Cannot delete the try-catch block when finally is added | priority | cannot delete the try catch block when finally is added add finally block from the source view try to delete the entire try catch cannot delete the try catch block when finally is added | 1 |

394,502 | 11,644,634,376 | IssuesEvent | 2020-02-29 19:46:02 | ODM2/ODM2DataSharingPortal | https://api.github.com/repos/ODM2/ODM2DataSharingPortal | opened | 500 Server Error when leaf species not selected | bug high priority leaf-pack | A user has reported getting a 500 error when submitting the leaf pack data entry form “when don’t check off a leaf species ... even if I fill out the “Other” field to indicate experimental materials.”

It sounds like the code is requiring leaf species. It should be optional because we offer the “Other” field not only for experimental materials but also for leaf species not listed (especially important for users outside of eastern US).

I’m marking this as high priority because we hope to push Leaf Pack Network traffic to Monitor My Watershed this week. Thanks! | 1.0 | 500 Server Error when leaf species not selected - A user has reported getting a 500 error when submitting the leaf pack data entry form “when don’t check off a leaf species ... even if I fill out the “Other” field to indicate experimental materials.”

It sounds like the code is requiring leaf species. It should be optional because we offer the “Other” field not only for experimental materials but also for leaf species not listed (especially important for users outside of eastern US).

I’m marking this as high priority because we hope to push Leaf Pack Network traffic to Monitor My Watershed this week. Thanks! | priority | server error when leaf species not selected a user has reported getting a error when submitting the leaf pack data entry form “when don’t check off a leaf species even if i fill out the “other” field to indicate experimental materials ” it sounds like the code is requiring leaf species it should be optional because we offer the “other” field not only for experimental materials but also for leaf species not listed especially important for users outside of eastern us i’m marking this as high priority because we hope to push leaf pack network traffic to monitor my watershed this week thanks | 1 |

133,465 | 5,203,883,228 | IssuesEvent | 2017-01-24 14:12:06 | ctsit/qipr_approver | https://api.github.com/repos/ctsit/qipr_approver | closed | Import information from Spreadsheet of Existing Projects | High Priority In progress New Feature | As a customer, I may have a spreadsheet that has a list of projects I would like to import. Please either import the data or give me an import tool.