Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

655,348 | 21,686,411,345 | IssuesEvent | 2022-05-09 11:44:37 | AgnostiqHQ/covalent | https://api.github.com/repos/AgnostiqHQ/covalent | closed | Decouple Dask from Covalent | feature priority / high covalent-mono | * Remove the dask dependency entirely from Covalent

* Use asyncio event loops to run workflows (single dispatch) at a time | 1.0 | Decouple Dask from Covalent - * Remove the dask dependency entirely from Covalent

* Use asyncio event loops to run workflows (single dispatch) at a time | priority | decouple dask from covalent remove the dask dependency entirely from covalent use asyncio event loops to run workflows single dispatch at a time | 1 |

351,412 | 10,518,134,108 | IssuesEvent | 2019-09-29 08:37:03 | phansch/dotfiles | https://api.github.com/repos/phansch/dotfiles | closed | Integrate ./util/dev fmt into Clippy workflow | Ansible enhancement priority:high | I don't think I want to run this on every save, but rather in a pre-commit hook. | 1.0 | Integrate ./util/dev fmt into Clippy workflow - I don't think I want to run this on every save, but rather in a pre-commit hook. | priority | integrate util dev fmt into clippy workflow i don t think i want to run this on every save but rather in a pre commit hook | 1 |

636,466 | 20,601,094,832 | IssuesEvent | 2022-03-06 09:10:17 | AY2122S2-CS2103-F09-3/tp | https://api.github.com/repos/AY2122S2-CS2103-F09-3/tp | reopened | Add appointment | type.Story priority.High | As an insurance agent, I can add new appointments so that I can note down any future meetings I have with my client | 1.0 | Add appointment - As an insurance agent, I can add new appointments so that I can note down any future meetings I have with my client | priority | add appointment as an insurance agent i can add new appointments so that i can note down any future meetings i have with my client | 1 |

506,638 | 14,669,592,529 | IssuesEvent | 2020-12-30 01:22:17 | napari/napari | https://api.github.com/repos/napari/napari | closed | Adding points on heavily-scaled data slices them on the wrong plane | bug high priority | ## 🐛 Bug

```python

import napari

from skimage import data

image = data.binary_blobs(128, n_dim=3)[:60]

with napari.gui_qt():

v = napari.view_image(image, scale=[0.29, 0.26, 0.26])

pts = v.add_points(name='interactive', size=1, ndim=3)

pts.mode = 'add'

v.dims.set_point(0, 30 * 0.29) # middle plane

```

Clicking on points adds them on the right plane, but they are not shown. One must slice several planes lower to see them. When you switch to 3D mode, they appear in the middle plane correctly.

| 1.0 | Adding points on heavily-scaled data slices them on the wrong plane - ## 🐛 Bug

```python

import napari

from skimage import data

image = data.binary_blobs(128, n_dim=3)[:60]

with napari.gui_qt():

v = napari.view_image(image, scale=[0.29, 0.26, 0.26])

pts = v.add_points(name='interactive', size=1, ndim=3)

pts.mode = 'add'

v.dims.set_point(0, 30 * 0.29) # middle plane

```

Clicking on points adds them on the right plane, but they are not shown. One must slice several planes lower to see them. When you switch to 3D mode, they appear in the middle plane correctly.

| priority | adding points on heavily scaled data slices them on the wrong plane 🐛 bug python import napari from skimage import data image data binary blobs n dim with napari gui qt v napari view image image scale pts v add points name interactive size ndim pts mode add v dims set point middle plane clicking on points adds them on the right plane but they are not shown one must slice several planes lower to see them when you switch to mode they appear in the middle plane correctly | 1 |

420,109 | 12,233,439,095 | IssuesEvent | 2020-05-04 11:38:33 | joonaspaakko/ScriptUI-Dialog-Builder-Joonas | https://api.github.com/repos/joonaspaakko/ScriptUI-Dialog-Builder-Joonas | opened | VerticalTabbedPanel + TabbedPanel → Automatic minimum size not working reliably | .BUG Priority: High ScriptUI - Disparity | I think I might've made some changes to the regular `TabbedPanel` when I added `VerticalTabbedPanel`, which caused this issue in both.

The expected behavior is for tabs to be the same size at all times.

Issue gif:

| 1.0 | VerticalTabbedPanel + TabbedPanel → Automatic minimum size not working reliably - I think I might've made some changes to the regular `TabbedPanel` when I added `VerticalTabbedPanel`, which caused this issue in both.

The expected behavior is for tabs to be the same size at all times.

Issue gif:

| priority | verticaltabbedpanel tabbedpanel → automatic minimum size not working reliably i think i might ve made some changes to the regular tabbedpanel when i added verticaltabbedpanel which caused this issue in both the expected behavior is for tabs to be the same size at all times issue gif | 1 |

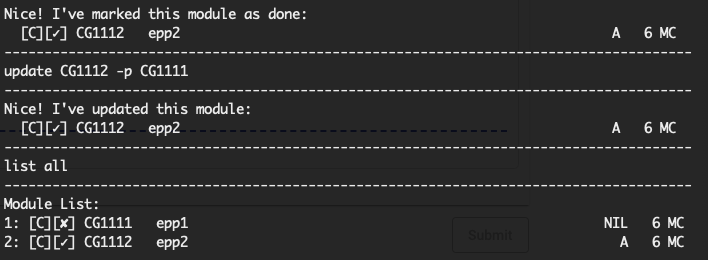

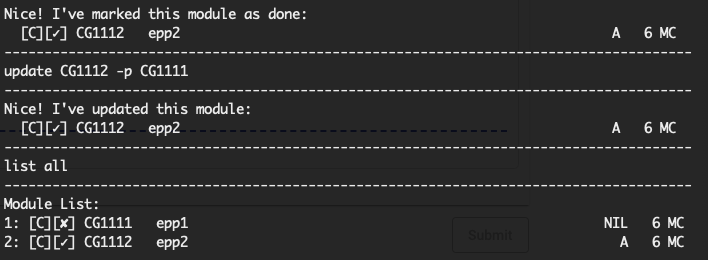

540,370 | 15,806,988,403 | IssuesEvent | 2021-04-04 08:17:10 | AY2021S2-CS2113T-W09-2/tp | https://api.github.com/repos/AY2021S2-CS2113T-W09-2/tp | closed | [PE-D] Update prerequisite after marked as done | priority.High severity.Low severity.Medium type.Bug | No details provided.

After marking a module as done, it was still possible to update the mod with a prerequisite of an undone mod. Perhaps can consider checking for this and throwing an error?

<!--session: 1617437456087-b11cd5d4-595b-4323-aa93-43e1ba798752-->

-------------

Labels: `severity.Low` `type.FunctionalityBug`

original: AlexanderTanJunAn/ped#5 | 1.0 | [PE-D] Update prerequisite after marked as done - No details provided.

After marking a module as done, it was still possible to update the mod with a prerequisite of an undone mod. Perhaps can consider checking for this and throwing an error?

<!--session: 1617437456087-b11cd5d4-595b-4323-aa93-43e1ba798752-->

-------------

Labels: `severity.Low` `type.FunctionalityBug`

original: AlexanderTanJunAn/ped#5 | priority | update prerequisite after marked as done no details provided after marking a module as done it was still possible to update the mod with a prerequisite of an undone mod perhaps can consider checking for this and throwing an error labels severity low type functionalitybug original alexandertanjunan ped | 1 |

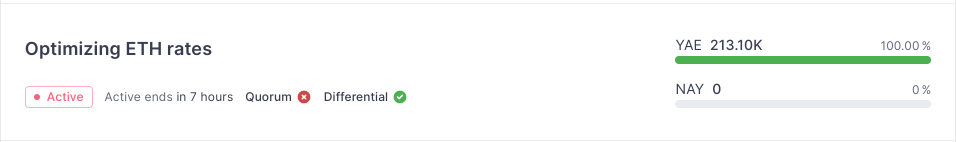

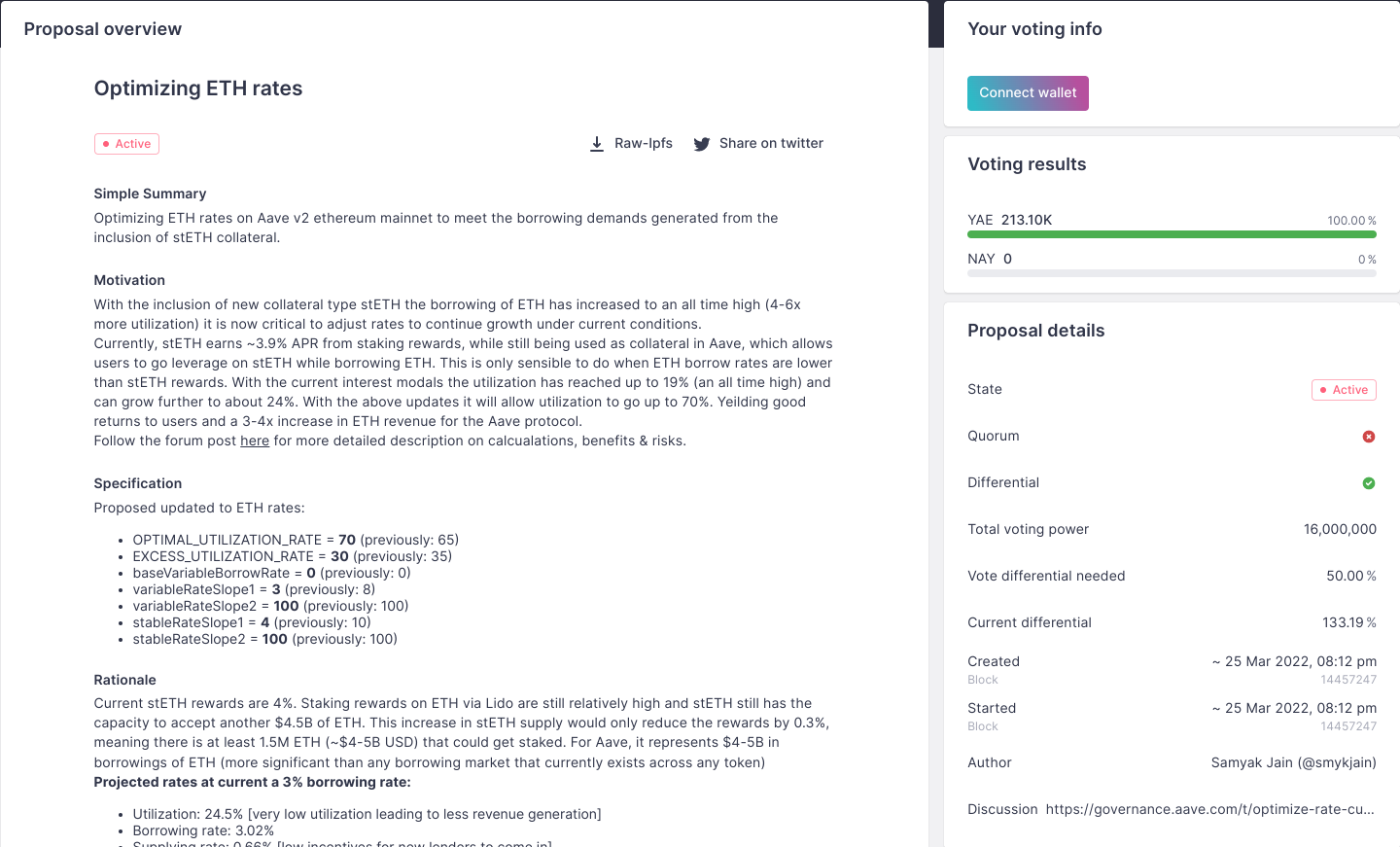

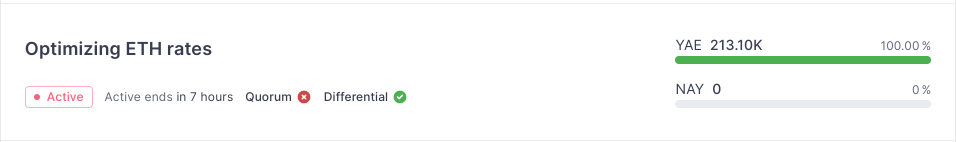

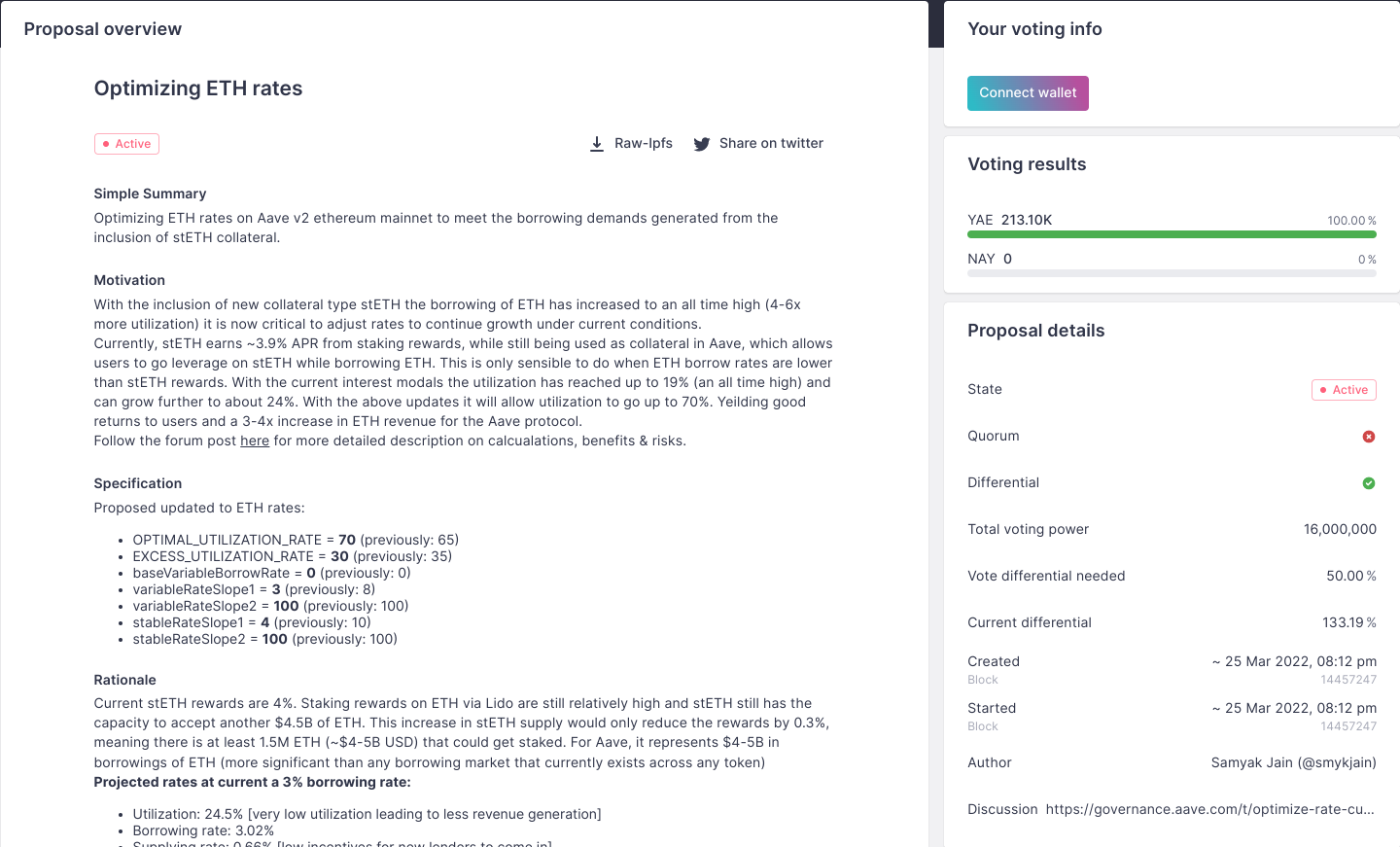

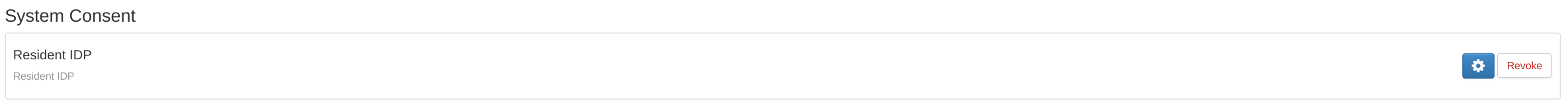

645,821 | 21,016,381,335 | IssuesEvent | 2022-03-30 11:25:06 | aave/interface | https://api.github.com/repos/aave/interface | closed | Governance page missing remaining time | feature priority:high | **Is your feature request related to a problem? Please describe.**

On the proposal page in governance it's not clear how much time is remaining:

https://app.aave.com/governance/ <- here it's visible

https://app.aave.com/governance/proposal/68/ <- here it's not

**Describe the solution you'd like**

Show the raiming time, as it's an important information.

**Additional context**

| 1.0 | Governance page missing remaining time - **Is your feature request related to a problem? Please describe.**

On the proposal page in governance it's not clear how much time is remaining:

https://app.aave.com/governance/ <- here it's visible

https://app.aave.com/governance/proposal/68/ <- here it's not

**Describe the solution you'd like**

Show the raiming time, as it's an important information.

**Additional context**

| priority | governance page missing remaining time is your feature request related to a problem please describe on the proposal page in governance it s not clear how much time is remaining here it s visible here it s not describe the solution you d like show the raiming time as it s an important information additional context | 1 |

715,554 | 24,603,846,535 | IssuesEvent | 2022-10-14 14:35:05 | epicmaxco/vuestic-ui | https://api.github.com/repos/epicmaxco/vuestic-ui | closed | Dropdown doesn't work on SSR | BUG HIGH PRIORITY | **Vuestic-ui version:** 1.5.0

Just doesn't open on click

Same with popover, very dead

| 1.0 | Dropdown doesn't work on SSR - **Vuestic-ui version:** 1.5.0

Just doesn't open on click

Same with popover, very dead

| priority | dropdown doesn t work on ssr vuestic ui version just doesn t open on click same with popover very dead | 1 |

598,855 | 18,257,127,919 | IssuesEvent | 2021-10-03 08:06:42 | AY2122S1-CS2113T-W12-1/tp | https://api.github.com/repos/AY2122S1-CS2113T-W12-1/tp | opened | Implement database class which contains all food data | priorityHigh | Implement database class that contains an ArrayList that stores all food data. | 1.0 | Implement database class which contains all food data - Implement database class that contains an ArrayList that stores all food data. | priority | implement database class which contains all food data implement database class that contains an arraylist that stores all food data | 1 |

455,885 | 13,133,734,399 | IssuesEvent | 2020-08-06 21:33:02 | CityOfNewYork/poletop-finder | https://api.github.com/repos/CityOfNewYork/poletop-finder | closed | Include symbology in details panel | priority: high | If equipment is installed vs not installed, appropriate icon should display next to the Poletop Reservation ID. | 1.0 | Include symbology in details panel - If equipment is installed vs not installed, appropriate icon should display next to the Poletop Reservation ID. | priority | include symbology in details panel if equipment is installed vs not installed appropriate icon should display next to the poletop reservation id | 1 |

689,836 | 23,636,003,648 | IssuesEvent | 2022-08-25 13:21:57 | kubermatic/kubermatic | https://api.github.com/repos/kubermatic/kubermatic | closed | Random failures with OIDC kubeconfig workflow | kind/bug priority/high | ### What happened?

<!-- Try to provide as much information as possible.

If you're reporting a security issue, please check the guidelines for reporting security issues:

https://github.com/kubermatic/kubermatic/blob/master/CONTRIBUTING.md#reporting-a-security-vulnerability -->

The OIDC Kubeconfig feature seems to be broken. While trying the workflow from the dashboard, we keep ending up with random errors, any one from this list:

```

- {"error":{"code":500,"message":"securecookie: base64 decode failed - caused by: illegal base64 data at input byte 3"}}

- {"error":{"code":400,"message":"incorrect value of state parameter: tmbhb7s9jj"}}

- {"error":{"code":400,"message":"incorrect value of cookie or cookie not set: http: named cookie not present"}}

- {"error":{"code":400,"message":"error while exchanging oidc code for token: oauth2: cannot fetch token: 400 Bad Request\nResponse: {\"error\":\"invalid_request\",\"error_description\":\"redirect_uri did not match URI from initial request.\"}"}}

```

Although without making any changes, sometimes it works.

### How to reproduce the issue?

<!-- Please provide as much information as possible, so we can reproduce the issue on our own. -->

Using our dashboard:

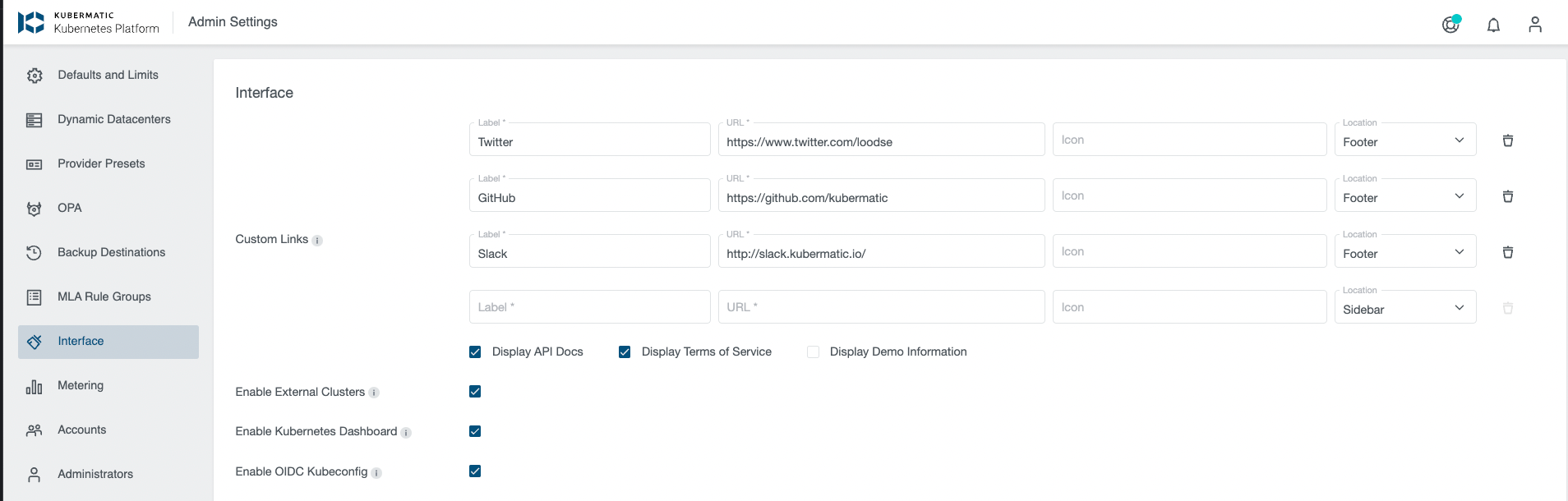

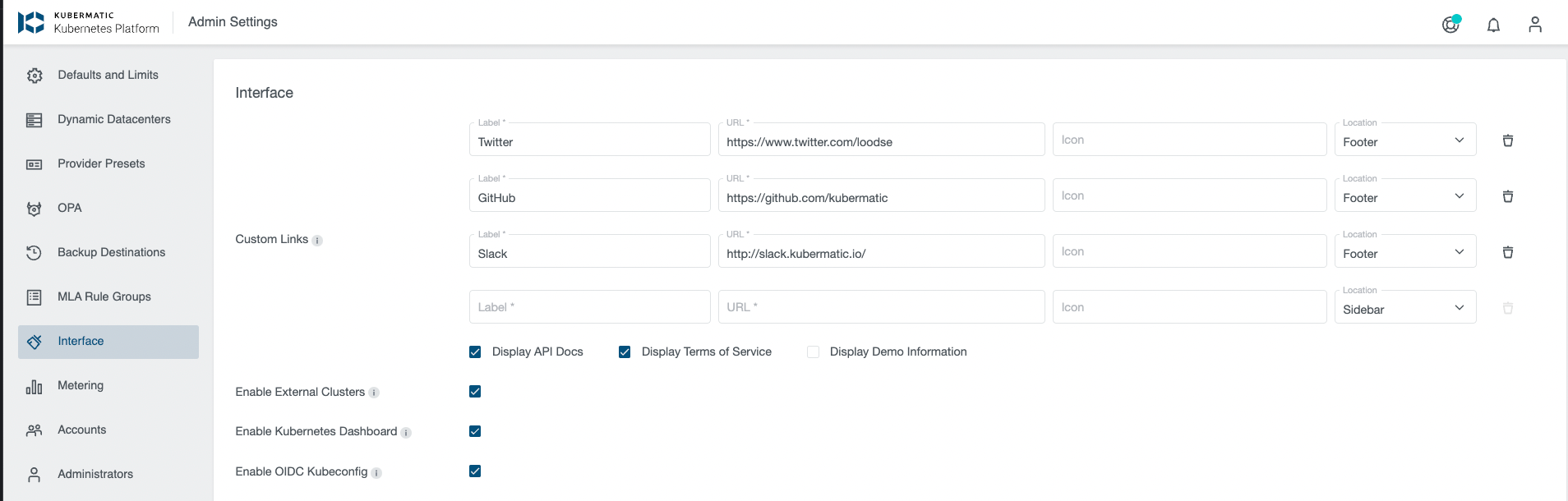

- Ensure that OIDC Kubeconfig is enabled in admin settings

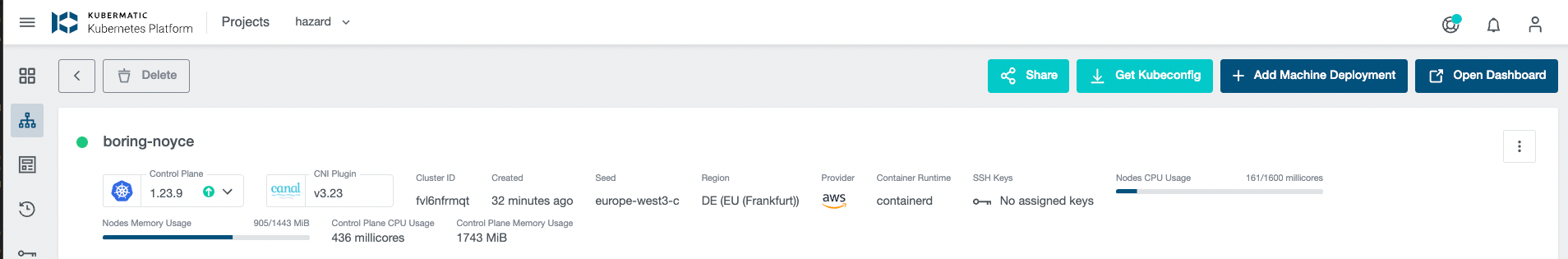

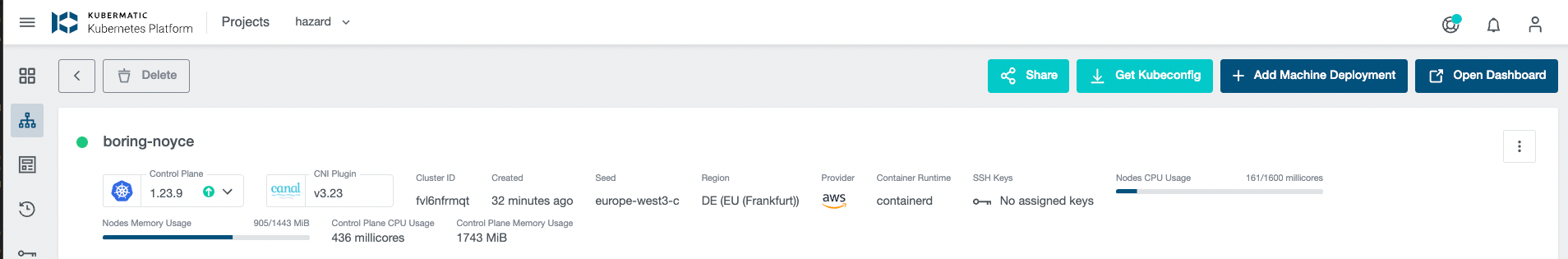

- Create a Kubernetes Cluster

- Click on `Get Kubeconfig` to retrieve the OIDC Kubeconfig

### How is your environment configured?

- KKP version:

- Shared or separate master/seed clusters?:

### Provide your KKP manifest here (if applicable)

<!-- Providing an applicable manifest (KubermaticConfiguration, Seed, Cluster or other resources) will help us to reproduce the issue.

Please make sure to redact all secrets (e.g. passwords, URLs...)! -->

<details>

```yaml

# paste manifest here

```

</details>

### What cloud provider are you running on?

<!-- AWS, Azure, DigitalOcean, GCP, Hetzner Cloud, Nutanix, OpenStack, Equinix Metal (Packet), VMware vSphere, Other (e.g. baremetal or non-natively supported provider) -->

### What operating system are you running in your user cluster?

<!-- Ubuntu 20.04, CentOS 7, Rocky Linux 8, Flatcar Linux, ... (optional, bug might not be related to user cluster) -->

### Additional information

<!-- Additional information about the bug you're reporting (optional). -->

| 1.0 | Random failures with OIDC kubeconfig workflow - ### What happened?

<!-- Try to provide as much information as possible.

If you're reporting a security issue, please check the guidelines for reporting security issues:

https://github.com/kubermatic/kubermatic/blob/master/CONTRIBUTING.md#reporting-a-security-vulnerability -->

The OIDC Kubeconfig feature seems to be broken. While trying the workflow from the dashboard, we keep ending up with random errors, any one from this list:

```

- {"error":{"code":500,"message":"securecookie: base64 decode failed - caused by: illegal base64 data at input byte 3"}}

- {"error":{"code":400,"message":"incorrect value of state parameter: tmbhb7s9jj"}}

- {"error":{"code":400,"message":"incorrect value of cookie or cookie not set: http: named cookie not present"}}

- {"error":{"code":400,"message":"error while exchanging oidc code for token: oauth2: cannot fetch token: 400 Bad Request\nResponse: {\"error\":\"invalid_request\",\"error_description\":\"redirect_uri did not match URI from initial request.\"}"}}

```

Although without making any changes, sometimes it works.

### How to reproduce the issue?

<!-- Please provide as much information as possible, so we can reproduce the issue on our own. -->

Using our dashboard:

- Ensure that OIDC Kubeconfig is enabled in admin settings

- Create a Kubernetes Cluster

- Click on `Get Kubeconfig` to retrieve the OIDC Kubeconfig

### How is your environment configured?

- KKP version:

- Shared or separate master/seed clusters?:

### Provide your KKP manifest here (if applicable)

<!-- Providing an applicable manifest (KubermaticConfiguration, Seed, Cluster or other resources) will help us to reproduce the issue.

Please make sure to redact all secrets (e.g. passwords, URLs...)! -->

<details>

```yaml

# paste manifest here

```

</details>

### What cloud provider are you running on?

<!-- AWS, Azure, DigitalOcean, GCP, Hetzner Cloud, Nutanix, OpenStack, Equinix Metal (Packet), VMware vSphere, Other (e.g. baremetal or non-natively supported provider) -->

### What operating system are you running in your user cluster?

<!-- Ubuntu 20.04, CentOS 7, Rocky Linux 8, Flatcar Linux, ... (optional, bug might not be related to user cluster) -->

### Additional information

<!-- Additional information about the bug you're reporting (optional). -->

| priority | random failures with oidc kubeconfig workflow what happened try to provide as much information as possible if you re reporting a security issue please check the guidelines for reporting security issues the oidc kubeconfig feature seems to be broken while trying the workflow from the dashboard we keep ending up with random errors any one from this list error code message securecookie decode failed caused by illegal data at input byte error code message incorrect value of state parameter error code message incorrect value of cookie or cookie not set http named cookie not present error code message error while exchanging oidc code for token cannot fetch token bad request nresponse error invalid request error description redirect uri did not match uri from initial request although without making any changes sometimes it works how to reproduce the issue using our dashboard ensure that oidc kubeconfig is enabled in admin settings create a kubernetes cluster click on get kubeconfig to retrieve the oidc kubeconfig how is your environment configured kkp version shared or separate master seed clusters provide your kkp manifest here if applicable providing an applicable manifest kubermaticconfiguration seed cluster or other resources will help us to reproduce the issue please make sure to redact all secrets e g passwords urls yaml paste manifest here what cloud provider are you running on what operating system are you running in your user cluster additional information | 1 |

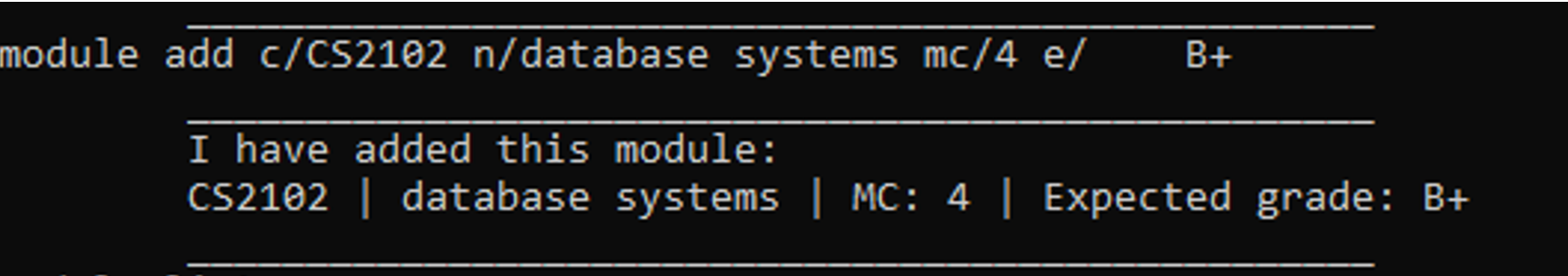

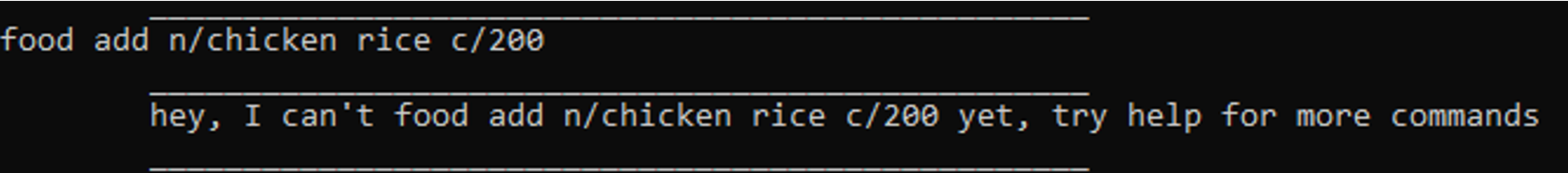

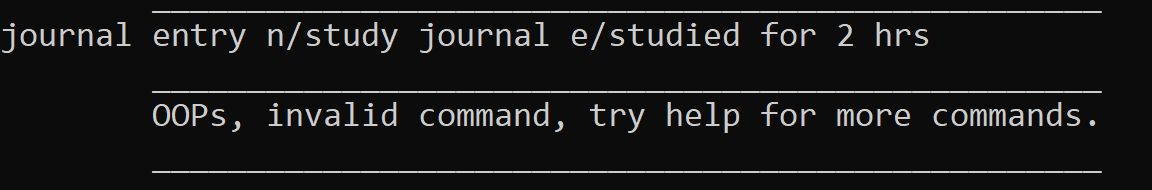

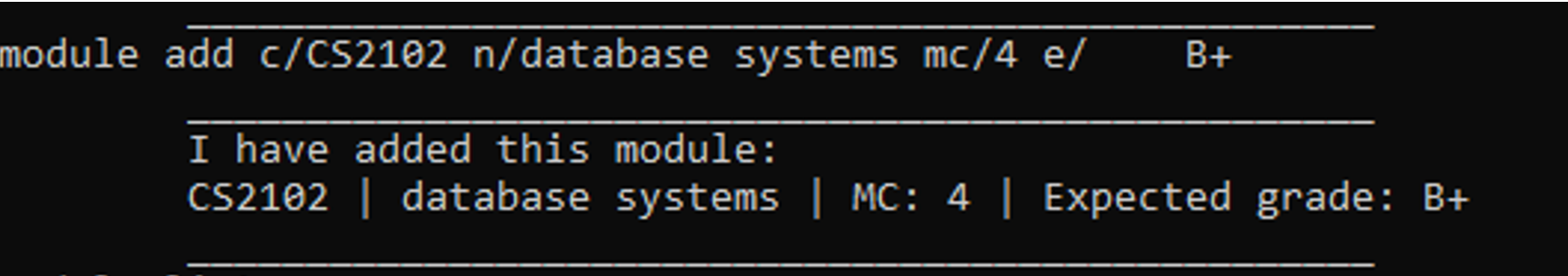

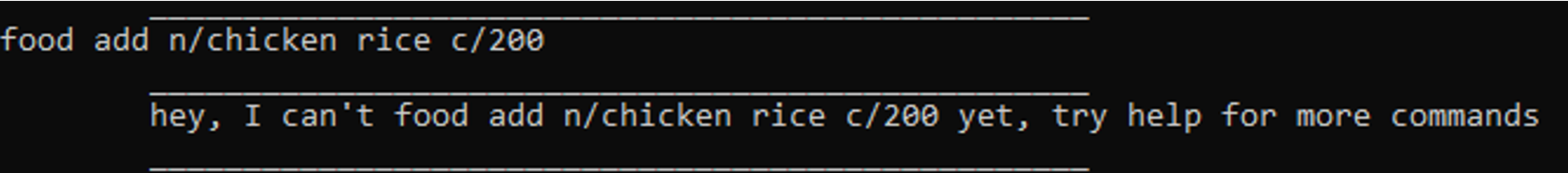

604,659 | 18,716,136,627 | IssuesEvent | 2021-11-03 05:10:48 | AY2122S1-CS2113T-T09-4/tp | https://api.github.com/repos/AY2122S1-CS2113T-T09-4/tp | closed | [PE-D] Inconsistent input flags and params | priority.High | Expected to be able to input with the presence of the ‘n/’ and ‘c/’ flag but required the presence of a space in front of input to be added. However, when adding in a similar format to modules it was allowed. Could make it consistent throughout the program.

-No space needed

-Space needed

Same problem for journal entries

<!--session: 1635513809369-5bee5e77-b44a-43bb-a435-5814eef45f7f--><!--Version: Web v3.4.1-->

-------------

Labels: `severity.Medium` `type.FunctionalityBug`

original: STAung07/ped#2 | 1.0 | [PE-D] Inconsistent input flags and params - Expected to be able to input with the presence of the ‘n/’ and ‘c/’ flag but required the presence of a space in front of input to be added. However, when adding in a similar format to modules it was allowed. Could make it consistent throughout the program.

-No space needed

-Space needed

Same problem for journal entries

<!--session: 1635513809369-5bee5e77-b44a-43bb-a435-5814eef45f7f--><!--Version: Web v3.4.1-->

-------------

Labels: `severity.Medium` `type.FunctionalityBug`

original: STAung07/ped#2 | priority | inconsistent input flags and params expected to be able to input with the presence of the ‘n ’ and ‘c ’ flag but required the presence of a space in front of input to be added however when adding in a similar format to modules it was allowed could make it consistent throughout the program no space needed space needed same problem for journal entries labels severity medium type functionalitybug original ped | 1 |

214,304 | 7,268,685,607 | IssuesEvent | 2018-02-20 10:57:49 | TCA-Team/TumCampusApp | https://api.github.com/repos/TCA-Team/TumCampusApp | closed | Crash after upgrade to v1.5.2 | Bug High Priority | ## Problem

Since upgrading to v1.5.2 the app crashes all the time when I open it. It also crashes from time to time while the app is not even used at all. Chat was never used before.

## Stacktrace

### Environment

* Phone: Nexus 7 (2013)

* OS version: 6.0.1

* TUM Campus App version: v1.5.2

* Language: de

| 1.0 | Crash after upgrade to v1.5.2 - ## Problem

Since upgrading to v1.5.2 the app crashes all the time when I open it. It also crashes from time to time while the app is not even used at all. Chat was never used before.

## Stacktrace

### Environment

* Phone: Nexus 7 (2013)

* OS version: 6.0.1

* TUM Campus App version: v1.5.2

* Language: de

| priority | crash after upgrade to problem since upgrading to the app crashes all the time when i open it it also crashes from time to time while the app is not even used at all chat was never used before stacktrace environment phone nexus os version tum campus app version language de | 1 |

338,608 | 10,232,261,592 | IssuesEvent | 2019-08-18 16:05:49 | fossasia/open-event-attendee-android | https://api.github.com/repos/fossasia/open-event-attendee-android | closed | App is crashing due to invalid timezone | Priority: High bug | **Describe the bug**

```

D/AndroidRuntime: Shutting down VM

E/AndroidRuntime: FATAL EXCEPTION: main

Process: com.eventyay.attendee, PID: 17006

org.threeten.bp.DateTimeException: Invalid ID for region-based ZoneId, invalid format:

at org.threeten.bp.ZoneRegion.ofId(ZoneRegion.java:138)

at org.threeten.bp.ZoneId.of(ZoneId.java:358)

at org.fossasia.openevent.general.event.EventUtils.getEventDateTime(EventUtils.kt:40)

at org.fossasia.openevent.general.sessions.SessionViewHolder.bind(SessionViewHolder.kt:44)

at org.fossasia.openevent.general.sessions.SessionRecyclerAdapter.onBindViewHolder(SessionRecyclerAdapter.kt:29)

at org.fossasia.openevent.general.sessions.SessionRecyclerAdapter.onBindViewHolder(SessionRecyclerAdapter.kt:9)

```

**Would you like to work on the issue?**

<!-- Please let us know if you can work on it or the issue should be assigned to someone else. -->

yes

| 1.0 | App is crashing due to invalid timezone - **Describe the bug**

```

D/AndroidRuntime: Shutting down VM

E/AndroidRuntime: FATAL EXCEPTION: main

Process: com.eventyay.attendee, PID: 17006

org.threeten.bp.DateTimeException: Invalid ID for region-based ZoneId, invalid format:

at org.threeten.bp.ZoneRegion.ofId(ZoneRegion.java:138)

at org.threeten.bp.ZoneId.of(ZoneId.java:358)

at org.fossasia.openevent.general.event.EventUtils.getEventDateTime(EventUtils.kt:40)

at org.fossasia.openevent.general.sessions.SessionViewHolder.bind(SessionViewHolder.kt:44)

at org.fossasia.openevent.general.sessions.SessionRecyclerAdapter.onBindViewHolder(SessionRecyclerAdapter.kt:29)

at org.fossasia.openevent.general.sessions.SessionRecyclerAdapter.onBindViewHolder(SessionRecyclerAdapter.kt:9)

```

**Would you like to work on the issue?**

<!-- Please let us know if you can work on it or the issue should be assigned to someone else. -->

yes

| priority | app is crashing due to invalid timezone describe the bug d androidruntime shutting down vm e androidruntime fatal exception main process com eventyay attendee pid org threeten bp datetimeexception invalid id for region based zoneid invalid format at org threeten bp zoneregion ofid zoneregion java at org threeten bp zoneid of zoneid java at org fossasia openevent general event eventutils geteventdatetime eventutils kt at org fossasia openevent general sessions sessionviewholder bind sessionviewholder kt at org fossasia openevent general sessions sessionrecycleradapter onbindviewholder sessionrecycleradapter kt at org fossasia openevent general sessions sessionrecycleradapter onbindviewholder sessionrecycleradapter kt would you like to work on the issue yes | 1 |

228,010 | 7,545,065,962 | IssuesEvent | 2018-04-17 20:25:52 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | BSOD: CLOCK_WATCHDOG_TIMEOUT | High Priority | On Windows 10, while running the latest version of the game, I will randomly experience a blue screen crash. The first time it happened was the last time I played on this computer 2 years ago. That crash corrupted a world, which I then reported and then stopped playing until now. This time, instead of running my own server, I'm playing in another. The first time it happened with the latest build I was actively mining stone, however there were other programs running at the same time. The second time I was away from keyboard doing nothing and came back to the blue screen in progress.

I never get this with any other software, so something must be going on with Eco to trigger it. However, it doesn't seem to be caused by any specific in game action.

System info:

OS: Windows 10 Professional 64-bit

Processor: Intel Core i7-5820k CPU @ 3.30 Ghz (water cooled)

GPU: Nvidia 980 Ti (water cooled)

RAM: 64.0 GB

| 1.0 | BSOD: CLOCK_WATCHDOG_TIMEOUT - On Windows 10, while running the latest version of the game, I will randomly experience a blue screen crash. The first time it happened was the last time I played on this computer 2 years ago. That crash corrupted a world, which I then reported and then stopped playing until now. This time, instead of running my own server, I'm playing in another. The first time it happened with the latest build I was actively mining stone, however there were other programs running at the same time. The second time I was away from keyboard doing nothing and came back to the blue screen in progress.

I never get this with any other software, so something must be going on with Eco to trigger it. However, it doesn't seem to be caused by any specific in game action.

System info:

OS: Windows 10 Professional 64-bit

Processor: Intel Core i7-5820k CPU @ 3.30 Ghz (water cooled)

GPU: Nvidia 980 Ti (water cooled)

RAM: 64.0 GB

| priority | bsod clock watchdog timeout on windows while running the latest version of the game i will randomly experience a blue screen crash the first time it happened was the last time i played on this computer years ago that crash corrupted a world which i then reported and then stopped playing until now this time instead of running my own server i m playing in another the first time it happened with the latest build i was actively mining stone however there were other programs running at the same time the second time i was away from keyboard doing nothing and came back to the blue screen in progress i never get this with any other software so something must be going on with eco to trigger it however it doesn t seem to be caused by any specific in game action system info os windows professional bit processor intel core cpu ghz water cooled gpu nvidia ti water cooled ram gb | 1 |

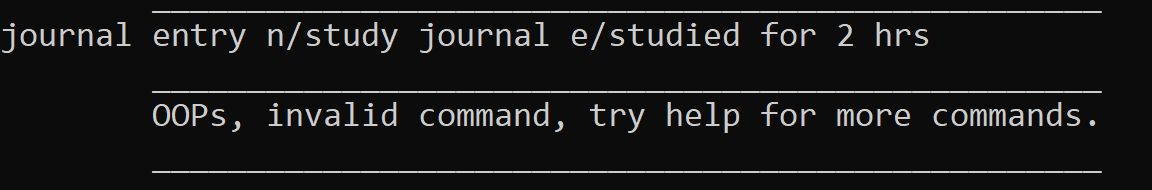

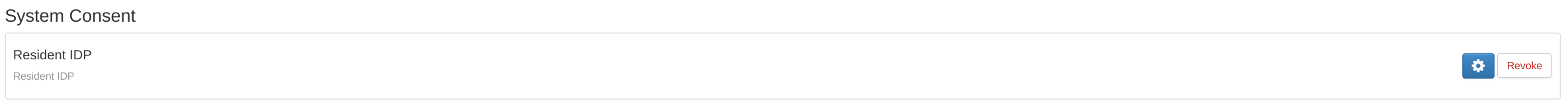

215,673 | 7,296,573,272 | IssuesEvent | 2018-02-26 11:14:12 | wso2/product-is | https://api.github.com/repos/wso2/product-is | opened | No tooltips for any of the buttons in the Dashboard | Affected/5.5.0-Alpha2 Priority/High Severity/Minor Type/Improvement | The following were noted in the Consent Management UI

| 1.0 | No tooltips for any of the buttons in the Dashboard - The following were noted in the Consent Management UI

| priority | no tooltips for any of the buttons in the dashboard the following were noted in the consent management ui | 1 |

675,074 | 23,077,694,048 | IssuesEvent | 2022-07-26 02:24:41 | ballerina-platform/ballerina-dev-website | https://api.github.com/repos/ballerina-platform/ballerina-dev-website | closed | Remove `-ing` Form From All BBE Titles | Priority/Highest Type/Task Area/BBEs | ## Description

There are BBEs with the `-ing` form added in the titles. Need to remove them. For example, [Ignoring return values and errors](https://ballerina.io/learn/by-example/ignoring-return-values-and-errors.html), [Controlling openness](https://ballerina.io/learn/by-example/controlling-openness.html), etc.

Area/BBEs

<!--Area/BBEs-->

<!--Area/HomePageSamples-->

<!--Area/LearnPages-->

<!--Area/CommonPages-->

<!--Area/Backend-->

<!--Area/UIUX-->

<!--Area/Workflows-->

<!--Area/Blog-->

## Describe your task(s)

> A detailed description of the task.

## Related issue(s) (optional)

> Any related issues such as sub tasks and issues reported in other repositories (e.g., component repositories), similar problems, etc.

## Suggested label(s) (optional)

> Optional comma-separated list of suggested labels. Non committers can’t assign labels to issues, and thereby, this will help issue creators who are not a committer to suggest possible labels.

## Suggested assignee(s) (optional)

> Optional comma-separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, and thereby, this will help issue creators who are not a committer to suggest possible assignees.

| 1.0 | Remove `-ing` Form From All BBE Titles - ## Description

There are BBEs with the `-ing` form added in the titles. Need to remove them. For example, [Ignoring return values and errors](https://ballerina.io/learn/by-example/ignoring-return-values-and-errors.html), [Controlling openness](https://ballerina.io/learn/by-example/controlling-openness.html), etc.

Area/BBEs

<!--Area/BBEs-->

<!--Area/HomePageSamples-->

<!--Area/LearnPages-->

<!--Area/CommonPages-->

<!--Area/Backend-->

<!--Area/UIUX-->

<!--Area/Workflows-->

<!--Area/Blog-->

## Describe your task(s)

> A detailed description of the task.

## Related issue(s) (optional)

> Any related issues such as sub tasks and issues reported in other repositories (e.g., component repositories), similar problems, etc.

## Suggested label(s) (optional)

> Optional comma-separated list of suggested labels. Non committers can’t assign labels to issues, and thereby, this will help issue creators who are not a committer to suggest possible labels.

## Suggested assignee(s) (optional)

> Optional comma-separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, and thereby, this will help issue creators who are not a committer to suggest possible assignees.

| priority | remove ing form from all bbe titles description there are bbes with the ing form added in the titles need to remove them for example etc area bbes describe your task s a detailed description of the task related issue s optional any related issues such as sub tasks and issues reported in other repositories e g component repositories similar problems etc suggested label s optional optional comma separated list of suggested labels non committers can’t assign labels to issues and thereby this will help issue creators who are not a committer to suggest possible labels suggested assignee s optional optional comma separated list of suggested team members who should attend the issue non committers can’t assign issues to assignees and thereby this will help issue creators who are not a committer to suggest possible assignees | 1 |

253,914 | 8,067,358,629 | IssuesEvent | 2018-08-05 06:12:37 | Entrana/EntranaBugs | https://api.github.com/repos/Entrana/EntranaBugs | opened | Bank Tabs | enhancement high priority server issue | With the new bank interface being packed, we need the bank configuration server sided to accept, save, configure, and load bank tabs. Reference Impact for configurations | 1.0 | Bank Tabs - With the new bank interface being packed, we need the bank configuration server sided to accept, save, configure, and load bank tabs. Reference Impact for configurations | priority | bank tabs with the new bank interface being packed we need the bank configuration server sided to accept save configure and load bank tabs reference impact for configurations | 1 |

130,223 | 5,111,988,579 | IssuesEvent | 2017-01-06 09:20:40 | hpi-swt2/workshop-portal | https://api.github.com/repos/hpi-swt2/workshop-portal | closed | List of event drafts | High Priority ready team-helene | **As**

organizer

**I want to**

see the list of event drafts on the events overview page

**in order to**

edit them after saving

# Acceptance criteria

- When an organizer creates an events and saves it without publishing it, the entry is shown in the list of events

- Draft, that are not published yet are visually marked as drafts (status: draft/published)

- The edit page of a draft has the publish button for making it visible to applicants

- Coaches and other users do not see the drafts in the list. (only organizers & admin can see them) | 1.0 | List of event drafts - **As**

organizer

**I want to**

see the list of event drafts on the events overview page

**in order to**

edit them after saving

# Acceptance criteria

- When an organizer creates an events and saves it without publishing it, the entry is shown in the list of events

- Draft, that are not published yet are visually marked as drafts (status: draft/published)

- The edit page of a draft has the publish button for making it visible to applicants

- Coaches and other users do not see the drafts in the list. (only organizers & admin can see them) | priority | list of event drafts as organizer i want to see the list of event drafts on the events overview page in order to edit them after saving acceptance criteria when an organizer creates an events and saves it without publishing it the entry is shown in the list of events draft that are not published yet are visually marked as drafts status draft published the edit page of a draft has the publish button for making it visible to applicants coaches and other users do not see the drafts in the list only organizers admin can see them | 1 |

311,300 | 9,531,666,039 | IssuesEvent | 2019-04-29 16:33:28 | minio/minio | https://api.github.com/repos/minio/minio | closed | Bucket not resolved when using MINIO_DOMAIN | community priority: high | ## Expected Behavior

When the environment variable MINIO_DOMAIN is set, MinIO should support virtual-host-style requests as explained in the [documentation](https://github.com/minio/minio/tree/master/docs/config#domain). For a request with a host `mybucket.miniotest.com` and with `MINIO_DOMAIN=miniotest.com`, MinIO should pick `mybucket` as the bucket.

I've been using MinIO locally with docker, and tracked down that the last release where it works according to this expected behaviour in *RELEASE.2019-03-27T22-35-21Z*, while in the release after that, *RELEASE.2019-04-04T18-31-46Z*, it does not work anymore.

## Current Behavior

From *RELEASE.2019-04-04T18-31-46Z* onwards, the result is that when requesting `mybucket.miniotest.com` with `MINIO_DOMAIN=miniotest.com`, the bucket isn't resolved correctly.

## Steps to Reproduce (for bugs)

1. Use this `docker-compose.yml`:

````

version: "3"

services:

minio:

image: minio/minio:RELEASE.2019-04-04T18-31-46Z

#image: minio/minio:RELEASE.2019-03-27T22-35-21Z # <- Works in this version

container_name: "miniotest"

hostname: miniotest.com

ports:

- "8001:9000"

volumes:

- "./minio-data:/data"

environment:

- "MINIO_ACCESS_KEY=minio"

- "MINIO_SECRET_KEY=miniopass"

- "MINIO_REGION=us-east-1"

- "MINIO_DOMAIN=miniotest.com"

command: server /data

````

2. `docker-compose up -d`

3. Put into /etc/hosts:

````

127.0.0.1 miniotest.com mybucket.miniotest.com

````

4. Doing a simple GET request with curl gives the following:

````

$ curl http://mybucket.miniotest.com:8001/mykey

<?xml version="1.0" encoding="UTF-8"?>

<Error><Code>AccessDenied</Code><Message>Access Denied.</Message><BucketName>mykey</BucketName><Resource>/mykey</Resource><RequestId>15985D7FA186B0D8</RequestId><HostId>c4afcac1-d638-4352-87b2-6374ce681e73</HostId></Error>

````

Here it incorrectly says BucketName=mykey when it should get the bucket from the host. Running the same with the older version *RELEASE.2019-03-27T22-35-21Z* gives:

````

$ curl http://mybucket.miniotest.com:8001/mykey

<?xml version="1.0" encoding="UTF-8"?>

<Error><Code>AccessDenied</Code><Message>Access Denied.</Message><Key>mykey</Key><BucketName>mybucket</BucketName><Resource>/mykey</Resource><RequestId>15985DDC715A2E78</RequestId><HostId>c4afcac1-d638-4352-87b2-6374ce681e73</HostId></Error>

````

Here the bucket is resolved correctly with BucketName=mybucket.

Tried also with having the parameter `--address miniotest.com:9000` when starting the service but that did not help.

## Context

Running MinIO locally in a docker environment which involves AWS client software that only supports virtual-host-style requests.

## Regression

Seems to be regression. Last version where it works is [RELEASE.2019-03-27T22-35-21Z](https://github.com/minio/minio/releases/tag/RELEASE.2019-03-27T22-35-21Z) when the next version [RELEASE.2019-04-04T18-31-46Z](https://github.com/minio/minio/releases/tag/RELEASE.2019-04-04T18-31-46Z) breaks the functionality.

## Your Environment

* Version used (`minio version`): RELEASE.2019-04-04T18-31-46Z

* Environment name and version (e.g. nginx 1.9.1): [MinIO docker images](https://hub.docker.com/r/minio/minio), Docker Version 2.0.0.3

| 1.0 | Bucket not resolved when using MINIO_DOMAIN - ## Expected Behavior

When the environment variable MINIO_DOMAIN is set, MinIO should support virtual-host-style requests as explained in the [documentation](https://github.com/minio/minio/tree/master/docs/config#domain). For a request with a host `mybucket.miniotest.com` and with `MINIO_DOMAIN=miniotest.com`, MinIO should pick `mybucket` as the bucket.

I've been using MinIO locally with docker, and tracked down that the last release where it works according to this expected behaviour in *RELEASE.2019-03-27T22-35-21Z*, while in the release after that, *RELEASE.2019-04-04T18-31-46Z*, it does not work anymore.

## Current Behavior

From *RELEASE.2019-04-04T18-31-46Z* onwards, the result is that when requesting `mybucket.miniotest.com` with `MINIO_DOMAIN=miniotest.com`, the bucket isn't resolved correctly.

## Steps to Reproduce (for bugs)

1. Use this `docker-compose.yml`:

````

version: "3"

services:

minio:

image: minio/minio:RELEASE.2019-04-04T18-31-46Z

#image: minio/minio:RELEASE.2019-03-27T22-35-21Z # <- Works in this version

container_name: "miniotest"

hostname: miniotest.com

ports:

- "8001:9000"

volumes:

- "./minio-data:/data"

environment:

- "MINIO_ACCESS_KEY=minio"

- "MINIO_SECRET_KEY=miniopass"

- "MINIO_REGION=us-east-1"

- "MINIO_DOMAIN=miniotest.com"

command: server /data

````

2. `docker-compose up -d`

3. Put into /etc/hosts:

````

127.0.0.1 miniotest.com mybucket.miniotest.com

````

4. Doing a simple GET request with curl gives the following:

````

$ curl http://mybucket.miniotest.com:8001/mykey

<?xml version="1.0" encoding="UTF-8"?>

<Error><Code>AccessDenied</Code><Message>Access Denied.</Message><BucketName>mykey</BucketName><Resource>/mykey</Resource><RequestId>15985D7FA186B0D8</RequestId><HostId>c4afcac1-d638-4352-87b2-6374ce681e73</HostId></Error>

````

Here it incorrectly says BucketName=mykey when it should get the bucket from the host. Running the same with the older version *RELEASE.2019-03-27T22-35-21Z* gives:

````

$ curl http://mybucket.miniotest.com:8001/mykey

<?xml version="1.0" encoding="UTF-8"?>

<Error><Code>AccessDenied</Code><Message>Access Denied.</Message><Key>mykey</Key><BucketName>mybucket</BucketName><Resource>/mykey</Resource><RequestId>15985DDC715A2E78</RequestId><HostId>c4afcac1-d638-4352-87b2-6374ce681e73</HostId></Error>

````

Here the bucket is resolved correctly with BucketName=mybucket.

Tried also with having the parameter `--address miniotest.com:9000` when starting the service but that did not help.

## Context

Running MinIO locally in a docker environment which involves AWS client software that only supports virtual-host-style requests.

## Regression

Seems to be regression. Last version where it works is [RELEASE.2019-03-27T22-35-21Z](https://github.com/minio/minio/releases/tag/RELEASE.2019-03-27T22-35-21Z) when the next version [RELEASE.2019-04-04T18-31-46Z](https://github.com/minio/minio/releases/tag/RELEASE.2019-04-04T18-31-46Z) breaks the functionality.

## Your Environment

* Version used (`minio version`): RELEASE.2019-04-04T18-31-46Z

* Environment name and version (e.g. nginx 1.9.1): [MinIO docker images](https://hub.docker.com/r/minio/minio), Docker Version 2.0.0.3

| priority | bucket not resolved when using minio domain expected behavior when the environment variable minio domain is set minio should support virtual host style requests as explained in the for a request with a host mybucket miniotest com and with minio domain miniotest com minio should pick mybucket as the bucket i ve been using minio locally with docker and tracked down that the last release where it works according to this expected behaviour in release while in the release after that release it does not work anymore current behavior from release onwards the result is that when requesting mybucket miniotest com with minio domain miniotest com the bucket isn t resolved correctly steps to reproduce for bugs use this docker compose yml version services minio image minio minio release image minio minio release works in this version container name miniotest hostname miniotest com ports volumes minio data data environment minio access key minio minio secret key miniopass minio region us east minio domain miniotest com command server data docker compose up d put into etc hosts miniotest com mybucket miniotest com doing a simple get request with curl gives the following curl accessdenied access denied mykey mykey here it incorrectly says bucketname mykey when it should get the bucket from the host running the same with the older version release gives curl accessdenied access denied mykey mybucket mykey here the bucket is resolved correctly with bucketname mybucket tried also with having the parameter address miniotest com when starting the service but that did not help context running minio locally in a docker environment which involves aws client software that only supports virtual host style requests regression seems to be regression last version where it works is when the next version breaks the functionality your environment version used minio version release environment name and version e g nginx docker version | 1 |

757,610 | 26,521,116,865 | IssuesEvent | 2023-01-19 02:47:27 | EinStealth/EinStealth | https://api.github.com/repos/EinStealth/EinStealth | closed | クリア後のResult画面を別Fragmentにする | Mobile Priority: High | # 概要

これもMainFragmentでそのままやってるので、ResultFragmentに移動するようにする

# 関連するissue

#43

# 参考

<!-- 参考資料などはここに書いてね -->

# チェックリスト

- [ ] 優先度tagを設定しましたか?

| 1.0 | クリア後のResult画面を別Fragmentにする - # 概要

これもMainFragmentでそのままやってるので、ResultFragmentに移動するようにする

# 関連するissue

#43

# 参考

<!-- 参考資料などはここに書いてね -->

# チェックリスト

- [ ] 優先度tagを設定しましたか?

| priority | クリア後のresult画面を別fragmentにする 概要 これもmainfragmentでそのままやってるので、resultfragmentに移動するようにする 関連するissue 参考 チェックリスト 優先度tagを設定しましたか? | 1 |

273,907 | 8,554,718,254 | IssuesEvent | 2018-11-08 07:40:46 | BuildCraft/BuildCraft | https://api.github.com/repos/BuildCraft/BuildCraft | closed | Chunk loading checking code doesn't work quite right | complexity: simple priority: high status: fixed/implemented in dev type: bug version: 1.12.2 | `ChunkLoaderManager.loadChunksForTile` requests a ticket from forge, then checks the config. It should instead check to see if it can load *first* and exit immediately if it shouldn't.

It would also be good to have some more (flag-enabled) logging for when chunks are loaded. (Behind a new debug flag "lib.chunkloading"). | 1.0 | Chunk loading checking code doesn't work quite right - `ChunkLoaderManager.loadChunksForTile` requests a ticket from forge, then checks the config. It should instead check to see if it can load *first* and exit immediately if it shouldn't.

It would also be good to have some more (flag-enabled) logging for when chunks are loaded. (Behind a new debug flag "lib.chunkloading"). | priority | chunk loading checking code doesn t work quite right chunkloadermanager loadchunksfortile requests a ticket from forge then checks the config it should instead check to see if it can load first and exit immediately if it shouldn t it would also be good to have some more flag enabled logging for when chunks are loaded behind a new debug flag lib chunkloading | 1 |

223,307 | 7,451,919,893 | IssuesEvent | 2018-03-29 06:07:12 | ballerina-lang/ballerina | https://api.github.com/repos/ballerina-lang/ballerina | opened | Populate the endpoint attributes within, based on the endpoint type | Priority/Highest Severity/Major Type/Improvement component/language-server | **Description:**

When the endpoint template is added, currently the attribute list is empty. What if we fill the attributes with the completion template aswell? | 1.0 | Populate the endpoint attributes within, based on the endpoint type - **Description:**

When the endpoint template is added, currently the attribute list is empty. What if we fill the attributes with the completion template aswell? | priority | populate the endpoint attributes within based on the endpoint type description when the endpoint template is added currently the attribute list is empty what if we fill the attributes with the completion template aswell | 1 |

525,567 | 15,256,342,552 | IssuesEvent | 2021-02-20 19:47:03 | actually-colab/editor | https://api.github.com/repos/actually-colab/editor | closed | Create active sessions table | database difficulty: medium priority: high socket | Create a table in dynamodb to store users with active sessions in a specific notebook

### Schema

```

uid: DUser['uid']

nb_id: DNotebook['nb_id']

time_connected: Datetime

time_disconnected?: Datetime

last_event: Datetime

```

Hash key: uid + nb_id

Range key: time_connected | 1.0 | Create active sessions table - Create a table in dynamodb to store users with active sessions in a specific notebook

### Schema

```

uid: DUser['uid']

nb_id: DNotebook['nb_id']

time_connected: Datetime

time_disconnected?: Datetime

last_event: Datetime

```

Hash key: uid + nb_id

Range key: time_connected | priority | create active sessions table create a table in dynamodb to store users with active sessions in a specific notebook schema uid duser nb id dnotebook time connected datetime time disconnected datetime last event datetime hash key uid nb id range key time connected | 1 |

266,033 | 8,361,940,696 | IssuesEvent | 2018-10-03 15:31:16 | LeedsCC/Helm-PHR-Project | https://api.github.com/repos/LeedsCC/Helm-PHR-Project | opened | OpenID Connect - end users | High Priority QEWD in progress | - [ ] 2FA

- [ ] PW complexity

- [ ] force change of temporary pw

- [ ] Password reset

-####-

Aligning with CitizenID, enforce timeout due to inactivity after 60minutes | 1.0 | OpenID Connect - end users - - [ ] 2FA

- [ ] PW complexity

- [ ] force change of temporary pw

- [ ] Password reset

-####-

Aligning with CitizenID, enforce timeout due to inactivity after 60minutes | priority | openid connect end users pw complexity force change of temporary pw password reset aligning with citizenid enforce timeout due to inactivity after | 1 |

628,685 | 20,010,780,202 | IssuesEvent | 2022-02-01 06:01:33 | TeamSparker/Spark-iOS | https://api.github.com/repos/TeamSparker/Spark-iOS | closed | [Feat] 습관방에서 멤버 상태스티커 반영 | Feat 👼타락pOwEr천사현규 P1 / Priority High | ## 📌 Issue

<!-- 이슈에 대해 간략하게 설명해주세요 -->

- 습관방에서 다른 멤버들의 상태가 반영되지 않음

## 📝 To-do

<!-- 진행할 작업에 대해 적어주세요 -->

- [x] 습관방에서 다른 멤버들의 상태 스티커가 반영되지 않음

| 1.0 | [Feat] 습관방에서 멤버 상태스티커 반영 - ## 📌 Issue

<!-- 이슈에 대해 간략하게 설명해주세요 -->

- 습관방에서 다른 멤버들의 상태가 반영되지 않음

## 📝 To-do

<!-- 진행할 작업에 대해 적어주세요 -->

- [x] 습관방에서 다른 멤버들의 상태 스티커가 반영되지 않음

| priority | 습관방에서 멤버 상태스티커 반영 📌 issue 습관방에서 다른 멤버들의 상태가 반영되지 않음 📝 to do 습관방에서 다른 멤버들의 상태 스티커가 반영되지 않음 | 1 |

647,428 | 21,103,505,753 | IssuesEvent | 2022-04-04 16:26:08 | AY2122S2-CS2103T-T12-4/tp | https://api.github.com/repos/AY2122S2-CS2103T-T12-4/tp | closed | [PE-D] Github Username needs to handle hyphens | priority.High | ## Steps to reproduce

1. enter `addc n/Nicole Lee p/12345678 e/nicole@stffhub.org u/nicole-lee` into the command line

2. System reports `Github usernames should only contain alphanumeric characters.`

## Expected

Usernames on GitHub [have hyphens in them](https://docs.github.com/en/site-policy/other-site-policies/github-username-policy). If a student's username already has a hyphen in it, NUS Classes will not be able to process it.

## Actual

NUS Classes was not able to create my `nicole-lee` contact

<!--session: 1648793079029-094b23bf-c065-4806-bff4-e64ce7b438fa--><!--Version: Web v3.4.2-->

-------------

Labels: `severity.Medium` `type.FeatureFlaw`

original: ian-from-dover/ped#5 | 1.0 | [PE-D] Github Username needs to handle hyphens - ## Steps to reproduce

1. enter `addc n/Nicole Lee p/12345678 e/nicole@stffhub.org u/nicole-lee` into the command line

2. System reports `Github usernames should only contain alphanumeric characters.`

## Expected

Usernames on GitHub [have hyphens in them](https://docs.github.com/en/site-policy/other-site-policies/github-username-policy). If a student's username already has a hyphen in it, NUS Classes will not be able to process it.

## Actual

NUS Classes was not able to create my `nicole-lee` contact

<!--session: 1648793079029-094b23bf-c065-4806-bff4-e64ce7b438fa--><!--Version: Web v3.4.2-->

-------------

Labels: `severity.Medium` `type.FeatureFlaw`

original: ian-from-dover/ped#5 | priority | github username needs to handle hyphens steps to reproduce enter addc n nicole lee p e nicole stffhub org u nicole lee into the command line system reports github usernames should only contain alphanumeric characters expected usernames on github if a student s username already has a hyphen in it nus classes will not be able to process it actual nus classes was not able to create my nicole lee contact labels severity medium type featureflaw original ian from dover ped | 1 |

340,553 | 10,273,176,593 | IssuesEvent | 2019-08-23 18:31:42 | fgpv-vpgf/fgpv-vpgf | https://api.github.com/repos/fgpv-vpgf/fgpv-vpgf | closed | Create a sample export plugin | addition: plugin priority: high | Create a simple sample export plugin which renders the map and watermark image on top of it for testing plugin loading and component positioning. | 1.0 | Create a sample export plugin - Create a simple sample export plugin which renders the map and watermark image on top of it for testing plugin loading and component positioning. | priority | create a sample export plugin create a simple sample export plugin which renders the map and watermark image on top of it for testing plugin loading and component positioning | 1 |

147,074 | 5,633,407,228 | IssuesEvent | 2017-04-05 18:48:53 | theam/haskell-do | https://api.github.com/repos/theam/haskell-do | closed | Console not working / empty | Bug High priority | No idea if I'm just doing something wrong here but the console panel is just empty grey rectangle with nothing in it. I can click a mouse at the top of it to get an input text field there and I can write text there but nothing happens, no matter how many times I press Enter. In the README instructions, there's at least `>` symbol visible but I don't have even that. Any ideas? | 1.0 | Console not working / empty - No idea if I'm just doing something wrong here but the console panel is just empty grey rectangle with nothing in it. I can click a mouse at the top of it to get an input text field there and I can write text there but nothing happens, no matter how many times I press Enter. In the README instructions, there's at least `>` symbol visible but I don't have even that. Any ideas? | priority | console not working empty no idea if i m just doing something wrong here but the console panel is just empty grey rectangle with nothing in it i can click a mouse at the top of it to get an input text field there and i can write text there but nothing happens no matter how many times i press enter in the readme instructions there s at least symbol visible but i don t have even that any ideas | 1 |

483,850 | 13,930,482,074 | IssuesEvent | 2020-10-22 02:34:24 | alibaba/sentinel-golang | https://api.github.com/repos/alibaba/sentinel-golang | closed | Purge existing panic operations | kind/enhancement priority/high | <!-- Here is for bug reports and feature requests ONLY!

If you're looking for help, please check our mail list and the Gitter room.

Please try to use English to describe your issue, or at least provide a snippet of English translation.

-->

## Issue Description

clean up panic

Type: *feature request*

### Describe what feature you want

Replace the existing panic operation in the project with log printing,any other better Suggestions?

### Additional context

Add any other context or screenshots about the feature request here.

| 1.0 | Purge existing panic operations - <!-- Here is for bug reports and feature requests ONLY!

If you're looking for help, please check our mail list and the Gitter room.

Please try to use English to describe your issue, or at least provide a snippet of English translation.

-->

## Issue Description

clean up panic

Type: *feature request*

### Describe what feature you want

Replace the existing panic operation in the project with log printing,any other better Suggestions?

### Additional context

Add any other context or screenshots about the feature request here.

| priority | purge existing panic operations here is for bug reports and feature requests only if you re looking for help please check our mail list and the gitter room please try to use english to describe your issue or at least provide a snippet of english translation issue description clean up panic type feature request describe what feature you want replace the existing panic operation in the project with log printing,any other better suggestions? additional context add any other context or screenshots about the feature request here | 1 |

207,813 | 7,134,078,192 | IssuesEvent | 2018-01-22 19:36:33 | PARINetwork/pari | https://api.github.com/repos/PARINetwork/pari | opened | Search does not work for Non-English languages | High Priority Small Work UI good first issue | https://ruralindiaonline.org/search/?q=تعلیم+کے+جنگل+میں+چاول+پر+بحث&type=article&type=video&type=audio&language=en

https://ruralindiaonline.org/search/?q=शिक्षा+के+वन+में+चावल+पर+बहस&type=article&type=video&type=audio&language=en

This is because the default search is English. Remove the default English option. | 1.0 | Search does not work for Non-English languages - https://ruralindiaonline.org/search/?q=تعلیم+کے+جنگل+میں+چاول+پر+بحث&type=article&type=video&type=audio&language=en

https://ruralindiaonline.org/search/?q=शिक्षा+के+वन+में+चावल+पर+बहस&type=article&type=video&type=audio&language=en

This is because the default search is English. Remove the default English option. | priority | search does not work for non english languages this is because the default search is english remove the default english option | 1 |

3,294 | 2,537,630,520 | IssuesEvent | 2015-01-26 21:54:04 | newca12/gapt | https://api.github.com/repos/newca12/gapt | closed | Implementation of First order logic | 1 star Component-Logic imported Milestone-Release1.0 Priority-High Type-Task | _From [shaoli...@gmail.com](https://code.google.com/u/113190107447576027220/) on October 07, 2009 10:46:22_

to implement FOL on top of higher order logic

_Original issue: http://code.google.com/p/gapt/issues/detail?id=14_ | 1.0 | Implementation of First order logic - _From [shaoli...@gmail.com](https://code.google.com/u/113190107447576027220/) on October 07, 2009 10:46:22_

to implement FOL on top of higher order logic

_Original issue: http://code.google.com/p/gapt/issues/detail?id=14_ | priority | implementation of first order logic from on october to implement fol on top of higher order logic original issue | 1 |

194,375 | 6,894,327,851 | IssuesEvent | 2017-11-23 09:35:14 | huridocs/uwazi | https://api.github.com/repos/huridocs/uwazi | closed | Apply MIT license to our repository | Effort: Low Priority: High Status: Sprint | Now that we have decided to license Uwazi under the MIT license (per issue #840), we need to apply the license to our repository. Here is some [info on best practices from Github](https://help.github.com/articles/licensing-a-repository/):

Most people place their license text in a file named LICENSE.txt (or LICENSE.md) in the root of the repository; [here's an example from Hubot](https://github.com/github/hubot/blob/master/LICENSE.md).

Some projects include information about their license in their README. For example, a project's README may include a note saying "This project is licensed under the terms of the MIT license."

As a best practice, we encourage you to include the license file with your project.

| 1.0 | Apply MIT license to our repository - Now that we have decided to license Uwazi under the MIT license (per issue #840), we need to apply the license to our repository. Here is some [info on best practices from Github](https://help.github.com/articles/licensing-a-repository/):

Most people place their license text in a file named LICENSE.txt (or LICENSE.md) in the root of the repository; [here's an example from Hubot](https://github.com/github/hubot/blob/master/LICENSE.md).

Some projects include information about their license in their README. For example, a project's README may include a note saying "This project is licensed under the terms of the MIT license."

As a best practice, we encourage you to include the license file with your project.

| priority | apply mit license to our repository now that we have decided to license uwazi under the mit license per issue we need to apply the license to our repository here is some most people place their license text in a file named license txt or license md in the root of the repository some projects include information about their license in their readme for example a project s readme may include a note saying this project is licensed under the terms of the mit license as a best practice we encourage you to include the license file with your project | 1 |

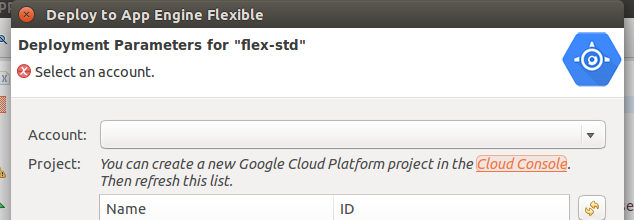

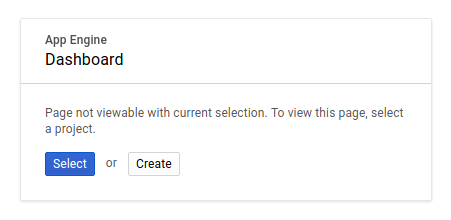

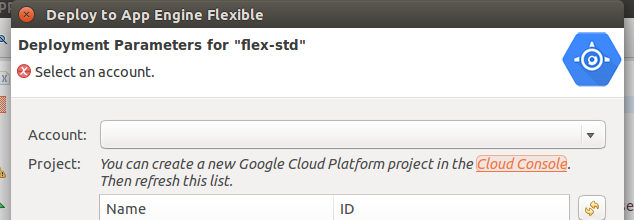

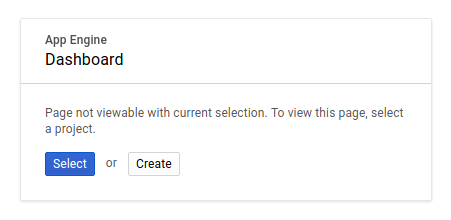

168,826 | 6,387,424,165 | IssuesEvent | 2017-08-03 13:39:03 | GoogleCloudPlatform/google-cloud-eclipse | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-eclipse | closed | Link in deploy dialog to create new GCP project doesn't seem to work | bug high priority | Not sure if we had a similar issue here or internally.

No matter which account is selected, I am presented with the following screen on the Developer Console:

| 1.0 | Link in deploy dialog to create new GCP project doesn't seem to work - Not sure if we had a similar issue here or internally.

No matter which account is selected, I am presented with the following screen on the Developer Console:

| priority | link in deploy dialog to create new gcp project doesn t seem to work not sure if we had a similar issue here or internally no matter which account is selected i am presented with the following screen on the developer console | 1 |

367,749 | 10,861,472,275 | IssuesEvent | 2019-11-14 11:09:31 | wso2/analytics-apim | https://api.github.com/repos/wso2/analytics-apim | closed | Dashboard DB cannot be used with PostgreSQL | APIM 3.0.0 Docs/Has Impact Priority/High | **Description:**

When a PostgeSQL database is setup for the dashboard DB, it gives the following error on startup:

`Caused by: java.sql.SQLFeatureNotSupportedException: Method org.postgresql.jdbc.PgConnection.createBlob() is not yet implemented.`

**OS, DB, other environment details and versions:**

Postgres DB version: 12.0

Postgres JDBC driver version: 42.2.8 | 1.0 | Dashboard DB cannot be used with PostgreSQL - **Description:**

When a PostgeSQL database is setup for the dashboard DB, it gives the following error on startup:

`Caused by: java.sql.SQLFeatureNotSupportedException: Method org.postgresql.jdbc.PgConnection.createBlob() is not yet implemented.`

**OS, DB, other environment details and versions:**

Postgres DB version: 12.0

Postgres JDBC driver version: 42.2.8 | priority | dashboard db cannot be used with postgresql description when a postgesql database is setup for the dashboard db it gives the following error on startup caused by java sql sqlfeaturenotsupportedexception method org postgresql jdbc pgconnection createblob is not yet implemented os db other environment details and versions postgres db version postgres jdbc driver version | 1 |

650,111 | 21,335,193,410 | IssuesEvent | 2022-04-18 13:48:11 | NEAR-Edu/near.academy | https://api.github.com/repos/NEAR-Edu/near.academy | opened | Install Google Analytics (probably via Google Tag Manager) properly | High Priority | https://github.com/NEAR-Edu/near.academy/blob/11d7421b3aeed804c92ec7800c3d40497648ee7a/src/frontend/src/app/App.analytics.tsx seems to have no options defined and seems to use https://github.com/react-ga/react-ga which seems not to be actively maintained. | 1.0 | Install Google Analytics (probably via Google Tag Manager) properly - https://github.com/NEAR-Edu/near.academy/blob/11d7421b3aeed804c92ec7800c3d40497648ee7a/src/frontend/src/app/App.analytics.tsx seems to have no options defined and seems to use https://github.com/react-ga/react-ga which seems not to be actively maintained. | priority | install google analytics probably via google tag manager properly seems to have no options defined and seems to use which seems not to be actively maintained | 1 |

403,039 | 11,834,782,391 | IssuesEvent | 2020-03-23 09:31:34 | FStarLang/FStar | https://api.github.com/repos/FStarLang/FStar | closed | Better pretty printer | area/syntax area/usability kind/enhancement priority/high | NS: better printing of terms would improve the readability of error messages immediately (and would also be useful groundwork for better visualizations in the future)

NS: About debug level low: It's not designed to be robust right now. Hence one of my tasks on the list I sent last night. It's using a very naive normalizer to print types. That thing blows up on anything but the smallest types.

| 1.0 | Better pretty printer - NS: better printing of terms would improve the readability of error messages immediately (and would also be useful groundwork for better visualizations in the future)

NS: About debug level low: It's not designed to be robust right now. Hence one of my tasks on the list I sent last night. It's using a very naive normalizer to print types. That thing blows up on anything but the smallest types.

| priority | better pretty printer ns better printing of terms would improve the readability of error messages immediately and would also be useful groundwork for better visualizations in the future ns about debug level low it s not designed to be robust right now hence one of my tasks on the list i sent last night it s using a very naive normalizer to print types that thing blows up on anything but the smallest types | 1 |

351,580 | 10,520,730,915 | IssuesEvent | 2019-09-30 02:43:18 | AY1920S1-CS2113T-W17-2/main | https://api.github.com/repos/AY1920S1-CS2113T-W17-2/main | closed | As a user, I can update my expenses record so that I can consistently keep track of my money. | priority.High type.Story | Expenses feature - Update record for tracking | 1.0 | As a user, I can update my expenses record so that I can consistently keep track of my money. - Expenses feature - Update record for tracking | priority | as a user i can update my expenses record so that i can consistently keep track of my money expenses feature update record for tracking | 1 |

714,550 | 24,566,061,201 | IssuesEvent | 2022-10-13 03:12:24 | AY2223S1-CS2103T-T09-4/tp | https://api.github.com/repos/AY2223S1-CS2103T-T09-4/tp | closed | Add the ability to edit next of kin phone numbers | priority.HIGH type.Task type.Task.Update | Update phone number that will be of 8 digits in length, starting with 8 or 9 | 1.0 | Add the ability to edit next of kin phone numbers - Update phone number that will be of 8 digits in length, starting with 8 or 9 | priority | add the ability to edit next of kin phone numbers update phone number that will be of digits in length starting with or | 1 |

152,312 | 5,844,270,139 | IssuesEvent | 2017-05-10 11:26:02 | GluuFederation/oxTrust | https://api.github.com/repos/GluuFederation/oxTrust | closed | Unable to remove Custom Script from oxTrust UI | bug High Priority | OS: CentOS 6.8 (possibly affecting all other)

Package: 2.4.4 sp2 upgraded to 3.0.1

Ticket: https://support.gluu.org/upgrade/3982/migrated-244sp2-301-unable-to-delete-unwanted-person-authentication-scripts/

Steps to reproduce: Install 2.4.4 and update to `2.4.4-sp2` be replacing the `identity.war, `oxauth.war` from maven and upgrade to 3.0.1. Log in and try to remove custom scripts it will throw error.

Report: https://github.com/GluuFederation/gluu-qa/wiki/Itemized-Reports#upgrade-script

Observation: Issue confirmed with similar stack trace given in the ticket. | 1.0 | Unable to remove Custom Script from oxTrust UI - OS: CentOS 6.8 (possibly affecting all other)

Package: 2.4.4 sp2 upgraded to 3.0.1

Ticket: https://support.gluu.org/upgrade/3982/migrated-244sp2-301-unable-to-delete-unwanted-person-authentication-scripts/

Steps to reproduce: Install 2.4.4 and update to `2.4.4-sp2` be replacing the `identity.war, `oxauth.war` from maven and upgrade to 3.0.1. Log in and try to remove custom scripts it will throw error.

Report: https://github.com/GluuFederation/gluu-qa/wiki/Itemized-Reports#upgrade-script

Observation: Issue confirmed with similar stack trace given in the ticket. | priority | unable to remove custom script from oxtrust ui os centos possibly affecting all other package upgraded to ticket steps to reproduce install and update to be replacing the identity war oxauth war from maven and upgrade to log in and try to remove custom scripts it will throw error report observation issue confirmed with similar stack trace given in the ticket | 1 |

793,678 | 28,006,913,331 | IssuesEvent | 2023-03-27 15:48:54 | ccrcomplete/CaffeStore | https://api.github.com/repos/ccrcomplete/CaffeStore | closed | Edit Product | High Priority Useful | the admin could be edit the info of whatever product, through a button in the list, between the id | 1.0 | Edit Product - the admin could be edit the info of whatever product, through a button in the list, between the id | priority | edit product the admin could be edit the info of whatever product through a button in the list between the id | 1 |

543,270 | 15,879,340,069 | IssuesEvent | 2021-04-09 12:19:46 | wso2/product-is | https://api.github.com/repos/wso2/product-is | closed | BPS profile name front end validation missing which must only contain letters and numbers | Affected/5.4.0 Affected/5.4.0-Update3 Priority/High bug | When creating BPS profile, ** Profile Name** must only contain letters and numbers. But in fronted its not validated and only giving error as

> Error when adding the BPS profile.

Below error can be seen in the backend

```

[-1234] [] [2018-01-05 07:51:38,255] ERROR {org.wso2.carbon.identity.workflow.impl.WorkflowImplAdminService} - Server error when adding the profile bps_1

org.wso2.carbon.identity.workflow.impl.WorkflowImplException: Profile name should be a not null alpha numeric string, if its not the default embedded BPS.

at org.wso2.carbon.identity.workflow.impl.listener.WorkflowImplValidationListener.doPreAddBPSProfile(WorkflowImplValidationListener.java:46)

at org.wso2.carbon.identity.workflow.impl.WorkflowImplServiceImpl.addBPSProfile(WorkflowImplServiceImpl.java:85)

at org.wso2.carbon.identity.workflow.impl.WorkflowImplAdminService.addBPSProfile(WorkflowImplAdminService.java:50)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:497)

at org.apache.axis2.rpc.receivers.RPCUtil.invokeServiceClass(RPCUtil.java:212)

at org.apache.axis2.rpc.receivers.RPCMessageReceiver.invokeBusinessLogic(RPCMessageReceiver.java:117)

at org.apache.axis2.receivers.AbstractInOutMessageReceiver.invokeBusinessLogic(AbstractInOutMessageReceiver.java:40)

at org.apache.axis2.receivers.AbstractMessageReceiver.receive(AbstractMessageReceiver.java:110)

at org.apache.axis2.engine.AxisEngine.receive(AxisEngine.java:180)

at org.apache.axis2.transport.local.LocalTransportReceiver.processMessage(LocalTransportReceiver.java:169)

at org.apache.axis2.transport.local.LocalTransportReceiver.processMessage(LocalTransportReceiver.java:82)

at org.wso2.carbon.core.transports.local.CarbonLocalTransportSender.finalizeSendWithToAddress(CarbonLocalTransportSender.java:45)

at org.apache.axis2.transport.local.LocalTransportSender.invoke(LocalTransportSender.java:77)

at org.apache.axis2.engine.AxisEngine.send(AxisEngine.java:442)

at org.apache.axis2.description.OutInAxisOperationClient.send(OutInAxisOperation.java:430)

at org.apache.axis2.description.OutInAxisOperationClient.executeImpl(OutInAxisOperation.java:225)

at org.apache.axis2.client.OperationClient.execute(OperationClient.java:149)

at org.wso2.carbon.identity.workflow.impl.stub.WorkflowImplAdminServiceStub.addBPSProfile(WorkflowImplAdminServiceStub.java:1354)

at org.wso2.carbon.identity.workflow.impl.ui.WorkflowImplAdminServiceClient.addBPSProfile(WorkflowImplAdminServiceClient.java:62)

at org.apache.jsp.workflow_002dimpl.update_002dbps_002dprofile_002dfinish_002dajaxprocessor_jsp._jspService(update_002dbps_002dprofile_002dfinish_002dajaxprocessor_jsp.java:157)

at org.apache.jasper.runtime.HttpJspBase.service(HttpJspBase.java:70)

at javax.servlet.http.HttpServlet.service(HttpServlet.java:731)

at org.apache.jasper.servlet.JspServletWrapper.service(JspServletWrapper.java:439)

at org.apache.jasper.servlet.JspServlet.serviceJspFile(JspServlet.java:395)

at org.apache.jasper.servlet.JspServlet.service(JspServlet.java:339)

at javax.servlet.http.HttpServlet.service(HttpServlet.java:731)

at org.wso2.carbon.ui.JspServlet.service(JspServlet.java:155)

at org.wso2.carbon.ui.TilesJspServlet.service(TilesJspServlet.java:80)

at javax.servlet.http.HttpServlet.service(HttpServlet.java:731)

at org.eclipse.equinox.http.helper.ContextPathServletAdaptor.service(ContextPathServletAdaptor.java:37)

at org.eclipse.equinox.http.servlet.internal.ServletRegistration.service(ServletRegistration.java:61)

at org.eclipse.equinox.http.servlet.internal.ProxyServlet.processAlias(ProxyServlet.java:128)

at org.eclipse.equinox.http.servlet.internal.ProxyServlet.service(ProxyServlet.java:68)

at javax.servlet.http.HttpServlet.service(HttpServlet.java:731)

at org.wso2.carbon.tomcat.ext.servlet.DelegationServlet.service(DelegationServlet.java:68)

at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:303)

at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:208)

at org.apache.tomcat.websocket.server.WsFilter.doFilter(WsFilter.java:52)

at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:241)

at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:208)

at org.owasp.csrfguard.CsrfGuardFilter.doFilter(CsrfGuardFilter.java:88)

at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:241)

at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:208)

at org.apache.catalina.filters.HttpHeaderSecurityFilter.doFilter(HttpHeaderSecurityFilter.java:124)

at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:241)

at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:208)

at org.wso2.carbon.tomcat.ext.filter.CharacterSetFilter.doFilter(CharacterSetFilter.java:65)

at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:241)

at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:208)

at org.apache.catalina.filters.HttpHeaderSecurityFilter.doFilter(HttpHeaderSecurityFilter.java:124)

at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:241)

at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:208)

at org.apache.catalina.core.StandardWrapperValve.invoke(StandardWrapperValve.java:219)

at org.apache.catalina.core.StandardContextValve.invoke(StandardContextValve.java:110)

at org.apache.catalina.authenticator.AuthenticatorBase.invoke(AuthenticatorBase.java:506)

at org.apache.catalina.core.StandardHostValve.invoke(StandardHostValve.java:169)

at org.apache.catalina.valves.ErrorReportValve.invoke(ErrorReportValve.java:103)

at org.wso2.carbon.identity.context.rewrite.valve.TenantContextRewriteValve.invoke(TenantContextRewriteValve.java:80)

at org.wso2.carbon.identity.authz.valve.AuthorizationValve.invoke(AuthorizationValve.java:91)

at org.wso2.carbon.identity.auth.valve.AuthenticationValve.invoke(AuthenticationValve.java:60)

at org.wso2.carbon.tomcat.ext.valves.CompositeValve.continueInvocation(CompositeValve.java:99)

at org.wso2.carbon.tomcat.ext.valves.CarbonTomcatValve$1.invoke(CarbonTomcatValve.java:47)

at org.wso2.carbon.webapp.mgt.TenantLazyLoaderValve.invoke(TenantLazyLoaderValve.java:57)

at org.wso2.carbon.tomcat.ext.valves.TomcatValveContainer.invokeValves(TomcatValveContainer.java:47)

at org.wso2.carbon.tomcat.ext.valves.CompositeValve.invoke(CompositeValve.java:62)

at org.wso2.carbon.tomcat.ext.valves.CarbonStuckThreadDetectionValve.invoke(CarbonStuckThreadDetectionValve.java:159)

at org.apache.catalina.valves.AccessLogValve.invoke(AccessLogValve.java:962)

at org.wso2.carbon.tomcat.ext.valves.CarbonContextCreatorValve.invoke(CarbonContextCreatorValve.java:57)

at org.apache.catalina.core.StandardEngineValve.invoke(StandardEngineValve.java:116)

at org.apache.catalina.connector.CoyoteAdapter.service(CoyoteAdapter.java:445)

at org.apache.coyote.http11.AbstractHttp11Processor.process(AbstractHttp11Processor.java:1115)

at org.apache.coyote.AbstractProtocol$AbstractConnectionHandler.process(AbstractProtocol.java:637)

at org.apache.tomcat.util.net.NioEndpoint$SocketProcessor.doRun(NioEndpoint.java:1775)

at org.apache.tomcat.util.net.NioEndpoint$SocketProcessor.run(NioEndpoint.java:1734)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617)

at org.apache.tomcat.util.threads.TaskThread$WrappingRunnable.run(TaskThread.java:61)

at java.lang.Thread.run(Thread.java:745)

TID

```: | 1.0 | BPS profile name front end validation missing which must only contain letters and numbers - When creating BPS profile, ** Profile Name** must only contain letters and numbers. But in fronted its not validated and only giving error as

> Error when adding the BPS profile.

Below error can be seen in the backend

```

[-1234] [] [2018-01-05 07:51:38,255] ERROR {org.wso2.carbon.identity.workflow.impl.WorkflowImplAdminService} - Server error when adding the profile bps_1

org.wso2.carbon.identity.workflow.impl.WorkflowImplException: Profile name should be a not null alpha numeric string, if its not the default embedded BPS.

at org.wso2.carbon.identity.workflow.impl.listener.WorkflowImplValidationListener.doPreAddBPSProfile(WorkflowImplValidationListener.java:46)

at org.wso2.carbon.identity.workflow.impl.WorkflowImplServiceImpl.addBPSProfile(WorkflowImplServiceImpl.java:85)

at org.wso2.carbon.identity.workflow.impl.WorkflowImplAdminService.addBPSProfile(WorkflowImplAdminService.java:50)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:497)

at org.apache.axis2.rpc.receivers.RPCUtil.invokeServiceClass(RPCUtil.java:212)

at org.apache.axis2.rpc.receivers.RPCMessageReceiver.invokeBusinessLogic(RPCMessageReceiver.java:117)

at org.apache.axis2.receivers.AbstractInOutMessageReceiver.invokeBusinessLogic(AbstractInOutMessageReceiver.java:40)

at org.apache.axis2.receivers.AbstractMessageReceiver.receive(AbstractMessageReceiver.java:110)

at org.apache.axis2.engine.AxisEngine.receive(AxisEngine.java:180)

at org.apache.axis2.transport.local.LocalTransportReceiver.processMessage(LocalTransportReceiver.java:169)

at org.apache.axis2.transport.local.LocalTransportReceiver.processMessage(LocalTransportReceiver.java:82)

at org.wso2.carbon.core.transports.local.CarbonLocalTransportSender.finalizeSendWithToAddress(CarbonLocalTransportSender.java:45)

at org.apache.axis2.transport.local.LocalTransportSender.invoke(LocalTransportSender.java:77)

at org.apache.axis2.engine.AxisEngine.send(AxisEngine.java:442)

at org.apache.axis2.description.OutInAxisOperationClient.send(OutInAxisOperation.java:430)

at org.apache.axis2.description.OutInAxisOperationClient.executeImpl(OutInAxisOperation.java:225)

at org.apache.axis2.client.OperationClient.execute(OperationClient.java:149)

at org.wso2.carbon.identity.workflow.impl.stub.WorkflowImplAdminServiceStub.addBPSProfile(WorkflowImplAdminServiceStub.java:1354)

at org.wso2.carbon.identity.workflow.impl.ui.WorkflowImplAdminServiceClient.addBPSProfile(WorkflowImplAdminServiceClient.java:62)

at org.apache.jsp.workflow_002dimpl.update_002dbps_002dprofile_002dfinish_002dajaxprocessor_jsp._jspService(update_002dbps_002dprofile_002dfinish_002dajaxprocessor_jsp.java:157)

at org.apache.jasper.runtime.HttpJspBase.service(HttpJspBase.java:70)

at javax.servlet.http.HttpServlet.service(HttpServlet.java:731)

at org.apache.jasper.servlet.JspServletWrapper.service(JspServletWrapper.java:439)

at org.apache.jasper.servlet.JspServlet.serviceJspFile(JspServlet.java:395)

at org.apache.jasper.servlet.JspServlet.service(JspServlet.java:339)

at javax.servlet.http.HttpServlet.service(HttpServlet.java:731)

at org.wso2.carbon.ui.JspServlet.service(JspServlet.java:155)

at org.wso2.carbon.ui.TilesJspServlet.service(TilesJspServlet.java:80)