Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

388,094 | 11,474,466,090 | IssuesEvent | 2020-02-10 04:24:48 | wso2/product-is | https://api.github.com/repos/wso2/product-is | closed | [DOC] Account Recovery REST API page is not available | Affected/5.7.0 Complexity/Low Priority/High Severity/Blocker Status/In Progress Type/Docs | Document version - IS 5.7.0

'Using the Account Recovery REST APIs' link in the document [1] is not opening the page.

[1] https://docs.wso2.com/display/IS570/REST+APIs | 1.0 | [DOC] Account Recovery REST API page is not available - Document version - IS 5.7.0

'Using the Account Recovery REST APIs' link in the document [1] is not opening the page.

[1] https://docs.wso2.com/display/IS570/REST+APIs | priority | account recovery rest api page is not available document version is using the account recovery rest apis link in the document is not opening the page | 1 |

5,504 | 2,576,979,809 | IssuesEvent | 2015-02-12 14:25:07 | phusion/passenger | https://api.github.com/repos/phusion/passenger | closed | Vendor daemon_controller and crash-watch | Bounty/Easy Priority/High | We should vendor daemon_controller and crash-watch. That makes packaging much easier. | 1.0 | Vendor daemon_controller and crash-watch - We should vendor daemon_controller and crash-watch. That makes packaging much easier. | priority | vendor daemon controller and crash watch we should vendor daemon controller and crash watch that makes packaging much easier | 1 |

421,987 | 12,264,625,110 | IssuesEvent | 2020-05-07 04:58:25 | dedis/cothority | https://api.github.com/repos/dedis/cothority | closed | View change: not enough proofs | bug high priority | Sometimes the view change gets stuck with:

```

E 19/29/10 15:23:12.138834793: viewchange.go:336 (byzcoin.(*Service).verifyViewChange) - tls://fairywren.ch:7770 not enough proofs: %v <= %v 2 2

``` | 1.0 | View change: not enough proofs - Sometimes the view change gets stuck with:

```

E 19/29/10 15:23:12.138834793: viewchange.go:336 (byzcoin.(*Service).verifyViewChange) - tls://fairywren.ch:7770 not enough proofs: %v <= %v 2 2

``` | priority | view change not enough proofs sometimes the view change gets stuck with e viewchange go byzcoin service verifyviewchange tls fairywren ch not enough proofs v v | 1 |

532,992 | 15,574,885,060 | IssuesEvent | 2021-03-17 10:22:57 | KusinVitamin/Projekt-Hemsida | https://api.github.com/repos/KusinVitamin/Projekt-Hemsida | closed | Feedback efter test | Needs more info Priority: High | # User story

As a ..., I want to ..., so I can ...

*Ideally, this is in the issue title, but if not, you can put it here. If so, delete this section.*

# Acceptance criteria

- [ ] This is something that can be verified to show that this user story is satisfied.

# Sprint Ready Checklist

1. - [ ] Acceptance criteria defined

2. - [ ] Team understands acceptance criteria

3. - [ ] Team has defined solution / steps to satisfy acceptance criteria

4. - [ ] Acceptance criteria is verifiable / testable

5. - [ ] External / 3rd Party dependencies identified

| 1.0 | Feedback efter test - # User story

As a ..., I want to ..., so I can ...

*Ideally, this is in the issue title, but if not, you can put it here. If so, delete this section.*

# Acceptance criteria

- [ ] This is something that can be verified to show that this user story is satisfied.

# Sprint Ready Checklist

1. - [ ] Acceptance criteria defined

2. - [ ] Team understands acceptance criteria

3. - [ ] Team has defined solution / steps to satisfy acceptance criteria

4. - [ ] Acceptance criteria is verifiable / testable

5. - [ ] External / 3rd Party dependencies identified

| priority | feedback efter test user story as a i want to so i can ideally this is in the issue title but if not you can put it here if so delete this section acceptance criteria this is something that can be verified to show that this user story is satisfied sprint ready checklist acceptance criteria defined team understands acceptance criteria team has defined solution steps to satisfy acceptance criteria acceptance criteria is verifiable testable external party dependencies identified | 1 |

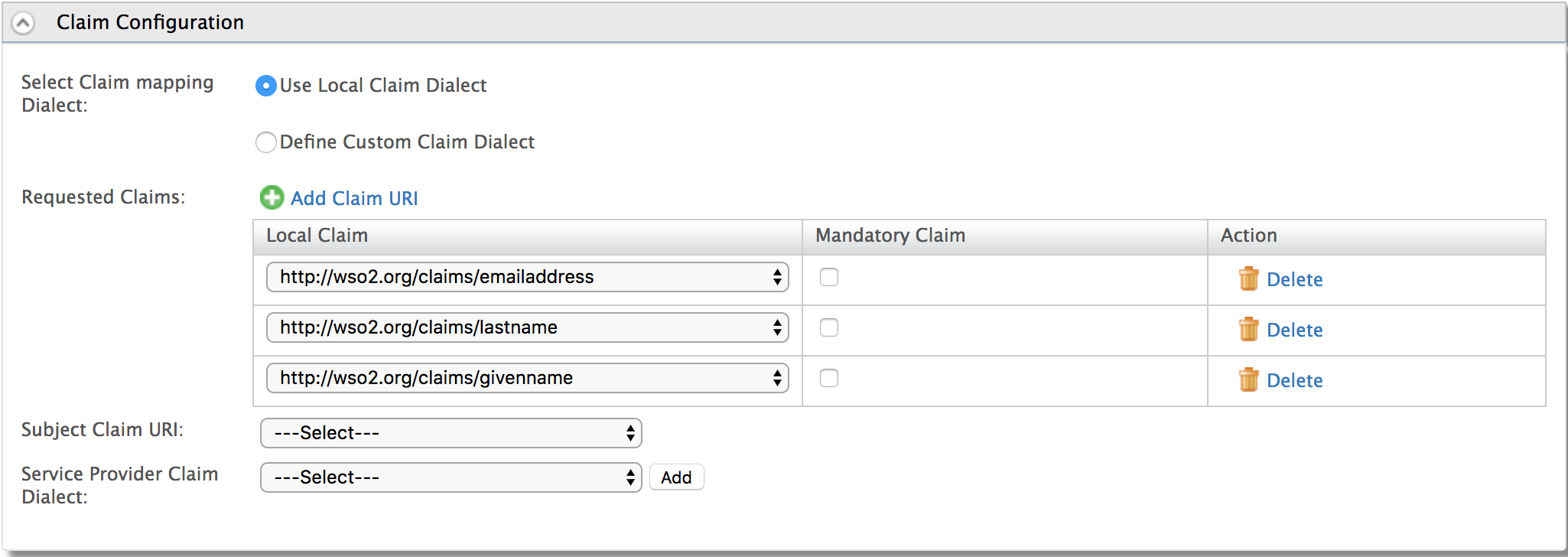

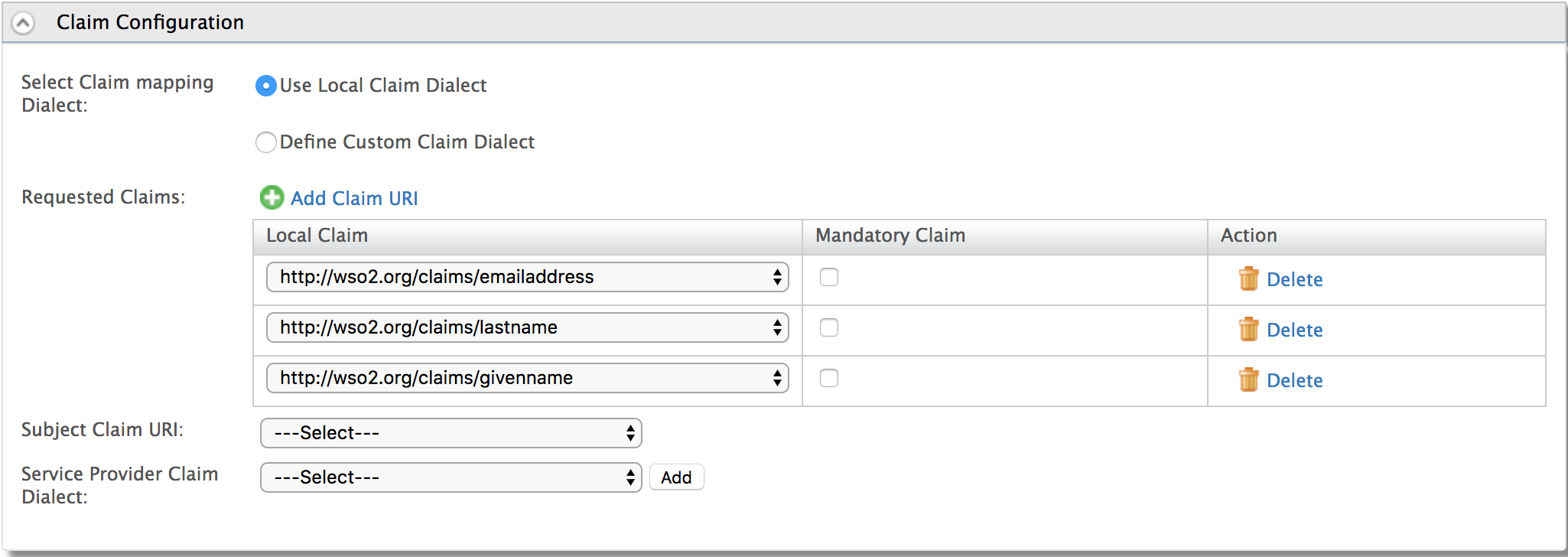

304,361 | 9,331,137,320 | IssuesEvent | 2019-03-28 09:03:42 | wso2/product-is | https://api.github.com/repos/wso2/product-is | closed | Claims not received in id token in response, or in id token for the code, returned via code id_token OIDC hybrid flow | Affected/5.8.0-Alpha2 Complexity/Medium Component/OAuth Priority/High Severity/Critical Type/Bug | **Environment:**

DB: DB2

JDK: Java 8

**Steps to reproduce:**

1. Create a tenant

2. Create a user in that tenant

3. Update first name -> http://wso2.org/claims/givenname, last name -> http://wso2.org/claims/lastname, email -> http://wso2.org/claims/emailaddress claims of that user

4. Create an oauth application

5. Configure claims section as below.

6. Make sure above claims are available for OIDC scopes as below.

given_name (mapped to http://wso2.org/claims/givenname) in openid scope

email (mapped to http://wso2.org/claims/lastname) only in email scope

family_name (mapped to http://wso2.org/claims/lastname) only in profile scope

gender (mapped to http://wso2.org/claims/gender)in openid scope

7. Initiate a request with OIDC Hybrid flow for response type **code id_token** only for openid scope

https://localhost:9443/oauth2/authorize?response_type=code id_token&client_id=xxx&nonce=asd&redirect_uri=http://localhost:8080/playground2/oauth2client&scope=openid

8. Note that only sub claim is returned in id token. Both given-name and sub expected.

9. Now initiate a token request for the code received. It returns only sub in id token. It should also return given-name

10. Perform step 7, 8 and 9 to openid email, openid email profile scope and note non responds with claims in requested scopes | 1.0 | Claims not received in id token in response, or in id token for the code, returned via code id_token OIDC hybrid flow - **Environment:**

DB: DB2

JDK: Java 8

**Steps to reproduce:**

1. Create a tenant

2. Create a user in that tenant

3. Update first name -> http://wso2.org/claims/givenname, last name -> http://wso2.org/claims/lastname, email -> http://wso2.org/claims/emailaddress claims of that user

4. Create an oauth application

5. Configure claims section as below.

6. Make sure above claims are available for OIDC scopes as below.

given_name (mapped to http://wso2.org/claims/givenname) in openid scope

email (mapped to http://wso2.org/claims/lastname) only in email scope

family_name (mapped to http://wso2.org/claims/lastname) only in profile scope

gender (mapped to http://wso2.org/claims/gender)in openid scope

7. Initiate a request with OIDC Hybrid flow for response type **code id_token** only for openid scope

https://localhost:9443/oauth2/authorize?response_type=code id_token&client_id=xxx&nonce=asd&redirect_uri=http://localhost:8080/playground2/oauth2client&scope=openid

8. Note that only sub claim is returned in id token. Both given-name and sub expected.

9. Now initiate a token request for the code received. It returns only sub in id token. It should also return given-name

10. Perform step 7, 8 and 9 to openid email, openid email profile scope and note non responds with claims in requested scopes | priority | claims not received in id token in response or in id token for the code returned via code id token oidc hybrid flow environment db jdk java steps to reproduce create a tenant create a user in that tenant update first name last name email claims of that user create an oauth application configure claims section as below make sure above claims are available for oidc scopes as below given name mapped to in openid scope email mapped to only in email scope family name mapped to only in profile scope gender mapped to openid scope initiate a request with oidc hybrid flow for response type code id token only for openid scope id token client id xxx nonce asd redirect uri note that only sub claim is returned in id token both given name and sub expected now initiate a token request for the code received it returns only sub in id token it should also return given name perform step and to openid email openid email profile scope and note non responds with claims in requested scopes | 1 |

526,942 | 15,305,334,570 | IssuesEvent | 2021-02-24 17:59:23 | netlify/explorers | https://api.github.com/repos/netlify/explorers | closed | Sends `mission-complete` data to activity table | gamification mscw: must priority: high to pair on type: dev | Picking up from #471 / #468

- use [missionComplete work in modalCongrats in stage.js](https://github.com/netlify/explorers/blob/58efe9fcd72d600639f1d9caf72c32932bee0272/src/pages/learn/%5Bmission%5D/%5Bstage%5D.js#L150) as a reference to send mission complete to activity table | 1.0 | Sends `mission-complete` data to activity table - Picking up from #471 / #468

- use [missionComplete work in modalCongrats in stage.js](https://github.com/netlify/explorers/blob/58efe9fcd72d600639f1d9caf72c32932bee0272/src/pages/learn/%5Bmission%5D/%5Bstage%5D.js#L150) as a reference to send mission complete to activity table | priority | sends mission complete data to activity table picking up from use as a reference to send mission complete to activity table | 1 |

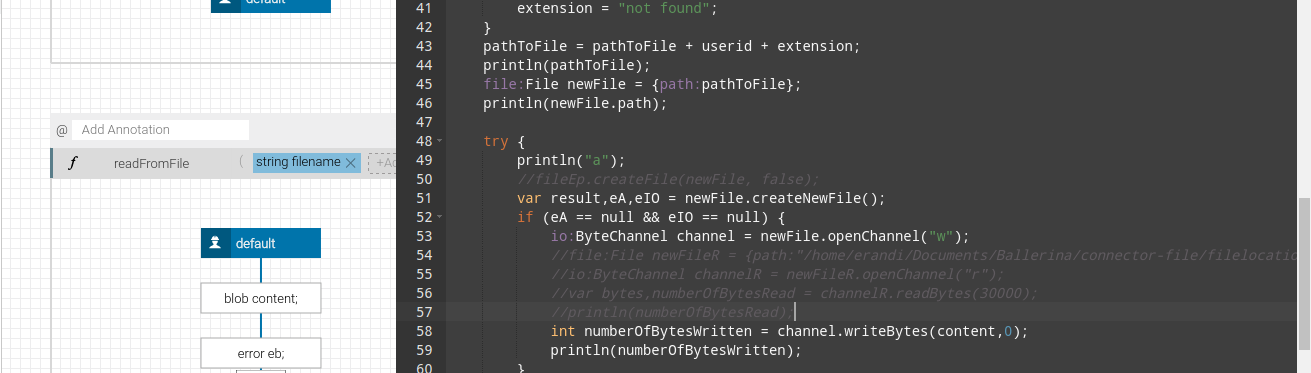

775,158 | 27,221,264,834 | IssuesEvent | 2023-02-21 05:39:49 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | Handle keywords used as identifiers such as func-names | Type/Improvement Priority/High Team/CompilerFE Area/Parser | **Description:**

$subject.

Examples are `start()`, `schedular.start()`, `client->continue()`, `x.map()`

| 1.0 | Handle keywords used as identifiers such as func-names - **Description:**

$subject.

Examples are `start()`, `schedular.start()`, `client->continue()`, `x.map()`

| priority | handle keywords used as identifiers such as func names description subject examples are start schedular start client continue x map | 1 |

275,993 | 8,583,025,741 | IssuesEvent | 2018-11-13 18:34:22 | Qiskit/qiskit-terra | https://api.github.com/repos/Qiskit/qiskit-terra | closed | The Qobj concept has gotten overly complicated. | priority: high type: discussion | I feel the qobj has become larger than what it was intended to be. I want it to be a simple serialization of a list of circuits (an object that is dump that is sent to the api and decoded after). I don't care if it is JSON, binary or email :-)

Basically, it should not exists until we run

```

dags_2_qobj (or circuits_2_qobj as in #1083)

```

and then I don't mind what it is but it does not include results or a results object. It is run on a backend

using

```

job = backend.run(qobj)

```

and then it no longer is used and its flow has ended. The Result object (which is a different folder or module) is made by

```

result = job.result()

```

So my questions are why do we have the _results in the qobj folder. It should not and if we want to have an internal object that handles what is returned by the backend then it lives in the Result folder and used by the result object. I don't want to think of qobj as the new object that handles the API. It is only the input.

---

I see that we have functions for converting to the old version. Why do we have these? I see the need in the future when we qobj v1 to qobj v2 we should have a conversion, but why can't we, for now, have these as part of the run method in the backend. ie hidden from qiskit terra in the ibm_provider

---

other things with qobj

1. validate against schema

```python

qobj_schema = json.load("schemas/qobj_schema.json") # qiskit defines this

backend_qobj_schema = backend.schema() # backend defines this. qiskit gets it via API call

jsonschema.validate(qobj_to_json(qobj), qobj_schema)

jsonschema.validate(qobj_to_json(qobj), backend_qobj_schema)

```

2. convert to circuits

```python

circuits = qobj_to_circuits(qobj)

```

3. convert between versions. Say we make the version 2 and the backend is version 1 where does this happen. I feel this should happen in the dags_2_qobj as qobj is lossy and the backend just uses the schema to check the version it supports. If we need to have some utility functions like qobj1_to_qobj2 etc for versions that we can update then we can have them in tools.

4. run smart validation see #1057 | 1.0 | The Qobj concept has gotten overly complicated. - I feel the qobj has become larger than what it was intended to be. I want it to be a simple serialization of a list of circuits (an object that is dump that is sent to the api and decoded after). I don't care if it is JSON, binary or email :-)

Basically, it should not exists until we run

```

dags_2_qobj (or circuits_2_qobj as in #1083)

```

and then I don't mind what it is but it does not include results or a results object. It is run on a backend

using

```

job = backend.run(qobj)

```

and then it no longer is used and its flow has ended. The Result object (which is a different folder or module) is made by

```

result = job.result()

```

So my questions are why do we have the _results in the qobj folder. It should not and if we want to have an internal object that handles what is returned by the backend then it lives in the Result folder and used by the result object. I don't want to think of qobj as the new object that handles the API. It is only the input.

---

I see that we have functions for converting to the old version. Why do we have these? I see the need in the future when we qobj v1 to qobj v2 we should have a conversion, but why can't we, for now, have these as part of the run method in the backend. ie hidden from qiskit terra in the ibm_provider

---

other things with qobj

1. validate against schema

```python

qobj_schema = json.load("schemas/qobj_schema.json") # qiskit defines this

backend_qobj_schema = backend.schema() # backend defines this. qiskit gets it via API call

jsonschema.validate(qobj_to_json(qobj), qobj_schema)

jsonschema.validate(qobj_to_json(qobj), backend_qobj_schema)

```

2. convert to circuits

```python

circuits = qobj_to_circuits(qobj)

```

3. convert between versions. Say we make the version 2 and the backend is version 1 where does this happen. I feel this should happen in the dags_2_qobj as qobj is lossy and the backend just uses the schema to check the version it supports. If we need to have some utility functions like qobj1_to_qobj2 etc for versions that we can update then we can have them in tools.

4. run smart validation see #1057 | priority | the qobj concept has gotten overly complicated i feel the qobj has become larger than what it was intended to be i want it to be a simple serialization of a list of circuits an object that is dump that is sent to the api and decoded after i don t care if it is json binary or email basically it should not exists until we run dags qobj or circuits qobj as in and then i don t mind what it is but it does not include results or a results object it is run on a backend using job backend run qobj and then it no longer is used and its flow has ended the result object which is a different folder or module is made by result job result so my questions are why do we have the results in the qobj folder it should not and if we want to have an internal object that handles what is returned by the backend then it lives in the result folder and used by the result object i don t want to think of qobj as the new object that handles the api it is only the input i see that we have functions for converting to the old version why do we have these i see the need in the future when we qobj to qobj we should have a conversion but why can t we for now have these as part of the run method in the backend ie hidden from qiskit terra in the ibm provider other things with qobj validate against schema python qobj schema json load schemas qobj schema json qiskit defines this backend qobj schema backend schema backend defines this qiskit gets it via api call jsonschema validate qobj to json qobj qobj schema jsonschema validate qobj to json qobj backend qobj schema convert to circuits python circuits qobj to circuits qobj convert between versions say we make the version and the backend is version where does this happen i feel this should happen in the dags qobj as qobj is lossy and the backend just uses the schema to check the version it supports if we need to have some utility functions like to etc for versions that we can update then we can have them in tools run smart validation see | 1 |

466,757 | 13,433,176,817 | IssuesEvent | 2020-09-07 09:27:27 | chubaofs/chubaofs | https://api.github.com/repos/chubaofs/chubaofs | closed | Refactor and enhance node offline process for DataNode and Metanode. | enhancement priority/high | The current offline logic for DataNode and MetaNode relies on Raft algorithm and logic, which introduces the following problems:

1. When rejoining the partition that was removed, if Raft has a low number of logs, Raft will copy the logs and replay the logs to restore the partition data on the new node. The logs from which the partition was removed will be applied, causing problems with changes to the partition's replica members.

2. When the partition copy group has no leader, the partition copy members cannot be adjusted and the node cannot be taken offline.

It is necessary to adjust the offline logic of DataNode and MetaNode. When processing the logic of adjusting the members of the partition copy, it does not go through or rely on Raft related mechanisms. These adjustments may require proper reconstruction of the Raft module used by ChubaoFS. | 1.0 | Refactor and enhance node offline process for DataNode and Metanode. - The current offline logic for DataNode and MetaNode relies on Raft algorithm and logic, which introduces the following problems:

1. When rejoining the partition that was removed, if Raft has a low number of logs, Raft will copy the logs and replay the logs to restore the partition data on the new node. The logs from which the partition was removed will be applied, causing problems with changes to the partition's replica members.

2. When the partition copy group has no leader, the partition copy members cannot be adjusted and the node cannot be taken offline.

It is necessary to adjust the offline logic of DataNode and MetaNode. When processing the logic of adjusting the members of the partition copy, it does not go through or rely on Raft related mechanisms. These adjustments may require proper reconstruction of the Raft module used by ChubaoFS. | priority | refactor and enhance node offline process for datanode and metanode the current offline logic for datanode and metanode relies on raft algorithm and logic which introduces the following problems when rejoining the partition that was removed if raft has a low number of logs raft will copy the logs and replay the logs to restore the partition data on the new node the logs from which the partition was removed will be applied causing problems with changes to the partition s replica members when the partition copy group has no leader the partition copy members cannot be adjusted and the node cannot be taken offline it is necessary to adjust the offline logic of datanode and metanode when processing the logic of adjusting the members of the partition copy it does not go through or rely on raft related mechanisms these adjustments may require proper reconstruction of the raft module used by chubaofs | 1 |

444,084 | 12,806,167,768 | IssuesEvent | 2020-07-03 08:56:52 | sosy-lab/benchexec | https://api.github.com/repos/sosy-lab/benchexec | closed | Show expected verdict in HTML table | HTML table enhancement high priority | When expected verdicts where encoded in the file name, they were visible in all tables automatically. Now this is no longer the case, so we should show them explicitly (at least optionally).

Possibilities include:

- an extra column between task name and run results (where we also show the specification if necessary)

- coloring the task name and/or specification according to the expected result (could be confused with correct/wrong, though)

- some other visual indicator like an icon (would need less space than a text column) | 1.0 | Show expected verdict in HTML table - When expected verdicts where encoded in the file name, they were visible in all tables automatically. Now this is no longer the case, so we should show them explicitly (at least optionally).

Possibilities include:

- an extra column between task name and run results (where we also show the specification if necessary)

- coloring the task name and/or specification according to the expected result (could be confused with correct/wrong, though)

- some other visual indicator like an icon (would need less space than a text column) | priority | show expected verdict in html table when expected verdicts where encoded in the file name they were visible in all tables automatically now this is no longer the case so we should show them explicitly at least optionally possibilities include an extra column between task name and run results where we also show the specification if necessary coloring the task name and or specification according to the expected result could be confused with correct wrong though some other visual indicator like an icon would need less space than a text column | 1 |

114,152 | 4,614,848,144 | IssuesEvent | 2016-09-25 20:07:59 | c0gent/NeverClicker | https://api.github.com/repos/c0gent/NeverClicker | opened | Mouse clicking outside of client window | bug priority high | Copy/Pasted from @zeusome's https://github.com/c0gent/NeverClicker/issues/11:

>Issue: For some reason, I had an issue where Neverclicker went out of Neverwinter Window and the mouse cursor was moving around/clicking things on my desktop. I returned to PC and several desktop apps had been opened. Will monitor this problem and provide more info when available. | 1.0 | Mouse clicking outside of client window - Copy/Pasted from @zeusome's https://github.com/c0gent/NeverClicker/issues/11:

>Issue: For some reason, I had an issue where Neverclicker went out of Neverwinter Window and the mouse cursor was moving around/clicking things on my desktop. I returned to PC and several desktop apps had been opened. Will monitor this problem and provide more info when available. | priority | mouse clicking outside of client window copy pasted from zeusome s issue for some reason i had an issue where neverclicker went out of neverwinter window and the mouse cursor was moving around clicking things on my desktop i returned to pc and several desktop apps had been opened will monitor this problem and provide more info when available | 1 |

450,509 | 13,012,627,925 | IssuesEvent | 2020-07-25 06:50:19 | buddyboss/buddyboss-platform | https://api.github.com/repos/buddyboss/buddyboss-platform | reopened | Learndash changing the Edit page links in admin bar to homepage instead of groups page | bug priority: high | **Describe the bug**

Groups edit page in front end redirects to Homepage

**To Reproduce**

Steps to reproduce the behavior:

Issue can be replicated in Demo

1. Go to Groups page (frontend)

2. Click on Edit Page

3. See error

**Expected behavior**

When editing Groups page, it should not redirect to Homepage edit

**Screenshots**

https://drive.google.com/file/d/1W9XNhXcswICRddM7ruLi8LY2I7bgfiGp/view?usp=sharing

**Support ticket links**

https://secure.helpscout.net/conversation/1215499495/81761 | 1.0 | Learndash changing the Edit page links in admin bar to homepage instead of groups page - **Describe the bug**

Groups edit page in front end redirects to Homepage

**To Reproduce**

Steps to reproduce the behavior:

Issue can be replicated in Demo

1. Go to Groups page (frontend)

2. Click on Edit Page

3. See error

**Expected behavior**

When editing Groups page, it should not redirect to Homepage edit

**Screenshots**

https://drive.google.com/file/d/1W9XNhXcswICRddM7ruLi8LY2I7bgfiGp/view?usp=sharing

**Support ticket links**

https://secure.helpscout.net/conversation/1215499495/81761 | priority | learndash changing the edit page links in admin bar to homepage instead of groups page describe the bug groups edit page in front end redirects to homepage to reproduce steps to reproduce the behavior issue can be replicated in demo go to groups page frontend click on edit page see error expected behavior when editing groups page it should not redirect to homepage edit screenshots support ticket links | 1 |

59,459 | 3,113,700,151 | IssuesEvent | 2015-09-03 01:29:41 | cs2103aug2015-t10-1j/main | https://api.github.com/repos/cs2103aug2015-t10-1j/main | opened | A user can add a deadline | priority.high type.story | ... so that the user can get a reminded at a specific time before the deadline to keep track of things. | 1.0 | A user can add a deadline - ... so that the user can get a reminded at a specific time before the deadline to keep track of things. | priority | a user can add a deadline so that the user can get a reminded at a specific time before the deadline to keep track of things | 1 |

85,051 | 3,684,268,050 | IssuesEvent | 2016-02-24 16:47:44 | Aurorastation/Aurora.3 | https://api.github.com/repos/Aurorastation/Aurora.3 | closed | Round start borgs not slaved to malf AI | Bug High Priority | When a roundstart borg had their job preference to to AI high and are assigned as a borg for a fallback job they don't appear to be slaved to the AI in the malf AI gametype.

This can lead to the malf AI being at a huge disadvantage, as borgs are more likely to notice hacked APCs, weird AI behaviour and can see the binary channel, one of the only private ways for the malf AI to comunicate with its slaved borgs that join midround. | 1.0 | Round start borgs not slaved to malf AI - When a roundstart borg had their job preference to to AI high and are assigned as a borg for a fallback job they don't appear to be slaved to the AI in the malf AI gametype.

This can lead to the malf AI being at a huge disadvantage, as borgs are more likely to notice hacked APCs, weird AI behaviour and can see the binary channel, one of the only private ways for the malf AI to comunicate with its slaved borgs that join midround. | priority | round start borgs not slaved to malf ai when a roundstart borg had their job preference to to ai high and are assigned as a borg for a fallback job they don t appear to be slaved to the ai in the malf ai gametype this can lead to the malf ai being at a huge disadvantage as borgs are more likely to notice hacked apcs weird ai behaviour and can see the binary channel one of the only private ways for the malf ai to comunicate with its slaved borgs that join midround | 1 |

826,904 | 31,717,321,937 | IssuesEvent | 2023-09-10 02:02:51 | Ottatop/pinnacle | https://api.github.com/repos/Ottatop/pinnacle | closed | Freezing when opening a bunch of Alacritty windows at once | bug high priority | Opening a bunch of Alacritty windows quickly will freeze the screen. This probably also happens with other windows. | 1.0 | Freezing when opening a bunch of Alacritty windows at once - Opening a bunch of Alacritty windows quickly will freeze the screen. This probably also happens with other windows. | priority | freezing when opening a bunch of alacritty windows at once opening a bunch of alacritty windows quickly will freeze the screen this probably also happens with other windows | 1 |

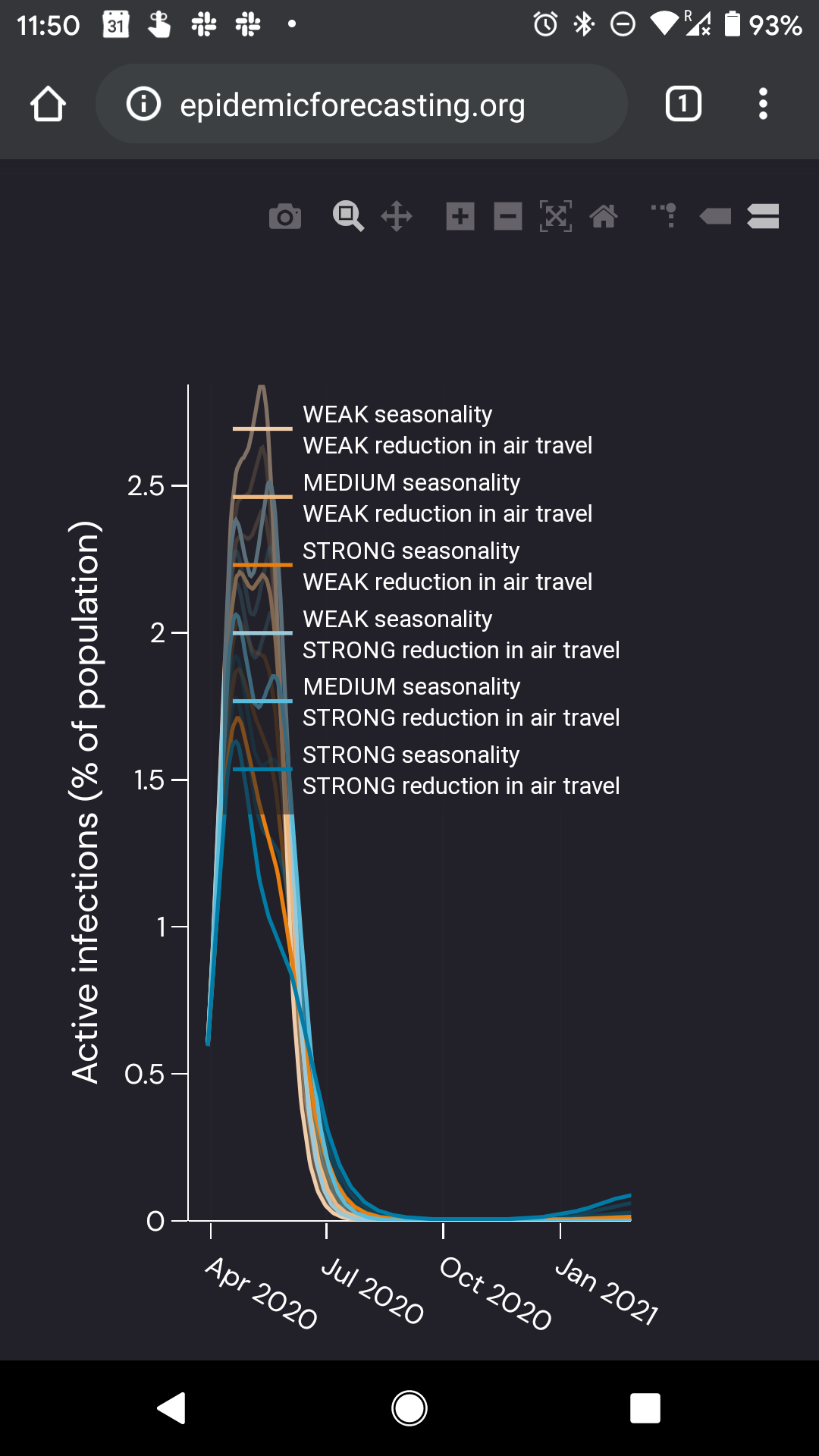

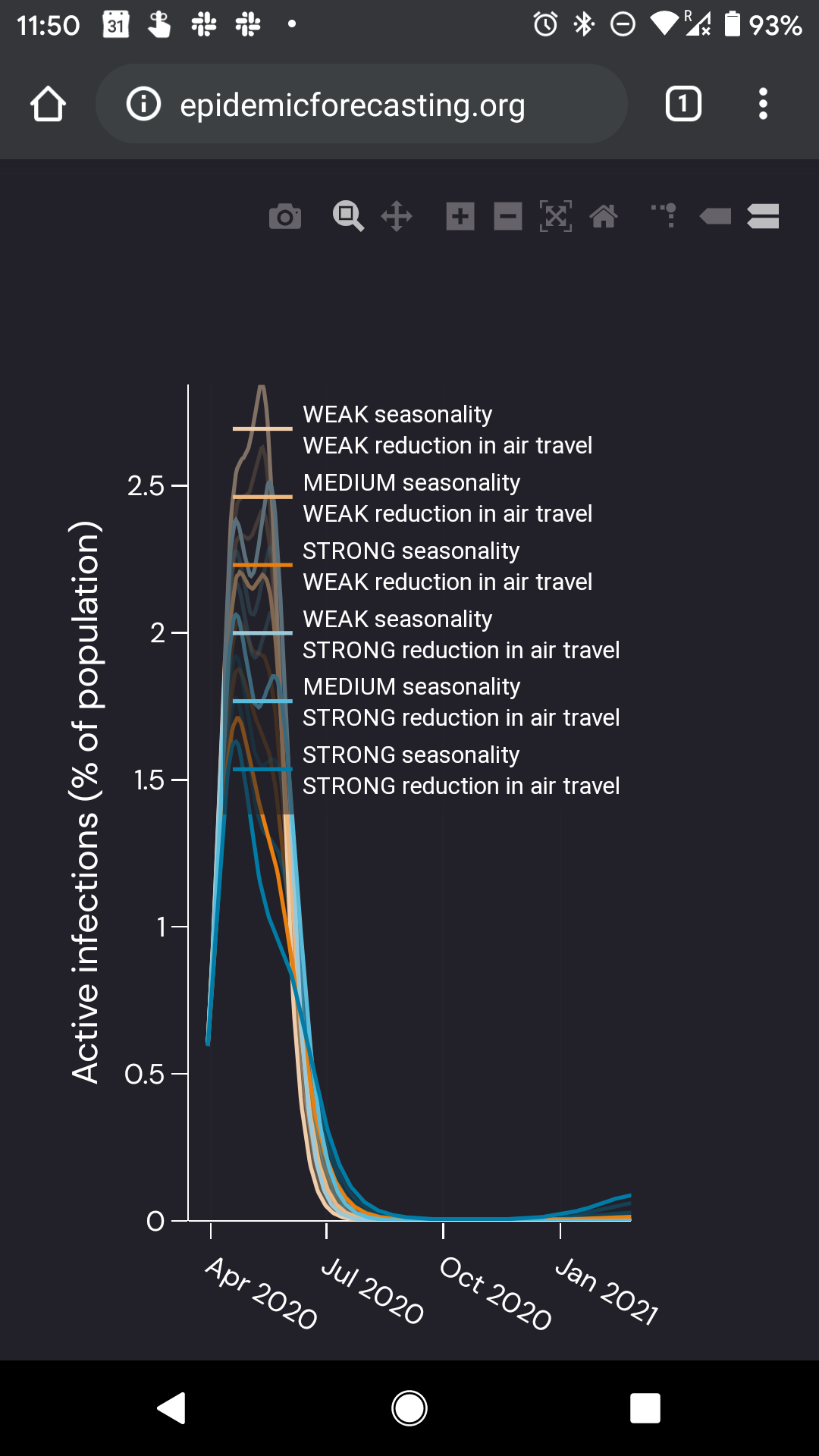

407,026 | 11,905,658,468 | IssuesEvent | 2020-03-30 18:57:31 | epidemics/covid | https://api.github.com/repos/epidemics/covid | opened | Smaller/no graph legend on mobile | high-priority | 60% of our users are on mobile, so it's important we support this use case.

However, currently the legend hides a lot of the graph:

I don't know how to solve this, but we should do _something_ about it. | 1.0 | Smaller/no graph legend on mobile - 60% of our users are on mobile, so it's important we support this use case.

However, currently the legend hides a lot of the graph:

I don't know how to solve this, but we should do _something_ about it. | priority | smaller no graph legend on mobile of our users are on mobile so it s important we support this use case however currently the legend hides a lot of the graph i don t know how to solve this but we should do something about it | 1 |

522,270 | 15,158,288,837 | IssuesEvent | 2021-02-12 00:46:45 | NOAA-GSL/MATS | https://api.github.com/repos/NOAA-GSL/MATS | closed | Single/multi-station plots display data an hour too early | Priority: High Project: MATS Status: Closed Type: Bug | ---

Author Name: **molly.b.smith** (@mollybsmith-noaa)

Original Redmine Issue: 71103, https://vlab.ncep.noaa.gov/redmine/issues/71103

Original Date: 2019-11-08

Original Assignee: molly.b.smith

---

Timeseries plots of station data seem to be shifted an hour earlier than they should be.

| 1.0 | Single/multi-station plots display data an hour too early - ---

Author Name: **molly.b.smith** (@mollybsmith-noaa)

Original Redmine Issue: 71103, https://vlab.ncep.noaa.gov/redmine/issues/71103

Original Date: 2019-11-08

Original Assignee: molly.b.smith

---

Timeseries plots of station data seem to be shifted an hour earlier than they should be.

| priority | single multi station plots display data an hour too early author name molly b smith mollybsmith noaa original redmine issue original date original assignee molly b smith timeseries plots of station data seem to be shifted an hour earlier than they should be | 1 |

606,049 | 18,753,971,881 | IssuesEvent | 2021-11-05 08:15:25 | AY2122S1-CS2113T-F14-1/tp | https://api.github.com/repos/AY2122S1-CS2113T-F14-1/tp | closed | [PE-D] Unhandled exception during edit of habits | priority.High | Upon changing of habit's interval to -1. Program crashes.

<!--session: 1635497040570-46ef78bd-b586-4c38-806b-ab369c91a526-->

<!--Version: Web v3.4.1-->

-------------

Labels: `type.FunctionalityBug` `severity.High`

original: andrewtkh1/ped#5 | 1.0 | [PE-D] Unhandled exception during edit of habits - Upon changing of habit's interval to -1. Program crashes.

<!--session: 1635497040570-46ef78bd-b586-4c38-806b-ab369c91a526-->

<!--Version: Web v3.4.1-->

-------------

Labels: `type.FunctionalityBug` `severity.High`

original: andrewtkh1/ped#5 | priority | unhandled exception during edit of habits upon changing of habit s interval to program crashes labels type functionalitybug severity high original ped | 1 |

115,305 | 4,662,924,288 | IssuesEvent | 2016-10-05 07:08:23 | CovertJaguar/Railcraft | https://api.github.com/repos/CovertJaguar/Railcraft | closed | Broken Forestry Module | bug priority-high | ```

org.apache.logging.log4j.core.appender.AppenderLoggingException: An exception occurred processing Appender ServerGuiConsole

at org.apache.logging.log4j.core.appender.DefaultErrorHandler.error(DefaultErrorHandler.java:73)

at org.apache.logging.log4j.core.config.AppenderControl.callAppender(AppenderControl.java:101)

at org.apache.logging.log4j.core.config.LoggerConfig.callAppenders(LoggerConfig.java:425)

at org.apache.logging.log4j.core.config.LoggerConfig.log(LoggerConfig.java:406)

at org.apache.logging.log4j.core.config.LoggerConfig.log(LoggerConfig.java:367)

at org.apache.logging.log4j.core.Logger.log(Logger.java:110)

at org.apache.logging.log4j.spi.AbstractLogger.log(AbstractLogger.java:1362)

at mods.railcraft.common.util.misc.Game.log(Game.java:80)

at mods.railcraft.common.util.misc.Game.log(Game.java:76)

at mods.railcraft.common.util.misc.Game.logThrowable(Game.java:115)

at mods.railcraft.common.util.misc.Game.logErrorAPI(Game.java:129)

at mods.railcraft.common.plugins.forestry.ForestryPlugin$ForestryPluginInstalled.addCarpenterRecipe(ForestryPlugin.java:294)

at mods.railcraft.common.modules.ModuleForestry$1.postInit(ModuleForestry.java:52)

at mods.railcraft.common.modules.RailcraftModulePayload$BaseModuleEventHandler.postInit(RailcraftModulePayload.java:82)

at mods.railcraft.common.modules.RailcraftModuleManager$Stage$4.passToModule(RailcraftModuleManager.java:278)

at mods.railcraft.common.modules.RailcraftModuleManager.processStage(RailcraftModuleManager.java:223)

at mods.railcraft.common.modules.RailcraftModuleManager.postInit(RailcraftModuleManager.java:210)

at mods.railcraft.common.core.Railcraft.postInit(Railcraft.java:191)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at net.minecraftforge.fml.common.FMLModContainer.handleModStateEvent(FMLModContainer.java:597)

at sun.reflect.GeneratedMethodAccessor10.invoke(Unknown Source)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at com.google.common.eventbus.EventSubscriber.handleEvent(EventSubscriber.java:74)

at com.google.common.eventbus.SynchronizedEventSubscriber.handleEvent(SynchronizedEventSubscriber.java:47)

at com.google.common.eventbus.EventBus.dispatch(EventBus.java:322)

at com.google.common.eventbus.EventBus.dispatchQueuedEvents(EventBus.java:304)

at com.google.common.eventbus.EventBus.post(EventBus.java:275)

at net.minecraftforge.fml.common.LoadController.sendEventToModContainer(LoadController.java:239)

at net.minecraftforge.fml.common.LoadController.propogateStateMessage(LoadController.java:217)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at com.google.common.eventbus.EventSubscriber.handleEvent(EventSubscriber.java:74)

at com.google.common.eventbus.SynchronizedEventSubscriber.handleEvent(SynchronizedEventSubscriber.java:47)

at com.google.common.eventbus.EventBus.dispatch(EventBus.java:322)

at com.google.common.eventbus.EventBus.dispatchQueuedEvents(EventBus.java:304)

at com.google.common.eventbus.EventBus.post(EventBus.java:275)

at net.minecraftforge.fml.common.LoadController.distributeStateMessage(LoadController.java:142)

at net.minecraftforge.fml.common.Loader.initializeMods(Loader.java:795)

at net.minecraftforge.fml.server.FMLServerHandler.finishServerLoading(FMLServerHandler.java:107)

at net.minecraftforge.fml.common.FMLCommonHandler.onServerStarted(FMLCommonHandler.java:333)

at net.minecraft.server.dedicated.DedicatedServer.func_71197_b(DedicatedServer.java:214)

at net.minecraft.server.MinecraftServer.run(MinecraftServer.java:431)

at java.lang.Thread.run(Thread.java:745)

Caused by: java.lang.IllegalArgumentException: can't parse argument number: concrete

at java.text.MessageFormat.makeFormat(MessageFormat.java:1429)

at java.text.MessageFormat.applyPattern(MessageFormat.java:479)

at java.text.MessageFormat.<init>(MessageFormat.java:362)

at java.text.MessageFormat.format(MessageFormat.java:840)

at org.apache.logging.log4j.message.MessageFormatMessage.formatMessage(MessageFormatMessage.java:89)

at org.apache.logging.log4j.message.MessageFormatMessage.getFormattedMessage(MessageFormatMessage.java:61)

at org.apache.logging.log4j.core.pattern.MessagePatternConverter.format(MessagePatternConverter.java:68)

at org.apache.logging.log4j.core.pattern.PatternFormatter.format(PatternFormatter.java:36)

at org.apache.logging.log4j.core.pattern.RegexReplacementConverter.format(RegexReplacementConverter.java:93)

at org.apache.logging.log4j.core.pattern.PatternFormatter.format(PatternFormatter.java:36)

at org.apache.logging.log4j.core.layout.PatternLayout.toSerializable(PatternLayout.java:167)

at org.apache.logging.log4j.core.layout.PatternLayout.toSerializable(PatternLayout.java:52)

at com.mojang.util.QueueLogAppender.append(QueueLogAppender.java:39)

at org.apache.logging.log4j.core.config.AppenderControl.callAppender(AppenderControl.java:99)

... 47 more

Caused by: java.lang.NumberFormatException: For input string: "concrete"

at java.lang.NumberFormatException.forInputString(NumberFormatException.java:65)

at java.lang.Integer.parseInt(Integer.java:580)

at java.lang.Integer.parseInt(Integer.java:615)

at java.text.MessageFormat.makeFormat(MessageFormat.java:1427)

... 60 more

``` | 1.0 | Broken Forestry Module - ```

org.apache.logging.log4j.core.appender.AppenderLoggingException: An exception occurred processing Appender ServerGuiConsole

at org.apache.logging.log4j.core.appender.DefaultErrorHandler.error(DefaultErrorHandler.java:73)

at org.apache.logging.log4j.core.config.AppenderControl.callAppender(AppenderControl.java:101)

at org.apache.logging.log4j.core.config.LoggerConfig.callAppenders(LoggerConfig.java:425)

at org.apache.logging.log4j.core.config.LoggerConfig.log(LoggerConfig.java:406)

at org.apache.logging.log4j.core.config.LoggerConfig.log(LoggerConfig.java:367)

at org.apache.logging.log4j.core.Logger.log(Logger.java:110)

at org.apache.logging.log4j.spi.AbstractLogger.log(AbstractLogger.java:1362)

at mods.railcraft.common.util.misc.Game.log(Game.java:80)

at mods.railcraft.common.util.misc.Game.log(Game.java:76)

at mods.railcraft.common.util.misc.Game.logThrowable(Game.java:115)

at mods.railcraft.common.util.misc.Game.logErrorAPI(Game.java:129)

at mods.railcraft.common.plugins.forestry.ForestryPlugin$ForestryPluginInstalled.addCarpenterRecipe(ForestryPlugin.java:294)

at mods.railcraft.common.modules.ModuleForestry$1.postInit(ModuleForestry.java:52)

at mods.railcraft.common.modules.RailcraftModulePayload$BaseModuleEventHandler.postInit(RailcraftModulePayload.java:82)

at mods.railcraft.common.modules.RailcraftModuleManager$Stage$4.passToModule(RailcraftModuleManager.java:278)

at mods.railcraft.common.modules.RailcraftModuleManager.processStage(RailcraftModuleManager.java:223)

at mods.railcraft.common.modules.RailcraftModuleManager.postInit(RailcraftModuleManager.java:210)

at mods.railcraft.common.core.Railcraft.postInit(Railcraft.java:191)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at net.minecraftforge.fml.common.FMLModContainer.handleModStateEvent(FMLModContainer.java:597)

at sun.reflect.GeneratedMethodAccessor10.invoke(Unknown Source)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at com.google.common.eventbus.EventSubscriber.handleEvent(EventSubscriber.java:74)

at com.google.common.eventbus.SynchronizedEventSubscriber.handleEvent(SynchronizedEventSubscriber.java:47)

at com.google.common.eventbus.EventBus.dispatch(EventBus.java:322)

at com.google.common.eventbus.EventBus.dispatchQueuedEvents(EventBus.java:304)

at com.google.common.eventbus.EventBus.post(EventBus.java:275)

at net.minecraftforge.fml.common.LoadController.sendEventToModContainer(LoadController.java:239)

at net.minecraftforge.fml.common.LoadController.propogateStateMessage(LoadController.java:217)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at com.google.common.eventbus.EventSubscriber.handleEvent(EventSubscriber.java:74)

at com.google.common.eventbus.SynchronizedEventSubscriber.handleEvent(SynchronizedEventSubscriber.java:47)

at com.google.common.eventbus.EventBus.dispatch(EventBus.java:322)

at com.google.common.eventbus.EventBus.dispatchQueuedEvents(EventBus.java:304)

at com.google.common.eventbus.EventBus.post(EventBus.java:275)

at net.minecraftforge.fml.common.LoadController.distributeStateMessage(LoadController.java:142)

at net.minecraftforge.fml.common.Loader.initializeMods(Loader.java:795)

at net.minecraftforge.fml.server.FMLServerHandler.finishServerLoading(FMLServerHandler.java:107)

at net.minecraftforge.fml.common.FMLCommonHandler.onServerStarted(FMLCommonHandler.java:333)

at net.minecraft.server.dedicated.DedicatedServer.func_71197_b(DedicatedServer.java:214)

at net.minecraft.server.MinecraftServer.run(MinecraftServer.java:431)

at java.lang.Thread.run(Thread.java:745)

Caused by: java.lang.IllegalArgumentException: can't parse argument number: concrete

at java.text.MessageFormat.makeFormat(MessageFormat.java:1429)

at java.text.MessageFormat.applyPattern(MessageFormat.java:479)

at java.text.MessageFormat.<init>(MessageFormat.java:362)

at java.text.MessageFormat.format(MessageFormat.java:840)

at org.apache.logging.log4j.message.MessageFormatMessage.formatMessage(MessageFormatMessage.java:89)

at org.apache.logging.log4j.message.MessageFormatMessage.getFormattedMessage(MessageFormatMessage.java:61)

at org.apache.logging.log4j.core.pattern.MessagePatternConverter.format(MessagePatternConverter.java:68)

at org.apache.logging.log4j.core.pattern.PatternFormatter.format(PatternFormatter.java:36)

at org.apache.logging.log4j.core.pattern.RegexReplacementConverter.format(RegexReplacementConverter.java:93)

at org.apache.logging.log4j.core.pattern.PatternFormatter.format(PatternFormatter.java:36)

at org.apache.logging.log4j.core.layout.PatternLayout.toSerializable(PatternLayout.java:167)

at org.apache.logging.log4j.core.layout.PatternLayout.toSerializable(PatternLayout.java:52)

at com.mojang.util.QueueLogAppender.append(QueueLogAppender.java:39)

at org.apache.logging.log4j.core.config.AppenderControl.callAppender(AppenderControl.java:99)

... 47 more

Caused by: java.lang.NumberFormatException: For input string: "concrete"

at java.lang.NumberFormatException.forInputString(NumberFormatException.java:65)

at java.lang.Integer.parseInt(Integer.java:580)

at java.lang.Integer.parseInt(Integer.java:615)

at java.text.MessageFormat.makeFormat(MessageFormat.java:1427)

... 60 more

``` | priority | broken forestry module org apache logging core appender appenderloggingexception an exception occurred processing appender serverguiconsole at org apache logging core appender defaulterrorhandler error defaulterrorhandler java at org apache logging core config appendercontrol callappender appendercontrol java at org apache logging core config loggerconfig callappenders loggerconfig java at org apache logging core config loggerconfig log loggerconfig java at org apache logging core config loggerconfig log loggerconfig java at org apache logging core logger log logger java at org apache logging spi abstractlogger log abstractlogger java at mods railcraft common util misc game log game java at mods railcraft common util misc game log game java at mods railcraft common util misc game logthrowable game java at mods railcraft common util misc game logerrorapi game java at mods railcraft common plugins forestry forestryplugin forestryplugininstalled addcarpenterrecipe forestryplugin java at mods railcraft common modules moduleforestry postinit moduleforestry java at mods railcraft common modules railcraftmodulepayload basemoduleeventhandler postinit railcraftmodulepayload java at mods railcraft common modules railcraftmodulemanager stage passtomodule railcraftmodulemanager java at mods railcraft common modules railcraftmodulemanager processstage railcraftmodulemanager java at mods railcraft common modules railcraftmodulemanager postinit railcraftmodulemanager java at mods railcraft common core railcraft postinit railcraft java at sun reflect nativemethodaccessorimpl native method at sun reflect nativemethodaccessorimpl invoke nativemethodaccessorimpl java at sun reflect delegatingmethodaccessorimpl invoke delegatingmethodaccessorimpl java at java lang reflect method invoke method java at net minecraftforge fml common fmlmodcontainer handlemodstateevent fmlmodcontainer java at sun reflect invoke unknown source at sun reflect delegatingmethodaccessorimpl invoke delegatingmethodaccessorimpl java at java lang reflect method invoke method java at com google common eventbus eventsubscriber handleevent eventsubscriber java at com google common eventbus synchronizedeventsubscriber handleevent synchronizedeventsubscriber java at com google common eventbus eventbus dispatch eventbus java at com google common eventbus eventbus dispatchqueuedevents eventbus java at com google common eventbus eventbus post eventbus java at net minecraftforge fml common loadcontroller sendeventtomodcontainer loadcontroller java at net minecraftforge fml common loadcontroller propogatestatemessage loadcontroller java at sun reflect nativemethodaccessorimpl native method at sun reflect nativemethodaccessorimpl invoke nativemethodaccessorimpl java at sun reflect delegatingmethodaccessorimpl invoke delegatingmethodaccessorimpl java at java lang reflect method invoke method java at com google common eventbus eventsubscriber handleevent eventsubscriber java at com google common eventbus synchronizedeventsubscriber handleevent synchronizedeventsubscriber java at com google common eventbus eventbus dispatch eventbus java at com google common eventbus eventbus dispatchqueuedevents eventbus java at com google common eventbus eventbus post eventbus java at net minecraftforge fml common loadcontroller distributestatemessage loadcontroller java at net minecraftforge fml common loader initializemods loader java at net minecraftforge fml server fmlserverhandler finishserverloading fmlserverhandler java at net minecraftforge fml common fmlcommonhandler onserverstarted fmlcommonhandler java at net minecraft server dedicated dedicatedserver func b dedicatedserver java at net minecraft server minecraftserver run minecraftserver java at java lang thread run thread java caused by java lang illegalargumentexception can t parse argument number concrete at java text messageformat makeformat messageformat java at java text messageformat applypattern messageformat java at java text messageformat messageformat java at java text messageformat format messageformat java at org apache logging message messageformatmessage formatmessage messageformatmessage java at org apache logging message messageformatmessage getformattedmessage messageformatmessage java at org apache logging core pattern messagepatternconverter format messagepatternconverter java at org apache logging core pattern patternformatter format patternformatter java at org apache logging core pattern regexreplacementconverter format regexreplacementconverter java at org apache logging core pattern patternformatter format patternformatter java at org apache logging core layout patternlayout toserializable patternlayout java at org apache logging core layout patternlayout toserializable patternlayout java at com mojang util queuelogappender append queuelogappender java at org apache logging core config appendercontrol callappender appendercontrol java more caused by java lang numberformatexception for input string concrete at java lang numberformatexception forinputstring numberformatexception java at java lang integer parseint integer java at java lang integer parseint integer java at java text messageformat makeformat messageformat java more | 1 |

695,217 | 23,849,169,551 | IssuesEvent | 2022-09-06 16:17:26 | huridocs/uwazi | https://api.github.com/repos/huridocs/uwazi | closed | 'Upload PDF' button doesn't change the uploading status when selecting other entity | Bug :lady_beetle: Sprint Priority: High Frontend :sunglasses: | **Describe the bug**

The label of the 'Upload PDF' button keeps the status of the last executed action across entities, ie: After a fail uploading a document, 'Upload PDF' keeps with the "An error occurred" label when selecting another entity.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to the Library

2. Add an invalid document to an existing entity (the 'Upload button' will show the label 'An error occurred')

3. Select another existing entity

4. The button remains with the label 'An error occurred'

**Expected behavior**

When selecting another entity the button should show its status, by default, the action to add a new document (the same in the case of selecting again the document with the failure?)

**Screenshots**

Entity with error

<img width="988" alt="Screen Shot 2022-08-16 at 11 49 18" src="https://user-images.githubusercontent.com/5322716/184935007-fba309e9-77cf-4815-bc68-c74bf02ebcf0.png">

Another entity

<img width="1024" alt="Screen Shot 2022-08-16 at 11 50 03" src="https://user-images.githubusercontent.com/5322716/184935023-ab57cdbe-827d-4329-ab18-6a6a8c504874.png">

**Device (please select all that apply)**

- [x] Desktop

- [x] Mobile

**Browser**

Firefox, Chrome

| 1.0 | 'Upload PDF' button doesn't change the uploading status when selecting other entity - **Describe the bug**

The label of the 'Upload PDF' button keeps the status of the last executed action across entities, ie: After a fail uploading a document, 'Upload PDF' keeps with the "An error occurred" label when selecting another entity.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to the Library

2. Add an invalid document to an existing entity (the 'Upload button' will show the label 'An error occurred')

3. Select another existing entity

4. The button remains with the label 'An error occurred'

**Expected behavior**

When selecting another entity the button should show its status, by default, the action to add a new document (the same in the case of selecting again the document with the failure?)

**Screenshots**

Entity with error

<img width="988" alt="Screen Shot 2022-08-16 at 11 49 18" src="https://user-images.githubusercontent.com/5322716/184935007-fba309e9-77cf-4815-bc68-c74bf02ebcf0.png">

Another entity

<img width="1024" alt="Screen Shot 2022-08-16 at 11 50 03" src="https://user-images.githubusercontent.com/5322716/184935023-ab57cdbe-827d-4329-ab18-6a6a8c504874.png">

**Device (please select all that apply)**

- [x] Desktop

- [x] Mobile

**Browser**

Firefox, Chrome

| priority | upload pdf button doesn t change the uploading status when selecting other entity describe the bug the label of the upload pdf button keeps the status of the last executed action across entities ie after a fail uploading a document upload pdf keeps with the an error occurred label when selecting another entity to reproduce steps to reproduce the behavior go to the library add an invalid document to an existing entity the upload button will show the label an error occurred select another existing entity the button remains with the label an error occurred expected behavior when selecting another entity the button should show its status by default the action to add a new document the same in the case of selecting again the document with the failure screenshots entity with error img width alt screen shot at src another entity img width alt screen shot at src device please select all that apply desktop mobile browser firefox chrome | 1 |

466,250 | 13,398,987,017 | IssuesEvent | 2020-09-03 13:54:15 | protofire/omen-exchange | https://api.github.com/repos/protofire/omen-exchange | closed | 'My Markets' not displaying markets that a user has created | bug priority:high | I assume this came about from making the change to start displaying markets that users have interacted with in the 'My Markets' view, but for some reason, it no longer returns a user's created markets. | 1.0 | 'My Markets' not displaying markets that a user has created - I assume this came about from making the change to start displaying markets that users have interacted with in the 'My Markets' view, but for some reason, it no longer returns a user's created markets. | priority | my markets not displaying markets that a user has created i assume this came about from making the change to start displaying markets that users have interacted with in the my markets view but for some reason it no longer returns a user s created markets | 1 |

444,629 | 12,815,186,294 | IssuesEvent | 2020-07-05 00:17:41 | acl-org/acl-2020-virtual-conference | https://api.github.com/repos/acl-org/acl-2020-virtual-conference | closed | Workshop: Open each workshop paper talk in a new page | priority:high volunteer needed | Currently, all pre-recorded talks of a workshop are all displayed in one page (e.g. https://virtual.acl2020.org/workshop_W1.html has about 50 talks all in one page).

Can we instead have a separate page for each talk and just have a link to that page in the main page? | 1.0 | Workshop: Open each workshop paper talk in a new page - Currently, all pre-recorded talks of a workshop are all displayed in one page (e.g. https://virtual.acl2020.org/workshop_W1.html has about 50 talks all in one page).

Can we instead have a separate page for each talk and just have a link to that page in the main page? | priority | workshop open each workshop paper talk in a new page currently all pre recorded talks of a workshop are all displayed in one page e g has about talks all in one page can we instead have a separate page for each talk and just have a link to that page in the main page | 1 |

625,236 | 19,722,928,365 | IssuesEvent | 2022-01-13 17:00:36 | DiscordBot-PMMP/DiscordBot | https://api.github.com/repos/DiscordBot-PMMP/DiscordBot | closed | Better description | Priority: High Type: Suggestion Status: In Progress | I cant understand what this plugin do it is a lib or something else? I dont think it is lib since it has token in resources but idk what this plugin do and how do we use this pls we need a better description to understand. | 1.0 | Better description - I cant understand what this plugin do it is a lib or something else? I dont think it is lib since it has token in resources but idk what this plugin do and how do we use this pls we need a better description to understand. | priority | better description i cant understand what this plugin do it is a lib or something else i dont think it is lib since it has token in resources but idk what this plugin do and how do we use this pls we need a better description to understand | 1 |

65,292 | 3,227,386,968 | IssuesEvent | 2015-10-11 05:10:20 | sohelvali/Test-Git-Issue | https://api.github.com/repos/sohelvali/Test-Git-Issue | closed | Uploaded document (Astellas) does not map Clinical Pharmacology Section | Completed High Priority Upload | Screenshot and uploaded doc attached. | 1.0 | Uploaded document (Astellas) does not map Clinical Pharmacology Section - Screenshot and uploaded doc attached. | priority | uploaded document astellas does not map clinical pharmacology section screenshot and uploaded doc attached | 1 |

312,048 | 9,542,337,350 | IssuesEvent | 2019-05-01 03:16:11 | vectorlit/unofficial_gencon_mobile | https://api.github.com/repos/vectorlit/unofficial_gencon_mobile | opened | Feature/Bug Fix: Database speed is slow | bug enhancement high priority | The database speed - both on commits and searches - is too slow. Whether this is related to the SQFlite (SQLite) implementation, the single-threaded nature of the app, the serialization/deserializations, or anything else is unknown. But the goal of this issue is to resolve general sluggishness in download commits, searches, and search result list bindings.

Use cases/examples:

- Displaying ALL events in the database (typically ~18,000 events) should take 3-5 seconds on a modern device. It currently takes ~45 seconds.

- Committing ALL events to the database, including network download (typically ~18,000 events) should take ~30 seconds on a modern device. It currently takes ~2 minutes. | 1.0 | Feature/Bug Fix: Database speed is slow - The database speed - both on commits and searches - is too slow. Whether this is related to the SQFlite (SQLite) implementation, the single-threaded nature of the app, the serialization/deserializations, or anything else is unknown. But the goal of this issue is to resolve general sluggishness in download commits, searches, and search result list bindings.

Use cases/examples:

- Displaying ALL events in the database (typically ~18,000 events) should take 3-5 seconds on a modern device. It currently takes ~45 seconds.

- Committing ALL events to the database, including network download (typically ~18,000 events) should take ~30 seconds on a modern device. It currently takes ~2 minutes. | priority | feature bug fix database speed is slow the database speed both on commits and searches is too slow whether this is related to the sqflite sqlite implementation the single threaded nature of the app the serialization deserializations or anything else is unknown but the goal of this issue is to resolve general sluggishness in download commits searches and search result list bindings use cases examples displaying all events in the database typically events should take seconds on a modern device it currently takes seconds committing all events to the database including network download typically events should take seconds on a modern device it currently takes minutes | 1 |

482,742 | 13,912,512,230 | IssuesEvent | 2020-10-20 18:59:13 | OpenLiberty/ci.maven | https://api.github.com/repos/OpenLiberty/ci.maven | closed | Track Dockerfile changes and rebuild image on changes | devMode devModeContainers enhancement high priority | Track changes to the Dockerfile itself and rebuild/restart container. | 1.0 | Track Dockerfile changes and rebuild image on changes - Track changes to the Dockerfile itself and rebuild/restart container. | priority | track dockerfile changes and rebuild image on changes track changes to the dockerfile itself and rebuild restart container | 1 |

726,931 | 25,016,793,225 | IssuesEvent | 2022-11-03 19:34:59 | Zefau/ioBroker.jarvis | https://api.github.com/repos/Zefau/ioBroker.jarvis | closed | Nach Update auf v3.1.0-beta.8 teilweise keine Values mehr sichtbar | :bug: bug HIGH PRIORITY | Nach dem Update von v3.1.0-beta.6 auf v3.1.0-beta.8 sehen meine Sreens recht traurig aus:

Viele Values und teilweise auch Icons werden nicht mehr geladen. Hier ein Beispiel, in dem eigentlich viele Werte stehen:

Browser (Edge) zeigt dies:

| 1.0 | Nach Update auf v3.1.0-beta.8 teilweise keine Values mehr sichtbar - Nach dem Update von v3.1.0-beta.6 auf v3.1.0-beta.8 sehen meine Sreens recht traurig aus:

Viele Values und teilweise auch Icons werden nicht mehr geladen. Hier ein Beispiel, in dem eigentlich viele Werte stehen:

Browser (Edge) zeigt dies:

| priority | nach update auf beta teilweise keine values mehr sichtbar nach dem update von beta auf beta sehen meine sreens recht traurig aus viele values und teilweise auch icons werden nicht mehr geladen hier ein beispiel in dem eigentlich viele werte stehen browser edge zeigt dies | 1 |

31,883 | 2,740,845,460 | IssuesEvent | 2015-04-21 06:51:22 | OCHA-DAP/hdx-ckan | https://api.github.com/repos/OCHA-DAP/hdx-ckan | closed | Custom location page: review of topline numbers and charts section | Priority-High | I think we need to review the way we are using the topline numbers and charts section to support several cases:

- topline name on 2 rows

- topline source on 2 rows

- different number of toplines vs charts | 1.0 | Custom location page: review of topline numbers and charts section - I think we need to review the way we are using the topline numbers and charts section to support several cases:

- topline name on 2 rows

- topline source on 2 rows

- different number of toplines vs charts | priority | custom location page review of topline numbers and charts section i think we need to review the way we are using the topline numbers and charts section to support several cases topline name on rows topline source on rows different number of toplines vs charts | 1 |

278,350 | 8,639,703,867 | IssuesEvent | 2018-11-23 20:53:46 | tophat/yvm | https://api.github.com/repos/tophat/yvm | closed | Getting a segfault when trying to do a `yarn install` using yvm | bug help wanted high priority must have | **Describe the bug**

When I run `yarn install` with `yvm` I'm getting a segfault:

```

75162/91112/Users/******/.yvm/yvm.sh: line 82: 60566 Segmentation fault: 11 node "${YVM_DIR}/yvm.js" $@

make: *** [node_modules] Error 139

``` | 1.0 | Getting a segfault when trying to do a `yarn install` using yvm - **Describe the bug**

When I run `yarn install` with `yvm` I'm getting a segfault:

```

75162/91112/Users/******/.yvm/yvm.sh: line 82: 60566 Segmentation fault: 11 node "${YVM_DIR}/yvm.js" $@

make: *** [node_modules] Error 139

``` | priority | getting a segfault when trying to do a yarn install using yvm describe the bug when i run yarn install with yvm i m getting a segfault users yvm yvm sh line segmentation fault node yvm dir yvm js make error | 1 |

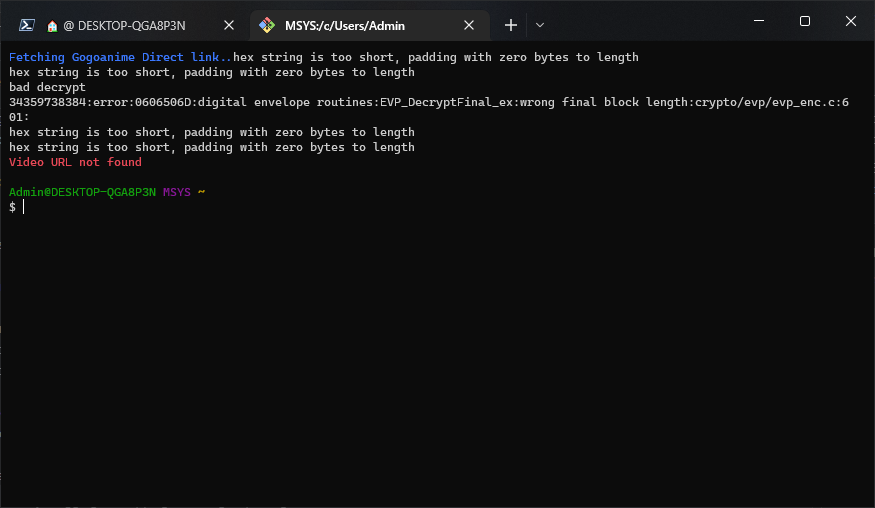

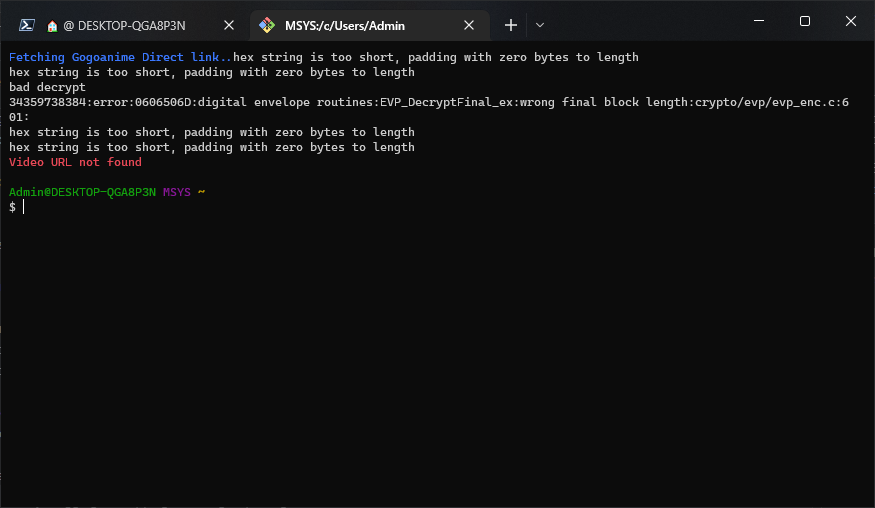

696,299 | 23,895,880,359 | IssuesEvent | 2022-09-08 14:44:58 | pystardust/ani-cli | https://api.github.com/repos/pystardust/ani-cli | closed | When I searched for a specific anime, the result returns to 'video URL not found' | type: bug category: url priority 1: high | **Metadata (please complete the following information)**

Version: 3.3

OS: Windows 10

Shell: git bash

Anime: Ore Dake Haireru Kakushi Dungeon

**Describe the bug**

After I enter the name of the anime and selected episode, it outputs hex errors and then results to 'video URL not found'

**Steps To Reproduce**

1. Open git bash normally

2. Run ani-cli

3. Input 'ore dake'

4. choose 4

5. choose 7 (episode)

**Expected behavior**

| 1.0 | When I searched for a specific anime, the result returns to 'video URL not found' - **Metadata (please complete the following information)**

Version: 3.3

OS: Windows 10

Shell: git bash

Anime: Ore Dake Haireru Kakushi Dungeon

**Describe the bug**

After I enter the name of the anime and selected episode, it outputs hex errors and then results to 'video URL not found'

**Steps To Reproduce**

1. Open git bash normally

2. Run ani-cli

3. Input 'ore dake'

4. choose 4

5. choose 7 (episode)

**Expected behavior**

| priority | when i searched for a specific anime the result returns to video url not found metadata please complete the following information version os windows shell git bash anime ore dake haireru kakushi dungeon describe the bug after i enter the name of the anime and selected episode it outputs hex errors and then results to video url not found steps to reproduce open git bash normally run ani cli input ore dake choose choose episode expected behavior | 1 |

25,373 | 2,680,828,958 | IssuesEvent | 2015-03-27 02:14:51 | JacobFrericks/HabitRPG-Native-Client | https://api.github.com/repos/JacobFrericks/HabitRPG-Native-Client | opened | "Does Not Repeat" text doesn't change when repeating | bug priority: high | Expected:

- add task

- set repeating to anything

- text should say "Repeating Monday, Wedensday" or "Repeating Weekdays" etc

Actual:

- add task

- set repeating to anything

- text still says "Does Not Repeat" | 1.0 | "Does Not Repeat" text doesn't change when repeating - Expected:

- add task

- set repeating to anything

- text should say "Repeating Monday, Wedensday" or "Repeating Weekdays" etc

Actual:

- add task

- set repeating to anything

- text still says "Does Not Repeat" | priority | does not repeat text doesn t change when repeating expected add task set repeating to anything text should say repeating monday wedensday or repeating weekdays etc actual add task set repeating to anything text still says does not repeat | 1 |

390,171 | 11,525,808,822 | IssuesEvent | 2020-02-15 11:08:57 | wso2/docs-is | https://api.github.com/repos/wso2/docs-is | closed | Grammar and Spelling errors in the Configuring SMSOTP doc | Affected/5.10.0 Priority/High Severity/Minor | **Description:**

Noted several grammar and spelling errors in [[1]](https://is.docs.wso2.com/en/next/learn/configuring-sms-otp/).

1. In **Enable SMSOTP** section > Value/Description table > row 2, column 2, "diable" should be "disable"

2. In **Enable SMSOTP** section > Value/Description table > row 7, column 2, "SMMSOTP" should be "SMSOTP"

3. In **Enable SMSOTP** section > Value/Description table > row 13, column 2, "unable" should be "enable"

4. In **Configuring the service provider** section > numbered point 1, "Navigate to Main tab -> Identity -> Identity Providers -> Add" should be "Navigate to Main tab -> Identity -> Service Providers -> Add" | 1.0 | Grammar and Spelling errors in the Configuring SMSOTP doc - **Description:**

Noted several grammar and spelling errors in [[1]](https://is.docs.wso2.com/en/next/learn/configuring-sms-otp/).

1. In **Enable SMSOTP** section > Value/Description table > row 2, column 2, "diable" should be "disable"

2. In **Enable SMSOTP** section > Value/Description table > row 7, column 2, "SMMSOTP" should be "SMSOTP"

3. In **Enable SMSOTP** section > Value/Description table > row 13, column 2, "unable" should be "enable"

4. In **Configuring the service provider** section > numbered point 1, "Navigate to Main tab -> Identity -> Identity Providers -> Add" should be "Navigate to Main tab -> Identity -> Service Providers -> Add" | priority | grammar and spelling errors in the configuring smsotp doc description noted several grammar and spelling errors in in enable smsotp section value description table row column diable should be disable in enable smsotp section value description table row column smmsotp should be smsotp in enable smsotp section value description table row column unable should be enable in configuring the service provider section numbered point navigate to main tab identity identity providers add should be navigate to main tab identity service providers add | 1 |

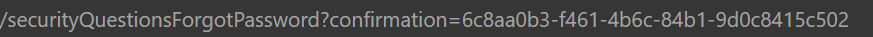

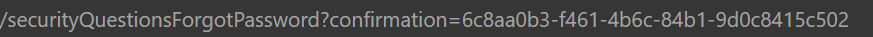

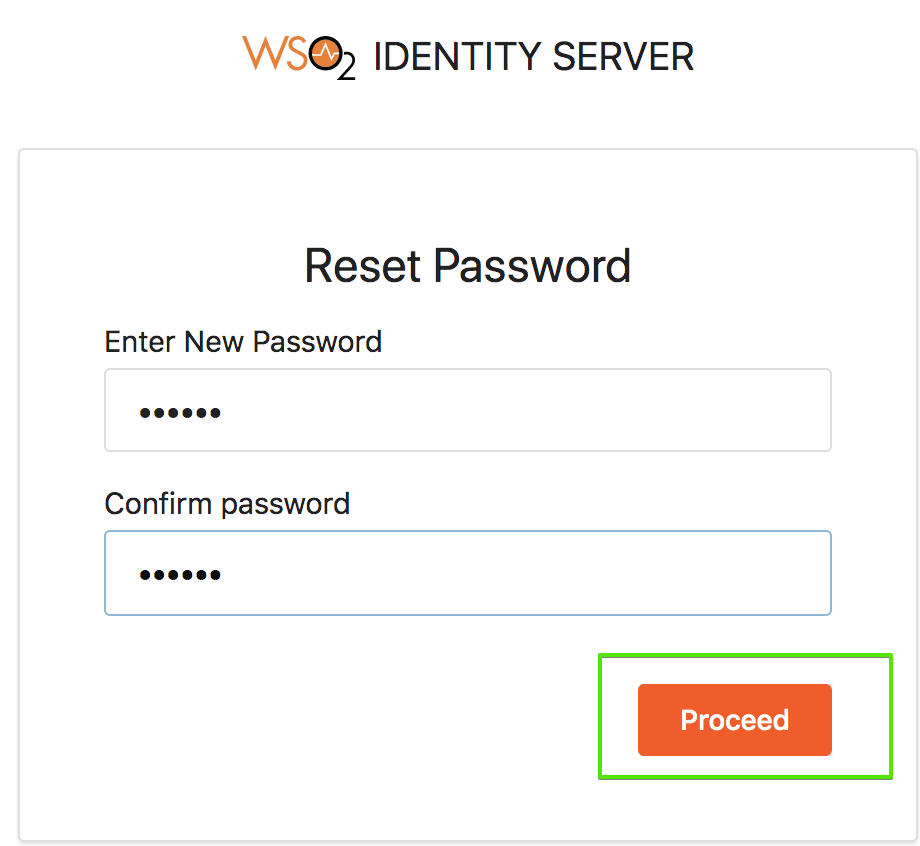

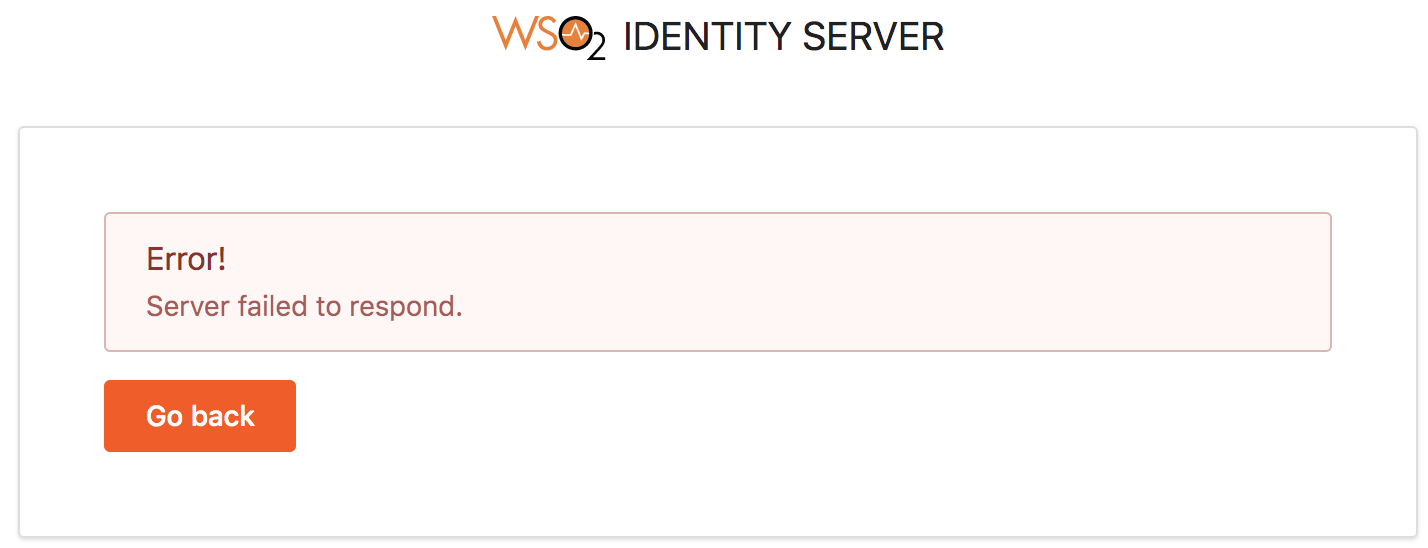

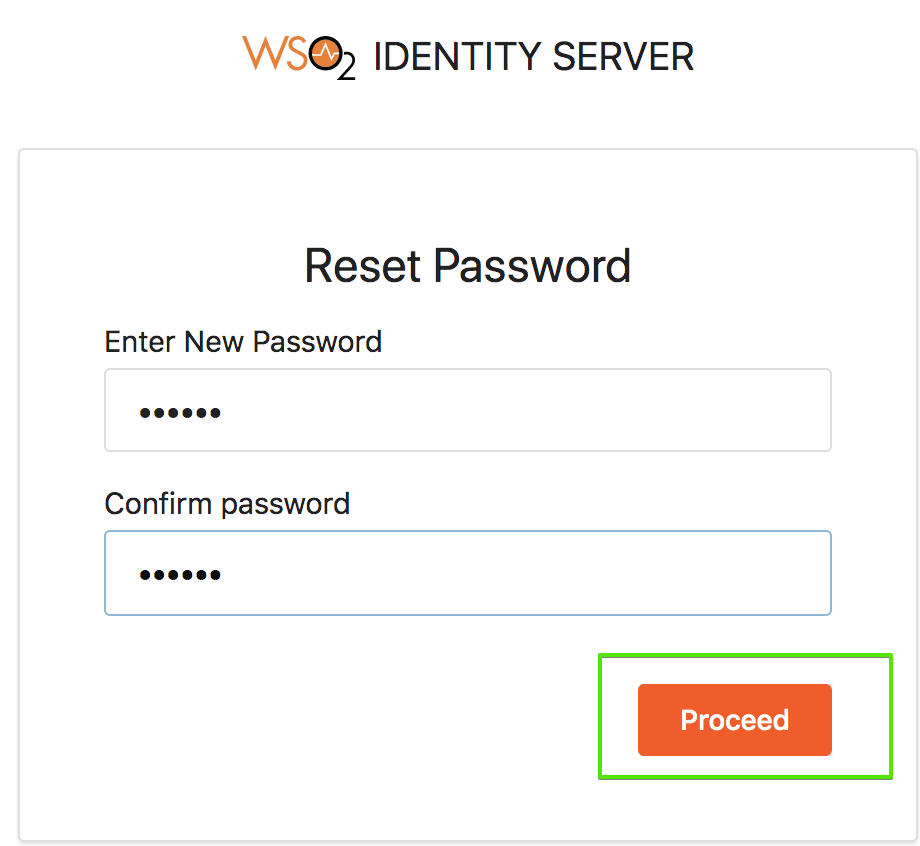

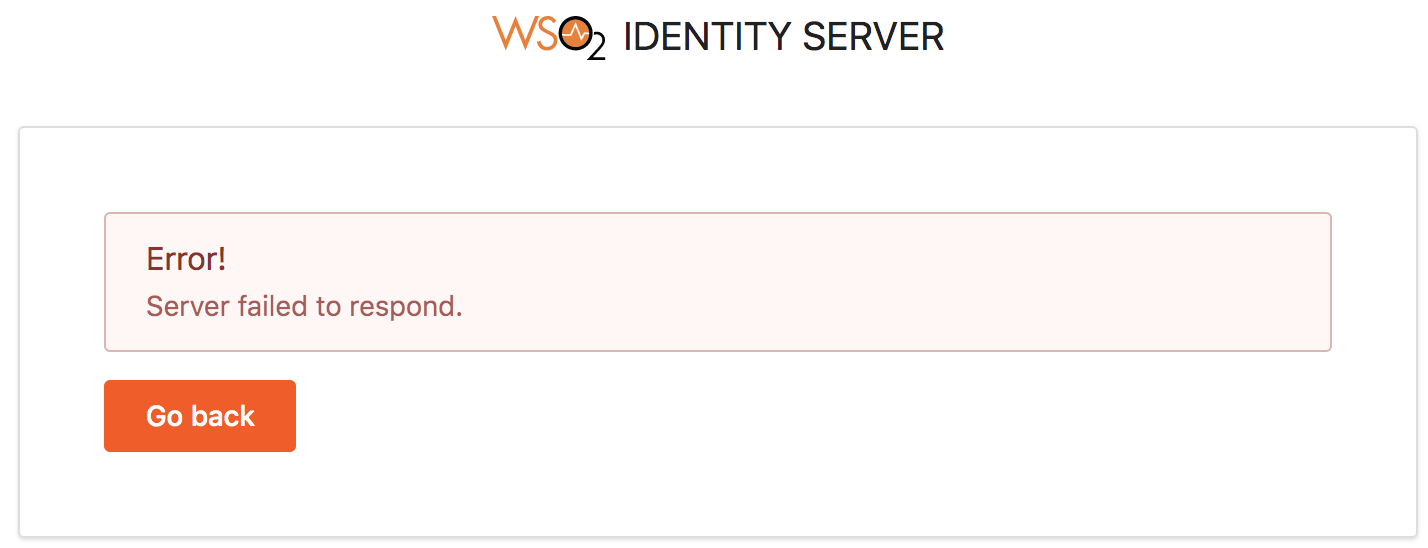

653,690 | 21,610,542,926 | IssuesEvent | 2022-05-04 09:38:32 | wso2/product-is | https://api.github.com/repos/wso2/product-is | closed | Password Reset confirmationcode not invalidating after first use | Priority/High Severity/Major bug 5.12.0-bug-fixing | **Describe the issue:**

We are able to use the same password reset email link to access the challenge questions and successfully reset user passwords multiple times before the 1440 minutes are up on its time expiration.

**How to reproduce:**

Step 1: Select 'Forgot Password?'

Step 2: Input email address

Step 3: Follow link in email to Change Password

Step 4: Correctly answer challenge questions and change password

Step 5: Click on same link which includes same confirmation code displayed in image above

Step 6: Correctly answer challenge questions and change password again

**Expected behavior:**

We expect that at Step 5 the confirmation code should have already been invalidated upon resetting the password in step 4 so the link should no longer allow you to change the password again

**Environment information:**

- Product Version: ISKM 5.10.0

- OS: RHEL 7.9

- Database: postgres13

- Userstore: JDBC

| 1.0 | Password Reset confirmationcode not invalidating after first use - **Describe the issue:**

We are able to use the same password reset email link to access the challenge questions and successfully reset user passwords multiple times before the 1440 minutes are up on its time expiration.

**How to reproduce:**

Step 1: Select 'Forgot Password?'

Step 2: Input email address

Step 3: Follow link in email to Change Password

Step 4: Correctly answer challenge questions and change password

Step 5: Click on same link which includes same confirmation code displayed in image above

Step 6: Correctly answer challenge questions and change password again

**Expected behavior:**

We expect that at Step 5 the confirmation code should have already been invalidated upon resetting the password in step 4 so the link should no longer allow you to change the password again

**Environment information:**

- Product Version: ISKM 5.10.0

- OS: RHEL 7.9

- Database: postgres13

- Userstore: JDBC

| priority | password reset confirmationcode not invalidating after first use describe the issue we are able to use the same password reset email link to access the challenge questions and successfully reset user passwords multiple times before the minutes are up on its time expiration how to reproduce step select forgot password step input email address step follow link in email to change password step correctly answer challenge questions and change password step click on same link which includes same confirmation code displayed in image above step correctly answer challenge questions and change password again expected behavior we expect that at step the confirmation code should have already been invalidated upon resetting the password in step so the link should no longer allow you to change the password again environment information product version iskm os rhel database userstore jdbc | 1 |

322,963 | 9,834,373,935 | IssuesEvent | 2019-06-17 09:32:25 | ahmedkaludi/accelerated-mobile-pages | https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages | closed | Issue with rank math,index should be same in both AMP and in Non-AMP in custom front page | NEED FAST REVIEW [Priority: HIGH] bug waiting | Des: Issue with rank math, the index should be same in both AMP and in Non-AMP in the custom front page

Ref: https://secure.helpscout.net/conversation/857663247/67968/

The below settings file is the rank math settings file at the user end, the issue is created in the local also.

[rank-math-settings-2019-06-04-08-12-18.txt](https://github.com/ahmedkaludi/accelerated-mobile-pages/files/3251330/rank-math-settings-2019-06-04-08-12-18.txt)

| 1.0 | Issue with rank math,index should be same in both AMP and in Non-AMP in custom front page - Des: Issue with rank math, the index should be same in both AMP and in Non-AMP in the custom front page

Ref: https://secure.helpscout.net/conversation/857663247/67968/

The below settings file is the rank math settings file at the user end, the issue is created in the local also.

[rank-math-settings-2019-06-04-08-12-18.txt](https://github.com/ahmedkaludi/accelerated-mobile-pages/files/3251330/rank-math-settings-2019-06-04-08-12-18.txt)

| priority | issue with rank math index should be same in both amp and in non amp in custom front page des issue with rank math the index should be same in both amp and in non amp in the custom front page ref the below settings file is the rank math settings file at the user end the issue is created in the local also | 1 |

187,619 | 6,759,735,909 | IssuesEvent | 2017-10-24 18:08:16 | blueprintmedicines/centromere | https://api.github.com/repos/blueprintmedicines/centromere | opened | Data Import Commons: Mutation Readers | Module: Core Priority: High Status: Investigating Type: Enhancement | Need better commons classes for reading mutation data files. This is tricky, since even though there are standards for mutation file formats (eg. [MAF](https://wiki.nci.nih.gov/display/TCGA/Mutation+Annotation+Format+(MAF)+Specification) and [VCF](http://www.internationalgenome.org/wiki/Analysis/Variant%20Call%20Format/vcf-variant-call-format-version-40/)), these are not REALLY standard, and a lot of the key fields vary by the tool used to generate them.

Suggested solution: generic, abstract implementation of readers for each file format that cover the common field, and subclass implementations that cover specific tool output formats (eg. Mutect or SNPEFF). | 1.0 | Data Import Commons: Mutation Readers - Need better commons classes for reading mutation data files. This is tricky, since even though there are standards for mutation file formats (eg. [MAF](https://wiki.nci.nih.gov/display/TCGA/Mutation+Annotation+Format+(MAF)+Specification) and [VCF](http://www.internationalgenome.org/wiki/Analysis/Variant%20Call%20Format/vcf-variant-call-format-version-40/)), these are not REALLY standard, and a lot of the key fields vary by the tool used to generate them.

Suggested solution: generic, abstract implementation of readers for each file format that cover the common field, and subclass implementations that cover specific tool output formats (eg. Mutect or SNPEFF). | priority | data import commons mutation readers need better commons classes for reading mutation data files this is tricky since even though there are standards for mutation file formats eg and these are not really standard and a lot of the key fields vary by the tool used to generate them suggested solution generic abstract implementation of readers for each file format that cover the common field and subclass implementations that cover specific tool output formats eg mutect or snpeff | 1 |

616,802 | 19,321,170,315 | IssuesEvent | 2021-12-14 05:51:56 | CDCgov/prime-reportstream | https://api.github.com/repos/CDCgov/prime-reportstream | closed | Tribal Citizenship is not reported correctly in the HL7 ReportStream produces | bug onboarding-ops HL7 high-priority data-issue | **Describe the bug**

The tribal citizenship is not coded correctly in the HL7 ReportStream produces. Specifically, the PID-39 field is missing PID-39.2 and PID-39.3.

**Impact**

WA has complained that their system is not able to digest the PID-39 from ReportStream.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to '...'

2. Click on '....'

3. Scroll down to '....'

4. See error

**Expected behavior**

PID-39 should be <tribe_id>^<tribe_name>^HL70171

**Additional context**

Problem reported by WA.

The `pdi-covid-19-0f6373fe-3695-4ca5-ad74-eab12ab1c19b-20211122163501.csv` report from simple_report contains a couple of real data with tribal affiliation set.

| 1.0 | Tribal Citizenship is not reported correctly in the HL7 ReportStream produces - **Describe the bug**

The tribal citizenship is not coded correctly in the HL7 ReportStream produces. Specifically, the PID-39 field is missing PID-39.2 and PID-39.3.

**Impact**

WA has complained that their system is not able to digest the PID-39 from ReportStream.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to '...'

2. Click on '....'

3. Scroll down to '....'

4. See error

**Expected behavior**

PID-39 should be <tribe_id>^<tribe_name>^HL70171

**Additional context**

Problem reported by WA.

The `pdi-covid-19-0f6373fe-3695-4ca5-ad74-eab12ab1c19b-20211122163501.csv` report from simple_report contains a couple of real data with tribal affiliation set.

| priority | tribal citizenship is not reported correctly in the reportstream produces describe the bug the tribal citizenship is not coded correctly in the reportstream produces specifically the pid field is missing pid and pid impact wa has complained that their system is not able to digest the pid from reportstream to reproduce steps to reproduce the behavior go to click on scroll down to see error expected behavior pid should be additional context problem reported by wa the pdi covid csv report from simple report contains a couple of real data with tribal affiliation set | 1 |

372,153 | 11,009,808,183 | IssuesEvent | 2019-12-04 13:26:22 | firecracker-microvm/firecracker | https://api.github.com/repos/firecracker-microvm/firecracker | closed | Ratelimiter: Very high CPU usage when ratelimiter is throttling guest RX. | Feature: IO Virtualization Performance: IO Priority: High Quality: Bug | When rate limiting is enabled and the guest is receiving a lot of traffic that triggers throttling the emulation thread will use 100% CPU.

There are 2 possible approaches here:

- quick fix: keep the current code structure and configure edge triggered epoll for the tap fd.

- proper fix: rework the current state machine and use edge triggered epoll

| 1.0 | Ratelimiter: Very high CPU usage when ratelimiter is throttling guest RX. - When rate limiting is enabled and the guest is receiving a lot of traffic that triggers throttling the emulation thread will use 100% CPU.

There are 2 possible approaches here:

- quick fix: keep the current code structure and configure edge triggered epoll for the tap fd.

- proper fix: rework the current state machine and use edge triggered epoll