Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

241,700 | 7,822,393,227 | IssuesEvent | 2018-06-14 02:12:50 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [studio-ui] spinner status on items deployed to staging is never removed | CI bug priority: high | ### Expected behavior

spinner over the file should be removed once the operation has completed

### Actual behavior

I think the spinner is waiting for the state to change to live. It never does - an item in staging is not live.

### Steps to reproduce the problem

* configure staging environment

* deploy item to staging

* note on dashboard and sidebar spinner is never removed.

### Log/stack trace (use https://gist.github.com)

N/A

### Specs

#### Version

Studio Version Number: 3.0.14-SNAPSHOT-bb5185

Build Number: bb518515b875d7784bb698317a7d0d3f36e2cbe0

Build Date/Time: 06-08-2018 09:50:34 -0400

#### OS

Any

#### Browser

Any | 1.0 | [studio-ui] spinner status on items deployed to staging is never removed - ### Expected behavior

spinner over the file should be removed once the operation has completed

### Actual behavior

I think the spinner is waiting for the state to change to live. It never does - an item in staging is not live.

### Steps to reproduce the problem

* configure staging environment

* deploy item to staging

* note on dashboard and sidebar spinner is never removed.

### Log/stack trace (use https://gist.github.com)

N/A

### Specs

#### Version

Studio Version Number: 3.0.14-SNAPSHOT-bb5185

Build Number: bb518515b875d7784bb698317a7d0d3f36e2cbe0

Build Date/Time: 06-08-2018 09:50:34 -0400

#### OS

Any

#### Browser

Any | priority | spinner status on items deployed to staging is never removed expected behavior spinner over the file should be removed once the operation has completed actual behavior i think the spinner is waiting for the state to change to live it never does an item in staging is not live steps to reproduce the problem configure staging environment deploy item to staging note on dashboard and sidebar spinner is never removed log stack trace use n a specs version studio version number snapshot build number build date time os any browser any | 1 |

611,502 | 18,957,065,638 | IssuesEvent | 2021-11-18 21:40:30 | bastislack/highline-freestyle | https://api.github.com/repos/bastislack/highline-freestyle | closed | Show combo stats | enhancement high priority | Have information like:

- minDiff

- maxDiff

- avgDiff

- summedDiff

- numTricks

- (and more ?)

show up on the combo details screen. | 1.0 | Show combo stats - Have information like:

- minDiff

- maxDiff

- avgDiff

- summedDiff

- numTricks

- (and more ?)

show up on the combo details screen. | priority | show combo stats have information like mindiff maxdiff avgdiff summeddiff numtricks and more show up on the combo details screen | 1 |

698,977 | 23,998,747,333 | IssuesEvent | 2022-09-14 09:39:56 | kapresoft/wow-addon-actionbar-plus | https://api.github.com/repos/kapresoft/wow-addon-actionbar-plus | closed | Lua Error | Bug Priority: High | Testing the new build and came across this:

```

Message: ...erface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua:153: Usage: GetItemCooldown(itemID)

Time: Tue Sep 13 23:51:39 2022

Count: 1

Stack: ...erface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua:153: Usage: GetItemCooldown(itemID)

[string "=[C]"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua"]:153: in function <...erface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua:152>

[string "=(tail call)"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua"]:208: in function <...barPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua:197>

[string "=(tail call)"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua"]:281: in function `UpdateCooldown'

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua"]:274: in function `UpdateState'

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua"]:279: in function <...barPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua:279>

Locals: (*temporary) = nil

Message: ...erface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua:153: Usage: GetItemCooldown(itemID)

Time: Tue Sep 13 23:51:54 2022

Count: 8

Stack: ...erface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua:153: Usage: GetItemCooldown(itemID)

[string "=[C]"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua"]:153: in function <...erface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua:152>

[string "=(tail call)"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua"]:208: in function <...barPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua:197>

[string "=(tail call)"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\WidgetUtil.lua"]:60: in function `UpdateUsable'

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonUI.lua"]:228: in function <...ionbarPlus\Core\AddonLib\Widget\Buttons\ButtonUI.lua:226>

[string "@Interface\AddOns\ActionbarPlus\Core\ExtLib\Ace3\CallbackHandler-1.0\CallbackHandler-1.0.lua"]:129: in function <...Lib\Ace3\CallbackHandler-1.0\CallbackHandler-1.0.lua:129>

[string "=[C]"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\ExtLib\Ace3\CallbackHandler-1.0\CallbackHandler-1.0.lua"]:29: in function <...Lib\Ace3\CallbackHandler-1.0\CallbackHandler-1.0.lua:25>

[string "@Interface\AddOns\ActionbarPlus\Core\ExtLib\Ace3\CallbackHandler-1.0\CallbackHandler-1.0.lua"]:64: in function `Fire'

[string "@Interface\AddOns\ActionbarPlus\Core\ExtLib\Ace3\AceEvent-3.0\AceEvent-3.0.lua"]:120: in function <...rPlus\Core\ExtLib\Ace3\AceEvent-3.0\AceEvent-3.0.lua:119>

Locals: <none>

Message: ...erface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua:153: Usage: GetItemCooldown(itemID)

Time: Tue Sep 13 23:51:39 2022

Count: 1

Stack: ...erface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua:153: Usage: GetItemCooldown(itemID)

[string "=[C]"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua"]:153: in function <...erface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua:152>

[string "=(tail call)"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua"]:208: in function <...barPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua:197>

[string "=(tail call)"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua"]:281: in function `UpdateCooldown'

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua"]:274: in function `UpdateState'

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua"]:279: in function <...barPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua:279>

```

| 1.0 | Lua Error - Testing the new build and came across this:

```

Message: ...erface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua:153: Usage: GetItemCooldown(itemID)

Time: Tue Sep 13 23:51:39 2022

Count: 1

Stack: ...erface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua:153: Usage: GetItemCooldown(itemID)

[string "=[C]"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua"]:153: in function <...erface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua:152>

[string "=(tail call)"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua"]:208: in function <...barPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua:197>

[string "=(tail call)"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua"]:281: in function `UpdateCooldown'

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua"]:274: in function `UpdateState'

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua"]:279: in function <...barPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua:279>

Locals: (*temporary) = nil

Message: ...erface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua:153: Usage: GetItemCooldown(itemID)

Time: Tue Sep 13 23:51:54 2022

Count: 8

Stack: ...erface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua:153: Usage: GetItemCooldown(itemID)

[string "=[C]"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua"]:153: in function <...erface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua:152>

[string "=(tail call)"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua"]:208: in function <...barPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua:197>

[string "=(tail call)"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\WidgetUtil.lua"]:60: in function `UpdateUsable'

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonUI.lua"]:228: in function <...ionbarPlus\Core\AddonLib\Widget\Buttons\ButtonUI.lua:226>

[string "@Interface\AddOns\ActionbarPlus\Core\ExtLib\Ace3\CallbackHandler-1.0\CallbackHandler-1.0.lua"]:129: in function <...Lib\Ace3\CallbackHandler-1.0\CallbackHandler-1.0.lua:129>

[string "=[C]"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\ExtLib\Ace3\CallbackHandler-1.0\CallbackHandler-1.0.lua"]:29: in function <...Lib\Ace3\CallbackHandler-1.0\CallbackHandler-1.0.lua:25>

[string "@Interface\AddOns\ActionbarPlus\Core\ExtLib\Ace3\CallbackHandler-1.0\CallbackHandler-1.0.lua"]:64: in function `Fire'

[string "@Interface\AddOns\ActionbarPlus\Core\ExtLib\Ace3\AceEvent-3.0\AceEvent-3.0.lua"]:120: in function <...rPlus\Core\ExtLib\Ace3\AceEvent-3.0\AceEvent-3.0.lua:119>

Locals: <none>

Message: ...erface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua:153: Usage: GetItemCooldown(itemID)

Time: Tue Sep 13 23:51:39 2022

Count: 1

Stack: ...erface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua:153: Usage: GetItemCooldown(itemID)

[string "=[C]"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua"]:153: in function <...erface\AddOns\ActionbarPlus\Core\Lib\API\TBC\API.lua:152>

[string "=(tail call)"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua"]:208: in function <...barPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua:197>

[string "=(tail call)"]: ?

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua"]:281: in function `UpdateCooldown'

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua"]:274: in function `UpdateState'

[string "@Interface\AddOns\ActionbarPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua"]:279: in function <...barPlus\Core\AddonLib\Widget\Buttons\ButtonMixin.lua:279>

```

| priority | lua error testing the new build and came across this message erface addons actionbarplus core lib api tbc api lua usage getitemcooldown itemid time tue sep count stack erface addons actionbarplus core lib api tbc api lua usage getitemcooldown itemid in function in function in function updatecooldown in function updatestate in function locals temporary nil message erface addons actionbarplus core lib api tbc api lua usage getitemcooldown itemid time tue sep count stack erface addons actionbarplus core lib api tbc api lua usage getitemcooldown itemid in function in function in function updateusable in function in function in function in function fire in function locals message erface addons actionbarplus core lib api tbc api lua usage getitemcooldown itemid time tue sep count stack erface addons actionbarplus core lib api tbc api lua usage getitemcooldown itemid in function in function in function updatecooldown in function updatestate in function | 1 |

44,488 | 2,906,282,224 | IssuesEvent | 2015-06-19 09:00:04 | mantidproject/mantid | https://api.github.com/repos/mantidproject/mantid | closed | Python Algorithm Issue | High Priority Python | This issue was originally [TRAC 11767](http://trac.mantidproject.org/mantid/ticket/11767)

This came to Mantid Help:

Hi,

Not for this release (so don’t panic!)

I noticed and interesting feature when trying to create a python algorithm. The only difference between the scripts below is defining the properties. The script that doesn’t work, works if I only have 3 declareProperty lines. If a forth is added then I get an error self is not defined.

Enjoy!

Aidy

This script doesn’t work

```python

from mantid.api import * # PythonAlgorithm, registerAlgorithm, WorkspaceProperty

class ApplyNegMuCorrection(PythonAlgorithm):

def PyInit(self):

self.declareProperty(name="First Run Number",

defaultValue=1700,

doc="First Run Number")

self.declareProperty("Last Run Number",1701,doc="Last Run Number")

self.declareProperty("Last1 Run Number",1701,doc="Last Run Number")

self.declareProperty("Last2 Run Number",1701,doc="Last Run Number")

#self.declareProperty("Gain for ISIS High E Detector A",

# 1.2,

# doc="Gain for ISIS detector Ax+B")

#self.declareProperty("Offset for ISIS High E Detector, B",

# 0.0,

# doc="Offset for ISIS detector Ax+B")

#self.declareProperty("Detector RIKEN High E, A",1.0,doc="Gain for RIKEN detector Ax+B")

#self.declareProperty("Detector RIKEN High E, B",0.0,doc="Gain for RIKEN detector Ax+B")

#self.declareProperty("Detector ISIS Low E, A",1.0,doc="Gain for ISIS detector Ax+B")

#self.declareProperty("Detector ISIS Low E, B",0.0,doc="Gain for ISIS detector Ax+B")

#self.declareProperty(WorkspaceProperty("OutputWorkspace","",Direction.Output))

def category(self):

return "CorrectionFunctions;Muon"

def PyExec(self):

iws = self.getProperty("InputWorkspace").value

x1=self.getProperty("x1").value

y1=self.getProperty("y1").value

ows=WorkspaceFactory.create(iws)

for s in range(iws.getNumberHistograms()):

y=ows.getAxis(1).getValue(s)

print "y[",s,"]=",y

ows.dataX(s)[:]=iws.dataX(s)[:]

ows.dataE(s)[:]=iws.dataE(s)[:]

for t in range(len(ows.dataY(s))):

x=ows.dataX(s)[t]

ows.dataY(s)[t]=iws.dataY(s)[t]+x1*x+y1*y

self.setProperty("OutputWorkspace",ows)

AlgorithmFactory.subscribe(ApplyNegMuCorrection)

```

This script does

```python

from mantid.api import * # PythonAlgorithm, registerAlgorithm, WorkspaceProperty

class ApplyNegMuCorrection(PythonAlgorithm):

def PyInit(self):

self.declareProperty(name="First Run Number",defaultValue=1700,doc="First Run Number")

self.declareProperty(name="Last Run Number",defaultValue=1701,doc="Last Run Number")

self.declareProperty(name="First2 Run Number",defaultValue=1700,doc="First Run Number")

self.declareProperty(name="First3 Run Number",defaultValue=1700,doc="First Run Number")

#self.declareProperty("Last Run Number",1701,doc="Last Run Number")

#self.declareProperty("Last1 Run Number",1701,doc="Last Run Number")

#self.declareProperty("Last2 Run Number",1701,doc="Last Run Number")

#self.declareProperty("Gain for ISIS High E Detector A",

# 1.2,

# doc="Gain for ISIS detector Ax+B")

#self.declareProperty("Offset for ISIS High E Detector, B",

# 0.0,

# doc="Offset for ISIS detector Ax+B")

#self.declareProperty("Detector RIKEN High E, A",1.0,doc="Gain for RIKEN detector Ax+B")

#self.declareProperty("Detector RIKEN High E, B",0.0,doc="Gain for RIKEN detector Ax+B")

#self.declareProperty("Detector ISIS Low E, A",1.0,doc="Gain for ISIS detector Ax+B")

#self.declareProperty("Detector ISIS Low E, B",0.0,doc="Gain for ISIS detector Ax+B")

#self.declareProperty(WorkspaceProperty("OutputWorkspace","",Direction.Output))

def category(self):

return "CorrectionFunctions;Muon"

def PyExec(self):

iws = self.getProperty("InputWorkspace").value

x1=self.getProperty("x1").value

y1=self.getProperty("y1").value

ows=WorkspaceFactory.create(iws)

for s in range(iws.getNumberHistograms()):

y=ows.getAxis(1).getValue(s)

print "y[",s,"]=",y

ows.dataX(s)[:]=iws.dataX(s)[:]

ows.dataE(s)[:]=iws.dataE(s)[:]

for t in range(len(ows.dataY(s))):

x=ows.dataX(s)[t]

ows.dataY(s)[t]=iws.dataY(s)[t]+x1*x+y1*y

self.setProperty("OutputWorkspace",ows)

AlgorithmFactory.subscribe(ApplyNegMuCorrection)

```

| 1.0 | Python Algorithm Issue - This issue was originally [TRAC 11767](http://trac.mantidproject.org/mantid/ticket/11767)

This came to Mantid Help:

Hi,

Not for this release (so don’t panic!)

I noticed and interesting feature when trying to create a python algorithm. The only difference between the scripts below is defining the properties. The script that doesn’t work, works if I only have 3 declareProperty lines. If a forth is added then I get an error self is not defined.

Enjoy!

Aidy

This script doesn’t work

```python

from mantid.api import * # PythonAlgorithm, registerAlgorithm, WorkspaceProperty

class ApplyNegMuCorrection(PythonAlgorithm):

def PyInit(self):

self.declareProperty(name="First Run Number",

defaultValue=1700,

doc="First Run Number")

self.declareProperty("Last Run Number",1701,doc="Last Run Number")

self.declareProperty("Last1 Run Number",1701,doc="Last Run Number")

self.declareProperty("Last2 Run Number",1701,doc="Last Run Number")

#self.declareProperty("Gain for ISIS High E Detector A",

# 1.2,

# doc="Gain for ISIS detector Ax+B")

#self.declareProperty("Offset for ISIS High E Detector, B",

# 0.0,

# doc="Offset for ISIS detector Ax+B")

#self.declareProperty("Detector RIKEN High E, A",1.0,doc="Gain for RIKEN detector Ax+B")

#self.declareProperty("Detector RIKEN High E, B",0.0,doc="Gain for RIKEN detector Ax+B")

#self.declareProperty("Detector ISIS Low E, A",1.0,doc="Gain for ISIS detector Ax+B")

#self.declareProperty("Detector ISIS Low E, B",0.0,doc="Gain for ISIS detector Ax+B")

#self.declareProperty(WorkspaceProperty("OutputWorkspace","",Direction.Output))

def category(self):

return "CorrectionFunctions;Muon"

def PyExec(self):

iws = self.getProperty("InputWorkspace").value

x1=self.getProperty("x1").value

y1=self.getProperty("y1").value

ows=WorkspaceFactory.create(iws)

for s in range(iws.getNumberHistograms()):

y=ows.getAxis(1).getValue(s)

print "y[",s,"]=",y

ows.dataX(s)[:]=iws.dataX(s)[:]

ows.dataE(s)[:]=iws.dataE(s)[:]

for t in range(len(ows.dataY(s))):

x=ows.dataX(s)[t]

ows.dataY(s)[t]=iws.dataY(s)[t]+x1*x+y1*y

self.setProperty("OutputWorkspace",ows)

AlgorithmFactory.subscribe(ApplyNegMuCorrection)

```

This script does

```python

from mantid.api import * # PythonAlgorithm, registerAlgorithm, WorkspaceProperty

class ApplyNegMuCorrection(PythonAlgorithm):

def PyInit(self):

self.declareProperty(name="First Run Number",defaultValue=1700,doc="First Run Number")

self.declareProperty(name="Last Run Number",defaultValue=1701,doc="Last Run Number")

self.declareProperty(name="First2 Run Number",defaultValue=1700,doc="First Run Number")

self.declareProperty(name="First3 Run Number",defaultValue=1700,doc="First Run Number")

#self.declareProperty("Last Run Number",1701,doc="Last Run Number")

#self.declareProperty("Last1 Run Number",1701,doc="Last Run Number")

#self.declareProperty("Last2 Run Number",1701,doc="Last Run Number")

#self.declareProperty("Gain for ISIS High E Detector A",

# 1.2,

# doc="Gain for ISIS detector Ax+B")

#self.declareProperty("Offset for ISIS High E Detector, B",

# 0.0,

# doc="Offset for ISIS detector Ax+B")

#self.declareProperty("Detector RIKEN High E, A",1.0,doc="Gain for RIKEN detector Ax+B")

#self.declareProperty("Detector RIKEN High E, B",0.0,doc="Gain for RIKEN detector Ax+B")

#self.declareProperty("Detector ISIS Low E, A",1.0,doc="Gain for ISIS detector Ax+B")

#self.declareProperty("Detector ISIS Low E, B",0.0,doc="Gain for ISIS detector Ax+B")

#self.declareProperty(WorkspaceProperty("OutputWorkspace","",Direction.Output))

def category(self):

return "CorrectionFunctions;Muon"

def PyExec(self):

iws = self.getProperty("InputWorkspace").value

x1=self.getProperty("x1").value

y1=self.getProperty("y1").value

ows=WorkspaceFactory.create(iws)

for s in range(iws.getNumberHistograms()):

y=ows.getAxis(1).getValue(s)

print "y[",s,"]=",y

ows.dataX(s)[:]=iws.dataX(s)[:]

ows.dataE(s)[:]=iws.dataE(s)[:]

for t in range(len(ows.dataY(s))):

x=ows.dataX(s)[t]

ows.dataY(s)[t]=iws.dataY(s)[t]+x1*x+y1*y

self.setProperty("OutputWorkspace",ows)

AlgorithmFactory.subscribe(ApplyNegMuCorrection)

```

| priority | python algorithm issue this issue was originally this came to mantid help hi not for this release so don’t panic i noticed and interesting feature when trying to create a python algorithm the only difference between the scripts below is defining the properties the script that doesn’t work works if i only have declareproperty lines if a forth is added then i get an error self is not defined enjoy aidy this script doesn’t work python from mantid api import pythonalgorithm registeralgorithm workspaceproperty class applynegmucorrection pythonalgorithm def pyinit self self declareproperty name first run number defaultvalue doc first run number self declareproperty last run number doc last run number self declareproperty run number doc last run number self declareproperty run number doc last run number self declareproperty gain for isis high e detector a doc gain for isis detector ax b self declareproperty offset for isis high e detector b doc offset for isis detector ax b self declareproperty detector riken high e a doc gain for riken detector ax b self declareproperty detector riken high e b doc gain for riken detector ax b self declareproperty detector isis low e a doc gain for isis detector ax b self declareproperty detector isis low e b doc gain for isis detector ax b self declareproperty workspaceproperty outputworkspace direction output def category self return correctionfunctions muon def pyexec self iws self getproperty inputworkspace value self getproperty value self getproperty value ows workspacefactory create iws for s in range iws getnumberhistograms y ows getaxis getvalue s print y y ows datax s iws datax s ows datae s iws datae s for t in range len ows datay s x ows datax s ows datay s iws datay s x y self setproperty outputworkspace ows algorithmfactory subscribe applynegmucorrection this script does python from mantid api import pythonalgorithm registeralgorithm workspaceproperty class applynegmucorrection pythonalgorithm def pyinit self self declareproperty name first run number defaultvalue doc first run number self declareproperty name last run number defaultvalue doc last run number self declareproperty name run number defaultvalue doc first run number self declareproperty name run number defaultvalue doc first run number self declareproperty last run number doc last run number self declareproperty run number doc last run number self declareproperty run number doc last run number self declareproperty gain for isis high e detector a doc gain for isis detector ax b self declareproperty offset for isis high e detector b doc offset for isis detector ax b self declareproperty detector riken high e a doc gain for riken detector ax b self declareproperty detector riken high e b doc gain for riken detector ax b self declareproperty detector isis low e a doc gain for isis detector ax b self declareproperty detector isis low e b doc gain for isis detector ax b self declareproperty workspaceproperty outputworkspace direction output def category self return correctionfunctions muon def pyexec self iws self getproperty inputworkspace value self getproperty value self getproperty value ows workspacefactory create iws for s in range iws getnumberhistograms y ows getaxis getvalue s print y y ows datax s iws datax s ows datae s iws datae s for t in range len ows datay s x ows datax s ows datay s iws datay s x y self setproperty outputworkspace ows algorithmfactory subscribe applynegmucorrection | 1 |

138,551 | 5,343,833,227 | IssuesEvent | 2017-02-17 12:42:19 | arimhan/RawParser | https://api.github.com/repos/arimhan/RawParser | closed | Canon file have wrong color | bug help wanted High priority | The Lossless jpeg decompressor fails on canon. Looks like they use a different decompressor

| 1.0 | Canon file have wrong color - The Lossless jpeg decompressor fails on canon. Looks like they use a different decompressor

| priority | canon file have wrong color the lossless jpeg decompressor fails on canon looks like they use a different decompressor | 1 |

779,107 | 27,339,733,671 | IssuesEvent | 2023-02-26 16:54:15 | OceanDataTools/openrvdas | https://api.github.com/repos/OceanDataTools/openrvdas | closed | InfluxDB GPG key is broken | bug high priority | See: https://community.influxdata.com/t/gpg-key-for-repository-broken-since-jan-26/28377

Causes failure on attempted influxdb installation of "can not verify key"

Ostensible fix is to update name of retrieved key to: https://repos.influxdata.com/influxdata-archive_compat.key | 1.0 | InfluxDB GPG key is broken - See: https://community.influxdata.com/t/gpg-key-for-repository-broken-since-jan-26/28377

Causes failure on attempted influxdb installation of "can not verify key"

Ostensible fix is to update name of retrieved key to: https://repos.influxdata.com/influxdata-archive_compat.key | priority | influxdb gpg key is broken see causes failure on attempted influxdb installation of can not verify key ostensible fix is to update name of retrieved key to | 1 |

793,999 | 28,019,201,966 | IssuesEvent | 2023-03-28 03:00:28 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | Torch 2 regression: `torch.asarray(np.array(1))` fail (scalar array) | high priority triaged module: numpy module: regression | ### 🐛 Describe the bug

It looks like `torch` 2 cannot convert `float` to `int` when the input is a scalar `np.ndarray`.

```python

torch.asarray(1., dtype=torch.int32) # Works

torch.asarray(np.array(1.), dtype=torch.int32) # Fail

torch.asarray(np.array([1.]), dtype=torch.int32) # Works

```

Traceback

```

>>> torch.asarray(np.asarray(1.), dtype=torch.int32)

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

TypeError: can't convert np.ndarray of type numpy.object_. The only supported types are: float64, float32, float16, complex64, complex128, int64, int32, int16, int8, uint8, and bool.

```

### Versions

2.0.0

cc @ezyang @gchanan @zou3519 @mruberry @rgommers | 1.0 | Torch 2 regression: `torch.asarray(np.array(1))` fail (scalar array) - ### 🐛 Describe the bug

It looks like `torch` 2 cannot convert `float` to `int` when the input is a scalar `np.ndarray`.

```python

torch.asarray(1., dtype=torch.int32) # Works

torch.asarray(np.array(1.), dtype=torch.int32) # Fail

torch.asarray(np.array([1.]), dtype=torch.int32) # Works

```

Traceback

```

>>> torch.asarray(np.asarray(1.), dtype=torch.int32)

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

TypeError: can't convert np.ndarray of type numpy.object_. The only supported types are: float64, float32, float16, complex64, complex128, int64, int32, int16, int8, uint8, and bool.

```

### Versions

2.0.0

cc @ezyang @gchanan @zou3519 @mruberry @rgommers | priority | torch regression torch asarray np array fail scalar array 🐛 describe the bug it looks like torch cannot convert float to int when the input is a scalar np ndarray python torch asarray dtype torch works torch asarray np array dtype torch fail torch asarray np array dtype torch works traceback torch asarray np asarray dtype torch traceback most recent call last file line in typeerror can t convert np ndarray of type numpy object the only supported types are and bool versions cc ezyang gchanan mruberry rgommers | 1 |

183,816 | 6,691,857,557 | IssuesEvent | 2017-10-09 14:31:04 | RigsOfRods/rigs-of-rods | https://api.github.com/repos/RigsOfRods/rigs-of-rods | closed | Collision limit for maps. | enhancement high-priority | So when .39+ was released, it was said that the collision limit for maps, had basically been removed, and you no longer had to worry about them. As I've been working on the Community map, I've noticed that not to be the case, as there seems to be a hard coded limit, that causes the log to repeatedly say `COLL: The hashtable is full.` once the limit is reached, thus freezing the game upon attempting to load said map. | 1.0 | Collision limit for maps. - So when .39+ was released, it was said that the collision limit for maps, had basically been removed, and you no longer had to worry about them. As I've been working on the Community map, I've noticed that not to be the case, as there seems to be a hard coded limit, that causes the log to repeatedly say `COLL: The hashtable is full.` once the limit is reached, thus freezing the game upon attempting to load said map. | priority | collision limit for maps so when was released it was said that the collision limit for maps had basically been removed and you no longer had to worry about them as i ve been working on the community map i ve noticed that not to be the case as there seems to be a hard coded limit that causes the log to repeatedly say coll the hashtable is full once the limit is reached thus freezing the game upon attempting to load said map | 1 |

145,565 | 5,577,864,117 | IssuesEvent | 2017-03-28 10:47:34 | uWebSockets/uWebSockets | https://api.github.com/repos/uWebSockets/uWebSockets | closed | v0.14.0 | high priority | This release will focus only on C++ features and be about removing libuv, creating a website and in other ways improving the library for C++ developers. I have had a Node.js overdose from doing v0.13.0 and I don't want to touch that again for a long time.

Features:

* Implement and default to raw epoll, fall back to libuv when in Node.js and non-Linux platforms

* Host a basic website with the C++ server to make sure it is pleasant to write a server in C++

* Fix C++ issues, improve interfaces & maybe look at C++17 networking as yet another backend | 1.0 | v0.14.0 - This release will focus only on C++ features and be about removing libuv, creating a website and in other ways improving the library for C++ developers. I have had a Node.js overdose from doing v0.13.0 and I don't want to touch that again for a long time.

Features:

* Implement and default to raw epoll, fall back to libuv when in Node.js and non-Linux platforms

* Host a basic website with the C++ server to make sure it is pleasant to write a server in C++

* Fix C++ issues, improve interfaces & maybe look at C++17 networking as yet another backend | priority | this release will focus only on c features and be about removing libuv creating a website and in other ways improving the library for c developers i have had a node js overdose from doing and i don t want to touch that again for a long time features implement and default to raw epoll fall back to libuv when in node js and non linux platforms host a basic website with the c server to make sure it is pleasant to write a server in c fix c issues improve interfaces maybe look at c networking as yet another backend | 1 |

134,121 | 5,220,528,224 | IssuesEvent | 2017-01-26 22:07:23 | rchavarria/mycantabria-visits | https://api.github.com/repos/rchavarria/mycantabria-visits | closed | Use falsy data for agents and customers | high priority | You can find inspiration in Dragon Ball TV series, about names, places, addresses, planets,... | 1.0 | Use falsy data for agents and customers - You can find inspiration in Dragon Ball TV series, about names, places, addresses, planets,... | priority | use falsy data for agents and customers you can find inspiration in dragon ball tv series about names places addresses planets | 1 |

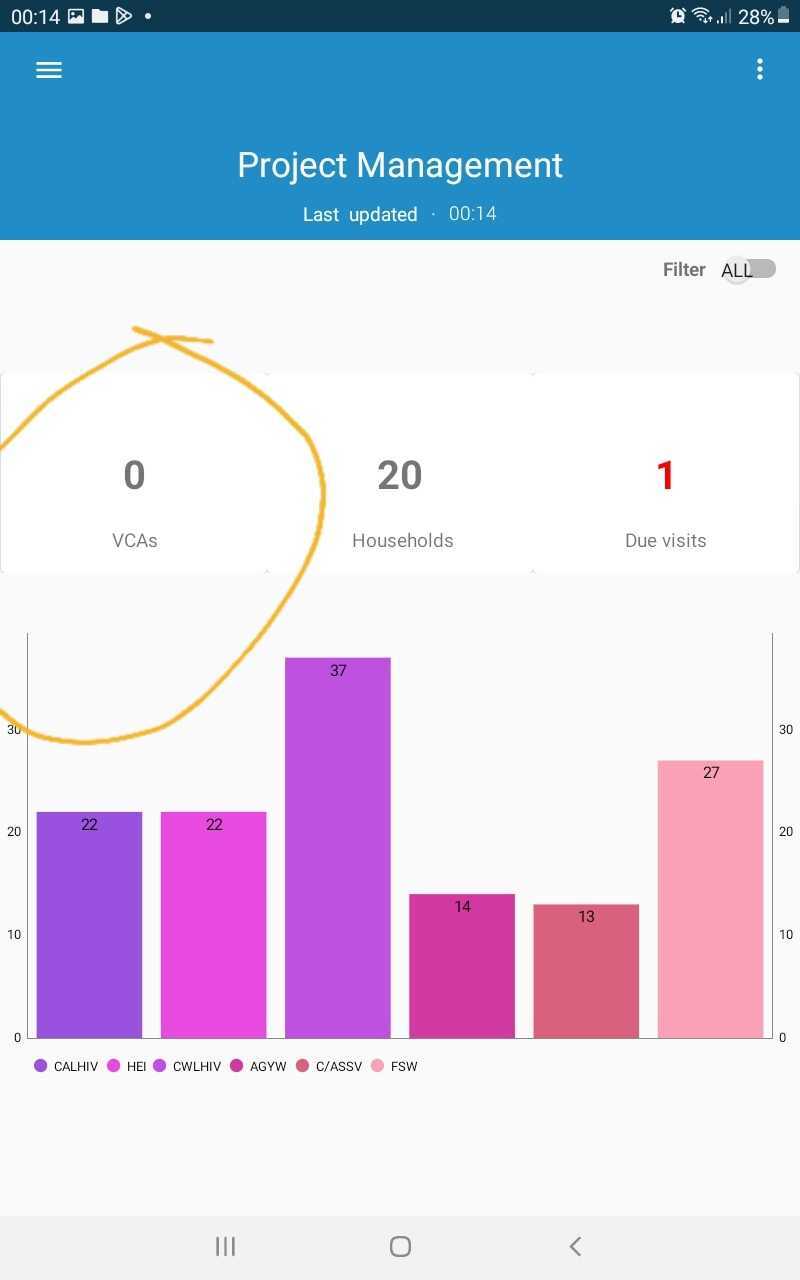

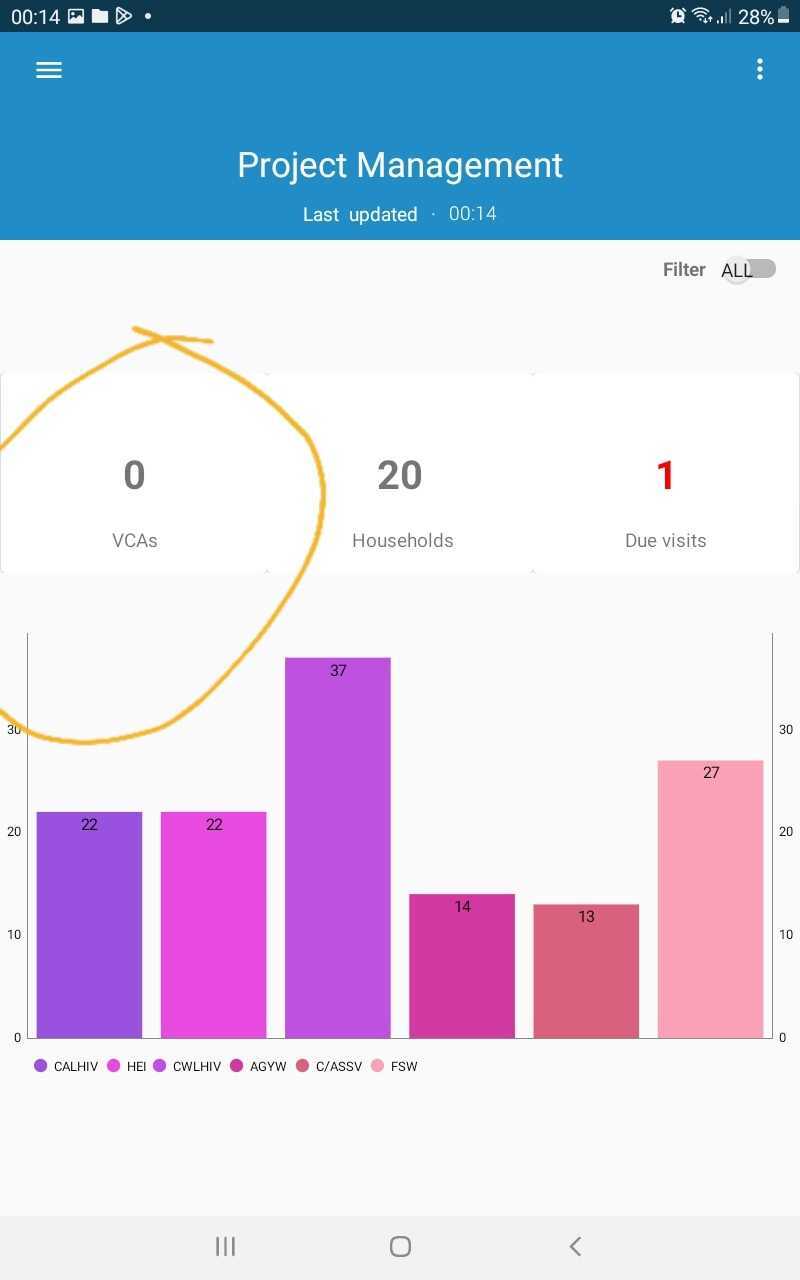

690,820 | 23,673,545,901 | IssuesEvent | 2022-08-27 18:40:28 | opensrp/fhircore | https://api.github.com/repos/opensrp/fhircore | closed | Quest/ANC - Toggle filter for Due Tasks/Services | Priority: high anc chw Quest Configurable Apps | **Description of feature**

Add the ability to filter Due and Upcoming services on the ANC app based on the [Task status ](https://www.hl7.org/fhir/valueset-task-status.html)

**Filter task by**

1. Due - [Ready](https://www.hl7.org/fhir/codesystem-task-status.html#task-status-requested). This will be included on the ANC Client/Family Register list Item with a circles red icon with count of all due services / tasks

2. Upcoming - [Requested](https://www.hl7.org/fhir/codesystem-task-status.html#task-status-ready) . This will be included on the ANC Client/Family Register list Item with a circles blue icon with count of all due services / tasks

**Filter on the TopBar menu item**

<img src="https://user-images.githubusercontent.com/4540684/153551771-fd2ee4cf-3660-4f80-b30d-c86767815d3c.png" width="200" height="400" />

**Acceptance Criteria**

- [ ] 1. Add the ability to activate/disable toggle filter on the Quest app based on a Config on the Top Bar navigation

- [ ] 2. Add the ability to Filter Due and Upcoming services for the Signed in CHW

| 1.0 | Quest/ANC - Toggle filter for Due Tasks/Services - **Description of feature**

Add the ability to filter Due and Upcoming services on the ANC app based on the [Task status ](https://www.hl7.org/fhir/valueset-task-status.html)

**Filter task by**

1. Due - [Ready](https://www.hl7.org/fhir/codesystem-task-status.html#task-status-requested). This will be included on the ANC Client/Family Register list Item with a circles red icon with count of all due services / tasks

2. Upcoming - [Requested](https://www.hl7.org/fhir/codesystem-task-status.html#task-status-ready) . This will be included on the ANC Client/Family Register list Item with a circles blue icon with count of all due services / tasks

**Filter on the TopBar menu item**

<img src="https://user-images.githubusercontent.com/4540684/153551771-fd2ee4cf-3660-4f80-b30d-c86767815d3c.png" width="200" height="400" />

**Acceptance Criteria**

- [ ] 1. Add the ability to activate/disable toggle filter on the Quest app based on a Config on the Top Bar navigation

- [ ] 2. Add the ability to Filter Due and Upcoming services for the Signed in CHW

| priority | quest anc toggle filter for due tasks services description of feature add the ability to filter due and upcoming services on the anc app based on the filter task by due this will be included on the anc client family register list item with a circles red icon with count of all due services tasks upcoming this will be included on the anc client family register list item with a circles blue icon with count of all due services tasks filter on the topbar menu item acceptance criteria add the ability to activate disable toggle filter on the quest app based on a config on the top bar navigation add the ability to filter due and upcoming services for the signed in chw | 1 |

317,117 | 9,661,105,217 | IssuesEvent | 2019-05-20 17:06:08 | datasnakes/OrthoEvolution | https://api.github.com/repos/datasnakes/OrthoEvolution | closed | Add granular installation of various tools/toolsets. | :jack_o_lantern: Hacktoberfest :jack_o_lantern: Priority: High :fire::fire::fire: Type: Enhancement :heart: | Add a way to install individuals tools. This can be accomplished by using the _extras_require_ option in the setup.py file. Using [airflow as an example](https://github.com/apache/incubator-airflow/blob/master/setup.py):

```python

# -*- coding: utf-8 -*-

#

# Licensed to the Apache Software Foundation (ASF) under one

# or more contributor license agreements. See the NOTICE file

# distributed with this work for additional information

# regarding copyright ownership. The ASF licenses this file

# to you under the Apache License, Version 2.0 (the

# "License"); you may not use this file except in compliance

# with the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing,

# software distributed under the License is distributed on an

# "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

# KIND, either express or implied. See the License for the

# specific language governing permissions and limitations

# under the License.

from setuptools import setup, find_packages, Command

from setuptools.command.test import test as TestCommand

import imp

import logging

import os

import sys

logger = logging.getLogger(__name__)

# Kept manually in sync with airflow.__version__

version = imp.load_source(

'airflow.version', os.path.join('airflow', 'version.py')).version

PY3 = sys.version_info[0] == 3

class Tox(TestCommand):

user_options = [('tox-args=', None, "Arguments to pass to tox")]

def initialize_options(self):

TestCommand.initialize_options(self)

self.tox_args = ''

def finalize_options(self):

TestCommand.finalize_options(self)

self.test_args = []

self.test_suite = True

def run_tests(self):

# import here, cause outside the eggs aren't loaded

import tox

errno = tox.cmdline(args=self.tox_args.split())

sys.exit(errno)

class CleanCommand(Command):

"""Custom clean command to tidy up the project root."""

user_options = []

def initialize_options(self):

pass

def finalize_options(self):

pass

def run(self):

os.system('rm -vrf ./build ./dist ./*.pyc ./*.tgz ./*.egg-info')

def git_version(version):

"""

Return a version to identify the state of the underlying git repo. The version will

indicate whether the head of the current git-backed working directory is tied to a

release tag or not : it will indicate the former with a 'release:{version}' prefix

and the latter with a 'dev0' prefix. Following the prefix will be a sha of the current

branch head. Finally, a "dirty" suffix is appended to indicate that uncommitted

changes are present.

"""

repo = None

try:

import git

repo = git.Repo('.git')

except ImportError:

logger.warning('gitpython not found: Cannot compute the git version.')

return ''

except Exception as e:

logger.warning('Cannot compute the git version. {}'.format(e))

return ''

if repo:

sha = repo.head.commit.hexsha

if repo.is_dirty():

return '.dev0+{sha}.dirty'.format(sha=sha)

# commit is clean

return '.release:{version}+{sha}'.format(version=version, sha=sha)

else:

return 'no_git_version'

def write_version(filename=os.path.join(*['airflow',

'git_version'])):

text = "{}".format(git_version(version))

with open(filename, 'w') as a:

a.write(text)

async = [

'greenlet>=0.4.9',

'eventlet>= 0.9.7',

'gevent>=0.13'

]

atlas = ['atlasclient>=0.1.2']

azure_blob_storage = ['azure-storage>=0.34.0']

azure_data_lake = [

'azure-mgmt-resource==1.2.2',

'azure-mgmt-datalake-store==0.4.0',

'azure-datalake-store==0.0.19'

]

cassandra = ['cassandra-driver>=3.13.0']

celery = [

'celery>=4.1.1, <4.2.0',

'flower>=0.7.3, <1.0'

]

cgroups = [

'cgroupspy>=0.1.4',

]

# major update coming soon, clamp to 0.x

cloudant = ['cloudant>=0.5.9,<2.0']

crypto = ['cryptography>=0.9.3']

dask = [

'distributed>=1.17.1, <2'

]

databricks = ['requests>=2.5.1, <3']

datadog = ['datadog>=0.14.0']

doc = [

'sphinx>=1.2.3',

'sphinx-argparse>=0.1.13',

'sphinx-rtd-theme>=0.1.6',

'Sphinx-PyPI-upload>=0.2.1'

]

docker = ['docker>=2.0.0']

druid = ['pydruid>=0.4.1']

elasticsearch = [

'elasticsearch>=5.0.0,<6.0.0',

'elasticsearch-dsl>=5.0.0,<6.0.0'

]

emr = ['boto3>=1.0.0']

gcp_api = [

'httplib2',

'google-api-python-client>=1.5.0, <1.6.0',

'oauth2client>=2.0.2, <2.1.0',

'PyOpenSSL',

'pandas-gbq'

]

github_enterprise = ['Flask-OAuthlib>=0.9.1']

hdfs = ['snakebite>=2.7.8']

hive = [

'hmsclient>=0.1.0',

'pyhive>=0.1.3',

'impyla>=0.13.3',

'thrift_sasl==0.2.1',

]

jdbc = ['jaydebeapi>=1.1.1']

jenkins = ['python-jenkins>=0.4.15']

jira = ['JIRA>1.0.7']

kerberos = ['pykerberos>=1.1.13',

'requests_kerberos>=0.10.0',

'thrift_sasl>=0.2.0',

'snakebite[kerberos]>=2.7.8']

kubernetes = ['kubernetes>=3.0.0',

'cryptography>=2.0.0']

ldap = ['ldap3>=0.9.9.1']

mssql = ['pymssql>=2.1.1']

mysql = ['mysqlclient>=1.3.6']

oracle = ['cx_Oracle>=5.1.2']

password = [

'bcrypt>=2.0.0',

'flask-bcrypt>=0.7.1',

]

pinot = ['pinotdb>=0.1.1']

postgres = ['psycopg2-binary>=2.7.4']

qds = ['qds-sdk>=1.9.6']

rabbitmq = ['librabbitmq>=1.6.1']

redis = ['redis>=2.10.5']

s3 = ['boto3>=1.7.0']

salesforce = ['simple-salesforce>=0.72']

samba = ['pysmbclient>=0.1.3']

segment = ['analytics-python>=1.2.9']

sendgrid = ['sendgrid>=5.2.0']

slack = ['slackclient>=1.0.0']

snowflake = ['snowflake-connector-python>=1.5.2',

'snowflake-sqlalchemy>=1.1.0']

ssh = ['paramiko>=2.1.1', 'pysftp>=0.2.9']

statsd = ['statsd>=3.0.1, <4.0']

vertica = ['vertica-python>=0.5.1']

webhdfs = ['hdfs[dataframe,avro,kerberos]>=2.0.4']

winrm = ['pywinrm==0.2.2']

zendesk = ['zdesk']

all_dbs = postgres + mysql + hive + mssql + hdfs + vertica + cloudant + druid + pinot \

+ cassandra

devel = [

'click',

'freezegun',

'jira',

'lxml>=3.3.4',

'mock',

'moto==1.1.19',

'nose',

'nose-ignore-docstring==0.2',

'nose-timer',

'parameterized',

'paramiko',

'pysftp',

'pywinrm',

'qds-sdk>=1.9.6',

'rednose',

'requests_mock'

]

devel_minreq = devel + kubernetes + mysql + doc + password + s3 + cgroups

devel_hadoop = devel_minreq + hive + hdfs + webhdfs + kerberos

devel_all = (sendgrid + devel + all_dbs + doc + samba + s3 + slack + crypto + oracle +

docker + ssh + kubernetes + celery + azure_blob_storage + redis + gcp_api +

datadog + zendesk + jdbc + ldap + kerberos + password + webhdfs + jenkins +

druid + pinot + segment + snowflake + elasticsearch + azure_data_lake, atlas)

# Snakebite & Google Cloud Dataflow are not Python 3 compatible :'(

if PY3:

devel_ci = [package for package in devel_all if package not in

['snakebite>=2.7.8', 'snakebite[kerberos]>=2.7.8']]

else:

devel_ci = devel_all

def do_setup():

write_version()

setup(

name='apache-airflow',

description='Programmatically author, schedule and monitor data pipelines',

license='Apache License 2.0',

version=version,

packages=find_packages(exclude=['tests*']),

package_data={'': ['airflow/alembic.ini', "airflow/git_version"]},

include_package_data=True,

zip_safe=False,

scripts=['airflow/bin/airflow'],

install_requires=[

'alembic>=0.8.3, <0.9',

'bleach==2.1.2',

'configparser>=3.5.0, <3.6.0',

'croniter>=0.3.17, <0.4',

'dill>=0.2.2, <0.3',

'flask>=0.12.4, <0.13',

'flask-appbuilder>=1.11.1, <2.0.0',

'flask-admin==1.4.1',

'flask-caching>=1.3.3, <1.4.0',

'flask-login==0.2.11',

'flask-swagger==0.2.13',

'flask-wtf>=0.14.2, <0.15',

'funcsigs==1.0.0',

'future>=0.16.0, <0.17',

'gitpython>=2.0.2',

'gunicorn>=19.4.0, <20.0',

'iso8601>=0.1.12',

'jinja2>=2.7.3, <2.9.0',

'lxml>=3.6.0, <4.0',

'markdown>=2.5.2, <3.0',

'pandas>=0.17.1, <1.0.0',

'pendulum==1.4.4',

'psutil>=4.2.0, <5.0.0',

'pygments>=2.0.1, <3.0',

'python-daemon>=2.1.1, <2.2',

'python-dateutil>=2.3, <3',

'python-nvd3==0.15.0',

'requests>=2.5.1, <3',

'setproctitle>=1.1.8, <2',

'sqlalchemy>=1.1.15, <1.2.0',

'sqlalchemy-utc>=0.9.0',

'tabulate>=0.7.5, <0.8.0',

'tenacity==4.8.0',

'thrift>=0.9.2',

'tzlocal>=1.4',

'unicodecsv>=0.14.1',

'werkzeug>=0.14.1, <0.15.0',

'zope.deprecation>=4.0, <5.0',

],

setup_requires=[

'docutils>=0.14, <1.0',

],

extras_require={

'all': devel_all,

'devel_ci': devel_ci,

'all_dbs': all_dbs,

'atlas': atlas,

'async': async,

'azure_blob_storage': azure_blob_storage,

'azure_data_lake': azure_data_lake,

'cassandra': cassandra,

'celery': celery,

'cgroups': cgroups,

'cloudant': cloudant,

'crypto': crypto,

'dask': dask,

'databricks': databricks,

'datadog': datadog,

'devel': devel_minreq,

'devel_hadoop': devel_hadoop,

'doc': doc,

'docker': docker,

'druid': druid,

'elasticsearch': elasticsearch,

'emr': emr,

'gcp_api': gcp_api,

'github_enterprise': github_enterprise,

'hdfs': hdfs,

'hive': hive,

'jdbc': jdbc,

'jira': jira,

'kerberos': kerberos,

'kubernetes': kubernetes,

'ldap': ldap,

'mssql': mssql,

'mysql': mysql,

'oracle': oracle,

'password': password,

'pinot': pinot,

'postgres': postgres,

'qds': qds,

'rabbitmq': rabbitmq,

'redis': redis,

's3': s3,

'salesforce': salesforce,

'samba': samba,

'sendgrid': sendgrid,

'segment': segment,

'slack': slack,

'snowflake': snowflake,

'ssh': ssh,

'statsd': statsd,

'vertica': vertica,

'webhdfs': webhdfs,

'winrm': winrm

},

classifiers=[

'Development Status :: 5 - Production/Stable',

'Environment :: Console',

'Environment :: Web Environment',

'Intended Audience :: Developers',

'Intended Audience :: System Administrators',

'License :: OSI Approved :: Apache Software License',

'Programming Language :: Python :: 2.7',

'Programming Language :: Python :: 3.4',

'Topic :: System :: Monitoring',

],

author='Apache Software Foundation',

author_email='dev@airflow.incubator.apache.org',

url='http://airflow.incubator.apache.org/',

download_url=(

'https://dist.apache.org/repos/dist/release/incubator/airflow/' + version),

cmdclass={

'test': Tox,

'extra_clean': CleanCommand,

},

)

if __name__ == "__main__":

do_setup()

```

| 1.0 | Add granular installation of various tools/toolsets. - Add a way to install individuals tools. This can be accomplished by using the _extras_require_ option in the setup.py file. Using [airflow as an example](https://github.com/apache/incubator-airflow/blob/master/setup.py):

```python

# -*- coding: utf-8 -*-

#

# Licensed to the Apache Software Foundation (ASF) under one

# or more contributor license agreements. See the NOTICE file

# distributed with this work for additional information

# regarding copyright ownership. The ASF licenses this file

# to you under the Apache License, Version 2.0 (the

# "License"); you may not use this file except in compliance

# with the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing,

# software distributed under the License is distributed on an

# "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

# KIND, either express or implied. See the License for the

# specific language governing permissions and limitations

# under the License.

from setuptools import setup, find_packages, Command

from setuptools.command.test import test as TestCommand

import imp

import logging

import os

import sys

logger = logging.getLogger(__name__)

# Kept manually in sync with airflow.__version__

version = imp.load_source(

'airflow.version', os.path.join('airflow', 'version.py')).version

PY3 = sys.version_info[0] == 3

class Tox(TestCommand):

user_options = [('tox-args=', None, "Arguments to pass to tox")]

def initialize_options(self):

TestCommand.initialize_options(self)

self.tox_args = ''

def finalize_options(self):

TestCommand.finalize_options(self)

self.test_args = []

self.test_suite = True

def run_tests(self):

# import here, cause outside the eggs aren't loaded

import tox

errno = tox.cmdline(args=self.tox_args.split())

sys.exit(errno)

class CleanCommand(Command):

"""Custom clean command to tidy up the project root."""

user_options = []

def initialize_options(self):

pass

def finalize_options(self):

pass

def run(self):

os.system('rm -vrf ./build ./dist ./*.pyc ./*.tgz ./*.egg-info')

def git_version(version):

"""

Return a version to identify the state of the underlying git repo. The version will

indicate whether the head of the current git-backed working directory is tied to a

release tag or not : it will indicate the former with a 'release:{version}' prefix

and the latter with a 'dev0' prefix. Following the prefix will be a sha of the current

branch head. Finally, a "dirty" suffix is appended to indicate that uncommitted

changes are present.

"""

repo = None

try:

import git

repo = git.Repo('.git')

except ImportError:

logger.warning('gitpython not found: Cannot compute the git version.')

return ''

except Exception as e:

logger.warning('Cannot compute the git version. {}'.format(e))

return ''

if repo:

sha = repo.head.commit.hexsha

if repo.is_dirty():

return '.dev0+{sha}.dirty'.format(sha=sha)

# commit is clean

return '.release:{version}+{sha}'.format(version=version, sha=sha)

else:

return 'no_git_version'

def write_version(filename=os.path.join(*['airflow',

'git_version'])):

text = "{}".format(git_version(version))

with open(filename, 'w') as a:

a.write(text)

async = [

'greenlet>=0.4.9',

'eventlet>= 0.9.7',

'gevent>=0.13'

]

atlas = ['atlasclient>=0.1.2']

azure_blob_storage = ['azure-storage>=0.34.0']

azure_data_lake = [

'azure-mgmt-resource==1.2.2',

'azure-mgmt-datalake-store==0.4.0',

'azure-datalake-store==0.0.19'

]

cassandra = ['cassandra-driver>=3.13.0']

celery = [

'celery>=4.1.1, <4.2.0',

'flower>=0.7.3, <1.0'

]

cgroups = [

'cgroupspy>=0.1.4',

]

# major update coming soon, clamp to 0.x

cloudant = ['cloudant>=0.5.9,<2.0']

crypto = ['cryptography>=0.9.3']

dask = [

'distributed>=1.17.1, <2'

]

databricks = ['requests>=2.5.1, <3']

datadog = ['datadog>=0.14.0']

doc = [

'sphinx>=1.2.3',

'sphinx-argparse>=0.1.13',

'sphinx-rtd-theme>=0.1.6',

'Sphinx-PyPI-upload>=0.2.1'

]

docker = ['docker>=2.0.0']

druid = ['pydruid>=0.4.1']

elasticsearch = [

'elasticsearch>=5.0.0,<6.0.0',

'elasticsearch-dsl>=5.0.0,<6.0.0'

]

emr = ['boto3>=1.0.0']

gcp_api = [

'httplib2',

'google-api-python-client>=1.5.0, <1.6.0',

'oauth2client>=2.0.2, <2.1.0',

'PyOpenSSL',

'pandas-gbq'

]

github_enterprise = ['Flask-OAuthlib>=0.9.1']

hdfs = ['snakebite>=2.7.8']

hive = [

'hmsclient>=0.1.0',

'pyhive>=0.1.3',

'impyla>=0.13.3',

'thrift_sasl==0.2.1',

]

jdbc = ['jaydebeapi>=1.1.1']

jenkins = ['python-jenkins>=0.4.15']

jira = ['JIRA>1.0.7']

kerberos = ['pykerberos>=1.1.13',

'requests_kerberos>=0.10.0',

'thrift_sasl>=0.2.0',

'snakebite[kerberos]>=2.7.8']

kubernetes = ['kubernetes>=3.0.0',

'cryptography>=2.0.0']

ldap = ['ldap3>=0.9.9.1']

mssql = ['pymssql>=2.1.1']

mysql = ['mysqlclient>=1.3.6']

oracle = ['cx_Oracle>=5.1.2']

password = [

'bcrypt>=2.0.0',

'flask-bcrypt>=0.7.1',

]

pinot = ['pinotdb>=0.1.1']

postgres = ['psycopg2-binary>=2.7.4']

qds = ['qds-sdk>=1.9.6']

rabbitmq = ['librabbitmq>=1.6.1']

redis = ['redis>=2.10.5']

s3 = ['boto3>=1.7.0']

salesforce = ['simple-salesforce>=0.72']

samba = ['pysmbclient>=0.1.3']

segment = ['analytics-python>=1.2.9']

sendgrid = ['sendgrid>=5.2.0']

slack = ['slackclient>=1.0.0']

snowflake = ['snowflake-connector-python>=1.5.2',

'snowflake-sqlalchemy>=1.1.0']

ssh = ['paramiko>=2.1.1', 'pysftp>=0.2.9']

statsd = ['statsd>=3.0.1, <4.0']

vertica = ['vertica-python>=0.5.1']

webhdfs = ['hdfs[dataframe,avro,kerberos]>=2.0.4']

winrm = ['pywinrm==0.2.2']

zendesk = ['zdesk']

all_dbs = postgres + mysql + hive + mssql + hdfs + vertica + cloudant + druid + pinot \

+ cassandra

devel = [

'click',

'freezegun',

'jira',

'lxml>=3.3.4',

'mock',

'moto==1.1.19',

'nose',

'nose-ignore-docstring==0.2',

'nose-timer',

'parameterized',

'paramiko',

'pysftp',

'pywinrm',

'qds-sdk>=1.9.6',

'rednose',

'requests_mock'

]

devel_minreq = devel + kubernetes + mysql + doc + password + s3 + cgroups

devel_hadoop = devel_minreq + hive + hdfs + webhdfs + kerberos

devel_all = (sendgrid + devel + all_dbs + doc + samba + s3 + slack + crypto + oracle +

docker + ssh + kubernetes + celery + azure_blob_storage + redis + gcp_api +

datadog + zendesk + jdbc + ldap + kerberos + password + webhdfs + jenkins +

druid + pinot + segment + snowflake + elasticsearch + azure_data_lake, atlas)

# Snakebite & Google Cloud Dataflow are not Python 3 compatible :'(

if PY3:

devel_ci = [package for package in devel_all if package not in

['snakebite>=2.7.8', 'snakebite[kerberos]>=2.7.8']]

else:

devel_ci = devel_all

def do_setup():

write_version()

setup(

name='apache-airflow',

description='Programmatically author, schedule and monitor data pipelines',

license='Apache License 2.0',

version=version,

packages=find_packages(exclude=['tests*']),

package_data={'': ['airflow/alembic.ini', "airflow/git_version"]},

include_package_data=True,

zip_safe=False,

scripts=['airflow/bin/airflow'],

install_requires=[

'alembic>=0.8.3, <0.9',

'bleach==2.1.2',

'configparser>=3.5.0, <3.6.0',

'croniter>=0.3.17, <0.4',

'dill>=0.2.2, <0.3',

'flask>=0.12.4, <0.13',

'flask-appbuilder>=1.11.1, <2.0.0',

'flask-admin==1.4.1',

'flask-caching>=1.3.3, <1.4.0',

'flask-login==0.2.11',

'flask-swagger==0.2.13',

'flask-wtf>=0.14.2, <0.15',

'funcsigs==1.0.0',

'future>=0.16.0, <0.17',

'gitpython>=2.0.2',

'gunicorn>=19.4.0, <20.0',

'iso8601>=0.1.12',

'jinja2>=2.7.3, <2.9.0',

'lxml>=3.6.0, <4.0',

'markdown>=2.5.2, <3.0',

'pandas>=0.17.1, <1.0.0',

'pendulum==1.4.4',

'psutil>=4.2.0, <5.0.0',

'pygments>=2.0.1, <3.0',

'python-daemon>=2.1.1, <2.2',

'python-dateutil>=2.3, <3',

'python-nvd3==0.15.0',

'requests>=2.5.1, <3',

'setproctitle>=1.1.8, <2',

'sqlalchemy>=1.1.15, <1.2.0',

'sqlalchemy-utc>=0.9.0',

'tabulate>=0.7.5, <0.8.0',

'tenacity==4.8.0',

'thrift>=0.9.2',

'tzlocal>=1.4',

'unicodecsv>=0.14.1',

'werkzeug>=0.14.1, <0.15.0',

'zope.deprecation>=4.0, <5.0',

],

setup_requires=[

'docutils>=0.14, <1.0',

],

extras_require={

'all': devel_all,

'devel_ci': devel_ci,

'all_dbs': all_dbs,

'atlas': atlas,

'async': async,

'azure_blob_storage': azure_blob_storage,

'azure_data_lake': azure_data_lake,

'cassandra': cassandra,

'celery': celery,

'cgroups': cgroups,

'cloudant': cloudant,

'crypto': crypto,

'dask': dask,

'databricks': databricks,

'datadog': datadog,

'devel': devel_minreq,

'devel_hadoop': devel_hadoop,

'doc': doc,

'docker': docker,

'druid': druid,

'elasticsearch': elasticsearch,

'emr': emr,

'gcp_api': gcp_api,

'github_enterprise': github_enterprise,

'hdfs': hdfs,

'hive': hive,

'jdbc': jdbc,

'jira': jira,

'kerberos': kerberos,

'kubernetes': kubernetes,

'ldap': ldap,

'mssql': mssql,

'mysql': mysql,

'oracle': oracle,

'password': password,

'pinot': pinot,

'postgres': postgres,

'qds': qds,

'rabbitmq': rabbitmq,

'redis': redis,

's3': s3,

'salesforce': salesforce,

'samba': samba,

'sendgrid': sendgrid,

'segment': segment,

'slack': slack,

'snowflake': snowflake,

'ssh': ssh,

'statsd': statsd,

'vertica': vertica,

'webhdfs': webhdfs,

'winrm': winrm

},

classifiers=[

'Development Status :: 5 - Production/Stable',

'Environment :: Console',

'Environment :: Web Environment',

'Intended Audience :: Developers',

'Intended Audience :: System Administrators',

'License :: OSI Approved :: Apache Software License',

'Programming Language :: Python :: 2.7',

'Programming Language :: Python :: 3.4',

'Topic :: System :: Monitoring',

],

author='Apache Software Foundation',

author_email='dev@airflow.incubator.apache.org',

url='http://airflow.incubator.apache.org/',

download_url=(

'https://dist.apache.org/repos/dist/release/incubator/airflow/' + version),

cmdclass={

'test': Tox,

'extra_clean': CleanCommand,

},

)

if __name__ == "__main__":

do_setup()

```

| priority | add granular installation of various tools toolsets add a way to install individuals tools this can be accomplished by using the extras require option in the setup py file using python coding utf licensed to the apache software foundation asf under one or more contributor license agreements see the notice file distributed with this work for additional information regarding copyright ownership the asf licenses this file to you under the apache license version the license you may not use this file except in compliance with the license you may obtain a copy of the license at unless required by applicable law or agreed to in writing software distributed under the license is distributed on an as is basis without warranties or conditions of any kind either express or implied see the license for the specific language governing permissions and limitations under the license from setuptools import setup find packages command from setuptools command test import test as testcommand import imp import logging import os import sys logger logging getlogger name kept manually in sync with airflow version version imp load source airflow version os path join airflow version py version sys version info class tox testcommand user options def initialize options self testcommand initialize options self self tox args def finalize options self testcommand finalize options self self test args self test suite true def run tests self import here cause outside the eggs aren t loaded import tox errno tox cmdline args self tox args split sys exit errno class cleancommand command custom clean command to tidy up the project root user options def initialize options self pass def finalize options self pass def run self os system rm vrf build dist pyc tgz egg info def git version version return a version to identify the state of the underlying git repo the version will indicate whether the head of the current git backed working directory is tied to a release tag or not it will indicate the former with a release version prefix and the latter with a prefix following the prefix will be a sha of the current branch head finally a dirty suffix is appended to indicate that uncommitted changes are present repo none try import git repo git repo git except importerror logger warning gitpython not found cannot compute the git version return except exception as e logger warning cannot compute the git version format e return if repo sha repo head commit hexsha if repo is dirty return sha dirty format sha sha commit is clean return release version sha format version version sha sha else return no git version def write version filename os path join airflow git version text format git version version with open filename w as a a write text async greenlet eventlet gevent atlas azure blob storage azure data lake azure mgmt resource azure mgmt datalake store azure datalake store cassandra celery celery flower cgroups cgroupspy major update coming soon clamp to x cloudant crypto dask distributed databricks datadog doc sphinx sphinx argparse sphinx rtd theme sphinx pypi upload docker druid elasticsearch elasticsearch elasticsearch dsl emr gcp api google api python client pyopenssl pandas gbq github enterprise hdfs hive hmsclient pyhive impyla thrift sasl jdbc jenkins jira kerberos pykerberos requests kerberos thrift sasl snakebite kubernetes kubernetes cryptography ldap mssql mysql oracle password bcrypt flask bcrypt pinot postgres qds rabbitmq redis salesforce samba segment sendgrid slack snowflake snowflake connector python snowflake sqlalchemy ssh statsd vertica webhdfs winrm zendesk all dbs postgres mysql hive mssql hdfs vertica cloudant druid pinot cassandra devel click freezegun jira lxml mock moto nose nose ignore docstring nose timer parameterized paramiko pysftp pywinrm qds sdk rednose requests mock devel minreq devel kubernetes mysql doc password cgroups devel hadoop devel minreq hive hdfs webhdfs kerberos devel all sendgrid devel all dbs doc samba slack crypto oracle docker ssh kubernetes celery azure blob storage redis gcp api datadog zendesk jdbc ldap kerberos password webhdfs jenkins druid pinot segment snowflake elasticsearch azure data lake atlas snakebite google cloud dataflow are not python compatible if devel ci package for package in devel all if package not in else devel ci devel all def do setup write version setup name apache airflow description programmatically author schedule and monitor data pipelines license apache license version version packages find packages exclude package data include package data true zip safe false scripts install requires alembic bleach configparser croniter dill flask flask appbuilder flask admin flask caching flask login flask swagger flask wtf funcsigs future gitpython gunicorn lxml markdown pandas pendulum psutil pygments python daemon python dateutil python requests setproctitle sqlalchemy sqlalchemy utc tabulate tenacity thrift tzlocal unicodecsv werkzeug zope deprecation setup requires docutils extras require all devel all devel ci devel ci all dbs all dbs atlas atlas async async azure blob storage azure blob storage azure data lake azure data lake cassandra cassandra celery celery cgroups cgroups cloudant cloudant crypto crypto dask dask databricks databricks datadog datadog devel devel minreq devel hadoop devel hadoop doc doc docker docker druid druid elasticsearch elasticsearch emr emr gcp api gcp api github enterprise github enterprise hdfs hdfs hive hive jdbc jdbc jira jira kerberos kerberos kubernetes kubernetes ldap ldap mssql mssql mysql mysql oracle oracle password password pinot pinot postgres postgres qds qds rabbitmq rabbitmq redis redis salesforce salesforce samba samba sendgrid sendgrid segment segment slack slack snowflake snowflake ssh ssh statsd statsd vertica vertica webhdfs webhdfs winrm winrm classifiers development status production stable environment console environment web environment intended audience developers intended audience system administrators license osi approved apache software license programming language python programming language python topic system monitoring author apache software foundation author email dev airflow incubator apache org url download url version cmdclass test tox extra clean cleancommand if name main do setup | 1 |

541,618 | 15,830,139,425 | IssuesEvent | 2021-04-06 12:07:54 | nf-core/tools | https://api.github.com/repos/nf-core/tools | opened | `nf-core modules create` - new `--conda_name` cli flag | command line tools high-priority | In https://github.com/nf-core/tools/pull/961 @Erkison added a really nice feature where the user is prompted for a new name to find a bioconda package that is different from the nf-core/modules tool name. This kicks in if `nf-core modules create` cannot find a tool on bioconda by that name.

[I suggested](https://github.com/nf-core/tools/pull/961#pullrequestreview-619851378) that we should probably also make this available through the cli in addition to just a prompt, and of course the need for this has come around sooner than expected (as it always does), courtesy of @drpatelh

So - feature request is to add a new flag `--conda_name` into the command which is passed through the code and used to search bioconda if given, without using any interactive prompts. | 1.0 | `nf-core modules create` - new `--conda_name` cli flag - In https://github.com/nf-core/tools/pull/961 @Erkison added a really nice feature where the user is prompted for a new name to find a bioconda package that is different from the nf-core/modules tool name. This kicks in if `nf-core modules create` cannot find a tool on bioconda by that name.

[I suggested](https://github.com/nf-core/tools/pull/961#pullrequestreview-619851378) that we should probably also make this available through the cli in addition to just a prompt, and of course the need for this has come around sooner than expected (as it always does), courtesy of @drpatelh

So - feature request is to add a new flag `--conda_name` into the command which is passed through the code and used to search bioconda if given, without using any interactive prompts. | priority | nf core modules create new conda name cli flag in erkison added a really nice feature where the user is prompted for a new name to find a bioconda package that is different from the nf core modules tool name this kicks in if nf core modules create cannot find a tool on bioconda by that name that we should probably also make this available through the cli in addition to just a prompt and of course the need for this has come around sooner than expected as it always does courtesy of drpatelh so feature request is to add a new flag conda name into the command which is passed through the code and used to search bioconda if given without using any interactive prompts | 1 |

163,694 | 6,204,034,762 | IssuesEvent | 2017-07-06 13:23:37 | hotosm/tasking-manager | https://api.github.com/repos/hotosm/tasking-manager | closed | Contribute Map tab - lose selected task while editing, no "select again" button | High Priority | Sometimes the selected task is not staying selected while a mapper is mapping and they are having a hard time finding it again on the map.

We need a "selected it again" button like in the TM2 | 1.0 | Contribute Map tab - lose selected task while editing, no "select again" button - Sometimes the selected task is not staying selected while a mapper is mapping and they are having a hard time finding it again on the map.

We need a "selected it again" button like in the TM2 | priority | contribute map tab lose selected task while editing no select again button sometimes the selected task is not staying selected while a mapper is mapping and they are having a hard time finding it again on the map we need a selected it again button like in the | 1 |

803,704 | 29,187,120,618 | IssuesEvent | 2023-05-19 16:22:03 | stratosphererl/stratosphere | https://api.github.com/repos/stratosphererl/stratosphere | closed | [EPIC] Authentication | type: epic priority: high work: complicated [2] area: auth | ## Description

Concrete and detailed description. What is this epic trying to address? What would be the purpose of this epic? Why is this important?

To secure our website and separate user interactions and data into profiles we should provide some sort of login/signout functionality and user authentication for our website and potentially overlay. To do this we will have the user log in via a third-party platform, such as Steam or Epic Games.

## Story(s)

- #71

- #12

## Affected Personas

- Frontend engineers (implement auth services and implement them on the website and/or overlay)

- Database engineers (implement storage of profile data, etc.)

- Website/Overlay users (receive functionality to create an account and log in to it

## What are we planning to do about it?

- Implement auth functionality as described by Steam and Epic Games onto our website

- Store data provide from Steam and/or Epic Games in an auth/profile DB

- Restrict uploading replays to only logged in users

## How will we measure success?

Users can successfully log in by using their Steam and Epic Games account credentials

Replays cannot be uploaded without logging in / creating an account

Profile data is presented to the user on their profile on our website | 1.0 | [EPIC] Authentication - ## Description

Concrete and detailed description. What is this epic trying to address? What would be the purpose of this epic? Why is this important?

To secure our website and separate user interactions and data into profiles we should provide some sort of login/signout functionality and user authentication for our website and potentially overlay. To do this we will have the user log in via a third-party platform, such as Steam or Epic Games.

## Story(s)

- #71

- #12

## Affected Personas

- Frontend engineers (implement auth services and implement them on the website and/or overlay)

- Database engineers (implement storage of profile data, etc.)

- Website/Overlay users (receive functionality to create an account and log in to it

## What are we planning to do about it?

- Implement auth functionality as described by Steam and Epic Games onto our website

- Store data provide from Steam and/or Epic Games in an auth/profile DB

- Restrict uploading replays to only logged in users

## How will we measure success?

Users can successfully log in by using their Steam and Epic Games account credentials

Replays cannot be uploaded without logging in / creating an account

Profile data is presented to the user on their profile on our website | priority | authentication description concrete and detailed description what is this epic trying to address what would be the purpose of this epic why is this important to secure our website and separate user interactions and data into profiles we should provide some sort of login signout functionality and user authentication for our website and potentially overlay to do this we will have the user log in via a third party platform such as steam or epic games story s affected personas frontend engineers implement auth services and implement them on the website and or overlay database engineers implement storage of profile data etc website overlay users receive functionality to create an account and log in to it what are we planning to do about it implement auth functionality as described by steam and epic games onto our website store data provide from steam and or epic games in an auth profile db restrict uploading replays to only logged in users how will we measure success users can successfully log in by using their steam and epic games account credentials replays cannot be uploaded without logging in creating an account profile data is presented to the user on their profile on our website | 1 |

494,452 | 14,258,847,190 | IssuesEvent | 2020-11-20 07:08:12 | canonical-web-and-design/vanilla-framework | https://api.github.com/repos/canonical-web-and-design/vanilla-framework | closed | Application layout responsiveness needs some improvement | Priority: High | A comment from @clagom that the juju team weren't able to use the vanilla panels because of responsiveness issues. Looking at the examples, there are a few things we can improve:

- ensure collapsed sidenav is wide enough to display icons centered in the column (or hide them)

- Beyond a certain width, the panel and main area become too narrow to be useful. At this point we should have an alternative mechanism like switching the panel to a slide from top/bottom.

@clagom @barrymcgee please expand on this if the above doesn't go into enough detail.

JAAS issue [here](https://github.com/canonical-web-and-design/jaas-dashboard/issues/683) | 1.0 | Application layout responsiveness needs some improvement - A comment from @clagom that the juju team weren't able to use the vanilla panels because of responsiveness issues. Looking at the examples, there are a few things we can improve:

- ensure collapsed sidenav is wide enough to display icons centered in the column (or hide them)

- Beyond a certain width, the panel and main area become too narrow to be useful. At this point we should have an alternative mechanism like switching the panel to a slide from top/bottom.

@clagom @barrymcgee please expand on this if the above doesn't go into enough detail.

JAAS issue [here](https://github.com/canonical-web-and-design/jaas-dashboard/issues/683) | priority | application layout responsiveness needs some improvement a comment from clagom that the juju team weren t able to use the vanilla panels because of responsiveness issues looking at the examples there are a few things we can improve ensure collapsed sidenav is wide enough to display icons centered in the column or hide them beyond a certain width the panel and main area become too narrow to be useful at this point we should have an alternative mechanism like switching the panel to a slide from top bottom clagom barrymcgee please expand on this if the above doesn t go into enough detail jaas issue | 1 |

384,839 | 11,404,784,230 | IssuesEvent | 2020-01-31 10:33:05 | ahmedkaludi/accelerated-mobile-pages | https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages | closed | Need to add nofollow feature in notification option | NEXT UPDATE [Priority: HIGH] enhancement | Need to add Nofollow feature in notification option

Screenshot:

https://monosnap.com/file/TV2lOrqEsWLvepW0TSoelzCITXzHNf

REF:https://secure.helpscout.net/conversation/945973540/80103/ | 1.0 | Need to add nofollow feature in notification option - Need to add Nofollow feature in notification option

Screenshot:

https://monosnap.com/file/TV2lOrqEsWLvepW0TSoelzCITXzHNf

REF:https://secure.helpscout.net/conversation/945973540/80103/ | priority | need to add nofollow feature in notification option need to add nofollow feature in notification option screenshot ref | 1 |

632,811 | 20,234,990,011 | IssuesEvent | 2022-02-14 00:13:42 | apache/dolphinscheduler | https://api.github.com/repos/apache/dolphinscheduler | closed | [BUG][DOCKER] docker compose api server failed | bug need to verify Stale priority:high | ### Search before asking

- [X] I had searched in the [issues](https://github.com/apache/dolphinscheduler/issues?q=is%3Aissue) and found no similar issues.

### What happened

OS: Centos 7 kernel 3.10.0

memory: 8G

Followed the instruction at [https://dolphinscheduler.apache.org/en-us/docs/latest/user_doc/guide/installation/docker.html](https://dolphinscheduler.apache.org/en-us/docs/latest/user_doc/guide/installation/docker.html)

with Docker 20.10.7 and Docker Compose 2.2.1

Success in starting PostgreSQL, Zookeeper, the master and the worker server, failed to start the API server (keep restarting). Logs show that the connection to 127.0.0.1:5432 was refused.

### What you expected to happen

Failed to connect the api at 127.0.0.1:12345/dolphinscheduler.

### How to reproduce

Follow the instruction at [https://dolphinscheduler.apache.org/en-us/docs/latest/user_doc/guide/installation/docker.html](https://dolphinscheduler.apache.org/en-us/docs/latest/user_doc/guide/installation/docker.html)

### Anything else

_No response_

### Version

2.0.0

### Are you willing to submit PR?

- [ ] Yes I am willing to submit a PR!

### Code of Conduct

- [X] I agree to follow this project's [Code of Conduct](https://www.apache.org/foundation/policies/conduct)

| 1.0 | [BUG][DOCKER] docker compose api server failed - ### Search before asking

- [X] I had searched in the [issues](https://github.com/apache/dolphinscheduler/issues?q=is%3Aissue) and found no similar issues.

### What happened

OS: Centos 7 kernel 3.10.0

memory: 8G

Followed the instruction at [https://dolphinscheduler.apache.org/en-us/docs/latest/user_doc/guide/installation/docker.html](https://dolphinscheduler.apache.org/en-us/docs/latest/user_doc/guide/installation/docker.html)