Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

407,781 | 11,937,350,444 | IssuesEvent | 2020-04-02 12:01:41 | flatlify/flatlify | https://api.github.com/repos/flatlify/flatlify | closed | Fix Id generation logic | Priority:High bug | Currently, the id is generated based on the amount of item. If one of the items deleted, newly created content overrides the last content entry.

Id should be incremented based on the largest id.

| 1.0 | Fix Id generation logic - Currently, the id is generated based on the amount of item. If one of the items deleted, newly created content overrides the last content entry.

Id should be incremented based on the largest id.

| priority | fix id generation logic currently the id is generated based on the amount of item if one of the items deleted newly created content overrides the last content entry id should be incremented based on the largest id | 1 |

53,081 | 3,035,201,709 | IssuesEvent | 2015-08-06 00:46:11 | pombase/canto | https://api.github.com/repos/pombase/canto | closed | "couldn't read the genotype list from the server" | bug high priority | If you click a link before a page with annotations has finished loading, there's a pop-up error. It might concern users so I've put it at high priority.

| 1.0 | "couldn't read the genotype list from the server" - If you click a link before a page with annotations has finished loading, there's a pop-up error. It might concern users so I've put it at high priority.

| priority | couldn t read the genotype list from the server if you click a link before a page with annotations has finished loading there s a pop up error it might concern users so i ve put it at high priority | 1 |

442,911 | 12,752,776,425 | IssuesEvent | 2020-06-27 18:16:25 | TimUntersberger/wwm | https://api.github.com/repos/TimUntersberger/wwm | closed | Keybindings don't work reliably or at all in some cases | bug difficulty: easy priority: high review | Similar to the report in a comment on Reddit, the keybindings are not reliable for me. I am excited about the possibilities with using WWM, but that is making it pretty difficult to use at this point. | 1.0 | Keybindings don't work reliably or at all in some cases - Similar to the report in a comment on Reddit, the keybindings are not reliable for me. I am excited about the possibilities with using WWM, but that is making it pretty difficult to use at this point. | priority | keybindings don t work reliably or at all in some cases similar to the report in a comment on reddit the keybindings are not reliable for me i am excited about the possibilities with using wwm but that is making it pretty difficult to use at this point | 1 |

429,808 | 12,427,897,801 | IssuesEvent | 2020-05-25 04:16:49 | rich-iannone/pointblank | https://api.github.com/repos/rich-iannone/pointblank | closed | Better printing of values for `col_vals_between()` and `col_vals_not_between()` in agent reports | Difficulty: [3] Advanced Effort: [3] High Priority: [3] High Type: ★ Enhancement | Right now, only single, literal values are allowed. This is in contrast to the other, single comparison validation functions that allow the use of a literal value and a column of values. There should be four allowable possibilities in total that comprise the different combinations of column/literal values per side. | 1.0 | Better printing of values for `col_vals_between()` and `col_vals_not_between()` in agent reports - Right now, only single, literal values are allowed. This is in contrast to the other, single comparison validation functions that allow the use of a literal value and a column of values. There should be four allowable possibilities in total that comprise the different combinations of column/literal values per side. | priority | better printing of values for col vals between and col vals not between in agent reports right now only single literal values are allowed this is in contrast to the other single comparison validation functions that allow the use of a literal value and a column of values there should be four allowable possibilities in total that comprise the different combinations of column literal values per side | 1 |

442,684 | 12,749,132,023 | IssuesEvent | 2020-06-26 21:51:20 | BCcampus/edehr | https://api.github.com/repos/BCcampus/edehr | closed | Excessive loading | Priority - High ~Bug | **Describe the bug**

As user navigates the EHR pages they see a lengthy page load on each page visited. This should not be necessary because the page data has not changed

This defect was intentionally reintroduced because the load is needed to actually show data on the page.

In PageController go to and remove line 29. Then as you navigate around the EHR pages they show no data found. Refresh any of those pages and the data appears.

To resolve will need to determine which parts of the load process transfer data into the page.

| 1.0 | Excessive loading - **Describe the bug**

As user navigates the EHR pages they see a lengthy page load on each page visited. This should not be necessary because the page data has not changed

This defect was intentionally reintroduced because the load is needed to actually show data on the page.

In PageController go to and remove line 29. Then as you navigate around the EHR pages they show no data found. Refresh any of those pages and the data appears.

To resolve will need to determine which parts of the load process transfer data into the page.

| priority | excessive loading describe the bug as user navigates the ehr pages they see a lengthy page load on each page visited this should not be necessary because the page data has not changed this defect was intentionally reintroduced because the load is needed to actually show data on the page in pagecontroller go to and remove line then as you navigate around the ehr pages they show no data found refresh any of those pages and the data appears to resolve will need to determine which parts of the load process transfer data into the page | 1 |

242,737 | 7,846,190,975 | IssuesEvent | 2018-06-19 14:52:11 | OpenNebula/one | https://api.github.com/repos/OpenNebula/one | closed | Oneflow not working after upgrading from 5.4.* to 5.5.80 | Category: Core & System Community Priority: High Status: Accepted Type: Bug | # Bug Report

## Version of OpenNebula

- [ ] 5.2.2

- [ ] 5.4.0

- [ ] 5.4.1

- [ ] 5.4.2

- [ ] 5.4.3

- [ ] 5.4.4

- [ ] 5.4.5

- [ ] 5.4.6

- [ ] 5.4.7

- [ ] 5.4.8

- [ ] 5.4.9

- [ ] 5.4.10

- [ ] 5.4.11

- [ ] 5.4.12

- [ ] 5.4.13

- [ ] 5.4.14

- [x] Development build

## Component

- [ ] Authorization (LDAP, x509 certs...)

- [ ] Command Line Interface (CLI)

- [ ] Contextualization

- [ ] Documentation

- [ ] Federation and HA

- [ ] Host, Clusters and Monitorization

- [ ] KVM

- [ ] Networking

- [ ] Orchestration (OpenNebula Flow)

- [ ] Packages

- [ ] Scheduler

- [ ] Storage & Images

- [ ] Sunstone

- [x] Upgrades

- [ ] User, Groups, VDCs and ACL

- [ ] vCenter

## Description

After upgrading ONE from 5.4.*, if you try to see a service created in 5.4, you get: [one.document.info] Error getting document [ID]

### Expected Behavior

N/A

### Actual Behavior

Old services are broken after upgrading

## How to reproduce

- Create a service in 5.4.* version

- Upgrade one to 5.5.80

- Try to show that service | 1.0 | Oneflow not working after upgrading from 5.4.* to 5.5.80 - # Bug Report

## Version of OpenNebula

- [ ] 5.2.2

- [ ] 5.4.0

- [ ] 5.4.1

- [ ] 5.4.2

- [ ] 5.4.3

- [ ] 5.4.4

- [ ] 5.4.5

- [ ] 5.4.6

- [ ] 5.4.7

- [ ] 5.4.8

- [ ] 5.4.9

- [ ] 5.4.10

- [ ] 5.4.11

- [ ] 5.4.12

- [ ] 5.4.13

- [ ] 5.4.14

- [x] Development build

## Component

- [ ] Authorization (LDAP, x509 certs...)

- [ ] Command Line Interface (CLI)

- [ ] Contextualization

- [ ] Documentation

- [ ] Federation and HA

- [ ] Host, Clusters and Monitorization

- [ ] KVM

- [ ] Networking

- [ ] Orchestration (OpenNebula Flow)

- [ ] Packages

- [ ] Scheduler

- [ ] Storage & Images

- [ ] Sunstone

- [x] Upgrades

- [ ] User, Groups, VDCs and ACL

- [ ] vCenter

## Description

After upgrading ONE from 5.4.*, if you try to see a service created in 5.4, you get: [one.document.info] Error getting document [ID]

### Expected Behavior

N/A

### Actual Behavior

Old services are broken after upgrading

## How to reproduce

- Create a service in 5.4.* version

- Upgrade one to 5.5.80

- Try to show that service | priority | oneflow not working after upgrading from to bug report version of opennebula development build component authorization ldap certs command line interface cli contextualization documentation federation and ha host clusters and monitorization kvm networking orchestration opennebula flow packages scheduler storage images sunstone upgrades user groups vdcs and acl vcenter description after upgrading one from if you try to see a service created in you get error getting document expected behavior n a actual behavior old services are broken after upgrading how to reproduce create a service in version upgrade one to try to show that service | 1 |

321,588 | 9,806,100,117 | IssuesEvent | 2019-06-12 10:30:53 | PARINetwork/pari | https://api.github.com/repos/PARINetwork/pari | closed | Product to Staging database mirroring has stopped working | DevOps High Priority | We regularly copy the prod database to staging (as a backup also and..) so that we can connect to the staging database to create reports.

This process seems to have stopped working.

The last database copy from prod to staging seems to have happened on

2018-08-24 08:24:37.807405+00 | 1.0 | Product to Staging database mirroring has stopped working - We regularly copy the prod database to staging (as a backup also and..) so that we can connect to the staging database to create reports.

This process seems to have stopped working.

The last database copy from prod to staging seems to have happened on

2018-08-24 08:24:37.807405+00 | priority | product to staging database mirroring has stopped working we regularly copy the prod database to staging as a backup also and so that we can connect to the staging database to create reports this process seems to have stopped working the last database copy from prod to staging seems to have happened on | 1 |

234,287 | 7,719,321,397 | IssuesEvent | 2018-05-23 19:01:29 | ampproject/amphtml | https://api.github.com/repos/ampproject/amphtml | closed | onMeasureChanged lifecycle callback | Category: Runtime P1: High Priority | @cathyxz noticed a infinite async loop in her code:

1. It's common to use the `onLayoutMeasure` callback to mean "when my measurements change" (from page resize, bind change, etc)

2. In `onLayoutMeasure`, she issues a `mutateElement` to a child element to synchronize it's measurements with the amp-element.

3. `mutateElement` invalidates the amp-elements cached measurements, requiring the next pass to remeasure the amp-element.

4. Once the next pass remeasures the amp-element, `onLayoutMeasure` is called

Hence, we have an async cycle of measures and mutates.

It'd be useful to have a new callback `onMeasureChanged` that is only called when the measurements of an amp-element changes. This would not trigger for every measurement invalidation caused by a mutation.

Steps:

- Plumb a `onMeasureChanged` lifecycle callback into `BaseElement` and `CustomElement`.

- Call it when appropriate from `Resource`.

- Audit all uses of `onLayoutMeasure`

- If it's just using it to determine if measurements changed, change it to `onMeasureChanged`.

- If there are no more calls to `onLayoutMeasure`, remove it as a lifecycle callback. | 1.0 | onMeasureChanged lifecycle callback - @cathyxz noticed a infinite async loop in her code:

1. It's common to use the `onLayoutMeasure` callback to mean "when my measurements change" (from page resize, bind change, etc)

2. In `onLayoutMeasure`, she issues a `mutateElement` to a child element to synchronize it's measurements with the amp-element.

3. `mutateElement` invalidates the amp-elements cached measurements, requiring the next pass to remeasure the amp-element.

4. Once the next pass remeasures the amp-element, `onLayoutMeasure` is called

Hence, we have an async cycle of measures and mutates.

It'd be useful to have a new callback `onMeasureChanged` that is only called when the measurements of an amp-element changes. This would not trigger for every measurement invalidation caused by a mutation.

Steps:

- Plumb a `onMeasureChanged` lifecycle callback into `BaseElement` and `CustomElement`.

- Call it when appropriate from `Resource`.

- Audit all uses of `onLayoutMeasure`

- If it's just using it to determine if measurements changed, change it to `onMeasureChanged`.

- If there are no more calls to `onLayoutMeasure`, remove it as a lifecycle callback. | priority | onmeasurechanged lifecycle callback cathyxz noticed a infinite async loop in her code it s common to use the onlayoutmeasure callback to mean when my measurements change from page resize bind change etc in onlayoutmeasure she issues a mutateelement to a child element to synchronize it s measurements with the amp element mutateelement invalidates the amp elements cached measurements requiring the next pass to remeasure the amp element once the next pass remeasures the amp element onlayoutmeasure is called hence we have an async cycle of measures and mutates it d be useful to have a new callback onmeasurechanged that is only called when the measurements of an amp element changes this would not trigger for every measurement invalidation caused by a mutation steps plumb a onmeasurechanged lifecycle callback into baseelement and customelement call it when appropriate from resource audit all uses of onlayoutmeasure if it s just using it to determine if measurements changed change it to onmeasurechanged if there are no more calls to onlayoutmeasure remove it as a lifecycle callback | 1 |

254,120 | 8,070,134,476 | IssuesEvent | 2018-08-06 08:46:20 | resin-io/resin-supervisor | https://api.github.com/repos/resin-io/resin-supervisor | opened | With an empty config.json the supervisor will try to read a config.txt | High priority Low-hanging fruit Needs more investigation type/bug | The config.txt file will only be present on a rpi family device, and the information for this comes from the config.json file.

To reproduce, start the supervisor with an empty config.json and watch the logs. | 1.0 | With an empty config.json the supervisor will try to read a config.txt - The config.txt file will only be present on a rpi family device, and the information for this comes from the config.json file.

To reproduce, start the supervisor with an empty config.json and watch the logs. | priority | with an empty config json the supervisor will try to read a config txt the config txt file will only be present on a rpi family device and the information for this comes from the config json file to reproduce start the supervisor with an empty config json and watch the logs | 1 |

65,467 | 3,228,437,964 | IssuesEvent | 2015-10-12 02:06:12 | biocore/qiita | https://api.github.com/repos/biocore/qiita | closed | Ensure unique IDs for metaanalysis | bug priority: high | ...in the case of the same samples run on multiple sequencing platforms, the resulting IDs need to remain unique. | 1.0 | Ensure unique IDs for metaanalysis - ...in the case of the same samples run on multiple sequencing platforms, the resulting IDs need to remain unique. | priority | ensure unique ids for metaanalysis in the case of the same samples run on multiple sequencing platforms the resulting ids need to remain unique | 1 |

373,694 | 11,047,442,235 | IssuesEvent | 2019-12-09 18:59:00 | coder3101/cp-editor2 | https://api.github.com/repos/coder3101/cp-editor2 | closed | Add files as input & Add more than three inputs | enhancement high_priority linux macOs windows | **Is your feature request related to a problem? Please describe.**

I can only add short inputs in the "Input or stdin" window. And I can only add three inputs.

**Describe the solution you'd like**

Support adding files as inputs. And support adding more than three inputs.

Maybe it's good to display the content of only three inputs, and others can be added by a popup window, or add from the webpage, which is described in #11.

**Describe alternatives you've considered**

Add a button "Run on file" which allows you to choose a file as the input.

**Additional context**

| 1.0 | Add files as input & Add more than three inputs - **Is your feature request related to a problem? Please describe.**

I can only add short inputs in the "Input or stdin" window. And I can only add three inputs.

**Describe the solution you'd like**

Support adding files as inputs. And support adding more than three inputs.

Maybe it's good to display the content of only three inputs, and others can be added by a popup window, or add from the webpage, which is described in #11.

**Describe alternatives you've considered**

Add a button "Run on file" which allows you to choose a file as the input.

**Additional context**

| priority | add files as input add more than three inputs is your feature request related to a problem please describe i can only add short inputs in the input or stdin window and i can only add three inputs describe the solution you d like support adding files as inputs and support adding more than three inputs maybe it s good to display the content of only three inputs and others can be added by a popup window or add from the webpage which is described in describe alternatives you ve considered add a button run on file which allows you to choose a file as the input additional context | 1 |

747,836 | 26,100,651,854 | IssuesEvent | 2022-12-27 06:26:46 | bounswe/bounswe2022group4 | https://api.github.com/repos/bounswe/bounswe2022group4 | closed | Mobile: Doctor comments should be highlighted. | Category - To Do Category - Enhancement Priority - High Status: In Progress Language - Kotlin Team - Mobile Mobile | ### Description:

Doctors should have outshined comments.

### What to do:

- [x] Doctor specific comment fragment

### Deadline

27.11.2022, 12.00(GMT+3) | 1.0 | Mobile: Doctor comments should be highlighted. - ### Description:

Doctors should have outshined comments.

### What to do:

- [x] Doctor specific comment fragment

### Deadline

27.11.2022, 12.00(GMT+3) | priority | mobile doctor comments should be highlighted description doctors should have outshined comments what to do doctor specific comment fragment deadline gmt | 1 |

825,931 | 31,479,262,176 | IssuesEvent | 2023-08-30 12:53:51 | gamefreedomgit/Maelstrom | https://api.github.com/repos/gamefreedomgit/Maelstrom | closed | Bug at last boss - ''Through a Glass, Darkly'' quest | Priority: High Quest | [//]: # (REMBEMBER! Add links to things related to the bug using for example:)

[//]: # (http://wowhead.com/)

[//]: # (cata-twinhead.twinstar.cz)

I'm currently doing this quest "Through a Glass, Darkly", which is part of the legendary weapon storyline in Firelands. I got to the final boss fight but unfortunately died on my first attempt. When I reentered the dungeon, I noticed a portal at the entrance that quickly took me to the last boss's room. However, after I died again, the portal was no longer there for instant teleportation. I also tried to manually run to the boss room, but I couldn't proceed because I needed to use an elevator. Unfortunately, there was no clickable option to board the elevator and reach the boss room.

**Solution:**

There should be a portal for instant teleport to last boss room once I enter the dungeon.

**Database links:**

| 1.0 | Bug at last boss - ''Through a Glass, Darkly'' quest - [//]: # (REMBEMBER! Add links to things related to the bug using for example:)

[//]: # (http://wowhead.com/)

[//]: # (cata-twinhead.twinstar.cz)

I'm currently doing this quest "Through a Glass, Darkly", which is part of the legendary weapon storyline in Firelands. I got to the final boss fight but unfortunately died on my first attempt. When I reentered the dungeon, I noticed a portal at the entrance that quickly took me to the last boss's room. However, after I died again, the portal was no longer there for instant teleportation. I also tried to manually run to the boss room, but I couldn't proceed because I needed to use an elevator. Unfortunately, there was no clickable option to board the elevator and reach the boss room.

**Solution:**

There should be a portal for instant teleport to last boss room once I enter the dungeon.

**Database links:**

| priority | bug at last boss through a glass darkly quest rembember add links to things related to the bug using for example cata twinhead twinstar cz i m currently doing this quest through a glass darkly which is part of the legendary weapon storyline in firelands i got to the final boss fight but unfortunately died on my first attempt when i reentered the dungeon i noticed a portal at the entrance that quickly took me to the last boss s room however after i died again the portal was no longer there for instant teleportation i also tried to manually run to the boss room but i couldn t proceed because i needed to use an elevator unfortunately there was no clickable option to board the elevator and reach the boss room solution there should be a portal for instant teleport to last boss room once i enter the dungeon database links | 1 |

327,368 | 9,974,681,317 | IssuesEvent | 2019-07-09 11:14:46 | JuliaDiffEq/OrdinaryDiffEq.jl | https://api.github.com/repos/JuliaDiffEq/OrdinaryDiffEq.jl | closed | Stiffness switching tests disabled | high-priority | Since they are no longer switching back, which isn't a bad thing but we need to update the tests. | 1.0 | Stiffness switching tests disabled - Since they are no longer switching back, which isn't a bad thing but we need to update the tests. | priority | stiffness switching tests disabled since they are no longer switching back which isn t a bad thing but we need to update the tests | 1 |

813,837 | 30,475,276,091 | IssuesEvent | 2023-07-17 16:03:41 | thunder-app/thunder | https://api.github.com/repos/thunder-app/thunder | closed | not all subscriptions showing | bug priority-high fixed in upcoming release | **Description**

In the sidebar not all my subscriptions are showing. See screenshots below

**Expected Behavior**

All subscribed communities should be shown

**Screenshots**

**Device & App Version:**

- Device: [e.g. iPhone6] Motorola One 5G Ace

- OS: [e.g. iOS8.1] Android 11

- Version [e.g. 22] https://github.com/hjiangsu/thunder/releases/tag/v0.2.1-prerelease%2B5

**Additional Context**

| 1.0 | not all subscriptions showing - **Description**

In the sidebar not all my subscriptions are showing. See screenshots below

**Expected Behavior**

All subscribed communities should be shown

**Screenshots**

**Device & App Version:**

- Device: [e.g. iPhone6] Motorola One 5G Ace

- OS: [e.g. iOS8.1] Android 11

- Version [e.g. 22] https://github.com/hjiangsu/thunder/releases/tag/v0.2.1-prerelease%2B5

**Additional Context**

| priority | not all subscriptions showing description in the sidebar not all my subscriptions are showing see screenshots below expected behavior all subscribed communities should be shown screenshots device app version device motorola one ace os android version additional context | 1 |

150,236 | 5,741,281,335 | IssuesEvent | 2017-04-24 04:46:14 | RestComm/Restcomm-Connect | https://api.github.com/repos/RestComm/Restcomm-Connect | closed | CDR resulting from sub-account-client inbound calls has parent 'accountSid' | CDR High-Priority in progress | When a registered client makes a call the related CDR record does not belong to the account the client belongs too but to its parent.

I've reproduced the issue like this:

* On a local instance i created a sub-account of administrator@company.com. I named it orestis@company.com

* Created a SIP client named 'orestis'

* Registered with 'orestis' using jitsi

* Made a call to the cloud instance at sip:1234@cloud.restcomm.com call succeeded.

* Checked CDRs using the Calls API. No CDRs under 'orestis@company.com' account.

* Check CDRs using parent administrator@company.com account. The call is there having account SID "ACae6e420f425248d6a26948c17a9e2acf" (administrator).

looks like the owner of the CDR is wrong

| 1.0 | CDR resulting from sub-account-client inbound calls has parent 'accountSid' - When a registered client makes a call the related CDR record does not belong to the account the client belongs too but to its parent.

I've reproduced the issue like this:

* On a local instance i created a sub-account of administrator@company.com. I named it orestis@company.com

* Created a SIP client named 'orestis'

* Registered with 'orestis' using jitsi

* Made a call to the cloud instance at sip:1234@cloud.restcomm.com call succeeded.

* Checked CDRs using the Calls API. No CDRs under 'orestis@company.com' account.

* Check CDRs using parent administrator@company.com account. The call is there having account SID "ACae6e420f425248d6a26948c17a9e2acf" (administrator).

looks like the owner of the CDR is wrong

| priority | cdr resulting from sub account client inbound calls has parent accountsid when a registered client makes a call the related cdr record does not belong to the account the client belongs too but to its parent i ve reproduced the issue like this on a local instance i created a sub account of administrator company com i named it orestis company com created a sip client named orestis registered with orestis using jitsi made a call to the cloud instance at sip cloud restcomm com call succeeded checked cdrs using the calls api no cdrs under orestis company com account check cdrs using parent administrator company com account the call is there having account sid administrator looks like the owner of the cdr is wrong | 1 |

432,298 | 12,490,659,187 | IssuesEvent | 2020-06-01 01:07:33 | openmsupply/mobile | https://api.github.com/repos/openmsupply/mobile | closed | Add date pickers to `VaccinePage` | Docs: not needed Effort: small Feature Module: vaccines Priority: high | ## Is your feature request related to a problem? Please describe.

Currently you can only view the last 30 days of temperatures. It would be great if you could view any date!

## Describe the solution you'd like

Add date pickers, which set a start and end date for viewing temperatures

## Implementation

- Add a date picker for the start and end date, which determine which dates to select temperature logs from.

- Potentially it would be better that rather than having a list of fridges, have a single component which has a drop down to select a fridge to show temperatures for?

## Describe alternatives you've considered

N/A

## Additional context

There may be an issue here with potentially showing a years worth of temperatures. That's a lot of records (> 15000). Possibly need to have a limit on the number of days - but could just have a longer 'loading' time rather than restricting like this

| 1.0 | Add date pickers to `VaccinePage` - ## Is your feature request related to a problem? Please describe.

Currently you can only view the last 30 days of temperatures. It would be great if you could view any date!

## Describe the solution you'd like

Add date pickers, which set a start and end date for viewing temperatures

## Implementation

- Add a date picker for the start and end date, which determine which dates to select temperature logs from.

- Potentially it would be better that rather than having a list of fridges, have a single component which has a drop down to select a fridge to show temperatures for?

## Describe alternatives you've considered

N/A

## Additional context

There may be an issue here with potentially showing a years worth of temperatures. That's a lot of records (> 15000). Possibly need to have a limit on the number of days - but could just have a longer 'loading' time rather than restricting like this

| priority | add date pickers to vaccinepage is your feature request related to a problem please describe currently you can only view the last days of temperatures it would be great if you could view any date describe the solution you d like add date pickers which set a start and end date for viewing temperatures implementation add a date picker for the start and end date which determine which dates to select temperature logs from potentially it would be better that rather than having a list of fridges have a single component which has a drop down to select a fridge to show temperatures for describe alternatives you ve considered n a additional context there may be an issue here with potentially showing a years worth of temperatures that s a lot of records possibly need to have a limit on the number of days but could just have a longer loading time rather than restricting like this | 1 |

192,418 | 6,849,801,671 | IssuesEvent | 2017-11-13 23:38:20 | Polymer/polymer-modulizer | https://api.github.com/repos/Polymer/polymer-modulizer | closed | Add guard rails | Priority: High Status: Available Type: Enhancement | We should require that the directory either be a clean git repo or the user pass in a `--hurt-me-plenty` flag in order to run in non-workspace mode. | 1.0 | Add guard rails - We should require that the directory either be a clean git repo or the user pass in a `--hurt-me-plenty` flag in order to run in non-workspace mode. | priority | add guard rails we should require that the directory either be a clean git repo or the user pass in a hurt me plenty flag in order to run in non workspace mode | 1 |

633,795 | 20,266,025,703 | IssuesEvent | 2022-02-15 12:09:01 | ooni/backend | https://api.github.com/repos/ooni/backend | closed | API database optimization | ooni/api epic priority/high optimization | This issue is meant to track ongoing efforts to optimize the API queries against the database.

The main concern is that some queries can be slow and the API might time out during the execution.

Related to ooni/api#95 ooni/backend#141 ooni/backend#142 ooni/backend#143 ooni/backend#144

Some medium/long-term improvement ideas:

- measure current SQL queries runtimes

- first, measure how often the bottleneck is disk IO VS network IO VS CPU on the database.

- consider getting some hours from a DBA

- create more materialized view tables for common look-up patterns and use them from the API

- investigate if some slowness is due to full table scans

- benchmark Postgresql 11 and consider upgrading (better partitioning and parallel scans [1])

- benchmark running a replica of some tables on the API host itself

- benchmark denormalizing result/measurement/input tables

- benchmark adding multi-column indexes

- we could even consider caching some pre-digested data on the API host, if it makes sense, and do local lookup (e.g. LMDB)

- investigate using external datastores

[1] https://pgdash.io/blog/postgres-11-whats-new.html | 1.0 | API database optimization - This issue is meant to track ongoing efforts to optimize the API queries against the database.

The main concern is that some queries can be slow and the API might time out during the execution.

Related to ooni/api#95 ooni/backend#141 ooni/backend#142 ooni/backend#143 ooni/backend#144

Some medium/long-term improvement ideas:

- measure current SQL queries runtimes

- first, measure how often the bottleneck is disk IO VS network IO VS CPU on the database.

- consider getting some hours from a DBA

- create more materialized view tables for common look-up patterns and use them from the API

- investigate if some slowness is due to full table scans

- benchmark Postgresql 11 and consider upgrading (better partitioning and parallel scans [1])

- benchmark running a replica of some tables on the API host itself

- benchmark denormalizing result/measurement/input tables

- benchmark adding multi-column indexes

- we could even consider caching some pre-digested data on the API host, if it makes sense, and do local lookup (e.g. LMDB)

- investigate using external datastores

[1] https://pgdash.io/blog/postgres-11-whats-new.html | priority | api database optimization this issue is meant to track ongoing efforts to optimize the api queries against the database the main concern is that some queries can be slow and the api might time out during the execution related to ooni api ooni backend ooni backend ooni backend ooni backend some medium long term improvement ideas measure current sql queries runtimes first measure how often the bottleneck is disk io vs network io vs cpu on the database consider getting some hours from a dba create more materialized view tables for common look up patterns and use them from the api investigate if some slowness is due to full table scans benchmark postgresql and consider upgrading better partitioning and parallel scans benchmark running a replica of some tables on the api host itself benchmark denormalizing result measurement input tables benchmark adding multi column indexes we could even consider caching some pre digested data on the api host if it makes sense and do local lookup e g lmdb investigate using external datastores | 1 |

174,032 | 6,535,839,833 | IssuesEvent | 2017-08-31 15:52:02 | ProjectSidewalk/SidewalkWebpage | https://api.github.com/repos/ProjectSidewalk/SidewalkWebpage | closed | Write query to determine time users spend using Project Sidewalk | Admin Interface Priority: High | @r-holland has been working on this.

To get an accurate picture of much time people are spending using the tool, we want to look at time spent on the audit page where the user is actively engaging with the tool (placing labels, panning in SV, moving along the street, etc).

After this query is written, it can be adapted to calculate...

* how long onboarding takes for an average user

* how long a 1000ft mission takes for an average user

* how much total time the average user spends using Project Sidewalk | 1.0 | Write query to determine time users spend using Project Sidewalk - @r-holland has been working on this.

To get an accurate picture of much time people are spending using the tool, we want to look at time spent on the audit page where the user is actively engaging with the tool (placing labels, panning in SV, moving along the street, etc).

After this query is written, it can be adapted to calculate...

* how long onboarding takes for an average user

* how long a 1000ft mission takes for an average user

* how much total time the average user spends using Project Sidewalk | priority | write query to determine time users spend using project sidewalk r holland has been working on this to get an accurate picture of much time people are spending using the tool we want to look at time spent on the audit page where the user is actively engaging with the tool placing labels panning in sv moving along the street etc after this query is written it can be adapted to calculate how long onboarding takes for an average user how long a mission takes for an average user how much total time the average user spends using project sidewalk | 1 |

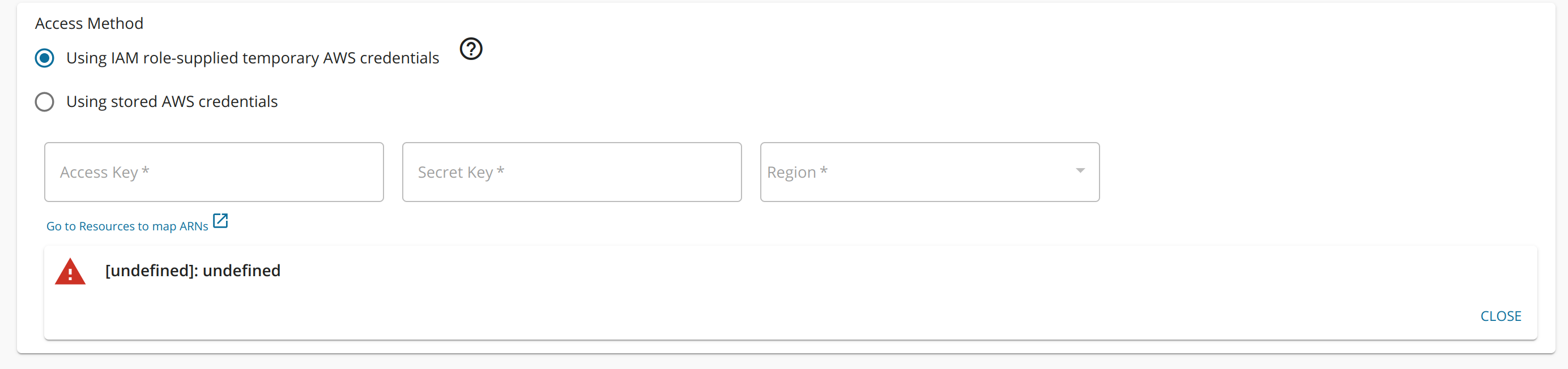

635,899 | 20,513,191,503 | IssuesEvent | 2022-03-01 09:05:51 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | closed | Error adding AWS Lambda endpoint Using IAM role-supplied temporary AWS credentials | Type/Bug Priority/High Feature/AWSLambda APIM - 4.1.0 | ### Description:

The following error can be seen when adding an AWS Lambda endpoint using IAM role-supplied temporary AWS credentials method.

### Steps to reproduce:

https://apim.docs.wso2.com/en/latest/tutorials/create-and-publish-awslambda-api/#create-and-publish-an-aws-lambda-api

### Affected Product Version:

4.1.0-alpha

| 1.0 | Error adding AWS Lambda endpoint Using IAM role-supplied temporary AWS credentials - ### Description:

The following error can be seen when adding an AWS Lambda endpoint using IAM role-supplied temporary AWS credentials method.

### Steps to reproduce:

https://apim.docs.wso2.com/en/latest/tutorials/create-and-publish-awslambda-api/#create-and-publish-an-aws-lambda-api

### Affected Product Version:

4.1.0-alpha

| priority | error adding aws lambda endpoint using iam role supplied temporary aws credentials description the following error can be seen when adding an aws lambda endpoint using iam role supplied temporary aws credentials method steps to reproduce affected product version alpha | 1 |

356,047 | 10,587,881,796 | IssuesEvent | 2019-10-08 23:41:47 | carbon-design-system/carbon | https://api.github.com/repos/carbon-design-system/carbon | closed | AVT 1 - React Combobox has DAP violations | Severity 1 🚨 priority: high type: a11y ♿ | ## Environment

macOS Mojave version 10.14.5

Version 75.0.3770.100 (Official Build) (64-bit)

Carbon v10 - React

Detailed Description

Run DAP on any of the three Combobox examples and the following DAP violations are present:

<img width="1077" alt="Screen Shot 2019-07-02 at 2 26 14 PM" src="https://user-images.githubusercontent.com/21676914/60541060-5258da80-9cd6-11e9-91ec-004172747e14.png">

| 1.0 | AVT 1 - React Combobox has DAP violations - ## Environment

macOS Mojave version 10.14.5

Version 75.0.3770.100 (Official Build) (64-bit)

Carbon v10 - React

Detailed Description

Run DAP on any of the three Combobox examples and the following DAP violations are present:

<img width="1077" alt="Screen Shot 2019-07-02 at 2 26 14 PM" src="https://user-images.githubusercontent.com/21676914/60541060-5258da80-9cd6-11e9-91ec-004172747e14.png">

| priority | avt react combobox has dap violations environment macos mojave version version official build bit carbon react detailed description run dap on any of the three combobox examples and the following dap violations are present img width alt screen shot at pm src | 1 |

674,205 | 23,042,887,532 | IssuesEvent | 2022-07-23 12:33:32 | Daniel123643/RTIBot | https://api.github.com/repos/Daniel123643/RTIBot | opened | Slash Command: /setup | feature high priority slash commands | **Details:**

* `/setup application` is a subcommand group.

- `/setup application channel <channel>` sets the <channel> to receive member applications.

- `/setup application message <message_id>` puts a ✅ button on a message with the given `<message_id>` which people press to start their member application process.

- `/setup application add <user>` starts the application process dialogue for the given `<user>`.

* `/setup publishcategory <category_name>` sets the category with name `<category_name>` to be where published raids go **(autocompleted)**.

* `/setup unpublishcategory <category_name>` sets the category with name `<category_name>` to be where unpublished raids go **(autocompleted)**.

* `/setup schedulepost [channel]` posts the raid schedule embed, optionally to the given `[channel]`.

* `/setup reservesping <time_in_minutes>` defines the `<time_in_minutes>` during which auto-broadcasting to reserves is throttled, with `0` or a negative number disabling the notification altogether.

* `/setup region <region>` defines what region the community is in **(autocompleted to EU, NA, or ANY)**.

* `/setup raidreminder <time_in_minutes>` defines the `<time_in_minutes>` before which all raid participants are sent a reminder about the raid.

* `/setup roledefault` is a subcommand group.

- `/setup roledefault add <role>` adds a `<role>` that's given to a user by default when they complete their member application.

- `/setup roledefault remove <role>` removes the default `<role>` from the list of roles that are given to a user by default when they complete their member application.

* `/setup rolemember <role>` sets the member role to `<role>`, i.e. the main role given to people when they become a member.

* `/setup unregister <time_in_minutes>` sets the `<time_in_minutes>` before a raid during which the commander is notified of people unregistering.

* `/setup trainingrequestspost` is a subcommand group.

- `/setup trainingrequestspost stats` posts an embed with stats of the training requests (requests, fulfilled, etc).

- `/setup trainingrequestspost summary` posts a paginated embed with a list of all users with training requests and what requests they've made.

- `/setup trainingrequestspost wing <wing>` posts a paginated embed with a detailed list of training requests for the given `<wing>` **(autocompleted to Wing 1, Wing 2, Wing 3, Wing 4, Wing 5, Wing 6, Wing 7, or EoD Strikes)**.

* `/setup runmaintenancecommands <type>` runs the maintenance commands with the given `<type>` **(autocompleted to hourly or daily)**.

* /setup trainingrequestsetup` is a subcommand group.

- `/setup trainingrequestsetup sync` syncs all the training requests with the GW2 API.

- `/setup trainingrequestsetup syncinterval <time_in_minutes>` sets how often (`<time_in_minutes>`) the training requests auto sync, with `0` or a negative number disabling the auto syncing altogether.

- `/setup trainingrequestsetup expiration <time_in_minutes>` sets when (`<time_in_minutes>`) the training requests expire, with `0` or a negative number disabling the expiration altogether.

Current functionality:

* `GlobalAutoBroadcastCommand`

* `GuildApplicationAddCommand`

* `GuildApplicationInfoCommand`

* `GuildApplicationSetChannelCommand`

* `GuildRegionCommand`

* `RaidScheduleCommand`

* `RaidSetCategoryCommand`

* `RaidSetDraftCategoryCommand`

* `ReminderNotificationCommand`

* `RoleAddAdditionalCommand`

* `RoleRemoveAdditionalCommand`

* `RunMaintenanceTasksCommand`

* `SetMemberRoleCommand`

* `TrainingRequestExpirationCommand`

* `TrainingRequestInfoCommand`

* `TrainingRequestSyncCommand`

* `TrainingRequestSyncIntervalCommand`

* `UnregisterNotificationCommand`

Notes:

* Ensure that there's validation on the Discord-related parameters (user, channel, role, etc).

* Error messages should be ephemeral. | 1.0 | Slash Command: /setup - **Details:**

* `/setup application` is a subcommand group.

- `/setup application channel <channel>` sets the <channel> to receive member applications.

- `/setup application message <message_id>` puts a ✅ button on a message with the given `<message_id>` which people press to start their member application process.

- `/setup application add <user>` starts the application process dialogue for the given `<user>`.

* `/setup publishcategory <category_name>` sets the category with name `<category_name>` to be where published raids go **(autocompleted)**.

* `/setup unpublishcategory <category_name>` sets the category with name `<category_name>` to be where unpublished raids go **(autocompleted)**.

* `/setup schedulepost [channel]` posts the raid schedule embed, optionally to the given `[channel]`.

* `/setup reservesping <time_in_minutes>` defines the `<time_in_minutes>` during which auto-broadcasting to reserves is throttled, with `0` or a negative number disabling the notification altogether.

* `/setup region <region>` defines what region the community is in **(autocompleted to EU, NA, or ANY)**.

* `/setup raidreminder <time_in_minutes>` defines the `<time_in_minutes>` before which all raid participants are sent a reminder about the raid.

* `/setup roledefault` is a subcommand group.

- `/setup roledefault add <role>` adds a `<role>` that's given to a user by default when they complete their member application.

- `/setup roledefault remove <role>` removes the default `<role>` from the list of roles that are given to a user by default when they complete their member application.

* `/setup rolemember <role>` sets the member role to `<role>`, i.e. the main role given to people when they become a member.

* `/setup unregister <time_in_minutes>` sets the `<time_in_minutes>` before a raid during which the commander is notified of people unregistering.

* `/setup trainingrequestspost` is a subcommand group.

- `/setup trainingrequestspost stats` posts an embed with stats of the training requests (requests, fulfilled, etc).

- `/setup trainingrequestspost summary` posts a paginated embed with a list of all users with training requests and what requests they've made.

- `/setup trainingrequestspost wing <wing>` posts a paginated embed with a detailed list of training requests for the given `<wing>` **(autocompleted to Wing 1, Wing 2, Wing 3, Wing 4, Wing 5, Wing 6, Wing 7, or EoD Strikes)**.

* `/setup runmaintenancecommands <type>` runs the maintenance commands with the given `<type>` **(autocompleted to hourly or daily)**.

* /setup trainingrequestsetup` is a subcommand group.

- `/setup trainingrequestsetup sync` syncs all the training requests with the GW2 API.

- `/setup trainingrequestsetup syncinterval <time_in_minutes>` sets how often (`<time_in_minutes>`) the training requests auto sync, with `0` or a negative number disabling the auto syncing altogether.

- `/setup trainingrequestsetup expiration <time_in_minutes>` sets when (`<time_in_minutes>`) the training requests expire, with `0` or a negative number disabling the expiration altogether.

Current functionality:

* `GlobalAutoBroadcastCommand`

* `GuildApplicationAddCommand`

* `GuildApplicationInfoCommand`

* `GuildApplicationSetChannelCommand`

* `GuildRegionCommand`

* `RaidScheduleCommand`

* `RaidSetCategoryCommand`

* `RaidSetDraftCategoryCommand`

* `ReminderNotificationCommand`

* `RoleAddAdditionalCommand`

* `RoleRemoveAdditionalCommand`

* `RunMaintenanceTasksCommand`

* `SetMemberRoleCommand`

* `TrainingRequestExpirationCommand`

* `TrainingRequestInfoCommand`

* `TrainingRequestSyncCommand`

* `TrainingRequestSyncIntervalCommand`

* `UnregisterNotificationCommand`

Notes:

* Ensure that there's validation on the Discord-related parameters (user, channel, role, etc).

* Error messages should be ephemeral. | priority | slash command setup details setup application is a subcommand group setup application channel sets the to receive member applications setup application message puts a ✅ button on a message with the given which people press to start their member application process setup application add starts the application process dialogue for the given setup publishcategory sets the category with name to be where published raids go autocompleted setup unpublishcategory sets the category with name to be where unpublished raids go autocompleted setup schedulepost posts the raid schedule embed optionally to the given setup reservesping defines the during which auto broadcasting to reserves is throttled with or a negative number disabling the notification altogether setup region defines what region the community is in autocompleted to eu na or any setup raidreminder defines the before which all raid participants are sent a reminder about the raid setup roledefault is a subcommand group setup roledefault add adds a that s given to a user by default when they complete their member application setup roledefault remove removes the default from the list of roles that are given to a user by default when they complete their member application setup rolemember sets the member role to i e the main role given to people when they become a member setup unregister sets the before a raid during which the commander is notified of people unregistering setup trainingrequestspost is a subcommand group setup trainingrequestspost stats posts an embed with stats of the training requests requests fulfilled etc setup trainingrequestspost summary posts a paginated embed with a list of all users with training requests and what requests they ve made setup trainingrequestspost wing posts a paginated embed with a detailed list of training requests for the given autocompleted to wing wing wing wing wing wing wing or eod strikes setup runmaintenancecommands runs the maintenance commands with the given autocompleted to hourly or daily setup trainingrequestsetup is a subcommand group setup trainingrequestsetup sync syncs all the training requests with the api setup trainingrequestsetup syncinterval sets how often the training requests auto sync with or a negative number disabling the auto syncing altogether setup trainingrequestsetup expiration sets when the training requests expire with or a negative number disabling the expiration altogether current functionality globalautobroadcastcommand guildapplicationaddcommand guildapplicationinfocommand guildapplicationsetchannelcommand guildregioncommand raidschedulecommand raidsetcategorycommand raidsetdraftcategorycommand remindernotificationcommand roleaddadditionalcommand roleremoveadditionalcommand runmaintenancetaskscommand setmemberrolecommand trainingrequestexpirationcommand trainingrequestinfocommand trainingrequestsynccommand trainingrequestsyncintervalcommand unregisternotificationcommand notes ensure that there s validation on the discord related parameters user channel role etc error messages should be ephemeral | 1 |

664,836 | 22,290,147,877 | IssuesEvent | 2022-06-12 08:29:06 | nhoizey/images-responsiver | https://api.github.com/repos/nhoizey/images-responsiver | closed | Allow setting `sizes` attribute in the source HTML | type: enhancement 🧗♂️ priority: high 🟠 package: images-responsiver ⚙️ | If a `sizes` attribute is already there, leave it like that. | 1.0 | Allow setting `sizes` attribute in the source HTML - If a `sizes` attribute is already there, leave it like that. | priority | allow setting sizes attribute in the source html if a sizes attribute is already there leave it like that | 1 |

620,541 | 19,564,629,536 | IssuesEvent | 2022-01-03 21:36:47 | kak-lsp/kak-lsp | https://api.github.com/repos/kak-lsp/kak-lsp | closed | Race condition between didSave and completion | bug high priority | I kept getting the wrong typescript completions when writing `object.`, I got the completions for `object ` instead. I realized what the problem is: `lsp-completion` directly runs `lsp-did-change`:

https://github.com/ul/kak-lsp/blob/243da6cb8ffa4e5367f5777d2fc48a1a9c30b875/rc/lsp.kak#L103

However, `lsp-did-change` asynchronously sends the buffer contents to the `kak-lsp` binary. So there is as race between that and the message `lsp-completion` sends to the binary. And indeed didChange loses, presumably because it spawns other binaries such as `sed` and the script for completions is much simpler.

One way to solve this would be to make a command `lsp-did-change-and-then` taking an extra argument to run that will be guaranteed to run after the new buffer contents has been sent. | 1.0 | Race condition between didSave and completion - I kept getting the wrong typescript completions when writing `object.`, I got the completions for `object ` instead. I realized what the problem is: `lsp-completion` directly runs `lsp-did-change`:

https://github.com/ul/kak-lsp/blob/243da6cb8ffa4e5367f5777d2fc48a1a9c30b875/rc/lsp.kak#L103

However, `lsp-did-change` asynchronously sends the buffer contents to the `kak-lsp` binary. So there is as race between that and the message `lsp-completion` sends to the binary. And indeed didChange loses, presumably because it spawns other binaries such as `sed` and the script for completions is much simpler.

One way to solve this would be to make a command `lsp-did-change-and-then` taking an extra argument to run that will be guaranteed to run after the new buffer contents has been sent. | priority | race condition between didsave and completion i kept getting the wrong typescript completions when writing object i got the completions for object instead i realized what the problem is lsp completion directly runs lsp did change however lsp did change asynchronously sends the buffer contents to the kak lsp binary so there is as race between that and the message lsp completion sends to the binary and indeed didchange loses presumably because it spawns other binaries such as sed and the script for completions is much simpler one way to solve this would be to make a command lsp did change and then taking an extra argument to run that will be guaranteed to run after the new buffer contents has been sent | 1 |

626,059 | 19,784,278,804 | IssuesEvent | 2022-01-18 03:30:02 | solo-io/gloo | https://api.github.com/repos/solo-io/gloo | closed | Publish metrics for invalid resources | Type: Enhancement Size: L Impact: XL Priority: High Area: Observability | **Is your feature request related to a problem? Please describe.**

When Gloo isn't able to process an object (a Virtual Service, ...), it updates its status to provide information about the error, but there's no way to be alerted

**Describe the solution you'd like**

Publish metrics in Prometheus to allow users to create alerts when it occurs

Metrics that show if a configuration is ok/missing/wrong that can be consumed using Prometheus for example.

use case: Alerting based on these metrics

**Additional Context**

Duplicate of: https://github.com/solo-io/gloo/issues/3866 | 1.0 | Publish metrics for invalid resources - **Is your feature request related to a problem? Please describe.**

When Gloo isn't able to process an object (a Virtual Service, ...), it updates its status to provide information about the error, but there's no way to be alerted

**Describe the solution you'd like**

Publish metrics in Prometheus to allow users to create alerts when it occurs

Metrics that show if a configuration is ok/missing/wrong that can be consumed using Prometheus for example.

use case: Alerting based on these metrics

**Additional Context**

Duplicate of: https://github.com/solo-io/gloo/issues/3866 | priority | publish metrics for invalid resources is your feature request related to a problem please describe when gloo isn t able to process an object a virtual service it updates its status to provide information about the error but there s no way to be alerted describe the solution you d like publish metrics in prometheus to allow users to create alerts when it occurs metrics that show if a configuration is ok missing wrong that can be consumed using prometheus for example use case alerting based on these metrics additional context duplicate of | 1 |

217,361 | 7,320,999,819 | IssuesEvent | 2018-03-02 09:49:36 | getinsomnia/insomnia | https://api.github.com/repos/getinsomnia/insomnia | closed | Change body to JSON or XML for new requests not changing | High Priority |

[Bug] – Upgraded to 5.14.8 (1st March) not able to change body type

- Insomnia Version: 5.14.8

- Operating System: ubuntu 16.04

Clicking the body drop down shows menu selecting JSON or XML does not switch body type. This works for existing requests but not new ones.

| 1.0 | Change body to JSON or XML for new requests not changing -

[Bug] – Upgraded to 5.14.8 (1st March) not able to change body type

- Insomnia Version: 5.14.8

- Operating System: ubuntu 16.04

Clicking the body drop down shows menu selecting JSON or XML does not switch body type. This works for existing requests but not new ones.

| priority | change body to json or xml for new requests not changing – upgraded to march not able to change body type insomnia version operating system ubuntu clicking the body drop down shows menu selecting json or xml does not switch body type this works for existing requests but not new ones | 1 |

264,883 | 8,320,672,945 | IssuesEvent | 2018-09-25 20:55:52 | oughtinc/mosaic | https://api.github.com/repos/oughtinc/mosaic | closed | Add isolated workspace view (without navigation buttons) | high-priority in-preview | E.g. `/workspaces/d8757f60-7ba4-4af7-8d33-fe25b0cc42ae/standalone`. This way, the experiment manager can send this URL and the participants aren't constantly tempted to navigate to parents or children.

Only show "open", "to subtree", "to parent" in normal mode. | 1.0 | Add isolated workspace view (without navigation buttons) - E.g. `/workspaces/d8757f60-7ba4-4af7-8d33-fe25b0cc42ae/standalone`. This way, the experiment manager can send this URL and the participants aren't constantly tempted to navigate to parents or children.

Only show "open", "to subtree", "to parent" in normal mode. | priority | add isolated workspace view without navigation buttons e g workspaces standalone this way the experiment manager can send this url and the participants aren t constantly tempted to navigate to parents or children only show open to subtree to parent in normal mode | 1 |

174,326 | 6,539,212,721 | IssuesEvent | 2017-09-01 10:06:36 | spring-projects/spring-boot | https://api.github.com/repos/spring-projects/spring-boot | opened | ManagementContextAutoConfiguration should happen "last" | priority: high theme: actuator type: enhancement | Current `master` does not enable the web endpoint extensions because the `EndpointAutoConfiguration` is not explicitly processed _before_ `ManagementContextAutoConfiguration` that is responsible to process `@ManagementContextConfiguration` classes.

We need to make sure that `@ManagementContextConfiguration` happens last. And the `@ConditionalOnBean` should not have a search strategy. | 1.0 | ManagementContextAutoConfiguration should happen "last" - Current `master` does not enable the web endpoint extensions because the `EndpointAutoConfiguration` is not explicitly processed _before_ `ManagementContextAutoConfiguration` that is responsible to process `@ManagementContextConfiguration` classes.

We need to make sure that `@ManagementContextConfiguration` happens last. And the `@ConditionalOnBean` should not have a search strategy. | priority | managementcontextautoconfiguration should happen last current master does not enable the web endpoint extensions because the endpointautoconfiguration is not explicitly processed before managementcontextautoconfiguration that is responsible to process managementcontextconfiguration classes we need to make sure that managementcontextconfiguration happens last and the conditionalonbean should not have a search strategy | 1 |

733,065 | 25,286,129,781 | IssuesEvent | 2022-11-16 19:27:36 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | Double-backward with `full_backward_hook` causes `RuntimeError` in PyTorch 1.13 | high priority module: autograd triaged module: regression actionable | Hi,

I am using double-backward calls to compute Hessian matrices, in combination with PyTorch's `full_backward_hook`s. After upgrading from `1.12.1` to `1.13.0`, I now run into the following error in the second backward pass:

```bash

RuntimeError: Module backward hook for grad_input is called before the grad_output one. This happens because the gradient in your nn.Module flows to the Module's input without passing through the Module's output. Make sure that the output depends on the input and that the loss is computed based on the output.

```

The following snippet reproduces my problem when I try to compute the Hessian of `f(x, y)` w.r.t. `x` where all symbols are scalars for simplicity.

```python

"""Compute the scalar-valued second-order derivative of f(x, y) w.r.t. x.

Use Hessian-vector products (double-backward pass) in combination with

full_backward_hook.

"""

from torch import ones_like, rand, rand_like

from torch.autograd import grad

from torch.nn import MSELoss

x = rand(1)

x.requires_grad_(True)

y = rand_like(x)

# without hook (working in 1.12.1 and 1.13.0)

lossfunc = MSELoss()

f = lossfunc(x, y)

(gradx_f,) = grad(f, x, create_graph=True)

(gradxgradx_f,) = grad(gradx_f @ ones_like(x), x)

# with hook (working in 1.12.1 and broken in 1.13.0

lossfunc = MSELoss()

def hook(module, grad_input, grad_output):

print("This is a test hook")

lossfunc.register_full_backward_hook(hook)

f = lossfunc(x, y)

# this line triggers the backward hook as expected

(gradx_f,) = grad(f, x, create_graph=True)

# the double-backward with hook crashes in 1.13, but used to work before

try:

(gradxgradx_f,) = grad(gradx_f @ ones_like(x), x)

except RuntimeError as e:

print(f"Caught RuntimeError: {e}")

```

Is this the intended behavior? If so, how do I compute higher-order derivatives through multiple backward calls, while using hooks, e.g. for monitoring?

Best,

Felix

### Versions

PyTorch version: 1.13.0+cu117

Is debug build: False

CUDA used to build PyTorch: 11.7

ROCM used to build PyTorch: N/A

OS: Ubuntu 22.04.1 LTS (x86_64)

GCC version: (Ubuntu 11.3.0-1ubuntu1~22.04) 11.3.0

Clang version: Could not collect

CMake version: Could not collect

Libc version: glibc-2.10

Python version: 3.7.6 (default, Jan 8 2020, 19:59:22) [GCC 7.3.0] (64-bit runtime)

Python platform: Linux-5.15.0-52-generic-x86_64-with-debian-bookworm-sid

Is CUDA available: False

CUDA runtime version: No CUDA

CUDA_MODULE_LOADING set to: N/A

GPU models and configuration: No CUDA

Nvidia driver version: No CUDA

cuDNN version: No CUDA

HIP runtime version: N/A

MIOpen runtime version: N/A

Is XNNPACK available: True

Versions of relevant libraries:

[pip3] backpack-for-pytorch==1.5.1.dev14+g5401cde6

[pip3] mypy==0.940

[pip3] mypy-extensions==0.4.3

[pip3] numpy==1.20.1

[pip3] pytorch-memlab==0.2.3

[pip3] torch==1.13.0

[pip3] torchvision==0.10.0

[conda] backpack-for-pytorch 1.5.1.dev14+g5401cde6 dev_0 <develop>

[conda] numpy 1.20.1 pypi_0 pypi

[conda] pytorch-memlab 0.2.3 pypi_0 pypi

[conda] torch 1.13.0 pypi_0 pypi

[conda] torchvision 0.10.0 pypi_0 pypi

cc @ezyang @gchanan @zou3519 @albanD @gqchen @pearu @nikitaved @soulitzer @Lezcano @Varal7 | 1.0 | Double-backward with `full_backward_hook` causes `RuntimeError` in PyTorch 1.13 - Hi,

I am using double-backward calls to compute Hessian matrices, in combination with PyTorch's `full_backward_hook`s. After upgrading from `1.12.1` to `1.13.0`, I now run into the following error in the second backward pass:

```bash

RuntimeError: Module backward hook for grad_input is called before the grad_output one. This happens because the gradient in your nn.Module flows to the Module's input without passing through the Module's output. Make sure that the output depends on the input and that the loss is computed based on the output.

```

The following snippet reproduces my problem when I try to compute the Hessian of `f(x, y)` w.r.t. `x` where all symbols are scalars for simplicity.

```python

"""Compute the scalar-valued second-order derivative of f(x, y) w.r.t. x.

Use Hessian-vector products (double-backward pass) in combination with

full_backward_hook.

"""

from torch import ones_like, rand, rand_like

from torch.autograd import grad

from torch.nn import MSELoss

x = rand(1)

x.requires_grad_(True)

y = rand_like(x)

# without hook (working in 1.12.1 and 1.13.0)

lossfunc = MSELoss()

f = lossfunc(x, y)

(gradx_f,) = grad(f, x, create_graph=True)

(gradxgradx_f,) = grad(gradx_f @ ones_like(x), x)

# with hook (working in 1.12.1 and broken in 1.13.0

lossfunc = MSELoss()

def hook(module, grad_input, grad_output):

print("This is a test hook")

lossfunc.register_full_backward_hook(hook)

f = lossfunc(x, y)

# this line triggers the backward hook as expected

(gradx_f,) = grad(f, x, create_graph=True)

# the double-backward with hook crashes in 1.13, but used to work before

try:

(gradxgradx_f,) = grad(gradx_f @ ones_like(x), x)

except RuntimeError as e:

print(f"Caught RuntimeError: {e}")

```

Is this the intended behavior? If so, how do I compute higher-order derivatives through multiple backward calls, while using hooks, e.g. for monitoring?

Best,

Felix

### Versions

PyTorch version: 1.13.0+cu117

Is debug build: False

CUDA used to build PyTorch: 11.7

ROCM used to build PyTorch: N/A

OS: Ubuntu 22.04.1 LTS (x86_64)

GCC version: (Ubuntu 11.3.0-1ubuntu1~22.04) 11.3.0

Clang version: Could not collect

CMake version: Could not collect

Libc version: glibc-2.10

Python version: 3.7.6 (default, Jan 8 2020, 19:59:22) [GCC 7.3.0] (64-bit runtime)

Python platform: Linux-5.15.0-52-generic-x86_64-with-debian-bookworm-sid

Is CUDA available: False

CUDA runtime version: No CUDA

CUDA_MODULE_LOADING set to: N/A

GPU models and configuration: No CUDA

Nvidia driver version: No CUDA

cuDNN version: No CUDA

HIP runtime version: N/A

MIOpen runtime version: N/A

Is XNNPACK available: True

Versions of relevant libraries:

[pip3] backpack-for-pytorch==1.5.1.dev14+g5401cde6

[pip3] mypy==0.940

[pip3] mypy-extensions==0.4.3

[pip3] numpy==1.20.1

[pip3] pytorch-memlab==0.2.3

[pip3] torch==1.13.0

[pip3] torchvision==0.10.0

[conda] backpack-for-pytorch 1.5.1.dev14+g5401cde6 dev_0 <develop>

[conda] numpy 1.20.1 pypi_0 pypi

[conda] pytorch-memlab 0.2.3 pypi_0 pypi

[conda] torch 1.13.0 pypi_0 pypi

[conda] torchvision 0.10.0 pypi_0 pypi

cc @ezyang @gchanan @zou3519 @albanD @gqchen @pearu @nikitaved @soulitzer @Lezcano @Varal7 | priority | double backward with full backward hook causes runtimeerror in pytorch hi i am using double backward calls to compute hessian matrices in combination with pytorch s full backward hook s after upgrading from to i now run into the following error in the second backward pass bash runtimeerror module backward hook for grad input is called before the grad output one this happens because the gradient in your nn module flows to the module s input without passing through the module s output make sure that the output depends on the input and that the loss is computed based on the output the following snippet reproduces my problem when i try to compute the hessian of f x y w r t x where all symbols are scalars for simplicity python compute the scalar valued second order derivative of f x y w r t x use hessian vector products double backward pass in combination with full backward hook from torch import ones like rand rand like from torch autograd import grad from torch nn import mseloss x rand x requires grad true y rand like x without hook working in and lossfunc mseloss f lossfunc x y gradx f grad f x create graph true gradxgradx f grad gradx f ones like x x with hook working in and broken in lossfunc mseloss def hook module grad input grad output print this is a test hook lossfunc register full backward hook hook f lossfunc x y this line triggers the backward hook as expected gradx f grad f x create graph true the double backward with hook crashes in but used to work before try gradxgradx f grad gradx f ones like x x except runtimeerror as e print f caught runtimeerror e is this the intended behavior if so how do i compute higher order derivatives through multiple backward calls while using hooks e g for monitoring best felix versions pytorch version is debug build false cuda used to build pytorch rocm used to build pytorch n a os ubuntu lts gcc version ubuntu clang version could not collect cmake version could not collect libc version glibc python version default jan bit runtime python platform linux generic with debian bookworm sid is cuda available false cuda runtime version no cuda cuda module loading set to n a gpu models and configuration no cuda nvidia driver version no cuda cudnn version no cuda hip runtime version n a miopen runtime version n a is xnnpack available true versions of relevant libraries backpack for pytorch mypy mypy extensions numpy pytorch memlab torch torchvision backpack for pytorch dev numpy pypi pypi pytorch memlab pypi pypi torch pypi pypi torchvision pypi pypi cc ezyang gchanan alband gqchen pearu nikitaved soulitzer lezcano | 1 |

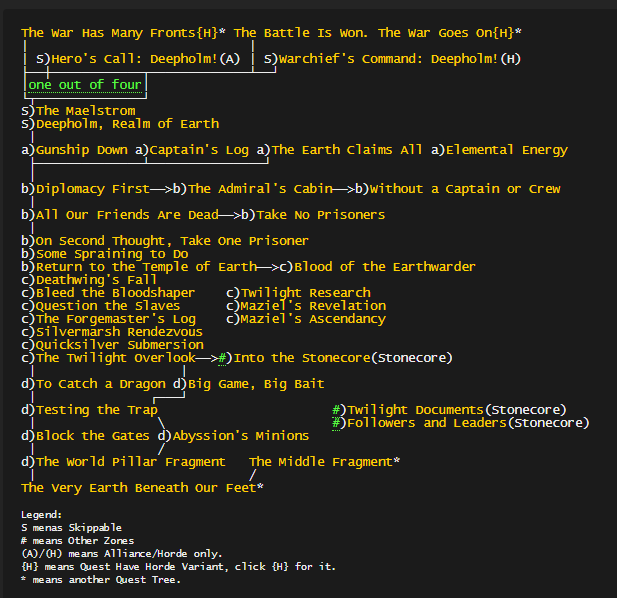

340,343 | 10,270,993,811 | IssuesEvent | 2019-08-23 13:07:03 | WoWManiaUK/Blackwing-Lair | https://api.github.com/repos/WoWManiaUK/Blackwing-Lair | closed | [QUEST] Deepholm: Quest Order: Upper World Pillar Fragment (part1) | Confirming fix in progress Fixed in Dev Priority-High Quest Order zone 80-85 Cata | **Links:**

https://www.wowhead.com/quest=26247/diplomacy-first

https://www.wowhead.com/npc=42684/stormcaller-mylra

https://www.wowhead.com/quest=26248/all-our-friends-are-dead

https://www.wowhead.com/quest=26249/the-admirals-cabin

https://www.wowhead.com/quest=26251

https://www.wowhead.com/quest=26427

https://www.wowhead.com/quest=26250

https://www.wowhead.com/quest=26254

https://www.wowhead.com/quest=26255/return-to-the-temple-of-earth

https://www.wowhead.com/quest=26258

https://www.wowhead.com/quest=26256/bleed-the-bloodshaper

https://www.wowhead.com/quest=26261/question-the-slaves

https://www.wowhead.com/quest=26260/the-forgemasters-log

https://www.wowhead.com/quest=27007/silvermarsh-rendezvous

https://www.wowhead.com/quest=27010/quicksilver-submersion

https://www.wowhead.com/quest=27061/the-twilight-overlook

https://www.wowhead.com/quest=26766/big-game-big-bait

https://www.wowhead.com/quest=26768/to-catch-a-dragon

https://www.wowhead.com/quest=28866/into-the-stonecore

https://www.wowhead.com/quest=26771/testing-the-trap

https://www.wowhead.com/quest=26857/abyssions-minions

https://www.wowhead.com/quest=26876/the-world-pillar-fragment

**Current situation of this post:**

NO ONE COMMENTS-EDIT THIS TOPIC FOR NOW.

Testing with: Holytester (dev)

Stuck at: "Diplomacy First" -> QID 26247

Problem: I made 2 new comments for the fix. I will wait for the fix for continue test.

**What should happen:**

When you complete: "Diplomacy First" -> QID 26247

You unlock 2 quests:

1-> "All our friends are dead" -> 26248

2-> "The admiral's Cabin" -> 26249

########

When you complete: "All our friends are dead" -> 26248

You unlock:

1-> "Take no Prisoners" -> 26251

########

When you complete: "The Admiral's Cabin" -> 26249

You unlock:

1-> "Without a Captain or Crew" -> 26427

########

When you complete: "Take no Prisoners" -> 26251

You unlock:

1-> "On second Thought, Take One Prisoner(26250)"

########

**When you complete: "On second Thought, Take One Prisoner(26250)"**

You unlock:

"Some Spraining to Do" -> 26254 (bugged) #2625

########

When you complete: "Some Spraining to Do(26254)

You unlock:

1->Return to the Temple of Earth -> 26255

########

When you complete: "Return to the Temple of Earth 26255"

You unlock:

1-> Deathwing's Fall -> 26258

########

When you complete: "Deathwing's Fall -> 26258"

You unlock:

1-> Bleed the Bloodshaper -> 26256

########

When you complete: "Bleed the Bloodshaper -> 26256"

You unlock:

1-> Question the Slaves -> 26261

########

When you complete: "Question the Slaves -> 26261"

You unlock:

1-> The Forgemaster's Log -> 26260

########

When you complete: "The Forgemaster's Log -> 26260 "

You unlock:

1-> Silvermarsh Rendezvous -> 27007

########

When you complete: "Silvermarsh Rendezvous -> 27007"

You unlock:

1-> Quicksilver Submersion -> 27010

########

When you complete: " Quicksilver Submersion -> 27010"

You unlock:

1-> The Twilight Overlook -> 27061

########

**When you complete: "The Twilight Overlook -> 27061"

You unlock 3 quests:

1->Big Game, Big Bait -> 26766 (you cant get 26766 and 26768 same time, its a bug)

2->To Catch a Dragon -> 26768

3-> Into the Stonecore -> 28866**

########

When you complete: "Big Game, Big Bait 26766 "+ "To Catch a Dragon 26768"

You unlock:

1-> Testing the Trap -> 26771

########

When you complete: "Testing the Trap -> 26771"

You unlock:

1-> Abyssion's Minions -> 26857

########

When you complete: "Abyssion's Minions -> 26857"

You unlock:

1-> The World Pillar Fragment -> 26876 (bugged quest)

########

When you complete: The Middle Fragment (27938) + The World Pillar Fragment -> 26876 (bugged quest) #3123

You unlock:

1-> The Very Earth Beneath Our Feet (26326)

----Part1 was done---

| 1.0 | [QUEST] Deepholm: Quest Order: Upper World Pillar Fragment (part1) - **Links:**

https://www.wowhead.com/quest=26247/diplomacy-first

https://www.wowhead.com/npc=42684/stormcaller-mylra

https://www.wowhead.com/quest=26248/all-our-friends-are-dead

https://www.wowhead.com/quest=26249/the-admirals-cabin

https://www.wowhead.com/quest=26251

https://www.wowhead.com/quest=26427

https://www.wowhead.com/quest=26250

https://www.wowhead.com/quest=26254

https://www.wowhead.com/quest=26255/return-to-the-temple-of-earth

https://www.wowhead.com/quest=26258

https://www.wowhead.com/quest=26256/bleed-the-bloodshaper

https://www.wowhead.com/quest=26261/question-the-slaves

https://www.wowhead.com/quest=26260/the-forgemasters-log

https://www.wowhead.com/quest=27007/silvermarsh-rendezvous

https://www.wowhead.com/quest=27010/quicksilver-submersion

https://www.wowhead.com/quest=27061/the-twilight-overlook

https://www.wowhead.com/quest=26766/big-game-big-bait

https://www.wowhead.com/quest=26768/to-catch-a-dragon

https://www.wowhead.com/quest=28866/into-the-stonecore

https://www.wowhead.com/quest=26771/testing-the-trap

https://www.wowhead.com/quest=26857/abyssions-minions

https://www.wowhead.com/quest=26876/the-world-pillar-fragment

**Current situation of this post:**

NO ONE COMMENTS-EDIT THIS TOPIC FOR NOW.

Testing with: Holytester (dev)

Stuck at: "Diplomacy First" -> QID 26247

Problem: I made 2 new comments for the fix. I will wait for the fix for continue test.

**What should happen:**

When you complete: "Diplomacy First" -> QID 26247

You unlock 2 quests:

1-> "All our friends are dead" -> 26248

2-> "The admiral's Cabin" -> 26249

########

When you complete: "All our friends are dead" -> 26248

You unlock:

1-> "Take no Prisoners" -> 26251

########

When you complete: "The Admiral's Cabin" -> 26249

You unlock:

1-> "Without a Captain or Crew" -> 26427

########

When you complete: "Take no Prisoners" -> 26251

You unlock:

1-> "On second Thought, Take One Prisoner(26250)"

########

**When you complete: "On second Thought, Take One Prisoner(26250)"**

You unlock:

"Some Spraining to Do" -> 26254 (bugged) #2625

########

When you complete: "Some Spraining to Do(26254)

You unlock:

1->Return to the Temple of Earth -> 26255

########

When you complete: "Return to the Temple of Earth 26255"

You unlock:

1-> Deathwing's Fall -> 26258

########

When you complete: "Deathwing's Fall -> 26258"

You unlock:

1-> Bleed the Bloodshaper -> 26256

########

When you complete: "Bleed the Bloodshaper -> 26256"

You unlock:

1-> Question the Slaves -> 26261

########

When you complete: "Question the Slaves -> 26261"

You unlock:

1-> The Forgemaster's Log -> 26260

########

When you complete: "The Forgemaster's Log -> 26260 "

You unlock:

1-> Silvermarsh Rendezvous -> 27007

########

When you complete: "Silvermarsh Rendezvous -> 27007"

You unlock:

1-> Quicksilver Submersion -> 27010

########

When you complete: " Quicksilver Submersion -> 27010"

You unlock:

1-> The Twilight Overlook -> 27061

########

**When you complete: "The Twilight Overlook -> 27061"

You unlock 3 quests:

1->Big Game, Big Bait -> 26766 (you cant get 26766 and 26768 same time, its a bug)

2->To Catch a Dragon -> 26768

3-> Into the Stonecore -> 28866**

########

When you complete: "Big Game, Big Bait 26766 "+ "To Catch a Dragon 26768"

You unlock:

1-> Testing the Trap -> 26771

########

When you complete: "Testing the Trap -> 26771"

You unlock:

1-> Abyssion's Minions -> 26857

########

When you complete: "Abyssion's Minions -> 26857"

You unlock:

1-> The World Pillar Fragment -> 26876 (bugged quest)

########

When you complete: The Middle Fragment (27938) + The World Pillar Fragment -> 26876 (bugged quest) #3123

You unlock:

1-> The Very Earth Beneath Our Feet (26326)

----Part1 was done---