Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

698,021 | 23,962,711,693 | IssuesEvent | 2022-09-12 20:43:10 | OpenPrinting/libcups | https://api.github.com/repos/OpenPrinting/libcups | closed | Public DNS-SD API for browsing, resolving, and publishing services | enhancement priority-high | Currently the various bits of CUPS, PAPPL, etc. have a conditional mess of code that use the Avahi or mDNSResponder APIs to do DNS-SD discovery and sharing. This continues to be a major pain, and CUPS should have a common API that developers can use to access whatever the local API/service is for this. Goals:

- Support the [Avahi](https://avahi.org), mDNSResponder, and [Win32](https://docs.microsoft.com/en-us/windows/win32/api/_dns/) APIs, with potential for others (systemd/D-Bus) in the future

- Support asynchronous browsing, resolution, and service publication with support for sub-types, domains, and LOC+TXT records

- Provide callbacks as needed

- Provide helper functions for renaming, getting the mDNS hostname, and getting/setting a list of DNS-SD domains

| 1.0 | Public DNS-SD API for browsing, resolving, and publishing services - Currently the various bits of CUPS, PAPPL, etc. have a conditional mess of code that use the Avahi or mDNSResponder APIs to do DNS-SD discovery and sharing. This continues to be a major pain, and CUPS should have a common API that developers can use to access whatever the local API/service is for this. Goals:

- Support the [Avahi](https://avahi.org), mDNSResponder, and [Win32](https://docs.microsoft.com/en-us/windows/win32/api/_dns/) APIs, with potential for others (systemd/D-Bus) in the future

- Support asynchronous browsing, resolution, and service publication with support for sub-types, domains, and LOC+TXT records

- Provide callbacks as needed

- Provide helper functions for renaming, getting the mDNS hostname, and getting/setting a list of DNS-SD domains

| priority | public dns sd api for browsing resolving and publishing services currently the various bits of cups pappl etc have a conditional mess of code that use the avahi or mdnsresponder apis to do dns sd discovery and sharing this continues to be a major pain and cups should have a common api that developers can use to access whatever the local api service is for this goals support the mdnsresponder and apis with potential for others systemd d bus in the future support asynchronous browsing resolution and service publication with support for sub types domains and loc txt records provide callbacks as needed provide helper functions for renaming getting the mdns hostname and getting setting a list of dns sd domains | 1 |

477,971 | 13,770,884,154 | IssuesEvent | 2020-10-07 20:59:00 | Viktor50/mymarket | https://api.github.com/repos/Viktor50/mymarket | opened | Связь между товаром в каталоге и товаром в магазине | High Priority | Т.к. кроме создания товара в магазине, нам будет необходимо держать его в актуальном состоянии (характеристики, цена, доступность), нам нужно хранить связь между карточкой товара в каталоге и магазине.

Что это значит и как должно выглядеть по итогу? Если у нас в магазине уже есть этот товар и мы нажимаем кнопку "Добавить/обновить товар в магазин", то все данные, которые мы отправляем из каталога должны перезаписать имеющиеся данные в магазине для этого товара (а не создать новый товар).

С базовой информацией по товару (наименование, модель, артикул, цена, наличие, характеристики, метатеги и т.д.) всё в принципе просто - ее просто перезаписываем, если она менялась с момента последнего использования кнопки "добавить/обновить".

Если у товара обновились картинки, то мы делаем следующее (**этот момент разработаем вместе**):

1. В БД удаляем старые ссылки на картинки для этого товара и прописываем новые

2. На стороне магазина удаляем в кэше папки с картинками этого товара. Эти папки расположены в двух местах: /var/www/ezon.by/image/cache/catalog/Good и /var/www/ezon.by/image/cache/webp/catalog/Good

3. Запускаем процесс кэширования картинок для этого товара (используем curl). | 1.0 | Связь между товаром в каталоге и товаром в магазине - Т.к. кроме создания товара в магазине, нам будет необходимо держать его в актуальном состоянии (характеристики, цена, доступность), нам нужно хранить связь между карточкой товара в каталоге и магазине.

Что это значит и как должно выглядеть по итогу? Если у нас в магазине уже есть этот товар и мы нажимаем кнопку "Добавить/обновить товар в магазин", то все данные, которые мы отправляем из каталога должны перезаписать имеющиеся данные в магазине для этого товара (а не создать новый товар).

С базовой информацией по товару (наименование, модель, артикул, цена, наличие, характеристики, метатеги и т.д.) всё в принципе просто - ее просто перезаписываем, если она менялась с момента последнего использования кнопки "добавить/обновить".

Если у товара обновились картинки, то мы делаем следующее (**этот момент разработаем вместе**):

1. В БД удаляем старые ссылки на картинки для этого товара и прописываем новые

2. На стороне магазина удаляем в кэше папки с картинками этого товара. Эти папки расположены в двух местах: /var/www/ezon.by/image/cache/catalog/Good и /var/www/ezon.by/image/cache/webp/catalog/Good

3. Запускаем процесс кэширования картинок для этого товара (используем curl). | priority | связь между товаром в каталоге и товаром в магазине т к кроме создания товара в магазине нам будет необходимо держать его в актуальном состоянии характеристики цена доступность нам нужно хранить связь между карточкой товара в каталоге и магазине что это значит и как должно выглядеть по итогу если у нас в магазине уже есть этот товар и мы нажимаем кнопку добавить обновить товар в магазин то все данные которые мы отправляем из каталога должны перезаписать имеющиеся данные в магазине для этого товара а не создать новый товар с базовой информацией по товару наименование модель артикул цена наличие характеристики метатеги и т д всё в принципе просто ее просто перезаписываем если она менялась с момента последнего использования кнопки добавить обновить если у товара обновились картинки то мы делаем следующее этот момент разработаем вместе в бд удаляем старые ссылки на картинки для этого товара и прописываем новые на стороне магазина удаляем в кэше папки с картинками этого товара эти папки расположены в двух местах var www ezon by image cache catalog good и var www ezon by image cache webp catalog good запускаем процесс кэширования картинок для этого товара используем curl | 1 |

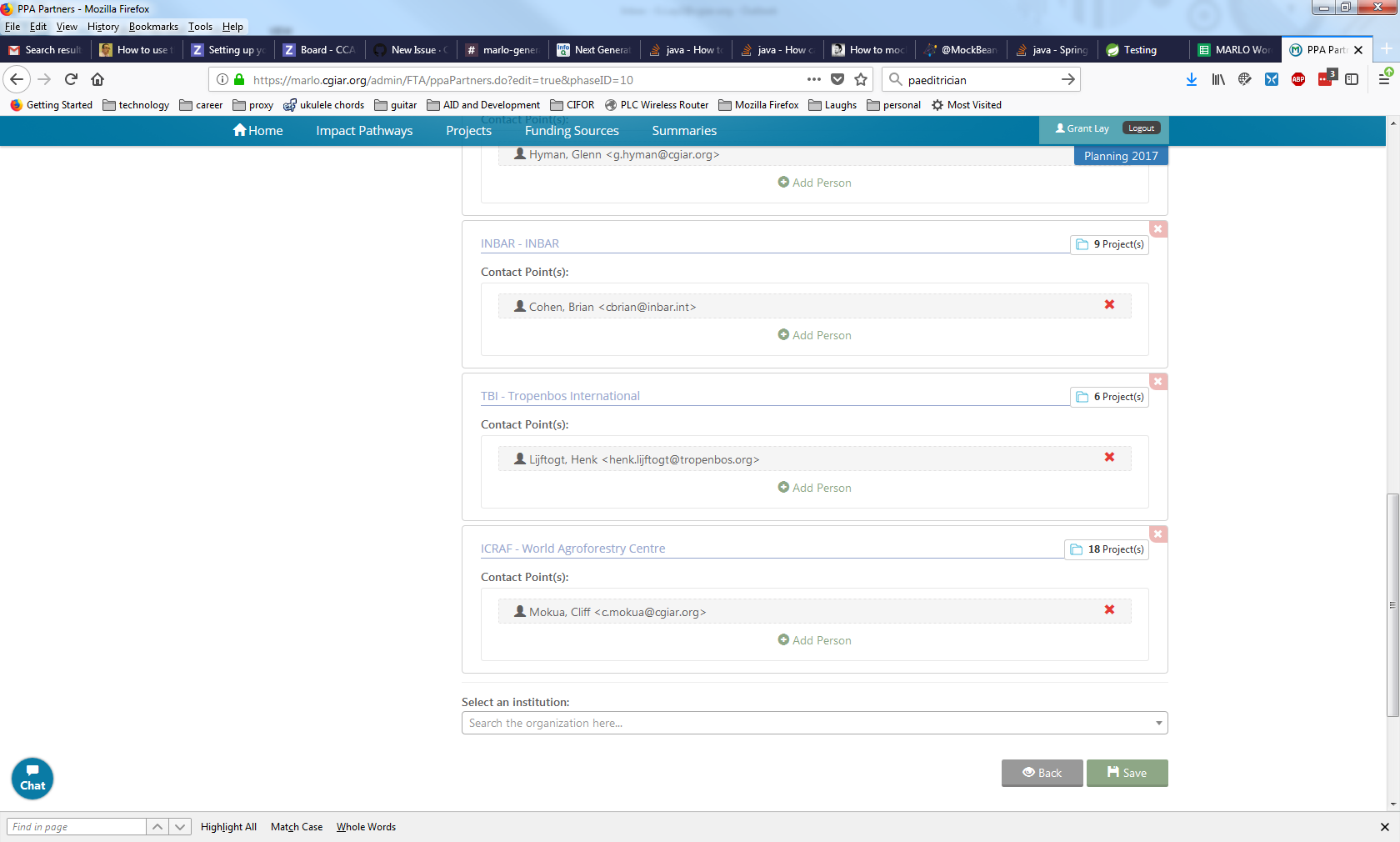

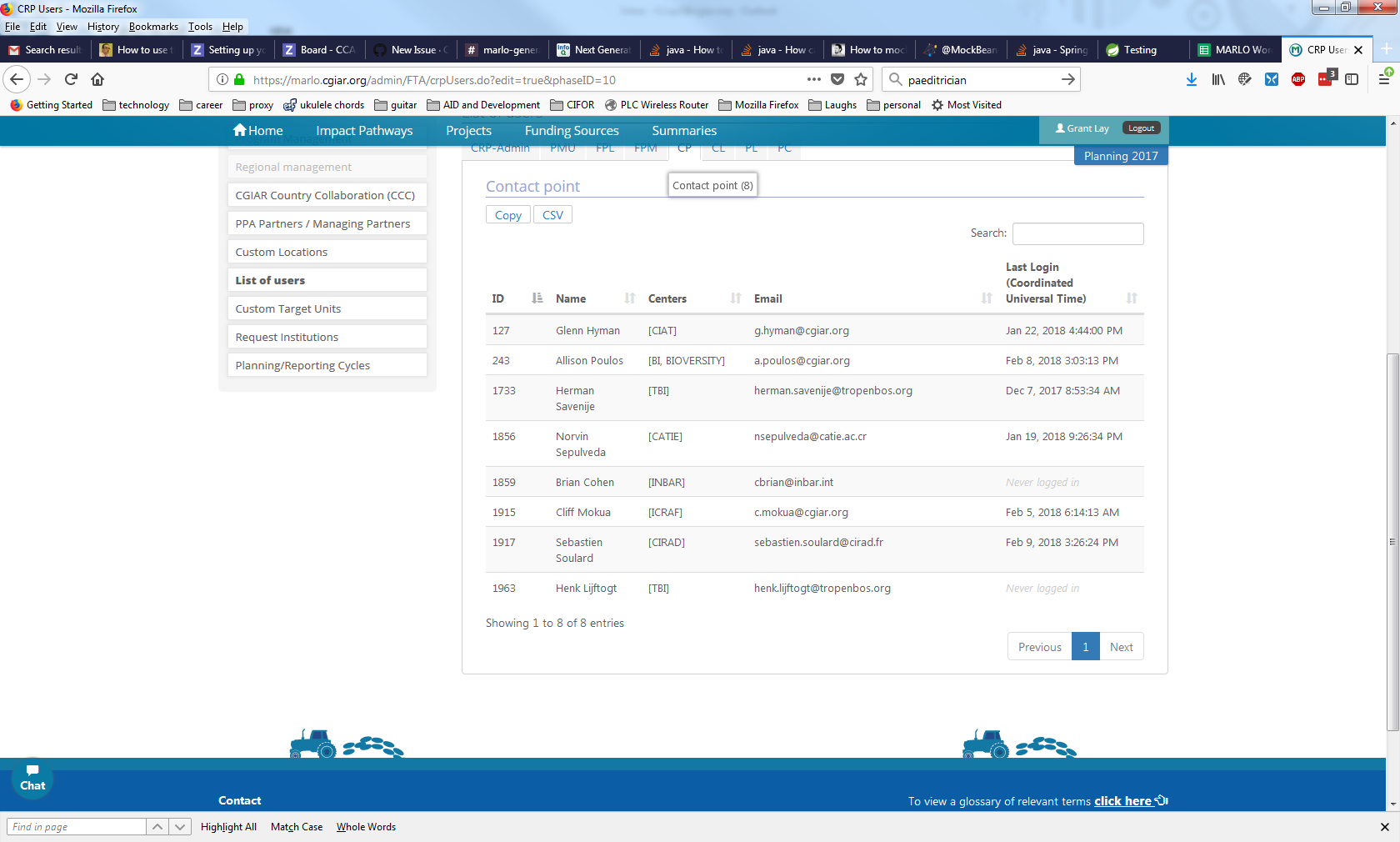

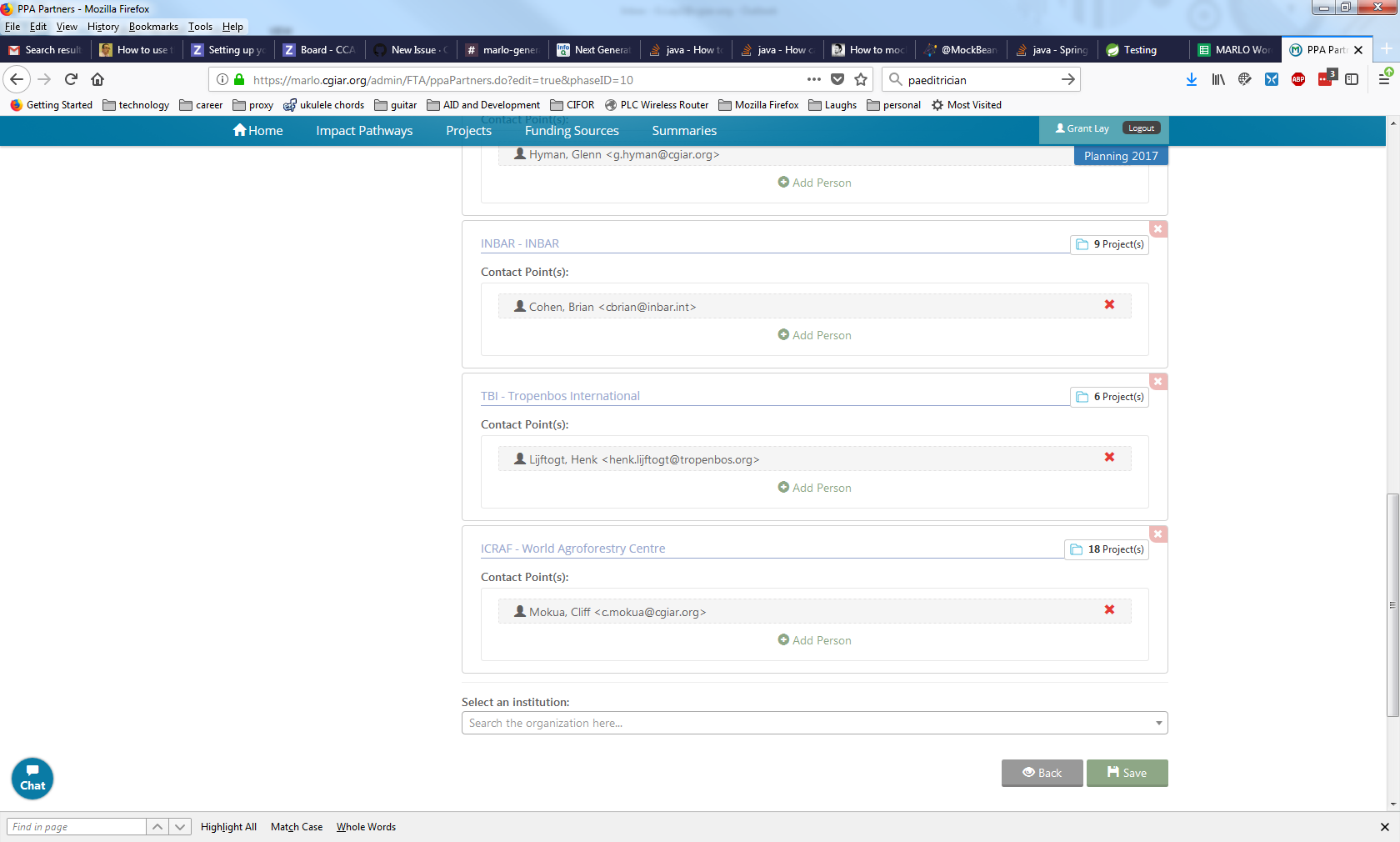

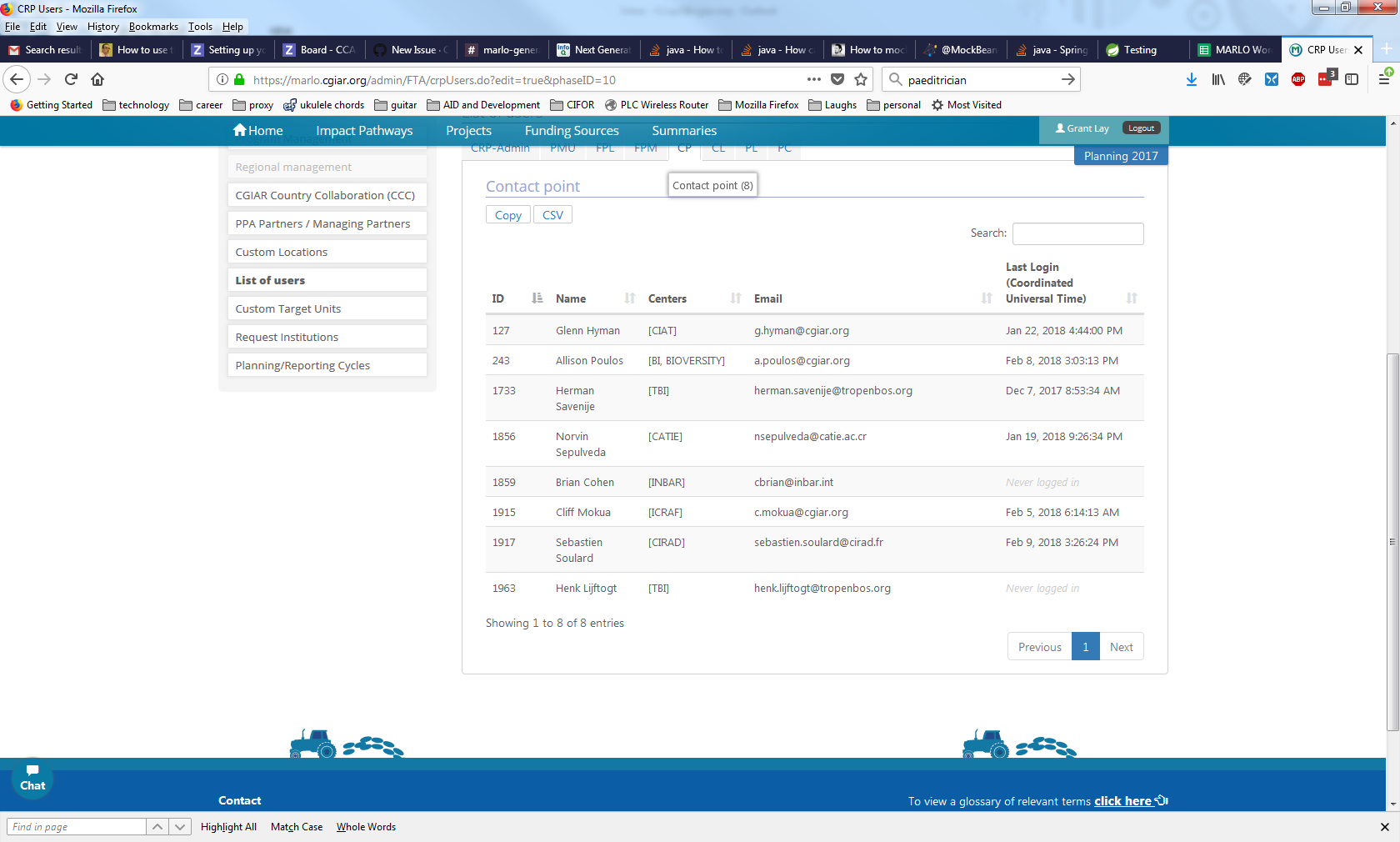

212,538 | 7,238,286,040 | IssuesEvent | 2018-02-13 14:09:43 | CCAFS/MARLO | https://api.github.com/repos/CCAFS/MARLO | closed | Removing and adding center contact points (PPA Partners) is not working correctly | Priority - High Type - Bug | I tried removing a PPA Partner contact point from FTA (Institution Tropenbos) and add a new contact point and:

1. The old contact point can not be removed.

2. The new contact point does not display in the PPA Partner screen (note that when I go to the user list that the new contact point appears and an email notification was sent out).

I am unable to remove Henk Lijftogt and newly added user Herman Savenije does not appear

In the user screen I can see that Herman has been added and Henk has not been removed.

| 1.0 | Removing and adding center contact points (PPA Partners) is not working correctly - I tried removing a PPA Partner contact point from FTA (Institution Tropenbos) and add a new contact point and:

1. The old contact point can not be removed.

2. The new contact point does not display in the PPA Partner screen (note that when I go to the user list that the new contact point appears and an email notification was sent out).

I am unable to remove Henk Lijftogt and newly added user Herman Savenije does not appear

In the user screen I can see that Herman has been added and Henk has not been removed.

| priority | removing and adding center contact points ppa partners is not working correctly i tried removing a ppa partner contact point from fta institution tropenbos and add a new contact point and the old contact point can not be removed the new contact point does not display in the ppa partner screen note that when i go to the user list that the new contact point appears and an email notification was sent out i am unable to remove henk lijftogt and newly added user herman savenije does not appear in the user screen i can see that herman has been added and henk has not been removed | 1 |

209,660 | 7,178,411,402 | IssuesEvent | 2018-01-31 16:25:12 | metasfresh/metasfresh | https://api.github.com/repos/metasfresh/metasfresh | opened | Dunning Level is not set in invoice after generating dunning doc | priority:high type:bug | ### Is this a bug or feature request?

bug

### What is the current behavior?

if you create a dunning doc for an invoice the field c_dunning_level_id remains null

#### Which are the steps to reproduce?

1. follow http://docs.metasfresh.org/webui_collection/EN/Dunning_Run.html

1. open the invoice and check dunning level

1. NOK: its null

### What is the expected or desired behavior?

the field should be filled so people can see the dunning level right on the invoice

if not filled the sql to find is quite heavy:

http://docs.metasfresh.org/sql_collection/c_dunning.html

| 1.0 | Dunning Level is not set in invoice after generating dunning doc - ### Is this a bug or feature request?

bug

### What is the current behavior?

if you create a dunning doc for an invoice the field c_dunning_level_id remains null

#### Which are the steps to reproduce?

1. follow http://docs.metasfresh.org/webui_collection/EN/Dunning_Run.html

1. open the invoice and check dunning level

1. NOK: its null

### What is the expected or desired behavior?

the field should be filled so people can see the dunning level right on the invoice

if not filled the sql to find is quite heavy:

http://docs.metasfresh.org/sql_collection/c_dunning.html

| priority | dunning level is not set in invoice after generating dunning doc is this a bug or feature request bug what is the current behavior if you create a dunning doc for an invoice the field c dunning level id remains null which are the steps to reproduce follow open the invoice and check dunning level nok its null what is the expected or desired behavior the field should be filled so people can see the dunning level right on the invoice if not filled the sql to find is quite heavy | 1 |

665,512 | 22,320,596,891 | IssuesEvent | 2022-06-14 05:52:13 | younginnovations/iatipublisher | https://api.github.com/repos/younginnovations/iatipublisher | opened | #57 Bug: Any user is allowed to access all of the activities created by any user | type: bug priority: high Backend | ### Context ###

- Desktop

- Chrome 102.0.5005.61

### Preconditon ###

https://stage.iatipublisher.yipl.com.np/

- Username: User_A

- Password: test1234

### Steps ###

- Go to

- https://stage.iatipublisher.yipl.com.np/activities/1

- https://stage.iatipublisher.yipl.com.np/activities/2

- https://stage.iatipublisher.yipl.com.np/activities/3

- https://stage.iatipublisher.yipl.com.np/activities/11

- https://stage.iatipublisher.yipl.com.np/activities/37

### Actual result ###

- User allowed to access to all of the activities which is not its own

### Expected result ###

- Activities which is only of its own is to be allowed to be accessible

| 1.0 | #57 Bug: Any user is allowed to access all of the activities created by any user - ### Context ###

- Desktop

- Chrome 102.0.5005.61

### Preconditon ###

https://stage.iatipublisher.yipl.com.np/

- Username: User_A

- Password: test1234

### Steps ###

- Go to

- https://stage.iatipublisher.yipl.com.np/activities/1

- https://stage.iatipublisher.yipl.com.np/activities/2

- https://stage.iatipublisher.yipl.com.np/activities/3

- https://stage.iatipublisher.yipl.com.np/activities/11

- https://stage.iatipublisher.yipl.com.np/activities/37

### Actual result ###

- User allowed to access to all of the activities which is not its own

### Expected result ###

- Activities which is only of its own is to be allowed to be accessible

| priority | bug any user is allowed to access all of the activities created by any user context desktop chrome preconditon username user a password steps go to actual result user allowed to access to all of the activities which is not its own expected result activities which is only of its own is to be allowed to be accessible | 1 |

372,954 | 11,030,987,586 | IssuesEvent | 2019-12-06 16:47:39 | ukwa/ukwa-ui | https://api.github.com/repos/ukwa/ukwa-ui | opened | Add more content to UKWA | Backend priority: high | Make 2015, 2016 (and 2017?) annual domain crawls accessible and searchable in UKWA.

Make 2019 Frequent crawls searchable in UKWA. | 1.0 | Add more content to UKWA - Make 2015, 2016 (and 2017?) annual domain crawls accessible and searchable in UKWA.

Make 2019 Frequent crawls searchable in UKWA. | priority | add more content to ukwa make and annual domain crawls accessible and searchable in ukwa make frequent crawls searchable in ukwa | 1 |

522,588 | 15,162,533,464 | IssuesEvent | 2021-02-12 10:45:08 | Najaran/NAJARANTIS_Modpack | https://api.github.com/repos/Najaran/NAJARANTIS_Modpack | closed | 初期ヘルスがハート3つ分になる | high priority | <!--

問題点に関するIssueを発行する場合、以下のフォームに沿って発行してください

* 基本情報、問題内容の要約については原則漏れなく記載してください。

* 再現性、スクリーンショットについては必須ではありませんが、説明時に必要があれば併せて報告ください。

* クラッシュログにはMinecraftに限らず、様々な情報が含まれています。取り扱いには十分に注意してください。(必要であればマスキングをしてください。)

-->

## 基本情報

* バージョン : alpha-0.1.4

* 環境 : クライアント

* Modpackへの改変 : なし

* 改変内容 :

## 問題内容の要約

初期ヘルスがハート3つ分になります。

## スクリーンショット

## クラッシュログ

クラッシュを伴わなかったため、無し | 1.0 | 初期ヘルスがハート3つ分になる - <!--

問題点に関するIssueを発行する場合、以下のフォームに沿って発行してください

* 基本情報、問題内容の要約については原則漏れなく記載してください。

* 再現性、スクリーンショットについては必須ではありませんが、説明時に必要があれば併せて報告ください。

* クラッシュログにはMinecraftに限らず、様々な情報が含まれています。取り扱いには十分に注意してください。(必要であればマスキングをしてください。)

-->

## 基本情報

* バージョン : alpha-0.1.4

* 環境 : クライアント

* Modpackへの改変 : なし

* 改変内容 :

## 問題内容の要約

初期ヘルスがハート3つ分になります。

## スクリーンショット

## クラッシュログ

クラッシュを伴わなかったため、無し | priority | 問題点に関するissueを発行する場合、以下のフォームに沿って発行してください 基本情報、問題内容の要約については原則漏れなく記載してください。 再現性、スクリーンショットについては必須ではありませんが、説明時に必要があれば併せて報告ください。 クラッシュログにはminecraftに限らず、様々な情報が含まれています。取り扱いには十分に注意してください。 必要であればマスキングをしてください。 基本情報 バージョン alpha 環境 クライアント modpackへの改変 なし 改変内容 問題内容の要約 。 スクリーンショット クラッシュログ クラッシュを伴わなかったため、無し | 1 |

787,871 | 27,734,142,319 | IssuesEvent | 2023-03-15 10:04:46 | AY2223S2-CS2113-T13-1/tp | https://api.github.com/repos/AY2223S2-CS2113-T13-1/tp | closed | "withdraw" command | type.Story priority.High | As a working student, I can withdraw money from my accounts so that I can spend it as cash.

### Acceptance Criteria

- Withdraw the amount of money of specified currency.

- The balance of the account should decrease by the specified amount

- Handle error if no account for this currency exists

- Handle error if amount is too big

- Handle error if input is invalid, e.g. amount cannot be parsed to a decimal

- Format: `withdraw 100 $/MYR` | 1.0 | "withdraw" command - As a working student, I can withdraw money from my accounts so that I can spend it as cash.

### Acceptance Criteria

- Withdraw the amount of money of specified currency.

- The balance of the account should decrease by the specified amount

- Handle error if no account for this currency exists

- Handle error if amount is too big

- Handle error if input is invalid, e.g. amount cannot be parsed to a decimal

- Format: `withdraw 100 $/MYR` | priority | withdraw command as a working student i can withdraw money from my accounts so that i can spend it as cash acceptance criteria withdraw the amount of money of specified currency the balance of the account should decrease by the specified amount handle error if no account for this currency exists handle error if amount is too big handle error if input is invalid e g amount cannot be parsed to a decimal format withdraw myr | 1 |

344,372 | 10,343,691,958 | IssuesEvent | 2019-09-04 09:29:47 | bbc/simorgh | https://api.github.com/repos/bbc/simorgh | closed | Confirm that the manifest.json is correct for Punjabi | high priority simorgh-core-stream ws-fp-phase2 ws-frontpage-stream | Blocked on https://github.com/bbc/simorgh/issues/3516

**Is your feature request related to a problem? Please describe.**

Confirm that the links in the manifest.json are correct for the assets added in https://github.com/bbc/simorgh/issues/3516

Confirm that the link to the manifest.json is correct - it should not live in /article it should live in the root eg bbc.com/punjabi/manifest.json

**Describe the solution you'd like**

A clear and concise description of what you want to happen.

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Testing notes**

[Tester to complete]

Dev insight: Will Cypress tests be required or are unit tests sufficient? Will there be any potential regression? etc

- [ ] This feature is expected to need manual testing.

**Additional context**

Add any other context or screenshots about the feature request here.

| 1.0 | Confirm that the manifest.json is correct for Punjabi - Blocked on https://github.com/bbc/simorgh/issues/3516

**Is your feature request related to a problem? Please describe.**

Confirm that the links in the manifest.json are correct for the assets added in https://github.com/bbc/simorgh/issues/3516

Confirm that the link to the manifest.json is correct - it should not live in /article it should live in the root eg bbc.com/punjabi/manifest.json

**Describe the solution you'd like**

A clear and concise description of what you want to happen.

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Testing notes**

[Tester to complete]

Dev insight: Will Cypress tests be required or are unit tests sufficient? Will there be any potential regression? etc

- [ ] This feature is expected to need manual testing.

**Additional context**

Add any other context or screenshots about the feature request here.

| priority | confirm that the manifest json is correct for punjabi blocked on is your feature request related to a problem please describe confirm that the links in the manifest json are correct for the assets added in confirm that the link to the manifest json is correct it should not live in article it should live in the root eg bbc com punjabi manifest json describe the solution you d like a clear and concise description of what you want to happen describe alternatives you ve considered a clear and concise description of any alternative solutions or features you ve considered testing notes dev insight will cypress tests be required or are unit tests sufficient will there be any potential regression etc this feature is expected to need manual testing additional context add any other context or screenshots about the feature request here | 1 |

80,643 | 3,572,677,747 | IssuesEvent | 2016-01-27 00:47:35 | pathwaysmedical/frasernw | https://api.github.com/repos/pathwaysmedical/frasernw | closed | CR: Hide Automatic Specialist / Clinic Updates | ChangeRequest High Priority New Feature | CR 13. November 2015. Priority 2.

Automatic **Latest Specialist / Clinic Updates** that appear on home page should be able to be edited or hidden if necessary.

#231 and this should be handled together.

------------

Comment by me: This could be tricky due to our use of `versions` via the `Papertrail` gem for generating Latest Specialist / Clinic Updates. | 1.0 | CR: Hide Automatic Specialist / Clinic Updates - CR 13. November 2015. Priority 2.

Automatic **Latest Specialist / Clinic Updates** that appear on home page should be able to be edited or hidden if necessary.

#231 and this should be handled together.

------------

Comment by me: This could be tricky due to our use of `versions` via the `Papertrail` gem for generating Latest Specialist / Clinic Updates. | priority | cr hide automatic specialist clinic updates cr november priority automatic latest specialist clinic updates that appear on home page should be able to be edited or hidden if necessary and this should be handled together comment by me this could be tricky due to our use of versions via the papertrail gem for generating latest specialist clinic updates | 1 |

540,289 | 15,805,655,086 | IssuesEvent | 2021-04-04 00:17:48 | LittleImprovementsCustom/LittleImprovementsCustom | https://api.github.com/repos/LittleImprovementsCustom/LittleImprovementsCustom | closed | Variated fungi doesn't show it's image | category: resource packs priority: high scale: simple type: bug |

> This issue was created by an automation. It was authored in Discord by Nigelrex#8452, in Beatserver #lounge. | 1.0 | Variated fungi doesn't show it's image -

> This issue was created by an automation. It was authored in Discord by Nigelrex#8452, in Beatserver #lounge. | priority | variated fungi doesn t show it s image this issue was created by an automation it was authored in discord by nigelrex in beatserver lounge | 1 |

272,101 | 8,499,108,026 | IssuesEvent | 2018-10-29 16:22:09 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | closed | Travis build does not start on stable branch | Environment Priority: High pending review review | ### Description

Travis regex does not match stable branch names.

### In case of Bug (otherwise remove this paragraph)

*Browser Affected*

(use this site: https://www.whatsmybrowser.org/ for non expert users)

- [ ] Internet Explorer

- [ ] Chrome

- [ ] Firefox

- [ ] Safari

*Browser Version Affected*

- Indicate the browser version in which the issue has been found

*Steps to reproduce*

- open a pr on stable branch

*Expected Result*

- travis buil will start

*Current Result*

- it does not start

### Other useful information (optional):

| 1.0 | Travis build does not start on stable branch - ### Description

Travis regex does not match stable branch names.

### In case of Bug (otherwise remove this paragraph)

*Browser Affected*

(use this site: https://www.whatsmybrowser.org/ for non expert users)

- [ ] Internet Explorer

- [ ] Chrome

- [ ] Firefox

- [ ] Safari

*Browser Version Affected*

- Indicate the browser version in which the issue has been found

*Steps to reproduce*

- open a pr on stable branch

*Expected Result*

- travis buil will start

*Current Result*

- it does not start

### Other useful information (optional):

| priority | travis build does not start on stable branch description travis regex does not match stable branch names in case of bug otherwise remove this paragraph browser affected use this site for non expert users internet explorer chrome firefox safari browser version affected indicate the browser version in which the issue has been found steps to reproduce open a pr on stable branch expected result travis buil will start current result it does not start other useful information optional | 1 |

116,613 | 4,704,304,169 | IssuesEvent | 2016-10-13 10:58:59 | mantidproject/mantid | https://api.github.com/repos/mantidproject/mantid | closed | Absorption for generic geometries - Improve UI, handling can materials etc | Component: Framework Misc: Roadmap Priority: High | The UI should focus on letting the use select from a list of possible geometries of sample holders etc. This may be central, central and filtered by instrument, or instrument specific (yet to decide). The sample in a cynlindrical sample holder the sample depth would need to be entered, but most other things can be defined in advance.

There are some anvil shapes on this tickety for PEARL #3119 | 1.0 | Absorption for generic geometries - Improve UI, handling can materials etc - The UI should focus on letting the use select from a list of possible geometries of sample holders etc. This may be central, central and filtered by instrument, or instrument specific (yet to decide). The sample in a cynlindrical sample holder the sample depth would need to be entered, but most other things can be defined in advance.

There are some anvil shapes on this tickety for PEARL #3119 | priority | absorption for generic geometries improve ui handling can materials etc the ui should focus on letting the use select from a list of possible geometries of sample holders etc this may be central central and filtered by instrument or instrument specific yet to decide the sample in a cynlindrical sample holder the sample depth would need to be entered but most other things can be defined in advance there are some anvil shapes on this tickety for pearl | 1 |

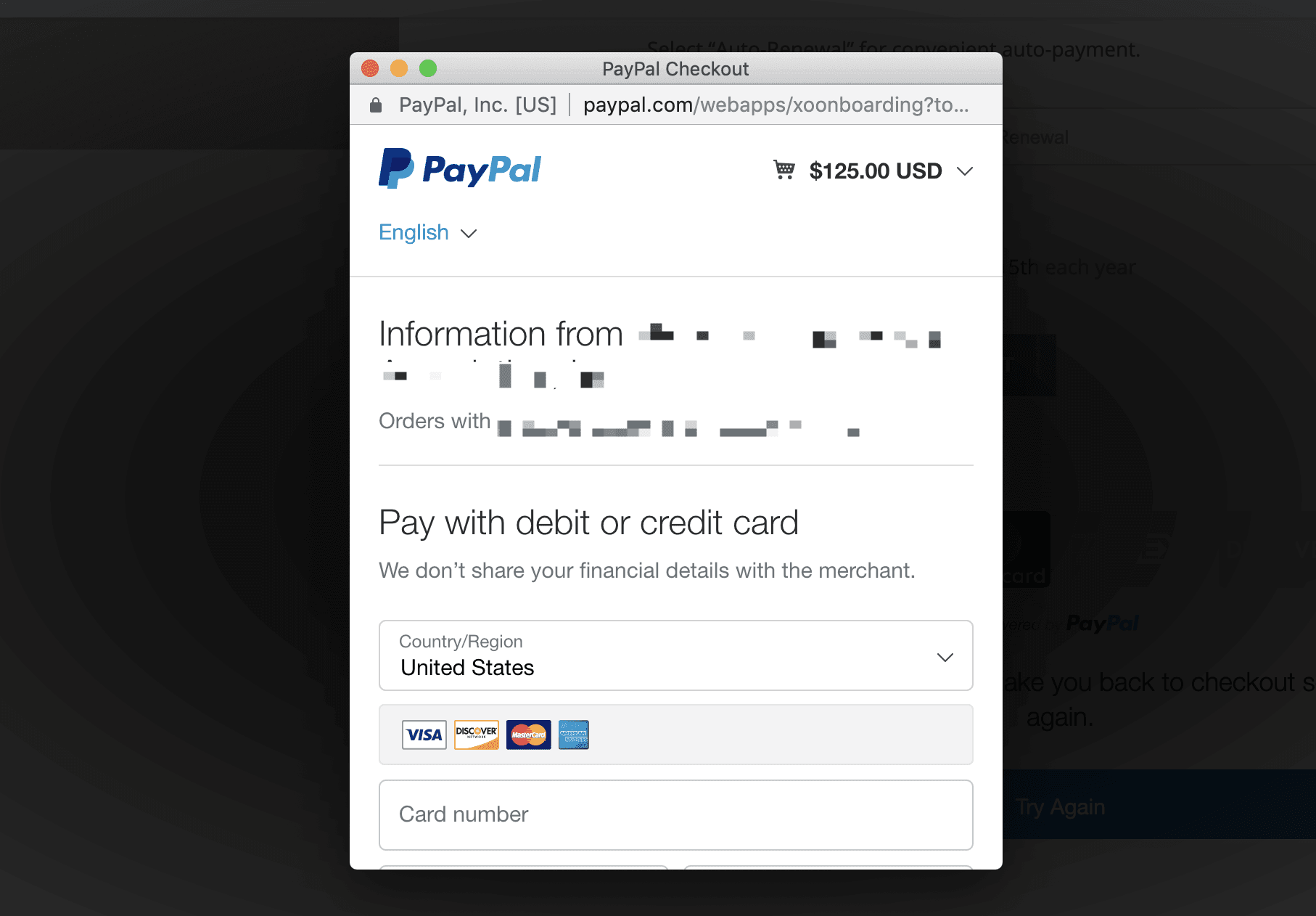

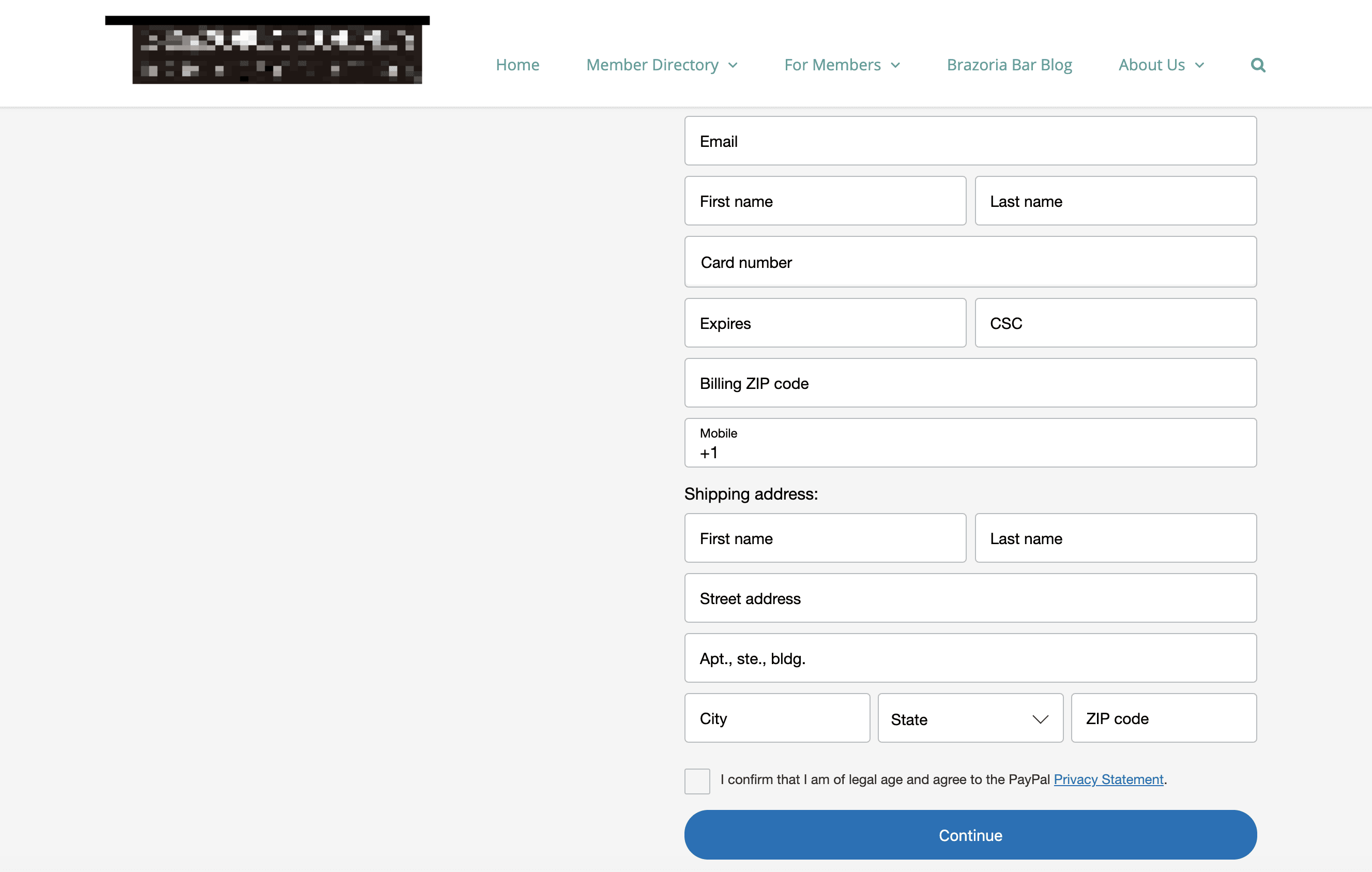

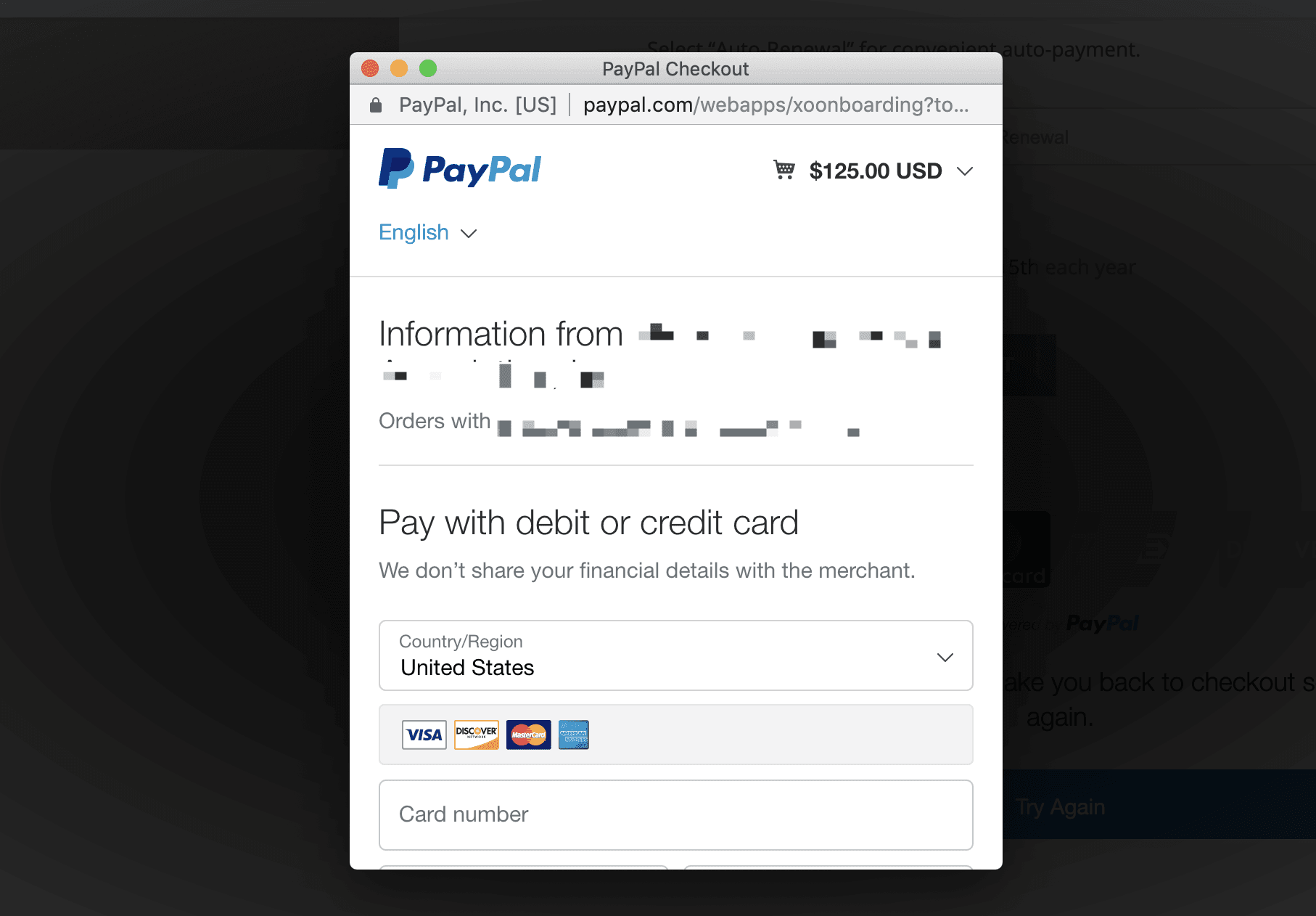

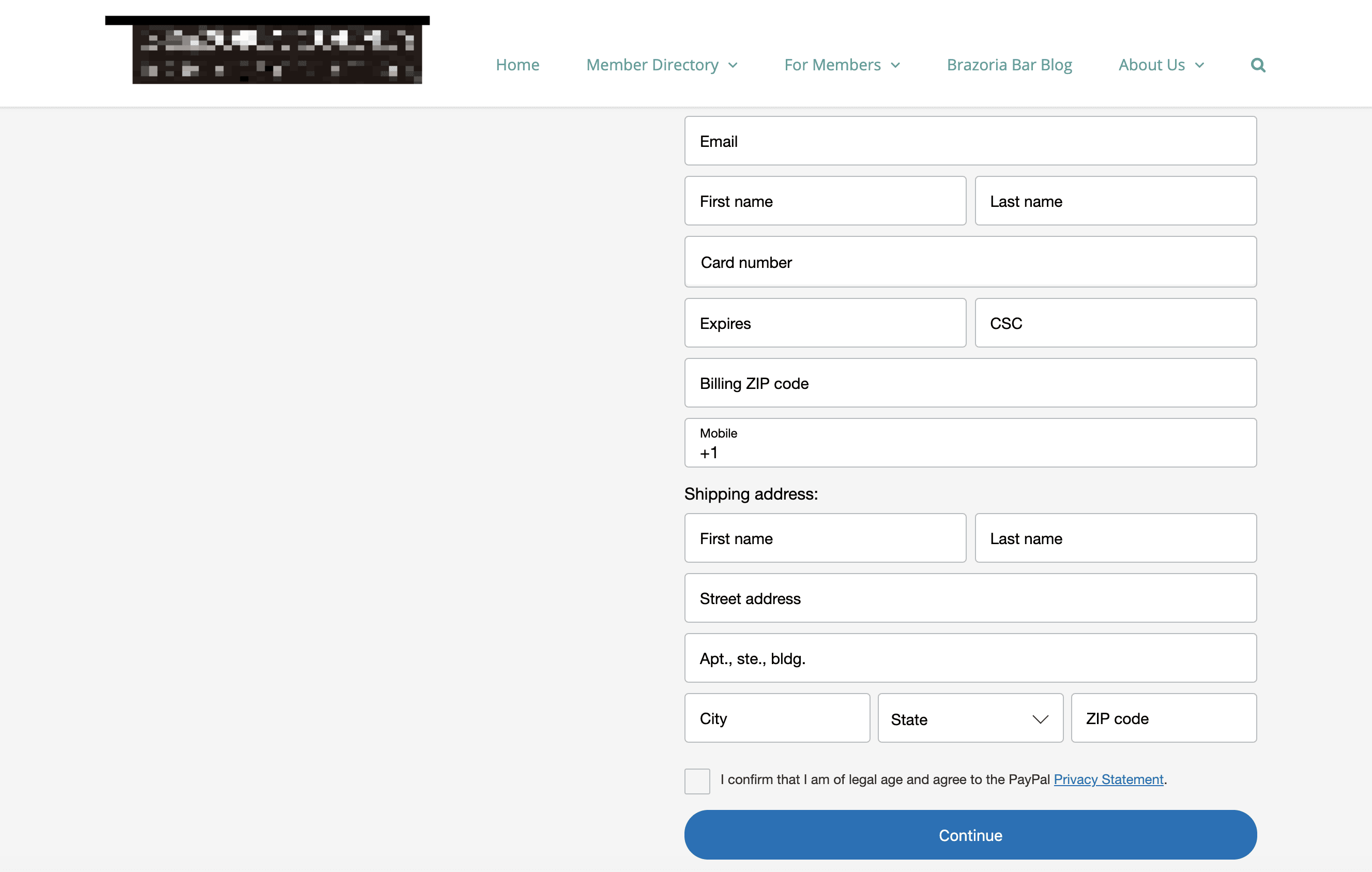

384,731 | 11,402,433,200 | IssuesEvent | 2020-01-31 03:11:08 | woocommerce/woocommerce-gateway-paypal-express-checkout | https://api.github.com/repos/woocommerce/woocommerce-gateway-paypal-express-checkout | closed | Smart Payment Buttons erroring with subscription products | Feature: Smart Payment Buttons Priority: High | 2293488-zd

## Synopsis

When clicking the credit card buttons, also known as _Smart Payment Buttons_ on the product page results in errors. This is only happening on subscription products (variable and simple). Non-subscription products allow inline checkout functionality for single product page.

## Details

**1. Set up Smart payment buttons**

These are the Smart Button settings that will get these buttons to show up on the single product page.

**2. Click Smart payment buttons from a subscription product page**

On subscription product page, click Visa, MasterCard, or Discover, to get this error message:

If you click Try Again, the modal PayPal payment information window loads:

If you click Amex, the modal payment window loads on the first click.

We were able to replicate this issue with subscription products. Based on this, I think we may be looking at another version of this bug, or a different but related bug: https://github.com/woocommerce/woocommerce-gateway-paypal-express-checkout/issues/596

No WC fatal errors logged, no PayPal errors logged.

## Expected behavior

If inline checkout for subscription products isn't supported, this checkout method should default to the modal with no error message.

| 1.0 | Smart Payment Buttons erroring with subscription products - 2293488-zd

## Synopsis

When clicking the credit card buttons, also known as _Smart Payment Buttons_ on the product page results in errors. This is only happening on subscription products (variable and simple). Non-subscription products allow inline checkout functionality for single product page.

## Details

**1. Set up Smart payment buttons**

These are the Smart Button settings that will get these buttons to show up on the single product page.

**2. Click Smart payment buttons from a subscription product page**

On subscription product page, click Visa, MasterCard, or Discover, to get this error message:

If you click Try Again, the modal PayPal payment information window loads:

If you click Amex, the modal payment window loads on the first click.

We were able to replicate this issue with subscription products. Based on this, I think we may be looking at another version of this bug, or a different but related bug: https://github.com/woocommerce/woocommerce-gateway-paypal-express-checkout/issues/596

No WC fatal errors logged, no PayPal errors logged.

## Expected behavior

If inline checkout for subscription products isn't supported, this checkout method should default to the modal with no error message.

| priority | smart payment buttons erroring with subscription products zd synopsis when clicking the credit card buttons also known as smart payment buttons on the product page results in errors this is only happening on subscription products variable and simple non subscription products allow inline checkout functionality for single product page details set up smart payment buttons these are the smart button settings that will get these buttons to show up on the single product page click smart payment buttons from a subscription product page on subscription product page click visa mastercard or discover to get this error message if you click try again the modal paypal payment information window loads if you click amex the modal payment window loads on the first click we were able to replicate this issue with subscription products based on this i think we may be looking at another version of this bug or a different but related bug no wc fatal errors logged no paypal errors logged expected behavior if inline checkout for subscription products isn t supported this checkout method should default to the modal with no error message | 1 |

103,223 | 4,165,523,729 | IssuesEvent | 2016-06-19 15:11:16 | ArdaCraft/IssueTracker | https://api.github.com/repos/ArdaCraft/IssueTracker | closed | Modblock torches missing | bug high priority mod | Noticed some of the modblock torch/lanterns were missing - may be an issue with the world converter | 1.0 | Modblock torches missing - Noticed some of the modblock torch/lanterns were missing - may be an issue with the world converter | priority | modblock torches missing noticed some of the modblock torch lanterns were missing may be an issue with the world converter | 1 |

300,520 | 9,211,330,352 | IssuesEvent | 2019-03-09 14:29:21 | qgisissuebot/QGIS | https://api.github.com/repos/qgisissuebot/QGIS | closed | Regression with expressions in Composer | Bug Priority: high Regression | ---

Author Name: **Pedro Venâncio** (Pedro Venâncio)

Original Redmine Issue: 21471, https://issues.qgis.org/issues/21471

---

I updated QGIS 3.4.5, 3.6.0 and 3.7.0 today to 3.4.5-3 (code revision 8ba90fc441), 3.6.0-4 (code revision ec30c48de9) and 3.7.0-4 (code revision 34a0650177) with OSGeo4W, and the labels with expressions are not rendered as expected, when are used more than one line.

For instance,

```

[% CASE WHEN month($now) = 3 THEN 'March' END || ' ' || year($now) %]

```

renders ok

```

March 2019

```

but

```

[%

CASE WHEN month($now) = 3 THEN 'March' END || ' ' || year($now)

%]

```

renders

```

[%

CASE WHEN month($now) = 3 THEN 'March' END || ' ' || year($now)

%]

```

I'm sure this is a regression, because it was working fine before today's update. Last week this was working fine.

| 1.0 | Regression with expressions in Composer - ---

Author Name: **Pedro Venâncio** (Pedro Venâncio)

Original Redmine Issue: 21471, https://issues.qgis.org/issues/21471

---

I updated QGIS 3.4.5, 3.6.0 and 3.7.0 today to 3.4.5-3 (code revision 8ba90fc441), 3.6.0-4 (code revision ec30c48de9) and 3.7.0-4 (code revision 34a0650177) with OSGeo4W, and the labels with expressions are not rendered as expected, when are used more than one line.

For instance,

```

[% CASE WHEN month($now) = 3 THEN 'March' END || ' ' || year($now) %]

```

renders ok

```

March 2019

```

but

```

[%

CASE WHEN month($now) = 3 THEN 'March' END || ' ' || year($now)

%]

```

renders

```

[%

CASE WHEN month($now) = 3 THEN 'March' END || ' ' || year($now)

%]

```

I'm sure this is a regression, because it was working fine before today's update. Last week this was working fine.

| priority | regression with expressions in composer author name pedro venâncio pedro venâncio original redmine issue i updated qgis and today to code revision code revision and code revision with and the labels with expressions are not rendered as expected when are used more than one line for instance renders ok march but case when month now then march end year now renders case when month now then march end year now i m sure this is a regression because it was working fine before today s update last week this was working fine | 1 |

62,184 | 3,175,710,034 | IssuesEvent | 2015-09-24 02:12:37 | mozilla/mdn-tests | https://api.github.com/repos/mozilla/mdn-tests | closed | Upgrade py.test dependency to 2.7 | Community difficulty beginner priority high | We should update to the latest version of py.test. This can be done by updating the pinned versions of pytest, py, and any pytest plugins from `requirements.txt` to the latest versions. See https://github.com/mozilla/Addon-Tests/commit/bfa2336803debe57aaffaed4e1033e5d88f4c198 as an example.

We should also remove any dependencies that are not required, such as `pytest-xdist`, which is useful for running tests in parallel, but is not a hard requirement.

Before submitting a pull request please test the changes locally. If you need any assistance, either comment here or ask in #mozwebqa on irc.mozilla.org. Details of how to get onto IRC can be found [here](https://wiki.mozilla.org/IRC). | 1.0 | Upgrade py.test dependency to 2.7 - We should update to the latest version of py.test. This can be done by updating the pinned versions of pytest, py, and any pytest plugins from `requirements.txt` to the latest versions. See https://github.com/mozilla/Addon-Tests/commit/bfa2336803debe57aaffaed4e1033e5d88f4c198 as an example.

We should also remove any dependencies that are not required, such as `pytest-xdist`, which is useful for running tests in parallel, but is not a hard requirement.

Before submitting a pull request please test the changes locally. If you need any assistance, either comment here or ask in #mozwebqa on irc.mozilla.org. Details of how to get onto IRC can be found [here](https://wiki.mozilla.org/IRC). | priority | upgrade py test dependency to we should update to the latest version of py test this can be done by updating the pinned versions of pytest py and any pytest plugins from requirements txt to the latest versions see as an example we should also remove any dependencies that are not required such as pytest xdist which is useful for running tests in parallel but is not a hard requirement before submitting a pull request please test the changes locally if you need any assistance either comment here or ask in mozwebqa on irc mozilla org details of how to get onto irc can be found | 1 |

766,299 | 26,877,412,694 | IssuesEvent | 2023-02-05 07:34:18 | nokotan/siv3d-studio | https://api.github.com/repos/nokotan/siv3d-studio | opened | 幅の狭い端末でアクティビティバーが押せなくなることがある | priority: high wontfix | ### 再現手順

テキストエディタで右側にカーソルを置いた状態でエクスプローラを開く

### 再現端末

Android 12 + Google Chrome | 1.0 | 幅の狭い端末でアクティビティバーが押せなくなることがある - ### 再現手順

テキストエディタで右側にカーソルを置いた状態でエクスプローラを開く

### 再現端末

Android 12 + Google Chrome | priority | 幅の狭い端末でアクティビティバーが押せなくなることがある 再現手順 テキストエディタで右側にカーソルを置いた状態でエクスプローラを開く 再現端末 android google chrome | 1 |

55,619 | 3,073,968,333 | IssuesEvent | 2015-08-20 02:20:08 | canadainc/ilmtest | https://api.github.com/repos/canadainc/ilmtest | opened | Implement client-server connection for download sessions | logic Priority-High task | To download the database and uncompress it. | 1.0 | Implement client-server connection for download sessions - To download the database and uncompress it. | priority | implement client server connection for download sessions to download the database and uncompress it | 1 |

684,946 | 23,439,206,702 | IssuesEvent | 2022-08-15 13:21:11 | wp-media/wp-rocket | https://api.github.com/repos/wp-media/wp-rocket | closed | Detect if WP Rocket is on one.com environment | type: enhancement priority: high effort: [S] one.com | Hi

Similar to what WP Rocket has for other hosting providers such as seen in:

https://github.com/wp-media/wp-rocket/blob/develop/inc/ThirdParty/Hostings/HostResolver.php

It would be great if this could also be done for domains on one.com environment.

We have a lot of server variables for instance $_SERVER['ONECOM_DOMAIN_NAME'] that would only be present on the one.com environment.

So if you have a domain let's call it domain.tld the ONECOM_DOMAIN_NAME server variable would be "domain.tld", simply detecting if this variable gives any value would be good enough to detect if it is on a one.com environment or not.

The reason for this request is that once this detection is in place, we can also improve some other things such as certain tailor-made pre-selected settings for domains on one.com environment but for those I will create separate tickets as to not make this one too cluttered.

Thank you and please let me know if any further information is required from my side. | 1.0 | Detect if WP Rocket is on one.com environment - Hi

Similar to what WP Rocket has for other hosting providers such as seen in:

https://github.com/wp-media/wp-rocket/blob/develop/inc/ThirdParty/Hostings/HostResolver.php

It would be great if this could also be done for domains on one.com environment.

We have a lot of server variables for instance $_SERVER['ONECOM_DOMAIN_NAME'] that would only be present on the one.com environment.

So if you have a domain let's call it domain.tld the ONECOM_DOMAIN_NAME server variable would be "domain.tld", simply detecting if this variable gives any value would be good enough to detect if it is on a one.com environment or not.

The reason for this request is that once this detection is in place, we can also improve some other things such as certain tailor-made pre-selected settings for domains on one.com environment but for those I will create separate tickets as to not make this one too cluttered.

Thank you and please let me know if any further information is required from my side. | priority | detect if wp rocket is on one com environment hi similar to what wp rocket has for other hosting providers such as seen in it would be great if this could also be done for domains on one com environment we have a lot of server variables for instance server that would only be present on the one com environment so if you have a domain let s call it domain tld the onecom domain name server variable would be domain tld simply detecting if this variable gives any value would be good enough to detect if it is on a one com environment or not the reason for this request is that once this detection is in place we can also improve some other things such as certain tailor made pre selected settings for domains on one com environment but for those i will create separate tickets as to not make this one too cluttered thank you and please let me know if any further information is required from my side | 1 |

323,017 | 9,842,039,595 | IssuesEvent | 2019-06-18 08:24:07 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | closed | Ability to configure CORS header "Access-Control-Expose-Headers" in WSO2 API Manager | 2.6.0 Priority/High Type/Improvement commitment | The ability to configure the CORS header "Access-Control-Expose-Headers" is not available in the WSO2 API Manager at the moment.

It would be better if this feature can be added to a future release. | 1.0 | Ability to configure CORS header "Access-Control-Expose-Headers" in WSO2 API Manager - The ability to configure the CORS header "Access-Control-Expose-Headers" is not available in the WSO2 API Manager at the moment.

It would be better if this feature can be added to a future release. | priority | ability to configure cors header access control expose headers in api manager the ability to configure the cors header access control expose headers is not available in the api manager at the moment it would be better if this feature can be added to a future release | 1 |

570,080 | 17,018,452,473 | IssuesEvent | 2021-07-02 15:09:16 | lakeboy93/Money4Mobs | https://api.github.com/repos/lakeboy93/Money4Mobs | closed | Using /mk with no parameters throws an error in the console. | High-Priority bug | There appears to be no handler for /mk on its own, resulting on an ArrayIndexOutOfBoundsException.

```

[05:44:17 ERROR]: null

org.bukkit.command.CommandException: Unhandled exception executing command 'mk' in plugin Money4Mobs v1.6.4

at org.bukkit.command.PluginCommand.execute(PluginCommand.java:47) ~[patched_1.17.jar:git-Paper-69]

at org.bukkit.command.SimpleCommandMap.dispatch(SimpleCommandMap.java:159) ~[patched_1.17.jar:git-Paper-69]

at org.bukkit.craftbukkit.v1_17_R1.CraftServer.dispatchCommand(CraftServer.java:821) ~[patched_1.17.jar:git-Paper-69]

at net.minecraft.server.network.ServerGamePacketListenerImpl.handleCommand(ServerGamePacketListenerImpl.java:2185) ~[?:?]

at net.minecraft.server.network.ServerGamePacketListenerImpl.handleChat(ServerGamePacketListenerImpl.java:1996) ~[?:?]

at net.minecraft.server.network.ServerGamePacketListenerImpl.handleChat(ServerGamePacketListenerImpl.java:1977) ~[?:?]

at net.minecraft.network.protocol.game.ServerboundChatPacket.handle(ServerboundChatPacket.java:46) ~[?:?]

at net.minecraft.network.protocol.game.ServerboundChatPacket.handle(ServerboundChatPacket.java:6) ~[?:?]

at net.minecraft.network.protocol.PacketUtils.lambda$ensureRunningOnSameThread$1(PacketUtils.java:36) ~[?:?]

at net.minecraft.server.TickTask.run(TickTask.java:18) ~[patched_1.17.jar:git-Paper-69]

at net.minecraft.util.thread.BlockableEventLoop.doRunTask(BlockableEventLoop.java:149) ~[?:?]

at net.minecraft.util.thread.ReentrantBlockableEventLoop.doRunTask(ReentrantBlockableEventLoop.java:23) ~[?:?]

at net.minecraft.server.MinecraftServer.doRunTask(MinecraftServer.java:1340) ~[patched_1.17.jar:git-Paper-69]

at net.minecraft.server.MinecraftServer.shouldRun(MinecraftServer.java:193) ~[patched_1.17.jar:git-Paper-69]

at net.minecraft.util.thread.BlockableEventLoop.pollTask(BlockableEventLoop.java:122) ~[?:?]

at net.minecraft.server.MinecraftServer.pollTaskInternal(MinecraftServer.java:1319) ~[patched_1.17.jar:git-Paper-69]

at net.minecraft.server.MinecraftServer.pollTask(MinecraftServer.java:1312) ~[patched_1.17.jar:git-Paper-69]

at net.minecraft.util.thread.BlockableEventLoop.managedBlock(BlockableEventLoop.java:132) ~[?:?]

at net.minecraft.server.MinecraftServer.waitUntilNextTick(MinecraftServer.java:1273) ~[patched_1.17.jar:git-Paper-69]

at net.minecraft.server.MinecraftServer.runServer(MinecraftServer.java:1184) ~[patched_1.17.jar:git-Paper-69]

at net.minecraft.server.MinecraftServer.lambda$spin$0(MinecraftServer.java:320) ~[patched_1.17.jar:git-Paper-69] at java.lang.Thread.run(Thread.java:831) [?:?]

Caused by: java.lang.ArrayIndexOutOfBoundsException: Index 0 out of bounds for length 0

at Latch.Money4Mobs.MkCommand.onCommand(MkCommand.java:764) ~[?:?]

at org.bukkit.command.PluginCommand.execute(PluginCommand.java:45) ~[patched_1.17.jar:git-Paper-69]

... 21 more`

```

### Server Information

Paper 1.17 build 69 with the following plugins:

- 1.17---Money4Mobs-v1.6.4

- iEconomy Release 1.1

- LuckPerms Bukkit-5.3.48

- Vault

| 1.0 | Using /mk with no parameters throws an error in the console. - There appears to be no handler for /mk on its own, resulting on an ArrayIndexOutOfBoundsException.

```

[05:44:17 ERROR]: null

org.bukkit.command.CommandException: Unhandled exception executing command 'mk' in plugin Money4Mobs v1.6.4

at org.bukkit.command.PluginCommand.execute(PluginCommand.java:47) ~[patched_1.17.jar:git-Paper-69]

at org.bukkit.command.SimpleCommandMap.dispatch(SimpleCommandMap.java:159) ~[patched_1.17.jar:git-Paper-69]

at org.bukkit.craftbukkit.v1_17_R1.CraftServer.dispatchCommand(CraftServer.java:821) ~[patched_1.17.jar:git-Paper-69]

at net.minecraft.server.network.ServerGamePacketListenerImpl.handleCommand(ServerGamePacketListenerImpl.java:2185) ~[?:?]

at net.minecraft.server.network.ServerGamePacketListenerImpl.handleChat(ServerGamePacketListenerImpl.java:1996) ~[?:?]

at net.minecraft.server.network.ServerGamePacketListenerImpl.handleChat(ServerGamePacketListenerImpl.java:1977) ~[?:?]

at net.minecraft.network.protocol.game.ServerboundChatPacket.handle(ServerboundChatPacket.java:46) ~[?:?]

at net.minecraft.network.protocol.game.ServerboundChatPacket.handle(ServerboundChatPacket.java:6) ~[?:?]

at net.minecraft.network.protocol.PacketUtils.lambda$ensureRunningOnSameThread$1(PacketUtils.java:36) ~[?:?]

at net.minecraft.server.TickTask.run(TickTask.java:18) ~[patched_1.17.jar:git-Paper-69]

at net.minecraft.util.thread.BlockableEventLoop.doRunTask(BlockableEventLoop.java:149) ~[?:?]

at net.minecraft.util.thread.ReentrantBlockableEventLoop.doRunTask(ReentrantBlockableEventLoop.java:23) ~[?:?]

at net.minecraft.server.MinecraftServer.doRunTask(MinecraftServer.java:1340) ~[patched_1.17.jar:git-Paper-69]

at net.minecraft.server.MinecraftServer.shouldRun(MinecraftServer.java:193) ~[patched_1.17.jar:git-Paper-69]

at net.minecraft.util.thread.BlockableEventLoop.pollTask(BlockableEventLoop.java:122) ~[?:?]

at net.minecraft.server.MinecraftServer.pollTaskInternal(MinecraftServer.java:1319) ~[patched_1.17.jar:git-Paper-69]

at net.minecraft.server.MinecraftServer.pollTask(MinecraftServer.java:1312) ~[patched_1.17.jar:git-Paper-69]

at net.minecraft.util.thread.BlockableEventLoop.managedBlock(BlockableEventLoop.java:132) ~[?:?]

at net.minecraft.server.MinecraftServer.waitUntilNextTick(MinecraftServer.java:1273) ~[patched_1.17.jar:git-Paper-69]

at net.minecraft.server.MinecraftServer.runServer(MinecraftServer.java:1184) ~[patched_1.17.jar:git-Paper-69]

at net.minecraft.server.MinecraftServer.lambda$spin$0(MinecraftServer.java:320) ~[patched_1.17.jar:git-Paper-69] at java.lang.Thread.run(Thread.java:831) [?:?]

Caused by: java.lang.ArrayIndexOutOfBoundsException: Index 0 out of bounds for length 0

at Latch.Money4Mobs.MkCommand.onCommand(MkCommand.java:764) ~[?:?]

at org.bukkit.command.PluginCommand.execute(PluginCommand.java:45) ~[patched_1.17.jar:git-Paper-69]

... 21 more`

```

### Server Information

Paper 1.17 build 69 with the following plugins:

- 1.17---Money4Mobs-v1.6.4

- iEconomy Release 1.1

- LuckPerms Bukkit-5.3.48

- Vault

| priority | using mk with no parameters throws an error in the console there appears to be no handler for mk on its own resulting on an arrayindexoutofboundsexception null org bukkit command commandexception unhandled exception executing command mk in plugin at org bukkit command plugincommand execute plugincommand java at org bukkit command simplecommandmap dispatch simplecommandmap java at org bukkit craftbukkit craftserver dispatchcommand craftserver java at net minecraft server network servergamepacketlistenerimpl handlecommand servergamepacketlistenerimpl java at net minecraft server network servergamepacketlistenerimpl handlechat servergamepacketlistenerimpl java at net minecraft server network servergamepacketlistenerimpl handlechat servergamepacketlistenerimpl java at net minecraft network protocol game serverboundchatpacket handle serverboundchatpacket java at net minecraft network protocol game serverboundchatpacket handle serverboundchatpacket java at net minecraft network protocol packetutils lambda ensurerunningonsamethread packetutils java at net minecraft server ticktask run ticktask java at net minecraft util thread blockableeventloop doruntask blockableeventloop java at net minecraft util thread reentrantblockableeventloop doruntask reentrantblockableeventloop java at net minecraft server minecraftserver doruntask minecraftserver java at net minecraft server minecraftserver shouldrun minecraftserver java at net minecraft util thread blockableeventloop polltask blockableeventloop java at net minecraft server minecraftserver polltaskinternal minecraftserver java at net minecraft server minecraftserver polltask minecraftserver java at net minecraft util thread blockableeventloop managedblock blockableeventloop java at net minecraft server minecraftserver waituntilnexttick minecraftserver java at net minecraft server minecraftserver runserver minecraftserver java at net minecraft server minecraftserver lambda spin minecraftserver java at java lang thread run thread java caused by java lang arrayindexoutofboundsexception index out of bounds for length at latch mkcommand oncommand mkcommand java at org bukkit command plugincommand execute plugincommand java more server information paper build with the following plugins ieconomy release luckperms bukkit vault | 1 |

83,449 | 3,634,984,495 | IssuesEvent | 2016-02-11 20:02:35 | ELVIS-Project/elvis-database | https://api.github.com/repos/ELVIS-Project/elvis-database | opened | 1-Click Dev Deployments | Priority: HIGH Status: IN PROGRESS Type: ENHANCEMENT | I'm writing a script to automate `dev` deployments. This technique will make it much easier to deploy builds.

The script clones the dev branch, sets up the virtual environment with requirements. The current build is moved to a back-up location.

Later on, we will adapt this script for 1-Click Prod deployments. | 1.0 | 1-Click Dev Deployments - I'm writing a script to automate `dev` deployments. This technique will make it much easier to deploy builds.

The script clones the dev branch, sets up the virtual environment with requirements. The current build is moved to a back-up location.

Later on, we will adapt this script for 1-Click Prod deployments. | priority | click dev deployments i m writing a script to automate dev deployments this technique will make it much easier to deploy builds the script clones the dev branch sets up the virtual environment with requirements the current build is moved to a back up location later on we will adapt this script for click prod deployments | 1 |

258,084 | 8,154,424,794 | IssuesEvent | 2018-08-23 03:12:03 | WordImpress/wp-business-reviews | https://api.github.com/repos/WordImpress/wp-business-reviews | opened | compat(collection): ensure collections render in Safari 10.X.X | high-priority | ## User Story

As a Safari user, I want collections to work in Safari 10.X.X so I can see my reviews.

## Current Behavior

Reviews do not appear in Safari 10.1.2 due to a console error related to JS uglification:

> SyntaxError: Cannot declare a let variable twice: 'e'.

The issue does not exist in Safari 11+.

## Expected Behavior

Collections render without console errors.

## Acceptance Criteria

- [ ] A collection renders in Safari 10.1.2 (confirm using BrowserStack).

- [ ] The [new Demos page](https://wpbusinessreviews.com/demos/) works in Safari 10.1.2.

## Possible Solution

The error and fix are documented in this [GitHub comment](https://github.com/mishoo/UglifyJS2/issues/1753#issuecomment-324814782). | 1.0 | compat(collection): ensure collections render in Safari 10.X.X - ## User Story

As a Safari user, I want collections to work in Safari 10.X.X so I can see my reviews.

## Current Behavior

Reviews do not appear in Safari 10.1.2 due to a console error related to JS uglification:

> SyntaxError: Cannot declare a let variable twice: 'e'.

The issue does not exist in Safari 11+.

## Expected Behavior

Collections render without console errors.

## Acceptance Criteria

- [ ] A collection renders in Safari 10.1.2 (confirm using BrowserStack).

- [ ] The [new Demos page](https://wpbusinessreviews.com/demos/) works in Safari 10.1.2.

## Possible Solution

The error and fix are documented in this [GitHub comment](https://github.com/mishoo/UglifyJS2/issues/1753#issuecomment-324814782). | priority | compat collection ensure collections render in safari x x user story as a safari user i want collections to work in safari x x so i can see my reviews current behavior reviews do not appear in safari due to a console error related to js uglification syntaxerror cannot declare a let variable twice e the issue does not exist in safari expected behavior collections render without console errors acceptance criteria a collection renders in safari confirm using browserstack the works in safari possible solution the error and fix are documented in this | 1 |

406,781 | 11,902,956,084 | IssuesEvent | 2020-03-30 14:40:42 | sous-chefs/java | https://api.github.com/repos/sous-chefs/java | closed | Add new attr for Homebrew Cask name | Feature Request Priority: High | Because Oracle likes to hide things behind login wall, naturally push is to go towards `adoptopenjdk` , this should extend to macOS + Homebrew.

At present Homebrew is hardcode to `java` in https://github.com/sous-chefs/java/blob/master/recipes/homebrew.rb#L6

Propose a new attribute to allow for passing cask name like `adoptopenjdk`.

Will do up a PR, but looking for idea/input. | 1.0 | Add new attr for Homebrew Cask name - Because Oracle likes to hide things behind login wall, naturally push is to go towards `adoptopenjdk` , this should extend to macOS + Homebrew.

At present Homebrew is hardcode to `java` in https://github.com/sous-chefs/java/blob/master/recipes/homebrew.rb#L6

Propose a new attribute to allow for passing cask name like `adoptopenjdk`.

Will do up a PR, but looking for idea/input. | priority | add new attr for homebrew cask name because oracle likes to hide things behind login wall naturally push is to go towards adoptopenjdk this should extend to macos homebrew at present homebrew is hardcode to java in propose a new attribute to allow for passing cask name like adoptopenjdk will do up a pr but looking for idea input | 1 |

658,193 | 21,880,274,778 | IssuesEvent | 2022-05-19 13:48:50 | bounswe/bounswe2022group8 | https://api.github.com/repos/bounswe/bounswe2022group8 | closed | Practice App: Feature-Comment | Effort: High Priority: High Status: in progress practice app | ### What's up?

Endpoints get,delete,post for comment on an art item will be implemented after the implementations of serializers and database are completed.

| API | API Method | API Description |

| :----: | :----: | :----: |

| ```api/v1/comments/artitem/<int:id>``` | ```GET``` | Get all of the comments of the specific art item (by id) |

| ```api/v1/comments/artitem/<int:id>``` | ```POST``` | Post comment for the specific art item (by id) |

| ```api/v1/user/<int:id>/comment/<int:commentid>``` | ```DELETE``` | Delete the comment by commentid posted by the user having id |

### To Do

- [x] Create new branch 'feature/comment' based on practice_app

- [x] Create endpoints to create, view, delete comments

- [x] Write unit tests

- [x] Create html page in templates

- [x] Create pull request and assign @serdarakol as reviewer as stated in [meeting notes #14](https://github.com/bounswe/bounswe2022group8/wiki/Week-10-Meeting-%2314)

### Deadline

15.05.2022 @20.00

### Additional Information

_No response_

### Reviewers

_No response_ | 1.0 | Practice App: Feature-Comment - ### What's up?

Endpoints get,delete,post for comment on an art item will be implemented after the implementations of serializers and database are completed.

| API | API Method | API Description |

| :----: | :----: | :----: |

| ```api/v1/comments/artitem/<int:id>``` | ```GET``` | Get all of the comments of the specific art item (by id) |

| ```api/v1/comments/artitem/<int:id>``` | ```POST``` | Post comment for the specific art item (by id) |

| ```api/v1/user/<int:id>/comment/<int:commentid>``` | ```DELETE``` | Delete the comment by commentid posted by the user having id |

### To Do

- [x] Create new branch 'feature/comment' based on practice_app

- [x] Create endpoints to create, view, delete comments

- [x] Write unit tests

- [x] Create html page in templates

- [x] Create pull request and assign @serdarakol as reviewer as stated in [meeting notes #14](https://github.com/bounswe/bounswe2022group8/wiki/Week-10-Meeting-%2314)

### Deadline

15.05.2022 @20.00

### Additional Information

_No response_

### Reviewers

_No response_ | priority | practice app feature comment what s up endpoints get delete post for comment on an art item will be implemented after the implementations of serializers and database are completed api api method api description api comments artitem get get all of the comments of the specific art item by id api comments artitem post post comment for the specific art item by id api user comment delete delete the comment by commentid posted by the user having id to do create new branch feature comment based on practice app create endpoints to create view delete comments write unit tests create html page in templates create pull request and assign serdarakol as reviewer as stated in deadline additional information no response reviewers no response | 1 |

365,785 | 10,797,729,419 | IssuesEvent | 2019-11-06 08:34:01 | oceanprotocol/commons | https://api.github.com/repos/oceanprotocol/commons | opened | Can't parse description field in asset registration | bug help wanted priority:high | ## Current Behavior

The following description will cause errors and break the registration flow;

```

Line 1

Line 2

```

Error trace (but just see for yourself)

```

Response message:

[{"message":"'Line 1\\n\\nLine 2' does not match '^(.*)$'","path":"base/description"}]

Error fetching querying metadata:

Response {type: "cors", url: "https://aquarius.commons.oceanprotocol.com/api/v1/aquarius/assets/ddo", redirected: false, status: 400, ok: false, …}

Logger.js:101

DDO stored

Logger.js:101 error: Cannot read property 'id' of null

```

This occurs in BMW, Commons, Nile, ...!

### Possible Solution

Plecos? Try the /validate endpoint in Aquarius service?

| 1.0 | Can't parse description field in asset registration - ## Current Behavior

The following description will cause errors and break the registration flow;

```

Line 1

Line 2

```

Error trace (but just see for yourself)

```

Response message:

[{"message":"'Line 1\\n\\nLine 2' does not match '^(.*)$'","path":"base/description"}]

Error fetching querying metadata:

Response {type: "cors", url: "https://aquarius.commons.oceanprotocol.com/api/v1/aquarius/assets/ddo", redirected: false, status: 400, ok: false, …}

Logger.js:101

DDO stored

Logger.js:101 error: Cannot read property 'id' of null

```

This occurs in BMW, Commons, Nile, ...!

### Possible Solution

Plecos? Try the /validate endpoint in Aquarius service?

| priority | can t parse description field in asset registration current behavior the following description will cause errors and break the registration flow line line error trace but just see for yourself response message error fetching querying metadata response type cors url redirected false status ok false … logger js ddo stored logger js error cannot read property id of null this occurs in bmw commons nile possible solution plecos try the validate endpoint in aquarius service | 1 |

695,923 | 23,876,610,866 | IssuesEvent | 2022-09-07 19:44:05 | Igalia/wpe-android | https://api.github.com/repos/Igalia/wpe-android | closed | Architecture redesign | enhancement priority-high | Current architecture is hard to keep robust and scalable because of many not so flexible approaches in current code.

For example:

All java -> c++ calls are doing via one Browser singleton and glue entity. This entry points keeps growing in size when we implement new features.

This task is to investigate better approaches and do design overhaul.

- Fix native access model (relates to #104 )

- Ensure thread safety and clean up threading model

- More straightforward 1:1 relationship between java and c++

- Reformat whole codebase using common code style and convetions | 1.0 | Architecture redesign - Current architecture is hard to keep robust and scalable because of many not so flexible approaches in current code.

For example:

All java -> c++ calls are doing via one Browser singleton and glue entity. This entry points keeps growing in size when we implement new features.

This task is to investigate better approaches and do design overhaul.

- Fix native access model (relates to #104 )

- Ensure thread safety and clean up threading model

- More straightforward 1:1 relationship between java and c++

- Reformat whole codebase using common code style and convetions | priority | architecture redesign current architecture is hard to keep robust and scalable because of many not so flexible approaches in current code for example all java c calls are doing via one browser singleton and glue entity this entry points keeps growing in size when we implement new features this task is to investigate better approaches and do design overhaul fix native access model relates to ensure thread safety and clean up threading model more straightforward relationship between java and c reformat whole codebase using common code style and convetions | 1 |

313,165 | 9,557,808,027 | IssuesEvent | 2019-05-03 12:42:03 | wso2/product-ei | https://api.github.com/repos/wso2/product-ei | opened | Update MicroIntegrator documentation for EI 6.5.0 | 6.5.0 Priority/Highest Type/Docs | The MicroIntegrator profile is moved out of the EI 6.5.0 distribution. This profile is going to be introduced as an independent distribution.

Documentation needs to be updated on all the related changes for the Micro Integrator profile.

[1] https://docs.wso2.com/display/EI6xx/Working+with+the+Micro+Integrator | 1.0 | Update MicroIntegrator documentation for EI 6.5.0 - The MicroIntegrator profile is moved out of the EI 6.5.0 distribution. This profile is going to be introduced as an independent distribution.

Documentation needs to be updated on all the related changes for the Micro Integrator profile.

[1] https://docs.wso2.com/display/EI6xx/Working+with+the+Micro+Integrator | priority | update microintegrator documentation for ei the microintegrator profile is moved out of the ei distribution this profile is going to be introduced as an independent distribution documentation needs to be updated on all the related changes for the micro integrator profile | 1 |

589,451 | 17,702,806,207 | IssuesEvent | 2021-08-25 01:37:50 | axinc-ai/ailia-models | https://api.github.com/repos/axinc-ai/ailia-models | closed | ADD mediapipe objectron | high priority | We want to add shoe objectron

https://ai.googleblog.com/2020/03/real-time-3d-object-detection-on-mobile.html

https://github.com/google-research-datasets/Objectron

Model conversion example (PintoModelZoo)

https://github.com/PINTO0309/PINTO_model_zoo/tree/main/036_Objectron

Runtime example (OpenVINO)

https://github.com/yas-sim/objectron-3d-object-detection-openvino | 1.0 | ADD mediapipe objectron - We want to add shoe objectron

https://ai.googleblog.com/2020/03/real-time-3d-object-detection-on-mobile.html

https://github.com/google-research-datasets/Objectron

Model conversion example (PintoModelZoo)

https://github.com/PINTO0309/PINTO_model_zoo/tree/main/036_Objectron

Runtime example (OpenVINO)

https://github.com/yas-sim/objectron-3d-object-detection-openvino | priority | add mediapipe objectron we want to add shoe objectron model conversion example pintomodelzoo runtime example openvino | 1 |

89,954 | 3,807,221,586 | IssuesEvent | 2016-03-25 06:23:42 | xcat2/xcat-core | https://api.github.com/repos/xcat2/xcat-core | closed | [FVT]: Hard disk boot failed on NextScale SLES11 SP4 after diskful installation | component:os_provision priority:high status:pending type:bug | Test OS: SLES11 SP4

Test xCAT version: xCAT Version 2.10 (git commit 79c7f6949c09e0b49875f10ede4b75b18ad4a0e7, built Fri Jul 24 05:27:07 EDT 2015)

How to reproduce:

1. Define NextScale node as a compute node

2. Provision SLES11 SP4 on NextScale node

3. reboot NextScale node and find the boot failed.

Here is the output from the console

```

System initializing

System initializing memory

System initializing...

System initializing

System initializing memory

System initializing...

Loading value-add drivers

Scanning system, connecting boot device(s)

<F1> Setup<F2> Diagnostics<F12> Select Boot Device

No key pressed. Preparing to boot normally...Optimized Boot ... 11

Boot Failed - SUSE Linux Enterprise Server 11 SP4: HD(1,GPT,E2A6F59C-6C93-4794-9B46-9FB1623565C0,0x800,0x42000)/\efi\SuSE\elilo.efi

Boot Failed - SUSE Linux Enterprise Server 11 SP4: HD(1,GPT,F245DF20-35F3-4B81-960C-9FFA6E2A6838,0x800,0x42000)/\efi\SuSE\elilo.efi

Boot Failed - PXE Network: PciRoot(0x0)/Pci(0x2,0x0)/Pci(0x0,0x0)/Ctrl(0x1)/MAC(E41D2D796471,0x0)/IPv4(0.0.0.0,0x0,DHCP,0.0.0.0,0.0.0.0,0.0.0.0)

Boot Failed - PXE Network: PciRoot(0x0)/Pci(0x2,0x0)/Pci(0x0,0x0)/Ctrl(0x2)/MAC(E41D2D796472,0x0)/IPv4(0.0.0.0,0x0,DHCP,0.0.0.0,0.0.0.0,0.0.0.0)

``` | 1.0 | [FVT]: Hard disk boot failed on NextScale SLES11 SP4 after diskful installation - Test OS: SLES11 SP4

Test xCAT version: xCAT Version 2.10 (git commit 79c7f6949c09e0b49875f10ede4b75b18ad4a0e7, built Fri Jul 24 05:27:07 EDT 2015)

How to reproduce:

1. Define NextScale node as a compute node

2. Provision SLES11 SP4 on NextScale node

3. reboot NextScale node and find the boot failed.

Here is the output from the console

```

System initializing

System initializing memory

System initializing...

System initializing

System initializing memory

System initializing...

Loading value-add drivers

Scanning system, connecting boot device(s)

<F1> Setup<F2> Diagnostics<F12> Select Boot Device

No key pressed. Preparing to boot normally...Optimized Boot ... 11

Boot Failed - SUSE Linux Enterprise Server 11 SP4: HD(1,GPT,E2A6F59C-6C93-4794-9B46-9FB1623565C0,0x800,0x42000)/\efi\SuSE\elilo.efi

Boot Failed - SUSE Linux Enterprise Server 11 SP4: HD(1,GPT,F245DF20-35F3-4B81-960C-9FFA6E2A6838,0x800,0x42000)/\efi\SuSE\elilo.efi

Boot Failed - PXE Network: PciRoot(0x0)/Pci(0x2,0x0)/Pci(0x0,0x0)/Ctrl(0x1)/MAC(E41D2D796471,0x0)/IPv4(0.0.0.0,0x0,DHCP,0.0.0.0,0.0.0.0,0.0.0.0)

Boot Failed - PXE Network: PciRoot(0x0)/Pci(0x2,0x0)/Pci(0x0,0x0)/Ctrl(0x2)/MAC(E41D2D796472,0x0)/IPv4(0.0.0.0,0x0,DHCP,0.0.0.0,0.0.0.0,0.0.0.0)

``` | priority | hard disk boot failed on nextscale after diskful installation test os test xcat version xcat version git commit built fri jul edt how to reproduce define nextscale node as a compute node provision on nextscale node reboot nextscale node and find the boot failed here is the output from the console system initializing system initializing memory system initializing system initializing system initializing memory system initializing loading value add drivers scanning system connecting boot device s setup diagnostics select boot device no key pressed preparing to boot normally optimized boot boot failed suse linux enterprise server hd gpt efi suse elilo efi boot failed suse linux enterprise server hd gpt efi suse elilo efi boot failed pxe network pciroot pci pci ctrl mac dhcp boot failed pxe network pciroot pci pci ctrl mac dhcp | 1 |

787,650 | 27,725,810,382 | IssuesEvent | 2023-03-15 02:03:02 | AY2223S2-CS2103T-W15-1/tp | https://api.github.com/repos/AY2223S2-CS2103T-W15-1/tp | closed | As a student, I want to be able to add a new contact without tags | type.Story priority.High | ...so I can add people who don't have a common CCA or module with me | 1.0 | As a student, I want to be able to add a new contact without tags - ...so I can add people who don't have a common CCA or module with me | priority | as a student i want to be able to add a new contact without tags so i can add people who don t have a common cca or module with me | 1 |

558,305 | 16,528,980,204 | IssuesEvent | 2021-05-27 01:36:31 | kobotoolbox/formpack | https://api.github.com/repos/kobotoolbox/formpack | closed | Handle pyxform v1.5.0 features | enhancement high priority | - Step 1: ensure exports succeed with new pxform features

- Step 2: integrate features properly into exports

| 1.0 | Handle pyxform v1.5.0 features - - Step 1: ensure exports succeed with new pxform features

- Step 2: integrate features properly into exports

| priority | handle pyxform features step ensure exports succeed with new pxform features step integrate features properly into exports | 1 |

524,115 | 15,196,594,022 | IssuesEvent | 2021-02-16 08:31:38 | wso2/streaming-integrator-tooling | https://api.github.com/repos/wso2/streaming-integrator-tooling | closed | Export to Kubernetes - Outdated Docker images are referred | Priority/High Severity/Critical Type/Bug | **Description:**

In the Export to Kubernetes feature, outdated Docker images are referred in the artifacts. This needs to be fixed

**Affected Product Version:**

SI Tooling 1.1.0 | 1.0 | Export to Kubernetes - Outdated Docker images are referred - **Description:**

In the Export to Kubernetes feature, outdated Docker images are referred in the artifacts. This needs to be fixed

**Affected Product Version:**

SI Tooling 1.1.0 | priority | export to kubernetes outdated docker images are referred description in the export to kubernetes feature outdated docker images are referred in the artifacts this needs to be fixed affected product version si tooling | 1 |

812,634 | 30,345,467,097 | IssuesEvent | 2023-07-11 15:09:30 | calcom/cal.com | https://api.github.com/repos/calcom/cal.com | opened | Events and emails are being sent to an old, disconnected Google account | 🐛 bug High priority calendar-apps | Found a bug? Please fill out the sections below. 👍

### Issue Summary

I recently was asked if I was joining a call that someone had scheduled with me and I noticed the event was not put onto the calendar I have linked (keith@cal.com) but instead it was sent to an old, disconnected Google account that I had already completely removed from my Cal.com account.

### Steps to Reproduce

1. Link 1 Google account and set that as the default calendar for where events are added

2. Link another Google account

3. Delete the first Google account added and make sure the 2nd one is now the default.

4. Submit bookings to your account.

### Actual Results

- I'm getting Google calendar events and emails to an old, disconnected Google account instead of the one that is set in app.cal.com (it's the only one even connected).

### Expected Results

- To get events on the calendar that is linked and set as default/event types

### Evidence

<img width="791" alt="Screen Shot 2023-07-11 at 4 29 51 PM" src="https://github.com/calcom/cal.com/assets/2538462/9b757e62-33f4-4af8-b281-d2f936214eab">

<img width="1415" alt="Screen Shot 2023-07-11 at 5 04 38 PM" src="https://github.com/calcom/cal.com/assets/2538462/6b10928b-e411-4b62-be99-9b9c225e4d59">

| 1.0 | Events and emails are being sent to an old, disconnected Google account - Found a bug? Please fill out the sections below. 👍

### Issue Summary

I recently was asked if I was joining a call that someone had scheduled with me and I noticed the event was not put onto the calendar I have linked (keith@cal.com) but instead it was sent to an old, disconnected Google account that I had already completely removed from my Cal.com account.

### Steps to Reproduce

1. Link 1 Google account and set that as the default calendar for where events are added

2. Link another Google account

3. Delete the first Google account added and make sure the 2nd one is now the default.

4. Submit bookings to your account.

### Actual Results

- I'm getting Google calendar events and emails to an old, disconnected Google account instead of the one that is set in app.cal.com (it's the only one even connected).

### Expected Results

- To get events on the calendar that is linked and set as default/event types

### Evidence

<img width="791" alt="Screen Shot 2023-07-11 at 4 29 51 PM" src="https://github.com/calcom/cal.com/assets/2538462/9b757e62-33f4-4af8-b281-d2f936214eab">

<img width="1415" alt="Screen Shot 2023-07-11 at 5 04 38 PM" src="https://github.com/calcom/cal.com/assets/2538462/6b10928b-e411-4b62-be99-9b9c225e4d59">

| priority | events and emails are being sent to an old disconnected google account found a bug please fill out the sections below 👍 issue summary i recently was asked if i was joining a call that someone had scheduled with me and i noticed the event was not put onto the calendar i have linked keith cal com but instead it was sent to an old disconnected google account that i had already completely removed from my cal com account steps to reproduce link google account and set that as the default calendar for where events are added link another google account delete the first google account added and make sure the one is now the default submit bookings to your account actual results i m getting google calendar events and emails to an old disconnected google account instead of the one that is set in app cal com it s the only one even connected expected results to get events on the calendar that is linked and set as default event types evidence img width alt screen shot at pm src img width alt screen shot at pm src | 1 |

671,099 | 22,743,156,628 | IssuesEvent | 2022-07-07 06:41:11 | Elice-SW-2-Team14/Animal-Hospital | https://api.github.com/repos/Elice-SW-2-Team14/Animal-Hospital | closed | [FE] 기초 셋팅 ( 모듈 , 전역 스타일 ) | 🔩 setup ❗️high-priority 🖥 Frontend | ## 🔨 기능 설명

기초 셋팅 ( 모듈 , 전역 스타일 )

## 📑 완료 조건

에러 없이 실행

## 💭 관련 백로그

[[FE] 초기 셋팅]-[프론트엔드 초기 셋팅]-[전역 스타일 설정]

## 💭 예상 작업 시간

(작업 시간)0.5h

| 1.0 | [FE] 기초 셋팅 ( 모듈 , 전역 스타일 ) - ## 🔨 기능 설명

기초 셋팅 ( 모듈 , 전역 스타일 )

## 📑 완료 조건

에러 없이 실행

## 💭 관련 백로그

[[FE] 초기 셋팅]-[프론트엔드 초기 셋팅]-[전역 스타일 설정]

## 💭 예상 작업 시간

(작업 시간)0.5h

| priority | 기초 셋팅 모듈 전역 스타일 🔨 기능 설명 기초 셋팅 모듈 전역 스타일 📑 완료 조건 에러 없이 실행 💭 관련 백로그 초기 셋팅 💭 예상 작업 시간 작업 시간 | 1 |

240,241 | 7,800,708,545 | IssuesEvent | 2018-06-09 12:46:36 | tine20/Tine-2.0-Open-Source-Groupware-and-CRM | https://api.github.com/repos/tine20/Tine-2.0-Open-Source-Groupware-and-CRM | closed | 0011034:

Uncaught TypeError when saving items | Bug Calendar Mantis high priority | **Reported by shochdoerfer on 13 May 2015 20:53**

**Version:** Koriander (2014.09.10)

When saving an item (e.g. add an event in the calendar, add a new timeaccout) I get the following JS error:

Uncaught TypeError: Cannot read property 'ext-comp-1225' of undefined

ext-all.js:7 Ext.Element

ext-all.js:7 h.get

ext-all.js:7 Ext.extend.onRender

ext-all.js:7 Ext.Panel.Ext.extend.onRender

ext-all.js:14 Ext.Window.Ext.extend.onRender

ext-all.js:7 Ext.extend.render

ext-all.js:14 Ext.MessageBox.getDialog

ext-all.js:14 Ext.MessageBox.show

ext-all.js:14 Ext.MessageBox.alert

index.php?method=Tinebase.getJsFiles:37 (anonymous function)

**Additional information:** I made sure that I cleared the browser cache so this should not be an issue.

| 1.0 | 0011034:

Uncaught TypeError when saving items - **Reported by shochdoerfer on 13 May 2015 20:53**

**Version:** Koriander (2014.09.10)

When saving an item (e.g. add an event in the calendar, add a new timeaccout) I get the following JS error:

Uncaught TypeError: Cannot read property 'ext-comp-1225' of undefined

ext-all.js:7 Ext.Element

ext-all.js:7 h.get

ext-all.js:7 Ext.extend.onRender

ext-all.js:7 Ext.Panel.Ext.extend.onRender

ext-all.js:14 Ext.Window.Ext.extend.onRender

ext-all.js:7 Ext.extend.render

ext-all.js:14 Ext.MessageBox.getDialog

ext-all.js:14 Ext.MessageBox.show

ext-all.js:14 Ext.MessageBox.alert

index.php?method=Tinebase.getJsFiles:37 (anonymous function)

**Additional information:** I made sure that I cleared the browser cache so this should not be an issue.

| priority | uncaught typeerror when saving items reported by shochdoerfer on may version koriander when saving an item e g add an event in the calendar add a new timeaccout i get the following js error uncaught typeerror cannot read property ext comp of undefined ext all js ext element ext all js h get ext all js ext extend onrender ext all js ext panel ext extend onrender ext all js ext window ext extend onrender ext all js ext extend render ext all js ext messagebox getdialog ext all js ext messagebox show ext all js ext messagebox alert index php method tinebase getjsfiles anonymous function additional information i made sure that i cleared the browser cache so this should not be an issue | 1 |

623,482 | 19,669,514,164 | IssuesEvent | 2022-01-11 04:47:00 | ballerina-platform/openapi-tools | https://api.github.com/repos/ballerina-platform/openapi-tools | opened | Add support to Typed headers for HTTP response | Type/Improvement Points/2 Priority/High BallerinaToOpenAPI | **Description:**

Related issue : https://github.com/ballerina-platform/ballerina-standard-library/issues/2562

```ballerina

public type RateLimitHeaders record {|

string x\-rate\-limit\-id;

int x\-rate\-limit\-remaining;

string[] x\-rate\-limit\-types;

|};

public type OkWithRateLmits record {|

*Ok;

RateLimitHeaders headers;

string body;

|};

service / on new http:Listener(9090) {