Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

67,744 | 3,278,176,728 | IssuesEvent | 2015-10-27 07:38:40 | nim-lang/Nim | https://api.github.com/repos/nim-lang/Nim | closed | Bug with generic proc returning a {.global.} `var seq[T]` | High Priority Regression | This bug was introduced in a commit sometime over the last 1-2 weeks:

```nim

proc problem[T]: var seq[T] =

## Problem! Bug with generics makes every call to this proc generate

## a new seq[T] instead of retrieving the `items {.global.}` variable.

var items {.global.}: seq[T]

return items

proc workaroun... | 1.0 | Bug with generic proc returning a {.global.} `var seq[T]` - This bug was introduced in a commit sometime over the last 1-2 weeks:

```nim

proc problem[T]: var seq[T] =

## Problem! Bug with generics makes every call to this proc generate

## a new seq[T] instead of retrieving the `items {.global.}` variable.

va... | priority | bug with generic proc returning a global var seq this bug was introduced in a commit sometime over the last weeks nim proc problem var seq problem bug with generics makes every call to this proc generate a new seq instead of retrieving the items global variable var items ... | 1 |

162,676 | 6,157,798,270 | IssuesEvent | 2017-06-28 19:46:38 | igvteam/juicebox.js | https://api.github.com/repos/igvteam/juicebox.js | opened | Shift - crosshairs not working | bug high priority | Shift - crosshairs are not working in the latest code. Pressing the shift key does not cause them to appear. By doing some clicking pressing and moving the mouse in and out of the element I was able to get them to appear once, but they were frozen (not tracking the mouse). | 1.0 | Shift - crosshairs not working - Shift - crosshairs are not working in the latest code. Pressing the shift key does not cause them to appear. By doing some clicking pressing and moving the mouse in and out of the element I was able to get them to appear once, but they were frozen (not tracking the mouse). | priority | shift crosshairs not working shift crosshairs are not working in the latest code pressing the shift key does not cause them to appear by doing some clicking pressing and moving the mouse in and out of the element i was able to get them to appear once but they were frozen not tracking the mouse | 1 |

95,078 | 3,933,813,707 | IssuesEvent | 2016-04-25 20:26:00 | washingtonstateuniversity/WSUWP-Content-Syndicate | https://api.github.com/repos/washingtonstateuniversity/WSUWP-Content-Syndicate | opened | Add actions throughout to better support logging | enhancement priority:high | We have custom logging in several places, but that shouldn't be part of the core plugin once we make it more publicly available. Instead, we can create some kind of "failure" method and use an action in there to help logging. | 1.0 | Add actions throughout to better support logging - We have custom logging in several places, but that shouldn't be part of the core plugin once we make it more publicly available. Instead, we can create some kind of "failure" method and use an action in there to help logging. | priority | add actions throughout to better support logging we have custom logging in several places but that shouldn t be part of the core plugin once we make it more publicly available instead we can create some kind of failure method and use an action in there to help logging | 1 |

643,315 | 20,948,287,462 | IssuesEvent | 2022-03-26 07:23:42 | AY2122S2-CS2113T-T09-1/tp | https://api.github.com/repos/AY2122S2-CS2113T-T09-1/tp | closed | Show daily or weekly schedule (Parsing tasks for printing schedule) | priority.High type.Chore | Implement logic handling for filtering tasks by date | 1.0 | Show daily or weekly schedule (Parsing tasks for printing schedule) - Implement logic handling for filtering tasks by date | priority | show daily or weekly schedule parsing tasks for printing schedule implement logic handling for filtering tasks by date | 1 |

119,971 | 4,778,436,640 | IssuesEvent | 2016-10-27 19:17:34 | RepreZen/SwagEdit | https://api.github.com/repos/RepreZen/SwagEdit | closed | Deprecated simple reference should display with specialized warning message | High Priority | Reported by @tedepstein in [ZEN-3016](https://modelsolv.atlassian.net/browse/ZEN-3016):

> Discovered in [support ticket #176](https://support.reprezen.com/helpdesk/tickets/176):

We used to recognize these deprecated simple references, and provide a friendlier warning message. Now it's just giving an "invalid refere... | 1.0 | Deprecated simple reference should display with specialized warning message - Reported by @tedepstein in [ZEN-3016](https://modelsolv.atlassian.net/browse/ZEN-3016):

> Discovered in [support ticket #176](https://support.reprezen.com/helpdesk/tickets/176):

We used to recognize these deprecated simple references, and... | priority | deprecated simple reference should display with specialized warning message reported by tedepstein in discovered in we used to recognize these deprecated simple references and provide a friendlier warning message now it s just giving an invalid reference warning which doesn t tell the whole story ... | 1 |

663,798 | 22,207,419,242 | IssuesEvent | 2022-06-07 15:57:37 | voxel51/fiftyone | https://api.github.com/repos/voxel51/fiftyone | opened | [BUG] App cannot handle schema changes to an in-use dataset | bug app high priority | On `fiftyone>=0.16.0`, the App cannot handle schema changes to an in-use dataset:

```py

import fiftyone as fo

sample = fo.Sample(filepath="/Users/Brian/Desktop/test.png")

dataset = fo.Dataset()

dataset.add_sample(sample)

session = fo.launch_app(dataset)

sample["value"] = 1

sample.save()

# Raises er... | 1.0 | [BUG] App cannot handle schema changes to an in-use dataset - On `fiftyone>=0.16.0`, the App cannot handle schema changes to an in-use dataset:

```py

import fiftyone as fo

sample = fo.Sample(filepath="/Users/Brian/Desktop/test.png")

dataset = fo.Dataset()

dataset.add_sample(sample)

session = fo.launch_app... | priority | app cannot handle schema changes to an in use dataset on fiftyone the app cannot handle schema changes to an in use dataset py import fiftyone as fo sample fo sample filepath users brian desktop test png dataset fo dataset dataset add sample sample session fo launch app data... | 1 |

172,910 | 6,517,770,124 | IssuesEvent | 2017-08-28 03:08:08 | localstack/localstack | https://api.github.com/repos/localstack/localstack | closed | Localstack Lambda service does not accept python3.6 f-string syntax | enhancement priority-high | I found Localstack Lambda service does not accept python3.6 f-string syntax.

I have created a simple Lambda python below.

```

import json

print('Loading function')

def lambda_handler(event, context):

#print("Received event: " + json.dumps(event, indent=2))

event2 = {"Records": [3,4,5]}

print(f... | 1.0 | Localstack Lambda service does not accept python3.6 f-string syntax - I found Localstack Lambda service does not accept python3.6 f-string syntax.

I have created a simple Lambda python below.

```

import json

print('Loading function')

def lambda_handler(event, context):

#print("Received event: " + json.d... | priority | localstack lambda service does not accept f string syntax i found localstack lambda service does not accept f string syntax i have created a simple lambda python below import json print loading function def lambda handler event context print received event json dumps event ... | 1 |

380,023 | 11,253,345,107 | IssuesEvent | 2020-01-11 15:40:33 | William-Lake/NLP-API | https://api.github.com/repos/William-Lake/NLP-API | closed | Provide a history of the path actions in the response. | High Priority Low Urgency | E.g. if the entire requests fails or only one endpoint, return the appropriate feedback to the user. | 1.0 | Provide a history of the path actions in the response. - E.g. if the entire requests fails or only one endpoint, return the appropriate feedback to the user. | priority | provide a history of the path actions in the response e g if the entire requests fails or only one endpoint return the appropriate feedback to the user | 1 |

636,135 | 20,592,831,106 | IssuesEvent | 2022-03-05 03:20:21 | datajoint/element-calcium-imaging | https://api.github.com/repos/datajoint/element-calcium-imaging | closed | extra use of output_dir in the Processing | high priority | `output_dir` should not be redefined within the trigger condition | 1.0 | extra use of output_dir in the Processing - `output_dir` should not be redefined within the trigger condition | priority | extra use of output dir in the processing output dir should not be redefined within the trigger condition | 1 |

113,616 | 4,565,737,632 | IssuesEvent | 2016-09-15 02:15:48 | Aqueti/atl | https://api.github.com/repos/Aqueti/atl | opened | Memory leak in BaseKey | High Priority | BaseKey returns a uint8_t* from its hash function. If this memory is not freed by the calling function, it will be leaked.

I see two ways to solve this: either be very explicit that that memory needs to be freed in documentation, or pass in a buffer for BaseKey to write to.

I'm not sure which I prefer - we shoul... | 1.0 | Memory leak in BaseKey - BaseKey returns a uint8_t* from its hash function. If this memory is not freed by the calling function, it will be leaked.

I see two ways to solve this: either be very explicit that that memory needs to be freed in documentation, or pass in a buffer for BaseKey to write to.

I'm not sure ... | priority | memory leak in basekey basekey returns a t from its hash function if this memory is not freed by the calling function it will be leaked i see two ways to solve this either be very explicit that that memory needs to be freed in documentation or pass in a buffer for basekey to write to i m not sure whic... | 1 |

131,533 | 5,154,640,095 | IssuesEvent | 2017-01-15 01:29:00 | rm-code/On-The-Roadside | https://api.github.com/repos/rm-code/On-The-Roadside | closed | Health system | Priority: High Status: Accepted Type: Feature | Some more brain storming stuff ...

The bodies of all creatures in the game will be modelled as graph like the on above. These graphs will feature entry nodes, bones and organs.

## Nodes

- Entry nodes... | 1.0 | Health system - Some more brain storming stuff ...

The bodies of all creatures in the game will be modelled as graph like the on above. These graphs will feature entry nodes, bones and organs.

## Node... | priority | health system some more brain storming stuff the bodies of all creatures in the game will be modelled as graph like the on above these graphs will feature entry nodes bones and organs nodes entry nodes pink are used to represent the outside of the body these are points which can be targ... | 1 |

379,565 | 11,223,426,156 | IssuesEvent | 2020-01-07 22:39:58 | Sp2000/colplus-repo | https://api.github.com/repos/Sp2000/colplus-repo | closed | References issues with several GSDs | data orphan high priority | Some of the older GSDs were still using the legacy reference_id system and/or were missing database_ids in the reference table of the **2019 annual edition** of Assembly_Global. The GSDs include:

id | database | missing_database_id | missing_reference_code | fixed

-- | -- | -- | -- | --

9 | ETI WBD (Euphausiacea)... | 1.0 | References issues with several GSDs - Some of the older GSDs were still using the legacy reference_id system and/or were missing database_ids in the reference table of the **2019 annual edition** of Assembly_Global. The GSDs include:

id | database | missing_database_id | missing_reference_code | fixed

-- | -- | -- ... | priority | references issues with several gsds some of the older gsds were still using the legacy reference id system and or were missing database ids in the reference table of the annual edition of assembly global the gsds include id database missing database id missing reference code fixed ... | 1 |

89,099 | 3,789,737,198 | IssuesEvent | 2016-03-21 18:57:16 | CoderDojo/community-platform | https://api.github.com/repos/CoderDojo/community-platform | closed | Invite all feature for events | backlog events high priority top priority | We want to add a feature where in the events section you can invite all in the events section, and it will send an email to all members of a Dojo notifying them that there is a new event live on the Dojo.

Wireframe to follow. | 2.0 | Invite all feature for events - We want to add a feature where in the events section you can invite all in the events section, and it will send an email to all members of a Dojo notifying them that there is a new event live on the Dojo.

Wireframe to follow. | priority | invite all feature for events we want to add a feature where in the events section you can invite all in the events section and it will send an email to all members of a dojo notifying them that there is a new event live on the dojo wireframe to follow | 1 |

242,684 | 7,845,411,733 | IssuesEvent | 2018-06-19 12:53:11 | cms-gem-daq-project/gem-plotting-tools | https://api.github.com/repos/cms-gem-daq-project/gem-plotting-tools | closed | Feature Request: Identifying Original maskReason & Date Range of Burned Input | Priority: High Status: Help Wanted Type: Enhancement | <!--- Provide a general summary of the issue in the Title above -->

## Brief summary of issue

<!--- Provide a description of the issue, including any other issues or pull requests it references -->

We have 30720 channels in CMS presently. And we need some automated way for tracking when changes occur. Ideally this... | 1.0 | Feature Request: Identifying Original maskReason & Date Range of Burned Input - <!--- Provide a general summary of the issue in the Title above -->

## Brief summary of issue

<!--- Provide a description of the issue, including any other issues or pull requests it references -->

We have 30720 channels in CMS presently... | priority | feature request identifying original maskreason date range of burned input brief summary of issue we have channels in cms presently and we need some automated way for tracking when changes occur ideally this should be via the db but this is not up and running yet but we have an automated pro... | 1 |

537,062 | 15,722,388,259 | IssuesEvent | 2021-03-29 05:38:46 | wso2/integration-studio | https://api.github.com/repos/wso2/integration-studio | closed | [Tooling] Make the ESB Editor respect workspace / project / file encoding | 8.0.0 Priority/High | **Description:**

Make the ESB Editor component of the WSO2 EI Tooling honor the file encoding specified in the following hierarchy : OS / eclipse / workspace / project / file

<!-- Give a brief description of the issue -->

The ESB Editor doesn't currently respect the workspace / project or file encoding of WSO2 art... | 1.0 | [Tooling] Make the ESB Editor respect workspace / project / file encoding - **Description:**

Make the ESB Editor component of the WSO2 EI Tooling honor the file encoding specified in the following hierarchy : OS / eclipse / workspace / project / file

<!-- Give a brief description of the issue -->

The ESB Editor do... | priority | make the esb editor respect workspace project file encoding description make the esb editor component of the ei tooling honor the file encoding specified in the following hierarchy os eclipse workspace project file the esb editor doesn t currently respect the workspace project or file ... | 1 |

64,408 | 3,211,400,273 | IssuesEvent | 2015-10-06 10:30:34 | cs2103aug2015-w11-1j/main | https://api.github.com/repos/cs2103aug2015-w11-1j/main | closed | Parser fine tune | priority.high | To remove magic number, string etc and remove main method to integrate into logic | 1.0 | Parser fine tune - To remove magic number, string etc and remove main method to integrate into logic | priority | parser fine tune to remove magic number string etc and remove main method to integrate into logic | 1 |

292,148 | 8,953,678,894 | IssuesEvent | 2019-01-25 20:12:37 | CredentialEngine/CompetencyFrameworks | https://api.github.com/repos/CredentialEngine/CompetencyFrameworks | opened | complexityLevel missing from framework export | High Priority bug | The beta connecting credentials framework properly shows in the CASS editor:

https://credentialengine.org/publisher/Competencies

It correctly indicates/links to the relevant concepts from the Beta Connecting Credentials levels concept scheme. It does so via the `ceasn:complexityLevel` property - however, this prop... | 1.0 | complexityLevel missing from framework export - The beta connecting credentials framework properly shows in the CASS editor:

https://credentialengine.org/publisher/Competencies

It correctly indicates/links to the relevant concepts from the Beta Connecting Credentials levels concept scheme. It does so via the `ceas... | priority | complexitylevel missing from framework export the beta connecting credentials framework properly shows in the cass editor it correctly indicates links to the relevant concepts from the beta connecting credentials levels concept scheme it does so via the ceasn complexitylevel property however this proper... | 1 |

582,460 | 17,361,850,914 | IssuesEvent | 2021-07-29 22:00:34 | jessebw/activeVote | https://api.github.com/repos/jessebw/activeVote | closed | current poll view - No poll | High Priority enhancement | - [x] If there is no current poll, display a landing view which lets the user know there are no active polls.

| 1.0 | current poll view - No poll - - [x] If there is no current poll, display a landing view which lets the user know there are no active polls.

| priority | current poll view no poll if there is no current poll display a landing view which lets the user know there are no active polls | 1 |

59,628 | 3,115,156,898 | IssuesEvent | 2015-09-03 13:11:15 | USGCRP/gcis-ontology | https://api.github.com/repos/USGCRP/gcis-ontology | closed | gcis:Project, Model as property | high-priority | Like https://github.com/USGCRP/gcis-ontology/issues/95 this is a spinoff of #12. Here, the turtle for models, in addition to projects, incorrectly uses classes as properties:

https://github.com/USGCRP/gcis/blob/master/lib/Tuba/files/templates/model/object.ttl.tut

Example with instance data:

http://data.globalchan... | 1.0 | gcis:Project, Model as property - Like https://github.com/USGCRP/gcis-ontology/issues/95 this is a spinoff of #12. Here, the turtle for models, in addition to projects, incorrectly uses classes as properties:

https://github.com/USGCRP/gcis/blob/master/lib/Tuba/files/templates/model/object.ttl.tut

Example with inst... | priority | gcis project model as property like this is a spinoff of here the turtle for models in addition to projects incorrectly uses classes as properties example with instance data i was unable to locate a candidate property relating a model to a project like within dbpedia similarly with re... | 1 |

440,068 | 12,692,564,002 | IssuesEvent | 2020-06-21 23:21:03 | ctm/mb2-doc | https://api.github.com/repos/ctm/mb2-doc | closed | login typo protection | easy enhancement high priority | Currently if someone typos their nickname when logging in, they'll create a new one, which probably isn't what's wanted. If we add a dialog box that says "the account isn't known, would you like to create it?", that would solve the problem.

| 1.0 | login typo protection - Currently if someone typos their nickname when logging in, they'll create a new one, which probably isn't what's wanted. If we add a dialog box that says "the account isn't known, would you like to create it?", that would solve the problem.

| priority | login typo protection currently if someone typos their nickname when logging in they ll create a new one which probably isn t what s wanted if we add a dialog box that says the account isn t known would you like to create it that would solve the problem | 1 |

552,075 | 16,194,264,574 | IssuesEvent | 2021-05-04 12:49:35 | EvanQuan/Chubberino | https://api.github.com/repos/EvanQuan/Chubberino | opened | Refund heisters on quitting mid-heists | enhancement high priority medium effort | Currently if the program ends in the middle of any heists, all heisters lose all cheese they wagered.

Should return all cheese on quitting, preferrable with a message. | 1.0 | Refund heisters on quitting mid-heists - Currently if the program ends in the middle of any heists, all heisters lose all cheese they wagered.

Should return all cheese on quitting, preferrable with a message. | priority | refund heisters on quitting mid heists currently if the program ends in the middle of any heists all heisters lose all cheese they wagered should return all cheese on quitting preferrable with a message | 1 |

558,703 | 16,540,982,064 | IssuesEvent | 2021-05-27 16:45:25 | wazuh/wazuh-documentation | https://api.github.com/repos/wazuh/wazuh-documentation | opened | Current installation guide - Index | priority: highest type: refactor | Hello team!

The aim of this issue is to adapt the current installation guide index to the new structure proposed.

We must clarify the differences between the kinds of deployments type.

Regards,

David | 1.0 | Current installation guide - Index - Hello team!

The aim of this issue is to adapt the current installation guide index to the new structure proposed.

We must clarify the differences between the kinds of deployments type.

Regards,

David | priority | current installation guide index hello team the aim of this issue is to adapt the current installation guide index to the new structure proposed we must clarify the differences between the kinds of deployments type regards david | 1 |

767,523 | 26,929,648,649 | IssuesEvent | 2023-02-07 15:59:18 | GoogleCloudPlatform/dataproc-templates | https://api.github.com/repos/GoogleCloudPlatform/dataproc-templates | closed | [Publishing] Create Gcloud commands and rest API for below templates. Target JDBC | publishing python java high-priority | 1. Java - GCSToJDBC

2. Python - GCSToJDBC

3. Python - JDBCToJDBC

Add the commands in : go/go/dataproc-gcp-documentation-internal document also contains commands for reference from other templates | 1.0 | [Publishing] Create Gcloud commands and rest API for below templates. Target JDBC - 1. Java - GCSToJDBC

2. Python - GCSToJDBC

3. Python - JDBCToJDBC

Add the commands in : go/go/dataproc-gcp-documentation-internal document also contains commands for reference from other templates | priority | create gcloud commands and rest api for below templates target jdbc java gcstojdbc python gcstojdbc python jdbctojdbc add the commands in go go dataproc gcp documentation internal document also contains commands for reference from other templates | 1 |

130,436 | 5,116,029,280 | IssuesEvent | 2017-01-07 00:10:59 | meumobi/sitebuilder | https://api.github.com/repos/meumobi/sitebuilder | opened | UpdateFeedsWorker low not work | bug feeds high priority | It's status is NOK on /status page, and since last deploy we've observed a error `could not find cursor over collection meumobi_partners.extensions` on logs

```

[2017-01-07 01:11:02] sitebuilder.ERROR: Uncaught Exception MongoCursorException: "localhost:27017: could not find cursor over collection meumobi_partners.... | 1.0 | UpdateFeedsWorker low not work - It's status is NOK on /status page, and since last deploy we've observed a error `could not find cursor over collection meumobi_partners.extensions` on logs

```

[2017-01-07 01:11:02] sitebuilder.ERROR: Uncaught Exception MongoCursorException: "localhost:27017: could not find cursor ... | priority | updatefeedsworker low not work it s status is nok on status page and since last deploy we ve observed a error could not find cursor over collection meumobi partners extensions on logs sitebuilder error uncaught exception mongocursorexception localhost could not find cursor over collection meumobi ... | 1 |

395,137 | 11,672,137,572 | IssuesEvent | 2020-03-04 05:41:22 | gambitph/Stackable | https://api.github.com/repos/gambitph/Stackable | opened | Can't change the Text Color of the blocks inside the Accordion | [block] accordion bug high priority | Can't change the Text Color of the blocks inside the Accordion. This happens on Frontend and Backend.

:

```

<oml:task xmlns:oml="http://openml.org/openml">

<oml:task_id>2</oml:task_id>

<oml:task_name>Task 2 (Supervised Classification)</oml:task_name>

<oml:task_type_id>1</oml:task_type_id>

<oml:task_type>Supervised Classification</o... | 1.0 | Test server does no longer return dataset ID within task XML - [https://test.openml.org/api/v1/task/2](https://test.openml.org/api/v1/task/2):

```

<oml:task xmlns:oml="http://openml.org/openml">

<oml:task_id>2</oml:task_id>

<oml:task_name>Task 2 (Supervised Classification)</oml:task_name>

<oml:task_type_id>1</... | priority | test server does no longer return dataset id within task xml oml task xmlns oml task supervised classification supervised classification ... | 1 |

309,030 | 9,460,448,810 | IssuesEvent | 2019-04-17 11:00:16 | netdata/netdata | https://api.github.com/repos/netdata/netdata | closed | Full integration with netdata of the new database implementation | area/database feature request priority/high | <!---

When creating a feature request please:

- Verify first that your issue is not already reported on GitHub

- Explain new feature briefly in "Feature idea summary" section

- Provide a clear and concise description of what you expect to happen.

--->

Complete integration of the new database with netdata, file ma... | 1.0 | Full integration with netdata of the new database implementation - <!---

When creating a feature request please:

- Verify first that your issue is not already reported on GitHub

- Explain new feature briefly in "Feature idea summary" section

- Provide a clear and concise description of what you expect to happen.

-... | priority | full integration with netdata of the new database implementation when creating a feature request please verify first that your issue is not already reported on github explain new feature briefly in feature idea summary section provide a clear and concise description of what you expect to happen ... | 1 |

724,392 | 24,928,519,834 | IssuesEvent | 2022-10-31 09:37:49 | alphagov/govuk-prototype-kit | https://api.github.com/repos/alphagov/govuk-prototype-kit | closed | Run v13 private beta | ⚠️ high priority user research | ## What

Running private beta for v13 5-23 September

## Why

To obtain feedback from our users on v13 and iterate before full release

## Who needs to work on this

The whole team?

## Done when

- [x] Users invited to beta @ruthhammond

- [x] EOI form re-shared to recruit more users to invite

- [x] Feedba... | 1.0 | Run v13 private beta - ## What

Running private beta for v13 5-23 September

## Why

To obtain feedback from our users on v13 and iterate before full release

## Who needs to work on this

The whole team?

## Done when

- [x] Users invited to beta @ruthhammond

- [x] EOI form re-shared to recruit more users ... | priority | run private beta what running private beta for september why to obtain feedback from our users on and iterate before full release who needs to work on this the whole team done when users invited to beta ruthhammond eoi form re shared to recruit more users to invite ... | 1 |

7,457 | 2,602,353,151 | IssuesEvent | 2015-02-24 07:57:53 | NebulousLabs/Sia | https://api.github.com/repos/NebulousLabs/Sia | closed | Add website to readme | bug High Priority | right now, people who find us through the github page (I mentioned Sia in a reddit comment earlier today) don't have any way to find the website and download the binaries. | 1.0 | Add website to readme - right now, people who find us through the github page (I mentioned Sia in a reddit comment earlier today) don't have any way to find the website and download the binaries. | priority | add website to readme right now people who find us through the github page i mentioned sia in a reddit comment earlier today don t have any way to find the website and download the binaries | 1 |

386,183 | 11,433,279,174 | IssuesEvent | 2020-02-04 15:26:40 | rich-iannone/pointblank | https://api.github.com/repos/rich-iannone/pointblank | closed | Validations that work for numeric columns should also work for Date and datetime-type columns | Difficulty: ③ Advanced Effort: ③ High Priority: ③ High Type: ★ Enhancement | Right now date and datetime column values cannot be validated, and that’s a shame. | 1.0 | Validations that work for numeric columns should also work for Date and datetime-type columns - Right now date and datetime column values cannot be validated, and that’s a shame. | priority | validations that work for numeric columns should also work for date and datetime type columns right now date and datetime column values cannot be validated and that’s a shame | 1 |

292,473 | 8,958,543,877 | IssuesEvent | 2019-01-27 15:11:36 | ngageoint/hootenanny | https://api.github.com/repos/ngageoint/hootenanny | closed | Unifying conflation changing direction of one way streets - Maldives | Category: Algorithms Priority: High Status: Defined Type: Bug | Found this while working on #2867 and looking at Maldives results in JOSM. The issue was pre-existing to those changes, so logging it separately. The road in question is Buruzu Magu when using NOME as the secondary, where the reference had no one way tag. Not sure yet if this all affects Network roads.

Another af... | 1.0 | Unifying conflation changing direction of one way streets - Maldives - Found this while working on #2867 and looking at Maldives results in JOSM. The issue was pre-existing to those changes, so logging it separately. The road in question is Buruzu Magu when using NOME as the secondary, where the reference had no one ... | priority | unifying conflation changing direction of one way streets maldives found this while working on and looking at maldives results in josm the issue was pre existing to those changes so logging it separately the road in question is buruzu magu when using nome as the secondary where the reference had no one way... | 1 |

752,946 | 26,333,600,271 | IssuesEvent | 2023-01-10 12:45:28 | GSM-MSG/GUI-iOS | https://api.github.com/repos/GSM-MSG/GUI-iOS | opened | BaseViewController | 1️⃣ Priority: High ⚙ Setting | ### Describe

ViewController들이 사용할 BaseViewController

### Additional

_No response_ | 1.0 | BaseViewController - ### Describe

ViewController들이 사용할 BaseViewController

### Additional

_No response_ | priority | baseviewcontroller describe viewcontroller들이 사용할 baseviewcontroller additional no response | 1 |

788,791 | 27,766,788,340 | IssuesEvent | 2023-03-16 12:01:00 | AY2223S2-CS2113-T15-1/tp | https://api.github.com/repos/AY2223S2-CS2113-T15-1/tp | opened | Add FileManager support for saving modified Note objects | type.Story priority.High | After the implementation of sort by importance, there will be an additional attribute in the Note class. | 1.0 | Add FileManager support for saving modified Note objects - After the implementation of sort by importance, there will be an additional attribute in the Note class. | priority | add filemanager support for saving modified note objects after the implementation of sort by importance there will be an additional attribute in the note class | 1 |

113,196 | 4,544,367,006 | IssuesEvent | 2016-09-10 17:06:06 | Starblaster64/Vs-Saxton-Hale-2 | https://api.github.com/repos/Starblaster64/Vs-Saxton-Hale-2 | closed | Compile issue | bug high priority | Putting this here for recording purposes, since I've already mentioned it twice before.

The plugin currently will not compile unless you add "modules/" to the path of every #include that references a file within that folder. | 1.0 | Compile issue - Putting this here for recording purposes, since I've already mentioned it twice before.

The plugin currently will not compile unless you add "modules/" to the path of every #include that references a file within that folder. | priority | compile issue putting this here for recording purposes since i ve already mentioned it twice before the plugin currently will not compile unless you add modules to the path of every include that references a file within that folder | 1 |

171,918 | 6,496,778,320 | IssuesEvent | 2017-08-22 11:31:02 | wende/elchemy | https://api.github.com/repos/wende/elchemy | closed | Make wildcard work when importing all types of a union | bug Complexity:Advanced Language:Elm Priority:High Project:Compiler | Add:

## Example:

```elm

import Module exposing (Union(..))

``` | 1.0 | Make wildcard work when importing all types of a union - Add:

## Example:

```elm

import Module exposing (Union(..))

``` | priority | make wildcard work when importing all types of a union add example elm import module exposing union | 1 |

292,807 | 8,968,747,649 | IssuesEvent | 2019-01-29 09:01:10 | evangelos-ch/MangAdventure | https://api.github.com/repos/evangelos-ch/MangAdventure | opened | [TODO] Reader improvements | Priority: High Status: On Hold Type: Enhancement | - [ ] Use avelino/django-turbolinks to load pages faster

- [ ] More reading modes:

- [ ] Long strip mode

- [ ] Fit page to screen

- [ ] Double page

- [ ] Right to left direction

- [ ] Bidirectional page click events

- [ ] RSS feeds

- [ ] AniList ~~& MAL~~ integration | 1.0 | [TODO] Reader improvements - - [ ] Use avelino/django-turbolinks to load pages faster

- [ ] More reading modes:

- [ ] Long strip mode

- [ ] Fit page to screen

- [ ] Double page

- [ ] Right to left direction

- [ ] Bidirectional page click events

- [ ] RSS feeds

- [ ] AniList ~~& MAL~~ integration | priority | reader improvements use avelino django turbolinks to load pages faster more reading modes long strip mode fit page to screen double page right to left direction bidirectional page click events rss feeds anilist mal integration | 1 |

104,639 | 4,216,362,843 | IssuesEvent | 2016-06-30 09:00:44 | ari/jobsworth | https://api.github.com/repos/ari/jobsworth | closed | Test failure after email template upgrade | high priority | @k41n Sorry to do this to you. I just committed some improvements to the outbound email templates, but this caused test failures. Could you please take a look and see whether the test just needs adjusting or if I actually broke the emails.

https://travis-ci.org/ari/jobsworth/jobs/140697171#L1874 | 1.0 | Test failure after email template upgrade - @k41n Sorry to do this to you. I just committed some improvements to the outbound email templates, but this caused test failures. Could you please take a look and see whether the test just needs adjusting or if I actually broke the emails.

https://travis-ci.org/ari/jobswor... | priority | test failure after email template upgrade sorry to do this to you i just committed some improvements to the outbound email templates but this caused test failures could you please take a look and see whether the test just needs adjusting or if i actually broke the emails | 1 |

395,798 | 11,696,739,092 | IssuesEvent | 2020-03-06 10:21:41 | ahmedkaludi/accelerated-mobile-pages | https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages | closed | Syntax is getting disturb of analytics code and creating syntactic errors in advance analytics section editor | NEXT UPDATE [Priority: HIGH] bug | The syntax is getting disturbed of analytics code and creating syntactic errors in the advance analytics section editor.

https://secure.helpscout.net/conversation/1082087063/110966?folderId=2632030

| 1.0 | Syntax is getting disturb of analytics code and creating syntactic errors in advance analytics section editor - The syntax is getting disturbed of analytics code and creating syntactic errors in the advance analytics section editor.

https://secure.helpscout.net/conversation/1082087063/110966?folderId=2632030

| priority | syntax is getting disturb of analytics code and creating syntactic errors in advance analytics section editor the syntax is getting disturbed of analytics code and creating syntactic errors in the advance analytics section editor | 1 |

146,667 | 5,625,815,629 | IssuesEvent | 2017-04-04 20:19:41 | SCIInstitute/ALMA-TDA | https://api.github.com/repos/SCIInstitute/ALMA-TDA | closed | command line interface | enhancement high priority | need to be able to process cubes from the command line to fit into existing workflows. | 1.0 | command line interface - need to be able to process cubes from the command line to fit into existing workflows. | priority | command line interface need to be able to process cubes from the command line to fit into existing workflows | 1 |

207,155 | 7,125,122,618 | IssuesEvent | 2018-01-19 21:36:39 | tavorperry/ZooP | https://api.github.com/repos/tavorperry/ZooP | opened | Delete all scripts in the end of pages ? | High Priority ! question | In every page, we have links to Jquery scripts like this:

<script src="https://code.jquery.com/jquery-1.12.4.min.js" integrity="sha256-ZosEbRLbNQzLpnKIkEdrPv7lOy9C27hHQ+Xp8a4MxAQ=" crossorigin="anonymous"></script>

1. What does it do?

2. Do we need it?

3. I tried to remove it from Volunteer and nothing changed...... | 1.0 | Delete all scripts in the end of pages ? - In every page, we have links to Jquery scripts like this:

<script src="https://code.jquery.com/jquery-1.12.4.min.js" integrity="sha256-ZosEbRLbNQzLpnKIkEdrPv7lOy9C27hHQ+Xp8a4MxAQ=" crossorigin="anonymous"></script>

1. What does it do?

2. Do we need it?

3. I tried to remo... | priority | delete all scripts in the end of pages in every page we have links to jquery scripts like this what does it do do we need it i tried to remove it from volunteer and nothing changed thanks | 1 |

342,835 | 10,322,361,254 | IssuesEvent | 2019-08-31 11:34:37 | wso2/docs-is | https://api.github.com/repos/wso2/docs-is | opened | Scim2 documents contains .xml configs | Priority/High | https://is.docs.wso2.com/en/5.9.0/connectors/configuring-SCIM-2.0-Provisioning-Connector/#ConfiguringSCIM2.0ProvisioningConnector-/UsersEndpoint contain xml configurations. They should be change to toml. | 1.0 | Scim2 documents contains .xml configs - https://is.docs.wso2.com/en/5.9.0/connectors/configuring-SCIM-2.0-Provisioning-Connector/#ConfiguringSCIM2.0ProvisioningConnector-/UsersEndpoint contain xml configurations. They should be change to toml. | priority | documents contains xml configs contain xml configurations they should be change to toml | 1 |

473,309 | 13,640,155,171 | IssuesEvent | 2020-09-25 12:17:48 | ExoCTK/exoctk | https://api.github.com/repos/ExoCTK/exoctk | closed | Update production environment | High Priority Web App | Upgrade production environment to `exoctk-3.6` including Python 3.6 since it is only running 2.7.5 right now! | 1.0 | Update production environment - Upgrade production environment to `exoctk-3.6` including Python 3.6 since it is only running 2.7.5 right now! | priority | update production environment upgrade production environment to exoctk including python since it is only running right now | 1 |

390,966 | 11,566,600,838 | IssuesEvent | 2020-02-20 12:49:50 | robotology/human-dynamics-estimation | https://api.github.com/repos/robotology/human-dynamics-estimation | closed | Add option to express net external wrench estimates of dummy source (hands) with orientation of world frame | complexity:medium component:HumanDynamicsEstimation component:HumanWrenchProvider priority:high type:enhancement type:task | Currently, the force-torque measurements from the ftShoes are expressed (both origin and orientation) with respect the human foot frames (`LeftFoot` and `RightFoot`). So, on calling [extractLinkNetExternalWrenchesFromDynamicVariables(const VectorDynSize& d, LinkNetExternalWrenches& netExtWrenches, const bool task1)](ht... | 1.0 | Add option to express net external wrench estimates of dummy source (hands) with orientation of world frame - Currently, the force-torque measurements from the ftShoes are expressed (both origin and orientation) with respect the human foot frames (`LeftFoot` and `RightFoot`). So, on calling [extractLinkNetExternalWrenc... | priority | add option to express net external wrench estimates of dummy source hands with orientation of world frame currently the force torque measurements from the ftshoes are expressed both origin and orientation with respect the human foot frames leftfoot and rightfoot so on calling the net external wrench ... | 1 |

236,887 | 7,753,360,142 | IssuesEvent | 2018-05-31 00:10:20 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | 7.5.0 Spawning the economy or placing a store crashes Eco | High Priority | Gabeux and I both replicated this by calling /spawneconomy and placing store objects, probably an issue with some deprecated currency features.

[store_crashIssue.txt](https://github.com/StrangeLoopGames/EcoIssues/files/2041162/store_crashIssue.txt)

| 1.0 | 7.5.0 Spawning the economy or placing a store crashes Eco - Gabeux and I both replicated this by calling /spawneconomy and placing store objects, probably an issue with some deprecated currency features.

[store_crashIssue.txt](https://github.com/StrangeLoopGames/EcoIssues/files/2041162/store_crashIssue.txt)

| priority | spawning the economy or placing a store crashes eco gabeux and i both replicated this by calling spawneconomy and placing store objects probably an issue with some deprecated currency features | 1 |

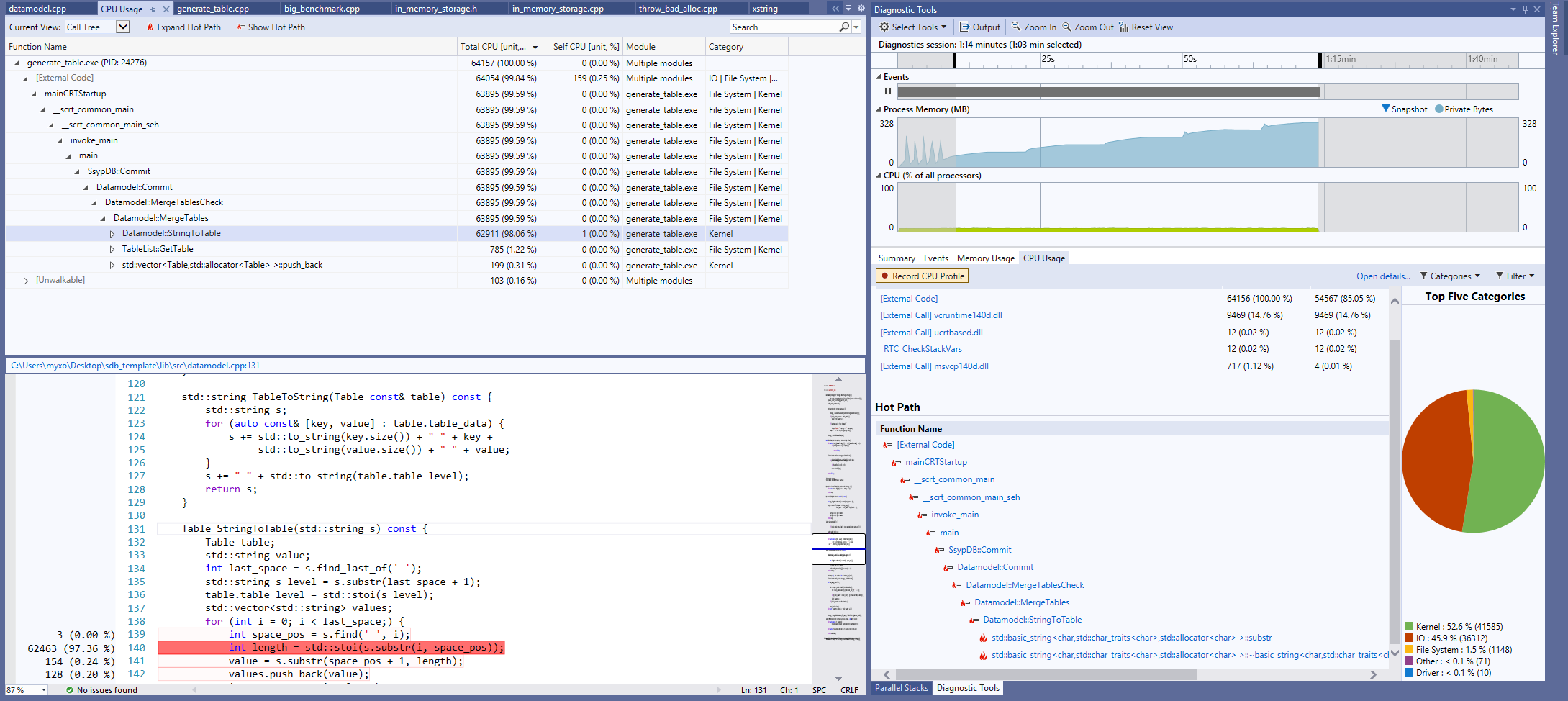

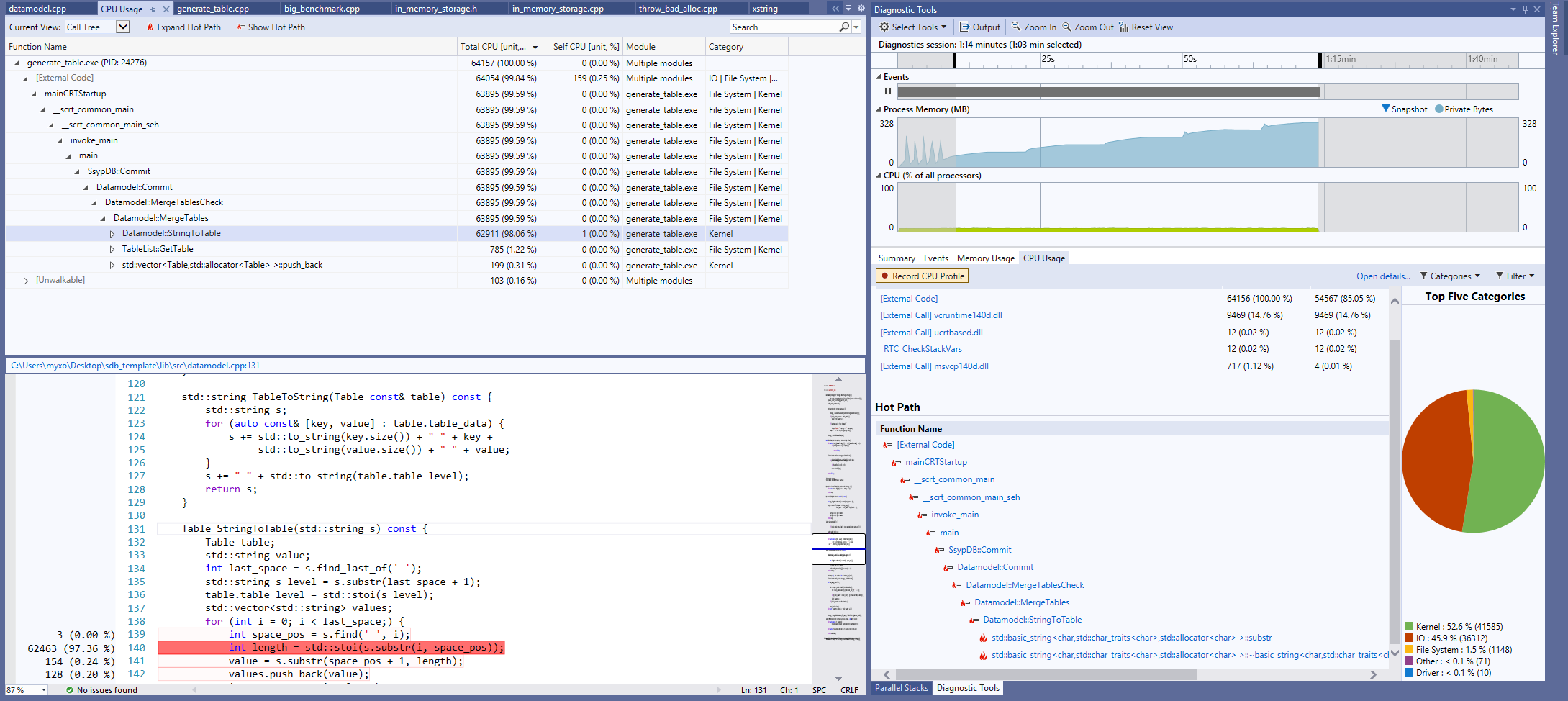

579,941 | 17,201,722,088 | IssuesEvent | 2021-07-17 11:26:54 | myxo/ssyp_db | https://api.github.com/repos/myxo/ssyp_db | closed | Datamodel: slow runtime | bug high priority | Решил я тут запустить generate_table и увидел чудовищную просадку по скорости работы. Результат профайлера:

| 1.0 | Datamodel: slow runtime - Решил я тут запустить generate_table и увидел чудовищную просадку по скорости работы. Результат профайлера:

| priority | datamodel slow runtime решил я тут запустить generate table и увидел чудовищную просадку по скорости работы результат профайлера | 1 |

331,905 | 10,081,428,467 | IssuesEvent | 2019-07-25 08:47:51 | ipfs/ipfs-cluster | https://api.github.com/repos/ipfs/ipfs-cluster | closed | [Daemon] Init should take a list of peers | difficulty:easy enhancement/feature help wanted priority:high ready | **Describe the feature you are proposing**

`ipfs-cluster-service init --peers <multiaddress,multiaddress>`

It should:

1) Add and write the given peers to the peerstore file

2) For raft config section, add the peer IDs to the init_raft_peerset

3) For crdt config section, add the peer IDs to the trusted_peers ... | 1.0 | [Daemon] Init should take a list of peers - **Describe the feature you are proposing**

`ipfs-cluster-service init --peers <multiaddress,multiaddress>`

It should:

1) Add and write the given peers to the peerstore file

2) For raft config section, add the peer IDs to the init_raft_peerset

3) For crdt config sec... | priority | init should take a list of peers describe the feature you are proposing ipfs cluster service init peers it should add and write the given peers to the peerstore file for raft config section add the peer ids to the init raft peerset for crdt config section add the peer ids to the tru... | 1 |

707,581 | 24,310,285,696 | IssuesEvent | 2022-09-29 21:30:40 | COS301-SE-2022/Office-Booker | https://api.github.com/repos/COS301-SE-2022/Office-Booker | closed | Prevent duplicate booking votings | Type: Enhance Priority: High Status: Busy Status: Needs-info | As it stands, a user is able to spam votes on a booking.

The currently suggested approach is to simply store an array of users who have already voted on a booking and disallow subsequent votes if they are already in that array. | 1.0 | Prevent duplicate booking votings - As it stands, a user is able to spam votes on a booking.

The currently suggested approach is to simply store an array of users who have already voted on a booking and disallow subsequent votes if they are already in that array. | priority | prevent duplicate booking votings as it stands a user is able to spam votes on a booking the currently suggested approach is to simply store an array of users who have already voted on a booking and disallow subsequent votes if they are already in that array | 1 |

777,620 | 27,288,462,530 | IssuesEvent | 2023-02-23 15:03:44 | Satellite-im/Uplink | https://api.github.com/repos/Satellite-im/Uplink | closed | Global - Changes requested from Matts PR #274 | High Priority Global | i recommend moving this and the use_future to the end of this function. the function was laid out so the `use_future`s were declared after `main_element`, which allowed a dev to see the end result of this function without having to scroll past all the `use_future`s

_Originally posted by @sdwoodbury in https://github... | 1.0 | Global - Changes requested from Matts PR #274 - i recommend moving this and the use_future to the end of this function. the function was laid out so the `use_future`s were declared after `main_element`, which allowed a dev to see the end result of this function without having to scroll past all the `use_future`s

_Or... | priority | global changes requested from matts pr i recommend moving this and the use future to the end of this function the function was laid out so the use future s were declared after main element which allowed a dev to see the end result of this function without having to scroll past all the use future s orig... | 1 |

426,281 | 12,370,378,655 | IssuesEvent | 2020-05-18 16:42:07 | Azure/ARO-RP | https://api.github.com/repos/Azure/ARO-RP | closed | CI GOROOT missing | priority-high | All CI is failing with:

```

go: cannot find GOROOT directory: /usr/local/go1.13

```

This might be change in the VM pool. This needs fixing asap.

https://msazure.visualstudio.com/AzureRedHatOpenShift/_build/results?buildId=31206518&view=logs&j=406f28f7-259e-5bfd-e153-dd013342e83f&t=50b0c634-7175-589f-aa2c-13d292... | 1.0 | CI GOROOT missing - All CI is failing with:

```

go: cannot find GOROOT directory: /usr/local/go1.13

```

This might be change in the VM pool. This needs fixing asap.

https://msazure.visualstudio.com/AzureRedHatOpenShift/_build/results?buildId=31206518&view=logs&j=406f28f7-259e-5bfd-e153-dd013342e83f&t=50b0c634-7... | priority | ci goroot missing all ci is failing with go cannot find goroot directory usr local this might be change in the vm pool this needs fixing asap | 1 |

56,247 | 3,078,627,002 | IssuesEvent | 2015-08-21 11:38:18 | nfprojects/nfengine | https://api.github.com/repos/nfprojects/nfengine | closed | Remove all useless #if ... #else ... #endif sections | bug high priority medium | Some parts of engine have code hidden by #if ... #else ... #endif sequence. Search for all of them and either remove them, or provide different way to determine which section to use (avoid preprocessor macros, we want the engine to be entirely compiled). | 1.0 | Remove all useless #if ... #else ... #endif sections - Some parts of engine have code hidden by #if ... #else ... #endif sequence. Search for all of them and either remove them, or provide different way to determine which section to use (avoid preprocessor macros, we want the engine to be entirely compiled). | priority | remove all useless if else endif sections some parts of engine have code hidden by if else endif sequence search for all of them and either remove them or provide different way to determine which section to use avoid preprocessor macros we want the engine to be entirely compiled | 1 |

580,234 | 17,213,594,245 | IssuesEvent | 2021-07-19 08:39:53 | GeneralMine/S2QUAT | https://api.github.com/repos/GeneralMine/S2QUAT | opened | Link question to factors or criterias | Enhancement Help wanted Priority: High | We need to establish a optional relationship from a question to a direct factor or criteria of the quality model. Thats necessary for #52 to connect the survey to the quality model in evaluation.

I guess its not possible to combine these two relationships to one, due to the fact that the relationship will always be an... | 1.0 | Link question to factors or criterias - We need to establish a optional relationship from a question to a direct factor or criteria of the quality model. Thats necessary for #52 to connect the survey to the quality model in evaluation.

I guess its not possible to combine these two relationships to one, due to the fact... | priority | link question to factors or criterias we need to establish a optional relationship from a question to a direct factor or criteria of the quality model thats necessary for to connect the survey to the quality model in evaluation i guess its not possible to combine these two relationships to one due to the fact ... | 1 |

92,569 | 3,872,420,348 | IssuesEvent | 2016-04-11 13:50:34 | DoSomething/gladiator | https://api.github.com/repos/DoSomething/gladiator | closed | Leaderboard | #leaderboard large priority-high | Columns for leaderboard:

Rank | Name | Number of x's y'd | Email | Flagged Status

| --- | --- | --- | --- | --- |

1 | Jerome | 50 | jeromoe@example.com | approved

2 | Hils | 35 | hils@clinton.com | approved

3 | Bernie | 20 | bernie@sanders.com | approved

Sort by number of x's y'd.

If there is a pending fil... | 1.0 | Leaderboard - Columns for leaderboard:

Rank | Name | Number of x's y'd | Email | Flagged Status

| --- | --- | --- | --- | --- |

1 | Jerome | 50 | jeromoe@example.com | approved

2 | Hils | 35 | hils@clinton.com | approved

3 | Bernie | 20 | bernie@sanders.com | approved

Sort by number of x's y'd.

If there is... | priority | leaderboard columns for leaderboard rank name number of x s y d email flagged status jerome jeromoe example com approved hils hils clinton com approved bernie bernie sanders com approved sort by number of x s y d if there is a ... | 1 |

612,596 | 19,026,692,605 | IssuesEvent | 2021-11-24 05:06:57 | boostcampwm-2021/iOS05-Escaper | https://api.github.com/repos/boostcampwm-2021/iOS05-Escaper | closed | [E1 S1 T6] 로그인 화면의 repository를 구성한다. | feature High Priority | ### Epic - Story - Task

Epic : 로그인 화면

Story : Escaper 서비스를 이용하기 위해 로그인을 할 수 있다.

Task : 로그인 화면의 repository를 구성한다. | 1.0 | [E1 S1 T6] 로그인 화면의 repository를 구성한다. - ### Epic - Story - Task

Epic : 로그인 화면

Story : Escaper 서비스를 이용하기 위해 로그인을 할 수 있다.

Task : 로그인 화면의 repository를 구성한다. | priority | 로그인 화면의 repository를 구성한다 epic story task epic 로그인 화면 story escaper 서비스를 이용하기 위해 로그인을 할 수 있다 task 로그인 화면의 repository를 구성한다 | 1 |

305,752 | 9,376,569,904 | IssuesEvent | 2019-04-04 08:17:27 | qlcchain/go-qlc | https://api.github.com/repos/qlcchain/go-qlc | closed | verify performance of sqlite | Priority: High Type: Enhancement | ### Description of the issue

verify performance of sqlite

### Issue-Type

- [ ] bug report

- [x] feature request

- [ ] Documentation improvement

| 1.0 | verify performance of sqlite - ### Description of the issue

verify performance of sqlite

### Issue-Type

- [ ] bug report

- [x] feature request

- [ ] Documentation improvement

| priority | verify performance of sqlite description of the issue verify performance of sqlite issue type bug report feature request documentation improvement | 1 |

601,749 | 18,429,868,892 | IssuesEvent | 2021-10-14 06:06:49 | ballerina-platform/ballerina-standard-library | https://api.github.com/repos/ballerina-platform/ballerina-standard-library | closed | Compiler plugin fails for wrong programs | Points/0.5 Priority/High Type/Improvement Team/PCP | Consider the below program where the io:println is not in the right place. Ideally it should be inside the init() function.

```ballerina

import ballerinax/kafka;

import ballerina/io;

kafka:ProducerConfiguration prod_config = {

clientId: "trainer-id",

acks: "all",

retryCount: 3

};

kafka:Producer... | 1.0 | Compiler plugin fails for wrong programs - Consider the below program where the io:println is not in the right place. Ideally it should be inside the init() function.

```ballerina

import ballerinax/kafka;

import ballerina/io;

kafka:ProducerConfiguration prod_config = {

clientId: "trainer-id",

acks: "a... | priority | compiler plugin fails for wrong programs consider the below program where the io println is not in the right place ideally it should be inside the init function ballerina import ballerinax kafka import ballerina io kafka producerconfiguration prod config clientid trainer id acks a... | 1 |

482,029 | 13,895,964,809 | IssuesEvent | 2020-10-19 16:31:16 | AY2021S1-CS2113T-F14-3/tp | https://api.github.com/repos/AY2021S1-CS2113T-F14-3/tp | closed | Removal of nested user inputs in RouteMapCommand class | priority.High type.Enhancement | Removal of nested user inputs in RouteMapCommand class to facillitate efficient use of the application | 1.0 | Removal of nested user inputs in RouteMapCommand class - Removal of nested user inputs in RouteMapCommand class to facillitate efficient use of the application | priority | removal of nested user inputs in routemapcommand class removal of nested user inputs in routemapcommand class to facillitate efficient use of the application | 1 |

741,782 | 25,818,555,010 | IssuesEvent | 2022-12-12 07:44:52 | xournalpp/xournalpp | https://api.github.com/repos/xournalpp/xournalpp | closed | Crash of unknown cause when writing | bug priority::high Crash | **Affects versions :**

- OS: Arch Linux

- (Linux only) Desktop environment: Gnome Wayland

- Which version of libgtk do you use 3.24.34

- Version of Xournal++: 1.12

- Installation method: flatpak

**Describe the bug**

Crash of unknown cause.

**To Reproduce**

Unknown. I was simply writing in a document i... | 1.0 | Crash of unknown cause when writing - **Affects versions :**

- OS: Arch Linux

- (Linux only) Desktop environment: Gnome Wayland

- Which version of libgtk do you use 3.24.34

- Version of Xournal++: 1.12

- Installation method: flatpak

**Describe the bug**

Crash of unknown cause.

**To Reproduce**

Unknown... | priority | crash of unknown cause when writing affects versions os arch linux linux only desktop environment gnome wayland which version of libgtk do you use version of xournal installation method flatpak describe the bug crash of unknown cause to reproduce unknown i... | 1 |

166,135 | 6,291,506,591 | IssuesEvent | 2017-07-20 01:04:56 | GoogleCloudPlatform/google-cloud-eclipse | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-eclipse | reopened | Cannot run StarterPipeline generated by Dataflow wizard (Dataflow 2.0.0) | bug high priority | ```

Exception in thread "main" java.lang.RuntimeException: Failed to construct instance from factory method DataflowRunner#fromOptions(interface org.apache.beam.sdk.options.PipelineOptions)

at org.apache.beam.sdk.util.InstanceBuilder.buildFromMethod(InstanceBuilder.java:233)

at org.apache.beam.sdk.util.InstanceBui... | 1.0 | Cannot run StarterPipeline generated by Dataflow wizard (Dataflow 2.0.0) - ```

Exception in thread "main" java.lang.RuntimeException: Failed to construct instance from factory method DataflowRunner#fromOptions(interface org.apache.beam.sdk.options.PipelineOptions)

at org.apache.beam.sdk.util.InstanceBuilder.buildFro... | priority | cannot run starterpipeline generated by dataflow wizard dataflow exception in thread main java lang runtimeexception failed to construct instance from factory method dataflowrunner fromoptions interface org apache beam sdk options pipelineoptions at org apache beam sdk util instancebuilder buildfro... | 1 |

722,322 | 24,858,631,949 | IssuesEvent | 2022-10-27 06:09:52 | kubesphere/kubesphere | https://api.github.com/repos/kubesphere/kubesphere | closed | Platform permission 'cluster-management' can not authorized/unauthorized workspace | kind/bug priority/high | **Describe the Bug**

There is a user test, his platform permission is `cluster-management`, and did not invited to cluster host

**Versions Used**

KubeSphere: `v3.3.1-rc.5`

**How To Reproduce**

Steps to reproduce the behavior:

1. Login ks with user test

2. Go to cluster visibility of cluster host

3. au... | 1.0 | Platform permission 'cluster-management' can not authorized/unauthorized workspace - **Describe the Bug**

There is a user test, his platform permission is `cluster-management`, and did not invited to cluster host

**Versions Used**

KubeSphere: `v3.3.1-rc.5`

**How To Reproduce**

Steps to reproduce the beha... | priority | platform permission cluster management can not authorized unauthorized workspace describe the bug there is a user test his platform permission is cluster management and did not invited to cluster host versions used kubesphere rc how to reproduce steps to reproduce the behav... | 1 |

158,497 | 6,028,805,632 | IssuesEvent | 2017-06-08 16:30:50 | ArctosDB/arctos | https://api.github.com/repos/ArctosDB/arctos | closed | test request - https | Enhancement Priority-High | Please visit https://arctos.database.museum - everything should be the same as the http site - and let me know if something doesn't work or if you somehow end up back on http://arctos.database.museum or experience any other problems.

If there are no problems found in the next few days we can probably safely update i... | 1.0 | test request - https - Please visit https://arctos.database.museum - everything should be the same as the http site - and let me know if something doesn't work or if you somehow end up back on http://arctos.database.museum or experience any other problems.

If there are no problems found in the next few days we can p... | priority | test request https please visit everything should be the same as the http site and let me know if something doesn t work or if you somehow end up back on or experience any other problems if there are no problems found in the next few days we can probably safely update incoming links and possibly force ... | 1 |

732,422 | 25,258,797,829 | IssuesEvent | 2022-11-15 20:38:48 | openaq/openaq-fetch | https://api.github.com/repos/openaq/openaq-fetch | reopened | Iran (Tehran) - Data Sources | help wanted new data high priority needs investigation | This is listed as high priority b/c Tehran, Iran is experiencing very bad AQ. Media outlets report schools shut/will be shutting down to AQ. Plus it is in a region where we have minimal to no coverage currently in our system.

Most useful info:

List of stations and coordinates: http://31.24.238.89/home/station.aspx

Hou... | 1.0 | Iran (Tehran) - Data Sources - This is listed as high priority b/c Tehran, Iran is experiencing very bad AQ. Media outlets report schools shut/will be shutting down to AQ. Plus it is in a region where we have minimal to no coverage currently in our system.

Most useful info:

List of stations and coordinates: http://31.... | priority | iran tehran data sources this is listed as high priority b c tehran iran is experiencing very bad aq media outlets report schools shut will be shutting down to aq plus it is in a region where we have minimal to no coverage currently in our system most useful info list of stations and coordinates hourly p... | 1 |

762,350 | 26,716,174,927 | IssuesEvent | 2023-01-28 14:45:42 | robertgouveia/JuiceJam | https://api.github.com/repos/robertgouveia/JuiceJam | opened | The user can see more of the level while in fullscreen. | bug priority: high | The game doesn't seem to be scaling properly. When I go into fullscreen I have an advantage because I can see much more of the level than I can in the small view.

Environment:

itch.io version 1 build

Reproducibility rate:

100%

Steps to reproduce:

1. Press the fullscreen button.

Expected result:

The game... | 1.0 | The user can see more of the level while in fullscreen. - The game doesn't seem to be scaling properly. When I go into fullscreen I have an advantage because I can see much more of the level than I can in the small view.

Environment:

itch.io version 1 build

Reproducibility rate:

100%

Steps to reproduce:

1. ... | priority | the user can see more of the level while in fullscreen the game doesn t seem to be scaling properly when i go into fullscreen i have an advantage because i can see much more of the level than i can in the small view environment itch io version build reproducibility rate steps to reproduce pr... | 1 |

758,690 | 26,565,172,186 | IssuesEvent | 2023-01-20 19:27:26 | meanstream-io/meanstream | https://api.github.com/repos/meanstream-io/meanstream | closed | USK Type Change Closes Panel | type: bug priority: high complexity: low impact: ux | When a user changes the type of upstream key e.g. from Luma to Chroma, the expansion panel closes and needs to be reopened. This is irritating for the user at best. | 1.0 | USK Type Change Closes Panel - When a user changes the type of upstream key e.g. from Luma to Chroma, the expansion panel closes and needs to be reopened. This is irritating for the user at best. | priority | usk type change closes panel when a user changes the type of upstream key e g from luma to chroma the expansion panel closes and needs to be reopened this is irritating for the user at best | 1 |

433,214 | 12,503,692,239 | IssuesEvent | 2020-06-02 07:43:59 | CatalogueOfLife/clearinghouse-ui | https://api.github.com/repos/CatalogueOfLife/clearinghouse-ui | closed | Removed decision shown in assembly source tree | bug high priority tiny | When I delete a decision from the source tree in the assembly it is gone.

But when I close the source tree on some higher node and reopen it the decision show up again.

Deleting it once more causes a 404 - so it in already gone in the db and just a rendering problem

https://data.dev.catalogue.life/catalogue/3/as... | 1.0 | Removed decision shown in assembly source tree - When I delete a decision from the source tree in the assembly it is gone.

But when I close the source tree on some higher node and reopen it the decision show up again.

Deleting it once more causes a 404 - so it in already gone in the db and just a rendering problem

... | priority | removed decision shown in assembly source tree when i delete a decision from the source tree in the assembly it is gone but when i close the source tree on some higher node and reopen it the decision show up again deleting it once more causes a so it in already gone in the db and just a rendering problem ... | 1 |

261,687 | 8,245,101,015 | IssuesEvent | 2018-09-11 08:42:06 | bitshares/bitshares-ui | https://api.github.com/repos/bitshares/bitshares-ui | reopened | [1][kapeer] Unable to update a smart coin's backing asset | bug high priority | **Describe the bug**

Should be able to update backing asset when supply is zero.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to https://wallet.bitshares.org/

2. Click on hamburg button, click assets

3. Click "update asset" of an smart coin which has zero supply

4. Click "smartcoin options" tab, scr... | 1.0 | [1][kapeer] Unable to update a smart coin's backing asset - **Describe the bug**

Should be able to update backing asset when supply is zero.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to https://wallet.bitshares.org/

2. Click on hamburg button, click assets

3. Click "update asset" of an smart coin ... | priority | unable to update a smart coin s backing asset describe the bug should be able to update backing asset when supply is zero to reproduce steps to reproduce the behavior go to click on hamburg button click assets click update asset of an smart coin which has zero supply click smar... | 1 |

439,909 | 12,690,330,025 | IssuesEvent | 2020-06-21 11:32:55 | aysegulsari/swe-573 | https://api.github.com/repos/aysegulsari/swe-573 | closed | Advanced search feature implementation | Priority: High Status: Pending Type: Development | Implement a search feature that will enabled user to search for other user profiles and recipes. | 1.0 | Advanced search feature implementation - Implement a search feature that will enabled user to search for other user profiles and recipes. | priority | advanced search feature implementation implement a search feature that will enabled user to search for other user profiles and recipes | 1 |

388,229 | 11,484,868,434 | IssuesEvent | 2020-02-11 05:34:37 | openmsupply/mobile | https://api.github.com/repos/openmsupply/mobile | closed | Can't select an ItemDirection | Bug: development Docs: not needed Effort: small Module: dispensary Priority: high | ## Describe the bug

`ItemDirection`s in the drop down aren't selectable

### To reproduce

Dispensing development bug

### Expected behaviour

Dispensing development bug

### Proposed Solution

Dispensing development bug

### Version and device info

Dispensing development bug

### Additional cont... | 1.0 | Can't select an ItemDirection - ## Describe the bug

`ItemDirection`s in the drop down aren't selectable

### To reproduce

Dispensing development bug

### Expected behaviour

Dispensing development bug

### Proposed Solution

Dispensing development bug

### Version and device info

Dispensing develo... | priority | can t select an itemdirection describe the bug itemdirection s in the drop down aren t selectable to reproduce dispensing development bug expected behaviour dispensing development bug proposed solution dispensing development bug version and device info dispensing develo... | 1 |

304,121 | 9,321,477,133 | IssuesEvent | 2019-03-27 04:06:08 | python/mypy | https://api.github.com/repos/python/mypy | closed | Type ignore comment has no effect after argument type comment | bug false-positive priority-0-high | This program generates an error on the first line even though there is a `# type: ignore` comment:

```py

def f(x, # type: x # type: ignore

):

# type: (...) -> None

pass

```

Similarly, this generates an error even though it shouldn't:

```py

def f(x=y, # type: int # type: ignore # Name '... | 1.0 | Type ignore comment has no effect after argument type comment - This program generates an error on the first line even though there is a `# type: ignore` comment:

```py

def f(x, # type: x # type: ignore

):

# type: (...) -> None

pass

```

Similarly, this generates an error even though it should... | priority | type ignore comment has no effect after argument type comment this program generates an error on the first line even though there is a type ignore comment py def f x type x type ignore type none pass similarly this generates an error even though it should... | 1 |

198,958 | 6,979,474,713 | IssuesEvent | 2017-12-12 21:10:40 | redhat-nfvpe/kube-centos-ansible | https://api.github.com/repos/redhat-nfvpe/kube-centos-ansible | closed | Ability to install optional packages on hosts | priority:high state:needs_review type:enhancement | Used to have some play where I installed packages that not everyone needs, but, that I need every single time I spin up a cluster. Especially an editor and network tracing tools (e.g. tcpdump).

I'm going to create a playbook that lets you set those packages if you need. | 1.0 | Ability to install optional packages on hosts - Used to have some play where I installed packages that not everyone needs, but, that I need every single time I spin up a cluster. Especially an editor and network tracing tools (e.g. tcpdump).

I'm going to create a playbook that lets you set those packages if you nee... | priority | ability to install optional packages on hosts used to have some play where i installed packages that not everyone needs but that i need every single time i spin up a cluster especially an editor and network tracing tools e g tcpdump i m going to create a playbook that lets you set those packages if you nee... | 1 |

166,587 | 6,307,208,868 | IssuesEvent | 2017-07-21 23:49:00 | haskell/cabal | https://api.github.com/repos/haskell/cabal | closed | Version number of Cabal on 2.0 branch is incorrect | priority: high | The version number listed in `Cabal.cabal` is 2.0.0.0 whereas the tag claims the release should be 2.0.0.1.

Thanks to @hvr for noticing this. | 1.0 | Version number of Cabal on 2.0 branch is incorrect - The version number listed in `Cabal.cabal` is 2.0.0.0 whereas the tag claims the release should be 2.0.0.1.

Thanks to @hvr for noticing this. | priority | version number of cabal on branch is incorrect the version number listed in cabal cabal is whereas the tag claims the release should be thanks to hvr for noticing this | 1 |

690,651 | 23,668,053,898 | IssuesEvent | 2022-08-27 00:45:34 | earth-chris/earthlib | https://api.github.com/repos/earth-chris/earthlib | opened | Create service account for testing `ee` functions in CI | high effort low priority | You need to run `ee.Initialize()` prior to running any earth engine calls, which requires setting up a service account. This should be done when the package is stable enough to handle this (lotsa refactoring goin' on right now).

Here's a useful [SO post](https://gis.stackexchange.com/questions/377222/creating-automa... | 1.0 | Create service account for testing `ee` functions in CI - You need to run `ee.Initialize()` prior to running any earth engine calls, which requires setting up a service account. This should be done when the package is stable enough to handle this (lotsa refactoring goin' on right now).

Here's a useful [SO post](http... | priority | create service account for testing ee functions in ci you need to run ee initialize prior to running any earth engine calls which requires setting up a service account this should be done when the package is stable enough to handle this lotsa refactoring goin on right now here s a useful to guide t... | 1 |

376,340 | 11,142,350,106 | IssuesEvent | 2019-12-22 09:01:35 | bounswe/bounswe2019group3 | https://api.github.com/repos/bounswe/bounswe2019group3 | closed | send exercise answers | Front-end Priority: High Status: In Progress | I added the exercises functionality but sending the answers part is not implemented yet. | 1.0 | send exercise answers - I added the exercises functionality but sending the answers part is not implemented yet. | priority | send exercise answers i added the exercises functionality but sending the answers part is not implemented yet | 1 |

495,697 | 14,286,474,068 | IssuesEvent | 2020-11-23 15:11:47 | PMEAL/OpenPNM | https://api.github.com/repos/PMEAL/OpenPNM | closed | GenericTransport._is_converged shoudn't raise Exception | bug easy high priority | `_is_converged` is only supposed to return `True/False`. | 1.0 | GenericTransport._is_converged shoudn't raise Exception - `_is_converged` is only supposed to return `True/False`. | priority | generictransport is converged shoudn t raise exception is converged is only supposed to return true false | 1 |

117,821 | 4,728,086,227 | IssuesEvent | 2016-10-18 15:07:07 | INN/largo-related-posts | https://api.github.com/repos/INN/largo-related-posts | closed | Incorrect display of related posts in widget | priority: high type: bug | From Mike:

When I publish the page, the related posts are not what I selected. Instead they seem to be completely different posts than what I have selected.

Example post: http://training-stage.publicbroadcasting.net/blog/test-post/ | 1.0 | Incorrect display of related posts in widget - From Mike:

When I publish the page, the related posts are not what I selected. Instead they seem to be completely different posts than what I have selected.

Example post: http://training-stage.publicbroadcasting.net/blog/test-post/ | priority | incorrect display of related posts in widget from mike when i publish the page the related posts are not what i selected instead they seem to be completely different posts than what i have selected example post | 1 |

492,463 | 14,213,719,794 | IssuesEvent | 2020-11-17 03:16:21 | neuropsychology/NeuroKit | https://api.github.com/repos/neuropsychology/NeuroKit | closed | Manuscript Revision Checklist - 5) Enhance Discussion | high priority :warning: | ### Enhance Discussion

- Clarify what high-level functions are still missing and the direction in which nk is going to implement these

- [x] Explain state of unittest, documentation, development, short-term targets

- Zen: since pytest is already mentioned in the body of the paragraph, we can talk about followi... | 1.0 | Manuscript Revision Checklist - 5) Enhance Discussion - ### Enhance Discussion

- Clarify what high-level functions are still missing and the direction in which nk is going to implement these

- [x] Explain state of unittest, documentation, development, short-term targets

- Zen: since pytest is already mentioned... | priority | manuscript revision checklist enhance discussion enhance discussion clarify what high level functions are still missing and the direction in which nk is going to implement these explain state of unittest documentation development short term targets zen since pytest is already mentioned i... | 1 |

87,502 | 3,755,545,648 | IssuesEvent | 2016-03-12 18:46:50 | cs2103jan2016-t11-3j/main | https://api.github.com/repos/cs2103jan2016-t11-3j/main | closed | Bug in edit | priority.high type.bug | Edits the wrong item sometimes, notably when the task list is first loaded.

Works fine if it's executed after "search"/"display" | 1.0 | Bug in edit - Edits the wrong item sometimes, notably when the task list is first loaded.

Works fine if it's executed after "search"/"display" | priority | bug in edit edits the wrong item sometimes notably when the task list is first loaded works fine if it s executed after search display | 1 |

477,004 | 13,753,856,924 | IssuesEvent | 2020-10-06 16:08:56 | rstudio/gt | https://api.github.com/repos/rstudio/gt | closed | save as RTF file with rowname_col specified | Difficulty: [3] Advanced Effort: [3] High Priority: ♨︎ Critical Type: ☹︎ Bug | When trying to save a gt object as an RTF file, I'm running into an issue if rowname_col is specified. I'm getting the error message "Error in row_splits[[i]] : subscript out of bounds".

I don't understand why the below code would lead to this error, but its very possible this is something I don't understand rather ... | 1.0 | save as RTF file with rowname_col specified - When trying to save a gt object as an RTF file, I'm running into an issue if rowname_col is specified. I'm getting the error message "Error in row_splits[[i]] : subscript out of bounds".

I don't understand why the below code would lead to this error, but its very possibl... | priority | save as rtf file with rowname col specified when trying to save a gt object as an rtf file i m running into an issue if rowname col is specified i m getting the error message error in row splits subscript out of bounds i don t understand why the below code would lead to this error but its very possible t... | 1 |

741,313 | 25,788,164,883 | IssuesEvent | 2022-12-09 23:09:28 | zulip/zulip-mobile | https://api.github.com/repos/zulip/zulip-mobile | closed | "Mark all as read" appears to mark all as _unread_ | P1 high-priority | (Marking P1 because this came up during work to have Flow check flagsReducer-test.js, toward https://github.com/zulip/zulip-mobile/issues/5102, disallow ancient servers.)

To reproduce:

- Arrange to have just a few unreads in the "All messages" view, and go to that view

- Tap "Mark all as read"

- See that the un... | 1.0 | "Mark all as read" appears to mark all as _unread_ - (Marking P1 because this came up during work to have Flow check flagsReducer-test.js, toward https://github.com/zulip/zulip-mobile/issues/5102, disallow ancient servers.)

To reproduce:

- Arrange to have just a few unreads in the "All messages" view, and go to t... | priority | mark all as read appears to mark all as unread marking because this came up during work to have flow check flagsreducer test js toward disallow ancient servers to reproduce arrange to have just a few unreads in the all messages view and go to that view tap mark all as read see that the... | 1 |

824,750 | 31,169,251,991 | IssuesEvent | 2023-08-16 22:52:51 | filamentphp/filament | https://api.github.com/repos/filamentphp/filament | closed | Builder broken - Undefined array key "type" | bug confirmed bug in dependency high priority | ### Package

filament/filament

### Package Version

v3.0.7

### Laravel Version

v10.17.1

### Livewire Version

_No response_

### PHP Version

PHP 8.2

### Problem description

Simple Builder setup causes error `Undefined array key "type"` when saving/deleting.

Error: https://flareapp.io/share/87ne66yP

### Expec... | 1.0 | Builder broken - Undefined array key "type" - ### Package

filament/filament

### Package Version

v3.0.7

### Laravel Version

v10.17.1

### Livewire Version

_No response_

### PHP Version

PHP 8.2

### Problem description

Simple Builder setup causes error `Undefined array key "type"` when saving/deleting.