Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

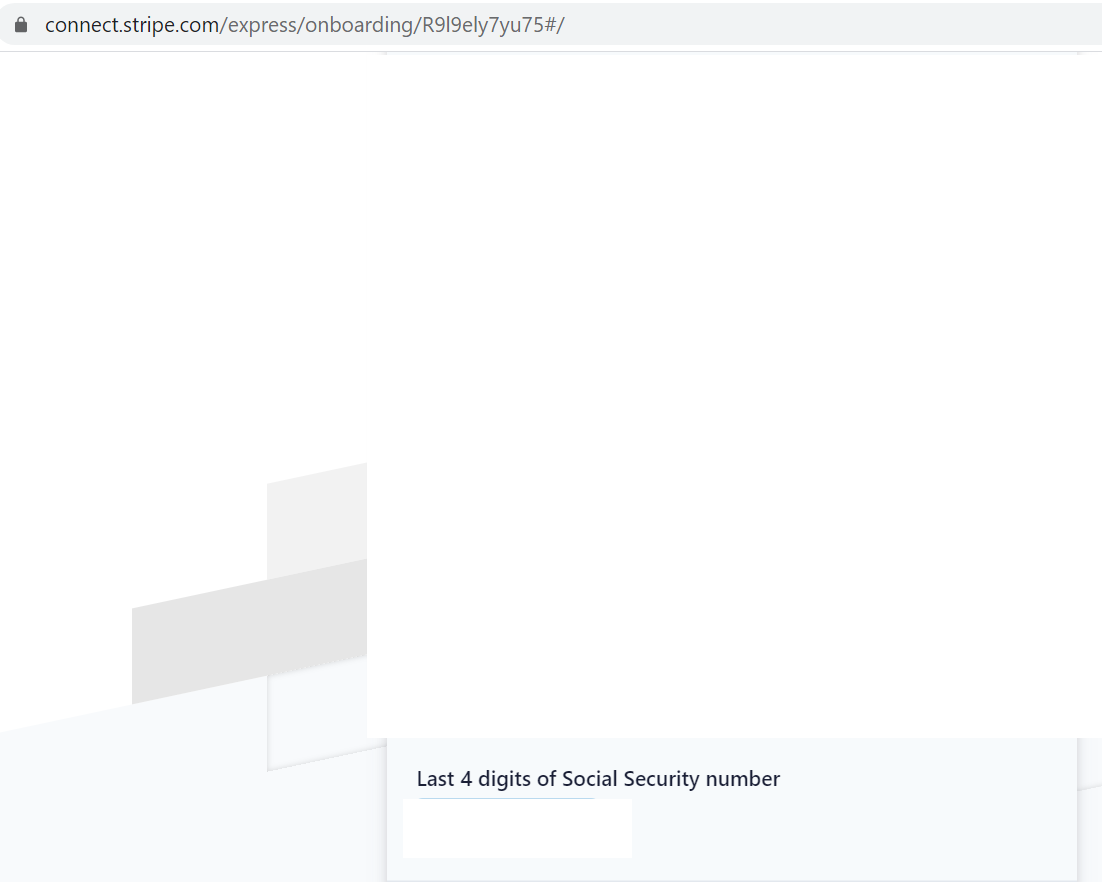

512,705 | 14,907,845,605 | IssuesEvent | 2021-01-22 04:17:00 | Plaxy-Technologies-Inc/YouPlanets-Bug-Report | https://api.github.com/repos/Plaxy-Technologies-Inc/YouPlanets-Bug-Report | closed | Bug/Feature Missing: Can't connect to Stripe without Social Security Number - creators can't get paid without one? | Emergency Priority: High |

| 1.0 | Bug/Feature Missing: Can't connect to Stripe without Social Security Number - creators can't get paid without one? -

| priority | bug feature missing can t connect to stripe without social security number creators can t get paid without one | 1 |

631,183 | 20,146,999,115 | IssuesEvent | 2022-02-09 08:39:28 | Disfactory/Disfactory | https://api.github.com/repos/Disfactory/Disfactory | closed | 無法上傳照片 | bug high priority | **Describe the bug**

User couldn't upload the photo.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to disfactory.tw

2. Click on "我想新增可疑工廠"

3. Click on '新增照片', choose one

4. See error

**Screenshots**

<img width="1440" alt="截圖 2022-02-07 上午10 51 32" src="https://user-images.githubusercontent.com/60970217/152717064-b8c8a2af-ba4d-4b9d-9308-d11770edb287.png">

**Desktop (please complete the following information):**

- OS: MacOS

- Browser: chrome

- Version: 97.0.4692.99

**Additional context**

There are two users respond that they experienced the same problem through troubleshooting form respectively on 1/30 and 2/5. | 1.0 | 無法上傳照片 - **Describe the bug**

User couldn't upload the photo.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to disfactory.tw

2. Click on "我想新增可疑工廠"

3. Click on '新增照片', choose one

4. See error

**Screenshots**

<img width="1440" alt="截圖 2022-02-07 上午10 51 32" src="https://user-images.githubusercontent.com/60970217/152717064-b8c8a2af-ba4d-4b9d-9308-d11770edb287.png">

**Desktop (please complete the following information):**

- OS: MacOS

- Browser: chrome

- Version: 97.0.4692.99

**Additional context**

There are two users respond that they experienced the same problem through troubleshooting form respectively on 1/30 and 2/5. | priority | 無法上傳照片 describe the bug user couldn t upload the photo to reproduce steps to reproduce the behavior go to disfactory tw click on 我想新增可疑工廠 click on 新增照片 choose one see error screenshots img width alt 截圖 src desktop please complete the following information os macos browser chrome version additional context there are two users respond that they experienced the same problem through troubleshooting form respectively on and | 1 |

335,813 | 10,167,022,266 | IssuesEvent | 2019-08-07 17:11:39 | worldmaking/msvr | https://api.github.com/repos/worldmaking/msvr | opened | running max causes module instancing error | Priority: High bug | @Zodsmar told me that he's able to reproduce an error where modules will not instantiate whenever max's audio rendering is turned on. | 1.0 | running max causes module instancing error - @Zodsmar told me that he's able to reproduce an error where modules will not instantiate whenever max's audio rendering is turned on. | priority | running max causes module instancing error zodsmar told me that he s able to reproduce an error where modules will not instantiate whenever max s audio rendering is turned on | 1 |

41,328 | 2,868,998,255 | IssuesEvent | 2015-06-05 22:28:20 | dart-lang/pub-dartlang | https://api.github.com/repos/dart-lang/pub-dartlang | closed | pub search not finding library that exists | bug notplanned Priority-High | <a href="https://github.com/sethladd"><img src="https://avatars.githubusercontent.com/u/5479?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [sethladd](https://github.com/sethladd)**

_Originally opened as dart-lang/sdk#20755_

----

This has happened a few times now, there's some systemic going on.

This time, I can't find stagehand. It's first version has been up since 7/26. Yet, search can't find it. See screenshots.

There have been other bugs like this, and somehow it just starts working. But in this case, it's been over a month.

I think we should take a look at this.

______

**Attachments:**

[Screen Shot 2014-08-30 at 5.19.53 PM.png](https://storage.googleapis.com/google-code-attachments/dart/issue-20755/comment-0/Screen Shot 2014-08-30 at 5.19.53 PM.png) (32.68 KB)

[Screen Shot 2014-08-30 at 5.19.48 PM.png](https://storage.googleapis.com/google-code-attachments/dart/issue-20755/comment-0/Screen Shot 2014-08-30 at 5.19.48 PM.png) (40.56 KB) | 1.0 | pub search not finding library that exists - <a href="https://github.com/sethladd"><img src="https://avatars.githubusercontent.com/u/5479?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [sethladd](https://github.com/sethladd)**

_Originally opened as dart-lang/sdk#20755_

----

This has happened a few times now, there's some systemic going on.

This time, I can't find stagehand. It's first version has been up since 7/26. Yet, search can't find it. See screenshots.

There have been other bugs like this, and somehow it just starts working. But in this case, it's been over a month.

I think we should take a look at this.

______

**Attachments:**

[Screen Shot 2014-08-30 at 5.19.53 PM.png](https://storage.googleapis.com/google-code-attachments/dart/issue-20755/comment-0/Screen Shot 2014-08-30 at 5.19.53 PM.png) (32.68 KB)

[Screen Shot 2014-08-30 at 5.19.48 PM.png](https://storage.googleapis.com/google-code-attachments/dart/issue-20755/comment-0/Screen Shot 2014-08-30 at 5.19.48 PM.png) (40.56 KB) | priority | pub search not finding library that exists issue by originally opened as dart lang sdk this has happened a few times now there s some systemic going on this time i can t find stagehand it s first version has been up since yet search can t find it see screenshots there have been other bugs like this and somehow it just starts working but in this case it s been over a month i think we should take a look at this attachments shot at pm png kb shot at pm png kb | 1 |

114,685 | 4,642,618,547 | IssuesEvent | 2016-09-30 10:18:05 | armadito/armadito-av | https://api.github.com/repos/armadito/armadito-av | opened | improve/simplify REST API | enhancement high priority | update REST API for the following enhancements:

- remove '/api/register' call and integrate returning the token in the JSON objects returned by other requests such as '/api/scan' or '/api/status'

- add arguments to '/api/scan': generate progress events, progress event frequency (may be merge in one parameter: 0 for no progress event, > 0 for frequency in seconds)

| 1.0 | improve/simplify REST API - update REST API for the following enhancements:

- remove '/api/register' call and integrate returning the token in the JSON objects returned by other requests such as '/api/scan' or '/api/status'

- add arguments to '/api/scan': generate progress events, progress event frequency (may be merge in one parameter: 0 for no progress event, > 0 for frequency in seconds)

| priority | improve simplify rest api update rest api for the following enhancements remove api register call and integrate returning the token in the json objects returned by other requests such as api scan or api status add arguments to api scan generate progress events progress event frequency may be merge in one parameter for no progress event for frequency in seconds | 1 |

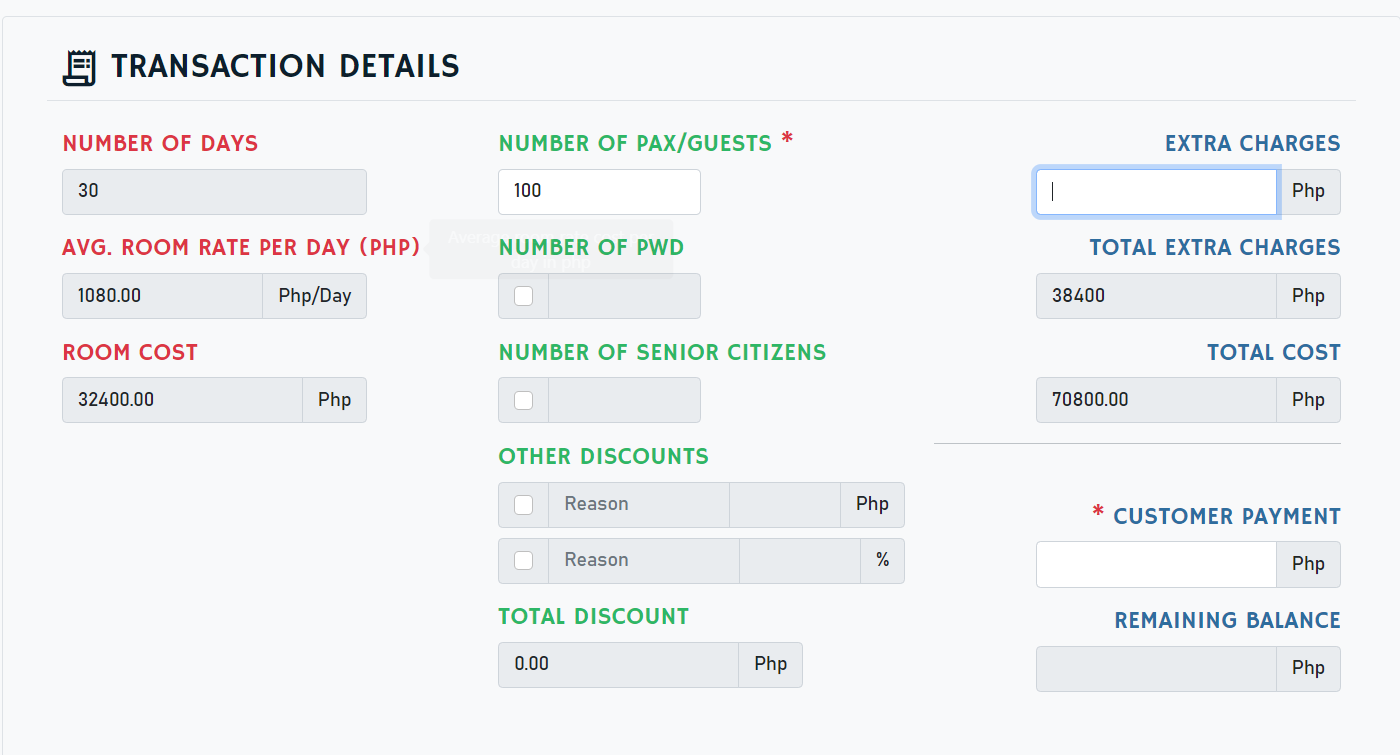

591,477 | 17,840,705,137 | IssuesEvent | 2021-09-03 09:41:53 | francheska-vicente/cssweng | https://api.github.com/repos/francheska-vicente/cssweng | opened | Rooms that offer monthly rate should not have extra persons | bug priority: high issue: back-end severity: medium issue: validation | ### Summary

- Rooms that offer monthly rates should not entertain extra pax.

### Steps to Reproduce

1. login

2. choose any date for booking

3. choose room 305

4. input 100 in the number of pax field

### Visual Proof

### Expected Results:

- Number of persons should be limited to at most 4 pax for twin bed (rm. 305).

### Actual Results:

- There is no limit to the number of extra pax for the monthly rate of twin bed (rm. 305).

| Additional Information | |

| ----------- | ----------- |

| Platform | V8 engine (Google) |

| Operating System | Windows 10 | | 1.0 | Rooms that offer monthly rate should not have extra persons - ### Summary

- Rooms that offer monthly rates should not entertain extra pax.

### Steps to Reproduce

1. login

2. choose any date for booking

3. choose room 305

4. input 100 in the number of pax field

### Visual Proof

### Expected Results:

- Number of persons should be limited to at most 4 pax for twin bed (rm. 305).

### Actual Results:

- There is no limit to the number of extra pax for the monthly rate of twin bed (rm. 305).

| Additional Information | |

| ----------- | ----------- |

| Platform | V8 engine (Google) |

| Operating System | Windows 10 | | priority | rooms that offer monthly rate should not have extra persons summary rooms that offer monthly rates should not entertain extra pax steps to reproduce login choose any date for booking choose room input in the number of pax field visual proof expected results number of persons should be limited to at most pax for twin bed rm actual results there is no limit to the number of extra pax for the monthly rate of twin bed rm additional information platform engine google operating system windows | 1 |

363,060 | 10,736,972,952 | IssuesEvent | 2019-10-29 12:07:04 | AY1920S1-CS2103T-W11-4/main | https://api.github.com/repos/AY1920S1-CS2103T-W11-4/main | closed | As a new user I want to see usage instructions | priority.High type.Story | So that I can refer to instructions when I forget how to use the App | 1.0 | As a new user I want to see usage instructions - So that I can refer to instructions when I forget how to use the App | priority | as a new user i want to see usage instructions so that i can refer to instructions when i forget how to use the app | 1 |

107,178 | 4,290,704,000 | IssuesEvent | 2016-07-18 10:54:05 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | opened | Disable current help tool Mobile | enhancement Mobile Priority: High | As a consequence of #845 we are going for the moment to remove the current help widget/tool from the mobile layout waiting for a more mobile friendly widget to be developed in future releases. | 1.0 | Disable current help tool Mobile - As a consequence of #845 we are going for the moment to remove the current help widget/tool from the mobile layout waiting for a more mobile friendly widget to be developed in future releases. | priority | disable current help tool mobile as a consequence of we are going for the moment to remove the current help widget tool from the mobile layout waiting for a more mobile friendly widget to be developed in future releases | 1 |

560,816 | 16,605,418,674 | IssuesEvent | 2021-06-02 02:43:04 | QuantEcon/quantecon-book-theme | https://api.github.com/repos/QuantEcon/quantecon-book-theme | closed | [google analytics] Check if Google Analytics is Setup | bug high-priority | @DrDrij @AakashGfude this is an issue to track the status for looking to see if google analytics is setup correctly for

- [ ] python-programming.quantecon.org

- [ ] python.quantecon.org | 1.0 | [google analytics] Check if Google Analytics is Setup - @DrDrij @AakashGfude this is an issue to track the status for looking to see if google analytics is setup correctly for

- [ ] python-programming.quantecon.org

- [ ] python.quantecon.org | priority | check if google analytics is setup drdrij aakashgfude this is an issue to track the status for looking to see if google analytics is setup correctly for python programming quantecon org python quantecon org | 1 |

323,563 | 9,856,515,180 | IssuesEvent | 2019-06-19 22:26:40 | NCIOCPL/cgov-digital-platform | https://api.github.com/repos/NCIOCPL/cgov-digital-platform | closed | Spanish CGOV - Missing Images and Infographics | High priority Migration bug | Images are missing from Spanish pages. These got migrated into Drupal, but they are not appearing on the front-end. Some examples pages include:

- http://ncigovcdode176.prod.acquia-sites.com/espanol/cancer/naturaleza/que-es

- http://ncigovcdode176.prod.acquia-sites.com/espanol/cancer/sobrellevar/supervivencia/infancia

- http://ncigovcdode176.prod.acquia-sites.com/espanol/cancer/tratamiento/tipos/radioterapia/haz-externo

- Infographic on http://ncigovcdode176.prod.acquia-sites.com/espanol/cancer/naturaleza/desigualdades

---

AC - Adding Videos from #2020

http://ncigovcdode176.prod.acquia-sites.com/espanol/cancer/tratamiento/tipos/inmunoterapia

VERSUS https://www.cancer.gov/espanol/cancer/tratamiento/tipos/inmunoterapia

• http://ncigovcdode176.prod.acquia-sites.com/espanol/cancer/naturaleza/desigualdades

VERSUS https://www.cancer.gov/espanol/cancer/naturaleza/desigualdades

• http://ncigovcdode176.prod.acquia-sites.com/espanol/cancer/tratamiento/estudios-clinicos/seguridad-paciente

• http://ncigovcdode176.prod.acquia-sites.com/espanol/cancer/tratamiento/estudios-clinicos/pago (edited)

| 1.0 | Spanish CGOV - Missing Images and Infographics - Images are missing from Spanish pages. These got migrated into Drupal, but they are not appearing on the front-end. Some examples pages include:

- http://ncigovcdode176.prod.acquia-sites.com/espanol/cancer/naturaleza/que-es

- http://ncigovcdode176.prod.acquia-sites.com/espanol/cancer/sobrellevar/supervivencia/infancia

- http://ncigovcdode176.prod.acquia-sites.com/espanol/cancer/tratamiento/tipos/radioterapia/haz-externo

- Infographic on http://ncigovcdode176.prod.acquia-sites.com/espanol/cancer/naturaleza/desigualdades

---

AC - Adding Videos from #2020

http://ncigovcdode176.prod.acquia-sites.com/espanol/cancer/tratamiento/tipos/inmunoterapia

VERSUS https://www.cancer.gov/espanol/cancer/tratamiento/tipos/inmunoterapia

• http://ncigovcdode176.prod.acquia-sites.com/espanol/cancer/naturaleza/desigualdades

VERSUS https://www.cancer.gov/espanol/cancer/naturaleza/desigualdades

• http://ncigovcdode176.prod.acquia-sites.com/espanol/cancer/tratamiento/estudios-clinicos/seguridad-paciente

• http://ncigovcdode176.prod.acquia-sites.com/espanol/cancer/tratamiento/estudios-clinicos/pago (edited)

| priority | spanish cgov missing images and infographics images are missing from spanish pages these got migrated into drupal but they are not appearing on the front end some examples pages include infographic on ac adding videos from versus • versus • • edited | 1 |

683,803 | 23,394,799,847 | IssuesEvent | 2022-08-11 21:47:03 | savvy-coders/sc-curriculum | https://api.github.com/repos/savvy-coders/sc-curriculum | closed | Investigate Parcel 2.7 potential 404 Error on npm run serve | bug high priority | When trying to build out SPA, ran npm run serve as test to see if it would build, it did build however the browser gave a 404 error. When uninstalling Parcel 2.7 and installed Parcel 2.5, the error seemed to "fix itself". Need to investigate if this was an isolated issue or if conflicts exist with new version of Parcel | 1.0 | Investigate Parcel 2.7 potential 404 Error on npm run serve - When trying to build out SPA, ran npm run serve as test to see if it would build, it did build however the browser gave a 404 error. When uninstalling Parcel 2.7 and installed Parcel 2.5, the error seemed to "fix itself". Need to investigate if this was an isolated issue or if conflicts exist with new version of Parcel | priority | investigate parcel potential error on npm run serve when trying to build out spa ran npm run serve as test to see if it would build it did build however the browser gave a error when uninstalling parcel and installed parcel the error seemed to fix itself need to investigate if this was an isolated issue or if conflicts exist with new version of parcel | 1 |

240,704 | 7,804,801,283 | IssuesEvent | 2018-06-11 08:45:38 | nimble-platform/common | https://api.github.com/repos/nimble-platform/common | closed | Each product within catalogue needs an assignment to a concept (domain ontology or eclass) | data schema very high priority | The current indexed data set (catalogue2) contains products which have no assignment to a concrete class. Current proposal is to add the field item_commodity_classification_uri in catalogue2.

For release 3: Each product within the catalogue2 needs a value for the field item_commodity_classification_uri | 1.0 | Each product within catalogue needs an assignment to a concept (domain ontology or eclass) - The current indexed data set (catalogue2) contains products which have no assignment to a concrete class. Current proposal is to add the field item_commodity_classification_uri in catalogue2.

For release 3: Each product within the catalogue2 needs a value for the field item_commodity_classification_uri | priority | each product within catalogue needs an assignment to a concept domain ontology or eclass the current indexed data set contains products which have no assignment to a concrete class current proposal is to add the field item commodity classification uri in for release each product within the needs a value for the field item commodity classification uri | 1 |

610,960 | 18,941,119,878 | IssuesEvent | 2021-11-18 03:03:50 | wso2/product-microgateway | https://api.github.com/repos/wso2/product-microgateway | closed | Error Appling API Key auth for petstore API | Type/Bug Priority/High | ### Description:

Error Appling API Key auth for petstore API

```log

adapter_1 | 2021-11-03 17:45:03 INFO [notification_listener.go:79] - [messaging.handleNotification] [-] Event REMOVE_API_FROM_GATEWAY is received

adapter_1 | 2021-11-03 17:45:03 INFO [notification_listener.go:79] - [messaging.handleNotification] [-] Event DEPLOY_API_IN_GATEWAY is received

adapter_1 | 2021-11-03 17:45:03 INFO [apis_fetcher.go:163] - [synchronizer.FetchAPIsFromControlPlane] [-] API 2653577f-2fb4-4066-887c-0f887f85af76 is added/updated to APIList for label [Default]

adapter_1 | 2021-11-03 17:45:03 INFO [apis_fetcher.go:64] - [synchronizer.FetchAPIs] [-] Fetching APIs from Control Plane.

adapter_1 | 2021-11-03 17:45:03 INFO [apis_fetcher.go:171] - [synchronizer.FetchAPIsFromControlPlane] [-] Pushing data to router and enforcer

adapter_1 | 2021-11-03 17:45:03 INFO [apis_fetcher.go:58] - [synchronizer.PushAPIProjects] [-] Start Deploying 1 API/s...

adapter_1 | 2021-11-03 17:45:03 INFO [apis_fetcher.go:91] - [synchronizer.PushAPIProjects] [-] Start deploying api from file (API_ID:REVISION_ID).zip : 2653577f-2fb4-4066-887c-0f887f85af76-f9073d74-6da6-4d5a-a3cf-4017c930d430.zip

adapter_1 | 2021-11-03 17:45:03 INFO [apis_impl.go:230] - [api.ApplyAPIProjectFromAPIM] [-] Deploying api SwaggerPetstore:1.0.5 in Organization carbon.super

adapter_1 | 2021-11-03 17:45:03 INFO [apis_impl.go:240] - [api.ApplyAPIProjectFromAPIM] [-] API SwaggerPetstore:1.0.5 with UUID "2653577f-2fb4-4066-887c-0f887f85af76" already deployed to vhost: localhost

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:213] - [model.checkAPIKeyInOperationArray] [-] Inside security scheme &[{api_key api_key api_key } {default oauth2 } {api_key apiKey api_key header} {petstore_auth oauth2 }].

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 0. Value: map[default:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 1. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:213] - [model.checkAPIKeyInOperationArray] [-] Inside security scheme &[{api_key api_key api_key } {default oauth2 } {api_key apiKey api_key header} {petstore_auth oauth2 }].

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 0. Value: map[default:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 1. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:213] - [model.checkAPIKeyInOperationArray] [-] Inside security scheme &[{api_key api_key api_key } {default oauth2 } {api_key apiKey api_key header} {petstore_auth oauth2 }].

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 0. Value: map[default:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 1. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:213] - [model.checkAPIKeyInOperationArray] [-] Inside security scheme &[{api_key api_key api_key } {default oauth2 } {api_key apiKey api_key header} {petstore_auth oauth2 }].

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 0. Value: map[default:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 1. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:213] - [model.checkAPIKeyInOperationArray] [-] Inside security scheme &[{api_key api_key api_key } {default oauth2 } {api_key apiKey api_key header} {petstore_auth oauth2 }].

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 0. Value: map[default:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 1. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:213] - [model.checkAPIKeyInOperationArray] [-] Inside security scheme &[{api_key api_key api_key } {default oauth2 } {api_key apiKey api_key header} {petstore_auth oauth2 }].

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 0. Value: map[default:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 1. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:213] - [model.checkAPIKeyInOperationArray] [-] Inside security scheme &[{api_key api_key api_key } {default oauth2 } {api_key apiKey api_key header} {petstore_auth oauth2 }].

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 0. Value: map[default:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 1. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:213] - [model.checkAPIKeyInOperationArray] [-] Inside security scheme &[{api_key api_key api_key } {default oauth2 } {api_key apiKey api_key header} {petstore_auth oauth2 }].

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 0. Value: map[petstore_auth:[write:pets read:pets]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 1. Value: map[default:[write:pets read:pets]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 2. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:213] - [model.checkAPIKeyInOperationArray] [-] Inside security scheme &[{api_key api_key api_key } {default oauth2 } {api_key apiKey api_key header} {petstore_auth oauth2 }].

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 0. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 1. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 2. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 ERRO [apis_impl.go:222] - [api.ApplyAPIProjectFromAPIM.func1] [-] Recovered from panic. Error encountered while applying API SwaggerPetstore:1.0.5 to localhost.

adapter_1 | 2021-11-03 17:45:03 ERRO [apis_fetcher.go:105] - [synchronizer.PushAPIProjects] [-] Error occurred while applying project SwaggerPetstore:1.0.5 with UUID "2653577f-2fb4-4066-887c-0f887f85af76"

adapter_1 | 2021-11-03 17:45:03 INFO [apis_fetcher.go:113] - [synchronizer.PushAPIProjects] [-] Successfully deployed 1 API/s

```

### Steps to reproduce:

- Create API from https://petstore.swagger.io/v2/swagger.json

- Add API Key auth from runtime API Configs in APIM

- Deploy API

- check adapter logs

### Affected Product Version:

1.0.0-beta-snapshot

### Environment details (with versions):

- OS: mac

- Client: curl

- Env (Docker/K8s): docker compose

---

### Optional Fields

#### Related Issues:

<!-- Any related issues from this/other repositories-->

#### Suggested Labels:

<!--Only to be used by non-members-->

#### Suggested Assignees:

<!--Only to be used by non-members-->

| 1.0 | Error Appling API Key auth for petstore API - ### Description:

Error Appling API Key auth for petstore API

```log

adapter_1 | 2021-11-03 17:45:03 INFO [notification_listener.go:79] - [messaging.handleNotification] [-] Event REMOVE_API_FROM_GATEWAY is received

adapter_1 | 2021-11-03 17:45:03 INFO [notification_listener.go:79] - [messaging.handleNotification] [-] Event DEPLOY_API_IN_GATEWAY is received

adapter_1 | 2021-11-03 17:45:03 INFO [apis_fetcher.go:163] - [synchronizer.FetchAPIsFromControlPlane] [-] API 2653577f-2fb4-4066-887c-0f887f85af76 is added/updated to APIList for label [Default]

adapter_1 | 2021-11-03 17:45:03 INFO [apis_fetcher.go:64] - [synchronizer.FetchAPIs] [-] Fetching APIs from Control Plane.

adapter_1 | 2021-11-03 17:45:03 INFO [apis_fetcher.go:171] - [synchronizer.FetchAPIsFromControlPlane] [-] Pushing data to router and enforcer

adapter_1 | 2021-11-03 17:45:03 INFO [apis_fetcher.go:58] - [synchronizer.PushAPIProjects] [-] Start Deploying 1 API/s...

adapter_1 | 2021-11-03 17:45:03 INFO [apis_fetcher.go:91] - [synchronizer.PushAPIProjects] [-] Start deploying api from file (API_ID:REVISION_ID).zip : 2653577f-2fb4-4066-887c-0f887f85af76-f9073d74-6da6-4d5a-a3cf-4017c930d430.zip

adapter_1 | 2021-11-03 17:45:03 INFO [apis_impl.go:230] - [api.ApplyAPIProjectFromAPIM] [-] Deploying api SwaggerPetstore:1.0.5 in Organization carbon.super

adapter_1 | 2021-11-03 17:45:03 INFO [apis_impl.go:240] - [api.ApplyAPIProjectFromAPIM] [-] API SwaggerPetstore:1.0.5 with UUID "2653577f-2fb4-4066-887c-0f887f85af76" already deployed to vhost: localhost

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:213] - [model.checkAPIKeyInOperationArray] [-] Inside security scheme &[{api_key api_key api_key } {default oauth2 } {api_key apiKey api_key header} {petstore_auth oauth2 }].

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 0. Value: map[default:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 1. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:213] - [model.checkAPIKeyInOperationArray] [-] Inside security scheme &[{api_key api_key api_key } {default oauth2 } {api_key apiKey api_key header} {petstore_auth oauth2 }].

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 0. Value: map[default:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 1. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:213] - [model.checkAPIKeyInOperationArray] [-] Inside security scheme &[{api_key api_key api_key } {default oauth2 } {api_key apiKey api_key header} {petstore_auth oauth2 }].

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 0. Value: map[default:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 1. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:213] - [model.checkAPIKeyInOperationArray] [-] Inside security scheme &[{api_key api_key api_key } {default oauth2 } {api_key apiKey api_key header} {petstore_auth oauth2 }].

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 0. Value: map[default:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 1. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:213] - [model.checkAPIKeyInOperationArray] [-] Inside security scheme &[{api_key api_key api_key } {default oauth2 } {api_key apiKey api_key header} {petstore_auth oauth2 }].

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 0. Value: map[default:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 1. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:213] - [model.checkAPIKeyInOperationArray] [-] Inside security scheme &[{api_key api_key api_key } {default oauth2 } {api_key apiKey api_key header} {petstore_auth oauth2 }].

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 0. Value: map[default:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 1. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:213] - [model.checkAPIKeyInOperationArray] [-] Inside security scheme &[{api_key api_key api_key } {default oauth2 } {api_key apiKey api_key header} {petstore_auth oauth2 }].

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 0. Value: map[default:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 1. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:213] - [model.checkAPIKeyInOperationArray] [-] Inside security scheme &[{api_key api_key api_key } {default oauth2 } {api_key apiKey api_key header} {petstore_auth oauth2 }].

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 0. Value: map[petstore_auth:[write:pets read:pets]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 1. Value: map[default:[write:pets read:pets]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 2. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:213] - [model.checkAPIKeyInOperationArray] [-] Inside security scheme &[{api_key api_key api_key } {default oauth2 } {api_key apiKey api_key header} {petstore_auth oauth2 }].

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 0. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 1. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 INFO [open_api.go:217] - [model.checkAPIKeyInOperationArray] [-] New method key 2. Value: map[api_key:[]]

adapter_1 | 2021-11-03 17:45:03 ERRO [apis_impl.go:222] - [api.ApplyAPIProjectFromAPIM.func1] [-] Recovered from panic. Error encountered while applying API SwaggerPetstore:1.0.5 to localhost.

adapter_1 | 2021-11-03 17:45:03 ERRO [apis_fetcher.go:105] - [synchronizer.PushAPIProjects] [-] Error occurred while applying project SwaggerPetstore:1.0.5 with UUID "2653577f-2fb4-4066-887c-0f887f85af76"

adapter_1 | 2021-11-03 17:45:03 INFO [apis_fetcher.go:113] - [synchronizer.PushAPIProjects] [-] Successfully deployed 1 API/s

```

### Steps to reproduce:

- Create API from https://petstore.swagger.io/v2/swagger.json

- Add API Key auth from runtime API Configs in APIM

- Deploy API

- check adapter logs

### Affected Product Version:

1.0.0-beta-snapshot

### Environment details (with versions):

- OS: mac

- Client: curl

- Env (Docker/K8s): docker compose

---

### Optional Fields

#### Related Issues:

<!-- Any related issues from this/other repositories-->

#### Suggested Labels:

<!--Only to be used by non-members-->

#### Suggested Assignees:

<!--Only to be used by non-members-->

| priority | error appling api key auth for petstore api description error appling api key auth for petstore api log adapter info event remove api from gateway is received adapter info event deploy api in gateway is received adapter info api is added updated to apilist for label adapter info fetching apis from control plane adapter info pushing data to router and enforcer adapter info start deploying api s adapter info start deploying api from file api id revision id zip zip adapter info deploying api swaggerpetstore in organization carbon super adapter info api swaggerpetstore with uuid already deployed to vhost localhost adapter info inside security scheme adapter info new method key value map adapter info new method key value map adapter info inside security scheme adapter info new method key value map adapter info new method key value map adapter info inside security scheme adapter info new method key value map adapter info new method key value map adapter info inside security scheme adapter info new method key value map adapter info new method key value map adapter info inside security scheme adapter info new method key value map adapter info new method key value map adapter info inside security scheme adapter info new method key value map adapter info new method key value map adapter info inside security scheme adapter info new method key value map adapter info new method key value map adapter info inside security scheme adapter info new method key value map adapter info new method key value map adapter info new method key value map adapter info inside security scheme adapter info new method key value map adapter info new method key value map adapter info new method key value map adapter erro recovered from panic error encountered while applying api swaggerpetstore to localhost adapter erro error occurred while applying project swaggerpetstore with uuid adapter info successfully deployed api s steps to reproduce create api from add api key auth from runtime api configs in apim deploy api check adapter logs affected product version beta snapshot environment details with versions os mac client curl env docker docker compose optional fields related issues suggested labels suggested assignees | 1 |

149,889 | 5,730,556,549 | IssuesEvent | 2017-04-21 09:44:13 | RestComm/mediaserver | https://api.github.com/repos/RestComm/mediaserver | closed | MGCP Channel must filter incoming packets | High-Priority MGCP2 netty | Add a network guard to the new MGCP Channel pipeline that filters incoming packets.

Only packets coming from local network are accepted, while packets coming from unknown external networks are discarded. | 1.0 | MGCP Channel must filter incoming packets - Add a network guard to the new MGCP Channel pipeline that filters incoming packets.

Only packets coming from local network are accepted, while packets coming from unknown external networks are discarded. | priority | mgcp channel must filter incoming packets add a network guard to the new mgcp channel pipeline that filters incoming packets only packets coming from local network are accepted while packets coming from unknown external networks are discarded | 1 |

317,619 | 9,667,001,322 | IssuesEvent | 2019-05-21 12:16:34 | sunpy/sunpy | https://api.github.com/repos/sunpy/sunpy | closed | Prepare for diff-rotations from different points of view. | Effort High Feature Request Package Intermediate Priority Medium coordinates | I've got this from a [draft document](https://issues.cosmos.esa.int/solarorbiterwiki/download/attachments/5801215/Triplet-TN%20SOL-SGS-TN-0020%20v0_2.pdf?version=1&modificationDate=1499950338000&api=v2) from [Solar Orbiter team](https://issues.cosmos.esa.int/solarorbiterwiki/display/SOSP/SOC+Documents):

> 4.3 SOC handling of the differential rotation

> SOC will use the following model of differential rotation to propagate from the triplet

> epoch:

> ω(Φ) = A + B sin2(Φ) + C sin4(Φ)

> Where ω is the rotation rate (in deg/day)

> And Φ is the solar latitude

>

> SOC will choose one of two sets of parameters:

> For magnetic features, meaning sunspots/active regions (derived from “Magnetic”

> in [DIFF])

>

> A = 14.252

> B = -1.678

> C = -2.401

> For non-magnetic features, e.g. coronal holes

> A = 14.705

> B = 0.0

> C = 0.0

> Note that this is a “rigid” rotation corresponding to 26.24 day synodic period from

> Earth (=> 24.48 day sidereal period).

None of the current values we've got matches what they are planing.

```python

>>> howard.to(u.deg / u.day)

<Quantity [ 14.32632838, -2.11875209, -1.83163148] deg / d>

>>> snodgrass.to(u.deg / u.day)

<Quantity [ 14.1134631 , -1.69797189, -2.34646844] deg / d>

>>> allen.to(u.deg / u.day)

<Quantity [ 14.44, -3. , 0. ] deg / d>

```

We do have a `synodic` correction as an option: `rotation -= 0.9856 * u.deg / u.day * duration` | 1.0 | Prepare for diff-rotations from different points of view. - I've got this from a [draft document](https://issues.cosmos.esa.int/solarorbiterwiki/download/attachments/5801215/Triplet-TN%20SOL-SGS-TN-0020%20v0_2.pdf?version=1&modificationDate=1499950338000&api=v2) from [Solar Orbiter team](https://issues.cosmos.esa.int/solarorbiterwiki/display/SOSP/SOC+Documents):

> 4.3 SOC handling of the differential rotation

> SOC will use the following model of differential rotation to propagate from the triplet

> epoch:

> ω(Φ) = A + B sin2(Φ) + C sin4(Φ)

> Where ω is the rotation rate (in deg/day)

> And Φ is the solar latitude

>

> SOC will choose one of two sets of parameters:

> For magnetic features, meaning sunspots/active regions (derived from “Magnetic”

> in [DIFF])

>

> A = 14.252

> B = -1.678

> C = -2.401

> For non-magnetic features, e.g. coronal holes

> A = 14.705

> B = 0.0

> C = 0.0

> Note that this is a “rigid” rotation corresponding to 26.24 day synodic period from

> Earth (=> 24.48 day sidereal period).

None of the current values we've got matches what they are planing.

```python

>>> howard.to(u.deg / u.day)

<Quantity [ 14.32632838, -2.11875209, -1.83163148] deg / d>

>>> snodgrass.to(u.deg / u.day)

<Quantity [ 14.1134631 , -1.69797189, -2.34646844] deg / d>

>>> allen.to(u.deg / u.day)

<Quantity [ 14.44, -3. , 0. ] deg / d>

```

We do have a `synodic` correction as an option: `rotation -= 0.9856 * u.deg / u.day * duration` | priority | prepare for diff rotations from different points of view i ve got this from a from soc handling of the differential rotation soc will use the following model of differential rotation to propagate from the triplet epoch ω φ a b φ c φ where ω is the rotation rate in deg day and φ is the solar latitude soc will choose one of two sets of parameters for magnetic features meaning sunspots active regions derived from “magnetic” in a b c for non magnetic features e g coronal holes a b c note that this is a “rigid” rotation corresponding to day synodic period from earth day sidereal period none of the current values we ve got matches what they are planing python howard to u deg u day snodgrass to u deg u day allen to u deg u day we do have a synodic correction as an option rotation u deg u day duration | 1 |

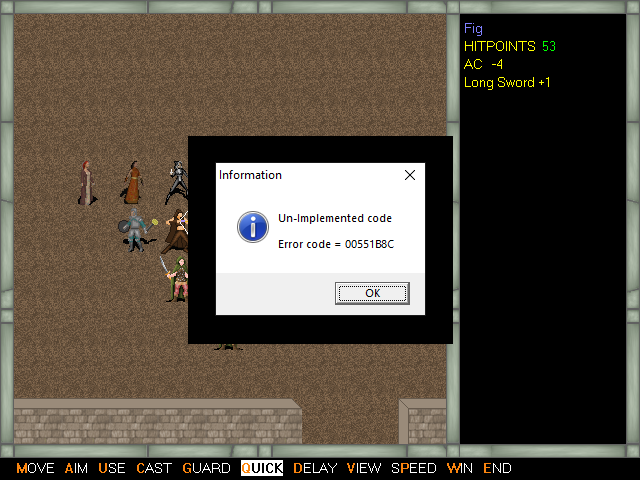

513,964 | 14,930,045,222 | IssuesEvent | 2021-01-25 01:43:21 | grannypron/uaf_levels | https://api.github.com/repos/grannypron/uaf_levels | closed | Error message when Quick is used in combat | Waiting For Approval bug game39 high priority | Get the following error when Q is pressed in combat for Quick:

Un-implemented code

Error Code = 00551B8C

| 1.0 | Error message when Quick is used in combat - Get the following error when Q is pressed in combat for Quick:

Un-implemented code

Error Code = 00551B8C

| priority | error message when quick is used in combat get the following error when q is pressed in combat for quick un implemented code error code | 1 |

447,153 | 12,884,600,776 | IssuesEvent | 2020-07-13 03:34:17 | mistifiedwarrior/Hackthon-Project | https://api.github.com/repos/mistifiedwarrior/Hackthon-Project | closed | Shopkeeper: see all bookings | easy high priority | As a shopkeeper

I want to see my all bookings

So that i can predict the today's selling

Acceptance criteria

- [x] criteria 1

Given in shopkeeper home page

When i click on the bookings

Then i can see all bookings

- [x] criteria 2

Given in booking details

When i want to look at the booking history

Then i can see all history | 1.0 | Shopkeeper: see all bookings - As a shopkeeper

I want to see my all bookings

So that i can predict the today's selling

Acceptance criteria

- [x] criteria 1

Given in shopkeeper home page

When i click on the bookings

Then i can see all bookings

- [x] criteria 2

Given in booking details

When i want to look at the booking history

Then i can see all history | priority | shopkeeper see all bookings as a shopkeeper i want to see my all bookings so that i can predict the today s selling acceptance criteria criteria given in shopkeeper home page when i click on the bookings then i can see all bookings criteria given in booking details when i want to look at the booking history then i can see all history | 1 |

338,817 | 10,237,788,662 | IssuesEvent | 2019-08-19 14:35:15 | IBM/carbon-addons-iot-react | https://api.github.com/repos/IBM/carbon-addons-iot-react | closed | [v2]: Upgrade Components to Carbon v10 | :computer: Development :fire: High priority v2 | Containing issue for the migration of our components to Carbon v10

High level steps/pieces:

- [x] upgrade carbon deps

- [x] upgrade storybook to v5 to support new prop-type definitions

- [x] Update/Fix Components

- [x] `ButtonEnhanced` refactored to `Button` wrapper

- [ ] ~`Table` renamed to `DataTable`, ensure sub components exported in the same fashion as Carbon to support custom composition of components. Avoid any breaking change from Carbon's documentation/usage for `DataTable`~

- [x] review visually for defects, fix as necessary

- [ ] ...

Maximo needs it and we need to support downstream teams | 1.0 | [v2]: Upgrade Components to Carbon v10 - Containing issue for the migration of our components to Carbon v10

High level steps/pieces:

- [x] upgrade carbon deps

- [x] upgrade storybook to v5 to support new prop-type definitions

- [x] Update/Fix Components

- [x] `ButtonEnhanced` refactored to `Button` wrapper

- [ ] ~`Table` renamed to `DataTable`, ensure sub components exported in the same fashion as Carbon to support custom composition of components. Avoid any breaking change from Carbon's documentation/usage for `DataTable`~

- [x] review visually for defects, fix as necessary

- [ ] ...

Maximo needs it and we need to support downstream teams | priority | upgrade components to carbon containing issue for the migration of our components to carbon high level steps pieces upgrade carbon deps upgrade storybook to to support new prop type definitions update fix components buttonenhanced refactored to button wrapper table renamed to datatable ensure sub components exported in the same fashion as carbon to support custom composition of components avoid any breaking change from carbon s documentation usage for datatable review visually for defects fix as necessary maximo needs it and we need to support downstream teams | 1 |

133,397 | 5,202,535,556 | IssuesEvent | 2017-01-24 09:52:25 | Promact/promact-oauth-server | https://api.github.com/repos/Promact/promact-oauth-server | closed | Restructure OAuth External Login Flow | Done high-priority OAuth Ready Support | Right now its not flowing the rule of OAuth 2.0 flow. So I need to restructure the OAuth flow and implement flow as OAuth 2.0 rules.

Following this link - https://www.digitalocean.com/community/tutorials/an-introduction-to-oauth-2

This is the current flow:

1. When user click the link, Login with Promact, then it will call the point 2

2. Authorization Code Link : The above link call a API and it will redirect to https://promactoauth.azurewebsites.net/OAuth/ExternalLogin?clientId=PromactAppClientId

3. User Authorizes Application : After successfully completion of point 2, server will redirect to OAuth server. In OAuth checking of app details with clientId will be done then user need to login.

4. OAuth Request to Application : After successfully completion of point 3, An request(server side call) will be send from OAuth to application with refresh token for getting app’s secret and redirect Uri then OAuth will receive the response and check for app secret.

5. Application Receives User Details and Access token : After successfully completion of point 4, Application will receive user details and add user to application and store the data.

My proposal for new structure:

1. When user click the link, Login with Promact, then it will call the point 2.

2. Authorization Code Link : The above link call a API and it will redirect to

https://promactoauth.azurewebsites.net/OAuth/ExternalLogin?clientId=PromactAppClientId&redirectUri=redirectUri

Example:

https://promactoauth.azurewebsites.net/OAuth/ExternalLogin?clientId={PromactAppClientId}&redirectUri=”https://promactslack.azurewebsites.net/OAuth/Authorization?code={AuthorizationCode}”

PromactAppClientId = Promact app’s client Id

redirectUri = external login call Url

3. User Authorizes Application : After successfully completion of point 2, server will redirect to OAuth server. In OAuth checking of app details with clientId and redirectUri will be done then user need to login.

4. Application Receives Authorization Code : After successfully completion of point 3, server will redirect to

Point 2’s redirectUri with authorization code.

Example : https://promactslack.azurewebsites.net/OAuth/Authorization?code=DFSD45FGD45FG11

5. Application Requests Access Token : After successfully completion of point 4, Server will call a API and application will request the OAuth for access token and user details

Http Request Url will be like this

https://promactoauth.azurewebsites.net/OAuth/AccessToken?clientId=PromactAppClientId&authorizationcode=AuthorizationCode&clientSecret=PromactAppClientSecret

Example :

https://promactoauth.azurewebsites.net/OAuth/AccessToken?clientId={PromactAppClientId}&authorizationcode={AuthorizationCode}&clientSecret={PromactAppClientSecret}

PromactAppClientId=Promact app’s clientId

AuthorizationCode = Point 4 code

PromactAppClientSecret – Promact app’s client secret

6. Application Receives Access Token : After successfully completion of point 5 and authorization code, client secret and details match then, Application will receive all required details of user and create and user for external login and store its access token of Promact.

| 1.0 | Restructure OAuth External Login Flow - Right now its not flowing the rule of OAuth 2.0 flow. So I need to restructure the OAuth flow and implement flow as OAuth 2.0 rules.

Following this link - https://www.digitalocean.com/community/tutorials/an-introduction-to-oauth-2

This is the current flow:

1. When user click the link, Login with Promact, then it will call the point 2

2. Authorization Code Link : The above link call a API and it will redirect to https://promactoauth.azurewebsites.net/OAuth/ExternalLogin?clientId=PromactAppClientId

3. User Authorizes Application : After successfully completion of point 2, server will redirect to OAuth server. In OAuth checking of app details with clientId will be done then user need to login.

4. OAuth Request to Application : After successfully completion of point 3, An request(server side call) will be send from OAuth to application with refresh token for getting app’s secret and redirect Uri then OAuth will receive the response and check for app secret.

5. Application Receives User Details and Access token : After successfully completion of point 4, Application will receive user details and add user to application and store the data.

My proposal for new structure:

1. When user click the link, Login with Promact, then it will call the point 2.

2. Authorization Code Link : The above link call a API and it will redirect to

https://promactoauth.azurewebsites.net/OAuth/ExternalLogin?clientId=PromactAppClientId&redirectUri=redirectUri

Example:

https://promactoauth.azurewebsites.net/OAuth/ExternalLogin?clientId={PromactAppClientId}&redirectUri=”https://promactslack.azurewebsites.net/OAuth/Authorization?code={AuthorizationCode}”

PromactAppClientId = Promact app’s client Id

redirectUri = external login call Url

3. User Authorizes Application : After successfully completion of point 2, server will redirect to OAuth server. In OAuth checking of app details with clientId and redirectUri will be done then user need to login.

4. Application Receives Authorization Code : After successfully completion of point 3, server will redirect to

Point 2’s redirectUri with authorization code.

Example : https://promactslack.azurewebsites.net/OAuth/Authorization?code=DFSD45FGD45FG11

5. Application Requests Access Token : After successfully completion of point 4, Server will call a API and application will request the OAuth for access token and user details

Http Request Url will be like this

https://promactoauth.azurewebsites.net/OAuth/AccessToken?clientId=PromactAppClientId&authorizationcode=AuthorizationCode&clientSecret=PromactAppClientSecret

Example :

https://promactoauth.azurewebsites.net/OAuth/AccessToken?clientId={PromactAppClientId}&authorizationcode={AuthorizationCode}&clientSecret={PromactAppClientSecret}

PromactAppClientId=Promact app’s clientId

AuthorizationCode = Point 4 code

PromactAppClientSecret – Promact app’s client secret

6. Application Receives Access Token : After successfully completion of point 5 and authorization code, client secret and details match then, Application will receive all required details of user and create and user for external login and store its access token of Promact.

| priority | restructure oauth external login flow right now its not flowing the rule of oauth flow so i need to restructure the oauth flow and implement flow as oauth rules following this link this is the current flow when user click the link login with promact then it will call the point authorization code link the above link call a api and it will redirect to user authorizes application after successfully completion of point server will redirect to oauth server in oauth checking of app details with clientid will be done then user need to login oauth request to application after successfully completion of point an request server side call will be send from oauth to application with refresh token for getting app’s secret and redirect uri then oauth will receive the response and check for app secret application receives user details and access token after successfully completion of point application will receive user details and add user to application and store the data my proposal for new structure when user click the link login with promact then it will call the point authorization code link the above link call a api and it will redirect to example promactappclientid promact app’s client id redirecturi external login call url user authorizes application after successfully completion of point server will redirect to oauth server in oauth checking of app details with clientid and redirecturi will be done then user need to login application receives authorization code after successfully completion of point server will redirect to point ’s redirecturi with authorization code example application requests access token after successfully completion of point server will call a api and application will request the oauth for access token and user details http request url will be like this example promactappclientid promact app’s clientid authorizationcode point code promactappclientsecret – promact app’s client secret application receives access token after successfully completion of point and authorization code client secret and details match then application will receive all required details of user and create and user for external login and store its access token of promact | 1 |

309,360 | 9,473,837,112 | IssuesEvent | 2019-04-19 04:13:48 | ga4gh/data-repository-service-schemas | https://api.github.com/repos/ga4gh/data-repository-service-schemas | closed | refine the list of AccessMethod type values | Due: Apr Priority: Critical Priority: High Project: DRS | Following up on the initial `type` list for `AccessMethod`, as enumerated in #236:

- how do we want to support htsget? My first thought is it should be a `type` = `htsget` of its own, where the `access_url` is required to support the htsget protocol, vs. just being a place to fetch bytes.

- do we want our initial `type` list to only include methods that we expect somebody to have implemented by v1 launch, or should we lean further into the hypothetical future? I'm inclined to trim it back to only things that will be live in 2019, once we know what that list is.

- can we combine `http` and `https` into one? I expect most servers will only use one of them, and as a client I don't think I would ever have interesting logic to pick one vs. the other -- if I intend to use HTTP, I'll pick that `type` and use whatever `access_url` I'm given. | 2.0 | refine the list of AccessMethod type values - Following up on the initial `type` list for `AccessMethod`, as enumerated in #236:

- how do we want to support htsget? My first thought is it should be a `type` = `htsget` of its own, where the `access_url` is required to support the htsget protocol, vs. just being a place to fetch bytes.

- do we want our initial `type` list to only include methods that we expect somebody to have implemented by v1 launch, or should we lean further into the hypothetical future? I'm inclined to trim it back to only things that will be live in 2019, once we know what that list is.

- can we combine `http` and `https` into one? I expect most servers will only use one of them, and as a client I don't think I would ever have interesting logic to pick one vs. the other -- if I intend to use HTTP, I'll pick that `type` and use whatever `access_url` I'm given. | priority | refine the list of accessmethod type values following up on the initial type list for accessmethod as enumerated in how do we want to support htsget my first thought is it should be a type htsget of its own where the access url is required to support the htsget protocol vs just being a place to fetch bytes do we want our initial type list to only include methods that we expect somebody to have implemented by launch or should we lean further into the hypothetical future i m inclined to trim it back to only things that will be live in once we know what that list is can we combine http and https into one i expect most servers will only use one of them and as a client i don t think i would ever have interesting logic to pick one vs the other if i intend to use http i ll pick that type and use whatever access url i m given | 1 |

323,103 | 9,842,860,435 | IssuesEvent | 2019-06-18 10:12:46 | stageosu/Kaguya | https://api.github.com/repos/stageosu/Kaguya | closed | Music player frequently skips songs on its own. | Bug Command Related Help wanted High Priority Music | More often than not, Kaguya will seemingly automatically skip songs that are queued (when it is their turn to play). In the log, it shows that there are no more items in the queue, so that is the reason why it has stopped playing (when it's clearly not the case). | 1.0 | Music player frequently skips songs on its own. - More often than not, Kaguya will seemingly automatically skip songs that are queued (when it is their turn to play). In the log, it shows that there are no more items in the queue, so that is the reason why it has stopped playing (when it's clearly not the case). | priority | music player frequently skips songs on its own more often than not kaguya will seemingly automatically skip songs that are queued when it is their turn to play in the log it shows that there are no more items in the queue so that is the reason why it has stopped playing when it s clearly not the case | 1 |

790,611 | 27,830,476,095 | IssuesEvent | 2023-03-20 04:05:08 | AY2223S2-CS2103-F10-2/tp | https://api.github.com/repos/AY2223S2-CS2103-F10-2/tp | opened | As a user, I can navigate to a specific module/lecture and search for contents within it's scope | type.Story priority.High | Integrate nav and find command to work together | 1.0 | As a user, I can navigate to a specific module/lecture and search for contents within it's scope - Integrate nav and find command to work together | priority | as a user i can navigate to a specific module lecture and search for contents within it s scope integrate nav and find command to work together | 1 |

263,578 | 8,292,960,972 | IssuesEvent | 2018-09-20 03:54:52 | aspnet/websdk | https://api.github.com/repos/aspnet/websdk | reopened | Missing features when creating a WebDeploy package | High Priority | This is the next step of https://github.com/dotnet/cli/issues/6598.

I am now able to create a WebDeploy package using MSBuild v15.

However, I am not able to accomplish the same things than before (with ASP.NET "classic" and MSBuild v14):

- [ ] How can I add parameters to the `WebApplication.Full.Parameters.xml` file (produced by the build) ?

- [x] There is no `.cmd` file generated.

- [x] In v14, the parameter `PackageLocation` defined the folder where to generate the package. Now, I have to utilize `DesktopBuildPackageLocation` and specify a file path. The former was very interresting for solutions that have multiple web applications (each web app generates its own package). With this new parameter, the zip file contains both the sites, but configured for only one. | 1.0 | Missing features when creating a WebDeploy package - This is the next step of https://github.com/dotnet/cli/issues/6598.

I am now able to create a WebDeploy package using MSBuild v15.

However, I am not able to accomplish the same things than before (with ASP.NET "classic" and MSBuild v14):

- [ ] How can I add parameters to the `WebApplication.Full.Parameters.xml` file (produced by the build) ?

- [x] There is no `.cmd` file generated.

- [x] In v14, the parameter `PackageLocation` defined the folder where to generate the package. Now, I have to utilize `DesktopBuildPackageLocation` and specify a file path. The former was very interresting for solutions that have multiple web applications (each web app generates its own package). With this new parameter, the zip file contains both the sites, but configured for only one. | priority | missing features when creating a webdeploy package this is the next step of i am now able to create a webdeploy package using msbuild however i am not able to accomplish the same things than before with asp net classic and msbuild how can i add parameters to the webapplication full parameters xml file produced by the build there is no cmd file generated in the parameter packagelocation defined the folder where to generate the package now i have to utilize desktopbuildpackagelocation and specify a file path the former was very interresting for solutions that have multiple web applications each web app generates its own package with this new parameter the zip file contains both the sites but configured for only one | 1 |

310,266 | 9,487,814,890 | IssuesEvent | 2019-04-22 17:55:57 | CosminNechifor/IKHNAIE | https://api.github.com/repos/CosminNechifor/IKHNAIE | closed | Implement a market contract | High Priority | The market contract should take care of keeping track of the ``Components`` that are being sent for sale.

Functionalities: **TBD**

This is block by the development fo the ``ComponentContract`` #11 #12. The functions ``submitedForSale`` and ``buyComponent`` should be accessible only from the ``Manager`` contract and it should take care if the user who performs the action has enough ``ether`` or is the ``owner`` of the component.

| 1.0 | Implement a market contract - The market contract should take care of keeping track of the ``Components`` that are being sent for sale.

Functionalities: **TBD**

This is block by the development fo the ``ComponentContract`` #11 #12. The functions ``submitedForSale`` and ``buyComponent`` should be accessible only from the ``Manager`` contract and it should take care if the user who performs the action has enough ``ether`` or is the ``owner`` of the component.

| priority | implement a market contract the market contract should take care of keeping track of the components that are being sent for sale functionalities tbd this is block by the development fo the componentcontract the functions submitedforsale and buycomponent should be accessible only from the manager contract and it should take care if the user who performs the action has enough ether or is the owner of the component | 1 |

359,276 | 10,667,527,206 | IssuesEvent | 2019-10-19 13:08:26 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | closed | Need separate Save button when assigning subscription tiers for an API for better user experience. | 3.0.0-beta Priority/Highest Type/Improvement | Create an API and publish it. By default, we assign the Bronze tier. If someone needs it to have unlimited tier only, they need to first tick "Unlimited" tier and then untick the "Bronze" tier. But usually, people will untick the "Bronze tier" first and then tick the tier they want. In such a case, when the Bronze tier is unticked, an error is thrown, saying API should have a tier assigned if it is not in created or if it is not a prototype API. Hence for better UX, a separate Save button would be ideal to save after ticking/unticking the subscription policies. | 1.0 | Need separate Save button when assigning subscription tiers for an API for better user experience. - Create an API and publish it. By default, we assign the Bronze tier. If someone needs it to have unlimited tier only, they need to first tick "Unlimited" tier and then untick the "Bronze" tier. But usually, people will untick the "Bronze tier" first and then tick the tier they want. In such a case, when the Bronze tier is unticked, an error is thrown, saying API should have a tier assigned if it is not in created or if it is not a prototype API. Hence for better UX, a separate Save button would be ideal to save after ticking/unticking the subscription policies. | priority | need separate save button when assigning subscription tiers for an api for better user experience create an api and publish it by default we assign the bronze tier if someone needs it to have unlimited tier only they need to first tick unlimited tier and then untick the bronze tier but usually people will untick the bronze tier first and then tick the tier they want in such a case when the bronze tier is unticked an error is thrown saying api should have a tier assigned if it is not in created or if it is not a prototype api hence for better ux a separate save button would be ideal to save after ticking unticking the subscription policies | 1 |

164,983 | 6,259,769,831 | IssuesEvent | 2017-07-14 18:52:23 | wpninjas/ninja-forms | https://api.github.com/repos/wpninjas/ninja-forms | closed | reCaptcha - Submitting form without validating locks the user from submitting. | DIFFICULTY: Involved PRIORITY: High VALUE: Friendly VALUE: Painless | As the title suggests, submitting without validating reCaptcha locks the form with an error, rendering the user unable to submit the form.

The only solution in this case is a complete reload of the page, and resubmission whilst appropriately validating reCaptcha. | 1.0 | reCaptcha - Submitting form without validating locks the user from submitting. - As the title suggests, submitting without validating reCaptcha locks the form with an error, rendering the user unable to submit the form.

The only solution in this case is a complete reload of the page, and resubmission whilst appropriately validating reCaptcha. | priority | recaptcha submitting form without validating locks the user from submitting as the title suggests submitting without validating recaptcha locks the form with an error rendering the user unable to submit the form the only solution in this case is a complete reload of the page and resubmission whilst appropriately validating recaptcha | 1 |

554,839 | 16,440,319,445 | IssuesEvent | 2021-05-20 13:43:55 | anguaive/typelonger | https://api.github.com/repos/anguaive/typelonger | opened | New specification and basic feature set | cleanup high-priority | ## To do:

- [ ] Examine old specification, rethink what to include and what to not include

- [ ] Decide on the basic feature set | 1.0 | New specification and basic feature set - ## To do:

- [ ] Examine old specification, rethink what to include and what to not include

- [ ] Decide on the basic feature set | priority | new specification and basic feature set to do examine old specification rethink what to include and what to not include decide on the basic feature set | 1 |

586,436 | 17,577,481,689 | IssuesEvent | 2021-08-15 22:09:41 | ErnestoFGonzalez/django-amazon-sns-mobile-push-notification | https://api.github.com/repos/ErnestoFGonzalez/django-amazon-sns-mobile-push-notification | closed | Add `badge` argument to `client.Client.publish_to_ios` method | enhancement priority:high difficulty:low | From [Local and Remote Notification Programming Guide: Creating the Remote Notification Payload](https://developer.apple.com/library/archive/documentation/NetworkingInternet/Conceptual/RemoteNotificationsPG/CreatingtheNotificationPayload.html#//apple_ref/doc/uid/TP40008194-CH10-SW1), we see that the `"aps"` entry includes a `"badge"` entry itself, which accepts an integer to set the app's devices badge number.

We'll had an optional `badge` argument to `client.Client.publish_to_ios` in order to be able to pass a payload like

```

{

"aps" : {

"alert" : {

"title" : string,

"body" : string,

"sound": string

},

"badge" : number

},

"id" : string,

"type": string,

"serializer": object

}

```

In order to pass `badge` down to `publish_to_ios` we nee to add the `badge` param to the following methods:

- [ ] `tasks.send_sns_mobile_push_notification_to_device`

- [ ] `models.Device.send`

- [ ] and finally `client.Client.publish_to_ios`.

| 1.0 | Add `badge` argument to `client.Client.publish_to_ios` method - From [Local and Remote Notification Programming Guide: Creating the Remote Notification Payload](https://developer.apple.com/library/archive/documentation/NetworkingInternet/Conceptual/RemoteNotificationsPG/CreatingtheNotificationPayload.html#//apple_ref/doc/uid/TP40008194-CH10-SW1), we see that the `"aps"` entry includes a `"badge"` entry itself, which accepts an integer to set the app's devices badge number.

We'll had an optional `badge` argument to `client.Client.publish_to_ios` in order to be able to pass a payload like

```

{

"aps" : {

"alert" : {

"title" : string,

"body" : string,

"sound": string

},

"badge" : number

},

"id" : string,

"type": string,

"serializer": object

}

```

In order to pass `badge` down to `publish_to_ios` we nee to add the `badge` param to the following methods:

- [ ] `tasks.send_sns_mobile_push_notification_to_device`

- [ ] `models.Device.send`

- [ ] and finally `client.Client.publish_to_ios`.

| priority | add badge argument to client client publish to ios method from we see that the aps entry includes a badge entry itself which accepts an integer to set the app s devices badge number we ll had an optional badge argument to client client publish to ios in order to be able to pass a payload like aps alert title string body string sound string badge number id string type string serializer object in order to pass badge down to publish to ios we nee to add the badge param to the following methods tasks send sns mobile push notification to device models device send and finally client client publish to ios | 1 |

681,168 | 23,299,414,375 | IssuesEvent | 2022-08-07 04:37:26 | AlexanderDefuria/FRC-Scouting | https://api.github.com/repos/AlexanderDefuria/FRC-Scouting | opened | Optimize Match Data Loading | Bug High Priority Back End | Currently matchdata is taking a long long time to load. Investigate. | 1.0 | Optimize Match Data Loading - Currently matchdata is taking a long long time to load. Investigate. | priority | optimize match data loading currently matchdata is taking a long long time to load investigate | 1 |

266,364 | 8,366,359,087 | IssuesEvent | 2018-10-04 08:54:24 | CS2113-AY1819S1-F10-3/Main | https://api.github.com/repos/CS2113-AY1819S1-F10-3/Main | closed | Morphing and implementing project specific classes | priority.high status.ongoing | Subject,PO and HQP classes (HQP is a sub-class of PO) | 1.0 | Morphing and implementing project specific classes - Subject,PO and HQP classes (HQP is a sub-class of PO) | priority | morphing and implementing project specific classes subject po and hqp classes hqp is a sub class of po | 1 |

550,229 | 16,107,446,812 | IssuesEvent | 2021-04-27 16:31:45 | pokt-network/pocket-core | https://api.github.com/repos/pokt-network/pocket-core | closed | Shift Tx Indexer to pocket core | enhancement high priority | **Is your feature request related to a problem? Please describe.**

Currently by using tendermint's `txIndexer` can lead to miss indexing of a tx as evidenced in #1188. This happens because the event does not necessarily match the signer.

**Describe the solution you'd like**

A custom `txIndexer` that allows us to specify how we wanna index and store tx's

**Describe alternatives you've considered**

Modifying the tx Indexer on tendermint was considered however it would've led to breaking the ABCI standard in order to be able to pass down signer for comparassion of event & signer

| 1.0 | Shift Tx Indexer to pocket core - **Is your feature request related to a problem? Please describe.**

Currently by using tendermint's `txIndexer` can lead to miss indexing of a tx as evidenced in #1188. This happens because the event does not necessarily match the signer.

**Describe the solution you'd like**

A custom `txIndexer` that allows us to specify how we wanna index and store tx's

**Describe alternatives you've considered**

Modifying the tx Indexer on tendermint was considered however it would've led to breaking the ABCI standard in order to be able to pass down signer for comparassion of event & signer

| priority | shift tx indexer to pocket core is your feature request related to a problem please describe currently by using tendermint s txindexer can lead to miss indexing of a tx as evidenced in this happens because the event does not necessarily match the signer describe the solution you d like a custom txindexer that allows us to specify how we wanna index and store tx s describe alternatives you ve considered modifying the tx indexer on tendermint was considered however it would ve led to breaking the abci standard in order to be able to pass down signer for comparassion of event signer | 1 |

443,261 | 12,769,462,407 | IssuesEvent | 2020-06-30 03:41:26 | ArkEcosystem/core | https://api.github.com/repos/ArkEcosystem/core | closed | Remove container getters | Priority: High | When the migration from awilix to inversify started I added 2 helpers in the form of `app.log` and `app.events`. These should be removed and replaced with injection where the logger or event dispatcher is needed. This will ensure that developers use injection and don't use internal helpers.

https://github.com/ArkEcosystem/core/blob/develop/packages/core-kernel/src/application.ts#L340-L360 | 1.0 | Remove container getters - When the migration from awilix to inversify started I added 2 helpers in the form of `app.log` and `app.events`. These should be removed and replaced with injection where the logger or event dispatcher is needed. This will ensure that developers use injection and don't use internal helpers.

https://github.com/ArkEcosystem/core/blob/develop/packages/core-kernel/src/application.ts#L340-L360 | priority | remove container getters when the migration from awilix to inversify started i added helpers in the form of app log and app events these should be removed and replaced with injection where the logger or event dispatcher is needed this will ensure that developers use injection and don t use internal helpers | 1 |

248,258 | 7,928,597,720 | IssuesEvent | 2018-07-06 12:20:36 | jncc/topcat | https://api.github.com/repos/jncc/topcat | closed | Copy some updated data files into http://data.jncc.gov.uk/data/ | high priority | I will be redacting some data from a large number of data files that are currently stored in http://data.jncc.gov.uk/data/ for access from Data.gov.uk.

Once this work is complete I will need developer assistance to replace the existing files with the updated files. Sometime in mid-May would be ideal. Thank you. | 1.0 | Copy some updated data files into http://data.jncc.gov.uk/data/ - I will be redacting some data from a large number of data files that are currently stored in http://data.jncc.gov.uk/data/ for access from Data.gov.uk.

Once this work is complete I will need developer assistance to replace the existing files with the updated files. Sometime in mid-May would be ideal. Thank you. | priority | copy some updated data files into i will be redacting some data from a large number of data files that are currently stored in for access from data gov uk once this work is complete i will need developer assistance to replace the existing files with the updated files sometime in mid may would be ideal thank you | 1 |

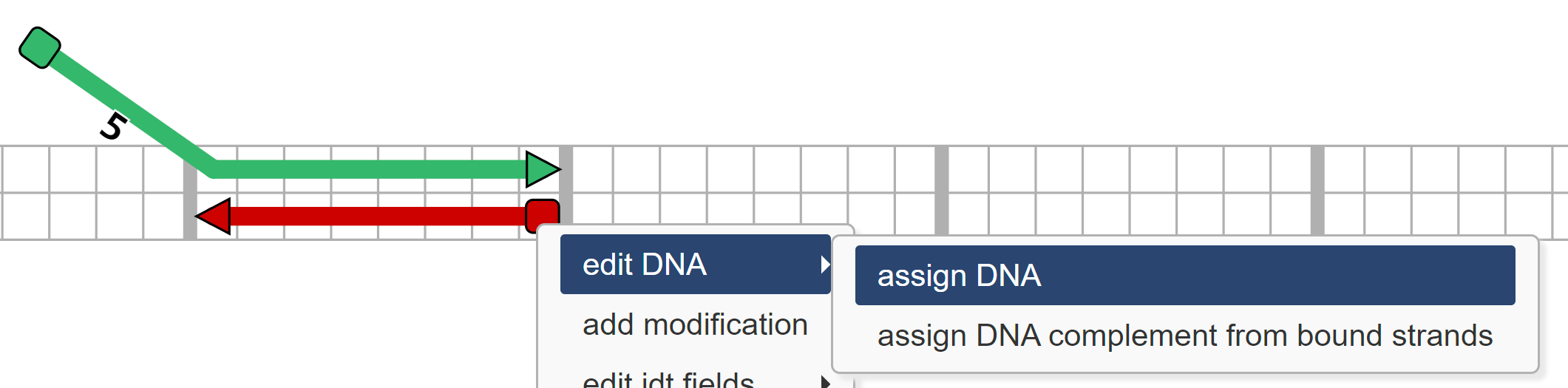

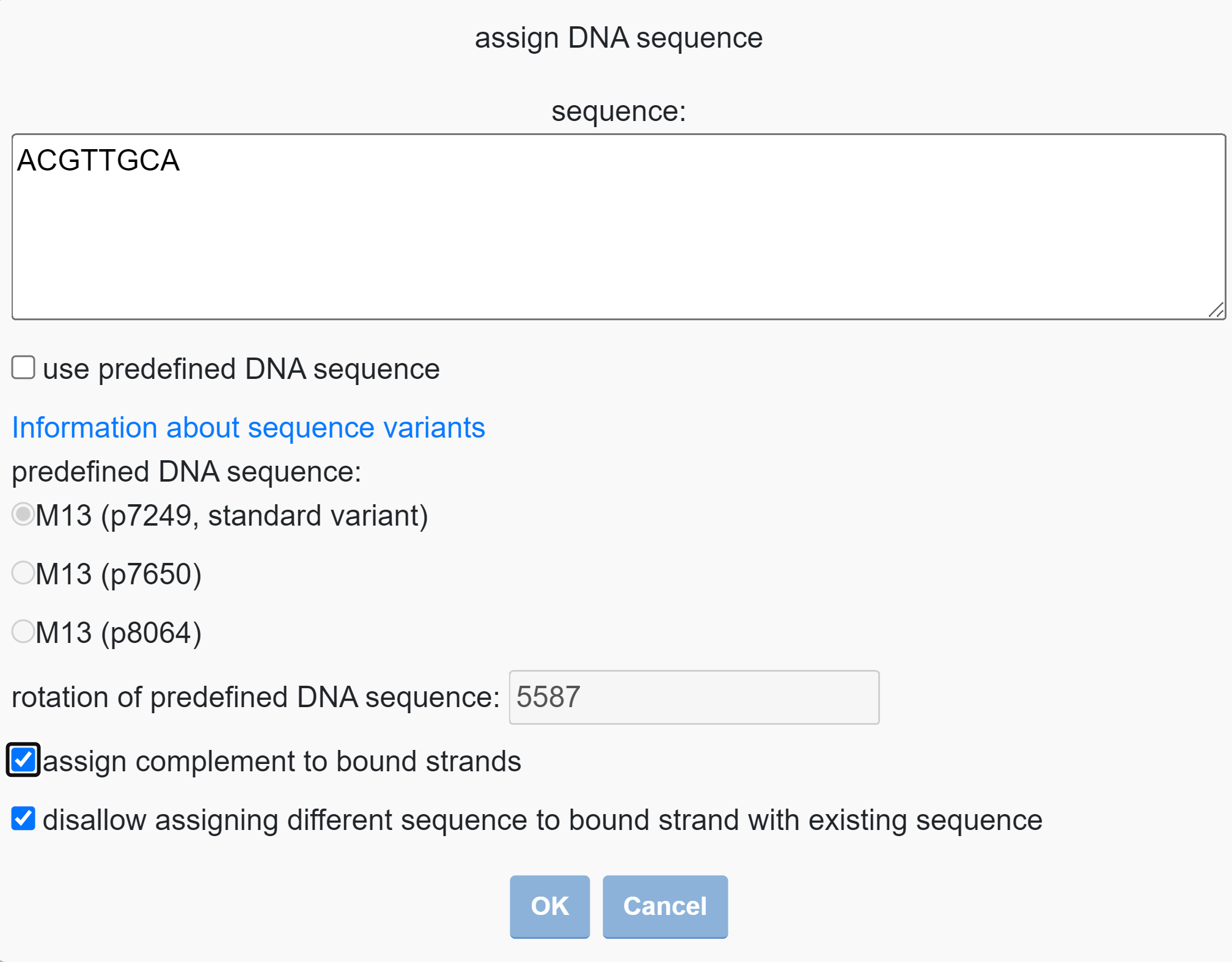

525,472 | 15,254,346,161 | IssuesEvent | 2021-02-20 11:36:51 | epam/Indigo | https://api.github.com/repos/epam/Indigo | closed | Calculate method does not return errors if structure is not valid | Bug High priority | **Step to reproduce**

1. Call indigo.calculate() for [R-Member.zip](https://github.com/epam/Indigo/files/5952295/R-Member.zip)

**Expected result**

Method throws not empty exception

**Actual result**

exception is empty

| 1.0 | Calculate method does not return errors if structure is not valid - **Step to reproduce**

1. Call indigo.calculate() for [R-Member.zip](https://github.com/epam/Indigo/files/5952295/R-Member.zip)

**Expected result**

Method throws not empty exception

**Actual result**

exception is empty

| priority | calculate method does not return errors if structure is not valid step to reproduce call indigo calculate for expected result method throws not empty exception actual result exception is empty | 1 |

570,925 | 17,023,209,413 | IssuesEvent | 2021-07-03 00:52:10 | microsoft/winget-create | https://api.github.com/repos/microsoft/winget-create | closed | Multiple URL support | High-Priority In-PR Issue-Feature | # Description of the new feature/enhancement

Add the possibility to add more than one installer in wingetcreate new and either wingetcreate update

# Proposed technical implementation details (optional)

~I think this is a low priority feature request, so keep calm and develop the rest 😆~

I didn't saw the source code, so I'm ignorant | 1.0 | Multiple URL support - # Description of the new feature/enhancement

Add the possibility to add more than one installer in wingetcreate new and either wingetcreate update

# Proposed technical implementation details (optional)

~I think this is a low priority feature request, so keep calm and develop the rest 😆~

I didn't saw the source code, so I'm ignorant | priority | multiple url support description of the new feature enhancement add the possibility to add more than one installer in wingetcreate new and either wingetcreate update proposed technical implementation details optional i think this is a low priority feature request so keep calm and develop the rest 😆 i didn t saw the source code so i m ignorant | 1 |

497,814 | 14,394,341,895 | IssuesEvent | 2020-12-03 01:03:37 | OpenPrinting/cups | https://api.github.com/repos/OpenPrinting/cups | closed | Canon ts6200 with IPP everywhere has duplicated grayscale option in color mode | bug priority-high | os : arch linux

cups : 2.3.3op1

I installed canon ts6200 series with IPP everywhere and there are one color and two grayscale options in color mode.